Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

741,724 | 25,814,585,605 | IssuesEvent | 2022-12-12 03:10:59 | containerd/nerdctl | https://api.github.com/repos/containerd/nerdctl | closed | [Rootful + SELinux] [BuildKit] RUN command throws error code 139 on centos | bug priority/high kind/external | ### Description

Cannot build using containerfile with RUN command on centos. The same containerfile works fine on ubuntu.

### Steps to reproduce the issue

1. Have a simple Containter file:

FROM alpine

RUN ls

2. Build it

### Describe the results you received and expected

nerdctl build -t test:1 .

[+] Building 1.5s (4/5)

[+] Building 1.6s (5/5) FINISHED

=> [internal] load build definition from Containerfile 0.0s

=> => transferring dockerfile: 118B 0.0s

=> [internal] load .dockerignore 0.0s

=> => transferring context: 2B 0.0s

=> [internal] load metadata for docker.io/library/alpine:latest 1.0s

=> [1/2] FROM docker.io/library/alpine@sha256:bc41182d7ef5ffc53a40b044e725193bc10142a1243f395ee852a8d9730fc2ad 0.3s

=> => resolve docker.io/library/alpine@sha256:bc41182d7ef5ffc53a40b044e725193bc10142a1243f395ee852a8d9730fc2ad 0.0s

=> => sha256:213ec9aee27d8be045c6a92b7eac22c9a64b44558193775a1a7f626352392b49 2.81MB / 2.81MB 0.1s

=> => extracting sha256:213ec9aee27d8be045c6a92b7eac22c9a64b44558193775a1a7f626352392b49 0.1s

=> ERROR [2/2] RUN ls 0.2s

------

> [2/2] RUN ls:

------

Containerfile:2

--------------------

1 | FROM alpine

2 | >>> RUN ls

3 |

--------------------

error: failed to solve: process "/bin/sh -c ls" did not complete successfully: exit code: 139

FATA[0001] unrecognized image format

### What version of nerdctl are you using?

nerdctl version 0.23.0

### Are you using a variant of nerdctl? (e.g., Rancher Desktop)

_No response_

### Host information

Client:

Namespace: default

Debug Mode: false

Server:

Server Version: v1.6.8

Storage Driver: overlayfs

Logging Driver: json-file

Cgroup Driver: cgroupfs

Cgroup Version: 1

Plugins:

Log: fluentd journald json-file

Storage: native overlayfs

Security Options:

seccomp

Profile: default

Kernel Version: 4.18.0-408.el8.x86_64

Operating System: CentOS Stream 8

OSType: linux

Architecture: x86_64

CPUs: 2

Total Memory: 14.47GiB

Name: pcr-nerdctl-tst2

ID: 2908f464-ed9f-4476-99cc-205a294e6e51

WARNING: bridge-nf-call-iptables is disabled

WARNING: bridge-nf-call-ip6tables is disabled

| 1.0 | [Rootful + SELinux] [BuildKit] RUN command throws error code 139 on centos - ### Description

Cannot build using containerfile with RUN command on centos. The same containerfile works fine on ubuntu.

### Steps to reproduce the issue

1. Have a simple Containter file:

FROM alpine

RUN ls

2. Build it

### Describe the results you received and expected

nerdctl build -t test:1 .

[+] Building 1.5s (4/5)

[+] Building 1.6s (5/5) FINISHED

=> [internal] load build definition from Containerfile 0.0s

=> => transferring dockerfile: 118B 0.0s

=> [internal] load .dockerignore 0.0s

=> => transferring context: 2B 0.0s

=> [internal] load metadata for docker.io/library/alpine:latest 1.0s

=> [1/2] FROM docker.io/library/alpine@sha256:bc41182d7ef5ffc53a40b044e725193bc10142a1243f395ee852a8d9730fc2ad 0.3s

=> => resolve docker.io/library/alpine@sha256:bc41182d7ef5ffc53a40b044e725193bc10142a1243f395ee852a8d9730fc2ad 0.0s

=> => sha256:213ec9aee27d8be045c6a92b7eac22c9a64b44558193775a1a7f626352392b49 2.81MB / 2.81MB 0.1s

=> => extracting sha256:213ec9aee27d8be045c6a92b7eac22c9a64b44558193775a1a7f626352392b49 0.1s

=> ERROR [2/2] RUN ls 0.2s

------

> [2/2] RUN ls:

------

Containerfile:2

--------------------

1 | FROM alpine

2 | >>> RUN ls

3 |

--------------------

error: failed to solve: process "/bin/sh -c ls" did not complete successfully: exit code: 139

FATA[0001] unrecognized image format

### What version of nerdctl are you using?

nerdctl version 0.23.0

### Are you using a variant of nerdctl? (e.g., Rancher Desktop)

_No response_

### Host information

Client:

Namespace: default

Debug Mode: false

Server:

Server Version: v1.6.8

Storage Driver: overlayfs

Logging Driver: json-file

Cgroup Driver: cgroupfs

Cgroup Version: 1

Plugins:

Log: fluentd journald json-file

Storage: native overlayfs

Security Options:

seccomp

Profile: default

Kernel Version: 4.18.0-408.el8.x86_64

Operating System: CentOS Stream 8

OSType: linux

Architecture: x86_64

CPUs: 2

Total Memory: 14.47GiB

Name: pcr-nerdctl-tst2

ID: 2908f464-ed9f-4476-99cc-205a294e6e51

WARNING: bridge-nf-call-iptables is disabled

WARNING: bridge-nf-call-ip6tables is disabled

| priority | run command throws error code on centos description cannot build using containerfile with run command on centos the same containerfile works fine on ubuntu steps to reproduce the issue have a simple containter file from alpine run ls build it describe the results you received and expected nerdctl build t test building building finished load build definition from containerfile transferring dockerfile load dockerignore transferring context load metadata for docker io library alpine latest from docker io library alpine resolve docker io library alpine extracting error run ls run ls containerfile from alpine run ls error failed to solve process bin sh c ls did not complete successfully exit code fata unrecognized image format what version of nerdctl are you using nerdctl version are you using a variant of nerdctl e g rancher desktop no response host information client namespace default debug mode false server server version storage driver overlayfs logging driver json file cgroup driver cgroupfs cgroup version plugins log fluentd journald json file storage native overlayfs security options seccomp profile default kernel version operating system centos stream ostype linux architecture cpus total memory name pcr nerdctl id warning bridge nf call iptables is disabled warning bridge nf call is disabled | 1 |

566,104 | 16,796,077,732 | IssuesEvent | 2021-06-16 03:52:40 | sodafoundation/multi-cloud | https://api.github.com/repos/sodafoundation/multi-cloud | opened | AccessKey , SecretKey to be created from API | High Priority | **Issue/Feature Description:**

AccessKey , SecretKey to be created from API - To enable users to create AKSK from the API , rather than the UI.

AkSk from API will enable users to create the AKSK from the API and integrate to their Clients.

**Why this issue to fixed / feature is needed(give scenarios or use cases):**

AkSk from API will enable users to create the AKSK from the API and integrate to their Clients.

**How to reproduce, in case of a bug:**

N/A

**Other Notes / Environment Information: (Please give the env information, log link or any useful information for this issue)**

| 1.0 | AccessKey , SecretKey to be created from API - **Issue/Feature Description:**

AccessKey , SecretKey to be created from API - To enable users to create AKSK from the API , rather than the UI.

AkSk from API will enable users to create the AKSK from the API and integrate to their Clients.

**Why this issue to fixed / feature is needed(give scenarios or use cases):**

AkSk from API will enable users to create the AKSK from the API and integrate to their Clients.

**How to reproduce, in case of a bug:**

N/A

**Other Notes / Environment Information: (Please give the env information, log link or any useful information for this issue)**

| priority | accesskey secretkey to be created from api issue feature description accesskey secretkey to be created from api to enable users to create aksk from the api rather than the ui aksk from api will enable users to create the aksk from the api and integrate to their clients why this issue to fixed feature is needed give scenarios or use cases aksk from api will enable users to create the aksk from the api and integrate to their clients how to reproduce in case of a bug n a other notes environment information please give the env information log link or any useful information for this issue | 1 |

96,091 | 3,964,411,317 | IssuesEvent | 2016-05-03 00:45:32 | daronco/test-issue-migrate2 | https://api.github.com/repos/daronco/test-issue-migrate2 | closed | Set a numeric voiceBridge for every room | Priority: High Status: Resolved Type: Bug | ---

Author Name: **Leonardo Daronco** (@daronco)

Original Redmine Issue: 145, http://dev.mconf.org/redmine/issues/145

Original Assignee: Leonardo Daronco

---

@Google user: leonardo...@gmail.com@

Use a numeric "voiceBridge" param when creating a room.

From the BigBlueButton API documentation: "we recommend you always pass a 5 digit voiceBridge parameter -- and have it begin with the digit '7' if you are using the default FreeSWITCH setup"

So the voiceBridge should have 5 digits, the first one being a '7' and the last ones can be taken from the meetingID (after implementing "/p/mconf/issues/detail?id=38": issue #38 ) or can be random (but unique).

| 1.0 | Set a numeric voiceBridge for every room - ---

Author Name: **Leonardo Daronco** (@daronco)

Original Redmine Issue: 145, http://dev.mconf.org/redmine/issues/145

Original Assignee: Leonardo Daronco

---

@Google user: leonardo...@gmail.com@

Use a numeric "voiceBridge" param when creating a room.

From the BigBlueButton API documentation: "we recommend you always pass a 5 digit voiceBridge parameter -- and have it begin with the digit '7' if you are using the default FreeSWITCH setup"

So the voiceBridge should have 5 digits, the first one being a '7' and the last ones can be taken from the meetingID (after implementing "/p/mconf/issues/detail?id=38": issue #38 ) or can be random (but unique).

| priority | set a numeric voicebridge for every room author name leonardo daronco daronco original redmine issue original assignee leonardo daronco google user leonardo gmail com use a numeric voicebridge param when creating a room from the bigbluebutton api documentation we recommend you always pass a digit voicebridge parameter and have it begin with the digit if you are using the default freeswitch setup so the voicebridge should have digits the first one being a and the last ones can be taken from the meetingid after implementing p mconf issues detail id issue or can be random but unique | 1 |

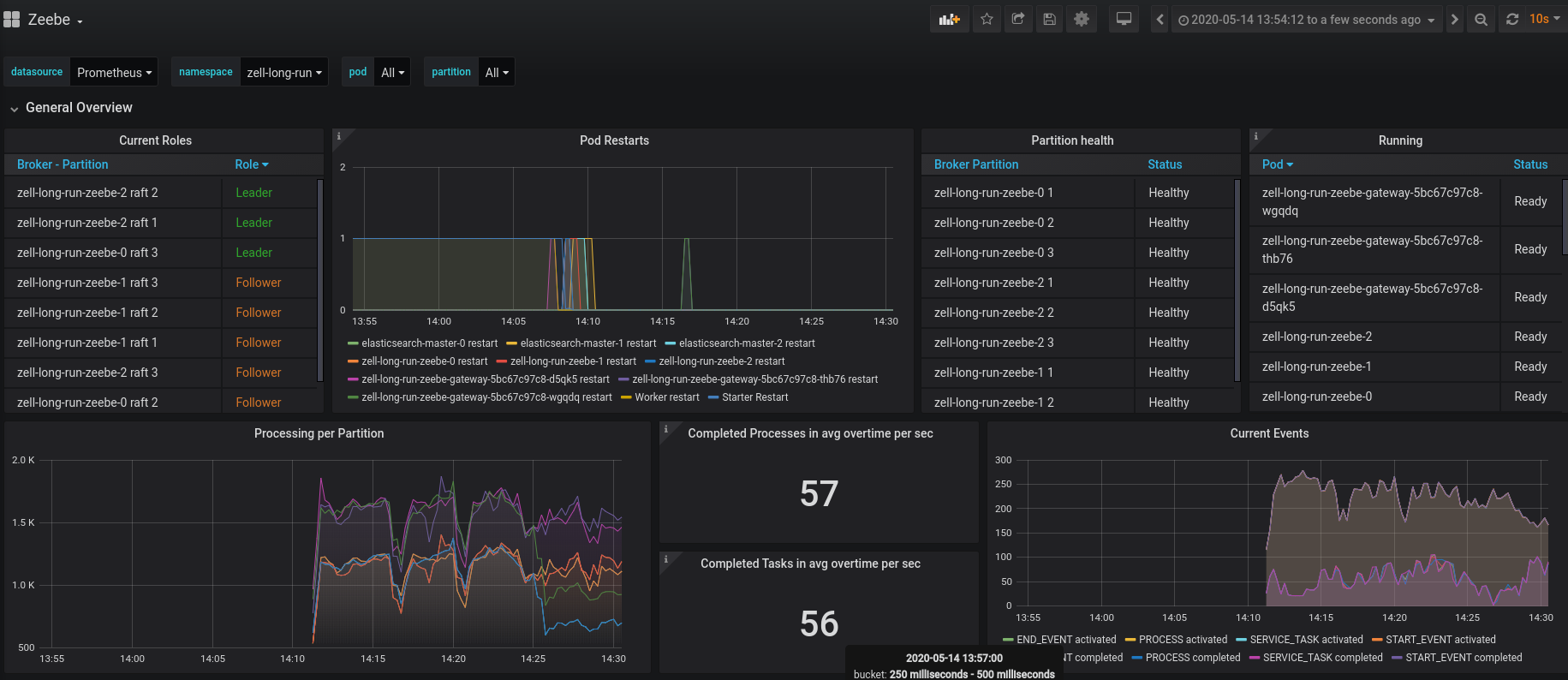

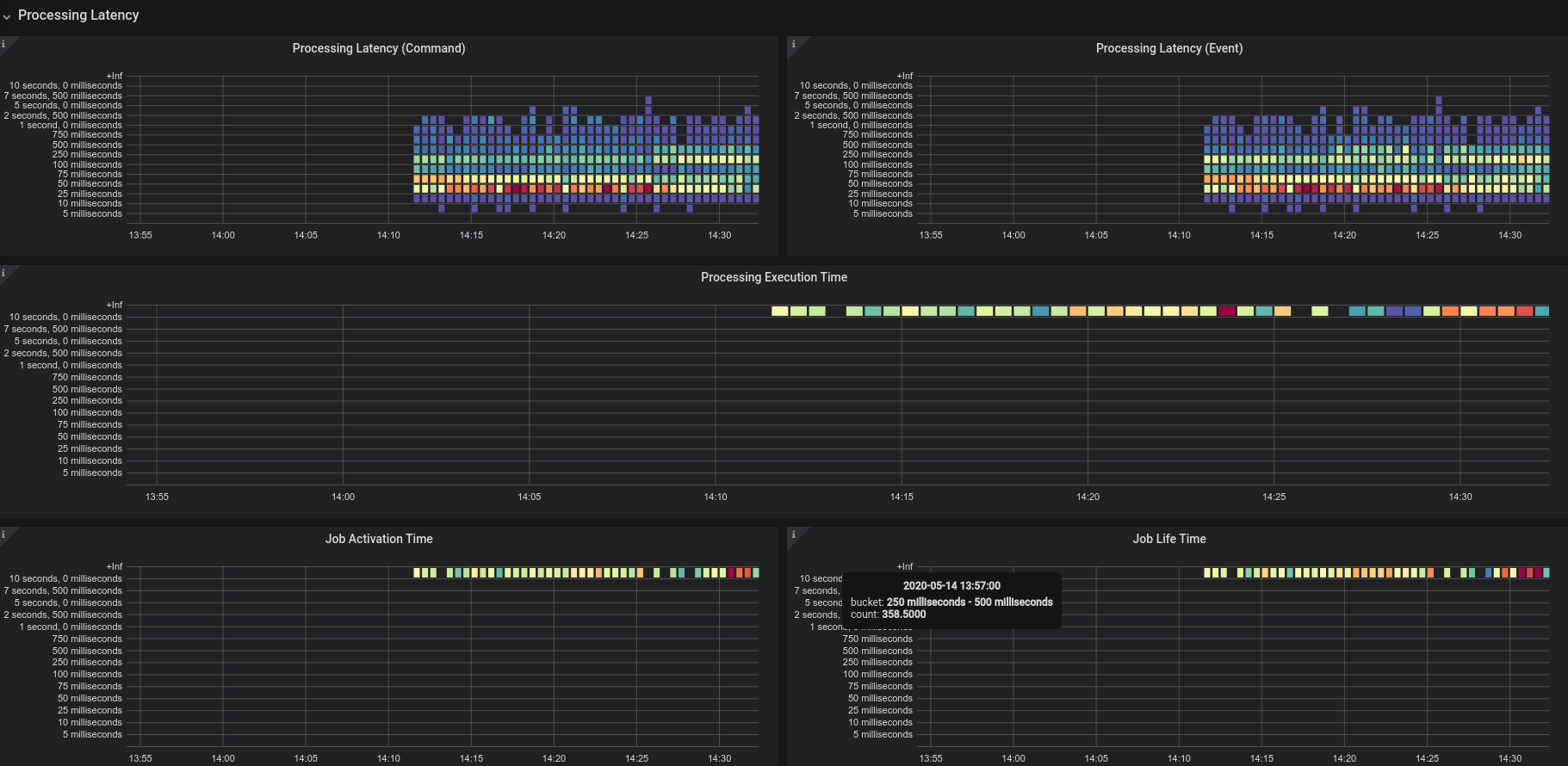

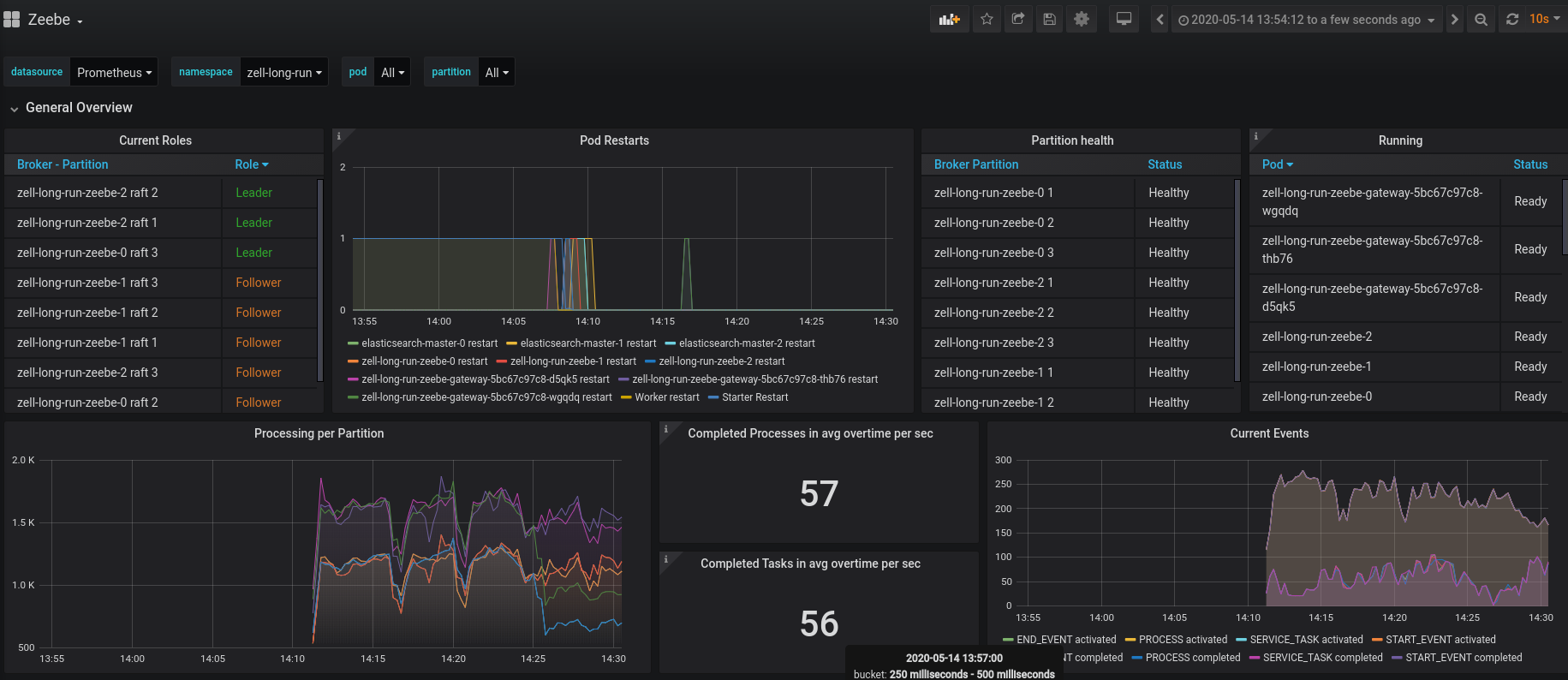

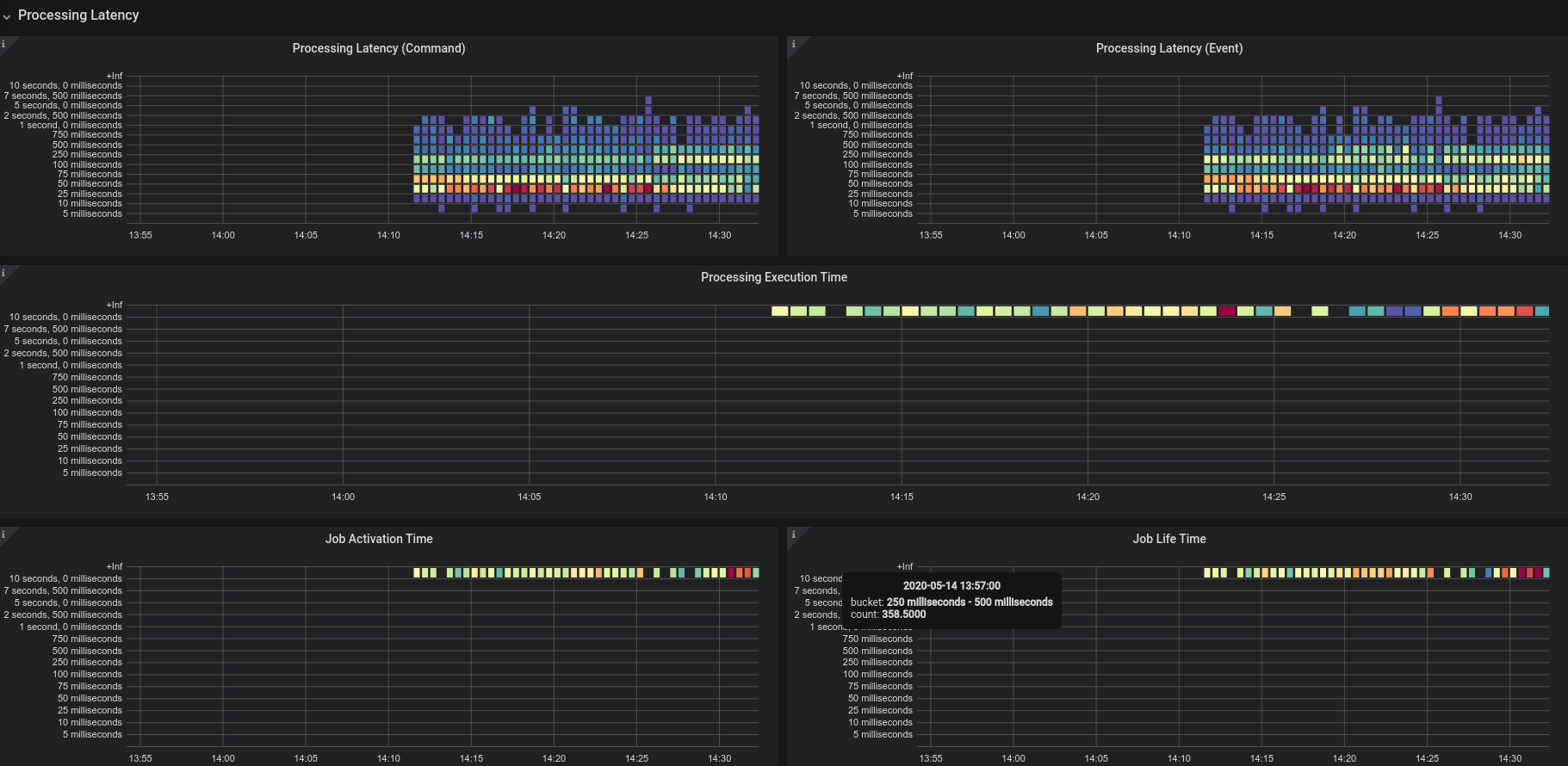

425,104 | 12,335,783,449 | IssuesEvent | 2020-05-14 12:34:46 | zeebe-io/zeebe | https://api.github.com/repos/zeebe-io/zeebe | opened | Possible regression in job activation | Impact: Performance Scope: broker Severity: High Status: Needs Priority Type: Bug | **Describe the bug**

I observed a big drop in throughput on running our normal benchmark.

I would normally expect ~200 workflows and task to be completed.

It seems that job activation is the problem since the activation latency is quite high.

The standalone gateway also throws endless the following timeouts:

```

2020-05-14 12:31:23.930 [io.zeebe.gateway.impl.broker.BrokerRequestManager] [gateway-scheduler-zb-actors-0] ERROR io.zeebe.gateway - Error handling gRPC request

io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: Time out between gateway and broker: Request type command-api-1 timed out in 15000 milliseconds

at io.grpc.Status.asRuntimeException(Status.java:524) ~[grpc-api-1.29.0.jar:1.29.0]

at io.zeebe.gateway.EndpointManager.convertThrowable(EndpointManager.java:397) ~[zeebe-gateway-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.gateway.EndpointManager.lambda$sendRequest$3(EndpointManager.java:311) ~[zeebe-gateway-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.gateway.impl.broker.BrokerRequestManager.lambda$sendRequest$3(BrokerRequestManager.java:148) ~[zeebe-gateway-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.gateway.impl.broker.BrokerRequestManager.lambda$sendRequestInternal$5(BrokerRequestManager.java:191) ~[zeebe-gateway-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.future.FutureContinuationRunnable.run(FutureContinuationRunnable.java:33) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorJob.invoke(ActorJob.java:76) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorJob.execute(ActorJob.java:39) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorTask.execute(ActorTask.java:118) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorThread.executeCurrentTask(ActorThread.java:107) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorThread.doWork(ActorThread.java:91) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorThread.run(ActorThread.java:204) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

Caused by: java.util.concurrent.TimeoutException: Request type command-api-1 timed out in 15000 milliseconds

at io.atomix.cluster.messaging.impl.AbstractClientConnection$Callback.timeout(AbstractClientConnection.java:163) ~[atomix-cluster-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at java.util.concurrent.Executors$RunnableAdapter.call(Unknown Source) ~[?:?]

at java.util.concurrent.FutureTask.run(Unknown Source) ~[?:?]

at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(Unknown Source) ~[?:?]

at java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source) ~[?:?]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source) ~[?:?]

at java.lang.Thread.run(Unknown Source) ~[?:?]

```

**To Reproduce**

Run the helm chart (v100) with our benchmark,

**Expected behavior**

Around 200 workflows are completed per second.

| 1.0 | Possible regression in job activation - **Describe the bug**

I observed a big drop in throughput on running our normal benchmark.

I would normally expect ~200 workflows and task to be completed.

It seems that job activation is the problem since the activation latency is quite high.

The standalone gateway also throws endless the following timeouts:

```

2020-05-14 12:31:23.930 [io.zeebe.gateway.impl.broker.BrokerRequestManager] [gateway-scheduler-zb-actors-0] ERROR io.zeebe.gateway - Error handling gRPC request

io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: Time out between gateway and broker: Request type command-api-1 timed out in 15000 milliseconds

at io.grpc.Status.asRuntimeException(Status.java:524) ~[grpc-api-1.29.0.jar:1.29.0]

at io.zeebe.gateway.EndpointManager.convertThrowable(EndpointManager.java:397) ~[zeebe-gateway-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.gateway.EndpointManager.lambda$sendRequest$3(EndpointManager.java:311) ~[zeebe-gateway-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.gateway.impl.broker.BrokerRequestManager.lambda$sendRequest$3(BrokerRequestManager.java:148) ~[zeebe-gateway-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.gateway.impl.broker.BrokerRequestManager.lambda$sendRequestInternal$5(BrokerRequestManager.java:191) ~[zeebe-gateway-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.future.FutureContinuationRunnable.run(FutureContinuationRunnable.java:33) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorJob.invoke(ActorJob.java:76) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorJob.execute(ActorJob.java:39) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorTask.execute(ActorTask.java:118) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorThread.executeCurrentTask(ActorThread.java:107) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorThread.doWork(ActorThread.java:91) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at io.zeebe.util.sched.ActorThread.run(ActorThread.java:204) [zeebe-util-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

Caused by: java.util.concurrent.TimeoutException: Request type command-api-1 timed out in 15000 milliseconds

at io.atomix.cluster.messaging.impl.AbstractClientConnection$Callback.timeout(AbstractClientConnection.java:163) ~[atomix-cluster-0.24.0-SNAPSHOT.jar:0.24.0-SNAPSHOT]

at java.util.concurrent.Executors$RunnableAdapter.call(Unknown Source) ~[?:?]

at java.util.concurrent.FutureTask.run(Unknown Source) ~[?:?]

at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(Unknown Source) ~[?:?]

at java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source) ~[?:?]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source) ~[?:?]

at java.lang.Thread.run(Unknown Source) ~[?:?]

```

**To Reproduce**

Run the helm chart (v100) with our benchmark,

**Expected behavior**

Around 200 workflows are completed per second.

| priority | possible regression in job activation describe the bug i observed a big drop in throughput on running our normal benchmark i would normally expect workflows and task to be completed it seems that job activation is the problem since the activation latency is quite high the standalone gateway also throws endless the following timeouts error io zeebe gateway error handling grpc request io grpc statusruntimeexception deadline exceeded time out between gateway and broker request type command api timed out in milliseconds at io grpc status asruntimeexception status java at io zeebe gateway endpointmanager convertthrowable endpointmanager java at io zeebe gateway endpointmanager lambda sendrequest endpointmanager java at io zeebe gateway impl broker brokerrequestmanager lambda sendrequest brokerrequestmanager java at io zeebe gateway impl broker brokerrequestmanager lambda sendrequestinternal brokerrequestmanager java at io zeebe util sched future futurecontinuationrunnable run futurecontinuationrunnable java at io zeebe util sched actorjob invoke actorjob java at io zeebe util sched actorjob execute actorjob java at io zeebe util sched actortask execute actortask java at io zeebe util sched actorthread executecurrenttask actorthread java at io zeebe util sched actorthread dowork actorthread java at io zeebe util sched actorthread run actorthread java caused by java util concurrent timeoutexception request type command api timed out in milliseconds at io atomix cluster messaging impl abstractclientconnection callback timeout abstractclientconnection java at java util concurrent executors runnableadapter call unknown source at java util concurrent futuretask run unknown source at java util concurrent scheduledthreadpoolexecutor scheduledfuturetask run unknown source at java util concurrent threadpoolexecutor runworker unknown source at java util concurrent threadpoolexecutor worker run unknown source at java lang thread run unknown source to reproduce run the helm chart with our benchmark expected behavior around workflows are completed per second | 1 |

619,804 | 19,535,416,577 | IssuesEvent | 2021-12-31 05:08:00 | ajayyy/SponsorBlock | https://api.github.com/repos/ajayyy/SponsorBlock | closed | Better-sqlite3 and other improvements | HIGH PRIORITY | [better-sqlite](https://github.com/JoshuaWise/better-sqlite3) is faster than the more popular `sqlite3` node library especially when load increases. The reason is that sqlite3 is "asynchronous" even though all the computation still happens on the same thread. This means the only thing it being async does is it increases the overhead significantly. (The reason it's more popular is as far as I know only because node devs fear stuff that is synchronous without really understanding what happens behind the scenes.) | 1.0 | Better-sqlite3 and other improvements - [better-sqlite](https://github.com/JoshuaWise/better-sqlite3) is faster than the more popular `sqlite3` node library especially when load increases. The reason is that sqlite3 is "asynchronous" even though all the computation still happens on the same thread. This means the only thing it being async does is it increases the overhead significantly. (The reason it's more popular is as far as I know only because node devs fear stuff that is synchronous without really understanding what happens behind the scenes.) | priority | better and other improvements is faster than the more popular node library especially when load increases the reason is that is asynchronous even though all the computation still happens on the same thread this means the only thing it being async does is it increases the overhead significantly the reason it s more popular is as far as i know only because node devs fear stuff that is synchronous without really understanding what happens behind the scenes | 1 |

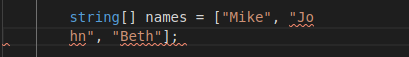

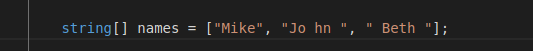

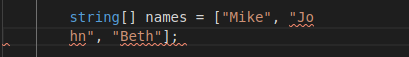

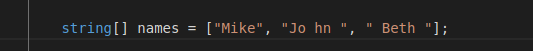

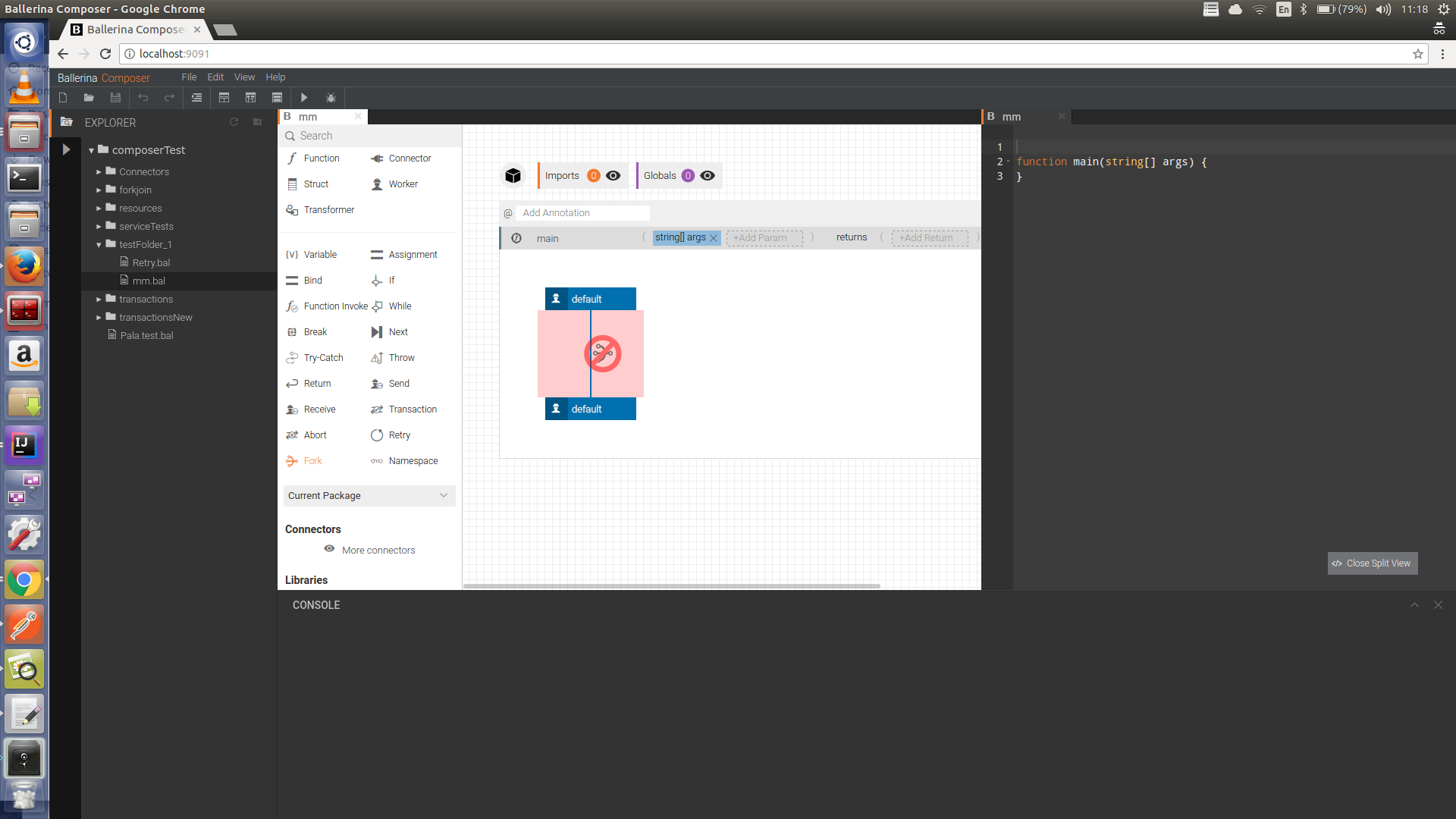

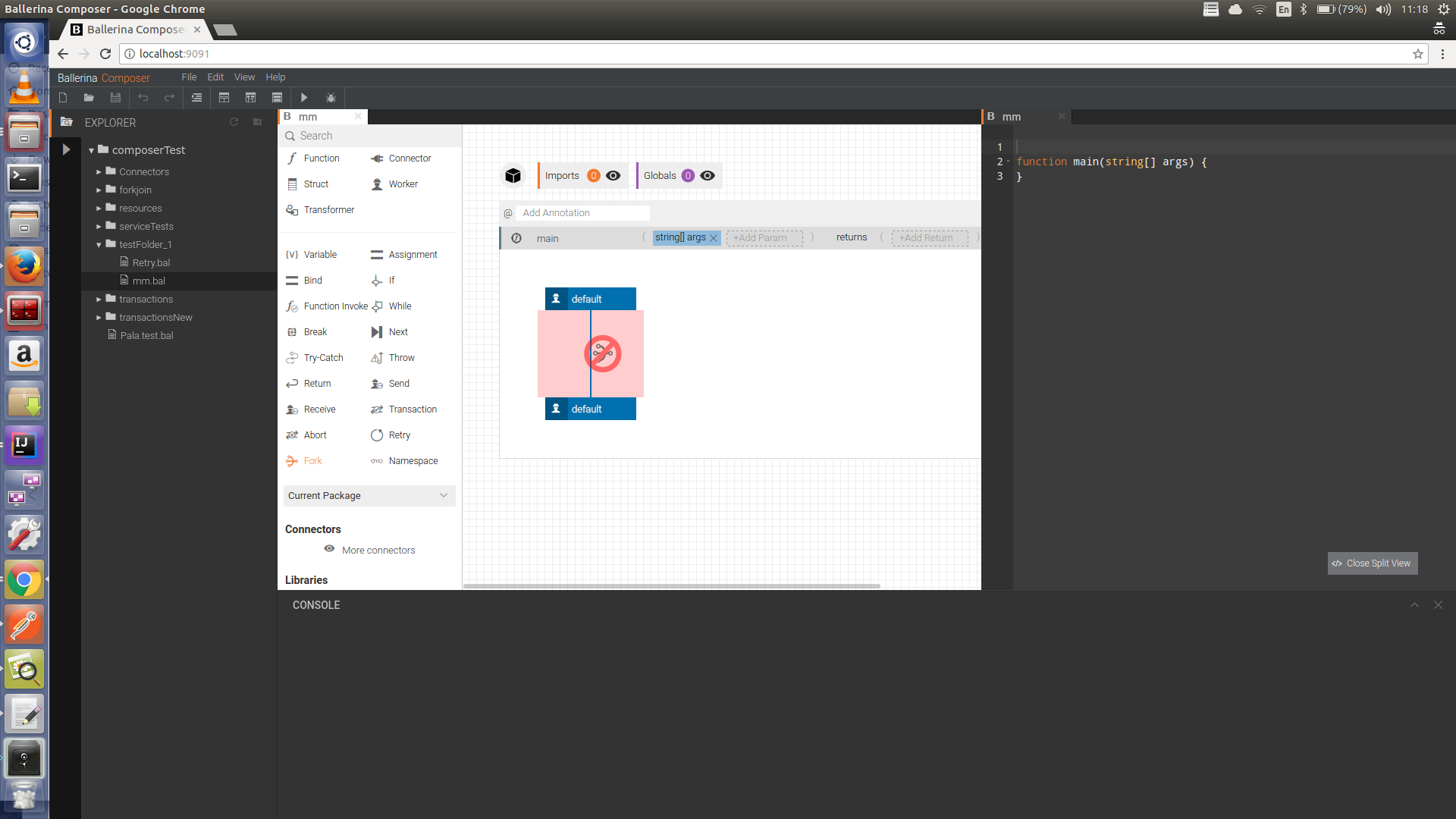

523,524 | 15,184,319,187 | IssuesEvent | 2021-02-15 09:24:54 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | [Formatter] Formatting doesn't work properly when a part of the string is in a different line | Area/Formatting Priority/High Team/Tooling Type/Bug | **Description:**

Please consider the following scenario

When I format the above code snippet additional spaces are added to the string values as given below.

**Steps to reproduce:**

**Affected Versions:**

SLP9-snapshot

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

| 1.0 | [Formatter] Formatting doesn't work properly when a part of the string is in a different line - **Description:**

Please consider the following scenario

When I format the above code snippet additional spaces are added to the string values as given below.

**Steps to reproduce:**

**Affected Versions:**

SLP9-snapshot

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

| priority | formatting doesn t work properly when a part of the string is in a different line description please consider the following scenario when i format the above code snippet additional spaces are added to the string values as given below steps to reproduce affected versions snapshot os db other environment details and versions related issues optional suggested labels optional suggested assignees optional | 1 |

393,662 | 11,623,112,634 | IssuesEvent | 2020-02-27 08:15:58 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Map water doesnt not always load on web interface | Priority: High Status: Fixed | Repeatedly reload any page with a map, sometimes the water does not load correctly.

https://gyazo.com/ce4aae1286389ebf4692477e4c662d25 | 1.0 | Map water doesnt not always load on web interface - Repeatedly reload any page with a map, sometimes the water does not load correctly.

https://gyazo.com/ce4aae1286389ebf4692477e4c662d25 | priority | map water doesnt not always load on web interface repeatedly reload any page with a map sometimes the water does not load correctly | 1 |

65,004 | 3,222,593,846 | IssuesEvent | 2015-10-09 02:33:50 | cs2103aug2015-t11-4j/main | https://api.github.com/repos/cs2103aug2015-t11-4j/main | closed | Parser support for update task | :parser priority.high type.task | Takes in string value from Logic and edit the certain task object and return the object to Logic | 1.0 | Parser support for update task - Takes in string value from Logic and edit the certain task object and return the object to Logic | priority | parser support for update task takes in string value from logic and edit the certain task object and return the object to logic | 1 |

398,475 | 11,741,500,717 | IssuesEvent | 2020-03-11 21:54:13 | SacredDuckwhale/Rarity | https://api.github.com/repos/SacredDuckwhale/Rarity | opened | The Combat-log based attempts detection doesn't work properly when used to detect outdoor world bosses | complexity: moderate module:core priority:high status:accepted type:bug | Verified for Dunegorger Kraulok. After looking at the code, it appears any UNIT_DIED event is now triggering the detection, when only those that are caused by the player or their party/raid should count.

The implementation appears to rely on bit flags for a certain srcFlag bitmap field (see https://wow.gamepedia.com/API_CombatLogGetCurrentEventInfo), but those are no longer working? The value is always -2147483648 and so ALL kills count regardless of who actually caused them.

Potential solutions:

* Repair the bit flag detection, if Blizzard hasn't removed/broken it

* Failing that, it might be possible to rely on the defeat detection (loot lockout) and count an attempt if and only if the player isn't yet logged out

Notes:

* MOP world bosses are probably affected, as well | 1.0 | The Combat-log based attempts detection doesn't work properly when used to detect outdoor world bosses - Verified for Dunegorger Kraulok. After looking at the code, it appears any UNIT_DIED event is now triggering the detection, when only those that are caused by the player or their party/raid should count.

The implementation appears to rely on bit flags for a certain srcFlag bitmap field (see https://wow.gamepedia.com/API_CombatLogGetCurrentEventInfo), but those are no longer working? The value is always -2147483648 and so ALL kills count regardless of who actually caused them.

Potential solutions:

* Repair the bit flag detection, if Blizzard hasn't removed/broken it

* Failing that, it might be possible to rely on the defeat detection (loot lockout) and count an attempt if and only if the player isn't yet logged out

Notes:

* MOP world bosses are probably affected, as well | priority | the combat log based attempts detection doesn t work properly when used to detect outdoor world bosses verified for dunegorger kraulok after looking at the code it appears any unit died event is now triggering the detection when only those that are caused by the player or their party raid should count the implementation appears to rely on bit flags for a certain srcflag bitmap field see but those are no longer working the value is always and so all kills count regardless of who actually caused them potential solutions repair the bit flag detection if blizzard hasn t removed broken it failing that it might be possible to rely on the defeat detection loot lockout and count an attempt if and only if the player isn t yet logged out notes mop world bosses are probably affected as well | 1 |

810,374 | 30,239,070,418 | IssuesEvent | 2023-07-06 12:22:40 | huridocs/uwazi | https://api.github.com/repos/huridocs/uwazi | closed | Cypress image snapshots not working | Bug :lady_beetle: Sprint Priority: High Frontend :sunglasses: | **Describe the bug**

Cypress image snapshots are likely to be misconfigured since they are not properly reporting changes to the UI.

**To Reproduce**

Steps to reproduce the behavior:

- In the new translations UI, in the component for the translations lists, change the type for the action buttons, so that they visually change.

- Run the E2E relevant to translations.

- There’s no error for the visual change.

**Expected behavior**

We should have a very low threshold of tolerance for visual changes in the UI and the test should fail.

| 1.0 | Cypress image snapshots not working - **Describe the bug**

Cypress image snapshots are likely to be misconfigured since they are not properly reporting changes to the UI.

**To Reproduce**

Steps to reproduce the behavior:

- In the new translations UI, in the component for the translations lists, change the type for the action buttons, so that they visually change.

- Run the E2E relevant to translations.

- There’s no error for the visual change.

**Expected behavior**

We should have a very low threshold of tolerance for visual changes in the UI and the test should fail.

| priority | cypress image snapshots not working describe the bug cypress image snapshots are likely to be misconfigured since they are not properly reporting changes to the ui to reproduce steps to reproduce the behavior in the new translations ui in the component for the translations lists change the type for the action buttons so that they visually change run the relevant to translations there’s no error for the visual change expected behavior we should have a very low threshold of tolerance for visual changes in the ui and the test should fail | 1 |

423,632 | 12,299,364,335 | IssuesEvent | 2020-05-11 12:15:21 | bounswe/bounswe2020group1 | https://api.github.com/repos/bounswe/bounswe2020group1 | closed | Implement the functions for "find similar words" API | priority:high type:implementation | Implementing functions that use the API provided by Datamuse. | 1.0 | Implement the functions for "find similar words" API - Implementing functions that use the API provided by Datamuse. | priority | implement the functions for find similar words api implementing functions that use the api provided by datamuse | 1 |

808,195 | 30,037,669,465 | IssuesEvent | 2023-06-27 13:43:39 | tum-esm/hermes | https://api.github.com/repos/tum-esm/hermes | closed | Send in-flow sensor measurement with every CO2 measurement over MQTT | type:feature status:implemented high-priority scope:sensor | The frequency of the in-flow measurements needs to be higher. (Current implementation every 2 minutes)

Change to directly integrate the in-flow sensor data into the CO2 measurement MQTT data stream. This also makes it more visible what sensor data the CO2 sensor received to perform the correction and allows us to do it ourselves in the future.

Physical Background: The pump creates a flow between 0.4-0.6 ppm. Depending on the flow the underpressure in the system changes. This change has a direct influence on the correction based on pressure in the Vaisala GMP343. | 1.0 | Send in-flow sensor measurement with every CO2 measurement over MQTT - The frequency of the in-flow measurements needs to be higher. (Current implementation every 2 minutes)

Change to directly integrate the in-flow sensor data into the CO2 measurement MQTT data stream. This also makes it more visible what sensor data the CO2 sensor received to perform the correction and allows us to do it ourselves in the future.

Physical Background: The pump creates a flow between 0.4-0.6 ppm. Depending on the flow the underpressure in the system changes. This change has a direct influence on the correction based on pressure in the Vaisala GMP343. | priority | send in flow sensor measurement with every measurement over mqtt the frequency of the in flow measurements needs to be higher current implementation every minutes change to directly integrate the in flow sensor data into the measurement mqtt data stream this also makes it more visible what sensor data the sensor received to perform the correction and allows us to do it ourselves in the future physical background the pump creates a flow between ppm depending on the flow the underpressure in the system changes this change has a direct influence on the correction based on pressure in the vaisala | 1 |

792,351 | 27,956,882,081 | IssuesEvent | 2023-03-24 13:03:33 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Needs to get the default value of an input parameter in a resource function | Type/Improvement Priority/High Team/jBallerina | **Description:**

When there is an input parameter with a default value in a resource function, there should be a way to get the default value of this parameter. This is a requirement for the Ballerina GraphQL package implementation.

Related: #27417, https://github.com/ballerina-platform/ballerina-standard-library/issues/1266 | 1.0 | Needs to get the default value of an input parameter in a resource function - **Description:**

When there is an input parameter with a default value in a resource function, there should be a way to get the default value of this parameter. This is a requirement for the Ballerina GraphQL package implementation.

Related: #27417, https://github.com/ballerina-platform/ballerina-standard-library/issues/1266 | priority | needs to get the default value of an input parameter in a resource function description when there is an input parameter with a default value in a resource function there should be a way to get the default value of this parameter this is a requirement for the ballerina graphql package implementation related | 1 |

484,359 | 13,938,471,195 | IssuesEvent | 2020-10-22 15:18:24 | wso2/product-is | https://api.github.com/repos/wso2/product-is | opened | Pattern based anomaly detection engine for the cloud | Complexity/High Component/Analytics Priority/Low gateway research | **Is your feature request related to a problem? Please describe.**

$subject allows monitoring an Identity Server cloud deployment for unusual behaviors, based on pattern-based anomalies of various signals coming from the deployment. The engine can be used to trigger alerts in near-realtime, on potential malicious requests, attacks, and other unexpected activities.

**Describe the solution you would prefer**

Come up with an anomaly detection engine PoC, where various signals from the Identity Server deployment(request rate, authentication events, etc) can be analyzed in general, and compare it with the patterns observed in the past, to identify anomalies. The engine should support various un-supervised analysis mechanisms, due to the vast amount of data that can be generated from a cloud deployment.

**Additional context**

-

| 1.0 | Pattern based anomaly detection engine for the cloud - **Is your feature request related to a problem? Please describe.**

$subject allows monitoring an Identity Server cloud deployment for unusual behaviors, based on pattern-based anomalies of various signals coming from the deployment. The engine can be used to trigger alerts in near-realtime, on potential malicious requests, attacks, and other unexpected activities.

**Describe the solution you would prefer**

Come up with an anomaly detection engine PoC, where various signals from the Identity Server deployment(request rate, authentication events, etc) can be analyzed in general, and compare it with the patterns observed in the past, to identify anomalies. The engine should support various un-supervised analysis mechanisms, due to the vast amount of data that can be generated from a cloud deployment.

**Additional context**

-

| priority | pattern based anomaly detection engine for the cloud is your feature request related to a problem please describe subject allows monitoring an identity server cloud deployment for unusual behaviors based on pattern based anomalies of various signals coming from the deployment the engine can be used to trigger alerts in near realtime on potential malicious requests attacks and other unexpected activities describe the solution you would prefer come up with an anomaly detection engine poc where various signals from the identity server deployment request rate authentication events etc can be analyzed in general and compare it with the patterns observed in the past to identify anomalies the engine should support various un supervised analysis mechanisms due to the vast amount of data that can be generated from a cloud deployment additional context | 1 |

184,914 | 6,717,386,738 | IssuesEvent | 2017-10-14 20:35:20 | semperfiwebdesign/all-in-one-seo-pack | https://api.github.com/repos/semperfiwebdesign/all-in-one-seo-pack | opened | Exclude Pages option no longer working | Initial Review Priority | High | As reported by Albert Belzer (belzer9@gmail.com) on October 14, 2017.

In his original e-mail, Albert states that both the "Disable SEO for this post/page option" setting on the Edit screen and the "Exlude Pages" setting in the General Settings are not working.

I checked this and although the former is working, the latter seems like it's not. | 1.0 | Exclude Pages option no longer working - As reported by Albert Belzer (belzer9@gmail.com) on October 14, 2017.

In his original e-mail, Albert states that both the "Disable SEO for this post/page option" setting on the Edit screen and the "Exlude Pages" setting in the General Settings are not working.

I checked this and although the former is working, the latter seems like it's not. | priority | exclude pages option no longer working as reported by albert belzer gmail com on october in his original e mail albert states that both the disable seo for this post page option setting on the edit screen and the exlude pages setting in the general settings are not working i checked this and although the former is working the latter seems like it s not | 1 |

382,199 | 11,302,244,285 | IssuesEvent | 2020-01-17 17:12:56 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | reopened | Prototype APIs can't be used for API products | 3.1.0 Priority/Highest Resolution/Fixed Type/Bug | $subject.

The inline script is not copied to the API product synapse. Hense invocation fails.

| 1.0 | Prototype APIs can't be used for API products - $subject.

The inline script is not copied to the API product synapse. Hense invocation fails.

| priority | prototype apis can t be used for api products subject the inline script is not copied to the api product synapse hense invocation fails | 1 |

382,645 | 11,309,760,056 | IssuesEvent | 2020-01-19 15:16:11 | FederatedAI/FATE | https://api.github.com/repos/FederatedAI/FATE | closed | Support for secret sharing scheme | enhancement priority:high research | **Is your feature request related to a problem? Please describe.**

Secret sharing Scheme is a must-have for FATE project.

**Describe the solution you'd like**

Do R&D on implementing secret sharing operations, such as:

1. Create beaver triple

2. Add, Multiply, Division, Compare, and others

Having secret sharing operations been created, then

1. Implement secret sharing based LR

2. Implement secret sharing based FTL

These works do not need to be full-fledged for industrial applications. However, they should be able to help us create various secure federated learning algorithms/prototypes.

| 1.0 | Support for secret sharing scheme - **Is your feature request related to a problem? Please describe.**

Secret sharing Scheme is a must-have for FATE project.

**Describe the solution you'd like**

Do R&D on implementing secret sharing operations, such as:

1. Create beaver triple

2. Add, Multiply, Division, Compare, and others

Having secret sharing operations been created, then

1. Implement secret sharing based LR

2. Implement secret sharing based FTL

These works do not need to be full-fledged for industrial applications. However, they should be able to help us create various secure federated learning algorithms/prototypes.

| priority | support for secret sharing scheme is your feature request related to a problem please describe secret sharing scheme is a must have for fate project describe the solution you d like do r d on implementing secret sharing operations such as create beaver triple add multiply division compare and others having secret sharing operations been created then implement secret sharing based lr implement secret sharing based ftl these works do not need to be full fledged for industrial applications however they should be able to help us create various secure federated learning algorithms prototypes | 1 |

72,470 | 3,386,257,735 | IssuesEvent | 2015-11-27 16:22:22 | CosmosOS/Cosmos | https://api.github.com/repos/CosmosOS/Cosmos | closed | Dup tries to pop more stuff from analytical stack than there is! | area_compiler complexity_medium pending_verification priority_high | Log:

```

4> Error: Exception: System.Exception: Error compiling method 'SystemVoidKernelCommandsInputCommand': System.Exception: OpCode IL_014D: Dup tries to pop more stuff from analytical stack than there is!

4> at Cosmos.IL2CPU.ILOpCode.InterpretStackTypes(IDictionary`2 aOpCodes, Stack`1 aStack, Boolean& aSituationChanged, Int32 aMaxRecursionDepth) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILOpCode.cs:line 369

4> at Cosmos.IL2CPU.AppAssembler.InterpretInstructionsToDetermineStackTypes(List`1 aCurrentGroup) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 714

4> at Cosmos.IL2CPU.AppAssembler.EmitInstructions(MethodInfo aMethod, List`1 aCurrentGroup, Boolean& emitINT3) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 557

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 514 ---> System.Exception: OpCode IL_014D: Dup tries to pop more stuff from analytical stack than there is!

4> at Cosmos.IL2CPU.ILOpCode.InterpretStackTypes(IDictionary`2 aOpCodes, Stack`1 aStack, Boolean& aSituationChanged, Int32 aMaxRecursionDepth) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILOpCode.cs:line 369

4> at Cosmos.IL2CPU.AppAssembler.InterpretInstructionsToDetermineStackTypes(List`1 aCurrentGroup) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 714

4> at Cosmos.IL2CPU.AppAssembler.EmitInstructions(MethodInfo aMethod, List`1 aCurrentGroup, Boolean& emitINT3) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 557

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 514

4> --- End of inner exception stack trace ---

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 529

4> at Cosmos.IL2CPU.ILScanner.Assemble() in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILScanner.cs:line 944

4> at Cosmos.IL2CPU.ILScanner.Execute(MethodBase aStartMethod) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILScanner.cs:line 256

4> at Cosmos.IL2CPU.CompilerEngine.Execute() in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\CompilerEngine.cs:line 238

```

And there is code where it heappen:

```C#

public static void InputCommand()

{

Console.Write("D:/command>");

comd = Console.ReadLine();

comd = comd.ToLower();

if (comd == "reboot") h.Power.Restart();

else if (comd == "shutdown") h.Power.Shutdown();

else if (comd == "echo")

{

Console.Write("Echo>");

arg = Console.ReadLine();

Console.WriteLine(arg);

}

else if (comd == "notepad")

{

System.CLI.Applications.Notepad();

}

else if (comd == "cls")

{

Console.Clear();

Console.WriteLine("TriangleOS");

Console.WriteLine("=============================");

}

else if (comd == "soundtest")

{

Console.Write("Frequency>");

arg = Console.ReadLine();

Console.Write("Duration>");

optarg = Console.ReadLine();

Console.Write("Eh, this isn't implemted right now.");

//h.Multimedia.Speakers.CallSound(int.Parse(arg), int.Parse(optarg));

}

else if (comd == "boot")

{

Console.WriteLine("Starting TriangleOS.Drivers . . .");

//ProcessManager.Process Audio = new ProcessManager.Process();

//ProcessManager.Process Graphics = new ProcessManager.Process();

//Graphics.ProcessThread = new System.Threading.Thread(

h.Graphics.LowLevel.init();

//);

//ProcessManager.Process Mouse = new ProcessManager.Process();

//Mouse.ProcessThread = new System.Threading.Thread(

h.Mouse.InitMouse();

//);

//Graphics.Start();

//Mouse.Start();

//Audio.ProcessThread = new System.Threading.Thread(

h.Multimedia.Speakers.IntailizeAudio();

//);

Kernel.GUI();

}

else if (comd == "cliboot")

{

System.CLI.Controls.TextBox Text = new System.CLI.Controls.TextBox();

Text.x = 1;

Text.y = 1;

Text.length = 20;

Text.DrawTextBox();

Text.TypeInto();

System.CLI.Controls.Button OK = new System.CLI.Controls.Button();

OK.y = 22;

OK.x = 1;

OK.width = 6;

OK.height = 1;

OK.text = "OK";

OK.DrawButton();

}

else if (comd == "calculator")

{

System.CLI.Applications.Calculator();

}

else if (comd == "cd")

{

h.Graphics.Console.ErrO("Impossible operation performed. Can't request I/O while it isn't running!");

}

else if (comd == "dir")

{

Console.WriteLine("This isn't folder. you can use <cd> to go up folder.");

}

else if (comd == "paint")

{

System.CLI.Applications.Paint();

}

else if (comd == "changelog")

{

Console.WriteLine("You are running version 0.0.3 Dev. Only Luka see the DEV!");

Console.WriteLine("v0.0.2:");

Console.WriteLine("Blue screen with cursor. not clearing.");

Console.WriteLine("v0.0.3:");

Console.WriteLine("Command Line Shell with Broken CLI, but working unresponsive GUI, but with DOS-like Shell. I/O, Audio, Multithreading, Shutdown doesn't work.");

}

else if (comd == "help")

{

Console.WriteLine("Copyright 2015 Thontelix TriangleOS. Special thanks to Cosmos .net ASM Compiler.");

Console.WriteLine("type CHANGELOG to get version changes.");

Console.WriteLine("How to use:");

Console.WriteLine("After every typed command, press enter. the output of command will be detailed. If there is blinking bottom line cursor, then you need to input something, if it doesn't give any feedback, then its operating a activity. If you want GUI, type 'boot' and press enter.");

Console.WriteLine("Commands:");

Console.WriteLine("shutdown - Gives you ability to safe turn off PC");

Console.WriteLine("boot - Boots you into TriangleOS");

Console.WriteLine("reboot - Reboots your PC");

Console.WriteLine("cd - Travel through directories");

Console.WriteLine("dir - Read content of directory");

Console.WriteLine("echo - Backs string you enter");

Console.WriteLine("shutdown - Backs string you enter");

Console.WriteLine("soundtest - Speakers Drivers. Doesnt work for now");

Console.WriteLine("help - Gives you list of commands.");

Console.WriteLine("paint - Console Paint App. CAUTION:Not for epilepsy persons.");

}

else

{

Console.WriteLine("That command doesn't exist. :(");

}

}

``` | 1.0 | Dup tries to pop more stuff from analytical stack than there is! - Log:

```

4> Error: Exception: System.Exception: Error compiling method 'SystemVoidKernelCommandsInputCommand': System.Exception: OpCode IL_014D: Dup tries to pop more stuff from analytical stack than there is!

4> at Cosmos.IL2CPU.ILOpCode.InterpretStackTypes(IDictionary`2 aOpCodes, Stack`1 aStack, Boolean& aSituationChanged, Int32 aMaxRecursionDepth) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILOpCode.cs:line 369

4> at Cosmos.IL2CPU.AppAssembler.InterpretInstructionsToDetermineStackTypes(List`1 aCurrentGroup) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 714

4> at Cosmos.IL2CPU.AppAssembler.EmitInstructions(MethodInfo aMethod, List`1 aCurrentGroup, Boolean& emitINT3) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 557

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 514 ---> System.Exception: OpCode IL_014D: Dup tries to pop more stuff from analytical stack than there is!

4> at Cosmos.IL2CPU.ILOpCode.InterpretStackTypes(IDictionary`2 aOpCodes, Stack`1 aStack, Boolean& aSituationChanged, Int32 aMaxRecursionDepth) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILOpCode.cs:line 369

4> at Cosmos.IL2CPU.AppAssembler.InterpretInstructionsToDetermineStackTypes(List`1 aCurrentGroup) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 714

4> at Cosmos.IL2CPU.AppAssembler.EmitInstructions(MethodInfo aMethod, List`1 aCurrentGroup, Boolean& emitINT3) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 557

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 514

4> --- End of inner exception stack trace ---

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 529

4> at Cosmos.IL2CPU.ILScanner.Assemble() in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILScanner.cs:line 944

4> at Cosmos.IL2CPU.ILScanner.Execute(MethodBase aStartMethod) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILScanner.cs:line 256

4> at Cosmos.IL2CPU.CompilerEngine.Execute() in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\CompilerEngine.cs:line 238

```

And there is code where it heappen:

```C#

public static void InputCommand()

{

Console.Write("D:/command>");

comd = Console.ReadLine();

comd = comd.ToLower();

if (comd == "reboot") h.Power.Restart();

else if (comd == "shutdown") h.Power.Shutdown();

else if (comd == "echo")

{

Console.Write("Echo>");

arg = Console.ReadLine();

Console.WriteLine(arg);

}

else if (comd == "notepad")

{

System.CLI.Applications.Notepad();

}

else if (comd == "cls")

{

Console.Clear();

Console.WriteLine("TriangleOS");

Console.WriteLine("=============================");

}

else if (comd == "soundtest")

{

Console.Write("Frequency>");

arg = Console.ReadLine();

Console.Write("Duration>");

optarg = Console.ReadLine();

Console.Write("Eh, this isn't implemted right now.");

//h.Multimedia.Speakers.CallSound(int.Parse(arg), int.Parse(optarg));

}

else if (comd == "boot")

{

Console.WriteLine("Starting TriangleOS.Drivers . . .");

//ProcessManager.Process Audio = new ProcessManager.Process();

//ProcessManager.Process Graphics = new ProcessManager.Process();

//Graphics.ProcessThread = new System.Threading.Thread(

h.Graphics.LowLevel.init();

//);

//ProcessManager.Process Mouse = new ProcessManager.Process();

//Mouse.ProcessThread = new System.Threading.Thread(

h.Mouse.InitMouse();

//);

//Graphics.Start();

//Mouse.Start();

//Audio.ProcessThread = new System.Threading.Thread(

h.Multimedia.Speakers.IntailizeAudio();

//);

Kernel.GUI();

}

else if (comd == "cliboot")

{

System.CLI.Controls.TextBox Text = new System.CLI.Controls.TextBox();

Text.x = 1;

Text.y = 1;

Text.length = 20;

Text.DrawTextBox();

Text.TypeInto();

System.CLI.Controls.Button OK = new System.CLI.Controls.Button();

OK.y = 22;

OK.x = 1;

OK.width = 6;

OK.height = 1;

OK.text = "OK";

OK.DrawButton();

}

else if (comd == "calculator")

{

System.CLI.Applications.Calculator();

}

else if (comd == "cd")

{

h.Graphics.Console.ErrO("Impossible operation performed. Can't request I/O while it isn't running!");

}

else if (comd == "dir")

{

Console.WriteLine("This isn't folder. you can use <cd> to go up folder.");

}

else if (comd == "paint")

{

System.CLI.Applications.Paint();

}

else if (comd == "changelog")

{

Console.WriteLine("You are running version 0.0.3 Dev. Only Luka see the DEV!");

Console.WriteLine("v0.0.2:");

Console.WriteLine("Blue screen with cursor. not clearing.");

Console.WriteLine("v0.0.3:");

Console.WriteLine("Command Line Shell with Broken CLI, but working unresponsive GUI, but with DOS-like Shell. I/O, Audio, Multithreading, Shutdown doesn't work.");

}

else if (comd == "help")

{

Console.WriteLine("Copyright 2015 Thontelix TriangleOS. Special thanks to Cosmos .net ASM Compiler.");

Console.WriteLine("type CHANGELOG to get version changes.");

Console.WriteLine("How to use:");

Console.WriteLine("After every typed command, press enter. the output of command will be detailed. If there is blinking bottom line cursor, then you need to input something, if it doesn't give any feedback, then its operating a activity. If you want GUI, type 'boot' and press enter.");

Console.WriteLine("Commands:");

Console.WriteLine("shutdown - Gives you ability to safe turn off PC");

Console.WriteLine("boot - Boots you into TriangleOS");

Console.WriteLine("reboot - Reboots your PC");

Console.WriteLine("cd - Travel through directories");

Console.WriteLine("dir - Read content of directory");

Console.WriteLine("echo - Backs string you enter");

Console.WriteLine("shutdown - Backs string you enter");

Console.WriteLine("soundtest - Speakers Drivers. Doesnt work for now");

Console.WriteLine("help - Gives you list of commands.");

Console.WriteLine("paint - Console Paint App. CAUTION:Not for epilepsy persons.");

}

else

{

Console.WriteLine("That command doesn't exist. :(");

}

}

``` | priority | dup tries to pop more stuff from analytical stack than there is log error exception system exception error compiling method systemvoidkernelcommandsinputcommand system exception opcode il dup tries to pop more stuff from analytical stack than there is at cosmos ilopcode interpretstacktypes idictionary aopcodes stack astack boolean asituationchanged amaxrecursiondepth in c users luka desktop cosmos master source cosmos ilopcode cs line at cosmos appassembler interpretinstructionstodeterminestacktypes list acurrentgroup in c users luka desktop cosmos master source cosmos appassembler cs line at cosmos appassembler emitinstructions methodinfo amethod list acurrentgroup boolean in c users luka desktop cosmos master source cosmos appassembler cs line at cosmos appassembler processmethod methodinfo amethod list aopcodes in c users luka desktop cosmos master source cosmos appassembler cs line system exception opcode il dup tries to pop more stuff from analytical stack than there is at cosmos ilopcode interpretstacktypes idictionary aopcodes stack astack boolean asituationchanged amaxrecursiondepth in c users luka desktop cosmos master source cosmos ilopcode cs line at cosmos appassembler interpretinstructionstodeterminestacktypes list acurrentgroup in c users luka desktop cosmos master source cosmos appassembler cs line at cosmos appassembler emitinstructions methodinfo amethod list acurrentgroup boolean in c users luka desktop cosmos master source cosmos appassembler cs line at cosmos appassembler processmethod methodinfo amethod list aopcodes in c users luka desktop cosmos master source cosmos appassembler cs line end of inner exception stack trace at cosmos appassembler processmethod methodinfo amethod list aopcodes in c users luka desktop cosmos master source cosmos appassembler cs line at cosmos ilscanner assemble in c users luka desktop cosmos master source cosmos ilscanner cs line at cosmos ilscanner execute methodbase astartmethod in c users luka desktop cosmos master source cosmos ilscanner cs line at cosmos compilerengine execute in c users luka desktop cosmos master source cosmos compilerengine cs line and there is code where it heappen c public static void inputcommand console write d command comd console readline comd comd tolower if comd reboot h power restart else if comd shutdown h power shutdown else if comd echo console write echo arg console readline console writeline arg else if comd notepad system cli applications notepad else if comd cls console clear console writeline triangleos console writeline else if comd soundtest console write frequency arg console readline console write duration optarg console readline console write eh this isn t implemted right now h multimedia speakers callsound int parse arg int parse optarg else if comd boot console writeline starting triangleos drivers processmanager process audio new processmanager process processmanager process graphics new processmanager process graphics processthread new system threading thread h graphics lowlevel init processmanager process mouse new processmanager process mouse processthread new system threading thread h mouse initmouse graphics start mouse start audio processthread new system threading thread h multimedia speakers intailizeaudio kernel gui else if comd cliboot system cli controls textbox text new system cli controls textbox text x text y text length text drawtextbox text typeinto system cli controls button ok new system cli controls button ok y ok x ok width ok height ok text ok ok drawbutton else if comd calculator system cli applications calculator else if comd cd h graphics console erro impossible operation performed can t request i o while it isn t running else if comd dir console writeline this isn t folder you can use to go up folder else if comd paint system cli applications paint else if comd changelog console writeline you are running version dev only luka see the dev console writeline console writeline blue screen with cursor not clearing console writeline console writeline command line shell with broken cli but working unresponsive gui but with dos like shell i o audio multithreading shutdown doesn t work else if comd help console writeline copyright thontelix triangleos special thanks to cosmos net asm compiler console writeline type changelog to get version changes console writeline how to use console writeline after every typed command press enter the output of command will be detailed if there is blinking bottom line cursor then you need to input something if it doesn t give any feedback then its operating a activity if you want gui type boot and press enter console writeline commands console writeline shutdown gives you ability to safe turn off pc console writeline boot boots you into triangleos console writeline reboot reboots your pc console writeline cd travel through directories console writeline dir read content of directory console writeline echo backs string you enter console writeline shutdown backs string you enter console writeline soundtest speakers drivers doesnt work for now console writeline help gives you list of commands console writeline paint console paint app caution not for epilepsy persons else console writeline that command doesn t exist | 1 |

240,185 | 7,800,589,404 | IssuesEvent | 2018-06-09 11:19:53 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0010214:

improve calendar performance by reducing the number of recurring events fetched | Calendar Mantis high priority | **Reported by pschuele on 5 Sep 2014 16:16**

**Version:** Collin (2013.10.8crowdfunding2)

improve calendar performance by reducing the number of recurring events fetched

-> because we load all related data for every recurring candidate even if it does not match the period filter

| 1.0 | 0010214:

improve calendar performance by reducing the number of recurring events fetched - **Reported by pschuele on 5 Sep 2014 16:16**

**Version:** Collin (2013.10.8crowdfunding2)

improve calendar performance by reducing the number of recurring events fetched

-> because we load all related data for every recurring candidate even if it does not match the period filter

| priority | improve calendar performance by reducing the number of recurring events fetched reported by pschuele on sep version collin improve calendar performance by reducing the number of recurring events fetched gt because we load all related data for every recurring candidate even if it does not match the period filter | 1 |

82,368 | 3,605,944,718 | IssuesEvent | 2016-02-04 08:57:04 | TrinityCore/TrinityCore | https://api.github.com/repos/TrinityCore/TrinityCore | closed | [3.3.5] [Script] ICC Emblem of Frost bug | Branch-3.3.5a Comp-Core Priority-High | Dead characters get frost without raid save !

How to reproduce:

1- Need 2 character. create a raid and enter into ICC.

2- Before first boss let's one of characters die and realese spirit. don't resurrect.

3- Kill first boss with another character.

4- Two characters get the frost. but the dead character did'nt get save. and can do it again!

This worked for next bosses.

rev: c04c409 | 1.0 | [3.3.5] [Script] ICC Emblem of Frost bug - Dead characters get frost without raid save !

How to reproduce:

1- Need 2 character. create a raid and enter into ICC.

2- Before first boss let's one of characters die and realese spirit. don't resurrect.

3- Kill first boss with another character.

4- Two characters get the frost. but the dead character did'nt get save. and can do it again!

This worked for next bosses.

rev: c04c409 | priority | icc emblem of frost bug dead characters get frost without raid save how to reproduce need character create a raid and enter into icc before first boss let s one of characters die and realese spirit don t resurrect kill first boss with another character two characters get the frost but the dead character did nt get save and can do it again this worked for next bosses rev | 1 |

757,617 | 26,521,577,842 | IssuesEvent | 2023-01-19 03:30:49 | pibolib/hack16-2 | https://api.github.com/repos/pibolib/hack16-2 | closed | Implement enemy dodge system | enhancement gameplay priority:high | This requires a rewrite to the way player bullets are handled in regards to hurting the enemy.

Specifications:

1. Remove direct call to Enemy:take_damage()

2. ~~Create signal on Enemy for bullet_collide~~

**Just calls the collision function directly, no signal needed**

4. ~~On bullet_collide, handle on a case by case (inherited class specific) basis~~

**Handles movement on a case by case basis, dodge() super function to always be called in inherited classes**

6. Always handle dodges before taking damage | 1.0 | Implement enemy dodge system - This requires a rewrite to the way player bullets are handled in regards to hurting the enemy.

Specifications:

1. Remove direct call to Enemy:take_damage()

2. ~~Create signal on Enemy for bullet_collide~~

**Just calls the collision function directly, no signal needed**

4. ~~On bullet_collide, handle on a case by case (inherited class specific) basis~~

**Handles movement on a case by case basis, dodge() super function to always be called in inherited classes**

6. Always handle dodges before taking damage | priority | implement enemy dodge system this requires a rewrite to the way player bullets are handled in regards to hurting the enemy specifications remove direct call to enemy take damage create signal on enemy for bullet collide just calls the collision function directly no signal needed on bullet collide handle on a case by case inherited class specific basis handles movement on a case by case basis dodge super function to always be called in inherited classes always handle dodges before taking damage | 1 |

766,406 | 26,882,646,626 | IssuesEvent | 2023-02-05 20:28:24 | sczerwinski/wavefront-obj-intellij-plugin | https://api.github.com/repos/sczerwinski/wavefront-obj-intellij-plugin | closed | Objects without material (or with non-existing material) not rendered | type:bug resolution:done priority:high component:3d | ### Steps

1. Remove all material references from OBJ file.

OR

2. Remove the material used in an OBJ file.

### Result

The OBJ file is not rendered.

### Expected Result

The OBJ file should be normally rendered, just without a material (default textures/colours).

| 1.0 | Objects without material (or with non-existing material) not rendered - ### Steps

1. Remove all material references from OBJ file.

OR

2. Remove the material used in an OBJ file.

### Result

The OBJ file is not rendered.

### Expected Result

The OBJ file should be normally rendered, just without a material (default textures/colours).

| priority | objects without material or with non existing material not rendered steps remove all material references from obj file or remove the material used in an obj file result the obj file is not rendered expected result the obj file should be normally rendered just without a material default textures colours | 1 |

95,990 | 3,962,917,705 | IssuesEvent | 2016-05-02 18:33:44 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | closed | Support for Esri Feature Service Symbology | addition: feature priority: high | The Esri Feature Service does not contain a legend endpoint which is where the viewer will by default attempt to obtain symbology for use in the legend/layer selector. Instead, the viewer should interrogate the service and interpret the Esri symbology values to generate an appropriate graphic.

Range, Unique Value and Simple rendering should be supported. | 1.0 | Support for Esri Feature Service Symbology - The Esri Feature Service does not contain a legend endpoint which is where the viewer will by default attempt to obtain symbology for use in the legend/layer selector. Instead, the viewer should interrogate the service and interpret the Esri symbology values to generate an appropriate graphic.

Range, Unique Value and Simple rendering should be supported. | priority | support for esri feature service symbology the esri feature service does not contain a legend endpoint which is where the viewer will by default attempt to obtain symbology for use in the legend layer selector instead the viewer should interrogate the service and interpret the esri symbology values to generate an appropriate graphic range unique value and simple rendering should be supported | 1 |

80,367 | 3,561,152,316 | IssuesEvent | 2016-01-23 16:13:02 | cuckoosandbox/cuckoo | https://api.github.com/repos/cuckoosandbox/cuckoo | closed | Memory dump only readable by root | Bug (to verify) High Priority | We are running cuckoo from git on ubuntu 14.04, and when a memory dump is taken by libvirt the file is only readable by root, which causes problems for volatility.

Just creating the file before calling libvirt seems to solve the problem.

My ugly hack in cuckoo/lib/common/abstracts.py from line 422:

def dump_memory(self, label, path):

"""Takes a memory dump.

@param path: path to where to store the memory dump.

"""

log.debug("Dumping memory for machine %s", label)

#Creating file before call to libvirt to get read permissions for user after dump

open(path,'w').write('')

conn = self._connect()

try:

self.vms[label].coreDump(path, flags=libvirt.VIR_DUMP_MEMORY_ONLY)

except libvirt.libvirtError as e:

raise CuckooMachineError("Error dumping memory virtual machine "

"{0}: {1}".format(label, e))

finally:

self._disconnect(conn)

| 1.0 | Memory dump only readable by root - We are running cuckoo from git on ubuntu 14.04, and when a memory dump is taken by libvirt the file is only readable by root, which causes problems for volatility.

Just creating the file before calling libvirt seems to solve the problem.

My ugly hack in cuckoo/lib/common/abstracts.py from line 422:

def dump_memory(self, label, path):

"""Takes a memory dump.

@param path: path to where to store the memory dump.

"""

log.debug("Dumping memory for machine %s", label)

#Creating file before call to libvirt to get read permissions for user after dump

open(path,'w').write('')

conn = self._connect()

try:

self.vms[label].coreDump(path, flags=libvirt.VIR_DUMP_MEMORY_ONLY)

except libvirt.libvirtError as e:

raise CuckooMachineError("Error dumping memory virtual machine "

"{0}: {1}".format(label, e))

finally:

self._disconnect(conn)

| priority | memory dump only readable by root we are running cuckoo from git on ubuntu and when a memory dump is taken by libvirt the file is only readable by root which causes problems for volatility just creating the file before calling libvirt seems to solve the problem my ugly hack in cuckoo lib common abstracts py from line def dump memory self label path takes a memory dump param path path to where to store the memory dump log debug dumping memory for machine s label creating file before call to libvirt to get read permissions for user after dump open path w write conn self connect try self vms coredump path flags libvirt vir dump memory only except libvirt libvirterror as e raise cuckoomachineerror error dumping memory virtual machine format label e finally self disconnect conn | 1 |

175,772 | 6,553,922,830 | IssuesEvent | 2017-09-06 01:58:47 | kinueng/bluetooth-scale | https://api.github.com/repos/kinueng/bluetooth-scale | closed | Start scanning after disconnecting from BLE device | enhancement priority/high status/inprogress | The end goal is to allow the app to always be running, but the BLE device can come and go as it pleases. | 1.0 | Start scanning after disconnecting from BLE device - The end goal is to allow the app to always be running, but the BLE device can come and go as it pleases. | priority | start scanning after disconnecting from ble device the end goal is to allow the app to always be running but the ble device can come and go as it pleases | 1 |

73,375 | 3,411,272,070 | IssuesEvent | 2015-12-05 00:57:49 | Ecotrust/COMPASS | https://api.github.com/repos/Ecotrust/COMPASS | closed | info icon on 'Active Tab' layers does nothing | bug High Priority | Either create info-dropdowns (like first tab) or remove icons. | 1.0 | info icon on 'Active Tab' layers does nothing - Either create info-dropdowns (like first tab) or remove icons. | priority | info icon on active tab layers does nothing either create info dropdowns like first tab or remove icons | 1 |

304,248 | 9,329,409,585 | IssuesEvent | 2019-03-28 02:15:41 | InQuest/ThreatKB | https://api.github.com/repos/InQuest/ThreatKB | closed | Dynamically update the title. | high-priority | Let the title be "ThreatKB" everywhere, unless, you're viewing or editing an artifact (C2 IP, C2 DNS, YARA). In that case, make the titles:

* KB: $IP

* KB: $Domain

* KB: YARA Signature Name

Will help identify the appropriate tabs at a glance, when we have multiple open. | 1.0 | Dynamically update the title. - Let the title be "ThreatKB" everywhere, unless, you're viewing or editing an artifact (C2 IP, C2 DNS, YARA). In that case, make the titles:

* KB: $IP

* KB: $Domain

* KB: YARA Signature Name

Will help identify the appropriate tabs at a glance, when we have multiple open. | priority | dynamically update the title let the title be threatkb everywhere unless you re viewing or editing an artifact ip dns yara in that case make the titles kb ip kb domain kb yara signature name will help identify the appropriate tabs at a glance when we have multiple open | 1 |

793,110 | 27,983,086,942 | IssuesEvent | 2023-03-26 11:46:15 | AY2223S2-CS2103T-W12-1/tp | https://api.github.com/repos/AY2223S2-CS2103T-W12-1/tp | closed | Update isSamePerson check to check for equality of nric only | type.Bug priority.High severity.Low | Currently, isSamePerson checks for equality of name and nric.

This may not match expectations as nric should uniquely identify a person and each person should only have one main name. | 1.0 | Update isSamePerson check to check for equality of nric only - Currently, isSamePerson checks for equality of name and nric.

This may not match expectations as nric should uniquely identify a person and each person should only have one main name. | priority | update issameperson check to check for equality of nric only currently issameperson checks for equality of name and nric this may not match expectations as nric should uniquely identify a person and each person should only have one main name | 1 |

530,370 | 15,421,828,030 | IssuesEvent | 2021-03-05 13:36:29 | Systems-Learning-and-Development-Lab/MMM | https://api.github.com/repos/Systems-Learning-and-Development-Lab/MMM | closed | Remove existing ball traces if balls are erased | enhancement priority-high | If user clicks on buttons in the UI to remove balls, then traces should be removed as well.

In response to [comment](https://github.com/Systems-Learning-and-Development-Lab/MMM/issues/40#issuecomment-789879108) | 1.0 | Remove existing ball traces if balls are erased - If user clicks on buttons in the UI to remove balls, then traces should be removed as well.

In response to [comment](https://github.com/Systems-Learning-and-Development-Lab/MMM/issues/40#issuecomment-789879108) | priority | remove existing ball traces if balls are erased if user clicks on buttons in the ui to remove balls then traces should be removed as well in response to | 1 |

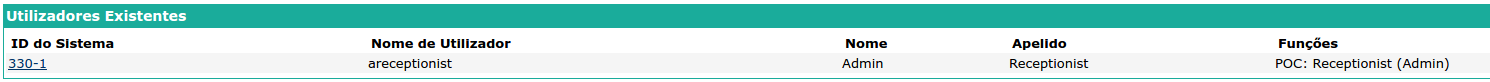

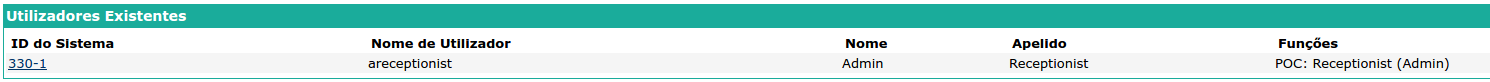

230,200 | 7,605,351,971 | IssuesEvent | 2018-04-30 08:35:34 | esaude/esaude-emr-poc | https://api.github.com/repos/esaude/esaude-emr-poc | closed | [Access Control] Cannot login with a user with receptionist (admin) role only | High Priority bug | Actual Results

--

The system is not permitting the login of a user with receptionist (admin) role only

Expected results

--

Should allow the login for all the POC roles

Steps to reproduce

--

In OpenMRS admin page create a user with POC: Receptionist (Admin) role only:

Screenshot/Attachment (Optional)

--

A visual description of the unexpected behaviour.

| 1.0 | [Access Control] Cannot login with a user with receptionist (admin) role only - Actual Results

--

The system is not permitting the login of a user with receptionist (admin) role only

Expected results

--

Should allow the login for all the POC roles

Steps to reproduce

--

In OpenMRS admin page create a user with POC: Receptionist (Admin) role only:

Screenshot/Attachment (Optional)

--

A visual description of the unexpected behaviour.

| priority | cannot login with a user with receptionist admin role only actual results the system is not permitting the login of a user with receptionist admin role only expected results should allow the login for all the poc roles steps to reproduce in openmrs admin page create a user with poc receptionist admin role only screenshot attachment optional a visual description of the unexpected behaviour | 1 |

82,151 | 3,603,541,996 | IssuesEvent | 2016-02-03 19:26:57 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | ListMixin map/extend generic method comments | analyzer-strong-mode area-analyzer priority-high | Right now they don't match the generic method comment signatures on Iterable, so this is an error:

````dart

class _FbList<E> extends Object with ListMixin<E> implements List<E>

```

Marked high-pri because this is easy to hit.

CC @leafpetersen, as this is related to SDK working with strong mode.

(this is sort of area-sdk, but only affects Analyzer strong mode. Not sure best way to label it.) | 1.0 | ListMixin map/extend generic method comments - Right now they don't match the generic method comment signatures on Iterable, so this is an error:

````dart

class _FbList<E> extends Object with ListMixin<E> implements List<E>

```

Marked high-pri because this is easy to hit.

CC @leafpetersen, as this is related to SDK working with strong mode.

(this is sort of area-sdk, but only affects Analyzer strong mode. Not sure best way to label it.) | priority | listmixin map extend generic method comments right now they don t match the generic method comment signatures on iterable so this is an error dart class fblist extends object with listmixin implements list marked high pri because this is easy to hit cc leafpetersen as this is related to sdk working with strong mode this is sort of area sdk but only affects analyzer strong mode not sure best way to label it | 1 |