Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

725,614 | 24,968,216,343 | IssuesEvent | 2022-11-01 21:27:22 | jesseClegg/Calorie-Tracker-Client-Software-Engineering | https://api.github.com/repos/jesseClegg/Calorie-Tracker-Client-Software-Engineering | closed | Auth | high priority | Need a login page and full auth integration into the app.

This would also be a good time to add Joey's landing page.

We also should add joey as collab | 1.0 | Auth - Need a login page and full auth integration into the app.

This would also be a good time to add Joey's landing page.

We also should add joey as collab | priority | auth need a login page and full auth integration into the app this would also be a good time to add joey s landing page we also should add joey as collab | 1 |

186,021 | 6,732,801,509 | IssuesEvent | 2017-10-18 12:54:23 | ballerinalang/composer | https://api.github.com/repos/ballerinalang/composer | closed | The text boxes are not well formatted in try-it | 0.94-pre-release Priority/High Severity/Minor Type/Bug | The text boxes are not well formatted in try-it

| 1.0 | The text boxes are not well formatted in try-it - The text boxes are not well formatted in try-it

| priority | the text boxes are not well formatted in try it the text boxes are not well formatted in try it | 1 |

268,994 | 8,418,923,178 | IssuesEvent | 2018-10-15 03:45:02 | CS2103-AY1819S1-W17-4/main | https://api.github.com/repos/CS2103-AY1819S1-W17-4/main | closed | Filter/search by 'and'/'or' composite predicates | priority.High type.Enhancement | - Support 'and' and 'or' operations, e.g. "tag>CS2101 or tag>CS2103T" | 1.0 | Filter/search by 'and'/'or' composite predicates - - Support 'and' and 'or' operations, e.g. "tag>CS2101 or tag>CS2103T" | priority | filter search by and or composite predicates support and and or operations e g tag or tag | 1 |

560,479 | 16,597,592,343 | IssuesEvent | 2021-06-01 15:07:23 | airbytehq/airbyte | https://api.github.com/repos/airbytehq/airbyte | closed | make normalization's hash inclusion in unnested table names optional | SM priority/high type/enhancement | The release of this change depends on https://github.com/airbytehq/airbyte/issues/3522

Consumers of tables produced by normalization cannot easily use nested tables due to the presence of hashes (used for collision prevention). These hashes are generally unnecessary outside of Postgres/similar dbs with low char limits for table names.

We should make this optional and set this to off by default except for Postgres/similar.

┆Issue is synchronized with this [Asana task](https://app.asana.com/0/1200367912513076/1200368129700092) by [Unito](https://www.unito.io)

| 1.0 | make normalization's hash inclusion in unnested table names optional - The release of this change depends on https://github.com/airbytehq/airbyte/issues/3522

Consumers of tables produced by normalization cannot easily use nested tables due to the presence of hashes (used for collision prevention). These hashes are generally unnecessary outside of Postgres/similar dbs with low char limits for table names.

We should make this optional and set this to off by default except for Postgres/similar.

┆Issue is synchronized with this [Asana task](https://app.asana.com/0/1200367912513076/1200368129700092) by [Unito](https://www.unito.io)

| priority | make normalization s hash inclusion in unnested table names optional the release of this change depends on consumers of tables produced by normalization cannot easily use nested tables due to the presence of hashes used for collision prevention these hashes are generally unnecessary outside of postgres similar dbs with low char limits for table names we should make this optional and set this to off by default except for postgres similar ┆issue is synchronized with this by | 1 |

355,014 | 10,575,628,246 | IssuesEvent | 2019-10-07 16:05:41 | arjo129/darpasubt | https://api.github.com/repos/arjo129/darpasubt | closed | [UGV] Chassis protection w/ cable management | priority.high ugv1 | A proper, polished chassis protection is required for qualification video.

This also includes having adequate cable management for safety and robustness against external elements. | 1.0 | [UGV] Chassis protection w/ cable management - A proper, polished chassis protection is required for qualification video.

This also includes having adequate cable management for safety and robustness against external elements. | priority | chassis protection w cable management a proper polished chassis protection is required for qualification video this also includes having adequate cable management for safety and robustness against external elements | 1 |

344,787 | 10,349,640,108 | IssuesEvent | 2019-09-04 23:18:11 | oslc-op/jira-migration-landfill | https://api.github.com/repos/oslc-op/jira-migration-landfill | closed | literal_value of the oslc_where syntax is not well-defined | Core: Query Priority: High Xtra: Jira | The spec is not clear on how to interpret the literals w/o the xsd data type.

E.g.

The terms boolean and decimal are short forms for typed literals. For example, true is a short form for "true"^xsd:booleancode>, 42 is a short form for "42"xsd:integer and 3.14159 is a short form for "3.14159"^xsd:decimal.

does not specify how I am supposed to know whether 42 is an integer but 3.14 is a decimal (or a single-precision float?), let alone how I am supposed to ensure that ‘true‘ is a boolean True, not a "true" string literal.

---

_Migrated from https://issues.oasis-open.org/browse/OSLCCORE-134 (opened by @berezovskyi; previously assigned to @oslc-bot)_

| 1.0 | literal_value of the oslc_where syntax is not well-defined - The spec is not clear on how to interpret the literals w/o the xsd data type.

E.g.

The terms boolean and decimal are short forms for typed literals. For example, true is a short form for "true"^xsd:booleancode>, 42 is a short form for "42"xsd:integer and 3.14159 is a short form for "3.14159"^xsd:decimal.

does not specify how I am supposed to know whether 42 is an integer but 3.14 is a decimal (or a single-precision float?), let alone how I am supposed to ensure that ‘true‘ is a boolean True, not a "true" string literal.

---

_Migrated from https://issues.oasis-open.org/browse/OSLCCORE-134 (opened by @berezovskyi; previously assigned to @oslc-bot)_

| priority | literal value of the oslc where syntax is not well defined the spec is not clear on how to interpret the literals w o the xsd data type e g the terms boolean and decimal are short forms for typed literals for example true is a short form for true xsd booleancode is a short form for xsd integer and is a short form for xsd decimal does not specify how i am supposed to know whether is an integer but is a decimal or a single precision float let alone how i am supposed to ensure that ‘true‘ is a boolean true not a true string literal migrated from opened by berezovskyi previously assigned to oslc bot | 1 |

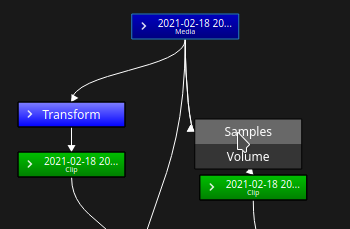

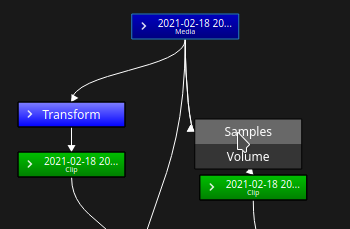

559,550 | 16,565,564,489 | IssuesEvent | 2021-05-29 10:29:28 | olive-editor/olive | https://api.github.com/repos/olive-editor/olive | closed | [NODES] Video and/or audio breaks when reconnecting nodes | High Priority Nodes/Compositing | <!-- ⚠ Do not delete this issue template! ⚠ -->

**Commit Hash** <!-- 8 character string of letters/numbers in title bar or Help > About dialog (e.g. 3ea173c9) -->

a0200f22

**Platform** <!-- e.g. Windows 10, Ubuntu 20.04 or macOS 10.15 -->

Kubuntu 21.04

**Summary**

When reconnecting nodes by CTRL + Dragging to override a connection with another identical connection, or just deleting the node connect and making a new one, audio and/or video breaks (depending upon which connection you change). I'm talking specifically about the connection from the media node to the transform and or volume node.

Reconnecting from the volume or transform nodes to their respective clip nodes causes no issues.

Please let me know if you need more information, but I think this should be pretty reproducible for testing/debugging purposes.

| 1.0 | [NODES] Video and/or audio breaks when reconnecting nodes - <!-- ⚠ Do not delete this issue template! ⚠ -->

**Commit Hash** <!-- 8 character string of letters/numbers in title bar or Help > About dialog (e.g. 3ea173c9) -->

a0200f22

**Platform** <!-- e.g. Windows 10, Ubuntu 20.04 or macOS 10.15 -->

Kubuntu 21.04

**Summary**

When reconnecting nodes by CTRL + Dragging to override a connection with another identical connection, or just deleting the node connect and making a new one, audio and/or video breaks (depending upon which connection you change). I'm talking specifically about the connection from the media node to the transform and or volume node.

Reconnecting from the volume or transform nodes to their respective clip nodes causes no issues.

Please let me know if you need more information, but I think this should be pretty reproducible for testing/debugging purposes.

| priority | video and or audio breaks when reconnecting nodes commit hash about dialog e g platform kubuntu summary when reconnecting nodes by ctrl dragging to override a connection with another identical connection or just deleting the node connect and making a new one audio and or video breaks depending upon which connection you change i m talking specifically about the connection from the media node to the transform and or volume node reconnecting from the volume or transform nodes to their respective clip nodes causes no issues please let me know if you need more information but i think this should be pretty reproducible for testing debugging purposes | 1 |

328,387 | 9,994,130,713 | IssuesEvent | 2019-07-11 16:53:01 | duo-labs/cloudmapper | https://api.github.com/repos/duo-labs/cloudmapper | closed | KeyError: 'PublicIPAddress' during prepare command | HighPriority bug | I experienced the following KeyError exception during a "prepare" run:

```

$ python cloudmapper.py prepare --config [CONFIG] --account [ACCOUNT]

[...]

Traceback (most recent call last):

File "cloudmapper.py", line 73, in <module>

main()

File "cloudmapper.py", line 67, in main

commands[command].run(arguments)

File "[PATH]/cloudmapper/commands/prepare.py", line 649, in run

prepare(account, config, outputfilter)

File "[PATH]/cloudmapper/commands/prepare.py", line 569, in prepare

cytoscape_json = build_data_structure(account, config, outputfilter)

File "[PATH]/cloudmapper/commands/prepare.py", line 479, in build_data_structure

for c, reasons in get_connections(cidrs, vpc, outputfilter).items():

File "[PATH]/cloudmapper/commands/prepare.py", line 211, in get_connections

for ip in sourceInstance.ips:

File "[PATH]/cloudmapper/shared/nodes.py", line 685, in ips

ips.append(cluster_node['PublicIPAddress'])

KeyError: 'PublicIPAddress'

```

I just added a try-except in the _shared/nodes.py_ file as workaround, which solved it for the moment:

```

@property

def ips(self):

ips = []

for cluster_node in self._json_blob['ClusterNodes']:

try:

ips.append(cluster_node['PrivateIPAddress'])

ips.append(cluster_node['PublicIPAddress'])

except:

continue

return ips

```

EDIT:

It indeed seems to expect both private and public IPs to be assigned to Redshift cluster nodes:

```

$ cat account-data/[ACCOUNT]/eu-central-1/redshift-describe-clusters.json

{

"Clusters": [

{

"AllowVersionUpgrade": true,

"AutomatedSnapshotRetentionPeriod": 5,

"AvailabilityZone": "eu-central-1b",

"ClusterCreateTime": "2019-01-27T00:47:29.584000+00:00",

"ClusterIdentifier": "CLUSTERNAME",

"ClusterNodes": [

{

"NodeRole": "LEADER",

"PrivateIPAddress": "10.0.0.1"

},

{

"NodeRole": "COMPUTE-0",

"PrivateIPAddress": "10.0.0.3"

},

{

"NodeRole": "COMPUTE-1",

"PrivateIPAddress": "10.0.0.2"

},

{

"NodeRole": "COMPUTE-2",

"PrivateIPAddress": "10.0.0.4"

},

{

"NodeRole": "COMPUTE-3",

"PrivateIPAddress": "10.0.0.5"

}

],

[...]

``` | 1.0 | KeyError: 'PublicIPAddress' during prepare command - I experienced the following KeyError exception during a "prepare" run:

```

$ python cloudmapper.py prepare --config [CONFIG] --account [ACCOUNT]

[...]

Traceback (most recent call last):

File "cloudmapper.py", line 73, in <module>

main()

File "cloudmapper.py", line 67, in main

commands[command].run(arguments)

File "[PATH]/cloudmapper/commands/prepare.py", line 649, in run

prepare(account, config, outputfilter)

File "[PATH]/cloudmapper/commands/prepare.py", line 569, in prepare

cytoscape_json = build_data_structure(account, config, outputfilter)

File "[PATH]/cloudmapper/commands/prepare.py", line 479, in build_data_structure

for c, reasons in get_connections(cidrs, vpc, outputfilter).items():

File "[PATH]/cloudmapper/commands/prepare.py", line 211, in get_connections

for ip in sourceInstance.ips:

File "[PATH]/cloudmapper/shared/nodes.py", line 685, in ips

ips.append(cluster_node['PublicIPAddress'])

KeyError: 'PublicIPAddress'

```

I just added a try-except in the _shared/nodes.py_ file as workaround, which solved it for the moment:

```

@property

def ips(self):

ips = []

for cluster_node in self._json_blob['ClusterNodes']:

try:

ips.append(cluster_node['PrivateIPAddress'])

ips.append(cluster_node['PublicIPAddress'])

except:

continue

return ips

```

EDIT:

It indeed seems to expect both private and public IPs to be assigned to Redshift cluster nodes:

```

$ cat account-data/[ACCOUNT]/eu-central-1/redshift-describe-clusters.json

{

"Clusters": [

{

"AllowVersionUpgrade": true,

"AutomatedSnapshotRetentionPeriod": 5,

"AvailabilityZone": "eu-central-1b",

"ClusterCreateTime": "2019-01-27T00:47:29.584000+00:00",

"ClusterIdentifier": "CLUSTERNAME",

"ClusterNodes": [

{

"NodeRole": "LEADER",

"PrivateIPAddress": "10.0.0.1"

},

{

"NodeRole": "COMPUTE-0",

"PrivateIPAddress": "10.0.0.3"

},

{

"NodeRole": "COMPUTE-1",

"PrivateIPAddress": "10.0.0.2"

},

{

"NodeRole": "COMPUTE-2",

"PrivateIPAddress": "10.0.0.4"

},

{

"NodeRole": "COMPUTE-3",

"PrivateIPAddress": "10.0.0.5"

}

],

[...]

``` | priority | keyerror publicipaddress during prepare command i experienced the following keyerror exception during a prepare run python cloudmapper py prepare config account traceback most recent call last file cloudmapper py line in main file cloudmapper py line in main commands run arguments file cloudmapper commands prepare py line in run prepare account config outputfilter file cloudmapper commands prepare py line in prepare cytoscape json build data structure account config outputfilter file cloudmapper commands prepare py line in build data structure for c reasons in get connections cidrs vpc outputfilter items file cloudmapper commands prepare py line in get connections for ip in sourceinstance ips file cloudmapper shared nodes py line in ips ips append cluster node keyerror publicipaddress i just added a try except in the shared nodes py file as workaround which solved it for the moment property def ips self ips for cluster node in self json blob try ips append cluster node ips append cluster node except continue return ips edit it indeed seems to expect both private and public ips to be assigned to redshift cluster nodes cat account data eu central redshift describe clusters json clusters allowversionupgrade true automatedsnapshotretentionperiod availabilityzone eu central clustercreatetime clusteridentifier clustername clusternodes noderole leader privateipaddress noderole compute privateipaddress noderole compute privateipaddress noderole compute privateipaddress noderole compute privateipaddress | 1 |

261,155 | 8,224,963,104 | IssuesEvent | 2018-09-06 14:59:57 | CCAFS/MARLO | https://api.github.com/repos/CCAFS/MARLO | closed | Update Struts Version (2..5.17) | Priority - High Type - Enhancement Type -Task | Apache anucemented a possible vulnerability in the struts versions (2.5.1 - 2.5.16)

https://nvd.nist.gov/vuln/detail/CVE-2018-11776

For security, we need change the version to 2.5.17. | 1.0 | Update Struts Version (2..5.17) - Apache anucemented a possible vulnerability in the struts versions (2.5.1 - 2.5.16)

https://nvd.nist.gov/vuln/detail/CVE-2018-11776

For security, we need change the version to 2.5.17. | priority | update struts version apache anucemented a possible vulnerability in the struts versions for security we need change the version to | 1 |

543,994 | 15,888,408,256 | IssuesEvent | 2021-04-10 07:11:30 | AY2021S2-CS2113-W10-3/tp | https://api.github.com/repos/AY2021S2-CS2113-W10-3/tp | closed | [PE-D] List Function Does Not Follow The Conventional Format | priority.High severity.VeryLow type.Bug | "list" function does not follow the format used in other functions, i.e. "list p/PROJECT_NAME".

<!--session: 1617437683676-c79db70e-3b76-47c5-b72f-e5698e9ed087-->

-------------

Labels: `severity.VeryLow` `type.FeatureFlaw`

original: baggiiiie/ped#6 | 1.0 | [PE-D] List Function Does Not Follow The Conventional Format - "list" function does not follow the format used in other functions, i.e. "list p/PROJECT_NAME".

<!--session: 1617437683676-c79db70e-3b76-47c5-b72f-e5698e9ed087-->

-------------

Labels: `severity.VeryLow` `type.FeatureFlaw`

original: baggiiiie/ped#6 | priority | list function does not follow the conventional format list function does not follow the format used in other functions i e list p project name labels severity verylow type featureflaw original baggiiiie ped | 1 |

506,083 | 14,658,050,865 | IssuesEvent | 2020-12-28 16:58:13 | bounswe/bounswe2020group8 | https://api.github.com/repos/bounswe/bounswe2020group8 | closed | Add Main Product not adding parameter values correctly | Priority: High bug web | **Describe the bug**

When vendor adds a main product, the parameter values are added as one string instead of array.

<!---

If not found, remove this part

--->

**Possible code location**

createMainProduct method in screens/VendorAccount/AddProduct.js

**To Reproduce**

Steps to reproduce the behavior:

1. Sign in with a vendor

2. Go to Add Product in the side menu in My Products

3. Scroll down to Create Main Product

4. Fill in form with a parameter name and value

5. Check Main Products

**Expected behavior**

The parameter values should be listed as an array.

**Additional context**

This should be solved if the parameter values are stored as an array. | 1.0 | Add Main Product not adding parameter values correctly - **Describe the bug**

When vendor adds a main product, the parameter values are added as one string instead of array.

<!---

If not found, remove this part

--->

**Possible code location**

createMainProduct method in screens/VendorAccount/AddProduct.js

**To Reproduce**

Steps to reproduce the behavior:

1. Sign in with a vendor

2. Go to Add Product in the side menu in My Products

3. Scroll down to Create Main Product

4. Fill in form with a parameter name and value

5. Check Main Products

**Expected behavior**

The parameter values should be listed as an array.

**Additional context**

This should be solved if the parameter values are stored as an array. | priority | add main product not adding parameter values correctly describe the bug when vendor adds a main product the parameter values are added as one string instead of array if not found remove this part possible code location createmainproduct method in screens vendoraccount addproduct js to reproduce steps to reproduce the behavior sign in with a vendor go to add product in the side menu in my products scroll down to create main product fill in form with a parameter name and value check main products expected behavior the parameter values should be listed as an array additional context this should be solved if the parameter values are stored as an array | 1 |

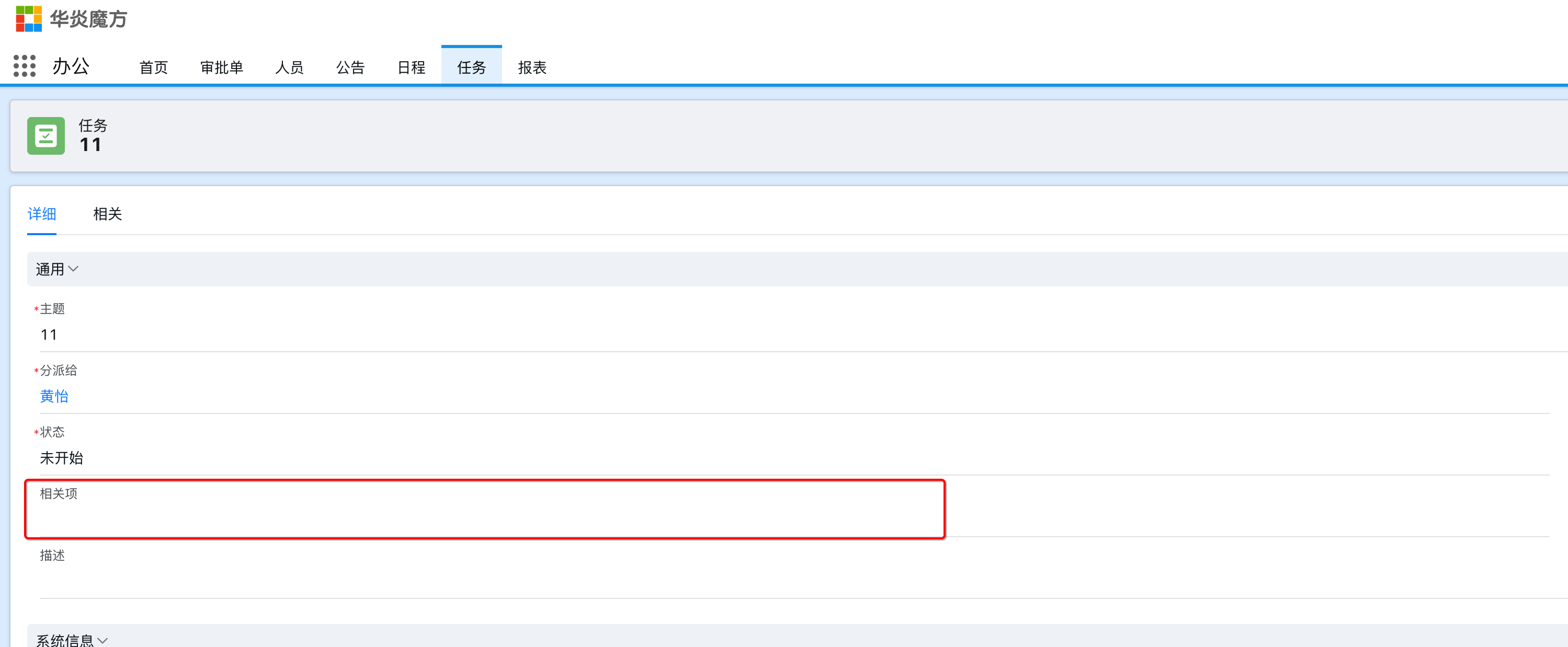

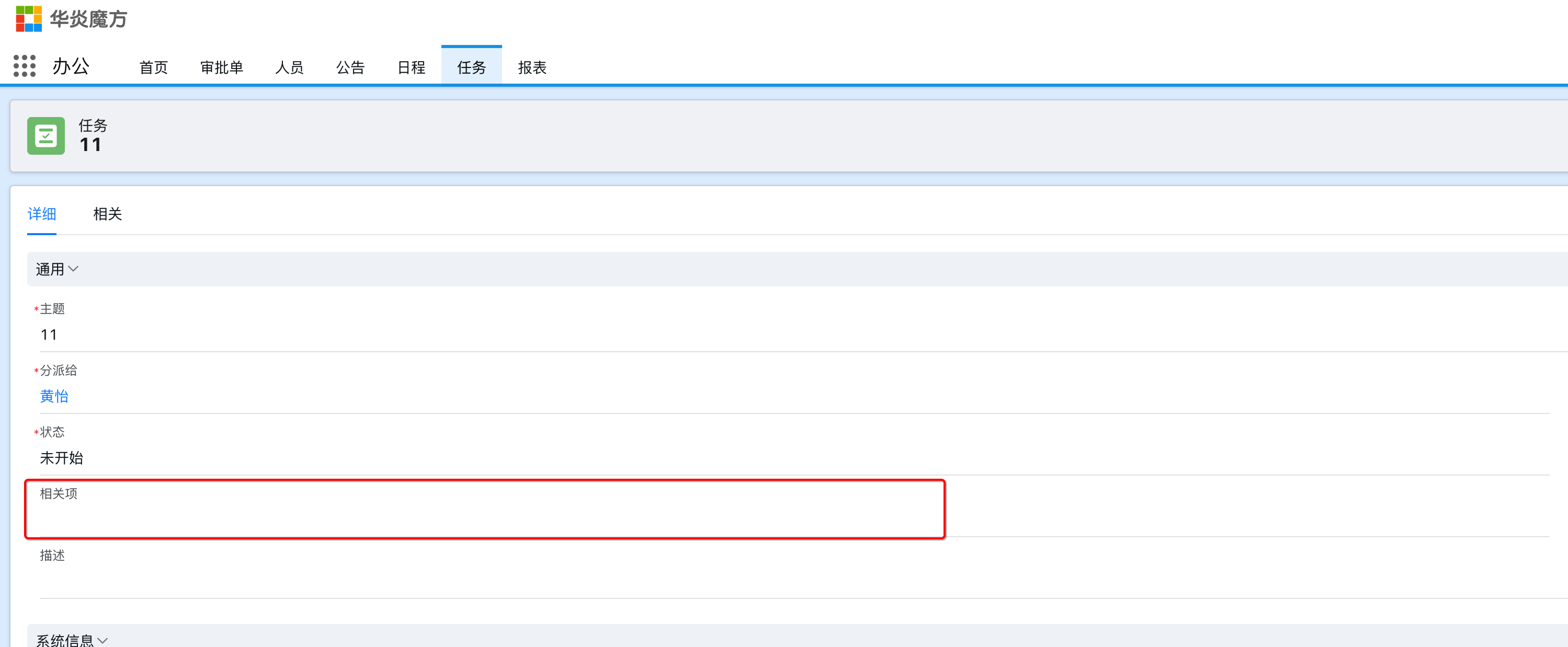

798,099 | 28,236,141,172 | IssuesEvent | 2023-04-06 00:47:44 | steedos/steedos-platform | https://api.github.com/repos/steedos/steedos-platform | closed | [Bug]: 任务详细页相关项(type: lookup, reference_to: !<tag:yaml.org,2002:js/function>)字段未显示 | bug done priority: High | ### Description

### Steps To Reproduce 重现步骤

1、办公模块新建任务

### Version 版本

2.4.8 | 1.0 | [Bug]: 任务详细页相关项(type: lookup, reference_to: !<tag:yaml.org,2002:js/function>)字段未显示 - ### Description

### Steps To Reproduce 重现步骤

1、办公模块新建任务

### Version 版本

2.4.8 | priority | 任务详细页相关项 type lookup reference to 字段未显示 description steps to reproduce 重现步骤 、办公模块新建任务 version 版本 | 1 |

40,940 | 2,868,956,043 | IssuesEvent | 2015-06-05 22:11:16 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Convert "pub build" to use barback | enhancement Fixed Priority-High | <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#13880_

----

Right now, it has some hardcoded stuff. It should use barback and transformers for its back-end. | 1.0 | Convert "pub build" to use barback - <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#13880_

----

Right now, it has some hardcoded stuff. It should use barback and transformers for its back-end. | priority | convert pub build to use barback issue by originally opened as dart lang sdk right now it has some hardcoded stuff it should use barback and transformers for its back end | 1 |

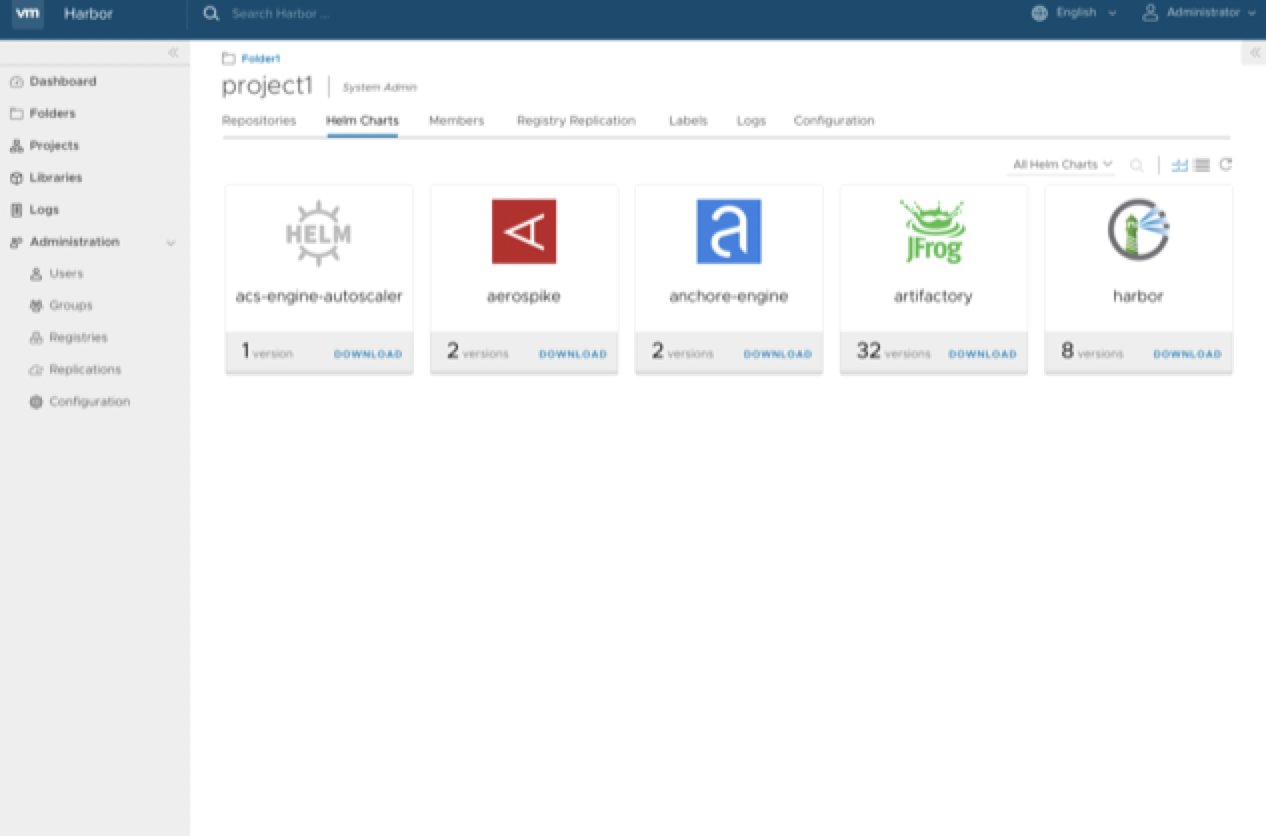

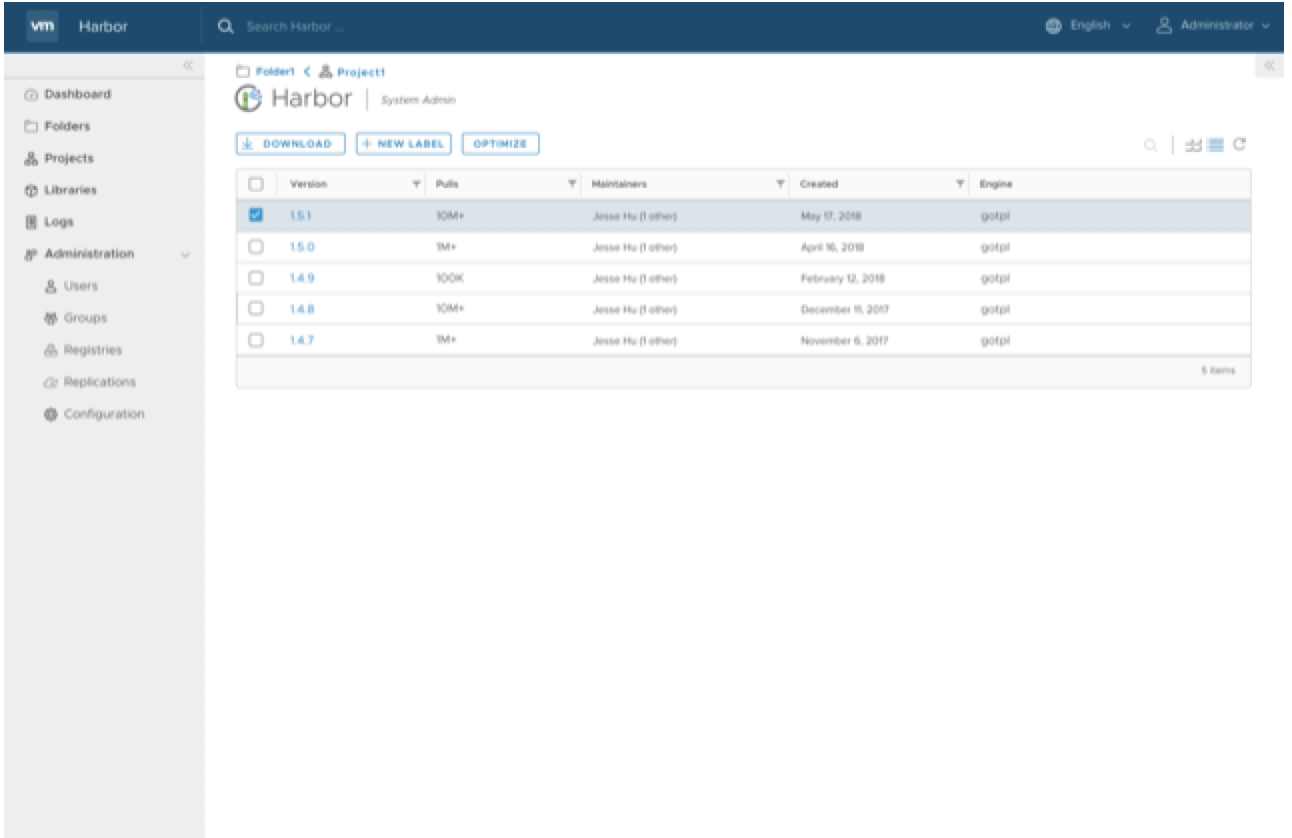

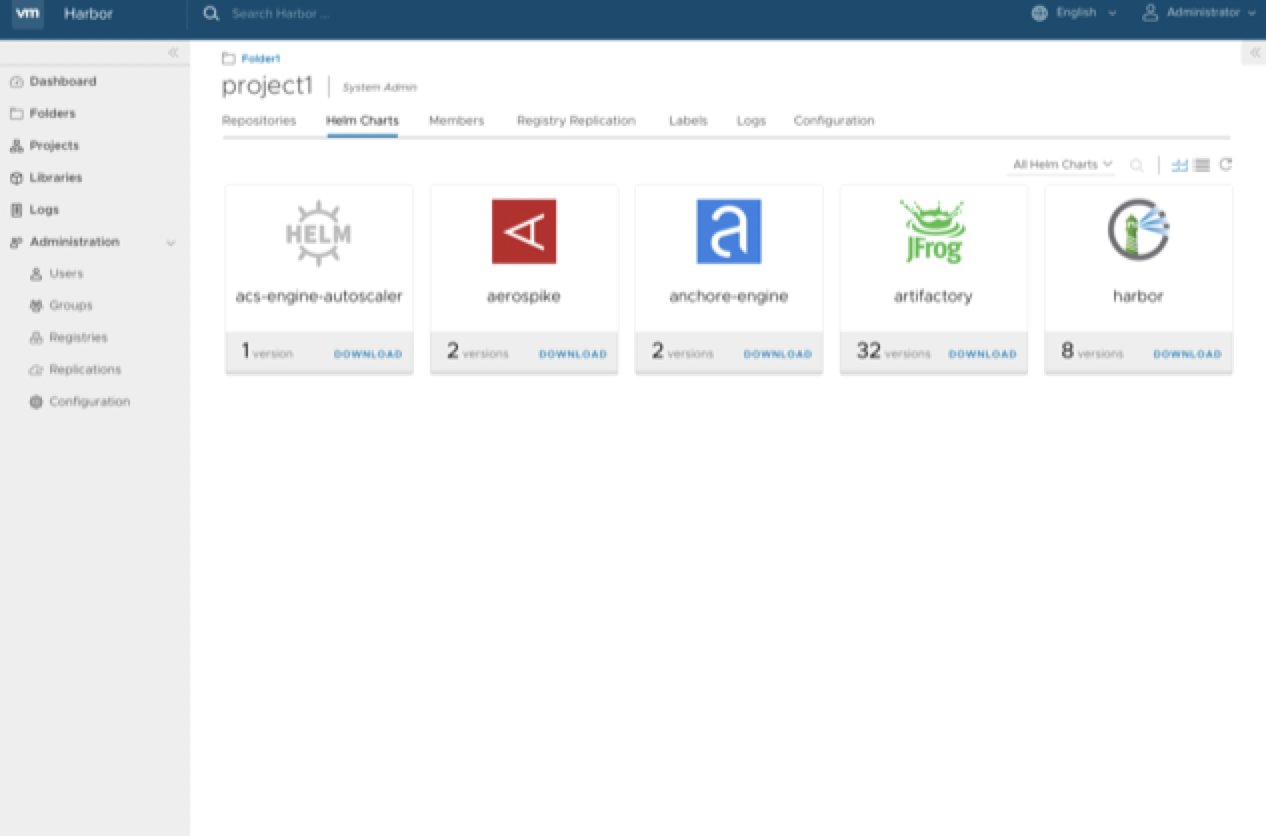

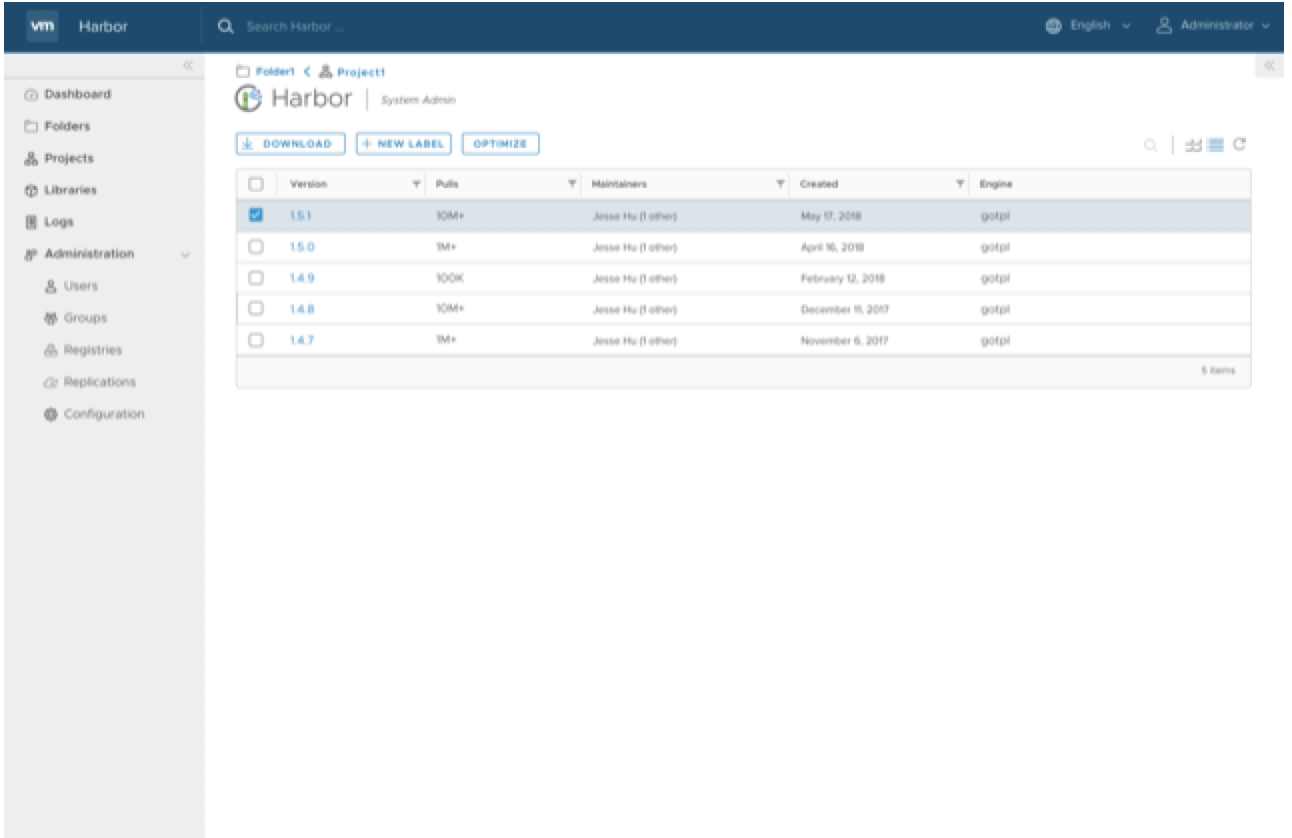

254,654 | 8,081,373,798 | IssuesEvent | 2018-08-08 03:06:24 | vmware/harbor | https://api.github.com/repos/vmware/harbor | closed | Helm Chart Card View Refactor | priority/high target/1.6.0 | 1. card view of chart

2. card view of chart versions

| 1.0 | Helm Chart Card View Refactor - 1. card view of chart

2. card view of chart versions

| priority | helm chart card view refactor card view of chart card view of chart versions | 1 |

170,953 | 6,475,268,698 | IssuesEvent | 2017-08-17 20:00:12 | semperfiwebdesign/all-in-one-seo-pack | https://api.github.com/repos/semperfiwebdesign/all-in-one-seo-pack | closed | Sorry, you are not allowed to access this page when activating Social Meta module | Bug Priority | High | When activating the Social Meta module, the first time you click on the Social Meta menu item you get a Sorry, you are not allowed to access this page error.

This is because the menu link is http://testsite.dev/wp-admin/admin.php?page=all-in-one-seo-pack/modules/aioseop_opengraph.php when it should be http://testsite.dev/wp-admin/admin.php?page=aiosp_opengraph

Need to find when this broke. | 1.0 | Sorry, you are not allowed to access this page when activating Social Meta module - When activating the Social Meta module, the first time you click on the Social Meta menu item you get a Sorry, you are not allowed to access this page error.

This is because the menu link is http://testsite.dev/wp-admin/admin.php?page=all-in-one-seo-pack/modules/aioseop_opengraph.php when it should be http://testsite.dev/wp-admin/admin.php?page=aiosp_opengraph

Need to find when this broke. | priority | sorry you are not allowed to access this page when activating social meta module when activating the social meta module the first time you click on the social meta menu item you get a sorry you are not allowed to access this page error this is because the menu link is when it should be need to find when this broke | 1 |

664,856 | 22,290,958,622 | IssuesEvent | 2022-06-12 11:01:14 | h-dt/hola-clone | https://api.github.com/repos/h-dt/hola-clone | opened | [dev, update] Board Read에 Comment와 Skill 로직 추가 | enhancement status: in progress priority:high | ## 본문 내용

### 추가/개선 필요 요소

Board Read에 Comment와 Skill 로직 추가

### 상세 요구 사항

- 기존에 Board만 만들어 두었던 로직에 Comment와 Skill 로직 추가하기

## issue-number

> #6

| 1.0 | [dev, update] Board Read에 Comment와 Skill 로직 추가 - ## 본문 내용

### 추가/개선 필요 요소

Board Read에 Comment와 Skill 로직 추가

### 상세 요구 사항

- 기존에 Board만 만들어 두었던 로직에 Comment와 Skill 로직 추가하기

## issue-number

> #6

| priority | board read에 comment와 skill 로직 추가 본문 내용 추가 개선 필요 요소 board read에 comment와 skill 로직 추가 상세 요구 사항 기존에 board만 만들어 두었던 로직에 comment와 skill 로직 추가하기 issue number | 1 |

699,999 | 24,041,139,083 | IssuesEvent | 2022-09-16 02:00:44 | wintercms/winter | https://api.github.com/repos/wintercms/winter | closed | [v1.2] CMS editor doesn't save all components added to page/layout/etc | Type: Bug Priority: High | ### Winter CMS Build

dev-develop

### PHP Version

8.0

### Database engine

MySQL/MariaDB

### Plugins installed

Winter.Pages

### Issue description

Adding multiple components to a layout/page from the CMS editor does not work, and only the last added component is saved to the actual file itself. All other components are lost.

### Steps to replicate

1. Install fresh winter cms project

2. Install Winter.Pages plugin

3. Add `static page` and `static menu` components to the default layout.

4. Save

5. View the `layouts/default.htm` file from your IDE/editor and see only one component is actually saved to the file.

### Workaround

Add components manually from your editor/IDE without using the backend. | 1.0 | [v1.2] CMS editor doesn't save all components added to page/layout/etc - ### Winter CMS Build

dev-develop

### PHP Version

8.0

### Database engine

MySQL/MariaDB

### Plugins installed

Winter.Pages

### Issue description

Adding multiple components to a layout/page from the CMS editor does not work, and only the last added component is saved to the actual file itself. All other components are lost.

### Steps to replicate

1. Install fresh winter cms project

2. Install Winter.Pages plugin

3. Add `static page` and `static menu` components to the default layout.

4. Save

5. View the `layouts/default.htm` file from your IDE/editor and see only one component is actually saved to the file.

### Workaround

Add components manually from your editor/IDE without using the backend. | priority | cms editor doesn t save all components added to page layout etc winter cms build dev develop php version database engine mysql mariadb plugins installed winter pages issue description adding multiple components to a layout page from the cms editor does not work and only the last added component is saved to the actual file itself all other components are lost steps to replicate install fresh winter cms project install winter pages plugin add static page and static menu components to the default layout save view the layouts default htm file from your ide editor and see only one component is actually saved to the file workaround add components manually from your editor ide without using the backend | 1 |

695,170 | 23,847,510,958 | IssuesEvent | 2022-09-06 15:03:20 | Qiskit/qiskit-ibm-runtime | https://api.github.com/repos/Qiskit/qiskit-ibm-runtime | closed | Sampler returns SamplerResult with dict | bug priority: high | **Describe the bug**

Sampler returns SamplerResult with a list of dict. However, it should be SamplerResult with a list of QuasiDistribution.

See https://github.com/Qiskit/qiskit-terra/blob/df54ae26d6125bee671ad27f4d412a92912aad1a/qiskit/primitives/sampler_result.py#L42 for detail.

====

This may cause bugs in the future. In particular, applications will be written assuming the type.

| 1.0 | Sampler returns SamplerResult with dict - **Describe the bug**

Sampler returns SamplerResult with a list of dict. However, it should be SamplerResult with a list of QuasiDistribution.

See https://github.com/Qiskit/qiskit-terra/blob/df54ae26d6125bee671ad27f4d412a92912aad1a/qiskit/primitives/sampler_result.py#L42 for detail.

====

This may cause bugs in the future. In particular, applications will be written assuming the type.

| priority | sampler returns samplerresult with dict describe the bug sampler returns samplerresult with a list of dict however it should be samplerresult with a list of quasidistribution see for detail this may cause bugs in the future in particular applications will be written assuming the type | 1 |

832,387 | 32,078,257,919 | IssuesEvent | 2023-09-25 12:27:16 | risingwavelabs/risingwave | https://api.github.com/repos/risingwavelabs/risingwave | opened | timestamp panic when selecting from kafka source with _rw_kafka_timestamp | type/bug priority/high | ```

create source test2 (v int) with (

connector = 'kafka',

topic = 'test',

properties.bootstrap.server = '127.0.0.1:29092',

scan.start_up.mode = 'earliest',

) FORMAT PLAIN ENCODE JSON

dev=> select * from test2 where _rw_kafka_timestamp >= '2023-09-25 10:34:33.000000+00:00';

v

---

1

2

(2 rows)

```

This is OK

But:

```

dev=> select * from test2 where _rw_kafka_timestamp >= '2023-09-25 10:34:33.000000';

ERROR: Panicked when processing: called `Result::unwrap()` on an `Err` value: Expr error: Unsupported function: cast(varchar) -> timestamptz

0: std::backtrace_rs::backtrace::libunwind::trace

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/../../backtrace/src/backtrace/libunwind.rs:93:5

1: std::backtrace_rs::backtrace::trace_unsynchronized

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/../../backtrace/src/backtrace/mod.rs:66:5

2: std::backtrace::Backtrace::create

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/backtrace.rs:331:13

3: <risingwave_common::error::RwError as core::convert::From<risingwave_common::error::ErrorCode>>::from

at ./src/common/src/error.rs:174:33

4: <T as core::convert::Into<U>>::into

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/core/src/convert/mod.rs:716:9

5: risingwave_expr::error::<impl core::convert::From<risingwave_expr::error::ExprError> for risingwave_common::error::RwError>::from

at ./src/expr/src/error.rs:91:9

6: <core::result::Result<T,F> as core::ops::try_trait::FromResidual<core::result::Result<core::convert::Infallible,E>>>::from_residual

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/core/src/result.rs:1962:27

7: risingwave_frontend::expr::ExprImpl::eval_row::{{closure}}

at ./src/frontend/src/expr/mod.rs:312:28

8: futures_util::future::future::FutureExt::now_or_never

at /Users/martin/.cargo/registry/src/index.crates.io-6f17d22bba15001f/futures-util-0.3.28/src/future/future/mod.rs:605:15

9: risingwave_frontend::expr::ExprImpl::try_fold_const

at ./src/frontend/src/expr/mod.rs:324:13

10: risingwave_frontend::optimizer::plan_node::logical_source::expr_to_kafka_timestamp_range::{{closure}}

at ./src/frontend/src/optimizer/plan_node/logical_source.rs:342:46

11: risingwave_frontend::optimizer::plan_node::logical_source::expr_to_kafka_timestamp_range

at ./src/frontend/src/optimizer/plan_node/logical_source.rs:372:58

```

or

```

dev=> select * from test2 where _rw_kafka_timestamp >= TO_TIMESTAMP('2023-09-25 10:34:33.000000', 'YYYY-MM-DD HH24:MI:SS.US');

ERROR: Panicked when processing: called `Result::unwrap()` on an `Err` value: Expr error: Unsupported function: to_timestamp should have been rewritten to include timezone

0: std::backtrace_rs::backtrace::libunwind::trace

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/../../backtrace/src/backtrace/libunwind.rs:93:5

1: std::backtrace_rs::backtrace::trace_unsynchronized

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/../../backtrace/src/backtrace/mod.rs:66:5

2: std::backtrace::Backtrace::create

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/backtrace.rs:331:13

3: <risingwave_common::error::RwError as core::convert::From<risingwave_common::error::ErrorCode>>::from

at ./src/common/src/error.rs:174:33

4: <T as core::convert::Into<U>>::into

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/core/src/convert/mod.rs:716:9

5: risingwave_expr::error::<impl core::convert::From<risingwave_expr::error::ExprError> for risingwave_common::error::RwError>::from

at ./src/expr/src/error.rs:91:9

6: <core::result::Result<T,F> as core::ops::try_trait::FromResidual<core::result::Result<core::convert::Infallible,E>>>::from_residual

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/core/src/result.rs:1962:27

7: risingwave_frontend::expr::ExprImpl::eval_row::{{closure}}

at ./src/frontend/src/expr/mod.rs:312:28

8: futures_util::future::future::FutureExt::now_or_never

```

We remark that the last query is generated by the users' Superset automatically, so the first query, as the workaround, cannot work. | 1.0 | timestamp panic when selecting from kafka source with _rw_kafka_timestamp - ```

create source test2 (v int) with (

connector = 'kafka',

topic = 'test',

properties.bootstrap.server = '127.0.0.1:29092',

scan.start_up.mode = 'earliest',

) FORMAT PLAIN ENCODE JSON

dev=> select * from test2 where _rw_kafka_timestamp >= '2023-09-25 10:34:33.000000+00:00';

v

---

1

2

(2 rows)

```

This is OK

But:

```

dev=> select * from test2 where _rw_kafka_timestamp >= '2023-09-25 10:34:33.000000';

ERROR: Panicked when processing: called `Result::unwrap()` on an `Err` value: Expr error: Unsupported function: cast(varchar) -> timestamptz

0: std::backtrace_rs::backtrace::libunwind::trace

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/../../backtrace/src/backtrace/libunwind.rs:93:5

1: std::backtrace_rs::backtrace::trace_unsynchronized

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/../../backtrace/src/backtrace/mod.rs:66:5

2: std::backtrace::Backtrace::create

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/backtrace.rs:331:13

3: <risingwave_common::error::RwError as core::convert::From<risingwave_common::error::ErrorCode>>::from

at ./src/common/src/error.rs:174:33

4: <T as core::convert::Into<U>>::into

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/core/src/convert/mod.rs:716:9

5: risingwave_expr::error::<impl core::convert::From<risingwave_expr::error::ExprError> for risingwave_common::error::RwError>::from

at ./src/expr/src/error.rs:91:9

6: <core::result::Result<T,F> as core::ops::try_trait::FromResidual<core::result::Result<core::convert::Infallible,E>>>::from_residual

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/core/src/result.rs:1962:27

7: risingwave_frontend::expr::ExprImpl::eval_row::{{closure}}

at ./src/frontend/src/expr/mod.rs:312:28

8: futures_util::future::future::FutureExt::now_or_never

at /Users/martin/.cargo/registry/src/index.crates.io-6f17d22bba15001f/futures-util-0.3.28/src/future/future/mod.rs:605:15

9: risingwave_frontend::expr::ExprImpl::try_fold_const

at ./src/frontend/src/expr/mod.rs:324:13

10: risingwave_frontend::optimizer::plan_node::logical_source::expr_to_kafka_timestamp_range::{{closure}}

at ./src/frontend/src/optimizer/plan_node/logical_source.rs:342:46

11: risingwave_frontend::optimizer::plan_node::logical_source::expr_to_kafka_timestamp_range

at ./src/frontend/src/optimizer/plan_node/logical_source.rs:372:58

```

or

```

dev=> select * from test2 where _rw_kafka_timestamp >= TO_TIMESTAMP('2023-09-25 10:34:33.000000', 'YYYY-MM-DD HH24:MI:SS.US');

ERROR: Panicked when processing: called `Result::unwrap()` on an `Err` value: Expr error: Unsupported function: to_timestamp should have been rewritten to include timezone

0: std::backtrace_rs::backtrace::libunwind::trace

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/../../backtrace/src/backtrace/libunwind.rs:93:5

1: std::backtrace_rs::backtrace::trace_unsynchronized

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/../../backtrace/src/backtrace/mod.rs:66:5

2: std::backtrace::Backtrace::create

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/std/src/backtrace.rs:331:13

3: <risingwave_common::error::RwError as core::convert::From<risingwave_common::error::ErrorCode>>::from

at ./src/common/src/error.rs:174:33

4: <T as core::convert::Into<U>>::into

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/core/src/convert/mod.rs:716:9

5: risingwave_expr::error::<impl core::convert::From<risingwave_expr::error::ExprError> for risingwave_common::error::RwError>::from

at ./src/expr/src/error.rs:91:9

6: <core::result::Result<T,F> as core::ops::try_trait::FromResidual<core::result::Result<core::convert::Infallible,E>>>::from_residual

at /rustc/62ebe3a2b177d50ec664798d731b8a8d1a9120d1/library/core/src/result.rs:1962:27

7: risingwave_frontend::expr::ExprImpl::eval_row::{{closure}}

at ./src/frontend/src/expr/mod.rs:312:28

8: futures_util::future::future::FutureExt::now_or_never

```

We remark that the last query is generated by the users' Superset automatically, so the first query, as the workaround, cannot work. | priority | timestamp panic when selecting from kafka source with rw kafka timestamp create source v int with connector kafka topic test properties bootstrap server scan start up mode earliest format plain encode json dev select from where rw kafka timestamp v rows this is ok but dev select from where rw kafka timestamp error panicked when processing called result unwrap on an err value expr error unsupported function cast varchar timestamptz std backtrace rs backtrace libunwind trace at rustc library std src backtrace src backtrace libunwind rs std backtrace rs backtrace trace unsynchronized at rustc library std src backtrace src backtrace mod rs std backtrace backtrace create at rustc library std src backtrace rs from at src common src error rs into at rustc library core src convert mod rs risingwave expr error for risingwave common error rwerror from at src expr src error rs as core ops try trait fromresidual from residual at rustc library core src result rs risingwave frontend expr exprimpl eval row closure at src frontend src expr mod rs futures util future future futureext now or never at users martin cargo registry src index crates io futures util src future future mod rs risingwave frontend expr exprimpl try fold const at src frontend src expr mod rs risingwave frontend optimizer plan node logical source expr to kafka timestamp range closure at src frontend src optimizer plan node logical source rs risingwave frontend optimizer plan node logical source expr to kafka timestamp range at src frontend src optimizer plan node logical source rs or dev select from where rw kafka timestamp to timestamp yyyy mm dd mi ss us error panicked when processing called result unwrap on an err value expr error unsupported function to timestamp should have been rewritten to include timezone std backtrace rs backtrace libunwind trace at rustc library std src backtrace src backtrace libunwind rs std backtrace rs backtrace trace unsynchronized at rustc library std src backtrace src backtrace mod rs std backtrace backtrace create at rustc library std src backtrace rs from at src common src error rs into at rustc library core src convert mod rs risingwave expr error for risingwave common error rwerror from at src expr src error rs as core ops try trait fromresidual from residual at rustc library core src result rs risingwave frontend expr exprimpl eval row closure at src frontend src expr mod rs futures util future future futureext now or never we remark that the last query is generated by the users superset automatically so the first query as the workaround cannot work | 1 |

756,580 | 26,477,466,520 | IssuesEvent | 2023-01-17 12:17:34 | codersforcauses/poops | https://api.github.com/repos/codersforcauses/poops | closed | Add vet concerns to notes | backend enhancement priority::high point::2 | **Is your feature request related to a problem? Please describe.**

Vet concerns are currently not added to the notes of the specific visit.

**Describe the solution you'd like**

Vet concerns should be added to the notes of the specific visit.

And also add the vet concerns to a collection called `vet_concerns`.

The schema for the document should be:

```yaml

/vet_concerns

/{vet_concern}

- user_uid: string

- user_name: string

- user_email: string

- user_phone?: integer

- client_name: string

- pet_name: string

- visit_time: timestamp

- visit_id: string

- detail: string

- created_at: timestamp

```

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| 1.0 | Add vet concerns to notes - **Is your feature request related to a problem? Please describe.**

Vet concerns are currently not added to the notes of the specific visit.

**Describe the solution you'd like**

Vet concerns should be added to the notes of the specific visit.

And also add the vet concerns to a collection called `vet_concerns`.

The schema for the document should be:

```yaml

/vet_concerns

/{vet_concern}

- user_uid: string

- user_name: string

- user_email: string

- user_phone?: integer

- client_name: string

- pet_name: string

- visit_time: timestamp

- visit_id: string

- detail: string

- created_at: timestamp

```

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| priority | add vet concerns to notes is your feature request related to a problem please describe vet concerns are currently not added to the notes of the specific visit describe the solution you d like vet concerns should be added to the notes of the specific visit and also add the vet concerns to a collection called vet concerns the schema for the document should be yaml vet concerns vet concern user uid string user name string user email string user phone integer client name string pet name string visit time timestamp visit id string detail string created at timestamp describe alternatives you ve considered a clear and concise description of any alternative solutions or features you ve considered additional context add any other context or screenshots about the feature request here | 1 |

121,832 | 4,821,931,266 | IssuesEvent | 2016-11-05 16:00:51 | California-Planet-Search/radvel | https://api.github.com/repos/California-Planet-Search/radvel | closed | "per tc e w k" fitting basis not working | bug priority:high | Fitted values are crazy when working in this basis. Need to check the basis conversions.

| 1.0 | "per tc e w k" fitting basis not working - Fitted values are crazy when working in this basis. Need to check the basis conversions.

| priority | per tc e w k fitting basis not working fitted values are crazy when working in this basis need to check the basis conversions | 1 |

512,811 | 14,910,081,835 | IssuesEvent | 2021-01-22 09:03:39 | airbytehq/airbyte | https://api.github.com/repos/airbytehq/airbyte | closed | Airbyte should fail when instance version doesn't match data version | priority/high type/enhancement | ## Tell us about the problem you're trying to solve

* If a user upgrades the version of Airbyte but doesn't run the appropriate migration of the underlying data, then the behavior is undefined and potentially will corrupt data.

## Describe the solution you’d like

* Airbyte should not allow starting up when data version and airbyte version are incompatible. | 1.0 | Airbyte should fail when instance version doesn't match data version - ## Tell us about the problem you're trying to solve

* If a user upgrades the version of Airbyte but doesn't run the appropriate migration of the underlying data, then the behavior is undefined and potentially will corrupt data.

## Describe the solution you’d like

* Airbyte should not allow starting up when data version and airbyte version are incompatible. | priority | airbyte should fail when instance version doesn t match data version tell us about the problem you re trying to solve if a user upgrades the version of airbyte but doesn t run the appropriate migration of the underlying data then the behavior is undefined and potentially will corrupt data describe the solution you’d like airbyte should not allow starting up when data version and airbyte version are incompatible | 1 |

344,420 | 10,344,410,025 | IssuesEvent | 2019-09-04 11:09:26 | openshift/odo | https://api.github.com/repos/openshift/odo | closed | "odo service create" doesn't set parameters correctly unless used in interactive mode | priority/High | [kind/bug]

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the Google group if you have a question rather than a bug or feature request.

The group is at: https://groups.google.com/forum/#!forum/odo-users

Thanks for understanding, and for contributing to the project!

-->

## What versions of software are you using?

- Operating System: Fedora

- Output of `odo version`: master

## How did you run odo exactly?

- Interactively create a service using just "odo service create" command and set the parameters as requested interactively. For example:

```sh

$ odo service create

? Which kind of service do you wish to create database

? Which database service class should we use dh-postgresql-apb

? Which service plan should we use dev

? Enter a value for string property postgresql_database (PostgreSQL Database Name): mydata

? Enter a value for string property postgresql_password (PostgreSQL Password): secret

? Enter a value for string property postgresql_user (PostgreSQL User): luke

? Enter a value for string property postgresql_version (PostgreSQL Version): 10

? How should we name your service dh-postgresql-apb

? Output the non-interactive version of the selected options Yes

? Wait for the service to be ready No

✓ Creating service [80ms]

✓ Service 'dh-postgresql-apb' was created

Progress of the provisioning will not be reported and might take a long time.

You can see the current status by executing 'odo service list'

Equivalent command:

odo service create dh-postgresql-apb dh-postgresql-apb --plan dev -p postgresql_database=mydata -p postgresql_password=secret -p postgresql_user=luke -p postgresql_version=10

$ oc describe po/postgresql-289609a5-c4b1-11e9-85e6-0242ac11000d-1-x2vhq | grep -A3 Env

Environment:

POSTGRESQL_PASSWORD: secret

POSTGRESQL_USER: luke

POSTGRESQL_DATABASE: mydata

```

- Use the "Equivalent command" printed in above output to create the service:

```sh

$ odo service create dh-postgresql-apb dh-postgresql-apb --plan dev -p postgresql_database=mydata -p postgresql_password=secret -p postgresql_user=luke -p postgresql_version=10

$ oc describe po/postgresql-a210cbb2-c4bd-11e9-85e6-0242ac11000d-1-n895l | grep -A3 Env

Environment:

POSTGRESQL_PASSWORD: changeme

POSTGRESQL_USER: admin

POSTGRESQL_DATABASE: admin

```

## Actual behavior

Environment variables are not set correctly when spinning up the service using full `odo service create` command in non-interactive mode.

## Expected behavior

Environment variables should be set to same values when creating service using either interactive mode or full command.

## Any logs, error output, etc?

| 1.0 | "odo service create" doesn't set parameters correctly unless used in interactive mode - [kind/bug]

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the Google group if you have a question rather than a bug or feature request.

The group is at: https://groups.google.com/forum/#!forum/odo-users

Thanks for understanding, and for contributing to the project!

-->

## What versions of software are you using?

- Operating System: Fedora

- Output of `odo version`: master

## How did you run odo exactly?

- Interactively create a service using just "odo service create" command and set the parameters as requested interactively. For example:

```sh

$ odo service create

? Which kind of service do you wish to create database

? Which database service class should we use dh-postgresql-apb

? Which service plan should we use dev

? Enter a value for string property postgresql_database (PostgreSQL Database Name): mydata

? Enter a value for string property postgresql_password (PostgreSQL Password): secret

? Enter a value for string property postgresql_user (PostgreSQL User): luke

? Enter a value for string property postgresql_version (PostgreSQL Version): 10

? How should we name your service dh-postgresql-apb

? Output the non-interactive version of the selected options Yes

? Wait for the service to be ready No

✓ Creating service [80ms]

✓ Service 'dh-postgresql-apb' was created

Progress of the provisioning will not be reported and might take a long time.

You can see the current status by executing 'odo service list'

Equivalent command:

odo service create dh-postgresql-apb dh-postgresql-apb --plan dev -p postgresql_database=mydata -p postgresql_password=secret -p postgresql_user=luke -p postgresql_version=10

$ oc describe po/postgresql-289609a5-c4b1-11e9-85e6-0242ac11000d-1-x2vhq | grep -A3 Env

Environment:

POSTGRESQL_PASSWORD: secret

POSTGRESQL_USER: luke

POSTGRESQL_DATABASE: mydata

```

- Use the "Equivalent command" printed in above output to create the service:

```sh

$ odo service create dh-postgresql-apb dh-postgresql-apb --plan dev -p postgresql_database=mydata -p postgresql_password=secret -p postgresql_user=luke -p postgresql_version=10

$ oc describe po/postgresql-a210cbb2-c4bd-11e9-85e6-0242ac11000d-1-n895l | grep -A3 Env

Environment:

POSTGRESQL_PASSWORD: changeme

POSTGRESQL_USER: admin

POSTGRESQL_DATABASE: admin

```

## Actual behavior

Environment variables are not set correctly when spinning up the service using full `odo service create` command in non-interactive mode.

## Expected behavior

Environment variables should be set to same values when creating service using either interactive mode or full command.

## Any logs, error output, etc?

| priority | odo service create doesn t set parameters correctly unless used in interactive mode welcome we kindly ask you to fill out the issue template below use the google group if you have a question rather than a bug or feature request the group is at thanks for understanding and for contributing to the project what versions of software are you using operating system fedora output of odo version master how did you run odo exactly interactively create a service using just odo service create command and set the parameters as requested interactively for example sh odo service create which kind of service do you wish to create database which database service class should we use dh postgresql apb which service plan should we use dev enter a value for string property postgresql database postgresql database name mydata enter a value for string property postgresql password postgresql password secret enter a value for string property postgresql user postgresql user luke enter a value for string property postgresql version postgresql version how should we name your service dh postgresql apb output the non interactive version of the selected options yes wait for the service to be ready no ✓ creating service ✓ service dh postgresql apb was created progress of the provisioning will not be reported and might take a long time you can see the current status by executing odo service list equivalent command odo service create dh postgresql apb dh postgresql apb plan dev p postgresql database mydata p postgresql password secret p postgresql user luke p postgresql version oc describe po postgresql grep env environment postgresql password secret postgresql user luke postgresql database mydata use the equivalent command printed in above output to create the service sh odo service create dh postgresql apb dh postgresql apb plan dev p postgresql database mydata p postgresql password secret p postgresql user luke p postgresql version oc describe po postgresql grep env environment postgresql password changeme postgresql user admin postgresql database admin actual behavior environment variables are not set correctly when spinning up the service using full odo service create command in non interactive mode expected behavior environment variables should be set to same values when creating service using either interactive mode or full command any logs error output etc | 1 |

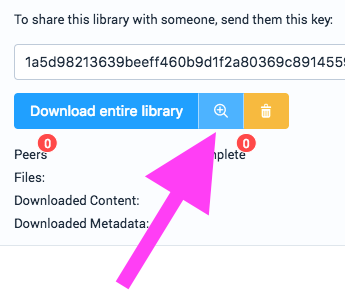

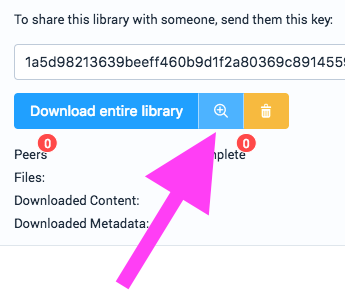

168,324 | 6,369,390,637 | IssuesEvent | 2017-08-01 11:45:46 | e-e-e/dat-library | https://api.github.com/repos/e-e-e/dat-library | closed | It's not clear that the zoom icon on a library is actually 'show in finder' | enhancement high priority | I had no idea what that button would do.

May I suggest that the text "show in finder" would be more useful that the icon here (for MacOS at least, I'm sure other OS's have an equivalent concept). It would help people discover that feature without having to click the button.

Also the common meaning of that icon might not easily sit conceptually with what the button does—but this is my first time using the app (great work by the way ❤️ ). | 1.0 | It's not clear that the zoom icon on a library is actually 'show in finder' - I had no idea what that button would do.

May I suggest that the text "show in finder" would be more useful that the icon here (for MacOS at least, I'm sure other OS's have an equivalent concept). It would help people discover that feature without having to click the button.

Also the common meaning of that icon might not easily sit conceptually with what the button does—but this is my first time using the app (great work by the way ❤️ ). | priority | it s not clear that the zoom icon on a library is actually show in finder i had no idea what that button would do may i suggest that the text show in finder would be more useful that the icon here for macos at least i m sure other os s have an equivalent concept it would help people discover that feature without having to click the button also the common meaning of that icon might not easily sit conceptually with what the button does—but this is my first time using the app great work by the way ❤️ | 1 |

298,439 | 9,200,274,609 | IssuesEvent | 2019-03-07 16:41:53 | rstudio/shinycannon | https://api.github.com/repos/rstudio/shinycannon | closed | When run with no args should show usage | Difficulty: Intermediate Effort: Low Priority: High Type: Enhancement | Currently, `shinycannon` displays detailed help without any usage instructions when run without args. Instead, it should display minimal help and demonstrate usage. | 1.0 | When run with no args should show usage - Currently, `shinycannon` displays detailed help without any usage instructions when run without args. Instead, it should display minimal help and demonstrate usage. | priority | when run with no args should show usage currently shinycannon displays detailed help without any usage instructions when run without args instead it should display minimal help and demonstrate usage | 1 |

196,139 | 6,924,907,169 | IssuesEvent | 2017-11-30 14:24:38 | fusetools/fuselibs-public | https://api.github.com/repos/fusetools/fuselibs-public | opened | Updates to property object are not being updated | Priority: High Severity: Bug | In the below project you can edit the right-hand values of the items. This should update the values in the view part as well, but it does not.

It's uncertain if this is a problem with using ux:Property, or so other defect.

Project: https://github.com/mortoray/fork-sandbox/tree/master/Nov2017/ItemListing | 1.0 | Updates to property object are not being updated - In the below project you can edit the right-hand values of the items. This should update the values in the view part as well, but it does not.

It's uncertain if this is a problem with using ux:Property, or so other defect.

Project: https://github.com/mortoray/fork-sandbox/tree/master/Nov2017/ItemListing | priority | updates to property object are not being updated in the below project you can edit the right hand values of the items this should update the values in the view part as well but it does not it s uncertain if this is a problem with using ux property or so other defect project | 1 |

76,552 | 3,489,213,944 | IssuesEvent | 2016-01-03 17:53:08 | benbaptist/benbot | https://api.github.com/repos/benbaptist/benbot | closed | No Access to BenBot Dashboard | bug dashboard high priority | Whatup man! So BenBot (aka CowCowBot) is in my chat (beam.pro/misterjoker) but won't do any of the commands. So far deactivate and activate work, but none of the custom commands. Also, going to http://dashboard.benbot.rocks/ automatically puts me at http://dashboard.benbot.rocks/switch

And when I click on my channel, it doesn't do anything. I've restarted the browser, I've logged out, and logged in, I've restarted. And, like I said prior, I !deactivate_bot and !activate the bot with no luck.

Also tested this during a stream as well with no luck whatsoever.

Thanks!

-mj | 1.0 | No Access to BenBot Dashboard - Whatup man! So BenBot (aka CowCowBot) is in my chat (beam.pro/misterjoker) but won't do any of the commands. So far deactivate and activate work, but none of the custom commands. Also, going to http://dashboard.benbot.rocks/ automatically puts me at http://dashboard.benbot.rocks/switch

And when I click on my channel, it doesn't do anything. I've restarted the browser, I've logged out, and logged in, I've restarted. And, like I said prior, I !deactivate_bot and !activate the bot with no luck.

Also tested this during a stream as well with no luck whatsoever.

Thanks!

-mj | priority | no access to benbot dashboard whatup man so benbot aka cowcowbot is in my chat beam pro misterjoker but won t do any of the commands so far deactivate and activate work but none of the custom commands also going to automatically puts me at and when i click on my channel it doesn t do anything i ve restarted the browser i ve logged out and logged in i ve restarted and like i said prior i deactivate bot and activate the bot with no luck also tested this during a stream as well with no luck whatsoever thanks mj | 1 |

127,539 | 5,032,032,778 | IssuesEvent | 2016-12-16 09:47:22 | fossasia/gci16.fossasia.org | https://api.github.com/repos/fossasia/gci16.fossasia.org | closed | Travis build fails | help wanted Priority: HIGH size/S | Why does [the current travis build](https://travis-ci.org/fossasia/gci16.fossasia.org/builds/184473563) fail?

Error:

```

/home/travis/.rvm/gems/ruby-2.3.3/gems/safe_yaml-1.0.4/lib/safe_yaml/load.rb:143:in `parse':

(/home/travis/build/fossasia/gci16.fossasia.org/_data/logosv2.yml):

did not find expected '-' indicator while parsing a block collection at line 2 column 1 (Psych::SyntaxError)

```

But if I look at the [file](https://github.com/fossasia/gci16.fossasia.org/blob/gh-pages/_data/logosv2.yml), I see this:

```

- author: Nguyen Chanh Dai

img: NguyenChanhDai.svg

```

Where is the problem? How can we fix travis? | 1.0 | Travis build fails - Why does [the current travis build](https://travis-ci.org/fossasia/gci16.fossasia.org/builds/184473563) fail?

Error:

```

/home/travis/.rvm/gems/ruby-2.3.3/gems/safe_yaml-1.0.4/lib/safe_yaml/load.rb:143:in `parse':

(/home/travis/build/fossasia/gci16.fossasia.org/_data/logosv2.yml):

did not find expected '-' indicator while parsing a block collection at line 2 column 1 (Psych::SyntaxError)

```

But if I look at the [file](https://github.com/fossasia/gci16.fossasia.org/blob/gh-pages/_data/logosv2.yml), I see this:

```

- author: Nguyen Chanh Dai

img: NguyenChanhDai.svg

```

Where is the problem? How can we fix travis? | priority | travis build fails why does fail error home travis rvm gems ruby gems safe yaml lib safe yaml load rb in parse home travis build fossasia fossasia org data yml did not find expected indicator while parsing a block collection at line column psych syntaxerror but if i look at the i see this author nguyen chanh dai img nguyenchanhdai svg where is the problem how can we fix travis | 1 |

434,530 | 12,519,686,692 | IssuesEvent | 2020-06-03 14:47:32 | DeadlyBossMods/DBM-Classic | https://api.github.com/repos/DeadlyBossMods/DBM-Classic | opened | GUI Issues | ⚠️ High Priority 🐛 Bug | Main tracking on retail DBM project here:

https://github.com/DeadlyBossMods/DeadlyBossMods/issues/204

Tracked here because the same issues would still impact DBM-Classic | 1.0 | GUI Issues - Main tracking on retail DBM project here:

https://github.com/DeadlyBossMods/DeadlyBossMods/issues/204

Tracked here because the same issues would still impact DBM-Classic | priority | gui issues main tracking on retail dbm project here tracked here because the same issues would still impact dbm classic | 1 |

287,320 | 8,809,161,808 | IssuesEvent | 2018-12-27 18:09:17 | comfortleaf/Legacy-WP | https://api.github.com/repos/comfortleaf/Legacy-WP | closed | Email Follow Up Campaigns | High Priority | Email Campaign / Drips are here:

https://comfortleaf.activehosted.com/series/9

-------

- [x] A message from our CEO

- [x] Comfort Leaf's Product Ingredients

- [x] Is CBD Legal? Legal Status of CBD in 50 States

- [x] Top 20 CBD Myths Debunked

- [x] This is how CBD is touching lives

- [x] Your Endocannabinoid System (Did you know?)

- [x] Comfort Leaf's Product Line

- [x] CBD Compliance, Lab Testing & Safety

- [x] What Doctors & Physicians are saying about CBD

- [x] 10 Little Known Uses For CBD

- [x] How to use CBD? Picking the best Product for me?

- [x] Have questions about CBD? Need assistance?

- [x] Have you had a chance to try CBD? (Free Sample) | 1.0 | Email Follow Up Campaigns - Email Campaign / Drips are here:

https://comfortleaf.activehosted.com/series/9

-------

- [x] A message from our CEO

- [x] Comfort Leaf's Product Ingredients

- [x] Is CBD Legal? Legal Status of CBD in 50 States

- [x] Top 20 CBD Myths Debunked

- [x] This is how CBD is touching lives

- [x] Your Endocannabinoid System (Did you know?)

- [x] Comfort Leaf's Product Line

- [x] CBD Compliance, Lab Testing & Safety

- [x] What Doctors & Physicians are saying about CBD

- [x] 10 Little Known Uses For CBD

- [x] How to use CBD? Picking the best Product for me?

- [x] Have questions about CBD? Need assistance?

- [x] Have you had a chance to try CBD? (Free Sample) | priority | email follow up campaigns email campaign drips are here a message from our ceo comfort leaf s product ingredients is cbd legal legal status of cbd in states top cbd myths debunked this is how cbd is touching lives your endocannabinoid system did you know comfort leaf s product line cbd compliance lab testing safety what doctors physicians are saying about cbd little known uses for cbd how to use cbd picking the best product for me have questions about cbd need assistance have you had a chance to try cbd free sample | 1 |

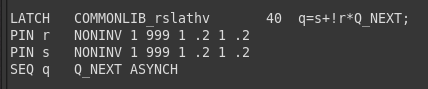

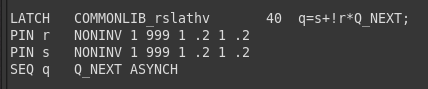

655,767 | 21,708,272,436 | IssuesEvent | 2022-05-10 11:42:22 | workcraft/workcraft | https://api.github.com/repos/workcraft/workcraft | closed | Incorrect extraction of set/reset functions from GenLib latch function | bug priority:high tag:model:circuit status:confirmed | Please answer these questions before submitting your issue. Thanks!

1. What version of Workcraft are you using?

Workcraft 3.3.7

2. What operating system are you using?

CentOS release 7.9.2009

3. What did you do? If possible, provide a list of steps to reproduce

the error.

I defined a gate library in the SIS Genlib format which includes a SR latch, as shown in the image below. I'm using it for technology mapping with MPSat.

4. What did you expect to see?

The SR latch is defined as follows:

When both inputs are high the output is supposed to be high as well.

5. What did you see instead?

When using the Initialisation Analyser with a circuit that uses these latches and both inputs are initialised to high, the output is not shown as propagated high as I was expecting.

| 1.0 | Incorrect extraction of set/reset functions from GenLib latch function - Please answer these questions before submitting your issue. Thanks!

1. What version of Workcraft are you using?

Workcraft 3.3.7

2. What operating system are you using?

CentOS release 7.9.2009

3. What did you do? If possible, provide a list of steps to reproduce

the error.

I defined a gate library in the SIS Genlib format which includes a SR latch, as shown in the image below. I'm using it for technology mapping with MPSat.

4. What did you expect to see?

The SR latch is defined as follows:

When both inputs are high the output is supposed to be high as well.

5. What did you see instead?

When using the Initialisation Analyser with a circuit that uses these latches and both inputs are initialised to high, the output is not shown as propagated high as I was expecting.

| priority | incorrect extraction of set reset functions from genlib latch function please answer these questions before submitting your issue thanks what version of workcraft are you using workcraft what operating system are you using centos release what did you do if possible provide a list of steps to reproduce the error i defined a gate library in the sis genlib format which includes a sr latch as shown in the image below i m using it for technology mapping with mpsat what did you expect to see the sr latch is defined as follows when both inputs are high the output is supposed to be high as well what did you see instead when using the initialisation analyser with a circuit that uses these latches and both inputs are initialised to high the output is not shown as propagated high as i was expecting | 1 |

128,066 | 5,048,135,928 | IssuesEvent | 2016-12-20 11:48:48 | Financial-Times/n-storylines | https://api.github.com/repos/Financial-Times/n-storylines | closed | Scroll on Mobile | High Priority | On Default and Small devices, the timeline should travel off the page to indicate that it can be scrolled:

<img width="506" alt="screen shot 2016-12-13 at 17 07 00" src="https://cloud.githubusercontent.com/assets/8199751/21152703/5e259ea2-c15f-11e6-9e15-cd183e745ce3.png"> | 1.0 | Scroll on Mobile - On Default and Small devices, the timeline should travel off the page to indicate that it can be scrolled:

<img width="506" alt="screen shot 2016-12-13 at 17 07 00" src="https://cloud.githubusercontent.com/assets/8199751/21152703/5e259ea2-c15f-11e6-9e15-cd183e745ce3.png"> | priority | scroll on mobile on default and small devices the timeline should travel off the page to indicate that it can be scrolled img width alt screen shot at src | 1 |

141,802 | 5,444,621,619 | IssuesEvent | 2017-03-07 03:37:00 | david-gay/2340 | https://api.github.com/repos/david-gay/2340 | closed | As a non-banned user, I want to create new water source reports, so that I can contribute to the community of people in search of water | Feature HighPriority | Must have some kind of input screen for the report where all the information is captured. The report should be stored somewhere in the model.

**Requirements**

- [x] After login, application should display the main screen of the application

- [x] You should have a way to navigate to the submit report screen

- [x] The submit report should prompt for all required information

- [x] Canceling the report does not save any information

- [x] Submitting the report should store it in the model

- [x] Need a way to view a list of all reports in the system | 1.0 | As a non-banned user, I want to create new water source reports, so that I can contribute to the community of people in search of water - Must have some kind of input screen for the report where all the information is captured. The report should be stored somewhere in the model.

**Requirements**

- [x] After login, application should display the main screen of the application

- [x] You should have a way to navigate to the submit report screen

- [x] The submit report should prompt for all required information

- [x] Canceling the report does not save any information

- [x] Submitting the report should store it in the model

- [x] Need a way to view a list of all reports in the system | priority | as a non banned user i want to create new water source reports so that i can contribute to the community of people in search of water must have some kind of input screen for the report where all the information is captured the report should be stored somewhere in the model requirements after login application should display the main screen of the application you should have a way to navigate to the submit report screen the submit report should prompt for all required information canceling the report does not save any information submitting the report should store it in the model need a way to view a list of all reports in the system | 1 |

521,278 | 15,106,928,538 | IssuesEvent | 2021-02-08 14:51:57 | cabouman/svmbir | https://api.github.com/repos/cabouman/svmbir | opened | package versioning | Priority High | As we transition from the "wild west" approach, as Charlie puts it, we need to establish a protocol for versioning the package. We should especially do this before putting this up on pypi.

This is a useful reference contributors should read:

https://nvie.com/posts/a-successful-git-branching-model/ | 1.0 | package versioning - As we transition from the "wild west" approach, as Charlie puts it, we need to establish a protocol for versioning the package. We should especially do this before putting this up on pypi.

This is a useful reference contributors should read:

https://nvie.com/posts/a-successful-git-branching-model/ | priority | package versioning as we transition from the wild west approach as charlie puts it we need to establish a protocol for versioning the package we should especially do this before putting this up on pypi this is a useful reference contributors should read | 1 |

70,172 | 3,320,823,572 | IssuesEvent | 2015-11-09 02:57:50 | web-cat/code-workout | https://api.github.com/repos/web-cat/code-workout | closed | Figure out VT login instructions | priority:high | How should VT users login? Perhaps we should just use VT G-mail credentials for logging in students? We need to figure out the procedure and instructions so students can begin using CodeWorkout in summer. | 1.0 | Figure out VT login instructions - How should VT users login? Perhaps we should just use VT G-mail credentials for logging in students? We need to figure out the procedure and instructions so students can begin using CodeWorkout in summer. | priority | figure out vt login instructions how should vt users login perhaps we should just use vt g mail credentials for logging in students we need to figure out the procedure and instructions so students can begin using codeworkout in summer | 1 |

352,077 | 10,531,547,272 | IssuesEvent | 2019-10-01 08:46:42 | canonical-web-and-design/ubuntu.com | https://api.github.com/repos/canonical-web-and-design/ubuntu.com | closed | Mobile connectivity takeover + landing page misuse “in favour of” | Priority: High | 1\. Go to [ubuntu.com](https://ubuntu.com/).

2\. If necessary, reload the page until you see “The future of mobile connectivity”.

3\. Follow the link to [the whitepaper page](https://ubuntu.com/engage/ubuntu-lime-telco?utm_source=Takeover&utm_medium=Takeover&utm_campaign=FY19_IOT_UbuntuCore_Whitepaper_LimeSDR).

What happens:

2, 3. Both pages have a subhed: “Are mobile operators ready to adopt open source in favour of proprietary technology?”

What’s wrong with this: It says the opposite of what’s intended.

The whitepaper page says: “Rather than rely on costly proprietary hardware and operating models, the use of open source technologies offers the ability to commoditise and democratise the wireless network infrastructure.” So, more open-source stuff, _less_ proprietary stuff.

But “in favour of” means “approving”, “to the benefit of”, or “to show preference for”. (References: [Collins](https://www.collinsdictionary.com/dictionary/english/in-favour-of), [Merriam-Webster](https://www.merriam-webster.com/dictionary/favor).) So “in favour of proprietary technology” would mean adopting _more_ proprietary technology.

What should happen: A simple fix would be to change “in favour of” to “rather than” or “instead of”.

---

*Reported from: https://ubuntu.com/* | 1.0 | Mobile connectivity takeover + landing page misuse “in favour of” - 1\. Go to [ubuntu.com](https://ubuntu.com/).

2\. If necessary, reload the page until you see “The future of mobile connectivity”.

3\. Follow the link to [the whitepaper page](https://ubuntu.com/engage/ubuntu-lime-telco?utm_source=Takeover&utm_medium=Takeover&utm_campaign=FY19_IOT_UbuntuCore_Whitepaper_LimeSDR).

What happens:

2, 3. Both pages have a subhed: “Are mobile operators ready to adopt open source in favour of proprietary technology?”

What’s wrong with this: It says the opposite of what’s intended.

The whitepaper page says: “Rather than rely on costly proprietary hardware and operating models, the use of open source technologies offers the ability to commoditise and democratise the wireless network infrastructure.” So, more open-source stuff, _less_ proprietary stuff.

But “in favour of” means “approving”, “to the benefit of”, or “to show preference for”. (References: [Collins](https://www.collinsdictionary.com/dictionary/english/in-favour-of), [Merriam-Webster](https://www.merriam-webster.com/dictionary/favor).) So “in favour of proprietary technology” would mean adopting _more_ proprietary technology.

What should happen: A simple fix would be to change “in favour of” to “rather than” or “instead of”.

---

*Reported from: https://ubuntu.com/* | priority | mobile connectivity takeover landing page misuse “in favour of” go to if necessary reload the page until you see “the future of mobile connectivity” follow the link to what happens both pages have a subhed “are mobile operators ready to adopt open source in favour of proprietary technology ” what’s wrong with this it says the opposite of what’s intended the whitepaper page says “rather than rely on costly proprietary hardware and operating models the use of open source technologies offers the ability to commoditise and democratise the wireless network infrastructure ” so more open source stuff less proprietary stuff but “in favour of” means “approving” “to the benefit of” or “to show preference for” references so “in favour of proprietary technology” would mean adopting more proprietary technology what should happen a simple fix would be to change “in favour of” to “rather than” or “instead of” reported from | 1 |

135,931 | 5,266,951,892 | IssuesEvent | 2017-02-04 17:51:41 | senderle/topic-modeling-tool | https://api.github.com/repos/senderle/topic-modeling-tool | closed | Fix MalformedInputException for metadata caused by Excel output | priority-high | CSV output from Excel on (at least) Macs uses a file encoding that breaks Java. This will probably require using the stream API.

| 1.0 | Fix MalformedInputException for metadata caused by Excel output - CSV output from Excel on (at least) Macs uses a file encoding that breaks Java. This will probably require using the stream API.

| priority | fix malformedinputexception for metadata caused by excel output csv output from excel on at least macs uses a file encoding that breaks java this will probably require using the stream api | 1 |

149,728 | 5,724,747,510 | IssuesEvent | 2017-04-20 15:08:33 | metasfresh/metasfresh-webui-frontend | https://api.github.com/repos/metasfresh/metasfresh-webui-frontend | closed | frontend: refactor /process/start response | enhancement high priority integrated release-candidate | We refactored the /process/start endpoint's respone as described here: https://github.com/metasfresh/metasfresh-webui-api/issues/294 .

Pls adapt the frontend to it, because more "action"s will come.