Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

466,654 | 13,430,857,528 | IssuesEvent | 2020-09-07 05:51:52 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | app.getpocket.com - desktop site instead of mobile site | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:81.0) Gecko/20100101 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/57745 -->

**URL**: https://app.getpocket.com/

**Browser / Version**: Firefox 81.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Edge

**Problem type**: Desktop site instead of mobile site

**Description**: Desktop site instead of mobile site

**Steps to Reproduce**:

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/9/9c82f483-788f-487f-858e-38568f16cd23.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200829200810</li><li>channel: aurora</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/9/9489329d-ab4f-4b90-a113-6c1eeb9764c0)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | app.getpocket.com - desktop site instead of mobile site - <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:81.0) Gecko/20100101 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/57745 -->

**URL**: https://app.getpocket.com/

**Browser / Version**: Firefox 81.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Edge

**Problem type**: Desktop site instead of mobile site

**Description**: Desktop site instead of mobile site

**Steps to Reproduce**:

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/9/9c82f483-788f-487f-858e-38568f16cd23.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200829200810</li><li>channel: aurora</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/9/9489329d-ab4f-4b90-a113-6c1eeb9764c0)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | app getpocket com desktop site instead of mobile site url browser version firefox operating system windows tested another browser yes edge problem type desktop site instead of mobile site description desktop site instead of mobile site steps to reproduce view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel aurora hastouchscreen true mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

397,380 | 11,727,643,889 | IssuesEvent | 2020-03-10 16:15:16 | privacytoolsIO/privacytools.io | https://api.github.com/repos/privacytoolsIO/privacytools.io | closed | Add steps to disable WebRTC in Safari | high priority 👁️ browsers 📝 correction | Please update the webRTC section about the Safari support. Current version of Safari just started working with webRTC.

EDIT: Sorry for the bad labeling, but you don’t have a good label for outdated info on the site, and this seemed like a close enough label 😅

| 1.0 | Add steps to disable WebRTC in Safari - Please update the webRTC section about the Safari support. Current version of Safari just started working with webRTC.

EDIT: Sorry for the bad labeling, but you don’t have a good label for outdated info on the site, and this seemed like a close enough label 😅

| priority | add steps to disable webrtc in safari please update the webrtc section about the safari support current version of safari just started working with webrtc edit sorry for the bad labeling but you don’t have a good label for outdated info on the site and this seemed like a close enough label 😅 | 1 |

153,467 | 5,892,761,782 | IssuesEvent | 2017-05-17 20:16:35 | denniscarr/dada | https://api.github.com/repos/denniscarr/dada | closed | More tasks | enhancement High priority | Task with more diversity, like killing NPCs, equipping objects, storing a certain number of things or a specific object in visor | 1.0 | More tasks - Task with more diversity, like killing NPCs, equipping objects, storing a certain number of things or a specific object in visor | priority | more tasks task with more diversity like killing npcs equipping objects storing a certain number of things or a specific object in visor | 1 |

623,208 | 19,663,214,363 | IssuesEvent | 2022-01-10 19:17:48 | Thorium-Sim/thorium | https://api.github.com/repos/Thorium-Sim/thorium | opened | Selecting Video Cards Crashes the Server | type/bug priority/high | ### Requested By: Alex DeBirk

### Priority: High

### Version: 3.5.1

When creating a timeline step that changes the viewscreen cards, selecting the card typically resets the server until it crashes.

### Steps to Reproduce

Using Linux Server, Windows Thorium editor, and Chrome. I create a timeline step that uses the Viewscreen: Change Viewscreen Card, and try to select the video. The card does not select. Any attempts to do so results in the last previous step that I created being reset. Eventually the server just crashes. | 1.0 | Selecting Video Cards Crashes the Server - ### Requested By: Alex DeBirk

### Priority: High

### Version: 3.5.1

When creating a timeline step that changes the viewscreen cards, selecting the card typically resets the server until it crashes.

### Steps to Reproduce

Using Linux Server, Windows Thorium editor, and Chrome. I create a timeline step that uses the Viewscreen: Change Viewscreen Card, and try to select the video. The card does not select. Any attempts to do so results in the last previous step that I created being reset. Eventually the server just crashes. | priority | selecting video cards crashes the server requested by alex debirk priority high version when creating a timeline step that changes the viewscreen cards selecting the card typically resets the server until it crashes steps to reproduce using linux server windows thorium editor and chrome i create a timeline step that uses the viewscreen change viewscreen card and try to select the video the card does not select any attempts to do so results in the last previous step that i created being reset eventually the server just crashes | 1 |

562,392 | 16,659,011,773 | IssuesEvent | 2021-06-06 02:39:54 | ut-code/utmap-times | https://api.github.com/repos/ut-code/utmap-times | closed | イベント記事タイプ/インターン記事タイプで、imageが表示されていない | Priority: High enhancement | イベント記事タイプ/インターン記事タイプで、imageが表示されていないので、表示させるようお願いいたします。

<img width="491" alt="スクリーンショット 2021-04-28 13 44 55" src="https://user-images.githubusercontent.com/80612908/116347829-ebe86900-a827-11eb-965a-a2d9e87ac65d.png">

| 1.0 | イベント記事タイプ/インターン記事タイプで、imageが表示されていない - イベント記事タイプ/インターン記事タイプで、imageが表示されていないので、表示させるようお願いいたします。

<img width="491" alt="スクリーンショット 2021-04-28 13 44 55" src="https://user-images.githubusercontent.com/80612908/116347829-ebe86900-a827-11eb-965a-a2d9e87ac65d.png">

| priority | イベント記事タイプ インターン記事タイプで、imageが表示されていない イベント記事タイプ インターン記事タイプで、imageが表示されていないので、表示させるようお願いいたします。 img width alt スクリーンショット src | 1 |

157,463 | 6,001,310,433 | IssuesEvent | 2017-06-05 08:49:25 | commons-app/apps-android-commons | https://api.github.com/repos/commons-app/apps-android-commons | closed | Tutorial messages use line breaks instead of '\n' after being translated | bug high priority localization | Tutorial messages that use `\n` for stylistic purposes actually appear in https://translatewiki.net as if having actual line breaks. Here's an example original message:

```

<string name="tutorial_2_subtext">- Natural objects (flowers, animals, mountains)\n- Useful objects (bicycles, train stations)\n- Famous people (your mayor, Olympic athletes you met)</string>

```

Shows as https://i.imgur.com/PYVYX4A.png

And naturally gets translated into:

```

<string name="tutorial_2_subtext">- Objets naturels (fleurs, animaux, montagnes)

- Objets utiles (bicyclettes, gares ferroviaires)

- Personnes célèbres (votre maire, les athlètes olympiques que vous avez rencontrés)</string>

```

The problem? It seems that the thing that lays out text in android only respects `\n` and not actual line breaks, which means that the dashes that supposed to be list bullets appear _mid-line_.

Live example (using a different string, because it looks worse): https://i.imgur.com/SYYvbU7.png

Since translations come from https://translatewiki.net, it could be a problem on their side, but it may be easy to work around this for now. | 1.0 | Tutorial messages use line breaks instead of '\n' after being translated - Tutorial messages that use `\n` for stylistic purposes actually appear in https://translatewiki.net as if having actual line breaks. Here's an example original message:

```

<string name="tutorial_2_subtext">- Natural objects (flowers, animals, mountains)\n- Useful objects (bicycles, train stations)\n- Famous people (your mayor, Olympic athletes you met)</string>

```

Shows as https://i.imgur.com/PYVYX4A.png

And naturally gets translated into:

```

<string name="tutorial_2_subtext">- Objets naturels (fleurs, animaux, montagnes)

- Objets utiles (bicyclettes, gares ferroviaires)

- Personnes célèbres (votre maire, les athlètes olympiques que vous avez rencontrés)</string>

```

The problem? It seems that the thing that lays out text in android only respects `\n` and not actual line breaks, which means that the dashes that supposed to be list bullets appear _mid-line_.

Live example (using a different string, because it looks worse): https://i.imgur.com/SYYvbU7.png

Since translations come from https://translatewiki.net, it could be a problem on their side, but it may be easy to work around this for now. | priority | tutorial messages use line breaks instead of n after being translated tutorial messages that use n for stylistic purposes actually appear in as if having actual line breaks here s an example original message natural objects flowers animals mountains n useful objects bicycles train stations n famous people your mayor olympic athletes you met shows as and naturally gets translated into objets naturels fleurs animaux montagnes objets utiles bicyclettes gares ferroviaires personnes célèbres votre maire les athlètes olympiques que vous avez rencontrés the problem it seems that the thing that lays out text in android only respects n and not actual line breaks which means that the dashes that supposed to be list bullets appear mid line live example using a different string because it looks worse since translations come from it could be a problem on their side but it may be easy to work around this for now | 1 |

651,981 | 21,517,411,500 | IssuesEvent | 2022-04-28 11:14:09 | ProsperityGH/VrijwilligersHuis-Opdracht | https://api.github.com/repos/ProsperityGH/VrijwilligersHuis-Opdracht | closed | Form frontend | enhancement High Priority | - [x] opmaak formulier css

- [x] contactgegevens

- [x] categories

- [x] locatie

- [x] hoeveelheid

- [x] opmerkingen | 1.0 | Form frontend - - [x] opmaak formulier css

- [x] contactgegevens

- [x] categories

- [x] locatie

- [x] hoeveelheid

- [x] opmerkingen | priority | form frontend opmaak formulier css contactgegevens categories locatie hoeveelheid opmerkingen | 1 |

311,130 | 9,528,991,865 | IssuesEvent | 2019-04-29 09:58:08 | Abwasserrohr/SKYBLOCK.SK | https://api.github.com/repos/Abwasserrohr/SKYBLOCK.SK | closed | Island creation process still uses old lore newline | bug priority:high | Because of this, lava is unusable on new islands. MMake a newline instead of || using a list. | 1.0 | Island creation process still uses old lore newline - Because of this, lava is unusable on new islands. MMake a newline instead of || using a list. | priority | island creation process still uses old lore newline because of this lava is unusable on new islands mmake a newline instead of using a list | 1 |

740,042 | 25,733,704,703 | IssuesEvent | 2022-12-07 22:30:54 | coder/code-server | https://api.github.com/repos/coder/code-server | closed | [Bug]: Invalid version in about window after 4.9.0 upgrade | bug high-priority | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### OS/Web Information

- Web Browser: Chrome

- Local OS: Arch Linux

- Remote OS: Debian sid

- Remote Architecture: amd64

- `code-server --version`: 4.9.0 0502dfa1ff42ab8a43adb911f7bf21f8b09ee25f with Code 1.73.1

### Steps to Reproduce

1. Open code-server

2. Navigate to Menu->Help->About

### Expected

Expected to see `code-server: v4.9.0` in about dialog.

Expected to no see the blue icon in status bar suggesting to download code-server.

### Actual

There a `code-server: v$VERSION`

Also there's a blue icon in status bar suggesting to download code-server, despite having launched with `--disable-update-check`

### Logs

_No response_

### Screenshot/Video

_No response_

### Does this issue happen in VS Code or GitHub Codespaces?

- [X] I cannot reproduce this in VS Code.

- [X] I cannot reproduce this in GitHub Codespaces.

### Are you accessing code-server over HTTPS?

- [X] I am using HTTPS.

### Notes

_No response_ | 1.0 | [Bug]: Invalid version in about window after 4.9.0 upgrade - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### OS/Web Information

- Web Browser: Chrome

- Local OS: Arch Linux

- Remote OS: Debian sid

- Remote Architecture: amd64

- `code-server --version`: 4.9.0 0502dfa1ff42ab8a43adb911f7bf21f8b09ee25f with Code 1.73.1

### Steps to Reproduce

1. Open code-server

2. Navigate to Menu->Help->About

### Expected

Expected to see `code-server: v4.9.0` in about dialog.

Expected to no see the blue icon in status bar suggesting to download code-server.

### Actual

There a `code-server: v$VERSION`

Also there's a blue icon in status bar suggesting to download code-server, despite having launched with `--disable-update-check`

### Logs

_No response_

### Screenshot/Video

_No response_

### Does this issue happen in VS Code or GitHub Codespaces?

- [X] I cannot reproduce this in VS Code.

- [X] I cannot reproduce this in GitHub Codespaces.

### Are you accessing code-server over HTTPS?

- [X] I am using HTTPS.

### Notes

_No response_ | priority | invalid version in about window after upgrade is there an existing issue for this i have searched the existing issues os web information web browser chrome local os arch linux remote os debian sid remote architecture code server version with code steps to reproduce open code server navigate to menu help about expected expected to see code server in about dialog expected to no see the blue icon in status bar suggesting to download code server actual there a code server v version also there s a blue icon in status bar suggesting to download code server despite having launched with disable update check logs no response screenshot video no response does this issue happen in vs code or github codespaces i cannot reproduce this in vs code i cannot reproduce this in github codespaces are you accessing code server over https i am using https notes no response | 1 |

58,168 | 3,087,882,916 | IssuesEvent | 2015-08-25 14:11:48 | PeerSay/Atlas | https://api.github.com/repos/PeerSay/Atlas | opened | Add and Avg. PeerSay Grade columon for every product in the decision table | enhancement Priority: High | To the right of the current Grade field. | 1.0 | Add and Avg. PeerSay Grade columon for every product in the decision table - To the right of the current Grade field. | priority | add and avg peersay grade columon for every product in the decision table to the right of the current grade field | 1 |

372,592 | 11,017,387,510 | IssuesEvent | 2019-12-05 08:18:48 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Add goto definition support for annotations and errors | Area/Tooling Component/LanguageServer Points/3 Priority/High Type/Task | **Description**

Add the goto Definition support for annotations and errors

**Affected Versions**

v1.0.0 at least | 1.0 | Add goto definition support for annotations and errors - **Description**

Add the goto Definition support for annotations and errors

**Affected Versions**

v1.0.0 at least | priority | add goto definition support for annotations and errors description add the goto definition support for annotations and errors affected versions at least | 1 |

101,278 | 4,112,019,170 | IssuesEvent | 2016-06-07 08:55:03 | scalan/scalan | https://api.github.com/repos/scalan/scalan | closed | Generate separate nodes and short names for StructElems in GraphVizExport | high priority | Non-trivial structs make graph nodes very wide. | 1.0 | Generate separate nodes and short names for StructElems in GraphVizExport - Non-trivial structs make graph nodes very wide. | priority | generate separate nodes and short names for structelems in graphvizexport non trivial structs make graph nodes very wide | 1 |

782,044 | 27,484,810,055 | IssuesEvent | 2023-03-04 01:21:29 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Fused AdamW causes NaN loss | high priority module: optimizer triaged | ### 🐛 Describe the bug

There already has been an extended discussion of this issue over on the nanoGPT repository:

- karpathy/nanoGPT#167

I have been encouraged to submit a separate bug report here, since the issue seems to lie with pytorch. I have prepared a preconfigured [fork of nanoGPT](https://github.com/oddlama/nanoGPT_nan) where I provided my configuration and part of my dataset which causes the issue to appear. It now immediately produces NaNs after the first training step, but only if fused adam is in use.

The settings are basically the nanoGPT shakespeare configuration, but using a blocksize of 343 and vocab size of 2006. The data is quite sparse (only 1,3% is not 0, which significantly accelerates getting to the issue). I've included 1000 batches of my own real data so you will have the same conditions as I do. I'm training on a single 2080Ti, please look at a diff of the latest commit in this repository to see what I changed exactly - it's not much.

```bash

> git clone https://github.com/oddlama/nanoGPT_nan

> cd nanoGPT_nan

```

```bash

> python train.py config/nan.py --allow_fused=True

[...]

number of parameters: 11.39M

using fused AdamW: True

compiling the model... (takes a ~minute)

step 0: train loss 6.6010, val loss 6.6009

[2023-03-01 15:19:43,485] torch._inductor.utils: [WARNING] using triton random, expect difference from eager

/projects/venv/lib/python3.10/site-packages/torch/_functorch/aot_autograd.py:1251: UserWarning: Your compiler for AOTAutograd is returning a a function that doesn't take boxed arguments. Please wrap it with functorch.compile.make_boxed_func or handle the boxed arguments yourself. See https://github.com/pytorch/pytorch/pull/83137#issuecomment-1211320670 for rationale.

warnings.warn(

iter 0: loss 6.6793, time 22316.39ms, mfu -100.00%

iter 1: loss nan, time 3249.91ms, mfu -100.00%

```

```bash

> python train.py config/nan.py --allow_fused=False

[...]

number of parameters: 11.39M

using fused AdamW: False

compiling the model... (takes a ~minute)

step 0: train loss 6.6010, val loss 6.6009

[2023-03-01 15:20:26,110] torch._inductor.utils: [WARNING] using triton random, expect difference from eager

/projects/nanoGPT_nan/venv/lib/python3.10/site-packages/torch/_functorch/aot_autograd.py:1251: UserWarning: Your compiler for AOTAutograd is returning a a function that doesn't take boxed arguments. Please wrap it with functorch.compile.make_boxed_func or handle the boxed arguments yourself. See https://github.com/pytorch/pytorch/pull/83137#issuecomment-1211320670 for rationale.

warnings.warn(

iter 0: loss 6.6793, time 22286.14ms, mfu -100.00%

iter 1: loss 6.6811, time 3534.44ms, mfu -100.00%

iter 2: loss 6.6770, time 3365.06ms, mfu -100.00%

iter 3: loss 4.7208, time 3366.52ms, mfu -100.00%

iter 4: loss 3.0927, time 3365.22ms, mfu -100.00%

iter 5: loss 2.0694, time 3362.80ms, mfu 6.51%

iter 6: loss 1.4752, time 3360.81ms, mfu 6.51%

# continues to work fine

```

Hope this helps.

P.S.:

Can anyone explain what the warning means? Doesn't seem to be related to the issue, but is it a user error that should be fixed?

```

[2023-03-01 15:19:43,485] torch._inductor.utils: [WARNING] using triton random, expect difference from eager

/projects/venv/lib/python3.10/site-packages/torch/_functorch/aot_autograd.py:1251: UserWarning: Your compiler for AOTAutograd is returning a a function that doesn't take boxed arguments. Please wrap it with functorch.compile.make_boxed_func or handle the boxed arguments yourself. See https://github.com/pytorch/pytorch/pull/83137#issuecomment-1211320670 for rationale.

warnings.warn(

```

### Versions

```

Collecting environment information...

PyTorch version: 2.0.0.dev20230220+cu118

Is debug build: False

CUDA used to build PyTorch: 11.8

ROCM used to build PyTorch: N/A

OS: Arch Linux (x86_64)

GCC version: (GCC) 12.2.1 20230201

Clang version: 15.0.7

CMake version: version 3.25.0

Libc version: glibc-2.37

Python version: 3.10.9 (main, Dec 19 2022, 17:35:49) [GCC 12.2.0] (64-bit runtime)

Python platform: Linux-5.15.79.1-microsoft-standard-WSL2-x86_64-with-glibc2.37

Is CUDA available: True

CUDA runtime version: 11.8.89

CUDA_MODULE_LOADING set to: LAZY

GPU models and configuration: GPU 0: NVIDIA GeForce RTX 2080 Ti

Nvidia driver version: 516.94

cuDNN version: Could not collect

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 48 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 24

On-line CPU(s) list: 0-23

Vendor ID: AuthenticAMD

Model name: AMD Ryzen 9 5900X 12-Core Processor

CPU family: 25

Model: 33

Thread(s) per core: 2

Core(s) per socket: 12

Socket(s): 1

Stepping: 0

BogoMIPS: 7400.01

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ht syscall nx mmxext fxsr_opt pdpe1gb rdtscp lm constant_tsc rep_good nopl tsc_reliable nonstop_tsc cpuid extd_apicid pni pclmulqdq ssse3 fma cx16 sse4_1 sse4_2 movbe popcnt aes xsave avx f16c rdrand hypervisor lahf_lm cmp_legacy cr8_legacy abm sse4a misalignsse 3dnowprefetch osvw topoext ibrs ibpb stibp vmmcall fsgsbase bmi1 avx2 smep bmi2 erms rdseed adx smap clflushopt clwb sha_ni xsaveopt xsavec xgetbv1 xsaves clzero xsaveerptr arat umip vaes vpclmulqdq rdpid fsrm

Hypervisor vendor: Microsoft

Virtualization type: full

L1d cache: 384 KiB (12 instances)

L1i cache: 384 KiB (12 instances)

L2 cache: 6 MiB (12 instances)

L3 cache: 32 MiB (1 instance)

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Not affected

Vulnerability Mmio stale data: Not affected

Vulnerability Retbleed: Not affected

Vulnerability Spec store bypass: Vulnerable

Vulnerability Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Vulnerability Spectre v2: Mitigation; Retpolines, IBPB conditional, IBRS_FW, STIBP conditional, RSB filling, PBRSB-eIBRS Not affected

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Versions of relevant libraries:

[pip3] mypy-extensions==1.0.0

[pip3] numpy==1.24.1

[pip3] pytorch-triton==2.0.0+d54c04abe2

[pip3] torch==2.0.0.dev20230220+cu118

[pip3] torchaudio==2.0.0.dev20230223+cu118

[pip3] torchinfo==1.7.2

[pip3] torchsummary==1.5.1

[pip3] torchvision==0.15.0.dev20230223+cu118

[conda] Could not collect

```

cc @ezyang @gchanan @zou3519 @vincentqb @jbschlosser @albanD @janeyx99 | 1.0 | Fused AdamW causes NaN loss - ### 🐛 Describe the bug

There already has been an extended discussion of this issue over on the nanoGPT repository:

- karpathy/nanoGPT#167

I have been encouraged to submit a separate bug report here, since the issue seems to lie with pytorch. I have prepared a preconfigured [fork of nanoGPT](https://github.com/oddlama/nanoGPT_nan) where I provided my configuration and part of my dataset which causes the issue to appear. It now immediately produces NaNs after the first training step, but only if fused adam is in use.

The settings are basically the nanoGPT shakespeare configuration, but using a blocksize of 343 and vocab size of 2006. The data is quite sparse (only 1,3% is not 0, which significantly accelerates getting to the issue). I've included 1000 batches of my own real data so you will have the same conditions as I do. I'm training on a single 2080Ti, please look at a diff of the latest commit in this repository to see what I changed exactly - it's not much.

```bash

> git clone https://github.com/oddlama/nanoGPT_nan

> cd nanoGPT_nan

```

```bash

> python train.py config/nan.py --allow_fused=True

[...]

number of parameters: 11.39M

using fused AdamW: True

compiling the model... (takes a ~minute)

step 0: train loss 6.6010, val loss 6.6009

[2023-03-01 15:19:43,485] torch._inductor.utils: [WARNING] using triton random, expect difference from eager

/projects/venv/lib/python3.10/site-packages/torch/_functorch/aot_autograd.py:1251: UserWarning: Your compiler for AOTAutograd is returning a a function that doesn't take boxed arguments. Please wrap it with functorch.compile.make_boxed_func or handle the boxed arguments yourself. See https://github.com/pytorch/pytorch/pull/83137#issuecomment-1211320670 for rationale.

warnings.warn(

iter 0: loss 6.6793, time 22316.39ms, mfu -100.00%

iter 1: loss nan, time 3249.91ms, mfu -100.00%

```

```bash

> python train.py config/nan.py --allow_fused=False

[...]

number of parameters: 11.39M

using fused AdamW: False

compiling the model... (takes a ~minute)

step 0: train loss 6.6010, val loss 6.6009

[2023-03-01 15:20:26,110] torch._inductor.utils: [WARNING] using triton random, expect difference from eager

/projects/nanoGPT_nan/venv/lib/python3.10/site-packages/torch/_functorch/aot_autograd.py:1251: UserWarning: Your compiler for AOTAutograd is returning a a function that doesn't take boxed arguments. Please wrap it with functorch.compile.make_boxed_func or handle the boxed arguments yourself. See https://github.com/pytorch/pytorch/pull/83137#issuecomment-1211320670 for rationale.

warnings.warn(

iter 0: loss 6.6793, time 22286.14ms, mfu -100.00%

iter 1: loss 6.6811, time 3534.44ms, mfu -100.00%

iter 2: loss 6.6770, time 3365.06ms, mfu -100.00%

iter 3: loss 4.7208, time 3366.52ms, mfu -100.00%

iter 4: loss 3.0927, time 3365.22ms, mfu -100.00%

iter 5: loss 2.0694, time 3362.80ms, mfu 6.51%

iter 6: loss 1.4752, time 3360.81ms, mfu 6.51%

# continues to work fine

```

Hope this helps.

P.S.:

Can anyone explain what the warning means? Doesn't seem to be related to the issue, but is it a user error that should be fixed?

```

[2023-03-01 15:19:43,485] torch._inductor.utils: [WARNING] using triton random, expect difference from eager

/projects/venv/lib/python3.10/site-packages/torch/_functorch/aot_autograd.py:1251: UserWarning: Your compiler for AOTAutograd is returning a a function that doesn't take boxed arguments. Please wrap it with functorch.compile.make_boxed_func or handle the boxed arguments yourself. See https://github.com/pytorch/pytorch/pull/83137#issuecomment-1211320670 for rationale.

warnings.warn(

```

### Versions

```

Collecting environment information...

PyTorch version: 2.0.0.dev20230220+cu118

Is debug build: False

CUDA used to build PyTorch: 11.8

ROCM used to build PyTorch: N/A

OS: Arch Linux (x86_64)

GCC version: (GCC) 12.2.1 20230201

Clang version: 15.0.7

CMake version: version 3.25.0

Libc version: glibc-2.37

Python version: 3.10.9 (main, Dec 19 2022, 17:35:49) [GCC 12.2.0] (64-bit runtime)

Python platform: Linux-5.15.79.1-microsoft-standard-WSL2-x86_64-with-glibc2.37

Is CUDA available: True

CUDA runtime version: 11.8.89

CUDA_MODULE_LOADING set to: LAZY

GPU models and configuration: GPU 0: NVIDIA GeForce RTX 2080 Ti

Nvidia driver version: 516.94

cuDNN version: Could not collect

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 48 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 24

On-line CPU(s) list: 0-23

Vendor ID: AuthenticAMD

Model name: AMD Ryzen 9 5900X 12-Core Processor

CPU family: 25

Model: 33

Thread(s) per core: 2

Core(s) per socket: 12

Socket(s): 1

Stepping: 0

BogoMIPS: 7400.01

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ht syscall nx mmxext fxsr_opt pdpe1gb rdtscp lm constant_tsc rep_good nopl tsc_reliable nonstop_tsc cpuid extd_apicid pni pclmulqdq ssse3 fma cx16 sse4_1 sse4_2 movbe popcnt aes xsave avx f16c rdrand hypervisor lahf_lm cmp_legacy cr8_legacy abm sse4a misalignsse 3dnowprefetch osvw topoext ibrs ibpb stibp vmmcall fsgsbase bmi1 avx2 smep bmi2 erms rdseed adx smap clflushopt clwb sha_ni xsaveopt xsavec xgetbv1 xsaves clzero xsaveerptr arat umip vaes vpclmulqdq rdpid fsrm

Hypervisor vendor: Microsoft

Virtualization type: full

L1d cache: 384 KiB (12 instances)

L1i cache: 384 KiB (12 instances)

L2 cache: 6 MiB (12 instances)

L3 cache: 32 MiB (1 instance)

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Not affected

Vulnerability Mmio stale data: Not affected

Vulnerability Retbleed: Not affected

Vulnerability Spec store bypass: Vulnerable

Vulnerability Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Vulnerability Spectre v2: Mitigation; Retpolines, IBPB conditional, IBRS_FW, STIBP conditional, RSB filling, PBRSB-eIBRS Not affected

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Versions of relevant libraries:

[pip3] mypy-extensions==1.0.0

[pip3] numpy==1.24.1

[pip3] pytorch-triton==2.0.0+d54c04abe2

[pip3] torch==2.0.0.dev20230220+cu118

[pip3] torchaudio==2.0.0.dev20230223+cu118

[pip3] torchinfo==1.7.2

[pip3] torchsummary==1.5.1

[pip3] torchvision==0.15.0.dev20230223+cu118

[conda] Could not collect

```

cc @ezyang @gchanan @zou3519 @vincentqb @jbschlosser @albanD @janeyx99 | priority | fused adamw causes nan loss 🐛 describe the bug there already has been an extended discussion of this issue over on the nanogpt repository karpathy nanogpt i have been encouraged to submit a separate bug report here since the issue seems to lie with pytorch i have prepared a preconfigured where i provided my configuration and part of my dataset which causes the issue to appear it now immediately produces nans after the first training step but only if fused adam is in use the settings are basically the nanogpt shakespeare configuration but using a blocksize of and vocab size of the data is quite sparse only is not which significantly accelerates getting to the issue i ve included batches of my own real data so you will have the same conditions as i do i m training on a single please look at a diff of the latest commit in this repository to see what i changed exactly it s not much bash git clone cd nanogpt nan bash python train py config nan py allow fused true number of parameters using fused adamw true compiling the model takes a minute step train loss val loss torch inductor utils using triton random expect difference from eager projects venv lib site packages torch functorch aot autograd py userwarning your compiler for aotautograd is returning a a function that doesn t take boxed arguments please wrap it with functorch compile make boxed func or handle the boxed arguments yourself see for rationale warnings warn iter loss time mfu iter loss nan time mfu bash python train py config nan py allow fused false number of parameters using fused adamw false compiling the model takes a minute step train loss val loss torch inductor utils using triton random expect difference from eager projects nanogpt nan venv lib site packages torch functorch aot autograd py userwarning your compiler for aotautograd is returning a a function that doesn t take boxed arguments please wrap it with functorch compile make boxed func or handle the boxed arguments yourself see for rationale warnings warn iter loss time mfu iter loss time mfu iter loss time mfu iter loss time mfu iter loss time mfu iter loss time mfu iter loss time mfu continues to work fine hope this helps p s can anyone explain what the warning means doesn t seem to be related to the issue but is it a user error that should be fixed torch inductor utils using triton random expect difference from eager projects venv lib site packages torch functorch aot autograd py userwarning your compiler for aotautograd is returning a a function that doesn t take boxed arguments please wrap it with functorch compile make boxed func or handle the boxed arguments yourself see for rationale warnings warn versions collecting environment information pytorch version is debug build false cuda used to build pytorch rocm used to build pytorch n a os arch linux gcc version gcc clang version cmake version version libc version glibc python version main dec bit runtime python platform linux microsoft standard with is cuda available true cuda runtime version cuda module loading set to lazy gpu models and configuration gpu nvidia geforce rtx ti nvidia driver version cudnn version could not collect hip runtime version n a miopen runtime version n a is xnnpack available true cpu architecture cpu op mode s bit bit address sizes bits physical bits virtual byte order little endian cpu s on line cpu s list vendor id authenticamd model name amd ryzen core processor cpu family model thread s per core core s per socket socket s stepping bogomips flags fpu vme de pse tsc msr pae mce apic sep mtrr pge mca cmov pat clflush mmx fxsr sse ht syscall nx mmxext fxsr opt rdtscp lm constant tsc rep good nopl tsc reliable nonstop tsc cpuid extd apicid pni pclmulqdq fma movbe popcnt aes xsave avx rdrand hypervisor lahf lm cmp legacy legacy abm misalignsse osvw topoext ibrs ibpb stibp vmmcall fsgsbase smep erms rdseed adx smap clflushopt clwb sha ni xsaveopt xsavec xsaves clzero xsaveerptr arat umip vaes vpclmulqdq rdpid fsrm hypervisor vendor microsoft virtualization type full cache kib instances cache kib instances cache mib instances cache mib instance vulnerability itlb multihit not affected vulnerability not affected vulnerability mds not affected vulnerability meltdown not affected vulnerability mmio stale data not affected vulnerability retbleed not affected vulnerability spec store bypass vulnerable vulnerability spectre mitigation usercopy swapgs barriers and user pointer sanitization vulnerability spectre mitigation retpolines ibpb conditional ibrs fw stibp conditional rsb filling pbrsb eibrs not affected vulnerability srbds not affected vulnerability tsx async abort not affected versions of relevant libraries mypy extensions numpy pytorch triton torch torchaudio torchinfo torchsummary torchvision could not collect cc ezyang gchanan vincentqb jbschlosser alband | 1 |

173,671 | 6,529,399,966 | IssuesEvent | 2017-08-30 11:28:35 | donniedarkoparko/app | https://api.github.com/repos/donniedarkoparko/app | closed | [Both] - Place - 2x messages when signing out and back in | bug priority: high | It seems like the Place listeners aren't getting detached on sign out. | 1.0 | [Both] - Place - 2x messages when signing out and back in - It seems like the Place listeners aren't getting detached on sign out. | priority | place messages when signing out and back in it seems like the place listeners aren t getting detached on sign out | 1 |

117,568 | 4,718,547,436 | IssuesEvent | 2016-10-17 03:08:26 | google/paco | https://api.github.com/repos/google/paco | closed | iOS - After joining show Post Join Install Instructions | Priority-High | The experiment provides a field called postJoinInstallInstructions that contains instructions to show the user once they consent to the informed consent.

This text should be shown above the Edit Alert Times text. It might scroll, but, we still need to show the Edit Alert Times button. Also, this is where we might ask for the location permission for an experiment. | 1.0 | iOS - After joining show Post Join Install Instructions - The experiment provides a field called postJoinInstallInstructions that contains instructions to show the user once they consent to the informed consent.

This text should be shown above the Edit Alert Times text. It might scroll, but, we still need to show the Edit Alert Times button. Also, this is where we might ask for the location permission for an experiment. | priority | ios after joining show post join install instructions the experiment provides a field called postjoininstallinstructions that contains instructions to show the user once they consent to the informed consent this text should be shown above the edit alert times text it might scroll but we still need to show the edit alert times button also this is where we might ask for the location permission for an experiment | 1 |

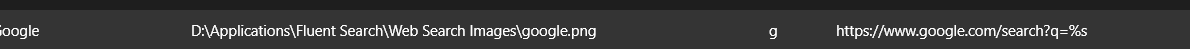

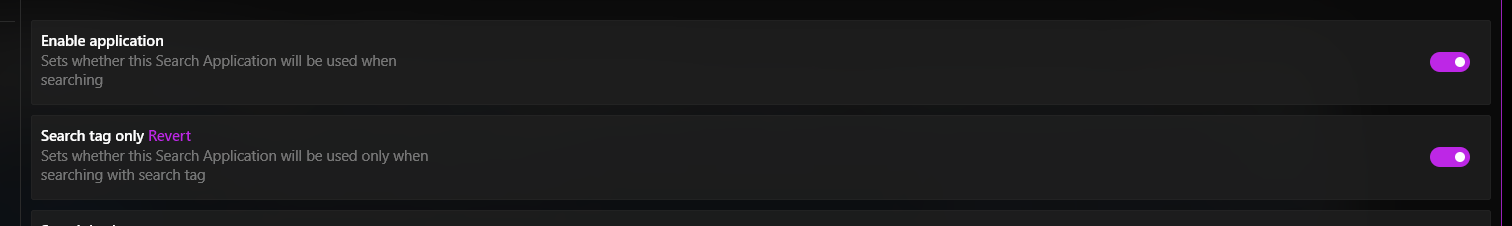

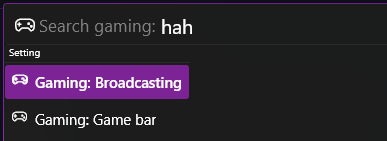

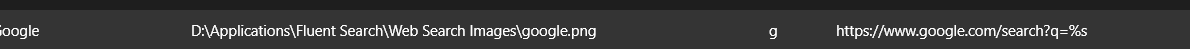

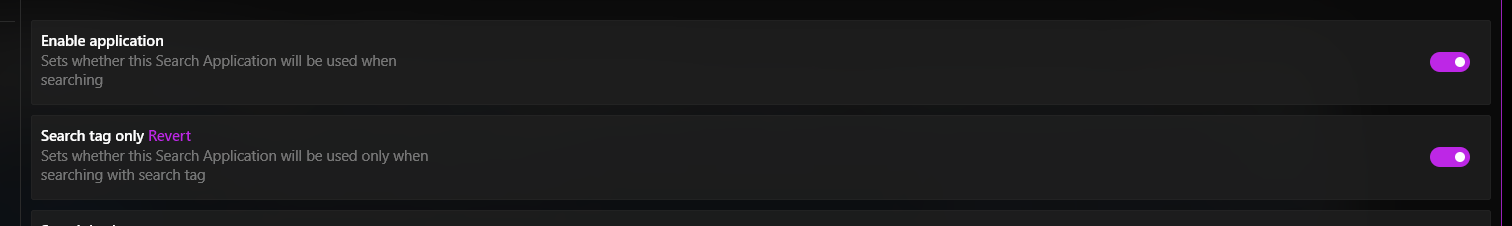

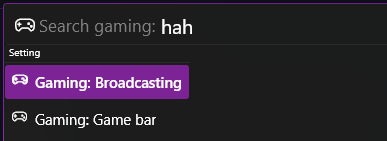

562,662 | 16,666,305,913 | IssuesEvent | 2021-06-07 04:44:25 | adirh3/Fluent-Search | https://api.github.com/repos/adirh3/Fluent-Search | closed | High Priority: Web Searches broken | High Priority bug | **Describe the bug**

Web searches are not triggering even if they're enabled. It might have broke the version _before_ 0.9.85.1

**To Reproduce**

Steps to reproduce the behavior:

1. Type "g <search string>

2. Observe that FS doesn't search google.

I have google in web search:

Search turned on:

**Expected behavior**

Google search should be triggered

**Screenshots**

Instead, I get this:

100% reproducible

**Desktop (please complete the following information):**

- Windows 10 LTSC v1809

- Fluent Search Version [e.g. 0.9.85.1] although it might have broken version before.

| 1.0 | High Priority: Web Searches broken - **Describe the bug**

Web searches are not triggering even if they're enabled. It might have broke the version _before_ 0.9.85.1

**To Reproduce**

Steps to reproduce the behavior:

1. Type "g <search string>

2. Observe that FS doesn't search google.

I have google in web search:

Search turned on:

**Expected behavior**

Google search should be triggered

**Screenshots**

Instead, I get this:

100% reproducible

**Desktop (please complete the following information):**

- Windows 10 LTSC v1809

- Fluent Search Version [e.g. 0.9.85.1] although it might have broken version before.

| priority | high priority web searches broken describe the bug web searches are not triggering even if they re enabled it might have broke the version before to reproduce steps to reproduce the behavior type g observe that fs doesn t search google i have google in web search search turned on expected behavior google search should be triggered screenshots instead i get this reproducible desktop please complete the following information windows ltsc fluent search version although it might have broken version before | 1 |

726,016 | 24,984,619,235 | IssuesEvent | 2022-11-02 14:15:03 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | ASAN shard 4 started to OOM after unrelated commit | high priority module: ci triaged | ### 🐛 Describe the bug

See https://hud.pytorch.org/hud/pytorch/pytorch/master/1?per_page=50&name_filter=asan%20%2F%20test%20(default%2C%204%2C%205

Error looks as follows:

```

test_serialization_offset_filelike_weights_only_False (__main__.TestOldSerialization) ... =================================================================

==1033==ERROR: AddressSanitizer: allocator is out of memory trying to allocate 0x80000000 bytes

```

First commit (clearly unrelated): https://hud.pytorch.org/pytorch/pytorch/commit/a51da28551e9f13a7afca5bbc829a8d9abced44e

### Versions

CI | 1.0 | ASAN shard 4 started to OOM after unrelated commit - ### 🐛 Describe the bug

See https://hud.pytorch.org/hud/pytorch/pytorch/master/1?per_page=50&name_filter=asan%20%2F%20test%20(default%2C%204%2C%205

Error looks as follows:

```

test_serialization_offset_filelike_weights_only_False (__main__.TestOldSerialization) ... =================================================================

==1033==ERROR: AddressSanitizer: allocator is out of memory trying to allocate 0x80000000 bytes

```

First commit (clearly unrelated): https://hud.pytorch.org/pytorch/pytorch/commit/a51da28551e9f13a7afca5bbc829a8d9abced44e

### Versions

CI | priority | asan shard started to oom after unrelated commit 🐛 describe the bug see error looks as follows test serialization offset filelike weights only false main testoldserialization error addresssanitizer allocator is out of memory trying to allocate bytes first commit clearly unrelated versions ci | 1 |

354,255 | 10,564,549,243 | IssuesEvent | 2019-10-05 02:56:06 | python/mypy | https://api.github.com/repos/python/mypy | closed | mypy 0.730 no longer recognizes x**y literals to be ints | bug needs discussion priority-0-high | I noticed a regression when switching from 0.720 to 0.730. Here's the code:

```py

def f() -> int:

size = 2 ** 20

reveal_type(size)

return size

```

**Expected behavior**

With mypy 0.720 (no matter whether the new or the old semantic analyzer is used), I get:

```

$ mypy --strict f.py

f.py:3: note: Revealed type is 'builtins.int'

```

**Actual behavior**

With mypy 0.730 (Python 3.6.8), I get:

```

$ mypy --strict f.py

f.py:3: note: Revealed type is 'Any'

f.py:4: error: Returning Any from function declared to return "int"

```

**Notes**

The behavior from 0.720 is what I'd expect.

One thing I noticed is that the typeshed indeed declares that `__pow__` for two `int`s returns `Any`. This makes sense because `2 ** -2` is a `float`. The only recent change which I can see in the typeshed though is the following:

```diff

--- a/stdlib/2and3/builtins.pyi

+++ b/stdlib/2and3/builtins.pyi

@@ -168,7 +168,7 @@ class int:

def __rtruediv__(self, x: int) -> float: ...

def __rmod__(self, x: int) -> int: ...

def __rdivmod__(self, x: int) -> Tuple[int, int]: ...

- def __pow__(self, x: int) -> Any: ... # Return type can be int or float, depending on x.

+ def __pow__(self, __x: int, __modulo: Optional[int] = ...) -> Any: ... # Return type can be int or float, depending on x.

def __rpow__(self, x: int) -> Any: ...

def __and__(self, n: int) -> int: ...

```

(see: https://github.com/python/typeshed/commit/b2cd972b1760d850694772fe763e3027f131f223)

This means that the `Any` return type has always been there but `mypy` used to correctly recognize that `2 ** 20` is an `int`. It's difficult for me to tell whether the behavior in 0.730 is a regression compared to 0.720 or actually the desired behavior – it seems to me that `mypy` follows the typeshed more precisely here. However, maybe it's worth to handle literals like `2 ** 30` in a way that `mypy` knows that this is always `int`? | 1.0 | mypy 0.730 no longer recognizes x**y literals to be ints - I noticed a regression when switching from 0.720 to 0.730. Here's the code:

```py

def f() -> int:

size = 2 ** 20

reveal_type(size)

return size

```

**Expected behavior**

With mypy 0.720 (no matter whether the new or the old semantic analyzer is used), I get:

```

$ mypy --strict f.py

f.py:3: note: Revealed type is 'builtins.int'

```

**Actual behavior**

With mypy 0.730 (Python 3.6.8), I get:

```

$ mypy --strict f.py

f.py:3: note: Revealed type is 'Any'

f.py:4: error: Returning Any from function declared to return "int"

```

**Notes**

The behavior from 0.720 is what I'd expect.

One thing I noticed is that the typeshed indeed declares that `__pow__` for two `int`s returns `Any`. This makes sense because `2 ** -2` is a `float`. The only recent change which I can see in the typeshed though is the following:

```diff

--- a/stdlib/2and3/builtins.pyi

+++ b/stdlib/2and3/builtins.pyi

@@ -168,7 +168,7 @@ class int:

def __rtruediv__(self, x: int) -> float: ...

def __rmod__(self, x: int) -> int: ...

def __rdivmod__(self, x: int) -> Tuple[int, int]: ...

- def __pow__(self, x: int) -> Any: ... # Return type can be int or float, depending on x.

+ def __pow__(self, __x: int, __modulo: Optional[int] = ...) -> Any: ... # Return type can be int or float, depending on x.

def __rpow__(self, x: int) -> Any: ...

def __and__(self, n: int) -> int: ...

```

(see: https://github.com/python/typeshed/commit/b2cd972b1760d850694772fe763e3027f131f223)

This means that the `Any` return type has always been there but `mypy` used to correctly recognize that `2 ** 20` is an `int`. It's difficult for me to tell whether the behavior in 0.730 is a regression compared to 0.720 or actually the desired behavior – it seems to me that `mypy` follows the typeshed more precisely here. However, maybe it's worth to handle literals like `2 ** 30` in a way that `mypy` knows that this is always `int`? | priority | mypy no longer recognizes x y literals to be ints i noticed a regression when switching from to here s the code py def f int size reveal type size return size expected behavior with mypy no matter whether the new or the old semantic analyzer is used i get mypy strict f py f py note revealed type is builtins int actual behavior with mypy python i get mypy strict f py f py note revealed type is any f py error returning any from function declared to return int notes the behavior from is what i d expect one thing i noticed is that the typeshed indeed declares that pow for two int s returns any this makes sense because is a float the only recent change which i can see in the typeshed though is the following diff a stdlib builtins pyi b stdlib builtins pyi class int def rtruediv self x int float def rmod self x int int def rdivmod self x int tuple def pow self x int any return type can be int or float depending on x def pow self x int modulo optional any return type can be int or float depending on x def rpow self x int any def and self n int int see this means that the any return type has always been there but mypy used to correctly recognize that is an int it s difficult for me to tell whether the behavior in is a regression compared to or actually the desired behavior – it seems to me that mypy follows the typeshed more precisely here however maybe it s worth to handle literals like in a way that mypy knows that this is always int | 1 |

173,854 | 6,533,216,377 | IssuesEvent | 2017-08-31 04:49:11 | JujaLabs/gamification-slack-bot | https://api.github.com/repos/JujaLabs/gamification-slack-bot | closed | Refactoring SlackCommandController and DefaultGamificationService | enhancement High priority | move slackNameHandlerService from GamificationSlackCommandController to DefaultGamificationService

sample code in the keeper-slack-bot | 1.0 | Refactoring SlackCommandController and DefaultGamificationService - move slackNameHandlerService from GamificationSlackCommandController to DefaultGamificationService

sample code in the keeper-slack-bot | priority | refactoring slackcommandcontroller and defaultgamificationservice move slacknamehandlerservice from gamificationslackcommandcontroller to defaultgamificationservice sample code in the keeper slack bot | 1 |

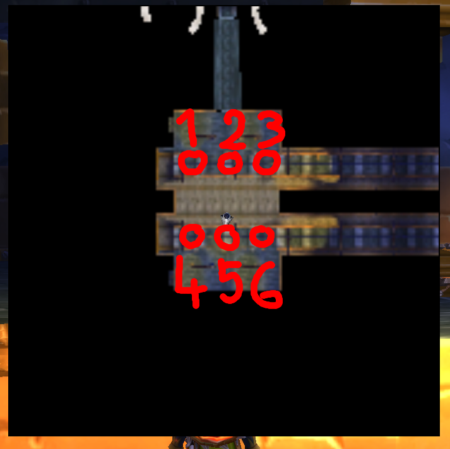

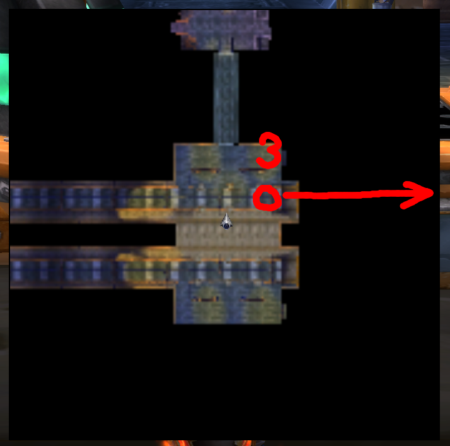

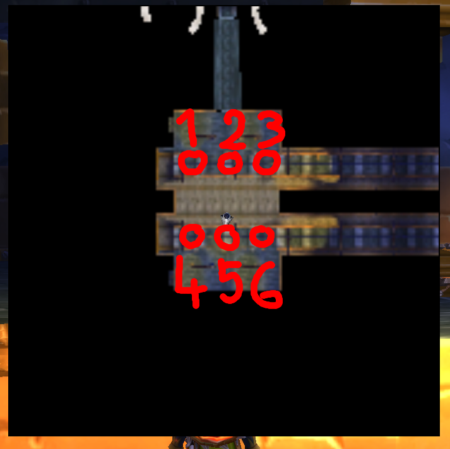

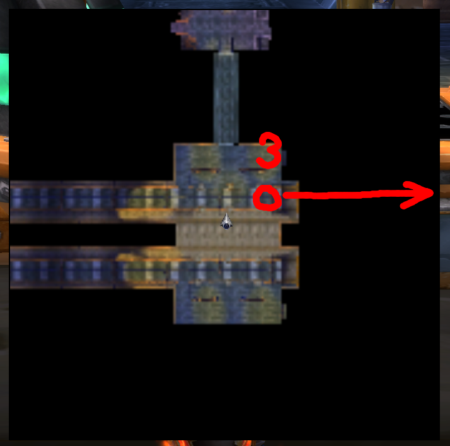

304,844 | 9,336,446,078 | IssuesEvent | 2019-03-28 21:15:38 | thebarrensissues/Issues | https://api.github.com/repos/thebarrensissues/Issues | closed | Deeprun Tram movement bugged. Trams moving through walls/other Trams | bug gamebreaking gameobject high priority | **Description:**

The movement of the Deeprun Trams is bugged, causing trams to disappear into walls or fly through other trams before disappearing into walls.

This will cause players to be flung into walls and dropped to the ground.

Fig1. _map of stormwind side tram station. trams numbered._

**Current behaviour:**

Please refer to Fig1.

Trams 1,2,4,5 all have correct movement and behaviour.

Tram 3 will move to the **west** when on the ironforge side, causing it to move into a wall.

Tram 6 will move to the **east** when on the stormwind side, causing it to clip through trams 4 and 5 before moving into a wall.

Tram 3 will not be present on the stormwind side station.

Tram 6 will not be present on the ironforge side station.

**Expected behaviour:**

All trams should not intercept each other nor move into walls.

**Steps to reproduce the problem:**

1. Go to the Deeprun tram, either at ironforge or stormwind

2. Observe the northern-most tram (1,2,3)

3. Observe the southern-most tram (4,5,6)

4. notice how trams 3 and 6 are bugged

**rev. hash/commit:**

**rev. 10725** | 1.0 | Deeprun Tram movement bugged. Trams moving through walls/other Trams - **Description:**

The movement of the Deeprun Trams is bugged, causing trams to disappear into walls or fly through other trams before disappearing into walls.

This will cause players to be flung into walls and dropped to the ground.

Fig1. _map of stormwind side tram station. trams numbered._

**Current behaviour:**

Please refer to Fig1.

Trams 1,2,4,5 all have correct movement and behaviour.

Tram 3 will move to the **west** when on the ironforge side, causing it to move into a wall.

Tram 6 will move to the **east** when on the stormwind side, causing it to clip through trams 4 and 5 before moving into a wall.

Tram 3 will not be present on the stormwind side station.

Tram 6 will not be present on the ironforge side station.

**Expected behaviour:**

All trams should not intercept each other nor move into walls.

**Steps to reproduce the problem:**

1. Go to the Deeprun tram, either at ironforge or stormwind

2. Observe the northern-most tram (1,2,3)

3. Observe the southern-most tram (4,5,6)

4. notice how trams 3 and 6 are bugged

**rev. hash/commit:**

**rev. 10725** | priority | deeprun tram movement bugged trams moving through walls other trams description the movement of the deeprun trams is bugged causing trams to disappear into walls or fly through other trams before disappearing into walls this will cause players to be flung into walls and dropped to the ground map of stormwind side tram station trams numbered current behaviour please refer to trams all have correct movement and behaviour tram will move to the west when on the ironforge side causing it to move into a wall tram will move to the east when on the stormwind side causing it to clip through trams and before moving into a wall tram will not be present on the stormwind side station tram will not be present on the ironforge side station expected behaviour all trams should not intercept each other nor move into walls steps to reproduce the problem go to the deeprun tram either at ironforge or stormwind observe the northern most tram observe the southern most tram notice how trams and are bugged rev hash commit rev | 1 |

122,242 | 4,828,699,874 | IssuesEvent | 2016-11-07 16:54:29 | meumobi/sitebuilder | https://api.github.com/repos/meumobi/sitebuilder | opened | Push not received | bug high priority push notification | I've sent push on various sites, with segmented audience and not, but never received push on my ios.

Can't digg further on my investigation due to issue #431 | 1.0 | Push not received - I've sent push on various sites, with segmented audience and not, but never received push on my ios.

Can't digg further on my investigation due to issue #431 | priority | push not received i ve sent push on various sites with segmented audience and not but never received push on my ios can t digg further on my investigation due to issue | 1 |

771,727 | 27,090,642,002 | IssuesEvent | 2023-02-14 20:44:34 | larray-project/larray | https://api.github.com/repos/larray-project/larray | closed | add some axes info in error message on invalid label | enhancement difficulty: low priority: high work in progress size: small | Having something **similar** to AxisCollection.info added to the "%r is not a valid label for any axis" message would very helpful. I have actually hacked my version several times to do just that and found it very helpful. I would only avoid displaying the first line with the array shape. | 1.0 | add some axes info in error message on invalid label - Having something **similar** to AxisCollection.info added to the "%r is not a valid label for any axis" message would very helpful. I have actually hacked my version several times to do just that and found it very helpful. I would only avoid displaying the first line with the array shape. | priority | add some axes info in error message on invalid label having something similar to axiscollection info added to the r is not a valid label for any axis message would very helpful i have actually hacked my version several times to do just that and found it very helpful i would only avoid displaying the first line with the array shape | 1 |

807,032 | 29,932,709,297 | IssuesEvent | 2023-06-22 10:34:57 | NikkelM/Random-YouTube-Video | https://api.github.com/repos/NikkelM/Random-YouTube-Video | closed | [Bug] If fetching videos takes too long, the background worker shuts down | Bug Priority: High | Example channel as reported on the Chrome Web store: https://www.youtube.com/@EpicSkillshot | 1.0 | [Bug] If fetching videos takes too long, the background worker shuts down - Example channel as reported on the Chrome Web store: https://www.youtube.com/@EpicSkillshot | priority | if fetching videos takes too long the background worker shuts down example channel as reported on the chrome web store | 1 |

493,708 | 14,236,987,033 | IssuesEvent | 2020-11-18 16:39:15 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | CRAFTERCMS-2185: Cropping an image should create a new image with a name that indicates the crop details | new feature priority: high | Original JIRA Fix Versions:

2.5.2, Original JIRA Components:

Studio,

----------

Original JIRA Description: :

----------

Original JIRA Comments:

Update Crop Service code to create a custom filename if supplied

Update dialog to use custom name, if name exists offer to rename (but pre-populate field with value)

=======================

import java.awt.image.BufferedImage

import java.io.ByteArrayOutputStream

import java.io.ByteArrayInputStream

import java.io.File

import scripts.api.ContentServices

import javax.imageio.ImageIO

def req = request

def site = params.site

def imgPath = params.path

def newName = params.newname

def t = params.t.toInteger()

def l = params.l.toInteger()

def w = params.w.toInteger()

def h = params.h.toInteger()

def newName = params.newFileName

def imgToCrop = null

def imgCropped = null

def imgCroppedOutStream = null

def imgCroppedInStream = null

def imgType = imgPath.substring(imgPath.indexOf(".")+1)

def imgPathOnly = imgPath.substring(0, imgPath.lastIndexOf("/"))

def imgFilename = imgPath.substring(imgPath.lastIndexOf("/")+1)

def newImgFilename =(newFileName!=null) ? newFileName: imgFilename

if (newName) {

imgFilename = newName;

}

def context = ContentServices.createContext(applicationContext, request)

imgToCrop = ImageIO.read(ContentServices.getContentAsStream(site, imgPath, context))

imgCropped = imgToCrop.getSubimage(l, t, w, h)

imgCroppedOutStream = new ByteArrayOutputStream()

ImageIO.write(imgCropped, imgType, imgCroppedOutStream)

imgCroppedInStream = new ByteArrayInputStream(imgCroppedOutStream.toByteArray())

def result = ContentServices.writeContentAsset(context, site, imgPathOnly, newImgFilename, imgCroppedInStream, "true", "", "", "", "false", "true", null);

return result

----------

UI should recommend file name

imgFilenameNoExt+"-t"+t+"-l"+l+"-h"+h+"-w"+w+"."+imgType

----------

Original JIRA:

http://issues.craftercms.org/browse/CRAFTERCMS-2185

---------- | 1.0 | CRAFTERCMS-2185: Cropping an image should create a new image with a name that indicates the crop details - Original JIRA Fix Versions:

2.5.2, Original JIRA Components:

Studio,

----------

Original JIRA Description: :

----------

Original JIRA Comments:

Update Crop Service code to create a custom filename if supplied

Update dialog to use custom name, if name exists offer to rename (but pre-populate field with value)

=======================

import java.awt.image.BufferedImage

import java.io.ByteArrayOutputStream

import java.io.ByteArrayInputStream

import java.io.File

import scripts.api.ContentServices

import javax.imageio.ImageIO

def req = request

def site = params.site

def imgPath = params.path

def newName = params.newname

def t = params.t.toInteger()

def l = params.l.toInteger()

def w = params.w.toInteger()

def h = params.h.toInteger()

def newName = params.newFileName

def imgToCrop = null

def imgCropped = null

def imgCroppedOutStream = null

def imgCroppedInStream = null

def imgType = imgPath.substring(imgPath.indexOf(".")+1)

def imgPathOnly = imgPath.substring(0, imgPath.lastIndexOf("/"))

def imgFilename = imgPath.substring(imgPath.lastIndexOf("/")+1)

def newImgFilename =(newFileName!=null) ? newFileName: imgFilename

if (newName) {

imgFilename = newName;

}

def context = ContentServices.createContext(applicationContext, request)

imgToCrop = ImageIO.read(ContentServices.getContentAsStream(site, imgPath, context))

imgCropped = imgToCrop.getSubimage(l, t, w, h)

imgCroppedOutStream = new ByteArrayOutputStream()

ImageIO.write(imgCropped, imgType, imgCroppedOutStream)

imgCroppedInStream = new ByteArrayInputStream(imgCroppedOutStream.toByteArray())

def result = ContentServices.writeContentAsset(context, site, imgPathOnly, newImgFilename, imgCroppedInStream, "true", "", "", "", "false", "true", null);

return result

----------

UI should recommend file name

imgFilenameNoExt+"-t"+t+"-l"+l+"-h"+h+"-w"+w+"."+imgType

----------

Original JIRA:

http://issues.craftercms.org/browse/CRAFTERCMS-2185

---------- | priority | craftercms cropping an image should create a new image with a name that indicates the crop details original jira fix versions original jira components studio original jira description original jira comments update crop service code to create a custom filename if supplied update dialog to use custom name if name exists offer to rename but pre populate field with value import java awt image bufferedimage import java io bytearrayoutputstream import java io bytearrayinputstream import java io file import scripts api contentservices import javax imageio imageio def req request def site params site def imgpath params path def newname params newname def t params t tointeger def l params l tointeger def w params w tointeger def h params h tointeger def newname params newfilename def imgtocrop null def imgcropped null def imgcroppedoutstream null def imgcroppedinstream null def imgtype imgpath substring imgpath indexof def imgpathonly imgpath substring imgpath lastindexof def imgfilename imgpath substring imgpath lastindexof def newimgfilename newfilename null newfilename imgfilename if newname imgfilename newname def context contentservices createcontext applicationcontext request imgtocrop imageio read contentservices getcontentasstream site imgpath context imgcropped imgtocrop getsubimage l t w h imgcroppedoutstream new bytearrayoutputstream imageio write imgcropped imgtype imgcroppedoutstream imgcroppedinstream new bytearrayinputstream imgcroppedoutstream tobytearray def result contentservices writecontentasset context site imgpathonly newimgfilename imgcroppedinstream true false true null return result ui should recommend file name imgfilenamenoext t t l l h h w w imgtype original jira | 1 |

752,170 | 26,275,853,657 | IssuesEvent | 2023-01-06 21:55:26 | Team-Betise/Lunchline | https://api.github.com/repos/Team-Betise/Lunchline | reopened | Table for menu | enhancement high-priority | Table to store the items currently in menu

- ItemName

- ItemCost

- Desc

- Rating

- CurrentAvailability (Set automatically according to Availability, can be changed by vendor)

- AvailabilityTimes (Array with start and end times) | 1.0 | Table for menu - Table to store the items currently in menu

- ItemName

- ItemCost

- Desc

- Rating

- CurrentAvailability (Set automatically according to Availability, can be changed by vendor)

- AvailabilityTimes (Array with start and end times) | priority | table for menu table to store the items currently in menu itemname itemcost desc rating currentavailability set automatically according to availability can be changed by vendor availabilitytimes array with start and end times | 1 |

145,595 | 5,578,391,441 | IssuesEvent | 2017-03-28 12:18:00 | w3c/wai-people-use-web | https://api.github.com/repos/w3c/wai-people-use-web | closed | [Principles] update references | High Priority | From Judy Brewer:

> The references to UAAG 1.0 and ATAG 1.0 are seriously outdated and should be updated. Additionally, in general comments on this page it states that WAI develops UAAG and ATAG. That also needs to be updated. | 1.0 | [Principles] update references - From Judy Brewer:

> The references to UAAG 1.0 and ATAG 1.0 are seriously outdated and should be updated. Additionally, in general comments on this page it states that WAI develops UAAG and ATAG. That also needs to be updated. | priority | update references from judy brewer the references to uaag and atag are seriously outdated and should be updated additionally in general comments on this page it states that wai develops uaag and atag that also needs to be updated | 1 |

544,983 | 15,933,216,389 | IssuesEvent | 2021-04-14 07:08:22 | rootless-containers/rootlesskit | https://api.github.com/repos/rootless-containers/rootlesskit | opened | [regression in Docker 20.10.6] slirp4netns port driver fails: "Timed out proxy starting the userland proxy." | bug priority/high | Rootless Docker 20.10.6 + RootlessKit v0.14.1 + slirp4netns port driver fails

```console

$ cat ~/.config/systemd/user/docker.service.d/override.conf

[Service]

Environment=DOCKERD_ROOTLESS_ROOTLESSKIT_PORT_DRIVER="slirp4netns"

$ docker --context=rootless run --rm -p 8080:80 nginx:alpine

docker: Error response from daemon: driver failed programming external connectivity on endpoint dreamy_gauss (93092ec62bc18d4190f18c05f188505c11acce4feef7ea2eb259805c18edfd85): Timed out proxy starting the userland proxy.

```

builtin port driver works.

The both drivers were working with Docker v20.10.5.

| 1.0 | [regression in Docker 20.10.6] slirp4netns port driver fails: "Timed out proxy starting the userland proxy." - Rootless Docker 20.10.6 + RootlessKit v0.14.1 + slirp4netns port driver fails

```console

$ cat ~/.config/systemd/user/docker.service.d/override.conf

[Service]

Environment=DOCKERD_ROOTLESS_ROOTLESSKIT_PORT_DRIVER="slirp4netns"

$ docker --context=rootless run --rm -p 8080:80 nginx:alpine

docker: Error response from daemon: driver failed programming external connectivity on endpoint dreamy_gauss (93092ec62bc18d4190f18c05f188505c11acce4feef7ea2eb259805c18edfd85): Timed out proxy starting the userland proxy.

```

builtin port driver works.

The both drivers were working with Docker v20.10.5.

| priority | port driver fails timed out proxy starting the userland proxy rootless docker rootlesskit port driver fails console cat config systemd user docker service d override conf environment dockerd rootless rootlesskit port driver docker context rootless run rm p nginx alpine docker error response from daemon driver failed programming external connectivity on endpoint dreamy gauss timed out proxy starting the userland proxy builtin port driver works the both drivers were working with docker | 1 |

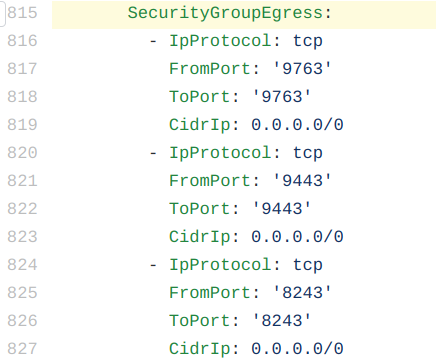

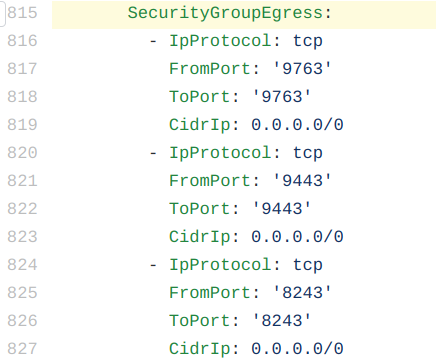

211,186 | 7,199,048,842 | IssuesEvent | 2018-02-05 14:51:08 | wso2/cloudformation-apim | https://api.github.com/repos/wso2/cloudformation-apim | opened | Current Egress policy is set to allowAll for 9763/9443/8243 ports | Priority/High Severity/Major Type/Bug | **Description:**

ATM, AWS::EC2::SecurityGroup Egress policy in cloudformation-apim scripts [1] is set to allowAll (0.0.0.0/0 CIDR block) for 9763/9443/8243 ports.

Since this allows complete internet access via these ports, we need to restrict it, right?

[1] https://github.com/wso2/cloudformation-apim/blob/master/patterns/pattern-1/pattern-1-cloudformation.template.yaml#L815

**Suggested Labels:**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees:**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

**Affected Product Version:**

**OS, DB, other environment details and versions:**

**Steps to reproduce:**

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. --> | 1.0 | Current Egress policy is set to allowAll for 9763/9443/8243 ports - **Description:**

ATM, AWS::EC2::SecurityGroup Egress policy in cloudformation-apim scripts [1] is set to allowAll (0.0.0.0/0 CIDR block) for 9763/9443/8243 ports.

Since this allows complete internet access via these ports, we need to restrict it, right?

[1] https://github.com/wso2/cloudformation-apim/blob/master/patterns/pattern-1/pattern-1-cloudformation.template.yaml#L815

**Suggested Labels:**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees:**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

**Affected Product Version:**

**OS, DB, other environment details and versions:**

**Steps to reproduce:**

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. --> | priority | current egress policy is set to allowall for ports description atm aws securitygroup egress policy in cloudformation apim scripts is set to allowall cidr block for ports since this allows complete internet access via these ports we need to restrict it right suggested labels suggested assignees affected product version os db other environment details and versions steps to reproduce related issues | 1 |

810,111 | 30,225,627,844 | IssuesEvent | 2023-07-06 00:04:02 | shieldworks/aegis | https://api.github.com/repos/shieldworks/aegis | closed | Ability to swap age encryption with a FIPS-compliant alternative | priority:high | Age encryption is not FIPS-complinat.

If that part is turned into a plugin, then we can offer different implementations at various compliancy levels. | 1.0 | Ability to swap age encryption with a FIPS-compliant alternative - Age encryption is not FIPS-complinat.

If that part is turned into a plugin, then we can offer different implementations at various compliancy levels. | priority | ability to swap age encryption with a fips compliant alternative age encryption is not fips complinat if that part is turned into a plugin then we can offer different implementations at various compliancy levels | 1 |

175,821 | 6,554,270,275 | IssuesEvent | 2017-09-06 04:35:11 | wso2/product-ei | https://api.github.com/repos/wso2/product-ei | opened | Changing port offset in deployment.yaml does not change the broker bind addresses | Component/Broker Priority/High Severity/Major Type/Bug | ### Steps tp reproduce

1. Unzip wso2ei-7.0.0-SNAPSHOT.zip to instance-1 and instance-2

1. Change the `wso2.carbon.offset` to 1 in instance-2

Following exception was observed even after changing the `wso2.carbon.offset` to 1 in instance-2.

```

[2017-09-06 09:37:38,155] ERROR {org.wso2.andes.server.Main} - Exception during startup. Triggering shutdown org.wso2.andes.kernel.AndesException: Unable to initialise application registry

at org.wso2.andes.server.Broker.startupImpl(Broker.java:307)

at org.wso2.andes.server.Broker.startup(Broker.java:110)

at org.wso2.andes.server.Main.startBroker(Main.java:217)

at org.wso2.andes.server.Main.execute(Main.java:206)

at org.wso2.andes.server.Main.<init>(Main.java:54)

at org.wso2.andes.server.Main.main(Main.java:47)

at org.wso2.carbon.business.messaging.core.internal.BrokerServiceComponent.startAndesBroker(BrokerServiceComponent.java:280)

at org.wso2.carbon.business.messaging.core.internal.BrokerServiceComponent.start(BrokerServiceComponent.java:142)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.eclipse.equinox.internal.ds.model.ServiceComponent.activate(ServiceComponent.java:235)

at org.eclipse.equinox.internal.ds.model.ServiceComponentProp.activate(ServiceComponentProp.java:146)

at org.eclipse.equinox.internal.ds.model.ServiceComponentProp.build(ServiceComponentProp.java:345)

at org.eclipse.equinox.internal.ds.InstanceProcess.buildComponent(InstanceProcess.java:620)

at org.eclipse.equinox.internal.ds.InstanceProcess.buildComponents(InstanceProcess.java:197)

at org.eclipse.equinox.internal.ds.Resolver.getEligible(Resolver.java:343)

at org.eclipse.equinox.internal.ds.SCRManager.serviceChanged(SCRManager.java:222)

at org.eclipse.osgi.internal.serviceregistry.FilteredServiceListener.serviceChanged(FilteredServiceListener.java:109)

at org.eclipse.osgi.internal.framework.BundleContextImpl.dispatchEvent(BundleContextImpl.java:915)

at org.eclipse.osgi.framework.eventmgr.EventManager.dispatchEvent(EventManager.java:230)

at org.eclipse.osgi.framework.eventmgr.ListenerQueue.dispatchEventSynchronous(ListenerQueue.java:148)

at org.eclipse.osgi.internal.serviceregistry.ServiceRegistry.publishServiceEventPrivileged(ServiceRegistry.java:862)

at org.eclipse.osgi.internal.serviceregistry.ServiceRegistry.publishServiceEvent(ServiceRegistry.java:801)

at org.eclipse.osgi.internal.serviceregistry.ServiceRegistrationImpl.register(ServiceRegistrationImpl.java:127)

at org.eclipse.osgi.internal.serviceregistry.ServiceRegistry.registerService(ServiceRegistry.java:225)

at org.eclipse.osgi.internal.framework.BundleContextImpl.registerService(BundleContextImpl.java:464)

at org.eclipse.osgi.internal.framework.BundleContextImpl.registerService(BundleContextImpl.java:482)

at org.eclipse.osgi.internal.framework.BundleContextImpl.registerService(BundleContextImpl.java:999)

at org.wso2.carbon.datasource.core.internal.DataSourceListenerComponent.onAllRequiredCapabilitiesAvailable(DataSourceListenerComponent.java:101)

at org.wso2.carbon.kernel.internal.startupresolver.StartupComponentManager.lambda$notifySatisfiableComponents$51(StartupComponentManager.java:238)

at java.util.ArrayList.forEach(ArrayList.java:1249)

at org.wso2.carbon.kernel.internal.startupresolver.StartupComponentManager.notifySatisfiableComponents(StartupComponentManager.java:224)

at org.wso2.carbon.kernel.internal.startupresolver.StartupOrderResolver$1.run(StartupOrderResolver.java:204)

at java.util.TimerThread.mainLoop(Timer.java:555)

at java.util.TimerThread.run(Timer.java:505)

Caused by: java.rmi.server.ExportException: Port already in use: 8999; nested exception is:

java.net.BindException: Address already in use (Bind failed)

at sun.rmi.transport.tcp.TCPTransport.listen(TCPTransport.java:341)

at sun.rmi.transport.tcp.TCPTransport.exportObject(TCPTransport.java:249)

at sun.rmi.transport.tcp.TCPEndpoint.exportObject(TCPEndpoint.java:411)

at sun.rmi.transport.LiveRef.exportObject(LiveRef.java:147)

at sun.rmi.server.UnicastServerRef.exportObject(UnicastServerRef.java:236)

at sun.rmi.registry.RegistryImpl.setup(RegistryImpl.java:213)

at sun.rmi.registry.RegistryImpl.<init>(RegistryImpl.java:173)

at sun.rmi.registry.RegistryImpl.<init>(RegistryImpl.java:144)

at java.rmi.registry.LocateRegistry.createRegistry(LocateRegistry.java:239)

at org.wso2.andes.server.management.JMXManagedObjectRegistry.start(JMXManagedObjectRegistry.java:205)

at org.wso2.andes.server.registry.ApplicationRegistry.initialise(ApplicationRegistry.java:251)

at org.wso2.andes.server.registry.ApplicationRegistry.initialise(ApplicationRegistry.java:147)

at org.wso2.andes.server.Broker.startupImpl(Broker.java:274)

... 36 more

Caused by: java.net.BindException: Address already in use (Bind failed)

at java.net.PlainSocketImpl.socketBind(Native Method)

at java.net.AbstractPlainSocketImpl.bind(AbstractPlainSocketImpl.java:387)

at java.net.ServerSocket.bind(ServerSocket.java:375)

at java.net.ServerSocket.<init>(ServerSocket.java:237)

at java.net.ServerSocket.<init>(ServerSocket.java:128)

at org.wso2.andes.server.management.JMXManagedObjectRegistry$CustomRMIServerSocketFactory$NoLocalAddressServerSocket.<init>(JMXManagedObjectRegistry.java:332)

at org.wso2.andes.server.management.JMXManagedObjectRegistry$CustomRMIServerSocketFactory.createServerSocket(JMXManagedObjectRegistry.java:325)

at sun.rmi.transport.tcp.TCPEndpoint.newServerSocket(TCPEndpoint.java:666)

at sun.rmi.transport.tcp.TCPTransport.listen(TCPTransport.java:330)

... 48 more

``` | 1.0 | Changing port offset in deployment.yaml does not change the broker bind addresses - ### Steps tp reproduce

1. Unzip wso2ei-7.0.0-SNAPSHOT.zip to instance-1 and instance-2

1. Change the `wso2.carbon.offset` to 1 in instance-2

Following exception was observed even after changing the `wso2.carbon.offset` to 1 in instance-2.

```

[2017-09-06 09:37:38,155] ERROR {org.wso2.andes.server.Main} - Exception during startup. Triggering shutdown org.wso2.andes.kernel.AndesException: Unable to initialise application registry

at org.wso2.andes.server.Broker.startupImpl(Broker.java:307)

at org.wso2.andes.server.Broker.startup(Broker.java:110)

at org.wso2.andes.server.Main.startBroker(Main.java:217)

at org.wso2.andes.server.Main.execute(Main.java:206)

at org.wso2.andes.server.Main.<init>(Main.java:54)

at org.wso2.andes.server.Main.main(Main.java:47)