Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

310,955 | 9,526,388,025 | IssuesEvent | 2019-04-28 19:36:48 | zanderbowen/AAE560-SP2019 | https://api.github.com/repos/zanderbowen/AAE560-SP2019 | closed | General Work Order Class Items | high priority stochastic | - [x] Add a column to obj.routing.Edges for TotalActual in the WorkOrder constructor method

- [x] Write a method to sum the actual delivery time and actual hours worked time. This will need to run after each cycle to update accordingly. | 1.0 | General Work Order Class Items - - [x] Add a column to obj.routing.Edges for TotalActual in the WorkOrder constructor method

- [x] Write a method to sum the actual delivery time and actual hours worked time. This will need to run after each cycle to update accordingly. | priority | general work order class items add a column to obj routing edges for totalactual in the workorder constructor method write a method to sum the actual delivery time and actual hours worked time this will need to run after each cycle to update accordingly | 1 |

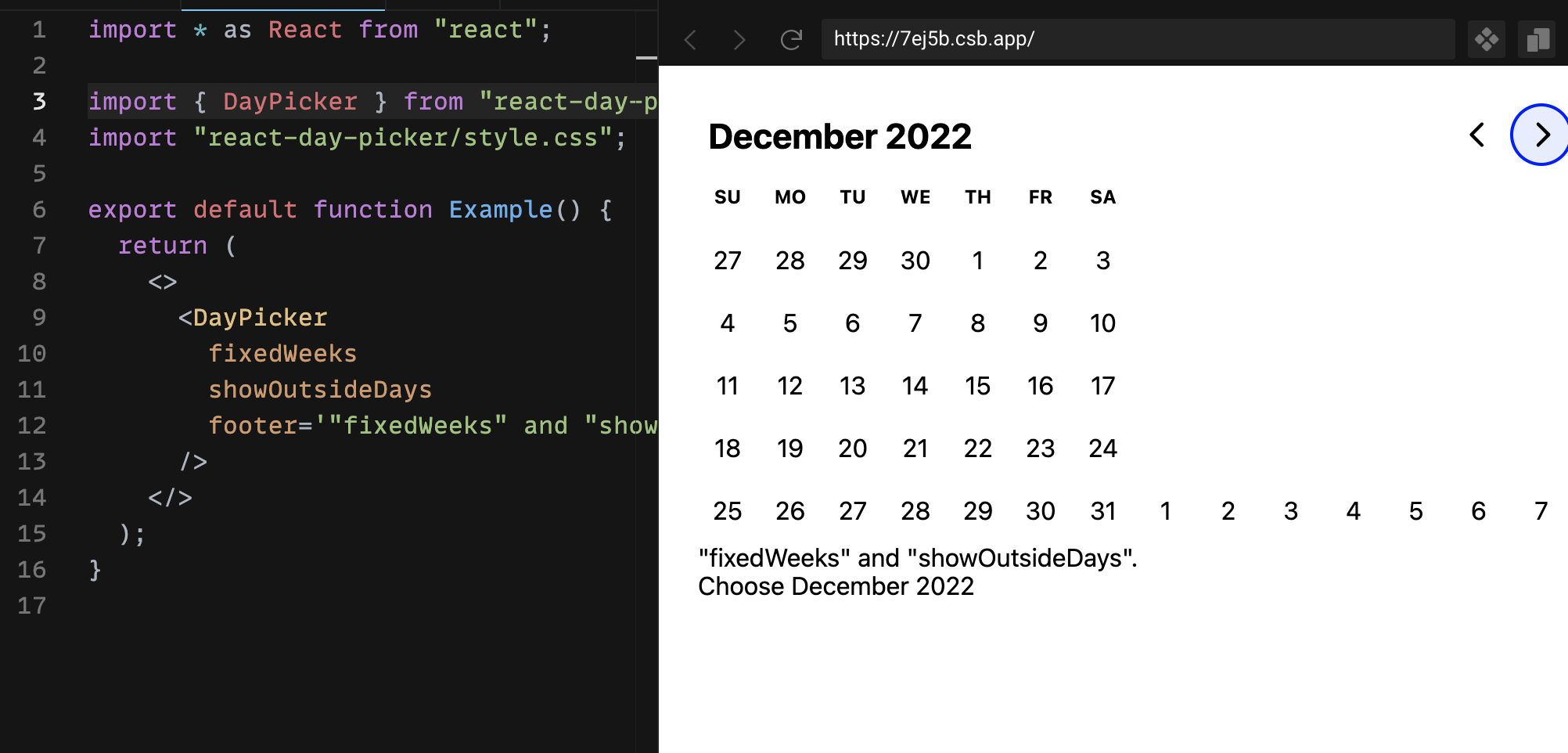

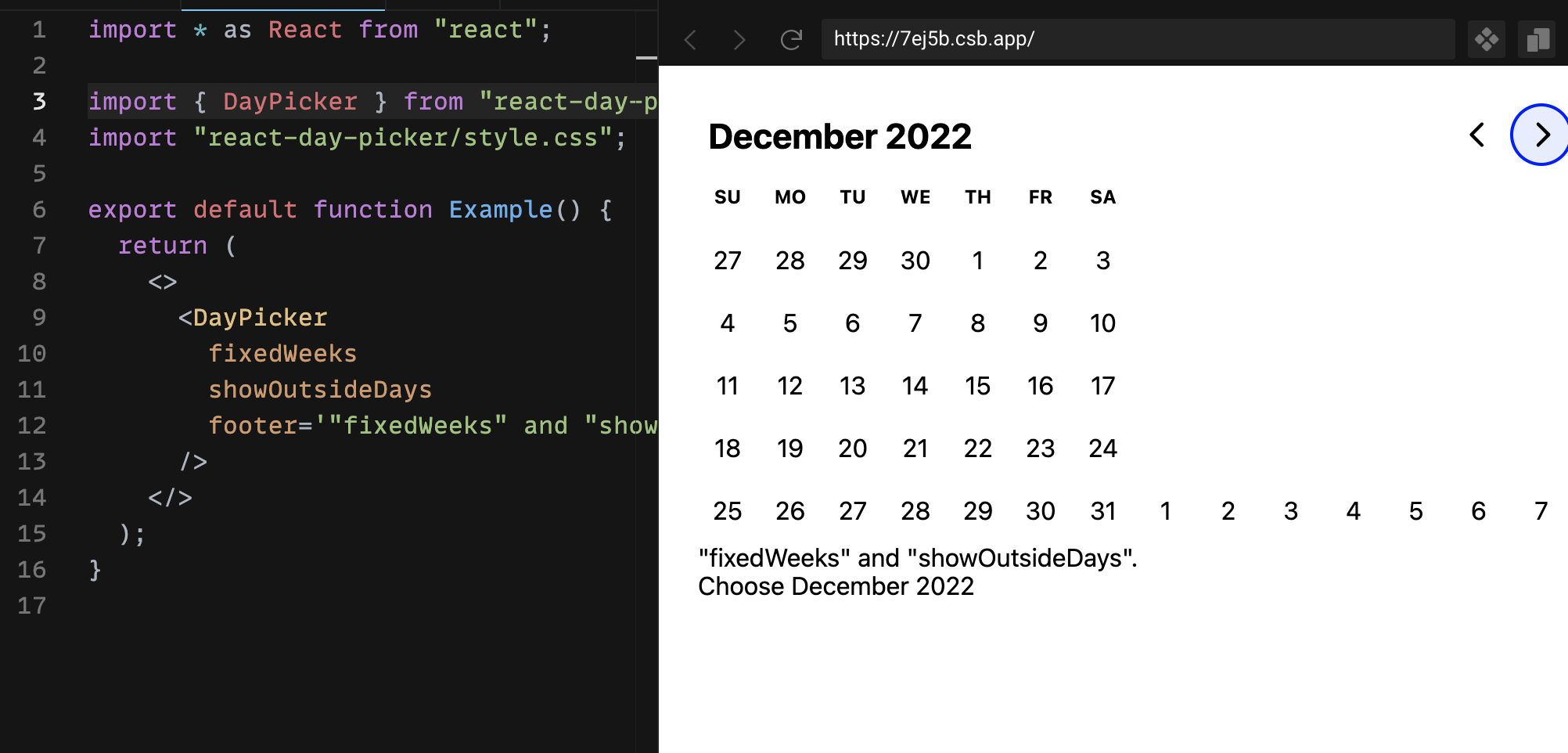

606,587 | 18,765,545,118 | IssuesEvent | 2021-11-05 23:10:16 | gpbl/react-day-picker | https://api.github.com/repos/gpbl/react-day-picker | closed | v8. Markup is broken with "showOutsideDays" and "fixedWeeks" options in December 2022 | Type: Bug Priority: High | **Describe the bug**

Layout is broken with `"react-day-picker": "8.0.0-beta.29"` under certain conditions.

**To Reproduce**

reproduced here:

https://codesandbox.io/s/daypicker-basicscustomization-weeknumber-example-forked-7ej5b?file=/example.tsx

using next month button scroll to December 2022. While other months are rendered just fine, this one brakes layout

**Screenshots**

**Additional context**

used beta 29 (as 30 doesn't seemed to be published to npm at the moment of writing)

| 1.0 | v8. Markup is broken with "showOutsideDays" and "fixedWeeks" options in December 2022 - **Describe the bug**

Layout is broken with `"react-day-picker": "8.0.0-beta.29"` under certain conditions.

**To Reproduce**

reproduced here:

https://codesandbox.io/s/daypicker-basicscustomization-weeknumber-example-forked-7ej5b?file=/example.tsx

using next month button scroll to December 2022. While other months are rendered just fine, this one brakes layout

**Screenshots**

**Additional context**

used beta 29 (as 30 doesn't seemed to be published to npm at the moment of writing)

| priority | markup is broken with showoutsidedays and fixedweeks options in december describe the bug layout is broken with react day picker beta under certain conditions to reproduce reproduced here using next month button scroll to december while other months are rendered just fine this one brakes layout screenshots additional context used beta as doesn t seemed to be published to npm at the moment of writing | 1 |

512,646 | 14,906,265,190 | IssuesEvent | 2021-01-22 00:10:03 | netlify/next-on-netlify | https://api.github.com/repos/netlify/next-on-netlify | closed | _redirects sorted wrong when using catch all route and dynamic routes | priority: high type: bug | The change [#145](https://github.com/netlify/next-on-netlify/pull/145) "revert route/redirect sorting logic to static then dynamic" causes the `_redirects` file to be sorted wrong when using an optional catch all route and dynamic paths.

## How to reproduce

This problem happens when we have the following routes:

```

pages/_app.js

pages/[[...any]].js

pages/[bar]/test.js

pages/test.js

```

Clone https://github.com/amuttsch/non-routes

Execute the following command

```bash

yarn install

yarn netlify

```

### Problem

The resulting `_redirects` file looks like this on version `^2.8.3`

```

# Next-on-Netlify Redirects

/_next/data/tveFS5JTA64mmnqiHIWfA/test.json /.netlify/functions/next_test 200

/_next/data/tveFS5JTA64mmnqiHIWfA/index.json /.netlify/functions/next_any 200

/_next/data/tveFS5JTA64mmnqiHIWfA/* /.netlify/functions/next_any 200 <------ catches ":bar/test.json"

/api/hello /.netlify/functions/next_api_hello 200

/test /.netlify/functions/next_test 200

/_next/data/tveFS5JTA64mmnqiHIWfA/:bar/test.json /.netlify/functions/next_bar_test 200

/_next/image* url=:url w=:width q=:quality /.netlify/functions/next_image?url=:url&w=:width&q=:quality 200

/:bar/test /.netlify/functions/next_bar_test 200

/ /.netlify/functions/next_any 200

/_next/* /_next/:splat 200

/* /.netlify/functions/next_any 200

```

The data routes `/_next/data` are split into two groups. `/_next/data/tveFS5JTA64mmnqiHIWfA/* /.netlify/functions/next_any 200` catches any data route, but `/_next/data/tveFS5JTA64mmnqiHIWfA/:bar/test.json /.netlify/functions/next_bar_test 200` is below this redirect. This causes our application to fail to load data when the user does a client side transition.

### Expected

Previous to `2.8.3` the file looks like this:

```

# Next-on-Netlify Redirects

/_next/data/xcFf_YKEf4dQzabGKlRTV/test.json /.netlify/functions/next_test 200

/_next/data/xcFf_YKEf4dQzabGKlRTV/:bar/test.json /.netlify/functions/next_bar_test 200

/_next/data/xcFf_YKEf4dQzabGKlRTV/index.json /.netlify/functions/next_any 200

/_next/data/xcFf_YKEf4dQzabGKlRTV/* /.netlify/functions/next_any 200

/_next/image* url=:url w=:width q=:quality /.netlify/functions/next_image?url=:url&w=:width&q=:quality 200

/api/hello /.netlify/functions/next_api_hello 200

/test /.netlify/functions/next_test 200

/:bar/test /.netlify/functions/next_bar_test 200

/ /.netlify/functions/next_any 200

/_next/* /_next/:splat 200

/* /.netlify/functions/next_any 200

```

Workaround: Downgrade `next-on-netlify` to `2.8.2`. | 1.0 | _redirects sorted wrong when using catch all route and dynamic routes - The change [#145](https://github.com/netlify/next-on-netlify/pull/145) "revert route/redirect sorting logic to static then dynamic" causes the `_redirects` file to be sorted wrong when using an optional catch all route and dynamic paths.

## How to reproduce

This problem happens when we have the following routes:

```

pages/_app.js

pages/[[...any]].js

pages/[bar]/test.js

pages/test.js

```

Clone https://github.com/amuttsch/non-routes

Execute the following command

```bash

yarn install

yarn netlify

```

### Problem

The resulting `_redirects` file looks like this on version `^2.8.3`

```

# Next-on-Netlify Redirects

/_next/data/tveFS5JTA64mmnqiHIWfA/test.json /.netlify/functions/next_test 200

/_next/data/tveFS5JTA64mmnqiHIWfA/index.json /.netlify/functions/next_any 200

/_next/data/tveFS5JTA64mmnqiHIWfA/* /.netlify/functions/next_any 200 <------ catches ":bar/test.json"

/api/hello /.netlify/functions/next_api_hello 200

/test /.netlify/functions/next_test 200

/_next/data/tveFS5JTA64mmnqiHIWfA/:bar/test.json /.netlify/functions/next_bar_test 200

/_next/image* url=:url w=:width q=:quality /.netlify/functions/next_image?url=:url&w=:width&q=:quality 200

/:bar/test /.netlify/functions/next_bar_test 200

/ /.netlify/functions/next_any 200

/_next/* /_next/:splat 200

/* /.netlify/functions/next_any 200

```

The data routes `/_next/data` are split into two groups. `/_next/data/tveFS5JTA64mmnqiHIWfA/* /.netlify/functions/next_any 200` catches any data route, but `/_next/data/tveFS5JTA64mmnqiHIWfA/:bar/test.json /.netlify/functions/next_bar_test 200` is below this redirect. This causes our application to fail to load data when the user does a client side transition.

### Expected

Previous to `2.8.3` the file looks like this:

```

# Next-on-Netlify Redirects

/_next/data/xcFf_YKEf4dQzabGKlRTV/test.json /.netlify/functions/next_test 200

/_next/data/xcFf_YKEf4dQzabGKlRTV/:bar/test.json /.netlify/functions/next_bar_test 200

/_next/data/xcFf_YKEf4dQzabGKlRTV/index.json /.netlify/functions/next_any 200

/_next/data/xcFf_YKEf4dQzabGKlRTV/* /.netlify/functions/next_any 200

/_next/image* url=:url w=:width q=:quality /.netlify/functions/next_image?url=:url&w=:width&q=:quality 200

/api/hello /.netlify/functions/next_api_hello 200

/test /.netlify/functions/next_test 200

/:bar/test /.netlify/functions/next_bar_test 200

/ /.netlify/functions/next_any 200

/_next/* /_next/:splat 200

/* /.netlify/functions/next_any 200

```

Workaround: Downgrade `next-on-netlify` to `2.8.2`. | priority | redirects sorted wrong when using catch all route and dynamic routes the change revert route redirect sorting logic to static then dynamic causes the redirects file to be sorted wrong when using an optional catch all route and dynamic paths how to reproduce this problem happens when we have the following routes pages app js pages js pages test js pages test js clone execute the following command bash yarn install yarn netlify problem the resulting redirects file looks like this on version next on netlify redirects next data test json netlify functions next test next data index json netlify functions next any next data netlify functions next any catches bar test json api hello netlify functions next api hello test netlify functions next test next data bar test json netlify functions next bar test next image url url w width q quality netlify functions next image url url w width q quality bar test netlify functions next bar test netlify functions next any next next splat netlify functions next any the data routes next data are split into two groups next data netlify functions next any catches any data route but next data bar test json netlify functions next bar test is below this redirect this causes our application to fail to load data when the user does a client side transition expected previous to the file looks like this next on netlify redirects next data xcff test json netlify functions next test next data xcff bar test json netlify functions next bar test next data xcff index json netlify functions next any next data xcff netlify functions next any next image url url w width q quality netlify functions next image url url w width q quality api hello netlify functions next api hello test netlify functions next test bar test netlify functions next bar test netlify functions next any next next splat netlify functions next any workaround downgrade next on netlify to | 1 |

636,378 | 20,598,670,187 | IssuesEvent | 2022-03-05 23:02:01 | localstack/localstack | https://api.github.com/repos/localstack/localstack | closed | bug: SSL certificate issue since v0.12.18 | bug priority-high needs-triaging infra-startup networking | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

LocalStack is taking a long time to start up since updating to v0.12.18 due to timing out when attempting to pull down the local test SSL certificate. This works fine on v0.12.17.

```

localstack_1 | Starting edge router (https port 4566)...

localstack_1 | 2021-11-11T09:33:23:INFO:bootstrap.py: Execution of "load_plugin_from_path" took 2430.59ms

localstack_1 | 2021-11-11T09:33:23:INFO:bootstrap.py: Execution of "load_plugins" took 2431.15ms

localstack_1 | Waiting for all LocalStack services to be ready

localstack_1 | ...

localstack_1 | Waiting for all LocalStack services to be ready

localstack_1 | 2021-11-11T09:46:28:INFO:localstack_ext.bootstrap.install: Unable to download local test SSL certificate from https://cdn.jsdelivr.net/gh/localstack/localstack-artifacts@master/local-certs/server.key to /tmp/localstack/server.test.pem: MyHTTPSConnectionPool(host='cdn.jsdelivr.net', port=443): Max retries exceeded with url: /gh/localstack/localstack-artifacts@master/local-certs/server.key (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x7f5ad9f41cd0>: Failed to establish a new connection: [Errno 110] Operation timed out')) Traceback (most recent call last):

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connection.py", line 175, in _new_conn

localstack_1 | (self._dns_host, self.port), self.timeout, **extra_kw

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/util/connection.py", line 96, in create_connection

localstack_1 | raise err

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/util/connection.py", line 86, in create_connection

localstack_1 | sock.connect(sa)

localstack_1 | TimeoutError: [Errno 110] Operation timed out

localstack_1 |

localstack_1 | During handling of the above exception, another exception occurred:

localstack_1 |

localstack_1 | Traceback (most recent call last):

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connectionpool.py", line 706, in urlopen

localstack_1 | chunked=chunked,

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connectionpool.py", line 382, in _make_request

localstack_1 | self._validate_conn(conn)

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connectionpool.py", line 1010, in _validate_conn

localstack_1 | conn.connect()

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connection.py", line 358, in connect

localstack_1 | conn = self._new_conn()

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connection.py", line 187, in _new_conn

localstack_1 | self, "Failed to establish a new connection: %s" % e

localstack_1 | urllib3.exceptions.NewConnectionError: <urllib3.connection.HTTPSConnection object at 0x7f5ad9f41cd0>: Failed to establish a new connection: [Errno 110] Operation timed out

localstack_1 |

localstack_1 | During handling of the above exception, another exception occurred:

localstack_1 |

localstack_1 | Traceback (most recent call last):

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/adapters.py", line 449, in send

localstack_1 | timeout=timeout

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connectionpool.py", line 756, in urlopen

localstack_1 | method, url, error=e, _pool=self, _stacktrace=sys.exc_info()[2]

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/util/retry.py", line 574, in increment

localstack_1 | raise MaxRetryError(_pool, url, error or ResponseError(cause))

localstack_1 | urllib3.exceptions.MaxRetryError: MyHTTPSConnectionPool(host='cdn.jsdelivr.net', port=443): Max retries exceeded with url: /gh/localstack/localstack-artifacts@master/local-certs/server.key (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x7f5ad9f41cd0>: Failed to establish a new connection: [Errno 110] Operation timed out'))

localstack_1 |

localstack_1 | During handling of the above exception, another exception occurred:

localstack_1 |

localstack_1 | Traceback (most recent call last):

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/localstack_ext/bootstrap/install.py", line 87, in do_download

localstack_1 | download(url,target_file)

localstack_1 | File "/opt/code/localstack/localstack/utils/common.py", line 1072, in download

localstack_1 | r = s.get(url, stream=True, verify=os.getenv("REQUESTS_CA_BUNDLE", verify_ssl))

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/sessions.py", line 555, in get

localstack_1 | return self.request('GET', url, **kwargs)

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/sessions.py", line 542, in request

localstack_1 | resp = self.send(prep, **send_kwargs)

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/sessions.py", line 655, in send

localstack_1 | r = adapter.send(request, **kwargs)

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/adapters.py", line 516, in send

localstack_1 | raise ConnectionError(e, request=request)

localstack_1 | requests.exceptions.ConnectionError: MyHTTPSConnectionPool(host='cdn.jsdelivr.net', port=443): Max retries exceeded with url: /gh/localstack/localstack-artifacts@master/local-certs/server.key (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x7f5ad9f41cd0>: Failed to establish a new connection: [Errno 110] Operation timed out'))

```

I expect this has something to do with this line in the release notes (but not 100%)

> add startup logic to install prebuilt SSL cert if available

### Expected Behavior

LocalStack to start up in a normal amount of time

### How are you starting LocalStack?

With a docker-compose file

### Steps To Reproduce

#### How are you starting localstack (e.g., `bin/localstack` command, arguments, or `docker-compose.yml`)

Using a custom docker-compose that starts localstack up as part of a suite of services

#### Client commands (e.g., AWS SDK code snippet, or sequence of "awslocal" commands)

N/A

### Environment

```markdown

- OS:

- LocalStack: v0.12.18 to latest

```

### Anything else?

The localstack image works fine on my local machine, but does not work when running on the CI server. The CI server is more locked down and lacks external connectivity without a proxy, so this may be causing the issue. | 1.0 | bug: SSL certificate issue since v0.12.18 - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

LocalStack is taking a long time to start up since updating to v0.12.18 due to timing out when attempting to pull down the local test SSL certificate. This works fine on v0.12.17.

```

localstack_1 | Starting edge router (https port 4566)...

localstack_1 | 2021-11-11T09:33:23:INFO:bootstrap.py: Execution of "load_plugin_from_path" took 2430.59ms

localstack_1 | 2021-11-11T09:33:23:INFO:bootstrap.py: Execution of "load_plugins" took 2431.15ms

localstack_1 | Waiting for all LocalStack services to be ready

localstack_1 | ...

localstack_1 | Waiting for all LocalStack services to be ready

localstack_1 | 2021-11-11T09:46:28:INFO:localstack_ext.bootstrap.install: Unable to download local test SSL certificate from https://cdn.jsdelivr.net/gh/localstack/localstack-artifacts@master/local-certs/server.key to /tmp/localstack/server.test.pem: MyHTTPSConnectionPool(host='cdn.jsdelivr.net', port=443): Max retries exceeded with url: /gh/localstack/localstack-artifacts@master/local-certs/server.key (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x7f5ad9f41cd0>: Failed to establish a new connection: [Errno 110] Operation timed out')) Traceback (most recent call last):

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connection.py", line 175, in _new_conn

localstack_1 | (self._dns_host, self.port), self.timeout, **extra_kw

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/util/connection.py", line 96, in create_connection

localstack_1 | raise err

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/util/connection.py", line 86, in create_connection

localstack_1 | sock.connect(sa)

localstack_1 | TimeoutError: [Errno 110] Operation timed out

localstack_1 |

localstack_1 | During handling of the above exception, another exception occurred:

localstack_1 |

localstack_1 | Traceback (most recent call last):

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connectionpool.py", line 706, in urlopen

localstack_1 | chunked=chunked,

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connectionpool.py", line 382, in _make_request

localstack_1 | self._validate_conn(conn)

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connectionpool.py", line 1010, in _validate_conn

localstack_1 | conn.connect()

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connection.py", line 358, in connect

localstack_1 | conn = self._new_conn()

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connection.py", line 187, in _new_conn

localstack_1 | self, "Failed to establish a new connection: %s" % e

localstack_1 | urllib3.exceptions.NewConnectionError: <urllib3.connection.HTTPSConnection object at 0x7f5ad9f41cd0>: Failed to establish a new connection: [Errno 110] Operation timed out

localstack_1 |

localstack_1 | During handling of the above exception, another exception occurred:

localstack_1 |

localstack_1 | Traceback (most recent call last):

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/adapters.py", line 449, in send

localstack_1 | timeout=timeout

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/connectionpool.py", line 756, in urlopen

localstack_1 | method, url, error=e, _pool=self, _stacktrace=sys.exc_info()[2]

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/urllib3/util/retry.py", line 574, in increment

localstack_1 | raise MaxRetryError(_pool, url, error or ResponseError(cause))

localstack_1 | urllib3.exceptions.MaxRetryError: MyHTTPSConnectionPool(host='cdn.jsdelivr.net', port=443): Max retries exceeded with url: /gh/localstack/localstack-artifacts@master/local-certs/server.key (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x7f5ad9f41cd0>: Failed to establish a new connection: [Errno 110] Operation timed out'))

localstack_1 |

localstack_1 | During handling of the above exception, another exception occurred:

localstack_1 |

localstack_1 | Traceback (most recent call last):

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/localstack_ext/bootstrap/install.py", line 87, in do_download

localstack_1 | download(url,target_file)

localstack_1 | File "/opt/code/localstack/localstack/utils/common.py", line 1072, in download

localstack_1 | r = s.get(url, stream=True, verify=os.getenv("REQUESTS_CA_BUNDLE", verify_ssl))

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/sessions.py", line 555, in get

localstack_1 | return self.request('GET', url, **kwargs)

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/sessions.py", line 542, in request

localstack_1 | resp = self.send(prep, **send_kwargs)

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/sessions.py", line 655, in send

localstack_1 | r = adapter.send(request, **kwargs)

localstack_1 | File "/opt/code/localstack/.venv/lib/python3.7/site-packages/requests/adapters.py", line 516, in send

localstack_1 | raise ConnectionError(e, request=request)

localstack_1 | requests.exceptions.ConnectionError: MyHTTPSConnectionPool(host='cdn.jsdelivr.net', port=443): Max retries exceeded with url: /gh/localstack/localstack-artifacts@master/local-certs/server.key (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x7f5ad9f41cd0>: Failed to establish a new connection: [Errno 110] Operation timed out'))

```

I expect this has something to do with this line in the release notes (but not 100%)

> add startup logic to install prebuilt SSL cert if available

### Expected Behavior

LocalStack to start up in a normal amount of time

### How are you starting LocalStack?

With a docker-compose file

### Steps To Reproduce

#### How are you starting localstack (e.g., `bin/localstack` command, arguments, or `docker-compose.yml`)

Using a custom docker-compose that starts localstack up as part of a suite of services

#### Client commands (e.g., AWS SDK code snippet, or sequence of "awslocal" commands)

N/A

### Environment

```markdown

- OS:

- LocalStack: v0.12.18 to latest

```

### Anything else?

The localstack image works fine on my local machine, but does not work when running on the CI server. The CI server is more locked down and lacks external connectivity without a proxy, so this may be causing the issue. | priority | bug ssl certificate issue since is there an existing issue for this i have searched the existing issues current behavior localstack is taking a long time to start up since updating to due to timing out when attempting to pull down the local test ssl certificate this works fine on localstack starting edge router https port localstack info bootstrap py execution of load plugin from path took localstack info bootstrap py execution of load plugins took localstack waiting for all localstack services to be ready localstack localstack waiting for all localstack services to be ready localstack info localstack ext bootstrap install unable to download local test ssl certificate from to tmp localstack server test pem myhttpsconnectionpool host cdn jsdelivr net port max retries exceeded with url gh localstack localstack artifacts master local certs server key caused by newconnectionerror failed to establish a new connection operation timed out traceback most recent call last localstack file opt code localstack venv lib site packages connection py line in new conn localstack self dns host self port self timeout extra kw localstack file opt code localstack venv lib site packages util connection py line in create connection localstack raise err localstack file opt code localstack venv lib site packages util connection py line in create connection localstack sock connect sa localstack timeouterror operation timed out localstack localstack during handling of the above exception another exception occurred localstack localstack traceback most recent call last localstack file opt code localstack venv lib site packages connectionpool py line in urlopen localstack chunked chunked localstack file opt code localstack venv lib site packages connectionpool py line in make request localstack self validate conn conn localstack file opt code localstack venv lib site packages connectionpool py line in validate conn localstack conn connect localstack file opt code localstack venv lib site packages connection py line in connect localstack conn self new conn localstack file opt code localstack venv lib site packages connection py line in new conn localstack self failed to establish a new connection s e localstack exceptions newconnectionerror failed to establish a new connection operation timed out localstack localstack during handling of the above exception another exception occurred localstack localstack traceback most recent call last localstack file opt code localstack venv lib site packages requests adapters py line in send localstack timeout timeout localstack file opt code localstack venv lib site packages connectionpool py line in urlopen localstack method url error e pool self stacktrace sys exc info localstack file opt code localstack venv lib site packages util retry py line in increment localstack raise maxretryerror pool url error or responseerror cause localstack exceptions maxretryerror myhttpsconnectionpool host cdn jsdelivr net port max retries exceeded with url gh localstack localstack artifacts master local certs server key caused by newconnectionerror failed to establish a new connection operation timed out localstack localstack during handling of the above exception another exception occurred localstack localstack traceback most recent call last localstack file opt code localstack venv lib site packages localstack ext bootstrap install py line in do download localstack download url target file localstack file opt code localstack localstack utils common py line in download localstack r s get url stream true verify os getenv requests ca bundle verify ssl localstack file opt code localstack venv lib site packages requests sessions py line in get localstack return self request get url kwargs localstack file opt code localstack venv lib site packages requests sessions py line in request localstack resp self send prep send kwargs localstack file opt code localstack venv lib site packages requests sessions py line in send localstack r adapter send request kwargs localstack file opt code localstack venv lib site packages requests adapters py line in send localstack raise connectionerror e request request localstack requests exceptions connectionerror myhttpsconnectionpool host cdn jsdelivr net port max retries exceeded with url gh localstack localstack artifacts master local certs server key caused by newconnectionerror failed to establish a new connection operation timed out i expect this has something to do with this line in the release notes but not add startup logic to install prebuilt ssl cert if available expected behavior localstack to start up in a normal amount of time how are you starting localstack with a docker compose file steps to reproduce how are you starting localstack e g bin localstack command arguments or docker compose yml using a custom docker compose that starts localstack up as part of a suite of services client commands e g aws sdk code snippet or sequence of awslocal commands n a environment markdown os localstack to latest anything else the localstack image works fine on my local machine but does not work when running on the ci server the ci server is more locked down and lacks external connectivity without a proxy so this may be causing the issue | 1 |

451,235 | 13,031,722,226 | IssuesEvent | 2020-07-28 02:06:56 | scprogramming/Open-Source-Scan | https://api.github.com/repos/scprogramming/Open-Source-Scan | opened | Add a way to clear scans and all non cpe/cve data | Analysis High Priority | I should have a way to delete scans, clear all scans, projects, etc to preserve cpe/cve data, but allow for clearning unneeded data. | 1.0 | Add a way to clear scans and all non cpe/cve data - I should have a way to delete scans, clear all scans, projects, etc to preserve cpe/cve data, but allow for clearning unneeded data. | priority | add a way to clear scans and all non cpe cve data i should have a way to delete scans clear all scans projects etc to preserve cpe cve data but allow for clearning unneeded data | 1 |

236,909 | 7,753,586,792 | IssuesEvent | 2018-05-31 01:33:49 | Gloirin/m2gTest | https://api.github.com/repos/Gloirin/m2gTest | closed | 0006634:

custom fields missing in XLS export | Addressbook bug high priority | **Reported by cweiss on 18 Jun 2012 07:49**

**Version:** Milan (2012-03-3)

custom fields missing in XLS export

| 1.0 | 0006634:

custom fields missing in XLS export - **Reported by cweiss on 18 Jun 2012 07:49**

**Version:** Milan (2012-03-3)

custom fields missing in XLS export

| priority | custom fields missing in xls export reported by cweiss on jun version milan custom fields missing in xls export | 1 |

718,537 | 24,721,619,448 | IssuesEvent | 2022-10-20 11:08:43 | harvester/harvester | https://api.github.com/repos/harvester/harvester | closed | [FEATURE]Dedicated storage network | kind/enhancement area/ui area/installer area/network priority/0 area/storage highlight blocker area/longhorn-related require-ui/small | We would like to have a dedicated storage network interface that can be specified (either as single nic or bonded NICs) to separate the storage traffic from the overlay traffic.

Depends on https://github.com/longhorn/longhorn/issues/2285 and #1048 | 1.0 | [FEATURE]Dedicated storage network - We would like to have a dedicated storage network interface that can be specified (either as single nic or bonded NICs) to separate the storage traffic from the overlay traffic.

Depends on https://github.com/longhorn/longhorn/issues/2285 and #1048 | priority | dedicated storage network we would like to have a dedicated storage network interface that can be specified either as single nic or bonded nics to separate the storage traffic from the overlay traffic depends on and | 1 |

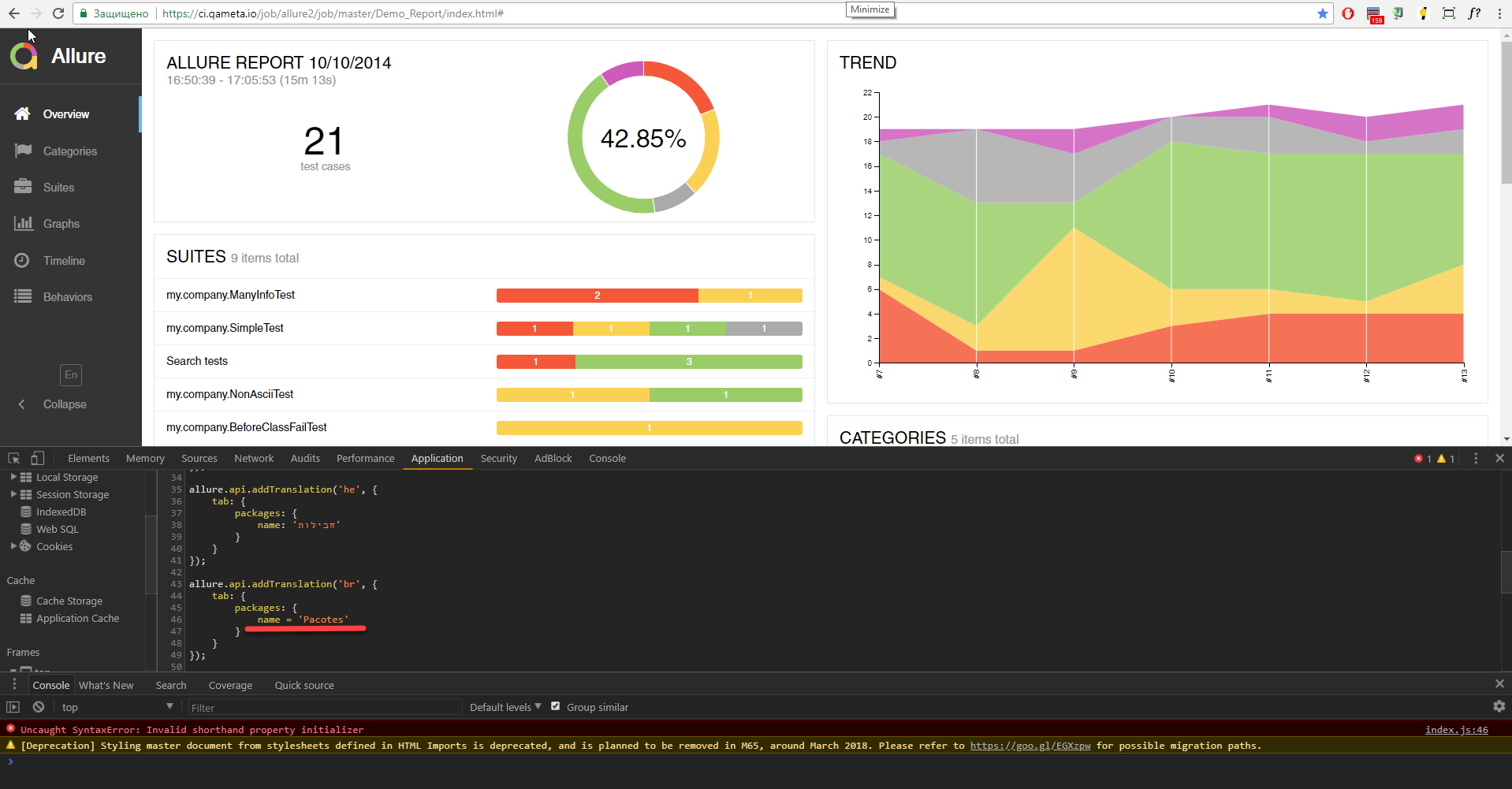

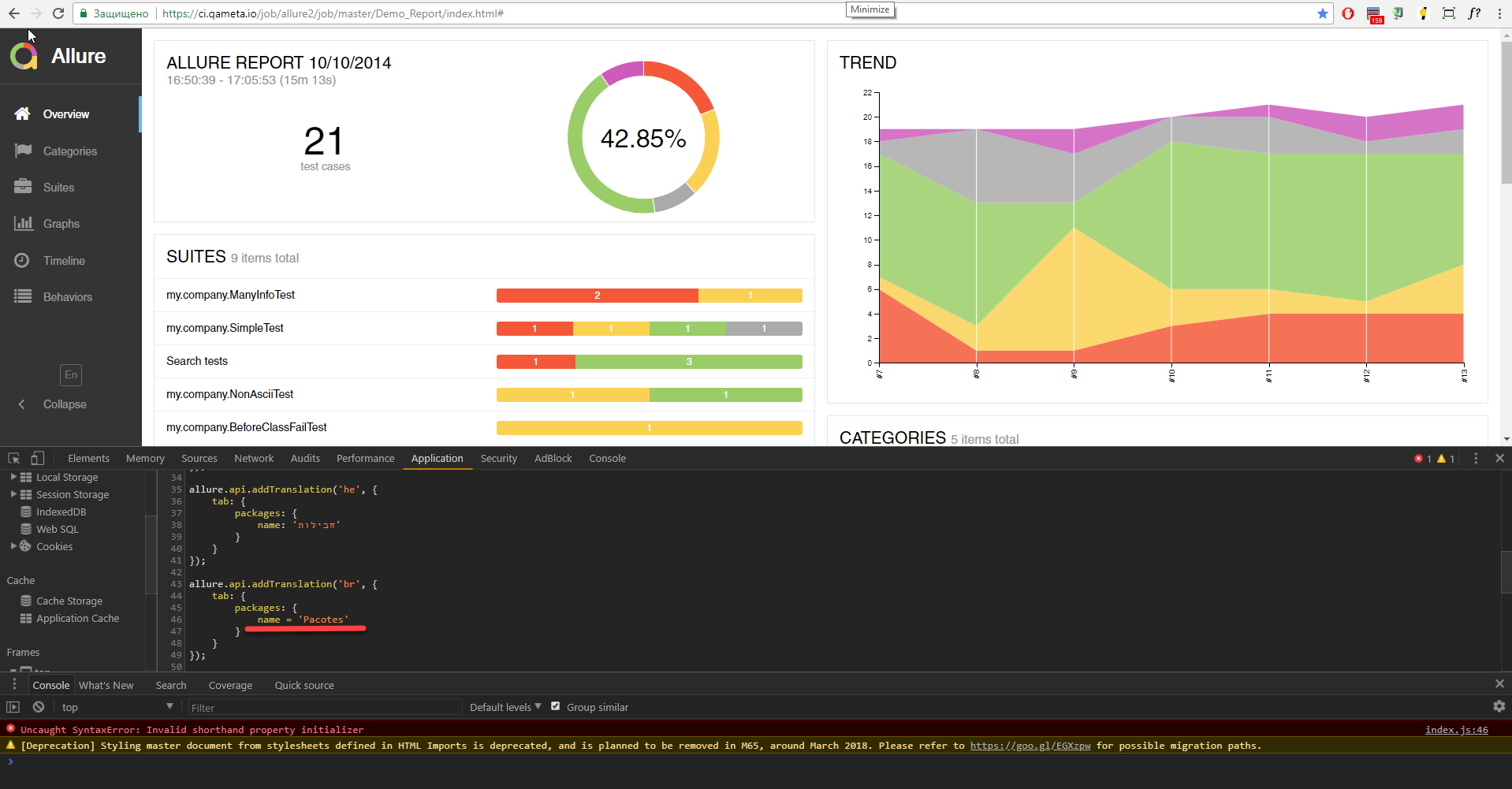

212,122 | 7,228,799,288 | IssuesEvent | 2018-02-11 13:41:13 | allure-framework/allure2 | https://api.github.com/repos/allure-framework/allure2 | closed | Packages tab is not shown in demo report due to invalid initializer | priority:high theme:ui type:bug work:review | [//]: # (

. Note: for support questions, please use Stackoverflow or Gitter**.

. This repository's issues are reserved for feature requests and bug reports.

.

. In case of any problems with Allure Jenkins plugin** please use the following repository

. to create an issue: https://github.com/jenkinsci/allure-plugin/issues

.

. Make sure you have a clear name for your issue. The name should start with a capital

. letter and no dot is required in the end of the sentence. An example of good issue names:

.

. - The report is broken in IE11

. - Add an ability to disable default plugins

. - Support emoji in test descriptions

)

#### I'm submitting a ...

- [x] bug report

- [ ] feature request

- [ ] support request => Please do not submit support request here, see note at the top of this template.

#### What is the current behavior?

#### If the current behavior is a bug, please provide the steps to reproduce and if possible a minimal demo of the problem

Screenshot is attached.

#### What is the expected behavior?

Correct identifier, Packages tab is shown

#### What is the motivation / use case for changing the behavior?

#### Please tell us about your environment:

| Allure version | 2.2.0 |

| --- | --- |

| Test framework | testng@6.8 |

| Allure adaptor | allure-testng@2.0-BETA11 |

| Generate report using | allure-maven@2.18 |

#### Other information

[//]: # (

. e.g. detailed explanation, stacktraces, related issues, suggestions

. how to fix, links for us to have more context, eg. Stackoverflow, Gitter etc

)

<!-- Love allure-report? Please consider supporting our collective:

👉 https://opencollective.com/allure-report/donate --> | 1.0 | Packages tab is not shown in demo report due to invalid initializer - [//]: # (

. Note: for support questions, please use Stackoverflow or Gitter**.

. This repository's issues are reserved for feature requests and bug reports.

.

. In case of any problems with Allure Jenkins plugin** please use the following repository

. to create an issue: https://github.com/jenkinsci/allure-plugin/issues

.

. Make sure you have a clear name for your issue. The name should start with a capital

. letter and no dot is required in the end of the sentence. An example of good issue names:

.

. - The report is broken in IE11

. - Add an ability to disable default plugins

. - Support emoji in test descriptions

)

#### I'm submitting a ...

- [x] bug report

- [ ] feature request

- [ ] support request => Please do not submit support request here, see note at the top of this template.

#### What is the current behavior?

#### If the current behavior is a bug, please provide the steps to reproduce and if possible a minimal demo of the problem

Screenshot is attached.

#### What is the expected behavior?

Correct identifier, Packages tab is shown

#### What is the motivation / use case for changing the behavior?

#### Please tell us about your environment:

| Allure version | 2.2.0 |

| --- | --- |

| Test framework | testng@6.8 |

| Allure adaptor | allure-testng@2.0-BETA11 |

| Generate report using | allure-maven@2.18 |

#### Other information

[//]: # (

. e.g. detailed explanation, stacktraces, related issues, suggestions

. how to fix, links for us to have more context, eg. Stackoverflow, Gitter etc

)

<!-- Love allure-report? Please consider supporting our collective:

👉 https://opencollective.com/allure-report/donate --> | priority | packages tab is not shown in demo report due to invalid initializer note for support questions please use stackoverflow or gitter this repository s issues are reserved for feature requests and bug reports in case of any problems with allure jenkins plugin please use the following repository to create an issue make sure you have a clear name for your issue the name should start with a capital letter and no dot is required in the end of the sentence an example of good issue names the report is broken in add an ability to disable default plugins support emoji in test descriptions i m submitting a bug report feature request support request please do not submit support request here see note at the top of this template what is the current behavior if the current behavior is a bug please provide the steps to reproduce and if possible a minimal demo of the problem screenshot is attached what is the expected behavior correct identifier packages tab is shown what is the motivation use case for changing the behavior please tell us about your environment allure version test framework testng allure adaptor allure testng generate report using allure maven other information e g detailed explanation stacktraces related issues suggestions how to fix links for us to have more context eg stackoverflow gitter etc love allure report please consider supporting our collective 👉 | 1 |

628,798 | 20,014,459,241 | IssuesEvent | 2022-02-01 10:35:21 | kubermatic/kubeone | https://api.github.com/repos/kubermatic/kubeone | opened | Test Cilium in kube-proxy mode -- Test Release 1.4 | priority/high sig/cluster-management | Instructions:

* Download the latest KubeOne 1.4.0 release candidate

* Follow the [Create a Kubernetes cluster tutorial](https://docs.kubermatic.com/kubeone/master/tutorials/creating_clusters/) to create your cluster

* Make sure to have Cilium enabled:

```yaml

clusterNetwork:

cni:

cilium:

enableHubble: true

```

* Wait for machine-controller-managed nodes to join the cluster

* Ensure all pods are Running

* Create a LoadBalancer Service and point to some test pod (e.g. Nginx pod). The LB should be reachable and serve the content as expected

* Ensure Hubble is running and reachable (you might need to port-forward to it)

This test can be done on a single cloud provider, on a single operating system, with a single Kubernetes version (e.g. 1.23.3 on Ubuntu on AWS). | 1.0 | Test Cilium in kube-proxy mode -- Test Release 1.4 - Instructions:

* Download the latest KubeOne 1.4.0 release candidate

* Follow the [Create a Kubernetes cluster tutorial](https://docs.kubermatic.com/kubeone/master/tutorials/creating_clusters/) to create your cluster

* Make sure to have Cilium enabled:

```yaml

clusterNetwork:

cni:

cilium:

enableHubble: true

```

* Wait for machine-controller-managed nodes to join the cluster

* Ensure all pods are Running

* Create a LoadBalancer Service and point to some test pod (e.g. Nginx pod). The LB should be reachable and serve the content as expected

* Ensure Hubble is running and reachable (you might need to port-forward to it)

This test can be done on a single cloud provider, on a single operating system, with a single Kubernetes version (e.g. 1.23.3 on Ubuntu on AWS). | priority | test cilium in kube proxy mode test release instructions download the latest kubeone release candidate follow the to create your cluster make sure to have cilium enabled yaml clusternetwork cni cilium enablehubble true wait for machine controller managed nodes to join the cluster ensure all pods are running create a loadbalancer service and point to some test pod e g nginx pod the lb should be reachable and serve the content as expected ensure hubble is running and reachable you might need to port forward to it this test can be done on a single cloud provider on a single operating system with a single kubernetes version e g on ubuntu on aws | 1 |

86,263 | 3,704,411,907 | IssuesEvent | 2016-03-01 00:04:24 | Macainian/Django-Maced | https://api.github.com/repos/Macainian/Django-Maced | opened | When items are modified, they only propagate select name/value changes. | Bug High Priority | This is an extension of issue #52 | 1.0 | When items are modified, they only propagate select name/value changes. - This is an extension of issue #52 | priority | when items are modified they only propagate select name value changes this is an extension of issue | 1 |

441,199 | 12,709,385,628 | IssuesEvent | 2020-06-23 12:16:34 | AlonDiskin/Visuals | https://api.github.com/repos/AlonDiskin/Visuals | closed | Videos and recycle bin state update error | bug high priority | reproduce steps:

- move some videos to recycle bin

- restore all trash items from recycle bin browser screen

- trash more videos from videos browser

- videos browser not update items as removed

- the now empty recycle bin browser do not show the new items as expected | 1.0 | Videos and recycle bin state update error - reproduce steps:

- move some videos to recycle bin

- restore all trash items from recycle bin browser screen

- trash more videos from videos browser

- videos browser not update items as removed

- the now empty recycle bin browser do not show the new items as expected | priority | videos and recycle bin state update error reproduce steps move some videos to recycle bin restore all trash items from recycle bin browser screen trash more videos from videos browser videos browser not update items as removed the now empty recycle bin browser do not show the new items as expected | 1 |

9,932 | 2,608,937,862 | IssuesEvent | 2015-02-26 11:05:57 | CSSE1001/MyPyTutor | https://api.github.com/repos/CSSE1001/MyPyTutor | closed | Verify installer behaviour for directories with spaces on Windows | bug priority: high | The installer (new and old) need to be checked on Windows to ensure that they correctly handle directories with spaces.

I've had trouble with this issue in the past, especially with the use of `os.execv`; Windows sometimes seems to like screwing up the components of the vector, even though they're given as a list. | 1.0 | Verify installer behaviour for directories with spaces on Windows - The installer (new and old) need to be checked on Windows to ensure that they correctly handle directories with spaces.

I've had trouble with this issue in the past, especially with the use of `os.execv`; Windows sometimes seems to like screwing up the components of the vector, even though they're given as a list. | priority | verify installer behaviour for directories with spaces on windows the installer new and old need to be checked on windows to ensure that they correctly handle directories with spaces i ve had trouble with this issue in the past especially with the use of os execv windows sometimes seems to like screwing up the components of the vector even though they re given as a list | 1 |

144,859 | 5,547,067,858 | IssuesEvent | 2017-03-23 03:45:42 | CS2103JAN2017-T16-B4/main | https://api.github.com/repos/CS2103JAN2017-T16-B4/main | closed | As a user I want to edit the deadline, name and schedule of any task | priority.high type.story | Update any task as required, as well as marking it as done and deleting it | 1.0 | As a user I want to edit the deadline, name and schedule of any task - Update any task as required, as well as marking it as done and deleting it | priority | as a user i want to edit the deadline name and schedule of any task update any task as required as well as marking it as done and deleting it | 1 |

266,999 | 8,378,159,735 | IssuesEvent | 2018-10-06 11:03:58 | rit-sse/OneRepoToRuleThemAll | https://api.github.com/repos/rit-sse/OneRepoToRuleThemAll | closed | Pin Down Node Version | High Priority | In our `Dockerfile` we say `FROM node` which will install the latest node version. It appears that this is installing node v10.

We should pin down the version (eg. `FROM node:carbon`) to the LTS release so that we're running on a consistent node version, not running on odd numbered Node releases, and can have a consistent upgrade process for our dependencies (not having them break under us).

Node v10 will become LTS this month (October 2018), so we can wait until it does to pin down the version.

We can also delete the `Dockerfile.edge` file as it's out-of-date and no longer needed. | 1.0 | Pin Down Node Version - In our `Dockerfile` we say `FROM node` which will install the latest node version. It appears that this is installing node v10.

We should pin down the version (eg. `FROM node:carbon`) to the LTS release so that we're running on a consistent node version, not running on odd numbered Node releases, and can have a consistent upgrade process for our dependencies (not having them break under us).

Node v10 will become LTS this month (October 2018), so we can wait until it does to pin down the version.

We can also delete the `Dockerfile.edge` file as it's out-of-date and no longer needed. | priority | pin down node version in our dockerfile we say from node which will install the latest node version it appears that this is installing node we should pin down the version eg from node carbon to the lts release so that we re running on a consistent node version not running on odd numbered node releases and can have a consistent upgrade process for our dependencies not having them break under us node will become lts this month october so we can wait until it does to pin down the version we can also delete the dockerfile edge file as it s out of date and no longer needed | 1 |

732,954 | 25,282,044,689 | IssuesEvent | 2022-11-16 16:25:50 | leka/LekaOS | https://api.github.com/repos/leka/LekaOS | closed | [Story] - LekaOS BLE v1.0.0 | 01 - type: story 90 - priority: high | # Introduction

BLE communication with the iPad is one of the most important feature of LekaOS. Without it the robot is useless and its presence allow us to:

- control the robot

- debug/log what's going on

- tests new functionalities (FOTA, scheduling, etc.)

## Roadmap

1. Setup a basic working example using mbed

1. Test the integration with timed tasks and scheduling

1. Implement [Leka Communication Specifications](https://github.com/leka/LKAlphaComSpecs)

## 1. Setup a basic working example using mbed

This first step is really easy: use the examples from mbed-os to create a simple working example of BLE for Leka to pave the way for future developments

This must include:

- [x] read, write and notifications services/characteristics

- [x] the use of predefined services (battery) and proprietary services (temperature, firmware version, basic command)

## 2. Test integration with timed tasks and scheduling

Through out our development we will need to start tasks and stop them. Gaining a deeper understanding about how this will work between BLE and mbed os is paramount as all future development will rely on that.

More information in #1 - https://github.com/leka/LekaOS/issues/1#issuecomment-557007922

This must include:

- [x] starting a long running thread

- [x] stoping a long running thread anytime

- [x] starting again from the beginning

- [x] starting again where we left off (if possible)

## 3. Implement Leka Communication Specifications

We'll review, update and implement the Leka Communication Specifications in parallel with the iOS app.

The main goal is to have the two perfectly synchronised.

This must include:

- [x] implement all the services/characteristics

- [x] implement the command analyser

- [x] a lot of testing

## 4. Mandatory commands

- [x] implement reset command and test in case of infinite loop | 1.0 | [Story] - LekaOS BLE v1.0.0 - # Introduction

BLE communication with the iPad is one of the most important feature of LekaOS. Without it the robot is useless and its presence allow us to:

- control the robot

- debug/log what's going on

- tests new functionalities (FOTA, scheduling, etc.)

## Roadmap

1. Setup a basic working example using mbed

1. Test the integration with timed tasks and scheduling

1. Implement [Leka Communication Specifications](https://github.com/leka/LKAlphaComSpecs)

## 1. Setup a basic working example using mbed

This first step is really easy: use the examples from mbed-os to create a simple working example of BLE for Leka to pave the way for future developments

This must include:

- [x] read, write and notifications services/characteristics

- [x] the use of predefined services (battery) and proprietary services (temperature, firmware version, basic command)

## 2. Test integration with timed tasks and scheduling

Through out our development we will need to start tasks and stop them. Gaining a deeper understanding about how this will work between BLE and mbed os is paramount as all future development will rely on that.

More information in #1 - https://github.com/leka/LekaOS/issues/1#issuecomment-557007922

This must include:

- [x] starting a long running thread

- [x] stoping a long running thread anytime

- [x] starting again from the beginning

- [x] starting again where we left off (if possible)

## 3. Implement Leka Communication Specifications

We'll review, update and implement the Leka Communication Specifications in parallel with the iOS app.

The main goal is to have the two perfectly synchronised.

This must include:

- [x] implement all the services/characteristics

- [x] implement the command analyser

- [x] a lot of testing

## 4. Mandatory commands

- [x] implement reset command and test in case of infinite loop | priority | lekaos ble introduction ble communication with the ipad is one of the most important feature of lekaos without it the robot is useless and its presence allow us to control the robot debug log what s going on tests new functionalities fota scheduling etc roadmap setup a basic working example using mbed test the integration with timed tasks and scheduling implement setup a basic working example using mbed this first step is really easy use the examples from mbed os to create a simple working example of ble for leka to pave the way for future developments this must include read write and notifications services characteristics the use of predefined services battery and proprietary services temperature firmware version basic command test integration with timed tasks and scheduling through out our development we will need to start tasks and stop them gaining a deeper understanding about how this will work between ble and mbed os is paramount as all future development will rely on that more information in this must include starting a long running thread stoping a long running thread anytime starting again from the beginning starting again where we left off if possible implement leka communication specifications we ll review update and implement the leka communication specifications in parallel with the ios app the main goal is to have the two perfectly synchronised this must include implement all the services characteristics implement the command analyser a lot of testing mandatory commands implement reset command and test in case of infinite loop | 1 |

788,885 | 27,771,807,270 | IssuesEvent | 2023-03-16 14:53:35 | janus-idp/software-templates | https://api.github.com/repos/janus-idp/software-templates | opened | Various updates in the 6 Janus GPTs | kind/enhancement priority/high | 1. Rename the GPTs titles.

ie

From: `.NET Frontend Golden Path Template`

To: `Create a .NET Frontend application with CI/CD`

2. Show the CI step before the CD step. The CI step will become step 3 and Argo will be step 4.

3. Update the current description in the CI section.

From: `This action will create a simple CI based on chosen method`

To: `This action will create a CI pipeline for your application based on chosen method`

4. In the ArgoCD step:

- Select Quay registry as default

- Move the image url before the namespace

5. Make sure the ports default are correct. It seems that most templates are using 5000 as default which is incorrect. | 1.0 | Various updates in the 6 Janus GPTs - 1. Rename the GPTs titles.

ie

From: `.NET Frontend Golden Path Template`

To: `Create a .NET Frontend application with CI/CD`

2. Show the CI step before the CD step. The CI step will become step 3 and Argo will be step 4.

3. Update the current description in the CI section.

From: `This action will create a simple CI based on chosen method`

To: `This action will create a CI pipeline for your application based on chosen method`

4. In the ArgoCD step:

- Select Quay registry as default

- Move the image url before the namespace

5. Make sure the ports default are correct. It seems that most templates are using 5000 as default which is incorrect. | priority | various updates in the janus gpts rename the gpts titles ie from net frontend golden path template to create a net frontend application with ci cd show the ci step before the cd step the ci step will become step and argo will be step update the current description in the ci section from this action will create a simple ci based on chosen method to this action will create a ci pipeline for your application based on chosen method in the argocd step select quay registry as default move the image url before the namespace make sure the ports default are correct it seems that most templates are using as default which is incorrect | 1 |

197,744 | 6,963,453,986 | IssuesEvent | 2017-12-08 17:26:59 | python/mypy | https://api.github.com/repos/python/mypy | closed | Slow incremental run when single module has errors | bug priority-0-high topic-daemon topic-incremental | `mypy -i` is slower than expected in the following scenario:

* Check out fresh mypy repository

* Create tiny module `mypy.x` that generates an error: `echo '1 + ""' > mypy/x.py`

* Run `mypy -i mypy`

* This takes a while, generates an error for `mypy/x.py` as expected

* Run `mypy -i mypy`

* This is as slow as the previous run, but caching should make this much faster

| 1.0 | Slow incremental run when single module has errors - `mypy -i` is slower than expected in the following scenario:

* Check out fresh mypy repository

* Create tiny module `mypy.x` that generates an error: `echo '1 + ""' > mypy/x.py`

* Run `mypy -i mypy`

* This takes a while, generates an error for `mypy/x.py` as expected

* Run `mypy -i mypy`

* This is as slow as the previous run, but caching should make this much faster

| priority | slow incremental run when single module has errors mypy i is slower than expected in the following scenario check out fresh mypy repository create tiny module mypy x that generates an error echo mypy x py run mypy i mypy this takes a while generates an error for mypy x py as expected run mypy i mypy this is as slow as the previous run but caching should make this much faster | 1 |

529,381 | 15,387,560,930 | IssuesEvent | 2021-03-03 09:41:22 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Coverity - High Impact Outstanding issues in MDEvent files | Framework High Priority Stale | This issue was originally [TRAC 9938](http://trac.mantidproject.org/mantid/ticket/9938)

There are 17 Coverity high impact outstanding issued in the `MDEvent` module. Distribute as you see fit.

| 1.0 | Coverity - High Impact Outstanding issues in MDEvent files - This issue was originally [TRAC 9938](http://trac.mantidproject.org/mantid/ticket/9938)

There are 17 Coverity high impact outstanding issued in the `MDEvent` module. Distribute as you see fit.

| priority | coverity high impact outstanding issues in mdevent files this issue was originally there are coverity high impact outstanding issued in the mdevent module distribute as you see fit | 1 |

509,616 | 14,740,532,189 | IssuesEvent | 2021-01-07 09:14:23 | canonical-web-and-design/vanilla-framework | https://api.github.com/repos/canonical-web-and-design/vanilla-framework | closed | Fieldset: Transparent border on fieldset results in border colour spilling outside element | Bug 🐛 Priority: High | **Describe the bug**

The transparent border currently applied to fieldsets causes a strange bug in chrome where the border color fills the window.

Replacing the fieldset with a div and copyign all fieldset styles onto the div doesn't seem to trigger the bug, so it seems highly specific to the fieldset or an inherent property of it:

**To Reproduce**

Paste this [markup](https://pastebin.canonical.com/p/2pnRKXPPkR/) in any site using vanilla.

- Device: Mac

- OS: Latest

- Browser Version 87.0.4280.67 (Official Build) (x86_64) | 1.0 | Fieldset: Transparent border on fieldset results in border colour spilling outside element - **Describe the bug**

The transparent border currently applied to fieldsets causes a strange bug in chrome where the border color fills the window.

Replacing the fieldset with a div and copyign all fieldset styles onto the div doesn't seem to trigger the bug, so it seems highly specific to the fieldset or an inherent property of it:

**To Reproduce**

Paste this [markup](https://pastebin.canonical.com/p/2pnRKXPPkR/) in any site using vanilla.

- Device: Mac

- OS: Latest

- Browser Version 87.0.4280.67 (Official Build) (x86_64) | priority | fieldset transparent border on fieldset results in border colour spilling outside element describe the bug the transparent border currently applied to fieldsets causes a strange bug in chrome where the border color fills the window replacing the fieldset with a div and copyign all fieldset styles onto the div doesn t seem to trigger the bug so it seems highly specific to the fieldset or an inherent property of it to reproduce paste this in any site using vanilla device mac os latest browser version official build | 1 |

155,746 | 5,960,139,006 | IssuesEvent | 2017-05-29 13:16:15 | Aurorastation/Aurora.3 | https://api.github.com/repos/Aurorastation/Aurora.3 | opened | SMC: Mapped in power connections do not appear to be updated properly | bug:confirmed flag:development flag:high priority | Okay. Long story short.

We've been running into issues in new map meme where connections that are valid, and exist since round start, are not flagged properly until an admin invokes `make-powernets`. Example cases of this:

* Tesla setup. This is all mapped in to be 100% functional (as far as wiring goes). However, after starting the engine, power does not flow until `make-powernets` or a local powernet update (I presume, haven't tried) is invoked.

* #2345 . Where mapped in z-wires do not move power until `make-powernets` is invoked. In this case, not even remaking the wires helps reliably, thus it's possible that local updates fail at times. Whether or not this is specific to z-wires should be confirmed.

* Possibly #2520 . Needs confirmation.

@Lohikar since this is something you've worked on, could you investigate during the week? | 1.0 | SMC: Mapped in power connections do not appear to be updated properly - Okay. Long story short.

We've been running into issues in new map meme where connections that are valid, and exist since round start, are not flagged properly until an admin invokes `make-powernets`. Example cases of this:

* Tesla setup. This is all mapped in to be 100% functional (as far as wiring goes). However, after starting the engine, power does not flow until `make-powernets` or a local powernet update (I presume, haven't tried) is invoked.

* #2345 . Where mapped in z-wires do not move power until `make-powernets` is invoked. In this case, not even remaking the wires helps reliably, thus it's possible that local updates fail at times. Whether or not this is specific to z-wires should be confirmed.

* Possibly #2520 . Needs confirmation.

@Lohikar since this is something you've worked on, could you investigate during the week? | priority | smc mapped in power connections do not appear to be updated properly okay long story short we ve been running into issues in new map meme where connections that are valid and exist since round start are not flagged properly until an admin invokes make powernets example cases of this tesla setup this is all mapped in to be functional as far as wiring goes however after starting the engine power does not flow until make powernets or a local powernet update i presume haven t tried is invoked where mapped in z wires do not move power until make powernets is invoked in this case not even remaking the wires helps reliably thus it s possible that local updates fail at times whether or not this is specific to z wires should be confirmed possibly needs confirmation lohikar since this is something you ve worked on could you investigate during the week | 1 |

272,410 | 8,508,152,747 | IssuesEvent | 2018-10-30 21:06:11 | isawnyu/isaw.web | https://api.github.com/repos/isawnyu/isaw.web | opened | Unable to edit images | bug high priority | I'm getting a blank dialog box this afternoon when I attempt to edit an image. It looks like this:

<img width="985" alt="screen shot 2018-10-30 at 4 57 29 pm" src="https://user-images.githubusercontent.com/7882896/47750546-00067c00-dc66-11e8-8be3-ac5886561082.png">

I am using Firefox (Mac). | 1.0 | Unable to edit images - I'm getting a blank dialog box this afternoon when I attempt to edit an image. It looks like this:

<img width="985" alt="screen shot 2018-10-30 at 4 57 29 pm" src="https://user-images.githubusercontent.com/7882896/47750546-00067c00-dc66-11e8-8be3-ac5886561082.png">

I am using Firefox (Mac). | priority | unable to edit images i m getting a blank dialog box this afternoon when i attempt to edit an image it looks like this img width alt screen shot at pm src i am using firefox mac | 1 |

739,995 | 25,731,498,609 | IssuesEvent | 2022-12-07 20:41:38 | fecgov/fec-cms | https://api.github.com/repos/fecgov/fec-cms | closed | Update meeting page template to change default open meeting and executive session time | Work: Front-end High priority | ### Summary

**What we're after:**

_OCS has indicated that meeting times will shift to start meetings at 10:30. We need to update the default time in the meeting page template so that users interested in Commission meetings have accurate information._

### Completion criteria

- [ ] Default time is changed on the meeting page template to 10:30. | 1.0 | Update meeting page template to change default open meeting and executive session time - ### Summary

**What we're after:**

_OCS has indicated that meeting times will shift to start meetings at 10:30. We need to update the default time in the meeting page template so that users interested in Commission meetings have accurate information._

### Completion criteria

- [ ] Default time is changed on the meeting page template to 10:30. | priority | update meeting page template to change default open meeting and executive session time summary what we re after ocs has indicated that meeting times will shift to start meetings at we need to update the default time in the meeting page template so that users interested in commission meetings have accurate information completion criteria default time is changed on the meeting page template to | 1 |

740,879 | 25,771,981,009 | IssuesEvent | 2022-12-09 08:48:15 | sorrowcode/taesch- | https://api.github.com/repos/sorrowcode/taesch- | closed | feature - Verbindung NearShopsScreen und MapScreen | USP - 5 feature 0 - highest priority | Als Nutzer möchte ich, dass ich beim Aufrufen eines Ladens eine Möglichkeit habe, dass ich zur Karte geführt werde, damit ich sehen kann, wo genau der Laden in meiner Nähe sich befindet.

Hier soll eine Strategie ausgearbeitet und implementiert werden, wodurch die Möglichkeit besteht, dynamisch zwischen NearShopsScreen und MapScreen zu navigieren und möglicherweise einzelne Läden anzeigen zu lassen. | 1.0 | feature - Verbindung NearShopsScreen und MapScreen - Als Nutzer möchte ich, dass ich beim Aufrufen eines Ladens eine Möglichkeit habe, dass ich zur Karte geführt werde, damit ich sehen kann, wo genau der Laden in meiner Nähe sich befindet.

Hier soll eine Strategie ausgearbeitet und implementiert werden, wodurch die Möglichkeit besteht, dynamisch zwischen NearShopsScreen und MapScreen zu navigieren und möglicherweise einzelne Läden anzeigen zu lassen. | priority | feature verbindung nearshopsscreen und mapscreen als nutzer möchte ich dass ich beim aufrufen eines ladens eine möglichkeit habe dass ich zur karte geführt werde damit ich sehen kann wo genau der laden in meiner nähe sich befindet hier soll eine strategie ausgearbeitet und implementiert werden wodurch die möglichkeit besteht dynamisch zwischen nearshopsscreen und mapscreen zu navigieren und möglicherweise einzelne läden anzeigen zu lassen | 1 |

586,988 | 17,601,238,335 | IssuesEvent | 2021-08-17 12:08:16 | gitpod-io/gitpod | https://api.github.com/repos/gitpod-io/gitpod | opened | Agent Smith signature check fails because of permission issues | type: bug component: agent-smith priority: highest (user impact) | ### Bug description

Agent Smith cannot check signatures of running processes at all because of permission issues. All violations that are found are found because if blacklisted commands, not exec signatures.

Log [link](https://cloudlogging.app.goo.gl/KcAc7153HjjXA6uE8)

Example log output:

```

{"@type":"type.googleapis.com/google.devtools.clouderrorreporting.v1beta1.ReportedErrorEvent","error":"open /proc/560401/exe: permission denied","level":"warning","message":"cannot open executable to check signatures","path":"id","serviceContext":{"service":"agent-smith","version":""},"severity":"WARNING","time":"2021-08-17T10:59:18Z"}

```

### Steps to reproduce

- Create a preview environment

- start a workspace

- check log of (relevant) agent smith daemon

### Expected behavior

Agent smith should be able to check signatures of all processes running inside a workspace.

### Example repository

_No response_

### Anything else?

_No response_ | 1.0 | Agent Smith signature check fails because of permission issues - ### Bug description

Agent Smith cannot check signatures of running processes at all because of permission issues. All violations that are found are found because if blacklisted commands, not exec signatures.

Log [link](https://cloudlogging.app.goo.gl/KcAc7153HjjXA6uE8)

Example log output:

```

{"@type":"type.googleapis.com/google.devtools.clouderrorreporting.v1beta1.ReportedErrorEvent","error":"open /proc/560401/exe: permission denied","level":"warning","message":"cannot open executable to check signatures","path":"id","serviceContext":{"service":"agent-smith","version":""},"severity":"WARNING","time":"2021-08-17T10:59:18Z"}

```

### Steps to reproduce

- Create a preview environment

- start a workspace

- check log of (relevant) agent smith daemon

### Expected behavior

Agent smith should be able to check signatures of all processes running inside a workspace.

### Example repository

_No response_

### Anything else?

_No response_ | priority | agent smith signature check fails because of permission issues bug description agent smith cannot check signatures of running processes at all because of permission issues all violations that are found are found because if blacklisted commands not exec signatures log example log output type type googleapis com google devtools clouderrorreporting reportederrorevent error open proc exe permission denied level warning message cannot open executable to check signatures path id servicecontext service agent smith version severity warning time steps to reproduce create a preview environment start a workspace check log of relevant agent smith daemon expected behavior agent smith should be able to check signatures of all processes running inside a workspace example repository no response anything else no response | 1 |

800,379 | 28,363,711,686 | IssuesEvent | 2023-04-12 12:36:37 | flowforge/flowforge | https://api.github.com/repos/flowforge/flowforge | closed | Cancel button application/settings edit page doesn't work | bug area:frontend priority:high | ### Current Behavior

```

ntime-core.esm-bundler.js:169 [Vue warn]: Property "cancelEditName" was accessed during render but is not defined on instance.

at <ProjectSettings application= Object instances= Array(1) is-visiting-admin=false ... >

at <RouterView application= Object instances= Array(1) is-visiting-admin=false ... >

at <ProjectPage onVnodeUnmounted=fn<onVnodeUnmounted> ref=Ref< Proxy(Object) > >

at <RouterView>

at <FfLayoutPlatform key=0 >

at <App>

w

```

### Expected Behavior

_No response_

### Steps To Reproduce

_No response_

### Environment

- FlowForge version:

- Node.js version:

- npm version:

- Platform/OS:

- Browser:

| 1.0 | Cancel button application/settings edit page doesn't work - ### Current Behavior

```

ntime-core.esm-bundler.js:169 [Vue warn]: Property "cancelEditName" was accessed during render but is not defined on instance.

at <ProjectSettings application= Object instances= Array(1) is-visiting-admin=false ... >

at <RouterView application= Object instances= Array(1) is-visiting-admin=false ... >

at <ProjectPage onVnodeUnmounted=fn<onVnodeUnmounted> ref=Ref< Proxy(Object) > >

at <RouterView>

at <FfLayoutPlatform key=0 >

at <App>

w

```

### Expected Behavior

_No response_

### Steps To Reproduce

_No response_

### Environment

- FlowForge version:

- Node.js version:

- npm version:

- Platform/OS:

- Browser:

| priority | cancel button application settings edit page doesn t work current behavior ntime core esm bundler js property canceleditname was accessed during render but is not defined on instance at at at ref ref at at at w expected behavior no response steps to reproduce no response environment flowforge version node js version npm version platform os browser | 1 |

772,523 | 27,125,714,699 | IssuesEvent | 2023-02-16 05:01:02 | FastcampusMini/mini-project | https://api.github.com/repos/FastcampusMini/mini-project | closed | Basket API | For: API Priority: High Status: In Progress Type: Feature | ## Title

장바구니 기능

## Description

장바구니

## Tasks

- [x] 장바구니 repo, service, dto 구현

| 1.0 | Basket API - ## Title

장바구니 기능

## Description

장바구니

## Tasks

- [x] 장바구니 repo, service, dto 구현

| priority | basket api title 장바구니 기능 description 장바구니 tasks 장바구니 repo service dto 구현 | 1 |

777,656 | 27,289,606,690 | IssuesEvent | 2023-02-23 15:43:58 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | PFacct issue in cluster | Type: Bug Priority: High | PacketFence 12.2.

Switching from standalone to cluster when creating a cluster, the load balancer does not want to start because PF acct is already listening.

| 1.0 | PFacct issue in cluster - PacketFence 12.2.

Switching from standalone to cluster when creating a cluster, the load balancer does not want to start because PF acct is already listening.

| priority | pfacct issue in cluster packetfence switching from standalone to cluster when creating a cluster the load balancer does not want to start because pf acct is already listening | 1 |

329,670 | 10,022,953,619 | IssuesEvent | 2019-07-16 17:58:54 | ArtskydJ/comicsrss.com | https://api.github.com/repos/ArtskydJ/comicsrss.com | closed | Some gocomics strips are not showing up | high-priority | From @megadr01d, originally posted in #86

Hope you don't mind... I removed your other comment since I see these as two separate issues.

-----------------------------------

Why are some GoComics comics are not available?

Like these two, which I'd love to see in the future:

https://www.gocomics.com/how-to-cat

https://www.gocomics.com/redmeat | 1.0 | Some gocomics strips are not showing up - From @megadr01d, originally posted in #86

Hope you don't mind... I removed your other comment since I see these as two separate issues.

-----------------------------------

Why are some GoComics comics are not available?

Like these two, which I'd love to see in the future:

https://www.gocomics.com/how-to-cat

https://www.gocomics.com/redmeat | priority | some gocomics strips are not showing up from originally posted in hope you don t mind i removed your other comment since i see these as two separate issues why are some gocomics comics are not available like these two which i d love to see in the future | 1 |

530,309 | 15,420,784,113 | IssuesEvent | 2021-03-05 12:05:53 | apluslms/a-plus | https://api.github.com/repos/apluslms/a-plus | closed | Latest course instance redirection must pick the latest visible instance | O1 needs area: UX student effort: hours experience: good first issue priority: high requester: Aalto teacher type: bug | Pull request #772 added the redirection view to the latest course instance: https://plus.cs.aalto.fi/o1/

However, if the latest instance is hidden from students, then this redirection becomes useless and confusing to students. It is common that the latest instance is hidden before it has begun because it is still work in progress. The redirection should always pick the latest VISIBLE instance. | 1.0 | Latest course instance redirection must pick the latest visible instance - Pull request #772 added the redirection view to the latest course instance: https://plus.cs.aalto.fi/o1/

However, if the latest instance is hidden from students, then this redirection becomes useless and confusing to students. It is common that the latest instance is hidden before it has begun because it is still work in progress. The redirection should always pick the latest VISIBLE instance. | priority | latest course instance redirection must pick the latest visible instance pull request added the redirection view to the latest course instance however if the latest instance is hidden from students then this redirection becomes useless and confusing to students it is common that the latest instance is hidden before it has begun because it is still work in progress the redirection should always pick the latest visible instance | 1 |

566,102 | 16,796,056,019 | IssuesEvent | 2021-06-16 03:49:18 | parallel-finance/parallel | https://api.github.com/repos/parallel-finance/parallel | closed | tokio-runtime-worker runtime::timestamp: `pallet_timestamp::UnixTime::now` is called at genesis, invalid value returned: 0 | high priority | <img width="1297" alt="image" src="https://user-images.githubusercontent.com/33961674/121112081-81711280-c842-11eb-9f7d-3391524261f4.png">

| 1.0 | tokio-runtime-worker runtime::timestamp: `pallet_timestamp::UnixTime::now` is called at genesis, invalid value returned: 0 - <img width="1297" alt="image" src="https://user-images.githubusercontent.com/33961674/121112081-81711280-c842-11eb-9f7d-3391524261f4.png">

| priority | tokio runtime worker runtime timestamp pallet timestamp unixtime now is called at genesis invalid value returned img width alt image src | 1 |

138,583 | 5,344,628,530 | IssuesEvent | 2017-02-17 15:01:22 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Feature Request: torch 'module' object has no attribute '__version__' | enhancement high priority | could you please add `__version__` to the `torch` module! all the cool modules have it! ;) | 1.0 | Feature Request: torch 'module' object has no attribute '__version__' - could you please add `__version__` to the `torch` module! all the cool modules have it! ;) | priority | feature request torch module object has no attribute version could you please add version to the torch module all the cool modules have it | 1 |

761,872 | 26,700,578,959 | IssuesEvent | 2023-01-27 14:05:46 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Error when Renaming Workspaces with Plots | High Priority Bug | **Describe the bug**

Workbench throws errors when a workspace is renamed with a plot of its data open.

**To Reproduce**

1. Load data

2. Plot a spectrum

3. Rename the workspace

**Expected behaviour**

Title of the plot is changed and no errors are thrown.

**Platform/Version (please complete the following information):**

- OS: All

- Mantid Version: 6.6 Nightly (2023-01-24)

**Additional context**

```

Error occurred in handler:

Traceback (most recent call last):

File "/home/conor/repos/mantid/qt/applications/workbench/workbench/plotting/figuremanager.py", line 62, in wrapper

func(*args, **kwargs)

File "/home/conor/repos/mantid/qt/applications/workbench/workbench/plotting/figuremanager.py", line 162, in renameHandle

self.canvas.manager.set_window_title(_replace_workspace_name_in_string(oldName, newName, self.canvas.get_window_title()))

AttributeError: 'MantidFigureCanvas' object has no attribute 'get_window_title'

```

| 1.0 | Error when Renaming Workspaces with Plots - **Describe the bug**

Workbench throws errors when a workspace is renamed with a plot of its data open.