Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

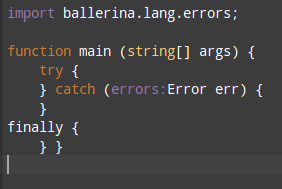

783,692 | 27,542,285,010 | IssuesEvent | 2023-03-07 09:23:13 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Remove `createRecordFromMap()` by desugaring the logic internally | Type/Task Priority/High Team/CompilerFE Area/Desugar Deferred | **Description:**

With https://github.com/ballerina-platform/ballerina-lang/pull/30525/ we introduced `createRecordFromMap()` `langlib.internal` function to create a record value from a map.

Creating a map and calling `value:cloneWithType` would not work since `value:cloneWithType` only supports anydata https://raw.githubusercontent.com/ballerina-platform/ballerina-spec/master/lang/lib/value.bal

**Steps to reproduce:**

**Affected Versions:**

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

| 1.0 | Remove `createRecordFromMap()` by desugaring the logic internally - **Description:**

With https://github.com/ballerina-platform/ballerina-lang/pull/30525/ we introduced `createRecordFromMap()` `langlib.internal` function to create a record value from a map.

Creating a map and calling `value:cloneWithType` would not work since `value:cloneWithType` only supports anydata https://raw.githubusercontent.com/ballerina-platform/ballerina-spec/master/lang/lib/value.bal

**Steps to reproduce:**

**Affected Versions:**

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

| priority | remove createrecordfrommap by desugaring the logic internally description with we introduced createrecordfrommap langlib internal function to create a record value from a map creating a map and calling value clonewithtype would not work since value clonewithtype only supports anydata steps to reproduce affected versions os db other environment details and versions related issues optional suggested labels optional suggested assignees optional | 1 |

792,888 | 27,976,476,191 | IssuesEvent | 2023-03-25 16:46:38 | AY2223S2-CS2103T-T14-3/tp | https://api.github.com/repos/AY2223S2-CS2103T-T14-3/tp | opened | Update Developer Guide | priority.High | To update the developer guide:

- Introduction

- Include GUI information

- Add in feature implementation for `upcoming` and `copy`. Update `delete` and `find` accordingly.

- Add in use cases

- Add in test cases for manual testing | 1.0 | Update Developer Guide - To update the developer guide:

- Introduction

- Include GUI information

- Add in feature implementation for `upcoming` and `copy`. Update `delete` and `find` accordingly.

- Add in use cases

- Add in test cases for manual testing | priority | update developer guide to update the developer guide introduction include gui information add in feature implementation for upcoming and copy update delete and find accordingly add in use cases add in test cases for manual testing | 1 |

711,742 | 24,473,541,551 | IssuesEvent | 2022-10-07 23:43:51 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Improve app title bar for stream and topic views | help wanted area: misc in progress priority: high | We should make the following changes in the app title bar:

- [ ] For stream views, display the stream name as `#stream`, rather than `stream`.

- [ ] For topic views, display the topic as `#stream > topic`, not just `topic`.

This will make the title bar more clear and consistent with other parts of the Zulip app.

[CZO discussion](https://chat.zulip.org/#narrow/stream/101-design/topic/app.20title.20bar/near/1434948)

Current UI (note that one can't tell a stream view from a topic view):

| 1.0 | Improve app title bar for stream and topic views - We should make the following changes in the app title bar:

- [ ] For stream views, display the stream name as `#stream`, rather than `stream`.

- [ ] For topic views, display the topic as `#stream > topic`, not just `topic`.

This will make the title bar more clear and consistent with other parts of the Zulip app.

[CZO discussion](https://chat.zulip.org/#narrow/stream/101-design/topic/app.20title.20bar/near/1434948)

Current UI (note that one can't tell a stream view from a topic view):

| priority | improve app title bar for stream and topic views we should make the following changes in the app title bar for stream views display the stream name as stream rather than stream for topic views display the topic as stream topic not just topic this will make the title bar more clear and consistent with other parts of the zulip app current ui note that one can t tell a stream view from a topic view | 1 |

239,973 | 7,800,247,239 | IssuesEvent | 2018-06-09 06:59:06 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0008136:

create accounts in update script | Bug HumanResources Mantis high priority | **Reported by astintzing on 4 Apr 2013 10:35**

create accounts in update script

| 1.0 | 0008136:

create accounts in update script - **Reported by astintzing on 4 Apr 2013 10:35**

create accounts in update script

| priority | create accounts in update script reported by astintzing on apr create accounts in update script | 1 |

495,558 | 14,283,969,220 | IssuesEvent | 2020-11-23 11:48:44 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | yandex.ru - see bug description | browser-fenix engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox Mobile 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:85.0) Gecko/85.0 Firefox/85.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/62336 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://yandex.ru/

**Browser / Version**: Firefox Mobile 85.0

**Operating System**: Android

**Tested Another Browser**: No

**Problem type**: Something else

**Description**: not opening

**Steps to Reproduce**:

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/11/092128ae-2e63-49f1-a12b-c503b29e95ce.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20201122093438</li><li>channel: nightly</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/11/ce7500c9-d73a-46e3-bb05-ef19a3cd2f0c)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | yandex.ru - see bug description - <!-- @browser: Firefox Mobile 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:85.0) Gecko/85.0 Firefox/85.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/62336 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://yandex.ru/

**Browser / Version**: Firefox Mobile 85.0

**Operating System**: Android

**Tested Another Browser**: No

**Problem type**: Something else

**Description**: not opening

**Steps to Reproduce**:

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/11/092128ae-2e63-49f1-a12b-c503b29e95ce.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20201122093438</li><li>channel: nightly</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/11/ce7500c9-d73a-46e3-bb05-ef19a3cd2f0c)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | yandex ru see bug description url browser version firefox mobile operating system android tested another browser no problem type something else description not opening steps to reproduce view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel nightly hastouchscreen true mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

152,562 | 5,849,169,235 | IssuesEvent | 2017-05-10 22:54:43 | Polymer/polymer-bundler | https://api.github.com/repos/Polymer/polymer-bundler | closed | Lazy Imports not getting resolved correctly in bundles | Priority: High Status: Review Needed Type: Bug | **Steps to Reproduce:**

1. Clone https://github.com/jay8t6/lazy-import-bundle

2. Run `npm i;bower i`

3. Run `npm run build`

4. Run `polymer serve build/ -o`

5. Open console

**Expected Result:** `paper-button` will lazily import into the side bar

**Actual Result:** `paper-button` doesn't import. You can see in the console that the import failed and resolved to `bower_components/bower_components/paper-button/paper-button.html`

@usergenic | 1.0 | Lazy Imports not getting resolved correctly in bundles - **Steps to Reproduce:**

1. Clone https://github.com/jay8t6/lazy-import-bundle

2. Run `npm i;bower i`

3. Run `npm run build`

4. Run `polymer serve build/ -o`

5. Open console

**Expected Result:** `paper-button` will lazily import into the side bar

**Actual Result:** `paper-button` doesn't import. You can see in the console that the import failed and resolved to `bower_components/bower_components/paper-button/paper-button.html`

@usergenic | priority | lazy imports not getting resolved correctly in bundles steps to reproduce clone run npm i bower i run npm run build run polymer serve build o open console expected result paper button will lazily import into the side bar actual result paper button doesn t import you can see in the console that the import failed and resolved to bower components bower components paper button paper button html usergenic | 1 |

726,490 | 25,000,784,634 | IssuesEvent | 2022-11-03 07:40:39 | submariner-io/enhancements | https://api.github.com/repos/submariner-io/enhancements | closed | Epic: Support OCP with submariner on VMWare | enhancement priority:high | **What would you like to be added**:

Proposal is to fully support connecting multiple OCP clusters on VMWare using Submariner.

**Why is this needed**:

Aim of this proposal is to list and track steps needed to fill any gaps and allow full support for such deployments.

**UPDATE**

Investigations have confirmed that there is nothing that needs to be done to prepare cloud for VM Ware, in subctl or OCM. Expectation is that VMWare should work without any changes, except when using NSX which is for now outside scope of this EPIC.

**Work Items**

Based on results of investigations, only effort required is testing.

- [ ] Add VMWare to Submariner Testday

- [ ] Run QE on VMWare

| 1.0 | Epic: Support OCP with submariner on VMWare - **What would you like to be added**:

Proposal is to fully support connecting multiple OCP clusters on VMWare using Submariner.

**Why is this needed**:

Aim of this proposal is to list and track steps needed to fill any gaps and allow full support for such deployments.

**UPDATE**

Investigations have confirmed that there is nothing that needs to be done to prepare cloud for VM Ware, in subctl or OCM. Expectation is that VMWare should work without any changes, except when using NSX which is for now outside scope of this EPIC.

**Work Items**

Based on results of investigations, only effort required is testing.

- [ ] Add VMWare to Submariner Testday

- [ ] Run QE on VMWare

| priority | epic support ocp with submariner on vmware what would you like to be added proposal is to fully support connecting multiple ocp clusters on vmware using submariner why is this needed aim of this proposal is to list and track steps needed to fill any gaps and allow full support for such deployments update investigations have confirmed that there is nothing that needs to be done to prepare cloud for vm ware in subctl or ocm expectation is that vmware should work without any changes except when using nsx which is for now outside scope of this epic work items based on results of investigations only effort required is testing add vmware to submariner testday run qe on vmware | 1 |

310,588 | 9,516,196,635 | IssuesEvent | 2019-04-26 08:14:12 | status-im/status-react | https://api.github.com/repos/status-im/status-react | opened | Delete options "Fetch 48-60h", "Fetch 84-96h" from release | high-priority release | With https://github.com/status-im/status-react/pull/8025 accidentally to `release` branch were included options "Fetch 48-60h", "Fetch 84-96h".

Please remove it and merge this PR to `develop` and to `release` branches | 1.0 | Delete options "Fetch 48-60h", "Fetch 84-96h" from release - With https://github.com/status-im/status-react/pull/8025 accidentally to `release` branch were included options "Fetch 48-60h", "Fetch 84-96h".

Please remove it and merge this PR to `develop` and to `release` branches | priority | delete options fetch fetch from release with accidentally to release branch were included options fetch fetch please remove it and merge this pr to develop and to release branches | 1 |

484,335 | 13,938,172,759 | IssuesEvent | 2020-10-22 14:57:57 | enso-org/ide | https://api.github.com/repos/enso-org/ide | opened | It's not possible to read file by selecting methods in searcher | Priority: High Type: Bug | <!--

Please ensure that you are using the latest version of Enso IDE before reporting

the bug! It may have been fixed since.

-->

### General Summary

<!--

- Please include a high-level description of your bug here.

-->

In specification the proper way of reading file from the data folder in the project directory is:

```

file1 = Enso_Project.data / "sample.json"

content1 = file1.read

```

or if user want to use absolute path:

```

file2 = File.new "/foo/bar/baz/sample.json"

content2 = file2.read

```

Unfortunately it is not possible to read file by using searcher and selecting `read` method in either of those cases

### Steps to Reproduce

<!--

Please list the reproduction steps for your bug.

-->

Open IDE

add node ` File.new "/foo/bar/baz/sample.json"` with proper filepath to existing file

open searcher with first node selected

### Expected Result

<!--

- A description of the results you expected from the reproduction steps.

-->

hint for reading file available

### Actual Result

<!--

- A description of what actually happens when you perform these steps.

- Please include any error output if relevant.

-->

hints for text or empty searcher

### Enso Version

<!--

- Please include the version of Enso IDE you are using here.

-->

core : 2.0.0-alpha.0

build : 51e09ff

electron : 8.1.1

chrome : 80.0.3987.141 | 1.0 | It's not possible to read file by selecting methods in searcher - <!--

Please ensure that you are using the latest version of Enso IDE before reporting

the bug! It may have been fixed since.

-->

### General Summary

<!--

- Please include a high-level description of your bug here.

-->

In specification the proper way of reading file from the data folder in the project directory is:

```

file1 = Enso_Project.data / "sample.json"

content1 = file1.read

```

or if user want to use absolute path:

```

file2 = File.new "/foo/bar/baz/sample.json"

content2 = file2.read

```

Unfortunately it is not possible to read file by using searcher and selecting `read` method in either of those cases

### Steps to Reproduce

<!--

Please list the reproduction steps for your bug.

-->

Open IDE

add node ` File.new "/foo/bar/baz/sample.json"` with proper filepath to existing file

open searcher with first node selected

### Expected Result

<!--

- A description of the results you expected from the reproduction steps.

-->

hint for reading file available

### Actual Result

<!--

- A description of what actually happens when you perform these steps.

- Please include any error output if relevant.

-->

hints for text or empty searcher

### Enso Version

<!--

- Please include the version of Enso IDE you are using here.

-->

core : 2.0.0-alpha.0

build : 51e09ff

electron : 8.1.1

chrome : 80.0.3987.141 | priority | it s not possible to read file by selecting methods in searcher please ensure that you are using the latest version of enso ide before reporting the bug it may have been fixed since general summary please include a high level description of your bug here in specification the proper way of reading file from the data folder in the project directory is enso project data sample json read or if user want to use absolute path file new foo bar baz sample json read unfortunately it is not possible to read file by using searcher and selecting read method in either of those cases steps to reproduce please list the reproduction steps for your bug open ide add node file new foo bar baz sample json with proper filepath to existing file open searcher with first node selected expected result a description of the results you expected from the reproduction steps hint for reading file available actual result a description of what actually happens when you perform these steps please include any error output if relevant hints for text or empty searcher enso version please include the version of enso ide you are using here core alpha build electron chrome | 1 |

236,712 | 7,752,129,914 | IssuesEvent | 2018-05-30 19:16:46 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: World creation fails | High Priority | **Version:** 0.7.3.3 beta

**Steps to Reproduce:**

1. Start the game on Linux using Steam

2. Create a new World and tick "Generate New"

3. Click "Start"

Sad that the game still does not work, I bought the game half a year ago, and it still does not work and has many bugs.

**Expected behavior:**

Generate a world I guess?

**Actual behavior:**

Error: Could not start server: Object reference not set to an instance of an object | 1.0 | USER ISSUE: World creation fails - **Version:** 0.7.3.3 beta

**Steps to Reproduce:**

1. Start the game on Linux using Steam

2. Create a new World and tick "Generate New"

3. Click "Start"

Sad that the game still does not work, I bought the game half a year ago, and it still does not work and has many bugs.

**Expected behavior:**

Generate a world I guess?

**Actual behavior:**

Error: Could not start server: Object reference not set to an instance of an object | priority | user issue world creation fails version beta steps to reproduce start the game on linux using steam create a new world and tick generate new click start sad that the game still does not work i bought the game half a year ago and it still does not work and has many bugs expected behavior generate a world i guess actual behavior error could not start server object reference not set to an instance of an object | 1 |

428,291 | 12,406,027,490 | IssuesEvent | 2020-05-21 18:21:58 | ClinGen/clincoded | https://api.github.com/repos/ClinGen/clincoded | closed | Add ClinVar Submitter IDs to VCEP affiliations | VCI affiliation backend feature priority: high | For all VCEP affiliations we need to find out and store their ClinVar submitter IDs in their affiliation files. | 1.0 | Add ClinVar Submitter IDs to VCEP affiliations - For all VCEP affiliations we need to find out and store their ClinVar submitter IDs in their affiliation files. | priority | add clinvar submitter ids to vcep affiliations for all vcep affiliations we need to find out and store their clinvar submitter ids in their affiliation files | 1 |

439,280 | 12,680,613,246 | IssuesEvent | 2020-06-19 14:00:38 | mlr-org/mlr3hyperband | https://api.github.com/repos/mlr-org/mlr3hyperband | closed | Implement private .assign_result function | Priority: High | The optimizer class offers an automatic way to assign the result. It just chooses the evaluation that gave the best result. However, for hyperband you might not want to return a result that is based on a lower fidelity. | 1.0 | Implement private .assign_result function - The optimizer class offers an automatic way to assign the result. It just chooses the evaluation that gave the best result. However, for hyperband you might not want to return a result that is based on a lower fidelity. | priority | implement private assign result function the optimizer class offers an automatic way to assign the result it just chooses the evaluation that gave the best result however for hyperband you might not want to return a result that is based on a lower fidelity | 1 |

269,533 | 8,439,909,552 | IssuesEvent | 2018-10-18 04:34:38 | CS2103-AY1819S1-F11-1/main | https://api.github.com/repos/CS2103-AY1819S1-F11-1/main | closed | Correct mistakes in V1.1 review | priority.High status.Ongoing type.Bug | Do feel free to add on

#82 (solves below):

-----

Other things to correct (if not done yet):

-------

| 1.0 | Correct mistakes in V1.1 review - Do feel free to add on

#82 (solves below):

-----

Other things to correct (if not done yet):

-------

| priority | correct mistakes in review do feel free to add on solves below other things to correct if not done yet | 1 |

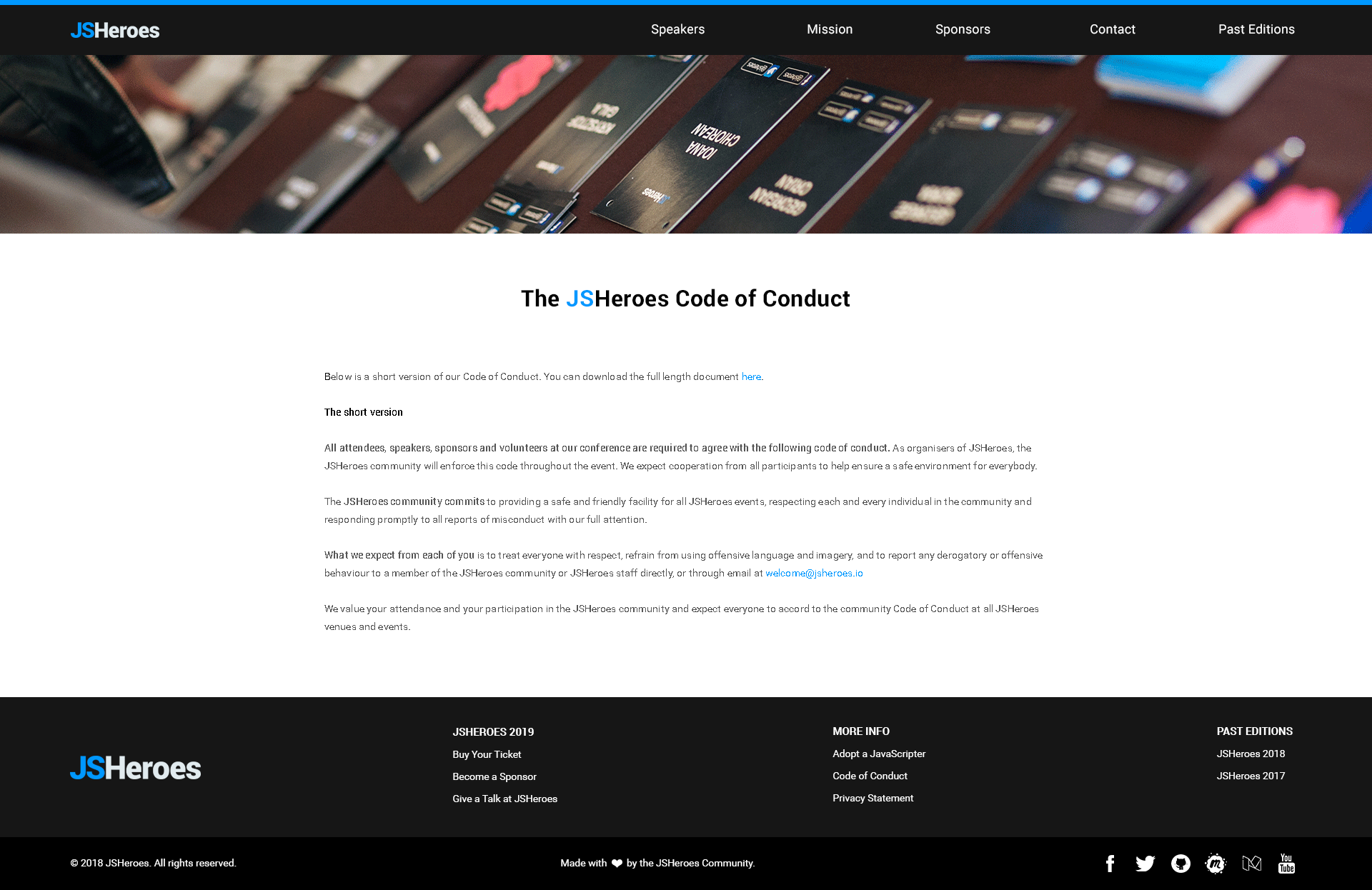

275,504 | 8,576,376,194 | IssuesEvent | 2018-11-12 20:11:16 | jsheroes/jsheroes.io | https://api.github.com/repos/jsheroes/jsheroes.io | closed | Code of Conduct redesign | good first issue high-priority | Redesigned the Code of Conduct page, replaced "Cluj JavaScripters" with "JSHeroes" in the text.

Attached:

- the mockups

- the photo used

- the PDF with the long version & corrected text:

[code-of-conduct-jsheroes-long-version.pdf](https://github.com/jsheroes/jsheroes.io/files/2461837/code-of-conduct-jsheroes-long-version.pdf)

Here is the text on the mockups:

### **The JSHeroes Code of Conduct**

Below is a short version of our Code of Conduct. You can download the full length document [here](https://drive.google.com/file/d/0B9mBUlTNHZJNRnhGWE5LRERud00/edit).

**The short version**

**All attendees, speakers, sponsors and volunteers at our conference are required to agree with the following code of conduct.** As organisers of JSHeroes, the JSHeroes community will enforce this code throughout the event. We expect cooperation from all participants to help ensure a safe environment for everybody.

**The JSHeroes community commits** to providing a safe and friendly facility for all JSHeroes events, respecting each and every individual in the community and responding promptly to all reports of misconduct with our full attention.

**What we expect from each of you** is to treat everyone with respect, refrain from using offensive language and imagery, and to report any derogatory or offensive behaviour to a member of the JSHeroes community or JSHeroes staff directly, or through email at [welcome@jsheroes.io](mailto:welcome@jsheroes.io)

We value your attendance and your participation in the JSHeroes community and expect everyone to accord to the community Code of Conduct at all JSHeroes venues and events.

| 1.0 | Code of Conduct redesign - Redesigned the Code of Conduct page, replaced "Cluj JavaScripters" with "JSHeroes" in the text.

Attached:

- the mockups

- the photo used

- the PDF with the long version & corrected text:

[code-of-conduct-jsheroes-long-version.pdf](https://github.com/jsheroes/jsheroes.io/files/2461837/code-of-conduct-jsheroes-long-version.pdf)

Here is the text on the mockups:

### **The JSHeroes Code of Conduct**

Below is a short version of our Code of Conduct. You can download the full length document [here](https://drive.google.com/file/d/0B9mBUlTNHZJNRnhGWE5LRERud00/edit).

**The short version**

**All attendees, speakers, sponsors and volunteers at our conference are required to agree with the following code of conduct.** As organisers of JSHeroes, the JSHeroes community will enforce this code throughout the event. We expect cooperation from all participants to help ensure a safe environment for everybody.

**The JSHeroes community commits** to providing a safe and friendly facility for all JSHeroes events, respecting each and every individual in the community and responding promptly to all reports of misconduct with our full attention.

**What we expect from each of you** is to treat everyone with respect, refrain from using offensive language and imagery, and to report any derogatory or offensive behaviour to a member of the JSHeroes community or JSHeroes staff directly, or through email at [welcome@jsheroes.io](mailto:welcome@jsheroes.io)

We value your attendance and your participation in the JSHeroes community and expect everyone to accord to the community Code of Conduct at all JSHeroes venues and events.

| priority | code of conduct redesign redesigned the code of conduct page replaced cluj javascripters with jsheroes in the text attached the mockups the photo used the pdf with the long version corrected text here is the text on the mockups the jsheroes code of conduct below is a short version of our code of conduct you can download the full length document the short version all attendees speakers sponsors and volunteers at our conference are required to agree with the following code of conduct as organisers of jsheroes the jsheroes community will enforce this code throughout the event we expect cooperation from all participants to help ensure a safe environment for everybody the jsheroes community commits to providing a safe and friendly facility for all jsheroes events respecting each and every individual in the community and responding promptly to all reports of misconduct with our full attention what we expect from each of you is to treat everyone with respect refrain from using offensive language and imagery and to report any derogatory or offensive behaviour to a member of the jsheroes community or jsheroes staff directly or through email at mailto welcome jsheroes io we value your attendance and your participation in the jsheroes community and expect everyone to accord to the community code of conduct at all jsheroes venues and events | 1 |

101,857 | 4,146,806,117 | IssuesEvent | 2016-06-15 02:26:18 | benbaptist/minecraft-wrapper | https://api.github.com/repos/benbaptist/minecraft-wrapper | closed | Don't understand why api.getStorage objects fluctuate | high priority | I am dealing with one object where I am storing warp data.. and it is constantly fluctuating.. items i deleted a day ago are back in the list.. and at any given moment, the list changes (I am the only one who can add/delete warps).. all the api.getstorages do this... why?

Without any input from me, it changes from this:

'''

{"warps": {"p2": [31883.323637433674, 67.0, 25338.20901793392], "portals": [3270.5307348580477, 103.0, 2669.668092340251, "staff", -90.60082244873047, -4.050037384033203, false], "p1": [31826.95201823558, 71.0, 25412.453195705904], "stronghold1": [741.2230532498089, 33.0, 0.6999999880790738], "OProom": [235.84977598355078, 56.0, -63.3245829276579], "Jeeskarox": [8117.1371682263925, 68.15457878522446, 63.444568633883726], "alex_chestroom": [3017.820089214752, 50.83829140374222, 8739.171687385], "myregion": [1521.9157604505936, 67.22955103477543, 301.3688044397513], "nether_pearltest": [115.53734140378518, 102.0, 83.46924722296535], "spawntest": [3271.685257982652, 103.0, 2673.5810949049383, "staff"], "swamp": [-464.845840532287, 84.31968973049136, -29.682383729993838], "wason4-1": [-13811.410204106456, 81.15052866660584, 9933.376722944515], "mystiques": [-5388.5, 72.0, -10011.5], "jail": [3263.5089288523445, 98.0, 2662.511479645375], "water_temple": [197.30000001192093, 44.0, -5605.519227954707], "hacker_hell": [7483.5, 0.8873451333870699, 7817.5], "zombieSpawner": [1614.5325514656352, 11.419156430429029, 580.6350509094153], "jacklare": [-1407.0923962382235, 70.7393572271209, 4541.348465524757]}}'''

to this:

'''

{"warps": {"OProom": [235.84977598355078, 56.0, -63.3245829276579], "alex_chestroom": [3017.820089214752, 50.83829140374222, 8739.171687385], "hacker_hell": [7483.5, 0.8873451333870699, 7817.5], "jacklare": [-1407.0923962382235, 70.7393572271209, 4541.348465524757]}}'''

to this:

'''

{"warps": {"alex_chestroom": [3017.820089214752, 50.83829140374222, 8739.171687385], "hacker_hell": [7483.5, 0.8873451333870699, 7817.5], "stronghold1": [740.1556378918563, 33.0, 1.5982858292301847, "staff", 186.7499542236328, 34.949989318847656, false], "jacklare": [-1396.0239155914612, 67.0, 4533.811520126322, "staff", 72.29999542236328, 25.149999618530273, false]}}''' | 1.0 | Don't understand why api.getStorage objects fluctuate - I am dealing with one object where I am storing warp data.. and it is constantly fluctuating.. items i deleted a day ago are back in the list.. and at any given moment, the list changes (I am the only one who can add/delete warps).. all the api.getstorages do this... why?

Without any input from me, it changes from this:

'''

{"warps": {"p2": [31883.323637433674, 67.0, 25338.20901793392], "portals": [3270.5307348580477, 103.0, 2669.668092340251, "staff", -90.60082244873047, -4.050037384033203, false], "p1": [31826.95201823558, 71.0, 25412.453195705904], "stronghold1": [741.2230532498089, 33.0, 0.6999999880790738], "OProom": [235.84977598355078, 56.0, -63.3245829276579], "Jeeskarox": [8117.1371682263925, 68.15457878522446, 63.444568633883726], "alex_chestroom": [3017.820089214752, 50.83829140374222, 8739.171687385], "myregion": [1521.9157604505936, 67.22955103477543, 301.3688044397513], "nether_pearltest": [115.53734140378518, 102.0, 83.46924722296535], "spawntest": [3271.685257982652, 103.0, 2673.5810949049383, "staff"], "swamp": [-464.845840532287, 84.31968973049136, -29.682383729993838], "wason4-1": [-13811.410204106456, 81.15052866660584, 9933.376722944515], "mystiques": [-5388.5, 72.0, -10011.5], "jail": [3263.5089288523445, 98.0, 2662.511479645375], "water_temple": [197.30000001192093, 44.0, -5605.519227954707], "hacker_hell": [7483.5, 0.8873451333870699, 7817.5], "zombieSpawner": [1614.5325514656352, 11.419156430429029, 580.6350509094153], "jacklare": [-1407.0923962382235, 70.7393572271209, 4541.348465524757]}}'''

to this:

'''

{"warps": {"OProom": [235.84977598355078, 56.0, -63.3245829276579], "alex_chestroom": [3017.820089214752, 50.83829140374222, 8739.171687385], "hacker_hell": [7483.5, 0.8873451333870699, 7817.5], "jacklare": [-1407.0923962382235, 70.7393572271209, 4541.348465524757]}}'''

to this:

'''

{"warps": {"alex_chestroom": [3017.820089214752, 50.83829140374222, 8739.171687385], "hacker_hell": [7483.5, 0.8873451333870699, 7817.5], "stronghold1": [740.1556378918563, 33.0, 1.5982858292301847, "staff", 186.7499542236328, 34.949989318847656, false], "jacklare": [-1396.0239155914612, 67.0, 4533.811520126322, "staff", 72.29999542236328, 25.149999618530273, false]}}''' | priority | don t understand why api getstorage objects fluctuate i am dealing with one object where i am storing warp data and it is constantly fluctuating items i deleted a day ago are back in the list and at any given moment the list changes i am the only one who can add delete warps all the api getstorages do this why without any input from me it changes from this warps portals oproom jeeskarox alex chestroom myregion nether pearltest spawntest swamp mystiques jail water temple hacker hell zombiespawner jacklare to this warps oproom alex chestroom hacker hell jacklare to this warps alex chestroom hacker hell jacklare | 1 |

285,886 | 8,780,576,899 | IssuesEvent | 2018-12-19 17:43:22 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [8.0.0 Steam Beta] Missing Icons | High Priority | Missing following icons: AdvancedBakingFocusedWorkflowTalentGroup, AdvancedBakingFrugalWorkspaceTalentGroup, AdvancedBakingLavishWorkspaceTalentGroup, AdvancedBakingParrallelProcessingTalentGroup, AdvancedCampfireCookingFocusedWorkflowTalentGroup, AdvancedCampfireCookingFrugalWorkspaceTalentGroup, AdvancedCampfireCookingLavishWorkspaceTalentGroup, AdvancedCampfireCookingParrallelProcessingTalentGroup, AdvancedCookingFocusedWorkflowTalentGroup, AdvancedCookingFrugalWorkspaceTalentGroup, AdvancedCookingLavishWorkspaceTalentGroup, AdvancedCookingParrallelProcessingTalentGroup, AdvancedSmeltingFocusedWorkflowTalentGroup, AdvancedSmeltingFrugalWorkspaceTalentGroup, AdvancedSmeltingLavishWorkspaceTalentGroup, AdvancedSmeltingParrallelProcessingTalentGroup, BakingFocusedWorkflowTalentGroup, BakingFrugalWorkspaceTalentGroup, BakingLavishWorkspaceTalentGroup, BakingParrallelProcessingTalentGroup, BasicEngineeringFocusedWorkflowTalentGroup, BasicEngineeringFrugalWorkspaceTalentGroup, BasicEngineeringLavishWorkspaceTalentGroup, BasicEngineeringParrallelProcessingTalentGroup, BricklayingFocusedWorkflowTalentGroup, BricklayingFrugalWorkspaceTalentGroup, BricklayingLavishWorkspaceTalentGroup, BricklayingParrallelProcessingTalentGroup, ButcheryFocusedWorkflowTalentGroup, ButcheryFrugalWorkspaceTalentGroup, ButcheryLavishWorkspaceTalentGroup, ButcheryParrallelProcessingTalentGroup, CampfireFocusedWorkflowTalentGroup, CampfireFrugalWorkspaceTalentGroup, CampfireLavishWorkspaceTalentGroup, CampfireParrallelProcessingTalentGroup, CementFocusedWorkflowTalentGroup, CementFrugalWorkspaceTalentGroup, CementLavishWorkspaceTalentGroup, CementParrallelProcessingTalentGroup, CookingFocusedWorkflowTalentGroup, CookingFrugalWorkspaceTalentGroup, CookingLavishWorkspaceTalentGroup, CookingParrallelProcessingTalentGroup, CuttingEdgeCookingFocusedWorkflowTalentGroup, CuttingEdgeCookingFrugalWorkspaceTalentGroup, CuttingEdgeCookingLavishWorkspaceTalentGroup, CuttingEdgeCookingParrallelProcessingTalentGroup, ElectronicsFocusedWorkflowTalentGroup, ElectronicsFrugalWorkspaceTalentGroup, ElectronicsLavishWorkspaceTalentGroup, ElectronicsParrallelProcessingTalentGroup, FertilizersFocusedWorkflowTalentGroup, FertilizersFrugalWorkspaceTalentGroup, FertilizersLavishWorkspaceTalentGroup, FertilizersParrallelProcessingTalentGroup, GatheringExperiencedFarmhandTalentGroup, GatheringNaturalGathererTalentGroup, GatheringToolEfficiencyTalentGroup, GatheringToolStrengthTalentGroup, GlassworkingFocusedWorkflowTalentGroup, GlassworkingFrugalWorkspaceTalentGroup, GlassworkingLavishWorkspaceTalentGroup, GlassworkingParrallelProcessingTalentGroup, HewingFocusedWorkflowTalentGroup, HewingFrugalWorkspaceTalentGroup, HewingLavishWorkspaceTalentGroup, HewingParrallelProcessingTalentGroup, HuntingAggressiveAnglerTalentGroup, HuntingArrowRecoveryTalentGroup, HuntingDeadeyeTalentGroup, HuntingFishermanTalentGroup, IndustryFocusedWorkflowTalentGroup, IndustryFrugalWorkspaceTalentGroup, IndustryLavishWorkspaceTalentGroup, IndustryParrallelProcessingTalentGroup, LoggingCleanupCrewTalentGroup, LoggingLoggersLuckTalentGroup, LoggingToolEfficiencyTalentGroup, LoggingToolStrengthTalentGroup, LumberFocusedWorkflowTalentGroup, LumberFrugalWorkspaceTalentGroup, LumberLavishWorkspaceTalentGroup, LumberParrallelProcessingTalentGroup, MechanicsFocusedWorkflowTalentGroup, MechanicsFrugalWorkspaceTalentGroup, MechanicsLavishWorkspaceTalentGroup, MechanicsParrallelProcessingTalentGroup, MetalConstructionFocusedWorkflowTalentGroup, MetalConstructionFrugalWorkspaceTalentGroup, MetalConstructionLavishWorkspaceTalentGroup, MetalConstructionParrallelProcessingTalentGroup, MillingFocusedWorkflowTalentGroup, MillingFrugalWorkspaceTalentGroup, MillingLavishWorkspaceTalentGroup, MillingParrallelProcessingTalentGroup, MiningLuckyBreakTalentGroup, MiningSweepingHandsTalentGroup, MiningToolEfficiencyTalentGroup, MiningToolStrengthTalentGroup, MortaringFocusedWorkflowTalentGroup, MortaringFrugalWorkspaceTalentGroup, MortaringLavishWorkspaceTalentGroup, MortaringParrallelProcessingTalentGroup, OilDrillingFocusedWorkflowTalentGroup, OilDrillingFrugalWorkspaceTalentGroup, OilDrillingLavishWorkspaceTalentGroup, OilDrillingParrallelProcessingTalentGroup, PaperMillingFocusedWorkflowTalentGroup, PaperMillingFrugalWorkspaceTalentGroup, PaperMillingLavishWorkspaceTalentGroup, PaperMillingParrallelProcessingTalentGroup, SelfImprovementDiverTalentGroup, SelfImprovementNatureAdventurerTalentGroup, SelfImprovementSprinterTalentGroup, SelfImprovementUrbanTravellerTalentGroup, SmeltingFocusedWorkflowTalentGroup, SmeltingFrugalWorkspaceTalentGroup, SmeltingLavishWorkspaceTalentGroup, SmeltingParrallelProcessingTalentGroup, TailoringFocusedWorkflowTalentGroup, TailoringFrugalWorkspaceTalentGroup, TailoringLavishWorkspaceTalentGroup, TailoringParrallelProcessingTalentGroup | 1.0 | [8.0.0 Steam Beta] Missing Icons - Missing following icons: AdvancedBakingFocusedWorkflowTalentGroup, AdvancedBakingFrugalWorkspaceTalentGroup, AdvancedBakingLavishWorkspaceTalentGroup, AdvancedBakingParrallelProcessingTalentGroup, AdvancedCampfireCookingFocusedWorkflowTalentGroup, AdvancedCampfireCookingFrugalWorkspaceTalentGroup, AdvancedCampfireCookingLavishWorkspaceTalentGroup, AdvancedCampfireCookingParrallelProcessingTalentGroup, AdvancedCookingFocusedWorkflowTalentGroup, AdvancedCookingFrugalWorkspaceTalentGroup, AdvancedCookingLavishWorkspaceTalentGroup, AdvancedCookingParrallelProcessingTalentGroup, AdvancedSmeltingFocusedWorkflowTalentGroup, AdvancedSmeltingFrugalWorkspaceTalentGroup, AdvancedSmeltingLavishWorkspaceTalentGroup, AdvancedSmeltingParrallelProcessingTalentGroup, BakingFocusedWorkflowTalentGroup, BakingFrugalWorkspaceTalentGroup, BakingLavishWorkspaceTalentGroup, BakingParrallelProcessingTalentGroup, BasicEngineeringFocusedWorkflowTalentGroup, BasicEngineeringFrugalWorkspaceTalentGroup, BasicEngineeringLavishWorkspaceTalentGroup, BasicEngineeringParrallelProcessingTalentGroup, BricklayingFocusedWorkflowTalentGroup, BricklayingFrugalWorkspaceTalentGroup, BricklayingLavishWorkspaceTalentGroup, BricklayingParrallelProcessingTalentGroup, ButcheryFocusedWorkflowTalentGroup, ButcheryFrugalWorkspaceTalentGroup, ButcheryLavishWorkspaceTalentGroup, ButcheryParrallelProcessingTalentGroup, CampfireFocusedWorkflowTalentGroup, CampfireFrugalWorkspaceTalentGroup, CampfireLavishWorkspaceTalentGroup, CampfireParrallelProcessingTalentGroup, CementFocusedWorkflowTalentGroup, CementFrugalWorkspaceTalentGroup, CementLavishWorkspaceTalentGroup, CementParrallelProcessingTalentGroup, CookingFocusedWorkflowTalentGroup, CookingFrugalWorkspaceTalentGroup, CookingLavishWorkspaceTalentGroup, CookingParrallelProcessingTalentGroup, CuttingEdgeCookingFocusedWorkflowTalentGroup, CuttingEdgeCookingFrugalWorkspaceTalentGroup, CuttingEdgeCookingLavishWorkspaceTalentGroup, CuttingEdgeCookingParrallelProcessingTalentGroup, ElectronicsFocusedWorkflowTalentGroup, ElectronicsFrugalWorkspaceTalentGroup, ElectronicsLavishWorkspaceTalentGroup, ElectronicsParrallelProcessingTalentGroup, FertilizersFocusedWorkflowTalentGroup, FertilizersFrugalWorkspaceTalentGroup, FertilizersLavishWorkspaceTalentGroup, FertilizersParrallelProcessingTalentGroup, GatheringExperiencedFarmhandTalentGroup, GatheringNaturalGathererTalentGroup, GatheringToolEfficiencyTalentGroup, GatheringToolStrengthTalentGroup, GlassworkingFocusedWorkflowTalentGroup, GlassworkingFrugalWorkspaceTalentGroup, GlassworkingLavishWorkspaceTalentGroup, GlassworkingParrallelProcessingTalentGroup, HewingFocusedWorkflowTalentGroup, HewingFrugalWorkspaceTalentGroup, HewingLavishWorkspaceTalentGroup, HewingParrallelProcessingTalentGroup, HuntingAggressiveAnglerTalentGroup, HuntingArrowRecoveryTalentGroup, HuntingDeadeyeTalentGroup, HuntingFishermanTalentGroup, IndustryFocusedWorkflowTalentGroup, IndustryFrugalWorkspaceTalentGroup, IndustryLavishWorkspaceTalentGroup, IndustryParrallelProcessingTalentGroup, LoggingCleanupCrewTalentGroup, LoggingLoggersLuckTalentGroup, LoggingToolEfficiencyTalentGroup, LoggingToolStrengthTalentGroup, LumberFocusedWorkflowTalentGroup, LumberFrugalWorkspaceTalentGroup, LumberLavishWorkspaceTalentGroup, LumberParrallelProcessingTalentGroup, MechanicsFocusedWorkflowTalentGroup, MechanicsFrugalWorkspaceTalentGroup, MechanicsLavishWorkspaceTalentGroup, MechanicsParrallelProcessingTalentGroup, MetalConstructionFocusedWorkflowTalentGroup, MetalConstructionFrugalWorkspaceTalentGroup, MetalConstructionLavishWorkspaceTalentGroup, MetalConstructionParrallelProcessingTalentGroup, MillingFocusedWorkflowTalentGroup, MillingFrugalWorkspaceTalentGroup, MillingLavishWorkspaceTalentGroup, MillingParrallelProcessingTalentGroup, MiningLuckyBreakTalentGroup, MiningSweepingHandsTalentGroup, MiningToolEfficiencyTalentGroup, MiningToolStrengthTalentGroup, MortaringFocusedWorkflowTalentGroup, MortaringFrugalWorkspaceTalentGroup, MortaringLavishWorkspaceTalentGroup, MortaringParrallelProcessingTalentGroup, OilDrillingFocusedWorkflowTalentGroup, OilDrillingFrugalWorkspaceTalentGroup, OilDrillingLavishWorkspaceTalentGroup, OilDrillingParrallelProcessingTalentGroup, PaperMillingFocusedWorkflowTalentGroup, PaperMillingFrugalWorkspaceTalentGroup, PaperMillingLavishWorkspaceTalentGroup, PaperMillingParrallelProcessingTalentGroup, SelfImprovementDiverTalentGroup, SelfImprovementNatureAdventurerTalentGroup, SelfImprovementSprinterTalentGroup, SelfImprovementUrbanTravellerTalentGroup, SmeltingFocusedWorkflowTalentGroup, SmeltingFrugalWorkspaceTalentGroup, SmeltingLavishWorkspaceTalentGroup, SmeltingParrallelProcessingTalentGroup, TailoringFocusedWorkflowTalentGroup, TailoringFrugalWorkspaceTalentGroup, TailoringLavishWorkspaceTalentGroup, TailoringParrallelProcessingTalentGroup | priority | missing icons missing following icons advancedbakingfocusedworkflowtalentgroup advancedbakingfrugalworkspacetalentgroup advancedbakinglavishworkspacetalentgroup advancedbakingparrallelprocessingtalentgroup advancedcampfirecookingfocusedworkflowtalentgroup advancedcampfirecookingfrugalworkspacetalentgroup advancedcampfirecookinglavishworkspacetalentgroup advancedcampfirecookingparrallelprocessingtalentgroup advancedcookingfocusedworkflowtalentgroup advancedcookingfrugalworkspacetalentgroup advancedcookinglavishworkspacetalentgroup advancedcookingparrallelprocessingtalentgroup advancedsmeltingfocusedworkflowtalentgroup advancedsmeltingfrugalworkspacetalentgroup advancedsmeltinglavishworkspacetalentgroup advancedsmeltingparrallelprocessingtalentgroup bakingfocusedworkflowtalentgroup bakingfrugalworkspacetalentgroup bakinglavishworkspacetalentgroup bakingparrallelprocessingtalentgroup basicengineeringfocusedworkflowtalentgroup basicengineeringfrugalworkspacetalentgroup basicengineeringlavishworkspacetalentgroup basicengineeringparrallelprocessingtalentgroup bricklayingfocusedworkflowtalentgroup bricklayingfrugalworkspacetalentgroup bricklayinglavishworkspacetalentgroup bricklayingparrallelprocessingtalentgroup butcheryfocusedworkflowtalentgroup butcheryfrugalworkspacetalentgroup butcherylavishworkspacetalentgroup butcheryparrallelprocessingtalentgroup campfirefocusedworkflowtalentgroup campfirefrugalworkspacetalentgroup campfirelavishworkspacetalentgroup campfireparrallelprocessingtalentgroup cementfocusedworkflowtalentgroup cementfrugalworkspacetalentgroup cementlavishworkspacetalentgroup cementparrallelprocessingtalentgroup cookingfocusedworkflowtalentgroup cookingfrugalworkspacetalentgroup cookinglavishworkspacetalentgroup cookingparrallelprocessingtalentgroup cuttingedgecookingfocusedworkflowtalentgroup cuttingedgecookingfrugalworkspacetalentgroup cuttingedgecookinglavishworkspacetalentgroup cuttingedgecookingparrallelprocessingtalentgroup electronicsfocusedworkflowtalentgroup electronicsfrugalworkspacetalentgroup electronicslavishworkspacetalentgroup electronicsparrallelprocessingtalentgroup fertilizersfocusedworkflowtalentgroup fertilizersfrugalworkspacetalentgroup fertilizerslavishworkspacetalentgroup fertilizersparrallelprocessingtalentgroup gatheringexperiencedfarmhandtalentgroup gatheringnaturalgatherertalentgroup gatheringtoolefficiencytalentgroup gatheringtoolstrengthtalentgroup glassworkingfocusedworkflowtalentgroup glassworkingfrugalworkspacetalentgroup glassworkinglavishworkspacetalentgroup glassworkingparrallelprocessingtalentgroup hewingfocusedworkflowtalentgroup hewingfrugalworkspacetalentgroup hewinglavishworkspacetalentgroup hewingparrallelprocessingtalentgroup huntingaggressiveanglertalentgroup huntingarrowrecoverytalentgroup huntingdeadeyetalentgroup huntingfishermantalentgroup industryfocusedworkflowtalentgroup industryfrugalworkspacetalentgroup industrylavishworkspacetalentgroup industryparrallelprocessingtalentgroup loggingcleanupcrewtalentgroup loggingloggerslucktalentgroup loggingtoolefficiencytalentgroup loggingtoolstrengthtalentgroup lumberfocusedworkflowtalentgroup lumberfrugalworkspacetalentgroup lumberlavishworkspacetalentgroup lumberparrallelprocessingtalentgroup mechanicsfocusedworkflowtalentgroup mechanicsfrugalworkspacetalentgroup mechanicslavishworkspacetalentgroup mechanicsparrallelprocessingtalentgroup metalconstructionfocusedworkflowtalentgroup metalconstructionfrugalworkspacetalentgroup metalconstructionlavishworkspacetalentgroup metalconstructionparrallelprocessingtalentgroup millingfocusedworkflowtalentgroup millingfrugalworkspacetalentgroup millinglavishworkspacetalentgroup millingparrallelprocessingtalentgroup miningluckybreaktalentgroup miningsweepinghandstalentgroup miningtoolefficiencytalentgroup miningtoolstrengthtalentgroup mortaringfocusedworkflowtalentgroup mortaringfrugalworkspacetalentgroup mortaringlavishworkspacetalentgroup mortaringparrallelprocessingtalentgroup oildrillingfocusedworkflowtalentgroup oildrillingfrugalworkspacetalentgroup oildrillinglavishworkspacetalentgroup oildrillingparrallelprocessingtalentgroup papermillingfocusedworkflowtalentgroup papermillingfrugalworkspacetalentgroup papermillinglavishworkspacetalentgroup papermillingparrallelprocessingtalentgroup selfimprovementdivertalentgroup selfimprovementnatureadventurertalentgroup selfimprovementsprintertalentgroup selfimprovementurbantravellertalentgroup smeltingfocusedworkflowtalentgroup smeltingfrugalworkspacetalentgroup smeltinglavishworkspacetalentgroup smeltingparrallelprocessingtalentgroup tailoringfocusedworkflowtalentgroup tailoringfrugalworkspacetalentgroup tailoringlavishworkspacetalentgroup tailoringparrallelprocessingtalentgroup | 1 |

486,985 | 14,017,446,544 | IssuesEvent | 2020-10-29 15:39:54 | GQCG/GQCP | https://api.github.com/repos/GQCG/GQCP | opened | Enable the evaluation of `RSQOperators` in a full spin-resolved ONV basis | C++ complexity: intermediate priority: high refactor | In recent refactors (#688), we had to temporarily disable some of the CI functionality. This issue tracks the re-enabling of the API to evaluate restricted operators in a full spin-resolved ONV basis. | 1.0 | Enable the evaluation of `RSQOperators` in a full spin-resolved ONV basis - In recent refactors (#688), we had to temporarily disable some of the CI functionality. This issue tracks the re-enabling of the API to evaluate restricted operators in a full spin-resolved ONV basis. | priority | enable the evaluation of rsqoperators in a full spin resolved onv basis in recent refactors we had to temporarily disable some of the ci functionality this issue tracks the re enabling of the api to evaluate restricted operators in a full spin resolved onv basis | 1 |

483,339 | 13,923,065,141 | IssuesEvent | 2020-10-21 14:00:37 | tomav/docker-mailserver | https://api.github.com/repos/tomav/docker-mailserver | closed | dovecot horked on {7.1.0,latest} versions. | bug postfix / dovecot related priority 1 [HIGH] script related | Horked is a technical termed for broken :)

Seriously though -- Dovecot configuration works appropriately up to version `7.0.1` and before. Upgrading to the `latest` or `release-v7.1.0` image results in dovecot not starting on docker-mailserver, and consequently rejecting IMAP connections.

Using the same configuration on images up to 7.0.1 works appropriately.

**Expectations**: A working configuration from 7.0.1 should work in 7.0.1+, unless explicit changes are called out in the [Announcements section](https://github.com/tomav/docker-mailserver#announcements).

## Context

Pretty standard postfix/dovecot setup using imap/ssl w/ letsencrypt, dmarc, dkim, etc.

docker-compose.yml (redacted and paths simplified):

```yaml

version: "3"

networks:

mail:

driver: bridge

ipam:

config:

- subnet: {MAIL NET}/24

db_db:

external: true

services:

mail:

image: tvial/docker-mailserver:release-v7.0.1

restart: "always"

stop_grace_period: "1m"

networks:

mail:

ipv4_address: {MAIL IP}

ports:

- "25:25/tcp"

- "587:587/tcp"

- "993:993/tcp"

hostname: "mail"

domainname: "{REDACTED}"

container_name: "mail"

environment:

- "DEFAULT_RELAY_HOST=''"

- "DMS_DEBUG=1"

- "DOVECOT_MAILBOX_FORMAT=maildir"

- "ENABLE_CLAMAV=0"

- "ENABLE_ELK_FORWARDER=0"

- "ENABLE_FAIL2BAN=0"

- "ENABLE_FETCHMAIL=0"

- "ENABLE_LDAP=''"

- "ENABLE_MANAGESIEVE=1"

- "ENABLE_POP3=''"

- "ENABLE_POSTFIX_VIRTUAL_TRANSPORT=''"

- "ENABLE_POSTGREY=1"

- "ENABLE_QUOTAS=0"

- "ENABLE_SASLAUTHD=0"

- "ENABLE_SPAMASSASSIN=1"

- "ENABLE_SRS=1"

- "LOGROTATE_INTERVAL=weekly"

- "LOGWATCH_INTERVAL=weekly"

- "ONE_DIR=1"

- "PERMIT_DOCKER=host"

- "PFLOGSUMM_TRIGGER=logrotate"

- "POSTFIX_DAGENT=''"

- "POSTFIX_INET_PROTOCOLS=ipv4"

- "POSTFIX_MAILBOX_SIZE_LIMIT=0"

- "POSTFIX_MESSAGE_SIZE_LIMIT=10480000"

- "POSTGREY_AUTO_WHITELIST_CLIENTS=0"

- "POSTGREY_DELAY=300"

- "POSTGREY_MAX_AGE=35"

- "POSTGREY_TEXT=Delayed by postgrey"

- "POSTMASTER_ADDRESS=postmaster@{REDACTED}"

- "POSTSCREEN_ACTION=enforce"

- "RELAY_HOST=''"

- "SA_KILL=6.31"

- "SA_SPAM_SUBJECT=***SPAM***"

- "SA_TAG2=6.31"

- "SA_TAG=3.0"

- "SASL_PASSWD=''"

- "SASLAUTHD_MECH_OPTIONS=''"

- "SASLAUTHD_MECHANISMS=''"

- "SMTP_ONLY=''"

- "SPOOF_PROTECTION=1"

- "SRS_EXCLUDE_DOMAINS=''"

- "SRS_SENDER_CLASSES=envelope_sender,header_sender"

- "SSL_TYPE=letsencrypt"

- "TLS_LEVEL=modern"

- "TZ=America/Los_Angeles"

- "VIRUSMAILS_DELETE_DELAY=7"

volumes:

- "/d/mail:/var/mail"

- "/d/config:/tmp/docker-mailserver"

- "/d/90-sieve.conf:/etc/dovecot/conf.d/90-sieve.conf"

- "/d/letsencrypt:/etc/letsencrypt:ro"

- "/var/log/docker/mail:/var/log/mail"

- "/etc/localtime:/etc/localtime:ro"

```

IMAP/SSL works fine, can login without issue (and all versions before this).

```bash

$ openssl s_client -starttls imap -connect mail.{REDACTED}:993

CONNECTED(00000005)

```

Dovecot is running on the server:

```bash

# ps -ef

UID PID PPID C STIME TTY TIME CMD

root 1 0 1 23:05 ? 00:00:00 /usr/bin/python2 /usr/bin/supervisord -c /etc/supervisor/supervisord.conf

root 8 1 0 23:05 ? 00:00:00 /bin/bash /usr/local/bin/start-mailserver.sh

root 457 0 0 23:05 pts/0 00:00:00 /bin/sh

root 525 1 0 23:05 ? 00:00:00 /usr/sbin/cron -f

root 527 1 0 23:05 ? 00:00:00 /usr/sbin/rsyslogd -n

root 533 1 0 23:05 ? 00:00:00 /usr/sbin/dovecot -F -c /etc/dovecot/dovecot.conf

dovecot 536 533 0 23:05 ? 00:00:00 dovecot/anvil

root 537 533 0 23:05 ? 00:00:00 dovecot/log

root 538 533 0 23:05 ? 00:00:00 dovecot/config

opendkim 540 1 0 23:05 ? 00:00:00 /usr/sbin/opendkim -f

opendkim 542 540 0 23:05 ? 00:00:00 /usr/sbin/opendkim -f

opendma+ 548 1 0 23:05 ? 00:00:00 /usr/sbin/opendmarc -f -p inet:8893@localhost -P /var/run/opendmarc/opendmarc.pid

postgrey 556 1 1 23:05 ? 00:00:00 postgrey --inet=127.0.0.1:10023 --syslog-facility=mail --delay=300 --max-age=35 --auto-whitelist-clients=0 --g

root 558 1 0 23:05 ? 00:00:00 bash /usr/local/bin/postfix-wrapper.sh

amavis 567 1 16 23:05 ? 00:00:01 /usr/sbin/amavisd-new (master)

root 569 8 0 23:05 ? 00:00:00 tail -fn 0 /var/log/mail/mail.log

postsrsd 661 1 0 23:05 ? 00:00:00 /usr/sbin/postsrsd -f 10001 -r 10002 -d {REDACTED} -s /etc/postsrsd.secret -a = -n 4 -N 4 -u postsrsd -p /var/r

root 1206 1 0 23:05 ? 00:00:00 /usr/lib/postfix/sbin/master

postfix 1208 1206 0 23:05 ? 00:00:00 pickup -l -t fifo -u -c -o content_filter= -o receive_override_options=no_header_body_checks

postfix 1209 1206 0 23:05 ? 00:00:00 qmgr -l -t unix -u

amavis 1210 567 0 23:05 ? 00:00:00 /usr/sbin/amavisd-new (virgin child)

amavis 1211 567 0 23:05 ? 00:00:00 /usr/sbin/amavisd-new (virgin child)

root 1214 558 0 23:05 ? 00:00:00 sleep 5

root 1215 457 0 23:05 pts/0 00:00:00 ps -ef

```

### *What* is affected by this bug?

Upgrading beyond `7.0.1` without any changes causes dovecot **not** to run, and therefore, IMAP/SSL to fail.

docker-compose.yml (same as above, just version bump):

```yaml

...

services:

mail:

image: tvial/docker-mailserver:release-v7.1.0

...

```

IMAP/SSL fails.

```bash

$ openssl s_client -starttls imap -connect mail.{REDACTED}:993

140671805964736:error:0200206F:system library:connect:Connection refused:../crypto/bio/b_sock2.c:110:

140671805964736:error:2008A067:BIO routines:BIO_connect:connect error:../crypto/bio/b_sock2.c:111:

connect:errno=111

```

Dovecot is **not** running on the server:

```bash

# ps -ef

UID PID PPID C STIME TTY TIME CMD

root 1 0 2 23:03 ? 00:00:00 /usr/bin/python2 /usr/bin/supervisord -c /etc/supervisor/supervisord.conf

root 8 1 0 23:03 ? 00:00:00 /bin/bash /usr/local/bin/start-mailserver.sh

root 552 1 0 23:03 ? 00:00:00 /usr/sbin/cron -f

root 554 1 0 23:03 ? 00:00:00 /usr/sbin/rsyslogd -n

opendkim 558 1 0 23:03 ? 00:00:00 /usr/sbin/opendkim -f

opendkim 560 558 0 23:03 ? 00:00:00 /usr/sbin/opendkim -f

opendma+ 566 1 0 23:03 ? 00:00:00 /usr/sbin/opendmarc -f -p inet:8893@localhost -P /var/run/opendmarc/opendmarc.pid

postgrey 574 1 3 23:03 ? 00:00:00 postgrey --inet=127.0.0.1:10023 --syslog-facility=mail --delay=300 --max-age=35 --auto-whitelist-clients=0 --g

root 576 1 0 23:03 ? 00:00:00 bash /usr/local/bin/postfix-wrapper.sh

amavis 585 1 44 23:03 ? 00:00:01 /usr/sbin/amavisd-new (master)

root 587 8 0 23:03 ? 00:00:00 tail -fn 0 /var/log/mail/mail.log

root 596 0 1 23:03 pts/0 00:00:00 /bin/sh

postsrsd 626 1 0 23:03 ? 00:00:00 /usr/sbin/postsrsd -f 10001 -r 10002 -d {REDACTED} -s /etc/postsrsd.secret -a = -n 4 -N 4 -u postsrsd -p /var/r

amavis 1141 585 0 23:04 ? 00:00:00 /usr/sbin/amavisd-new (virgin child)

amavis 1142 585 0 23:04 ? 00:00:00 /usr/sbin/amavisd-new (virgin child)

root 1225 1 0 23:04 ? 00:00:00 /usr/lib/postfix/sbin/master

root 1226 576 0 23:04 ? 00:00:00 sleep 5

postfix 1227 1225 0 23:04 ? 00:00:00 pickup -l -t fifo -u -c -o content_filter= -o receive_override_options=no_header_body_checks

postfix 1228 1225 0 23:04 ? 00:00:00 qmgr -l -t unix -u

root 1229 596 0 23:04 pts/0 00:00:00 ps -ef

```

roundcube (web UI using IMAP/SSL) is not happy either:

/var/log/syslog:

```bash

Oct 12 20:18:47 {SERVER} roundcube[7483]: errors: <eb34b0a6> IMAP Error: Login failed for {USER} against {REDACTED} from {GATEWAY IP}(X-Real-IP:

{CLIENT IP},X-Forwarded-For: {CLIENT IP}). Could not connect to ssl://{REDACTED}:993: Unknown reason in /var/www/html/program/lib/Roundcube/rcube_imap.php on line 200 (POST /?_task=login&_action=login)

```

### *When* does this occur?

Any version beyond `7.0.1`

## *How* do we replicate the issue?

See above for replication. I can provide additional files for postfix conf and dovecot sieve, but that doesn't seem to be the issue.

## Actual Behavior

IMAP/SSL logins fail. Dovecot not running on the server.

## Expected behavior (i.e. solution)

**Expectations**: A working configuration from 7.0.1 should work in 7.0.1+, unless explicit changes are called out in the [Announcements section](https://github.com/tomav/docker-mailserver#announcements).

## Your Environment

* Amount of RAM available: 128GB

* Mailserver version used:

* working: release-v7.0.0, release-v7.0.1

* non-working: release-v7.1.0, latest

* Docker version used: Docker version 19.03.13, build 4484c46d9d

* Environment settings relevant to the config: See config.

* Any relevant stack traces ("Full trace" preferred):

<!---

Please remember to format code using triple backticks (`) so that it is neatly formatted when the issue is posted.

Spoilers are recommended for readability:

<details>

<summary>Click me to expand </summary>

```sh

echo "hello world"

```

</details>

-->

| 1.0 | dovecot horked on {7.1.0,latest} versions. - Horked is a technical termed for broken :)

Seriously though -- Dovecot configuration works appropriately up to version `7.0.1` and before. Upgrading to the `latest` or `release-v7.1.0` image results in dovecot not starting on docker-mailserver, and consequently rejecting IMAP connections.

Using the same configuration on images up to 7.0.1 works appropriately.

**Expectations**: A working configuration from 7.0.1 should work in 7.0.1+, unless explicit changes are called out in the [Announcements section](https://github.com/tomav/docker-mailserver#announcements).

## Context

Pretty standard postfix/dovecot setup using imap/ssl w/ letsencrypt, dmarc, dkim, etc.

docker-compose.yml (redacted and paths simplified):

```yaml

version: "3"

networks:

mail:

driver: bridge

ipam:

config:

- subnet: {MAIL NET}/24

db_db:

external: true

services:

mail:

image: tvial/docker-mailserver:release-v7.0.1

restart: "always"

stop_grace_period: "1m"

networks:

mail:

ipv4_address: {MAIL IP}

ports:

- "25:25/tcp"

- "587:587/tcp"

- "993:993/tcp"

hostname: "mail"

domainname: "{REDACTED}"

container_name: "mail"

environment:

- "DEFAULT_RELAY_HOST=''"

- "DMS_DEBUG=1"

- "DOVECOT_MAILBOX_FORMAT=maildir"

- "ENABLE_CLAMAV=0"

- "ENABLE_ELK_FORWARDER=0"

- "ENABLE_FAIL2BAN=0"

- "ENABLE_FETCHMAIL=0"

- "ENABLE_LDAP=''"

- "ENABLE_MANAGESIEVE=1"

- "ENABLE_POP3=''"

- "ENABLE_POSTFIX_VIRTUAL_TRANSPORT=''"

- "ENABLE_POSTGREY=1"

- "ENABLE_QUOTAS=0"

- "ENABLE_SASLAUTHD=0"

- "ENABLE_SPAMASSASSIN=1"

- "ENABLE_SRS=1"

- "LOGROTATE_INTERVAL=weekly"

- "LOGWATCH_INTERVAL=weekly"

- "ONE_DIR=1"

- "PERMIT_DOCKER=host"

- "PFLOGSUMM_TRIGGER=logrotate"

- "POSTFIX_DAGENT=''"

- "POSTFIX_INET_PROTOCOLS=ipv4"

- "POSTFIX_MAILBOX_SIZE_LIMIT=0"

- "POSTFIX_MESSAGE_SIZE_LIMIT=10480000"

- "POSTGREY_AUTO_WHITELIST_CLIENTS=0"

- "POSTGREY_DELAY=300"

- "POSTGREY_MAX_AGE=35"

- "POSTGREY_TEXT=Delayed by postgrey"

- "POSTMASTER_ADDRESS=postmaster@{REDACTED}"

- "POSTSCREEN_ACTION=enforce"

- "RELAY_HOST=''"

- "SA_KILL=6.31"

- "SA_SPAM_SUBJECT=***SPAM***"

- "SA_TAG2=6.31"

- "SA_TAG=3.0"

- "SASL_PASSWD=''"

- "SASLAUTHD_MECH_OPTIONS=''"

- "SASLAUTHD_MECHANISMS=''"

- "SMTP_ONLY=''"

- "SPOOF_PROTECTION=1"

- "SRS_EXCLUDE_DOMAINS=''"

- "SRS_SENDER_CLASSES=envelope_sender,header_sender"

- "SSL_TYPE=letsencrypt"

- "TLS_LEVEL=modern"

- "TZ=America/Los_Angeles"

- "VIRUSMAILS_DELETE_DELAY=7"

volumes:

- "/d/mail:/var/mail"

- "/d/config:/tmp/docker-mailserver"

- "/d/90-sieve.conf:/etc/dovecot/conf.d/90-sieve.conf"

- "/d/letsencrypt:/etc/letsencrypt:ro"

- "/var/log/docker/mail:/var/log/mail"

- "/etc/localtime:/etc/localtime:ro"

```

IMAP/SSL works fine, can login without issue (and all versions before this).

```bash

$ openssl s_client -starttls imap -connect mail.{REDACTED}:993

CONNECTED(00000005)

```

Dovecot is running on the server:

```bash

# ps -ef

UID PID PPID C STIME TTY TIME CMD

root 1 0 1 23:05 ? 00:00:00 /usr/bin/python2 /usr/bin/supervisord -c /etc/supervisor/supervisord.conf

root 8 1 0 23:05 ? 00:00:00 /bin/bash /usr/local/bin/start-mailserver.sh

root 457 0 0 23:05 pts/0 00:00:00 /bin/sh

root 525 1 0 23:05 ? 00:00:00 /usr/sbin/cron -f

root 527 1 0 23:05 ? 00:00:00 /usr/sbin/rsyslogd -n

root 533 1 0 23:05 ? 00:00:00 /usr/sbin/dovecot -F -c /etc/dovecot/dovecot.conf

dovecot 536 533 0 23:05 ? 00:00:00 dovecot/anvil

root 537 533 0 23:05 ? 00:00:00 dovecot/log

root 538 533 0 23:05 ? 00:00:00 dovecot/config

opendkim 540 1 0 23:05 ? 00:00:00 /usr/sbin/opendkim -f

opendkim 542 540 0 23:05 ? 00:00:00 /usr/sbin/opendkim -f

opendma+ 548 1 0 23:05 ? 00:00:00 /usr/sbin/opendmarc -f -p inet:8893@localhost -P /var/run/opendmarc/opendmarc.pid

postgrey 556 1 1 23:05 ? 00:00:00 postgrey --inet=127.0.0.1:10023 --syslog-facility=mail --delay=300 --max-age=35 --auto-whitelist-clients=0 --g

root 558 1 0 23:05 ? 00:00:00 bash /usr/local/bin/postfix-wrapper.sh

amavis 567 1 16 23:05 ? 00:00:01 /usr/sbin/amavisd-new (master)

root 569 8 0 23:05 ? 00:00:00 tail -fn 0 /var/log/mail/mail.log

postsrsd 661 1 0 23:05 ? 00:00:00 /usr/sbin/postsrsd -f 10001 -r 10002 -d {REDACTED} -s /etc/postsrsd.secret -a = -n 4 -N 4 -u postsrsd -p /var/r

root 1206 1 0 23:05 ? 00:00:00 /usr/lib/postfix/sbin/master

postfix 1208 1206 0 23:05 ? 00:00:00 pickup -l -t fifo -u -c -o content_filter= -o receive_override_options=no_header_body_checks

postfix 1209 1206 0 23:05 ? 00:00:00 qmgr -l -t unix -u

amavis 1210 567 0 23:05 ? 00:00:00 /usr/sbin/amavisd-new (virgin child)

amavis 1211 567 0 23:05 ? 00:00:00 /usr/sbin/amavisd-new (virgin child)

root 1214 558 0 23:05 ? 00:00:00 sleep 5

root 1215 457 0 23:05 pts/0 00:00:00 ps -ef

```

### *What* is affected by this bug?

Upgrading beyond `7.0.1` without any changes causes dovecot **not** to run, and therefore, IMAP/SSL to fail.

docker-compose.yml (same as above, just version bump):

```yaml

...

services:

mail:

image: tvial/docker-mailserver:release-v7.1.0

...

```

IMAP/SSL fails.

```bash

$ openssl s_client -starttls imap -connect mail.{REDACTED}:993

140671805964736:error:0200206F:system library:connect:Connection refused:../crypto/bio/b_sock2.c:110:

140671805964736:error:2008A067:BIO routines:BIO_connect:connect error:../crypto/bio/b_sock2.c:111:

connect:errno=111

```

Dovecot is **not** running on the server:

```bash

# ps -ef

UID PID PPID C STIME TTY TIME CMD

root 1 0 2 23:03 ? 00:00:00 /usr/bin/python2 /usr/bin/supervisord -c /etc/supervisor/supervisord.conf

root 8 1 0 23:03 ? 00:00:00 /bin/bash /usr/local/bin/start-mailserver.sh

root 552 1 0 23:03 ? 00:00:00 /usr/sbin/cron -f

root 554 1 0 23:03 ? 00:00:00 /usr/sbin/rsyslogd -n

opendkim 558 1 0 23:03 ? 00:00:00 /usr/sbin/opendkim -f

opendkim 560 558 0 23:03 ? 00:00:00 /usr/sbin/opendkim -f

opendma+ 566 1 0 23:03 ? 00:00:00 /usr/sbin/opendmarc -f -p inet:8893@localhost -P /var/run/opendmarc/opendmarc.pid

postgrey 574 1 3 23:03 ? 00:00:00 postgrey --inet=127.0.0.1:10023 --syslog-facility=mail --delay=300 --max-age=35 --auto-whitelist-clients=0 --g

root 576 1 0 23:03 ? 00:00:00 bash /usr/local/bin/postfix-wrapper.sh

amavis 585 1 44 23:03 ? 00:00:01 /usr/sbin/amavisd-new (master)

root 587 8 0 23:03 ? 00:00:00 tail -fn 0 /var/log/mail/mail.log

root 596 0 1 23:03 pts/0 00:00:00 /bin/sh

postsrsd 626 1 0 23:03 ? 00:00:00 /usr/sbin/postsrsd -f 10001 -r 10002 -d {REDACTED} -s /etc/postsrsd.secret -a = -n 4 -N 4 -u postsrsd -p /var/r

amavis 1141 585 0 23:04 ? 00:00:00 /usr/sbin/amavisd-new (virgin child)

amavis 1142 585 0 23:04 ? 00:00:00 /usr/sbin/amavisd-new (virgin child)

root 1225 1 0 23:04 ? 00:00:00 /usr/lib/postfix/sbin/master

root 1226 576 0 23:04 ? 00:00:00 sleep 5

postfix 1227 1225 0 23:04 ? 00:00:00 pickup -l -t fifo -u -c -o content_filter= -o receive_override_options=no_header_body_checks

postfix 1228 1225 0 23:04 ? 00:00:00 qmgr -l -t unix -u

root 1229 596 0 23:04 pts/0 00:00:00 ps -ef

```

roundcube (web UI using IMAP/SSL) is not happy either:

/var/log/syslog:

```bash

Oct 12 20:18:47 {SERVER} roundcube[7483]: errors: <eb34b0a6> IMAP Error: Login failed for {USER} against {REDACTED} from {GATEWAY IP}(X-Real-IP:

{CLIENT IP},X-Forwarded-For: {CLIENT IP}). Could not connect to ssl://{REDACTED}:993: Unknown reason in /var/www/html/program/lib/Roundcube/rcube_imap.php on line 200 (POST /?_task=login&_action=login)

```

### *When* does this occur?

Any version beyond `7.0.1`

## *How* do we replicate the issue?

See above for replication. I can provide additional files for postfix conf and dovecot sieve, but that doesn't seem to be the issue.

## Actual Behavior

IMAP/SSL logins fail. Dovecot not running on the server.

## Expected behavior (i.e. solution)

**Expectations**: A working configuration from 7.0.1 should work in 7.0.1+, unless explicit changes are called out in the [Announcements section](https://github.com/tomav/docker-mailserver#announcements).

## Your Environment

* Amount of RAM available: 128GB

* Mailserver version used:

* working: release-v7.0.0, release-v7.0.1

* non-working: release-v7.1.0, latest

* Docker version used: Docker version 19.03.13, build 4484c46d9d

* Environment settings relevant to the config: See config.

* Any relevant stack traces ("Full trace" preferred):

<!---

Please remember to format code using triple backticks (`) so that it is neatly formatted when the issue is posted.

Spoilers are recommended for readability:

<details>

<summary>Click me to expand </summary>

```sh

echo "hello world"

```

</details>

-->

| priority | dovecot horked on latest versions horked is a technical termed for broken seriously though dovecot configuration works appropriately up to version and before upgrading to the latest or release image results in dovecot not starting on docker mailserver and consequently rejecting imap connections using the same configuration on images up to works appropriately expectations a working configuration from should work in unless explicit changes are called out in the context pretty standard postfix dovecot setup using imap ssl w letsencrypt dmarc dkim etc docker compose yml redacted and paths simplified yaml version networks mail driver bridge ipam config subnet mail net db db external true services mail image tvial docker mailserver release restart always stop grace period networks mail address mail ip ports tcp tcp tcp hostname mail domainname redacted container name mail environment default relay host dms debug dovecot mailbox format maildir enable clamav enable elk forwarder enable enable fetchmail enable ldap enable managesieve enable enable postfix virtual transport enable postgrey enable quotas enable saslauthd enable spamassassin enable srs logrotate interval weekly logwatch interval weekly one dir permit docker host pflogsumm trigger logrotate postfix dagent postfix inet protocols postfix mailbox size limit postfix message size limit postgrey auto whitelist clients postgrey delay postgrey max age postgrey text delayed by postgrey postmaster address postmaster redacted postscreen action enforce relay host sa kill sa spam subject spam sa sa tag sasl passwd saslauthd mech options saslauthd mechanisms smtp only spoof protection srs exclude domains srs sender classes envelope sender header sender ssl type letsencrypt tls level modern tz america los angeles virusmails delete delay volumes d mail var mail d config tmp docker mailserver d sieve conf etc dovecot conf d sieve conf d letsencrypt etc letsencrypt ro var log docker mail var log mail etc localtime etc localtime ro imap ssl works fine can login without issue and all versions before this bash openssl s client starttls imap connect mail redacted connected dovecot is running on the server bash ps ef uid pid ppid c stime tty time cmd root usr bin usr bin supervisord c etc supervisor supervisord conf root bin bash usr local bin start mailserver sh root pts bin sh root usr sbin cron f root usr sbin rsyslogd n root usr sbin dovecot f c etc dovecot dovecot conf dovecot dovecot anvil root dovecot log root dovecot config opendkim usr sbin opendkim f opendkim usr sbin opendkim f opendma usr sbin opendmarc f p inet localhost p var run opendmarc opendmarc pid postgrey postgrey inet syslog facility mail delay max age auto whitelist clients g root bash usr local bin postfix wrapper sh amavis usr sbin amavisd new master root tail fn var log mail mail log postsrsd usr sbin postsrsd f r d redacted s etc postsrsd secret a n n u postsrsd p var r root usr lib postfix sbin master postfix pickup l t fifo u c o content filter o receive override options no header body checks postfix qmgr l t unix u amavis usr sbin amavisd new virgin child amavis usr sbin amavisd new virgin child root sleep root pts ps ef what is affected by this bug upgrading beyond without any changes causes dovecot not to run and therefore imap ssl to fail docker compose yml same as above just version bump yaml services mail image tvial docker mailserver release imap ssl fails bash openssl s client starttls imap connect mail redacted error system library connect connection refused crypto bio b c error bio routines bio connect connect error crypto bio b c connect errno dovecot is not running on the server bash ps ef uid pid ppid c stime tty time cmd root usr bin usr bin supervisord c etc supervisor supervisord conf root bin bash usr local bin start mailserver sh root usr sbin cron f root usr sbin rsyslogd n opendkim usr sbin opendkim f opendkim usr sbin opendkim f opendma usr sbin opendmarc f p inet localhost p var run opendmarc opendmarc pid postgrey postgrey inet syslog facility mail delay max age auto whitelist clients g root bash usr local bin postfix wrapper sh amavis usr sbin amavisd new master root tail fn var log mail mail log root pts bin sh postsrsd usr sbin postsrsd f r d redacted s etc postsrsd secret a n n u postsrsd p var r amavis usr sbin amavisd new virgin child amavis usr sbin amavisd new virgin child root usr lib postfix sbin master root sleep postfix pickup l t fifo u c o content filter o receive override options no header body checks postfix qmgr l t unix u root pts ps ef roundcube web ui using imap ssl is not happy either var log syslog bash oct server roundcube errors imap error login failed for user against redacted from gateway ip x real ip client ip x forwarded for client ip could not connect to ssl redacted unknown reason in var www html program lib roundcube rcube imap php on line post task login action login when does this occur any version beyond how do we replicate the issue see above for replication i can provide additional files for postfix conf and dovecot sieve but that doesn t seem to be the issue actual behavior imap ssl logins fail dovecot not running on the server expected behavior i e solution expectations a working configuration from should work in unless explicit changes are called out in the your environment amount of ram available mailserver version used working release release non working release latest docker version used docker version build environment settings relevant to the config see config any relevant stack traces full trace preferred please remember to format code using triple backticks so that it is neatly formatted when the issue is posted spoilers are recommended for readability click me to expand sh echo hello world | 1 |

202,049 | 7,043,566,956 | IssuesEvent | 2017-12-31 08:42:25 | commons-app/apps-android-commons | https://api.github.com/repos/commons-app/apps-android-commons | opened | [Urgent] Crashes in v2.6.5 | bug high priority | We are getting a lot of crashes in v2.6.5 production, with a crash rate of 4.27%, more than in previous versions. This has unfortunately led to a lot of uninstalls. :( The majority of the crashes seem to be related to Dagger. I have pasted them below:

9 reports, 6 users:

```

java.lang.RuntimeException:

at android.app.ActivityThread.handleCreateService (ActivityThread.java:3349)

at android.app.ActivityThread.-wrap4 (Unknown Source)

at android.app.ActivityThread$H.handleMessage (ActivityThread.java:1677)

at android.os.Handler.dispatchMessage (Handler.java:106)

at android.os.Looper.loop (Looper.java:164)

at android.app.ActivityThread.main (ActivityThread.java:6494)

at java.lang.reflect.Method.invoke (Native Method)

at com.android.internal.os.RuntimeInit$MethodAndArgsCaller.run (RuntimeInit.java:438)

at com.android.internal.os.ZygoteInit.main (ZygoteInit.java:807)

Caused by: java.lang.RuntimeException:

at dagger.android.AndroidInjection.inject (AndroidInjection.java:134)

at dagger.android.DaggerService.onCreate (DaggerService.java:27)

at fr.free.nrw.commons.HandlerService.onCreate (HandlerService.java:55)

at fr.free.nrw.commons.upload.UploadService.onCreate (UploadService.java:122)

at android.app.ActivityThread.handleCreateService (ActivityThread.java:3339)

```

8 reports, 4 users:

```

java.lang.RuntimeException:

at android.app.ActivityThread.handleCreateService (ActivityThread.java:3544)

at android.app.ActivityThread.-wrap6 (ActivityThread.java)

at android.app.ActivityThread$H.handleMessage (ActivityThread.java:1732)

at android.os.Handler.dispatchMessage (Handler.java:102)

at android.os.Looper.loop (Looper.java:154)

at android.app.ActivityThread.main (ActivityThread.java:6776)

at java.lang.reflect.Method.invoke (Native Method)

at com.android.internal.os.ZygoteInit$MethodAndArgsCaller.run (ZygoteInit.java:1496)

at com.android.internal.os.ZygoteInit.main (ZygoteInit.java:1386)

Caused by: java.lang.RuntimeException:

at dagger.android.AndroidInjection.inject (AndroidInjection.java:134)

at dagger.android.DaggerService.onCreate (DaggerService.java:27)

at fr.free.nrw.commons.HandlerService.onCreate (HandlerService.java:55)

at fr.free.nrw.commons.upload.UploadService.onCreate (UploadService.java:122)

at android.app.ActivityThread.handleCreateService (ActivityThread.java:3534)

```

5 reports, 2 users:

```

java.lang.RuntimeException:

at fr.free.nrw.commons.contributions.ContributionsActivity$1.onServiceDisconnected (ContributionsActivity.java:97)

at android.app.LoadedApk$ServiceDispatcher.doConnected (LoadedApk.java:1457)

at android.app.LoadedApk$ServiceDispatcher$RunConnection.run (LoadedApk.java:1489)

at android.os.Handler.handleCallback (Handler.java:754)

at android.os.Handler.dispatchMessage (Handler.java:95)

at android.os.Looper.loop (Looper.java:163)

at android.app.ActivityThread.main (ActivityThread.java:6361)

at java.lang.reflect.Method.invoke (Native Method)

at com.android.internal.os.ZygoteInit$MethodAndArgsCaller.run (ZygoteInit.java:904)

at com.android.internal.os.ZygoteInit.main (ZygoteInit.java:794)

```

8 reports, 3 users:

```

java.lang.RuntimeException:

at android.app.ActivityThread.handleCreateService (ActivityThread.java:3193)

at android.app.ActivityThread.-wrap5 (ActivityThread.java)

at android.app.ActivityThread$H.handleMessage (ActivityThread.java:1563)

at android.os.Handler.dispatchMessage (Handler.java:102)

at android.os.Looper.loop (Looper.java:154)