Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

88,104 | 3,773,110,643 | IssuesEvent | 2016-03-17 00:02:42 | nilsschmidt1337/ldparteditor | https://api.github.com/repos/nilsschmidt1337/ldparteditor | closed | Is there a way to move the Manipolator icons to a toolbar? | enhancement high-priority |

Well... its a mess IMHO :-1:

| 1.0 | Is there a way to move the Manipolator icons to a toolbar? -

Well... its a mess IMHO :-1:

| priority | is there a way to move the manipolator icons to a toolbar well its a mess imho | 1 |

391,980 | 11,581,509,168 | IssuesEvent | 2020-02-21 22:51:35 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [engine] Support passing filters as variables in graphql queries | CI enhancement priority: high | **Is your feature request related to a problem? Please describe.**

GraphQL allows a consumer to pass a filter as a parameter in a graphQL query. Crafter does not currently support this.

**Describe the solution you'd like**

Support the ability to pass a graphQL query

Example of passing a filter as a parameter:

```

Query:

query MyQuery($disabled: BooleanFilters) {

page_entry {

items {

disabled(filter: $disabled)

}

}

}

Parameter:

{ "disabled": { "equals": true } }

```

| 1.0 | [engine] Support passing filters as variables in graphql queries - **Is your feature request related to a problem? Please describe.**

GraphQL allows a consumer to pass a filter as a parameter in a graphQL query. Crafter does not currently support this.

**Describe the solution you'd like**

Support the ability to pass a graphQL query

Example of passing a filter as a parameter:

```

Query:

query MyQuery($disabled: BooleanFilters) {

page_entry {

items {

disabled(filter: $disabled)

}

}

}

Parameter:

{ "disabled": { "equals": true } }

```

| priority | support passing filters as variables in graphql queries is your feature request related to a problem please describe graphql allows a consumer to pass a filter as a parameter in a graphql query crafter does not currently support this describe the solution you d like support the ability to pass a graphql query example of passing a filter as a parameter query query myquery disabled booleanfilters page entry items disabled filter disabled parameter disabled equals true | 1 |

654,488 | 21,653,993,932 | IssuesEvent | 2022-05-06 12:24:43 | epiforecasts/EpiNow2 | https://api.github.com/repos/epiforecasts/EpiNow2 | closed | Review use of futile.logger and error catching in package | bug help wanted high priority | The current implementation of `futile.logger`/error catching in `EpiNow2` makes it somewhat difficult to find errors or debug with R's normal tools (as I understand them) as it stands and could do with another dev pass.

This is due both to the complexity of the package and the evolution of `EpiNow2` without updating the supporting in package logging. This is blocking us from rapidly iterating in `covid-rt-estimates` and makes development of `EpiNow2` somewhat frustrating due to a lack of familiarity with the logging package/appropriate and robust error catching.

Support is needed to design a logging system that is user friendly, that catches errors where they happen to ease debugging, that does not saturate the code with too many logging updates, and that presents summaries to users appropriately when runnng large runs in a useful format without overwhelming them with log calls. Ideally this should be primarily be implemented into EpiNow2 rather than upstream so that all users can benefit. | 1.0 | Review use of futile.logger and error catching in package - The current implementation of `futile.logger`/error catching in `EpiNow2` makes it somewhat difficult to find errors or debug with R's normal tools (as I understand them) as it stands and could do with another dev pass.

This is due both to the complexity of the package and the evolution of `EpiNow2` without updating the supporting in package logging. This is blocking us from rapidly iterating in `covid-rt-estimates` and makes development of `EpiNow2` somewhat frustrating due to a lack of familiarity with the logging package/appropriate and robust error catching.

Support is needed to design a logging system that is user friendly, that catches errors where they happen to ease debugging, that does not saturate the code with too many logging updates, and that presents summaries to users appropriately when runnng large runs in a useful format without overwhelming them with log calls. Ideally this should be primarily be implemented into EpiNow2 rather than upstream so that all users can benefit. | priority | review use of futile logger and error catching in package the current implementation of futile logger error catching in makes it somewhat difficult to find errors or debug with r s normal tools as i understand them as it stands and could do with another dev pass this is due both to the complexity of the package and the evolution of without updating the supporting in package logging this is blocking us from rapidly iterating in covid rt estimates and makes development of somewhat frustrating due to a lack of familiarity with the logging package appropriate and robust error catching support is needed to design a logging system that is user friendly that catches errors where they happen to ease debugging that does not saturate the code with too many logging updates and that presents summaries to users appropriately when runnng large runs in a useful format without overwhelming them with log calls ideally this should be primarily be implemented into rather than upstream so that all users can benefit | 1 |

632,083 | 20,171,261,536 | IssuesEvent | 2022-02-10 10:37:21 | ballerina-platform/ballerina-update-tool | https://api.github.com/repos/ballerina-platform/ballerina-update-tool | closed | Current version (not published) is not showing as installed in `bal dist list` | Type/Bug Priority/High | **Description:**

When we are having different dsitribution versions (e.g: 2201.0.1-SNAPSHOT), the `bal dist list` command is not showing the installed version as it has been installed. Reason is that the current version has not been published yet.

<img width="834" alt="Screenshot 2022-02-10 at 14 57 56" src="https://user-images.githubusercontent.com/51471998/153378906-3fa0db98-f7fe-43fd-8ea7-26d5658370d8.png">

**Steps to reproduce:**

Download the installer (ubuntu deb/rpm, macos pkg, windows msi) from the daily build in ballerina-distribution or build the ballerina-distribution and generate installer from the pack.

Install ballerina from the installer and run the `bal dist list`.

**Affected Versions:**

All the versions

**OS, DB, other environment details and versions:**

All the Operating systems.

| 1.0 | Current version (not published) is not showing as installed in `bal dist list` - **Description:**

When we are having different dsitribution versions (e.g: 2201.0.1-SNAPSHOT), the `bal dist list` command is not showing the installed version as it has been installed. Reason is that the current version has not been published yet.

<img width="834" alt="Screenshot 2022-02-10 at 14 57 56" src="https://user-images.githubusercontent.com/51471998/153378906-3fa0db98-f7fe-43fd-8ea7-26d5658370d8.png">

**Steps to reproduce:**

Download the installer (ubuntu deb/rpm, macos pkg, windows msi) from the daily build in ballerina-distribution or build the ballerina-distribution and generate installer from the pack.

Install ballerina from the installer and run the `bal dist list`.

**Affected Versions:**

All the versions

**OS, DB, other environment details and versions:**

All the Operating systems.

| priority | current version not published is not showing as installed in bal dist list description when we are having different dsitribution versions e g snapshot the bal dist list command is not showing the installed version as it has been installed reason is that the current version has not been published yet img width alt screenshot at src steps to reproduce download the installer ubuntu deb rpm macos pkg windows msi from the daily build in ballerina distribution or build the ballerina distribution and generate installer from the pack install ballerina from the installer and run the bal dist list affected versions all the versions os db other environment details and versions all the operating systems | 1 |

580,421 | 17,243,677,397 | IssuesEvent | 2021-07-21 04:50:29 | rizinorg/rizin | https://api.github.com/repos/rizinorg/rizin | opened | Fix PE: corkami 65535sects.exe - section list, entrypoint, open and analyze with ASAN build | PE RzAnalysis high-priority optimization | It is unnecessary slow. Could be and should be optimized. It fails on our CI

```

[XX] db/formats/pe/65535sects PE: corkami 65535sects.exe - section list, entrypoint, open and analyze

RZ_NOPLUGINS=1 rizin -escr.utf8=0 -escr.color=0 -escr.interactive=0 -N -Qc 'aa

om~?

s

pi 1

q!

' bins/pe/65535sects.exe

-- stdout

--- expected

+++ actual

@@ -1,3 +1,0 @@

-65536

-0x291120

-mov edi, 0x7027aff9

-- stderr

[ ] Analyze all flags starting with sym. and entry0 (aa)

[

[x] Analyze all flags starting with sym. and entry0 (aa)

-- exit status: -1

``` | 1.0 | Fix PE: corkami 65535sects.exe - section list, entrypoint, open and analyze with ASAN build - It is unnecessary slow. Could be and should be optimized. It fails on our CI

```

[XX] db/formats/pe/65535sects PE: corkami 65535sects.exe - section list, entrypoint, open and analyze

RZ_NOPLUGINS=1 rizin -escr.utf8=0 -escr.color=0 -escr.interactive=0 -N -Qc 'aa

om~?

s

pi 1

q!

' bins/pe/65535sects.exe

-- stdout

--- expected

+++ actual

@@ -1,3 +1,0 @@

-65536

-0x291120

-mov edi, 0x7027aff9

-- stderr

[ ] Analyze all flags starting with sym. and entry0 (aa)

[

[x] Analyze all flags starting with sym. and entry0 (aa)

-- exit status: -1

``` | priority | fix pe corkami exe section list entrypoint open and analyze with asan build it is unnecessary slow could be and should be optimized it fails on our ci db formats pe pe corkami exe section list entrypoint open and analyze rz noplugins rizin escr escr color escr interactive n qc aa om s pi q bins pe exe stdout expected actual mov edi stderr analyze all flags starting with sym and aa analyze all flags starting with sym and aa exit status | 1 |

650,621 | 21,411,200,362 | IssuesEvent | 2022-04-22 06:13:08 | lifestream-friendship-network/feedback | https://api.github.com/repos/lifestream-friendship-network/feedback | closed | Paginated Friends, blocks, invite queries | high priority next deployment | All of these were placeholder "return everything" situations, and that's no good once it scales up a bit. | 1.0 | Paginated Friends, blocks, invite queries - All of these were placeholder "return everything" situations, and that's no good once it scales up a bit. | priority | paginated friends blocks invite queries all of these were placeholder return everything situations and that s no good once it scales up a bit | 1 |

556,066 | 16,473,573,652 | IssuesEvent | 2021-05-23 22:16:38 | meanmedianmoge/zoia_lib | https://api.github.com/repos/meanmedianmoge/zoia_lib | opened | No Patch Names or Details | bug high priority | **Describe the bug**

There's no info about any patches

**To Reproduce**

Steps to reproduce the behavior:

1. download the app and run it on ubuntu 20.04

**Expected behavior**

Expect to see some patch names, from the screenshots in the manual this is what I expect

**Screenshots**

**Desktop:**

Ubuntu 20.04

| 1.0 | No Patch Names or Details - **Describe the bug**

There's no info about any patches

**To Reproduce**

Steps to reproduce the behavior:

1. download the app and run it on ubuntu 20.04

**Expected behavior**

Expect to see some patch names, from the screenshots in the manual this is what I expect

**Screenshots**

**Desktop:**

Ubuntu 20.04

| priority | no patch names or details describe the bug there s no info about any patches to reproduce steps to reproduce the behavior download the app and run it on ubuntu expected behavior expect to see some patch names from the screenshots in the manual this is what i expect screenshots desktop ubuntu | 1 |

545,448 | 15,950,809,054 | IssuesEvent | 2021-04-15 09:05:37 | sopra-fs21-group-4/client | https://api.github.com/repos/sopra-fs21-group-4/client | opened | submit meme title request and implementation in the backend | high priority task | submit meme title request and implementation in the backend

Time estimate 8h

User story: #9 | 1.0 | submit meme title request and implementation in the backend - submit meme title request and implementation in the backend

Time estimate 8h

User story: #9 | priority | submit meme title request and implementation in the backend submit meme title request and implementation in the backend time estimate user story | 1 |

804,197 | 29,478,738,953 | IssuesEvent | 2023-06-02 02:16:34 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Make sure when Electron crashes there is a crash report | OS: mac browser: electron type: user experience stage: backlog priority: high stale | To debug issues like #1434 we should make sure there is Electron crash report saved (see https://electronjs.org/docs/api/crash-reporter)

- [ ] how to reliably crash Cypress on Mac? Really need segmentation fault like this one

```

Node[1] 29936 segmentation fault ./node_modules/cypress/dist/Cypress.app/Contents/MacOS/Cypress

```

- [ ] does it write message about the crash into `/var/log/system.log`?

- [ ] make sure the report file is saved

- [ ] put the report and instructions to the user in the error message shown from the CLI | 1.0 | Make sure when Electron crashes there is a crash report - To debug issues like #1434 we should make sure there is Electron crash report saved (see https://electronjs.org/docs/api/crash-reporter)

- [ ] how to reliably crash Cypress on Mac? Really need segmentation fault like this one

```

Node[1] 29936 segmentation fault ./node_modules/cypress/dist/Cypress.app/Contents/MacOS/Cypress

```

- [ ] does it write message about the crash into `/var/log/system.log`?

- [ ] make sure the report file is saved

- [ ] put the report and instructions to the user in the error message shown from the CLI | priority | make sure when electron crashes there is a crash report to debug issues like we should make sure there is electron crash report saved see how to reliably crash cypress on mac really need segmentation fault like this one node segmentation fault node modules cypress dist cypress app contents macos cypress does it write message about the crash into var log system log make sure the report file is saved put the report and instructions to the user in the error message shown from the cli | 1 |

656,242 | 21,724,213,399 | IssuesEvent | 2022-05-11 05:41:11 | jordan-sullivan/flashcards-2.5 | https://api.github.com/repos/jordan-sullivan/flashcards-2.5 | opened | create takeTurn Method | high priority | takeTurn: method that updates turns count, evaluates guesses, gives feedback, and stores ids of incorrect guesses

When a guess is made, a new Turn instance is created.

The turns count is updated, regardless of whether the guess is correct or incorrect

The next card becomes current card

Guess is evaluated/recorded. Incorrect guesses will be stored (via the id) in an array of incorrectGuesses

Feedback is returned regarding whether the guess is incorrect or correct | 1.0 | create takeTurn Method - takeTurn: method that updates turns count, evaluates guesses, gives feedback, and stores ids of incorrect guesses

When a guess is made, a new Turn instance is created.

The turns count is updated, regardless of whether the guess is correct or incorrect

The next card becomes current card

Guess is evaluated/recorded. Incorrect guesses will be stored (via the id) in an array of incorrectGuesses

Feedback is returned regarding whether the guess is incorrect or correct | priority | create taketurn method taketurn method that updates turns count evaluates guesses gives feedback and stores ids of incorrect guesses when a guess is made a new turn instance is created the turns count is updated regardless of whether the guess is correct or incorrect the next card becomes current card guess is evaluated recorded incorrect guesses will be stored via the id in an array of incorrectguesses feedback is returned regarding whether the guess is incorrect or correct | 1 |

21,603 | 2,641,718,336 | IssuesEvent | 2015-03-11 19:20:16 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Commit 129 | Milestone-2 Priority-High Type-Review | Original [issue 74](https://code.google.com/p/html5rocks/issues/detail?id=74) created by chrsmith on 2010-07-28T00:14:17.000Z:

<b>Link to revision:</b>

http://code.google.com/p/html5rocks/source/detail?r=129

<b>Purpose of code changes:</b>

Adding web workers tutorial

| 1.0 | Commit 129 - Original [issue 74](https://code.google.com/p/html5rocks/issues/detail?id=74) created by chrsmith on 2010-07-28T00:14:17.000Z:

<b>Link to revision:</b>

http://code.google.com/p/html5rocks/source/detail?r=129

<b>Purpose of code changes:</b>

Adding web workers tutorial

| priority | commit original created by chrsmith on link to revision purpose of code changes adding web workers tutorial | 1 |

734,725 | 25,360,597,676 | IssuesEvent | 2022-11-20 21:04:44 | bounswe/bounswe2022group7 | https://api.github.com/repos/bounswe/bounswe2022group7 | closed | [BE] Get User Endpoints Implementation | Status: Completed Priority: High Difficulty: Medium Type: Implementation Target: Backend | We should implement an endpoint that returns user related information in order to provide data of the profile pages to the frontend team.

We are going to have two different endpoints:

1. /profile returns the whole registered user class which should be extracted from the token. Token is required, no additional body or variable required.

2. /profile/{username} returns the account info information of the user corresponding the username. Token is not required, only takes username path variable.

@sabrimete is assigned to this implementation.

**Reviewer:** @askabderon

**Deadline:** 22/11/2022 23.59 | 1.0 | [BE] Get User Endpoints Implementation - We should implement an endpoint that returns user related information in order to provide data of the profile pages to the frontend team.

We are going to have two different endpoints:

1. /profile returns the whole registered user class which should be extracted from the token. Token is required, no additional body or variable required.

2. /profile/{username} returns the account info information of the user corresponding the username. Token is not required, only takes username path variable.

@sabrimete is assigned to this implementation.

**Reviewer:** @askabderon

**Deadline:** 22/11/2022 23.59 | priority | get user endpoints implementation we should implement an endpoint that returns user related information in order to provide data of the profile pages to the frontend team we are going to have two different endpoints profile returns the whole registered user class which should be extracted from the token token is required no additional body or variable required profile username returns the account info information of the user corresponding the username token is not required only takes username path variable sabrimete is assigned to this implementation reviewer askabderon deadline | 1 |

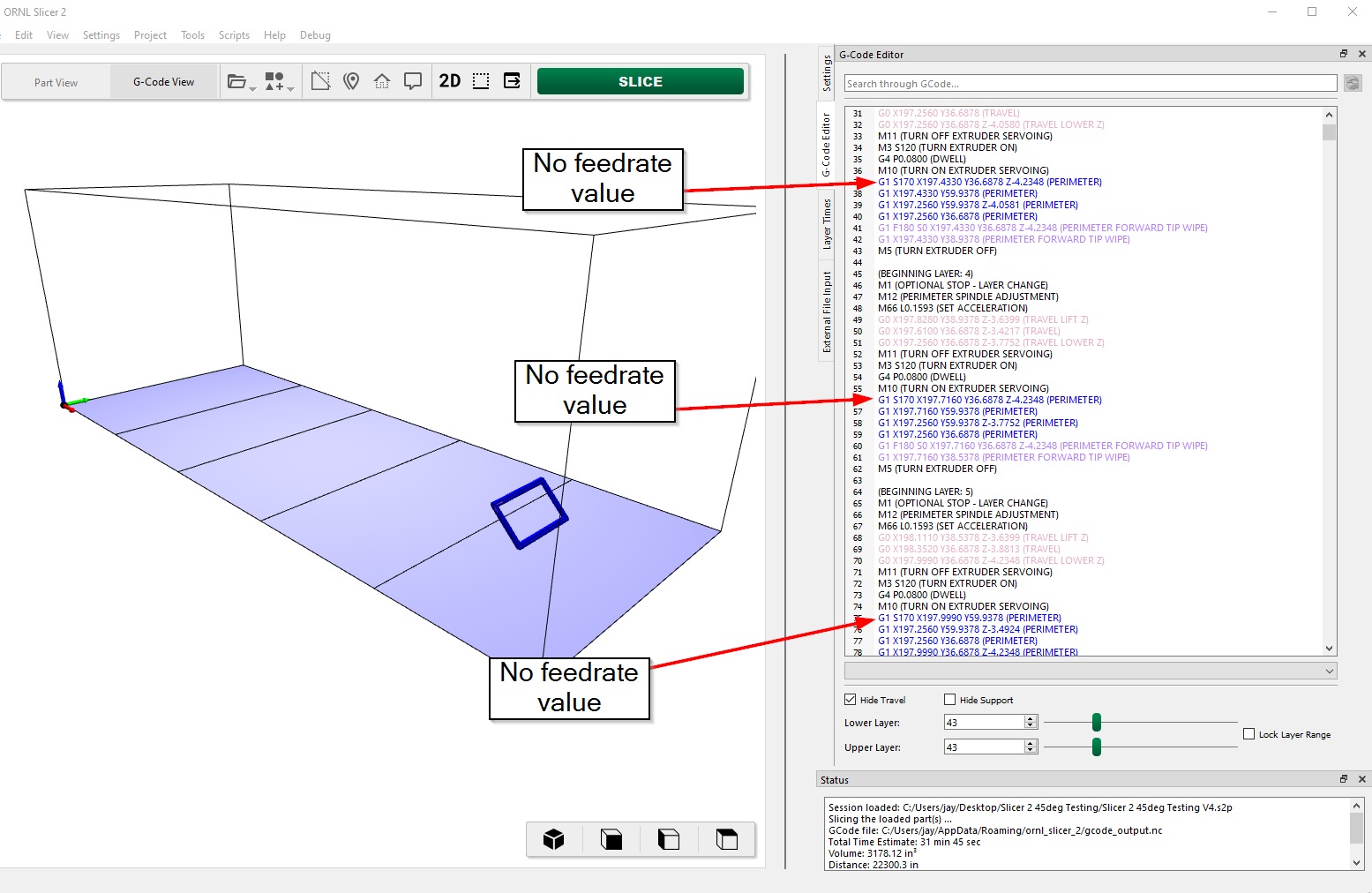

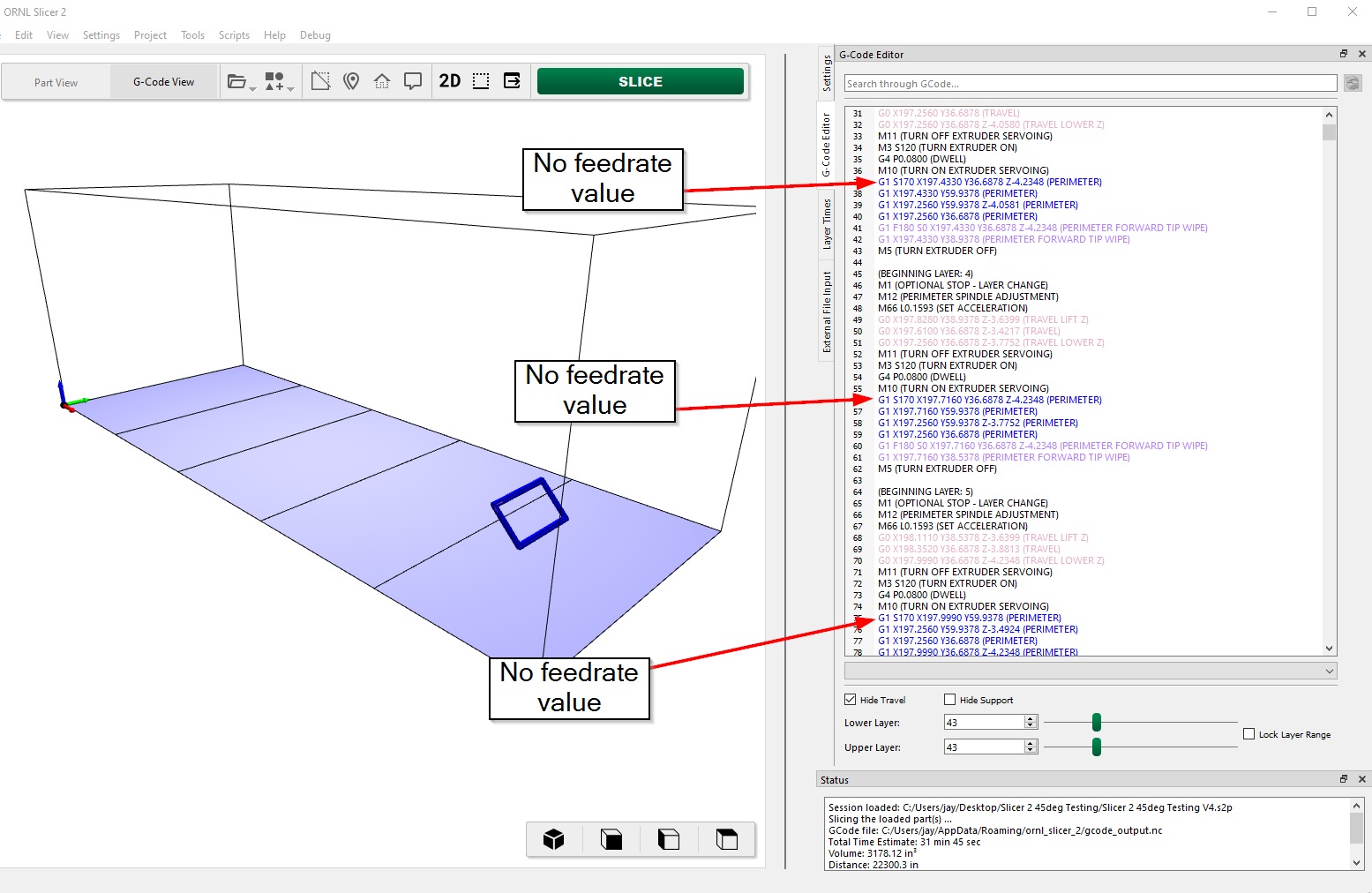

651,304 | 21,473,107,620 | IssuesEvent | 2022-04-26 11:21:14 | mdfbaam/ORNL-Slicer-2-Issue-Tracker | https://api.github.com/repos/mdfbaam/ORNL-Slicer-2-Issue-Tracker | closed | Slicer does not output F values when slicing | bug core-slicing high-priority | ## Expected Behavior

Self-Explanatory.

## Actual Behavior

Normally there would be FXXX right in front of all the "S" commands (like S170). However, I came across this bug when slicing at an angle. Even after restarting the computer and putting the slice angle back to zero it still did not output F values for the normal print moves. You'll notice it DOES output F values for the tip wipe, so that seems to be operating normally. However, it is missing from all the other lines.

## Possible Solution

Self-Explanatory

## Steps to Reproduce the Problem

Self-Explanatory. Ideally, bullet or numbered list.

## Specifications

Platform, machine specs, anything else you think might be relevant.

| 1.0 | Slicer does not output F values when slicing - ## Expected Behavior

Self-Explanatory.

## Actual Behavior

Normally there would be FXXX right in front of all the "S" commands (like S170). However, I came across this bug when slicing at an angle. Even after restarting the computer and putting the slice angle back to zero it still did not output F values for the normal print moves. You'll notice it DOES output F values for the tip wipe, so that seems to be operating normally. However, it is missing from all the other lines.

## Possible Solution

Self-Explanatory

## Steps to Reproduce the Problem

Self-Explanatory. Ideally, bullet or numbered list.

## Specifications

Platform, machine specs, anything else you think might be relevant.

| priority | slicer does not output f values when slicing expected behavior self explanatory actual behavior normally there would be fxxx right in front of all the s commands like however i came across this bug when slicing at an angle even after restarting the computer and putting the slice angle back to zero it still did not output f values for the normal print moves you ll notice it does output f values for the tip wipe so that seems to be operating normally however it is missing from all the other lines possible solution self explanatory steps to reproduce the problem self explanatory ideally bullet or numbered list specifications platform machine specs anything else you think might be relevant | 1 |

547,315 | 16,041,109,201 | IssuesEvent | 2021-04-22 08:00:39 | 46elks/praktik-apiskolan | https://api.github.com/repos/46elks/praktik-apiskolan | closed | Add link to live webpage in "about" section of repository | enhancement good first issue high priority | The future link to the live website should be provided in the "about" section, which also contains a description and topics | 1.0 | Add link to live webpage in "about" section of repository - The future link to the live website should be provided in the "about" section, which also contains a description and topics | priority | add link to live webpage in about section of repository the future link to the live website should be provided in the about section which also contains a description and topics | 1 |

191,441 | 6,828,965,161 | IssuesEvent | 2017-11-08 22:18:10 | crowdAI/crowdai | https://api.github.com/repos/crowdAI/crowdai | closed | Participant qualification - multi round challenge | feature high priority | Reject submissions from participants if they have not qualified for stage 2 (with an appropriate message)

Some view of the qualified stage 2 participants

Re: https://github.com/crowdAI/crowdai/issues/340 | 1.0 | Participant qualification - multi round challenge - Reject submissions from participants if they have not qualified for stage 2 (with an appropriate message)

Some view of the qualified stage 2 participants

Re: https://github.com/crowdAI/crowdai/issues/340 | priority | participant qualification multi round challenge reject submissions from participants if they have not qualified for stage with an appropriate message some view of the qualified stage participants re | 1 |

751,446 | 26,245,424,703 | IssuesEvent | 2023-01-05 14:54:26 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | Unread chat badge shown on muted channels on reboot | bug Chat priority 1: high E:Bugfixes S:2 messenger | # Bug Report

## Description

If you have a muted public chat, receive messages it, don't read them, close the app and reopen, the unread badge will be shown on the section icon on the left

See video below for example

## Steps to reproduce

1. Mute a channel and put it as inactive

2. In another account, send messages in that muted channel (this works fine, no badge is shown)

3. Close app no. 1 and reopen

Result: the badge is shown on the left and you don't even know why

#### Expected behavior

No badge is ever shown for a muted channel

### Additional Information

- Status desktop version: 0.9.0RC1

[Screencast 2023-01-03 16:15:45-unread.webm](https://user-images.githubusercontent.com/11926403/210442700-2a3817ab-e903-413d-b066-41937bc4486e.webm)

| 1.0 | Unread chat badge shown on muted channels on reboot - # Bug Report

## Description

If you have a muted public chat, receive messages it, don't read them, close the app and reopen, the unread badge will be shown on the section icon on the left

See video below for example

## Steps to reproduce

1. Mute a channel and put it as inactive

2. In another account, send messages in that muted channel (this works fine, no badge is shown)

3. Close app no. 1 and reopen

Result: the badge is shown on the left and you don't even know why

#### Expected behavior

No badge is ever shown for a muted channel

### Additional Information

- Status desktop version: 0.9.0RC1

[Screencast 2023-01-03 16:15:45-unread.webm](https://user-images.githubusercontent.com/11926403/210442700-2a3817ab-e903-413d-b066-41937bc4486e.webm)

| priority | unread chat badge shown on muted channels on reboot bug report description if you have a muted public chat receive messages it don t read them close the app and reopen the unread badge will be shown on the section icon on the left see video below for example steps to reproduce mute a channel and put it as inactive in another account send messages in that muted channel this works fine no badge is shown close app no and reopen result the badge is shown on the left and you don t even know why expected behavior no badge is ever shown for a muted channel additional information status desktop version | 1 |

125,652 | 4,959,184,694 | IssuesEvent | 2016-12-02 12:29:22 | hpi-swt2/workshop-portal | https://api.github.com/repos/hpi-swt2/workshop-portal | opened | Start page organizer view | High Priority team-helene | **As**

organizer

**I want to**

have a top page menu bar consisting of Start, Veranstaltungen, Anfragen and a drop down under my name with Profilinfo, Mein Profil (former Nutzer in the pupil view), Ausloggen

**in order to**

have smooth navigation

- [ ] | 1.0 | Start page organizer view - **As**

organizer

**I want to**

have a top page menu bar consisting of Start, Veranstaltungen, Anfragen and a drop down under my name with Profilinfo, Mein Profil (former Nutzer in the pupil view), Ausloggen

**in order to**

have smooth navigation

- [ ] | priority | start page organizer view as organizer i want to have a top page menu bar consisting of start veranstaltungen anfragen and a drop down under my name with profilinfo mein profil former nutzer in the pupil view ausloggen in order to have smooth navigation | 1 |

502,993 | 14,576,998,838 | IssuesEvent | 2020-12-18 00:52:45 | neuropoly/spinalcordtoolbox | https://api.github.com/repos/neuropoly/spinalcordtoolbox | closed | Created labels are not integer | API: labels.py bug priority:HIGH sct_label_vertebrae | ### Description

When creating manual labels, the created labels do not have the value asked for: they are output in float (it whould be integer) and the value is slightly different (precision issue).

User forum: https://forum.spinalcordmri.org/t/sct-register-to-template-source-and-destination-landmarks-are-not-the-same/590/4

### Steps to Reproduce

Data: [t2.nii.gz](https://github.com/neuropoly/spinalcordtoolbox/files/5704767/t2.nii.gz)

~~~

sct_label_utils -i t2.nii.gz -create-viewer 3,9 -ldisc t2_labels_disc_manual.nii.gz

sct_label_utils -i t2_labels_disc_manual.nii.gz -display

~~~

output:

~~~

Position=(214,298,7) -- Value= 2.999999999301508

Position=(199,103,7) -- Value= 8.999999997904524

~~~

Expected output:

~~~

Position=(214,298,7) -- Value= 3

Position=(199,103,7) -- Value= 9

~~~

Interestingly, when doing the same experiment on the `sct_testing_data/t2/t2.nii.gz`, it works as expected.

| 1.0 | Created labels are not integer - ### Description

When creating manual labels, the created labels do not have the value asked for: they are output in float (it whould be integer) and the value is slightly different (precision issue).

User forum: https://forum.spinalcordmri.org/t/sct-register-to-template-source-and-destination-landmarks-are-not-the-same/590/4

### Steps to Reproduce

Data: [t2.nii.gz](https://github.com/neuropoly/spinalcordtoolbox/files/5704767/t2.nii.gz)

~~~

sct_label_utils -i t2.nii.gz -create-viewer 3,9 -ldisc t2_labels_disc_manual.nii.gz

sct_label_utils -i t2_labels_disc_manual.nii.gz -display

~~~

output:

~~~

Position=(214,298,7) -- Value= 2.999999999301508

Position=(199,103,7) -- Value= 8.999999997904524

~~~

Expected output:

~~~

Position=(214,298,7) -- Value= 3

Position=(199,103,7) -- Value= 9

~~~

Interestingly, when doing the same experiment on the `sct_testing_data/t2/t2.nii.gz`, it works as expected.

| priority | created labels are not integer description when creating manual labels the created labels do not have the value asked for they are output in float it whould be integer and the value is slightly different precision issue user forum steps to reproduce data sct label utils i nii gz create viewer ldisc labels disc manual nii gz sct label utils i labels disc manual nii gz display output position value position value expected output position value position value interestingly when doing the same experiment on the sct testing data nii gz it works as expected | 1 |

219,456 | 7,342,566,809 | IssuesEvent | 2018-03-07 08:24:33 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Filter claims from SSO consent approval for OIDC | Priority/Highest Severity/Blocker Type/Improvement | Output user information of ID tokens, userinfo requests should be restricted based on the user consent | 1.0 | Filter claims from SSO consent approval for OIDC - Output user information of ID tokens, userinfo requests should be restricted based on the user consent | priority | filter claims from sso consent approval for oidc output user information of id tokens userinfo requests should be restricted based on the user consent | 1 |

211,280 | 7,199,985,813 | IssuesEvent | 2018-02-05 17:32:39 | DrylandEcology/rSFSW2 | https://api.github.com/repos/DrylandEcology/rSFSW2 | closed | No slot of name "MonthlyProductionValues_grass" | bug high priority in progress | All rSFSW2 simulations are failing due to recent commits to rSOILWAT2's master branch.

```

[1] "Datafile 'sw_input_climscen_values' contains zero rows. 'Label's of the master input file 'SWRunInformation' are used to populate rows and 'Label's of the datafile."

Error in slot(prod_default, paste0("MonthlyProductionValues_", tolower(fg))) :

no slot of name "MonthlyProductionValues_grass" for this object of class "swProd"

```

The rSFSW2 automated builds did not catch this, because the last run on master was before the commits that caused this failure. I restarted rSFSW2's automated build on master and replicated the error, along with the other two pull requests open on rSFSW2.

---------------------

**The issue is very simple: the function `update_biomass` in `Vegetation.R` needs to be updated to match the new slot layout in `swProd`.**

I tried changing it from this:

```

temp <- slot(prod_default, paste0("MonthlyProductionValues_", tolower(fg)))

```

To this:

```

if (fg == "Grass") temp <- rSOILWAT2::swProd_MonProd_grass(prod_default)

else if (fg == "Shrub") temp <- rSOILWAT2::swProd_MonProd_shrub(prod_default)

else if (fg == "Tree") temp <- rSOILWAT2::swProd_MonProd_tree(prod_default)

else if (fg == "Forb") temp <- rSOILWAT2::swProd_MonProd_forb(prod_default)

```

But it was unsuccessful:

```

Error in object@MonthlyVeg[[rSW2_glovars[["kSOILWAT2"]][["VegTypes"]][["SW_TREES"]]]] :

attempt to select less than one element in integerOneIndex

```

So, I am going to continue working on what I was assigned to and am instead assigning @dschlaep because he made the changes to rSOILWAT2. In the meantime, commit `e0fa1acd62c2d17c961e58ff2a1eed982a347937` on rSOILWAT2 does not have this issue. | 1.0 | No slot of name "MonthlyProductionValues_grass" - All rSFSW2 simulations are failing due to recent commits to rSOILWAT2's master branch.

```

[1] "Datafile 'sw_input_climscen_values' contains zero rows. 'Label's of the master input file 'SWRunInformation' are used to populate rows and 'Label's of the datafile."

Error in slot(prod_default, paste0("MonthlyProductionValues_", tolower(fg))) :

no slot of name "MonthlyProductionValues_grass" for this object of class "swProd"

```

The rSFSW2 automated builds did not catch this, because the last run on master was before the commits that caused this failure. I restarted rSFSW2's automated build on master and replicated the error, along with the other two pull requests open on rSFSW2.

---------------------

**The issue is very simple: the function `update_biomass` in `Vegetation.R` needs to be updated to match the new slot layout in `swProd`.**

I tried changing it from this:

```

temp <- slot(prod_default, paste0("MonthlyProductionValues_", tolower(fg)))

```

To this:

```

if (fg == "Grass") temp <- rSOILWAT2::swProd_MonProd_grass(prod_default)

else if (fg == "Shrub") temp <- rSOILWAT2::swProd_MonProd_shrub(prod_default)

else if (fg == "Tree") temp <- rSOILWAT2::swProd_MonProd_tree(prod_default)

else if (fg == "Forb") temp <- rSOILWAT2::swProd_MonProd_forb(prod_default)

```

But it was unsuccessful:

```

Error in object@MonthlyVeg[[rSW2_glovars[["kSOILWAT2"]][["VegTypes"]][["SW_TREES"]]]] :

attempt to select less than one element in integerOneIndex

```

So, I am going to continue working on what I was assigned to and am instead assigning @dschlaep because he made the changes to rSOILWAT2. In the meantime, commit `e0fa1acd62c2d17c961e58ff2a1eed982a347937` on rSOILWAT2 does not have this issue. | priority | no slot of name monthlyproductionvalues grass all simulations are failing due to recent commits to s master branch datafile sw input climscen values contains zero rows label s of the master input file swruninformation are used to populate rows and label s of the datafile error in slot prod default monthlyproductionvalues tolower fg no slot of name monthlyproductionvalues grass for this object of class swprod the automated builds did not catch this because the last run on master was before the commits that caused this failure i restarted s automated build on master and replicated the error along with the other two pull requests open on the issue is very simple the function update biomass in vegetation r needs to be updated to match the new slot layout in swprod i tried changing it from this temp slot prod default monthlyproductionvalues tolower fg to this if fg grass temp swprod monprod grass prod default else if fg shrub temp swprod monprod shrub prod default else if fg tree temp swprod monprod tree prod default else if fg forb temp swprod monprod forb prod default but it was unsuccessful error in object monthlyveg attempt to select less than one element in integeroneindex so i am going to continue working on what i was assigned to and am instead assigning dschlaep because he made the changes to in the meantime commit on does not have this issue | 1 |

699,488 | 24,018,339,351 | IssuesEvent | 2022-09-15 04:32:43 | MathMarEcol/WSMPA2 | https://api.github.com/repos/MathMarEcol/WSMPA2 | closed | Order of features and targets | High Priority | In the `prioritizr::problem` call, ensure that we have a check for the order of features matching the order of targets. Otherwise the incorrect target could be applied.

They should all stay in the correct order but worth checking. | 1.0 | Order of features and targets - In the `prioritizr::problem` call, ensure that we have a check for the order of features matching the order of targets. Otherwise the incorrect target could be applied.

They should all stay in the correct order but worth checking. | priority | order of features and targets in the prioritizr problem call ensure that we have a check for the order of features matching the order of targets otherwise the incorrect target could be applied they should all stay in the correct order but worth checking | 1 |

163,910 | 6,216,692,020 | IssuesEvent | 2017-07-08 06:46:12 | tkh44/emotion | https://api.github.com/repos/tkh44/emotion | closed | Don't pass innerRef to DOM component | beginner friendly bug help wanted high priority | I have a StyledInput and use `innerRef` to get the input element. But React will display this annoying warning.

`Warning: Unknown prop `innerRef` on <input> tag. Remove this prop from the element. For details, see https://fb.me/react-unknown-prop`

We should remove the innerRef after pass it to ref. Or better, filter unknown props like styled-component, but this comes with a cost of bigger runtime | 1.0 | Don't pass innerRef to DOM component - I have a StyledInput and use `innerRef` to get the input element. But React will display this annoying warning.

`Warning: Unknown prop `innerRef` on <input> tag. Remove this prop from the element. For details, see https://fb.me/react-unknown-prop`

We should remove the innerRef after pass it to ref. Or better, filter unknown props like styled-component, but this comes with a cost of bigger runtime | priority | don t pass innerref to dom component i have a styledinput and use innerref to get the input element but react will display this annoying warning warning unknown prop innerref on tag remove this prop from the element for details see we should remove the innerref after pass it to ref or better filter unknown props like styled component but this comes with a cost of bigger runtime | 1 |

188,189 | 6,773,825,638 | IssuesEvent | 2017-10-27 08:00:54 | vincentrk/quadrodoodle | https://api.github.com/repos/vincentrk/quadrodoodle | closed | Stop sending JS and Throttle messages during calibration | high priority | Currently these messages are being sent but should not be.

Look into why/where they are being sent from and stop them for calibration mode | 1.0 | Stop sending JS and Throttle messages during calibration - Currently these messages are being sent but should not be.

Look into why/where they are being sent from and stop them for calibration mode | priority | stop sending js and throttle messages during calibration currently these messages are being sent but should not be look into why where they are being sent from and stop them for calibration mode | 1 |

440,058 | 12,692,411,987 | IssuesEvent | 2020-06-21 22:21:23 | cds-snc/covid-shield-mobile | https://api.github.com/repos/cds-snc/covid-shield-mobile | closed | Turn on Bluetooth button doesn't work | bluetooth high priority upstream | If bluetooth is turned off globally, the button should take you to the correct screen to turn it on. | 1.0 | Turn on Bluetooth button doesn't work - If bluetooth is turned off globally, the button should take you to the correct screen to turn it on. | priority | turn on bluetooth button doesn t work if bluetooth is turned off globally the button should take you to the correct screen to turn it on | 1 |

227,895 | 7,543,956,738 | IssuesEvent | 2018-04-17 16:56:53 | GingerWalnut/SQ5.0Public | https://api.github.com/repos/GingerWalnut/SQ5.0Public | closed | Arenstad Server Transfer Glitch | Priority High Ships Bug | When I flew out of Arenstad, I spawned too close to Arenstad and we're stuck outside of the planet as server jump is failing. Additionally, it says that we are encountering an obstacle about 5000 blocks away from where we are so... | 1.0 | Arenstad Server Transfer Glitch - When I flew out of Arenstad, I spawned too close to Arenstad and we're stuck outside of the planet as server jump is failing. Additionally, it says that we are encountering an obstacle about 5000 blocks away from where we are so... | priority | arenstad server transfer glitch when i flew out of arenstad i spawned too close to arenstad and we re stuck outside of the planet as server jump is failing additionally it says that we are encountering an obstacle about blocks away from where we are so | 1 |

118,808 | 4,756,261,887 | IssuesEvent | 2016-10-24 13:32:16 | IQSS/dataverse | https://api.github.com/repos/IQSS/dataverse | closed | Widgets - Embedding of Dataverse Metrics | Component: Dataverse General Info Priority: High Status: Triaged Type: Feature | @dancabral would like to embed the dataverse metrics onto the IQSS website.

Is is possible to add this data as a embeddable widget so that it can be updated continuously?

Specifically these elements:

If a JS embed is not practical, we could use a custom created iframe as long as the data is available at a URI.

| 1.0 | Widgets - Embedding of Dataverse Metrics - @dancabral would like to embed the dataverse metrics onto the IQSS website.

Is is possible to add this data as a embeddable widget so that it can be updated continuously?

Specifically these elements:

If a JS embed is not practical, we could use a custom created iframe as long as the data is available at a URI.

| priority | widgets embedding of dataverse metrics dancabral would like to embed the dataverse metrics onto the iqss website is is possible to add this data as a embeddable widget so that it can be updated continuously specifically these elements if a js embed is not practical we could use a custom created iframe as long as the data is available at a uri | 1 |

657,344 | 21,790,899,968 | IssuesEvent | 2022-05-14 22:08:46 | bounswe/bounswe2022group9 | https://api.github.com/repos/bounswe/bounswe2022group9 | closed | Practice App: Adding sign in functionality | Priority: High In Progress Practice Application | Deadline: 15.05.2022 23.59

TODO:

- [ ] Sign in function should be added to views.py.

- [ ] An html should be prepared for sign in. | 1.0 | Practice App: Adding sign in functionality - Deadline: 15.05.2022 23.59

TODO:

- [ ] Sign in function should be added to views.py.

- [ ] An html should be prepared for sign in. | priority | practice app adding sign in functionality deadline todo sign in function should be added to views py an html should be prepared for sign in | 1 |

123,469 | 4,863,427,937 | IssuesEvent | 2016-11-14 15:26:34 | mgoral/subconvert | https://api.github.com/repos/mgoral/subconvert | opened | Move to tox+pytest | High Priority Request | Just pretend that autotools never happened, ok?

One crazy thing is how we compile and install translations. I'm thinking about extracting these to a separate repository and handling their installation there (probably via autotools or some dead-simple script).

Another thing: to keep backward compatibility, subconvert should remove the old distribution from $PREFIX and link/install a start script and .desktop file. Or maybe it shouldn't do anything? | 1.0 | Move to tox+pytest - Just pretend that autotools never happened, ok?

One crazy thing is how we compile and install translations. I'm thinking about extracting these to a separate repository and handling their installation there (probably via autotools or some dead-simple script).

Another thing: to keep backward compatibility, subconvert should remove the old distribution from $PREFIX and link/install a start script and .desktop file. Or maybe it shouldn't do anything? | priority | move to tox pytest just pretend that autotools never happened ok one crazy thing is how we compile and install translations i m thinking about extracting these to a separate repository and handling their installation there probably via autotools or some dead simple script another thing to keep backward compatibility subconvert should remove the old distribution from prefix and link install a start script and desktop file or maybe it shouldn t do anything | 1 |

490,701 | 14,139,008,939 | IssuesEvent | 2020-11-10 09:15:24 | wso2/product-is | https://api.github.com/repos/wso2/product-is | opened | Add Country attribute to SCIM2 user core dialect | Priority/Highest Severity/Major improvement | **Describe the issue:**

At the moment even though we have the attribute http://wso2.org/claims/country in the local dialect we don't have a SCIM attribute aligned with it. So it's better to add a new SCIM attribute and map it to the local attribute. | 1.0 | Add Country attribute to SCIM2 user core dialect - **Describe the issue:**

At the moment even though we have the attribute http://wso2.org/claims/country in the local dialect we don't have a SCIM attribute aligned with it. So it's better to add a new SCIM attribute and map it to the local attribute. | priority | add country attribute to user core dialect describe the issue at the moment even though we have the attribute in the local dialect we don t have a scim attribute aligned with it so it s better to add a new scim attribute and map it to the local attribute | 1 |

737,870 | 25,535,667,603 | IssuesEvent | 2022-11-29 11:47:18 | aau-giraf/web-api | https://api.github.com/repos/aau-giraf/web-api | closed | Integration tests change values in the database for localhost or live server | priority: high | ## Description

When running the integration tests more than once, the number of failed tests increases from 46 to 100+. The integration tests access the actual database used in production or what is used locally and change it. Since the tests fail, the database is changed, so it doesn't work as intended anymore.

**Possible Suggested Solution**

Change, so the tests run in an temporary database that will be deleted after the tests have been completed.

**This issue will be expanded upon when further information is discovered.** | 1.0 | Integration tests change values in the database for localhost or live server - ## Description

When running the integration tests more than once, the number of failed tests increases from 46 to 100+. The integration tests access the actual database used in production or what is used locally and change it. Since the tests fail, the database is changed, so it doesn't work as intended anymore.

**Possible Suggested Solution**

Change, so the tests run in an temporary database that will be deleted after the tests have been completed.

**This issue will be expanded upon when further information is discovered.** | priority | integration tests change values in the database for localhost or live server description when running the integration tests more than once the number of failed tests increases from to the integration tests access the actual database used in production or what is used locally and change it since the tests fail the database is changed so it doesn t work as intended anymore possible suggested solution change so the tests run in an temporary database that will be deleted after the tests have been completed this issue will be expanded upon when further information is discovered | 1 |

195,206 | 6,905,165,668 | IssuesEvent | 2017-11-27 05:16:17 | tkanezaki/phoenix | https://api.github.com/repos/tkanezaki/phoenix | closed | APIレスポンスのソート修正 | bug priority:high | 以下の通りになるよう修正

**GET /messages**

Message.weight (Desc),

Message.displayStartDate (Desc),

Message.messageID (Desc)

**GET /feeds, GET /merchandises, GET /leaflets**

Article.weight (Desc),

Article.displayStartDate (Desc),

Article.articleId (Desc)

**GET /feeds/clipped**

~ArticleClip.createdAt (Desc)~

ArticleClip.id (Desc)

**GET /feed-labelgroups, GET /merchandise-labelgroups**

LabelGroup.weight (Desc),

LabelGroup.labelGroupId (Asc),

[ Label.weight (Desc),

Label.labelId (Asc) ]

**GET /merchandise-categories**

Label.weight (Desc),

Label.labelId (Asc)

**GET /shops**

Shop.weight (Desc),

Shop.subdivisionISO (Asc),

Shop.shopId (Desc)

**GET /shops/clipped**

~ShopClip.createdAt (Desc)~

ShopClip.id (Desc)

**GET /shops/coodinates**

distance (Asc)

**GET /shops/bounds**

Shop.shopId (Asc)

**GET /shop-attributegroups**

AttributeGroup.weight (Desc),

AttributeGroup.attributeGroupId (Asc),

[ Attribute.weight (Desc),

Attribute.attributeId (Asc) ]

**GET /checkin-histories**

~CheckinHistory.createdAt (Desc)~

CheckinHistory.id (Desc)

**GET /coupons**

Coupon.weight (Desc),

Coupon.applicationStartDate (Desc)

Coupon.couponId (Desc)

**GET /coupon-categories**

CouponCategory.weight (Desc),

CouponCategory.couponCategoryId (Asc)

**GET /coupons/clipped**

~CouponClip.createdAt (Desc)~

CouponClip.id (Desc) | 1.0 | APIレスポンスのソート修正 - 以下の通りになるよう修正

**GET /messages**

Message.weight (Desc),

Message.displayStartDate (Desc),

Message.messageID (Desc)

**GET /feeds, GET /merchandises, GET /leaflets**

Article.weight (Desc),

Article.displayStartDate (Desc),

Article.articleId (Desc)

**GET /feeds/clipped**

~ArticleClip.createdAt (Desc)~

ArticleClip.id (Desc)

**GET /feed-labelgroups, GET /merchandise-labelgroups**

LabelGroup.weight (Desc),

LabelGroup.labelGroupId (Asc),

[ Label.weight (Desc),

Label.labelId (Asc) ]

**GET /merchandise-categories**

Label.weight (Desc),

Label.labelId (Asc)

**GET /shops**

Shop.weight (Desc),

Shop.subdivisionISO (Asc),

Shop.shopId (Desc)

**GET /shops/clipped**

~ShopClip.createdAt (Desc)~

ShopClip.id (Desc)

**GET /shops/coodinates**

distance (Asc)

**GET /shops/bounds**

Shop.shopId (Asc)

**GET /shop-attributegroups**

AttributeGroup.weight (Desc),

AttributeGroup.attributeGroupId (Asc),

[ Attribute.weight (Desc),

Attribute.attributeId (Asc) ]

**GET /checkin-histories**

~CheckinHistory.createdAt (Desc)~

CheckinHistory.id (Desc)

**GET /coupons**

Coupon.weight (Desc),

Coupon.applicationStartDate (Desc)

Coupon.couponId (Desc)

**GET /coupon-categories**

CouponCategory.weight (Desc),

CouponCategory.couponCategoryId (Asc)

**GET /coupons/clipped**

~CouponClip.createdAt (Desc)~

CouponClip.id (Desc) | priority | apiレスポンスのソート修正 以下の通りになるよう修正 get messages message weight desc message displaystartdate desc message messageid desc get feeds get merchandises get leaflets article weight desc article displaystartdate desc article articleid desc get feeds clipped articleclip createdat desc articleclip id desc get feed labelgroups get merchandise labelgroups labelgroup weight desc labelgroup labelgroupid asc label weight desc label labelid asc get merchandise categories label weight desc label labelid asc get shops shop weight desc shop subdivisioniso asc shop shopid desc get shops clipped shopclip createdat desc shopclip id desc get shops coodinates distance asc get shops bounds shop shopid asc get shop attributegroups attributegroup weight desc attributegroup attributegroupid asc attribute weight desc attribute attributeid asc get checkin histories checkinhistory createdat desc checkinhistory id desc get coupons coupon weight desc coupon applicationstartdate desc coupon couponid desc get coupon categories couponcategory weight desc couponcategory couponcategoryid asc get coupons clipped couponclip createdat desc couponclip id desc | 1 |

155,484 | 5,956,336,562 | IssuesEvent | 2017-05-28 15:52:20 | bhwarren/Sutta-Data-Manager | https://api.github.com/repos/bhwarren/Sutta-Data-Manager | opened | Change sutta selector to something more descriptive | priority: High Todo UI | Like SC. Also put most recently edited above everything for quick access | 1.0 | Change sutta selector to something more descriptive - Like SC. Also put most recently edited above everything for quick access | priority | change sutta selector to something more descriptive like sc also put most recently edited above everything for quick access | 1 |

443,052 | 12,758,807,561 | IssuesEvent | 2020-06-29 03:39:23 | Azure/ARO-RP | https://api.github.com/repos/Azure/ARO-RP | closed | c# serialisation bug | priority-high size-small | I don't have all the details, but when running a k8s createorupdate to update an object, I think I posted

```yaml

...

metadata:

generation: 2

...

```

and I got some error back from k8s that makes me think that the yaml->json c# incorrectly serialised that to `"metadata": {"generation": "2"}` (i.e. "2" as a string, not a number).

are you able to recreate this?

| 1.0 | c# serialisation bug - I don't have all the details, but when running a k8s createorupdate to update an object, I think I posted

```yaml

...

metadata:

generation: 2

...

```

and I got some error back from k8s that makes me think that the yaml->json c# incorrectly serialised that to `"metadata": {"generation": "2"}` (i.e. "2" as a string, not a number).

are you able to recreate this?

| priority | c serialisation bug i don t have all the details but when running a createorupdate to update an object i think i posted yaml metadata generation and i got some error back from that makes me think that the yaml json c incorrectly serialised that to metadata generation i e as a string not a number are you able to recreate this | 1 |

658,058 | 21,877,019,979 | IssuesEvent | 2022-05-19 11:07:31 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | Can't instantiate VMTemplates when setting VM.instantiate_name to false | Category: Sunstone Type: Bug Sponsored Status: Accepted Priority: High | **Description**

When setting VM.instantiate_name to false, users can't instantiate VM templates.

**To Reproduce**

Go to the view YAML configuration file and set `VM.instantiate_name` to false.

**Expected behavior**

To be able to instantiate VMTemplates when this setting is disabled.

**Details**

- Affected Component: Sunstone

- Hypervisor: [e.g. KVM]

- Version: 6.4, development

<!--////////////////////////////////////////////-->

<!-- THIS SECTION IS FOR THE DEVELOPMENT TEAM -->

<!-- BOTH FOR BUGS AND ENHANCEMENT REQUESTS -->

<!-- PROGRESS WILL BE REFLECTED HERE -->

<!--////////////////////////////////////////////-->

## Progress Status

- [x] Code commited

- [ ] Testing - QA

- [x] Documentation (Release notes - resolved issues, compatibility, known issues) | 1.0 | Can't instantiate VMTemplates when setting VM.instantiate_name to false - **Description**

When setting VM.instantiate_name to false, users can't instantiate VM templates.

**To Reproduce**

Go to the view YAML configuration file and set `VM.instantiate_name` to false.

**Expected behavior**

To be able to instantiate VMTemplates when this setting is disabled.

**Details**

- Affected Component: Sunstone

- Hypervisor: [e.g. KVM]

- Version: 6.4, development

<!--////////////////////////////////////////////-->

<!-- THIS SECTION IS FOR THE DEVELOPMENT TEAM -->

<!-- BOTH FOR BUGS AND ENHANCEMENT REQUESTS -->

<!-- PROGRESS WILL BE REFLECTED HERE -->

<!--////////////////////////////////////////////-->

## Progress Status

- [x] Code commited

- [ ] Testing - QA

- [x] Documentation (Release notes - resolved issues, compatibility, known issues) | priority | can t instantiate vmtemplates when setting vm instantiate name to false description when setting vm instantiate name to false users can t instantiate vm templates to reproduce go to the view yaml configuration file and set vm instantiate name to false expected behavior to be able to instantiate vmtemplates when this setting is disabled details affected component sunstone hypervisor version development progress status code commited testing qa documentation release notes resolved issues compatibility known issues | 1 |

443,414 | 12,794,123,254 | IssuesEvent | 2020-07-02 06:09:01 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Need a load API Policies to memory for subscription validation | Priority/Highest Type/Improvement | ### Describe your problem(s)

To cater in memory subscription validation, We need a separate REST API to retrieve API Policies and need to store them in a Map. | 1.0 | Need a load API Policies to memory for subscription validation - ### Describe your problem(s)

To cater in memory subscription validation, We need a separate REST API to retrieve API Policies and need to store them in a Map. | priority | need a load api policies to memory for subscription validation describe your problem s to cater in memory subscription validation we need a separate rest api to retrieve api policies and need to store them in a map | 1 |

719,043 | 24,743,671,152 | IssuesEvent | 2022-10-21 07:56:40 | AY2223S1-CS2103T-W13-1/tp | https://api.github.com/repos/AY2223S1-CS2103T-W13-1/tp | closed | Update Developer Guide | type.DG priority.high | Update implementation details for each user.

- [ ] Po-Hsien

- [ ] Bao Bin

- [ ] Zizheng

- [ ] Sheyuan

- [ ] Silas | 1.0 | Update Developer Guide - Update implementation details for each user.

- [ ] Po-Hsien

- [ ] Bao Bin

- [ ] Zizheng

- [ ] Sheyuan

- [ ] Silas | priority | update developer guide update implementation details for each user po hsien bao bin zizheng sheyuan silas | 1 |

273,606 | 8,550,783,126 | IssuesEvent | 2018-11-07 16:20:57 | CypherpunkArmory/UserLAnd | https://api.github.com/repos/CypherpunkArmory/UserLAnd | closed | unable to start userland | high priority | userland 0.3.4 (17)

Samsung Galaxy Tab 2

Android 7.1.2

LineageOS 14.1-20180131-UNOFFICIAL-espressowifi

While loading the assets Userland always hangs on the part about busybox:

"Extracting: Exec: Failed to execute command [../support/busybox, sh, -c, ../support/execInPro..."

Userland then just hangs, and I have to manually end it.

The PRoot debug log is attached.

[PRoot_Debug_Log.txt](https://github.com/CypherpunkArmory/UserLAnd/files/2346830/PRoot_Debug_Log.txt)

| 1.0 | unable to start userland - userland 0.3.4 (17)

Samsung Galaxy Tab 2

Android 7.1.2

LineageOS 14.1-20180131-UNOFFICIAL-espressowifi

While loading the assets Userland always hangs on the part about busybox:

"Extracting: Exec: Failed to execute command [../support/busybox, sh, -c, ../support/execInPro..."

Userland then just hangs, and I have to manually end it.

The PRoot debug log is attached.

[PRoot_Debug_Log.txt](https://github.com/CypherpunkArmory/UserLAnd/files/2346830/PRoot_Debug_Log.txt)

| priority | unable to start userland userland samsung galaxy tab android lineageos unofficial espressowifi while loading the assets userland always hangs on the part about busybox extracting exec failed to execute command support busybox sh c support execinpro userland then just hangs and i have to manually end it the proot debug log is attached | 1 |

616,401 | 19,301,650,733 | IssuesEvent | 2021-12-13 06:41:28 | OpenTabletDriver/OpenTabletDriver | https://api.github.com/repos/OpenTabletDriver/OpenTabletDriver | closed | Valid inputs being dropped - Hover distance and area issues | bug priority:high | ## Description

<!-- Describe the issue below -->

Many valid inputs are being ignored leading certain tablets to have their input cut off before it should be. This can lead to lowered hover distance, and certain parts of areas (specifically some parts of the area deadzones on wacom tablets) being unreachable or having input cut off too soon.

## System Information:

<!-- Please fill out this information -->

| Name | Value |

| ---------------- | ----- |

| OpenTabletDriver Version | 0.6.0 Pre-release

| Tablet | Tested on CTL-480 but many others likely affected

| 1.0 | Valid inputs being dropped - Hover distance and area issues - ## Description

<!-- Describe the issue below -->

Many valid inputs are being ignored leading certain tablets to have their input cut off before it should be. This can lead to lowered hover distance, and certain parts of areas (specifically some parts of the area deadzones on wacom tablets) being unreachable or having input cut off too soon.

## System Information:

<!-- Please fill out this information -->

| Name | Value |

| ---------------- | ----- |

| OpenTabletDriver Version | 0.6.0 Pre-release

| Tablet | Tested on CTL-480 but many others likely affected

| priority | valid inputs being dropped hover distance and area issues description many valid inputs are being ignored leading certain tablets to have their input cut off before it should be this can lead to lowered hover distance and certain parts of areas specifically some parts of the area deadzones on wacom tablets being unreachable or having input cut off too soon system information name value opentabletdriver version pre release tablet tested on ctl but many others likely affected | 1 |

633,201 | 20,247,686,467 | IssuesEvent | 2022-02-14 15:08:25 | VulcanWM/munity | https://api.github.com/repos/VulcanWM/munity | closed | Lyrics | TYPE: bug PRIORITY: high PROGRESS: completed | - ~~Remove the `embed` and the number in the lyric~~

- ~~Remove the one word lyrics~~

- ~~Remove the songs with edit in it~~ | 1.0 | Lyrics - - ~~Remove the `embed` and the number in the lyric~~

- ~~Remove the one word lyrics~~

- ~~Remove the songs with edit in it~~ | priority | lyrics remove the embed and the number in the lyric remove the one word lyrics remove the songs with edit in it | 1 |

116,711 | 4,705,609,065 | IssuesEvent | 2016-10-13 14:56:52 | geosolutions-it/evo-odas | https://api.github.com/repos/geosolutions-it/evo-odas | closed | ImageMosaic date ingestion from landsat files | duplicate Priority: High task | Landsat images filenames follow this pattern:

LC81390452014295LGN00

Where 2014295 is the julian day (format=YYYYDDD). This needs to be ingested correctly in the mosaic as time dimension | 1.0 | ImageMosaic date ingestion from landsat files - Landsat images filenames follow this pattern:

LC81390452014295LGN00

Where 2014295 is the julian day (format=YYYYDDD). This needs to be ingested correctly in the mosaic as time dimension | priority | imagemosaic date ingestion from landsat files landsat images filenames follow this pattern where is the julian day format yyyyddd this needs to be ingested correctly in the mosaic as time dimension | 1 |

230,434 | 7,610,142,021 | IssuesEvent | 2018-05-01 06:05:08 | connectedbusiness/eshopconnected | https://api.github.com/repos/connectedbusiness/eshopconnected | closed | Magento: Downloaded orders that are already completed | bug high priority | #151904 - eShopConnected downloaded orders that are already completed in Magento

Details: After applying the patch for eShopConnected, it started to download the orders from Magento however it downloaded ALL the orders including the COMPLETED orders instead of OPEN orders only.

David Nelson

www.dynenttech.com | 1.0 | Magento: Downloaded orders that are already completed - #151904 - eShopConnected downloaded orders that are already completed in Magento

Details: After applying the patch for eShopConnected, it started to download the orders from Magento however it downloaded ALL the orders including the COMPLETED orders instead of OPEN orders only.

David Nelson

www.dynenttech.com | priority | magento downloaded orders that are already completed eshopconnected downloaded orders that are already completed in magento details after applying the patch for eshopconnected it started to download the orders from magento however it downloaded all the orders including the completed orders instead of open orders only david nelson | 1 |

807,927 | 30,025,568,117 | IssuesEvent | 2023-06-27 05:42:49 | EESSI/eessi-bot-software-layer | https://api.github.com/repos/EESSI/eessi-bot-software-layer | reopened | job manager crash due to "Bad credentials" | difficulty:medium priority:high bug | We should make the communication with GitHub in `process_running_jobs` in the job manager a bit more robust, and retry in case something went wrong?

This could be some kind of rate limiting thing in GitHub (the job manager doesn't use a GitHub token)

```

Traceback (most recent call last):

File "/usr/lib64/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/usr/lib64/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/mnt/shared/home/bot/eessi-bot-software-layer/eessi_bot_job_manager.py", line 715, in <module>

main()

File "/mnt/shared/home/bot/eessi-bot-software-layer/eessi_bot_job_manager.py", line 680, in main

job_manager.process_running_jobs(known_jobs[rj])

File "/mnt/shared/home/bot/eessi-bot-software-layer/eessi_bot_job_manager.py", line 351, in process_running_jobs

pullrequest = repo.get_pull(int(pr_number))

File "/mnt/shared/home/bot/.local/lib/python3.6/site-packages/github/Repository.py", line 2792, in get_pull

"GET", f"{self.url}/pulls/{number}"

File "/mnt/shared/home/bot/.local/lib/python3.6/site-packages/github/Requester.py", line 355, in requestJsonAndCheck

verb, url, parameters, headers, input, self.__customConnection(url)

File "/mnt/shared/home/bot/.local/lib/python3.6/site-packages/github/Requester.py", line 378, in __check

raise self.__createException(status, responseHeaders, output)

github.GithubException.BadCredentialsException: 401 {"message": "Bad credentials", "documentation_url": "https://docs.github.com/rest"}

```

tail of job manager log file when crash happened:

```

[20230115-T00:13:37] job manager main loop: iteration 3097

[20230115-T00:13:37] job manager main loop: known_jobs='3311'

[20230115-T00:13:37] run_subprocess(): 'get_current_jobs(): squeue command' by running '/usr/bin/squeue --long --user=bot' in directory '/mnt/shared/home/bot/eessi-bot-software-layer'

[20230115-T00:13:37] run_cmd(): Result for running '/usr/bin/squeue --long --user=bot' in 'None

stdout 'Sun Jan 15 00:13:37 2023

JOBID PARTITION NAME USER STATE TIME TIME_LIMI NODES NODELIST(REASON)

3311 compute eessi-bo bot RUNNING 9:02:11 UNLIMITED 1 fair-mastodon-c5-2xlarge-0001

'

stderr ''

exit code 0

[20230115-T00:13:37] job manager main loop: current_jobs='3311'

[20230115-T00:13:37] job manager main loop: new_jobs=''

[20230115-T00:13:37] job manager main loop: running_jobs='3311'

[20230115-T00:13:37] Found metadata file at /mnt/shared/home/bot/eessi-bot-software-layer/jobs/submitted/3311/_bot_job3311.metadata

``` | 1.0 | job manager crash due to "Bad credentials" - We should make the communication with GitHub in `process_running_jobs` in the job manager a bit more robust, and retry in case something went wrong?

This could be some kind of rate limiting thing in GitHub (the job manager doesn't use a GitHub token)

```

Traceback (most recent call last):

File "/usr/lib64/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/usr/lib64/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/mnt/shared/home/bot/eessi-bot-software-layer/eessi_bot_job_manager.py", line 715, in <module>

main()

File "/mnt/shared/home/bot/eessi-bot-software-layer/eessi_bot_job_manager.py", line 680, in main

job_manager.process_running_jobs(known_jobs[rj])

File "/mnt/shared/home/bot/eessi-bot-software-layer/eessi_bot_job_manager.py", line 351, in process_running_jobs

pullrequest = repo.get_pull(int(pr_number))

File "/mnt/shared/home/bot/.local/lib/python3.6/site-packages/github/Repository.py", line 2792, in get_pull

"GET", f"{self.url}/pulls/{number}"

File "/mnt/shared/home/bot/.local/lib/python3.6/site-packages/github/Requester.py", line 355, in requestJsonAndCheck

verb, url, parameters, headers, input, self.__customConnection(url)

File "/mnt/shared/home/bot/.local/lib/python3.6/site-packages/github/Requester.py", line 378, in __check

raise self.__createException(status, responseHeaders, output)

github.GithubException.BadCredentialsException: 401 {"message": "Bad credentials", "documentation_url": "https://docs.github.com/rest"}

```

tail of job manager log file when crash happened:

```

[20230115-T00:13:37] job manager main loop: iteration 3097

[20230115-T00:13:37] job manager main loop: known_jobs='3311'

[20230115-T00:13:37] run_subprocess(): 'get_current_jobs(): squeue command' by running '/usr/bin/squeue --long --user=bot' in directory '/mnt/shared/home/bot/eessi-bot-software-layer'

[20230115-T00:13:37] run_cmd(): Result for running '/usr/bin/squeue --long --user=bot' in 'None

stdout 'Sun Jan 15 00:13:37 2023

JOBID PARTITION NAME USER STATE TIME TIME_LIMI NODES NODELIST(REASON)

3311 compute eessi-bo bot RUNNING 9:02:11 UNLIMITED 1 fair-mastodon-c5-2xlarge-0001

'

stderr ''

exit code 0

[20230115-T00:13:37] job manager main loop: current_jobs='3311'

[20230115-T00:13:37] job manager main loop: new_jobs=''

[20230115-T00:13:37] job manager main loop: running_jobs='3311'

[20230115-T00:13:37] Found metadata file at /mnt/shared/home/bot/eessi-bot-software-layer/jobs/submitted/3311/_bot_job3311.metadata

``` | priority | job manager crash due to bad credentials we should make the communication with github in process running jobs in the job manager a bit more robust and retry in case something went wrong this could be some kind of rate limiting thing in github the job manager doesn t use a github token traceback most recent call last file usr runpy py line in run module as main main mod spec file usr runpy py line in run code exec code run globals file mnt shared home bot eessi bot software layer eessi bot job manager py line in main file mnt shared home bot eessi bot software layer eessi bot job manager py line in main job manager process running jobs known jobs file mnt shared home bot eessi bot software layer eessi bot job manager py line in process running jobs pullrequest repo get pull int pr number file mnt shared home bot local lib site packages github repository py line in get pull get f self url pulls number file mnt shared home bot local lib site packages github requester py line in requestjsonandcheck verb url parameters headers input self customconnection url file mnt shared home bot local lib site packages github requester py line in check raise self createexception status responseheaders output github githubexception badcredentialsexception message bad credentials documentation url tail of job manager log file when crash happened job manager main loop iteration job manager main loop known jobs run subprocess get current jobs squeue command by running usr bin squeue long user bot in directory mnt shared home bot eessi bot software layer run cmd result for running usr bin squeue long user bot in none stdout sun jan jobid partition name user state time time limi nodes nodelist reason compute eessi bo bot running unlimited fair mastodon stderr exit code job manager main loop current jobs job manager main loop new jobs job manager main loop running jobs found metadata file at mnt shared home bot eessi bot software layer jobs submitted bot metadata | 1 |

380,104 | 11,253,901,857 | IssuesEvent | 2020-01-11 19:30:44 | CodeletApp/codelet-app | https://api.github.com/repos/CodeletApp/codelet-app | closed | Question Process: Step 1 | High Priority | - What sub-components/features can we split the question list view into?

- The sub-components should be reusable for any question data.

### Sub Components

- Approaches.js

- Footer/Next Button | 1.0 | Question Process: Step 1 - - What sub-components/features can we split the question list view into?

- The sub-components should be reusable for any question data.

### Sub Components

- Approaches.js

- Footer/Next Button | priority | question process step what sub components features can we split the question list view into the sub components should be reusable for any question data sub components approaches js footer next button | 1 |

144,497 | 5,541,476,739 | IssuesEvent | 2017-03-22 12:58:42 | Osslack/HANA_SSBM | https://api.github.com/repos/Osslack/HANA_SSBM | closed | Allgemeine Informationen | Priority_high | Allgemeine statistische informationen auswerten:

- Durchschnitt

- Median

- Minimum

- Maximum

- Standard Abweichung

- Insgesamt | 1.0 | Allgemeine Informationen - Allgemeine statistische informationen auswerten:

- Durchschnitt

- Median

- Minimum

- Maximum

- Standard Abweichung

- Insgesamt | priority | allgemeine informationen allgemeine statistische informationen auswerten durchschnitt median minimum maximum standard abweichung insgesamt | 1 |

624,870 | 19,711,228,382 | IssuesEvent | 2022-01-13 05:42:31 | rich-iannone/pointblank | https://api.github.com/repos/rich-iannone/pointblank | closed | `col_vals_XXX` fails on SQL Server connection | Type: ☹︎ Bug Difficulty: [3] Advanced Effort: [3] High Priority: [3] High | ## Prework

* [x] Read and agree to the [code of conduct](https://www.contributor-covenant.org/version/2/0/code_of_conduct/) and [contributing guidelines](https://github.com/rich-iannone/pointblank/blob/master/.github/CONTRIBUTING.md).

* [x] If there is [already a relevant issue](https://github.com/rich-iannone/pointblank/issues), whether open or closed, comment on the existing thread instead of posting a new issue.

* [ ] Post a [minimal reproducible example](https://www.tidyverse.org/help/) so the maintainer can troubleshoot the problems you identify. A reproducible example is:

* [ ] **Runnable**: post enough R code and data so any onlooker can create the error on their own computer.

* [ ] **Minimal**: reduce runtime wherever possible and remove complicated details that are irrelevant to the issue at hand.

* [x] **Readable**: format your code according to the [tidyverse style guide](https://style.tidyverse.org/).

## Description

Connecting to one of our databases and trying to run `interrrogate()` throws an error when using the `col_vals_XXX` family of functions. So in example below, the `col_is_date()` runs fine, but `col_vals_between()` does not. I can't really figure out why?

If I collect the needed columns using `dplyr::collect()` and run the exact same chunk everything works fine. Same goes for a similar interrogation on the `small_table_duckdb`.

NOTE: I'm aware that the example is a pseudo reprex - I included it here, so you can see what I did. If there is anyway I can improve the example code, please just let me know!

## Reproducible example

``` r

library(pointblank)

small_table_duckdb <-

db_tbl(

table = small_table,

dbname = ":memory:",

dbtype = "duckdb"

)

stdb_agent <- create_agent(read_fn = ~ small_table_duckdb) |>

col_is_character(vars(b)) |>

col_vals_between(vars(a), left = 0, right = 1000000) |>

interrogate()

con <- DBI::dbConnect(odbc::odbc(),

driver = "ODBC Driver 17 for SQL Server",

server = <OUR SERVER>,

database = <OUR DATABASE>,

uid = <THE UID>,

pwd = <THE PWD>)

kemi <- dplyr::tbl(con, dbplyr::in_schema("data", "kemi"))

kemi_agent <- create_agent(read_fn = ~ kemi) |>

col_is_date(vars(dato)) |>

col_vals_between(

columns = vars(jord_C),

left = 0.1,

right = 30,

na_pass = TRUE

) |>

interrogate()

```

#> Error: nanodbc/nanodbc.cpp:1655: 00000: [Microsoft][ODBC Driver 17 for SQL Server][SQL Server]An expression of non-boolean type specified in a context where a condition is expected, near ')'. [Microsoft][ODBC Driver 17 for SQL Server][SQL Server]Incorrect syntax near 'jord_C'. [Microsoft][ODBC Driver 17 for SQL Server][SQL Server]Statement(s) could not be prepared.

#> <SQL> 'SELECT "n"

#> FROM (SELECT COUNT(*) AS "n"