Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

473,122 | 13,637,162,152 | IssuesEvent | 2020-09-25 07:21:35 | buger/goreplay | https://api.github.com/repos/buger/goreplay | closed | [1.2.0] panic: runtime error: slice bounds out of range [13:12] | Priority: High bug | ```

panic: runtime error: slice bounds out of range [13:12]

goroutine 7 [running]:

github.com/buger/goreplay/proto.HasResponseTitle(0xc00027ec66, 0xb7, 0xb7, 0xc002610200)

/go/src/github.com/buger/goreplay/proto/proto.go:357 +0x242

main.startHint(0xc002c12370, 0xc0003a0630)

/go/src/github.com/buger/goreplay/input_raw.go:203 +0x7c

github.com/buger/goreplay/tcp.(*MessagePool).Handler(0xc0003a2a40, 0x1124fc0, 0xc003d5da20)

/go/src/github.com/buger/goreplay/tcp/tcp_message.go:176 +0x9fa

github.com/buger/goreplay/capture.(*Listener).Listen(0xc00034f4a0, 0x1118860, 0xc0003a2a80, 0xc000380400, 0x0, 0x0)

/go/src/github.com/buger/goreplay/capture/capture.go:163 +0x163

github.com/buger/goreplay/capture.(*Listener).ListenBackground.func1(0xc00011efc0, 0xc00034f4a0, 0x1118860, 0xc0003a2a80, 0xc000380400)

/go/src/github.com/buger/goreplay/capture/capture.go:173 +0x7b

created by github.com/buger/goreplay/capture.(*Listener).ListenBackground

/go/src/github.com/buger/goreplay/capture/capture.go:171 +0x84

``` | 1.0 | [1.2.0] panic: runtime error: slice bounds out of range [13:12] - ```

panic: runtime error: slice bounds out of range [13:12]

goroutine 7 [running]:

github.com/buger/goreplay/proto.HasResponseTitle(0xc00027ec66, 0xb7, 0xb7, 0xc002610200)

/go/src/github.com/buger/goreplay/proto/proto.go:357 +0x242

main.startHint(0xc002c12370, 0xc0003a0630)

/go/src/github.com/buger/goreplay/input_raw.go:203 +0x7c

github.com/buger/goreplay/tcp.(*MessagePool).Handler(0xc0003a2a40, 0x1124fc0, 0xc003d5da20)

/go/src/github.com/buger/goreplay/tcp/tcp_message.go:176 +0x9fa

github.com/buger/goreplay/capture.(*Listener).Listen(0xc00034f4a0, 0x1118860, 0xc0003a2a80, 0xc000380400, 0x0, 0x0)

/go/src/github.com/buger/goreplay/capture/capture.go:163 +0x163

github.com/buger/goreplay/capture.(*Listener).ListenBackground.func1(0xc00011efc0, 0xc00034f4a0, 0x1118860, 0xc0003a2a80, 0xc000380400)

/go/src/github.com/buger/goreplay/capture/capture.go:173 +0x7b

created by github.com/buger/goreplay/capture.(*Listener).ListenBackground

/go/src/github.com/buger/goreplay/capture/capture.go:171 +0x84

``` | priority | panic runtime error slice bounds out of range panic runtime error slice bounds out of range goroutine github com buger goreplay proto hasresponsetitle go src github com buger goreplay proto proto go main starthint go src github com buger goreplay input raw go github com buger goreplay tcp messagepool handler go src github com buger goreplay tcp tcp message go github com buger goreplay capture listener listen go src github com buger goreplay capture capture go github com buger goreplay capture listener listenbackground go src github com buger goreplay capture capture go created by github com buger goreplay capture listener listenbackground go src github com buger goreplay capture capture go | 1 |

226,129 | 7,504,242,725 | IssuesEvent | 2018-04-10 02:28:36 | bugfroggy/Quickplay2.0 | https://api.github.com/repos/bugfroggy/Quickplay2.0 | closed | Premium .jars for 1.9-1.12.2 have invalid accepted versions | Bug Premium Priority: HIGH | When the latest .jars were compiled, I forgot to update the accepted versions. That means any clients running them won't start, claiming the version is invalid. | 1.0 | Premium .jars for 1.9-1.12.2 have invalid accepted versions - When the latest .jars were compiled, I forgot to update the accepted versions. That means any clients running them won't start, claiming the version is invalid. | priority | premium jars for have invalid accepted versions when the latest jars were compiled i forgot to update the accepted versions that means any clients running them won t start claiming the version is invalid | 1 |

130,470 | 5,116,693,492 | IssuesEvent | 2017-01-07 07:05:47 | HuskieRobotics/roborioExpansion | https://api.github.com/repos/HuskieRobotics/roborioExpansion | closed | Create ReadDIO poly VI to read a single DIO line? | Feature High-Priority labview roboRIO low-priority | Should we create a ReadDIO polymorphic VI similar to the ReadAI polymorphic VI that makes it easy to read a single DIO channel? | 2.0 | Create ReadDIO poly VI to read a single DIO line? - Should we create a ReadDIO polymorphic VI similar to the ReadAI polymorphic VI that makes it easy to read a single DIO channel? | priority | create readdio poly vi to read a single dio line should we create a readdio polymorphic vi similar to the readai polymorphic vi that makes it easy to read a single dio channel | 1 |

350,381 | 10,483,276,066 | IssuesEvent | 2019-09-24 13:45:43 | bmp-git/PPS-18-scala-mqtt | https://api.github.com/repos/bmp-git/PPS-18-scala-mqtt | opened | As a client I want to publish a message in a topic and subscribe to a topic. | priority: high | - [ ] Develop publish/subscribe packet parser.

- [ ] Develop publish/subscribe packet builder

- [ ] Develop MQTT publish/subscribe logic in Protocol Manager (Qos 0)

- [ ] Implement topic’s matching logic | 1.0 | As a client I want to publish a message in a topic and subscribe to a topic. - - [ ] Develop publish/subscribe packet parser.

- [ ] Develop publish/subscribe packet builder

- [ ] Develop MQTT publish/subscribe logic in Protocol Manager (Qos 0)

- [ ] Implement topic’s matching logic | priority | as a client i want to publish a message in a topic and subscribe to a topic develop publish subscribe packet parser develop publish subscribe packet builder develop mqtt publish subscribe logic in protocol manager qos implement topic’s matching logic | 1 |

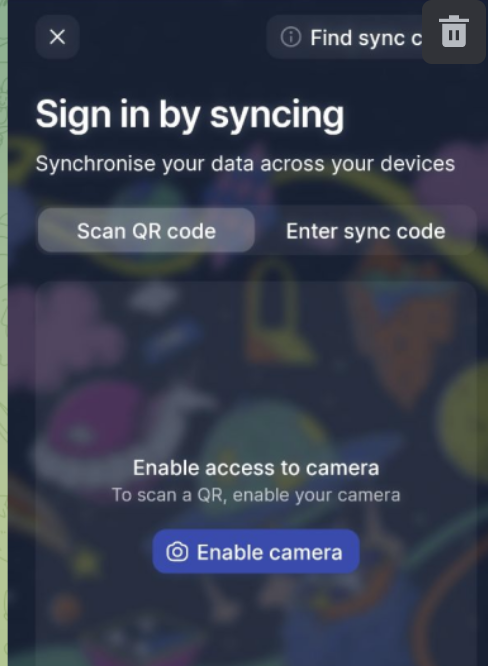

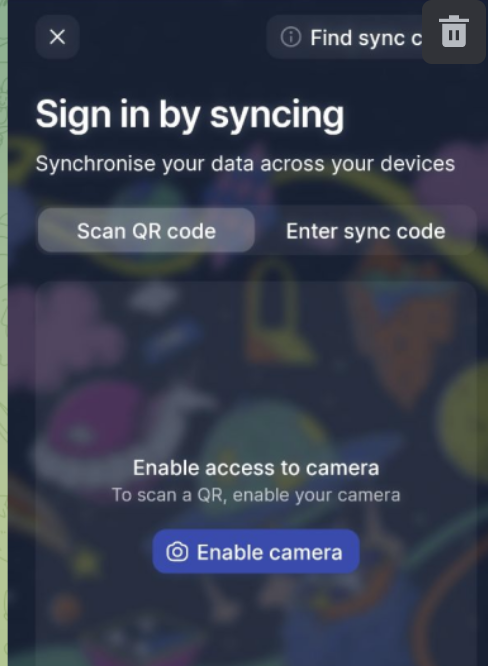

794,491 | 28,038,108,983 | IssuesEvent | 2023-03-28 16:23:58 | status-im/status-mobile | https://api.github.com/repos/status-im/status-mobile | closed | The user is not navigated to 'Sign in by syncing page' if user with existing multi account taps 'Add existing Status profile' option | bug high-priority onboarding | #### Problem:

The user is not able to open 'Sign in by syncing page' flow If the current user already has a multi-account

#### Preconditions:

User is logged in

#### Steps to reproduce:

1. Sign Out

2. Tap [+]

3. Select 'Add existing Status profile' option

#### Actual result:

The user is navigated to the "I'm new to Status" flow

https://user-images.githubusercontent.com/52490791/228255311-3fac3a58-9c1d-4813-a61b-80beb243a4f6.mp4

#### Expected result:

The user is navigated to the 'Sign in by sync' flow

https://www.figma.com/file/o4qG1bnFyuyFOvHQVGgeFY/Onboarding-for-Mobile?node-id=4357-629321&t=yGoIqMSJielr7hwI-0

#### ENV:

Nightly 28 Mar 2023 | 1.0 | The user is not navigated to 'Sign in by syncing page' if user with existing multi account taps 'Add existing Status profile' option - #### Problem:

The user is not able to open 'Sign in by syncing page' flow If the current user already has a multi-account

#### Preconditions:

User is logged in

#### Steps to reproduce:

1. Sign Out

2. Tap [+]

3. Select 'Add existing Status profile' option

#### Actual result:

The user is navigated to the "I'm new to Status" flow

https://user-images.githubusercontent.com/52490791/228255311-3fac3a58-9c1d-4813-a61b-80beb243a4f6.mp4

#### Expected result:

The user is navigated to the 'Sign in by sync' flow

https://www.figma.com/file/o4qG1bnFyuyFOvHQVGgeFY/Onboarding-for-Mobile?node-id=4357-629321&t=yGoIqMSJielr7hwI-0

#### ENV:

Nightly 28 Mar 2023 | priority | the user is not navigated to sign in by syncing page if user with existing multi account taps add existing status profile option problem the user is not able to open sign in by syncing page flow if the current user already has a multi account preconditions user is logged in steps to reproduce sign out tap select add existing status profile option actual result the user is navigated to the i m new to status flow expected result the user is navigated to the sign in by sync flow env nightly mar | 1 |

184,052 | 6,700,607,494 | IssuesEvent | 2017-10-11 06:02:20 | ocf/slackbridge | https://api.github.com/repos/ocf/slackbridge | closed | Create a new IRC bot when a new user joins Slack | enhancement high-priority | Currently they would not be able to talk from Slack -> IRC until the bridge restarts.

There is an [API method for when a new user joins Slack](https://api.slack.com/events/team_join), and messages are also sent when a user joins/quits a channel (with subtypes of `channel_join` and `channel_leave`), so we should be able to hook into those. | 1.0 | Create a new IRC bot when a new user joins Slack - Currently they would not be able to talk from Slack -> IRC until the bridge restarts.

There is an [API method for when a new user joins Slack](https://api.slack.com/events/team_join), and messages are also sent when a user joins/quits a channel (with subtypes of `channel_join` and `channel_leave`), so we should be able to hook into those. | priority | create a new irc bot when a new user joins slack currently they would not be able to talk from slack irc until the bridge restarts there is an and messages are also sent when a user joins quits a channel with subtypes of channel join and channel leave so we should be able to hook into those | 1 |

659,947 | 21,945,464,259 | IssuesEvent | 2022-05-23 23:40:41 | DIT113-V22/group-14 | https://api.github.com/repos/DIT113-V22/group-14 | opened | Add unique QR code signpost | enhancement sprint #4 High Priority | - [ ] Create unique texture for each signpost

- [ ] Edit signpost asset to be slimmer so it can sit closer to pot (aids QR code processing)

- [ ] Import signposts

- [ ] Position signposts | 1.0 | Add unique QR code signpost - - [ ] Create unique texture for each signpost

- [ ] Edit signpost asset to be slimmer so it can sit closer to pot (aids QR code processing)

- [ ] Import signposts

- [ ] Position signposts | priority | add unique qr code signpost create unique texture for each signpost edit signpost asset to be slimmer so it can sit closer to pot aids qr code processing import signposts position signposts | 1 |

417,817 | 12,179,634,927 | IssuesEvent | 2020-04-28 11:02:07 | Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | https://api.github.com/repos/Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | closed | [BUG] | Vrukul Blood trait | :beetle: bug :beetle: :exclamation: priority high |

**DO NOT REMOVE PRE-EXISTING LINES**

------------------------------------------------------------------------------------------------------------

-->

**Your mod version is: master branch**

**What expansions do you have installed?

All **

**Please explain your issue in as much detail as possible:**

I have Vrykul blood trait in father and mother

Vrykul blood trait not inherited

**Upload an attachment below: .zip of your save, or screenshots:**

| 1.0 | [BUG] | Vrukul Blood trait -

**DO NOT REMOVE PRE-EXISTING LINES**

------------------------------------------------------------------------------------------------------------

-->

**Your mod version is: master branch**

**What expansions do you have installed?

All **

**Please explain your issue in as much detail as possible:**

I have Vrykul blood trait in father and mother

Vrykul blood trait not inherited

**Upload an attachment below: .zip of your save, or screenshots:**

| priority | vrukul blood trait do not remove pre existing lines your mod version is master branch what expansions do you have installed all please explain your issue in as much detail as possible i have vrykul blood trait in father and mother vrykul blood trait not inherited upload an attachment below zip of your save or screenshots | 1 |

553,491 | 16,372,869,161 | IssuesEvent | 2021-05-15 13:59:33 | GeekyEggo/SoundDeck | https://api.github.com/repos/GeekyEggo/SoundDeck | opened | New action, "Play Folder" | priority: high type: feature | ## Purpose

This issue aims to centralize the discussion of adding a new action that allows users to play audio from a folder.

## Overview

It should be possible, from a new action, to select a folder, and then play audio from that folder when pressing the action. The action should provide a lot of the functionality seen within the existing "Play Audio" action, i.e. device selection and playback action.

## Options

- Playback Device

- Should include "Default" and "Default (Communications)"

- Show list all other playback devices.

- Folder

- The folder that contains the audio files.

- Files

- All(default)

- First (fulfils #38)

- Last

- Order

- Date created

- Date modified

- File name (default)

- Random

- Title (ID3 tag)

- Track Order (ID3 tag)

- Action

- Play / Next (default)

- Play / Stop

- Play All / Stop ¹

- Loop / Stop

- Loop All / Stop ¹

- Loop All / Stop (Reset) ¹

¹ _Only available when the files selection is "All"_

## Requirements

- The action must be able to play audio similar to the "Play Audio" action.

- The action must automatically detect file changes for the selected folder.

- The action must support the following file formats

- MP3, *.mp3, *.mpga

- OGG, *.oga, *.ogg, *.opus

- WAV, *.wav

## References

- #25 Play Audio - Play from Folder

- #38 Play first file in folder | 1.0 | New action, "Play Folder" - ## Purpose

This issue aims to centralize the discussion of adding a new action that allows users to play audio from a folder.

## Overview

It should be possible, from a new action, to select a folder, and then play audio from that folder when pressing the action. The action should provide a lot of the functionality seen within the existing "Play Audio" action, i.e. device selection and playback action.

## Options

- Playback Device

- Should include "Default" and "Default (Communications)"

- Show list all other playback devices.

- Folder

- The folder that contains the audio files.

- Files

- All(default)

- First (fulfils #38)

- Last

- Order

- Date created

- Date modified

- File name (default)

- Random

- Title (ID3 tag)

- Track Order (ID3 tag)

- Action

- Play / Next (default)

- Play / Stop

- Play All / Stop ¹

- Loop / Stop

- Loop All / Stop ¹

- Loop All / Stop (Reset) ¹

¹ _Only available when the files selection is "All"_

## Requirements

- The action must be able to play audio similar to the "Play Audio" action.

- The action must automatically detect file changes for the selected folder.

- The action must support the following file formats

- MP3, *.mp3, *.mpga

- OGG, *.oga, *.ogg, *.opus

- WAV, *.wav

## References

- #25 Play Audio - Play from Folder

- #38 Play first file in folder | priority | new action play folder purpose this issue aims to centralize the discussion of adding a new action that allows users to play audio from a folder overview it should be possible from a new action to select a folder and then play audio from that folder when pressing the action the action should provide a lot of the functionality seen within the existing play audio action i e device selection and playback action options playback device should include default and default communications show list all other playback devices folder the folder that contains the audio files files all default first fulfils last order date created date modified file name default random title tag track order tag action play next default play stop play all stop ¹ loop stop loop all stop ¹ loop all stop reset ¹ ¹ only available when the files selection is all requirements the action must be able to play audio similar to the play audio action the action must automatically detect file changes for the selected folder the action must support the following file formats mpga ogg oga ogg opus wav wav references play audio play from folder play first file in folder | 1 |

109,825 | 4,414,396,674 | IssuesEvent | 2016-08-13 11:57:25 | OpenCollective/OpenCollective | https://api.github.com/repos/OpenCollective/OpenCollective | closed | Github oauth is broken | bug high priority | It errors out with redirect-uri-mismatch.

On github, we have registered `https://api.opencollective.com/connected-accounts/github/callback`.

In our requests, we are sending `https://prod-opencollective-api.herokuapp.com/connected-accounts/github/callback`.

The `herokuapp` url is coming from `API_URL` in heroku settings. Changing that to `api.opencollective.com` triggers a cloudflare alert breaking the flow altogether:

`You've requested a page on a website (api.opencollective.com) that is on the CloudFlare network. Unfortunately, it is resolving to an IP address that is creating a conflict within CloudFlare's system.` | 1.0 | Github oauth is broken - It errors out with redirect-uri-mismatch.

On github, we have registered `https://api.opencollective.com/connected-accounts/github/callback`.

In our requests, we are sending `https://prod-opencollective-api.herokuapp.com/connected-accounts/github/callback`.

The `herokuapp` url is coming from `API_URL` in heroku settings. Changing that to `api.opencollective.com` triggers a cloudflare alert breaking the flow altogether:

`You've requested a page on a website (api.opencollective.com) that is on the CloudFlare network. Unfortunately, it is resolving to an IP address that is creating a conflict within CloudFlare's system.` | priority | github oauth is broken it errors out with redirect uri mismatch on github we have registered in our requests we are sending the herokuapp url is coming from api url in heroku settings changing that to api opencollective com triggers a cloudflare alert breaking the flow altogether you ve requested a page on a website api opencollective com that is on the cloudflare network unfortunately it is resolving to an ip address that is creating a conflict within cloudflare s system | 1 |

604,595 | 18,715,299,100 | IssuesEvent | 2021-11-03 03:12:50 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | History revert option is enabled for path without write permission | bug priority: high CI | ### Bug Report

#### Crafter CMS Version

3.1.x

#### Describe the bug

Using Editorial blueprint, with the following role, a user can see the revert option on the History dialog for some paths.

```

<role name="new-role">

<rule regex="/site/website/articles/2016/12/top-books-for-young-women/index.xml">

<allowed-permissions>

<permission>Read</permission>

<permission>Write</permission>

</allowed-permissions>

</rule>

</role>

```

#### To Reproduce

Steps to reproduce the behavior:

1. Create a new site with the Editorial blueprint

2. Update role as above

3. Create a new user with `new-role` only

4. Login as a user (user1) with 'new-role' permission.

5. check history of the file - top-books-for-young-women

Here you can see the revert option. This is acceptable.

6. Now check history for other pages. eg- Coffee is Good for Your Health

Expected behaviour - The user user1 should not see any 'revert' option.

Actual Behaviour - The user can see revert option. Refer image ref-1.

#### Logs

#### Screenshots

<img width="1445" alt="ref-1" src="https://user-images.githubusercontent.com/2996543/139823286-a8572b20-b73f-45c5-a918-9bf89e8033e3.png">

| 1.0 | History revert option is enabled for path without write permission - ### Bug Report

#### Crafter CMS Version

3.1.x

#### Describe the bug

Using Editorial blueprint, with the following role, a user can see the revert option on the History dialog for some paths.

```

<role name="new-role">

<rule regex="/site/website/articles/2016/12/top-books-for-young-women/index.xml">

<allowed-permissions>

<permission>Read</permission>

<permission>Write</permission>

</allowed-permissions>

</rule>

</role>

```

#### To Reproduce

Steps to reproduce the behavior:

1. Create a new site with the Editorial blueprint

2. Update role as above

3. Create a new user with `new-role` only

4. Login as a user (user1) with 'new-role' permission.

5. check history of the file - top-books-for-young-women

Here you can see the revert option. This is acceptable.

6. Now check history for other pages. eg- Coffee is Good for Your Health

Expected behaviour - The user user1 should not see any 'revert' option.

Actual Behaviour - The user can see revert option. Refer image ref-1.

#### Logs

#### Screenshots

<img width="1445" alt="ref-1" src="https://user-images.githubusercontent.com/2996543/139823286-a8572b20-b73f-45c5-a918-9bf89e8033e3.png">

| priority | history revert option is enabled for path without write permission bug report crafter cms version x describe the bug using editorial blueprint with the following role a user can see the revert option on the history dialog for some paths read write to reproduce steps to reproduce the behavior create a new site with the editorial blueprint update role as above create a new user with new role only login as a user with new role permission check history of the file top books for young women here you can see the revert option this is acceptable now check history for other pages eg coffee is good for your health expected behaviour the user should not see any revert option actual behaviour the user can see revert option refer image ref logs screenshots img width alt ref src | 1 |

492,944 | 14,223,222,395 | IssuesEvent | 2020-11-17 17:54:40 | aims-group/metagrid | https://api.github.com/repos/aims-group/metagrid | closed | Update GitHub Actions frontend workflow to use Environment Files instead of set-env | Priority: High Type: Configuration Type: DevOps | **Is your feature request related to a problem? Please describe.**

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

The `set-env` command is deprecated and will be disabled soon. Please upgrade to using Environment Files.

https://github.blog/changelog/2020-10-01-github-actions-deprecating-set-env-and-add-path-commands/

https://docs.github.com/en/free-pro-team@latest/actions/reference/workflow-commands-for-github-actions#environment-files

https://stackoverflow.com/questions/61117865/how-to-set-environment-variable-in-node-js-process-when-deploying-with-github-ac

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

Update GitHub Actions build files

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| 1.0 | Update GitHub Actions frontend workflow to use Environment Files instead of set-env - **Is your feature request related to a problem? Please describe.**

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

The `set-env` command is deprecated and will be disabled soon. Please upgrade to using Environment Files.

https://github.blog/changelog/2020-10-01-github-actions-deprecating-set-env-and-add-path-commands/

https://docs.github.com/en/free-pro-team@latest/actions/reference/workflow-commands-for-github-actions#environment-files

https://stackoverflow.com/questions/61117865/how-to-set-environment-variable-in-node-js-process-when-deploying-with-github-ac

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

Update GitHub Actions build files

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| priority | update github actions frontend workflow to use environment files instead of set env is your feature request related to a problem please describe a clear and concise description of what the problem is ex i m always frustrated when the set env command is deprecated and will be disabled soon please upgrade to using environment files describe the solution you d like a clear and concise description of what you want to happen update github actions build files describe alternatives you ve considered a clear and concise description of any alternative solutions or features you ve considered additional context add any other context or screenshots about the feature request here | 1 |

208,224 | 7,137,165,075 | IssuesEvent | 2018-01-23 10:03:05 | noavish/24event | https://api.github.com/repos/noavish/24event | opened | get request - all events | high-priority server-side | - [ ] build get request for all events when the page loads - work with fetch (client side) | 1.0 | get request - all events - - [ ] build get request for all events when the page loads - work with fetch (client side) | priority | get request all events build get request for all events when the page loads work with fetch client side | 1 |

817,227 | 30,631,993,235 | IssuesEvent | 2023-07-24 15:05:35 | vscentrum/vsc-software-stack | https://api.github.com/repos/vscentrum/vsc-software-stack | closed | PICRUSt2 | difficulty: easy new priority: high Python site:ugent conda | * link to support ticket: [#2023060960000701](https://otrsdict.ugent.be/otrs/index.pl?Action=AgentTicketZoom;TicketID=122238)

* website: https://github.com/picrust/picrust2

* installation docs: https://github.com/picrust/picrust2/wiki/Installation

* toolchain: `foss/2022b`

* easyblock to use: `PythonBundle`

* required dependencies:

* see https://github.com/picrust/picrust2/blob/master/setup.py

* notes:

* ...

* effort: *(TBD)*

* other install methods

* conda: yes (https://github.com/picrust/picrust2/wiki/Installation#install-from-bioconda)

* container image: no

* pre-built binaries (RHEL8 Linux x86_64): no

* easyconfig outside EasyBuild: no

| 1.0 | PICRUSt2 - * link to support ticket: [#2023060960000701](https://otrsdict.ugent.be/otrs/index.pl?Action=AgentTicketZoom;TicketID=122238)

* website: https://github.com/picrust/picrust2

* installation docs: https://github.com/picrust/picrust2/wiki/Installation

* toolchain: `foss/2022b`

* easyblock to use: `PythonBundle`

* required dependencies:

* see https://github.com/picrust/picrust2/blob/master/setup.py

* notes:

* ...

* effort: *(TBD)*

* other install methods

* conda: yes (https://github.com/picrust/picrust2/wiki/Installation#install-from-bioconda)

* container image: no

* pre-built binaries (RHEL8 Linux x86_64): no

* easyconfig outside EasyBuild: no

| priority | link to support ticket website installation docs toolchain foss easyblock to use pythonbundle required dependencies see notes effort tbd other install methods conda yes container image no pre built binaries linux no easyconfig outside easybuild no | 1 |

5,017 | 2,570,444,483 | IssuesEvent | 2015-02-10 09:32:53 | UnifiedViews/Core | https://api.github.com/repos/UnifiedViews/Core | closed | Backend stopped | priority: High severity: bug | Now I am not sure what caused backend to stop, whether the exceptions or the DPU update, which is the last log line (developUK branch)

<pre><code>

2014-11-20 07:20:47,783 [dpu: [INTLIB] PSP Extractor] WARN exec:84 dpu:2008 c.c.m.x.odcs.backend.db.SQLDatabaseReconnectAspect - failureTolerant has caught exception

org.springframework.transaction.TransactionSystemException: Could not commit JPA transaction; nested exception is javax.persistence.RollbackException: Exception [EclipseLink-4002] (Eclipse Persistence Services - 2.5.1.v20130918-f2b9fc5): org.eclipse.persistence.exceptions.DatabaseException

Internal Exception: com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: The last packet successfully received from the server was72817 seconds ago.The last packet sent successfully to the server was 72817 seconds ago, which is longer than the server configured value of 'wait_timeout'. You should consider either expiring and/or testing connection validity before use in your application, increasing the server configured values for client timeouts, or using the Connector/J connection property 'autoReconnect=true' to avoid this problem.

Error Code: 0

Call: SELECT ID FROM dpu_instance WHERE (ID = ?)

bind => [2008]

Query: DoesExistQuery(referenceClass=DPUInstanceRecord sql="SELECT ID FROM dpu_instance WHERE (ID = ?)")

at org.springframework.orm.jpa.JpaTransactionManager.doCommit(JpaTransactionManager.java:521) ~[spring-orm-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.transaction.support.AbstractPlatformTransactionManager.processCommit(AbstractPlatformTransactionManager.java:754) ~[spring-tx-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.transaction.support.AbstractPlatformTransactionManager.commit(AbstractPlatformTransactionManager.java:723) ~[spring-tx-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.transaction.interceptor.TransactionAspectSupport.commitTransactionAfterReturning(TransactionAspectSupport.java:387) ~[spring-tx-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.transaction.aspectj.AbstractTransactionAspect.ajc$afterReturning$org_springframework_transaction_aspectj_AbstractTransactionAspect$3$2a73e96c(AbstractTransactionAspect.aj:78) ~[spring-aspects-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at cz.cuni.mff.xrg.odcs.commons.app.facade.DPUFacadeImpl.save(DPUFacadeImpl.java:270) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at sun.reflect.GeneratedMethodAccessor18.invoke(Unknown Source) ~[na:na]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[na:1.7.0_72]

at java.lang.reflect.Method.invoke(Method.java:606) ~[na:1.7.0_72]

at org.springframework.aop.support.AopUtils.invokeJoinpointUsingReflection(AopUtils.java:319) ~[spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.ReflectiveMethodInvocation.invokeJoinpoint(ReflectiveMethodInvocation.java:183) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:150) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.aspectj.MethodInvocationProceedingJoinPoint.proceed(MethodInvocationProceedingJoinPoint.java:80) ~[spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at cz.cuni.mff.xrg.odcs.backend.db.SQLDatabaseReconnectAspect.failureTolerant(SQLDatabaseReconnectAspect.java:101) ~[backend-1.4.1-SNAPSHOT.jar:na]

at sun.reflect.GeneratedMethodAccessor12.invoke(Unknown Source) ~[na:na]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[na:1.7.0_72]

at java.lang.reflect.Method.invoke(Method.java:606) ~[na:1.7.0_72]

at org.springframework.aop.aspectj.AbstractAspectJAdvice.invokeAdviceMethodWithGivenArgs(AbstractAspectJAdvice.java:621) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.aspectj.AbstractAspectJAdvice.invokeAdviceMethod(AbstractAspectJAdvice.java:610) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.aspectj.AspectJAroundAdvice.invoke(AspectJAroundAdvice.java:65) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:172) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.interceptor.ExposeInvocationInterceptor.invoke(ExposeInvocationInterceptor.java:90) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:172) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.JdkDynamicAopProxy.invoke(JdkDynamicAopProxy.java:202) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at com.sun.proxy.$Proxy30.save(Unknown Source) [na:na]

at cz.cuni.mff.xrg.odcs.backend.EventListenerDatabase.onDPUEvent(EventListenerDatabase.java:29) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.EventListenerDatabase.onApplicationEvent(EventListenerDatabase.java:46) [backend-1.4.1-SNAPSHOT.jar:na]

at org.springframework.context.event.SimpleApplicationEventMulticaster.multicastEvent(SimpleApplicationEventMulticaster.java:97) [spring-context-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.context.support.AbstractApplicationContext.publishEvent(AbstractApplicationContext.java:327) [spring-context-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at cz.cuni.mff.xrg.odcs.backend.context.Context.sendMessage(Context.java:280) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.context.Context.sendMessage(Context.java:264) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.context.Context.sendMessage(Context.java:257) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.opendata.linked.psp_cz.metadata.Extractor.innerExecute(Extractor.java:113) [bundlefile:na]

at cz.cuni.mff.xrg.uv.boost.dpu.advanced.DpuAdvancedBase.execute(DpuAdvancedBase.java:223) [boost-dpu-1.1.2.jar:na]

at cz.cuni.mff.xrg.odcs.backend.execution.dpu.DPUExecutor.executeInstance(DPUExecutor.java:231) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.execution.dpu.DPUExecutor.execute(DPUExecutor.java:369) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.execution.dpu.DPUExecutor.run(DPUExecutor.java:451) [backend-1.4.1-SNAPSHOT.jar:na]

at java.lang.Thread.run(Thread.java:745) [na:1.7.0_72]

Caused by: javax.persistence.RollbackException: Exception [EclipseLink-4002] (Eclipse Persistence Services - 2.5.1.v20130918-f2b9fc5): org.eclipse.persistence.exceptions.DatabaseException

Internal Exception: com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: The last packet successfully received from the server was72817 seconds ago.The last packet sent successfully to the server was 72817 seconds ago, which is longer than the server configured value of 'wait_timeout'. You should consider either expiring and/or testing connection validity before use in your application, increasing the server configured values for client timeouts, or using the Connector/J connection property 'autoReconnect=true' to avoid this problem.

Error Code: 0

Call: SELECT ID FROM dpu_instance WHERE (ID = ?)

bind => [2008]

Query: DoesExistQuery(referenceClass=DPUInstanceRecord sql="SELECT ID FROM dpu_instance WHERE (ID = ?)")

at org.eclipse.persistence.internal.jpa.transaction.EntityTransactionImpl.commit(EntityTransactionImpl.java:157) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.springframework.orm.jpa.JpaTransactionManager.doCommit(JpaTransactionManager.java:512) ~[spring-orm-3.1.4.RELEASE.jar:3.1.4.RELEASE]

... 37 common frames omitted

Caused by: org.eclipse.persistence.exceptions.DatabaseException:

Internal Exception: com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: The last packet successfully received from the server was72817 seconds ago.The last packet sent successfully to the server was 72817 seconds ago, which is longer than the server configured value of 'wait_timeout'. You should consider either expiring and/or testing connection validity before use in your application, increasing the server configured values for client timeouts, or using the Connector/J connection property 'autoReconnect=true' to avoid this problem.

Error Code: 0

Call: SELECT ID FROM dpu_instance WHERE (ID = ?)

bind => [2008]

Query: DoesExistQuery(referenceClass=DPUInstanceRecord sql="SELECT ID FROM dpu_instance WHERE (ID = ?)")

at org.eclipse.persistence.exceptions.DatabaseException.sqlException(DatabaseException.java:340) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.processExceptionForCommError(DatabaseAccessor.java:1611) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.basicExecuteCall(DatabaseAccessor.java:674) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.executeCall(DatabaseAccessor.java:558) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.AbstractSession.basicExecuteCall(AbstractSession.java:1991) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.sessions.server.ServerSession.executeCall(ServerSession.java:570) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.sessions.server.ClientSession.executeCall(ClientSession.java:250) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.queries.DatasourceCallQueryMechanism.executeCall(DatasourceCallQueryMechanism.java:242) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.queries.DatasourceCallQueryMechanism.executeCall(DatasourceCallQueryMechanism.java:228) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.queries.DatasourceCallQueryMechanism.selectRowForDoesExist(DatasourceCallQueryMechanism.java:736) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.queries.DoesExistQuery.executeDatabaseQuery(DoesExistQuery.java:241) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.queries.DatabaseQuery.execute(DatabaseQuery.java:899) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.queries.DatabaseQuery.executeInUnitOfWork(DatabaseQuery.java:798) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.internalExecuteQuery(UnitOfWorkImpl.java:2896) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.AbstractSession.executeQuery(AbstractSession.java:1793) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.AbstractSession.executeQuery(AbstractSession.java:1775) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.AbstractSession.executeQuery(AbstractSession.java:1726) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.checkForUnregisteredExistingObject(UnitOfWorkImpl.java:785) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.discoverAndPersistUnregisteredNewObjects(UnitOfWorkImpl.java:4194) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.mappings.ObjectReferenceMapping.cascadeDiscoverAndPersistUnregisteredNewObjects(ObjectReferenceMapping.java:949) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.mappings.ObjectReferenceMapping.cascadeDiscoverAndPersistUnregisteredNewObjects(ObjectReferenceMapping.java:927) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.descriptors.ObjectBuilder.cascadeDiscoverAndPersistUnregisteredNewObjects(ObjectBuilder.java:2514) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.discoverAndPersistUnregisteredNewObjects(UnitOfWorkImpl.java:4207) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.RepeatableWriteUnitOfWork.discoverUnregisteredNewObjects(RepeatableWriteUnitOfWork.java:305) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.calculateChanges(UnitOfWorkImpl.java:723) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.commitToDatabaseWithChangeSet(UnitOfWorkImpl.java:1516) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.RepeatableWriteUnitOfWork.commitRootUnitOfWork(RepeatableWriteUnitOfWork.java:277) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.commitAndResume(UnitOfWorkImpl.java:1169) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.jpa.transaction.EntityTransactionImpl.commit(EntityTransactionImpl.java:132) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

... 38 common frames omitted

Caused by: com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: The last packet successfully received from the server was72817 seconds ago.The last packet sent successfully to the server was 72817 seconds ago, which is longer than the server configured value of 'wait_timeout'. You should consider either expiring and/or testing connection validity before use in your application, increasing the server configured values for client timeouts, or using the Connector/J connection property 'autoReconnect=true' to avoid this problem.

at sun.reflect.GeneratedConstructorAccessor215.newInstance(Unknown Source) ~[na:na]

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) ~[na:1.7.0_72]

at java.lang.reflect.Constructor.newInstance(Constructor.java:526) ~[na:1.7.0_72]

at com.mysql.jdbc.Util.handleNewInstance(Util.java:406) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.SQLError.createCommunicationsException(SQLError.java:1074) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.MysqlIO.send(MysqlIO.java:3246) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.MysqlIO.sendCommand(MysqlIO.java:1917) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.MysqlIO.sqlQueryDirect(MysqlIO.java:2060) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.ConnectionImpl.execSQL(ConnectionImpl.java:2542) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.PreparedStatement.executeInternal(PreparedStatement.java:1734) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.PreparedStatement.executeQuery(PreparedStatement.java:1885) ~[mysql-connector-java-5.1.6.jar:na]

at org.apache.commons.dbcp.DelegatingPreparedStatement.executeQuery(DelegatingPreparedStatement.java:96) ~[commons-dbcp-1.4.jar:1.4]

at org.apache.commons.dbcp.DelegatingPreparedStatement.executeQuery(DelegatingPreparedStatement.java:96) ~[commons-dbcp-1.4.jar:1.4]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.executeSelect(DatabaseAccessor.java:1007) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.basicExecuteCall(DatabaseAccessor.java:642) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

... 64 common frames omitted

Caused by: java.net.SocketException: Broken pipe

at java.net.SocketOutputStream.socketWrite0(Native Method) ~[na:1.7.0_72]

at java.net.SocketOutputStream.socketWrite(SocketOutputStream.java:113) ~[na:1.7.0_72]

at java.net.SocketOutputStream.write(SocketOutputStream.java:159) ~[na:1.7.0_72]

at java.io.BufferedOutputStream.flushBuffer(BufferedOutputStream.java:82) ~[na:1.7.0_72]

at java.io.BufferedOutputStream.flush(BufferedOutputStream.java:140) ~[na:1.7.0_72]

at com.mysql.jdbc.MysqlIO.send(MysqlIO.java:3227) ~[mysql-connector-java-5.1.6.jar:na]

... 73 common frames omitted

2014-11-20 07:20:47,784 [dpu: [INTLIB] PSP Extractor] WARN exec:84 dpu:2008 c.c.m.x.odcs.backend.db.SQLDatabaseReconnectAspect - Database is down after 1 attempts.

... 73 common frames omitted

2014-11-20 07:20:47,784 [dpu: [INTLIB] PSP Extractor] WARN exec:84 dpu:2008 c.c.m.x.odcs.backend.db.SQLDatabaseReconnectAspect - Database is down after 1 attempts.

2014-11-20 07:20:53,719 [taskScheduler-6] TRACE exec: dpu: c.c.mff.xrg.odcs.commons.app.scheduling.Schedule - onTimeCheck started

2014-11-20 07:21:06,113 [taskScheduler-2] DEBUG exec: dpu: cz.cuni.mff.xrg.odcs.backend.execution.Engine - >>> Entering checkJobs()

2014-11-20 07:32:46,991 [File notifier server] DEBUG exec: dpu: c.c.m.x.o.c.app.module.osgi.OSGIChangeManager - Udating DPU in: extractor_rdfa_distiller

</code></pre> | 1.0 | Backend stopped - Now I am not sure what caused backend to stop, whether the exceptions or the DPU update, which is the last log line (developUK branch)

<pre><code>

2014-11-20 07:20:47,783 [dpu: [INTLIB] PSP Extractor] WARN exec:84 dpu:2008 c.c.m.x.odcs.backend.db.SQLDatabaseReconnectAspect - failureTolerant has caught exception

org.springframework.transaction.TransactionSystemException: Could not commit JPA transaction; nested exception is javax.persistence.RollbackException: Exception [EclipseLink-4002] (Eclipse Persistence Services - 2.5.1.v20130918-f2b9fc5): org.eclipse.persistence.exceptions.DatabaseException

Internal Exception: com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: The last packet successfully received from the server was72817 seconds ago.The last packet sent successfully to the server was 72817 seconds ago, which is longer than the server configured value of 'wait_timeout'. You should consider either expiring and/or testing connection validity before use in your application, increasing the server configured values for client timeouts, or using the Connector/J connection property 'autoReconnect=true' to avoid this problem.

Error Code: 0

Call: SELECT ID FROM dpu_instance WHERE (ID = ?)

bind => [2008]

Query: DoesExistQuery(referenceClass=DPUInstanceRecord sql="SELECT ID FROM dpu_instance WHERE (ID = ?)")

at org.springframework.orm.jpa.JpaTransactionManager.doCommit(JpaTransactionManager.java:521) ~[spring-orm-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.transaction.support.AbstractPlatformTransactionManager.processCommit(AbstractPlatformTransactionManager.java:754) ~[spring-tx-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.transaction.support.AbstractPlatformTransactionManager.commit(AbstractPlatformTransactionManager.java:723) ~[spring-tx-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.transaction.interceptor.TransactionAspectSupport.commitTransactionAfterReturning(TransactionAspectSupport.java:387) ~[spring-tx-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.transaction.aspectj.AbstractTransactionAspect.ajc$afterReturning$org_springframework_transaction_aspectj_AbstractTransactionAspect$3$2a73e96c(AbstractTransactionAspect.aj:78) ~[spring-aspects-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at cz.cuni.mff.xrg.odcs.commons.app.facade.DPUFacadeImpl.save(DPUFacadeImpl.java:270) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at sun.reflect.GeneratedMethodAccessor18.invoke(Unknown Source) ~[na:na]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[na:1.7.0_72]

at java.lang.reflect.Method.invoke(Method.java:606) ~[na:1.7.0_72]

at org.springframework.aop.support.AopUtils.invokeJoinpointUsingReflection(AopUtils.java:319) ~[spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.ReflectiveMethodInvocation.invokeJoinpoint(ReflectiveMethodInvocation.java:183) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:150) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.aspectj.MethodInvocationProceedingJoinPoint.proceed(MethodInvocationProceedingJoinPoint.java:80) ~[spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at cz.cuni.mff.xrg.odcs.backend.db.SQLDatabaseReconnectAspect.failureTolerant(SQLDatabaseReconnectAspect.java:101) ~[backend-1.4.1-SNAPSHOT.jar:na]

at sun.reflect.GeneratedMethodAccessor12.invoke(Unknown Source) ~[na:na]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[na:1.7.0_72]

at java.lang.reflect.Method.invoke(Method.java:606) ~[na:1.7.0_72]

at org.springframework.aop.aspectj.AbstractAspectJAdvice.invokeAdviceMethodWithGivenArgs(AbstractAspectJAdvice.java:621) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.aspectj.AbstractAspectJAdvice.invokeAdviceMethod(AbstractAspectJAdvice.java:610) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.aspectj.AspectJAroundAdvice.invoke(AspectJAroundAdvice.java:65) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:172) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.interceptor.ExposeInvocationInterceptor.invoke(ExposeInvocationInterceptor.java:90) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:172) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.aop.framework.JdkDynamicAopProxy.invoke(JdkDynamicAopProxy.java:202) [spring-aop-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at com.sun.proxy.$Proxy30.save(Unknown Source) [na:na]

at cz.cuni.mff.xrg.odcs.backend.EventListenerDatabase.onDPUEvent(EventListenerDatabase.java:29) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.EventListenerDatabase.onApplicationEvent(EventListenerDatabase.java:46) [backend-1.4.1-SNAPSHOT.jar:na]

at org.springframework.context.event.SimpleApplicationEventMulticaster.multicastEvent(SimpleApplicationEventMulticaster.java:97) [spring-context-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at org.springframework.context.support.AbstractApplicationContext.publishEvent(AbstractApplicationContext.java:327) [spring-context-3.1.4.RELEASE.jar:3.1.4.RELEASE]

at cz.cuni.mff.xrg.odcs.backend.context.Context.sendMessage(Context.java:280) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.context.Context.sendMessage(Context.java:264) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.context.Context.sendMessage(Context.java:257) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.opendata.linked.psp_cz.metadata.Extractor.innerExecute(Extractor.java:113) [bundlefile:na]

at cz.cuni.mff.xrg.uv.boost.dpu.advanced.DpuAdvancedBase.execute(DpuAdvancedBase.java:223) [boost-dpu-1.1.2.jar:na]

at cz.cuni.mff.xrg.odcs.backend.execution.dpu.DPUExecutor.executeInstance(DPUExecutor.java:231) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.execution.dpu.DPUExecutor.execute(DPUExecutor.java:369) [backend-1.4.1-SNAPSHOT.jar:na]

at cz.cuni.mff.xrg.odcs.backend.execution.dpu.DPUExecutor.run(DPUExecutor.java:451) [backend-1.4.1-SNAPSHOT.jar:na]

at java.lang.Thread.run(Thread.java:745) [na:1.7.0_72]

Caused by: javax.persistence.RollbackException: Exception [EclipseLink-4002] (Eclipse Persistence Services - 2.5.1.v20130918-f2b9fc5): org.eclipse.persistence.exceptions.DatabaseException

Internal Exception: com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: The last packet successfully received from the server was72817 seconds ago.The last packet sent successfully to the server was 72817 seconds ago, which is longer than the server configured value of 'wait_timeout'. You should consider either expiring and/or testing connection validity before use in your application, increasing the server configured values for client timeouts, or using the Connector/J connection property 'autoReconnect=true' to avoid this problem.

Error Code: 0

Call: SELECT ID FROM dpu_instance WHERE (ID = ?)

bind => [2008]

Query: DoesExistQuery(referenceClass=DPUInstanceRecord sql="SELECT ID FROM dpu_instance WHERE (ID = ?)")

at org.eclipse.persistence.internal.jpa.transaction.EntityTransactionImpl.commit(EntityTransactionImpl.java:157) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.springframework.orm.jpa.JpaTransactionManager.doCommit(JpaTransactionManager.java:512) ~[spring-orm-3.1.4.RELEASE.jar:3.1.4.RELEASE]

... 37 common frames omitted

Caused by: org.eclipse.persistence.exceptions.DatabaseException:

Internal Exception: com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: The last packet successfully received from the server was72817 seconds ago.The last packet sent successfully to the server was 72817 seconds ago, which is longer than the server configured value of 'wait_timeout'. You should consider either expiring and/or testing connection validity before use in your application, increasing the server configured values for client timeouts, or using the Connector/J connection property 'autoReconnect=true' to avoid this problem.

Error Code: 0

Call: SELECT ID FROM dpu_instance WHERE (ID = ?)

bind => [2008]

Query: DoesExistQuery(referenceClass=DPUInstanceRecord sql="SELECT ID FROM dpu_instance WHERE (ID = ?)")

at org.eclipse.persistence.exceptions.DatabaseException.sqlException(DatabaseException.java:340) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.processExceptionForCommError(DatabaseAccessor.java:1611) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.basicExecuteCall(DatabaseAccessor.java:674) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.executeCall(DatabaseAccessor.java:558) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.AbstractSession.basicExecuteCall(AbstractSession.java:1991) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.sessions.server.ServerSession.executeCall(ServerSession.java:570) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.sessions.server.ClientSession.executeCall(ClientSession.java:250) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.queries.DatasourceCallQueryMechanism.executeCall(DatasourceCallQueryMechanism.java:242) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.queries.DatasourceCallQueryMechanism.executeCall(DatasourceCallQueryMechanism.java:228) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.queries.DatasourceCallQueryMechanism.selectRowForDoesExist(DatasourceCallQueryMechanism.java:736) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.queries.DoesExistQuery.executeDatabaseQuery(DoesExistQuery.java:241) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.queries.DatabaseQuery.execute(DatabaseQuery.java:899) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.queries.DatabaseQuery.executeInUnitOfWork(DatabaseQuery.java:798) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.internalExecuteQuery(UnitOfWorkImpl.java:2896) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.AbstractSession.executeQuery(AbstractSession.java:1793) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.AbstractSession.executeQuery(AbstractSession.java:1775) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.AbstractSession.executeQuery(AbstractSession.java:1726) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.checkForUnregisteredExistingObject(UnitOfWorkImpl.java:785) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.discoverAndPersistUnregisteredNewObjects(UnitOfWorkImpl.java:4194) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.mappings.ObjectReferenceMapping.cascadeDiscoverAndPersistUnregisteredNewObjects(ObjectReferenceMapping.java:949) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.mappings.ObjectReferenceMapping.cascadeDiscoverAndPersistUnregisteredNewObjects(ObjectReferenceMapping.java:927) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.descriptors.ObjectBuilder.cascadeDiscoverAndPersistUnregisteredNewObjects(ObjectBuilder.java:2514) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.discoverAndPersistUnregisteredNewObjects(UnitOfWorkImpl.java:4207) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.RepeatableWriteUnitOfWork.discoverUnregisteredNewObjects(RepeatableWriteUnitOfWork.java:305) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.calculateChanges(UnitOfWorkImpl.java:723) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.commitToDatabaseWithChangeSet(UnitOfWorkImpl.java:1516) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.RepeatableWriteUnitOfWork.commitRootUnitOfWork(RepeatableWriteUnitOfWork.java:277) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.sessions.UnitOfWorkImpl.commitAndResume(UnitOfWorkImpl.java:1169) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.jpa.transaction.EntityTransactionImpl.commit(EntityTransactionImpl.java:132) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

... 38 common frames omitted

Caused by: com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: The last packet successfully received from the server was72817 seconds ago.The last packet sent successfully to the server was 72817 seconds ago, which is longer than the server configured value of 'wait_timeout'. You should consider either expiring and/or testing connection validity before use in your application, increasing the server configured values for client timeouts, or using the Connector/J connection property 'autoReconnect=true' to avoid this problem.

at sun.reflect.GeneratedConstructorAccessor215.newInstance(Unknown Source) ~[na:na]

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) ~[na:1.7.0_72]

at java.lang.reflect.Constructor.newInstance(Constructor.java:526) ~[na:1.7.0_72]

at com.mysql.jdbc.Util.handleNewInstance(Util.java:406) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.SQLError.createCommunicationsException(SQLError.java:1074) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.MysqlIO.send(MysqlIO.java:3246) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.MysqlIO.sendCommand(MysqlIO.java:1917) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.MysqlIO.sqlQueryDirect(MysqlIO.java:2060) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.ConnectionImpl.execSQL(ConnectionImpl.java:2542) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.PreparedStatement.executeInternal(PreparedStatement.java:1734) ~[mysql-connector-java-5.1.6.jar:na]

at com.mysql.jdbc.PreparedStatement.executeQuery(PreparedStatement.java:1885) ~[mysql-connector-java-5.1.6.jar:na]

at org.apache.commons.dbcp.DelegatingPreparedStatement.executeQuery(DelegatingPreparedStatement.java:96) ~[commons-dbcp-1.4.jar:1.4]

at org.apache.commons.dbcp.DelegatingPreparedStatement.executeQuery(DelegatingPreparedStatement.java:96) ~[commons-dbcp-1.4.jar:1.4]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.executeSelect(DatabaseAccessor.java:1007) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

at org.eclipse.persistence.internal.databaseaccess.DatabaseAccessor.basicExecuteCall(DatabaseAccessor.java:642) ~[commons-app-1.4.1-SNAPSHOT.jar:na]

... 64 common frames omitted

Caused by: java.net.SocketException: Broken pipe

at java.net.SocketOutputStream.socketWrite0(Native Method) ~[na:1.7.0_72]

at java.net.SocketOutputStream.socketWrite(SocketOutputStream.java:113) ~[na:1.7.0_72]

at java.net.SocketOutputStream.write(SocketOutputStream.java:159) ~[na:1.7.0_72]

at java.io.BufferedOutputStream.flushBuffer(BufferedOutputStream.java:82) ~[na:1.7.0_72]

at java.io.BufferedOutputStream.flush(BufferedOutputStream.java:140) ~[na:1.7.0_72]

at com.mysql.jdbc.MysqlIO.send(MysqlIO.java:3227) ~[mysql-connector-java-5.1.6.jar:na]

... 73 common frames omitted

2014-11-20 07:20:47,784 [dpu: [INTLIB] PSP Extractor] WARN exec:84 dpu:2008 c.c.m.x.odcs.backend.db.SQLDatabaseReconnectAspect - Database is down after 1 attempts.

... 73 common frames omitted

2014-11-20 07:20:47,784 [dpu: [INTLIB] PSP Extractor] WARN exec:84 dpu:2008 c.c.m.x.odcs.backend.db.SQLDatabaseReconnectAspect - Database is down after 1 attempts.

2014-11-20 07:20:53,719 [taskScheduler-6] TRACE exec: dpu: c.c.mff.xrg.odcs.commons.app.scheduling.Schedule - onTimeCheck started

2014-11-20 07:21:06,113 [taskScheduler-2] DEBUG exec: dpu: cz.cuni.mff.xrg.odcs.backend.execution.Engine - >>> Entering checkJobs()

2014-11-20 07:32:46,991 [File notifier server] DEBUG exec: dpu: c.c.m.x.o.c.app.module.osgi.OSGIChangeManager - Udating DPU in: extractor_rdfa_distiller

</code></pre> | priority | backend stopped now i am not sure what caused backend to stop whether the exceptions or the dpu update which is the last log line developuk branch psp extractor warn exec dpu c c m x odcs backend db sqldatabasereconnectaspect failuretolerant has caught exception org springframework transaction transactionsystemexception could not commit jpa transaction nested exception is javax persistence rollbackexception exception eclipse persistence services org eclipse persistence exceptions databaseexception internal exception com mysql jdbc exceptions communicationsexception the last packet successfully received from the server seconds ago the last packet sent successfully to the server was seconds ago which is longer than the server configured value of wait timeout you should consider either expiring and or testing connection validity before use in your application increasing the server configured values for client timeouts or using the connector j connection property autoreconnect true to avoid this problem error code call select id from dpu instance where id bind query doesexistquery referenceclass dpuinstancerecord sql select id from dpu instance where id at org springframework orm jpa jpatransactionmanager docommit jpatransactionmanager java at org springframework transaction support abstractplatformtransactionmanager processcommit abstractplatformtransactionmanager java at org springframework transaction support abstractplatformtransactionmanager commit abstractplatformtransactionmanager java at org springframework transaction interceptor transactionaspectsupport committransactionafterreturning transactionaspectsupport java at org springframework transaction aspectj abstracttransactionaspect ajc afterreturning org springframework transaction aspectj abstracttransactionaspect abstracttransactionaspect aj at cz cuni mff xrg odcs commons app facade dpufacadeimpl save dpufacadeimpl java at sun reflect invoke unknown source at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org springframework aop support aoputils invokejoinpointusingreflection aoputils java at org springframework aop framework reflectivemethodinvocation invokejoinpoint reflectivemethodinvocation java at org springframework aop framework reflectivemethodinvocation proceed reflectivemethodinvocation java at org springframework aop aspectj methodinvocationproceedingjoinpoint proceed methodinvocationproceedingjoinpoint java at cz cuni mff xrg odcs backend db sqldatabasereconnectaspect failuretolerant sqldatabasereconnectaspect java at sun reflect invoke unknown source at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org springframework aop aspectj abstractaspectjadvice invokeadvicemethodwithgivenargs abstractaspectjadvice java at org springframework aop aspectj abstractaspectjadvice invokeadvicemethod abstractaspectjadvice java at org springframework aop aspectj aspectjaroundadvice invoke aspectjaroundadvice java at org springframework aop framework reflectivemethodinvocation proceed reflectivemethodinvocation java at org springframework aop interceptor exposeinvocationinterceptor invoke exposeinvocationinterceptor java at org springframework aop framework reflectivemethodinvocation proceed reflectivemethodinvocation java at org springframework aop framework jdkdynamicaopproxy invoke jdkdynamicaopproxy java at com sun proxy save unknown source at cz cuni mff xrg odcs backend eventlistenerdatabase ondpuevent eventlistenerdatabase java at cz cuni mff xrg odcs backend eventlistenerdatabase onapplicationevent eventlistenerdatabase java at org springframework context event simpleapplicationeventmulticaster multicastevent simpleapplicationeventmulticaster java at org springframework context support abstractapplicationcontext publishevent abstractapplicationcontext java at cz cuni mff xrg odcs backend context context sendmessage context java at cz cuni mff xrg odcs backend context context sendmessage context java at cz cuni mff xrg odcs backend context context sendmessage context java at cz opendata linked psp cz metadata extractor innerexecute extractor java at cz cuni mff xrg uv boost dpu advanced dpuadvancedbase execute dpuadvancedbase java at cz cuni mff xrg odcs backend execution dpu dpuexecutor executeinstance dpuexecutor java at cz cuni mff xrg odcs backend execution dpu dpuexecutor execute dpuexecutor java at cz cuni mff xrg odcs backend execution dpu dpuexecutor run dpuexecutor java at java lang thread run thread java caused by javax persistence rollbackexception exception eclipse persistence services org eclipse persistence exceptions databaseexception internal exception com mysql jdbc exceptions communicationsexception the last packet successfully received from the server seconds ago the last packet sent successfully to the server was seconds ago which is longer than the server configured value of wait timeout you should consider either expiring and or testing connection validity before use in your application increasing the server configured values for client timeouts or using the connector j connection property autoreconnect true to avoid this problem error code call select id from dpu instance where id bind query doesexistquery referenceclass dpuinstancerecord sql select id from dpu instance where id at org eclipse persistence internal jpa transaction entitytransactionimpl commit entitytransactionimpl java at org springframework orm jpa jpatransactionmanager docommit jpatransactionmanager java common frames omitted caused by org eclipse persistence exceptions databaseexception internal exception com mysql jdbc exceptions communicationsexception the last packet successfully received from the server seconds ago the last packet sent successfully to the server was seconds ago which is longer than the server configured value of wait timeout you should consider either expiring and or testing connection validity before use in your application increasing the server configured values for client timeouts or using the connector j connection property autoreconnect true to avoid this problem error code call select id from dpu instance where id bind query doesexistquery referenceclass dpuinstancerecord sql select id from dpu instance where id at org eclipse persistence exceptions databaseexception sqlexception databaseexception java at org eclipse persistence internal databaseaccess databaseaccessor processexceptionforcommerror databaseaccessor java at org eclipse persistence internal databaseaccess databaseaccessor basicexecutecall databaseaccessor java at org eclipse persistence internal databaseaccess databaseaccessor executecall databaseaccessor java at org eclipse persistence internal sessions abstractsession basicexecutecall abstractsession java at org eclipse persistence sessions server serversession executecall serversession java at org eclipse persistence sessions server clientsession executecall clientsession java at org eclipse persistence internal queries datasourcecallquerymechanism executecall datasourcecallquerymechanism java at org eclipse persistence internal queries datasourcecallquerymechanism executecall datasourcecallquerymechanism java at org eclipse persistence internal queries datasourcecallquerymechanism selectrowfordoesexist datasourcecallquerymechanism java at org eclipse persistence queries doesexistquery executedatabasequery doesexistquery java at org eclipse persistence queries databasequery execute databasequery java at org eclipse persistence queries databasequery executeinunitofwork databasequery java at org eclipse persistence internal sessions unitofworkimpl internalexecutequery unitofworkimpl java at org eclipse persistence internal sessions abstractsession executequery abstractsession java at org eclipse persistence internal sessions abstractsession executequery abstractsession java at org eclipse persistence internal sessions abstractsession executequery abstractsession java at org eclipse persistence internal sessions unitofworkimpl checkforunregisteredexistingobject unitofworkimpl java at org eclipse persistence internal sessions unitofworkimpl discoverandpersistunregisterednewobjects unitofworkimpl java at org eclipse persistence mappings objectreferencemapping cascadediscoverandpersistunregisterednewobjects objectreferencemapping java at org eclipse persistence mappings objectreferencemapping cascadediscoverandpersistunregisterednewobjects objectreferencemapping java at org eclipse persistence internal descriptors objectbuilder cascadediscoverandpersistunregisterednewobjects objectbuilder java at org eclipse persistence internal sessions unitofworkimpl discoverandpersistunregisterednewobjects unitofworkimpl java at org eclipse persistence internal sessions repeatablewriteunitofwork discoverunregisterednewobjects repeatablewriteunitofwork java at org eclipse persistence internal sessions unitofworkimpl calculatechanges unitofworkimpl java at org eclipse persistence internal sessions unitofworkimpl committodatabasewithchangeset unitofworkimpl java at org eclipse persistence internal sessions repeatablewriteunitofwork commitrootunitofwork repeatablewriteunitofwork java at org eclipse persistence internal sessions unitofworkimpl commitandresume unitofworkimpl java at org eclipse persistence internal jpa transaction entitytransactionimpl commit entitytransactionimpl java common frames omitted caused by com mysql jdbc exceptions communicationsexception the last packet successfully received from the server seconds ago the last packet sent successfully to the server was seconds ago which is longer than the server configured value of wait timeout you should consider either expiring and or testing connection validity before use in your application increasing the server configured values for client timeouts or using the connector j connection property autoreconnect true to avoid this problem at sun reflect newinstance unknown source at sun reflect delegatingconstructoraccessorimpl newinstance delegatingconstructoraccessorimpl java at java lang reflect constructor newinstance constructor java at com mysql jdbc util handlenewinstance util java at com mysql jdbc sqlerror createcommunicationsexception sqlerror java at com mysql jdbc mysqlio send mysqlio java at com mysql jdbc mysqlio sendcommand mysqlio java at com mysql jdbc mysqlio sqlquerydirect mysqlio java at com mysql jdbc connectionimpl execsql connectionimpl java at com mysql jdbc preparedstatement executeinternal preparedstatement java at com mysql jdbc preparedstatement executequery preparedstatement java at org apache commons dbcp delegatingpreparedstatement executequery delegatingpreparedstatement java at org apache commons dbcp delegatingpreparedstatement executequery delegatingpreparedstatement java at org eclipse persistence internal databaseaccess databaseaccessor executeselect databaseaccessor java at org eclipse persistence internal databaseaccess databaseaccessor basicexecutecall databaseaccessor java common frames omitted caused by java net socketexception broken pipe at java net socketoutputstream native method at java net socketoutputstream socketwrite socketoutputstream java at java net socketoutputstream write socketoutputstream java at java io bufferedoutputstream flushbuffer bufferedoutputstream java at java io bufferedoutputstream flush bufferedoutputstream java at com mysql jdbc mysqlio send mysqlio java common frames omitted psp extractor warn exec dpu c c m x odcs backend db sqldatabasereconnectaspect database is down after attempts common frames omitted psp extractor warn exec dpu c c m x odcs backend db sqldatabasereconnectaspect database is down after attempts trace exec dpu c c mff xrg odcs commons app scheduling schedule ontimecheck started debug exec dpu cz cuni mff xrg odcs backend execution engine entering checkjobs debug exec dpu c c m x o c app module osgi osgichangemanager udating dpu in extractor rdfa distiller | 1 |

554,033 | 16,387,847,413 | IssuesEvent | 2021-05-17 12:51:16 | gammapy/gammapy | https://api.github.com/repos/gammapy/gammapy | closed | Clean up model names | cleanup priority-high | This is a reminder issue for myself to clean up the model class names, once #2319 is done.

Moving the models broke all user scripts & notebooks anyways, so now is a good time to do renames of models.

Plan for names is to add "Source", "Spatial", "Spectral" and "Time" in a uniform way: https://github.com/gammapy/gammapy/blob/master/docs/development/pigs/pig-016.rst#introduce-gammapymodeling

| 1.0 | Clean up model names - This is a reminder issue for myself to clean up the model class names, once #2319 is done.

Moving the models broke all user scripts & notebooks anyways, so now is a good time to do renames of models.

Plan for names is to add "Source", "Spatial", "Spectral" and "Time" in a uniform way: https://github.com/gammapy/gammapy/blob/master/docs/development/pigs/pig-016.rst#introduce-gammapymodeling

| priority | clean up model names this is a reminder issue for myself to clean up the model class names once is done moving the models broke all user scripts notebooks anyways so now is a good time to do renames of models plan for names is to add source spatial spectral and time in a uniform way | 1 |

528,108 | 15,360,232,594 | IssuesEvent | 2021-03-01 16:42:32 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | ISIS reduction always crashes | Bug Direct Inelastic High Priority Stale | <!-- TEMPLATE FOR BUG REPORTS -->

**Original reporter:** [ISIS excitation group]/[DaaaS testers]. <!--If the issue was raised by a user they should be named here.-->

Att of: @martyngigg; @NickDraper

### Expected behavior

### Actual behavior

ISIS reduction script always crashes on all Unix machines when runs long enough. It seems there is a memory leak. Windows machines are also affected but more difficult to diagnoze.

### Steps to reproduce the behavior

typical Isis reduction script demonstrating this behaviour are located at:

1) [LET/ReductionWrapper_withPerformance.py](https://github.com/mantidproject/scriptrepository/blob/master/direct_inelastic/LET/ReductionWrapper_withPerformance.py) -- the logger,

containing normal ISIS inelastic data reduction cycle with added facility to measure performance and memory usage of the reduction. It currently deploy `free -m` Unix command and Python `resource` module to measure memory usage. The performance logs are stored in script directory as text files with names build from instrument name and the host name.

and:

2) [MERLINReduction_2018_2WithPerformance.py](https://github.com/mantidproject/scriptrepository/blob/master/direct_inelastic/MERLIN/MERLINReduction_2018_2WithPerformance.py)

An executor script, which is normal ISIS reduction script, modified to use module (1). Other executors are availible but this one is the most efficient one.

The issue occurs the faster the lower memory a machine has, though isiscompute with 0.5Tb of memory is also susseptable when mutliple users occupy substantial chunk of memory.

To demonstrate the issue, one should place these two files in a single folder of a unix machine which has access to archive mounted at root. The mahcine should also have isis instrument parameters in /usr/local/mprogs/InstrumentFiles. See the executor script rows 150-151 where necessary path-es to the data are specified. The results and the log files are written into the script folder.

The typical issue can be best reproduced on 32Gb DaaaS virtual machine [(https://isis.analysis.stfc.ac.uk/#/login)](url) as follows.

The reduction script starts and have the following memory and performance characteristics, printed in the log file:

>

*** Test started: 29/10/2018 at 17:39

*** Self Memory: 487.6640625Kb; Kids memory 136.0234375Kb

*** total used free shared buff/cache available

*** Mem: 32169 700 30789 13 679 31027 -- **these are the values returned by flree -m**

*** Swap: 2047 647 1400

*** ---> File MER41624.nxs processed in 76.16sec

*** Self Memory: 15167.0Kb; Kids memory 305.0Kb -- **these are the values retrieved from resources**

*** Mem: 32169 15322 16087 13 758 16401

*** Swap: 2047 647 1400

*** ---> File MER41625.nxs processed in 33.68sec

*** Self Memory: 18495.0Kb; Kids memory 14860.0Kb

*** Can not launch subprocess to evaluate free memory.

*** ---> File MER41626.nxs processed in 34.54sec

*** Self Memory: 18495.0Kb; Kids memory 14860.0Kb

*** Mem: 32169 5221 26103 13 844 26502

*** Swap: 2047 647 1400

The memory, reported by **resources** module starts creeping up.