Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

31,497 | 2,733,156,095 | IssuesEvent | 2015-04-17 12:09:56 | tjcsl/cslbot | https://api.github.com/repos/tjcsl/cslbot | closed | gizoogle filter strips anything after an opening HTML (<) tag | bot bug high priority | No description given.

Issue created by jwoglom!~jwoglom@unaffiliated/jwoglom | 1.0 | gizoogle filter strips anything after an opening HTML (<) tag - No description given.

Issue created by jwoglom!~jwoglom@unaffiliated/jwoglom | priority | gizoogle filter strips anything after an opening html tag no description given issue created by jwoglom jwoglom unaffiliated jwoglom | 1 |

304,193 | 9,322,383,408 | IssuesEvent | 2019-03-27 08:00:55 | rucio/rucio | https://api.github.com/repos/rucio/rucio | opened | traceback error in conveyor-submitter and judge* | Priority: High bug | Motivation

----------

When no scheme is specified in the configuration, the conveyor fails to find a common scheme for the source and destination files, here `gsiftp`:

```

2019-03-27 08:51:47,386 3214 CRITICAL Exception happened when trying to get transfer for request 5d9c6d99ebf04465ad3bef4d8078219c: Traceback (most recent call last):

File "/usr/local/lib/python2.7/dist-packages/rucio/core/transfer.py", line 624, in get_transfer_requests_and_source_replicas

'schemes': __add_compatible_schemes(schemes=[matching_scheme[0]], allowed_schemes=current_schemes),

File "/usr/local/lib/python2.7/dist-packages/rucio/core/transfer.py", line 923, in __add_compatible_schemes

if scheme in allowed_schemes:

TypeError: argument of type 'NoneType' is not iterable

```

The respective rules stay in the STUCK state when asked for reevaluation:

```

2019-03-27 08:49:23,677 3213 INFO rule_repairer[0/0]: Repairing rule 32d6775ead104b2c8be50cc946f01782

2019-03-27 08:49:23,749 3213 INFO Rule 32d6775ead104b2c8be50cc946f01782 [0/0/1] state=STUCK

```

| 1.0 | traceback error in conveyor-submitter and judge* - Motivation

----------

When no scheme is specified in the configuration, the conveyor fails to find a common scheme for the source and destination files, here `gsiftp`:

```

2019-03-27 08:51:47,386 3214 CRITICAL Exception happened when trying to get transfer for request 5d9c6d99ebf04465ad3bef4d8078219c: Traceback (most recent call last):

File "/usr/local/lib/python2.7/dist-packages/rucio/core/transfer.py", line 624, in get_transfer_requests_and_source_replicas

'schemes': __add_compatible_schemes(schemes=[matching_scheme[0]], allowed_schemes=current_schemes),

File "/usr/local/lib/python2.7/dist-packages/rucio/core/transfer.py", line 923, in __add_compatible_schemes

if scheme in allowed_schemes:

TypeError: argument of type 'NoneType' is not iterable

```

The respective rules stay in the STUCK state when asked for reevaluation:

```

2019-03-27 08:49:23,677 3213 INFO rule_repairer[0/0]: Repairing rule 32d6775ead104b2c8be50cc946f01782

2019-03-27 08:49:23,749 3213 INFO Rule 32d6775ead104b2c8be50cc946f01782 [0/0/1] state=STUCK

```

| priority | traceback error in conveyor submitter and judge motivation when no scheme is specified in the configuration the conveyor fails to find a common scheme for the source and destination files here gsiftp critical exception happened when trying to get transfer for request traceback most recent call last file usr local lib dist packages rucio core transfer py line in get transfer requests and source replicas schemes add compatible schemes schemes allowed schemes current schemes file usr local lib dist packages rucio core transfer py line in add compatible schemes if scheme in allowed schemes typeerror argument of type nonetype is not iterable the respective rules stay in the stuck state when asked for reevaluation info rule repairer repairing rule info rule state stuck | 1 |

173,031 | 6,519,215,041 | IssuesEvent | 2017-08-28 11:47:49 | CanberraOceanRacingClub/namadgi3 | https://api.github.com/repos/CanberraOceanRacingClub/namadgi3 | opened | Bar crossing SOP | priority 1: High | Many members will be unfamiliar with crossing river bars in a large vessel. The Clyde River Bar presents a special problem for CORC member. | 1.0 | Bar crossing SOP - Many members will be unfamiliar with crossing river bars in a large vessel. The Clyde River Bar presents a special problem for CORC member. | priority | bar crossing sop many members will be unfamiliar with crossing river bars in a large vessel the clyde river bar presents a special problem for corc member | 1 |

637,571 | 20,672,128,676 | IssuesEvent | 2022-03-10 04:11:41 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Cannot subscribe to webhook APIs in APIM configured with Postgres | Type/Bug Severity/Blocker Priority/Highest WUM Affected/4.0.0 | ### Description:

Cannot subscribe to webhook API. the following error is thrown. The reason for this error is timestamp is set to null in WebhooksDAO.addSubscription() in https://github.com/wso2/carbon-apimgt/blob/master/components/apimgt/org.wso2.carbon.apimgt.impl/src/main/java/org/wso2/carbon/apimgt/impl/dao/WebhooksDAO.java#L182

**SecretValidationTestCase** and **WebSubAPITestCase** test cases are failing due to this

[2021-10-13 12:08:29,456] ERROR - NotifyApiServiceImpl Error while processing notification

org.wso2.carbon.apimgt.api.APIManagementException: Error while adding subscriptions request for callbackhttp://www.google.com for the API 35e130c1-d5a4-47b5-9e7d-8909e4fdebbe

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription_aroundBody10(WebhooksDAO.java:187) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription(WebhooksDAO.java:163) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription_aroundBody2(WebhooksDAO.java:87) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription(WebhooksDAO.java:75) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

at org.wso2.carbon.apimgt.notification.WebhooksSubscriptionEventHandler.handleEvent(WebhooksSubscriptionEventHandler.java:51) ~[org.wso2.carbon.apimgt.notification_9.0.174.jar:?]

at org.wso2.carbon.apimgt.notification.NotificationEventService.processEvent(NotificationEventService.java:44) ~[org.wso2.carbon.apimgt.notification_9.0.174.jar:?]

at org.wso2.carbon.apimgt.internal.service.impl.NotifyApiServiceImpl.notifyPost(NotifyApiServiceImpl.java:29) [classes/:?]

at org.wso2.carbon.apimgt.internal.service.NotifyApi.notifyPost(NotifyApi.java:47) [classes/:?]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[?:1.8.0_161]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[?:1.8.0_161]

:

:

at org.apache.tomcat.util.threads.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:659) [tomcat_9.0.52.wso2v2.jar:?]

at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:61) [tomcat_9.0.52.wso2v2.jar:?]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_161]

Caused by: org.postgresql.util.PSQLException: ERROR: column "delivered_at" is of type timestamp without time zone but expression is of type character varying

Hint: You will need to rewrite or cast the expression.

Position: 228

at org.postgresql.core.v3.QueryExecutorImpl.receiveErrorResponse(QueryExecutorImpl.java:2284) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.core.v3.QueryExecutorImpl.processResults(QueryExecutorImpl.java:2003) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.core.v3.QueryExecutorImpl.execute(QueryExecutorImpl.java:200) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.jdbc.PgStatement.execute(PgStatement.java:424) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.jdbc.PgPreparedStatement.executeWithFlags(PgPreparedStatement.java:161) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.jdbc.PgPreparedStatement.executeUpdate(PgPreparedStatement.java:133) ~[postgresql-9.4.1208.jar:9.4.1208]

at sun.reflect.GeneratedMethodAccessor143.invoke(Unknown Source) ~[?:?]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_161]

at java.lang.reflect.Method.invoke(Method.java:498) ~[?:1.8.0_161]

at org.apache.tomcat.jdbc.pool.StatementFacade$StatementProxy.invoke(StatementFacade.java:114) ~[jdbc-pool_9.0.35.wso2v1.jar:?]

at com.sun.proxy.$Proxy47.executeUpdate(Unknown Source) ~[?:?]

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription_aroundBody10(WebhooksDAO.java:185) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

... 59 more

### Steps to reproduce:

1. Setup webook and subscribe as mentioned in https://apim.docs.wso2.com/en/latest/tutorials/streaming-api/create-and-publish-websub-api/

### Affected Product Version:

APIM 4.0.0

### Environment details (with versions):

DB: Postgres 10.x

| 1.0 | Cannot subscribe to webhook APIs in APIM configured with Postgres - ### Description:

Cannot subscribe to webhook API. the following error is thrown. The reason for this error is timestamp is set to null in WebhooksDAO.addSubscription() in https://github.com/wso2/carbon-apimgt/blob/master/components/apimgt/org.wso2.carbon.apimgt.impl/src/main/java/org/wso2/carbon/apimgt/impl/dao/WebhooksDAO.java#L182

**SecretValidationTestCase** and **WebSubAPITestCase** test cases are failing due to this

[2021-10-13 12:08:29,456] ERROR - NotifyApiServiceImpl Error while processing notification

org.wso2.carbon.apimgt.api.APIManagementException: Error while adding subscriptions request for callbackhttp://www.google.com for the API 35e130c1-d5a4-47b5-9e7d-8909e4fdebbe

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription_aroundBody10(WebhooksDAO.java:187) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription(WebhooksDAO.java:163) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription_aroundBody2(WebhooksDAO.java:87) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription(WebhooksDAO.java:75) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

at org.wso2.carbon.apimgt.notification.WebhooksSubscriptionEventHandler.handleEvent(WebhooksSubscriptionEventHandler.java:51) ~[org.wso2.carbon.apimgt.notification_9.0.174.jar:?]

at org.wso2.carbon.apimgt.notification.NotificationEventService.processEvent(NotificationEventService.java:44) ~[org.wso2.carbon.apimgt.notification_9.0.174.jar:?]

at org.wso2.carbon.apimgt.internal.service.impl.NotifyApiServiceImpl.notifyPost(NotifyApiServiceImpl.java:29) [classes/:?]

at org.wso2.carbon.apimgt.internal.service.NotifyApi.notifyPost(NotifyApi.java:47) [classes/:?]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[?:1.8.0_161]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[?:1.8.0_161]

:

:

at org.apache.tomcat.util.threads.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:659) [tomcat_9.0.52.wso2v2.jar:?]

at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:61) [tomcat_9.0.52.wso2v2.jar:?]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_161]

Caused by: org.postgresql.util.PSQLException: ERROR: column "delivered_at" is of type timestamp without time zone but expression is of type character varying

Hint: You will need to rewrite or cast the expression.

Position: 228

at org.postgresql.core.v3.QueryExecutorImpl.receiveErrorResponse(QueryExecutorImpl.java:2284) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.core.v3.QueryExecutorImpl.processResults(QueryExecutorImpl.java:2003) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.core.v3.QueryExecutorImpl.execute(QueryExecutorImpl.java:200) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.jdbc.PgStatement.execute(PgStatement.java:424) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.jdbc.PgPreparedStatement.executeWithFlags(PgPreparedStatement.java:161) ~[postgresql-9.4.1208.jar:9.4.1208]

at org.postgresql.jdbc.PgPreparedStatement.executeUpdate(PgPreparedStatement.java:133) ~[postgresql-9.4.1208.jar:9.4.1208]

at sun.reflect.GeneratedMethodAccessor143.invoke(Unknown Source) ~[?:?]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_161]

at java.lang.reflect.Method.invoke(Method.java:498) ~[?:1.8.0_161]

at org.apache.tomcat.jdbc.pool.StatementFacade$StatementProxy.invoke(StatementFacade.java:114) ~[jdbc-pool_9.0.35.wso2v1.jar:?]

at com.sun.proxy.$Proxy47.executeUpdate(Unknown Source) ~[?:?]

at org.wso2.carbon.apimgt.impl.dao.WebhooksDAO.addSubscription_aroundBody10(WebhooksDAO.java:185) ~[org.wso2.carbon.apimgt.impl_9.0.174.51.jar:?]

... 59 more

### Steps to reproduce:

1. Setup webook and subscribe as mentioned in https://apim.docs.wso2.com/en/latest/tutorials/streaming-api/create-and-publish-websub-api/

### Affected Product Version:

APIM 4.0.0

### Environment details (with versions):

DB: Postgres 10.x

| priority | cannot subscribe to webhook apis in apim configured with postgres description cannot subscribe to webhook api the following error is thrown the reason for this error is timestamp is set to null in webhooksdao addsubscription in secretvalidationtestcase and websubapitestcase test cases are failing due to this error notifyapiserviceimpl error while processing notification org carbon apimgt api apimanagementexception error while adding subscriptions request for callback for the api at org carbon apimgt impl dao webhooksdao addsubscription webhooksdao java at org carbon apimgt impl dao webhooksdao addsubscription webhooksdao java at org carbon apimgt impl dao webhooksdao addsubscription webhooksdao java at org carbon apimgt impl dao webhooksdao addsubscription webhooksdao java at org carbon apimgt notification webhookssubscriptioneventhandler handleevent webhookssubscriptioneventhandler java at org carbon apimgt notification notificationeventservice processevent notificationeventservice java at org carbon apimgt internal service impl notifyapiserviceimpl notifypost notifyapiserviceimpl java at org carbon apimgt internal service notifyapi notifypost notifyapi java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at org apache tomcat util threads threadpoolexecutor worker run threadpoolexecutor java at org apache tomcat util threads taskthread wrappingrunnable run taskthread java at java lang thread run thread java caused by org postgresql util psqlexception error column delivered at is of type timestamp without time zone but expression is of type character varying hint you will need to rewrite or cast the expression position at org postgresql core queryexecutorimpl receiveerrorresponse queryexecutorimpl java at org postgresql core queryexecutorimpl processresults queryexecutorimpl java at org postgresql core queryexecutorimpl execute queryexecutorimpl java at org postgresql jdbc pgstatement execute pgstatement java at org postgresql jdbc pgpreparedstatement executewithflags pgpreparedstatement java at org postgresql jdbc pgpreparedstatement executeupdate pgpreparedstatement java at sun reflect invoke unknown source at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org apache tomcat jdbc pool statementfacade statementproxy invoke statementfacade java at com sun proxy executeupdate unknown source at org carbon apimgt impl dao webhooksdao addsubscription webhooksdao java more steps to reproduce setup webook and subscribe as mentioned in affected product version apim environment details with versions db postgres x | 1 |

578,829 | 17,155,783,599 | IssuesEvent | 2021-07-14 06:36:35 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | The User_Code in OAuth Device Flow cannot be configured to have a user defined keyset | Component/OAuth Priority/High Severity/Major improvement | **Description:**

The User_Code in device flow is generated from a static key set [1] and hence, cannot be configured by the admin.

[1] - https://github.com/wso2-extensions/identity-inbound-auth-oauth/blob/b211bbcddaa6006ae0b40f89c363ea4480a009e9/components/org.wso2.carbon.identity.oauth/src/main/java/org/wso2/carbon/identity/oauth2/device/constants/Constants.java#L59

| 1.0 | The User_Code in OAuth Device Flow cannot be configured to have a user defined keyset - **Description:**

The User_Code in device flow is generated from a static key set [1] and hence, cannot be configured by the admin.

[1] - https://github.com/wso2-extensions/identity-inbound-auth-oauth/blob/b211bbcddaa6006ae0b40f89c363ea4480a009e9/components/org.wso2.carbon.identity.oauth/src/main/java/org/wso2/carbon/identity/oauth2/device/constants/Constants.java#L59

| priority | the user code in oauth device flow cannot be configured to have a user defined keyset description the user code in device flow is generated from a static key set and hence cannot be configured by the admin | 1 |

603,993 | 18,675,296,000 | IssuesEvent | 2021-10-31 13:05:25 | FantasticoFox/DataAccounting | https://api.github.com/repos/FantasticoFox/DataAccounting | opened | [Feature] Implement Chunking to keep remote-verification feasable | high priority ux | It takes time to verify revisions, it takes longer time with Signatures as it is an additional compute step and it takes longest with remote lockups on-chain to check if a time-stamp is correct.

If a page has more then 50 revisions this can take 30 s+ on a remote server (especially if the server is on the other side of the world and you have ~250 ms latency). To avoid unmanageable chain-verification issues we can 'chunk' every 50 revisions (it's arbitrary but goes along with the blob of loading 50 revisions in MW).

Implementation specification:

* There is a manual way to do chunking by just 'moving a page and renaming it with the last page_verification hash in the title as an identifier' - this allows when linking the old page to have the state of the page as well. If 'correct linking' is implemented this becomes obsolete as the pages will link with page_verification hashes.

* An automated implemented would detect how many verified revisions exist and move the page as a consequence of succeeding 50 pages with auto-rename and should be turned into 'read only' [Action:Protect] to restrict the page from any further manipulation. The original page should have (in the content section) a new footer added called 'Data Accounting Chunking' with a drop down menu, implemented like in the signatures.

| 1.0 | [Feature] Implement Chunking to keep remote-verification feasable - It takes time to verify revisions, it takes longer time with Signatures as it is an additional compute step and it takes longest with remote lockups on-chain to check if a time-stamp is correct.

If a page has more then 50 revisions this can take 30 s+ on a remote server (especially if the server is on the other side of the world and you have ~250 ms latency). To avoid unmanageable chain-verification issues we can 'chunk' every 50 revisions (it's arbitrary but goes along with the blob of loading 50 revisions in MW).

Implementation specification:

* There is a manual way to do chunking by just 'moving a page and renaming it with the last page_verification hash in the title as an identifier' - this allows when linking the old page to have the state of the page as well. If 'correct linking' is implemented this becomes obsolete as the pages will link with page_verification hashes.

* An automated implemented would detect how many verified revisions exist and move the page as a consequence of succeeding 50 pages with auto-rename and should be turned into 'read only' [Action:Protect] to restrict the page from any further manipulation. The original page should have (in the content section) a new footer added called 'Data Accounting Chunking' with a drop down menu, implemented like in the signatures.

| priority | implement chunking to keep remote verification feasable it takes time to verify revisions it takes longer time with signatures as it is an additional compute step and it takes longest with remote lockups on chain to check if a time stamp is correct if a page has more then revisions this can take s on a remote server especially if the server is on the other side of the world and you have ms latency to avoid unmanageable chain verification issues we can chunk every revisions it s arbitrary but goes along with the blob of loading revisions in mw implementation specification there is a manual way to do chunking by just moving a page and renaming it with the last page verification hash in the title as an identifier this allows when linking the old page to have the state of the page as well if correct linking is implemented this becomes obsolete as the pages will link with page verification hashes an automated implemented would detect how many verified revisions exist and move the page as a consequence of succeeding pages with auto rename and should be turned into read only to restrict the page from any further manipulation the original page should have in the content section a new footer added called data accounting chunking with a drop down menu implemented like in the signatures | 1 |

348,709 | 10,451,736,885 | IssuesEvent | 2019-09-19 13:26:43 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Custom post description migrates to image description | Urgent [Priority: HIGH] bug | Excerpts are showing in captions

To re-occur, import WordPress.2019-08-28.xml from the attachments,

and see the post "Стратегии на E3 2019"

and then view it's AMP version. You will find this text added below the image as caption "Обзор всех новостей стратегий с выставки" - THis text is actually the excerpt of the post.

[Downloads.zip](https://github.com/ahmedkaludi/accelerated-mobile-pages/files/3562443/Downloads.zip)

REF: https://wordpress.org/support/topic/custom-post-description-migrates-to-image-description/#post-11875201 | 1.0 | Custom post description migrates to image description - Excerpts are showing in captions

To re-occur, import WordPress.2019-08-28.xml from the attachments,

and see the post "Стратегии на E3 2019"

and then view it's AMP version. You will find this text added below the image as caption "Обзор всех новостей стратегий с выставки" - THis text is actually the excerpt of the post.

[Downloads.zip](https://github.com/ahmedkaludi/accelerated-mobile-pages/files/3562443/Downloads.zip)

REF: https://wordpress.org/support/topic/custom-post-description-migrates-to-image-description/#post-11875201 | priority | custom post description migrates to image description excerpts are showing in captions to re occur import wordpress xml from the attachments and see the post стратегии на and then view it s amp version you will find this text added below the image as caption обзор всех новостей стратегий с выставки this text is actually the excerpt of the post ref | 1 |

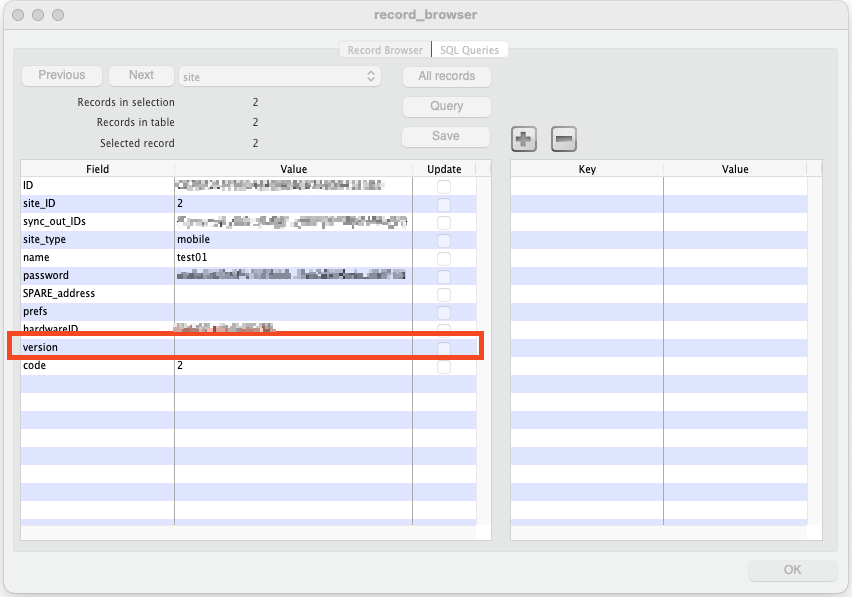

742,237 | 25,844,469,958 | IssuesEvent | 2022-12-13 04:45:29 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | closed | Mobile version is blank in mSupply on the fresh install | Priority: high Bug: production Effort: small Solution: Proposed | ## Describe the bug

There are many mobile sites that don't have version information on the desktop.

In the desktop, the mobile version is populated from message.toVersionString with type = 'mobile_upgrade'

As discussed with @sworup when the mobile is newly installed, no message seems to send to the desktop. Only when the mobile is upgraded, the message is sent.

### To reproduce

Steps to reproduce the behavior:

1. Do a fresh install of mSupply mobile and enter the sync username password to sync data

2. At this point the mobile app should have a site set.

3. In mSupply desktop go to site table to find the site of the current mobile app

4. See current mobile app version is empty

### Expected behavior

Even during a fresh install, the desktop site table record for the mobile app's site should have the `version` field populated with the current version of the mobile app.

### Proposed Solution

We have to do two things:

- During fresh install, the mobile version should be sent through message to desktop to update `site.version` properly.

- For fresh install too, add a ` createRecord(database, 'UpgradeMessage', fromVersion, toVersion);` call where fromVersion would be an empty string and toVersion would be the current version. This will tell the dashboard user on which day a fresh installation was done, along with showing the current version.

At this part of the code:

https://github.com/openmsupply/mobile/blob/9ecf8683e74d9cad797191f44c500ca3cd6f5fe6/src/dataMigration.js#L35-L53

Make sure the line below is not triggering a return.

https://github.com/openmsupply/mobile/blob/9ecf8683e74d9cad797191f44c500ca3cd6f5fe6/src/dataMigration.js#L39

If it is triggering a return, then the update of the Setting would not trigger, hence not triggering the message that would update `site.version` on desktop.

https://github.com/openmsupply/mobile/blob/9ecf8683e74d9cad797191f44c500ca3cd6f5fe6/src/dataMigration.js#L54-L56

This is where I would start.

### Version and device info

- App version: 8.6.1

- Tablet model:

- OS version:

### Additional context

Add any other context about the problem here.

| 1.0 | Mobile version is blank in mSupply on the fresh install - ## Describe the bug

There are many mobile sites that don't have version information on the desktop.

In the desktop, the mobile version is populated from message.toVersionString with type = 'mobile_upgrade'

As discussed with @sworup when the mobile is newly installed, no message seems to send to the desktop. Only when the mobile is upgraded, the message is sent.

### To reproduce

Steps to reproduce the behavior:

1. Do a fresh install of mSupply mobile and enter the sync username password to sync data

2. At this point the mobile app should have a site set.

3. In mSupply desktop go to site table to find the site of the current mobile app

4. See current mobile app version is empty

### Expected behavior

Even during a fresh install, the desktop site table record for the mobile app's site should have the `version` field populated with the current version of the mobile app.

### Proposed Solution

We have to do two things:

- During fresh install, the mobile version should be sent through message to desktop to update `site.version` properly.

- For fresh install too, add a ` createRecord(database, 'UpgradeMessage', fromVersion, toVersion);` call where fromVersion would be an empty string and toVersion would be the current version. This will tell the dashboard user on which day a fresh installation was done, along with showing the current version.

At this part of the code:

https://github.com/openmsupply/mobile/blob/9ecf8683e74d9cad797191f44c500ca3cd6f5fe6/src/dataMigration.js#L35-L53

Make sure the line below is not triggering a return.

https://github.com/openmsupply/mobile/blob/9ecf8683e74d9cad797191f44c500ca3cd6f5fe6/src/dataMigration.js#L39

If it is triggering a return, then the update of the Setting would not trigger, hence not triggering the message that would update `site.version` on desktop.

https://github.com/openmsupply/mobile/blob/9ecf8683e74d9cad797191f44c500ca3cd6f5fe6/src/dataMigration.js#L54-L56

This is where I would start.

### Version and device info

- App version: 8.6.1

- Tablet model:

- OS version:

### Additional context

Add any other context about the problem here.

| priority | mobile version is blank in msupply on the fresh install describe the bug there are many mobile sites that don t have version information on the desktop in the desktop the mobile version is populated from message toversionstring with type mobile upgrade as discussed with sworup when the mobile is newly installed no message seems to send to the desktop only when the mobile is upgraded the message is sent to reproduce steps to reproduce the behavior do a fresh install of msupply mobile and enter the sync username password to sync data at this point the mobile app should have a site set in msupply desktop go to site table to find the site of the current mobile app see current mobile app version is empty expected behavior even during a fresh install the desktop site table record for the mobile app s site should have the version field populated with the current version of the mobile app proposed solution we have to do two things during fresh install the mobile version should be sent through message to desktop to update site version properly for fresh install too add a createrecord database upgrademessage fromversion toversion call where fromversion would be an empty string and toversion would be the current version this will tell the dashboard user on which day a fresh installation was done along with showing the current version at this part of the code make sure the line below is not triggering a return if it is triggering a return then the update of the setting would not trigger hence not triggering the message that would update site version on desktop this is where i would start version and device info app version tablet model os version additional context add any other context about the problem here | 1 |

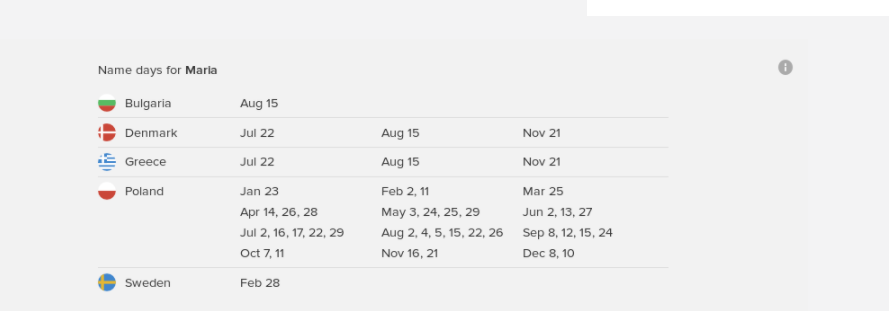

178,951 | 6,620,332,716 | IssuesEvent | 2017-09-21 15:14:14 | duckduckgo/zeroclickinfo-goodies | https://api.github.com/repos/duckduckgo/zeroclickinfo-goodies | closed | Name days: Convert to structured answer and move code to template | Category: Highest Impact Tasks Improvement Priority: High Topic: Other | ### Description

<!-- Describe the bug or suggestion in detail -->

The name days IA needs to be updated. It's got a lot of legacy code, injecting html into the duckbar which shouldn't be happening. For some reason the styles are messed up now (below is what it should look like)

Can you please update this @kirkins?

## Steps to recreate

<!-- Describe the steps, or provide a link to an example search -->

## People to notify

<!-- Please @mention any relevant people/organizations here:-->

<!-- LANGUAGE LEADERS ONLY: REMOVE THIS LINE

## Get Started

- [ ] 1) Claim this issue by commenting below

- [ ] 2) Review our [Contributing Guide](https://github.com/duckduckgo/zeroclickinfo-goodies/blob/master/CONTRIBUTING.md)

- [ ] 3) [Set up your development environment](https://docs.duckduckhack.com/welcome/setup-dev-environment.html), and fork this repository

- [ ] 4) Create a Pull Request

## Resources

- Join [DuckDuckHack Slack](https://quackslack.herokuapp.com/) to ask questions

- Join the [DuckDuckHack Forum](https://forum.duckduckhack.com/) to discuss project planning and Instant Answer metrics

- Read the [DuckDuckHack Documentation](https://docs.duckduckhack.com/) for technical help

<!-- DO NOT REMOVE -->

---

<!-- The Instant Answer ID can be found by clicking the `?` icon beside the Instant Answer result on DuckDuckGo.com -->

Instant Answer Page: https://duck.co/ia/view/name_days

<!-- FILL THIS IN: ^^^^ -->

| 1.0 | Name days: Convert to structured answer and move code to template - ### Description

<!-- Describe the bug or suggestion in detail -->

The name days IA needs to be updated. It's got a lot of legacy code, injecting html into the duckbar which shouldn't be happening. For some reason the styles are messed up now (below is what it should look like)

Can you please update this @kirkins?

## Steps to recreate

<!-- Describe the steps, or provide a link to an example search -->

## People to notify

<!-- Please @mention any relevant people/organizations here:-->

<!-- LANGUAGE LEADERS ONLY: REMOVE THIS LINE

## Get Started

- [ ] 1) Claim this issue by commenting below

- [ ] 2) Review our [Contributing Guide](https://github.com/duckduckgo/zeroclickinfo-goodies/blob/master/CONTRIBUTING.md)

- [ ] 3) [Set up your development environment](https://docs.duckduckhack.com/welcome/setup-dev-environment.html), and fork this repository

- [ ] 4) Create a Pull Request

## Resources

- Join [DuckDuckHack Slack](https://quackslack.herokuapp.com/) to ask questions

- Join the [DuckDuckHack Forum](https://forum.duckduckhack.com/) to discuss project planning and Instant Answer metrics

- Read the [DuckDuckHack Documentation](https://docs.duckduckhack.com/) for technical help

<!-- DO NOT REMOVE -->

---

<!-- The Instant Answer ID can be found by clicking the `?` icon beside the Instant Answer result on DuckDuckGo.com -->

Instant Answer Page: https://duck.co/ia/view/name_days

<!-- FILL THIS IN: ^^^^ -->

| priority | name days convert to structured answer and move code to template description the name days ia needs to be updated it s got a lot of legacy code injecting html into the duckbar which shouldn t be happening for some reason the styles are messed up now below is what it should look like can you please update this kirkins steps to recreate people to notify language leaders only remove this line get started claim this issue by commenting below review our and fork this repository create a pull request resources join to ask questions join the to discuss project planning and instant answer metrics read the for technical help instant answer page | 1 |

402,715 | 11,813,428,877 | IssuesEvent | 2020-03-19 22:23:09 | sqlalchemy/alembic | https://api.github.com/repos/sqlalchemy/alembic | closed | more regressions with type comaprisons | autogenerate - detection bug high priority | this comaprison should return no change, because "Unicode" is the generic type:

```

diff --git a/tests/test_autogen_diffs.py b/tests/test_autogen_diffs.py

index e1e5c8d..7fa4465 100644

--- a/tests/test_autogen_diffs.py

+++ b/tests/test_autogen_diffs.py

@@ -795,6 +795,8 @@ class CompareMetadataToInspectorTest(TestBase):

(Unicode(32), VARCHAR(32), False, config.requirements.unicode_string),

(VARCHAR(6), VARCHAR(12), True),

(VARCHAR(6), String(12), True),

+ (mysql.VARCHAR(200, charset='utf8'), Unicode(200), False, ),

+ (String(255, collation='utf8_bin'), String(255), False)

)

def test_string_comparisons(self, cola, colb, expect_changes):

is_(self._compare_columns(cola, colb), expect_changes)

``` | 1.0 | more regressions with type comaprisons - this comaprison should return no change, because "Unicode" is the generic type:

```

diff --git a/tests/test_autogen_diffs.py b/tests/test_autogen_diffs.py

index e1e5c8d..7fa4465 100644

--- a/tests/test_autogen_diffs.py

+++ b/tests/test_autogen_diffs.py

@@ -795,6 +795,8 @@ class CompareMetadataToInspectorTest(TestBase):

(Unicode(32), VARCHAR(32), False, config.requirements.unicode_string),

(VARCHAR(6), VARCHAR(12), True),

(VARCHAR(6), String(12), True),

+ (mysql.VARCHAR(200, charset='utf8'), Unicode(200), False, ),

+ (String(255, collation='utf8_bin'), String(255), False)

)

def test_string_comparisons(self, cola, colb, expect_changes):

is_(self._compare_columns(cola, colb), expect_changes)

``` | priority | more regressions with type comaprisons this comaprison should return no change because unicode is the generic type diff git a tests test autogen diffs py b tests test autogen diffs py index a tests test autogen diffs py b tests test autogen diffs py class comparemetadatatoinspectortest testbase unicode varchar false config requirements unicode string varchar varchar true varchar string true mysql varchar charset unicode false string collation bin string false def test string comparisons self cola colb expect changes is self compare columns cola colb expect changes | 1 |

379,221 | 11,217,732,466 | IssuesEvent | 2020-01-07 09:54:22 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Godot 3.1 RC2 create_trimesh_collision() and create_convex_collision() lead to instant crash on Android | bug high priority platform:android topic:core | <!-- Please search existing issues for potential duplicates before filing yours:

https://github.com/godotengine/godot/issues?q=is%3Aissue

-->

**Godot version:**

3.1 RC2

**OS/device including version:**

Oukitel K6000 Pro, Android 6.0

Samsung Galaxy J5, Android 7

**Issue description:**

The methods `create_convex_collision()` and `create_trimesh_collision()` of `MeshInstance` (https://docs.godotengine.org/en/latest/classes/class_meshinstance.html lead to an instant crash on Android.

The last version I tested was 3.1 beta 7, there it worked, so it seems it was introduced in some of the last betas.

I think this is the relevant error description from logcat:

>

> 03-12 17:30:47.191 32301 32317 E godot : **ERROR**: OpenGL ES 2.0 does not allow retrieving mesh array data

> 03-12 17:30:47.191 32301 32317 E godot : At: drivers/gles2/rasterizer_storage_gles2.cpp:2541:mesh_surface_get_array() - OpenGL ES 2.0 does not allow retrieving mesh array data

> 03-12 17:30:47.191 32301 32317 E godot : **ERROR**: Condition ' vertex_data.size() == 0 ' is true. returned: Array()

> 03-12 17:30:47.191 32301 32317 E godot : At: servers/visual_server.cpp:1606:mesh_surface_get_arrays() - Condition ' vertex_data.size() == 0 ' is true. returned: Array()

> 03-12 17:30:47.191 32301 32317 E godot : **ERROR**: FATAL: Index p_index=0 out of size (((Vector<T> *)(this))->_cowdata.size()=0)

> 03-12 17:30:47.191 32301 32317 E godot : At: ./core/vector.h:49:operator[]() - FATAL: Index p_index=0 out of size (((Vector<T> *)(this))->_cowdata.size()=0)

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #00 pc 000000000164c5d8 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #01 pc 00000000010946ac /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #02 pc 0000000000c1270c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #03 pc 0000000000c12854 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #04 pc 000000000034c1e4 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #05 pc 00000000016ce8b8 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #06 pc 0000000001781c80 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #07 pc 000000000028d6e0 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #08 pc 000000000024a738 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #09 pc 00000000008b58fc /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #10 pc 0000000000bdd92c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #11 pc 0000000000c15654 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #12 pc 00000000016cb16c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #13 pc 00000000008b72dc /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #14 pc 00000000008b726c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #15 pc 00000000008b726c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #16 pc 00000000008bc6a0 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #17 pc 00000000008e97b8 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #18 pc 00000000001a5a84 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so (Java_org_godotengine_godot_GodotLib_step+80)

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #19 pc 000000000027d0a8 /data/app/com.example.crashTest-1/oat/arm64/base.odex (offset 0x147000) (void org.godotengine.godot.GodotLib.step()+124)

> 03-12 17:30:47.279 32343 32343 I AEE/AED : #20 pc 0000000000281f80 /data/app/com.example.crashTest-1/oat/arm64/base.odex (offset 0x147000) (void org.godotengine.godot.GodotView$Renderer.onDrawFrame(javax.microedition.khronos.opengles.GL10)+84)

> 03-12 17:30:47.594 942 32344 W ActivityManager: Force finishing activity com.example.crashTest/org.godotengine.godot.Godot

> 03-12 17:30:47.649 942 959 V WindowManager: Changing focus from Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot} to Window{3be8bb7 u0 Application Error: com.example.crashTest} Callers=com.android.server.wm.WindowManagerService.addWindow:2821 com.android.server.wm.Session.addToDisplay:171 android.view.ViewRootImpl.setView:647 android.view.WindowManagerGlobal.addView:319

> 03-12 17:30:47.649 942 963 I WindowManager: Focus moving from Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot} to Window{3be8bb7 u0 Application Error: com.example.crashTest}

> 03-12 17:30:47.703 942 963 I WindowManager: Losing delayed focus: Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot}

> 03-12 17:30:47.714 268 970 I BufferQueueProducer: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:268) disconnect(P): api 1

> 03-12 17:30:47.715 268 970 I BufferQueueConsumer: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:268) getReleasedBuffers: returning mask 0xffffffffffffffff

> 03-12 17:30:47.717 942 1815 I WindowState: WIN DEATH: Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot}

> 03-12 17:30:47.777 942 1678 V WindowManager: Removing focused app token:AppWindowToken{edbb8cd token=Token{df0be64 ActivityRecord{97c8ff7 u0 com.example.crashTest/org.godotengine.godot.Godot t148}}}

> 03-12 17:30:47.837 268 268 I BufferQueueConsumer: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:268) setDefaultBufferSize: width=1080 height=1920

> 03-12 17:30:47.838 942 1678 W WindowState: Failed to report 'resized' to the client of Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot}, removing this window.

> 03-12 17:30:47.841 268 384 D SurfaceFlinger: remove: com.example.crashTest/org.godotengine.godot.Godot

> 03-12 17:30:47.855 268 268 I BufferQueueConsumer: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:-1) disconnect(C)

> 03-12 17:30:47.857 268 268 I BufferQueue: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:-1) ~BufferQueueCore

>

**Steps to reproduce:**

- Create a new project

- Add a MeshInstance Object to the tree

- Execute `create_convex_collision()` or `create_trimesh_collision()`

**Minimal reproduction project:**

<!-- Recommended as it greatly speeds up debugging. Drag and drop a zip archive to upload it. -->

[AndroidCrash.zip](https://github.com/godotengine/godot/files/2957886/AndroidCrash.zip)

| 1.0 | Godot 3.1 RC2 create_trimesh_collision() and create_convex_collision() lead to instant crash on Android - <!-- Please search existing issues for potential duplicates before filing yours:

https://github.com/godotengine/godot/issues?q=is%3Aissue

-->

**Godot version:**

3.1 RC2

**OS/device including version:**

Oukitel K6000 Pro, Android 6.0

Samsung Galaxy J5, Android 7

**Issue description:**

The methods `create_convex_collision()` and `create_trimesh_collision()` of `MeshInstance` (https://docs.godotengine.org/en/latest/classes/class_meshinstance.html lead to an instant crash on Android.

The last version I tested was 3.1 beta 7, there it worked, so it seems it was introduced in some of the last betas.

I think this is the relevant error description from logcat:

>

> 03-12 17:30:47.191 32301 32317 E godot : **ERROR**: OpenGL ES 2.0 does not allow retrieving mesh array data

> 03-12 17:30:47.191 32301 32317 E godot : At: drivers/gles2/rasterizer_storage_gles2.cpp:2541:mesh_surface_get_array() - OpenGL ES 2.0 does not allow retrieving mesh array data

> 03-12 17:30:47.191 32301 32317 E godot : **ERROR**: Condition ' vertex_data.size() == 0 ' is true. returned: Array()

> 03-12 17:30:47.191 32301 32317 E godot : At: servers/visual_server.cpp:1606:mesh_surface_get_arrays() - Condition ' vertex_data.size() == 0 ' is true. returned: Array()

> 03-12 17:30:47.191 32301 32317 E godot : **ERROR**: FATAL: Index p_index=0 out of size (((Vector<T> *)(this))->_cowdata.size()=0)

> 03-12 17:30:47.191 32301 32317 E godot : At: ./core/vector.h:49:operator[]() - FATAL: Index p_index=0 out of size (((Vector<T> *)(this))->_cowdata.size()=0)

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #00 pc 000000000164c5d8 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #01 pc 00000000010946ac /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #02 pc 0000000000c1270c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #03 pc 0000000000c12854 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #04 pc 000000000034c1e4 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #05 pc 00000000016ce8b8 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.277 32343 32343 I AEE/AED : #06 pc 0000000001781c80 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #07 pc 000000000028d6e0 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #08 pc 000000000024a738 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #09 pc 00000000008b58fc /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #10 pc 0000000000bdd92c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #11 pc 0000000000c15654 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #12 pc 00000000016cb16c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #13 pc 00000000008b72dc /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #14 pc 00000000008b726c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #15 pc 00000000008b726c /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #16 pc 00000000008bc6a0 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #17 pc 00000000008e97b8 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #18 pc 00000000001a5a84 /data/app/com.example.crashTest-1/lib/arm64/libgodot_android.so (Java_org_godotengine_godot_GodotLib_step+80)

> 03-12 17:30:47.278 32343 32343 I AEE/AED : #19 pc 000000000027d0a8 /data/app/com.example.crashTest-1/oat/arm64/base.odex (offset 0x147000) (void org.godotengine.godot.GodotLib.step()+124)

> 03-12 17:30:47.279 32343 32343 I AEE/AED : #20 pc 0000000000281f80 /data/app/com.example.crashTest-1/oat/arm64/base.odex (offset 0x147000) (void org.godotengine.godot.GodotView$Renderer.onDrawFrame(javax.microedition.khronos.opengles.GL10)+84)

> 03-12 17:30:47.594 942 32344 W ActivityManager: Force finishing activity com.example.crashTest/org.godotengine.godot.Godot

> 03-12 17:30:47.649 942 959 V WindowManager: Changing focus from Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot} to Window{3be8bb7 u0 Application Error: com.example.crashTest} Callers=com.android.server.wm.WindowManagerService.addWindow:2821 com.android.server.wm.Session.addToDisplay:171 android.view.ViewRootImpl.setView:647 android.view.WindowManagerGlobal.addView:319

> 03-12 17:30:47.649 942 963 I WindowManager: Focus moving from Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot} to Window{3be8bb7 u0 Application Error: com.example.crashTest}

> 03-12 17:30:47.703 942 963 I WindowManager: Losing delayed focus: Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot}

> 03-12 17:30:47.714 268 970 I BufferQueueProducer: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:268) disconnect(P): api 1

> 03-12 17:30:47.715 268 970 I BufferQueueConsumer: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:268) getReleasedBuffers: returning mask 0xffffffffffffffff

> 03-12 17:30:47.717 942 1815 I WindowState: WIN DEATH: Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot}

> 03-12 17:30:47.777 942 1678 V WindowManager: Removing focused app token:AppWindowToken{edbb8cd token=Token{df0be64 ActivityRecord{97c8ff7 u0 com.example.crashTest/org.godotengine.godot.Godot t148}}}

> 03-12 17:30:47.837 268 268 I BufferQueueConsumer: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:268) setDefaultBufferSize: width=1080 height=1920

> 03-12 17:30:47.838 942 1678 W WindowState: Failed to report 'resized' to the client of Window{e32c239 u0 com.example.crashTest/org.godotengine.godot.Godot}, removing this window.

> 03-12 17:30:47.841 268 384 D SurfaceFlinger: remove: com.example.crashTest/org.godotengine.godot.Godot

> 03-12 17:30:47.855 268 268 I BufferQueueConsumer: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:-1) disconnect(C)

> 03-12 17:30:47.857 268 268 I BufferQueue: [com.example.crashTest/org.godotengine.godot.Godot](this:0x7f847fb800,id:2482,api:1,p:-1,c:-1) ~BufferQueueCore

>

**Steps to reproduce:**

- Create a new project

- Add a MeshInstance Object to the tree

- Execute `create_convex_collision()` or `create_trimesh_collision()`

**Minimal reproduction project:**

<!-- Recommended as it greatly speeds up debugging. Drag and drop a zip archive to upload it. -->

[AndroidCrash.zip](https://github.com/godotengine/godot/files/2957886/AndroidCrash.zip)

| priority | godot create trimesh collision and create convex collision lead to instant crash on android please search existing issues for potential duplicates before filing yours godot version os device including version oukitel pro android samsung galaxy android issue description the methods create convex collision and create trimesh collision of meshinstance lead to an instant crash on android the last version i tested was beta there it worked so it seems it was introduced in some of the last betas i think this is the relevant error description from logcat e godot error opengl es does not allow retrieving mesh array data e godot at drivers rasterizer storage cpp mesh surface get array opengl es does not allow retrieving mesh array data e godot error condition vertex data size is true returned array e godot at servers visual server cpp mesh surface get arrays condition vertex data size is true returned array e godot error fatal index p index out of size vector this cowdata size e godot at core vector h operator fatal index p index out of size vector this cowdata size i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so i aee aed pc data app com example crashtest lib libgodot android so java org godotengine godot godotlib step i aee aed pc data app com example crashtest oat base odex offset void org godotengine godot godotlib step i aee aed pc data app com example crashtest oat base odex offset void org godotengine godot godotview renderer ondrawframe javax microedition khronos opengles w activitymanager force finishing activity com example crashtest org godotengine godot godot v windowmanager changing focus from window com example crashtest org godotengine godot godot to window application error com example crashtest callers com android server wm windowmanagerservice addwindow com android server wm session addtodisplay android view viewrootimpl setview android view windowmanagerglobal addview i windowmanager focus moving from window com example crashtest org godotengine godot godot to window application error com example crashtest i windowmanager losing delayed focus window com example crashtest org godotengine godot godot i bufferqueueproducer this id api p c disconnect p api i bufferqueueconsumer this id api p c getreleasedbuffers returning mask i windowstate win death window com example crashtest org godotengine godot godot v windowmanager removing focused app token appwindowtoken token token activityrecord com example crashtest org godotengine godot godot i bufferqueueconsumer this id api p c setdefaultbuffersize width height w windowstate failed to report resized to the client of window com example crashtest org godotengine godot godot removing this window d surfaceflinger remove com example crashtest org godotengine godot godot i bufferqueueconsumer this id api p c disconnect c i bufferqueue this id api p c bufferqueuecore steps to reproduce create a new project add a meshinstance object to the tree execute create convex collision or create trimesh collision minimal reproduction project | 1 |

264,672 | 8,318,215,548 | IssuesEvent | 2018-09-25 14:10:14 | layersoflondon/application | https://api.github.com/repos/layersoflondon/application | opened | Map: Map popover doesn't feel very clickable | High priority | I've seen and heard about users not expecting to click though to the full record. | 1.0 | Map: Map popover doesn't feel very clickable - I've seen and heard about users not expecting to click though to the full record. | priority | map map popover doesn t feel very clickable i ve seen and heard about users not expecting to click though to the full record | 1 |

288,091 | 8,824,985,716 | IssuesEvent | 2019-01-02 19:07:46 | spacetelescope/specviz | https://api.github.com/repos/spacetelescope/specviz | closed | specviz crash: model fitting | bug priority-high | If I load a spectrum (e.g. example_stis.fits), select a region, click on add model and select Const1d specviz crashes with the following message

```

Traceback (most recent call last):

File "/Users/gderosa/Desktop/specviz/specviz/plugins/model_editor/model_editor.py", line 41, in <lambda>

action.triggered.connect(lambda x, m=v: self._add_fittable_model(m))

File "/Users/gderosa/Desktop/specviz/specviz/plugins/model_editor/model_editor.py", line 88, in _add_fittable_model

idx = self.model_tree_view.model().add_model(model())

AttributeError: 'NoneType' object has no attribute 'add_model'

Abort trap: 6

```

| 1.0 | specviz crash: model fitting - If I load a spectrum (e.g. example_stis.fits), select a region, click on add model and select Const1d specviz crashes with the following message

```

Traceback (most recent call last):

File "/Users/gderosa/Desktop/specviz/specviz/plugins/model_editor/model_editor.py", line 41, in <lambda>

action.triggered.connect(lambda x, m=v: self._add_fittable_model(m))

File "/Users/gderosa/Desktop/specviz/specviz/plugins/model_editor/model_editor.py", line 88, in _add_fittable_model

idx = self.model_tree_view.model().add_model(model())

AttributeError: 'NoneType' object has no attribute 'add_model'

Abort trap: 6

```

| priority | specviz crash model fitting if i load a spectrum e g example stis fits select a region click on add model and select specviz crashes with the following message traceback most recent call last file users gderosa desktop specviz specviz plugins model editor model editor py line in action triggered connect lambda x m v self add fittable model m file users gderosa desktop specviz specviz plugins model editor model editor py line in add fittable model idx self model tree view model add model model attributeerror nonetype object has no attribute add model abort trap | 1 |

445,166 | 12,827,115,688 | IssuesEvent | 2020-07-06 17:50:41 | unitymakesus/ednc-2020 | https://api.github.com/repos/unitymakesus/ednc-2020 | closed | Articles only showing up for authors on localhost?? | Priority: High Type: Bug | Local Test: https://ednc.test/author/mrash/

Localhost (`yarn start`): https://localhost:3000/author/mrash/

| 1.0 | Articles only showing up for authors on localhost?? - Local Test: https://ednc.test/author/mrash/

Localhost (`yarn start`): https://localhost:3000/author/mrash/

| priority | articles only showing up for authors on localhost local test localhost yarn start | 1 |

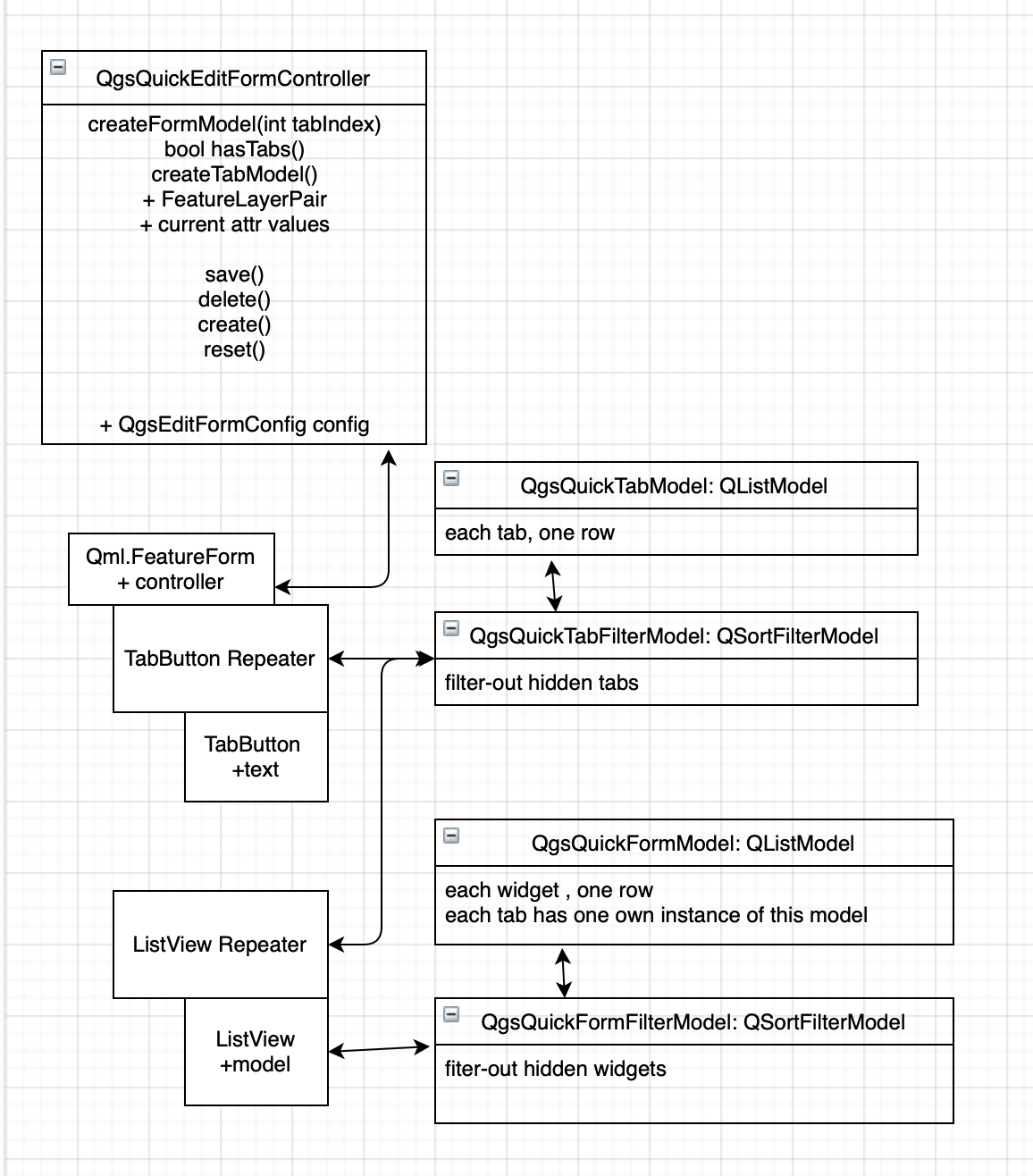

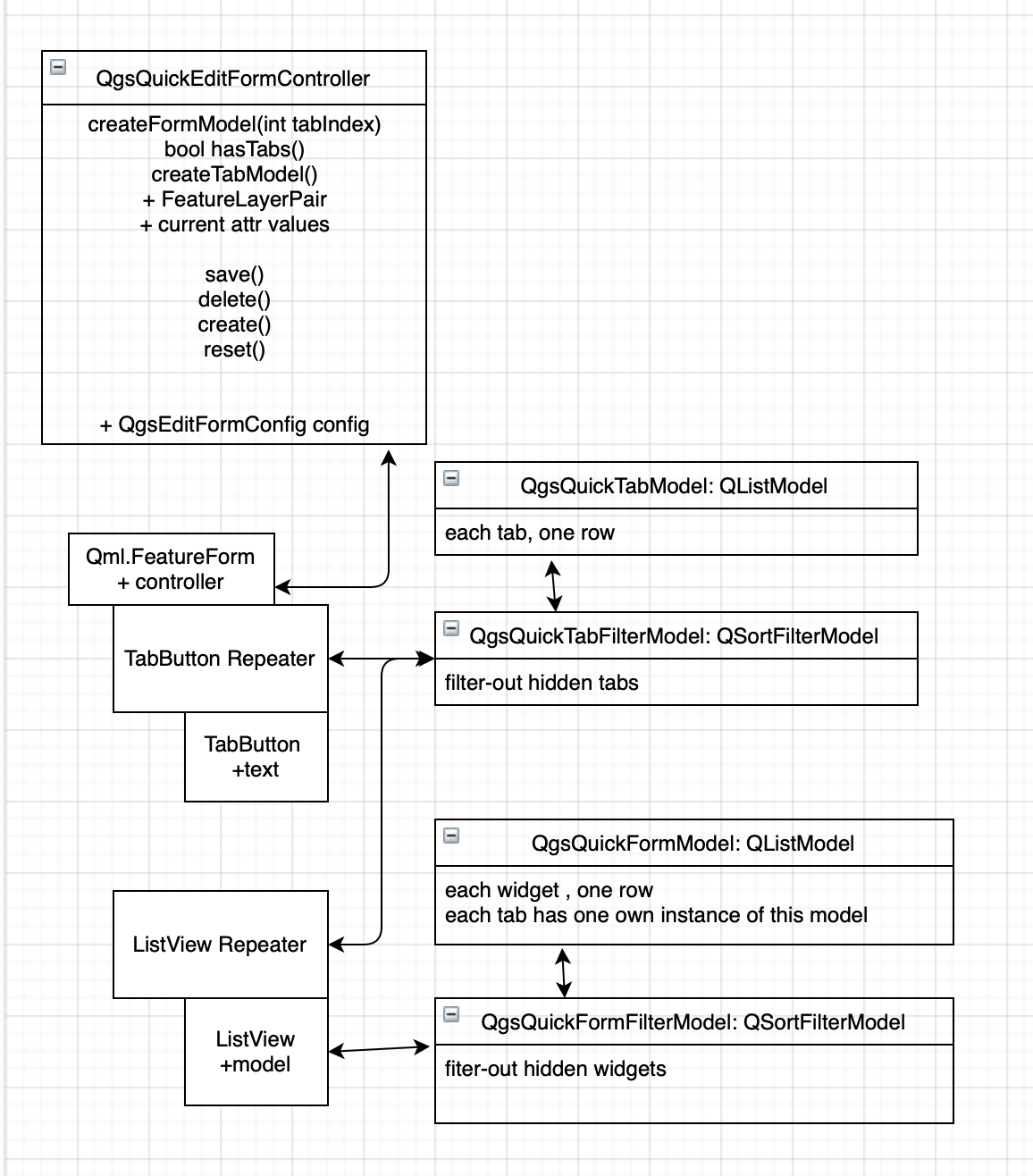

553,003 | 16,332,804,168 | IssuesEvent | 2021-05-12 11:23:21 | lutraconsulting/input | https://api.github.com/repos/lutraconsulting/input | closed | Refactor form-related models | enhancement forms high priority | There are several models linked together making things quite complex - and buggy in some more advanced scenarios, e.g. when using conditional visibility. It would be good to simplify the whole approach - maybe something like this:

- do not link models together - e.g. the dreaded QgsQuickSubModel

- use just simple list models (not hierarchical)

- have one central controller class for all the form logic to avoid spaghetti of signals

- remove QgsQuickAttributeModel if possible - if we don't need that item model then let's keep the logic in the central controller

| 1.0 | Refactor form-related models - There are several models linked together making things quite complex - and buggy in some more advanced scenarios, e.g. when using conditional visibility. It would be good to simplify the whole approach - maybe something like this:

- do not link models together - e.g. the dreaded QgsQuickSubModel

- use just simple list models (not hierarchical)

- have one central controller class for all the form logic to avoid spaghetti of signals

- remove QgsQuickAttributeModel if possible - if we don't need that item model then let's keep the logic in the central controller

| priority | refactor form related models there are several models linked together making things quite complex and buggy in some more advanced scenarios e g when using conditional visibility it would be good to simplify the whole approach maybe something like this do not link models together e g the dreaded qgsquicksubmodel use just simple list models not hierarchical have one central controller class for all the form logic to avoid spaghetti of signals remove qgsquickattributemodel if possible if we don t need that item model then let s keep the logic in the central controller | 1 |

559,239 | 16,553,432,079 | IssuesEvent | 2021-05-28 11:15:33 | ChainSafe/chainbridge-utils | https://api.github.com/repos/ChainSafe/chainbridge-utils | closed | Update deprecated package "golang.org/x/crypto/ssh/terminal" --> "golang.org/x/term" | Priority: 2 - High bug | The package `golang.org/x/crypto/ssh/terminal` has been deprecated and should be replaced by `golang.org/x/term`.

The deprecation of this package **may** be linked to an [issue](https://discord.com/channels/593655374469660673/713076180374519859/846056268489424896) experienced by a Discord user (XanMan) whereby he encounters an error when attempting to input the password for his encrypted Keystore:

```bash

invalid input: The handle is invalid.

Enter password to encrypt keystore file:

```

## Expected Behavior

Program should accept command line input without error

## Current Behavior

**Example command:**

`chainbridge accounts generate`

- This successfully creates the `keys` directory within ChainBridge repository root, then fails upon prompting the user for password (input).

Program is throwing error: `The handle is invalid` which is originating from the `golang.org/x/crypto/ssh/terminal` package and propagated by our method `keystore.GetPassword` as shown [here](https://github.com/ChainSafe/chainbridge-utils/blob/2aba2e18b4cb636b58c353d7d9cd4820393d49bb/keystore/encrypt.go#L112).

## Possible Solution

Update package `golang.org/x/crypto/ssh/terminal` to `golang.org/x/term`.

## Steps to Reproduce (for bugs)

***Use windows machine**

1. `chainbridge accounts generate`

2. attempt inputting keystore password

## Related

[Gossamer-1599](https://github.com/ChainSafe/gossamer/issues/1599)

## Versions

ChainBridge commit (or docker tag): `v1.1.1`

chainbridge-solidity version:

chainbridge-substrate version:

Go version: `1.16.4`

| 1.0 | Update deprecated package "golang.org/x/crypto/ssh/terminal" --> "golang.org/x/term" - The package `golang.org/x/crypto/ssh/terminal` has been deprecated and should be replaced by `golang.org/x/term`.

The deprecation of this package **may** be linked to an [issue](https://discord.com/channels/593655374469660673/713076180374519859/846056268489424896) experienced by a Discord user (XanMan) whereby he encounters an error when attempting to input the password for his encrypted Keystore:

```bash

invalid input: The handle is invalid.

Enter password to encrypt keystore file:

```

## Expected Behavior

Program should accept command line input without error

## Current Behavior

**Example command:**

`chainbridge accounts generate`

- This successfully creates the `keys` directory within ChainBridge repository root, then fails upon prompting the user for password (input).

Program is throwing error: `The handle is invalid` which is originating from the `golang.org/x/crypto/ssh/terminal` package and propagated by our method `keystore.GetPassword` as shown [here](https://github.com/ChainSafe/chainbridge-utils/blob/2aba2e18b4cb636b58c353d7d9cd4820393d49bb/keystore/encrypt.go#L112).

## Possible Solution

Update package `golang.org/x/crypto/ssh/terminal` to `golang.org/x/term`.

## Steps to Reproduce (for bugs)

***Use windows machine**

1. `chainbridge accounts generate`

2. attempt inputting keystore password

## Related

[Gossamer-1599](https://github.com/ChainSafe/gossamer/issues/1599)

## Versions

ChainBridge commit (or docker tag): `v1.1.1`

chainbridge-solidity version:

chainbridge-substrate version:

Go version: `1.16.4`

| priority | update deprecated package golang org x crypto ssh terminal golang org x term the package golang org x crypto ssh terminal has been deprecated and should be replaced by golang org x term the deprecation of this package may be linked to an experienced by a discord user xanman whereby he encounters an error when attempting to input the password for his encrypted keystore bash invalid input the handle is invalid enter password to encrypt keystore file expected behavior program should accept command line input without error current behavior example command chainbridge accounts generate this successfully creates the keys directory within chainbridge repository root then fails upon prompting the user for password input program is throwing error the handle is invalid which is originating from the golang org x crypto ssh terminal package and propagated by our method keystore getpassword as shown possible solution update package golang org x crypto ssh terminal to golang org x term steps to reproduce for bugs use windows machine chainbridge accounts generate attempt inputting keystore password related versions chainbridge commit or docker tag chainbridge solidity version chainbridge substrate version go version | 1 |

468,158 | 13,462,412,482 | IssuesEvent | 2020-09-09 16:02:15 | AY2021S1-CS2103-W14/tp | https://api.github.com/repos/AY2021S1-CS2103-W14/tp | opened | As a new/forgetful user, I want to access the command list/user guide | priority.High type.Story | ... easily refer to instructions for commands and usage instructions

| 1.0 | As a new/forgetful user, I want to access the command list/user guide - ... easily refer to instructions for commands and usage instructions

| priority | as a new forgetful user i want to access the command list user guide easily refer to instructions for commands and usage instructions | 1 |

205,916 | 7,107,248,082 | IssuesEvent | 2018-01-16 19:18:25 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Placing blocks against a wall makes a second rock appear, and the placed block cannot be picked up. | High Priority | Something to do with prediction allowing placement on walls, but the server not.

| 1.0 | Placing blocks against a wall makes a second rock appear, and the placed block cannot be picked up. - Something to do with prediction allowing placement on walls, but the server not.

| priority | placing blocks against a wall makes a second rock appear and the placed block cannot be picked up something to do with prediction allowing placement on walls but the server not | 1 |

774,248 | 27,189,333,233 | IssuesEvent | 2023-02-19 16:01:46 | AY2223S2-CS2103T-T09-4/tp | https://api.github.com/repos/AY2223S2-CS2103T-T09-4/tp | opened | Add FAQ and command summary to the User Guide for v1.1 | type.Story priority.High guide.User | As a user, I can see the FAQ and command summary in the User Guide. | 1.0 | Add FAQ and command summary to the User Guide for v1.1 - As a user, I can see the FAQ and command summary in the User Guide. | priority | add faq and command summary to the user guide for as a user i can see the faq and command summary in the user guide | 1 |

270,103 | 8,452,349,577 | IssuesEvent | 2018-10-20 02:38:07 | Sage-Bionetworks/Agora | https://api.github.com/repos/Sage-Bionetworks/Agora | closed | Update Background Images | high priority | When I was exporting the smaller images I noticed so inconsistencies so here's the updated files for all of the backgrounds, let me know if you need another format etc. You can also find these in the Images page of Figma.

[UpdatedImages.zip](https://github.com/Sage-Bionetworks/Agora/files/2493057/UpdatedImages.zip)

| 1.0 | Update Background Images - When I was exporting the smaller images I noticed so inconsistencies so here's the updated files for all of the backgrounds, let me know if you need another format etc. You can also find these in the Images page of Figma.

[UpdatedImages.zip](https://github.com/Sage-Bionetworks/Agora/files/2493057/UpdatedImages.zip)

| priority | update background images when i was exporting the smaller images i noticed so inconsistencies so here s the updated files for all of the backgrounds let me know if you need another format etc you can also find these in the images page of figma | 1 |

394,764 | 11,648,464,001 | IssuesEvent | 2020-03-01 20:52:03 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | closed | Two decimal places showing | Bug: development Docs: not needed Effort: small Module: dispensary Priority: high | ## Describe the bug

When paying for a prescription, two decimal places show instead of one in the payment amount

### To reproduce

1. Go to pay for a prescription

4. See error

### Expected behaviour

Should only show one

### Proposed Solution

Show one

### Version and device info

N/A

### Additional context

nN/A

| 1.0 | Two decimal places showing - ## Describe the bug

When paying for a prescription, two decimal places show instead of one in the payment amount

### To reproduce

1. Go to pay for a prescription

4. See error

### Expected behaviour

Should only show one

### Proposed Solution

Show one

### Version and device info

N/A

### Additional context

nN/A

| priority | two decimal places showing describe the bug when paying for a prescription two decimal places show instead of one in the payment amount to reproduce go to pay for a prescription see error expected behaviour should only show one proposed solution show one version and device info n a additional context nn a | 1 |

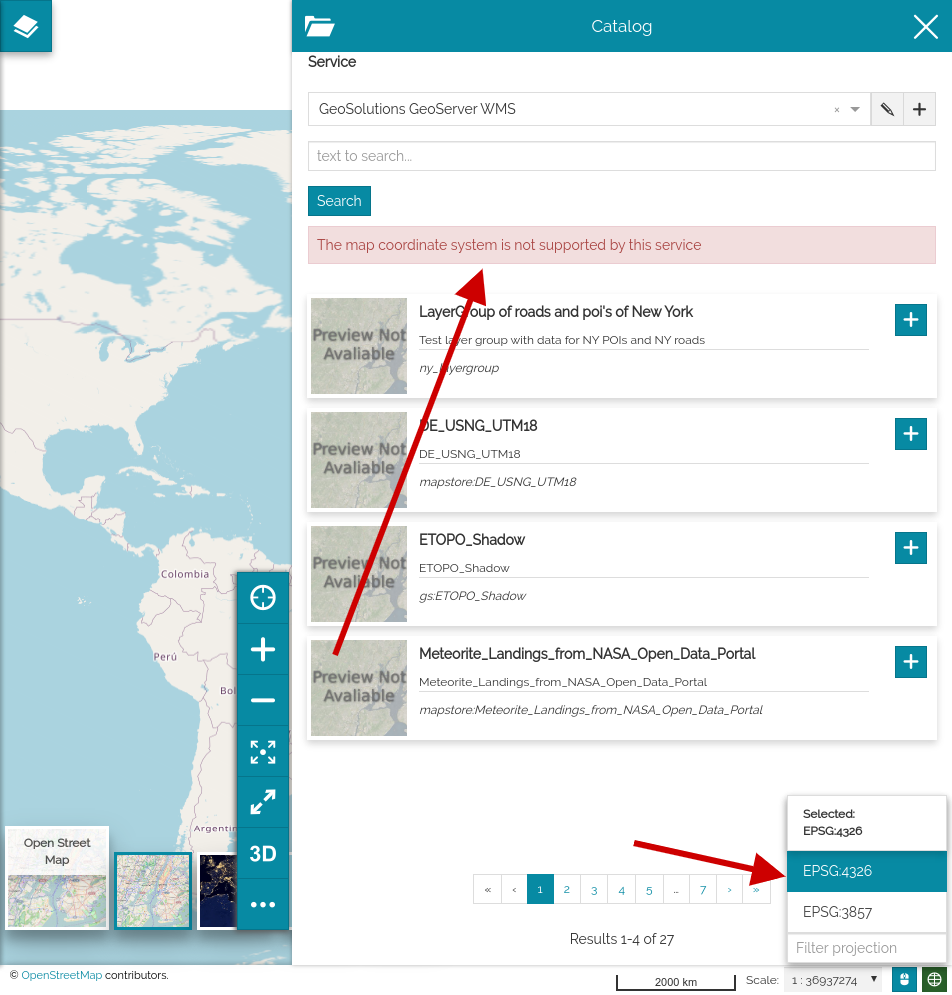

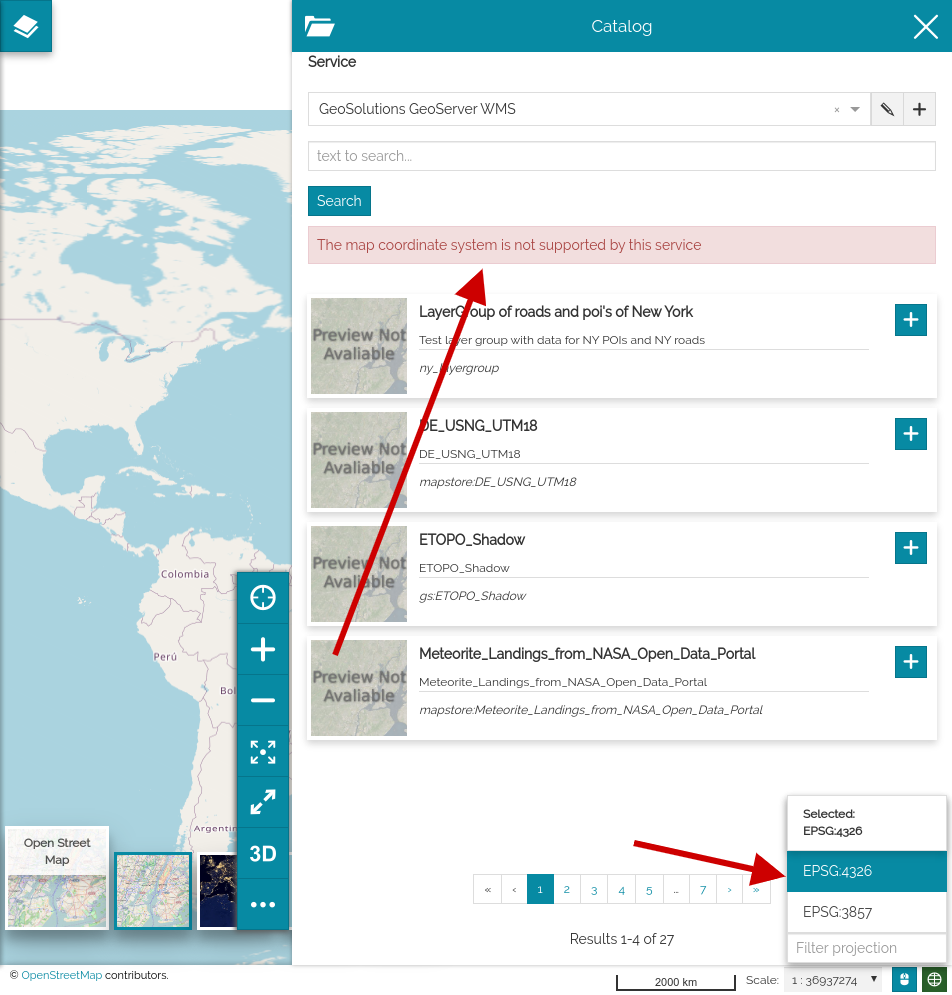

424,902 | 12,324,780,283 | IssuesEvent | 2020-05-13 14:11:41 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Can not add WMS background in WGS84 | Priority: High bug | ## Description

When you have a map in WGS84, adding a background shows an error:

It works with CSW or WMTS catalogs.

## How to reproduce

- Login as admin (to show crs selector)

- create a new map and switch to WGS84

- Try to add a new background from WMS service (tested with https://gs-stable.geo-solutions.it/geoserver/wms)

*Expected Result*

The layer is added if the CRS is supported

*Current Result*

An error tells the CRS is not compatible

- [x] Not browser related

<details><summary> <b>Browser info</b> </summary>

<!-- If browser related, please compile the following table -->

<!-- If your browser is not in the list please add a new row to the table with the version -->

(use this site: <a href="https://www.whatsmybrowser.org/">https://www.whatsmybrowser.org/</a> for non expert users)

| Browser Affected | Version |

|---|---|

|Internet Explorer| |

|Edge| |

|Chrome| |

|Firefox| |

|Safari| |

</details>

| 1.0 | Can not add WMS background in WGS84 - ## Description

When you have a map in WGS84, adding a background shows an error:

It works with CSW or WMTS catalogs.

## How to reproduce

- Login as admin (to show crs selector)

- create a new map and switch to WGS84

- Try to add a new background from WMS service (tested with https://gs-stable.geo-solutions.it/geoserver/wms)

*Expected Result*

The layer is added if the CRS is supported

*Current Result*

An error tells the CRS is not compatible

- [x] Not browser related

<details><summary> <b>Browser info</b> </summary>

<!-- If browser related, please compile the following table -->

<!-- If your browser is not in the list please add a new row to the table with the version -->

(use this site: <a href="https://www.whatsmybrowser.org/">https://www.whatsmybrowser.org/</a> for non expert users)

| Browser Affected | Version |

|---|---|

|Internet Explorer| |

|Edge| |

|Chrome| |

|Firefox| |

|Safari| |

</details>

| priority | can not add wms background in description when you have a map in adding a background shows an error it works with csw or wmts catalogs how to reproduce login as admin to show crs selector create a new map and switch to try to add a new background from wms service tested with expected result the layer is added if the crs is supported current result an error tells the crs is not compatible not browser related browser info use this site a href for non expert users browser affected version internet explorer edge chrome firefox safari | 1 |

127,694 | 5,038,507,733 | IssuesEvent | 2016-12-18 09:23:30 | fossasia/asksusi.com | https://api.github.com/repos/fossasia/asksusi.com | closed | Responsive UI for asksusi | bug enhancement High Priority | Currently the css is not mobile compatible.

The css needs to be tested on various devices and make use of bootstrap.

| 1.0 | Responsive UI for asksusi - Currently the css is not mobile compatible.

The css needs to be tested on various devices and make use of bootstrap.

| priority | responsive ui for asksusi currently the css is not mobile compatible the css needs to be tested on various devices and make use of bootstrap | 1 |

739,254 | 25,587,753,662 | IssuesEvent | 2022-12-01 10:36:32 | nf-core/tools | https://api.github.com/repos/nf-core/tools | opened | Module lint: Check container syntax | linting high-priority | ### Description of feature

x-ref https://github.com/nf-core/tools/issues/1627

Check that the `container` syntax is correct and can be parsed properly. eg. single quotes vs double quotes. | 1.0 | Module lint: Check container syntax - ### Description of feature

x-ref https://github.com/nf-core/tools/issues/1627

Check that the `container` syntax is correct and can be parsed properly. eg. single quotes vs double quotes. | priority | module lint check container syntax description of feature x ref check that the container syntax is correct and can be parsed properly eg single quotes vs double quotes | 1 |

685,590 | 23,461,649,250 | IssuesEvent | 2022-08-16 13:34:49 | kubermatic/dashboard | https://api.github.com/repos/kubermatic/dashboard | closed | Remove the warning for delete AKS/GKE external cluster dialog | kind/bug priority/high sig/ui externalcluster | ### What happened

Remove the warning fro AKS/GKE externalcluster delete:

- [ ] remove the warning sign to information sign

- [ ] keep the first line informing user of attached mds

- [ ] remove the line `Please delete nodegroups....`

** No changes required for EKS

### Expected behavior

### How to reproduce

### Environment

- UI Version:

- API Version:

- Domain:

- Others:

### Current workaround

### Affected user persona

### Business goal to be improved

### Metric to be improved

| 1.0 | Remove the warning for delete AKS/GKE external cluster dialog - ### What happened

Remove the warning fro AKS/GKE externalcluster delete:

- [ ] remove the warning sign to information sign

- [ ] keep the first line informing user of attached mds

- [ ] remove the line `Please delete nodegroups....`

** No changes required for EKS

### Expected behavior

### How to reproduce

### Environment

- UI Version:

- API Version:

- Domain:

- Others:

### Current workaround

### Affected user persona

### Business goal to be improved

### Metric to be improved

| priority | remove the warning for delete aks gke external cluster dialog what happened remove the warning fro aks gke externalcluster delete remove the warning sign to information sign keep the first line informing user of attached mds remove the line please delete nodegroups no changes required for eks expected behavior how to reproduce environment ui version api version domain others current workaround affected user persona business goal to be improved metric to be improved | 1 |

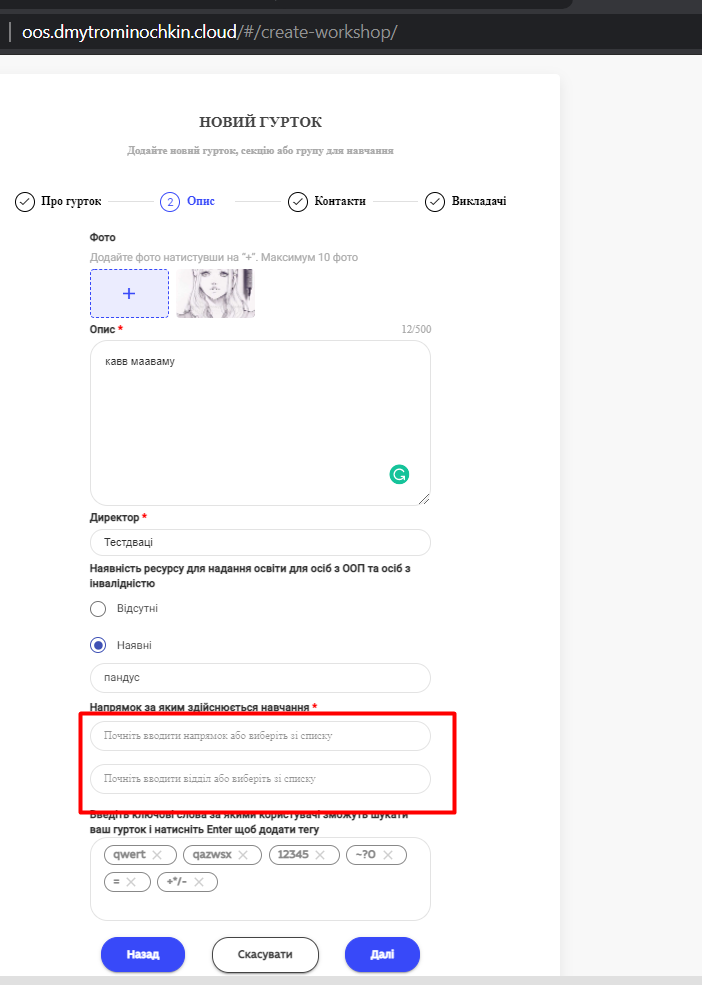

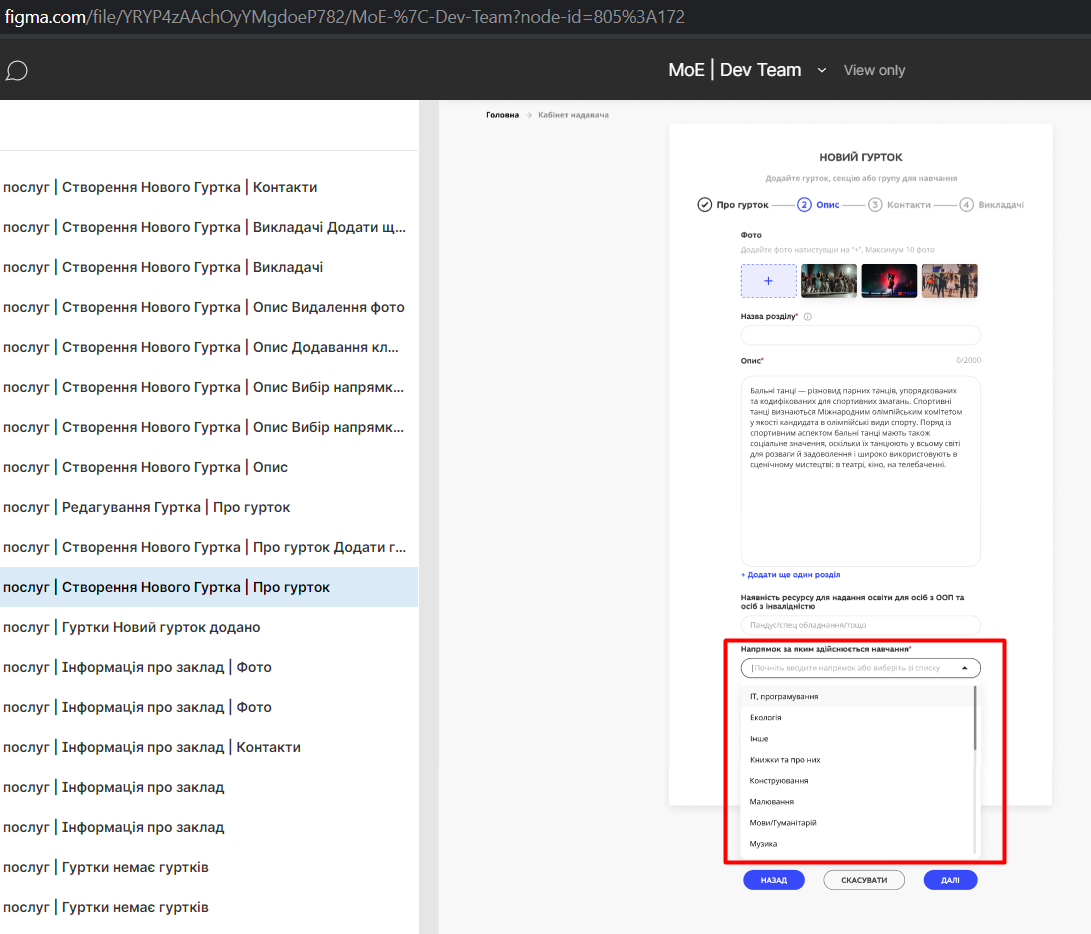

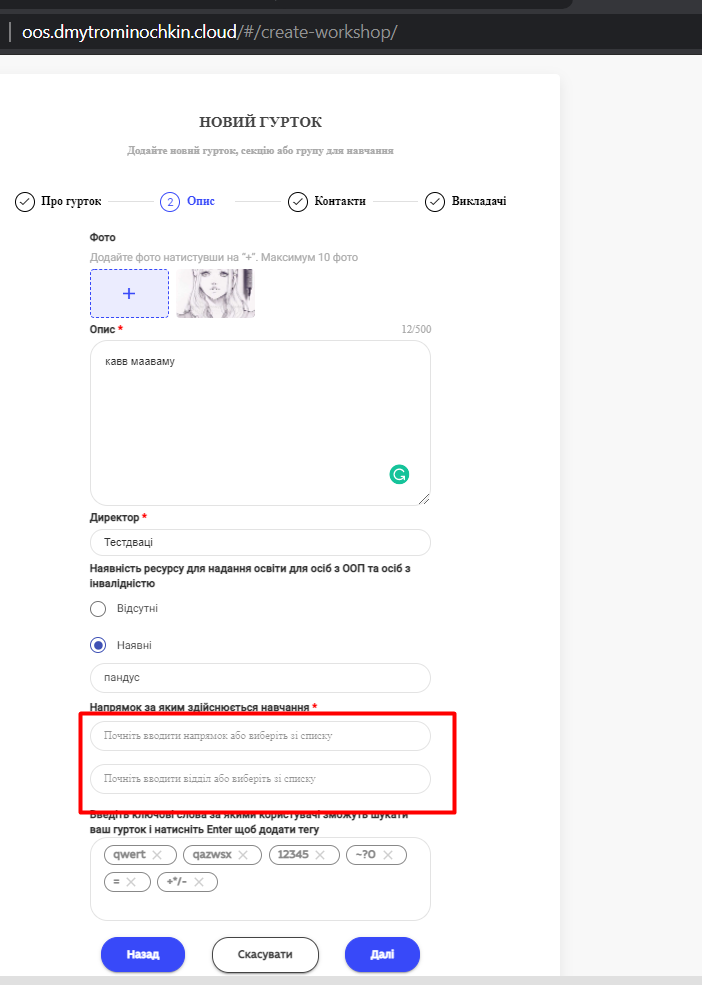

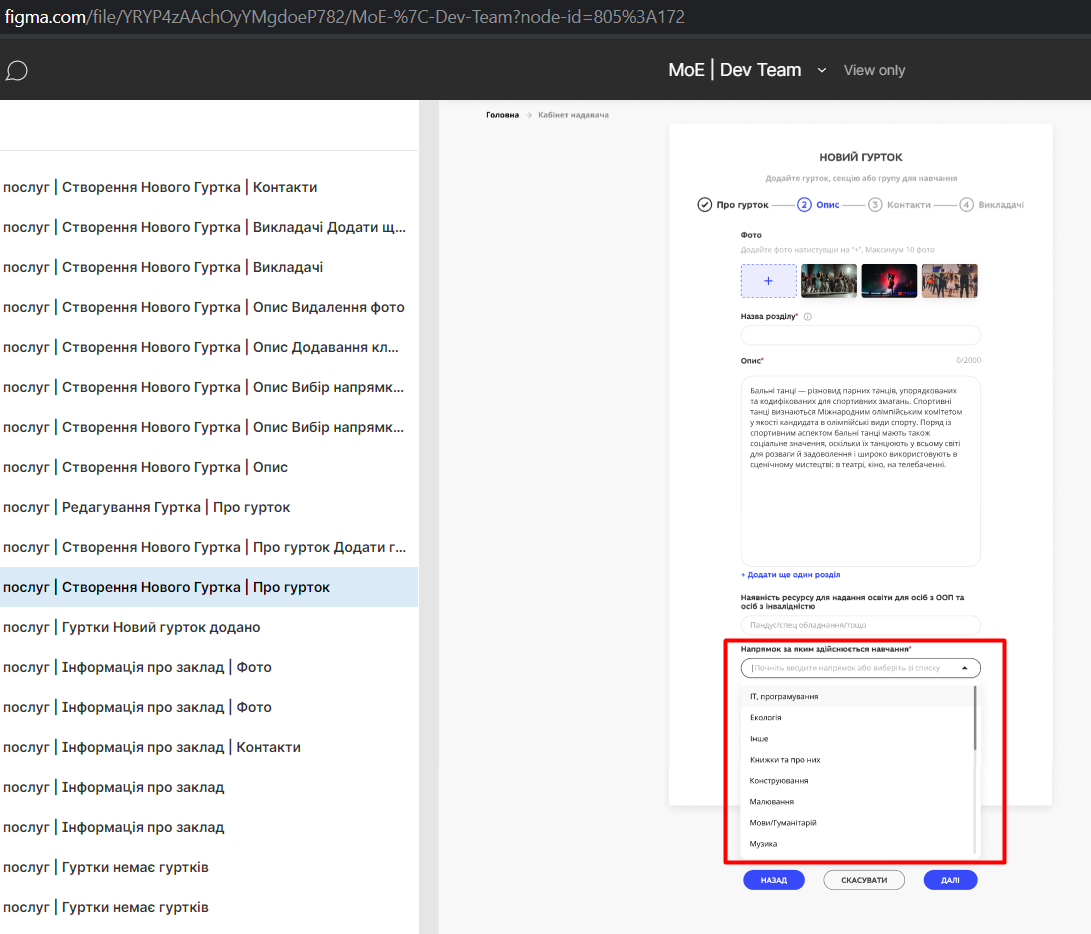

590,690 | 17,784,728,542 | IssuesEvent | 2021-08-31 09:39:28 | ita-social-projects/OoS-Backend | https://api.github.com/repos/ita-social-projects/OoS-Backend | opened | [SP Class] Drop-down box with data is missing on the 'Новий гурток' create page in 'Опис' tab in 'Напрямок за яким здійснюється навчання' field | bug priority:high sev:minor Type:Functional | **Environment:** Windows 10 Home, Google Chrome 92.0.4515.159.

**Reproducible:** always.

**Build found:** 31/08/2021 12:26

Preconditions

A user (Service Provider) has been registered already in the system.

(e.g.Login/pass=7gakor46280@asmm5.com/Qwer1234?)

**Steps to reproduce**

1. Loggin as Service Provider.

2. Click on the drop-down arrow to the right of the 'User Name' -> 'Мої гуртки'.

3. Click on the 'Додати гурток' button.

4. Fill mandatory fields on the 'Про гурток' tab -> click on 'Опис' tab.

5. Take a look at 'Напрямок за яким здійснюється навчання' field.

**Actual result**