Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

24,902 | 2,674,557,099 | IssuesEvent | 2015-03-25 04:06:01 | bowdidge/switchlist | https://api.github.com/repos/bowdidge/switchlist | closed | Mark industry track capacity, and don't send cars to a track if there's no space. | auto-migrated Milestone-Release0.9 Priority-High Type-Enhancement | ```

Currently, SwitchList doesn't have any idea about the capacity of each

industry. If the rates for cargos is set wrong so too many cars are sent to

the industry, there might not be space for new cars.

SwitchList ought to keep track of the length of each car and the space at each

industry, and avoid directing cars to an industry if there is no space.

This will require changing the UI and file database to store car sizes and

capacity, add switches so that users can ignore the feature if necessary, and

change the car assignment algorithm to do the right thing when a track

overflows.

The workaround is to match the cargo rates to the track size better; maybe

there's some simple UI or hints to help users understand what a given "cars per

week" means in terms of the total cars likely to arrive on the track.

```

-----

Original issue reported on code.google.com by `rwbowdi...@gmail.com` on 23 Apr 2011 at 5:37 | 1.0 | Mark industry track capacity, and don't send cars to a track if there's no space. - ```

Currently, SwitchList doesn't have any idea about the capacity of each

industry. If the rates for cargos is set wrong so too many cars are sent to

the industry, there might not be space for new cars.

SwitchList ought to keep track of the length of each car and the space at each

industry, and avoid directing cars to an industry if there is no space.

This will require changing the UI and file database to store car sizes and

capacity, add switches so that users can ignore the feature if necessary, and

change the car assignment algorithm to do the right thing when a track

overflows.

The workaround is to match the cargo rates to the track size better; maybe

there's some simple UI or hints to help users understand what a given "cars per

week" means in terms of the total cars likely to arrive on the track.

```

-----

Original issue reported on code.google.com by `rwbowdi...@gmail.com` on 23 Apr 2011 at 5:37 | priority | mark industry track capacity and don t send cars to a track if there s no space currently switchlist doesn t have any idea about the capacity of each industry if the rates for cargos is set wrong so too many cars are sent to the industry there might not be space for new cars switchlist ought to keep track of the length of each car and the space at each industry and avoid directing cars to an industry if there is no space this will require changing the ui and file database to store car sizes and capacity add switches so that users can ignore the feature if necessary and change the car assignment algorithm to do the right thing when a track overflows the workaround is to match the cargo rates to the track size better maybe there s some simple ui or hints to help users understand what a given cars per week means in terms of the total cars likely to arrive on the track original issue reported on code google com by rwbowdi gmail com on apr at | 1 |

681,265 | 23,303,209,083 | IssuesEvent | 2022-08-07 16:32:16 | OpenCubicChunks/CubicChunks2 | https://api.github.com/repos/OpenCubicChunks/CubicChunks2 | closed | Implement a real HeightmapStorage implementation | High priority | This should also come with tests that verify nothing changed on save/load | 1.0 | Implement a real HeightmapStorage implementation - This should also come with tests that verify nothing changed on save/load | priority | implement a real heightmapstorage implementation this should also come with tests that verify nothing changed on save load | 1 |

108,559 | 4,347,353,964 | IssuesEvent | 2016-07-29 19:12:01 | codebuddiesdotorg/cb-v2-scratch | https://api.github.com/repos/codebuddiesdotorg/cb-v2-scratch | closed | On the profile page, hangouts that the user did not participate in show up on the lefthand side. | bug help wanted high-priority ready | The # of hangouts attended count is correct, but ALL the hangouts show up below.

Check: server/hangouts/methods.js | 1.0 | On the profile page, hangouts that the user did not participate in show up on the lefthand side. - The # of hangouts attended count is correct, but ALL the hangouts show up below.

Check: server/hangouts/methods.js | priority | on the profile page hangouts that the user did not participate in show up on the lefthand side the of hangouts attended count is correct but all the hangouts show up below check server hangouts methods js | 1 |

128,488 | 5,065,597,947 | IssuesEvent | 2016-12-23 13:04:48 | DiCarloLab-Delft/PycQED_py3 | https://api.github.com/repos/DiCarloLab-Delft/PycQED_py3 | opened | Kernel object robustness and simplification | enhancement priority: must/high | The current kernel object handles predistortions. However, there are several other points that also handle distortions leading to two problems.

1. It is unclear what information is stored where, leading to human mistakes

2. The different allocation of information leads to inefficiencies in calculating the convolutions. This leads to a ~10-20s of unneeded convolution time everytime we upload a sequence.

The following points are improvements to address these issues. Note I do not intend to do all of these at once.

- calculate only if parameters changed

- Add RT corrections to the kernel object.

- Rename distortions class

- path for saving the kernels should not be the notebook directory

- test saving and loading

- Change parameters to SI units

- Automatically pick order of distortions to speed

up convolutions

- Add shortening of kernel if possible

- Clear functions to interact with the distortions (instead of the 4 snippered ones there are now). | 1.0 | Kernel object robustness and simplification - The current kernel object handles predistortions. However, there are several other points that also handle distortions leading to two problems.

1. It is unclear what information is stored where, leading to human mistakes

2. The different allocation of information leads to inefficiencies in calculating the convolutions. This leads to a ~10-20s of unneeded convolution time everytime we upload a sequence.

The following points are improvements to address these issues. Note I do not intend to do all of these at once.

- calculate only if parameters changed

- Add RT corrections to the kernel object.

- Rename distortions class

- path for saving the kernels should not be the notebook directory

- test saving and loading

- Change parameters to SI units

- Automatically pick order of distortions to speed

up convolutions

- Add shortening of kernel if possible

- Clear functions to interact with the distortions (instead of the 4 snippered ones there are now). | priority | kernel object robustness and simplification the current kernel object handles predistortions however there are several other points that also handle distortions leading to two problems it is unclear what information is stored where leading to human mistakes the different allocation of information leads to inefficiencies in calculating the convolutions this leads to a of unneeded convolution time everytime we upload a sequence the following points are improvements to address these issues note i do not intend to do all of these at once calculate only if parameters changed add rt corrections to the kernel object rename distortions class path for saving the kernels should not be the notebook directory test saving and loading change parameters to si units automatically pick order of distortions to speed up convolutions add shortening of kernel if possible clear functions to interact with the distortions instead of the snippered ones there are now | 1 |

291,623 | 8,940,966,749 | IssuesEvent | 2019-01-24 02:05:37 | mRemoteNG/mRemoteNG | https://api.github.com/repos/mRemoteNG/mRemoteNG | closed | putty panel not fitted into window (you can drag it around like a mdichild) | Bug High Priority Verified | <!--- Provide a general summary of the issue in the Title above -->

Reference #1261

## Expected Behavior

<!--- If you're describing a bug, tell us what should happen -->

<!--- If you're suggesting a change/improvement, tell us how it should work -->

## Current Behavior

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

## Possible Solution

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

<!--- or ideas how to implement the addition or change -->

## Steps to Reproduce (for bugs)

<!--- Provide an unambiguous set of steps to reproduce -->

<!--- this bug. Include code to reproduce, if relevant -->

1. Open putty connection

2. See a sliver of the putty window

3. this can be dragged within the connection panel

## Context

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Version used:

* Operating System and version (e.g. Windows 10 1709 x64):

| 1.0 | putty panel not fitted into window (you can drag it around like a mdichild) - <!--- Provide a general summary of the issue in the Title above -->

Reference #1261

## Expected Behavior

<!--- If you're describing a bug, tell us what should happen -->

<!--- If you're suggesting a change/improvement, tell us how it should work -->

## Current Behavior

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

## Possible Solution

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

<!--- or ideas how to implement the addition or change -->

## Steps to Reproduce (for bugs)

<!--- Provide an unambiguous set of steps to reproduce -->

<!--- this bug. Include code to reproduce, if relevant -->

1. Open putty connection

2. See a sliver of the putty window

3. this can be dragged within the connection panel

## Context

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Version used:

* Operating System and version (e.g. Windows 10 1709 x64):

| priority | putty panel not fitted into window you can drag it around like a mdichild reference expected behavior current behavior possible solution steps to reproduce for bugs open putty connection see a sliver of the putty window this can be dragged within the connection panel context your environment version used operating system and version e g windows | 1 |

693,032 | 23,759,997,241 | IssuesEvent | 2022-09-01 08:04:32 | redhat-developer/odo | https://api.github.com/repos/redhat-developer/odo | closed | devfile variables inside kubernetes component are not replaced | kind/bug triage/duplicate priority/High | Devfile variables inside `kubernetes` component files referenced by `uri` should work the same way as they do when `inline` is used

```yaml

#devfile.yaml

commands:

- exec:

commandLine: npm install

component: runtime

group:

isDefault: true

kind: build

workingDir: $PROJECT_SOURCE

id: install

- exec:

commandLine: npm start

component: runtime

group:

isDefault: true

kind: run

workingDir: $PROJECT_SOURCE

id: run

- id: build-image

apply:

component: prod-image

- id: deployk8s

apply:

component: outerloop-deploy

- id: deploy

composite:

commands:

- build-image

- deployk8s

group:

kind: deploy

isDefault: true

components:

- container:

endpoints:

- name: http-3000

targetPort: 3000

image: registry.access.redhat.com/ubi8/nodejs-14:latest

memoryLimit: 1024Mi

mountSources: true

name: runtime

- name: prod-image

image:

imageName: "{{CONTAINER_IMAGE}}"

dockerfile:

uri: ./Dockerfile

buildContext: ${PROJECT_SOURCE}

- name: outerloop-deploy

kubernetes:

uri: kubernetes/deployment.yaml

variables:

CONTAINER_IMAGE: quay.io/tkral/test:latest

metadata:

language: javascript

name: nodejs-nodejs-kkty

projectType: nodejs

schemaVersion: 2.2.0

starterProjects:

- git:

remotes:

origin: https://github.com/odo-devfiles/nodejs-ex.git

name: nodejs-starter

```

```yaml

# kubernetes/deployment.yaml

kind: Deployment

apiVersion: apps/v1

metadata:

name: mynode

spec:

replicas: 1

selector:

matchLabels:

app: node-app

template:

metadata:

labels:

app: node-app

spec:

containers:

- name: main

image: "{{CONTAINER_IMAGE}}"

resources: {}

```

```

odo deploy

```

```

$ k get deployment mynode -o yaml

apiVersion: apps/v1

kind: Deployment

metadata:

annotations:

deployment.kubernetes.io/revision: "1"

creationTimestamp: "2021-11-23T08:58:43Z"

generation: 1

labels:

app.kubernetes.io/managed-by: odo

name: mynode

namespace: test

resourceVersion: "31444"

uid: 98f8a389-0a3d-4abf-b9f1-c691ad7c4cf0

spec:

progressDeadlineSeconds: 600

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

app: node-app

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

creationTimestamp: null

labels:

app: node-app

spec:

containers:

- image: '{{ CONTAINER_IMAGE }}'

imagePullPolicy: IfNotPresent

name: main

resources: {}

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

status:

conditions:

- lastTransitionTime: "2021-11-23T08:58:43Z"

lastUpdateTime: "2021-11-23T08:58:43Z"

message: Deployment does not have minimum availability.

reason: MinimumReplicasUnavailable

status: "False"

type: Available

- lastTransitionTime: "2021-11-23T09:08:44Z"

lastUpdateTime: "2021-11-23T09:08:44Z"

message: ReplicaSet "mynode-5db6864ffd" has timed out progressing.

reason: ProgressDeadlineExceeded

status: "False"

type: Progressing

observedGeneration: 1

replicas: 1

unavailableReplicas: 1

updatedReplicas: 1

```

/kind bug

/priority high

| 1.0 | devfile variables inside kubernetes component are not replaced - Devfile variables inside `kubernetes` component files referenced by `uri` should work the same way as they do when `inline` is used

```yaml

#devfile.yaml

commands:

- exec:

commandLine: npm install

component: runtime

group:

isDefault: true

kind: build

workingDir: $PROJECT_SOURCE

id: install

- exec:

commandLine: npm start

component: runtime

group:

isDefault: true

kind: run

workingDir: $PROJECT_SOURCE

id: run

- id: build-image

apply:

component: prod-image

- id: deployk8s

apply:

component: outerloop-deploy

- id: deploy

composite:

commands:

- build-image

- deployk8s

group:

kind: deploy

isDefault: true

components:

- container:

endpoints:

- name: http-3000

targetPort: 3000

image: registry.access.redhat.com/ubi8/nodejs-14:latest

memoryLimit: 1024Mi

mountSources: true

name: runtime

- name: prod-image

image:

imageName: "{{CONTAINER_IMAGE}}"

dockerfile:

uri: ./Dockerfile

buildContext: ${PROJECT_SOURCE}

- name: outerloop-deploy

kubernetes:

uri: kubernetes/deployment.yaml

variables:

CONTAINER_IMAGE: quay.io/tkral/test:latest

metadata:

language: javascript

name: nodejs-nodejs-kkty

projectType: nodejs

schemaVersion: 2.2.0

starterProjects:

- git:

remotes:

origin: https://github.com/odo-devfiles/nodejs-ex.git

name: nodejs-starter

```

```yaml

# kubernetes/deployment.yaml

kind: Deployment

apiVersion: apps/v1

metadata:

name: mynode

spec:

replicas: 1

selector:

matchLabels:

app: node-app

template:

metadata:

labels:

app: node-app

spec:

containers:

- name: main

image: "{{CONTAINER_IMAGE}}"

resources: {}

```

```

odo deploy

```

```

$ k get deployment mynode -o yaml

apiVersion: apps/v1

kind: Deployment

metadata:

annotations:

deployment.kubernetes.io/revision: "1"

creationTimestamp: "2021-11-23T08:58:43Z"

generation: 1

labels:

app.kubernetes.io/managed-by: odo

name: mynode

namespace: test

resourceVersion: "31444"

uid: 98f8a389-0a3d-4abf-b9f1-c691ad7c4cf0

spec:

progressDeadlineSeconds: 600

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

app: node-app

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

creationTimestamp: null

labels:

app: node-app

spec:

containers:

- image: '{{ CONTAINER_IMAGE }}'

imagePullPolicy: IfNotPresent

name: main

resources: {}

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

status:

conditions:

- lastTransitionTime: "2021-11-23T08:58:43Z"

lastUpdateTime: "2021-11-23T08:58:43Z"

message: Deployment does not have minimum availability.

reason: MinimumReplicasUnavailable

status: "False"

type: Available

- lastTransitionTime: "2021-11-23T09:08:44Z"

lastUpdateTime: "2021-11-23T09:08:44Z"

message: ReplicaSet "mynode-5db6864ffd" has timed out progressing.

reason: ProgressDeadlineExceeded

status: "False"

type: Progressing

observedGeneration: 1

replicas: 1

unavailableReplicas: 1

updatedReplicas: 1

```

/kind bug

/priority high

| priority | devfile variables inside kubernetes component are not replaced devfile variables inside kubernetes component files referenced by uri should work the same way as they do when inline is used yaml devfile yaml commands exec commandline npm install component runtime group isdefault true kind build workingdir project source id install exec commandline npm start component runtime group isdefault true kind run workingdir project source id run id build image apply component prod image id apply component outerloop deploy id deploy composite commands build image group kind deploy isdefault true components container endpoints name http targetport image registry access redhat com nodejs latest memorylimit mountsources true name runtime name prod image image imagename container image dockerfile uri dockerfile buildcontext project source name outerloop deploy kubernetes uri kubernetes deployment yaml variables container image quay io tkral test latest metadata language javascript name nodejs nodejs kkty projecttype nodejs schemaversion starterprojects git remotes origin name nodejs starter yaml kubernetes deployment yaml kind deployment apiversion apps metadata name mynode spec replicas selector matchlabels app node app template metadata labels app node app spec containers name main image container image resources odo deploy k get deployment mynode o yaml apiversion apps kind deployment metadata annotations deployment kubernetes io revision creationtimestamp generation labels app kubernetes io managed by odo name mynode namespace test resourceversion uid spec progressdeadlineseconds replicas revisionhistorylimit selector matchlabels app node app strategy rollingupdate maxsurge maxunavailable type rollingupdate template metadata creationtimestamp null labels app node app spec containers image container image imagepullpolicy ifnotpresent name main resources terminationmessagepath dev termination log terminationmessagepolicy file dnspolicy clusterfirst restartpolicy always schedulername default scheduler securitycontext terminationgraceperiodseconds status conditions lasttransitiontime lastupdatetime message deployment does not have minimum availability reason minimumreplicasunavailable status false type available lasttransitiontime lastupdatetime message replicaset mynode has timed out progressing reason progressdeadlineexceeded status false type progressing observedgeneration replicas unavailablereplicas updatedreplicas kind bug priority high | 1 |

558,010 | 16,524,296,367 | IssuesEvent | 2021-05-26 17:57:49 | Javacord/Javacord | https://api.github.com/repos/Javacord/Javacord | opened | Add support for components | high priority | Discord now has components (buttons) for messages and responses,

https://github.com/discord/discord-api-docs/pull/3007 | 1.0 | Add support for components - Discord now has components (buttons) for messages and responses,

https://github.com/discord/discord-api-docs/pull/3007 | priority | add support for components discord now has components buttons for messages and responses | 1 |

479,245 | 13,793,433,470 | IssuesEvent | 2020-10-09 14:56:52 | eclipse-glsp/glsp | https://api.github.com/repos/eclipse-glsp/glsp | closed | Split public API from internal implementations | high-priority | A generic point that we should start thinking about is to make it clear (by package name) what is internal and public API meant to be directly used and extended by clients. This will give us more flexibility in the future when doing modifications as we'll know what we can change without affecting existing language-specific client implementations.

[migrated from https://github.com/eclipsesource/graphical-lsp/issues/363] | 1.0 | Split public API from internal implementations - A generic point that we should start thinking about is to make it clear (by package name) what is internal and public API meant to be directly used and extended by clients. This will give us more flexibility in the future when doing modifications as we'll know what we can change without affecting existing language-specific client implementations.

[migrated from https://github.com/eclipsesource/graphical-lsp/issues/363] | priority | split public api from internal implementations a generic point that we should start thinking about is to make it clear by package name what is internal and public api meant to be directly used and extended by clients this will give us more flexibility in the future when doing modifications as we ll know what we can change without affecting existing language specific client implementations | 1 |

130,346 | 5,114,437,591 | IssuesEvent | 2017-01-06 18:29:29 | aayaffe/SailingRaceCourseManager | https://api.github.com/repos/aayaffe/SailingRaceCourseManager | opened | Admin revoked | Priority: High Type: Bug | Admin options are not available after using other features of the phone (such as camera) and then returning to map activity.

Closing and opening, Fixes issue | 1.0 | Admin revoked - Admin options are not available after using other features of the phone (such as camera) and then returning to map activity.

Closing and opening, Fixes issue | priority | admin revoked admin options are not available after using other features of the phone such as camera and then returning to map activity closing and opening fixes issue | 1 |

241,784 | 7,834,436,134 | IssuesEvent | 2018-06-16 13:59:16 | kaytotes/ImprovedBlizzardUI | https://api.github.com/repos/kaytotes/ImprovedBlizzardUI | closed | Kill Feed | 8.0 high priority ptr | The Kill Feed is no longer functional and causes instant errors on the PTR.

| 1.0 | Kill Feed - The Kill Feed is no longer functional and causes instant errors on the PTR.

| priority | kill feed the kill feed is no longer functional and causes instant errors on the ptr | 1 |

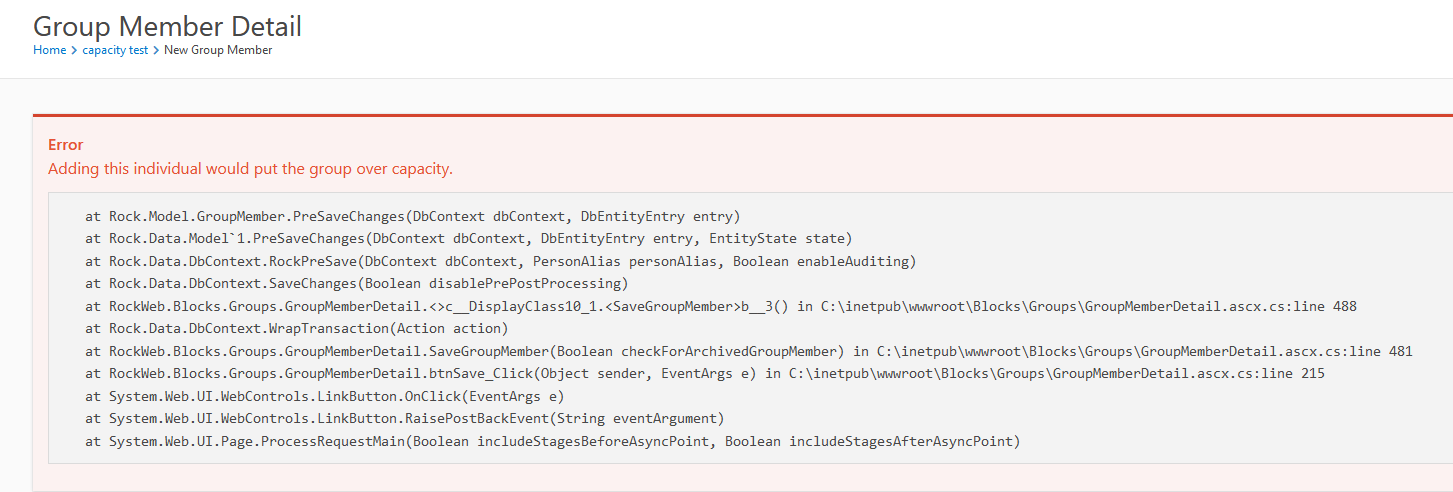

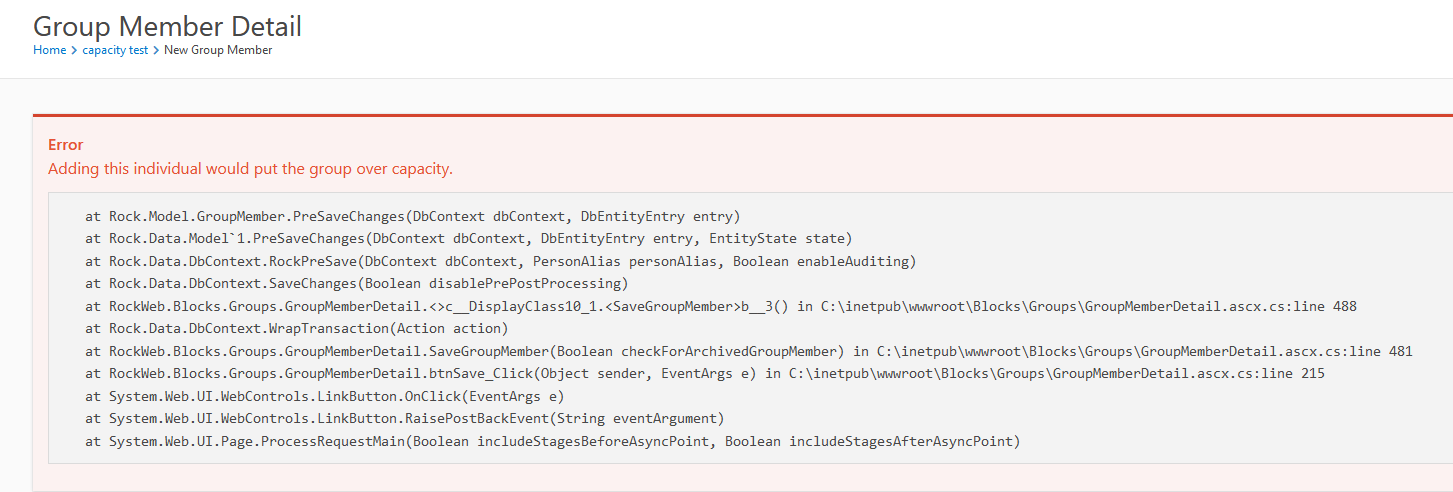

420,449 | 12,238,258,513 | IssuesEvent | 2020-05-04 19:29:18 | SparkDevNetwork/Rock | https://api.github.com/repos/SparkDevNetwork/Rock | closed | Group Capacity Off By One Exception | Fixed in v10.3 Priority: High Status: Confirmed Topic: Group Type: Bug | ### Description

On a Group Type with a _Group Capacity_ rule of _Hard_, the defined Capacity is not honored and an exception is thrown when trying to add someone that would make the group AT capacity, but NOT over it.

### Steps to Reproduce

1. Identify a group type that has a Group Capacity rule of Hard. (Or just edit Small Group type and add that capacity rule)

2. Go to Group Viewer and create a new group of that type.

3. Edit the new group and set capacity to 1

4. Add someone to the group and observe the exception.

**Expected behavior:**

Group capacity would be honored and you would be able to add people up TO the defined group capacity.

**Actual behavior:**

An exception is thrown when adding a member that would make the group AT the defined capacity. To work around the issue you must increase the desired capacity by 1 to allow the group to be at the desired capacity. This is new behavior as of 10.x and didn't occur in 9.4.

Duped on demo and prealpha.

### Versions

* **Rock Version:** 10.0-11.0 | 1.0 | Group Capacity Off By One Exception - ### Description

On a Group Type with a _Group Capacity_ rule of _Hard_, the defined Capacity is not honored and an exception is thrown when trying to add someone that would make the group AT capacity, but NOT over it.

### Steps to Reproduce

1. Identify a group type that has a Group Capacity rule of Hard. (Or just edit Small Group type and add that capacity rule)

2. Go to Group Viewer and create a new group of that type.

3. Edit the new group and set capacity to 1

4. Add someone to the group and observe the exception.

**Expected behavior:**

Group capacity would be honored and you would be able to add people up TO the defined group capacity.

**Actual behavior:**

An exception is thrown when adding a member that would make the group AT the defined capacity. To work around the issue you must increase the desired capacity by 1 to allow the group to be at the desired capacity. This is new behavior as of 10.x and didn't occur in 9.4.

Duped on demo and prealpha.

### Versions

* **Rock Version:** 10.0-11.0 | priority | group capacity off by one exception description on a group type with a group capacity rule of hard the defined capacity is not honored and an exception is thrown when trying to add someone that would make the group at capacity but not over it steps to reproduce identify a group type that has a group capacity rule of hard or just edit small group type and add that capacity rule go to group viewer and create a new group of that type edit the new group and set capacity to add someone to the group and observe the exception expected behavior group capacity would be honored and you would be able to add people up to the defined group capacity actual behavior an exception is thrown when adding a member that would make the group at the defined capacity to work around the issue you must increase the desired capacity by to allow the group to be at the desired capacity this is new behavior as of x and didn t occur in duped on demo and prealpha versions rock version | 1 |

253,512 | 8,057,055,843 | IssuesEvent | 2018-08-02 14:26:00 | Linaro/squad | https://api.github.com/repos/Linaro/squad | closed | Be able to specify baseline when using email api | enhancement high priority | When calling the API like so:

https://qa-reports.linaro.org/api/builds/8275/email/

Note the line "compared to build v4.14.56-93-gec86d5e19e14".

Squad chooses the baseline (build v4.14.56-93-gec86d5e19e14 in this case) by naively using the previous build. However, my API client is able to determine a better baseline because it has knowledge of the project.

If the email endpoint allowed a 'baseline' build to be specified, perhaps like so: "https://qa-reports.linaro.org/api/builds/8275/email?baseline=8156", then the fixes and regressions that an email report produces would be much more useful. | 1.0 | Be able to specify baseline when using email api - When calling the API like so:

https://qa-reports.linaro.org/api/builds/8275/email/

Note the line "compared to build v4.14.56-93-gec86d5e19e14".

Squad chooses the baseline (build v4.14.56-93-gec86d5e19e14 in this case) by naively using the previous build. However, my API client is able to determine a better baseline because it has knowledge of the project.

If the email endpoint allowed a 'baseline' build to be specified, perhaps like so: "https://qa-reports.linaro.org/api/builds/8275/email?baseline=8156", then the fixes and regressions that an email report produces would be much more useful. | priority | be able to specify baseline when using email api when calling the api like so note the line compared to build squad chooses the baseline build in this case by naively using the previous build however my api client is able to determine a better baseline because it has knowledge of the project if the email endpoint allowed a baseline build to be specified perhaps like so then the fixes and regressions that an email report produces would be much more useful | 1 |

386,837 | 11,451,406,386 | IssuesEvent | 2020-02-06 11:32:10 | balena-io/balena-supervisor | https://api.github.com/repos/balena-io/balena-supervisor | opened | The supervisor can report a spurious target state when moving between applications | High priority | This exhibited itself as the supervisor complaining it did not support multiple applications, and the state endpoint had correctly only reported a single app as the target state.

It seems to be related to volatile state, as when the healthcheck kicked in, the supervisor self-recovered. | 1.0 | The supervisor can report a spurious target state when moving between applications - This exhibited itself as the supervisor complaining it did not support multiple applications, and the state endpoint had correctly only reported a single app as the target state.

It seems to be related to volatile state, as when the healthcheck kicked in, the supervisor self-recovered. | priority | the supervisor can report a spurious target state when moving between applications this exhibited itself as the supervisor complaining it did not support multiple applications and the state endpoint had correctly only reported a single app as the target state it seems to be related to volatile state as when the healthcheck kicked in the supervisor self recovered | 1 |

303,730 | 9,310,061,007 | IssuesEvent | 2019-03-25 17:51:52 | ConsenSys/mythril-classic | https://api.github.com/repos/ConsenSys/mythril-classic | closed | Refactor: mythril/mythril.py | Priority: High Review maintenance | ## Description

This issue tracks maintenance of mythril/mythril.py

## Checkpoints:

- [ ] Cleanup Code

- [ ] Ensure 80% code coverage

- [ ] Ensure all public functions have been outfitted with the proper documentation | 1.0 | Refactor: mythril/mythril.py - ## Description

This issue tracks maintenance of mythril/mythril.py

## Checkpoints:

- [ ] Cleanup Code

- [ ] Ensure 80% code coverage

- [ ] Ensure all public functions have been outfitted with the proper documentation | priority | refactor mythril mythril py description this issue tracks maintenance of mythril mythril py checkpoints cleanup code ensure code coverage ensure all public functions have been outfitted with the proper documentation | 1 |

594,690 | 18,051,507,232 | IssuesEvent | 2021-09-19 20:34:35 | robotcoral/coral-app | https://api.github.com/repos/robotcoral/coral-app | opened | Flags can be set underneath a slab | bug High Priority | **Describe the bug**

If karol stands on 1 or more slabs he can place a flag on the floor underneath the slabs.

**To Reproduce**

Steps to reproduce the behavior:

1. Put down one or more slabs as karol

2. Move karol such that he stands on the slabs

3. Place a flag on the current position

**Expected behavior**

Karol places the flag on top of the slabs.

**Additional context**

Version 0.1.10 nightly

| 1.0 | Flags can be set underneath a slab - **Describe the bug**

If karol stands on 1 or more slabs he can place a flag on the floor underneath the slabs.

**To Reproduce**

Steps to reproduce the behavior:

1. Put down one or more slabs as karol

2. Move karol such that he stands on the slabs

3. Place a flag on the current position

**Expected behavior**

Karol places the flag on top of the slabs.

**Additional context**

Version 0.1.10 nightly

| priority | flags can be set underneath a slab describe the bug if karol stands on or more slabs he can place a flag on the floor underneath the slabs to reproduce steps to reproduce the behavior put down one or more slabs as karol move karol such that he stands on the slabs place a flag on the current position expected behavior karol places the flag on top of the slabs additional context version nightly | 1 |

534,762 | 15,648,429,126 | IssuesEvent | 2021-03-23 05:42:57 | TerryCavanagh/diceydungeons.com | https://api.github.com/repos/TerryCavanagh/diceydungeons.com | closed | When Super Magician appears as a boss due to Frog's rule, he doesn't have increased HP | High Priority reported in v1.11 | Just missing a field in the frog HP modifiers file I believe | 1.0 | When Super Magician appears as a boss due to Frog's rule, he doesn't have increased HP - Just missing a field in the frog HP modifiers file I believe | priority | when super magician appears as a boss due to frog s rule he doesn t have increased hp just missing a field in the frog hp modifiers file i believe | 1 |

511,993 | 14,886,685,660 | IssuesEvent | 2021-01-20 17:16:08 | ChainSafe/gossamer | https://api.github.com/repos/ChainSafe/gossamer | opened | fix out of memory panic when handling block body | Priority: 2 - High Type: Bug | ## Describe the bug

<!-- A clear and concise description of what the bug is. -->

- node panics with "out of memory" when handling block body: https://github.com/paritytech/cumulus/blob/master/consensus/src/lib.rs

- fix this and re-enable

- may be due to decoding issues

- not sure if this happens with just gossamer, may only be with kusama

<!-- Thank you 🙏 --> | 1.0 | fix out of memory panic when handling block body - ## Describe the bug

<!-- A clear and concise description of what the bug is. -->

- node panics with "out of memory" when handling block body: https://github.com/paritytech/cumulus/blob/master/consensus/src/lib.rs

- fix this and re-enable

- may be due to decoding issues

- not sure if this happens with just gossamer, may only be with kusama

<!-- Thank you 🙏 --> | priority | fix out of memory panic when handling block body describe the bug node panics with out of memory when handling block body fix this and re enable may be due to decoding issues not sure if this happens with just gossamer may only be with kusama | 1 |

497,471 | 14,371,307,330 | IssuesEvent | 2020-12-01 12:22:48 | FEUP-ESOF-2020-21/open-cx-t4g2-codemasters | https://api.github.com/repos/FEUP-ESOF-2020-21/open-cx-t4g2-codemasters | closed | US: As a user I want to be able to rate a talk | conference manager high priority iteration-3 user-story | As a user I want to be able to rate a talk.

Scenario: Rate a talk.

Given: A conference that I have attended

When: I tap “Rate this talk”

Then: I give a score between 0 and 10

Value: Must have

Effort: XL | 1.0 | US: As a user I want to be able to rate a talk - As a user I want to be able to rate a talk.

Scenario: Rate a talk.

Given: A conference that I have attended

When: I tap “Rate this talk”

Then: I give a score between 0 and 10

Value: Must have

Effort: XL | priority | us as a user i want to be able to rate a talk as a user i want to be able to rate a talk scenario rate a talk given a conference that i have attended when i tap “rate this talk” then i give a score between and value must have effort xl | 1 |

552,216 | 16,218,476,649 | IssuesEvent | 2021-05-06 00:26:35 | lalitpagaria/obsei | https://api.github.com/repos/lalitpagaria/obsei | closed | HTTP Sink is not working due to date time serialization issue on AppStore and PlayStore Scrapper Sources | bug high priority | below issue is coming :

TypeError: datetime.datetime(...) is not JSON serializable

**To Reproduce**

Select PlayStore & AppStore Scrapper and use some HTTP mock server or HTTP local server to receive sentiments data.

**Expected behavior**

Should work with any date time format

**Stacktrace**

TypeError: datetime.datetime(...) is not JSON serializable

**Please complete the following information:**

- OS: windows

- Version:

**Additional context**

Add any other context about the problem here.

| 1.0 | HTTP Sink is not working due to date time serialization issue on AppStore and PlayStore Scrapper Sources - below issue is coming :

TypeError: datetime.datetime(...) is not JSON serializable

**To Reproduce**

Select PlayStore & AppStore Scrapper and use some HTTP mock server or HTTP local server to receive sentiments data.

**Expected behavior**

Should work with any date time format

**Stacktrace**

TypeError: datetime.datetime(...) is not JSON serializable

**Please complete the following information:**

- OS: windows

- Version:

**Additional context**

Add any other context about the problem here.

| priority | http sink is not working due to date time serialization issue on appstore and playstore scrapper sources below issue is coming typeerror datetime datetime is not json serializable to reproduce select playstore appstore scrapper and use some http mock server or http local server to receive sentiments data expected behavior should work with any date time format stacktrace typeerror datetime datetime is not json serializable please complete the following information os windows version additional context add any other context about the problem here | 1 |

185,726 | 6,727,089,271 | IssuesEvent | 2017-10-17 12:25:48 | ballerinalang/composer | https://api.github.com/repos/ballerinalang/composer | closed | Undo action removes all latest added attributes after an immediate add of an attribute | 0.94-pre-release Priority/High | Browser: Chrome Version 61.0.3163.100 (Official Build) (64-bit)

**Steps**

1. Add one or two attributes (att1, att2) with values for any configuration

2. Add another attribute (att3)

3. Click undo or press Ctrl+Z

**Acutal result**

All attributes including att1, att2 get undone

**Expected result**

Only att3 should be undone | 1.0 | Undo action removes all latest added attributes after an immediate add of an attribute - Browser: Chrome Version 61.0.3163.100 (Official Build) (64-bit)

**Steps**

1. Add one or two attributes (att1, att2) with values for any configuration

2. Add another attribute (att3)

3. Click undo or press Ctrl+Z

**Acutal result**

All attributes including att1, att2 get undone

**Expected result**

Only att3 should be undone | priority | undo action removes all latest added attributes after an immediate add of an attribute browser chrome version official build bit steps add one or two attributes with values for any configuration add another attribute click undo or press ctrl z acutal result all attributes including get undone expected result only should be undone | 1 |

99,676 | 4,059,209,061 | IssuesEvent | 2016-05-25 08:45:29 | icatproject/python-icat | https://api.github.com/repos/icatproject/python-icat | closed | Ensure compatibility with ICAT 4.7 and IDS 1.6 | blocked enhancement in progress Priority-High | The first snapshot releases for icat.server 4.7.0 and ids.server 1.6.0 are out. Need to make sure python-icat copes well with these upcoming versions and supports all important new features. ICAT 4.7 is supposed to have some minor schema changes, so at least this will require changes in python-icat.

Blocked until the final release versions of icat.server 4.7.0 and ids.server 1.6.0 are available for testing. | 1.0 | Ensure compatibility with ICAT 4.7 and IDS 1.6 - The first snapshot releases for icat.server 4.7.0 and ids.server 1.6.0 are out. Need to make sure python-icat copes well with these upcoming versions and supports all important new features. ICAT 4.7 is supposed to have some minor schema changes, so at least this will require changes in python-icat.

Blocked until the final release versions of icat.server 4.7.0 and ids.server 1.6.0 are available for testing. | priority | ensure compatibility with icat and ids the first snapshot releases for icat server and ids server are out need to make sure python icat copes well with these upcoming versions and supports all important new features icat is supposed to have some minor schema changes so at least this will require changes in python icat blocked until the final release versions of icat server and ids server are available for testing | 1 |

487,458 | 14,047,112,968 | IssuesEvent | 2020-11-02 06:28:43 | wso2/product-apim-tooling | https://api.github.com/repos/wso2/product-apim-tooling | closed | Revamp the existing "apictl" commands and the new commands to be added to API Controller | Affected/3.1.0 Next Release - 4.x Priority/High Type/Improvement | **Description:**

There are two (2) types of command signatures (structures) in API Controller such as **apictl [verb] [noun] [flags]** and **apictl [command] [flags]**. Below are the commands belonging to those two (2) categories.

<table>

<tbody>

<tr>

<th>apictl [verb] [noun] [flags]</th>

<th>apictl [command] [flags]</strong></th>

</tr>

<tr>

<td valign="top">

<p><strong>Existing commands:</strong></p>

<ul>

<li>apictl list apis [flags]</li>

<li>apictl list apps [flags]</li>

<li>apictl login <env-name> [flags]</li>

<li>apictl logout <env-name> [flags]</li>

<li>apictl install api-operator [flags]</li>

<li>apictl uninstall api-operator [flags]</li>

<li>apictl change registry [flags]</li>

<li>apictl version <---- apictl noun only</li>

<li>apictl help <----- apictl verb only</li>

</ul>

<br />

<p><strong>Newly added commands:</strong></p>

<ul>

<li>apictl list api-products [flags]</li>

</ul>

</td>

<td>

<p><strong>Existing commands:</strong></p>

<ul>

<li >apictl add [flags]</li>

<li>apictl add-env [flags]</li>

<li>apictl remove-env [flags]</li>

<li>apictl export-api [flags]</li>

<li>apictl export-apis [flags]</li>

<li>apictl export-app [flags]</li>

<li>apictl import-api [flags]</li>

<li>apictl import-app [flags]</li>

<li>apictl init [flags]</li>

<li>apictl get-keys [flags]</li>

<li>apictl set [flags]</li>

<li>apictl update [flags]</li>

</ul>

<br />

<p><strong>Newly added commands:</strong></p>

<ul>

<li>apictl delete-api [flags]</li>

<li>apictl change-api-status [flags]</li>

<li>apictl delete-api-product [flag]</li>

</ul>

<br />

<p><strong>Commands to be added:</strong></p>

<ul>

<li>apictl import-api-product [flags]</li>

<li>apictl export-api-product [flags]</li>

</ul>

</td>

</tr>

</tbody>

</table>

It would be better if all the commands can be revamped into one then all the commands will be more consistent.

**Suggested new structure:** _**apictl [verb] [noun] [flags]** (The structure that already has been used in the left column)_

The recently added new commands (check right column **Newly added commands:**) and the commands to be added (check right column **Commands to be added:**) can be easily restructured to the suggested new structure.

The existing commands (check right column **Existing commands:**) should be migrated in a manner without breaking any user functionality. These existing commands can be deprecated first without directly removing them which will address the backward compatibility.

**Suggested Labels:**

Type/Improvement

Affected/3.1.0

**Affected Product Version:**

APICTL 3.1.0 | 1.0 | Revamp the existing "apictl" commands and the new commands to be added to API Controller - **Description:**

There are two (2) types of command signatures (structures) in API Controller such as **apictl [verb] [noun] [flags]** and **apictl [command] [flags]**. Below are the commands belonging to those two (2) categories.

<table>

<tbody>

<tr>

<th>apictl [verb] [noun] [flags]</th>

<th>apictl [command] [flags]</strong></th>

</tr>

<tr>

<td valign="top">

<p><strong>Existing commands:</strong></p>

<ul>

<li>apictl list apis [flags]</li>

<li>apictl list apps [flags]</li>

<li>apictl login <env-name> [flags]</li>

<li>apictl logout <env-name> [flags]</li>

<li>apictl install api-operator [flags]</li>

<li>apictl uninstall api-operator [flags]</li>

<li>apictl change registry [flags]</li>

<li>apictl version <---- apictl noun only</li>

<li>apictl help <----- apictl verb only</li>

</ul>

<br />

<p><strong>Newly added commands:</strong></p>

<ul>

<li>apictl list api-products [flags]</li>

</ul>

</td>

<td>

<p><strong>Existing commands:</strong></p>

<ul>

<li >apictl add [flags]</li>

<li>apictl add-env [flags]</li>

<li>apictl remove-env [flags]</li>

<li>apictl export-api [flags]</li>

<li>apictl export-apis [flags]</li>

<li>apictl export-app [flags]</li>

<li>apictl import-api [flags]</li>

<li>apictl import-app [flags]</li>

<li>apictl init [flags]</li>

<li>apictl get-keys [flags]</li>

<li>apictl set [flags]</li>

<li>apictl update [flags]</li>

</ul>

<br />

<p><strong>Newly added commands:</strong></p>

<ul>

<li>apictl delete-api [flags]</li>

<li>apictl change-api-status [flags]</li>

<li>apictl delete-api-product [flag]</li>

</ul>

<br />

<p><strong>Commands to be added:</strong></p>

<ul>

<li>apictl import-api-product [flags]</li>

<li>apictl export-api-product [flags]</li>

</ul>

</td>

</tr>

</tbody>

</table>

It would be better if all the commands can be revamped into one then all the commands will be more consistent.

**Suggested new structure:** _**apictl [verb] [noun] [flags]** (The structure that already has been used in the left column)_

The recently added new commands (check right column **Newly added commands:**) and the commands to be added (check right column **Commands to be added:**) can be easily restructured to the suggested new structure.

The existing commands (check right column **Existing commands:**) should be migrated in a manner without breaking any user functionality. These existing commands can be deprecated first without directly removing them which will address the backward compatibility.

**Suggested Labels:**

Type/Improvement

Affected/3.1.0

**Affected Product Version:**

APICTL 3.1.0 | priority | revamp the existing apictl commands and the new commands to be added to api controller description there are two types of command signatures structures in api controller such as apictl and apictl below are the commands belonging to those two categories apictl apictl existing commands apictl list apis apictl list apps apictl login lt env name gt apictl logout lt env name gt apictl install api operator apictl uninstall api operator apictl change registry apictl version lt apictl noun only apictl help nbsp nbsp lt apictl verb only newly added nbsp commands apictl list api products existing commands apictl add apictl add env apictl remove env apictl export api apictl export apis apictl export app apictl import api apictl import app apictl init apictl get keys apictl set apictl update newly added commands apictl delete api apictl change api status apictl delete api product commands to be added apictl import api product apictl export api product it would be better if all the commands can be revamped into one then all the commands will be more consistent suggested new structure apictl the structure that already has been used in the left column the recently added new commands check right column newly added commands and the commands to be added check right column commands to be added can be easily restructured to the suggested new structure the existing commands check right column existing commands should be migrated in a manner without breaking any user functionality these existing commands can be deprecated first without directly removing them which will address the backward compatibility suggested labels type improvement affected affected product version apictl | 1 |

463,541 | 13,283,379,793 | IssuesEvent | 2020-08-24 03:00:55 | yidongnan/grpc-spring-boot-starter | https://api.github.com/repos/yidongnan/grpc-spring-boot-starter | closed | Stub creation has no fallback | bug high priority | I believe this to be a blocking regression issue.

**The context**

Use [grpc-kotlin](https://github.com/grpc/grpc-kotlin) library to make gRPC calls.

**The bug**

In `grpc-spring-boot-starter:v2.9.0.RELEASE`, `GrpcClientBeanPostProcessor` tries to figure out (reflectively) the appropriate `static` method to create a stub. If it fails, it **falls back** to using the constructor (which is assumed as `private`, but may not be, as in the case of `grpc-kotlin`). In `grpc-spring-boot-starter:v2.10.0.RELEASE`, the fallback is not present, and thus, the stub injection blows up with an error `java.lang.IllegalArgumentException: Unsupported stub type`. See the class hierarchy below:

```

class ServiceNameCoroutineStub @JvmOverloads constructor(

channel: Channel,

callOptions: CallOptions = DEFAULT

) : AbstractCoroutineStub<ServiceNameCoroutineStub>(channel, callOptions)

```

```

abstract class AbstractCoroutineStub<S: AbstractCoroutineStub<S>>(

channel: Channel,

callOptions: CallOptions = CallOptions.DEFAULT

): AbstractStub<S>(channel, callOptions)

```

The problem, that already existed in `grpc-spring-boot-starter:v2.9.0.RELEASE` but was hidden by the fallback, is that there's no code in `GrpcClientBeanPostProcessor` to check for `AbstractStub` subclasses. I think the fallback to using the constructor directly is ok, as seen in the [example code](https://github.com/grpc/grpc-kotlin/blob/master/examples/src/main/kotlin/io/grpc/examples/helloworld/HelloWorldClient.kt#L31), and should not be removed. However, the constructor can be assumed `public` and there's no need to try to change its visibility.

Note that this too, will fail, if grpc-kotlin makes the constructor `private` and adds a static method (I created https://github.com/grpc/grpc-kotlin/issues/163). The futureproof way to fix this is not to check for the stub type, but to check for the existence of static methods named `new*Stub`, and then comparing the return types with the declared stub type.

**Stacktrace and logs**

```

Caused by: org.springframework.beans.BeanInstantiationException: Failed to instantiate [com.mycompany.ServiceNameGrpcKt$ServiceNameCoroutineStub]: Unsupported stub type: com.mycompany.ServiceNameGrpcKt$ServiceNameCoroutineStub -> Please report this issue.

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.lambda$createStub$1(GrpcClientBeanPostProcessor.java:244)

at java.base/java.util.Optional.orElseThrow(Optional.java:408)

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.createStub(GrpcClientBeanPostProcessor.java:243)

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.valueForMember(GrpcClientBeanPostProcessor.java:218)

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.processInjectionPoint(GrpcClientBeanPostProcessor.java:127)

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.postProcessBeforeInitialization(GrpcClientBeanPostProcessor.java:83)

at org.springframework.beans.factory.support.AbstractAutowireCapableBeanFactory.applyBeanPostProcessorsBeforeInitialization(AbstractAutowireCapableBeanFactory.java:416)

at org.springframework.beans.factory.support.AbstractAutowireCapableBeanFactory.initializeBean(AbstractAutowireCapableBeanFactory.java:1788)

at org.springframework.beans.factory.support.AbstractAutowireCapableBeanFactory.doCreateBean(AbstractAutowireCapableBeanFactory.java:595)

```

**Steps to Reproduce**

N/A

**The application's environment**

Which versions do you use?

* Spring (boot): 2.3.1.RELEASE

* grpc-java: 1.30.2

* grpc-spring-boot-starter: 2.9.0.RELEASE

**Additional context**

* Did it ever work before? Yes.

* Do you have a demo? No.

| 1.0 | Stub creation has no fallback - I believe this to be a blocking regression issue.

**The context**

Use [grpc-kotlin](https://github.com/grpc/grpc-kotlin) library to make gRPC calls.

**The bug**

In `grpc-spring-boot-starter:v2.9.0.RELEASE`, `GrpcClientBeanPostProcessor` tries to figure out (reflectively) the appropriate `static` method to create a stub. If it fails, it **falls back** to using the constructor (which is assumed as `private`, but may not be, as in the case of `grpc-kotlin`). In `grpc-spring-boot-starter:v2.10.0.RELEASE`, the fallback is not present, and thus, the stub injection blows up with an error `java.lang.IllegalArgumentException: Unsupported stub type`. See the class hierarchy below:

```

class ServiceNameCoroutineStub @JvmOverloads constructor(

channel: Channel,

callOptions: CallOptions = DEFAULT

) : AbstractCoroutineStub<ServiceNameCoroutineStub>(channel, callOptions)

```

```

abstract class AbstractCoroutineStub<S: AbstractCoroutineStub<S>>(

channel: Channel,

callOptions: CallOptions = CallOptions.DEFAULT

): AbstractStub<S>(channel, callOptions)

```

The problem, that already existed in `grpc-spring-boot-starter:v2.9.0.RELEASE` but was hidden by the fallback, is that there's no code in `GrpcClientBeanPostProcessor` to check for `AbstractStub` subclasses. I think the fallback to using the constructor directly is ok, as seen in the [example code](https://github.com/grpc/grpc-kotlin/blob/master/examples/src/main/kotlin/io/grpc/examples/helloworld/HelloWorldClient.kt#L31), and should not be removed. However, the constructor can be assumed `public` and there's no need to try to change its visibility.

Note that this too, will fail, if grpc-kotlin makes the constructor `private` and adds a static method (I created https://github.com/grpc/grpc-kotlin/issues/163). The futureproof way to fix this is not to check for the stub type, but to check for the existence of static methods named `new*Stub`, and then comparing the return types with the declared stub type.

**Stacktrace and logs**

```

Caused by: org.springframework.beans.BeanInstantiationException: Failed to instantiate [com.mycompany.ServiceNameGrpcKt$ServiceNameCoroutineStub]: Unsupported stub type: com.mycompany.ServiceNameGrpcKt$ServiceNameCoroutineStub -> Please report this issue.

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.lambda$createStub$1(GrpcClientBeanPostProcessor.java:244)

at java.base/java.util.Optional.orElseThrow(Optional.java:408)

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.createStub(GrpcClientBeanPostProcessor.java:243)

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.valueForMember(GrpcClientBeanPostProcessor.java:218)

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.processInjectionPoint(GrpcClientBeanPostProcessor.java:127)

at net.devh.boot.grpc.client.inject.GrpcClientBeanPostProcessor.postProcessBeforeInitialization(GrpcClientBeanPostProcessor.java:83)

at org.springframework.beans.factory.support.AbstractAutowireCapableBeanFactory.applyBeanPostProcessorsBeforeInitialization(AbstractAutowireCapableBeanFactory.java:416)

at org.springframework.beans.factory.support.AbstractAutowireCapableBeanFactory.initializeBean(AbstractAutowireCapableBeanFactory.java:1788)

at org.springframework.beans.factory.support.AbstractAutowireCapableBeanFactory.doCreateBean(AbstractAutowireCapableBeanFactory.java:595)

```

**Steps to Reproduce**

N/A

**The application's environment**

Which versions do you use?

* Spring (boot): 2.3.1.RELEASE

* grpc-java: 1.30.2

* grpc-spring-boot-starter: 2.9.0.RELEASE

**Additional context**

* Did it ever work before? Yes.

* Do you have a demo? No.

| priority | stub creation has no fallback i believe this to be a blocking regression issue the context use library to make grpc calls the bug in grpc spring boot starter release grpcclientbeanpostprocessor tries to figure out reflectively the appropriate static method to create a stub if it fails it falls back to using the constructor which is assumed as private but may not be as in the case of grpc kotlin in grpc spring boot starter release the fallback is not present and thus the stub injection blows up with an error java lang illegalargumentexception unsupported stub type see the class hierarchy below class servicenamecoroutinestub jvmoverloads constructor channel channel calloptions calloptions default abstractcoroutinestub channel calloptions abstract class abstractcoroutinestub channel channel calloptions calloptions calloptions default abstractstub channel calloptions the problem that already existed in grpc spring boot starter release but was hidden by the fallback is that there s no code in grpcclientbeanpostprocessor to check for abstractstub subclasses i think the fallback to using the constructor directly is ok as seen in the and should not be removed however the constructor can be assumed public and there s no need to try to change its visibility note that this too will fail if grpc kotlin makes the constructor private and adds a static method i created the futureproof way to fix this is not to check for the stub type but to check for the existence of static methods named new stub and then comparing the return types with the declared stub type stacktrace and logs caused by org springframework beans beaninstantiationexception failed to instantiate unsupported stub type com mycompany servicenamegrpckt servicenamecoroutinestub please report this issue at net devh boot grpc client inject grpcclientbeanpostprocessor lambda createstub grpcclientbeanpostprocessor java at java base java util optional orelsethrow optional java at net devh boot grpc client inject grpcclientbeanpostprocessor createstub grpcclientbeanpostprocessor java at net devh boot grpc client inject grpcclientbeanpostprocessor valueformember grpcclientbeanpostprocessor java at net devh boot grpc client inject grpcclientbeanpostprocessor processinjectionpoint grpcclientbeanpostprocessor java at net devh boot grpc client inject grpcclientbeanpostprocessor postprocessbeforeinitialization grpcclientbeanpostprocessor java at org springframework beans factory support abstractautowirecapablebeanfactory applybeanpostprocessorsbeforeinitialization abstractautowirecapablebeanfactory java at org springframework beans factory support abstractautowirecapablebeanfactory initializebean abstractautowirecapablebeanfactory java at org springframework beans factory support abstractautowirecapablebeanfactory docreatebean abstractautowirecapablebeanfactory java steps to reproduce n a the application s environment which versions do you use spring boot release grpc java grpc spring boot starter release additional context did it ever work before yes do you have a demo no | 1 |

517,831 | 15,020,327,818 | IssuesEvent | 2021-02-01 14:35:31 | Ameelio/pathways-client | https://api.github.com/repos/Ameelio/pathways-client | opened | [Calls] Inc people are shown a notification when they receive a DOC alert | good first issue high-priority | **Is your feature request related to a problem? Please describe.**

Alerts should be given the prominence given that they can lead to a call termination

Currently, they only show up on the regular chat interface

**Describe the solution you'd like**

When users receive a DOC message, it should also be shown as an alert

**Additional context**

There's a `openwithnotification` util function that triggers a notif

| 1.0 | [Calls] Inc people are shown a notification when they receive a DOC alert - **Is your feature request related to a problem? Please describe.**

Alerts should be given the prominence given that they can lead to a call termination

Currently, they only show up on the regular chat interface

**Describe the solution you'd like**

When users receive a DOC message, it should also be shown as an alert

**Additional context**

There's a `openwithnotification` util function that triggers a notif

| priority | inc people are shown a notification when they receive a doc alert is your feature request related to a problem please describe alerts should be given the prominence given that they can lead to a call termination currently they only show up on the regular chat interface describe the solution you d like when users receive a doc message it should also be shown as an alert additional context there s a openwithnotification util function that triggers a notif | 1 |

268,814 | 8,414,909,390 | IssuesEvent | 2018-10-13 08:40:50 | Pack4Duck/ClassicUO | https://api.github.com/repos/Pack4Duck/ClassicUO | closed | ClassicUO fails to load if you're missing an anim*.idx file | Priority: high bug | With the installation of UOR, the following files exist:

- anim.idx

- anim4.idx

- anim5.idx

In Animation.cs, lines 140-149 assume that you have anim, anim2, anim3, anim4, and anim5.

Steps to reproduce the behavior:

1. Delete/rename of of the anim*.idx files

2. Run ClassicUO

3. Observe error

**Expected behavior**

ClassicUO can handle data directories that don't come with all of the anim* files.

**Desktop (please complete the following information):**

- OS: Win10

- Version: master branch, 5.0.8.3 of client from UOR

| 1.0 | ClassicUO fails to load if you're missing an anim*.idx file - With the installation of UOR, the following files exist:

- anim.idx

- anim4.idx

- anim5.idx

In Animation.cs, lines 140-149 assume that you have anim, anim2, anim3, anim4, and anim5.

Steps to reproduce the behavior:

1. Delete/rename of of the anim*.idx files

2. Run ClassicUO

3. Observe error

**Expected behavior**

ClassicUO can handle data directories that don't come with all of the anim* files.

**Desktop (please complete the following information):**

- OS: Win10

- Version: master branch, 5.0.8.3 of client from UOR

| priority | classicuo fails to load if you re missing an anim idx file with the installation of uor the following files exist anim idx idx idx in animation cs lines assume that you have anim and steps to reproduce the behavior delete rename of of the anim idx files run classicuo observe error expected behavior classicuo can handle data directories that don t come with all of the anim files desktop please complete the following information os version master branch of client from uor | 1 |

604,503 | 18,685,695,906 | IssuesEvent | 2021-11-01 12:09:00 | betagouv/service-national-universel | https://api.github.com/repos/betagouv/service-national-universel | opened | fix(inscription): parametrage écran disponibilité | enhancement priority-HIGH inscription | ### Fonctionnalité liée à un problème ?

_No response_

### Fonctionnalité

**fevrier**

- Dispo février - Géo : France entière + Outre Mer seulement Guadeloupe, Guyane et Martinique

- Dispo février - Niveau scolaire : exclusion des 1ère et Terminale

- Dispo février : nés entre le 25/02/2004 et le 14/02/2007 (hors 1ère et term)

**juin**

- Dispo juin : nés entre le 24/06/2004 et le 13/06/2007(hors 1ère et term)

- Dispo juin - Niveau scolaire : exclusion des 1ère et Terminale

- Dispo juin - Niveau scolaire : exclusion 3e et 2nde pro ? (à confirmer)

**juillet**

- Dispo juillet : nés entre le 15/07/2004 et le 04/07/2007

### Commentaires

_No response_ | 1.0 | fix(inscription): parametrage écran disponibilité - ### Fonctionnalité liée à un problème ?

_No response_

### Fonctionnalité

**fevrier**

- Dispo février - Géo : France entière + Outre Mer seulement Guadeloupe, Guyane et Martinique

- Dispo février - Niveau scolaire : exclusion des 1ère et Terminale

- Dispo février : nés entre le 25/02/2004 et le 14/02/2007 (hors 1ère et term)

**juin**

- Dispo juin : nés entre le 24/06/2004 et le 13/06/2007(hors 1ère et term)

- Dispo juin - Niveau scolaire : exclusion des 1ère et Terminale

- Dispo juin - Niveau scolaire : exclusion 3e et 2nde pro ? (à confirmer)

**juillet**

- Dispo juillet : nés entre le 15/07/2004 et le 04/07/2007

### Commentaires

_No response_ | priority | fix inscription parametrage écran disponibilité fonctionnalité liée à un problème no response fonctionnalité fevrier dispo février géo france entière outre mer seulement guadeloupe guyane et martinique dispo février niveau scolaire exclusion des et terminale dispo février nés entre le et le hors et term juin dispo juin nés entre le et le hors et term dispo juin niveau scolaire exclusion des et terminale dispo juin niveau scolaire exclusion et pro à confirmer juillet dispo juillet nés entre le et le commentaires no response | 1 |

404,677 | 11,861,489,924 | IssuesEvent | 2020-03-25 16:25:25 | levelkdev/BC-DAPP | https://api.github.com/repos/levelkdev/BC-DAPP | closed | Wrong use of units in dapp | bug high-priority | At this moment the dapp is always using WEI units, where WEI units should be use only when we communicate to the contracts and in the UI it should always display ETH units (which uses 18 decimals by default)

In order to fix I propose to use the BN library provided by web3, accessible by `web3.utils.BN` and use the methods `web3.utils.fromWei` and `web3.utils.toWei` | 1.0 | Wrong use of units in dapp - At this moment the dapp is always using WEI units, where WEI units should be use only when we communicate to the contracts and in the UI it should always display ETH units (which uses 18 decimals by default)

In order to fix I propose to use the BN library provided by web3, accessible by `web3.utils.BN` and use the methods `web3.utils.fromWei` and `web3.utils.toWei` | priority | wrong use of units in dapp at this moment the dapp is always using wei units where wei units should be use only when we communicate to the contracts and in the ui it should always display eth units which uses decimals by default in order to fix i propose to use the bn library provided by accessible by utils bn and use the methods utils fromwei and utils towei | 1 |

111,922 | 4,494,880,802 | IssuesEvent | 2016-08-31 08:11:48 | code-corps/code-corps-phoenix | https://api.github.com/repos/code-corps/code-corps-phoenix | closed | Add in post_type filtering to the posts endpoint | awaiting review high priority | We don't have any `post_type` filtering on the posts endpoint right now. We need to add that filtering in to our queries for the `index` actions. | 1.0 | Add in post_type filtering to the posts endpoint - We don't have any `post_type` filtering on the posts endpoint right now. We need to add that filtering in to our queries for the `index` actions. | priority | add in post type filtering to the posts endpoint we don t have any post type filtering on the posts endpoint right now we need to add that filtering in to our queries for the index actions | 1 |

558,419 | 16,533,021,614 | IssuesEvent | 2021-05-27 08:32:33 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | myspace.com - design is broken | browser-firefox-ios bugbug-probability-high os-ios priority-normal | <!-- @browser: Firefox iOS 33.1 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 14_4 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.1 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/75143 -->

**URL**: https://myspace.com/nscrepresenta/mixes/classic-my-photos-464417/photo/193447851

**Browser / Version**: Firefox iOS 33.1

**Operating System**: iOS 14.4

**Tested Another Browser**: Yes Chrome

**Problem type**: Design is broken

**Description**: Images not loaded

**Steps to Reproduce**:

Picture didn’t load. Need old pictures

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | myspace.com - design is broken - <!-- @browser: Firefox iOS 33.1 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 14_4 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.1 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/75143 -->

**URL**: https://myspace.com/nscrepresenta/mixes/classic-my-photos-464417/photo/193447851

**Browser / Version**: Firefox iOS 33.1

**Operating System**: iOS 14.4

**Tested Another Browser**: Yes Chrome

**Problem type**: Design is broken

**Description**: Images not loaded

**Steps to Reproduce**:

Picture didn’t load. Need old pictures

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | myspace com design is broken url browser version firefox ios operating system ios tested another browser yes chrome problem type design is broken description images not loaded steps to reproduce picture didn’t load need old pictures browser configuration none from with ❤️ | 1 |

320,140 | 9,770,022,415 | IssuesEvent | 2019-06-06 09:56:17 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | CTCLoss produces NaNs in some situations | high priority module: cuda module: nn topic: determinism triaged | ## 🐛 Bug

when I train a cnn-rnn-ctc text recognize model, I meet nan loss after some iters, but it's ok at pytorch 0.4 with warpctc

## To Reproduce

Steps to reproduce the behavior:

1. download the code from https://github.com/WenmuZhou/crnn.pytorch

2. change the ctc loss from warpctc to nn.CTCloss()

3. run

- PyTorch Version (e.g., 1.0): 1.0.0.dev20181115

- OS (e.g., Linux): ubuntu 16.04

- How you installed PyTorch (`conda`, `pip`, source): pip3

- Build command you used (if compiling from source):

- Python version: 3.5.2

- CUDA/cuDNN version: 8.0/6.0

- GPU models and configuration: 1080ti

- Any other relevant information: | 1.0 | CTCLoss produces NaNs in some situations - ## 🐛 Bug

when I train a cnn-rnn-ctc text recognize model, I meet nan loss after some iters, but it's ok at pytorch 0.4 with warpctc

## To Reproduce

Steps to reproduce the behavior:

1. download the code from https://github.com/WenmuZhou/crnn.pytorch

2. change the ctc loss from warpctc to nn.CTCloss()

3. run

- PyTorch Version (e.g., 1.0): 1.0.0.dev20181115

- OS (e.g., Linux): ubuntu 16.04

- How you installed PyTorch (`conda`, `pip`, source): pip3

- Build command you used (if compiling from source):

- Python version: 3.5.2

- CUDA/cuDNN version: 8.0/6.0

- GPU models and configuration: 1080ti

- Any other relevant information: | priority | ctcloss produces nans in some situations 🐛 bug when i train a cnn rnn ctc text recognize model i meet nan loss after some iters but it s ok at pytorch with warpctc to reproduce steps to reproduce the behavior download the code from change the ctc loss from warpctc to nn ctcloss run pytorch version e g os e g linux ubuntu how you installed pytorch conda pip source build command you used if compiling from source python version cuda cudnn version gpu models and configuration any other relevant information | 1 |

525,658 | 15,257,740,422 | IssuesEvent | 2021-02-21 03:01:24 | aneuhold/BestCommunityService | https://api.github.com/repos/aneuhold/BestCommunityService | closed | External Services Page | priority: High | A page for external services.

AC 1: It has something that shows a number to call for in-home services.

AC 2: A description of each thing that is available

| 1.0 | External Services Page - A page for external services.

AC 1: It has something that shows a number to call for in-home services.

AC 2: A description of each thing that is available

| priority | external services page a page for external services ac it has something that shows a number to call for in home services ac a description of each thing that is available | 1 |

270,842 | 8,471,068,560 | IssuesEvent | 2018-10-24 07:21:01 | CS2113-AY1819S1-T12-1/main | https://api.github.com/repos/CS2113-AY1819S1-T12-1/main | opened | [1.3] Check OOP for new method design | priority.high | Check design for new method and class related to export command, sort and new filter | 1.0 | [1.3] Check OOP for new method design - Check design for new method and class related to export command, sort and new filter | priority | check oop for new method design check design for new method and class related to export command sort and new filter | 1 |

636,900 | 20,612,445,224 | IssuesEvent | 2022-03-07 09:58:24 | NicholasG04/Prom | https://api.github.com/repos/NicholasG04/Prom | closed | Lack of discrete axolotl references | bug enhancement UI User Panel Admin Panel High Priority | There is an atrocious lack of hidden, inconspicuous axolotl references throughout the website, this must be corrected immediately for the safety and wellbeing of the human population. | 1.0 | Lack of discrete axolotl references - There is an atrocious lack of hidden, inconspicuous axolotl references throughout the website, this must be corrected immediately for the safety and wellbeing of the human population. | priority | lack of discrete axolotl references there is an atrocious lack of hidden inconspicuous axolotl references throughout the website this must be corrected immediately for the safety and wellbeing of the human population | 1 |

665,063 | 22,298,163,795 | IssuesEvent | 2022-06-13 05:41:42 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | ERROR {org.wso2.carbon.is.migration.MigrationClientImpl} - Migration process was stopped. while scanning a simple key in 'reader', line 367 when generating dry run reports in migration | Priority/High Severity/Critical bug Component/Migration 6.0.0-Migration Affected-6.0.0 QA-Reported | **How to reproduce:**

1. Get IS 5.11.0 U2 updated for latest level 151

2. Get IS 6.0.0 m3 pack

3. Point for mssql 2019

```

[server]

hostname = "localhost"

node_ip = "127.0.0.1"

base_path = "https://$ref{server.hostname}:${carbon.management.port}"

offset = "1"

[super_admin]

username = "admin"

password = "admin"

create_admin_account = true

[user_store]

type = "database_unique_id"

[database.identity_db]

url = "jdbc:sqlserver://localhost:1433;databaseName=migrt4;SendStringParametersAsUnicode=false"

username = "sa"

password = "MyPassword001"

driver = "com.microsoft.sqlserver.jdbc.SQLServerDriver"

[database.identity_db.pool_options]

maxActive = "80"

maxWait="6000"

minIdle ="5"

testOnBorrow = true

validationQuery="SELECT 1"

validationInterval="30000"

defaultAutoCommit=false

[database.shared_db]

url = "jdbc:sqlserver://localhost:1433;databaseName=migrt4;SendStringParametersAsUnicode=false"

username = "sa"

password = "MyPassword001"

driver = "com.microsoft.sqlserver.jdbc.SQLServerDriver"

[database.shared_db.pool_options]

maxActive = "80"

maxWait="6000"

minIdle ="5"

testOnBorrow = true

validationQuery="SELECT 1"

validationInterval="30000"

defaultAutoCommit=false

[keystore.primary]

file_name = "wso2carbon.jks"

password = "wso2carbon"

[truststore]