Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

363,101 | 10,737,648,196 | IssuesEvent | 2019-10-29 13:29:56 | AY1920S1-CS2113T-W17-4/main | https://api.github.com/repos/AY1920S1-CS2113T-W17-4/main | closed | As a Computing student, I can view my tasks for the week in calendar format | priority.High type.Epic type.Story | so that I can plan my time for the week. | 1.0 | As a Computing student, I can view my tasks for the week in calendar format - so that I can plan my time for the week. | priority | as a computing student i can view my tasks for the week in calendar format so that i can plan my time for the week | 1 |

614,129 | 19,142,811,069 | IssuesEvent | 2021-12-02 02:07:54 | teamc0/heart-muscle-be | https://api.github.com/repos/teamc0/heart-muscle-be | opened | - [Style]: 프로젝트명 "heart-muscle" 해서 새로 올리기 | priority:high status : In progress status : to do | ### Issue Type 리스트

- [ ] style : 코드 형식 변경, 세미콜론 추가, 변수 명 통일화 etc.. (비지니스 로직에 변경 없음)

## 본문 내용

- [ ] 프로젝트 명 "heart-muscle"로 해서 올리기

| 1.0 | - [Style]: 프로젝트명 "heart-muscle" 해서 새로 올리기 - ### Issue Type 리스트

- [ ] style : 코드 형식 변경, 세미콜론 추가, 변수 명 통일화 etc.. (비지니스 로직에 변경 없음)

## 본문 내용

- [ ] 프로젝트 명 "heart-muscle"로 해서 올리기

| priority | 프로젝트명 heart muscle 해서 새로 올리기 issue type 리스트 style 코드 형식 변경 세미콜론 추가 변수 명 통일화 etc 비지니스 로직에 변경 없음 본문 내용 프로젝트 명 heart muscle 로 해서 올리기 | 1 |

187,087 | 6,744,757,770 | IssuesEvent | 2017-10-20 16:50:51 | canonical-websites/www.ubuntu.com | https://api.github.com/repos/canonical-websites/www.ubuntu.com | closed | The spelling of GNOME is inconsistent | Priority: High Type: Bug | ## Summary

If someone navigates to https://www.ubuntu.com/desktop/1710 and reads the text, GNOME is spelled like "Gnome" but further down it is spelled like "GNOME." [As per the official GNOME website](https://www.gnome.org/), the spelling is "GNOME." Please correct this.

(I don't feel the other headers are necessary because this is a pretty simple problem and using those would be redundant...)

| 1.0 | The spelling of GNOME is inconsistent - ## Summary

If someone navigates to https://www.ubuntu.com/desktop/1710 and reads the text, GNOME is spelled like "Gnome" but further down it is spelled like "GNOME." [As per the official GNOME website](https://www.gnome.org/), the spelling is "GNOME." Please correct this.

(I don't feel the other headers are necessary because this is a pretty simple problem and using those would be redundant...)

| priority | the spelling of gnome is inconsistent summary if someone navigates to and reads the text gnome is spelled like gnome but further down it is spelled like gnome the spelling is gnome please correct this i don t feel the other headers are necessary because this is a pretty simple problem and using those would be redundant | 1 |

388,805 | 11,492,716,010 | IssuesEvent | 2020-02-11 21:33:09 | DynamicProgrammingEECS441/PicassoXS | https://api.github.com/repos/DynamicProgrammingEECS441/PicassoXS | opened | General Filter & Portrait Mode Filter - Preprocess data from client | Back End Feature CORE Feature Type::Skeletal Product Sprint::High Priority | ## Info

1. get client request, downlaod image, laod image into tensor

2. may require using extra web server | 1.0 | General Filter & Portrait Mode Filter - Preprocess data from client - ## Info

1. get client request, downlaod image, laod image into tensor

2. may require using extra web server | priority | general filter portrait mode filter preprocess data from client info get client request downlaod image laod image into tensor may require using extra web server | 1 |

467,846 | 13,456,659,448 | IssuesEvent | 2020-09-09 08:09:54 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.ebay.com - see bug description | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:81.0) Gecko/20100101 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/57966 -->

**URL**: https://www.ebay.com/

**Browser / Version**: Firefox 81.0

**Operating System**: Windows 7

**Tested Another Browser**: Yes Internet Explorer

**Problem type**: Something else

**Description**: no GUI just listing of information and selection of boxes.

**Steps to Reproduce**:

The website would open and work slowly. The website "Reverb.com" would not cooperate in explorer.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/9/138a01f7-4433-4718-98bf-1e34f0c6ac5b.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200906164749</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/9/38d01030-7167-4204-b8a5-1ea8a68bf42e)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.ebay.com - see bug description - <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:81.0) Gecko/20100101 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/57966 -->

**URL**: https://www.ebay.com/

**Browser / Version**: Firefox 81.0

**Operating System**: Windows 7

**Tested Another Browser**: Yes Internet Explorer

**Problem type**: Something else

**Description**: no GUI just listing of information and selection of boxes.

**Steps to Reproduce**:

The website would open and work slowly. The website "Reverb.com" would not cooperate in explorer.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/9/138a01f7-4433-4718-98bf-1e34f0c6ac5b.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200906164749</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/9/38d01030-7167-4204-b8a5-1ea8a68bf42e)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | see bug description url browser version firefox operating system windows tested another browser yes internet explorer problem type something else description no gui just listing of information and selection of boxes steps to reproduce the website would open and work slowly the website reverb com would not cooperate in explorer view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

718,178 | 24,706,471,823 | IssuesEvent | 2022-10-19 19:32:09 | opendatahub-io/odh-dashboard | https://api.github.com/repos/opendatahub-io/odh-dashboard | closed | [DSG]: Hook up Prometheus | kind/enhancement priority/high feature/dsg | ### Feature description

Our current backend makes a call to prometheus using the NodeJS `https` library. We should try to make this call from the frontend if we can. This may be problematic with the needing of the user token... if we can get that we should be able to make the call as OpenShift Console does it.

### Describe alternatives you've considered

We can fall back on a special backend route that is for Prometheus. It won't need to be secured as everything will be done as the user as if they were on OpenShift Console.

### Anything else?

However we do this, we should make utilities and isolated code for only reaching out to Prometheus. Ideally whatever that code is is then wrapped with use-cases (likely on the frontend in either design).

The coder who calls these methods shouldn't need to have to reconstruct query language for Prometheus in order to use the hook. | 1.0 | [DSG]: Hook up Prometheus - ### Feature description

Our current backend makes a call to prometheus using the NodeJS `https` library. We should try to make this call from the frontend if we can. This may be problematic with the needing of the user token... if we can get that we should be able to make the call as OpenShift Console does it.

### Describe alternatives you've considered

We can fall back on a special backend route that is for Prometheus. It won't need to be secured as everything will be done as the user as if they were on OpenShift Console.

### Anything else?

However we do this, we should make utilities and isolated code for only reaching out to Prometheus. Ideally whatever that code is is then wrapped with use-cases (likely on the frontend in either design).

The coder who calls these methods shouldn't need to have to reconstruct query language for Prometheus in order to use the hook. | priority | hook up prometheus feature description our current backend makes a call to prometheus using the nodejs https library we should try to make this call from the frontend if we can this may be problematic with the needing of the user token if we can get that we should be able to make the call as openshift console does it describe alternatives you ve considered we can fall back on a special backend route that is for prometheus it won t need to be secured as everything will be done as the user as if they were on openshift console anything else however we do this we should make utilities and isolated code for only reaching out to prometheus ideally whatever that code is is then wrapped with use cases likely on the frontend in either design the coder who calls these methods shouldn t need to have to reconstruct query language for prometheus in order to use the hook | 1 |

322,353 | 9,816,768,347 | IssuesEvent | 2019-06-13 15:17:36 | roboticslab-uc3m/vision | https://api.github.com/repos/roboticslab-uc3m/vision | closed | Further improvements on yarp::dev::IRGBDSensor | priority: high | I left some ideas in https://github.com/roboticslab-uc3m/vision/pull/86 for further development and enhancement of the current depth-frame apps. Since that PR was aimed to solve a bug, I have split the underlying low-priority tasks into this issue:

- ~~we no longer create the RGBD device locally, a client device is opened instead (current default: *RGBDSensorClient*) and connects to the corresponding network wrapper -> partially restore previous behavior and allow local devices, too (e.g. *depthCamera*)~~

- [x] fetch intrinsic/extrinsic camera parameters from device via `IRGBDSensor`'s getters (https://github.com/roboticslab-uc3m/vision/commit/cf963808bbbb2cfce2f4f866198bc0127eb23d15)

- [x] generate convenient .ini files at `share/` for each camera+mode (old OpenNI2DeviceServer plugin had several preconfigured modes, [investigate](https://github.com/roboticslab-uc3m/teo-configuration-files/blob/b37b041ddcfddfe1347cfb4d5c22737d0aa069d2/share/teoBase/scripts/teoBase.xml#L36)) -> https://github.com/roboticslab-uc3m/teo-configuration-files/issues/16

- [x] update the [installation guides](https://github.com/roboticslab-uc3m/installation-guides/blob/4969a46fe95f32ddd9710296a720adbc67c1afcb/install-yarp.md), which currently cover the installation of old OpenNI2-based YARP plugins (https://github.com/roboticslab-uc3m/installation-guides/commit/2491869375820d9dfa1492305ade2e119135c2b8)

Expanding on the intrinsic/extrinsic params stuff:

https://github.com/roboticslab-uc3m/vision/blob/5c7709b9f03c38aa4fd2058451502c0de7c07194/programs/colorRegionDetection/main.cpp#L32-L39

Now, such parameters belong to (are required by) the *depthCamera* device and should be loaded via .ini file: http://www.yarp.it/classyarp_1_1dev_1_1depthCameraDriver.html#details. Note that YARP 3 introduces `RGBDSensorParamParser` (https://github.com/robotology/yarp/pull/1634).

Config settings for old `OpenNI2DeviceServer` device: http://wiki.icub.org/wiki/OpenNI2. | 1.0 | Further improvements on yarp::dev::IRGBDSensor - I left some ideas in https://github.com/roboticslab-uc3m/vision/pull/86 for further development and enhancement of the current depth-frame apps. Since that PR was aimed to solve a bug, I have split the underlying low-priority tasks into this issue:

- ~~we no longer create the RGBD device locally, a client device is opened instead (current default: *RGBDSensorClient*) and connects to the corresponding network wrapper -> partially restore previous behavior and allow local devices, too (e.g. *depthCamera*)~~

- [x] fetch intrinsic/extrinsic camera parameters from device via `IRGBDSensor`'s getters (https://github.com/roboticslab-uc3m/vision/commit/cf963808bbbb2cfce2f4f866198bc0127eb23d15)

- [x] generate convenient .ini files at `share/` for each camera+mode (old OpenNI2DeviceServer plugin had several preconfigured modes, [investigate](https://github.com/roboticslab-uc3m/teo-configuration-files/blob/b37b041ddcfddfe1347cfb4d5c22737d0aa069d2/share/teoBase/scripts/teoBase.xml#L36)) -> https://github.com/roboticslab-uc3m/teo-configuration-files/issues/16

- [x] update the [installation guides](https://github.com/roboticslab-uc3m/installation-guides/blob/4969a46fe95f32ddd9710296a720adbc67c1afcb/install-yarp.md), which currently cover the installation of old OpenNI2-based YARP plugins (https://github.com/roboticslab-uc3m/installation-guides/commit/2491869375820d9dfa1492305ade2e119135c2b8)

Expanding on the intrinsic/extrinsic params stuff:

https://github.com/roboticslab-uc3m/vision/blob/5c7709b9f03c38aa4fd2058451502c0de7c07194/programs/colorRegionDetection/main.cpp#L32-L39

Now, such parameters belong to (are required by) the *depthCamera* device and should be loaded via .ini file: http://www.yarp.it/classyarp_1_1dev_1_1depthCameraDriver.html#details. Note that YARP 3 introduces `RGBDSensorParamParser` (https://github.com/robotology/yarp/pull/1634).

Config settings for old `OpenNI2DeviceServer` device: http://wiki.icub.org/wiki/OpenNI2. | priority | further improvements on yarp dev irgbdsensor i left some ideas in for further development and enhancement of the current depth frame apps since that pr was aimed to solve a bug i have split the underlying low priority tasks into this issue we no longer create the rgbd device locally a client device is opened instead current default rgbdsensorclient and connects to the corresponding network wrapper partially restore previous behavior and allow local devices too e g depthcamera fetch intrinsic extrinsic camera parameters from device via irgbdsensor s getters generate convenient ini files at share for each camera mode old plugin had several preconfigured modes update the which currently cover the installation of old based yarp plugins expanding on the intrinsic extrinsic params stuff now such parameters belong to are required by the depthcamera device and should be loaded via ini file note that yarp introduces rgbdsensorparamparser config settings for old device | 1 |

239,770 | 7,800,012,427 | IssuesEvent | 2018-06-09 03:27:13 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0006680:

folder permissions dialog is broken | Bug Filemanager Mantis high priority | **Reported by pschuele on 27 Jun 2012 10:00**

**Version:** Milan (2012.03.5)

folder permissions dialog is broken

- it shows object [object] and no permissions can be set

| 1.0 | 0006680:

folder permissions dialog is broken - **Reported by pschuele on 27 Jun 2012 10:00**

**Version:** Milan (2012.03.5)

folder permissions dialog is broken

- it shows object [object] and no permissions can be set

| priority | folder permissions dialog is broken reported by pschuele on jun version milan folder permissions dialog is broken it shows object and no permissions can be set | 1 |

397,308 | 11,726,590,784 | IssuesEvent | 2020-03-10 14:44:14 | AY1920S2-CS2103T-W16-2/main | https://api.github.com/repos/AY1920S2-CS2103T-W16-2/main | opened | As a user I want to keep track of how many repetitions per exercise | priority.High type.Epic | ... so that I know the details of each exercise. | 1.0 | As a user I want to keep track of how many repetitions per exercise - ... so that I know the details of each exercise. | priority | as a user i want to keep track of how many repetitions per exercise so that i know the details of each exercise | 1 |

617,144 | 19,344,034,112 | IssuesEvent | 2021-12-15 08:57:28 | ls1intum/Artemis | https://api.github.com/repos/ls1intum/Artemis | closed | Nullpointer Exception while Trigger All | bug component:Programming priority:high | ### Describe the bug

We had to trigger all build plans, since we've updates some test cases.

I've marked it as high, since we need this feature if we have to adapt some test cases due to student responses.

What happened:

* Not all Builds have been triggered

* Got this exception in the BE

```

2021-12-07 10:07:22.610 ERROR 12 --- [ artemis-task-1] .a.i.SimpleAsyncUncaughtExceptionHandler : Unexpected exception occurred invoking async method: public void de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.triggerInstructorBuildForExercise(java.lang.Long) throws de.tum.in.www1.artemis.web.rest.errors.EntityNotFoundException

java.lang.NullPointerException: Cannot invoke "org.eclipse.jgit.lib.ObjectId.getName()" because the return value of "de.tum.in.www1.artemis.service.connectors.GitService.getLastCommitHash(de.tum.in.www1.artemis.domain.VcsRepositoryUrl)" is null

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.getLastCommitHashForParticipation(ProgrammingSubmissionService.java:368)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.getOrCreateSubmissionWithLastCommitHashForParticipation(ProgrammingSubmissionService.java:359)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.triggerBuildAndNotifyUser(ProgrammingSubmissionService.java:429)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.triggerBuildForParticipations(ProgrammingSubmissionService.java:316)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.triggerInstructorBuildForExercise(ProgrammingSubmissionService.java:291)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService$$FastClassBySpringCGLIB$$fadb5611.invoke(<generated>)

at org.springframework.cglib.proxy.MethodProxy.invoke(MethodProxy.java:218)

at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.invokeJoinpoint(CglibAopProxy.java:783)

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:163)

at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.proceed(CglibAopProxy.java:753)

at org.springframework.aop.interceptor.AsyncExecutionInterceptor.lambda$invoke$0(AsyncExecutionInterceptor.java:115)

at java.base/java.util.concurrent.FutureTask.run(FutureTask.java:264)

at tech.jhipster.async.ExceptionHandlingAsyncTaskExecutor.lambda$createWrappedRunnable$1(ExceptionHandlingAsyncTaskExecutor.java:78)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1130)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:630)

at java.base/java.lang.Thread.run(Thread.java:831)

```

### To Reproduce

1. Trigger All for a Programming Task (Unclear what the internal state of all participations must be)

### Expected behavior

Trigger all should not fail. And should trigger all participations.

### Screenshots

_No response_

### What browsers are you seeing the problem on?

Firefox

### Additional context

_No response_

### Relevant log output

_No response_ | 1.0 | Nullpointer Exception while Trigger All - ### Describe the bug

We had to trigger all build plans, since we've updates some test cases.

I've marked it as high, since we need this feature if we have to adapt some test cases due to student responses.

What happened:

* Not all Builds have been triggered

* Got this exception in the BE

```

2021-12-07 10:07:22.610 ERROR 12 --- [ artemis-task-1] .a.i.SimpleAsyncUncaughtExceptionHandler : Unexpected exception occurred invoking async method: public void de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.triggerInstructorBuildForExercise(java.lang.Long) throws de.tum.in.www1.artemis.web.rest.errors.EntityNotFoundException

java.lang.NullPointerException: Cannot invoke "org.eclipse.jgit.lib.ObjectId.getName()" because the return value of "de.tum.in.www1.artemis.service.connectors.GitService.getLastCommitHash(de.tum.in.www1.artemis.domain.VcsRepositoryUrl)" is null

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.getLastCommitHashForParticipation(ProgrammingSubmissionService.java:368)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.getOrCreateSubmissionWithLastCommitHashForParticipation(ProgrammingSubmissionService.java:359)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.triggerBuildAndNotifyUser(ProgrammingSubmissionService.java:429)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.triggerBuildForParticipations(ProgrammingSubmissionService.java:316)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService.triggerInstructorBuildForExercise(ProgrammingSubmissionService.java:291)

at de.tum.in.www1.artemis.service.programming.ProgrammingSubmissionService$$FastClassBySpringCGLIB$$fadb5611.invoke(<generated>)

at org.springframework.cglib.proxy.MethodProxy.invoke(MethodProxy.java:218)

at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.invokeJoinpoint(CglibAopProxy.java:783)

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:163)

at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.proceed(CglibAopProxy.java:753)

at org.springframework.aop.interceptor.AsyncExecutionInterceptor.lambda$invoke$0(AsyncExecutionInterceptor.java:115)

at java.base/java.util.concurrent.FutureTask.run(FutureTask.java:264)

at tech.jhipster.async.ExceptionHandlingAsyncTaskExecutor.lambda$createWrappedRunnable$1(ExceptionHandlingAsyncTaskExecutor.java:78)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1130)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:630)

at java.base/java.lang.Thread.run(Thread.java:831)

```

### To Reproduce

1. Trigger All for a Programming Task (Unclear what the internal state of all participations must be)

### Expected behavior

Trigger all should not fail. And should trigger all participations.

### Screenshots

_No response_

### What browsers are you seeing the problem on?

Firefox

### Additional context

_No response_

### Relevant log output

_No response_ | priority | nullpointer exception while trigger all describe the bug we had to trigger all build plans since we ve updates some test cases i ve marked it as high since we need this feature if we have to adapt some test cases due to student responses what happened not all builds have been triggered got this exception in the be error a i simpleasyncuncaughtexceptionhandler unexpected exception occurred invoking async method public void de tum in artemis service programming programmingsubmissionservice triggerinstructorbuildforexercise java lang long throws de tum in artemis web rest errors entitynotfoundexception java lang nullpointerexception cannot invoke org eclipse jgit lib objectid getname because the return value of de tum in artemis service connectors gitservice getlastcommithash de tum in artemis domain vcsrepositoryurl is null at de tum in artemis service programming programmingsubmissionservice getlastcommithashforparticipation programmingsubmissionservice java at de tum in artemis service programming programmingsubmissionservice getorcreatesubmissionwithlastcommithashforparticipation programmingsubmissionservice java at de tum in artemis service programming programmingsubmissionservice triggerbuildandnotifyuser programmingsubmissionservice java at de tum in artemis service programming programmingsubmissionservice triggerbuildforparticipations programmingsubmissionservice java at de tum in artemis service programming programmingsubmissionservice triggerinstructorbuildforexercise programmingsubmissionservice java at de tum in artemis service programming programmingsubmissionservice fastclassbyspringcglib invoke at org springframework cglib proxy methodproxy invoke methodproxy java at org springframework aop framework cglibaopproxy cglibmethodinvocation invokejoinpoint cglibaopproxy java at org springframework aop framework reflectivemethodinvocation proceed reflectivemethodinvocation java at org springframework aop framework cglibaopproxy cglibmethodinvocation proceed cglibaopproxy java at org springframework aop interceptor asyncexecutioninterceptor lambda invoke asyncexecutioninterceptor java at java base java util concurrent futuretask run futuretask java at tech jhipster async exceptionhandlingasynctaskexecutor lambda createwrappedrunnable exceptionhandlingasynctaskexecutor java at java base java util concurrent threadpoolexecutor runworker threadpoolexecutor java at java base java util concurrent threadpoolexecutor worker run threadpoolexecutor java at java base java lang thread run thread java to reproduce trigger all for a programming task unclear what the internal state of all participations must be expected behavior trigger all should not fail and should trigger all participations screenshots no response what browsers are you seeing the problem on firefox additional context no response relevant log output no response | 1 |

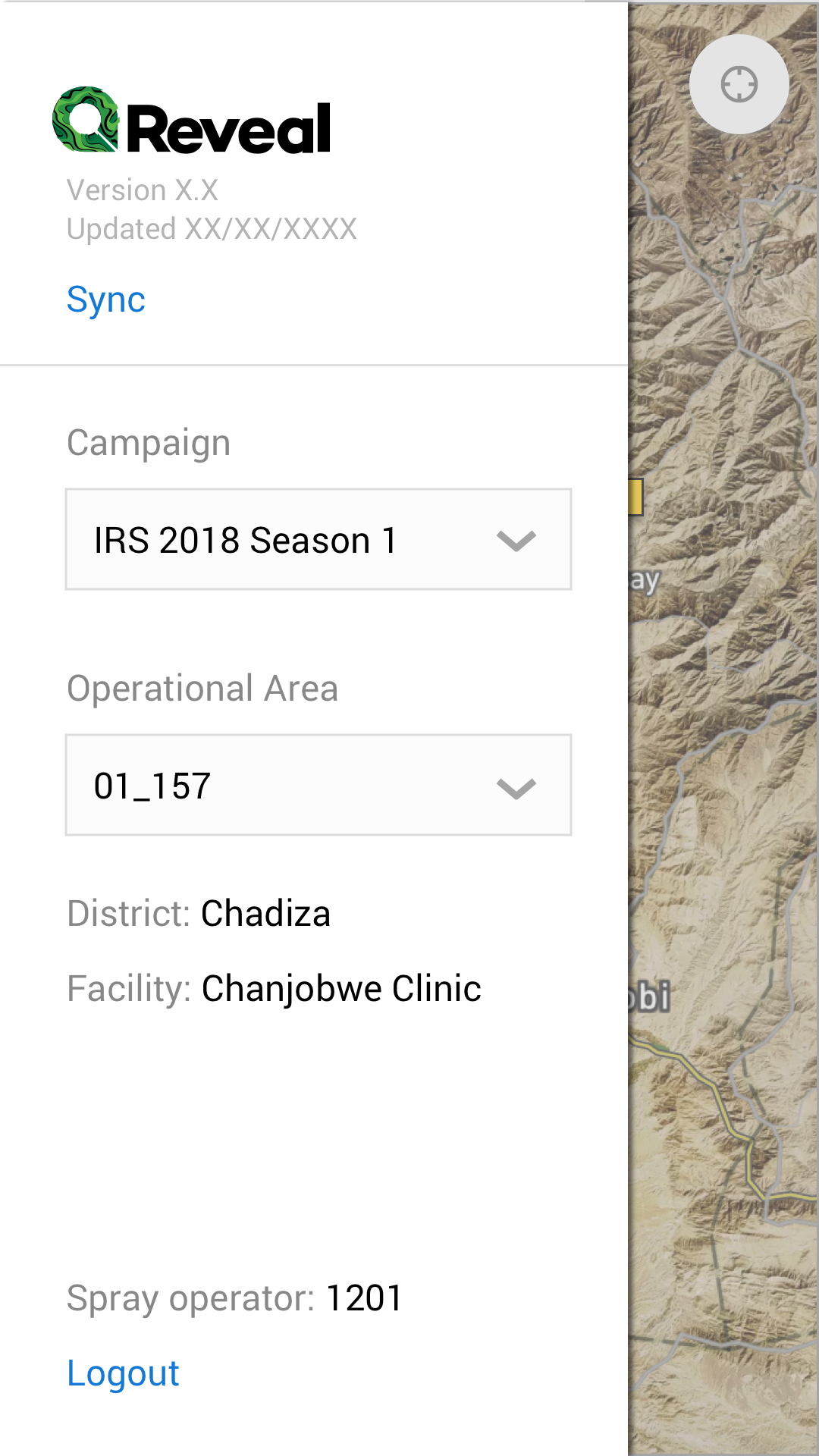

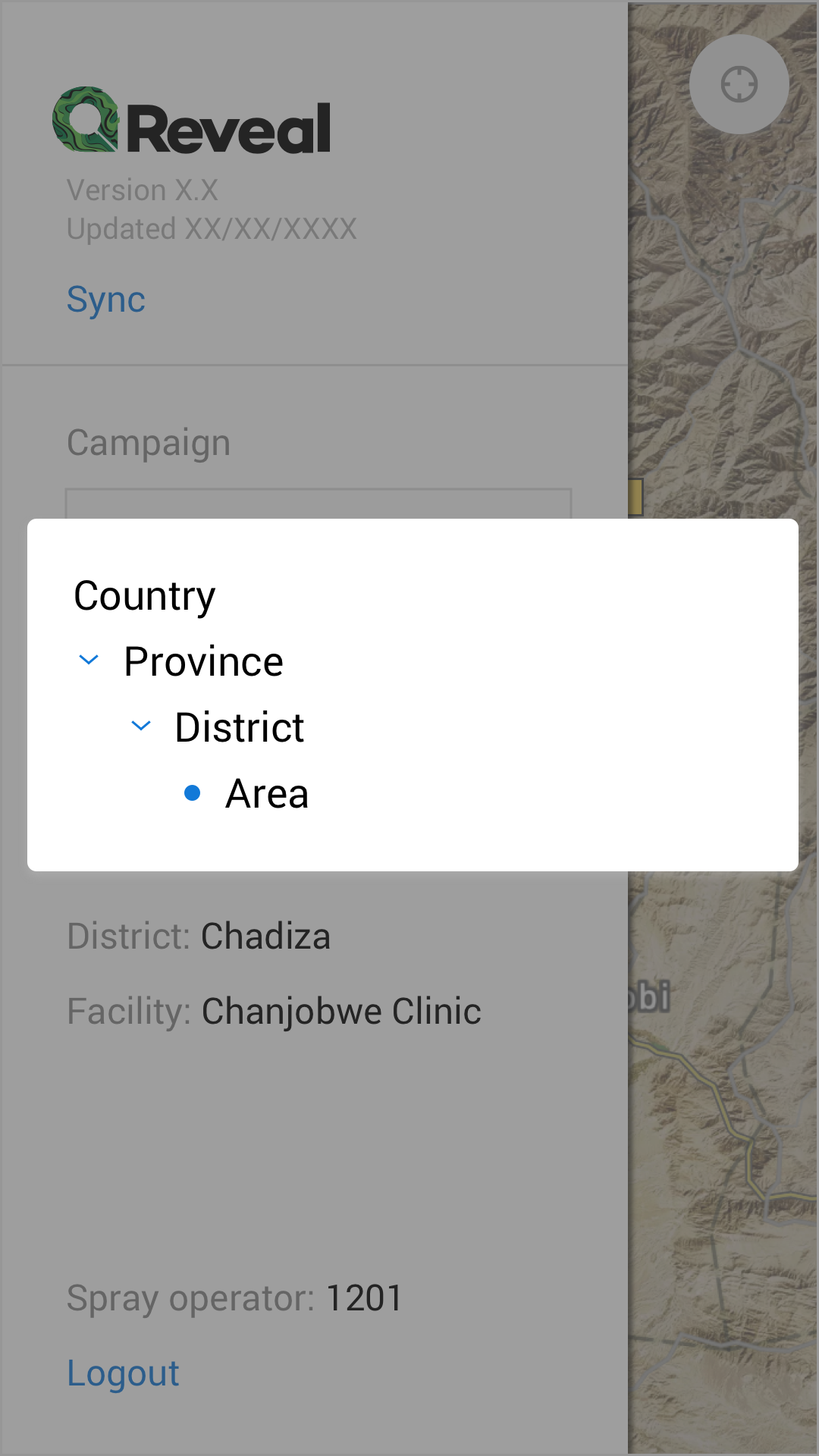

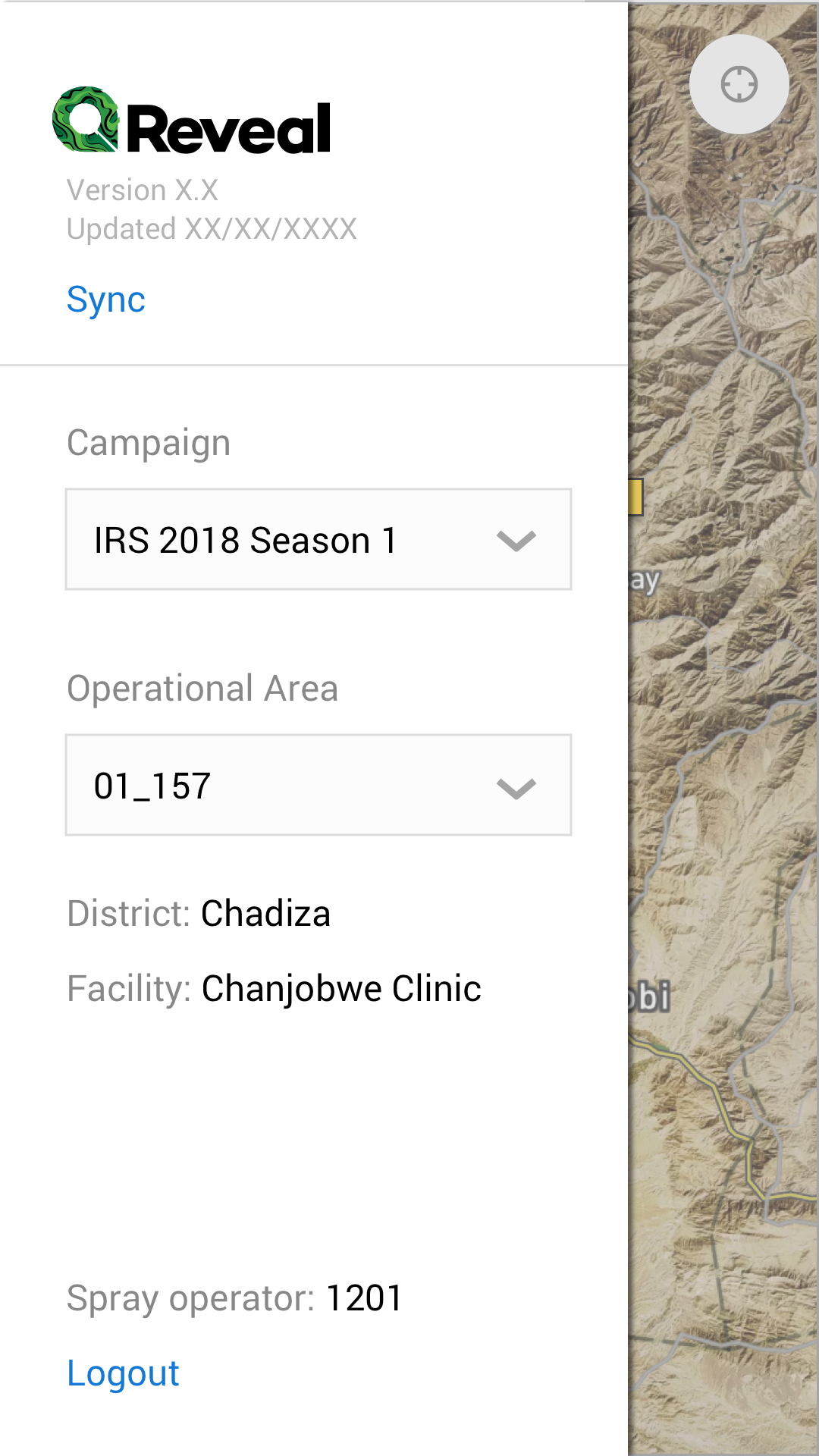

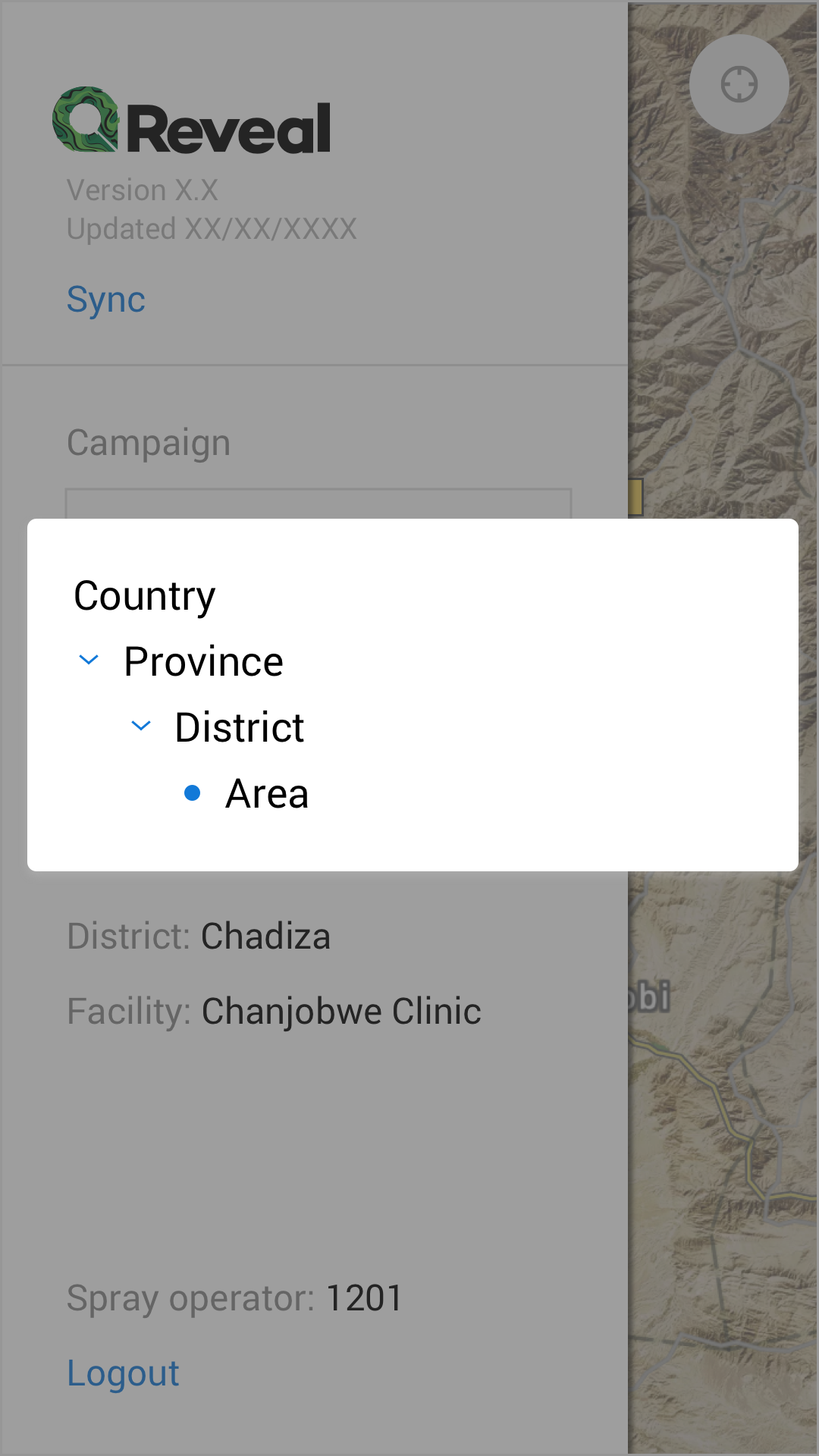

291,944 | 8,951,649,647 | IssuesEvent | 2019-01-25 14:33:04 | OpenSRP/opensrp-client-reveal | https://api.github.com/repos/OpenSRP/opensrp-client-reveal | closed | Implement Hamburger menu | Android Client Priority: High | The Reveal app has a hamburger menu that slides from the left side of the app.

Navigation:

- [x] This menu displays when the user touches the hamburger menu on the map view.

- [x] Users can close this menu by sliding it from right to left

- [x] This view acts as an overlay that makes the underlying map navigation unresponsive. We don't want users accidentally moving the map around when they try to close this menu.

Data:

The data on this screen needs to act as a filter for the map:

- [x] Campaign is a dropdown menu that filters the available tasks by campaign.

- [x] Operational Area is a hierarchy menu that filters the operational areas that have been downloaded on the Android client for that user. This filters the task by taskGroup.

Actions:

- [x] The user can touch the word "Sync" to close this menu and perform a sync operation

- [x] The user can touch the word "Logout" to close this menu and logout of the app | 1.0 | Implement Hamburger menu - The Reveal app has a hamburger menu that slides from the left side of the app.

Navigation:

- [x] This menu displays when the user touches the hamburger menu on the map view.

- [x] Users can close this menu by sliding it from right to left

- [x] This view acts as an overlay that makes the underlying map navigation unresponsive. We don't want users accidentally moving the map around when they try to close this menu.

Data:

The data on this screen needs to act as a filter for the map:

- [x] Campaign is a dropdown menu that filters the available tasks by campaign.

- [x] Operational Area is a hierarchy menu that filters the operational areas that have been downloaded on the Android client for that user. This filters the task by taskGroup.

Actions:

- [x] The user can touch the word "Sync" to close this menu and perform a sync operation

- [x] The user can touch the word "Logout" to close this menu and logout of the app | priority | implement hamburger menu the reveal app has a hamburger menu that slides from the left side of the app navigation this menu displays when the user touches the hamburger menu on the map view users can close this menu by sliding it from right to left this view acts as an overlay that makes the underlying map navigation unresponsive we don t want users accidentally moving the map around when they try to close this menu data the data on this screen needs to act as a filter for the map campaign is a dropdown menu that filters the available tasks by campaign operational area is a hierarchy menu that filters the operational areas that have been downloaded on the android client for that user this filters the task by taskgroup actions the user can touch the word sync to close this menu and perform a sync operation the user can touch the word logout to close this menu and logout of the app | 1 |

310,109 | 9,485,868,048 | IssuesEvent | 2019-04-22 12:01:31 | strapi/strapi | https://api.github.com/repos/strapi/strapi | closed | Delete entry with an Media relation error Parameter "obj" to Document() | priority: high status: confirmed type: bug 🐛 | **Informations**

- **Node.js version**: 10.15.0

- **NPM version**: 6.4.1

- **Strapi version**: v3.0.0-alpha.18

- **Database**: MongoDB 3.6.9

- **Operating system**: Debian 9

**What is the current behavior?**

Issuing delete requests causes 500 internal error. but the entry gets deleted anyway. in a non-custom install. only some content types added

The delete request: /products/5c3a52af383fe663810f0abc

```

(node:613) DeprecationWarning: collection.findAndModify is deprecated. Use findOneAndUpdate, findOneAndReplace or findOneAndDelete instead.

{ ObjectParameterError: Parameter "obj" to Document() must be an object, got 5c3a995fc4859602655bfe33

at new ObjectParameterError

```

**Steps to reproduce the problem**

setup insomina. create content type. issue delete request

**What is the expected behavior?**

No internal error

**Suggested solutions**

No idea atm. | 1.0 | Delete entry with an Media relation error Parameter "obj" to Document() - **Informations**

- **Node.js version**: 10.15.0

- **NPM version**: 6.4.1

- **Strapi version**: v3.0.0-alpha.18

- **Database**: MongoDB 3.6.9

- **Operating system**: Debian 9

**What is the current behavior?**

Issuing delete requests causes 500 internal error. but the entry gets deleted anyway. in a non-custom install. only some content types added

The delete request: /products/5c3a52af383fe663810f0abc

```

(node:613) DeprecationWarning: collection.findAndModify is deprecated. Use findOneAndUpdate, findOneAndReplace or findOneAndDelete instead.

{ ObjectParameterError: Parameter "obj" to Document() must be an object, got 5c3a995fc4859602655bfe33

at new ObjectParameterError

```

**Steps to reproduce the problem**

setup insomina. create content type. issue delete request

**What is the expected behavior?**

No internal error

**Suggested solutions**

No idea atm. | priority | delete entry with an media relation error parameter obj to document informations node js version npm version strapi version alpha database mongodb operating system debian what is the current behavior issuing delete requests causes internal error but the entry gets deleted anyway in a non custom install only some content types added the delete request products node deprecationwarning collection findandmodify is deprecated use findoneandupdate findoneandreplace or findoneanddelete instead objectparametererror parameter obj to document must be an object got at new objectparametererror steps to reproduce the problem setup insomina create content type issue delete request what is the expected behavior no internal error suggested solutions no idea atm | 1 |

201,946 | 7,042,690,440 | IssuesEvent | 2017-12-30 16:46:41 | tripl3dogdare/scjson | https://api.github.com/repos/tripl3dogdare/scjson | closed | Get ScJson on SBT package management systems | enhancement priority:high | Currently, ScJson can only be installed via unmanaged dependencies or direct source addition. This needs to be dealt with sooner than later. | 1.0 | Get ScJson on SBT package management systems - Currently, ScJson can only be installed via unmanaged dependencies or direct source addition. This needs to be dealt with sooner than later. | priority | get scjson on sbt package management systems currently scjson can only be installed via unmanaged dependencies or direct source addition this needs to be dealt with sooner than later | 1 |

794,372 | 28,033,554,508 | IssuesEvent | 2023-03-28 13:49:43 | scaleway/scaleway-cli | https://api.github.com/repos/scaleway/scaleway-cli | closed | shell: completion description is missing with positional arguments | bug shell priority:high | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

## Command attempted

```

lb backend update 18b068f7-4ad8-4fb7-8101-f01e399cdb29 forward-port-algorith

```

### Expected Behavior

Field should be documented

### Actual Behavior

Field description is "command not found"

## More info

<!-- output of `scw version`, your OS version, steps to reproduce, etc. -->

| 1.0 | shell: completion description is missing with positional arguments - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

## Command attempted

```

lb backend update 18b068f7-4ad8-4fb7-8101-f01e399cdb29 forward-port-algorith

```

### Expected Behavior

Field should be documented

### Actual Behavior

Field description is "command not found"

## More info

<!-- output of `scw version`, your OS version, steps to reproduce, etc. -->

| priority | shell completion description is missing with positional arguments community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they generate extra noise for issue followers and do not help prioritize the request if you are interested in working on this issue or have submitted a pull request please leave a comment command attempted lb backend update forward port algorith expected behavior field should be documented actual behavior field description is command not found more info | 1 |

787,013 | 27,701,797,677 | IssuesEvent | 2023-03-14 08:35:22 | Signbank/Global-signbank | https://api.github.com/repos/Signbank/Global-signbank | reopened | Problem when playing an uploaded video | high priority | An error occurs in the drag and drop code, or the form that it uses, when opening a gloss detail page

See: https://signbank.cls.ru.nl/dictionary/gloss/44008/

Might be a browser problem, or maybe it is saved somewhere different than in my local setup, or it is something else.

Check why "id_videofile" is nowhere as 'id' but is only used for 'for'? | 1.0 | Problem when playing an uploaded video - An error occurs in the drag and drop code, or the form that it uses, when opening a gloss detail page

See: https://signbank.cls.ru.nl/dictionary/gloss/44008/

Might be a browser problem, or maybe it is saved somewhere different than in my local setup, or it is something else.

Check why "id_videofile" is nowhere as 'id' but is only used for 'for'? | priority | problem when playing an uploaded video an error occurs in the drag and drop code or the form that it uses when opening a gloss detail page see might be a browser problem or maybe it is saved somewhere different than in my local setup or it is something else check why id videofile is nowhere as id but is only used for for | 1 |

678,469 | 23,198,665,018 | IssuesEvent | 2022-08-01 19:02:43 | PenPow/Sentry | https://api.github.com/repos/PenPow/Sentry | closed | Interactions: Not Clearing Out Prior / Commands on Post | bug priority:high semver:major managers | # Overview

Not clearing old slash commands when deployed

also make it singular if only giving one command, it was annoying me | 1.0 | Interactions: Not Clearing Out Prior / Commands on Post - # Overview

Not clearing old slash commands when deployed

also make it singular if only giving one command, it was annoying me | priority | interactions not clearing out prior commands on post overview not clearing old slash commands when deployed also make it singular if only giving one command it was annoying me | 1 |

196,558 | 6,935,118,206 | IssuesEvent | 2017-12-03 03:54:02 | vmware/harbor | https://api.github.com/repos/vmware/harbor | closed | Harbor will be started multiple times in the bosh release/tile | area/bosh-release area/tile kind/bug priority/high target/pks-0.8 | Here are logs:

-------------------

[Fri Dec 1 10:48:10 UTC 2017] Starting Harbor 1.2.0 at https://testing.habor.vmware.com

[Fri Dec 1 10:48:10 UTC 2017] Loading docker images ...

Loaded image: vmware/mariadb-photon:10.2.8

Loaded image: vmware/harbor-ui:v1.2.0-219-gdb6def3

Loaded image: vmware/harbor-jobservice:v1.2.0-219-gdb6def3

Loaded image: vmware/nginx-photon:1.11.13

Loaded image: vmware/postgresql:9.6.5-photon

Loaded image: vmware/harbor-db:v1.2.0-219-gdb6def3

Loaded image: vmware/photon:1.0

Loaded image: vmware/clair:v2.0.1-photon

Loaded image: vmware/harbor-adminserver:v1.2.0-219-gdb6def3

Loaded image: vmware/registry:2.6.2-photon

Loaded image: vmware/notary-photon:server-0.5.1

Loaded image: vmware/notary-photon:signer-0.5.1

Loaded image: vmware/harbor-log:v1.2.0-219-gdb6def3

[Fri Dec 1 10:50:59 UTC 2017] Launching docker-compose up ...

[Fri Dec 1 10:50:59 UTC 2017] Waiting 120 seconds for Harbor Service to be ready ...

[Fri Dec 1 10:51:11 UTC 2017] Starting Harbor 1.2.0 at https://testing.habor.vmware.com

[Fri Dec 1 10:51:11 UTC 2017] Loading docker images ...

Loaded image: vmware/mariadb-photon:10.2.8

Loaded image: vmware/harbor-ui:v1.2.0-219-gdb6def3

Loaded image: vmware/harbor-jobservice:v1.2.0-219-gdb6def3

Loaded image: vmware/nginx-photon:1.11.13

Loaded image: vmware/postgresql:9.6.5-photon

Loaded image: vmware/harbor-db:v1.2.0-219-gdb6def3

Loaded image: vmware/photon:1.0

Loaded image: vmware/clair:v2.0.1-photon

Loaded image: vmware/harbor-adminserver:v1.2.0-219-gdb6def3

Loaded image: vmware/registry:2.6.2-photon

Loaded image: vmware/notary-photon:server-0.5.1

Loaded image: vmware/notary-photon:signer-0.5.1

Loaded image: vmware/harbor-log:v1.2.0-219-gdb6def3

[Fri Dec 1 10:52:45 UTC 2017] Launching docker-compose up ...

[Fri Dec 1 10:52:45 UTC 2017] Waiting 120 seconds for Harbor Service to be ready ...

[Fri Dec 1 10:52:56 UTC 2017] Starting Harbor 1.2.0 at https://testing.habor.vmware.com

[Fri Dec 1 10:52:56 UTC 2017] Loading docker images ...

Loaded image: vmware/mariadb-photon:10.2.8

Loaded image: vmware/harbor-ui:v1.2.0-219-gdb6def3

Loaded image: vmware/harbor-jobservice:v1.2.0-219-gdb6def3

Loaded image: vmware/nginx-photon:1.11.13

Loaded image: vmware/postgresql:9.6.5-photon

Loaded image: vmware/harbor-db:v1.2.0-219-gdb6def3

Loaded image: vmware/photon:1.0

Loaded image: vmware/clair:v2.0.1-photon

Loaded image: vmware/harbor-adminserver:v1.2.0-219-gdb6def3

Loaded image: vmware/registry:2.6.2-photon

Loaded image: vmware/notary-photon:server-0.5.1

Loaded image: vmware/notary-photon:signer-0.5.1

Loaded image: vmware/harbor-log:v1.2.0-219-gdb6def3

[Fri Dec 1 10:54:35 UTC 2017] Launching docker-compose up ...

[Fri Dec 1 10:54:35 UTC 2017] Waiting 120 seconds for Harbor Service to be ready ...

[Fri Dec 1 10:55:16 UTC 2017] Error: Harbor Service failed to start in 120 seconds

[Fri Dec 1 10:55:26 UTC 2017] Error: Harbor Service failed to start in 120 seconds

[Fri Dec 1 10:56:32 UTC 2017] Error: Harbor Service failed to start in 120 seconds

| 1.0 | Harbor will be started multiple times in the bosh release/tile - Here are logs:

-------------------

[Fri Dec 1 10:48:10 UTC 2017] Starting Harbor 1.2.0 at https://testing.habor.vmware.com

[Fri Dec 1 10:48:10 UTC 2017] Loading docker images ...

Loaded image: vmware/mariadb-photon:10.2.8

Loaded image: vmware/harbor-ui:v1.2.0-219-gdb6def3

Loaded image: vmware/harbor-jobservice:v1.2.0-219-gdb6def3

Loaded image: vmware/nginx-photon:1.11.13

Loaded image: vmware/postgresql:9.6.5-photon

Loaded image: vmware/harbor-db:v1.2.0-219-gdb6def3

Loaded image: vmware/photon:1.0

Loaded image: vmware/clair:v2.0.1-photon

Loaded image: vmware/harbor-adminserver:v1.2.0-219-gdb6def3

Loaded image: vmware/registry:2.6.2-photon

Loaded image: vmware/notary-photon:server-0.5.1

Loaded image: vmware/notary-photon:signer-0.5.1

Loaded image: vmware/harbor-log:v1.2.0-219-gdb6def3

[Fri Dec 1 10:50:59 UTC 2017] Launching docker-compose up ...

[Fri Dec 1 10:50:59 UTC 2017] Waiting 120 seconds for Harbor Service to be ready ...

[Fri Dec 1 10:51:11 UTC 2017] Starting Harbor 1.2.0 at https://testing.habor.vmware.com

[Fri Dec 1 10:51:11 UTC 2017] Loading docker images ...

Loaded image: vmware/mariadb-photon:10.2.8

Loaded image: vmware/harbor-ui:v1.2.0-219-gdb6def3

Loaded image: vmware/harbor-jobservice:v1.2.0-219-gdb6def3

Loaded image: vmware/nginx-photon:1.11.13

Loaded image: vmware/postgresql:9.6.5-photon

Loaded image: vmware/harbor-db:v1.2.0-219-gdb6def3

Loaded image: vmware/photon:1.0

Loaded image: vmware/clair:v2.0.1-photon

Loaded image: vmware/harbor-adminserver:v1.2.0-219-gdb6def3

Loaded image: vmware/registry:2.6.2-photon

Loaded image: vmware/notary-photon:server-0.5.1

Loaded image: vmware/notary-photon:signer-0.5.1

Loaded image: vmware/harbor-log:v1.2.0-219-gdb6def3

[Fri Dec 1 10:52:45 UTC 2017] Launching docker-compose up ...

[Fri Dec 1 10:52:45 UTC 2017] Waiting 120 seconds for Harbor Service to be ready ...

[Fri Dec 1 10:52:56 UTC 2017] Starting Harbor 1.2.0 at https://testing.habor.vmware.com

[Fri Dec 1 10:52:56 UTC 2017] Loading docker images ...

Loaded image: vmware/mariadb-photon:10.2.8

Loaded image: vmware/harbor-ui:v1.2.0-219-gdb6def3

Loaded image: vmware/harbor-jobservice:v1.2.0-219-gdb6def3

Loaded image: vmware/nginx-photon:1.11.13

Loaded image: vmware/postgresql:9.6.5-photon

Loaded image: vmware/harbor-db:v1.2.0-219-gdb6def3

Loaded image: vmware/photon:1.0

Loaded image: vmware/clair:v2.0.1-photon

Loaded image: vmware/harbor-adminserver:v1.2.0-219-gdb6def3

Loaded image: vmware/registry:2.6.2-photon

Loaded image: vmware/notary-photon:server-0.5.1

Loaded image: vmware/notary-photon:signer-0.5.1

Loaded image: vmware/harbor-log:v1.2.0-219-gdb6def3

[Fri Dec 1 10:54:35 UTC 2017] Launching docker-compose up ...

[Fri Dec 1 10:54:35 UTC 2017] Waiting 120 seconds for Harbor Service to be ready ...

[Fri Dec 1 10:55:16 UTC 2017] Error: Harbor Service failed to start in 120 seconds

[Fri Dec 1 10:55:26 UTC 2017] Error: Harbor Service failed to start in 120 seconds

[Fri Dec 1 10:56:32 UTC 2017] Error: Harbor Service failed to start in 120 seconds

| priority | harbor will be started multiple times in the bosh release tile here are logs starting harbor at loading docker images loaded image vmware mariadb photon loaded image vmware harbor ui loaded image vmware harbor jobservice loaded image vmware nginx photon loaded image vmware postgresql photon loaded image vmware harbor db loaded image vmware photon loaded image vmware clair photon loaded image vmware harbor adminserver loaded image vmware registry photon loaded image vmware notary photon server loaded image vmware notary photon signer loaded image vmware harbor log launching docker compose up waiting seconds for harbor service to be ready starting harbor at loading docker images loaded image vmware mariadb photon loaded image vmware harbor ui loaded image vmware harbor jobservice loaded image vmware nginx photon loaded image vmware postgresql photon loaded image vmware harbor db loaded image vmware photon loaded image vmware clair photon loaded image vmware harbor adminserver loaded image vmware registry photon loaded image vmware notary photon server loaded image vmware notary photon signer loaded image vmware harbor log launching docker compose up waiting seconds for harbor service to be ready starting harbor at loading docker images loaded image vmware mariadb photon loaded image vmware harbor ui loaded image vmware harbor jobservice loaded image vmware nginx photon loaded image vmware postgresql photon loaded image vmware harbor db loaded image vmware photon loaded image vmware clair photon loaded image vmware harbor adminserver loaded image vmware registry photon loaded image vmware notary photon server loaded image vmware notary photon signer loaded image vmware harbor log launching docker compose up waiting seconds for harbor service to be ready error harbor service failed to start in seconds error harbor service failed to start in seconds error harbor service failed to start in seconds | 1 |

829,675 | 31,886,415,501 | IssuesEvent | 2023-09-17 01:23:46 | primaryodors/primarydock | https://api.github.com/repos/primaryodors/primarydock | opened | Increase memory robustness. | high priority | There is a segfault that's sometimes happening during predictions with no clear pattern for reproducibility. It is not happening on the dev machines where the code can be stepped through, and running the faulty dock under valgrind would probably take days. Let's see if it resolves on its own after applying some better practices to memory management.

All functions that return any type of pointer to pointers must be changed to not do this. When such a function is called, it allocates a pointer array on the heap and sets the elements to point to persistent objects that must remain active throughout program execution. If the array is deallocated with delete[], it frees up memory that's still being used and leads to segfaults and corrupted data. If deallocated with delete, it causes a "mismatched free/delete" error. If not deallocated, it causes a memory leak.

Where performance is not critical, it is helpful to use std::vector and std::shared_ptr. Where there is a known limit to the size of the returned array, a stack allocated array can be passed in as a pointer argument. Another option is to use arrays of Star objects. | 1.0 | Increase memory robustness. - There is a segfault that's sometimes happening during predictions with no clear pattern for reproducibility. It is not happening on the dev machines where the code can be stepped through, and running the faulty dock under valgrind would probably take days. Let's see if it resolves on its own after applying some better practices to memory management.

All functions that return any type of pointer to pointers must be changed to not do this. When such a function is called, it allocates a pointer array on the heap and sets the elements to point to persistent objects that must remain active throughout program execution. If the array is deallocated with delete[], it frees up memory that's still being used and leads to segfaults and corrupted data. If deallocated with delete, it causes a "mismatched free/delete" error. If not deallocated, it causes a memory leak.

Where performance is not critical, it is helpful to use std::vector and std::shared_ptr. Where there is a known limit to the size of the returned array, a stack allocated array can be passed in as a pointer argument. Another option is to use arrays of Star objects. | priority | increase memory robustness there is a segfault that s sometimes happening during predictions with no clear pattern for reproducibility it is not happening on the dev machines where the code can be stepped through and running the faulty dock under valgrind would probably take days let s see if it resolves on its own after applying some better practices to memory management all functions that return any type of pointer to pointers must be changed to not do this when such a function is called it allocates a pointer array on the heap and sets the elements to point to persistent objects that must remain active throughout program execution if the array is deallocated with delete it frees up memory that s still being used and leads to segfaults and corrupted data if deallocated with delete it causes a mismatched free delete error if not deallocated it causes a memory leak where performance is not critical it is helpful to use std vector and std shared ptr where there is a known limit to the size of the returned array a stack allocated array can be passed in as a pointer argument another option is to use arrays of star objects | 1 |

109,502 | 4,388,624,023 | IssuesEvent | 2016-08-08 19:29:05 | DistrictDataLabs/partisan-discourse | https://api.github.com/repos/DistrictDataLabs/partisan-discourse | opened | Model Management View: Listing all the models in the system and for your user | priority: high ready type: feature | To increase the value of `partisan-discourse` as a tool to demonstrate a model management system and as a system to learn more about ML, we want to incorporate a model display view in the UI that showcases to a user the currently stored models, particularly as a breakdown between the global models and a user's models.

This issue is closed when a user can login, navigate to a model display page, and there see a listing of their user's models (and their respective scoring data) and the global (aka null user) models and their respective scoring data. The page design is pretty open ended but for whomever takes this issue we can brainstorm on it a bit together as needed. | 1.0 | Model Management View: Listing all the models in the system and for your user - To increase the value of `partisan-discourse` as a tool to demonstrate a model management system and as a system to learn more about ML, we want to incorporate a model display view in the UI that showcases to a user the currently stored models, particularly as a breakdown between the global models and a user's models.

This issue is closed when a user can login, navigate to a model display page, and there see a listing of their user's models (and their respective scoring data) and the global (aka null user) models and their respective scoring data. The page design is pretty open ended but for whomever takes this issue we can brainstorm on it a bit together as needed. | priority | model management view listing all the models in the system and for your user to increase the value of partisan discourse as a tool to demonstrate a model management system and as a system to learn more about ml we want to incorporate a model display view in the ui that showcases to a user the currently stored models particularly as a breakdown between the global models and a user s models this issue is closed when a user can login navigate to a model display page and there see a listing of their user s models and their respective scoring data and the global aka null user models and their respective scoring data the page design is pretty open ended but for whomever takes this issue we can brainstorm on it a bit together as needed | 1 |

522,037 | 15,147,445,165 | IssuesEvent | 2021-02-11 09:09:27 | BirminghamConservatoire/IntegraLive | https://api.github.com/repos/BirminghamConservatoire/IntegraLive | closed | Integra Live won't launch on macOs Big Sur | Mac-only priority high | Pablo Furman at San José State University reported last week that IL won't launch on Macs running macOs Big Sur. A message comes up requesting an updated version. Is there an easy way to fix this?

I'm still running on High Sierra and students here use mostly Catalina without problems, but I'll try to reproduce on an extra machine if I can get hold of one. | 1.0 | Integra Live won't launch on macOs Big Sur - Pablo Furman at San José State University reported last week that IL won't launch on Macs running macOs Big Sur. A message comes up requesting an updated version. Is there an easy way to fix this?

I'm still running on High Sierra and students here use mostly Catalina without problems, but I'll try to reproduce on an extra machine if I can get hold of one. | priority | integra live won t launch on macos big sur pablo furman at san josé state university reported last week that il won t launch on macs running macos big sur a message comes up requesting an updated version is there an easy way to fix this i m still running on high sierra and students here use mostly catalina without problems but i ll try to reproduce on an extra machine if i can get hold of one | 1 |

500,185 | 14,492,260,988 | IssuesEvent | 2020-12-11 06:36:30 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m.youtube.com - see bug description | browser-focus-geckoview engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox Mobile 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 6.0; Mobile; rv:83.0) Gecko/83.0 Firefox/83.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/63447 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://m.youtube.com/watch?v=EWjZOxs87yg

**Browser / Version**: Firefox Mobile 83.0

**Operating System**: Android 6.0

**Tested Another Browser**: Yes Other

**Problem type**: Something else

**Description**: Sound quality

**Steps to Reproduce**:

The problem with YouTube audio quality

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | m.youtube.com - see bug description - <!-- @browser: Firefox Mobile 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 6.0; Mobile; rv:83.0) Gecko/83.0 Firefox/83.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/63447 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://m.youtube.com/watch?v=EWjZOxs87yg

**Browser / Version**: Firefox Mobile 83.0

**Operating System**: Android 6.0

**Tested Another Browser**: Yes Other

**Problem type**: Something else

**Description**: Sound quality

**Steps to Reproduce**:

The problem with YouTube audio quality

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | m youtube com see bug description url browser version firefox mobile operating system android tested another browser yes other problem type something else description sound quality steps to reproduce the problem with youtube audio quality browser configuration none from with ❤️ | 1 |

465,827 | 13,392,874,270 | IssuesEvent | 2020-09-03 02:36:20 | WordPress/learn | https://api.github.com/repos/WordPress/learn | closed | Search results: Differentiate between CPTs | [Component] Learn Theme [Priority] High | The search function searches the lesson plans and the workshops, but they are displayed in a single list with no visual distinction - example: https://learn.wordpress.org/?s=block

Could this be updated to show some sort of visual differentiator? Either a highlight of some kind or, better yet, display results in two columns/areas - one for each CPT. | 1.0 | Search results: Differentiate between CPTs - The search function searches the lesson plans and the workshops, but they are displayed in a single list with no visual distinction - example: https://learn.wordpress.org/?s=block

Could this be updated to show some sort of visual differentiator? Either a highlight of some kind or, better yet, display results in two columns/areas - one for each CPT. | priority | search results differentiate between cpts the search function searches the lesson plans and the workshops but they are displayed in a single list with no visual distinction example could this be updated to show some sort of visual differentiator either a highlight of some kind or better yet display results in two columns areas one for each cpt | 1 |

268,660 | 8,409,993,796 | IssuesEvent | 2018-10-12 09:11:55 | hajkmap/Hajk | https://api.github.com/repos/hajkmap/Hajk | closed | Byt så att alla plugins som har en panel renderar i en drawer | High priority | Byt så att alla plugins som har en panel renderar i en drawer | 1.0 | Byt så att alla plugins som har en panel renderar i en drawer - Byt så att alla plugins som har en panel renderar i en drawer | priority | byt så att alla plugins som har en panel renderar i en drawer byt så att alla plugins som har en panel renderar i en drawer | 1 |

735,839 | 25,443,345,671 | IssuesEvent | 2022-11-24 02:04:41 | Automattic/abacus | https://api.github.com/repos/Automattic/abacus | closed | Add new analysis columns | [!priority] high [type] enhancement [section] experiment results [!team] explat [!milestone] current | After adding absolute impact to Abacus (https://github.com/Automattic/abacus/pull/772), I think we can change the way we show the results of the experiment:

- [ ] Display baseline interval below metric name p10gg3-aFD-p2#comment-33110.

- [ ] Rename "absolute change" to "estimated difference"

- [x] Rename "relative change (lift)" to "estimated impact". Done in https://github.com/Automattic/abacus/pull/772

- [ ] Reduce the font size and font color to a grey `#828282` of absolute change and relative change

- [x] Add absolute impact to "estimated impact" with medium weight. Done in https://github.com/Automattic/abacus/pull/772

- [ ] Adjust analysis text to be medium weight

- [ ] Align absolute change and relative change to be on the same horizontal ruler

- [ ] The gap between absolute impact and relative change should be vertically aligned with metric name and analysis text

<img width="1194" alt="Screen Shot 2022-09-16 at 3 18 32 PM" src="https://user-images.githubusercontent.com/4505888/190815505-bf891f65-2fd8-4dfa-aba8-973976b90063.png"> | 1.0 | Add new analysis columns - After adding absolute impact to Abacus (https://github.com/Automattic/abacus/pull/772), I think we can change the way we show the results of the experiment:

- [ ] Display baseline interval below metric name p10gg3-aFD-p2#comment-33110.

- [ ] Rename "absolute change" to "estimated difference"

- [x] Rename "relative change (lift)" to "estimated impact". Done in https://github.com/Automattic/abacus/pull/772

- [ ] Reduce the font size and font color to a grey `#828282` of absolute change and relative change

- [x] Add absolute impact to "estimated impact" with medium weight. Done in https://github.com/Automattic/abacus/pull/772

- [ ] Adjust analysis text to be medium weight

- [ ] Align absolute change and relative change to be on the same horizontal ruler

- [ ] The gap between absolute impact and relative change should be vertically aligned with metric name and analysis text

<img width="1194" alt="Screen Shot 2022-09-16 at 3 18 32 PM" src="https://user-images.githubusercontent.com/4505888/190815505-bf891f65-2fd8-4dfa-aba8-973976b90063.png"> | priority | add new analysis columns after adding absolute impact to abacus i think we can change the way we show the results of the experiment display baseline interval below metric name afd comment rename absolute change to estimated difference rename relative change lift to estimated impact done in reduce the font size and font color to a grey of absolute change and relative change add absolute impact to estimated impact with medium weight done in adjust analysis text to be medium weight align absolute change and relative change to be on the same horizontal ruler the gap between absolute impact and relative change should be vertically aligned with metric name and analysis text img width alt screen shot at pm src | 1 |

468,631 | 13,487,133,479 | IssuesEvent | 2020-09-11 10:28:53 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.zoho.com - site is not usable | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-important | <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:81.0) Gecko/20100101 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/58073 -->

**URL**: https://www.zoho.com/meeting/login.html

**Browser / Version**: Firefox 81.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Chrome

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

The site has certificate issues

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/9/bd33879d-87a3-45f1-9214-ca1e23a1f5c2.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200910180444</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/9/0f2d2807-a9b8-4d15-bef9-cc9ad8b0b895)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.zoho.com - site is not usable - <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:81.0) Gecko/20100101 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/58073 -->

**URL**: https://www.zoho.com/meeting/login.html

**Browser / Version**: Firefox 81.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Chrome

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

The site has certificate issues

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/9/bd33879d-87a3-45f1-9214-ca1e23a1f5c2.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200910180444</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/9/0f2d2807-a9b8-4d15-bef9-cc9ad8b0b895)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | site is not usable url browser version firefox operating system windows tested another browser yes chrome problem type site is not usable description page not loading correctly steps to reproduce the site has certificate issues view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

412,898 | 12,057,864,772 | IssuesEvent | 2020-04-15 16:30:11 | hobbit-project/platform | https://api.github.com/repos/hobbit-project/platform | closed | Platform should make sure that container names are valid | component: controller priority: high type: bug | ## Problem

For some images, the generated container name is too long:

```

2018-05-08 09:49:36,381 ERROR [org.hobbit.controller.docker.ContainerManagerImpl] - <Couldn't create Docker container. Returning null.>

com.spotify.docker.client.exceptions.DockerRequestException: Request error: POST unix://localhost:80/services/create: 400, body: {"message":"rpc error: code = InvalidArgument desc = name must be 63 characters or

fewer"}

at com.spotify.docker.client.DefaultDockerClient.propagate(DefaultDockerClient.java:2702)

at com.spotify.docker.client.DefaultDockerClient.request(DefaultDockerClient.java:2652)

at com.spotify.docker.client.DefaultDockerClient.createService(DefaultDockerClient.java:1848)

at org.hobbit.controller.docker.ContainerManagerImpl.createContainer(ContainerManagerImpl.java:499)

at org.hobbit.controller.docker.ContainerManagerImpl.startContainer(ContainerManagerImpl.java:559)

at org.hobbit.controller.docker.ContainerManagerImpl.startContainer(ContainerManagerImpl.java:575)

at org.hobbit.controller.ExperimentManager.createNextExperiment(ExperimentManager.java:229)

at org.hobbit.controller.ExperimentManager$1.run(ExperimentManager.java:128)

at java.util.TimerThread.mainLoop(Timer.java:555)

at java.util.TimerThread.run(Timer.java:505)

Caused by: javax.ws.rs.BadRequestException: HTTP 400 Bad Request

at org.glassfish.jersey.client.JerseyInvocation.convertToException(JerseyInvocation.java:999)

at org.glassfish.jersey.client.JerseyInvocation.translate(JerseyInvocation.java:816)

at org.glassfish.jersey.client.JerseyInvocation.access$700(JerseyInvocation.java:92)

at org.glassfish.jersey.client.JerseyInvocation$5.completed(JerseyInvocation.java:773)

at org.glassfish.jersey.client.ClientRuntime.processResponse(ClientRuntime.java:198)

at org.glassfish.jersey.client.ClientRuntime.access$300(ClientRuntime.java:79)

at org.glassfish.jersey.client.ClientRuntime$2.run(ClientRuntime.java:180)

at org.glassfish.jersey.internal.Errors$1.call(Errors.java:271)

at org.glassfish.jersey.internal.Errors$1.call(Errors.java:267)

at org.glassfish.jersey.internal.Errors.process(Errors.java:315)

at org.glassfish.jersey.internal.Errors.process(Errors.java:297)

at org.glassfish.jersey.internal.Errors.process(Errors.java:267)

at org.glassfish.jersey.process.internal.RequestScope.runInScope(RequestScope.java:340)

at org.glassfish.jersey.client.ClientRuntime$3.run(ClientRuntime.java:210)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

2018-05-08 09:49:36,383 ERROR [org.hobbit.controller.ExperimentManager] - <Exception while trying to start a new benchmark. Removing it from the queue.>

java.lang.Exception: Couldn't create benchmark controller http://w3id.org/gerbil/qa/hobbit/vocab#GerbilBenchmarkTask3Testing

at org.hobbit.controller.ExperimentManager.createNextExperiment(ExperimentManager.java:239)

at org.hobbit.controller.ExperimentManager$1.run(ExperimentManager.java:128)

at java.util.TimerThread.mainLoop(Timer.java:555)

at java.util.TimerThread.run(Timer.java:505)

``` | 1.0 | Platform should make sure that container names are valid - ## Problem

For some images, the generated container name is too long:

```

2018-05-08 09:49:36,381 ERROR [org.hobbit.controller.docker.ContainerManagerImpl] - <Couldn't create Docker container. Returning null.>

com.spotify.docker.client.exceptions.DockerRequestException: Request error: POST unix://localhost:80/services/create: 400, body: {"message":"rpc error: code = InvalidArgument desc = name must be 63 characters or

fewer"}

at com.spotify.docker.client.DefaultDockerClient.propagate(DefaultDockerClient.java:2702)

at com.spotify.docker.client.DefaultDockerClient.request(DefaultDockerClient.java:2652)

at com.spotify.docker.client.DefaultDockerClient.createService(DefaultDockerClient.java:1848)

at org.hobbit.controller.docker.ContainerManagerImpl.createContainer(ContainerManagerImpl.java:499)

at org.hobbit.controller.docker.ContainerManagerImpl.startContainer(ContainerManagerImpl.java:559)

at org.hobbit.controller.docker.ContainerManagerImpl.startContainer(ContainerManagerImpl.java:575)

at org.hobbit.controller.ExperimentManager.createNextExperiment(ExperimentManager.java:229)

at org.hobbit.controller.ExperimentManager$1.run(ExperimentManager.java:128)

at java.util.TimerThread.mainLoop(Timer.java:555)

at java.util.TimerThread.run(Timer.java:505)

Caused by: javax.ws.rs.BadRequestException: HTTP 400 Bad Request

at org.glassfish.jersey.client.JerseyInvocation.convertToException(JerseyInvocation.java:999)

at org.glassfish.jersey.client.JerseyInvocation.translate(JerseyInvocation.java:816)

at org.glassfish.jersey.client.JerseyInvocation.access$700(JerseyInvocation.java:92)

at org.glassfish.jersey.client.JerseyInvocation$5.completed(JerseyInvocation.java:773)

at org.glassfish.jersey.client.ClientRuntime.processResponse(ClientRuntime.java:198)

at org.glassfish.jersey.client.ClientRuntime.access$300(ClientRuntime.java:79)

at org.glassfish.jersey.client.ClientRuntime$2.run(ClientRuntime.java:180)

at org.glassfish.jersey.internal.Errors$1.call(Errors.java:271)

at org.glassfish.jersey.internal.Errors$1.call(Errors.java:267)

at org.glassfish.jersey.internal.Errors.process(Errors.java:315)

at org.glassfish.jersey.internal.Errors.process(Errors.java:297)

at org.glassfish.jersey.internal.Errors.process(Errors.java:267)

at org.glassfish.jersey.process.internal.RequestScope.runInScope(RequestScope.java:340)

at org.glassfish.jersey.client.ClientRuntime$3.run(ClientRuntime.java:210)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

2018-05-08 09:49:36,383 ERROR [org.hobbit.controller.ExperimentManager] - <Exception while trying to start a new benchmark. Removing it from the queue.>

java.lang.Exception: Couldn't create benchmark controller http://w3id.org/gerbil/qa/hobbit/vocab#GerbilBenchmarkTask3Testing

at org.hobbit.controller.ExperimentManager.createNextExperiment(ExperimentManager.java:239)

at org.hobbit.controller.ExperimentManager$1.run(ExperimentManager.java:128)

at java.util.TimerThread.mainLoop(Timer.java:555)

at java.util.TimerThread.run(Timer.java:505)

``` | priority | platform should make sure that container names are valid problem for some images the generated container name is too long error com spotify docker client exceptions dockerrequestexception request error post unix localhost services create body message rpc error code invalidargument desc name must be characters or fewer at com spotify docker client defaultdockerclient propagate defaultdockerclient java at com spotify docker client defaultdockerclient request defaultdockerclient java at com spotify docker client defaultdockerclient createservice defaultdockerclient java at org hobbit controller docker containermanagerimpl createcontainer containermanagerimpl java at org hobbit controller docker containermanagerimpl startcontainer containermanagerimpl java at org hobbit controller docker containermanagerimpl startcontainer containermanagerimpl java at org hobbit controller experimentmanager createnextexperiment experimentmanager java at org hobbit controller experimentmanager run experimentmanager java at java util timerthread mainloop timer java at java util timerthread run timer java caused by javax ws rs badrequestexception http bad request at org glassfish jersey client jerseyinvocation converttoexception jerseyinvocation java at org glassfish jersey client jerseyinvocation translate jerseyinvocation java at org glassfish jersey client jerseyinvocation access jerseyinvocation java at org glassfish jersey client jerseyinvocation completed jerseyinvocation java at org glassfish jersey client clientruntime processresponse clientruntime java at org glassfish jersey client clientruntime access clientruntime java at org glassfish jersey client clientruntime run clientruntime java at org glassfish jersey internal errors call errors java at org glassfish jersey internal errors call errors java at org glassfish jersey internal errors process errors java at org glassfish jersey internal errors process errors java at org glassfish jersey internal errors process errors java at org glassfish jersey process internal requestscope runinscope requestscope java at org glassfish jersey client clientruntime run clientruntime java at java util concurrent executors runnableadapter call executors java at java util concurrent futuretask run futuretask java at java util concurrent threadpoolexecutor runworker threadpoolexecutor java at java util concurrent threadpoolexecutor worker run threadpoolexecutor java at java lang thread run thread java error java lang exception couldn t create benchmark controller at org hobbit controller experimentmanager createnextexperiment experimentmanager java at org hobbit controller experimentmanager run experimentmanager java at java util timerthread mainloop timer java at java util timerthread run timer java | 1 |

189,274 | 6,795,935,153 | IssuesEvent | 2017-11-01 17:18:17 | drewrehfeld/opened | https://api.github.com/repos/drewrehfeld/opened | opened | style issue on comment thread | bug HIGHEST! P1 (highest priority) | Something weird has happened with the spacing on posts on the newsfeed

current style:

what it should look like:

| 1.0 | style issue on comment thread - Something weird has happened with the spacing on posts on the newsfeed

current style:

what it should look like:

| priority | style issue on comment thread something weird has happened with the spacing on posts on the newsfeed current style what it should look like | 1 |

551,959 | 16,191,894,096 | IssuesEvent | 2021-05-04 09:39:17 | openshift/odo | https://api.github.com/repos/openshift/odo | closed | use Devfiles for s2i components | kind/cleanup points/2 priority/High | Use devfile.yml for s2i components instead of LocalConfig.yaml

# Motivation

Odo has two types of components, Devfile and s2i. Currently, each component type has its own separate code path. This means that each command needs to understand how to do the action for Devfile and also for s2i. Technically it is possible to create a Devfile that will mimic what odo is doing with s2i. We already have this in `odo utils convert`. Odo should leverage that logic and start treating s2i components as regular Devfile. This will reduce the odo code base and will make code maintenance a lot simpler.

## `odo create --s2i`

`odo create --s2i` command would no longer generate `LocalConfig` (`./odo/config.yml`). Instead, it should just generate `devfile.yml`, and optionally `./odo/env.yml`) depending on what will be required.

## all other commands

The rest of the commands (like `odo storage`, `odo url`, etc..) should not need to know anything about s2i. For them, it will be just another Devfile component.

## Acceptance Criteria