Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

338,627 | 10,232,453,098 | IssuesEvent | 2019-08-18 17:37:04 | futurismo-org/titan | https://api.github.com/repos/futurismo-org/titan | closed | りんごリジェクト対応(5回目) | high priority | ちゃんと見ていないので、解説する。

1. 1 Safety: Objectionable Content

1. 2 Safety: User Generated Content | 1.0 | りんごリジェクト対応(5回目) - ちゃんと見ていないので、解説する。

1. 1 Safety: Objectionable Content

1. 2 Safety: User Generated Content | priority | りんごリジェクト対応 ちゃんと見ていないので、解説する。 safety objectionable content safety user generated content | 1 |

43,879 | 2,893,715,337 | IssuesEvent | 2015-06-15 19:26:02 | SCIInstitute/shapeworks | https://api.github.com/repos/SCIInstitute/shapeworks | reopened | Final Release | High Priority IBBM | No more features to add at this point. Only bugs that are important for IBBM allowed after this. | 1.0 | Final Release - No more features to add at this point. Only bugs that are important for IBBM allowed after this. | priority | final release no more features to add at this point only bugs that are important for ibbm allowed after this | 1 |

186,230 | 6,734,519,625 | IssuesEvent | 2017-10-18 18:20:16 | resin-io/resin-cli | https://api.github.com/repos/resin-io/resin-cli | closed | resin device register only does the old long UUIDs | priority:high type:bug | With resinOS 2.0, the device UUIDs became half the length (~31~ 32 chars down from 62 characters). Running `resin device register AppName` only able to do the original 62 character version. Not sure if it's a problem, just highlight it, because this way images freshly downloaded from the Dashboard and images configured with the CLI will have different behaviour for 2.0.

What would be the right default behaviour? Or this just doesn't have any real consequences?

<img src="https://frontapp.com/assets/img/icons/favicon-32x32.png" height="16" width="16" alt="Front logo" /> [Front conversations](https://app.frontapp.com/open/top_20dt) | 1.0 | resin device register only does the old long UUIDs - With resinOS 2.0, the device UUIDs became half the length (~31~ 32 chars down from 62 characters). Running `resin device register AppName` only able to do the original 62 character version. Not sure if it's a problem, just highlight it, because this way images freshly downloaded from the Dashboard and images configured with the CLI will have different behaviour for 2.0.

What would be the right default behaviour? Or this just doesn't have any real consequences?

<img src="https://frontapp.com/assets/img/icons/favicon-32x32.png" height="16" width="16" alt="Front logo" /> [Front conversations](https://app.frontapp.com/open/top_20dt) | priority | resin device register only does the old long uuids with resinos the device uuids became half the length chars down from characters running resin device register appname only able to do the original character version not sure if it s a problem just highlight it because this way images freshly downloaded from the dashboard and images configured with the cli will have different behaviour for what would be the right default behaviour or this just doesn t have any real consequences | 1 |

3,912 | 2,542,061,046 | IssuesEvent | 2015-01-28 14:06:33 | bethlakshmi/GBE2 | https://api.github.com/repos/bethlakshmi/GBE2 | closed | Update Your Bio | High Priority | Your bio, which is now live on the site, reads:

Betty has been doing this too long.

She also ported the data.

You might want to change that... | 1.0 | Update Your Bio - Your bio, which is now live on the site, reads:

Betty has been doing this too long.

She also ported the data.

You might want to change that... | priority | update your bio your bio which is now live on the site reads betty has been doing this too long she also ported the data you might want to change that | 1 |

628,072 | 19,974,919,871 | IssuesEvent | 2022-01-29 00:52:10 | rstudio/gt | https://api.github.com/repos/rstudio/gt | closed | Option to set locale globally | Difficulty: [2] Intermediate Effort: [3] High Priority: ♨︎ Critical Type: ★ Enhancement | ### Setting locale for fmt_\* globally

Im using gt increasingly in html reports in Europe, therefore I would like to set the locale globally, for example via an option that switches every standard gt fmt_\* to use the locale specified in the option.

That would make (working with) gt code in non-US locales less verbose and reduces the need to use purrr::partial

| 1.0 | Option to set locale globally - ### Setting locale for fmt_\* globally

Im using gt increasingly in html reports in Europe, therefore I would like to set the locale globally, for example via an option that switches every standard gt fmt_\* to use the locale specified in the option.

That would make (working with) gt code in non-US locales less verbose and reduces the need to use purrr::partial

| priority | option to set locale globally setting locale for fmt globally im using gt increasingly in html reports in europe therefore i would like to set the locale globally for example via an option that switches every standard gt fmt to use the locale specified in the option that would make working with gt code in non us locales less verbose and reduces the need to use purrr partial | 1 |

528,599 | 15,370,530,770 | IssuesEvent | 2021-03-02 08:55:35 | Mobsya/aseba | https://api.github.com/repos/Mobsya/aseba | opened | ThymioSuite on Big Sur do not seen robots, problem of Discovery | Mac OS specific Thymio Device Manager bug high priority | Some user reported that on Big Sur (some on 10.15.7), robot are not seen. Lot of investigation was made with users and apple around a correct packaging and Notarization. That's resolved the problem for some user because TDM was blocked by gatekeeper.

Finally bug remains with some Big Sur user where the package was not the problem. Finally we discovered that TDM cannot show itself with the Discovery process (Bonjour) a error came back from the library with "bad parameters". [https://github.com/Mobsya/aseba/blob/6a557425761f494f2536ba725889acad54840215/aseba/thymio-device-manager/aseba_node_registery.cpp#L138](url).

This behaviour has been seen on two user computer but cannot be reproduced.

| 1.0 | ThymioSuite on Big Sur do not seen robots, problem of Discovery - Some user reported that on Big Sur (some on 10.15.7), robot are not seen. Lot of investigation was made with users and apple around a correct packaging and Notarization. That's resolved the problem for some user because TDM was blocked by gatekeeper.

Finally bug remains with some Big Sur user where the package was not the problem. Finally we discovered that TDM cannot show itself with the Discovery process (Bonjour) a error came back from the library with "bad parameters". [https://github.com/Mobsya/aseba/blob/6a557425761f494f2536ba725889acad54840215/aseba/thymio-device-manager/aseba_node_registery.cpp#L138](url).

This behaviour has been seen on two user computer but cannot be reproduced.

| priority | thymiosuite on big sur do not seen robots problem of discovery some user reported that on big sur some on robot are not seen lot of investigation was made with users and apple around a correct packaging and notarization that s resolved the problem for some user because tdm was blocked by gatekeeper finally bug remains with some big sur user where the package was not the problem finally we discovered that tdm cannot show itself with the discovery process bonjour a error came back from the library with bad parameters url this behaviour has been seen on two user computer but cannot be reproduced | 1 |

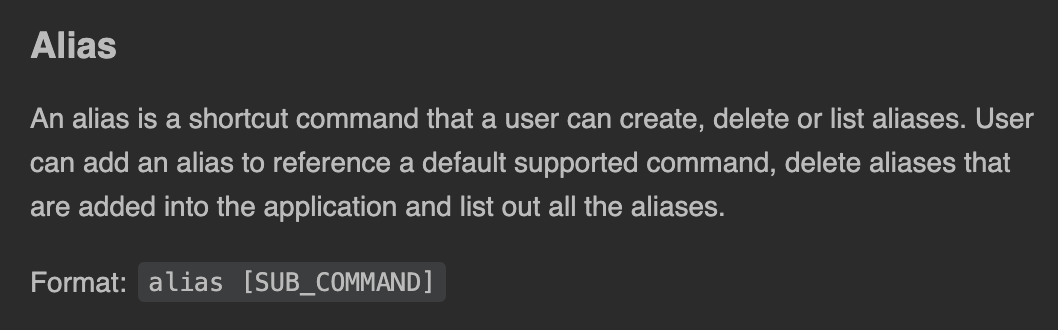

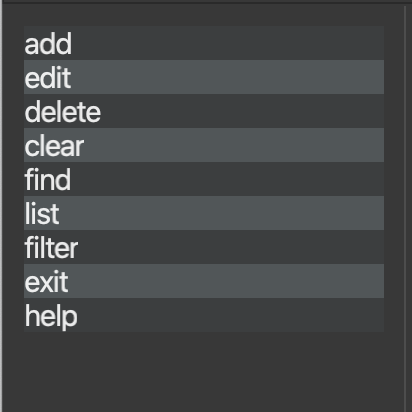

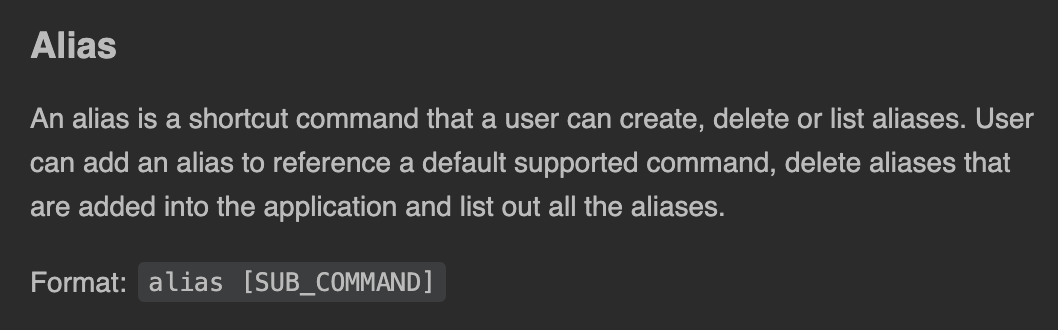

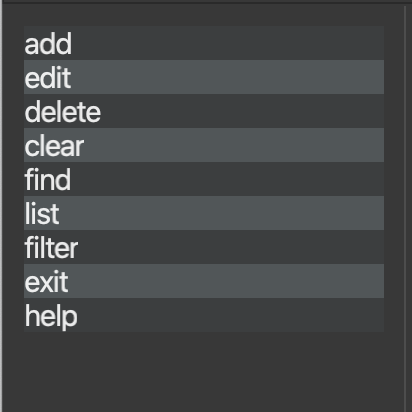

533,233 | 15,586,878,098 | IssuesEvent | 2021-03-18 02:59:41 | AY2021S2-CS2103T-T12-3/tp | https://api.github.com/repos/AY2021S2-CS2103T-T12-3/tp | opened | Alias command is not found in autocomplete panel | priority.High | A possible reason is that **getAutoCompleteCommands()** is not pulling that command yet.

**Alias command as found in UG.**

**Alias command not found.**

| 1.0 | Alias command is not found in autocomplete panel - A possible reason is that **getAutoCompleteCommands()** is not pulling that command yet.

**Alias command as found in UG.**

**Alias command not found.**

| priority | alias command is not found in autocomplete panel a possible reason is that getautocompletecommands is not pulling that command yet alias command as found in ug alias command not found | 1 |

478,479 | 13,780,041,736 | IssuesEvent | 2020-10-08 14:28:05 | carbon-design-system/ibm-dotcom-library | https://api.github.com/repos/carbon-design-system/ibm-dotcom-library | closed | Web Component: Develop Table of Contents of the React version - Group 2 | Airtable Done dev package: web components priority: high | #### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

IBM.com Library developer

> I need to:

create the `Table of Contents`

> so that I can:

provide ibm.com adopter developers a web component version for every react version available in the ibm.com Library

#### Additional information

<!-- {{Please provide any additional information or resources for reference}} -->

- Story within Storybook with corresponding knobs

- Utilize Carbon

- Create with Shadow DOM and Custom Elements standards

- **See the Epic for the Design and Functional specs information**

- [React canary environment](https://ibmdotcom-react-canary.mybluemix.net/?path=/docs/overview-getting-started--page)

- Prod QA testing issue (#3631)

#### Acceptance criteria

- [ ] Include README for the web component and corresponding styles

- [ ] Create Web Components styles in styles package

- [ ] No custom styles in web-components package

- [ ] Do not create knobs in Storybook that include JSON objects

- [ ] Break out Storybook stories into multiple variation stories, if applicable

- [ ] Create codesandbox example under `/packages/web-components/examples/codesandbox` and include in README

- [ ] Minimum 80% unit test coverage

- [ ] A comment is posted in the Prod QA issue, tagging Praveen when development is finished

| 1.0 | Web Component: Develop Table of Contents of the React version - Group 2 - #### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

IBM.com Library developer

> I need to:

create the `Table of Contents`

> so that I can:

provide ibm.com adopter developers a web component version for every react version available in the ibm.com Library

#### Additional information

<!-- {{Please provide any additional information or resources for reference}} -->

- Story within Storybook with corresponding knobs

- Utilize Carbon

- Create with Shadow DOM and Custom Elements standards

- **See the Epic for the Design and Functional specs information**

- [React canary environment](https://ibmdotcom-react-canary.mybluemix.net/?path=/docs/overview-getting-started--page)

- Prod QA testing issue (#3631)

#### Acceptance criteria

- [ ] Include README for the web component and corresponding styles

- [ ] Create Web Components styles in styles package

- [ ] No custom styles in web-components package

- [ ] Do not create knobs in Storybook that include JSON objects

- [ ] Break out Storybook stories into multiple variation stories, if applicable

- [ ] Create codesandbox example under `/packages/web-components/examples/codesandbox` and include in README

- [ ] Minimum 80% unit test coverage

- [ ] A comment is posted in the Prod QA issue, tagging Praveen when development is finished

| priority | web component develop table of contents of the react version group user story as a ibm com library developer i need to create the table of contents so that i can provide ibm com adopter developers a web component version for every react version available in the ibm com library additional information story within storybook with corresponding knobs utilize carbon create with shadow dom and custom elements standards see the epic for the design and functional specs information prod qa testing issue acceptance criteria include readme for the web component and corresponding styles create web components styles in styles package no custom styles in web components package do not create knobs in storybook that include json objects break out storybook stories into multiple variation stories if applicable create codesandbox example under packages web components examples codesandbox and include in readme minimum unit test coverage a comment is posted in the prod qa issue tagging praveen when development is finished | 1 |

759,362 | 26,591,525,305 | IssuesEvent | 2023-01-23 09:06:52 | codelab-app/builder | https://api.github.com/repos/codelab-app/builder | closed | Proposal for component slots | priority: high | ## The Problem

The current component system works for only basic templating. For example, you can't create a useful layout component right now.

## The solution

We need what's the equivalent of slots in templating tools. In Vue they are called slots, in Rails this is done through partials, in Laravel you have component slots. And in React this functionality is filled mostly by render props or by passing components as props/in context.

## Implementation

I can imagine 2 ways to do this

### 1. The explicit way

Users explicitly define an API for their components, similar to how we have an api for Atoms props. For example:

<img width="962" alt="image" src="https://user-images.githubusercontent.com/57956282/187932904-ee36b004-bbb9-4671-9119-4faf59705218.png">

For slots we can use existing types, like RenderPropsType, ReactNodeType, ElementType.

This api serves as the place of truth for defining the inputs that a component takes.

The benefit of this is that that's not only applicable for slots, but we can also assign other props to the component, like strings, numbers, etc.

We use this interface to generate a form for the component, just like we do for atoms.

The next part is to be able to assign this slot to a particular element

One way to do that is to bind it to props. Say that we have a Div atom with this API

<img width="959" alt="image" src="https://user-images.githubusercontent.com/57956282/187933534-e081da3a-0039-45b5-99b1-8e831d7adfdf.png">

Now we only need to connect `heroContent` from the Layout's API to the `children` of the Divs API. The easiest way I imagine is to bind it as we bind global state variables.

<img width="1908" alt="image" src="https://user-images.githubusercontent.com/57956282/187933790-70b0db34-6773-4a60-9dda-604fc74fb4db.png">

This would require modifying the prop evaluating code to take into account the current component that the element is in and its props.

### The implicit way

We create a new Atom Type, for example named `Slot`.

The user creates a new element as usual and assigns it an Atom with type Slot:

<img width="1919" alt="image" src="https://user-images.githubusercontent.com/57956282/187934585-3e701b16-624b-48ab-aef7-989c6c449acc.png">

Then on the component instance, we render a form that has all of the elements inside it with atom type Slot and we allow the user to pick a Component to render for them.

<img width="959" alt="image" src="https://user-images.githubusercontent.com/57956282/187935140-50fc67cc-ab9d-425f-8c5b-dd23bd7f4494.png">

The data from this form is stored on the component instance either as a separate field or as a special prop. It has the shape of a key-value object where the key is the id of the Slot-atomed element and the value is the component id to render. This is then used when evaluating the props to render the specific component instead of the slot-atomed element.

This approach seems simpler, but it's less flexible since the user can't define other component props other than slots.

#### Note

In both implementations, we can additionally add the ability to directly drag and drop an element to the slot to avoid creating a component for it.

Any thoughts or other ideas?

| 1.0 | Proposal for component slots - ## The Problem

The current component system works for only basic templating. For example, you can't create a useful layout component right now.

## The solution

We need what's the equivalent of slots in templating tools. In Vue they are called slots, in Rails this is done through partials, in Laravel you have component slots. And in React this functionality is filled mostly by render props or by passing components as props/in context.

## Implementation

I can imagine 2 ways to do this

### 1. The explicit way

Users explicitly define an API for their components, similar to how we have an api for Atoms props. For example:

<img width="962" alt="image" src="https://user-images.githubusercontent.com/57956282/187932904-ee36b004-bbb9-4671-9119-4faf59705218.png">

For slots we can use existing types, like RenderPropsType, ReactNodeType, ElementType.

This api serves as the place of truth for defining the inputs that a component takes.

The benefit of this is that that's not only applicable for slots, but we can also assign other props to the component, like strings, numbers, etc.

We use this interface to generate a form for the component, just like we do for atoms.

The next part is to be able to assign this slot to a particular element

One way to do that is to bind it to props. Say that we have a Div atom with this API

<img width="959" alt="image" src="https://user-images.githubusercontent.com/57956282/187933534-e081da3a-0039-45b5-99b1-8e831d7adfdf.png">

Now we only need to connect `heroContent` from the Layout's API to the `children` of the Divs API. The easiest way I imagine is to bind it as we bind global state variables.

<img width="1908" alt="image" src="https://user-images.githubusercontent.com/57956282/187933790-70b0db34-6773-4a60-9dda-604fc74fb4db.png">

This would require modifying the prop evaluating code to take into account the current component that the element is in and its props.

### The implicit way

We create a new Atom Type, for example named `Slot`.

The user creates a new element as usual and assigns it an Atom with type Slot:

<img width="1919" alt="image" src="https://user-images.githubusercontent.com/57956282/187934585-3e701b16-624b-48ab-aef7-989c6c449acc.png">

Then on the component instance, we render a form that has all of the elements inside it with atom type Slot and we allow the user to pick a Component to render for them.

<img width="959" alt="image" src="https://user-images.githubusercontent.com/57956282/187935140-50fc67cc-ab9d-425f-8c5b-dd23bd7f4494.png">

The data from this form is stored on the component instance either as a separate field or as a special prop. It has the shape of a key-value object where the key is the id of the Slot-atomed element and the value is the component id to render. This is then used when evaluating the props to render the specific component instead of the slot-atomed element.

This approach seems simpler, but it's less flexible since the user can't define other component props other than slots.

#### Note

In both implementations, we can additionally add the ability to directly drag and drop an element to the slot to avoid creating a component for it.

Any thoughts or other ideas?

| priority | proposal for component slots the problem the current component system works for only basic templating for example you can t create a useful layout component right now the solution we need what s the equivalent of slots in templating tools in vue they are called slots in rails this is done through partials in laravel you have component slots and in react this functionality is filled mostly by render props or by passing components as props in context implementation i can imagine ways to do this the explicit way users explicitly define an api for their components similar to how we have an api for atoms props for example img width alt image src for slots we can use existing types like renderpropstype reactnodetype elementtype this api serves as the place of truth for defining the inputs that a component takes the benefit of this is that that s not only applicable for slots but we can also assign other props to the component like strings numbers etc we use this interface to generate a form for the component just like we do for atoms the next part is to be able to assign this slot to a particular element one way to do that is to bind it to props say that we have a div atom with this api img width alt image src now we only need to connect herocontent from the layout s api to the children of the divs api the easiest way i imagine is to bind it as we bind global state variables img width alt image src this would require modifying the prop evaluating code to take into account the current component that the element is in and its props the implicit way we create a new atom type for example named slot the user creates a new element as usual and assigns it an atom with type slot img width alt image src then on the component instance we render a form that has all of the elements inside it with atom type slot and we allow the user to pick a component to render for them img width alt image src the data from this form is stored on the component instance either as a separate field or as a special prop it has the shape of a key value object where the key is the id of the slot atomed element and the value is the component id to render this is then used when evaluating the props to render the specific component instead of the slot atomed element this approach seems simpler but it s less flexible since the user can t define other component props other than slots note in both implementations we can additionally add the ability to directly drag and drop an element to the slot to avoid creating a component for it any thoughts or other ideas | 1 |

687,341 | 23,522,443,739 | IssuesEvent | 2022-08-19 07:34:34 | roq-trading/roq-issues | https://api.github.com/repos/roq-trading/roq-issues | closed | [roq-server] Using an invalid account causes crash | bug high priority support | Validation is done correctly and an `OrderAck` with the reject is prepared for sending.

When sending the `OrderAck`, the gateway needs to find the `account_id` and that's where it fails.

There's a low-level optimization that allows clients to only process updates where `account_id`'s (as known to the gateway) are used for filtering.

The client filtering is based on the subscription configuration and managed inside the `roq-client` library, i.e. not in the "user" code.

This is therefore a problem -- an `account_id` is needed for the filtering.

Somehow we need to allow for missing `account_id` as well. | 1.0 | [roq-server] Using an invalid account causes crash - Validation is done correctly and an `OrderAck` with the reject is prepared for sending.

When sending the `OrderAck`, the gateway needs to find the `account_id` and that's where it fails.

There's a low-level optimization that allows clients to only process updates where `account_id`'s (as known to the gateway) are used for filtering.

The client filtering is based on the subscription configuration and managed inside the `roq-client` library, i.e. not in the "user" code.

This is therefore a problem -- an `account_id` is needed for the filtering.

Somehow we need to allow for missing `account_id` as well. | priority | using an invalid account causes crash validation is done correctly and an orderack with the reject is prepared for sending when sending the orderack the gateway needs to find the account id and that s where it fails there s a low level optimization that allows clients to only process updates where account id s as known to the gateway are used for filtering the client filtering is based on the subscription configuration and managed inside the roq client library i e not in the user code this is therefore a problem an account id is needed for the filtering somehow we need to allow for missing account id as well | 1 |

393,638 | 11,622,676,081 | IssuesEvent | 2020-02-27 07:10:26 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Cannot commit edits to spatialite layers in QGIS 3.12 | Bug Data Provider High Priority Regression | <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ ] Create a light and self-contained sample dataset and project file which demonstrates the issue

If the issue concerns a **third party plugin**, then it **cannot** be fixed by the QGIS team. Please raise your issue in the dedicated bug tracker for that specific plugin (as listed in the plugin's description). -->

**Describe the bug**

When I try to commit edits to a spatialite layer I get a yellow warning saying the following:

`Could not commit changes to layer Facilities`

`Errors: ERROR: 5 feature(s) not added.`

`Provider errors:`

`SQLite error: unknown cause`

`SQL: INSERT INTO "facilities"("geometry",,"type","comment") VALUES (GeomFromWKB(?, 2157),,?,?)`

and a red warning saying the following:

`Layer Facilities: SQLite error: unknown cause SQL: INSERT INTO "facilities"("geometry",,"type","comment") VALUES (GeomFromWKB(?, 2157),,?,?)`

This prevents me from making any edits to spatialite layers.

**How to Reproduce**

1. Create a new spatialite database and layer

2. Enable editing, add features, click `save edits`

3. See error(s)

**QGIS and OS versions**

<!-- In the QGIS menu help/about, click in the dialog, Ctrl+A and then Ctrl+C. Finally paste here -->

QGIS version

3.12.0-București

QGIS code revision

cd141490ec

Compiled against Qt

5.11.2

Running against Qt

5.11.2

Compiled against GDAL/OGR

3.0.4

Running against GDAL/OGR

3.0.4

Compiled against GEOS

3.8.0-CAPI-1.13.1

Running against GEOS

3.8.0-CAPI-1.13.1

Compiled against SQLite

3.29.0

Running against SQLite

3.29.0

PostgreSQL Client Version

11.5

SpatiaLite Version

4.3.0

QWT Version

6.1.3

QScintilla2 Version

2.10.8

Compiled against PROJ

6.3.1

Running against PROJ

Rel. 6.3.1, February 10th, 2020

OS Version

Windows 10 (10.0)

**Test Project and Database**

[test_project_and_db.zip](https://github.com/qgis/QGIS/files/4256866/test_project_and_db.zip)

| 1.0 | Cannot commit edits to spatialite layers in QGIS 3.12 - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

- [ ] Search through existing issue reports and gis.stackexchange.com to check whether the issue already exists

- [ ] Test with a [clean new user profile](https://docs.qgis.org/testing/en/docs/user_manual/introduction/qgis_configuration.html?highlight=profile#working-with-user-profiles).

- [ ] Create a light and self-contained sample dataset and project file which demonstrates the issue

If the issue concerns a **third party plugin**, then it **cannot** be fixed by the QGIS team. Please raise your issue in the dedicated bug tracker for that specific plugin (as listed in the plugin's description). -->

**Describe the bug**

When I try to commit edits to a spatialite layer I get a yellow warning saying the following:

`Could not commit changes to layer Facilities`

`Errors: ERROR: 5 feature(s) not added.`

`Provider errors:`

`SQLite error: unknown cause`

`SQL: INSERT INTO "facilities"("geometry",,"type","comment") VALUES (GeomFromWKB(?, 2157),,?,?)`

and a red warning saying the following:

`Layer Facilities: SQLite error: unknown cause SQL: INSERT INTO "facilities"("geometry",,"type","comment") VALUES (GeomFromWKB(?, 2157),,?,?)`

This prevents me from making any edits to spatialite layers.

**How to Reproduce**

1. Create a new spatialite database and layer

2. Enable editing, add features, click `save edits`

3. See error(s)

**QGIS and OS versions**

<!-- In the QGIS menu help/about, click in the dialog, Ctrl+A and then Ctrl+C. Finally paste here -->

QGIS version

3.12.0-București

QGIS code revision

cd141490ec

Compiled against Qt

5.11.2

Running against Qt

5.11.2

Compiled against GDAL/OGR

3.0.4

Running against GDAL/OGR

3.0.4

Compiled against GEOS

3.8.0-CAPI-1.13.1

Running against GEOS

3.8.0-CAPI-1.13.1

Compiled against SQLite

3.29.0

Running against SQLite

3.29.0

PostgreSQL Client Version

11.5

SpatiaLite Version

4.3.0

QWT Version

6.1.3

QScintilla2 Version

2.10.8

Compiled against PROJ

6.3.1

Running against PROJ

Rel. 6.3.1, February 10th, 2020

OS Version

Windows 10 (10.0)

**Test Project and Database**

[test_project_and_db.zip](https://github.com/qgis/QGIS/files/4256866/test_project_and_db.zip)

| priority | cannot commit edits to spatialite layers in qgis bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support organisation and financially sponsoring a fix checklist before submitting search through existing issue reports and gis stackexchange com to check whether the issue already exists test with a create a light and self contained sample dataset and project file which demonstrates the issue if the issue concerns a third party plugin then it cannot be fixed by the qgis team please raise your issue in the dedicated bug tracker for that specific plugin as listed in the plugin s description describe the bug when i try to commit edits to a spatialite layer i get a yellow warning saying the following could not commit changes to layer facilities errors error feature s not added provider errors sqlite error unknown cause sql insert into facilities geometry type comment values geomfromwkb and a red warning saying the following layer facilities sqlite error unknown cause sql insert into facilities geometry type comment values geomfromwkb this prevents me from making any edits to spatialite layers how to reproduce create a new spatialite database and layer enable editing add features click save edits see error s qgis and os versions qgis version bucurești qgis code revision compiled against qt running against qt compiled against gdal ogr running against gdal ogr compiled against geos capi running against geos capi compiled against sqlite running against sqlite postgresql client version spatialite version qwt version version compiled against proj running against proj rel february os version windows test project and database | 1 |

444,298 | 12,809,386,262 | IssuesEvent | 2020-07-03 15:32:04 | cds-snc/covid-shield-mobile | https://api.github.com/repos/cds-snc/covid-shield-mobile | reopened | "Share your random codes" notification - Screen in background on infinite load state | bug high priority | Environment :

Pixel 3XL, Android v9 (BrowserStack), version v14

Scenario :

- Go to Enter you code screen from the menu

- Enter a 8-digit code and submit

- Click on "Agree" on the upload code screen

Expected :

A notification about "share you random codes" is displayed on top of the upload code screen

Issue :

The screen in the background of the notification is on an infinite load state

| 1.0 | "Share your random codes" notification - Screen in background on infinite load state - Environment :

Pixel 3XL, Android v9 (BrowserStack), version v14

Scenario :

- Go to Enter you code screen from the menu

- Enter a 8-digit code and submit

- Click on "Agree" on the upload code screen

Expected :

A notification about "share you random codes" is displayed on top of the upload code screen

Issue :

The screen in the background of the notification is on an infinite load state

| priority | share your random codes notification screen in background on infinite load state environment pixel android browserstack version scenario go to enter you code screen from the menu enter a digit code and submit click on agree on the upload code screen expected a notification about share you random codes is displayed on top of the upload code screen issue the screen in the background of the notification is on an infinite load state | 1 |

354,862 | 10,573,840,280 | IssuesEvent | 2019-10-07 12:53:28 | AY1920S1-CS2103T-W11-1/main | https://api.github.com/repos/AY1920S1-CS2103T-W11-1/main | opened | As a user, I want to mark tasks as done/undone | priority.High status.Ongoing type.Story | ... so I can manage my progress in the training plans. | 1.0 | As a user, I want to mark tasks as done/undone - ... so I can manage my progress in the training plans. | priority | as a user i want to mark tasks as done undone so i can manage my progress in the training plans | 1 |

394,718 | 11,647,939,654 | IssuesEvent | 2020-03-01 17:53:29 | Rammelkast/AntiCheatReloaded | https://api.github.com/repos/Rammelkast/AntiCheatReloaded | closed | Speed bypass | bypass help wanted high priority | Video:

https://youtu.be/H9u6GS0jNes

Code:

```

package AppleClient.modules.movement;

import org.lwjgl.input.Keyboard;

import AppleClient.events.EventTarget;

import AppleClient.events.events.EventMove;

import AppleClient.events.events.EventTick;

import AppleClient.modules.Module;

import net.minecraft.util.MovementInput;

public class MemeSpeed extends Module

{

public MemeSpeed() {

super("ACRSpeed", Keyboard.KEY_Z, 7733063, Category.MOVEMENT, "memes", new String[] {"2fast4uboi"}, true);

}

@Override

public void onEnable() {

if(mc.thePlayer != null) {

}

super.onEnable();

}

public void setSpeed(double speed) {

final MovementInput movementInput = mc.thePlayer.movementInput;

float forward = movementInput.moveForward;

float strafe = movementInput.moveStrafe;

float yaw = mc.thePlayer.rotationYaw;

if (forward == 0.0f && strafe == 0.0f) {

mc.thePlayer.motionX = 0.0;

mc.thePlayer.motionZ = 0.0;

}

else if (forward != 0.0f) {

if (strafe >= 1.0f) {

yaw += ((forward > 0.0f) ? -45 : 45);

strafe = 0.0f;

}

else if (strafe <= -1.0f) {

yaw += ((forward > 0.0f) ? 45 : -45);

strafe = 0.0f;

}

if (forward > 0.0f) {

forward = 1.0f;

}

else if (forward < 0.0f) {

forward = -1.0f;

}

}

final double mx = Math.cos(Math.toRadians(yaw + 90.0f));

final double mz = Math.sin(Math.toRadians(yaw + 90.0f));

mc.thePlayer.motionX = forward * speed * mx + strafe * speed * mz;

mc.thePlayer.motionZ = forward * speed * mz - strafe * speed * mx;

if (forward == 0.0f && strafe == 0.0f) {

mc.thePlayer.motionX = 0.0;

mc.thePlayer.motionZ = 0.0;

}

}

@EventTarget

private void onUpdate(EventTick event) {

if((mc.thePlayer.moveForward != 0.0D || mc.thePlayer.moveStrafing != 0.0D) && mc.thePlayer.onGround) {

mc.thePlayer.motionY = 0.4D;

}

}

@EventTarget

public void onMove(EventMove event) {

//heres how it changes between speeds to bypass.

boolean hack = mc.thePlayer.ticksExisted % 2 == 0;

MemeSpeed.this.setSpeed(hack ? 0.06D : 1.6D);

}

}

```

What it does is change between a slow speed, then goes fast for a short amount of time, before the anticheat can recognize it. | 1.0 | Speed bypass - Video:

https://youtu.be/H9u6GS0jNes

Code:

```

package AppleClient.modules.movement;

import org.lwjgl.input.Keyboard;

import AppleClient.events.EventTarget;

import AppleClient.events.events.EventMove;

import AppleClient.events.events.EventTick;

import AppleClient.modules.Module;

import net.minecraft.util.MovementInput;

public class MemeSpeed extends Module

{

public MemeSpeed() {

super("ACRSpeed", Keyboard.KEY_Z, 7733063, Category.MOVEMENT, "memes", new String[] {"2fast4uboi"}, true);

}

@Override

public void onEnable() {

if(mc.thePlayer != null) {

}

super.onEnable();

}

public void setSpeed(double speed) {

final MovementInput movementInput = mc.thePlayer.movementInput;

float forward = movementInput.moveForward;

float strafe = movementInput.moveStrafe;

float yaw = mc.thePlayer.rotationYaw;

if (forward == 0.0f && strafe == 0.0f) {

mc.thePlayer.motionX = 0.0;

mc.thePlayer.motionZ = 0.0;

}

else if (forward != 0.0f) {

if (strafe >= 1.0f) {

yaw += ((forward > 0.0f) ? -45 : 45);

strafe = 0.0f;

}

else if (strafe <= -1.0f) {

yaw += ((forward > 0.0f) ? 45 : -45);

strafe = 0.0f;

}

if (forward > 0.0f) {

forward = 1.0f;

}

else if (forward < 0.0f) {

forward = -1.0f;

}

}

final double mx = Math.cos(Math.toRadians(yaw + 90.0f));

final double mz = Math.sin(Math.toRadians(yaw + 90.0f));

mc.thePlayer.motionX = forward * speed * mx + strafe * speed * mz;

mc.thePlayer.motionZ = forward * speed * mz - strafe * speed * mx;

if (forward == 0.0f && strafe == 0.0f) {

mc.thePlayer.motionX = 0.0;

mc.thePlayer.motionZ = 0.0;

}

}

@EventTarget

private void onUpdate(EventTick event) {

if((mc.thePlayer.moveForward != 0.0D || mc.thePlayer.moveStrafing != 0.0D) && mc.thePlayer.onGround) {

mc.thePlayer.motionY = 0.4D;

}

}

@EventTarget

public void onMove(EventMove event) {

//heres how it changes between speeds to bypass.

boolean hack = mc.thePlayer.ticksExisted % 2 == 0;

MemeSpeed.this.setSpeed(hack ? 0.06D : 1.6D);

}

}

```

What it does is change between a slow speed, then goes fast for a short amount of time, before the anticheat can recognize it. | priority | speed bypass video code package appleclient modules movement import org lwjgl input keyboard import appleclient events eventtarget import appleclient events events eventmove import appleclient events events eventtick import appleclient modules module import net minecraft util movementinput public class memespeed extends module public memespeed super acrspeed keyboard key z category movement memes new string true override public void onenable if mc theplayer null super onenable public void setspeed double speed final movementinput movementinput mc theplayer movementinput float forward movementinput moveforward float strafe movementinput movestrafe float yaw mc theplayer rotationyaw if forward strafe mc theplayer motionx mc theplayer motionz else if forward if strafe yaw forward strafe else if strafe yaw forward strafe if forward forward else if forward forward final double mx math cos math toradians yaw final double mz math sin math toradians yaw mc theplayer motionx forward speed mx strafe speed mz mc theplayer motionz forward speed mz strafe speed mx if forward strafe mc theplayer motionx mc theplayer motionz eventtarget private void onupdate eventtick event if mc theplayer moveforward mc theplayer movestrafing mc theplayer onground mc theplayer motiony eventtarget public void onmove eventmove event heres how it changes between speeds to bypass boolean hack mc theplayer ticksexisted memespeed this setspeed hack what it does is change between a slow speed then goes fast for a short amount of time before the anticheat can recognize it | 1 |

153,971 | 5,906,750,331 | IssuesEvent | 2017-05-19 15:52:52 | cdnjs/cdnjs | https://api.github.com/repos/cdnjs/cdnjs | closed | [Request] Add jmespath | High Priority in progress Library - Request to Add/Update | **Library name:** jmespath

**Git repository url:** https://github.com/jmespath/jmespath.js

**npm package name or url** (if there is one): https://www.npmjs.com/package/jmespath

**License (List them all if it's multiple):** Apache License, Version 2.0

**Official homepage:** http://jmespath.org/

**Wanna say something? Leave message here:**

=====================

Notes from cdnjs maintainer:

Please read the [README.md](https://github.com/cdnjs/cdnjs#cdnjs-library-repository) and [CONTRIBUTING.md](https://github.com/cdnjs/cdnjs/blob/master/CONTRIBUTING.md) document first.

We encourage you to add a library via sending pull request,

it'll be faster than just opening a request issue,

since there are tons of issues, please wait with patience,

and please don't forget to read the guidelines for contributing, thanks!!

| 1.0 | [Request] Add jmespath - **Library name:** jmespath

**Git repository url:** https://github.com/jmespath/jmespath.js

**npm package name or url** (if there is one): https://www.npmjs.com/package/jmespath

**License (List them all if it's multiple):** Apache License, Version 2.0

**Official homepage:** http://jmespath.org/

**Wanna say something? Leave message here:**

=====================

Notes from cdnjs maintainer:

Please read the [README.md](https://github.com/cdnjs/cdnjs#cdnjs-library-repository) and [CONTRIBUTING.md](https://github.com/cdnjs/cdnjs/blob/master/CONTRIBUTING.md) document first.

We encourage you to add a library via sending pull request,

it'll be faster than just opening a request issue,

since there are tons of issues, please wait with patience,

and please don't forget to read the guidelines for contributing, thanks!!

| priority | add jmespath library name jmespath git repository url npm package name or url if there is one license list them all if it s multiple apache license version official homepage wanna say something leave message here notes from cdnjs maintainer please read the and document first we encourage you to add a library via sending pull request it ll be faster than just opening a request issue since there are tons of issues please wait with patience and please don t forget to read the guidelines for contributing thanks | 1 |

161,676 | 6,132,993,337 | IssuesEvent | 2017-06-25 09:21:29 | play2-maven-plugin/play2-maven-plugin | https://api.github.com/repos/play2-maven-plugin/play2-maven-plugin | closed | Upgrade Play! version from 2.6.0-RC2 to 2.6.0 | Component-Maven-Plugin Component-Provider26 Priority-High Type-Task | Upgrade:

- `play` version from `2.6.0-RC2` to `2.6.0`

- `twirl` version from `1.3.0` to `1.3.2`

- `ebean-agent` version from `10.1.7` to `10.3.1` (used in `play-ebean` version `4.0.2`)

Upgrade in documentation and test projects:

- `akka-*` dependencies versions to `2.5.3`

- `play-ebean` version to `4.0.2`

- `play-json` version to `2.6.0`

- `play-slick` version to `3.0.0`

- `hibernate-entitymanager` version to `5.2.10.Final`

- `scalatestplus-play` version to `3.0.0`

| 1.0 | Upgrade Play! version from 2.6.0-RC2 to 2.6.0 - Upgrade:

- `play` version from `2.6.0-RC2` to `2.6.0`

- `twirl` version from `1.3.0` to `1.3.2`

- `ebean-agent` version from `10.1.7` to `10.3.1` (used in `play-ebean` version `4.0.2`)

Upgrade in documentation and test projects:

- `akka-*` dependencies versions to `2.5.3`

- `play-ebean` version to `4.0.2`

- `play-json` version to `2.6.0`

- `play-slick` version to `3.0.0`

- `hibernate-entitymanager` version to `5.2.10.Final`

- `scalatestplus-play` version to `3.0.0`

| priority | upgrade play version from to upgrade play version from to twirl version from to ebean agent version from to used in play ebean version upgrade in documentation and test projects akka dependencies versions to play ebean version to play json version to play slick version to hibernate entitymanager version to final scalatestplus play version to | 1 |

681,150 | 23,298,773,054 | IssuesEvent | 2022-08-07 01:45:32 | zot4plan/Zot4Plan | https://api.github.com/repos/zot4plan/Zot4Plan | opened | INS-15 Tracking taken GE courses | Priority: high Type: feature request | **Story**

Users want to know how many and which GE courses have been taken.

**Requirement**

1. Each GE category has a badge which display the number of taken courses

2. Show list of taken courses when hovering the badge

| 1.0 | INS-15 Tracking taken GE courses - **Story**

Users want to know how many and which GE courses have been taken.

**Requirement**

1. Each GE category has a badge which display the number of taken courses

2. Show list of taken courses when hovering the badge

| priority | ins tracking taken ge courses story users want to know how many and which ge courses have been taken requirement each ge category has a badge which display the number of taken courses show list of taken courses when hovering the badge | 1 |

449,301 | 12,966,629,447 | IssuesEvent | 2020-07-21 01:08:52 | dhowe/Website | https://api.github.com/repos/dhowe/Website | closed | Ready new design to go live | priority: high | lets get the new website design pushed live:

- [x] check resolution of all images and replace any pixelated/low-res

- [x] check display in all mobile | 1.0 | Ready new design to go live - lets get the new website design pushed live:

- [x] check resolution of all images and replace any pixelated/low-res

- [x] check display in all mobile | priority | ready new design to go live lets get the new website design pushed live check resolution of all images and replace any pixelated low res check display in all mobile | 1 |

374,480 | 11,091,183,010 | IssuesEvent | 2019-12-15 10:28:58 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | excise.wb.gov.in - see bug description | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Firefox 72.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:72.0) Gecko/20100101 Firefox/72.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx

**Browser / Version**: Firefox 72.0

**Operating System**: Windows 7

**Tested Another Browser**: Unknown

**Problem type**: Something else

**Description**: the page whichi was working dssapeared and new page started

**Steps to Reproduce**:

i lost the page which iwas working

[](https://webcompat.com/uploads/2019/12/151a4e01-abc0-4731-a07e-41417c726d87.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191210230245</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

<p>Console Messages:</p>

<pre>

[{'level': 'error', 'log': ["SyntaxError: expected expression, got '}'"], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/CSS/menu.js', 'pos': '216:10'}, {'level': 'warn', 'log': ['This page uses the non standard property zoom. Consider using calc() in the relevant property values, or using transform along with transform-origin: 0 0.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.simplyscroll.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.simplyscroll.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '9:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.jtweetsanywhere.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.simplyscroll2.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.simplyscroll2.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '10:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.bxSlider.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/themeroller/themeswitchertool/ was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/js/demos.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.jtweetsanywhere.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.jtweetsanywhere.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '11:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.bxSlider.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.bxSlider.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '12:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/js/demos.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/js/demos.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '21:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/themeroller/themeswitchertool/ was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/themeroller/themeswitchertool/.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '22:1'}, {'level': 'error', 'log': ['TypeError: $(...).simplyScroll is not a function'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '153:28'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'error', 'log': ['ReferenceError: theme_path is not defined'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/nic9e1a.js?oo10gf', 'pos': '221:5'}, {'level': 'error', 'log': ['TypeError: this.mqo is null'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Javascript/marquee.js', 'pos': '14:202'}]

</pre>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | excise.wb.gov.in - see bug description - <!-- @browser: Firefox 72.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:72.0) Gecko/20100101 Firefox/72.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx

**Browser / Version**: Firefox 72.0

**Operating System**: Windows 7

**Tested Another Browser**: Unknown

**Problem type**: Something else

**Description**: the page whichi was working dssapeared and new page started

**Steps to Reproduce**:

i lost the page which iwas working

[](https://webcompat.com/uploads/2019/12/151a4e01-abc0-4731-a07e-41417c726d87.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191210230245</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

<p>Console Messages:</p>

<pre>

[{'level': 'error', 'log': ["SyntaxError: expected expression, got '}'"], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/CSS/menu.js', 'pos': '216:10'}, {'level': 'warn', 'log': ['This page uses the non standard property zoom. Consider using calc() in the relevant property values, or using transform along with transform-origin: 0 0.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.simplyscroll.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.simplyscroll.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '9:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.jtweetsanywhere.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.simplyscroll2.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.simplyscroll2.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '10:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.bxSlider.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/themeroller/themeswitchertool/ was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/js/demos.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.jtweetsanywhere.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.jtweetsanywhere.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '11:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.bxSlider.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/WBSBCL/Bevco/NIC/js/jquery.bxSlider.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '12:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/js/demos.js was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/js/demos.js.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '21:1'}, {'level': 'warn', 'log': ['The script from https://excise.wb.gov.in/themeroller/themeswitchertool/ was loaded even though its MIME type () is not a valid JavaScript MIME type.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source https://excise.wb.gov.in/themeroller/themeswitchertool/.'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '22:1'}, {'level': 'error', 'log': ['TypeError: $(...).simplyScroll is not a function'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Common/NIC_Home.aspx', 'pos': '153:28'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'log', 'log': ['[cycle] terminating; zero elements found by selector'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/jquery.cycle.all.2.749e1a.js?oo10gf', 'pos': '19:18'}, {'level': 'error', 'log': ['ReferenceError: theme_path is not defined'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Rectangle_Box/nic9e1a.js?oo10gf', 'pos': '221:5'}, {'level': 'error', 'log': ['TypeError: this.mqo is null'], 'uri': 'https://excise.wb.gov.in/WBSBCL/Bevco/NIC/Javascript/marquee.js', 'pos': '14:202'}]

</pre>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | excise wb gov in see bug description url browser version firefox operating system windows tested another browser unknown problem type something else description the page whichi was working dssapeared and new page started steps to reproduce i lost the page which iwas working browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false console messages uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level warn log uri pos level error log uri pos level log log terminating zero elements found by selector uri pos level log log terminating zero elements found by selector uri pos level log log terminating zero elements found by selector uri pos level log log terminating zero elements found by selector uri pos level log log terminating zero elements found by selector uri pos level error log uri pos level error log uri pos from with ❤️ | 1 |

545,354 | 15,948,800,697 | IssuesEvent | 2021-04-15 06:29:08 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.3 release-184] Can't migrate White tiger cycle 10 | Category: Tech Priority: High Squad: Wild Turkey Status: Fixed Type: Bug | https://drive.google.com/file/d/1vBiHPylX02eiHy53IfosJ8ZJSu-62x9p/view?usp=sharing

[log_210408120622.log](https://github.com/StrangeLoopGames/EcoIssues/files/6277913/log_210408120622.log)

```

Failed to start the server. Exception was Exception: NullReferenceException

Message:Object reference not set to an instance of an object.

Source:Eco.Gameplay

System.NullReferenceException: Object reference not set to an instance of an object.

at Eco.Gameplay.Items.WorkOrder.get_Product()

at Eco.Gameplay.Items.WorkOrder.UILinkContent()

at Eco.Gameplay.Systems.TextLinks.UILinkExtensions.UILink(ILinkable linkable)

at Eco.Gameplay.Utils.SimpleEntry.get_MarkedUpName()

at Eco.Core.Utils.PropertyScanning.PropertyScanner.SetupValidity(ScanScope scope, ScanSettings settings, ScanResults results)

at Eco.Core.Utils.PropertyScanning.PropertyScanner.ScanObj(Object root, ScanSettings settings)

at Eco.Core.Utils.PropertyScanning.PropertyScanner.Scan(ScanSettings settings)

at Eco.Gameplay.Utils.SimpleEntry.Initialize()

at Eco.Gameplay.Economy.WorkParties.WorkParty.Initialize()

at Eco.Core.Systems.Registrar.Initialize()

at Eco.Core.Systems.Registrars.Init()

at Eco.Core.Utils.Initializer.Initialize()

at Eco.Server.PluginManager.InitializeAsync(StartupInfo startupInfo)

at Eco.Server.Startup.StartAsync(StartupInfo startupInfo)

``` | 1.0 | [0.9.3 release-184] Can't migrate White tiger cycle 10 - https://drive.google.com/file/d/1vBiHPylX02eiHy53IfosJ8ZJSu-62x9p/view?usp=sharing

[log_210408120622.log](https://github.com/StrangeLoopGames/EcoIssues/files/6277913/log_210408120622.log)

```

Failed to start the server. Exception was Exception: NullReferenceException

Message:Object reference not set to an instance of an object.

Source:Eco.Gameplay

System.NullReferenceException: Object reference not set to an instance of an object.

at Eco.Gameplay.Items.WorkOrder.get_Product()

at Eco.Gameplay.Items.WorkOrder.UILinkContent()

at Eco.Gameplay.Systems.TextLinks.UILinkExtensions.UILink(ILinkable linkable)

at Eco.Gameplay.Utils.SimpleEntry.get_MarkedUpName()

at Eco.Core.Utils.PropertyScanning.PropertyScanner.SetupValidity(ScanScope scope, ScanSettings settings, ScanResults results)

at Eco.Core.Utils.PropertyScanning.PropertyScanner.ScanObj(Object root, ScanSettings settings)

at Eco.Core.Utils.PropertyScanning.PropertyScanner.Scan(ScanSettings settings)

at Eco.Gameplay.Utils.SimpleEntry.Initialize()

at Eco.Gameplay.Economy.WorkParties.WorkParty.Initialize()

at Eco.Core.Systems.Registrar.Initialize()

at Eco.Core.Systems.Registrars.Init()

at Eco.Core.Utils.Initializer.Initialize()

at Eco.Server.PluginManager.InitializeAsync(StartupInfo startupInfo)

at Eco.Server.Startup.StartAsync(StartupInfo startupInfo)

``` | priority | can t migrate white tiger cycle failed to start the server exception was exception nullreferenceexception message object reference not set to an instance of an object source eco gameplay system nullreferenceexception object reference not set to an instance of an object at eco gameplay items workorder get product at eco gameplay items workorder uilinkcontent at eco gameplay systems textlinks uilinkextensions uilink ilinkable linkable at eco gameplay utils simpleentry get markedupname at eco core utils propertyscanning propertyscanner setupvalidity scanscope scope scansettings settings scanresults results at eco core utils propertyscanning propertyscanner scanobj object root scansettings settings at eco core utils propertyscanning propertyscanner scan scansettings settings at eco gameplay utils simpleentry initialize at eco gameplay economy workparties workparty initialize at eco core systems registrar initialize at eco core systems registrars init at eco core utils initializer initialize at eco server pluginmanager initializeasync startupinfo startupinfo at eco server startup startasync startupinfo startupinfo | 1 |

585,670 | 17,514,187,860 | IssuesEvent | 2021-08-11 03:42:12 | encorelab/ck-board | https://api.github.com/repos/encorelab/ck-board | closed | Move post modification buttons on post | bug high priority | Move edit and delete buttons on the post objects themselves, instead of having them on the side toolbar. | 1.0 | Move post modification buttons on post - Move edit and delete buttons on the post objects themselves, instead of having them on the side toolbar. | priority | move post modification buttons on post move edit and delete buttons on the post objects themselves instead of having them on the side toolbar | 1 |

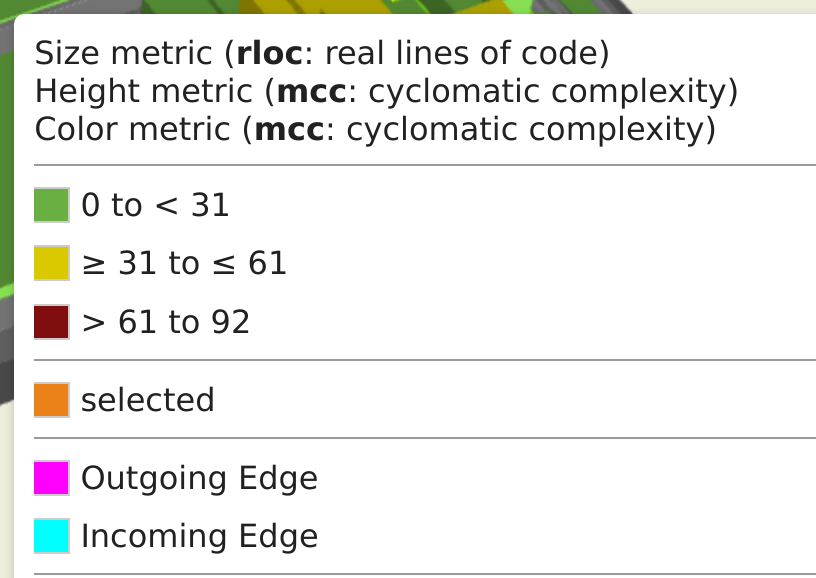

582,870 | 17,372,779,126 | IssuesEvent | 2021-07-30 16:07:18 | MaibornWolff/codecharta | https://api.github.com/repos/MaibornWolff/codecharta | opened | Legend improvements | difficulty:low feature javascript pr-visualization priority:high | # Feature request

## Description

As a user, I want the legend to be as useful as possible so that I have all necessary information without any information that is not needed or being distracted by anything.

## Acceptance criteria

- Change the background color of the legend to be identical to the background of the map (it should be 100% transparent)

- Remove the bounding box of the legend so that the content is visible without anything distracting the user

- Remove "Outgoing" and "Incoming" Edge from the legend in case there is no such metric available

- Add all used metrics to the legend and add a description in case we know what the abbreviation stands for

## Development notes (optional Task Breakdown)

- [ ] Remove the bounding box and changing the background color

- [ ] Remove "outgoing" and "incoming" edges from the legend, if not applicable

- [ ] Add all used metrics to the legend

- [ ] Add a list of known metric descriptions to the frontend and show a translation next to the entry

| 1.0 | Legend improvements - # Feature request

## Description

As a user, I want the legend to be as useful as possible so that I have all necessary information without any information that is not needed or being distracted by anything.

## Acceptance criteria

- Change the background color of the legend to be identical to the background of the map (it should be 100% transparent)

- Remove the bounding box of the legend so that the content is visible without anything distracting the user

- Remove "Outgoing" and "Incoming" Edge from the legend in case there is no such metric available

- Add all used metrics to the legend and add a description in case we know what the abbreviation stands for

## Development notes (optional Task Breakdown)

- [ ] Remove the bounding box and changing the background color

- [ ] Remove "outgoing" and "incoming" edges from the legend, if not applicable

- [ ] Add all used metrics to the legend

- [ ] Add a list of known metric descriptions to the frontend and show a translation next to the entry

| priority | legend improvements feature request description as a user i want the legend to be as useful as possible so that i have all necessary information without any information that is not needed or being distracted by anything acceptance criteria change the background color of the legend to be identical to the background of the map it should be transparent remove the bounding box of the legend so that the content is visible without anything distracting the user remove outgoing and incoming edge from the legend in case there is no such metric available add all used metrics to the legend and add a description in case we know what the abbreviation stands for development notes optional task breakdown remove the bounding box and changing the background color remove outgoing and incoming edges from the legend if not applicable add all used metrics to the legend add a list of known metric descriptions to the frontend and show a translation next to the entry | 1 |

563,454 | 16,685,275,210 | IssuesEvent | 2021-06-08 07:22:38 | nlpsandbox/nlpsandbox.io | https://api.github.com/repos/nlpsandbox/nlpsandbox.io | closed | Create multi-site compatible leaderboard | Priority: High | - [x] Select the columns in the leaderboard (@tschaffter )

- [ ] Implement the leaderboard (@thomasyu888 ) | 1.0 | Create multi-site compatible leaderboard - - [x] Select the columns in the leaderboard (@tschaffter )

- [ ] Implement the leaderboard (@thomasyu888 ) | priority | create multi site compatible leaderboard select the columns in the leaderboard tschaffter implement the leaderboard | 1 |

794,481 | 28,037,790,952 | IssuesEvent | 2023-03-28 16:11:17 | asastats/channel | https://api.github.com/repos/asastats/channel | opened | ASA Stats is displaying a different swap price for Tinyman | bug high priority | Typing the same ASA amount in Tinyman gives a different swap value. | 1.0 | ASA Stats is displaying a different swap price for Tinyman - Typing the same ASA amount in Tinyman gives a different swap value. | priority | asa stats is displaying a different swap price for tinyman typing the same asa amount in tinyman gives a different swap value | 1 |

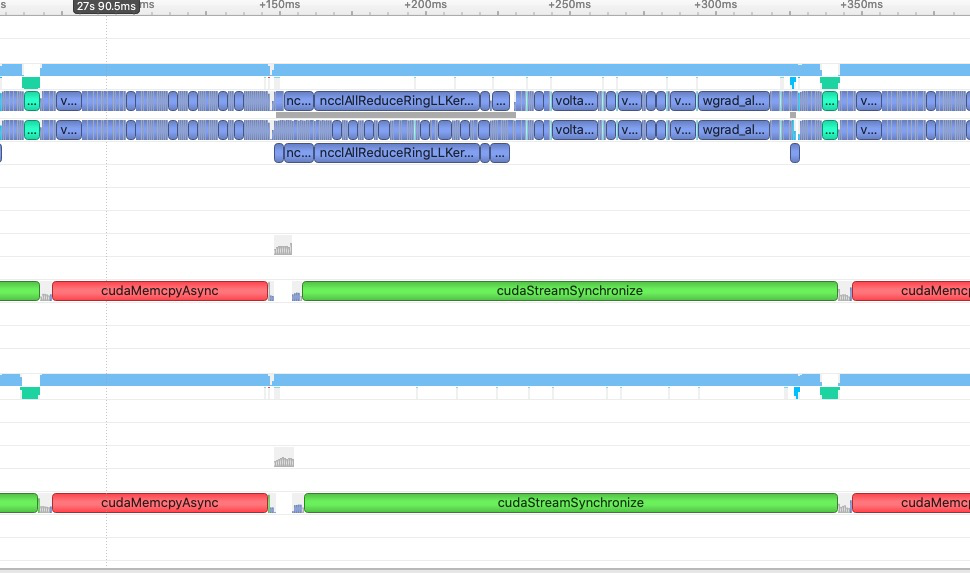

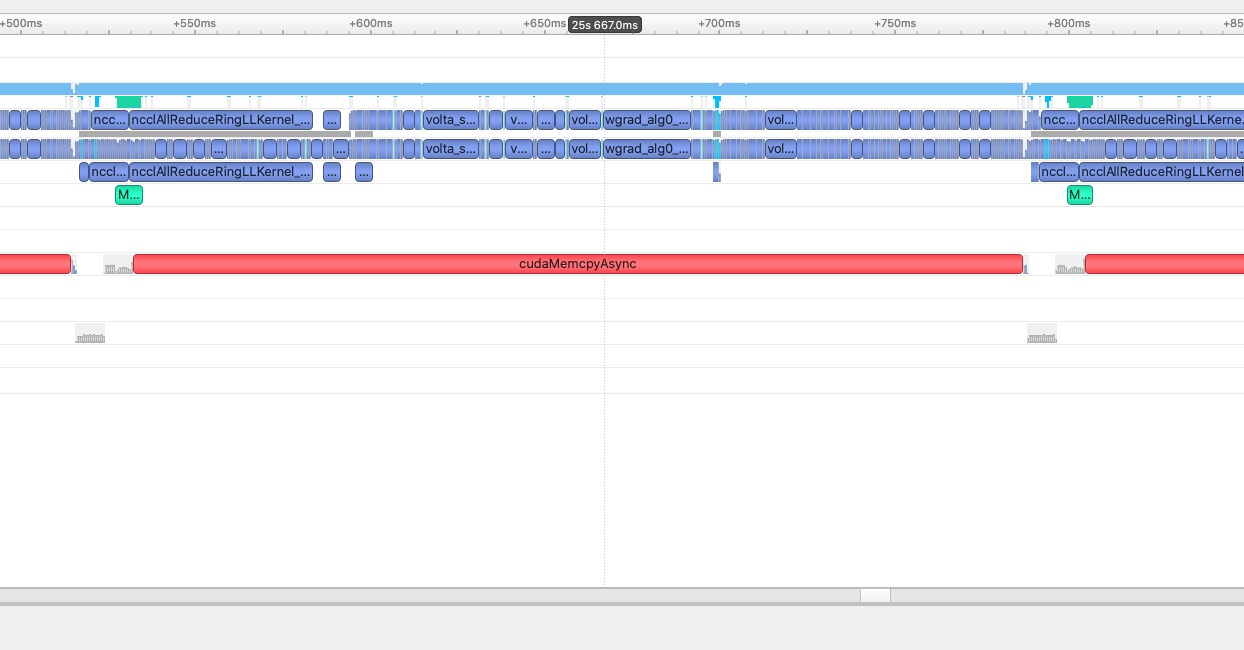

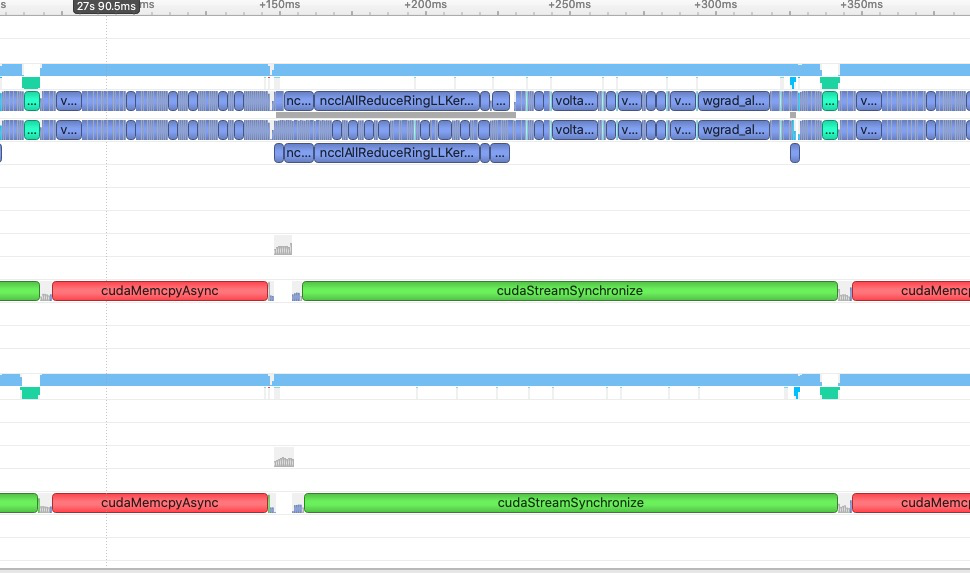

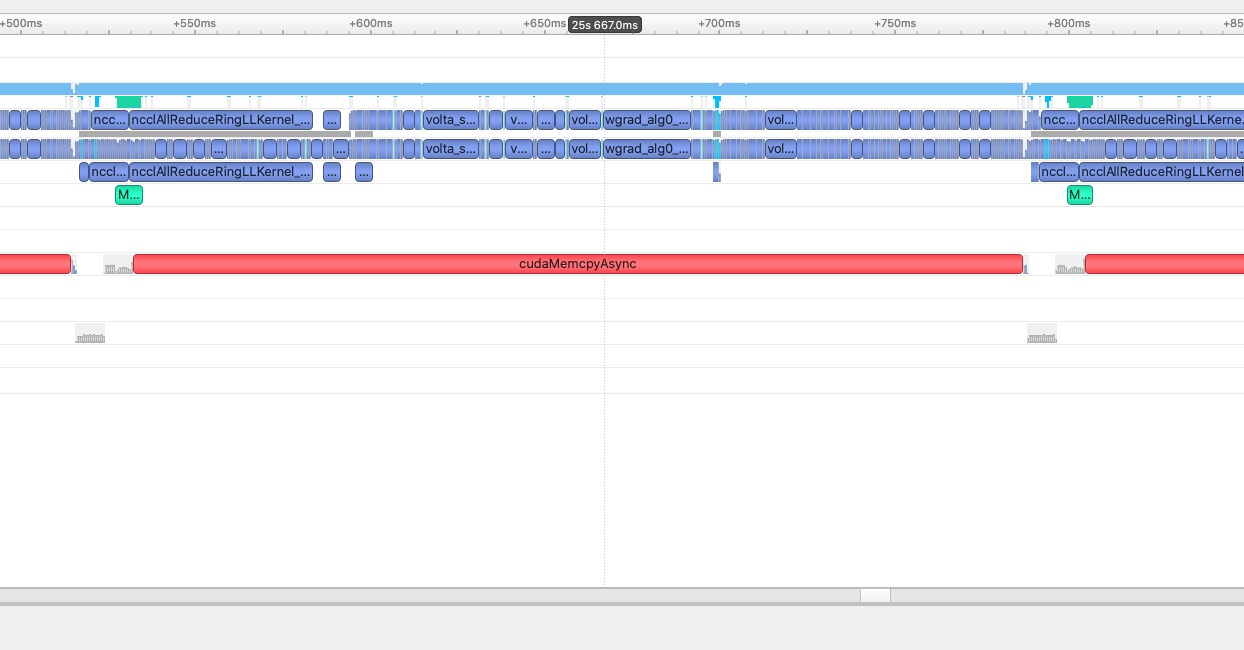

524,799 | 15,223,629,826 | IssuesEvent | 2021-02-18 03:09:21 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Data copy from CPU to GPU use default stream in nightly version. | high priority module: cuda oncall: distributed | ## 🐛 Bug

After https://github.com/pytorch/pytorch/pull/46304, data copy per iteration from cpu to gpu can't overlap with last iteration's computation/communication.

There is my test result which shows different behaviors by literally same code.

nightly:

1.5.1 release:

## To Reproduce

Steps to reproduce the behavior:

1. Run the same code in nightly build and 1.5.1 release build. Use nsys profiling it.

My test code is listed as below, you can use it do some tests. But I think the reason that incurs different behavior is clear. Is it a new feature? If so, I might not think it's a good one.

```

import os

import time

import sys

import random

import traceback

import numpy as np

import torch

import torch.nn as nn

import torch.nn.parallel

import torch.distributed as dist

import torch.optim

import torch.utils.data

import torch.utils.data.distributed

import torchvision.transforms as transforms

import torchvision.datasets as datasets

import torchvision.models as models

from torch.multiprocessing import Pool, Process

import argparse

class AverageMeter(object):

"""Computes and stores the average and current value"""

def __init__(self):

self.reset()

def reset(self):

self.val = 0

self.avg = 0

self.sum = 0

self.count = 0

def update(self, val, n=1):

self.val = val

self.sum += val * n

self.count += n

self.avg = self.sum / self.count

def accuracy(output, target, topk=(1,)):

"""Computes the precision@k for the specified values of k"""

with torch.no_grad():

maxk = max(topk)

batch_size = target.size(0)

_, pred = output.topk(maxk, 1, True, True)

pred = pred.t()

correct = pred.eq(target.view(1, -1).expand_as(pred))

res = []

for k in topk:

correct_k = correct[:k].contiguous().view(-1).float().sum(0, keepdim=True)

res.append(correct_k.mul_(100.0 / batch_size))

return res

def train(train_loader, model, criterion, optimizer, epoch, batch_size):

batch_time = AverageMeter()

data_time = AverageMeter()

losses = AverageMeter()

top1 = AverageMeter()

top5 = AverageMeter()

local_rank = os.environ['LOCAL_RANK']

# switch to train mode

model.train()

end = time.time()

for i, (input, target) in enumerate(train_loader):

# measure data loading time

data_time.update(time.time() - end)

# Create non_blocking tensors for distributed training

# input = input.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

# compute output

output = model(input)

loss = criterion(output, target)

# measure accuracy and record loss

prec1, prec5 = accuracy(output, target, topk=(1, 5))

losses.update(loss.item(), input.size(0))

top1.update(prec1[0], input.size(0))

top5.update(prec5[0], input.size(0))

# compute gradients in a backward pass

optimizer.zero_grad()

loss.backward()

# Call step of optimizer to update model params

optimizer.step()

# measure elapsed time

if i >= 10:

batch_time.update(time.time() - end)

end = time.time()

if local_rank == '0' and i % 10 == 0 and i > 10:

# if local_rank == '0' and i > 0:

print('Epoch: [{0}][{1}/{2}]\t'

'Speed {speed_now:.3f} ({speed_avg:.3f})\t'

'Time {batch_time.val:.3f} ({batch_time.avg:.3f})\t'

'Data {data_time.val:.3f} ({data_time.avg:.3f})\t'

'Loss {loss.val:.4f} ({loss.avg:.4f})\t'

'Prec@1 {top1.val:.3f} ({top1.avg:.3f})\t'

'Prec@5 {top5.val:.3f} ({top5.avg:.3f})'.format(

epoch, i, len(train_loader), batch_time=batch_time,

speed_now=batch_size/batch_time.val, speed_avg=batch_size/batch_time.avg,

data_time=data_time, loss=losses, top1=top1, top5=top5))

def adjust_learning_rate(initial_lr, optimizer, epoch):

"""Sets the learning rate to the initial LR decayed by 10 every 30 epochs"""

lr = initial_lr * (0.1 ** (epoch // 30))

for param_group in optimizer.param_groups:

param_group['lr'] = lr

def validate(val_loader, model, criterion):

batch_time = AverageMeter()

losses = AverageMeter()

top1 = AverageMeter()

top5 = AverageMeter()

# switch to evaluate mode

model.eval()

with torch.no_grad():

end = time.time()

for i, (input, target) in enumerate(val_loader):

input = input.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

# compute output

output = model(input)

loss = criterion(output, target)

# measure accuracy and record loss

prec1, prec5 = accuracy(output, target, topk=(1, 5))

losses.update(loss.item(), input.size(0))

top1.update(prec1[0], input.size(0))

top5.update(prec5[0], input.size(0))

# measure elapsed time

torch.cuda.synchronize()

batch_time.update(time.time() - end)

end = time.time()

if i % 100 == 0:

print('Test: [{0}/{1}]\t'

'Time {batch_time.val:.3f} ({batch_time.avg:.3f})\t'

'Loss {loss.val:.4f} ({loss.avg:.4f})\t'

'Prec@1 {top1.val:.3f} ({top1.avg:.3f})\t'

'Prec@5 {top5.val:.3f} ({top5.avg:.3f})'.format(

i, len(val_loader), batch_time=batch_time, loss=losses,

top1=top1, top5=top5))

print(' * Prec@1 {top1.avg:.3f} Prec@5 {top5.avg:.3f}'

.format(top1=top1, top5=top5))

return top1.avg

def start(backend):

print("Collect Inputs...")

# Batch Size for training and testing

batch_size = 64

# Number of additional worker processes for dataloading

workers = 8

# Number of epochs to train for

num_epochs = 1

# Starting Learning Rate