Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

683,633 | 23,389,473,981 | IssuesEvent | 2022-08-11 16:23:22 | archesproject/arches | https://api.github.com/repos/archesproject/arches | closed | i18n language switcher broken | Type: Bug Priority: High | After the webpack build merged, I can no longer switch languages with the language switcher in 7.x. After talking with @chrabyrd this may have to do with a missing i18n_patterns call in urls.py - more research is needed. | 1.0 | i18n language switcher broken - After the webpack build merged, I can no longer switch languages with the language switcher in 7.x. After talking with @chrabyrd this may have to do with a missing i18n_patterns call in urls.py - more research is needed. | priority | language switcher broken after the webpack build merged i can no longer switch languages with the language switcher in x after talking with chrabyrd this may have to do with a missing patterns call in urls py more research is needed | 1 |

765,825 | 26,862,475,805 | IssuesEvent | 2023-02-03 19:45:54 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Foreach op don't follow aten level debug asserts | high priority triage review triaged actionable module: mta | Since https://github.com/pytorch/pytorch/pull/91846 has moved to make torch.nn.utils.clip_grad_norm_() use foreach ops, it throws the error message:

*** RuntimeError: t.storage().use_count() == 1 INTERNAL ASSERT FAILED at "caffe2/torch/csrc/autograd/autograd_not_implemented_fallback.cpp":189, please report a bug to PyTorch.

when PyTorch is built with debug asserts.

Given the assert, the error is that this foreach op should be returning a brand new Tensor but it is actually returning a Tensor that shares storage with at least another one.

- If this is done on purpose for a good reason, we should remove this assert

- If that is not expected, then it can be a bug in the implementation where it returns a view where it should not.

cc @ezyang @gchanan @zou3519 @crcrpar @mcarilli @ngimel | 1.0 | Foreach op don't follow aten level debug asserts - Since https://github.com/pytorch/pytorch/pull/91846 has moved to make torch.nn.utils.clip_grad_norm_() use foreach ops, it throws the error message:

*** RuntimeError: t.storage().use_count() == 1 INTERNAL ASSERT FAILED at "caffe2/torch/csrc/autograd/autograd_not_implemented_fallback.cpp":189, please report a bug to PyTorch.

when PyTorch is built with debug asserts.

Given the assert, the error is that this foreach op should be returning a brand new Tensor but it is actually returning a Tensor that shares storage with at least another one.

- If this is done on purpose for a good reason, we should remove this assert

- If that is not expected, then it can be a bug in the implementation where it returns a view where it should not.

cc @ezyang @gchanan @zou3519 @crcrpar @mcarilli @ngimel | priority | foreach op don t follow aten level debug asserts since has moved to make torch nn utils clip grad norm use foreach ops it throws the error message runtimeerror t storage use count internal assert failed at torch csrc autograd autograd not implemented fallback cpp please report a bug to pytorch when pytorch is built with debug asserts given the assert the error is that this foreach op should be returning a brand new tensor but it is actually returning a tensor that shares storage with at least another one if this is done on purpose for a good reason we should remove this assert if that is not expected then it can be a bug in the implementation where it returns a view where it should not cc ezyang gchanan crcrpar mcarilli ngimel | 1 |

794,956 | 28,056,412,012 | IssuesEvent | 2023-03-29 09:39:39 | projectdiscovery/naabu | https://api.github.com/repos/projectdiscovery/naabu | closed | exclude `IP` / `Port` / `Host` option is not working after last update | Priority: High Status: Completed Type: Bug | Hello,

when i try to exclude ports using -ep or -exclude-ports

the outpust still have excluded ports on it.

```console

naabu -host hackerone.com -ep 80

__

___ ___ ___ _/ / __ __

/ _ \/ _ \/ _ \/ _ \/ // /

/_//_/\_,_/\_,_/_.__/\_,_/

projectdiscovery.io

[INF] Current naabu version 2.1.4 (latest)

[INF] Running CONNECT scan with non root privileges

[INF] Found 1 ports on host hackerone.com (104.16.100.52)

hackerone.com:80

```

tried more than once.

Thanks | 1.0 | exclude `IP` / `Port` / `Host` option is not working after last update - Hello,

when i try to exclude ports using -ep or -exclude-ports

the outpust still have excluded ports on it.

```console

naabu -host hackerone.com -ep 80

__

___ ___ ___ _/ / __ __

/ _ \/ _ \/ _ \/ _ \/ // /

/_//_/\_,_/\_,_/_.__/\_,_/

projectdiscovery.io

[INF] Current naabu version 2.1.4 (latest)

[INF] Running CONNECT scan with non root privileges

[INF] Found 1 ports on host hackerone.com (104.16.100.52)

hackerone.com:80

```

tried more than once.

Thanks | priority | exclude ip port host option is not working after last update hello when i try to exclude ports using ep or exclude ports the outpust still have excluded ports on it console naabu host hackerone com ep projectdiscovery io current naabu version latest running connect scan with non root privileges found ports on host hackerone com hackerone com tried more than once thanks | 1 |

364,081 | 10,758,645,096 | IssuesEvent | 2019-10-31 15:18:52 | smallhadroncollider/taskell | https://api.github.com/repos/smallhadroncollider/taskell | closed | Allow sub tasks reordering | enhancement high priority in progress | Would be possible to allow sub tasks to move up or down with K and J? | 1.0 | Allow sub tasks reordering - Would be possible to allow sub tasks to move up or down with K and J? | priority | allow sub tasks reordering would be possible to allow sub tasks to move up or down with k and j | 1 |

547,637 | 16,044,143,832 | IssuesEvent | 2021-04-22 11:41:47 | apluslms/mooc-grader | https://api.github.com/repos/apluslms/mooc-grader | closed | Question choice label does not connect to the input | area: UX student area: questionnaire effort: hours experience: good first issue priority: high requester: internal type: bug | Questionnaire HTML forms have labels that have outdated `for` attribute values. This concerns at least checkbox and radio button questions. The `for` value is not the same as the `id` attribute of the input and thus, clicking on the label does not tick the checkbox.

The id attributes were changed in A+ v1.8 (January 2021). The new id contains a random part so that the id is unique in the DOM even when the page (A+ content chapter) includes multiple exercises of the same type. The current label `for` values match the old ids that are not used any longer (the old ids were probably the Django default values).

Relevant code:

https://github.com/apluslms/mooc-grader/blob/2497d7874335e5cb65dea3fe50c6304874d14a8c/access/templates/access/graded_form.html#L124

https://github.com/apluslms/mooc-grader/blob/2497d7874335e5cb65dea3fe50c6304874d14a8c/access/types/forms.py#L46

| 1.0 | Question choice label does not connect to the input - Questionnaire HTML forms have labels that have outdated `for` attribute values. This concerns at least checkbox and radio button questions. The `for` value is not the same as the `id` attribute of the input and thus, clicking on the label does not tick the checkbox.

The id attributes were changed in A+ v1.8 (January 2021). The new id contains a random part so that the id is unique in the DOM even when the page (A+ content chapter) includes multiple exercises of the same type. The current label `for` values match the old ids that are not used any longer (the old ids were probably the Django default values).

Relevant code:

https://github.com/apluslms/mooc-grader/blob/2497d7874335e5cb65dea3fe50c6304874d14a8c/access/templates/access/graded_form.html#L124

https://github.com/apluslms/mooc-grader/blob/2497d7874335e5cb65dea3fe50c6304874d14a8c/access/types/forms.py#L46

| priority | question choice label does not connect to the input questionnaire html forms have labels that have outdated for attribute values this concerns at least checkbox and radio button questions the for value is not the same as the id attribute of the input and thus clicking on the label does not tick the checkbox the id attributes were changed in a january the new id contains a random part so that the id is unique in the dom even when the page a content chapter includes multiple exercises of the same type the current label for values match the old ids that are not used any longer the old ids were probably the django default values relevant code | 1 |

503,409 | 14,591,271,037 | IssuesEvent | 2020-12-19 12:09:18 | space-wizards/RobustToolbox | https://api.github.com/repos/space-wizards/RobustToolbox | closed | Defer move events out of TransformComponent.HandleComponentState() | Feature: Entities Feature: Networking Priority: 1-high Type: Bug | I just had a crash because an entity with a snap grid component got its states applied before the grid, and the snap grid's event handler for updating position hit an assert because the grid hadn't been given data yet.

Reproduction is to apply the following change, doing `cvar net.pvs 0` server side and then run a `restartround`, as far as I can tell:

```diff

index 91de2d007..ed8046ce1 100644

--- a/Robust.Server/GameObjects/ServerEntityManager.cs

+++ b/Robust.Server/GameObjects/ServerEntityManager.cs

@@ -12,6 +12,7 @@ using Robust.Server.Interfaces.Timing;

using Robust.Shared;

using Robust.Shared.GameObjects;

using Robust.Shared.GameObjects.Components;

+using Robust.Shared.GameObjects.Components.Map;

using Robust.Shared.GameObjects.Components.Transform;

using Robust.Shared.Interfaces.Configuration;

using Robust.Shared.Interfaces.GameObjects;

@@ -165,7 +166,7 @@ namespace Robust.Server.GameObjects

}

// no point sending an empty collection

- return stateEntities.Count == 0 ? default : stateEntities;

+ return stateEntities.Count == 0 ? default : stateEntities.OrderByDescending(e => GetEntity(e.Uid).HasComponent<IMapGridComponent>()).ToList();

}

private readonly Dictionary<IPlayerSession, SortedSet<EntityUid>> _seenMovers

``` | 1.0 | Defer move events out of TransformComponent.HandleComponentState() - I just had a crash because an entity with a snap grid component got its states applied before the grid, and the snap grid's event handler for updating position hit an assert because the grid hadn't been given data yet.

Reproduction is to apply the following change, doing `cvar net.pvs 0` server side and then run a `restartround`, as far as I can tell:

```diff

index 91de2d007..ed8046ce1 100644

--- a/Robust.Server/GameObjects/ServerEntityManager.cs

+++ b/Robust.Server/GameObjects/ServerEntityManager.cs

@@ -12,6 +12,7 @@ using Robust.Server.Interfaces.Timing;

using Robust.Shared;

using Robust.Shared.GameObjects;

using Robust.Shared.GameObjects.Components;

+using Robust.Shared.GameObjects.Components.Map;

using Robust.Shared.GameObjects.Components.Transform;

using Robust.Shared.Interfaces.Configuration;

using Robust.Shared.Interfaces.GameObjects;

@@ -165,7 +166,7 @@ namespace Robust.Server.GameObjects

}

// no point sending an empty collection

- return stateEntities.Count == 0 ? default : stateEntities;

+ return stateEntities.Count == 0 ? default : stateEntities.OrderByDescending(e => GetEntity(e.Uid).HasComponent<IMapGridComponent>()).ToList();

}

private readonly Dictionary<IPlayerSession, SortedSet<EntityUid>> _seenMovers

``` | priority | defer move events out of transformcomponent handlecomponentstate i just had a crash because an entity with a snap grid component got its states applied before the grid and the snap grid s event handler for updating position hit an assert because the grid hadn t been given data yet reproduction is to apply the following change doing cvar net pvs server side and then run a restartround as far as i can tell diff index a robust server gameobjects serverentitymanager cs b robust server gameobjects serverentitymanager cs using robust server interfaces timing using robust shared using robust shared gameobjects using robust shared gameobjects components using robust shared gameobjects components map using robust shared gameobjects components transform using robust shared interfaces configuration using robust shared interfaces gameobjects namespace robust server gameobjects no point sending an empty collection return stateentities count default stateentities return stateentities count default stateentities orderbydescending e getentity e uid hascomponent tolist private readonly dictionary seenmovers | 1 |

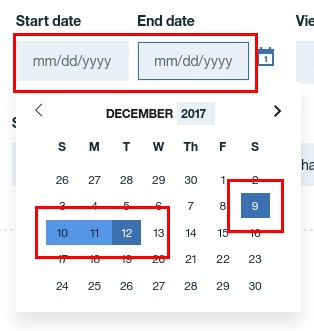

206,789 | 7,121,239,178 | IssuesEvent | 2018-01-19 06:36:28 | carbon-design-system/carbon-components-react | https://api.github.com/repos/carbon-design-system/carbon-components-react | closed | Is there any way to reset range DatePicker ? | bug priority: high | <!-- Feel free to remove sections that aren't relevant.

## Title line template: [Title]: Brief description

-->

## Detailed description

Describe in detail the issue you're having. Is this a feature request (new component, new icon), a bug, or a general issue?

Now I need to reset DataPicker (range type) - it means after I select a date range, I need to reset it to the initial state without re-rendering the component.

> Is this issue related to a specific component?

DatePicker/DatePickerInput

> What did you expect to happen? What happened instead? What would you like to see changed?

`<DatePickerInput

labelText="Start date"

onChange={this.handleDateChange}

onClick={this.handleFromToClick}

placeholder="mm/dd/yyyy"

id="fromDate"

value={this.state.startDate}

/>`

Now I bind a property value with a property "startDate" in local state, by reset startDate value in local state, the input text is gone, but in the popup, the date range is still there. The expected behavior is there should be a way to "completely" reset the component - the date range in popup should be reset as well.

> What browser are you working in?

Chrome

> What version of the Carbon Design System are you using?

7.26.10

> What offering/product do you work on? Any pressing ship or release dates we should be aware of?

Yes. It is better to be fixed ASAP.

## Steps to reproduce the issue

## Additional information

* Screenshots or code

* Notes

## Add labels

Please choose the appropriate label(s) from our existing label list to ensure that your issue is properly categorized. This will help us to better understand and address your issue.

| 1.0 | Is there any way to reset range DatePicker ? - <!-- Feel free to remove sections that aren't relevant.

## Title line template: [Title]: Brief description

-->

## Detailed description

Describe in detail the issue you're having. Is this a feature request (new component, new icon), a bug, or a general issue?

Now I need to reset DataPicker (range type) - it means after I select a date range, I need to reset it to the initial state without re-rendering the component.

> Is this issue related to a specific component?

DatePicker/DatePickerInput

> What did you expect to happen? What happened instead? What would you like to see changed?

`<DatePickerInput

labelText="Start date"

onChange={this.handleDateChange}

onClick={this.handleFromToClick}

placeholder="mm/dd/yyyy"

id="fromDate"

value={this.state.startDate}

/>`

Now I bind a property value with a property "startDate" in local state, by reset startDate value in local state, the input text is gone, but in the popup, the date range is still there. The expected behavior is there should be a way to "completely" reset the component - the date range in popup should be reset as well.

> What browser are you working in?

Chrome

> What version of the Carbon Design System are you using?

7.26.10

> What offering/product do you work on? Any pressing ship or release dates we should be aware of?

Yes. It is better to be fixed ASAP.

## Steps to reproduce the issue

## Additional information

* Screenshots or code

* Notes

## Add labels

Please choose the appropriate label(s) from our existing label list to ensure that your issue is properly categorized. This will help us to better understand and address your issue.

| priority | is there any way to reset range datepicker feel free to remove sections that aren t relevant title line template brief description detailed description describe in detail the issue you re having is this a feature request new component new icon a bug or a general issue now i need to reset datapicker range type it means after i select a date range i need to reset it to the initial state without re rendering the component is this issue related to a specific component datepicker datepickerinput what did you expect to happen what happened instead what would you like to see changed datepickerinput labeltext start date onchange this handledatechange onclick this handlefromtoclick placeholder mm dd yyyy id fromdate value this state startdate now i bind a property value with a property startdate in local state by reset startdate value in local state the input text is gone but in the popup the date range is still there the expected behavior is there should be a way to completely reset the component the date range in popup should be reset as well what browser are you working in chrome what version of the carbon design system are you using what offering product do you work on any pressing ship or release dates we should be aware of yes it is better to be fixed asap steps to reproduce the issue additional information screenshots or code notes add labels please choose the appropriate label s from our existing label list to ensure that your issue is properly categorized this will help us to better understand and address your issue | 1 |

413,804 | 12,092,366,727 | IssuesEvent | 2020-04-19 15:22:49 | Rammelkast/AntiCheatReloaded | https://api.github.com/repos/Rammelkast/AntiCheatReloaded | closed | False positive with Elytra | false-positive high priority | When sprintjumping and subsequently using an elytra, ACR sometimes gives the following false positive:

USER ascended 8 times in a row (max = 8)

caused by false positive in the checkAscension method (Backend.java line 408)

| 1.0 | False positive with Elytra - When sprintjumping and subsequently using an elytra, ACR sometimes gives the following false positive:

USER ascended 8 times in a row (max = 8)

caused by false positive in the checkAscension method (Backend.java line 408)

| priority | false positive with elytra when sprintjumping and subsequently using an elytra acr sometimes gives the following false positive user ascended times in a row max caused by false positive in the checkascension method backend java line | 1 |

747,735 | 26,096,859,135 | IssuesEvent | 2022-12-26 21:37:38 | bounswe/bounswe2022group4 | https://api.github.com/repos/bounswe/bounswe2022group4 | closed | Backend: Create tests for the text annotations | Category - Enhancement Priority - High Status: Completed Difficulty - Hard Backend Team - Backend | Description:

Tests are essential in the software development lifecycle. We need to create tests for the text annotation endpoints.

Steps:

- Create a test for TextAnnotationAPIView. It has only one endpoint GET. We can check the status code and the content of the response.

- Create a test for PostTextAnnotationAPIView. It has two endpoints GET and POST. We can check the status codes and the content of the responses.

Deadline: 25.12.2022 23.59 | 1.0 | Backend: Create tests for the text annotations - Description:

Tests are essential in the software development lifecycle. We need to create tests for the text annotation endpoints.

Steps:

- Create a test for TextAnnotationAPIView. It has only one endpoint GET. We can check the status code and the content of the response.

- Create a test for PostTextAnnotationAPIView. It has two endpoints GET and POST. We can check the status codes and the content of the responses.

Deadline: 25.12.2022 23.59 | priority | backend create tests for the text annotations description tests are essential in the software development lifecycle we need to create tests for the text annotation endpoints steps create a test for textannotationapiview it has only one endpoint get we can check the status code and the content of the response create a test for posttextannotationapiview it has two endpoints get and post we can check the status codes and the content of the responses deadline | 1 |

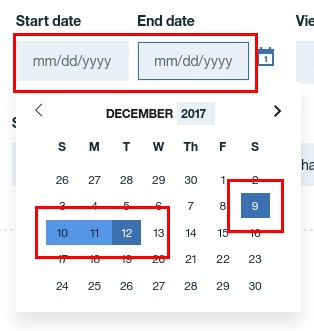

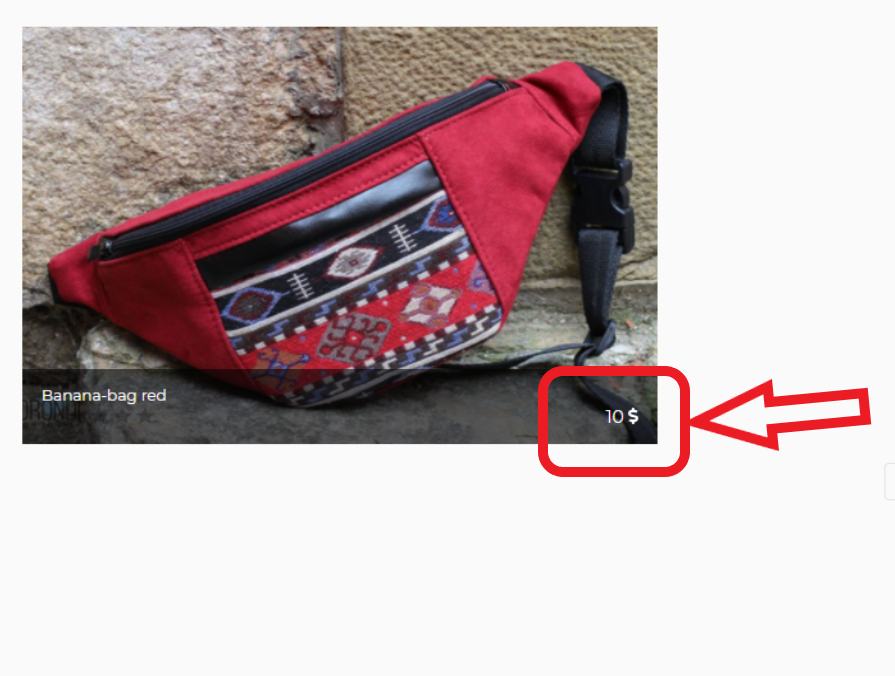

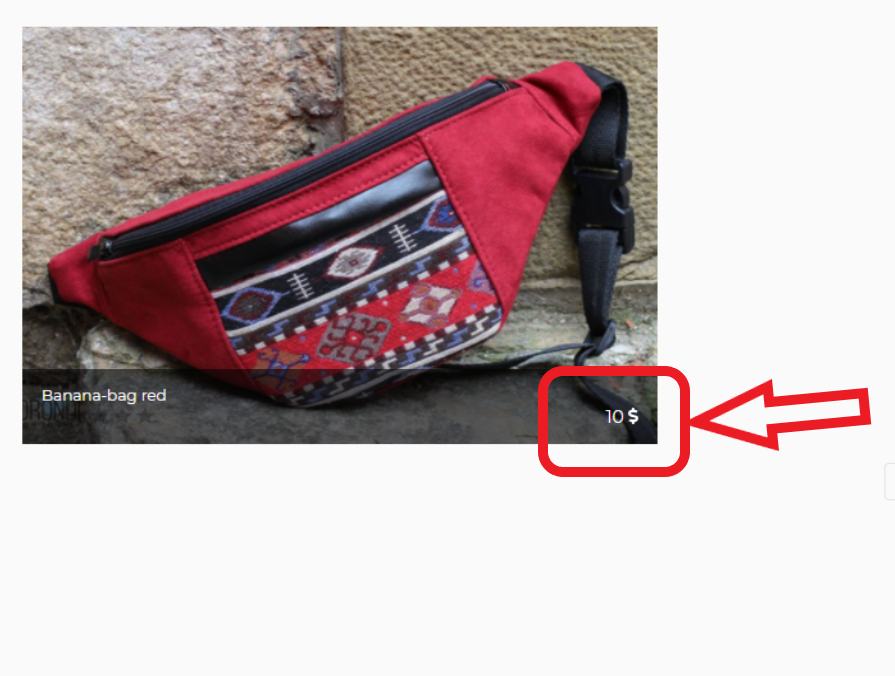

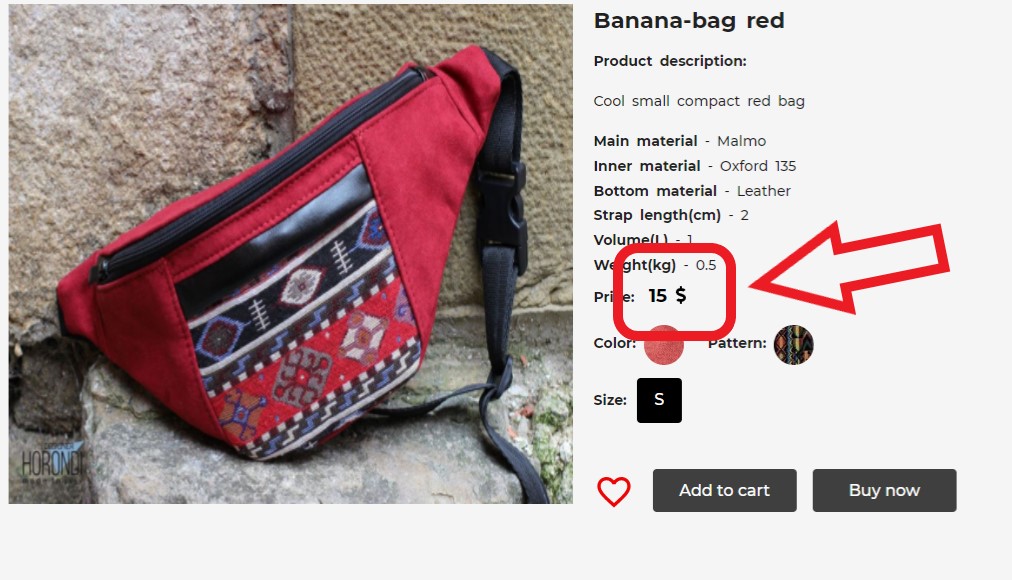

631,427 | 20,151,624,509 | IssuesEvent | 2022-02-09 12:58:43 | ita-social-projects/horondi_client_fe | https://api.github.com/repos/ita-social-projects/horondi_client_fe | closed | [Product Detail Page] Inconsistent of product prices on Category page and Product detail page | bug UI priority: high severity: trivial | **Environment:** Windows 10 64bit, Firefox 91.0.4472.124 64bit

**Reproducible:** always

**Preconditions**

Go to: https://horondi-front-staging.azurewebsites.net/

**Description:**

**Steps to reproduce**

1. Click to the menu on the left corner on the top of the pages

2. Choose any point on "BAGS" tab (e.g. BANANA BAGS)

3. Pay attention to the price of the good (e.g. Banana bag-red)

3. Open "Banana bag-red" product detail page

4. Pay attention to the price of the good

**Actual result**

Inconsistent prices are displayed

**Expected result**

Product prices are appropriate

| 1.0 | [Product Detail Page] Inconsistent of product prices on Category page and Product detail page - **Environment:** Windows 10 64bit, Firefox 91.0.4472.124 64bit

**Reproducible:** always

**Preconditions**

Go to: https://horondi-front-staging.azurewebsites.net/

**Description:**

**Steps to reproduce**

1. Click to the menu on the left corner on the top of the pages

2. Choose any point on "BAGS" tab (e.g. BANANA BAGS)

3. Pay attention to the price of the good (e.g. Banana bag-red)

3. Open "Banana bag-red" product detail page

4. Pay attention to the price of the good

**Actual result**

Inconsistent prices are displayed

**Expected result**

Product prices are appropriate

| priority | inconsistent of product prices on category page and product detail page environment windows firefox reproducible always preconditions go to description steps to reproduce click to the menu on the left corner on the top of the pages choose any point on bags tab e g banana bags pay attention to the price of the good e g banana bag red open banana bag red product detail page pay attention to the price of the good actual result inconsistent prices are displayed expected result product prices are appropriate | 1 |

416,429 | 12,146,313,969 | IssuesEvent | 2020-04-24 10:54:37 | luna/enso | https://api.github.com/repos/luna/enso | opened | Build the Transitive Closure of Invalidated External IDs | Category: Compiler Change: Non-Breaking Difficulty: Core Contributor Priority: High Type: Enhancement | ### Summary

<!--

- A summary of the task.

-->

### Value

<!--

- This section should describe the value of this task.

- This value can be for users, to the team, etc.

-->

### Specification

- [ ] On change, store the previous version of the IR for the module(s) being changed.

- [ ] From the change, determine the internal identifier 'root' that has been changed.

- [ ] Traverse the _previous_ IR representation, and gather the transitive closure of _external_ IDs that have been invalidated (using the results of dataflow analysis).

- [ ] If a node has an external ID, it should be added to the result set.

### Acceptance Criteria & Test Cases

<!--

- Any criteria that must be satisfied for the task to be accepted.

- The test plan for the feature, related to the acceptance criteria.

-->

| 1.0 | Build the Transitive Closure of Invalidated External IDs - ### Summary

<!--

- A summary of the task.

-->

### Value

<!--

- This section should describe the value of this task.

- This value can be for users, to the team, etc.

-->

### Specification

- [ ] On change, store the previous version of the IR for the module(s) being changed.

- [ ] From the change, determine the internal identifier 'root' that has been changed.

- [ ] Traverse the _previous_ IR representation, and gather the transitive closure of _external_ IDs that have been invalidated (using the results of dataflow analysis).

- [ ] If a node has an external ID, it should be added to the result set.

### Acceptance Criteria & Test Cases

<!--

- Any criteria that must be satisfied for the task to be accepted.

- The test plan for the feature, related to the acceptance criteria.

-->

| priority | build the transitive closure of invalidated external ids summary a summary of the task value this section should describe the value of this task this value can be for users to the team etc specification on change store the previous version of the ir for the module s being changed from the change determine the internal identifier root that has been changed traverse the previous ir representation and gather the transitive closure of external ids that have been invalidated using the results of dataflow analysis if a node has an external id it should be added to the result set acceptance criteria test cases any criteria that must be satisfied for the task to be accepted the test plan for the feature related to the acceptance criteria | 1 |

760,812 | 26,657,510,618 | IssuesEvent | 2023-01-25 18:02:28 | ThinkR-open/checkhelper | https://api.github.com/repos/ThinkR-open/checkhelper | closed | Final checks for the CRAN submission | priority: high chore project ok | ## Critères de validation

- [ ] Arthur ajouté dans DESCRIPTION

- [ ] `check_clean_userspace`

- [ ] a un paramètre par défaut

- [ ] a un exemple avec une variable

- [ ] Les instructions/checks du fichier `dev_history.Rmd` sont exécutes et sont ok :

## Comment technique ?

Faire passer tout le contenu de `dev_history.Rmd`

```

# Check package coverage

covr::package_coverage()

# _Check in interactive test-inflate for templates and Addins

pkgload::load_all()

devtools::test()

testthat::test_dir("tests/testthat/")

# Test no output generated in the user files

# Run examples in interactive mode too

devtools::run_examples()

# Check that the state is clean after check

checkhelper::check_clean_userspace(pkg = ".")

# Check package as CRAN

rcmdcheck::rcmdcheck(args = c("--no-manual", "--as-cran"))

# devtools::check(args = c("--no-manual", "--as-cran"))

# Check content

# remotes::install_github("ThinkR-open/checkhelper")

checkhelper::find_missing_tags() # Toutes les fonctions doivent avoir soit `@noRd` soit un `@export`

checkhelper::check_clean_userspace(pkg = ".")

checkhelper::check_as_cran()

# Check spelling

# usethis::use_spell_check()

spelling::spell_check_package()

# Check URL are correct

# remotes::install_github("r-lib/urlchecker")

urlchecker::url_check()

urlchecker::url_update()

# Upgrade version number

usethis::use_version(which = c("patch", "minor", "major", "dev")[2])

# check on other distributions

# _rhub

# devtools::check_rhub()

rhub::platforms()

rhub::check_on_windows(check_args = "--force-multiarch", show_status = FALSE)

rhub::check_on_solaris(show_status = FALSE)

rhub::check(platform = "debian-clang-devel", show_status = FALSE)

rhub::check(platform = "debian-gcc-devel", show_status = FALSE)

rhub::check(platform = "fedora-clang-devel", show_status = FALSE)

rhub::check(platform = "macos-highsierra-release-cran", show_status = FALSE)

rhub::check_for_cran(show_status = FALSE)

# _win devel

devtools::check_win_devel()

devtools::check_win_release()

# remotes::install_github("r-lib/devtools")

devtools::check_mac_release() # Need to follow the URL proposed to see the results

# Update NEWS

# Bump version manually and add list of changes

# Add comments for CRAN

# Need to .gitignore this file

usethis::use_cran_comments(open = rlang::is_interactive())

# Upgrade version number if necessary

usethis::use_version(which = c("patch", "minor", "major", "dev")[1])

# Verify you're ready for release, and release

devtools::release()

```

| 1.0 | Final checks for the CRAN submission - ## Critères de validation

- [ ] Arthur ajouté dans DESCRIPTION

- [ ] `check_clean_userspace`

- [ ] a un paramètre par défaut

- [ ] a un exemple avec une variable

- [ ] Les instructions/checks du fichier `dev_history.Rmd` sont exécutes et sont ok :

## Comment technique ?

Faire passer tout le contenu de `dev_history.Rmd`

```

# Check package coverage

covr::package_coverage()

# _Check in interactive test-inflate for templates and Addins

pkgload::load_all()

devtools::test()

testthat::test_dir("tests/testthat/")

# Test no output generated in the user files

# Run examples in interactive mode too

devtools::run_examples()

# Check that the state is clean after check

checkhelper::check_clean_userspace(pkg = ".")

# Check package as CRAN

rcmdcheck::rcmdcheck(args = c("--no-manual", "--as-cran"))

# devtools::check(args = c("--no-manual", "--as-cran"))

# Check content

# remotes::install_github("ThinkR-open/checkhelper")

checkhelper::find_missing_tags() # Toutes les fonctions doivent avoir soit `@noRd` soit un `@export`

checkhelper::check_clean_userspace(pkg = ".")

checkhelper::check_as_cran()

# Check spelling

# usethis::use_spell_check()

spelling::spell_check_package()

# Check URL are correct

# remotes::install_github("r-lib/urlchecker")

urlchecker::url_check()

urlchecker::url_update()

# Upgrade version number

usethis::use_version(which = c("patch", "minor", "major", "dev")[2])

# check on other distributions

# _rhub

# devtools::check_rhub()

rhub::platforms()

rhub::check_on_windows(check_args = "--force-multiarch", show_status = FALSE)

rhub::check_on_solaris(show_status = FALSE)

rhub::check(platform = "debian-clang-devel", show_status = FALSE)

rhub::check(platform = "debian-gcc-devel", show_status = FALSE)

rhub::check(platform = "fedora-clang-devel", show_status = FALSE)

rhub::check(platform = "macos-highsierra-release-cran", show_status = FALSE)

rhub::check_for_cran(show_status = FALSE)

# _win devel

devtools::check_win_devel()

devtools::check_win_release()

# remotes::install_github("r-lib/devtools")

devtools::check_mac_release() # Need to follow the URL proposed to see the results

# Update NEWS

# Bump version manually and add list of changes

# Add comments for CRAN

# Need to .gitignore this file

usethis::use_cran_comments(open = rlang::is_interactive())

# Upgrade version number if necessary

usethis::use_version(which = c("patch", "minor", "major", "dev")[1])

# Verify you're ready for release, and release

devtools::release()

```

| priority | final checks for the cran submission critères de validation arthur ajouté dans description check clean userspace a un paramètre par défaut a un exemple avec une variable les instructions checks du fichier dev history rmd sont exécutes et sont ok comment technique faire passer tout le contenu de dev history rmd check package coverage covr package coverage check in interactive test inflate for templates and addins pkgload load all devtools test testthat test dir tests testthat test no output generated in the user files run examples in interactive mode too devtools run examples check that the state is clean after check checkhelper check clean userspace pkg check package as cran rcmdcheck rcmdcheck args c no manual as cran devtools check args c no manual as cran check content remotes install github thinkr open checkhelper checkhelper find missing tags toutes les fonctions doivent avoir soit nord soit un export checkhelper check clean userspace pkg checkhelper check as cran check spelling usethis use spell check spelling spell check package check url are correct remotes install github r lib urlchecker urlchecker url check urlchecker url update upgrade version number usethis use version which c patch minor major dev check on other distributions rhub devtools check rhub rhub platforms rhub check on windows check args force multiarch show status false rhub check on solaris show status false rhub check platform debian clang devel show status false rhub check platform debian gcc devel show status false rhub check platform fedora clang devel show status false rhub check platform macos highsierra release cran show status false rhub check for cran show status false win devel devtools check win devel devtools check win release remotes install github r lib devtools devtools check mac release need to follow the url proposed to see the results update news bump version manually and add list of changes add comments for cran need to gitignore this file usethis use cran comments open rlang is interactive upgrade version number if necessary usethis use version which c patch minor major dev verify you re ready for release and release devtools release | 1 |

102,811 | 4,161,044,162 | IssuesEvent | 2016-06-17 15:18:03 | ALitttleBitDifferent/AmbientPrologueBugs | https://api.github.com/repos/ALitttleBitDifferent/AmbientPrologueBugs | opened | teleport through solid objects | bug Exploit High Priority | **Submitted by:** Zil

**Description:**

It is possible to make astrums horn poke through a wall/door, allowing spells to be cast through.

**How to reproduce:**

When standing with a wall or door on the right, looking straight down (or sometimes up) while in casting mode will put astrums head though it slightly, allowing player to teleport into the wall/dor and then once again into locked or hidden rooms. | 1.0 | teleport through solid objects - **Submitted by:** Zil

**Description:**

It is possible to make astrums horn poke through a wall/door, allowing spells to be cast through.

**How to reproduce:**

When standing with a wall or door on the right, looking straight down (or sometimes up) while in casting mode will put astrums head though it slightly, allowing player to teleport into the wall/dor and then once again into locked or hidden rooms. | priority | teleport through solid objects submitted by zil description it is possible to make astrums horn poke through a wall door allowing spells to be cast through how to reproduce when standing with a wall or door on the right looking straight down or sometimes up while in casting mode will put astrums head though it slightly allowing player to teleport into the wall dor and then once again into locked or hidden rooms | 1 |

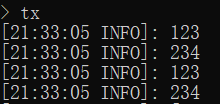

659,091 | 21,916,230,304 | IssuesEvent | 2022-05-21 21:42:08 | SkriptLang/Skript | https://api.github.com/repos/SkriptLang/Skript | closed | Loop Issue | bug priority: high completed | ### Skript/Server Version

```markdown

[21:34:25 INFO]: [Skript] Skript's aliases can be found here: https://github.com/SkriptLang/skript-aliases

[21:34:25 INFO]: [Skript] Skript's documentation can be found here: https://skriptlang.github.io/Skript

[21:34:25 INFO]: [Skript] Server Version: git-Paper-794 (MC: 1.16.5)

[21:34:25 INFO]: [Skript] Skript Version: 2.6.1

[21:34:25 INFO]: [Skript] Installed Skript Addons:

[21:34:25 INFO]: [Skript] - Repuska v2.8.8

[21:34:25 INFO]: [Skript] Installed dependencies:

[21:34:25 INFO]: [Skript] - Vault v1.7.3-b131

[21:34:25 INFO]: [Skript] - WorldGuard v7.0.5+3827266

```

### Bug Description

Here is my code of looping:

```

command /tx:

trigger:

set {_x} to "123"

set {_y} to "234"

loop {_x} and {_y}:

send loop-value to console

```

the output should be "123" and "234"

but the actual console output is:

### Expected Behavior

Times of looping multiple variables should be equal to the index of variables not "index of variables^2"

### Steps to Reproduce

```

command /tx:

trigger:

set {_x} to "123"

set {_y} to "234"

set {_z} to "345"

loop {_x}, {_y} and {_z}:

send loop-value to console

```

output screenshot:

```

command /tx:

trigger:

set {_x} to "123"

set {_y} to "234"

loop {_x} and {_y}:

send loop-value to console

```

output screenshot:

![Uploading image.png…]()

### Errors or Screenshots

None

### Other

_No response_

### Agreement

- [X] I have read the guidelines above and affirm I am following them with this report. | 1.0 | Loop Issue - ### Skript/Server Version

```markdown

[21:34:25 INFO]: [Skript] Skript's aliases can be found here: https://github.com/SkriptLang/skript-aliases

[21:34:25 INFO]: [Skript] Skript's documentation can be found here: https://skriptlang.github.io/Skript

[21:34:25 INFO]: [Skript] Server Version: git-Paper-794 (MC: 1.16.5)

[21:34:25 INFO]: [Skript] Skript Version: 2.6.1

[21:34:25 INFO]: [Skript] Installed Skript Addons:

[21:34:25 INFO]: [Skript] - Repuska v2.8.8

[21:34:25 INFO]: [Skript] Installed dependencies:

[21:34:25 INFO]: [Skript] - Vault v1.7.3-b131

[21:34:25 INFO]: [Skript] - WorldGuard v7.0.5+3827266

```

### Bug Description

Here is my code of looping:

```

command /tx:

trigger:

set {_x} to "123"

set {_y} to "234"

loop {_x} and {_y}:

send loop-value to console

```

the output should be "123" and "234"

but the actual console output is:

### Expected Behavior

Times of looping multiple variables should be equal to the index of variables not "index of variables^2"

### Steps to Reproduce

```

command /tx:

trigger:

set {_x} to "123"

set {_y} to "234"

set {_z} to "345"

loop {_x}, {_y} and {_z}:

send loop-value to console

```

output screenshot:

```

command /tx:

trigger:

set {_x} to "123"

set {_y} to "234"

loop {_x} and {_y}:

send loop-value to console

```

output screenshot:

![Uploading image.png…]()

### Errors or Screenshots

None

### Other

_No response_

### Agreement

- [X] I have read the guidelines above and affirm I am following them with this report. | priority | loop issue skript server version markdown skript s aliases can be found here skript s documentation can be found here server version git paper mc skript version installed skript addons repuska installed dependencies vault worldguard bug description here is my code of looping command tx trigger set x to set y to loop x and y send loop value to console the output should be and but the actual console output is expected behavior times of looping multiple variables should be equal to the index of variables not index of variables steps to reproduce command tx trigger set x to set y to set z to loop x y and z send loop value to console output screenshot command tx trigger set x to set y to loop x and y send loop value to console output screenshot errors or screenshots none other no response agreement i have read the guidelines above and affirm i am following them with this report | 1 |

284,023 | 8,729,624,825 | IssuesEvent | 2018-12-10 20:50:57 | ilmtest/search-engine | https://api.github.com/repos/ilmtest/search-engine | opened | Book coverage stats do not take into consideration to_page | bug priority/high technical-logic | Only checks from_page. It should also take into account to_page-from_page+1 to know how many pages were actually covered. | 1.0 | Book coverage stats do not take into consideration to_page - Only checks from_page. It should also take into account to_page-from_page+1 to know how many pages were actually covered. | priority | book coverage stats do not take into consideration to page only checks from page it should also take into account to page from page to know how many pages were actually covered | 1 |

247,703 | 7,922,565,196 | IssuesEvent | 2018-07-05 11:16:46 | cilium/cilium | https://api.github.com/repos/cilium/cilium | closed | Request fails in scale out scenario for pod to pod networking | area/datapath kind/bug kind/community-report priority/high |

## Bug reports

I have tried to communicate from a pod to pods which are scaling out at a moment using Service's Cluster IP. In Kubernetes, grouped Endpoints as one Service can be scaled out in some cases.(either manually and auto-scaling).

In this case, when pods are scaling out, requests fail must happen.

Request fails should not be happened in scaling out scenario. If connection sync is not correct in HTTP, web server sends RST-packet.. then request fails. (It doesn't happen in Calico)

---

In my polite opinion, it happens because of hash algorithm.

--> https://github.com/cilium/cilium/blob/master/bpf/lib/lb.h#L184

### Title

Request fails in scale out scenario for pod to pod networking

### General Information

- Cilium version (run `cilium version`

```

Client: 1.0.90 aef051d 2018-05-23T12:01:01-07:00 go version go1.9 linux/amd64

Daemon: 1.0.90 aef051d 2018-05-23T12:01:01-07:00 go version go1.9 linux/amd64

```

- Kernel version (run `uname -a`)

```

4.12.2-1.20180114.el7.centos.x86_64

```

- Orchestration system version in use (e.g. `kubectl version`, Mesos, ...)

```

Kubernetes v1.9.6

```

### How to reproduce the issue

1. Make 2 sets of Deployment and target Service(ClusterIP) in Kubernetes

2. Generate packets(I used locust - https://github.com/kubernetes/charts/tree/master/stable/locust) from a pod to the ClusterIP.

3. Check correct connectivity

4. Try to change Replica in a Deployment. The target Deployment is web servers(SimpleNodeJS...just helloworld)

5. Check request fails

---

If you have any discussion, then please contact me anytime. I'm very interested in Cillium.

Thank you.

| 1.0 | Request fails in scale out scenario for pod to pod networking -

## Bug reports

I have tried to communicate from a pod to pods which are scaling out at a moment using Service's Cluster IP. In Kubernetes, grouped Endpoints as one Service can be scaled out in some cases.(either manually and auto-scaling).

In this case, when pods are scaling out, requests fail must happen.

Request fails should not be happened in scaling out scenario. If connection sync is not correct in HTTP, web server sends RST-packet.. then request fails. (It doesn't happen in Calico)

---

In my polite opinion, it happens because of hash algorithm.

--> https://github.com/cilium/cilium/blob/master/bpf/lib/lb.h#L184

### Title

Request fails in scale out scenario for pod to pod networking

### General Information

- Cilium version (run `cilium version`

```

Client: 1.0.90 aef051d 2018-05-23T12:01:01-07:00 go version go1.9 linux/amd64

Daemon: 1.0.90 aef051d 2018-05-23T12:01:01-07:00 go version go1.9 linux/amd64

```

- Kernel version (run `uname -a`)

```

4.12.2-1.20180114.el7.centos.x86_64

```

- Orchestration system version in use (e.g. `kubectl version`, Mesos, ...)

```

Kubernetes v1.9.6

```

### How to reproduce the issue

1. Make 2 sets of Deployment and target Service(ClusterIP) in Kubernetes

2. Generate packets(I used locust - https://github.com/kubernetes/charts/tree/master/stable/locust) from a pod to the ClusterIP.

3. Check correct connectivity

4. Try to change Replica in a Deployment. The target Deployment is web servers(SimpleNodeJS...just helloworld)

5. Check request fails

---

If you have any discussion, then please contact me anytime. I'm very interested in Cillium.

Thank you.

| priority | request fails in scale out scenario for pod to pod networking bug reports i have tried to communicate from a pod to pods which are scaling out at a moment using service s cluster ip in kubernetes grouped endpoints as one service can be scaled out in some cases either manually and auto scaling in this case when pods are scaling out requests fail must happen request fails should not be happened in scaling out scenario if connection sync is not correct in http web server sends rst packet then request fails it doesn t happen in calico in my polite opinion it happens because of hash algorithm title request fails in scale out scenario for pod to pod networking general information cilium version run cilium version client go version linux daemon go version linux kernel version run uname a centos orchestration system version in use e g kubectl version mesos kubernetes how to reproduce the issue make sets of deployment and target service clusterip in kubernetes generate packets i used locust from a pod to the clusterip check correct connectivity try to change replica in a deployment the target deployment is web servers simplenodejs just helloworld check request fails if you have any discussion then please contact me anytime i m very interested in cillium thank you | 1 |

54,005 | 3,058,455,089 | IssuesEvent | 2015-08-14 08:19:35 | OCHA-DAP/hdx-ckan | https://api.github.com/repos/OCHA-DAP/hdx-ckan | closed | Location page: random number of datasets on page | bug Priority-High | For different locations there is a random number of datasets that are displayed on each page (same for testing and prod servers)

| 1.0 | Location page: random number of datasets on page - For different locations there is a random number of datasets that are displayed on each page (same for testing and prod servers)

| priority | location page random number of datasets on page for different locations there is a random number of datasets that are displayed on each page same for testing and prod servers | 1 |

683,625 | 23,389,183,601 | IssuesEvent | 2022-08-11 16:08:33 | insightsengineering/tern.mmrm | https://api.github.com/repos/insightsengineering/tern.mmrm | closed | [UAT] Test fitting of rank-deficient models | sme high priority | UAT the option `accept_singular` in `fit_mmrm()` which allows to estimate rank-deficient models (similar as `lm()` and `gls()` do) by omitting singular coefficients.

To do:

- [x] Use pre-release branch on enableR (see Dinakar's instructions)

- [x] See `?fit_mmrm` for description of the `accept_singular` and check if that is clear

- [ ] Play around with the argument and results and see if that works - will need very small data sets, or artificially introduced collinear columns in the formula, to see the effect. | 1.0 | [UAT] Test fitting of rank-deficient models - UAT the option `accept_singular` in `fit_mmrm()` which allows to estimate rank-deficient models (similar as `lm()` and `gls()` do) by omitting singular coefficients.

To do:

- [x] Use pre-release branch on enableR (see Dinakar's instructions)

- [x] See `?fit_mmrm` for description of the `accept_singular` and check if that is clear

- [ ] Play around with the argument and results and see if that works - will need very small data sets, or artificially introduced collinear columns in the formula, to see the effect. | priority | test fitting of rank deficient models uat the option accept singular in fit mmrm which allows to estimate rank deficient models similar as lm and gls do by omitting singular coefficients to do use pre release branch on enabler see dinakar s instructions see fit mmrm for description of the accept singular and check if that is clear play around with the argument and results and see if that works will need very small data sets or artificially introduced collinear columns in the formula to see the effect | 1 |

468,751 | 13,489,793,535 | IssuesEvent | 2020-09-11 14:19:09 | tal3898/Hummus | https://api.github.com/repos/tal3898/Hummus | closed | add support button, in the bottom left | Done new feature priority - high | add a floating button in the bottom left of the windows.

display there my name, and my email (טל?? + ctrl + k)

make a "chatbot" there, that writes only:

חמור איך אני יכול לענות לך פה? תשלח לי מייל

| 1.0 | add support button, in the bottom left - add a floating button in the bottom left of the windows.

display there my name, and my email (טל?? + ctrl + k)

make a "chatbot" there, that writes only:

חמור איך אני יכול לענות לך פה? תשלח לי מייל

| priority | add support button in the bottom left add a floating button in the bottom left of the windows display there my name and my email טל ctrl k make a chatbot there that writes only חמור איך אני יכול לענות לך פה תשלח לי מייל | 1 |

705,153 | 24,223,552,953 | IssuesEvent | 2022-09-26 12:51:10 | joomlahenk/fabrik | https://api.github.com/repos/joomlahenk/fabrik | closed | Overloading no longer work in J!4.2.2. Please test. | help wanted High Priority | List filters were working in J!4.1.5, but no longer works in J!4.2.2. I also get:

Notice: Indirect modification of overloaded property FabrikFEModelList::$_whereSQL has no effect in .../components/com_fabrik/models/list.php on line 3579

Changed all $this->_whereSQL into $whereSQL and added property public $whereSQL = array();

Now list filters work again, but I am not sure if this is a final solution. May cause other issues?

| 1.0 | Overloading no longer work in J!4.2.2. Please test. - List filters were working in J!4.1.5, but no longer works in J!4.2.2. I also get:

Notice: Indirect modification of overloaded property FabrikFEModelList::$_whereSQL has no effect in .../components/com_fabrik/models/list.php on line 3579

Changed all $this->_whereSQL into $whereSQL and added property public $whereSQL = array();

Now list filters work again, but I am not sure if this is a final solution. May cause other issues?

| priority | overloading no longer work in j please test list filters were working in j but no longer works in j i also get notice indirect modification of overloaded property fabrikfemodellist wheresql has no effect in components com fabrik models list php on line changed all this wheresql into wheresql and added property public wheresql array now list filters work again but i am not sure if this is a final solution may cause other issues | 1 |

45,656 | 2,938,000,670 | IssuesEvent | 2015-07-01 07:52:49 | ndomar/megasoft-13 | https://api.github.com/repos/ndomar/megasoft-13 | closed | As a designer I should be able to react to events by adding a navigation link, animation or alert. | Component-1 Points-5 Priority-High | @Hoss93 @MennaAshraf @mayaammar @ahmadsoliman

This story is concerned with the events panel in terms of adding different actions to react to various events.

The following has to be added:

1- Listing different animations and events.

2- Defining an animation on a certain property

3- Validate the entered data.

Success scenarios:

1- Event and action are saved into the component's html.

Failure Scenario:

1- The action is not applied if it doesn't pass the validation procedure. | 1.0 | As a designer I should be able to react to events by adding a navigation link, animation or alert. - @Hoss93 @MennaAshraf @mayaammar @ahmadsoliman

This story is concerned with the events panel in terms of adding different actions to react to various events.

The following has to be added:

1- Listing different animations and events.

2- Defining an animation on a certain property

3- Validate the entered data.

Success scenarios:

1- Event and action are saved into the component's html.

Failure Scenario:

1- The action is not applied if it doesn't pass the validation procedure. | priority | as a designer i should be able to react to events by adding a navigation link animation or alert mennaashraf mayaammar ahmadsoliman this story is concerned with the events panel in terms of adding different actions to react to various events the following has to be added listing different animations and events defining an animation on a certain property validate the entered data success scenarios event and action are saved into the component s html failure scenario the action is not applied if it doesn t pass the validation procedure | 1 |

484,308 | 13,937,802,228 | IssuesEvent | 2020-10-22 14:33:03 | prysmaticlabs/prysm | https://api.github.com/repos/prysmaticlabs/prysm | closed | Beacon Node Still Returns Out of Order Blocks From Range Requests | Bug Priority: High Sync | # 🐞 Bug Report

### Description

Currently Lighthouse Peers score Prysm Nodes down due to the fact that prysm nodes occasionally

send back out of order blocks.

### Has this worked before in a previous version?

Supposed to.

## 🔬 Minimal Reproduction

Run a Prysm Node in medalla, and after a while lighthouse nodes start banning them.

## 🔥 Error

```

DEBUG sync: Peer has sent a goodbye message Reason=unknown goodbye value of 251 Received

```

Consistent goodbyes received from lighthouse nodes.

## 🌍 Your Environment

**Operating System:**

Ubuntu

**What version of Prysm are you running? (Which release)**

https://github.com/prysmaticlabs/prysm/commit/390a589afb6a38925b2d6da25a3df3d4f84a9bf7

**Anything else relevant (validator index / public key)?**

| 1.0 | Beacon Node Still Returns Out of Order Blocks From Range Requests - # 🐞 Bug Report

### Description

Currently Lighthouse Peers score Prysm Nodes down due to the fact that prysm nodes occasionally

send back out of order blocks.

### Has this worked before in a previous version?

Supposed to.

## 🔬 Minimal Reproduction

Run a Prysm Node in medalla, and after a while lighthouse nodes start banning them.

## 🔥 Error

```

DEBUG sync: Peer has sent a goodbye message Reason=unknown goodbye value of 251 Received

```

Consistent goodbyes received from lighthouse nodes.

## 🌍 Your Environment

**Operating System:**

Ubuntu

**What version of Prysm are you running? (Which release)**

https://github.com/prysmaticlabs/prysm/commit/390a589afb6a38925b2d6da25a3df3d4f84a9bf7

**Anything else relevant (validator index / public key)?**

| priority | beacon node still returns out of order blocks from range requests 🐞 bug report description currently lighthouse peers score prysm nodes down due to the fact that prysm nodes occasionally send back out of order blocks has this worked before in a previous version supposed to 🔬 minimal reproduction run a prysm node in medalla and after a while lighthouse nodes start banning them 🔥 error debug sync peer has sent a goodbye message reason unknown goodbye value of received consistent goodbyes received from lighthouse nodes 🌍 your environment operating system ubuntu what version of prysm are you running which release anything else relevant validator index public key | 1 |

141,526 | 5,437,233,518 | IssuesEvent | 2017-03-06 05:54:55 | uryoya/molt | https://api.github.com/repos/uryoya/molt | closed | マジックナンバーのソースコードベタ書きを修正したい | high priority refactoring | - [x] Redis Server HOST

- [x] Redis Server PORT

- [x] Flask DEBUG mode

- [x] Flask TEST mode

- [x] Flask HOST

- [x] Flask PORT

他にもあったら追記します | 1.0 | マジックナンバーのソースコードベタ書きを修正したい - - [x] Redis Server HOST

- [x] Redis Server PORT

- [x] Flask DEBUG mode

- [x] Flask TEST mode

- [x] Flask HOST

- [x] Flask PORT

他にもあったら追記します | priority | マジックナンバーのソースコードベタ書きを修正したい redis server host redis server port flask debug mode flask test mode flask host flask port 他にもあったら追記します | 1 |

89,966 | 3,807,427,595 | IssuesEvent | 2016-03-25 08:17:42 | Captianrock/android_PV | https://api.github.com/repos/Captianrock/android_PV | closed | User Authentication | High Priority New Feature | As a user of the web client, I would like to authenticate myself when logging in. | 1.0 | User Authentication - As a user of the web client, I would like to authenticate myself when logging in. | priority | user authentication as a user of the web client i would like to authenticate myself when logging in | 1 |

559,342 | 16,556,541,149 | IssuesEvent | 2021-05-28 14:31:51 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | profile.snapchat.com - site is not usable | browser-firefox-ios bugbug-probability-high os-ios priority-normal | <!-- @browser: Firefox iOS 33.1 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 13_3 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.1 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/74607 -->

**URL**: https://profile.snapchat.com/_popup?redirect=https%3A%2F%2Faccounts.snapchat.com%2Faccounts%2Flogin%3Fclient_id%3Dads-api%26referrer%3Dhttps%25253A%25252F%25252Fprofile.snapchat.com%25252F_popup%25253FparentId%25253D3d81e660-c9e9-409e-b35a-94a047fdc23d%252526close%25253Dtrue%252526callback%25253DonPopupCalled%26business%3Dtrue%26skip_login%3Dtrue%26multi_user%3Dtrue%26ignore_welcome_email%3Dfalse

**Browser / Version**: Firefox iOS 33.1

**Operating System**: iOS 13.3

**Tested Another Browser**: Yes Safari

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

Won’t load at all just blank page

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | profile.snapchat.com - site is not usable - <!-- @browser: Firefox iOS 33.1 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 13_3 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.1 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/74607 -->

**URL**: https://profile.snapchat.com/_popup?redirect=https%3A%2F%2Faccounts.snapchat.com%2Faccounts%2Flogin%3Fclient_id%3Dads-api%26referrer%3Dhttps%25253A%25252F%25252Fprofile.snapchat.com%25252F_popup%25253FparentId%25253D3d81e660-c9e9-409e-b35a-94a047fdc23d%252526close%25253Dtrue%252526callback%25253DonPopupCalled%26business%3Dtrue%26skip_login%3Dtrue%26multi_user%3Dtrue%26ignore_welcome_email%3Dfalse

**Browser / Version**: Firefox iOS 33.1

**Operating System**: iOS 13.3

**Tested Another Browser**: Yes Safari

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

Won’t load at all just blank page

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | profile snapchat com site is not usable url browser version firefox ios operating system ios tested another browser yes safari problem type site is not usable description page not loading correctly steps to reproduce won’t load at all just blank page browser configuration none from with ❤️ | 1 |

404,273 | 11,854,543,154 | IssuesEvent | 2020-03-25 01:10:45 | art-community/ART | https://api.github.com/repos/art-community/ART | closed | Bug with HTTP interceptors | HTTP server bug good first issue help wanted high priority |

When HTTP interceptor returns 'STOP_HANDLING' strategy, then no one from subsequent HTTP Filters (include servlets) will be called.

In this issue needs to refactor HttpServer class and replace next result holders

```

private final static ThreadLocal<InterceptionStrategy> lastRequestInterceptionResult = new ThreadLocal<>();

private final static ThreadLocal<InterceptionStrategy> lastResponseInterceptionResult = new ThreadLocal<>();

```

for something safe and simple.

We need to clear last interception strategy between requests. | 1.0 | Bug with HTTP interceptors -

When HTTP interceptor returns 'STOP_HANDLING' strategy, then no one from subsequent HTTP Filters (include servlets) will be called.

In this issue needs to refactor HttpServer class and replace next result holders

```

private final static ThreadLocal<InterceptionStrategy> lastRequestInterceptionResult = new ThreadLocal<>();

private final static ThreadLocal<InterceptionStrategy> lastResponseInterceptionResult = new ThreadLocal<>();

```

for something safe and simple.

We need to clear last interception strategy between requests. | priority | bug with http interceptors when http interceptor returns stop handling strategy then no one from subsequent http filters include servlets will be called in this issue needs to refactor httpserver class and replace next result holders private final static threadlocal lastrequestinterceptionresult new threadlocal private final static threadlocal lastresponseinterceptionresult new threadlocal for something safe and simple we need to clear last interception strategy between requests | 1 |

295,762 | 9,100,872,949 | IssuesEvent | 2019-02-20 09:41:39 | ESA-VirES/WebClient-Framework | https://api.github.com/repos/ESA-VirES/WebClient-Framework | closed | 'time selection outside the model validity' warning not displayed for the new Swarm SHA L2 models. | bug priority high | The recently added Swarm L2 SHA models, like the older models, are limited by the validity period.

A warning should be displayed when the time selection exceeds this validity period, just like it is done for the older models.

| 1.0 | 'time selection outside the model validity' warning not displayed for the new Swarm SHA L2 models. - The recently added Swarm L2 SHA models, like the older models, are limited by the validity period.

A warning should be displayed when the time selection exceeds this validity period, just like it is done for the older models.

| priority | time selection outside the model validity warning not displayed for the new swarm sha models the recently added swarm sha models like the older models are limited by the validity period a warning should be displayed when the time selection exceeds this validity period just like it is done for the older models | 1 |

470,015 | 13,529,699,799 | IssuesEvent | 2020-09-15 18:43:07 | HEXRD/hexrdgui | https://api.github.com/repos/HEXRD/hexrdgui | closed | distortion overhaul | enhancement hedm high priority llnl | I'll put you on notice that I am finally fixing the distortion, which remains to be `None` or hard coded the the 6-parameter GE-style function that is defined in distortion.py

Summary of the changes:

1. distortion.py is moving to a package

2. defining an abc to provide a single interface for the distortion application

3. start a registry of different adapters for the various distortion functions (which have different length parameter lists)

Questions:

- do we want to always have a distortion class generated and just have a null case rather than using `None`?

- different distortion functions will have different length parameter lists; I recognize this creates issues for the GUI -- do we need to generate a separate widget for handing the distortion? Tree view might not care, but the forms view will...

This is related to #418

I'm working this now... | 1.0 | distortion overhaul - I'll put you on notice that I am finally fixing the distortion, which remains to be `None` or hard coded the the 6-parameter GE-style function that is defined in distortion.py

Summary of the changes:

1. distortion.py is moving to a package

2. defining an abc to provide a single interface for the distortion application

3. start a registry of different adapters for the various distortion functions (which have different length parameter lists)

Questions:

- do we want to always have a distortion class generated and just have a null case rather than using `None`?

- different distortion functions will have different length parameter lists; I recognize this creates issues for the GUI -- do we need to generate a separate widget for handing the distortion? Tree view might not care, but the forms view will...

This is related to #418

I'm working this now... | priority | distortion overhaul i ll put you on notice that i am finally fixing the distortion which remains to be none or hard coded the the parameter ge style function that is defined in distortion py summary of the changes distortion py is moving to a package defining an abc to provide a single interface for the distortion application start a registry of different adapters for the various distortion functions which have different length parameter lists questions do we want to always have a distortion class generated and just have a null case rather than using none different distortion functions will have different length parameter lists i recognize this creates issues for the gui do we need to generate a separate widget for handing the distortion tree view might not care but the forms view will this is related to i m working this now | 1 |

166,909 | 6,314,524,325 | IssuesEvent | 2017-07-24 11:05:36 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | opened | Intermittent icon status in layer tree when loading | enhancement Priority: High task | The animated gif below to clarify the issue:

This issue could be fixed during the TOC review ( issue #2025 )

| 1.0 | Intermittent icon status in layer tree when loading - The animated gif below to clarify the issue:

This issue could be fixed during the TOC review ( issue #2025 )

| priority | intermittent icon status in layer tree when loading the animated gif below to clarify the issue this issue could be fixed during the toc review issue | 1 |

513,805 | 14,926,888,446 | IssuesEvent | 2021-01-24 13:24:14 | bounswe/bounswe2020group4 | https://api.github.com/repos/bounswe/bounswe2020group4 | closed | (BKND) Push Notifications | Backend Coding Effort: High Priority: High Status: In-Progress Task: Assignment | Realtime push notifications should be sent to clients.

Notifications should be stored to be returned in an endpoint.

Deadline: 24/01/21 | 1.0 | (BKND) Push Notifications - Realtime push notifications should be sent to clients.

Notifications should be stored to be returned in an endpoint.

Deadline: 24/01/21 | priority | bknd push notifications realtime push notifications should be sent to clients notifications should be stored to be returned in an endpoint deadline | 1 |

240,789 | 7,805,843,624 | IssuesEvent | 2018-06-11 12:18:35 | ColoredCow/employee-portal | https://api.github.com/repos/ColoredCow/employee-portal | opened | Applicant and college association | HR priority : high | An applicant can belong to a college. Currently, we're tracking the college names. We'll have to associate with the id instead once #414 is done. | 1.0 | Applicant and college association - An applicant can belong to a college. Currently, we're tracking the college names. We'll have to associate with the id instead once #414 is done. | priority | applicant and college association an applicant can belong to a college currently we re tracking the college names we ll have to associate with the id instead once is done | 1 |

563,747 | 16,704,905,501 | IssuesEvent | 2021-06-09 08:50:08 | enix/dothill-csi | https://api.github.com/repos/enix/dothill-csi | opened | volume is considered already unpublished as soon as lsblk cannot find one of the devices | priority/high type/bug | ```

DEBUG: 2021/06/09 08:45:50 iscsi.go:454: An error occured while looking info about SCSI devices: lsblk: /dev/sdd: not a block device, (exit status 64)

W0609 08:45:50.834330 1 node.go:224] assuming that ISCSI connection is already closed: lsblk: /dev/sdd: not a block device, (exit status 64)

``` | 1.0 | volume is considered already unpublished as soon as lsblk cannot find one of the devices - ```

DEBUG: 2021/06/09 08:45:50 iscsi.go:454: An error occured while looking info about SCSI devices: lsblk: /dev/sdd: not a block device, (exit status 64)

W0609 08:45:50.834330 1 node.go:224] assuming that ISCSI connection is already closed: lsblk: /dev/sdd: not a block device, (exit status 64)

``` | priority | volume is considered already unpublished as soon as lsblk cannot find one of the devices debug iscsi go an error occured while looking info about scsi devices lsblk dev sdd not a block device exit status node go assuming that iscsi connection is already closed lsblk dev sdd not a block device exit status | 1 |

52,780 | 3,029,469,683 | IssuesEvent | 2015-08-04 12:49:36 | PolarisSS13/Polaris | https://api.github.com/repos/PolarisSS13/Polaris | opened | You can join as jobs that don't exist or have roundstart markers | NRV Dauntless Priority: High |

Jobs that don't exist:

>Librarian

>Atmos Tech (General Engineer as replacement)

>Cargo Tech

>IA Agent

>Chaplain

>Warden

>Xenobiologist

>RD

>Psych

>Geneticist

>Gardener | 1.0 | You can join as jobs that don't exist or have roundstart markers -

Jobs that don't exist:

>Librarian

>Atmos Tech (General Engineer as replacement)

>Cargo Tech

>IA Agent

>Chaplain

>Warden

>Xenobiologist

>RD

>Psych

>Geneticist

>Gardener | priority | you can join as jobs that don t exist or have roundstart markers jobs that don t exist librarian atmos tech general engineer as replacement cargo tech ia agent chaplain warden xenobiologist rd psych geneticist gardener | 1 |

292,445 | 8,958,093,881 | IssuesEvent | 2019-01-27 11:22:04 | stancl/tenancy | https://api.github.com/repos/stancl/tenancy | opened | HTTPS certificates | enhancement high priority | When the `yourclient.yourapp.com`, `yourclient2.yourapp.com` model is used, a wildcard cert can take care of HTTPS. However, when the `yourapp.yourclient.com`, `yourapp.yourclient2.com` model is used, there needs to be some feature for HTTPS management. Luckily file-based verification can be used with Let's Encrypt, so perhaps creating a route to verify the domain ownership is sufficient? Auto renewal etc could be added too. | 1.0 | HTTPS certificates - When the `yourclient.yourapp.com`, `yourclient2.yourapp.com` model is used, a wildcard cert can take care of HTTPS. However, when the `yourapp.yourclient.com`, `yourapp.yourclient2.com` model is used, there needs to be some feature for HTTPS management. Luckily file-based verification can be used with Let's Encrypt, so perhaps creating a route to verify the domain ownership is sufficient? Auto renewal etc could be added too. | priority | https certificates when the yourclient yourapp com yourapp com model is used a wildcard cert can take care of https however when the yourapp yourclient com yourapp com model is used there needs to be some feature for https management luckily file based verification can be used with let s encrypt so perhaps creating a route to verify the domain ownership is sufficient auto renewal etc could be added too | 1 |

234,420 | 7,720,826,640 | IssuesEvent | 2018-05-24 01:29:52 | AtlasOfLivingAustralia/layers-service | https://api.github.com/repos/AtlasOfLivingAustralia/layers-service | closed | Load Layer: States and Territories Polygons 2011 | enhancement priority-high status-started | _migrated from:_ https://code.google.com/p/ala/issues/detail?id=58

_date:_ Thu Aug 8 05:31:55 2013

_author:_ moyesyside

---

Original Issue - [https://code.google.com/p/alageospatialportal/issues/detail?id=801](https://code.google.com/p/alageospatialportal/issues/detail?id=801)

Reported by d...@dougashton.com, Nov 29, 2011

load "layers->political->Australia States and Territories" and then look at border area. I looked at the border between Canberra and Queanbeyan and also Cameron Corner [http://en.wikipedia.org/wiki/Cameron_Corner](http://en.wikipedia.org/wiki/Cameron_Corner). The Google supplied borders matched the base layers but "layers->political->Australia States and Territories" matched neither.

The end result is confusing if you are trying to work out visually which state a point close to the border is in. Important for understanding the operation of Sensitive data rules.

In addition the "layers->political->Australia States and Territories" shows a dog leg in the border near Cameron Corner that I have not been able to see on other maps.

Unfortunately the "View metadata for Australian States .." page [http://spatial.ala.org.au/alaspatial/layers/22](http://spatial.ala.org.au/alaspatial/layers/22) has broken links.

The same issues apply to the LGA Boundaries layer as well

Nov 29, 2011 `#1` d...@dougashton.com

I have just tested "layers->Marine->boundaries->States including coastal waters" and the State boundaries it includes match very closely with the google boundaries and base layers.

This layer also has excellent metadata associated with it and appears authoritative.

Nov 29, 2011 `#2` ajay.ranipeta

setting to Lee for triage

Owner: leebel...@gmail.com Nov 29, 2011 Project Member `#3` leebel...@gmail.com

It boils down to a need for a better States and Territories layer and fixing the broken links in the layer table. Meanwhile, the states/territories with coastal waters will need to suffice.

There is a 2011 states/territories shapefile at [http://abs.gov.au/AUSSTATS/abs@.nsf/DetailsPage/1270.0.55.001July%202011?OpenDocument](http://abs.gov.au/AUSSTATS/abs@.nsf/DetailsPage/1270.0.55.001July%202011?OpenDocument). Metadata? Who knows.

Status: Accepted Owner: yuanfang...@gmail.com Cc: leebel...@gmail.com Nov 29, 2011 Project Member `#4` leebel...@gmail.com

(No comment was entered for this change.)

Cc: d...@dougashton.com Feb 15, 2012 `#5` ajay.ranipeta

Should this be done by Chris as he is set to update the LGA boundaries? [Issue 670](https://code.google.com/p/ala/issues/detail?id=670)

Feb 15, 2012 Project Member `#6` leebel...@gmail.com

Yes. Over to Chris to lead. Added the updated states/territories to the ListOfOutstandingLayersToBeIngested spreadsheet on Google Docs.

Owner: chris.fl...@gmail.com Labels: -Priority-Medium Priority-High Apr 24, 2012 Project Member `#7` chris.fl...@gmail.com

Changing title to reflect that this layer needs to be loaded.

Summary: LOAD LAYER: States and Territories Polygons 2011 Apr 25, 2012 Project Member `#8` leebel...@gmail.com

(No comment was entered for this change.)

Labels: -Priority-High Priority-Low Jul 10, 2013 Project Member `#9` leebel...@gmail.com

This is a basic layer used in many applications and there appears to be an offset of up to 1km on the ACT boundary so it would be a good idea to update this layer asap. Agree with Doug that the layer "States and territories including coastal waters" is far more accurate

Cc: -d...@dougashton.com moyesyside Labels: -Priority-Low Priority-High

| 1.0 | Load Layer: States and Territories Polygons 2011 - _migrated from:_ https://code.google.com/p/ala/issues/detail?id=58

_date:_ Thu Aug 8 05:31:55 2013

_author:_ moyesyside

---

Original Issue - [https://code.google.com/p/alageospatialportal/issues/detail?id=801](https://code.google.com/p/alageospatialportal/issues/detail?id=801)

Reported by d...@dougashton.com, Nov 29, 2011

load "layers->political->Australia States and Territories" and then look at border area. I looked at the border between Canberra and Queanbeyan and also Cameron Corner [http://en.wikipedia.org/wiki/Cameron_Corner](http://en.wikipedia.org/wiki/Cameron_Corner). The Google supplied borders matched the base layers but "layers->political->Australia States and Territories" matched neither.

The end result is confusing if you are trying to work out visually which state a point close to the border is in. Important for understanding the operation of Sensitive data rules.

In addition the "layers->political->Australia States and Territories" shows a dog leg in the border near Cameron Corner that I have not been able to see on other maps.

Unfortunately the "View metadata for Australian States .." page [http://spatial.ala.org.au/alaspatial/layers/22](http://spatial.ala.org.au/alaspatial/layers/22) has broken links.

The same issues apply to the LGA Boundaries layer as well

Nov 29, 2011 `#1` d...@dougashton.com

I have just tested "layers->Marine->boundaries->States including coastal waters" and the State boundaries it includes match very closely with the google boundaries and base layers.

This layer also has excellent metadata associated with it and appears authoritative.

Nov 29, 2011 `#2` ajay.ranipeta

setting to Lee for triage

Owner: leebel...@gmail.com Nov 29, 2011 Project Member `#3` leebel...@gmail.com

It boils down to a need for a better States and Territories layer and fixing the broken links in the layer table. Meanwhile, the states/territories with coastal waters will need to suffice.