Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

735,798 | 25,414,725,724 | IssuesEvent | 2022-11-22 22:30:34 | VEuPathDB/web-eda | https://api.github.com/repos/VEuPathDB/web-eda | closed | Bug: Scatterplot has legend issue on QA site | bug high priority | There is now a bug with the legend when an overlay variable is added to the plot. See this GEMS1 example.

**On the live site it looks fine**

<img width="944" alt="image" src="https://user-images.githubusercontent.com/25450900/202033718-9af0b401-1d74-41ad-82d5-ef6390709e6d.png">

**On the QA site we are getting a gradient color map but the colors don't match what is on the plot and this is a categorical variable**

<img width="980" alt="image" src="https://user-images.githubusercontent.com/25450900/202033790-ad59b01e-2166-493b-9b2f-9466253286d5.png">

| 1.0 | Bug: Scatterplot has legend issue on QA site - There is now a bug with the legend when an overlay variable is added to the plot. See this GEMS1 example.

**On the live site it looks fine**

<img width="944" alt="image" src="https://user-images.githubusercontent.com/25450900/202033718-9af0b401-1d74-41ad-82d5-ef6390709e6d.png">

**On the QA site we are getting a gradient color map but the colors don't match what is on the plot and this is a categorical variable**

<img width="980" alt="image" src="https://user-images.githubusercontent.com/25450900/202033790-ad59b01e-2166-493b-9b2f-9466253286d5.png">

| priority | bug scatterplot has legend issue on qa site there is now a bug with the legend when an overlay variable is added to the plot see this example on the live site it looks fine img width alt image src on the qa site we are getting a gradient color map but the colors don t match what is on the plot and this is a categorical variable img width alt image src | 1 |

816,855 | 30,614,577,860 | IssuesEvent | 2023-07-24 01:06:21 | steedos/steedos-platform | https://api.github.com/repos/steedos/steedos-platform | closed | [Bug]: 应用程序选项卡未能显示 | bug done priority: High | ### Description

<img width="1083" alt="image" src="https://github.com/steedos/steedos-platform/assets/6194462/d1fc0a59-b658-4d67-abd9-fd3384ce93a9">

### Steps To Reproduce 重现步骤

2.5.8之前新建的应用程序-选项卡. 在2.5.8版本中未能正常显示.

### Version 版本

2.5.8 | 1.0 | [Bug]: 应用程序选项卡未能显示 - ### Description

<img width="1083" alt="image" src="https://github.com/steedos/steedos-platform/assets/6194462/d1fc0a59-b658-4d67-abd9-fd3384ce93a9">

### Steps To Reproduce 重现步骤

2.5.8之前新建的应用程序-选项卡. 在2.5.8版本中未能正常显示.

### Version 版本

2.5.8 | priority | 应用程序选项卡未能显示 description img width alt image src steps to reproduce 重现步骤 选项卡 version 版本 | 1 |

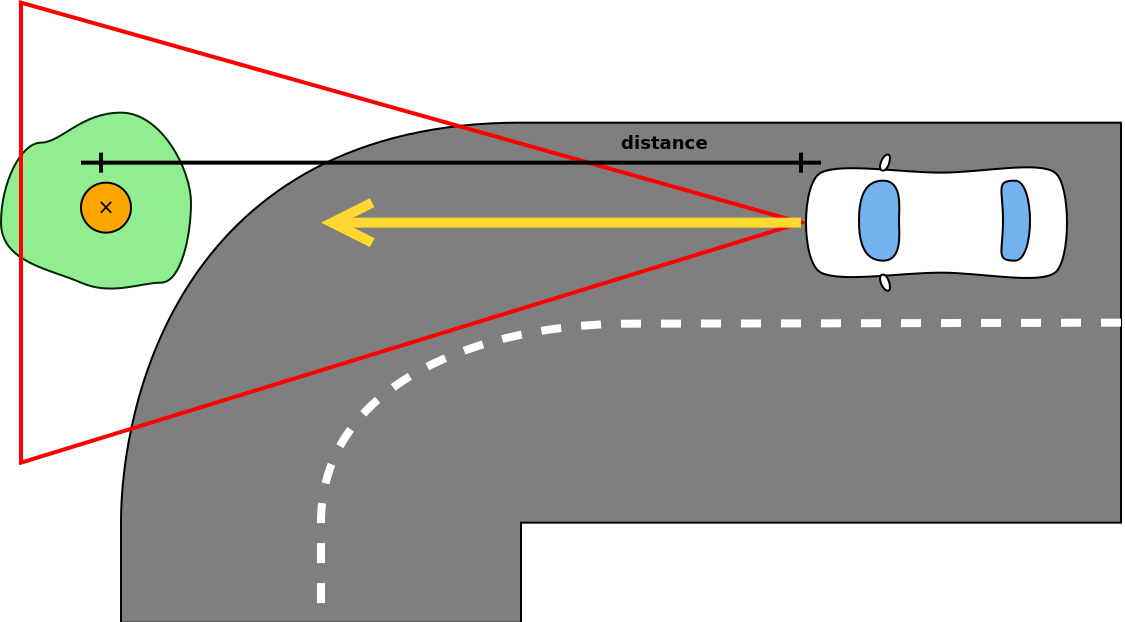

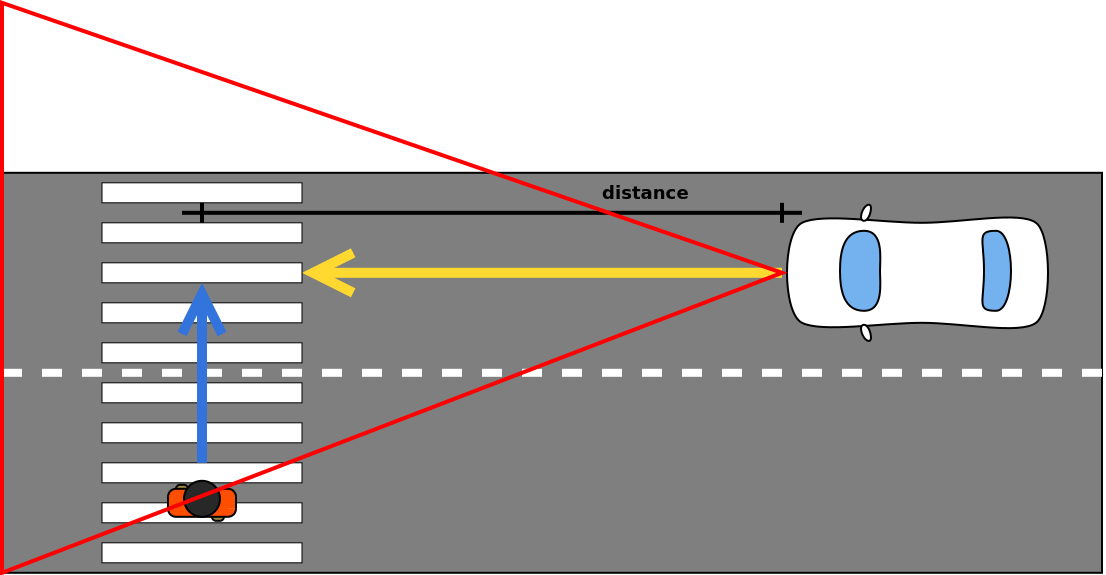

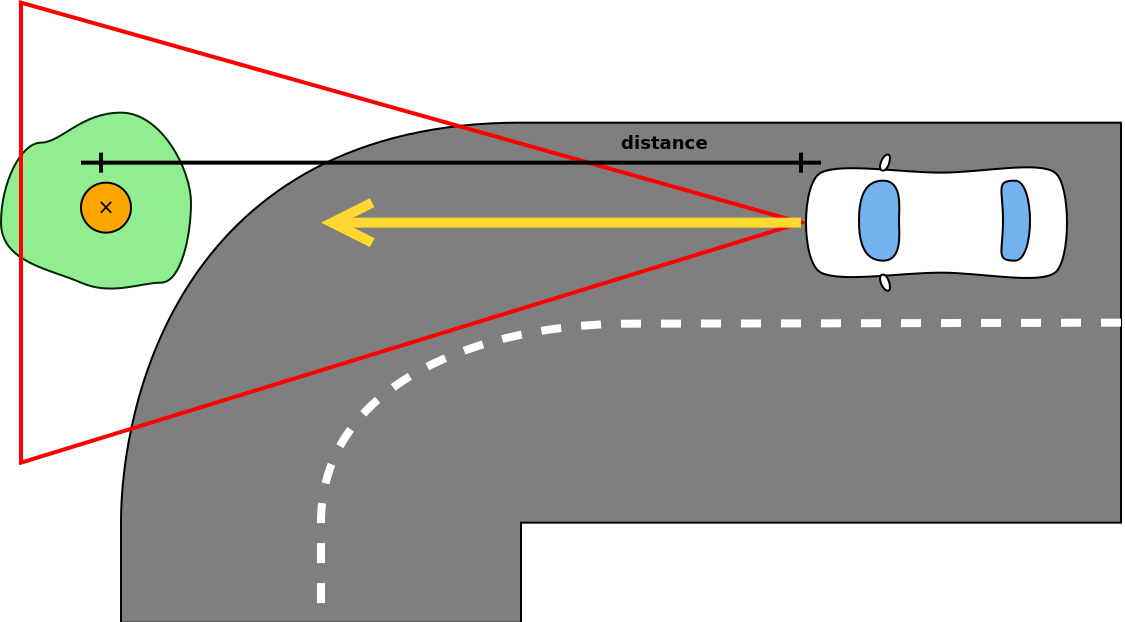

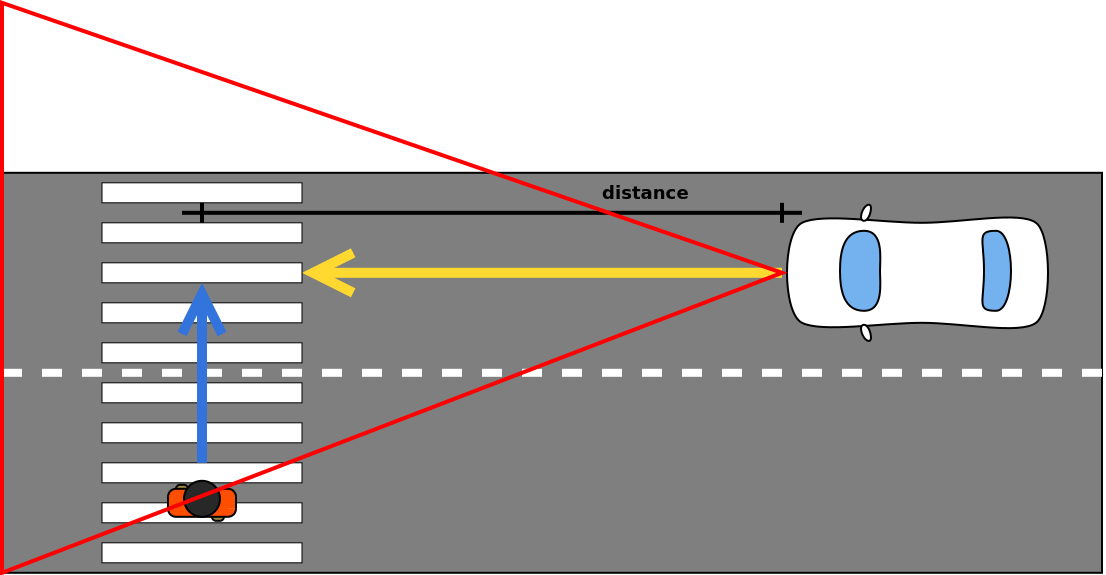

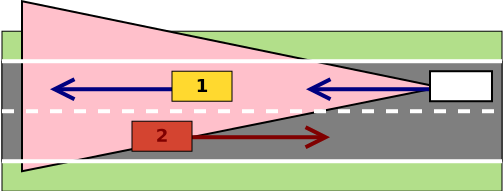

787,957 | 27,737,077,438 | IssuesEvent | 2023-03-15 11:59:44 | SzFMV2023-Tavasz/AutomatedCar-A | https://api.github.com/repos/SzFMV2023-Tavasz/AutomatedCar-A | opened | Vészfékező | effort: high priority: critical type: user story | A modul felelőssége a radar szenzorra épülő [automata vészfékező rendszer](https://szfmv2023-tavasz.github.io/handout/functions.html#autonóm-vészfékező-rendszer-automatic-emergency-brake---aeb) megvalósítása. A vészfékező kritikus biztonsági funkció, így nem kapcsolható ki manuálisan, de maximum 70 km/h sebességig működik. A működése két esetre bontható: ütközés statikus vagy dinamikus objektummal.

Az előbbi az egyszerűbb eset, mivel a veszélyt jelentő objektum pozíciója változatlan.

El kell dönteni, hogy az autó az aktuális irányvektort figyelembe véve ütközni fog-e az objektummal. Ha igen, az autó ismert sebességét figyelembe véve kiszámolható, hogy ehhez mennyi időre van szükség és, hogy mekkora mértékű lassulás kell ehhez.

A radar visszaadja az autó előtt levő legközelebbi releváns objektum adatait (táv, sebesség), ezekkel lehet számolni. A távolságból és az autó sebességéből meghatározható, hogy milyen lassulást kell adni az autónak, hogy még megálljon, de ne lépje túl a \\( 9 m/s^2 \\)-et.

Ha az ütközés elkerülhető, vizuális figyelmeztetést kell elhelyezni a vezetőnek, hogy fékezzen. Ha nem reagál, azaz továbbra is ütközési pályán vagyunk és már csak vészfékezéssel kerülhető el az ütközés, akkor a hajtásláncnak vészfékezési inputot kell adni. Ez a maximálisan megengedett, \\( 9 m/s^2 \\)-es lassulást (ennél nagyobb lassulás veszélyes az utasokra), akkor

Ha más nem próbálgatással meg kell határozni, hogy adott sebességről egy maximális fékezési input (100% pedál állás) mennyi idő alatt fékezi állóra az autót.

A modul olyan triggerekkel vezérli az autót mint amilyenek a billentyűlenyomás kezelőtől jönnek (fékpedál állás).

Dinamikus objektumok esetében a vészfékezés elve azonos, de az ütközési pálya meghatározása összetettebb.

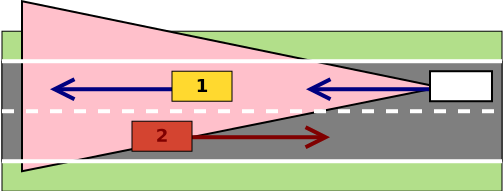

Másik sávban szembe jövő autóra nem kell vészfékezést kiváltani, tehát el kell tudni dönteni, hogy abban az esetben nincs ütközési pálya.

### Definition of Done

- [ ] Elkerülhető ütközés esetén vizuális figyelmeztetés a sofőrnek

- [ ] Ha a sofőr nem avatkozik közbe, automatikus fékezés (az utolsó pillanatban, ahol az ütközés még elkerülhető)

- [ ] Az automatikus fékezés mértéke a sebességgel arányos, de nem lehet \\( 9 m/s^2 \\)-nél nagyobb

- [ ] 70 km/h felett figyelmeztetés, hogy az AEB nem tud minden helyzetet kezelni

- [ ] A vezérelt autó nem üt el gyalogost, nem megy neki fának

- [ ] Nem releváns objektumok esetében (fals pozitív) mint a szembejövő autó nem történik vészfékezés | 1.0 | Vészfékező - A modul felelőssége a radar szenzorra épülő [automata vészfékező rendszer](https://szfmv2023-tavasz.github.io/handout/functions.html#autonóm-vészfékező-rendszer-automatic-emergency-brake---aeb) megvalósítása. A vészfékező kritikus biztonsági funkció, így nem kapcsolható ki manuálisan, de maximum 70 km/h sebességig működik. A működése két esetre bontható: ütközés statikus vagy dinamikus objektummal.

Az előbbi az egyszerűbb eset, mivel a veszélyt jelentő objektum pozíciója változatlan.

El kell dönteni, hogy az autó az aktuális irányvektort figyelembe véve ütközni fog-e az objektummal. Ha igen, az autó ismert sebességét figyelembe véve kiszámolható, hogy ehhez mennyi időre van szükség és, hogy mekkora mértékű lassulás kell ehhez.

A radar visszaadja az autó előtt levő legközelebbi releváns objektum adatait (táv, sebesség), ezekkel lehet számolni. A távolságból és az autó sebességéből meghatározható, hogy milyen lassulást kell adni az autónak, hogy még megálljon, de ne lépje túl a \\( 9 m/s^2 \\)-et.

Ha az ütközés elkerülhető, vizuális figyelmeztetést kell elhelyezni a vezetőnek, hogy fékezzen. Ha nem reagál, azaz továbbra is ütközési pályán vagyunk és már csak vészfékezéssel kerülhető el az ütközés, akkor a hajtásláncnak vészfékezési inputot kell adni. Ez a maximálisan megengedett, \\( 9 m/s^2 \\)-es lassulást (ennél nagyobb lassulás veszélyes az utasokra), akkor

Ha más nem próbálgatással meg kell határozni, hogy adott sebességről egy maximális fékezési input (100% pedál állás) mennyi idő alatt fékezi állóra az autót.

A modul olyan triggerekkel vezérli az autót mint amilyenek a billentyűlenyomás kezelőtől jönnek (fékpedál állás).

Dinamikus objektumok esetében a vészfékezés elve azonos, de az ütközési pálya meghatározása összetettebb.

Másik sávban szembe jövő autóra nem kell vészfékezést kiváltani, tehát el kell tudni dönteni, hogy abban az esetben nincs ütközési pálya.

### Definition of Done

- [ ] Elkerülhető ütközés esetén vizuális figyelmeztetés a sofőrnek

- [ ] Ha a sofőr nem avatkozik közbe, automatikus fékezés (az utolsó pillanatban, ahol az ütközés még elkerülhető)

- [ ] Az automatikus fékezés mértéke a sebességgel arányos, de nem lehet \\( 9 m/s^2 \\)-nél nagyobb

- [ ] 70 km/h felett figyelmeztetés, hogy az AEB nem tud minden helyzetet kezelni

- [ ] A vezérelt autó nem üt el gyalogost, nem megy neki fának

- [ ] Nem releváns objektumok esetében (fals pozitív) mint a szembejövő autó nem történik vészfékezés | priority | vészfékező a modul felelőssége a radar szenzorra épülő megvalósítása a vészfékező kritikus biztonsági funkció így nem kapcsolható ki manuálisan de maximum km h sebességig működik a működése két esetre bontható ütközés statikus vagy dinamikus objektummal az előbbi az egyszerűbb eset mivel a veszélyt jelentő objektum pozíciója változatlan el kell dönteni hogy az autó az aktuális irányvektort figyelembe véve ütközni fog e az objektummal ha igen az autó ismert sebességét figyelembe véve kiszámolható hogy ehhez mennyi időre van szükség és hogy mekkora mértékű lassulás kell ehhez a radar visszaadja az autó előtt levő legközelebbi releváns objektum adatait táv sebesség ezekkel lehet számolni a távolságból és az autó sebességéből meghatározható hogy milyen lassulást kell adni az autónak hogy még megálljon de ne lépje túl a m s et ha az ütközés elkerülhető vizuális figyelmeztetést kell elhelyezni a vezetőnek hogy fékezzen ha nem reagál azaz továbbra is ütközési pályán vagyunk és már csak vészfékezéssel kerülhető el az ütközés akkor a hajtásláncnak vészfékezési inputot kell adni ez a maximálisan megengedett m s es lassulást ennél nagyobb lassulás veszélyes az utasokra akkor ha más nem próbálgatással meg kell határozni hogy adott sebességről egy maximális fékezési input pedál állás mennyi idő alatt fékezi állóra az autót a modul olyan triggerekkel vezérli az autót mint amilyenek a billentyűlenyomás kezelőtől jönnek fékpedál állás dinamikus objektumok esetében a vészfékezés elve azonos de az ütközési pálya meghatározása összetettebb másik sávban szembe jövő autóra nem kell vészfékezést kiváltani tehát el kell tudni dönteni hogy abban az esetben nincs ütközési pálya definition of done elkerülhető ütközés esetén vizuális figyelmeztetés a sofőrnek ha a sofőr nem avatkozik közbe automatikus fékezés az utolsó pillanatban ahol az ütközés még elkerülhető az automatikus fékezés mértéke a sebességgel arányos de nem lehet m s nél nagyobb km h felett figyelmeztetés hogy az aeb nem tud minden helyzetet kezelni a vezérelt autó nem üt el gyalogost nem megy neki fának nem releváns objektumok esetében fals pozitív mint a szembejövő autó nem történik vészfékezés | 1 |

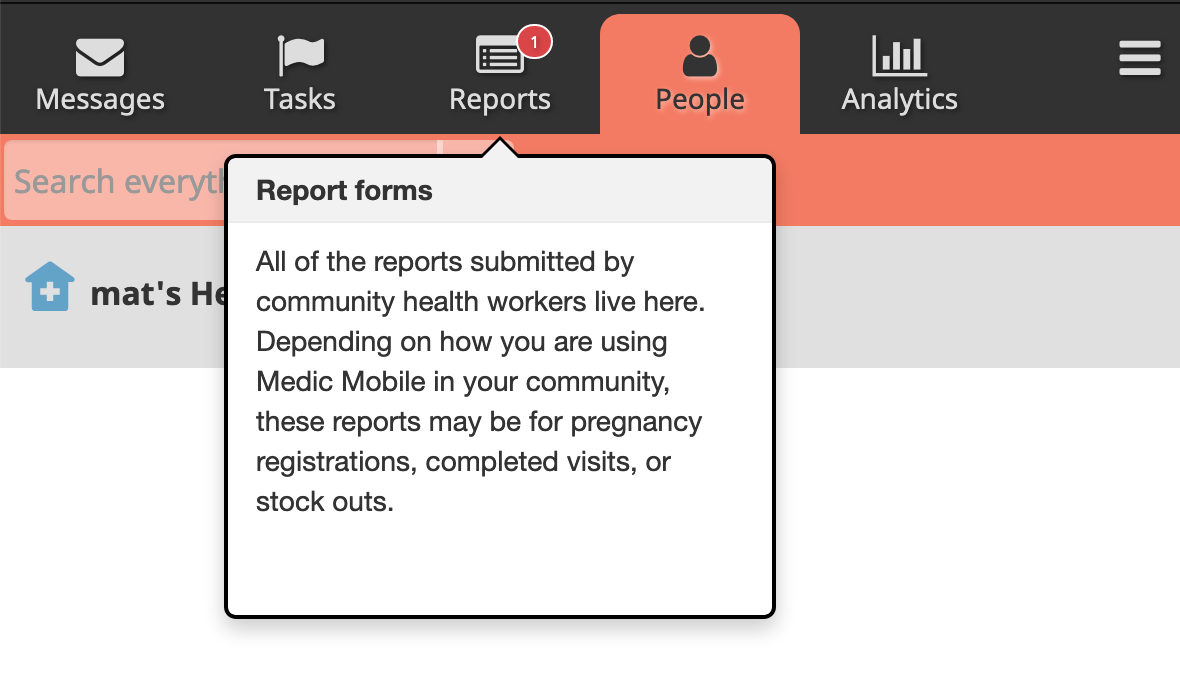

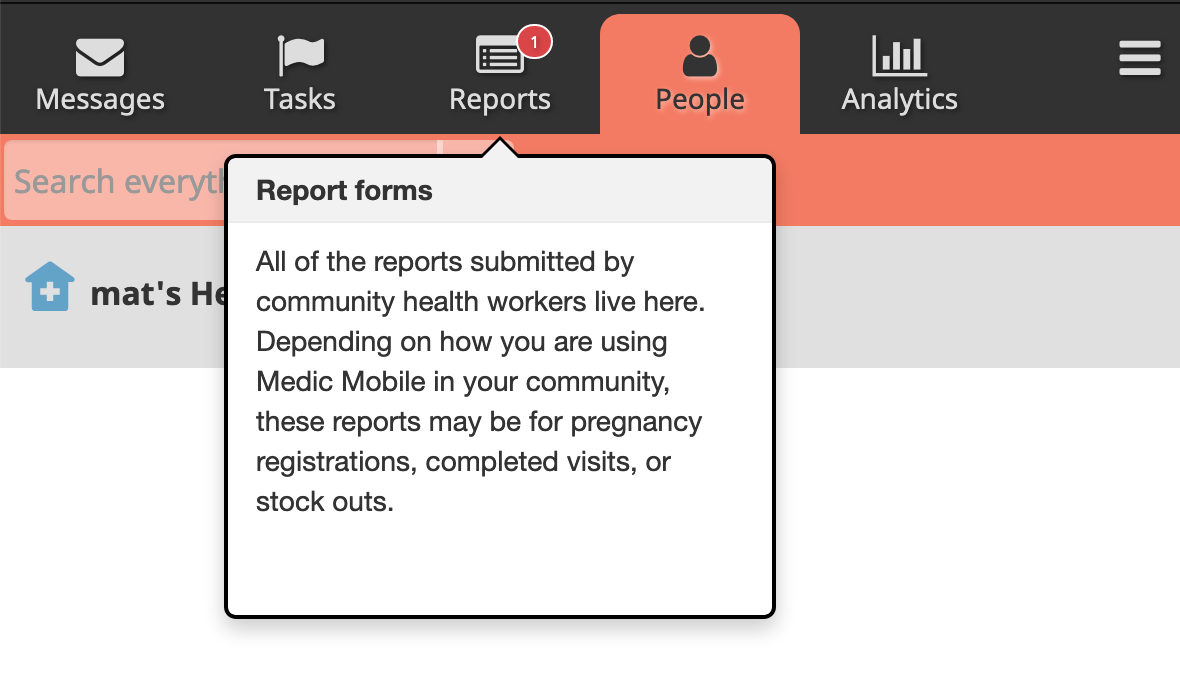

350,374 | 10,483,067,677 | IssuesEvent | 2019-09-24 13:16:31 | medic/medic | https://api.github.com/repos/medic/medic | closed | Guided Tour does not show control buttons | Priority: 1 - High Type: Bug | **Describe the bug**

There are no control buttons (previous, next, end tour).

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Menu >>

2. Click on 'Guided Tour'

3. Pick any option

**Observed behavior**

There are no control buttons (previous, next, end tour). No way to move between tabs or to exit the tour.

**Expected behavior**

The dialog box should show 3 buttons (`previous`, `next`, `end tour`).

**Logs**

No errors in console.

**Screenshots**

**Environment**

- Instance: local

- Browser: Firefox, Chrome

- Client platform: MacOS

- App: webapp, admin

- Version: 3.6.*

| 1.0 | Guided Tour does not show control buttons - **Describe the bug**

There are no control buttons (previous, next, end tour).

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Menu >>

2. Click on 'Guided Tour'

3. Pick any option

**Observed behavior**

There are no control buttons (previous, next, end tour). No way to move between tabs or to exit the tour.

**Expected behavior**

The dialog box should show 3 buttons (`previous`, `next`, `end tour`).

**Logs**

No errors in console.

**Screenshots**

**Environment**

- Instance: local

- Browser: Firefox, Chrome

- Client platform: MacOS

- App: webapp, admin

- Version: 3.6.*

| priority | guided tour does not show control buttons describe the bug there are no control buttons previous next end tour to reproduce steps to reproduce the behavior go to menu click on guided tour pick any option observed behavior there are no control buttons previous next end tour no way to move between tabs or to exit the tour expected behavior the dialog box should show buttons previous next end tour logs no errors in console screenshots environment instance local browser firefox chrome client platform macos app webapp admin version | 1 |

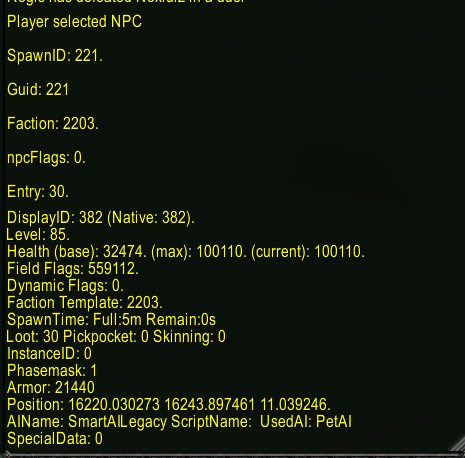

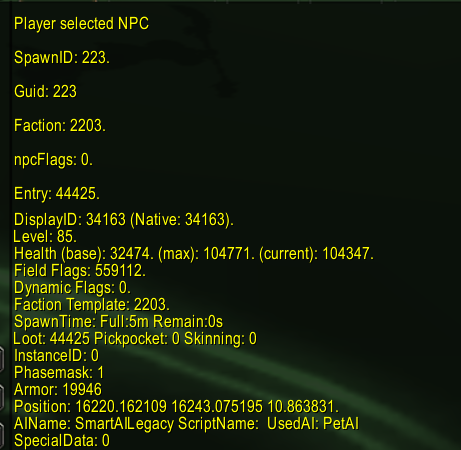

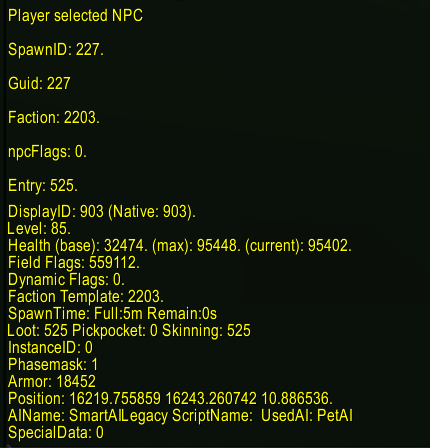

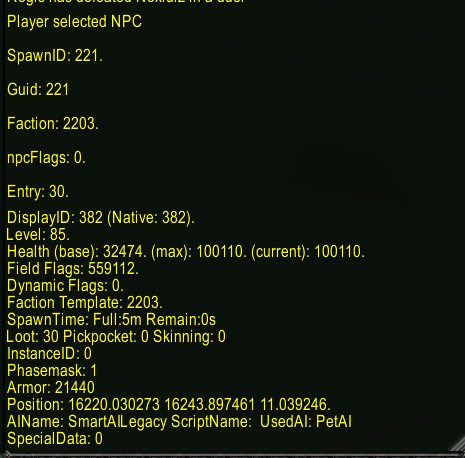

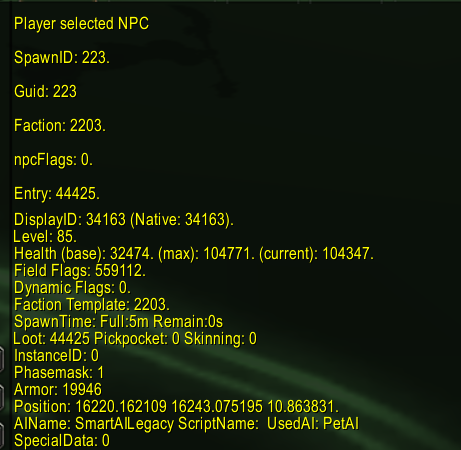

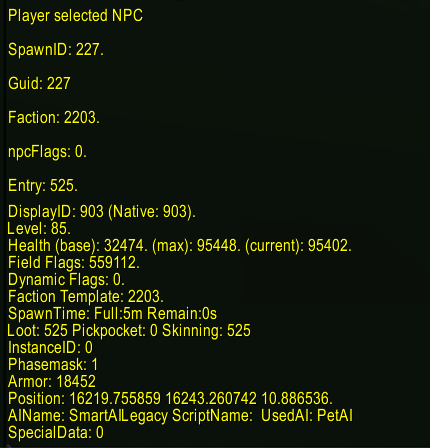

773,225 | 27,150,638,167 | IssuesEvent | 2023-02-17 00:45:19 | gamefreedomgit/Maelstrom | https://api.github.com/repos/gamefreedomgit/Maelstrom | closed | [Moved from Discord] Pets are not stacking Focus fire | Class: Hunter Pet Talent Priority: High Status: Confirmed | Pet IDs below are shown not to stack focus fire when attacking, talents have been checked

| 1.0 | [Moved from Discord] Pets are not stacking Focus fire - Pet IDs below are shown not to stack focus fire when attacking, talents have been checked

| priority | pets are not stacking focus fire pet ids below are shown not to stack focus fire when attacking talents have been checked | 1 |

318,485 | 9,693,278,828 | IssuesEvent | 2019-05-24 15:41:07 | geosolutions-it/MapStore2-C027 | https://api.github.com/repos/geosolutions-it/MapStore2-C027 | closed | Supporto LDAP - MS2 | Priority: High Project: C027 | At the moment the MapStore's LDAP support does not allow to read users within nested groups. By implementing this feature in MapStore, GS, and GF there will be the possibility to set auth rules with greater granularity in GF; however the possibility to authenticate users within nested domain groups in LDAP should be allowed in MS.

The current configuration deployed in the client's infrastructure is composed by MapStore, GeoServer and GeoFence independently connected to the same LDAP path with the same configuration. The authentications GeoServer side for OGC requests are managed through Authkey generated by MapStore.

The client's MS version is the stable 2018.01.xx of 13 Feb 2018, the proposal [here](https://docs.google.com/document/d/1IbKi3dWXvxzVf_sR3iv3HwoJdGJGbrNwqoFKIyz5t0E/edit#heading=h.hpvkr3wxmvs0) | 1.0 | Supporto LDAP - MS2 - At the moment the MapStore's LDAP support does not allow to read users within nested groups. By implementing this feature in MapStore, GS, and GF there will be the possibility to set auth rules with greater granularity in GF; however the possibility to authenticate users within nested domain groups in LDAP should be allowed in MS.

The current configuration deployed in the client's infrastructure is composed by MapStore, GeoServer and GeoFence independently connected to the same LDAP path with the same configuration. The authentications GeoServer side for OGC requests are managed through Authkey generated by MapStore.

The client's MS version is the stable 2018.01.xx of 13 Feb 2018, the proposal [here](https://docs.google.com/document/d/1IbKi3dWXvxzVf_sR3iv3HwoJdGJGbrNwqoFKIyz5t0E/edit#heading=h.hpvkr3wxmvs0) | priority | supporto ldap at the moment the mapstore s ldap support does not allow to read users within nested groups by implementing this feature in mapstore gs and gf there will be the possibility to set auth rules with greater granularity in gf however the possibility to authenticate users within nested domain groups in ldap should be allowed in ms the current configuration deployed in the client s infrastructure is composed by mapstore geoserver and geofence independently connected to the same ldap path with the same configuration the authentications geoserver side for ogc requests are managed through authkey generated by mapstore the client s ms version is the stable xx of feb the proposal | 1 |

33,988 | 2,774,188,062 | IssuesEvent | 2015-05-04 06:14:02 | punongbayan-araullo/tickets | https://api.github.com/repos/punongbayan-araullo/tickets | opened | Move ePayroll website from Diadem 3 to Diadem 6 | priority - high status - accepted system - paysql | Move ePayroll website from Diadem 3 to Diadem 6 | 1.0 | Move ePayroll website from Diadem 3 to Diadem 6 - Move ePayroll website from Diadem 3 to Diadem 6 | priority | move epayroll website from diadem to diadem move epayroll website from diadem to diadem | 1 |

174,457 | 6,540,249,251 | IssuesEvent | 2017-09-01 14:45:13 | envistaInteractive/itagroup-ecommerce-template | https://api.github.com/repos/envistaInteractive/itagroup-ecommerce-template | opened | scss template variable customization | CSS Module enhancement High Priority | Restructure scss scaffolding to allow for customizable scss. | 1.0 | scss template variable customization - Restructure scss scaffolding to allow for customizable scss. | priority | scss template variable customization restructure scss scaffolding to allow for customizable scss | 1 |

24,634 | 2,671,258,850 | IssuesEvent | 2015-03-24 04:02:15 | thedouglenz/ape | https://api.github.com/repos/thedouglenz/ape | closed | Controllerize the outer page stuffs | High Priority | The outer stuff shows up on all pages and needs the nav bar to be inside it but the nav bar needs some program logic to hade and show the login/logout/register buttons when necessary | 1.0 | Controllerize the outer page stuffs - The outer stuff shows up on all pages and needs the nav bar to be inside it but the nav bar needs some program logic to hade and show the login/logout/register buttons when necessary | priority | controllerize the outer page stuffs the outer stuff shows up on all pages and needs the nav bar to be inside it but the nav bar needs some program logic to hade and show the login logout register buttons when necessary | 1 |

274,470 | 8,561,732,492 | IssuesEvent | 2018-11-09 08:16:57 | CS2113-AY1819S1-W13-2/main | https://api.github.com/repos/CS2113-AY1819S1-W13-2/main | reopened | "edit" command allows ending time to be earlier than starting time | duplicate priority.high type.bug | **Describe the bug**

When I entered the command "edit 1 te/0670" (a nonsense time format which is also earlier than the starting time), the update is successful.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to input textbox.

2. Type "edit 1 te/0670".

3. Press enter.

4. Click on entry #1.

5. See error.

**Expected behavior**

Program should prevent nonsense updates/entries.

**Screenshots**

<hr>

**Reported by:** @ongweekeong

**Severity:** `Low`

<sub>[original: nusCS2113-AY1819S1/pe-1#610]</sub> | 1.0 | "edit" command allows ending time to be earlier than starting time - **Describe the bug**

When I entered the command "edit 1 te/0670" (a nonsense time format which is also earlier than the starting time), the update is successful.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to input textbox.

2. Type "edit 1 te/0670".

3. Press enter.

4. Click on entry #1.

5. See error.

**Expected behavior**

Program should prevent nonsense updates/entries.

**Screenshots**

<hr>

**Reported by:** @ongweekeong

**Severity:** `Low`

<sub>[original: nusCS2113-AY1819S1/pe-1#610]</sub> | priority | edit command allows ending time to be earlier than starting time describe the bug when i entered the command edit te a nonsense time format which is also earlier than the starting time the update is successful to reproduce steps to reproduce the behavior go to input textbox type edit te press enter click on entry see error expected behavior program should prevent nonsense updates entries screenshots reported by ongweekeong severity low | 1 |

376,923 | 11,158,178,858 | IssuesEvent | 2019-12-25 18:22:52 | clabe45/vidar | https://api.github.com/repos/clabe45/vidar | closed | Cache properties | priority:high type:bug type:enhancement | ~~**Blocked by #15**~~

Because function properties don't have to be deterministic (for a given time in the movie), yet they should be constant for each frame, they should be cached per-frame. This way, multiple references to one property won't yield different results in one frame. | 1.0 | Cache properties - ~~**Blocked by #15**~~

Because function properties don't have to be deterministic (for a given time in the movie), yet they should be constant for each frame, they should be cached per-frame. This way, multiple references to one property won't yield different results in one frame. | priority | cache properties blocked by because function properties don t have to be deterministic for a given time in the movie yet they should be constant for each frame they should be cached per frame this way multiple references to one property won t yield different results in one frame | 1 |

580,158 | 17,211,143,157 | IssuesEvent | 2021-07-19 04:44:59 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | opened | [Linter] Detect possible usage of check | Area/CompilerLinter Priority/High Team/CompilerFE Type/Improvement | Rule: Whenever possible we should use check to handle errors. Give a `hint` for those occurrences.

Anti-pattern

```

As much as possible avoid use of T|error in variable declarations. Instead use check and add “error?” to the return type,

For example:

Do NOT do;

public main() {

string|error res = doSomething();

if res is error {

io:println(res.toString());

}

else {

io:println("The result is ", res);

}

}

Instead do:

public main() returns error? {

string res = check doSomething();

io:println("The result is ", res);

}

``` | 1.0 | [Linter] Detect possible usage of check - Rule: Whenever possible we should use check to handle errors. Give a `hint` for those occurrences.

Anti-pattern

```

As much as possible avoid use of T|error in variable declarations. Instead use check and add “error?” to the return type,

For example:

Do NOT do;

public main() {

string|error res = doSomething();

if res is error {

io:println(res.toString());

}

else {

io:println("The result is ", res);

}

}

Instead do:

public main() returns error? {

string res = check doSomething();

io:println("The result is ", res);

}

``` | priority | detect possible usage of check rule whenever possible we should use check to handle errors give a hint for those occurrences anti pattern as much as possible avoid use of t error in variable declarations instead use check and add “error ” to the return type for example do not do public main string error res dosomething if res is error io println res tostring else io println the result is res instead do public main returns error string res check dosomething io println the result is res | 1 |

65,686 | 3,237,609,649 | IssuesEvent | 2015-10-14 12:51:52 | nextgis/ngm_clink_monitoring | https://api.github.com/repos/nextgis/ngm_clink_monitoring | closed | Проблема со статусом | High Priority question | Несмотря на то, что уже есть данные с мобильного приложения, статус объекта почему-то не переходит в статус «Идет строительство». | 1.0 | Проблема со статусом - Несмотря на то, что уже есть данные с мобильного приложения, статус объекта почему-то не переходит в статус «Идет строительство». | priority | проблема со статусом несмотря на то что уже есть данные с мобильного приложения статус объекта почему то не переходит в статус «идет строительство» | 1 |

97,132 | 3,985,387,707 | IssuesEvent | 2016-05-07 21:01:27 | emencia/emencia-django-bazar | https://api.github.com/repos/emencia/emencia-django-bazar | closed | Missing package requirement | bug high-priority | At least setup lack requirement of "django-localflavor".

Also there is some additional package that may be optional but actually allways used but not mandated as required:

* rstview and djangocodemirror (related to formatting);

* sendfile (related to note attachments);

| 1.0 | Missing package requirement - At least setup lack requirement of "django-localflavor".

Also there is some additional package that may be optional but actually allways used but not mandated as required:

* rstview and djangocodemirror (related to formatting);

* sendfile (related to note attachments);

| priority | missing package requirement at least setup lack requirement of django localflavor also there is some additional package that may be optional but actually allways used but not mandated as required rstview and djangocodemirror related to formatting sendfile related to note attachments | 1 |

20,807 | 2,631,260,588 | IssuesEvent | 2015-03-07 00:08:26 | okTurtles/okturtles.com | https://api.github.com/repos/okTurtles/okturtles.com | closed | Get rid of TypeKit | high priority | People don't like it for privacy reasons (valid concern), and many of our visitors block TypeKit (breaking the site):

https://www.reddit.com/r/privacy/comments/2xjy13/love_this_idea_for_fixing_https_with_a/cp12d63

Need to find a good alternative font that looks similar to Proxima Nova.

<bountysource-plugin>

---

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/9093083-get-rid-of-typekit?utm_campaign=plugin&utm_content=tracker%2F835003&utm_medium=issues&utm_source=github)** We accept bounties via [Bountysource](https://www.bountysource.com/?utm_campaign=plugin&utm_content=tracker%2F835003&utm_medium=issues&utm_source=github).

</bountysource-plugin> | 1.0 | Get rid of TypeKit - People don't like it for privacy reasons (valid concern), and many of our visitors block TypeKit (breaking the site):

https://www.reddit.com/r/privacy/comments/2xjy13/love_this_idea_for_fixing_https_with_a/cp12d63

Need to find a good alternative font that looks similar to Proxima Nova.

<bountysource-plugin>

---

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/9093083-get-rid-of-typekit?utm_campaign=plugin&utm_content=tracker%2F835003&utm_medium=issues&utm_source=github)** We accept bounties via [Bountysource](https://www.bountysource.com/?utm_campaign=plugin&utm_content=tracker%2F835003&utm_medium=issues&utm_source=github).

</bountysource-plugin> | priority | get rid of typekit people don t like it for privacy reasons valid concern and many of our visitors block typekit breaking the site need to find a good alternative font that looks similar to proxima nova want to back this issue we accept bounties via | 1 |

474,398 | 13,668,920,471 | IssuesEvent | 2020-09-29 00:24:10 | canonical-web-and-design/maas-ui | https://api.github.com/repos/canonical-web-and-design/maas-ui | closed | Main navigation loses active indicator in some subpages | Bug 🐛 Priority: High | Going to `/subnets` highlights the nav, but going to `/fabric` does not. | 1.0 | Main navigation loses active indicator in some subpages - Going to `/subnets` highlights the nav, but going to `/fabric` does not. | priority | main navigation loses active indicator in some subpages going to subnets highlights the nav but going to fabric does not | 1 |

176,891 | 6,568,951,158 | IssuesEvent | 2017-09-09 00:20:47 | open-austin/iced-coffee | https://api.github.com/repos/open-austin/iced-coffee | closed | Membership -> Bylaws | high priority | @seandellis and I talked about this today!

Review our agenda and notes here:

https://docs.google.com/document/d/1P8wbVHkb8Df1LKXiiJjD1nwg1axKNqvhJ9noxe4_4OA/edit?usp=sharing

Bylaws here:

https://docs.google.com/document/d/1D6mhqrxokBCXG8g9I5XXKtBvUyjUCJS4_njqc1N9DVs/edit?usp=sharing

Vickies notes here:

https://docs.google.com/document/d/1P8wbVHkb8Df1LKXiiJjD1nwg1axKNqvhJ9noxe4_4OA/edit?usp=sharing

To-Do

- [x] Determine necessary information to be an affiliate member #257 // @seandellis + Me to create google doc

- [x] Translate Vickie's Doc to bylaws and have it reviewed by Jon + Leadership at next monthly meeting // Me

- [x] Create affiliate form #257 // @seandellis

- [ ] Determine best/easiest way to create and update a "profile". Thinking Slack // Me

| 1.0 | Membership -> Bylaws - @seandellis and I talked about this today!

Review our agenda and notes here:

https://docs.google.com/document/d/1P8wbVHkb8Df1LKXiiJjD1nwg1axKNqvhJ9noxe4_4OA/edit?usp=sharing

Bylaws here:

https://docs.google.com/document/d/1D6mhqrxokBCXG8g9I5XXKtBvUyjUCJS4_njqc1N9DVs/edit?usp=sharing

Vickies notes here:

https://docs.google.com/document/d/1P8wbVHkb8Df1LKXiiJjD1nwg1axKNqvhJ9noxe4_4OA/edit?usp=sharing

To-Do

- [x] Determine necessary information to be an affiliate member #257 // @seandellis + Me to create google doc

- [x] Translate Vickie's Doc to bylaws and have it reviewed by Jon + Leadership at next monthly meeting // Me

- [x] Create affiliate form #257 // @seandellis

- [ ] Determine best/easiest way to create and update a "profile". Thinking Slack // Me

| priority | membership bylaws seandellis and i talked about this today review our agenda and notes here bylaws here vickies notes here to do determine necessary information to be an affiliate member seandellis me to create google doc translate vickie s doc to bylaws and have it reviewed by jon leadership at next monthly meeting me create affiliate form seandellis determine best easiest way to create and update a profile thinking slack me | 1 |

200,226 | 7,001,602,813 | IssuesEvent | 2017-12-18 10:50:53 | meumobi/AMS.Connect | https://api.github.com/repos/meumobi/AMS.Connect | closed | revenu net is not computed from unplugged records of the day | bug high-priority | ### Expected behaviour

Tell us what should happen

### Actual behaviour

margin is setted on Admargin daily file

but when get row margin value is empty

```

[2017-12-16 13:57:26] lumen.DEBUG: Get 'revenu net' row {"margin":"","revenu":0,"Margin is numeric: ":false,"Revenue is numeric: ":true} []

[2017-12-16 13:57:26] lumen.DEBUG: Get 'revenu net' row {"revenu net":"Unknown"} []

```

### Steps to reproduce

1.

2.

3.

### Expected responses

- Why it happens

- How to fix it

- How to test

| 1.0 | revenu net is not computed from unplugged records of the day - ### Expected behaviour

Tell us what should happen

### Actual behaviour

margin is setted on Admargin daily file

but when get row margin value is empty

```

[2017-12-16 13:57:26] lumen.DEBUG: Get 'revenu net' row {"margin":"","revenu":0,"Margin is numeric: ":false,"Revenue is numeric: ":true} []

[2017-12-16 13:57:26] lumen.DEBUG: Get 'revenu net' row {"revenu net":"Unknown"} []

```

### Steps to reproduce

1.

2.

3.

### Expected responses

- Why it happens

- How to fix it

- How to test

| priority | revenu net is not computed from unplugged records of the day expected behaviour tell us what should happen actual behaviour margin is setted on admargin daily file but when get row margin value is empty lumen debug get revenu net row margin revenu margin is numeric false revenue is numeric true lumen debug get revenu net row revenu net unknown steps to reproduce expected responses why it happens how to fix it how to test | 1 |

765,669 | 26,856,781,148 | IssuesEvent | 2023-02-03 15:11:10 | unlock-protocol/unlock | https://api.github.com/repos/unlock-protocol/unlock | closed | Enable claim for Goerli locks | 🚨 High Priority | It looks like we have not enabled free claims on Goerli? [Try here](https://app.unlock-protocol.com/checkout?paywallConfig=%7B%22locks%22%3A%7B%220x24a61c3afe7cdd992eed28ffbe62a7a262496928%22%3A%7B%22network%22%3A5%2C%22skipRecipient%22%3Atrue%7D%7D%2C%22pessimistic%22%3Atrue%2C%22skipRecipient%22%3Atrue%7D)

We should add that as it is a useful flow to "debug" things!

<img width="535" alt="Screenshot 2023-02-02 at 3 58 55 PM" src="https://user-images.githubusercontent.com/17735/216448161-9bcfe65e-5424-4574-bde7-135c5dd952f9.png">

| 1.0 | Enable claim for Goerli locks - It looks like we have not enabled free claims on Goerli? [Try here](https://app.unlock-protocol.com/checkout?paywallConfig=%7B%22locks%22%3A%7B%220x24a61c3afe7cdd992eed28ffbe62a7a262496928%22%3A%7B%22network%22%3A5%2C%22skipRecipient%22%3Atrue%7D%7D%2C%22pessimistic%22%3Atrue%2C%22skipRecipient%22%3Atrue%7D)

We should add that as it is a useful flow to "debug" things!

<img width="535" alt="Screenshot 2023-02-02 at 3 58 55 PM" src="https://user-images.githubusercontent.com/17735/216448161-9bcfe65e-5424-4574-bde7-135c5dd952f9.png">

| priority | enable claim for goerli locks it looks like we have not enabled free claims on goerli we should add that as it is a useful flow to debug things img width alt screenshot at pm src | 1 |

123,650 | 4,866,419,567 | IssuesEvent | 2016-11-14 23:43:51 | phetsims/tasks | https://api.github.com/repos/phetsims/tasks | opened | A better way to pass through configuration | priority:2-high | While working on adding nested options to NumberControl, @pixelzoom said:

> I still think there must be a better approach (than options) to customizing UI components.

I want to take a look at an idea I had for this. | 1.0 | A better way to pass through configuration - While working on adding nested options to NumberControl, @pixelzoom said:

> I still think there must be a better approach (than options) to customizing UI components.

I want to take a look at an idea I had for this. | priority | a better way to pass through configuration while working on adding nested options to numbercontrol pixelzoom said i still think there must be a better approach than options to customizing ui components i want to take a look at an idea i had for this | 1 |

442,115 | 12,739,792,465 | IssuesEvent | 2020-06-26 00:15:02 | ubclaunchpad/rocket2 | https://api.github.com/repos/ubclaunchpad/rocket2 | closed | `/rocket help' logs 'app command triggered incorrectly' | bug high priority | **Describe the bug**

Running `/rocket help` gives the help text correctly, but later also logs that an `app command triggered incorrectly`, which is obviously not the case.

**To Reproduce**

Steps to reproduce the behavior:

1. `/rocket help`

**Expected behavior**

No errors should be logged.

**Screenshots**

```console

2020-06-20T00:37:35.404290984Z {Time: 2020-06-20 00:37:35, Level: [INFO], function: server.handle_commands():104, message: Slash command received}

2020-06-20T00:37:35.405382493Z {Time: 2020-06-20 00:37:35, Level: [INFO], function: server.handle_commands():110, message: Slack signature verified}

2020-06-20T00:37:35.406558906Z {Time: 2020-06-20 00:37:35, Level: [INFO], function: server.handle_commands():113, message: @UCXF0357H: /rocket help}

2020-06-20T00:37:35.409130177Z {Time: 2020-06-20 00:37:35, Level: [INFO], function: parser.handle_app_command():62, message: Help command was called}

2020-06-20T00:37:35.410432442Z {Time: 2020-06-20 00:37:35, Level: [ERROR], function: parser.handle_app_command():70, message: app command triggered incorrectly}

``` | 1.0 | `/rocket help' logs 'app command triggered incorrectly' - **Describe the bug**

Running `/rocket help` gives the help text correctly, but later also logs that an `app command triggered incorrectly`, which is obviously not the case.

**To Reproduce**

Steps to reproduce the behavior:

1. `/rocket help`

**Expected behavior**

No errors should be logged.

**Screenshots**

```console

2020-06-20T00:37:35.404290984Z {Time: 2020-06-20 00:37:35, Level: [INFO], function: server.handle_commands():104, message: Slash command received}

2020-06-20T00:37:35.405382493Z {Time: 2020-06-20 00:37:35, Level: [INFO], function: server.handle_commands():110, message: Slack signature verified}

2020-06-20T00:37:35.406558906Z {Time: 2020-06-20 00:37:35, Level: [INFO], function: server.handle_commands():113, message: @UCXF0357H: /rocket help}

2020-06-20T00:37:35.409130177Z {Time: 2020-06-20 00:37:35, Level: [INFO], function: parser.handle_app_command():62, message: Help command was called}

2020-06-20T00:37:35.410432442Z {Time: 2020-06-20 00:37:35, Level: [ERROR], function: parser.handle_app_command():70, message: app command triggered incorrectly}

``` | priority | rocket help logs app command triggered incorrectly describe the bug running rocket help gives the help text correctly but later also logs that an app command triggered incorrectly which is obviously not the case to reproduce steps to reproduce the behavior rocket help expected behavior no errors should be logged screenshots console time level function server handle commands message slash command received time level function server handle commands message slack signature verified time level function server handle commands message rocket help time level function parser handle app command message help command was called time level function parser handle app command message app command triggered incorrectly | 1 |

240,138 | 7,800,472,774 | IssuesEvent | 2018-06-09 09:50:55 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0009464:

user grid does not refresh after ctx menu action | Admin Bug Mantis high priority | **Reported by pschuele on 27 Dec 2013 13:56**

**Version:** Kristina (2013.03.8)

user grid does not refresh after ctx menu action like enable/disable user

| 1.0 | 0009464:

user grid does not refresh after ctx menu action - **Reported by pschuele on 27 Dec 2013 13:56**

**Version:** Kristina (2013.03.8)

user grid does not refresh after ctx menu action like enable/disable user

| priority | user grid does not refresh after ctx menu action reported by pschuele on dec version kristina user grid does not refresh after ctx menu action like enable disable user | 1 |

802,853 | 29,047,588,390 | IssuesEvent | 2023-05-13 19:19:02 | exellian/rex | https://api.github.com/repos/exellian/rex | closed | DRAFT: Template rendering function design | draft priority: high | A really important problem which needs to be solved is the design of the rendering function of a template. This function should not only render an arbitrary string instead it should be able to be used also for hydration and rendering a template to different objects. Therefore the function must be generated in a way that is very generic.

idea:

A template is built out of tree structure called Nodes (Tags, VoidTags, Text and Expressions):

```html

<div>

{ 0 + 3 } // Expression

<div> //Tag with child nodes

...

</div>

this is the text of a text node

</div>

```

So each node is either a leaf (TextNode, VoidTag, Expressions) or a Node with children (TagNodes, Expressions).

The real problem is that an Expression can not only be a leaf but also a node in this tree. In the following the individual cases are shown were Expressions are nodes with children:

```html

<div>

{ <div> hallo </div> } // Expression which contains a tag with children. // Type: Node

{ // Expression which contains a for loop // Type: Array<Node>

for x in 0..10 {

<div></div>

}

}

{ // Expression which contains a for loop // Type: Array<Array<Node>>

for x in 0..10 {

for x in 0..10 {

<div></div>

}

}

}

{ // Expression which has an if block // Type: Node

if success {

<div>success!</div>

} else {

8 + 9 // Any other datatype which is not of type Node or Array<Node> gets automatically converted to a TextNode

}

}

{ (<div></div>) } // We could allow a Node inside a group, but this should probably discussed later on!

{ x.test } //

```

So basically an expression embedded directly into the template can only have the type Node, Array<Node>, Array<Array<Node>>, Array<Array<Array<Node>>>, ...

Because we don't want to have different possible types of expressions the idea is to transform expressions in a way so that simply every expression has type Array<Node>. To do this we have to generate for every non Node expression a make_text_node statement, for every simply Node a make_vector_from_one_element statement, for every Array<Node> statement nothing and if we have a for loop expression, we have to perform a flat_map from Array<Array<Node>> to Array<Node>

| 1.0 | DRAFT: Template rendering function design - A really important problem which needs to be solved is the design of the rendering function of a template. This function should not only render an arbitrary string instead it should be able to be used also for hydration and rendering a template to different objects. Therefore the function must be generated in a way that is very generic.

idea:

A template is built out of tree structure called Nodes (Tags, VoidTags, Text and Expressions):

```html

<div>

{ 0 + 3 } // Expression

<div> //Tag with child nodes

...

</div>

this is the text of a text node

</div>

```

So each node is either a leaf (TextNode, VoidTag, Expressions) or a Node with children (TagNodes, Expressions).

The real problem is that an Expression can not only be a leaf but also a node in this tree. In the following the individual cases are shown were Expressions are nodes with children:

```html

<div>

{ <div> hallo </div> } // Expression which contains a tag with children. // Type: Node

{ // Expression which contains a for loop // Type: Array<Node>

for x in 0..10 {

<div></div>

}

}

{ // Expression which contains a for loop // Type: Array<Array<Node>>

for x in 0..10 {

for x in 0..10 {

<div></div>

}

}

}

{ // Expression which has an if block // Type: Node

if success {

<div>success!</div>

} else {

8 + 9 // Any other datatype which is not of type Node or Array<Node> gets automatically converted to a TextNode

}

}

{ (<div></div>) } // We could allow a Node inside a group, but this should probably discussed later on!

{ x.test } //

```

So basically an expression embedded directly into the template can only have the type Node, Array<Node>, Array<Array<Node>>, Array<Array<Array<Node>>>, ...

Because we don't want to have different possible types of expressions the idea is to transform expressions in a way so that simply every expression has type Array<Node>. To do this we have to generate for every non Node expression a make_text_node statement, for every simply Node a make_vector_from_one_element statement, for every Array<Node> statement nothing and if we have a for loop expression, we have to perform a flat_map from Array<Array<Node>> to Array<Node>

| priority | draft template rendering function design a really important problem which needs to be solved is the design of the rendering function of a template this function should not only render an arbitrary string instead it should be able to be used also for hydration and rendering a template to different objects therefore the function must be generated in a way that is very generic idea a template is built out of tree structure called nodes tags voidtags text and expressions html expression tag with child nodes this is the text of a text node so each node is either a leaf textnode voidtag expressions or a node with children tagnodes expressions the real problem is that an expression can not only be a leaf but also a node in this tree in the following the individual cases are shown were expressions are nodes with children html hallo expression which contains a tag with children type node expression which contains a for loop type array for x in expression which contains a for loop type array for x in for x in expression which has an if block type node if success success else any other datatype which is not of type node or array gets automatically converted to a textnode we could allow a node inside a group but this should probably discussed later on x test so basically an expression embedded directly into the template can only have the type node array array array because we don t want to have different possible types of expressions the idea is to transform expressions in a way so that simply every expression has type array to do this we have to generate for every non node expression a make text node statement for every simply node a make vector from one element statement for every array statement nothing and if we have a for loop expression we have to perform a flat map from array to array | 1 |

419,434 | 12,223,588,717 | IssuesEvent | 2020-05-02 18:16:49 | Broken-Gem-Studio/Broken-Engine | https://api.github.com/repos/Broken-Gem-Studio/Broken-Engine | closed | Dt delay | Bug High Priority | ## Bug Description

dt has a delay of some kind, time is not advancing properly

## Type of Bug

Select the type of bug with and "x" ([x])

* [ ] Visual

* [ ] Physics

* [ ] Audio

* [ ] Particles

* [ ] Resource Management & Save/Load

* [ ] Materials

* [ ] Components

* [ ] Game Objects

* [ ] UI/UX

* [X] Scripting

* [X] Other

## Severity

Select the severity of bug affection and mark with "x" ([x])

- [ ] Crash

- [X] Game stopper/slower

- [ ] Cosmetic

## Reproduction

Steps to reproduce the behavior:

1. just enter play mode, or use time in a script

2.

3.

4.

## Frequency

Select the frequency with which the bug appears and mark it "x" ([x])

* [X] Always

* [ ] Very Often

* [ ] Usually

* [ ] Few Times

* [ ] Few Times under specific conditions

## Conduct

### Expected result:

time works correctly

### Actual result:

time advances slowly

## Screenshots and Illustrations:

## Build

- **Please specify the build:** ``Insert the build here``

latest dev commit 2225

## Observations and Additional Information

| 1.0 | Dt delay - ## Bug Description

dt has a delay of some kind, time is not advancing properly

## Type of Bug

Select the type of bug with and "x" ([x])

* [ ] Visual

* [ ] Physics

* [ ] Audio

* [ ] Particles

* [ ] Resource Management & Save/Load

* [ ] Materials

* [ ] Components

* [ ] Game Objects

* [ ] UI/UX

* [X] Scripting

* [X] Other

## Severity

Select the severity of bug affection and mark with "x" ([x])

- [ ] Crash

- [X] Game stopper/slower

- [ ] Cosmetic

## Reproduction

Steps to reproduce the behavior:

1. just enter play mode, or use time in a script

2.

3.

4.

## Frequency

Select the frequency with which the bug appears and mark it "x" ([x])

* [X] Always

* [ ] Very Often

* [ ] Usually

* [ ] Few Times

* [ ] Few Times under specific conditions

## Conduct

### Expected result:

time works correctly

### Actual result:

time advances slowly

## Screenshots and Illustrations:

## Build

- **Please specify the build:** ``Insert the build here``

latest dev commit 2225

## Observations and Additional Information

| priority | dt delay bug description dt has a delay of some kind time is not advancing properly type of bug select the type of bug with and x visual physics audio particles resource management save load materials components game objects ui ux scripting other severity select the severity of bug affection and mark with x crash game stopper slower cosmetic reproduction steps to reproduce the behavior just enter play mode or use time in a script frequency select the frequency with which the bug appears and mark it x always very often usually few times few times under specific conditions conduct expected result time works correctly actual result time advances slowly screenshots and illustrations build please specify the build insert the build here latest dev commit observations and additional information | 1 |

146,991 | 5,631,853,766 | IssuesEvent | 2017-04-05 15:22:27 | nus-mtp/nus-oracle | https://api.github.com/repos/nus-mtp/nus-oracle | closed | [SignIn Page] Clicking on any Modal will render wrong loading msg subsequently when logging in | bug high priority UI | * Because no setState for loadingMsgs.LOAD_LOGGING_IN was set when user hid any of the Modal windows for signing up/forget password | 1.0 | [SignIn Page] Clicking on any Modal will render wrong loading msg subsequently when logging in - * Because no setState for loadingMsgs.LOAD_LOGGING_IN was set when user hid any of the Modal windows for signing up/forget password | priority | clicking on any modal will render wrong loading msg subsequently when logging in because no setstate for loadingmsgs load logging in was set when user hid any of the modal windows for signing up forget password | 1 |

526,501 | 15,294,359,233 | IssuesEvent | 2021-02-24 02:18:27 | rdsaliba/notorious-eng | https://api.github.com/repos/rdsaliba/notorious-eng | closed | (F10) Evaluating RUL models for specific asset type | High Priority user story | As a user, I would like to see an RUL model performance for a specific asset type.

Description:

Given a static file filled with asset measurement data from the asset type that the user is consulting, the cbms should be able to train the different models for that asset type with the data provided and should be able to generate the evaluation performance of each model comparing to real RUL values. This is to calculate the RMSE.

Acceptance criteria:

- [x] The system reads data from archived assets for a specific asset type

- [x] The user can specify which ones are for testing and which ones are for training

- [x] Display the number of maximum assets available

- [x] Validate that the number of specified assets is under the maximum

- [x] The system has a generate models button to evaluate all models

- [x] The system has a generate model button for each individual model

- [x] The system generates the trained models and displays the evaluation of each model (RMSE) | 1.0 | (F10) Evaluating RUL models for specific asset type - As a user, I would like to see an RUL model performance for a specific asset type.

Description:

Given a static file filled with asset measurement data from the asset type that the user is consulting, the cbms should be able to train the different models for that asset type with the data provided and should be able to generate the evaluation performance of each model comparing to real RUL values. This is to calculate the RMSE.

Acceptance criteria:

- [x] The system reads data from archived assets for a specific asset type

- [x] The user can specify which ones are for testing and which ones are for training

- [x] Display the number of maximum assets available

- [x] Validate that the number of specified assets is under the maximum

- [x] The system has a generate models button to evaluate all models

- [x] The system has a generate model button for each individual model

- [x] The system generates the trained models and displays the evaluation of each model (RMSE) | priority | evaluating rul models for specific asset type as a user i would like to see an rul model performance for a specific asset type description given a static file filled with asset measurement data from the asset type that the user is consulting the cbms should be able to train the different models for that asset type with the data provided and should be able to generate the evaluation performance of each model comparing to real rul values this is to calculate the rmse acceptance criteria the system reads data from archived assets for a specific asset type the user can specify which ones are for testing and which ones are for training display the number of maximum assets available validate that the number of specified assets is under the maximum the system has a generate models button to evaluate all models the system has a generate model button for each individual model the system generates the trained models and displays the evaluation of each model rmse | 1 |

193,318 | 6,883,924,638 | IssuesEvent | 2017-11-21 11:05:34 | BaselLaserMouse/BakingTray | https://api.github.com/repos/BaselLaserMouse/BakingTray | closed | Extracting imaging FOV from ScanImage is slow. Should cache it. | high priority | It takes about 200 ms to read the imaging FOV each time `SIBT.returnScanSettings` is called and sometimes this is repeatedly. To minimise this reading, we should cache all scan settings in the scanner class and only re-read if any settings that might alter the imaging FOV have changed. i.e. The scan angle, scan angle multipliers, number of microns per optical degree, and the fill-fraction. | 1.0 | Extracting imaging FOV from ScanImage is slow. Should cache it. - It takes about 200 ms to read the imaging FOV each time `SIBT.returnScanSettings` is called and sometimes this is repeatedly. To minimise this reading, we should cache all scan settings in the scanner class and only re-read if any settings that might alter the imaging FOV have changed. i.e. The scan angle, scan angle multipliers, number of microns per optical degree, and the fill-fraction. | priority | extracting imaging fov from scanimage is slow should cache it it takes about ms to read the imaging fov each time sibt returnscansettings is called and sometimes this is repeatedly to minimise this reading we should cache all scan settings in the scanner class and only re read if any settings that might alter the imaging fov have changed i e the scan angle scan angle multipliers number of microns per optical degree and the fill fraction | 1 |

437,287 | 12,566,705,353 | IssuesEvent | 2020-06-08 11:42:38 | CatalogueOfLife/clearinghouse-ui | https://api.github.com/repos/CatalogueOfLife/clearinghouse-ui | closed | Need a place to release a catalogue draft and watch its progress | API change assembly high priority | Releasing a (draft) catalogue requires a button to kick that off. It also needs a place to monitor the long running process. Maybe its wise to think about the generic long running monitoring idea Geoff had? | 1.0 | Need a place to release a catalogue draft and watch its progress - Releasing a (draft) catalogue requires a button to kick that off. It also needs a place to monitor the long running process. Maybe its wise to think about the generic long running monitoring idea Geoff had? | priority | need a place to release a catalogue draft and watch its progress releasing a draft catalogue requires a button to kick that off it also needs a place to monitor the long running process maybe its wise to think about the generic long running monitoring idea geoff had | 1 |

667,611 | 22,494,017,137 | IssuesEvent | 2022-06-23 05:33:45 | jhudsl/intro_to_r | https://api.github.com/repos/jhudsl/intro_to_r | closed | statistics updates | high priority | - update broom usage

- resources for classes on models in R??

- case studies have lots of stats

- tidyverse chapter series on modeling/ML (some from jhudatascience.org and data trail)

| 1.0 | statistics updates - - update broom usage

- resources for classes on models in R??

- case studies have lots of stats

- tidyverse chapter series on modeling/ML (some from jhudatascience.org and data trail)

| priority | statistics updates update broom usage resources for classes on models in r case studies have lots of stats tidyverse chapter series on modeling ml some from jhudatascience org and data trail | 1 |

286,114 | 8,784,043,771 | IssuesEvent | 2018-12-20 08:38:01 | projectacrn/acrn-hypervisor | https://api.github.com/repos/projectacrn/acrn-hypervisor | closed | GPU Mediator shall support virtual displays for guest domains | area: hypervisor priority: high status: closed type: feature | GPU Mediator shall support virtual displays for guest domains Some domains on the platform may have no assigned display pipes or planes and yet may be producing output that will be directed to some display (local through indirect display or remote) With that in mind the domain will require some means of determining the parameters of the physical display target (i.e. virtual VBT or cached EDID information) and GPU mediator will need to in some way provide MMIO state the driver requires to determine a display device is present (when it is not physically) and that the graphics stack should produce a display output buffer. | 1.0 | GPU Mediator shall support virtual displays for guest domains - GPU Mediator shall support virtual displays for guest domains Some domains on the platform may have no assigned display pipes or planes and yet may be producing output that will be directed to some display (local through indirect display or remote) With that in mind the domain will require some means of determining the parameters of the physical display target (i.e. virtual VBT or cached EDID information) and GPU mediator will need to in some way provide MMIO state the driver requires to determine a display device is present (when it is not physically) and that the graphics stack should produce a display output buffer. | priority | gpu mediator shall support virtual displays for guest domains gpu mediator shall support virtual displays for guest domains some domains on the platform may have no assigned display pipes or planes and yet may be producing output that will be directed to some display local through indirect display or remote with that in mind the domain will require some means of determining the parameters of the physical display target i e virtual vbt or cached edid information and gpu mediator will need to in some way provide mmio state the driver requires to determine a display device is present when it is not physically and that the graphics stack should produce a display output buffer | 1 |

767,899 | 26,946,641,997 | IssuesEvent | 2023-02-08 08:38:41 | telstra/open-kilda | https://api.github.com/repos/telstra/open-kilda | opened | flow_id in the request line is ignored while flow update | bug area/api priority/1-highest | Steps

case 1

1) create flow (e.g. with flow_id = flow1) - POST [/v2/flows]

2) make **wrong** put request to update it - - PUT [/v2/flows/flow_not_match]

where body of the request contains flow_id = flow1

note: system shouldn't contain any flow with "flow_not_match" id

actual result: flow is updated successfully

expected result: 404 with the corresponding error message like "flow not found"

case 2

1) create flow (e.g. with flow_id = flow1) - POST [/v2/flows]

2) make put request to update it - - PUT [/v2/flows/flow1]

where body of the request **doesn't contains** flow_id = flow1

e.g. body should contain flow_id = flow_not_match

note: system shouldn't contain any flow with "flow_not_match" id

actual result: 404 with the corresponding error message like "flow not found"

expected result: flow should be updated, flow_id from the body should be ignored

actually we are taking flow_id from body, but it should be taken from the request line

also flow_id update is not supported, so it can be set as nullable in the body

| 1.0 | flow_id in the request line is ignored while flow update - Steps

case 1

1) create flow (e.g. with flow_id = flow1) - POST [/v2/flows]

2) make **wrong** put request to update it - - PUT [/v2/flows/flow_not_match]

where body of the request contains flow_id = flow1

note: system shouldn't contain any flow with "flow_not_match" id

actual result: flow is updated successfully

expected result: 404 with the corresponding error message like "flow not found"

case 2

1) create flow (e.g. with flow_id = flow1) - POST [/v2/flows]

2) make put request to update it - - PUT [/v2/flows/flow1]

where body of the request **doesn't contains** flow_id = flow1

e.g. body should contain flow_id = flow_not_match

note: system shouldn't contain any flow with "flow_not_match" id

actual result: 404 with the corresponding error message like "flow not found"

expected result: flow should be updated, flow_id from the body should be ignored

actually we are taking flow_id from body, but it should be taken from the request line

also flow_id update is not supported, so it can be set as nullable in the body

| priority | flow id in the request line is ignored while flow update steps case create flow e g with flow id post make wrong put request to update it put where body of the request contains flow id note system shouldn t contain any flow with flow not match id actual result flow is updated successfully expected result with the corresponding error message like flow not found case create flow e g with flow id post make put request to update it put where body of the request doesn t contains flow id e g body should contain flow id flow not match note system shouldn t contain any flow with flow not match id actual result with the corresponding error message like flow not found expected result flow should be updated flow id from the body should be ignored actually we are taking flow id from body but it should be taken from the request line also flow id update is not supported so it can be set as nullable in the body | 1 |

632,082 | 20,171,234,560 | IssuesEvent | 2022-02-10 10:35:58 | neo4j/graphql | https://api.github.com/repos/neo4j/graphql | closed | *ConnectOrCreateFieldInputOnCreate and *CreateFieldInput are completely identical | confirmed bug report high priority | While harmonizing the logic for schema augmentation in java, I realized, that the types for `${prefix}ConnectOrCreateFieldInputOnCreate` and `${prefix}CreateFieldInput` are identical.

For example the following schema:

```graphql

type Movie {

title: String!

id: ID! @id

}

type Actor {

name: String!

movies: [Movie!]! @relationship(type: "ACTED_IN", direction: OUT)

}

```

will generate the following types among others:

```graphql

input ActorMoviesConnectOrCreateFieldInputOnCreate {

node: MovieCreateInput!

}

input ActorMoviesCreateFieldInput {

node: MovieCreateInput!

}

```

And if there are relation properties like:

```graphql

type Movie {

title: String!

isan: String! @unique

}

type Actor {

name: String!

movies: [Movie!]! @relationship(type: "ACTED_IN", direction: OUT, properties: "ActedIn")

}

interface ActedIn {

screentime: Int!

characterName: String

}

```

The following types are generated among others:

```graphql

input ActorMoviesConnectOrCreateFieldInputOnCreate {

edge: ActedInCreateInput!

node: MovieCreateInput!

}

input ActorMoviesCreateFieldInput {

edge: ActedInCreateInput!

node: MovieCreateInput!

}

```

| 1.0 | *ConnectOrCreateFieldInputOnCreate and *CreateFieldInput are completely identical - While harmonizing the logic for schema augmentation in java, I realized, that the types for `${prefix}ConnectOrCreateFieldInputOnCreate` and `${prefix}CreateFieldInput` are identical.

For example the following schema:

```graphql

type Movie {

title: String!

id: ID! @id

}

type Actor {

name: String!

movies: [Movie!]! @relationship(type: "ACTED_IN", direction: OUT)

}

```

will generate the following types among others:

```graphql

input ActorMoviesConnectOrCreateFieldInputOnCreate {

node: MovieCreateInput!

}

input ActorMoviesCreateFieldInput {

node: MovieCreateInput!

}

```

And if there are relation properties like:

```graphql

type Movie {

title: String!

isan: String! @unique

}

type Actor {

name: String!

movies: [Movie!]! @relationship(type: "ACTED_IN", direction: OUT, properties: "ActedIn")

}

interface ActedIn {

screentime: Int!

characterName: String

}

```

The following types are generated among others:

```graphql

input ActorMoviesConnectOrCreateFieldInputOnCreate {

edge: ActedInCreateInput!

node: MovieCreateInput!

}

input ActorMoviesCreateFieldInput {

edge: ActedInCreateInput!

node: MovieCreateInput!

}

```

| priority | connectorcreatefieldinputoncreate and createfieldinput are completely identical while harmonizing the logic for schema augmentation in java i realized that the types for prefix connectorcreatefieldinputoncreate and prefix createfieldinput are identical for example the following schema graphql type movie title string id id id type actor name string movies relationship type acted in direction out will generate the following types among others graphql input actormoviesconnectorcreatefieldinputoncreate node moviecreateinput input actormoviescreatefieldinput node moviecreateinput and if there are relation properties like graphql type movie title string isan string unique type actor name string movies relationship type acted in direction out properties actedin interface actedin screentime int charactername string the following types are generated among others graphql input actormoviesconnectorcreatefieldinputoncreate edge actedincreateinput node moviecreateinput input actormoviescreatefieldinput edge actedincreateinput node moviecreateinput | 1 |

442,477 | 12,746,087,829 | IssuesEvent | 2020-06-26 15:19:38 | a2000-erp-team/WEBERP | https://api.github.com/repos/a2000-erp-team/WEBERP | opened | Remove extra numbers behind memory in GJ transaction | ABIGAIL High Priority | FINANCE-LEDGER-GLOPERATION-Edit [GJ] transaction

| 1.0 | Remove extra numbers behind memory in GJ transaction - FINANCE-LEDGER-GLOPERATION-Edit [GJ] transaction

| priority | remove extra numbers behind memory in gj transaction finance ledger gloperation edit transaction | 1 |

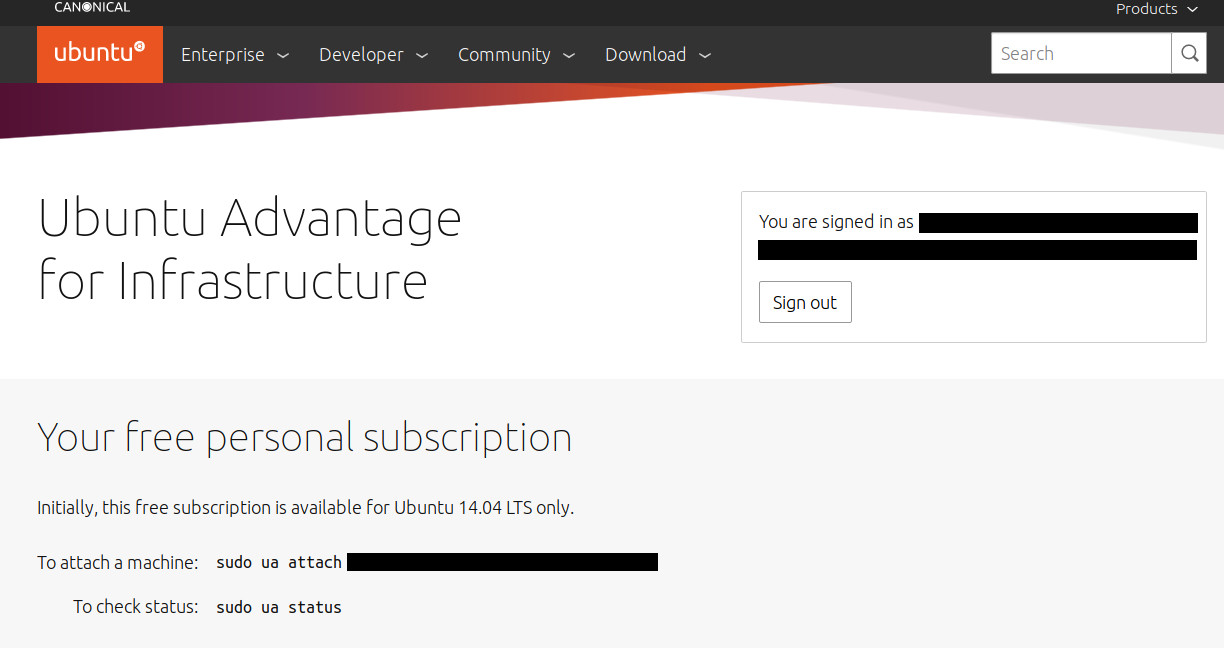

369,274 | 10,894,956,385 | IssuesEvent | 2019-11-19 09:44:02 | canonical-web-and-design/ubuntu.com | https://api.github.com/repos/canonical-web-and-design/ubuntu.com | closed | /livepatch incorrectly points to https://ubuntu.com/advantage | Priority: High | https://ubuntu.com/livepatch currently, amongst other, states this:

> Free for personal use

>

> All you need is an Ubuntu One account. Free for 3 machines.

>

> [Get Livepatch](https://ubuntu.com/advantage)

After reading this text and clicking the button, a random user will expect to find information on how to enable live kernel patching on any currently supported Ubuntu release.

Instead, what they currently find at /advantage is a statement that

> Initially, free subscription is available for Ubuntu 14.04 LTS only.

The location where, today, someone wants who wants to enable live kernel patching, should be pointed to, seems to rather be https://auth.livepatch.canonical.com/ though.

---

*Reported from: https://ubuntu.com/livepatch* | 1.0 | /livepatch incorrectly points to https://ubuntu.com/advantage - https://ubuntu.com/livepatch currently, amongst other, states this:

> Free for personal use

>

> All you need is an Ubuntu One account. Free for 3 machines.

>

> [Get Livepatch](https://ubuntu.com/advantage)

After reading this text and clicking the button, a random user will expect to find information on how to enable live kernel patching on any currently supported Ubuntu release.

Instead, what they currently find at /advantage is a statement that

> Initially, free subscription is available for Ubuntu 14.04 LTS only.

The location where, today, someone wants who wants to enable live kernel patching, should be pointed to, seems to rather be https://auth.livepatch.canonical.com/ though.

---

*Reported from: https://ubuntu.com/livepatch* | priority | livepatch incorrectly points to currently amongst other states this free for personal use all you need is an ubuntu one account free for machines after reading this text and clicking the button a random user will expect to find information on how to enable live kernel patching on any currently supported ubuntu release instead what they currently find at advantage is a statement that initially free subscription is available for ubuntu lts only the location where today someone wants who wants to enable live kernel patching should be pointed to seems to rather be though reported from | 1 |

252,098 | 8,031,831,326 | IssuesEvent | 2018-07-28 07:20:36 | opencv/opencv | https://api.github.com/repos/opencv/opencv | opened | Nightly: test_imgproc(Imgproc_InitUndistortMap.accuracy) failure | bug category: imgproc priority: high | [The first failed build](http://pullrequest.opencv.org/buildbot/builders/3_4_coverage-lin64-debug/builds/102/steps/test_imgproc/logs/stdio):

```

[ RUN ] Imgproc_InitUndistortMap.accuracy

/build/3_4_coverage-lin64-debug/opencv/modules/ts/src/ts.cpp:549: Failure

Failed

failure reason: Arithmetic exception

test case #12

seed: 09491e2273330ddc

-----------------------------------

LOG:

General failure:

Arithmetic exception (-6)

-----------------------------------

CONSOLE: .

-----------------------------------

[ FAILED ] Imgproc_InitUndistortMap.accuracy (71 ms)

``` | 1.0 | Nightly: test_imgproc(Imgproc_InitUndistortMap.accuracy) failure - [The first failed build](http://pullrequest.opencv.org/buildbot/builders/3_4_coverage-lin64-debug/builds/102/steps/test_imgproc/logs/stdio):

```

[ RUN ] Imgproc_InitUndistortMap.accuracy

/build/3_4_coverage-lin64-debug/opencv/modules/ts/src/ts.cpp:549: Failure

Failed

failure reason: Arithmetic exception

test case #12

seed: 09491e2273330ddc

-----------------------------------

LOG:

General failure:

Arithmetic exception (-6)

-----------------------------------

CONSOLE: .

-----------------------------------

[ FAILED ] Imgproc_InitUndistortMap.accuracy (71 ms)

``` | priority | nightly test imgproc imgproc initundistortmap accuracy failure imgproc initundistortmap accuracy build coverage debug opencv modules ts src ts cpp failure failed failure reason arithmetic exception test case seed log general failure arithmetic exception console imgproc initundistortmap accuracy ms | 1 |

482,282 | 13,903,942,442 | IssuesEvent | 2020-10-20 07:57:26 | bryntum/support | https://api.github.com/repos/bryntum/support | closed | Calendar sync doesn't work | bug forum high-priority resolved | [Forum post](https://www.bryntum.com/forum/viewtopic.php?f=54&t=15105)

Use this config for Basic Calendar demo. No sync is called after creating a new event.

```

window.calendar = new Calendar({

crudManager : {

eventStore : {

fields : [

{ name : 'room' }

]

},

transport : {

load : {

url : 'data/data.json'

},

sync : {

url : 'data/sync.json'

}

},

autoLoad : true,

autoSync : true

},

``` | 1.0 | Calendar sync doesn't work - [Forum post](https://www.bryntum.com/forum/viewtopic.php?f=54&t=15105)

Use this config for Basic Calendar demo. No sync is called after creating a new event.

```

window.calendar = new Calendar({

crudManager : {

eventStore : {

fields : [

{ name : 'room' }

]

},

transport : {

load : {

url : 'data/data.json'

},

sync : {

url : 'data/sync.json'

}

},

autoLoad : true,

autoSync : true

},

``` | priority | calendar sync doesn t work use this config for basic calendar demo no sync is called after creating a new event window calendar new calendar crudmanager eventstore fields name room transport load url data data json sync url data sync json autoload true autosync true | 1 |

375,719 | 11,133,375,577 | IssuesEvent | 2019-12-20 09:15:28 | projectacrn/acrn-hypervisor | https://api.github.com/repos/projectacrn/acrn-hypervisor | closed | acrn failed to boot when generate hypervisor config source from config app | priority: P2-High type: bug | Environment

git clone https://github.com/projectacrn/acrn-hypervisor.git

HW/Board

KBLNUC

Build link

build from source

Image info

1fe1afd4 (HEAD -> master, origin/master, origin/HEAD) acrn-config: Add ramdisk tag parsing support

Steps

git clone https://github.com/projectacrn/acrn-hypervisor.git

follow guide to launch config app: https://projectacrn.github.io/latest/tutorials/acrn_configuration_tool.html#use-the-acrn-configuration-app

import default board info from misc/acrn-config/xmls/board-xmls/nuc7i7dnb.xml

select scenario: industry

click "generate board src" and "generate scenario src"

make all BOARD=nuc7i7dnb SCENARIO=industry

replace acrn.efi into kblnuc, and launch acrn

Expected result

acrn and sos can launch successfully

Actual result

acrn launch failed

Reproduce rate

3/3

Debugging info

debug logs could be put here or attached in an attachment. | 1.0 | acrn failed to boot when generate hypervisor config source from config app - Environment

git clone https://github.com/projectacrn/acrn-hypervisor.git

HW/Board

KBLNUC

Build link

build from source

Image info

1fe1afd4 (HEAD -> master, origin/master, origin/HEAD) acrn-config: Add ramdisk tag parsing support

Steps

git clone https://github.com/projectacrn/acrn-hypervisor.git

follow guide to launch config app: https://projectacrn.github.io/latest/tutorials/acrn_configuration_tool.html#use-the-acrn-configuration-app

import default board info from misc/acrn-config/xmls/board-xmls/nuc7i7dnb.xml

select scenario: industry

click "generate board src" and "generate scenario src"

make all BOARD=nuc7i7dnb SCENARIO=industry

replace acrn.efi into kblnuc, and launch acrn

Expected result

acrn and sos can launch successfully

Actual result

acrn launch failed

Reproduce rate

3/3

Debugging info

debug logs could be put here or attached in an attachment. | priority | acrn failed to boot when generate hypervisor config source from config app environment git clone hw board kblnuc build link build from source image info head master origin master origin head acrn config add ramdisk tag parsing support steps git clone follow guide to launch config app import default board info from misc acrn config xmls board xmls xml select scenario industry click generate board src and generate scenario src make all board scenario industry replace acrn efi into kblnuc and launch acrn expected result acrn and sos can launch successfully actual result acrn launch failed reproduce rate debugging info debug logs could be put here or attached in an attachment | 1 |

601,434 | 18,408,692,428 | IssuesEvent | 2021-10-13 01:01:13 | gambitph/Stackable | https://api.github.com/repos/gambitph/Stackable | opened | Content Vertical Align in Style tab is not working in backend | bug high priority [version] V3 | <!--

Before posting, make sure that:

1. you are running the latest version of Stackable, and

2. you have searched whether your issue has already been reported

-->

**To Reproduce**

Steps to reproduce the behavior:

1. Add a v3 Feature > Default layout

2. Set block alignment to full width

3. upload an image and set image height to at least 700px

4. Go to Feature > Style tab > Container Size & Spacing

5. Set Content Vertical Align to flex-end

6. see bug in backend (this works in frontend)

this is applicable to all blocks with Container in Style tab

https://user-images.githubusercontent.com/28699204/137048941-3ce51deb-4c75-4389-a253-461d70e9ad64.mov

| 1.0 | Content Vertical Align in Style tab is not working in backend - <!--

Before posting, make sure that:

1. you are running the latest version of Stackable, and

2. you have searched whether your issue has already been reported

-->

**To Reproduce**

Steps to reproduce the behavior:

1. Add a v3 Feature > Default layout

2. Set block alignment to full width

3. upload an image and set image height to at least 700px

4. Go to Feature > Style tab > Container Size & Spacing

5. Set Content Vertical Align to flex-end

6. see bug in backend (this works in frontend)

this is applicable to all blocks with Container in Style tab

https://user-images.githubusercontent.com/28699204/137048941-3ce51deb-4c75-4389-a253-461d70e9ad64.mov

| priority | content vertical align in style tab is not working in backend before posting make sure that you are running the latest version of stackable and you have searched whether your issue has already been reported to reproduce steps to reproduce the behavior add a feature default layout set block alignment to full width upload an image and set image height to at least go to feature style tab container size spacing set content vertical align to flex end see bug in backend this works in frontend this is applicable to all blocks with container in style tab | 1 |

561,168 | 16,612,379,447 | IssuesEvent | 2021-06-02 13:06:03 | telstra/open-kilda | https://api.github.com/repos/telstra/open-kilda | closed | Re-implement Feature toggles in separate topology or Northbound. | area/arch priority/2-high | The feature toggles need to be re-designed and re-implemented as they were disabled in #1128. | 1.0 | Re-implement Feature toggles in separate topology or Northbound. - The feature toggles need to be re-designed and re-implemented as they were disabled in #1128. | priority | re implement feature toggles in separate topology or northbound the feature toggles need to be re designed and re implemented as they were disabled in | 1 |

364,380 | 10,763,452,701 | IssuesEvent | 2019-11-01 04:03:52 | ppy/osu | https://api.github.com/repos/ppy/osu | closed | osu!mania doesn't register misses at high scroll speeds | gameplay high priority osu!mania osu!taiko replay | Dunno if already reported but most Osu!mania Notes are getting ignored when missing them with scrollspeed 50 , sliders are working.

Video: