Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

22,030

| 2,644,424,706

|

IssuesEvent

|

2015-03-12 16:52:02

|

TabakoffLab/PhenoGen

|

https://api.github.com/repos/TabakoffLab/PhenoGen

|

closed

|

Logging in directly to view expression data of dataset results in errors

|

bug High Priority

|

multiple errors occur when you try to directly log into view expresison data of a dataset

|

1.0

|

Logging in directly to view expression data of dataset results in errors - multiple errors occur when you try to directly log into view expresison data of a dataset

|

priority

|

logging in directly to view expression data of dataset results in errors multiple errors occur when you try to directly log into view expresison data of a dataset

| 1

|

206,481

| 7,112,714,286

|

IssuesEvent

|

2018-01-17 17:55:38

|

IfyAniefuna/experiment_metadata

|

https://api.github.com/repos/IfyAniefuna/experiment_metadata

|

opened

|

loading multiple metadata files then entering info into form has weird behavior

|

bug high priority

|

Sample time keeps on erasing itself after being entered.

This can be avoided if you program this drag-and-drop to immediately download a spreadsheet after multiple metadata files have been drag-and-dropped into the form.

|

1.0

|

loading multiple metadata files then entering info into form has weird behavior - Sample time keeps on erasing itself after being entered.

This can be avoided if you program this drag-and-drop to immediately download a spreadsheet after multiple metadata files have been drag-and-dropped into the form.

|

priority

|

loading multiple metadata files then entering info into form has weird behavior sample time keeps on erasing itself after being entered this can be avoided if you program this drag and drop to immediately download a spreadsheet after multiple metadata files have been drag and dropped into the form

| 1

|

631,514

| 20,153,072,162

|

IssuesEvent

|

2022-02-09 14:13:27

|

kubermatic/kubeone

|

https://api.github.com/repos/kubermatic/kubeone

|

closed

|

Test changing the maximum container log size and files -- Test Release 1.4

|

priority/high sig/cluster-management

|

Instructions:

* Download the latest KubeOne 1.4.0 release candidate

* Follow the [Create a Kubernetes cluster tutorial](https://docs.kubermatic.com/kubeone/master/tutorials/creating_clusters/) to create your cluster

* Make sure to add the following stanza to your KubeOneCluster manifest before applying the cluster for the first time (feel free to change values as appropriate):

```yaml

…

loggingConfig:

containerLogMaxSize: “100Ki”

containerLogMaxFiles: 3

```

* Make sure to add the following stanza depending on container runtime that you’re testing.

* For Docker:

```yaml

…

containerRuntime:

docker: {}

```

* For containerd:

```yaml

…

containerRuntime:

containerd: {}

```

* Wait for machine-controller-managed nodes to join the cluster

* Ensure all pods are Running

* Ensure that the container logs are rotated when size reaches provided value (e.g. 100Ki) and that the expected number of files is kept

* For Docker clusters, logs are located in `/var/lib/docker/containers/<container id>/<container id>-json.log `

* For containerd clusters, logs are located in `/var/log/containers/`

This test should be done for both Docker and containerd (as instructed above). Kubernetes version, operating system, and cloud provider don’t matter.

* [x] Docker

* [x] containerd

|

1.0

|

Test changing the maximum container log size and files -- Test Release 1.4 - Instructions:

* Download the latest KubeOne 1.4.0 release candidate

* Follow the [Create a Kubernetes cluster tutorial](https://docs.kubermatic.com/kubeone/master/tutorials/creating_clusters/) to create your cluster

* Make sure to add the following stanza to your KubeOneCluster manifest before applying the cluster for the first time (feel free to change values as appropriate):

```yaml

…

loggingConfig:

containerLogMaxSize: “100Ki”

containerLogMaxFiles: 3

```

* Make sure to add the following stanza depending on container runtime that you’re testing.

* For Docker:

```yaml

…

containerRuntime:

docker: {}

```

* For containerd:

```yaml

…

containerRuntime:

containerd: {}

```

* Wait for machine-controller-managed nodes to join the cluster

* Ensure all pods are Running

* Ensure that the container logs are rotated when size reaches provided value (e.g. 100Ki) and that the expected number of files is kept

* For Docker clusters, logs are located in `/var/lib/docker/containers/<container id>/<container id>-json.log `

* For containerd clusters, logs are located in `/var/log/containers/`

This test should be done for both Docker and containerd (as instructed above). Kubernetes version, operating system, and cloud provider don’t matter.

* [x] Docker

* [x] containerd

|

priority

|

test changing the maximum container log size and files test release instructions download the latest kubeone release candidate follow the to create your cluster make sure to add the following stanza to your kubeonecluster manifest before applying the cluster for the first time feel free to change values as appropriate yaml … loggingconfig containerlogmaxsize “ ” containerlogmaxfiles make sure to add the following stanza depending on container runtime that you’re testing for docker yaml … containerruntime docker for containerd yaml … containerruntime containerd wait for machine controller managed nodes to join the cluster ensure all pods are running ensure that the container logs are rotated when size reaches provided value e g and that the expected number of files is kept for docker clusters logs are located in var lib docker containers json log for containerd clusters logs are located in var log containers this test should be done for both docker and containerd as instructed above kubernetes version operating system and cloud provider don’t matter docker containerd

| 1

|

468,033

| 13,460,217,419

|

IssuesEvent

|

2020-09-09 13:20:37

|

onaio/reveal-frontend

|

https://api.github.com/repos/onaio/reveal-frontend

|

closed

|

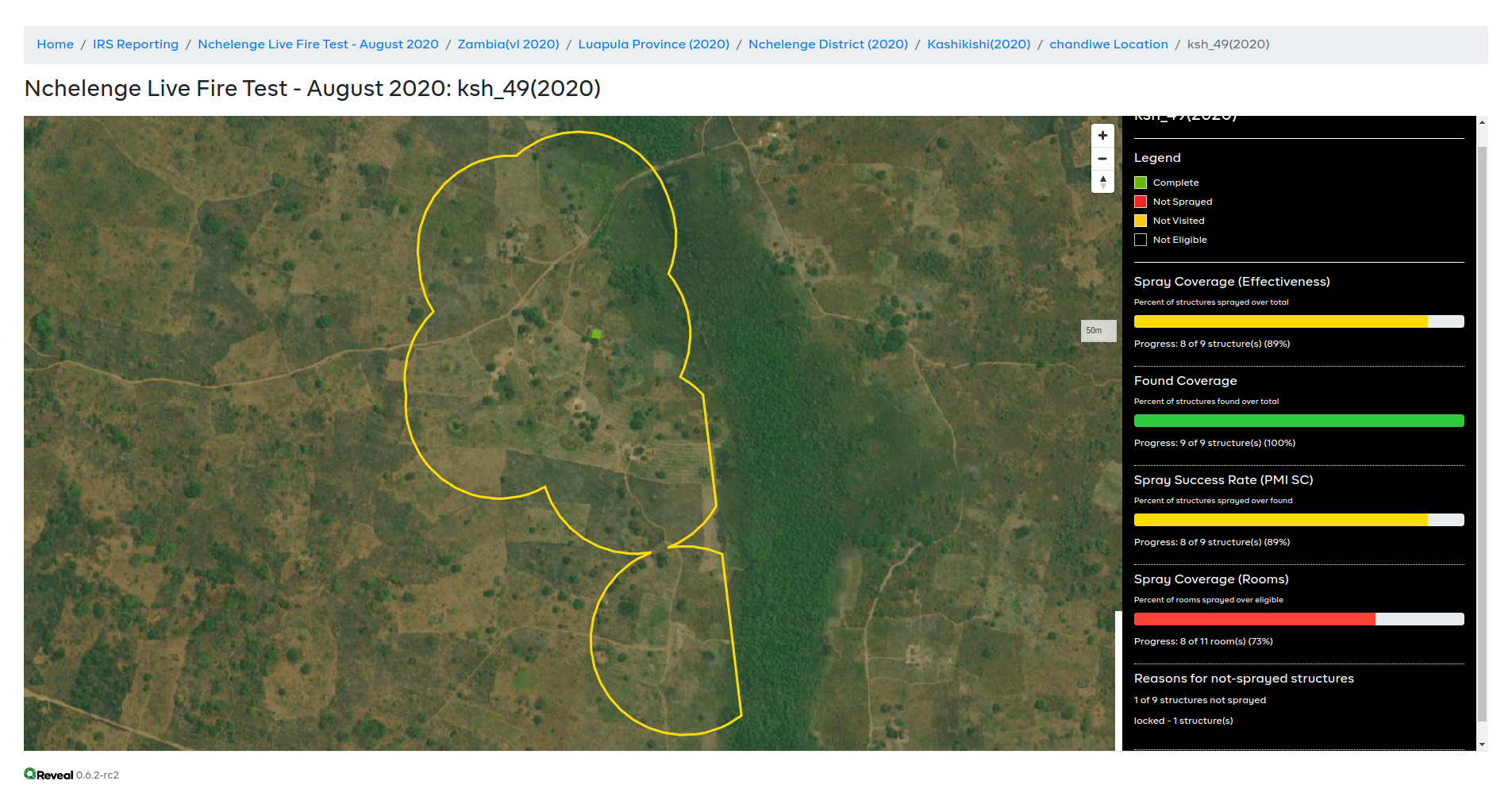

IRS reports map not showing all structures

|

Priority: High

|

Consider this report https://web.reveal-stage.smartregister.org/intervention/irs/report/5640fcc2-772a-5e06-9e00-491e3aa544f5/6be2f032-ab8e-4f0d-999c-d951f7040418/map which should be displaying 9 structures on the map, but only shows 1.

The data is [there](https://superset.reveal-stage.smartregister.org/superset/slice_json/592?form_data={%22adhoc_filters%22:[{%22clause%22:%22WHERE%22,%22expressionType%22:%22SIMPLE%22,%22comparator%22:%226be2f032-ab8e-4f0d-999c-d951f7040418%22,%22operator%22:%22==%22,%22subject%22:%22jurisdiction_id%22},{%22clause%22:%22WHERE%22,%22expressionType%22:%22SIMPLE%22,%22comparator%22:%225640fcc2-772a-5e06-9e00-491e3aa544f5%22,%22operator%22:%22==%22,%22subject%22:%22plan_id%22}],%22row_limit%22:15000}).

|

1.0

|

IRS reports map not showing all structures - Consider this report https://web.reveal-stage.smartregister.org/intervention/irs/report/5640fcc2-772a-5e06-9e00-491e3aa544f5/6be2f032-ab8e-4f0d-999c-d951f7040418/map which should be displaying 9 structures on the map, but only shows 1.

The data is [there](https://superset.reveal-stage.smartregister.org/superset/slice_json/592?form_data={%22adhoc_filters%22:[{%22clause%22:%22WHERE%22,%22expressionType%22:%22SIMPLE%22,%22comparator%22:%226be2f032-ab8e-4f0d-999c-d951f7040418%22,%22operator%22:%22==%22,%22subject%22:%22jurisdiction_id%22},{%22clause%22:%22WHERE%22,%22expressionType%22:%22SIMPLE%22,%22comparator%22:%225640fcc2-772a-5e06-9e00-491e3aa544f5%22,%22operator%22:%22==%22,%22subject%22:%22plan_id%22}],%22row_limit%22:15000}).

|

priority

|

irs reports map not showing all structures consider this report which should be displaying structures on the map but only shows the data is limit

| 1

|

424,747

| 12,322,772,322

|

IssuesEvent

|

2020-05-13 10:56:44

|

godotengine/godot

|

https://api.github.com/repos/godotengine/godot

|

closed

|

Update gamepad remapping system to reflect changes in SDL code.

|

bug hero wanted! high priority topic:input

|

**Operating system or device - Godot version:**

All

**Issue description:**

It seems like SDL2 has been updated and now has the ability to map only half of a gamepad axis.

https://hg.libsdl.org/SDL/rev/5ea5f198879f

While I haven't found any mappings in the wild using this feature yet, we should implement it in order to keep compatibility. Also it's a very useful feature imho :)

|

1.0

|

Update gamepad remapping system to reflect changes in SDL code. - **Operating system or device - Godot version:**

All

**Issue description:**

It seems like SDL2 has been updated and now has the ability to map only half of a gamepad axis.

https://hg.libsdl.org/SDL/rev/5ea5f198879f

While I haven't found any mappings in the wild using this feature yet, we should implement it in order to keep compatibility. Also it's a very useful feature imho :)

|

priority

|

update gamepad remapping system to reflect changes in sdl code operating system or device godot version all issue description it seems like has been updated and now has the ability to map only half of a gamepad axis while i haven t found any mappings in the wild using this feature yet we should implement it in order to keep compatibility also it s a very useful feature imho

| 1

|

425,620

| 12,343,118,774

|

IssuesEvent

|

2020-05-15 02:58:13

|

juntofoundation/junto-mobile

|

https://api.github.com/repos/juntofoundation/junto-mobile

|

closed

|

Placeholders for CachedNetworkImage isn't showing up

|

High Priority

|

i.e. MemberAvatar & MemberAvatarPlaceholder

|

1.0

|

Placeholders for CachedNetworkImage isn't showing up - i.e. MemberAvatar & MemberAvatarPlaceholder

|

priority

|

placeholders for cachednetworkimage isn t showing up i e memberavatar memberavatarplaceholder

| 1

|

608,069

| 18,797,944,903

|

IssuesEvent

|

2021-11-09 01:43:59

|

Eclipse-Station/NEV-Northern-Light

|

https://api.github.com/repos/Eclipse-Station/NEV-Northern-Light

|

closed

|

Radiation Collectors are not accepting Phoron Tanks

|

bug Priority: High :warning: Severity: S-2 Major :busts_in_silhouette: Impact: I-3 Some

|

None of the Radiation collectors are accepting phoron tanks, rendering them non-functional.

As part of troubleshooting, I have confirmed that I am using the correct tanks (handheld phoron tanks) and that they are filled to 1013 Kpa.

The rad collectors are wrenched down on top of wire knots. (See provided image).

I am able to extend the array, but without a tank inside, they generate no power.

|

1.0

|

Radiation Collectors are not accepting Phoron Tanks - None of the Radiation collectors are accepting phoron tanks, rendering them non-functional.

As part of troubleshooting, I have confirmed that I am using the correct tanks (handheld phoron tanks) and that they are filled to 1013 Kpa.

The rad collectors are wrenched down on top of wire knots. (See provided image).

I am able to extend the array, but without a tank inside, they generate no power.

|

priority

|

radiation collectors are not accepting phoron tanks none of the radiation collectors are accepting phoron tanks rendering them non functional as part of troubleshooting i have confirmed that i am using the correct tanks handheld phoron tanks and that they are filled to kpa the rad collectors are wrenched down on top of wire knots see provided image i am able to extend the array but without a tank inside they generate no power

| 1

|

619,708

| 19,532,979,937

|

IssuesEvent

|

2021-12-30 21:06:43

|

levovix0/DMusic

|

https://api.github.com/repos/levovix0/DMusic

|

closed

|

DMusic process stops immediately

|

bug High priority

|

**Describe the bug**

When run DMusic.exe process stops immediately. DMusic window don't show

**To Reproduce**

Steps to reproduce the behavior:

1. Download Release archive

2. Unpacked the archive

3. Run DMusic.exe

4. DMusic window don't show

**Expected behavior**

I can see DMusic window

OS: [Windows 10/11 x64]

Version [Release 0.2]

|

1.0

|

DMusic process stops immediately - **Describe the bug**

When run DMusic.exe process stops immediately. DMusic window don't show

**To Reproduce**

Steps to reproduce the behavior:

1. Download Release archive

2. Unpacked the archive

3. Run DMusic.exe

4. DMusic window don't show

**Expected behavior**

I can see DMusic window

OS: [Windows 10/11 x64]

Version [Release 0.2]

|

priority

|

dmusic process stops immediately describe the bug when run dmusic exe process stops immediately dmusic window don t show to reproduce steps to reproduce the behavior download release archive unpacked the archive run dmusic exe dmusic window don t show expected behavior i can see dmusic window os version

| 1

|

383,072

| 11,349,525,282

|

IssuesEvent

|

2020-01-24 05:21:04

|

clappr/clappr

|

https://api.github.com/repos/clappr/clappr

|

closed

|

Fullscreen on Android Chrome closed when clicked on player.

|

bug high-priority

|

**Browser**: Chrome 55.0.2883.91

**OS**: Android 6.0.1

**Clappr Version**: latest (http://cdn.clappr.io/latest/clappr.js on http://cdn.clappr.io)

**Steps to reproduce**:

* open http://cdn.clappr.io in Chrome on Android

* play video and resize to fullscreen

* click on player container

* I was expecting "pause without exit from fullscreen" but instead it shows "exit from fullscreen without pause"

This reproduced at http://cdn.clappr.io/

|

1.0

|

Fullscreen on Android Chrome closed when clicked on player. - **Browser**: Chrome 55.0.2883.91

**OS**: Android 6.0.1

**Clappr Version**: latest (http://cdn.clappr.io/latest/clappr.js on http://cdn.clappr.io)

**Steps to reproduce**:

* open http://cdn.clappr.io in Chrome on Android

* play video and resize to fullscreen

* click on player container

* I was expecting "pause without exit from fullscreen" but instead it shows "exit from fullscreen without pause"

This reproduced at http://cdn.clappr.io/

|

priority

|

fullscreen on android chrome closed when clicked on player browser chrome os android clappr version latest on steps to reproduce open in chrome on android play video and resize to fullscreen click on player container i was expecting pause without exit from fullscreen but instead it shows exit from fullscreen without pause this reproduced at

| 1

|

264,072

| 8,304,904,548

|

IssuesEvent

|

2018-09-21 23:41:40

|

python/mypy

|

https://api.github.com/repos/python/mypy

|

opened

|

mypy ignores type errors inside `list` and `dict` calls

|

bug priority-0-high

|

In the following program:

```

from typing import Union, Iterable, Tuple

class A:

def foo(self) -> Iterable[Tuple[int, int]]: pass

def bar(x: int) -> Union[A, int]: ...

list(bar('lol').foo()) # No errors!

dict(bar('lol').foo()) # No errors!

tuple(bar('lol').foo()) # Does error

set(bar('lol').foo()) # Does error

```

two errors ought to be generated for each call (one for `int` not having `.foo`, one for `'lol'` being the wrong type of argument). These errors seem to be suppressed while checking `list` and `dict`, which get filled with `Any`s.

|

1.0

|

mypy ignores type errors inside `list` and `dict` calls - In the following program:

```

from typing import Union, Iterable, Tuple

class A:

def foo(self) -> Iterable[Tuple[int, int]]: pass

def bar(x: int) -> Union[A, int]: ...

list(bar('lol').foo()) # No errors!

dict(bar('lol').foo()) # No errors!

tuple(bar('lol').foo()) # Does error

set(bar('lol').foo()) # Does error

```

two errors ought to be generated for each call (one for `int` not having `.foo`, one for `'lol'` being the wrong type of argument). These errors seem to be suppressed while checking `list` and `dict`, which get filled with `Any`s.

|

priority

|

mypy ignores type errors inside list and dict calls in the following program from typing import union iterable tuple class a def foo self iterable pass def bar x int union list bar lol foo no errors dict bar lol foo no errors tuple bar lol foo does error set bar lol foo does error two errors ought to be generated for each call one for int not having foo one for lol being the wrong type of argument these errors seem to be suppressed while checking list and dict which get filled with any s

| 1

|

336,232

| 10,173,685,568

|

IssuesEvent

|

2019-08-08 13:38:04

|

MAIF/otoroshi

|

https://api.github.com/repos/MAIF/otoroshi

|

opened

|

Identity aware TCP forwarding over HTTPS

|

beyond corp. feature identity aware proxy priority:high security tcp

|

Like in GCP IAP. The idea here is to provide a client that will expose a local port for TCP connections. This client will wrap every tcp packet in an https connection and send it to Otoroshi. Otoroshi will verify if the connection is okay (user, etc ...) and then unwrap packet and forward it to the target tcp service.

To do that we need to

* write a client (node js or rust) based on https://github.com/mathieuancelin/node-httptunnel

* can establish a connection with a public service

* can establish a connection with a private service (apikey)

* can establish a connection with a secured service (auth. modules)

* write the logic to unwrap packets and send it to target service in `handler.scala`

* add special event log with identity

* support private app session id extraction from places other than cookies (#202)

* header

* query param

* config. will be set in auth. module config.

* Support private apps redirection to `urn:ietf:wg:oauth:2.0:oob` (#297)

* Support full OAuth2 lifecyle through private apps (#298)

* TCP forwarding over https will allow to

* setup a target address and port (tls flag)

* get address and or port from headers or query params (flag)

## Docs

* https://cloud.google.com/blog/products/identity-security/cloud-iap-enables-context-aware-access-to-vms-via-ssh-and-rdp-without-bastion-hosts

* https://cloud.google.com/iap/docs/using-tcp-forwarding

* https://cloud.google.com/solutions/building-internet-connectivity-for-private-vms

|

1.0

|

Identity aware TCP forwarding over HTTPS - Like in GCP IAP. The idea here is to provide a client that will expose a local port for TCP connections. This client will wrap every tcp packet in an https connection and send it to Otoroshi. Otoroshi will verify if the connection is okay (user, etc ...) and then unwrap packet and forward it to the target tcp service.

To do that we need to

* write a client (node js or rust) based on https://github.com/mathieuancelin/node-httptunnel

* can establish a connection with a public service

* can establish a connection with a private service (apikey)

* can establish a connection with a secured service (auth. modules)

* write the logic to unwrap packets and send it to target service in `handler.scala`

* add special event log with identity

* support private app session id extraction from places other than cookies (#202)

* header

* query param

* config. will be set in auth. module config.

* Support private apps redirection to `urn:ietf:wg:oauth:2.0:oob` (#297)

* Support full OAuth2 lifecyle through private apps (#298)

* TCP forwarding over https will allow to

* setup a target address and port (tls flag)

* get address and or port from headers or query params (flag)

## Docs

* https://cloud.google.com/blog/products/identity-security/cloud-iap-enables-context-aware-access-to-vms-via-ssh-and-rdp-without-bastion-hosts

* https://cloud.google.com/iap/docs/using-tcp-forwarding

* https://cloud.google.com/solutions/building-internet-connectivity-for-private-vms

|

priority

|

identity aware tcp forwarding over https like in gcp iap the idea here is to provide a client that will expose a local port for tcp connections this client will wrap every tcp packet in an https connection and send it to otoroshi otoroshi will verify if the connection is okay user etc and then unwrap packet and forward it to the target tcp service to do that we need to write a client node js or rust based on can establish a connection with a public service can establish a connection with a private service apikey can establish a connection with a secured service auth modules write the logic to unwrap packets and send it to target service in handler scala add special event log with identity support private app session id extraction from places other than cookies header query param config will be set in auth module config support private apps redirection to urn ietf wg oauth oob support full lifecyle through private apps tcp forwarding over https will allow to setup a target address and port tls flag get address and or port from headers or query params flag docs

| 1

|

348,367

| 10,441,671,823

|

IssuesEvent

|

2019-09-18 11:23:07

|

wso2/product-apim

|

https://api.github.com/repos/wso2/product-apim

|

opened

|

[Store] Cannot invoke api from the store swagger console

|

3.0.0 Priority/Highest Severity/Critical Store

|

Tried it on the latest build (18 th sep). Seems like a CORS issue

<img width="731" alt="Screen Shot 2019-09-18 at 4 51 16 PM" src="https://user-images.githubusercontent.com/4861150/65144311-b7e67880-da34-11e9-9ea6-2bc31adcd9a9.png">

When wirelogs are enabled, I could see that the Access-Control-Request-Headers is set as null

[2019-09-18 16:39:02,149] DEBUG - wire HTTPS-Listener I/O dispatcher-8 >> "Access-Control-Request-Headers: null[\r][\n]"

|

1.0

|

[Store] Cannot invoke api from the store swagger console - Tried it on the latest build (18 th sep). Seems like a CORS issue

<img width="731" alt="Screen Shot 2019-09-18 at 4 51 16 PM" src="https://user-images.githubusercontent.com/4861150/65144311-b7e67880-da34-11e9-9ea6-2bc31adcd9a9.png">

When wirelogs are enabled, I could see that the Access-Control-Request-Headers is set as null

[2019-09-18 16:39:02,149] DEBUG - wire HTTPS-Listener I/O dispatcher-8 >> "Access-Control-Request-Headers: null[\r][\n]"

|

priority

|

cannot invoke api from the store swagger console tried it on the latest build th sep seems like a cors issue img width alt screen shot at pm src when wirelogs are enabled i could see that the access control request headers is set as null debug wire https listener i o dispatcher access control request headers null

| 1

|

665,490

| 22,319,999,903

|

IssuesEvent

|

2022-06-14 04:57:58

|

opencrvs/opencrvs-core

|

https://api.github.com/repos/opencrvs/opencrvs-core

|

closed

|

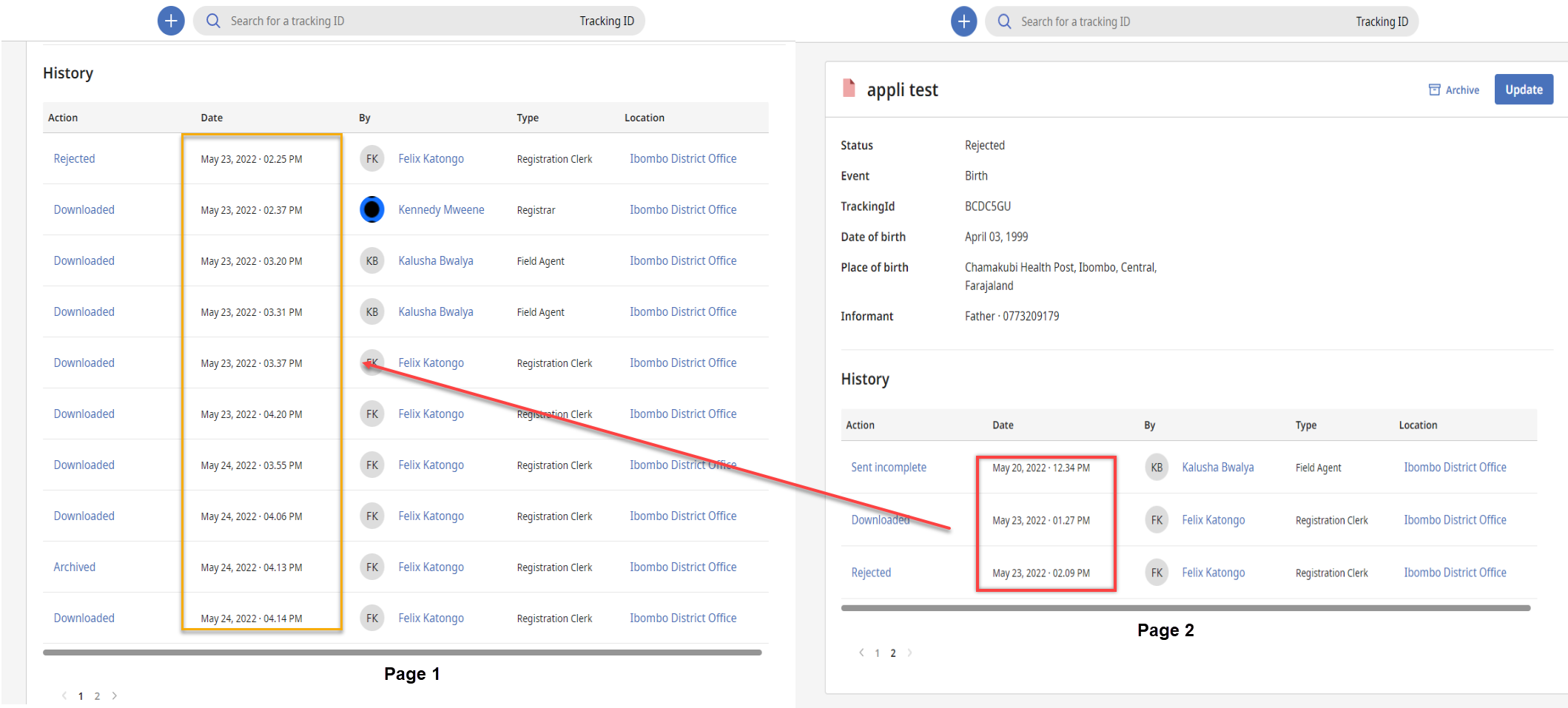

Sorting is not correct in History page

|

👹Bug Priority: high

|

**Bug Description:**

The history of any birth/death application is not sorted correctly in the history screen

**Steps:**

1. Log in as a Registration clerk

2. Navigate to requires Update

3. Click on an Application

4. Download the application

5. Add more than 10 records in the history of the application

6. navigate to the 2nd page of the history

**Actual Result:**

- History is sorted incorrectly. Record which should show on 1st page is showing on the 2nd page

**Expected Result:**

- History should show the most recent record in the first.

**Screenshot:**

**Tested on:**

https://login.farajaland-qa.opencrvs.org/

**Username & Password Used:**

- Username: felix.katongo

- password: test

**Desktop:**

- OS: Windows 10

- Browser: Chrome

|

1.0

|

Sorting is not correct in History page - **Bug Description:**

The history of any birth/death application is not sorted correctly in the history screen

**Steps:**

1. Log in as a Registration clerk

2. Navigate to requires Update

3. Click on an Application

4. Download the application

5. Add more than 10 records in the history of the application

6. navigate to the 2nd page of the history

**Actual Result:**

- History is sorted incorrectly. Record which should show on 1st page is showing on the 2nd page

**Expected Result:**

- History should show the most recent record in the first.

**Screenshot:**

**Tested on:**

https://login.farajaland-qa.opencrvs.org/

**Username & Password Used:**

- Username: felix.katongo

- password: test

**Desktop:**

- OS: Windows 10

- Browser: Chrome

|

priority

|

sorting is not correct in history page bug description the history of any birth death application is not sorted correctly in the history screen steps log in as a registration clerk navigate to requires update click on an application download the application add more than records in the history of the application navigate to the page of the history actual result history is sorted incorrectly record which should show on page is showing on the page expected result history should show the most recent record in the first screenshot tested on username password used username felix katongo password test desktop os windows browser chrome

| 1

|

636,178

| 20,594,447,093

|

IssuesEvent

|

2022-03-05 08:57:11

|

kubesphere/ks-devops

|

https://api.github.com/repos/kubesphere/ks-devops

|

closed

|

Request to refine Role Templates related to DevOps

|

kind/feature priority/high

|

### What is version of KubeSphere DevOps has the issue?

latest

### How did you install the Kubernetes? Or what is the Kubernetes distribution?

_No response_

### Describe this feature

Recently, we added a little DevOps APIs with version v1alpha1, please see blow:

- https://github.com/kubesphere/ks-devops/pull/468

- https://github.com/kubesphere/ks-devops/pull/467

- https://github.com/kubesphere/ks-devops/pull/460

If we hadn't defined Role Templates at [here](https://github.com/kubesphere/ks-installer/blob/915f2ce8690ff6ea0e1f9201a56ffdf4e005cde0/roles/ks-core/prepare/files/ks-init/role-templates.yaml), non-admin users could not access those new resources in the [console](https://github.com/kubesphere/console).

So I request to refine Role Templates related to DevOps

### Additional information

/cc @kubesphere/sig-devops

|

1.0

|

Request to refine Role Templates related to DevOps - ### What is version of KubeSphere DevOps has the issue?

latest

### How did you install the Kubernetes? Or what is the Kubernetes distribution?

_No response_

### Describe this feature

Recently, we added a little DevOps APIs with version v1alpha1, please see blow:

- https://github.com/kubesphere/ks-devops/pull/468

- https://github.com/kubesphere/ks-devops/pull/467

- https://github.com/kubesphere/ks-devops/pull/460

If we hadn't defined Role Templates at [here](https://github.com/kubesphere/ks-installer/blob/915f2ce8690ff6ea0e1f9201a56ffdf4e005cde0/roles/ks-core/prepare/files/ks-init/role-templates.yaml), non-admin users could not access those new resources in the [console](https://github.com/kubesphere/console).

So I request to refine Role Templates related to DevOps

### Additional information

/cc @kubesphere/sig-devops

|

priority

|

request to refine role templates related to devops what is version of kubesphere devops has the issue latest how did you install the kubernetes or what is the kubernetes distribution no response describe this feature recently we added a little devops apis with version please see blow if we hadn t defined role templates at non admin users could not access those new resources in the so i request to refine role templates related to devops additional information cc kubesphere sig devops

| 1

|

629,189

| 20,025,527,303

|

IssuesEvent

|

2022-02-01 20:51:38

|

patternfly/patternfly-elements

|

https://api.github.com/repos/patternfly/patternfly-elements

|

closed

|

[Bug] When navigating through the accordion panels with the arrow keys the panels activate automatically

|

accessibility priority: high functionality

|

<!-- Hello! Please read the [Contributing Guidelines](CONTRIBUTING.md) before submitting an issue. -->

## Description of the issue

<!-- A clear and concise description of what the bug is. -->

When you navigate through the accordion with assistive tech and the keyboard, pressing the arrow keys to switch to different accordion panels is also activating the panels. The keyboard pattern for accordions is that the arrow keys ONLY shift the focus to the next or previous panel.

### Impacted component(s)

- [pfe-accordion](https://patternflyelements.org/components/accordion/)

### Steps to reproduce

1. Go to https://patternflyelements.org/components/accordion/

2. Use the tab key to navigate to the first accordion panel on the page

3. Press the left, right, up, or down arrow keys to navigate through the panels

4. You will see that the panels open automatically when they receive focus via the arrow keys

### Expected behavior

<!-- A clear and concise description of what you expected to happen. -->

The expected behavior is that the arrow keys only allow users to navigate through the accordion panels. The enter key and spacebar key are the only methods used to activate the panels and display the content. This method must be activated by the user expressly and should not happen automatically.

#### See

- [WCAG Accordion Example](https://www.w3.org/TR/wai-aria-practices/examples/accordion/accordion.html)

- [Carnegie Museums Accordion Example](http://web-accessibility.carnegiemuseums.org/code/accordions/)

### Screenshots

<!-- If applicable, add screenshots to help demonstrate the issue. -->

<!--

Please update the labels for this component to reflect the topic of the issue: accessibility, doc / demo, functionality, integration, styles-only, tests, tools.

Note also the severity level; all new issues default to severity level 1 which is low priority. If you feel this issue deserves more attention, please set the label to sev-2 or sev-3.

-->

|

1.0

|

[Bug] When navigating through the accordion panels with the arrow keys the panels activate automatically - <!-- Hello! Please read the [Contributing Guidelines](CONTRIBUTING.md) before submitting an issue. -->

## Description of the issue

<!-- A clear and concise description of what the bug is. -->

When you navigate through the accordion with assistive tech and the keyboard, pressing the arrow keys to switch to different accordion panels is also activating the panels. The keyboard pattern for accordions is that the arrow keys ONLY shift the focus to the next or previous panel.

### Impacted component(s)

- [pfe-accordion](https://patternflyelements.org/components/accordion/)

### Steps to reproduce

1. Go to https://patternflyelements.org/components/accordion/

2. Use the tab key to navigate to the first accordion panel on the page

3. Press the left, right, up, or down arrow keys to navigate through the panels

4. You will see that the panels open automatically when they receive focus via the arrow keys

### Expected behavior

<!-- A clear and concise description of what you expected to happen. -->

The expected behavior is that the arrow keys only allow users to navigate through the accordion panels. The enter key and spacebar key are the only methods used to activate the panels and display the content. This method must be activated by the user expressly and should not happen automatically.

#### See

- [WCAG Accordion Example](https://www.w3.org/TR/wai-aria-practices/examples/accordion/accordion.html)

- [Carnegie Museums Accordion Example](http://web-accessibility.carnegiemuseums.org/code/accordions/)

### Screenshots

<!-- If applicable, add screenshots to help demonstrate the issue. -->

<!--

Please update the labels for this component to reflect the topic of the issue: accessibility, doc / demo, functionality, integration, styles-only, tests, tools.

Note also the severity level; all new issues default to severity level 1 which is low priority. If you feel this issue deserves more attention, please set the label to sev-2 or sev-3.

-->

|

priority

|

when navigating through the accordion panels with the arrow keys the panels activate automatically description of the issue when you navigate through the accordion with assistive tech and the keyboard pressing the arrow keys to switch to different accordion panels is also activating the panels the keyboard pattern for accordions is that the arrow keys only shift the focus to the next or previous panel impacted component s steps to reproduce go to use the tab key to navigate to the first accordion panel on the page press the left right up or down arrow keys to navigate through the panels you will see that the panels open automatically when they receive focus via the arrow keys expected behavior the expected behavior is that the arrow keys only allow users to navigate through the accordion panels the enter key and spacebar key are the only methods used to activate the panels and display the content this method must be activated by the user expressly and should not happen automatically see screenshots please update the labels for this component to reflect the topic of the issue accessibility doc demo functionality integration styles only tests tools note also the severity level all new issues default to severity level which is low priority if you feel this issue deserves more attention please set the label to sev or sev

| 1

|

796,731

| 28,126,308,079

|

IssuesEvent

|

2023-03-31 18:02:49

|

python-graphblas/python-graphblas

|

https://api.github.com/repos/python-graphblas/python-graphblas

|

closed

|

[pyos] Installation from PyPI only supports some platforms

|

highpriority upstream

|

Looking at the installation instructions ([readme](https://github.com/python-graphblas/python-graphblas#install) or [docs](https://python-graphblas.readthedocs.io/en/stable/getting_started/#installation)), I got the impression that a simple `pip install python-graphblas` would work for most users, but this does not appear to be the case. Originally I installed from Conda-Forge, so I did not notice this.

It seems that the essential dependency [suitesparse-graphblas](https://pypi.org/project/suitesparse-graphblas/) only provides binaries for Linux. Thus a simple `pip install python-graphblas` will fail for macOS and Windows users, and I expect most of them will be confused.

Can you please explain this situation better, so that the installation experience is as smooth as possible? I.e.,

- Ideally, provide binaries for all platforms.

- If this is not possible, point out the issues and their solutions.

- A simple solution is to use Anaconda.

- A more complicated one is to compile `suitesparse-graphblas` from source. There should be instructions for this.

https://github.com/pyOpenSci/software-submission/issues/81

|

1.0

|

[pyos] Installation from PyPI only supports some platforms - Looking at the installation instructions ([readme](https://github.com/python-graphblas/python-graphblas#install) or [docs](https://python-graphblas.readthedocs.io/en/stable/getting_started/#installation)), I got the impression that a simple `pip install python-graphblas` would work for most users, but this does not appear to be the case. Originally I installed from Conda-Forge, so I did not notice this.

It seems that the essential dependency [suitesparse-graphblas](https://pypi.org/project/suitesparse-graphblas/) only provides binaries for Linux. Thus a simple `pip install python-graphblas` will fail for macOS and Windows users, and I expect most of them will be confused.

Can you please explain this situation better, so that the installation experience is as smooth as possible? I.e.,

- Ideally, provide binaries for all platforms.

- If this is not possible, point out the issues and their solutions.

- A simple solution is to use Anaconda.

- A more complicated one is to compile `suitesparse-graphblas` from source. There should be instructions for this.

https://github.com/pyOpenSci/software-submission/issues/81

|

priority

|

installation from pypi only supports some platforms looking at the installation instructions or i got the impression that a simple pip install python graphblas would work for most users but this does not appear to be the case originally i installed from conda forge so i did not notice this it seems that the essential dependency only provides binaries for linux thus a simple pip install python graphblas will fail for macos and windows users and i expect most of them will be confused can you please explain this situation better so that the installation experience is as smooth as possible i e ideally provide binaries for all platforms if this is not possible point out the issues and their solutions a simple solution is to use anaconda a more complicated one is to compile suitesparse graphblas from source there should be instructions for this

| 1

|

608,110

| 18,798,684,924

|

IssuesEvent

|

2021-11-09 03:09:08

|

ngageoint/hootenanny

|

https://api.github.com/repos/ngageoint/hootenanny

|

closed

|

MultipleChangesetProvider combine changeset changes

|

Type: Bug Type: Task Category: Core Priority: High

|

`MultipleChangesetProvider` combines two changesets together, one for geometry changes and the other for tag changes. These are output in order so elements with both geometry and tag changes are output twice, neither one is correct. Combine both of the changes in `MultipleChangesetProvider::readNextChange()`.

|

1.0

|

MultipleChangesetProvider combine changeset changes - `MultipleChangesetProvider` combines two changesets together, one for geometry changes and the other for tag changes. These are output in order so elements with both geometry and tag changes are output twice, neither one is correct. Combine both of the changes in `MultipleChangesetProvider::readNextChange()`.

|

priority

|

multiplechangesetprovider combine changeset changes multiplechangesetprovider combines two changesets together one for geometry changes and the other for tag changes these are output in order so elements with both geometry and tag changes are output twice neither one is correct combine both of the changes in multiplechangesetprovider readnextchange

| 1

|

4,934

| 2,566,394,811

|

IssuesEvent

|

2015-02-08 14:02:19

|

chessmasterhong/WaterEmblem

|

https://api.github.com/repos/chessmasterhong/WaterEmblem

|

opened

|

Create new bosses

|

enhancement high priority

|

For each chapter, a boss will be needed. For the sake of the game plot, two new bosses will need to be made from scratch (meaning their animations and such, since I never put them together), and we'll be re-using some old bosses such as the `King` entity.

|

1.0

|

Create new bosses - For each chapter, a boss will be needed. For the sake of the game plot, two new bosses will need to be made from scratch (meaning their animations and such, since I never put them together), and we'll be re-using some old bosses such as the `King` entity.

|

priority

|

create new bosses for each chapter a boss will be needed for the sake of the game plot two new bosses will need to be made from scratch meaning their animations and such since i never put them together and we ll be re using some old bosses such as the king entity

| 1

|

221,963

| 7,404,083,913

|

IssuesEvent

|

2018-03-20 02:31:56

|

PaulL48/SOEN341-SC4

|

https://api.github.com/repos/PaulL48/SOEN341-SC4

|

closed

|

Refactor acceptance tests

|

enhancement priority: high project management risk: low sp 3

|

The TA has suggested we use a template style as he showed us to have a finer granularity of acceptance tests.

|

1.0

|

Refactor acceptance tests - The TA has suggested we use a template style as he showed us to have a finer granularity of acceptance tests.

|

priority

|

refactor acceptance tests the ta has suggested we use a template style as he showed us to have a finer granularity of acceptance tests

| 1

|

478,667

| 13,783,122,334

|

IssuesEvent

|

2020-10-08 18:42:25

|

fossasia/open-event-frontend

|

https://api.github.com/repos/fossasia/open-event-frontend

|

opened

|

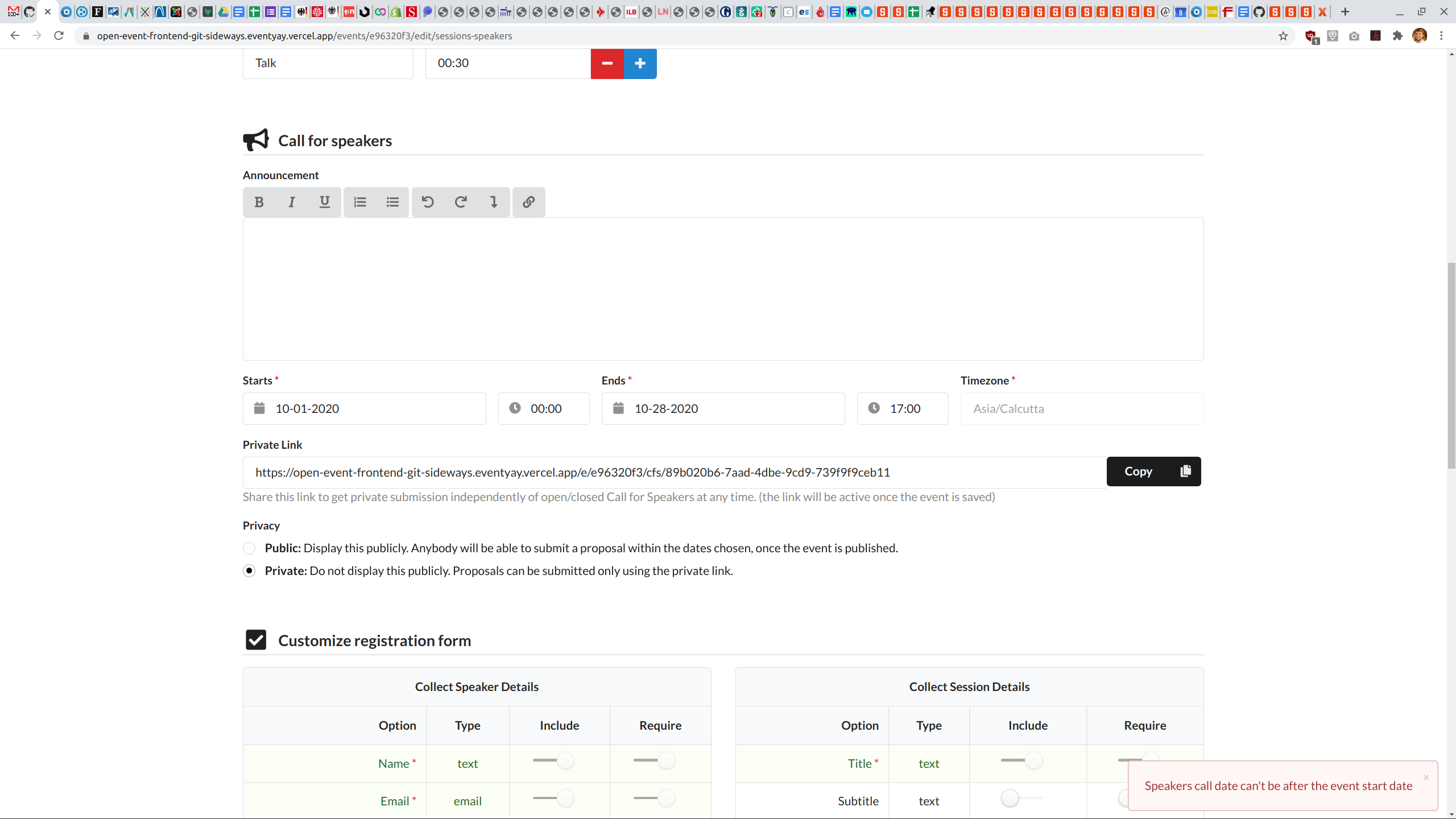

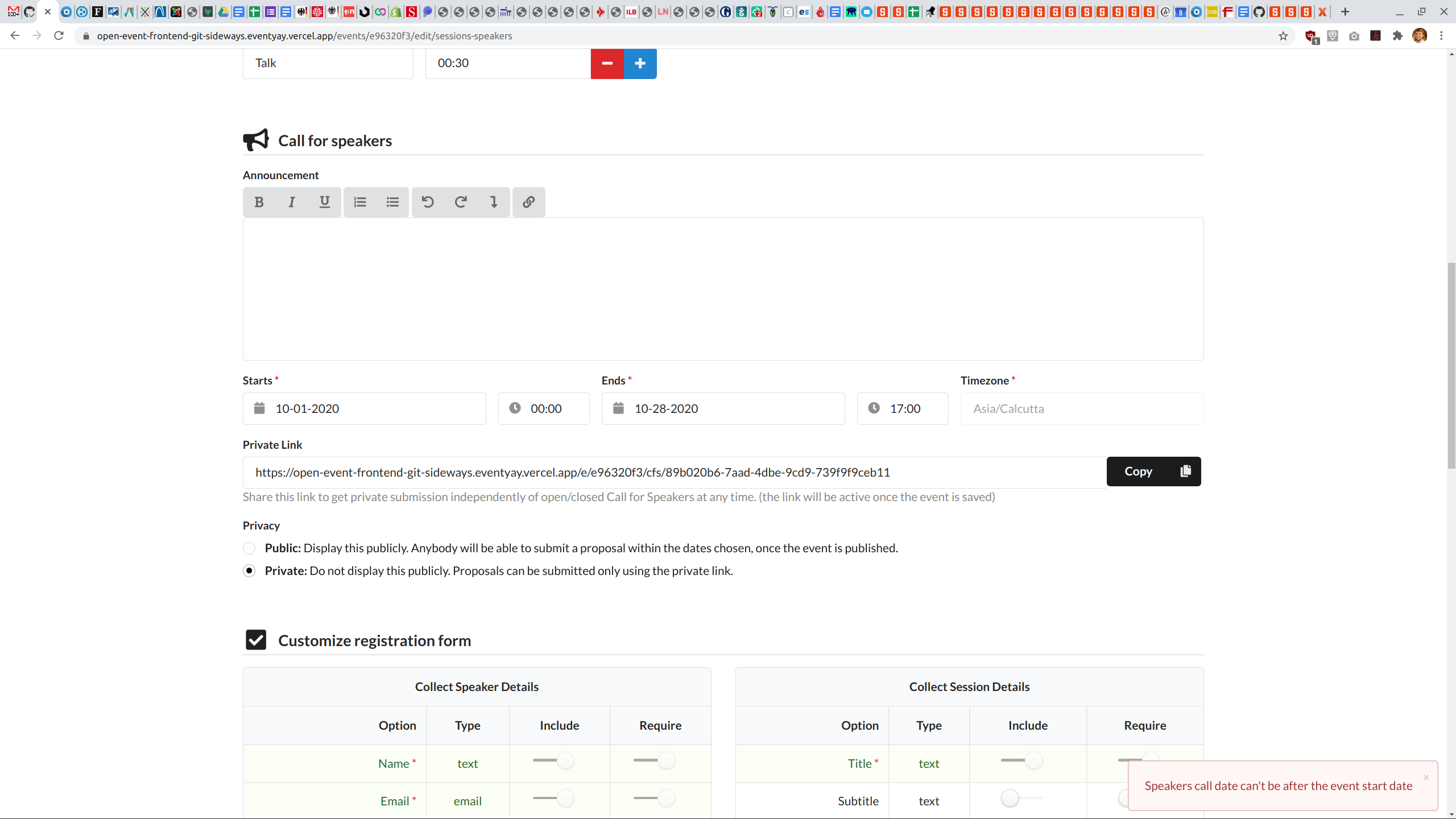

Wizard Step 5: Error Message "speakers call data can't be after the event start date" even though call date is before event

|

Priority: High Priority: Urgent bug

|

When an organizer plays around with the call date and chooses the wrong date an error message appears. When the organizers fixes it the error message still appears "speakers call data can't be after the event start date". Sometimes the error message does not appear even if the dates for "Call for Speakers" are after the event.

Compare: http://eventyay.com/events/e96320f3/edit/sessions-speakers

|

2.0

|

Wizard Step 5: Error Message "speakers call data can't be after the event start date" even though call date is before event - When an organizer plays around with the call date and chooses the wrong date an error message appears. When the organizers fixes it the error message still appears "speakers call data can't be after the event start date". Sometimes the error message does not appear even if the dates for "Call for Speakers" are after the event.

Compare: http://eventyay.com/events/e96320f3/edit/sessions-speakers

|

priority

|

wizard step error message speakers call data can t be after the event start date even though call date is before event when an organizer plays around with the call date and chooses the wrong date an error message appears when the organizers fixes it the error message still appears speakers call data can t be after the event start date sometimes the error message does not appear even if the dates for call for speakers are after the event compare

| 1

|

103,007

| 4,163,881,170

|

IssuesEvent

|

2016-06-18 11:53:09

|

gama-platform/gama

|

https://api.github.com/repos/gama-platform/gama

|

closed

|

Basic highlight does not work in Java2D displays

|

> Bug Affects Usability Concerns Simulation Display Java2D OS All Priority High Version Git

|

### Steps to reproduce

1. Run a simulation with a Java2D display, where the species displayed do not possess the special aspect called `highlighted`

2. Select an agent and choose to highlight it. Although it is correctly set in the inspector (if you inspect it also), its default color is not changed.

3. Define the same display as an OpenGL display. It works correctly.

### Expected behavior

The agent should change color in answer to the `Highlight` command.

### Actual behavior

Nothing is changed.

### System and version

GAMA Git version, MacOS X

|

1.0

|

Basic highlight does not work in Java2D displays - ### Steps to reproduce

1. Run a simulation with a Java2D display, where the species displayed do not possess the special aspect called `highlighted`

2. Select an agent and choose to highlight it. Although it is correctly set in the inspector (if you inspect it also), its default color is not changed.

3. Define the same display as an OpenGL display. It works correctly.

### Expected behavior

The agent should change color in answer to the `Highlight` command.

### Actual behavior

Nothing is changed.

### System and version

GAMA Git version, MacOS X

|

priority

|

basic highlight does not work in displays steps to reproduce run a simulation with a display where the species displayed do not possess the special aspect called highlighted select an agent and choose to highlight it although it is correctly set in the inspector if you inspect it also its default color is not changed define the same display as an opengl display it works correctly expected behavior the agent should change color in answer to the highlight command actual behavior nothing is changed system and version gama git version macos x

| 1

|

590,349

| 17,776,740,338

|

IssuesEvent

|

2021-08-30 20:16:12

|

Simon-Initiative/oli-torus

|

https://api.github.com/repos/Simon-Initiative/oli-torus

|

closed

|

Trying to access an activity after page title change fails

|

bug High Priority

|

**Describe the bug**

After creating an activity on a page and clicking "Edit ...", an error is thrown.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to a page

2. Change the title

2. Create an activity (Multiple Choice, for example)

2. Immediately click "Edit Multiple Choice"

3. An error will be thrown

**Expected behavior**

A user should be able to edit an activity after changing the page title

**Screenshots**

<img width="1526" alt="Screen Shot 2021-03-22 at 12 57 03 PM" src="https://user-images.githubusercontent.com/6248894/112028328-6ed22180-8b0e-11eb-96e7-239b42d9c4fc.png">

**Environment (please complete the following information):**

- OS: macOS 11.2.3

- Browser Chrome

- Version 89

**Additional context**

I've seen this a couple times, usually when creating the first activity in a project.

|

1.0

|

Trying to access an activity after page title change fails - **Describe the bug**

After creating an activity on a page and clicking "Edit ...", an error is thrown.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to a page

2. Change the title

2. Create an activity (Multiple Choice, for example)

2. Immediately click "Edit Multiple Choice"

3. An error will be thrown

**Expected behavior**

A user should be able to edit an activity after changing the page title

**Screenshots**

<img width="1526" alt="Screen Shot 2021-03-22 at 12 57 03 PM" src="https://user-images.githubusercontent.com/6248894/112028328-6ed22180-8b0e-11eb-96e7-239b42d9c4fc.png">

**Environment (please complete the following information):**

- OS: macOS 11.2.3

- Browser Chrome

- Version 89

**Additional context**

I've seen this a couple times, usually when creating the first activity in a project.

|

priority

|

trying to access an activity after page title change fails describe the bug after creating an activity on a page and clicking edit an error is thrown to reproduce steps to reproduce the behavior go to a page change the title create an activity multiple choice for example immediately click edit multiple choice an error will be thrown expected behavior a user should be able to edit an activity after changing the page title screenshots img width alt screen shot at pm src environment please complete the following information os macos browser chrome version additional context i ve seen this a couple times usually when creating the first activity in a project

| 1

|

87,495

| 3,755,443,460

|

IssuesEvent

|

2016-03-12 17:23:10

|

BradWBeer/clinch

|

https://api.github.com/repos/BradWBeer/clinch

|

opened

|

Setting sdl2:gl-set-attr :*-size breaks opengl context

|

bug High Priority

|

Found on TatriX's machine.

* sbcl 1.3.1-1

* arch linux x64

*sdl2 2.0.4-2

|

1.0

|

Setting sdl2:gl-set-attr :*-size breaks opengl context - Found on TatriX's machine.

* sbcl 1.3.1-1

* arch linux x64

*sdl2 2.0.4-2

|

priority

|

setting gl set attr size breaks opengl context found on tatrix s machine sbcl arch linux

| 1

|

513,463

| 14,922,177,396

|

IssuesEvent

|

2021-01-23 13:42:53

|

ihhub/fheroes2

|

https://api.github.com/repos/ihhub/fheroes2

|

closed

|

Game doesn't run main menu music theme when we load game

|

bug high priority sound

|

Only in multiplayer hot seat mode.

When we press load game, while playing at the adventure map and return to main menu we still continue hearing music from the adventure map until we cancel loading or enter the list of savegames.

|

1.0

|

Game doesn't run main menu music theme when we load game - Only in multiplayer hot seat mode.

When we press load game, while playing at the adventure map and return to main menu we still continue hearing music from the adventure map until we cancel loading or enter the list of savegames.

|

priority

|

game doesn t run main menu music theme when we load game only in multiplayer hot seat mode when we press load game while playing at the adventure map and return to main menu we still continue hearing music from the adventure map until we cancel loading or enter the list of savegames

| 1

|

599,717

| 18,281,267,364

|

IssuesEvent

|

2021-10-05 03:56:24

|

wso2-attic/docker-das

|

https://api.github.com/repos/wso2-attic/docker-das

|

closed

|

Simplify Data Analytics Server Dockerfile

|

Type/Task Priority/High

|

**Description:**

The existing WSO2 Dockerfiles use a complex set of bash scripts and Puppet for building the Docker images. These bash scripts have been used for improving certain aspects of Docker image build process and Puppet has been used for configuration management. Nevertheless, with our experience and the feedback received from our users we found that it would be much better to have plain Dockerfiles for building WSO2 Docker images than incorporating such features.

Above approach has already been followed in kubernetes-apim repository for building API Manager Docker images including API Manager Analytics. We can take that as the baseline and update Data Analytics Server Dockerfile accordingly.

**Affected Product Version:**

Data Analytics Server 3.1.0

**Related Issues:**

https://github.com/wso2/docker-is/issues/15, https://github.com/wso2/docker-apim/issues/61, https://github.com/wso2/docker-ei/issues/12

|

1.0

|

Simplify Data Analytics Server Dockerfile - **Description:**

The existing WSO2 Dockerfiles use a complex set of bash scripts and Puppet for building the Docker images. These bash scripts have been used for improving certain aspects of Docker image build process and Puppet has been used for configuration management. Nevertheless, with our experience and the feedback received from our users we found that it would be much better to have plain Dockerfiles for building WSO2 Docker images than incorporating such features.

Above approach has already been followed in kubernetes-apim repository for building API Manager Docker images including API Manager Analytics. We can take that as the baseline and update Data Analytics Server Dockerfile accordingly.

**Affected Product Version:**

Data Analytics Server 3.1.0

**Related Issues:**

https://github.com/wso2/docker-is/issues/15, https://github.com/wso2/docker-apim/issues/61, https://github.com/wso2/docker-ei/issues/12

|

priority

|

simplify data analytics server dockerfile description the existing dockerfiles use a complex set of bash scripts and puppet for building the docker images these bash scripts have been used for improving certain aspects of docker image build process and puppet has been used for configuration management nevertheless with our experience and the feedback received from our users we found that it would be much better to have plain dockerfiles for building docker images than incorporating such features above approach has already been followed in kubernetes apim repository for building api manager docker images including api manager analytics we can take that as the baseline and update data analytics server dockerfile accordingly affected product version data analytics server related issues

| 1

|

478,488

| 13,780,251,708

|

IssuesEvent

|

2020-10-08 14:42:45

|

AY2021S1-CS2103-F10-4/tp

|

https://api.github.com/repos/AY2021S1-CS2103-F10-4/tp

|

closed

|

List all available shifts

|

class.Shift priority.High type.Story

|

As a user I can see all available shifts and their details so that I know which shifts need workers.

|

1.0

|

List all available shifts - As a user I can see all available shifts and their details so that I know which shifts need workers.

|

priority

|

list all available shifts as a user i can see all available shifts and their details so that i know which shifts need workers

| 1

|

318,490

| 9,693,308,927

|

IssuesEvent

|

2019-05-24 15:45:27

|

geosolutions-it/MapStore2-C027

|

https://api.github.com/repos/geosolutions-it/MapStore2-C027

|

closed

|

Supporto LDAP - GeoServer

|

Priority: High Project: C027 [zube]: Ready enhancement in progress

|

Allow in GeoServer the possibility to read LDAP users within a herarchy of nested groups.

The current configuration deployed in the client's infrastructure is composed by MapStore, GeoServer and GeoFence independently connected to the same LDAP path with the same configuration. The authentications GeoServer side for OGC requests are managed through Authkey generated by MapStore.

The Client's GS version is 2.13.2, the proposal [here](https://docs.google.com/document/d/1IbKi3dWXvxzVf_sR3iv3HwoJdGJGbrNwqoFKIyz5t0E/edit#heading=h.hpvkr3wxmvs0)

|

1.0

|

Supporto LDAP - GeoServer - Allow in GeoServer the possibility to read LDAP users within a herarchy of nested groups.

The current configuration deployed in the client's infrastructure is composed by MapStore, GeoServer and GeoFence independently connected to the same LDAP path with the same configuration. The authentications GeoServer side for OGC requests are managed through Authkey generated by MapStore.

The Client's GS version is 2.13.2, the proposal [here](https://docs.google.com/document/d/1IbKi3dWXvxzVf_sR3iv3HwoJdGJGbrNwqoFKIyz5t0E/edit#heading=h.hpvkr3wxmvs0)

|

priority

|

supporto ldap geoserver allow in geoserver the possibility to read ldap users within a herarchy of nested groups the current configuration deployed in the client s infrastructure is composed by mapstore geoserver and geofence independently connected to the same ldap path with the same configuration the authentications geoserver side for ogc requests are managed through authkey generated by mapstore the client s gs version is the proposal

| 1

|

34,529

| 2,782,138,632

|

IssuesEvent

|

2015-05-06 16:38:16

|

CenterForOpenScience/osf.io

|

https://api.github.com/repos/CenterForOpenScience/osf.io

|

closed

|

Add clarifying message when Registering a Project re: It's components

|

5 - Pending Review enhancement Priority - High

|

Similar to language added to Component (sub-project) Registration #2615, let's add the following message when a user clicks to Register a Project:

'You are about to Register the project "Parent Project Name" and everything that is inside it. If you would prefer to register just a particular component of "Parent Project Name", please click back and navigate to that component and then initiate registration.'

|

1.0

|

Add clarifying message when Registering a Project re: It's components - Similar to language added to Component (sub-project) Registration #2615, let's add the following message when a user clicks to Register a Project:

'You are about to Register the project "Parent Project Name" and everything that is inside it. If you would prefer to register just a particular component of "Parent Project Name", please click back and navigate to that component and then initiate registration.'

|

priority

|

add clarifying message when registering a project re it s components similar to language added to component sub project registration let s add the following message when a user clicks to register a project you are about to register the project parent project name and everything that is inside it if you would prefer to register just a particular component of parent project name please click back and navigate to that component and then initiate registration

| 1

|

435,608

| 12,536,611,829

|

IssuesEvent

|

2020-06-05 00:39:06

|

ROCmSoftwarePlatform/MIOpen

|

https://api.github.com/repos/ROCmSoftwarePlatform/MIOpen

|

opened

|

[ROCm3.5][MP Winograd] miopenGcnAsmWinogradXformData_7_7_2_2: Memory access fault by GPU node-2

|

complexity_high priority_blocker

|

ROCm3.5, Radeon VII, develop at e65e04ac1df4f. CMake command line:

```bash

CXX=/opt/rocm/llvm/bin/clang++ \

CXXFLAGS=-O0 \

cmake \

-DBUILD_DEV=On \

-DCMAKE_BUILD_TYPE=debug \

-DMIOPEN_GPU_SYNC=On \

-DCMAKE_CXX_FLAGS_DEBUG=-g \

-fno-omit-frame-pointer \

-fsanitize=undefined \

-fno-sanitize-recover=undefined \

-DMIOPEN_TEST_FLAGS=--disable-verification-cache ../..

```

Failing config:

```

$ ./bin/MIOpenDriver conv -n 1 -c 3 -H 32 -W 32 -k 1 -c 3 -y 7 -x 7 -p 0 -q 0 -u 1 -v 1 -l 1 -j 1 -V 0 -F 0

...

Memory access fault by GPU node-2 (Agent handle: 0x247cb00) on address (nil). Reason: Page not present or supervisor privilege.

```

## Triaging

- The issue disappears with `-F 4` (WrW only):

```

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] ConvBinWinogradRxS: miopenSp3AsmConvRxSf3x2: 0.11072 < 3.40282e+38

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] ConvBinWinogradRxSf2x3: miopenSp3AsmConv_group_20_5_23_M_stride1: 0.06672 < 0.11072

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] ConvWinograd3x3MultipassWrW<7-2>: miopenGcnAsmWinogradXformData_7_7_2_2/miopenGcnAsmWinogradXformFilter_7_7_2_2/miopenGcnAsmWinogradXformOut_7_7_2_2: 0.09536 >= 0.06672

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] ConvWinograd3x3MultipassWrW<7-3>: miopenGcnAsmWinogradXformData_7_7_3_3/miopenGcnAsmWinogradXformFilter_7_7_3_3/miopenGcnAsmWinogradXformOut_7_7_3_3: 0.0712 >= 0.06672

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] Selected: ConvBinWinogradRxSf2x3: miopenSp3AsmConv_group_20_5_23_M_stride1: 0.06672, workspce_sz = 0

```

[pass.log](https://github.com/ROCmSoftwarePlatform/MIOpen/files/4733293/pass.log)

[fail.log](https://github.com/ROCmSoftwarePlatform/MIOpen/files/4733294/fail.log)

- Disabling all algos except Winograd doesn't help. I.e. when run with `-F 0`, driver fails at WrW Find phase, after evaluating `ConvBinWinogradRxS` and `ConvBinWinogradRxSf2x3` (during evaluation of `ConvWinograd3x3MultipassWrW<7-2>`). Of course, the problem disappears with `-F 4`. Settings:

```

export MIOPEN_DEBUG_CONV_GEMM=0

export MIOPEN_DEBUG_CONV_DIRECT=0

export MIOPEN_DEBUG_CONV_FFT=0

export MIOPEN_DEBUG_CONV_IMPLICIT_GEMM=0

```

Then I played with enabling/disabling individual Winograd Solvers and stopped with the following settings:

```

MIOPEN_DEBUG_AMD_WINOGRAD_RXS=0

MIOPEN_DEBUG_AMD_WINOGRAD_RXS_F2X3=0

MIOPEN_DEBUG_AMD_WINOGRAD_RXS_F3X2=0

MIOPEN_DEBUG_CONV_DIRECT=0

MIOPEN_DEBUG_CONV_GEMM=1

MIOPEN_DEBUG_CONV_IMPLICIT_GEMM=0

````

and got the following (no failure):

```

...Info [FindConvFwdAlgorithm] FW Chosen Algorithm: gemm , 397488, 0.05904...

...Info [FindConvBwdDataAlgorithm] BWD Chosen Algorithm: gemm , 397488, 0.06784...

...Info [FindConvBwdWeightsAlgorithm] BWrW Chosen Algorithm: ConvWinograd3x3MultipassWrW<7-3> , 158268, 0.06304...

```

- At this point enabling either of Winograd RxS solvers brings the `Memory access fault by GPU node-2` failure back.

- Weird effect: enabling `MIOPEN_DEBUG_AMD_WINOGRAD_RXS` or `MIOPEN_DEBUG_AMD_WINOGRAD_RXS_F2X3` also leads to ___very strange failure, as if this somehow disables GEMM___:

```

...Info [FindConvFwdAlgorithm] FW Chosen Algorithm: ConvBinWinogradRxS , 0, 0.04016...

!!! MIOpen Error: /home/atamazov/github/MLOpen1/src/ocl/convolutionocl.cpp:2632: Backward Data Algo cannot be executed !!! ...

```

:red_circle: All the above suggests that the library is somehow messed up.

|

1.0

|

[ROCm3.5][MP Winograd] miopenGcnAsmWinogradXformData_7_7_2_2: Memory access fault by GPU node-2 - ROCm3.5, Radeon VII, develop at e65e04ac1df4f. CMake command line:

```bash

CXX=/opt/rocm/llvm/bin/clang++ \

CXXFLAGS=-O0 \

cmake \

-DBUILD_DEV=On \

-DCMAKE_BUILD_TYPE=debug \

-DMIOPEN_GPU_SYNC=On \

-DCMAKE_CXX_FLAGS_DEBUG=-g \

-fno-omit-frame-pointer \

-fsanitize=undefined \

-fno-sanitize-recover=undefined \

-DMIOPEN_TEST_FLAGS=--disable-verification-cache ../..

```

Failing config:

```

$ ./bin/MIOpenDriver conv -n 1 -c 3 -H 32 -W 32 -k 1 -c 3 -y 7 -x 7 -p 0 -q 0 -u 1 -v 1 -l 1 -j 1 -V 0 -F 0

...

Memory access fault by GPU node-2 (Agent handle: 0x247cb00) on address (nil). Reason: Page not present or supervisor privilege.

```

## Triaging

- The issue disappears with `-F 4` (WrW only):

```

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] ConvBinWinogradRxS: miopenSp3AsmConvRxSf3x2: 0.11072 < 3.40282e+38

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] ConvBinWinogradRxSf2x3: miopenSp3AsmConv_group_20_5_23_M_stride1: 0.06672 < 0.11072

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] ConvWinograd3x3MultipassWrW<7-2>: miopenGcnAsmWinogradXformData_7_7_2_2/miopenGcnAsmWinogradXformFilter_7_7_2_2/miopenGcnAsmWinogradXformOut_7_7_2_2: 0.09536 >= 0.06672

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] ConvWinograd3x3MultipassWrW<7-3>: miopenGcnAsmWinogradXformData_7_7_3_3/miopenGcnAsmWinogradXformFilter_7_7_3_3/miopenGcnAsmWinogradXformOut_7_7_3_3: 0.0712 >= 0.06672

MIOpen(HIP): Info [FindConvBwdWeightsAlgorithm] Selected: ConvBinWinogradRxSf2x3: miopenSp3AsmConv_group_20_5_23_M_stride1: 0.06672, workspce_sz = 0

```

[pass.log](https://github.com/ROCmSoftwarePlatform/MIOpen/files/4733293/pass.log)

[fail.log](https://github.com/ROCmSoftwarePlatform/MIOpen/files/4733294/fail.log)

- Disabling all algos except Winograd doesn't help. I.e. when run with `-F 0`, driver fails at WrW Find phase, after evaluating `ConvBinWinogradRxS` and `ConvBinWinogradRxSf2x3` (during evaluation of `ConvWinograd3x3MultipassWrW<7-2>`). Of course, the problem disappears with `-F 4`. Settings:

```

export MIOPEN_DEBUG_CONV_GEMM=0

export MIOPEN_DEBUG_CONV_DIRECT=0

export MIOPEN_DEBUG_CONV_FFT=0

export MIOPEN_DEBUG_CONV_IMPLICIT_GEMM=0

```

Then I played with enabling/disabling individual Winograd Solvers and stopped with the following settings:

```

MIOPEN_DEBUG_AMD_WINOGRAD_RXS=0

MIOPEN_DEBUG_AMD_WINOGRAD_RXS_F2X3=0

MIOPEN_DEBUG_AMD_WINOGRAD_RXS_F3X2=0

MIOPEN_DEBUG_CONV_DIRECT=0

MIOPEN_DEBUG_CONV_GEMM=1

MIOPEN_DEBUG_CONV_IMPLICIT_GEMM=0

````

and got the following (no failure):

```

...Info [FindConvFwdAlgorithm] FW Chosen Algorithm: gemm , 397488, 0.05904...

...Info [FindConvBwdDataAlgorithm] BWD Chosen Algorithm: gemm , 397488, 0.06784...

...Info [FindConvBwdWeightsAlgorithm] BWrW Chosen Algorithm: ConvWinograd3x3MultipassWrW<7-3> , 158268, 0.06304...

```

- At this point enabling either of Winograd RxS solvers brings the `Memory access fault by GPU node-2` failure back.

- Weird effect: enabling `MIOPEN_DEBUG_AMD_WINOGRAD_RXS` or `MIOPEN_DEBUG_AMD_WINOGRAD_RXS_F2X3` also leads to ___very strange failure, as if this somehow disables GEMM___:

```

...Info [FindConvFwdAlgorithm] FW Chosen Algorithm: ConvBinWinogradRxS , 0, 0.04016...

!!! MIOpen Error: /home/atamazov/github/MLOpen1/src/ocl/convolutionocl.cpp:2632: Backward Data Algo cannot be executed !!! ...

```

:red_circle: All the above suggests that the library is somehow messed up.

|

priority

|

miopengcnasmwinogradxformdata memory access fault by gpu node radeon vii develop at cmake command line bash cxx opt rocm llvm bin clang cxxflags cmake dbuild dev on dcmake build type debug dmiopen gpu sync on dcmake cxx flags debug g fno omit frame pointer fsanitize undefined fno sanitize recover undefined dmiopen test flags disable verification cache failing config bin miopendriver conv n c h w k c y x p q u v l j v f memory access fault by gpu node agent handle on address nil reason page not present or supervisor privilege triaging the issue disappears with f wrw only miopen hip info convbinwinogradrxs miopen hip info group m miopen hip info miopengcnasmwinogradxformdata miopengcnasmwinogradxformfilter miopengcnasmwinogradxformout miopen hip info miopengcnasmwinogradxformdata miopengcnasmwinogradxformfilter miopengcnasmwinogradxformout miopen hip info selected group m workspce sz disabling all algos except winograd doesn t help i e when run with f driver fails at wrw find phase after evaluating convbinwinogradrxs and during evaluation of of course the problem disappears with f settings export miopen debug conv gemm export miopen debug conv direct export miopen debug conv fft export miopen debug conv implicit gemm then i played with enabling disabling individual winograd solvers and stopped with the following settings miopen debug amd winograd rxs miopen debug amd winograd rxs miopen debug amd winograd rxs miopen debug conv direct miopen debug conv gemm miopen debug conv implicit gemm and got the following no failure info fw chosen algorithm gemm info bwd chosen algorithm gemm info bwrw chosen algorithm at this point enabling either of winograd rxs solvers brings the memory access fault by gpu node failure back weird effect enabling miopen debug amd winograd rxs or miopen debug amd winograd rxs also leads to very strange failure as if this somehow disables gemm info fw chosen algorithm convbinwinogradrxs miopen error home atamazov github src ocl convolutionocl cpp backward data algo cannot be executed red circle all the above suggests that the library is somehow messed up

| 1

|

108,345

| 4,337,515,629

|

IssuesEvent

|

2016-07-28 00:47:16

|

koding/koding

|

https://api.github.com/repos/koding/koding

|

closed

|

Different teams/organizations might have different period of free trials

|

A-Feature Priority-High

|

### Overview

We want to be able to provide a way to change the period of free trials if they register/create the team with a promotional code.

### Goals

- Koding admins (mainly marketing/business related people) can create a promotional event.

- This event will have a specific code/token, and amount of time that it extends the trial.

- This token must be present in the team creation process to make use of this extended trial.

- Teams created with this token, will have extended amount of trial.

|

1.0

|

Different teams/organizations might have different period of free trials - ### Overview

We want to be able to provide a way to change the period of free trials if they register/create the team with a promotional code.

### Goals

- Koding admins (mainly marketing/business related people) can create a promotional event.

- This event will have a specific code/token, and amount of time that it extends the trial.

- This token must be present in the team creation process to make use of this extended trial.

- Teams created with this token, will have extended amount of trial.

|

priority

|

different teams organizations might have different period of free trials overview we want to be able to provide a way to change the period of free trials if they register create the team with a promotional code goals koding admins mainly marketing business related people can create a promotional event this event will have a specific code token and amount of time that it extends the trial this token must be present in the team creation process to make use of this extended trial teams created with this token will have extended amount of trial

| 1

|

421,217

| 12,255,080,188

|

IssuesEvent

|

2020-05-06 09:35:14

|

red-hat-storage/ocs-ci

|

https://api.github.com/repos/red-hat-storage/ocs-ci

|

closed

|

modify ocp installer url for downloads

|

High Priority team/ecosystem

|

From Trevor: ( He pointed out for clients but I see the installer as well, the directory structure differs from the existing one we have in the code)

```

https://mirror.openshift.com/pub/openshift-v4/clients/ , on the other hand, is something we point customers at, so that (and our other mirrors) should be reliable

```

|

1.0

|

modify ocp installer url for downloads - From Trevor: ( He pointed out for clients but I see the installer as well, the directory structure differs from the existing one we have in the code)

```

https://mirror.openshift.com/pub/openshift-v4/clients/ , on the other hand, is something we point customers at, so that (and our other mirrors) should be reliable

```

|

priority

|

modify ocp installer url for downloads from trevor he pointed out for clients but i see the installer as well the directory structure differs from the existing one we have in the code on the other hand is something we point customers at so that and our other mirrors should be reliable

| 1

|

595,473

| 18,067,548,020

|

IssuesEvent

|

2021-09-20 21:04:18

|

OpenMandrivaAssociation/test2

|

https://api.github.com/repos/OpenMandrivaAssociation/test2

|

closed

|

Packages info says that "it's an official Mandriva package" (Bugzilla Bug 92)

|

bug high priority major

|

This issue was created automatically with bugzilla2github

# Bugzilla Bug 92

Date: 2013-08-20 00:24:35 +0000

From: Gus Ballan <<siriustheking@yahoo.com>>

To: OpenMandriva QA <<bugs@openmandriva.org>>

CC: @benbullard79, @itchka, @robxu9

Last updated: 2013-09-15 19:33:36 +0000

## Comment 449

Date: 2013-08-20 00:24:35 +0000

From: Gus Ballan <<siriustheking@yahoo.com>>

From MCC/Software Management you can select a package and some info appears. In a lot of packages, that info includes a reference to Mandriva instead of OpenMandriva.

One example:

Selecting "drakconf-kde4" in MCC/Software Management shows:

-----------

drakconf-kde4 - Drakx tools implementaion for KDE4 Control Center Aviso: Éste es un paquete oficial soportado por Mandriva

This metapackage install needing KCM plugins for run Drakx utilites via KDE Control Center. It's install Rpmdrake, XFdrake, Firewall, Userdrake and other utilites.

------------

Approximate translation of spanish line:

"Warning: This is an package officially supported by Mandriva"

Note that this is ONLY ONE example. A lot of packages show the same behaviour.

The line that says "Mandriva" doesn't appear from console:

[username@localhost ~]$ urpmq -i drakconf-kde4

http://abf-downloads.rosalinux.ru/cooker/repository/i586/media/main/release/media_info/info.xml.lzma

Name : drakconf-kde4

Version : 2013.0

Release : 2

Group : System/Base

Size : 0 Architecture: noarch

Source RPM : drakconf-kde4-2013.0-2.src.rpm

Summary : Drakx tools implementaion for KDE4 Control Center

Description :

This metapackage install needing KCM plugins for run Drakx utilites

via KDE Control Center. It's install Rpmdrake, XFdrake, Firewall,

Userdrake and other utilites.

Name : drakconf-kde4

Version : 2013.0

Release : 2

Group : System/Base

Size : 0 Architecture: noarch

Source RPM : drakconf-kde4-2013.0-2.src.rpm

Summary : Drakx tools implementaion for KDE4 Control Center

Description :

This metapackage install needing KCM plugins for run Drakx utilites

via KDE Control Center. It's install Rpmdrake, XFdrake, Firewall,

Userdrake and other utilites.

## Comment 451

Date: 2013-08-20 00:57:06 +0000

From: @benbullard79

Wow, this needs to be corrected or we'll all land in jail in Paris. Hey I'm being serious here. This is a must fix.

## Comment 452

Date: 2013-08-20 03:50:30 +0000

From: @robxu9

already fixed in source; still waiting on other mirror stuff

granted, I'm more worried about the lack of translations this might cause...

## Comment 574

Date: 2013-08-26 08:37:50 +0000

From: @itchka

Robert, Can we close this.

Colin

## Comment 587

Date: 2013-08-26 13:09:15 +0000

From: @robxu9

Not yet.

## Comment 866

Date: 2013-09-15 19:33:36 +0000

From: @robxu9

fixed in rpmdrake

|

1.0

|

Packages info says that "it's an official Mandriva package" (Bugzilla Bug 92) - This issue was created automatically with bugzilla2github

# Bugzilla Bug 92

Date: 2013-08-20 00:24:35 +0000

From: Gus Ballan <<siriustheking@yahoo.com>>

To: OpenMandriva QA <<bugs@openmandriva.org>>

CC: @benbullard79, @itchka, @robxu9

Last updated: 2013-09-15 19:33:36 +0000

## Comment 449

Date: 2013-08-20 00:24:35 +0000

From: Gus Ballan <<siriustheking@yahoo.com>>

From MCC/Software Management you can select a package and some info appears. In a lot of packages, that info includes a reference to Mandriva instead of OpenMandriva.

One example:

Selecting "drakconf-kde4" in MCC/Software Management shows:

-----------