Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

344,099

| 10,339,995,360

|

IssuesEvent

|

2019-09-03 20:44:33

|

oslc-op/jira-migration-landfill

|

https://api.github.com/repos/oslc-op/jira-migration-landfill

|

closed

|

Some form of subscription and/or update notification may be required

|

Domain: CfgM Priority: High Status: Deferred Xtra: Jira

|

If a Global Configuration server is to handle local contributions to local configurations, or if subsidiary layers of global configuration servers are to be supported, then a leaf node in the contribution tree might not be an immediate contribution to a GC in that first GC server. In that case, that first GC server must either be notified of the change to the contribution tree, or must delegate component resolution to the next layer down (the subsidiary GC server, or the local configuration server with multiple levels of contributed configurations).

The current draft spec does not address delegated layers of component resolution.

---

_Migrated from https://issues.oasis-open.org/browse/OSLCCORE-120 (opened by @oslc-bot; previously assigned to @undefined)_

|

1.0

|

Some form of subscription and/or update notification may be required - If a Global Configuration server is to handle local contributions to local configurations, or if subsidiary layers of global configuration servers are to be supported, then a leaf node in the contribution tree might not be an immediate contribution to a GC in that first GC server. In that case, that first GC server must either be notified of the change to the contribution tree, or must delegate component resolution to the next layer down (the subsidiary GC server, or the local configuration server with multiple levels of contributed configurations).

The current draft spec does not address delegated layers of component resolution.

---

_Migrated from https://issues.oasis-open.org/browse/OSLCCORE-120 (opened by @oslc-bot; previously assigned to @undefined)_

|

priority

|

some form of subscription and or update notification may be required if a global configuration server is to handle local contributions to local configurations or if subsidiary layers of global configuration servers are to be supported then a leaf node in the contribution tree might not be an immediate contribution to a gc in that first gc server in that case that first gc server must either be notified of the change to the contribution tree or must delegate component resolution to the next layer down the subsidiary gc server or the local configuration server with multiple levels of contributed configurations the current draft spec does not address delegated layers of component resolution migrated from opened by oslc bot previously assigned to undefined

| 1

|

755,497

| 26,430,600,096

|

IssuesEvent

|

2023-01-14 19:17:08

|

LiteLDev/LiteLoaderBDS

|

https://api.github.com/repos/LiteLDev/LiteLoaderBDS

|

closed

|

LLSE ob.setScore / pl.setScore 不会自动在计分板中创建目标

|

type: bug module: script engine priority: high

|

### 异常模块

ScriptEngine (脚本引擎)

### 操作系统

Windows 10

### LiteLoader 版本

LiteLoaderBDS 2.7.2+2b8c54d25

### BDS 版本

Version 1.19.31.01(ProtocolVersion 554)

### 发生了什么?

LLSE JS中(非Nodejs ,这里面没测试)

`ob.setScore` 和 `pl.setScore` 均不能自动创建计分板目标,即第一个函数里面的第一个参数 玩家,第二个函数里面的pl

比如 `ob.setScore(player,1);` (我确定这里的ob 不为null,且是有效的计分板对象,计分板也存在,计分板名称也无误,且player是真实有效的玩家对象)

这句函数在执行的时候 不会返回null,控制台也没有报错,游戏中的计分板(在右侧显示)除了显示标题空空如也

如果使用命令 `/scoreboard players add XXX jifenban` 将player玩家添加到该计分板中,则这条函数可以正常使用(正常设置分数)

如果`ob.setScore()`的第一个参数为字符串(这个字符串目标不在该计分板中的时候),执行函数控制台会报错

**总结 :核心的问题是,这条函数(或许还有其他计分板函数)在之前的版本中是可以检测并创建计分板目标后然后再设置目标分数的,但现在并不会检测并创建计分板目标了(这里的计分板目标指的是计分板中的玩家名)**

### 复现此问题的步骤

附上我的脚本作为参考

```javescript

/*

* 开发者 - CNGEGE

*/

(function(){

//logger.setConsole(true);

let ob = null;

let obname = "onlineplayers";

let obshowname = "在线玩家(ping)";

let timerid = -1;

let timeout = 1000 * 5;

function ServerStarted(){

ob = mc.getScoreObjective(obname);

if(ob == null){

logger.log("计分板为空");

ob = mc.newScoreObjective(obname,obshowname);

ob.setDisplay("sidebar");

}else if(ob.displayName != obshowname)

{

logger.log("计分板名字不对应");

mc.removeScoreObjective(obname);

ob = mc.newScoreObjective(obname,obshowname);

ob.setDisplay("sidebar");

}

logger.log("服务开启");

}

function Join(player){

//logger.log("玩家加入游戏");

//logger.log("当前玩家数量:",mc.getOnlinePlayers().length.toString());

let device = player.getDevice();

player.setScore(obname,device.avgPing);

//如果 当前只有这一位玩家 则开启一个计时器,每5s计算一次玩家的ping值 直到没有玩家在线了,则关闭计时器

if(mc.getOnlinePlayers().length == 1)

{

timerid = setInterval(()=>{

let players = mc.getOnlinePlayers();

if(players.length == 0){

clearInterval(timerid);

}else{

for(i=0;i<players.length;i++){

let dev = players[i].getDevice();

//logger.log("玩家名字:"+players[i].name+" 延迟:"+dev.lastPing.toString());

//players[i].setScore(obname,dev.lastPing);

if(ob.setScore(players[i],dev.lastPing) == null){

logger.log("失败");

}

}

}

},timeout);

}

}

function Left(player){

//logger.log("玩家退出游戏");

player.deleteScore(obname);

}

mc.listen("onJoin",Join);

mc.listen("onLeft",Left);

mc.listen("onServerStarted",ServerStarted);

})()

```

### 有关的日志/输出

_No response_

### 插件列表

```raw

22:20:58 INFO [Server] 插件列表 [12]

22:20:58 INFO [Server] - AutoFishing [v1.2.1] (AutoFishing.dll)

22:20:58 INFO [Server] LL版 BDS服务器全自动挂机钓鱼

22:20:58 INFO [Server] - DispenserGetLavaFromCauldron [v3.2.1] (DispenserGetLavaFromCauldron.dll)

22:20:58 INFO [Server] 用发射器向装有岩浆的炼药锅发射空桶以装岩浆/反之亦然

22:20:58 INFO [Server] - TreeCuttingAndMining [v2.1.0] (TreeCuttingAndMining.dll)

22:20:58 INFO [Server] 砍树与挖矿插件

22:20:58 INFO [Server] - DispenserDestroyBlock [v2.1.1] (DispenserDestroyBlock.dll)

22:20:58 INFO [Server] 激活发射器利用工具破坏方块

22:20:58 INFO [Server] - LLMoney [v2.7.0] (LLMoney.dll)

22:20:58 INFO [Server] EconomyCore for LiteLoaderBDS

22:20:58 INFO [Server] - Hundred_Times [v1.0.0] (Hundred_Times.dll)

22:20:58 INFO [Server] 百倍掉落物 by CNGEGE

22:20:58 INFO [Server] - PlayerKB [v1.2.0] (PlayerKB.dll)

22:20:58 INFO [Server] 玩家击退&间隔控制

22:20:58 INFO [Server] - PermissionAPI [v2.7.0] (PermissionAPI.dll)

22:20:58 INFO [Server] Builtin & Powerful permission API for LiteLoaderBDS

22:20:58 INFO [Server] - ScriptEngine-QuickJs [v2.7.2] (LiteLoader.Js.dll)

22:20:58 INFO [Server] Javascript ScriptEngine for LiteLoaderBDS

22:20:58 INFO [Server] - onLinePlayer [v1.0.0] (onLinePlayer.js)

22:20:58 INFO [Server] onLinePlayer

22:20:58 INFO [Server] - ScriptEngine-Lua [v2.7.2] (LiteLoader.Lua.dll)

22:20:58 INFO [Server] Lua ScriptEngine for LiteLoaderBDS

22:20:58 INFO [Server] - ScriptEngine-NodeJs [v2.7.2] (LiteLoader.NodeJs.dll)

22:20:58 INFO [Server] Node.js ScriptEngine for LiteLoaderBDS

22:20:58 INFO [Server]

22:20:58 INFO [Server]

```

|

1.0

|

LLSE ob.setScore / pl.setScore 不会自动在计分板中创建目标 - ### 异常模块

ScriptEngine (脚本引擎)

### 操作系统

Windows 10

### LiteLoader 版本

LiteLoaderBDS 2.7.2+2b8c54d25

### BDS 版本

Version 1.19.31.01(ProtocolVersion 554)

### 发生了什么?

LLSE JS中(非Nodejs ,这里面没测试)

`ob.setScore` 和 `pl.setScore` 均不能自动创建计分板目标,即第一个函数里面的第一个参数 玩家,第二个函数里面的pl

比如 `ob.setScore(player,1);` (我确定这里的ob 不为null,且是有效的计分板对象,计分板也存在,计分板名称也无误,且player是真实有效的玩家对象)

这句函数在执行的时候 不会返回null,控制台也没有报错,游戏中的计分板(在右侧显示)除了显示标题空空如也

如果使用命令 `/scoreboard players add XXX jifenban` 将player玩家添加到该计分板中,则这条函数可以正常使用(正常设置分数)

如果`ob.setScore()`的第一个参数为字符串(这个字符串目标不在该计分板中的时候),执行函数控制台会报错

**总结 :核心的问题是,这条函数(或许还有其他计分板函数)在之前的版本中是可以检测并创建计分板目标后然后再设置目标分数的,但现在并不会检测并创建计分板目标了(这里的计分板目标指的是计分板中的玩家名)**

### 复现此问题的步骤

附上我的脚本作为参考

```javescript

/*

* 开发者 - CNGEGE

*/

(function(){

//logger.setConsole(true);

let ob = null;

let obname = "onlineplayers";

let obshowname = "在线玩家(ping)";

let timerid = -1;

let timeout = 1000 * 5;

function ServerStarted(){

ob = mc.getScoreObjective(obname);

if(ob == null){

logger.log("计分板为空");

ob = mc.newScoreObjective(obname,obshowname);

ob.setDisplay("sidebar");

}else if(ob.displayName != obshowname)

{

logger.log("计分板名字不对应");

mc.removeScoreObjective(obname);

ob = mc.newScoreObjective(obname,obshowname);

ob.setDisplay("sidebar");

}

logger.log("服务开启");

}

function Join(player){

//logger.log("玩家加入游戏");

//logger.log("当前玩家数量:",mc.getOnlinePlayers().length.toString());

let device = player.getDevice();

player.setScore(obname,device.avgPing);

//如果 当前只有这一位玩家 则开启一个计时器,每5s计算一次玩家的ping值 直到没有玩家在线了,则关闭计时器

if(mc.getOnlinePlayers().length == 1)

{

timerid = setInterval(()=>{

let players = mc.getOnlinePlayers();

if(players.length == 0){

clearInterval(timerid);

}else{

for(i=0;i<players.length;i++){

let dev = players[i].getDevice();

//logger.log("玩家名字:"+players[i].name+" 延迟:"+dev.lastPing.toString());

//players[i].setScore(obname,dev.lastPing);

if(ob.setScore(players[i],dev.lastPing) == null){

logger.log("失败");

}

}

}

},timeout);

}

}

function Left(player){

//logger.log("玩家退出游戏");

player.deleteScore(obname);

}

mc.listen("onJoin",Join);

mc.listen("onLeft",Left);

mc.listen("onServerStarted",ServerStarted);

})()

```

### 有关的日志/输出

_No response_

### 插件列表

```raw

22:20:58 INFO [Server] 插件列表 [12]

22:20:58 INFO [Server] - AutoFishing [v1.2.1] (AutoFishing.dll)

22:20:58 INFO [Server] LL版 BDS服务器全自动挂机钓鱼

22:20:58 INFO [Server] - DispenserGetLavaFromCauldron [v3.2.1] (DispenserGetLavaFromCauldron.dll)

22:20:58 INFO [Server] 用发射器向装有岩浆的炼药锅发射空桶以装岩浆/反之亦然

22:20:58 INFO [Server] - TreeCuttingAndMining [v2.1.0] (TreeCuttingAndMining.dll)

22:20:58 INFO [Server] 砍树与挖矿插件

22:20:58 INFO [Server] - DispenserDestroyBlock [v2.1.1] (DispenserDestroyBlock.dll)

22:20:58 INFO [Server] 激活发射器利用工具破坏方块

22:20:58 INFO [Server] - LLMoney [v2.7.0] (LLMoney.dll)

22:20:58 INFO [Server] EconomyCore for LiteLoaderBDS

22:20:58 INFO [Server] - Hundred_Times [v1.0.0] (Hundred_Times.dll)

22:20:58 INFO [Server] 百倍掉落物 by CNGEGE

22:20:58 INFO [Server] - PlayerKB [v1.2.0] (PlayerKB.dll)

22:20:58 INFO [Server] 玩家击退&间隔控制

22:20:58 INFO [Server] - PermissionAPI [v2.7.0] (PermissionAPI.dll)

22:20:58 INFO [Server] Builtin & Powerful permission API for LiteLoaderBDS

22:20:58 INFO [Server] - ScriptEngine-QuickJs [v2.7.2] (LiteLoader.Js.dll)

22:20:58 INFO [Server] Javascript ScriptEngine for LiteLoaderBDS

22:20:58 INFO [Server] - onLinePlayer [v1.0.0] (onLinePlayer.js)

22:20:58 INFO [Server] onLinePlayer

22:20:58 INFO [Server] - ScriptEngine-Lua [v2.7.2] (LiteLoader.Lua.dll)

22:20:58 INFO [Server] Lua ScriptEngine for LiteLoaderBDS

22:20:58 INFO [Server] - ScriptEngine-NodeJs [v2.7.2] (LiteLoader.NodeJs.dll)

22:20:58 INFO [Server] Node.js ScriptEngine for LiteLoaderBDS

22:20:58 INFO [Server]

22:20:58 INFO [Server]

```

|

priority

|

llse ob setscore pl setscore 不会自动在计分板中创建目标 异常模块 scriptengine 脚本引擎 操作系统 windows liteloader 版本 liteloaderbds bds 版本 version protocolversion 发生了什么 llse js中(非nodejs ,这里面没测试) ob setscore 和 pl setscore 均不能自动创建计分板目标 即第一个函数里面的第一个参数 玩家,第二个函数里面的pl 比如 ob setscore player (我确定这里的ob 不为null 且是有效的计分板对象,计分板也存在 计分板名称也无误,且player是真实有效的玩家对象) 这句函数在执行的时候 不会返回null 控制台也没有报错,游戏中的计分板(在右侧显示)除了显示标题空空如也 如果使用命令 scoreboard players add xxx jifenban 将player玩家添加到该计分板中,则这条函数可以正常使用(正常设置分数) 如果 ob setscore 的第一个参数为字符串(这个字符串目标不在该计分板中的时候),执行函数控制台会报错 总结 :核心的问题是,这条函数(或许还有其他计分板函数)在之前的版本中是可以检测并创建计分板目标后然后再设置目标分数的,但现在并不会检测并创建计分板目标了(这里的计分板目标指的是计分板中的玩家名) 复现此问题的步骤 附上我的脚本作为参考 javescript 开发者 cngege function logger setconsole true let ob null let obname onlineplayers let obshowname 在线玩家 ping let timerid let timeout function serverstarted ob mc getscoreobjective obname if ob null logger log 计分板为空 ob mc newscoreobjective obname obshowname ob setdisplay sidebar else if ob displayname obshowname logger log 计分板名字不对应 mc removescoreobjective obname ob mc newscoreobjective obname obshowname ob setdisplay sidebar logger log 服务开启 function join player logger log 玩家加入游戏 logger log 当前玩家数量 mc getonlineplayers length tostring let device player getdevice player setscore obname device avgping 如果 当前只有这一位玩家 则开启一个计时器, 直到没有玩家在线了,则关闭计时器 if mc getonlineplayers length timerid setinterval let players mc getonlineplayers if players length clearinterval timerid else for i i players length i let dev players getdevice logger log 玩家名字 players name 延迟 dev lastping tostring players setscore obname dev lastping if ob setscore players dev lastping null logger log 失败 timeout function left player logger log 玩家退出游戏 player deletescore obname mc listen onjoin join mc listen onleft left mc listen onserverstarted serverstarted 有关的日志 输出 no response 插件列表 raw info 插件列表 info autofishing autofishing dll info ll版 bds服务器全自动挂机钓鱼 info dispensergetlavafromcauldron dispensergetlavafromcauldron dll info 用发射器向装有岩浆的炼药锅发射空桶以装岩浆 反之亦然 info treecuttingandmining treecuttingandmining dll info 砍树与挖矿插件 info dispenserdestroyblock dispenserdestroyblock dll info 激活发射器利用工具破坏方块 info llmoney llmoney dll info economycore for liteloaderbds info hundred times hundred times dll info 百倍掉落物 by cngege info playerkb playerkb dll info 玩家击退 间隔控制 info permissionapi permissionapi dll info builtin powerful permission api for liteloaderbds info scriptengine quickjs liteloader js dll info javascript scriptengine for liteloaderbds info onlineplayer onlineplayer js info onlineplayer info scriptengine lua liteloader lua dll info lua scriptengine for liteloaderbds info scriptengine nodejs liteloader nodejs dll info node js scriptengine for liteloaderbds info info

| 1

|

488,429

| 14,077,269,881

|

IssuesEvent

|

2020-11-04 11:45:29

|

CLIxIndia-Dev/clixoer

|

https://api.github.com/repos/CLIxIndia-Dev/clixoer

|

opened

|

Filter Option Needs Tool Tip Explaining Types as Explained in SRS Slide

|

backend enhancement highpriority

|

**Is your feature request related to a problem? Please describe.**

Filter Option Needs Tool Tip Explaining Types as Explained in SRS Slide [Here](https://docs.google.com/presentation/d/1ivAPOnd9aXzfNQCtjQuRFx2Iw9nk7PWfM7mNTe9D7XU/edit#slide=id.g74b266a43a_0_22)

**Describe the solution you'd like**

Add Too Tips explaining what filters are

|

1.0

|

Filter Option Needs Tool Tip Explaining Types as Explained in SRS Slide - **Is your feature request related to a problem? Please describe.**

Filter Option Needs Tool Tip Explaining Types as Explained in SRS Slide [Here](https://docs.google.com/presentation/d/1ivAPOnd9aXzfNQCtjQuRFx2Iw9nk7PWfM7mNTe9D7XU/edit#slide=id.g74b266a43a_0_22)

**Describe the solution you'd like**

Add Too Tips explaining what filters are

|

priority

|

filter option needs tool tip explaining types as explained in srs slide is your feature request related to a problem please describe filter option needs tool tip explaining types as explained in srs slide describe the solution you d like add too tips explaining what filters are

| 1

|

193,589

| 6,886,245,620

|

IssuesEvent

|

2017-11-21 18:46:58

|

GoogleCloudPlatform/google-cloud-eclipse

|

https://api.github.com/repos/GoogleCloudPlatform/google-cloud-eclipse

|

closed

|

Convert to App Engine Standard menu should configure Java 8 instead of Java 7

|

enhancement high priority

|

Currently, it's always Java 7.

It may be OK for Java 7 projects to remain on the Java 7 runtime, although they will have no problem being deployed to the Java 8 runtime.

|

1.0

|

Convert to App Engine Standard menu should configure Java 8 instead of Java 7 - Currently, it's always Java 7.

It may be OK for Java 7 projects to remain on the Java 7 runtime, although they will have no problem being deployed to the Java 8 runtime.

|

priority

|

convert to app engine standard menu should configure java instead of java currently it s always java it may be ok for java projects to remain on the java runtime although they will have no problem being deployed to the java runtime

| 1

|

361,435

| 10,708,890,879

|

IssuesEvent

|

2019-10-24 20:43:45

|

opencv/opencv

|

https://api.github.com/repos/opencv/opencv

|

closed

|

Incorrect window size on Mac OS X Lion

|

auto-transferred bug category: highgui-gui priority: normal

|

Transferred from http://code.opencv.org/issues/2189

```

|| Jan Dlabal on 2012-07-24 17:26

|| Priority: Normal

|| Affected: None

|| Category: highgui-gui

|| Tracker: Bug

|| Difficulty: None

|| PR: None

|| Platform: None / None

```

## Incorrect window size on Mac OS X Lion

```

See http://stackoverflow.com/questions/11635842/opencv-not-filling-entire-image.

Basically this:

@

cv::Mat cvSideDepthImage1(150, 150, CV_8UC1, cv::Scalar(100));

cv::imshow("side1", cvSideDepthImage1);

@

Creates this 200x150px window:

!http://i.stack.imgur.com/HetAA.png!

When it should just show a gray 150x150 square.

This is OpenCV 2.4.9 on OS X Lion (all updates installed).

```

## History

##### Marina Kolpakova on 2012-07-24 18:05

```

- Category set to highgui-images

```

##### Andrey Kamaev on 2012-08-15 13:42

```

- Assignee set to Vadim Pisarevsky

```

##### Andrey Kamaev on 2012-08-16 15:42

```

- Category changed from highgui-images to highgui-gui

```

|

1.0

|

Incorrect window size on Mac OS X Lion - Transferred from http://code.opencv.org/issues/2189

```

|| Jan Dlabal on 2012-07-24 17:26

|| Priority: Normal

|| Affected: None

|| Category: highgui-gui

|| Tracker: Bug

|| Difficulty: None

|| PR: None

|| Platform: None / None

```

## Incorrect window size on Mac OS X Lion

```

See http://stackoverflow.com/questions/11635842/opencv-not-filling-entire-image.

Basically this:

@

cv::Mat cvSideDepthImage1(150, 150, CV_8UC1, cv::Scalar(100));

cv::imshow("side1", cvSideDepthImage1);

@

Creates this 200x150px window:

!http://i.stack.imgur.com/HetAA.png!

When it should just show a gray 150x150 square.

This is OpenCV 2.4.9 on OS X Lion (all updates installed).

```

## History

##### Marina Kolpakova on 2012-07-24 18:05

```

- Category set to highgui-images

```

##### Andrey Kamaev on 2012-08-15 13:42

```

- Assignee set to Vadim Pisarevsky

```

##### Andrey Kamaev on 2012-08-16 15:42

```

- Category changed from highgui-images to highgui-gui

```

|

priority

|

incorrect window size on mac os x lion transferred from jan dlabal on priority normal affected none category highgui gui tracker bug difficulty none pr none platform none none incorrect window size on mac os x lion see basically this cv mat cv cv scalar cv imshow creates this window when it should just show a gray square this is opencv on os x lion all updates installed history marina kolpakova on category set to highgui images andrey kamaev on assignee set to vadim pisarevsky andrey kamaev on category changed from highgui images to highgui gui

| 1

|

250,942

| 7,992,991,238

|

IssuesEvent

|

2018-07-20 05:18:47

|

AtlasOfLivingAustralia/fieldcapture

|

https://api.github.com/repos/AtlasOfLivingAustralia/fieldcapture

|

closed

|

Support for multiple project funding sources

|

priority-high status-new type-enhancement

|

_migrated from:_ https://code.google.com/p/ala/issues/detail?id=735

_date:_ Sat Jul 5 06:10:04 2014

_author:_ CoolDa...@gmail.com

---

New doE programmes require projects to be apportioned across multiple funding streams. This is also a requirement of the generalised system as many projects undertaken by organisations involve multiple funding partners.

A simple multi-row table in the Admin>Project information tab should be adequate for this putpose.

|

1.0

|

Support for multiple project funding sources - _migrated from:_ https://code.google.com/p/ala/issues/detail?id=735

_date:_ Sat Jul 5 06:10:04 2014

_author:_ CoolDa...@gmail.com

---

New doE programmes require projects to be apportioned across multiple funding streams. This is also a requirement of the generalised system as many projects undertaken by organisations involve multiple funding partners.

A simple multi-row table in the Admin>Project information tab should be adequate for this putpose.

|

priority

|

support for multiple project funding sources migrated from date sat jul author coolda gmail com new doe programmes require projects to be apportioned across multiple funding streams this is also a requirement of the generalised system as many projects undertaken by organisations involve multiple funding partners a simple multi row table in the admin project information tab should be adequate for this putpose

| 1

|

168,478

| 6,376,387,998

|

IssuesEvent

|

2017-08-02 07:18:12

|

leo-project/leofs

|

https://api.github.com/repos/leo-project/leofs

|

closed

|

Consistency Problem with Async. Deletion

|

Bug Priority-HIGH v1.3 _leo_storage

|

## Description

Asynchronous deletion would cause consistency problem if another modification has occurred before the async. deletion is handled

## Root Cause

Directory Deletion

- Object list under the directory is pull when `leo_storage` handles the deletion, the list of objects could have been changed by the time

- Time stamp of the deletion request is not taken into account, re-created file could be incorrectly deleted

Object Deletion

- Time stamp of the deletion request is recorded as the time `leo_storage` starts to handle, not the origin request time

## Action to take

Clarify the consistency model, especially the mix of sync and async. operations, the time stamp record for reconciliation

## Related Issue

Spark first cleanup the temporary folder and then start to write data into the folder https://github.com/leo-project/leofs/issues/595

|

1.0

|

Consistency Problem with Async. Deletion - ## Description

Asynchronous deletion would cause consistency problem if another modification has occurred before the async. deletion is handled

## Root Cause

Directory Deletion

- Object list under the directory is pull when `leo_storage` handles the deletion, the list of objects could have been changed by the time

- Time stamp of the deletion request is not taken into account, re-created file could be incorrectly deleted

Object Deletion

- Time stamp of the deletion request is recorded as the time `leo_storage` starts to handle, not the origin request time

## Action to take

Clarify the consistency model, especially the mix of sync and async. operations, the time stamp record for reconciliation

## Related Issue

Spark first cleanup the temporary folder and then start to write data into the folder https://github.com/leo-project/leofs/issues/595

|

priority

|

consistency problem with async deletion description asynchronous deletion would cause consistency problem if another modification has occurred before the async deletion is handled root cause directory deletion object list under the directory is pull when leo storage handles the deletion the list of objects could have been changed by the time time stamp of the deletion request is not taken into account re created file could be incorrectly deleted object deletion time stamp of the deletion request is recorded as the time leo storage starts to handle not the origin request time action to take clarify the consistency model especially the mix of sync and async operations the time stamp record for reconciliation related issue spark first cleanup the temporary folder and then start to write data into the folder

| 1

|

134,853

| 5,238,529,692

|

IssuesEvent

|

2017-01-31 05:26:26

|

axsh/openvdc

|

https://api.github.com/repos/axsh/openvdc

|

closed

|

Scheduler needs configuration file to run on multi-host environment

|

Priority : High Type : Feature

|

### Problem

It is currently not possible to start scheduler through systemd and have it interact with zookeeper, api, etc. when those are installed on another host.

Also if `openvdc-cli` isn't installed on the same host, scheduler will not start at all because an EnvironmentFile was mistakenly added to the `openvdc-cli` package instead.

### Solution

* Have scheduler accept a configuration file similar to https://github.com/axsh/openvdc/pull/91.

* Remove the EnvironmentFile in favour of the new configuration file.

|

1.0

|

Scheduler needs configuration file to run on multi-host environment - ### Problem

It is currently not possible to start scheduler through systemd and have it interact with zookeeper, api, etc. when those are installed on another host.

Also if `openvdc-cli` isn't installed on the same host, scheduler will not start at all because an EnvironmentFile was mistakenly added to the `openvdc-cli` package instead.

### Solution

* Have scheduler accept a configuration file similar to https://github.com/axsh/openvdc/pull/91.

* Remove the EnvironmentFile in favour of the new configuration file.

|

priority

|

scheduler needs configuration file to run on multi host environment problem it is currently not possible to start scheduler through systemd and have it interact with zookeeper api etc when those are installed on another host also if openvdc cli isn t installed on the same host scheduler will not start at all because an environmentfile was mistakenly added to the openvdc cli package instead solution have scheduler accept a configuration file similar to remove the environmentfile in favour of the new configuration file

| 1

|

792,046

| 27,943,861,482

|

IssuesEvent

|

2023-03-24 00:15:40

|

evo-lua/evo-runtime

|

https://api.github.com/repos/evo-lua/evo-runtime

|

closed

|

Integrate a UUID generation library (to generate IDs for connected WebSocket clients)

|

Priority: High Complexity: Moderate Scope: Dependencies Scope: Runtime Status: In Progress Type: New Feature

|

This looks decent enough at first glance: https://github.com/mariusbancila/stduuid

In the current uws prototype (#73 ) I'm using the remote address of the connected peer as an ID, but that could go wrong. It's also somewhat wasteful (since it's currently a string value that is replicated for every event). I guess using an UUID value and only converting it to a Lua string when needed (via FFI bindings) would solve both of these issues.

Goals:

- [x] Can generate uuid strings (Lua and cdata)

Roadmap:

- [x] Add submodule

- [x] Integrate in build process

- [x] Add version to help command

- [x] Static exports table

- [x] FFI bindings library

- [x] Unit tests

- [x] API documentation

|

1.0

|

Integrate a UUID generation library (to generate IDs for connected WebSocket clients) - This looks decent enough at first glance: https://github.com/mariusbancila/stduuid

In the current uws prototype (#73 ) I'm using the remote address of the connected peer as an ID, but that could go wrong. It's also somewhat wasteful (since it's currently a string value that is replicated for every event). I guess using an UUID value and only converting it to a Lua string when needed (via FFI bindings) would solve both of these issues.

Goals:

- [x] Can generate uuid strings (Lua and cdata)

Roadmap:

- [x] Add submodule

- [x] Integrate in build process

- [x] Add version to help command

- [x] Static exports table

- [x] FFI bindings library

- [x] Unit tests

- [x] API documentation

|

priority

|

integrate a uuid generation library to generate ids for connected websocket clients this looks decent enough at first glance in the current uws prototype i m using the remote address of the connected peer as an id but that could go wrong it s also somewhat wasteful since it s currently a string value that is replicated for every event i guess using an uuid value and only converting it to a lua string when needed via ffi bindings would solve both of these issues goals can generate uuid strings lua and cdata roadmap add submodule integrate in build process add version to help command static exports table ffi bindings library unit tests api documentation

| 1

|

384,110

| 11,383,671,793

|

IssuesEvent

|

2020-01-29 06:51:06

|

ahmedkaludi/accelerated-mobile-pages

|

https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages

|

closed

|

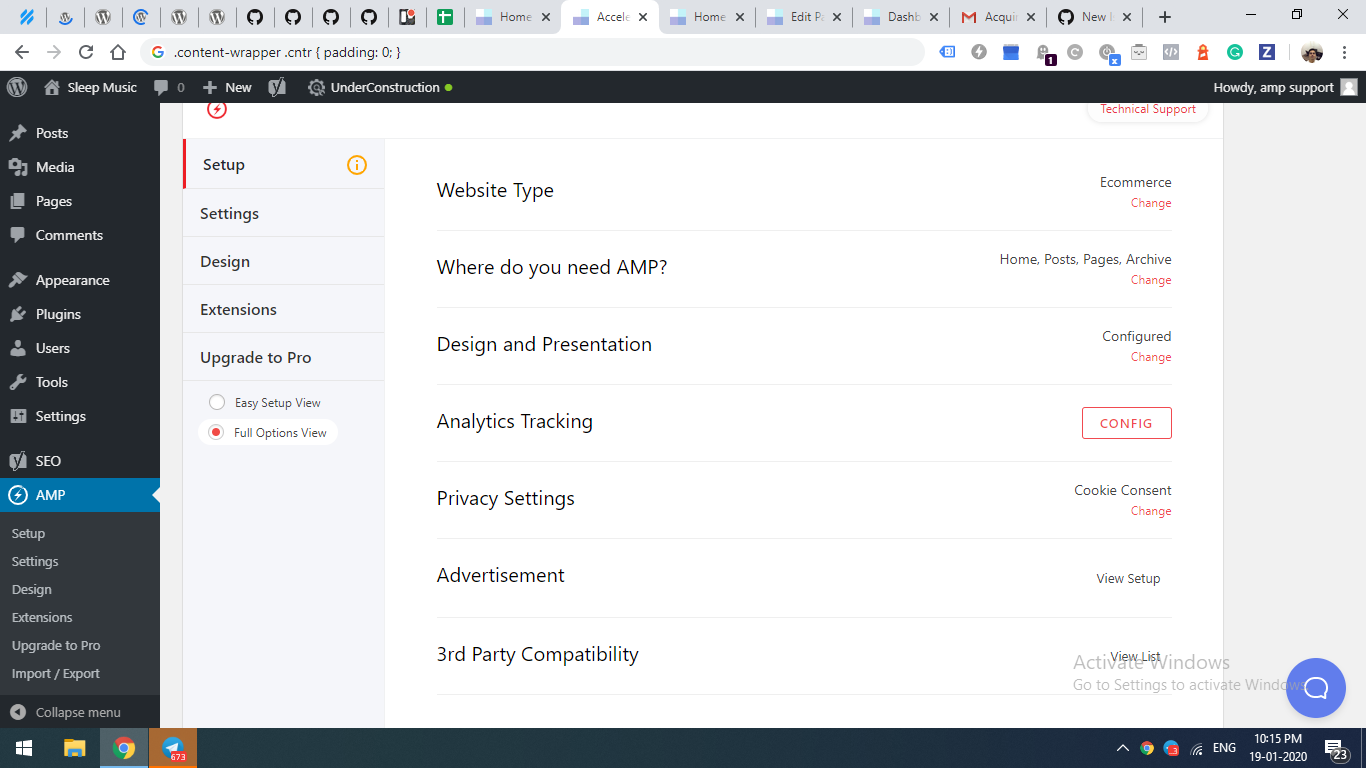

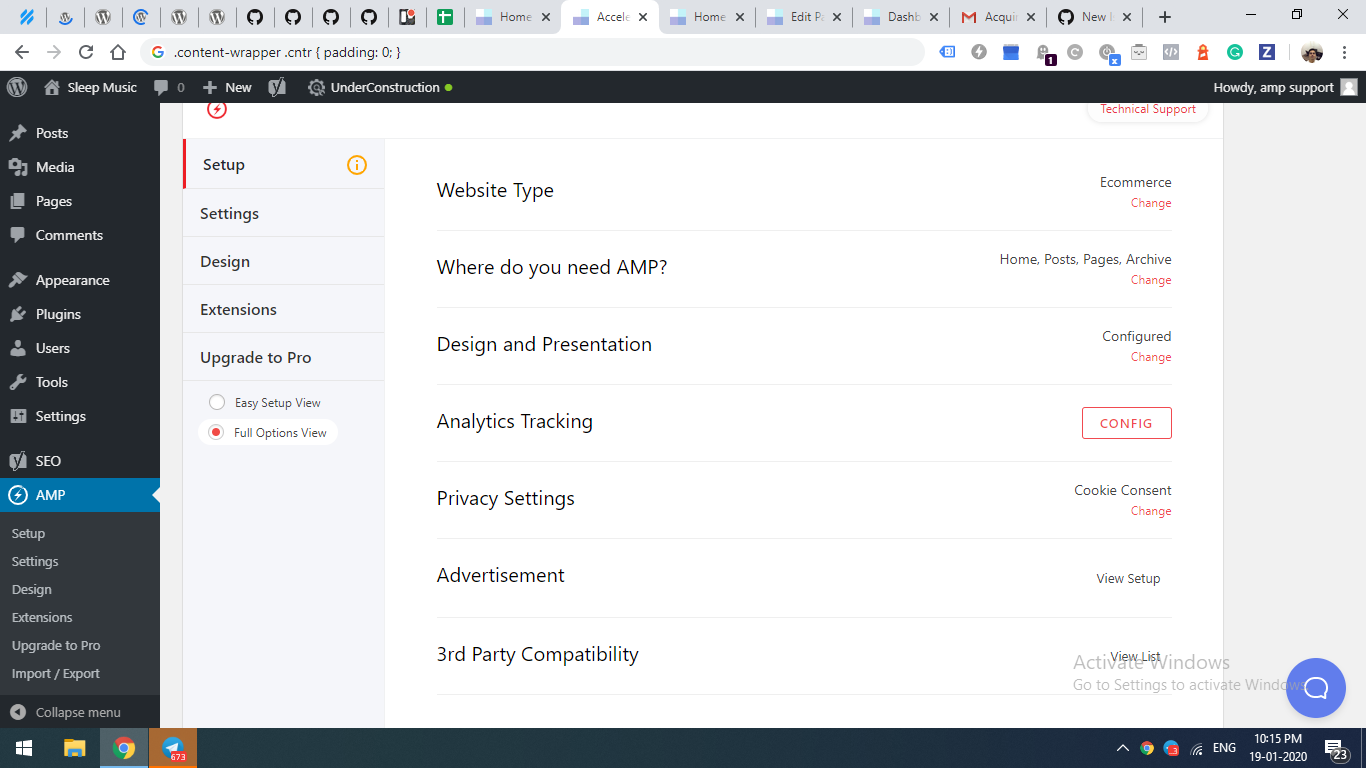

Your setup is not completed. Please setup for better AMP Experience.

|

NEXT UPDATE [Priority: HIGH] bug

|

What am I supposed to do?

|

1.0

|

Your setup is not completed. Please setup for better AMP Experience. - What am I supposed to do?

|

priority

|

your setup is not completed please setup for better amp experience what am i supposed to do

| 1

|

518,563

| 15,030,424,501

|

IssuesEvent

|

2021-02-02 07:25:42

|

red-hat-storage/ocs-ci

|

https://api.github.com/repos/red-hat-storage/ocs-ci

|

closed

|

Deployments to vsphere stuck after initializing terraform work directory

|

High Priority bug

|

Multiple vsphere deployments have become stuck after initializing the terraform work directory. Jobs stuck at this point will remain here until aborted or until the jenkins slave is shut down. The nodes that have been brought up do not appear to be in a running state / created successfully by terraform so they will need to be cleaned up manually through the vsphere console. I have experienced this myself on multiple different datacenters so I know the issue isn't isolated to one. I am still unsure if it is a vsphere issue or a jenkins slave issue.

Logs while job is stuck:

```

18:09:45 - MainThread - ocs_ci.deployment.terraform - INFO - Initializing terraform work directory

18:09:45 - MainThread - ocs_ci.utility.utils - INFO - Executing command: terraform init /home/jenkins/workspace/qe-deploy-ocs-cluster/ocs-ci/external/installer/upi/vsphere/

18:10:04 - MainThread - ocs_ci.utility.utils - INFO - Executing command: terraform apply '-var-file=/home/jenkins/current-cluster-dir/openshift-cluster-dir/terraform_data/terraform.tfvars' -auto-approve '/home/jenkins/workspace/qe-deploy-ocs-cluster/ocs-ci/external/installer/upi/vsphere/'

```

After shutting down the jenkins slave:

```

Cannot contact temp-slave-jnk-pr1377-b976: hudson.remoting.ChannelClosedException: Channel "unknown": Remote call on temp-slave-jnk-pr1377-b976 failed. The channel is closing down or has closed down

```

Jenkins job: https://ocs4-jenkins.rhev-ci-vms.eng.rdu2.redhat.com/job/qe-deploy-ocs-cluster/4128/console

|

1.0

|

Deployments to vsphere stuck after initializing terraform work directory - Multiple vsphere deployments have become stuck after initializing the terraform work directory. Jobs stuck at this point will remain here until aborted or until the jenkins slave is shut down. The nodes that have been brought up do not appear to be in a running state / created successfully by terraform so they will need to be cleaned up manually through the vsphere console. I have experienced this myself on multiple different datacenters so I know the issue isn't isolated to one. I am still unsure if it is a vsphere issue or a jenkins slave issue.

Logs while job is stuck:

```

18:09:45 - MainThread - ocs_ci.deployment.terraform - INFO - Initializing terraform work directory

18:09:45 - MainThread - ocs_ci.utility.utils - INFO - Executing command: terraform init /home/jenkins/workspace/qe-deploy-ocs-cluster/ocs-ci/external/installer/upi/vsphere/

18:10:04 - MainThread - ocs_ci.utility.utils - INFO - Executing command: terraform apply '-var-file=/home/jenkins/current-cluster-dir/openshift-cluster-dir/terraform_data/terraform.tfvars' -auto-approve '/home/jenkins/workspace/qe-deploy-ocs-cluster/ocs-ci/external/installer/upi/vsphere/'

```

After shutting down the jenkins slave:

```

Cannot contact temp-slave-jnk-pr1377-b976: hudson.remoting.ChannelClosedException: Channel "unknown": Remote call on temp-slave-jnk-pr1377-b976 failed. The channel is closing down or has closed down

```

Jenkins job: https://ocs4-jenkins.rhev-ci-vms.eng.rdu2.redhat.com/job/qe-deploy-ocs-cluster/4128/console

|

priority

|

deployments to vsphere stuck after initializing terraform work directory multiple vsphere deployments have become stuck after initializing the terraform work directory jobs stuck at this point will remain here until aborted or until the jenkins slave is shut down the nodes that have been brought up do not appear to be in a running state created successfully by terraform so they will need to be cleaned up manually through the vsphere console i have experienced this myself on multiple different datacenters so i know the issue isn t isolated to one i am still unsure if it is a vsphere issue or a jenkins slave issue logs while job is stuck mainthread ocs ci deployment terraform info initializing terraform work directory mainthread ocs ci utility utils info executing command terraform init home jenkins workspace qe deploy ocs cluster ocs ci external installer upi vsphere mainthread ocs ci utility utils info executing command terraform apply var file home jenkins current cluster dir openshift cluster dir terraform data terraform tfvars auto approve home jenkins workspace qe deploy ocs cluster ocs ci external installer upi vsphere after shutting down the jenkins slave cannot contact temp slave jnk hudson remoting channelclosedexception channel unknown remote call on temp slave jnk failed the channel is closing down or has closed down jenkins job

| 1

|

515,966

| 14,973,097,785

|

IssuesEvent

|

2021-01-28 00:16:57

|

DoobDev/Doob

|

https://api.github.com/repos/DoobDev/Doob

|

closed

|

starboard duplication glitch

|

High Priority Stale bug

|

if you keep spamming the star, the number just goes up, it never removes stars.

|

1.0

|

starboard duplication glitch - if you keep spamming the star, the number just goes up, it never removes stars.

|

priority

|

starboard duplication glitch if you keep spamming the star the number just goes up it never removes stars

| 1

|

658,591

| 21,898,043,474

|

IssuesEvent

|

2022-05-20 10:34:31

|

zephyrproject-rtos/zephyr

|

https://api.github.com/repos/zephyrproject-rtos/zephyr

|

closed

|

K_ESSENTIAL option doesn't have any effect on k_create_thread

|

bug priority: high area: Kernel

|

**Describe the bug**

In our application we spawn a thread at innit that will be running a loop during all the lifecycle of the app. If that thread stops execution or exits for any reason we want the whole system to restart. To achieve this we configure the thread with the option **K_ESSENTIAL** which according to the documentation:

*This option tags the thread as an essential thread. This instructs the kernel to treat the termination or aborting of the thread as a fatal system error.*

What we observe is that configuring the **K_ESSENTIAL** option has no effect whatsoever and that if the thread stops the rest of the app keeps running.

**To Reproduce**

Here a simple app to reproduce the problem:

```

#include <zephyr.h>

#include <sys/printk.h>

#define LOOP2_STACK_SIZE 1024

#define LOOP2_PRIORITY 1

struct k_thread loop2_thread;

K_THREAD_STACK_DEFINE(loop2_area, LOOP2_STACK_SIZE);

void loop2(void *one, void *two, void *three) {

int i = 0;

while (1)

{

printk("LOOP 2\n");

i++;

if (i > 3)

{

printk("LOOP 2 EXITING\n");

return;

}

k_sleep(K_SECONDS(1));

}

}

void main(void)

{

printk("Hello World! %s\n", CONFIG_BOARD);

k_tid_t loop2_thread_id = k_thread_create(&loop2_thread, loop2_area,

K_THREAD_STACK_SIZEOF(loop2_area),

loop2,

NULL, NULL, NULL,

LOOP2_PRIORITY, K_ESSENTIAL, K_NO_WAIT);

while (1) {

printk("LOOP 1\n");

k_sleep(K_SECONDS(2));

}

}

```

**Expected behavior**

When the spawned thread exits the app should be restarted (or halted depending on the platform)

**Impact**

This is a good fit for our application since the devices that are running it are spread geographically and adds robustness to the design.

**Environment (please complete the following information):**

We have observed this behavior when testing on qemu_cortex as well as on our hardware board which is a Nordic Semiconductors nrf9160 based one.

We are using Nordic SDK 1.9.1 which ships with Zephyr OS v2.7.99

|

1.0

|

K_ESSENTIAL option doesn't have any effect on k_create_thread - **Describe the bug**

In our application we spawn a thread at innit that will be running a loop during all the lifecycle of the app. If that thread stops execution or exits for any reason we want the whole system to restart. To achieve this we configure the thread with the option **K_ESSENTIAL** which according to the documentation:

*This option tags the thread as an essential thread. This instructs the kernel to treat the termination or aborting of the thread as a fatal system error.*

What we observe is that configuring the **K_ESSENTIAL** option has no effect whatsoever and that if the thread stops the rest of the app keeps running.

**To Reproduce**

Here a simple app to reproduce the problem:

```

#include <zephyr.h>

#include <sys/printk.h>

#define LOOP2_STACK_SIZE 1024

#define LOOP2_PRIORITY 1

struct k_thread loop2_thread;

K_THREAD_STACK_DEFINE(loop2_area, LOOP2_STACK_SIZE);

void loop2(void *one, void *two, void *three) {

int i = 0;

while (1)

{

printk("LOOP 2\n");

i++;

if (i > 3)

{

printk("LOOP 2 EXITING\n");

return;

}

k_sleep(K_SECONDS(1));

}

}

void main(void)

{

printk("Hello World! %s\n", CONFIG_BOARD);

k_tid_t loop2_thread_id = k_thread_create(&loop2_thread, loop2_area,

K_THREAD_STACK_SIZEOF(loop2_area),

loop2,

NULL, NULL, NULL,

LOOP2_PRIORITY, K_ESSENTIAL, K_NO_WAIT);

while (1) {

printk("LOOP 1\n");

k_sleep(K_SECONDS(2));

}

}

```

**Expected behavior**

When the spawned thread exits the app should be restarted (or halted depending on the platform)

**Impact**

This is a good fit for our application since the devices that are running it are spread geographically and adds robustness to the design.

**Environment (please complete the following information):**

We have observed this behavior when testing on qemu_cortex as well as on our hardware board which is a Nordic Semiconductors nrf9160 based one.

We are using Nordic SDK 1.9.1 which ships with Zephyr OS v2.7.99

|

priority

|

k essential option doesn t have any effect on k create thread describe the bug in our application we spawn a thread at innit that will be running a loop during all the lifecycle of the app if that thread stops execution or exits for any reason we want the whole system to restart to achieve this we configure the thread with the option k essential which according to the documentation this option tags the thread as an essential thread this instructs the kernel to treat the termination or aborting of the thread as a fatal system error what we observe is that configuring the k essential option has no effect whatsoever and that if the thread stops the rest of the app keeps running to reproduce here a simple app to reproduce the problem include include define stack size define priority struct k thread thread k thread stack define area stack size void void one void two void three int i while printk loop n i if i printk loop exiting n return k sleep k seconds void main void printk hello world s n config board k tid t thread id k thread create thread area k thread stack sizeof area null null null priority k essential k no wait while printk loop n k sleep k seconds expected behavior when the spawned thread exits the app should be restarted or halted depending on the platform impact this is a good fit for our application since the devices that are running it are spread geographically and adds robustness to the design environment please complete the following information we have observed this behavior when testing on qemu cortex as well as on our hardware board which is a nordic semiconductors based one we are using nordic sdk which ships with zephyr os

| 1

|

282,054

| 8,703,250,234

|

IssuesEvent

|

2018-12-05 16:13:29

|

opencollective/opencollective

|

https://api.github.com/repos/opencollective/opencollective

|

closed

|

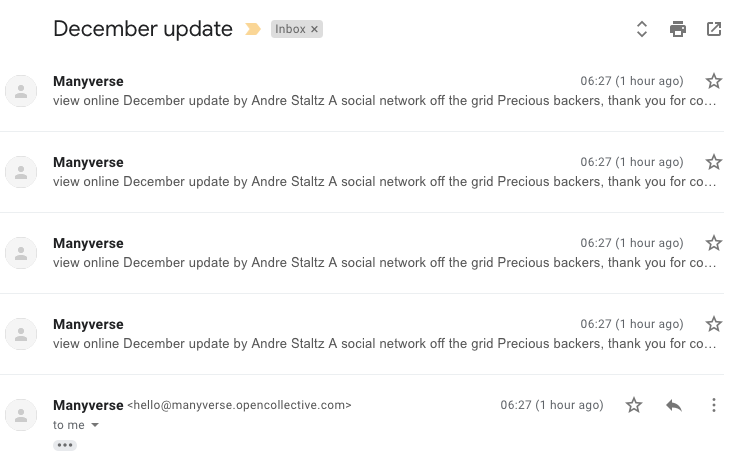

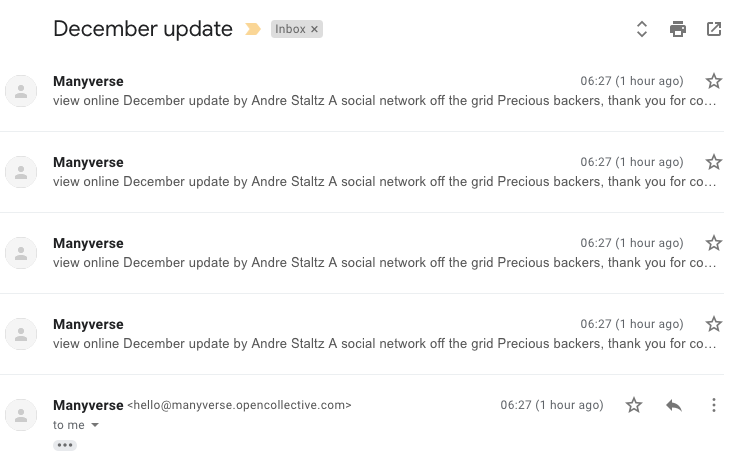

Duplicated emails received by backers on Collective updates

|

api backend bug high priority

|

Some users(example: I got the same 4 emails from `Manyverse` and @piamancini got 6) are receiving multiple emails through the Collectives Updates:

|

1.0

|

Duplicated emails received by backers on Collective updates - Some users(example: I got the same 4 emails from `Manyverse` and @piamancini got 6) are receiving multiple emails through the Collectives Updates:

|

priority

|

duplicated emails received by backers on collective updates some users example i got the same emails from manyverse and piamancini got are receiving multiple emails through the collectives updates

| 1

|

395,215

| 11,672,653,204

|

IssuesEvent

|

2020-03-04 07:13:41

|

fontforge/fontforge

|

https://api.github.com/repos/fontforge/fontforge

|

closed

|

GUI version of fontforge fails when used within in a script (but didn't used to)

|

High Priority

|

### When reporting a bug/issue:

- [ ] The FontForge version and the operating system you're using

Debian testing, version 1:20190801~dfsg-2

- [ ] The behavior you expect to see, and the actual behavior

I am the maintainer of [mftrace](https://github.com/hanwen/mftrace) for Debian. mftrace uses fontforge to do part of its job, and begins by testing that fontforge is present and working by running "fontforge --version". This ran without issue using older versions of the fontforge package (the most recent such Debian package was 1:20170731~dfsg-2; after that, they jumped to version 20190801), but not with the current version - it just exits with an error. Tracking this back, when the GUI-enabled version of fontforge is run without a valid DISPLAY, even if just running "fontforge --version" or "fontforge --help", it bombs out. The older version did not do this.

I am aware that there is a non-GUI version of fontforge, but the GUI-enabled version used to work for non-GUI tasks in a non-GUI environment, but it no longer does. Furthermore, scripts are used to using plain "fontforge", without caring whether it is GUI-enabled or not (and there is no simple way to check for this), but this change in behaviour has broken at least mftrace, and quite possibly other scripts too.

I have not isolated the change that caused this change in behaviour, though; at a quick glance, the code does not look dramatically different between 20170731 and 20190801.

- [ ] Steps to reproduce the behavior

Run "fontforge --version" or "fontforge --help" using the GUI-enabled fontforge in a terminal without a valid DISPLAY.

- [ ] Possible solution/fix/workaround

I'm not sure where this change in behaviour was introduced, but perhaps there could be a check in `fontforgeexe/startui.c` for whether there is a valid GUI, and to revert to the behaviour of `fontforgeexe/startnoui.c` if there is not instead of crashing.

|

1.0

|

GUI version of fontforge fails when used within in a script (but didn't used to) - ### When reporting a bug/issue:

- [ ] The FontForge version and the operating system you're using

Debian testing, version 1:20190801~dfsg-2

- [ ] The behavior you expect to see, and the actual behavior

I am the maintainer of [mftrace](https://github.com/hanwen/mftrace) for Debian. mftrace uses fontforge to do part of its job, and begins by testing that fontforge is present and working by running "fontforge --version". This ran without issue using older versions of the fontforge package (the most recent such Debian package was 1:20170731~dfsg-2; after that, they jumped to version 20190801), but not with the current version - it just exits with an error. Tracking this back, when the GUI-enabled version of fontforge is run without a valid DISPLAY, even if just running "fontforge --version" or "fontforge --help", it bombs out. The older version did not do this.

I am aware that there is a non-GUI version of fontforge, but the GUI-enabled version used to work for non-GUI tasks in a non-GUI environment, but it no longer does. Furthermore, scripts are used to using plain "fontforge", without caring whether it is GUI-enabled or not (and there is no simple way to check for this), but this change in behaviour has broken at least mftrace, and quite possibly other scripts too.

I have not isolated the change that caused this change in behaviour, though; at a quick glance, the code does not look dramatically different between 20170731 and 20190801.

- [ ] Steps to reproduce the behavior

Run "fontforge --version" or "fontforge --help" using the GUI-enabled fontforge in a terminal without a valid DISPLAY.

- [ ] Possible solution/fix/workaround

I'm not sure where this change in behaviour was introduced, but perhaps there could be a check in `fontforgeexe/startui.c` for whether there is a valid GUI, and to revert to the behaviour of `fontforgeexe/startnoui.c` if there is not instead of crashing.

|

priority

|

gui version of fontforge fails when used within in a script but didn t used to when reporting a bug issue the fontforge version and the operating system you re using debian testing version dfsg the behavior you expect to see and the actual behavior i am the maintainer of for debian mftrace uses fontforge to do part of its job and begins by testing that fontforge is present and working by running fontforge version this ran without issue using older versions of the fontforge package the most recent such debian package was dfsg after that they jumped to version but not with the current version it just exits with an error tracking this back when the gui enabled version of fontforge is run without a valid display even if just running fontforge version or fontforge help it bombs out the older version did not do this i am aware that there is a non gui version of fontforge but the gui enabled version used to work for non gui tasks in a non gui environment but it no longer does furthermore scripts are used to using plain fontforge without caring whether it is gui enabled or not and there is no simple way to check for this but this change in behaviour has broken at least mftrace and quite possibly other scripts too i have not isolated the change that caused this change in behaviour though at a quick glance the code does not look dramatically different between and steps to reproduce the behavior run fontforge version or fontforge help using the gui enabled fontforge in a terminal without a valid display possible solution fix workaround i m not sure where this change in behaviour was introduced but perhaps there could be a check in fontforgeexe startui c for whether there is a valid gui and to revert to the behaviour of fontforgeexe startnoui c if there is not instead of crashing

| 1

|

231,438

| 7,632,506,884

|

IssuesEvent

|

2018-05-05 16:02:30

|

tootsuite/mastodon

|

https://api.github.com/repos/tootsuite/mastodon

|

closed

|

Video modal extends beyond screen

|

bug priority - high ui

|

Referencing #5956 and @yuntan , hoping you can help fix this as you were working on the modal last.

Check this as an example of a large-dimension video. When opened in a modal it extends beyond the screen:

https://mastodon.social/@WAHa_06x36/99972837564881692

To reproduce, paste link in search, open by pressing <- -> arrows next to fullscreen then press play when pressing play it pops outside (as per 2nd screenshot)

* * * *

- [x] I searched or browsed the repo’s other issues to ensure this is not a duplicate.

- [x] This bug happens on a [tagged release](https://github.com/tootsuite/mastodon/releases) and not on `master` (If you're a user, don't worry about this).

|

1.0

|

Video modal extends beyond screen - Referencing #5956 and @yuntan , hoping you can help fix this as you were working on the modal last.

Check this as an example of a large-dimension video. When opened in a modal it extends beyond the screen:

https://mastodon.social/@WAHa_06x36/99972837564881692

To reproduce, paste link in search, open by pressing <- -> arrows next to fullscreen then press play when pressing play it pops outside (as per 2nd screenshot)

* * * *

- [x] I searched or browsed the repo’s other issues to ensure this is not a duplicate.

- [x] This bug happens on a [tagged release](https://github.com/tootsuite/mastodon/releases) and not on `master` (If you're a user, don't worry about this).

|

priority

|

video modal extends beyond screen referencing and yuntan hoping you can help fix this as you were working on the modal last check this as an example of a large dimension video when opened in a modal it extends beyond the screen to reproduce paste link in search open by pressing arrows next to fullscreen then press play when pressing play it pops outside as per screenshot i searched or browsed the repo’s other issues to ensure this is not a duplicate this bug happens on a and not on master if you re a user don t worry about this

| 1

|

531,652

| 15,501,840,759

|

IssuesEvent

|

2021-03-11 11:03:02

|

glennmdt/sdmx-ml

|

https://api.github.com/repos/glennmdt/sdmx-ml

|

closed

|

Metadata Provision Agreement and Metadata Provider Scheme missing from XML Schemas

|

High Priority bug

|

Two structures are missing from the schemas:

* Metadata Provision Agreement

* Metadata Provider Scheme

They are essentially the same as the data equivalents except a Metadata Provision Agreement can link to any other artefact.

See section 7.3 in the following

[https://metatechltd.sharepoint.com/:w:/s/SDMX30TechnicalSpecifications/EflcBha7_PxBuFkeXu_dqRMBISBAy9IsjqXHjYkNHq321Q?e=ZCiUyI](url)

|

1.0

|

Metadata Provision Agreement and Metadata Provider Scheme missing from XML Schemas - Two structures are missing from the schemas:

* Metadata Provision Agreement

* Metadata Provider Scheme

They are essentially the same as the data equivalents except a Metadata Provision Agreement can link to any other artefact.

See section 7.3 in the following

[https://metatechltd.sharepoint.com/:w:/s/SDMX30TechnicalSpecifications/EflcBha7_PxBuFkeXu_dqRMBISBAy9IsjqXHjYkNHq321Q?e=ZCiUyI](url)

|

priority

|

metadata provision agreement and metadata provider scheme missing from xml schemas two structures are missing from the schemas metadata provision agreement metadata provider scheme they are essentially the same as the data equivalents except a metadata provision agreement can link to any other artefact see section in the following url

| 1

|

234,601

| 7,723,682,964

|

IssuesEvent

|

2018-05-24 13:11:16

|

zephyrproject-rtos/zephyr

|

https://api.github.com/repos/zephyrproject-rtos/zephyr

|

closed

|

Kernel tests failing at runtime on frdm_kw41z

|

area: Kernel bug priority: high

|

Several kernel tests are failing at runtime on frdm_kw41z on commit e7509c1 (v1.12.0-rc1)

```

$ sanitycheck -T tests/kernel/ -p frdm_kw41z --device-testing --device-serial /dev/ttyACM0

Cleaning output directory /home/maureen/zephyr/sanity-out

Building testcase defconfigs...

63 tests selected, 8604 tests discarded due to filters

total complete: 26/ 63 41% failed: 0

frdm_kw41z tests/kernel/fatal/kernel.common.stack_sentinel FAILED: failed

see: sanity-out/frdm_kw41z/tests/kernel/fatal/kernel.common.stack_sentinel/handler.log

total complete: 32/ 63 50% failed: 1

frdm_kw41z tests/kernel/mem_protect/stackprot/kernel.memory_protection FAILED: timeout

see: sanity-out/frdm_kw41z/tests/kernel/mem_protect/stackprot/kernel.memory_protection/handler.log

total complete: 36/ 63 57% failed: 2

frdm_kw41z tests/kernel/mem_protect/stack_random/kernel.memory_protection.stack_random FAILED: timeout

see: sanity-out/frdm_kw41z/tests/kernel/mem_protect/stack_random/kernel.memory_protection.stack_random/handler.log

total complete: 63/ 63 100% failed: 3

60 of 63 tests passed with 0 warnings in 896 seconds

```

|

1.0

|

Kernel tests failing at runtime on frdm_kw41z - Several kernel tests are failing at runtime on frdm_kw41z on commit e7509c1 (v1.12.0-rc1)

```

$ sanitycheck -T tests/kernel/ -p frdm_kw41z --device-testing --device-serial /dev/ttyACM0

Cleaning output directory /home/maureen/zephyr/sanity-out

Building testcase defconfigs...

63 tests selected, 8604 tests discarded due to filters

total complete: 26/ 63 41% failed: 0

frdm_kw41z tests/kernel/fatal/kernel.common.stack_sentinel FAILED: failed

see: sanity-out/frdm_kw41z/tests/kernel/fatal/kernel.common.stack_sentinel/handler.log

total complete: 32/ 63 50% failed: 1

frdm_kw41z tests/kernel/mem_protect/stackprot/kernel.memory_protection FAILED: timeout

see: sanity-out/frdm_kw41z/tests/kernel/mem_protect/stackprot/kernel.memory_protection/handler.log

total complete: 36/ 63 57% failed: 2

frdm_kw41z tests/kernel/mem_protect/stack_random/kernel.memory_protection.stack_random FAILED: timeout

see: sanity-out/frdm_kw41z/tests/kernel/mem_protect/stack_random/kernel.memory_protection.stack_random/handler.log

total complete: 63/ 63 100% failed: 3

60 of 63 tests passed with 0 warnings in 896 seconds

```

|

priority

|

kernel tests failing at runtime on frdm several kernel tests are failing at runtime on frdm on commit sanitycheck t tests kernel p frdm device testing device serial dev cleaning output directory home maureen zephyr sanity out building testcase defconfigs tests selected tests discarded due to filters total complete failed frdm tests kernel fatal kernel common stack sentinel failed failed see sanity out frdm tests kernel fatal kernel common stack sentinel handler log total complete failed frdm tests kernel mem protect stackprot kernel memory protection failed timeout see sanity out frdm tests kernel mem protect stackprot kernel memory protection handler log total complete failed frdm tests kernel mem protect stack random kernel memory protection stack random failed timeout see sanity out frdm tests kernel mem protect stack random kernel memory protection stack random handler log total complete failed of tests passed with warnings in seconds

| 1

|

538,550

| 15,771,905,197

|

IssuesEvent

|

2021-03-31 21:06:59

|

myRutgers/Web

|

https://api.github.com/repos/myRutgers/Web

|

closed

|

myNews Drawer Article Sponsors

|

High Priority enhancement myNews

|

The article news sites and search bar are hard coded and we need the actual data to show the real ones. Also doesn't have any functionality right now

|

1.0

|

myNews Drawer Article Sponsors - The article news sites and search bar are hard coded and we need the actual data to show the real ones. Also doesn't have any functionality right now

|

priority

|

mynews drawer article sponsors the article news sites and search bar are hard coded and we need the actual data to show the real ones also doesn t have any functionality right now

| 1

|

708,363

| 24,339,774,845

|

IssuesEvent

|

2022-10-01 14:58:23

|

worldmaking/mischmasch

|

https://api.github.com/repos/worldmaking/mischmasch

|

closed

|

dealing with feedback connections

|

Priority: High Audio V0.5.x // Node

|

@grrrwaaa will attempt to handle this directly within Audio.js rather than using the connection cable='history' in patch.document

|

1.0

|

dealing with feedback connections - @grrrwaaa will attempt to handle this directly within Audio.js rather than using the connection cable='history' in patch.document

|

priority

|

dealing with feedback connections grrrwaaa will attempt to handle this directly within audio js rather than using the connection cable history in patch document

| 1

|

624,752

| 19,706,286,633

|

IssuesEvent

|

2022-01-12 22:28:45

|

E3SM-Project/scream

|

https://api.github.com/repos/E3SM-Project/scream

|

closed

|

Need to rethink (or extend) how scream does IO on dyn grid

|

I/O priority:high dynamics

|

Restart files _require_ a Discontinuous Galerkin (DG) version of the dyn grid data (meaning that the corresponding edge dofs on bordering elements need to be separately saved), while right now, our IO on dyn grid assumes a Continuous Galerkin (CG) field (meaning that of the corresponding edge dofs on bordering elements, only one is saved). E.g., for output, we do a dyn_grid->phys_gll remap, and then write the phys_gll field (where the "remap" is simply "picking" one of the occurrences of each dof in the GLL grid).

Some thoughts below.

- The SEGrids class already stores nelem * np * np dofs. The way we currently set it up in `dynamics/homme/interface/dyn_grid_mod.F90`, we replicate edge dofs gids, so that the global gids are still the same as in the corresponding unique PointGrid. However, I don't think we ever use this fact, so we could easily change SEGrid, to store nelem * np * np unique gids (I believe that's what Homme stores in `elem(ie)%gdofP`, so we can just use those).

- The model restart files require to restart dyn grid field in a DG-like fashion, which means IO classes need to be able to handle SEGrid in a DG-like way. However, normal output might still be processed in the way we currently do (i.e., remap dyn->physGLL and then save, and viceversa for input). So we might want to handle both.

- Scorpio IO classes _assume_ that (partitioned) dofs have a layout of the form `(ncols[,...])`. For instance, for a 3d vector quantity, the layout is assumed to be `(ncols,dim,nlevs)`. The natural dyn layout of a 3d field is `(nelem,dim,np,np,nlev)`. We can either make IO handle the latter, or we can output/load the field as a "point-grid-like" field, with layout `(nelem*np*np, dim, nlev)`. The latter is what EAM does, in a way. But we'd have to make the IO class switch based on a) grid type, and b) if SEGrid, whether to output a DG or CG field.

|

1.0

|

Need to rethink (or extend) how scream does IO on dyn grid - Restart files _require_ a Discontinuous Galerkin (DG) version of the dyn grid data (meaning that the corresponding edge dofs on bordering elements need to be separately saved), while right now, our IO on dyn grid assumes a Continuous Galerkin (CG) field (meaning that of the corresponding edge dofs on bordering elements, only one is saved). E.g., for output, we do a dyn_grid->phys_gll remap, and then write the phys_gll field (where the "remap" is simply "picking" one of the occurrences of each dof in the GLL grid).

Some thoughts below.

- The SEGrids class already stores nelem * np * np dofs. The way we currently set it up in `dynamics/homme/interface/dyn_grid_mod.F90`, we replicate edge dofs gids, so that the global gids are still the same as in the corresponding unique PointGrid. However, I don't think we ever use this fact, so we could easily change SEGrid, to store nelem * np * np unique gids (I believe that's what Homme stores in `elem(ie)%gdofP`, so we can just use those).

- The model restart files require to restart dyn grid field in a DG-like fashion, which means IO classes need to be able to handle SEGrid in a DG-like way. However, normal output might still be processed in the way we currently do (i.e., remap dyn->physGLL and then save, and viceversa for input). So we might want to handle both.

- Scorpio IO classes _assume_ that (partitioned) dofs have a layout of the form `(ncols[,...])`. For instance, for a 3d vector quantity, the layout is assumed to be `(ncols,dim,nlevs)`. The natural dyn layout of a 3d field is `(nelem,dim,np,np,nlev)`. We can either make IO handle the latter, or we can output/load the field as a "point-grid-like" field, with layout `(nelem*np*np, dim, nlev)`. The latter is what EAM does, in a way. But we'd have to make the IO class switch based on a) grid type, and b) if SEGrid, whether to output a DG or CG field.

|

priority

|

need to rethink or extend how scream does io on dyn grid restart files require a discontinuous galerkin dg version of the dyn grid data meaning that the corresponding edge dofs on bordering elements need to be separately saved while right now our io on dyn grid assumes a continuous galerkin cg field meaning that of the corresponding edge dofs on bordering elements only one is saved e g for output we do a dyn grid phys gll remap and then write the phys gll field where the remap is simply picking one of the occurrences of each dof in the gll grid some thoughts below the segrids class already stores nelem np np dofs the way we currently set it up in dynamics homme interface dyn grid mod we replicate edge dofs gids so that the global gids are still the same as in the corresponding unique pointgrid however i don t think we ever use this fact so we could easily change segrid to store nelem np np unique gids i believe that s what homme stores in elem ie gdofp so we can just use those the model restart files require to restart dyn grid field in a dg like fashion which means io classes need to be able to handle segrid in a dg like way however normal output might still be processed in the way we currently do i e remap dyn physgll and then save and viceversa for input so we might want to handle both scorpio io classes assume that partitioned dofs have a layout of the form ncols for instance for a vector quantity the layout is assumed to be ncols dim nlevs the natural dyn layout of a field is nelem dim np np nlev we can either make io handle the latter or we can output load the field as a point grid like field with layout nelem np np dim nlev the latter is what eam does in a way but we d have to make the io class switch based on a grid type and b if segrid whether to output a dg or cg field

| 1

|

404,234

| 11,854,119,261

|

IssuesEvent

|

2020-03-24 23:49:03

|

monarch-initiative/mondo

|

https://api.github.com/repos/monarch-initiative/mondo

|

closed

|

remove comment from MONDO_0005597 'cystic renal cell carcinoma'

|

high priority

|

there is a comment on MONDO_0005597 'cystic renal cell carcinoma' to merge MONDO_0005597 with MONDO:0003010 multilocular clear cell renal cell carcinoma, but this has already been done. Need to remove comment.

|

1.0

|

remove comment from MONDO_0005597 'cystic renal cell carcinoma' - there is a comment on MONDO_0005597 'cystic renal cell carcinoma' to merge MONDO_0005597 with MONDO:0003010 multilocular clear cell renal cell carcinoma, but this has already been done. Need to remove comment.

|

priority

|

remove comment from mondo cystic renal cell carcinoma there is a comment on mondo cystic renal cell carcinoma to merge mondo with mondo multilocular clear cell renal cell carcinoma but this has already been done need to remove comment

| 1

|

387,009

| 11,454,589,990

|

IssuesEvent

|

2020-02-06 17:21:32

|

openforcefield/openforcefield

|

https://api.github.com/repos/openforcefield/openforcefield

|

closed

|

Add functionality to create OFFMols with specific indexing

|

effort:high priority:high

|

**Is your feature request related to a problem? Please describe.**

Many people have requested the ability to create OFFMols with specified atom orderings. Most commonly, this is in the context of [Canonical, isomeric, explicit hydrogen, mapped SMILES](https://github.com/openforcefield/cmiles#how-to-use-cmiles), which we attach to OFF-submitted molecules in QCArchive. However, people have also requested the ability to reorder the atoms from a molecule that already has an indexing system (like, an SDF).

**Describe the solution you'd like**

This is two similar requests in one:

* `Molecule.from_object(obj, index_map=dict(current_idx:new_idx)`: Be able to read a molecule with a _defined_ indexing system (like, from SDF), but have the created OFFMol have a different atom/bond ordering.

* `Molecule.from_mapped_smiles(mapped_smiles)`: Read an explicit-hydrogen, fully mapped SMILES, and create an OFFMol with that atom ordering.

* Needs to check+fail if H's aren't explicit.

For both functions, we'll need to resolve what to do if the map indices don't begin at 1, of if the numbering system has gaps. For now, a reasonable behavior is probably just "fail".

|

1.0

|

Add functionality to create OFFMols with specific indexing - **Is your feature request related to a problem? Please describe.**

Many people have requested the ability to create OFFMols with specified atom orderings. Most commonly, this is in the context of [Canonical, isomeric, explicit hydrogen, mapped SMILES](https://github.com/openforcefield/cmiles#how-to-use-cmiles), which we attach to OFF-submitted molecules in QCArchive. However, people have also requested the ability to reorder the atoms from a molecule that already has an indexing system (like, an SDF).

**Describe the solution you'd like**

This is two similar requests in one:

* `Molecule.from_object(obj, index_map=dict(current_idx:new_idx)`: Be able to read a molecule with a _defined_ indexing system (like, from SDF), but have the created OFFMol have a different atom/bond ordering.

* `Molecule.from_mapped_smiles(mapped_smiles)`: Read an explicit-hydrogen, fully mapped SMILES, and create an OFFMol with that atom ordering.

* Needs to check+fail if H's aren't explicit.

For both functions, we'll need to resolve what to do if the map indices don't begin at 1, of if the numbering system has gaps. For now, a reasonable behavior is probably just "fail".

|

priority

|

add functionality to create offmols with specific indexing is your feature request related to a problem please describe many people have requested the ability to create offmols with specified atom orderings most commonly this is in the context of which we attach to off submitted molecules in qcarchive however people have also requested the ability to reorder the atoms from a molecule that already has an indexing system like an sdf describe the solution you d like this is two similar requests in one molecule from object obj index map dict current idx new idx be able to read a molecule with a defined indexing system like from sdf but have the created offmol have a different atom bond ordering molecule from mapped smiles mapped smiles read an explicit hydrogen fully mapped smiles and create an offmol with that atom ordering needs to check fail if h s aren t explicit for both functions we ll need to resolve what to do if the map indices don t begin at of if the numbering system has gaps for now a reasonable behavior is probably just fail

| 1

|

6,673

| 2,590,834,331

|

IssuesEvent

|

2015-02-18 21:21:43

|

chessmasterhong/WaterEmblem

|

https://api.github.com/repos/chessmasterhong/WaterEmblem

|

closed

|

Continuing a suspended game does not restore game back to a playable state

|

bug high priority

|

Due to the large number changes done to the codebase since the suspend game feature was last updated, suspending the game will not save all data necessary to bring the game back to a playable state upon continuing the suspended game. Some data were were overlooked and were not saved in the game suspending or were not properly loaded during the game continuing process.

|

1.0

|

Continuing a suspended game does not restore game back to a playable state - Due to the large number changes done to the codebase since the suspend game feature was last updated, suspending the game will not save all data necessary to bring the game back to a playable state upon continuing the suspended game. Some data were were overlooked and were not saved in the game suspending or were not properly loaded during the game continuing process.

|

priority

|

continuing a suspended game does not restore game back to a playable state due to the large number changes done to the codebase since the suspend game feature was last updated suspending the game will not save all data necessary to bring the game back to a playable state upon continuing the suspended game some data were were overlooked and were not saved in the game suspending or were not properly loaded during the game continuing process

| 1

|

802,540

| 28,966,322,640

|

IssuesEvent

|

2023-05-10 08:10:23

|

gamefreedomgit/Maelstrom

|

https://api.github.com/repos/gamefreedomgit/Maelstrom

|

closed

|

Mana regeneration (any class)

|

Core Status: Confirmed Priority: High

|

**Description:**

Mana regeneration on any class is not acting as expected, with the count regressing every few milliseconds.

**How to reproduce:**

Cast any spell and observe your mana go "backwards" every few milliseconds.

Example:

**How it should work:**

Mana regeneration should be continuous without regressing.

|

1.0

|

Mana regeneration (any class) - **Description:**

Mana regeneration on any class is not acting as expected, with the count regressing every few milliseconds.

**How to reproduce:**

Cast any spell and observe your mana go "backwards" every few milliseconds.

Example:

**How it should work:**

Mana regeneration should be continuous without regressing.

|

priority

|

mana regeneration any class description mana regeneration on any class is not acting as expected with the count regressing every few milliseconds how to reproduce cast any spell and observe your mana go backwards every few milliseconds example how it should work mana regeneration should be continuous without regressing

| 1

|

164,593

| 6,229,530,428

|

IssuesEvent

|

2017-07-11 04:23:14

|

NREL/EnergyPlus

|

https://api.github.com/repos/NREL/EnergyPlus

|

closed

|

User file not calculating zone volumes correctly

|

Priority2 S1 - High

|

Helpdesk ticket 11140

User expected similar loads from four similar zones, but they varied signficantly. The four zones have similar floor area and the same ceiling height but greatly different zone volumes:

The difference in volumes cause the infiltration and ventilation flows to be different, because both were specified in ACH.

Workaround is to put hard values for Volume in the Zone objects.

|

1.0

|

User file not calculating zone volumes correctly - Helpdesk ticket 11140

User expected similar loads from four similar zones, but they varied signficantly. The four zones have similar floor area and the same ceiling height but greatly different zone volumes:

The difference in volumes cause the infiltration and ventilation flows to be different, because both were specified in ACH.

Workaround is to put hard values for Volume in the Zone objects.

|

priority

|

user file not calculating zone volumes correctly helpdesk ticket user expected similar loads from four similar zones but they varied signficantly the four zones have similar floor area and the same ceiling height but greatly different zone volumes the difference in volumes cause the infiltration and ventilation flows to be different because both were specified in ach workaround is to put hard values for volume in the zone objects

| 1

|

134,952

| 5,240,692,673

|

IssuesEvent

|

2017-01-31 13:53:26

|

SIMRacingApps/SIMRacingApps

|

https://api.github.com/repos/SIMRacingApps/SIMRacingApps

|

closed

|

Tire Measurements Don't Change on all Dallara tires

|

bug high priority

|

When I'm running laps in iRacing i click the "fuel and 4 tires" button and when i do go to pit. my crew changes the tires and fill the fuel but the app only tells me my right rear tire was changed and still says i ran *amount of laps* on the other three tires. Please help me fix this

|

1.0

|

Tire Measurements Don't Change on all Dallara tires - When I'm running laps in iRacing i click the "fuel and 4 tires" button and when i do go to pit. my crew changes the tires and fill the fuel but the app only tells me my right rear tire was changed and still says i ran *amount of laps* on the other three tires. Please help me fix this

|

priority

|

tire measurements don t change on all dallara tires when i m running laps in iracing i click the fuel and tires button and when i do go to pit my crew changes the tires and fill the fuel but the app only tells me my right rear tire was changed and still says i ran amount of laps on the other three tires please help me fix this

| 1

|

771,802

| 27,092,857,176

|

IssuesEvent

|

2023-02-14 22:47:27

|

asastats/channel

|

https://api.github.com/repos/asastats/channel

|

closed

|

Add new Yieldly's NFT prize game

|

feature high priority addressed

|

By @SCN9A in [GitHub](https://github.com/asastats/channel/discussions/130#discussioncomment-4970939):

> Yieldly announced new NFT prize game

> https://twitter.com/YieldlyFinance/status/1625471570054418437

> Escrow for YLDY staked to join NFT Prize game is

https://algoexplorer.io/address/E3SJQLV7PHEL6J4MX3EESXG7JP5KI35ORAOOX55OLIE2QOK4YMT3UBPA6Y

|

1.0

|

Add new Yieldly's NFT prize game - By @SCN9A in [GitHub](https://github.com/asastats/channel/discussions/130#discussioncomment-4970939):

> Yieldly announced new NFT prize game