Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

782,631

| 27,501,581,730

|

IssuesEvent

|

2023-03-05 18:58:27

|

renovatebot/renovate

|

https://api.github.com/repos/renovatebot/renovate

|

closed

|

docker digestPin is no longer working for multiple images in same file

|

type:bug priority-2-high manager:dockerfile status:in-progress reproduction:provided

|

### How are you running Renovate?

Mend Renovate hosted app on github.com

### If you're self-hosting Renovate, tell us what version of Renovate you run.

_No response_

### If you're self-hosting Renovate, select which platform you are using.

None

### If you're self-hosting Renovate, tell us what version of the platform you run.

_No response_

### Was this something which used to work for you, and then stopped?

It used to work, and then stopped

### Describe the bug

I noticed today that when using docker:digestPin, renovate is no longer updating all image/from statements within the dockerfile. It would appear that it is only updating the last dependency, although the PR body table contains all of the dependencies and updates

Minimal reproduction

- repository - https://github.com/setchy/renovate-docker-pinning-issue

- pin PR with the two dependencies correctly identified in issue body - https://github.com/setchy/renovate-docker-pinning-issue/pull/5

- only the last dependency was pinned - https://github.com/setchy/renovate-docker-pinning-issue/pull/5/files

### Relevant debug logs

<details><summary>Logs</summary>

```

Copy/paste the relevant log(s) here, between the starting and ending backticks

```

</details>

### Have you created a minimal reproduction repository?

I have linked to a minimal reproduction repository in the bug description

|

1.0

|

docker digestPin is no longer working for multiple images in same file - ### How are you running Renovate?

Mend Renovate hosted app on github.com

### If you're self-hosting Renovate, tell us what version of Renovate you run.

_No response_

### If you're self-hosting Renovate, select which platform you are using.

None

### If you're self-hosting Renovate, tell us what version of the platform you run.

_No response_

### Was this something which used to work for you, and then stopped?

It used to work, and then stopped

### Describe the bug

I noticed today that when using docker:digestPin, renovate is no longer updating all image/from statements within the dockerfile. It would appear that it is only updating the last dependency, although the PR body table contains all of the dependencies and updates

Minimal reproduction

- repository - https://github.com/setchy/renovate-docker-pinning-issue

- pin PR with the two dependencies correctly identified in issue body - https://github.com/setchy/renovate-docker-pinning-issue/pull/5

- only the last dependency was pinned - https://github.com/setchy/renovate-docker-pinning-issue/pull/5/files

### Relevant debug logs

<details><summary>Logs</summary>

```

Copy/paste the relevant log(s) here, between the starting and ending backticks

```

</details>

### Have you created a minimal reproduction repository?

I have linked to a minimal reproduction repository in the bug description

|

priority

|

docker digestpin is no longer working for multiple images in same file how are you running renovate mend renovate hosted app on github com if you re self hosting renovate tell us what version of renovate you run no response if you re self hosting renovate select which platform you are using none if you re self hosting renovate tell us what version of the platform you run no response was this something which used to work for you and then stopped it used to work and then stopped describe the bug i noticed today that when using docker digestpin renovate is no longer updating all image from statements within the dockerfile it would appear that it is only updating the last dependency although the pr body table contains all of the dependencies and updates minimal reproduction repository pin pr with the two dependencies correctly identified in issue body only the last dependency was pinned relevant debug logs logs copy paste the relevant log s here between the starting and ending backticks have you created a minimal reproduction repository i have linked to a minimal reproduction repository in the bug description

| 1

|

71,133

| 3,352,374,944

|

IssuesEvent

|

2015-11-17 22:27:56

|

minetest-LOTT/Lord-of-the-Test

|

https://api.github.com/repos/minetest-LOTT/Lord-of-the-Test

|

closed

|

Clothes aren't updated on the player model

|

bug high priority

|

I don't know what is happening internally, but new clothes chosen after commit 233c3ee3500a82b190caa4b6c11ebb854263630d don't appear on the player model.

|

1.0

|

Clothes aren't updated on the player model - I don't know what is happening internally, but new clothes chosen after commit 233c3ee3500a82b190caa4b6c11ebb854263630d don't appear on the player model.

|

priority

|

clothes aren t updated on the player model i don t know what is happening internally but new clothes chosen after commit don t appear on the player model

| 1

|

204,338

| 7,087,021,698

|

IssuesEvent

|

2018-01-11 16:29:55

|

inverse-inc/packetfence

|

https://api.github.com/repos/inverse-inc/packetfence

|

closed

|

DAL: Blocked attempts to insert duplicate nodes by pfqueue when processing DHCP packets

|

Priority: High Status: For review Type: Bug

|

Seems related to #2823

```

Dec 12 07:53:19 pf-julien pfqueue: pfqueue(4507) ERROR: [mac:94:db:c9:38:85:5b] Database query failed with non retryable error: Duplicate entry '94:db:c9:38:85:5b' for key 'PRIMARY' (errno: 1062) [INSERT INTO `node` ( `autoreg`, `bandwidth_balance`, `bypass_role_id`, `bypass_vlan`, `category_id`, `computername`, `detect_date`, `device_class`, `device_score`, `device_type`, `device_version`, `dhcp6_enterprise`, `dhcp6_fingerprint`, `dhcp_fingerprint`, `dhcp_vendor`, `last_arp`, `last_dhcp`, `last_seen`, `lastskip`, `mac`, `machine_account`, `notes`, `pid`, `regdate`, `sessionid`, `status`, `time_balance`, `unregdate`, `user_agent`, `voip`) VALUES ( ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ? )]{no, NULL, NULL, , 1, not-zammits-pc, 2017-12-11 15:14:53, Linux, 50, Ubuntu/Debian 5/Knoppix 6, NULL, , , 1,28,2,3,15,6,119,12,44,47,26,121,42, , 0000-00-00 00:00:00, 2017-12-12 07:53:18, 2017-12-12 07:53:18, 0000-00-00 00:00:00, 94:db:c9:38:85:5b, NULL, , jsemaan, 0000-00-00 00:00:00, , unreg, NULL, 0000-00-00 00:00:00, , no} (pf::dal::db_execute)

```

|

1.0

|

DAL: Blocked attempts to insert duplicate nodes by pfqueue when processing DHCP packets - Seems related to #2823

```

Dec 12 07:53:19 pf-julien pfqueue: pfqueue(4507) ERROR: [mac:94:db:c9:38:85:5b] Database query failed with non retryable error: Duplicate entry '94:db:c9:38:85:5b' for key 'PRIMARY' (errno: 1062) [INSERT INTO `node` ( `autoreg`, `bandwidth_balance`, `bypass_role_id`, `bypass_vlan`, `category_id`, `computername`, `detect_date`, `device_class`, `device_score`, `device_type`, `device_version`, `dhcp6_enterprise`, `dhcp6_fingerprint`, `dhcp_fingerprint`, `dhcp_vendor`, `last_arp`, `last_dhcp`, `last_seen`, `lastskip`, `mac`, `machine_account`, `notes`, `pid`, `regdate`, `sessionid`, `status`, `time_balance`, `unregdate`, `user_agent`, `voip`) VALUES ( ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ? )]{no, NULL, NULL, , 1, not-zammits-pc, 2017-12-11 15:14:53, Linux, 50, Ubuntu/Debian 5/Knoppix 6, NULL, , , 1,28,2,3,15,6,119,12,44,47,26,121,42, , 0000-00-00 00:00:00, 2017-12-12 07:53:18, 2017-12-12 07:53:18, 0000-00-00 00:00:00, 94:db:c9:38:85:5b, NULL, , jsemaan, 0000-00-00 00:00:00, , unreg, NULL, 0000-00-00 00:00:00, , no} (pf::dal::db_execute)

```

|

priority

|

dal blocked attempts to insert duplicate nodes by pfqueue when processing dhcp packets seems related to dec pf julien pfqueue pfqueue error database query failed with non retryable error duplicate entry db for key primary errno no null null not zammits pc linux ubuntu debian knoppix null db null jsemaan unreg null no pf dal db execute

| 1

|

204,564

| 7,088,765,036

|

IssuesEvent

|

2018-01-11 22:48:51

|

terascope/teraslice

|

https://api.github.com/repos/terascope/teraslice

|

reopened

|

Roles of Job vs Execution Context

|

bug priority:high

|

This has been bothering me for a while and I know I've brought it up before but now I think I realize the reason the way we currently store and validate jobs is not quite right given that we also have this notion of the execution context. The thing that bothers me is that a users job gets validated and expanded and stored as a "Job" and then copied over as an execution context. I suggest that we change how this works and only ever store the users "raw" (unvalidated) job on the job object and then do the validation and expansion on the execution context. Conceptually speaking the job represents the users unmodified request and the execution context represents the the expansion of the job and thing that actually gets executed.

The realization I had was that the validation/expansion step then more tightly couples the job to implementation details of a specific version of the code. You could be left with jobs that don't run after upgrading teraslice or with defaults from earlier versions. Whereas if the job had not been validated/expanded it would be more likely to run after code changes. Now, I don't consider this a real driving reason to make this change, it is more of a hint or clue that the current behavior is not quite right.

I realize this complicates the validation at submission time but that is probably manageable.

|

1.0

|

Roles of Job vs Execution Context - This has been bothering me for a while and I know I've brought it up before but now I think I realize the reason the way we currently store and validate jobs is not quite right given that we also have this notion of the execution context. The thing that bothers me is that a users job gets validated and expanded and stored as a "Job" and then copied over as an execution context. I suggest that we change how this works and only ever store the users "raw" (unvalidated) job on the job object and then do the validation and expansion on the execution context. Conceptually speaking the job represents the users unmodified request and the execution context represents the the expansion of the job and thing that actually gets executed.

The realization I had was that the validation/expansion step then more tightly couples the job to implementation details of a specific version of the code. You could be left with jobs that don't run after upgrading teraslice or with defaults from earlier versions. Whereas if the job had not been validated/expanded it would be more likely to run after code changes. Now, I don't consider this a real driving reason to make this change, it is more of a hint or clue that the current behavior is not quite right.

I realize this complicates the validation at submission time but that is probably manageable.

|

priority

|

roles of job vs execution context this has been bothering me for a while and i know i ve brought it up before but now i think i realize the reason the way we currently store and validate jobs is not quite right given that we also have this notion of the execution context the thing that bothers me is that a users job gets validated and expanded and stored as a job and then copied over as an execution context i suggest that we change how this works and only ever store the users raw unvalidated job on the job object and then do the validation and expansion on the execution context conceptually speaking the job represents the users unmodified request and the execution context represents the the expansion of the job and thing that actually gets executed the realization i had was that the validation expansion step then more tightly couples the job to implementation details of a specific version of the code you could be left with jobs that don t run after upgrading teraslice or with defaults from earlier versions whereas if the job had not been validated expanded it would be more likely to run after code changes now i don t consider this a real driving reason to make this change it is more of a hint or clue that the current behavior is not quite right i realize this complicates the validation at submission time but that is probably manageable

| 1

|

127,826

| 5,039,710,834

|

IssuesEvent

|

2016-12-18 23:17:45

|

nohharri/GroupGenius

|

https://api.github.com/repos/nohharri/GroupGenius

|

opened

|

firebase/angular slow down

|

high priority

|

something is slow and not replacing as fast as it used to. Like on the public page, the group placeholder image sticks for about .2s after selecting a group. Same between the switch of "join a group" and "request pending"

|

1.0

|

firebase/angular slow down - something is slow and not replacing as fast as it used to. Like on the public page, the group placeholder image sticks for about .2s after selecting a group. Same between the switch of "join a group" and "request pending"

|

priority

|

firebase angular slow down something is slow and not replacing as fast as it used to like on the public page the group placeholder image sticks for about after selecting a group same between the switch of join a group and request pending

| 1

|

298,298

| 9,198,455,118

|

IssuesEvent

|

2019-03-07 12:41:01

|

CMDT/TimeSeriesDataCapture

|

https://api.github.com/repos/CMDT/TimeSeriesDataCapture

|

closed

|

Hardcoded values for credentials in BrowseData

|

Browse API High Priority bug

|

This will alleviate problems requiring fixes in [AliceLiveProjects](https://github.com/aliceliveprojects/TimeSeriesDataCapture_BrowseData/commits/master):

https://github.com/aliceliveprojects/TimeSeriesDataCapture_BrowseData/commit/50ed1ebce2d0d2d19c2c2117698d18a29e3cc606

https://github.com/aliceliveprojects/TimeSeriesDataCapture_BrowseData/commit/0943822bf3643e410da2897e1653d9437aee8e14

|

1.0

|

Hardcoded values for credentials in BrowseData - This will alleviate problems requiring fixes in [AliceLiveProjects](https://github.com/aliceliveprojects/TimeSeriesDataCapture_BrowseData/commits/master):

https://github.com/aliceliveprojects/TimeSeriesDataCapture_BrowseData/commit/50ed1ebce2d0d2d19c2c2117698d18a29e3cc606

https://github.com/aliceliveprojects/TimeSeriesDataCapture_BrowseData/commit/0943822bf3643e410da2897e1653d9437aee8e14

|

priority

|

hardcoded values for credentials in browsedata this will alleviate problems requiring fixes in

| 1

|

354,487

| 10,568,144,470

|

IssuesEvent

|

2019-10-06 10:50:04

|

netdata/netdata

|

https://api.github.com/repos/netdata/netdata

|

closed

|

Slaves not connecting to master

|

bug priority/high

|

Hi!

Here is a new one:

Master stopped receiving stream data from half of slaves. And of course "I didn't touch anything!" (apart from memory mode = dbengine on Master) :)

Here are last logs from aforementioned v17 Slave

```

2019-09-25 12:09:19: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:19: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:19: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:19: netdata ERROR : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:24: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:24: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:24: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:24: netdata ERROR : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:29: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:29: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:29: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:29: netdata ERROR : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:34: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:34: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:34: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:34: netdata ERROR : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

```

And same from one of the missing v13 Slaves:

```

2019-09-25 12:09:22: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:22: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:22: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:22: netdata ERROR : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:27: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:27: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:27: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:27: netdata ERROR : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:32: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:32: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:32: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:32: netdata ERROR : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:37: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:37: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:37: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:37: netdata ERROR : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

```

Then I restarted netdata v17 Master and list of available slaves changed. Some became available again, others became unavailable.

Should I start a new issue?

Thanks!

_Originally posted by @noobiek in https://github.com/netdata/netdata/issues/6852#issuecomment-534935227_

|

1.0

|

Slaves not connecting to master - Hi!

Here is a new one:

Master stopped receiving stream data from half of slaves. And of course "I didn't touch anything!" (apart from memory mode = dbengine on Master) :)

Here are last logs from aforementioned v17 Slave

```

2019-09-25 12:09:19: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:19: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:19: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:19: netdata ERROR : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:24: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:24: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:24: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:24: netdata ERROR : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:29: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:29: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:29: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:29: netdata ERROR : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:34: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:34: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:34: netdata INFO : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:34: netdata ERROR : STREAM_SENDER[wtdb1] : STREAM wtdb1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

```

And same from one of the missing v13 Slaves:

```

2019-09-25 12:09:22: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:22: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:22: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:22: netdata ERROR : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:27: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:27: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:27: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:27: netdata ERROR : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:32: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:32: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:32: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:32: netdata ERROR : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

2019-09-25 12:09:37: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: connecting...

2019-09-25 12:09:37: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: initializing communication...

2019-09-25 12:09:37: netdata INFO : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: waiting response from remote netdata...

2019-09-25 12:09:37: netdata ERROR : STREAM_SENDER[wtapp1] : STREAM wtapp1 [send to _MASTER_IP_:19999]: server is not replying properly (is it a netdata?).

```

Then I restarted netdata v17 Master and list of available slaves changed. Some became available again, others became unavailable.

Should I start a new issue?

Thanks!

_Originally posted by @noobiek in https://github.com/netdata/netdata/issues/6852#issuecomment-534935227_

|

priority

|

slaves not connecting to master hi here is a new one master stopped receiving stream data from half of slaves and of course i didn t touch anything apart from memory mode dbengine on master here are last logs from aforementioned slave netdata info stream sender stream connecting netdata info stream sender stream initializing communication netdata info stream sender stream waiting response from remote netdata netdata error stream sender stream server is not replying properly is it a netdata netdata info stream sender stream connecting netdata info stream sender stream initializing communication netdata info stream sender stream waiting response from remote netdata netdata error stream sender stream server is not replying properly is it a netdata netdata info stream sender stream connecting netdata info stream sender stream initializing communication netdata info stream sender stream waiting response from remote netdata netdata error stream sender stream server is not replying properly is it a netdata netdata info stream sender stream connecting netdata info stream sender stream initializing communication netdata info stream sender stream waiting response from remote netdata netdata error stream sender stream server is not replying properly is it a netdata and same from one of the missing slaves netdata info stream sender stream connecting netdata info stream sender stream initializing communication netdata info stream sender stream waiting response from remote netdata netdata error stream sender stream server is not replying properly is it a netdata netdata info stream sender stream connecting netdata info stream sender stream initializing communication netdata info stream sender stream waiting response from remote netdata netdata error stream sender stream server is not replying properly is it a netdata netdata info stream sender stream connecting netdata info stream sender stream initializing communication netdata info stream sender stream waiting response from remote netdata netdata error stream sender stream server is not replying properly is it a netdata netdata info stream sender stream connecting netdata info stream sender stream initializing communication netdata info stream sender stream waiting response from remote netdata netdata error stream sender stream server is not replying properly is it a netdata then i restarted netdata master and list of available slaves changed some became available again others became unavailable should i start a new issue thanks originally posted by noobiek in

| 1

|

213,989

| 7,262,411,470

|

IssuesEvent

|

2018-02-19 05:47:43

|

wso2/testgrid

|

https://api.github.com/repos/wso2/testgrid

|

opened

|

AWS region can be configured via infrastructureConfig now.

|

Priority/Highest Severity/Blocker Type/Bug

|

**Description:**

This can be done by setting the inputParameter 'region' under the `infrastructureConfig -> scripts`.

However, this does not work ATM. We need to look at why this does not happen.

**Affected Product Version:**

0.9.0-m14

**OS, DB, other environment details and versions:**

**Steps to reproduce:**

Run `cloudformation-is` with the following testgrid.yaml.

```yaml

version: '0.9'

infrastructureConfig:

iacProvider: CLOUDFORMATION

infrastructureProvider: AWS

containerOrchestrationEngine: None

parameters:

- JDK : ORACLE_JDK8

provisioners:

- name: 01-two-node-deployment

description: Provision Infra for a two node IS cluster

dir: cloudformation-templates/pattern-1

scripts:

- name: infra-for-two-node-deployment

description: Creates infrastructure for a IS two node deployment.

type: CLOUDFORMATION

file: pattern-1-with-puppet-cloudformation.template.yml

inputParameters:

parseInfrastructureScript: false

region: us-east-2

DBPassword: "DB_Password"

EC2KeyPair: "testgrid-key"

ALBCertificateARN: "arn:aws:acm:us-east-1:809489900555:certificate/2ab5aded-5df1-4549-9f7e-91639ff6634e"

scenarioConfig:

scenarios:

- "scenario02"

- "scenario12"

- "scenario21"

```

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

|

1.0

|

AWS region can be configured via infrastructureConfig now. - **Description:**

This can be done by setting the inputParameter 'region' under the `infrastructureConfig -> scripts`.

However, this does not work ATM. We need to look at why this does not happen.

**Affected Product Version:**

0.9.0-m14

**OS, DB, other environment details and versions:**

**Steps to reproduce:**

Run `cloudformation-is` with the following testgrid.yaml.

```yaml

version: '0.9'

infrastructureConfig:

iacProvider: CLOUDFORMATION

infrastructureProvider: AWS

containerOrchestrationEngine: None

parameters:

- JDK : ORACLE_JDK8

provisioners:

- name: 01-two-node-deployment

description: Provision Infra for a two node IS cluster

dir: cloudformation-templates/pattern-1

scripts:

- name: infra-for-two-node-deployment

description: Creates infrastructure for a IS two node deployment.

type: CLOUDFORMATION

file: pattern-1-with-puppet-cloudformation.template.yml

inputParameters:

parseInfrastructureScript: false

region: us-east-2

DBPassword: "DB_Password"

EC2KeyPair: "testgrid-key"

ALBCertificateARN: "arn:aws:acm:us-east-1:809489900555:certificate/2ab5aded-5df1-4549-9f7e-91639ff6634e"

scenarioConfig:

scenarios:

- "scenario02"

- "scenario12"

- "scenario21"

```

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

|

priority

|

aws region can be configured via infrastructureconfig now description this can be done by setting the inputparameter region under the infrastructureconfig scripts however this does not work atm we need to look at why this does not happen affected product version os db other environment details and versions steps to reproduce run cloudformation is with the following testgrid yaml yaml version infrastructureconfig iacprovider cloudformation infrastructureprovider aws containerorchestrationengine none parameters jdk oracle provisioners name two node deployment description provision infra for a two node is cluster dir cloudformation templates pattern scripts name infra for two node deployment description creates infrastructure for a is two node deployment type cloudformation file pattern with puppet cloudformation template yml inputparameters parseinfrastructurescript false region us east dbpassword db password testgrid key albcertificatearn arn aws acm us east certificate scenarioconfig scenarios related issues

| 1

|

595,991

| 18,093,441,481

|

IssuesEvent

|

2021-09-22 06:08:35

|

zulip/zulip-mobile

|

https://api.github.com/repos/zulip/zulip-mobile

|

opened

|

Make typeahead boxes more visible

|

help wanted a-compose/send P1 high-priority

|

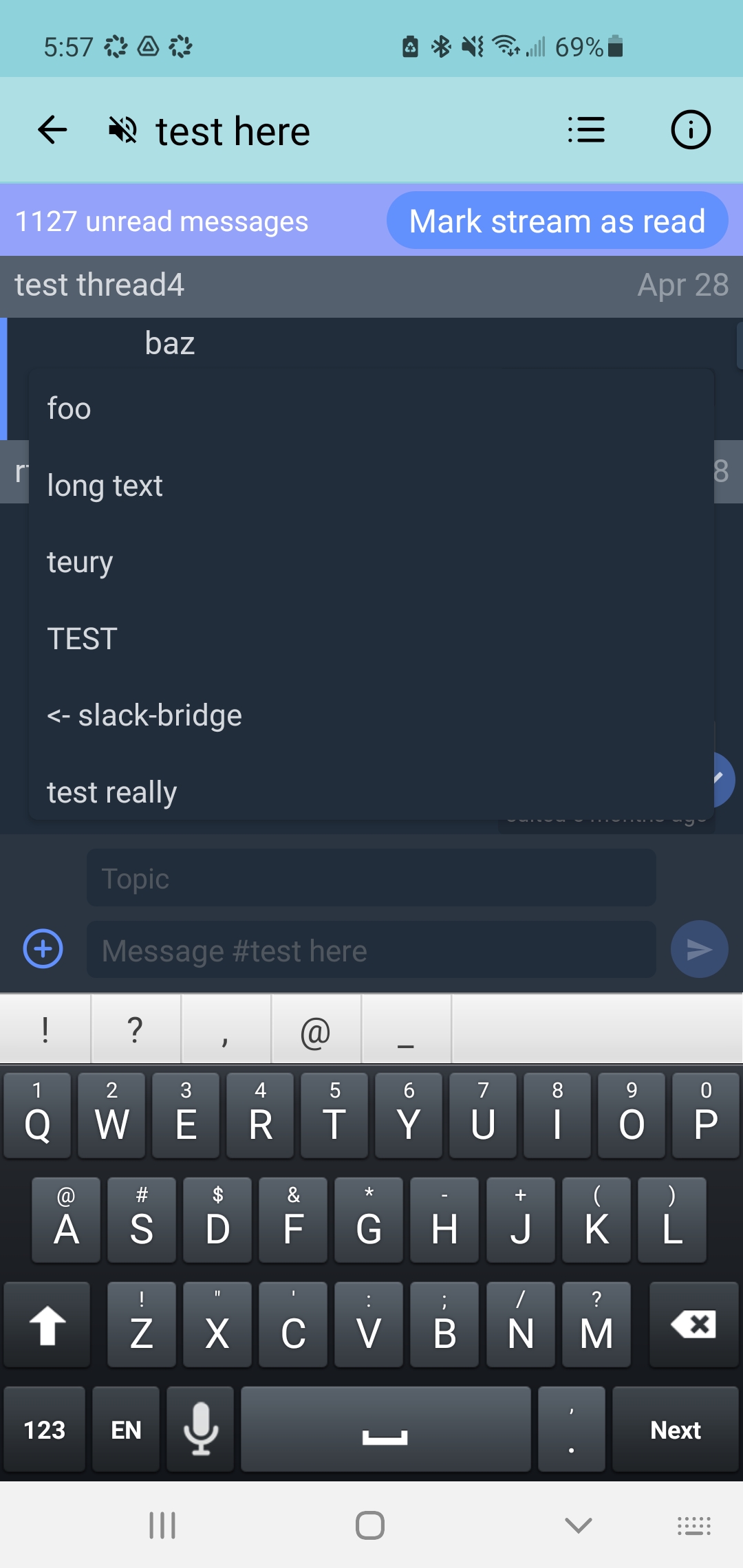

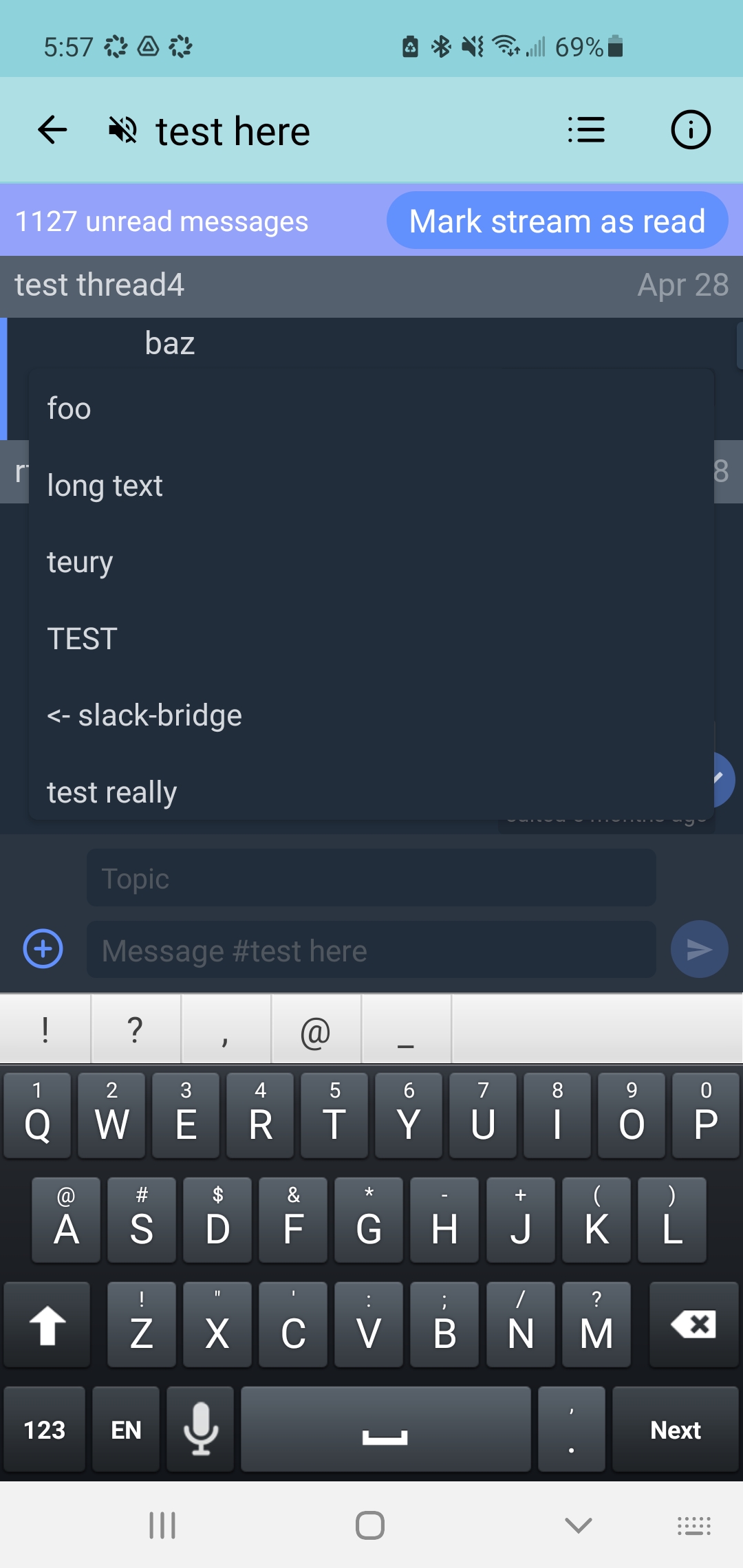

At present, typeahead boxes are not visually distinct from the background in night mode. This is especially problematic when the box opens automatically when the user does not expect it, as it now does for topic typeahead when sending a message from an interleaved view.

Possible solutions are to give the box more of a border or a different background color. The light mode typeahead box has a more clear boundary, but perhaps would benefit from more distinct styling as well.

Screenshot:

[CZO thread](https://chat.zulip.org/#narrow/stream/243-mobile-team/topic/compose.20topic.20and.20navigation)

|

1.0

|

Make typeahead boxes more visible - At present, typeahead boxes are not visually distinct from the background in night mode. This is especially problematic when the box opens automatically when the user does not expect it, as it now does for topic typeahead when sending a message from an interleaved view.

Possible solutions are to give the box more of a border or a different background color. The light mode typeahead box has a more clear boundary, but perhaps would benefit from more distinct styling as well.

Screenshot:

[CZO thread](https://chat.zulip.org/#narrow/stream/243-mobile-team/topic/compose.20topic.20and.20navigation)

|

priority

|

make typeahead boxes more visible at present typeahead boxes are not visually distinct from the background in night mode this is especially problematic when the box opens automatically when the user does not expect it as it now does for topic typeahead when sending a message from an interleaved view possible solutions are to give the box more of a border or a different background color the light mode typeahead box has a more clear boundary but perhaps would benefit from more distinct styling as well screenshot

| 1

|

492,737

| 14,218,872,755

|

IssuesEvent

|

2020-11-17 12:28:21

|

Scholar-6/brillder

|

https://api.github.com/repos/Scholar-6/brillder

|

closed

|

Correct word highlighting showing as incorrect in book, categorise showing all green when some incorrect

|

High Level Priority

|

<img width="1506" alt="Screenshot 2020-11-17 at 11 51 42" src="https://user-images.githubusercontent.com/59654112/99381401-68ffd180-28cb-11eb-88db-272b61f20565.png">

- [ ] highlighting validation/display

<img width="1180" alt="Screenshot 2020-11-17 at 11 51 54" src="https://user-images.githubusercontent.com/59654112/99381412-6d2bef00-28cb-11eb-8348-e763792eb720.png">ng

- [x] categorise validation/display

https://brillder.scholar6.org/post-play/brick/308/13

|

1.0

|

Correct word highlighting showing as incorrect in book, categorise showing all green when some incorrect - <img width="1506" alt="Screenshot 2020-11-17 at 11 51 42" src="https://user-images.githubusercontent.com/59654112/99381401-68ffd180-28cb-11eb-88db-272b61f20565.png">

- [ ] highlighting validation/display

<img width="1180" alt="Screenshot 2020-11-17 at 11 51 54" src="https://user-images.githubusercontent.com/59654112/99381412-6d2bef00-28cb-11eb-8348-e763792eb720.png">ng

- [x] categorise validation/display

https://brillder.scholar6.org/post-play/brick/308/13

|

priority

|

correct word highlighting showing as incorrect in book categorise showing all green when some incorrect img width alt screenshot at src highlighting validation display img width alt screenshot at src categorise validation display

| 1

|

688,108

| 23,548,667,965

|

IssuesEvent

|

2022-08-21 13:58:30

|

proveuswrong/webapp-news

|

https://api.github.com/repos/proveuswrong/webapp-news

|

opened

|

Implement Dispute Flow

|

priority: high type: enhancement

|

- Period tracking

- Evidence browsing and submission

- Appeal status and funding

- Pass arbitrator period

- Execute arbitrator ruling

- Draw jury

|

1.0

|

Implement Dispute Flow - - Period tracking

- Evidence browsing and submission

- Appeal status and funding

- Pass arbitrator period

- Execute arbitrator ruling

- Draw jury

|

priority

|

implement dispute flow period tracking evidence browsing and submission appeal status and funding pass arbitrator period execute arbitrator ruling draw jury

| 1

|

475,337

| 13,691,297,893

|

IssuesEvent

|

2020-09-30 15:22:38

|

CCAFS/MARLO

|

https://api.github.com/repos/CCAFS/MARLO

|

closed

|

[MR] (KDS-MARLO) Satisfaction Survey summary

|

Priority - High Type -Task

|

Analysis of the results of the MARLO satisfaction survey

- [x] Export the results

- [x] Made the analysis

- [x] Share the results

**Move to Review when:** share the results with Hector and team

**Move to Closed when:** send the email to MARLO Family

https://docs.google.com/document/d/1-8ZOLustcz3juFSr8KcCsMi2sXvEqpcL4bJiWY-JVuY/edit?usp=sharing

|

1.0

|

[MR] (KDS-MARLO) Satisfaction Survey summary - Analysis of the results of the MARLO satisfaction survey

- [x] Export the results

- [x] Made the analysis

- [x] Share the results

**Move to Review when:** share the results with Hector and team

**Move to Closed when:** send the email to MARLO Family

https://docs.google.com/document/d/1-8ZOLustcz3juFSr8KcCsMi2sXvEqpcL4bJiWY-JVuY/edit?usp=sharing

|

priority

|

kds marlo satisfaction survey summary analysis of the results of the marlo satisfaction survey export the results made the analysis share the results move to review when share the results with hector and team move to closed when send the email to marlo family

| 1

|

140,154

| 5,398,003,557

|

IssuesEvent

|

2017-02-27 15:58:27

|

jazzsequence/museum-core

|

https://api.github.com/repos/jazzsequence/museum-core

|

closed

|

fix call to 'register_sidebar'

|

deprecated priority-high

|

> The first call to 'register_sidebar' does not define an 'id' which is required as of WP 4.2.2 (or somewhere thereabout).

|

1.0

|

fix call to 'register_sidebar' - > The first call to 'register_sidebar' does not define an 'id' which is required as of WP 4.2.2 (or somewhere thereabout).

|

priority

|

fix call to register sidebar the first call to register sidebar does not define an id which is required as of wp or somewhere thereabout

| 1

|

19,572

| 2,622,153,867

|

IssuesEvent

|

2015-03-04 00:07:17

|

byzhang/terrastore

|

https://api.github.com/repos/byzhang/terrastore

|

closed

|

Upgrade to Terracotta 3.2.0.

|

auto-migrated Milestone-0.4 Priority-High Type-Enhancement

|

```

Upgrade to the latest Terracotta 3.2.0.

```

Original issue reported on code.google.com by `sergio.b...@gmail.com` on 14 Jan 2010 at 9:08

|

1.0

|

Upgrade to Terracotta 3.2.0. - ```

Upgrade to the latest Terracotta 3.2.0.

```

Original issue reported on code.google.com by `sergio.b...@gmail.com` on 14 Jan 2010 at 9:08

|

priority

|

upgrade to terracotta upgrade to the latest terracotta original issue reported on code google com by sergio b gmail com on jan at

| 1

|

352,630

| 10,544,327,717

|

IssuesEvent

|

2019-10-02 16:41:19

|

fac-17/My-Body-Back

|

https://api.github.com/repos/fac-17/My-Body-Back

|

closed

|

Create File Structure

|

Feature High Priority

|

- [x] Clone this repo

- [x] Create React App

- [x] Create folders & gitkeep files for initial push

Should be done after researching React Router #48

|

1.0

|

Create File Structure - - [x] Clone this repo

- [x] Create React App

- [x] Create folders & gitkeep files for initial push

Should be done after researching React Router #48

|

priority

|

create file structure clone this repo create react app create folders gitkeep files for initial push should be done after researching react router

| 1

|

788,508

| 27,755,304,120

|

IssuesEvent

|

2023-03-16 01:38:47

|

quickwit-oss/tantivy

|

https://api.github.com/repos/quickwit-oss/tantivy

|

closed

|

More Documents like this

|

good first issue high priority

|

It would be great to have a "MoreLikeThis" feature in Tantivy.

An efficient, effective "more-like-this" query generator would be a great contribution.

Elasticsearch and Lucene both support it:

https://www.elastic.co/guide/en/elasticsearch/reference/current/query-dsl-mlt-query.html

https://lucene.apache.org/core/7_2_0/queries/org/apache/lucene/queries/mlt/MoreLikeThis.html

> Note: this came up when trying to add support for Tantivy in [Django-Haystack](https://django-haystack.readthedocs.io/en/master/backend_support.html#backend-support-matrix)

If it's helpful, here's how Whoosh (pure search engine implemented in Python) is doing it:

https://github.com/mchaput/whoosh/blob/main/src/whoosh/searching.py#L543-L585

|

1.0

|

More Documents like this - It would be great to have a "MoreLikeThis" feature in Tantivy.

An efficient, effective "more-like-this" query generator would be a great contribution.

Elasticsearch and Lucene both support it:

https://www.elastic.co/guide/en/elasticsearch/reference/current/query-dsl-mlt-query.html

https://lucene.apache.org/core/7_2_0/queries/org/apache/lucene/queries/mlt/MoreLikeThis.html

> Note: this came up when trying to add support for Tantivy in [Django-Haystack](https://django-haystack.readthedocs.io/en/master/backend_support.html#backend-support-matrix)

If it's helpful, here's how Whoosh (pure search engine implemented in Python) is doing it:

https://github.com/mchaput/whoosh/blob/main/src/whoosh/searching.py#L543-L585

|

priority

|

more documents like this it would be great to have a morelikethis feature in tantivy an efficient effective more like this query generator would be a great contribution elasticsearch and lucene both support it note this came up when trying to add support for tantivy in if it s helpful here s how whoosh pure search engine implemented in python is doing it

| 1

|

101,005

| 4,105,744,350

|

IssuesEvent

|

2016-06-06 04:04:53

|

idevelopment/RingMe

|

https://api.github.com/repos/idevelopment/RingMe

|

opened

|

Get all agents on the index page

|

enhancement High Priority

|

Get all agents on the index page

When the customer clicks on the `callback button` we need to verify if the customer is registered.

If the customer is registered send the form data to the db and notify the agent to call the customer.

|

1.0

|

Get all agents on the index page - Get all agents on the index page

When the customer clicks on the `callback button` we need to verify if the customer is registered.

If the customer is registered send the form data to the db and notify the agent to call the customer.

|

priority

|

get all agents on the index page get all agents on the index page when the customer clicks on the callback button we need to verify if the customer is registered if the customer is registered send the form data to the db and notify the agent to call the customer

| 1

|

461,487

| 13,231,066,982

|

IssuesEvent

|

2020-08-18 10:57:59

|

CHOMPStation2/CHOMPStation2

|

https://api.github.com/repos/CHOMPStation2/CHOMPStation2

|

closed

|

Plethora of issues related to using_map.get_map_levels (maybe) making programs function on only one z-level

|

High Priority

|

Basically a lot of "check x over multiple z levels" programs now only function on the z-level said program (i.e. the computer using said program) is located in. From my testing so far I believe this is related to using_map.get_map_levels(). From my testing so far this includes:

-Crew monitor

-Atmosphere alarms

-Power monitor

-Network cards (modular computer wifi)

-Alarm handler

-Space z-level transferring at the edge

I have not tested everything which calls using_map.get_map_levels but suspect the list of broken programs is significantly longer.

This causes #470

|

1.0

|

Plethora of issues related to using_map.get_map_levels (maybe) making programs function on only one z-level - Basically a lot of "check x over multiple z levels" programs now only function on the z-level said program (i.e. the computer using said program) is located in. From my testing so far I believe this is related to using_map.get_map_levels(). From my testing so far this includes:

-Crew monitor

-Atmosphere alarms

-Power monitor

-Network cards (modular computer wifi)

-Alarm handler

-Space z-level transferring at the edge

I have not tested everything which calls using_map.get_map_levels but suspect the list of broken programs is significantly longer.

This causes #470

|

priority

|

plethora of issues related to using map get map levels maybe making programs function on only one z level basically a lot of check x over multiple z levels programs now only function on the z level said program i e the computer using said program is located in from my testing so far i believe this is related to using map get map levels from my testing so far this includes crew monitor atmosphere alarms power monitor network cards modular computer wifi alarm handler space z level transferring at the edge i have not tested everything which calls using map get map levels but suspect the list of broken programs is significantly longer this causes

| 1

|

141,951

| 5,447,436,314

|

IssuesEvent

|

2017-03-07 13:35:22

|

duckduckgo/zeroclickinfo-fathead

|

https://api.github.com/repos/duckduckgo/zeroclickinfo-fathead

|

closed

|

C++: CPP Reference Fathead

|

Difficulty: High Improvement Mission: Programming Priority: High Status: Needs a Developer Topic: C++

|

# Recreate the CPP Reference Fathead Instant Answer

Help us make DuckDuckGo the best search engine for programmers!

### What do I need to know?

You'll need to know how to code in **Perl**, **Python**, **Ruby**, or **JavaScript**.

### What am I doing?

You will write a script that scrapes or downloads the data source below, and generates an **output.txt** file containing the parsed documentation. You can learn more about Fatheads and the `output.txt` syntax [**here**](https://docs.duckduckhack.com/resources/fathead-overview.html).

**Bonus Info** 🚀 : This Fathead already exists, and it's awesome! We have decided to make it a candidate for deprecation so we can align the code to mirror other Fatheads. Further, we don't wish to rely on external parties to provide the data.

**Data source**: The same, but open to discussion.

<!-- ^^^ FILL THIS IN ^^^ -->

**Instant Answer Page**: https://duck.co/ia/view/cppreference_doc

<!-- ^^^ FILL THIS IN, AFTER ISSUE IS CLAIMED ^^^ -->

### What is the Goal?

As part of our [Programming Mission](https://forum.duckduckhack.com/t/duckduckhack-programming-mission-overview/53), we're aiming to reach 100% Instant Answer (IA) coverage for searches related to programming languages by creating new Instant Answers, and improving existing ones.

Here are some Fathead examples:

- Ruby Docs

- [Code](https://github.com/duckduckgo/zeroclickinfo-fathead/tree/master/lib/fathead/ruby) | [Example Query](https://duckduckgo.com/?q=array+bsearch&ia=about)

- MDN CSS

- [Code](https://github.com/duckduckgo/zeroclickinfo-fathead/tree/master/lib/fathead/mdn_css) | [Example Query](https://duckduckgo.com/?q=css+background-position&ia=about)

[See more related Instant Answers](https://duck.co/ia?repo=fathead)

## Get Started

- [ ] 1) Claim this issue by commenting below

- [ ] 2) Review our [Contributing Guide](https://github.com/duckduckgo/zeroclickinfo-fathead/blob/master/CONTRIBUTING.md)

- [ ] 3) [Set up your development environment](https://docs.duckduckhack.com/welcome/setup-dev-environment.html), and fork this repository

- [ ] 4) Create a new Instant Answer Page: https://duck.co/ia/new_ia (then let us know, here!)

- [ ] 5) Create the Fathead

- [ ] 6) Create a Pull Request

- [ ] 7) Ping @sahildua2305 for a review

<!-- ^^^ FILL THIS IN ^^^ -->

## Resources

- Join [DuckDuckHack Slack](https://quackslack.herokuapp.com/) to ask questions

- Join the [DuckDuckHack Forum](https://forum.duckduckhack.com/) to discuss project planning and Instant Answer metrics

- Read the [DuckDuckHack Documentation](https://docs.duckduckhack.com/) for technical help

|

1.0

|

C++: CPP Reference Fathead - # Recreate the CPP Reference Fathead Instant Answer

Help us make DuckDuckGo the best search engine for programmers!

### What do I need to know?

You'll need to know how to code in **Perl**, **Python**, **Ruby**, or **JavaScript**.

### What am I doing?

You will write a script that scrapes or downloads the data source below, and generates an **output.txt** file containing the parsed documentation. You can learn more about Fatheads and the `output.txt` syntax [**here**](https://docs.duckduckhack.com/resources/fathead-overview.html).

**Bonus Info** 🚀 : This Fathead already exists, and it's awesome! We have decided to make it a candidate for deprecation so we can align the code to mirror other Fatheads. Further, we don't wish to rely on external parties to provide the data.

**Data source**: The same, but open to discussion.

<!-- ^^^ FILL THIS IN ^^^ -->

**Instant Answer Page**: https://duck.co/ia/view/cppreference_doc

<!-- ^^^ FILL THIS IN, AFTER ISSUE IS CLAIMED ^^^ -->

### What is the Goal?

As part of our [Programming Mission](https://forum.duckduckhack.com/t/duckduckhack-programming-mission-overview/53), we're aiming to reach 100% Instant Answer (IA) coverage for searches related to programming languages by creating new Instant Answers, and improving existing ones.

Here are some Fathead examples:

- Ruby Docs

- [Code](https://github.com/duckduckgo/zeroclickinfo-fathead/tree/master/lib/fathead/ruby) | [Example Query](https://duckduckgo.com/?q=array+bsearch&ia=about)

- MDN CSS

- [Code](https://github.com/duckduckgo/zeroclickinfo-fathead/tree/master/lib/fathead/mdn_css) | [Example Query](https://duckduckgo.com/?q=css+background-position&ia=about)

[See more related Instant Answers](https://duck.co/ia?repo=fathead)

## Get Started

- [ ] 1) Claim this issue by commenting below

- [ ] 2) Review our [Contributing Guide](https://github.com/duckduckgo/zeroclickinfo-fathead/blob/master/CONTRIBUTING.md)

- [ ] 3) [Set up your development environment](https://docs.duckduckhack.com/welcome/setup-dev-environment.html), and fork this repository

- [ ] 4) Create a new Instant Answer Page: https://duck.co/ia/new_ia (then let us know, here!)

- [ ] 5) Create the Fathead

- [ ] 6) Create a Pull Request

- [ ] 7) Ping @sahildua2305 for a review

<!-- ^^^ FILL THIS IN ^^^ -->

## Resources

- Join [DuckDuckHack Slack](https://quackslack.herokuapp.com/) to ask questions

- Join the [DuckDuckHack Forum](https://forum.duckduckhack.com/) to discuss project planning and Instant Answer metrics

- Read the [DuckDuckHack Documentation](https://docs.duckduckhack.com/) for technical help

|

priority

|

c cpp reference fathead recreate the cpp reference fathead instant answer help us make duckduckgo the best search engine for programmers what do i need to know you ll need to know how to code in perl python ruby or javascript what am i doing you will write a script that scrapes or downloads the data source below and generates an output txt file containing the parsed documentation you can learn more about fatheads and the output txt syntax bonus info 🚀 this fathead already exists and it s awesome we have decided to make it a candidate for deprecation so we can align the code to mirror other fatheads further we don t wish to rely on external parties to provide the data data source the same but open to discussion instant answer page what is the goal as part of our we re aiming to reach instant answer ia coverage for searches related to programming languages by creating new instant answers and improving existing ones here are some fathead examples ruby docs mdn css get started claim this issue by commenting below review our and fork this repository create a new instant answer page then let us know here create the fathead create a pull request ping for a review resources join to ask questions join the to discuss project planning and instant answer metrics read the for technical help

| 1

|

817,171

| 30,629,046,098

|

IssuesEvent

|

2023-07-24 13:27:46

|

bigbluebutton/bigbluebutton

|

https://api.github.com/repos/bigbluebutton/bigbluebutton

|

closed

|

Whiteboard tools are working only on a small area of the presentation

|

priority: high module: client

|

**Describe the bug**

All whiteboard tools works only on small area of the entire whiteboard in Google Chrome browser. Problem presist with default presentation and with uploaded one. It started with Chrome latest update and it apears on every PC of my team with updated chrome browser. Issue is not found on Edge, Opera and Mozilla.

**Actual behavior**

moving the mouse otside the marked area of the screenshoot it look like the whiteboard tools are desactivated and for example the hand changes to the usual mouse cursor.

**Screenshots**

**BBB version:**

BigBlueButton Server 2.3.10 (2419)

**Desktop :**

- OS: Windows 10

- Browser Chrome

- Version 114.0.5735.90 (Official Build) (64-bit)

|

1.0

|

Whiteboard tools are working only on a small area of the presentation - **Describe the bug**

All whiteboard tools works only on small area of the entire whiteboard in Google Chrome browser. Problem presist with default presentation and with uploaded one. It started with Chrome latest update and it apears on every PC of my team with updated chrome browser. Issue is not found on Edge, Opera and Mozilla.

**Actual behavior**

moving the mouse otside the marked area of the screenshoot it look like the whiteboard tools are desactivated and for example the hand changes to the usual mouse cursor.

**Screenshots**

**BBB version:**

BigBlueButton Server 2.3.10 (2419)

**Desktop :**

- OS: Windows 10

- Browser Chrome

- Version 114.0.5735.90 (Official Build) (64-bit)

|

priority

|

whiteboard tools are working only on a small area of the presentation describe the bug all whiteboard tools works only on small area of the entire whiteboard in google chrome browser problem presist with default presentation and with uploaded one it started with chrome latest update and it apears on every pc of my team with updated chrome browser issue is not found on edge opera and mozilla actual behavior moving the mouse otside the marked area of the screenshoot it look like the whiteboard tools are desactivated and for example the hand changes to the usual mouse cursor screenshots bbb version bigbluebutton server desktop os windows browser chrome version official build bit

| 1

|

381,638

| 11,277,535,180

|

IssuesEvent

|

2020-01-15 03:11:49

|

medic/cht-core

|

https://api.github.com/repos/medic/cht-core

|

closed

|

Supervisors download all task documents from CHWs they supervise

|

Priority: 1 - High Type: Bug

|

**Describe the bug**

As part of Rules-Engine v2, tasks are now stored to disk and replicated up and down.

However, a stored task has this structure:

```

{

"type": "task",

"authoredOn": "<timestamp>",

"state": "<some_task_state>"

"stateHistory": [{ "state": "<some_task_state>", "timestamp": "<timestamp>" }, ...],

"user": "<users's contact document id>",

"requester": "<taskEmission.doc.contact._id>",

"owner": "<taskEmission.contact._id>",

"emission": { ... emission data }

}

```

relevant code here: https://github.com/medic/cht-core/blob/master/shared-libs/rules-engine/src/transform-task-emission-to-doc.js#L26

The `user` field is used for determining replication permissions for the doc and is the value emitted in `medic/docs_by_replication_key`: https://github.com/medic/cht-core/blob/master/ddocs/medic/views/docs_by_replication_key/map.js#L71 .

Because the field contains the uuid of the user's contact doc, supervisors are most likely allowed to view the people they supervise, hence they will download all the tasks of these users.

**To Reproduce**

Using "legacy" hierarchy:

1. Create a CHW under a `health_center`, add some families and people to the families and add at least one report that would generate a task. Sync!

2. Create a Supervisor above the CHW, in `district_hospital` level and give it `replication_depth` = 2. This means that he will download the `health_center`, the CHW Contact document and the families, but none of the people in the families or their reports.

3. Log in with the Supervisor account and check the tasks tab. Notice that you see the same task as the "CHW".

**Expected behavior**

I believe it is intended for supervisors not to download supervisee tasks, as that could prove severely detrimental for their devices performance.

**Environment**

- App: webapp

- Version: 3.8.x

**Additional context**

It appears that simply switching the `task.user` field to contain the actual user-settings document id (`org:couchdb:user:<username>`) would solve the replication issue, as only the users themselves have access to the user-settings docs.

If, in the future, we want to have the ability to generate tasks for other users, not based on their username, we could additionally have `medic/docs_by_replication_key` emit an additional value that would send the task to the correct people - for example emit the `owner` and have the task downloaded to everyone who sees the person.

|

1.0

|

Supervisors download all task documents from CHWs they supervise -

**Describe the bug**

As part of Rules-Engine v2, tasks are now stored to disk and replicated up and down.

However, a stored task has this structure:

```

{

"type": "task",

"authoredOn": "<timestamp>",

"state": "<some_task_state>"

"stateHistory": [{ "state": "<some_task_state>", "timestamp": "<timestamp>" }, ...],

"user": "<users's contact document id>",

"requester": "<taskEmission.doc.contact._id>",

"owner": "<taskEmission.contact._id>",

"emission": { ... emission data }

}

```

relevant code here: https://github.com/medic/cht-core/blob/master/shared-libs/rules-engine/src/transform-task-emission-to-doc.js#L26

The `user` field is used for determining replication permissions for the doc and is the value emitted in `medic/docs_by_replication_key`: https://github.com/medic/cht-core/blob/master/ddocs/medic/views/docs_by_replication_key/map.js#L71 .

Because the field contains the uuid of the user's contact doc, supervisors are most likely allowed to view the people they supervise, hence they will download all the tasks of these users.

**To Reproduce**

Using "legacy" hierarchy:

1. Create a CHW under a `health_center`, add some families and people to the families and add at least one report that would generate a task. Sync!

2. Create a Supervisor above the CHW, in `district_hospital` level and give it `replication_depth` = 2. This means that he will download the `health_center`, the CHW Contact document and the families, but none of the people in the families or their reports.

3. Log in with the Supervisor account and check the tasks tab. Notice that you see the same task as the "CHW".

**Expected behavior**

I believe it is intended for supervisors not to download supervisee tasks, as that could prove severely detrimental for their devices performance.

**Environment**

- App: webapp

- Version: 3.8.x

**Additional context**

It appears that simply switching the `task.user` field to contain the actual user-settings document id (`org:couchdb:user:<username>`) would solve the replication issue, as only the users themselves have access to the user-settings docs.

If, in the future, we want to have the ability to generate tasks for other users, not based on their username, we could additionally have `medic/docs_by_replication_key` emit an additional value that would send the task to the correct people - for example emit the `owner` and have the task downloaded to everyone who sees the person.

|

priority

|

supervisors download all task documents from chws they supervise describe the bug as part of rules engine tasks are now stored to disk and replicated up and down however a stored task has this structure type task authoredon state statehistory user requester owner emission emission data relevant code here the user field is used for determining replication permissions for the doc and is the value emitted in medic docs by replication key because the field contains the uuid of the user s contact doc supervisors are most likely allowed to view the people they supervise hence they will download all the tasks of these users to reproduce using legacy hierarchy create a chw under a health center add some families and people to the families and add at least one report that would generate a task sync create a supervisor above the chw in district hospital level and give it replication depth this means that he will download the health center the chw contact document and the families but none of the people in the families or their reports log in with the supervisor account and check the tasks tab notice that you see the same task as the chw expected behavior i believe it is intended for supervisors not to download supervisee tasks as that could prove severely detrimental for their devices performance environment app webapp version x additional context it appears that simply switching the task user field to contain the actual user settings document id org couchdb user would solve the replication issue as only the users themselves have access to the user settings docs if in the future we want to have the ability to generate tasks for other users not based on their username we could additionally have medic docs by replication key emit an additional value that would send the task to the correct people for example emit the owner and have the task downloaded to everyone who sees the person

| 1

|

192,973

| 6,877,599,646

|

IssuesEvent

|

2017-11-20 08:44:39

|

OpenNebula/one

|

https://api.github.com/repos/OpenNebula/one

|

opened

|

Add Sunstone option to start websocketproxy.py with -v

|

Category: Sunstone Priority: High Status: Pending Tracker: Backlog

|

---

Author Name: **Arnold Bechtoldt** (Arnold Bechtoldt)

Original Redmine Issue: 3613, https://dev.opennebula.org/issues/3613

Original Date: 2015-02-18

---

None

|

1.0

|

Add Sunstone option to start websocketproxy.py with -v - ---

Author Name: **Arnold Bechtoldt** (Arnold Bechtoldt)

Original Redmine Issue: 3613, https://dev.opennebula.org/issues/3613

Original Date: 2015-02-18

---

None

|

priority

|

add sunstone option to start websocketproxy py with v author name arnold bechtoldt arnold bechtoldt original redmine issue original date none

| 1

|

98,826

| 4,031,734,195

|

IssuesEvent

|

2016-05-18 18:10:50

|

SuLab/mark2cure

|

https://api.github.com/repos/SuLab/mark2cure

|

closed

|

Fix concept definition links for relation training

|

high priority ux

|

@x0xMaximus can you please add these links to this page https://mark2cure.org/training/relation/3/step/1/? They are modals and there is some sort of inheritance thing that is causing problems to add these.

This is what the page should look like:

links are the relation modals for each concept like this: https://github.com/SuLab/mark2cure/blob/master/mark2cure/instructions/templates/instructions/drug-definitions-relation-modal.jade

|

1.0

|

Fix concept definition links for relation training - @x0xMaximus can you please add these links to this page https://mark2cure.org/training/relation/3/step/1/? They are modals and there is some sort of inheritance thing that is causing problems to add these.

This is what the page should look like:

links are the relation modals for each concept like this: https://github.com/SuLab/mark2cure/blob/master/mark2cure/instructions/templates/instructions/drug-definitions-relation-modal.jade

|

priority

|

fix concept definition links for relation training can you please add these links to this page they are modals and there is some sort of inheritance thing that is causing problems to add these this is what the page should look like links are the relation modals for each concept like this

| 1

|

577,024

| 17,102,009,990

|

IssuesEvent

|

2021-07-09 12:39:38

|

Codethulhu03/UAV

|

https://api.github.com/repos/Codethulhu03/UAV

|

closed

|

Reset Button in connect drone ui: What is its function? It crashes the program

|

bug gui high priority question

|

line 297, in reset

if self.simulationCheck.isChecked():

AttributeError: 'Ui_ConnectDrone' object has no attribute 'simulationCheck'

|

1.0

|

Reset Button in connect drone ui: What is its function? It crashes the program - line 297, in reset

if self.simulationCheck.isChecked():

AttributeError: 'Ui_ConnectDrone' object has no attribute 'simulationCheck'

|

priority

|

reset button in connect drone ui what is its function it crashes the program line in reset if self simulationcheck ischecked attributeerror ui connectdrone object has no attribute simulationcheck

| 1

|

149,753

| 5,725,152,301

|

IssuesEvent

|

2017-04-20 15:57:03

|

fedora-infra/bodhi

|

https://api.github.com/repos/fedora-infra/bodhi

|

closed

|

[RFE] Expire overrides using CLI.

|

Client High priority RFE

|

It seems it is not possible to expire override using CLI. The web UI seems to be the only option ATM. Please consider adding this feature.

```

$ rpm -q bodhi-client

bodhi-client-2.2.4-1.fc26.noarch

```

I noted this originally here:

https://bugzilla.redhat.com/show_bug.cgi?id=1366114#c6

|

1.0

|

[RFE] Expire overrides using CLI. - It seems it is not possible to expire override using CLI. The web UI seems to be the only option ATM. Please consider adding this feature.

```

$ rpm -q bodhi-client

bodhi-client-2.2.4-1.fc26.noarch

```

I noted this originally here:

https://bugzilla.redhat.com/show_bug.cgi?id=1366114#c6

|

priority

|

expire overrides using cli it seems it is not possible to expire override using cli the web ui seems to be the only option atm please consider adding this feature rpm q bodhi client bodhi client noarch i noted this originally here

| 1

|

487,728

| 14,058,813,836

|

IssuesEvent

|

2020-11-03 01:14:12

|

metwork-framework/mfdata

|

https://api.github.com/repos/metwork-framework/mfdata

|

closed

|

[switch_rules:alwaystrue] with multiple steps - Questions

|

Priority: High Status: In Progress Type: Bug backport-to-1.0

|

I'm trying to use [switch_rules:alwaystrue] in my foo plugin with multiple steps : main and other

My config.ini (according to the documentation with multiple steps)

[switch_rules:alwaystrue]

*=main, other

With this configuration, switch fails : Bad syntax

```

2020-10-29T10:26:51.214605Z [ERROR] (switch/rules#13532) bad action [other] for section [switch_rules_foo:alwaystrue] and pattern: * {path=/home/dearith10/metwork/mfdata/tmp/config_auto/plugin_switch_rules.ini}

Traceback (most recent call last):

File "/opt/metwork-mfext-1.0/opt/python3/bin/switch_step", line 11, in <module>

sys.exit(main())

File "/opt/metwork-mfext-1.0/opt/python3/lib/python3.7/site-packages/acquisition/switch_step.py", line 110, in main

x.run()

File "/opt/metwork-mfext-1.0/opt/python3/lib/python3.7/site-packages/acquisition/step.py", line 526, in run

self._init()

File "/opt/metwork-mfext-1.0/opt/python3/lib/python3.7/site-packages/acquisition/switch_step.py", line 35, in _init

r, self.args.switch_section_prefix)

File "/opt/metwork-mfext-1.0/opt/python3/lib/python3.7/site-packages/acquisition/switch_rules.py", line 305, in read

raise BadSyntax()

acquisition.switch_rules.BadSyntax

```

The plugin_switch_rules.ini :

```

# GENERATED FILE

# <CONTRIBUTION OF foo PLUGIN>

[switch_rules_foo:alwaystrue]

* = foo/main, other

# </CONTRIBUTION OF foo PLUGIN>

# <CONTRIBUTION OF ungzip PLUGIN>

[switch_rules_ungzip:fnmatch:latest.guess_file_type.main.system_magic]

gzip compressed data* = ungzip/main

# </CONTRIBUTION OF ungzip PLUGIN>

```

Now, if I set my switch rules like this:

```

[switch_rules:alwaystrue]

*=foo/main, foo/other

```

It works,

Is it an issue or am I wrong ? What is the correct way to configure rules with mutiple steps ?

|

1.0

|

[switch_rules:alwaystrue] with multiple steps - Questions - I'm trying to use [switch_rules:alwaystrue] in my foo plugin with multiple steps : main and other

My config.ini (according to the documentation with multiple steps)

[switch_rules:alwaystrue]

*=main, other

With this configuration, switch fails : Bad syntax

```

2020-10-29T10:26:51.214605Z [ERROR] (switch/rules#13532) bad action [other] for section [switch_rules_foo:alwaystrue] and pattern: * {path=/home/dearith10/metwork/mfdata/tmp/config_auto/plugin_switch_rules.ini}

Traceback (most recent call last):

File "/opt/metwork-mfext-1.0/opt/python3/bin/switch_step", line 11, in <module>

sys.exit(main())

File "/opt/metwork-mfext-1.0/opt/python3/lib/python3.7/site-packages/acquisition/switch_step.py", line 110, in main

x.run()

File "/opt/metwork-mfext-1.0/opt/python3/lib/python3.7/site-packages/acquisition/step.py", line 526, in run

self._init()

File "/opt/metwork-mfext-1.0/opt/python3/lib/python3.7/site-packages/acquisition/switch_step.py", line 35, in _init

r, self.args.switch_section_prefix)

File "/opt/metwork-mfext-1.0/opt/python3/lib/python3.7/site-packages/acquisition/switch_rules.py", line 305, in read

raise BadSyntax()

acquisition.switch_rules.BadSyntax

```

The plugin_switch_rules.ini :

```

# GENERATED FILE

# <CONTRIBUTION OF foo PLUGIN>

[switch_rules_foo:alwaystrue]

* = foo/main, other

# </CONTRIBUTION OF foo PLUGIN>

# <CONTRIBUTION OF ungzip PLUGIN>

[switch_rules_ungzip:fnmatch:latest.guess_file_type.main.system_magic]

gzip compressed data* = ungzip/main

# </CONTRIBUTION OF ungzip PLUGIN>

```

Now, if I set my switch rules like this:

```

[switch_rules:alwaystrue]

*=foo/main, foo/other

```

It works,

Is it an issue or am I wrong ? What is the correct way to configure rules with mutiple steps ?

|

priority

|

with multiple steps questions i m trying to use in my foo plugin with multiple steps main and other my config ini according to the documentation with multiple steps main other with this configuration switch fails bad syntax switch rules bad action for section and pattern path home metwork mfdata tmp config auto plugin switch rules ini traceback most recent call last file opt metwork mfext opt bin switch step line in sys exit main file opt metwork mfext opt lib site packages acquisition switch step py line in main x run file opt metwork mfext opt lib site packages acquisition step py line in run self init file opt metwork mfext opt lib site packages acquisition switch step py line in init r self args switch section prefix file opt metwork mfext opt lib site packages acquisition switch rules py line in read raise badsyntax acquisition switch rules badsyntax the plugin switch rules ini generated file foo main other gzip compressed data ungzip main now if i set my switch rules like this foo main foo other it works is it an issue or am i wrong what is the correct way to configure rules with mutiple steps

| 1

|

443,848

| 12,800,328,759

|

IssuesEvent

|

2020-07-02 16:53:38

|

ahmedkaludi/accelerated-mobile-pages

|

https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages

|

closed

|

When gdpr option is enabled the site is becoming unclickable in browser Safari on IOS and MacOS

|

Urgent [Priority: HIGH] bug

|

When testing with Google Chrome and Microsoft Edge everything works fine with the GDPR popup

It´s only when using the browsers Safari on IOS and MacOS the problem exists.

https://secure.helpscout.net/conversation/1191220977/135300?folderId=2632030

The issue is occuirng in our staing as well. when gdpr is enabled the site is becoming unclikable

https://monosnap.com/file/6vm5nUGhKdm4lZ3zq2zL2qafkE0rtl

https://wordpress-123147-847862.cloudwaysapps.com/amp/

|

1.0

|

When gdpr option is enabled the site is becoming unclickable in browser Safari on IOS and MacOS - When testing with Google Chrome and Microsoft Edge everything works fine with the GDPR popup

It´s only when using the browsers Safari on IOS and MacOS the problem exists.

https://secure.helpscout.net/conversation/1191220977/135300?folderId=2632030

The issue is occuirng in our staing as well. when gdpr is enabled the site is becoming unclikable

https://monosnap.com/file/6vm5nUGhKdm4lZ3zq2zL2qafkE0rtl

https://wordpress-123147-847862.cloudwaysapps.com/amp/

|

priority

|

when gdpr option is enabled the site is becoming unclickable in browser safari on ios and macos when testing with google chrome and microsoft edge everything works fine with the gdpr popup it´s only when using the browsers safari on ios and macos the problem exists the issue is occuirng in our staing as well when gdpr is enabled the site is becoming unclikable

| 1

|

6,544

| 2,589,165,544

|

IssuesEvent

|