Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

370,067

| 10,924,843,727

|

IssuesEvent

|

2019-11-22 11:02:29

|

bounswe/bounswe2019group10

|

https://api.github.com/repos/bounswe/bounswe2019group10

|

closed

|

Implement endpoint for users to learn their writing scores

|

Priority: High Relation: Backend

|

Currently the writing endpoint does not support the user to learn their scores. Implement the endpoint so that users can learn their scores.

|

1.0

|

Implement endpoint for users to learn their writing scores - Currently the writing endpoint does not support the user to learn their scores. Implement the endpoint so that users can learn their scores.

|

priority

|

implement endpoint for users to learn their writing scores currently the writing endpoint does not support the user to learn their scores implement the endpoint so that users can learn their scores

| 1

|

182,094

| 6,666,863,886

|

IssuesEvent

|

2017-10-03 10:03:48

|

Maslosoft/IlmatarWidgets

|

https://api.github.com/repos/Maslosoft/IlmatarWidgets

|

closed

|

Action grid needs to prevent links clicking

|

bug have-workaround high-priority important

|

This has to be done either:

1. **Remove <s>Link and </s>Action cell decorators (preferred)**

2. Overwrite events (is possible)

|

1.0

|

Action grid needs to prevent links clicking - This has to be done either:

1. **Remove <s>Link and </s>Action cell decorators (preferred)**

2. Overwrite events (is possible)

|

priority

|

action grid needs to prevent links clicking this has to be done either remove link and action cell decorators preferred overwrite events is possible

| 1

|

800,676

| 28,374,805,850

|

IssuesEvent

|

2023-04-12 19:55:58

|

VoltanFr/memcheck

|

https://api.github.com/repos/VoltanFr/memcheck

|

closed

|

Create function-based indexes on db text fields for optimized search

|

database performance complexity-high priority-high page-search

|

By default, we search case insensitively.

In the [`SearchCards` class](https://github.com/VoltanFr/memcheck/blob/master/MemCheck.Application/Searching/SearchCards.cs):

```cs

cardsFilteredWithExludedTags.Where(card =>

EF.Functions.Like(card.FrontSide, $"%{request.RequiredText}%")

|| EF.Functions.Like(card.BackSide, $"%{request.RequiredText}%")

|| EF.Functions.Like(card.AdditionalInfo, $"%{request.RequiredText}%")

|| EF.Functions.Like(card.References, $"%{request.RequiredText}%")

)

```

Give a try to creating function-based indexes (aka _computed columns_) on the text fields, to improve perf. Confirm that it will improve more than cost, and create them. About cost, we need to consider the volume used and the maintaining of the computed columns.

By the way, there is currently no index on the text fields (as per the code above, that would be useless).

Resources...

- [Introduction to function-based indexes](https://use-the-index-luke.com/sql/where-clause/functions/case-insensitive-search)

- [Case-insensitive Search Operations](https://techcommunity.microsoft.com/t5/sql-server-blog/case-insensitive-search-operations/ba-p/383199)

Note that the second resource link says `While this example presents a solution for a case insensitive search using the UPPER function, the solution can be easily be extended for use with other functions as well`. I don't know what that means, what other types of computed columns we could use, but this might be worth exploring.

|

1.0

|

Create function-based indexes on db text fields for optimized search - By default, we search case insensitively.

In the [`SearchCards` class](https://github.com/VoltanFr/memcheck/blob/master/MemCheck.Application/Searching/SearchCards.cs):

```cs

cardsFilteredWithExludedTags.Where(card =>

EF.Functions.Like(card.FrontSide, $"%{request.RequiredText}%")

|| EF.Functions.Like(card.BackSide, $"%{request.RequiredText}%")

|| EF.Functions.Like(card.AdditionalInfo, $"%{request.RequiredText}%")

|| EF.Functions.Like(card.References, $"%{request.RequiredText}%")

)

```

Give a try to creating function-based indexes (aka _computed columns_) on the text fields, to improve perf. Confirm that it will improve more than cost, and create them. About cost, we need to consider the volume used and the maintaining of the computed columns.

By the way, there is currently no index on the text fields (as per the code above, that would be useless).

Resources...

- [Introduction to function-based indexes](https://use-the-index-luke.com/sql/where-clause/functions/case-insensitive-search)

- [Case-insensitive Search Operations](https://techcommunity.microsoft.com/t5/sql-server-blog/case-insensitive-search-operations/ba-p/383199)

Note that the second resource link says `While this example presents a solution for a case insensitive search using the UPPER function, the solution can be easily be extended for use with other functions as well`. I don't know what that means, what other types of computed columns we could use, but this might be worth exploring.

|

priority

|

create function based indexes on db text fields for optimized search by default we search case insensitively in the cs cardsfilteredwithexludedtags where card ef functions like card frontside request requiredtext ef functions like card backside request requiredtext ef functions like card additionalinfo request requiredtext ef functions like card references request requiredtext give a try to creating function based indexes aka computed columns on the text fields to improve perf confirm that it will improve more than cost and create them about cost we need to consider the volume used and the maintaining of the computed columns by the way there is currently no index on the text fields as per the code above that would be useless resources note that the second resource link says while this example presents a solution for a case insensitive search using the upper function the solution can be easily be extended for use with other functions as well i don t know what that means what other types of computed columns we could use but this might be worth exploring

| 1

|

645,112

| 20,994,884,939

|

IssuesEvent

|

2022-03-29 12:43:26

|

cryostatio/cryostat

|

https://api.github.com/repos/cryostatio/cryostat

|

closed

|

Label for recording template/type is incompatible with GraphQL filtering/k8s label selectors

|

bug high-priority

|

> @ebaron I just realized we have a grammar incompatibility between k8s label selectors and our current form of template specifier. We use ex. `template=Profiling,type=TARGET` to specify the event template to use to start a recording, and we capture that string verbatim and store it in the metadata labels attached to a recording (active or archived), with the map key `template`. For example:

```json

{

"data": {

"environmentNodes": [

{

"descendantTargets": [

{

"recordings": {

"active": [

{

"metadata": {

"labels": {

"template": "template=Profiling,type=TARGET"

}

},

"name": "foo",

"state": "RUNNING"

}

],

"archived": []

}

}

],

"labels": {},

"name": "JDP",

"nodeType": "Realm"

}

]

}

}

```

> This is incompatible with both the equality-based and set-based k8s label selector syntaxes. The equality style expression would look like `template = template=Profiling,type=TARGET` and the set style expression would look like `template in (template=Profiling,type=TARGET)`. Both of these are ambiguous and invalid because our `template=Foo[,type=TYPE]` expression is not a valid k8s label value.

> Possible solutions:

> 1. Maintain the existing `templateLabel` special case label selector style, where the filter input simply takes the expected value of the label, and not a full label selector expression. All other kinds of label can be selected normally with k8s selector style.

> 2. Change our `template=Foo[,type=TYPE]` syntax. Doesn't seem appropriate for 2.1.0.

> 2b. We could alternately maintain the same input syntax, but replace the delimiters to something k8s label selector compatible when persisting them into recording metadata.

> 3. Implement some more specialized parser for the label selectors that can handle this ambiguous case for our specific scenario, but this is pretty hacky and can still end up being ambiguous with our template specifier syntax anyway.

> Thoughts? For 2.1.0, option 1 seems to make the most sense to me, or maybe 2b. For 3.0 we can change the event specifier syntax and avoid this issue entirely.

_Originally posted by @andrewazores in https://github.com/cryostatio/cryostat/issues/825#issuecomment-1079222248_

|

1.0

|

Label for recording template/type is incompatible with GraphQL filtering/k8s label selectors - > @ebaron I just realized we have a grammar incompatibility between k8s label selectors and our current form of template specifier. We use ex. `template=Profiling,type=TARGET` to specify the event template to use to start a recording, and we capture that string verbatim and store it in the metadata labels attached to a recording (active or archived), with the map key `template`. For example:

```json

{

"data": {

"environmentNodes": [

{

"descendantTargets": [

{

"recordings": {

"active": [

{

"metadata": {

"labels": {

"template": "template=Profiling,type=TARGET"

}

},

"name": "foo",

"state": "RUNNING"

}

],

"archived": []

}

}

],

"labels": {},

"name": "JDP",

"nodeType": "Realm"

}

]

}

}

```

> This is incompatible with both the equality-based and set-based k8s label selector syntaxes. The equality style expression would look like `template = template=Profiling,type=TARGET` and the set style expression would look like `template in (template=Profiling,type=TARGET)`. Both of these are ambiguous and invalid because our `template=Foo[,type=TYPE]` expression is not a valid k8s label value.

> Possible solutions:

> 1. Maintain the existing `templateLabel` special case label selector style, where the filter input simply takes the expected value of the label, and not a full label selector expression. All other kinds of label can be selected normally with k8s selector style.

> 2. Change our `template=Foo[,type=TYPE]` syntax. Doesn't seem appropriate for 2.1.0.

> 2b. We could alternately maintain the same input syntax, but replace the delimiters to something k8s label selector compatible when persisting them into recording metadata.

> 3. Implement some more specialized parser for the label selectors that can handle this ambiguous case for our specific scenario, but this is pretty hacky and can still end up being ambiguous with our template specifier syntax anyway.

> Thoughts? For 2.1.0, option 1 seems to make the most sense to me, or maybe 2b. For 3.0 we can change the event specifier syntax and avoid this issue entirely.

_Originally posted by @andrewazores in https://github.com/cryostatio/cryostat/issues/825#issuecomment-1079222248_

|

priority

|

label for recording template type is incompatible with graphql filtering label selectors ebaron i just realized we have a grammar incompatibility between label selectors and our current form of template specifier we use ex template profiling type target to specify the event template to use to start a recording and we capture that string verbatim and store it in the metadata labels attached to a recording active or archived with the map key template for example json data environmentnodes descendanttargets recordings active metadata labels template template profiling type target name foo state running archived labels name jdp nodetype realm this is incompatible with both the equality based and set based label selector syntaxes the equality style expression would look like template template profiling type target and the set style expression would look like template in template profiling type target both of these are ambiguous and invalid because our template foo expression is not a valid label value possible solutions maintain the existing templatelabel special case label selector style where the filter input simply takes the expected value of the label and not a full label selector expression all other kinds of label can be selected normally with selector style change our template foo syntax doesn t seem appropriate for we could alternately maintain the same input syntax but replace the delimiters to something label selector compatible when persisting them into recording metadata implement some more specialized parser for the label selectors that can handle this ambiguous case for our specific scenario but this is pretty hacky and can still end up being ambiguous with our template specifier syntax anyway thoughts for option seems to make the most sense to me or maybe for we can change the event specifier syntax and avoid this issue entirely originally posted by andrewazores in

| 1

|

294,374

| 9,022,794,953

|

IssuesEvent

|

2019-02-07 03:33:49

|

RoboJackets/robocup-software

|

https://api.github.com/repos/RoboJackets/robocup-software

|

opened

|

Path planner occasionally doesn't create a continuous path in the velocity state

|

area / planning-motion exp / master (4) priority / high status / new type / bug

|

This is specific with partial paths and re-planning every single frame. I haven't tested anything else.

When there is a path from the previous frame, the first X seconds are taken off and used as the initial part of the next path. This sometimes produces a situation where the second path and the first path have a discontinuity in the velocity state. It seems specific to large changes in the path target where the previous was a straight line path and the second path being appended is a hard turn in any of the directions.

Here are a sample of saddle points where the first point set is the last pos/vel of the previous path and the second set point is the first pos/vel of the appended path.

```

Run 1

Point(-1.13272, 1.78457)Point(-0.12078, -0.297487) - Prev path

Point(-1.13272, 1.78457)Point(-0.231728, -0.570758) - Appended path

```

```

Run 2

Point(-1.1357, 1.77774)Point(-0.109906, -0.254372) - Prev path

Point(-1.1357, 1.77774)Point(-0.175222, -0.405543) - Appended path

```

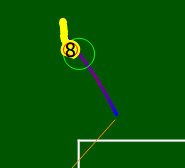

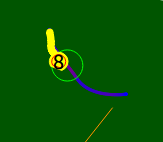

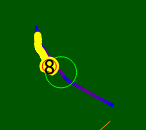

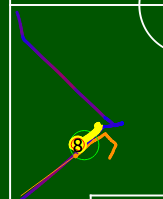

This picture doesn't correspond to the above data, it's from a separate run, but it shows the previous path, the new path, and a later picture of the velocity discontinuity.

Initial path

Next frames appended path

The discontinuity at a later point

Here is another picture of it happening over a larger path. You can see the fast transitions from red to a deep blue.

[Here](https://github.com/RoboJackets/robocup-software/pull/1180/files?utf8=%E2%9C%93&diff=unified#diff-bfec79fac28b5f67abeb89d135d4b0c8R136) is the previous path snipping.

[Here](https://github.com/RoboJackets/robocup-software/pull/1180/files?utf8=%E2%9C%93&diff=unified#diff-bfec79fac28b5f67abeb89d135d4b0c8R229) is where we create the new path.

[Here](https://github.com/RoboJackets/robocup-software/pull/1180/files?utf8=%E2%9C%93&diff=unified#diff-bfec79fac28b5f67abeb89d135d4b0c8R236) is where we combine the two paths together.

And finally [here](https://github.com/RoboJackets/robocup-software/pull/1180/files?utf8=%E2%9C%93&diff=unified#diff-bfec79fac28b5f67abeb89d135d4b0c8R241) is the returning of the path.

My first thought is that we may be trying to command an impossible path and it's loosing the initial velocity constraint in order to solve it.

|

1.0

|

Path planner occasionally doesn't create a continuous path in the velocity state - This is specific with partial paths and re-planning every single frame. I haven't tested anything else.

When there is a path from the previous frame, the first X seconds are taken off and used as the initial part of the next path. This sometimes produces a situation where the second path and the first path have a discontinuity in the velocity state. It seems specific to large changes in the path target where the previous was a straight line path and the second path being appended is a hard turn in any of the directions.

Here are a sample of saddle points where the first point set is the last pos/vel of the previous path and the second set point is the first pos/vel of the appended path.

```

Run 1

Point(-1.13272, 1.78457)Point(-0.12078, -0.297487) - Prev path

Point(-1.13272, 1.78457)Point(-0.231728, -0.570758) - Appended path

```

```

Run 2

Point(-1.1357, 1.77774)Point(-0.109906, -0.254372) - Prev path

Point(-1.1357, 1.77774)Point(-0.175222, -0.405543) - Appended path

```

This picture doesn't correspond to the above data, it's from a separate run, but it shows the previous path, the new path, and a later picture of the velocity discontinuity.

Initial path

Next frames appended path

The discontinuity at a later point

Here is another picture of it happening over a larger path. You can see the fast transitions from red to a deep blue.

[Here](https://github.com/RoboJackets/robocup-software/pull/1180/files?utf8=%E2%9C%93&diff=unified#diff-bfec79fac28b5f67abeb89d135d4b0c8R136) is the previous path snipping.

[Here](https://github.com/RoboJackets/robocup-software/pull/1180/files?utf8=%E2%9C%93&diff=unified#diff-bfec79fac28b5f67abeb89d135d4b0c8R229) is where we create the new path.

[Here](https://github.com/RoboJackets/robocup-software/pull/1180/files?utf8=%E2%9C%93&diff=unified#diff-bfec79fac28b5f67abeb89d135d4b0c8R236) is where we combine the two paths together.

And finally [here](https://github.com/RoboJackets/robocup-software/pull/1180/files?utf8=%E2%9C%93&diff=unified#diff-bfec79fac28b5f67abeb89d135d4b0c8R241) is the returning of the path.

My first thought is that we may be trying to command an impossible path and it's loosing the initial velocity constraint in order to solve it.

|

priority

|

path planner occasionally doesn t create a continuous path in the velocity state this is specific with partial paths and re planning every single frame i haven t tested anything else when there is a path from the previous frame the first x seconds are taken off and used as the initial part of the next path this sometimes produces a situation where the second path and the first path have a discontinuity in the velocity state it seems specific to large changes in the path target where the previous was a straight line path and the second path being appended is a hard turn in any of the directions here are a sample of saddle points where the first point set is the last pos vel of the previous path and the second set point is the first pos vel of the appended path run point point prev path point point appended path run point point prev path point point appended path this picture doesn t correspond to the above data it s from a separate run but it shows the previous path the new path and a later picture of the velocity discontinuity initial path next frames appended path the discontinuity at a later point here is another picture of it happening over a larger path you can see the fast transitions from red to a deep blue is the previous path snipping is where we create the new path is where we combine the two paths together and finally is the returning of the path my first thought is that we may be trying to command an impossible path and it s loosing the initial velocity constraint in order to solve it

| 1

|

462,692

| 13,251,720,486

|

IssuesEvent

|

2020-08-20 03:02:22

|

phetsims/gravity-and-orbits

|

https://api.github.com/repos/phetsims/gravity-and-orbits

|

closed

|

Use DragListener instead of SimpleDragHandler and MovableDragHandler

|

priority:2-high status:ready-for-review

|

Use DragListener instead of SimpleDragHandler and MovableDragHandler. Not necessary for dev version but would be nice for production RC.

|

1.0

|

Use DragListener instead of SimpleDragHandler and MovableDragHandler - Use DragListener instead of SimpleDragHandler and MovableDragHandler. Not necessary for dev version but would be nice for production RC.

|

priority

|

use draglistener instead of simpledraghandler and movabledraghandler use draglistener instead of simpledraghandler and movabledraghandler not necessary for dev version but would be nice for production rc

| 1

|

343,287

| 10,327,548,077

|

IssuesEvent

|

2019-09-02 07:18:02

|

StrangeLoopGames/EcoIssues

|

https://api.github.com/repos/StrangeLoopGames/EcoIssues

|

closed

|

Inventory breaks and rejects new contents, saying it's full, when there is nothing in the carried inventory. [master branch/9.0]

|

High Priority

|

Often times I'll give myself a block, place it down, then try to give myself another and it will say my inventory is full.

The only way to fix it that I've found is using /dump then giving myself the item again.

|

1.0

|

Inventory breaks and rejects new contents, saying it's full, when there is nothing in the carried inventory. [master branch/9.0] -

Often times I'll give myself a block, place it down, then try to give myself another and it will say my inventory is full.

The only way to fix it that I've found is using /dump then giving myself the item again.

|

priority

|

inventory breaks and rejects new contents saying it s full when there is nothing in the carried inventory often times i ll give myself a block place it down then try to give myself another and it will say my inventory is full the only way to fix it that i ve found is using dump then giving myself the item again

| 1

|

224,714

| 7,472,057,069

|

IssuesEvent

|

2018-04-03 11:21:39

|

wso2/product-ei

|

https://api.github.com/repos/wso2/product-ei

|

opened

|

Provide the link to download connectors

|

Priority/High Type/Docs

|

**Description:**

Provide the link to download connectors in the doc [1] under the below instruction

`If you have already downloaded the connectors, select the Connector location option and browse to the connector file from the file system. Click Finish.`

[1] https://docs.wso2.com/display/EI611/Working+with+Connectors+via+Tooling

|

1.0

|

Provide the link to download connectors - **Description:**

Provide the link to download connectors in the doc [1] under the below instruction

`If you have already downloaded the connectors, select the Connector location option and browse to the connector file from the file system. Click Finish.`

[1] https://docs.wso2.com/display/EI611/Working+with+Connectors+via+Tooling

|

priority

|

provide the link to download connectors description provide the link to download connectors in the doc under the below instruction if you have already downloaded the connectors select the connector location option and browse to the connector file from the file system click finish

| 1

|

436,473

| 12,550,692,124

|

IssuesEvent

|

2020-06-06 12:06:21

|

zairza-cetb/bench-routes

|

https://api.github.com/repos/zairza-cetb/bench-routes

|

closed

|

Board: release alpha-3

|

next-release priority:high

|

Following are the list of features we would love to cover for alpha-3 version:

### API

- [ ] Start or stop collector through the dashboard

### Persistent connection

- [ ] live updates in dashboard

### Dashboard

- [ ] notifications as a toast

- [ ] Collector support in graphs

### TSDB

- [ ] saving time-series as binary data instead of JSON (for improving space complexity)

**Notes:**

1. We plan to stick to v1.0 to alpha-3 release. However, alpha-4 or alpha-5 should have v1.1 dashboard.

Please feel free to comment and update this board.

|

1.0

|

Board: release alpha-3 - Following are the list of features we would love to cover for alpha-3 version:

### API

- [ ] Start or stop collector through the dashboard

### Persistent connection

- [ ] live updates in dashboard

### Dashboard

- [ ] notifications as a toast

- [ ] Collector support in graphs

### TSDB

- [ ] saving time-series as binary data instead of JSON (for improving space complexity)

**Notes:**

1. We plan to stick to v1.0 to alpha-3 release. However, alpha-4 or alpha-5 should have v1.1 dashboard.

Please feel free to comment and update this board.

|

priority

|

board release alpha following are the list of features we would love to cover for alpha version api start or stop collector through the dashboard persistent connection live updates in dashboard dashboard notifications as a toast collector support in graphs tsdb saving time series as binary data instead of json for improving space complexity notes we plan to stick to to alpha release however alpha or alpha should have dashboard please feel free to comment and update this board

| 1

|

581,466

| 17,294,227,392

|

IssuesEvent

|

2021-07-25 11:49:27

|

BlueBubblesApp/BlueBubbles-Android-App

|

https://api.github.com/repos/BlueBubblesApp/BlueBubbles-Android-App

|

opened

|

Fix grey screen bug in server settings

|

Bug Difficulty: Easy Difficulty: Medium UX priority: high

|

Not sure why this happened/happens. Joel said it happened for him when he couldn't connect to the server.

The screenshot Joal shared suggests the issue is due to the socket stream/stream builder widget we use. Just because that's where the grey box starts.

|

1.0

|

Fix grey screen bug in server settings - Not sure why this happened/happens. Joel said it happened for him when he couldn't connect to the server.

The screenshot Joal shared suggests the issue is due to the socket stream/stream builder widget we use. Just because that's where the grey box starts.

|

priority

|

fix grey screen bug in server settings not sure why this happened happens joel said it happened for him when he couldn t connect to the server the screenshot joal shared suggests the issue is due to the socket stream stream builder widget we use just because that s where the grey box starts

| 1

|

738,052

| 25,543,317,690

|

IssuesEvent

|

2022-11-29 16:47:20

|

Canadian-Geospatial-Platform/geoview

|

https://api.github.com/repos/Canadian-Geospatial-Platform/geoview

|

opened

|

WMS layer URL error

|

bug-type: broken use case priority: high

|

https://geo.weather.gc.ca/geomet?lang=en&service=WMS&request=GetCapabilities&layers=REPS.DIAG.6_PRMM.ERGE10

This url should be abler to load but the layer stepper says it is not valid

|

1.0

|

WMS layer URL error - https://geo.weather.gc.ca/geomet?lang=en&service=WMS&request=GetCapabilities&layers=REPS.DIAG.6_PRMM.ERGE10

This url should be abler to load but the layer stepper says it is not valid

|

priority

|

wms layer url error this url should be abler to load but the layer stepper says it is not valid

| 1

|

559,093

| 16,549,849,444

|

IssuesEvent

|

2021-05-28 07:17:13

|

bryntum/support

|

https://api.github.com/repos/bryntum/support

|

closed

|

Memory leak when replacing project instance

|

bug high-priority premium resolved

|

https://www.bryntum.com/forum/viewtopic.php?p=84601#p84601

https://www.bryntum.com/forum/viewtopic.php?p=84602#p84602

http://lh/bryntum-suite/scheduler/examples/bigdataset/

Take a snapshot

<img width="1989" alt="Снимок экрана 2021-03-26 в 14 08 24" src="https://user-images.githubusercontent.com/57486733/112624121-3bf59980-8e3e-11eb-9843-9e1d3ff67ebf.png">

Click 10K and take a snapshot. See 11K in Memory

<img width="1986" alt="Снимок экрана 2021-03-26 в 14 10 05" src="https://user-images.githubusercontent.com/57486733/112624129-3ef08a00-8e3e-11eb-83c7-621009751da3.png">

Click about 10 times 5K and 10K. Take a snapshot. See all records are in memory.

<img width="1988" alt="Снимок экрана 2021-03-26 в 14 11 44" src="https://user-images.githubusercontent.com/57486733/112624126-3e57f380-8e3e-11eb-8733-0c9d1a12d844.png">

|

1.0

|

Memory leak when replacing project instance - https://www.bryntum.com/forum/viewtopic.php?p=84601#p84601

https://www.bryntum.com/forum/viewtopic.php?p=84602#p84602

http://lh/bryntum-suite/scheduler/examples/bigdataset/

Take a snapshot

<img width="1989" alt="Снимок экрана 2021-03-26 в 14 08 24" src="https://user-images.githubusercontent.com/57486733/112624121-3bf59980-8e3e-11eb-9843-9e1d3ff67ebf.png">

Click 10K and take a snapshot. See 11K in Memory

<img width="1986" alt="Снимок экрана 2021-03-26 в 14 10 05" src="https://user-images.githubusercontent.com/57486733/112624129-3ef08a00-8e3e-11eb-83c7-621009751da3.png">

Click about 10 times 5K and 10K. Take a snapshot. See all records are in memory.

<img width="1988" alt="Снимок экрана 2021-03-26 в 14 11 44" src="https://user-images.githubusercontent.com/57486733/112624126-3e57f380-8e3e-11eb-8733-0c9d1a12d844.png">

|

priority

|

memory leak when replacing project instance take a snapshot img width alt снимок экрана в src click and take a snapshot see in memory img width alt снимок экрана в src click about times and take a snapshot see all records are in memory img width alt снимок экрана в src

| 1

|

216,688

| 7,310,947,580

|

IssuesEvent

|

2018-02-28 16:21:23

|

getcanoe/canoe

|

https://api.github.com/repos/getcanoe/canoe

|

opened

|

Remove 'Import Wallet' Page from Behind The Password Wall

|

Priority: High

|

For users to be able to recover their wallets if they forgot their passwords.

|

1.0

|

Remove 'Import Wallet' Page from Behind The Password Wall - For users to be able to recover their wallets if they forgot their passwords.

|

priority

|

remove import wallet page from behind the password wall for users to be able to recover their wallets if they forgot their passwords

| 1

|

168,167

| 6,363,382,632

|

IssuesEvent

|

2017-07-31 17:16:47

|

robertgarrigos/ubercart

|

https://api.github.com/repos/robertgarrigos/ubercart

|

closed

|

Replace entity_flush_caches() function

|

priority - high type - bug

|

entity_flush_caches() function does not exist in backdrop anymore. What would be the best solution? Is there a backdrop function which could be used to replace entity_flush_caches()?

|

1.0

|

Replace entity_flush_caches() function - entity_flush_caches() function does not exist in backdrop anymore. What would be the best solution? Is there a backdrop function which could be used to replace entity_flush_caches()?

|

priority

|

replace entity flush caches function entity flush caches function does not exist in backdrop anymore what would be the best solution is there a backdrop function which could be used to replace entity flush caches

| 1

|

731,456

| 25,216,870,035

|

IssuesEvent

|

2022-11-14 09:49:00

|

ballerina-platform/ballerina-dev-website

|

https://api.github.com/repos/ballerina-platform/ballerina-dev-website

|

closed

|

Add the Pilcrow Sign to the Topics on the Home Page

|

Priority/High Area/UIUX Type/Improvement Area/CommonPages

|

**Description:**

Add the pilcrow sign to the topics on the home page.

**Related website/documentation area**

<!--Add one of the following: `Area/BBEs`, `Area/HomePageSamples`, `Area/LearnPages`, `Area/Blog`, `Area/CommonPages`,` Area/Backend`, `Area/UIUX`, and `Area/Workflows` -->

**Describe the problem(s)**

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

|

1.0

|

Add the Pilcrow Sign to the Topics on the Home Page - **Description:**

Add the pilcrow sign to the topics on the home page.

**Related website/documentation area**

<!--Add one of the following: `Area/BBEs`, `Area/HomePageSamples`, `Area/LearnPages`, `Area/Blog`, `Area/CommonPages`,` Area/Backend`, `Area/UIUX`, and `Area/Workflows` -->

**Describe the problem(s)**

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

|

priority

|

add the pilcrow sign to the topics on the home page description add the pilcrow sign to the topics on the home page related website documentation area describe the problem s describe your solution s related issues optional suggested labels optional suggested assignees optional

| 1

|

668,022

| 22,549,233,863

|

IssuesEvent

|

2022-06-27 02:27:52

|

solunareclipse1/ExtraPets2

|

https://api.github.com/repos/solunareclipse1/ExtraPets2

|

closed

|

biometric security on overseer rifle desync

|

bug high priority

|

no idea why. can cause fake damage to other players for clients. i hate networking

|

1.0

|

biometric security on overseer rifle desync - no idea why. can cause fake damage to other players for clients. i hate networking

|

priority

|

biometric security on overseer rifle desync no idea why can cause fake damage to other players for clients i hate networking

| 1

|

112,261

| 4,514,689,942

|

IssuesEvent

|

2016-09-05 01:03:42

|

idevelopment/RingMe

|

https://api.github.com/repos/idevelopment/RingMe

|

opened

|

Staff registration failed

|

bug High Priority

|

when i fill in the registration form and press save, it just redirects me to the registration form.

also the user is not added to the database.

|

1.0

|

Staff registration failed - when i fill in the registration form and press save, it just redirects me to the registration form.

also the user is not added to the database.

|

priority

|

staff registration failed when i fill in the registration form and press save it just redirects me to the registration form also the user is not added to the database

| 1

|

141,981

| 5,447,880,271

|

IssuesEvent

|

2017-03-07 14:40:42

|

fossasia/open-event-orga-server

|

https://api.github.com/repos/fossasia/open-event-orga-server

|

closed

|

Public Schedule Calendar View: Decrease width of header to 200px

|

enhancement Priority: High scheduling

|

Let's save some space to show more info on the screen. Please decrease the header of the table in the public schedule calendar view to 200px width.

|

1.0

|

Public Schedule Calendar View: Decrease width of header to 200px - Let's save some space to show more info on the screen. Please decrease the header of the table in the public schedule calendar view to 200px width.

|

priority

|

public schedule calendar view decrease width of header to let s save some space to show more info on the screen please decrease the header of the table in the public schedule calendar view to width

| 1

|

353,441

| 10,552,779,831

|

IssuesEvent

|

2019-10-03 15:48:24

|

kiwicom/schemathesis

|

https://api.github.com/repos/kiwicom/schemathesis

|

closed

|

Runner. Provide a way to setup authorization

|

Priority: High Type: Enhancement

|

At the moment there is no way to setup auth in `runner._execute_all_tests`. But it should be configurable.

We can start with these options:

- basic auth (for CLI we can follow cURL convention `--user` option)

- custom header (we can add again, a cURL convention `--header`) so users can add auth header manually

As the next step, it would be nice to have more flexible code in `runner` via e.g. passing arguments to the `requests.Session` or auth callbacks to solve the case when a token expires (and it should be refreshed)

|

1.0

|

Runner. Provide a way to setup authorization - At the moment there is no way to setup auth in `runner._execute_all_tests`. But it should be configurable.

We can start with these options:

- basic auth (for CLI we can follow cURL convention `--user` option)

- custom header (we can add again, a cURL convention `--header`) so users can add auth header manually

As the next step, it would be nice to have more flexible code in `runner` via e.g. passing arguments to the `requests.Session` or auth callbacks to solve the case when a token expires (and it should be refreshed)

|

priority

|

runner provide a way to setup authorization at the moment there is no way to setup auth in runner execute all tests but it should be configurable we can start with these options basic auth for cli we can follow curl convention user option custom header we can add again a curl convention header so users can add auth header manually as the next step it would be nice to have more flexible code in runner via e g passing arguments to the requests session or auth callbacks to solve the case when a token expires and it should be refreshed

| 1

|

75,805

| 3,476,154,828

|

IssuesEvent

|

2015-12-26 14:59:57

|

mlhwang/monsterappetite

|

https://api.github.com/repos/mlhwang/monsterappetite

|

closed

|

Condition button's actions

|

Priority - High Snackazon _bug

|

Even without a snack selection you can click on the "Made my choice" button and move on to the next page. This should not happen. It is REQUIRED to make a snack selection.

|

1.0

|

Condition button's actions - Even without a snack selection you can click on the "Made my choice" button and move on to the next page. This should not happen. It is REQUIRED to make a snack selection.

|

priority

|

condition button s actions even without a snack selection you can click on the made my choice button and move on to the next page this should not happen it is required to make a snack selection

| 1

|

249,487

| 7,962,458,268

|

IssuesEvent

|

2018-07-13 14:22:36

|

PushTracker/EvalApp

|

https://api.github.com/repos/PushTracker/EvalApp

|

closed

|

in iOS, ActionBar shifts up after a navigation back to home occurs from a view that experienced an orientation change.

|

bug high-priority ios

|

Changing orientation fixes the ActionBar back to normal position.

|

1.0

|

in iOS, ActionBar shifts up after a navigation back to home occurs from a view that experienced an orientation change. - Changing orientation fixes the ActionBar back to normal position.

|

priority

|

in ios actionbar shifts up after a navigation back to home occurs from a view that experienced an orientation change changing orientation fixes the actionbar back to normal position

| 1

|

636,753

| 20,608,170,294

|

IssuesEvent

|

2022-03-07 04:32:29

|

zulip/zulip

|

https://api.github.com/repos/zulip/zulip

|

opened

|

Improve image title and tooltips

|

help wanted area: general UI priority: high area: message feed display

|

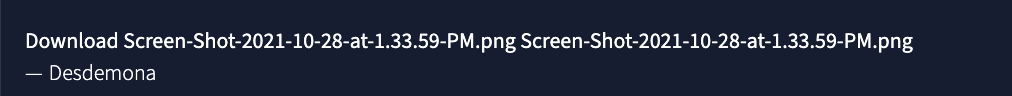

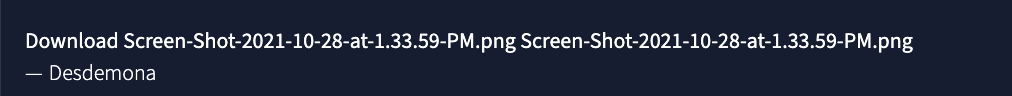

In the image lightbox, the title we currently display in the upper left is: `Download <file name> <image name>`. This looks quite odd/confusing, especially in the pretty common case when the file name and image name are the same (i.e. the user didn't rename the image after uploading):

To address this, we should do the following.

## Lightbox title:

1. Remove the word "Download".

2. If the image name is not empty, show just the image name, and not the file name.

3. If the image name is empty, show just the file name.

## Tooltip on lightbox title:

1. Change to a tippy tooltip

2. Update content to say:

```

<image name>

File name: <file name>

```

## Tooltip on image preview:

(This is currently the same as the tooltip in the lightbox.)

1. Change to a tippy tooltip. (We may want to play with the delay if it feels too invasive.)

2. Update content to say: `View or download <image title>`

[CZO discussion thread](https://chat.zulip.org/#narrow/stream/101-design/topic/image.20title.20in.20lightbox)

|

1.0

|

Improve image title and tooltips - In the image lightbox, the title we currently display in the upper left is: `Download <file name> <image name>`. This looks quite odd/confusing, especially in the pretty common case when the file name and image name are the same (i.e. the user didn't rename the image after uploading):

To address this, we should do the following.

## Lightbox title:

1. Remove the word "Download".

2. If the image name is not empty, show just the image name, and not the file name.

3. If the image name is empty, show just the file name.

## Tooltip on lightbox title:

1. Change to a tippy tooltip

2. Update content to say:

```

<image name>

File name: <file name>

```

## Tooltip on image preview:

(This is currently the same as the tooltip in the lightbox.)

1. Change to a tippy tooltip. (We may want to play with the delay if it feels too invasive.)

2. Update content to say: `View or download <image title>`

[CZO discussion thread](https://chat.zulip.org/#narrow/stream/101-design/topic/image.20title.20in.20lightbox)

|

priority

|

improve image title and tooltips in the image lightbox the title we currently display in the upper left is download this looks quite odd confusing especially in the pretty common case when the file name and image name are the same i e the user didn t rename the image after uploading to address this we should do the following lightbox title remove the word download if the image name is not empty show just the image name and not the file name if the image name is empty show just the file name tooltip on lightbox title change to a tippy tooltip update content to say file name tooltip on image preview this is currently the same as the tooltip in the lightbox change to a tippy tooltip we may want to play with the delay if it feels too invasive update content to say view or download

| 1

|

707,112

| 24,295,756,512

|

IssuesEvent

|

2022-09-29 09:54:41

|

bigbio/pmultiqc

|

https://api.github.com/repos/bigbio/pmultiqc

|

closed

|

Delta mass plot labelling wrong

|

bug high-priority

|

In the beginning, "count" is selected but "frequency" is shown.

Tooltips always show "frequency".

|

1.0

|

Delta mass plot labelling wrong - In the beginning, "count" is selected but "frequency" is shown.

Tooltips always show "frequency".

|

priority

|

delta mass plot labelling wrong in the beginning count is selected but frequency is shown tooltips always show frequency

| 1

|

41,556

| 2,869,060,625

|

IssuesEvent

|

2015-06-05 23:00:52

|

dart-lang/observe

|

https://api.github.com/repos/dart-lang/observe

|

closed

|

reconcile package:observe and observe-js API

|

Area-Polymer enhancement Fixed Priority-High

|

<a href="https://github.com/jmesserly"><img src="https://avatars.githubusercontent.com/u/1081711?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [jmesserly](https://github.com/jmesserly)**

_Originally opened as dart-lang/sdk#13554_

----

Now that Polymer.js includes observe-js (it was previously just a set of utilities at https://github.com/rafaelw/ChangeSummary), it would be nice to align APIs with Dart's package:observe where it makes sense.

\* PathObserver is in pretty good shape.

\* CompoundBinding should be CompoundPathObserver, refactor APIs

\* ListPathObserver should be ListReduction and get the reduceFn.

\* add/expose: Observer, ListObserver, ObjectObserver

\* expose: ListSplice

\* possibly expose Path... but needs a new name.

|

1.0

|

reconcile package:observe and observe-js API - <a href="https://github.com/jmesserly"><img src="https://avatars.githubusercontent.com/u/1081711?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [jmesserly](https://github.com/jmesserly)**

_Originally opened as dart-lang/sdk#13554_

----

Now that Polymer.js includes observe-js (it was previously just a set of utilities at https://github.com/rafaelw/ChangeSummary), it would be nice to align APIs with Dart's package:observe where it makes sense.

\* PathObserver is in pretty good shape.

\* CompoundBinding should be CompoundPathObserver, refactor APIs

\* ListPathObserver should be ListReduction and get the reduceFn.

\* add/expose: Observer, ListObserver, ObjectObserver

\* expose: ListSplice

\* possibly expose Path... but needs a new name.

|

priority

|

reconcile package observe and observe js api issue by originally opened as dart lang sdk now that polymer js includes observe js it was previously just a set of utilities at it would be nice to align apis with dart s package observe where it makes sense pathobserver is in pretty good shape compoundbinding should be compoundpathobserver refactor apis listpathobserver should be listreduction and get the reducefn add expose observer listobserver objectobserver expose listsplice possibly expose path but needs a new name

| 1

|

714,129

| 24,551,922,892

|

IssuesEvent

|

2022-10-12 13:14:43

|

owncloud/web

|

https://api.github.com/repos/owncloud/web

|

closed

|

Shared via link > "parent folder" shows "Resource not found" on click

|

Type:Bug Priority:p2-high GA-Blocker

|

### Steps to reproduce

1. receive a share

2. go to "shares" > "shared with me" > create a link for the with-you-shared file

3. go to "shares" > "shared via link" > click on the parent folder of the shared resource

4. View shows "Resource not found"

https://user-images.githubusercontent.com/26610733/181061204-6d205bdf-f99c-486a-b65b-cc2e813cad9a.mp4

### Expected behaviour

show "Shares" > "Shared with me" > "highlight resource"

### Actual behaviour

View shows "Resource not found"

|

1.0

|

Shared via link > "parent folder" shows "Resource not found" on click -

### Steps to reproduce

1. receive a share

2. go to "shares" > "shared with me" > create a link for the with-you-shared file

3. go to "shares" > "shared via link" > click on the parent folder of the shared resource

4. View shows "Resource not found"

https://user-images.githubusercontent.com/26610733/181061204-6d205bdf-f99c-486a-b65b-cc2e813cad9a.mp4

### Expected behaviour

show "Shares" > "Shared with me" > "highlight resource"

### Actual behaviour

View shows "Resource not found"

|

priority

|

shared via link parent folder shows resource not found on click steps to reproduce receive a share go to shares shared with me create a link for the with you shared file go to shares shared via link click on the parent folder of the shared resource view shows resource not found expected behaviour show shares shared with me highlight resource actual behaviour view shows resource not found

| 1

|

5,795

| 2,579,760,544

|

IssuesEvent

|

2015-02-13 13:08:33

|

pufexi/multiorder

|

https://api.github.com/repos/pufexi/multiorder

|

opened

|

Zrusit ID obj pri zadavani selectboxem

|

easy task :-) high priority

|

Je supr, ze jde nyni zadavat zbozi pomoci ID, ale v tom selectboxu nakonec to ID at neni, protoze pak pujde u selectboxu zadavat zbozi napsanim prvniho pismena zbozi na klavesnici, v pripade, ze zadavatel nezna ID, tak nemusi rolovat jak blazen.

Zaroven by bylo fajn, kdyby ENTER na pridani objednavky fungoval i v SELECTBOXU, nyni funguje jen v tech 2 inputech. Pokud je to nejak zbytecne narocne, asi neres.

|

1.0

|

Zrusit ID obj pri zadavani selectboxem - Je supr, ze jde nyni zadavat zbozi pomoci ID, ale v tom selectboxu nakonec to ID at neni, protoze pak pujde u selectboxu zadavat zbozi napsanim prvniho pismena zbozi na klavesnici, v pripade, ze zadavatel nezna ID, tak nemusi rolovat jak blazen.

Zaroven by bylo fajn, kdyby ENTER na pridani objednavky fungoval i v SELECTBOXU, nyni funguje jen v tech 2 inputech. Pokud je to nejak zbytecne narocne, asi neres.

|

priority

|

zrusit id obj pri zadavani selectboxem je supr ze jde nyni zadavat zbozi pomoci id ale v tom selectboxu nakonec to id at neni protoze pak pujde u selectboxu zadavat zbozi napsanim prvniho pismena zbozi na klavesnici v pripade ze zadavatel nezna id tak nemusi rolovat jak blazen zaroven by bylo fajn kdyby enter na pridani objednavky fungoval i v selectboxu nyni funguje jen v tech inputech pokud je to nejak zbytecne narocne asi neres

| 1

|

347,600

| 10,431,897,447

|

IssuesEvent

|

2019-09-17 10:02:44

|

OpenSRP/opensrp-client-chw-anc

|

https://api.github.com/repos/OpenSRP/opensrp-client-chw-anc

|

closed

|

Birth vaccines should not appear as a PNC task if birth vaccines are recorded in Pregnancy Outcome form

|

High Priority PNC bug

|

This was reported by the client.

Steps to reproduce:

- Open a record for an ANC woman and open the Pregnancy Outcome form

- Record the outcome is a Live Birth, and at the bottom of the form, record that the two birth vaccines, BCG and OPV 0, were given (enter a date for each vaccine)

- (Note: make sure you put the delivery date as 3 days ago, so that the first PNC home visit shows up)

- Save the form, then open the PNC profile for the woman and open the PNC visit form

- The birth vaccines are shown as a task in the PNC home visit, when they shouldn't be, because the birth vaccines were already recorded.

To pass QA:

- [x] The above steps should result in no birth vaccine task in the first PNC home visit

- [x] This should work in both English and French versions

|

1.0

|

Birth vaccines should not appear as a PNC task if birth vaccines are recorded in Pregnancy Outcome form - This was reported by the client.

Steps to reproduce:

- Open a record for an ANC woman and open the Pregnancy Outcome form

- Record the outcome is a Live Birth, and at the bottom of the form, record that the two birth vaccines, BCG and OPV 0, were given (enter a date for each vaccine)

- (Note: make sure you put the delivery date as 3 days ago, so that the first PNC home visit shows up)

- Save the form, then open the PNC profile for the woman and open the PNC visit form

- The birth vaccines are shown as a task in the PNC home visit, when they shouldn't be, because the birth vaccines were already recorded.

To pass QA:

- [x] The above steps should result in no birth vaccine task in the first PNC home visit

- [x] This should work in both English and French versions

|

priority

|

birth vaccines should not appear as a pnc task if birth vaccines are recorded in pregnancy outcome form this was reported by the client steps to reproduce open a record for an anc woman and open the pregnancy outcome form record the outcome is a live birth and at the bottom of the form record that the two birth vaccines bcg and opv were given enter a date for each vaccine note make sure you put the delivery date as days ago so that the first pnc home visit shows up save the form then open the pnc profile for the woman and open the pnc visit form the birth vaccines are shown as a task in the pnc home visit when they shouldn t be because the birth vaccines were already recorded to pass qa the above steps should result in no birth vaccine task in the first pnc home visit this should work in both english and french versions

| 1

|

351,324

| 10,515,687,738

|

IssuesEvent

|

2019-09-28 11:59:47

|

HW-PlayersPatch/Development

|

https://api.github.com/repos/HW-PlayersPatch/Development

|

closed

|

SP Voiceactor Code

|

Priority1: High Status3: Actionable Type2: Bug Type4: Campaign

|

# commands.lua / SpeechRaceHelper

-race.lua is written by SpeechRaceHelper(), which is currently only ran by MP, not SP.

-commands.lua first looks for race.lua (SP and MP), if it doesn't exist it uses the default table written in commands.lua.

-The problem is any other mod could also write to race.lua with different races. Then the user playing SP would have screwed up Audio if the races are in a different order (I tested on 9/28 and confirmed). To fix, every SP mission MUST call SpeechRaceHelper(). Or some other solution.

# Extra Races (skip)

Maybe make dual command and observer races filtered out from SP via tags or whatever it is. I was thinking SP only races are filtered out from MP, but maybe not per the logs below?

HwRM.log SP:

Race Filtering: SINGLEPLAYER rules - @SinglePlayer

17 Races Discovered

HwRM.log MP:

17 Races Discovered

Race Filtering: DEATHMATCH rules - @Deathmatch,Extras

Edit: ya forget the extra races thing, no filtering outside of the MP gamemodes. SP only loads the races needed for the mission so no baggage.

|

1.0

|

SP Voiceactor Code - # commands.lua / SpeechRaceHelper

-race.lua is written by SpeechRaceHelper(), which is currently only ran by MP, not SP.

-commands.lua first looks for race.lua (SP and MP), if it doesn't exist it uses the default table written in commands.lua.

-The problem is any other mod could also write to race.lua with different races. Then the user playing SP would have screwed up Audio if the races are in a different order (I tested on 9/28 and confirmed). To fix, every SP mission MUST call SpeechRaceHelper(). Or some other solution.

# Extra Races (skip)

Maybe make dual command and observer races filtered out from SP via tags or whatever it is. I was thinking SP only races are filtered out from MP, but maybe not per the logs below?

HwRM.log SP:

Race Filtering: SINGLEPLAYER rules - @SinglePlayer

17 Races Discovered

HwRM.log MP:

17 Races Discovered

Race Filtering: DEATHMATCH rules - @Deathmatch,Extras

Edit: ya forget the extra races thing, no filtering outside of the MP gamemodes. SP only loads the races needed for the mission so no baggage.

|

priority

|

sp voiceactor code commands lua speechracehelper race lua is written by speechracehelper which is currently only ran by mp not sp commands lua first looks for race lua sp and mp if it doesn t exist it uses the default table written in commands lua the problem is any other mod could also write to race lua with different races then the user playing sp would have screwed up audio if the races are in a different order i tested on and confirmed to fix every sp mission must call speechracehelper or some other solution extra races skip maybe make dual command and observer races filtered out from sp via tags or whatever it is i was thinking sp only races are filtered out from mp but maybe not per the logs below hwrm log sp race filtering singleplayer rules singleplayer races discovered hwrm log mp races discovered race filtering deathmatch rules deathmatch extras edit ya forget the extra races thing no filtering outside of the mp gamemodes sp only loads the races needed for the mission so no baggage

| 1

|

424,372

| 12,309,849,170

|

IssuesEvent

|

2020-05-12 09:37:33

|

geocollections/sarv-edit

|

https://api.github.com/repos/geocollections/sarv-edit

|

closed

|

Cannot edit taxon_list records and other issues

|

HIGH PRIORITY bug

|

In sample view in taxon subform user cannot edit records with error 'cant change field preparation'.

Preparation field is correct in config, but preparation_number should be removed from app - it is not in the models. Example record: https://edit2.geocollections.info/sample/174106

- In autocomplete field the selection opens behind popup and cannot be seen - this is recent bug affecting all forms.

- Taxon field is not required, but currently edit button is disabled if taxon is empty - should be possible to save record without related taxon.

- Also, taxon and taxon_txt fields should be on separate rows and take full popup width.

|

1.0

|

Cannot edit taxon_list records and other issues - In sample view in taxon subform user cannot edit records with error 'cant change field preparation'.

Preparation field is correct in config, but preparation_number should be removed from app - it is not in the models. Example record: https://edit2.geocollections.info/sample/174106

- In autocomplete field the selection opens behind popup and cannot be seen - this is recent bug affecting all forms.

- Taxon field is not required, but currently edit button is disabled if taxon is empty - should be possible to save record without related taxon.

- Also, taxon and taxon_txt fields should be on separate rows and take full popup width.

|

priority

|

cannot edit taxon list records and other issues in sample view in taxon subform user cannot edit records with error cant change field preparation preparation field is correct in config but preparation number should be removed from app it is not in the models example record in autocomplete field the selection opens behind popup and cannot be seen this is recent bug affecting all forms taxon field is not required but currently edit button is disabled if taxon is empty should be possible to save record without related taxon also taxon and taxon txt fields should be on separate rows and take full popup width

| 1

|

150,077

| 5,735,949,591

|

IssuesEvent

|

2017-04-22 03:13:28

|

Angblah/Comparator

|

https://api.github.com/repos/Angblah/Comparator

|

closed

|

Adding/Deleting Item/Attribute UI

|

Priority: High Stack: UI Status: Completed Type: Feature

|

Updating the UI for adding and deleting things from the workspace.

|

1.0

|

Adding/Deleting Item/Attribute UI - Updating the UI for adding and deleting things from the workspace.

|

priority

|

adding deleting item attribute ui updating the ui for adding and deleting things from the workspace

| 1

|

539,003

| 15,782,041,919

|

IssuesEvent

|

2021-04-01 12:15:47

|

michaelrsweet/htmldoc

|

https://api.github.com/repos/michaelrsweet/htmldoc

|

closed

|

AddressSanitizer: heap-buffer-overflow on render_table_row() ps-pdf.cxx:6123:34

|

bug priority-high

|

Hello, While fuzzing htmldoc , I found a heap-buffer-overflow in the render_table_row() ps-pdf.cxx:6123:34

- test platform

htmldoc Version 1.9.12 git [master 6898d0a]

OS :Ubuntu 20.04.1 LTS x86_64

kernel: 5.4.0-53-generic

compiler: clang version 10.0.0-4ubuntu1

reproduced:

htmldoc -f demo.pdf poc7.html

poc(zipped for update):

[poc7.zip](https://github.com/michaelrsweet/htmldoc/files/5872217/poc7.zip)

```

=================================================================

==38248==ERROR: AddressSanitizer: heap-buffer-overflow on address 0x625000002100 at pc 0x00000059260e bp 0x7fffa3362670 sp 0x7fffa3362668

READ of size 8 at 0x625000002100 thread T0

#0 0x59260d in render_table_row(hdtable_t&, tree_str***, int, unsigned char*, float, float, float, float, float*, float*, int*) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:6123:34

#1 0x588630 in parse_table(tree_str*, float, float, float, float, float*, float*, int*, int) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:7081:5

#2 0x558013 in parse_doc(tree_str*, float*, float*, float*, float*, float*, float*, int*, tree_str*, int*) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:4167:11

#3 0x556c54 in parse_doc(tree_str*, float*, float*, float*, float*, float*, float*, int*, tree_str*, int*) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:4081:9

#4 0x556c54 in parse_doc(tree_str*, float*, float*, float*, float*, float*, float*, int*, tree_str*, int*) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:4081:9

#5 0x54f90e in pspdf_export /home//htmldoc_sani/htmldoc/ps-pdf.cxx:803:3

#6 0x53c845 in main /home//htmldoc_sani/htmldoc/htmldoc.cxx:1291:3

#7 0x7f52a6b3e0b2 in __libc_start_main /build/glibc-eX1tMB/glibc-2.31/csu/../csu/libc-start.c:308:16

#8 0x41f8bd in _start (/home//htmldoc_sani/htmldoc/htmldoc+0x41f8bd)

0x625000002100 is located 32 bytes to the right of 8160-byte region [0x625000000100,0x6250000020e0)

allocated by thread T0 here:

#0 0x4eea4e in realloc /home/goushi/work/libfuzzer-workshop/src/llvm/projects/compiler-rt/lib/asan/asan_malloc_linux.cc:165

#1 0x55d96b in check_pages(int) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:8804:24

SUMMARY: AddressSanitizer: heap-buffer-overflow /home//htmldoc_sani/htmldoc/ps-pdf.cxx:6123:34 in render_table_row(hdtable_t&, tree_str***, int, unsigned char*, float, float, float, float, float*, float*, int*)

Shadow bytes around the buggy address:

0x0c4a7fff83d0: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00

0x0c4a7fff83e0: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00

0x0c4a7fff83f0: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00

0x0c4a7fff8400: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00

0x0c4a7fff8410: 00 00 00 00 00 00 00 00 00 00 00 00 fa fa fa fa

=>0x0c4a7fff8420:[fa]fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8430: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8440: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8450: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8460: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8470: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

Shadow byte legend (one shadow byte represents 8 application bytes):

Addressable: 00

Partially addressable: 01 02 03 04 05 06 07

Heap left redzone: fa

Freed heap region: fd

Stack left redzone: f1

Stack mid redzone: f2

Stack right redzone: f3

Stack after return: f5

Stack use after scope: f8

Global redzone: f9

Global init order: f6

Poisoned by user: f7

Container overflow: fc

Array cookie: ac

Intra object redzone: bb

ASan internal: fe

Left alloca redzone: ca

Right alloca redzone: cb

Shadow gap: cc

==38248==ABORTING

```

```

──── source:ps-pdf.cxx+8754 ────

8749 break;

8750 }

8751

8752 if (insert)

8753 {

→ 8754 if (insert->prev)

8755 insert->prev->next = r;

8756 else

8757 pages[page].start = r;

8758

8759 r->prev = insert->prev;

─ threads ────

[#0] Id 1, Name: "htmldoc", stopped 0x415e8c in new_render (), reason: SIGSEGV

── trace ────

[#0] 0x415e8c → new_render(page=0x14, type=0x2, x=0, y=-6.3660128850113321e+24, width=1.2732025770022664e+25, height=6.3660128850113321e+24, data=0x7fffffff6a40, insert=0x682881c800000000)

[#1] 0x4267e2 → render_table_row(table=@0x7fffffff6d98, cells=<optimized out>, row=<optimized out>, height_var=<optimized out>, left=0, right=0, bottom=<optimized out>, top=<optimized out>, x=<optimized out>, y=<optimized out>, page=<optimized out>)

[#2] 0x424519 → parse_table(t=<optimized out>, left=<optimized out>, right=<optimized out>, bottom=<optimized out>, top=<optimized out>, x=<optimized out>, y=<optimized out>, page=<optimized out>, needspace=<optimized out>)

[#3] 0x4157c0 → parse_doc(t=0x918c20, left=0x7fffffffb6e8, right=0x7fffffffb6e4, bottom=0x7fffffffb6ac, top=<optimized out>, x=<optimized out>, y=0x7fffffffb674, page=0x7fffffffb684, cpara=0x917cc0, needspace=0x7fffffffb6d4)

[#4] 0x414964 → parse_doc(t=0x918390, left=<optimized out>, right=<optimized out>, bottom=<optimized out>, top=0x7fffffffb69c, x=0x7fffffffb6ec, y=<optimized out>, page=<optimized out>, cpara=<optimized out>, needspace=<optimized out>)

[#5] 0x414964 → parse_doc(t=0x9171d0, left=<optimized out>, right=<optimized out>, bottom=<optimized out>, top=0x7fffffffb69c, x=0x7fffffffb6ec, y=<optimized out>, page=<optimized out>, cpara=<optimized out>, needspace=<optimized out>)

[#6] 0x411980 → pspdf_export(document=<optimized out>, toc=<optimized out>)

[#7] 0x408e89 → main(argc=<optimized out>, argv=<optimized out>)

──

```

|

1.0

|

AddressSanitizer: heap-buffer-overflow on render_table_row() ps-pdf.cxx:6123:34 - Hello, While fuzzing htmldoc , I found a heap-buffer-overflow in the render_table_row() ps-pdf.cxx:6123:34

- test platform

htmldoc Version 1.9.12 git [master 6898d0a]

OS :Ubuntu 20.04.1 LTS x86_64

kernel: 5.4.0-53-generic

compiler: clang version 10.0.0-4ubuntu1

reproduced:

htmldoc -f demo.pdf poc7.html

poc(zipped for update):

[poc7.zip](https://github.com/michaelrsweet/htmldoc/files/5872217/poc7.zip)

```

=================================================================

==38248==ERROR: AddressSanitizer: heap-buffer-overflow on address 0x625000002100 at pc 0x00000059260e bp 0x7fffa3362670 sp 0x7fffa3362668

READ of size 8 at 0x625000002100 thread T0

#0 0x59260d in render_table_row(hdtable_t&, tree_str***, int, unsigned char*, float, float, float, float, float*, float*, int*) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:6123:34

#1 0x588630 in parse_table(tree_str*, float, float, float, float, float*, float*, int*, int) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:7081:5

#2 0x558013 in parse_doc(tree_str*, float*, float*, float*, float*, float*, float*, int*, tree_str*, int*) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:4167:11

#3 0x556c54 in parse_doc(tree_str*, float*, float*, float*, float*, float*, float*, int*, tree_str*, int*) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:4081:9

#4 0x556c54 in parse_doc(tree_str*, float*, float*, float*, float*, float*, float*, int*, tree_str*, int*) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:4081:9

#5 0x54f90e in pspdf_export /home//htmldoc_sani/htmldoc/ps-pdf.cxx:803:3

#6 0x53c845 in main /home//htmldoc_sani/htmldoc/htmldoc.cxx:1291:3

#7 0x7f52a6b3e0b2 in __libc_start_main /build/glibc-eX1tMB/glibc-2.31/csu/../csu/libc-start.c:308:16

#8 0x41f8bd in _start (/home//htmldoc_sani/htmldoc/htmldoc+0x41f8bd)

0x625000002100 is located 32 bytes to the right of 8160-byte region [0x625000000100,0x6250000020e0)

allocated by thread T0 here:

#0 0x4eea4e in realloc /home/goushi/work/libfuzzer-workshop/src/llvm/projects/compiler-rt/lib/asan/asan_malloc_linux.cc:165

#1 0x55d96b in check_pages(int) /home//htmldoc_sani/htmldoc/ps-pdf.cxx:8804:24

SUMMARY: AddressSanitizer: heap-buffer-overflow /home//htmldoc_sani/htmldoc/ps-pdf.cxx:6123:34 in render_table_row(hdtable_t&, tree_str***, int, unsigned char*, float, float, float, float, float*, float*, int*)

Shadow bytes around the buggy address:

0x0c4a7fff83d0: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00

0x0c4a7fff83e0: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00

0x0c4a7fff83f0: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00

0x0c4a7fff8400: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00

0x0c4a7fff8410: 00 00 00 00 00 00 00 00 00 00 00 00 fa fa fa fa

=>0x0c4a7fff8420:[fa]fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8430: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8440: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8450: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8460: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

0x0c4a7fff8470: fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa

Shadow byte legend (one shadow byte represents 8 application bytes):

Addressable: 00

Partially addressable: 01 02 03 04 05 06 07

Heap left redzone: fa

Freed heap region: fd

Stack left redzone: f1

Stack mid redzone: f2

Stack right redzone: f3

Stack after return: f5

Stack use after scope: f8

Global redzone: f9

Global init order: f6

Poisoned by user: f7

Container overflow: fc

Array cookie: ac

Intra object redzone: bb

ASan internal: fe

Left alloca redzone: ca

Right alloca redzone: cb

Shadow gap: cc

==38248==ABORTING

```

```

──── source:ps-pdf.cxx+8754 ────

8749 break;

8750 }

8751

8752 if (insert)

8753 {

→ 8754 if (insert->prev)

8755 insert->prev->next = r;

8756 else

8757 pages[page].start = r;

8758

8759 r->prev = insert->prev;

─ threads ────

[#0] Id 1, Name: "htmldoc", stopped 0x415e8c in new_render (), reason: SIGSEGV

── trace ────

[#0] 0x415e8c → new_render(page=0x14, type=0x2, x=0, y=-6.3660128850113321e+24, width=1.2732025770022664e+25, height=6.3660128850113321e+24, data=0x7fffffff6a40, insert=0x682881c800000000)

[#1] 0x4267e2 → render_table_row(table=@0x7fffffff6d98, cells=<optimized out>, row=<optimized out>, height_var=<optimized out>, left=0, right=0, bottom=<optimized out>, top=<optimized out>, x=<optimized out>, y=<optimized out>, page=<optimized out>)

[#2] 0x424519 → parse_table(t=<optimized out>, left=<optimized out>, right=<optimized out>, bottom=<optimized out>, top=<optimized out>, x=<optimized out>, y=<optimized out>, page=<optimized out>, needspace=<optimized out>)

[#3] 0x4157c0 → parse_doc(t=0x918c20, left=0x7fffffffb6e8, right=0x7fffffffb6e4, bottom=0x7fffffffb6ac, top=<optimized out>, x=<optimized out>, y=0x7fffffffb674, page=0x7fffffffb684, cpara=0x917cc0, needspace=0x7fffffffb6d4)

[#4] 0x414964 → parse_doc(t=0x918390, left=<optimized out>, right=<optimized out>, bottom=<optimized out>, top=0x7fffffffb69c, x=0x7fffffffb6ec, y=<optimized out>, page=<optimized out>, cpara=<optimized out>, needspace=<optimized out>)

[#5] 0x414964 → parse_doc(t=0x9171d0, left=<optimized out>, right=<optimized out>, bottom=<optimized out>, top=0x7fffffffb69c, x=0x7fffffffb6ec, y=<optimized out>, page=<optimized out>, cpara=<optimized out>, needspace=<optimized out>)

[#6] 0x411980 → pspdf_export(document=<optimized out>, toc=<optimized out>)

[#7] 0x408e89 → main(argc=<optimized out>, argv=<optimized out>)

──

```

|

priority

|

addresssanitizer heap buffer overflow on render table row ps pdf cxx hello while fuzzing htmldoc i found a heap buffer overflow in the render table row ps pdf cxx test platform htmldoc version git os ubuntu lts kernel generic compiler clang version reproduced htmldoc f demo pdf html poc zipped for update error addresssanitizer heap buffer overflow on address at pc bp sp read of size at thread in render table row hdtable t tree str int unsigned char float float float float float float int home htmldoc sani htmldoc ps pdf cxx in parse table tree str float float float float float float int int home htmldoc sani htmldoc ps pdf cxx in parse doc tree str float float float float float float int tree str int home htmldoc sani htmldoc ps pdf cxx in parse doc tree str float float float float float float int tree str int home htmldoc sani htmldoc ps pdf cxx in parse doc tree str float float float float float float int tree str int home htmldoc sani htmldoc ps pdf cxx in pspdf export home htmldoc sani htmldoc ps pdf cxx in main home htmldoc sani htmldoc htmldoc cxx in libc start main build glibc glibc csu csu libc start c in start home htmldoc sani htmldoc htmldoc is located bytes to the right of byte region allocated by thread here in realloc home goushi work libfuzzer workshop src llvm projects compiler rt lib asan asan malloc linux cc in check pages int home htmldoc sani htmldoc ps pdf cxx summary addresssanitizer heap buffer overflow home htmldoc sani htmldoc ps pdf cxx in render table row hdtable t tree str int unsigned char float float float float float float int shadow bytes around the buggy address fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa fa shadow byte legend one shadow byte represents application bytes addressable partially addressable heap left redzone fa freed heap region fd stack left redzone stack mid redzone stack right redzone stack after return stack use after scope global redzone global init order poisoned by user container overflow fc array cookie ac intra object redzone bb asan internal fe left alloca redzone ca right alloca redzone cb shadow gap cc aborting ──── source ps pdf cxx ──── break if insert → if insert prev insert prev next r else pages start r r prev insert prev ─ threads ──── id name htmldoc stopped in new render reason sigsegv ── trace ──── → new render page type x y width height data insert → render table row table cells row height var left right bottom top x y page → parse table t left right bottom top x y page needspace → parse doc t left right bottom top x y page cpara needspace → parse doc t left right bottom top x y page cpara needspace → parse doc t left right bottom top x y page cpara needspace → pspdf export document toc → main argc argv ──

| 1

|

725,856

| 24,978,227,531

|

IssuesEvent

|

2022-11-02 09:38:05

|

rmlockwood/FLExTrans

|

https://api.github.com/repos/rmlockwood/FLExTrans

|

closed

|

[Setttings] The settings tool picks up the vernacular title instead of the analysis lang. title

|