Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

620,665

| 19,566,906,951

|

IssuesEvent

|

2022-01-04 02:40:42

|

bounswe/2021SpringGroup12

|

https://api.github.com/repos/bounswe/2021SpringGroup12

|

closed

|

Add Assignee Feature to Entities - Android

|

enhancement priority: high android

|

**Description**

- Add assignee part to the entity pages to be used in group goal

- Connect it with the code so that it is only active if the entity belongs to a group goal

- Change the entity data as well or create new entity data to be used for group goal

- It should have an option to add and remove assignees

- Make necessary server connections.

|

1.0

|

Add Assignee Feature to Entities - Android - **Description**

- Add assignee part to the entity pages to be used in group goal

- Connect it with the code so that it is only active if the entity belongs to a group goal

- Change the entity data as well or create new entity data to be used for group goal

- It should have an option to add and remove assignees

- Make necessary server connections.

|

priority

|

add assignee feature to entities android description add assignee part to the entity pages to be used in group goal connect it with the code so that it is only active if the entity belongs to a group goal change the entity data as well or create new entity data to be used for group goal it should have an option to add and remove assignees make necessary server connections

| 1

|

674,213

| 23,043,031,115

|

IssuesEvent

|

2022-07-23 13:00:09

|

Daniel123643/RTIBot

|

https://api.github.com/repos/Daniel123643/RTIBot

|

opened

|

Slash Command: /invite

|

feature high priority slash commands

|

**Details:**

* `/invite user <user>` invites a given `<user>` to the raid channel, giving them permissions to read and read message history.

* `/invite participants <channel>` invites everyone who signed up as a participant to a raid in the given `<channel>`.

- **NOTE:** This is completely new functionality!

* `/invite trainingrequests` is a subcommand group.

- `/invite trainingrequests wing <wing> [exclusive]` invites all people with training requests for the given `<wing>`, optionally with the `[exclusive]` argument that states people that need only THAT wing get invited **(autocompleted to Wing 1, Wing 2, Wing 3, Wing 4, Wing 5, Wing 6, Wing 7, or EoD Strikes)**.

- **NOTE:** This is new functionality, but it's similar to `TrainingRequestsByExclusiveWingsCommand`!

- /invite trainingrequests boss <boss> [exclusive]` invites all people with training requests for the given `<boss>`, optionally with the `[exclusive]` argument that states that people that need only THAT boss in the wing get invited **(autocompleted to all bosses we support in our training requests)**.

- **NOTE:** This is new functionality, but it's similar to `TrainingRequestsByBossCommand` and `TrainingRequestsByExclusiveBossCommand`!

**Current functionality:**

* `RaidInviteCommand`

**Notes:**

* Ensure that there's validation on the `<user>` and `<channel>` parameters (i.e. they accept only Discord users or channels).

* Error messages should be ephemeral.

|

1.0

|

Slash Command: /invite - **Details:**

* `/invite user <user>` invites a given `<user>` to the raid channel, giving them permissions to read and read message history.

* `/invite participants <channel>` invites everyone who signed up as a participant to a raid in the given `<channel>`.

- **NOTE:** This is completely new functionality!

* `/invite trainingrequests` is a subcommand group.

- `/invite trainingrequests wing <wing> [exclusive]` invites all people with training requests for the given `<wing>`, optionally with the `[exclusive]` argument that states people that need only THAT wing get invited **(autocompleted to Wing 1, Wing 2, Wing 3, Wing 4, Wing 5, Wing 6, Wing 7, or EoD Strikes)**.

- **NOTE:** This is new functionality, but it's similar to `TrainingRequestsByExclusiveWingsCommand`!

- /invite trainingrequests boss <boss> [exclusive]` invites all people with training requests for the given `<boss>`, optionally with the `[exclusive]` argument that states that people that need only THAT boss in the wing get invited **(autocompleted to all bosses we support in our training requests)**.

- **NOTE:** This is new functionality, but it's similar to `TrainingRequestsByBossCommand` and `TrainingRequestsByExclusiveBossCommand`!

**Current functionality:**

* `RaidInviteCommand`

**Notes:**

* Ensure that there's validation on the `<user>` and `<channel>` parameters (i.e. they accept only Discord users or channels).

* Error messages should be ephemeral.

|

priority

|

slash command invite details invite user invites a given to the raid channel giving them permissions to read and read message history invite participants invites everyone who signed up as a participant to a raid in the given note this is completely new functionality invite trainingrequests is a subcommand group invite trainingrequests wing invites all people with training requests for the given optionally with the argument that states people that need only that wing get invited autocompleted to wing wing wing wing wing wing wing or eod strikes note this is new functionality but it s similar to trainingrequestsbyexclusivewingscommand invite trainingrequests boss invites all people with training requests for the given optionally with the argument that states that people that need only that boss in the wing get invited autocompleted to all bosses we support in our training requests note this is new functionality but it s similar to trainingrequestsbybosscommand and trainingrequestsbyexclusivebosscommand current functionality raidinvitecommand notes ensure that there s validation on the and parameters i e they accept only discord users or channels error messages should be ephemeral

| 1

|

512,029

| 14,887,144,023

|

IssuesEvent

|

2021-01-20 17:54:43

|

ballerina-platform/ballerina-lang

|

https://api.github.com/repos/ballerina-platform/ballerina-lang

|

closed

|

Add documentation codeaction generates invalid cont for resources and methods

|

Area/LanguageServer Priority/High Team/Tooling Type/Bug

|

**Description:**

Add documentation codeaction generates invalid cont for resources and methods

**Steps to reproduce:**

**Affected Versions:**

2.0.0-SLP9-SNAPSHOT

**OS, DB, other environment details and versions:**

|

1.0

|

Add documentation codeaction generates invalid cont for resources and methods - **Description:**

Add documentation codeaction generates invalid cont for resources and methods

**Steps to reproduce:**

**Affected Versions:**

2.0.0-SLP9-SNAPSHOT

**OS, DB, other environment details and versions:**

|

priority

|

add documentation codeaction generates invalid cont for resources and methods description add documentation codeaction generates invalid cont for resources and methods steps to reproduce affected versions snapshot os db other environment details and versions

| 1

|

94,514

| 3,926,983,885

|

IssuesEvent

|

2016-04-23 08:10:42

|

sensebox/OpenSenseMap

|

https://api.github.com/repos/sensebox/OpenSenseMap

|

closed

|

Replace green location markers

|

priority high

|

Replace the green with red ones. People are thinking it means "running" and "not running".

|

1.0

|

Replace green location markers - Replace the green with red ones. People are thinking it means "running" and "not running".

|

priority

|

replace green location markers replace the green with red ones people are thinking it means running and not running

| 1

|

741,252

| 25,785,924,022

|

IssuesEvent

|

2022-12-09 20:26:44

|

uclahs-cds/public-tool-PipeVal

|

https://api.github.com/repos/uclahs-cds/public-tool-PipeVal

|

closed

|

Ubuntu 20.04 update Dockerfile fix

|

bug high priority

|

Fix Dockerfile to successfully build with the recently updated Ubuntu 20.04 version. There are certain issues with gcc and proper dependencies/libraries for building HTSlib

|

1.0

|

Ubuntu 20.04 update Dockerfile fix - Fix Dockerfile to successfully build with the recently updated Ubuntu 20.04 version. There are certain issues with gcc and proper dependencies/libraries for building HTSlib

|

priority

|

ubuntu update dockerfile fix fix dockerfile to successfully build with the recently updated ubuntu version there are certain issues with gcc and proper dependencies libraries for building htslib

| 1

|

279,524

| 8,667,202,970

|

IssuesEvent

|

2018-11-29 07:50:58

|

StrangeLoopGames/EcoIssues

|

https://api.github.com/repos/StrangeLoopGames/EcoIssues

|

reopened

|

[7.8.0 #Be3effa9] Not all species were present

|

High Priority Not reproduced

|

It's a QA-Pass Task and for the first time i got several species that haven't even been created (web page shows there never has been one):

<img width="361" alt="species" src="https://user-images.githubusercontent.com/25908592/48198277-9fa7c680-e358-11e8-8dfe-7b7d47c4bf87.png">

|

1.0

|

[7.8.0 #Be3effa9] Not all species were present - It's a QA-Pass Task and for the first time i got several species that haven't even been created (web page shows there never has been one):

<img width="361" alt="species" src="https://user-images.githubusercontent.com/25908592/48198277-9fa7c680-e358-11e8-8dfe-7b7d47c4bf87.png">

|

priority

|

not all species were present it s a qa pass task and for the first time i got several species that haven t even been created web page shows there never has been one img width alt species src

| 1

|

123,202

| 4,858,722,470

|

IssuesEvent

|

2016-11-13 08:50:53

|

vnaskos/lajarus

|

https://api.github.com/repos/vnaskos/lajarus

|

opened

|

Create the item model and entity

|

point: 1 priority: highest type: feature

|

Create the item model and entity classes which should have the same fields as in the item database table

|

1.0

|

Create the item model and entity - Create the item model and entity classes which should have the same fields as in the item database table

|

priority

|

create the item model and entity create the item model and entity classes which should have the same fields as in the item database table

| 1

|

310,885

| 9,525,303,589

|

IssuesEvent

|

2019-04-28 11:14:19

|

code4romania/monitorizare-vot-android

|

https://api.github.com/repos/code4romania/monitorizare-vot-android

|

closed

|

We need a way to notify the user that the answer was saved

|

android enhancement good first issue help wanted high priority may-release

|

After filling in the response for a question and moving to the next one, the answer is stored locally, but there is no feedback for the user. The user might get confused if they don't know that the answer was saved.

The answers are also synced with the server when the user returns to the forms list.

Add toast message / snackbar when the answer is saved locally (when the user navigates to the next question) with the text "Answer saved" / "Raspuns salvat"

Add toast message / snackbar when the answers are sent to the server (when the user returns to the forms screen) with the text "Answers synced with server" / "Raspunsuri sincronizate cu serverul"

Add toast message / snackbar when a note is saved with the text "Note saved" / "Nota salvata"

|

1.0

|

We need a way to notify the user that the answer was saved - After filling in the response for a question and moving to the next one, the answer is stored locally, but there is no feedback for the user. The user might get confused if they don't know that the answer was saved.

The answers are also synced with the server when the user returns to the forms list.

Add toast message / snackbar when the answer is saved locally (when the user navigates to the next question) with the text "Answer saved" / "Raspuns salvat"

Add toast message / snackbar when the answers are sent to the server (when the user returns to the forms screen) with the text "Answers synced with server" / "Raspunsuri sincronizate cu serverul"

Add toast message / snackbar when a note is saved with the text "Note saved" / "Nota salvata"

|

priority

|

we need a way to notify the user that the answer was saved after filling in the response for a question and moving to the next one the answer is stored locally but there is no feedback for the user the user might get confused if they don t know that the answer was saved the answers are also synced with the server when the user returns to the forms list add toast message snackbar when the answer is saved locally when the user navigates to the next question with the text answer saved raspuns salvat add toast message snackbar when the answers are sent to the server when the user returns to the forms screen with the text answers synced with server raspunsuri sincronizate cu serverul add toast message snackbar when a note is saved with the text note saved nota salvata

| 1

|

822,330

| 30,865,371,226

|

IssuesEvent

|

2023-08-03 07:40:02

|

red-hat-storage/ocs-ci

|

https://api.github.com/repos/red-hat-storage/ocs-ci

|

closed

|

s3 sync of the /test_objects dir at the s3cli pod fails with "File not found" errors

|

High Priority MCG Squad/Red

|

As described in [this BZ](https://bugzilla.redhat.com/show_bug.cgi?id=2227162), many MCG tests are failing while trying to upload files from the /test_objects dir in the s3cli pod:

```

Error during execution of command: oc -n openshift-storage rsh s3cli-0 sh -c "AWS_CA_BUNDLE=/cert/service-ca.crt AWS_ACCESS_KEY_ID=***** AWS_SECRET_ACCESS_KEY=***** AWS_DEFAULT_REGION=us-east-2 aws s3 --endpoint=***** sync /test_objects/ s3://oc-bucket-71316705b4034a0b9296ec69854586".

2023-07-28 12:36:58 Error is warning: Skipping file /test_objects/airbus.jpg. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/apple.mp4. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/bolder.jpg. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/book.txt. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/canada.jpg. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/danny.webm. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/enwik8. File does not exist.

```

For some unclear reason this directory does not have the executable permissions, which evidently causes this error (local attempts to s3 sync/cp files from a dir with 777 permission for example have succeeded)

|

1.0

|

s3 sync of the /test_objects dir at the s3cli pod fails with "File not found" errors - As described in [this BZ](https://bugzilla.redhat.com/show_bug.cgi?id=2227162), many MCG tests are failing while trying to upload files from the /test_objects dir in the s3cli pod:

```

Error during execution of command: oc -n openshift-storage rsh s3cli-0 sh -c "AWS_CA_BUNDLE=/cert/service-ca.crt AWS_ACCESS_KEY_ID=***** AWS_SECRET_ACCESS_KEY=***** AWS_DEFAULT_REGION=us-east-2 aws s3 --endpoint=***** sync /test_objects/ s3://oc-bucket-71316705b4034a0b9296ec69854586".

2023-07-28 12:36:58 Error is warning: Skipping file /test_objects/airbus.jpg. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/apple.mp4. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/bolder.jpg. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/book.txt. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/canada.jpg. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/danny.webm. File does not exist.

2023-07-28 12:36:58 warning: Skipping file /test_objects/enwik8. File does not exist.

```

For some unclear reason this directory does not have the executable permissions, which evidently causes this error (local attempts to s3 sync/cp files from a dir with 777 permission for example have succeeded)

|

priority

|

sync of the test objects dir at the pod fails with file not found errors as described in many mcg tests are failing while trying to upload files from the test objects dir in the pod error during execution of command oc n openshift storage rsh sh c aws ca bundle cert service ca crt aws access key id aws secret access key aws default region us east aws endpoint sync test objects oc bucket error is warning skipping file test objects airbus jpg file does not exist warning skipping file test objects apple file does not exist warning skipping file test objects bolder jpg file does not exist warning skipping file test objects book txt file does not exist warning skipping file test objects canada jpg file does not exist warning skipping file test objects danny webm file does not exist warning skipping file test objects file does not exist for some unclear reason this directory does not have the executable permissions which evidently causes this error local attempts to sync cp files from a dir with permission for example have succeeded

| 1

|

373,024

| 11,031,999,102

|

IssuesEvent

|

2019-12-06 19:06:45

|

jumptrading/FluentTerminal

|

https://api.github.com/repos/jumptrading/FluentTerminal

|

closed

|

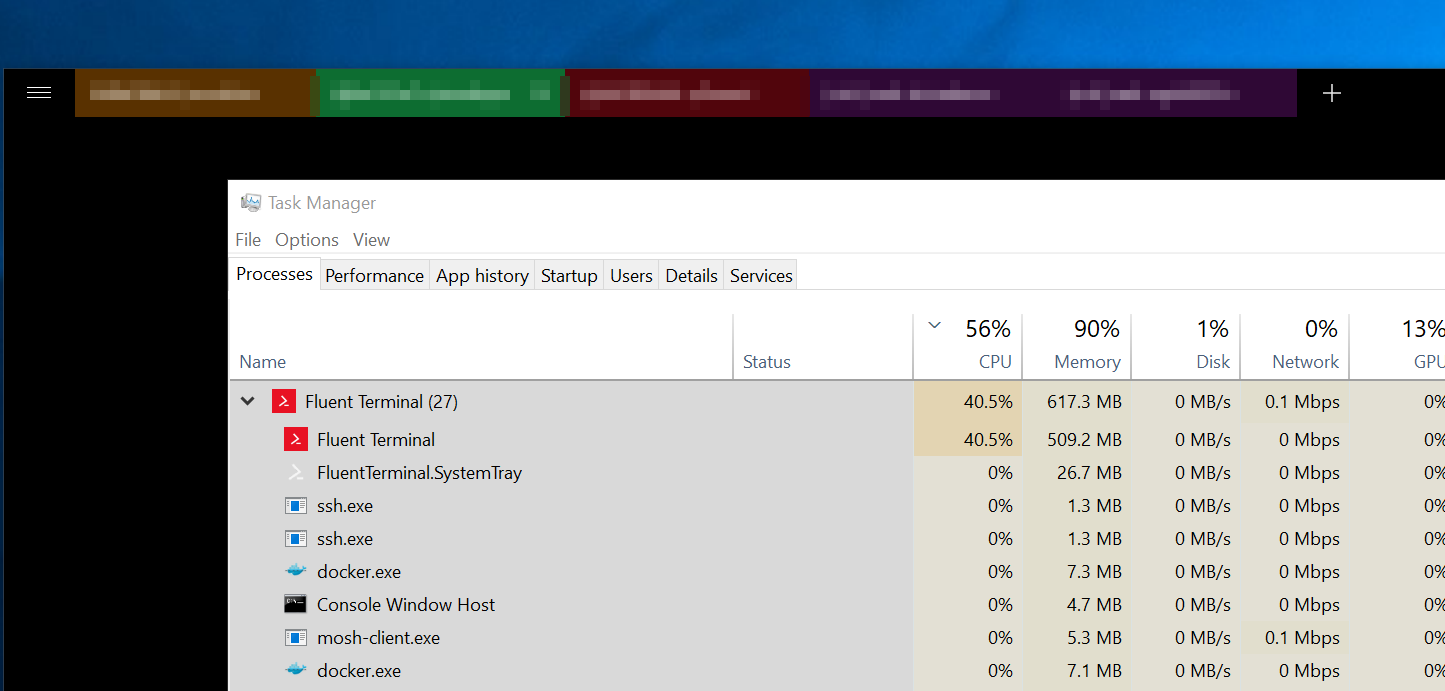

FluentTerminal starts using significant CPU after being left for some time

|

bug high-priority not reproducible

|

From @cjw296:

> hmm, weird one, haven't used my laptop much this afternoon, it's been docked and locked

came back to this:

> I get a blue spinning circle on the fluent window

See also logs in Slack thread

|

1.0

|

FluentTerminal starts using significant CPU after being left for some time - From @cjw296:

> hmm, weird one, haven't used my laptop much this afternoon, it's been docked and locked

came back to this:

> I get a blue spinning circle on the fluent window

See also logs in Slack thread

|

priority

|

fluentterminal starts using significant cpu after being left for some time from hmm weird one haven t used my laptop much this afternoon it s been docked and locked came back to this i get a blue spinning circle on the fluent window see also logs in slack thread

| 1

|

272,468

| 8,513,910,041

|

IssuesEvent

|

2018-10-31 17:10:18

|

CS2113-AY1819S1-W12-3/main

|

https://api.github.com/repos/CS2113-AY1819S1-W12-3/main

|

opened

|

App data/initialisation files not created at root folder [Ubuntu 18.04 LTS]

|

priority.high status.ongoing type.bug

|

On Ubuntu 18.04 LTS systems, files that are created when the app is first ran are not created at the root folder where the .jar file resides.

Instead, the files are always created at the user's home folder. (ie /home/user)

|

1.0

|

App data/initialisation files not created at root folder [Ubuntu 18.04 LTS] - On Ubuntu 18.04 LTS systems, files that are created when the app is first ran are not created at the root folder where the .jar file resides.

Instead, the files are always created at the user's home folder. (ie /home/user)

|

priority

|

app data initialisation files not created at root folder on ubuntu lts systems files that are created when the app is first ran are not created at the root folder where the jar file resides instead the files are always created at the user s home folder ie home user

| 1

|

352,397

| 10,541,607,053

|

IssuesEvent

|

2019-10-02 11:13:42

|

code4romania/monitorizare-vot-ios

|

https://api.github.com/repos/code4romania/monitorizare-vot-ios

|

closed

|

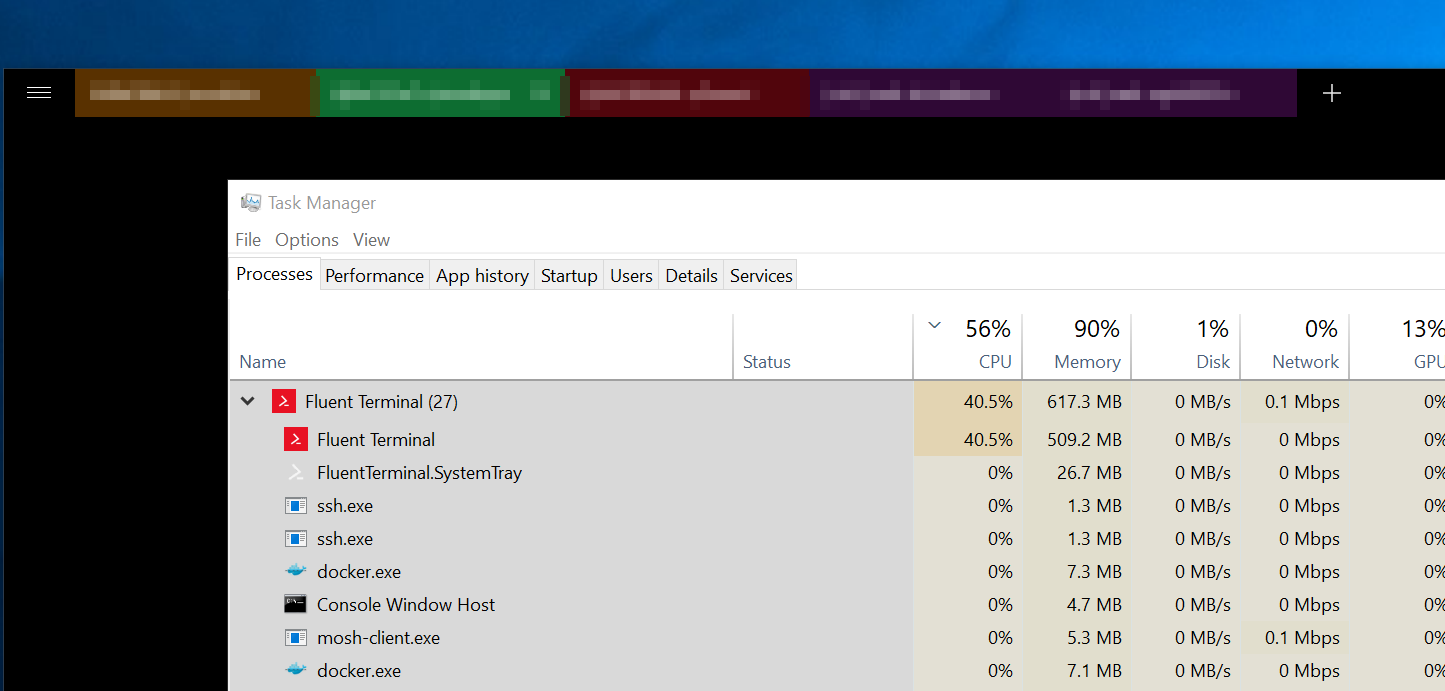

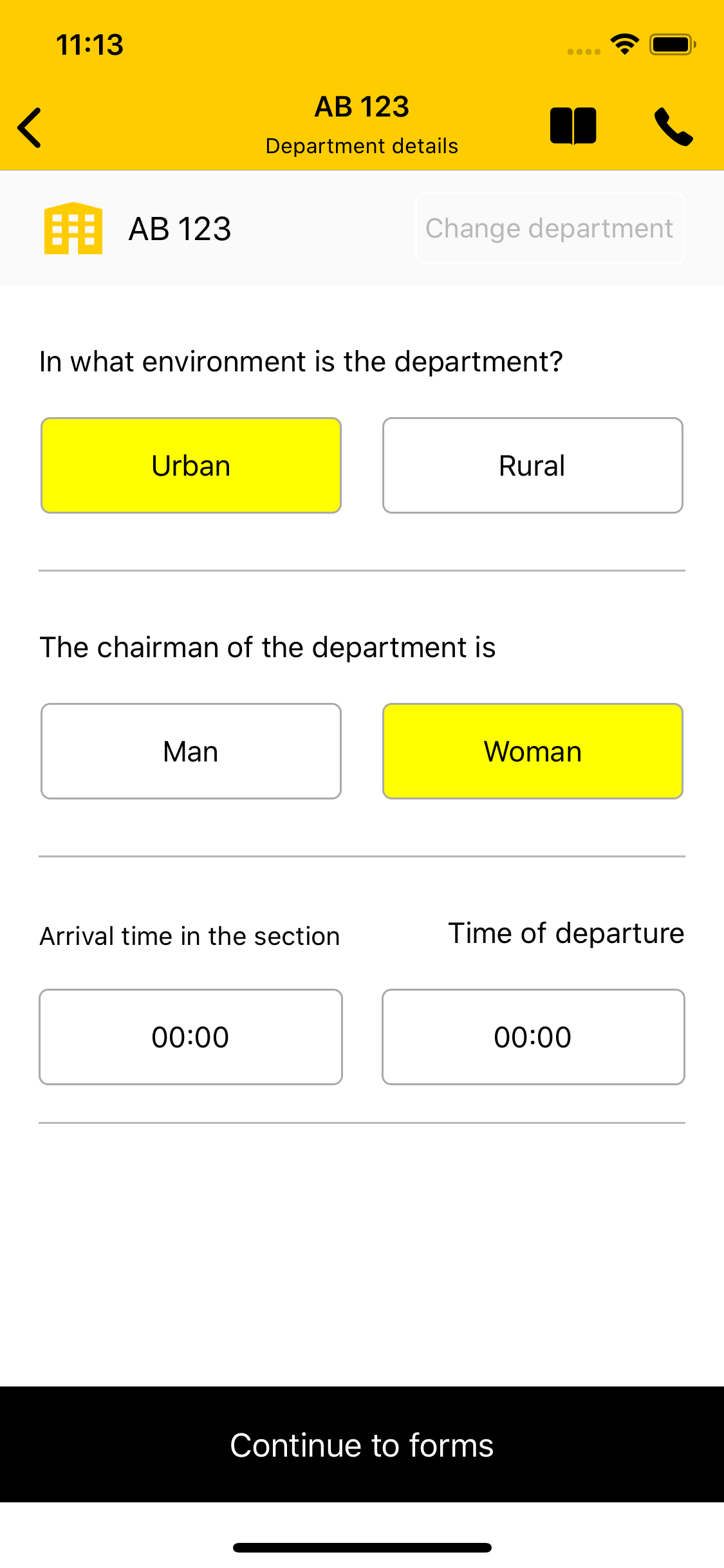

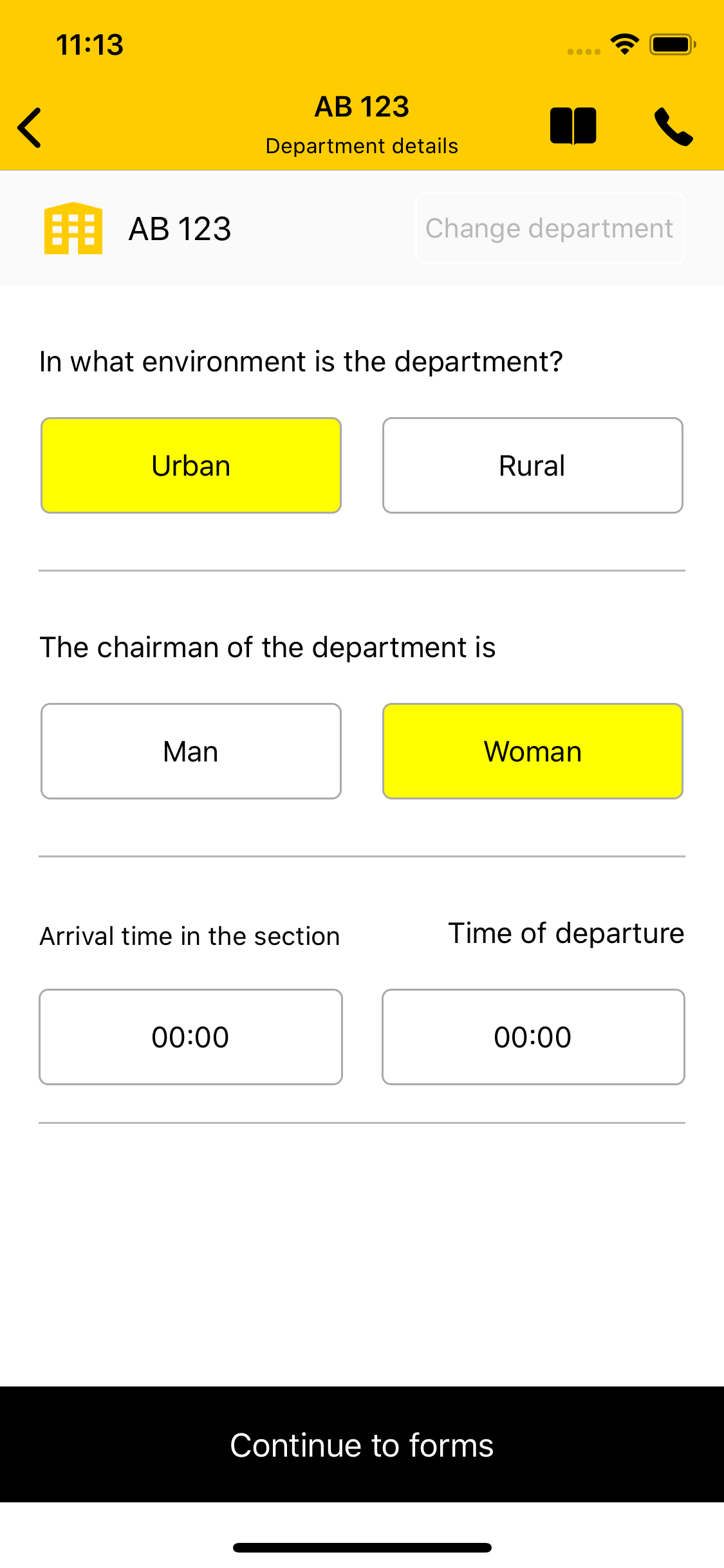

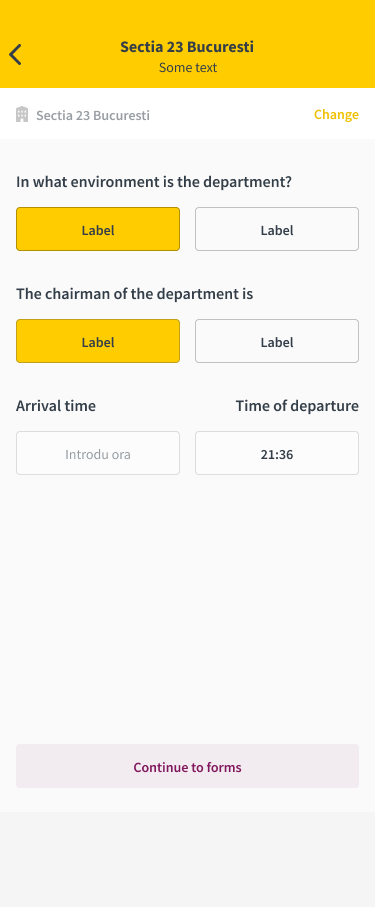

[UI] Update section details screen

|

enhancement help wanted high priority ios

|

Notes:

- [x] Refactor into MvvM

- [x] Use nibs instead of story boards, it makes collaboration easier

- [x] Remove Romanian names from class name, variables etc

- [x] Remove hard coded values, move them into configuration objects/plist/constants

- [ ] Implement a nicer hour picker view controller to handle the time updates (and that we will reuse in all other areas)

You can find the UI document [here](https://www.figma.com/file/21x1ui3YZJrGnpZNVnEuuQ/DRAFT-MV---mobile?node-id=15%3A331&viewport=-134%2C2369%2C0.4088895320892334).

Old UI:

New UI:

|

1.0

|

[UI] Update section details screen - Notes:

- [x] Refactor into MvvM

- [x] Use nibs instead of story boards, it makes collaboration easier

- [x] Remove Romanian names from class name, variables etc

- [x] Remove hard coded values, move them into configuration objects/plist/constants

- [ ] Implement a nicer hour picker view controller to handle the time updates (and that we will reuse in all other areas)

You can find the UI document [here](https://www.figma.com/file/21x1ui3YZJrGnpZNVnEuuQ/DRAFT-MV---mobile?node-id=15%3A331&viewport=-134%2C2369%2C0.4088895320892334).

Old UI:

New UI:

|

priority

|

update section details screen notes refactor into mvvm use nibs instead of story boards it makes collaboration easier remove romanian names from class name variables etc remove hard coded values move them into configuration objects plist constants implement a nicer hour picker view controller to handle the time updates and that we will reuse in all other areas you can find the ui document old ui new ui

| 1

|

514,643

| 14,941,943,455

|

IssuesEvent

|

2021-01-25 20:31:32

|

jesus-collective/mobile

|

https://api.github.com/repos/jesus-collective/mobile

|

opened

|

Episode previously saved suddenly unsaved

|

High Priority resources

|

As seen below, the episode titled "new title" was previously named "The Greatest" and it was saved and displaying in the series page. Suddenly, it's disappeared and "new title" has replaced it.

|

1.0

|

Episode previously saved suddenly unsaved - As seen below, the episode titled "new title" was previously named "The Greatest" and it was saved and displaying in the series page. Suddenly, it's disappeared and "new title" has replaced it.

|

priority

|

episode previously saved suddenly unsaved as seen below the episode titled new title was previously named the greatest and it was saved and displaying in the series page suddenly it s disappeared and new title has replaced it

| 1

|

733,207

| 25,293,712,778

|

IssuesEvent

|

2022-11-17 03:53:10

|

nimblehq/android-templates

|

https://api.github.com/repos/nimblehq/android-templates

|

closed

|

Update Kotlin Version

|

type : chore status : essential priority : high

|

## Why

Change the Kotlin version:

- `kotlin-stdlib` : 1.5.21 > 1.7.0 or higher

- `kotlinx-coroutines-android` : 1.5.0 > 1.6.3 or higher

- `kotlinx-coroutines-test` : 1.5.0 > 1.6.3 or higher

- `kotlin-reflect` : 1.5.10 > 1.7.0 or higher

## Who Benefits?

Developers will benefit from new features and performance improvements:

- https://github.com/JetBrains/kotlin/releases

- https://github.com/Kotlin/kotlinx.coroutines/releases

- https://kotlinlang.org/docs/whatsnew16.html

I think this is essential, as it will bring a good experience for the developers themselves

|

1.0

|

Update Kotlin Version - ## Why

Change the Kotlin version:

- `kotlin-stdlib` : 1.5.21 > 1.7.0 or higher

- `kotlinx-coroutines-android` : 1.5.0 > 1.6.3 or higher

- `kotlinx-coroutines-test` : 1.5.0 > 1.6.3 or higher

- `kotlin-reflect` : 1.5.10 > 1.7.0 or higher

## Who Benefits?

Developers will benefit from new features and performance improvements:

- https://github.com/JetBrains/kotlin/releases

- https://github.com/Kotlin/kotlinx.coroutines/releases

- https://kotlinlang.org/docs/whatsnew16.html

I think this is essential, as it will bring a good experience for the developers themselves

|

priority

|

update kotlin version why change the kotlin version kotlin stdlib or higher kotlinx coroutines android or higher kotlinx coroutines test or higher kotlin reflect or higher who benefits developers will benefit from new features and performance improvements i think this is essential as it will bring a good experience for the developers themselves

| 1

|

268,052

| 8,402,735,946

|

IssuesEvent

|

2018-10-11 07:44:52

|

pupil-labs/pupil

|

https://api.github.com/repos/pupil-labs/pupil

|

closed

|

iMotions Exporter: Support world-less recordings

|

bug priority:high

|

Currently, the iMotions Exporter crashes if no world video is present.

|

1.0

|

iMotions Exporter: Support world-less recordings - Currently, the iMotions Exporter crashes if no world video is present.

|

priority

|

imotions exporter support world less recordings currently the imotions exporter crashes if no world video is present

| 1

|

544,797

| 15,897,287,986

|

IssuesEvent

|

2021-04-11 20:29:07

|

wso2/product-apim

|

https://api.github.com/repos/wso2/product-apim

|

closed

|

Enforce API LC based permission validation

|

API-M 4.0.0 Feature/Lifecycle Priority/Highest T2 Type/Bug

|

### Description:

When an API is in Published state, API is directly visible in developer portal. Hence, changes to the API should not be allowed for API Creators.

This includes both UI and REST API side validations.

|

1.0

|

Enforce API LC based permission validation - ### Description:

When an API is in Published state, API is directly visible in developer portal. Hence, changes to the API should not be allowed for API Creators.

This includes both UI and REST API side validations.

|

priority

|

enforce api lc based permission validation description when an api is in published state api is directly visible in developer portal hence changes to the api should not be allowed for api creators this includes both ui and rest api side validations

| 1

|

591,763

| 17,860,701,309

|

IssuesEvent

|

2021-09-05 22:48:29

|

ankidroid/Anki-Android

|

https://api.github.com/repos/ankidroid/Anki-Android

|

closed

|

[Bug] Some sort of issue from mailing list about image changes causing problems

|

Priority-High Help Wanted Needs Triage

|

###### Reproduction Steps

Just prose, not sure it has repro steps. Reporter did not want to sign up for github

----

I'm very familiar with Anki Droid, and use it daily. I have always done all of the check media and check database commands. This problem is nothing that I'm doing, as I haven't changed my interactions with AnkiDroid for years, and my card collection is not corrupted in any way. I check it daily with all the error checking functions.

Once I have replaced the media file in Anki Droid and then attempt to synchronize, I get the error 400 message. When I go out to Anki desktop in Windows, it says that there is a card expecting a media file which is not there. So there is something about a simultaneous erasure of a media file and a creation of a new one in ankicard that now suddenly confuses the sync function, or the hub, or desktop anki. I don't know what it is.

Good luck on it. I don't know what a GitHub is, or how to create a problem report, so I hope you can take care of that end of things.

The problem that Ankidroid is now creating, every day, with different cards, after trying a lot of different things, is that I have to completely uninstall ankidroid, erase all of my material, reinstall ankidroid, and download everything from the hub. It's a big deal as I have about twelve thousand cards in 49 decks, and lots of media. I will have to use only desktop anki until this problem gets fixed within ankiDroid.

My drastic approach fixes things until the next time that I try to change a media file. Then the new card that I have changed the media file on is the one that causes the sync not to work.

I hope this more complete description helps you! Thanks for looking at the problem.

Frederick

Sent from my T-Mobile 5G Device

-------- Original message --------

Date: 9/3/21 1:54 PM (GMT-08:00)

Subject: Re: [Ankidroid] AnkiDroid Flashcards

Hi there! Can you open an issue in our github (there's a link from the help menu in the app) with as many details + steps to reproduce + filenames (maybe the name is a problem?) as possible?

You might try deck list --> menu --> check database and check media as well to see if that helps

-Mike

> Something has changed in the most recent version that is causing error 400.

>

> What seems to be happening is that when I replace a sound file with a different sound file on a card, when I go to synchronize it with Anki, I get error 400. And I can no longer synchronize.

>

###### Expected Result

###### Actual Result

###### Debug info

Refer to the [support page](https://ankidroid.org/docs/help.html) if you are unsure where to get the "debug info".

###### Research

*Enter an [x] character to confirm the points below:*

- [ ] I have read the [support page](https://ankidroid.org/docs/help.html) and am reporting a bug or enhancement request specific to AnkiDroid

- [ ] I have checked the [manual](https://ankidroid.org/docs/manual.html) and the [FAQ](https://github.com/ankidroid/Anki-Android/wiki/FAQ) and could not find a solution to my issue

- [ ] I have searched for similar existing issues here and on the user forum

- [ ] (Optional) I have confirmed the issue is not resolved in the latest alpha release ([instructions](https://docs.ankidroid.org/manual.html#betaTesting))

|

1.0

|

[Bug] Some sort of issue from mailing list about image changes causing problems - ###### Reproduction Steps

Just prose, not sure it has repro steps. Reporter did not want to sign up for github

----

I'm very familiar with Anki Droid, and use it daily. I have always done all of the check media and check database commands. This problem is nothing that I'm doing, as I haven't changed my interactions with AnkiDroid for years, and my card collection is not corrupted in any way. I check it daily with all the error checking functions.

Once I have replaced the media file in Anki Droid and then attempt to synchronize, I get the error 400 message. When I go out to Anki desktop in Windows, it says that there is a card expecting a media file which is not there. So there is something about a simultaneous erasure of a media file and a creation of a new one in ankicard that now suddenly confuses the sync function, or the hub, or desktop anki. I don't know what it is.

Good luck on it. I don't know what a GitHub is, or how to create a problem report, so I hope you can take care of that end of things.

The problem that Ankidroid is now creating, every day, with different cards, after trying a lot of different things, is that I have to completely uninstall ankidroid, erase all of my material, reinstall ankidroid, and download everything from the hub. It's a big deal as I have about twelve thousand cards in 49 decks, and lots of media. I will have to use only desktop anki until this problem gets fixed within ankiDroid.

My drastic approach fixes things until the next time that I try to change a media file. Then the new card that I have changed the media file on is the one that causes the sync not to work.

I hope this more complete description helps you! Thanks for looking at the problem.

Frederick

Sent from my T-Mobile 5G Device

-------- Original message --------

Date: 9/3/21 1:54 PM (GMT-08:00)

Subject: Re: [Ankidroid] AnkiDroid Flashcards

Hi there! Can you open an issue in our github (there's a link from the help menu in the app) with as many details + steps to reproduce + filenames (maybe the name is a problem?) as possible?

You might try deck list --> menu --> check database and check media as well to see if that helps

-Mike

> Something has changed in the most recent version that is causing error 400.

>

> What seems to be happening is that when I replace a sound file with a different sound file on a card, when I go to synchronize it with Anki, I get error 400. And I can no longer synchronize.

>

###### Expected Result

###### Actual Result

###### Debug info

Refer to the [support page](https://ankidroid.org/docs/help.html) if you are unsure where to get the "debug info".

###### Research

*Enter an [x] character to confirm the points below:*

- [ ] I have read the [support page](https://ankidroid.org/docs/help.html) and am reporting a bug or enhancement request specific to AnkiDroid

- [ ] I have checked the [manual](https://ankidroid.org/docs/manual.html) and the [FAQ](https://github.com/ankidroid/Anki-Android/wiki/FAQ) and could not find a solution to my issue

- [ ] I have searched for similar existing issues here and on the user forum

- [ ] (Optional) I have confirmed the issue is not resolved in the latest alpha release ([instructions](https://docs.ankidroid.org/manual.html#betaTesting))

|

priority

|

some sort of issue from mailing list about image changes causing problems reproduction steps just prose not sure it has repro steps reporter did not want to sign up for github i m very familiar with anki droid and use it daily i have always done all of the check media and check database commands this problem is nothing that i m doing as i haven t changed my interactions with ankidroid for years and my card collection is not corrupted in any way i check it daily with all the error checking functions once i have replaced the media file in anki droid and then attempt to synchronize i get the error message when i go out to anki desktop in windows it says that there is a card expecting a media file which is not there so there is something about a simultaneous erasure of a media file and a creation of a new one in ankicard that now suddenly confuses the sync function or the hub or desktop anki i don t know what it is good luck on it i don t know what a github is or how to create a problem report so i hope you can take care of that end of things the problem that ankidroid is now creating every day with different cards after trying a lot of different things is that i have to completely uninstall ankidroid erase all of my material reinstall ankidroid and download everything from the hub it s a big deal as i have about twelve thousand cards in decks and lots of media i will have to use only desktop anki until this problem gets fixed within ankidroid my drastic approach fixes things until the next time that i try to change a media file then the new card that i have changed the media file on is the one that causes the sync not to work i hope this more complete description helps you thanks for looking at the problem frederick sent from my t mobile device original message date pm gmt subject re ankidroid flashcards hi there can you open an issue in our github there s a link from the help menu in the app with as many details steps to reproduce filenames maybe the name is a problem as possible you might try deck list menu check database and check media as well to see if that helps mike something has changed in the most recent version that is causing error what seems to be happening is that when i replace a sound file with a different sound file on a card when i go to synchronize it with anki i get error and i can no longer synchronize expected result actual result debug info refer to the if you are unsure where to get the debug info research enter an character to confirm the points below i have read the and am reporting a bug or enhancement request specific to ankidroid i have checked the and the and could not find a solution to my issue i have searched for similar existing issues here and on the user forum optional i have confirmed the issue is not resolved in the latest alpha release

| 1

|

181,232

| 6,657,380,009

|

IssuesEvent

|

2017-09-30 04:33:00

|

yourWaifu/sleepy-discord

|

https://api.github.com/repos/yourWaifu/sleepy-discord

|

opened

|

Automation of setting up the library

|

Feature Request High Priority

|

Compiling or setting up the library should be a more painless process. Once the library is downloaded, the user should just need to do a few things like maybe running a script and then hit compile to get up and running.

There are few things I need for this to work the way I want it to. Here's a list of things I want to have for automating the seting up the deps folder

- Users should be able to choose libraries (like CPR, Websocketpp, or uWebSockets)

- Users can choose to use no libraries if they wish

- Works on Windows, macOS, and Linux

- Download the libraries for the user and the libraries' needed libraries and compile them if they need to be compiled to compile Sleepy Discord

Compiling Sleepy Discord should be separate, because you only need to set up the deps folder once and Visual Studio has already automated compiling the library.

There have been a few people that tried tackling this issue, however none of them hits all of the above.

|

1.0

|

Automation of setting up the library - Compiling or setting up the library should be a more painless process. Once the library is downloaded, the user should just need to do a few things like maybe running a script and then hit compile to get up and running.

There are few things I need for this to work the way I want it to. Here's a list of things I want to have for automating the seting up the deps folder

- Users should be able to choose libraries (like CPR, Websocketpp, or uWebSockets)

- Users can choose to use no libraries if they wish

- Works on Windows, macOS, and Linux

- Download the libraries for the user and the libraries' needed libraries and compile them if they need to be compiled to compile Sleepy Discord

Compiling Sleepy Discord should be separate, because you only need to set up the deps folder once and Visual Studio has already automated compiling the library.

There have been a few people that tried tackling this issue, however none of them hits all of the above.

|

priority

|

automation of setting up the library compiling or setting up the library should be a more painless process once the library is downloaded the user should just need to do a few things like maybe running a script and then hit compile to get up and running there are few things i need for this to work the way i want it to here s a list of things i want to have for automating the seting up the deps folder users should be able to choose libraries like cpr websocketpp or uwebsockets users can choose to use no libraries if they wish works on windows macos and linux download the libraries for the user and the libraries needed libraries and compile them if they need to be compiled to compile sleepy discord compiling sleepy discord should be separate because you only need to set up the deps folder once and visual studio has already automated compiling the library there have been a few people that tried tackling this issue however none of them hits all of the above

| 1

|

367,135

| 10,840,896,436

|

IssuesEvent

|

2019-11-12 09:21:04

|

input-output-hk/ouroboros-network

|

https://api.github.com/repos/input-output-hk/ouroboros-network

|

closed

|

Different byron proxies for the same network do not use the same chain?

|

consensus priority high

|

In order to sync mainnet more quickly I configured my node to use a locally running byron proxy using:

```

$ nix-build -A scripts.mainnet.node -o launch_mainnet_node --arg customConfig '{ useProxy = true;

}'

$ ./launch_mainnet_node

```

which allowed me to sync all the way to the current chain tip.

If I now rebuild it to use the default remote proxy and then run that using:

```

$ nix-build -A scripts.mainnet.node -o launch_mainnet_node

$ ./launch_mainnet_node

```

I get a "ForkTooDeep" error:

```

[iohk.cardano.node.CoreId 0.ChainSyncClient:Warning:82] [2019-10-02 04:48:21.21 UTC] {"exception":"ForkTooDeep (Point {getPoint = Origin}) (Tip {tipPoint = Point {getPoint = At (Block {blockPointSlot = SlotNo {unSlotNo = 1500206}, blockPointHash = ByronHash {unByronHash = AbstractHash d814b9e4d6cf69f751340563dc2c9875400ac700c9f45416551b25dfa0743d7d}})}, tipBlockNo = BlockNo {unBlockNo = 1499903}})","kind":"ChainSyncClientEvent.TraceException"}

```

and it fails to sync.

If I then rebuild (using top `nix-build` command) to use my local byron proxy it is perfectly happy to continue on the existing chain.

I have already confirmed that these are using the same genesis hash and genesis JSON file.

|

1.0

|

Different byron proxies for the same network do not use the same chain? - In order to sync mainnet more quickly I configured my node to use a locally running byron proxy using:

```

$ nix-build -A scripts.mainnet.node -o launch_mainnet_node --arg customConfig '{ useProxy = true;

}'

$ ./launch_mainnet_node

```

which allowed me to sync all the way to the current chain tip.

If I now rebuild it to use the default remote proxy and then run that using:

```

$ nix-build -A scripts.mainnet.node -o launch_mainnet_node

$ ./launch_mainnet_node

```

I get a "ForkTooDeep" error:

```

[iohk.cardano.node.CoreId 0.ChainSyncClient:Warning:82] [2019-10-02 04:48:21.21 UTC] {"exception":"ForkTooDeep (Point {getPoint = Origin}) (Tip {tipPoint = Point {getPoint = At (Block {blockPointSlot = SlotNo {unSlotNo = 1500206}, blockPointHash = ByronHash {unByronHash = AbstractHash d814b9e4d6cf69f751340563dc2c9875400ac700c9f45416551b25dfa0743d7d}})}, tipBlockNo = BlockNo {unBlockNo = 1499903}})","kind":"ChainSyncClientEvent.TraceException"}

```

and it fails to sync.

If I then rebuild (using top `nix-build` command) to use my local byron proxy it is perfectly happy to continue on the existing chain.

I have already confirmed that these are using the same genesis hash and genesis JSON file.

|

priority

|

different byron proxies for the same network do not use the same chain in order to sync mainnet more quickly i configured my node to use a locally running byron proxy using nix build a scripts mainnet node o launch mainnet node arg customconfig useproxy true launch mainnet node which allowed me to sync all the way to the current chain tip if i now rebuild it to use the default remote proxy and then run that using nix build a scripts mainnet node o launch mainnet node launch mainnet node i get a forktoodeep error exception forktoodeep point getpoint origin tip tippoint point getpoint at block blockpointslot slotno unslotno blockpointhash byronhash unbyronhash abstracthash tipblockno blockno unblockno kind chainsyncclientevent traceexception and it fails to sync if i then rebuild using top nix build command to use my local byron proxy it is perfectly happy to continue on the existing chain i have already confirmed that these are using the same genesis hash and genesis json file

| 1

|

164,929

| 6,259,031,399

|

IssuesEvent

|

2017-07-14 17:02:48

|

juauer/modelcars

|

https://api.github.com/repos/juauer/modelcars

|

closed

|

Master wieder zum Master machen

|

HIGH PRIORITY

|

Ich habe beschlossen, dass die ausführlichen Includes aus f861ba1bea6f91ce9d7266690d02326a008f47a2 der einfachste Weg sind, das Kompilier-Problem zu lösen und habe den Commit in den Master gecherry-picked.

Ich habe den Commit danch (f861ba1bea6f91ce9d7266690d02326a008f47a2) auch geholt, aber etwas verändert:

* das Profil gab es schon (genau so) man soll aber crosscompile/install benutzen, damit der Pfad zum Toolchain File nicht absolut angegeben werden muss. Bei jedem anderen außer dem Autor würde es sonst nicht kompilieren (falscher Pfad) ;) Siehe auch 2b2bcd2c8c29742a5c414a4d1b522f3afb01e8ea !

* die anderen Files habe ich nach / geschoben. Fragen / Issues dazu:

* was ist mit diesem .txt file? Ist das nicht eigentlich ein Launchfile?

* der environment 'Hack' muss weg, sonst kann das ja außer uns nie jemand starten / verstehen / warten

* replace.sh muss bestimmt angepasst werden, nach den von mir vorgenommenen Änderungen. Erbitte review.

Den Branch können wir löschen !?

|

1.0

|

Master wieder zum Master machen - Ich habe beschlossen, dass die ausführlichen Includes aus f861ba1bea6f91ce9d7266690d02326a008f47a2 der einfachste Weg sind, das Kompilier-Problem zu lösen und habe den Commit in den Master gecherry-picked.

Ich habe den Commit danch (f861ba1bea6f91ce9d7266690d02326a008f47a2) auch geholt, aber etwas verändert:

* das Profil gab es schon (genau so) man soll aber crosscompile/install benutzen, damit der Pfad zum Toolchain File nicht absolut angegeben werden muss. Bei jedem anderen außer dem Autor würde es sonst nicht kompilieren (falscher Pfad) ;) Siehe auch 2b2bcd2c8c29742a5c414a4d1b522f3afb01e8ea !

* die anderen Files habe ich nach / geschoben. Fragen / Issues dazu:

* was ist mit diesem .txt file? Ist das nicht eigentlich ein Launchfile?

* der environment 'Hack' muss weg, sonst kann das ja außer uns nie jemand starten / verstehen / warten

* replace.sh muss bestimmt angepasst werden, nach den von mir vorgenommenen Änderungen. Erbitte review.

Den Branch können wir löschen !?

|

priority

|

master wieder zum master machen ich habe beschlossen dass die ausführlichen includes aus der einfachste weg sind das kompilier problem zu lösen und habe den commit in den master gecherry picked ich habe den commit danch auch geholt aber etwas verändert das profil gab es schon genau so man soll aber crosscompile install benutzen damit der pfad zum toolchain file nicht absolut angegeben werden muss bei jedem anderen außer dem autor würde es sonst nicht kompilieren falscher pfad siehe auch die anderen files habe ich nach geschoben fragen issues dazu was ist mit diesem txt file ist das nicht eigentlich ein launchfile der environment hack muss weg sonst kann das ja außer uns nie jemand starten verstehen warten replace sh muss bestimmt angepasst werden nach den von mir vorgenommenen änderungen erbitte review den branch können wir löschen

| 1

|

406,704

| 11,901,719,013

|

IssuesEvent

|

2020-03-30 12:55:30

|

AY1920S2-CS2103-W14-3/main

|

https://api.github.com/repos/AY1920S2-CS2103-W14-3/main

|

closed

|

As a busy university student with many events to attend and friends to catch up with I want to be able to keep track of all the events that I need to attend

|

priority.High type.Story

|

so that I do not miss any meetings and anger anyone.

|

1.0

|

As a busy university student with many events to attend and friends to catch up with I want to be able to keep track of all the events that I need to attend - so that I do not miss any meetings and anger anyone.

|

priority

|

as a busy university student with many events to attend and friends to catch up with i want to be able to keep track of all the events that i need to attend so that i do not miss any meetings and anger anyone

| 1

|

538,926

| 15,780,932,093

|

IssuesEvent

|

2021-04-01 10:37:29

|

ita-social-projects/TeachUA

|

https://api.github.com/repos/ita-social-projects/TeachUA

|

opened

|

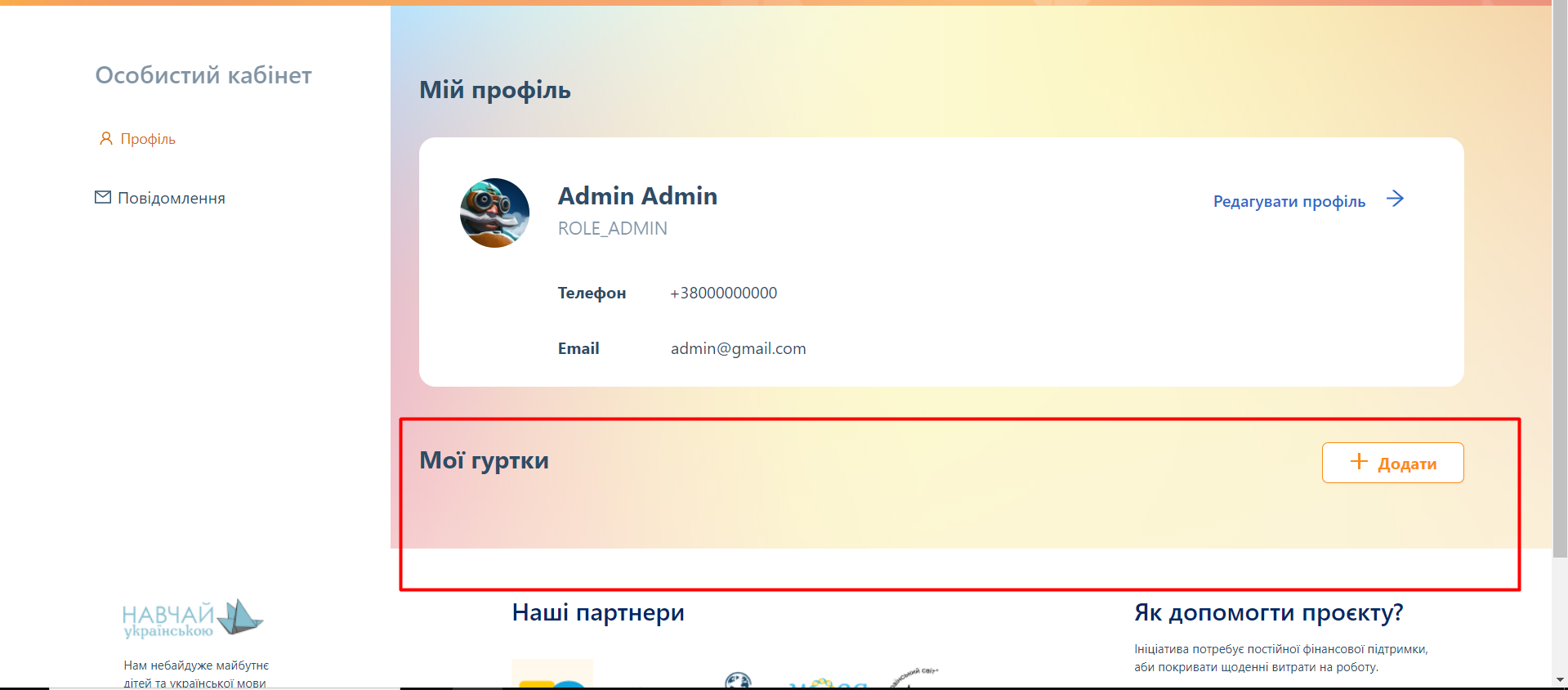

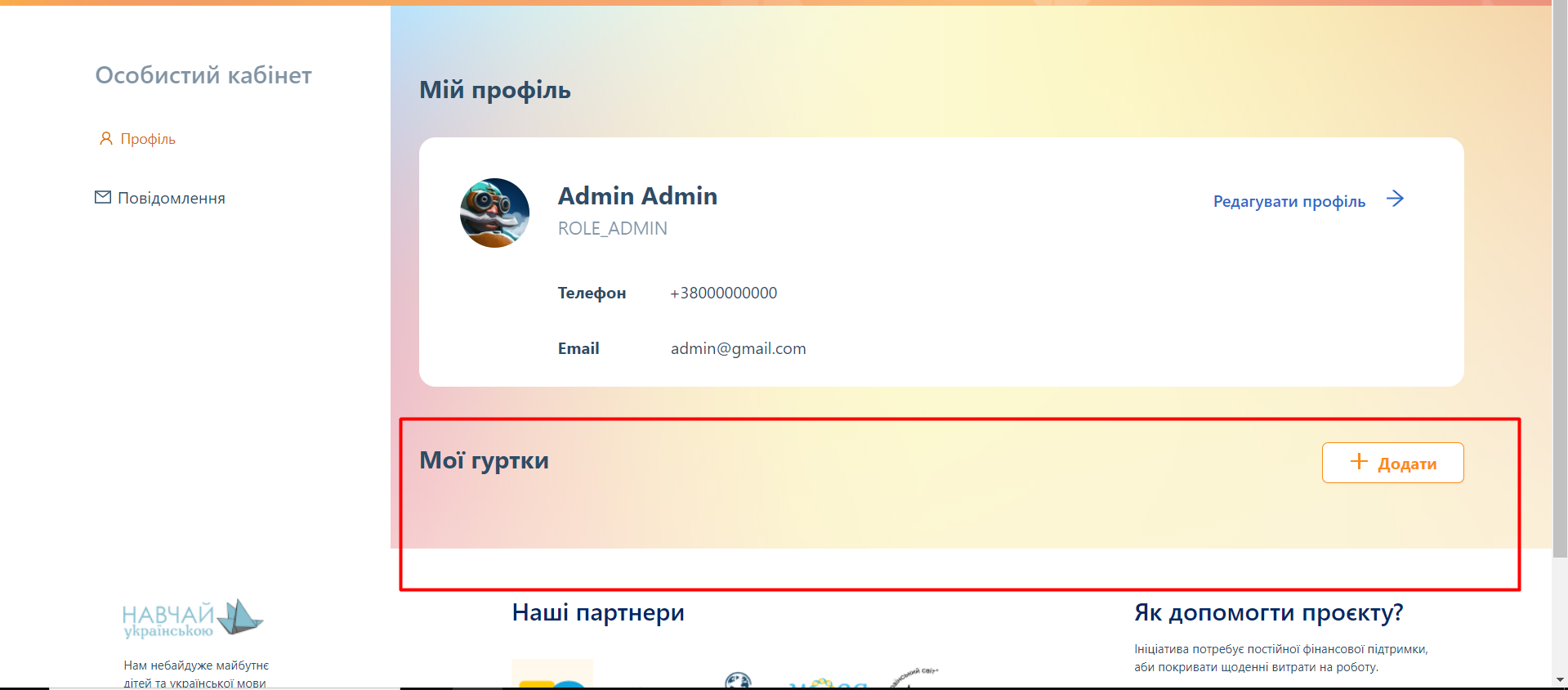

[Особистий кабінет] Clubs that was added by a user aren't shown in 'Мої гуртки' section

|

Priority: High bug

|

**Environment:** Windows, Google Chrome 88.0.4324.190 (64-bit).

**Reproducible:** always.

**Build found:** last commit from https://speak-ukrainian.org.ua/dev/clubs

**Steps to reproduce**

1. Log into the system (email: admin@gmail.com password: admin)

2. Go to 'Мій профіль'

3. Click on '+Додати' button and click on 'Додати гурток' in a drop down-list

4. Fill all mandatory fields on all steps of the pop-up 'Додати гурток' and add a new club

5. Pay attention to 'Мої гуртки' section on 'Мій профіль' page

**Actual result**

An added club isn't shown in 'Мої гуртки' section

**Expected result**

Added clubs should be shown in 'Мої гуртки' section

**Labels to be added**

"Bug", Priority ("pri: high"), Severity ("severity: major"), Type ("Functional").

|

1.0

|

[Особистий кабінет] Clubs that was added by a user aren't shown in 'Мої гуртки' section - **Environment:** Windows, Google Chrome 88.0.4324.190 (64-bit).

**Reproducible:** always.

**Build found:** last commit from https://speak-ukrainian.org.ua/dev/clubs

**Steps to reproduce**

1. Log into the system (email: admin@gmail.com password: admin)

2. Go to 'Мій профіль'

3. Click on '+Додати' button and click on 'Додати гурток' in a drop down-list

4. Fill all mandatory fields on all steps of the pop-up 'Додати гурток' and add a new club

5. Pay attention to 'Мої гуртки' section on 'Мій профіль' page

**Actual result**

An added club isn't shown in 'Мої гуртки' section

**Expected result**

Added clubs should be shown in 'Мої гуртки' section

**Labels to be added**

"Bug", Priority ("pri: high"), Severity ("severity: major"), Type ("Functional").

|

priority

|

clubs that was added by a user aren t shown in мої гуртки section environment windows google chrome bit reproducible always build found last commit from steps to reproduce log into the system email admin gmail com password admin go to мій профіль click on додати button and click on додати гурток in a drop down list fill all mandatory fields on all steps of the pop up додати гурток and add a new club pay attention to мої гуртки section on мій профіль page actual result an added club isn t shown in мої гуртки section expected result added clubs should be shown in мої гуртки section labels to be added bug priority pri high severity severity major type functional

| 1

|

601,174

| 18,389,395,099

|

IssuesEvent

|

2021-10-12 02:10:58

|

yjunechoe/ggtrace

|

https://api.github.com/repos/yjunechoe/ggtrace

|

closed

|

quosures should be passed around instead of expressions

|

bug priority: high

|

util functions need to be able to see the env in which the ggproto method was defined, in case it becomes inaccessible from the util function's local scope

|

1.0

|

quosures should be passed around instead of expressions - util functions need to be able to see the env in which the ggproto method was defined, in case it becomes inaccessible from the util function's local scope

|

priority

|

quosures should be passed around instead of expressions util functions need to be able to see the env in which the ggproto method was defined in case it becomes inaccessible from the util function s local scope

| 1

|

528,868

| 15,376,168,049

|

IssuesEvent

|

2021-03-02 15:41:46

|

ctm/mb2-doc

|

https://api.github.com/repos/ctm/mb2-doc

|

opened

|

use finished_at rather than started_at to flag events that never started

|

chore easy high priority

|

When an event is to be abandoned, set `finished_at` to the current time and leave `started_at` as `NULL`. This also means that when we're looking for upcoming events, we'll want to skip over any events that have `finished_at` set.

Currently when mb2 decides to abandon an event due to lack of participants, it sets `started_at` to the time that it's abandoned and leaves `finished_at` as `NULL`. That has worked fine, but it's a bit misleading, since that's the same thing that we get if mb2 is crashes (or taken down) during an event.

To do this properly, we'll want.a migration that updates all the pre-existing rows in the `events` table that were abandoned (rather than interrupted).

FWIW, I ran into this while working on ring games (#88) because I'm adding a `starting_chips` column to the `entries` table and was momentarily puzzled by why my SQL wasn't detecting starting chips for some tourneys. It's because those never started…

|

1.0

|

use finished_at rather than started_at to flag events that never started - When an event is to be abandoned, set `finished_at` to the current time and leave `started_at` as `NULL`. This also means that when we're looking for upcoming events, we'll want to skip over any events that have `finished_at` set.

Currently when mb2 decides to abandon an event due to lack of participants, it sets `started_at` to the time that it's abandoned and leaves `finished_at` as `NULL`. That has worked fine, but it's a bit misleading, since that's the same thing that we get if mb2 is crashes (or taken down) during an event.

To do this properly, we'll want.a migration that updates all the pre-existing rows in the `events` table that were abandoned (rather than interrupted).

FWIW, I ran into this while working on ring games (#88) because I'm adding a `starting_chips` column to the `entries` table and was momentarily puzzled by why my SQL wasn't detecting starting chips for some tourneys. It's because those never started…

|

priority

|

use finished at rather than started at to flag events that never started when an event is to be abandoned set finished at to the current time and leave started at as null this also means that when we re looking for upcoming events we ll want to skip over any events that have finished at set currently when decides to abandon an event due to lack of participants it sets started at to the time that it s abandoned and leaves finished at as null that has worked fine but it s a bit misleading since that s the same thing that we get if is crashes or taken down during an event to do this properly we ll want a migration that updates all the pre existing rows in the events table that were abandoned rather than interrupted fwiw i ran into this while working on ring games because i m adding a starting chips column to the entries table and was momentarily puzzled by why my sql wasn t detecting starting chips for some tourneys it s because those never started hellip

| 1

|

33,512

| 2,766,309,832

|

IssuesEvent

|

2015-04-30 03:43:44

|

neurosynth/neurosynth-web

|

https://api.github.com/repos/neurosynth/neurosynth-web

|

closed

|

Some custom analyses display 0 studies

|

bug priority:high

|

Some custom analysis records have an n_studies value of 0. This appears to happen if the analysis is saved with 0 studies, and then later updated--the n_studies field isn't updated.

|

1.0

|

Some custom analyses display 0 studies - Some custom analysis records have an n_studies value of 0. This appears to happen if the analysis is saved with 0 studies, and then later updated--the n_studies field isn't updated.

|

priority

|

some custom analyses display studies some custom analysis records have an n studies value of this appears to happen if the analysis is saved with studies and then later updated the n studies field isn t updated

| 1

|

830,967

| 32,032,932,467

|

IssuesEvent

|

2023-09-22 13:31:37

|

puyu-pe/puka

|

https://api.github.com/repos/puyu-pe/puka

|

opened

|

Implementar panel de acciones en trayicon

|

point: 2 priority: high type:feature

|

# **🚀 Feature Request**

## **Is your feature request related to a problem? Please describe.**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

* Al hacer click en el trayicon se debe habrir un panel donde el usuario podra volver a imprimir los tickets que quedaron en cola de impresión.

* El nuevo panel de acciones tiene que ser el componente padre del trayicon

|

1.0

|

Implementar panel de acciones en trayicon - # **🚀 Feature Request**

## **Is your feature request related to a problem? Please describe.**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

* Al hacer click en el trayicon se debe habrir un panel donde el usuario podra volver a imprimir los tickets que quedaron en cola de impresión.

* El nuevo panel de acciones tiene que ser el componente padre del trayicon

|

priority

|

implementar panel de acciones en trayicon 🚀 feature request is your feature request related to a problem please describe al hacer click en el trayicon se debe habrir un panel donde el usuario podra volver a imprimir los tickets que quedaron en cola de impresión el nuevo panel de acciones tiene que ser el componente padre del trayicon

| 1

|

534,520

| 15,624,736,488

|

IssuesEvent

|

2021-03-21 04:14:49

|

pc2ccs/pc2v9

|

https://api.github.com/repos/pc2ccs/pc2v9

|

closed

|

Setting Allow multiple logins per team - de-selected when other settings are updated

|

bug duplicate high priority

|

**Describe the issue**:

When certain settings on the Settings tab are updated

the Setting Allow multiple logins per team is unchecked/ de-selected

There may be a number of Settings fields that cause

this bug.

**To Reproduce**:

1. Start server, start admin

1. check ```Allow multiple logins per team ```

1. Update (to save it)

1. Edit Scoring Properties, Update then Update

**Expected behavior**:

Allow multiple logins per team should be checked

**Actual behavior**:

Allow multiple logins per team it NOT checked

**Environment**:

**Log Info**:

**Screenshots**:

**Additional context**:

|

1.0

|

Setting Allow multiple logins per team - de-selected when other settings are updated - **Describe the issue**:

When certain settings on the Settings tab are updated

the Setting Allow multiple logins per team is unchecked/ de-selected

There may be a number of Settings fields that cause

this bug.

**To Reproduce**:

1. Start server, start admin

1. check ```Allow multiple logins per team ```

1. Update (to save it)

1. Edit Scoring Properties, Update then Update

**Expected behavior**:

Allow multiple logins per team should be checked

**Actual behavior**:

Allow multiple logins per team it NOT checked

**Environment**:

**Log Info**:

**Screenshots**:

**Additional context**:

|

priority

|

setting allow multiple logins per team de selected when other settings are updated describe the issue when certain settings on the settings tab are updated the setting allow multiple logins per team is unchecked de selected there may be a number of settings fields that cause this bug to reproduce start server start admin check allow multiple logins per team update to save it edit scoring properties update then update expected behavior allow multiple logins per team should be checked actual behavior allow multiple logins per team it not checked environment log info screenshots additional context

| 1

|

730,484

| 25,174,901,013

|

IssuesEvent

|

2022-11-11 08:21:06

|

coursier/coursier

|

https://api.github.com/repos/coursier/coursier

|

closed

|

Scala 2.12.16 version of sbt-coursier

|

high priority

|

I am probably going to publish sbt 1.8.0 RC or milestone so people can start using scala-xml, but could we also maintain a branch with Scala 2.12.17 as well for 1.7.x series until 1.8.x matures?

Thanks!

|

1.0

|

Scala 2.12.16 version of sbt-coursier - I am probably going to publish sbt 1.8.0 RC or milestone so people can start using scala-xml, but could we also maintain a branch with Scala 2.12.17 as well for 1.7.x series until 1.8.x matures?

Thanks!

|

priority

|

scala version of sbt coursier i am probably going to publish sbt rc or milestone so people can start using scala xml but could we also maintain a branch with scala as well for x series until x matures thanks

| 1

|

223,899

| 7,463,360,427

|

IssuesEvent

|

2018-04-01 03:56:02

|

uber/pyro

|

https://api.github.com/repos/uber/pyro

|

closed

|

latest dev docs broken

|

high priority

|

```

/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/docs/source/contrib.gp.rst:6: WARNING: autodoc: failed to import module u'pyro.contrib.gp'; the following exception was raised:

Traceback (most recent call last):

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/envs/dev/local/lib/python2.7/site-packages/sphinx/ext/autodoc.py", line 658, in import_object

__import__(self.modname)

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/pyro/__init__.py", line 14, in <module>

import pyro.infer as infer

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/pyro/infer/__init__.py", line 9, in <module>

from pyro.infer.advi import ADVI, ADVIMultivariateNormal, ADVIDiagonalNormal

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/pyro/infer/advi.py", line 16, in <module>

from contextlib2 import ExitStack # python 2

ImportError: No module named contextlib2

/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/docs/source/contrib.gp.rst:15: WARNING: autodoc: failed to import module u'pyro.contrib.gp.models.model'; the following exception was raised:

Traceback (most recent call last):

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/envs/dev/local/lib/python2.7/site-packages/sphinx/ext/autodoc.py", line 658, in import_object

__import__(self.modname)

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/pyro/__init__.py", line 13, in <module>

import pyro.distributions as dist

AttributeError: 'module' object has no attribute 'distributions'

```

may possibly be from #961 and/or #951. can we error on these warnings in travis? because the docs build succeeds even if exceptions are thrown.

|

1.0

|

latest dev docs broken - ```

/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/docs/source/contrib.gp.rst:6: WARNING: autodoc: failed to import module u'pyro.contrib.gp'; the following exception was raised:

Traceback (most recent call last):

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/envs/dev/local/lib/python2.7/site-packages/sphinx/ext/autodoc.py", line 658, in import_object

__import__(self.modname)

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/pyro/__init__.py", line 14, in <module>

import pyro.infer as infer

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/pyro/infer/__init__.py", line 9, in <module>

from pyro.infer.advi import ADVI, ADVIMultivariateNormal, ADVIDiagonalNormal

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/pyro/infer/advi.py", line 16, in <module>

from contextlib2 import ExitStack # python 2

ImportError: No module named contextlib2

/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/docs/source/contrib.gp.rst:15: WARNING: autodoc: failed to import module u'pyro.contrib.gp.models.model'; the following exception was raised:

Traceback (most recent call last):

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/envs/dev/local/lib/python2.7/site-packages/sphinx/ext/autodoc.py", line 658, in import_object

__import__(self.modname)

File "/home/docs/checkouts/readthedocs.org/user_builds/pyro-ppl/checkouts/dev/pyro/__init__.py", line 13, in <module>

import pyro.distributions as dist

AttributeError: 'module' object has no attribute 'distributions'

```

may possibly be from #961 and/or #951. can we error on these warnings in travis? because the docs build succeeds even if exceptions are thrown.

|

priority

|

latest dev docs broken home docs checkouts readthedocs org user builds pyro ppl checkouts dev docs source contrib gp rst warning autodoc failed to import module u pyro contrib gp the following exception was raised traceback most recent call last file home docs checkouts readthedocs org user builds pyro ppl envs dev local lib site packages sphinx ext autodoc py line in import object import self modname file home docs checkouts readthedocs org user builds pyro ppl checkouts dev pyro init py line in import pyro infer as infer file home docs checkouts readthedocs org user builds pyro ppl checkouts dev pyro infer init py line in from pyro infer advi import advi advimultivariatenormal advidiagonalnormal file home docs checkouts readthedocs org user builds pyro ppl checkouts dev pyro infer advi py line in from import exitstack python importerror no module named home docs checkouts readthedocs org user builds pyro ppl checkouts dev docs source contrib gp rst warning autodoc failed to import module u pyro contrib gp models model the following exception was raised traceback most recent call last file home docs checkouts readthedocs org user builds pyro ppl envs dev local lib site packages sphinx ext autodoc py line in import object import self modname file home docs checkouts readthedocs org user builds pyro ppl checkouts dev pyro init py line in import pyro distributions as dist attributeerror module object has no attribute distributions may possibly be from and or can we error on these warnings in travis because the docs build succeeds even if exceptions are thrown

| 1

|

192,991

| 6,877,707,298

|

IssuesEvent

|

2017-11-20 09:11:11

|

ballerinalang/composer

|

https://api.github.com/repos/ballerinalang/composer

|

closed

|

Too many options for the same functionality when debugging which is complicated

|

Priority/High Severity/Major Type/Improvement UX

|

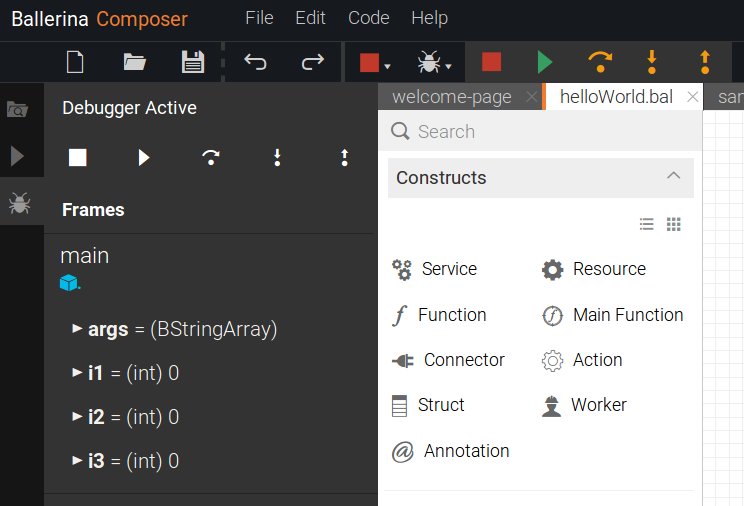

Pack - 23/08/2017

The same debugging functions can be seen at two places which confuses a first time user as to which one to use. Also, one option is with colors and the other without colors.

|

1.0

|

Too many options for the same functionality when debugging which is complicated - Pack - 23/08/2017

The same debugging functions can be seen at two places which confuses a first time user as to which one to use. Also, one option is with colors and the other without colors.

|

priority

|

too many options for the same functionality when debugging which is complicated pack the same debugging functions can be seen at two places which confuses a first time user as to which one to use also one option is with colors and the other without colors

| 1

|

521,763

| 15,115,250,054

|

IssuesEvent

|

2021-02-09 03:58:29

|

onaio/reveal-frontend

|

https://api.github.com/repos/onaio/reveal-frontend

|

closed

|

Plans are showing different status on Action plan page and on the Monitor page for Thailand Production

|

Priority: High

|

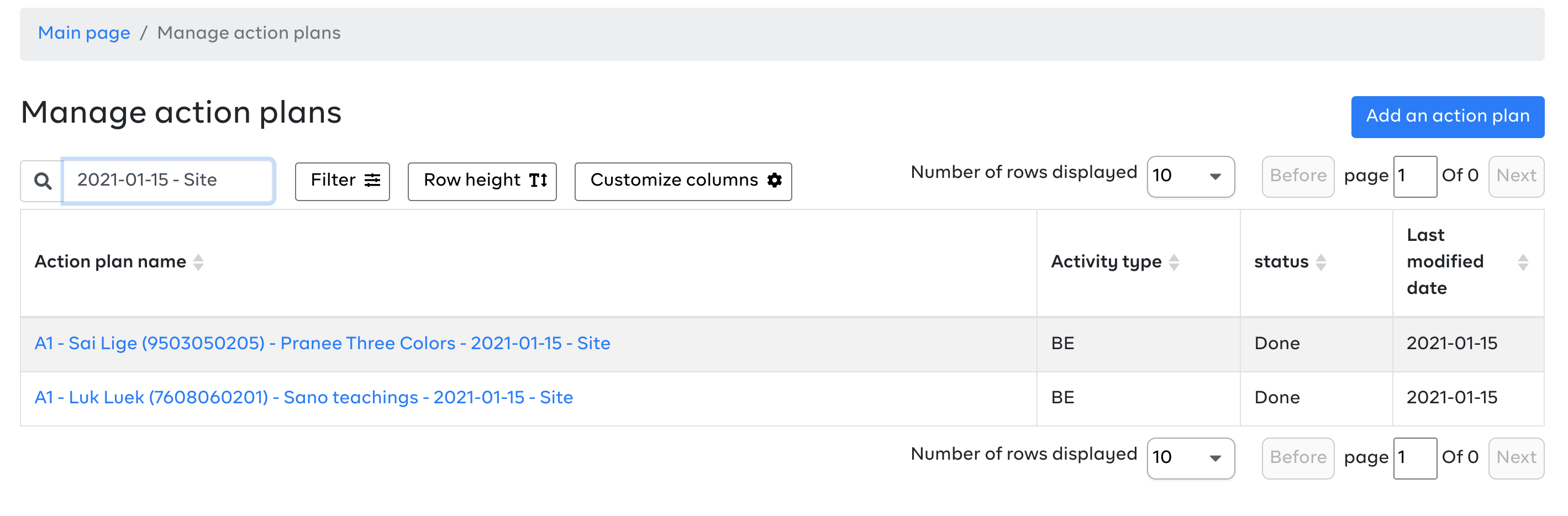

Thailand has reported instances where the same plan shows different status on the action plan page and on the monitoring page. A case in point is the plan with the details below:

- plan : A1 - โป่งลึก (7608060201) - โสน คำสอน - 2021-01-15 - Site

- plan id: b618ca2b-1469-4221-8ff3-a36e08ec8f8c

- status in 'manage plans' (web UI) = complete. [Here](https://mhealth.ddc.moph.go.th/focus-investigation/map/8876ab40-e2cd-5790-950f-ef15e6267bb5

) is a link to the WebUI.

- status in 'monitor' (web UI) = active

See Sample Screenshots below:

**Action Page** - Status reads - Done

**Monitor Page** - Status reads - Active

The expectation is that plans should have the same status irrespective on which page its accessed from.

|

1.0

|

Plans are showing different status on Action plan page and on the Monitor page for Thailand Production - Thailand has reported instances where the same plan shows different status on the action plan page and on the monitoring page. A case in point is the plan with the details below:

- plan : A1 - โป่งลึก (7608060201) - โสน คำสอน - 2021-01-15 - Site

- plan id: b618ca2b-1469-4221-8ff3-a36e08ec8f8c

- status in 'manage plans' (web UI) = complete. [Here](https://mhealth.ddc.moph.go.th/focus-investigation/map/8876ab40-e2cd-5790-950f-ef15e6267bb5

) is a link to the WebUI.

- status in 'monitor' (web UI) = active

See Sample Screenshots below:

**Action Page** - Status reads - Done

**Monitor Page** - Status reads - Active

The expectation is that plans should have the same status irrespective on which page its accessed from.

|

priority

|

plans are showing different status on action plan page and on the monitor page for thailand production thailand has reported instances where the same plan shows different status on the action plan page and on the monitoring page a case in point is the plan with the details below plan โป่งลึก โสน คำสอน site plan id status in manage plans web ui complete is a link to the webui status in monitor web ui active see sample screenshots below action page status reads done monitor page status reads active the expectation is that plans should have the same status irrespective on which page its accessed from

| 1

|

174,385

| 6,539,634,264

|

IssuesEvent

|

2017-09-01 12:15:55

|

DOAJ/doaj

|

https://api.github.com/repos/DOAJ/doaj

|

closed

|

xml processing failed 0214-0071

|

article xml high priority

|

I am attaching two xmls. I checked with a validator and they seemed fine.

Could you have a look please?

[xml_cienciasdeporte.zip](https://github.com/DOAJ/doaj/files/893981/xml_cienciasdeporte.zip)

|

1.0

|

xml processing failed 0214-0071 - I am attaching two xmls. I checked with a validator and they seemed fine.

Could you have a look please?

[xml_cienciasdeporte.zip](https://github.com/DOAJ/doaj/files/893981/xml_cienciasdeporte.zip)

|

priority

|

xml processing failed i am attaching two xmls i checked with a validator and they seemed fine could you have a look please

| 1

|

568,627

| 16,984,618,152

|

IssuesEvent

|

2021-06-30 13:07:26

|

Qiskit/qiskit-terra

|

https://api.github.com/repos/Qiskit/qiskit-terra

|

closed

|

Support PauliGate in qpy

|

bug priority: high

|

<!-- ⚠️ If you do not respect this template, your issue will be closed -->

<!-- ⚠️ Make sure to browse the opened and closed issues -->

### Information

- **Qiskit Terra version**:

- **Python version**:

- **Operating system**:

### What is the current behavior?

Serializing a circuit with a PauliGate fails with

```

File "/Users/jessieyu/Documents/Q/Github/qiskit-terra/qiskit/circuit/qpy_serialization.py", line 824, in dump

_write_circuit(file_obj, circuit)

File "/Users/jessieyu/Documents/Q/Github/qiskit-terra/qiskit/circuit/qpy_serialization.py", line 867, in _write_circuit

_write_instruction(instruction_buffer, instruction, custom_instructions, index_map)

File "/Users/jessieyu/Documents/Q/Github/qiskit-terra/qiskit/circuit/qpy_serialization.py", line 724, in _write_instruction

raise TypeError(

TypeError: Invalid parameter type <qiskit.circuit.library.generalized_gates.pauli.PauliGate object at 0x13bde8730> for gate <class 'str'>,

```

### Steps to reproduce the problem

```

circ = (X ^ Y ^ Z).to_circuit_op().to_circuit()

qpy_serialization.dump(buff, circ)

```

### What is the expected behavior?

The circuit can be serialized.

Also the error message seems backwards - should have said `Invalid parameter type 'str' for gate 'PauliGate'` instead.

### Suggested solutions

Allow string as a valid parameter for gates.

|

1.0

|

Support PauliGate in qpy - <!-- ⚠️ If you do not respect this template, your issue will be closed -->

<!-- ⚠️ Make sure to browse the opened and closed issues -->

### Information

- **Qiskit Terra version**:

- **Python version**:

- **Operating system**:

### What is the current behavior?

Serializing a circuit with a PauliGate fails with

```

File "/Users/jessieyu/Documents/Q/Github/qiskit-terra/qiskit/circuit/qpy_serialization.py", line 824, in dump

_write_circuit(file_obj, circuit)

File "/Users/jessieyu/Documents/Q/Github/qiskit-terra/qiskit/circuit/qpy_serialization.py", line 867, in _write_circuit

_write_instruction(instruction_buffer, instruction, custom_instructions, index_map)

File "/Users/jessieyu/Documents/Q/Github/qiskit-terra/qiskit/circuit/qpy_serialization.py", line 724, in _write_instruction

raise TypeError(

TypeError: Invalid parameter type <qiskit.circuit.library.generalized_gates.pauli.PauliGate object at 0x13bde8730> for gate <class 'str'>,

```

### Steps to reproduce the problem

```

circ = (X ^ Y ^ Z).to_circuit_op().to_circuit()

qpy_serialization.dump(buff, circ)

```

### What is the expected behavior?

The circuit can be serialized.

Also the error message seems backwards - should have said `Invalid parameter type 'str' for gate 'PauliGate'` instead.

### Suggested solutions

Allow string as a valid parameter for gates.

|

priority

|