Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1,048

| 2,507,024,719

|

IssuesEvent

|

2015-01-12 15:39:29

|

G-Node/GCA-Web

|

https://api.github.com/repos/G-Node/GCA-Web

|

closed

|

Editor does not work in IE

|

bug high priority

|

The editor does not update the abstract properly in internet explorer (version 11 and others).

|

1.0

|

Editor does not work in IE - The editor does not update the abstract properly in internet explorer (version 11 and others).

|

priority

|

editor does not work in ie the editor does not update the abstract properly in internet explorer version and others

| 1

|

239,601

| 7,799,873,036

|

IssuesEvent

|

2018-06-09 01:30:38

|

tine20/Tine-2.0-Open-Source-Groupware-and-CRM

|

https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM

|

closed

|

0005720:

'my contacts' favorite does not work correctly

|

Addressbook Bug Mantis high priority

|

**Reported by pschuele on 15 Feb 2012 14:41**

**Version:** Milan (2012-03) Beta 4

'my contacts' favorite does not work correctly

it looks like the server does not send the correct node info, the users displayname is shown in the filter instead of the node/path ...

|

1.0

|

0005720:

'my contacts' favorite does not work correctly - **Reported by pschuele on 15 Feb 2012 14:41**

**Version:** Milan (2012-03) Beta 4

'my contacts' favorite does not work correctly

it looks like the server does not send the correct node info, the users displayname is shown in the filter instead of the node/path ...

|

priority

|

my contacts favorite does not work correctly reported by pschuele on feb version milan beta my contacts favorite does not work correctly it looks like the server does not send the correct node info the users displayname is shown in the filter instead of the node path

| 1

|

390,186

| 11,527,787,139

|

IssuesEvent

|

2020-02-16 00:23:26

|

tempor1s/oracli

|

https://api.github.com/repos/tempor1s/oracli

|

closed

|

Require user auth to be pulled from header

|

enhancement help wanted high priority

|

Currently, you need to send the token over the body, but I would prefer if we can move this over to the token being sent in the header as it is a much cleaner implementation of authentication :)

|

1.0

|

Require user auth to be pulled from header - Currently, you need to send the token over the body, but I would prefer if we can move this over to the token being sent in the header as it is a much cleaner implementation of authentication :)

|

priority

|

require user auth to be pulled from header currently you need to send the token over the body but i would prefer if we can move this over to the token being sent in the header as it is a much cleaner implementation of authentication

| 1

|

712,312

| 24,490,137,051

|

IssuesEvent

|

2022-10-09 23:48:10

|

python/mypy

|

https://api.github.com/repos/python/mypy

|

closed

|

Give an error if there is a variable annotation within a function but no signature

|

feature priority-0-high topic-usability good-first-issue

|

Mypy should perhaps give an error about a variable annotation in an otherwise unanotated function. Example:

```py

def f():

a: int = 'x' # Maybe this annotation should be an error?

```

The rationale is that mypy will ignore the type annotation since the function is still considered unannotated, but this is confusing because there is an annotation *within* the function so it can appear to be annotated.

If `--check-untyped-defs` is being used this error shouldn't be generated.

Originally reported in #3945.

|

1.0

|

Give an error if there is a variable annotation within a function but no signature - Mypy should perhaps give an error about a variable annotation in an otherwise unanotated function. Example:

```py

def f():

a: int = 'x' # Maybe this annotation should be an error?

```

The rationale is that mypy will ignore the type annotation since the function is still considered unannotated, but this is confusing because there is an annotation *within* the function so it can appear to be annotated.

If `--check-untyped-defs` is being used this error shouldn't be generated.

Originally reported in #3945.

|

priority

|

give an error if there is a variable annotation within a function but no signature mypy should perhaps give an error about a variable annotation in an otherwise unanotated function example py def f a int x maybe this annotation should be an error the rationale is that mypy will ignore the type annotation since the function is still considered unannotated but this is confusing because there is an annotation within the function so it can appear to be annotated if check untyped defs is being used this error shouldn t be generated originally reported in

| 1

|

716,371

| 24,630,274,496

|

IssuesEvent

|

2022-10-17 00:57:10

|

adisve/tumble-for-kronox

|

https://api.github.com/repos/adisve/tumble-for-kronox

|

closed

|

German and French translation

|

enhancement High Priority

|

Update to the translations with the new strings that were added.

|

1.0

|

German and French translation - Update to the translations with the new strings that were added.

|

priority

|

german and french translation update to the translations with the new strings that were added

| 1

|

260,961

| 8,221,636,317

|

IssuesEvent

|

2018-09-06 03:06:55

|

craftercms/craftercms

|

https://api.github.com/repos/craftercms/craftercms

|

closed

|

[studio] items are not deleted in stage on delete

|

CI bug priority: high

|

### Expected behavior

Deletes should be pushed to both live and staging

### Actual behavior

In 3.0.x delete is an immediate deploy to live. When staging is enabled, the delete takes place on live but not staging.

### Steps to reproduce the problem

* Create an a page

* Publish it to staging

* Publish it to live

* Delete the item

* Not that it still exists in staging

### Log/stack trace (use https://gist.github.com)

N/A

### Specs

#### Version

Studio Version Number: 3.0.16-SNAPSHOT-4c989c

Build Number: 4c989c7cb50201155c988637a6db453257b7a1cc

Build Date/Time: 08-02-2018 14:57:36 -0400

#### OS

Any

#### Browser

Any

|

1.0

|

[studio] items are not deleted in stage on delete - ### Expected behavior

Deletes should be pushed to both live and staging

### Actual behavior

In 3.0.x delete is an immediate deploy to live. When staging is enabled, the delete takes place on live but not staging.

### Steps to reproduce the problem

* Create an a page

* Publish it to staging

* Publish it to live

* Delete the item

* Not that it still exists in staging

### Log/stack trace (use https://gist.github.com)

N/A

### Specs

#### Version

Studio Version Number: 3.0.16-SNAPSHOT-4c989c

Build Number: 4c989c7cb50201155c988637a6db453257b7a1cc

Build Date/Time: 08-02-2018 14:57:36 -0400

#### OS

Any

#### Browser

Any

|

priority

|

items are not deleted in stage on delete expected behavior deletes should be pushed to both live and staging actual behavior in x delete is an immediate deploy to live when staging is enabled the delete takes place on live but not staging steps to reproduce the problem create an a page publish it to staging publish it to live delete the item not that it still exists in staging log stack trace use n a specs version studio version number snapshot build number build date time os any browser any

| 1

|

290,459

| 8,895,463,430

|

IssuesEvent

|

2019-01-16 08:45:24

|

telstra/open-kilda

|

https://api.github.com/repos/telstra/open-kilda

|

closed

|

New API: Reroute all flows which go through a particular ISL

|

area/api area/arch feature priority/2-high

|

Initiate a reroute for all flows on this ISL.

API: PATCH `links/flows/reroute?<params>`

Related: #1548 (Maintenance mode for ISL)

|

1.0

|

New API: Reroute all flows which go through a particular ISL - Initiate a reroute for all flows on this ISL.

API: PATCH `links/flows/reroute?<params>`

Related: #1548 (Maintenance mode for ISL)

|

priority

|

new api reroute all flows which go through a particular isl initiate a reroute for all flows on this isl api patch links flows reroute related maintenance mode for isl

| 1

|

369,920

| 10,919,947,713

|

IssuesEvent

|

2019-11-21 20:08:49

|

flextype/flextype

|

https://api.github.com/repos/flextype/flextype

|

closed

|

Flextype Core: Forms - add ability to hide fieldsets from entries type select

|

priority: high type: feature

|

We should have ability to hide fieldsets from entries type select, this will be useful for nested fieldsets. Possible we should add new property `hide` - by default is `false`

Logic:

if **hide** property is **true** then hide fieldsets from entries type select.

if **hide** property is **false** then show fieldsets from entries type select.

if **hide** property is **is not exists** then show fieldsets from entries type select.

|

1.0

|

Flextype Core: Forms - add ability to hide fieldsets from entries type select - We should have ability to hide fieldsets from entries type select, this will be useful for nested fieldsets. Possible we should add new property `hide` - by default is `false`

Logic:

if **hide** property is **true** then hide fieldsets from entries type select.

if **hide** property is **false** then show fieldsets from entries type select.

if **hide** property is **is not exists** then show fieldsets from entries type select.

|

priority

|

flextype core forms add ability to hide fieldsets from entries type select we should have ability to hide fieldsets from entries type select this will be useful for nested fieldsets possible we should add new property hide by default is false logic if hide property is true then hide fieldsets from entries type select if hide property is false then show fieldsets from entries type select if hide property is is not exists then show fieldsets from entries type select

| 1

|

139,443

| 5,375,479,836

|

IssuesEvent

|

2017-02-23 05:02:26

|

ArctosDB/documentation-wiki

|

https://api.github.com/repos/ArctosDB/documentation-wiki

|

opened

|

remove hyphens from anchor links when creating from <h3>subtitles

|

bug Priority: High question

|

Here's the problem:

in the current site the anchors were not necessarily the subheader/subtitle phrase:

https://arctosdb.org/documentation/agent/#namesearch

BUT the subheader is "Searching Agents"

Opt#1 I can go through and update the subheaders to match but in some cases like this one is leaves wtih some awkward phrasing.

Opt#2? Anyway to create anchors not from <h3>tags?

In any case we need to get rid of hyphens when constructing the anchors from subtitles

(readability pfft!)

This blocks the launch if we dont have a solid fix

|

1.0

|

remove hyphens from anchor links when creating from <h3>subtitles - Here's the problem:

in the current site the anchors were not necessarily the subheader/subtitle phrase:

https://arctosdb.org/documentation/agent/#namesearch

BUT the subheader is "Searching Agents"

Opt#1 I can go through and update the subheaders to match but in some cases like this one is leaves wtih some awkward phrasing.

Opt#2? Anyway to create anchors not from <h3>tags?

In any case we need to get rid of hyphens when constructing the anchors from subtitles

(readability pfft!)

This blocks the launch if we dont have a solid fix

|

priority

|

remove hyphens from anchor links when creating from subtitles here s the problem in the current site the anchors were not necessarily the subheader subtitle phrase but the subheader is searching agents opt i can go through and update the subheaders to match but in some cases like this one is leaves wtih some awkward phrasing opt anyway to create anchors not from tags in any case we need to get rid of hyphens when constructing the anchors from subtitles readability pfft this blocks the launch if we dont have a solid fix

| 1

|

396,193

| 11,705,177,872

|

IssuesEvent

|

2020-03-07 14:22:28

|

localstack/localstack

|

https://api.github.com/repos/localstack/localstack

|

closed

|

Missing required parameter in input: "FunctionName"

|

bug needs-triaging priority-high

|

While creating a lambda using CFN, my command fails with the following message:

```

2019-11-08 15:52:29,358:API: Error on request:

Traceback (most recent call last):

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/werkzeug/serving.py", line 304, in run_wsgi

execute(self.server.app)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/werkzeug/serving.py", line 292, in execute

application_iter = app(environ, start_response)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/server.py", line 132, in __call__

return backend_app(environ, start_response)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 2309, in __call__

return self.wsgi_app(environ, start_response)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 2295, in wsgi_app

response = self.handle_exception(e)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask_cors/extension.py", line 161, in wrapped_function

return cors_after_request(app.make_response(f(*args, **kwargs)))

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1741, in handle_exception

reraise(exc_type, exc_value, tb)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/_compat.py", line 35, in reraise

raise value

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 2292, in wsgi_app

response = self.full_dispatch_request()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1815, in full_dispatch_request

rv = self.handle_user_exception(e)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask_cors/extension.py", line 161, in wrapped_function

return cors_after_request(app.make_response(f(*args, **kwargs)))

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1718, in handle_user_exception

reraise(exc_type, exc_value, tb)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/_compat.py", line 35, in reraise

raise value

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1813, in full_dispatch_request

rv = self.dispatch_request()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1799, in dispatch_request

return self.view_functions[rule.endpoint](**req.view_args)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/utils.py", line 140, in __call__

result = self.callback(request, request.url, {})

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/responses.py", line 168, in dispatch

return cls()._dispatch(*args, **kwargs)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/responses.py", line 259, in _dispatch

return self.call_action()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/responses.py", line 340, in call_action

response = method()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/utils.py", line 264, in _wrapper

response = f(*args, **kwargs)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/responses.py", line 108, in create_change_set

change_set_type=update_or_create,

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/models.py", line 452, in create_change_set

cross_stack_resources=self.exports

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/models.py", line 295, in __init__

create_change_set=True,

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/models.py", line 187, in __init__

self.resource_map = self._create_resource_map()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/models.py", line 195, in _create_resource_map

resource_map.create()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/parsing.py", line 482, in create

if isinstance(self[resource], ec2_models.TaggedEC2Resource):

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/parsing.py", line 415, in __getitem__

resource_logical_id, resource_json, self, self._region_name)

File "/opt/code/localstack/localstack/services/cloudformation/cloudformation_starter.py", line 173, in parse_and_create_resource

return _parse_and_create_resource(logical_id, resource_json, resources_map, region_name)

File "/opt/code/localstack/localstack/services/cloudformation/cloudformation_starter.py", line 260, in _parse_and_create_resource

result = deploy_func(logical_id, resource_wrapped, stack_name=stack_name)

File "/opt/code/localstack/localstack/utils/cloudformation/template_deployer.py", line 671, in deploy_resource

result = deploy_resource_via_sdk_function(resource_id, resources, resource_type, func, stack_name)

File "/opt/code/localstack/localstack/utils/cloudformation/template_deployer.py", line 741, in deploy_resource_via_sdk_function

raise e

File "/opt/code/localstack/localstack/utils/cloudformation/template_deployer.py", line 738, in deploy_resource_via_sdk_function

result = function(**params)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/botocore/client.py", line 357, in _api_call

return self._make_api_call(operation_name, kwargs)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/botocore/client.py", line 634, in _make_api_call

api_params, operation_model, context=request_context)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/botocore/client.py", line 682, in _convert_to_request_dict

api_params, operation_model)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/botocore/validate.py", line 297, in serialize_to_request

raise ParamValidationError(report=report.generate_report())

botocore.exceptions.ParamValidationError: Parameter validation failed:

Missing required parameter in input: "FunctionName"

```

Considering the fact that the `FunctionName` property is not mandatory from CFN perspective (it's even worse, since having it or not has significant implications), is it possible to create a name on the fly?

|

1.0

|

Missing required parameter in input: "FunctionName" - While creating a lambda using CFN, my command fails with the following message:

```

2019-11-08 15:52:29,358:API: Error on request:

Traceback (most recent call last):

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/werkzeug/serving.py", line 304, in run_wsgi

execute(self.server.app)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/werkzeug/serving.py", line 292, in execute

application_iter = app(environ, start_response)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/server.py", line 132, in __call__

return backend_app(environ, start_response)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 2309, in __call__

return self.wsgi_app(environ, start_response)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 2295, in wsgi_app

response = self.handle_exception(e)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask_cors/extension.py", line 161, in wrapped_function

return cors_after_request(app.make_response(f(*args, **kwargs)))

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1741, in handle_exception

reraise(exc_type, exc_value, tb)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/_compat.py", line 35, in reraise

raise value

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 2292, in wsgi_app

response = self.full_dispatch_request()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1815, in full_dispatch_request

rv = self.handle_user_exception(e)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask_cors/extension.py", line 161, in wrapped_function

return cors_after_request(app.make_response(f(*args, **kwargs)))

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1718, in handle_user_exception

reraise(exc_type, exc_value, tb)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/_compat.py", line 35, in reraise

raise value

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1813, in full_dispatch_request

rv = self.dispatch_request()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/flask/app.py", line 1799, in dispatch_request

return self.view_functions[rule.endpoint](**req.view_args)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/utils.py", line 140, in __call__

result = self.callback(request, request.url, {})

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/responses.py", line 168, in dispatch

return cls()._dispatch(*args, **kwargs)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/responses.py", line 259, in _dispatch

return self.call_action()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/responses.py", line 340, in call_action

response = method()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/core/utils.py", line 264, in _wrapper

response = f(*args, **kwargs)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/responses.py", line 108, in create_change_set

change_set_type=update_or_create,

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/models.py", line 452, in create_change_set

cross_stack_resources=self.exports

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/models.py", line 295, in __init__

create_change_set=True,

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/models.py", line 187, in __init__

self.resource_map = self._create_resource_map()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/models.py", line 195, in _create_resource_map

resource_map.create()

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/parsing.py", line 482, in create

if isinstance(self[resource], ec2_models.TaggedEC2Resource):

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/moto/cloudformation/parsing.py", line 415, in __getitem__

resource_logical_id, resource_json, self, self._region_name)

File "/opt/code/localstack/localstack/services/cloudformation/cloudformation_starter.py", line 173, in parse_and_create_resource

return _parse_and_create_resource(logical_id, resource_json, resources_map, region_name)

File "/opt/code/localstack/localstack/services/cloudformation/cloudformation_starter.py", line 260, in _parse_and_create_resource

result = deploy_func(logical_id, resource_wrapped, stack_name=stack_name)

File "/opt/code/localstack/localstack/utils/cloudformation/template_deployer.py", line 671, in deploy_resource

result = deploy_resource_via_sdk_function(resource_id, resources, resource_type, func, stack_name)

File "/opt/code/localstack/localstack/utils/cloudformation/template_deployer.py", line 741, in deploy_resource_via_sdk_function

raise e

File "/opt/code/localstack/localstack/utils/cloudformation/template_deployer.py", line 738, in deploy_resource_via_sdk_function

result = function(**params)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/botocore/client.py", line 357, in _api_call

return self._make_api_call(operation_name, kwargs)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/botocore/client.py", line 634, in _make_api_call

api_params, operation_model, context=request_context)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/botocore/client.py", line 682, in _convert_to_request_dict

api_params, operation_model)

File "/opt/code/localstack/.venv/lib/python3.6/site-packages/botocore/validate.py", line 297, in serialize_to_request

raise ParamValidationError(report=report.generate_report())

botocore.exceptions.ParamValidationError: Parameter validation failed:

Missing required parameter in input: "FunctionName"

```

Considering the fact that the `FunctionName` property is not mandatory from CFN perspective (it's even worse, since having it or not has significant implications), is it possible to create a name on the fly?

|

priority

|

missing required parameter in input functionname while creating a lambda using cfn my command fails with the following message api error on request traceback most recent call last file opt code localstack venv lib site packages werkzeug serving py line in run wsgi execute self server app file opt code localstack venv lib site packages werkzeug serving py line in execute application iter app environ start response file opt code localstack venv lib site packages moto server py line in call return backend app environ start response file opt code localstack venv lib site packages flask app py line in call return self wsgi app environ start response file opt code localstack venv lib site packages flask app py line in wsgi app response self handle exception e file opt code localstack venv lib site packages flask cors extension py line in wrapped function return cors after request app make response f args kwargs file opt code localstack venv lib site packages flask app py line in handle exception reraise exc type exc value tb file opt code localstack venv lib site packages flask compat py line in reraise raise value file opt code localstack venv lib site packages flask app py line in wsgi app response self full dispatch request file opt code localstack venv lib site packages flask app py line in full dispatch request rv self handle user exception e file opt code localstack venv lib site packages flask cors extension py line in wrapped function return cors after request app make response f args kwargs file opt code localstack venv lib site packages flask app py line in handle user exception reraise exc type exc value tb file opt code localstack venv lib site packages flask compat py line in reraise raise value file opt code localstack venv lib site packages flask app py line in full dispatch request rv self dispatch request file opt code localstack venv lib site packages flask app py line in dispatch request return self view functions req view args file opt code localstack venv lib site packages moto core utils py line in call result self callback request request url file opt code localstack venv lib site packages moto core responses py line in dispatch return cls dispatch args kwargs file opt code localstack venv lib site packages moto core responses py line in dispatch return self call action file opt code localstack venv lib site packages moto core responses py line in call action response method file opt code localstack venv lib site packages moto core utils py line in wrapper response f args kwargs file opt code localstack venv lib site packages moto cloudformation responses py line in create change set change set type update or create file opt code localstack venv lib site packages moto cloudformation models py line in create change set cross stack resources self exports file opt code localstack venv lib site packages moto cloudformation models py line in init create change set true file opt code localstack venv lib site packages moto cloudformation models py line in init self resource map self create resource map file opt code localstack venv lib site packages moto cloudformation models py line in create resource map resource map create file opt code localstack venv lib site packages moto cloudformation parsing py line in create if isinstance self models file opt code localstack venv lib site packages moto cloudformation parsing py line in getitem resource logical id resource json self self region name file opt code localstack localstack services cloudformation cloudformation starter py line in parse and create resource return parse and create resource logical id resource json resources map region name file opt code localstack localstack services cloudformation cloudformation starter py line in parse and create resource result deploy func logical id resource wrapped stack name stack name file opt code localstack localstack utils cloudformation template deployer py line in deploy resource result deploy resource via sdk function resource id resources resource type func stack name file opt code localstack localstack utils cloudformation template deployer py line in deploy resource via sdk function raise e file opt code localstack localstack utils cloudformation template deployer py line in deploy resource via sdk function result function params file opt code localstack venv lib site packages botocore client py line in api call return self make api call operation name kwargs file opt code localstack venv lib site packages botocore client py line in make api call api params operation model context request context file opt code localstack venv lib site packages botocore client py line in convert to request dict api params operation model file opt code localstack venv lib site packages botocore validate py line in serialize to request raise paramvalidationerror report report generate report botocore exceptions paramvalidationerror parameter validation failed missing required parameter in input functionname considering the fact that the functionname property is not mandatory from cfn perspective it s even worse since having it or not has significant implications is it possible to create a name on the fly

| 1

|

319,617

| 9,747,282,420

|

IssuesEvent

|

2019-06-03 14:05:46

|

dojot/dojot

|

https://api.github.com/repos/dojot/dojot

|

closed

|

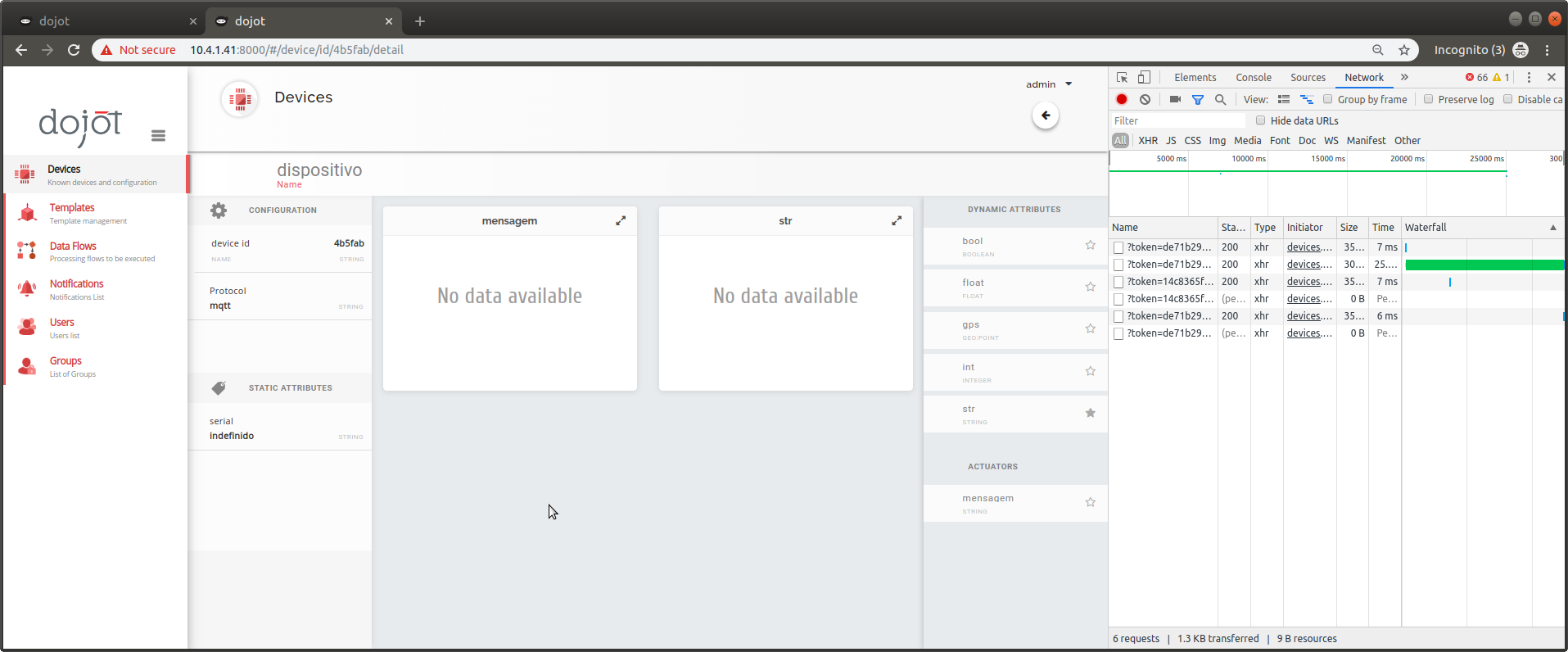

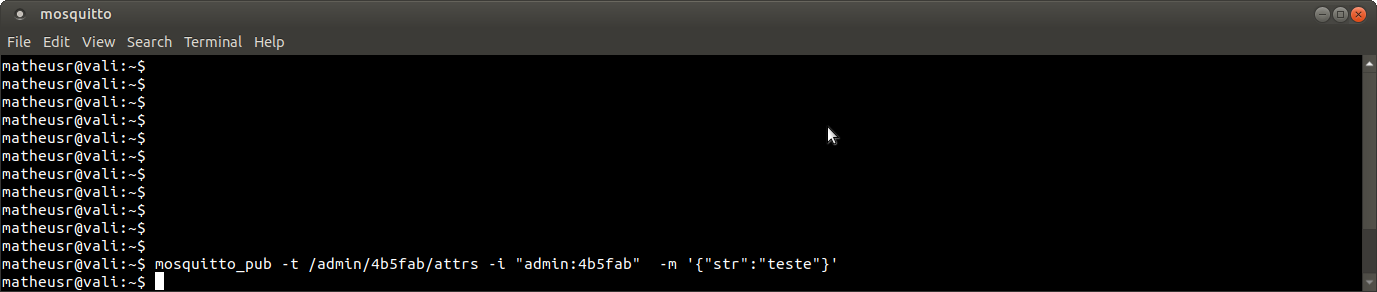

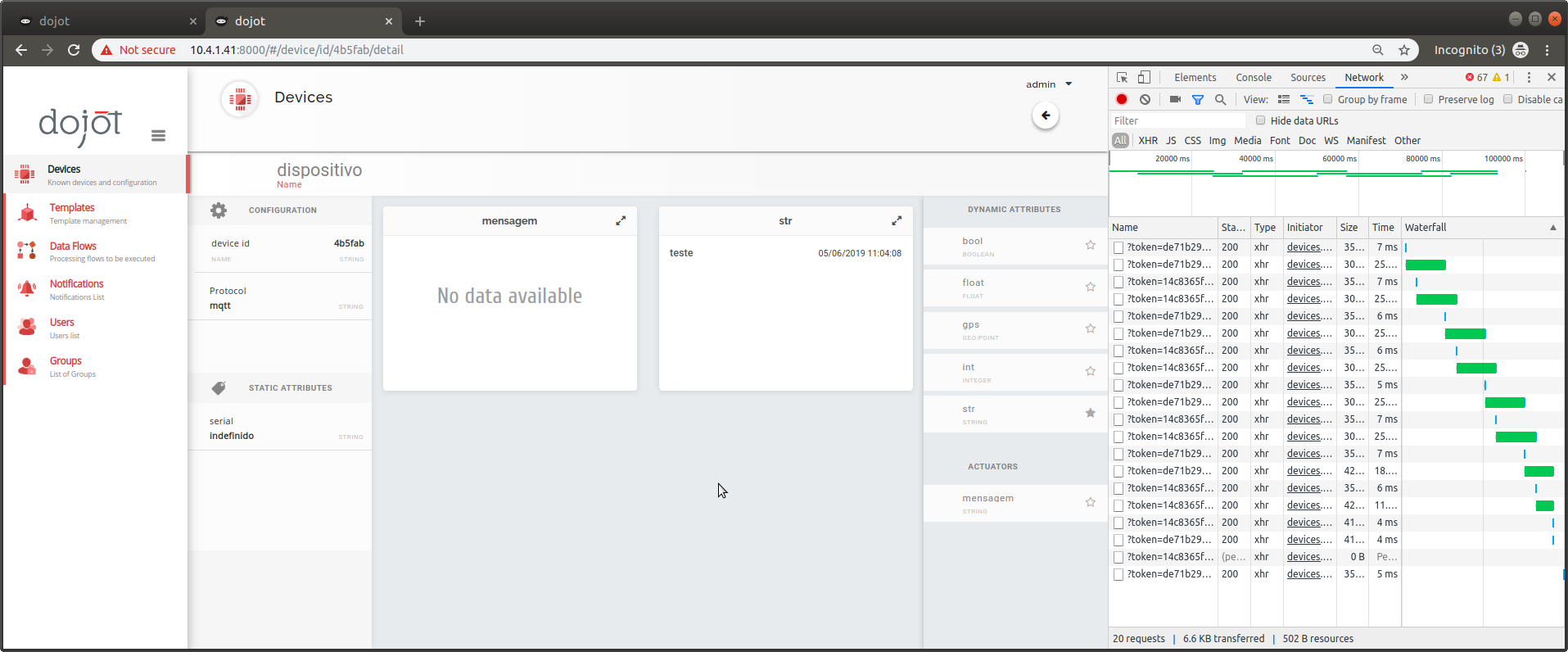

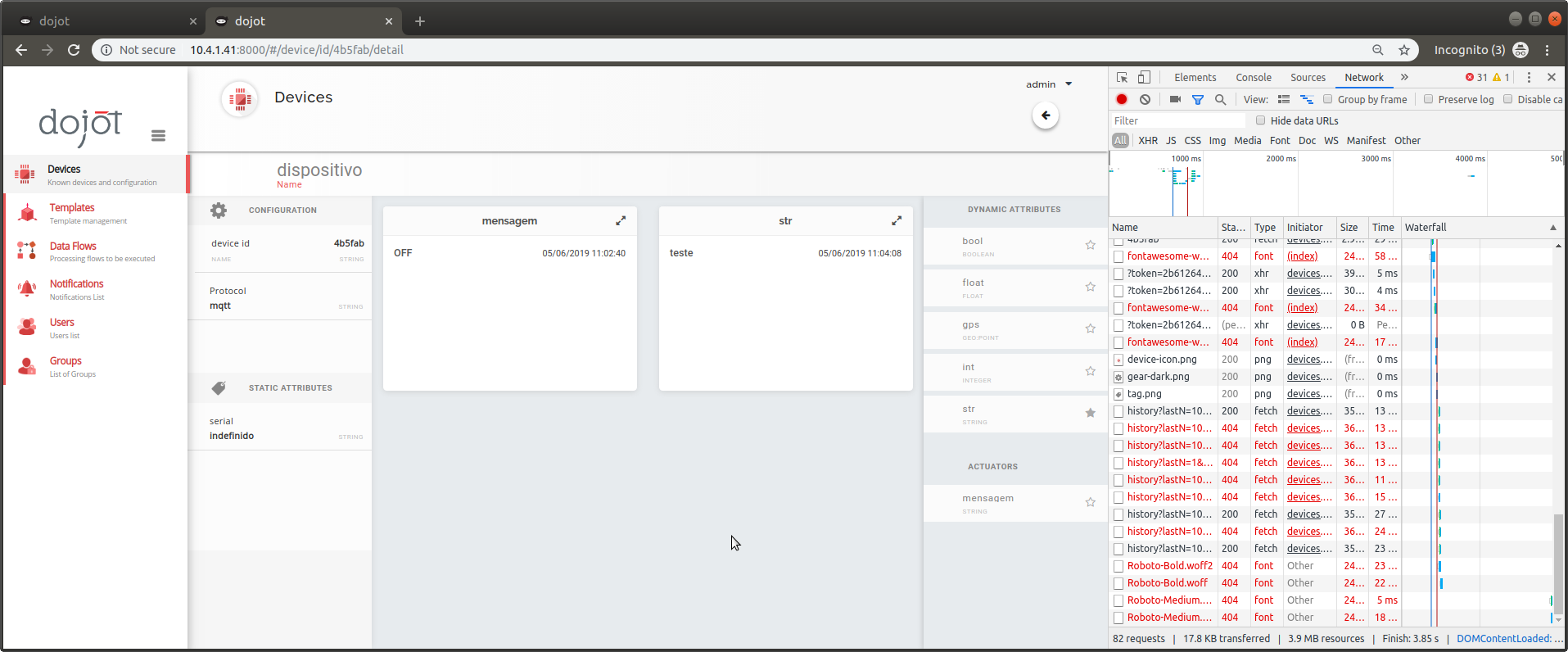

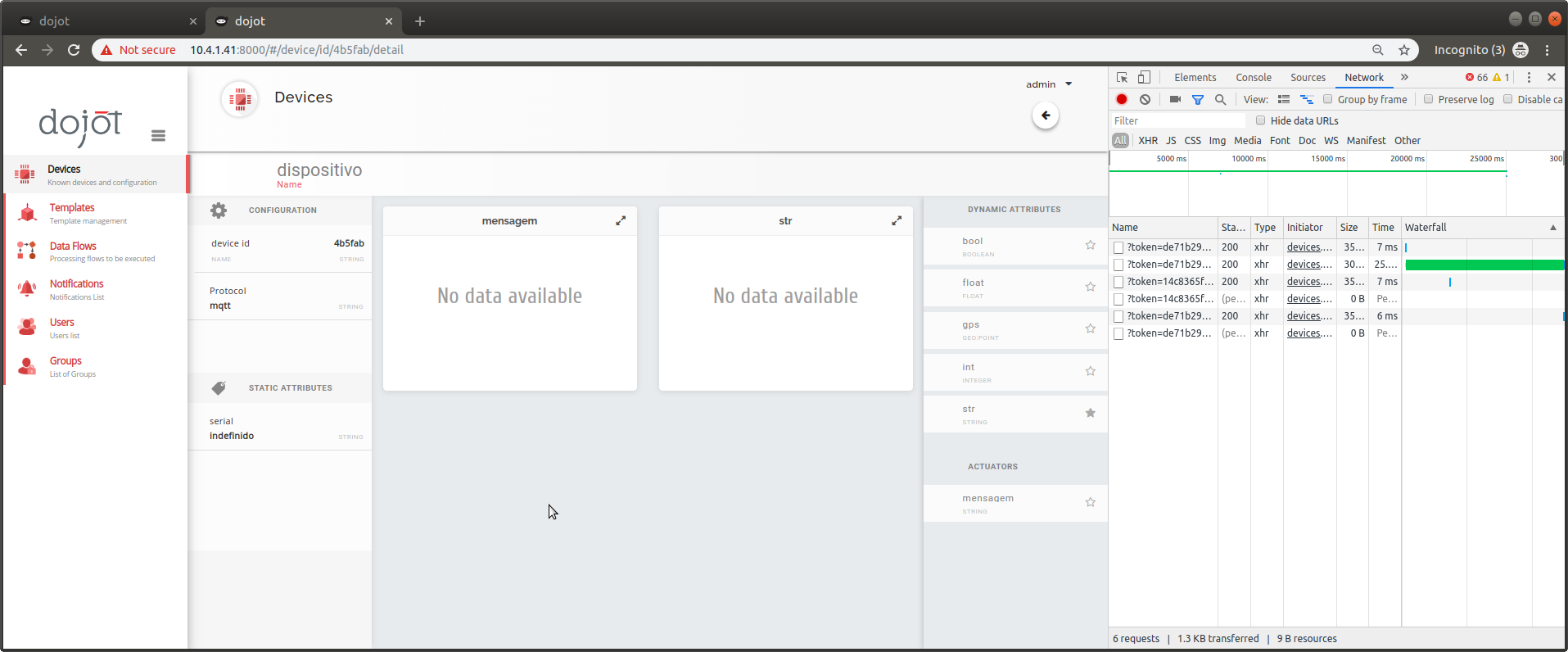

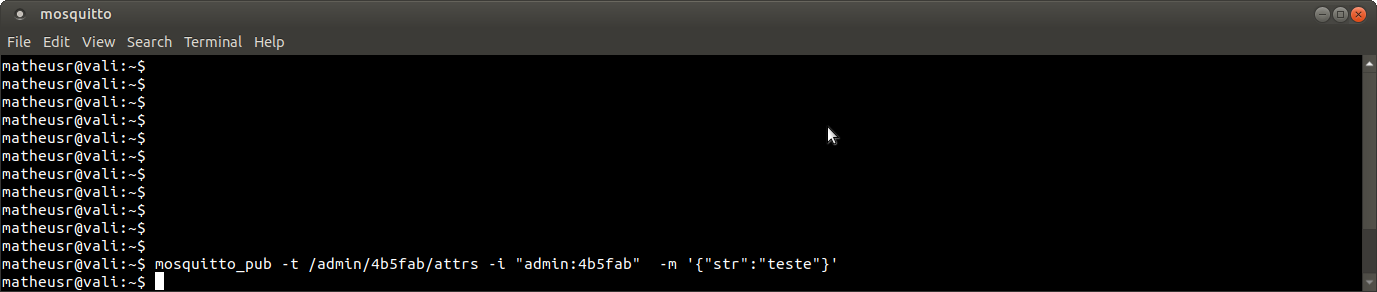

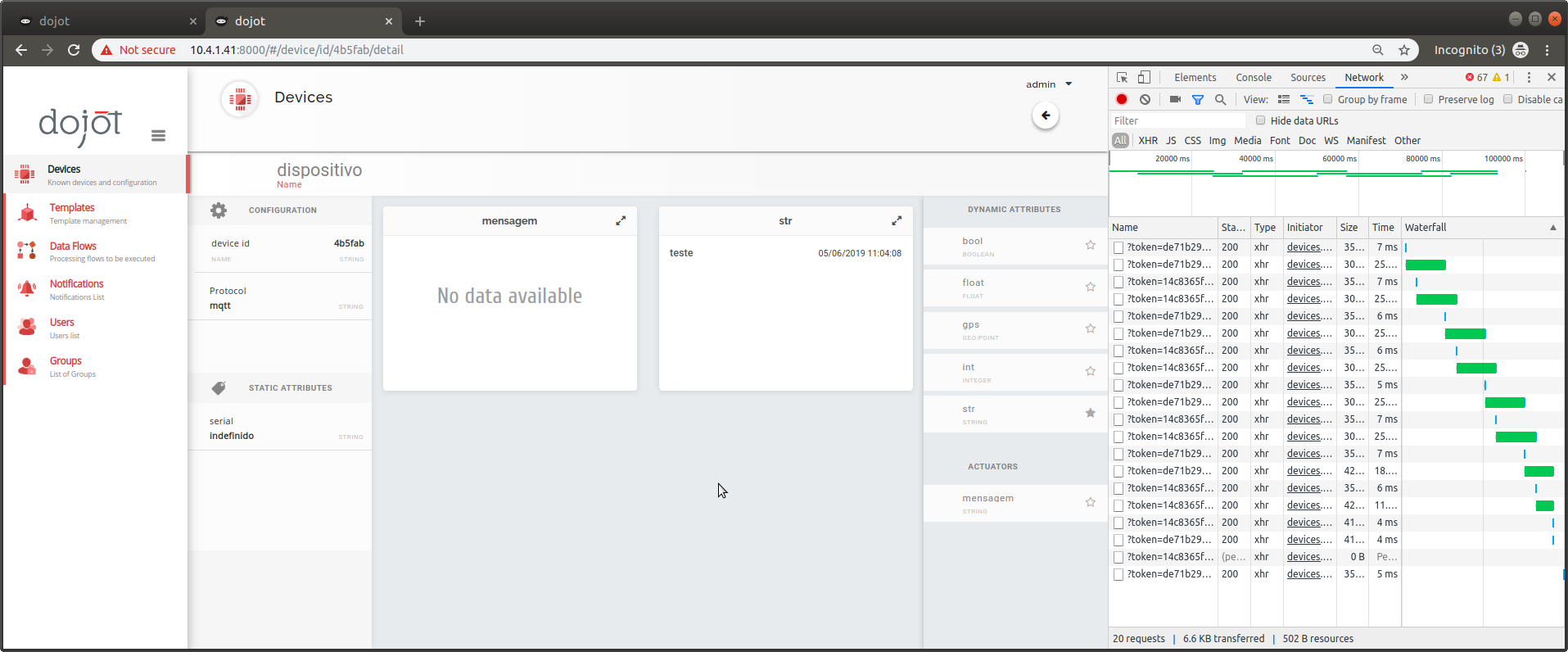

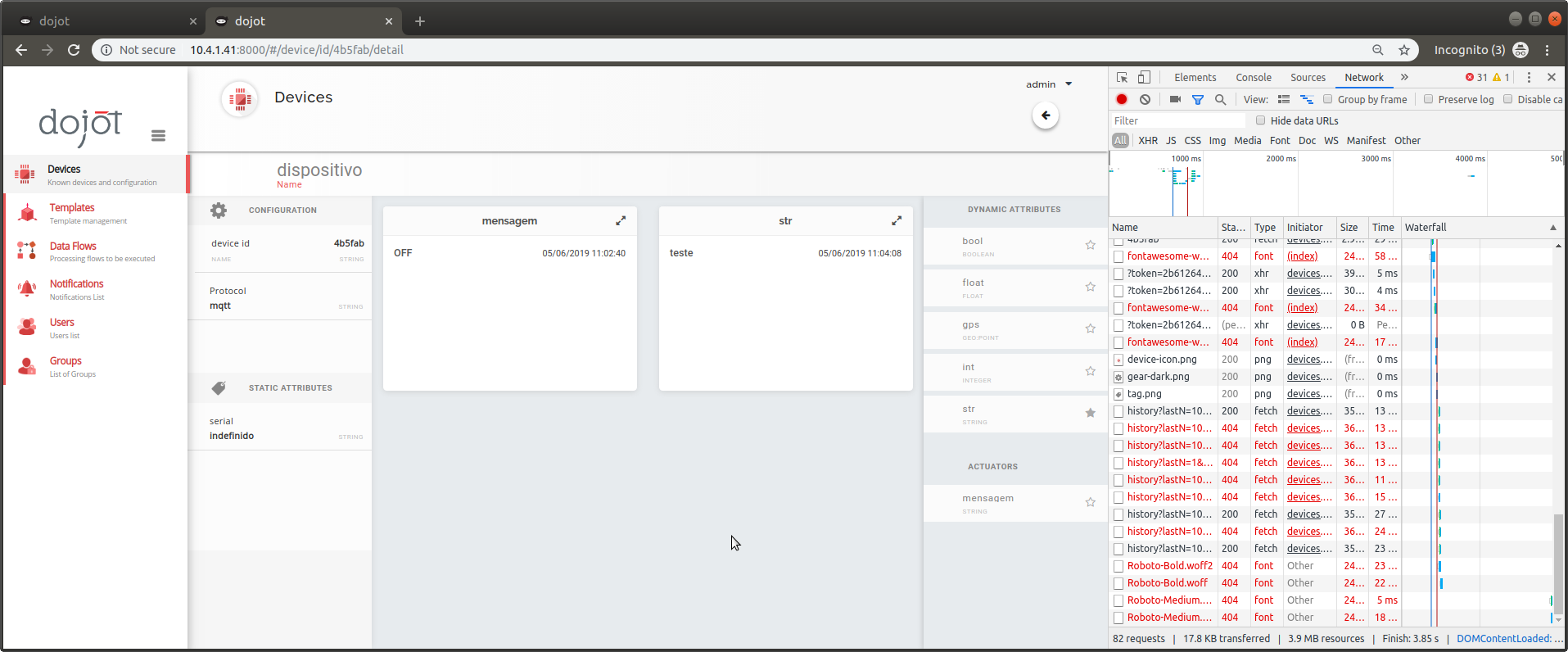

[DataBroker, device-manager, history] Real-time does not work on actuate attributes

|

Priority:High Status:In Progress Team:Backend Type:Bug

|

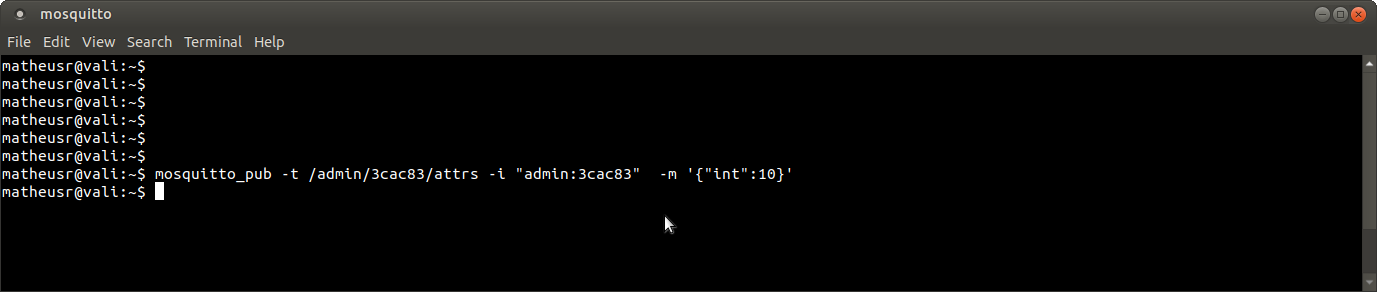

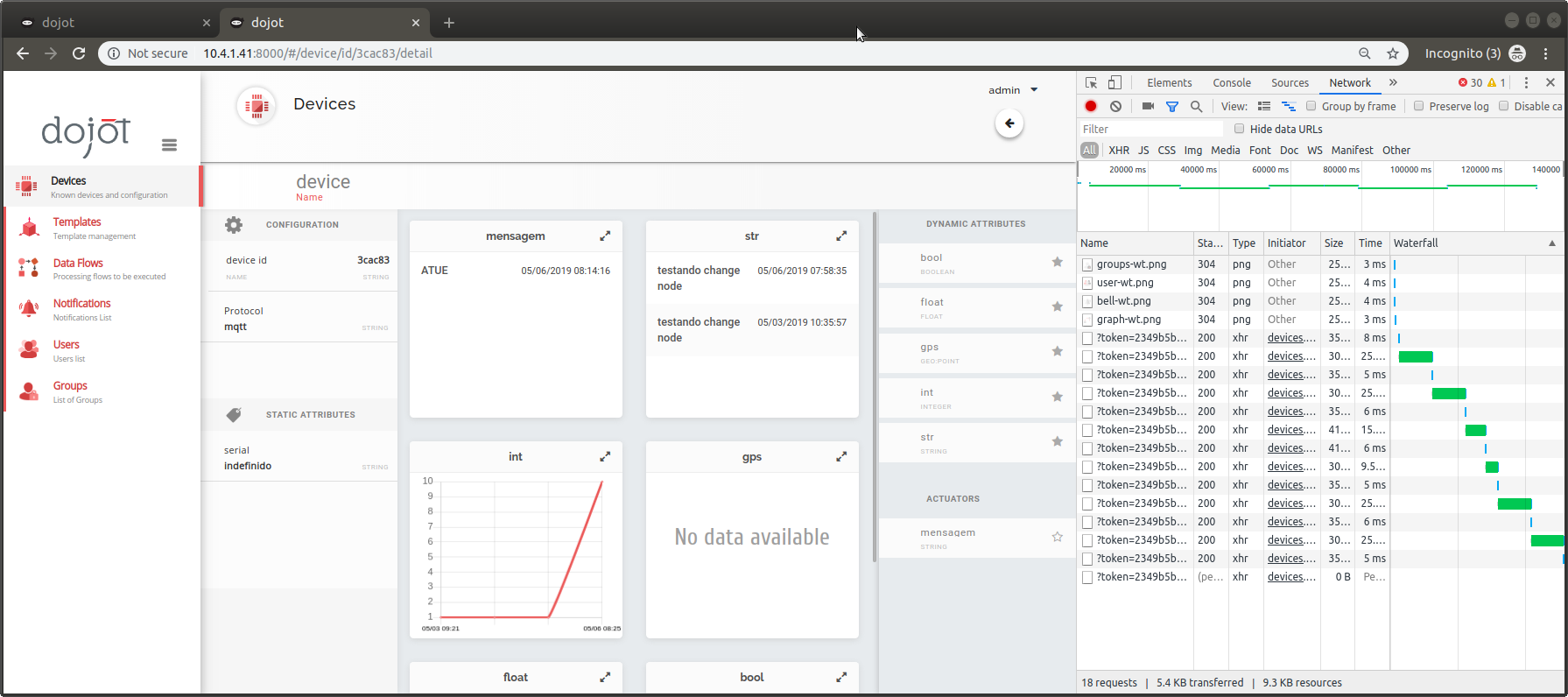

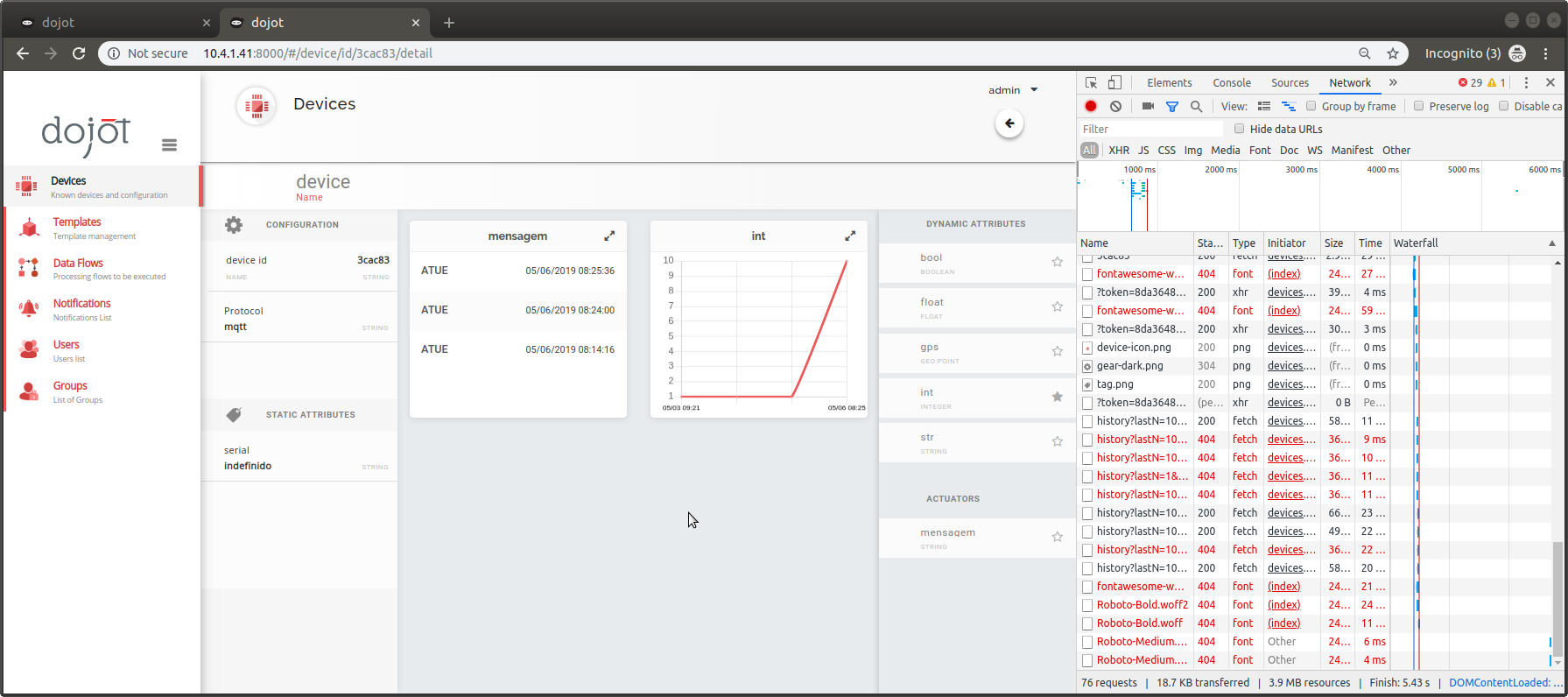

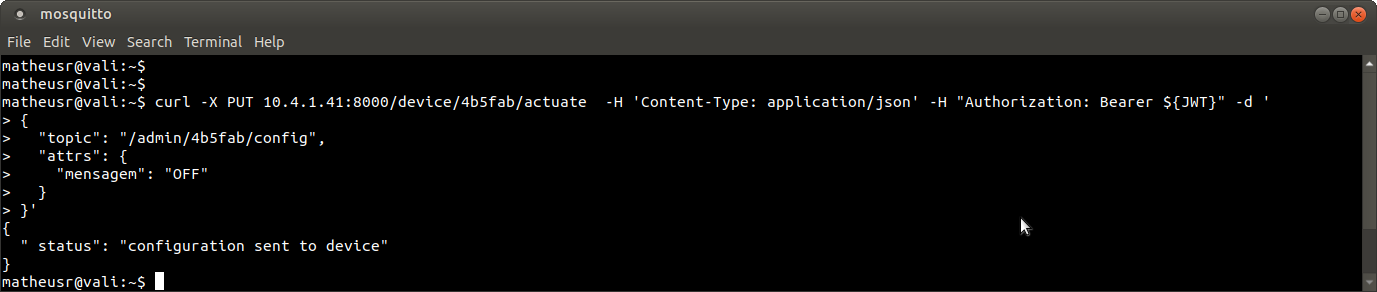

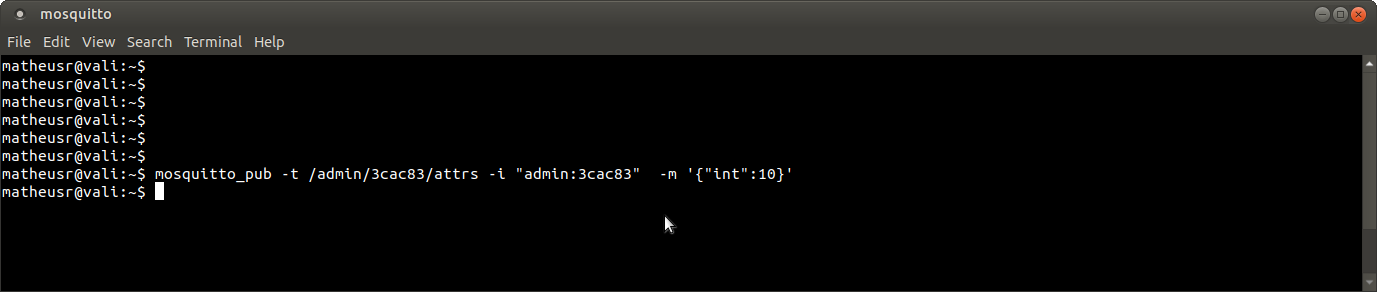

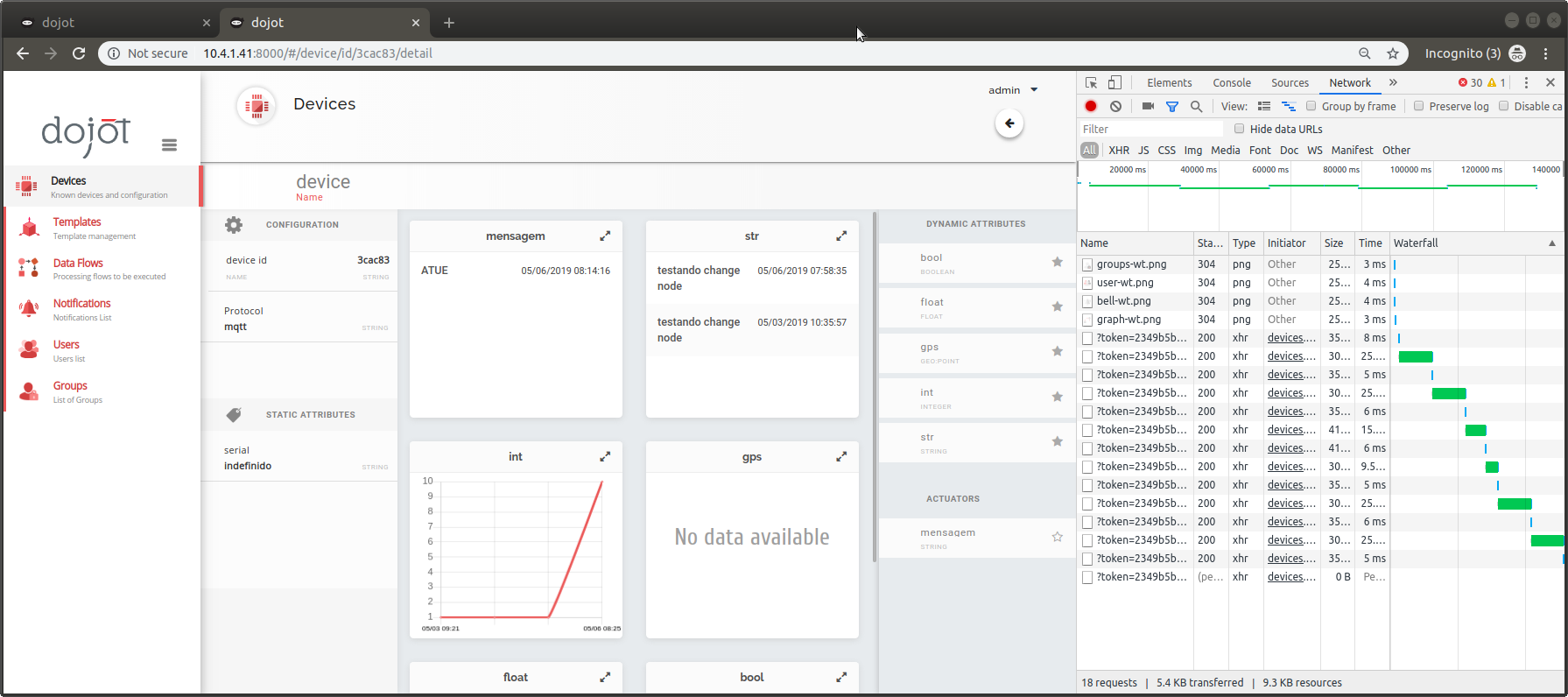

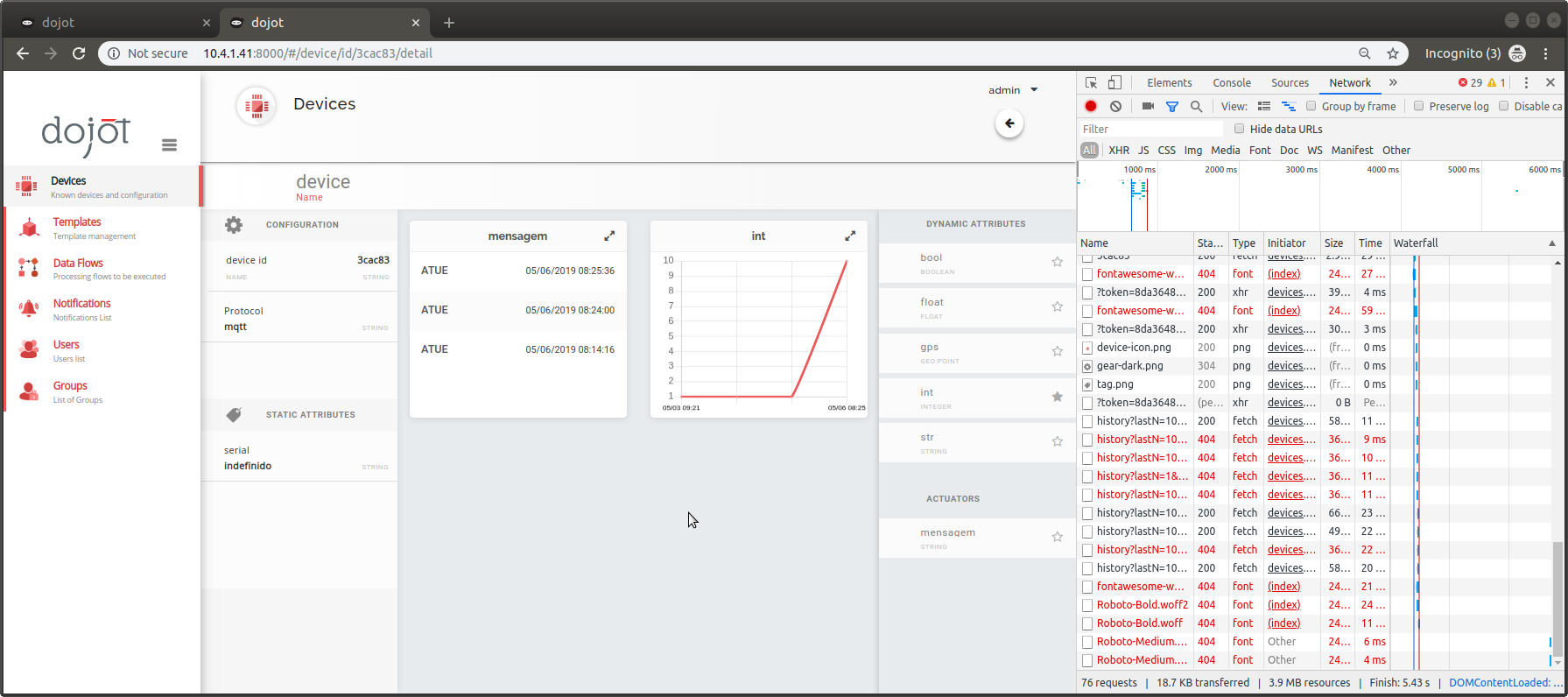

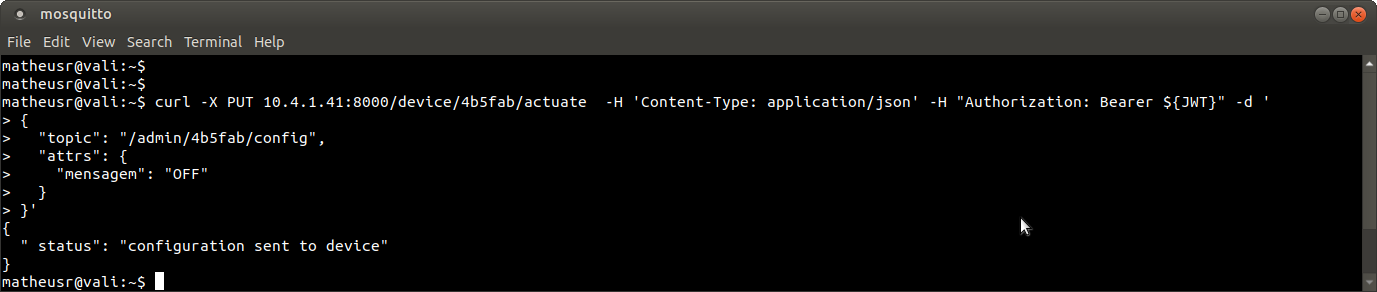

**Steps to reproduce the problem:**

_test 1_:

1. create a flow with attribute of actuation

2. activate the flow

3. view attribute detail

4. After F5

_test 2_:

1. actuate on the device

2. view attribute detail

3. publish data from another attribute

4. After F5

**Affected Version**: 61.1-20190423

|

1.0

|

[DataBroker, device-manager, history] Real-time does not work on actuate attributes - **Steps to reproduce the problem:**

_test 1_:

1. create a flow with attribute of actuation

2. activate the flow

3. view attribute detail

4. After F5

_test 2_:

1. actuate on the device

2. view attribute detail

3. publish data from another attribute

4. After F5

**Affected Version**: 61.1-20190423

|

priority

|

real time does not work on actuate attributes steps to reproduce the problem test create a flow with attribute of actuation activate the flow view attribute detail after test actuate on the device view attribute detail publish data from another attribute after affected version

| 1

|

530,665

| 15,435,525,670

|

IssuesEvent

|

2021-03-07 09:15:45

|

VelvetThePanda/Silk

|

https://api.github.com/repos/VelvetThePanda/Silk

|

closed

|

Daily command for newcomers doesn't save balance correctly

|

Bugged Priority: HIGH

|

**Describe the bug**

If you're new, and you run the daily command, it will say you've collected $500, but in reality it saves 0 dollars. However, if you have an account, it saves correctly.

**To Reproduce**

- Be new

- Run daily command

- Run cash command

- Observe

**Expected behavior**

An account is created, and $500 is deposited.

**Actual behavior**

An account is created, but $0 is saved.

|

1.0

|

Daily command for newcomers doesn't save balance correctly - **Describe the bug**

If you're new, and you run the daily command, it will say you've collected $500, but in reality it saves 0 dollars. However, if you have an account, it saves correctly.

**To Reproduce**

- Be new

- Run daily command

- Run cash command

- Observe

**Expected behavior**

An account is created, and $500 is deposited.

**Actual behavior**

An account is created, but $0 is saved.

|

priority

|

daily command for newcomers doesn t save balance correctly describe the bug if you re new and you run the daily command it will say you ve collected but in reality it saves dollars however if you have an account it saves correctly to reproduce be new run daily command run cash command observe expected behavior an account is created and is deposited actual behavior an account is created but is saved

| 1

|

580,146

| 17,210,729,901

|

IssuesEvent

|

2021-07-19 03:42:53

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

reopened

|

Allow negative learning rates

|

enhancement high priority module: optimizer triage review triaged

|

## 🚀 Feature

Currently, optimizers throw an assertion error when negative learning rates are supplied at construction. This proposal suggests removing this restriction.

## Motivation

Since maximization is equivalent to minimizing a negative loss function, negative learning rates are useful and make sense. For example, in GANs, the generator and discriminator can be trained adversarially by giving the discriminator a negative learning rate. This avoids having two backward passes, which improves both computational efficiency and conceptual clarity.

## Pitch

As learning rates are typically parameterized by constants, providing a negative rate by accident is highly unlikely. I argue that making this mistake is much less likely than wanting a negative learning rate, and so the defensive assertion is better removed.

## Alternatives

It is currently possible to set a negative LR through an ugly loop through the optimizer's `param_group`. An alternative would be to provide a cleaner way to do so, i.e., a `maximize=True` flag on optimizer construction.

cc @ezyang @gchanan @zou3519 @bdhirsh @jbschlosser @anjali411 @vincentqb @iramazanli

|

1.0

|

Allow negative learning rates - ## 🚀 Feature

Currently, optimizers throw an assertion error when negative learning rates are supplied at construction. This proposal suggests removing this restriction.

## Motivation

Since maximization is equivalent to minimizing a negative loss function, negative learning rates are useful and make sense. For example, in GANs, the generator and discriminator can be trained adversarially by giving the discriminator a negative learning rate. This avoids having two backward passes, which improves both computational efficiency and conceptual clarity.

## Pitch

As learning rates are typically parameterized by constants, providing a negative rate by accident is highly unlikely. I argue that making this mistake is much less likely than wanting a negative learning rate, and so the defensive assertion is better removed.

## Alternatives

It is currently possible to set a negative LR through an ugly loop through the optimizer's `param_group`. An alternative would be to provide a cleaner way to do so, i.e., a `maximize=True` flag on optimizer construction.

cc @ezyang @gchanan @zou3519 @bdhirsh @jbschlosser @anjali411 @vincentqb @iramazanli

|

priority

|

allow negative learning rates 🚀 feature currently optimizers throw an assertion error when negative learning rates are supplied at construction this proposal suggests removing this restriction motivation since maximization is equivalent to minimizing a negative loss function negative learning rates are useful and make sense for example in gans the generator and discriminator can be trained adversarially by giving the discriminator a negative learning rate this avoids having two backward passes which improves both computational efficiency and conceptual clarity pitch as learning rates are typically parameterized by constants providing a negative rate by accident is highly unlikely i argue that making this mistake is much less likely than wanting a negative learning rate and so the defensive assertion is better removed alternatives it is currently possible to set a negative lr through an ugly loop through the optimizer s param group an alternative would be to provide a cleaner way to do so i e a maximize true flag on optimizer construction cc ezyang gchanan bdhirsh jbschlosser vincentqb iramazanli

| 1

|

760,111

| 26,629,051,264

|

IssuesEvent

|

2023-01-24 16:28:26

|

valantic/vue-template

|

https://api.github.com/repos/valantic/vue-template

|

closed

|

Update api documentation

|

enhancement high priority vue-3

|

All attributes on the api helper are documented as required. But most of them are optional (e.g. `[notificationOptions]`). Update documentation and wrap optional attributes in square brackets.

|

1.0

|

Update api documentation - All attributes on the api helper are documented as required. But most of them are optional (e.g. `[notificationOptions]`). Update documentation and wrap optional attributes in square brackets.

|

priority

|

update api documentation all attributes on the api helper are documented as required but most of them are optional e g update documentation and wrap optional attributes in square brackets

| 1

|

423,538

| 12,298,203,675

|

IssuesEvent

|

2020-05-11 10:06:46

|

MyDataTaiwan/mylog14

|

https://api.github.com/repos/MyDataTaiwan/mylog14

|

reopened

|

[Todo] Style adjustment for daily-overview page

|

enhancement priority-high

|

# Issue description

1. Center the Main Header title

2. Fix that the lower part of the countdown animation overlap with the dividing line

|

1.0

|

[Todo] Style adjustment for daily-overview page - # Issue description

1. Center the Main Header title

2. Fix that the lower part of the countdown animation overlap with the dividing line

|

priority

|

style adjustment for daily overview page issue description center the main header title fix that the lower part of the countdown animation overlap with the dividing line

| 1

|

178,636

| 6,613,080,570

|

IssuesEvent

|

2017-09-20 07:51:23

|

OpenWebslides/OpenWebslides

|

https://api.github.com/repos/OpenWebslides/OpenWebslides

|

opened

|

Docker race condition

|

bug high priority operations

|

When restarting Docker/rebooting server, the NGINX container cannot reach the Docker-defined `app` host.

|

1.0

|

Docker race condition - When restarting Docker/rebooting server, the NGINX container cannot reach the Docker-defined `app` host.

|

priority

|

docker race condition when restarting docker rebooting server the nginx container cannot reach the docker defined app host

| 1

|

556,187

| 16,477,192,564

|

IssuesEvent

|

2021-05-24 07:14:51

|

MathiasReker/Delfinen

|

https://api.github.com/repos/MathiasReker/Delfinen

|

closed

|

View Member info

|

feature request high priority required

|

Feature Request

Display Member infomation nicely in terminal.

- [x] The user must choose which member he wants to view a. The User searches on ID or name

* If the user searches on ID, and it exists, the user is directed to the "member view"

* If there is more than one match, the matches are displayed, and the user must choose by picking a corresponding

number

- [x] The system displays member information (Name, Age, etc.)

- [x] The system displays different options

* Update Member info

* Anonymize the member

|

1.0

|

View Member info - Feature Request

Display Member infomation nicely in terminal.

- [x] The user must choose which member he wants to view a. The User searches on ID or name

* If the user searches on ID, and it exists, the user is directed to the "member view"

* If there is more than one match, the matches are displayed, and the user must choose by picking a corresponding

number

- [x] The system displays member information (Name, Age, etc.)

- [x] The system displays different options

* Update Member info

* Anonymize the member

|

priority

|

view member info feature request display member infomation nicely in terminal the user must choose which member he wants to view a the user searches on id or name if the user searches on id and it exists the user is directed to the member view if there is more than one match the matches are displayed and the user must choose by picking a corresponding number the system displays member information name age etc the system displays different options update member info anonymize the member

| 1

|

291,505

| 8,926,061,704

|

IssuesEvent

|

2019-01-22 02:16:03

|

SalvatoreTosti/spicy-bingo

|

https://api.github.com/repos/SalvatoreTosti/spicy-bingo

|

opened

|

Update validation on user-inputted name fields

|

high priority

|

User inputted fields should be checked to ensure they conform to the back-end specifications of the information.

Ex. space checking on new room names.

|

1.0

|

Update validation on user-inputted name fields - User inputted fields should be checked to ensure they conform to the back-end specifications of the information.

Ex. space checking on new room names.

|

priority

|

update validation on user inputted name fields user inputted fields should be checked to ensure they conform to the back end specifications of the information ex space checking on new room names

| 1

|

348,771

| 10,452,589,496

|

IssuesEvent

|

2019-09-19 14:57:06

|

ansible/galaxy

|

https://api.github.com/repos/ansible/galaxy

|

opened

|

Security Updates

|

area/backend priority/high status/new type/bug

|

[ ] Update packages/dependencies to fix failing security audit

[ ] Address the concerns identified by @chrismeyersfsu

|

1.0

|

Security Updates - [ ] Update packages/dependencies to fix failing security audit

[ ] Address the concerns identified by @chrismeyersfsu

|

priority

|

security updates update packages dependencies to fix failing security audit address the concerns identified by chrismeyersfsu

| 1

|

458,086

| 13,168,399,022

|

IssuesEvent

|

2020-08-11 12:03:46

|

kubesphere/kubesphere

|

https://api.github.com/repos/kubesphere/kubesphere

|

closed

|

custom project role permission denied

|

area/iam kind/bug kind/need-to-verify priority/high

|

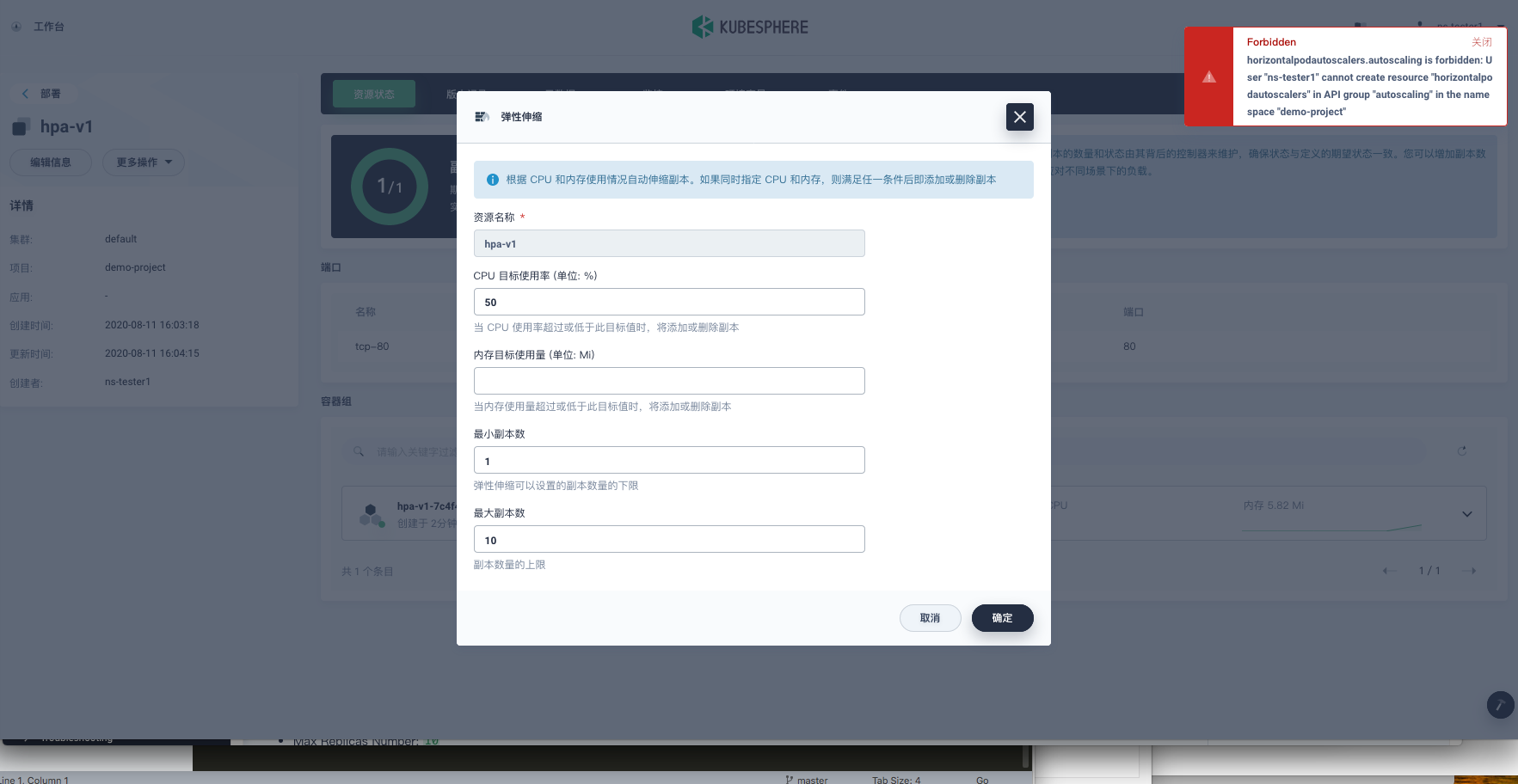

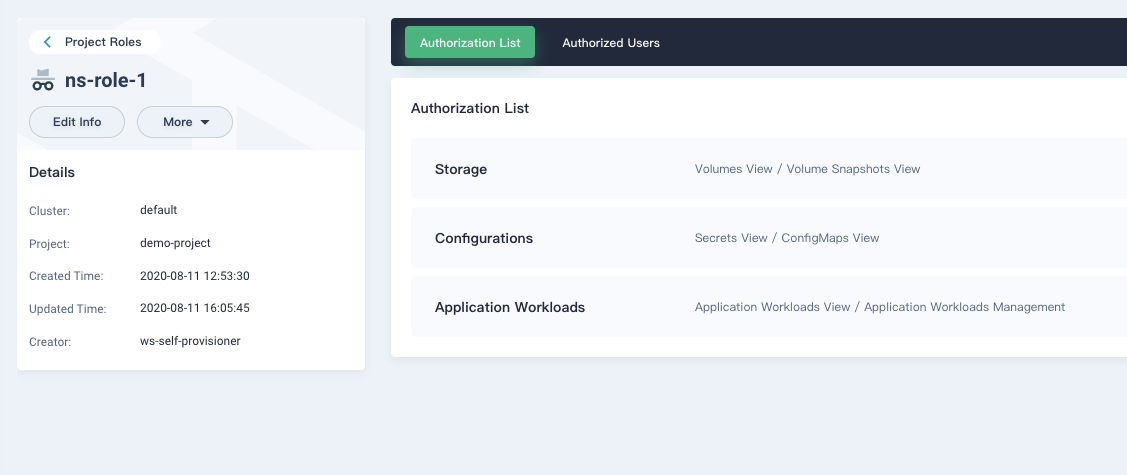

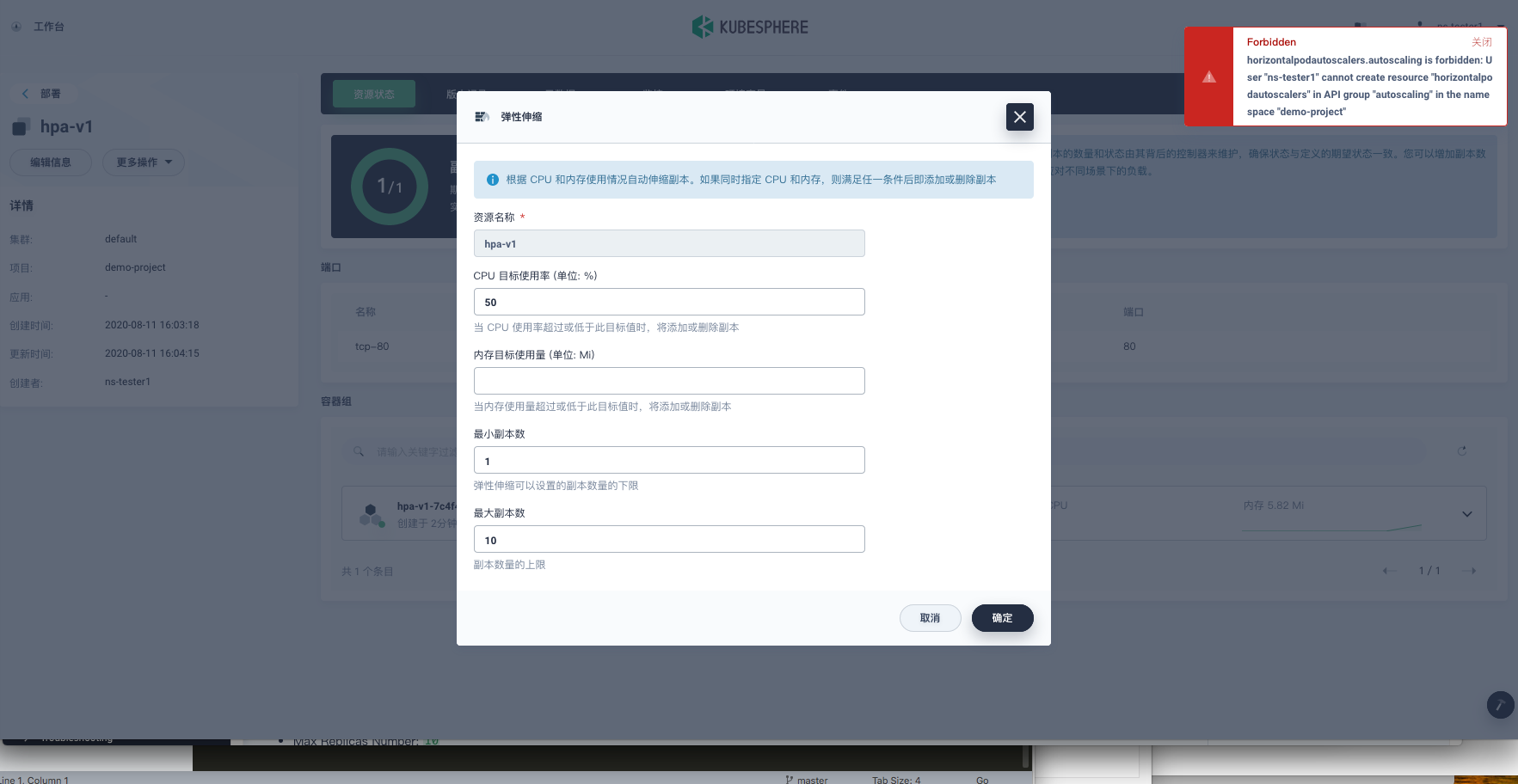

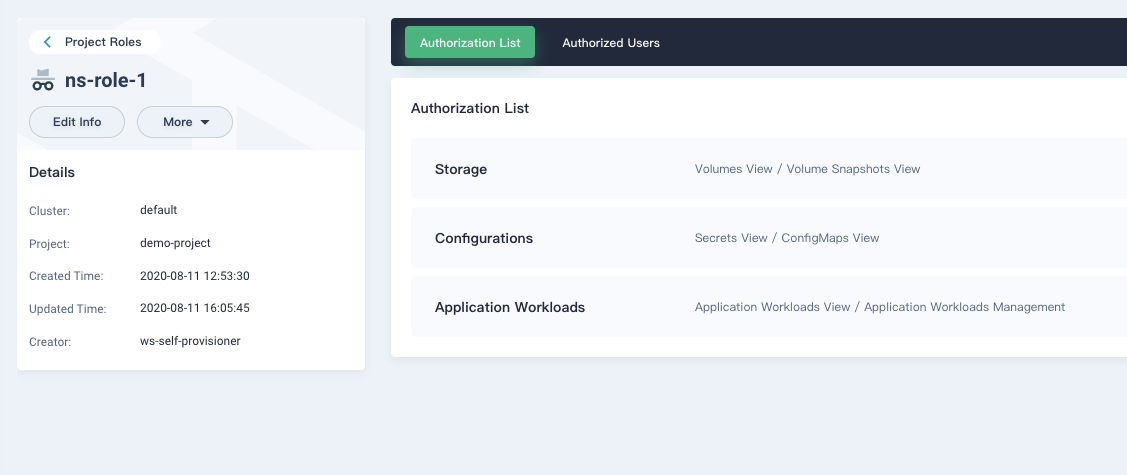

**Describe the Bug**

A custom project role with `Application Workloads View / Application Workloads Management` configured HPA but denied

**Versions Used**

KubeSphere: 3.0.0 (2020-08-11)

|

1.0

|

custom project role permission denied - **Describe the Bug**

A custom project role with `Application Workloads View / Application Workloads Management` configured HPA but denied

**Versions Used**

KubeSphere: 3.0.0 (2020-08-11)

|

priority

|

custom project role permission denied describe the bug a custom project role with application workloads view application workloads management configured hpa but denied versions used kubesphere

| 1

|

425,412

| 12,339,932,104

|

IssuesEvent

|

2020-05-14 19:00:13

|

canonical-web-and-design/jaas-dashboard

|

https://api.github.com/repos/canonical-web-and-design/jaas-dashboard

|

closed

|

Empty table warning is partly in header

|

Bug 🐛 Models Listing Priority: High

|

To reproduce, run the dashboard with no models.

|

1.0

|

Empty table warning is partly in header -

To reproduce, run the dashboard with no models.

|

priority

|

empty table warning is partly in header to reproduce run the dashboard with no models

| 1

|

631,396

| 20,151,422,599

|

IssuesEvent

|

2022-02-09 12:47:28

|

Blosc/caterva

|

https://api.github.com/repos/Blosc/caterva

|

closed

|

Implement a resize functionality

|

enhancement high priority

|

This would allow to extend/shrink an array in different dimensions. I suggest a new function with a signature similar to this:

```

/**

* @brief Resize a caterva array

*

* Changes the shape of the caterva array by growing or shrinking one or more dimensions.

*

* @param ctx The caterva context to be used.

* @param array The caterva array.

* @param new_dims New dimensions of the array.

*

* @return An error code

*/

int caterva_resize(caterva_ctx_t *ctx, caterva_array_t *array, int *new_dims);

```

|

1.0

|

Implement a resize functionality - This would allow to extend/shrink an array in different dimensions. I suggest a new function with a signature similar to this:

```

/**

* @brief Resize a caterva array

*

* Changes the shape of the caterva array by growing or shrinking one or more dimensions.

*

* @param ctx The caterva context to be used.

* @param array The caterva array.

* @param new_dims New dimensions of the array.

*

* @return An error code

*/

int caterva_resize(caterva_ctx_t *ctx, caterva_array_t *array, int *new_dims);

```

|

priority

|

implement a resize functionality this would allow to extend shrink an array in different dimensions i suggest a new function with a signature similar to this brief resize a caterva array changes the shape of the caterva array by growing or shrinking one or more dimensions param ctx the caterva context to be used param array the caterva array param new dims new dimensions of the array return an error code int caterva resize caterva ctx t ctx caterva array t array int new dims

| 1

|

659,192

| 21,919,302,097

|

IssuesEvent

|

2022-05-22 10:20:07

|

OpenRefine/OpenRefine

|

https://api.github.com/repos/OpenRefine/OpenRefine

|

closed

|

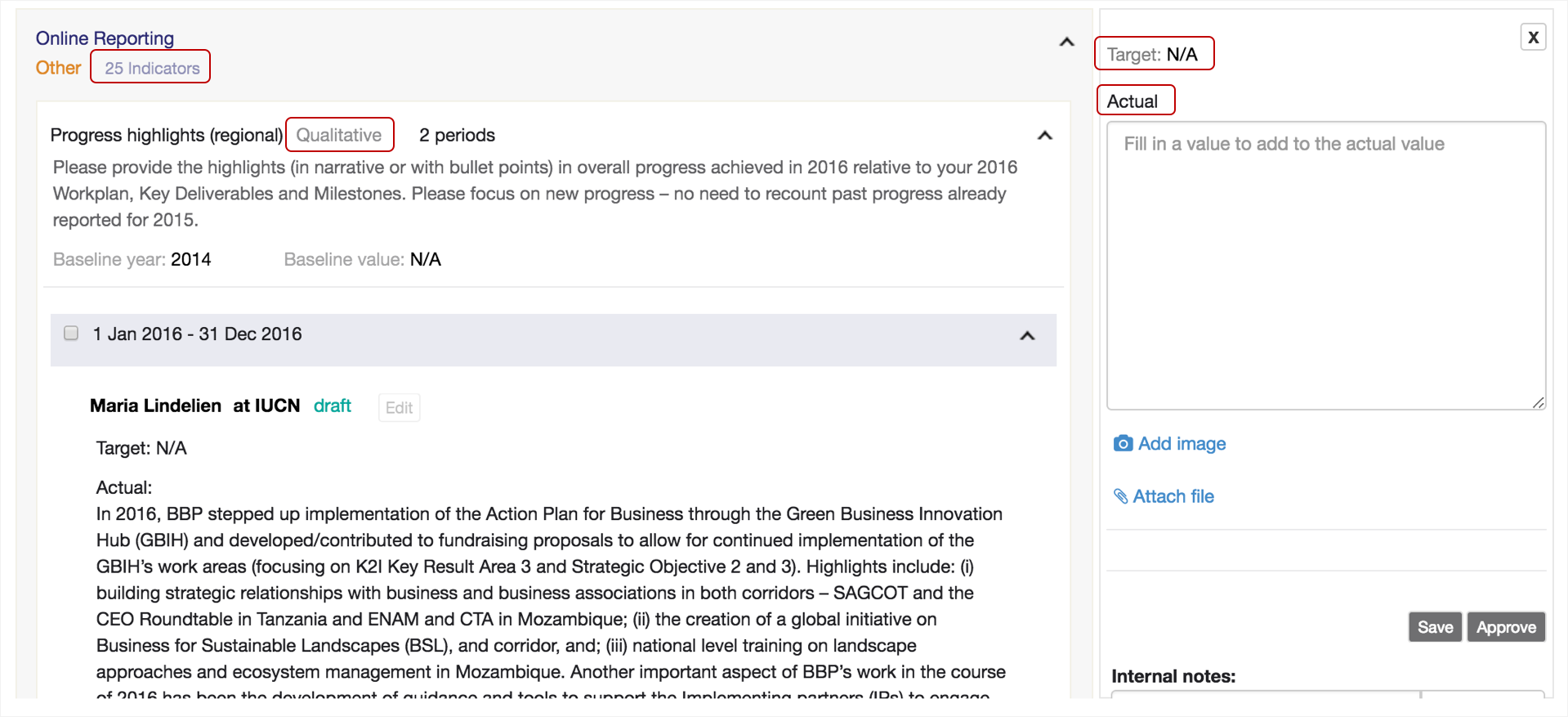

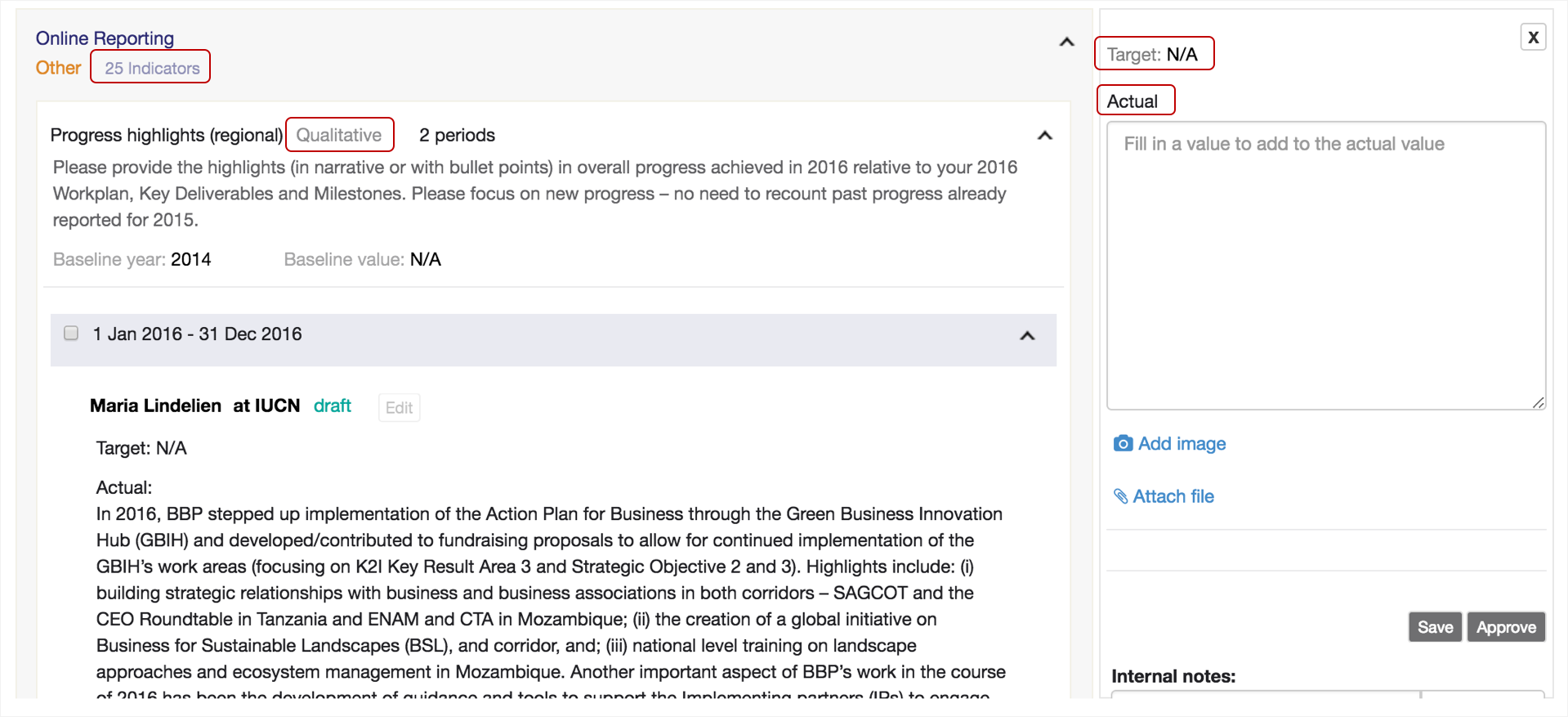

Snapshot releases (Mac and Linux versions) on MacOS are not properly loading

|

bug priority: High packaging

|

When opening the Mac OS (.dmg) snapshot release, it doesn't load OpenRefine any further than the logo, as seen in this screenshot:

When I try with the Linux download, that one doesn't even open a new browser tab at all.

### Expected Behavior

OpenRefine should fully load.

### Context

Terminal log when trying to start the MacOS .dmg (pretty minimal):

```

Sandras-MacBook-Air:~ fokky$ /Applications/OpenRefine\ snapshot\ 20220408.app/Contents/MacOS/JavaAppLauncher ; exit;

08:15:29.649 [ refine_server] Starting Server bound to '127.0.0.1:3333' (0ms)

08:15:29.717 [ refine_server] Initializing context: '/' from '/Applications/OpenRefine snapshot 20220408.app/Contents/Resources/webapp' (68ms)

08:15:31.931 [ refine] Starting OpenRefine 3.6-SNAPSHOT [0ba3b92]... (2214ms)

08:15:31.937 [ refine] initializing FileProjectManager with dir (6ms)

08:15:31.937 [ refine] /Users/fokky/Library/Application Support/OpenRefine (0ms)

```

When I try to open the Linux version of the snapshot release, that one doesn't even start up a new browser tab. Terminal log for that one is:

```

miniMac:~ fokky$ /Users/fokky/Desktop/openrefine-3.6-SNAPSHOT\ 3/refine ; exit;

Using refine.ini for configuration

cat: refine.ini: No such file or directory

-------------------------------------------------------------------------------------------------

You have 812M of free memory.

Your current configuration is set to use 1024M of memory.

OpenRefine can run better when given more memory. Read our FAQ on how to allocate more memory here:

https://docs.openrefine.org/manual/installing#increasing-memory-allocation

-------------------------------------------------------------------------------------------------

ls: server/target/lib: No such file or directory

\nCould not find Maven locally, starting download for Maven ...

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 8469k 100 8469k 0 0 10.7M 0 --:--:-- --:--:-- --:--:-- 10.7M

/Users/fokky/Desktop/openrefine-3.6-SNAPSHOT 3/refine: line 294: cd: main/webapp: No such file or directory

[INFO] Scanning for projects...

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 0.300 s

[INFO] Finished at: 2022-04-10T09:26:43+02:00

[INFO] ------------------------------------------------------------------------

[ERROR] The goal you specified requires a project to execute but there is no POM in this directory (/Users/fokky/Desktop). Please verify you invoked Maven from the correct directory. -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MissingProjectException

[INFO] Scanning for projects...

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 0.426 s

[INFO] Finished at: 2022-04-10T09:26:47+02:00

[INFO] ------------------------------------------------------------------------

[ERROR] The goal you specified requires a project to execute but there is no POM in this directory (/Users/fokky/Desktop). Please verify you invoked Maven from the correct directory. -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MissingProjectException

Error: Could not find or load main class com.google.refine.Refine

Caused by: java.lang.ClassNotFoundException: com.google.refine.Refine

logout

Saving session...

...copying shared history...

...saving history...truncating history files...

...completed.

[Process completed]

```

### Versions<!-- (please complete the following information)-->

- Operating System: MacOS 10.13.6, MacOS 12.2.1

- Browser Version: Chrome

- JRE or JDK Version: n/a?

- OpenRefine: 3.6 snapshot release

### Files used

I just tried with the April 8 and April 10 snapshot releases, but the problem has been around for longer (several weeks?).

I heard from a Linux user (via the Structured Data on Commons Telegram group) that they have the same issue of OpenRefine snapshot releases not loading for them.

|

1.0

|

Snapshot releases (Mac and Linux versions) on MacOS are not properly loading - When opening the Mac OS (.dmg) snapshot release, it doesn't load OpenRefine any further than the logo, as seen in this screenshot:

When I try with the Linux download, that one doesn't even open a new browser tab at all.

### Expected Behavior

OpenRefine should fully load.

### Context

Terminal log when trying to start the MacOS .dmg (pretty minimal):

```

Sandras-MacBook-Air:~ fokky$ /Applications/OpenRefine\ snapshot\ 20220408.app/Contents/MacOS/JavaAppLauncher ; exit;

08:15:29.649 [ refine_server] Starting Server bound to '127.0.0.1:3333' (0ms)

08:15:29.717 [ refine_server] Initializing context: '/' from '/Applications/OpenRefine snapshot 20220408.app/Contents/Resources/webapp' (68ms)

08:15:31.931 [ refine] Starting OpenRefine 3.6-SNAPSHOT [0ba3b92]... (2214ms)

08:15:31.937 [ refine] initializing FileProjectManager with dir (6ms)

08:15:31.937 [ refine] /Users/fokky/Library/Application Support/OpenRefine (0ms)

```

When I try to open the Linux version of the snapshot release, that one doesn't even start up a new browser tab. Terminal log for that one is:

```

miniMac:~ fokky$ /Users/fokky/Desktop/openrefine-3.6-SNAPSHOT\ 3/refine ; exit;

Using refine.ini for configuration

cat: refine.ini: No such file or directory

-------------------------------------------------------------------------------------------------

You have 812M of free memory.

Your current configuration is set to use 1024M of memory.

OpenRefine can run better when given more memory. Read our FAQ on how to allocate more memory here:

https://docs.openrefine.org/manual/installing#increasing-memory-allocation

-------------------------------------------------------------------------------------------------

ls: server/target/lib: No such file or directory

\nCould not find Maven locally, starting download for Maven ...

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 8469k 100 8469k 0 0 10.7M 0 --:--:-- --:--:-- --:--:-- 10.7M

/Users/fokky/Desktop/openrefine-3.6-SNAPSHOT 3/refine: line 294: cd: main/webapp: No such file or directory

[INFO] Scanning for projects...

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 0.300 s

[INFO] Finished at: 2022-04-10T09:26:43+02:00

[INFO] ------------------------------------------------------------------------

[ERROR] The goal you specified requires a project to execute but there is no POM in this directory (/Users/fokky/Desktop). Please verify you invoked Maven from the correct directory. -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MissingProjectException

[INFO] Scanning for projects...

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 0.426 s

[INFO] Finished at: 2022-04-10T09:26:47+02:00

[INFO] ------------------------------------------------------------------------

[ERROR] The goal you specified requires a project to execute but there is no POM in this directory (/Users/fokky/Desktop). Please verify you invoked Maven from the correct directory. -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MissingProjectException

Error: Could not find or load main class com.google.refine.Refine

Caused by: java.lang.ClassNotFoundException: com.google.refine.Refine

logout

Saving session...

...copying shared history...

...saving history...truncating history files...

...completed.

[Process completed]

```

### Versions<!-- (please complete the following information)-->

- Operating System: MacOS 10.13.6, MacOS 12.2.1

- Browser Version: Chrome

- JRE or JDK Version: n/a?

- OpenRefine: 3.6 snapshot release

### Files used

I just tried with the April 8 and April 10 snapshot releases, but the problem has been around for longer (several weeks?).

I heard from a Linux user (via the Structured Data on Commons Telegram group) that they have the same issue of OpenRefine snapshot releases not loading for them.

|

priority

|

snapshot releases mac and linux versions on macos are not properly loading when opening the mac os dmg snapshot release it doesn t load openrefine any further than the logo as seen in this screenshot when i try with the linux download that one doesn t even open a new browser tab at all expected behavior openrefine should fully load context terminal log when trying to start the macos dmg pretty minimal sandras macbook air fokky applications openrefine snapshot app contents macos javaapplauncher exit starting server bound to initializing context from applications openrefine snapshot app contents resources webapp starting openrefine snapshot initializing fileprojectmanager with dir users fokky library application support openrefine when i try to open the linux version of the snapshot release that one doesn t even start up a new browser tab terminal log for that one is minimac fokky users fokky desktop openrefine snapshot refine exit using refine ini for configuration cat refine ini no such file or directory you have of free memory your current configuration is set to use of memory openrefine can run better when given more memory read our faq on how to allocate more memory here ls server target lib no such file or directory ncould not find maven locally starting download for maven total received xferd average speed time time time current dload upload total spent left speed users fokky desktop openrefine snapshot refine line cd main webapp no such file or directory scanning for projects build failure total time s finished at the goal you specified requires a project to execute but there is no pom in this directory users fokky desktop please verify you invoked maven from the correct directory to see the full stack trace of the errors re run maven with the e switch re run maven using the x switch to enable full debug logging for more information about the errors and possible solutions please read the following articles scanning for projects build failure total time s finished at the goal you specified requires a project to execute but there is no pom in this directory users fokky desktop please verify you invoked maven from the correct directory to see the full stack trace of the errors re run maven with the e switch re run maven using the x switch to enable full debug logging for more information about the errors and possible solutions please read the following articles error could not find or load main class com google refine refine caused by java lang classnotfoundexception com google refine refine logout saving session copying shared history saving history truncating history files completed versions operating system macos macos browser version chrome jre or jdk version n a openrefine snapshot release files used i just tried with the april and april snapshot releases but the problem has been around for longer several weeks i heard from a linux user via the structured data on commons telegram group that they have the same issue of openrefine snapshot releases not loading for them

| 1

|

510,546

| 14,792,652,967

|

IssuesEvent

|

2021-01-12 15:00:06

|

staxrip/staxrip

|

https://api.github.com/repos/staxrip/staxrip

|

closed

|

Staxrip will try to append CHUNKS even if chunks = 1

|

added/fixed/done bug priority high

|

**BUG:**

When there are files called file_chunk2.hevc and file_chunk3.hevc (...) in the temp folder, Staxrip will attempt to append them when muxing, even if chunk encoding is not activated (i.e chunks =1).

These files could happen to be there due to a previous encoding.

**Expected behaviour**

If chunks = 1 then those files should be ignored, and muxing should not append them.

Muxing should work SMARTLY.

If chunks = 1 , look for file_out.hevc, ignore any other

If chunks = 2 look for file_out.hevc and file_chunk2.hevc, ignore any other

If chunks = 3 look for file_out.hevc and file_chunk2.hevc and file_chunk3.hevc, ignore any other

Here is the complete log for your pleasure: it shows that NO second chunk has been encoded, but muxer has appended a chunk from a previous processing.

```

------------------------- System Environment -------------------------

StaxRip : 2.1.7.1

Windows : Windows 10 Home 2004

Language : English (United States)

CPU : Intel(R) Core(TM) i7-6700HQ CPU @ 2.60GHz

GPU : Intel(R) HD Graphics 530, NVIDIA GeForce GTX 960M

Resolution : 1920 x 1080

DPI : 96

----------------------- Media Info Source File -----------------------

D:\Downloads\_IDM Downloads\Timecode sample - 25fps.mp4

General

Complete name : D:\Downloads\_IDM Downloads\Timecode sample - 25fps.mp4

Format : MPEG-4

Format profile : Base Media / Version 2

Codec ID : mp42 (isom/mp42)

File size : 4.88 MiB

Duration : 1 min 18 s

Overall bit rate mode : Variable

Overall bit rate : 522 kb/s

Encoded date : UTC 2017-12-17 15:18:14

Tagged date : UTC 2017-12-17 15:18:14

gsst : 0

gstd : 78460

Video

ID : 1

Format : AVC

Format/Info : Advanced Video Codec

Format profile : High@L3.1

Format settings : CABAC / 1 Ref Frames

Format, CABAC : Yes

Format, Reference frames : 1 frame

Format, GOP : M=1, N=60

Codec ID : avc1

Codec ID/Info : Advanced Video Coding

Duration : 1 min 18 s

Bit rate : 327 kb/s

Maximum bit rate : 471 kb/s

Width : 1 280 pixels

Height : 720 pixels

Display aspect ratio : 16:9

Frame rate mode : Constant

Frame rate : 25.000 FPS

Color space : YUV

Chroma subsampling : 4:2:0

Bit depth : 8 bits

Scan type : Progressive

Bits/(Pixel*Frame) : 0.014

Stream size : 3.06 MiB (63%)

Tagged date : UTC 2017-12-17 15:18:15

Color range : Limited

Color primaries : BT.709

Transfer characteristics : BT.709

Matrix coefficients : BT.709

Codec configuration box : avcC

Audio

ID : 2

Format : AAC LC

Format/Info : Advanced Audio Codec Low Complexity

Codec ID : mp4a-40-2

Duration : 1 min 18 s

Bit rate mode : Variable

Bit rate : 192 kb/s

Maximum bit rate : 201 kb/s

Channel(s) : 2 channels

Channel layout : L R

Sampling rate : 44.1 kHz

Frame rate : 43.066 FPS (1024 SPF)

Compression mode : Lossy

Stream size : 1.79 MiB (37%)

Title : IsoMedia File Produced by Google, 5-11-2011

Encoded date : UTC 2017-12-17 15:18:15

Tagged date : UTC 2017-12-17 15:18:15

----------------------------- Demux audio -----------------------------

MP4Box 1.1.0-DEV-rev390-g4228658a9-x64-gcc10.2.0 Patman86

"C:\Program Files\StaxRip\Apps\Support\MP4Box\MP4Box.exe" -single 2 -out "C:\Temp\_StaxRip\Timecode sample - 25fps_temp\ID1 {IsoMedia File Produced by Google, 5-11-2011}.m4a" "D:\Downloads\_IDM Downloads\Timecode sample - 25fps.mp4"

Start: 11:12:51

End: 11:12:51

Duration: 00:00:00

General

Complete name : C:\Temp\_StaxRip\Timecode sample - 25fps_temp\ID1 {IsoMedia File Produced by Google, 5-11-2011}.m4a

Format : MPEG-4

Format profile : Base Media

Codec ID : isom (isom)

File size : 1.81 MiB

Duration : 1 min 18 s

Overall bit rate mode : Variable

Overall bit rate : 194 kb/s

Encoded date : UTC 2021-01-11 09:12:51

Tagged date : UTC 2021-01-11 09:12:51

Audio

ID : 2

Format : AAC LC

Format/Info : Advanced Audio Codec Low Complexity

Format profile : AAC@L2

Codec ID : mp4a-40-2

Duration : 1 min 18 s

Bit rate mode : Variable

Bit rate : 192 kb/s

Nominal bit rate : 728 b/s

Maximum bit rate : 201 kb/s

Channel(s) : 2 channels

Channel layout : L R

Sampling rate : 44.1 kHz

Frame rate : 43.066 FPS (1024 SPF)

Compression mode : Lossy

Stream size : 1.79 MiB (99%)

Encoded date : UTC 2021-01-11 09:12:51

Tagged date : UTC 2021-01-11 09:12:51

---------------------- Indexing using ffmsindex ----------------------

"C:\Program Files\StaxRip\Apps\Plugins\Dual\ffms2\ffmsindex.exe" "D:\Downloads\_IDM Downloads\Timecode sample - 25fps.mp4" "C:\Temp\_StaxRip\Timecode sample - 25fps_temp\temp.ffindex"

Writing index... done.

Start: 11:12:52

End: 11:12:52

Duration: 00:00:00

---------------------------- Configuration ----------------------------

Template : ZK x265 480p BT709 Base

Video Encoder Profile : x265

Container/Muxer Profile : MKV (mkvmerge)

--------------------------- AviSynth Script ---------------------------

AddAutoloadDir("C:\Program Files\StaxRip\Apps\FrameServer\AviSynth\plugins")

AddAutoloadDir("C:\Program Files\Staxrip\Settings\Plugins\AviSynth")

AddAutoloadDir("C:\Program Files\Staxrip\Settings\Plugins\Dual")

LoadPlugin("C:\Program Files\StaxRip\Apps\Plugins\Dual\ffms2\ffms2.dll")

LoadPlugin("C:\Program Files\StaxRip\Apps\Plugins\AVS\JPSDR\Plugins_JPSDR.dll")

FFVideoSource("D:\Downloads\_IDM Downloads\Timecode sample - 25fps.mp4", cachefile="C:\Temp\_StaxRip\Timecode sample - 25fps_temp\temp.ffindex")

#AssumeFPS(25)

LanczosResizeMT(856, 480, prefetch=4)

Trim(100, 145) + Trim(1375, 1420)

------------------------- Source Script Info -------------------------

Width : 1280

Height : 720

Frames : 1960

Time : 01:18.400

Framerate : 25 (25/1)

Format : YUV420P8

------------------------- Target Script Info -------------------------

Width : 856

Height : 480

Frames : 92

Time : 00:03.680

Framerate : 25 (25/1)

Format : YUV420P8

--------------------------- Video encoding ---------------------------

x265 M-3.4+35-772bb4c84-x64-gcc10.2.0 Patman86

"C:\Program Files\StaxRip\Apps\Encoders\x265\x265.exe" --crf 24 --preset slow --output-depth 10 --output "C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out.hevc" "C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps.avs"

avs+ [info]: AviSynth+ 3.6.2 (r3341, master, x86_64)

avs+ [info]: Video colorspace: YUV420 (YV12)

avs+ [info]: Video depth: 8

avs+ [info]: Video resolution: 856x480

avs+ [info]: Video framerate: 25/1

avs+ [info]: Video framecount: 92

avs+ [info]: 856x480 fps 25/1 i420p8 frames 0 - 91 of 92

raw [info]: output file: C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out.hevc

x265 [info]: HEVC encoder version x265M 3.4+35-772bb4c84

x265 [info]: build info [Windows][MSVC 1928][64 bit] 10bit

x265 [info]: using cpu capabilities: MMX2 SSE2Fast LZCNT SSSE3 SSE4.2 AVX FMA3 BMI2 AVX2

x265 [info]: Main 10 profile, Level-3 (Main tier)

x265 [info]: Thread pool created using 8 threads

x265 [info]: Slices : 1

x265 [info]: frame threads / pool features : 3 / wpp(8 rows)

x265 [warning]: Source height < 720p; disabling lookahead-slices

x265 [info]: Coding QT: max CU size, min CU size : 64 / 8

x265 [info]: Residual QT: max TU size, max depth : 32 / 1 inter / 1 intra

x265 [info]: ME / range / subpel / merge : star / 57 / 3 / 3

x265 [info]: Keyframe min / max / scenecut / bias : 25 / 250 / 40 / 5.00

x265 [info]: Lookahead / bframes / badapt : 25 / 4 / 2

x265 [info]: b-pyramid / weightp / weightb : 1 / 1 / 0

x265 [info]: References / ref-limit cu / depth : 4 / on / on

x265 [info]: AQ: mode / str / qg-size / cu-tree : 2 / 1.0 / 32 / 1

x265 [info]: Rate Control / qCompress : CRF-24.0 / 0.60

x265 [info]: tools: rect limit-modes rd=4 psy-rd=2.00 rdoq=2 psy-rdoq=1.00

x265 [info]: tools: rskip mode=1 signhide tmvp strong-intra-smoothing deblock

x265 [info]: tools: sao

x265 [info]: frame I: 1, Avg QP:22.44 kb/s: 1123.60

x265 [info]: frame P: 20, Avg QP:27.63 kb/s: 119.84

x265 [info]: frame B: 71, Avg QP:31.24 kb/s: 58.88

x265 [info]: Weighted P-Frames: Y:0.0% UV:0.0%

x265 [info]: consecutive B-frames: 9.5% 4.8% 4.8% 0.0% 81.0%

encoded 92 frames in 1.98s (46.46 fps), 83.71 kb/s, Avg QP:30.36

Start: 23:13:42

End: 23:13:45

Duration: 00:00:02

General

Complete name : C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out.hevc

Format : HEVC

Format/Info : High Efficiency Video Coding

File size : 40.3 KiB

Duration : 640 ms

Overall bit rate : 516 kb/s

Writing library : x265M - 3.4+35-772bb4c84:[Windows][MSVC 1928][64 bit] 10bit

Video

Format : HEVC

Format/Info : High Efficiency Video Coding

Format profile : Main 10@L3@Main

Duration : 640 ms

Width : 856 pixels

Height : 480 pixels

Display aspect ratio : 16:9

Frame rate : 25.000 FPS

Color space : YUV

Chroma subsampling : 4:2:0

Bit depth : 10 bits

Writing library : x265M - 3.4+35-772bb4c84:[Windows][MSVC 1928][64 bit] 10bit

---------------------------- Muxing to MKV ----------------------------

mkvmerge 52

"C:\Program Files\StaxRip\Apps\Support\MKVToolNix\mkvmerge.exe" -o "R:\Timecode sample - 25fps.mkv" "C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out.hevc" + "C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out_chunk2.hevc" --ui-language en --title ""

mkvmerge v52.0.0 ('Secret For The Mad') 64-bit

'C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out.hevc': Using the demultiplexer for the format 'HEVC/H.265'.

'C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out_chunk2.hevc': Using the demultiplexer for the format 'HEVC/H.265'.

'C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out.hevc' track 0: Using the output module for the format 'HEVC/H.265 (unframed)'.

'C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out_chunk2.hevc' track 0: Using the output module for the format 'HEVC/H.265 (unframed)'.

No append mapping was given for the file no. 1 ('C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out_chunk2.hevc'). A default mapping of 1:0:0:0 will be used instead. Please keep that in mind if mkvmerge aborts with an error message regarding invalid '--append-to' options.

The file 'R:\Timecode sample - 25fps.mkv' has been opened for writing.

Appending track 0 from file no. 1 ('C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out_chunk2.hevc') to track 0 from file no. 0 ('C:\Temp\_StaxRip\Timecode sample - 25fps_temp\Timecode sample - 25fps_out.hevc').

The cue entries (the index) are being written...

Multiplexing took 0 seconds.

Start: 23:13:45

End: 23:13:45

Duration: 00:00:00

General

Complete name : R:\Timecode sample - 25fps.mkv

Format : Matroska

Format version : Version 4

File size : 73.1 KiB

Duration : 5 s 520 ms

Overall bit rate : 108 kb/s

Encoded date : UTC 2021-01-11 21:13:45

Writing application : mkvmerge v52.0.0 ('Secret For The Mad') 64-bit

Writing library : libebml v1.4.1 + libmatroska v1.6.2

Video

ID : 1

Format : HEVC

Format/Info : High Efficiency Video Coding

Format profile : Main 10@L3@Main

Codec ID : V_MPEGH/ISO/HEVC

Duration : 5 s 520 ms

Bit rate : 95.0 kb/s

Width : 856 pixels

Height : 480 pixels

Display aspect ratio : 16:9

Frame rate mode : Constant

Frame rate : 25.000 FPS

Color space : YUV

Chroma subsampling : 4:2:0

Bit depth : 10 bits

Bits/(Pixel*Frame) : 0.009

Stream size : 64.0 KiB (88%)

Writing library : x265M - 3.4+35-772bb4c84:[Windows][MSVC 1928][64 bit] 10bit

Default : Yes

Forced : No

---------------------------- Job Complete ----------------------------

Start: 23:13:42

End: 23:13:45

Duration: 00:00:03

```

|

1.0

|

Staxrip will try to append CHUNKS even if chunks = 1 - **BUG:**

When there are files called file_chunk2.hevc and file_chunk3.hevc (...) in the temp folder, Staxrip will attempt to append them when muxing, even if chunk encoding is not activated (i.e chunks =1).

These files could happen to be there due to a previous encoding.

**Expected behaviour**

If chunks = 1 then those files should be ignored, and muxing should not append them.

Muxing should work SMARTLY.

If chunks = 1 , look for file_out.hevc, ignore any other

If chunks = 2 look for file_out.hevc and file_chunk2.hevc, ignore any other

If chunks = 3 look for file_out.hevc and file_chunk2.hevc and file_chunk3.hevc, ignore any other

Here is the complete log for your pleasure: it shows that NO second chunk has been encoded, but muxer has appended a chunk from a previous processing.

```

------------------------- System Environment -------------------------

StaxRip : 2.1.7.1

Windows : Windows 10 Home 2004

Language : English (United States)

CPU : Intel(R) Core(TM) i7-6700HQ CPU @ 2.60GHz

GPU : Intel(R) HD Graphics 530, NVIDIA GeForce GTX 960M

Resolution : 1920 x 1080

DPI : 96

----------------------- Media Info Source File -----------------------

D:\Downloads\_IDM Downloads\Timecode sample - 25fps.mp4

General

Complete name : D:\Downloads\_IDM Downloads\Timecode sample - 25fps.mp4

Format : MPEG-4

Format profile : Base Media / Version 2

Codec ID : mp42 (isom/mp42)

File size : 4.88 MiB