Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

651,921

| 21,514,959,924

|

IssuesEvent

|

2022-04-28 09:02:33

|

nf-core/tools

|

https://api.github.com/repos/nf-core/tools

|

closed

|

README logo not being rendered

|

bug high-priority

|

### Description of the bug

It appears that the path to the logo used in the README isn't being found and hence the image isn't being rendered. If we look at the template PR for rnaseq [here](https://github.com/nf-core/rnaseq/tree/nf-core-template-merge-2.3.2) the path to the image at the top of the README is:

```

#

```

However it should be:

```

#

```

Note, it should be `nf-core-rnaseq_logo*` and not `nf-core/rnaseq_logo*`.

In the pipeline template the logo based Jinja variables are `logo_light` and `logo_dark`. These will need to be fixed and checked wherever else they are being used.

### Command used and terminal output

_No response_

### System information

_No response_

|

1.0

|

README logo not being rendered - ### Description of the bug

It appears that the path to the logo used in the README isn't being found and hence the image isn't being rendered. If we look at the template PR for rnaseq [here](https://github.com/nf-core/rnaseq/tree/nf-core-template-merge-2.3.2) the path to the image at the top of the README is:

```

#

```

However it should be:

```

#

```

Note, it should be `nf-core-rnaseq_logo*` and not `nf-core/rnaseq_logo*`.

In the pipeline template the logo based Jinja variables are `logo_light` and `logo_dark`. These will need to be fixed and checked wherever else they are being used.

### Command used and terminal output

_No response_

### System information

_No response_

|

priority

|

readme logo not being rendered description of the bug it appears that the path to the logo used in the readme isn t being found and hence the image isn t being rendered if we look at the template pr for rnaseq the path to the image at the top of the readme is docs images nf core rnaseq logo light png gh light mode only docs images nf core rnaseq logo dark png gh dark mode only however it should be docs images nf core rnaseq logo light png gh light mode only docs images nf core rnaseq logo dark png gh dark mode only note it should be nf core rnaseq logo and not nf core rnaseq logo in the pipeline template the logo based jinja variables are logo light and logo dark these will need to be fixed and checked wherever else they are being used command used and terminal output no response system information no response

| 1

|

424,515

| 12,312,363,767

|

IssuesEvent

|

2020-05-12 13:50:09

|

hotosm/tasking-manager

|

https://api.github.com/repos/hotosm/tasking-manager

|

closed

|

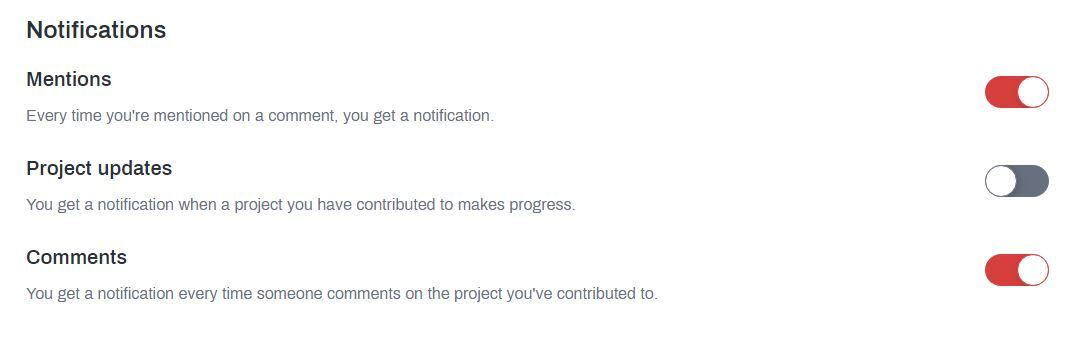

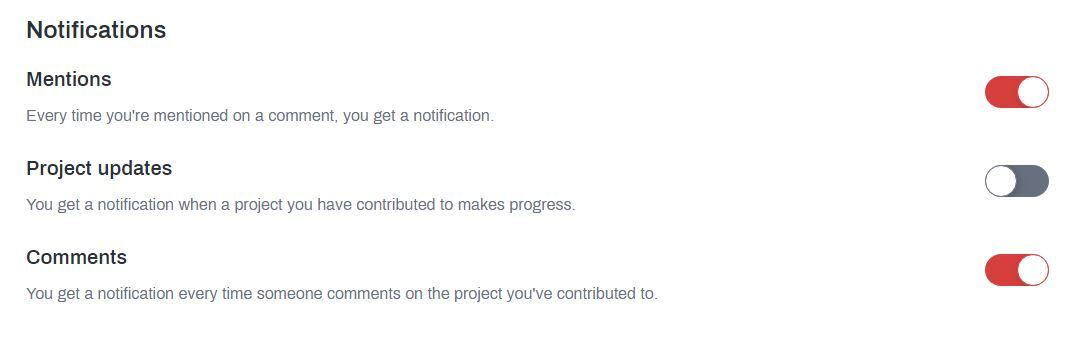

Check on notification options

|

Priority: High Status: Needs implementation Type: Bug

|

Users reported that the options on their profile aren't having effects on the messages they receive:

|

1.0

|

Check on notification options - Users reported that the options on their profile aren't having effects on the messages they receive:

|

priority

|

check on notification options users reported that the options on their profile aren t having effects on the messages they receive

| 1

|

237,661

| 7,762,875,603

|

IssuesEvent

|

2018-06-01 14:49:46

|

martchellop/Entretenibit

|

https://api.github.com/repos/martchellop/Entretenibit

|

closed

|

Add the cards in the select page

|

enhancement priority: high

|

Add the cards in the select page, they are 2 per line and should always be centralized in the middle.

|

1.0

|

Add the cards in the select page - Add the cards in the select page, they are 2 per line and should always be centralized in the middle.

|

priority

|

add the cards in the select page add the cards in the select page they are per line and should always be centralized in the middle

| 1

|

779,445

| 27,353,091,433

|

IssuesEvent

|

2023-02-27 10:57:19

|

sebastien-d-me/SebBlog

|

https://api.github.com/repos/sebastien-d-me/SebBlog

|

opened

|

Comment validation system

|

Priority: High Statut: Not started Type : Back-end

|

#### Description:

Creation of the comment validation system.

------------

###### Estimated time: 2 day(s)

###### Difficulty: ⭐⭐

|

1.0

|

Comment validation system - #### Description:

Creation of the comment validation system.

------------

###### Estimated time: 2 day(s)

###### Difficulty: ⭐⭐

|

priority

|

comment validation system description creation of the comment validation system estimated time day s difficulty ⭐⭐

| 1

|

501,076

| 14,520,680,097

|

IssuesEvent

|

2020-12-14 05:58:40

|

a2000-erp-team/WEBERP

|

https://api.github.com/repos/a2000-erp-team/WEBERP

|

opened

|

Upon saving of report maintenance, system will save and refresh the screen to the first line. Please edit this to fix the screen to where user has saved and NOT to refresh to the 1st line

|

ABIGAIL High Priority

|

|

1.0

|

Upon saving of report maintenance, system will save and refresh the screen to the first line. Please edit this to fix the screen to where user has saved and NOT to refresh to the 1st line -

|

priority

|

upon saving of report maintenance system will save and refresh the screen to the first line please edit this to fix the screen to where user has saved and not to refresh to the line

| 1

|

714,692

| 24,570,597,760

|

IssuesEvent

|

2022-10-13 08:20:45

|

fractal-analytics-platform/fractal-server

|

https://api.github.com/repos/fractal-analytics-platform/fractal-server

|

closed

|

`sqlite3.OperationalError: no such table: applyworkflow`

|

High Priority

|

When running the server (from `main`), and submitting a workflow via the client, I get the error:

```python traceback

INFO: 127.0.0.1:53570 - "POST /api/v1/project/apply/ HTTP/1.1" 500 Internal Server Error

ERROR: Exception in ASGI application

Traceback (most recent call last):

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1819, in _execute_context

self.dialect.do_execute(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/default.py", line 732, in do_execute

cursor.execute(statement, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 100, in execute

self._adapt_connection._handle_exception(error)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 229, in _handle_exception

raise error

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 82, in execute

self.await_(_cursor.execute(operation, parameters))

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 76, in await_only

return current.driver.switch(awaitable)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 129, in greenlet_spawn

value = await result

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 37, in execute

await self._execute(self._cursor.execute, sql, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 31, in _execute

return await self._conn._execute(fn, *args, **kwargs)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 129, in _execute

return await future

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 102, in run

result = function()

sqlite3.OperationalError: no such table: applyworkflow

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/uvicorn/protocols/http/h11_impl.py", line 404, in run_asgi

result = await app( # type: ignore[func-returns-value]

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/uvicorn/middleware/proxy_headers.py", line 78, in __call__

return await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/applications.py", line 269, in __call__

await super().__call__(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/applications.py", line 124, in __call__

await self.middleware_stack(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/errors.py", line 184, in __call__

raise exc

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/errors.py", line 162, in __call__

await self.app(scope, receive, _send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/cors.py", line 84, in __call__

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/exceptions.py", line 93, in __call__

raise exc

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/exceptions.py", line 82, in __call__

await self.app(scope, receive, sender)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/middleware/asyncexitstack.py", line 21, in __call__

raise e

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/middleware/asyncexitstack.py", line 18, in __call__

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 670, in __call__

await route.handle(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 266, in handle

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 65, in app

response = await func(request)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/routing.py", line 227, in app

raw_response = await run_endpoint_function(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/routing.py", line 160, in run_endpoint_function

return await dependant.call(**values)

File "/home/tommaso/Fractal/fractal-server/fractal_server/app/api/v1/project.py", line 191, in apply_workflow

await db.commit()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/ext/asyncio/session.py", line 578, in commit

return await greenlet_spawn(self.sync_session.commit)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 136, in greenlet_spawn

result = context.switch(value)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 1431, in commit

self._transaction.commit(_to_root=self.future)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 829, in commit

self._prepare_impl()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 808, in _prepare_impl

self.session.flush()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3363, in flush

self._flush(objects)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3503, in _flush

transaction.rollback(_capture_exception=True)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/langhelpers.py", line 70, in __exit__

compat.raise_(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3463, in _flush

flush_context.execute()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/unitofwork.py", line 456, in execute

rec.execute(self)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/unitofwork.py", line 630, in execute

util.preloaded.orm_persistence.save_obj(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/persistence.py", line 245, in save_obj

_emit_insert_statements(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/persistence.py", line 1238, in _emit_insert_statements

result = connection._execute_20(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1631, in _execute_20

return meth(self, args_10style, kwargs_10style, execution_options)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/sql/elements.py", line 325, in _execute_on_connection

return connection._execute_clauseelement(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1498, in _execute_clauseelement

ret = self._execute_context(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1862, in _execute_context

self._handle_dbapi_exception(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 2043, in _handle_dbapi_exception

util.raise_(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1819, in _execute_context

self.dialect.do_execute(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/default.py", line 732, in do_execute

cursor.execute(statement, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 100, in execute

self._adapt_connection._handle_exception(error)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 229, in _handle_exception

raise error

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 82, in execute

self.await_(_cursor.execute(operation, parameters))

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 76, in await_only

return current.driver.switch(awaitable)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 129, in greenlet_spawn

value = await result

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 37, in execute

await self._execute(self._cursor.execute, sql, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 31, in _execute

return await self._conn._execute(fn, *args, **kwargs)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 129, in _execute

return await future

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 102, in run

result = function()

sqlalchemy.exc.OperationalError: (sqlite3.OperationalError) no such table: applyworkflow

[SQL: INSERT INTO applyworkflow (project_id, input_dataset_id, output_dataset_id, workflow_id, overwrite_input, worker_init, start_timestamp, status) VALUES (?, ?, ?, ?, ?, ?, ?, ?)]

[parameters: (1, 1, 2, 10, 0, None, '2022-10-13 07:53:48.577775', <StatusType.SUBMITTED: 'submitted'>)]

(Background on this error at: https://sqlalche.me/e/14/e3q8)

2022-10-13 09:53:48,579; ERROR; Exception in ASGI application

Traceback (most recent call last):

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1819, in _execute_context

self.dialect.do_execute(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/default.py", line 732, in do_execute

cursor.execute(statement, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 100, in execute

self._adapt_connection._handle_exception(error)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 229, in _handle_exception

raise error

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 82, in execute

self.await_(_cursor.execute(operation, parameters))

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 76, in await_only

return current.driver.switch(awaitable)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 129, in greenlet_spawn

value = await result

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 37, in execute

await self._execute(self._cursor.execute, sql, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 31, in _execute

return await self._conn._execute(fn, *args, **kwargs)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 129, in _execute

return await future

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 102, in run

result = function()

sqlite3.OperationalError: no such table: applyworkflow

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/uvicorn/protocols/http/h11_impl.py", line 404, in run_asgi

result = await app( # type: ignore[func-returns-value]

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/uvicorn/middleware/proxy_headers.py", line 78, in __call__

return await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/applications.py", line 269, in __call__

await super().__call__(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/applications.py", line 124, in __call__

await self.middleware_stack(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/errors.py", line 184, in __call__

raise exc

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/errors.py", line 162, in __call__

await self.app(scope, receive, _send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/cors.py", line 84, in __call__

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/exceptions.py", line 93, in __call__

raise exc

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/exceptions.py", line 82, in __call__

await self.app(scope, receive, sender)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/middleware/asyncexitstack.py", line 21, in __call__

raise e

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/middleware/asyncexitstack.py", line 18, in __call__

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 670, in __call__

await route.handle(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 266, in handle

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 65, in app

response = await func(request)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/routing.py", line 227, in app

raw_response = await run_endpoint_function(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/routing.py", line 160, in run_endpoint_function

return await dependant.call(**values)

File "/home/tommaso/Fractal/fractal-server/fractal_server/app/api/v1/project.py", line 191, in apply_workflow

await db.commit()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/ext/asyncio/session.py", line 578, in commit

return await greenlet_spawn(self.sync_session.commit)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 136, in greenlet_spawn

result = context.switch(value)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 1431, in commit

self._transaction.commit(_to_root=self.future)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 829, in commit

self._prepare_impl()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 808, in _prepare_impl

self.session.flush()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3363, in flush

self._flush(objects)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3503, in _flush

transaction.rollback(_capture_exception=True)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/langhelpers.py", line 70, in __exit__

compat.raise_(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3463, in _flush

flush_context.execute()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/unitofwork.py", line 456, in execute

rec.execute(self)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/unitofwork.py", line 630, in execute

util.preloaded.orm_persistence.save_obj(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/persistence.py", line 245, in save_obj

_emit_insert_statements(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/persistence.py", line 1238, in _emit_insert_statements

result = connection._execute_20(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1631, in _execute_20

return meth(self, args_10style, kwargs_10style, execution_options)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/sql/elements.py", line 325, in _execute_on_connection

return connection._execute_clauseelement(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1498, in _execute_clauseelement

ret = self._execute_context(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1862, in _execute_context

self._handle_dbapi_exception(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 2043, in _handle_dbapi_exception

util.raise_(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1819, in _execute_context

self.dialect.do_execute(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/default.py", line 732, in do_execute

cursor.execute(statement, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 100, in execute

self._adapt_connection._handle_exception(error)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 229, in _handle_exception

raise error

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 82, in execute

self.await_(_cursor.execute(operation, parameters))

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 76, in await_only

return current.driver.switch(awaitable)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 129, in greenlet_spawn

value = await result

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 37, in execute

await self._execute(self._cursor.execute, sql, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 31, in _execute

return await self._conn._execute(fn, *args, **kwargs)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 129, in _execute

return await future

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 102, in run

result = function()

sqlalchemy.exc.OperationalError: (sqlite3.OperationalError) no such table: applyworkflow

```

|

1.0

|

`sqlite3.OperationalError: no such table: applyworkflow` - When running the server (from `main`), and submitting a workflow via the client, I get the error:

```python traceback

INFO: 127.0.0.1:53570 - "POST /api/v1/project/apply/ HTTP/1.1" 500 Internal Server Error

ERROR: Exception in ASGI application

Traceback (most recent call last):

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1819, in _execute_context

self.dialect.do_execute(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/default.py", line 732, in do_execute

cursor.execute(statement, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 100, in execute

self._adapt_connection._handle_exception(error)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 229, in _handle_exception

raise error

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 82, in execute

self.await_(_cursor.execute(operation, parameters))

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 76, in await_only

return current.driver.switch(awaitable)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 129, in greenlet_spawn

value = await result

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 37, in execute

await self._execute(self._cursor.execute, sql, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 31, in _execute

return await self._conn._execute(fn, *args, **kwargs)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 129, in _execute

return await future

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 102, in run

result = function()

sqlite3.OperationalError: no such table: applyworkflow

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/uvicorn/protocols/http/h11_impl.py", line 404, in run_asgi

result = await app( # type: ignore[func-returns-value]

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/uvicorn/middleware/proxy_headers.py", line 78, in __call__

return await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/applications.py", line 269, in __call__

await super().__call__(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/applications.py", line 124, in __call__

await self.middleware_stack(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/errors.py", line 184, in __call__

raise exc

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/errors.py", line 162, in __call__

await self.app(scope, receive, _send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/cors.py", line 84, in __call__

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/exceptions.py", line 93, in __call__

raise exc

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/exceptions.py", line 82, in __call__

await self.app(scope, receive, sender)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/middleware/asyncexitstack.py", line 21, in __call__

raise e

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/middleware/asyncexitstack.py", line 18, in __call__

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 670, in __call__

await route.handle(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 266, in handle

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 65, in app

response = await func(request)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/routing.py", line 227, in app

raw_response = await run_endpoint_function(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/routing.py", line 160, in run_endpoint_function

return await dependant.call(**values)

File "/home/tommaso/Fractal/fractal-server/fractal_server/app/api/v1/project.py", line 191, in apply_workflow

await db.commit()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/ext/asyncio/session.py", line 578, in commit

return await greenlet_spawn(self.sync_session.commit)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 136, in greenlet_spawn

result = context.switch(value)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 1431, in commit

self._transaction.commit(_to_root=self.future)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 829, in commit

self._prepare_impl()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 808, in _prepare_impl

self.session.flush()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3363, in flush

self._flush(objects)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3503, in _flush

transaction.rollback(_capture_exception=True)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/langhelpers.py", line 70, in __exit__

compat.raise_(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3463, in _flush

flush_context.execute()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/unitofwork.py", line 456, in execute

rec.execute(self)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/unitofwork.py", line 630, in execute

util.preloaded.orm_persistence.save_obj(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/persistence.py", line 245, in save_obj

_emit_insert_statements(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/persistence.py", line 1238, in _emit_insert_statements

result = connection._execute_20(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1631, in _execute_20

return meth(self, args_10style, kwargs_10style, execution_options)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/sql/elements.py", line 325, in _execute_on_connection

return connection._execute_clauseelement(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1498, in _execute_clauseelement

ret = self._execute_context(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1862, in _execute_context

self._handle_dbapi_exception(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 2043, in _handle_dbapi_exception

util.raise_(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1819, in _execute_context

self.dialect.do_execute(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/default.py", line 732, in do_execute

cursor.execute(statement, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 100, in execute

self._adapt_connection._handle_exception(error)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 229, in _handle_exception

raise error

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 82, in execute

self.await_(_cursor.execute(operation, parameters))

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 76, in await_only

return current.driver.switch(awaitable)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 129, in greenlet_spawn

value = await result

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 37, in execute

await self._execute(self._cursor.execute, sql, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 31, in _execute

return await self._conn._execute(fn, *args, **kwargs)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 129, in _execute

return await future

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 102, in run

result = function()

sqlalchemy.exc.OperationalError: (sqlite3.OperationalError) no such table: applyworkflow

[SQL: INSERT INTO applyworkflow (project_id, input_dataset_id, output_dataset_id, workflow_id, overwrite_input, worker_init, start_timestamp, status) VALUES (?, ?, ?, ?, ?, ?, ?, ?)]

[parameters: (1, 1, 2, 10, 0, None, '2022-10-13 07:53:48.577775', <StatusType.SUBMITTED: 'submitted'>)]

(Background on this error at: https://sqlalche.me/e/14/e3q8)

2022-10-13 09:53:48,579; ERROR; Exception in ASGI application

Traceback (most recent call last):

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1819, in _execute_context

self.dialect.do_execute(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/default.py", line 732, in do_execute

cursor.execute(statement, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 100, in execute

self._adapt_connection._handle_exception(error)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 229, in _handle_exception

raise error

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 82, in execute

self.await_(_cursor.execute(operation, parameters))

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 76, in await_only

return current.driver.switch(awaitable)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 129, in greenlet_spawn

value = await result

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 37, in execute

await self._execute(self._cursor.execute, sql, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 31, in _execute

return await self._conn._execute(fn, *args, **kwargs)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 129, in _execute

return await future

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 102, in run

result = function()

sqlite3.OperationalError: no such table: applyworkflow

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/uvicorn/protocols/http/h11_impl.py", line 404, in run_asgi

result = await app( # type: ignore[func-returns-value]

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/uvicorn/middleware/proxy_headers.py", line 78, in __call__

return await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/applications.py", line 269, in __call__

await super().__call__(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/applications.py", line 124, in __call__

await self.middleware_stack(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/errors.py", line 184, in __call__

raise exc

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/errors.py", line 162, in __call__

await self.app(scope, receive, _send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/middleware/cors.py", line 84, in __call__

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/exceptions.py", line 93, in __call__

raise exc

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/exceptions.py", line 82, in __call__

await self.app(scope, receive, sender)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/middleware/asyncexitstack.py", line 21, in __call__

raise e

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/middleware/asyncexitstack.py", line 18, in __call__

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 670, in __call__

await route.handle(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 266, in handle

await self.app(scope, receive, send)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/starlette/routing.py", line 65, in app

response = await func(request)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/routing.py", line 227, in app

raw_response = await run_endpoint_function(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/fastapi/routing.py", line 160, in run_endpoint_function

return await dependant.call(**values)

File "/home/tommaso/Fractal/fractal-server/fractal_server/app/api/v1/project.py", line 191, in apply_workflow

await db.commit()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/ext/asyncio/session.py", line 578, in commit

return await greenlet_spawn(self.sync_session.commit)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 136, in greenlet_spawn

result = context.switch(value)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 1431, in commit

self._transaction.commit(_to_root=self.future)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 829, in commit

self._prepare_impl()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 808, in _prepare_impl

self.session.flush()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3363, in flush

self._flush(objects)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3503, in _flush

transaction.rollback(_capture_exception=True)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/langhelpers.py", line 70, in __exit__

compat.raise_(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/session.py", line 3463, in _flush

flush_context.execute()

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/unitofwork.py", line 456, in execute

rec.execute(self)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/unitofwork.py", line 630, in execute

util.preloaded.orm_persistence.save_obj(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/persistence.py", line 245, in save_obj

_emit_insert_statements(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/orm/persistence.py", line 1238, in _emit_insert_statements

result = connection._execute_20(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1631, in _execute_20

return meth(self, args_10style, kwargs_10style, execution_options)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/sql/elements.py", line 325, in _execute_on_connection

return connection._execute_clauseelement(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1498, in _execute_clauseelement

ret = self._execute_context(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1862, in _execute_context

self._handle_dbapi_exception(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 2043, in _handle_dbapi_exception

util.raise_(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/base.py", line 1819, in _execute_context

self.dialect.do_execute(

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/engine/default.py", line 732, in do_execute

cursor.execute(statement, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 100, in execute

self._adapt_connection._handle_exception(error)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 229, in _handle_exception

raise error

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/dialects/sqlite/aiosqlite.py", line 82, in execute

self.await_(_cursor.execute(operation, parameters))

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 76, in await_only

return current.driver.switch(awaitable)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 129, in greenlet_spawn

value = await result

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 37, in execute

await self._execute(self._cursor.execute, sql, parameters)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/cursor.py", line 31, in _execute

return await self._conn._execute(fn, *args, **kwargs)

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 129, in _execute

return await future

File "/home/tommaso/miniconda3/envs/fractal/lib/python3.8/site-packages/aiosqlite/core.py", line 102, in run

result = function()

sqlalchemy.exc.OperationalError: (sqlite3.OperationalError) no such table: applyworkflow

```

|

priority

|

operationalerror no such table applyworkflow when running the server from main and submitting a workflow via the client i get the error python traceback info post api project apply http internal server error error exception in asgi application traceback most recent call last file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute context self dialect do execute file home tommaso envs fractal lib site packages sqlalchemy engine default py line in do execute cursor execute statement parameters file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in execute self adapt connection handle exception error file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in handle exception raise error file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in execute self await cursor execute operation parameters file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in await only return current driver switch awaitable file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in greenlet spawn value await result file home tommaso envs fractal lib site packages aiosqlite cursor py line in execute await self execute self cursor execute sql parameters file home tommaso envs fractal lib site packages aiosqlite cursor py line in execute return await self conn execute fn args kwargs file home tommaso envs fractal lib site packages aiosqlite core py line in execute return await future file home tommaso envs fractal lib site packages aiosqlite core py line in run result function operationalerror no such table applyworkflow the above exception was the direct cause of the following exception traceback most recent call last file home tommaso envs fractal lib site packages uvicorn protocols http impl py line in run asgi result await app type ignore file home tommaso envs fractal lib site packages uvicorn middleware proxy headers py line in call return await self app scope receive send file home tommaso envs fractal lib site packages fastapi applications py line in call await super call scope receive send file home tommaso envs fractal lib site packages starlette applications py line in call await self middleware stack scope receive send file home tommaso envs fractal lib site packages starlette middleware errors py line in call raise exc file home tommaso envs fractal lib site packages starlette middleware errors py line in call await self app scope receive send file home tommaso envs fractal lib site packages starlette middleware cors py line in call await self app scope receive send file home tommaso envs fractal lib site packages starlette exceptions py line in call raise exc file home tommaso envs fractal lib site packages starlette exceptions py line in call await self app scope receive sender file home tommaso envs fractal lib site packages fastapi middleware asyncexitstack py line in call raise e file home tommaso envs fractal lib site packages fastapi middleware asyncexitstack py line in call await self app scope receive send file home tommaso envs fractal lib site packages starlette routing py line in call await route handle scope receive send file home tommaso envs fractal lib site packages starlette routing py line in handle await self app scope receive send file home tommaso envs fractal lib site packages starlette routing py line in app response await func request file home tommaso envs fractal lib site packages fastapi routing py line in app raw response await run endpoint function file home tommaso envs fractal lib site packages fastapi routing py line in run endpoint function return await dependant call values file home tommaso fractal fractal server fractal server app api project py line in apply workflow await db commit file home tommaso envs fractal lib site packages sqlalchemy ext asyncio session py line in commit return await greenlet spawn self sync session commit file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in greenlet spawn result context switch value file home tommaso envs fractal lib site packages sqlalchemy orm session py line in commit self transaction commit to root self future file home tommaso envs fractal lib site packages sqlalchemy orm session py line in commit self prepare impl file home tommaso envs fractal lib site packages sqlalchemy orm session py line in prepare impl self session flush file home tommaso envs fractal lib site packages sqlalchemy orm session py line in flush self flush objects file home tommaso envs fractal lib site packages sqlalchemy orm session py line in flush transaction rollback capture exception true file home tommaso envs fractal lib site packages sqlalchemy util langhelpers py line in exit compat raise file home tommaso envs fractal lib site packages sqlalchemy util compat py line in raise raise exception file home tommaso envs fractal lib site packages sqlalchemy orm session py line in flush flush context execute file home tommaso envs fractal lib site packages sqlalchemy orm unitofwork py line in execute rec execute self file home tommaso envs fractal lib site packages sqlalchemy orm unitofwork py line in execute util preloaded orm persistence save obj file home tommaso envs fractal lib site packages sqlalchemy orm persistence py line in save obj emit insert statements file home tommaso envs fractal lib site packages sqlalchemy orm persistence py line in emit insert statements result connection execute file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute return meth self args kwargs execution options file home tommaso envs fractal lib site packages sqlalchemy sql elements py line in execute on connection return connection execute clauseelement file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute clauseelement ret self execute context file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute context self handle dbapi exception file home tommaso envs fractal lib site packages sqlalchemy engine base py line in handle dbapi exception util raise file home tommaso envs fractal lib site packages sqlalchemy util compat py line in raise raise exception file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute context self dialect do execute file home tommaso envs fractal lib site packages sqlalchemy engine default py line in do execute cursor execute statement parameters file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in execute self adapt connection handle exception error file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in handle exception raise error file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in execute self await cursor execute operation parameters file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in await only return current driver switch awaitable file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in greenlet spawn value await result file home tommaso envs fractal lib site packages aiosqlite cursor py line in execute await self execute self cursor execute sql parameters file home tommaso envs fractal lib site packages aiosqlite cursor py line in execute return await self conn execute fn args kwargs file home tommaso envs fractal lib site packages aiosqlite core py line in execute return await future file home tommaso envs fractal lib site packages aiosqlite core py line in run result function sqlalchemy exc operationalerror operationalerror no such table applyworkflow background on this error at error exception in asgi application traceback most recent call last file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute context self dialect do execute file home tommaso envs fractal lib site packages sqlalchemy engine default py line in do execute cursor execute statement parameters file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in execute self adapt connection handle exception error file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in handle exception raise error file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in execute self await cursor execute operation parameters file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in await only return current driver switch awaitable file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in greenlet spawn value await result file home tommaso envs fractal lib site packages aiosqlite cursor py line in execute await self execute self cursor execute sql parameters file home tommaso envs fractal lib site packages aiosqlite cursor py line in execute return await self conn execute fn args kwargs file home tommaso envs fractal lib site packages aiosqlite core py line in execute return await future file home tommaso envs fractal lib site packages aiosqlite core py line in run result function operationalerror no such table applyworkflow the above exception was the direct cause of the following exception traceback most recent call last file home tommaso envs fractal lib site packages uvicorn protocols http impl py line in run asgi result await app type ignore file home tommaso envs fractal lib site packages uvicorn middleware proxy headers py line in call return await self app scope receive send file home tommaso envs fractal lib site packages fastapi applications py line in call await super call scope receive send file home tommaso envs fractal lib site packages starlette applications py line in call await self middleware stack scope receive send file home tommaso envs fractal lib site packages starlette middleware errors py line in call raise exc file home tommaso envs fractal lib site packages starlette middleware errors py line in call await self app scope receive send file home tommaso envs fractal lib site packages starlette middleware cors py line in call await self app scope receive send file home tommaso envs fractal lib site packages starlette exceptions py line in call raise exc file home tommaso envs fractal lib site packages starlette exceptions py line in call await self app scope receive sender file home tommaso envs fractal lib site packages fastapi middleware asyncexitstack py line in call raise e file home tommaso envs fractal lib site packages fastapi middleware asyncexitstack py line in call await self app scope receive send file home tommaso envs fractal lib site packages starlette routing py line in call await route handle scope receive send file home tommaso envs fractal lib site packages starlette routing py line in handle await self app scope receive send file home tommaso envs fractal lib site packages starlette routing py line in app response await func request file home tommaso envs fractal lib site packages fastapi routing py line in app raw response await run endpoint function file home tommaso envs fractal lib site packages fastapi routing py line in run endpoint function return await dependant call values file home tommaso fractal fractal server fractal server app api project py line in apply workflow await db commit file home tommaso envs fractal lib site packages sqlalchemy ext asyncio session py line in commit return await greenlet spawn self sync session commit file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in greenlet spawn result context switch value file home tommaso envs fractal lib site packages sqlalchemy orm session py line in commit self transaction commit to root self future file home tommaso envs fractal lib site packages sqlalchemy orm session py line in commit self prepare impl file home tommaso envs fractal lib site packages sqlalchemy orm session py line in prepare impl self session flush file home tommaso envs fractal lib site packages sqlalchemy orm session py line in flush self flush objects file home tommaso envs fractal lib site packages sqlalchemy orm session py line in flush transaction rollback capture exception true file home tommaso envs fractal lib site packages sqlalchemy util langhelpers py line in exit compat raise file home tommaso envs fractal lib site packages sqlalchemy util compat py line in raise raise exception file home tommaso envs fractal lib site packages sqlalchemy orm session py line in flush flush context execute file home tommaso envs fractal lib site packages sqlalchemy orm unitofwork py line in execute rec execute self file home tommaso envs fractal lib site packages sqlalchemy orm unitofwork py line in execute util preloaded orm persistence save obj file home tommaso envs fractal lib site packages sqlalchemy orm persistence py line in save obj emit insert statements file home tommaso envs fractal lib site packages sqlalchemy orm persistence py line in emit insert statements result connection execute file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute return meth self args kwargs execution options file home tommaso envs fractal lib site packages sqlalchemy sql elements py line in execute on connection return connection execute clauseelement file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute clauseelement ret self execute context file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute context self handle dbapi exception file home tommaso envs fractal lib site packages sqlalchemy engine base py line in handle dbapi exception util raise file home tommaso envs fractal lib site packages sqlalchemy util compat py line in raise raise exception file home tommaso envs fractal lib site packages sqlalchemy engine base py line in execute context self dialect do execute file home tommaso envs fractal lib site packages sqlalchemy engine default py line in do execute cursor execute statement parameters file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in execute self adapt connection handle exception error file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in handle exception raise error file home tommaso envs fractal lib site packages sqlalchemy dialects sqlite aiosqlite py line in execute self await cursor execute operation parameters file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in await only return current driver switch awaitable file home tommaso envs fractal lib site packages sqlalchemy util concurrency py line in greenlet spawn value await result file home tommaso envs fractal lib site packages aiosqlite cursor py line in execute await self execute self cursor execute sql parameters file home tommaso envs fractal lib site packages aiosqlite cursor py line in execute return await self conn execute fn args kwargs file home tommaso envs fractal lib site packages aiosqlite core py line in execute return await future file home tommaso envs fractal lib site packages aiosqlite core py line in run result function sqlalchemy exc operationalerror operationalerror no such table applyworkflow

| 1

|

637,038

| 20,618,407,707

|

IssuesEvent

|

2022-03-07 15:15:30

|

owid/covid-19-data

|

https://api.github.com/repos/owid/covid-19-data

|

closed

|

Invalid data in vaccinations-by-manufacturer.csv

|

bug dom:vaccinations priority:high report

|

### Country

Uruguay

### Domain

Vaccinations

### Which data is inaccurate or missing?

In file: [vaccinations-by-manufacturer.csv](https://raw.githubusercontent.com/owid/covid-19-data/master/public/data/vaccinations/vaccinations-by-manufacturer.csv)

E.g.:

See the bottom lines, which do not have data:

Uruguay,2022-03-06,Oxford/AstraZeneca,89635

Uruguay,2022-03-06,Pfizer/BioNTech,2398276

Uruguay,2022-03-06,Sinovac,3247639

Uruguay,,Oxford/AstraZeneca,89635

Uruguay,,Pfizer/BioNTech,2362759

Uruguay,,Sinovac,3247636

### Why do you think the data is inaccurate or missing?

Either the data is not available, or there is human error, or error of automatic processing of the data.

|

1.0

|

Invalid data in vaccinations-by-manufacturer.csv - ### Country

Uruguay

### Domain

Vaccinations

### Which data is inaccurate or missing?

In file: [vaccinations-by-manufacturer.csv](https://raw.githubusercontent.com/owid/covid-19-data/master/public/data/vaccinations/vaccinations-by-manufacturer.csv)

E.g.:

See the bottom lines, which do not have data:

Uruguay,2022-03-06,Oxford/AstraZeneca,89635

Uruguay,2022-03-06,Pfizer/BioNTech,2398276

Uruguay,2022-03-06,Sinovac,3247639

Uruguay,,Oxford/AstraZeneca,89635

Uruguay,,Pfizer/BioNTech,2362759

Uruguay,,Sinovac,3247636

### Why do you think the data is inaccurate or missing?

Either the data is not available, or there is human error, or error of automatic processing of the data.

|

priority

|

invalid data in vaccinations by manufacturer csv country uruguay domain vaccinations which data is inaccurate or missing in file e g see the bottom lines which do not have data uruguay oxford astrazeneca uruguay pfizer biontech uruguay sinovac uruguay oxford astrazeneca uruguay pfizer biontech uruguay sinovac why do you think the data is inaccurate or missing either the data is not available or there is human error or error of automatic processing of the data

| 1

|

825,268

| 31,301,744,637

|

IssuesEvent

|

2023-08-23 00:33:37

|

SurajPratap10/Imagine_AI

|

https://api.github.com/repos/SurajPratap10/Imagine_AI

|

closed

|

Adding a blogs section containg lastes ai news

|

gssoc23 High Priority 🔥 ⭐ goal: addition level3

|

Similar to this it will help the user to get the updated and the latest news about ai

pls assign me this issue under GSSOC'23 label

@SurajPratap10

|

1.0

|

Adding a blogs section containg lastes ai news - Similar to this it will help the user to get the updated and the latest news about ai

pls assign me this issue under GSSOC'23 label

@SurajPratap10

|

priority

|

adding a blogs section containg lastes ai news similar to this it will help the user to get the updated and the latest news about ai pls assign me this issue under gssoc label

| 1

|

25,289

| 2,678,805,008

|

IssuesEvent

|

2015-03-26 13:29:08

|

andresriancho/w3af

|

https://api.github.com/repos/andresriancho/w3af

|

closed

|

JSON fuzzing error @ return self.get_token().set_value(value)

|

bug priority:high

|

It has something to do with the delay controllers used in blind sql injection, eval, os commanding detection.

I suspect it's something with the copying of mutants not maintaining the token value.

```

********************************************************************************

mutant.get_token(): <DataToken for (u'object-external_reference-string',): "">

trivial_mutant.get_token(): None

********************************************************************************

```

```python

trivial_mutant = mutant.copy()

print '*' * 80

print 'mutant.get_token(): %r' % mutant.get_token()

print 'trivial_mutant.get_token(): %r' % trivial_mutant.get_token()

print '*' * 80

trivial_mutant.set_token_value(payload)

```

## Version Information

```

Python version: 2.7.6 (default, Mar 22 2014, 22:59:56) [GCC 4.8.2]

GTK version: 2.24.23

PyGTK version: 2.24.0

w3af version:

w3af - Web Application Attack and Audit Framework

Version: 1.6.48

Revision: cbd26a6993 - 25 mar 2015 10:49

Branch: detached HEAD

Local changes: Yes

Author: Andres Riancho and the w3af team.

```

## Traceback

```pytb

A "AttributeError" exception was found while running audit.generic on "Method: POST | https://domain/path/foo/ | JSON: (object-external_reference-string, object-token-string, object-reason-string, object-payment_method_id-string)". The exception was: "'NoneType' object has no attribute 'set_value'" at mutant.py:set_token_value():106.The full traceback is:

File "/home/user/pch/w3af/w3af/core/controllers/core_helpers/consumers/audit.py", line 110, in _audit

plugin.audit_with_copy(fuzzable_request, orig_resp)

File "/home/user/pch/w3af/w3af/core/controllers/plugins/audit_plugin.py", line 139, in audit_with_copy

return self.audit(fuzzable_request, orig_resp)

File "/home/user/pch/w3af/w3af/plugins/audit/generic.py", line 92, in audit

m.set_token_value(error_string)

File "/home/user/pch/w3af/w3af/core/data/fuzzer/mutants/mutant.py", line 106, in set_token_value

return self.get_token().set_value(value)

```

## Enabled Plugins

```python

{'attack': {},

'audit': {u'blind_sqli': <OptionList: eq_limit>,

u'buffer_overflow': <OptionList: >,

u'cors_origin': <OptionList: origin_header_value>,

u'csrf': <OptionList: >,

u'dav': <OptionList: >,

u'eval': <OptionList: use_time_delay|use_echo>,

u'file_upload': <OptionList: extensions>,

u'format_string': <OptionList: >,

u'frontpage': <OptionList: >,

u'generic': <OptionList: diff_ratio>,

u'global_redirect': <OptionList: >,

u'htaccess_methods': <OptionList: >,

u'ldapi': <OptionList: >,

u'lfi': <OptionList: >,

u'memcachei': <OptionList: >,

u'mx_injection': <OptionList: >,

u'os_commanding': <OptionList: >,

u'phishing_vector': <OptionList: >,

u'preg_replace': <OptionList: >,

u'redos': <OptionList: >,

u'response_splitting': <OptionList: >,

u'rfd': <OptionList: >,

u'rfi': <OptionList: listen_address|listen_port|use_w3af_site>,

u'shell_shock': <OptionList: >,

u'sqli': <OptionList: >,

u'ssi': <OptionList: >,

u'ssl_certificate': <OptionList: minExpireDays|caFileName>,

u'un_ssl': <OptionList: >,

u'xpath': <OptionList: >,

u'xss': <OptionList: persistent_xss>,

u'xst': <OptionList: >},

'auth': {},

'bruteforce': {},

'crawl': {u'spider_man': <OptionList: listen_address|listen_port>},

'evasion': {},

'grep': {'error_500': {}},

'infrastructure': {'allowed_methods': {},

'frontpage_version': {},

'server_header': {}},

'mangle': {},

'output': {u'console': <OptionList: verbose>}}

```

|

1.0

|

JSON fuzzing error @ return self.get_token().set_value(value) - It has something to do with the delay controllers used in blind sql injection, eval, os commanding detection.

I suspect it's something with the copying of mutants not maintaining the token value.

```

********************************************************************************

mutant.get_token(): <DataToken for (u'object-external_reference-string',): "">

trivial_mutant.get_token(): None

********************************************************************************

```

```python

trivial_mutant = mutant.copy()

print '*' * 80

print 'mutant.get_token(): %r' % mutant.get_token()

print 'trivial_mutant.get_token(): %r' % trivial_mutant.get_token()

print '*' * 80

trivial_mutant.set_token_value(payload)

```

## Version Information

```

Python version: 2.7.6 (default, Mar 22 2014, 22:59:56) [GCC 4.8.2]

GTK version: 2.24.23

PyGTK version: 2.24.0

w3af version:

w3af - Web Application Attack and Audit Framework

Version: 1.6.48

Revision: cbd26a6993 - 25 mar 2015 10:49

Branch: detached HEAD

Local changes: Yes

Author: Andres Riancho and the w3af team.

```

## Traceback

```pytb

A "AttributeError" exception was found while running audit.generic on "Method: POST | https://domain/path/foo/ | JSON: (object-external_reference-string, object-token-string, object-reason-string, object-payment_method_id-string)". The exception was: "'NoneType' object has no attribute 'set_value'" at mutant.py:set_token_value():106.The full traceback is:

File "/home/user/pch/w3af/w3af/core/controllers/core_helpers/consumers/audit.py", line 110, in _audit

plugin.audit_with_copy(fuzzable_request, orig_resp)

File "/home/user/pch/w3af/w3af/core/controllers/plugins/audit_plugin.py", line 139, in audit_with_copy

return self.audit(fuzzable_request, orig_resp)

File "/home/user/pch/w3af/w3af/plugins/audit/generic.py", line 92, in audit

m.set_token_value(error_string)

File "/home/user/pch/w3af/w3af/core/data/fuzzer/mutants/mutant.py", line 106, in set_token_value

return self.get_token().set_value(value)

```

## Enabled Plugins

```python

{'attack': {},

'audit': {u'blind_sqli': <OptionList: eq_limit>,

u'buffer_overflow': <OptionList: >,

u'cors_origin': <OptionList: origin_header_value>,

u'csrf': <OptionList: >,

u'dav': <OptionList: >,

u'eval': <OptionList: use_time_delay|use_echo>,

u'file_upload': <OptionList: extensions>,

u'format_string': <OptionList: >,

u'frontpage': <OptionList: >,

u'generic': <OptionList: diff_ratio>,

u'global_redirect': <OptionList: >,

u'htaccess_methods': <OptionList: >,

u'ldapi': <OptionList: >,

u'lfi': <OptionList: >,

u'memcachei': <OptionList: >,

u'mx_injection': <OptionList: >,

u'os_commanding': <OptionList: >,

u'phishing_vector': <OptionList: >,

u'preg_replace': <OptionList: >,

u'redos': <OptionList: >,

u'response_splitting': <OptionList: >,

u'rfd': <OptionList: >,

u'rfi': <OptionList: listen_address|listen_port|use_w3af_site>,

u'shell_shock': <OptionList: >,

u'sqli': <OptionList: >,

u'ssi': <OptionList: >,

u'ssl_certificate': <OptionList: minExpireDays|caFileName>,

u'un_ssl': <OptionList: >,

u'xpath': <OptionList: >,

u'xss': <OptionList: persistent_xss>,

u'xst': <OptionList: >},

'auth': {},

'bruteforce': {},

'crawl': {u'spider_man': <OptionList: listen_address|listen_port>},

'evasion': {},

'grep': {'error_500': {}},

'infrastructure': {'allowed_methods': {},

'frontpage_version': {},

'server_header': {}},

'mangle': {},

'output': {u'console': <OptionList: verbose>}}

```

|

priority

|

json fuzzing error return self get token set value value it has something to do with the delay controllers used in blind sql injection eval os commanding detection i suspect it s something with the copying of mutants not maintaining the token value mutant get token trivial mutant get token none python trivial mutant mutant copy print print mutant get token r mutant get token print trivial mutant get token r trivial mutant get token print trivial mutant set token value payload version information python version default mar gtk version pygtk version version web application attack and audit framework version revision mar branch detached head local changes yes author andres riancho and the team traceback pytb a attributeerror exception was found while running audit generic on method post json object external reference string object token string object reason string object payment method id string the exception was nonetype object has no attribute set value at mutant py set token value the full traceback is file home user pch core controllers core helpers consumers audit py line in audit plugin audit with copy fuzzable request orig resp file home user pch core controllers plugins audit plugin py line in audit with copy return self audit fuzzable request orig resp file home user pch plugins audit generic py line in audit m set token value error string file home user pch core data fuzzer mutants mutant py line in set token value return self get token set value value enabled plugins python attack audit u blind sqli u buffer overflow u cors origin u csrf u dav u eval u file upload u format string u frontpage u generic u global redirect u htaccess methods u ldapi u lfi u memcachei u mx injection u os commanding u phishing vector u preg replace u redos u response splitting u rfd u rfi u shell shock u sqli u ssi u ssl certificate u un ssl u xpath u xss u xst auth bruteforce crawl u spider man evasion grep error infrastructure allowed methods frontpage version server header mangle output u console

| 1

|

611,325

| 18,952,167,810

|

IssuesEvent

|

2021-11-18 16:11:26

|

dmwm/CRABServer

|

https://api.github.com/repos/dmwm/CRABServer

|

closed

|

Error during code cleanup

|