Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

202,292

| 7,046,311,220

|

IssuesEvent

|

2018-01-02 06:51:04

|

OperationCode/operationcode_frontend

|

https://api.github.com/repos/OperationCode/operationcode_frontend

|

closed

|

Stop directing traffic to Discourse

|

beginner friendly Priority: High Status: Available Type: Feature

|

<!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

Discourse is not gaining traction. Let's double down on Slack and email marketing.

## What should your feature do?

Don't shut down Discourse yet - instead, find all areas where it's referenced in the frontend and backend (either Discourse, or community.operationcode.org) and remove them.

|

1.0

|

Stop directing traffic to Discourse - <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

Discourse is not gaining traction. Let's double down on Slack and email marketing.

## What should your feature do?

Don't shut down Discourse yet - instead, find all areas where it's referenced in the frontend and backend (either Discourse, or community.operationcode.org) and remove them.

|

priority

|

stop directing traffic to discourse feature why is this feature being added discourse is not gaining traction let s double down on slack and email marketing what should your feature do don t shut down discourse yet instead find all areas where it s referenced in the frontend and backend either discourse or community operationcode org and remove them

| 1

|

228,429

| 7,550,851,003

|

IssuesEvent

|

2018-04-18 18:13:06

|

umple/umple

|

https://api.github.com/repos/umple/umple

|

closed

|

variables of associations are not accessible in subclasses

|

Diffic-Med Priority-VHigh associations bug

|

Please consider the following example,

```

class A{

0..1 sth-- * B;

}

class B{

}

class D{

isA A;

0..1 sth -- * B;

}

```

It generates the following code

```

public B getB_B(int index)

{

B aB = (B)bs.get(index);

return aB;

}

```

The variable `bs` is not accessible in the class D, so there is an error. It's a private varibale in the superclass `A`.

There several other errors in other APIs in association with the variable `bs`

|

1.0

|

variables of associations are not accessible in subclasses - Please consider the following example,

```

class A{

0..1 sth-- * B;

}

class B{

}

class D{

isA A;

0..1 sth -- * B;

}

```

It generates the following code

```

public B getB_B(int index)

{

B aB = (B)bs.get(index);

return aB;

}

```

The variable `bs` is not accessible in the class D, so there is an error. It's a private varibale in the superclass `A`.

There several other errors in other APIs in association with the variable `bs`

|

priority

|

variables of associations are not accessible in subclasses please consider the following example class a sth b class b class d isa a sth b it generates the following code public b getb b int index b ab b bs get index return ab the variable bs is not accessible in the class d so there is an error it s a private varibale in the superclass a there several other errors in other apis in association with the variable bs

| 1

|

374,954

| 11,097,729,015

|

IssuesEvent

|

2019-12-16 13:56:26

|

bounswe/bounswe2019group10

|

https://api.github.com/repos/bounswe/bounswe2019group10

|

opened

|

Need endpoint for notification

|

Priority: High Relation: Backend

|

Need a new notification endpoint that sets all notifications read status to true. Currently checking the number of notifications on the header causes to become red preventing user from seeing new notifications.

|

1.0

|

Need endpoint for notification - Need a new notification endpoint that sets all notifications read status to true. Currently checking the number of notifications on the header causes to become red preventing user from seeing new notifications.

|

priority

|

need endpoint for notification need a new notification endpoint that sets all notifications read status to true currently checking the number of notifications on the header causes to become red preventing user from seeing new notifications

| 1

|

517,247

| 14,997,928,152

|

IssuesEvent

|

2021-01-29 17:37:20

|

visit-dav/visit

|

https://api.github.com/repos/visit-dav/visit

|

closed

|

We need to switch the development branch to use python 3.

|

enhancement impact high likelihood high priority reviewed

|

We are currently still building against python 2 on quartz and running the test suite using python 2. The third party libraries on quartz should be rebuilt with python 3 and the config site file updated. The CI should also be built with python 3.

We should remove the python 2 stuff at some later date once we have confidence that the python 3 stuff is solid. This may be in a few months.

|

1.0

|

We need to switch the development branch to use python 3. - We are currently still building against python 2 on quartz and running the test suite using python 2. The third party libraries on quartz should be rebuilt with python 3 and the config site file updated. The CI should also be built with python 3.

We should remove the python 2 stuff at some later date once we have confidence that the python 3 stuff is solid. This may be in a few months.

|

priority

|

we need to switch the development branch to use python we are currently still building against python on quartz and running the test suite using python the third party libraries on quartz should be rebuilt with python and the config site file updated the ci should also be built with python we should remove the python stuff at some later date once we have confidence that the python stuff is solid this may be in a few months

| 1

|

605,549

| 18,736,770,411

|

IssuesEvent

|

2021-11-04 08:44:07

|

AY2122S1-CS2103T-F13-2/tp

|

https://api.github.com/repos/AY2122S1-CS2103T-F13-2/tp

|

closed

|

[PE-D] Deleting elderly does not remove all traces of his name

|

priority.High

|

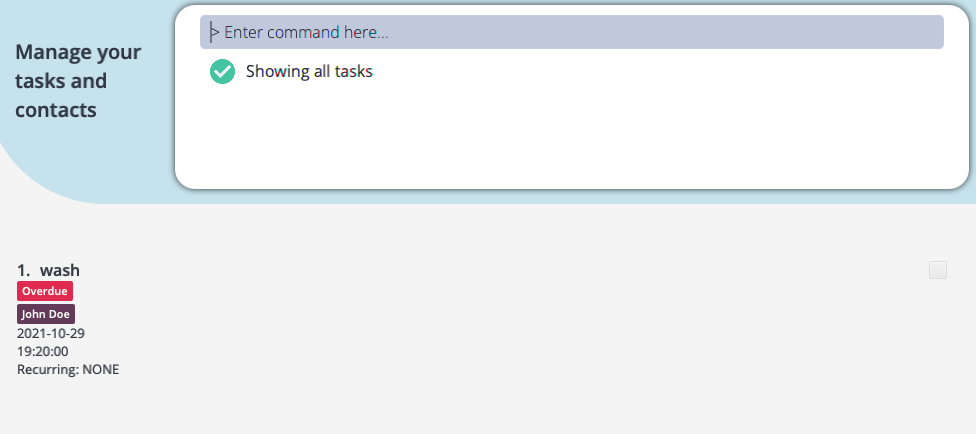

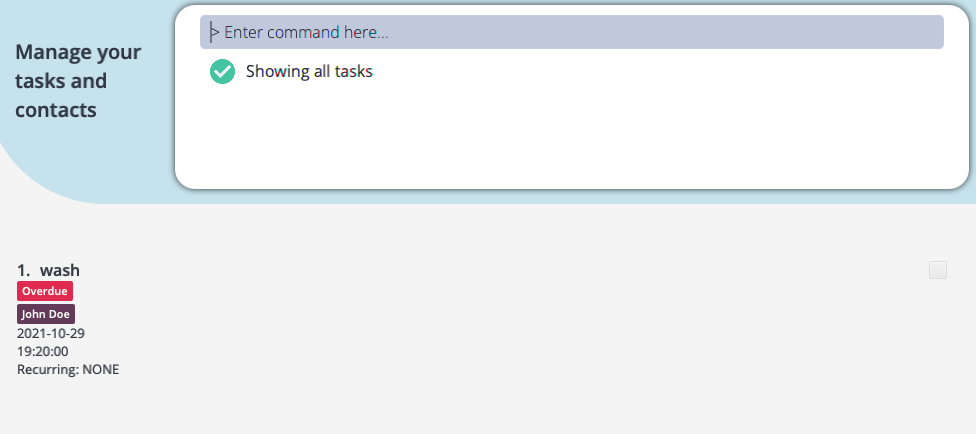

steps to reproduce:

1. addElderly en/John Doe a/40 g/M r/53 nn/John Beckham rs/Father p/98765432 e/mary@example.com t/friends t/owesMoney

2. addTask en/John Doe desc/wash date/2021-10-29 time/19:20

3. deleteElderly 1

The elderly is related to the task, assuming the task is meant to be completed for that elderly, but when the elderly is deleted, the tag with his name remains in the task as shown.

<!--session: 1635516837992-8cbce362-68fb-4d9f-97d5-b66f233b2e1c--><!--Version: Web v3.4.1-->

-------------

Labels: `severity.Low` `type.FunctionalityBug`

original: amzhy/ped#19

|

1.0

|

[PE-D] Deleting elderly does not remove all traces of his name -

steps to reproduce:

1. addElderly en/John Doe a/40 g/M r/53 nn/John Beckham rs/Father p/98765432 e/mary@example.com t/friends t/owesMoney

2. addTask en/John Doe desc/wash date/2021-10-29 time/19:20

3. deleteElderly 1

The elderly is related to the task, assuming the task is meant to be completed for that elderly, but when the elderly is deleted, the tag with his name remains in the task as shown.

<!--session: 1635516837992-8cbce362-68fb-4d9f-97d5-b66f233b2e1c--><!--Version: Web v3.4.1-->

-------------

Labels: `severity.Low` `type.FunctionalityBug`

original: amzhy/ped#19

|

priority

|

deleting elderly does not remove all traces of his name steps to reproduce addelderly en john doe a g m r nn john beckham rs father p e mary example com t friends t owesmoney addtask en john doe desc wash date time deleteelderly the elderly is related to the task assuming the task is meant to be completed for that elderly but when the elderly is deleted the tag with his name remains in the task as shown labels severity low type functionalitybug original amzhy ped

| 1

|

223,927

| 7,463,655,459

|

IssuesEvent

|

2018-04-01 08:39:10

|

CS2103JAN2018-W14-B1/main

|

https://api.github.com/repos/CS2103JAN2018-W14-B1/main

|

closed

|

List command to accept arguments to request different types of contacts to be listed

|

Priority.high component: logic

|

List command to accept a "TYPE" argument for a "contacts" list or a "student" list

|

1.0

|

List command to accept arguments to request different types of contacts to be listed - List command to accept a "TYPE" argument for a "contacts" list or a "student" list

|

priority

|

list command to accept arguments to request different types of contacts to be listed list command to accept a type argument for a contacts list or a student list

| 1

|

650,925

| 21,443,500,969

|

IssuesEvent

|

2022-04-25 01:59:44

|

Bishop-Laboratory/RLoop-QC-Meta-Analysis-Miller-2022

|

https://api.github.com/repos/Bishop-Laboratory/RLoop-QC-Meta-Analysis-Miller-2022

|

closed

|

Analysis benefit of QC on downstream analysis

|

analysis needed high-priority

|

One of the primary distinctions between our approach and that of [R-loopBase](https://academic.oup.com/nar/advance-article/doi/10.1093/nar/gkab1103/6430826) is that they did not consider data quality. In fact, roughly 31% of the data in their analysis was marked as NEG by our quality model. To better emphasize this distinction, we will perform an analysis which demonstrates the importance of our QC approach on the downstream results in our study. We will calculate the consensus R-loop peaks +/- our high-confidence QC filter and then regenerate the following figures:

- [ ] 4F

- [ ] 4G

- [ ] 4I

- [ ] 5A

- [ ] 6A

|

1.0

|

Analysis benefit of QC on downstream analysis - One of the primary distinctions between our approach and that of [R-loopBase](https://academic.oup.com/nar/advance-article/doi/10.1093/nar/gkab1103/6430826) is that they did not consider data quality. In fact, roughly 31% of the data in their analysis was marked as NEG by our quality model. To better emphasize this distinction, we will perform an analysis which demonstrates the importance of our QC approach on the downstream results in our study. We will calculate the consensus R-loop peaks +/- our high-confidence QC filter and then regenerate the following figures:

- [ ] 4F

- [ ] 4G

- [ ] 4I

- [ ] 5A

- [ ] 6A

|

priority

|

analysis benefit of qc on downstream analysis one of the primary distinctions between our approach and that of is that they did not consider data quality in fact roughly of the data in their analysis was marked as neg by our quality model to better emphasize this distinction we will perform an analysis which demonstrates the importance of our qc approach on the downstream results in our study we will calculate the consensus r loop peaks our high confidence qc filter and then regenerate the following figures

| 1

|

702,582

| 24,126,897,502

|

IssuesEvent

|

2022-09-21 01:55:40

|

4paradigm/OpenMLDB

|

https://api.github.com/repos/4paradigm/OpenMLDB

|

closed

|

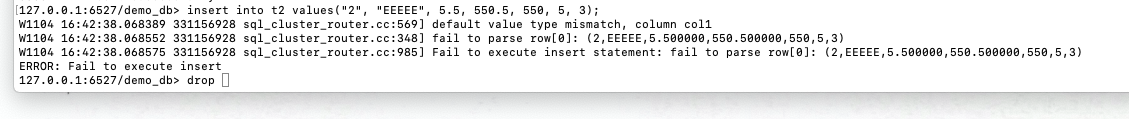

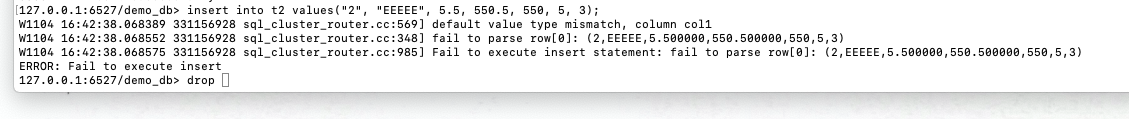

Delete GLOG message in sql_cluster_router

|

bug enhancement high-priority storage-engine

|

Log message display on CLI directly is not well.

|

1.0

|

Delete GLOG message in sql_cluster_router - Log message display on CLI directly is not well.

|

priority

|

delete glog message in sql cluster router log message display on cli directly is not well

| 1

|

300,503

| 9,211,292,185

|

IssuesEvent

|

2019-03-09 14:08:59

|

qgisissuebot/QGIS

|

https://api.github.com/repos/qgisissuebot/QGIS

|

closed

|

Crach on closing

|

Bug Priority: high Regression

|

---

Author Name: **Matjaž Mori** (Matjaž Mori)

Original Redmine Issue: 21416, https://issues.qgis.org/issues/21416

---

## User Feedback

This crash happens everytime i close the program.

## Report Details

*Crash ID*: a037e3dd18301dbfb420623bbdf24000bc039583

*Stack Trace*

```

QgsMapToolExtent::~QgsMapToolExtent :

PyInit__gui :

QObjectPrivate::deleteChildren :

QWidget::~QWidget :

QgsVectorLayerProperties::`default constructor closure' :

QgisApp::~QgisApp :

CPLStringList::operator char const * __ptr64 const * __ptr64 :

main :

BaseThreadInitThunk :

RtlUserThreadStart :

```

*QGIS Info*

QGIS Version: 3.4.5-Madeira

QGIS code revision: commit:89ee6f6e23

Compiled against Qt: 5.11.2

Running against Qt: 5.11.2

Compiled against GDAL: 2.4.0

Running against GDAL: 2.4.0

*System Info*

CPU Type: x86_64

Kernel Type: winnt

Kernel Version: 6.1.7601

|

1.0

|

Crach on closing - ---

Author Name: **Matjaž Mori** (Matjaž Mori)

Original Redmine Issue: 21416, https://issues.qgis.org/issues/21416

---

## User Feedback

This crash happens everytime i close the program.

## Report Details

*Crash ID*: a037e3dd18301dbfb420623bbdf24000bc039583

*Stack Trace*

```

QgsMapToolExtent::~QgsMapToolExtent :

PyInit__gui :

QObjectPrivate::deleteChildren :

QWidget::~QWidget :

QgsVectorLayerProperties::`default constructor closure' :

QgisApp::~QgisApp :

CPLStringList::operator char const * __ptr64 const * __ptr64 :

main :

BaseThreadInitThunk :

RtlUserThreadStart :

```

*QGIS Info*

QGIS Version: 3.4.5-Madeira

QGIS code revision: commit:89ee6f6e23

Compiled against Qt: 5.11.2

Running against Qt: 5.11.2

Compiled against GDAL: 2.4.0

Running against GDAL: 2.4.0

*System Info*

CPU Type: x86_64

Kernel Type: winnt

Kernel Version: 6.1.7601

|

priority

|

crach on closing author name matjaž mori matjaž mori original redmine issue user feedback this crash happens everytime i close the program report details crash id stack trace qgsmaptoolextent qgsmaptoolextent pyinit gui qobjectprivate deletechildren qwidget qwidget qgsvectorlayerproperties default constructor closure qgisapp qgisapp cplstringlist operator char const const main basethreadinitthunk rtluserthreadstart qgis info qgis version madeira qgis code revision commit compiled against qt running against qt compiled against gdal running against gdal system info cpu type kernel type winnt kernel version

| 1

|

666,670

| 22,363,117,771

|

IssuesEvent

|

2022-06-15 23:08:06

|

microsoft/AdaptiveCards

|

https://api.github.com/repos/microsoft/AdaptiveCards

|

closed

|

[Accessibility] CalendarReminder: Focus order is not logical as focus is going on 'Snooze' button instead of 'Dropdown' menu items by using swipe.

|

Bug Area-Renderers Platform-iOS High Priority Area-Accessibility A11ySev2 HCL-E+D Product-AC HCL-AdaptiveCards-iOS

|

### Target Platforms

iOS

### SDK Version

App Version: Version 1.0 (2.3.1-beta.20210603.1)

### Application Name

Visualizer

### Problem Description

[33752716](https://microsoft.visualstudio.com/OS/_workitems/edit/33752716)

Focus order is not logical in swipe navigation on "CalendarReminder.JSON” page. As after performing right swipe from the 'Snooze for' label it is going on the 'Snooze' button.

### Screenshots

_No response_

### Card JSON

[CalendarReminder.json](https://github.com/microsoft/AdaptiveCards/blob/main/samples/v1.0/Scenarios/CalendarReminder.json)

### Sample Code Language

_No response_

### Sample Code

_No response_

|

1.0

|

[Accessibility] CalendarReminder: Focus order is not logical as focus is going on 'Snooze' button instead of 'Dropdown' menu items by using swipe. - ### Target Platforms

iOS

### SDK Version

App Version: Version 1.0 (2.3.1-beta.20210603.1)

### Application Name

Visualizer

### Problem Description

[33752716](https://microsoft.visualstudio.com/OS/_workitems/edit/33752716)

Focus order is not logical in swipe navigation on "CalendarReminder.JSON” page. As after performing right swipe from the 'Snooze for' label it is going on the 'Snooze' button.

### Screenshots

_No response_

### Card JSON

[CalendarReminder.json](https://github.com/microsoft/AdaptiveCards/blob/main/samples/v1.0/Scenarios/CalendarReminder.json)

### Sample Code Language

_No response_

### Sample Code

_No response_

|

priority

|

calendarreminder focus order is not logical as focus is going on snooze button instead of dropdown menu items by using swipe target platforms ios sdk version app version version beta application name visualizer problem description focus order is not logical in swipe navigation on calendarreminder json” page as after performing right swipe from the snooze for label it is going on the snooze button screenshots no response card json sample code language no response sample code no response

| 1

|

189,140

| 6,794,594,034

|

IssuesEvent

|

2017-11-01 12:50:53

|

metasfresh/metasfresh

|

https://api.github.com/repos/metasfresh/metasfresh

|

closed

|

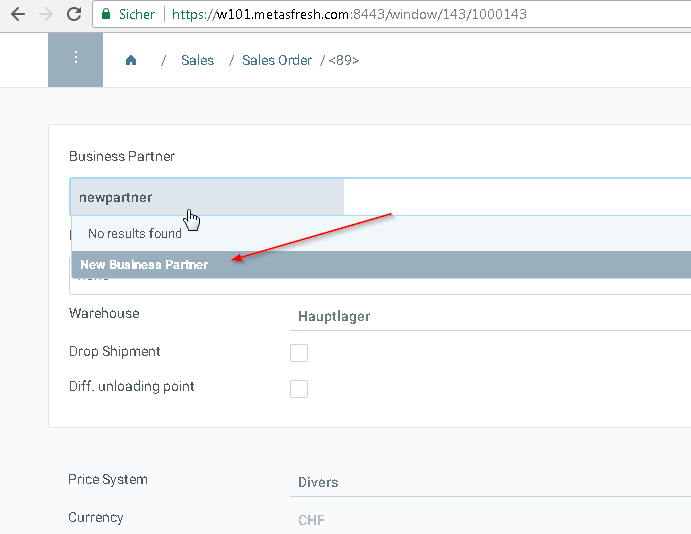

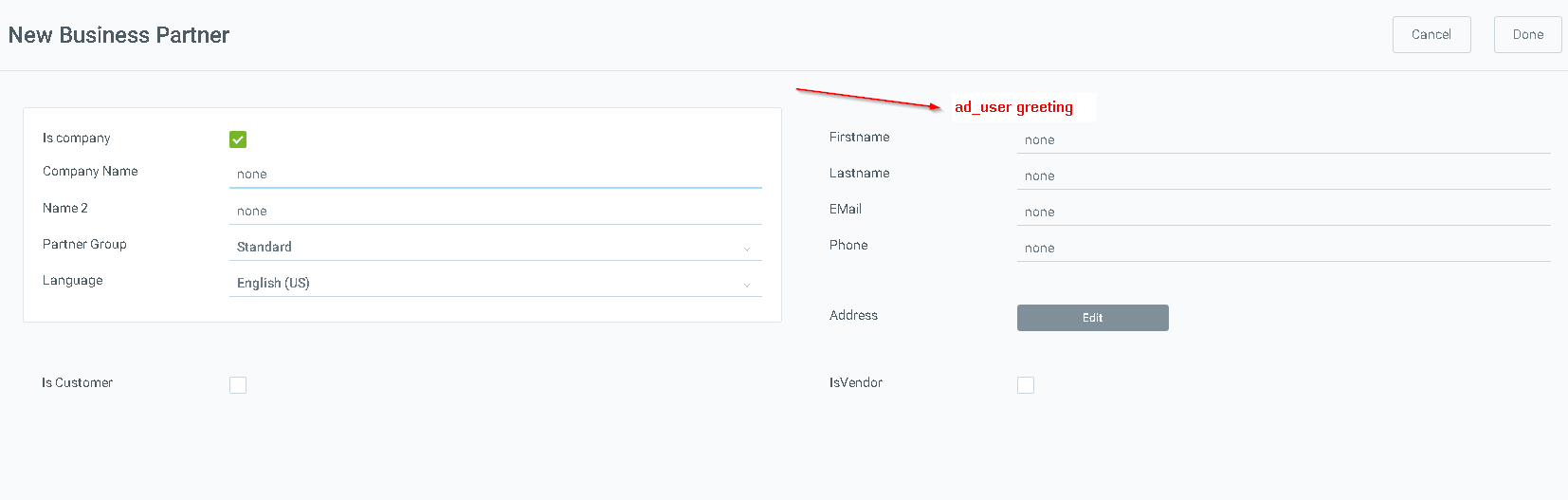

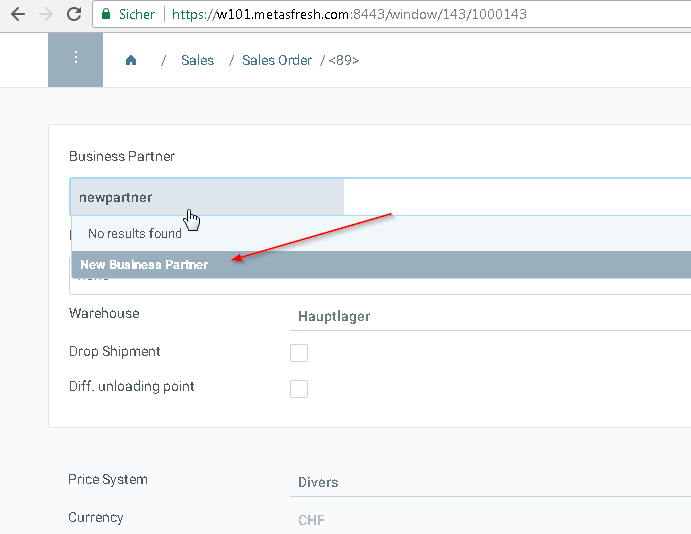

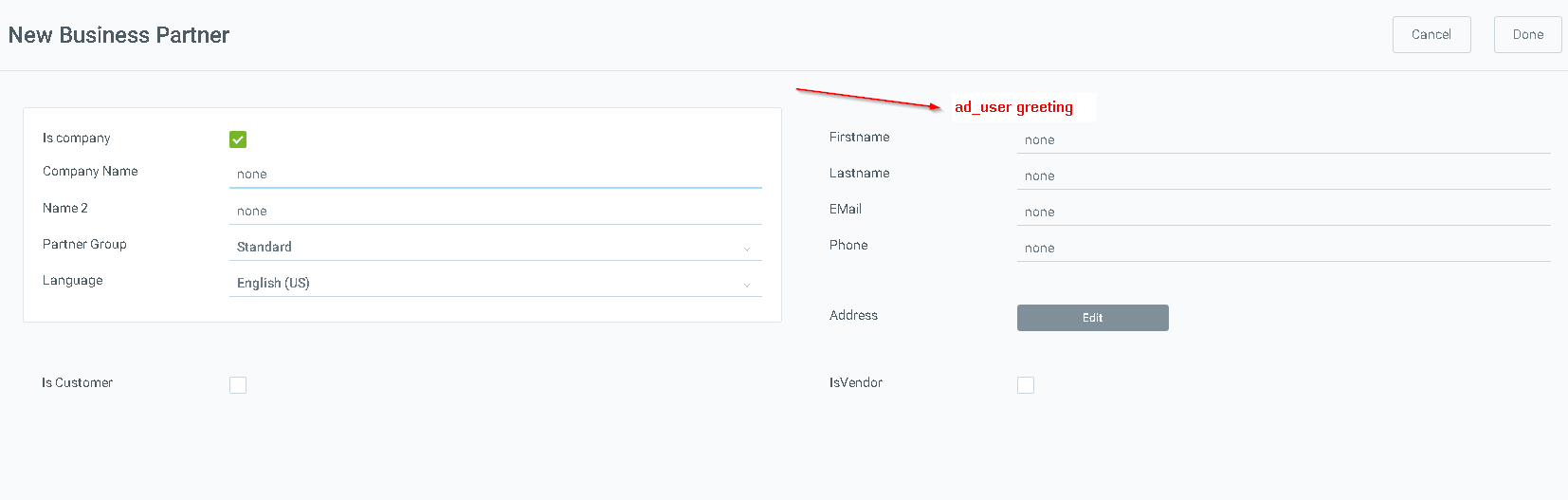

Add greeting to partner quick creation from order

|

branch:master priority:high type:enhancement

|

### Is this a bug or feature request?

FR

### What is the current behavior?

field is not there

#### Which are the steps to reproduce?

### What is the expected or desired behavior?

|

1.0

|

Add greeting to partner quick creation from order - ### Is this a bug or feature request?

FR

### What is the current behavior?

field is not there

#### Which are the steps to reproduce?

### What is the expected or desired behavior?

|

priority

|

add greeting to partner quick creation from order is this a bug or feature request fr what is the current behavior field is not there which are the steps to reproduce what is the expected or desired behavior

| 1

|

458,310

| 13,173,068,905

|

IssuesEvent

|

2020-08-11 19:36:11

|

metrumresearchgroup/rbabylon

|

https://api.github.com/repos/metrumresearchgroup/rbabylon

|

closed

|

Add model_summary() info to run_log()

|

enhancement priority: high risk: medium

|

# Summary

As a user, I would like to be able to summarize multiple models in batch and have some subset of the information in those summaries extracted into a tibble similar to `bbi_run_log_df` (the tibble output from `run_log()`). I would also like to be able to easily append that table onto a `bbi_run_log_df`.

## Technical specification

This will be accomplished with the following functions:

* **summary_log()** -- Return a new tibble with the "absolute_model_path" column as the primary key, plus the columns extracted from `model_summaries()`.

* **add_summary()** -- Return the input tibble, with the columns extracted from `model_summaries()` joined onto it.

## Fields extracted to tibble

* objective function value

* model estimation method

* number of (non-fixed) parameters

* number of patients/observations

* the boolean heuristics that are currently in `bbi_nonmem_summary$run_heuristics`

## Related Issues

Note that this functionality is dependent on functionality described in https://github.com/metrumresearchgroup/rbabylon/issues/53 and will be implemented with that in mind.

# Tests

- tests/testthat/test-summary-log.R

- summary_log() errors with malformed YAML

- summary_log() returns NULL and warns when no YAML found

- summary_log() works correctly with nested dirs

- summary_log(.recurse = FALSE) works

- add_summary() works correctly

- summary_log works some failed summaries

- summary_log works all failed summaries

|

1.0

|

Add model_summary() info to run_log() - # Summary

As a user, I would like to be able to summarize multiple models in batch and have some subset of the information in those summaries extracted into a tibble similar to `bbi_run_log_df` (the tibble output from `run_log()`). I would also like to be able to easily append that table onto a `bbi_run_log_df`.

## Technical specification

This will be accomplished with the following functions:

* **summary_log()** -- Return a new tibble with the "absolute_model_path" column as the primary key, plus the columns extracted from `model_summaries()`.

* **add_summary()** -- Return the input tibble, with the columns extracted from `model_summaries()` joined onto it.

## Fields extracted to tibble

* objective function value

* model estimation method

* number of (non-fixed) parameters

* number of patients/observations

* the boolean heuristics that are currently in `bbi_nonmem_summary$run_heuristics`

## Related Issues

Note that this functionality is dependent on functionality described in https://github.com/metrumresearchgroup/rbabylon/issues/53 and will be implemented with that in mind.

# Tests

- tests/testthat/test-summary-log.R

- summary_log() errors with malformed YAML

- summary_log() returns NULL and warns when no YAML found

- summary_log() works correctly with nested dirs

- summary_log(.recurse = FALSE) works

- add_summary() works correctly

- summary_log works some failed summaries

- summary_log works all failed summaries

|

priority

|

add model summary info to run log summary as a user i would like to be able to summarize multiple models in batch and have some subset of the information in those summaries extracted into a tibble similar to bbi run log df the tibble output from run log i would also like to be able to easily append that table onto a bbi run log df technical specification this will be accomplished with the following functions summary log return a new tibble with the absolute model path column as the primary key plus the columns extracted from model summaries add summary return the input tibble with the columns extracted from model summaries joined onto it fields extracted to tibble objective function value model estimation method number of non fixed parameters number of patients observations the boolean heuristics that are currently in bbi nonmem summary run heuristics related issues note that this functionality is dependent on functionality described in and will be implemented with that in mind tests tests testthat test summary log r summary log errors with malformed yaml summary log returns null and warns when no yaml found summary log works correctly with nested dirs summary log recurse false works add summary works correctly summary log works some failed summaries summary log works all failed summaries

| 1

|

706,625

| 24,279,756,761

|

IssuesEvent

|

2022-09-28 16:21:22

|

7thbeatgames/rd

|

https://api.github.com/repos/7thbeatgames/rd

|

closed

|

Change the window movement to "One Screen" the first time OBS is detected to be running at the same time as RD.

|

Comp: In-game Priority: High Suggestion

|

### Please Check

- [X] I searched for the issues, and made sure there were no duplicates.

- [X] I agree the terms, and understand that my suggestion is not guaranteed to be added or addressed.

### What problem motivated you to submit the suggestion?

Pretty much every youtuber or live streamer who plays RD will have to fumble around with the window movement settings the first time they reach 2-X. This interrupts the flow of the game and can be annoying to find.

### Suggestion / Solution

The first time RD detects OBS being open at the same time as RD itself, it will automatically change the window dance settings to One Screen (only if window dance has not been activated before). The player can always change it back later and it won't be annoying for recording if 2-X has already been played.

### Alternatives & Workarounds

_No response_

### Demo & Mockup

_No response_

### Note

_No response_

|

1.0

|

Change the window movement to "One Screen" the first time OBS is detected to be running at the same time as RD. - ### Please Check

- [X] I searched for the issues, and made sure there were no duplicates.

- [X] I agree the terms, and understand that my suggestion is not guaranteed to be added or addressed.

### What problem motivated you to submit the suggestion?

Pretty much every youtuber or live streamer who plays RD will have to fumble around with the window movement settings the first time they reach 2-X. This interrupts the flow of the game and can be annoying to find.

### Suggestion / Solution

The first time RD detects OBS being open at the same time as RD itself, it will automatically change the window dance settings to One Screen (only if window dance has not been activated before). The player can always change it back later and it won't be annoying for recording if 2-X has already been played.

### Alternatives & Workarounds

_No response_

### Demo & Mockup

_No response_

### Note

_No response_

|

priority

|

change the window movement to one screen the first time obs is detected to be running at the same time as rd please check i searched for the issues and made sure there were no duplicates i agree the terms and understand that my suggestion is not guaranteed to be added or addressed what problem motivated you to submit the suggestion pretty much every youtuber or live streamer who plays rd will have to fumble around with the window movement settings the first time they reach x this interrupts the flow of the game and can be annoying to find suggestion solution the first time rd detects obs being open at the same time as rd itself it will automatically change the window dance settings to one screen only if window dance has not been activated before the player can always change it back later and it won t be annoying for recording if x has already been played alternatives workarounds no response demo mockup no response note no response

| 1

|

701,422

| 24,097,673,896

|

IssuesEvent

|

2022-09-19 20:22:04

|

Azordev/did-admin-panel

|

https://api.github.com/repos/Azordev/did-admin-panel

|

closed

|

crear funcionalidad del perfil de provedor

|

EE-2 priority high QA check

|

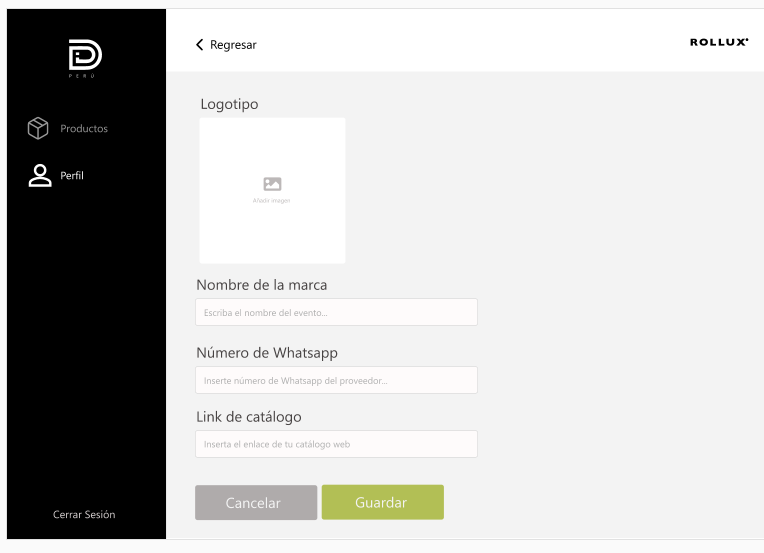

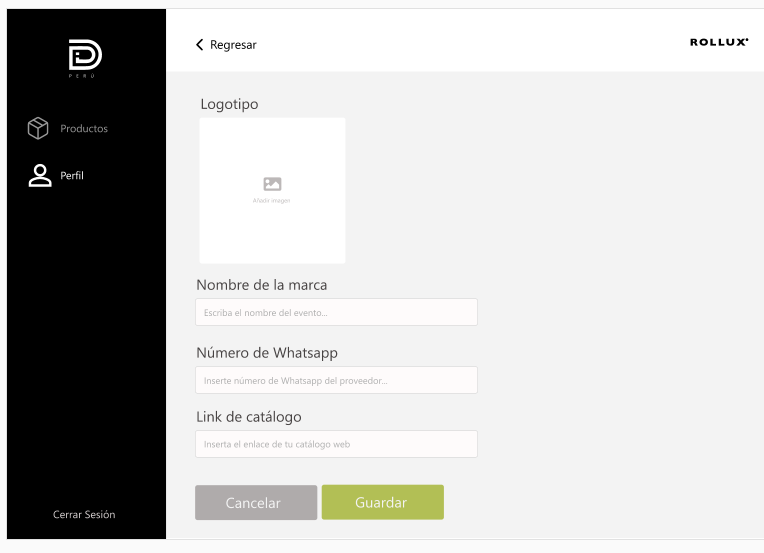

# EXPLANATION

Al ingresar con credenciales de proveedor se abre una vista d pantalla donde esta un formulario ,este formulario se debe llenar con los datos para crear un perfil como proveedor pero al ingresar los dato no guarda la información no guarda el imagen no tiene funcionalidad

# SCREESHOTS

1 Diseño asignado esta es la vista de pantalla de perfil

2 Crear la funcionalidad de logotipo al ingresar un imagen debe guardarse y mostrarse cuando se guarde la infomacion como un logotipo de la empresa en su perfil

3 las validaciones "Cancelar" y "Guardar" deben funcionar

# USER STORY

Como proveedor quiero ingresar una imagen como logo de la empresa

Como proveedor quiero crea mi perfil al seleccionar la opción "Guardar"

|

1.0

|

crear funcionalidad del perfil de provedor - # EXPLANATION

Al ingresar con credenciales de proveedor se abre una vista d pantalla donde esta un formulario ,este formulario se debe llenar con los datos para crear un perfil como proveedor pero al ingresar los dato no guarda la información no guarda el imagen no tiene funcionalidad

# SCREESHOTS

1 Diseño asignado esta es la vista de pantalla de perfil

2 Crear la funcionalidad de logotipo al ingresar un imagen debe guardarse y mostrarse cuando se guarde la infomacion como un logotipo de la empresa en su perfil

3 las validaciones "Cancelar" y "Guardar" deben funcionar

# USER STORY

Como proveedor quiero ingresar una imagen como logo de la empresa

Como proveedor quiero crea mi perfil al seleccionar la opción "Guardar"

|

priority

|

crear funcionalidad del perfil de provedor explanation al ingresar con credenciales de proveedor se abre una vista d pantalla donde esta un formulario este formulario se debe llenar con los datos para crear un perfil como proveedor pero al ingresar los dato no guarda la información no guarda el imagen no tiene funcionalidad screeshots diseño asignado esta es la vista de pantalla de perfil crear la funcionalidad de logotipo al ingresar un imagen debe guardarse y mostrarse cuando se guarde la infomacion como un logotipo de la empresa en su perfil las validaciones cancelar y guardar deben funcionar user story como proveedor quiero ingresar una imagen como logo de la empresa como proveedor quiero crea mi perfil al seleccionar la opción guardar

| 1

|

504,324

| 14,616,757,892

|

IssuesEvent

|

2020-12-22 13:46:03

|

SAP/ownid-webapp

|

https://api.github.com/repos/SAP/ownid-webapp

|

closed

|

Passcode login/register

|

Priority: High

|

- Once the Passcode is collected, it is being used as the encryption key to the private key (so no need to store it anywhere)

- Can have unlimited attempts for entering the right Passcode in login. The design has a link to reset Passcode (we have another task for that)

- Passcode set to one website should be used also for other websites. Dor suggested that can keep a string maybe in the IndexedDB that can be decrypted with the Passcode to validate the Passcode. Using user email as the string force using the same email to all websites. Other option can be to store in user profile as a hash string.

Tasks:

- Collect, store the PIN

- Use the PIN to replace the encryption cookies

SoW:

1. Implement Passcode for Login/Register

|

1.0

|

Passcode login/register - - Once the Passcode is collected, it is being used as the encryption key to the private key (so no need to store it anywhere)

- Can have unlimited attempts for entering the right Passcode in login. The design has a link to reset Passcode (we have another task for that)

- Passcode set to one website should be used also for other websites. Dor suggested that can keep a string maybe in the IndexedDB that can be decrypted with the Passcode to validate the Passcode. Using user email as the string force using the same email to all websites. Other option can be to store in user profile as a hash string.

Tasks:

- Collect, store the PIN

- Use the PIN to replace the encryption cookies

SoW:

1. Implement Passcode for Login/Register

|

priority

|

passcode login register once the passcode is collected it is being used as the encryption key to the private key so no need to store it anywhere can have unlimited attempts for entering the right passcode in login the design has a link to reset passcode we have another task for that passcode set to one website should be used also for other websites dor suggested that can keep a string maybe in the indexeddb that can be decrypted with the passcode to validate the passcode using user email as the string force using the same email to all websites other option can be to store in user profile as a hash string tasks collect store the pin use the pin to replace the encryption cookies sow implement passcode for login register

| 1

|

404,771

| 11,862,993,151

|

IssuesEvent

|

2020-03-25 18:53:59

|

domialex/Sidekick

|

https://api.github.com/repos/domialex/Sidekick

|

closed

|

Gem level filter is set to a value lower than the gem level when price checking a gem

|

Priority: High Status: Available Type: Bug

|

If I price check a gem level 21, it sets 16 in the Minimum field (0.7.0-beta). I'm pretty sure the previous version price checked using the same level.

|

1.0

|

Gem level filter is set to a value lower than the gem level when price checking a gem - If I price check a gem level 21, it sets 16 in the Minimum field (0.7.0-beta). I'm pretty sure the previous version price checked using the same level.

|

priority

|

gem level filter is set to a value lower than the gem level when price checking a gem if i price check a gem level it sets in the minimum field beta i m pretty sure the previous version price checked using the same level

| 1

|

185,956

| 6,732,308,681

|

IssuesEvent

|

2017-10-18 10:57:54

|

gear54rus/RESTED-APS

|

https://api.github.com/repos/gear54rus/RESTED-APS

|

opened

|

Check integration with history, collections and exports/imports

|

priority: high type: enhancement

|

The fork added a lot of APS fields, need to check that they are saved, restored and imported/exported properly.

Sending both APS and main requests should save history,

|

1.0

|

Check integration with history, collections and exports/imports - The fork added a lot of APS fields, need to check that they are saved, restored and imported/exported properly.

Sending both APS and main requests should save history,

|

priority

|

check integration with history collections and exports imports the fork added a lot of aps fields need to check that they are saved restored and imported exported properly sending both aps and main requests should save history

| 1

|

200,524

| 7,008,760,165

|

IssuesEvent

|

2017-12-19 16:41:25

|

kleros/kleros-interaction

|

https://api.github.com/repos/kleros/kleros-interaction

|

opened

|

Submit a ERC for the evidence standard

|

high priority

|

Following ERC792 https://github.com/ethereum/EIPs/issues/792 for the arbitration standard,

we now need to create a standard for the way to handle evidence.

|

1.0

|

Submit a ERC for the evidence standard - Following ERC792 https://github.com/ethereum/EIPs/issues/792 for the arbitration standard,

we now need to create a standard for the way to handle evidence.

|

priority

|

submit a erc for the evidence standard following for the arbitration standard we now need to create a standard for the way to handle evidence

| 1

|

68,607

| 3,291,434,735

|

IssuesEvent

|

2015-10-30 09:05:03

|

radike/issue-tracker

|

https://api.github.com/repos/radike/issue-tracker

|

closed

|

Split the main project into more assemblies

|

enhancement priority HIGH

|

e.g. create Entity project, use ViewModels, and use auto-mapper to map Entities and ViewModels

|

1.0

|

Split the main project into more assemblies - e.g. create Entity project, use ViewModels, and use auto-mapper to map Entities and ViewModels

|

priority

|

split the main project into more assemblies e g create entity project use viewmodels and use auto mapper to map entities and viewmodels

| 1

|

125,774

| 4,964,665,886

|

IssuesEvent

|

2016-12-03 22:03:58

|

vnaskos/lajarus

|

https://api.github.com/repos/vnaskos/lajarus

|

closed

|

Refactor every create method on server

|

point: 2 priority: highest type: refactor

|

Every "create" method should accept RequestBody parameters, which will contain all the necessary info. They also has to be linked with validation checks.

|

1.0

|

Refactor every create method on server - Every "create" method should accept RequestBody parameters, which will contain all the necessary info. They also has to be linked with validation checks.

|

priority

|

refactor every create method on server every create method should accept requestbody parameters which will contain all the necessary info they also has to be linked with validation checks

| 1

|

237,269

| 7,757,974,617

|

IssuesEvent

|

2018-05-31 18:03:26

|

martchellop/Entretenibit

|

https://api.github.com/repos/martchellop/Entretenibit

|

opened

|

Get robot working on everyones PC: specify requirements.txt

|

priority: high

|

The basic robot mentioned in #66 has already been done and now #67 needs to be done.

I am trying to work o n #67 but the robot isn't working out of the box.

As such, I was thinking of defining the necessary libraries and putting them in a requirements.txt witch can them in the second sprint be added to a more permanent docker solution.

|

1.0

|

Get robot working on everyones PC: specify requirements.txt - The basic robot mentioned in #66 has already been done and now #67 needs to be done.

I am trying to work o n #67 but the robot isn't working out of the box.

As such, I was thinking of defining the necessary libraries and putting them in a requirements.txt witch can them in the second sprint be added to a more permanent docker solution.

|

priority

|

get robot working on everyones pc specify requirements txt the basic robot mentioned in has already been done and now needs to be done i am trying to work o n but the robot isn t working out of the box as such i was thinking of defining the necessary libraries and putting them in a requirements txt witch can them in the second sprint be added to a more permanent docker solution

| 1

|

687,016

| 23,511,340,929

|

IssuesEvent

|

2022-08-18 16:50:37

|

responsible-ai-collaborative/aiid

|

https://api.github.com/repos/responsible-ai-collaborative/aiid

|

closed

|

Images 404ing

|

Type:Bug Effort: Low Priority:High

|

Two images on incident 66 are 404ing.

<img width="480" alt="Screen Shot 2022-06-21 at 10 13 45 PM" src="https://user-images.githubusercontent.com/64780/174948812-8650ebfa-a85a-4533-a3bd-cae887d8b177.png">

<img width="862" alt="Screen Shot 2022-06-21 at 10 12 48 PM" src="https://user-images.githubusercontent.com/64780/174948725-b7315b23-7917-4f07-a152-6493c96c840d.png">

|

1.0

|

Images 404ing - Two images on incident 66 are 404ing.

<img width="480" alt="Screen Shot 2022-06-21 at 10 13 45 PM" src="https://user-images.githubusercontent.com/64780/174948812-8650ebfa-a85a-4533-a3bd-cae887d8b177.png">

<img width="862" alt="Screen Shot 2022-06-21 at 10 12 48 PM" src="https://user-images.githubusercontent.com/64780/174948725-b7315b23-7917-4f07-a152-6493c96c840d.png">

|

priority

|

images two images on incident are img width alt screen shot at pm src img width alt screen shot at pm src

| 1

|

711,751

| 24,473,808,493

|

IssuesEvent

|

2022-10-08 00:20:47

|

eugenemel/maven

|

https://api.github.com/repos/eugenemel/maven

|

closed

|

Updates to support additional information in SRMTransition (GUI, peakdetector)

|

high_priority QQQ peakdetector

|

Sometimes, in addition to a `precursorMz` and ` productMz`, `SRMTransition`s may also have a `transitionId` number. In that case, these transitions should be split out into different groups.

This has largely bee updated for peakdetector, but has not been corrected in the MAVEN gui.

|

1.0

|

Updates to support additional information in SRMTransition (GUI, peakdetector) - Sometimes, in addition to a `precursorMz` and ` productMz`, `SRMTransition`s may also have a `transitionId` number. In that case, these transitions should be split out into different groups.

This has largely bee updated for peakdetector, but has not been corrected in the MAVEN gui.

|

priority

|

updates to support additional information in srmtransition gui peakdetector sometimes in addition to a precursormz and productmz srmtransition s may also have a transitionid number in that case these transitions should be split out into different groups this has largely bee updated for peakdetector but has not been corrected in the maven gui

| 1

|

686,572

| 23,496,118,695

|

IssuesEvent

|

2022-08-18 01:44:50

|

ploomber/ploomber

|

https://api.github.com/repos/ploomber/ploomber

|

closed

|

compatibility with IPython 8

|

bug good first issue high priority med effort

|

IPython just had a major release and one of the tests is breaking so I pinned the version in `setup.py`. Ploomber actually works fine, it seems like some of the testing config is incompatible with the new IPython internals.

Even a diagnosis of next steps will be useful here!

|

1.0

|

compatibility with IPython 8 - IPython just had a major release and one of the tests is breaking so I pinned the version in `setup.py`. Ploomber actually works fine, it seems like some of the testing config is incompatible with the new IPython internals.

Even a diagnosis of next steps will be useful here!

|

priority

|

compatibility with ipython ipython just had a major release and one of the tests is breaking so i pinned the version in setup py ploomber actually works fine it seems like some of the testing config is incompatible with the new ipython internals even a diagnosis of next steps will be useful here

| 1

|

43,118

| 2,882,788,440

|

IssuesEvent

|

2015-06-11 08:06:40

|

HubTurbo/HubTurbo

|

https://api.github.com/repos/HubTurbo/HubTurbo

|

closed

|

Selection should not change when switching back from bView to pView

|

priority.high type.enhancement

|

Currently, the selection seems to be based on index rather than a unique identifier. For example, if the 3rd issue was selected originally, the 3rd issue will be selected after switching to pView even if the originally selected issue is no longer the 3rd issue.

|

1.0

|

Selection should not change when switching back from bView to pView - Currently, the selection seems to be based on index rather than a unique identifier. For example, if the 3rd issue was selected originally, the 3rd issue will be selected after switching to pView even if the originally selected issue is no longer the 3rd issue.

|

priority

|

selection should not change when switching back from bview to pview currently the selection seems to be based on index rather than a unique identifier for example if the issue was selected originally the issue will be selected after switching to pview even if the originally selected issue is no longer the issue

| 1

|

106,384

| 4,271,382,688

|

IssuesEvent

|

2016-07-13 10:53:16

|

GluuFederation/oxAuth

|

https://api.github.com/repos/GluuFederation/oxAuth

|

closed

|

SuperGluu Interception Script Incorrectly Processess Registration

|

bug High priority

|

There is a problem with the service that processes the QR code request from the phone.

Here is the scenario we tested in a two step authentication

1) User mike: enroll phone 1.... logout

2) Login user mike 2nd time

3) Push notification goes to mike, but Will uses his phone to scan QR code.

4) Will's phone gets "Authentication Success" and the authentication proceeds.

The problem is this: post enrollment of a device, only the registered phone should be accepted. The phone should have been informed "Key does not match--authentication failed" And the oxAuth session should be terminated.

I think this should be a quick fix.

|

1.0

|

SuperGluu Interception Script Incorrectly Processess Registration - There is a problem with the service that processes the QR code request from the phone.

Here is the scenario we tested in a two step authentication

1) User mike: enroll phone 1.... logout

2) Login user mike 2nd time

3) Push notification goes to mike, but Will uses his phone to scan QR code.

4) Will's phone gets "Authentication Success" and the authentication proceeds.

The problem is this: post enrollment of a device, only the registered phone should be accepted. The phone should have been informed "Key does not match--authentication failed" And the oxAuth session should be terminated.

I think this should be a quick fix.

|

priority

|

supergluu interception script incorrectly processess registration there is a problem with the service that processes the qr code request from the phone here is the scenario we tested in a two step authentication user mike enroll phone logout login user mike time push notification goes to mike but will uses his phone to scan qr code will s phone gets authentication success and the authentication proceeds the problem is this post enrollment of a device only the registered phone should be accepted the phone should have been informed key does not match authentication failed and the oxauth session should be terminated i think this should be a quick fix

| 1

|

489,404

| 14,105,947,813

|

IssuesEvent

|

2020-11-06 14:14:58

|

onaio/reveal-frontend

|

https://api.github.com/repos/onaio/reveal-frontend

|

opened

|

Missing Plan for User on Thailand Local Production

|

Priority: High

|

The user **vbdu_12.1.4-1** on Thailand Local Production cannot find [this](https://mhealth.ddc.moph.go.th/plans/update/3dd52eae-2d16-5d92-ac16-b35b5d90b503) plan in monitor tab.

|

1.0

|

Missing Plan for User on Thailand Local Production - The user **vbdu_12.1.4-1** on Thailand Local Production cannot find [this](https://mhealth.ddc.moph.go.th/plans/update/3dd52eae-2d16-5d92-ac16-b35b5d90b503) plan in monitor tab.

|

priority

|

missing plan for user on thailand local production the user vbdu on thailand local production cannot find plan in monitor tab

| 1

|

146,488

| 5,622,897,007

|

IssuesEvent

|

2017-04-04 13:52:48

|

CS2103JAN2017-W14-B1/main

|

https://api.github.com/repos/CS2103JAN2017-W14-B1/main

|

closed

|

[UNDO] If redo is input after a command that's not undo, the state shows incorrect data

|

priority.high type.bug

|

Clear redo stack if the command entered is not "undo"

|

1.0

|

[UNDO] If redo is input after a command that's not undo, the state shows incorrect data - Clear redo stack if the command entered is not "undo"

|

priority

|

if redo is input after a command that s not undo the state shows incorrect data clear redo stack if the command entered is not undo

| 1

|

448,428

| 12,950,691,967

|

IssuesEvent

|

2020-07-19 14:09:50

|

crytic/slither

|

https://api.github.com/repos/crytic/slither

|

closed

|

Crash on nested try/catch if/then/else

|

High Priority bug

|

```solidity

interface I{

function f() external returns(bool);

}

contract C{

function f(I i) public returns(bool){

bool fail;

try i.f() returns (bool success){

if(success){

fail = false;

}

else{

fail = true;

}

} catch Error(string memory reason){

fail = true;

}

if(fail){

return fail;

}

}

}

```

Related: https://github.com/crytic/slither/issues/511

|

1.0

|

Crash on nested try/catch if/then/else - ```solidity

interface I{

function f() external returns(bool);

}

contract C{

function f(I i) public returns(bool){

bool fail;

try i.f() returns (bool success){

if(success){

fail = false;

}

else{

fail = true;

}

} catch Error(string memory reason){

fail = true;

}

if(fail){

return fail;

}

}

}

```

Related: https://github.com/crytic/slither/issues/511

|

priority

|

crash on nested try catch if then else solidity interface i function f external returns bool contract c function f i i public returns bool bool fail try i f returns bool success if success fail false else fail true catch error string memory reason fail true if fail return fail related

| 1

|

313,103

| 9,557,060,348

|

IssuesEvent

|

2019-05-03 10:16:59

|

geosolutions-it/smb-portal

|

https://api.github.com/repos/geosolutions-it/smb-portal

|

closed

|

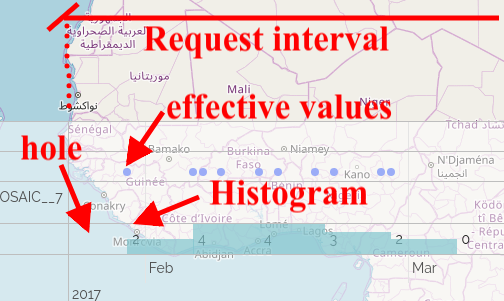

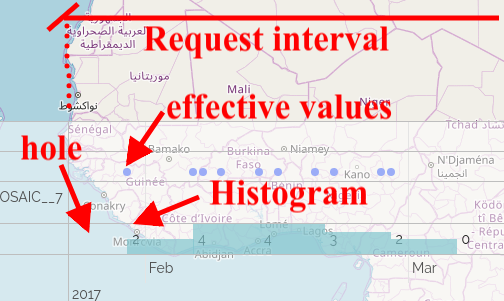

GetHistogram should calculate histogram on the required interval

|

Priority: High backlog geoserver

|

At the moment the histogram start point is calculated as the first value available on the server, instead of initial value indicated in the request.

For instance this request:

```

http://cloudsdi.geo-solutions.it:80/geoserver/gwc/service/wmts?service=WMTS&REQUEST=GetHistogram&resolution=P1W&histogram=time&version=1.1.0&layer=landsat8:B3&tileMatrixSet=EPSG:4326&time=2017-01-31T00:48:07.404Z/2017-07-04T21:00:22.692Z

```

Returns this response:

```

<?xml version="1.0" encoding="UTF-8"?><Histogram xmlns="http://demo.geo-solutions.it/share/wmts-multidim/wmts_multi_dimensional.xsd" xmlns:ows="http://www.opengis.net/ows/1.1">

<ows:Identifier>time</ows:Identifier>

<Domain>2017-02-06T00:00:00.000Z/2017-04-11T00:00:00.000Z/P1W</Domain>

<Values>4,4,3,2,0,3,2,1,1,2</Values>

</Histogram>

```

So the client requires `2017-01-31T00:48:07.404Z/2017-07-04T21:00:22.692Z` and the server reply with `2017-02-06T00:00:00.000Z/2017-04-11T00:00:00.000Z/P1W`.

So if you try to draw an histogram you can only draw this

We should allow to start the histogram from the provided interval or add a parameter to define effective interval where to calculate histogram .

|

1.0

|

GetHistogram should calculate histogram on the required interval - At the moment the histogram start point is calculated as the first value available on the server, instead of initial value indicated in the request.

For instance this request:

```

http://cloudsdi.geo-solutions.it:80/geoserver/gwc/service/wmts?service=WMTS&REQUEST=GetHistogram&resolution=P1W&histogram=time&version=1.1.0&layer=landsat8:B3&tileMatrixSet=EPSG:4326&time=2017-01-31T00:48:07.404Z/2017-07-04T21:00:22.692Z

```

Returns this response:

```

<?xml version="1.0" encoding="UTF-8"?><Histogram xmlns="http://demo.geo-solutions.it/share/wmts-multidim/wmts_multi_dimensional.xsd" xmlns:ows="http://www.opengis.net/ows/1.1">

<ows:Identifier>time</ows:Identifier>

<Domain>2017-02-06T00:00:00.000Z/2017-04-11T00:00:00.000Z/P1W</Domain>

<Values>4,4,3,2,0,3,2,1,1,2</Values>

</Histogram>

```

So the client requires `2017-01-31T00:48:07.404Z/2017-07-04T21:00:22.692Z` and the server reply with `2017-02-06T00:00:00.000Z/2017-04-11T00:00:00.000Z/P1W`.

So if you try to draw an histogram you can only draw this

We should allow to start the histogram from the provided interval or add a parameter to define effective interval where to calculate histogram .

|

priority

|

gethistogram should calculate histogram on the required interval at the moment the histogram start point is calculated as the first value available on the server instead of initial value indicated in the request for instance this request returns this response histogram xmlns xmlns ows time so the client requires and the server reply with so if you try to draw an histogram you can only draw this we should allow to start the histogram from the provided interval or add a parameter to define effective interval where to calculate histogram

| 1

|

779,313

| 27,348,722,145

|

IssuesEvent

|

2023-02-27 07:55:29

|

xKDR/Survey.jl

|

https://api.github.com/repos/xKDR/Survey.jl

|

opened

|

Urgent main test failing

|

high priority

|

```julia

Test Summary: | Pass Total Time

makeshort | 6 6 0.1s

gdf1 = 3×2 DataFrame

Row │ stype nrow

│ String1 Int64

─────┼────────────────

1 │ H 14

2 │ E 144

3 │ M 25

gdf2 = 2×2 DataFrame

Row │ schwide nrow

│ String3 Int64

─────┼────────────────

1 │ Yes 160

2 │ No 23

ratio.jl: Error During Test at /home/runner/work/Survey.jl/Survey.jl/test/raking.jl:1

Got exception outside of a @test

ArgumentError: invalid index: "maxit" of type String

```

I dont understand why, but some tests are failing.

|

1.0

|

Urgent main test failing - ```julia

Test Summary: | Pass Total Time

makeshort | 6 6 0.1s

gdf1 = 3×2 DataFrame

Row │ stype nrow

│ String1 Int64

─────┼────────────────

1 │ H 14

2 │ E 144

3 │ M 25

gdf2 = 2×2 DataFrame

Row │ schwide nrow

│ String3 Int64

─────┼────────────────

1 │ Yes 160

2 │ No 23

ratio.jl: Error During Test at /home/runner/work/Survey.jl/Survey.jl/test/raking.jl:1

Got exception outside of a @test

ArgumentError: invalid index: "maxit" of type String

```

I dont understand why, but some tests are failing.

|

priority

|

urgent main test failing julia test summary pass total time makeshort × dataframe row │ stype nrow │ ─────┼──────────────── │ h │ e │ m × dataframe row │ schwide nrow │ ─────┼──────────────── │ yes │ no ratio jl error during test at home runner work survey jl survey jl test raking jl got exception outside of a test argumenterror invalid index maxit of type string i dont understand why but some tests are failing

| 1

|

503,061

| 14,578,604,572

|

IssuesEvent

|

2020-12-18 05:19:22

|

dogpineapple/tackyboard

|

https://api.github.com/repos/dogpineapple/tackyboard

|

closed

|

Navigation Bar

|

frontend high priority

|

Navigation Bar needs:

- [ ] logout || sign-in, login

- [ ] user settings (to allow user account deletion)

- [ ] button to add new job post

- [ ] the tackyboard logo that does absolutely nothing OR it should direct the user back to the dashboard if logged in. landing page if not logged in.

|

1.0

|

Navigation Bar - Navigation Bar needs:

- [ ] logout || sign-in, login

- [ ] user settings (to allow user account deletion)

- [ ] button to add new job post

- [ ] the tackyboard logo that does absolutely nothing OR it should direct the user back to the dashboard if logged in. landing page if not logged in.

|

priority

|

navigation bar navigation bar needs logout sign in login user settings to allow user account deletion button to add new job post the tackyboard logo that does absolutely nothing or it should direct the user back to the dashboard if logged in landing page if not logged in

| 1

|

469,733

| 13,524,598,915

|

IssuesEvent

|

2020-09-15 11:48:32

|

inverse-inc/packetfence

|

https://api.github.com/repos/inverse-inc/packetfence

|

closed

|

Using the quick search of the switch groups searches for invalid ranges

|

Priority: High Type: Bug

|

**Describe the bug**

If you use the quick search of the switches in the left menu and search for a switch range (ex: 10.0.0.0/8), it searches for '10.0.0.0' and omits the mask which yields no results at all

**To Reproduce**

1. Create a switch with identifier '10.0.0.0/8'

1. Try to use the quick search in the left menu of the load page to search for it

**Expected behavior**

Should search for '10.0.0.0/8'

|

1.0

|

Using the quick search of the switch groups searches for invalid ranges - **Describe the bug**

If you use the quick search of the switches in the left menu and search for a switch range (ex: 10.0.0.0/8), it searches for '10.0.0.0' and omits the mask which yields no results at all

**To Reproduce**

1. Create a switch with identifier '10.0.0.0/8'

1. Try to use the quick search in the left menu of the load page to search for it

**Expected behavior**

Should search for '10.0.0.0/8'

|

priority

|

using the quick search of the switch groups searches for invalid ranges describe the bug if you use the quick search of the switches in the left menu and search for a switch range ex it searches for and omits the mask which yields no results at all to reproduce create a switch with identifier try to use the quick search in the left menu of the load page to search for it expected behavior should search for

| 1

|

526,006

| 15,278,009,983

|

IssuesEvent

|

2021-02-23 00:30:26

|

jcsnorlax97/rentr

|

https://api.github.com/repos/jcsnorlax97/rentr

|

opened

|

[TASK] Cleaning up naming conventions & Update Listing entity table

|

High Priority backend database dev-task

|

- [ ] Rename `getUser()` to be `getUserViaId()` in UserController & UserDao

- [ ] Rename `unAuthenticated()` to be `unauthenticated()`

- [ ] Replace `res.status(401).json(...)` in `authenticateUser()` in `UserController` with `ApiError.unauthenticated(...)`

- [ ] Ensure the following attributes are in the "Listing" entity:

- [ ] Number of bedrooms (String)

- [ ] Number of washrooms (String)

- [ ] Price (String)

- [ ] Laundry Room? (Boolean)

- [ ] Pet Allowed? (Boolean)

- [ ] Description (String)

- [ ] Title (String)

- [ ] Image (Url/Base64) (String)

- [ ] Parking Available? (Boolean)

|

1.0

|

[TASK] Cleaning up naming conventions & Update Listing entity table - - [ ] Rename `getUser()` to be `getUserViaId()` in UserController & UserDao

- [ ] Rename `unAuthenticated()` to be `unauthenticated()`

- [ ] Replace `res.status(401).json(...)` in `authenticateUser()` in `UserController` with `ApiError.unauthenticated(...)`

- [ ] Ensure the following attributes are in the "Listing" entity:

- [ ] Number of bedrooms (String)

- [ ] Number of washrooms (String)

- [ ] Price (String)

- [ ] Laundry Room? (Boolean)

- [ ] Pet Allowed? (Boolean)

- [ ] Description (String)

- [ ] Title (String)

- [ ] Image (Url/Base64) (String)

- [ ] Parking Available? (Boolean)

|

priority

|

cleaning up naming conventions update listing entity table rename getuser to be getuserviaid in usercontroller userdao rename unauthenticated to be unauthenticated replace res status json in authenticateuser in usercontroller with apierror unauthenticated ensure the following attributes are in the listing entity number of bedrooms string number of washrooms string price string laundry room boolean pet allowed boolean description string title string image url string parking available boolean

| 1

|

537,941

| 15,757,773,562

|

IssuesEvent

|

2021-03-31 05:49:57

|

garden-io/garden

|

https://api.github.com/repos/garden-io/garden

|

closed

|

Get rid of NFS dependency for in-cluster building

|

enhancement priority:high stale

|

## Background

One of the most frustrating issues with our remote building feature is the reliance on shareable (ReadWriteMany) volumes, which currently works through NFS at the moment, and optionally other RWX-capable volume provisioners such as EFS. This has turned out to cost a lot of maintenance burden and operational issues for our users.

The reason we currently have this requirement is that in order to sync code from the user's local build staging directory, we rsync to an in-cluster volume, that needs to be mountable by the in-cluster builder, whether that is an in-cluster docker daemon, or kaniko.

At the time we didn't see an alternative, that would be reasonably performant. We also had a performance goal in mind that may simply not be as important anymore. The raw efficiency of rsync is appealing, but in many (even most) cases the sync of code is only a small part of the overall build time.

## Proposed solution

We can avoid this requirement altogether, through some added code complexity in the actual build flows, but in turn avoiding the complexity relating to managing finicky storage providers.

We still rsync over to the cluster, but instead of directly mounting the sync volume, we modify the flow to allow using a simpler RWO volume.

### Build flow

Depending on the build mode, we do the following:

- cluster-docker

- We add an rsync container to the cluster-docker Pod spec, and instead of referencing a shared PVC for the build sync volume, we have an RWO PVC that the main docker container and the rsync container share.

- When building, we do the exact same thing as previously, but instead of syncing to a separate build sync Pod, we sync to the rsync container in the cluster-docker Pod, before executing the build.

- Kaniko

- We still create a build sync Deployment and a PVC, but the PVC can now be RWO, and we only have one sync Pod, much like the in-cluster registry.

- We still sync to the build-sync Pod ahead of the build.

- In the Kaniko build Pod, we create an init container, that rsyncs *from* the build-sync Pod to the container filesystem, replacing the shared build-sync PVC reference with a shared emptyDir volume within the Pod.

- The Kaniko build Pod does what it did previously.

### Migration

We change the name of the `build-sync` service to `build-sync-v2`, and `docker-daemon` to `docker-daemon-v2`(and the helm release names accordingly). This is to avoid conflicts during rollout since they both function differently from the prior versions, and cannot cover both client versions simultaneously.

Users need to be instructed to remove the old `build-sync` and `docker-daemon` deployments and volumes manually, as well as the NFS provisioner, when their team has updated to the new version. Or uninstall and re-init completely, of course. We can print out a message to this effect in the cluster-init command.

The current `storage.sync` parameters still apply to the new `build-sync-v2` volume without any changes.

We still always install `build-sync-v2`, even though it isn't necessary when using the `cluster-docker` build mode, in order to avoid headaches around using cluster-docker and kaniko in different scenarios on the same cluster.

If it is installed, the `cleanup-cluster-registry` script now also executes through the rsync container in the docker daemon Pod, in addition to the `build-sync-v2` Pod.

## Benefits

1. Far simpler requirements for in-cluster building.

2. Fewer bugs and support headaches.

3. Less operation overhead for customers.

4. New Kaniko flow can be made to work without even re-running cluster-init (falling back to older build-sync volume if it's available).

## Drawbacks

1. The two build modes change a bit and have somewhat different flows after the transition.

2. There is a slightly cumbersome transition, having to instruct users to clean up older system services manually, and potentially in parallel for a bit.

## Prioritization

I'd say sooner is better for this, since this causes annoying operational issues for users, and support issues for the Garden team by extension.

|

1.0

|

Get rid of NFS dependency for in-cluster building - ## Background

One of the most frustrating issues with our remote building feature is the reliance on shareable (ReadWriteMany) volumes, which currently works through NFS at the moment, and optionally other RWX-capable volume provisioners such as EFS. This has turned out to cost a lot of maintenance burden and operational issues for our users.

The reason we currently have this requirement is that in order to sync code from the user's local build staging directory, we rsync to an in-cluster volume, that needs to be mountable by the in-cluster builder, whether that is an in-cluster docker daemon, or kaniko.

At the time we didn't see an alternative, that would be reasonably performant. We also had a performance goal in mind that may simply not be as important anymore. The raw efficiency of rsync is appealing, but in many (even most) cases the sync of code is only a small part of the overall build time.

## Proposed solution

We can avoid this requirement altogether, through some added code complexity in the actual build flows, but in turn avoiding the complexity relating to managing finicky storage providers.

We still rsync over to the cluster, but instead of directly mounting the sync volume, we modify the flow to allow using a simpler RWO volume.

### Build flow

Depending on the build mode, we do the following:

- cluster-docker

- We add an rsync container to the cluster-docker Pod spec, and instead of referencing a shared PVC for the build sync volume, we have an RWO PVC that the main docker container and the rsync container share.

- When building, we do the exact same thing as previously, but instead of syncing to a separate build sync Pod, we sync to the rsync container in the cluster-docker Pod, before executing the build.

- Kaniko

- We still create a build sync Deployment and a PVC, but the PVC can now be RWO, and we only have one sync Pod, much like the in-cluster registry.

- We still sync to the build-sync Pod ahead of the build.

- In the Kaniko build Pod, we create an init container, that rsyncs *from* the build-sync Pod to the container filesystem, replacing the shared build-sync PVC reference with a shared emptyDir volume within the Pod.

- The Kaniko build Pod does what it did previously.

### Migration

We change the name of the `build-sync` service to `build-sync-v2`, and `docker-daemon` to `docker-daemon-v2`(and the helm release names accordingly). This is to avoid conflicts during rollout since they both function differently from the prior versions, and cannot cover both client versions simultaneously.

Users need to be instructed to remove the old `build-sync` and `docker-daemon` deployments and volumes manually, as well as the NFS provisioner, when their team has updated to the new version. Or uninstall and re-init completely, of course. We can print out a message to this effect in the cluster-init command.

The current `storage.sync` parameters still apply to the new `build-sync-v2` volume without any changes.

We still always install `build-sync-v2`, even though it isn't necessary when using the `cluster-docker` build mode, in order to avoid headaches around using cluster-docker and kaniko in different scenarios on the same cluster.

If it is installed, the `cleanup-cluster-registry` script now also executes through the rsync container in the docker daemon Pod, in addition to the `build-sync-v2` Pod.

## Benefits

1. Far simpler requirements for in-cluster building.

2. Fewer bugs and support headaches.

3. Less operation overhead for customers.

4. New Kaniko flow can be made to work without even re-running cluster-init (falling back to older build-sync volume if it's available).

## Drawbacks

1. The two build modes change a bit and have somewhat different flows after the transition.

2. There is a slightly cumbersome transition, having to instruct users to clean up older system services manually, and potentially in parallel for a bit.

## Prioritization

I'd say sooner is better for this, since this causes annoying operational issues for users, and support issues for the Garden team by extension.

|

priority

|

get rid of nfs dependency for in cluster building background one of the most frustrating issues with our remote building feature is the reliance on shareable readwritemany volumes which currently works through nfs at the moment and optionally other rwx capable volume provisioners such as efs this has turned out to cost a lot of maintenance burden and operational issues for our users the reason we currently have this requirement is that in order to sync code from the user s local build staging directory we rsync to an in cluster volume that needs to be mountable by the in cluster builder whether that is an in cluster docker daemon or kaniko at the time we didn t see an alternative that would be reasonably performant we also had a performance goal in mind that may simply not be as important anymore the raw efficiency of rsync is appealing but in many even most cases the sync of code is only a small part of the overall build time proposed solution we can avoid this requirement altogether through some added code complexity in the actual build flows but in turn avoiding the complexity relating to managing finicky storage providers we still rsync over to the cluster but instead of directly mounting the sync volume we modify the flow to allow using a simpler rwo volume build flow depending on the build mode we do the following cluster docker we add an rsync container to the cluster docker pod spec and instead of referencing a shared pvc for the build sync volume we have an rwo pvc that the main docker container and the rsync container share when building we do the exact same thing as previously but instead of syncing to a separate build sync pod we sync to the rsync container in the cluster docker pod before executing the build kaniko we still create a build sync deployment and a pvc but the pvc can now be rwo and we only have one sync pod much like the in cluster registry we still sync to the build sync pod ahead of the build in the kaniko build pod we create an init container that rsyncs from the build sync pod to the container filesystem replacing the shared build sync pvc reference with a shared emptydir volume within the pod the kaniko build pod does what it did previously migration we change the name of the build sync service to build sync and docker daemon to docker daemon and the helm release names accordingly this is to avoid conflicts during rollout since they both function differently from the prior versions and cannot cover both client versions simultaneously users need to be instructed to remove the old build sync and docker daemon deployments and volumes manually as well as the nfs provisioner when their team has updated to the new version or uninstall and re init completely of course we can print out a message to this effect in the cluster init command the current storage sync parameters still apply to the new build sync volume without any changes we still always install build sync even though it isn t necessary when using the cluster docker build mode in order to avoid headaches around using cluster docker and kaniko in different scenarios on the same cluster if it is installed the cleanup cluster registry script now also executes through the rsync container in the docker daemon pod in addition to the build sync pod benefits far simpler requirements for in cluster building fewer bugs and support headaches less operation overhead for customers new kaniko flow can be made to work without even re running cluster init falling back to older build sync volume if it s available drawbacks the two build modes change a bit and have somewhat different flows after the transition there is a slightly cumbersome transition having to instruct users to clean up older system services manually and potentially in parallel for a bit prioritization i d say sooner is better for this since this causes annoying operational issues for users and support issues for the garden team by extension

| 1

|

98,053

| 4,016,260,425

|

IssuesEvent

|

2016-05-15 13:48:32

|

loklak/loklak_webclient

|

https://api.github.com/repos/loklak/loklak_webclient

|

closed

|

Show all tweets created by loklak with rich content attachments within the search results.

|

Attachments Feature ongoing Priority 1 - High Search

|

While the search results should be identical to twitter search result views, we want to make use of our own abilities and show the rich content of special tweets that are developed in https://github.com/loklak/loklak_webclient/issues/63

This means, you should share your work to visualize the rich-content tweets. This affects the following formats:

- identify and attach exact geographic coordinates and maps

- attach video and/or audio content

- attach larger texts with simple markdown (i.e. https://guides.github.com/features/mastering-markdown/) as used in github or maybe wikitext, code citations (with code pretty-print) and other typical text rendering

- attach better images (i.e. animated gif or images with EXIF data attached)

|

1.0

|

Show all tweets created by loklak with rich content attachments within the search results. - While the search results should be identical to twitter search result views, we want to make use of our own abilities and show the rich content of special tweets that are developed in https://github.com/loklak/loklak_webclient/issues/63

This means, you should share your work to visualize the rich-content tweets. This affects the following formats:

- identify and attach exact geographic coordinates and maps

- attach video and/or audio content

- attach larger texts with simple markdown (i.e. https://guides.github.com/features/mastering-markdown/) as used in github or maybe wikitext, code citations (with code pretty-print) and other typical text rendering

- attach better images (i.e. animated gif or images with EXIF data attached)

|

priority

|

show all tweets created by loklak with rich content attachments within the search results while the search results should be identical to twitter search result views we want to make use of our own abilities and show the rich content of special tweets that are developed in this means you should share your work to visualize the rich content tweets this affects the following formats identify and attach exact geographic coordinates and maps attach video and or audio content attach larger texts with simple markdown i e as used in github or maybe wikitext code citations with code pretty print and other typical text rendering attach better images i e animated gif or images with exif data attached

| 1

|

539,840

| 15,795,727,254

|

IssuesEvent

|

2021-04-02 13:40:51

|

wso2/product-apim

|

https://api.github.com/repos/wso2/product-apim

|

closed

|

Deploy Sample API option available after deploying the sample API

|

API-M 4.0.0 Priority/High React-UI Type/Bug

|

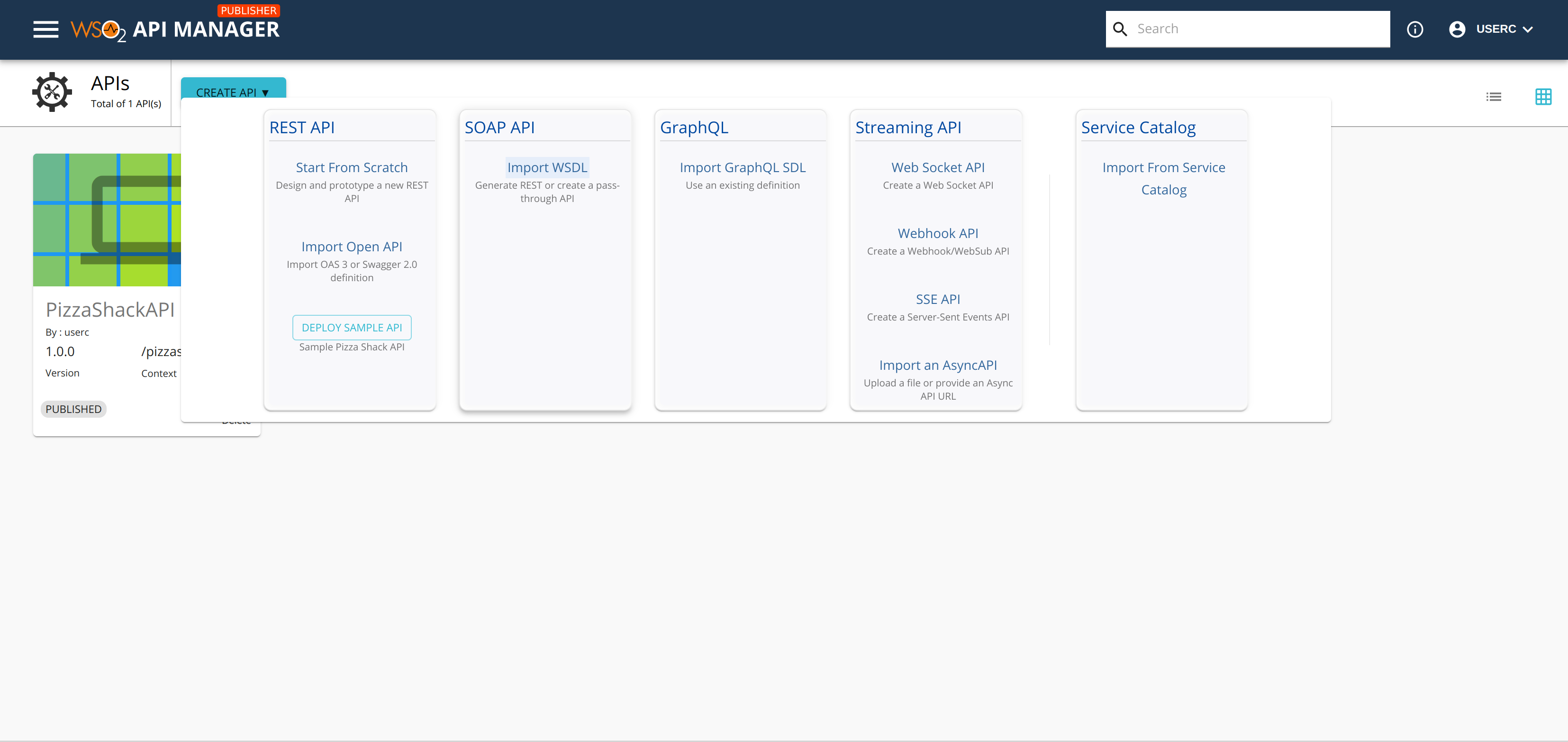

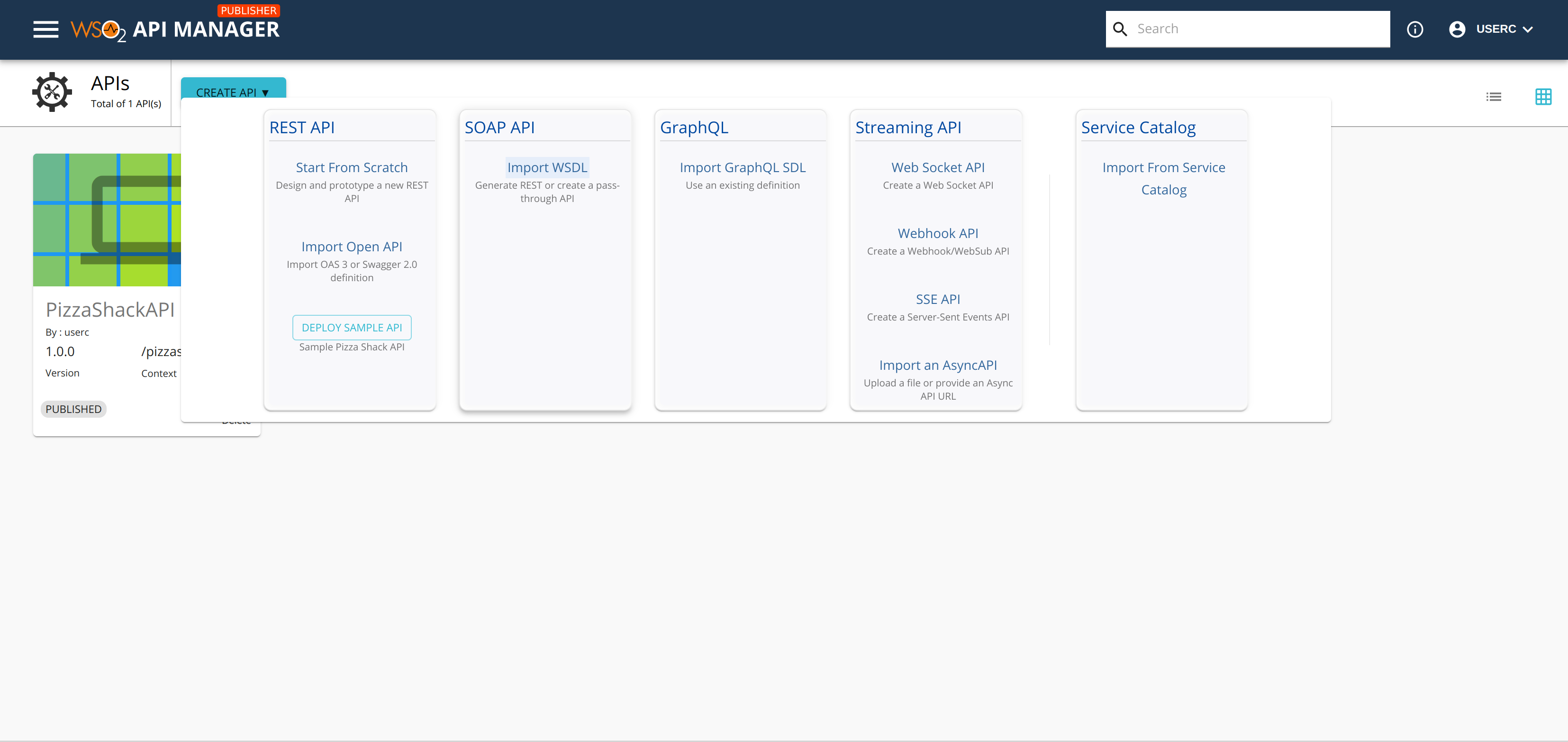

### Description:

Deploy Sample API option is available even after deploying the PIzzaShackAPI. Clicking on this would cause the following error in the console and the Publisher will be loading indefinitely.

```