Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

718,745

| 24,730,464,917

|

IssuesEvent

|

2022-10-20 17:07:39

|

Sphereserver/Source-X

|

https://api.github.com/repos/Sphereserver/Source-X

|

closed

|

Delete character issue

|

Status-Bug: Confirmed Priority: High

|

When deleting a character it appears that it does not update the character list on your screen till you reload it, this should automatically happen after deleting the char, I believe this is the cause for a few players accidently deleting chars they didn't mean to, in an attempt to delete the character that appears to stay on the char list.

|

1.0

|

Delete character issue - When deleting a character it appears that it does not update the character list on your screen till you reload it, this should automatically happen after deleting the char, I believe this is the cause for a few players accidently deleting chars they didn't mean to, in an attempt to delete the character that appears to stay on the char list.

|

priority

|

delete character issue when deleting a character it appears that it does not update the character list on your screen till you reload it this should automatically happen after deleting the char i believe this is the cause for a few players accidently deleting chars they didn t mean to in an attempt to delete the character that appears to stay on the char list

| 1

|

537,787

| 15,737,106,606

|

IssuesEvent

|

2021-03-30 02:10:29

|

ctm/mb2-doc

|

https://api.github.com/repos/ctm/mb2-doc

|

closed

|

clean up unwrap and [] from nick_mapper

|

chore easy high priority

|

Yesterday's crashes (#336) were from the same bug where nick_mapper was dereferencing a hash element that no longer existed.

There are other places where nick_mapper does a blind deref where it shouldn't. There are places where we can do something other than crash if an expected value is not there. I will fix those.

|

1.0

|

clean up unwrap and [] from nick_mapper - Yesterday's crashes (#336) were from the same bug where nick_mapper was dereferencing a hash element that no longer existed.

There are other places where nick_mapper does a blind deref where it shouldn't. There are places where we can do something other than crash if an expected value is not there. I will fix those.

|

priority

|

clean up unwrap and from nick mapper yesterday s crashes were from the same bug where nick mapper was dereferencing a hash element that no longer existed there are other places where nick mapper does a blind deref where it shouldn t there are places where we can do something other than crash if an expected value is not there i will fix those

| 1

|

678,371

| 23,195,463,815

|

IssuesEvent

|

2022-08-01 15:58:48

|

phetsims/joist

|

https://api.github.com/repos/phetsims/joist

|

closed

|

"Read me" buttons should never be interrupted by sim voicing alerts

|

priority:2-high status:ready-for-review dev:voicing

|

@terracoda reported that it is possible to have alerts from the sim interrupt read-me buttons. @jessegreenberg and I thought that we could use `priority` to accomplish this since it just needs to be done for voicing.

|

1.0

|

"Read me" buttons should never be interrupted by sim voicing alerts - @terracoda reported that it is possible to have alerts from the sim interrupt read-me buttons. @jessegreenberg and I thought that we could use `priority` to accomplish this since it just needs to be done for voicing.

|

priority

|

read me buttons should never be interrupted by sim voicing alerts terracoda reported that it is possible to have alerts from the sim interrupt read me buttons jessegreenberg and i thought that we could use priority to accomplish this since it just needs to be done for voicing

| 1

|

659,425

| 21,926,810,357

|

IssuesEvent

|

2022-05-23 05:39:57

|

factly/dega

|

https://api.github.com/repos/factly/dega

|

opened

|

Inconsistencies retrieving users in policies

|

priority:high

|

I am unable to retrieve a list of all the users to assign for policies. Harsha is assigned with same permissions is able to see all the users as expected.

|

1.0

|

Inconsistencies retrieving users in policies - I am unable to retrieve a list of all the users to assign for policies. Harsha is assigned with same permissions is able to see all the users as expected.

|

priority

|

inconsistencies retrieving users in policies i am unable to retrieve a list of all the users to assign for policies harsha is assigned with same permissions is able to see all the users as expected

| 1

|

458,833

| 13,182,624,665

|

IssuesEvent

|

2020-08-12 16:05:08

|

geosolutions-it/MapStore2

|

https://api.github.com/repos/geosolutions-it/MapStore2

|

closed

|

Cannot update the detail card for certain maps

|

Priority: High bug

|

### Description

A map that had a thumbnail (removed) can not update detail card.

You have to apply some changes also to metadata to allow Save functionality to work.

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

any

*Steps to reproduce*

- Create a new map, with a thumbnail and detail card

- Edit the map you created removing the thumbnail, then save again

- Edit again the map and try to edit detail

- Try to Save

*Expected Result*

- The map is saved again with changes to the detail card

*Current Result*

- Click on save button has no effect. You have to edit also title or description to make it work.

### Other useful information (optional):

**dev notes**

The real save effect is generated by this

https://github.com/geosolutions-it/MapStore2/blob/master/web/client/components/maps/modals/MetadataModal.jsx#L190

And than this

https://github.com/geosolutions-it/MapStore2/blob/master/web/client/components/maps/forms/Thumbnail.jsx#L159

When `this.props.map.newThumbnail` has "NODATA" instead of undefined, the if block is skipped and so the map is not saved.

That code is more complicated then needed and it may require some refactor. We should also evaluate that #2908 may solve this and other issues, finalizing the refactor of this part that can be cancelled in favor of the dashboard's one, that is more stable and tested.

|

1.0

|

Cannot update the detail card for certain maps - ### Description

A map that had a thumbnail (removed) can not update detail card.

You have to apply some changes also to metadata to allow Save functionality to work.

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

any

*Steps to reproduce*

- Create a new map, with a thumbnail and detail card

- Edit the map you created removing the thumbnail, then save again

- Edit again the map and try to edit detail

- Try to Save

*Expected Result*

- The map is saved again with changes to the detail card

*Current Result*

- Click on save button has no effect. You have to edit also title or description to make it work.

### Other useful information (optional):

**dev notes**

The real save effect is generated by this

https://github.com/geosolutions-it/MapStore2/blob/master/web/client/components/maps/modals/MetadataModal.jsx#L190

And than this

https://github.com/geosolutions-it/MapStore2/blob/master/web/client/components/maps/forms/Thumbnail.jsx#L159

When `this.props.map.newThumbnail` has "NODATA" instead of undefined, the if block is skipped and so the map is not saved.

That code is more complicated then needed and it may require some refactor. We should also evaluate that #2908 may solve this and other issues, finalizing the refactor of this part that can be cancelled in favor of the dashboard's one, that is more stable and tested.

|

priority

|

cannot update the detail card for certain maps description a map that had a thumbnail removed can not update detail card you have to apply some changes also to metadata to allow save functionality to work in case of bug otherwise remove this paragraph browser affected any steps to reproduce create a new map with a thumbnail and detail card edit the map you created removing the thumbnail then save again edit again the map and try to edit detail try to save expected result the map is saved again with changes to the detail card current result click on save button has no effect you have to edit also title or description to make it work other useful information optional dev notes the real save effect is generated by this and than this when this props map newthumbnail has nodata instead of undefined the if block is skipped and so the map is not saved that code is more complicated then needed and it may require some refactor we should also evaluate that may solve this and other issues finalizing the refactor of this part that can be cancelled in favor of the dashboard s one that is more stable and tested

| 1

|

205,975

| 7,107,793,147

|

IssuesEvent

|

2018-01-16 21:18:05

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

storage: unexpected GC queue activity immediately after DROP

|

bug high priority

|

Experimentation notes. I'm running single-node release-1.1 with a tpch.lineitem

(SF 1) table restore. Without changing the TTL, I dropped this table last night.

The "live bytes" fell to ~zero within 30 minutes (i.e., it took 30 minutes for

all keys to be deleted, but not cleared yet) while on disk we're now using 1.7GB

instead of 1.3GB (makes sense since we wrote lots of MVCC tombstones).

What stuck out is that while this was going on, I saw lots of unexpected GC runs

that didn't get to delete data. I initially thought those must have been

triggered by the "intent age" (which spikes as the range deletion puts down many

many intents that are only cleaned up after commit; they're likely visible for

too long and get the replica queued). But what speaks against this theory is

that all night, GC was running in circles, apparently always triggered but never

successful at reducing the score. This strikes me as quite odd and needs more

investigation.

This morning, I changed the TTL to 100s and am seeing steady GC queue activity,

each run clearing out a whole range and making steady progress. Annoyingly, the

consistency checker is also running all the time, which can't help performance.

The GC queue took around 18 minutes to clean up ~1.3 on-disk-data worth of data,

which seems OK. After the run, the data directory stabilized at 200-300MB, which

after an offline-compaction drops to 8MB.

RocksDB seems to be running compactions, since the data directory (at the time

of writing) has dropped to 613MB and within a minute more to 419MB (with some

jittering). Logging output is quiet, memory usage is stable, though I'm sometimes

seeing 25 GC runs logged in the runtime stats which I think is higher than I am

used to seeing (the GC queue is not allocation efficient, so that makes some sense

to me).

Running the experiment again to look specifically into the first part.

|

1.0

|

storage: unexpected GC queue activity immediately after DROP - Experimentation notes. I'm running single-node release-1.1 with a tpch.lineitem

(SF 1) table restore. Without changing the TTL, I dropped this table last night.

The "live bytes" fell to ~zero within 30 minutes (i.e., it took 30 minutes for

all keys to be deleted, but not cleared yet) while on disk we're now using 1.7GB

instead of 1.3GB (makes sense since we wrote lots of MVCC tombstones).

What stuck out is that while this was going on, I saw lots of unexpected GC runs

that didn't get to delete data. I initially thought those must have been

triggered by the "intent age" (which spikes as the range deletion puts down many

many intents that are only cleaned up after commit; they're likely visible for

too long and get the replica queued). But what speaks against this theory is

that all night, GC was running in circles, apparently always triggered but never

successful at reducing the score. This strikes me as quite odd and needs more

investigation.

This morning, I changed the TTL to 100s and am seeing steady GC queue activity,

each run clearing out a whole range and making steady progress. Annoyingly, the

consistency checker is also running all the time, which can't help performance.

The GC queue took around 18 minutes to clean up ~1.3 on-disk-data worth of data,

which seems OK. After the run, the data directory stabilized at 200-300MB, which

after an offline-compaction drops to 8MB.

RocksDB seems to be running compactions, since the data directory (at the time

of writing) has dropped to 613MB and within a minute more to 419MB (with some

jittering). Logging output is quiet, memory usage is stable, though I'm sometimes

seeing 25 GC runs logged in the runtime stats which I think is higher than I am

used to seeing (the GC queue is not allocation efficient, so that makes some sense

to me).

Running the experiment again to look specifically into the first part.

|

priority

|

storage unexpected gc queue activity immediately after drop experimentation notes i m running single node release with a tpch lineitem sf table restore without changing the ttl i dropped this table last night the live bytes fell to zero within minutes i e it took minutes for all keys to be deleted but not cleared yet while on disk we re now using instead of makes sense since we wrote lots of mvcc tombstones what stuck out is that while this was going on i saw lots of unexpected gc runs that didn t get to delete data i initially thought those must have been triggered by the intent age which spikes as the range deletion puts down many many intents that are only cleaned up after commit they re likely visible for too long and get the replica queued but what speaks against this theory is that all night gc was running in circles apparently always triggered but never successful at reducing the score this strikes me as quite odd and needs more investigation this morning i changed the ttl to and am seeing steady gc queue activity each run clearing out a whole range and making steady progress annoyingly the consistency checker is also running all the time which can t help performance the gc queue took around minutes to clean up on disk data worth of data which seems ok after the run the data directory stabilized at which after an offline compaction drops to rocksdb seems to be running compactions since the data directory at the time of writing has dropped to and within a minute more to with some jittering logging output is quiet memory usage is stable though i m sometimes seeing gc runs logged in the runtime stats which i think is higher than i am used to seeing the gc queue is not allocation efficient so that makes some sense to me running the experiment again to look specifically into the first part

| 1

|

616,185

| 19,295,844,816

|

IssuesEvent

|

2021-12-12 15:21:48

|

fvh-P/assaultlily-rdf

|

https://api.github.com/repos/fvh-P/assaultlily-rdf

|

closed

|

データ形式をTurtleへ移行

|

Priority-High in progress

|

現状はスキーマ定義・制約定義ファイルを除いてXML形式で記述しているが、XMLは可読性が低くさらに将来的にRDF-starという拡張構文を採用しようとしたときにはXMLは非対応になるので、少しずつ移行します。

|

1.0

|

データ形式をTurtleへ移行 - 現状はスキーマ定義・制約定義ファイルを除いてXML形式で記述しているが、XMLは可読性が低くさらに将来的にRDF-starという拡張構文を採用しようとしたときにはXMLは非対応になるので、少しずつ移行します。

|

priority

|

データ形式をturtleへ移行 現状はスキーマ定義・制約定義ファイルを除いてxml形式で記述しているが、xmlは可読性が低くさらに将来的にrdf starという拡張構文を採用しようとしたときにはxmlは非対応になるので、少しずつ移行します。

| 1

|

141,199

| 5,431,675,911

|

IssuesEvent

|

2017-03-04 02:25:48

|

ampproject/amphtml

|

https://api.github.com/repos/ampproject/amphtml

|

closed

|

amp-social-share fails in PWA

|

P1: High Priority

|

```

<amp-social-share

type="twitter"

layout="container"

data-param-text="..."

class="...">

</amp-social-share>

```

```

Uncaught Error: No ampdoc found for [object HTMLElement]

at qa (log.js:438)

at oa.f.createError (log.js:239)

at rf.getAmpDoc (ampdoc-impl.js:153)

at HTMLElement.Sf.b.connectedCallback (custom-element.js:928)

at A.define (document-register-element.node.js:1209)

at Nf (custom-element.js:1662)

at Pf (custom-element.js:183)

at $i.f.loadExtension (custom-element.js:180)

at Dj (runtime.js:757)

at zj.attachShadowDoc (runtime.js:640)

```

|

1.0

|

amp-social-share fails in PWA - ```

<amp-social-share

type="twitter"

layout="container"

data-param-text="..."

class="...">

</amp-social-share>

```

```

Uncaught Error: No ampdoc found for [object HTMLElement]

at qa (log.js:438)

at oa.f.createError (log.js:239)

at rf.getAmpDoc (ampdoc-impl.js:153)

at HTMLElement.Sf.b.connectedCallback (custom-element.js:928)

at A.define (document-register-element.node.js:1209)

at Nf (custom-element.js:1662)

at Pf (custom-element.js:183)

at $i.f.loadExtension (custom-element.js:180)

at Dj (runtime.js:757)

at zj.attachShadowDoc (runtime.js:640)

```

|

priority

|

amp social share fails in pwa amp social share type twitter layout container data param text class uncaught error no ampdoc found for at qa log js at oa f createerror log js at rf getampdoc ampdoc impl js at htmlelement sf b connectedcallback custom element js at a define document register element node js at nf custom element js at pf custom element js at i f loadextension custom element js at dj runtime js at zj attachshadowdoc runtime js

| 1

|

536,097

| 15,703,939,666

|

IssuesEvent

|

2021-03-26 14:26:45

|

epiphany-platform/epiphany

|

https://api.github.com/repos/epiphany-platform/epiphany

|

opened

|

[BUG] Epicli apply fails on updating in-cluster configuration after upgrading from older version

|

area/kubernetes priority/high status/grooming-needed type/bug

|

**Describe the bug**

Re-applying configuration after upgrading from version 0.6 to develop fails on TASK [kubernetes_common : Update in-cluster configuration].

**How to reproduce**

Steps to reproduce the behavior:

1. Deploy a 0.6 cluster with kubernetes master and node components enabled (at least 1 vm) - execute `epicli apply` from v0.6 branch

2. Upgrade the cluster to the develop branch - execute `epicli upgrade` from develop branch

3. Adjust config yaml to be compatible with the develop version by adding/enabling the repository vm

4. Execute `epicli apply` from develop branch

**Expected behavior**

The configuration has been successfully applied.

**Environment**

- Cloud provider: [all]

- OS: [all]

**epicli version**: [`epicli --version`]

v0.6 -> develop

**Additional context**

```

2021-03-26T14:04:11.6836483Z [38;21m14:04:11 INFO cli.engine.ansible.AnsibleCommand - TASK [kubernetes_common : Update in-cluster configuration] *********************[0m

2021-03-26T14:04:42.3211765Z [31;21m14:04:42 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (30 retries left).[0m

2021-03-26T14:05:22.8164666Z [31;21m14:05:22 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (29 retries left).[0m

2021-03-26T14:05:43.4044389Z [31;21m14:05:43 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (28 retries left).[0m

2021-03-26T14:06:19.4157736Z [31;21m14:06:19 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (27 retries left).[0m

2021-03-26T14:06:40.0400051Z [31;21m14:06:40 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (26 retries left).[0m

2021-03-26T14:07:20.5517770Z [31;21m14:07:20 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (25 retries left).[0m

2021-03-26T14:07:41.2678359Z [31;21m14:07:41 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (24 retries left).[0m

2021-03-26T14:08:02.7200544Z [31;21m14:08:01 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (23 retries left).[0m

2021-03-26T14:08:22.4268007Z [31;21m14:08:22 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (22 retries left).[0m

2021-03-26T14:08:53.0409911Z [31;21m14:08:53 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (21 retries left).[0m

2021-03-26T14:09:13.6231207Z [31;21m14:09:13 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (20 retries left).[0m

2021-03-26T14:09:34.2449222Z [31;21m14:09:34 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (19 retries left).[0m

2021-03-26T14:09:54.8584134Z [31;21m14:09:54 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (18 retries left).[0m

2021-03-26T14:10:15.6237479Z [31;21m14:10:15 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (17 retries left).[0m

2021-03-26T14:10:36.2497736Z [31;21m14:10:36 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (16 retries left).[0m

2021-03-26T14:10:56.8413822Z [31;21m14:10:56 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (15 retries left).[0m

2021-03-26T14:11:17.4373229Z [31;21m14:11:17 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (14 retries left).[0m

2021-03-26T14:11:38.0264108Z [31;21m14:11:38 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (13 retries left).[0m

2021-03-26T14:12:18.1581608Z [31;21m14:12:18 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (12 retries left).[0m

2021-03-26T14:12:38.7525803Z [31;21m14:12:38 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (11 retries left).[0m

2021-03-26T14:12:59.3522341Z [31;21m14:12:59 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (10 retries left).[0m

2021-03-26T14:13:19.9730043Z [31;21m14:13:19 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (9 retries left).[0m

2021-03-26T14:13:40.5855881Z [31;21m14:13:40 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (8 retries left).[0m

2021-03-26T14:14:02.0216303Z [31;21m14:14:02 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (7 retries left).[0m

2021-03-26T14:14:22.6657500Z [31;21m14:14:22 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (6 retries left).[0m

2021-03-26T14:14:43.3398206Z [31;21m14:14:43 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (5 retries left).[0m

2021-03-26T14:15:03.9312202Z [31;21m14:15:03 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (4 retries left).[0m

2021-03-26T14:15:24.5243491Z [31;21m14:15:24 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (3 retries left).[0m

2021-03-26T14:15:45.1148318Z [31;21m14:15:45 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (2 retries left).[0m

2021-03-26T14:16:05.7873626Z [31;21m14:16:05 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (1 retries left).[0m

2021-03-26T14:16:26.3828175Z [31;21m14:16:26 ERROR cli.engine.ansible.AnsibleCommand - fatal: [ec2-xx-xx-xx-xx.eu-west-1.compute.amazonaws.com]: FAILED! => {"attempts": 30, "changed": true, "cmd": "kubeadm init phase upload-config kubeadm --config /etc/kubeadm/kubeadm-config.yml\n", "delta": "0:00:09.978625", "end": "2021-03-26 14:16:26.296787", "msg": "non-zero return code", "rc": 1, "start": "2021-03-26 14:16:16.318162", "stderr": "W0326 14:16:16.361302 11488 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]\nerror execution phase upload-config/kubeadm: error uploading the kubeadm ClusterConfiguration: Post https://10.1.2.49:6443/api/v1/namespaces/kube-system/configmaps?timeout=10s: dial tcp 10.1.2.49:6443: connect: connection refused\nTo see the stack trace of this error execute with --v=5 or higher", "stderr_lines": ["W0326 14:16:16.361302 11488 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]", "error execution phase upload-config/kubeadm: error uploading the kubeadm ClusterConfiguration: Post https://10.1.2.49:6443/api/v1/namespaces/kube-system/configmaps?timeout=10s: dial tcp 10.1.2.49:6443: connect: connection refused", "To see the stack trace of this error execute with --v=5 or higher"], "stdout": "[upload-config] Storing the configuration used in ConfigMap \"kubeadm-config\" in the \"kube-system\" Namespace", "stdout_lines": ["[upload-config] Storing the configuration used in ConfigMap \"kubeadm-config\" in the \"kube-system\" Namespace"]}[0m

```

---

**DoD checklist**

* [ ] Changelog updated (if affected version was released)

* [ ] COMPONENTS.md updated / doesn't need to be updated

* [ ] Automated tests passed (QA pipelines)

* [ ] apply

* [ ] upgrade

* [ ] Case covered by automated test (if possible)

* [ ] Idempotency tested

* [ ] Documentation updated / doesn't need to be updated

* [ ] All conversations in PR resolved

|

1.0

|

[BUG] Epicli apply fails on updating in-cluster configuration after upgrading from older version - **Describe the bug**

Re-applying configuration after upgrading from version 0.6 to develop fails on TASK [kubernetes_common : Update in-cluster configuration].

**How to reproduce**

Steps to reproduce the behavior:

1. Deploy a 0.6 cluster with kubernetes master and node components enabled (at least 1 vm) - execute `epicli apply` from v0.6 branch

2. Upgrade the cluster to the develop branch - execute `epicli upgrade` from develop branch

3. Adjust config yaml to be compatible with the develop version by adding/enabling the repository vm

4. Execute `epicli apply` from develop branch

**Expected behavior**

The configuration has been successfully applied.

**Environment**

- Cloud provider: [all]

- OS: [all]

**epicli version**: [`epicli --version`]

v0.6 -> develop

**Additional context**

```

2021-03-26T14:04:11.6836483Z [38;21m14:04:11 INFO cli.engine.ansible.AnsibleCommand - TASK [kubernetes_common : Update in-cluster configuration] *********************[0m

2021-03-26T14:04:42.3211765Z [31;21m14:04:42 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (30 retries left).[0m

2021-03-26T14:05:22.8164666Z [31;21m14:05:22 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (29 retries left).[0m

2021-03-26T14:05:43.4044389Z [31;21m14:05:43 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (28 retries left).[0m

2021-03-26T14:06:19.4157736Z [31;21m14:06:19 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (27 retries left).[0m

2021-03-26T14:06:40.0400051Z [31;21m14:06:40 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (26 retries left).[0m

2021-03-26T14:07:20.5517770Z [31;21m14:07:20 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (25 retries left).[0m

2021-03-26T14:07:41.2678359Z [31;21m14:07:41 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (24 retries left).[0m

2021-03-26T14:08:02.7200544Z [31;21m14:08:01 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (23 retries left).[0m

2021-03-26T14:08:22.4268007Z [31;21m14:08:22 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (22 retries left).[0m

2021-03-26T14:08:53.0409911Z [31;21m14:08:53 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (21 retries left).[0m

2021-03-26T14:09:13.6231207Z [31;21m14:09:13 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (20 retries left).[0m

2021-03-26T14:09:34.2449222Z [31;21m14:09:34 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (19 retries left).[0m

2021-03-26T14:09:54.8584134Z [31;21m14:09:54 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (18 retries left).[0m

2021-03-26T14:10:15.6237479Z [31;21m14:10:15 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (17 retries left).[0m

2021-03-26T14:10:36.2497736Z [31;21m14:10:36 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (16 retries left).[0m

2021-03-26T14:10:56.8413822Z [31;21m14:10:56 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (15 retries left).[0m

2021-03-26T14:11:17.4373229Z [31;21m14:11:17 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (14 retries left).[0m

2021-03-26T14:11:38.0264108Z [31;21m14:11:38 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (13 retries left).[0m

2021-03-26T14:12:18.1581608Z [31;21m14:12:18 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (12 retries left).[0m

2021-03-26T14:12:38.7525803Z [31;21m14:12:38 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (11 retries left).[0m

2021-03-26T14:12:59.3522341Z [31;21m14:12:59 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (10 retries left).[0m

2021-03-26T14:13:19.9730043Z [31;21m14:13:19 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (9 retries left).[0m

2021-03-26T14:13:40.5855881Z [31;21m14:13:40 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (8 retries left).[0m

2021-03-26T14:14:02.0216303Z [31;21m14:14:02 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (7 retries left).[0m

2021-03-26T14:14:22.6657500Z [31;21m14:14:22 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (6 retries left).[0m

2021-03-26T14:14:43.3398206Z [31;21m14:14:43 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (5 retries left).[0m

2021-03-26T14:15:03.9312202Z [31;21m14:15:03 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (4 retries left).[0m

2021-03-26T14:15:24.5243491Z [31;21m14:15:24 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (3 retries left).[0m

2021-03-26T14:15:45.1148318Z [31;21m14:15:45 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (2 retries left).[0m

2021-03-26T14:16:05.7873626Z [31;21m14:16:05 ERROR cli.engine.ansible.AnsibleCommand - FAILED - RETRYING: Update in-cluster configuration (1 retries left).[0m

2021-03-26T14:16:26.3828175Z [31;21m14:16:26 ERROR cli.engine.ansible.AnsibleCommand - fatal: [ec2-xx-xx-xx-xx.eu-west-1.compute.amazonaws.com]: FAILED! => {"attempts": 30, "changed": true, "cmd": "kubeadm init phase upload-config kubeadm --config /etc/kubeadm/kubeadm-config.yml\n", "delta": "0:00:09.978625", "end": "2021-03-26 14:16:26.296787", "msg": "non-zero return code", "rc": 1, "start": "2021-03-26 14:16:16.318162", "stderr": "W0326 14:16:16.361302 11488 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]\nerror execution phase upload-config/kubeadm: error uploading the kubeadm ClusterConfiguration: Post https://10.1.2.49:6443/api/v1/namespaces/kube-system/configmaps?timeout=10s: dial tcp 10.1.2.49:6443: connect: connection refused\nTo see the stack trace of this error execute with --v=5 or higher", "stderr_lines": ["W0326 14:16:16.361302 11488 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]", "error execution phase upload-config/kubeadm: error uploading the kubeadm ClusterConfiguration: Post https://10.1.2.49:6443/api/v1/namespaces/kube-system/configmaps?timeout=10s: dial tcp 10.1.2.49:6443: connect: connection refused", "To see the stack trace of this error execute with --v=5 or higher"], "stdout": "[upload-config] Storing the configuration used in ConfigMap \"kubeadm-config\" in the \"kube-system\" Namespace", "stdout_lines": ["[upload-config] Storing the configuration used in ConfigMap \"kubeadm-config\" in the \"kube-system\" Namespace"]}[0m

```

---

**DoD checklist**

* [ ] Changelog updated (if affected version was released)

* [ ] COMPONENTS.md updated / doesn't need to be updated

* [ ] Automated tests passed (QA pipelines)

* [ ] apply

* [ ] upgrade

* [ ] Case covered by automated test (if possible)

* [ ] Idempotency tested

* [ ] Documentation updated / doesn't need to be updated

* [ ] All conversations in PR resolved

|

priority

|

epicli apply fails on updating in cluster configuration after upgrading from older version describe the bug re applying configuration after upgrading from version to develop fails on task how to reproduce steps to reproduce the behavior deploy a cluster with kubernetes master and node components enabled at least vm execute epicli apply from branch upgrade the cluster to the develop branch execute epicli upgrade from develop branch adjust config yaml to be compatible with the develop version by adding enabling the repository vm execute epicli apply from develop branch expected behavior the configuration has been successfully applied environment cloud provider os epicli version develop additional context error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left error cli engine ansible ansiblecommand failed retrying update in cluster configuration retries left failed attempts changed true cmd kubeadm init phase upload config kubeadm config etc kubeadm kubeadm config yml n delta end msg non zero return code rc start stderr configset go warning kubeadm cannot validate component configs for api groups nerror execution phase upload config kubeadm error uploading the kubeadm clusterconfiguration post dial tcp connect connection refused nto see the stack trace of this error execute with v or higher stderr lines warning kubeadm cannot validate component configs for api groups error execution phase upload config kubeadm error uploading the kubeadm clusterconfiguration post dial tcp connect connection refused to see the stack trace of this error execute with v or higher stdout storing the configuration used in configmap kubeadm config in the kube system namespace stdout lines storing the configuration used in configmap kubeadm config in the kube system namespace dod checklist changelog updated if affected version was released components md updated doesn t need to be updated automated tests passed qa pipelines apply upgrade case covered by automated test if possible idempotency tested documentation updated doesn t need to be updated all conversations in pr resolved

| 1

|

184,122

| 6,705,779,008

|

IssuesEvent

|

2017-10-12 02:35:12

|

syndesisio/syndesis-ui

|

https://api.github.com/repos/syndesisio/syndesis-ui

|

closed

|

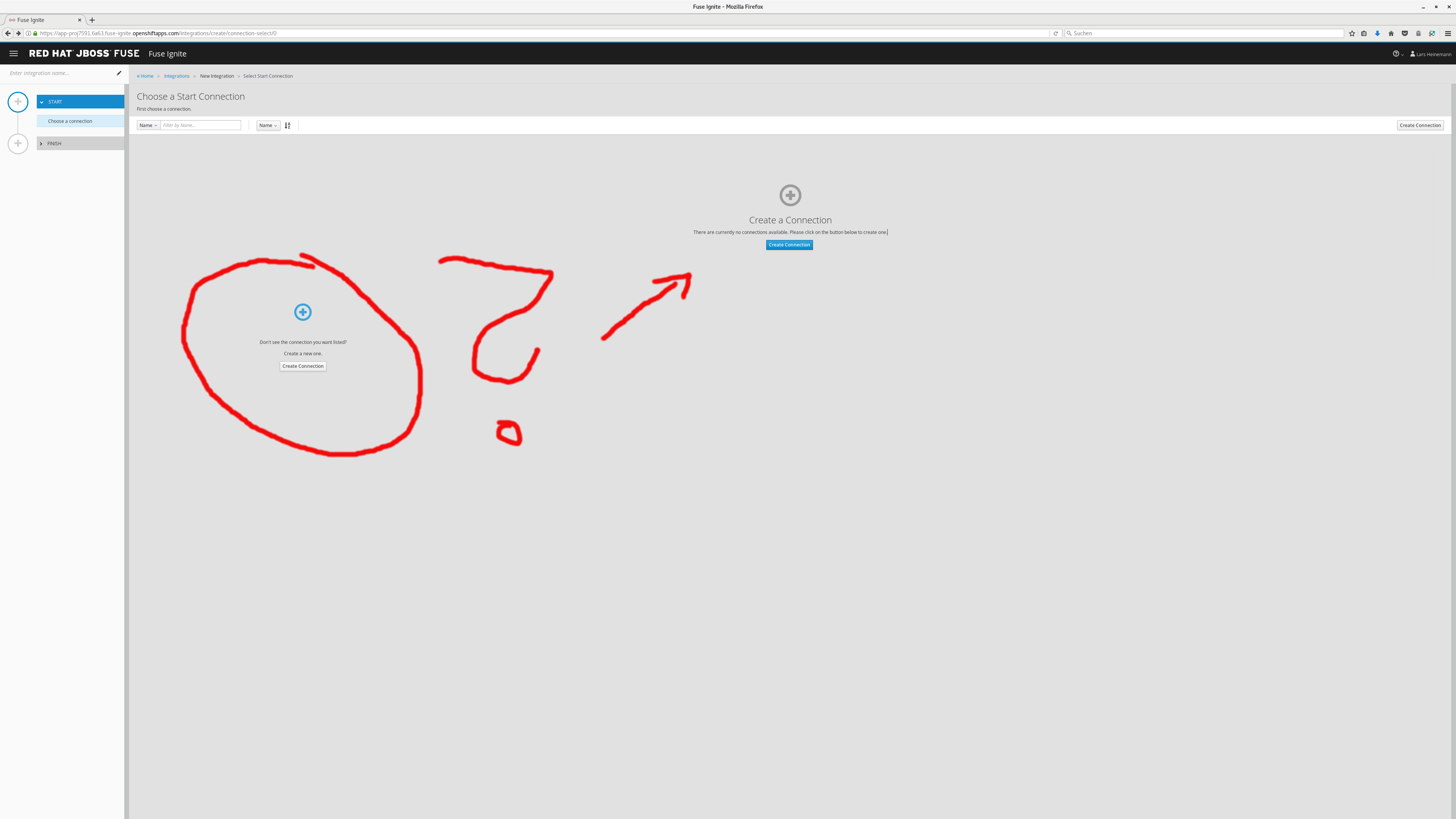

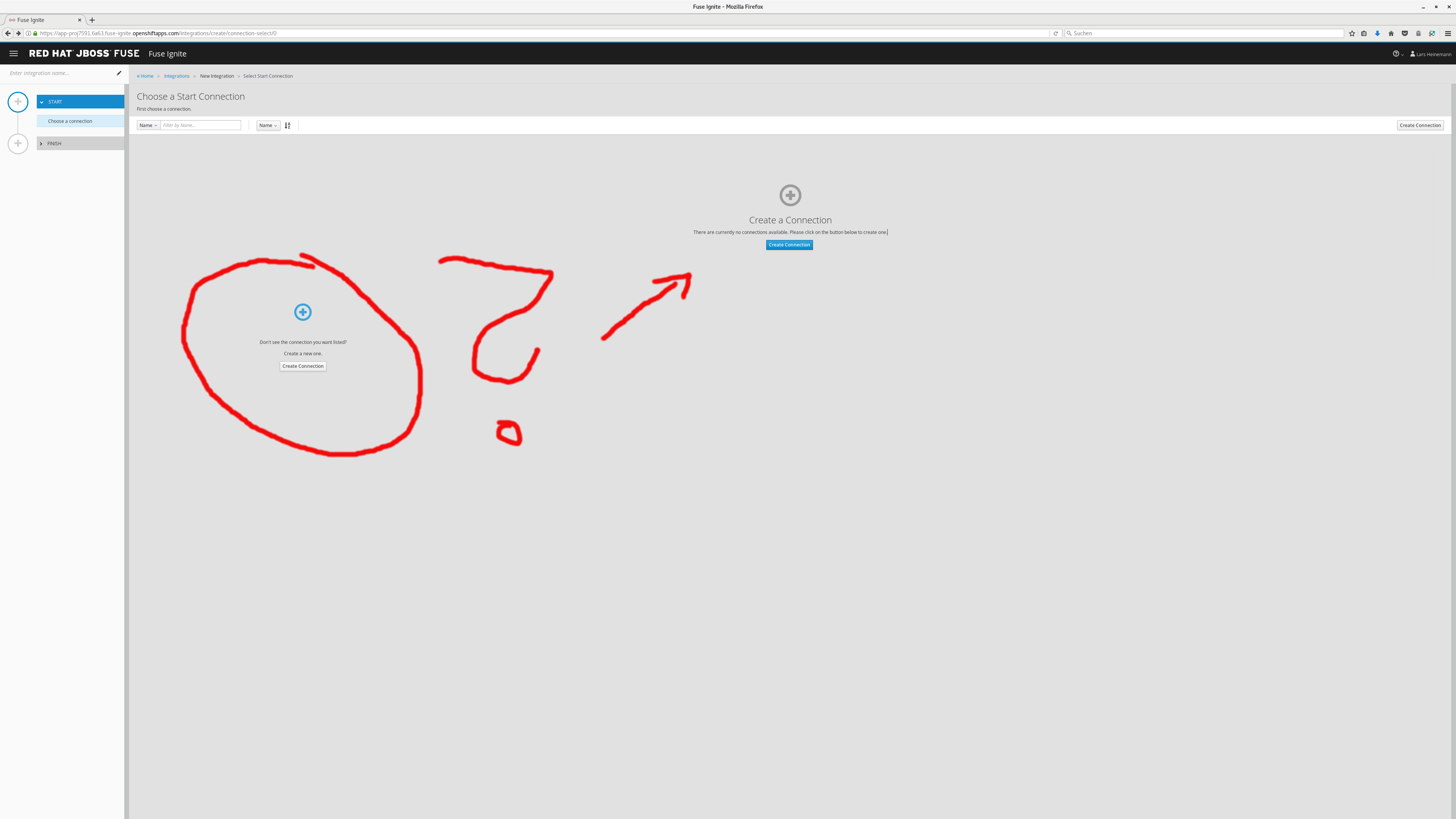

Strange UI for new integration when no connection is available

|

bug Priority - High

|

Just reported by Lars:

IMO there should be only the lower 'create connection', or even having 'Create integration' disabled when there are no connections defined.

|

1.0

|

Strange UI for new integration when no connection is available - Just reported by Lars:

IMO there should be only the lower 'create connection', or even having 'Create integration' disabled when there are no connections defined.

|

priority

|

strange ui for new integration when no connection is available just reported by lars imo there should be only the lower create connection or even having create integration disabled when there are no connections defined

| 1

|

308,410

| 9,438,919,973

|

IssuesEvent

|

2019-04-14 05:23:53

|

CS2103-AY1819S2-W15-4/main

|

https://api.github.com/repos/CS2103-AY1819S2-W15-4/main

|

closed

|

Handle exception when list of people are cleared and schedule is called.

|

priority.High

|

How to get error: clear list of people. Call "Schedule".

|

1.0

|

Handle exception when list of people are cleared and schedule is called. - How to get error: clear list of people. Call "Schedule".

|

priority

|

handle exception when list of people are cleared and schedule is called how to get error clear list of people call schedule

| 1

|

64,422

| 3,211,550,484

|

IssuesEvent

|

2015-10-06 11:26:38

|

CoderDojo/community-platform

|

https://api.github.com/repos/CoderDojo/community-platform

|

closed

|

Verified Dojo missing from new database

|

bug high priority question

|

This Dojo is missing:

`Brecht @ de bib Brecht`

It is not in the new db. It is owned by the same user as this Dojo: http://zen.coderdojo.com/dashboard/dojo/be/kerklei-2-brecht/dojo-brecht

Was there an issue with the migration where one user owned more than one Dojo?

|

1.0

|

Verified Dojo missing from new database - This Dojo is missing:

`Brecht @ de bib Brecht`

It is not in the new db. It is owned by the same user as this Dojo: http://zen.coderdojo.com/dashboard/dojo/be/kerklei-2-brecht/dojo-brecht

Was there an issue with the migration where one user owned more than one Dojo?

|

priority

|

verified dojo missing from new database this dojo is missing brecht de bib brecht it is not in the new db it is owned by the same user as this dojo was there an issue with the migration where one user owned more than one dojo

| 1

|

735,569

| 25,403,908,969

|

IssuesEvent

|

2022-11-22 14:03:39

|

netdata/netdata-cloud

|

https://api.github.com/repos/netdata/netdata-cloud

|

opened

|

[Bug]: Chart only present on child agent isn't synced to cloud

|

bug FOSS agent priority/high visualizations-team

|

### Bug description

In a parent/child setup where child is streaming data in cloud through a claimed parent (only parent is claimed to netdata cloud, NOT the child), a chart X that lives on child but doesn't exist on parent **doesn't sync in cloud although it exists on agent dashboard**

### Expected behavior

All charts should sync to cloud eventually.

### Steps to reproduce

1. Setup parent and child agents

2. Claim parent to netdata cloud

3. Create a chart in child agent (e.g connect a usb flashdrive on the child host which doesn't exist on parent)

4. Chart should sync to cloud and appear in charts list

### Screenshots

_No response_

### Error Logs

Logs on child's side

```

2022-11-22 09:33:30: STREAM: 377 from 'parentd3tqypgcj2v:19999' for host 'child_one_d3phxg4jzio': REPLAY_CHART "mock_test.mock-area" "true" 0 0

2022-11-22 09:33:30: STREAM: 377 from 'parentd3tqypgcj2v:19999' for host 'child_one_d3phxg4jzio': REPLAY_CHART "netdata.runtime_mock_test" "true" 0 0

```

Parent is full of

`sending empty replication because first entry of the child is invalid (0)`

even though both have been claimed and streaming for more than 2 mins

### Desktop

OS: ubuntu

Browser Chrome

Browser Version 106

### Additional context

This seems to be an agent issue that affects syncing of charts in cloud. Agent dashboard seems to work as expected.

agent version is the latest nightly. Issues started appearing on 22/11/2022

|

1.0

|

[Bug]: Chart only present on child agent isn't synced to cloud - ### Bug description

In a parent/child setup where child is streaming data in cloud through a claimed parent (only parent is claimed to netdata cloud, NOT the child), a chart X that lives on child but doesn't exist on parent **doesn't sync in cloud although it exists on agent dashboard**

### Expected behavior

All charts should sync to cloud eventually.

### Steps to reproduce

1. Setup parent and child agents

2. Claim parent to netdata cloud

3. Create a chart in child agent (e.g connect a usb flashdrive on the child host which doesn't exist on parent)

4. Chart should sync to cloud and appear in charts list

### Screenshots

_No response_

### Error Logs

Logs on child's side

```

2022-11-22 09:33:30: STREAM: 377 from 'parentd3tqypgcj2v:19999' for host 'child_one_d3phxg4jzio': REPLAY_CHART "mock_test.mock-area" "true" 0 0

2022-11-22 09:33:30: STREAM: 377 from 'parentd3tqypgcj2v:19999' for host 'child_one_d3phxg4jzio': REPLAY_CHART "netdata.runtime_mock_test" "true" 0 0

```

Parent is full of

`sending empty replication because first entry of the child is invalid (0)`

even though both have been claimed and streaming for more than 2 mins

### Desktop

OS: ubuntu

Browser Chrome

Browser Version 106

### Additional context

This seems to be an agent issue that affects syncing of charts in cloud. Agent dashboard seems to work as expected.

agent version is the latest nightly. Issues started appearing on 22/11/2022

|

priority

|

chart only present on child agent isn t synced to cloud bug description in a parent child setup where child is streaming data in cloud through a claimed parent only parent is claimed to netdata cloud not the child a chart x that lives on child but doesn t exist on parent doesn t sync in cloud although it exists on agent dashboard expected behavior all charts should sync to cloud eventually steps to reproduce setup parent and child agents claim parent to netdata cloud create a chart in child agent e g connect a usb flashdrive on the child host which doesn t exist on parent chart should sync to cloud and appear in charts list screenshots no response error logs logs on child s side stream from for host child one replay chart mock test mock area true stream from for host child one replay chart netdata runtime mock test true parent is full of sending empty replication because first entry of the child is invalid even though both have been claimed and streaming for more than mins desktop os ubuntu browser chrome browser version additional context this seems to be an agent issue that affects syncing of charts in cloud agent dashboard seems to work as expected agent version is the latest nightly issues started appearing on

| 1

|

402,622

| 11,812,163,222

|

IssuesEvent

|

2020-03-19 19:36:27

|

ClinGen/clincoded

|

https://api.github.com/repos/ClinGen/clincoded

|

closed

|

Delete the GDM - Wrong MONDO ID

|

EP request GCI curation edit priority: high

|

https://curation.clinicalgenome.org/curation-central/?gdm=cdd07931-9249-49a5-b918-9f363cceda58&pmid=10700180

New MONDO ID hasn't yet been published, however it was due for a January release.

|

1.0

|

Delete the GDM - Wrong MONDO ID - https://curation.clinicalgenome.org/curation-central/?gdm=cdd07931-9249-49a5-b918-9f363cceda58&pmid=10700180

New MONDO ID hasn't yet been published, however it was due for a January release.

|

priority

|

delete the gdm wrong mondo id new mondo id hasn t yet been published however it was due for a january release

| 1

|

442,282

| 12,743,051,236

|

IssuesEvent

|

2020-06-26 09:38:31

|

wso2/micro-integrator

|

https://api.github.com/repos/wso2/micro-integrator

|

closed

|

[External user store][Ldap][Management API]Get Users return 404

|

Priority/High Severity/Blocker

|

**Description:**

When it is connected to an external user store (ldap), get users return 404.

Doc: https://ei.docs.wso2.com/en/7.1.0/micro-integrator/administer-and-observe/working-with-management-api/#get-users

**Steps to reproduce:**

1. Create the following ldap user store.

[userstore-ldif.zip](https://github.com/wso2/micro-integrator/files/4829475/userstore-ldif.zip)

2. Add the below configurations to the deployment.toml file.

```

[internal_apis.file_user_store]

enable = false

[user_store]

type = "read_only_ldap"

class = "org.wso2.micro.integrator.security.user.core.ldap.ReadOnlyLDAPUserStoreManager"

connection_url = "ldap://localhost:10389"

connection_name = "uid=admin,ou=system"

connection_password = "secret"

user_search_base = "ou=system"

```

3. Start the micro integrator as below.

`./micro-integrator.sh -DenableManagementApi`

4. Login to the server as below by obtaining the access token.

```

curl -X GET "https://localhost:9164/management/login" -H "accept: application/json" -H "Authorization: Basic YWRtaW46c2VjcmV0" -k -i

```

5. Now try to invoke the users and add users as below.

```

curl -X GET "https://localhost:9164/management/users?pattern=”*us*”&role=”role”" -H "accept: application/json" -H "Authorization: Bearer %AccessToken%" -k -i

```

```

curl -X POST -d @user "https://localhost:9164/management/users" -H "accept: application/json" -H "Content-Type: application/json" -H "Authorization: Bearer %AccessToken% " -k -i

```

https://ei.docs.wso2.com/en/7.1.0/micro-integrator/administer-and-observe/working-with-management-api/#add-users

**Expected**: List the users or add users

**Actual**: Getting the following error.

```

HTTP/1.1 404 Not Found

Authorization: Bearer eyJraWQiOiI0M2QyYzhiZi1mOTM0LTRhY2MtOWFkYS0xY2IxODJkZTdmZjYiLCJhbGciOiJSUzI1NiJ9.eyJzdWIiOiJhZG1pbiIsImlzcyI6Imh0dHBzOlwvXC8xMjcuMC4wLjE6OTE2NFwvIiwiZXhwIjoxNTkzMDcxNjAyfQ.WPza3dOJH5WAd0m9-oYSK1eysg72_YaBBk7L0XxPKA4cjLP2L0O08E0wHkqwW6CaJFbeX0rpodtq5YQSkFMRgLjhanrObT4ZMn0L5oGWcOQhvqb95goGn_WOqHZDt5yVLaXcaCRzyE-N_i1CK-5Y2uLAiW3hvMzlwqYpSu1dczoFGcvMkKxx_F3IFqeOu__zsOvag6QvX395SJ-Ll0-iKDXMej9OQKIycagtBtGJr5M68uKJ8XADLfJxb0YCvShh14p91bsp-a48y7Q0WA8cCgI2AW7BRRuk6JctfRMzRjx2XnuNk3dYUzBoNoGDePRciDTi1UhmW3LMuT_HrEbPyg

Access-Control-Allow-Origin: *

Access-Control-Allow-Methods: GET, POST, PUT, DELETE,OPTIONS, PATCH

Host: localhost:9164

Access-Control-Allow-Headers: Authorization, Content-Type

accept: application/json

Date: Thu, 25 Jun 2020 06:54:12 GMT

Transfer-Encoding: chunked

```

Carbon logs

```

[2020-06-25 12:24:12,488] ERROR {AbstractUserStoreManager} - Error occurred while accessing Java Security Manager Privilege Block when called by method getRoleListOfUser with 1 length of Objects and argTypes [class java.lang.String]

[2020-06-25 12:24:12,489] ERROR {AuthorizationHandler} - Error initializing the user store org.wso2.micro.integrator.security.user.core.UserStoreException: Error occurred while accessing Java Security Manager Privilege Block when called by method getRoleListOfUser with 1 length of Objects and argTypes [class java.lang.String]

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.callSecure(AbstractUserStoreManager.java:192)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.getRoleListOfUser(AbstractUserStoreManager.java:4271)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandler.authorize(AuthorizationHandler.java:109)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandler.processAuthorizationWithCarbonUserStore(AuthorizationHandler.java:99)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandler.authorize(AuthorizationHandler.java:79)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandlerAdapter.handle(AuthorizationHandlerAdapter.java:50)

at org.wso2.micro.integrator.management.apis.security.handler.SecurityHandlerAdapter.invoke(SecurityHandlerAdapter.java:120)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandler.invoke(AuthorizationHandler.java:59)

at org.wso2.carbon.inbound.endpoint.internal.http.api.InternalAPIDispatcher.dispatch(InternalAPIDispatcher.java:75)

at org.wso2.carbon.inbound.endpoint.protocol.http.InboundHttpServerWorker.run(InboundHttpServerWorker.java:109)

at org.apache.axis2.transport.base.threads.NativeWorkerPool$1.run(NativeWorkerPool.java:172)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.security.PrivilegedActionException: java.lang.reflect.InvocationTargetException

at java.security.AccessController.doPrivileged(Native Method)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.callSecure(AbstractUserStoreManager.java:171)

... 13 more

Caused by: java.lang.reflect.InvocationTargetException

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager$2.run(AbstractUserStoreManager.java:174)

... 15 more

Caused by: java.lang.NullPointerException

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.doGetInternalRoleListOfUser(AbstractUserStoreManager.java:386)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.doGetRoleListOfUser(AbstractUserStoreManager.java:5719)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.getRoleListOfUser(AbstractUserStoreManager.java:4295)

... 20 more

[2020-06-25 12:24:12,490] ERROR {AuthorizationHandler} - User admin cannot be authorized

```

**Note**:

But using the same above access token can access the resources such as apis, carbon applications, and etc.

```

HTTP/1.1 200 OK

Authorization: Bearer eyJraWQiOiI0M2QyYzhiZi1mOTM0LTRhY2MtOWFkYS0xY2IxODJkZTdmZjYiLCJhbGciOiJSUzI1NiJ9.eyJzdWIiOiJhZG1pbiIsImlzcyI6Imh0dHBzOlwvXC8xMjcuMC4wLjE6OTE2NFwvIiwiZXhwIjoxNTkzMDcxNjAyfQ.WPza3dOJH5WAd0m9-oYSK1eysg72_YaBBk7L0XxPKA4cjLP2L0O08E0wHkqwW6CaJFbeX0rpodtq5YQSkFMRgLjhanrObT4ZMn0L5oGWcOQhvqb95goGn_WOqHZDt5yVLaXcaCRzyE-N_i1CK-5Y2uLAiW3hvMzlwqYpSu1dczoFGcvMkKxx_F3IFqeOu__zsOvag6QvX395SJ-Ll0-iKDXMej9OQKIycagtBtGJr5M68uKJ8XADLfJxb0YCvShh14p91bsp-a48y7Q0WA8cCgI2AW7BRRuk6JctfRMzRjx2XnuNk3dYUzBoNoGDePRciDTi1UhmW3LMuT_HrEbPyg

Access-Control-Allow-Origin: *

Access-Control-Allow-Methods: GET, POST, PUT, DELETE,OPTIONS, PATCH

Host: localhost:9164

Access-Control-Allow-Headers: Authorization, Content-Type

accept: application/json

Content-Type: application/json; charset=UTF-8

Date: Thu, 25 Jun 2020 06:55:40 GMT

Transfer-Encoding: chunked

{"count":2,"list":[{"name":"Extract","url":"http://localhost:8290/extract"},{"name":"TestGoogle","url":"http://localhost:8290/search"}]}%

````

Further, invoking secured rest APIs too work with the existing users in the external user store.

|

1.0

|

[External user store][Ldap][Management API]Get Users return 404 - **Description:**

When it is connected to an external user store (ldap), get users return 404.

Doc: https://ei.docs.wso2.com/en/7.1.0/micro-integrator/administer-and-observe/working-with-management-api/#get-users

**Steps to reproduce:**

1. Create the following ldap user store.

[userstore-ldif.zip](https://github.com/wso2/micro-integrator/files/4829475/userstore-ldif.zip)

2. Add the below configurations to the deployment.toml file.

```

[internal_apis.file_user_store]

enable = false

[user_store]

type = "read_only_ldap"

class = "org.wso2.micro.integrator.security.user.core.ldap.ReadOnlyLDAPUserStoreManager"

connection_url = "ldap://localhost:10389"

connection_name = "uid=admin,ou=system"

connection_password = "secret"

user_search_base = "ou=system"

```

3. Start the micro integrator as below.

`./micro-integrator.sh -DenableManagementApi`

4. Login to the server as below by obtaining the access token.

```

curl -X GET "https://localhost:9164/management/login" -H "accept: application/json" -H "Authorization: Basic YWRtaW46c2VjcmV0" -k -i

```

5. Now try to invoke the users and add users as below.

```

curl -X GET "https://localhost:9164/management/users?pattern=”*us*”&role=”role”" -H "accept: application/json" -H "Authorization: Bearer %AccessToken%" -k -i

```

```

curl -X POST -d @user "https://localhost:9164/management/users" -H "accept: application/json" -H "Content-Type: application/json" -H "Authorization: Bearer %AccessToken% " -k -i

```

https://ei.docs.wso2.com/en/7.1.0/micro-integrator/administer-and-observe/working-with-management-api/#add-users

**Expected**: List the users or add users

**Actual**: Getting the following error.

```

HTTP/1.1 404 Not Found

Authorization: Bearer eyJraWQiOiI0M2QyYzhiZi1mOTM0LTRhY2MtOWFkYS0xY2IxODJkZTdmZjYiLCJhbGciOiJSUzI1NiJ9.eyJzdWIiOiJhZG1pbiIsImlzcyI6Imh0dHBzOlwvXC8xMjcuMC4wLjE6OTE2NFwvIiwiZXhwIjoxNTkzMDcxNjAyfQ.WPza3dOJH5WAd0m9-oYSK1eysg72_YaBBk7L0XxPKA4cjLP2L0O08E0wHkqwW6CaJFbeX0rpodtq5YQSkFMRgLjhanrObT4ZMn0L5oGWcOQhvqb95goGn_WOqHZDt5yVLaXcaCRzyE-N_i1CK-5Y2uLAiW3hvMzlwqYpSu1dczoFGcvMkKxx_F3IFqeOu__zsOvag6QvX395SJ-Ll0-iKDXMej9OQKIycagtBtGJr5M68uKJ8XADLfJxb0YCvShh14p91bsp-a48y7Q0WA8cCgI2AW7BRRuk6JctfRMzRjx2XnuNk3dYUzBoNoGDePRciDTi1UhmW3LMuT_HrEbPyg

Access-Control-Allow-Origin: *

Access-Control-Allow-Methods: GET, POST, PUT, DELETE,OPTIONS, PATCH

Host: localhost:9164

Access-Control-Allow-Headers: Authorization, Content-Type

accept: application/json

Date: Thu, 25 Jun 2020 06:54:12 GMT

Transfer-Encoding: chunked

```

Carbon logs

```

[2020-06-25 12:24:12,488] ERROR {AbstractUserStoreManager} - Error occurred while accessing Java Security Manager Privilege Block when called by method getRoleListOfUser with 1 length of Objects and argTypes [class java.lang.String]

[2020-06-25 12:24:12,489] ERROR {AuthorizationHandler} - Error initializing the user store org.wso2.micro.integrator.security.user.core.UserStoreException: Error occurred while accessing Java Security Manager Privilege Block when called by method getRoleListOfUser with 1 length of Objects and argTypes [class java.lang.String]

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.callSecure(AbstractUserStoreManager.java:192)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.getRoleListOfUser(AbstractUserStoreManager.java:4271)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandler.authorize(AuthorizationHandler.java:109)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandler.processAuthorizationWithCarbonUserStore(AuthorizationHandler.java:99)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandler.authorize(AuthorizationHandler.java:79)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandlerAdapter.handle(AuthorizationHandlerAdapter.java:50)

at org.wso2.micro.integrator.management.apis.security.handler.SecurityHandlerAdapter.invoke(SecurityHandlerAdapter.java:120)

at org.wso2.micro.integrator.management.apis.security.handler.AuthorizationHandler.invoke(AuthorizationHandler.java:59)

at org.wso2.carbon.inbound.endpoint.internal.http.api.InternalAPIDispatcher.dispatch(InternalAPIDispatcher.java:75)

at org.wso2.carbon.inbound.endpoint.protocol.http.InboundHttpServerWorker.run(InboundHttpServerWorker.java:109)

at org.apache.axis2.transport.base.threads.NativeWorkerPool$1.run(NativeWorkerPool.java:172)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.security.PrivilegedActionException: java.lang.reflect.InvocationTargetException

at java.security.AccessController.doPrivileged(Native Method)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.callSecure(AbstractUserStoreManager.java:171)

... 13 more

Caused by: java.lang.reflect.InvocationTargetException

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager$2.run(AbstractUserStoreManager.java:174)

... 15 more

Caused by: java.lang.NullPointerException

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.doGetInternalRoleListOfUser(AbstractUserStoreManager.java:386)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.doGetRoleListOfUser(AbstractUserStoreManager.java:5719)

at org.wso2.micro.integrator.security.user.core.common.AbstractUserStoreManager.getRoleListOfUser(AbstractUserStoreManager.java:4295)

... 20 more

[2020-06-25 12:24:12,490] ERROR {AuthorizationHandler} - User admin cannot be authorized

```

**Note**:

But using the same above access token can access the resources such as apis, carbon applications, and etc.

```

HTTP/1.1 200 OK

Authorization: Bearer eyJraWQiOiI0M2QyYzhiZi1mOTM0LTRhY2MtOWFkYS0xY2IxODJkZTdmZjYiLCJhbGciOiJSUzI1NiJ9.eyJzdWIiOiJhZG1pbiIsImlzcyI6Imh0dHBzOlwvXC8xMjcuMC4wLjE6OTE2NFwvIiwiZXhwIjoxNTkzMDcxNjAyfQ.WPza3dOJH5WAd0m9-oYSK1eysg72_YaBBk7L0XxPKA4cjLP2L0O08E0wHkqwW6CaJFbeX0rpodtq5YQSkFMRgLjhanrObT4ZMn0L5oGWcOQhvqb95goGn_WOqHZDt5yVLaXcaCRzyE-N_i1CK-5Y2uLAiW3hvMzlwqYpSu1dczoFGcvMkKxx_F3IFqeOu__zsOvag6QvX395SJ-Ll0-iKDXMej9OQKIycagtBtGJr5M68uKJ8XADLfJxb0YCvShh14p91bsp-a48y7Q0WA8cCgI2AW7BRRuk6JctfRMzRjx2XnuNk3dYUzBoNoGDePRciDTi1UhmW3LMuT_HrEbPyg

Access-Control-Allow-Origin: *

Access-Control-Allow-Methods: GET, POST, PUT, DELETE,OPTIONS, PATCH

Host: localhost:9164

Access-Control-Allow-Headers: Authorization, Content-Type

accept: application/json

Content-Type: application/json; charset=UTF-8

Date: Thu, 25 Jun 2020 06:55:40 GMT

Transfer-Encoding: chunked

{"count":2,"list":[{"name":"Extract","url":"http://localhost:8290/extract"},{"name":"TestGoogle","url":"http://localhost:8290/search"}]}%

````

Further, invoking secured rest APIs too work with the existing users in the external user store.

|

priority

|

get users return description when it is connected to an external user store ldap get users return doc steps to reproduce create the following ldap user store add the below configurations to the deployment toml file enable false type read only ldap class org micro integrator security user core ldap readonlyldapuserstoremanager connection url ldap localhost connection name uid admin ou system connection password secret user search base ou system start the micro integrator as below micro integrator sh denablemanagementapi login to the server as below by obtaining the access token curl x get h accept application json h authorization basic k i now try to invoke the users and add users as below curl x get h accept application json h authorization bearer accesstoken k i curl x post d user h accept application json h content type application json h authorization bearer accesstoken k i expected list the users or add users actual getting the following error http not found authorization bearer n hrebpyg access control allow origin access control allow methods get post put delete options patch host localhost access control allow headers authorization content type accept application json date thu jun gmt transfer encoding chunked carbon logs error abstractuserstoremanager error occurred while accessing java security manager privilege block when called by method getrolelistofuser with length of objects and argtypes error authorizationhandler error initializing the user store org micro integrator security user core userstoreexception error occurred while accessing java security manager privilege block when called by method getrolelistofuser with length of objects and argtypes at org micro integrator security user core common abstractuserstoremanager callsecure abstractuserstoremanager java at org micro integrator security user core common abstractuserstoremanager getrolelistofuser abstractuserstoremanager java at org micro integrator management apis security handler authorizationhandler authorize authorizationhandler java at org micro integrator management apis security handler authorizationhandler processauthorizationwithcarbonuserstore authorizationhandler java at org micro integrator management apis security handler authorizationhandler authorize authorizationhandler java at org micro integrator management apis security handler authorizationhandleradapter handle authorizationhandleradapter java at org micro integrator management apis security handler securityhandleradapter invoke securityhandleradapter java at org micro integrator management apis security handler authorizationhandler invoke authorizationhandler java at org carbon inbound endpoint internal http api internalapidispatcher dispatch internalapidispatcher java at org carbon inbound endpoint protocol http inboundhttpserverworker run inboundhttpserverworker java at org apache transport base threads nativeworkerpool run nativeworkerpool java at java util concurrent threadpoolexecutor runworker threadpoolexecutor java at java util concurrent threadpoolexecutor worker run threadpoolexecutor java at java lang thread run thread java caused by java security privilegedactionexception java lang reflect invocationtargetexception at java security accesscontroller doprivileged native method at org micro integrator security user core common abstractuserstoremanager callsecure abstractuserstoremanager java more caused by java lang reflect invocationtargetexception at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org micro integrator security user core common abstractuserstoremanager run abstractuserstoremanager java more caused by java lang nullpointerexception at org micro integrator security user core common abstractuserstoremanager dogetinternalrolelistofuser abstractuserstoremanager java at org micro integrator security user core common abstractuserstoremanager dogetrolelistofuser abstractuserstoremanager java at org micro integrator security user core common abstractuserstoremanager getrolelistofuser abstractuserstoremanager java more error authorizationhandler user admin cannot be authorized note but using the same above access token can access the resources such as apis carbon applications and etc http ok authorization bearer n hrebpyg access control allow origin access control allow methods get post put delete options patch host localhost access control allow headers authorization content type accept application json content type application json charset utf date thu jun gmt transfer encoding chunked count list further invoking secured rest apis too work with the existing users in the external user store

| 1

|

370,105

| 10,925,415,318

|

IssuesEvent

|

2019-11-22 12:27:50

|

ubtue/DatenProbleme

|

https://api.github.com/repos/ubtue/DatenProbleme

|

opened

|

ISSN 2159-6808 Journal of Religion and Violence Rezensionen

|

high priority

|

Rezensionen werden nicht mit 655 getagt.

Sie stehen in der Sektion Book Reviews

|

1.0

|

ISSN 2159-6808 Journal of Religion and Violence Rezensionen - Rezensionen werden nicht mit 655 getagt.

Sie stehen in der Sektion Book Reviews

|

priority

|

issn journal of religion and violence rezensionen rezensionen werden nicht mit getagt sie stehen in der sektion book reviews

| 1

|

75,214

| 3,460,290,365

|

IssuesEvent

|

2015-12-19 02:26:34

|

notsecure/uTox

|

https://api.github.com/repos/notsecure/uTox

|

closed

|

uTox automatically accepts group invites without asking

|

bug groups high_priority Security

|

When being invited into a group chat, uTox automatically accepts an invite. It should ask the user if they want to join the groupchat first.

|

1.0

|

uTox automatically accepts group invites without asking - When being invited into a group chat, uTox automatically accepts an invite. It should ask the user if they want to join the groupchat first.

|

priority

|

utox automatically accepts group invites without asking when being invited into a group chat utox automatically accepts an invite it should ask the user if they want to join the groupchat first

| 1

|

380,397

| 11,259,998,519

|

IssuesEvent

|

2020-01-13 09:38:28

|

ahmedkaludi/accelerated-mobile-pages

|

https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages

|

closed

|

Calculated fields form plugin is generating validation error on AMP pages.

|

[Priority: HIGH] bug

|

Missing URL for attribute 'src' in tag 'amp-img'.

Ref;-https://secure.helpscout.net/conversation/1049505523/105584?folderId=1060556

|

1.0

|

Calculated fields form plugin is generating validation error on AMP pages. - Missing URL for attribute 'src' in tag 'amp-img'.

Ref;-https://secure.helpscout.net/conversation/1049505523/105584?folderId=1060556

|

priority

|

calculated fields form plugin is generating validation error on amp pages missing url for attribute src in tag amp img ref

| 1

|

195,566

| 6,913,136,118

|

IssuesEvent

|

2017-11-28 14:25:40

|

smartchicago/chicago-early-learning

|

https://api.github.com/repos/smartchicago/chicago-early-learning

|

closed

|

Add map images to Family Resource Centers page

|

High Priority Waiting on Merge

|

We still need to add the map image assets to the Family Resource Centers page as seen in the original design - #828.

|

1.0

|

Add map images to Family Resource Centers page - We still need to add the map image assets to the Family Resource Centers page as seen in the original design - #828.

|

priority

|

add map images to family resource centers page we still need to add the map image assets to the family resource centers page as seen in the original design

| 1

|

337,263

| 10,212,850,371

|

IssuesEvent

|

2019-08-14 20:31:23

|

hydroshare/hydroshare

|

https://api.github.com/repos/hydroshare/hydroshare

|

opened

|

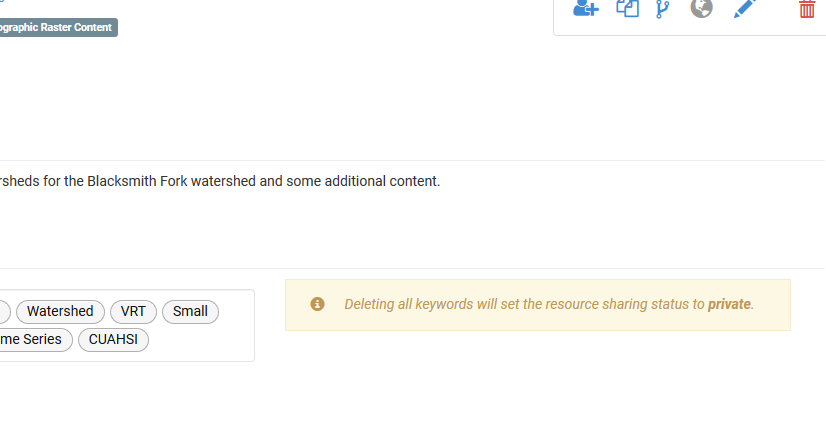

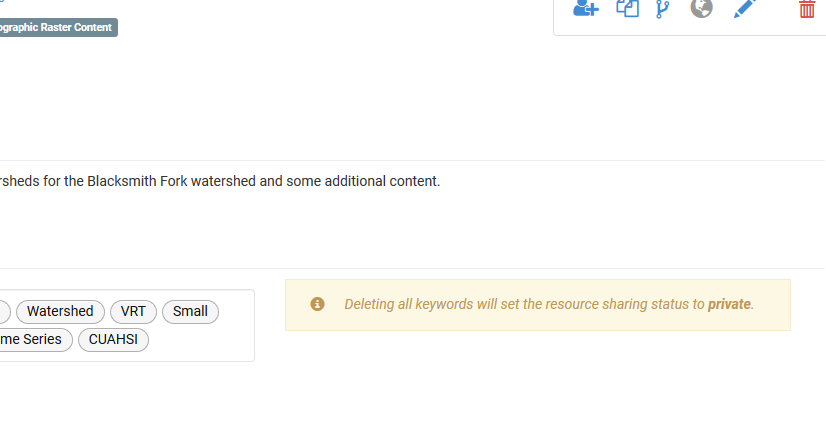

"Edit" message for keywords shows in "view" mode.

|

High Priority page state

|

Take a look at just about any public resource to reproduce. I was able to reproduce this issue on this resource without even being logged in.

https://www.hydroshare.org/resource/5586e9524b114c30a1a29d58f4c98355/

|

1.0

|

"Edit" message for keywords shows in "view" mode. - Take a look at just about any public resource to reproduce. I was able to reproduce this issue on this resource without even being logged in.

https://www.hydroshare.org/resource/5586e9524b114c30a1a29d58f4c98355/

|

priority

|

edit message for keywords shows in view mode take a look at just about any public resource to reproduce i was able to reproduce this issue on this resource without even being logged in

| 1

|

750,106

| 26,188,976,650

|

IssuesEvent

|

2023-01-03 06:35:41

|

factly/kavach

|

https://api.github.com/repos/factly/kavach

|

opened

|

Posthog not working on Kavach

|

priority:high

|

Environment variable passed to kavach-web image is not being taken up using process.env ( example - for kavach-web I am passing posthog_api_url and posthog_api_key and its not being taken because of which it is not showing the authentication events. )

|

1.0

|

Posthog not working on Kavach - Environment variable passed to kavach-web image is not being taken up using process.env ( example - for kavach-web I am passing posthog_api_url and posthog_api_key and its not being taken because of which it is not showing the authentication events. )

|

priority

|

posthog not working on kavach environment variable passed to kavach web image is not being taken up using process env example for kavach web i am passing posthog api url and posthog api key and its not being taken because of which it is not showing the authentication events

| 1

|

337,435

| 10,218,007,628

|

IssuesEvent

|

2019-08-15 14:57:37

|

wso2/streaming-integrator-tooling

|

https://api.github.com/repos/wso2/streaming-integrator-tooling

|

closed

|

change startup log to streaming integrator

|

Priority/Highest Severity/Critical

|

**Description:**

Currently startup log prints as 'WSO2 Stream Processor started in 3.979 sec' We need to change this.

**Suggested Labels:**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees:**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

**Affected Product Version:**

**OS, DB, other environment details and versions:**

**Steps to reproduce:**

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

|

1.0

|

change startup log to streaming integrator - **Description:**

Currently startup log prints as 'WSO2 Stream Processor started in 3.979 sec' We need to change this.

**Suggested Labels:**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees:**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

**Affected Product Version:**

**OS, DB, other environment details and versions:**

**Steps to reproduce:**

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

|

priority

|

change startup log to streaming integrator description currently startup log prints as stream processor started in sec we need to change this suggested labels suggested assignees affected product version os db other environment details and versions steps to reproduce related issues

| 1

|

587,663

| 17,628,424,134

|