Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

172,660

| 6,515,025,566

|

IssuesEvent

|

2017-08-26 09:40:13

|

BuckleScript/bucklescript

|

https://api.github.com/repos/BuckleScript/bucklescript

|

closed

|

Userland bettererror handling

|

discussion PRIORITY:HIGH

|

I think I've found a process that lets us iterate on BetterErrors in userland while upstreaming the stable, generalizable better error reporting into BS in the future. Upon compilation error, bsb gets the path of the file and find the dependency (third/first-party)'s customError.ml/re file and invoke it while passing the file name, the error location, message, etc. The script returns the properly explained error which the compile displays (or the default, if none).

- That single file is a nice default and ensures that we don't get too crazy with dragging in 10 deps into. file to analyze error, as to stay fast. Helps us dogfood the future bs stdlib stuff too.

- The third-party is also able to provide much more specific message than the default compiler ones. E.g. ReasonReact has some "variable cannot be generalized", "foo would escape its scope" pitfalls which are intimidating to newcomers but which can be very trivially explained away using ReasonReact-specific explanations.

- This way we get to iterate on a clean version of BetterErrors in userland, including better error report, etc., inside ReasonReact itself. E.g. we could show a visual diff of type. Other dependencies interested in such thing can copy paste our logic. Then upstream if relevant.

Implementation-wise, I think this is feasible with only a few changes to the generated ninja file. Correct me if I'm missing something.

cc @jaredly @sanderspies @glennsl

_Btw, there's been some positive internal changes (that we'll discuss in private) that makes it so that better error reporting is bumped all the way to the top of our priorities_.

|

1.0

|

Userland bettererror handling - I think I've found a process that lets us iterate on BetterErrors in userland while upstreaming the stable, generalizable better error reporting into BS in the future. Upon compilation error, bsb gets the path of the file and find the dependency (third/first-party)'s customError.ml/re file and invoke it while passing the file name, the error location, message, etc. The script returns the properly explained error which the compile displays (or the default, if none).

- That single file is a nice default and ensures that we don't get too crazy with dragging in 10 deps into. file to analyze error, as to stay fast. Helps us dogfood the future bs stdlib stuff too.

- The third-party is also able to provide much more specific message than the default compiler ones. E.g. ReasonReact has some "variable cannot be generalized", "foo would escape its scope" pitfalls which are intimidating to newcomers but which can be very trivially explained away using ReasonReact-specific explanations.

- This way we get to iterate on a clean version of BetterErrors in userland, including better error report, etc., inside ReasonReact itself. E.g. we could show a visual diff of type. Other dependencies interested in such thing can copy paste our logic. Then upstream if relevant.

Implementation-wise, I think this is feasible with only a few changes to the generated ninja file. Correct me if I'm missing something.

cc @jaredly @sanderspies @glennsl

_Btw, there's been some positive internal changes (that we'll discuss in private) that makes it so that better error reporting is bumped all the way to the top of our priorities_.

|

priority

|

userland bettererror handling i think i ve found a process that lets us iterate on bettererrors in userland while upstreaming the stable generalizable better error reporting into bs in the future upon compilation error bsb gets the path of the file and find the dependency third first party s customerror ml re file and invoke it while passing the file name the error location message etc the script returns the properly explained error which the compile displays or the default if none that single file is a nice default and ensures that we don t get too crazy with dragging in deps into file to analyze error as to stay fast helps us dogfood the future bs stdlib stuff too the third party is also able to provide much more specific message than the default compiler ones e g reasonreact has some variable cannot be generalized foo would escape its scope pitfalls which are intimidating to newcomers but which can be very trivially explained away using reasonreact specific explanations this way we get to iterate on a clean version of bettererrors in userland including better error report etc inside reasonreact itself e g we could show a visual diff of type other dependencies interested in such thing can copy paste our logic then upstream if relevant implementation wise i think this is feasible with only a few changes to the generated ninja file correct me if i m missing something cc jaredly sanderspies glennsl btw there s been some positive internal changes that we ll discuss in private that makes it so that better error reporting is bumped all the way to the top of our priorities

| 1

|

491,236

| 14,147,479,140

|

IssuesEvent

|

2020-11-10 20:52:44

|

re-vault/practical-revault

|

https://api.github.com/repos/re-vault/practical-revault

|

reopened

|

Unvault tx fixed feerate of 253perkw isn't a good idea

|

High priority

|

Not crucial security-wise as it's not a revocation tx (to which we attach fee-bumping inputs so it's fine), but if the rolling feerate of nodes' mempools get above that (as it is at the time i'm writing this) then the unvault transaction won't be relayed (feefilter protocol message).

TL;DR: unvault tx fees should not be static (or at least not that low but it does not count as a *solution*)

|

1.0

|

Unvault tx fixed feerate of 253perkw isn't a good idea - Not crucial security-wise as it's not a revocation tx (to which we attach fee-bumping inputs so it's fine), but if the rolling feerate of nodes' mempools get above that (as it is at the time i'm writing this) then the unvault transaction won't be relayed (feefilter protocol message).

TL;DR: unvault tx fees should not be static (or at least not that low but it does not count as a *solution*)

|

priority

|

unvault tx fixed feerate of isn t a good idea not crucial security wise as it s not a revocation tx to which we attach fee bumping inputs so it s fine but if the rolling feerate of nodes mempools get above that as it is at the time i m writing this then the unvault transaction won t be relayed feefilter protocol message tl dr unvault tx fees should not be static or at least not that low but it does not count as a solution

| 1

|

55,807

| 3,074,836,106

|

IssuesEvent

|

2015-08-20 09:53:24

|

agda/agda

|

https://api.github.com/repos/agda/agda

|

closed

|

Caching: Internal error in getConstructorData

|

auto-migrated bug Caching master Priority-Highest

|

```

Recipe to reproduce in emacs:

0. start with empty .agda file

1. restart Agda (clearing the cache)

2. paste the following code

record R : Set1 where

field

f : Set

3. load

4. append the following code

foo = record { f = {!!} }

5. load

An internal error has occurred. Please report this as a bug.

Location of the error: src/full/Agda/TypeChecking/Datatypes.hs:59

```

Original issue reported on code.google.com by `andreas....@gmail.com` on 24 Feb 2015 at 7:35

|

1.0

|

Caching: Internal error in getConstructorData - ```

Recipe to reproduce in emacs:

0. start with empty .agda file

1. restart Agda (clearing the cache)

2. paste the following code

record R : Set1 where

field

f : Set

3. load

4. append the following code

foo = record { f = {!!} }

5. load

An internal error has occurred. Please report this as a bug.

Location of the error: src/full/Agda/TypeChecking/Datatypes.hs:59

```

Original issue reported on code.google.com by `andreas....@gmail.com` on 24 Feb 2015 at 7:35

|

priority

|

caching internal error in getconstructordata recipe to reproduce in emacs start with empty agda file restart agda clearing the cache paste the following code record r where field f set load append the following code foo record f load an internal error has occurred please report this as a bug location of the error src full agda typechecking datatypes hs original issue reported on code google com by andreas gmail com on feb at

| 1

|

258,937

| 8,181,058,202

|

IssuesEvent

|

2018-08-28 21:29:13

|

craftercms/craftercms

|

https://api.github.com/repos/craftercms/craftercms

|

opened

|

[studio] Updated Global Menu config so that it uses the correct permissions

|

bug priority: high

|

After https://github.com/craftercms/craftercms/issues/2387 and the correct user/group permissions are defined, please update the `global-menu-config.xml` with the right permissions.

|

1.0

|

[studio] Updated Global Menu config so that it uses the correct permissions - After https://github.com/craftercms/craftercms/issues/2387 and the correct user/group permissions are defined, please update the `global-menu-config.xml` with the right permissions.

|

priority

|

updated global menu config so that it uses the correct permissions after and the correct user group permissions are defined please update the global menu config xml with the right permissions

| 1

|

761,549

| 26,685,423,913

|

IssuesEvent

|

2023-01-26 21:28:58

|

nikkistorme/shelf

|

https://api.github.com/repos/nikkistorme/shelf

|

closed

|

Improve shelf sorting

|

high priority 2 hours Shelves

|

# Sort by:

- [x] Page count

- [x] Date added to Library

- [x] Name

- [x] Author

- [x] Finished date (hide this on the Unread shelf)

|

1.0

|

Improve shelf sorting - # Sort by:

- [x] Page count

- [x] Date added to Library

- [x] Name

- [x] Author

- [x] Finished date (hide this on the Unread shelf)

|

priority

|

improve shelf sorting sort by page count date added to library name author finished date hide this on the unread shelf

| 1

|

523,750

| 15,189,045,427

|

IssuesEvent

|

2021-02-15 15:53:28

|

MapColonies/discrete-layer-client

|

https://api.github.com/repos/MapColonies/discrete-layer-client

|

closed

|

Write CSW catalog service API for JSON

|

enhancement priority: high

|

as csw catalog supports XML protocol and we need to send a JSON from the client we need to write NPM package that will support transforming the JSON to csw/xml protocol

|

1.0

|

Write CSW catalog service API for JSON - as csw catalog supports XML protocol and we need to send a JSON from the client we need to write NPM package that will support transforming the JSON to csw/xml protocol

|

priority

|

write csw catalog service api for json as csw catalog supports xml protocol and we need to send a json from the client we need to write npm package that will support transforming the json to csw xml protocol

| 1

|

451,998

| 13,044,641,660

|

IssuesEvent

|

2020-07-29 05:20:05

|

WaifuHarem/waifu-server

|

https://api.github.com/repos/WaifuHarem/waifu-server

|

opened

|

[tests/parsertest.js] Implement parsertest

|

High Priority

|

parsertest.js is currently an empty file. A test suite needs to be created for the module which will test all implemented parser operations with valid and invalid inputs.

|

1.0

|

[tests/parsertest.js] Implement parsertest - parsertest.js is currently an empty file. A test suite needs to be created for the module which will test all implemented parser operations with valid and invalid inputs.

|

priority

|

implement parsertest parsertest js is currently an empty file a test suite needs to be created for the module which will test all implemented parser operations with valid and invalid inputs

| 1

|

543,306

| 15,879,709,393

|

IssuesEvent

|

2021-04-09 12:49:34

|

Adyen/adyen-magento2

|

https://api.github.com/repos/Adyen/adyen-magento2

|

closed

|

[PW-2029] Charged currency is incorrect

|

Bug report Issue: Confirmed Priority: high Progress: in progress

|

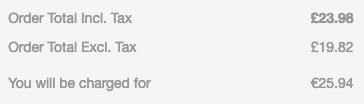

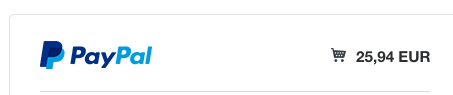

**Magento version**: 2.3.1

**Plugin version**: 2.4.0

**Description**

When I configure Magento to display a local currency, this currency is sent to Adyen as the currency the customer has to complete the payment with.

This behaviour is different than other (built in) payment providers in Magento.

Magento itself also displays a notice that the currency that the customer will be charged for will be different:

If I complete this payment with a payment method provided by the Adyen plugin, the customer will be charged for £23.98 instead of €25.94

|

1.0

|

[PW-2029] Charged currency is incorrect - **Magento version**: 2.3.1

**Plugin version**: 2.4.0

**Description**

When I configure Magento to display a local currency, this currency is sent to Adyen as the currency the customer has to complete the payment with.

This behaviour is different than other (built in) payment providers in Magento.

Magento itself also displays a notice that the currency that the customer will be charged for will be different:

If I complete this payment with a payment method provided by the Adyen plugin, the customer will be charged for £23.98 instead of €25.94

|

priority

|

charged currency is incorrect magento version plugin version description when i configure magento to display a local currency this currency is sent to adyen as the currency the customer has to complete the payment with this behaviour is different than other built in payment providers in magento magento itself also displays a notice that the currency that the customer will be charged for will be different if i complete this payment with a payment method provided by the adyen plugin the customer will be charged for £ instead of €

| 1

|

660,221

| 21,957,294,584

|

IssuesEvent

|

2022-05-24 13:10:57

|

ut-issl/s2e-core

|

https://api.github.com/repos/ut-issl/s2e-core

|

closed

|

Improve updating time in SimTime

|

priority::high

|

## Overview

Improve implementation of updating simulation time to not skip time for realtime simulation.

## Details

Relevant code: [SimTime.cpp](https://github.com/ut-issl/s2e-core/blob/13d443f2806d983e2288290add1e5c8d0f0a9d56/src/Environment/Global/SimTime.cpp#L85)

Relevant discussion: https://arkedge-space.slack.com/archives/C02JRCY0A5V/p1646816850264289

### Cause

When running realtime (or faster, step_sec >= 1) simulation, normally your computer is fast enough.

But sometimes it needs more calculation than normal steps, then computing time may exceeds realtime.

In that case S2E assesses your computer performance is not enough and forcibly skips time.

### Problem

- S2E's time became faster and faster than C2A's time because [this code](https://github.com/ut-issl/s2e-core/blob/13d443f2806d983e2288290add1e5c8d0f0a9d56/src/Environment/Global/SimTime.cpp#L92) skips time, and we cannot properly compare S2E's logged value and C2A's telemetry value.

- Error message "Error: the specified step_sec is too small for this computer." is continuously displayed dispite your computer is fast enough.

### Possible improvement

Use average wait time over a short term (one second or something) instead of single step to check the computer is able to compute simulation steps in realtime.

## Conditions for close

When the implementation is complete or another solution is found.

## Supplement

NA

## Note

NA

|

1.0

|

Improve updating time in SimTime - ## Overview

Improve implementation of updating simulation time to not skip time for realtime simulation.

## Details

Relevant code: [SimTime.cpp](https://github.com/ut-issl/s2e-core/blob/13d443f2806d983e2288290add1e5c8d0f0a9d56/src/Environment/Global/SimTime.cpp#L85)

Relevant discussion: https://arkedge-space.slack.com/archives/C02JRCY0A5V/p1646816850264289

### Cause

When running realtime (or faster, step_sec >= 1) simulation, normally your computer is fast enough.

But sometimes it needs more calculation than normal steps, then computing time may exceeds realtime.

In that case S2E assesses your computer performance is not enough and forcibly skips time.

### Problem

- S2E's time became faster and faster than C2A's time because [this code](https://github.com/ut-issl/s2e-core/blob/13d443f2806d983e2288290add1e5c8d0f0a9d56/src/Environment/Global/SimTime.cpp#L92) skips time, and we cannot properly compare S2E's logged value and C2A's telemetry value.

- Error message "Error: the specified step_sec is too small for this computer." is continuously displayed dispite your computer is fast enough.

### Possible improvement

Use average wait time over a short term (one second or something) instead of single step to check the computer is able to compute simulation steps in realtime.

## Conditions for close

When the implementation is complete or another solution is found.

## Supplement

NA

## Note

NA

|

priority

|

improve updating time in simtime overview improve implementation of updating simulation time to not skip time for realtime simulation details relevant code relevant discussion cause when running realtime or faster step sec simulation normally your computer is fast enough but sometimes it needs more calculation than normal steps then computing time may exceeds realtime in that case assesses your computer performance is not enough and forcibly skips time problem s time became faster and faster than s time because skips time and we cannot properly compare s logged value and s telemetry value error message error the specified step sec is too small for this computer is continuously displayed dispite your computer is fast enough possible improvement use average wait time over a short term one second or something instead of single step to check the computer is able to compute simulation steps in realtime conditions for close when the implementation is complete or another solution is found supplement na note na

| 1

|

98,465

| 4,021,731,188

|

IssuesEvent

|

2016-05-16 23:18:06

|

shelljs/shelljs

|

https://api.github.com/repos/shelljs/shelljs

|

opened

|

Big picture shelljs@1.0.0 discussion

|

chore feat help wanted high priority meeting question

|

Hi Everyone,

As discussed in #443, I'd like to start discussing `shelljs@1.0.0` features on a high level.

`v1.0.0` features [@shelljs/contributors, feel free to edit]:

- [ ] Async versions of all commands (#402, #387)

- [ ] Plugin System (#391)

- [ ] It would be quite nice to impliment all built in commands as plugins.

- [ ] Code Style Guidelines (#317)

- [ ] Miscellaneous cleanup/refactor of everything.

Thoughts?

|

1.0

|

Big picture shelljs@1.0.0 discussion - Hi Everyone,

As discussed in #443, I'd like to start discussing `shelljs@1.0.0` features on a high level.

`v1.0.0` features [@shelljs/contributors, feel free to edit]:

- [ ] Async versions of all commands (#402, #387)

- [ ] Plugin System (#391)

- [ ] It would be quite nice to impliment all built in commands as plugins.

- [ ] Code Style Guidelines (#317)

- [ ] Miscellaneous cleanup/refactor of everything.

Thoughts?

|

priority

|

big picture shelljs discussion hi everyone as discussed in i d like to start discussing shelljs features on a high level features async versions of all commands plugin system it would be quite nice to impliment all built in commands as plugins code style guidelines miscellaneous cleanup refactor of everything thoughts

| 1

|

814,072

| 30,485,052,458

|

IssuesEvent

|

2023-07-18 00:57:36

|

calcom/cal.com

|

https://api.github.com/repos/calcom/cal.com

|

closed

|

[CAL-2170] Wrong slot starting times for timezones that are not full hours

|

🐛 bug High priority

|

The slot starting times aren't correct for timezones like Asia/Kolkata (+5:30). Hourly events would start at half-hour slots instead of full-hour slots.

<sub>From [SyncLinear.com](https://synclinear.com) | [CAL-2170](https://linear.app/calcom/issue/CAL-2170/wrong-slot-starting-times-for-timezones-that-are-not-a-full-hour)</sub>

|

1.0

|

[CAL-2170] Wrong slot starting times for timezones that are not full hours - The slot starting times aren't correct for timezones like Asia/Kolkata (+5:30). Hourly events would start at half-hour slots instead of full-hour slots.

<sub>From [SyncLinear.com](https://synclinear.com) | [CAL-2170](https://linear.app/calcom/issue/CAL-2170/wrong-slot-starting-times-for-timezones-that-are-not-a-full-hour)</sub>

|

priority

|

wrong slot starting times for timezones that are not full hours the slot starting times aren t correct for timezones like asia kolkata hourly events would start at half hour slots instead of full hour slots from

| 1

|

284,178

| 8,736,331,295

|

IssuesEvent

|

2018-12-11 19:14:36

|

aowen87/TicketTester

|

https://api.github.com/repos/aowen87/TicketTester

|

closed

|

Huge memory leaks in GUI adding, deleting, hiding, showing plots

|

bug likelihood medium priority reviewed severity high

|

Allen Sanderson reported a huge memory leak in the VisIt GUI when he was adding, deleting, hiding and showing plots. He had about 400 variables in his file and the memory jumped by about 100Mb each time such an operation was performed. This could cause the GUI to quickly exceed several Gb.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 3108

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: Immediate

Subject: Huge memory leaks in GUI adding, deleting, hiding, showing plots

Assigned to: Mark Miller

Category:

Target version: 2.13.2

Author: Eric Brugger

Start: 05/16/2018

Due date:

% Done: 0

Estimated time: 6.0

Created: 05/16/2018 12:51 pm

Updated: 05/22/2018 07:02 pm

Likelihood: 3 - Occasional

Severity: 5 - Very Serious

Found in version: 2.13.0

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

Allen Sanderson reported a huge memory leak in the VisIt GUI when he was adding, deleting, hiding and showing plots. He had about 400 variables in his file and the memory jumped by about 100Mb each time such an operation was performed. This could cause the GUI to quickly exceed several Gb.

Comments:

|

1.0

|

Huge memory leaks in GUI adding, deleting, hiding, showing plots - Allen Sanderson reported a huge memory leak in the VisIt GUI when he was adding, deleting, hiding and showing plots. He had about 400 variables in his file and the memory jumped by about 100Mb each time such an operation was performed. This could cause the GUI to quickly exceed several Gb.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 3108

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: Immediate

Subject: Huge memory leaks in GUI adding, deleting, hiding, showing plots

Assigned to: Mark Miller

Category:

Target version: 2.13.2

Author: Eric Brugger

Start: 05/16/2018

Due date:

% Done: 0

Estimated time: 6.0

Created: 05/16/2018 12:51 pm

Updated: 05/22/2018 07:02 pm

Likelihood: 3 - Occasional

Severity: 5 - Very Serious

Found in version: 2.13.0

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

Allen Sanderson reported a huge memory leak in the VisIt GUI when he was adding, deleting, hiding and showing plots. He had about 400 variables in his file and the memory jumped by about 100Mb each time such an operation was performed. This could cause the GUI to quickly exceed several Gb.

Comments:

|

priority

|

huge memory leaks in gui adding deleting hiding showing plots allen sanderson reported a huge memory leak in the visit gui when he was adding deleting hiding and showing plots he had about variables in his file and the memory jumped by about each time such an operation was performed this could cause the gui to quickly exceed several gb redmine migration this ticket was migrated from redmine as such not all information was able to be captured in the transition below is a complete record of the original redmine ticket ticket number status resolved project visit tracker bug priority immediate subject huge memory leaks in gui adding deleting hiding showing plots assigned to mark miller category target version author eric brugger start due date done estimated time created pm updated pm likelihood occasional severity very serious found in version impact expected use os all support group any description allen sanderson reported a huge memory leak in the visit gui when he was adding deleting hiding and showing plots he had about variables in his file and the memory jumped by about each time such an operation was performed this could cause the gui to quickly exceed several gb comments

| 1

|

272,777

| 8,517,643,032

|

IssuesEvent

|

2018-11-01 08:50:24

|

egoist/bili

|

https://api.github.com/repos/egoist/bili

|

closed

|

Not working when using top-level node_modules (like Yarn Workspaces)

|

bug priority: high

|

Hi,

Bili 3.1.2 complains about `× Cannot find plugin "rollup-plugin-typescript2" in current directory!`, but I do have it in my `package.json`. Indeed, I see [the `require()` is done relative to the current dir](https://github.com/egoist/bili/blob/bafeef9e26a192f2c887067e4dfd16c5cbfdc50b/src/index.js#L647).

In my case, I have the following tree:

```

node_modules/

packages/

--a

----package.json

--b

----package.json

...

```

All dependencies are installed by in the parent `node_modules/` by [Yarn Workspaces](https://yarnpkg.com/lang/en/docs/workspaces/), which is perfectly valid:

> Node will look for your modules in special folders named node_modules. A node_modules folder can be on the same level as the current file, or higher up in the directory chain. Node will walk up the directory chain, looking through each node_modules until it finds the module you tried to load.

|

1.0

|

Not working when using top-level node_modules (like Yarn Workspaces) - Hi,

Bili 3.1.2 complains about `× Cannot find plugin "rollup-plugin-typescript2" in current directory!`, but I do have it in my `package.json`. Indeed, I see [the `require()` is done relative to the current dir](https://github.com/egoist/bili/blob/bafeef9e26a192f2c887067e4dfd16c5cbfdc50b/src/index.js#L647).

In my case, I have the following tree:

```

node_modules/

packages/

--a

----package.json

--b

----package.json

...

```

All dependencies are installed by in the parent `node_modules/` by [Yarn Workspaces](https://yarnpkg.com/lang/en/docs/workspaces/), which is perfectly valid:

> Node will look for your modules in special folders named node_modules. A node_modules folder can be on the same level as the current file, or higher up in the directory chain. Node will walk up the directory chain, looking through each node_modules until it finds the module you tried to load.

|

priority

|

not working when using top level node modules like yarn workspaces hi bili complains about × cannot find plugin rollup plugin in current directory but i do have it in my package json indeed i see in my case i have the following tree node modules packages a package json b package json all dependencies are installed by in the parent node modules by which is perfectly valid node will look for your modules in special folders named node modules a node modules folder can be on the same level as the current file or higher up in the directory chain node will walk up the directory chain looking through each node modules until it finds the module you tried to load

| 1

|

791,229

| 27,856,527,629

|

IssuesEvent

|

2023-03-20 23:41:56

|

ArctosDB/arctos

|

https://api.github.com/repos/ArctosDB/arctos

|

opened

|

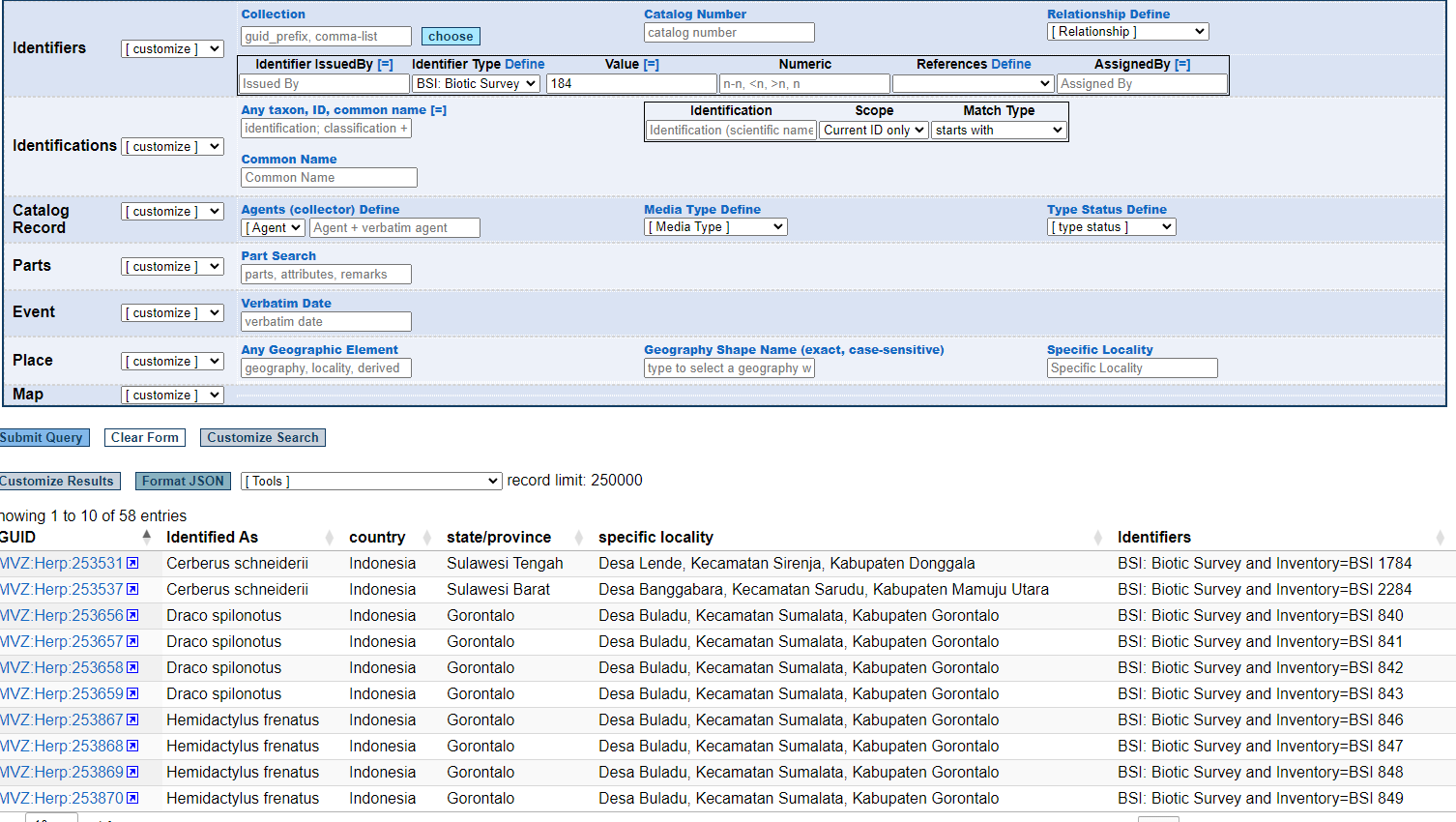

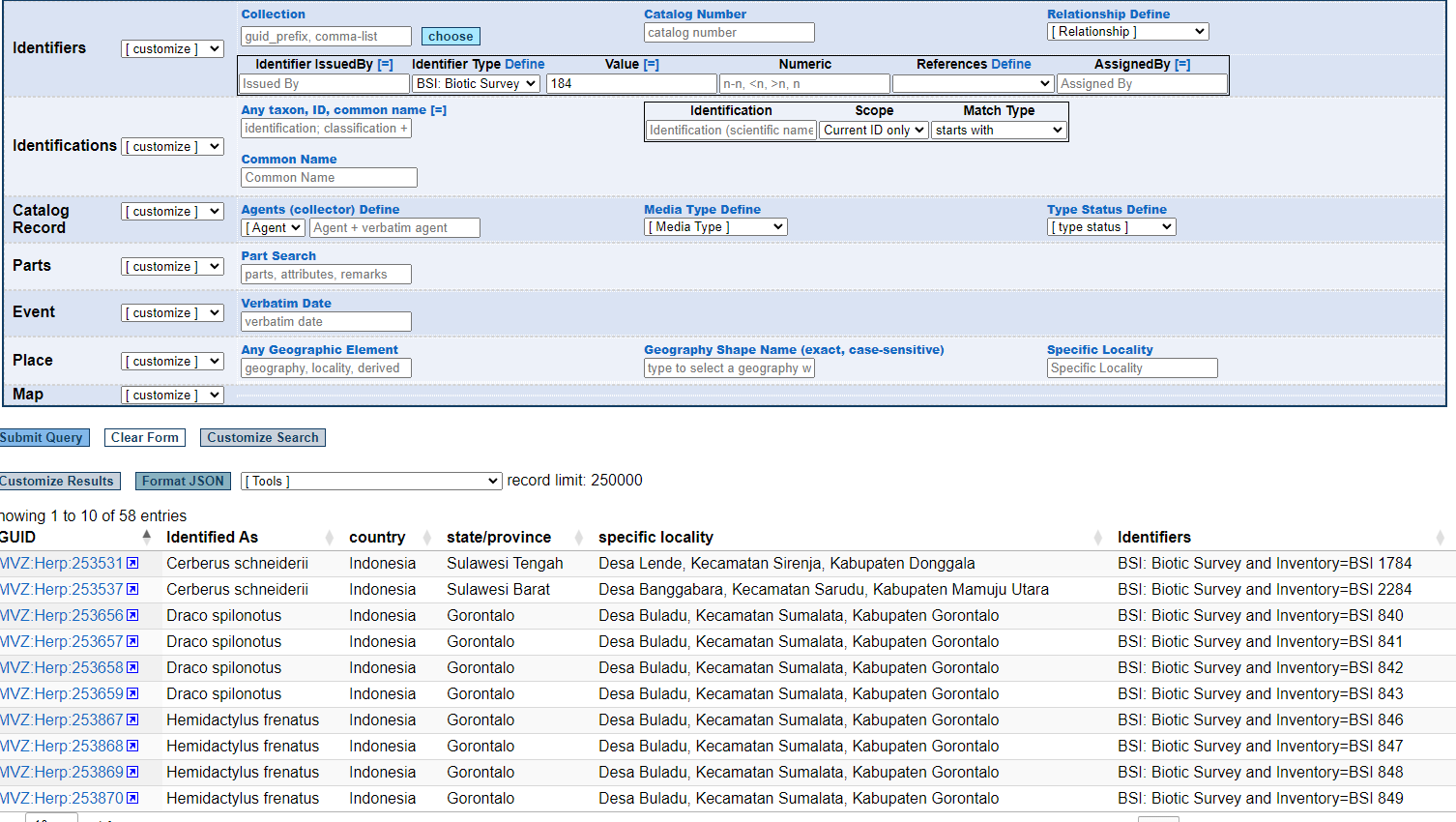

other identifier search on numeric is wonky

|

Priority-High (Needed for work) Function-SearchOrDownload Bug

|

See the search and results below--

search is for identifier = BSI + numeric= 184

results includes BSI values that include the last 2 digits? even with the ```=``` qualifier

So what is up?

|

1.0

|

other identifier search on numeric is wonky - See the search and results below--

search is for identifier = BSI + numeric= 184

results includes BSI values that include the last 2 digits? even with the ```=``` qualifier

So what is up?

|

priority

|

other identifier search on numeric is wonky see the search and results below search is for identifier bsi numeric results includes bsi values that include the last digits even with the qualifier so what is up

| 1

|

382,600

| 11,308,876,281

|

IssuesEvent

|

2020-01-19 09:15:46

|

troop-370/troop370

|

https://api.github.com/repos/troop-370/troop370

|

closed

|

New River Trek date has the wrong month

|

priority: high update

|

From the committee chair:

> Please correct dates from June 4-11 to July 4-11

|

1.0

|

New River Trek date has the wrong month - From the committee chair:

> Please correct dates from June 4-11 to July 4-11

|

priority

|

new river trek date has the wrong month from the committee chair please correct dates from june to july

| 1

|

363,783

| 10,755,115,746

|

IssuesEvent

|

2019-10-31 08:23:53

|

onaio/rdt-standard

|

https://api.github.com/repos/onaio/rdt-standard

|

closed

|

Update Bahasa Translation Hamburger Menu and Name

|

Android Client Priority - high bug

|

Please see translations for hamburger menu below:

<string name="drawer_menu_item_sync">Sinkronisasi</string>

<string name="drawer_menu_item_logout">Keluar</string>

<string name="drawer_menu_item_image_sync">Sinkronisasi gambar</string>

<string name="search_hint">Cari</string>

</resources>

|

1.0

|

Update Bahasa Translation Hamburger Menu and Name - Please see translations for hamburger menu below:

<string name="drawer_menu_item_sync">Sinkronisasi</string>

<string name="drawer_menu_item_logout">Keluar</string>

<string name="drawer_menu_item_image_sync">Sinkronisasi gambar</string>

<string name="search_hint">Cari</string>

</resources>

|

priority

|

update bahasa translation hamburger menu and name please see translations for hamburger menu below sinkronisasi keluar sinkronisasi gambar cari

| 1

|

635,754

| 20,508,166,138

|

IssuesEvent

|

2022-03-01 01:34:32

|

ArctosDB/arctos

|

https://api.github.com/repos/ArctosDB/arctos

|

closed

|

Data Entry - make fields in Identification and Identification Extras identical

|

Priority-High (Needed for work) Enhancement Display/Interface

|

Issue Documentation is http://handbook.arctosdb.org/how_to/How-to-Use-Issues-in-Arctos.html

**Is your feature request related to a problem? Please describe.**

The fields in the Identification section are not the same in the Identification Extra section.

<img width="804" alt="Screen Shot 2022-02-20 at 11 54 59 AM" src="https://user-images.githubusercontent.com/15368365/154859338-9a9ca7ff-be23-4aeb-a66e-eeb5c7c6953f.png">

<img width="1038" alt="Screen Shot 2022-02-20 at 12 01 00 PM" src="https://user-images.githubusercontent.com/15368365/154859544-366ff67f-4434-4dcc-a130-706e95c208d7.png">

**Describe what you're trying to accomplish**

1. We need to be able to "build" taxonomic names in the Identification Extra section.

2. We need to be able to leave the date field blank as in the primary identification.

**Describe the solution you'd like**

Duplicate the Identification fields in the Identification Extras section.

**Describe alternatives you've considered**

We have to correct the record after it is uploaded which is a wonderful opportunity create errors and omissions.

**Additional context**

It would also be nice if the fields had the same names in each section and were in the same order.

Agent 1 vs. Identifying Agent

NatureofID vs. Nature of ID

ID Date vs. MadeDate

ID Confidence vs. Confidence

Remarks vs. ID Remarks

Volunteers who database for a few hours a week really need UI consistency.

**Priority**

We're running into problems daily, so it's high priority for us.

|

1.0

|

Data Entry - make fields in Identification and Identification Extras identical - Issue Documentation is http://handbook.arctosdb.org/how_to/How-to-Use-Issues-in-Arctos.html

**Is your feature request related to a problem? Please describe.**

The fields in the Identification section are not the same in the Identification Extra section.

<img width="804" alt="Screen Shot 2022-02-20 at 11 54 59 AM" src="https://user-images.githubusercontent.com/15368365/154859338-9a9ca7ff-be23-4aeb-a66e-eeb5c7c6953f.png">

<img width="1038" alt="Screen Shot 2022-02-20 at 12 01 00 PM" src="https://user-images.githubusercontent.com/15368365/154859544-366ff67f-4434-4dcc-a130-706e95c208d7.png">

**Describe what you're trying to accomplish**

1. We need to be able to "build" taxonomic names in the Identification Extra section.

2. We need to be able to leave the date field blank as in the primary identification.

**Describe the solution you'd like**

Duplicate the Identification fields in the Identification Extras section.

**Describe alternatives you've considered**

We have to correct the record after it is uploaded which is a wonderful opportunity create errors and omissions.

**Additional context**

It would also be nice if the fields had the same names in each section and were in the same order.

Agent 1 vs. Identifying Agent

NatureofID vs. Nature of ID

ID Date vs. MadeDate

ID Confidence vs. Confidence

Remarks vs. ID Remarks

Volunteers who database for a few hours a week really need UI consistency.

**Priority**

We're running into problems daily, so it's high priority for us.

|

priority

|

data entry make fields in identification and identification extras identical issue documentation is is your feature request related to a problem please describe the fields in the identification section are not the same in the identification extra section img width alt screen shot at am src img width alt screen shot at pm src describe what you re trying to accomplish we need to be able to build taxonomic names in the identification extra section we need to be able to leave the date field blank as in the primary identification describe the solution you d like duplicate the identification fields in the identification extras section describe alternatives you ve considered we have to correct the record after it is uploaded which is a wonderful opportunity create errors and omissions additional context it would also be nice if the fields had the same names in each section and were in the same order agent vs identifying agent natureofid vs nature of id id date vs madedate id confidence vs confidence remarks vs id remarks volunteers who database for a few hours a week really need ui consistency priority we re running into problems daily so it s high priority for us

| 1

|

804,559

| 29,492,948,892

|

IssuesEvent

|

2023-06-02 14:43:04

|

yugabyte/yugabyte-db

|

https://api.github.com/repos/yugabyte/yugabyte-db

|

opened

|

[YCQL] Add some of tablet, table, partition hash, and tserver UUID to the slow query log

|

kind/new-feature area/docdb priority/high area/ycql status/awaiting-triage jira-originated

|

Jira Link: [DB-6781](https://yugabyte.atlassian.net/browse/DB-6781)

|

1.0

|

[YCQL] Add some of tablet, table, partition hash, and tserver UUID to the slow query log - Jira Link: [DB-6781](https://yugabyte.atlassian.net/browse/DB-6781)

|

priority

|

add some of tablet table partition hash and tserver uuid to the slow query log jira link

| 1

|

444,599

| 12,814,866,806

|

IssuesEvent

|

2020-07-04 21:36:41

|

GeyserMC/Geyser

|

https://api.github.com/repos/GeyserMC/Geyser

|

closed

|

Memory leak w/ ping passthrough

|

Confirmed Bug Priority: High

|

**Describe the bug**

<!--- A clear and concise description of what the bug is. -->

Enabling any feature that enables ping passthrough will cause the proxy to memory leak.

**To Reproduce**

Enable `passthrough-motd` or `passthrough-player-counts` in the config.

**Geyser Version**

Standalone git-master-e81c6f2

**Additional Context**

Heap dump: https://www.mediafire.com/file/bqyuz2sum1mpn2u/java_pid30423.hprof/file

|

1.0

|

Memory leak w/ ping passthrough - **Describe the bug**

<!--- A clear and concise description of what the bug is. -->

Enabling any feature that enables ping passthrough will cause the proxy to memory leak.

**To Reproduce**

Enable `passthrough-motd` or `passthrough-player-counts` in the config.

**Geyser Version**

Standalone git-master-e81c6f2

**Additional Context**

Heap dump: https://www.mediafire.com/file/bqyuz2sum1mpn2u/java_pid30423.hprof/file

|

priority

|

memory leak w ping passthrough describe the bug enabling any feature that enables ping passthrough will cause the proxy to memory leak to reproduce enable passthrough motd or passthrough player counts in the config geyser version standalone git master additional context heap dump

| 1

|

72,332

| 3,381,326,705

|

IssuesEvent

|

2015-11-26 01:52:43

|

neuropoly/spinalcordtoolbox

|

https://api.github.com/repos/neuropoly/spinalcordtoolbox

|

closed

|

harmonize flags

|

priority: high

|

Please refer to: https://github.com/neuropoly/spinalcordtoolbox/wiki/flags

Important: if you make change to a flag, please use deprecated mode and indicate in this thread.

If not possible to use deprecated, please list potential conflicts for each file.

If no parser in the script, add it.

**Sara**

- [x] sct_apply_transfo

- "-c" changed to "-crop"

- [x] sct_average_data_within_mask

- added parser

- "-t" was used to number of volume to use if 4D. Changed it to "-nvol".

- [x] sct_check_atlas_integrity

- added parser

- "-m" was used for GM segmentation. Changed it to "-gm"

- "-t" was use for atlas threshold. Changed it to "-thr"

- "-g" was use for GM threshold. Changed it to "-thrgm" (to match with "-thr")

- [x] sct_check_dependences

- added parser

- "-l" was used for log file option. Changed it to "-log"

- [x] sct_compute_ernst_angle

- added get_parser() method.

- "-d" was used for display option. Changed it to "-v"

- [x] sct_compute_hausdorff_distance

- added get_parser() method.

- "-t" was used for thinning. Changed it to "-thinning"

- "-r" was used for second input (reference). Changed it to "-d"

- [x] sct_compute_mtr

- added parser

- changed "-i" and "-j" to "-mt0" and "mt1" to be more explicit.

- [x] sct_concat_transfo

- added parser

- [x] sct_convert (already up to date with flags convention)

- [x] sct_segment_graymatter

- "-l" was for vertebral level file. Changed to "-vert"

- [x] sct_register_to_template

- '-p' replaced by '-param'

- [x] sct_resample

- added get_parser() function

- [x] sct_smooth_spinalcord

- added parser

- changed flag '-s' to '-smooth' **WARNING: -s is not deprecated to -smooth**

- changed "-c" (centerline or seg) to "-s"

- [x] sct_straighten_spinalcord

- changed flag '-p' to '-pad'

- changed flag '-params' to '-param'

- changed flag "-c" to "-s"

- [x] sct_testing

- added parser

- [x] sct_warp_template

- changed flag -o to -ofolder

**Simon**

- [x] sct_create_mask

- added parser

- "-m" was for method. Changed to "-p"

- "-s" was for size, changed for "-size"

- [x] sct_crop_image

- added get_parser function.

- [x] sct_dice_coefficient

- did a warper for the sct_dice_coefficient binary (now isct_dice...)

- input files now have flags -i and -d **WARNING: you will need to change this in your scripts, there was no flags before so there is no deprecated option ...**

- [x] sct_dmri_compute_dti (already up to date with flags convention)

- [x] sct_dmri_concat_bvals (already up to date with flags convention)

- [x] sct_dmri_concat_bvecs (already up to date with flags convention)

- [x] sct_dmri_get_bvalue

- added parser

- [x] sct_dmri_moco

- added parser

- '-b' was for bvecs file, changed to '-bvec'

- '-p' was for param, changed to '-param'

- '-t' was for an otsu threshold, changed to '-thr'

- '-o' was for output folder, changed to '-ofolder'

**Benjamin**

- [x] sct_dmri_separate_b0_and_dwi

- "-m" was used for bvals. Changed it to "-bval".

- "-b" was used for bvecs. Changed it to "-bvec".

- "-o" was used for output folder. Changed it to "-ofolder".

- [x] sct_dmri_transpose_bvecs

- "-i" was used for bvec file. Changed it to "-bvec". "-i" is now deprecated.

- [x] sct_download_data

- [x] sct_extract_metric

- No parser.

- '-p' changed to '-param'

- [x] sct_flatten_sagittal

- No parser.

- "-c" changed to "-s"

- "-s" changed to "-x" **WARNING: -s is not depredated to -x**

- [x] sct_fmri_compute_tsnr

- added get_parser function.

- [x] sct_fmri_moco

- No parser.

- '-p' changed to '-param'

- [x] sct_get_centerline

- "-t" option was used for contrast. It is now deprecated by "-c".

- "-p" option was used for a file containing a point. It is now deprecated by "-point".

**Olivier**

- [x] sct_image

- [x] sct_label_utils

- "-r" was used to reference an image. Now deprecated by "-ref"

- "-level" was used for vertebral level. Now deprecated by "-vert"

- "-t" --> changed for -p (julien)

- [x] sct_label_vertebrae

- "-t" was used for processes, now using -p

- "-seg" for segmentation file is now "-s"

- [x] sct_maths

- [x] sct_process_segmentation

- "-l" switched to "-vert" for vertebral levels

- "-s" replaced by "-size"

- No Parser

- [x] sct_propseg

- "-t" option is for contrast

- [x] sct_register_multimodal

- "-p" was used for params, now -param

|

1.0

|

harmonize flags - Please refer to: https://github.com/neuropoly/spinalcordtoolbox/wiki/flags

Important: if you make change to a flag, please use deprecated mode and indicate in this thread.

If not possible to use deprecated, please list potential conflicts for each file.

If no parser in the script, add it.

**Sara**

- [x] sct_apply_transfo

- "-c" changed to "-crop"

- [x] sct_average_data_within_mask

- added parser

- "-t" was used to number of volume to use if 4D. Changed it to "-nvol".

- [x] sct_check_atlas_integrity

- added parser

- "-m" was used for GM segmentation. Changed it to "-gm"

- "-t" was use for atlas threshold. Changed it to "-thr"

- "-g" was use for GM threshold. Changed it to "-thrgm" (to match with "-thr")

- [x] sct_check_dependences

- added parser

- "-l" was used for log file option. Changed it to "-log"

- [x] sct_compute_ernst_angle

- added get_parser() method.

- "-d" was used for display option. Changed it to "-v"

- [x] sct_compute_hausdorff_distance

- added get_parser() method.

- "-t" was used for thinning. Changed it to "-thinning"

- "-r" was used for second input (reference). Changed it to "-d"

- [x] sct_compute_mtr

- added parser

- changed "-i" and "-j" to "-mt0" and "mt1" to be more explicit.

- [x] sct_concat_transfo

- added parser

- [x] sct_convert (already up to date with flags convention)

- [x] sct_segment_graymatter

- "-l" was for vertebral level file. Changed to "-vert"

- [x] sct_register_to_template

- '-p' replaced by '-param'

- [x] sct_resample

- added get_parser() function

- [x] sct_smooth_spinalcord

- added parser

- changed flag '-s' to '-smooth' **WARNING: -s is not deprecated to -smooth**

- changed "-c" (centerline or seg) to "-s"

- [x] sct_straighten_spinalcord

- changed flag '-p' to '-pad'

- changed flag '-params' to '-param'

- changed flag "-c" to "-s"

- [x] sct_testing

- added parser

- [x] sct_warp_template

- changed flag -o to -ofolder

**Simon**

- [x] sct_create_mask

- added parser

- "-m" was for method. Changed to "-p"

- "-s" was for size, changed for "-size"

- [x] sct_crop_image

- added get_parser function.

- [x] sct_dice_coefficient

- did a warper for the sct_dice_coefficient binary (now isct_dice...)

- input files now have flags -i and -d **WARNING: you will need to change this in your scripts, there was no flags before so there is no deprecated option ...**

- [x] sct_dmri_compute_dti (already up to date with flags convention)

- [x] sct_dmri_concat_bvals (already up to date with flags convention)

- [x] sct_dmri_concat_bvecs (already up to date with flags convention)

- [x] sct_dmri_get_bvalue

- added parser

- [x] sct_dmri_moco

- added parser

- '-b' was for bvecs file, changed to '-bvec'

- '-p' was for param, changed to '-param'

- '-t' was for an otsu threshold, changed to '-thr'

- '-o' was for output folder, changed to '-ofolder'

**Benjamin**

- [x] sct_dmri_separate_b0_and_dwi

- "-m" was used for bvals. Changed it to "-bval".

- "-b" was used for bvecs. Changed it to "-bvec".

- "-o" was used for output folder. Changed it to "-ofolder".

- [x] sct_dmri_transpose_bvecs

- "-i" was used for bvec file. Changed it to "-bvec". "-i" is now deprecated.

- [x] sct_download_data

- [x] sct_extract_metric

- No parser.

- '-p' changed to '-param'

- [x] sct_flatten_sagittal

- No parser.

- "-c" changed to "-s"

- "-s" changed to "-x" **WARNING: -s is not depredated to -x**

- [x] sct_fmri_compute_tsnr

- added get_parser function.

- [x] sct_fmri_moco

- No parser.

- '-p' changed to '-param'

- [x] sct_get_centerline

- "-t" option was used for contrast. It is now deprecated by "-c".

- "-p" option was used for a file containing a point. It is now deprecated by "-point".

**Olivier**

- [x] sct_image

- [x] sct_label_utils

- "-r" was used to reference an image. Now deprecated by "-ref"

- "-level" was used for vertebral level. Now deprecated by "-vert"

- "-t" --> changed for -p (julien)

- [x] sct_label_vertebrae

- "-t" was used for processes, now using -p

- "-seg" for segmentation file is now "-s"

- [x] sct_maths

- [x] sct_process_segmentation

- "-l" switched to "-vert" for vertebral levels

- "-s" replaced by "-size"

- No Parser

- [x] sct_propseg

- "-t" option is for contrast

- [x] sct_register_multimodal

- "-p" was used for params, now -param

|

priority

|

harmonize flags please refer to important if you make change to a flag please use deprecated mode and indicate in this thread if not possible to use deprecated please list potential conflicts for each file if no parser in the script add it sara sct apply transfo c changed to crop sct average data within mask added parser t was used to number of volume to use if changed it to nvol sct check atlas integrity added parser m was used for gm segmentation changed it to gm t was use for atlas threshold changed it to thr g was use for gm threshold changed it to thrgm to match with thr sct check dependences added parser l was used for log file option changed it to log sct compute ernst angle added get parser method d was used for display option changed it to v sct compute hausdorff distance added get parser method t was used for thinning changed it to thinning r was used for second input reference changed it to d sct compute mtr added parser changed i and j to and to be more explicit sct concat transfo added parser sct convert already up to date with flags convention sct segment graymatter l was for vertebral level file changed to vert sct register to template p replaced by param sct resample added get parser function sct smooth spinalcord added parser changed flag s to smooth warning s is not deprecated to smooth changed c centerline or seg to s sct straighten spinalcord changed flag p to pad changed flag params to param changed flag c to s sct testing added parser sct warp template changed flag o to ofolder simon sct create mask added parser m was for method changed to p s was for size changed for size sct crop image added get parser function sct dice coefficient did a warper for the sct dice coefficient binary now isct dice input files now have flags i and d warning you will need to change this in your scripts there was no flags before so there is no deprecated option sct dmri compute dti already up to date with flags convention sct dmri concat bvals already up to date with flags convention sct dmri concat bvecs already up to date with flags convention sct dmri get bvalue added parser sct dmri moco added parser b was for bvecs file changed to bvec p was for param changed to param t was for an otsu threshold changed to thr o was for output folder changed to ofolder benjamin sct dmri separate and dwi m was used for bvals changed it to bval b was used for bvecs changed it to bvec o was used for output folder changed it to ofolder sct dmri transpose bvecs i was used for bvec file changed it to bvec i is now deprecated sct download data sct extract metric no parser p changed to param sct flatten sagittal no parser c changed to s s changed to x warning s is not depredated to x sct fmri compute tsnr added get parser function sct fmri moco no parser p changed to param sct get centerline t option was used for contrast it is now deprecated by c p option was used for a file containing a point it is now deprecated by point olivier sct image sct label utils r was used to reference an image now deprecated by ref level was used for vertebral level now deprecated by vert t changed for p julien sct label vertebrae t was used for processes now using p seg for segmentation file is now s sct maths sct process segmentation l switched to vert for vertebral levels s replaced by size no parser sct propseg t option is for contrast sct register multimodal p was used for params now param

| 1

|

653,369

| 21,580,637,830

|

IssuesEvent

|

2022-05-02 18:19:22

|

WordPress/openverse-frontend

|

https://api.github.com/repos/WordPress/openverse-frontend

|

opened

|

Cannot close global audio player after clicking on the waveform

|

🟧 priority: high 🚦 status: awaiting triage 🛠 goal: fix 🕹 aspect: interface

|

## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

Global audio player close button becomes not interactive after you've clicked on the waveform.

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

1. <!-- Step 1 ... -->Open the global audio player.

2. <!-- Step 2 ... -->Click on the waveform.

3. <!-- Step 3 ... -->Try closing the global audio player by clicking on the x button. It doesn't work.

## Screenshots

<!-- Add screenshots to show the problem; or delete the section entirely. -->

## Environment

<!-- Please complete this, unless you are certain the problem is not environment specific. -->

- Device: <!-- (_eg._ iPhone Xs; laptop) -->

- OS: <!-- (_eg._ iOS 13.5; Fedora 32) -->

- Browser: <!-- (_eg._ Safari; Firefox) -->

- Version: <!-- (_eg._ 13; 73) -->

- Other info: <!-- (_eg._ display resolution, ease-of-access settings) -->

## Additional context

<!-- Add any other context about the problem here; or delete the section entirely. -->

## Resolution

<!-- Replace the [ ] with [x] to check the box. -->

- [ ] 🙋 I would be interested in resolving this bug.

|

1.0

|

Cannot close global audio player after clicking on the waveform - ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

Global audio player close button becomes not interactive after you've clicked on the waveform.

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

1. <!-- Step 1 ... -->Open the global audio player.

2. <!-- Step 2 ... -->Click on the waveform.

3. <!-- Step 3 ... -->Try closing the global audio player by clicking on the x button. It doesn't work.

## Screenshots

<!-- Add screenshots to show the problem; or delete the section entirely. -->

## Environment

<!-- Please complete this, unless you are certain the problem is not environment specific. -->

- Device: <!-- (_eg._ iPhone Xs; laptop) -->

- OS: <!-- (_eg._ iOS 13.5; Fedora 32) -->

- Browser: <!-- (_eg._ Safari; Firefox) -->

- Version: <!-- (_eg._ 13; 73) -->

- Other info: <!-- (_eg._ display resolution, ease-of-access settings) -->

## Additional context

<!-- Add any other context about the problem here; or delete the section entirely. -->

## Resolution

<!-- Replace the [ ] with [x] to check the box. -->

- [ ] 🙋 I would be interested in resolving this bug.

|

priority

|

cannot close global audio player after clicking on the waveform description global audio player close button becomes not interactive after you ve clicked on the waveform reproduction open the global audio player click on the waveform try closing the global audio player by clicking on the x button it doesn t work screenshots environment device os browser version other info additional context resolution 🙋 i would be interested in resolving this bug

| 1

|

634,122

| 20,326,542,082

|

IssuesEvent

|

2022-02-18 06:27:08

|

avneesh0612/react-nextjs-snippets

|

https://api.github.com/repos/avneesh0612/react-nextjs-snippets

|

closed

|

[FEATURE] Add imports and hooks to Typescript in React

|

good first issue 🕹 aspect: interface 🏁 status: ready for dev ⭐ goal: addition 🟧 priority: high

|

### Description

The react imports and hooks don't work in typescript `tsx` files. So, we just need to add these imports in ts snippets JSON and add types if and where needed

### Screenshots

_No response_

### Additional information

_No response_

|

1.0

|

[FEATURE] Add imports and hooks to Typescript in React - ### Description

The react imports and hooks don't work in typescript `tsx` files. So, we just need to add these imports in ts snippets JSON and add types if and where needed

### Screenshots

_No response_

### Additional information

_No response_

|

priority

|

add imports and hooks to typescript in react description the react imports and hooks don t work in typescript tsx files so we just need to add these imports in ts snippets json and add types if and where needed screenshots no response additional information no response

| 1

|

229,829

| 7,595,693,532

|

IssuesEvent

|

2018-04-27 06:52:53

|

wso2/product-is

|

https://api.github.com/repos/wso2/product-is

|

opened

|

Setting local claims for federated users is not handled

|

Component/Auth Framework Priority/High Type/Bug

|

When calling user.localClaims["<claim_uri>"] = "value"; this should check for the remote claim from dialect or claim mapping and set the corresponding claim value.

|

1.0

|

Setting local claims for federated users is not handled - When calling user.localClaims["<claim_uri>"] = "value"; this should check for the remote claim from dialect or claim mapping and set the corresponding claim value.

|

priority

|

setting local claims for federated users is not handled when calling user localclaims value this should check for the remote claim from dialect or claim mapping and set the corresponding claim value

| 1

|

328,474

| 9,995,182,280

|

IssuesEvent

|

2019-07-11 19:35:21

|

rstudio/gt

|

https://api.github.com/repos/rstudio/gt

|

closed

|

Rename the `cells_styles()` function as `cell_text()`; create `cell_fill()` fcn

|

Difficulty: ① Novice Effort: ① Low Priority: ③ High Type: ★ Enhancement

|

Rename the `cells_styles()` function as `cell_text()`. This helper function should only be concerned with cell text so all `text_*` args should be renamed to lose the `text_` part.

|

1.0

|

Rename the `cells_styles()` function as `cell_text()`; create `cell_fill()` fcn - Rename the `cells_styles()` function as `cell_text()`. This helper function should only be concerned with cell text so all `text_*` args should be renamed to lose the `text_` part.

|

priority

|

rename the cells styles function as cell text create cell fill fcn rename the cells styles function as cell text this helper function should only be concerned with cell text so all text args should be renamed to lose the text part

| 1

|

482,488

| 13,907,692,671

|

IssuesEvent

|

2020-10-20 12:56:28

|

ctm/mb2-doc

|

https://api.github.com/repos/ctm/mb2-doc

|

closed

|

Mexican poker log strings are incorrect

|

chore easy high priority

|

When up and down are chosen in Mexican poker, the text that gets printed talks about discarding. Ugh.

That may be a little tricky to solve cleanly, but I can almost definitely hack in something that's better even if it's not a general solution.

|

1.0

|

Mexican poker log strings are incorrect - When up and down are chosen in Mexican poker, the text that gets printed talks about discarding. Ugh.

That may be a little tricky to solve cleanly, but I can almost definitely hack in something that's better even if it's not a general solution.

|

priority

|

mexican poker log strings are incorrect when up and down are chosen in mexican poker the text that gets printed talks about discarding ugh that may be a little tricky to solve cleanly but i can almost definitely hack in something that s better even if it s not a general solution

| 1

|

615,216

| 19,250,014,456

|

IssuesEvent

|

2021-12-09 03:17:19

|

matrixorigin/matrixone

|

https://api.github.com/repos/matrixorigin/matrixone

|

opened

|

add AOE RFC documents

|

component/aoe priority/high kind/feature severity/critical

|

1. Overall architecture

2. WAL

3. Buffer manager

4. Metadata

5. Data and index management

6. MVCC

7. Logstore

|

1.0

|

add AOE RFC documents - 1. Overall architecture

2. WAL

3. Buffer manager

4. Metadata

5. Data and index management

6. MVCC

7. Logstore

|

priority

|

add aoe rfc documents overall architecture wal buffer manager metadata data and index management mvcc logstore

| 1

|

591,959

| 17,866,597,354

|

IssuesEvent

|

2021-09-06 10:10:54

|

ballerina-platform/ballerina-lang

|

https://api.github.com/repos/ballerina-platform/ballerina-lang

|

closed

|

Add an API for finding symbol references in a given document only

|

Type/Improvement Priority/High Team/CompilerFETools Points/4 Area/SemanticAPI SwanLakeDump

|

**Description:**

Currently, in order to find the references we access all the constructs in a given BLangPackage and when it comes to a larger project, we iterate through around hundreds of constructs in a single run.

Since we use the references API to implement the semantic token feature, we spend cycles to find the references in unnecessary documents and related constructs as well. Exposing an API to capture the references in a given document we can improve the performance.

**Describe your solution(s)**

Introduce an API to find the references in a single document

|

1.0

|

Add an API for finding symbol references in a given document only - **Description:**

Currently, in order to find the references we access all the constructs in a given BLangPackage and when it comes to a larger project, we iterate through around hundreds of constructs in a single run.

Since we use the references API to implement the semantic token feature, we spend cycles to find the references in unnecessary documents and related constructs as well. Exposing an API to capture the references in a given document we can improve the performance.

**Describe your solution(s)**

Introduce an API to find the references in a single document

|

priority

|

add an api for finding symbol references in a given document only description currently in order to find the references we access all the constructs in a given blangpackage and when it comes to a larger project we iterate through around hundreds of constructs in a single run since we use the references api to implement the semantic token feature we spend cycles to find the references in unnecessary documents and related constructs as well exposing an api to capture the references in a given document we can improve the performance describe your solution s introduce an api to find the references in a single document

| 1

|

602,369

| 18,467,769,356

|

IssuesEvent

|

2021-10-17 07:22:29

|

SmallMolecules/small-molecules

|

https://api.github.com/repos/SmallMolecules/small-molecules

|

closed

|

Create particle object

|

Priority: High Size: Medium

|

Need to create a basic particle object following the mind map that was made earlier.

|

1.0

|

Create particle object - Need to create a basic particle object following the mind map that was made earlier.

|

priority

|

create particle object need to create a basic particle object following the mind map that was made earlier

| 1

|

209,937

| 7,181,373,248

|

IssuesEvent

|

2018-02-01 04:33:51

|

Mawerh/OOPP

|

https://api.github.com/repos/Mawerh/OOPP

|

closed

|

Function for weather api

|

Complexity High Priority High

|

As a person who is out most of the time, i wish for there to be some way my windows will close and open based on the weather.

|

1.0

|

Function for weather api - As a person who is out most of the time, i wish for there to be some way my windows will close and open based on the weather.

|

priority

|

function for weather api as a person who is out most of the time i wish for there to be some way my windows will close and open based on the weather

| 1

|

281,105

| 8,690,956,575

|

IssuesEvent

|

2018-12-03 23:15:56

|

zulip/zulip

|

https://api.github.com/repos/zulip/zulip

|

closed

|

send_email: Add support for passing in additional `To` addresses

|

area: emails area: refactoring help wanted in progress priority: high

|

For the recent "realm reactivation request" feature (https://github.com/zulip/zulip/pull/10816) and likely several similar features we may add in the future where we want to email all organization administrators, we ideally want to off a `send_email` wrapper/variant called maybe `send_email_to_admins` that take a realm and sends the provided email to all the organization administrators of the realm, but in a single email (which means that e.g. those users replying to each other works in a good way).

|

1.0

|

send_email: Add support for passing in additional `To` addresses - For the recent "realm reactivation request" feature (https://github.com/zulip/zulip/pull/10816) and likely several similar features we may add in the future where we want to email all organization administrators, we ideally want to off a `send_email` wrapper/variant called maybe `send_email_to_admins` that take a realm and sends the provided email to all the organization administrators of the realm, but in a single email (which means that e.g. those users replying to each other works in a good way).

|

priority

|

send email add support for passing in additional to addresses for the recent realm reactivation request feature and likely several similar features we may add in the future where we want to email all organization administrators we ideally want to off a send email wrapper variant called maybe send email to admins that take a realm and sends the provided email to all the organization administrators of the realm but in a single email which means that e g those users replying to each other works in a good way

| 1

|

797,869

| 28,182,081,430

|

IssuesEvent

|

2023-04-04 03:56:36

|

Snapmaker/Luban

|

https://api.github.com/repos/Snapmaker/Luban

|

closed

|

Bug: Variable line width skeleton production error due to polygon problem

|

Type: Bug/Bug Fix Priority: High Software: Slicer

|

## Description

This is a slicing engine problem.

In the slicing of the above model there is a certain possibility of slicing failure at different layer thickness parameters. This issue was also found in Cura Engine and was not fully resolved in 5.3.

|

1.0

|

Bug: Variable line width skeleton production error due to polygon problem - ## Description

This is a slicing engine problem.

In the slicing of the above model there is a certain possibility of slicing failure at different layer thickness parameters. This issue was also found in Cura Engine and was not fully resolved in 5.3.

|

priority

|

bug variable line width skeleton production error due to polygon problem description this is a slicing engine problem in the slicing of the above model there is a certain possibility of slicing failure at different layer thickness parameters this issue was also found in cura engine and was not fully resolved in

| 1

|

276,649

| 8,607,013,169

|

IssuesEvent

|

2018-11-17 17:58:31

|

josephroqueca/bowling-companion

|

https://api.github.com/repos/josephroqueca/bowling-companion

|

opened

|

Update Kotlin to 1.3

|

high priority

|

New stable release of Kotlin, with coroutines hitting version 1.0.0 means this will need more than just a simple version bump

|

1.0

|

Update Kotlin to 1.3 - New stable release of Kotlin, with coroutines hitting version 1.0.0 means this will need more than just a simple version bump

|

priority

|

update kotlin to new stable release of kotlin with coroutines hitting version means this will need more than just a simple version bump

| 1

|

196,479

| 6,928,382,417

|

IssuesEvent

|

2017-12-01 04:29:42

|

craftercms/craftercms

|

https://api.github.com/repos/craftercms/craftercms

|

closed

|

[studio-ui] Insert component, layout and stub RTE dropdowns wrap early and do not allow scroll

|

bug priority: high

|

### Expected behavior

* Dropdown should resize the width to match the text.

* Dropdown should be scrollable after it reaches 80% of the length of the window.

### Actual behavior

### Steps to reproduce the problem

* configure a lot of components to the RTE insert stubs

* Open RTE

Applies to 2.x and 3.x

|

1.0

|

[studio-ui] Insert component, layout and stub RTE dropdowns wrap early and do not allow scroll - ### Expected behavior

* Dropdown should resize the width to match the text.

* Dropdown should be scrollable after it reaches 80% of the length of the window.

### Actual behavior

### Steps to reproduce the problem

* configure a lot of components to the RTE insert stubs

* Open RTE

Applies to 2.x and 3.x

|

priority

|

insert component layout and stub rte dropdowns wrap early and do not allow scroll expected behavior dropdown should resize the width to match the text dropdown should be scrollable after it reaches of the length of the window actual behavior steps to reproduce the problem configure a lot of components to the rte insert stubs open rte applies to x and x

| 1

|

514,911

| 14,946,811,036

|

IssuesEvent

|

2021-01-26 07:33:26

|

ProjectSidewalk/SidewalkWebpage

|

https://api.github.com/repos/ProjectSidewalk/SidewalkWebpage

|

closed

|

Sidewalk Gallery: Cards in "All" should be shuffled randomly

|

Priority: High bug sidewalkgallery

|

Currently, while the cards of each label type are chosen randomly, they appear on the "All" page in a grouped order (i.e., Others, Occlusions, No Sidewalk...). This is not ideal and we probably should shuffle them more.

A quick fix is just to shuffle the list of labels queried in the `getAssortedLabels()` method in `LabelTable.scala` before returning.

|

1.0

|

Sidewalk Gallery: Cards in "All" should be shuffled randomly - Currently, while the cards of each label type are chosen randomly, they appear on the "All" page in a grouped order (i.e., Others, Occlusions, No Sidewalk...). This is not ideal and we probably should shuffle them more.

A quick fix is just to shuffle the list of labels queried in the `getAssortedLabels()` method in `LabelTable.scala` before returning.

|

priority

|

sidewalk gallery cards in all should be shuffled randomly currently while the cards of each label type are chosen randomly they appear on the all page in a grouped order i e others occlusions no sidewalk this is not ideal and we probably should shuffle them more a quick fix is just to shuffle the list of labels queried in the getassortedlabels method in labeltable scala before returning

| 1

|

150,959

| 5,794,236,336

|

IssuesEvent

|

2017-05-02 14:29:56

|

GSA/fpki-guides

|

https://api.github.com/repos/GSA/fpki-guides

|

closed

|

Trust Store Management Guide

|

Audience - Engineers general overview Priority - High

|

@dasgituser (Dave Silver) and @tkpk (Giuseppe Cimmino) are converting the FPKI Management Authority''s Trust Store Management Guide to a playbook. The Federal Public Key Infrastructure Management Authority designed and created the Trust Store Management Guide as an education resource for Department, Agency, corporate, and other organizational system level administrators and managers who use the Federal Public Key Infrastructure (FPKI) as part of regular business practices.

|

1.0

|

Trust Store Management Guide - @dasgituser (Dave Silver) and @tkpk (Giuseppe Cimmino) are converting the FPKI Management Authority''s Trust Store Management Guide to a playbook. The Federal Public Key Infrastructure Management Authority designed and created the Trust Store Management Guide as an education resource for Department, Agency, corporate, and other organizational system level administrators and managers who use the Federal Public Key Infrastructure (FPKI) as part of regular business practices.

|

priority

|

trust store management guide dasgituser dave silver and tkpk giuseppe cimmino are converting the fpki management authority s trust store management guide to a playbook the federal public key infrastructure management authority designed and created the trust store management guide as an education resource for department agency corporate and other organizational system level administrators and managers who use the federal public key infrastructure fpki as part of regular business practices

| 1

|

773,108

| 27,146,514,137

|

IssuesEvent

|

2023-02-16 20:24:18

|

IDAES/idaes-pse

|

https://api.github.com/repos/IDAES/idaes-pse

|

closed

|

Cubic EoS model fails to initialize at high pressures.

|

Priority:High

|

An external user brought a use case to my attention where the Cubic EoS model failed to converge at high pressures.

The issue appears to be that the deviation between the ideal initial guess and the actual solution for bubble temperature is large enough to cause the solver to fail (scaling might also be an issue).

Test case files attached.

[C1C2C3_CEOS.txt](https://github.com/IDAES/idaes-dev/files/5189179/C1C2C3_CEOS.txt)

[mainfile_test_cubic_eos_PR.txt](https://github.com/IDAES/idaes-dev/files/5189180/mainfile_test_cubic_eos_PR.txt)

|

1.0

|

Cubic EoS model fails to initialize at high pressures. - An external user brought a use case to my attention where the Cubic EoS model failed to converge at high pressures.