Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

608,013 | 18,796,008,366 | IssuesEvent | 2021-11-08 22:25:43 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | 1D convolution is broken for mkldnn tensors | high priority module: nn module: convolution module: mkldnn module: correctness (silent) | ## 🐛 Bug

1D convolution is broken for mkldnn tensors and a badly-worded error is thrown for this case.

## To Reproduce

```python

import torch

input = torch.randn(2, 3, 10).to_mkldnn()

weight = torch.randn(3, 3, 3).to_mkldnn()

bias = torch.randn(3).to_mkldnn()

output = torch.nn.functional.conv1d(input, weight, bias)

```

```

RuntimeError: opaque tensors do not have strides

```

## Expected behavior

Either the correct output is returned for mkldnn tensor inputs or a proper error is thrown indicating the lack of support for 1D convolution with mkldnn tensors.

## Additional Context

The problem occurs when trying to view 1D spatial input / weight as 2D. This is done for other backends (e.g. cuDNN) that don't support 1D spatial input directly. However, the [mkldnn convolution docs](https://oneapi-src.github.io/oneDNN/v1.0/dev_guide_convolution.html) indicate that 1D spatial inputs are supported directly, so it should be an easy fix to avoid the view for the mkldnn case.

https://github.com/pytorch/pytorch/blob/a1d733ae8ca4bbad8fa22a4a532b67915114ae93/aten/src/ATen/native/Convolution.cpp#L889-L897 | 1.0 | 1D convolution is broken for mkldnn tensors - ## 🐛 Bug

1D convolution is broken for mkldnn tensors and a badly-worded error is thrown for this case.

## To Reproduce

```python

import torch

input = torch.randn(2, 3, 10).to_mkldnn()

weight = torch.randn(3, 3, 3).to_mkldnn()

bias = torch.randn(3).to_mkldnn()

output = torch.nn.functional.conv1d(input, weight, bias)

```

```

RuntimeError: opaque tensors do not have strides

```

## Expected behavior

Either the correct output is returned for mkldnn tensor inputs or a proper error is thrown indicating the lack of support for 1D convolution with mkldnn tensors.

## Additional Context

The problem occurs when trying to view 1D spatial input / weight as 2D. This is done for other backends (e.g. cuDNN) that don't support 1D spatial input directly. However, the [mkldnn convolution docs](https://oneapi-src.github.io/oneDNN/v1.0/dev_guide_convolution.html) indicate that 1D spatial inputs are supported directly, so it should be an easy fix to avoid the view for the mkldnn case.

https://github.com/pytorch/pytorch/blob/a1d733ae8ca4bbad8fa22a4a532b67915114ae93/aten/src/ATen/native/Convolution.cpp#L889-L897 | priority | convolution is broken for mkldnn tensors 🐛 bug convolution is broken for mkldnn tensors and a badly worded error is thrown for this case to reproduce python import torch input torch randn to mkldnn weight torch randn to mkldnn bias torch randn to mkldnn output torch nn functional input weight bias runtimeerror opaque tensors do not have strides expected behavior either the correct output is returned for mkldnn tensor inputs or a proper error is thrown indicating the lack of support for convolution with mkldnn tensors additional context the problem occurs when trying to view spatial input weight as this is done for other backends e g cudnn that don t support spatial input directly however the indicate that spatial inputs are supported directly so it should be an easy fix to avoid the view for the mkldnn case | 1 |

690,214 | 23,650,668,131 | IssuesEvent | 2022-08-26 06:12:33 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Add a Drafts tab for drafts in current narrow | help wanted area: compose priority: high | As [discussed on CZO](https://chat.zulip.org/#narrow/stream/101-design/topic/save.20and.20clear.20button.20design/near/1359699), it would be helpful to be able to view drafts addressed to the current narrow, especially in light of #18555.

To address this, we should make a tabbed drafts UI with the following tabs:

* **This conversation**: Drafts for the current narrow, i.e. the compose box narrow if compose box is open, or otherwise the narrow that `r` would refer to.

* **All**: Same as the drafts view we have today.

We should try being smart about which tab to show when the user opens drafts:

- Open the "All" tab and disable the "This conversation" tab when there are no drafts in the current context.

- Open the "This conversation" tab from the compose box Drafts link.

- (perhaps) Open the "All" tab from the left sidebar link, or maybe make it dependent on whether the compose box is open of closed. We'll need to experiment here.

#20971 should be implemented as a "Scheduled" tab under Drafts. We can implement the "Scheduled" tab and the "This conversation" tab in either order.

| 1.0 | Add a Drafts tab for drafts in current narrow - As [discussed on CZO](https://chat.zulip.org/#narrow/stream/101-design/topic/save.20and.20clear.20button.20design/near/1359699), it would be helpful to be able to view drafts addressed to the current narrow, especially in light of #18555.

To address this, we should make a tabbed drafts UI with the following tabs:

* **This conversation**: Drafts for the current narrow, i.e. the compose box narrow if compose box is open, or otherwise the narrow that `r` would refer to.

* **All**: Same as the drafts view we have today.

We should try being smart about which tab to show when the user opens drafts:

- Open the "All" tab and disable the "This conversation" tab when there are no drafts in the current context.

- Open the "This conversation" tab from the compose box Drafts link.

- (perhaps) Open the "All" tab from the left sidebar link, or maybe make it dependent on whether the compose box is open of closed. We'll need to experiment here.

#20971 should be implemented as a "Scheduled" tab under Drafts. We can implement the "Scheduled" tab and the "This conversation" tab in either order.

| priority | add a drafts tab for drafts in current narrow as it would be helpful to be able to view drafts addressed to the current narrow especially in light of to address this we should make a tabbed drafts ui with the following tabs this conversation drafts for the current narrow i e the compose box narrow if compose box is open or otherwise the narrow that r would refer to all same as the drafts view we have today we should try being smart about which tab to show when the user opens drafts open the all tab and disable the this conversation tab when there are no drafts in the current context open the this conversation tab from the compose box drafts link perhaps open the all tab from the left sidebar link or maybe make it dependent on whether the compose box is open of closed we ll need to experiment here should be implemented as a scheduled tab under drafts we can implement the scheduled tab and the this conversation tab in either order | 1 |

75,788 | 3,475,865,732 | IssuesEvent | 2015-12-26 06:24:58 | speedovation/kiwi | https://api.github.com/repos/speedovation/kiwi | closed | Php Heredoc and Nowdoc support | 3 - Done High Priority | Add support for

Heredoc and Nowodc with proper syntax highlighting

* HTML

* CSS

* JS

* SQL

<!---

@huboard:{"milestone_order":9.094947017729282e-13,"order":5.684341886080802e-14,"custom_state":""}

-->

| 1.0 | Php Heredoc and Nowdoc support - Add support for

Heredoc and Nowodc with proper syntax highlighting

* HTML

* CSS

* JS

* SQL

<!---

@huboard:{"milestone_order":9.094947017729282e-13,"order":5.684341886080802e-14,"custom_state":""}

-->

| priority | php heredoc and nowdoc support add support for heredoc and nowodc with proper syntax highlighting html css js sql huboard milestone order order custom state | 1 |

307,573 | 9,418,850,975 | IssuesEvent | 2019-04-10 20:19:04 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Object of type 'Eco.Gameplay.Items.AuthorizationInventory' cannot be converted to type 'Eco.Gameplay.Items.ItemStack | Fixed High Priority Push Candidate |

[log.txt](https://github.com/StrangeLoopGames/EcoIssues/files/2973960/log.txt)

Got this twice when trying to drag stuff out of a stockpile the same time as someone else,0.8.0.7

Server encountered an exception:

<size=60.00%>Exception: ArgumentException

Message:Object of type 'Eco.Gameplay.Items.AuthorizationInventory' cannot be converted to type 'Eco.Gameplay.Items.ItemStack'.

Source:mscorlib

System.ArgumentException: Object of type 'Eco.Gameplay.Items.AuthorizationInventory' cannot be converted to type 'Eco.Gameplay.Items.ItemStack'.

at System.RuntimeType.CheckValue (System.Object value, System.Reflection.Binder binder, System.Globalization.CultureInfo culture, System.Reflection.BindingFlags invokeAttr) [0x00071] in <2943701620b54f86b436d3ffad010412>:0

at System.Reflection.MonoMethod.ConvertValues (System.Reflection.Binder binder, System.Object[] args, System.Reflection.ParameterInfo[] pinfo, System.Globalization.CultureInfo culture, System.Reflection.BindingFlags invokeAttr) [0x00069] in <2943701620b54f86b436d3ffad010412>:0

at System.Reflection.MonoMethod.Invoke (System.Object obj, System.Reflection.BindingFlags invokeAttr, System.Reflection.Binder binder, System.Object[] parameters, System.Globalization.CultureInfo culture) [0x00011] in <2943701620b54f86b436d3ffad010412>:0

at System.Reflection.MethodBase.Invoke (System.Object obj, System.Object[] parameters) [0x00000] in <2943701620b54f86b436d3ffad010412>:0

at Eco.Shared.Networking.RPCManager.TryInvoke (Eco.Shared.Networking.INetClient client, System.Object target, System.String methodname, Eco.Shared.Serialization.BSONObject bsonArgs, System.Object& result) [0x00074] in <e2ef92c2851e48349a7bb563b39facd2>:0

at Eco.Shared.Networking.RPCManager.InvokeOn (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson, System.Object target, System.String methodname) [0x00056] in <e2ef92c2851e48349a7bb563b39facd2>:0

at Eco.Core.Controller.ControllerManager.HandleViewRPC (Eco.Shared.Networking.INetClient client, System.Int32 controllerID, System.String methodname, Eco.Shared.Serialization.BSONObject bson) [0x00007] in <e12b9c3fd01845b4ba12cb89bfab028b>:0

at Eco.Plugins.Networking.Client.ViewRPC (Eco.Shared.Networking.INetClient client, System.Int32 id, System.String methodname, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <aad317c740ad4ca1b489827c0eec9b2f>:0

at (wrapper managed-to-native) System.Reflection.MonoMethod.InternalInvoke(System.Reflection.MonoMethod,object,object[],System.Exception&)

at System.Reflection.MonoMethod.Invoke (System.Object obj, System.Reflection.BindingFlags invokeAttr, System.Reflection.Binder binder, System.Object[] parameters, System.Globalization.CultureInfo culture) [0x0003b] in <2943701620b54f86b436d3ffad010412>:0</size>

| 1.0 | Object of type 'Eco.Gameplay.Items.AuthorizationInventory' cannot be converted to type 'Eco.Gameplay.Items.ItemStack -

[log.txt](https://github.com/StrangeLoopGames/EcoIssues/files/2973960/log.txt)

Got this twice when trying to drag stuff out of a stockpile the same time as someone else,0.8.0.7

Server encountered an exception:

<size=60.00%>Exception: ArgumentException

Message:Object of type 'Eco.Gameplay.Items.AuthorizationInventory' cannot be converted to type 'Eco.Gameplay.Items.ItemStack'.

Source:mscorlib

System.ArgumentException: Object of type 'Eco.Gameplay.Items.AuthorizationInventory' cannot be converted to type 'Eco.Gameplay.Items.ItemStack'.

at System.RuntimeType.CheckValue (System.Object value, System.Reflection.Binder binder, System.Globalization.CultureInfo culture, System.Reflection.BindingFlags invokeAttr) [0x00071] in <2943701620b54f86b436d3ffad010412>:0

at System.Reflection.MonoMethod.ConvertValues (System.Reflection.Binder binder, System.Object[] args, System.Reflection.ParameterInfo[] pinfo, System.Globalization.CultureInfo culture, System.Reflection.BindingFlags invokeAttr) [0x00069] in <2943701620b54f86b436d3ffad010412>:0

at System.Reflection.MonoMethod.Invoke (System.Object obj, System.Reflection.BindingFlags invokeAttr, System.Reflection.Binder binder, System.Object[] parameters, System.Globalization.CultureInfo culture) [0x00011] in <2943701620b54f86b436d3ffad010412>:0

at System.Reflection.MethodBase.Invoke (System.Object obj, System.Object[] parameters) [0x00000] in <2943701620b54f86b436d3ffad010412>:0

at Eco.Shared.Networking.RPCManager.TryInvoke (Eco.Shared.Networking.INetClient client, System.Object target, System.String methodname, Eco.Shared.Serialization.BSONObject bsonArgs, System.Object& result) [0x00074] in <e2ef92c2851e48349a7bb563b39facd2>:0

at Eco.Shared.Networking.RPCManager.InvokeOn (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson, System.Object target, System.String methodname) [0x00056] in <e2ef92c2851e48349a7bb563b39facd2>:0

at Eco.Core.Controller.ControllerManager.HandleViewRPC (Eco.Shared.Networking.INetClient client, System.Int32 controllerID, System.String methodname, Eco.Shared.Serialization.BSONObject bson) [0x00007] in <e12b9c3fd01845b4ba12cb89bfab028b>:0

at Eco.Plugins.Networking.Client.ViewRPC (Eco.Shared.Networking.INetClient client, System.Int32 id, System.String methodname, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <aad317c740ad4ca1b489827c0eec9b2f>:0

at (wrapper managed-to-native) System.Reflection.MonoMethod.InternalInvoke(System.Reflection.MonoMethod,object,object[],System.Exception&)

at System.Reflection.MonoMethod.Invoke (System.Object obj, System.Reflection.BindingFlags invokeAttr, System.Reflection.Binder binder, System.Object[] parameters, System.Globalization.CultureInfo culture) [0x0003b] in <2943701620b54f86b436d3ffad010412>:0</size>

| priority | object of type eco gameplay items authorizationinventory cannot be converted to type eco gameplay items itemstack got this twice when trying to drag stuff out of a stockpile the same time as someone else server encountered an exception exception argumentexception message object of type eco gameplay items authorizationinventory cannot be converted to type eco gameplay items itemstack source mscorlib system argumentexception object of type eco gameplay items authorizationinventory cannot be converted to type eco gameplay items itemstack at system runtimetype checkvalue system object value system reflection binder binder system globalization cultureinfo culture system reflection bindingflags invokeattr in at system reflection monomethod convertvalues system reflection binder binder system object args system reflection parameterinfo pinfo system globalization cultureinfo culture system reflection bindingflags invokeattr in at system reflection monomethod invoke system object obj system reflection bindingflags invokeattr system reflection binder binder system object parameters system globalization cultureinfo culture in at system reflection methodbase invoke system object obj system object parameters in at eco shared networking rpcmanager tryinvoke eco shared networking inetclient client system object target system string methodname eco shared serialization bsonobject bsonargs system object result in at eco shared networking rpcmanager invokeon eco shared networking inetclient client eco shared serialization bsonobject bson system object target system string methodname in at eco core controller controllermanager handleviewrpc eco shared networking inetclient client system controllerid system string methodname eco shared serialization bsonobject bson in at eco plugins networking client viewrpc eco shared networking inetclient client system id system string methodname eco shared serialization bsonobject bson in at wrapper managed to native system reflection monomethod internalinvoke system reflection monomethod object object system exception at system reflection monomethod invoke system object obj system reflection bindingflags invokeattr system reflection binder binder system object parameters system globalization cultureinfo culture in | 1 |

550,913 | 16,134,488,209 | IssuesEvent | 2021-04-29 09:58:10 | sopra-fs21-group-26/client | https://api.github.com/repos/sopra-fs21-group-26/client | closed | Implement Leave Lobby | high priority task | <h2>Sub-Tasks:</h2>

- [x] CSS

- [x] Leave Button

~~- [ ] Admin can leave~~

- [x] Player can leave

<h2>Estimate: 2h</h2> | 1.0 | Implement Leave Lobby - <h2>Sub-Tasks:</h2>

- [x] CSS

- [x] Leave Button

~~- [ ] Admin can leave~~

- [x] Player can leave

<h2>Estimate: 2h</h2> | priority | implement leave lobby sub tasks css leave button admin can leave player can leave estimate | 1 |

297,308 | 9,166,899,843 | IssuesEvent | 2019-03-02 08:12:24 | Luca1152/gravity-box | https://api.github.com/repos/Luca1152/gravity-box | closed | Save/load functionality | Priority: High Status: In Progress Type: Enhancement | ## Description

The player should be able to save the maps he creates in the level editor, but also load them back.

## Tasks

- [x] Define how a maps file would look like

- [x] Add save to JSON functionality

- [x] Fix the id of map objects to be unique, and not 0

- [x] Add load from JSON functionality | 1.0 | Save/load functionality - ## Description

The player should be able to save the maps he creates in the level editor, but also load them back.

## Tasks

- [x] Define how a maps file would look like

- [x] Add save to JSON functionality

- [x] Fix the id of map objects to be unique, and not 0

- [x] Add load from JSON functionality | priority | save load functionality description the player should be able to save the maps he creates in the level editor but also load them back tasks define how a maps file would look like add save to json functionality fix the id of map objects to be unique and not add load from json functionality | 1 |

625,683 | 19,760,767,026 | IssuesEvent | 2022-01-16 11:27:24 | MattTheLegoman/RealmsInExile | https://api.github.com/repos/MattTheLegoman/RealmsInExile | closed | Finalise terrain and heightmap painting | priority: high mapping | Finalise terrain painting, particularly:

- Oases and variety in the Dune Sea

- Adding source hills for Harnen tributary streams

- Fixing terrain clipping in shallow seas (particularly near Tulwang)

- Water colour map (particularly visible line in south and river colouring) | 1.0 | Finalise terrain and heightmap painting - Finalise terrain painting, particularly:

- Oases and variety in the Dune Sea

- Adding source hills for Harnen tributary streams

- Fixing terrain clipping in shallow seas (particularly near Tulwang)

- Water colour map (particularly visible line in south and river colouring) | priority | finalise terrain and heightmap painting finalise terrain painting particularly oases and variety in the dune sea adding source hills for harnen tributary streams fixing terrain clipping in shallow seas particularly near tulwang water colour map particularly visible line in south and river colouring | 1 |

399,137 | 11,743,465,076 | IssuesEvent | 2020-03-12 04:37:03 | AY1920S2-CS2103T-F10-2/main | https://api.github.com/repos/AY1920S2-CS2103T-F10-2/main | opened | As a user I want to tag each application with a status | priority.High type.Story | ... so that I can track my internship application phase | 1.0 | As a user I want to tag each application with a status - ... so that I can track my internship application phase | priority | as a user i want to tag each application with a status so that i can track my internship application phase | 1 |

142,843 | 5,477,929,740 | IssuesEvent | 2017-03-12 13:36:55 | CS2103JAN2017-T15-B1/main | https://api.github.com/repos/CS2103JAN2017-T15-B1/main | closed | Update PersonCard so that it shows task fields and not person fields | priority.high type.task | To fulfill #11, #14

Update, including its name, so that it no longer lists

- address, phone and email

and instread lists

- (optional) deadline

- priority

- description | 1.0 | Update PersonCard so that it shows task fields and not person fields - To fulfill #11, #14

Update, including its name, so that it no longer lists

- address, phone and email

and instread lists

- (optional) deadline

- priority

- description | priority | update personcard so that it shows task fields and not person fields to fulfill update including its name so that it no longer lists address phone and email and instread lists optional deadline priority description | 1 |

51,977 | 3,016,274,387 | IssuesEvent | 2015-07-30 00:56:14 | pombase/pombase-chado | https://api.github.com/repos/pombase/pombase-chado | closed | Store expression correctly | high priority | The expression of an allele is now separate from the rest of the allele data in the Canto JSON export flie. It should now be stored as a `feature_relationshipprop` on the relationship between the genotypes and the alleles, not as a `featureprop` of the allele. | 1.0 | Store expression correctly - The expression of an allele is now separate from the rest of the allele data in the Canto JSON export flie. It should now be stored as a `feature_relationshipprop` on the relationship between the genotypes and the alleles, not as a `featureprop` of the allele. | priority | store expression correctly the expression of an allele is now separate from the rest of the allele data in the canto json export flie it should now be stored as a feature relationshipprop on the relationship between the genotypes and the alleles not as a featureprop of the allele | 1 |

358,975 | 10,652,345,979 | IssuesEvent | 2019-10-17 12:26:03 | AY1920S1-CS2103T-F11-3/main | https://api.github.com/repos/AY1920S1-CS2103T-F11-3/main | closed | Add feature for file encryption and decryption | priority.High type.Epic | Add commands to support file encryption and decryption, as well as the data model and logic necessary to keep track of encrypted files. | 1.0 | Add feature for file encryption and decryption - Add commands to support file encryption and decryption, as well as the data model and logic necessary to keep track of encrypted files. | priority | add feature for file encryption and decryption add commands to support file encryption and decryption as well as the data model and logic necessary to keep track of encrypted files | 1 |

747,890 | 26,101,938,486 | IssuesEvent | 2022-12-27 08:18:46 | bounswe/bounswe2022group4 | https://api.github.com/repos/bounswe/bounswe2022group4 | closed | Mobile: User and Post Search | Category - To Do Category - Enhancement Priority - High Status: In Progress Difficulty - Medium Language - Kotlin Mobile | ### Description:

users should be able to search users and posts.

### What to do:

- [ ] Search button on home fragment should open a new fragment search fragment

- [ ] After an input that long than 3 char should call post and user search and display them

### Deadline

12.26.2022, 22.00(GMT+3)

| 1.0 | Mobile: User and Post Search - ### Description:

users should be able to search users and posts.

### What to do:

- [ ] Search button on home fragment should open a new fragment search fragment

- [ ] After an input that long than 3 char should call post and user search and display them

### Deadline

12.26.2022, 22.00(GMT+3)

| priority | mobile user and post search description users should be able to search users and posts what to do search button on home fragment should open a new fragment search fragment after an input that long than char should call post and user search and display them deadline gmt | 1 |

709,739 | 24,388,874,730 | IssuesEvent | 2022-10-04 13:51:58 | kubeshop/testkube | https://api.github.com/repos/kubeshop/testkube | closed | Throttling homebrew-core PR submissions | bug 🐛 high-priority | Hi 👋 , just to raise an issue here to notify some spam ban on the homebrew-core side, it would be nice that you folks can throttle the PR submissions. Thanks!

relates to

- https://github.com/Homebrew/homebrew-core/pull/111524

- https://github.com/Homebrew/homebrew-core/pull/107721

| 1.0 | Throttling homebrew-core PR submissions - Hi 👋 , just to raise an issue here to notify some spam ban on the homebrew-core side, it would be nice that you folks can throttle the PR submissions. Thanks!

relates to

- https://github.com/Homebrew/homebrew-core/pull/111524

- https://github.com/Homebrew/homebrew-core/pull/107721

| priority | throttling homebrew core pr submissions hi 👋 just to raise an issue here to notify some spam ban on the homebrew core side it would be nice that you folks can throttle the pr submissions thanks relates to | 1 |

192,959 | 6,877,593,277 | IssuesEvent | 2017-11-20 08:42:59 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | opened | TLS LDAP does not support STARTTLS | Category: Drivers - Auth Priority: High Status: Pending Tracker: Backlog | ---

Author Name: **EOLE Team** (EOLE Team)

Original Redmine Issue: 3482, https://dev.opennebula.org/issues/3482

Original Date: 2015-01-03

---

Hello,

Using ONE 4.8 and 4.10, I try to switch the LDAP authentication to TLS and found that only LDAP over SSL (by default on port *@636@*) is working.

First, a note should be added to the documentation about this issue.

I propose to modify the *@encryption@* in "configuration file":http://docs.opennebula.org/4.10/administration/authentication/ldap.html#configuration with the following possibilities:

* *@:null@* to disable encryption (by default)

* *@:simple_tls@* to use LDAP over SSL

* *@:starttls@* to use the "STARTTLS":https://en.wikipedia.org/wiki/STARTTLS

With the following configuration example:

```

server 1:

[...]

# Ldap server

:host: localhost

# No encryption by default on standart port

# Uncomment this line to use STARTTLS

#:encryption: :starttls

:port: 389

# Uncomment this lines to use LDAP over SSL on ldaps port

#:encryption: :simple_tls

#:port: 636

[...]

```

Thanks.

| 1.0 | TLS LDAP does not support STARTTLS - ---

Author Name: **EOLE Team** (EOLE Team)

Original Redmine Issue: 3482, https://dev.opennebula.org/issues/3482

Original Date: 2015-01-03

---

Hello,

Using ONE 4.8 and 4.10, I try to switch the LDAP authentication to TLS and found that only LDAP over SSL (by default on port *@636@*) is working.

First, a note should be added to the documentation about this issue.

I propose to modify the *@encryption@* in "configuration file":http://docs.opennebula.org/4.10/administration/authentication/ldap.html#configuration with the following possibilities:

* *@:null@* to disable encryption (by default)

* *@:simple_tls@* to use LDAP over SSL

* *@:starttls@* to use the "STARTTLS":https://en.wikipedia.org/wiki/STARTTLS

With the following configuration example:

```

server 1:

[...]

# Ldap server

:host: localhost

# No encryption by default on standart port

# Uncomment this line to use STARTTLS

#:encryption: :starttls

:port: 389

# Uncomment this lines to use LDAP over SSL on ldaps port

#:encryption: :simple_tls

#:port: 636

[...]

```

Thanks.

| priority | tls ldap does not support starttls author name eole team eole team original redmine issue original date hello using one and i try to switch the ldap authentication to tls and found that only ldap over ssl by default on port is working first a note should be added to the documentation about this issue i propose to modify the encryption in configuration file with the following possibilities null to disable encryption by default simple tls to use ldap over ssl starttls to use the starttls with the following configuration example server ldap server host localhost no encryption by default on standart port uncomment this line to use starttls encryption starttls port uncomment this lines to use ldap over ssl on ldaps port encryption simple tls port thanks | 1 |

578,988 | 17,169,628,902 | IssuesEvent | 2021-07-15 01:05:45 | parallel-finance/parallel | https://api.github.com/repos/parallel-finance/parallel | closed | check on-chain staking's missing parts | high priority | - [x] amount to stake, unstake

- [x] Exchange Rate

- [x] leverage staking

- [x] Staking APY

- [x] Total Stakers

- [x] xKSM Market Cap

- [x] Bonded Ratio (not sure to understand)

- [x] Staking Fee

- [x] Pending Unstake

- [x] Validator Sets

- [x] Delivery date

<img width="671" alt="image" src="https://user-images.githubusercontent.com/33961674/122855363-b94e7e80-d347-11eb-92e5-8166f747f54c.png"> | 1.0 | check on-chain staking's missing parts - - [x] amount to stake, unstake

- [x] Exchange Rate

- [x] leverage staking

- [x] Staking APY

- [x] Total Stakers

- [x] xKSM Market Cap

- [x] Bonded Ratio (not sure to understand)

- [x] Staking Fee

- [x] Pending Unstake

- [x] Validator Sets

- [x] Delivery date

<img width="671" alt="image" src="https://user-images.githubusercontent.com/33961674/122855363-b94e7e80-d347-11eb-92e5-8166f747f54c.png"> | priority | check on chain staking s missing parts amount to stake unstake exchange rate leverage staking staking apy total stakers xksm market cap bonded ratio not sure to understand staking fee pending unstake validator sets delivery date img width alt image src | 1 |

585,296 | 17,484,440,828 | IssuesEvent | 2021-08-09 09:07:42 | faktaoklimatu/web-core | https://api.github.com/repos/faktaoklimatu/web-core | opened | Remove cache workaround | bug 3: high priority | Currently, production cache is dropped to 10 minutes (0ddb93d) due to publishing AR6 infographic updates.

Discuss if such situations are going to be more frequent. If so, consider adjusting image loading. | 1.0 | Remove cache workaround - Currently, production cache is dropped to 10 minutes (0ddb93d) due to publishing AR6 infographic updates.

Discuss if such situations are going to be more frequent. If so, consider adjusting image loading. | priority | remove cache workaround currently production cache is dropped to minutes due to publishing infographic updates discuss if such situations are going to be more frequent if so consider adjusting image loading | 1 |

708,594 | 24,347,340,747 | IssuesEvent | 2022-10-02 13:49:34 | AY2223S1-CS2103T-T13-2/tp | https://api.github.com/repos/AY2223S1-CS2103T-T13-2/tp | closed | Delete client information | type.Story priority.High | ### User story

As a financial advisor, I want to be able to delete client information, so that I do not have unwanted clients stored.

### Acceptance criteria

- [ ] Update command syntax

| 1.0 | Delete client information - ### User story

As a financial advisor, I want to be able to delete client information, so that I do not have unwanted clients stored.

### Acceptance criteria

- [ ] Update command syntax

| priority | delete client information user story as a financial advisor i want to be able to delete client information so that i do not have unwanted clients stored acceptance criteria update command syntax | 1 |

190,162 | 6,810,476,796 | IssuesEvent | 2017-11-05 06:10:31 | localstack/localstack | https://api.github.com/repos/localstack/localstack | closed | S3api put-bucket-notification-configuration does not add filters from aws CLI | bug feature-missing priority-high | localstack does not add filters while configuring S3 bucket Events for QueueConfiguration

command which i am using:

```

aws --endpoint-url http://192.168.99.100:9072 s3api put-bucket-notification-configuration --bucket 800a2c5d-9b64-440a-9e70-836e673b8fa6 --notification-configuration file://C:/Persnl/LocalStack/notification.json

notification.json:

{

"QueueConfigurations": [

{

"Id": "1",

"QueueArn": "arn:aws:sqs:us-east-1:123456789012:gehc-cds-local-test",

"Events": ["s3:ObjectCreated:*"],

"Filter": {

"Key": {

"FilterRules": [

{

"Name": "prefix",

"Value": "upload/"

},

{

"Name": "suffix",

"Value": "upload-manifest.cos"

}

]

}

}

}

]

}

```

but when i am running command to see applied configuration:

```

aws --endpoint-url http://192.168.99.100:9072 s3api get-bucket-notification-configuration --bucket 800a2c5d-9b64-440a-9e70-836e673b8fa6

```

i am getting output without filter:

```

{

"QueueConfigurations": [

{

"Id": "6cfb6e5f-667e-451c-8a10-4d5589ab5d25",

"QueueArn": "arn:aws:sqs:us-east-1:123456789012:gehc-cds-local-test",

"Events": [

"s3:ObjectCreated:*"

]

}

]

}

``` | 1.0 | S3api put-bucket-notification-configuration does not add filters from aws CLI - localstack does not add filters while configuring S3 bucket Events for QueueConfiguration

command which i am using:

```

aws --endpoint-url http://192.168.99.100:9072 s3api put-bucket-notification-configuration --bucket 800a2c5d-9b64-440a-9e70-836e673b8fa6 --notification-configuration file://C:/Persnl/LocalStack/notification.json

notification.json:

{

"QueueConfigurations": [

{

"Id": "1",

"QueueArn": "arn:aws:sqs:us-east-1:123456789012:gehc-cds-local-test",

"Events": ["s3:ObjectCreated:*"],

"Filter": {

"Key": {

"FilterRules": [

{

"Name": "prefix",

"Value": "upload/"

},

{

"Name": "suffix",

"Value": "upload-manifest.cos"

}

]

}

}

}

]

}

```

but when i am running command to see applied configuration:

```

aws --endpoint-url http://192.168.99.100:9072 s3api get-bucket-notification-configuration --bucket 800a2c5d-9b64-440a-9e70-836e673b8fa6

```

i am getting output without filter:

```

{

"QueueConfigurations": [

{

"Id": "6cfb6e5f-667e-451c-8a10-4d5589ab5d25",

"QueueArn": "arn:aws:sqs:us-east-1:123456789012:gehc-cds-local-test",

"Events": [

"s3:ObjectCreated:*"

]

}

]

}

``` | priority | put bucket notification configuration does not add filters from aws cli localstack does not add filters while configuring bucket events for queueconfiguration command which i am using aws endpoint url put bucket notification configuration bucket notification configuration file c persnl localstack notification json notification json queueconfigurations id queuearn arn aws sqs us east gehc cds local test events filter key filterrules name prefix value upload name suffix value upload manifest cos but when i am running command to see applied configuration aws endpoint url get bucket notification configuration bucket i am getting output without filter queueconfigurations id queuearn arn aws sqs us east gehc cds local test events objectcreated | 1 |

341,601 | 10,299,280,383 | IssuesEvent | 2019-08-28 13:11:43 | UniversityOfHelsinkiCS/fuksilaiterekisteri | https://api.github.com/repos/UniversityOfHelsinkiCS/fuksilaiterekisteri | closed | make sure checker scripts don't blow up the system during oodi maintenance | enhancement high priority | test to see if checker scripts blow up when oodi is down

next downtime is (presumably) on 1st of Sep | 1.0 | make sure checker scripts don't blow up the system during oodi maintenance - test to see if checker scripts blow up when oodi is down

next downtime is (presumably) on 1st of Sep | priority | make sure checker scripts don t blow up the system during oodi maintenance test to see if checker scripts blow up when oodi is down next downtime is presumably on of sep | 1 |

170,128 | 6,424,714,187 | IssuesEvent | 2017-08-09 14:05:13 | mreishman/Log-Hog | https://api.github.com/repos/mreishman/Log-Hog | closed | Force refresh all every x poll requests | enhancement Priority - 1 - Very High | - [x] Option to force refresh all files every x poll requests

- [x] Option to force refresh also by unlocking poll request if hasn't changed in x poll requests

(Default every 120) - 1 min for 500ms, 2 min for 1000ms | 1.0 | Force refresh all every x poll requests - - [x] Option to force refresh all files every x poll requests

- [x] Option to force refresh also by unlocking poll request if hasn't changed in x poll requests

(Default every 120) - 1 min for 500ms, 2 min for 1000ms | priority | force refresh all every x poll requests option to force refresh all files every x poll requests option to force refresh also by unlocking poll request if hasn t changed in x poll requests default every min for min for | 1 |

528,902 | 15,376,774,664 | IssuesEvent | 2021-03-02 16:20:34 | Systems-Learning-and-Development-Lab/MMM | https://api.github.com/repos/Systems-Learning-and-Development-Lab/MMM | closed | Button קבוצה | priority-high | Until the bug with group name will be solved, remove button

Comment: as far as I understand we don't need it now, but if we need so leave it and explain why.

@Ron-Teller

| 1.0 | Button קבוצה - Until the bug with group name will be solved, remove button

Comment: as far as I understand we don't need it now, but if we need so leave it and explain why.

@Ron-Teller

| priority | button קבוצה until the bug with group name will be solved remove button comment as far as i understand we don t need it now but if we need so leave it and explain why ron teller | 1 |

469,102 | 13,501,535,564 | IssuesEvent | 2020-09-13 03:05:41 | wise-old-man/wise-old-man | https://api.github.com/repos/wise-old-man/wise-old-man | closed | Duration undefined in competition created discord event | bug priority-high | This is because this event, unlike all the others, doesn't get the full competition info from `getDetails`, which calculates the duration. | 1.0 | Duration undefined in competition created discord event - This is because this event, unlike all the others, doesn't get the full competition info from `getDetails`, which calculates the duration. | priority | duration undefined in competition created discord event this is because this event unlike all the others doesn t get the full competition info from getdetails which calculates the duration | 1 |

392,322 | 11,590,135,120 | IssuesEvent | 2020-02-24 05:32:01 | ncssar/sign-in | https://api.github.com/repos/ncssar/sign-in | closed | inactivity timers | Priority: High enhancement forNextMeeting | - from lookup screen, return to keypad screen after n seconds of inactivity

- from sign-in / sign-out screen, start flashing to get user's attention after very short inactivity, then return to keypad screen after a bit more inactivity (if signing out, it's probably acceptable to leave them as dnso (did not sign out) since dnso is a lesser problem than accidentally signing out someone else who is actually still here or in the field; but, how should this be handled for signing in?) | 1.0 | inactivity timers - - from lookup screen, return to keypad screen after n seconds of inactivity

- from sign-in / sign-out screen, start flashing to get user's attention after very short inactivity, then return to keypad screen after a bit more inactivity (if signing out, it's probably acceptable to leave them as dnso (did not sign out) since dnso is a lesser problem than accidentally signing out someone else who is actually still here or in the field; but, how should this be handled for signing in?) | priority | inactivity timers from lookup screen return to keypad screen after n seconds of inactivity from sign in sign out screen start flashing to get user s attention after very short inactivity then return to keypad screen after a bit more inactivity if signing out it s probably acceptable to leave them as dnso did not sign out since dnso is a lesser problem than accidentally signing out someone else who is actually still here or in the field but how should this be handled for signing in | 1 |

300,650 | 9,211,575,562 | IssuesEvent | 2019-03-09 16:32:11 | qgisissuebot/QGIS | https://api.github.com/repos/qgisissuebot/QGIS | closed | QGIS Crashed Windows when close qis | Bug Priority: high | ---

Author Name: **Martín Fernando Ortiz** (Martín Fernando Ortiz)

Original Redmine Issue: 20970, https://issues.qgis.org/issues/20970

Original Date: 2019-01-11T10:36:47.354Z

Affected QGIS version: 3.4.2

---

## User Feedback

It didnt crash... but when i closed qgis always appear the QGIS Crashed Windows

## Report Details

*Crash ID*: a82e7e07e3c0e2dbce4f2adf5452fe54341e44a9

*Stack Trace*

```

proj_lpz_dist :

proj_lpz_dist :

QgsCoordinateTransform::transformPolygon :

QgsCoordinateTransform::transformPolygon :

QgsCoordinateTransform::~QgsCoordinateTransform :

QgsFirstRunDialog::tr :

QObjectPrivate::deleteChildren :

QWidget::~QWidget :

CPLStringList::operator[] :

main :

BaseThreadInitThunk :

RtlUserThreadStart :

```

*QGIS Info*

QGIS Version: 3.4.2-Madeira

QGIS code revision: 22034aa070

Compiled against Qt: 5.11.2

Running against Qt: 5.11.2

Compiled against GDAL: 2.3.2

Running against GDAL: 2.3.2

*System Info*

CPU Type: x86_64

Kernel Type: winnt

Kernel Version: 10.0.17134

| 1.0 | QGIS Crashed Windows when close qis - ---

Author Name: **Martín Fernando Ortiz** (Martín Fernando Ortiz)

Original Redmine Issue: 20970, https://issues.qgis.org/issues/20970

Original Date: 2019-01-11T10:36:47.354Z

Affected QGIS version: 3.4.2

---

## User Feedback

It didnt crash... but when i closed qgis always appear the QGIS Crashed Windows

## Report Details

*Crash ID*: a82e7e07e3c0e2dbce4f2adf5452fe54341e44a9

*Stack Trace*

```

proj_lpz_dist :

proj_lpz_dist :

QgsCoordinateTransform::transformPolygon :

QgsCoordinateTransform::transformPolygon :

QgsCoordinateTransform::~QgsCoordinateTransform :

QgsFirstRunDialog::tr :

QObjectPrivate::deleteChildren :

QWidget::~QWidget :

CPLStringList::operator[] :

main :

BaseThreadInitThunk :

RtlUserThreadStart :

```

*QGIS Info*

QGIS Version: 3.4.2-Madeira

QGIS code revision: 22034aa070

Compiled against Qt: 5.11.2

Running against Qt: 5.11.2

Compiled against GDAL: 2.3.2

Running against GDAL: 2.3.2

*System Info*

CPU Type: x86_64

Kernel Type: winnt

Kernel Version: 10.0.17134

| priority | qgis crashed windows when close qis author name martín fernando ortiz martín fernando ortiz original redmine issue original date affected qgis version user feedback it didnt crash but when i closed qgis always appear the qgis crashed windows report details crash id stack trace proj lpz dist proj lpz dist qgscoordinatetransform transformpolygon qgscoordinatetransform transformpolygon qgscoordinatetransform qgscoordinatetransform qgsfirstrundialog tr qobjectprivate deletechildren qwidget qwidget cplstringlist operator main basethreadinitthunk rtluserthreadstart qgis info qgis version madeira qgis code revision compiled against qt running against qt compiled against gdal running against gdal system info cpu type kernel type winnt kernel version | 1 |

669,582 | 22,632,444,302 | IssuesEvent | 2022-06-30 15:41:53 | netdata/netdata | https://api.github.com/repos/netdata/netdata | closed | [Bug]: Lot of timeouts waiting for agent reply, part of #incident-41 | bug priority/high area/ACLK | ### Bug description

an unusually high amount of timeouts on queries to the agent from the cloud

this also causes initial sync to be way too slow

additional issues found during incident-41 have to be investigated/fixed separately

### Expected behavior

no timeouts if the network connection is OK

### Steps to reproduce

1. claim new agent with mqtt5 enabled

2.

3.

...

### Installation method

other

### System info

```shell

Linux

```

### Netdata build info

```shell

mqtt5 enabled

```

### Additional info

_No response_ | 1.0 | [Bug]: Lot of timeouts waiting for agent reply, part of #incident-41 - ### Bug description

an unusually high amount of timeouts on queries to the agent from the cloud

this also causes initial sync to be way too slow

additional issues found during incident-41 have to be investigated/fixed separately

### Expected behavior

no timeouts if the network connection is OK

### Steps to reproduce

1. claim new agent with mqtt5 enabled

2.

3.

...

### Installation method

other

### System info

```shell

Linux

```

### Netdata build info

```shell

mqtt5 enabled

```

### Additional info

_No response_ | priority | lot of timeouts waiting for agent reply part of incident bug description an unusually high amount of timeouts on queries to the agent from the cloud this also causes initial sync to be way too slow additional issues found during incident have to be investigated fixed separately expected behavior no timeouts if the network connection is ok steps to reproduce claim new agent with enabled installation method other system info shell linux netdata build info shell enabled additional info no response | 1 |

444,587 | 12,814,753,167 | IssuesEvent | 2020-07-04 20:49:19 | ctm/mb2-doc | https://api.github.com/repos/ctm/mb2-doc | opened | MeMyself still has table height issues | can't reproduce chore high priority | > memyself: Cliff, is fixing the height of the game window still on your list? It still opens with the bell and timer buttons below the Windows 10 taskbar.

> memyself: by below, I mean underneath. I have to resize the window to see everything.

> deadhead: That one fell off my radar. Does the lobby show up underneath?

> deadhead: I did make an adjustment a while back, do you remember things changing at all?

> memyself: The lobby changed size, but the game window didn't.

commit ade14a1f9 subtracts 30 from avail_height, but that either isn't happening or wasn't enough.

I'll fiddle around locally and try changing the 30 to 100. Hopefully that won't hose anyone.

| 1.0 | MeMyself still has table height issues - > memyself: Cliff, is fixing the height of the game window still on your list? It still opens with the bell and timer buttons below the Windows 10 taskbar.

> memyself: by below, I mean underneath. I have to resize the window to see everything.

> deadhead: That one fell off my radar. Does the lobby show up underneath?

> deadhead: I did make an adjustment a while back, do you remember things changing at all?

> memyself: The lobby changed size, but the game window didn't.

commit ade14a1f9 subtracts 30 from avail_height, but that either isn't happening or wasn't enough.

I'll fiddle around locally and try changing the 30 to 100. Hopefully that won't hose anyone.

| priority | memyself still has table height issues memyself cliff is fixing the height of the game window still on your list it still opens with the bell and timer buttons below the windows taskbar memyself by below i mean underneath i have to resize the window to see everything deadhead that one fell off my radar does the lobby show up underneath deadhead i did make an adjustment a while back do you remember things changing at all memyself the lobby changed size but the game window didn t commit subtracts from avail height but that either isn t happening or wasn t enough i ll fiddle around locally and try changing the to hopefully that won t hose anyone | 1 |

373,291 | 11,038,546,033 | IssuesEvent | 2019-12-08 14:44:23 | jonfroehlich/makeabilitylabwebsite | https://api.github.com/repos/jonfroehlich/makeabilitylabwebsite | closed | Our auto-capitalization function is a bit too aggressive | Priority: High bug | Some paper titles need capitalization like 'BodyVis' or 'SIG' but this is mangled with new auto-capitalization functionality.

| 1.0 | Our auto-capitalization function is a bit too aggressive - Some paper titles need capitalization like 'BodyVis' or 'SIG' but this is mangled with new auto-capitalization functionality.

| priority | our auto capitalization function is a bit too aggressive some paper titles need capitalization like bodyvis or sig but this is mangled with new auto capitalization functionality | 1 |

155,643 | 5,958,505,515 | IssuesEvent | 2017-05-29 08:09:20 | GeekyAnts/NativeBase | https://api.github.com/repos/GeekyAnts/NativeBase | closed | Flatlist instead of ListView in List Component | enhancement high priority | ## Version Info

* react-native:0.42.0

* react: 15.4.2

* native-base: ^2.0.11

## Expected behaviour: Performance Improvement

## Actual behaviour: Slow loading

## Issue Type:

- [x] Feature

- [ ] Bug

## Performance Issue:

Android

iOS.

If You could do this it would make the rendering of the lists faster and would also help to handle large or bad data in the list's dataSource | 1.0 | Flatlist instead of ListView in List Component - ## Version Info

* react-native:0.42.0

* react: 15.4.2

* native-base: ^2.0.11

## Expected behaviour: Performance Improvement

## Actual behaviour: Slow loading

## Issue Type:

- [x] Feature

- [ ] Bug

## Performance Issue:

Android

iOS.

If You could do this it would make the rendering of the lists faster and would also help to handle large or bad data in the list's dataSource | priority | flatlist instead of listview in list component version info react native react native base expected behaviour performance improvement actual behaviour slow loading issue type feature bug performance issue android ios if you could do this it would make the rendering of the lists faster and would also help to handle large or bad data in the list s datasource | 1 |

715,338 | 24,594,850,343 | IssuesEvent | 2022-10-14 07:24:14 | AY2223S1-CS2103T-W16-3/tp | https://api.github.com/repos/AY2223S1-CS2103T-W16-3/tp | closed | As a hospital staff I can assign diagnoses to patients in their appointments | type.Story priority.High | ...so that I can store the diagnosis results and prescribed medication for each appointment separately. | 1.0 | As a hospital staff I can assign diagnoses to patients in their appointments - ...so that I can store the diagnosis results and prescribed medication for each appointment separately. | priority | as a hospital staff i can assign diagnoses to patients in their appointments so that i can store the diagnosis results and prescribed medication for each appointment separately | 1 |

119,082 | 4,760,870,171 | IssuesEvent | 2016-10-25 05:37:19 | csasf/members | https://api.github.com/repos/csasf/members | opened | Mailgun: Hook up password reset emails | high-priority | Devise (our authentication solution) has the ability to send out password reset emails. We should use this as a first test of mailgun at a broad scale. | 1.0 | Mailgun: Hook up password reset emails - Devise (our authentication solution) has the ability to send out password reset emails. We should use this as a first test of mailgun at a broad scale. | priority | mailgun hook up password reset emails devise our authentication solution has the ability to send out password reset emails we should use this as a first test of mailgun at a broad scale | 1 |

100,501 | 4,097,482,830 | IssuesEvent | 2016-06-03 01:53:49 | dhowe/AdNauseam | https://api.github.com/repos/dhowe/AdNauseam | closed | Cross-platform handing of private-mode | Needs-verification PRIORITY: High | How to detect whether a window is in private-mode (incognito)?

CHROME

http://developer.chrome.com/dev/extensions/extension.html#property-inIncognitoContext

FF(via JSM)

https://developer.mozilla.org/EN/docs/Supporting_per-window_private_browsing

FF(via SDK)

` if (require("sdk/private-browsing").isPrivate(wnd)) { }` | 1.0 | Cross-platform handing of private-mode - How to detect whether a window is in private-mode (incognito)?

CHROME

http://developer.chrome.com/dev/extensions/extension.html#property-inIncognitoContext

FF(via JSM)

https://developer.mozilla.org/EN/docs/Supporting_per-window_private_browsing

FF(via SDK)

` if (require("sdk/private-browsing").isPrivate(wnd)) { }` | priority | cross platform handing of private mode how to detect whether a window is in private mode incognito chrome ff via jsm ff via sdk if require sdk private browsing isprivate wnd | 1 |

377,084 | 11,163,387,688 | IssuesEvent | 2019-12-26 22:19:52 | phillipmonroe/Tabletop-Master | https://api.github.com/repos/phillipmonroe/Tabletop-Master | opened | Choose Campaign | priority-high story | As a user I would like to be able to choose which campaign I would like so that I can access the proper resources

**Acceptance Criteria:**

- [ ] Given a user is logged in to a valid account, when the user first logs in then they will be greeted with a page to select which campaign they would like to use.

- [ ] Given a user is logged in to a valid account, when the user selects on a campaign, then they will be directed to that campaign and it's resources. | 1.0 | Choose Campaign - As a user I would like to be able to choose which campaign I would like so that I can access the proper resources

**Acceptance Criteria:**

- [ ] Given a user is logged in to a valid account, when the user first logs in then they will be greeted with a page to select which campaign they would like to use.

- [ ] Given a user is logged in to a valid account, when the user selects on a campaign, then they will be directed to that campaign and it's resources. | priority | choose campaign as a user i would like to be able to choose which campaign i would like so that i can access the proper resources acceptance criteria given a user is logged in to a valid account when the user first logs in then they will be greeted with a page to select which campaign they would like to use given a user is logged in to a valid account when the user selects on a campaign then they will be directed to that campaign and it s resources | 1 |

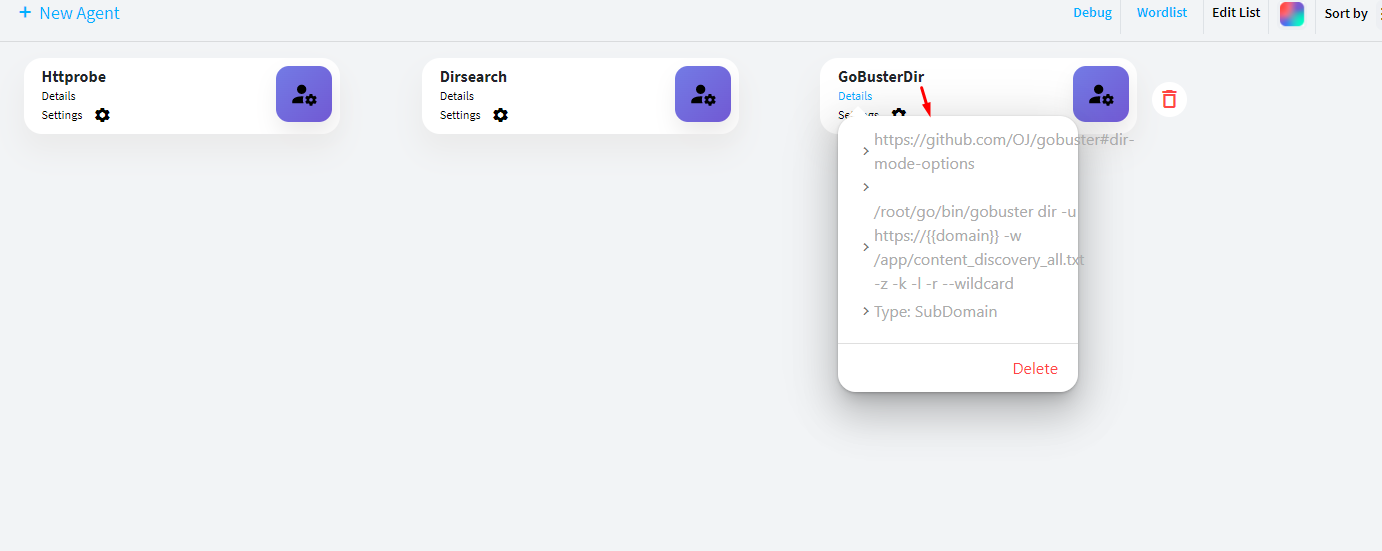

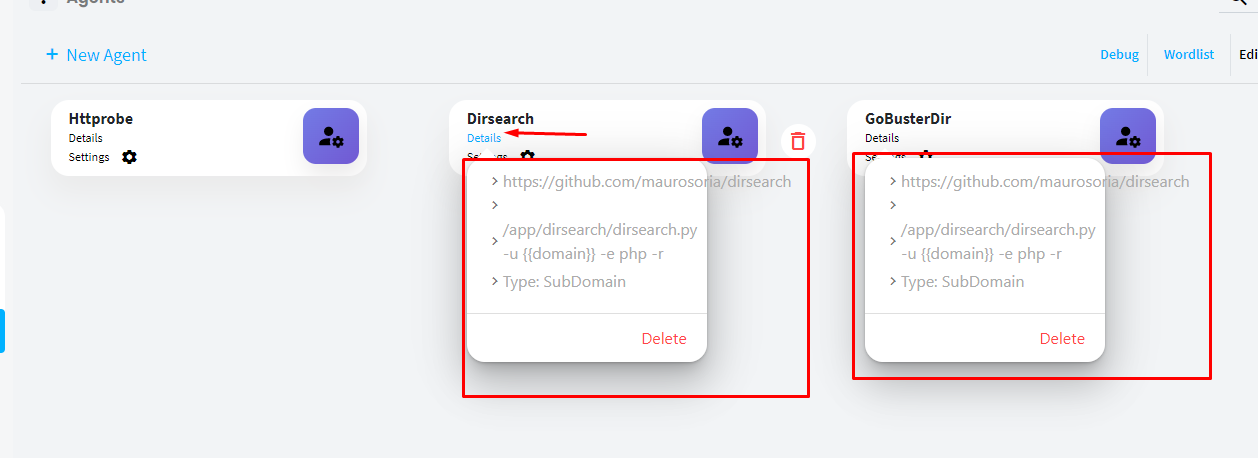

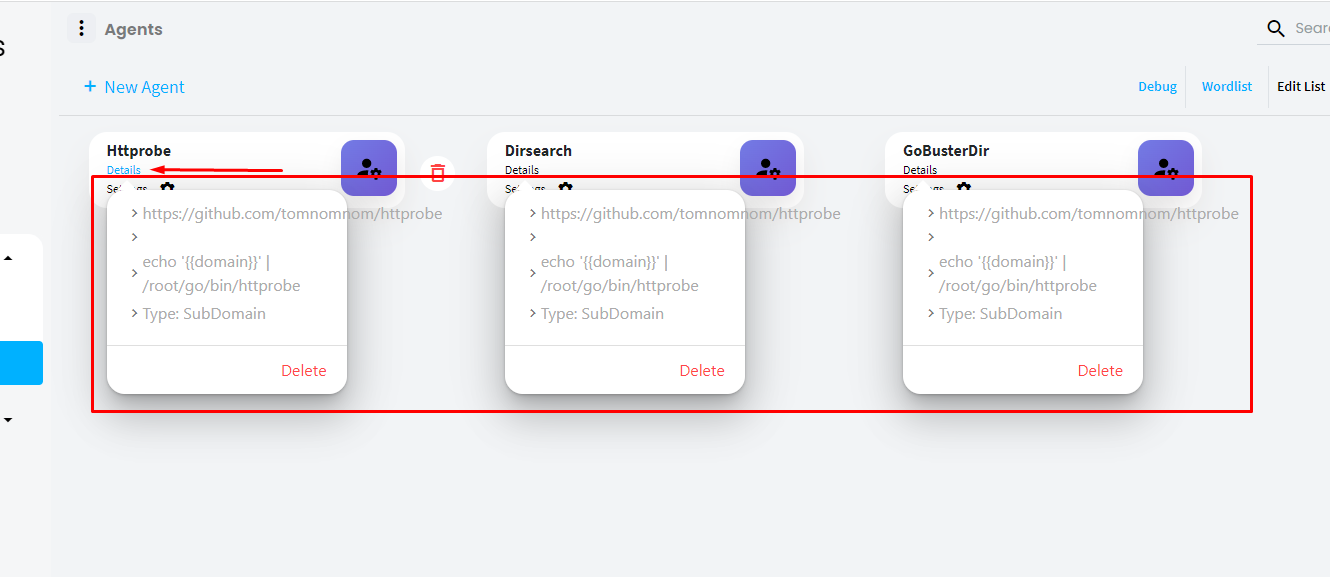

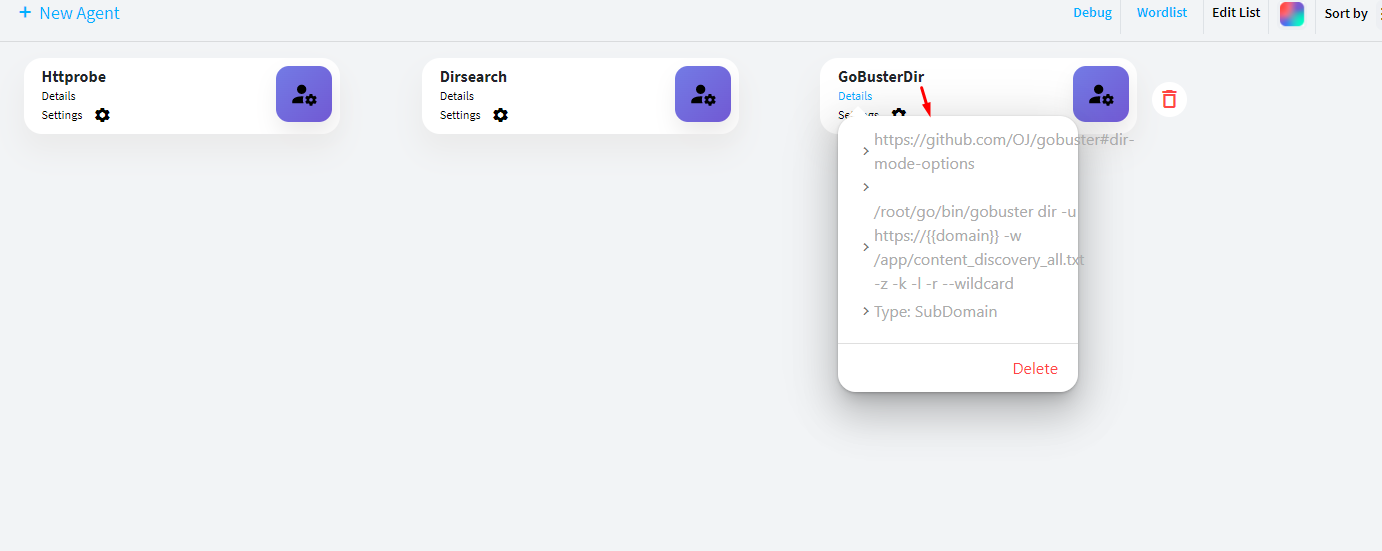

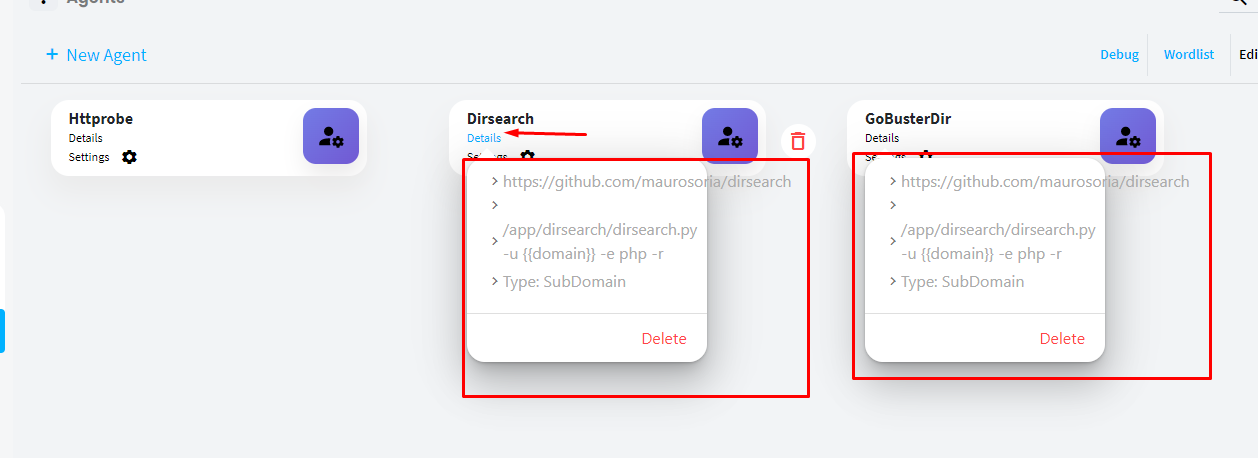

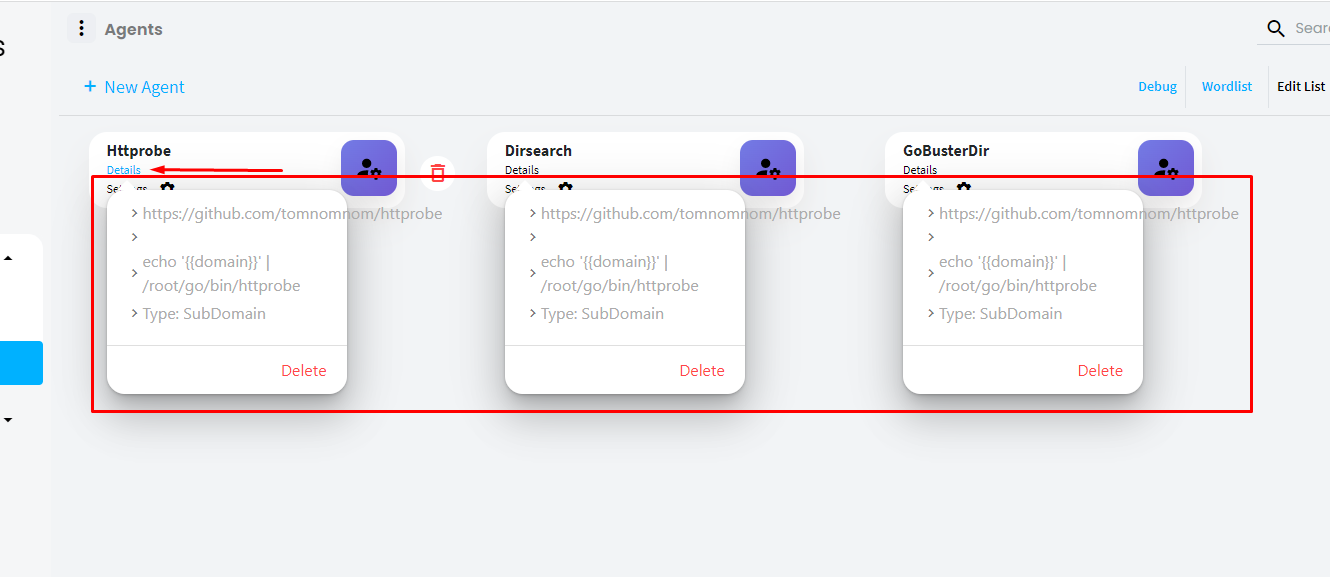

632,152 | 20,175,133,716 | IssuesEvent | 2022-02-10 13:55:41 | reconness/reconness-frontend | https://api.github.com/repos/reconness/reconness-frontend | closed | [BUG] Details popup changes its content in Agent list | bug priority: high severity: major | **Describe the bug**

In Agent list, clicking in different Details links, will cause information changes everytime

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Agent list

2. Click on Details in any agent item and a popup will be displayed with selected Agent information

3. Cick on details in another agent item without closing the firt aget details popup. You will see that both Details popups will display the information of the last clicked agent item

4. Repeat in another agent item and now the 3 details popups will display the same info, related to the last clicked agent item

5. Also, notice that the text is not contained inside the Details popup container

**Expected behavior**

Any open Details popup should be closed when user clicks in another Agent item Details link or, if all Details popup will remain open, they should display the content related to its agent item and not change everytime

**Screenshots**

| 1.0 | [BUG] Details popup changes its content in Agent list - **Describe the bug**

In Agent list, clicking in different Details links, will cause information changes everytime

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Agent list

2. Click on Details in any agent item and a popup will be displayed with selected Agent information

3. Cick on details in another agent item without closing the firt aget details popup. You will see that both Details popups will display the information of the last clicked agent item

4. Repeat in another agent item and now the 3 details popups will display the same info, related to the last clicked agent item

5. Also, notice that the text is not contained inside the Details popup container

**Expected behavior**

Any open Details popup should be closed when user clicks in another Agent item Details link or, if all Details popup will remain open, they should display the content related to its agent item and not change everytime

**Screenshots**

| priority | details popup changes its content in agent list describe the bug in agent list clicking in different details links will cause information changes everytime to reproduce steps to reproduce the behavior go to agent list click on details in any agent item and a popup will be displayed with selected agent information cick on details in another agent item without closing the firt aget details popup you will see that both details popups will display the information of the last clicked agent item repeat in another agent item and now the details popups will display the same info related to the last clicked agent item also notice that the text is not contained inside the details popup container expected behavior any open details popup should be closed when user clicks in another agent item details link or if all details popup will remain open they should display the content related to its agent item and not change everytime screenshots | 1 |

527,191 | 15,325,428,378 | IssuesEvent | 2021-02-26 01:17:31 | jcsnorlax97/rentr | https://api.github.com/repos/jcsnorlax97/rentr | closed | [TASK] Fix Tool bar styling issue on dev | High Priority dev-task frontend | Post ad button should be displayed after user's logged in, and should be on the same line | 1.0 | [TASK] Fix Tool bar styling issue on dev - Post ad button should be displayed after user's logged in, and should be on the same line | priority | fix tool bar styling issue on dev post ad button should be displayed after user s logged in and should be on the same line | 1 |

52,003 | 3,016,743,607 | IssuesEvent | 2015-07-30 06:24:24 | earwig/mwparserfromhell | https://api.github.com/repos/earwig/mwparserfromhell | closed | Automatically build Windows Wheels | aspect: other priority: high | We can probably auto-build wheels on release using http://www.appveyor.com/ ; I'll fiddle around with it sometime soon(tm). | 1.0 | Automatically build Windows Wheels - We can probably auto-build wheels on release using http://www.appveyor.com/ ; I'll fiddle around with it sometime soon(tm). | priority | automatically build windows wheels we can probably auto build wheels on release using i ll fiddle around with it sometime soon tm | 1 |

272,535 | 8,514,546,784 | IssuesEvent | 2018-10-31 18:52:40 | GCTC-NTGC/TalentCloud | https://api.github.com/repos/GCTC-NTGC/TalentCloud | opened | BUG - Manager - Multilingual fields on job poster are not being saved | BED High Priority Medium Complexity | # Description

When creating a job, any fields that must be saved in both french and english (eg title, impact) are not being saved. This is happening whether its created through a form OR through db:seed. | 1.0 | BUG - Manager - Multilingual fields on job poster are not being saved - # Description

When creating a job, any fields that must be saved in both french and english (eg title, impact) are not being saved. This is happening whether its created through a form OR through db:seed. | priority | bug manager multilingual fields on job poster are not being saved description when creating a job any fields that must be saved in both french and english eg title impact are not being saved this is happening whether its created through a form or through db seed | 1 |

241,841 | 7,834,958,923 | IssuesEvent | 2018-06-16 20:53:03 | tgstation/tgstation | https://api.github.com/repos/tgstation/tgstation | closed | Briefcase teleporters have no limit on how many items they can teleport at a time, and can absolutely destroy the server. | Bug Priority: High | [Round ID]: # 89253

[Reproduction]: # Buy briefcase teleporter. Put a large amount of items on it. Teleport them back and forth, and watch the server freeze for increasing amounts of time. It's likely due to the massive amount of sparks this creates rather than the teleporting itself, so maybe it's fixable by just reducing the sparks to 1 per teleport, instead of 1 per item?

This was discovered in VR where a syndie research thingy person brought a couple hundred carp and were teleporting them back and forth until they were found and deleted. I don't even think this stuff is logged. | 1.0 | Briefcase teleporters have no limit on how many items they can teleport at a time, and can absolutely destroy the server. - [Round ID]: # 89253

[Reproduction]: # Buy briefcase teleporter. Put a large amount of items on it. Teleport them back and forth, and watch the server freeze for increasing amounts of time. It's likely due to the massive amount of sparks this creates rather than the teleporting itself, so maybe it's fixable by just reducing the sparks to 1 per teleport, instead of 1 per item?

This was discovered in VR where a syndie research thingy person brought a couple hundred carp and were teleporting them back and forth until they were found and deleted. I don't even think this stuff is logged. | priority | briefcase teleporters have no limit on how many items they can teleport at a time and can absolutely destroy the server buy briefcase teleporter put a large amount of items on it teleport them back and forth and watch the server freeze for increasing amounts of time it s likely due to the massive amount of sparks this creates rather than the teleporting itself so maybe it s fixable by just reducing the sparks to per teleport instead of per item this was discovered in vr where a syndie research thingy person brought a couple hundred carp and were teleporting them back and forth until they were found and deleted i don t even think this stuff is logged | 1 |

408,842 | 11,953,109,898 | IssuesEvent | 2020-04-03 20:13:13 | seung-lab/neuroglancer | https://api.github.com/repos/seung-lab/neuroglancer | closed | Cannot get shareable raw link without VPN | Priority: High Realm: SeungLab Type: Bug | When I am using Neuroglancer outside of the lab and without the use of the VPN, I cannot get the legacy style link. After hitting share I get a message "Posting state to dynamicannotationserver...". I shouldn't need to have access to the link shortening server to get the raw link.

Edit: It seems like after a minute, the request does time out and I can hit "cancel" to get the raw link. This is still a bug though because the time to wait is way too long and having to hit cancel to get a raw link is not intuitive. | 1.0 | Cannot get shareable raw link without VPN - When I am using Neuroglancer outside of the lab and without the use of the VPN, I cannot get the legacy style link. After hitting share I get a message "Posting state to dynamicannotationserver...". I shouldn't need to have access to the link shortening server to get the raw link.

Edit: It seems like after a minute, the request does time out and I can hit "cancel" to get the raw link. This is still a bug though because the time to wait is way too long and having to hit cancel to get a raw link is not intuitive. | priority | cannot get shareable raw link without vpn when i am using neuroglancer outside of the lab and without the use of the vpn i cannot get the legacy style link after hitting share i get a message posting state to dynamicannotationserver i shouldn t need to have access to the link shortening server to get the raw link edit it seems like after a minute the request does time out and i can hit cancel to get the raw link this is still a bug though because the time to wait is way too long and having to hit cancel to get a raw link is not intuitive | 1 |

478,825 | 13,786,227,317 | IssuesEvent | 2020-10-09 01:10:23 | colab-coop/hello-voter | https://api.github.com/repos/colab-coop/hello-voter | closed | Remove "Technical Support" button from Help page | Highest Priority | - Remove "Technical Support" button from the Help page for all organizations | 1.0 | Remove "Technical Support" button from Help page - - Remove "Technical Support" button from the Help page for all organizations | priority | remove technical support button from help page remove technical support button from the help page for all organizations | 1 |

559,001 | 16,547,215,949 | IssuesEvent | 2021-05-28 02:30:32 | The-Academic-Observatory/observatory-platform | https://api.github.com/repos/The-Academic-Observatory/observatory-platform | closed | OAeBU Dashboard Mockup in Kibana/ES | OAeBU_Mellon: Elasticsearch_Kibana Priority: High Type: Enhancement | Below is an overview of steps for creating the Mellon/OAeBN Mock-up dashboard.

1. **Create Ver 1.0 of a combined OAEBU schema based on HERMIOS and the 4 data source from the 2 partners**. – Alkim and I worked on this on Friday but did not finish this completely today. He and I will have another chat Monday about the schema. I am sure will need to iterate as this schema is just a starting point, and I know everyone has been thinking about this a lot so far. I expect it to evolve a lot as the whole ID/parts issues are addressed.

2. **Create Dummy Datasets for ANU and UCL using the above schema**. Alkim and I will revisit on Monday as part of locking in the schema. The understanding is that the raw fields from [Google Analytics, GoogleBooks, OAPEN, and JSTOR] will need processing to extract the required data. Although down the track the Telescopes etc. will end up in BigQuery, for this Dummy Data we are just creating this is GoogleSheets/Excel as that is now suitable for the import into ES.

3. **Import the Dummy dataset into Elasticsearch using the new schema**: using the manual CSV upload option (instead of the python/JSON-mapping method used in the OP). When uploading we will need to make sure that the mappings have suitable types for aggregation and text fields are mapped suitable for filtering, as this is an issue with the current data. Titles were a particular topic to watch out for. https://www.elastic.co/blog/importing-csv-and-log-data-into-elasticsearch-with-file-data-visualizer

4. **Update the ANU/UCL Dashboards to match the Mock-up data**. This will hopefully address the issue with being able to filter by title AND time

5. **Check Security is OK** the new read-only roles I have created called (names on Slack). Alkim has accounts already set up that map to these.

6. In the New spaces on K-Dev (i.e. “oaebu-anu-press” and “oaebu-ucl-press” I have updated the advance settings to make the spaces a bit more user friendly (see my tech notes linked to on Slack)

7. Iterate if needed on the above :-)

Solving this issue will make obsolete #346 and #349

| 1.0 | OAeBU Dashboard Mockup in Kibana/ES - Below is an overview of steps for creating the Mellon/OAeBN Mock-up dashboard.

1. **Create Ver 1.0 of a combined OAEBU schema based on HERMIOS and the 4 data source from the 2 partners**. – Alkim and I worked on this on Friday but did not finish this completely today. He and I will have another chat Monday about the schema. I am sure will need to iterate as this schema is just a starting point, and I know everyone has been thinking about this a lot so far. I expect it to evolve a lot as the whole ID/parts issues are addressed.

2. **Create Dummy Datasets for ANU and UCL using the above schema**. Alkim and I will revisit on Monday as part of locking in the schema. The understanding is that the raw fields from [Google Analytics, GoogleBooks, OAPEN, and JSTOR] will need processing to extract the required data. Although down the track the Telescopes etc. will end up in BigQuery, for this Dummy Data we are just creating this is GoogleSheets/Excel as that is now suitable for the import into ES.

3. **Import the Dummy dataset into Elasticsearch using the new schema**: using the manual CSV upload option (instead of the python/JSON-mapping method used in the OP). When uploading we will need to make sure that the mappings have suitable types for aggregation and text fields are mapped suitable for filtering, as this is an issue with the current data. Titles were a particular topic to watch out for. https://www.elastic.co/blog/importing-csv-and-log-data-into-elasticsearch-with-file-data-visualizer

4. **Update the ANU/UCL Dashboards to match the Mock-up data**. This will hopefully address the issue with being able to filter by title AND time

5. **Check Security is OK** the new read-only roles I have created called (names on Slack). Alkim has accounts already set up that map to these.

6. In the New spaces on K-Dev (i.e. “oaebu-anu-press” and “oaebu-ucl-press” I have updated the advance settings to make the spaces a bit more user friendly (see my tech notes linked to on Slack)

7. Iterate if needed on the above :-)

Solving this issue will make obsolete #346 and #349

| priority | oaebu dashboard mockup in kibana es below is an overview of steps for creating the mellon oaebn mock up dashboard create ver of a combined oaebu schema based on hermios and the data source from the partners – alkim and i worked on this on friday but did not finish this completely today he and i will have another chat monday about the schema i am sure will need to iterate as this schema is just a starting point and i know everyone has been thinking about this a lot so far i expect it to evolve a lot as the whole id parts issues are addressed create dummy datasets for anu and ucl using the above schema alkim and i will revisit on monday as part of locking in the schema the understanding is that the raw fields from will need processing to extract the required data although down the track the telescopes etc will end up in bigquery for this dummy data we are just creating this is googlesheets excel as that is now suitable for the import into es import the dummy dataset into elasticsearch using the new schema using the manual csv upload option instead of the python json mapping method used in the op when uploading we will need to make sure that the mappings have suitable types for aggregation and text fields are mapped suitable for filtering as this is an issue with the current data titles were a particular topic to watch out for update the anu ucl dashboards to match the mock up data this will hopefully address the issue with being able to filter by title and time check security is ok the new read only roles i have created called names on slack alkim has accounts already set up that map to these in the new spaces on k dev i e “oaebu anu press” and “oaebu ucl press” i have updated the advance settings to make the spaces a bit more user friendly see my tech notes linked to on slack iterate if needed on the above solving this issue will make obsolete and | 1 |

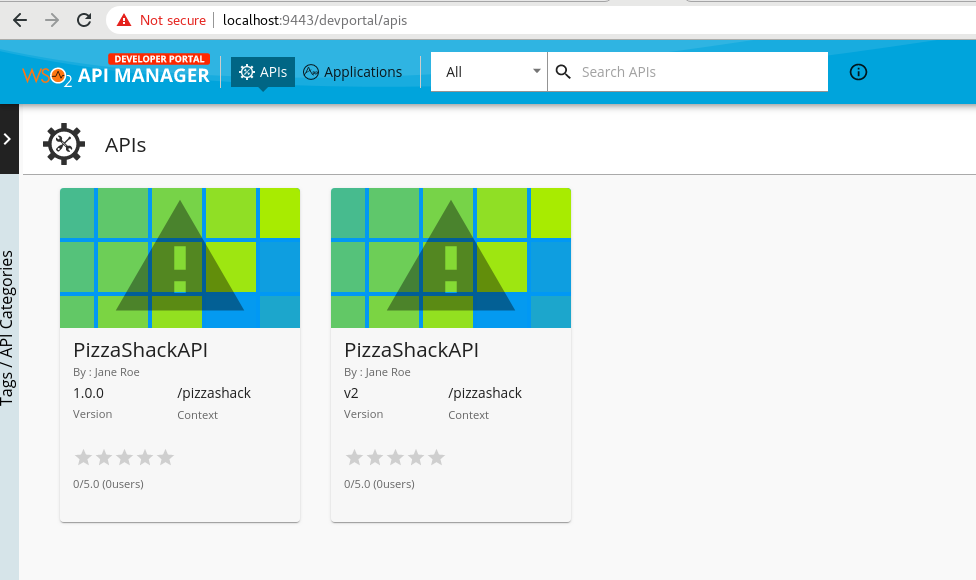

529,737 | 15,394,733,253 | IssuesEvent | 2021-03-03 18:17:30 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Developer Portal shows all the versions of the API regardless of display_multiple_versions | API-M 4.0.0 Priority/High Type/Bug | ### Description:

Originally reported by @tharindu1st

## Steps to reproduce:

1. Create a few versions of an API and Publish them

2. Check from the developer portal and all the versions are shown even though `apim.devportal.display_multiple_versions` is set to false (default value)

| 1.0 | Developer Portal shows all the versions of the API regardless of display_multiple_versions - ### Description:

Originally reported by @tharindu1st

## Steps to reproduce:

1. Create a few versions of an API and Publish them

2. Check from the developer portal and all the versions are shown even though `apim.devportal.display_multiple_versions` is set to false (default value)

| priority | developer portal shows all the versions of the api regardless of display multiple versions description originally reported by steps to reproduce create a few versions of an api and publish them check from the developer portal and all the versions are shown even though apim devportal display multiple versions is set to false default value | 1 |

751,559 | 26,250,128,771 | IssuesEvent | 2023-01-05 18:30:05 | AleoHQ/leo | https://api.github.com/repos/AleoHQ/leo | closed | [Feature] Clarify `input` Access Namescope | feature priority-high | ## Feature

This is a discussion on whether `input` access calls should be allowed outside the main function.

## Motivation

This impacts the decision between how the following issues are resolved:

* #1291

* #1297 | 1.0 | [Feature] Clarify `input` Access Namescope - ## Feature

This is a discussion on whether `input` access calls should be allowed outside the main function.

## Motivation

This impacts the decision between how the following issues are resolved:

* #1291

* #1297 | priority | clarify input access namescope feature this is a discussion on whether input access calls should be allowed outside the main function motivation this impacts the decision between how the following issues are resolved | 1 |

505,258 | 14,630,736,492 | IssuesEvent | 2020-12-23 18:20:35 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | ewaybillgst.gov.in - desktop site instead of mobile site | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Firefox 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; rv:83.0) Gecko/20100101 Firefox/83.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64224 -->

**URL**: https://ewaybillgst.gov.in/CustomError.aspx

**Browser / Version**: Firefox 83.0

**Operating System**: Windows 7

**Tested Another Browser**: No

**Problem type**: Desktop site instead of mobile site

**Description**: Desktop site instead of mobile site

**Steps to Reproduce**:

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/12/6fe45ae8-e952-4ba8-a1fe-a6d009e62c34.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20201105203649</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/12/833c37db-97c5-45e7-9a85-a82fb2966ce6)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | ewaybillgst.gov.in - desktop site instead of mobile site - <!-- @browser: Firefox 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; rv:83.0) Gecko/20100101 Firefox/83.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64224 -->

**URL**: https://ewaybillgst.gov.in/CustomError.aspx

**Browser / Version**: Firefox 83.0

**Operating System**: Windows 7

**Tested Another Browser**: No

**Problem type**: Desktop site instead of mobile site

**Description**: Desktop site instead of mobile site

**Steps to Reproduce**:

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/12/6fe45ae8-e952-4ba8-a1fe-a6d009e62c34.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20201105203649</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/12/833c37db-97c5-45e7-9a85-a82fb2966ce6)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | ewaybillgst gov in desktop site instead of mobile site url browser version firefox operating system windows tested another browser no problem type desktop site instead of mobile site description desktop site instead of mobile site steps to reproduce view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

796,042 | 28,097,371,779 | IssuesEvent | 2023-03-30 16:44:36 | opendatahub-io/odh-dashboard | https://api.github.com/repos/opendatahub-io/odh-dashboard | opened | Pipeline Import | priority/high feature/ds-pipelines | The only ability in v1 to create a Pipeline from the Dashboard.

- In #1031 there was a created `ImportPipelineButton` that just needs a modal

- Implement the Import Modal

Mocks: https://www.sketch.com/s/6c72b6b2-2c32-45ff-b07c-381d9d8c8267/a/Oebd322 | 1.0 | Pipeline Import - The only ability in v1 to create a Pipeline from the Dashboard.

- In #1031 there was a created `ImportPipelineButton` that just needs a modal

- Implement the Import Modal