Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

667,020 | 22,406,524,506 | IssuesEvent | 2022-06-18 03:34:37 | containerd/nerdctl | https://api.github.com/repos/containerd/nerdctl | closed | Running `nerdctl compose up` immediately exists with "no such file or directory" on -json.log file | bug priority/high area/logging | ### Description

Running `nerdctl compose up` (without the `-d` detach flag) immediately exits with an error that the container *-json.log* file does not exist.

Running `nerdctl compose up -d; sleep 2; nerdctl compose logs -f` works; the containers are created and the log files exist and are tailed to stdout.

This behaviour occurs with both v0.20.0 (as installed by Lima) and current master (ddad5009be1618b213d0da43b03afd3df1e41852) compiled from source.

### Steps to reproduce the issue

1. Create `docker-compose.yml` file as below

2. Run `nerdctl compose up`

```

version: '3.1'

services:

db:

image: postgres:latest

environment:

POSTGRES_PASSWORD: postgres

ports:

- 5432:5432

```

### Describe the results you received and expected

Expected results:

Container starts and log file is tailed to stdout.

Received results:

nerdctl immediately exists, with the following output (with `-debug` flag):

```

dave@lima-default psql-example % nerdctl compose up --debug

DEBU[0000] stateDir: /run/user/501/containerd-rootless

DEBU[0000] rootless parent main: executing "/usr/bin/nsenter" with [-r/ -w/home/dave.linux/dev/containers/psql-example --preserve-credentials -m -n -U -t 1610 -F nerdctl compose up --debug]

INFO[0000] Ensuring image postgres:latest

DEBU[0000] filters: [labels."com.docker.compose.project"==psql-example,labels."com.docker.compose.service"==db]

INFO[0000] Creating container psql-example_db_1

DEBU[0000] failed to run [aa-exec -p nerdctl-default -- true]: "aa-exec: ERROR: profile 'nerdctl-default' does not exist\n\n" error="exit status 1"

DEBU[0000] verification process skipped

DEBU[0000] creating anonymous volume "43cd122bfc77156a7e186835af920a0ece71334be29f77c931860b073a872ca7", for "VOLUME /var/lib/postgresql/data"

DEBU[0000] final cOpts is [0x6e5a40 0xaafa50 0x6e5e60 0x6e5bc0 0x6e58b0 0xab0e00 0xab1fa0 0x6e62f0]

INFO[0000] Attaching to logs

DEBU[0000] filters: [labels."com.docker.compose.project"==psql-example,labels."com.docker.compose.service"==db]

db_1 |time="2022-05-20T20:39:51Z" level=fatal msg="failed to open \"/home/dave.linux/.local/share/nerdctl/1935db59/containers/default/d3c03ca1e0c65d0bba6e43d24ab8bd5fc5820d276cf9ebda97894d07b2991d57/d3c03ca1e0c65d0bba6e43d24ab8bd5fc5820d276cf9ebda97894d07b2991d57-json.log\", container is not created with `nerdctl run -d`?: stat /home/dave.linux/.local/share/nerdctl/1935db59/containers/default/d3c03ca1e0c65d0bba6e43d24ab8bd5fc5820d276cf9ebda97894d07b2991d57/d3c03ca1e0c65d0bba6e43d24ab8bd5fc5820d276cf9ebda97894d07b2991d57-json.log: no such file or directory"

INFO[0000] Container "psql-example_db_1" exited

INFO[0000] All the containers have exited

INFO[0000] Stopping containers (forcibly)

INFO[0000] Stopping container psql-example_db_1

```

### What version of nerdctl are you using?

Client:

Version: v0.20.0

OS/Arch: linux/arm64

Git commit: e77e05b5fd252274e3727e0439e9a2d45622ccb9

Server:

containerd:

Version: v1.6.4

GitCommit: 212e8b6fa2f44b9c21b2798135fc6fb7c53efc16

### Are you using a variant of nerdctl? (e.g., Rancher Desktop)

Lima

### Host information

Client:

Namespace: default

Debug Mode: false

Server:

Server Version: v1.6.4

Storage Driver: fuse-overlayfs

Logging Driver: json-file

Cgroup Driver: systemd

Cgroup Version: 2

Plugins:

Log: json-file

Storage: native overlayfs stargz fuse-overlayfs

Security Options:

apparmor

seccomp

Profile: default

cgroupns

rootless

Kernel Version: 5.10.0-13-arm64

Operating System: Debian GNU/Linux 11 (bullseye)

OSType: linux

Architecture: aarch64

CPUs: 4

Total Memory: 3.831GiB

Name: lima-default

ID: a2e0f2fe-8b65-4b55-b746-945a35e37c9f

WARNING: AppArmor profile "nerdctl-default" is not loaded.

Use 'sudo nerdctl apparmor load' if you prefer to use AppArmor with rootless mode.

This warning is negligible if you do not intend to use AppArmor.

WARNING: bridge-nf-call-iptables is disabled

WARNING: bridge-nf-call-ip6tables is disabled | 1.0 | Running `nerdctl compose up` immediately exists with "no such file or directory" on -json.log file - ### Description

Running `nerdctl compose up` (without the `-d` detach flag) immediately exits with an error that the container *-json.log* file does not exist.

Running `nerdctl compose up -d; sleep 2; nerdctl compose logs -f` works; the containers are created and the log files exist and are tailed to stdout.

This behaviour occurs with both v0.20.0 (as installed by Lima) and current master (ddad5009be1618b213d0da43b03afd3df1e41852) compiled from source.

### Steps to reproduce the issue

1. Create `docker-compose.yml` file as below

2. Run `nerdctl compose up`

```

version: '3.1'

services:

db:

image: postgres:latest

environment:

POSTGRES_PASSWORD: postgres

ports:

- 5432:5432

```

### Describe the results you received and expected

Expected results:

Container starts and log file is tailed to stdout.

Received results:

nerdctl immediately exists, with the following output (with `-debug` flag):

```

dave@lima-default psql-example % nerdctl compose up --debug

DEBU[0000] stateDir: /run/user/501/containerd-rootless

DEBU[0000] rootless parent main: executing "/usr/bin/nsenter" with [-r/ -w/home/dave.linux/dev/containers/psql-example --preserve-credentials -m -n -U -t 1610 -F nerdctl compose up --debug]

INFO[0000] Ensuring image postgres:latest

DEBU[0000] filters: [labels."com.docker.compose.project"==psql-example,labels."com.docker.compose.service"==db]

INFO[0000] Creating container psql-example_db_1

DEBU[0000] failed to run [aa-exec -p nerdctl-default -- true]: "aa-exec: ERROR: profile 'nerdctl-default' does not exist\n\n" error="exit status 1"

DEBU[0000] verification process skipped

DEBU[0000] creating anonymous volume "43cd122bfc77156a7e186835af920a0ece71334be29f77c931860b073a872ca7", for "VOLUME /var/lib/postgresql/data"

DEBU[0000] final cOpts is [0x6e5a40 0xaafa50 0x6e5e60 0x6e5bc0 0x6e58b0 0xab0e00 0xab1fa0 0x6e62f0]

INFO[0000] Attaching to logs

DEBU[0000] filters: [labels."com.docker.compose.project"==psql-example,labels."com.docker.compose.service"==db]

db_1 |time="2022-05-20T20:39:51Z" level=fatal msg="failed to open \"/home/dave.linux/.local/share/nerdctl/1935db59/containers/default/d3c03ca1e0c65d0bba6e43d24ab8bd5fc5820d276cf9ebda97894d07b2991d57/d3c03ca1e0c65d0bba6e43d24ab8bd5fc5820d276cf9ebda97894d07b2991d57-json.log\", container is not created with `nerdctl run -d`?: stat /home/dave.linux/.local/share/nerdctl/1935db59/containers/default/d3c03ca1e0c65d0bba6e43d24ab8bd5fc5820d276cf9ebda97894d07b2991d57/d3c03ca1e0c65d0bba6e43d24ab8bd5fc5820d276cf9ebda97894d07b2991d57-json.log: no such file or directory"

INFO[0000] Container "psql-example_db_1" exited

INFO[0000] All the containers have exited

INFO[0000] Stopping containers (forcibly)

INFO[0000] Stopping container psql-example_db_1

```

### What version of nerdctl are you using?

Client:

Version: v0.20.0

OS/Arch: linux/arm64

Git commit: e77e05b5fd252274e3727e0439e9a2d45622ccb9

Server:

containerd:

Version: v1.6.4

GitCommit: 212e8b6fa2f44b9c21b2798135fc6fb7c53efc16

### Are you using a variant of nerdctl? (e.g., Rancher Desktop)

Lima

### Host information

Client:

Namespace: default

Debug Mode: false

Server:

Server Version: v1.6.4

Storage Driver: fuse-overlayfs

Logging Driver: json-file

Cgroup Driver: systemd

Cgroup Version: 2

Plugins:

Log: json-file

Storage: native overlayfs stargz fuse-overlayfs

Security Options:

apparmor

seccomp

Profile: default

cgroupns

rootless

Kernel Version: 5.10.0-13-arm64

Operating System: Debian GNU/Linux 11 (bullseye)

OSType: linux

Architecture: aarch64

CPUs: 4

Total Memory: 3.831GiB

Name: lima-default

ID: a2e0f2fe-8b65-4b55-b746-945a35e37c9f

WARNING: AppArmor profile "nerdctl-default" is not loaded.

Use 'sudo nerdctl apparmor load' if you prefer to use AppArmor with rootless mode.

This warning is negligible if you do not intend to use AppArmor.

WARNING: bridge-nf-call-iptables is disabled

WARNING: bridge-nf-call-ip6tables is disabled | priority | running nerdctl compose up immediately exists with no such file or directory on json log file description running nerdctl compose up without the d detach flag immediately exits with an error that the container json log file does not exist running nerdctl compose up d sleep nerdctl compose logs f works the containers are created and the log files exist and are tailed to stdout this behaviour occurs with both as installed by lima and current master compiled from source steps to reproduce the issue create docker compose yml file as below run nerdctl compose up version services db image postgres latest environment postgres password postgres ports describe the results you received and expected expected results container starts and log file is tailed to stdout received results nerdctl immediately exists with the following output with debug flag dave lima default psql example nerdctl compose up debug debu statedir run user containerd rootless debu rootless parent main executing usr bin nsenter with info ensuring image postgres latest debu filters info creating container psql example db debu failed to run aa exec error profile nerdctl default does not exist n n error exit status debu verification process skipped debu creating anonymous volume for volume var lib postgresql data debu final copts is info attaching to logs debu filters db time level fatal msg failed to open home dave linux local share nerdctl containers default json log container is not created with nerdctl run d stat home dave linux local share nerdctl containers default json log no such file or directory info container psql example db exited info all the containers have exited info stopping containers forcibly info stopping container psql example db what version of nerdctl are you using client version os arch linux git commit server containerd version gitcommit are you using a variant of nerdctl e g rancher desktop lima host information client namespace default debug mode false server server version storage driver fuse overlayfs logging driver json file cgroup driver systemd cgroup version plugins log json file storage native overlayfs stargz fuse overlayfs security options apparmor seccomp profile default cgroupns rootless kernel version operating system debian gnu linux bullseye ostype linux architecture cpus total memory name lima default id warning apparmor profile nerdctl default is not loaded use sudo nerdctl apparmor load if you prefer to use apparmor with rootless mode this warning is negligible if you do not intend to use apparmor warning bridge nf call iptables is disabled warning bridge nf call is disabled | 1 |

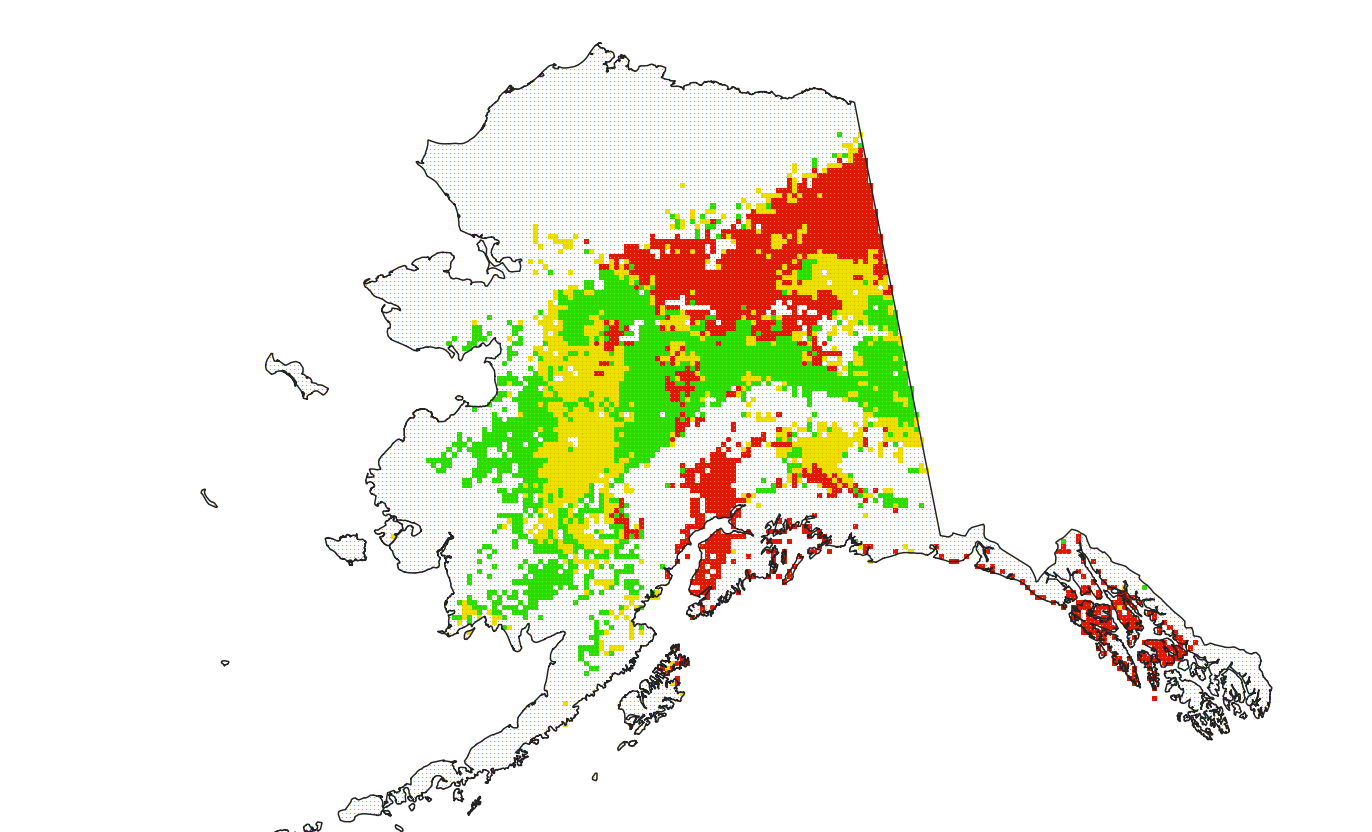

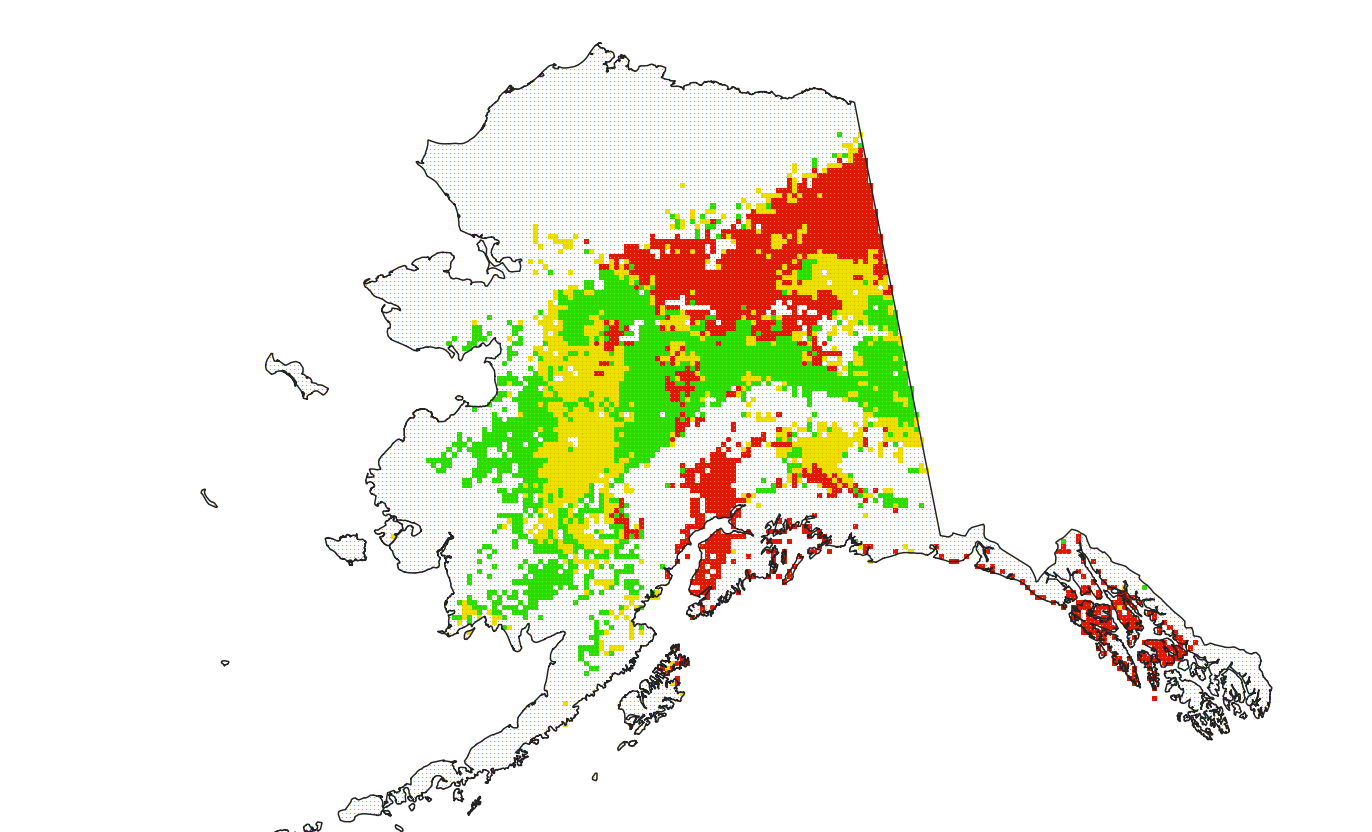

80,798 | 3,574,630,821 | IssuesEvent | 2016-01-27 12:48:09 | leeensminger/OED_Wetlands | https://api.github.com/repos/leeensminger/OED_Wetlands | closed | Program Mitigation Summary report - Status per Watershed does not display values | bug - high priority | Mitigation Status per Watershed does not display values. The report should be displaying each Mitigation Status per watershed.

There are 7 pages to the report, which include all the 8 digit watersheds. I've reviewed each page looking for data, but here's a snippet:

| 1.0 | Program Mitigation Summary report - Status per Watershed does not display values - Mitigation Status per Watershed does not display values. The report should be displaying each Mitigation Status per watershed.

There are 7 pages to the report, which include all the 8 digit watersheds. I've reviewed each page looking for data, but here's a snippet:

| priority | program mitigation summary report status per watershed does not display values mitigation status per watershed does not display values the report should be displaying each mitigation status per watershed there are pages to the report which include all the digit watersheds i ve reviewed each page looking for data but here s a snippet | 1 |

670,203 | 22,679,846,502 | IssuesEvent | 2022-07-04 08:55:02 | opensrp/opensrp-client-anc | https://api.github.com/repos/opensrp/opensrp-client-anc | closed | [Ona Support Request]: Adding new questions to forms | 🚧 WIP high priority Tech Partner (SID Team) | ### Affected App or Server Version

local Indonesian apk

### What kind of support do you need?

ONA team has shared related documentation but the SID team is facing problems in implementing it. The SID team will share the latest code used for it

### What is the acceptance criteria for your support request?

The SID team can add, remove, and modify questions on the client app

### Relevant Information

_No response_ | 1.0 | [Ona Support Request]: Adding new questions to forms - ### Affected App or Server Version

local Indonesian apk

### What kind of support do you need?

ONA team has shared related documentation but the SID team is facing problems in implementing it. The SID team will share the latest code used for it

### What is the acceptance criteria for your support request?

The SID team can add, remove, and modify questions on the client app

### Relevant Information

_No response_ | priority | adding new questions to forms affected app or server version local indonesian apk what kind of support do you need ona team has shared related documentation but the sid team is facing problems in implementing it the sid team will share the latest code used for it what is the acceptance criteria for your support request the sid team can add remove and modify questions on the client app relevant information no response | 1 |

516,870 | 14,989,766,386 | IssuesEvent | 2021-01-29 04:41:22 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | torch.cuda.memory_allocated() doesn't correctly work until the context is initialized | high priority module: cuda module: docs module: memory usage triaged | ## 🐛 Bug

The manpage https://pytorch.org/docs/stable/cuda.html#torch.cuda.memory_allocated advertises that it's possible to pass an `int` argument but it doesn't work.

And even if I create a device argument it doesn't work correctly in multi-gpu settings - giving me stats for the first device for all other devices.

## To Reproduce

Steps to reproduce the behavior:

```

python -c 'import torch; print(torch.cuda.memory_allocated(0))'

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 306, in memory_allocated

return memory_stats(device=device)["allocated_bytes.all.current"]

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 187, in memory_stats

stats = memory_stats_as_nested_dict(device=device)

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 197, in memory_stats_as_nested_dict

return torch._C._cuda_memoryStats(device)

RuntimeError: Invalid device argument.

```

If I make use of the context manager device as a device, then it works:

```

python -c 'import torch; print(torch.cuda.memory_allocated(torch.cuda.device("cuda:0")))'

0

```

It also starts working if I first call `torch.cuda.get_device_name(0))`:

```

python -c 'import torch; print(torch.cuda.get_device_name(0)); print(torch.cuda.memory_allocated(0))'

```

It also seems to just give device 0 stats regardless of what I pass to it, e.g. with 2 gpus:

```

python -c 'import torch; x=torch.ones(10<<20).to(0); y=torch.ones(10).to(1);print([torch.cuda.memory_allocated(torch.cuda.device(f"cuda:{id}")) for id in range(torch.cuda.device_count())])'

[41943040, 41943040]

```

`torch.cuda.memory_allocated` for device 1 returns the same stats as device 0.

If I replace `torch.cuda.device` with `torch.device` it works correctly. But then fails again if I don't do the `to()` calls:

```

python -c 'import torch; x=torch.ones(10<<10); y=torch.ones(10);print([torch.cuda.memory_allocated(torch.device(f"cuda:{id}")) for id in range(torch.cuda.device_count())]);'

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "<string>", line 1, in <listcomp>

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 306, in memory_allocated

return memory_stats(device=device)["allocated_bytes.all.current"]

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 187, in memory_stats

stats = memory_stats_as_nested_dict(device=device)

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 197, in memory_stats_as_nested_dict

return torch._C._cuda_memoryStats(device)

RuntimeError: Invalid device argument.

```

A pattern appears that this function only works once some operation on the desired device has been performed, and then it reports correctly only for that device and not others.

I'm seeing other ops having the same problem: e.g. [this](https://github.com/NVIDIA/apex/issues/1022) and [this](https://github.com/pytorch/pytorch/issues/21819) and the fix is to run some other safe op like `torch.cuda.set_device` which then fixes these unsafe ops.

It appears that the only way I can take a snapshot of memory allocations per device is with context manager:

```

per_device_memory = []

for id in range(torch.cuda.device_count()):

with torch.cuda.device(id):

per_device_memory.append(torch.cuda.memory_allocated())

```

and this is not possible:

```

per_device_memory = [torch.cuda.memory_allocated(id) for id in range(torch.cuda.device_count())]

```

which is very odd.

## Expected behavior

1. Either the documentation is wrong, or there is a bug and it should return the memory usage for an `int` device number.

2. The behavior seems to be inconsistent depending on what cuda functions are called prior to this function

3. `torch.device` and `torch.cuda.device` seem to be interchangeable in some situations but not others

4. it must not report memory usage for 0th device when it's being asked to report memory usage for other devices

## Environment

```

PyTorch version: 1.8.0.dev20201219+cu110

Is debug build: False

CUDA used to build PyTorch: 11.0

ROCM used to build PyTorch: N/A

OS: Ubuntu 20.04.1 LTS (x86_64)

GCC version: (Ubuntu 9.3.0-17ubuntu1~20.04) 9.3.0

Clang version: 10.0.0-4ubuntu1

CMake version: version 3.18.2

Python version: 3.8 (64-bit runtime)

Is CUDA available: True

CUDA runtime version: Could not collect

GPU models and configuration:

GPU 0: GeForce GTX 1070 Ti

GPU 1: GeForce RTX 3090

Nvidia driver version: 455.45.01

cuDNN version: Probably one of the following:

/usr/lib/x86_64-linux-gnu/libcudnn.so.7.6.5

/usr/lib/x86_64-linux-gnu/libcudnn.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_adv_infer.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_adv_train.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_cnn_infer.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_cnn_train.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_ops_infer.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_ops_train.so.8.0.4

HIP runtime version: N/A

MIOpen runtime version: N/A

Versions of relevant libraries:

[pip3] numpy==1.19.4

[pip3] pytorch-lightning==1.1.1rc0

[pip3] pytorch-memlab==0.2.2

[pip3] torch==1.8.0.dev20201219+cu110

[pip3] torchtext==0.6.0

[pip3] torchvision==0.9.0a0+f80b83e

[conda] blas 1.0 mkl

[conda] magma-cuda111 2.5.2 1 pytorch

[conda] mkl 2020.2 256

[conda] mkl-include 2020.2 256

[conda] mkl-service 2.3.0 py38he904b0f_0

[conda] mkl_fft 1.2.0 py38h23d657b_0

[conda] mkl_random 1.1.1 py38h0573a6f_0

[conda] numpy 1.19.4 pypi_0 pypi

[conda] pytorch-lightning 1.1.1rc0 dev_0 <develop>

[conda] pytorch-memlab 0.2.2 pypi_0 pypi

[conda] torch 1.8.0.dev20201219+cu110 pypi_0 pypi

[conda] torchtext 0.6.0 pypi_0 pypi

[conda] torchvision 0.9.0a0+f80b83e dev_0 <develop>

```

cc @ezyang @gchanan @zou3519 @bdhirsh @jbschlosser @ngimel @jlin27 @mruberry | 1.0 | torch.cuda.memory_allocated() doesn't correctly work until the context is initialized - ## 🐛 Bug

The manpage https://pytorch.org/docs/stable/cuda.html#torch.cuda.memory_allocated advertises that it's possible to pass an `int` argument but it doesn't work.

And even if I create a device argument it doesn't work correctly in multi-gpu settings - giving me stats for the first device for all other devices.

## To Reproduce

Steps to reproduce the behavior:

```

python -c 'import torch; print(torch.cuda.memory_allocated(0))'

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 306, in memory_allocated

return memory_stats(device=device)["allocated_bytes.all.current"]

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 187, in memory_stats

stats = memory_stats_as_nested_dict(device=device)

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 197, in memory_stats_as_nested_dict

return torch._C._cuda_memoryStats(device)

RuntimeError: Invalid device argument.

```

If I make use of the context manager device as a device, then it works:

```

python -c 'import torch; print(torch.cuda.memory_allocated(torch.cuda.device("cuda:0")))'

0

```

It also starts working if I first call `torch.cuda.get_device_name(0))`:

```

python -c 'import torch; print(torch.cuda.get_device_name(0)); print(torch.cuda.memory_allocated(0))'

```

It also seems to just give device 0 stats regardless of what I pass to it, e.g. with 2 gpus:

```

python -c 'import torch; x=torch.ones(10<<20).to(0); y=torch.ones(10).to(1);print([torch.cuda.memory_allocated(torch.cuda.device(f"cuda:{id}")) for id in range(torch.cuda.device_count())])'

[41943040, 41943040]

```

`torch.cuda.memory_allocated` for device 1 returns the same stats as device 0.

If I replace `torch.cuda.device` with `torch.device` it works correctly. But then fails again if I don't do the `to()` calls:

```

python -c 'import torch; x=torch.ones(10<<10); y=torch.ones(10);print([torch.cuda.memory_allocated(torch.device(f"cuda:{id}")) for id in range(torch.cuda.device_count())]);'

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "<string>", line 1, in <listcomp>

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 306, in memory_allocated

return memory_stats(device=device)["allocated_bytes.all.current"]

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 187, in memory_stats

stats = memory_stats_as_nested_dict(device=device)

File "/home/stas/anaconda3/envs/main-38/lib/python3.8/site-packages/torch/cuda/memory.py", line 197, in memory_stats_as_nested_dict

return torch._C._cuda_memoryStats(device)

RuntimeError: Invalid device argument.

```

A pattern appears that this function only works once some operation on the desired device has been performed, and then it reports correctly only for that device and not others.

I'm seeing other ops having the same problem: e.g. [this](https://github.com/NVIDIA/apex/issues/1022) and [this](https://github.com/pytorch/pytorch/issues/21819) and the fix is to run some other safe op like `torch.cuda.set_device` which then fixes these unsafe ops.

It appears that the only way I can take a snapshot of memory allocations per device is with context manager:

```

per_device_memory = []

for id in range(torch.cuda.device_count()):

with torch.cuda.device(id):

per_device_memory.append(torch.cuda.memory_allocated())

```

and this is not possible:

```

per_device_memory = [torch.cuda.memory_allocated(id) for id in range(torch.cuda.device_count())]

```

which is very odd.

## Expected behavior

1. Either the documentation is wrong, or there is a bug and it should return the memory usage for an `int` device number.

2. The behavior seems to be inconsistent depending on what cuda functions are called prior to this function

3. `torch.device` and `torch.cuda.device` seem to be interchangeable in some situations but not others

4. it must not report memory usage for 0th device when it's being asked to report memory usage for other devices

## Environment

```

PyTorch version: 1.8.0.dev20201219+cu110

Is debug build: False

CUDA used to build PyTorch: 11.0

ROCM used to build PyTorch: N/A

OS: Ubuntu 20.04.1 LTS (x86_64)

GCC version: (Ubuntu 9.3.0-17ubuntu1~20.04) 9.3.0

Clang version: 10.0.0-4ubuntu1

CMake version: version 3.18.2

Python version: 3.8 (64-bit runtime)

Is CUDA available: True

CUDA runtime version: Could not collect

GPU models and configuration:

GPU 0: GeForce GTX 1070 Ti

GPU 1: GeForce RTX 3090

Nvidia driver version: 455.45.01

cuDNN version: Probably one of the following:

/usr/lib/x86_64-linux-gnu/libcudnn.so.7.6.5

/usr/lib/x86_64-linux-gnu/libcudnn.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_adv_infer.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_adv_train.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_cnn_infer.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_cnn_train.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_ops_infer.so.8.0.4

/usr/lib/x86_64-linux-gnu/libcudnn_ops_train.so.8.0.4

HIP runtime version: N/A

MIOpen runtime version: N/A

Versions of relevant libraries:

[pip3] numpy==1.19.4

[pip3] pytorch-lightning==1.1.1rc0

[pip3] pytorch-memlab==0.2.2

[pip3] torch==1.8.0.dev20201219+cu110

[pip3] torchtext==0.6.0

[pip3] torchvision==0.9.0a0+f80b83e

[conda] blas 1.0 mkl

[conda] magma-cuda111 2.5.2 1 pytorch

[conda] mkl 2020.2 256

[conda] mkl-include 2020.2 256

[conda] mkl-service 2.3.0 py38he904b0f_0

[conda] mkl_fft 1.2.0 py38h23d657b_0

[conda] mkl_random 1.1.1 py38h0573a6f_0

[conda] numpy 1.19.4 pypi_0 pypi

[conda] pytorch-lightning 1.1.1rc0 dev_0 <develop>

[conda] pytorch-memlab 0.2.2 pypi_0 pypi

[conda] torch 1.8.0.dev20201219+cu110 pypi_0 pypi

[conda] torchtext 0.6.0 pypi_0 pypi

[conda] torchvision 0.9.0a0+f80b83e dev_0 <develop>

```

cc @ezyang @gchanan @zou3519 @bdhirsh @jbschlosser @ngimel @jlin27 @mruberry | priority | torch cuda memory allocated doesn t correctly work until the context is initialized 🐛 bug the manpage advertises that it s possible to pass an int argument but it doesn t work and even if i create a device argument it doesn t work correctly in multi gpu settings giving me stats for the first device for all other devices to reproduce steps to reproduce the behavior python c import torch print torch cuda memory allocated traceback most recent call last file line in file home stas envs main lib site packages torch cuda memory py line in memory allocated return memory stats device device file home stas envs main lib site packages torch cuda memory py line in memory stats stats memory stats as nested dict device device file home stas envs main lib site packages torch cuda memory py line in memory stats as nested dict return torch c cuda memorystats device runtimeerror invalid device argument if i make use of the context manager device as a device then it works python c import torch print torch cuda memory allocated torch cuda device cuda it also starts working if i first call torch cuda get device name python c import torch print torch cuda get device name print torch cuda memory allocated it also seems to just give device stats regardless of what i pass to it e g with gpus python c import torch x torch ones to y torch ones to print torch cuda memory allocated for device returns the same stats as device if i replace torch cuda device with torch device it works correctly but then fails again if i don t do the to calls python c import torch x torch ones y torch ones print traceback most recent call last file line in file line in file home stas envs main lib site packages torch cuda memory py line in memory allocated return memory stats device device file home stas envs main lib site packages torch cuda memory py line in memory stats stats memory stats as nested dict device device file home stas envs main lib site packages torch cuda memory py line in memory stats as nested dict return torch c cuda memorystats device runtimeerror invalid device argument a pattern appears that this function only works once some operation on the desired device has been performed and then it reports correctly only for that device and not others i m seeing other ops having the same problem e g and and the fix is to run some other safe op like torch cuda set device which then fixes these unsafe ops it appears that the only way i can take a snapshot of memory allocations per device is with context manager per device memory for id in range torch cuda device count with torch cuda device id per device memory append torch cuda memory allocated and this is not possible per device memory which is very odd expected behavior either the documentation is wrong or there is a bug and it should return the memory usage for an int device number the behavior seems to be inconsistent depending on what cuda functions are called prior to this function torch device and torch cuda device seem to be interchangeable in some situations but not others it must not report memory usage for device when it s being asked to report memory usage for other devices environment pytorch version is debug build false cuda used to build pytorch rocm used to build pytorch n a os ubuntu lts gcc version ubuntu clang version cmake version version python version bit runtime is cuda available true cuda runtime version could not collect gpu models and configuration gpu geforce gtx ti gpu geforce rtx nvidia driver version cudnn version probably one of the following usr lib linux gnu libcudnn so usr lib linux gnu libcudnn so usr lib linux gnu libcudnn adv infer so usr lib linux gnu libcudnn adv train so usr lib linux gnu libcudnn cnn infer so usr lib linux gnu libcudnn cnn train so usr lib linux gnu libcudnn ops infer so usr lib linux gnu libcudnn ops train so hip runtime version n a miopen runtime version n a versions of relevant libraries numpy pytorch lightning pytorch memlab torch torchtext torchvision blas mkl magma pytorch mkl mkl include mkl service mkl fft mkl random numpy pypi pypi pytorch lightning dev pytorch memlab pypi pypi torch pypi pypi torchtext pypi pypi torchvision dev cc ezyang gchanan bdhirsh jbschlosser ngimel mruberry | 1 |

608,942 | 18,851,666,071 | IssuesEvent | 2021-11-11 21:47:38 | nothingworksright/api.recipe.report | https://api.github.com/repos/nothingworksright/api.recipe.report | closed | Better status code responses | 🚀 Enhancement 🧑 Complexity 3 - Some ⚡ Priority 3 - High 🤫 Severity 1 - Mild | For now most things are either 200 or 500. There should be better specific codes and 400 codes depending on the reason for the error. | 1.0 | Better status code responses - For now most things are either 200 or 500. There should be better specific codes and 400 codes depending on the reason for the error. | priority | better status code responses for now most things are either or there should be better specific codes and codes depending on the reason for the error | 1 |

524,742 | 15,222,655,247 | IssuesEvent | 2021-02-18 00:47:36 | canonical-web-and-design/maas-ui | https://api.github.com/repos/canonical-web-and-design/maas-ui | closed | Searching(GUI) for machines with the name starting with number does not work in version 2.8.2 | Priority: High | Bug originally filed by pedrovlf at https://bugs.launchpad.net/bugs/1912529

In version 2.8.2(snap) of MAAS when trying to use the GUI to search for a machine that starts with a name, it doesn't bring any results, it doesn't work(search-maas1.png).

e.g. Machine named 87ontario2-compute016 does not work

When trying to search using hostname:=87ontario2-compute016 this works. | 1.0 | Searching(GUI) for machines with the name starting with number does not work in version 2.8.2 - Bug originally filed by pedrovlf at https://bugs.launchpad.net/bugs/1912529

In version 2.8.2(snap) of MAAS when trying to use the GUI to search for a machine that starts with a name, it doesn't bring any results, it doesn't work(search-maas1.png).

e.g. Machine named 87ontario2-compute016 does not work

When trying to search using hostname:=87ontario2-compute016 this works. | priority | searching gui for machines with the name starting with number does not work in version bug originally filed by pedrovlf at in version snap of maas when trying to use the gui to search for a machine that starts with a name it doesn t bring any results it doesn t work search png e g machine named does not work when trying to search using hostname this works | 1 |

76,572 | 3,489,313,363 | IssuesEvent | 2016-01-03 19:42:45 | horaklukas/posterator | https://api.github.com/repos/horaklukas/posterator | opened | Unsaved titles changes | enhancement priority-high | Add popup window warning about unsaved changes in poster when any action that could cause data lost happen. For better protection against data lost, unsaved changes could be saved in some cache (eg. cookies) and when app start restoring of them would be offered.

| 1.0 | Unsaved titles changes - Add popup window warning about unsaved changes in poster when any action that could cause data lost happen. For better protection against data lost, unsaved changes could be saved in some cache (eg. cookies) and when app start restoring of them would be offered.

| priority | unsaved titles changes add popup window warning about unsaved changes in poster when any action that could cause data lost happen for better protection against data lost unsaved changes could be saved in some cache eg cookies and when app start restoring of them would be offered | 1 |

239,296 | 7,788,422,276 | IssuesEvent | 2018-06-07 04:34:54 | hackoregon/civic-devops | https://api.github.com/repos/hackoregon/civic-devops | closed | Implement smoke test for containers that currently deploy with only dummy tests | Priority: high bug | Some API projects have been implemented with dummy tests that just call `pass`. This was done to get around a Travis issue where it would fail every job when it detected 0 tests in the project.

Since then we've had many containers that deploy without being able to pass the basic "health check" from ALB (which is implemented as a GET request for the base route for each API project, looking for a 200-299 HTTP response). e.g. for the Local Elections API, ALB requests `/local-elections/` and is currently receiving a 404 Not Found response (see [bug 3](https://github.com/hackoregon/elections-2018-backend/issues/3) for details). (Also see #140, and ignore the fact I closed that issue without addressing it.)

So to prevent these jank containers from deploying, and causing a lot of ECS thrash (cf. #149), we need at least a bare minimum test that validates the following:

- gunicorn is running

- Django code isn’t crashing, and

- there’s a 200 responding on at least the tested endpoint

@BrianHGrant is the Django man - as always, he volunteered up the perfect snippet:

```

from django.test import TestCase

from rest_framework.test import APIClient, RequestsClient

class RootTest(TestCase):

""" Test for Root Endpoint"""

def setUp(self):

pass

class RootEndpointsTestCase(TestCase):

def setUp(self):

self.client = APIClient()

def test_list_200_response(self):

response = self.client.get('/transportation-systems/')

assert response.status_code == 200

```

I realize it’s not actually inspecting the response to see that it’s Django, nor whether the response is a valid output e.g. good HTML, valid JSON, anything like that. However, what with the `pass` “tests” we’ve implemented in most projects to get past the test phase of Travis, this has to be far better, and should catch some of the Django startup issues that we’ve been screwed by, before they get deployed to ECS.

Let's get this into all the API projects:

- [x] [disaster-resilience-backend](https://github.com/hackoregon/disaster-resilience-backend)

- [x] [elections-2018-backend](https://github.com/hackoregon/elections-2018-backend)

- [x] [housing-2018](https://github.com/hackoregon/housing-2018)

- [x] [neighborhoods-2018](https://github.com/hackoregon/neighborhoods-2018)

- [x] [transportation-systems-backend-2018](https://github.com/hackoregon/transportation-systems-backend-2018)

| 1.0 | Implement smoke test for containers that currently deploy with only dummy tests - Some API projects have been implemented with dummy tests that just call `pass`. This was done to get around a Travis issue where it would fail every job when it detected 0 tests in the project.

Since then we've had many containers that deploy without being able to pass the basic "health check" from ALB (which is implemented as a GET request for the base route for each API project, looking for a 200-299 HTTP response). e.g. for the Local Elections API, ALB requests `/local-elections/` and is currently receiving a 404 Not Found response (see [bug 3](https://github.com/hackoregon/elections-2018-backend/issues/3) for details). (Also see #140, and ignore the fact I closed that issue without addressing it.)

So to prevent these jank containers from deploying, and causing a lot of ECS thrash (cf. #149), we need at least a bare minimum test that validates the following:

- gunicorn is running

- Django code isn’t crashing, and

- there’s a 200 responding on at least the tested endpoint

@BrianHGrant is the Django man - as always, he volunteered up the perfect snippet:

```

from django.test import TestCase

from rest_framework.test import APIClient, RequestsClient

class RootTest(TestCase):

""" Test for Root Endpoint"""

def setUp(self):

pass

class RootEndpointsTestCase(TestCase):

def setUp(self):

self.client = APIClient()

def test_list_200_response(self):

response = self.client.get('/transportation-systems/')

assert response.status_code == 200

```

I realize it’s not actually inspecting the response to see that it’s Django, nor whether the response is a valid output e.g. good HTML, valid JSON, anything like that. However, what with the `pass` “tests” we’ve implemented in most projects to get past the test phase of Travis, this has to be far better, and should catch some of the Django startup issues that we’ve been screwed by, before they get deployed to ECS.

Let's get this into all the API projects:

- [x] [disaster-resilience-backend](https://github.com/hackoregon/disaster-resilience-backend)

- [x] [elections-2018-backend](https://github.com/hackoregon/elections-2018-backend)

- [x] [housing-2018](https://github.com/hackoregon/housing-2018)

- [x] [neighborhoods-2018](https://github.com/hackoregon/neighborhoods-2018)

- [x] [transportation-systems-backend-2018](https://github.com/hackoregon/transportation-systems-backend-2018)

| priority | implement smoke test for containers that currently deploy with only dummy tests some api projects have been implemented with dummy tests that just call pass this was done to get around a travis issue where it would fail every job when it detected tests in the project since then we ve had many containers that deploy without being able to pass the basic health check from alb which is implemented as a get request for the base route for each api project looking for a http response e g for the local elections api alb requests local elections and is currently receiving a not found response see for details also see and ignore the fact i closed that issue without addressing it so to prevent these jank containers from deploying and causing a lot of ecs thrash cf we need at least a bare minimum test that validates the following gunicorn is running django code isn’t crashing and there’s a responding on at least the tested endpoint brianhgrant is the django man as always he volunteered up the perfect snippet from django test import testcase from rest framework test import apiclient requestsclient class roottest testcase test for root endpoint def setup self pass class rootendpointstestcase testcase def setup self self client apiclient def test list response self response self client get transportation systems assert response status code i realize it’s not actually inspecting the response to see that it’s django nor whether the response is a valid output e g good html valid json anything like that however what with the pass “tests” we’ve implemented in most projects to get past the test phase of travis this has to be far better and should catch some of the django startup issues that we’ve been screwed by before they get deployed to ecs let s get this into all the api projects | 1 |

779,889 | 27,370,313,420 | IssuesEvent | 2023-02-27 22:49:39 | Automattic/woocommerce-payments | https://api.github.com/repos/Automattic/woocommerce-payments | opened | Fatal errors with WC Subscriptions installed | type: bug priority: high component: wc subscriptions integration | ### Describe the bug

If WC Subscriptions detects an old version of WooCommerce's database it deactivates itself. If WCPay

is also in use this can cause Fatal Errors.

```

Fatal error: Uncaught Error: Class "WC_Subscriptions_Cart" not found

in woocommerce-payments/includes/compat/subscriptions/trait-wc-payments-subscriptions-utilities.php on line 87

```

```

Fatal error: Uncaught Error: Class 'WC_Subscriptions_Product' not found in woocommerce-

payments/includes/subscriptions/class-wc-payments-product-service.php:192

```

### To Reproduce

Fatal errors can be triggered by manually updating $wc_minimum_required_version in woocommerce-subscriptions/woocommerce-subscriptions.php to something above the current WC version eg 7.5

### Additional context

<!-- Any additional context or details you think might be helpful. -->

<!-- Ticket numbers/links, plugin versions, system statuses etc. -->

5924491-zd-woothemes

5921622-zd-woothemes

https://wordpress.org/support/topic/fatal-error-4375/ | 1.0 | Fatal errors with WC Subscriptions installed - ### Describe the bug

If WC Subscriptions detects an old version of WooCommerce's database it deactivates itself. If WCPay

is also in use this can cause Fatal Errors.

```

Fatal error: Uncaught Error: Class "WC_Subscriptions_Cart" not found

in woocommerce-payments/includes/compat/subscriptions/trait-wc-payments-subscriptions-utilities.php on line 87

```

```

Fatal error: Uncaught Error: Class 'WC_Subscriptions_Product' not found in woocommerce-

payments/includes/subscriptions/class-wc-payments-product-service.php:192

```

### To Reproduce

Fatal errors can be triggered by manually updating $wc_minimum_required_version in woocommerce-subscriptions/woocommerce-subscriptions.php to something above the current WC version eg 7.5

### Additional context

<!-- Any additional context or details you think might be helpful. -->

<!-- Ticket numbers/links, plugin versions, system statuses etc. -->

5924491-zd-woothemes

5921622-zd-woothemes

https://wordpress.org/support/topic/fatal-error-4375/ | priority | fatal errors with wc subscriptions installed describe the bug if wc subscriptions detects an old version of woocommerce s database it deactivates itself if wcpay is also in use this can cause fatal errors fatal error uncaught error class wc subscriptions cart not found in woocommerce payments includes compat subscriptions trait wc payments subscriptions utilities php on line fatal error uncaught error class wc subscriptions product not found in woocommerce payments includes subscriptions class wc payments product service php to reproduce fatal errors can be triggered by manually updating wc minimum required version in woocommerce subscriptions woocommerce subscriptions php to something above the current wc version eg additional context zd woothemes zd woothemes | 1 |

274,385 | 8,560,378,001 | IssuesEvent | 2018-11-09 00:56:26 | OpenSRP/opensrp-server-web | https://api.github.com/repos/OpenSRP/opensrp-server-web | opened | Create a postgres data warehouse database on Amazon RDS | Data Warehouse Priority: High | We need a data storage mechanism for the data warehouse. The demo, field testing and pilot system will need to be run by Ona. This ticket focuses on setting up an Amazon RDS instance for Reveal's data warehouse.

- [ ] Create an RDS instance on AWS and add it to the appropriate setup scripts. | 1.0 | Create a postgres data warehouse database on Amazon RDS - We need a data storage mechanism for the data warehouse. The demo, field testing and pilot system will need to be run by Ona. This ticket focuses on setting up an Amazon RDS instance for Reveal's data warehouse.

- [ ] Create an RDS instance on AWS and add it to the appropriate setup scripts. | priority | create a postgres data warehouse database on amazon rds we need a data storage mechanism for the data warehouse the demo field testing and pilot system will need to be run by ona this ticket focuses on setting up an amazon rds instance for reveal s data warehouse create an rds instance on aws and add it to the appropriate setup scripts | 1 |

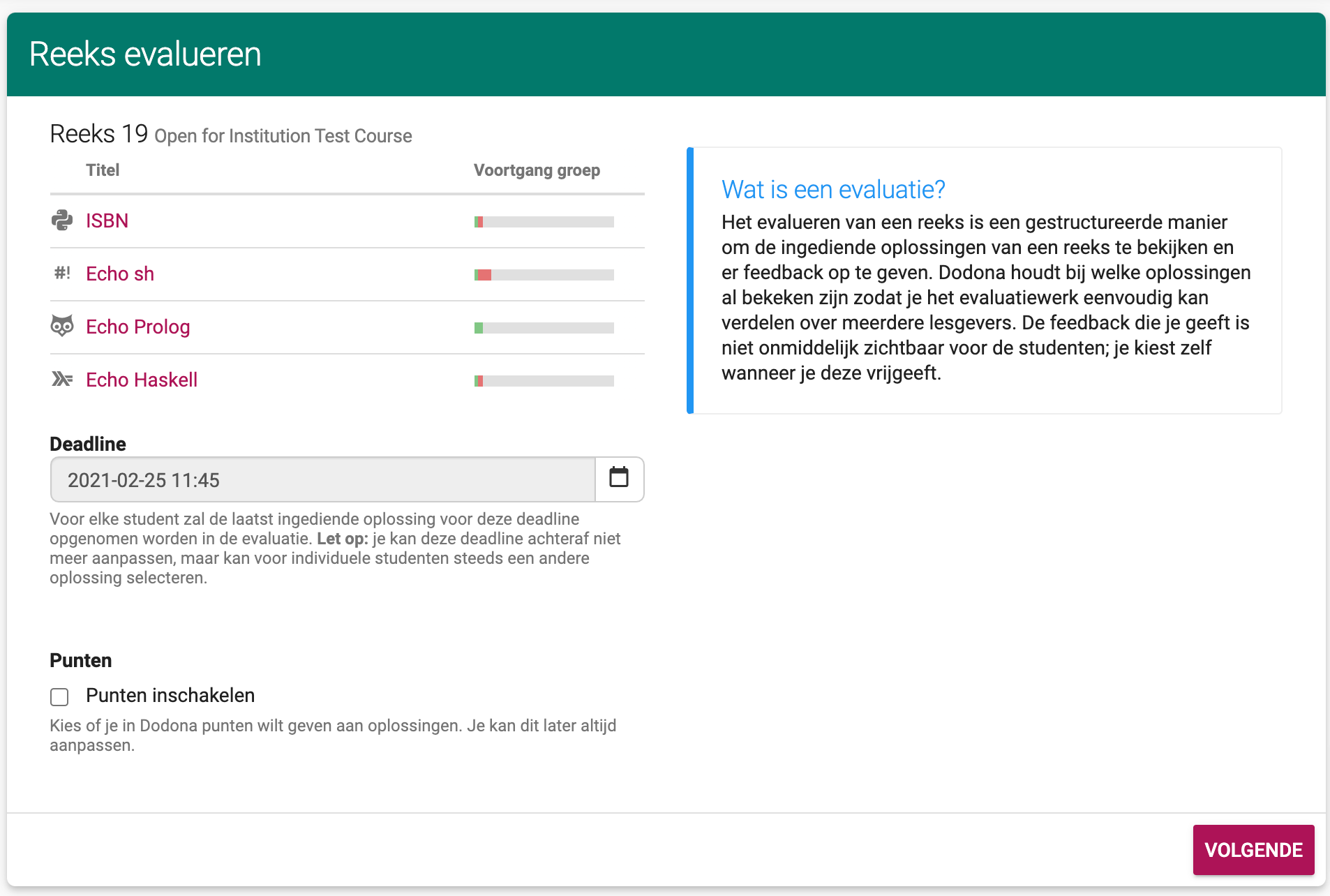

592,764 | 17,929,692,004 | IssuesEvent | 2021-09-10 07:32:38 | dodona-edu/dodona | https://api.github.com/repos/dodona-edu/dodona | closed | Use stepper component in evaluation wizard | feature high priority |

This is the first screen a user gets when starting an evaluation and this might be optimized a bit. A user has to set a deadline here and indicate if he wants to enable grades. The rest is just extra information (eg, the series overview) but this isn't super clear.

- include something about grades in the info box

- make it clearer that the user has to pick a deadline and can enable grades. Some ideas which may or may not work:

- slightly change the wording, eg Deadline -> Pick a deadline

- add a "Settings" header to separate the top part of the page from the bottom part

- Add some text to the top of the series overview that makes it clear that the listing of exercises is just informational

- Maybe also tweak the card title to make it more clear that we started some sort of wizard, eg "Configuring a series evaluation"

_Originally posted by @bmesuere in https://github.com/dodona-edu/dodona/issues/2462#issuecomment-785808920_

_The score-related options have been removed from this page, but we can still check if we can apply some of the other suggestions_. | 1.0 | Use stepper component in evaluation wizard -

This is the first screen a user gets when starting an evaluation and this might be optimized a bit. A user has to set a deadline here and indicate if he wants to enable grades. The rest is just extra information (eg, the series overview) but this isn't super clear.

- include something about grades in the info box

- make it clearer that the user has to pick a deadline and can enable grades. Some ideas which may or may not work:

- slightly change the wording, eg Deadline -> Pick a deadline

- add a "Settings" header to separate the top part of the page from the bottom part

- Add some text to the top of the series overview that makes it clear that the listing of exercises is just informational

- Maybe also tweak the card title to make it more clear that we started some sort of wizard, eg "Configuring a series evaluation"

_Originally posted by @bmesuere in https://github.com/dodona-edu/dodona/issues/2462#issuecomment-785808920_

_The score-related options have been removed from this page, but we can still check if we can apply some of the other suggestions_. | priority | use stepper component in evaluation wizard this is the first screen a user gets when starting an evaluation and this might be optimized a bit a user has to set a deadline here and indicate if he wants to enable grades the rest is just extra information eg the series overview but this isn t super clear include something about grades in the info box make it clearer that the user has to pick a deadline and can enable grades some ideas which may or may not work slightly change the wording eg deadline pick a deadline add a settings header to separate the top part of the page from the bottom part add some text to the top of the series overview that makes it clear that the listing of exercises is just informational maybe also tweak the card title to make it more clear that we started some sort of wizard eg configuring a series evaluation originally posted by bmesuere in the score related options have been removed from this page but we can still check if we can apply some of the other suggestions | 1 |

100,056 | 4,075,913,597 | IssuesEvent | 2016-05-29 14:57:40 | moria0525/MadeinJLM-students | https://api.github.com/repos/moria0525/MadeinJLM-students | closed | Profile development | 1 - Ready Dor-H moria0525 Points: 8 Priority: Very High sh00ki |

## User Story Template

- As a user

- I want a profile with all my information

- So that companies will know more about me

## Bug Template

#### Expected behavior

#### Actual behavior

#### Steps to reproduce the behavior

<!---

@huboard:{"order":27.0,"milestone_order":45,"custom_state":""}

-->

| 1.0 | Profile development -

## User Story Template

- As a user

- I want a profile with all my information

- So that companies will know more about me

## Bug Template

#### Expected behavior

#### Actual behavior

#### Steps to reproduce the behavior

<!---

@huboard:{"order":27.0,"milestone_order":45,"custom_state":""}

-->

| priority | profile development user story template as a user i want a profile with all my information so that companies will know more about me bug template expected behavior actual behavior steps to reproduce the behavior huboard order milestone order custom state | 1 |

779,381 | 27,351,061,717 | IssuesEvent | 2023-02-27 09:35:34 | orden-gg/fireball | https://api.github.com/repos/orden-gg/fireball | opened | Move ClientContext to store | enhancement priority: high | Right now `ClientContext` has a lot of complexity and unclearness, would be good to move it into a store to separate business logic and reduce complexity. | 1.0 | Move ClientContext to store - Right now `ClientContext` has a lot of complexity and unclearness, would be good to move it into a store to separate business logic and reduce complexity. | priority | move clientcontext to store right now clientcontext has a lot of complexity and unclearness would be good to move it into a store to separate business logic and reduce complexity | 1 |

403,333 | 11,839,349,720 | IssuesEvent | 2020-03-23 17:01:08 | ansible/galaxy_ng | https://api.github.com/repos/ansible/galaxy_ng | opened | Importer: build image using pulp_container | area/importer priority/high status/new type/enhancement | Subtask of #6

When running the import process outside of c.rh.c, use `pulp_container` to integrate with Buildah and create the needed container image. | 1.0 | Importer: build image using pulp_container - Subtask of #6

When running the import process outside of c.rh.c, use `pulp_container` to integrate with Buildah and create the needed container image. | priority | importer build image using pulp container subtask of when running the import process outside of c rh c use pulp container to integrate with buildah and create the needed container image | 1 |

405,582 | 11,879,175,695 | IssuesEvent | 2020-03-27 08:11:43 | Baschdl/match4healthcare | https://api.github.com/repos/Baschdl/match4healthcare | opened | Log-In Form: Add note concerning username and e-mail delivery time | dev-ready high-priority | Bitte Hinweis am Nutzerformular einfügen:

> Deine e-Mail Adresse ist gleichzeitig dein Benutzername. In Einzelfällen kann es etwas dauern, bis die Bestätigungsmail dich erreicht, da einige e-Mail Anbieter diese nicht sofort durchlassen

Reason:

https://match4healthcare.slack.com/archives/C010K4T5YGZ/p1585245475001000

(Plus weitere Berichte) | 1.0 | Log-In Form: Add note concerning username and e-mail delivery time - Bitte Hinweis am Nutzerformular einfügen:

> Deine e-Mail Adresse ist gleichzeitig dein Benutzername. In Einzelfällen kann es etwas dauern, bis die Bestätigungsmail dich erreicht, da einige e-Mail Anbieter diese nicht sofort durchlassen

Reason:

https://match4healthcare.slack.com/archives/C010K4T5YGZ/p1585245475001000

(Plus weitere Berichte) | priority | log in form add note concerning username and e mail delivery time bitte hinweis am nutzerformular einfügen deine e mail adresse ist gleichzeitig dein benutzername in einzelfällen kann es etwas dauern bis die bestätigungsmail dich erreicht da einige e mail anbieter diese nicht sofort durchlassen reason plus weitere berichte | 1 |

494,378 | 14,256,423,249 | IssuesEvent | 2020-11-20 00:58:38 | phetsims/chipper | https://api.github.com/repos/phetsims/chipper | reopened | [mac specific] No Xcode or CLT version detected | meeting:developer priority:2-high type:bug | Related to https://github.com/phetsims/chipper/issues/990

After updating to Node 14, then cleaning node_modules and running `npm install`, I see these errors:

```

~/apache-document-root/main/energy-skate-park$ cd ../chipper/

~/apache-document-root/main/chipper$ rm -rf node_modules/

~/apache-document-root/main/chipper$ npm install

> fsevents@1.2.13 install /Users/samreid/apache-document-root/main/chipper/node_modules/watchpack-chokidar2/node_modules/fsevents

> node install.js

No receipt for 'com.apple.pkg.CLTools_Executables' found at '/'.

No receipt for 'com.apple.pkg.DeveloperToolsCLILeo' found at '/'.

No receipt for 'com.apple.pkg.DeveloperToolsCLI' found at '/'.

gyp: No Xcode or CLT version detected!

gyp ERR! configure error

gyp ERR! stack Error: `gyp` failed with exit code: 1

gyp ERR! stack at ChildProcess.onCpExit (/Users/samreid/.npm-global/lib/node_modules/npm/node_modules/node-gyp/lib/configure.js:351:16)

gyp ERR! stack at ChildProcess.emit (events.js:315:20)

gyp ERR! stack at Process.ChildProcess._handle.onexit (internal/child_process.js:277:12)

gyp ERR! System Darwin 19.6.0

gyp ERR! command "/usr/local/bin/node" "/Users/samreid/.npm-global/lib/node_modules/npm/node_modules/node-gyp/bin/node-gyp.js" "rebuild"

gyp ERR! cwd /Users/samreid/apache-document-root/main/chipper/node_modules/watchpack-chokidar2/node_modules/fsevents

gyp ERR! node -v v14.15.0

gyp ERR! node-gyp -v v5.1.0

gyp ERR! not ok

> fsevents@1.2.13 install /Users/samreid/apache-document-root/main/chipper/node_modules/webpack-dev-server/node_modules/fsevents

> node install.js

No receipt for 'com.apple.pkg.CLTools_Executables' found at '/'.

No receipt for 'com.apple.pkg.DeveloperToolsCLILeo' found at '/'.

No receipt for 'com.apple.pkg.DeveloperToolsCLI' found at '/'.

gyp: No Xcode or CLT version detected!

gyp ERR! configure error

gyp ERR! stack Error: `gyp` failed with exit code: 1

gyp ERR! stack at ChildProcess.onCpExit (/Users/samreid/.npm-global/lib/node_modules/npm/node_modules/node-gyp/lib/configure.js:351:16)

gyp ERR! stack at ChildProcess.emit (events.js:315:20)

gyp ERR! stack at Process.ChildProcess._handle.onexit (internal/child_process.js:277:12)

gyp ERR! System Darwin 19.6.0

gyp ERR! command "/usr/local/bin/node" "/Users/samreid/.npm-global/lib/node_modules/npm/node_modules/node-gyp/bin/node-gyp.js" "rebuild"

gyp ERR! cwd /Users/samreid/apache-document-root/main/chipper/node_modules/webpack-dev-server/node_modules/fsevents

gyp ERR! node -v v14.15.0

gyp ERR! node-gyp -v v5.1.0

gyp ERR! not ok

> puppeteer@2.1.1 install /Users/samreid/apache-document-root/main/chipper/node_modules/puppeteer

> node install.js

Downloading Chromium r722234 - 116.4 Mb [====================] 100% 0.0s

Chromium downloaded to /Users/samreid/apache-document-root/main/chipper/node_modules/puppeteer/.local-chromium/mac-722234

added 1053 packages from 1388 contributors and audited 1055 packages in 23.514s

44 packages are looking for funding

run `npm fund` for details

found 4 vulnerabilities (1 low, 1 moderate, 2 high)

run `npm audit fix` to fix them, or `npm audit` for details

~/apache-document-root/main/chipper$ cd ../energy-skate-park

~/apache-document-root/main/energy-skate-park$ grunt

(node:215) Warning: Accessing non-existent property 'padLevels' of module exports inside circular dependency

(Use `node --trace-warnings ...` to show where the warning was created)

Running "lint-all" task

Running "report-media" task

Running "clean" task

Running "build" task

Building runnable repository (energy-skate-park, brands: phet, phet-io)

Building brand: phet

>> Webpack build complete: 2778ms

>> Unused images module: energy-skate-park/images/skater-icon_png.js

>> Production minification complete: 19184ms (2172328 bytes)

>> Debug minification complete: 0ms (6656430 bytes)

Building brand: phet-io

>> Webpack build complete: 2079ms

>> Unused images module: energy-skate-park/images/skater-icon_png.js

>> Production minification complete: 15328ms (2186122 bytes)

>> Debug minification complete: 16000ms (2453198 bytes)

>> No client guides found at ../phet-io-client-guides/energy-skate-park/, no guides being built.

Done.

```

Assigning to @ariel-phet to prioritize and delegate. | 1.0 | [mac specific] No Xcode or CLT version detected - Related to https://github.com/phetsims/chipper/issues/990

After updating to Node 14, then cleaning node_modules and running `npm install`, I see these errors:

```

~/apache-document-root/main/energy-skate-park$ cd ../chipper/

~/apache-document-root/main/chipper$ rm -rf node_modules/

~/apache-document-root/main/chipper$ npm install

> fsevents@1.2.13 install /Users/samreid/apache-document-root/main/chipper/node_modules/watchpack-chokidar2/node_modules/fsevents

> node install.js

No receipt for 'com.apple.pkg.CLTools_Executables' found at '/'.

No receipt for 'com.apple.pkg.DeveloperToolsCLILeo' found at '/'.

No receipt for 'com.apple.pkg.DeveloperToolsCLI' found at '/'.

gyp: No Xcode or CLT version detected!

gyp ERR! configure error

gyp ERR! stack Error: `gyp` failed with exit code: 1

gyp ERR! stack at ChildProcess.onCpExit (/Users/samreid/.npm-global/lib/node_modules/npm/node_modules/node-gyp/lib/configure.js:351:16)

gyp ERR! stack at ChildProcess.emit (events.js:315:20)

gyp ERR! stack at Process.ChildProcess._handle.onexit (internal/child_process.js:277:12)

gyp ERR! System Darwin 19.6.0

gyp ERR! command "/usr/local/bin/node" "/Users/samreid/.npm-global/lib/node_modules/npm/node_modules/node-gyp/bin/node-gyp.js" "rebuild"

gyp ERR! cwd /Users/samreid/apache-document-root/main/chipper/node_modules/watchpack-chokidar2/node_modules/fsevents

gyp ERR! node -v v14.15.0

gyp ERR! node-gyp -v v5.1.0

gyp ERR! not ok

> fsevents@1.2.13 install /Users/samreid/apache-document-root/main/chipper/node_modules/webpack-dev-server/node_modules/fsevents

> node install.js

No receipt for 'com.apple.pkg.CLTools_Executables' found at '/'.

No receipt for 'com.apple.pkg.DeveloperToolsCLILeo' found at '/'.

No receipt for 'com.apple.pkg.DeveloperToolsCLI' found at '/'.

gyp: No Xcode or CLT version detected!

gyp ERR! configure error

gyp ERR! stack Error: `gyp` failed with exit code: 1

gyp ERR! stack at ChildProcess.onCpExit (/Users/samreid/.npm-global/lib/node_modules/npm/node_modules/node-gyp/lib/configure.js:351:16)

gyp ERR! stack at ChildProcess.emit (events.js:315:20)

gyp ERR! stack at Process.ChildProcess._handle.onexit (internal/child_process.js:277:12)

gyp ERR! System Darwin 19.6.0

gyp ERR! command "/usr/local/bin/node" "/Users/samreid/.npm-global/lib/node_modules/npm/node_modules/node-gyp/bin/node-gyp.js" "rebuild"

gyp ERR! cwd /Users/samreid/apache-document-root/main/chipper/node_modules/webpack-dev-server/node_modules/fsevents

gyp ERR! node -v v14.15.0

gyp ERR! node-gyp -v v5.1.0

gyp ERR! not ok

> puppeteer@2.1.1 install /Users/samreid/apache-document-root/main/chipper/node_modules/puppeteer

> node install.js

Downloading Chromium r722234 - 116.4 Mb [====================] 100% 0.0s

Chromium downloaded to /Users/samreid/apache-document-root/main/chipper/node_modules/puppeteer/.local-chromium/mac-722234

added 1053 packages from 1388 contributors and audited 1055 packages in 23.514s

44 packages are looking for funding

run `npm fund` for details

found 4 vulnerabilities (1 low, 1 moderate, 2 high)

run `npm audit fix` to fix them, or `npm audit` for details

~/apache-document-root/main/chipper$ cd ../energy-skate-park

~/apache-document-root/main/energy-skate-park$ grunt

(node:215) Warning: Accessing non-existent property 'padLevels' of module exports inside circular dependency

(Use `node --trace-warnings ...` to show where the warning was created)

Running "lint-all" task

Running "report-media" task

Running "clean" task

Running "build" task

Building runnable repository (energy-skate-park, brands: phet, phet-io)

Building brand: phet

>> Webpack build complete: 2778ms

>> Unused images module: energy-skate-park/images/skater-icon_png.js

>> Production minification complete: 19184ms (2172328 bytes)

>> Debug minification complete: 0ms (6656430 bytes)

Building brand: phet-io

>> Webpack build complete: 2079ms

>> Unused images module: energy-skate-park/images/skater-icon_png.js

>> Production minification complete: 15328ms (2186122 bytes)

>> Debug minification complete: 16000ms (2453198 bytes)

>> No client guides found at ../phet-io-client-guides/energy-skate-park/, no guides being built.

Done.

```

Assigning to @ariel-phet to prioritize and delegate. | priority | no xcode or clt version detected related to after updating to node then cleaning node modules and running npm install i see these errors apache document root main energy skate park cd chipper apache document root main chipper rm rf node modules apache document root main chipper npm install fsevents install users samreid apache document root main chipper node modules watchpack node modules fsevents node install js no receipt for com apple pkg cltools executables found at no receipt for com apple pkg developertoolsclileo found at no receipt for com apple pkg developertoolscli found at gyp no xcode or clt version detected gyp err configure error gyp err stack error gyp failed with exit code gyp err stack at childprocess oncpexit users samreid npm global lib node modules npm node modules node gyp lib configure js gyp err stack at childprocess emit events js gyp err stack at process childprocess handle onexit internal child process js gyp err system darwin gyp err command usr local bin node users samreid npm global lib node modules npm node modules node gyp bin node gyp js rebuild gyp err cwd users samreid apache document root main chipper node modules watchpack node modules fsevents gyp err node v gyp err node gyp v gyp err not ok fsevents install users samreid apache document root main chipper node modules webpack dev server node modules fsevents node install js no receipt for com apple pkg cltools executables found at no receipt for com apple pkg developertoolsclileo found at no receipt for com apple pkg developertoolscli found at gyp no xcode or clt version detected gyp err configure error gyp err stack error gyp failed with exit code gyp err stack at childprocess oncpexit users samreid npm global lib node modules npm node modules node gyp lib configure js gyp err stack at childprocess emit events js gyp err stack at process childprocess handle onexit internal child process js gyp err system darwin gyp err command usr local bin node users samreid npm global lib node modules npm node modules node gyp bin node gyp js rebuild gyp err cwd users samreid apache document root main chipper node modules webpack dev server node modules fsevents gyp err node v gyp err node gyp v gyp err not ok puppeteer install users samreid apache document root main chipper node modules puppeteer node install js downloading chromium mb chromium downloaded to users samreid apache document root main chipper node modules puppeteer local chromium mac added packages from contributors and audited packages in packages are looking for funding run npm fund for details found vulnerabilities low moderate high run npm audit fix to fix them or npm audit for details apache document root main chipper cd energy skate park apache document root main energy skate park grunt node warning accessing non existent property padlevels of module exports inside circular dependency use node trace warnings to show where the warning was created running lint all task running report media task running clean task running build task building runnable repository energy skate park brands phet phet io building brand phet webpack build complete unused images module energy skate park images skater icon png js production minification complete bytes debug minification complete bytes building brand phet io webpack build complete unused images module energy skate park images skater icon png js production minification complete bytes debug minification complete bytes no client guides found at phet io client guides energy skate park no guides being built done assigning to ariel phet to prioritize and delegate | 1 |

612,530 | 19,024,640,556 | IssuesEvent | 2021-11-24 00:52:28 | microsoft/fluentui | https://api.github.com/repos/microsoft/fluentui | closed | Label needs ARIA hidden on red asterisk | Priority 1: High Component: Label Status: In PR Fluent UI vNext Fit and Finish | This was originally opened by @yvonne-chien-ms in #20139. Splitting into separate issues for each bug logged in the original issue.

### ARIA hidden on red asterisk

#### Current

No `aria-hidden` on red asterisk, which means screen reader will read out asterisk as "star".

#### Requested update

Use `aria-hidden="true"` on red asterisk, then put the `required` attribute on the Input/Combobox/etc itself to programmatically mark the field as required. (This may warrant a discussion.)

#### Priority

Normal

| 1.0 | Label needs ARIA hidden on red asterisk - This was originally opened by @yvonne-chien-ms in #20139. Splitting into separate issues for each bug logged in the original issue.

### ARIA hidden on red asterisk

#### Current

No `aria-hidden` on red asterisk, which means screen reader will read out asterisk as "star".

#### Requested update

Use `aria-hidden="true"` on red asterisk, then put the `required` attribute on the Input/Combobox/etc itself to programmatically mark the field as required. (This may warrant a discussion.)

#### Priority

Normal

| priority | label needs aria hidden on red asterisk this was originally opened by yvonne chien ms in splitting into separate issues for each bug logged in the original issue aria hidden on red asterisk current no aria hidden on red asterisk which means screen reader will read out asterisk as star requested update use aria hidden true on red asterisk then put the required attribute on the input combobox etc itself to programmatically mark the field as required this may warrant a discussion priority normal | 1 |

135,132 | 5,242,727,798 | IssuesEvent | 2017-01-31 18:49:55 | nextgis/nextgisweb_compulink | https://api.github.com/repos/nextgis/nextgisweb_compulink | closed | Название полей в таблице ОС | enhancement High Priority | Добавить к наименованию двух полей «Начало/Окончание сдачи заказчику»

следующий текст «в эксплуатацию» в нижней панели:

| 1.0 | Название полей в таблице ОС - Добавить к наименованию двух полей «Начало/Окончание сдачи заказчику»

следующий текст «в эксплуатацию» в нижней панели:

| priority | название полей в таблице ос добавить к наименованию двух полей «начало окончание сдачи заказчику» следующий текст «в эксплуатацию» в нижней панели | 1 |

343,004 | 10,324,336,702 | IssuesEvent | 2019-09-01 08:12:55 | OpenSRP/opensrp-client-chw | https://api.github.com/repos/OpenSRP/opensrp-client-chw | closed | Change all French translations of "PCV" vaccine to "Pneumo" | enhancement high priority | We need to update the French translation of the PCV vaccine. The French translation should be changed to `Pneumo` in all instances in the French version of all the WCARO apps. | 1.0 | Change all French translations of "PCV" vaccine to "Pneumo" - We need to update the French translation of the PCV vaccine. The French translation should be changed to `Pneumo` in all instances in the French version of all the WCARO apps. | priority | change all french translations of pcv vaccine to pneumo we need to update the french translation of the pcv vaccine the french translation should be changed to pneumo in all instances in the french version of all the wcaro apps | 1 |

136,489 | 5,283,673,299 | IssuesEvent | 2017-02-07 21:59:39 | DCLP/dclpxsltbox | https://api.github.com/repos/DCLP/dclpxsltbox | closed | inconsistencies in biblScope markup not handled by XSLT | bug priority: high tweak XSLT | found by @leoba and split out from #146:

In Bibl files, if page numbers for journal articles are only in @from and @to values on biblScope and not in the content of biblScope, they do not display. Compare:

https://github.com/DCLP/idp.data/blob/master/Biblio/81/80929.xml#L7 (HTML: https://github.com/DCLP/dclpxsltbox/blob/master/output/dclp/65/64713.html#L46)

http://litpap.info/dclp/64713

with

https://github.com/DCLP/idp.data/blob/master/Biblio/66/65876.xml#L13

(HTML: https://github.com/DCLP/dclpxsltbox/blob/master/output/dclp/101/100149.html#L46)

http://litpap.info/dclp/100149

Is this an XSLT issue or an XML issue?

| 1.0 | inconsistencies in biblScope markup not handled by XSLT - found by @leoba and split out from #146:

In Bibl files, if page numbers for journal articles are only in @from and @to values on biblScope and not in the content of biblScope, they do not display. Compare:

https://github.com/DCLP/idp.data/blob/master/Biblio/81/80929.xml#L7 (HTML: https://github.com/DCLP/dclpxsltbox/blob/master/output/dclp/65/64713.html#L46)

http://litpap.info/dclp/64713

with

https://github.com/DCLP/idp.data/blob/master/Biblio/66/65876.xml#L13

(HTML: https://github.com/DCLP/dclpxsltbox/blob/master/output/dclp/101/100149.html#L46)

http://litpap.info/dclp/100149

Is this an XSLT issue or an XML issue?

| priority | inconsistencies in biblscope markup not handled by xslt found by leoba and split out from in bibl files if page numbers for journal articles are only in from and to values on biblscope and not in the content of biblscope they do not display compare html with html is this an xslt issue or an xml issue | 1 |

391,265 | 11,571,547,389 | IssuesEvent | 2020-02-20 21:48:07 | googlefonts/typography | https://api.github.com/repos/googlefonts/typography | closed | Get Google Analytics tracking code | high priority | Not sure who has to create this, but guessing we'll want to track it :) | 1.0 | Get Google Analytics tracking code - Not sure who has to create this, but guessing we'll want to track it :) | priority | get google analytics tracking code not sure who has to create this but guessing we ll want to track it | 1 |

280,642 | 8,684,407,629 | IssuesEvent | 2018-12-03 02:06:23 | AlexRaubach/ListFortress | https://api.github.com/repos/AlexRaubach/ListFortress | closed | Import Tournaments from Cryodex and Tabletop.to | High Priority enhancement | Need to build a tournament and participants from the incoming json. | 1.0 | Import Tournaments from Cryodex and Tabletop.to - Need to build a tournament and participants from the incoming json. | priority | import tournaments from cryodex and tabletop to need to build a tournament and participants from the incoming json | 1 |

534,376 | 15,615,370,887 | IssuesEvent | 2021-03-19 19:05:18 | ucb-rit/coldfront | https://api.github.com/repos/ucb-rit/coldfront | closed | Improvements to Home Pages | high priority |

Lets add these three buttons to the user HOME pages:

1) Review and Sign Cluster Access Agreement, keep this persistent.

Show the timestamp of when the user agreement has been signed. Both on the home page and also under the user profile page.

2) Join a Project.

(We will revisit this later for further enhancements) | 1.0 | Improvements to Home Pages -

Lets add these three buttons to the user HOME pages:

1) Review and Sign Cluster Access Agreement, keep this persistent.

Show the timestamp of when the user agreement has been signed. Both on the home page and also under the user profile page.

2) Join a Project.

(We will revisit this later for further enhancements) | priority | improvements to home pages lets add these three buttons to the user home pages review and sign cluster access agreement keep this persistent show the timestamp of when the user agreement has been signed both on the home page and also under the user profile page join a project we will revisit this later for further enhancements | 1 |

203,379 | 7,060,602,698 | IssuesEvent | 2018-01-05 09:32:34 | wso2-incubator/testgrid | https://api.github.com/repos/wso2-incubator/testgrid | closed | PROD environment dasboard points to dev api enpoints | Priority/Highest Severity/Critical Type/Bug | **Description:**

dashboard always calls API endpoints in dev environment. PROD dashboard should point to PROD API endpoints.

| 1.0 | PROD environment dasboard points to dev api enpoints - **Description:**

dashboard always calls API endpoints in dev environment. PROD dashboard should point to PROD API endpoints.

| priority | prod environment dasboard points to dev api enpoints description dashboard always calls api endpoints in dev environment prod dashboard should point to prod api endpoints | 1 |

137,273 | 5,301,278,874 | IssuesEvent | 2017-02-10 09:03:54 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Upgrade documentation to Jekyll | in progress Priority: High task | Documentation folder needs to be updated and upgraded to use Jekyll as documentation generator | 1.0 | Upgrade documentation to Jekyll - Documentation folder needs to be updated and upgraded to use Jekyll as documentation generator | priority | upgrade documentation to jekyll documentation folder needs to be updated and upgraded to use jekyll as documentation generator | 1 |

656,630 | 21,769,600,087 | IssuesEvent | 2022-05-13 07:43:50 | woocommerce/woocommerce-ios | https://api.github.com/repos/woocommerce/woocommerce-ios | closed | [Coupons] - Can't search for subcategories | type: bug priority: high feature: coupons | **Describe the bug**

On Edit Product Categories, looks like we can't search for subcategories, it will return results not found.

**To Reproduce**

Steps to reproduce the behavior:

1. Turn on Coupon Management experimental feature

2. Select a coupon

3. Edit the coupon code and go to Edit Product Categories

4. On search, search for a subcategory

5. See that it will show results not found

**Screenshots**

https://user-images.githubusercontent.com/17252150/168043287-b017cc32-346f-49c1-825e-5f122009317c.MP4

**Expected behavior**

We should be able to search for both categories and subcategories using the search bar

**Mobile Environment**

Device: iPhone 13

iOS version: 15.3.1

WooCommerce iOS version: AppCenter build pr6809-84ae456

| 1.0 | [Coupons] - Can't search for subcategories - **Describe the bug**

On Edit Product Categories, looks like we can't search for subcategories, it will return results not found.

**To Reproduce**

Steps to reproduce the behavior:

1. Turn on Coupon Management experimental feature

2. Select a coupon

3. Edit the coupon code and go to Edit Product Categories

4. On search, search for a subcategory