Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

18,787 | 13,103,727,978 | IssuesEvent | 2020-08-04 09:03:27 | NUTFes/group-manager-2 | https://api.github.com/repos/NUTFes/group-manager-2 | closed | 管理者アプリの作成 | backend frontend infrastructure | - group-manager-2

- api

- view

- admin_api (new)

- admin_view (new)

- docs

- docker-compose.yml

| 1.0 | 管理者アプリの作成 - - group-manager-2

- api

- view

- admin_api (new)

- admin_view (new)

- docs

- docker-compose.yml

| infrastructure | 管理者アプリの作成 group manager api view admin api new admin view new docs docker compose yml | 1 |

266 | 2,602,005,035 | IssuesEvent | 2015-02-24 03:06:58 | jquery/esprima | https://api.github.com/repos/jquery/esprima | closed | Unit Tests: break into much smaller files | easy enhancement infrastructure | We should take a page from espree here and break out the test files into much smaller pieces organized by the main language feature they are testing.

Right now it is unwieldily working with the giant file, and intimidating to newer contributors, like myself. | 1.0 | Unit Tests: break into much smaller files - We should take a page from espree here and break out the test files into much smaller pieces organized by the main language feature they are testing.

Right now it is unwieldily working with the giant file, and intimidating to newer contributors, like myself. | infrastructure | unit tests break into much smaller files we should take a page from espree here and break out the test files into much smaller pieces organized by the main language feature they are testing right now it is unwieldily working with the giant file and intimidating to newer contributors like myself | 1 |

27,583 | 21,941,905,938 | IssuesEvent | 2022-05-23 19:03:28 | cloud-native-toolkit/software-everywhere | https://api.github.com/repos/cloud-native-toolkit/software-everywhere | closed | Request access: {Sarang/Dinesh/Dileep} New ROKS Cluster with Portworx for cp4ba(Business Automation) | category:infrastructure access_request | **Email address**

@saratrip @dineshchandrapandey @DileepPaul ,brahm.singh@ibm.com , mverma17@in.ibm.com

**Cloud environment**

IBM Cloud account,

**Purpose**

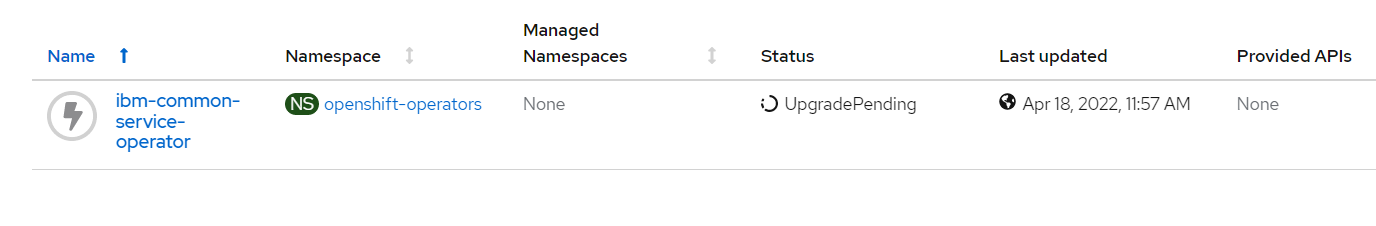

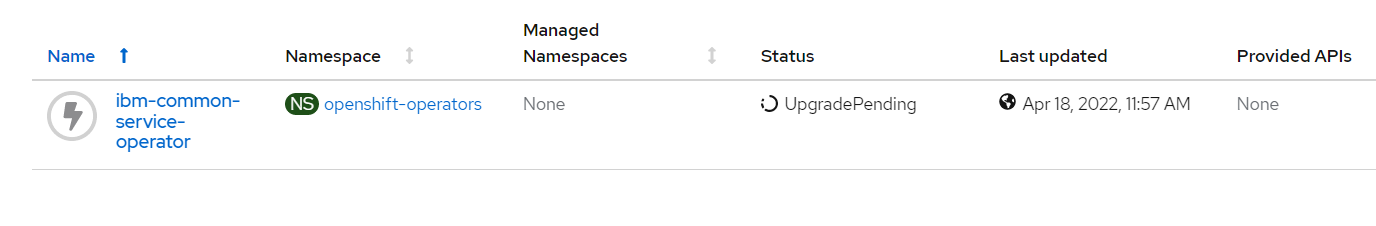

Request to create new ROKS Cluster with Portworx for cp4ba(Business Automation). We are using OCP48 gitops cluster but are unable to do test on the cluster because the common service operator is giving issues/not installed. We are unable to proceed and it is major blocker for us.

**Duration of access**

3 Months

| 1.0 | Request access: {Sarang/Dinesh/Dileep} New ROKS Cluster with Portworx for cp4ba(Business Automation) - **Email address**

@saratrip @dineshchandrapandey @DileepPaul ,brahm.singh@ibm.com , mverma17@in.ibm.com

**Cloud environment**

IBM Cloud account,

**Purpose**

Request to create new ROKS Cluster with Portworx for cp4ba(Business Automation). We are using OCP48 gitops cluster but are unable to do test on the cluster because the common service operator is giving issues/not installed. We are unable to proceed and it is major blocker for us.

**Duration of access**

3 Months

| infrastructure | request access sarang dinesh dileep new roks cluster with portworx for business automation email address saratrip dineshchandrapandey dileeppaul brahm singh ibm com in ibm com cloud environment ibm cloud account purpose request to create new roks cluster with portworx for business automation we are using gitops cluster but are unable to do test on the cluster because the common service operator is giving issues not installed we are unable to proceed and it is major blocker for us duration of access months | 1 |

30,793 | 25,083,464,283 | IssuesEvent | 2022-11-07 21:26:49 | ProjectPythiaCookbooks/cookbook-template | https://api.github.com/repos/ProjectPythiaCookbooks/cookbook-template | closed | Build via binderbot | infrastructure | Following successful experiments in https://github.com/ProjectPythiaCookbooks/cmip6-cookbook/pull/27 and https://github.com/ProjectPythia/pythia-foundations/pull/322, it's time to build the binderbot functionality into the template and (once it's working) push those changes out to all cookbook repos.

My recent refactor of the infrastructure makes this much easier. Most (all?) the changes will actually occur in the reusable workflows over at https://github.com/ProjectPythiaCookbooks/cookbook-actions.

What I have in mind is a python script that parses `_config.yml` and `_toc.yml` to get things needed for the call to binderbot:

- the link to the binder (stored in the field `binderhub_url:` in `_config.yml`)

- the list of all notebook files to be executed (from `_toc.yml`)

That would all happen within the reusable https://github.com/ProjectPythiaCookbooks/cookbook-actions/blob/main/.github/workflows/build-book.yaml

One question is whether this should be automatic (i.e. every Cookbook executes this way), or whether there should be a switch for the individual Cookbook to choose whether to execute via binderbot or on GitHub Actions. | 1.0 | Build via binderbot - Following successful experiments in https://github.com/ProjectPythiaCookbooks/cmip6-cookbook/pull/27 and https://github.com/ProjectPythia/pythia-foundations/pull/322, it's time to build the binderbot functionality into the template and (once it's working) push those changes out to all cookbook repos.

My recent refactor of the infrastructure makes this much easier. Most (all?) the changes will actually occur in the reusable workflows over at https://github.com/ProjectPythiaCookbooks/cookbook-actions.

What I have in mind is a python script that parses `_config.yml` and `_toc.yml` to get things needed for the call to binderbot:

- the link to the binder (stored in the field `binderhub_url:` in `_config.yml`)

- the list of all notebook files to be executed (from `_toc.yml`)

That would all happen within the reusable https://github.com/ProjectPythiaCookbooks/cookbook-actions/blob/main/.github/workflows/build-book.yaml

One question is whether this should be automatic (i.e. every Cookbook executes this way), or whether there should be a switch for the individual Cookbook to choose whether to execute via binderbot or on GitHub Actions. | infrastructure | build via binderbot following successful experiments in and it s time to build the binderbot functionality into the template and once it s working push those changes out to all cookbook repos my recent refactor of the infrastructure makes this much easier most all the changes will actually occur in the reusable workflows over at what i have in mind is a python script that parses config yml and toc yml to get things needed for the call to binderbot the link to the binder stored in the field binderhub url in config yml the list of all notebook files to be executed from toc yml that would all happen within the reusable one question is whether this should be automatic i e every cookbook executes this way or whether there should be a switch for the individual cookbook to choose whether to execute via binderbot or on github actions | 1 |

7,550 | 6,989,427,913 | IssuesEvent | 2017-12-14 16:08:37 | seqan/lambda | https://api.github.com/repos/seqan/lambda | closed | refactor codebase | infrastructure | - [ ] make one binary with different "actions" (mkindex, search)

- [ ] resort the source code | 1.0 | refactor codebase - - [ ] make one binary with different "actions" (mkindex, search)

- [ ] resort the source code | infrastructure | refactor codebase make one binary with different actions mkindex search resort the source code | 1 |

7,545 | 6,988,084,042 | IssuesEvent | 2017-12-14 11:32:51 | h-da/geli | https://api.github.com/repos/h-da/geli | opened | Introduce API-Docu, build automatically | api enhancement infrastructure | # User Story

### As a:

Developer

### I want:

to have an API-Documentation

### so that:

I can see all URL-Paths and their functions.

## Acceptance criteria:

- [ ] All API-Functions are commented

- [ ] Build the Documentation on build time

- [ ] The documentation should be generated only on tagged builds

- [ ] Publish the build (as GH Pages)

- [ ] The version of the docu should be switched

## Additional info:

https://pages.github.com/

------

_Please tag this issue if you are sure to which tag(s) it belongs._

| 1.0 | Introduce API-Docu, build automatically - # User Story

### As a:

Developer

### I want:

to have an API-Documentation

### so that:

I can see all URL-Paths and their functions.

## Acceptance criteria:

- [ ] All API-Functions are commented

- [ ] Build the Documentation on build time

- [ ] The documentation should be generated only on tagged builds

- [ ] Publish the build (as GH Pages)

- [ ] The version of the docu should be switched

## Additional info:

https://pages.github.com/

------

_Please tag this issue if you are sure to which tag(s) it belongs._

| infrastructure | introduce api docu build automatically user story as a developer i want to have an api documentation so that i can see all url paths and their functions acceptance criteria all api functions are commented build the documentation on build time the documentation should be generated only on tagged builds publish the build as gh pages the version of the docu should be switched additional info please tag this issue if you are sure to which tag s it belongs | 1 |

12,790 | 9,956,684,231 | IssuesEvent | 2019-07-05 14:33:48 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | generator.py fails silently if you specify an invalid --type | :Generator :infrastructure | For confirmed bugs, please report:

- Version: master

- Operating System: any

- Steps to Reproduce:

```

python ${GOPATH}/src/github.com/elastic/beats/script/generate.py --type=fakebeat --project_name=examplebeat

```

Since `fakebeat` doesn't exist, nothing happens. The script exits zero, no message is printed.

This is presumably because the python script is iterating over a directory that doesn't exist:

```

for root, dirs, files in os.walk(template_path + '/' + beat_type + '/{beat}'):

```

According to the pydoc, `os.walk` will ignore errors by default. We should either specify some error handling for `os.walk`, or check the validity of the type before hand.

| 1.0 | generator.py fails silently if you specify an invalid --type - For confirmed bugs, please report:

- Version: master

- Operating System: any

- Steps to Reproduce:

```

python ${GOPATH}/src/github.com/elastic/beats/script/generate.py --type=fakebeat --project_name=examplebeat

```

Since `fakebeat` doesn't exist, nothing happens. The script exits zero, no message is printed.

This is presumably because the python script is iterating over a directory that doesn't exist:

```

for root, dirs, files in os.walk(template_path + '/' + beat_type + '/{beat}'):

```

According to the pydoc, `os.walk` will ignore errors by default. We should either specify some error handling for `os.walk`, or check the validity of the type before hand.

| infrastructure | generator py fails silently if you specify an invalid type for confirmed bugs please report version master operating system any steps to reproduce python gopath src github com elastic beats script generate py type fakebeat project name examplebeat since fakebeat doesn t exist nothing happens the script exits zero no message is printed this is presumably because the python script is iterating over a directory that doesn t exist for root dirs files in os walk template path beat type beat according to the pydoc os walk will ignore errors by default we should either specify some error handling for os walk or check the validity of the type before hand | 1 |

12,719 | 9,935,404,424 | IssuesEvent | 2019-07-02 16:27:55 | raiden-network/raiden-services | https://api.github.com/repos/raiden-network/raiden-services | closed | Deployment guide | Infrastructure :office: | We need better docs for *Configuration and instructions for running Raiden Services*

A good example is the transport repo: https://github.com/raiden-network/raiden-transport/ | 1.0 | Deployment guide - We need better docs for *Configuration and instructions for running Raiden Services*

A good example is the transport repo: https://github.com/raiden-network/raiden-transport/ | infrastructure | deployment guide we need better docs for configuration and instructions for running raiden services a good example is the transport repo | 1 |

25,092 | 18,105,043,650 | IssuesEvent | 2021-09-22 18:15:07 | dotnet/fsharp | https://api.github.com/repos/dotnet/fsharp | closed | Remove IVT in our language service and consume it as other editors do | Area-Infrastructure | This is a tracking issue.

We are currently IVT our own language service. This makes things awkward, because we can consume our language service differently from other editors. It's also a real pain to deal with. We need to remove IVTs and consume our language service just like any other editor. | 1.0 | Remove IVT in our language service and consume it as other editors do - This is a tracking issue.

We are currently IVT our own language service. This makes things awkward, because we can consume our language service differently from other editors. It's also a real pain to deal with. We need to remove IVTs and consume our language service just like any other editor. | infrastructure | remove ivt in our language service and consume it as other editors do this is a tracking issue we are currently ivt our own language service this makes things awkward because we can consume our language service differently from other editors it s also a real pain to deal with we need to remove ivts and consume our language service just like any other editor | 1 |

9,724 | 3,314,774,350 | IssuesEvent | 2015-11-06 08:04:43 | gbv/paia | https://api.github.com/repos/gbv/paia | closed | URL | documentation question | After implementing DAIA in Bibdia for SLB Potsdam/KOBV I thought I'd have a look at the next interface PAIA. Why on earth does the specfication insist on explicit URLs? And why is the patron identifier also in the URL? First the explicit URLs mean I'd have to map /core internally into a URL to Bibdia. The site CANNOT use /core for anything else but PAIA. Secondly why is the patron identifier plain for all to see in the URL? Once again I'd have to rewrite this into a more conventional URL which, for example, DAIA uses. In DAIA the URL is not specified, just the parameters to it. OAI follows a similar procedure, so does NCIP via HTTPS. And why the patron ID is part of the URL instead of just simply a parameter (and then in a POST body) is beyond me, since the patron is actually a parameter and not a resource identifier. The last part of the URL is similarly a verb. | 1.0 | URL - After implementing DAIA in Bibdia for SLB Potsdam/KOBV I thought I'd have a look at the next interface PAIA. Why on earth does the specfication insist on explicit URLs? And why is the patron identifier also in the URL? First the explicit URLs mean I'd have to map /core internally into a URL to Bibdia. The site CANNOT use /core for anything else but PAIA. Secondly why is the patron identifier plain for all to see in the URL? Once again I'd have to rewrite this into a more conventional URL which, for example, DAIA uses. In DAIA the URL is not specified, just the parameters to it. OAI follows a similar procedure, so does NCIP via HTTPS. And why the patron ID is part of the URL instead of just simply a parameter (and then in a POST body) is beyond me, since the patron is actually a parameter and not a resource identifier. The last part of the URL is similarly a verb. | non_infrastructure | url after implementing daia in bibdia for slb potsdam kobv i thought i d have a look at the next interface paia why on earth does the specfication insist on explicit urls and why is the patron identifier also in the url first the explicit urls mean i d have to map core internally into a url to bibdia the site cannot use core for anything else but paia secondly why is the patron identifier plain for all to see in the url once again i d have to rewrite this into a more conventional url which for example daia uses in daia the url is not specified just the parameters to it oai follows a similar procedure so does ncip via https and why the patron id is part of the url instead of just simply a parameter and then in a post body is beyond me since the patron is actually a parameter and not a resource identifier the last part of the url is similarly a verb | 0 |

273,877 | 29,831,109,382 | IssuesEvent | 2023-06-18 09:33:23 | RG4421/ampere-centos-kernel | https://api.github.com/repos/RG4421/ampere-centos-kernel | closed | CVE-2019-19927 (Medium) detected in linuxv5.2 - autoclosed | Mend: dependency security vulnerability | ## CVE-2019-19927 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torvalds/linux.git>https://github.com/torvalds/linux.git</a></p>

<p>Found in base branch: <b>amp-centos-8.0-kernel</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/gpu/drm/ttm/ttm_page_alloc.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/gpu/drm/ttm/ttm_page_alloc.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

In the Linux kernel 5.0.0-rc7 (as distributed in ubuntu/linux.git on kernel.ubuntu.com), mounting a crafted f2fs filesystem image and performing some operations can lead to slab-out-of-bounds read access in ttm_put_pages in drivers/gpu/drm/ttm/ttm_page_alloc.c. This is related to the vmwgfx or ttm module.

<p>Publish Date: 2019-12-31

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-19927>CVE-2019-19927</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.0</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2020-05-14</p>

<p>Fix Resolution: v5.1-rc6</p>

</p>

</details>

<p></p>

| True | CVE-2019-19927 (Medium) detected in linuxv5.2 - autoclosed - ## CVE-2019-19927 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torvalds/linux.git>https://github.com/torvalds/linux.git</a></p>

<p>Found in base branch: <b>amp-centos-8.0-kernel</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/gpu/drm/ttm/ttm_page_alloc.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/gpu/drm/ttm/ttm_page_alloc.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

In the Linux kernel 5.0.0-rc7 (as distributed in ubuntu/linux.git on kernel.ubuntu.com), mounting a crafted f2fs filesystem image and performing some operations can lead to slab-out-of-bounds read access in ttm_put_pages in drivers/gpu/drm/ttm/ttm_page_alloc.c. This is related to the vmwgfx or ttm module.

<p>Publish Date: 2019-12-31

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-19927>CVE-2019-19927</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.0</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2020-05-14</p>

<p>Fix Resolution: v5.1-rc6</p>

</p>

</details>

<p></p>

| non_infrastructure | cve medium detected in autoclosed cve medium severity vulnerability vulnerable library linux kernel source tree library home page a href found in base branch amp centos kernel vulnerable source files drivers gpu drm ttm ttm page alloc c drivers gpu drm ttm ttm page alloc c vulnerability details in the linux kernel as distributed in ubuntu linux git on kernel ubuntu com mounting a crafted filesystem image and performing some operations can lead to slab out of bounds read access in ttm put pages in drivers gpu drm ttm ttm page alloc c this is related to the vmwgfx or ttm module publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required high user interaction none scope unchanged impact metrics confidentiality impact high integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version release date fix resolution | 0 |

23,893 | 16,677,437,563 | IssuesEvent | 2021-06-07 18:05:06 | onnx/onnx | https://api.github.com/repos/onnx/onnx | closed | Time to upgrade protobuf | infrastructure | I got the error message below about a deprecated Python feature when running ONNX tests locally. It seems that it's time to upgrade ONNX to use newer protobuf version. Otherwise, our code might not work with Python 3.8.

```

c:\programdata\anaconda3\lib\site-packages\google\protobuf\descriptor.py:47

c:\programdata\anaconda3\lib\site-packages\google\protobuf\descriptor.py:47:

DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working

from google.protobuf.pyext import _message

```

Here is how it can be reproduced.

1. pip install -e onnx

2. cd onnx

3. pytest

Not using recent versions of protobuf also causes [problem](https://github.com/conda-forge/onnx-feedstock/issues/29) and [problem](https://github.com/conda-forge/onnx-feedstock/issues/14) to other packages. | 1.0 | Time to upgrade protobuf - I got the error message below about a deprecated Python feature when running ONNX tests locally. It seems that it's time to upgrade ONNX to use newer protobuf version. Otherwise, our code might not work with Python 3.8.

```

c:\programdata\anaconda3\lib\site-packages\google\protobuf\descriptor.py:47

c:\programdata\anaconda3\lib\site-packages\google\protobuf\descriptor.py:47:

DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working

from google.protobuf.pyext import _message

```

Here is how it can be reproduced.

1. pip install -e onnx

2. cd onnx

3. pytest

Not using recent versions of protobuf also causes [problem](https://github.com/conda-forge/onnx-feedstock/issues/29) and [problem](https://github.com/conda-forge/onnx-feedstock/issues/14) to other packages. | infrastructure | time to upgrade protobuf i got the error message below about a deprecated python feature when running onnx tests locally it seems that it s time to upgrade onnx to use newer protobuf version otherwise our code might not work with python c programdata lib site packages google protobuf descriptor py c programdata lib site packages google protobuf descriptor py deprecationwarning using or importing the abcs from collections instead of from collections abc is deprecated and in it will stop working from google protobuf pyext import message here is how it can be reproduced pip install e onnx cd onnx pytest not using recent versions of protobuf also causes and to other packages | 1 |

12,680 | 9,914,035,429 | IssuesEvent | 2019-06-28 13:27:39 | pulibrary/drds_sprints | https://api.github.com/repos/pulibrary/drds_sprints | closed | Cantaloupe | [zube]: Planned infrastructure planned | Setup Cantaloupe (either on the new image server, or alongside Loris) and configure Figgy to use it. | 1.0 | Cantaloupe - Setup Cantaloupe (either on the new image server, or alongside Loris) and configure Figgy to use it. | infrastructure | cantaloupe setup cantaloupe either on the new image server or alongside loris and configure figgy to use it | 1 |

144 | 2,537,005,822 | IssuesEvent | 2015-01-26 17:40:06 | dinyar/uGMTfirmware | https://api.github.com/repos/dinyar/uGMTfirmware | opened | Allow one write op via IPbus to fill more than one value in memories | infrastructure | Most values in the internal memories are significantly smaller than 32 bit while IPbus uses 32 bit words. To speed up the configuration step we can fit several of these smaller values into one IPbus write word. | 1.0 | Allow one write op via IPbus to fill more than one value in memories - Most values in the internal memories are significantly smaller than 32 bit while IPbus uses 32 bit words. To speed up the configuration step we can fit several of these smaller values into one IPbus write word. | infrastructure | allow one write op via ipbus to fill more than one value in memories most values in the internal memories are significantly smaller than bit while ipbus uses bit words to speed up the configuration step we can fit several of these smaller values into one ipbus write word | 1 |

187,375 | 6,756,598,817 | IssuesEvent | 2017-10-24 07:46:34 | threefoldfoundation/app_backend | https://api.github.com/repos/threefoldfoundation/app_backend | closed | Wallet on Dashboard | priority_minor state_verification type_feature | - see list of users + search

- detail of user for transaction history + balance of tokens

payment admins should be able to grant tokens in detail page of a user | 1.0 | Wallet on Dashboard - - see list of users + search

- detail of user for transaction history + balance of tokens

payment admins should be able to grant tokens in detail page of a user | non_infrastructure | wallet on dashboard see list of users search detail of user for transaction history balance of tokens payment admins should be able to grant tokens in detail page of a user | 0 |

24,778 | 17,773,022,285 | IssuesEvent | 2021-08-30 15:40:53 | google/iree | https://api.github.com/repos/google/iree | closed | Providing IREE Snapshot "releases" as a branch | infrastructure 🛠️ | Chatting with @hcindyl about integrating IREE into a project that uses [git-repo](https://gerrit.googlesource.com/git-repo). They're interested in having a less frequently updated version of IREE than ToT. Not necessarily stable, but updated less frequently. We discussed using the [snapshot release](https://github.com/google/iree/blob/main/.github/workflows/schedule_snapshot_release.yml), which creates a release twice daily. The wrinkle is that git-repo apparently has spotty support for fetching from tags, so they'd like a branch instead. I think that's something we could do reasonably easily. It would also be nice if this didn't just snapshot latest main, but instead took the last commit where all checks passed. I can look into how we would query this with the GitHub API.

@stellaraccident, since you created this snapshot. Can you comment on its stability and whether I could piggy back off this?

| 1.0 | Providing IREE Snapshot "releases" as a branch - Chatting with @hcindyl about integrating IREE into a project that uses [git-repo](https://gerrit.googlesource.com/git-repo). They're interested in having a less frequently updated version of IREE than ToT. Not necessarily stable, but updated less frequently. We discussed using the [snapshot release](https://github.com/google/iree/blob/main/.github/workflows/schedule_snapshot_release.yml), which creates a release twice daily. The wrinkle is that git-repo apparently has spotty support for fetching from tags, so they'd like a branch instead. I think that's something we could do reasonably easily. It would also be nice if this didn't just snapshot latest main, but instead took the last commit where all checks passed. I can look into how we would query this with the GitHub API.

@stellaraccident, since you created this snapshot. Can you comment on its stability and whether I could piggy back off this?

| infrastructure | providing iree snapshot releases as a branch chatting with hcindyl about integrating iree into a project that uses they re interested in having a less frequently updated version of iree than tot not necessarily stable but updated less frequently we discussed using the which creates a release twice daily the wrinkle is that git repo apparently has spotty support for fetching from tags so they d like a branch instead i think that s something we could do reasonably easily it would also be nice if this didn t just snapshot latest main but instead took the last commit where all checks passed i can look into how we would query this with the github api stellaraccident since you created this snapshot can you comment on its stability and whether i could piggy back off this | 1 |

29,971 | 24,444,029,474 | IssuesEvent | 2022-10-06 16:25:59 | PostHog/posthog | https://api.github.com/repos/PostHog/posthog | closed | Couldn't find a package.json file in "/code" in web container on hobby 1.40.0 | bug infrastructure | ## Bug description

On 1.40.0 the web package is not initialized so the server never starts. rolling back to 1.39.1 solves this

## How to reproduce

1. upgrade to 1.40.0 and start hobby with docker compose

2. check web container logs

3.

## Environment

- [ ] PostHog Cloud

- [x] self-hosted PostHog, version/commit: please provide

- hobby 1.40.0

## Additional context

(internal workspace: https://posthog.slack.com/archives/C03KZUU124U/p1664371893124139)

#### *Thank you* for your bug report – we love squashing them!

| 1.0 | Couldn't find a package.json file in "/code" in web container on hobby 1.40.0 - ## Bug description

On 1.40.0 the web package is not initialized so the server never starts. rolling back to 1.39.1 solves this

## How to reproduce

1. upgrade to 1.40.0 and start hobby with docker compose

2. check web container logs

3.

## Environment

- [ ] PostHog Cloud

- [x] self-hosted PostHog, version/commit: please provide

- hobby 1.40.0

## Additional context

(internal workspace: https://posthog.slack.com/archives/C03KZUU124U/p1664371893124139)

#### *Thank you* for your bug report – we love squashing them!

| infrastructure | couldn t find a package json file in code in web container on hobby bug description on the web package is not initialized so the server never starts rolling back to solves this how to reproduce upgrade to and start hobby with docker compose check web container logs environment posthog cloud self hosted posthog version commit please provide hobby additional context internal workspace thank you for your bug report – we love squashing them | 1 |

32,807 | 27,006,283,106 | IssuesEvent | 2023-02-10 11:57:25 | arduino/arduino-create-agent | https://api.github.com/repos/arduino/arduino-create-agent | closed | The CI should upload a json with version and checksum to S3, for the new update logic | type: enhancement os: macos topic: infrastructure | Similar to https://github.com/arduino/arduino-create-agent/issues/736, the json file has to be placed in https://downloads.arduino.cc/CreateAgent/Stable/darwin-arm64.json and should contain:

```

{

"Version":"1.2.8",

"Sha256": "3ahZ78JAs3cNfK60jofS/PsWRQiJvV1sZUchGvCqyLY="

}

``` | 1.0 | The CI should upload a json with version and checksum to S3, for the new update logic - Similar to https://github.com/arduino/arduino-create-agent/issues/736, the json file has to be placed in https://downloads.arduino.cc/CreateAgent/Stable/darwin-arm64.json and should contain:

```

{

"Version":"1.2.8",

"Sha256": "3ahZ78JAs3cNfK60jofS/PsWRQiJvV1sZUchGvCqyLY="

}

``` | infrastructure | the ci should upload a json with version and checksum to for the new update logic similar to the json file has to be placed in and should contain version | 1 |

28,623 | 23,395,929,268 | IssuesEvent | 2022-08-11 23:32:23 | grpc/grpc.io | https://api.github.com/repos/grpc/grpc.io | opened | Netlify: select a new build image | p1-high p2-medium infrastructure e0-minutes | As reported via deploy logs:

```nocode

---------------------------------------------------------------------

DEPRECATION NOTICE: Builds using the Xenial build image will fail after November 15th, 2022.

The build image for this site uses Ubuntu 16.04 Xenial Xerus, which is no longer supported.

All Netlify builds using the Xenial build image will begin failing in the week of November 15th, 2022.

To avoid service disruption, please select a newer build image at the following link:

https://app.netlify.com/sites/grpc-io/settings/deploys#build-image-selection

For more details, visit the build image migration guide:

https://answers.netlify.com/t/please-read-end-of-support-for-xenial-build-image-everything-you-need-to-know/68239

---------------------------------------------------------------------

| 1.0 | Netlify: select a new build image - As reported via deploy logs:

```nocode

---------------------------------------------------------------------

DEPRECATION NOTICE: Builds using the Xenial build image will fail after November 15th, 2022.

The build image for this site uses Ubuntu 16.04 Xenial Xerus, which is no longer supported.

All Netlify builds using the Xenial build image will begin failing in the week of November 15th, 2022.

To avoid service disruption, please select a newer build image at the following link:

https://app.netlify.com/sites/grpc-io/settings/deploys#build-image-selection

For more details, visit the build image migration guide:

https://answers.netlify.com/t/please-read-end-of-support-for-xenial-build-image-everything-you-need-to-know/68239

---------------------------------------------------------------------

| infrastructure | netlify select a new build image as reported via deploy logs nocode deprecation notice builds using the xenial build image will fail after november the build image for this site uses ubuntu xenial xerus which is no longer supported all netlify builds using the xenial build image will begin failing in the week of november to avoid service disruption please select a newer build image at the following link for more details visit the build image migration guide | 1 |

3,749 | 4,540,579,230 | IssuesEvent | 2016-09-09 15:03:29 | jquery/esprima | https://api.github.com/repos/jquery/esprima | opened | Drop support for io.js, Node.js v0.12 and v5 | infrastructure | This is to continue what has been started earlier (#1528).

* io.js: merged back to Node.js project long time ago

* Node.js v0.12: active LTS ended on 2016-04-01, maintenace will end on 2016-12-31

* Node.js v5: https://nodejs.org/en/blog/community/v5-to-v7/

Reference: https://github.com/nodejs/LTS | 1.0 | Drop support for io.js, Node.js v0.12 and v5 - This is to continue what has been started earlier (#1528).

* io.js: merged back to Node.js project long time ago

* Node.js v0.12: active LTS ended on 2016-04-01, maintenace will end on 2016-12-31

* Node.js v5: https://nodejs.org/en/blog/community/v5-to-v7/

Reference: https://github.com/nodejs/LTS | infrastructure | drop support for io js node js and this is to continue what has been started earlier io js merged back to node js project long time ago node js active lts ended on maintenace will end on node js reference | 1 |

146,655 | 23,099,754,473 | IssuesEvent | 2022-07-27 00:33:39 | Australian-Imaging-Service/pipelines | https://api.github.com/repos/Australian-Imaging-Service/pipelines | opened | [STORY] Create Pydra Task interface for dwi2response MRtrix3 command | pipelines-stream story analysis-design shallow 3pt ready | ### Description

As a pipeline developer, I would like to be able to use a ready built Pydra task interface for the `dwi2response` MRtrix3 command, so that I can call that function conveniently from Arcana.

### Acceptance Criteria

- [ ] dwi2response can be successfully called from Pydra

- [ ] all options can be set via the input interface

- [ ] all outputs can be retrieved from output interface

| 1.0 | [STORY] Create Pydra Task interface for dwi2response MRtrix3 command - ### Description

As a pipeline developer, I would like to be able to use a ready built Pydra task interface for the `dwi2response` MRtrix3 command, so that I can call that function conveniently from Arcana.

### Acceptance Criteria

- [ ] dwi2response can be successfully called from Pydra

- [ ] all options can be set via the input interface

- [ ] all outputs can be retrieved from output interface

| non_infrastructure | create pydra task interface for command description as a pipeline developer i would like to be able to use a ready built pydra task interface for the command so that i can call that function conveniently from arcana acceptance criteria can be successfully called from pydra all options can be set via the input interface all outputs can be retrieved from output interface | 0 |

81,113 | 7,768,116,691 | IssuesEvent | 2018-06-03 14:38:26 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | investigate flaky parallel/test-crypto-dh-leak | CI / flaky test crypto | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demonstrates the problem, keeping it as

simple and free of external dependencies as you are able.

-->

* **Version**: v11.0.0-pre (master)

* **Platform**: ubuntu1604_sharedlibs_debug_x64

* **Subsystem**: test crypto

<!-- Enter your issue details below this comment. -->

https://ci.nodejs.org/job/node-test-commit-linux-containered/4846/nodes=ubuntu1604_sharedlibs_debug_x64/console

```console

03:13:14 not ok 414 parallel/test-crypto-dh-leak

03:13:14 ---

03:13:14 duration_ms: 2.717

03:13:14 severity: fail

03:13:14 exitcode: 1

03:13:14 stack: |-

03:13:14 assert.js:270

03:13:14 throw err;

03:13:14 ^

03:13:14

03:13:14 AssertionError [ERR_ASSERTION]: The expression evaluated to a falsy value:

03:13:14

03:13:14 assert(after - before < 5 << 20)

03:13:14

03:13:14 at Object.<anonymous> (/home/iojs/build/workspace/node-test-commit-linux-containered/nodes/ubuntu1604_sharedlibs_debug_x64/test/parallel/test-crypto-dh-leak.js:26:1)

03:13:14 at Module._compile (internal/modules/cjs/loader.js:702:30)

03:13:14 at Object.Module._extensions..js (internal/modules/cjs/loader.js:713:10)

03:13:14 at Module.load (internal/modules/cjs/loader.js:612:32)

03:13:14 at tryModuleLoad (internal/modules/cjs/loader.js:551:12)

03:13:14 at Function.Module._load (internal/modules/cjs/loader.js:543:3)

03:13:14 at Function.Module.runMain (internal/modules/cjs/loader.js:744:10)

03:13:14 at startup (internal/bootstrap/node.js:261:19)

03:13:14 at bootstrapNodeJSCore (internal/bootstrap/node.js:595:3)

03:13:14 ...

``` | 1.0 | investigate flaky parallel/test-crypto-dh-leak - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demonstrates the problem, keeping it as

simple and free of external dependencies as you are able.

-->

* **Version**: v11.0.0-pre (master)

* **Platform**: ubuntu1604_sharedlibs_debug_x64

* **Subsystem**: test crypto

<!-- Enter your issue details below this comment. -->

https://ci.nodejs.org/job/node-test-commit-linux-containered/4846/nodes=ubuntu1604_sharedlibs_debug_x64/console

```console

03:13:14 not ok 414 parallel/test-crypto-dh-leak

03:13:14 ---

03:13:14 duration_ms: 2.717

03:13:14 severity: fail

03:13:14 exitcode: 1

03:13:14 stack: |-

03:13:14 assert.js:270

03:13:14 throw err;

03:13:14 ^

03:13:14

03:13:14 AssertionError [ERR_ASSERTION]: The expression evaluated to a falsy value:

03:13:14

03:13:14 assert(after - before < 5 << 20)

03:13:14

03:13:14 at Object.<anonymous> (/home/iojs/build/workspace/node-test-commit-linux-containered/nodes/ubuntu1604_sharedlibs_debug_x64/test/parallel/test-crypto-dh-leak.js:26:1)

03:13:14 at Module._compile (internal/modules/cjs/loader.js:702:30)

03:13:14 at Object.Module._extensions..js (internal/modules/cjs/loader.js:713:10)

03:13:14 at Module.load (internal/modules/cjs/loader.js:612:32)

03:13:14 at tryModuleLoad (internal/modules/cjs/loader.js:551:12)

03:13:14 at Function.Module._load (internal/modules/cjs/loader.js:543:3)

03:13:14 at Function.Module.runMain (internal/modules/cjs/loader.js:744:10)

03:13:14 at startup (internal/bootstrap/node.js:261:19)

03:13:14 at bootstrapNodeJSCore (internal/bootstrap/node.js:595:3)

03:13:14 ...

``` | non_infrastructure | investigate flaky parallel test crypto dh leak thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able version output of node v platform output of uname a unix or version and or bit windows subsystem if known please specify affected core module name if possible please provide code that demonstrates the problem keeping it as simple and free of external dependencies as you are able version pre master platform sharedlibs debug subsystem test crypto console not ok parallel test crypto dh leak duration ms severity fail exitcode stack assert js throw err assertionerror the expression evaluated to a falsy value assert after before at object home iojs build workspace node test commit linux containered nodes sharedlibs debug test parallel test crypto dh leak js at module compile internal modules cjs loader js at object module extensions js internal modules cjs loader js at module load internal modules cjs loader js at trymoduleload internal modules cjs loader js at function module load internal modules cjs loader js at function module runmain internal modules cjs loader js at startup internal bootstrap node js at bootstrapnodejscore internal bootstrap node js | 0 |

36,698 | 17,867,692,281 | IssuesEvent | 2021-09-06 11:32:59 | getsentry/sentry-java | https://api.github.com/repos/getsentry/sentry-java | closed | Add support for tracing origins | performance | Currently the [SentryOkHttpInterceptor](https://github.com/getsentry/sentry-java/blob/aca78d9ca024c75225e59c36e35622055d2f6cbf/sentry-android-okhttp/src/main/java/io/sentry/android/okhttp/SentryOkHttpInterceptor.kt#L33-L35) adds the [sentry-trace HTTP header](https://develop.sentry.dev/sdk/performance/#header-sentry-trace) to every HTTP request. Some HTTP requests are targeting backends that don't have support for the sentry-trace HTTP header. Therefore JavaScript has the concept of [tracingOrigins](https://docs.sentry.io/platforms/javascript/performance/instrumentation/automatic-instrumentation/#tracingorigins), which is a list of URLs to which the integration should append the sentry-trace HTTP header. | True | Add support for tracing origins - Currently the [SentryOkHttpInterceptor](https://github.com/getsentry/sentry-java/blob/aca78d9ca024c75225e59c36e35622055d2f6cbf/sentry-android-okhttp/src/main/java/io/sentry/android/okhttp/SentryOkHttpInterceptor.kt#L33-L35) adds the [sentry-trace HTTP header](https://develop.sentry.dev/sdk/performance/#header-sentry-trace) to every HTTP request. Some HTTP requests are targeting backends that don't have support for the sentry-trace HTTP header. Therefore JavaScript has the concept of [tracingOrigins](https://docs.sentry.io/platforms/javascript/performance/instrumentation/automatic-instrumentation/#tracingorigins), which is a list of URLs to which the integration should append the sentry-trace HTTP header. | non_infrastructure | add support for tracing origins currently the adds the to every http request some http requests are targeting backends that don t have support for the sentry trace http header therefore javascript has the concept of which is a list of urls to which the integration should append the sentry trace http header | 0 |

38,770 | 2,850,254,371 | IssuesEvent | 2015-05-31 12:14:52 | damonkohler/android-scripting | https://api.github.com/repos/damonkohler/android-scripting | closed | PIL module please | auto-migrated Priority-Medium Type-Enhancement | ```

What should be supported?

Python Imaging Library (PIL)

I'm looking for a way to convert .jpg into .pcx

```

Original issue reported on code.google.com by `paulherweg` on 17 Jan 2013 at 7:45 | 1.0 | PIL module please - ```

What should be supported?

Python Imaging Library (PIL)

I'm looking for a way to convert .jpg into .pcx

```

Original issue reported on code.google.com by `paulherweg` on 17 Jan 2013 at 7:45 | non_infrastructure | pil module please what should be supported python imaging library pil i m looking for a way to convert jpg into pcx original issue reported on code google com by paulherweg on jan at | 0 |

25,545 | 18,846,523,583 | IssuesEvent | 2021-11-11 15:31:07 | replab/replab | https://api.github.com/repos/replab/replab | closed | Use MATLAB profiler for code coverage | Infrastructure Priority: Low | We could remove MoCOV from the dependencies (it's quite fragile) | 1.0 | Use MATLAB profiler for code coverage - We could remove MoCOV from the dependencies (it's quite fragile) | infrastructure | use matlab profiler for code coverage we could remove mocov from the dependencies it s quite fragile | 1 |

27,635 | 22,053,136,869 | IssuesEvent | 2022-05-30 10:25:37 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Fix ConnectTest.ConnectAsync_CancellationRequestedAfterConnect_ThrowsOperationCanceledException on NodeJS | arch-wasm area-Infrastructure-mono in-pr | Running test on NodeJS causes uncaught exception

```

info: Error: read ECONNRESET

info: at TCP.onStreamRead (node:internal/stream_base_commons:220:20) {

info: errno: -4077,

info: code: 'ECONNRESET',

info: syscall: 'read'

info: }

``` | 1.0 | Fix ConnectTest.ConnectAsync_CancellationRequestedAfterConnect_ThrowsOperationCanceledException on NodeJS - Running test on NodeJS causes uncaught exception

```

info: Error: read ECONNRESET

info: at TCP.onStreamRead (node:internal/stream_base_commons:220:20) {

info: errno: -4077,

info: code: 'ECONNRESET',

info: syscall: 'read'

info: }

``` | infrastructure | fix connecttest connectasync cancellationrequestedafterconnect throwsoperationcanceledexception on nodejs running test on nodejs causes uncaught exception info error read econnreset info at tcp onstreamread node internal stream base commons info errno info code econnreset info syscall read info | 1 |

415,110 | 12,124,932,745 | IssuesEvent | 2020-04-22 14:51:01 | opendifferentialprivacy/whitenoise-core | https://api.github.com/repos/opendifferentialprivacy/whitenoise-core | opened | License, Gitter, communication for Build | Effort 1 - Small :coffee: Priority 1: High | - Placeholder for communication with MS

- [ ] Add license

- [ ] When complete, add communication channels to README

- [ ] link to the minisite | 1.0 | License, Gitter, communication for Build - - Placeholder for communication with MS

- [ ] Add license

- [ ] When complete, add communication channels to README

- [ ] link to the minisite | non_infrastructure | license gitter communication for build placeholder for communication with ms add license when complete add communication channels to readme link to the minisite | 0 |

10,727 | 8,697,288,458 | IssuesEvent | 2018-12-04 19:50:38 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Breaking field changes for 6.0 | :infrastructure Metricbeat discuss meta module | Metricbeat uses the a convention for all its fields names: https://www.elastic.co/guide/en/beats/libbeat/master/event-conventions.html This convention evolves over time and gets improvements. Some of the older fields do not follow the full convention anymore and should be updated. The main issue with updating the fields is that it can break backward compatibility. That means if we do these changes they can only be done in a major relaese.

This issue is intended to track the fields which are not up-to-date and should potentially be changed for 6.0 and discuss potential migration paths.

Fields (current -> new)

* system.process.cpu.user -> system.process.cpu.user.ticks

* system.process.cpu.system -> system.process.cpu.system.ticks

| 1.0 | Breaking field changes for 6.0 - Metricbeat uses the a convention for all its fields names: https://www.elastic.co/guide/en/beats/libbeat/master/event-conventions.html This convention evolves over time and gets improvements. Some of the older fields do not follow the full convention anymore and should be updated. The main issue with updating the fields is that it can break backward compatibility. That means if we do these changes they can only be done in a major relaese.

This issue is intended to track the fields which are not up-to-date and should potentially be changed for 6.0 and discuss potential migration paths.

Fields (current -> new)

* system.process.cpu.user -> system.process.cpu.user.ticks

* system.process.cpu.system -> system.process.cpu.system.ticks

| infrastructure | breaking field changes for metricbeat uses the a convention for all its fields names this convention evolves over time and gets improvements some of the older fields do not follow the full convention anymore and should be updated the main issue with updating the fields is that it can break backward compatibility that means if we do these changes they can only be done in a major relaese this issue is intended to track the fields which are not up to date and should potentially be changed for and discuss potential migration paths fields current new system process cpu user system process cpu user ticks system process cpu system system process cpu system ticks | 1 |

631,978 | 20,166,907,954 | IssuesEvent | 2022-02-10 06:04:03 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | opened | Ahelp 'kick' button immediately kicks, no confirmation | Type: Bug Priority: 2-Before Release Difficulty: 1-Easy | ## Description

<!-- Explain your issue in detail, including the steps to reproduce it if applicable. Issues without proper explanation are liable to be closed by maintainers.-->

accidentally kicked a guy this way and it was awkward | 1.0 | Ahelp 'kick' button immediately kicks, no confirmation - ## Description

<!-- Explain your issue in detail, including the steps to reproduce it if applicable. Issues without proper explanation are liable to be closed by maintainers.-->

accidentally kicked a guy this way and it was awkward | non_infrastructure | ahelp kick button immediately kicks no confirmation description accidentally kicked a guy this way and it was awkward | 0 |

119,077 | 25,464,553,049 | IssuesEvent | 2022-11-25 01:47:47 | objectos/objectos | https://api.github.com/repos/objectos/objectos | closed | Field declarations TC06: array initializer | a:objectos-code | Objectos Code:

```java

field(t(_int(), dim()), id("a"), a());

field(t(_int(), dim()), id("b"), a(i(0)));

field(t(_int(), dim()), id("c"), a(i(0), i(1)));

```

Should generate:

```java

int[] a = {};

int[] b = {0};

int[] c = {0, 1};

``` | 1.0 | Field declarations TC06: array initializer - Objectos Code:

```java

field(t(_int(), dim()), id("a"), a());

field(t(_int(), dim()), id("b"), a(i(0)));

field(t(_int(), dim()), id("c"), a(i(0), i(1)));

```

Should generate:

```java

int[] a = {};

int[] b = {0};

int[] c = {0, 1};

``` | non_infrastructure | field declarations array initializer objectos code java field t int dim id a a field t int dim id b a i field t int dim id c a i i should generate java int a int b int c | 0 |

3,592 | 4,426,976,176 | IssuesEvent | 2016-08-16 19:59:44 | coherence-community/coherence-incubator | https://api.github.com/repos/coherence-community/coherence-incubator | closed | Create Coherence Incubator 13 (bridging release) based on Coherence 12.2.1+ | Module: Command Pattern Module: Common Module: Event Distribution Pattern Module: Functor Pattern Module: JVisualVM Module: Messaging Pattern Module: Processing Pattern Module: Push Replication Pattern Priority: Major Type: Infrastructure | To support live migration and compatibility with the latest Coherence 12.2.1+ releases, we need to create a new release of the Coherence Incubator.

This will support using Coherence Incubator 12 features, on Coherence 12.2.1+ releases, while applications migrate to use the latest Coherence 12.2.1 features, namely Federated Caching instead of Push Replication. | 1.0 | Create Coherence Incubator 13 (bridging release) based on Coherence 12.2.1+ - To support live migration and compatibility with the latest Coherence 12.2.1+ releases, we need to create a new release of the Coherence Incubator.

This will support using Coherence Incubator 12 features, on Coherence 12.2.1+ releases, while applications migrate to use the latest Coherence 12.2.1 features, namely Federated Caching instead of Push Replication. | infrastructure | create coherence incubator bridging release based on coherence to support live migration and compatibility with the latest coherence releases we need to create a new release of the coherence incubator this will support using coherence incubator features on coherence releases while applications migrate to use the latest coherence features namely federated caching instead of push replication | 1 |

154,112 | 19,710,788,344 | IssuesEvent | 2022-01-13 04:55:46 | ChoeMinji/react-17.0.2 | https://api.github.com/repos/ChoeMinji/react-17.0.2 | opened | CVE-2020-15215 (Medium) detected in electron-9.1.0.tgz | security vulnerability | ## CVE-2020-15215 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>electron-9.1.0.tgz</b></p></summary>

<p>Build cross platform desktop apps with JavaScript, HTML, and CSS</p>

<p>Library home page: <a href="https://registry.npmjs.org/electron/-/electron-9.1.0.tgz">https://registry.npmjs.org/electron/-/electron-9.1.0.tgz</a></p>

<p>

Dependency Hierarchy:

- react-devtools-4.8.2.tgz (Root Library)

- :x: **electron-9.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ChoeMinji/react-17.0.2/commit/4669645897ed4ebcd4ee037f4dabb509ed4754c7">4669645897ed4ebcd4ee037f4dabb509ed4754c7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Electron before versions 11.0.0-beta.6, 10.1.2, 9.3.1 or 8.5.2 is vulnerable to a context isolation bypass. Apps using both `contextIsolation` and `sandbox: true` are affected. Apps using both `contextIsolation` and `nodeIntegrationInSubFrames: true` are affected. This is a context isolation bypass, meaning that code running in the main world context in the renderer can reach into the isolated Electron context and perform privileged actions.

<p>Publish Date: 2020-10-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-15215>CVE-2020-15215</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/electron/electron/security/advisories/GHSA-56pc-6jqp-xqj8">https://github.com/electron/electron/security/advisories/GHSA-56pc-6jqp-xqj8</a></p>

<p>Release Date: 2020-10-19</p>

<p>Fix Resolution: v8.5.2, v9.3.1, v10.1.2, v11.0.0-beta.6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-15215 (Medium) detected in electron-9.1.0.tgz - ## CVE-2020-15215 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>electron-9.1.0.tgz</b></p></summary>

<p>Build cross platform desktop apps with JavaScript, HTML, and CSS</p>

<p>Library home page: <a href="https://registry.npmjs.org/electron/-/electron-9.1.0.tgz">https://registry.npmjs.org/electron/-/electron-9.1.0.tgz</a></p>

<p>

Dependency Hierarchy:

- react-devtools-4.8.2.tgz (Root Library)

- :x: **electron-9.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ChoeMinji/react-17.0.2/commit/4669645897ed4ebcd4ee037f4dabb509ed4754c7">4669645897ed4ebcd4ee037f4dabb509ed4754c7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Electron before versions 11.0.0-beta.6, 10.1.2, 9.3.1 or 8.5.2 is vulnerable to a context isolation bypass. Apps using both `contextIsolation` and `sandbox: true` are affected. Apps using both `contextIsolation` and `nodeIntegrationInSubFrames: true` are affected. This is a context isolation bypass, meaning that code running in the main world context in the renderer can reach into the isolated Electron context and perform privileged actions.

<p>Publish Date: 2020-10-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-15215>CVE-2020-15215</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/electron/electron/security/advisories/GHSA-56pc-6jqp-xqj8">https://github.com/electron/electron/security/advisories/GHSA-56pc-6jqp-xqj8</a></p>

<p>Release Date: 2020-10-19</p>

<p>Fix Resolution: v8.5.2, v9.3.1, v10.1.2, v11.0.0-beta.6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_infrastructure | cve medium detected in electron tgz cve medium severity vulnerability vulnerable library electron tgz build cross platform desktop apps with javascript html and css library home page a href dependency hierarchy react devtools tgz root library x electron tgz vulnerable library found in head commit a href found in base branch master vulnerability details electron before versions beta or is vulnerable to a context isolation bypass apps using both contextisolation and sandbox true are affected apps using both contextisolation and nodeintegrationinsubframes true are affected this is a context isolation bypass meaning that code running in the main world context in the renderer can reach into the isolated electron context and perform privileged actions publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact low integrity impact low availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution beta step up your open source security game with whitesource | 0 |

10,949 | 8,826,818,059 | IssuesEvent | 2019-01-03 05:15:26 | astroML/astroML | https://api.github.com/repos/astroML/astroML | closed | deprecation decorator | infrastructure question | For version 0.4 a few things need to be deprecated. We don't have our own infrastructure to do it nicely, but:

* `astropy.utils.deprecated` is a very powerful decorator, but it issues an `AstropyDeprecationWarning`. If there isn't an easy way to swap the warning to either an `AstroMLDeprecationWarning` or just a simple `DeprecationWarning` we may just write a lightweight wrapper around the astropy one.

* An alternative would be to use `sklearn.utils.deprecated`. | 1.0 | deprecation decorator - For version 0.4 a few things need to be deprecated. We don't have our own infrastructure to do it nicely, but:

* `astropy.utils.deprecated` is a very powerful decorator, but it issues an `AstropyDeprecationWarning`. If there isn't an easy way to swap the warning to either an `AstroMLDeprecationWarning` or just a simple `DeprecationWarning` we may just write a lightweight wrapper around the astropy one.

* An alternative would be to use `sklearn.utils.deprecated`. | infrastructure | deprecation decorator for version a few things need to be deprecated we don t have our own infrastructure to do it nicely but astropy utils deprecated is a very powerful decorator but it issues an astropydeprecationwarning if there isn t an easy way to swap the warning to either an astromldeprecationwarning or just a simple deprecationwarning we may just write a lightweight wrapper around the astropy one an alternative would be to use sklearn utils deprecated | 1 |

428,122 | 12,403,271,123 | IssuesEvent | 2020-05-21 13:37:48 | yalla-coop/tempo | https://api.github.com/repos/yalla-coop/tempo | closed | Apple Phone Design Issues | backlog bug priority-5 | Apple Phone devices are throwing up a few design issues for us to look at

- [x] Bug 187 - After sending TC's successfully to another member, the graphic above the congratulations message is a bit squashed and stretched

- [x] Bug 188 - On the member's homepage the gift graphic in the pink 'Share the love…' box doesn't load properly. Have tried reloading multiple times and restarting the browser and relogging in

- [x] Bug 193 - Similar issue to #187 - after I send TC's to my group the graphic is stretched above the 'Gift Sent!' header. The page also opens too zoomed in here so the full sub-heading message isn't displayed.

| 1.0 | Apple Phone Design Issues - Apple Phone devices are throwing up a few design issues for us to look at

- [x] Bug 187 - After sending TC's successfully to another member, the graphic above the congratulations message is a bit squashed and stretched

- [x] Bug 188 - On the member's homepage the gift graphic in the pink 'Share the love…' box doesn't load properly. Have tried reloading multiple times and restarting the browser and relogging in

- [x] Bug 193 - Similar issue to #187 - after I send TC's to my group the graphic is stretched above the 'Gift Sent!' header. The page also opens too zoomed in here so the full sub-heading message isn't displayed.

| non_infrastructure | apple phone design issues apple phone devices are throwing up a few design issues for us to look at bug after sending tc s successfully to another member the graphic above the congratulations message is a bit squashed and stretched bug on the member s homepage the gift graphic in the pink share the love… box doesn t load properly have tried reloading multiple times and restarting the browser and relogging in bug similar issue to after i send tc s to my group the graphic is stretched above the gift sent header the page also opens too zoomed in here so the full sub heading message isn t displayed | 0 |

10,658 | 8,665,737,595 | IssuesEvent | 2018-11-29 00:41:01 | astroML/astroML | https://api.github.com/repos/astroML/astroML | opened | Fix pytest 4 compatibility | infrastructure | We got quite a few `RemovedInPytest4Warning` that needs some attention first, and there are also issues that looks similar to https://github.com/astropy/astropy/issues/6025 | 1.0 | Fix pytest 4 compatibility - We got quite a few `RemovedInPytest4Warning` that needs some attention first, and there are also issues that looks similar to https://github.com/astropy/astropy/issues/6025 | infrastructure | fix pytest compatibility we got quite a few that needs some attention first and there are also issues that looks similar to | 1 |

6,795 | 6,612,650,606 | IssuesEvent | 2017-09-20 05:29:57 | archco/moss-ui | https://api.github.com/repos/archco/moss-ui | closed | Change scss directory structure. | css infrastructure | `scss/components/` 에 같이 있는 scss-components와 vue-components를 분리한다. scss-components를 scss-parts로 변경한다.

```yml

scss:

- components # vue components.

- helpers # scss helper utilities.

- lib # libraries and functions.

- mixins # scss mixins.

- parts # scss parts.

``` | 1.0 | Change scss directory structure. - `scss/components/` 에 같이 있는 scss-components와 vue-components를 분리한다. scss-components를 scss-parts로 변경한다.

```yml

scss:

- components # vue components.

- helpers # scss helper utilities.

- lib # libraries and functions.

- mixins # scss mixins.

- parts # scss parts.

``` | infrastructure | change scss directory structure scss components 에 같이 있는 scss components와 vue components를 분리한다 scss components를 scss parts로 변경한다 yml scss components vue components helpers scss helper utilities lib libraries and functions mixins scss mixins parts scss parts | 1 |

16,824 | 12,152,117,272 | IssuesEvent | 2020-04-24 21:26:40 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | opened | Better sync with lab & prod templates eg.; jenkins | Infrastructure action-required | https://trello.com/c/XwDDreQy/31-better-sync-with-lab-prod-templates-eg-jenkins

Things like custom jenkins templates in Prod don't live in Lab. There should be some sync between these two. | 1.0 | Better sync with lab & prod templates eg.; jenkins - https://trello.com/c/XwDDreQy/31-better-sync-with-lab-prod-templates-eg-jenkins

Things like custom jenkins templates in Prod don't live in Lab. There should be some sync between these two. | infrastructure | better sync with lab prod templates eg jenkins things like custom jenkins templates in prod don t live in lab there should be some sync between these two | 1 |

20,303 | 13,797,400,778 | IssuesEvent | 2020-10-09 22:09:21 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | osx-arm64 enable CoreCLR native component builds in CI. | arch-arm64 area-Infrastructure-coreclr os-mac-os-x-big-sur | Once #40435 merges we need to enable CI builds of CoreCLR osx-arm64 native components.

Blocked on #41133 | 1.0 | osx-arm64 enable CoreCLR native component builds in CI. - Once #40435 merges we need to enable CI builds of CoreCLR osx-arm64 native components.

Blocked on #41133 | infrastructure | osx enable coreclr native component builds in ci once merges we need to enable ci builds of coreclr osx native components blocked on | 1 |

11,939 | 9,529,591,196 | IssuesEvent | 2019-04-29 11:46:05 | buildit/gravity-ui-web | https://api.github.com/repos/buildit/gravity-ui-web | opened | Add tests! | :bulb: idea good first issue infrastructure ui | **Is your feature request related to a problem? Please describe.**

We do some linting on our SASS code, but other than that, there is currently no testing on the `gravity-ui-web` library. We ought to fix that!

**Describe the solution you'd like**

Off the top of my head, these are all kinds of tests we should consider adding:

* Directly on the SASS code:

* Testing our SASS mixins and functions using something like [`sass-true`](https://github.com/oddbird/true)

* Testing that the SASS files from our ITCSS `settings` and `tools` layers do not output any CSS. (I think this would currently fail due to `gravy`, but nonetheless it would be good to have a test covering this, so we can fix that and avoid future regressions)

* Directly on the client-side JS code

* Bog-standard unit-tests using Jest or whatever.

* Note, currently we only have a teeny bit of JS code for the toggle button, but I anticipate this will grow in future releases, so having some testing infrastrcture in place would be good

* Via the pattern library:

* Run a11y tests (using either [pa11y](http://pa11y.org/) or [aXe](https://www.deque.com/axe/) per component (if you look at the Pattern Lab output in `dist/`, you can see that each pattern is output to its own HTML file. We could potentially just load each of those in turn and run our tests on them

* Run visual regression tests per component.

* While we fully expect things to render subtly differently in different browsers, components should render identically in the same browser - unless we've intentionally changed something. Therefore, running visual regression tests in one or more representative browsers would flag up any unintentional styling regressions.

* Since some components change appearance across breakpoints, we ought to test each component at a selection of viewport sizes.

* The reference images could be quite large, so we may want to explore storing them somewhere other than our git repo

* Maybe do snapshot testing of the rendered HTML?

* Similar to the visual regression testing, our HTML structures shouldn't really change by accident. So, something like Jest's snapshot testing might be a convenient way of checking against a reference and warning when that changes.

* E2E tests

* Being able to test the bahviour of any interactive components (currently only toggle button - but there are likely to be more in future) in a browser would be good.

* Toolks like Cypress or something the runs over WebDriver would be good

* Personally, I'd prefer the latter because we could then run our tests across many different browsers (e.g. via BrowserStack or similar). Cypress is convenient but is tied to the Blink engine (i.e. Chromium). I think we should make an effort to test in other browser engines too.

I'm sure there's more - but you get the idea. Feel free to add comments and suggestions in the comments below.

I wouldn't expect all of these right away. Each category probably deserves its own PR (and deeper discussion before implementing), but I wanted to brain-dump my thoughts somewhere :-D

I think the SASS testing would be a good place to start as that's going to give us the most value in the short term. | 1.0 | Add tests! - **Is your feature request related to a problem? Please describe.**

We do some linting on our SASS code, but other than that, there is currently no testing on the `gravity-ui-web` library. We ought to fix that!

**Describe the solution you'd like**

Off the top of my head, these are all kinds of tests we should consider adding:

* Directly on the SASS code:

* Testing our SASS mixins and functions using something like [`sass-true`](https://github.com/oddbird/true)

* Testing that the SASS files from our ITCSS `settings` and `tools` layers do not output any CSS. (I think this would currently fail due to `gravy`, but nonetheless it would be good to have a test covering this, so we can fix that and avoid future regressions)

* Directly on the client-side JS code

* Bog-standard unit-tests using Jest or whatever.

* Note, currently we only have a teeny bit of JS code for the toggle button, but I anticipate this will grow in future releases, so having some testing infrastrcture in place would be good

* Via the pattern library:

* Run a11y tests (using either [pa11y](http://pa11y.org/) or [aXe](https://www.deque.com/axe/) per component (if you look at the Pattern Lab output in `dist/`, you can see that each pattern is output to its own HTML file. We could potentially just load each of those in turn and run our tests on them

* Run visual regression tests per component.

* While we fully expect things to render subtly differently in different browsers, components should render identically in the same browser - unless we've intentionally changed something. Therefore, running visual regression tests in one or more representative browsers would flag up any unintentional styling regressions.

* Since some components change appearance across breakpoints, we ought to test each component at a selection of viewport sizes.

* The reference images could be quite large, so we may want to explore storing them somewhere other than our git repo

* Maybe do snapshot testing of the rendered HTML?

* Similar to the visual regression testing, our HTML structures shouldn't really change by accident. So, something like Jest's snapshot testing might be a convenient way of checking against a reference and warning when that changes.

* E2E tests

* Being able to test the bahviour of any interactive components (currently only toggle button - but there are likely to be more in future) in a browser would be good.

* Toolks like Cypress or something the runs over WebDriver would be good