Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

66,244 | 3,251,412,545 | IssuesEvent | 2015-10-19 09:39:55 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | opened | ReplicationController failed to notice Pod Eviction | kind/bug priority/P0 team/CSI team/node | TL;DR: I don't have a reliable repro yet, it might be a one-off flake. If it's not it can be serious, as we can permanently kill a Pod.

I was running 250 Node tests (denisty 30') looking at heapster resource consumption. The first run was close to the limit (3G), so the second one was likely to end in OOM. To nobody's surprise it did. Because it was eating by far most memory it was (most likely) killed by the sys oom killer, which, for some reason ended up in:

```

Mon, 19 Oct 2015 11:11:11 +0200 Mon, 19 Oct 2015 11:11:11 +0200 1 heapster-v10-j8a4y Pod NodeControllerEviction {controllermanager } Marking for deletion Pod heapster-v10-j8a4y from Node e2e-test-gmarek-minion-9ei

```

(Node itself was healthy at the moment)

Small problem is that the Pod is not running anywhere now:

```

kubectl --namespace=kube-system get pod | grep heapster

```

returns an empty result.

The real question is why this is the case:

```

gmarek@breakwater:~/go/src/k8s.io/kubernetes$ kubectl --namespace=kube-system describe rc heapster-v10

Name: heapster-v10

Namespace: kube-system

Image(s): gcr.io/google_containers/heapster:v0.18.2

Selector: k8s-app=heapster,version=v10

Labels: k8s-app=heapster,kubernetes.io/cluster-service=true,version=v10

Replicas: 1 current / 1 desired

Pods Status: 0 Running / 0 Waiting / 0 Succeeded / 0 Failed

No events.

```

cc @wojtek-t @fgrzadkowski @davidopp @lavalamp @dchen1107 | 1.0 | ReplicationController failed to notice Pod Eviction - TL;DR: I don't have a reliable repro yet, it might be a one-off flake. If it's not it can be serious, as we can permanently kill a Pod.

I was running 250 Node tests (denisty 30') looking at heapster resource consumption. The first run was close to the limit (3G), so the second one was likely to end in OOM. To nobody's surprise it did. Because it was eating by far most memory it was (most likely) killed by the sys oom killer, which, for some reason ended up in:

```

Mon, 19 Oct 2015 11:11:11 +0200 Mon, 19 Oct 2015 11:11:11 +0200 1 heapster-v10-j8a4y Pod NodeControllerEviction {controllermanager } Marking for deletion Pod heapster-v10-j8a4y from Node e2e-test-gmarek-minion-9ei

```

(Node itself was healthy at the moment)

Small problem is that the Pod is not running anywhere now:

```

kubectl --namespace=kube-system get pod | grep heapster

```

returns an empty result.

The real question is why this is the case:

```

gmarek@breakwater:~/go/src/k8s.io/kubernetes$ kubectl --namespace=kube-system describe rc heapster-v10

Name: heapster-v10

Namespace: kube-system

Image(s): gcr.io/google_containers/heapster:v0.18.2

Selector: k8s-app=heapster,version=v10

Labels: k8s-app=heapster,kubernetes.io/cluster-service=true,version=v10

Replicas: 1 current / 1 desired

Pods Status: 0 Running / 0 Waiting / 0 Succeeded / 0 Failed

No events.

```

cc @wojtek-t @fgrzadkowski @davidopp @lavalamp @dchen1107 | non_infrastructure | replicationcontroller failed to notice pod eviction tl dr i don t have a reliable repro yet it might be a one off flake if it s not it can be serious as we can permanently kill a pod i was running node tests denisty looking at heapster resource consumption the first run was close to the limit so the second one was likely to end in oom to nobody s surprise it did because it was eating by far most memory it was most likely killed by the sys oom killer which for some reason ended up in mon oct mon oct heapster pod nodecontrollereviction controllermanager marking for deletion pod heapster from node test gmarek minion node itself was healthy at the moment small problem is that the pod is not running anywhere now kubectl namespace kube system get pod grep heapster returns an empty result the real question is why this is the case gmarek breakwater go src io kubernetes kubectl namespace kube system describe rc heapster name heapster namespace kube system image s gcr io google containers heapster selector app heapster version labels app heapster kubernetes io cluster service true version replicas current desired pods status running waiting succeeded failed no events cc wojtek t fgrzadkowski davidopp lavalamp | 0 |

220,992 | 7,372,742,906 | IssuesEvent | 2018-03-13 15:28:45 | idaholab/raven | https://api.github.com/repos/idaholab/raven | opened | RAVEN interface and ExternalXML | improvement priority_normal | --------

Issue Description

--------

##### What did you expect to see happen?

When using the RAVEN interface:

If the "inner" RAVEN uses ExteranlXML, the outer run should not be affected.

##### What did you see instead?

If, for example, the OutStreams are in the main "inner" file and the DataObjects are in an ExternalXML file, the RAVENparser will crash with (for example):

```

IOError: RAVEN_PARSER ERROR: The OutStream of type "Print" named "dumpOPT" is linked to not existing DataObject!

```

##### Do you have a suggested fix for the development team?

Expand the necessary ExternalXML nodes before parsing the XML.

----------------

For Change Control Board: Issue Review

----------------

This review should occur before any development is performed as a response to this issue.

- [x] 1. Is it tagged with a type: defect or improvement?

- [x] 2. Is it tagged with a priority: critical, normal or minor?

- [x] 3. If it will impact requirements or requirements tests, is it tagged with requirements?

- [x] 4. If it is a defect, can it cause wrong results for users? If so an email needs to be sent to the users.

- [x] 5. Is a rationale provided? (Such as explaining why the improvement is needed or why current code is wrong.)

-------

For Change Control Board: Issue Closure

-------

This review should occur when the issue is imminently going to be closed.

- [ ] 1. If the issue is a defect, is the defect fixed?

- [ ] 2. If the issue is a defect, is the defect tested for in the regression test system? (If not explain why not.)

- [ ] 3. If the issue can impact users, has an email to the users group been written (the email should specify if the defect impacts stable or master)?

- [ ] 4. If the issue is a defect, does it impact the latest stable branch? If yes, is there any issue tagged with stable (create if needed)?

- [ ] 5. If the issue is being closed without a merge request, has an explanation of why it is being closed been provided?

| 1.0 | RAVEN interface and ExternalXML - --------

Issue Description

--------

##### What did you expect to see happen?

When using the RAVEN interface:

If the "inner" RAVEN uses ExteranlXML, the outer run should not be affected.

##### What did you see instead?

If, for example, the OutStreams are in the main "inner" file and the DataObjects are in an ExternalXML file, the RAVENparser will crash with (for example):

```

IOError: RAVEN_PARSER ERROR: The OutStream of type "Print" named "dumpOPT" is linked to not existing DataObject!

```

##### Do you have a suggested fix for the development team?

Expand the necessary ExternalXML nodes before parsing the XML.

----------------

For Change Control Board: Issue Review

----------------

This review should occur before any development is performed as a response to this issue.

- [x] 1. Is it tagged with a type: defect or improvement?

- [x] 2. Is it tagged with a priority: critical, normal or minor?

- [x] 3. If it will impact requirements or requirements tests, is it tagged with requirements?

- [x] 4. If it is a defect, can it cause wrong results for users? If so an email needs to be sent to the users.

- [x] 5. Is a rationale provided? (Such as explaining why the improvement is needed or why current code is wrong.)

-------

For Change Control Board: Issue Closure

-------

This review should occur when the issue is imminently going to be closed.

- [ ] 1. If the issue is a defect, is the defect fixed?

- [ ] 2. If the issue is a defect, is the defect tested for in the regression test system? (If not explain why not.)

- [ ] 3. If the issue can impact users, has an email to the users group been written (the email should specify if the defect impacts stable or master)?

- [ ] 4. If the issue is a defect, does it impact the latest stable branch? If yes, is there any issue tagged with stable (create if needed)?

- [ ] 5. If the issue is being closed without a merge request, has an explanation of why it is being closed been provided?

| non_infrastructure | raven interface and externalxml issue description what did you expect to see happen when using the raven interface if the inner raven uses exteranlxml the outer run should not be affected what did you see instead if for example the outstreams are in the main inner file and the dataobjects are in an externalxml file the ravenparser will crash with for example ioerror raven parser error the outstream of type print named dumpopt is linked to not existing dataobject do you have a suggested fix for the development team expand the necessary externalxml nodes before parsing the xml for change control board issue review this review should occur before any development is performed as a response to this issue is it tagged with a type defect or improvement is it tagged with a priority critical normal or minor if it will impact requirements or requirements tests is it tagged with requirements if it is a defect can it cause wrong results for users if so an email needs to be sent to the users is a rationale provided such as explaining why the improvement is needed or why current code is wrong for change control board issue closure this review should occur when the issue is imminently going to be closed if the issue is a defect is the defect fixed if the issue is a defect is the defect tested for in the regression test system if not explain why not if the issue can impact users has an email to the users group been written the email should specify if the defect impacts stable or master if the issue is a defect does it impact the latest stable branch if yes is there any issue tagged with stable create if needed if the issue is being closed without a merge request has an explanation of why it is being closed been provided | 0 |

249,084 | 7,953,757,763 | IssuesEvent | 2018-07-12 03:37:42 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Dedicated steam eco server not showing in list | Medium Priority | I have been searching to fix the same problem firewall is open on eco and eco server , port are forwarded correctly and I am running the server as an administrator . In steam server list can't even see my server as active with internal ip as a localhost or with my web address but I can access my server in-game but my friends cannot . tried to link my steam account on eco server but the link is not working for now.

please help fix this ! | 1.0 | Dedicated steam eco server not showing in list - I have been searching to fix the same problem firewall is open on eco and eco server , port are forwarded correctly and I am running the server as an administrator . In steam server list can't even see my server as active with internal ip as a localhost or with my web address but I can access my server in-game but my friends cannot . tried to link my steam account on eco server but the link is not working for now.

please help fix this ! | non_infrastructure | dedicated steam eco server not showing in list i have been searching to fix the same problem firewall is open on eco and eco server port are forwarded correctly and i am running the server as an administrator in steam server list can t even see my server as active with internal ip as a localhost or with my web address but i can access my server in game but my friends cannot tried to link my steam account on eco server but the link is not working for now please help fix this | 0 |

172,098 | 21,031,333,808 | IssuesEvent | 2022-03-31 01:21:26 | srivatsamarichi/spring-petclinic | https://api.github.com/repos/srivatsamarichi/spring-petclinic | opened | CVE-2022-22950 (Medium) detected in spring-expression-5.3.6.jar | security vulnerability | ## CVE-2022-22950 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-expression-5.3.6.jar</b></p></summary>

<p>Spring Expression Language (SpEL)</p>

<p>Library home page: <a href="https://github.com/spring-projects/spring-framework">https://github.com/spring-projects/spring-framework</a></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/springframework/spring-expression/5.3.6/spring-expression-5.3.6.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-2.4.5.jar (Root Library)

- spring-webmvc-5.3.6.jar

- :x: **spring-expression-5.3.6.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Spring Framework versions 5.3.0 - 5.3.16 and older unsupported versions, it is possible for a user to provide a specially crafted SpEL expression that may cause a denial of service condition

<p>Publish Date: 2022-01-11

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-22950>CVE-2022-22950</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://tanzu.vmware.com/security/cve-2022-22950">https://tanzu.vmware.com/security/cve-2022-22950</a></p>

<p>Release Date: 2022-01-11</p>

<p>Fix Resolution: org.springframework:spring-expression:5.3.17</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-22950 (Medium) detected in spring-expression-5.3.6.jar - ## CVE-2022-22950 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-expression-5.3.6.jar</b></p></summary>

<p>Spring Expression Language (SpEL)</p>

<p>Library home page: <a href="https://github.com/spring-projects/spring-framework">https://github.com/spring-projects/spring-framework</a></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/springframework/spring-expression/5.3.6/spring-expression-5.3.6.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-2.4.5.jar (Root Library)

- spring-webmvc-5.3.6.jar

- :x: **spring-expression-5.3.6.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Spring Framework versions 5.3.0 - 5.3.16 and older unsupported versions, it is possible for a user to provide a specially crafted SpEL expression that may cause a denial of service condition

<p>Publish Date: 2022-01-11

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-22950>CVE-2022-22950</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://tanzu.vmware.com/security/cve-2022-22950">https://tanzu.vmware.com/security/cve-2022-22950</a></p>

<p>Release Date: 2022-01-11</p>

<p>Fix Resolution: org.springframework:spring-expression:5.3.17</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_infrastructure | cve medium detected in spring expression jar cve medium severity vulnerability vulnerable library spring expression jar spring expression language spel library home page a href path to dependency file pom xml path to vulnerable library home wss scanner repository org springframework spring expression spring expression jar dependency hierarchy spring boot starter web jar root library spring webmvc jar x spring expression jar vulnerable library found in base branch master vulnerability details in spring framework versions and older unsupported versions it is possible for a user to provide a specially crafted spel expression that may cause a denial of service condition publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact low availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution org springframework spring expression step up your open source security game with whitesource | 0 |

795,103 | 28,061,574,959 | IssuesEvent | 2023-03-29 12:58:37 | unlock-protocol/unlock | https://api.github.com/repos/unlock-protocol/unlock | closed | Walletless "registration" for events | 🚨 High Priority Events | On free events where we subsidize gas, we should be able to not require users to use their wallets when they checkout by just using the walletless airdrops.

Note: we need to make sure the event does **not** use hooks either.

| 1.0 | Walletless "registration" for events - On free events where we subsidize gas, we should be able to not require users to use their wallets when they checkout by just using the walletless airdrops.

Note: we need to make sure the event does **not** use hooks either.

| non_infrastructure | walletless registration for events on free events where we subsidize gas we should be able to not require users to use their wallets when they checkout by just using the walletless airdrops note we need to make sure the event does not use hooks either | 0 |

194,503 | 15,434,177,507 | IssuesEvent | 2021-03-07 01:46:48 | wds9601/film-club | https://api.github.com/repos/wds9601/film-club | opened | Some films don't have release dates 😒 | bug documentation | Has caused an error server-side: https://github.com/gstro/film-club-server/issues/38

This ticket captures work to be done to update the API spec, and also as a reminder to check for `null` in the client code when using `releaseDate`. | 1.0 | Some films don't have release dates 😒 - Has caused an error server-side: https://github.com/gstro/film-club-server/issues/38

This ticket captures work to be done to update the API spec, and also as a reminder to check for `null` in the client code when using `releaseDate`. | non_infrastructure | some films don t have release dates 😒 has caused an error server side this ticket captures work to be done to update the api spec and also as a reminder to check for null in the client code when using releasedate | 0 |

29,773 | 24,259,591,287 | IssuesEvent | 2022-09-27 21:06:29 | JupiterBroadcasting/jupiterbroadcasting.com | https://api.github.com/repos/JupiterBroadcasting/jupiterbroadcasting.com | opened | Testing `develop` branch workflow | dev infrastructure | Testing part of the workflow for our (soon) eventual move to a [Gitflow](https://www.atlassian.com/git/tutorials/comparing-workflows/gitflow-workflow) workflow to protect main and offer - in the future - live full-production testing of the develop branch.

We'll see if I understand all of this well ; )

Steps for this test:

* [x] do a PR #431 to merge `main` -> `develop` to bring `develop` in sync w `main`

* [ ] have someone merge said PR (thanks @elreydetoda !)

* [ ] apply PR #400 to `develop`

* [ ] see how the E2E Tests do w that one (for fun!). Not expecting anything PR #400 specific other than a green light..

* [ ] If satisfied, do a PR from `develop` to `main`

| 1.0 | Testing `develop` branch workflow - Testing part of the workflow for our (soon) eventual move to a [Gitflow](https://www.atlassian.com/git/tutorials/comparing-workflows/gitflow-workflow) workflow to protect main and offer - in the future - live full-production testing of the develop branch.

We'll see if I understand all of this well ; )

Steps for this test:

* [x] do a PR #431 to merge `main` -> `develop` to bring `develop` in sync w `main`

* [ ] have someone merge said PR (thanks @elreydetoda !)

* [ ] apply PR #400 to `develop`

* [ ] see how the E2E Tests do w that one (for fun!). Not expecting anything PR #400 specific other than a green light..

* [ ] If satisfied, do a PR from `develop` to `main`

| infrastructure | testing develop branch workflow testing part of the workflow for our soon eventual move to a workflow to protect main and offer in the future live full production testing of the develop branch we ll see if i understand all of this well steps for this test do a pr to merge main develop to bring develop in sync w main have someone merge said pr thanks elreydetoda apply pr to develop see how the tests do w that one for fun not expecting anything pr specific other than a green light if satisfied do a pr from develop to main | 1 |

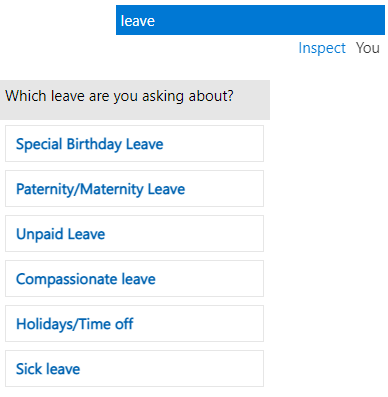

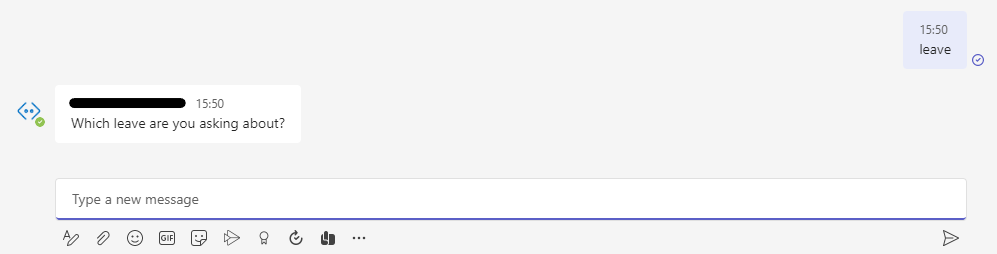

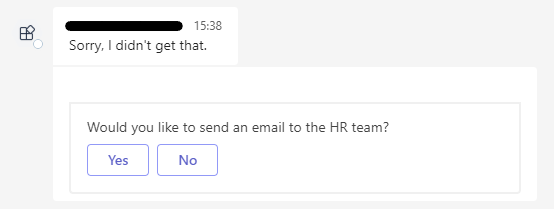

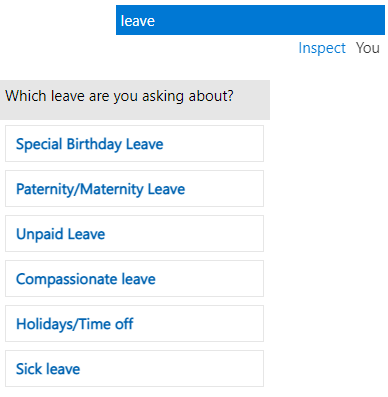

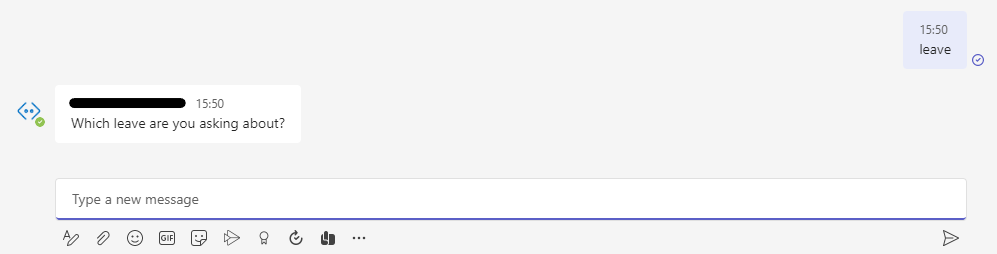

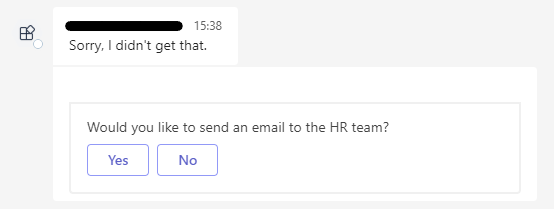

53,162 | 22,637,806,746 | IssuesEvent | 2022-06-30 20:57:50 | microsoft/BotFramework-Composer | https://api.github.com/repos/microsoft/BotFramework-Composer | closed | QnAmaker multi-turn prompts don't show on Teams, Composer prompts do | customer-reported Bot Services | I am making a chatbot using Composer that calls a QnAMaker knowledge base that contains multi-turn prompts. These prompts work fine when tested in Composer, Web Chat, and QnAMaker itself:

When testing the bot in Teams, however, the prompts don't appear:

I have tried using the Teams channel in the bot resource, as well as connecting it to a Teams app through the App Studio, and neither of them produce the prompts. I can't find anything specific to multi-turn integration with Teams in the documentation so am unsure why this would be happening?

Prompts created within Composer do appear in Teams:

So it is only QnAMaker multi-turn prompts that are causing this problem. However, there are no settings in QnAmaker or Teams that relate to this, so it must be something that needs changing in Composer to get it to work. Any help would be appreciated. | 1.0 | QnAmaker multi-turn prompts don't show on Teams, Composer prompts do - I am making a chatbot using Composer that calls a QnAMaker knowledge base that contains multi-turn prompts. These prompts work fine when tested in Composer, Web Chat, and QnAMaker itself:

When testing the bot in Teams, however, the prompts don't appear:

I have tried using the Teams channel in the bot resource, as well as connecting it to a Teams app through the App Studio, and neither of them produce the prompts. I can't find anything specific to multi-turn integration with Teams in the documentation so am unsure why this would be happening?

Prompts created within Composer do appear in Teams:

So it is only QnAMaker multi-turn prompts that are causing this problem. However, there are no settings in QnAmaker or Teams that relate to this, so it must be something that needs changing in Composer to get it to work. Any help would be appreciated. | non_infrastructure | qnamaker multi turn prompts don t show on teams composer prompts do i am making a chatbot using composer that calls a qnamaker knowledge base that contains multi turn prompts these prompts work fine when tested in composer web chat and qnamaker itself when testing the bot in teams however the prompts don t appear i have tried using the teams channel in the bot resource as well as connecting it to a teams app through the app studio and neither of them produce the prompts i can t find anything specific to multi turn integration with teams in the documentation so am unsure why this would be happening prompts created within composer do appear in teams so it is only qnamaker multi turn prompts that are causing this problem however there are no settings in qnamaker or teams that relate to this so it must be something that needs changing in composer to get it to work any help would be appreciated | 0 |

20,002 | 13,624,179,737 | IssuesEvent | 2020-09-24 07:39:29 | globaldothealth/list | https://api.github.com/repos/globaldothealth/list | closed | Fix 60-second request timeout / socket hang up in dev/prod | Infrastructure P1 Launch blocker | **Describe the bug**

Requests in dev/prod time out after 60 seconds.

**To Reproduce**

Send an API request, say to batch upsert, with a large amount of data (>10k rows).

**Expected behavior**

Requests, at least batch upsert, should allow more time to complete.

**Environment (please complete the following information):**

Only occurs in dev/prod -- can't repro locally. I've seen the issue both for bulk upload and ADI, so the issue isn't specific to the UI/browser.

I've previously tweaked our nginx config to avoid 504s, but in these cases, the API client gets `500: socket hang up`. | 1.0 | Fix 60-second request timeout / socket hang up in dev/prod - **Describe the bug**

Requests in dev/prod time out after 60 seconds.

**To Reproduce**

Send an API request, say to batch upsert, with a large amount of data (>10k rows).

**Expected behavior**

Requests, at least batch upsert, should allow more time to complete.

**Environment (please complete the following information):**

Only occurs in dev/prod -- can't repro locally. I've seen the issue both for bulk upload and ADI, so the issue isn't specific to the UI/browser.

I've previously tweaked our nginx config to avoid 504s, but in these cases, the API client gets `500: socket hang up`. | infrastructure | fix second request timeout socket hang up in dev prod describe the bug requests in dev prod time out after seconds to reproduce send an api request say to batch upsert with a large amount of data rows expected behavior requests at least batch upsert should allow more time to complete environment please complete the following information only occurs in dev prod can t repro locally i ve seen the issue both for bulk upload and adi so the issue isn t specific to the ui browser i ve previously tweaked our nginx config to avoid but in these cases the api client gets socket hang up | 1 |

22,562 | 15,279,611,689 | IssuesEvent | 2021-02-23 04:25:50 | LLNL/maestrowf | https://api.github.com/repos/LLNL/maestrowf | opened | Tests for DataStructures package | Infrastructure | Create tests that increase coverage for the maestrowf/datastructures package modules. | 1.0 | Tests for DataStructures package - Create tests that increase coverage for the maestrowf/datastructures package modules. | infrastructure | tests for datastructures package create tests that increase coverage for the maestrowf datastructures package modules | 1 |

28,977 | 23,645,587,925 | IssuesEvent | 2022-08-25 21:43:44 | meltano/squared | https://api.github.com/repos/meltano/squared | closed | Rerunning Paritially Completed CI Fails at dbt | data/Infrastructure data/Product Dogfooding | In this CI run https://github.com/meltano/squared/actions/runs/2784683224 github had some API errors but following a re-run it succeeded, probably a throttling thing. The problem is that the re-run caused the CI_BRANCH variable to update to the next increment so half of the EL sources werent available for the transform tests to pass.

The original purpose of adding a run ID and run attempt `CI_BRANCH: 'b${{ github.RUN_ID }}_${{ github.RUN_ATTEMPT }}'` as part of the branch unique id was to make different changes on the same branch test in isolation. For example if I push up a branch with a bug, it failed, then I push another change I'd want that second change to run in isolation from scratch otherwise the "fix" changes could cause a separate uncaught error.

I dont think our unique id is doing what we want. What we really want is to use the branch name plus the commit hash of the most recent commit, so after new commits are added to the branch our CI environment is reset but retries using the same commits should re-use whats already created. | 1.0 | Rerunning Paritially Completed CI Fails at dbt - In this CI run https://github.com/meltano/squared/actions/runs/2784683224 github had some API errors but following a re-run it succeeded, probably a throttling thing. The problem is that the re-run caused the CI_BRANCH variable to update to the next increment so half of the EL sources werent available for the transform tests to pass.

The original purpose of adding a run ID and run attempt `CI_BRANCH: 'b${{ github.RUN_ID }}_${{ github.RUN_ATTEMPT }}'` as part of the branch unique id was to make different changes on the same branch test in isolation. For example if I push up a branch with a bug, it failed, then I push another change I'd want that second change to run in isolation from scratch otherwise the "fix" changes could cause a separate uncaught error.

I dont think our unique id is doing what we want. What we really want is to use the branch name plus the commit hash of the most recent commit, so after new commits are added to the branch our CI environment is reset but retries using the same commits should re-use whats already created. | infrastructure | rerunning paritially completed ci fails at dbt in this ci run github had some api errors but following a re run it succeeded probably a throttling thing the problem is that the re run caused the ci branch variable to update to the next increment so half of the el sources werent available for the transform tests to pass the original purpose of adding a run id and run attempt ci branch b github run id github run attempt as part of the branch unique id was to make different changes on the same branch test in isolation for example if i push up a branch with a bug it failed then i push another change i d want that second change to run in isolation from scratch otherwise the fix changes could cause a separate uncaught error i dont think our unique id is doing what we want what we really want is to use the branch name plus the commit hash of the most recent commit so after new commits are added to the branch our ci environment is reset but retries using the same commits should re use whats already created | 1 |

34,933 | 30,595,915,513 | IssuesEvent | 2023-07-21 22:04:28 | OpenXRay/xray-16 | https://api.github.com/repos/OpenXRay/xray-16 | opened | Actions improvements | Enhancement Help wanted Portability Infrastructure Player Experience Developer Experience Linux good first issue macOS | TODO:

- [ ] Output binaries for macOS builds

- [ ] Provide AppImage package | 1.0 | Actions improvements - TODO:

- [ ] Output binaries for macOS builds

- [ ] Provide AppImage package | infrastructure | actions improvements todo output binaries for macos builds provide appimage package | 1 |

29,161 | 23,764,483,296 | IssuesEvent | 2022-09-01 11:41:05 | wellcomecollection/platform | https://api.github.com/repos/wellcomecollection/platform | closed | Point libsys external DNS to III hosted Sierra | 🚧 Infrastructure 📚Catalogue | ### Background

Sierra is being migrated from on-premises to hosting from III. This is happening on Tuesday 30th August. I have confirmed that there will be no IP whitelisting for the REST API and so the only change we need to make is to point the external DNS for libsys.wellcomelibrary.org to the new hosted server from III.

### Details

- This should not be done until we have had the notification from LSS that the migration has started.

- External DNS for libsys.wellcomelibrary.org should CNAME to welli.iii.com

- Once Sierra is back up, we should check the adapter is working. This will likely be the next working day due to time zone differences. | 1.0 | Point libsys external DNS to III hosted Sierra - ### Background

Sierra is being migrated from on-premises to hosting from III. This is happening on Tuesday 30th August. I have confirmed that there will be no IP whitelisting for the REST API and so the only change we need to make is to point the external DNS for libsys.wellcomelibrary.org to the new hosted server from III.

### Details

- This should not be done until we have had the notification from LSS that the migration has started.

- External DNS for libsys.wellcomelibrary.org should CNAME to welli.iii.com

- Once Sierra is back up, we should check the adapter is working. This will likely be the next working day due to time zone differences. | infrastructure | point libsys external dns to iii hosted sierra background sierra is being migrated from on premises to hosting from iii this is happening on tuesday august i have confirmed that there will be no ip whitelisting for the rest api and so the only change we need to make is to point the external dns for libsys wellcomelibrary org to the new hosted server from iii details this should not be done until we have had the notification from lss that the migration has started external dns for libsys wellcomelibrary org should cname to welli iii com once sierra is back up we should check the adapter is working this will likely be the next working day due to time zone differences | 1 |

19,979 | 11,354,113,964 | IssuesEvent | 2020-01-24 16:53:29 | terraform-providers/terraform-provider-aws | https://api.github.com/repos/terraform-providers/terraform-provider-aws | closed | Recently enabled EFS service in AWS China regions is using incorrect domain name. | partition/aws-cn service/efs | <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform Version

<!--- Please run `terraform -v` to show the Terraform core version and provider version(s). If you are not running the latest version of Terraform or the provider, please upgrade because your issue may have already been fixed. [Terraform documentation on provider versioning](https://www.terraform.io/docs/configuration/providers.html#provider-versions). --->

```

Terraform v0.12.19

+ provider.aws v2.45.0

+ provider.template v2.1.2

```

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* aws_efs_file_system

### Terraform Configuration Files

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

```hcl

resource "aws_efs_file_system" "efs" {

performance_mode = "generalPurpose"

encrypted = true

}

```

### Debug Output

<!---

Please provide a link to a GitHub Gist containing the complete debug output. Please do NOT paste the debug output in the issue; just paste a link to the Gist.

To obtain the debug output, see the [Terraform documentation on debugging](https://www.terraform.io/docs/internals/debugging.html).

--->

### Panic Output

<!--- If Terraform produced a panic, please provide a link to a GitHub Gist containing the output of the `crash.log`. --->

### Expected Behavior

<!--- What should have happened? --->

```hcl

$ terraform state show module.dbsdevlsp.aws_efs_file_system.efs\[0\]

# module.dbsdevlsp.aws_efs_file_system.efs[0]:

resource "aws_efs_file_system" "efs" {

arn = "arn:aws-cn:elasticfilesystem:cn-north-1:xxxxxxxxxx:file-system/fs-abcdefgh"

creation_token = "terraform-20200124105304526000000001"

dns_name = "fs-abcdefgh.efs.cn-north-1.amazonaws.com.cn"

encrypted = true

id = "fs-abcdefgh"

kms_key_id = "arn:aws-cn:kms:cn-north-1:xxxxxxxxxx:key/abcdefgh-1234-5678-90ab-cdefghijklmn"

performance_mode = "generalPurpose"

provisioned_throughput_in_mibps = 0

throughput_mode = "bursting"

}

```

### Actual Behavior

<!--- What actually happened? --->

```hcl

$ terraform state show module.dbsdevlsp.aws_efs_file_system.efs\[0\]

# module.dbsdevlsp.aws_efs_file_system.efs[0]:

resource "aws_efs_file_system" "efs" {

arn = "arn:aws-cn:elasticfilesystem:cn-north-1:xxxxxxxxxx:file-system/fs-abcdefgh"

creation_token = "terraform-20200124105304526000000001"

dns_name = "fs-abcdefgh.efs.cn-north-1.amazonaws.com"

encrypted = true

id = "fs-abcdefgh"

kms_key_id = "arn:aws-cn:kms:cn-north-1:xxxxxxxxxx:key/abcdefgh-1234-5678-90ab-cdefghijklmn"

performance_mode = "generalPurpose"

provisioned_throughput_in_mibps = 0

throughput_mode = "bursting"

}

```

### Steps to Reproduce

<!--- Please list the steps required to reproduce the issue. --->

1. `terraform apply`

### Important Factoids

<!--- Are there anything atypical about your accounts that we should know? For example: Running in EC2 Classic? --->

This is in AWS China region cn-north-1.

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Vendor documentation? For example:

--->

* It seems to me that aws/resource_aws_efs_file_system.go line 396: `func resourceAwsEfsDnsName(fileSystemId, region string) string` uses improper hardcoding for the domain name ("amazonaws.com") while the actual domain name used in cn-north-1 seems to be "amazonaws.com.cn".

| 1.0 | Recently enabled EFS service in AWS China regions is using incorrect domain name. - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform Version

<!--- Please run `terraform -v` to show the Terraform core version and provider version(s). If you are not running the latest version of Terraform or the provider, please upgrade because your issue may have already been fixed. [Terraform documentation on provider versioning](https://www.terraform.io/docs/configuration/providers.html#provider-versions). --->

```

Terraform v0.12.19

+ provider.aws v2.45.0

+ provider.template v2.1.2

```

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* aws_efs_file_system

### Terraform Configuration Files

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

```hcl

resource "aws_efs_file_system" "efs" {

performance_mode = "generalPurpose"

encrypted = true

}

```

### Debug Output

<!---

Please provide a link to a GitHub Gist containing the complete debug output. Please do NOT paste the debug output in the issue; just paste a link to the Gist.

To obtain the debug output, see the [Terraform documentation on debugging](https://www.terraform.io/docs/internals/debugging.html).

--->

### Panic Output

<!--- If Terraform produced a panic, please provide a link to a GitHub Gist containing the output of the `crash.log`. --->

### Expected Behavior

<!--- What should have happened? --->

```hcl

$ terraform state show module.dbsdevlsp.aws_efs_file_system.efs\[0\]

# module.dbsdevlsp.aws_efs_file_system.efs[0]:

resource "aws_efs_file_system" "efs" {

arn = "arn:aws-cn:elasticfilesystem:cn-north-1:xxxxxxxxxx:file-system/fs-abcdefgh"

creation_token = "terraform-20200124105304526000000001"

dns_name = "fs-abcdefgh.efs.cn-north-1.amazonaws.com.cn"

encrypted = true

id = "fs-abcdefgh"

kms_key_id = "arn:aws-cn:kms:cn-north-1:xxxxxxxxxx:key/abcdefgh-1234-5678-90ab-cdefghijklmn"

performance_mode = "generalPurpose"

provisioned_throughput_in_mibps = 0

throughput_mode = "bursting"

}

```

### Actual Behavior

<!--- What actually happened? --->

```hcl

$ terraform state show module.dbsdevlsp.aws_efs_file_system.efs\[0\]

# module.dbsdevlsp.aws_efs_file_system.efs[0]:

resource "aws_efs_file_system" "efs" {

arn = "arn:aws-cn:elasticfilesystem:cn-north-1:xxxxxxxxxx:file-system/fs-abcdefgh"

creation_token = "terraform-20200124105304526000000001"

dns_name = "fs-abcdefgh.efs.cn-north-1.amazonaws.com"

encrypted = true

id = "fs-abcdefgh"

kms_key_id = "arn:aws-cn:kms:cn-north-1:xxxxxxxxxx:key/abcdefgh-1234-5678-90ab-cdefghijklmn"

performance_mode = "generalPurpose"

provisioned_throughput_in_mibps = 0

throughput_mode = "bursting"

}

```

### Steps to Reproduce

<!--- Please list the steps required to reproduce the issue. --->

1. `terraform apply`

### Important Factoids

<!--- Are there anything atypical about your accounts that we should know? For example: Running in EC2 Classic? --->

This is in AWS China region cn-north-1.

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Vendor documentation? For example:

--->

* It seems to me that aws/resource_aws_efs_file_system.go line 396: `func resourceAwsEfsDnsName(fileSystemId, region string) string` uses improper hardcoding for the domain name ("amazonaws.com") while the actual domain name used in cn-north-1 seems to be "amazonaws.com.cn".

| non_infrastructure | recently enabled efs service in aws china regions is using incorrect domain name please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple providers if you are running into one of these scenarios we recommend opening an issue in the instead community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they generate extra noise for issue followers and do not help prioritize the request if you are interested in working on this issue or have submitted a pull request please leave a comment terraform version terraform provider aws provider template affected resource s aws efs file system terraform configuration files hcl resource aws efs file system efs performance mode generalpurpose encrypted true debug output please provide a link to a github gist containing the complete debug output please do not paste the debug output in the issue just paste a link to the gist to obtain the debug output see the panic output expected behavior hcl terraform state show module dbsdevlsp aws efs file system efs module dbsdevlsp aws efs file system efs resource aws efs file system efs arn arn aws cn elasticfilesystem cn north xxxxxxxxxx file system fs abcdefgh creation token terraform dns name fs abcdefgh efs cn north amazonaws com cn encrypted true id fs abcdefgh kms key id arn aws cn kms cn north xxxxxxxxxx key abcdefgh cdefghijklmn performance mode generalpurpose provisioned throughput in mibps throughput mode bursting actual behavior hcl terraform state show module dbsdevlsp aws efs file system efs module dbsdevlsp aws efs file system efs resource aws efs file system efs arn arn aws cn elasticfilesystem cn north xxxxxxxxxx file system fs abcdefgh creation token terraform dns name fs abcdefgh efs cn north amazonaws com encrypted true id fs abcdefgh kms key id arn aws cn kms cn north xxxxxxxxxx key abcdefgh cdefghijklmn performance mode generalpurpose provisioned throughput in mibps throughput mode bursting steps to reproduce terraform apply important factoids this is in aws china region cn north references information about referencing github issues are there any other github issues open or closed or pull requests that should be linked here vendor documentation for example it seems to me that aws resource aws efs file system go line func resourceawsefsdnsname filesystemid region string string uses improper hardcoding for the domain name amazonaws com while the actual domain name used in cn north seems to be amazonaws com cn | 0 |

9,241 | 7,881,705,981 | IssuesEvent | 2018-06-26 20:00:26 | great-lakes/project-egypt | https://api.github.com/repos/great-lakes/project-egypt | closed | Create instruction for running tests | infrastructure | Parent #24

- [x] Write new MD or add to README.md explaining how to create a new test and how to run tests | 1.0 | Create instruction for running tests - Parent #24

- [x] Write new MD or add to README.md explaining how to create a new test and how to run tests | infrastructure | create instruction for running tests parent write new md or add to readme md explaining how to create a new test and how to run tests | 1 |

383,143 | 11,351,316,590 | IssuesEvent | 2020-01-24 10:55:42 | ooni/ooni.org | https://api.github.com/repos/ooni/ooni.org | closed | Perform data analysis of India websites for OONI fellow | data analysis effort/L priority/high | This entails looking at web_connectivity measurements from a specific set of report_ids and looking at the blocking of websites depending on the target region. | 1.0 | Perform data analysis of India websites for OONI fellow - This entails looking at web_connectivity measurements from a specific set of report_ids and looking at the blocking of websites depending on the target region. | non_infrastructure | perform data analysis of india websites for ooni fellow this entails looking at web connectivity measurements from a specific set of report ids and looking at the blocking of websites depending on the target region | 0 |

5,453 | 5,660,769,105 | IssuesEvent | 2017-04-10 15:48:48 | vmware/docker-volume-vsphere | https://api.github.com/repos/vmware/docker-volume-vsphere | closed | VIB Installation failures in CI | component/test-infrastructure kind/test P1 | Intermittently seen following failures in CI;

For e.g. https://ci.vmware.run/vmware/docker-volume-vsphere/2036

```

=> Deploying to ESX root@192.168.31.62 Fri Mar 31 18:49:47 UTC 2017

Connection to 192.168.31.62 closed by remote host. <<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<

Installation Result:

Message: Operation finished successfully.

Reboot Required: false

VIBs Installed: VMWare_bootbank_esx-vmdkops-service_0.13.03aa2c0-0.0.1

VIBs Removed:

VIBs Skipped:

=> deployESXInstall: Installation hit an error on root@192.168.31.62 Fri Mar 31 18:50:04 UTC 2017

make[1]: *** [deploy-esx] Error 2

make: *** [deploy-esx] Error 2

``` | 1.0 | VIB Installation failures in CI - Intermittently seen following failures in CI;

For e.g. https://ci.vmware.run/vmware/docker-volume-vsphere/2036

```

=> Deploying to ESX root@192.168.31.62 Fri Mar 31 18:49:47 UTC 2017

Connection to 192.168.31.62 closed by remote host. <<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<

Installation Result:

Message: Operation finished successfully.

Reboot Required: false

VIBs Installed: VMWare_bootbank_esx-vmdkops-service_0.13.03aa2c0-0.0.1

VIBs Removed:

VIBs Skipped:

=> deployESXInstall: Installation hit an error on root@192.168.31.62 Fri Mar 31 18:50:04 UTC 2017

make[1]: *** [deploy-esx] Error 2

make: *** [deploy-esx] Error 2

``` | infrastructure | vib installation failures in ci intermittently seen following failures in ci for e g deploying to esx root fri mar utc connection to closed by remote host installation result message operation finished successfully reboot required false vibs installed vmware bootbank esx vmdkops service vibs removed vibs skipped deployesxinstall installation hit an error on root fri mar utc make error make error | 1 |

684,995 | 23,441,049,745 | IssuesEvent | 2022-08-15 14:54:40 | LinkNacional/wc_cielo_payment_gateway | https://api.github.com/repos/LinkNacional/wc_cielo_payment_gateway | closed | Implementação de parcelamento de compras | enhancement priority | - [x] Adicionar configuração que habilita o parcelamento das compras;

- [x] Implementar seletor de parcelas;

- [x] Fazer integração com API 3.0. | 1.0 | Implementação de parcelamento de compras - - [x] Adicionar configuração que habilita o parcelamento das compras;

- [x] Implementar seletor de parcelas;

- [x] Fazer integração com API 3.0. | non_infrastructure | implementação de parcelamento de compras adicionar configuração que habilita o parcelamento das compras implementar seletor de parcelas fazer integração com api | 0 |

263,377 | 8,288,726,023 | IssuesEvent | 2018-09-19 12:54:37 | regardscitoyens/the-law-factory-parser | https://api.github.com/repos/regardscitoyens/the-law-factory-parser | closed | Add last pending step (including CC) to all textes en cours | bug priority | Handle cases where texts are not published in the right order :

- for instance CC published but not final texte adopté

cf https://github.com/regardscitoyens/the-law-factory-parser/commit/dea0565f3406d5203863f35c179c021679ef5331

~I thought it was already the case, but it seems it is not always the case, for instance here:

https://www.lafabriquedelaloi.fr/articles.html?loi=ppl17-337 with the TA AN hémicycle is awaiting publication http://www.assemblee-nationale.fr/15/ta/ta0164.asp

pjl17-249~

| 1.0 | Add last pending step (including CC) to all textes en cours - Handle cases where texts are not published in the right order :

- for instance CC published but not final texte adopté

cf https://github.com/regardscitoyens/the-law-factory-parser/commit/dea0565f3406d5203863f35c179c021679ef5331

~I thought it was already the case, but it seems it is not always the case, for instance here:

https://www.lafabriquedelaloi.fr/articles.html?loi=ppl17-337 with the TA AN hémicycle is awaiting publication http://www.assemblee-nationale.fr/15/ta/ta0164.asp

pjl17-249~

| non_infrastructure | add last pending step including cc to all textes en cours handle cases where texts are not published in the right order for instance cc published but not final texte adopté cf i thought it was already the case but it seems it is not always the case for instance here with the ta an hémicycle is awaiting publication | 0 |

17,158 | 12,238,393,412 | IssuesEvent | 2020-05-04 19:43:49 | apple/turicreate | https://api.github.com/repos/apple/turicreate | opened | Temporarily disable s3 upload test for SFrame | S3 infrastructure | The current internal S3 proxy service doesn't allow up delete directories. When SFrame uploads files, it will first check the files and then delete them all. This check and deletion action is not atomic and causes a lot of build failures when multiple machines try to upload at the same time.

After we move to a service that can allow up delete directories, we can use `uuid` for each runner to let them upload without interfering with other runners. | 1.0 | Temporarily disable s3 upload test for SFrame - The current internal S3 proxy service doesn't allow up delete directories. When SFrame uploads files, it will first check the files and then delete them all. This check and deletion action is not atomic and causes a lot of build failures when multiple machines try to upload at the same time.

After we move to a service that can allow up delete directories, we can use `uuid` for each runner to let them upload without interfering with other runners. | infrastructure | temporarily disable upload test for sframe the current internal proxy service doesn t allow up delete directories when sframe uploads files it will first check the files and then delete them all this check and deletion action is not atomic and causes a lot of build failures when multiple machines try to upload at the same time after we move to a service that can allow up delete directories we can use uuid for each runner to let them upload without interfering with other runners | 1 |

172,881 | 6,517,332,546 | IssuesEvent | 2017-08-27 21:54:10 | robertsanseries/ciano | https://api.github.com/repos/robertsanseries/ciano | closed | Conversion list | Priority 2 - [Normal] Status 4 - [Confirmed] Status 5 - [In Progress] Status 6 - [Finished] Type 3 - [Enhancement] | Conversion list should equal torrential application.

- [x] Name of the file selected for conversion

- [x] ProgressBar

- [x] Time

- [x] Size

- [x] Display for which type is to be converted

- [x] Button cancel and remove line

| 1.0 | Conversion list - Conversion list should equal torrential application.

- [x] Name of the file selected for conversion

- [x] ProgressBar

- [x] Time

- [x] Size

- [x] Display for which type is to be converted

- [x] Button cancel and remove line

| non_infrastructure | conversion list conversion list should equal torrential application name of the file selected for conversion progressbar time size display for which type is to be converted button cancel and remove line | 0 |

6,873 | 24,005,697,465 | IssuesEvent | 2022-09-14 14:39:33 | tm24fan8/Home-Assistant-Configs | https://api.github.com/repos/tm24fan8/Home-Assistant-Configs | closed | Add 2-hour delay and cancellation modes for school | enhancement lighting security convenience presence detection automation TTS | Need a couple buttons to easily change scheduling for when the schools decide to cause havoc | 1.0 | Add 2-hour delay and cancellation modes for school - Need a couple buttons to easily change scheduling for when the schools decide to cause havoc | non_infrastructure | add hour delay and cancellation modes for school need a couple buttons to easily change scheduling for when the schools decide to cause havoc | 0 |

6,395 | 6,379,321,995 | IssuesEvent | 2017-08-02 14:32:09 | scikit-beam/scikit-beam | https://api.github.com/repos/scikit-beam/scikit-beam | closed | Switch to py.test | infrastructure | It would be great if we could switch to py.test for our testing framework for a number of reasons.

1. It is very easy to use

2. It has a very large number of plugins

3. Errybody doin' it

4. ...and many others.

| 1.0 | Switch to py.test - It would be great if we could switch to py.test for our testing framework for a number of reasons.

1. It is very easy to use

2. It has a very large number of plugins

3. Errybody doin' it

4. ...and many others.

| infrastructure | switch to py test it would be great if we could switch to py test for our testing framework for a number of reasons it is very easy to use it has a very large number of plugins errybody doin it and many others | 1 |

16,730 | 12,129,377,697 | IssuesEvent | 2020-04-22 22:26:25 | 18F/tts-tech-portfolio | https://api.github.com/repos/18F/tts-tech-portfolio | closed | decommission `Department of Labor - Wage and Hour - Section 14c` repository | epic: software and infrastructure grooming: draft - initial | https://github.com/18F/dol-whd-14c

Seems the 18F work has ended, but the issues are still active. Doesn't make sense for it to stay under the 18F org as is.

- [ ] Figure out 18F stakeholders, if any remain

- [ ] [Transfer repository](https://handbook.18f.gov/github/#rules) to Department of Labor, or

- [ ] Archive the repository

- [ ] Remove the GitHub [users](https://github.com/orgs/18F/teams/dol-whd-partner/members) (that aren't TTS staff) and [team](https://github.com/orgs/18F/teams/dol-whd-partner)

See email thread `Zenhub Problem`.

cc @18F/dol-whd-partner | 1.0 | decommission `Department of Labor - Wage and Hour - Section 14c` repository - https://github.com/18F/dol-whd-14c

Seems the 18F work has ended, but the issues are still active. Doesn't make sense for it to stay under the 18F org as is.

- [ ] Figure out 18F stakeholders, if any remain

- [ ] [Transfer repository](https://handbook.18f.gov/github/#rules) to Department of Labor, or

- [ ] Archive the repository

- [ ] Remove the GitHub [users](https://github.com/orgs/18F/teams/dol-whd-partner/members) (that aren't TTS staff) and [team](https://github.com/orgs/18F/teams/dol-whd-partner)

See email thread `Zenhub Problem`.

cc @18F/dol-whd-partner | infrastructure | decommission department of labor wage and hour section repository seems the work has ended but the issues are still active doesn t make sense for it to stay under the org as is figure out stakeholders if any remain to department of labor or archive the repository remove the github that aren t tts staff and see email thread zenhub problem cc dol whd partner | 1 |

24,753 | 24,235,808,936 | IssuesEvent | 2022-09-26 23:06:07 | simonw/datasette | https://api.github.com/repos/simonw/datasette | closed | Preserve query on timeout | enhancement usability | If a query hits the timeout it shows a message like:

> SQL query took too long. The time limit is controlled by the [sql_time_limit_ms](https://docs.datasette.io/en/stable/settings.html#sql-time-limit-ms) configuration option.

But the query is lost. Hitting the browser back button shows the query _before_ the one that errored.

It would be nice if the query that errored was preserved for more tweaking. This would make it similar to how "invalid syntax" works since #1346 / #619. | True | Preserve query on timeout - If a query hits the timeout it shows a message like:

> SQL query took too long. The time limit is controlled by the [sql_time_limit_ms](https://docs.datasette.io/en/stable/settings.html#sql-time-limit-ms) configuration option.

But the query is lost. Hitting the browser back button shows the query _before_ the one that errored.

It would be nice if the query that errored was preserved for more tweaking. This would make it similar to how "invalid syntax" works since #1346 / #619. | non_infrastructure | preserve query on timeout if a query hits the timeout it shows a message like sql query took too long the time limit is controlled by the configuration option but the query is lost hitting the browser back button shows the query before the one that errored it would be nice if the query that errored was preserved for more tweaking this would make it similar to how invalid syntax works since | 0 |

30,250 | 24,700,174,928 | IssuesEvent | 2022-10-19 14:46:55 | dotnet/dotnet-docker | https://api.github.com/repos/dotnet/dotnet-docker | closed | Support pre-release servicing drops | bug area-infrastructure | We should be providing updates of our nightly images whenever possible for servicing releases. These would be drops that are not MSRC-related and thus would be publicly available. For example, 5.0.1 is not a MSRC release so it has builds available at `https://dotnetcli.blob.core.windows.net/dotnet/sdk/5.0.1-servicing.<build>`. Doing this for servicing drops is important for the same reason it's important for preview releases: it provides an additional level of validation of the release and specifically for container environments.

There are a few issues that need to be resolved in order to provide these updates:

- The Dockerfile templates do not currently support the path syntax required to reference these servicing drops. The path includes the build version for the directory name but only the product version for the filename (e.g. https://dotnetcli.blob.core.windows.net/dotnet/Sdk/5.0.101-servicing.20601.5/dotnet-sdk-5.0.101-win-x64.zip). But the Dockerfile templates use the same version in both the directory name and the filename: https://github.com/dotnet/dotnet-docker/blob/eb12720ccea648c2e543ffa1c358f47ba0cc292d/eng/dockerfile-templates/sdk/5.0/Dockerfile.nanoserver#L14 The Dockerfile template needs the ability to provide distinct values for the version specified between the directory and filename.

- The same issue exists for the update-dependencies tool for the URL that it constructs in order to retrieve the SHA values of the files. While it does have logic to provide distinct version values between the directory and filename: https://github.com/dotnet/dotnet-docker/blob/eb12720ccea648c2e543ffa1c358f47ba0cc292d/eng/update-dependencies/DockerfileShaUpdater.cs#L36 It doesn't actually set those variables to distinct values for servicing drops due to its special case logic which only accounts for RTM releases: https://github.com/dotnet/dotnet-docker/blob/eb12720ccea648c2e543ffa1c358f47ba0cc292d/eng/update-dependencies/DockerfileShaUpdater.cs#L101

- In order to automate the creation of PRs that update the Dockerfile for new servicing drops, there needs to be changes to the build pipeline which does this. Currently, the pipeline can only handle two channels: one for the the core .NET product (runtime, aspnet, sdk) and one for the .NET monitor tool. These are represented as stages within the pipeline: https://github.com/dotnet/dotnet-docker/blob/eb12720ccea648c2e543ffa1c358f47ba0cc292d/eng/pipelines/update-dependencies.yml#L12 In order to be able to support nightly updates for both preview releases and servicing releases, there needs to be support for handling an additional channel.

| 1.0 | Support pre-release servicing drops - We should be providing updates of our nightly images whenever possible for servicing releases. These would be drops that are not MSRC-related and thus would be publicly available. For example, 5.0.1 is not a MSRC release so it has builds available at `https://dotnetcli.blob.core.windows.net/dotnet/sdk/5.0.1-servicing.<build>`. Doing this for servicing drops is important for the same reason it's important for preview releases: it provides an additional level of validation of the release and specifically for container environments.

There are a few issues that need to be resolved in order to provide these updates:

- The Dockerfile templates do not currently support the path syntax required to reference these servicing drops. The path includes the build version for the directory name but only the product version for the filename (e.g. https://dotnetcli.blob.core.windows.net/dotnet/Sdk/5.0.101-servicing.20601.5/dotnet-sdk-5.0.101-win-x64.zip). But the Dockerfile templates use the same version in both the directory name and the filename: https://github.com/dotnet/dotnet-docker/blob/eb12720ccea648c2e543ffa1c358f47ba0cc292d/eng/dockerfile-templates/sdk/5.0/Dockerfile.nanoserver#L14 The Dockerfile template needs the ability to provide distinct values for the version specified between the directory and filename.

- The same issue exists for the update-dependencies tool for the URL that it constructs in order to retrieve the SHA values of the files. While it does have logic to provide distinct version values between the directory and filename: https://github.com/dotnet/dotnet-docker/blob/eb12720ccea648c2e543ffa1c358f47ba0cc292d/eng/update-dependencies/DockerfileShaUpdater.cs#L36 It doesn't actually set those variables to distinct values for servicing drops due to its special case logic which only accounts for RTM releases: https://github.com/dotnet/dotnet-docker/blob/eb12720ccea648c2e543ffa1c358f47ba0cc292d/eng/update-dependencies/DockerfileShaUpdater.cs#L101

- In order to automate the creation of PRs that update the Dockerfile for new servicing drops, there needs to be changes to the build pipeline which does this. Currently, the pipeline can only handle two channels: one for the the core .NET product (runtime, aspnet, sdk) and one for the .NET monitor tool. These are represented as stages within the pipeline: https://github.com/dotnet/dotnet-docker/blob/eb12720ccea648c2e543ffa1c358f47ba0cc292d/eng/pipelines/update-dependencies.yml#L12 In order to be able to support nightly updates for both preview releases and servicing releases, there needs to be support for handling an additional channel.

| infrastructure | support pre release servicing drops we should be providing updates of our nightly images whenever possible for servicing releases these would be drops that are not msrc related and thus would be publicly available for example is not a msrc release so it has builds available at doing this for servicing drops is important for the same reason it s important for preview releases it provides an additional level of validation of the release and specifically for container environments there are a few issues that need to be resolved in order to provide these updates the dockerfile templates do not currently support the path syntax required to reference these servicing drops the path includes the build version for the directory name but only the product version for the filename e g but the dockerfile templates use the same version in both the directory name and the filename the dockerfile template needs the ability to provide distinct values for the version specified between the directory and filename the same issue exists for the update dependencies tool for the url that it constructs in order to retrieve the sha values of the files while it does have logic to provide distinct version values between the directory and filename it doesn t actually set those variables to distinct values for servicing drops due to its special case logic which only accounts for rtm releases in order to automate the creation of prs that update the dockerfile for new servicing drops there needs to be changes to the build pipeline which does this currently the pipeline can only handle two channels one for the the core net product runtime aspnet sdk and one for the net monitor tool these are represented as stages within the pipeline in order to be able to support nightly updates for both preview releases and servicing releases there needs to be support for handling an additional channel | 1 |

4,901 | 5,325,930,827 | IssuesEvent | 2017-02-15 01:39:26 | mirai-audio/mir | https://api.github.com/repos/mirai-audio/mir | opened | mir release script | infrastructure | # Goal

Easily create tagged release with a release branch off master.

### Expected Behavior

When a release has been tested, QA'ed and ready for launch:

```bash

./run-release 1.3.4

```

* tags master with release number

* creates release branch

* pushes both to github

## Considerations

```bash

# Generate release notes from last MINOR release tag, crediting each author per commit.

git log `git describe --abbrev=0 --tags`.. --pretty=format:"* %s - @%an"

# Generate release notes from last MINOR release tag, rollup commits to each author

git shortlog `git describe --abbrev=0 --tags`..

```

Creation of git tags, see https://github.com/0xadada/dockdj/blob/master/bin/deploy#L86

## Tasks

List all of the subtasks that will contribute to completion of this issue. Once

all subtasks are complete, that will indicate the issue is "done".

* [ ] Create bash script

* [ ] Test on a test repo w/o pushing

| 1.0 | mir release script - # Goal

Easily create tagged release with a release branch off master.

### Expected Behavior

When a release has been tested, QA'ed and ready for launch:

```bash

./run-release 1.3.4

```

* tags master with release number

* creates release branch

* pushes both to github

## Considerations

```bash

# Generate release notes from last MINOR release tag, crediting each author per commit.

git log `git describe --abbrev=0 --tags`.. --pretty=format:"* %s - @%an"

# Generate release notes from last MINOR release tag, rollup commits to each author

git shortlog `git describe --abbrev=0 --tags`..

```

Creation of git tags, see https://github.com/0xadada/dockdj/blob/master/bin/deploy#L86

## Tasks

List all of the subtasks that will contribute to completion of this issue. Once

all subtasks are complete, that will indicate the issue is "done".

* [ ] Create bash script

* [ ] Test on a test repo w/o pushing

| infrastructure | mir release script goal easily create tagged release with a release branch off master expected behavior when a release has been tested qa ed and ready for launch bash run release tags master with release number creates release branch pushes both to github considerations bash generate release notes from last minor release tag crediting each author per commit git log git describe abbrev tags pretty format s an generate release notes from last minor release tag rollup commits to each author git shortlog git describe abbrev tags creation of git tags see tasks list all of the subtasks that will contribute to completion of this issue once all subtasks are complete that will indicate the issue is done create bash script test on a test repo w o pushing | 1 |

62,817 | 8,641,245,989 | IssuesEvent | 2018-11-24 15:43:40 | OpenAPITools/openapi-generator | https://api.github.com/repos/OpenAPITools/openapi-generator | closed | [Documentation] Missing doc export from default codegen | Feature: Documentation | Missing options which are exported by the DefaultCodegen like modelNamePrefix for example.

##### Related issues/PRs

<!-- has a similar issue/PR been reported/opened before? Please do a search in https://github.com/openapitools/openapi-generator/issues?utf8=%E2%9C%93&q=is%3Aissue%20 -->

#932

| 1.0 | [Documentation] Missing doc export from default codegen - Missing options which are exported by the DefaultCodegen like modelNamePrefix for example.

##### Related issues/PRs

<!-- has a similar issue/PR been reported/opened before? Please do a search in https://github.com/openapitools/openapi-generator/issues?utf8=%E2%9C%93&q=is%3Aissue%20 -->

#932

| non_infrastructure | missing doc export from default codegen missing options which are exported by the defaultcodegen like modelnameprefix for example related issues prs | 0 |

377,852 | 11,185,420,267 | IssuesEvent | 2020-01-01 01:31:26 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | br.hao123.com - see bug description | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox 73.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.3; Win64; x64; rv:73.0) Gecko/20100101 Firefox/73.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://br.hao123.com/?tn=sft_hp_hao123_br

**Browser / Version**: Firefox 73.0

**Operating System**: Windows 8.1

**Tested Another Browser**: Yes

**Problem type**: Something else

**Description**: It doesn't disapear

**Steps to Reproduce**:

Even though I keep trying to change my home page, This site continues to appear

[](https://webcompat.com/uploads/2020/1/f53090b1-0130-4d25-bb93-a87bfde12d9d.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191231213920</li><li>channel: nightly</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/1/b8a9ccc3-c807-4bb6-be20-bc25d5986928)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | br.hao123.com - see bug description - <!-- @browser: Firefox 73.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.3; Win64; x64; rv:73.0) Gecko/20100101 Firefox/73.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://br.hao123.com/?tn=sft_hp_hao123_br

**Browser / Version**: Firefox 73.0

**Operating System**: Windows 8.1

**Tested Another Browser**: Yes

**Problem type**: Something else

**Description**: It doesn't disapear

**Steps to Reproduce**:

Even though I keep trying to change my home page, This site continues to appear

[](https://webcompat.com/uploads/2020/1/f53090b1-0130-4d25-bb93-a87bfde12d9d.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191231213920</li><li>channel: nightly</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/1/b8a9ccc3-c807-4bb6-be20-bc25d5986928)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_infrastructure | br com see bug description url browser version firefox operating system windows tested another browser yes problem type something else description it doesn t disapear steps to reproduce even though i keep trying to change my home page this site continues to appear browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel nightly hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 0 |