Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

34,355 | 29,513,219,644 | IssuesEvent | 2023-06-04 07:06:53 | bllendev/kalibre | https://api.github.com/repos/bllendev/kalibre | opened | react infra - Integrate React App with Django | infrastructure | - Set up the necessary configuration so that the React app can be served from the Django project. This can involve configuring Django to serve the React build files and setting up proxying for API requests during development. | 1.0 | react infra - Integrate React App with Django - - Set up the necessary configuration so that the React app can be served from the Django project. This can involve configuring Django to serve the React build files and setting up proxying for API requests during development. | infrastructure | react infra integrate react app with django set up the necessary configuration so that the react app can be served from the django project this can involve configuring django to serve the react build files and setting up proxying for api requests during development | 1 |

198,056 | 6,969,227,225 | IssuesEvent | 2017-12-11 03:44:57 | gw2efficiency/issues | https://api.github.com/repos/gw2efficiency/issues | closed | Gifts (for legendary armor) only visible when in bank | Bug Priority B Stalled: Discussion Needed | Hi.

If i place gifts (bones/dust etc) on my character, it is not showing on item search (and thus it is not calculated for crafting as 'owned material'. If i place this items in bank, they are found and properly calculated.

Browsing specific character inventory (not searching) properly shows item in question in their bag slots though.

It might be related to [this issue](https://github.com/gw2efficiency/issues/issues/752)

Items which i tested and are affected:

- Gift of Dust

- Gift of Bones

- Gift of Scales

- Gift of Fangs

- Gift of Blood

- Gift of Totems

- Gift of Claws

- Gift of Venom

- Gift of Dedication

- Eldritch Scroll

- Legendary Insight

Haven't tested more, all of those are used for Legendary Armor which i happend to be crafting when i noticed it. | 1.0 | Gifts (for legendary armor) only visible when in bank - Hi.

If i place gifts (bones/dust etc) on my character, it is not showing on item search (and thus it is not calculated for crafting as 'owned material'. If i place this items in bank, they are found and properly calculated.

Browsing specific character inventory (not searching) properly shows item in question in their bag slots though.

It might be related to [this issue](https://github.com/gw2efficiency/issues/issues/752)

Items which i tested and are affected:

- Gift of Dust

- Gift of Bones

- Gift of Scales

- Gift of Fangs

- Gift of Blood

- Gift of Totems

- Gift of Claws

- Gift of Venom

- Gift of Dedication

- Eldritch Scroll

- Legendary Insight

Haven't tested more, all of those are used for Legendary Armor which i happend to be crafting when i noticed it. | non_infrastructure | gifts for legendary armor only visible when in bank hi if i place gifts bones dust etc on my character it is not showing on item search and thus it is not calculated for crafting as owned material if i place this items in bank they are found and properly calculated browsing specific character inventory not searching properly shows item in question in their bag slots though it might be related to items which i tested and are affected gift of dust gift of bones gift of scales gift of fangs gift of blood gift of totems gift of claws gift of venom gift of dedication eldritch scroll legendary insight haven t tested more all of those are used for legendary armor which i happend to be crafting when i noticed it | 0 |

225,456 | 24,840,466,850 | IssuesEvent | 2022-10-26 12:20:38 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | aspnet:5.0-alpine docker image has reference to zlib-1.2.12-r0 which has vulnerability CVE-2022-37434 | Security | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Describe the bug

We are using docker image [mcr.microsoft.com/dotnet/aspnet:5.0-alpine] and we found out that it has a zlib-1.2.12-r0 package with vulnerability CVE-2022-37434.

We have confirmed that by reviewing the packages in this docker images using the command:

`docker run --rm mcr.microsoft.com/dotnet/aspnet:5.0-alpine apk list`

Checking the history of the docker image:

`docker image history --no-trunc mcr.microsoft.com/dotnet/aspnet:5.0-alpine`

we can see that this image hasn't been rebuilt for 5 months, and therefore it wouldn't have picked the latest zlib version (1.2.13) that has fixed the vulnerability

### Expected Behavior

The aspnet:5.0-alpine docker image should be rebuilt to get the latest fixed zlib package. Ideally this should have been done automatically

### Steps To Reproduce

We run aspnet:5.0-alpine docker image on Azure App Service for Containers, and the Azure Cloud Defender raised a vulnerability alert that it has a CVE-2022-37434 vulnerability

### Exceptions (if any)

ASP.Net docker images should be updated with the latest vulnerability fixes especially when the fixed packages have been available for over 2 months

### .NET Version

_No response_

### Anything else?

_No response_ | True | aspnet:5.0-alpine docker image has reference to zlib-1.2.12-r0 which has vulnerability CVE-2022-37434 - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Describe the bug

We are using docker image [mcr.microsoft.com/dotnet/aspnet:5.0-alpine] and we found out that it has a zlib-1.2.12-r0 package with vulnerability CVE-2022-37434.

We have confirmed that by reviewing the packages in this docker images using the command:

`docker run --rm mcr.microsoft.com/dotnet/aspnet:5.0-alpine apk list`

Checking the history of the docker image:

`docker image history --no-trunc mcr.microsoft.com/dotnet/aspnet:5.0-alpine`

we can see that this image hasn't been rebuilt for 5 months, and therefore it wouldn't have picked the latest zlib version (1.2.13) that has fixed the vulnerability

### Expected Behavior

The aspnet:5.0-alpine docker image should be rebuilt to get the latest fixed zlib package. Ideally this should have been done automatically

### Steps To Reproduce

We run aspnet:5.0-alpine docker image on Azure App Service for Containers, and the Azure Cloud Defender raised a vulnerability alert that it has a CVE-2022-37434 vulnerability

### Exceptions (if any)

ASP.Net docker images should be updated with the latest vulnerability fixes especially when the fixed packages have been available for over 2 months

### .NET Version

_No response_

### Anything else?

_No response_ | non_infrastructure | aspnet alpine docker image has reference to zlib which has vulnerability cve is there an existing issue for this i have searched the existing issues describe the bug we are using docker image and we found out that it has a zlib package with vulnerability cve we have confirmed that by reviewing the packages in this docker images using the command docker run rm mcr microsoft com dotnet aspnet alpine apk list checking the history of the docker image docker image history no trunc mcr microsoft com dotnet aspnet alpine we can see that this image hasn t been rebuilt for months and therefore it wouldn t have picked the latest zlib version that has fixed the vulnerability expected behavior the aspnet alpine docker image should be rebuilt to get the latest fixed zlib package ideally this should have been done automatically steps to reproduce we run aspnet alpine docker image on azure app service for containers and the azure cloud defender raised a vulnerability alert that it has a cve vulnerability exceptions if any asp net docker images should be updated with the latest vulnerability fixes especially when the fixed packages have been available for over months net version no response anything else no response | 0 |

10,251 | 8,452,988,349 | IssuesEvent | 2018-10-20 10:53:33 | TeamBravo2018/cloned-rfid-card-detection | https://api.github.com/repos/TeamBravo2018/cloned-rfid-card-detection | opened | Setup Message Broker | backlog item infrastructure messaging test production | ### Description ###

Setup HiveMq. Make sure that the deployments on PCF can communicate with the broker.

| 1.0 | Setup Message Broker - ### Description ###

Setup HiveMq. Make sure that the deployments on PCF can communicate with the broker.

| infrastructure | setup message broker description setup hivemq make sure that the deployments on pcf can communicate with the broker | 1 |

354,069 | 10,562,585,292 | IssuesEvent | 2019-10-04 18:42:20 | grpc/grpc | https://api.github.com/repos/grpc/grpc | reopened | MacOS Basic Tests C/C++ and Node Time Out | kind/bug lang/c++ lang/node priority/P1 | Basic Tests C/C++ MacOS and Basic Tests Node MacOS flakes.

When they fail, I see a bunch of messages saying "file has vanished"

> file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_qps_scenario_changes.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_shellcheck.sh"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_submodules.sh"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_test_filtering.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_tracer_sanity.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_version.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/core_banned_functions.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/core_untyped_structs.sh"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/sanity_tests.yaml"

rsync warning: some files vanished before they could be transferred (code 24) at main.c(1677) [generator=3.1.3]

https://source.cloud.google.com/results/invocations/9b4b867c-54ba-436a-b188-4e281627fe0d/targets

https://source.cloud.google.com/results/invocations/adb1dbdc-5999-4ad0-a2a0-2a6a2a4fb518/targets | 1.0 | MacOS Basic Tests C/C++ and Node Time Out - Basic Tests C/C++ MacOS and Basic Tests Node MacOS flakes.

When they fail, I see a bunch of messages saying "file has vanished"

> file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_qps_scenario_changes.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_shellcheck.sh"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_submodules.sh"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_test_filtering.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_tracer_sanity.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/check_version.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/core_banned_functions.py"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/core_untyped_structs.sh"

file has vanished: "/tmpfs/src/github/grpc/workspace_c_macos_opt_native/tools/run_tests/sanity/sanity_tests.yaml"

rsync warning: some files vanished before they could be transferred (code 24) at main.c(1677) [generator=3.1.3]

https://source.cloud.google.com/results/invocations/9b4b867c-54ba-436a-b188-4e281627fe0d/targets

https://source.cloud.google.com/results/invocations/adb1dbdc-5999-4ad0-a2a0-2a6a2a4fb518/targets | non_infrastructure | macos basic tests c c and node time out basic tests c c macos and basic tests node macos flakes when they fail i see a bunch of messages saying file has vanished file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity check qps scenario changes py file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity check shellcheck sh file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity check submodules sh file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity check test filtering py file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity check tracer sanity py file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity check version py file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity core banned functions py file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity core untyped structs sh file has vanished tmpfs src github grpc workspace c macos opt native tools run tests sanity sanity tests yaml rsync warning some files vanished before they could be transferred code at main c | 0 |

201,502 | 15,802,437,385 | IssuesEvent | 2021-04-03 09:44:08 | nowknowing/ped | https://api.github.com/repos/nowknowing/ped | opened | UG: add_person and add_booking lacking critical details | severity.High type.DocumentationBug | The mention of "multi-step" without further details of what the multi-step consists of is not acceptable.

Perhaps give at least one example of the "steps" i.e. commands that follow.

<!--session: 1617429926787-c5fe719d-2acf-4ed4-8116-9865b2720c99--> | 1.0 | UG: add_person and add_booking lacking critical details - The mention of "multi-step" without further details of what the multi-step consists of is not acceptable.

Perhaps give at least one example of the "steps" i.e. commands that follow.

<!--session: 1617429926787-c5fe719d-2acf-4ed4-8116-9865b2720c99--> | non_infrastructure | ug add person and add booking lacking critical details the mention of multi step without further details of what the multi step consists of is not acceptable perhaps give at least one example of the steps i e commands that follow | 0 |

15,840 | 11,727,540,313 | IssuesEvent | 2020-03-10 16:05:45 | reapit/foundations | https://api.github.com/repos/reapit/foundations | closed | Create Properties broker | feature infrastructure platform-team | Create properties broker function that fronts the properties service and offers facility to embed the following entities

- Images

- Documents

- Offers

- Negotiator

- Offices

- Department

- Vendor

- Area

Project and CI/CD pipelines should also be initialised as part of this ticket. Broker should become the entry point for the properties service which should no longer be directly public

The properties service should be updated to include the `embed` parameter option on the GET endpoints with the supported options listed above | 1.0 | Create Properties broker - Create properties broker function that fronts the properties service and offers facility to embed the following entities

- Images

- Documents

- Offers

- Negotiator

- Offices

- Department

- Vendor

- Area

Project and CI/CD pipelines should also be initialised as part of this ticket. Broker should become the entry point for the properties service which should no longer be directly public

The properties service should be updated to include the `embed` parameter option on the GET endpoints with the supported options listed above | infrastructure | create properties broker create properties broker function that fronts the properties service and offers facility to embed the following entities images documents offers negotiator offices department vendor area project and ci cd pipelines should also be initialised as part of this ticket broker should become the entry point for the properties service which should no longer be directly public the properties service should be updated to include the embed parameter option on the get endpoints with the supported options listed above | 1 |

11,161 | 8,969,351,239 | IssuesEvent | 2019-01-29 10:34:38 | GFDRR/open-risk-data-dashboard | https://api.github.com/repos/GFDRR/open-risk-data-dashboard | opened | Profile unnecessary fileds FE / BE | backend frontend infrastructure | The fileds First Name, Last Name, Title and Institution were removed from FE side (Registration form, My Profile & Admin User Profile manage).

In this moment in FE side the User profile Object contains this fileds because BE side requires them.

We must to remove all references to this fields from FE and BE side.

| 1.0 | Profile unnecessary fileds FE / BE - The fileds First Name, Last Name, Title and Institution were removed from FE side (Registration form, My Profile & Admin User Profile manage).

In this moment in FE side the User profile Object contains this fileds because BE side requires them.

We must to remove all references to this fields from FE and BE side.

| infrastructure | profile unnecessary fileds fe be the fileds first name last name title and institution were removed from fe side registration form my profile admin user profile manage in this moment in fe side the user profile object contains this fileds because be side requires them we must to remove all references to this fields from fe and be side | 1 |

6,309 | 6,311,744,469 | IssuesEvent | 2017-07-23 22:14:50 | twosigma/beakerx | https://api.github.com/repos/twosigma/beakerx | opened | set versions automatically | Enhancement Infrastructure | npm gets the version from package.json

and python gets it from _version.py

would be nice if they got it from git.

i think versioneer works for python, what about npm? | 1.0 | set versions automatically - npm gets the version from package.json

and python gets it from _version.py

would be nice if they got it from git.

i think versioneer works for python, what about npm? | infrastructure | set versions automatically npm gets the version from package json and python gets it from version py would be nice if they got it from git i think versioneer works for python what about npm | 1 |

279,541 | 24,233,458,469 | IssuesEvent | 2022-09-26 20:29:17 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | [Desktop] Clicking on Brave icon with no windows open should open a new window | tests OS/macOS closed/stale OS/Desktop | Carried over from https://github.com/brave/browser-laptop/issues/167

Test would do the following:

- [ ] have no windows open

- [ ] click on Brave icon in dock

- [ ] new window should be opened | 1.0 | [Desktop] Clicking on Brave icon with no windows open should open a new window - Carried over from https://github.com/brave/browser-laptop/issues/167

Test would do the following:

- [ ] have no windows open

- [ ] click on Brave icon in dock

- [ ] new window should be opened | non_infrastructure | clicking on brave icon with no windows open should open a new window carried over from test would do the following have no windows open click on brave icon in dock new window should be opened | 0 |

193,831 | 6,888,420,896 | IssuesEvent | 2017-11-22 05:49:05 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Remove simplejson dependency from zulip | area: dependencies priority: high | Zulip still uses `simplejson` (Rather than the built-into-Python-3 `json` module or the even faster `ujson` module) for one thing: writing the `page_params` object into a `<script>` tag in `zerver/views/home.py`.

It'd be really great to be able to finish removing `simplejson` as a dependency. However, it is not trivial to do so, since we're using `simplejson.encoder.JSONEncoderForHTML`, and that doesn't seem to exist in `zerver/views/`

This is a great issue for someone who knows Python well, since I think a solution is likely to involve implementing a version of `simplejson.encoder.JSONEncoderForHTML` on top of the standard library.

| 1.0 | Remove simplejson dependency from zulip - Zulip still uses `simplejson` (Rather than the built-into-Python-3 `json` module or the even faster `ujson` module) for one thing: writing the `page_params` object into a `<script>` tag in `zerver/views/home.py`.

It'd be really great to be able to finish removing `simplejson` as a dependency. However, it is not trivial to do so, since we're using `simplejson.encoder.JSONEncoderForHTML`, and that doesn't seem to exist in `zerver/views/`

This is a great issue for someone who knows Python well, since I think a solution is likely to involve implementing a version of `simplejson.encoder.JSONEncoderForHTML` on top of the standard library.

| non_infrastructure | remove simplejson dependency from zulip zulip still uses simplejson rather than the built into python json module or the even faster ujson module for one thing writing the page params object into a tag in zerver views home py it d be really great to be able to finish removing simplejson as a dependency however it is not trivial to do so since we re using simplejson encoder jsonencoderforhtml and that doesn t seem to exist in zerver views this is a great issue for someone who knows python well since i think a solution is likely to involve implementing a version of simplejson encoder jsonencoderforhtml on top of the standard library | 0 |

3,406 | 4,290,594,489 | IssuesEvent | 2016-07-18 10:23:50 | Microsoft/visualfsharp | https://api.github.com/repos/Microsoft/visualfsharp | closed | Add jenkins build by branch and os | infrastructure | Current jenkins ci builds `master` branch in `Debug` and `Release` configuration in `Windows`.

Like http://dotnet-ci.cloudapp.net/job/Microsoft_visualfsharp/job/release_windows_nt/

Others useful build configuration:

- OS: Windows/Macosx/Linux, because are needed by xplat (coreclr)

- branch: `master`, `coreclr`, `vs2015` are active branches built by appveyor

Proposed name in jenkins `branch_conf_osname` . Actual is `conf_osname`

ref [dotnet jenkins new new branch model](https://github.com/dotnet/cli/pull/1386#issue-133290544)

/cc @otawfik-ms @TyOverby | 1.0 | Add jenkins build by branch and os - Current jenkins ci builds `master` branch in `Debug` and `Release` configuration in `Windows`.

Like http://dotnet-ci.cloudapp.net/job/Microsoft_visualfsharp/job/release_windows_nt/

Others useful build configuration:

- OS: Windows/Macosx/Linux, because are needed by xplat (coreclr)

- branch: `master`, `coreclr`, `vs2015` are active branches built by appveyor

Proposed name in jenkins `branch_conf_osname` . Actual is `conf_osname`

ref [dotnet jenkins new new branch model](https://github.com/dotnet/cli/pull/1386#issue-133290544)

/cc @otawfik-ms @TyOverby | infrastructure | add jenkins build by branch and os current jenkins ci builds master branch in debug and release configuration in windows like others useful build configuration os windows macosx linux because are needed by xplat coreclr branch master coreclr are active branches built by appveyor proposed name in jenkins branch conf osname actual is conf osname ref cc otawfik ms tyoverby | 1 |

134,315 | 29,994,273,485 | IssuesEvent | 2023-06-26 03:13:31 | FerretDB/FerretDB | https://api.github.com/repos/FerretDB/FerretDB | opened | Support `maxPoolSize` and `minPoolSize` connection option | code/chore not ready | ### What should be done?

https://www.mongodb.com/docs/manual/reference/connection-string/#mongodb-urioption-urioption.maxPoolSize

https://www.mongodb.com/docs/manual/reference/connection-string/#mongodb-urioption-urioption.minPoolSize

See the issue number added in the PR https://github.com/FerretDB/FerretDB/pull/2878

### Where?

https://github.com/FerretDB/FerretDB/tree/main/internal/clientconn

### Definition of Done

- all handlers updated;

- unit tests added/updated;

- integration/compatibility tests added/updated;

- spot refactorings done;

- user documentation updated or an issue to create documentation created;

- something else?

| 1.0 | Support `maxPoolSize` and `minPoolSize` connection option - ### What should be done?

https://www.mongodb.com/docs/manual/reference/connection-string/#mongodb-urioption-urioption.maxPoolSize

https://www.mongodb.com/docs/manual/reference/connection-string/#mongodb-urioption-urioption.minPoolSize

See the issue number added in the PR https://github.com/FerretDB/FerretDB/pull/2878

### Where?

https://github.com/FerretDB/FerretDB/tree/main/internal/clientconn

### Definition of Done

- all handlers updated;

- unit tests added/updated;

- integration/compatibility tests added/updated;

- spot refactorings done;

- user documentation updated or an issue to create documentation created;

- something else?

| non_infrastructure | support maxpoolsize and minpoolsize connection option what should be done see the issue number added in the pr where definition of done all handlers updated unit tests added updated integration compatibility tests added updated spot refactorings done user documentation updated or an issue to create documentation created something else | 0 |

9,539 | 8,029,904,361 | IssuesEvent | 2018-07-27 17:40:19 | brave/browser-laptop | https://api.github.com/repos/brave/browser-laptop | closed | npm install fails with npm 5.4 and higher | dev-setup infrastructure stale | ### Description

`npm install` fails with:

```bash

Finished generating code

test.vcxproj -> C:\dev\browser-laptop\node_modules\tracking-protection\node_modules\cppunitlite\build\Release\\test.exe

test.vcxproj -> C:\dev\browser-laptop\node_modules\tracking-protection\node_modules\cppunitlite\build\Release\test.pdb (Full PDB)

+ cppunitlite@1.0.0

added 1 package in 4.797s

npm ERR! Cannot read property 'pause' of undefined

```

### Steps to Reproduce

1. update npm to 5.4

2. get code

3. run `npm install`

**Actual result:**

```bash

Finished generating code

test.vcxproj -> C:\dev\browser-laptop\node_modules\tracking-protection\node_modules\cppunitlite\build\Release\\test.exe

test.vcxproj -> C:\dev\browser-laptop\node_modules\tracking-protection\node_modules\cppunitlite\build\Release\test.pdb (Full PDB)

+ cppunitlite@1.0.0

added 1 package in 4.797s

npm ERR! Cannot read property 'pause' of undefined

```

**Expected result:**

success

**Reproduces how often:** [What percentage of the time does it reproduce?]

100%

### Brave Version

0.21.0

### Additional Information

temporarily had to downgrade npm to version 5.3.0

| 1.0 | npm install fails with npm 5.4 and higher - ### Description

`npm install` fails with:

```bash

Finished generating code

test.vcxproj -> C:\dev\browser-laptop\node_modules\tracking-protection\node_modules\cppunitlite\build\Release\\test.exe

test.vcxproj -> C:\dev\browser-laptop\node_modules\tracking-protection\node_modules\cppunitlite\build\Release\test.pdb (Full PDB)

+ cppunitlite@1.0.0

added 1 package in 4.797s

npm ERR! Cannot read property 'pause' of undefined

```

### Steps to Reproduce

1. update npm to 5.4

2. get code

3. run `npm install`

**Actual result:**

```bash

Finished generating code

test.vcxproj -> C:\dev\browser-laptop\node_modules\tracking-protection\node_modules\cppunitlite\build\Release\\test.exe

test.vcxproj -> C:\dev\browser-laptop\node_modules\tracking-protection\node_modules\cppunitlite\build\Release\test.pdb (Full PDB)

+ cppunitlite@1.0.0

added 1 package in 4.797s

npm ERR! Cannot read property 'pause' of undefined

```

**Expected result:**

success

**Reproduces how often:** [What percentage of the time does it reproduce?]

100%

### Brave Version

0.21.0

### Additional Information

temporarily had to downgrade npm to version 5.3.0

| infrastructure | npm install fails with npm and higher description npm install fails with bash finished generating code test vcxproj c dev browser laptop node modules tracking protection node modules cppunitlite build release test exe test vcxproj c dev browser laptop node modules tracking protection node modules cppunitlite build release test pdb full pdb cppunitlite added package in npm err cannot read property pause of undefined steps to reproduce update npm to get code run npm install actual result bash finished generating code test vcxproj c dev browser laptop node modules tracking protection node modules cppunitlite build release test exe test vcxproj c dev browser laptop node modules tracking protection node modules cppunitlite build release test pdb full pdb cppunitlite added package in npm err cannot read property pause of undefined expected result success reproduces how often brave version additional information temporarily had to downgrade npm to version | 1 |

22,890 | 15,604,457,466 | IssuesEvent | 2021-03-19 03:58:08 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Tracking issue: enable more MSVC warnings | area-Infrastructure-coreclr in pr up-for-grabs | *Initial cost estimate*: 1 week

*Initial contacts*: @trylek, @GrabYourPitchforks, @janvorli

We enable the following warning codes (as error) on some internal runs as part of compliance checks. The warnings are reviewed by hand. The manual reviews always show that we're ok, but it would be nice if we could turn these on as part of the public build. There's a little bit of work involved here to make things compile cleanly. But it would save us the trouble of running through manual review every release.

```cmake

add_compile_options(/we4018)

add_compile_options(/we4055)

add_compile_options(/we4146)

add_compile_options(/we4242)

add_compile_options(/we4244)

add_compile_options(/we4267)

add_compile_options(/we4302)

add_compile_options(/we4308)

add_compile_options(/we4509)

add_compile_options(/we4510)

add_compile_options(/we4532)

add_compile_options(/we4533)

add_compile_options(/we4610)

add_compile_options(/we4611)

add_compile_options(/we4700)

add_compile_options(/we4701)

add_compile_options(/we4703)

add_compile_options(/we4789)

add_compile_options(/we4995)

add_compile_options(/we4996)

``` | 1.0 | Tracking issue: enable more MSVC warnings - *Initial cost estimate*: 1 week

*Initial contacts*: @trylek, @GrabYourPitchforks, @janvorli

We enable the following warning codes (as error) on some internal runs as part of compliance checks. The warnings are reviewed by hand. The manual reviews always show that we're ok, but it would be nice if we could turn these on as part of the public build. There's a little bit of work involved here to make things compile cleanly. But it would save us the trouble of running through manual review every release.

```cmake

add_compile_options(/we4018)

add_compile_options(/we4055)

add_compile_options(/we4146)

add_compile_options(/we4242)

add_compile_options(/we4244)

add_compile_options(/we4267)

add_compile_options(/we4302)

add_compile_options(/we4308)

add_compile_options(/we4509)

add_compile_options(/we4510)

add_compile_options(/we4532)

add_compile_options(/we4533)

add_compile_options(/we4610)

add_compile_options(/we4611)

add_compile_options(/we4700)

add_compile_options(/we4701)

add_compile_options(/we4703)

add_compile_options(/we4789)

add_compile_options(/we4995)

add_compile_options(/we4996)

``` | infrastructure | tracking issue enable more msvc warnings initial cost estimate week initial contacts trylek grabyourpitchforks janvorli we enable the following warning codes as error on some internal runs as part of compliance checks the warnings are reviewed by hand the manual reviews always show that we re ok but it would be nice if we could turn these on as part of the public build there s a little bit of work involved here to make things compile cleanly but it would save us the trouble of running through manual review every release cmake add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options add compile options | 1 |

23,254 | 16,011,757,327 | IssuesEvent | 2021-04-20 11:31:47 | TeamFranka/affinity | https://api.github.com/repos/TeamFranka/affinity | opened | Registration Email test | infrastructure reliability | We have a test to do a signup, let's improve it by:

- [ ] adding [mailhog based email backend to our docker-compose](https://kreuzwerker.de/post/e2e-testing-of-emails-in-mailhog-using-cypress)

- [ ] hook it up to the parse-platform in the docker-compose

- [ ] advance the tests to:

- [ ] check for the email-required toast-message

- [ ] open the email, find the link, click it

- [ ] refresh the app and make sure the toast is gone

- [ ] add a second tests

- [ ] check for the email-required toast-message

- [ ] use it to ask for the email again

- [ ] open that new email, find the link, click it

- [ ] refresh the app and make sure the toast is gone | 1.0 | Registration Email test - We have a test to do a signup, let's improve it by:

- [ ] adding [mailhog based email backend to our docker-compose](https://kreuzwerker.de/post/e2e-testing-of-emails-in-mailhog-using-cypress)

- [ ] hook it up to the parse-platform in the docker-compose

- [ ] advance the tests to:

- [ ] check for the email-required toast-message

- [ ] open the email, find the link, click it

- [ ] refresh the app and make sure the toast is gone

- [ ] add a second tests

- [ ] check for the email-required toast-message

- [ ] use it to ask for the email again

- [ ] open that new email, find the link, click it

- [ ] refresh the app and make sure the toast is gone | infrastructure | registration email test we have a test to do a signup let s improve it by adding hook it up to the parse platform in the docker compose advance the tests to check for the email required toast message open the email find the link click it refresh the app and make sure the toast is gone add a second tests check for the email required toast message use it to ask for the email again open that new email find the link click it refresh the app and make sure the toast is gone | 1 |

354,474 | 25,167,677,129 | IssuesEvent | 2022-11-10 22:35:15 | notaryproject/notation | https://api.github.com/repos/notaryproject/notation | closed | Plugin config directory varies based on OS and should be clearer with docs | documentation | the [os.UserConfigDir()](https://github.com/notaryproject/notation/blob/c61102cdb414a9aa55980bd113496abef3fcf784/pkg/config/path.go#L59) varies and therefore isn't the same based on OS.

i.e. on Ubuntu `~/.config/notation/plugins/azure-kv` works fine.

on a mac it is `~/Library/Application Support/notation/plugins` | 1.0 | Plugin config directory varies based on OS and should be clearer with docs - the [os.UserConfigDir()](https://github.com/notaryproject/notation/blob/c61102cdb414a9aa55980bd113496abef3fcf784/pkg/config/path.go#L59) varies and therefore isn't the same based on OS.

i.e. on Ubuntu `~/.config/notation/plugins/azure-kv` works fine.

on a mac it is `~/Library/Application Support/notation/plugins` | non_infrastructure | plugin config directory varies based on os and should be clearer with docs the varies and therefore isn t the same based on os i e on ubuntu config notation plugins azure kv works fine on a mac it is library application support notation plugins | 0 |

22,486 | 15,217,773,177 | IssuesEvent | 2021-02-17 17:00:03 | airyhq/airy | https://api.github.com/repos/airyhq/airy | opened | Re-evaluate the hostnames for Airy Core | cli infrastructure needs discussion | It will be more convenient for local deployment to have one hostname and instead of additional subdomains, have a path in the request.

This will impact the ingress and probably the path will only be needed for the `frontend` services. An example mapping of the requests:

```

api.airy.core/* -> airy.core/*

webhooks.airy.core/* -> airy.core/*

ui.airy.core/* -> airy.core/ui/*

chatplugin.airy.core/* -> airy.core/ui/chatplugin/*

tools.airy.core/* -> airy.core/tools/*

``` | 1.0 | Re-evaluate the hostnames for Airy Core - It will be more convenient for local deployment to have one hostname and instead of additional subdomains, have a path in the request.

This will impact the ingress and probably the path will only be needed for the `frontend` services. An example mapping of the requests:

```

api.airy.core/* -> airy.core/*

webhooks.airy.core/* -> airy.core/*

ui.airy.core/* -> airy.core/ui/*

chatplugin.airy.core/* -> airy.core/ui/chatplugin/*

tools.airy.core/* -> airy.core/tools/*

``` | infrastructure | re evaluate the hostnames for airy core it will be more convenient for local deployment to have one hostname and instead of additional subdomains have a path in the request this will impact the ingress and probably the path will only be needed for the frontend services an example mapping of the requests api airy core airy core webhooks airy core airy core ui airy core airy core ui chatplugin airy core airy core ui chatplugin tools airy core airy core tools | 1 |

18,964 | 13,181,067,673 | IssuesEvent | 2020-08-12 13:47:22 | pymor/pymor | https://api.github.com/repos/pymor/pymor | closed | Gitlab CI replaces branch refs for docs upload | bug infrastructure | turns 2020.1.1 into 2020-1-1 so I manually had to as et a symlink in the docs repo for the release just now | 1.0 | Gitlab CI replaces branch refs for docs upload - turns 2020.1.1 into 2020-1-1 so I manually had to as et a symlink in the docs repo for the release just now | infrastructure | gitlab ci replaces branch refs for docs upload turns into so i manually had to as et a symlink in the docs repo for the release just now | 1 |

29,430 | 24,007,621,770 | IssuesEvent | 2022-09-14 15:56:47 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | Infrastructure Monitoring: Migrate DataDog to GovCloud instance | Epic operations monitoring platform-initiative infrastructure crew-platform team-platform-infrastructure FY22-Q3 | **Note: This initiative should move to recently complete. @jhouse-solvd 9/14**

## Description

DataDog is moving to a dedicated installation in VA's GovCloud. The DOTS team has offered to help migrate dashboards, alerts, and monitors, but cannot migrate historical data.

## Details

- The DOTS team is migrating DataDog from its current SaaS instance (datadoghq.com) to GovCloud (ddog-gov.com)

- Authentication methods for this service will change (will be using Ablevets Okta)

- Authentication to Okta will be with a username and password

- DataDog engineers are available in the VA OIT DevOps Slack workspace to discuss technical details

- **All historic data will be lost and unable to be migrated**

- DataDog agents are being re-deployed to point to the new instance

- There will be a new subdomain: vagov.ddog-gov.com

- Once the account is migrated, the existing account will remain active for a short time but will be sunset by June 30, 2022

- The Platform Infrastructure Team is meeting w/ the DOTS team to gather add'l details

## Add'l considerations

- Authentication method will apply to the whole DataDog account, not per user.

- DataDog in GovCloud will still be accessible from anywhere (even off of the VA network

## Definition of done

- Platform Crew users are able to access the new instance

- DataDog agents are sending metrics to the new instance

- DataDog monitors are sending notifications

- to Slack

- to PagerDuty

- DataDog resources have been migrated to the new instance

- Documentation exists that highlights the following:

- How to request access to the new DataDog instance

- How to access the new DataDog instance

- How to migrate resources to the new DataDog instance | 2.0 | Infrastructure Monitoring: Migrate DataDog to GovCloud instance - **Note: This initiative should move to recently complete. @jhouse-solvd 9/14**

## Description

DataDog is moving to a dedicated installation in VA's GovCloud. The DOTS team has offered to help migrate dashboards, alerts, and monitors, but cannot migrate historical data.

## Details

- The DOTS team is migrating DataDog from its current SaaS instance (datadoghq.com) to GovCloud (ddog-gov.com)

- Authentication methods for this service will change (will be using Ablevets Okta)

- Authentication to Okta will be with a username and password

- DataDog engineers are available in the VA OIT DevOps Slack workspace to discuss technical details

- **All historic data will be lost and unable to be migrated**

- DataDog agents are being re-deployed to point to the new instance

- There will be a new subdomain: vagov.ddog-gov.com

- Once the account is migrated, the existing account will remain active for a short time but will be sunset by June 30, 2022

- The Platform Infrastructure Team is meeting w/ the DOTS team to gather add'l details

## Add'l considerations

- Authentication method will apply to the whole DataDog account, not per user.

- DataDog in GovCloud will still be accessible from anywhere (even off of the VA network

## Definition of done

- Platform Crew users are able to access the new instance

- DataDog agents are sending metrics to the new instance

- DataDog monitors are sending notifications

- to Slack

- to PagerDuty

- DataDog resources have been migrated to the new instance

- Documentation exists that highlights the following:

- How to request access to the new DataDog instance

- How to access the new DataDog instance

- How to migrate resources to the new DataDog instance | infrastructure | infrastructure monitoring migrate datadog to govcloud instance note this initiative should move to recently complete jhouse solvd description datadog is moving to a dedicated installation in va s govcloud the dots team has offered to help migrate dashboards alerts and monitors but cannot migrate historical data details the dots team is migrating datadog from its current saas instance datadoghq com to govcloud ddog gov com authentication methods for this service will change will be using ablevets okta authentication to okta will be with a username and password datadog engineers are available in the va oit devops slack workspace to discuss technical details all historic data will be lost and unable to be migrated datadog agents are being re deployed to point to the new instance there will be a new subdomain vagov ddog gov com once the account is migrated the existing account will remain active for a short time but will be sunset by june the platform infrastructure team is meeting w the dots team to gather add l details add l considerations authentication method will apply to the whole datadog account not per user datadog in govcloud will still be accessible from anywhere even off of the va network definition of done platform crew users are able to access the new instance datadog agents are sending metrics to the new instance datadog monitors are sending notifications to slack to pagerduty datadog resources have been migrated to the new instance documentation exists that highlights the following how to request access to the new datadog instance how to access the new datadog instance how to migrate resources to the new datadog instance | 1 |

26,607 | 20,325,109,096 | IssuesEvent | 2022-02-18 04:33:50 | pixiebrix/pixiebrix-extension | https://api.github.com/repos/pixiebrix/pixiebrix-extension | closed | Additional error patterns to exclude from Rollbar reporting | infrastructure developer experience | These errors are plentiful and clogging up our Rollbar tubes. I think they're generally benign errors from the messenger?

If they were having business impact we'd see them as errors in brick/extension point execution?

* No frame with id 453 in tab 81.

* The frame was removed.

* Extension context invalidated.

The last one might be fixed already. I'm only seeing error telemetry for it in Rollbar for 1.5.3. It's in the list of [CONNECTION_ERROR_PATTERNS](http://github.com/pixiebrix/pixiebrix-extension/blob/afe1c8d8608bf90c692413d0157ff33f7db8e1da/src/errors.ts#L269-L269)

| 1.0 | Additional error patterns to exclude from Rollbar reporting - These errors are plentiful and clogging up our Rollbar tubes. I think they're generally benign errors from the messenger?

If they were having business impact we'd see them as errors in brick/extension point execution?

* No frame with id 453 in tab 81.

* The frame was removed.

* Extension context invalidated.

The last one might be fixed already. I'm only seeing error telemetry for it in Rollbar for 1.5.3. It's in the list of [CONNECTION_ERROR_PATTERNS](http://github.com/pixiebrix/pixiebrix-extension/blob/afe1c8d8608bf90c692413d0157ff33f7db8e1da/src/errors.ts#L269-L269)

| infrastructure | additional error patterns to exclude from rollbar reporting these errors are plentiful and clogging up our rollbar tubes i think they re generally benign errors from the messenger if they were having business impact we d see them as errors in brick extension point execution no frame with id in tab the frame was removed extension context invalidated the last one might be fixed already i m only seeing error telemetry for it in rollbar for it s in the list of | 1 |

390,167 | 11,525,776,335 | IssuesEvent | 2020-02-15 10:55:24 | wso2/product-is | https://api.github.com/repos/wso2/product-is | opened | Challenge questions API does not work with scope based access control | Affected/5.10.0-Beta2 Priority/High Type/Bug | Steps to reproduce

As provided in identity.xml challenge question API is secured with following scopes

```

<Resource context="(.*)/api/users/v1/(.*)/challenges(.*)" secured="true" http-method="all">

<Permissions>/permission/admin/manage/identity</Permissions>

<Scopes>internal_identity_mgt_view</Scopes>

<Scopes>internal_identity_mgt_update</Scopes>

<Scopes>internal_identity_mgt_create</Scopes>

<Scopes>internal_identity_mgt_delete</Scopes>

</Resource>

<Resource context="(.*)/api/users/v1/(.*)/challenge-answers(.*)" secured="true" http-method="all">

<Permissions>/permission/admin/manage/identity</Permissions>

<Scopes>internal_identity_mgt_view</Scopes>

<Scopes>internal_identity_mgt_update</Scopes>

<Scopes>internal_identity_mgt_create</Scopes>

<Scopes>internal_identity_mgt_delete</Scopes>

</Resource>

```

Retrieve an access token for the scope **internal_identity_mgt_view**

Use the access token in bearer header and try to view the available challenge questions for a user using https://localhost:9443/t/wso2.com/api/users/v1/8022efd8-01cf-4f29-80fd-9e20641ff6bc/challenges

It returns 403 forbidden error | 1.0 | Challenge questions API does not work with scope based access control - Steps to reproduce

As provided in identity.xml challenge question API is secured with following scopes

```

<Resource context="(.*)/api/users/v1/(.*)/challenges(.*)" secured="true" http-method="all">

<Permissions>/permission/admin/manage/identity</Permissions>

<Scopes>internal_identity_mgt_view</Scopes>

<Scopes>internal_identity_mgt_update</Scopes>

<Scopes>internal_identity_mgt_create</Scopes>

<Scopes>internal_identity_mgt_delete</Scopes>

</Resource>

<Resource context="(.*)/api/users/v1/(.*)/challenge-answers(.*)" secured="true" http-method="all">

<Permissions>/permission/admin/manage/identity</Permissions>

<Scopes>internal_identity_mgt_view</Scopes>

<Scopes>internal_identity_mgt_update</Scopes>

<Scopes>internal_identity_mgt_create</Scopes>

<Scopes>internal_identity_mgt_delete</Scopes>

</Resource>

```

Retrieve an access token for the scope **internal_identity_mgt_view**

Use the access token in bearer header and try to view the available challenge questions for a user using https://localhost:9443/t/wso2.com/api/users/v1/8022efd8-01cf-4f29-80fd-9e20641ff6bc/challenges

It returns 403 forbidden error | non_infrastructure | challenge questions api does not work with scope based access control steps to reproduce as provided in identity xml challenge question api is secured with following scopes permission admin manage identity internal identity mgt view internal identity mgt update internal identity mgt create internal identity mgt delete permission admin manage identity internal identity mgt view internal identity mgt update internal identity mgt create internal identity mgt delete retrieve an access token for the scope internal identity mgt view use the access token in bearer header and try to view the available challenge questions for a user using it returns forbidden error | 0 |

13,266 | 10,171,670,742 | IssuesEvent | 2019-08-08 08:56:05 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | Webgl teximage tests timing out flakily on Firefox linux | P3 area-infrastructure type-bug web-libraries | All the webgl tests dealing with textures seem to be timing out flakily on Firefox linux. Marking all tests in fast/canvas/webgl as Timeout, Pass to handle this.

examples of tests that have been seen timing out:

co19/LayoutTests/fast/canvas/webgl/texImage2DImageDataTest_t01

co19/LayoutTests/fast/canvas/webgl/gl-teximage_t01

co19/LayoutTests/fast/canvas/webgl/tex-image-and-sub-image-2d-with-video-rgba4444_t01

co19/LayoutTests/fast/canvas/webgl/tex-image-and-sub-image-2d-with-image-rgb565_t01

| 1.0 | Webgl teximage tests timing out flakily on Firefox linux - All the webgl tests dealing with textures seem to be timing out flakily on Firefox linux. Marking all tests in fast/canvas/webgl as Timeout, Pass to handle this.

examples of tests that have been seen timing out:

co19/LayoutTests/fast/canvas/webgl/texImage2DImageDataTest_t01

co19/LayoutTests/fast/canvas/webgl/gl-teximage_t01

co19/LayoutTests/fast/canvas/webgl/tex-image-and-sub-image-2d-with-video-rgba4444_t01

co19/LayoutTests/fast/canvas/webgl/tex-image-and-sub-image-2d-with-image-rgb565_t01

| infrastructure | webgl teximage tests timing out flakily on firefox linux all the webgl tests dealing with textures seem to be timing out flakily on firefox linux marking all tests in fast canvas webgl as timeout pass to handle this examples of tests that have been seen timing out layouttests fast canvas webgl layouttests fast canvas webgl gl teximage layouttests fast canvas webgl tex image and sub image with video layouttests fast canvas webgl tex image and sub image with image | 1 |

19,177 | 13,199,480,535 | IssuesEvent | 2020-08-14 05:59:39 | aodn/aodn-portal | https://api.github.com/repos/aodn/aodn-portal | opened | Portal is not displaying links from GN3 correctly on step 3 | T1 - IMOS T2 - O&M - Continuous Improvement T3 Information Infrastructure | This issue tracker is only for AODN Portal issues.

### Steps to reproduce the issue

1. Add https://portal.aodn.org.au/search?uuid=e850651b-d65d-495b-8182-5dde35919616 to the Portal

2. Proceed to Info tab on step 2 look at link to acoustic data viewer

3. Proceed to Step 3 look at link to acoustic data viewer

### Expected behaviour

The link on step 3 should be displayed like Acoustic Data Viewer (preview and download raw data) as it is on step 2

### Actual behaviour

It is displayed as simply acoustic.aodn.org.au

| 1.0 | Portal is not displaying links from GN3 correctly on step 3 - This issue tracker is only for AODN Portal issues.

### Steps to reproduce the issue

1. Add https://portal.aodn.org.au/search?uuid=e850651b-d65d-495b-8182-5dde35919616 to the Portal

2. Proceed to Info tab on step 2 look at link to acoustic data viewer

3. Proceed to Step 3 look at link to acoustic data viewer

### Expected behaviour

The link on step 3 should be displayed like Acoustic Data Viewer (preview and download raw data) as it is on step 2

### Actual behaviour

It is displayed as simply acoustic.aodn.org.au

| infrastructure | portal is not displaying links from correctly on step this issue tracker is only for aodn portal issues steps to reproduce the issue add to the portal proceed to info tab on step look at link to acoustic data viewer proceed to step look at link to acoustic data viewer expected behaviour the link on step should be displayed like acoustic data viewer preview and download raw data as it is on step actual behaviour it is displayed as simply acoustic aodn org au | 1 |

24,278 | 17,079,464,759 | IssuesEvent | 2021-07-08 01:28:53 | zowe/docs-site | https://api.github.com/repos/zowe/docs-site | closed | Enhancement - Dark Theme option | area: site-infrastructure type: enhancement | Add a button to site for switching between Regular Theme (light) and Dark Theme. We could use a package like this one (which didn't seem to work with our site when importing it in module.exports in config.js by default, just tried quickly) https://www.npmjs.com/package/vuepress-theme-dark-new

OR borrow ideas from other style sheets to define our own dark theme.

Site would switch CSS styles it reads from based on the state of the button.

Very low priority, just a cool idea, especially for a project focused on developers who I find often prefer dark themes. | 1.0 | Enhancement - Dark Theme option - Add a button to site for switching between Regular Theme (light) and Dark Theme. We could use a package like this one (which didn't seem to work with our site when importing it in module.exports in config.js by default, just tried quickly) https://www.npmjs.com/package/vuepress-theme-dark-new

OR borrow ideas from other style sheets to define our own dark theme.

Site would switch CSS styles it reads from based on the state of the button.

Very low priority, just a cool idea, especially for a project focused on developers who I find often prefer dark themes. | infrastructure | enhancement dark theme option add a button to site for switching between regular theme light and dark theme we could use a package like this one which didn t seem to work with our site when importing it in module exports in config js by default just tried quickly or borrow ideas from other style sheets to define our own dark theme site would switch css styles it reads from based on the state of the button very low priority just a cool idea especially for a project focused on developers who i find often prefer dark themes | 1 |

29,905 | 24,381,438,241 | IssuesEvent | 2022-10-04 08:11:03 | thinktecture/relayserver | https://api.github.com/repos/thinktecture/relayserver | opened | Consolidate logging | enhancement cosmetic infrastructure | Make logging similar to what is done in .NET:

One `LoggerExtensions` class with all `[LoggerMessage]` definitions on it. EventId is counted from 1 per logging extension class.

Extensions class is scoped per project or even per single class when there are a lot of messages.

Examples: See HttpConnectionDispatcher.Log and HttpConnectionManager.Log in SignalR Core. | 1.0 | Consolidate logging - Make logging similar to what is done in .NET:

One `LoggerExtensions` class with all `[LoggerMessage]` definitions on it. EventId is counted from 1 per logging extension class.

Extensions class is scoped per project or even per single class when there are a lot of messages.

Examples: See HttpConnectionDispatcher.Log and HttpConnectionManager.Log in SignalR Core. | infrastructure | consolidate logging make logging similar to what is done in net one loggerextensions class with all definitions on it eventid is counted from per logging extension class extensions class is scoped per project or even per single class when there are a lot of messages examples see httpconnectiondispatcher log and httpconnectionmanager log in signalr core | 1 |

17,238 | 12,260,326,934 | IssuesEvent | 2020-05-06 18:06:24 | NeuronRobotics/nrjavaserial | https://api.github.com/repos/NeuronRobotics/nrjavaserial | closed | Get Gradle to build with Java 1.5 compatibility | infrastructure | #21 asked us to compile for Java 1.5, and we've maintained that between then and now (I think). However, Gradle doesn't run on Java 1.5 – it has a minimum of 1.6. Before we build a new JAR for the 3.12.0 release, we need to figure out the configuration required to get Gradle to target 1.5 from a 1.6 compiler, or to get Gradle to invoke a 1.5 `javac` when running under 1.6.

| 1.0 | Get Gradle to build with Java 1.5 compatibility - #21 asked us to compile for Java 1.5, and we've maintained that between then and now (I think). However, Gradle doesn't run on Java 1.5 – it has a minimum of 1.6. Before we build a new JAR for the 3.12.0 release, we need to figure out the configuration required to get Gradle to target 1.5 from a 1.6 compiler, or to get Gradle to invoke a 1.5 `javac` when running under 1.6.

| infrastructure | get gradle to build with java compatibility asked us to compile for java and we ve maintained that between then and now i think however gradle doesn t run on java – it has a minimum of before we build a new jar for the release we need to figure out the configuration required to get gradle to target from a compiler or to get gradle to invoke a javac when running under | 1 |

30,919 | 25,169,276,242 | IssuesEvent | 2022-11-11 00:42:32 | astropy/astroquery | https://api.github.com/repos/astropy/astroquery | closed | ENH: Add linkcheck to CI | testing infrastructure | As suggested by @bsipocz in https://github.com/astropy/astroquery/pull/1784#discussion_r463344856 .

Might need pinning like https://github.com/astropy/astropy/blob/d9ad8d486e6f792595f7d40bc9951389e5e57992/tox.ini#L82-L86 and then do https://github.com/astropy/astropy/blob/d9ad8d486e6f792595f7d40bc9951389e5e57992/tox.ini#L115 in the `docs` directory. Adapt those to however the CI is set up if you don't use `tox`. | 1.0 | ENH: Add linkcheck to CI - As suggested by @bsipocz in https://github.com/astropy/astroquery/pull/1784#discussion_r463344856 .

Might need pinning like https://github.com/astropy/astropy/blob/d9ad8d486e6f792595f7d40bc9951389e5e57992/tox.ini#L82-L86 and then do https://github.com/astropy/astropy/blob/d9ad8d486e6f792595f7d40bc9951389e5e57992/tox.ini#L115 in the `docs` directory. Adapt those to however the CI is set up if you don't use `tox`. | infrastructure | enh add linkcheck to ci as suggested by bsipocz in might need pinning like and then do in the docs directory adapt those to however the ci is set up if you don t use tox | 1 |

32,512 | 26,747,553,160 | IssuesEvent | 2023-01-30 17:01:08 | opendatahub-io/odh-dashboard | https://api.github.com/repos/opendatahub-io/odh-dashboard | closed | [Feature Request]: Remove Python 2 dependency | kind/enhancement infrastructure priority/normal dependencies | ### Feature description

In order to improve our building process we should find a way to not require installing python 2:

https://github.com/opendatahub-io/odh-dashboard/blob/aaebc79eb2b24d7024e4e97e9b95b75e70eb764c/Dockerfile#L27

Python 2 is needed because it is a dependency of [node-gyp](https://github.com/opendatahub-io/odh-dashboard/blob/main/frontend/package-lock.json#L11757-L11783) which is required by [node-sass](https://github.com/opendatahub-io/odh-dashboard/blob/main/frontend/package-lock.json#L11862-L11884).

[Node-sass is deprecated](https://sass-lang.com/blog/libsass-is-deprecated), finding an alternative library may not require Python 2.

| 1.0 | [Feature Request]: Remove Python 2 dependency - ### Feature description

In order to improve our building process we should find a way to not require installing python 2:

https://github.com/opendatahub-io/odh-dashboard/blob/aaebc79eb2b24d7024e4e97e9b95b75e70eb764c/Dockerfile#L27

Python 2 is needed because it is a dependency of [node-gyp](https://github.com/opendatahub-io/odh-dashboard/blob/main/frontend/package-lock.json#L11757-L11783) which is required by [node-sass](https://github.com/opendatahub-io/odh-dashboard/blob/main/frontend/package-lock.json#L11862-L11884).

[Node-sass is deprecated](https://sass-lang.com/blog/libsass-is-deprecated), finding an alternative library may not require Python 2.

| infrastructure | remove python dependency feature description in order to improve our building process we should find a way to not require installing python python is needed because it is a dependency of which is required by finding an alternative library may not require python | 1 |

18,776 | 13,099,116,587 | IssuesEvent | 2020-08-03 20:54:02 | patternfly/patternfly-org | https://api.github.com/repos/patternfly/patternfly-org | closed | Implement new context switcher styles | infrastructure | @redallen commented on [Wed Nov 06 2019](https://github.com/patternfly/gatsby-theme-patternfly-org/issues/102)

Possibly implement this design by @mceledonia. The Core and React versions of the site would just have the dropdown disabled.

Framework - 14px secondary text color RH text medium

React/HTML - 24px primary text color RH text bold

View repo - 14px link text color (#06c) RH text regular

| 1.0 | Implement new context switcher styles - @redallen commented on [Wed Nov 06 2019](https://github.com/patternfly/gatsby-theme-patternfly-org/issues/102)

Possibly implement this design by @mceledonia. The Core and React versions of the site would just have the dropdown disabled.

Framework - 14px secondary text color RH text medium

React/HTML - 24px primary text color RH text bold

View repo - 14px link text color (#06c) RH text regular

| infrastructure | implement new context switcher styles redallen commented on possibly implement this design by mceledonia the core and react versions of the site would just have the dropdown disabled framework secondary text color rh text medium react html primary text color rh text bold view repo link text color rh text regular | 1 |

134,796 | 10,932,208,128 | IssuesEvent | 2019-11-23 16:07:24 | Students-of-the-city-of-Kostroma/Ray-of-hope | https://api.github.com/repos/Students-of-the-city-of-Kostroma/Ray-of-hope | opened | Протестировать макет публикации нового поста для WEB | O5 PR4 Post Sprint 7 Testing WEB-frontend | Epic #97

Story #98

Протестировать макеты постов в WEB, созданные в ходе выполнения задачи #116, на соответствие требованиям.

Поднимать баги по мере нахождения.

| 1.0 | Протестировать макет публикации нового поста для WEB - Epic #97

Story #98

Протестировать макеты постов в WEB, созданные в ходе выполнения задачи #116, на соответствие требованиям.

Поднимать баги по мере нахождения.

| non_infrastructure | протестировать макет публикации нового поста для web epic story протестировать макеты постов в web созданные в ходе выполнения задачи на соответствие требованиям поднимать баги по мере нахождения | 0 |

18,223 | 12,837,640,677 | IssuesEvent | 2020-07-07 16:05:54 | blockframes/blockframes | https://api.github.com/repos/blockframes/blockframes | closed | Setup Firebase Local UI Emulator | Infrastructure | Setup the Firebase Local UI Emulator

&

Run the app against this.

| 1.0 | Setup Firebase Local UI Emulator - Setup the Firebase Local UI Emulator

&

Run the app against this.

| infrastructure | setup firebase local ui emulator setup the firebase local ui emulator run the app against this | 1 |

5,302 | 5,557,638,810 | IssuesEvent | 2017-03-24 12:41:32 | datasciencebr/serenata-de-amor | https://api.github.com/repos/datasciencebr/serenata-de-amor | closed | Plugin to use Neo4j within Jupyter notebooks | hackathon infrastructure medium | This is not an issue that will generate code for Serenata de Amor, but will create a while new package.

Using graph database will help in Serenata de Ampr us and I ran into @nicolewhite's repo that makes great dataviz using Neo4j inside Jupyter notebooks (check this [example](http://nicolewhite.github.io/neo4j-jupyter/hello-world.html) and the main [repo](https://github.com/nicolewhite/neo4j-jupyter)).

My idea (and via tweet Nicole's said she's fine with that) is to pack her code into a package instalable via `pip`. Adding the module to Serenata de Amor repo and we can use the dataviz in out notebook. That's the idea. | 1.0 | Plugin to use Neo4j within Jupyter notebooks - This is not an issue that will generate code for Serenata de Amor, but will create a while new package.

Using graph database will help in Serenata de Ampr us and I ran into @nicolewhite's repo that makes great dataviz using Neo4j inside Jupyter notebooks (check this [example](http://nicolewhite.github.io/neo4j-jupyter/hello-world.html) and the main [repo](https://github.com/nicolewhite/neo4j-jupyter)).

My idea (and via tweet Nicole's said she's fine with that) is to pack her code into a package instalable via `pip`. Adding the module to Serenata de Amor repo and we can use the dataviz in out notebook. That's the idea. | infrastructure | plugin to use within jupyter notebooks this is not an issue that will generate code for serenata de amor but will create a while new package using graph database will help in serenata de ampr us and i ran into nicolewhite s repo that makes great dataviz using inside jupyter notebooks check this and the main my idea and via tweet nicole s said she s fine with that is to pack her code into a package instalable via pip adding the module to serenata de amor repo and we can use the dataviz in out notebook that s the idea | 1 |

8,706 | 7,573,419,161 | IssuesEvent | 2018-04-23 17:43:08 | dart-lang/site-www | https://api.github.com/repos/dart-lang/site-www | opened | Document how to link to sites, search for non-macro links | Infrastructure | I think we need a tiny wiki doc (or section of a doc) about how to link to various sites. E.g. I just approved a PR that links directly to webdev.dartlang.org, but it would be nice to be able to point contributors to a clear list of the macros to use instead. It'd be similar to https://github.com/dart-lang/site-www/wiki/Referring-to-API-docs. Maybe it would incorporate it.

(And, obviously, we should search for that webdev.dartlang.org link and fix it. It wasn't worth holding up the PR for.)

Not sure whether this doc ultimately belongs in site-shared or here.

/cc @chalin | 1.0 | Document how to link to sites, search for non-macro links - I think we need a tiny wiki doc (or section of a doc) about how to link to various sites. E.g. I just approved a PR that links directly to webdev.dartlang.org, but it would be nice to be able to point contributors to a clear list of the macros to use instead. It'd be similar to https://github.com/dart-lang/site-www/wiki/Referring-to-API-docs. Maybe it would incorporate it.

(And, obviously, we should search for that webdev.dartlang.org link and fix it. It wasn't worth holding up the PR for.)

Not sure whether this doc ultimately belongs in site-shared or here.

/cc @chalin | infrastructure | document how to link to sites search for non macro links i think we need a tiny wiki doc or section of a doc about how to link to various sites e g i just approved a pr that links directly to webdev dartlang org but it would be nice to be able to point contributors to a clear list of the macros to use instead it d be similar to maybe it would incorporate it and obviously we should search for that webdev dartlang org link and fix it it wasn t worth holding up the pr for not sure whether this doc ultimately belongs in site shared or here cc chalin | 1 |

29,087 | 23,709,188,084 | IssuesEvent | 2022-08-30 06:09:25 | UnitTestBot/UTBotJava | https://api.github.com/repos/UnitTestBot/UTBotJava | closed | Night Statistics Monitoring | enhancement infrastructure | **Description**

We want to develop and improve our product and, of course, there are some changes and its combinations which, according to some statistics, can make UTBot worse.

The main idea is collecting statistics after made changes. But it takes too long to collect statistics on a huge project to do it after each push into master. Thus, we will do it every night when no one makes changes.

Also we need to visualize collected statistics to easily analyze them.

| 1.0 | Night Statistics Monitoring - **Description**

We want to develop and improve our product and, of course, there are some changes and its combinations which, according to some statistics, can make UTBot worse.

The main idea is collecting statistics after made changes. But it takes too long to collect statistics on a huge project to do it after each push into master. Thus, we will do it every night when no one makes changes.

Also we need to visualize collected statistics to easily analyze them.

| infrastructure | night statistics monitoring description we want to develop and improve our product and of course there are some changes and its combinations which according to some statistics can make utbot worse the main idea is collecting statistics after made changes but it takes too long to collect statistics on a huge project to do it after each push into master thus we will do it every night when no one makes changes also we need to visualize collected statistics to easily analyze them | 1 |

8,681 | 7,558,925,878 | IssuesEvent | 2018-04-20 00:53:17 | connormlewis/idb | https://api.github.com/repos/connormlewis/idb | closed | Refine acceptance testing | infrastructure | Need to add acceptances tests that test searching, sorting, and filtering. | 1.0 | Refine acceptance testing - Need to add acceptances tests that test searching, sorting, and filtering. | infrastructure | refine acceptance testing need to add acceptances tests that test searching sorting and filtering | 1 |

12,335 | 9,708,890,720 | IssuesEvent | 2019-05-28 08:50:55 | ethersphere/go-ethereum | https://api.github.com/repos/ethersphere/go-ethereum | closed | Kubernetes: Add grafana dashboards to visualise disk usage | infrastructure | Moved from issue: ethereum/swarm-cluster#77

@nonsense wrote:

>We need to add disk i/o utilisation graphs to both persistent volumes and local vm disks, so that we track disk i/o usage on Swarm.

>We are having a lot of issues with it, with Swarm using 100% of available IOPS on current setup. It would be difficult to improve this and add benchmarking tests unless we can monitor it on K8s. | 1.0 | Kubernetes: Add grafana dashboards to visualise disk usage - Moved from issue: ethereum/swarm-cluster#77

@nonsense wrote:

>We need to add disk i/o utilisation graphs to both persistent volumes and local vm disks, so that we track disk i/o usage on Swarm.

>We are having a lot of issues with it, with Swarm using 100% of available IOPS on current setup. It would be difficult to improve this and add benchmarking tests unless we can monitor it on K8s. | infrastructure | kubernetes add grafana dashboards to visualise disk usage moved from issue ethereum swarm cluster nonsense wrote we need to add disk i o utilisation graphs to both persistent volumes and local vm disks so that we track disk i o usage on swarm we are having a lot of issues with it with swarm using of available iops on current setup it would be difficult to improve this and add benchmarking tests unless we can monitor it on | 1 |

240,134 | 18,293,519,354 | IssuesEvent | 2021-10-05 17:51:09 | FlukeAndFeather/stickleback | https://api.github.com/repos/FlukeAndFeather/stickleback | opened | GIF links broken in PyPI README | documentation | See https://pypi.org/project/stickleback/0.1.1/, section "Visualize sensor and event data". Use absolute URLs or something instead? | 1.0 | GIF links broken in PyPI README - See https://pypi.org/project/stickleback/0.1.1/, section "Visualize sensor and event data". Use absolute URLs or something instead? | non_infrastructure | gif links broken in pypi readme see section visualize sensor and event data use absolute urls or something instead | 0 |

5,513 | 5,714,936,500 | IssuesEvent | 2017-04-19 11:49:58 | m-labs/artiq | https://api.github.com/repos/m-labs/artiq | closed | conda leaves build files around | area:infrastructure | After a build, files are left over in ``/var/lib/buildbot/slaves/debian-stretch-amd64-2/miniconda/conda-bld``. This wastes disk space, and more importantly randomly breaks builds when the file names conflict, resulting in errors like:

``fatal: destination path '/var/lib/buildbot/slaves/debian-stretch-amd64-2/miniconda/conda-bld/artiq-kc705-nist_clock_1491023827445/work' already exists and is not an empty directory.`` | 1.0 | conda leaves build files around - After a build, files are left over in ``/var/lib/buildbot/slaves/debian-stretch-amd64-2/miniconda/conda-bld``. This wastes disk space, and more importantly randomly breaks builds when the file names conflict, resulting in errors like:

``fatal: destination path '/var/lib/buildbot/slaves/debian-stretch-amd64-2/miniconda/conda-bld/artiq-kc705-nist_clock_1491023827445/work' already exists and is not an empty directory.`` | infrastructure | conda leaves build files around after a build files are left over in var lib buildbot slaves debian stretch miniconda conda bld this wastes disk space and more importantly randomly breaks builds when the file names conflict resulting in errors like fatal destination path var lib buildbot slaves debian stretch miniconda conda bld artiq nist clock work already exists and is not an empty directory | 1 |

14,657 | 10,209,341,898 | IssuesEvent | 2019-08-14 12:29:51 | microsoft/azure-pipelines-tasks | https://api.github.com/repos/microsoft/azure-pipelines-tasks | closed | Add support for multiple deployments in parallel in AzureRmWebAppDeploymentV4 | Area: AzureAppService Area: Release enhancement | ## Required Information

**Question, Bug, or Feature?**

*Type*: Feature

**Enter Task Name**: AzureRmWebAppDeployment

## Environment

- Server - Azure Pipelines or TFS on-premises?

- Azure Pipelines

- Agent - Hosted or Private:

- Private with Windows

## Issue Description

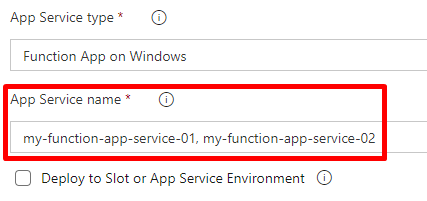

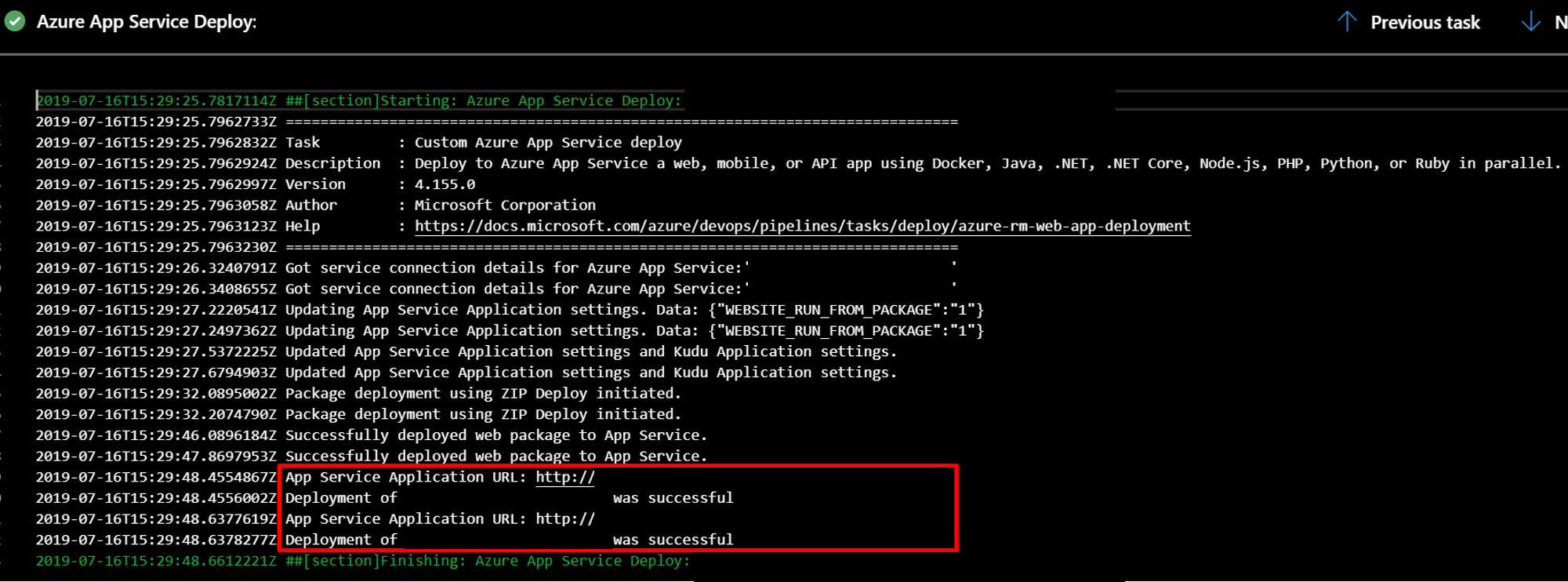

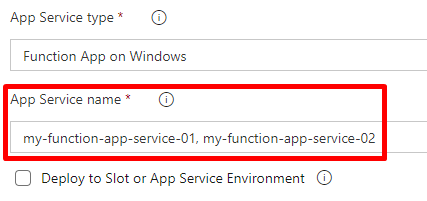

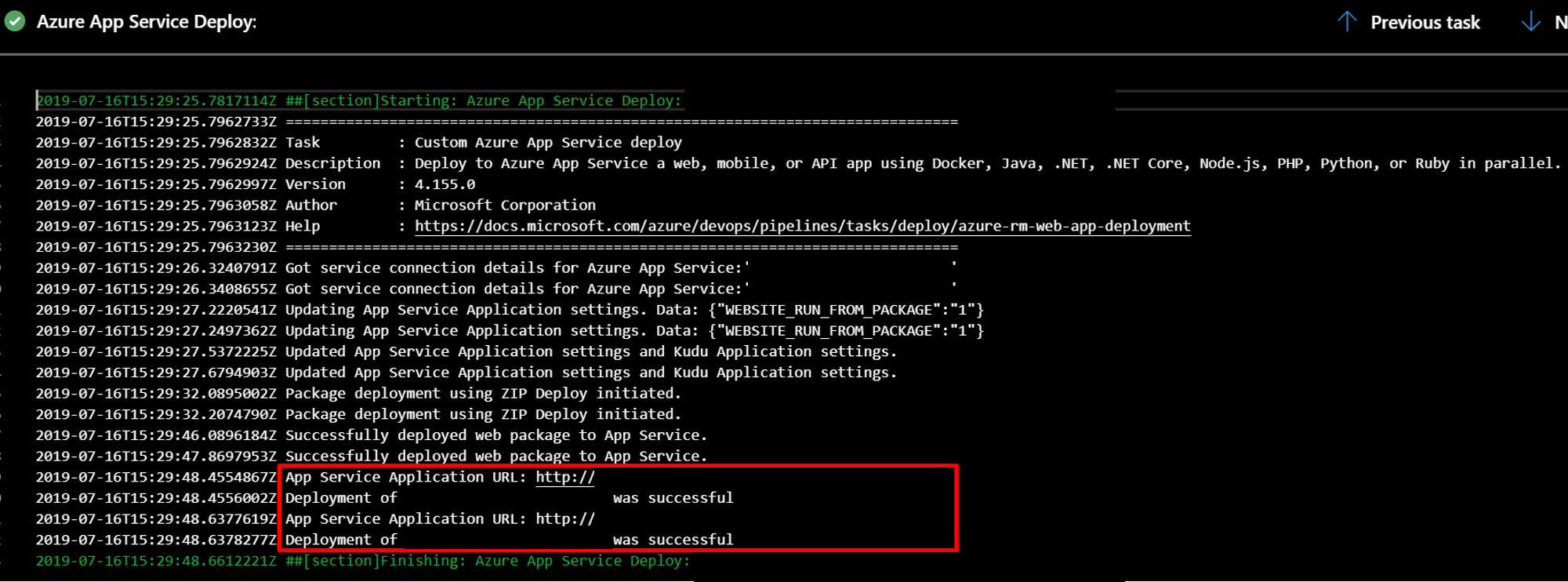

Currently, the AzureRmWebAppDeploymentV4 task allows to deploy to a single Azure App Service. We created a pull request to update it to allow parallel deployments to multiple Azure App Services. Link to the PR: [10909](https://github.com/microsoft/azure-pipelines-tasks/pull/10909)

This pull request adds support for deploying to multiple Azure App Services in one task execution. The services are specified in the App Service name field of the task separated by commas.

The task reads the list of names and deploys the app to the services in parallel.

A pre-release version was tested locally and in Azure Pipelines. The names of the apps were hidden from the picture:

Thank you for your time. | 1.0 | Add support for multiple deployments in parallel in AzureRmWebAppDeploymentV4 - ## Required Information

**Question, Bug, or Feature?**

*Type*: Feature

**Enter Task Name**: AzureRmWebAppDeployment

## Environment

- Server - Azure Pipelines or TFS on-premises?

- Azure Pipelines

- Agent - Hosted or Private:

- Private with Windows

## Issue Description

Currently, the AzureRmWebAppDeploymentV4 task allows to deploy to a single Azure App Service. We created a pull request to update it to allow parallel deployments to multiple Azure App Services. Link to the PR: [10909](https://github.com/microsoft/azure-pipelines-tasks/pull/10909)

This pull request adds support for deploying to multiple Azure App Services in one task execution. The services are specified in the App Service name field of the task separated by commas.

The task reads the list of names and deploys the app to the services in parallel.

A pre-release version was tested locally and in Azure Pipelines. The names of the apps were hidden from the picture:

Thank you for your time. | non_infrastructure | add support for multiple deployments in parallel in required information question bug or feature type feature enter task name azurermwebappdeployment environment server azure pipelines or tfs on premises azure pipelines agent hosted or private private with windows issue description currently the task allows to deploy to a single azure app service we created a pull request to update it to allow parallel deployments to multiple azure app services link to the pr this pull request adds support for deploying to multiple azure app services in one task execution the services are specified in the app service name field of the task separated by commas the task reads the list of names and deploys the app to the services in parallel a pre release version was tested locally and in azure pipelines the names of the apps were hidden from the picture thank you for your time | 0 |