Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

30,253 | 24,701,374,935 | IssuesEvent | 2022-10-19 15:31:01 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | build-android-rootfs.sh Must be fix the "termux" URL | help wanted area-Infrastructure-coreclr | The

https://github.com/dotnet/runtime/blob/main/eng/common/cross/build-android-rootfs.sh

Go line: 100

Find string:

http://termux.net/

Replace all to:

https://packages.termux.dev/termux-main-21/

Build succeeded test:

./build.sh --os Android

or

ROOTFS_DIR=$(realpath /Home/runtime_linux/.tools/android-rootfs/android-ndk-r21/sysroot) ./build.sh --cross --arch arm64 --subset mono

@steveisok | 1.0 | build-android-rootfs.sh Must be fix the "termux" URL - The

https://github.com/dotnet/runtime/blob/main/eng/common/cross/build-android-rootfs.sh

Go line: 100

Find string:

http://termux.net/

Replace all to:

https://packages.termux.dev/termux-main-21/

Build succeeded test:

./build.sh --os Android

or

ROOTFS_DIR=$(realpath /Home/runtime_linux/.tools/android-rootfs/android-ndk-r21/sysroot) ./build.sh --cross --arch arm64 --subset mono

@steveisok | infrastructure | build android rootfs sh must be fix the termux url the go line find string replace all to build succeeded test build sh os android or rootfs dir realpath home runtime linux tools android rootfs android ndk sysroot build sh cross arch subset mono steveisok | 1 |

85,900 | 16,759,626,193 | IssuesEvent | 2021-06-13 14:15:14 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [com_fields] Field type media. Wrong default value/path in article edit. | J3 Issue No Code Attached Yet | ### Steps to reproduce the issue

- Create a custom field for articles of type `Media`

- Select a directory and enter an existing image name inside that directory as Default Value

- Save the field

- Open an article and see:

- **The path is wrong. It should be `images/banners/shop-ad.jpg`**

- Don't change the media field and save the article.

- See the article in frontend.

### Expected result

- Field resolves the directory path correctly.

### Actual result

- Directory path ignored and one have to select the media again (in any article).

| 1.0 | [com_fields] Field type media. Wrong default value/path in article edit. - ### Steps to reproduce the issue

- Create a custom field for articles of type `Media`

- Select a directory and enter an existing image name inside that directory as Default Value

- Save the field

- Open an article and see:

- **The path is wrong. It should be `images/banners/shop-ad.jpg`**

- Don't change the media field and save the article.

- See the article in frontend.

### Expected result

- Field resolves the directory path correctly.

### Actual result

- Directory path ignored and one have to select the media again (in any article).

| non_infrastructure | field type media wrong default value path in article edit steps to reproduce the issue create a custom field for articles of type media select a directory and enter an existing image name inside that directory as default value save the field open an article and see the path is wrong it should be images banners shop ad jpg don t change the media field and save the article see the article in frontend expected result field resolves the directory path correctly actual result directory path ignored and one have to select the media again in any article | 0 |

301,435 | 26,047,941,148 | IssuesEvent | 2022-12-22 15:55:14 | openhab/openhab-core | https://api.github.com/repos/openhab/openhab-core | opened | ThreadPoolManagerTest unstable | test | This test failed in a GHA Windows build: https://github.com/wborn/openhab-core/actions/runs/3758246710/jobs/6386349876

```

[ERROR] Tests run: 8, Failures: 1, Errors: 0, Skipped: 0, Time elapsed: 3.194 s <<< FAILURE! - in org.openhab.core.common.ThreadPoolManagerTest

[ERROR] org.openhab.core.common.ThreadPoolManagerTest.testGetPoolShutdown Time elapsed: 1.57 s <<< FAILURE!

org.opentest4j.AssertionFailedError: Checking if thread pool Test works ==> expected: <true> but was: <false>

at org.junit.jupiter.api.AssertionUtils.fail(AssertionUtils.java:55)

at org.junit.jupiter.api.AssertTrue.assertTrue(AssertTrue.java:40)

at org.junit.jupiter.api.Assertions.assertTrue(Assertions.java:210)

at org.openhab.core.common.ThreadPoolManagerTest.checkThreadPoolWorks(ThreadPoolManagerTest.java:128)

at org.openhab.core.common.ThreadPoolManagerTest.testGetPoolShutdown(ThreadPoolManagerTest.java:108)

``` | 1.0 | ThreadPoolManagerTest unstable - This test failed in a GHA Windows build: https://github.com/wborn/openhab-core/actions/runs/3758246710/jobs/6386349876

```

[ERROR] Tests run: 8, Failures: 1, Errors: 0, Skipped: 0, Time elapsed: 3.194 s <<< FAILURE! - in org.openhab.core.common.ThreadPoolManagerTest

[ERROR] org.openhab.core.common.ThreadPoolManagerTest.testGetPoolShutdown Time elapsed: 1.57 s <<< FAILURE!

org.opentest4j.AssertionFailedError: Checking if thread pool Test works ==> expected: <true> but was: <false>

at org.junit.jupiter.api.AssertionUtils.fail(AssertionUtils.java:55)

at org.junit.jupiter.api.AssertTrue.assertTrue(AssertTrue.java:40)

at org.junit.jupiter.api.Assertions.assertTrue(Assertions.java:210)

at org.openhab.core.common.ThreadPoolManagerTest.checkThreadPoolWorks(ThreadPoolManagerTest.java:128)

at org.openhab.core.common.ThreadPoolManagerTest.testGetPoolShutdown(ThreadPoolManagerTest.java:108)

``` | non_infrastructure | threadpoolmanagertest unstable this test failed in a gha windows build tests run failures errors skipped time elapsed s failure in org openhab core common threadpoolmanagertest org openhab core common threadpoolmanagertest testgetpoolshutdown time elapsed s failure org assertionfailederror checking if thread pool test works expected but was at org junit jupiter api assertionutils fail assertionutils java at org junit jupiter api asserttrue asserttrue asserttrue java at org junit jupiter api assertions asserttrue assertions java at org openhab core common threadpoolmanagertest checkthreadpoolworks threadpoolmanagertest java at org openhab core common threadpoolmanagertest testgetpoolshutdown threadpoolmanagertest java | 0 |

475,010 | 13,685,839,211 | IssuesEvent | 2020-09-30 07:48:25 | zeebe-io/zeebe | https://api.github.com/repos/zeebe-io/zeebe | closed | Detect issues when upgrading the broker to a new version | Impact: Availability Impact: Data Priority: Critical Scope: broker Status: In Progress Status: Planned Type: Enhancement | **Is your feature request related to a problem? Please describe.**

Currently, it can happen that the upgrade of the broker to a new version fails (e.g. #5268). As a user, it is not clear how to detect these failures and how to solve them.

**Describe the solution you'd like**

On startup with a new version, the broker detects if there are any issues that prevent a successful upgrade.

If it detects an issue then it informs the user how to solve this issue. This can be a good error message and documentation that explain the procedure in detail.

**Describe alternatives you've considered**

* fix the upgrade issues on-the-fly: would be nice but this is complicated and error-prone

* build a tool for the detection: it requires too much internal logic from the workflow engine that can't be extracted

**Additional context**

Current upgrade issues: #5268, #5251

| 1.0 | Detect issues when upgrading the broker to a new version - **Is your feature request related to a problem? Please describe.**

Currently, it can happen that the upgrade of the broker to a new version fails (e.g. #5268). As a user, it is not clear how to detect these failures and how to solve them.

**Describe the solution you'd like**

On startup with a new version, the broker detects if there are any issues that prevent a successful upgrade.

If it detects an issue then it informs the user how to solve this issue. This can be a good error message and documentation that explain the procedure in detail.

**Describe alternatives you've considered**

* fix the upgrade issues on-the-fly: would be nice but this is complicated and error-prone

* build a tool for the detection: it requires too much internal logic from the workflow engine that can't be extracted

**Additional context**

Current upgrade issues: #5268, #5251

| non_infrastructure | detect issues when upgrading the broker to a new version is your feature request related to a problem please describe currently it can happen that the upgrade of the broker to a new version fails e g as a user it is not clear how to detect these failures and how to solve them describe the solution you d like on startup with a new version the broker detects if there are any issues that prevent a successful upgrade if it detects an issue then it informs the user how to solve this issue this can be a good error message and documentation that explain the procedure in detail describe alternatives you ve considered fix the upgrade issues on the fly would be nice but this is complicated and error prone build a tool for the detection it requires too much internal logic from the workflow engine that can t be extracted additional context current upgrade issues | 0 |

6,110 | 6,159,733,973 | IssuesEvent | 2017-06-29 01:38:39 | vmware/docker-volume-vsphere | https://api.github.com/repos/vmware/docker-volume-vsphere | opened | Need test binary to enable verbose mode of gocheck | component/test-infrastructure kind/enhancement | To have better time reporting of our e2e tests, we need to enable verbose mode

(done through -check.v flag).

Doing this will allow us to spit more suite level information (time required and individual test suite pass or fail.

We can then use it to print overall summary at the end of test-all target.

We also need this kind of binary to properly spit out coverage info. So two things are served from this binary.

CC @shuklanirdesh82 | 1.0 | Need test binary to enable verbose mode of gocheck - To have better time reporting of our e2e tests, we need to enable verbose mode

(done through -check.v flag).

Doing this will allow us to spit more suite level information (time required and individual test suite pass or fail.

We can then use it to print overall summary at the end of test-all target.

We also need this kind of binary to properly spit out coverage info. So two things are served from this binary.

CC @shuklanirdesh82 | infrastructure | need test binary to enable verbose mode of gocheck to have better time reporting of our tests we need to enable verbose mode done through check v flag doing this will allow us to spit more suite level information time required and individual test suite pass or fail we can then use it to print overall summary at the end of test all target we also need this kind of binary to properly spit out coverage info so two things are served from this binary cc | 1 |

23,977 | 11,994,922,013 | IssuesEvent | 2020-04-08 14:26:32 | wellcomecollection/platform | https://api.github.com/repos/wellcomecollection/platform | closed | Bag verifier: one of the tests is flaky | 🐛 Bug 📦 Storage service | As seen in https://api.travis-ci.org/v3/job/601215760/log.txt on https://github.com/wellcometrust/storage-service/pull/388:

> 11:23:18.022 [default-akka.actor.default-dispatcher-3] ERROR u.a.w.p.a.c.s.m.IngestStepWorker$$anon$1 - DeterministicFailure(java.lang.Throwable: Payload-Oxum has the wrong number of payload files: 26, but bag manifest has 27,Some(root=qmtisvty/UwHIqF8C/fCmzS9nM/v6, status=incomplete, ingestId=94f2402d-6ee8-44aa-bb9f-cf826a9d78bb, duration=PT0.039472S, durationSeconds=0))

| 1.0 | Bag verifier: one of the tests is flaky - As seen in https://api.travis-ci.org/v3/job/601215760/log.txt on https://github.com/wellcometrust/storage-service/pull/388:

> 11:23:18.022 [default-akka.actor.default-dispatcher-3] ERROR u.a.w.p.a.c.s.m.IngestStepWorker$$anon$1 - DeterministicFailure(java.lang.Throwable: Payload-Oxum has the wrong number of payload files: 26, but bag manifest has 27,Some(root=qmtisvty/UwHIqF8C/fCmzS9nM/v6, status=incomplete, ingestId=94f2402d-6ee8-44aa-bb9f-cf826a9d78bb, duration=PT0.039472S, durationSeconds=0))

| non_infrastructure | bag verifier one of the tests is flaky as seen in on error u a w p a c s m ingeststepworker anon deterministicfailure java lang throwable payload oxum has the wrong number of payload files but bag manifest has some root qmtisvty status incomplete ingestid duration durationseconds | 0 |

18,854 | 13,136,016,219 | IssuesEvent | 2020-08-07 04:47:36 | kubeflow/kfserving | https://api.github.com/repos/kubeflow/kfserving | closed | Separate AdmissionControllers to a different deployment | area/engprod area/infrastructure-feature area/operator kind/feature priority/p2 | /kind feature

**Describe the solution you'd like**

[A clear and concise description of what you want to happen.]

We need to be able to autoscale our admission controller separately to support large volatile clusters with lots of Pod CRUDs.

**Anything else you would like to add:**

[Miscellaneous information that will assist in solving the issue.]

This should be done when upgrading ControllerRuntime to avoid wasted effort.

| 1.0 | Separate AdmissionControllers to a different deployment - /kind feature

**Describe the solution you'd like**

[A clear and concise description of what you want to happen.]

We need to be able to autoscale our admission controller separately to support large volatile clusters with lots of Pod CRUDs.

**Anything else you would like to add:**

[Miscellaneous information that will assist in solving the issue.]

This should be done when upgrading ControllerRuntime to avoid wasted effort.

| infrastructure | separate admissioncontrollers to a different deployment kind feature describe the solution you d like we need to be able to autoscale our admission controller separately to support large volatile clusters with lots of pod cruds anything else you would like to add this should be done when upgrading controllerruntime to avoid wasted effort | 1 |

14,821 | 11,172,377,858 | IssuesEvent | 2019-12-29 05:27:56 | Blacksmoke16/oq | https://api.github.com/repos/Blacksmoke16/oq | closed | oq does not work in a docker container | kind:bug kind:infrastructure status:wip | I have build a docker container like so

FROM crystallang/crystal:latest

RUN git clone https://github.com/Blacksmoke16/oq.git

WORKDIR /oq

RUN shards build --production

RUN chmod +x /oq/bin/oq

RUN cp /oq/bin/oq /bin/

ENV PATH /bin/:$PATH

RUN oq --help

------------

I get an error when running oq --help

Sending build context to Docker daemon 5.632kB

Step 1/8 : FROM crystallang/crystal:latest

---> e9906ad8c49f

Step 2/8 : RUN git clone https://github.com/Blacksmoke16/oq.git

---> Using cache

---> 9989d5d29ddb

Step 3/8 : WORKDIR /oq

---> Using cache

---> 9a3f277c8558

Step 4/8 : RUN shards build --production

---> Using cache

---> 1ed78db07894

Step 5/8 : RUN chmod +x /oq/bin/oq

---> Using cache

---> 0ebaf7c94a18

Step 6/8 : RUN cp /oq/bin/oq /bin/

---> Using cache

---> e02d9d996fff

Step 7/8 : ENV PATH /bin/:$PATH

---> Using cache

---> 6bc2e585cc6e

Step 8/8 : RUN oq --help

---> Running in d0822a0f3af4

Failed to raise an exception: END_OF_STACK

[0x490bb6] *CallStack::print_backtrace:Int32 +118

[0x46d466] __crystal_raise +86

[0x46d98e] ???

[0x4bbab6] *Crystal::System::File::open<String, String, File::Permissions>:Int32 +214

[0x4b7ec3] *File::new<String, String, File::Permissions, Nil, Nil>:File +67

[0x48785d] *CallStack::read_dwarf_sections:(Array(Tuple(UInt64, UInt64, String)) | Nil) +109

[0x4875ed] *CallStack::decode_line_number<UInt64>:Tuple(String, Int32, Int32) +45

[0x486d78] *CallStack#decode_backtrace:Array(String) +296

[0x486c32] *CallStack#printable_backtrace:Array(String) +50

[0x4f049d] *Exception+ +77

[0x4f02e8] *Exception+ +120

[0x4ec07a] *AtExitHandlers::run<Int32>:Int32 +490

[0x55510b] *Crystal::main<Int32, Pointer(Pointer(UInt8))>:Int32 +139

[0x477e76] main +6

[0x7f0eb572e830] __libc_start_main +240

[0x46ba19] _start +41

[0x0] ???

The command '/bin/sh -c oq --help' returned a non-zero code: 5

------------

Please can you help identify the issue. Thanks. | 1.0 | oq does not work in a docker container - I have build a docker container like so

FROM crystallang/crystal:latest

RUN git clone https://github.com/Blacksmoke16/oq.git

WORKDIR /oq

RUN shards build --production

RUN chmod +x /oq/bin/oq

RUN cp /oq/bin/oq /bin/

ENV PATH /bin/:$PATH

RUN oq --help

------------

I get an error when running oq --help

Sending build context to Docker daemon 5.632kB

Step 1/8 : FROM crystallang/crystal:latest

---> e9906ad8c49f

Step 2/8 : RUN git clone https://github.com/Blacksmoke16/oq.git

---> Using cache

---> 9989d5d29ddb

Step 3/8 : WORKDIR /oq

---> Using cache

---> 9a3f277c8558

Step 4/8 : RUN shards build --production

---> Using cache

---> 1ed78db07894

Step 5/8 : RUN chmod +x /oq/bin/oq

---> Using cache

---> 0ebaf7c94a18

Step 6/8 : RUN cp /oq/bin/oq /bin/

---> Using cache

---> e02d9d996fff

Step 7/8 : ENV PATH /bin/:$PATH

---> Using cache

---> 6bc2e585cc6e

Step 8/8 : RUN oq --help

---> Running in d0822a0f3af4

Failed to raise an exception: END_OF_STACK

[0x490bb6] *CallStack::print_backtrace:Int32 +118

[0x46d466] __crystal_raise +86

[0x46d98e] ???

[0x4bbab6] *Crystal::System::File::open<String, String, File::Permissions>:Int32 +214

[0x4b7ec3] *File::new<String, String, File::Permissions, Nil, Nil>:File +67

[0x48785d] *CallStack::read_dwarf_sections:(Array(Tuple(UInt64, UInt64, String)) | Nil) +109

[0x4875ed] *CallStack::decode_line_number<UInt64>:Tuple(String, Int32, Int32) +45

[0x486d78] *CallStack#decode_backtrace:Array(String) +296

[0x486c32] *CallStack#printable_backtrace:Array(String) +50

[0x4f049d] *Exception+ +77

[0x4f02e8] *Exception+ +120

[0x4ec07a] *AtExitHandlers::run<Int32>:Int32 +490

[0x55510b] *Crystal::main<Int32, Pointer(Pointer(UInt8))>:Int32 +139

[0x477e76] main +6

[0x7f0eb572e830] __libc_start_main +240

[0x46ba19] _start +41

[0x0] ???

The command '/bin/sh -c oq --help' returned a non-zero code: 5

------------

Please can you help identify the issue. Thanks. | infrastructure | oq does not work in a docker container i have build a docker container like so from crystallang crystal latest run git clone workdir oq run shards build production run chmod x oq bin oq run cp oq bin oq bin env path bin path run oq help i get an error when running oq help sending build context to docker daemon step from crystallang crystal latest step run git clone using cache step workdir oq using cache step run shards build production using cache step run chmod x oq bin oq using cache step run cp oq bin oq bin using cache step env path bin path using cache step run oq help running in failed to raise an exception end of stack callstack print backtrace crystal raise crystal system file open file new file callstack read dwarf sections array tuple string nil callstack decode line number tuple string callstack decode backtrace array string callstack printable backtrace array string exception exception atexithandlers run crystal main main libc start main start the command bin sh c oq help returned a non zero code please can you help identify the issue thanks | 1 |

24,394 | 17,191,179,005 | IssuesEvent | 2021-07-16 11:11:44 | google/site-kit-wp | https://api.github.com/repos/google/site-kit-wp | opened | Add viewContext for all top-level React apps | P1 Type: Infrastructure | ## Feature Description

The `Root` component that we use for all top-level React apps has a `viewContext` prop that was introduced initially for providing view/screen context for [feature tours](https://github.com/google/site-kit-wp/issues/2649). It was later also integrated with our `ErrorHandler` (boundary) to provide more contextual error codes for tracking React errors.

However, the prop was initially optional because we only needed feature tours in a few select places so it didn't make sense at the time to define this in all instances. For errors however, we do see these raised on all screens (for one reason or another) and this results in less contextual error codes because it has `null` where there would otherwise be a named view context.

---------------

_Do not alter or remove anything below. The following sections will be managed by moderators only._

## Acceptance criteria

* The `viewContext` prop of the `Root` component should be promoted to be a required prop

* [View context constants](https://github.com/google/site-kit-wp/blob/6123efaea980ad2c4179a2995b1d7c6178b010da/assets/js/googlesitekit/constants.js) should be added for all missing contexts (please list in IB)

* All instances of `Root` missing this prop should be updated to provide it via the respective constant

## Implementation Brief

* <!-- One or more bullet points for how to technically implement the feature. -->

### Test Coverage

* <!-- One or more bullet points for how to implement automated tests to verify the feature works. -->

### Visual Regression Changes

* <!-- One or more bullet points describing how the feature will affect visual regression tests, if applicable. -->

## QA Brief

* <!-- One or more bullet points for how to test that the feature works as expected. -->

## Changelog entry

* <!-- One sentence summarizing the PR, to be used in the changelog. -->

| 1.0 | Add viewContext for all top-level React apps - ## Feature Description

The `Root` component that we use for all top-level React apps has a `viewContext` prop that was introduced initially for providing view/screen context for [feature tours](https://github.com/google/site-kit-wp/issues/2649). It was later also integrated with our `ErrorHandler` (boundary) to provide more contextual error codes for tracking React errors.

However, the prop was initially optional because we only needed feature tours in a few select places so it didn't make sense at the time to define this in all instances. For errors however, we do see these raised on all screens (for one reason or another) and this results in less contextual error codes because it has `null` where there would otherwise be a named view context.

---------------

_Do not alter or remove anything below. The following sections will be managed by moderators only._

## Acceptance criteria

* The `viewContext` prop of the `Root` component should be promoted to be a required prop

* [View context constants](https://github.com/google/site-kit-wp/blob/6123efaea980ad2c4179a2995b1d7c6178b010da/assets/js/googlesitekit/constants.js) should be added for all missing contexts (please list in IB)

* All instances of `Root` missing this prop should be updated to provide it via the respective constant

## Implementation Brief

* <!-- One or more bullet points for how to technically implement the feature. -->

### Test Coverage

* <!-- One or more bullet points for how to implement automated tests to verify the feature works. -->

### Visual Regression Changes

* <!-- One or more bullet points describing how the feature will affect visual regression tests, if applicable. -->

## QA Brief

* <!-- One or more bullet points for how to test that the feature works as expected. -->

## Changelog entry

* <!-- One sentence summarizing the PR, to be used in the changelog. -->

| infrastructure | add viewcontext for all top level react apps feature description the root component that we use for all top level react apps has a viewcontext prop that was introduced initially for providing view screen context for it was later also integrated with our errorhandler boundary to provide more contextual error codes for tracking react errors however the prop was initially optional because we only needed feature tours in a few select places so it didn t make sense at the time to define this in all instances for errors however we do see these raised on all screens for one reason or another and this results in less contextual error codes because it has null where there would otherwise be a named view context do not alter or remove anything below the following sections will be managed by moderators only acceptance criteria the viewcontext prop of the root component should be promoted to be a required prop should be added for all missing contexts please list in ib all instances of root missing this prop should be updated to provide it via the respective constant implementation brief test coverage visual regression changes qa brief changelog entry | 1 |

120,568 | 17,644,231,593 | IssuesEvent | 2021-08-20 02:00:55 | fbennets/HCLC-GDPR-Bot | https://api.github.com/repos/fbennets/HCLC-GDPR-Bot | opened | CVE-2021-29522 (Medium) detected in tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2021-29522 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/ef/73/205b5e7f8fe086ffe4165d984acb2c49fa3086f330f03099378753982d2e/tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl">https://files.pythonhosted.org/packages/ef/73/205b5e7f8fe086ffe4165d984acb2c49fa3086f330f03099378753982d2e/tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</a></p>

<p>Path to dependency file: HCLC-GDPR-Bot/requirements.txt</p>

<p>Path to vulnerable library: HCLC-GDPR-Bot/requirements.txt</p>

<p>

Dependency Hierarchy:

- tensorflow_addons-0.7.1-cp27-cp27mu-manylinux2010_x86_64.whl (Root Library)

- :x: **tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an end-to-end open source platform for machine learning. The `tf.raw_ops.Conv3DBackprop*` operations fail to validate that the input tensors are not empty. In turn, this would result in a division by 0. This is because the implementation(https://github.com/tensorflow/tensorflow/blob/a91bb59769f19146d5a0c20060244378e878f140/tensorflow/core/kernels/conv_grad_ops_3d.cc#L430-L450) does not check that the divisor used in computing the shard size is not zero. Thus, if attacker controls the input sizes, they can trigger a denial of service via a division by zero error. The fix will be included in TensorFlow 2.5.0. We will also cherrypick this commit on TensorFlow 2.4.2, TensorFlow 2.3.3, TensorFlow 2.2.3 and TensorFlow 2.1.4, as these are also affected and still in supported range.

<p>Publish Date: 2021-05-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-29522>CVE-2021-29522</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-c968-pq7h-7fxv">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-c968-pq7h-7fxv</a></p>

<p>Release Date: 2021-05-14</p>

<p>Fix Resolution: tensorflow - 2.5.0, tensorflow-cpu - 2.5.0, tensorflow-gpu - 2.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-29522 (Medium) detected in tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl - ## CVE-2021-29522 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/ef/73/205b5e7f8fe086ffe4165d984acb2c49fa3086f330f03099378753982d2e/tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl">https://files.pythonhosted.org/packages/ef/73/205b5e7f8fe086ffe4165d984acb2c49fa3086f330f03099378753982d2e/tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</a></p>

<p>Path to dependency file: HCLC-GDPR-Bot/requirements.txt</p>

<p>Path to vulnerable library: HCLC-GDPR-Bot/requirements.txt</p>

<p>

Dependency Hierarchy:

- tensorflow_addons-0.7.1-cp27-cp27mu-manylinux2010_x86_64.whl (Root Library)

- :x: **tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an end-to-end open source platform for machine learning. The `tf.raw_ops.Conv3DBackprop*` operations fail to validate that the input tensors are not empty. In turn, this would result in a division by 0. This is because the implementation(https://github.com/tensorflow/tensorflow/blob/a91bb59769f19146d5a0c20060244378e878f140/tensorflow/core/kernels/conv_grad_ops_3d.cc#L430-L450) does not check that the divisor used in computing the shard size is not zero. Thus, if attacker controls the input sizes, they can trigger a denial of service via a division by zero error. The fix will be included in TensorFlow 2.5.0. We will also cherrypick this commit on TensorFlow 2.4.2, TensorFlow 2.3.3, TensorFlow 2.2.3 and TensorFlow 2.1.4, as these are also affected and still in supported range.

<p>Publish Date: 2021-05-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-29522>CVE-2021-29522</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-c968-pq7h-7fxv">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-c968-pq7h-7fxv</a></p>

<p>Release Date: 2021-05-14</p>

<p>Fix Resolution: tensorflow - 2.5.0, tensorflow-cpu - 2.5.0, tensorflow-gpu - 2.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_infrastructure | cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file hclc gdpr bot requirements txt path to vulnerable library hclc gdpr bot requirements txt dependency hierarchy tensorflow addons whl root library x tensorflow whl vulnerable library found in base branch master vulnerability details tensorflow is an end to end open source platform for machine learning the tf raw ops operations fail to validate that the input tensors are not empty in turn this would result in a division by this is because the implementation does not check that the divisor used in computing the shard size is not zero thus if attacker controls the input sizes they can trigger a denial of service via a division by zero error the fix will be included in tensorflow we will also cherrypick this commit on tensorflow tensorflow tensorflow and tensorflow as these are also affected and still in supported range publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tensorflow tensorflow cpu tensorflow gpu step up your open source security game with whitesource | 0 |

714,400 | 24,560,388,149 | IssuesEvent | 2022-10-12 19:41:11 | interaction-lab/MoveToCode | https://api.github.com/repos/interaction-lab/MoveToCode | closed | Make tutor Kuri communication more salient? | high priority | - [ ] test out new location for text

- [ ] test out voice -> possibly Polly if not just our voice | 1.0 | Make tutor Kuri communication more salient? - - [ ] test out new location for text

- [ ] test out voice -> possibly Polly if not just our voice | non_infrastructure | make tutor kuri communication more salient test out new location for text test out voice possibly polly if not just our voice | 0 |

20,903 | 14,233,776,305 | IssuesEvent | 2020-11-18 12:40:09 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Unable to build a custom version of the dotnet/runtime repository with a suffix. | area-Infrastructure-coreclr untriaged |

### Description

At Criteo, we build our own custom CLR. We do this because we have changes in the CLR that are specifics to our use-cases and it won't be accepted upstream (already discussed).

To have my _dotnet-runtime-5.0.0-criteo1.tar.gz_ artifact, I use the command:

`./build.sh -c Release /p:VersionSuffix=criteo1`

Then I looked at the _artifacts/packages/Release/Shipping/_ folder to get the artifact and use it.

Steps to reproduce (without applying the patches):

```

git clone https://github.com/dotnet/runtime

cd runtime

git checkout -b 5.0.0-rtm-criteo1 v5.0.0-rtm.20519.4

./build.sh -c Release /p:VersionSuffix=criteo1

```

Since the RTM, I got these errors

```

Build FAILED.

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: Detected package downgrade: Microsoft.NETCore.DotNetHostPolicy from 5.0.0 to 5.0.0-criteo1. Reference the package directly from the project to select a different version.

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.App.Internal 5.0.0-criteo1 -> Microsoft.NETCore.DotNetHostPolicy (>= 5.0.0)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetHostPolicy (>= 5.0.0-criteo1)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: Detected package downgrade: Microsoft.NETCore.DotNetHostResolver from 5.0.0 to 5.0.0-criteo1. Reference the package directly from the project to select a different version.

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetHostPolicy 5.0.0-criteo1 -> Microsoft.NETCore.DotNetHostResolver (>= 5.0.0)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetHostResolver (>= 5.0.0-criteo1)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: Detected package downgrade: Microsoft.NETCore.DotNetAppHost from 5.0.0 to 5.0.0-criteo1. Reference the package directly from the project to select a different version.

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetHostResolver 5.0.0-criteo1 -> Microsoft.NETCore.DotNetAppHost (>= 5.0.0)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetAppHost (>= 5.0.0-criteo1)

/build/runtime/src/installer/pkg/packaging/installers.proj(50,5): error MSB4181: The "MSBuild" task returned false but did not log an error.

0 Warning(s)

4 Error(s)

```

### Configuration

* We use the Centos7 docker image to build the CLR

### Regression?

I was able to build and publish the dotnet-runtime-5.0.0-criteo1.tar.gz artifact until the RTM release (example: for the RC1 https://github.com/criteo-forks/runtime/releases/tag/v5.0.0-rc1-criteo1)

| 1.0 | Unable to build a custom version of the dotnet/runtime repository with a suffix. -

### Description

At Criteo, we build our own custom CLR. We do this because we have changes in the CLR that are specifics to our use-cases and it won't be accepted upstream (already discussed).

To have my _dotnet-runtime-5.0.0-criteo1.tar.gz_ artifact, I use the command:

`./build.sh -c Release /p:VersionSuffix=criteo1`

Then I looked at the _artifacts/packages/Release/Shipping/_ folder to get the artifact and use it.

Steps to reproduce (without applying the patches):

```

git clone https://github.com/dotnet/runtime

cd runtime

git checkout -b 5.0.0-rtm-criteo1 v5.0.0-rtm.20519.4

./build.sh -c Release /p:VersionSuffix=criteo1

```

Since the RTM, I got these errors

```

Build FAILED.

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: Detected package downgrade: Microsoft.NETCore.DotNetHostPolicy from 5.0.0 to 5.0.0-criteo1. Reference the package directly from the project to select a different version.

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.App.Internal 5.0.0-criteo1 -> Microsoft.NETCore.DotNetHostPolicy (>= 5.0.0)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetHostPolicy (>= 5.0.0-criteo1)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: Detected package downgrade: Microsoft.NETCore.DotNetHostResolver from 5.0.0 to 5.0.0-criteo1. Reference the package directly from the project to select a different version.

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetHostPolicy 5.0.0-criteo1 -> Microsoft.NETCore.DotNetHostResolver (>= 5.0.0)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetHostResolver (>= 5.0.0-criteo1)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: Detected package downgrade: Microsoft.NETCore.DotNetAppHost from 5.0.0 to 5.0.0-criteo1. Reference the package directly from the project to select a different version.

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetHostResolver 5.0.0-criteo1 -> Microsoft.NETCore.DotNetAppHost (>= 5.0.0)

/build/runtime/src/installer/pkg/projects/netcoreapp/sfx/Microsoft.NETCore.App.SharedFx.sfxproj : error NU1605: unused -> Microsoft.NETCore.DotNetAppHost (>= 5.0.0-criteo1)

/build/runtime/src/installer/pkg/packaging/installers.proj(50,5): error MSB4181: The "MSBuild" task returned false but did not log an error.

0 Warning(s)

4 Error(s)

```

### Configuration

* We use the Centos7 docker image to build the CLR

### Regression?

I was able to build and publish the dotnet-runtime-5.0.0-criteo1.tar.gz artifact until the RTM release (example: for the RC1 https://github.com/criteo-forks/runtime/releases/tag/v5.0.0-rc1-criteo1)

| infrastructure | unable to build a custom version of the dotnet runtime repository with a suffix description at criteo we build our own custom clr we do this because we have changes in the clr that are specifics to our use cases and it won t be accepted upstream already discussed to have my dotnet runtime tar gz artifact i use the command build sh c release p versionsuffix then i looked at the artifacts packages release shipping folder to get the artifact and use it steps to reproduce without applying the patches git clone cd runtime git checkout b rtm rtm build sh c release p versionsuffix since the rtm i got these errors build failed build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error detected package downgrade microsoft netcore dotnethostpolicy from to reference the package directly from the project to select a different version build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error unused microsoft netcore app internal microsoft netcore dotnethostpolicy build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error unused microsoft netcore dotnethostpolicy build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error detected package downgrade microsoft netcore dotnethostresolver from to reference the package directly from the project to select a different version build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error unused microsoft netcore dotnethostpolicy microsoft netcore dotnethostresolver build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error unused microsoft netcore dotnethostresolver build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error detected package downgrade microsoft netcore dotnetapphost from to reference the package directly from the project to select a different version build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error unused microsoft netcore dotnethostresolver microsoft netcore dotnetapphost build runtime src installer pkg projects netcoreapp sfx microsoft netcore app sharedfx sfxproj error unused microsoft netcore dotnetapphost build runtime src installer pkg packaging installers proj error the msbuild task returned false but did not log an error warning s error s configuration we use the docker image to build the clr regression i was able to build and publish the dotnet runtime tar gz artifact until the rtm release example for the | 1 |

14,941 | 11,255,833,875 | IssuesEvent | 2020-01-12 12:19:43 | stylelint/stylelint | https://api.github.com/repos/stylelint/stylelint | closed | Increase flow coverage | help wanted type: infrastructure | A few places we could gain some ground adding Flow annotations, without diving into the rules.

- [x] lib/utils/getCacheFile

- [x] lib/utils/hash.js

- [x] lib/utils/isAfterSingleLineComment.js

- [x] lib/utils/whitespaceChecker.js

- [x] lib/formatters/needlessDisablesStringFormatter.js

- [ ] lib/formatters/stringFormatter.js

- [x] lib/formatters/verboseFormatter.js

| 1.0 | Increase flow coverage - A few places we could gain some ground adding Flow annotations, without diving into the rules.

- [x] lib/utils/getCacheFile

- [x] lib/utils/hash.js

- [x] lib/utils/isAfterSingleLineComment.js

- [x] lib/utils/whitespaceChecker.js

- [x] lib/formatters/needlessDisablesStringFormatter.js

- [ ] lib/formatters/stringFormatter.js

- [x] lib/formatters/verboseFormatter.js

| infrastructure | increase flow coverage a few places we could gain some ground adding flow annotations without diving into the rules lib utils getcachefile lib utils hash js lib utils isaftersinglelinecomment js lib utils whitespacechecker js lib formatters needlessdisablesstringformatter js lib formatters stringformatter js lib formatters verboseformatter js | 1 |

10,122 | 8,377,499,671 | IssuesEvent | 2018-10-06 01:56:12 | RITlug/TigerOS | https://api.github.com/repos/RITlug/TigerOS | closed | Document Fedora Upgrade Process | infrastructure priority:med | Currently we are upgrading to Fedora 28. However, we don't have a process on how to properly do this.

It would be nice if we had the documentation available to look at with each 6-month release cycle Fedora follows. | 1.0 | Document Fedora Upgrade Process - Currently we are upgrading to Fedora 28. However, we don't have a process on how to properly do this.

It would be nice if we had the documentation available to look at with each 6-month release cycle Fedora follows. | infrastructure | document fedora upgrade process currently we are upgrading to fedora however we don t have a process on how to properly do this it would be nice if we had the documentation available to look at with each month release cycle fedora follows | 1 |

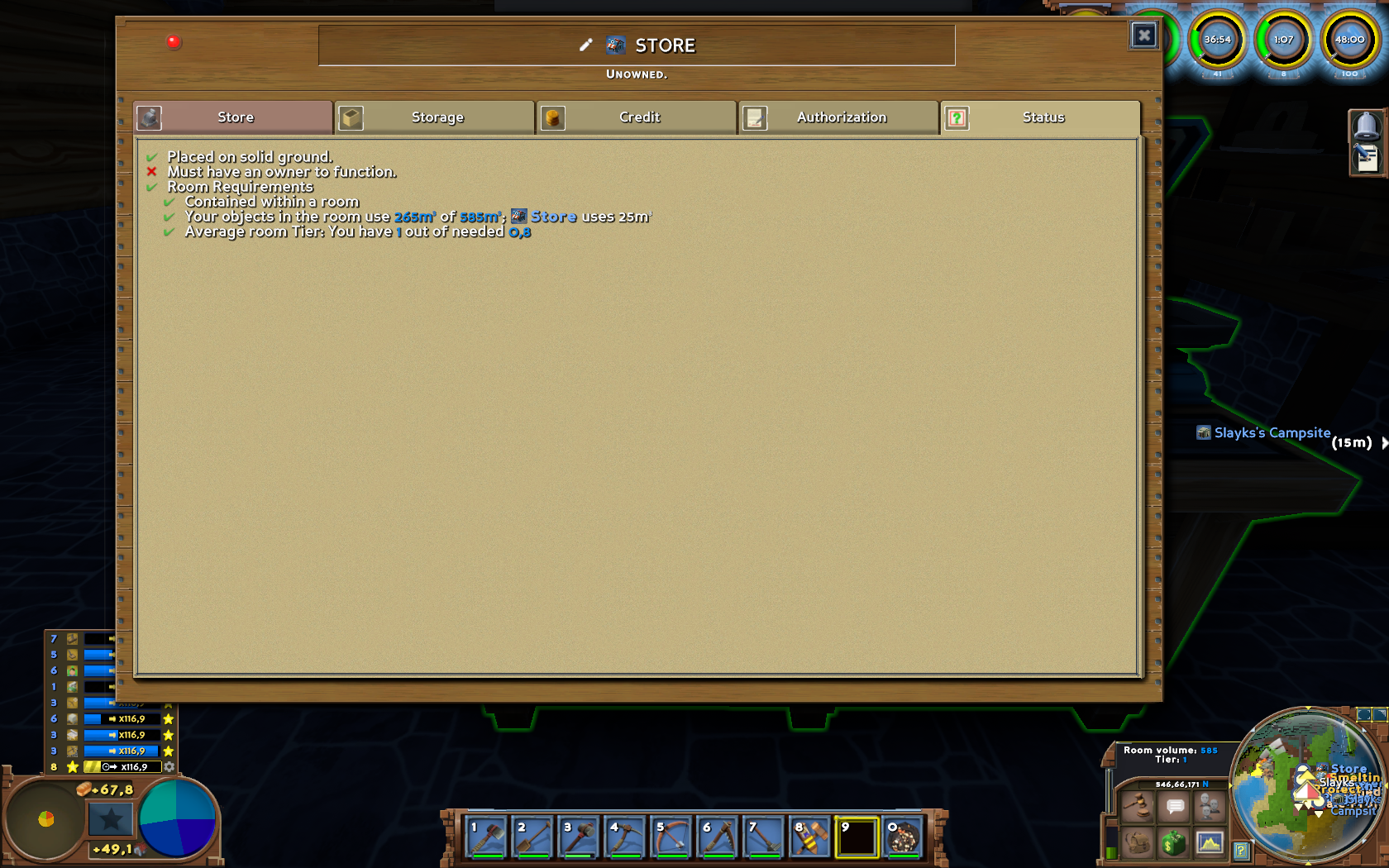

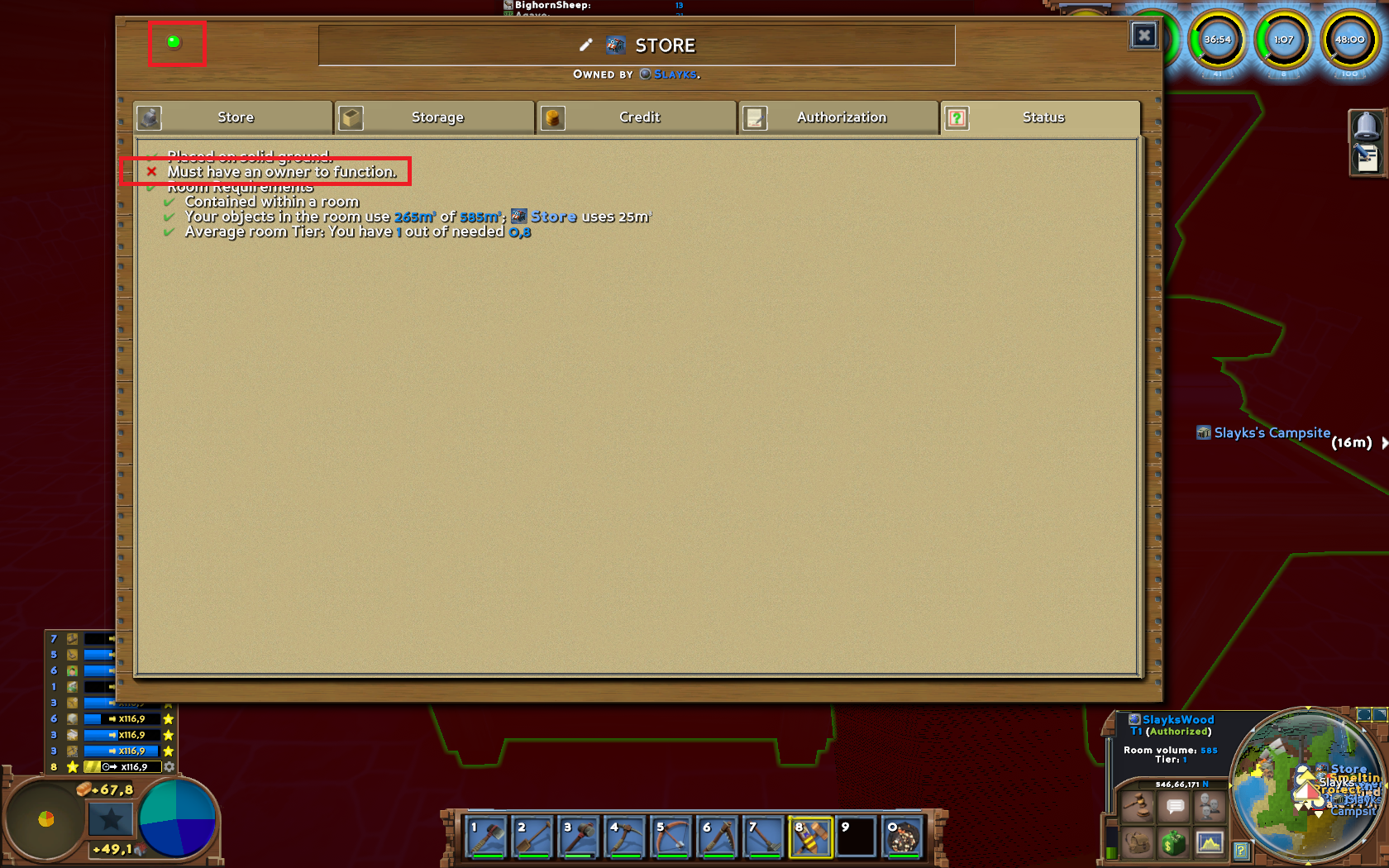

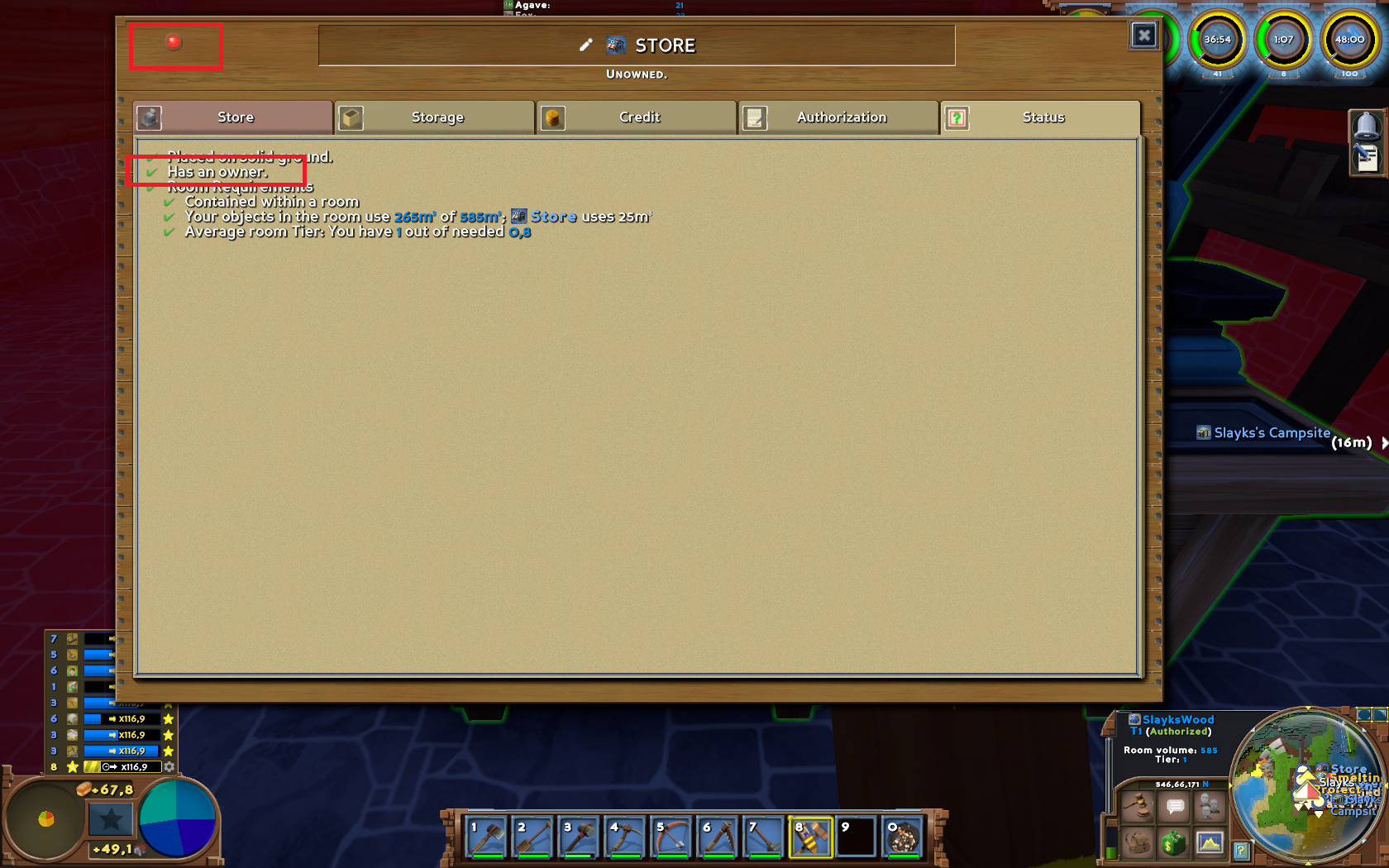

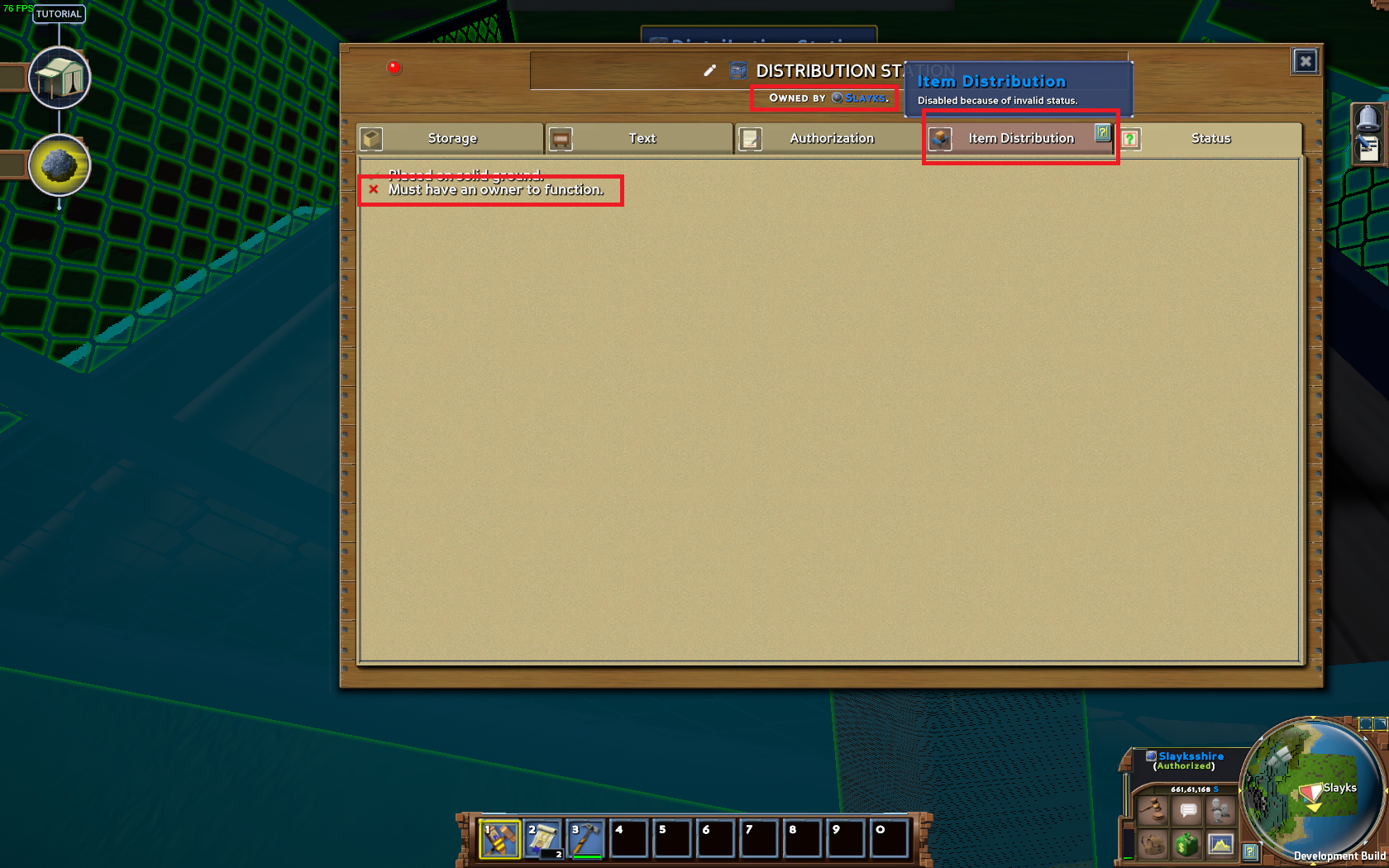

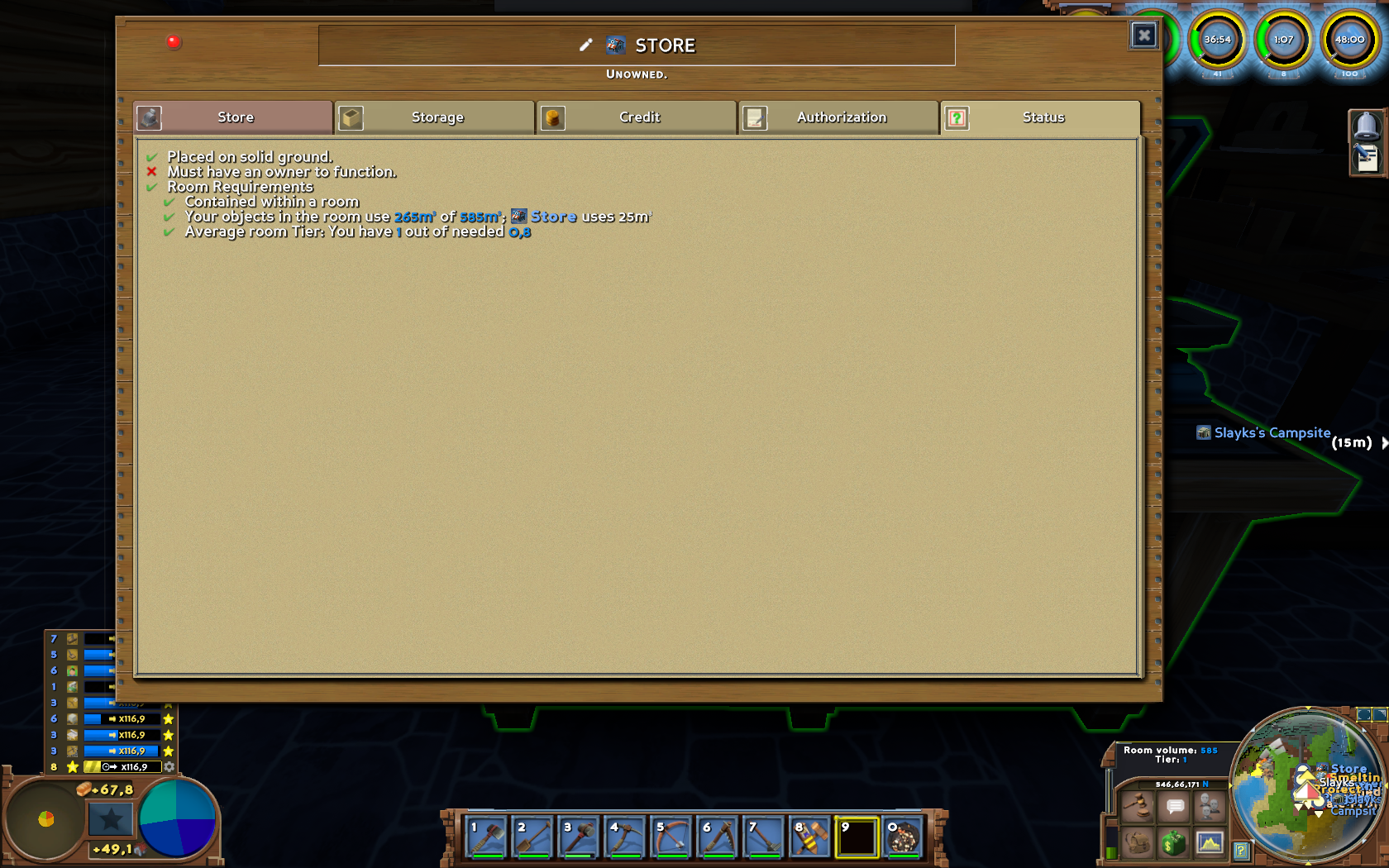

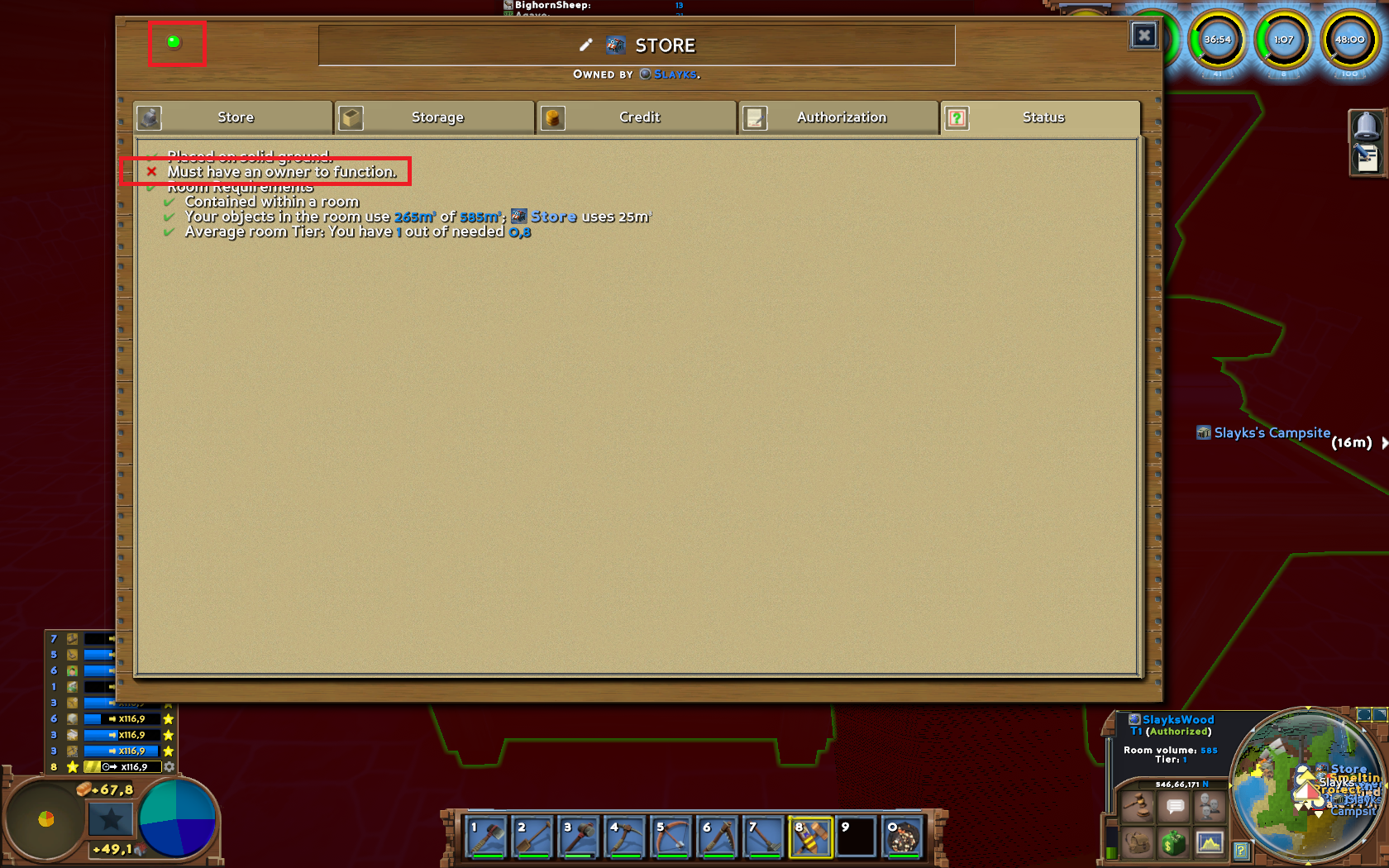

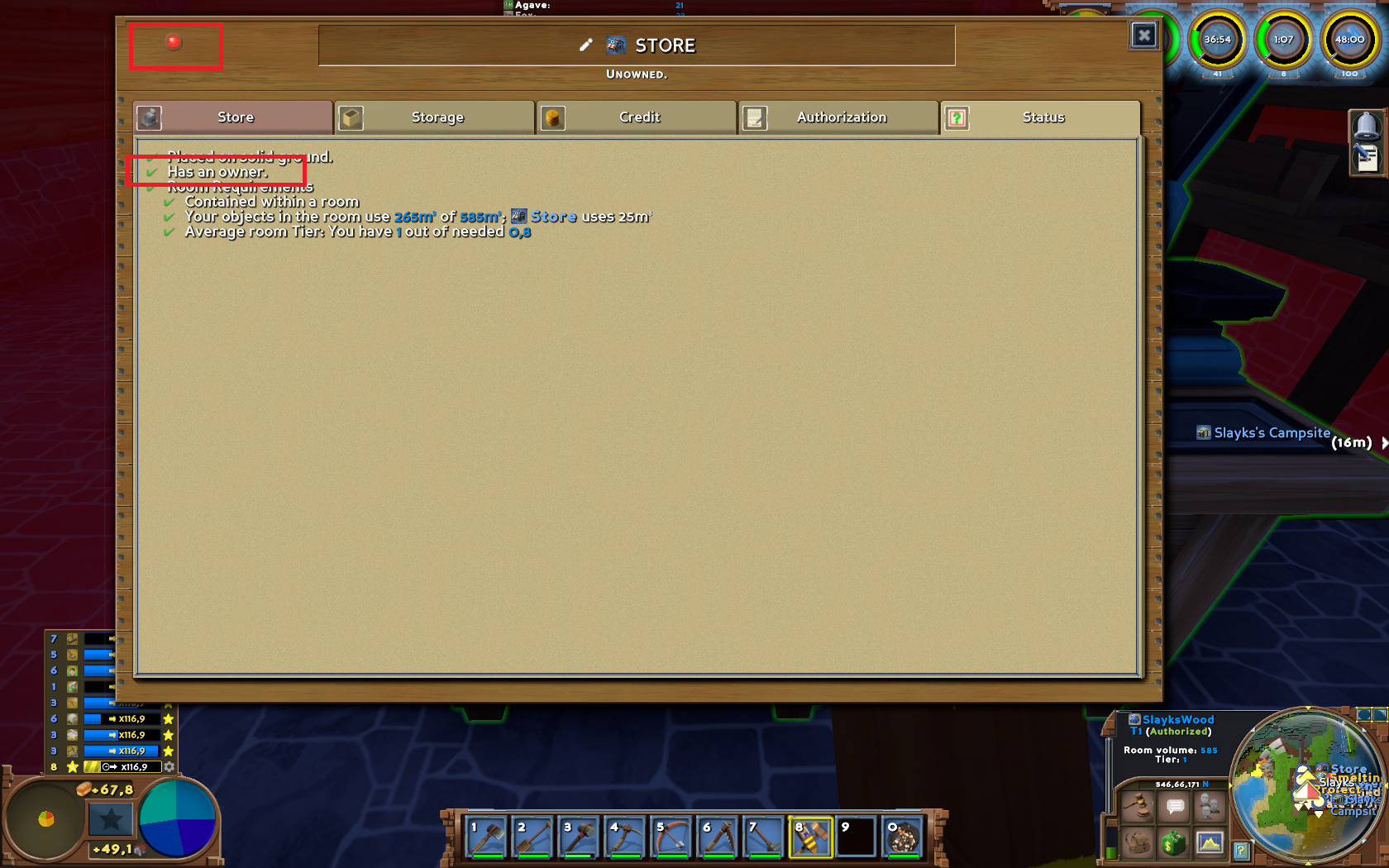

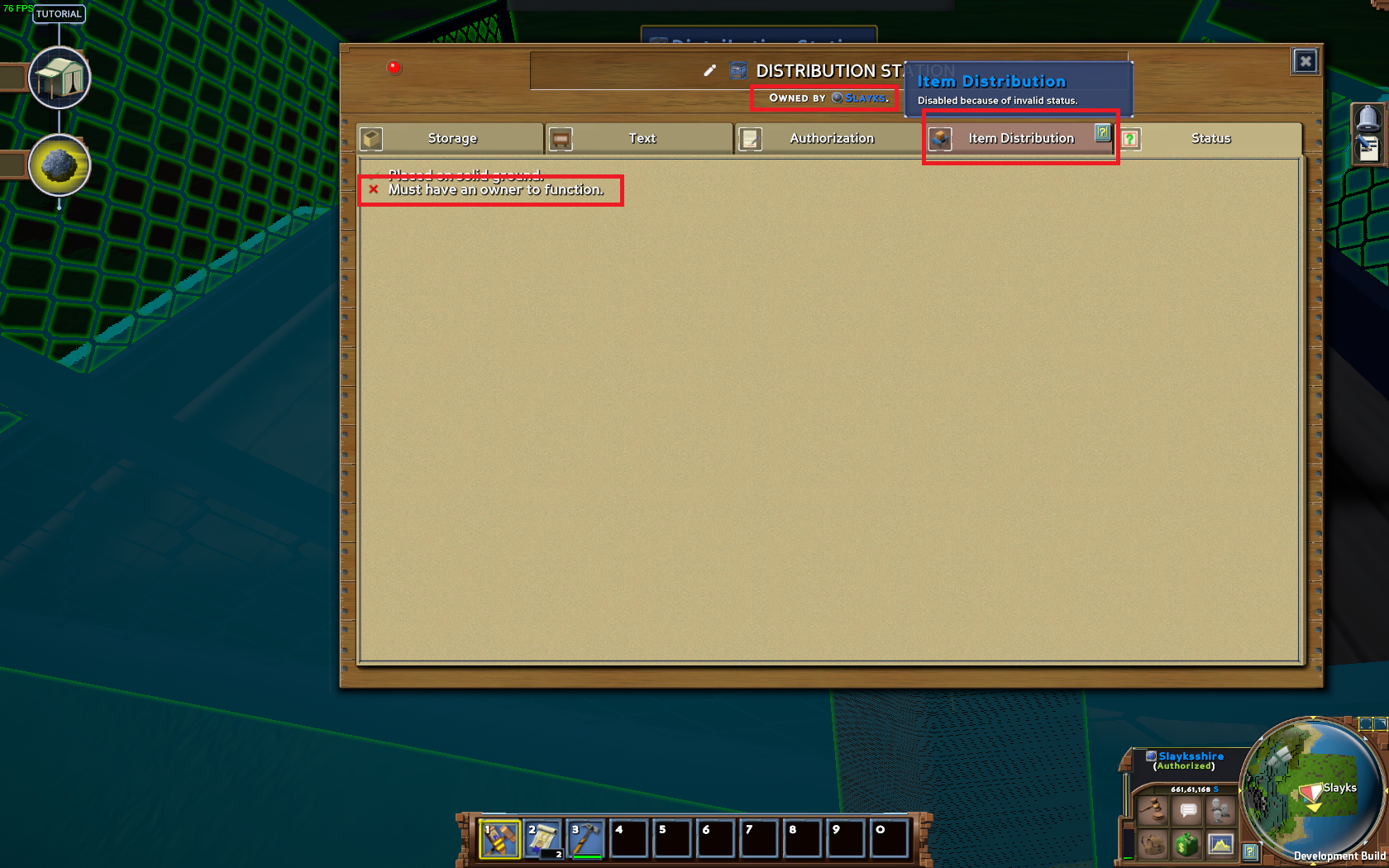

442,181 | 12,741,231,488 | IssuesEvent | 2020-06-26 05:25:53 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1595] Claiming troubles | Category: Gameplay Priority: Medium Status: Fixed Week Task | Step to reproduce:

- place store on unowned property:

- claim this store:

- unclaim:

Same with distribution station, but you can use it at all, because if you don't have an owner you can't add items:

| 1.0 | [0.9.0 staging-1595] Claiming troubles - Step to reproduce:

- place store on unowned property:

- claim this store:

- unclaim:

Same with distribution station, but you can use it at all, because if you don't have an owner you can't add items:

| non_infrastructure | claiming troubles step to reproduce place store on unowned property claim this store unclaim same with distribution station but you can use it at all because if you don t have an owner you can t add items | 0 |

10,878 | 8,781,857,381 | IssuesEvent | 2018-12-19 21:46:41 | elleFlorio/scalachain | https://api.github.com/repos/elleFlorio/scalachain | opened | Integrate sbt Native Packager docker image creation | enhancement good first issue infrastructure | The docker image defined in the [/docker](https://github.com/elleFlorio/scalachain/tree/master/docker) folder is good for development purposes.

It would be good to integrate the sbt plugin for [sbt Native Packager](https://www.scala-sbt.org/sbt-native-packager/), in order to create a docker container running the Scalachain node binary.

The image should have the correct name - `elleflorio/scalachain` - and the correct tags.

This issue can be implemented inside the `docker-integration` branch. | 1.0 | Integrate sbt Native Packager docker image creation - The docker image defined in the [/docker](https://github.com/elleFlorio/scalachain/tree/master/docker) folder is good for development purposes.

It would be good to integrate the sbt plugin for [sbt Native Packager](https://www.scala-sbt.org/sbt-native-packager/), in order to create a docker container running the Scalachain node binary.

The image should have the correct name - `elleflorio/scalachain` - and the correct tags.

This issue can be implemented inside the `docker-integration` branch. | infrastructure | integrate sbt native packager docker image creation the docker image defined in the folder is good for development purposes it would be good to integrate the sbt plugin for in order to create a docker container running the scalachain node binary the image should have the correct name elleflorio scalachain and the correct tags this issue can be implemented inside the docker integration branch | 1 |

71,165 | 13,625,638,695 | IssuesEvent | 2020-09-24 09:47:35 | drafthub/drafthub | https://api.github.com/repos/drafthub/drafthub | closed | enhance code quality of `core.models` | code quality good first issue help wanted | here are some complaints reported by pylint.

```

$ docker-compose exec web python check.py lint | grep -v docstring | grep core | grep models

************* Module drafthub.core.models

drafthub/core/models.py:45:0: C0305: Trailing newlines (trailing-newlines)

drafthub/core/models.py:10:4: E0307: __str__ does not return str (invalid-str-returned)

drafthub/core/models.py:15:8: C0103: Variable name "Draft" doesn't conform to snake_case naming style (invalid-name)

drafthub/core/models.py:15:16: E1101: Instance of 'Blog' has no 'my_drafts' member (no-member)

drafthub/core/models.py:23:8: C0103: Variable name "Draft" doesn't conform to snake_case naming style (invalid-name)

drafthub/core/models.py:23:16: E1101: Instance of 'Blog' has no 'my_drafts' member (no-member)

drafthub/core/models.py:31:8: C0103: Variable name "Draft" doesn't conform to snake_case naming style (invalid-name)

drafthub/core/models.py:31:16: E1101: Instance of 'Blog' has no 'my_drafts' member (no-member)

drafthub/core/models.py:42:4: R0903: Too few public methods (0/2) (too-few-public-methods)

```

See how you can contribute: [CONTRIBUTING.md](https://github.com/drafthub/drafthub/blob/master/CONTRIBUTING.md) | 1.0 | enhance code quality of `core.models` - here are some complaints reported by pylint.

```

$ docker-compose exec web python check.py lint | grep -v docstring | grep core | grep models

************* Module drafthub.core.models

drafthub/core/models.py:45:0: C0305: Trailing newlines (trailing-newlines)

drafthub/core/models.py:10:4: E0307: __str__ does not return str (invalid-str-returned)

drafthub/core/models.py:15:8: C0103: Variable name "Draft" doesn't conform to snake_case naming style (invalid-name)

drafthub/core/models.py:15:16: E1101: Instance of 'Blog' has no 'my_drafts' member (no-member)

drafthub/core/models.py:23:8: C0103: Variable name "Draft" doesn't conform to snake_case naming style (invalid-name)

drafthub/core/models.py:23:16: E1101: Instance of 'Blog' has no 'my_drafts' member (no-member)

drafthub/core/models.py:31:8: C0103: Variable name "Draft" doesn't conform to snake_case naming style (invalid-name)

drafthub/core/models.py:31:16: E1101: Instance of 'Blog' has no 'my_drafts' member (no-member)

drafthub/core/models.py:42:4: R0903: Too few public methods (0/2) (too-few-public-methods)

```

See how you can contribute: [CONTRIBUTING.md](https://github.com/drafthub/drafthub/blob/master/CONTRIBUTING.md) | non_infrastructure | enhance code quality of core models here are some complaints reported by pylint docker compose exec web python check py lint grep v docstring grep core grep models module drafthub core models drafthub core models py trailing newlines trailing newlines drafthub core models py str does not return str invalid str returned drafthub core models py variable name draft doesn t conform to snake case naming style invalid name drafthub core models py instance of blog has no my drafts member no member drafthub core models py variable name draft doesn t conform to snake case naming style invalid name drafthub core models py instance of blog has no my drafts member no member drafthub core models py variable name draft doesn t conform to snake case naming style invalid name drafthub core models py instance of blog has no my drafts member no member drafthub core models py too few public methods too few public methods see how you can contribute | 0 |

21,094 | 14,360,999,872 | IssuesEvent | 2020-11-30 17:37:09 | servo/servo | https://api.github.com/repos/servo/servo | closed | Permanent timeout in test-android-startup job | A-infrastructure I-bustage | ```

+ ./mach test-android-startup --release

Assuming --target i686-linux-android

Couldn't statvfs() path: No such file or directory

emulator: Requested console port 5580: Inferring adb port 5581.

emulator: WARNING: cannot read adb public key file: /root/.android/adbkey.pub

emulator: WARNING: Your AVD has been configured with an in-guest renderer, but the system image does not support guest rendering.Falling back to 'swiftshader_indirect' mode.

qemu-system-x86_64: warning: host doesn't support requested feature: CPUID.80000001H:ECX.abm [bit 5]

qemu-system-x86_64: warning: host doesn't support requested feature: CPUID.80000001H:ECX.abm [bit 5]

pulseaudio: pa_context_connect() failed

pulseaudio: Reason: Connection refused

pulseaudio: Failed to initialize PA contextaudio: Could not init `pa' audio driver

### WARNING: could not find /usr/share/zoneinfo/ directory. unable to determine host timezone

emulator: Cold boot: requested by the user

* daemon not running; starting now at tcp:5037

* daemon started successfully

### WARNING: could not find /usr/share/zoneinfo/ directory. unable to determine host timezone

emulator: INFO: boot completed

### WARNING: could not find /usr/share/zoneinfo/ directory. unable to determine host timezone

Success

Starting: Intent { act=android.intent.action.MAIN cat=[android.intent.category.LAUNCHER] cmp=org.mozilla.servo/.MainActivity (has extras) }

--------- beginning of system

--------- beginning of main

--------- beginning of crash

[taskcluster:error] Task timeout after 1800 seconds. Force killing container.

```

https://tools.taskcluster.net/groups/X33ARXIMS5WDXIOk0PKwHg/tasks/LjWoG6vwQwSkWVwokP9dvA/runs/0/logs/public%2Flogs%2Flive.log

cc @SimonSapin

I have seen this on two jobs today so far. | 1.0 | Permanent timeout in test-android-startup job - ```

+ ./mach test-android-startup --release

Assuming --target i686-linux-android

Couldn't statvfs() path: No such file or directory

emulator: Requested console port 5580: Inferring adb port 5581.

emulator: WARNING: cannot read adb public key file: /root/.android/adbkey.pub

emulator: WARNING: Your AVD has been configured with an in-guest renderer, but the system image does not support guest rendering.Falling back to 'swiftshader_indirect' mode.

qemu-system-x86_64: warning: host doesn't support requested feature: CPUID.80000001H:ECX.abm [bit 5]

qemu-system-x86_64: warning: host doesn't support requested feature: CPUID.80000001H:ECX.abm [bit 5]

pulseaudio: pa_context_connect() failed

pulseaudio: Reason: Connection refused

pulseaudio: Failed to initialize PA contextaudio: Could not init `pa' audio driver

### WARNING: could not find /usr/share/zoneinfo/ directory. unable to determine host timezone

emulator: Cold boot: requested by the user

* daemon not running; starting now at tcp:5037

* daemon started successfully

### WARNING: could not find /usr/share/zoneinfo/ directory. unable to determine host timezone

emulator: INFO: boot completed

### WARNING: could not find /usr/share/zoneinfo/ directory. unable to determine host timezone

Success

Starting: Intent { act=android.intent.action.MAIN cat=[android.intent.category.LAUNCHER] cmp=org.mozilla.servo/.MainActivity (has extras) }

--------- beginning of system

--------- beginning of main

--------- beginning of crash

[taskcluster:error] Task timeout after 1800 seconds. Force killing container.

```

https://tools.taskcluster.net/groups/X33ARXIMS5WDXIOk0PKwHg/tasks/LjWoG6vwQwSkWVwokP9dvA/runs/0/logs/public%2Flogs%2Flive.log

cc @SimonSapin

I have seen this on two jobs today so far. | infrastructure | permanent timeout in test android startup job mach test android startup release assuming target linux android couldn t statvfs path no such file or directory emulator requested console port inferring adb port emulator warning cannot read adb public key file root android adbkey pub emulator warning your avd has been configured with an in guest renderer but the system image does not support guest rendering falling back to swiftshader indirect mode qemu system warning host doesn t support requested feature cpuid ecx abm qemu system warning host doesn t support requested feature cpuid ecx abm pulseaudio pa context connect failed pulseaudio reason connection refused pulseaudio failed to initialize pa contextaudio could not init pa audio driver warning could not find usr share zoneinfo directory unable to determine host timezone emulator cold boot requested by the user daemon not running starting now at tcp daemon started successfully warning could not find usr share zoneinfo directory unable to determine host timezone emulator info boot completed warning could not find usr share zoneinfo directory unable to determine host timezone success starting intent act android intent action main cat cmp org mozilla servo mainactivity has extras beginning of system beginning of main beginning of crash task timeout after seconds force killing container cc simonsapin i have seen this on two jobs today so far | 1 |

12,477 | 9,798,904,061 | IssuesEvent | 2019-06-11 13:24:23 | astropy/regions | https://api.github.com/repos/astropy/regions | reopened | Example breaks windows testing | bug infrastructure testing | #263 has enable doctests and as a result the appveyor build is complaining file access error as it's being used by another process. (The weird thing is that all tests pass, so it would be nice to be able to turn this strictness off).

The workaround I ended up with is to allow the failures of the appveyor jobs to let the release procedure for 0.4 proceed.

cc @pllim @astrofrog @saimn as you are the ones who solved similar issues in astropy before.

```

============= 955 passed, 8 skipped, 13 xfailed in 35.62 seconds ==============

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "C:\conda\envs\test\lib\site-packages\astropy\utils\decorators.py", line 860, in test

func = make_function_with_signature(func, name=name, **wrapped_args)

File "C:\conda\envs\test\lib\site-packages\astropy\tests\runner.py", line 260, in test

return runner.run_tests(**kwargs)

File "C:\conda\envs\test\lib\site-packages\astropy\tests\runner.py", line 605, in run_tests

return super().run_tests(**kwargs)

File "C:\conda\envs\test\lib\site-packages\astropy\tests\runner.py", line 242, in run_tests

return pytest.main(args=args, plugins=plugins)

File "C:\conda\envs\test\lib\site-packages\astropy\config\paths.py", line 182, in __exit__

shutil.rmtree(self._path)

File "C:\conda\envs\test\lib\shutil.py", line 513, in rmtree

return _rmtree_unsafe(path, onerror)

File "C:\conda\envs\test\lib\shutil.py", line 392, in _rmtree_unsafe

_rmtree_unsafe(fullname, onerror)

File "C:\conda\envs\test\lib\shutil.py", line 392, in _rmtree_unsafe

_rmtree_unsafe(fullname, onerror)

File "C:\conda\envs\test\lib\shutil.py", line 392, in _rmtree_unsafe

_rmtree_unsafe(fullname, onerror)

File "C:\conda\envs\test\lib\shutil.py", line 397, in _rmtree_unsafe

onerror(os.unlink, fullname, sys.exc_info())

File "C:\conda\envs\test\lib\shutil.py", line 395, in _rmtree_unsafe

os.unlink(fullname)

PermissionError: [WinError 32] The process cannot access the file because it is being used by another process: 'C:\\Users\\appveyor\\AppData\\Local\\Temp\\1\\tmppexf4t80astropy_cache\\astropy\\download\\py3\\2c9202ae878ecfcb60878ceb63837f5f'

``` | 1.0 | Example breaks windows testing - #263 has enable doctests and as a result the appveyor build is complaining file access error as it's being used by another process. (The weird thing is that all tests pass, so it would be nice to be able to turn this strictness off).

The workaround I ended up with is to allow the failures of the appveyor jobs to let the release procedure for 0.4 proceed.

cc @pllim @astrofrog @saimn as you are the ones who solved similar issues in astropy before.

```

============= 955 passed, 8 skipped, 13 xfailed in 35.62 seconds ==============

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "C:\conda\envs\test\lib\site-packages\astropy\utils\decorators.py", line 860, in test

func = make_function_with_signature(func, name=name, **wrapped_args)

File "C:\conda\envs\test\lib\site-packages\astropy\tests\runner.py", line 260, in test

return runner.run_tests(**kwargs)

File "C:\conda\envs\test\lib\site-packages\astropy\tests\runner.py", line 605, in run_tests

return super().run_tests(**kwargs)

File "C:\conda\envs\test\lib\site-packages\astropy\tests\runner.py", line 242, in run_tests

return pytest.main(args=args, plugins=plugins)

File "C:\conda\envs\test\lib\site-packages\astropy\config\paths.py", line 182, in __exit__

shutil.rmtree(self._path)

File "C:\conda\envs\test\lib\shutil.py", line 513, in rmtree

return _rmtree_unsafe(path, onerror)

File "C:\conda\envs\test\lib\shutil.py", line 392, in _rmtree_unsafe

_rmtree_unsafe(fullname, onerror)

File "C:\conda\envs\test\lib\shutil.py", line 392, in _rmtree_unsafe

_rmtree_unsafe(fullname, onerror)

File "C:\conda\envs\test\lib\shutil.py", line 392, in _rmtree_unsafe

_rmtree_unsafe(fullname, onerror)

File "C:\conda\envs\test\lib\shutil.py", line 397, in _rmtree_unsafe

onerror(os.unlink, fullname, sys.exc_info())

File "C:\conda\envs\test\lib\shutil.py", line 395, in _rmtree_unsafe

os.unlink(fullname)

PermissionError: [WinError 32] The process cannot access the file because it is being used by another process: 'C:\\Users\\appveyor\\AppData\\Local\\Temp\\1\\tmppexf4t80astropy_cache\\astropy\\download\\py3\\2c9202ae878ecfcb60878ceb63837f5f'

``` | infrastructure | example breaks windows testing has enable doctests and as a result the appveyor build is complaining file access error as it s being used by another process the weird thing is that all tests pass so it would be nice to be able to turn this strictness off the workaround i ended up with is to allow the failures of the appveyor jobs to let the release procedure for proceed cc pllim astrofrog saimn as you are the ones who solved similar issues in astropy before passed skipped xfailed in seconds traceback most recent call last file line in file c conda envs test lib site packages astropy utils decorators py line in test func make function with signature func name name wrapped args file c conda envs test lib site packages astropy tests runner py line in test return runner run tests kwargs file c conda envs test lib site packages astropy tests runner py line in run tests return super run tests kwargs file c conda envs test lib site packages astropy tests runner py line in run tests return pytest main args args plugins plugins file c conda envs test lib site packages astropy config paths py line in exit shutil rmtree self path file c conda envs test lib shutil py line in rmtree return rmtree unsafe path onerror file c conda envs test lib shutil py line in rmtree unsafe rmtree unsafe fullname onerror file c conda envs test lib shutil py line in rmtree unsafe rmtree unsafe fullname onerror file c conda envs test lib shutil py line in rmtree unsafe rmtree unsafe fullname onerror file c conda envs test lib shutil py line in rmtree unsafe onerror os unlink fullname sys exc info file c conda envs test lib shutil py line in rmtree unsafe os unlink fullname permissionerror the process cannot access the file because it is being used by another process c users appveyor appdata local temp cache astropy download | 1 |

22,499 | 15,224,201,844 | IssuesEvent | 2021-02-18 04:38:16 | hyphacoop/organizing | https://api.github.com/repos/hyphacoop/organizing | opened | Identify existing implicit roles | wg:business-planning wg:finance wg:governance wg:infrastructure wg:operations | <sup>_This initial comment is collaborative and open to modification by all._</sup>

📅 **Due date:** March 1, 2021

## Task Summary

Each WG to discuss and identify any implicit roles currently operating in the WG, the can list them here in a comment or add them directly to the miro board: https://miro.com/app/board/o9J_lVt9EFQ=/. Associated with Call me Chrysalis initiative.

## To Do for each WG, check off when complete

- [ ] bizdev

- [ ] gov

- [ ] ops

- [ ] infra

- [ ] finance

- [ ] cmc: add to miro board | 1.0 | Identify existing implicit roles - <sup>_This initial comment is collaborative and open to modification by all._</sup>

📅 **Due date:** March 1, 2021

## Task Summary

Each WG to discuss and identify any implicit roles currently operating in the WG, the can list them here in a comment or add them directly to the miro board: https://miro.com/app/board/o9J_lVt9EFQ=/. Associated with Call me Chrysalis initiative.

## To Do for each WG, check off when complete

- [ ] bizdev

- [ ] gov

- [ ] ops

- [ ] infra

- [ ] finance

- [ ] cmc: add to miro board | infrastructure | identify existing implicit roles this initial comment is collaborative and open to modification by all 📅 due date march task summary each wg to discuss and identify any implicit roles currently operating in the wg the can list them here in a comment or add them directly to the miro board associated with call me chrysalis initiative to do for each wg check off when complete bizdev gov ops infra finance cmc add to miro board | 1 |

26,108 | 19,668,650,882 | IssuesEvent | 2022-01-11 03:08:30 | APSIMInitiative/ApsimX | https://api.github.com/repos/APSIMInitiative/ApsimX | closed | Apsim should be able to download soil descriptions from web services | newfeature interface/infrastructure | ASRIS and ISRIC provide web services which yield soil descriptions for any specified location. Apsim should be able to access these web services to populate its soil descriptions. | 1.0 | Apsim should be able to download soil descriptions from web services - ASRIS and ISRIC provide web services which yield soil descriptions for any specified location. Apsim should be able to access these web services to populate its soil descriptions. | infrastructure | apsim should be able to download soil descriptions from web services asris and isric provide web services which yield soil descriptions for any specified location apsim should be able to access these web services to populate its soil descriptions | 1 |

344 | 2,652,902,416 | IssuesEvent | 2015-03-16 19:58:04 | mroth/emojitrack | https://api.github.com/repos/mroth/emojitrack | closed | admin pages bootstrap 3 transition | infrastructure | and redesign a little to be more legible on mobile, so i can check up on things remotely more effectively | 1.0 | admin pages bootstrap 3 transition - and redesign a little to be more legible on mobile, so i can check up on things remotely more effectively | infrastructure | admin pages bootstrap transition and redesign a little to be more legible on mobile so i can check up on things remotely more effectively | 1 |

476,368 | 13,737,377,410 | IssuesEvent | 2020-10-05 13:06:46 | root-project/root | https://api.github.com/repos/root-project/root | opened | [TMVA] Provide support in MethodPyKeras for tensorflow.keras | affects:6.22 affects:master in:TMVA new feature priority:critical | Currently in ROOT version 6.22 >Tensorflow is supported but with a standalone keras (with Keras version >= 2.3)

The keras shipped with tensorflow (tf.keras) is instead not supported.

It is now needed since LCG software distributions (from LCG 98) do not have anymore Keras | 1.0 | [TMVA] Provide support in MethodPyKeras for tensorflow.keras - Currently in ROOT version 6.22 >Tensorflow is supported but with a standalone keras (with Keras version >= 2.3)

The keras shipped with tensorflow (tf.keras) is instead not supported.

It is now needed since LCG software distributions (from LCG 98) do not have anymore Keras | non_infrastructure | provide support in methodpykeras for tensorflow keras currently in root version tensorflow is supported but with a standalone keras with keras version the keras shipped with tensorflow tf keras is instead not supported it is now needed since lcg software distributions from lcg do not have anymore keras | 0 |

11,421 | 9,187,415,754 | IssuesEvent | 2019-03-06 02:47:31 | ansible/ansible | https://api.github.com/repos/ansible/ansible | closed | module gunicorn hardcodes path to temp directory | affects_2.3 bug module support:community web_infrastructure | <!---

Verify first that your issue/request is not already reported on GitHub.

Also test if the latest release, and devel branch are affected too.

-->

##### ISSUE TYPE

<!--- Pick one below and delete the rest -->

- Bug Report

##### COMPONENT NAME

<!---

Name of the module, plugin, task or feature

Do not include extra details here, e.g. "vyos_command" not "the network module vyos_command" or the full path

-->

gunicorn

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes below -->

```

ansible 2.3.1.0

config file =

configured module search path = Default w/o overrides

python version = 2.7.13 (default, Feb 1 2017, 13:04:42) [GCC 4.2.1 Compatible Apple LLVM 8.0.0 (clang-800.0.42.1)]

```

##### CONFIGURATION

<!---

If using Ansible 2.4 or above, paste the results of "ansible-config dump --only-changed"

Otherwise, mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say "N/A" for anything that is not platform-specific.

Also mention the specific version of what you are trying to control,

e.g. if this is a network bug the version of firmware on the network device.

-->

Mac OS X 10.12.6

##### SUMMARY

<!--- Explain the problem briefly -->

The gunicorn module is hardcoding references to a '/tmp' directory instead of using Python's tempfile.

It assumes that /tmp is the always the TMP directory, which is not the case.

See https://github.com/ansible/ansible/blob/devel/lib/ansible/modules/web_infrastructure/gunicorn.py#L134

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem, using a minimal test-case.

For new features, show how the feature would be used.

-->

N/A - found using code inspection

<!--- Paste example playbooks or commands between quotes below -->

```yaml

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

<!--- Paste verbatim command output between quotes below -->

```

```

| 1.0 | module gunicorn hardcodes path to temp directory - <!---

Verify first that your issue/request is not already reported on GitHub.

Also test if the latest release, and devel branch are affected too.

-->

##### ISSUE TYPE

<!--- Pick one below and delete the rest -->

- Bug Report

##### COMPONENT NAME

<!---

Name of the module, plugin, task or feature

Do not include extra details here, e.g. "vyos_command" not "the network module vyos_command" or the full path

-->

gunicorn

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes below -->

```

ansible 2.3.1.0

config file =

configured module search path = Default w/o overrides

python version = 2.7.13 (default, Feb 1 2017, 13:04:42) [GCC 4.2.1 Compatible Apple LLVM 8.0.0 (clang-800.0.42.1)]

```

##### CONFIGURATION

<!---

If using Ansible 2.4 or above, paste the results of "ansible-config dump --only-changed"

Otherwise, mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say "N/A" for anything that is not platform-specific.

Also mention the specific version of what you are trying to control,

e.g. if this is a network bug the version of firmware on the network device.

-->

Mac OS X 10.12.6

##### SUMMARY

<!--- Explain the problem briefly -->

The gunicorn module is hardcoding references to a '/tmp' directory instead of using Python's tempfile.

It assumes that /tmp is the always the TMP directory, which is not the case.

See https://github.com/ansible/ansible/blob/devel/lib/ansible/modules/web_infrastructure/gunicorn.py#L134

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem, using a minimal test-case.

For new features, show how the feature would be used.

-->

N/A - found using code inspection

<!--- Paste example playbooks or commands between quotes below -->

```yaml

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

<!--- Paste verbatim command output between quotes below -->

```

```

| infrastructure | module gunicorn hardcodes path to temp directory verify first that your issue request is not already reported on github also test if the latest release and devel branch are affected too issue type bug report component name name of the module plugin task or feature do not include extra details here e g vyos command not the network module vyos command or the full path gunicorn ansible version ansible config file configured module search path default w o overrides python version default feb configuration if using ansible or above paste the results of ansible config dump only changed otherwise mention any settings you have changed added removed in ansible cfg or using the ansible environment variables os environment mention the os you are running ansible from and the os you are managing or say n a for anything that is not platform specific also mention the specific version of what you are trying to control e g if this is a network bug the version of firmware on the network device mac os x summary the gunicorn module is hardcoding references to a tmp directory instead of using python s tempfile it assumes that tmp is the always the tmp directory which is not the case see steps to reproduce for bugs show exactly how to reproduce the problem using a minimal test case for new features show how the feature would be used n a found using code inspection yaml expected results actual results | 1 |

2,322 | 3,618,426,913 | IssuesEvent | 2016-02-08 11:31:55 | jaagameister/learning | https://api.github.com/repos/jaagameister/learning | opened | application logging | infrastructure | basically every user input and state change should be logged with a time stamp

- new skill

- new mission

| 1.0 | application logging - basically every user input and state change should be logged with a time stamp

- new skill

- new mission

| infrastructure | application logging basically every user input and state change should be logged with a time stamp new skill new mission | 1 |