Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

49,225 | 10,331,090,553 | IssuesEvent | 2019-09-02 16:33:29 | scorelab/TensorMap | https://api.github.com/repos/scorelab/TensorMap | opened | Find an issue in TensorMap and open an issue | Google Code-In | Setup project then find an issue in the project and open an issue in the project’s GitHub page | 1.0 | Find an issue in TensorMap and open an issue - Setup project then find an issue in the project and open an issue in the project’s GitHub page | non_infrastructure | find an issue in tensormap and open an issue setup project then find an issue in the project and open an issue in the project’s github page | 0 |

3,476 | 4,330,141,814 | IssuesEvent | 2016-07-26 19:03:01 | yale-web-technologies/mirador-annotations | https://api.github.com/repos/yale-web-technologies/mirador-annotations | closed | Enforce unique IDs | infrastructure | Please enforce unique IDs on all objects. Any attempt to create a new object with an existing ID should cause an error and handled appropriately.

| 1.0 | Enforce unique IDs - Please enforce unique IDs on all objects. Any attempt to create a new object with an existing ID should cause an error and handled appropriately.

| infrastructure | enforce unique ids please enforce unique ids on all objects any attempt to create a new object with an existing id should cause an error and handled appropriately | 1 |

11,765 | 9,418,050,570 | IssuesEvent | 2019-04-10 18:14:44 | dotnet/dotnet-docker | https://api.github.com/repos/dotnet/dotnet-docker | closed | Update PowerShell test to not rely on Get-Date | area:infrastructure bug triaged | The VerifySDKImage_PowerShellScenario test attempts to verify that PowerShell works in the container by calling Get-Date and comparing the output value to the current date from the test code. There can be a difference in time between when the value is calculated in the container versus when the value is calculated in test code. If the time difference crosses over midnight, then the two values will correspond to different dates and fail the test. Another possibility is that the container and the test runner are running in different time zones and also cross the midnight time boundary.

The test should be updated to make use of a PowerShell command that is not date/time-dependent. | 1.0 | Update PowerShell test to not rely on Get-Date - The VerifySDKImage_PowerShellScenario test attempts to verify that PowerShell works in the container by calling Get-Date and comparing the output value to the current date from the test code. There can be a difference in time between when the value is calculated in the container versus when the value is calculated in test code. If the time difference crosses over midnight, then the two values will correspond to different dates and fail the test. Another possibility is that the container and the test runner are running in different time zones and also cross the midnight time boundary.

The test should be updated to make use of a PowerShell command that is not date/time-dependent. | infrastructure | update powershell test to not rely on get date the verifysdkimage powershellscenario test attempts to verify that powershell works in the container by calling get date and comparing the output value to the current date from the test code there can be a difference in time between when the value is calculated in the container versus when the value is calculated in test code if the time difference crosses over midnight then the two values will correspond to different dates and fail the test another possibility is that the container and the test runner are running in different time zones and also cross the midnight time boundary the test should be updated to make use of a powershell command that is not date time dependent | 1 |

5,442 | 5,656,500,947 | IssuesEvent | 2017-04-10 01:58:32 | openwhisk/openwhisk-wskdeploy | https://api.github.com/repos/openwhisk/openwhisk-wskdeploy | closed | Proposal: Enable the travis support with openwhisk and add the structure for integration tests | high infrastructure | In order to run integration tests against an openwhisk deployment, openwhisk-wskdeploy needs to enable travis support with core openwhisk installation. Currently this is missing in this project.

openwhisk-wskdeploy needs to have an integration tests folder containing all the integration use cases supported by OpenWhisk. These test cases should pass within the travis to assure the code quality. Currently there is no integration test available in this project.

As the first iteration, the supported integration test cases can start with an easy one: install a basic action and a basic package with manifest and deployment files, verify if they have been installed in openwhisk, remove them in openwhisk, and verify if they have been removed in openwhisk.

For further iterations, the integration test cases need to be documented as the basic features for wskdeploy to support.

| 1.0 | Proposal: Enable the travis support with openwhisk and add the structure for integration tests - In order to run integration tests against an openwhisk deployment, openwhisk-wskdeploy needs to enable travis support with core openwhisk installation. Currently this is missing in this project.

openwhisk-wskdeploy needs to have an integration tests folder containing all the integration use cases supported by OpenWhisk. These test cases should pass within the travis to assure the code quality. Currently there is no integration test available in this project.

As the first iteration, the supported integration test cases can start with an easy one: install a basic action and a basic package with manifest and deployment files, verify if they have been installed in openwhisk, remove them in openwhisk, and verify if they have been removed in openwhisk.

For further iterations, the integration test cases need to be documented as the basic features for wskdeploy to support.

| infrastructure | proposal enable the travis support with openwhisk and add the structure for integration tests in order to run integration tests against an openwhisk deployment openwhisk wskdeploy needs to enable travis support with core openwhisk installation currently this is missing in this project openwhisk wskdeploy needs to have an integration tests folder containing all the integration use cases supported by openwhisk these test cases should pass within the travis to assure the code quality currently there is no integration test available in this project as the first iteration the supported integration test cases can start with an easy one install a basic action and a basic package with manifest and deployment files verify if they have been installed in openwhisk remove them in openwhisk and verify if they have been removed in openwhisk for further iterations the integration test cases need to be documented as the basic features for wskdeploy to support | 1 |

8,974 | 7,753,803,342 | IssuesEvent | 2018-05-31 02:52:07 | dotnet/corefxlab | https://api.github.com/repos/dotnet/corefxlab | closed | Packages don't get generated when the build has warnings | area-Infrastructure | But we need to support warnings for some cases, e.g. ObsoleteAttribute. | 1.0 | Packages don't get generated when the build has warnings - But we need to support warnings for some cases, e.g. ObsoleteAttribute. | infrastructure | packages don t get generated when the build has warnings but we need to support warnings for some cases e g obsoleteattribute | 1 |

63,263 | 15,529,840,684 | IssuesEvent | 2021-03-13 16:45:24 | opencv/opencv | https://api.github.com/repos/opencv/opencv | closed | PPC64: Unable to detect CPU features when optimization is disabled | affected: 3.4 bug category: build/install category: core platform: ppc (PowerPC) | which leads to a fatal error during initializing the core module if build option `DCV_ENABLE_INTRINSICS` was off.

#### Error message:

```Bash

******************************************************************

* FATAL ERROR: *

* This OpenCV build doesn't support current CPU/HW configuration *

* *

* Use OPENCV_DUMP_CONFIG=1 environment variable for details *

******************************************************************

Required baseline features:

ID=200 (VSX) - NOT AVAILABLE

terminate called after throwing an instance of 'cv::Exception'

what(): OpenCV(4.5.2-pre) /opencv/opencv/modules/core/src/system.cpp:625: error: (-215:Assertion failed) Missing support for required CPU baseline features. Check OpenCV build configuration and required CPU/HW setup. in function 'initialize

````

#### steps to produce

build OpenCV with `-DDCV_ENABLE_INTRINSICS=OFF`

#### workaround

skip baseline validation via environment variable `OPENCV_SKIP_CPU_BASELINE_CHECK` | 1.0 | PPC64: Unable to detect CPU features when optimization is disabled - which leads to a fatal error during initializing the core module if build option `DCV_ENABLE_INTRINSICS` was off.

#### Error message:

```Bash

******************************************************************

* FATAL ERROR: *

* This OpenCV build doesn't support current CPU/HW configuration *

* *

* Use OPENCV_DUMP_CONFIG=1 environment variable for details *

******************************************************************

Required baseline features:

ID=200 (VSX) - NOT AVAILABLE

terminate called after throwing an instance of 'cv::Exception'

what(): OpenCV(4.5.2-pre) /opencv/opencv/modules/core/src/system.cpp:625: error: (-215:Assertion failed) Missing support for required CPU baseline features. Check OpenCV build configuration and required CPU/HW setup. in function 'initialize

````

#### steps to produce

build OpenCV with `-DDCV_ENABLE_INTRINSICS=OFF`

#### workaround

skip baseline validation via environment variable `OPENCV_SKIP_CPU_BASELINE_CHECK` | non_infrastructure | unable to detect cpu features when optimization is disabled which leads to a fatal error during initializing the core module if build option dcv enable intrinsics was off error message bash fatal error this opencv build doesn t support current cpu hw configuration use opencv dump config environment variable for details required baseline features id vsx not available terminate called after throwing an instance of cv exception what opencv pre opencv opencv modules core src system cpp error assertion failed missing support for required cpu baseline features check opencv build configuration and required cpu hw setup in function initialize steps to produce build opencv with ddcv enable intrinsics off workaround skip baseline validation via environment variable opencv skip cpu baseline check | 0 |

645,399 | 21,003,685,252 | IssuesEvent | 2022-03-29 20:05:25 | feast-dev/feast | https://api.github.com/repos/feast-dev/feast | closed | feast apply fails for Spark Offline Store once the registry has been created | kind/bug priority/p2 | ## Expected Behavior

It should not throw any exception

## Current Behavior

feast apply throws an exception saying "ValueError: Could not identify the source type being added."

Error Log:

```$ feast apply

/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/scipy/sparse/sputils.py:16: DeprecationWarning: `np.typeDict` is a deprecated alias for `np.sctypeDict`.

supported_dtypes = [np.typeDict[x] for x in supported_dtypes]

/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/scipy/fftpack/__init__.py:103: DeprecationWarning: The module numpy.dual is deprecated. Instead of using dual, use the functions directly from numpy or scipy.

from numpy.dual import register_func

/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/infra/offline_stores/contrib/spark_offline_store/spark_source.py:61: RuntimeWarning: The spark data source API is an experimental feature in alpha development. This API is unstable and it could and most probably will be changed in the future.

RuntimeWarning,

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

2022-03-15 08:09:23,416 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

2022-03-15 08:09:48,173 WARN yarn.Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME.

2022-03-15 08:10:47,117 WARN util.package: Truncated the string representation of a plan since it was too large. This behavior can be adjusted by setting 'spark.sql.debug.maxToStringFields'.

Traceback (most recent call last):

File "/grid/1/cremo/venvs/feast-spark/bin/feast", line 10, in <module>

sys.exit(cli())

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 1128, in __call__

return self.main(*args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 1053, in main

rv = self.invoke(ctx)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 1659, in invoke

return _process_result(sub_ctx.command.invoke(sub_ctx))

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 1395, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 754, in invoke

return __callback(*args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/decorators.py", line 26, in new_func

return f(get_current_context(), *args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/cli.py", line 439, in apply_total_command

apply_total(repo_config, repo, skip_source_validation)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/usage.py", line 269, in wrapper

return func(*args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/repo_operations.py", line 251, in apply_total

store, project, registry, repo, skip_source_validation

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/repo_operations.py", line 210, in apply_total_with_repo_instance

registry_diff, infra_diff, new_infra = store._plan(repo)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/usage.py", line 280, in wrapper

raise exc.with_traceback(traceback)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/usage.py", line 269, in wrapper

return func(*args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/feature_store.py", line 543, in _plan

self._registry, self.project, desired_repo_contents

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/diff/registry_diff.py", line 215, in diff_between

registry, current_project, desired_repo_contents

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/diff/registry_diff.py", line 172, in extract_objects_for_keep_delete_update_add

] = FeastObjectType.get_objects_from_registry(registry, current_project)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/registry.py", line 84, in get_objects_from_registry

FeastObjectType.DATA_SOURCE: registry.list_data_sources(project=project),

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/registry.py", line 302, in list_data_sources

data_sources.append(DataSource.from_proto(data_source_proto))

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/data_source.py", line 252, in from_proto

raise ValueError("Could not identify the source type being added.")

ValueError: Could not identify the source type being added.```

## Steps to reproduce

For Spark Offline Store,

- Create the registry using feast apply

- Update registry using feast ap[ply again, with or without any change in example.py

### Specifications

- Version: 0.19.3

- Platform: linux

- Subsystem:

## Possible Solution

class DataSource(ABC):

def from_proto(data_source: DataSourceProto) -> Any:

# we should add check datasource and identify of it is of SparkSource type and use SparkSource.from_proto() | 1.0 | feast apply fails for Spark Offline Store once the registry has been created - ## Expected Behavior

It should not throw any exception

## Current Behavior

feast apply throws an exception saying "ValueError: Could not identify the source type being added."

Error Log:

```$ feast apply

/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/scipy/sparse/sputils.py:16: DeprecationWarning: `np.typeDict` is a deprecated alias for `np.sctypeDict`.

supported_dtypes = [np.typeDict[x] for x in supported_dtypes]

/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/scipy/fftpack/__init__.py:103: DeprecationWarning: The module numpy.dual is deprecated. Instead of using dual, use the functions directly from numpy or scipy.

from numpy.dual import register_func

/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/infra/offline_stores/contrib/spark_offline_store/spark_source.py:61: RuntimeWarning: The spark data source API is an experimental feature in alpha development. This API is unstable and it could and most probably will be changed in the future.

RuntimeWarning,

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

2022-03-15 08:09:23,416 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

2022-03-15 08:09:48,173 WARN yarn.Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME.

2022-03-15 08:10:47,117 WARN util.package: Truncated the string representation of a plan since it was too large. This behavior can be adjusted by setting 'spark.sql.debug.maxToStringFields'.

Traceback (most recent call last):

File "/grid/1/cremo/venvs/feast-spark/bin/feast", line 10, in <module>

sys.exit(cli())

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 1128, in __call__

return self.main(*args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 1053, in main

rv = self.invoke(ctx)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 1659, in invoke

return _process_result(sub_ctx.command.invoke(sub_ctx))

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 1395, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/core.py", line 754, in invoke

return __callback(*args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/click/decorators.py", line 26, in new_func

return f(get_current_context(), *args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/cli.py", line 439, in apply_total_command

apply_total(repo_config, repo, skip_source_validation)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/usage.py", line 269, in wrapper

return func(*args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/repo_operations.py", line 251, in apply_total

store, project, registry, repo, skip_source_validation

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/repo_operations.py", line 210, in apply_total_with_repo_instance

registry_diff, infra_diff, new_infra = store._plan(repo)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/usage.py", line 280, in wrapper

raise exc.with_traceback(traceback)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/usage.py", line 269, in wrapper

return func(*args, **kwargs)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/feature_store.py", line 543, in _plan

self._registry, self.project, desired_repo_contents

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/diff/registry_diff.py", line 215, in diff_between

registry, current_project, desired_repo_contents

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/diff/registry_diff.py", line 172, in extract_objects_for_keep_delete_update_add

] = FeastObjectType.get_objects_from_registry(registry, current_project)

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/registry.py", line 84, in get_objects_from_registry

FeastObjectType.DATA_SOURCE: registry.list_data_sources(project=project),

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/registry.py", line 302, in list_data_sources

data_sources.append(DataSource.from_proto(data_source_proto))

File "/grid/1/cremo/venvs/feast-spark/lib/python3.7/site-packages/feast/data_source.py", line 252, in from_proto

raise ValueError("Could not identify the source type being added.")

ValueError: Could not identify the source type being added.```

## Steps to reproduce

For Spark Offline Store,

- Create the registry using feast apply

- Update registry using feast ap[ply again, with or without any change in example.py

### Specifications

- Version: 0.19.3

- Platform: linux

- Subsystem:

## Possible Solution

class DataSource(ABC):

def from_proto(data_source: DataSourceProto) -> Any:

# we should add check datasource and identify of it is of SparkSource type and use SparkSource.from_proto() | non_infrastructure | feast apply fails for spark offline store once the registry has been created expected behavior it should not throw any exception current behavior feast apply throws an exception saying valueerror could not identify the source type being added error log feast apply grid cremo venvs feast spark lib site packages scipy sparse sputils py deprecationwarning np typedict is a deprecated alias for np sctypedict supported dtypes for x in supported dtypes grid cremo venvs feast spark lib site packages scipy fftpack init py deprecationwarning the module numpy dual is deprecated instead of using dual use the functions directly from numpy or scipy from numpy dual import register func grid cremo venvs feast spark lib site packages feast infra offline stores contrib spark offline store spark source py runtimewarning the spark data source api is an experimental feature in alpha development this api is unstable and it could and most probably will be changed in the future runtimewarning setting default log level to warn to adjust logging level use sc setloglevel newlevel for sparkr use setloglevel newlevel warn util nativecodeloader unable to load native hadoop library for your platform using builtin java classes where applicable warn yarn client neither spark yarn jars nor spark yarn archive is set falling back to uploading libraries under spark home warn util package truncated the string representation of a plan since it was too large this behavior can be adjusted by setting spark sql debug maxtostringfields traceback most recent call last file grid cremo venvs feast spark bin feast line in sys exit cli file grid cremo venvs feast spark lib site packages click core py line in call return self main args kwargs file grid cremo venvs feast spark lib site packages click core py line in main rv self invoke ctx file grid cremo venvs feast spark lib site packages click core py line in invoke return process result sub ctx command invoke sub ctx file grid cremo venvs feast spark lib site packages click core py line in invoke return ctx invoke self callback ctx params file grid cremo venvs feast spark lib site packages click core py line in invoke return callback args kwargs file grid cremo venvs feast spark lib site packages click decorators py line in new func return f get current context args kwargs file grid cremo venvs feast spark lib site packages feast cli py line in apply total command apply total repo config repo skip source validation file grid cremo venvs feast spark lib site packages feast usage py line in wrapper return func args kwargs file grid cremo venvs feast spark lib site packages feast repo operations py line in apply total store project registry repo skip source validation file grid cremo venvs feast spark lib site packages feast repo operations py line in apply total with repo instance registry diff infra diff new infra store plan repo file grid cremo venvs feast spark lib site packages feast usage py line in wrapper raise exc with traceback traceback file grid cremo venvs feast spark lib site packages feast usage py line in wrapper return func args kwargs file grid cremo venvs feast spark lib site packages feast feature store py line in plan self registry self project desired repo contents file grid cremo venvs feast spark lib site packages feast diff registry diff py line in diff between registry current project desired repo contents file grid cremo venvs feast spark lib site packages feast diff registry diff py line in extract objects for keep delete update add feastobjecttype get objects from registry registry current project file grid cremo venvs feast spark lib site packages feast registry py line in get objects from registry feastobjecttype data source registry list data sources project project file grid cremo venvs feast spark lib site packages feast registry py line in list data sources data sources append datasource from proto data source proto file grid cremo venvs feast spark lib site packages feast data source py line in from proto raise valueerror could not identify the source type being added valueerror could not identify the source type being added steps to reproduce for spark offline store create the registry using feast apply update registry using feast ap ply again with or without any change in example py specifications version platform linux subsystem possible solution class datasource abc def from proto data source datasourceproto any we should add check datasource and identify of it is of sparksource type and use sparksource from proto | 0 |

9,547 | 8,032,641,218 | IssuesEvent | 2018-07-28 17:44:00 | ionide/ionide-vscode-fsharp | https://api.github.com/repos/ionide/ionide-vscode-fsharp | closed | Can't start in devMode | infrastructure | I start FsAutoComplete from CLI:

```shell

➜ FsAutoComplete git:(master) ✗ dotnet src/FsAutoComplete.netcore/bin/Debug/netcoreapp2.0/fsautocomplete.dll --mode http

[21:32:52 INF] Smooth! Suave listener started in 40,563 with binding 127.0.0.1:8088

```

I set `devMode = true`.

And now, when `LanguageService` try to start it's failing.

```fs

doRetry startByDevMode

|> Promise.onSuccess (fun _ ->

printfn "success"

socketNotify <- startSocket "notify"

socketNotifyWorkspace <- startSocket "notifyWorkspace"

()

)

|> Promise.onFail (fun _ ->

printfn "Failed"

)

```

I only see `"Failed"` in the output. Any idea ? | 1.0 | Can't start in devMode - I start FsAutoComplete from CLI:

```shell

➜ FsAutoComplete git:(master) ✗ dotnet src/FsAutoComplete.netcore/bin/Debug/netcoreapp2.0/fsautocomplete.dll --mode http

[21:32:52 INF] Smooth! Suave listener started in 40,563 with binding 127.0.0.1:8088

```

I set `devMode = true`.

And now, when `LanguageService` try to start it's failing.

```fs

doRetry startByDevMode

|> Promise.onSuccess (fun _ ->

printfn "success"

socketNotify <- startSocket "notify"

socketNotifyWorkspace <- startSocket "notifyWorkspace"

()

)

|> Promise.onFail (fun _ ->

printfn "Failed"

)

```

I only see `"Failed"` in the output. Any idea ? | infrastructure | can t start in devmode i start fsautocomplete from cli shell ➜ fsautocomplete git master ✗ dotnet src fsautocomplete netcore bin debug fsautocomplete dll mode http smooth suave listener started in with binding i set devmode true and now when languageservice try to start it s failing fs doretry startbydevmode promise onsuccess fun printfn success socketnotify startsocket notify socketnotifyworkspace startsocket notifyworkspace promise onfail fun printfn failed i only see failed in the output any idea | 1 |

544,419 | 15,893,411,081 | IssuesEvent | 2021-04-11 05:41:57 | remnoteio/remnote-issues | https://api.github.com/repos/remnoteio/remnote-issues | closed | indelible strange line in rem | fixed-in-remnote-1.3 priority=3 | ERROR: type should be string, got "\r\n\r\nhttps://user-images.githubusercontent.com/20538090/105758826-c31eb200-5f60-11eb-8b79-4915893c348b.mp4\r\n\r\n\r\nI think the reason of that line is because parent is multiline card" | 1.0 | indelible strange line in rem -

https://user-images.githubusercontent.com/20538090/105758826-c31eb200-5f60-11eb-8b79-4915893c348b.mp4

I think the reason of that line is because parent is multiline card | non_infrastructure | indelible strange line in rem i think the reason of that line is because parent is multiline card | 0 |

26,410 | 11,305,769,003 | IssuesEvent | 2020-01-18 08:41:26 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | security: allow users to enable password auth for 'root' | A-security C-enhancement | tldr; this issue proposes to enable:

- setting/changing the password of the `root` user;

- allowing root to log in using their password on the UI;

- allowing root to log in using their password on SQL, subject to regular rules in the HBA configuration.

It also fixes the [regression](https://github.com/cockroachdb/cockroach/issues/43847#issuecomment-572615646) introduced by #42563, by enabling `root` authentication on the admin UI to obtain a login cookie.

### Background

CockroachDB currently offers 5 separate guardrails to ensure that `root` is always able to connect even when the authentication configuration is botched:

1. when running `--insecure`, anyone can log in without auth.

2. the crdb [HBA configuration](https://www.postgresql.org/docs/current/auth-pg-hba-conf.html) enforces that `host all root all cert` is always the first rule, meaning that clients can log in as `root` if and only if they present a valid TLS client cert for `root` (and because the method is `cert` and not `cert-password`, password auth is rejected for `root` in any case).

3. `ALTER USER WITH PASSWORD` is disallowed for `root`, so root cannot have a password

4. the internal "check password" mechanism, shared by both SQL and HTTP connections, to compare a client-provided password with a user record fails with an error if the user is `root`.

5. the UI `UserLogin` HTTP API reports an error if the user is `root`.

6. the cockroach CLI commands using SQL connections report an error if the user is `root` and a password is supplied.

Meanwhile, there is a separate, unrelated (but relevant) rule:

7. if a user's password is unset/empty, then this user is not able to use password authentication.

### Proposal

This issue proposes to **remove rules 3-6** specifically, without changing the others.

This proposal would not change the security rules for a cluster using the default configuration: by default, root would not be able to log in using password anywhere (by default, the root account has no password so rule 6 applies) and is required to present a cert on SQL (due to rule 2).

### Non-Pitfalls

A possible counter-argument to this proposal is that CockroachdB uses `root` internally to establish SQL client connections towards itself.

This is not an obstacle: in insecure clusters, the password would be ignored anyway; in secure clusters, CockroachDB's internal connections use a valid client cert. | True | security: allow users to enable password auth for 'root' - tldr; this issue proposes to enable:

- setting/changing the password of the `root` user;

- allowing root to log in using their password on the UI;

- allowing root to log in using their password on SQL, subject to regular rules in the HBA configuration.

It also fixes the [regression](https://github.com/cockroachdb/cockroach/issues/43847#issuecomment-572615646) introduced by #42563, by enabling `root` authentication on the admin UI to obtain a login cookie.

### Background

CockroachDB currently offers 5 separate guardrails to ensure that `root` is always able to connect even when the authentication configuration is botched:

1. when running `--insecure`, anyone can log in without auth.

2. the crdb [HBA configuration](https://www.postgresql.org/docs/current/auth-pg-hba-conf.html) enforces that `host all root all cert` is always the first rule, meaning that clients can log in as `root` if and only if they present a valid TLS client cert for `root` (and because the method is `cert` and not `cert-password`, password auth is rejected for `root` in any case).

3. `ALTER USER WITH PASSWORD` is disallowed for `root`, so root cannot have a password

4. the internal "check password" mechanism, shared by both SQL and HTTP connections, to compare a client-provided password with a user record fails with an error if the user is `root`.

5. the UI `UserLogin` HTTP API reports an error if the user is `root`.

6. the cockroach CLI commands using SQL connections report an error if the user is `root` and a password is supplied.

Meanwhile, there is a separate, unrelated (but relevant) rule:

7. if a user's password is unset/empty, then this user is not able to use password authentication.

### Proposal

This issue proposes to **remove rules 3-6** specifically, without changing the others.

This proposal would not change the security rules for a cluster using the default configuration: by default, root would not be able to log in using password anywhere (by default, the root account has no password so rule 6 applies) and is required to present a cert on SQL (due to rule 2).

### Non-Pitfalls

A possible counter-argument to this proposal is that CockroachdB uses `root` internally to establish SQL client connections towards itself.

This is not an obstacle: in insecure clusters, the password would be ignored anyway; in secure clusters, CockroachDB's internal connections use a valid client cert. | non_infrastructure | security allow users to enable password auth for root tldr this issue proposes to enable setting changing the password of the root user allowing root to log in using their password on the ui allowing root to log in using their password on sql subject to regular rules in the hba configuration it also fixes the introduced by by enabling root authentication on the admin ui to obtain a login cookie background cockroachdb currently offers separate guardrails to ensure that root is always able to connect even when the authentication configuration is botched when running insecure anyone can log in without auth the crdb enforces that host all root all cert is always the first rule meaning that clients can log in as root if and only if they present a valid tls client cert for root and because the method is cert and not cert password password auth is rejected for root in any case alter user with password is disallowed for root so root cannot have a password the internal check password mechanism shared by both sql and http connections to compare a client provided password with a user record fails with an error if the user is root the ui userlogin http api reports an error if the user is root the cockroach cli commands using sql connections report an error if the user is root and a password is supplied meanwhile there is a separate unrelated but relevant rule if a user s password is unset empty then this user is not able to use password authentication proposal this issue proposes to remove rules specifically without changing the others this proposal would not change the security rules for a cluster using the default configuration by default root would not be able to log in using password anywhere by default the root account has no password so rule applies and is required to present a cert on sql due to rule non pitfalls a possible counter argument to this proposal is that cockroachdb uses root internally to establish sql client connections towards itself this is not an obstacle in insecure clusters the password would be ignored anyway in secure clusters cockroachdb s internal connections use a valid client cert | 0 |

22,625 | 15,326,022,258 | IssuesEvent | 2021-02-26 02:39:36 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Threading.Tasks.DataFlow and ComponentModel.Annotations are not building NetCoreAppCurrent | area-Infrastructure-libraries easy | These libraries are included in the shared framework, but we aren't building them for `$(NetCoreAppCurrent)`.

https://github.com/dotnet/runtime/blob/8a3fd5a065b019cc70a600a759ea0c87c14cae8f/src/libraries/System.Threading.Tasks.Dataflow/src/System.Threading.Tasks.Dataflow.csproj#L3

https://github.com/dotnet/runtime/blob/8a3fd5a065b019cc70a600a759ea0c87c14cae8f/src/libraries/System.ComponentModel.Annotations/src/System.ComponentModel.Annotations.csproj#L3

This causes the following problems:

1. These libraries have `<Nullable>enable</Nullable>` in them, but they are building against netstandard APIs, which don't have nullablility annotations.

2. When someone wants to use a new API (or new attributes, like `DynamicDependency` and `UnconditionalSuppressMessage`), they are not available.

We should add a `$(NetCoreAppCurrent)` target for these libraries, and ship that in the shared framework / runtimepack. I analyzed a recent shared framework, and these were the only 2 libraries (outside of `System.Runtime.CompilerServices.Unsafe`) that didn't target `net6.0`.

cc @LakshanF @joperezr @safern @ViktorHofer @ericstj | 1.0 | Threading.Tasks.DataFlow and ComponentModel.Annotations are not building NetCoreAppCurrent - These libraries are included in the shared framework, but we aren't building them for `$(NetCoreAppCurrent)`.

https://github.com/dotnet/runtime/blob/8a3fd5a065b019cc70a600a759ea0c87c14cae8f/src/libraries/System.Threading.Tasks.Dataflow/src/System.Threading.Tasks.Dataflow.csproj#L3

https://github.com/dotnet/runtime/blob/8a3fd5a065b019cc70a600a759ea0c87c14cae8f/src/libraries/System.ComponentModel.Annotations/src/System.ComponentModel.Annotations.csproj#L3

This causes the following problems:

1. These libraries have `<Nullable>enable</Nullable>` in them, but they are building against netstandard APIs, which don't have nullablility annotations.

2. When someone wants to use a new API (or new attributes, like `DynamicDependency` and `UnconditionalSuppressMessage`), they are not available.

We should add a `$(NetCoreAppCurrent)` target for these libraries, and ship that in the shared framework / runtimepack. I analyzed a recent shared framework, and these were the only 2 libraries (outside of `System.Runtime.CompilerServices.Unsafe`) that didn't target `net6.0`.

cc @LakshanF @joperezr @safern @ViktorHofer @ericstj | infrastructure | threading tasks dataflow and componentmodel annotations are not building netcoreappcurrent these libraries are included in the shared framework but we aren t building them for netcoreappcurrent this causes the following problems these libraries have enable in them but they are building against netstandard apis which don t have nullablility annotations when someone wants to use a new api or new attributes like dynamicdependency and unconditionalsuppressmessage they are not available we should add a netcoreappcurrent target for these libraries and ship that in the shared framework runtimepack i analyzed a recent shared framework and these were the only libraries outside of system runtime compilerservices unsafe that didn t target cc lakshanf joperezr safern viktorhofer ericstj | 1 |

32,440 | 26,700,726,940 | IssuesEvent | 2023-01-27 14:11:56 | open-telemetry/opentelemetry.io | https://api.github.com/repos/open-telemetry/opentelemetry.io | opened | npm run serve misbehaving | bug infrastructure | It seems that `netlify-cli` serve is misbehaving. For example, page refresh, after a change doesn't always show the resulting change.

As a workaround, view the site served at http://localhost:1313/, which is the site as served by Hugo rather than Netlify dev. | 1.0 | npm run serve misbehaving - It seems that `netlify-cli` serve is misbehaving. For example, page refresh, after a change doesn't always show the resulting change.

As a workaround, view the site served at http://localhost:1313/, which is the site as served by Hugo rather than Netlify dev. | infrastructure | npm run serve misbehaving it seems that netlify cli serve is misbehaving for example page refresh after a change doesn t always show the resulting change as a workaround view the site served at which is the site as served by hugo rather than netlify dev | 1 |

18,674 | 13,066,059,137 | IssuesEvent | 2020-07-30 20:55:05 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | HitSpool interface: report running component (Trac #944) | Migrated from Trac enhancement infrastructure | The cronjob installed on expcont for doing an hourly:

fab hs_status

that reports running or not running comonents to i3live is still not working properly.

Migrated from https://code.icecube.wisc.edu/ticket/944

```json

{

"status": "closed",

"changetime": "2015-08-10T22:35:05",

"description": "The cronjob installed on expcont for doing an hourly:\n\nfab hs_status\n\nthat reports running or not running comonents to i3live is still not working properly. ",

"reporter": "dheereman",

"cc": "dheereman",

"resolution": "wontfix",

"_ts": "1439246105293024",

"component": "infrastructure",

"summary": "HitSpool interface: report running component",

"priority": "normal",

"keywords": "hitspool",

"time": "2015-04-21T09:52:00",

"milestone": "",

"owner": "dheereman",

"type": "enhancement"

}

```

| 1.0 | HitSpool interface: report running component (Trac #944) - The cronjob installed on expcont for doing an hourly:

fab hs_status

that reports running or not running comonents to i3live is still not working properly.

Migrated from https://code.icecube.wisc.edu/ticket/944

```json

{

"status": "closed",

"changetime": "2015-08-10T22:35:05",

"description": "The cronjob installed on expcont for doing an hourly:\n\nfab hs_status\n\nthat reports running or not running comonents to i3live is still not working properly. ",

"reporter": "dheereman",

"cc": "dheereman",

"resolution": "wontfix",

"_ts": "1439246105293024",

"component": "infrastructure",

"summary": "HitSpool interface: report running component",

"priority": "normal",

"keywords": "hitspool",

"time": "2015-04-21T09:52:00",

"milestone": "",

"owner": "dheereman",

"type": "enhancement"

}

```

| infrastructure | hitspool interface report running component trac the cronjob installed on expcont for doing an hourly fab hs status that reports running or not running comonents to is still not working properly migrated from json status closed changetime description the cronjob installed on expcont for doing an hourly n nfab hs status n nthat reports running or not running comonents to is still not working properly reporter dheereman cc dheereman resolution wontfix ts component infrastructure summary hitspool interface report running component priority normal keywords hitspool time milestone owner dheereman type enhancement | 1 |

58,787 | 6,620,564,310 | IssuesEvent | 2017-09-21 15:56:24 | Microsoft/vscode | https://api.github.com/repos/Microsoft/vscode | opened | Improved --wait behaviour | testplan-item | Refs: https://github.com/Microsoft/vscode/issues/24327

- [ ] Win

- [ ] Mac

- [ ] Linux

Complexity: 2

Running code with `--wait` parameter from the command line makes the command line process wait until the window that opens is closed. During this milestone we are now tracking the file to open as argument and also terminate the calling process when all the files are closed. This allows to reuse an existing Code instance for this purpose.

* verify you can use `--wait` with 0, 1 or many files and the calling process terminates when the files close

* verify the command line will terminate also when the target window closes | 1.0 | Improved --wait behaviour - Refs: https://github.com/Microsoft/vscode/issues/24327

- [ ] Win

- [ ] Mac

- [ ] Linux

Complexity: 2

Running code with `--wait` parameter from the command line makes the command line process wait until the window that opens is closed. During this milestone we are now tracking the file to open as argument and also terminate the calling process when all the files are closed. This allows to reuse an existing Code instance for this purpose.

* verify you can use `--wait` with 0, 1 or many files and the calling process terminates when the files close

* verify the command line will terminate also when the target window closes | non_infrastructure | improved wait behaviour refs win mac linux complexity running code with wait parameter from the command line makes the command line process wait until the window that opens is closed during this milestone we are now tracking the file to open as argument and also terminate the calling process when all the files are closed this allows to reuse an existing code instance for this purpose verify you can use wait with or many files and the calling process terminates when the files close verify the command line will terminate also when the target window closes | 0 |

64,204 | 8,718,340,856 | IssuesEvent | 2018-12-07 20:06:00 | publiclab/plots2 | https://api.github.com/repos/publiclab/plots2 | opened | Move some files from root directory into subfolders if possible | documentation help-wanted | We have many different files in the root directory, but they push down the README quite far when viewed on GitHub: https://github.com/publiclab/plots2

Some files seem like they could be kept in a subfolder to save vertical space -- some have already been moved to `.github/` -- but it'll take research to tell which we can move without breaking anything. Let's do some careful research and paste in links in the comments for any docs showing where we could stash these files.

Thanks! | 1.0 | Move some files from root directory into subfolders if possible - We have many different files in the root directory, but they push down the README quite far when viewed on GitHub: https://github.com/publiclab/plots2

Some files seem like they could be kept in a subfolder to save vertical space -- some have already been moved to `.github/` -- but it'll take research to tell which we can move without breaking anything. Let's do some careful research and paste in links in the comments for any docs showing where we could stash these files.

Thanks! | non_infrastructure | move some files from root directory into subfolders if possible we have many different files in the root directory but they push down the readme quite far when viewed on github some files seem like they could be kept in a subfolder to save vertical space some have already been moved to github but it ll take research to tell which we can move without breaking anything let s do some careful research and paste in links in the comments for any docs showing where we could stash these files thanks | 0 |

4,715 | 5,243,726,911 | IssuesEvent | 2017-01-31 21:29:55 | github/VisualStudio | https://api.github.com/repos/github/VisualStudio | closed | Update step 126 in Test Manifest to reflect maintainer workflow | infrastructure | It's currently:

> Clicking on a pull request title opens browser window to pull request on .com

But now clicking on the title displays a detailed view of the pull request | 1.0 | Update step 126 in Test Manifest to reflect maintainer workflow - It's currently:

> Clicking on a pull request title opens browser window to pull request on .com

But now clicking on the title displays a detailed view of the pull request | infrastructure | update step in test manifest to reflect maintainer workflow it s currently clicking on a pull request title opens browser window to pull request on com but now clicking on the title displays a detailed view of the pull request | 1 |

65,001 | 14,707,130,779 | IssuesEvent | 2021-01-04 21:04:23 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | opened | microProfile-4.0 performance issue with appSecurity-3.0/jaspic. | in:MicroProfile performance team:Core Security | I am noticing this extra time spent in an mp-4.0 app compared to a mp-3.3 app. (JaspiServiceImpl.isAnyProviderRegistered)

```

Parent 0 0.11 2.26 7 147 J:com/ibm/ws/webcontainer/security/WebAppSecurityCollaboratorImpl.performSecurityChecks(Ljavax/servlet/http/HttpServletRequest;Ljavax/servlet/http/HttpServletResponse;Ljavax/security/auth/Subject;Lcom/ibm/ws/webcontainer/security/WebSecurityContext;)V

Self 0 0.11 2.26 7 147 J:com/ibm/ws/security/jaspi/JaspiServiceImpl.isAnyProviderRegistered(Lcom/ibm/ws/webcontainer/security/WebRequest;)Z

Child 0 1.22 2.08 79 135 J:com/ibm/ws/security/javaeesec/BridgeBuilderImpl.buildBridgeIfNeeded(Ljava/lang/String;Ljavax/security/auth/message/config/AuthConfigFactory;)V

Child 0 0.06 0.06 4 4 J:com/ibm/wsspi/kernel/service/utils/AtomicServiceReference.getService()Ljava/lang/Object;

Child 0 0.00 0.02 0 1 J:com/ibm/ws/security/jaspi/JaspiServiceImpl.getAuthConfigFactory()Ljavax/security/auth/message/config/AuthConfigFactory;

```

It looks like mpJwt-1.2 pulls in appSecurity-3.0 (while mp3.3 used appSecurity-2.0), so now isJapsiEnabled is true here, which leads to the extra 2.26% time seen above:

https://github.com/OpenLiberty/open-liberty/blob/c2b0154dcec9728aea7bf3bb72b8c7d99f18b7d7/dev/com.ibm.ws.webcontainer.security/src/com/ibm/ws/webcontainer/security/WebAppSecurityCollaboratorImpl.java#L667

Is there anyway to disable jaspic since I am not using it, or is this something spec-wise we’re stuck with? I also wonder why it is doing the buildBridgeIfNeeded on every request,

| True | microProfile-4.0 performance issue with appSecurity-3.0/jaspic. - I am noticing this extra time spent in an mp-4.0 app compared to a mp-3.3 app. (JaspiServiceImpl.isAnyProviderRegistered)

```

Parent 0 0.11 2.26 7 147 J:com/ibm/ws/webcontainer/security/WebAppSecurityCollaboratorImpl.performSecurityChecks(Ljavax/servlet/http/HttpServletRequest;Ljavax/servlet/http/HttpServletResponse;Ljavax/security/auth/Subject;Lcom/ibm/ws/webcontainer/security/WebSecurityContext;)V

Self 0 0.11 2.26 7 147 J:com/ibm/ws/security/jaspi/JaspiServiceImpl.isAnyProviderRegistered(Lcom/ibm/ws/webcontainer/security/WebRequest;)Z

Child 0 1.22 2.08 79 135 J:com/ibm/ws/security/javaeesec/BridgeBuilderImpl.buildBridgeIfNeeded(Ljava/lang/String;Ljavax/security/auth/message/config/AuthConfigFactory;)V

Child 0 0.06 0.06 4 4 J:com/ibm/wsspi/kernel/service/utils/AtomicServiceReference.getService()Ljava/lang/Object;

Child 0 0.00 0.02 0 1 J:com/ibm/ws/security/jaspi/JaspiServiceImpl.getAuthConfigFactory()Ljavax/security/auth/message/config/AuthConfigFactory;

```

It looks like mpJwt-1.2 pulls in appSecurity-3.0 (while mp3.3 used appSecurity-2.0), so now isJapsiEnabled is true here, which leads to the extra 2.26% time seen above:

https://github.com/OpenLiberty/open-liberty/blob/c2b0154dcec9728aea7bf3bb72b8c7d99f18b7d7/dev/com.ibm.ws.webcontainer.security/src/com/ibm/ws/webcontainer/security/WebAppSecurityCollaboratorImpl.java#L667

Is there anyway to disable jaspic since I am not using it, or is this something spec-wise we’re stuck with? I also wonder why it is doing the buildBridgeIfNeeded on every request,

| non_infrastructure | microprofile performance issue with appsecurity jaspic i am noticing this extra time spent in an mp app compared to a mp app jaspiserviceimpl isanyproviderregistered parent j com ibm ws webcontainer security webappsecuritycollaboratorimpl performsecuritychecks ljavax servlet http httpservletrequest ljavax servlet http httpservletresponse ljavax security auth subject lcom ibm ws webcontainer security websecuritycontext v self j com ibm ws security jaspi jaspiserviceimpl isanyproviderregistered lcom ibm ws webcontainer security webrequest z child j com ibm ws security javaeesec bridgebuilderimpl buildbridgeifneeded ljava lang string ljavax security auth message config authconfigfactory v child j com ibm wsspi kernel service utils atomicservicereference getservice ljava lang object child j com ibm ws security jaspi jaspiserviceimpl getauthconfigfactory ljavax security auth message config authconfigfactory it looks like mpjwt pulls in appsecurity while used appsecurity so now isjapsienabled is true here which leads to the extra time seen above is there anyway to disable jaspic since i am not using it or is this something spec wise we’re stuck with i also wonder why it is doing the buildbridgeifneeded on every request | 0 |

6,551 | 6,510,168,559 | IssuesEvent | 2017-08-25 01:17:48 | proudcity/wp-proudcity | https://api.github.com/repos/proudcity/wp-proudcity | closed | Build in GKE Triggers instead of Jenkins? | infrastructure ready | @aschmoe With the switch to the bigger VMs, we got put on a newer version of Kubernetes that seems to have broken docker building in Jenkins (see last build in https://jenkins.proudcity.com/job/proudcity-api-swagger/). It sounds like the fix is to just rebuild the Jenkins task pod with the latest from the dind (docker in docker) image. https://github.com/jenkinsci/docker-jnlp-slave/issues/40

This got me testing the Build Triggers in JCE > Container Registry > Build Triggers. It was simple to setup and it just seems to work: https://console.cloud.google.com/gcr/builds/44aefd1e-67f8-48e1-83fc-348781d3549e?project=proudcity-1184&authuser=0.

@todo:

* discuss with @aschmoe

* evaluate pricing

* migrate over all docker build processes

* disable jenkins tasks (or at least stop git watching)

This would just be for the docker build tasks. The other tasks (build.sh, cmd.sh, etc) would still use Jenkins. | 1.0 | Build in GKE Triggers instead of Jenkins? - @aschmoe With the switch to the bigger VMs, we got put on a newer version of Kubernetes that seems to have broken docker building in Jenkins (see last build in https://jenkins.proudcity.com/job/proudcity-api-swagger/). It sounds like the fix is to just rebuild the Jenkins task pod with the latest from the dind (docker in docker) image. https://github.com/jenkinsci/docker-jnlp-slave/issues/40

This got me testing the Build Triggers in JCE > Container Registry > Build Triggers. It was simple to setup and it just seems to work: https://console.cloud.google.com/gcr/builds/44aefd1e-67f8-48e1-83fc-348781d3549e?project=proudcity-1184&authuser=0.

@todo:

* discuss with @aschmoe

* evaluate pricing

* migrate over all docker build processes

* disable jenkins tasks (or at least stop git watching)

This would just be for the docker build tasks. The other tasks (build.sh, cmd.sh, etc) would still use Jenkins. | infrastructure | build in gke triggers instead of jenkins aschmoe with the switch to the bigger vms we got put on a newer version of kubernetes that seems to have broken docker building in jenkins see last build in it sounds like the fix is to just rebuild the jenkins task pod with the latest from the dind docker in docker image this got me testing the build triggers in jce container registry build triggers it was simple to setup and it just seems to work todo discuss with aschmoe evaluate pricing migrate over all docker build processes disable jenkins tasks or at least stop git watching this would just be for the docker build tasks the other tasks build sh cmd sh etc would still use jenkins | 1 |

130,042 | 18,154,707,110 | IssuesEvent | 2021-09-26 21:38:39 | ghc-dev/Rachel-Christian | https://api.github.com/repos/ghc-dev/Rachel-Christian | opened | CVE-2019-16869 (High) detected in netty-codec-http-4.1.39.Final.jar | security vulnerability | ## CVE-2019-16869 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.39.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application framework for

rapid development of maintainable high performance protocol servers and

clients.</p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: Rachel-Christian/build.gradle</p>

<p>Path to vulnerable library: ches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.39.Final/732d06961162e27fa3ae5989541c4460853745d3/netty-codec-http-4.1.39.Final.jar</p>

<p>

Dependency Hierarchy:

- :x: **netty-codec-http-4.1.39.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ghc-dev/Rachel-Christian/commit/b737b027c17d2099f7597b2b0401681337cf2af5">b737b027c17d2099f7597b2b0401681337cf2af5</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Netty before 4.1.42.Final mishandles whitespace before the colon in HTTP headers (such as a "Transfer-Encoding : chunked" line), which leads to HTTP request smuggling.

<p>Publish Date: 2019-09-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16869>CVE-2019-16869</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16869">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16869</a></p>

<p>Release Date: 2019-09-26</p>

<p>Fix Resolution: io.netty:netty-all:4.1.42.Final,io.netty:netty-codec-http:4.1.42.Final</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"io.netty","packageName":"netty-codec-http","packageVersion":"4.1.39.Final","packageFilePaths":["/build.gradle"],"isTransitiveDependency":false,"dependencyTree":"io.netty:netty-codec-http:4.1.39.Final","isMinimumFixVersionAvailable":true,"minimumFixVersion":"io.netty:netty-all:4.1.42.Final,io.netty:netty-codec-http:4.1.42.Final"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2019-16869","vulnerabilityDetails":"Netty before 4.1.42.Final mishandles whitespace before the colon in HTTP headers (such as a \"Transfer-Encoding : chunked\" line), which leads to HTTP request smuggling.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16869","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | True | CVE-2019-16869 (High) detected in netty-codec-http-4.1.39.Final.jar - ## CVE-2019-16869 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.39.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application framework for

rapid development of maintainable high performance protocol servers and

clients.</p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: Rachel-Christian/build.gradle</p>

<p>Path to vulnerable library: ches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.39.Final/732d06961162e27fa3ae5989541c4460853745d3/netty-codec-http-4.1.39.Final.jar</p>

<p>

Dependency Hierarchy:

- :x: **netty-codec-http-4.1.39.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ghc-dev/Rachel-Christian/commit/b737b027c17d2099f7597b2b0401681337cf2af5">b737b027c17d2099f7597b2b0401681337cf2af5</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Netty before 4.1.42.Final mishandles whitespace before the colon in HTTP headers (such as a "Transfer-Encoding : chunked" line), which leads to HTTP request smuggling.

<p>Publish Date: 2019-09-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16869>CVE-2019-16869</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16869">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-16869</a></p>

<p>Release Date: 2019-09-26</p>

<p>Fix Resolution: io.netty:netty-all:4.1.42.Final,io.netty:netty-codec-http:4.1.42.Final</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"io.netty","packageName":"netty-codec-http","packageVersion":"4.1.39.Final","packageFilePaths":["/build.gradle"],"isTransitiveDependency":false,"dependencyTree":"io.netty:netty-codec-http:4.1.39.Final","isMinimumFixVersionAvailable":true,"minimumFixVersion":"io.netty:netty-all:4.1.42.Final,io.netty:netty-codec-http:4.1.42.Final"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2019-16869","vulnerabilityDetails":"Netty before 4.1.42.Final mishandles whitespace before the colon in HTTP headers (such as a \"Transfer-Encoding : chunked\" line), which leads to HTTP request smuggling.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-16869","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | non_infrastructure | cve high detected in netty codec http final jar cve high severity vulnerability vulnerable library netty codec http final jar netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers and clients library home page a href path to dependency file rachel christian build gradle path to vulnerable library ches modules files io netty netty codec http final netty codec http final jar dependency hierarchy x netty codec http final jar vulnerable library found in head commit a href found in base branch master vulnerability details netty before final mishandles whitespace before the colon in http headers such as a transfer encoding chunked line which leads to http request smuggling publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution io netty netty all final io netty netty codec http final rescue worker helmet automatic remediation is available for this issue isopenpronvulnerability true ispackagebased true isdefaultbranch true packages istransitivedependency false dependencytree io netty netty codec http final isminimumfixversionavailable true minimumfixversion io netty netty all final io netty netty codec http final basebranches vulnerabilityidentifier cve vulnerabilitydetails netty before final mishandles whitespace before the colon in http headers such as a transfer encoding chunked line which leads to http request smuggling vulnerabilityurl | 0 |

28,174 | 23,070,209,336 | IssuesEvent | 2022-07-25 17:17:50 | Zilliqa/scilla | https://api.github.com/repos/Zilliqa/scilla | closed | `make gold` should not update JSON gold files if it's only whitespace change | infrastructure tests | The Yojson library's pretty-printer is not stable across different versions of the library. We should check that the new JSON AST is actually different before updating the "gold" files, otherwise `make gold` may produce too many unrelated changes. | 1.0 | `make gold` should not update JSON gold files if it's only whitespace change - The Yojson library's pretty-printer is not stable across different versions of the library. We should check that the new JSON AST is actually different before updating the "gold" files, otherwise `make gold` may produce too many unrelated changes. | infrastructure | make gold should not update json gold files if it s only whitespace change the yojson library s pretty printer is not stable across different versions of the library we should check that the new json ast is actually different before updating the gold files otherwise make gold may produce too many unrelated changes | 1 |

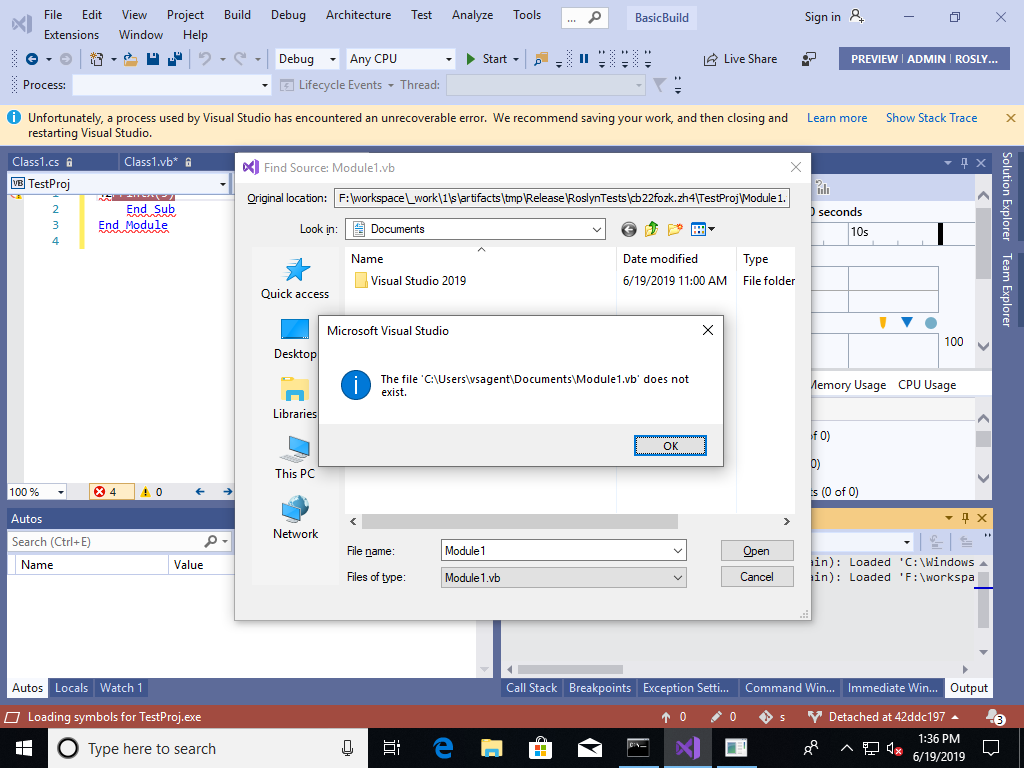

15,940 | 11,778,018,289 | IssuesEvent | 2020-03-16 15:40:53 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | Debug issue of aspnetcore source on VS2019 | area-infrastructure | I was able to successfully build aspnetcore code base by following the instructions at (https://github.com/dotnet/aspnetcore/blob/master/docs/BuildFromSource.md). But when I run any of the sample projects in debug mode and set a break point, none of the breakpoints are being hit and I get "The application is in Break mode" message.( Screenshot attached).

I want to point out that I am **not** referring to stepping into aspnetcore code from my application using source linking and loading debug symbols as mentioned here

https://www.stevejgordon.co.uk/debugging-asp-net-core-2-source

I am struggling to debug the actual aspnet core source code on my local machine after i downloaded the source from git.

What am I missing?

| 1.0 | Debug issue of aspnetcore source on VS2019 - I was able to successfully build aspnetcore code base by following the instructions at (https://github.com/dotnet/aspnetcore/blob/master/docs/BuildFromSource.md). But when I run any of the sample projects in debug mode and set a break point, none of the breakpoints are being hit and I get "The application is in Break mode" message.( Screenshot attached).

I want to point out that I am **not** referring to stepping into aspnetcore code from my application using source linking and loading debug symbols as mentioned here

https://www.stevejgordon.co.uk/debugging-asp-net-core-2-source

I am struggling to debug the actual aspnet core source code on my local machine after i downloaded the source from git.

What am I missing?

| infrastructure | debug issue of aspnetcore source on i was able to successfully build aspnetcore code base by following the instructions at but when i run any of the sample projects in debug mode and set a break point none of the breakpoints are being hit and i get the application is in break mode message screenshot attached i want to point out that i am not referring to stepping into aspnetcore code from my application using source linking and loading debug symbols as mentioned here i am struggling to debug the actual aspnet core source code on my local machine after i downloaded the source from git what am i missing | 1 |

4,848 | 5,294,623,079 | IssuesEvent | 2017-02-09 11:21:08 | twingly/twingly-search-api-ruby | https://api.github.com/repos/twingly/twingly-search-api-ruby | opened | Make Travis test gem installation | enhancement infrastructure small | Seen on https://github.com/sickill/rainbow/commit/30904393e61844304abc0f9f00226497fc1b8201

```yml

matrix:

include:

- rvm: 2.0.0

...

- rvm: 2.2.6

install: true # This skips 'bundle install'

script: gem build rainbow && gem install *.gem

``` | 1.0 | Make Travis test gem installation - Seen on https://github.com/sickill/rainbow/commit/30904393e61844304abc0f9f00226497fc1b8201

```yml

matrix:

include:

- rvm: 2.0.0

...

- rvm: 2.2.6

install: true # This skips 'bundle install'

script: gem build rainbow && gem install *.gem

``` | infrastructure | make travis test gem installation seen on yml matrix include rvm rvm install true this skips bundle install script gem build rainbow gem install gem | 1 |

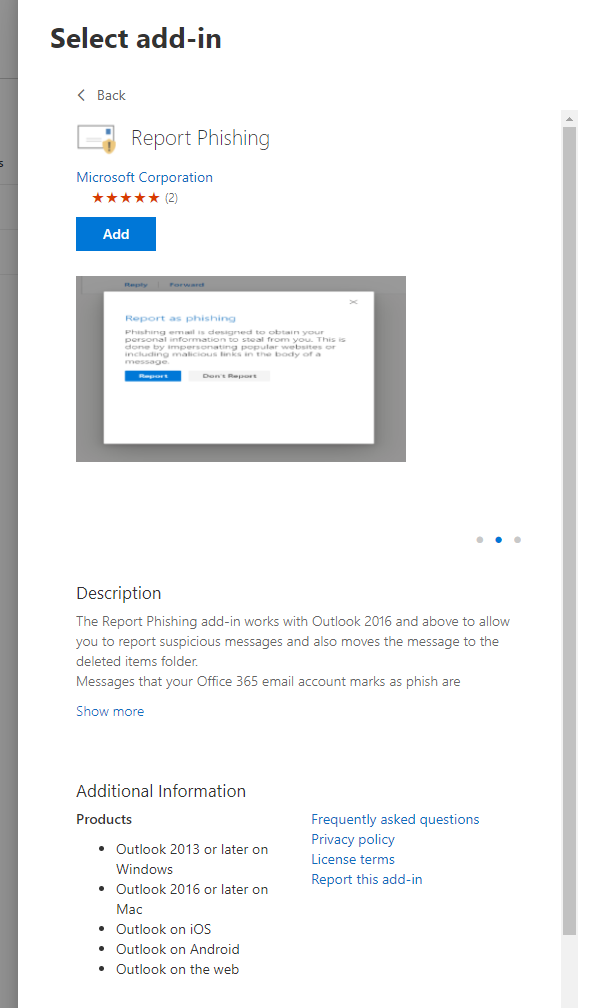

81,019 | 15,597,889,913 | IssuesEvent | 2021-03-18 17:26:09 | MicrosoftDocs/microsoft-365-docs | https://api.github.com/repos/MicrosoftDocs/microsoft-365-docs | closed | Update versions information for Outlook client | security |

The information on the docs site and in the M365 admin center, "Deploy a new add-in" > "Choose from the Store" pages contradicts. At the link below, it shows that the "Report Phishing" button is only compatible with Outlook 2016 for Mac, but the Store shows "Outlook 2016 or later on Mac".

https://docs.microsoft.com/en-us/microsoft-365/security/office-365-security/enable-the-report-phish-add-in?view=o365-worldwide#what-do-you-need-to-know-before-you-begin

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 214e9296-0909-0946-57b0-dca5f22ee854

* Version Independent ID: 689f3c32-eeb9-9c60-5d7c-78ebd6dcb165

* Content: [Enable the Report Phish add-in - Office 365](https://docs.microsoft.com/en-us/microsoft-365/security/office-365-security/enable-the-report-phish-add-in?view=o365-worldwide#get-and-enable-the-report-phishing-add-in-for-your-organization)

* Content Source: [microsoft-365/security/office-365-security/enable-the-report-phish-add-in.md](https://github.com/MicrosoftDocs/microsoft-365-docs/blob/public/microsoft-365/security/office-365-security/enable-the-report-phish-add-in.md)

* Service: **o365-seccomp**

* GitHub Login: @siosulli

* Microsoft Alias: **siosulli** | True | Update versions information for Outlook client -

The information on the docs site and in the M365 admin center, "Deploy a new add-in" > "Choose from the Store" pages contradicts. At the link below, it shows that the "Report Phishing" button is only compatible with Outlook 2016 for Mac, but the Store shows "Outlook 2016 or later on Mac".

https://docs.microsoft.com/en-us/microsoft-365/security/office-365-security/enable-the-report-phish-add-in?view=o365-worldwide#what-do-you-need-to-know-before-you-begin

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 214e9296-0909-0946-57b0-dca5f22ee854

* Version Independent ID: 689f3c32-eeb9-9c60-5d7c-78ebd6dcb165

* Content: [Enable the Report Phish add-in - Office 365](https://docs.microsoft.com/en-us/microsoft-365/security/office-365-security/enable-the-report-phish-add-in?view=o365-worldwide#get-and-enable-the-report-phishing-add-in-for-your-organization)

* Content Source: [microsoft-365/security/office-365-security/enable-the-report-phish-add-in.md](https://github.com/MicrosoftDocs/microsoft-365-docs/blob/public/microsoft-365/security/office-365-security/enable-the-report-phish-add-in.md)

* Service: **o365-seccomp**

* GitHub Login: @siosulli

* Microsoft Alias: **siosulli** | non_infrastructure | update versions information for outlook client the information on the docs site and in the admin center deploy a new add in choose from the store pages contradicts at the link below it shows that the report phishing button is only compatible with outlook for mac but the store shows outlook or later on mac document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service seccomp github login siosulli microsoft alias siosulli | 0 |

47,732 | 6,062,897,010 | IssuesEvent | 2017-06-14 10:34:13 | owncloud/client | https://api.github.com/repos/owncloud/client | opened | [Sharing Dialog] Include "Show file listing" checkbox on public link section on folders | Design & UX Enhancement Sharing | See: https://github.com/owncloud/core/pull/27548 - `permissions` is set to `4` (shouldn't it be `6`: `CREATE` & `UPDATE` instead? cc/ @PVince81)

- [ ] Show file listing checkbox: https://github.com/owncloud/core/pull/27548#issuecomment-299473915

- [ ] Do not display the "Direct download" option on non-listed directories: https://github.com/owncloud/core/pull/27548#issuecomment-300431656 | 1.0 | [Sharing Dialog] Include "Show file listing" checkbox on public link section on folders - See: https://github.com/owncloud/core/pull/27548 - `permissions` is set to `4` (shouldn't it be `6`: `CREATE` & `UPDATE` instead? cc/ @PVince81)

- [ ] Show file listing checkbox: https://github.com/owncloud/core/pull/27548#issuecomment-299473915

- [ ] Do not display the "Direct download" option on non-listed directories: https://github.com/owncloud/core/pull/27548#issuecomment-300431656 | non_infrastructure | include show file listing checkbox on public link section on folders see permissions is set to shouldn t it be create update instead cc show file listing checkbox do not display the direct download option on non listed directories | 0 |

17,206 | 12,247,906,652 | IssuesEvent | 2020-05-05 16:36:48 | enarx/enarx | https://api.github.com/repos/enarx/enarx | opened | Set the log level to 'error' for our cargo-make 'deny' task | good first issue infrastructure | One of of the side effects of emulating the workspace with `cargo-make` is that `cargo-deny` helpfully points out that some of the crates it is looking at don't contain all of the licenses listed in `deny.toml`. These are spurious and tend to clutter the CI output. The `deny.toml` is correct _overall_ throughout our emulated workspace but `cargo-deny` looks at each crate in a vacuum with `deny.toml`.

For example:

```

[cargo-make][1] INFO - Execute Command: "cargo" "deny" "check" "licenses"

warning: license was not encountered

┌── /__w/enarx/enarx/deny.toml:13:5 ───

│

13 │ "BSD-3-Clause",

│ ^^^^^^^^^^^^^^ no crate used this license

│

warning: license was not encountered

┌── /__w/enarx/enarx/deny.toml:11:5 ───

│

11 │ "MIT",

│ ^^^^^ no crate used this license

│

```

Check out `cargo deny --help` to see how to set the log level. Update the `deny` task in the `Makefile.toml` to supply the argument. | 1.0 | Set the log level to 'error' for our cargo-make 'deny' task - One of of the side effects of emulating the workspace with `cargo-make` is that `cargo-deny` helpfully points out that some of the crates it is looking at don't contain all of the licenses listed in `deny.toml`. These are spurious and tend to clutter the CI output. The `deny.toml` is correct _overall_ throughout our emulated workspace but `cargo-deny` looks at each crate in a vacuum with `deny.toml`.

For example:

```

[cargo-make][1] INFO - Execute Command: "cargo" "deny" "check" "licenses"

warning: license was not encountered

┌── /__w/enarx/enarx/deny.toml:13:5 ───

│

13 │ "BSD-3-Clause",

│ ^^^^^^^^^^^^^^ no crate used this license

│

warning: license was not encountered

┌── /__w/enarx/enarx/deny.toml:11:5 ───

│

11 │ "MIT",

│ ^^^^^ no crate used this license

│

```