Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,675 | 29,513,460,559 | IssuesEvent | 2023-06-04 07:50:17 | debanjum/khoj | https://api.github.com/repos/debanjum/khoj | opened | Create benchmarks for search | maintain | We need a consistent mechanism for evaluating search quality across different data sources over time for quality assessment. | True | Create benchmarks for search - We need a consistent mechanism for evaluating search quality across different data sources over time for quality assessment. | main | create benchmarks for search we need a consistent mechanism for evaluating search quality across different data sources over time for quality assessment | 1 |

3,105 | 11,868,460,111 | IssuesEvent | 2020-03-26 09:14:04 | chocolatey-community/chocolatey-package-requests | https://api.github.com/repos/chocolatey-community/chocolatey-package-requests | closed | RFM - freac.portable | Status: Available For Maintainer(s) | ## Current Maintainer

- [x] I am the maintainer of the package and wish to pass it to someone else;

## I DON'T Want To Become The Maintainer

- [x] I have followed the Package Triage Process and I do NOT want to become maintainer of the package;

- [x] There is no existing open maintainer request for this package;

## Checklist

- [x] Issue title starts with 'RFM - '

## Existing Package Details

Package URL: https://chocolatey.org/packages/freac.portable

Package source URL: https://github.com/abejenaru/chocolatey-packages/tree/master/automatic/freac.portable

| True | RFM - freac.portable - ## Current Maintainer

- [x] I am the maintainer of the package and wish to pass it to someone else;

## I DON'T Want To Become The Maintainer

- [x] I have followed the Package Triage Process and I do NOT want to become maintainer of the package;

- [x] There is no existing open maintainer request for this package;

## Checklist

- [x] Issue title starts with 'RFM - '

## Existing Package Details

Package URL: https://chocolatey.org/packages/freac.portable

Package source URL: https://github.com/abejenaru/chocolatey-packages/tree/master/automatic/freac.portable

| main | rfm freac portable current maintainer i am the maintainer of the package and wish to pass it to someone else i don t want to become the maintainer i have followed the package triage process and i do not want to become maintainer of the package there is no existing open maintainer request for this package checklist issue title starts with rfm existing package details package url package source url | 1 |

33,824 | 2,772,815,007 | IssuesEvent | 2015-05-03 01:37:56 | synergy/synergy | https://api.github.com/repos/synergy/synergy | reopened | Auto-start GUI setting on Linux | bug priority-soon | **Imported issue:**

* Author: Nick Bolton

* Date: 2011-12-06 15:48:52

* Legacy ID: 3025

| 1.0 | Auto-start GUI setting on Linux - **Imported issue:**

* Author: Nick Bolton

* Date: 2011-12-06 15:48:52

* Legacy ID: 3025

| non_main | auto start gui setting on linux imported issue author nick bolton date legacy id | 0 |

49,254 | 6,019,188,633 | IssuesEvent | 2017-06-07 14:02:51 | sbsdev/daisyproducer | https://api.github.com/repos/sbsdev/daisyproducer | closed | Import of GD productions from ABACUS fails | deployed on testing | The xml from ABACUS now contains an new element `<drucker/>`. That change makes the validation fail and hence the import.

| 1.0 | Import of GD productions from ABACUS fails - The xml from ABACUS now contains an new element `<drucker/>`. That change makes the validation fail and hence the import.

| non_main | import of gd productions from abacus fails the xml from abacus now contains an new element that change makes the validation fail and hence the import | 0 |

73,844 | 19,842,831,676 | IssuesEvent | 2022-01-21 00:29:32 | tsunamayo/Starship-EVO | https://api.github.com/repos/tsunamayo/Starship-EVO | opened | [New build - DEFAULT] 22w03c: Space Battle Editor | Build Release Note | The "spawn NPC" F8 menu evolved in a full blown Space Battle editor.

This is mainly to help me improve, balance and optimize space combat, but also to design larger battles to be added with faction encounters.

- Spawn several ships at the same time

- Choose the NPC behaviour: foe / friend.

- Set a spawning distance.

- Export a given space battle blueprint.

You will need to be flying a ship for it to work correctly.

Hotfixes:

#4488 #3416 Decals are not mirrored correctly on some orientations.

#4491 Rotor preview incorrect at various grid-size.

#4495 #4496 Children entity can appears at the wrong place after a blueprint deletion. | 1.0 | [New build - DEFAULT] 22w03c: Space Battle Editor - The "spawn NPC" F8 menu evolved in a full blown Space Battle editor.

This is mainly to help me improve, balance and optimize space combat, but also to design larger battles to be added with faction encounters.

- Spawn several ships at the same time

- Choose the NPC behaviour: foe / friend.

- Set a spawning distance.

- Export a given space battle blueprint.

You will need to be flying a ship for it to work correctly.

Hotfixes:

#4488 #3416 Decals are not mirrored correctly on some orientations.

#4491 Rotor preview incorrect at various grid-size.

#4495 #4496 Children entity can appears at the wrong place after a blueprint deletion. | non_main | space battle editor the spawn npc menu evolved in a full blown space battle editor this is mainly to help me improve balance and optimize space combat but also to design larger battles to be added with faction encounters spawn several ships at the same time choose the npc behaviour foe friend set a spawning distance export a given space battle blueprint you will need to be flying a ship for it to work correctly hotfixes decals are not mirrored correctly on some orientations rotor preview incorrect at various grid size children entity can appears at the wrong place after a blueprint deletion | 0 |

479,681 | 13,804,541,105 | IssuesEvent | 2020-10-11 09:29:09 | AY2021S1-TIC4001-2/tp | https://api.github.com/repos/AY2021S1-TIC4001-2/tp | closed | Add saveIncomeCategories method to Storage class | priority.High type.Task | ... for storing the income categories in the income category list to the harddisk. | 1.0 | Add saveIncomeCategories method to Storage class - ... for storing the income categories in the income category list to the harddisk. | non_main | add saveincomecategories method to storage class for storing the income categories in the income category list to the harddisk | 0 |

2,869 | 10,275,929,668 | IssuesEvent | 2019-08-24 12:47:57 | arcticicestudio/arctic | https://api.github.com/repos/arcticicestudio/arctic | closed | ESLint | context-workflow scope-dx scope-maintainability scope-quality scope-stability type-feature | <p align="center"><img src="https://user-images.githubusercontent.com/7836623/63634799-a8555900-c65b-11e9-8988-dd0bc5d9c03b.png" /></p>

Integrate [ESLint][], the _pluggable_ and de-facto standard linting utility for JavaScript.

### Configuration Preset

The configuration presets that will be used are [@arcticicestudio/eslint-config][pr] that implements the [Arctic Ice Studio JavaScript Style][stg-js]. It comes with the following peer dependencies:

- [eslint][esl-gh]

- [babel-eslint][esl-pr-b]

It it built on top of [@arcticicestudio/eslint-config-base][pr-b] that includes various rules of the following plugins and rule presets that are therefore also required peer dependencies:

- [@typescript-eslint/eslint-plugin][esl-ts-p]

- [@typescript-eslint/parser][esl-ts-pa]

- [eslint-config-prettier][esl-c-pr]

- [eslint-plugin-babel][esl-p-b]

- [eslint-plugin-import][esl-p-i]

- [eslint-plugin-jsx-a11y][esl-p-a11y]

- [eslint-plugin-prettier][esl-p-pr]

- [eslint-plugin-react-hooks][esl-p-r-h]

- [eslint-plugin-react][esl-p-r]

Since _arctic_ will be built with [TypeScript][ts], the [@arcticicestudio/eslint-config-typescript][pr-ts] preset will be extended to add support for _TypeScript_ source file linting and compatibility with [Prettier][] through the [`@arcticicestudio/eslint-config-typescript/prettier` extension entry point][pr-ts-d#ep]. This preset requires the following peer dependencies:

- [@typescript-eslint/eslint-plugin][esl-ts-p]

- [@typescript-eslint/parser][esl-ts-pa]

- [typescript][gh-ts]

Since the custom presets are still in major version `0` note that the version range should be `>=0.x.x <1.0.0` to avoid the “SemVer Major Zero Caveat”. When defining package versions with the the carat `^` or tilde `~` range selector it won't affect packages with a major version of `0`. _yarn_ will resolve these packages to their exact version until the major version is greater or equal to `1`.

To avoid this caveat the more detailed version range `>=0.x.x <1.0.0` should be used to resolve all versions greater or equal to `0.x.x` but less than `1.0.0`. This will always use the latest `0.x.x` version and removes the need to increment the version manually on each new release.

To allow to lint TypeScript code the `@typescript-eslint/parser` parser will be used and [specified as parser][esl-d-parser] next to the main [Babel parser][esl-pr-b]. Also to make use of the latest experimental Babel features and proposals, [eslint-plugin-babel][esl-p-b] will be added with the following rule configurations:

- `babel/camelcase` with level `error` - doesn't complain about optional chaining (`let foo = bar?.a_b;`). Note that the [core rule `camelcase`][esl-r-cc] must be disabled!

- `babel/no-unused-expressions` with level `error` - doesn't fail when using `do` expressions or optional chaining (`a?.b()`). Note that the [core rule `no-unused-expressions`][esl-r-nue] must be disabled!

See the [documentation of provided rules][esl-p-b#r]and required configurations to use them.

The `.eslintrc.js` configuration file will be placed in the project root next to the `.eslintignore` file to define ignore pattern.

#### Webpack Import Resolving Strategy

To prepare for a better developer experience with Webpack (that will be used later on through Gatsby) the [resolvers of the eslint-plugin-import][esl-p-i#res] will be configured for the `src` and `src/components` paths.

### Package Script

To allow to run the JavaScript linting separately a `lint:js` npm script/task will be added to be included in the main `lint` script flow. To use the great [auto-fixing][esl-d-cli#af] feature another `format:js` script/task will be added.

## Tasks

- [x] Install required packages to as development dependencies:

- [@arcticicestudio/eslint-config-typescript][npm-esl-c-ais-ts]

- [@arcticicestudio/eslint-config][npm-esl-c-ais]

- [eslint-config-prettier][npm-esl-c-pr]

- [eslint-plugin-babel][npm-esl-p-b]

- [eslint-plugin-import][npm-esl-p-i]

- [eslint-plugin-jsx-a11y][npm-esl-p-a11y]

- [eslint-plugin-prettier][npm-esl-p-pr]

- [eslint-plugin-react-hooks][npm-esl-p-r-h]

- [eslint-plugin-react][npm-esl-p-r]

- [eslint][npm-esl]

- [typescript][npm-ts]

- [x] Implement `.eslintrc.js` configuration file.

- [x] Extend installed presets.

- [x] [Prepare Webpack](#webpack-preparations) compatibility with resolvers.

- [x] Integrate [eslint-plugin-babel][npm-esl-p-b]

- [x] Enable `babel/no-unused-expressions` and `babel/camelcase` rules including the deactivation of their associated core rules.

- [x] Add `babel` to the array of enabled plugins.

- [x] Implement `.eslintignore` ignore pattern file.

- [x] Implement npm `format:fix-js`, `format:fix-ts`, `lint:js` and `lint:ts` scripts.

- [x] Lint current code base for the first time and fix possible JavaScript style guide violations.

[esl-c-pr]: https://github.com/prettier/eslint-config-prettier

[esl-d-cli#af]: https://eslint.org/docs/user-guide/command-line-interface#fixing-problems

[esl-d-parser]: https://eslint.org/docs/user-guide/configuring#specifying-parser

[esl-p-a11y]: https://github.com/evcohen/eslint-plugin-jsx-a11y

[esl-p-b]: https://github.com/babel/eslint-plugin-babel

[esl-p-b#r]: https://github.com/babel/eslint-plugin-babel#rules

[esl-p-i]: https://github.com/benmosher/eslint-plugin-import

[esl-p-i#res]: https://github.com/benmosher/eslint-plugin-import#resolvers

[esl-p-pr]: https://github.com/prettier/eslint-plugin-prettier

[esl-p-r-h]: https://github.com/facebook/react/tree/master/packages/eslint-plugin-react-hooks

[esl-p-r]: https://github.com/yannickcr/eslint-plugin-react

[esl-pr-b]: https://github.com/babel/babel-eslint

[esl-r-cc]: https://eslint.org/docs/rules/camelcase

[esl-r-nue]: https://eslint.org/docs/rules/no-unused-expressions

[esl-ts-p]: https://github.com/typescript-eslint/typescript-eslint/tree/master/packages/eslint-plugin

[esl-ts-pa]: https://github.com/typescript-eslint/typescript-eslint/tree/master/packages/parser

[eslint]: https://eslint.org

[esl-gh]: https://github.com/eslint/eslint

[gh-ts]: https://github.com/Microsoft/TypeScript

[npm-esl-c-ais-ts]: https://www.npmjs.com/package/%40arcticicestudio/eslint-config-typescript

[npm-esl-c-ais]: https://www.npmjs.com/package/%40arcticicestudio/eslint-config

[npm-esl-c-pr]: https://www.npmjs.com/package/eslint-config-prettier

[npm-esl-p-a11y]: https://www.npmjs.com/package/eslint-plugin-jsx-a11y

[npm-esl-p-b]: https://www.npmjs.com/package/eslint-plugin-babel

[npm-esl-p-i]: https://www.npmjs.com/package/eslint-plugin-import

[npm-esl-p-pr]: https://www.npmjs.com/package/eslint-plugin-prettier

[npm-esl-p-r-h]: https://www.npmjs.com/package/eslint-plugin-react-hooks

[npm-esl-p-r]: https://www.npmjs.com/package/eslint-plugin-react

[npm-esl]: https://www.npmjs.com/package/eslint

[npm-ts]: https://www.npmjs.com/package/typescript

[pr-b]: https://github.com/arcticicestudio/styleguide-javascript/tree/develop/packages/%40arcticicestudio/eslint-config-base

[pr-ts-d#ep]: https://github.com/arcticicestudio/styleguide-javascript/blob/develop/packages/%40arcticicestudio/eslint-config-typescript/README.md#entry-points

[pr-ts]: https://github.com/arcticicestudio/styleguide-javascript/tree/develop/packages/%40arcticicestudio/eslint-config-typescript

[pr]: https://github.com/arcticicestudio/styleguide-javascript/tree/develop/packages/%40arcticicestudio/eslint-config

[prettier]: https://prettier.io

[stg-js]: https://arcticicestudio.github.io/styleguide-javascript

[ts]: https://www.typescriptlang.org

| True | ESLint - <p align="center"><img src="https://user-images.githubusercontent.com/7836623/63634799-a8555900-c65b-11e9-8988-dd0bc5d9c03b.png" /></p>

Integrate [ESLint][], the _pluggable_ and de-facto standard linting utility for JavaScript.

### Configuration Preset

The configuration presets that will be used are [@arcticicestudio/eslint-config][pr] that implements the [Arctic Ice Studio JavaScript Style][stg-js]. It comes with the following peer dependencies:

- [eslint][esl-gh]

- [babel-eslint][esl-pr-b]

It it built on top of [@arcticicestudio/eslint-config-base][pr-b] that includes various rules of the following plugins and rule presets that are therefore also required peer dependencies:

- [@typescript-eslint/eslint-plugin][esl-ts-p]

- [@typescript-eslint/parser][esl-ts-pa]

- [eslint-config-prettier][esl-c-pr]

- [eslint-plugin-babel][esl-p-b]

- [eslint-plugin-import][esl-p-i]

- [eslint-plugin-jsx-a11y][esl-p-a11y]

- [eslint-plugin-prettier][esl-p-pr]

- [eslint-plugin-react-hooks][esl-p-r-h]

- [eslint-plugin-react][esl-p-r]

Since _arctic_ will be built with [TypeScript][ts], the [@arcticicestudio/eslint-config-typescript][pr-ts] preset will be extended to add support for _TypeScript_ source file linting and compatibility with [Prettier][] through the [`@arcticicestudio/eslint-config-typescript/prettier` extension entry point][pr-ts-d#ep]. This preset requires the following peer dependencies:

- [@typescript-eslint/eslint-plugin][esl-ts-p]

- [@typescript-eslint/parser][esl-ts-pa]

- [typescript][gh-ts]

Since the custom presets are still in major version `0` note that the version range should be `>=0.x.x <1.0.0` to avoid the “SemVer Major Zero Caveat”. When defining package versions with the the carat `^` or tilde `~` range selector it won't affect packages with a major version of `0`. _yarn_ will resolve these packages to their exact version until the major version is greater or equal to `1`.

To avoid this caveat the more detailed version range `>=0.x.x <1.0.0` should be used to resolve all versions greater or equal to `0.x.x` but less than `1.0.0`. This will always use the latest `0.x.x` version and removes the need to increment the version manually on each new release.

To allow to lint TypeScript code the `@typescript-eslint/parser` parser will be used and [specified as parser][esl-d-parser] next to the main [Babel parser][esl-pr-b]. Also to make use of the latest experimental Babel features and proposals, [eslint-plugin-babel][esl-p-b] will be added with the following rule configurations:

- `babel/camelcase` with level `error` - doesn't complain about optional chaining (`let foo = bar?.a_b;`). Note that the [core rule `camelcase`][esl-r-cc] must be disabled!

- `babel/no-unused-expressions` with level `error` - doesn't fail when using `do` expressions or optional chaining (`a?.b()`). Note that the [core rule `no-unused-expressions`][esl-r-nue] must be disabled!

See the [documentation of provided rules][esl-p-b#r]and required configurations to use them.

The `.eslintrc.js` configuration file will be placed in the project root next to the `.eslintignore` file to define ignore pattern.

#### Webpack Import Resolving Strategy

To prepare for a better developer experience with Webpack (that will be used later on through Gatsby) the [resolvers of the eslint-plugin-import][esl-p-i#res] will be configured for the `src` and `src/components` paths.

### Package Script

To allow to run the JavaScript linting separately a `lint:js` npm script/task will be added to be included in the main `lint` script flow. To use the great [auto-fixing][esl-d-cli#af] feature another `format:js` script/task will be added.

## Tasks

- [x] Install required packages to as development dependencies:

- [@arcticicestudio/eslint-config-typescript][npm-esl-c-ais-ts]

- [@arcticicestudio/eslint-config][npm-esl-c-ais]

- [eslint-config-prettier][npm-esl-c-pr]

- [eslint-plugin-babel][npm-esl-p-b]

- [eslint-plugin-import][npm-esl-p-i]

- [eslint-plugin-jsx-a11y][npm-esl-p-a11y]

- [eslint-plugin-prettier][npm-esl-p-pr]

- [eslint-plugin-react-hooks][npm-esl-p-r-h]

- [eslint-plugin-react][npm-esl-p-r]

- [eslint][npm-esl]

- [typescript][npm-ts]

- [x] Implement `.eslintrc.js` configuration file.

- [x] Extend installed presets.

- [x] [Prepare Webpack](#webpack-preparations) compatibility with resolvers.

- [x] Integrate [eslint-plugin-babel][npm-esl-p-b]

- [x] Enable `babel/no-unused-expressions` and `babel/camelcase` rules including the deactivation of their associated core rules.

- [x] Add `babel` to the array of enabled plugins.

- [x] Implement `.eslintignore` ignore pattern file.

- [x] Implement npm `format:fix-js`, `format:fix-ts`, `lint:js` and `lint:ts` scripts.

- [x] Lint current code base for the first time and fix possible JavaScript style guide violations.

[esl-c-pr]: https://github.com/prettier/eslint-config-prettier

[esl-d-cli#af]: https://eslint.org/docs/user-guide/command-line-interface#fixing-problems

[esl-d-parser]: https://eslint.org/docs/user-guide/configuring#specifying-parser

[esl-p-a11y]: https://github.com/evcohen/eslint-plugin-jsx-a11y

[esl-p-b]: https://github.com/babel/eslint-plugin-babel

[esl-p-b#r]: https://github.com/babel/eslint-plugin-babel#rules

[esl-p-i]: https://github.com/benmosher/eslint-plugin-import

[esl-p-i#res]: https://github.com/benmosher/eslint-plugin-import#resolvers

[esl-p-pr]: https://github.com/prettier/eslint-plugin-prettier

[esl-p-r-h]: https://github.com/facebook/react/tree/master/packages/eslint-plugin-react-hooks

[esl-p-r]: https://github.com/yannickcr/eslint-plugin-react

[esl-pr-b]: https://github.com/babel/babel-eslint

[esl-r-cc]: https://eslint.org/docs/rules/camelcase

[esl-r-nue]: https://eslint.org/docs/rules/no-unused-expressions

[esl-ts-p]: https://github.com/typescript-eslint/typescript-eslint/tree/master/packages/eslint-plugin

[esl-ts-pa]: https://github.com/typescript-eslint/typescript-eslint/tree/master/packages/parser

[eslint]: https://eslint.org

[esl-gh]: https://github.com/eslint/eslint

[gh-ts]: https://github.com/Microsoft/TypeScript

[npm-esl-c-ais-ts]: https://www.npmjs.com/package/%40arcticicestudio/eslint-config-typescript

[npm-esl-c-ais]: https://www.npmjs.com/package/%40arcticicestudio/eslint-config

[npm-esl-c-pr]: https://www.npmjs.com/package/eslint-config-prettier

[npm-esl-p-a11y]: https://www.npmjs.com/package/eslint-plugin-jsx-a11y

[npm-esl-p-b]: https://www.npmjs.com/package/eslint-plugin-babel

[npm-esl-p-i]: https://www.npmjs.com/package/eslint-plugin-import

[npm-esl-p-pr]: https://www.npmjs.com/package/eslint-plugin-prettier

[npm-esl-p-r-h]: https://www.npmjs.com/package/eslint-plugin-react-hooks

[npm-esl-p-r]: https://www.npmjs.com/package/eslint-plugin-react

[npm-esl]: https://www.npmjs.com/package/eslint

[npm-ts]: https://www.npmjs.com/package/typescript

[pr-b]: https://github.com/arcticicestudio/styleguide-javascript/tree/develop/packages/%40arcticicestudio/eslint-config-base

[pr-ts-d#ep]: https://github.com/arcticicestudio/styleguide-javascript/blob/develop/packages/%40arcticicestudio/eslint-config-typescript/README.md#entry-points

[pr-ts]: https://github.com/arcticicestudio/styleguide-javascript/tree/develop/packages/%40arcticicestudio/eslint-config-typescript

[pr]: https://github.com/arcticicestudio/styleguide-javascript/tree/develop/packages/%40arcticicestudio/eslint-config

[prettier]: https://prettier.io

[stg-js]: https://arcticicestudio.github.io/styleguide-javascript

[ts]: https://www.typescriptlang.org

| main | eslint integrate the pluggable and de facto standard linting utility for javascript configuration preset the configuration presets that will be used are that implements the it comes with the following peer dependencies it it built on top of that includes various rules of the following plugins and rule presets that are therefore also required peer dependencies since arctic will be built with the preset will be extended to add support for typescript source file linting and compatibility with through the this preset requires the following peer dependencies since the custom presets are still in major version note that the version range should be x x to avoid the “semver major zero caveat” when defining package versions with the the carat or tilde range selector it won t affect packages with a major version of yarn will resolve these packages to their exact version until the major version is greater or equal to to avoid this caveat the more detailed version range x x should be used to resolve all versions greater or equal to x x but less than this will always use the latest x x version and removes the need to increment the version manually on each new release to allow to lint typescript code the typescript eslint parser parser will be used and next to the main also to make use of the latest experimental babel features and proposals will be added with the following rule configurations babel camelcase with level error doesn t complain about optional chaining let foo bar a b note that the must be disabled babel no unused expressions with level error doesn t fail when using do expressions or optional chaining a b note that the must be disabled see the and required configurations to use them the eslintrc js configuration file will be placed in the project root next to the eslintignore file to define ignore pattern webpack import resolving strategy to prepare for a better developer experience with webpack that will be used later on through gatsby the will be configured for the src and src components paths package script to allow to run the javascript linting separately a lint js npm script task will be added to be included in the main lint script flow to use the great feature another format js script task will be added tasks install required packages to as development dependencies implement eslintrc js configuration file extend installed presets webpack preparations compatibility with resolvers integrate enable babel no unused expressions and babel camelcase rules including the deactivation of their associated core rules add babel to the array of enabled plugins implement eslintignore ignore pattern file implement npm format fix js format fix ts lint js and lint ts scripts lint current code base for the first time and fix possible javascript style guide violations | 1 |

3,145 | 12,059,001,120 | IssuesEvent | 2020-04-15 18:28:37 | arcticicestudio/igloo | https://api.github.com/repos/arcticicestudio/igloo | closed | “taskwarrior“ & “timewarrior“ snowblock decommission | scope-maintainability snowblock-taskwarrior snowblock-timewarrior type-task | Related to #248

---

Both _snowblocks_ for [Taskwarrior][] and [Timewarrior][] are not required anymore since they have been replaced with my own custom 💙 [Go][] application that is currently private/closed source, but planned to be open sourced later on.

Both tools are great and provide a lot of features, but are also kind of overloaded with unused and unnecessary functions. I also missed the possibility to integrate the data and API into my other Go applications as well as web-based projects with a quite more modern _techstack_ ([Protocol Buffers][proto], [NATS][] Messaging, [React][] SPA etc.).

Therefore the _snowblocks_ will be removed while the data is still available through the [_Git_ repository history/logs][git-docs-hist].

[git-docs-hist]: https://git-scm.com/book/en/v2/Git-Basics-Viewing-the-Commit-History

[go]: https://go.dev

[nats]: https://nats.io

[proto]: https://developers.google.com/protocol-buffers

[react]: https://reactjs.org

[taskwarrior]: https://taskwarrior.org

[timewarrior]: https://timewarrior.net | True | “taskwarrior“ & “timewarrior“ snowblock decommission - Related to #248

---

Both _snowblocks_ for [Taskwarrior][] and [Timewarrior][] are not required anymore since they have been replaced with my own custom 💙 [Go][] application that is currently private/closed source, but planned to be open sourced later on.

Both tools are great and provide a lot of features, but are also kind of overloaded with unused and unnecessary functions. I also missed the possibility to integrate the data and API into my other Go applications as well as web-based projects with a quite more modern _techstack_ ([Protocol Buffers][proto], [NATS][] Messaging, [React][] SPA etc.).

Therefore the _snowblocks_ will be removed while the data is still available through the [_Git_ repository history/logs][git-docs-hist].

[git-docs-hist]: https://git-scm.com/book/en/v2/Git-Basics-Viewing-the-Commit-History

[go]: https://go.dev

[nats]: https://nats.io

[proto]: https://developers.google.com/protocol-buffers

[react]: https://reactjs.org

[taskwarrior]: https://taskwarrior.org

[timewarrior]: https://timewarrior.net | main | “taskwarrior“ “timewarrior“ snowblock decommission related to both snowblocks for and are not required anymore since they have been replaced with my own custom 💙 application that is currently private closed source but planned to be open sourced later on both tools are great and provide a lot of features but are also kind of overloaded with unused and unnecessary functions i also missed the possibility to integrate the data and api into my other go applications as well as web based projects with a quite more modern techstack messaging spa etc therefore the snowblocks will be removed while the data is still available through the | 1 |

266,547 | 28,379,520,106 | IssuesEvent | 2023-04-13 01:04:29 | AlexRogalskiy/AlexRogalskiy | https://api.github.com/repos/AlexRogalskiy/AlexRogalskiy | opened | CVE-2023-26964 (Medium) detected in h2-0.1.26.crate, hyper-0.12.35.crate | Mend: dependency security vulnerability | ## CVE-2023-26964 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>h2-0.1.26.crate</b>, <b>hyper-0.12.35.crate</b></p></summary>

<p>

<details><summary><b>h2-0.1.26.crate</b></p></summary>

<p>An HTTP/2.0 client and server</p>

<p>Library home page: <a href="https://crates.io/api/v1/crates/h2/0.1.26/download">https://crates.io/api/v1/crates/h2/0.1.26/download</a></p>

<p>

Dependency Hierarchy:

- rss-1.9.0.crate (Root Library)

- reqwest-0.9.24.crate

- hyper-0.12.35.crate

- :x: **h2-0.1.26.crate** (Vulnerable Library)

</details>

<details><summary><b>hyper-0.12.35.crate</b></p></summary>

<p>A fast and correct HTTP library.</p>

<p>Library home page: <a href="https://crates.io/api/v1/crates/hyper/0.12.35/download">https://crates.io/api/v1/crates/hyper/0.12.35/download</a></p>

<p>

Dependency Hierarchy:

- rss-1.9.0.crate (Root Library)

- reqwest-0.9.24.crate

- :x: **hyper-0.12.35.crate** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in hyper v0.13.7. h2-0.2.4 Stream stacking occurs when the H2 component processes HTTP2 RST_STREAM frames. As a result, the memory and CPU usage are high which can lead to a Denial of Service (DoS).

<p>Publish Date: 2023-04-11

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-26964>CVE-2023-26964</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2023-26964 (Medium) detected in h2-0.1.26.crate, hyper-0.12.35.crate - ## CVE-2023-26964 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>h2-0.1.26.crate</b>, <b>hyper-0.12.35.crate</b></p></summary>

<p>

<details><summary><b>h2-0.1.26.crate</b></p></summary>

<p>An HTTP/2.0 client and server</p>

<p>Library home page: <a href="https://crates.io/api/v1/crates/h2/0.1.26/download">https://crates.io/api/v1/crates/h2/0.1.26/download</a></p>

<p>

Dependency Hierarchy:

- rss-1.9.0.crate (Root Library)

- reqwest-0.9.24.crate

- hyper-0.12.35.crate

- :x: **h2-0.1.26.crate** (Vulnerable Library)

</details>

<details><summary><b>hyper-0.12.35.crate</b></p></summary>

<p>A fast and correct HTTP library.</p>

<p>Library home page: <a href="https://crates.io/api/v1/crates/hyper/0.12.35/download">https://crates.io/api/v1/crates/hyper/0.12.35/download</a></p>

<p>

Dependency Hierarchy:

- rss-1.9.0.crate (Root Library)

- reqwest-0.9.24.crate

- :x: **hyper-0.12.35.crate** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in hyper v0.13.7. h2-0.2.4 Stream stacking occurs when the H2 component processes HTTP2 RST_STREAM frames. As a result, the memory and CPU usage are high which can lead to a Denial of Service (DoS).

<p>Publish Date: 2023-04-11

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-26964>CVE-2023-26964</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve medium detected in crate hyper crate cve medium severity vulnerability vulnerable libraries crate hyper crate crate an http client and server library home page a href dependency hierarchy rss crate root library reqwest crate hyper crate x crate vulnerable library hyper crate a fast and correct http library library home page a href dependency hierarchy rss crate root library reqwest crate x hyper crate vulnerable library found in base branch master vulnerability details an issue was discovered in hyper stream stacking occurs when the component processes rst stream frames as a result the memory and cpu usage are high which can lead to a denial of service dos publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href step up your open source security game with mend | 0 |

269,553 | 28,960,216,928 | IssuesEvent | 2023-05-10 01:24:13 | shahul01/nlk-socket-simple-demo | https://api.github.com/repos/shahul01/nlk-socket-simple-demo | opened | socket.io-4.5.4.tgz: 1 vulnerabilities (highest severity is: 6.5) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>socket.io-4.5.4.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /server/package.json</p>

<p>Path to vulnerable library: /server/node_modules/engine.io/package.json</p>

<p>

</details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (socket.io version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2023-31125](https://www.mend.io/vulnerability-database/CVE-2023-31125) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Medium | 6.5 | engine.io-6.2.1.tgz | Transitive | N/A* | ❌ |

<p>*For some transitive vulnerabilities, there is no version of direct dependency with a fix. Check the "Details" section below to see if there is a version of transitive dependency where vulnerability is fixed.</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> CVE-2023-31125</summary>

### Vulnerable Library - <b>engine.io-6.2.1.tgz</b></p>

<p>The realtime engine behind Socket.IO. Provides the foundation of a bidirectional connection between client and server</p>

<p>Library home page: <a href="https://registry.npmjs.org/engine.io/-/engine.io-6.2.1.tgz">https://registry.npmjs.org/engine.io/-/engine.io-6.2.1.tgz</a></p>

<p>Path to dependency file: /server/package.json</p>

<p>Path to vulnerable library: /server/node_modules/engine.io/package.json</p>

<p>

Dependency Hierarchy:

- socket.io-4.5.4.tgz (Root Library)

- :x: **engine.io-6.2.1.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

Engine.IO is the implementation of transport-based cross-browser/cross-device bi-directional communication layer for Socket.IO. An uncaught exception vulnerability was introduced in version 5.1.0 and included in version 4.1.0 of the `socket.io` parent package. Older versions are not impacted. A specially crafted HTTP request can trigger an uncaught exception on the Engine.IO server, thus killing the Node.js process. This impacts all the users of the `engine.io` package, including those who use depending packages like `socket.io`. This issue was fixed in version 6.4.2 of Engine.IO. There is no known workaround except upgrading to a safe version.

<p>Publish Date: 2023-05-08

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-31125>CVE-2023-31125</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>6.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2023-31125">https://www.cve.org/CVERecord?id=CVE-2023-31125</a></p>

<p>Release Date: 2023-05-08</p>

<p>Fix Resolution: engine.io - 6.4.2</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details> | True | socket.io-4.5.4.tgz: 1 vulnerabilities (highest severity is: 6.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>socket.io-4.5.4.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /server/package.json</p>

<p>Path to vulnerable library: /server/node_modules/engine.io/package.json</p>

<p>

</details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (socket.io version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2023-31125](https://www.mend.io/vulnerability-database/CVE-2023-31125) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Medium | 6.5 | engine.io-6.2.1.tgz | Transitive | N/A* | ❌ |

<p>*For some transitive vulnerabilities, there is no version of direct dependency with a fix. Check the "Details" section below to see if there is a version of transitive dependency where vulnerability is fixed.</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> CVE-2023-31125</summary>

### Vulnerable Library - <b>engine.io-6.2.1.tgz</b></p>

<p>The realtime engine behind Socket.IO. Provides the foundation of a bidirectional connection between client and server</p>

<p>Library home page: <a href="https://registry.npmjs.org/engine.io/-/engine.io-6.2.1.tgz">https://registry.npmjs.org/engine.io/-/engine.io-6.2.1.tgz</a></p>

<p>Path to dependency file: /server/package.json</p>

<p>Path to vulnerable library: /server/node_modules/engine.io/package.json</p>

<p>

Dependency Hierarchy:

- socket.io-4.5.4.tgz (Root Library)

- :x: **engine.io-6.2.1.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

Engine.IO is the implementation of transport-based cross-browser/cross-device bi-directional communication layer for Socket.IO. An uncaught exception vulnerability was introduced in version 5.1.0 and included in version 4.1.0 of the `socket.io` parent package. Older versions are not impacted. A specially crafted HTTP request can trigger an uncaught exception on the Engine.IO server, thus killing the Node.js process. This impacts all the users of the `engine.io` package, including those who use depending packages like `socket.io`. This issue was fixed in version 6.4.2 of Engine.IO. There is no known workaround except upgrading to a safe version.

<p>Publish Date: 2023-05-08

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-31125>CVE-2023-31125</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>6.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2023-31125">https://www.cve.org/CVERecord?id=CVE-2023-31125</a></p>

<p>Release Date: 2023-05-08</p>

<p>Fix Resolution: engine.io - 6.4.2</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details> | non_main | socket io tgz vulnerabilities highest severity is vulnerable library socket io tgz path to dependency file server package json path to vulnerable library server node modules engine io package json vulnerabilities cve severity cvss dependency type fixed in socket io version remediation available medium engine io tgz transitive n a for some transitive vulnerabilities there is no version of direct dependency with a fix check the details section below to see if there is a version of transitive dependency where vulnerability is fixed details cve vulnerable library engine io tgz the realtime engine behind socket io provides the foundation of a bidirectional connection between client and server library home page a href path to dependency file server package json path to vulnerable library server node modules engine io package json dependency hierarchy socket io tgz root library x engine io tgz vulnerable library found in base branch master vulnerability details engine io is the implementation of transport based cross browser cross device bi directional communication layer for socket io an uncaught exception vulnerability was introduced in version and included in version of the socket io parent package older versions are not impacted a specially crafted http request can trigger an uncaught exception on the engine io server thus killing the node js process this impacts all the users of the engine io package including those who use depending packages like socket io this issue was fixed in version of engine io there is no known workaround except upgrading to a safe version publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution engine io step up your open source security game with mend | 0 |

5,000 | 25,723,359,976 | IssuesEvent | 2022-12-07 15:06:40 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | Table not found error | type: bug work: frontend status: ready restricted: maintainers | ## Steps to reproduce

1. Navigate to http://localhost:8000/mathesar_tables/

1. Click on "Library Management" to open schema with id `217` (or similar)

1. On the "Authors" table card, hover the "Go to Table" hyperlink. Observe that it uses schema `217` (or equivalent) in the URL. Good.

1. In the navigation header, open the "Choose a Schema" dropdown, and click on "public", navigating to http://localhost:8000/mathesar_tables/1/

1. Observe that table cards now use schema id `1` in their URLs. Good.

1. Use the same schema switcher to navigate back to "Library Management".

1. Hover the "Go to Table" hyperlinks for various tables.

1. Expect these URLs to use schema `217` (or equivalent).

1. Instead observe the table URLs to use schema `1`.

1. Clicking on one of these URLs rightly displays an error message like:

> Table with id 1267 not found.

| True | Table not found error - ## Steps to reproduce

1. Navigate to http://localhost:8000/mathesar_tables/

1. Click on "Library Management" to open schema with id `217` (or similar)

1. On the "Authors" table card, hover the "Go to Table" hyperlink. Observe that it uses schema `217` (or equivalent) in the URL. Good.

1. In the navigation header, open the "Choose a Schema" dropdown, and click on "public", navigating to http://localhost:8000/mathesar_tables/1/

1. Observe that table cards now use schema id `1` in their URLs. Good.

1. Use the same schema switcher to navigate back to "Library Management".

1. Hover the "Go to Table" hyperlinks for various tables.

1. Expect these URLs to use schema `217` (or equivalent).

1. Instead observe the table URLs to use schema `1`.

1. Clicking on one of these URLs rightly displays an error message like:

> Table with id 1267 not found.

| main | table not found error steps to reproduce navigate to click on library management to open schema with id or similar on the authors table card hover the go to table hyperlink observe that it uses schema or equivalent in the url good in the navigation header open the choose a schema dropdown and click on public navigating to observe that table cards now use schema id in their urls good use the same schema switcher to navigate back to library management hover the go to table hyperlinks for various tables expect these urls to use schema or equivalent instead observe the table urls to use schema clicking on one of these urls rightly displays an error message like table with id not found | 1 |

1,304 | 5,495,033,343 | IssuesEvent | 2017-03-15 02:18:33 | Code4SocialGood/C4SG | https://api.github.com/repos/Code4SocialGood/C4SG | closed | Replace CSS Framework | Architecture Front-End | - **Currently using**

Materialize CSS (http://materializecss.com/) +

Angular2-Material (https://github.com/InfomediaLtd/angular2-materialize)

- **Replace with**

Angular/Material 2 (https://material.angular.io/) | 1.0 | Replace CSS Framework - - **Currently using**

Materialize CSS (http://materializecss.com/) +

Angular2-Material (https://github.com/InfomediaLtd/angular2-materialize)

- **Replace with**

Angular/Material 2 (https://material.angular.io/) | non_main | replace css framework currently using materialize css material replace with angular material | 0 |

2,458 | 8,639,897,895 | IssuesEvent | 2018-11-23 22:30:02 | F5OEO/rpitx | https://api.github.com/repos/F5OEO/rpitx | closed | why rpitx developed for transceiver? | V1 related (not maintained) | Hi,it is great,it is possible for developing it for receiver and transmitter tool.

can anyone offer any idea??

best regards stackprogramer

| True | why rpitx developed for transceiver? - Hi,it is great,it is possible for developing it for receiver and transmitter tool.

can anyone offer any idea??

best regards stackprogramer

| main | why rpitx developed for transceiver hi it is great it is possible for developing it for receiver and transmitter tool can anyone offer any idea best regards stackprogramer | 1 |

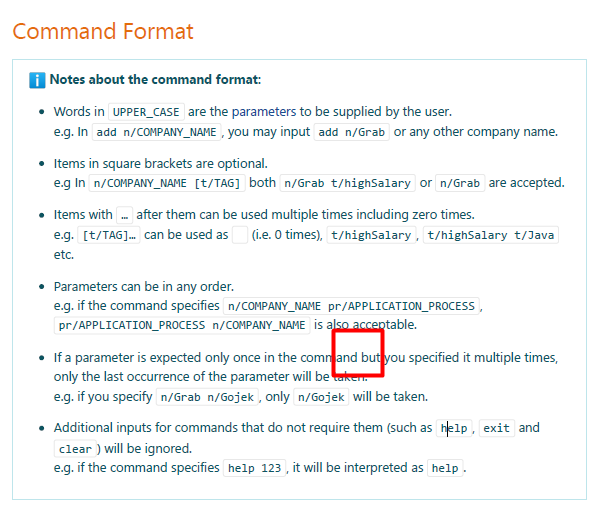

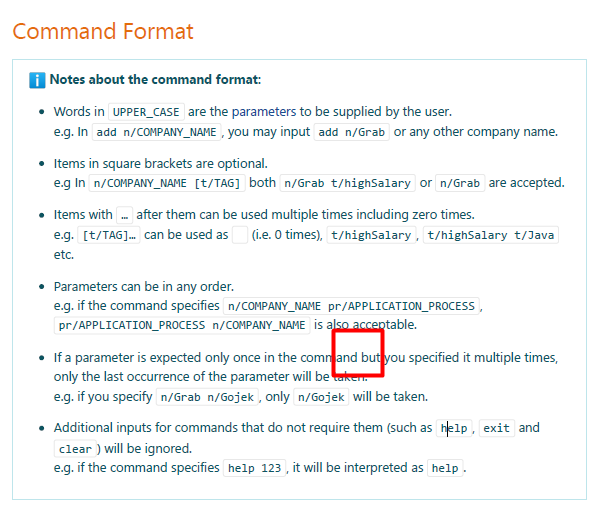

357,417 | 25,176,382,458 | IssuesEvent | 2022-11-11 09:37:55 | PangKuangWei/pe | https://api.github.com/repos/PangKuangWei/pe | opened | UG Bug: Grammar Mistake (Comma Usage for 2 independent clauses) | severity.VeryLow type.DocumentationBug | There is a missing comma before the word `but` shown in the screenshot below. There should be a comma here because these 2 parts are independent clauses.

<!--session: 1668152656976-1bbc2a95-284a-4da4-b139-83a160afbe25-->

<!--Version: Web v3.4.4--> | 1.0 | UG Bug: Grammar Mistake (Comma Usage for 2 independent clauses) - There is a missing comma before the word `but` shown in the screenshot below. There should be a comma here because these 2 parts are independent clauses.

<!--session: 1668152656976-1bbc2a95-284a-4da4-b139-83a160afbe25-->

<!--Version: Web v3.4.4--> | non_main | ug bug grammar mistake comma usage for independent clauses there is a missing comma before the word but shown in the screenshot below there should be a comma here because these parts are independent clauses | 0 |

827,355 | 31,767,119,845 | IssuesEvent | 2023-09-12 09:26:42 | oceanbase/odc | https://api.github.com/repos/oceanbase/odc | opened | [Bug]: When importing the file, the id is undefined and does not exist. | type-bug priority-medium | ### ODC version

odc421

### OB version

Oceanbase410

### What happened?

When importing the file, the id is undefined and does not exist.

### What did you expect to happen?

Execute normally

### How can we reproduce it (as minimally and precisely as possible)?

1. Import the file in the import panel, uncheck Ignore the first line

2. Select the corresponding library and table

3. Select next

4. Go back to the previous step and check Ignore first line

5. Click again

### Anything else we need to know?

_No response_

### Cloud

_No response_ | 1.0 | [Bug]: When importing the file, the id is undefined and does not exist. - ### ODC version

odc421

### OB version

Oceanbase410

### What happened?

When importing the file, the id is undefined and does not exist.

### What did you expect to happen?

Execute normally

### How can we reproduce it (as minimally and precisely as possible)?

1. Import the file in the import panel, uncheck Ignore the first line

2. Select the corresponding library and table

3. Select next

4. Go back to the previous step and check Ignore first line

5. Click again

### Anything else we need to know?

_No response_

### Cloud

_No response_ | non_main | when importing the file the id is undefined and does not exist odc version ob version what happened when importing the file the id is undefined and does not exist what did you expect to happen execute normally how can we reproduce it as minimally and precisely as possible import the file in the import panel uncheck ignore the first line select the corresponding library and table select next go back to the previous step and check ignore first line click again anything else we need to know no response cloud no response | 0 |

4,654 | 24,096,902,233 | IssuesEvent | 2022-09-19 19:37:04 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Running sam sync with esbuild does not include dependencies | stage/bug-repro maintainer/need-followup | ### Description:

Running sam sync with esbuild does not include the dependencies.

Running sam deploy works.

### Steps to reproduce:

1. Include a dependency:

```json

{

"name": "hello_world",

"main": "app.js",

"dependencies": {

"uuidv4": "^6.2.12"

},

...

```

2. Include it in your app.ts

```ts

import type { APIGatewayProxyHandler } from 'aws-lambda';

import { uuid } from 'uuidv4';

export const lambdaHandler: APIGatewayProxyHandler = async (event) => {

try {

console.log('event', event);

return {

body: JSON.stringify({

message: 'hello ' + uuid() + process.env.FLAVOR,

}),

statusCode: 200,

};

} catch (err) {

console.log(err);

return {

body: JSON.stringify({

message: 'some error happened',

}),

statusCode: 500,

};

}

};

```

3. Use esbuild as the BuildMethod:

```yaml

HelloWorldFunction:

Type: AWS::Serverless::Function # More info about Function Resource: https://github.com/awslabs/serverless-application-model/blob/master/versions/2016-10-31.md#awsserverlessfunction

Properties:

CodeUri: hello/

Handler: app.lambdaHandler

Runtime: nodejs14.x

Architectures:

- x86_64

Environment:

Variables:

FLAVOR: !Ref FLAVOR

Events:

HelloWorld:

Type: Api # More info about API Event Source: https://github.com/awslabs/serverless-application-model/blob/master/versions/2016-10-31.md#api

Properties:

Path: /hello

Method: get

Metadata: # Manage esbuild properties

BuildMethod: esbuild

BuildProperties:

Minify: true

Target: 'es2020'

Sourcemap: true

EntryPoints:

- app.ts

```

4. Run:

```console

sam sync --stack-name ... --watch

```

### Observed result:

There is no error in the terminal:

```console

2022-03-03 16:05:33 - Waiting for stack create/update to complete

CloudFormation events from stack operations

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

ResourceStatus ResourceType LogicalResourceId ResourceStatusReason

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

UPDATE_IN_PROGRESS AWS::CloudFormation::Stack test-app Transformation succeeded

CREATE_IN_PROGRESS AWS::CloudFormation::Stack AwsSamAutoDependencyLayerNestedStack -

CREATE_IN_PROGRESS AWS::CloudFormation::Stack AwsSamAutoDependencyLayerNestedStack Resource creation Initiated

CREATE_COMPLETE AWS::CloudFormation::Stack AwsSamAutoDependencyLayerNestedStack -

UPDATE_IN_PROGRESS AWS::Lambda::Function HelloWorldFunction -

UPDATE_COMPLETE AWS::Lambda::Function HelloWorldFunction -

UPDATE_COMPLETE AWS::CloudFormation::Stack test-app -

UPDATE_COMPLETE_CLEANUP_IN_PROGRESS AWS::CloudFormation::Stack test-app -

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

CloudFormation outputs from deployed stack

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Outputs

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Key HelloWorldFunctionIamRole

Description Implicit IAM Role created for Hello World function

Value arn:aws:iam::102512246328:role/test-app-HelloWorldFunctionRole-IFB6LCDCZFB8

Key HelloWorldApi

Description API Gateway endpoint URL for Prod stage for Hello World function

Value https://xfi7je9i27.execute-api.eu-north-1.amazonaws.com/Prod/hello/

Key HelloWorldFunction

Description Hello World Lambda Function ARN

Value arn:aws:lambda:eu-north-1:102512246328:function:test-app-HelloWorldFunction-mJ9kipF6iwEi

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Stack update succeeded. Sync infra completed.

{'StackId': 'arn:aws:cloudformation:eu-north-1:102512246328:stack/test-app/0c378cc0-9960-11ec-9d59-0e1e6bf4f0ce', 'ResponseMetadata': {'RequestId': '041daa8a-5770-4086-b8d2-3ddf51d2c7f9', 'HTTPStatusCode': 200, 'HTTPHeaders': {'x-amzn-requestid': '041daa8a-5770-4086-b8d2-3ddf51d2c7f9', 'content-type': 'text/xml', 'content-length': '379', 'date': 'Thu, 03 Mar 2022 15:05:29 GMT'}, 'RetryAttempts': 0}}

Infra sync completed.

```

but in cloud watch i get this:

```json

{

"errorType": "Runtime.ImportModuleError",

"errorMessage": "Error: Cannot find module 'uuidv4'\nRequire stack:\n- /var/task/app.js\n- /var/runtime/UserFunction.js\n- /var/runtime/index.js",

"stack": [

"Runtime.ImportModuleError: Error: Cannot find module 'uuidv4'",

"Require stack:",

"- /var/task/app.js",

"- /var/runtime/UserFunction.js",

"- /var/runtime/index.js",

" at _loadUserApp (/var/runtime/UserFunction.js:202:13)",

" at Object.module.exports.load (/var/runtime/UserFunction.js:242:17)",

" at Object.<anonymous> (/var/runtime/index.js:43:30)",

" at Module._compile (internal/modules/cjs/loader.js:1085:14)",

" at Object.Module._extensions..js (internal/modules/cjs/loader.js:1114:10)",

" at Module.load (internal/modules/cjs/loader.js:950:32)",

" at Function.Module._load (internal/modules/cjs/loader.js:790:12)",

" at Function.executeUserEntryPoint [as runMain] (internal/modules/run_main.js:76:12)",

" at internal/main/run_main_module.js:17:47"

]

}

```

### Expected result:

Have the lambda function not crashing when using sam sync.

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Windows x64

3. `sam --version`: 1.40.1

6. AWS region: eu-north-1 | True | Running sam sync with esbuild does not include dependencies - ### Description:

Running sam sync with esbuild does not include the dependencies.

Running sam deploy works.

### Steps to reproduce:

1. Include a dependency:

```json

{

"name": "hello_world",

"main": "app.js",

"dependencies": {

"uuidv4": "^6.2.12"

},

...

```

2. Include it in your app.ts

```ts

import type { APIGatewayProxyHandler } from 'aws-lambda';

import { uuid } from 'uuidv4';

export const lambdaHandler: APIGatewayProxyHandler = async (event) => {

try {

console.log('event', event);

return {

body: JSON.stringify({

message: 'hello ' + uuid() + process.env.FLAVOR,

}),

statusCode: 200,

};

} catch (err) {

console.log(err);

return {

body: JSON.stringify({

message: 'some error happened',

}),

statusCode: 500,

};

}

};

```

3. Use esbuild as the BuildMethod:

```yaml

HelloWorldFunction:

Type: AWS::Serverless::Function # More info about Function Resource: https://github.com/awslabs/serverless-application-model/blob/master/versions/2016-10-31.md#awsserverlessfunction

Properties:

CodeUri: hello/

Handler: app.lambdaHandler

Runtime: nodejs14.x

Architectures:

- x86_64

Environment:

Variables:

FLAVOR: !Ref FLAVOR

Events:

HelloWorld:

Type: Api # More info about API Event Source: https://github.com/awslabs/serverless-application-model/blob/master/versions/2016-10-31.md#api

Properties:

Path: /hello

Method: get

Metadata: # Manage esbuild properties

BuildMethod: esbuild

BuildProperties:

Minify: true

Target: 'es2020'

Sourcemap: true

EntryPoints:

- app.ts

```

4. Run:

```console

sam sync --stack-name ... --watch

```

### Observed result:

There is no error in the terminal:

```console

2022-03-03 16:05:33 - Waiting for stack create/update to complete

CloudFormation events from stack operations

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

ResourceStatus ResourceType LogicalResourceId ResourceStatusReason

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

UPDATE_IN_PROGRESS AWS::CloudFormation::Stack test-app Transformation succeeded

CREATE_IN_PROGRESS AWS::CloudFormation::Stack AwsSamAutoDependencyLayerNestedStack -

CREATE_IN_PROGRESS AWS::CloudFormation::Stack AwsSamAutoDependencyLayerNestedStack Resource creation Initiated

CREATE_COMPLETE AWS::CloudFormation::Stack AwsSamAutoDependencyLayerNestedStack -

UPDATE_IN_PROGRESS AWS::Lambda::Function HelloWorldFunction -

UPDATE_COMPLETE AWS::Lambda::Function HelloWorldFunction -

UPDATE_COMPLETE AWS::CloudFormation::Stack test-app -

UPDATE_COMPLETE_CLEANUP_IN_PROGRESS AWS::CloudFormation::Stack test-app -

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

CloudFormation outputs from deployed stack

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Outputs

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Key HelloWorldFunctionIamRole

Description Implicit IAM Role created for Hello World function

Value arn:aws:iam::102512246328:role/test-app-HelloWorldFunctionRole-IFB6LCDCZFB8

Key HelloWorldApi

Description API Gateway endpoint URL for Prod stage for Hello World function

Value https://xfi7je9i27.execute-api.eu-north-1.amazonaws.com/Prod/hello/

Key HelloWorldFunction

Description Hello World Lambda Function ARN

Value arn:aws:lambda:eu-north-1:102512246328:function:test-app-HelloWorldFunction-mJ9kipF6iwEi

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Stack update succeeded. Sync infra completed.

{'StackId': 'arn:aws:cloudformation:eu-north-1:102512246328:stack/test-app/0c378cc0-9960-11ec-9d59-0e1e6bf4f0ce', 'ResponseMetadata': {'RequestId': '041daa8a-5770-4086-b8d2-3ddf51d2c7f9', 'HTTPStatusCode': 200, 'HTTPHeaders': {'x-amzn-requestid': '041daa8a-5770-4086-b8d2-3ddf51d2c7f9', 'content-type': 'text/xml', 'content-length': '379', 'date': 'Thu, 03 Mar 2022 15:05:29 GMT'}, 'RetryAttempts': 0}}

Infra sync completed.

```

but in cloud watch i get this:

```json

{

"errorType": "Runtime.ImportModuleError",

"errorMessage": "Error: Cannot find module 'uuidv4'\nRequire stack:\n- /var/task/app.js\n- /var/runtime/UserFunction.js\n- /var/runtime/index.js",

"stack": [

"Runtime.ImportModuleError: Error: Cannot find module 'uuidv4'",

"Require stack:",

"- /var/task/app.js",

"- /var/runtime/UserFunction.js",

"- /var/runtime/index.js",

" at _loadUserApp (/var/runtime/UserFunction.js:202:13)",

" at Object.module.exports.load (/var/runtime/UserFunction.js:242:17)",

" at Object.<anonymous> (/var/runtime/index.js:43:30)",

" at Module._compile (internal/modules/cjs/loader.js:1085:14)",

" at Object.Module._extensions..js (internal/modules/cjs/loader.js:1114:10)",

" at Module.load (internal/modules/cjs/loader.js:950:32)",

" at Function.Module._load (internal/modules/cjs/loader.js:790:12)",

" at Function.executeUserEntryPoint [as runMain] (internal/modules/run_main.js:76:12)",

" at internal/main/run_main_module.js:17:47"

]

}

```

### Expected result:

Have the lambda function not crashing when using sam sync.

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Windows x64

3. `sam --version`: 1.40.1

6. AWS region: eu-north-1 | main | running sam sync with esbuild does not include dependencies description running sam sync with esbuild does not include the dependencies running sam deploy works steps to reproduce include a dependency json name hello world main app js dependencies include it in your app ts ts import type apigatewayproxyhandler from aws lambda import uuid from export const lambdahandler apigatewayproxyhandler async event try console log event event return body json stringify message hello uuid process env flavor statuscode catch err console log err return body json stringify message some error happened statuscode use esbuild as the buildmethod yaml helloworldfunction type aws serverless function more info about function resource properties codeuri hello handler app lambdahandler runtime x architectures environment variables flavor ref flavor events helloworld type api more info about api event source properties path hello method get metadata manage esbuild properties buildmethod esbuild buildproperties minify true target sourcemap true entrypoints app ts run console sam sync stack name watch observed result there is no error in the terminal console waiting for stack create update to complete cloudformation events from stack operations resourcestatus resourcetype logicalresourceid resourcestatusreason update in progress aws cloudformation stack test app transformation succeeded create in progress aws cloudformation stack awssamautodependencylayernestedstack create in progress aws cloudformation stack awssamautodependencylayernestedstack resource creation initiated create complete aws cloudformation stack awssamautodependencylayernestedstack update in progress aws lambda function helloworldfunction update complete aws lambda function helloworldfunction update complete aws cloudformation stack test app update complete cleanup in progress aws cloudformation stack test app cloudformation outputs from deployed stack outputs key helloworldfunctioniamrole description implicit iam role created for hello world function value arn aws iam role test app helloworldfunctionrole key helloworldapi description api gateway endpoint url for prod stage for hello world function value key helloworldfunction description hello world lambda function arn value arn aws lambda eu north function test app helloworldfunction stack update succeeded sync infra completed stackid arn aws cloudformation eu north stack test app responsemetadata requestid httpstatuscode httpheaders x amzn requestid content type text xml content length date thu mar gmt retryattempts infra sync completed but in cloud watch i get this json errortype runtime importmoduleerror errormessage error cannot find module nrequire stack n var task app js n var runtime userfunction js n var runtime index js stack runtime importmoduleerror error cannot find module require stack var task app js var runtime userfunction js var runtime index js at loaduserapp var runtime userfunction js at object module exports load var runtime userfunction js at object var runtime index js at module compile internal modules cjs loader js at object module extensions js internal modules cjs loader js at module load internal modules cjs loader js at function module load internal modules cjs loader js at function executeuserentrypoint internal modules run main js at internal main run main module js expected result have the lambda function not crashing when using sam sync additional environment details ex windows mac amazon linux etc os windows sam version aws region eu north | 1 |

4,083 | 19,285,831,764 | IssuesEvent | 2021-12-11 00:45:43 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Multiple queryStringParameters ignored in sam local start-api for HTTP APIs | area/local/start-api stage/needs-investigation stage/bug-repro maintainer/need-followup | <!-- Make sure we don't have an existing Issue that reports the bug you are seeing (both open and closed).

If you do find an existing Issue, re-open or add a comment to that Issue instead of creating a new one. -->

### Description:

<!-- Briefly describe the bug you are facing.-->

I have a simple lambda function which takes multiple query string parameters, however only the last query string parameter is kept.

### Steps to reproduce:

<!-- Provide detailed steps to replicate the bug, including steps from third party tools (CDK, etc.) -->

I have defined a function as follows:

```yaml

TestFunction:

Type: AWS::Serverless::Function

Properties:

CodeUri: rest_api/test/

Events:

GetTest:

Type: HttpApi

Properties:

Path: /api/test

Method: get

```

Then run the application with `sam local start-api --debug`

Then sent a query to it using postman as a GET request: http://localhost:3000/api/test?test_ids=1&test_ids=2

### Observed result:

<!-- Please provide command output with `--debug` flag set. -->

The event output for the function is the following

```json

Constructed String representation of Event Version 2.0 to invoke Lambda. Event:

{

"version": "2.0",

"routeKey": "GET /api/test",

"rawPath": "/api/test",

"rawQueryString": "test_ids=1&test_ids=2",

"cookies": [],

"headers": {

"Content-Type": "application/json",

"User-Agent": "PostmanRuntime/7.28.3",

"Accept": "*/*",

"Postman-Token": "cb0df40e-920d-4f8d-bbf8-9381f9ee5e45",

"Host": "localhost:3000",

"Accept-Encoding": "gzip, deflate, br",

"Connection": "keep-alive",

"Content-Length": "21",

"X-Forwarded-Proto": "http",

"X-Forwarded-Port": "3000"

},

"queryStringParameters": {

"test_ids": "2"

},

"requestContext": {

"accountId": "123456789012",

"apiId": "1234567890",

"http": {

"method": "GET",

"path": "/api/test",

"protocol": "HTTP/1.1",

"sourceIp": "127.0.0.1",

"userAgent": "Custom User Agent String"

},

"requestId": "46b22e74-8e33-4f90-8f5c-3af887356c8e",

"routeKey": "GET /api/test",

"stage": null

},

"body": "",

"pathParameters": {},

"stageVariables": null,

"isBase64Encoded": false

}

```

Critically:

```json

"queryStringParameters": {

"test_ids": "2"

},

```

### Expected result:

<!-- Describe what you expected. -->

I expected the following parsing for the queryStringParameters, as per the documentation

```json

"queryStringParameters": {

"test_ids": "1,2"

},

```

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Windows 10

2. `sam --version`: 1.29.0

3. AWS region: ap-southeast-2

`Add --debug flag to command you are running`

| True | Multiple queryStringParameters ignored in sam local start-api for HTTP APIs - <!-- Make sure we don't have an existing Issue that reports the bug you are seeing (both open and closed).

If you do find an existing Issue, re-open or add a comment to that Issue instead of creating a new one. -->

### Description:

<!-- Briefly describe the bug you are facing.-->

I have a simple lambda function which takes multiple query string parameters, however only the last query string parameter is kept.

### Steps to reproduce:

<!-- Provide detailed steps to replicate the bug, including steps from third party tools (CDK, etc.) -->

I have defined a function as follows:

```yaml

TestFunction:

Type: AWS::Serverless::Function

Properties:

CodeUri: rest_api/test/

Events:

GetTest:

Type: HttpApi

Properties:

Path: /api/test

Method: get

```

Then run the application with `sam local start-api --debug`

Then sent a query to it using postman as a GET request: http://localhost:3000/api/test?test_ids=1&test_ids=2

### Observed result:

<!-- Please provide command output with `--debug` flag set. -->

The event output for the function is the following

```json

Constructed String representation of Event Version 2.0 to invoke Lambda. Event:

{

"version": "2.0",

"routeKey": "GET /api/test",

"rawPath": "/api/test",

"rawQueryString": "test_ids=1&test_ids=2",

"cookies": [],

"headers": {

"Content-Type": "application/json",

"User-Agent": "PostmanRuntime/7.28.3",

"Accept": "*/*",

"Postman-Token": "cb0df40e-920d-4f8d-bbf8-9381f9ee5e45",

"Host": "localhost:3000",

"Accept-Encoding": "gzip, deflate, br",

"Connection": "keep-alive",

"Content-Length": "21",

"X-Forwarded-Proto": "http",

"X-Forwarded-Port": "3000"

},

"queryStringParameters": {

"test_ids": "2"

},

"requestContext": {

"accountId": "123456789012",

"apiId": "1234567890",

"http": {

"method": "GET",

"path": "/api/test",

"protocol": "HTTP/1.1",

"sourceIp": "127.0.0.1",

"userAgent": "Custom User Agent String"

},

"requestId": "46b22e74-8e33-4f90-8f5c-3af887356c8e",

"routeKey": "GET /api/test",

"stage": null

},

"body": "",

"pathParameters": {},

"stageVariables": null,

"isBase64Encoded": false

}

```

Critically:

```json

"queryStringParameters": {

"test_ids": "2"

},

```

### Expected result:

<!-- Describe what you expected. -->

I expected the following parsing for the queryStringParameters, as per the documentation

```json

"queryStringParameters": {

"test_ids": "1,2"

},

```

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Windows 10

2. `sam --version`: 1.29.0

3. AWS region: ap-southeast-2

`Add --debug flag to command you are running`

| main | multiple querystringparameters ignored in sam local start api for http apis make sure we don t have an existing issue that reports the bug you are seeing both open and closed if you do find an existing issue re open or add a comment to that issue instead of creating a new one description i have a simple lambda function which takes multiple query string parameters however only the last query string parameter is kept steps to reproduce i have defined a function as follows yaml testfunction type aws serverless function properties codeuri rest api test events gettest type httpapi properties path api test method get then run the application with sam local start api debug then sent a query to it using postman as a get request observed result the event output for the function is the following json constructed string representation of event version to invoke lambda event version routekey get api test rawpath api test rawquerystring test ids test ids cookies headers content type application json user agent postmanruntime accept postman token host localhost accept encoding gzip deflate br connection keep alive content length x forwarded proto http x forwarded port querystringparameters test ids requestcontext accountid apiid http method get path api test protocol http sourceip useragent custom user agent string requestid routekey get api test stage null body pathparameters stagevariables null false critically json querystringparameters test ids expected result i expected the following parsing for the querystringparameters as per the documentation json querystringparameters test ids additional environment details ex windows mac amazon linux etc os windows sam version aws region ap southeast add debug flag to command you are running | 1 |

41,914 | 6,953,504,736 | IssuesEvent | 2017-12-06 21:16:54 | prometheus/prometheus | https://api.github.com/repos/prometheus/prometheus | closed | Integrations link broken | component/documentation kind/bug low hanging fruit | On the Prometheus configuration page in the file discovery section:

https://prometheus.io/docs/prometheus/latest/configuration/configuration/#file_sd_config

There's a link to integrations that resolves to: