Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

60,390 | 14,792,286,649 | IssuesEvent | 2021-01-12 14:34:42 | DaemonEngine/Daemon | https://api.github.com/repos/DaemonEngine/Daemon | closed | Various loud CMake warnings when compiling. | A-Build T-Enhancement | Compiling Unvanquished with a recent CMake version (3.19) produces a lot of warnings about behavior being deprecated soon. I'd like to take a look at this to make the build nicer on my machine. Assigning to myself as I'll make a PR for this shortly. | 1.0 | Various loud CMake warnings when compiling. - Compiling Unvanquished with a recent CMake version (3.19) produces a lot of warnings about behavior being deprecated soon. I'd like to take a look at this to make the build nicer on my machine. Assigning to myself as I'll make a PR for this shortly. | non_main | various loud cmake warnings when compiling compiling unvanquished with a recent cmake version produces a lot of warnings about behavior being deprecated soon i d like to take a look at this to make the build nicer on my machine assigning to myself as i ll make a pr for this shortly | 0 |

3,027 | 11,201,203,024 | IssuesEvent | 2020-01-04 01:19:53 | amyjko/faculty | https://api.github.com/repos/amyjko/faculty | closed | Move images in to sub-directories | maintainability | The image directory is getting unruly. Move blog post images into a dedicated directory, as well as other image types. | True | Move images in to sub-directories - The image directory is getting unruly. Move blog post images into a dedicated directory, as well as other image types. | main | move images in to sub directories the image directory is getting unruly move blog post images into a dedicated directory as well as other image types | 1 |

3,054 | 11,440,030,367 | IssuesEvent | 2020-02-05 08:49:02 | precice/precice | https://api.github.com/repos/precice/precice | opened | Interleaved Output of Assertions | good first issue maintainability | # Problem description

Hitting an assertion on all ranks results in chunk-wise interleaved output.

These chunks cannot be associated with the rank.

# Solution

Buffer the entire resulting assertion text and output it in one go.

# Relevant Information

https://github.com/precice/precice/blob/develop/src/utils/assertion.hpp

| True | Interleaved Output of Assertions - # Problem description

Hitting an assertion on all ranks results in chunk-wise interleaved output.

These chunks cannot be associated with the rank.

# Solution

Buffer the entire resulting assertion text and output it in one go.

# Relevant Information

https://github.com/precice/precice/blob/develop/src/utils/assertion.hpp

| main | interleaved output of assertions problem description hitting an assertion on all ranks results in chunk wise interleaved output these chunks cannot be associated with the rank solution buffer the entire resulting assertion text and output it in one go relevant information | 1 |

465,559 | 13,388,035,802 | IssuesEvent | 2020-09-02 16:48:13 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | binder: version of Drake binary is not guaranteed to be the latest | component: jupyter priority: medium team: kitware | I posted in the issue linked below today, and opened up a new Binder session. I noticed that I got an old version of `drake`:

https://github.com/RobotLocomotion/drake/issues/11457#issuecomment-622985019

> Version info (from notebook):

> ```

> In [3]: !cat /opt/drake/share/doc/drake/VERSION.TXT

> Out [3]: 20200420074541 b02ff6e7b75d1a9a3ae7d4cf21fe76fa558cff74

> ```

FTR, my session URL was: (not that you'll be able to visit it?)

`https://notebooks.gesis.org/binder/jupyter/user/robotlocomotion-drake-2q29xfvv/tree/tutorials`

When posting this issue, I wanted to make sure I could do this from scratch, so I opened another new Binder session:

- Visit: https://mybinder.org/v2/gh/RobotLocomotion/drake/nightly-release?filepath=tutorials

- Get a newly provisioned session

- Execute `!cat /opt/drake/share/doc/drake/VERSION.TXT` in a notebook.

However, when I opened a new session, I got a different version:

```

In [1]: !cat /opt/drake/share/doc/drake/VERSION.TXT

Out [1]: 20200430074549 26c38c92d871567f333eb8ece2677bcfc4ab165f

```

FTR, this new session URL was:

`https://hub.gke.mybinder.org/user/robotlocomotion-drake-zp1zbpov/tree/tutorials`

I think you predicted something like this might happen due to our usage of a fixed branch name. My guess is it's a combination of optimization of server-side w/ JupyterHub's side, possibly coupled with user's local browser cache.

Note that the URLs come from different hosts entirely, `notebooks.gesis.org` vs. `hub.gke.mybinder.org`.

Is there a possible (easy) mitigation?

Perhaps advice to users, like Ctrl+Shift+R (fully refresh cache) when visiting the Binder provisioning page? https://mybinder.org/v2/gh/RobotLocomotion/drake/nightly-release?filepath=tutorials

EDIT: Mentioned in Drake Slack, `#python` channel:

https://drakedevelopers.slack.com/archives/C2CK4CWE7/p1588440496027800 | 1.0 | binder: version of Drake binary is not guaranteed to be the latest - I posted in the issue linked below today, and opened up a new Binder session. I noticed that I got an old version of `drake`:

https://github.com/RobotLocomotion/drake/issues/11457#issuecomment-622985019

> Version info (from notebook):

> ```

> In [3]: !cat /opt/drake/share/doc/drake/VERSION.TXT

> Out [3]: 20200420074541 b02ff6e7b75d1a9a3ae7d4cf21fe76fa558cff74

> ```

FTR, my session URL was: (not that you'll be able to visit it?)

`https://notebooks.gesis.org/binder/jupyter/user/robotlocomotion-drake-2q29xfvv/tree/tutorials`

When posting this issue, I wanted to make sure I could do this from scratch, so I opened another new Binder session:

- Visit: https://mybinder.org/v2/gh/RobotLocomotion/drake/nightly-release?filepath=tutorials

- Get a newly provisioned session

- Execute `!cat /opt/drake/share/doc/drake/VERSION.TXT` in a notebook.

However, when I opened a new session, I got a different version:

```

In [1]: !cat /opt/drake/share/doc/drake/VERSION.TXT

Out [1]: 20200430074549 26c38c92d871567f333eb8ece2677bcfc4ab165f

```

FTR, this new session URL was:

`https://hub.gke.mybinder.org/user/robotlocomotion-drake-zp1zbpov/tree/tutorials`

I think you predicted something like this might happen due to our usage of a fixed branch name. My guess is it's a combination of optimization of server-side w/ JupyterHub's side, possibly coupled with user's local browser cache.

Note that the URLs come from different hosts entirely, `notebooks.gesis.org` vs. `hub.gke.mybinder.org`.

Is there a possible (easy) mitigation?

Perhaps advice to users, like Ctrl+Shift+R (fully refresh cache) when visiting the Binder provisioning page? https://mybinder.org/v2/gh/RobotLocomotion/drake/nightly-release?filepath=tutorials

EDIT: Mentioned in Drake Slack, `#python` channel:

https://drakedevelopers.slack.com/archives/C2CK4CWE7/p1588440496027800 | non_main | binder version of drake binary is not guaranteed to be the latest i posted in the issue linked below today and opened up a new binder session i noticed that i got an old version of drake version info from notebook in cat opt drake share doc drake version txt out ftr my session url was not that you ll be able to visit it when posting this issue i wanted to make sure i could do this from scratch so i opened another new binder session visit get a newly provisioned session execute cat opt drake share doc drake version txt in a notebook however when i opened a new session i got a different version in cat opt drake share doc drake version txt out ftr this new session url was i think you predicted something like this might happen due to our usage of a fixed branch name my guess is it s a combination of optimization of server side w jupyterhub s side possibly coupled with user s local browser cache note that the urls come from different hosts entirely notebooks gesis org vs hub gke mybinder org is there a possible easy mitigation perhaps advice to users like ctrl shift r fully refresh cache when visiting the binder provisioning page edit mentioned in drake slack python channel | 0 |

1,139 | 4,998,879,085 | IssuesEvent | 2016-12-09 21:20:09 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | ec2: group_id doesn't seem to accept a list of security groups as documented | affects_2.1 aws bug_report cloud waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

ec2

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.1.1.0

config file =

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

##### SUMMARY

Ansible doesn't accept a list of security groups when launching an instance, while it's [documented](https://docs.ansible.com/ansible/ec2_module.html) that it does: "security group id (or list of ids) to use with the instance"

##### STEPS TO REPRODUCE

Execute the following task:

<!--- Paste example playbooks or commands between quotes below -->

```

- name: "Launch proxy instance: {{ owner }}_i_{{ env }}_dmz_2"

ec2:

region: "{{ region }}"

image: "{{ ami_id }}"

count_tag:

Name: "{{ owner }}_i_{{ env }}_dmz_2"

exact_count: 1

#wait: yes

instance_type: "t2.micro"

key_name: "{{ ssh_key_name}}"

# TODO

group_id:

- "{{ preprod_sg_ssh.group_id }}"

- "{{ preprod_sg_proxy.group_id }}"

vpc_subnet_id: "{{ preprod_subnet_dmz_2 }}"

zone: "{{ az2 }}"

instance_tags:

Name: "{{ owner }}_i_{{ env }}_dmz_2"

Env: "{{ owner }}_{{ env }}"

Tier: "{{ owner }}_{{ env }}_dmz"

register: preprod_i_dmz_2 # preprod_i_dmz_2.tagged_instances[0].id

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

Expected that the two SGs specified would be assigned to the instance.

##### ACTUAL RESULTS

None of the two SGs were assigned to the instance. The instance had the default SG assigned.

| True | ec2: group_id doesn't seem to accept a list of security groups as documented - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

ec2

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.1.1.0

config file =

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

##### SUMMARY

Ansible doesn't accept a list of security groups when launching an instance, while it's [documented](https://docs.ansible.com/ansible/ec2_module.html) that it does: "security group id (or list of ids) to use with the instance"

##### STEPS TO REPRODUCE

Execute the following task:

<!--- Paste example playbooks or commands between quotes below -->

```

- name: "Launch proxy instance: {{ owner }}_i_{{ env }}_dmz_2"

ec2:

region: "{{ region }}"

image: "{{ ami_id }}"

count_tag:

Name: "{{ owner }}_i_{{ env }}_dmz_2"

exact_count: 1

#wait: yes

instance_type: "t2.micro"

key_name: "{{ ssh_key_name}}"

# TODO

group_id:

- "{{ preprod_sg_ssh.group_id }}"

- "{{ preprod_sg_proxy.group_id }}"

vpc_subnet_id: "{{ preprod_subnet_dmz_2 }}"

zone: "{{ az2 }}"

instance_tags:

Name: "{{ owner }}_i_{{ env }}_dmz_2"

Env: "{{ owner }}_{{ env }}"

Tier: "{{ owner }}_{{ env }}_dmz"

register: preprod_i_dmz_2 # preprod_i_dmz_2.tagged_instances[0].id

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

Expected that the two SGs specified would be assigned to the instance.

##### ACTUAL RESULTS

None of the two SGs were assigned to the instance. The instance had the default SG assigned.

| main | group id doesn t seem to accept a list of security groups as documented issue type bug report component name ansible version ansible config file configured module search path default w o overrides configuration mention any settings you have changed added removed in ansible cfg or using the ansible environment variables os environment mention the os you are running ansible from and the os you are managing or say “n a” for anything that is not platform specific summary ansible doesn t accept a list of security groups when launching an instance while it s that it does security group id or list of ids to use with the instance steps to reproduce execute the following task name launch proxy instance owner i env dmz region region image ami id count tag name owner i env dmz exact count wait yes instance type micro key name ssh key name todo group id preprod sg ssh group id preprod sg proxy group id vpc subnet id preprod subnet dmz zone instance tags name owner i env dmz env owner env tier owner env dmz register preprod i dmz preprod i dmz tagged instances id expected results expected that the two sgs specified would be assigned to the instance actual results none of the two sgs were assigned to the instance the instance had the default sg assigned | 1 |

9,964 | 3,985,093,696 | IssuesEvent | 2016-05-07 17:02:28 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | Guest access vanishes guest articles from the articlemanager for everyone except superusers | No Code Attached Yet | #### Steps to reproduce the issue

Inside Admin select Usermanager - Options, set Guest User Group to Guest, save&close.

Select Content/Article Manager, select Categories, and create category test with access level Guest.

Select Articles and create a new article called test, with access level guest. Click on save: now you see it.

Click on save&close: now you don’t see it anymore, and forever it wil be absent from the article manager lists.

So: you made an article, and after save&close you will no more see it in the articlemanger.

On the frontend meanwhile: you will see the article when it is published. However: it will disappear when you login to try and edit it!

#### Expected result

Inside the article manager you can see all articles that you have edit and delete rights for.

So: you made an article and would like to edit or delete it: these rights you have.

#### Actual result

You have edited and delete access to an article but because the article disappears from view after logging in. which you need to do to gain access to the backend of the site, you can no more edit it or delete it.

The current guest setting is to radical: it is intended on the frontend of the site but it also governs inside the admin of the website.

There is a difference between the intended behavior of an article with guest access on the frontend versus the same article on the backend.

The guest account setting inside the Options inside the Usermanager are intended for the frontend only!.They however also rule the behavior inside the admin of the website. There this behavior is not intended.

Solution: to differentiate between those two 'states'.

#### System information (as much as possible)

Standard joomla site, 3.3.6.

#### Additional comments

This seems to me a bug. On the other hand it is completely logical.

When the backend will be more integrated inside the frontend, there are perhaps more situations where the backend-logic and the frontend logic clash.

For now the only solution is to use the superuser access: there is an overruling mechanism that is triggered by this access-level. This solution is not preferred in situations where editors are not to be given ’absolute control’ of the entire website.

| 1.0 | Guest access vanishes guest articles from the articlemanager for everyone except superusers - #### Steps to reproduce the issue

Inside Admin select Usermanager - Options, set Guest User Group to Guest, save&close.

Select Content/Article Manager, select Categories, and create category test with access level Guest.

Select Articles and create a new article called test, with access level guest. Click on save: now you see it.

Click on save&close: now you don’t see it anymore, and forever it wil be absent from the article manager lists.

So: you made an article, and after save&close you will no more see it in the articlemanger.

On the frontend meanwhile: you will see the article when it is published. However: it will disappear when you login to try and edit it!

#### Expected result

Inside the article manager you can see all articles that you have edit and delete rights for.

So: you made an article and would like to edit or delete it: these rights you have.

#### Actual result

You have edited and delete access to an article but because the article disappears from view after logging in. which you need to do to gain access to the backend of the site, you can no more edit it or delete it.

The current guest setting is to radical: it is intended on the frontend of the site but it also governs inside the admin of the website.

There is a difference between the intended behavior of an article with guest access on the frontend versus the same article on the backend.

The guest account setting inside the Options inside the Usermanager are intended for the frontend only!.They however also rule the behavior inside the admin of the website. There this behavior is not intended.

Solution: to differentiate between those two 'states'.

#### System information (as much as possible)

Standard joomla site, 3.3.6.

#### Additional comments

This seems to me a bug. On the other hand it is completely logical.

When the backend will be more integrated inside the frontend, there are perhaps more situations where the backend-logic and the frontend logic clash.

For now the only solution is to use the superuser access: there is an overruling mechanism that is triggered by this access-level. This solution is not preferred in situations where editors are not to be given ’absolute control’ of the entire website.

| non_main | guest access vanishes guest articles from the articlemanager for everyone except superusers steps to reproduce the issue inside admin select usermanager options set guest user group to guest save close select content article manager select categories and create category test with access level guest select articles and create a new article called test with access level guest click on save now you see it click on save close now you don’t see it anymore and forever it wil be absent from the article manager lists so you made an article and after save close you will no more see it in the articlemanger on the frontend meanwhile you will see the article when it is published however it will disappear when you login to try and edit it expected result inside the article manager you can see all articles that you have edit and delete rights for so you made an article and would like to edit or delete it these rights you have actual result you have edited and delete access to an article but because the article disappears from view after logging in which you need to do to gain access to the backend of the site you can no more edit it or delete it the current guest setting is to radical it is intended on the frontend of the site but it also governs inside the admin of the website there is a difference between the intended behavior of an article with guest access on the frontend versus the same article on the backend the guest account setting inside the options inside the usermanager are intended for the frontend only they however also rule the behavior inside the admin of the website there this behavior is not intended solution to differentiate between those two states system information as much as possible standard joomla site additional comments this seems to me a bug on the other hand it is completely logical when the backend will be more integrated inside the frontend there are perhaps more situations where the backend logic and the frontend logic clash for now the only solution is to use the superuser access there is an overruling mechanism that is triggered by this access level this solution is not preferred in situations where editors are not to be given ’absolute control’ of the entire website | 0 |

116,924 | 9,888,717,456 | IssuesEvent | 2019-06-25 12:15:51 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | GCE-5000: apiserver - increased cpu usage | kind/failing-test sig/scalability | <!-- Please only use this template for submitting reports about failing tests in Kubernetes CI jobs -->

**Which jobs are failing**:

gce-scale-performance

**Which test(s) are failing**:

density test

**Since when has it been failing**:

Run 1117699728771911683. 2019-04-15 10:03 CEST

**Testgrid link**:

https://testgrid.k8s.io/sig-scalability-gce#gce-scale-performance

**Reason for failure**:

Increased cpu usage of apiserver

**Anything else we need to know**:

The test is not failing, however the cpu usage increased from 10 cores to 25 cores (90th percentile).

| 1.0 | GCE-5000: apiserver - increased cpu usage - <!-- Please only use this template for submitting reports about failing tests in Kubernetes CI jobs -->

**Which jobs are failing**:

gce-scale-performance

**Which test(s) are failing**:

density test

**Since when has it been failing**:

Run 1117699728771911683. 2019-04-15 10:03 CEST

**Testgrid link**:

https://testgrid.k8s.io/sig-scalability-gce#gce-scale-performance

**Reason for failure**:

Increased cpu usage of apiserver

**Anything else we need to know**:

The test is not failing, however the cpu usage increased from 10 cores to 25 cores (90th percentile).

| non_main | gce apiserver increased cpu usage which jobs are failing gce scale performance which test s are failing density test since when has it been failing run cest testgrid link reason for failure increased cpu usage of apiserver anything else we need to know the test is not failing however the cpu usage increased from cores to cores percentile | 0 |

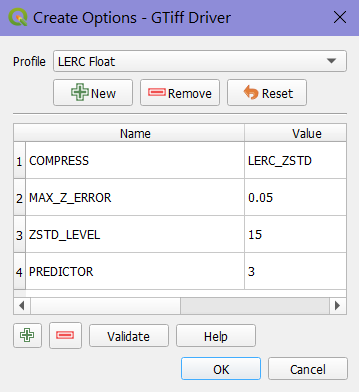

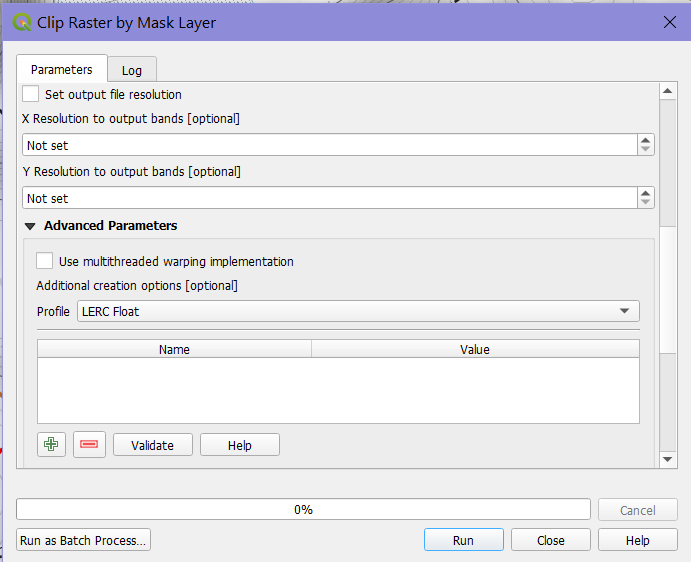

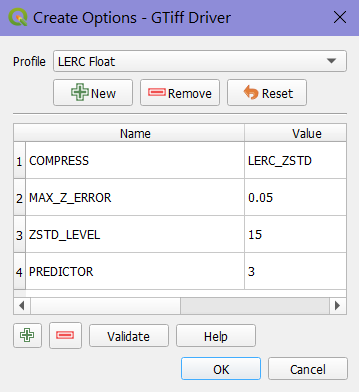

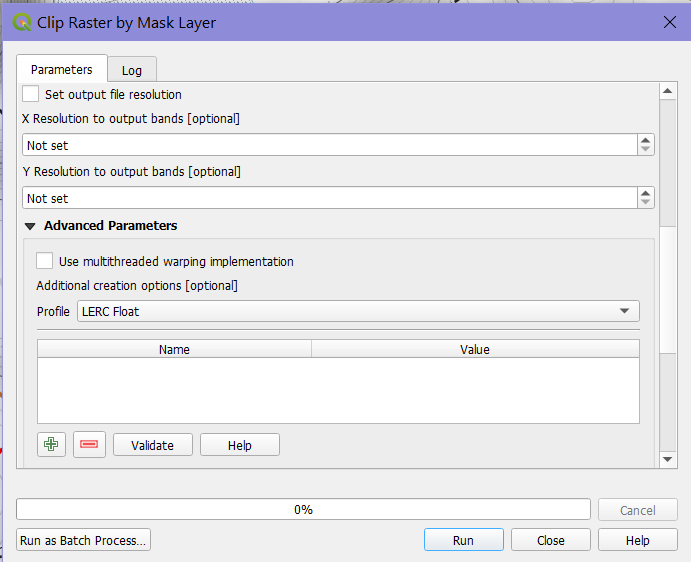

12,759 | 15,115,739,616 | IssuesEvent | 2021-02-09 05:14:47 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | New GTiff create option not offered for QGIS raster processing | Bug Processing | OSGeo4W 3.17.0 version '9fe1c17719' and also 3.16.3

Defined a new GTiff creation option set (via Options / GDAL). It is valid and works fine for e.g. exporting a raster layer from the layer tree.

However, selecting this creation option set in the Advanced Options of a QGIS raster processing algorithm (e.g. Clip Raster by Mask Layer, but also tried Warp) does not populate the parameter list, which stays empty.

Selecting one of the "out of the box" options sets, like JPEG or High Compression, does populate the list and execute properly.

I've tested and the options I'm specifying are consistent with the datatype of the raster being processed, and the options can be manually entered via the + button.

| 1.0 | New GTiff create option not offered for QGIS raster processing - OSGeo4W 3.17.0 version '9fe1c17719' and also 3.16.3

Defined a new GTiff creation option set (via Options / GDAL). It is valid and works fine for e.g. exporting a raster layer from the layer tree.

However, selecting this creation option set in the Advanced Options of a QGIS raster processing algorithm (e.g. Clip Raster by Mask Layer, but also tried Warp) does not populate the parameter list, which stays empty.

Selecting one of the "out of the box" options sets, like JPEG or High Compression, does populate the list and execute properly.

I've tested and the options I'm specifying are consistent with the datatype of the raster being processed, and the options can be manually entered via the + button.

| non_main | new gtiff create option not offered for qgis raster processing version and also defined a new gtiff creation option set via options gdal it is valid and works fine for e g exporting a raster layer from the layer tree however selecting this creation option set in the advanced options of a qgis raster processing algorithm e g clip raster by mask layer but also tried warp does not populate the parameter list which stays empty selecting one of the out of the box options sets like jpeg or high compression does populate the list and execute properly i ve tested and the options i m specifying are consistent with the datatype of the raster being processed and the options can be manually entered via the button | 0 |

929 | 4,642,761,981 | IssuesEvent | 2016-09-30 10:53:14 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | win_feature always returns "Failed to add feature" on Windows Server 2016 | affects_2.1 bug_report waiting_on_maintainer windows | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

* `win_feature`

##### ANSIBLE VERSION

```

ansible 2.1.1.0

config file = SNIPPED/ansible/ansible.cfg

configured module search path = ['library']

```

##### CONFIGURATION

* n/a

##### OS / ENVIRONMENT

* Host: Mac OS X El Capitan 10.11.6

* Target: Windows Server 2016 Datacenter (RTM Build 14393.rs1_release.160915-0644)

##### SUMMARY

`win_feature` module is unable to add features to Windows Server 2016 targets (including trivial use cases such as `Telnet-Client`)

##### STEPS TO REPRODUCE

```

ansible -m win_feature -a 'name=AD-Domain-Services' examplehost

```

(in this case, `examplehost` is a VM running on Virtualbox 5.0.26r108824)

##### EXPECTED RESULTS

```

127.0.0.1 | SUCCESS => {

"changed": true,

"exitcode": "0",

"failed": false,

"feature_result": [],

"invocation": {

"module_name": "win_feature"

},

"msg": "Happy Happy Joy Joy",

"restart_needed": false,

"success": true

}

```

##### ACTUAL RESULTS

```

Using SNIPPED/ansible/ansible.cfg as config file

Loaded callback minimal of type stdout, v2.0

<127.0.0.1> ESTABLISH WINRM CONNECTION FOR USER: vagrant on PORT 55986 TO 127.0.0.1

<127.0.0.1> EXEC Set-StrictMode -Version Latest

(New-Item -Type Directory -Path $env:temp -Name "ansible-tmp-1475181976.06-278942010948362").FullName | Write-Host -Separator '';

<127.0.0.1> PUT "/var/folders/15/b3hfwryj5570qt_r8b9q2jpw0000gn/T/tmpAgxz8u" TO "C:\Users\vagrant\AppData\Local\Temp\ansible-tmp-1475181976.06-278942010948362\win_feature.ps1"

<127.0.0.1> EXEC Set-StrictMode -Version Latest

Try

{

& 'C:\Users\vagrant\AppData\Local\Temp\ansible-tmp-1475181976.06-278942010948362\win_feature.ps1'

}

Catch

{

$_obj = @{ failed = $true }

If ($_.Exception.GetType)

{

$_obj.Add('msg', $_.Exception.Message)

}

Else

{

$_obj.Add('msg', $_.ToString())

}

If ($_.InvocationInfo.PositionMessage)

{

$_obj.Add('exception', $_.InvocationInfo.PositionMessage)

}

ElseIf ($_.ScriptStackTrace)

{

$_obj.Add('exception', $_.ScriptStackTrace)

}

Try

{

$_obj.Add('error_record', ($_ | ConvertTo-Json | ConvertFrom-Json))

}

Catch

{

}

Echo $_obj | ConvertTo-Json -Compress -Depth 99

Exit 1

}

Finally { Remove-Item "C:\Users\vagrant\AppData\Local\Temp\ansible-tmp-1475181976.06-278942010948362" -Force -Recurse -ErrorAction SilentlyContinue }

127.0.0.1 | FAILED! => {

"changed": false,

"exitcode": "Failed",

"failed": true,

"feature_result": [],

"invocation": {

"module_name": "win_feature"

},

"msg": "Failed to add feature",

"restart_needed": false,

"success": false

}

``` | True | win_feature always returns "Failed to add feature" on Windows Server 2016 - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

* `win_feature`

##### ANSIBLE VERSION

```

ansible 2.1.1.0

config file = SNIPPED/ansible/ansible.cfg

configured module search path = ['library']

```

##### CONFIGURATION

* n/a

##### OS / ENVIRONMENT

* Host: Mac OS X El Capitan 10.11.6

* Target: Windows Server 2016 Datacenter (RTM Build 14393.rs1_release.160915-0644)

##### SUMMARY

`win_feature` module is unable to add features to Windows Server 2016 targets (including trivial use cases such as `Telnet-Client`)

##### STEPS TO REPRODUCE

```

ansible -m win_feature -a 'name=AD-Domain-Services' examplehost

```

(in this case, `examplehost` is a VM running on Virtualbox 5.0.26r108824)

##### EXPECTED RESULTS

```

127.0.0.1 | SUCCESS => {

"changed": true,

"exitcode": "0",

"failed": false,

"feature_result": [],

"invocation": {

"module_name": "win_feature"

},

"msg": "Happy Happy Joy Joy",

"restart_needed": false,

"success": true

}

```

##### ACTUAL RESULTS

```

Using SNIPPED/ansible/ansible.cfg as config file

Loaded callback minimal of type stdout, v2.0

<127.0.0.1> ESTABLISH WINRM CONNECTION FOR USER: vagrant on PORT 55986 TO 127.0.0.1

<127.0.0.1> EXEC Set-StrictMode -Version Latest

(New-Item -Type Directory -Path $env:temp -Name "ansible-tmp-1475181976.06-278942010948362").FullName | Write-Host -Separator '';

<127.0.0.1> PUT "/var/folders/15/b3hfwryj5570qt_r8b9q2jpw0000gn/T/tmpAgxz8u" TO "C:\Users\vagrant\AppData\Local\Temp\ansible-tmp-1475181976.06-278942010948362\win_feature.ps1"

<127.0.0.1> EXEC Set-StrictMode -Version Latest

Try

{

& 'C:\Users\vagrant\AppData\Local\Temp\ansible-tmp-1475181976.06-278942010948362\win_feature.ps1'

}

Catch

{

$_obj = @{ failed = $true }

If ($_.Exception.GetType)

{

$_obj.Add('msg', $_.Exception.Message)

}

Else

{

$_obj.Add('msg', $_.ToString())

}

If ($_.InvocationInfo.PositionMessage)

{

$_obj.Add('exception', $_.InvocationInfo.PositionMessage)

}

ElseIf ($_.ScriptStackTrace)

{

$_obj.Add('exception', $_.ScriptStackTrace)

}

Try

{

$_obj.Add('error_record', ($_ | ConvertTo-Json | ConvertFrom-Json))

}

Catch

{

}

Echo $_obj | ConvertTo-Json -Compress -Depth 99

Exit 1

}

Finally { Remove-Item "C:\Users\vagrant\AppData\Local\Temp\ansible-tmp-1475181976.06-278942010948362" -Force -Recurse -ErrorAction SilentlyContinue }

127.0.0.1 | FAILED! => {

"changed": false,

"exitcode": "Failed",

"failed": true,

"feature_result": [],

"invocation": {

"module_name": "win_feature"

},

"msg": "Failed to add feature",

"restart_needed": false,

"success": false

}

``` | main | win feature always returns failed to add feature on windows server issue type bug report component name win feature ansible version ansible config file snipped ansible ansible cfg configured module search path configuration n a os environment host mac os x el capitan target windows server datacenter rtm build release summary win feature module is unable to add features to windows server targets including trivial use cases such as telnet client steps to reproduce ansible m win feature a name ad domain services examplehost in this case examplehost is a vm running on virtualbox expected results success changed true exitcode failed false feature result invocation module name win feature msg happy happy joy joy restart needed false success true actual results using snipped ansible ansible cfg as config file loaded callback minimal of type stdout establish winrm connection for user vagrant on port to exec set strictmode version latest new item type directory path env temp name ansible tmp fullname write host separator put var folders t to c users vagrant appdata local temp ansible tmp win feature exec set strictmode version latest try c users vagrant appdata local temp ansible tmp win feature catch obj failed true if exception gettype obj add msg exception message else obj add msg tostring if invocationinfo positionmessage obj add exception invocationinfo positionmessage elseif scriptstacktrace obj add exception scriptstacktrace try obj add error record convertto json convertfrom json catch echo obj convertto json compress depth exit finally remove item c users vagrant appdata local temp ansible tmp force recurse erroraction silentlycontinue failed changed false exitcode failed failed true feature result invocation module name win feature msg failed to add feature restart needed false success false | 1 |

137,557 | 30,713,289,139 | IssuesEvent | 2023-07-27 11:19:47 | SuperTux/supertux | https://api.github.com/repos/SuperTux/supertux | closed | Leafshot is hardcoded in the Kamikaze Snowball code!? | involves:functionality category:code status:needs-work | I just learned that Leafshot's code is within `kamikazesnowball.cpp` which makes it currently impossible to change their sprite. They should be either their own separate object as they also have a different speed value and are also freeze-able. | 1.0 | Leafshot is hardcoded in the Kamikaze Snowball code!? - I just learned that Leafshot's code is within `kamikazesnowball.cpp` which makes it currently impossible to change their sprite. They should be either their own separate object as they also have a different speed value and are also freeze-able. | non_main | leafshot is hardcoded in the kamikaze snowball code i just learned that leafshot s code is within kamikazesnowball cpp which makes it currently impossible to change their sprite they should be either their own separate object as they also have a different speed value and are also freeze able | 0 |

359,030 | 25,214,090,216 | IssuesEvent | 2022-11-14 07:40:47 | scylladb/scylla-monitoring | https://api.github.com/repos/scylladb/scylla-monitoring | opened | docs: sizing calculator | documentation | It would be usefull to represent the sizing info incded here:

https://monitoring.docs.scylladb.com/stable/install/monitoring_stack.html#calculating-prometheus-minimal-disk-space-requirement

In a small sizing calculator with the inputs:

- number of Scylla cores

- data retention in days

For reference, here is a similar calculator in docs

https://docs.scylladb.com/stable/cql/consistency-calculator.html | 1.0 | docs: sizing calculator - It would be usefull to represent the sizing info incded here:

https://monitoring.docs.scylladb.com/stable/install/monitoring_stack.html#calculating-prometheus-minimal-disk-space-requirement

In a small sizing calculator with the inputs:

- number of Scylla cores

- data retention in days

For reference, here is a similar calculator in docs

https://docs.scylladb.com/stable/cql/consistency-calculator.html | non_main | docs sizing calculator it would be usefull to represent the sizing info incded here in a small sizing calculator with the inputs number of scylla cores data retention in days for reference here is a similar calculator in docs | 0 |

65,377 | 7,875,008,573 | IssuesEvent | 2018-06-25 18:56:27 | Opentrons/opentrons | https://api.github.com/repos/Opentrons/opentrons | opened | PD: stop LassPass from trying to auto-fill the form | bug protocol designer | ## current behavior

When filling out a form in PD, when LastPass is installed in Chrome, LastPass will open up a buncha tabs as the user clicks into the form!

## expected behavior

Hopefully setting `autocomplete="off"` on our `<form>` elements will stop this, as long as the user as "Allow pages to disable autofill" checked

Via https://stackoverflow.com/a/28216951/556651 | 1.0 | PD: stop LassPass from trying to auto-fill the form - ## current behavior

When filling out a form in PD, when LastPass is installed in Chrome, LastPass will open up a buncha tabs as the user clicks into the form!

## expected behavior

Hopefully setting `autocomplete="off"` on our `<form>` elements will stop this, as long as the user as "Allow pages to disable autofill" checked

Via https://stackoverflow.com/a/28216951/556651 | non_main | pd stop lasspass from trying to auto fill the form current behavior when filling out a form in pd when lastpass is installed in chrome lastpass will open up a buncha tabs as the user clicks into the form expected behavior hopefully setting autocomplete off on our elements will stop this as long as the user as allow pages to disable autofill checked via | 0 |

67,793 | 28,048,486,038 | IssuesEvent | 2023-03-29 02:21:00 | MicrosoftDocs/live-share | https://api.github.com/repos/MicrosoftDocs/live-share | closed | [VS][C++] Error list not working | bug client: vs area: language services needs-repro | Error list on the guest in a Live Share session is not synced with host's error list. | 1.0 | [VS][C++] Error list not working - Error list on the guest in a Live Share session is not synced with host's error list. | non_main | error list not working error list on the guest in a live share session is not synced with host s error list | 0 |

3,719 | 15,375,755,236 | IssuesEvent | 2021-03-02 15:15:48 | zaproxy/zaproxy | https://api.github.com/repos/zaproxy/zaproxy | closed | Create AbstractHostFileScanRule | Maintainability add-on | Similar to `addOns/commonlib/src/main/java/org/zaproxy/addon/commonlib/AbstractAppFilePlugin.java` for use in HiddenFileScanRule, the ELMAH scan rule, and whatever future use cases. To ensure consistency and ease of maintenance. | True | Create AbstractHostFileScanRule - Similar to `addOns/commonlib/src/main/java/org/zaproxy/addon/commonlib/AbstractAppFilePlugin.java` for use in HiddenFileScanRule, the ELMAH scan rule, and whatever future use cases. To ensure consistency and ease of maintenance. | main | create abstracthostfilescanrule similar to addons commonlib src main java org zaproxy addon commonlib abstractappfileplugin java for use in hiddenfilescanrule the elmah scan rule and whatever future use cases to ensure consistency and ease of maintenance | 1 |

2,914 | 10,391,741,219 | IssuesEvent | 2019-09-11 08:12:08 | precice/precice | https://api.github.com/repos/precice/precice | opened | Generalize Mesh adding and filtering | maintainability | We currently have slight variations of the same code that handles adding one mesh to another.

1. `void Mesh::addMesh(Mesh const& diff);` adds the `diff` to the current mesh.

2. `void ReceivedPartition::filterMesh(mesh::Mesh &filteredMesh, const bool filterByBB)`

This adds the internal mesh to `filteredMesh` and filters vertices based on a predicate: tagged vertices, or vertices inside a bounding-box.

Both functions can be generalized to:

```cpp

// Generalized version filtering vertices based on a given unary predicate.

template<typename UnaryPredicate>

void Mesh::addMesh(Mesh const& other, UnaryPredicate p);

// Version that simply adds the Mesh

void Mesh::addMesh(Mesh const& other) {

addMesh(other, [](mesh::Vertex const &) { return true; });

}

// The new possible implementation.

void ReceivedPartition::filterMesh(mesh::Mesh &filteredMesh, const bool filterByBB) {

if (filterByBB) {

filteredMesh.addMesh(_mesh,

[this](mesh::Vertex const & v){ return this->isVertexinBB(v);});

} else {

filteredMesh.addMesh(_mesh,

[](mesh::Vertex const & v){ return v.isTagged();});

}

}

```

This makes the code DRY, easier to maintain and allows us to optimize a single function. | True | Generalize Mesh adding and filtering - We currently have slight variations of the same code that handles adding one mesh to another.

1. `void Mesh::addMesh(Mesh const& diff);` adds the `diff` to the current mesh.

2. `void ReceivedPartition::filterMesh(mesh::Mesh &filteredMesh, const bool filterByBB)`

This adds the internal mesh to `filteredMesh` and filters vertices based on a predicate: tagged vertices, or vertices inside a bounding-box.

Both functions can be generalized to:

```cpp

// Generalized version filtering vertices based on a given unary predicate.

template<typename UnaryPredicate>

void Mesh::addMesh(Mesh const& other, UnaryPredicate p);

// Version that simply adds the Mesh

void Mesh::addMesh(Mesh const& other) {

addMesh(other, [](mesh::Vertex const &) { return true; });

}

// The new possible implementation.

void ReceivedPartition::filterMesh(mesh::Mesh &filteredMesh, const bool filterByBB) {

if (filterByBB) {

filteredMesh.addMesh(_mesh,

[this](mesh::Vertex const & v){ return this->isVertexinBB(v);});

} else {

filteredMesh.addMesh(_mesh,

[](mesh::Vertex const & v){ return v.isTagged();});

}

}

```

This makes the code DRY, easier to maintain and allows us to optimize a single function. | main | generalize mesh adding and filtering we currently have slight variations of the same code that handles adding one mesh to another void mesh addmesh mesh const diff adds the diff to the current mesh void receivedpartition filtermesh mesh mesh filteredmesh const bool filterbybb this adds the internal mesh to filteredmesh and filters vertices based on a predicate tagged vertices or vertices inside a bounding box both functions can be generalized to cpp generalized version filtering vertices based on a given unary predicate template void mesh addmesh mesh const other unarypredicate p version that simply adds the mesh void mesh addmesh mesh const other addmesh other mesh vertex const return true the new possible implementation void receivedpartition filtermesh mesh mesh filteredmesh const bool filterbybb if filterbybb filteredmesh addmesh mesh mesh vertex const v return this isvertexinbb v else filteredmesh addmesh mesh mesh vertex const v return v istagged this makes the code dry easier to maintain and allows us to optimize a single function | 1 |

321,907 | 9,810,372,862 | IssuesEvent | 2019-06-12 20:19:20 | 2019-a-gr2-moviles/Museum-app-Cerezo-Murillo | https://api.github.com/repos/2019-a-gr2-moviles/Museum-app-Cerezo-Murillo | opened | Modificación de información personal de los usuarios | medium priority user story | # Descripción

Yo como usuario quiero ver y modificar mi información personal para mantenerla actualizada.

# Criterios de aceptación

- El usuario podrá ver sus datos personales: Nombre, Apellido y Correo electrónico.

- El usuario podrá modificar su correo electrónico y su contraseña. | 1.0 | Modificación de información personal de los usuarios - # Descripción

Yo como usuario quiero ver y modificar mi información personal para mantenerla actualizada.

# Criterios de aceptación

- El usuario podrá ver sus datos personales: Nombre, Apellido y Correo electrónico.

- El usuario podrá modificar su correo electrónico y su contraseña. | non_main | modificación de información personal de los usuarios descripción yo como usuario quiero ver y modificar mi información personal para mantenerla actualizada criterios de aceptación el usuario podrá ver sus datos personales nombre apellido y correo electrónico el usuario podrá modificar su correo electrónico y su contraseña | 0 |

5,316 | 26,831,755,815 | IssuesEvent | 2023-02-02 16:28:03 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | opened | Use consistent string formatting in JS | engineering maintain needs grooming | ## Description

Based on https://github.com/mozilla/foundation.mozilla.org/pull/10053#discussion_r1093784778

The code base uses a mix of backticks <code>`</code> and quotes `"` for string formatting in JS.

It would be nice to find a consistent standard and have the linting piont out when we are inconsistent.

Which one we go with should be decided by the team.

## Acceptance criteria

- [ ] The code base uses consistent string formatting.

- [ ] Running `inv lint-js` shows errors when we use inconsistent formatting. | True | Use consistent string formatting in JS - ## Description

Based on https://github.com/mozilla/foundation.mozilla.org/pull/10053#discussion_r1093784778

The code base uses a mix of backticks <code>`</code> and quotes `"` for string formatting in JS.

It would be nice to find a consistent standard and have the linting piont out when we are inconsistent.

Which one we go with should be decided by the team.

## Acceptance criteria

- [ ] The code base uses consistent string formatting.

- [ ] Running `inv lint-js` shows errors when we use inconsistent formatting. | main | use consistent string formatting in js description based on the code base uses a mix of backticks and quotes for string formatting in js it would be nice to find a consistent standard and have the linting piont out when we are inconsistent which one we go with should be decided by the team acceptance criteria the code base uses consistent string formatting running inv lint js shows errors when we use inconsistent formatting | 1 |

3,061 | 11,459,653,698 | IssuesEvent | 2020-02-07 07:53:14 | alacritty/alacritty | https://api.github.com/repos/alacritty/alacritty | closed | Performance of `cat` in GNU Screen worse than xterm’s on X11? | C - waiting on maintainer | > Which operating system does the issue occur on?

NixOS (Linux) 17.03.1844.83706dd49f (Gorilla)

> If on linux, are you using X11 or Wayland?

X11

---

If I set up _really_ fast keyboard repetition, e.g. `"-ardelay" "150" "-arinterval" "8"` in xserver’s args, and hold a letter in both xterm, and Alacritty, the latter visibly stutters, while xterm is very smooth.

Here’s my config, nothing fancy: https://github.com/michalrus/dotfiles/blob/237d1a7e94e22f6ea5c3e3c28d50452a4342e70e/dotfiles/michalrus/base/.config/alacritty/alacritty.yml | True | Performance of `cat` in GNU Screen worse than xterm’s on X11? - > Which operating system does the issue occur on?

NixOS (Linux) 17.03.1844.83706dd49f (Gorilla)

> If on linux, are you using X11 or Wayland?

X11

---

If I set up _really_ fast keyboard repetition, e.g. `"-ardelay" "150" "-arinterval" "8"` in xserver’s args, and hold a letter in both xterm, and Alacritty, the latter visibly stutters, while xterm is very smooth.

Here’s my config, nothing fancy: https://github.com/michalrus/dotfiles/blob/237d1a7e94e22f6ea5c3e3c28d50452a4342e70e/dotfiles/michalrus/base/.config/alacritty/alacritty.yml | main | performance of cat in gnu screen worse than xterm’s on which operating system does the issue occur on nixos linux gorilla if on linux are you using or wayland if i set up really fast keyboard repetition e g ardelay arinterval in xserver’s args and hold a letter in both xterm and alacritty the latter visibly stutters while xterm is very smooth here’s my config nothing fancy | 1 |

2,193 | 7,745,171,211 | IssuesEvent | 2018-05-29 17:28:04 | react-navigation/react-navigation | https://api.github.com/repos/react-navigation/react-navigation | closed | getScreenDetails do not works no more after migrating to v2 | needs response from maintainer | In v1 i was using this code

```

const AppStack = StackNavigator(

{

Home: HomeScreen,

Example: ExampleContentScreen

},

{

navigationOptions: {

header: (navigationOptions) => {

console.log (navigationOptions);

const { scene, getScreenDetails } = navigationOptions;

const screenDetails = getScreenDetails(scene);

const { options } = screenDetails;

return (

<CustomHeader

onLeftIconPress={options.onLeftIconPress}

leftIconName={options.leftIconName}

title={options.headerTitle}

/>

);

}

}

}

);

```

I changed `StackNavigator` into `createStackNavigator`.

Running this code i got an error about `getScreenDetails()` that doesn't exists.

what the migration path for this function? | True | getScreenDetails do not works no more after migrating to v2 - In v1 i was using this code

```

const AppStack = StackNavigator(

{

Home: HomeScreen,

Example: ExampleContentScreen

},

{

navigationOptions: {

header: (navigationOptions) => {

console.log (navigationOptions);

const { scene, getScreenDetails } = navigationOptions;

const screenDetails = getScreenDetails(scene);

const { options } = screenDetails;

return (

<CustomHeader

onLeftIconPress={options.onLeftIconPress}

leftIconName={options.leftIconName}

title={options.headerTitle}

/>

);

}

}

}

);

```

I changed `StackNavigator` into `createStackNavigator`.

Running this code i got an error about `getScreenDetails()` that doesn't exists.

what the migration path for this function? | main | getscreendetails do not works no more after migrating to in i was using this code const appstack stacknavigator home homescreen example examplecontentscreen navigationoptions header navigationoptions console log navigationoptions const scene getscreendetails navigationoptions const screendetails getscreendetails scene const options screendetails return customheader onlefticonpress options onlefticonpress lefticonname options lefticonname title options headertitle i changed stacknavigator into createstacknavigator running this code i got an error about getscreendetails that doesn t exists what the migration path for this function | 1 |

10,377 | 3,385,127,426 | IssuesEvent | 2015-11-27 09:43:06 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | closed | Components: Add VerticalNavItem to devdocs | Components Documentation [Type] Task | Originally reported by @scruffian.

We should add this new component to https://wpcalypso.wordpress.com/devdocs/design

@folletto noticed this is quite similar to `FoldableCard`. He also pointed that, to be generic enough, this requires support to more than just a title (i.e. My Site → Settings). | 1.0 | Components: Add VerticalNavItem to devdocs - Originally reported by @scruffian.

We should add this new component to https://wpcalypso.wordpress.com/devdocs/design

@folletto noticed this is quite similar to `FoldableCard`. He also pointed that, to be generic enough, this requires support to more than just a title (i.e. My Site → Settings). | non_main | components add verticalnavitem to devdocs originally reported by scruffian we should add this new component to folletto noticed this is quite similar to foldablecard he also pointed that to be generic enough this requires support to more than just a title i e my site → settings | 0 |

1,214 | 5,194,607,873 | IssuesEvent | 2017-01-23 04:58:40 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | Git ability to clean untracked and ignored files | affects_2.3 feature_idea waiting_on_maintainer | Git module should have an option to run `git clean -f` to remove untracked files. This is useful to say build a project from a pristine repository. Currently all untracked files remain in the directory.

I think there should be two options :

- `clean_untracked` - remove files and directories

- `clean_ignored` - remove ignored flies

If this sounds good, I can send a PR

| True | Git ability to clean untracked and ignored files - Git module should have an option to run `git clean -f` to remove untracked files. This is useful to say build a project from a pristine repository. Currently all untracked files remain in the directory.

I think there should be two options :

- `clean_untracked` - remove files and directories

- `clean_ignored` - remove ignored flies

If this sounds good, I can send a PR

| main | git ability to clean untracked and ignored files git module should have an option to run git clean f to remove untracked files this is useful to say build a project from a pristine repository currently all untracked files remain in the directory i think there should be two options clean untracked remove files and directories clean ignored remove ignored flies if this sounds good i can send a pr | 1 |

93,722 | 3,908,718,139 | IssuesEvent | 2016-04-19 16:45:56 | docker/docker | https://api.github.com/repos/docker/docker | closed | Running out of inodes on /run | kind/bug priority/P1 | After updating to docker 1.11 I seem to run out of inodes on /run.

Inspecting the `/run/docker/libcontainerd` dirs I see that my containers have the following numbers of of files:

```

/var/run/docker/libcontainerd/30b451cdf9a4b92c35e4b042715f0c560b535b0c5af4bc67acf7580baa1b2264

9334

/var/run/docker/libcontainerd/35fd9cdb1f80db3bb5fd16aadfe1b30ead0582906f992e4d4865a81798a15072

256507

/var/run/docker/libcontainerd/3a34cbef697c5b7a3865b4556d97c21427b599ff56ab6833bd02013f71aa7738

35129

/var/run/docker/libcontainerd/5e7e460fcf536f2cd75032a9a92e4843812fa4b72b8b5e13b089a90f1ef28e2a

9942

/var/run/docker/libcontainerd/5ebcfb17c26815533f4abd883fb59344554077018f04ce062a67bbdfe50f5c3d

9942

/var/run/docker/libcontainerd/776106ffe1802805469ff0078e02b71e7c9dd9e8c08e8d31951712c6b8438b49

1002

/var/run/docker/libcontainerd/91f43f1dd0252f30363337a4c073962194b9cc7fb1df632a92db4f2fa15ca551

55663

/var/run/docker/libcontainerd/b417ff5d733d5f2bc37cac57bb7af72b86bc680f44e7d5656c55d1067ce66c5d

46336

/var/run/docker/libcontainerd/bd07d50b3c3bdf7e9c8ddf6894e5a079af09f7c9d0d9e27f7d62065cdf181618

9942

/var/run/docker/libcontainerd/ce6bf9750bd4183f2b0d87cf67d11c4047e0473cf396867b70798cccd818e67f

10705

/var/run/docker/libcontainerd/d59d15e94c33556ae099232caed4d824c966e754a2ae63116f3a4249ef290507

7581

/var/run/docker/libcontainerd/docker-containerd.pid

1

/var/run/docker/libcontainerd/docker-containerd.sock

1

/var/run/docker/libcontainerd/e6f8f0e6de324d13389f167c68a31c345eb740b0b8b60b3995a170940e38d01f

26

/var/run/docker/libcontainerd/eb7baaf652b9247f9efbadebbdce9b6b3d88b1515ab71462b91b995187496373

35275

/var/run/docker/libcontainerd/event.ts

1

```

The container with the most files is one I use to 'exec' commands on for health checking within an overlay network.

**Restarting the container resolves the issue**

Listing the files within that culprit container's dir in `/var/run/docker/libcontainerd` I see piles of

```

...

123e29754fba075637b49a21362c5265e5c350b5aa516c187cdc498f8d365a01-stdin

123e29754fba075637b49a21362c5265e5c350b5aa516c187cdc498f8d365a01-stdout

...

```

I also updated kernel recently as well

Output of uname -a:

```

Linux aws-qa-node-1 4.2.0-35-generic #40~14.04.1-Ubuntu SMP Fri Mar 18 16:37:35 UTC 2016 x86_64 x86_64 x86_64 GNU/Linux

```

Trying to exec a command on a container:

```

sudo docker exec dev_consulcheck_blue_1 /bin/sh

mkfifo: /var/run/docker/libcontainerd/35fd9cdb1f80db3bb5fd16aadfe1b30ead0582906f992e4d4865a81798a15072/041ced8a2353434d55e034a37d7c096e4030bbf1878176331c69d1666af3990f-stdin no space left on device

```

Trying to run a container:

```

sudo docker run --rm -it busybox /bin/sh

Unable to find image 'busybox:latest' locally

latest: Pulling from library/busybox

385e281300cc: Pull complete

a3ed95caeb02: Pull complete

Digest: sha256:4a887a2326ec9e0fa90cce7b4764b0e627b5d6afcb81a3f73c85dc29cea00048

Status: Downloaded newer image for busybox:latest

docker: Error response from daemon: mkdir /var/run/docker/libcontainerd/557517143c7a394f2bdd0f2719274d596682b4eaa180e56c176ae2770e10e815/rootfs: no space left on device

```

**Output of `docker version`:**

```

Client:

Version: 1.11.0

API version: 1.23

Go version: go1.5.4

Git commit: 4dc5990

Built: Wed Apr 13 18:34:23 2016

OS/Arch: linux/amd64

Server:

Version: 1.11.0

API version: 1.23

Go version: go1.5.4

Git commit: 4dc5990

Built: Wed Apr 13 18:34:23 2016

OS/Arch: linux/amd64

```

**Output of `docker info`:**

```

Containers: 13

Running: 13

Paused: 0

Stopped: 0

Images: 69

Server Version: 1.11.0

Storage Driver: aufs

Root Dir: /var/lib/docker/aufs

Backing Filesystem: extfs

Dirs: 397

Dirperm1 Supported: true

Logging Driver: json-file

Cgroup Driver: cgroupfs

Plugins:

Volume: local rexray

Network: host bridge null overlay

Kernel Version: 4.2.0-35-generic

Operating System: Ubuntu 14.04.4 LTS

OSType: linux

Architecture: x86_64

CPUs: 1

Total Memory: 1.952 GiB

Name: aws-qa-node-1

ID: A5QZ:FCVL:NXOH:5V3R:SKZ4:V3EZ:55D2:EPLE:3QBM:TQXK:EXEL:MFSI

Docker Root Dir: /var/lib/docker

Debug mode (client): false

Debug mode (server): false

Registry: https://index.docker.io/v1/

WARNING: No swap limit support

Labels:

nodeindex=1

role.service=true

role.infra=true

provider=generic

ec2.instance.type.t2.small=true

Cluster store: consul://10.0.2.95:8500

Cluster advertise: 10.0.4.203:2376

```

**Additional environment details (AWS, VirtualBox, physical, etc.):**

Running on aws.

| 1.0 | Running out of inodes on /run - After updating to docker 1.11 I seem to run out of inodes on /run.

Inspecting the `/run/docker/libcontainerd` dirs I see that my containers have the following numbers of of files:

```

/var/run/docker/libcontainerd/30b451cdf9a4b92c35e4b042715f0c560b535b0c5af4bc67acf7580baa1b2264

9334

/var/run/docker/libcontainerd/35fd9cdb1f80db3bb5fd16aadfe1b30ead0582906f992e4d4865a81798a15072

256507

/var/run/docker/libcontainerd/3a34cbef697c5b7a3865b4556d97c21427b599ff56ab6833bd02013f71aa7738

35129

/var/run/docker/libcontainerd/5e7e460fcf536f2cd75032a9a92e4843812fa4b72b8b5e13b089a90f1ef28e2a

9942

/var/run/docker/libcontainerd/5ebcfb17c26815533f4abd883fb59344554077018f04ce062a67bbdfe50f5c3d

9942

/var/run/docker/libcontainerd/776106ffe1802805469ff0078e02b71e7c9dd9e8c08e8d31951712c6b8438b49

1002

/var/run/docker/libcontainerd/91f43f1dd0252f30363337a4c073962194b9cc7fb1df632a92db4f2fa15ca551

55663

/var/run/docker/libcontainerd/b417ff5d733d5f2bc37cac57bb7af72b86bc680f44e7d5656c55d1067ce66c5d

46336

/var/run/docker/libcontainerd/bd07d50b3c3bdf7e9c8ddf6894e5a079af09f7c9d0d9e27f7d62065cdf181618

9942

/var/run/docker/libcontainerd/ce6bf9750bd4183f2b0d87cf67d11c4047e0473cf396867b70798cccd818e67f

10705

/var/run/docker/libcontainerd/d59d15e94c33556ae099232caed4d824c966e754a2ae63116f3a4249ef290507

7581

/var/run/docker/libcontainerd/docker-containerd.pid

1

/var/run/docker/libcontainerd/docker-containerd.sock

1

/var/run/docker/libcontainerd/e6f8f0e6de324d13389f167c68a31c345eb740b0b8b60b3995a170940e38d01f

26

/var/run/docker/libcontainerd/eb7baaf652b9247f9efbadebbdce9b6b3d88b1515ab71462b91b995187496373

35275

/var/run/docker/libcontainerd/event.ts

1

```

The container with the most files is one I use to 'exec' commands on for health checking within an overlay network.

**Restarting the container resolves the issue**

Listing the files within that culprit container's dir in `/var/run/docker/libcontainerd` I see piles of

```

...

123e29754fba075637b49a21362c5265e5c350b5aa516c187cdc498f8d365a01-stdin

123e29754fba075637b49a21362c5265e5c350b5aa516c187cdc498f8d365a01-stdout

...

```

I also updated kernel recently as well

Output of uname -a:

```

Linux aws-qa-node-1 4.2.0-35-generic #40~14.04.1-Ubuntu SMP Fri Mar 18 16:37:35 UTC 2016 x86_64 x86_64 x86_64 GNU/Linux

```

Trying to exec a command on a container:

```

sudo docker exec dev_consulcheck_blue_1 /bin/sh

mkfifo: /var/run/docker/libcontainerd/35fd9cdb1f80db3bb5fd16aadfe1b30ead0582906f992e4d4865a81798a15072/041ced8a2353434d55e034a37d7c096e4030bbf1878176331c69d1666af3990f-stdin no space left on device

```

Trying to run a container:

```

sudo docker run --rm -it busybox /bin/sh

Unable to find image 'busybox:latest' locally

latest: Pulling from library/busybox

385e281300cc: Pull complete

a3ed95caeb02: Pull complete

Digest: sha256:4a887a2326ec9e0fa90cce7b4764b0e627b5d6afcb81a3f73c85dc29cea00048

Status: Downloaded newer image for busybox:latest

docker: Error response from daemon: mkdir /var/run/docker/libcontainerd/557517143c7a394f2bdd0f2719274d596682b4eaa180e56c176ae2770e10e815/rootfs: no space left on device

```

**Output of `docker version`:**

```

Client:

Version: 1.11.0

API version: 1.23

Go version: go1.5.4

Git commit: 4dc5990

Built: Wed Apr 13 18:34:23 2016

OS/Arch: linux/amd64

Server:

Version: 1.11.0

API version: 1.23

Go version: go1.5.4

Git commit: 4dc5990

Built: Wed Apr 13 18:34:23 2016

OS/Arch: linux/amd64

```

**Output of `docker info`:**

```

Containers: 13

Running: 13

Paused: 0

Stopped: 0

Images: 69

Server Version: 1.11.0

Storage Driver: aufs

Root Dir: /var/lib/docker/aufs

Backing Filesystem: extfs

Dirs: 397

Dirperm1 Supported: true

Logging Driver: json-file

Cgroup Driver: cgroupfs

Plugins:

Volume: local rexray

Network: host bridge null overlay

Kernel Version: 4.2.0-35-generic

Operating System: Ubuntu 14.04.4 LTS

OSType: linux

Architecture: x86_64

CPUs: 1

Total Memory: 1.952 GiB

Name: aws-qa-node-1

ID: A5QZ:FCVL:NXOH:5V3R:SKZ4:V3EZ:55D2:EPLE:3QBM:TQXK:EXEL:MFSI

Docker Root Dir: /var/lib/docker

Debug mode (client): false

Debug mode (server): false

Registry: https://index.docker.io/v1/

WARNING: No swap limit support

Labels:

nodeindex=1

role.service=true

role.infra=true

provider=generic

ec2.instance.type.t2.small=true

Cluster store: consul://10.0.2.95:8500

Cluster advertise: 10.0.4.203:2376

```

**Additional environment details (AWS, VirtualBox, physical, etc.):**

Running on aws.

| non_main | running out of inodes on run after updating to docker i seem to run out of inodes on run inspecting the run docker libcontainerd dirs i see that my containers have the following numbers of of files var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd docker containerd pid var run docker libcontainerd docker containerd sock var run docker libcontainerd var run docker libcontainerd var run docker libcontainerd event ts the container with the most files is one i use to exec commands on for health checking within an overlay network restarting the container resolves the issue listing the files within that culprit container s dir in var run docker libcontainerd i see piles of stdin stdout i also updated kernel recently as well output of uname a linux aws qa node generic ubuntu smp fri mar utc gnu linux trying to exec a command on a container sudo docker exec dev consulcheck blue bin sh mkfifo var run docker libcontainerd stdin no space left on device trying to run a container sudo docker run rm it busybox bin sh unable to find image busybox latest locally latest pulling from library busybox pull complete pull complete digest status downloaded newer image for busybox latest docker error response from daemon mkdir var run docker libcontainerd rootfs no space left on device output of docker version client version api version go version git commit built wed apr os arch linux server version api version go version git commit built wed apr os arch linux output of docker info containers running paused stopped images server version storage driver aufs root dir var lib docker aufs backing filesystem extfs dirs supported true logging driver json file cgroup driver cgroupfs plugins volume local rexray network host bridge null overlay kernel version generic operating system ubuntu lts ostype linux architecture cpus total memory gib name aws qa node id fcvl nxoh eple tqxk exel mfsi docker root dir var lib docker debug mode client false debug mode server false registry warning no swap limit support labels nodeindex role service true role infra true provider generic instance type small true cluster store consul cluster advertise additional environment details aws virtualbox physical etc running on aws | 0 |

1,872 | 6,577,498,977 | IssuesEvent | 2017-09-12 01:20:17 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | ec2_vpc module erroneously recreates VPCs when passing loosely defined CIDR blocks | affects_2.1 aws bug_report cloud waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

ec2_vpc module

##### ANSIBLE VERSION

```

ansible 2.1.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

##### OS / ENVIRONMENT

Host OS is Arch Linux, I'm building infrastructure in AWS using boto version 2.39.0, and aws-cli version 1.10.17.

##### SUMMARY

When creating VPCs, AWS will automatically convert your subnet CIDR blocks to it's strictest representation (10.20.30.0/16 will be converted to 10.20.0.0/16), however, when performing checks (beginning line 193 of ec2_vpc.py) to determine if the VPC needs to be modified, Ansible uses the representation provided by the user, which can differ from the representation returned by AWS, in this case, a new VPC will be erroneously created for each subsequent playbook run.

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

Save the following playbook as ec2_vpc-test.yml and run it with ansible-playbook ec2_vpc-test.yml

```

---

- hosts: localhost

tasks:

- name: "Create VPC"

local_action:

module: ec2_vpc

state: present

cidr_block: "10.20.30.0/16"

resource_tags:

Name: 'ec2_vpc subnet test'

region: "eu-west-1"

- name: "Create VPC"

local_action:

module: ec2_vpc

state: present

cidr_block: "10.20.30.0/16"

resource_tags:

Name: 'ec2_vpc subnet test'

region: "eu-west-1"

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

I expect that only one VPC will be created regardless of how many times the playbook is run

##### ACTUAL RESULTS

Two new, identical VPCs are created every time this playbook is run, despite no playbook changes being made.

```

[dwood@dawood-arch ansible]$ ansible-playbook ec2_vpc-test.yml

[WARNING]: Host file not found: /etc/ansible/hosts

[WARNING]: provided hosts list is empty, only localhost is available

PLAY [localhost] ***************************************************************

TASK [Create VPC] **************************************************************

changed: [localhost -> localhost]

TASK [Create VPC] **************************************************************

changed: [localhost -> localhost]

PLAY RECAP *********************************************************************

localhost : ok=2 changed=2 unreachable=0 failed=0

[dwood@dawood-arch ansible]$

```

| True | ec2_vpc module erroneously recreates VPCs when passing loosely defined CIDR blocks - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

ec2_vpc module

##### ANSIBLE VERSION

```

ansible 2.1.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

##### OS / ENVIRONMENT

Host OS is Arch Linux, I'm building infrastructure in AWS using boto version 2.39.0, and aws-cli version 1.10.17.

##### SUMMARY

When creating VPCs, AWS will automatically convert your subnet CIDR blocks to it's strictest representation (10.20.30.0/16 will be converted to 10.20.0.0/16), however, when performing checks (beginning line 193 of ec2_vpc.py) to determine if the VPC needs to be modified, Ansible uses the representation provided by the user, which can differ from the representation returned by AWS, in this case, a new VPC will be erroneously created for each subsequent playbook run.

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

Save the following playbook as ec2_vpc-test.yml and run it with ansible-playbook ec2_vpc-test.yml

```

---

- hosts: localhost

tasks:

- name: "Create VPC"

local_action:

module: ec2_vpc

state: present

cidr_block: "10.20.30.0/16"

resource_tags:

Name: 'ec2_vpc subnet test'

region: "eu-west-1"

- name: "Create VPC"

local_action:

module: ec2_vpc

state: present

cidr_block: "10.20.30.0/16"

resource_tags:

Name: 'ec2_vpc subnet test'

region: "eu-west-1"

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

I expect that only one VPC will be created regardless of how many times the playbook is run

##### ACTUAL RESULTS

Two new, identical VPCs are created every time this playbook is run, despite no playbook changes being made.

```

[dwood@dawood-arch ansible]$ ansible-playbook ec2_vpc-test.yml

[WARNING]: Host file not found: /etc/ansible/hosts

[WARNING]: provided hosts list is empty, only localhost is available

PLAY [localhost] ***************************************************************

TASK [Create VPC] **************************************************************

changed: [localhost -> localhost]

TASK [Create VPC] **************************************************************

changed: [localhost -> localhost]

PLAY RECAP *********************************************************************

localhost : ok=2 changed=2 unreachable=0 failed=0

[dwood@dawood-arch ansible]$

```

| main | vpc module erroneously recreates vpcs when passing loosely defined cidr blocks issue type bug report component name vpc module ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides configuration os environment host os is arch linux i m building infrastructure in aws using boto version and aws cli version summary when creating vpcs aws will automatically convert your subnet cidr blocks to it s strictest representation will be converted to however when performing checks beginning line of vpc py to determine if the vpc needs to be modified ansible uses the representation provided by the user which can differ from the representation returned by aws in this case a new vpc will be erroneously created for each subsequent playbook run steps to reproduce for bugs show exactly how to reproduce the problem for new features show how the feature would be used save the following playbook as vpc test yml and run it with ansible playbook vpc test yml hosts localhost tasks name create vpc local action module vpc state present cidr block resource tags name vpc subnet test region eu west name create vpc local action module vpc state present cidr block resource tags name vpc subnet test region eu west expected results i expect that only one vpc will be created regardless of how many times the playbook is run actual results two new identical vpcs are created every time this playbook is run despite no playbook changes being made ansible playbook vpc test yml host file not found etc ansible hosts provided hosts list is empty only localhost is available play task changed task changed play recap localhost ok changed unreachable failed | 1 |

200 | 2,832,153,909 | IssuesEvent | 2015-05-25 04:39:40 | tgstation/-tg-station | https://api.github.com/repos/tgstation/-tg-station | closed | Flag NOSHIELD is unused | Maintainability - Hinders improvements Not a bug | Defined in __DEFINES/flags.dm

The item flag NOSHIELD is meant to be used to allow weapons to bypass the riot shield, however while it is defined, it is not actually used anywhere in the code. | True | Flag NOSHIELD is unused - Defined in __DEFINES/flags.dm

The item flag NOSHIELD is meant to be used to allow weapons to bypass the riot shield, however while it is defined, it is not actually used anywhere in the code. | main | flag noshield is unused defined in defines flags dm the item flag noshield is meant to be used to allow weapons to bypass the riot shield however while it is defined it is not actually used anywhere in the code | 1 |

231 | 2,905,114,374 | IssuesEvent | 2015-06-18 21:42:32 | cattolyst/datafinisher | https://api.github.com/repos/cattolyst/datafinisher | opened | Identify common or cumbersome SQL code patterns and write functions to generate them | maintainability | Examples: select statements, join statements, date manipulation, various group concatenations. This ticket isn't about actually writing these functions, but rather collecting and prioritizing a list of candidate SQL patterns. | True | Identify common or cumbersome SQL code patterns and write functions to generate them - Examples: select statements, join statements, date manipulation, various group concatenations. This ticket isn't about actually writing these functions, but rather collecting and prioritizing a list of candidate SQL patterns. | main | identify common or cumbersome sql code patterns and write functions to generate them examples select statements join statements date manipulation various group concatenations this ticket isn t about actually writing these functions but rather collecting and prioritizing a list of candidate sql patterns | 1 |

242,100 | 20,196,931,275 | IssuesEvent | 2022-02-11 11:31:57 | getsentry/sentry-ruby | https://api.github.com/repos/getsentry/sentry-ruby | closed | Reorganize `sentry-rails`' test apps | testing sentry-rails | `sentry-rails` supports a wide range of Rails versions (from 5.0 to 7.0). This means that it's test setup is complex and [full of compatibility workarounds](https://github.com/getsentry/sentry-ruby/blob/master/sentry-rails/spec/support/test_rails_app/app.rb#L155-L197). We should start thinking a more maintainable way to build test apps (perhaps one app file for each Rails version). | 1.0 | Reorganize `sentry-rails`' test apps - `sentry-rails` supports a wide range of Rails versions (from 5.0 to 7.0). This means that it's test setup is complex and [full of compatibility workarounds](https://github.com/getsentry/sentry-ruby/blob/master/sentry-rails/spec/support/test_rails_app/app.rb#L155-L197). We should start thinking a more maintainable way to build test apps (perhaps one app file for each Rails version). | non_main | reorganize sentry rails test apps sentry rails supports a wide range of rails versions from to this means that it s test setup is complex and we should start thinking a more maintainable way to build test apps perhaps one app file for each rails version | 0 |

1,113 | 4,988,930,209 | IssuesEvent | 2016-12-08 10:06:21 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | template_module: does not fail anymore when source file is absent | affects_2.1 bug_report waiting_on_maintainer | ##### ISSUE TYPE

Bug Report

##### COMPONENT NAME

template module

##### ANSIBLE VERSION

2.1.0

##### SUMMARY

Issue Type: Bug Report

Ansible Version: ansible-playbook 2.1.0 (devel 45355cd566) last updated 2016/01/08 15:07:35 (GMT +200)

Environment: Ubuntu 15.04

Problem: I thing the expected behaviour is that Ansible fails on runtime when a source file is missing.

The old error looks like this:

```

fatal: [{{hostname}}] => input file not found at /{foobar}/roles/foobar/templates/etc/apt/foo/bar.j2 or /{foobar}/etc/apt/foo/bar.j2

```