Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,789 | 30,660,611,093 | IssuesEvent | 2023-07-25 14:47:12 | jupyter-naas/awesome-notebooks | https://api.github.com/repos/jupyter-naas/awesome-notebooks | closed | Pandas - Apply custom styles on column | templates maintainer | This notebook will show how to apply custom styles on a column of a Pandas DataFrame. It is usefull for data analysis and data visualization.

| True | Pandas - Apply custom styles on column - This notebook will show how to apply custom styles on a column of a Pandas DataFrame. It is usefull for data analysis and data visualization.

| main | pandas apply custom styles on column this notebook will show how to apply custom styles on a column of a pandas dataframe it is usefull for data analysis and data visualization | 1 |

2,263 | 7,961,970,660 | IssuesEvent | 2018-07-13 12:50:27 | cucumber/aruba | https://api.github.com/repos/cucumber/aruba | closed | Add more logging for childprocess | needs feedback by maintainer stale type: new feature | <!-- These sections are meant as guidance for you, to help you give the kind of information we'll need to help with your issue. If a section doesn't seem to fit, just skip it.

In general: Please provide as much information as you can to help us solving your problem -->

## Summary

`ChildProcess` has an API for a logger to troubleshoot issues with this gem. This might be a valuable addition for aruba to write those logs on request as well

<!--- Provide a general summary description of the issue -->

## Expected Behavior

* Aruba has a configuration flag to activate this logger

* Those logs are written to a separate file

* The path to the file is written to console

<!--- If you're describing a bug, tell us what should happen -->

<!--- If you're suggesting a change/improvement, tell us how it should work -->

<!--- Feel free to use Given / Then / Then if that helps, but please add some plain-language context too -->

## Context & Motivation

This might help to troubleshoot issues with "ChildProcess" itself.

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

| True | Add more logging for childprocess - <!-- These sections are meant as guidance for you, to help you give the kind of information we'll need to help with your issue. If a section doesn't seem to fit, just skip it.

In general: Please provide as much information as you can to help us solving your problem -->

## Summary

`ChildProcess` has an API for a logger to troubleshoot issues with this gem. This might be a valuable addition for aruba to write those logs on request as well

<!--- Provide a general summary description of the issue -->

## Expected Behavior

* Aruba has a configuration flag to activate this logger

* Those logs are written to a separate file

* The path to the file is written to console

<!--- If you're describing a bug, tell us what should happen -->

<!--- If you're suggesting a change/improvement, tell us how it should work -->

<!--- Feel free to use Given / Then / Then if that helps, but please add some plain-language context too -->

## Context & Motivation

This might help to troubleshoot issues with "ChildProcess" itself.

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

| main | add more logging for childprocess these sections are meant as guidance for you to help you give the kind of information we ll need to help with your issue if a section doesn t seem to fit just skip it in general please provide as much information as you can to help us solving your problem summary childprocess has an api for a logger to troubleshoot issues with this gem this might be a valuable addition for aruba to write those logs on request as well expected behavior aruba has a configuration flag to activate this logger those logs are written to a separate file the path to the file is written to console context motivation this might help to troubleshoot issues with childprocess itself | 1 |

5,608 | 28,069,213,189 | IssuesEvent | 2023-03-29 17:44:14 | OpenRefine/OpenRefine | https://api.github.com/repos/OpenRefine/OpenRefine | opened | Make it possible to skip either frontend or backend CI jobs | enhancement maintainability to be reviewed CI/CD | A lot of pull requests either affect the backend or frontend but currently one can just skip all of CI or none, which is especially annoying when multiple jobs gets queued.

### Proposed solution

We could add two commit message keywords and conditions to frontend respective backend related tests/quality checks:

```

if: ${{ !contains(github.event.head_commit.message, '#frontend-only') }}

```

```

if: ${{ !contains(github.event.head_commit.message, '#backend-only') }}

```

So that contributors can skip jobs that aren't relevant to their contribution. All jobs would still run on merges. | True | Make it possible to skip either frontend or backend CI jobs - A lot of pull requests either affect the backend or frontend but currently one can just skip all of CI or none, which is especially annoying when multiple jobs gets queued.

### Proposed solution

We could add two commit message keywords and conditions to frontend respective backend related tests/quality checks:

```

if: ${{ !contains(github.event.head_commit.message, '#frontend-only') }}

```

```

if: ${{ !contains(github.event.head_commit.message, '#backend-only') }}

```

So that contributors can skip jobs that aren't relevant to their contribution. All jobs would still run on merges. | main | make it possible to skip either frontend or backend ci jobs a lot of pull requests either affect the backend or frontend but currently one can just skip all of ci or none which is especially annoying when multiple jobs gets queued proposed solution we could add two commit message keywords and conditions to frontend respective backend related tests quality checks if contains github event head commit message frontend only if contains github event head commit message backend only so that contributors can skip jobs that aren t relevant to their contribution all jobs would still run on merges | 1 |

554,081 | 16,388,596,207 | IssuesEvent | 2021-05-17 13:37:54 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.fedex.com - site is not usable | browser-firefox-ios os-ios priority-important | <!-- @browser: Firefox iOS 33.1 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 14_4_2 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.1 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/74045 -->

**URL**: https://www.fedex.com/fedextrack/?trknbr=787095647716

**Browser / Version**: Firefox iOS 33.1

**Operating System**: iOS 14.4.2

**Tested Another Browser**: Yes Safari

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

After signing into account, infinite page reload cycle m m tracking date

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.fedex.com - site is not usable - <!-- @browser: Firefox iOS 33.1 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 14_4_2 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.1 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/74045 -->

**URL**: https://www.fedex.com/fedextrack/?trknbr=787095647716

**Browser / Version**: Firefox iOS 33.1

**Operating System**: iOS 14.4.2

**Tested Another Browser**: Yes Safari

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

After signing into account, infinite page reload cycle m m tracking date

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_main | site is not usable url browser version firefox ios operating system ios tested another browser yes safari problem type site is not usable description page not loading correctly steps to reproduce after signing into account infinite page reload cycle m m tracking date browser configuration none from with ❤️ | 0 |

96,866 | 8,635,029,298 | IssuesEvent | 2018-11-22 19:51:54 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Manual test run on OS X for 0.56.x - Release Hotfix 2 | OS/macOS release-notes/exclude tests | ## Per release specialty tests

- [x] Upgrade to Chromium 70.0.3538.110.([#2200](https://github.com/brave/brave-browser/issues/2200))

### Installer

- [x] Check that installer is close to the size of last release

- [x] Check signature: If OS Run `spctl --assess --verbose /Applications/Brave-Browser-Beta.app/` and make sure it returns `accepted`. If Windows right click on the `brave_installer-x64.exe` and go to Properties, go to the Digital Signatures tab and double click on the signature. Make sure it says "The digital signature is OK" in the popup window

### Data(Upgrade from previous release)

- [x] Make sure that data from the last version appears in the new version OK

- [x] With data from the last version, verify that

- [x] bookmarks on the bookmark toolbar and bookmark folders can be opened

- [x] cookies are preserved

- [x] installed extensions are retained and work correctly

- [x] opened tabs can be reloaded

- [x] stored passwords are preserved

- [x] unpinned tabs can be pinned

### Widevine

- [x] Verify `Widevine Notification` is shown when you visit Netflix for the first time

- [x] Test that you can stream on Netflix on a fresh profile after installing Widevine

### Geolocation

- [ ] Check that https://developer.mozilla.org/en-US/docs/Web/API/Geolocation/Using_geolocation shows correct location

- [x] Check that https://browserleaks.com/geo works and shows correct location

- [x] Check that https://html5demos.com/geo/ works but doesn't require an accurate location

### Crash Reporting

- [x] Check that loading `brave://crash` causes the new tab to crash

- [x] Check that `brave://crashes` lists all the crashes and includes both Crash Report ID & Local Crash ID

- [x] Verify the crash ID matches the report on brave stats

### Bravery settings

- [x] Verify that HTTPS Everywhere works by loading http://https-everywhere.badssl.com/

- [x] Turning HTTPS Everywhere off and shields off both disable the redirect to https://https-everywhere.badssl.com/

- [x] Verify that toggling `Ads and trackers blocked` works as expected

- [x] Visit https://testsafebrowsing.appspot.com/s/phishing.html, verify that Safe Browsing (via our Proxy) works for all the listed items

- [x] Visit https://brianbondy.com/ and then turn on script blocking, page should not load. Allow it from the script blocking UI in the URL bar and it should load the page correctly

- [x] Test that 3rd party storage results are blank at https://jsfiddle.net/7ke9r14a/9/ when 3rd party cookies are blocked and not blank when 3rd party cookies are unblocked

### Fingerprint Tests

- [ ] Visit https://jsfiddle.net/bkf50r8v/13/, ensure 3 blocked items are listed in shields. Result window should show `got canvas fingerprint 0` and `got webgl fingerprint 00`

- [x] Test that audio fingerprint is blocked at https://audiofingerprint.openwpm.com/ only when `Block all fingerprinting protection` is on

- [x] Test that Brave browser isn't detected on https://extensions.inrialpes.fr/brave/

- [x] Test that https://diafygi.github.io/webrtc-ips/ doesn't leak IP address when `Block all fingerprinting protection` is on

### Content tests

- [x] Open a page with an input control and type some misspellings on a textbox, make sure they are underlined

- [x] Make sure that right clicking on a word with suggestions gives a suggestion and that clicking on the suggestion replaces the text

- [x] Test that https://mixed-script.badssl.com/ shows up as grey not red (no mixed content scripts are run)

### Session storage

- [x] Temporarily move away your browser profile and test that a new profile is created when browser is launched

- macOS - `~/Library/Application\ Support/BraveSoftware/`

- Windows - `%userprofile%\appdata\Local\BraveSoftware\`

- Linux(Ubuntu) - `~/.config/BraveSoftware/`

- [x] Test that windows and tabs restore when closed, including active tab

- [x] Ensure that the tabs in the above session are being lazy loaded when the session is restored

## Chromium upgrade tests

#### Adblock

- [x] Verify referrer blocking works properly for TLD+1. Visit `https://technology.slashdot.org/` and verify adblock works properly similar to `https://slashdot.org/`

#### Components

- [x] Delete Adblock folder from browser profile and restart browser. Visit `brave://components` and verify `Brave Ad Block Updater` downloads and update the component. Repeat for all Brave components

| 1.0 | Manual test run on OS X for 0.56.x - Release Hotfix 2 - ## Per release specialty tests

- [x] Upgrade to Chromium 70.0.3538.110.([#2200](https://github.com/brave/brave-browser/issues/2200))

### Installer

- [x] Check that installer is close to the size of last release

- [x] Check signature: If OS Run `spctl --assess --verbose /Applications/Brave-Browser-Beta.app/` and make sure it returns `accepted`. If Windows right click on the `brave_installer-x64.exe` and go to Properties, go to the Digital Signatures tab and double click on the signature. Make sure it says "The digital signature is OK" in the popup window

### Data(Upgrade from previous release)

- [x] Make sure that data from the last version appears in the new version OK

- [x] With data from the last version, verify that

- [x] bookmarks on the bookmark toolbar and bookmark folders can be opened

- [x] cookies are preserved

- [x] installed extensions are retained and work correctly

- [x] opened tabs can be reloaded

- [x] stored passwords are preserved

- [x] unpinned tabs can be pinned

### Widevine

- [x] Verify `Widevine Notification` is shown when you visit Netflix for the first time

- [x] Test that you can stream on Netflix on a fresh profile after installing Widevine

### Geolocation

- [ ] Check that https://developer.mozilla.org/en-US/docs/Web/API/Geolocation/Using_geolocation shows correct location

- [x] Check that https://browserleaks.com/geo works and shows correct location

- [x] Check that https://html5demos.com/geo/ works but doesn't require an accurate location

### Crash Reporting

- [x] Check that loading `brave://crash` causes the new tab to crash

- [x] Check that `brave://crashes` lists all the crashes and includes both Crash Report ID & Local Crash ID

- [x] Verify the crash ID matches the report on brave stats

### Bravery settings

- [x] Verify that HTTPS Everywhere works by loading http://https-everywhere.badssl.com/

- [x] Turning HTTPS Everywhere off and shields off both disable the redirect to https://https-everywhere.badssl.com/

- [x] Verify that toggling `Ads and trackers blocked` works as expected

- [x] Visit https://testsafebrowsing.appspot.com/s/phishing.html, verify that Safe Browsing (via our Proxy) works for all the listed items

- [x] Visit https://brianbondy.com/ and then turn on script blocking, page should not load. Allow it from the script blocking UI in the URL bar and it should load the page correctly

- [x] Test that 3rd party storage results are blank at https://jsfiddle.net/7ke9r14a/9/ when 3rd party cookies are blocked and not blank when 3rd party cookies are unblocked

### Fingerprint Tests

- [ ] Visit https://jsfiddle.net/bkf50r8v/13/, ensure 3 blocked items are listed in shields. Result window should show `got canvas fingerprint 0` and `got webgl fingerprint 00`

- [x] Test that audio fingerprint is blocked at https://audiofingerprint.openwpm.com/ only when `Block all fingerprinting protection` is on

- [x] Test that Brave browser isn't detected on https://extensions.inrialpes.fr/brave/

- [x] Test that https://diafygi.github.io/webrtc-ips/ doesn't leak IP address when `Block all fingerprinting protection` is on

### Content tests

- [x] Open a page with an input control and type some misspellings on a textbox, make sure they are underlined

- [x] Make sure that right clicking on a word with suggestions gives a suggestion and that clicking on the suggestion replaces the text

- [x] Test that https://mixed-script.badssl.com/ shows up as grey not red (no mixed content scripts are run)

### Session storage

- [x] Temporarily move away your browser profile and test that a new profile is created when browser is launched

- macOS - `~/Library/Application\ Support/BraveSoftware/`

- Windows - `%userprofile%\appdata\Local\BraveSoftware\`

- Linux(Ubuntu) - `~/.config/BraveSoftware/`

- [x] Test that windows and tabs restore when closed, including active tab

- [x] Ensure that the tabs in the above session are being lazy loaded when the session is restored

## Chromium upgrade tests

#### Adblock

- [x] Verify referrer blocking works properly for TLD+1. Visit `https://technology.slashdot.org/` and verify adblock works properly similar to `https://slashdot.org/`

#### Components

- [x] Delete Adblock folder from browser profile and restart browser. Visit `brave://components` and verify `Brave Ad Block Updater` downloads and update the component. Repeat for all Brave components

| non_main | manual test run on os x for x release hotfix per release specialty tests upgrade to chromium installer check that installer is close to the size of last release check signature if os run spctl assess verbose applications brave browser beta app and make sure it returns accepted if windows right click on the brave installer exe and go to properties go to the digital signatures tab and double click on the signature make sure it says the digital signature is ok in the popup window data upgrade from previous release make sure that data from the last version appears in the new version ok with data from the last version verify that bookmarks on the bookmark toolbar and bookmark folders can be opened cookies are preserved installed extensions are retained and work correctly opened tabs can be reloaded stored passwords are preserved unpinned tabs can be pinned widevine verify widevine notification is shown when you visit netflix for the first time test that you can stream on netflix on a fresh profile after installing widevine geolocation check that shows correct location check that works and shows correct location check that works but doesn t require an accurate location crash reporting check that loading brave crash causes the new tab to crash check that brave crashes lists all the crashes and includes both crash report id local crash id verify the crash id matches the report on brave stats bravery settings verify that https everywhere works by loading turning https everywhere off and shields off both disable the redirect to verify that toggling ads and trackers blocked works as expected visit verify that safe browsing via our proxy works for all the listed items visit and then turn on script blocking page should not load allow it from the script blocking ui in the url bar and it should load the page correctly test that party storage results are blank at when party cookies are blocked and not blank when party cookies are unblocked fingerprint tests visit ensure blocked items are listed in shields result window should show got canvas fingerprint and got webgl fingerprint test that audio fingerprint is blocked at only when block all fingerprinting protection is on test that brave browser isn t detected on test that doesn t leak ip address when block all fingerprinting protection is on content tests open a page with an input control and type some misspellings on a textbox make sure they are underlined make sure that right clicking on a word with suggestions gives a suggestion and that clicking on the suggestion replaces the text test that shows up as grey not red no mixed content scripts are run session storage temporarily move away your browser profile and test that a new profile is created when browser is launched macos library application support bravesoftware windows userprofile appdata local bravesoftware linux ubuntu config bravesoftware test that windows and tabs restore when closed including active tab ensure that the tabs in the above session are being lazy loaded when the session is restored chromium upgrade tests adblock verify referrer blocking works properly for tld visit and verify adblock works properly similar to components delete adblock folder from browser profile and restart browser visit brave components and verify brave ad block updater downloads and update the component repeat for all brave components | 0 |

874 | 4,540,095,870 | IssuesEvent | 2016-09-09 13:37:41 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | eos eapi failed commands | affects_2.1 bug_report networking waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

networking/eos_command

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.1.0.0

config file = /home/admin-0/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

-->

##### OS / ENVIRONMENT

<!---

N/A

-->

##### SUMMARY

<!--- Explain the problem briefly -->

Running the eos_command 'show version' using the eapi transport works fine. When running the command 'show running-configuration section Et1' it returns a failure. Both work fine when using cli as the transport.

Switch is an Arista 7150S running 4.16.7M

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

<!--- Paste example playbooks or commands between quotes below -->

```

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

<!--- Paste verbatim command output between quotes below -->

```

PLAYBOOK: test_arista_command.yml **********************************************

1 plays in test_arista_command.yml

PLAY [arista_test] *************************************************************

TASK [setup] *******************************************************************

<10.24.1.14> ESTABLISH LOCAL CONNECTION FOR USER: reynolds <10.24.1.14> EXEC /bin/sh -c 'LANG=C LC_ALL=C LC_MESSAGES=C /usr/bin/python && sleep 0'

<10.24.1.13> ESTABLISH LOCAL CONNECTION FOR USER: reynolds <10.24.1.13> EXEC /bin/sh -c 'LANG=C LC_ALL=C LC_MESSAGES=C /usr/bin/python && sleep 0'

ok: [myswitch]

TASK [test_arista_command : test command] **************************************

task path: /home/reynolds/wc/cfg/ansible/roles/test_arista_command/tasks/main.yml:1

<10.24.1.13> ESTABLISH LOCAL CONNECTION FOR USER: reynolds <10.24.1.13> EXEC /bin/sh -c 'LANG=C LC_ALL=C LC_MESSAGES=C /usr/bin/python && sleep 0'

fatal: [myswitch]: FAILED! => {"changed": false, "code": 1003, "commands": ["show running-config section Et1"], "data": [{}, {"errors": ["Command cannot be used over the API at this time. To see ASCII output, set format='text' in your request"]}], "failed": true, "invocation": {"module_args": {"auth_pass": null, "authorize": true, "commands": ["show running-config section Et1"], "host": "10.24.1.13", "interval": 1, "password": "VALUE_SPECIFIED_IN_NO_LOG_PARAMETER", "port": null, "provider": null, "retries": 10, "ssh_keyfile": null, "transport": "eapi", "url_password": "VALUE_SPECIFIED_IN_NO_LOG_PARAMETER", "url_username": "ansible", "use_ssl": false, "username": "ansible", "waitfor": null}, "module_name": "eos_command"}, "message": "CLI command 2 of 2 'show running-config section Et1' failed: unconverted command", "msg": "json-rpc error"}

```

| True | eos eapi failed commands - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

networking/eos_command

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.1.0.0

config file = /home/admin-0/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

-->

##### OS / ENVIRONMENT

<!---

N/A

-->

##### SUMMARY

<!--- Explain the problem briefly -->

Running the eos_command 'show version' using the eapi transport works fine. When running the command 'show running-configuration section Et1' it returns a failure. Both work fine when using cli as the transport.

Switch is an Arista 7150S running 4.16.7M

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

<!--- Paste example playbooks or commands between quotes below -->

```

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

<!--- Paste verbatim command output between quotes below -->

```

PLAYBOOK: test_arista_command.yml **********************************************

1 plays in test_arista_command.yml

PLAY [arista_test] *************************************************************

TASK [setup] *******************************************************************

<10.24.1.14> ESTABLISH LOCAL CONNECTION FOR USER: reynolds <10.24.1.14> EXEC /bin/sh -c 'LANG=C LC_ALL=C LC_MESSAGES=C /usr/bin/python && sleep 0'

<10.24.1.13> ESTABLISH LOCAL CONNECTION FOR USER: reynolds <10.24.1.13> EXEC /bin/sh -c 'LANG=C LC_ALL=C LC_MESSAGES=C /usr/bin/python && sleep 0'

ok: [myswitch]

TASK [test_arista_command : test command] **************************************

task path: /home/reynolds/wc/cfg/ansible/roles/test_arista_command/tasks/main.yml:1

<10.24.1.13> ESTABLISH LOCAL CONNECTION FOR USER: reynolds <10.24.1.13> EXEC /bin/sh -c 'LANG=C LC_ALL=C LC_MESSAGES=C /usr/bin/python && sleep 0'

fatal: [myswitch]: FAILED! => {"changed": false, "code": 1003, "commands": ["show running-config section Et1"], "data": [{}, {"errors": ["Command cannot be used over the API at this time. To see ASCII output, set format='text' in your request"]}], "failed": true, "invocation": {"module_args": {"auth_pass": null, "authorize": true, "commands": ["show running-config section Et1"], "host": "10.24.1.13", "interval": 1, "password": "VALUE_SPECIFIED_IN_NO_LOG_PARAMETER", "port": null, "provider": null, "retries": 10, "ssh_keyfile": null, "transport": "eapi", "url_password": "VALUE_SPECIFIED_IN_NO_LOG_PARAMETER", "url_username": "ansible", "use_ssl": false, "username": "ansible", "waitfor": null}, "module_name": "eos_command"}, "message": "CLI command 2 of 2 'show running-config section Et1' failed: unconverted command", "msg": "json-rpc error"}

```

| main | eos eapi failed commands issue type bug report component name networking eos command ansible version ansible config file home admin ansible ansible cfg configured module search path default w o overrides configuration os environment n a summary running the eos command show version using the eapi transport works fine when running the command show running configuration section it returns a failure both work fine when using cli as the transport switch is an arista running steps to reproduce for bugs show exactly how to reproduce the problem for new features show how the feature would be used expected results actual results playbook test arista command yml plays in test arista command yml play task establish local connection for user reynolds exec bin sh c lang c lc all c lc messages c usr bin python sleep establish local connection for user reynolds exec bin sh c lang c lc all c lc messages c usr bin python sleep ok task task path home reynolds wc cfg ansible roles test arista command tasks main yml establish local connection for user reynolds exec bin sh c lang c lc all c lc messages c usr bin python sleep fatal failed changed false code commands data failed true invocation module args auth pass null authorize true commands host interval password value specified in no log parameter port null provider null retries ssh keyfile null transport eapi url password value specified in no log parameter url username ansible use ssl false username ansible waitfor null module name eos command message cli command of show running config section failed unconverted command msg json rpc error | 1 |

379,549 | 26,375,083,876 | IssuesEvent | 2023-01-12 01:16:56 | criblio/appscope | https://api.github.com/repos/criblio/appscope | opened | 1.2.2 docs tweaks | documentation | ### Steps To Reproduce

_No response_

### Environment

```markdown

- AppScope:

- OS:

- Architecture:

- Kernel:

```

### Requested priority

None

### Relevant log output

_No response_ | 1.0 | 1.2.2 docs tweaks - ### Steps To Reproduce

_No response_

### Environment

```markdown

- AppScope:

- OS:

- Architecture:

- Kernel:

```

### Requested priority

None

### Relevant log output

_No response_ | non_main | docs tweaks steps to reproduce no response environment markdown appscope os architecture kernel requested priority none relevant log output no response | 0 |

52,043 | 12,842,776,587 | IssuesEvent | 2020-07-08 02:55:28 | inspireui/support | https://api.github.com/repos/inspireui/support | closed | pod install faild | FluxStore ios-build-fail |

** Product = Fluxstore Pro - Flutter

** version = 1.7.5

** Testing Device/Simulator = problem while building app

I can't install the pod file. I already attach screenshot app command.

error::===

[!] Error installing FBAudienceNetwork

[!] /usr/bin/curl -f -L -o /var/folders/13/0hdhg6mx3w35k1893tjgb2mr0000gn/T/d20200705-1271-xeiny3/file.zip https://developers.facebook.com/resources/FBAudienceNetwork-5.8.0.zip --create-dirs --netrc-optional --retry 2

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 0 0 0 0 0 0 0 --:--:-- 0:00:02 --:--:-- 0

Warning: Transient problem: HTTP error Will retry in 1 seconds. 2 retries

Warning: left.

0 0 0 0 0 0 0 0 --:--:-- 0:00:01 --:--:-- 0

Warning: Transient problem: HTTP error Will retry in 2 seconds. 1 retries

Warning: left.

0 0 0 0 0 0 0 0 --:--:-- 0:00:01 --:--:-- 0

curl: (22) The requested URL returned error: 500

[!] Automatically assigning platform `iOS` with version `10.3` on target `Runner` because no platform was specified. Please specify a platform for this target in your Podfile. See `https://guides.cocoapods.org/syntax/podfile.html#platform`.

[!] Automatically assigning platform `iOS` with version `10.3` on target `OneSignalNotificationServiceExtension` because no platform was specified. Please specify a platform for this target in your Podfile. See `https://guides.cocoapods.org/syntax/podfile.html#platform`.

<img width="1440" alt="Screen Shot 2020-07-05 at 7 27 11 PM" src="https://user-images.githubusercontent.com/17203863/86536076-d6e7c580-bef5-11ea-8b81-2b98323212d2.png">

_**

| 1.0 | pod install faild -

** Product = Fluxstore Pro - Flutter

** version = 1.7.5

** Testing Device/Simulator = problem while building app

I can't install the pod file. I already attach screenshot app command.

error::===

[!] Error installing FBAudienceNetwork

[!] /usr/bin/curl -f -L -o /var/folders/13/0hdhg6mx3w35k1893tjgb2mr0000gn/T/d20200705-1271-xeiny3/file.zip https://developers.facebook.com/resources/FBAudienceNetwork-5.8.0.zip --create-dirs --netrc-optional --retry 2

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 0 0 0 0 0 0 0 --:--:-- 0:00:02 --:--:-- 0

Warning: Transient problem: HTTP error Will retry in 1 seconds. 2 retries

Warning: left.

0 0 0 0 0 0 0 0 --:--:-- 0:00:01 --:--:-- 0

Warning: Transient problem: HTTP error Will retry in 2 seconds. 1 retries

Warning: left.

0 0 0 0 0 0 0 0 --:--:-- 0:00:01 --:--:-- 0

curl: (22) The requested URL returned error: 500

[!] Automatically assigning platform `iOS` with version `10.3` on target `Runner` because no platform was specified. Please specify a platform for this target in your Podfile. See `https://guides.cocoapods.org/syntax/podfile.html#platform`.

[!] Automatically assigning platform `iOS` with version `10.3` on target `OneSignalNotificationServiceExtension` because no platform was specified. Please specify a platform for this target in your Podfile. See `https://guides.cocoapods.org/syntax/podfile.html#platform`.

<img width="1440" alt="Screen Shot 2020-07-05 at 7 27 11 PM" src="https://user-images.githubusercontent.com/17203863/86536076-d6e7c580-bef5-11ea-8b81-2b98323212d2.png">

_**

| non_main | pod install faild product fluxstore pro flutter version testing device simulator problem while building app i can t install the pod file i already attach screenshot app command error error installing fbaudiencenetwork usr bin curl f l o var folders t file zip create dirs netrc optional retry total received xferd average speed time time time current dload upload total spent left speed warning transient problem http error will retry in seconds retries warning left warning transient problem http error will retry in seconds retries warning left curl the requested url returned error automatically assigning platform ios with version on target runner because no platform was specified please specify a platform for this target in your podfile see automatically assigning platform ios with version on target onesignalnotificationserviceextension because no platform was specified please specify a platform for this target in your podfile see img width alt screen shot at pm src | 0 |

194,894 | 22,281,547,421 | IssuesEvent | 2022-06-11 01:01:07 | temporalio/sdk-go | https://api.github.com/repos/temporalio/sdk-go | closed | github.com/stretchr/testify-v1.7.0: 1 vulnerabilities (highest severity is: 7.5) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/stretchr/testify-v1.7.0</b></p></summary>

<p></p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/temporalio/sdk-go/commit/3a2b86ebed54b2f01acfa03635867e89913c3bd4">3a2b86ebed54b2f01acfa03635867e89913c3bd4</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | --- | --- |

| [CVE-2022-28948](https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-28948) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High | 7.5 | github.com/go-yaml/yaml-496545a6307b2a7d7a710fd516e5e16e8ab62dbc | Transitive | N/A | ❌ |

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> CVE-2022-28948</summary>

### Vulnerable Library - <b>github.com/go-yaml/yaml-496545a6307b2a7d7a710fd516e5e16e8ab62dbc</b></p>

<p>YAML support for the Go language.</p>

<p>

Dependency Hierarchy:

- github.com/stretchr/testify-v1.7.0 (Root Library)

- :x: **github.com/go-yaml/yaml-496545a6307b2a7d7a710fd516e5e16e8ab62dbc** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/temporalio/sdk-go/commit/3a2b86ebed54b2f01acfa03635867e89913c3bd4">3a2b86ebed54b2f01acfa03635867e89913c3bd4</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

An issue in the Unmarshal function in Go-Yaml v3 causes the program to crash when attempting to deserialize invalid input.

<p>Publish Date: 2022-05-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-28948>CVE-2022-28948</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-hp87-p4gw-j4gq">https://github.com/advisories/GHSA-hp87-p4gw-j4gq</a></p>

<p>Release Date: 2022-05-19</p>

<p>Fix Resolution: 3.0.0</p>

</p>

<p></p>

</details>

<!-- <REMEDIATE>[{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"GO","packageName":"github.com/go-yaml/yaml","packageVersion":"496545a6307b2a7d7a710fd516e5e16e8ab62dbc","packageFilePaths":[],"isTransitiveDependency":true,"dependencyTree":"github.com/stretchr/testify:v1.7.0;github.com/go-yaml/yaml:496545a6307b2a7d7a710fd516e5e16e8ab62dbc","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.0.0","isBinary":true}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2022-28948","vulnerabilityDetails":"An issue in the Unmarshal function in Go-Yaml v3 causes the program to crash when attempting to deserialize invalid input.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-28948","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}]</REMEDIATE> --> | True | github.com/stretchr/testify-v1.7.0: 1 vulnerabilities (highest severity is: 7.5) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/stretchr/testify-v1.7.0</b></p></summary>

<p></p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/temporalio/sdk-go/commit/3a2b86ebed54b2f01acfa03635867e89913c3bd4">3a2b86ebed54b2f01acfa03635867e89913c3bd4</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | --- | --- |

| [CVE-2022-28948](https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-28948) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High | 7.5 | github.com/go-yaml/yaml-496545a6307b2a7d7a710fd516e5e16e8ab62dbc | Transitive | N/A | ❌ |

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> CVE-2022-28948</summary>

### Vulnerable Library - <b>github.com/go-yaml/yaml-496545a6307b2a7d7a710fd516e5e16e8ab62dbc</b></p>

<p>YAML support for the Go language.</p>

<p>

Dependency Hierarchy:

- github.com/stretchr/testify-v1.7.0 (Root Library)

- :x: **github.com/go-yaml/yaml-496545a6307b2a7d7a710fd516e5e16e8ab62dbc** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/temporalio/sdk-go/commit/3a2b86ebed54b2f01acfa03635867e89913c3bd4">3a2b86ebed54b2f01acfa03635867e89913c3bd4</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

An issue in the Unmarshal function in Go-Yaml v3 causes the program to crash when attempting to deserialize invalid input.

<p>Publish Date: 2022-05-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-28948>CVE-2022-28948</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-hp87-p4gw-j4gq">https://github.com/advisories/GHSA-hp87-p4gw-j4gq</a></p>

<p>Release Date: 2022-05-19</p>

<p>Fix Resolution: 3.0.0</p>

</p>

<p></p>

</details>

<!-- <REMEDIATE>[{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"GO","packageName":"github.com/go-yaml/yaml","packageVersion":"496545a6307b2a7d7a710fd516e5e16e8ab62dbc","packageFilePaths":[],"isTransitiveDependency":true,"dependencyTree":"github.com/stretchr/testify:v1.7.0;github.com/go-yaml/yaml:496545a6307b2a7d7a710fd516e5e16e8ab62dbc","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.0.0","isBinary":true}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2022-28948","vulnerabilityDetails":"An issue in the Unmarshal function in Go-Yaml v3 causes the program to crash when attempting to deserialize invalid input.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-28948","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}]</REMEDIATE> --> | non_main | github com stretchr testify vulnerabilities highest severity is autoclosed vulnerable library github com stretchr testify found in head commit a href vulnerabilities cve severity cvss dependency type fixed in remediation available high github com go yaml yaml transitive n a details cve vulnerable library github com go yaml yaml yaml support for the go language dependency hierarchy github com stretchr testify root library x github com go yaml yaml vulnerable library found in head commit a href found in base branch master vulnerability details an issue in the unmarshal function in go yaml causes the program to crash when attempting to deserialize invalid input publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution istransitivedependency true dependencytree github com stretchr testify github com go yaml yaml isminimumfixversionavailable true minimumfixversion isbinary true basebranches vulnerabilityidentifier cve vulnerabilitydetails an issue in the unmarshal function in go yaml causes the program to crash when attempting to deserialize invalid input vulnerabilityurl | 0 |

538 | 3,952,606,356 | IssuesEvent | 2016-04-29 09:35:39 | duckduckgo/zeroclickinfo-spice | https://api.github.com/repos/duckduckgo/zeroclickinfo-spice | opened | Holiday: Give answers for Mother's Day, Father's Day, etc. | Maintainer Input Requested | Following a [suggestion on Twitter](https://twitter.com/daytonlowell/status/725342856852824066), it would be helpful if this triggered on things such as `when is mothers day`. This changes depending on country, however, so would probably need to incorporate locale detection as well.

------

IA Page: http://duck.co/ia/view/holiday

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @sekhavati | True | Holiday: Give answers for Mother's Day, Father's Day, etc. - Following a [suggestion on Twitter](https://twitter.com/daytonlowell/status/725342856852824066), it would be helpful if this triggered on things such as `when is mothers day`. This changes depending on country, however, so would probably need to incorporate locale detection as well.

------

IA Page: http://duck.co/ia/view/holiday

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @sekhavati | main | holiday give answers for mother s day father s day etc following a it would be helpful if this triggered on things such as when is mothers day this changes depending on country however so would probably need to incorporate locale detection as well ia page sekhavati | 1 |

2,719 | 9,595,364,911 | IssuesEvent | 2019-05-09 15:53:28 | RalfKoban/MiKo-Analyzers | https://api.github.com/repos/RalfKoban/MiKo-Analyzers | opened | Do not ignore null arguments | Area: analyzer Area: maintainability feasability unclear feature | If there is an method argument provided, and the argument is checked for null and in such case simply a return is done, then there might be an actual bug hidden underneath.

So such check would silently ignore and cloak that bug.

Therefore we need to check for that and report it as an issue.

Code example:

```C#

public void DoSomething(object o)

{

if (o is null) return;

}

``` | True | Do not ignore null arguments - If there is an method argument provided, and the argument is checked for null and in such case simply a return is done, then there might be an actual bug hidden underneath.

So such check would silently ignore and cloak that bug.

Therefore we need to check for that and report it as an issue.

Code example:

```C#

public void DoSomething(object o)

{

if (o is null) return;

}

``` | main | do not ignore null arguments if there is an method argument provided and the argument is checked for null and in such case simply a return is done then there might be an actual bug hidden underneath so such check would silently ignore and cloak that bug therefore we need to check for that and report it as an issue code example c public void dosomething object o if o is null return | 1 |

3,776 | 15,882,302,571 | IssuesEvent | 2021-04-09 15:51:10 | sympy/sympy | https://api.github.com/repos/sympy/sympy | closed | Failing Master build due to Deprecations in NumPy 1.20 | GitHub Actions Maintainability | Looking at the traceback in the failing Travis build in master, there are a lot of deprecation warnings that are causing the build to fail.

It looks like NumPy has updated their data type aliases and deprecated some of the existing ones like np.int, np.complex, etc. (numpy/numpy#14882) in their latest [1.20 release](https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations). Just looking at the no of failures, it looks like we have a lot of instances of these which we need to update

| True | Failing Master build due to Deprecations in NumPy 1.20 - Looking at the traceback in the failing Travis build in master, there are a lot of deprecation warnings that are causing the build to fail.

It looks like NumPy has updated their data type aliases and deprecated some of the existing ones like np.int, np.complex, etc. (numpy/numpy#14882) in their latest [1.20 release](https://numpy.org/devdocs/release/1.20.0-notes.html#deprecations). Just looking at the no of failures, it looks like we have a lot of instances of these which we need to update

| main | failing master build due to deprecations in numpy looking at the traceback in the failing travis build in master there are a lot of deprecation warnings that are causing the build to fail it looks like numpy has updated their data type aliases and deprecated some of the existing ones like np int np complex etc numpy numpy in their latest just looking at the no of failures it looks like we have a lot of instances of these which we need to update | 1 |

4,555 | 23,725,983,160 | IssuesEvent | 2022-08-30 19:39:22 | rustsec/advisory-db | https://api.github.com/repos/rustsec/advisory-db | closed | `dotenv` crate is implicitly unmaintained | Unmaintained | As of May 21st, 2022, https://github.com/dotenv-rs/dotenv 's latest version is 0.15.0, which was published on October 22nd, 2019. And the latest commit is [3c1a77bc95821777e5ceb996c5e0b082f2a3ea38](https://github.com/dotenv-rs/dotenv/commit/3c1a77bc95821777e5ceb996c5e0b082f2a3ea38), which was pushed on Jun 27th, 2020.

On Dec 24th, 2021, someone asked the project status on [Current maintenance state · Issue #74 · dotenv-rs/dotenv](https://github.com/dotenv-rs/dotenv/issues/74) but there's no response from the maintainers.

I'm not sure how long "prolonged period" refers to, but this crate is a candidate for an "unmaintained" crate, I think. At least we should monitor how things are going there. | True | `dotenv` crate is implicitly unmaintained - As of May 21st, 2022, https://github.com/dotenv-rs/dotenv 's latest version is 0.15.0, which was published on October 22nd, 2019. And the latest commit is [3c1a77bc95821777e5ceb996c5e0b082f2a3ea38](https://github.com/dotenv-rs/dotenv/commit/3c1a77bc95821777e5ceb996c5e0b082f2a3ea38), which was pushed on Jun 27th, 2020.

On Dec 24th, 2021, someone asked the project status on [Current maintenance state · Issue #74 · dotenv-rs/dotenv](https://github.com/dotenv-rs/dotenv/issues/74) but there's no response from the maintainers.

I'm not sure how long "prolonged period" refers to, but this crate is a candidate for an "unmaintained" crate, I think. At least we should monitor how things are going there. | main | dotenv crate is implicitly unmaintained as of may s latest version is which was published on october and the latest commit is which was pushed on jun on dec someone asked the project status on but there s no response from the maintainers i m not sure how long prolonged period refers to but this crate is a candidate for an unmaintained crate i think at least we should monitor how things are going there | 1 |

51,094 | 6,147,329,942 | IssuesEvent | 2017-06-27 15:31:01 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Win32Api SetEnvironmentVariableW - Disabled tests | area-System.Runtime.Extensions disabled-test | Re-Enable all tests which are disabled on UAP/UAPAOT with reason substring "SetEnvironmentVariableW" as soon as Win32 api gets available.

https://github.com/dotnet/corefx/blob/master/src/System.Runtime.Extensions/tests/System/Environment.SetEnvironmentVariable.cs

https://github.com/dotnet/corefx/blob/master/src/System.Runtime.Extensions/tests/System/Environment.GetEnvironmentVariable.cs

https://github.com/dotnet/corefx/blob/master/src/System.Runtime.Extensions/tests/System/Environment.ExpandEnvironmentVariables.cs

| 1.0 | Win32Api SetEnvironmentVariableW - Disabled tests - Re-Enable all tests which are disabled on UAP/UAPAOT with reason substring "SetEnvironmentVariableW" as soon as Win32 api gets available.

https://github.com/dotnet/corefx/blob/master/src/System.Runtime.Extensions/tests/System/Environment.SetEnvironmentVariable.cs

https://github.com/dotnet/corefx/blob/master/src/System.Runtime.Extensions/tests/System/Environment.GetEnvironmentVariable.cs

https://github.com/dotnet/corefx/blob/master/src/System.Runtime.Extensions/tests/System/Environment.ExpandEnvironmentVariables.cs

| non_main | setenvironmentvariablew disabled tests re enable all tests which are disabled on uap uapaot with reason substring setenvironmentvariablew as soon as api gets available | 0 |

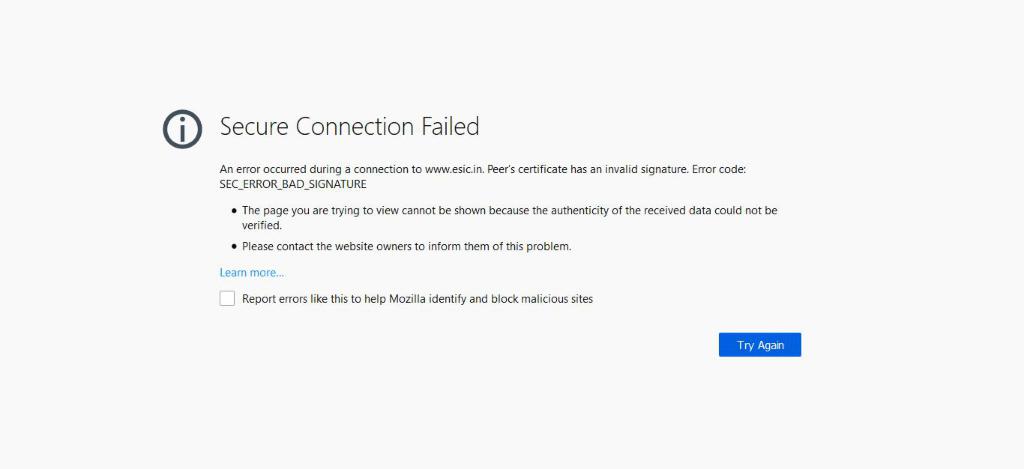

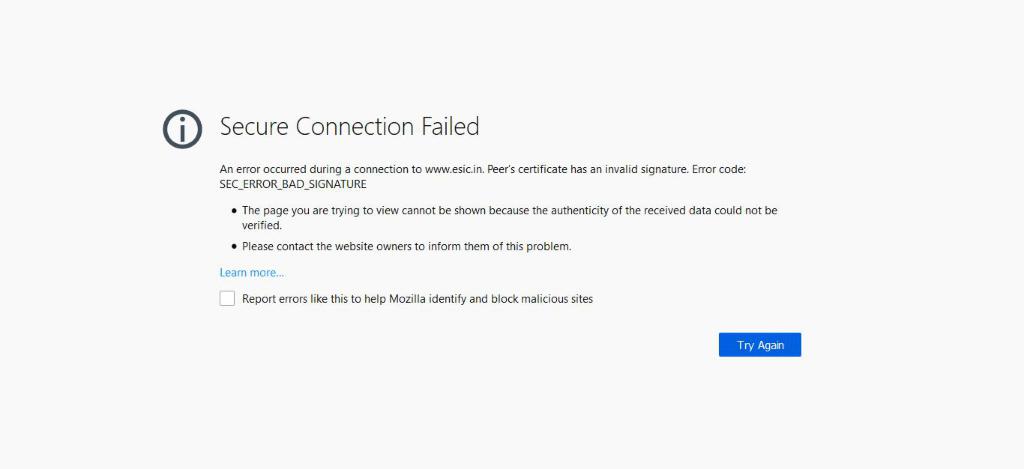

322,114 | 9,813,139,638 | IssuesEvent | 2019-06-13 07:12:09 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.esic.in - desktop site instead of mobile site | browser-firefox engine-gecko priority-normal type-connection-error-unknown | <!-- @browser: Firefox 67.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:67.0) Gecko/20100101 Firefox/67.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.esic.in/

**Browser / Version**: Firefox 67.0

**Operating System**: Windows 10

**Tested Another Browser**: Unknown

**Problem type**: Desktop site instead of mobile site

**Description**: its not open

**Steps to Reproduce**:

[](https://webcompat.com/uploads/2019/6/86aea0fb-b235-4547-a061-791119c4ef98.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>image.mem.shared: true</li><li>buildID: 20190529130856</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>hasTouchScreen: false</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>gfx.webrender.all: false</li><li>channel: release</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.esic.in - desktop site instead of mobile site - <!-- @browser: Firefox 67.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:67.0) Gecko/20100101 Firefox/67.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.esic.in/

**Browser / Version**: Firefox 67.0

**Operating System**: Windows 10

**Tested Another Browser**: Unknown

**Problem type**: Desktop site instead of mobile site

**Description**: its not open

**Steps to Reproduce**:

[](https://webcompat.com/uploads/2019/6/86aea0fb-b235-4547-a061-791119c4ef98.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>image.mem.shared: true</li><li>buildID: 20190529130856</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>hasTouchScreen: false</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>gfx.webrender.all: false</li><li>channel: release</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_main | desktop site instead of mobile site url browser version firefox operating system windows tested another browser unknown problem type desktop site instead of mobile site description its not open steps to reproduce browser configuration mixed active content blocked false image mem shared true buildid tracking content blocked false gfx webrender blob images true hastouchscreen false mixed passive content blocked false gfx webrender enabled false gfx webrender all false channel release from with ❤️ | 0 |

2,895 | 10,319,654,184 | IssuesEvent | 2019-08-30 18:08:22 | backdrop-ops/contrib | https://api.github.com/repos/backdrop-ops/contrib | closed | Nagios module and Application to join backdrop-contrib | Maintainer application Port in progress | I ported the Nagios module (https://github.com/MegaphoneJon/nagios/tree/1.x-1.x) to Backdrop. I only had a single site running it, but I shared it with Palante Tech, who confirms it works on their Backdrop sites as well. I'd like to submit it to the contrib repo. | True | Nagios module and Application to join backdrop-contrib - I ported the Nagios module (https://github.com/MegaphoneJon/nagios/tree/1.x-1.x) to Backdrop. I only had a single site running it, but I shared it with Palante Tech, who confirms it works on their Backdrop sites as well. I'd like to submit it to the contrib repo. | main | nagios module and application to join backdrop contrib i ported the nagios module to backdrop i only had a single site running it but i shared it with palante tech who confirms it works on their backdrop sites as well i d like to submit it to the contrib repo | 1 |

1,276 | 5,399,957,917 | IssuesEvent | 2017-02-27 20:47:43 | canadainc/sunnah10 | https://api.github.com/repos/canadainc/sunnah10 | closed | Implement RSS feed generator | enhancement invalid logic maintainability ui usability | Allow generating JSON files that can be used in the various BB10 apps for importing.

| True | Implement RSS feed generator - Allow generating JSON files that can be used in the various BB10 apps for importing.

| main | implement rss feed generator allow generating json files that can be used in the various apps for importing | 1 |

198,054 | 14,959,865,397 | IssuesEvent | 2021-01-27 04:19:38 | nasa/osal | https://api.github.com/repos/nasa/osal | closed | mqueue test program | enhancement unit-test | OSAL should include a simple mqueue test program to validate that the user has the correct settings and permissions to create/open/close/delete mqueues. Often users stumble on mqueue configuration and it is more difficult to diagnose when it's wrapped in the entirety of OSAL/cFS. | 1.0 | mqueue test program - OSAL should include a simple mqueue test program to validate that the user has the correct settings and permissions to create/open/close/delete mqueues. Often users stumble on mqueue configuration and it is more difficult to diagnose when it's wrapped in the entirety of OSAL/cFS. | non_main | mqueue test program osal should include a simple mqueue test program to validate that the user has the correct settings and permissions to create open close delete mqueues often users stumble on mqueue configuration and it is more difficult to diagnose when it s wrapped in the entirety of osal cfs | 0 |

343,665 | 30,682,389,137 | IssuesEvent | 2023-07-26 10:00:22 | iho-ohi/S-101_Portrayal-Catalogue | https://api.github.com/repos/iho-ohi/S-101_Portrayal-Catalogue | closed | New symbol for Berth features with categoryOfCargo = 7 (Dangerous or Hazardous) - ENCWG7-5.3_2022 [PSWG #112] | enhancement test PC 1.1.0 | SPEC at: https://github.com/S-101-Portrayal-subWG/Working-Documents/issues/112#issuecomment-1381193928 | 1.0 | New symbol for Berth features with categoryOfCargo = 7 (Dangerous or Hazardous) - ENCWG7-5.3_2022 [PSWG #112] - SPEC at: https://github.com/S-101-Portrayal-subWG/Working-Documents/issues/112#issuecomment-1381193928 | non_main | new symbol for berth features with categoryofcargo dangerous or hazardous spec at | 0 |

2,552 | 8,687,417,498 | IssuesEvent | 2018-12-03 13:42:50 | pbrisbin/bugsnag-haskell | https://api.github.com/repos/pbrisbin/bugsnag-haskell | opened | Integration testing | enhancement help wanted maintainability | We lack any good way to test things end-to-end: e.g. to assert on the result of some `notify(With)` call given some scenario. This makes it hard to get a regression test on #31 for example, which led to us not really fixing it.

I'm thinking about something like sticking an optional `IORef` in Settings that, when present, gets the Events appended to it instead of actually send to Bugsnag. This could even be useful to end-users who want to test Bugsnag-related paths in their own applications. | True | Integration testing - We lack any good way to test things end-to-end: e.g. to assert on the result of some `notify(With)` call given some scenario. This makes it hard to get a regression test on #31 for example, which led to us not really fixing it.

I'm thinking about something like sticking an optional `IORef` in Settings that, when present, gets the Events appended to it instead of actually send to Bugsnag. This could even be useful to end-users who want to test Bugsnag-related paths in their own applications. | main | integration testing we lack any good way to test things end to end e g to assert on the result of some notify with call given some scenario this makes it hard to get a regression test on for example which led to us not really fixing it i m thinking about something like sticking an optional ioref in settings that when present gets the events appended to it instead of actually send to bugsnag this could even be useful to end users who want to test bugsnag related paths in their own applications | 1 |

991 | 3,268,128,405 | IssuesEvent | 2015-10-23 09:31:19 | peter992233/CSCI342Project | https://api.github.com/repos/peter992233/CSCI342Project | opened | Add Proper Level System (With Difficulty) | Core Requirement | Extend on the level system by adding scaling difficulty such as shooting rate, speed of enemies and a multiplied score for each level they pass | 1.0 | Add Proper Level System (With Difficulty) - Extend on the level system by adding scaling difficulty such as shooting rate, speed of enemies and a multiplied score for each level they pass | non_main | add proper level system with difficulty extend on the level system by adding scaling difficulty such as shooting rate speed of enemies and a multiplied score for each level they pass | 0 |

388,019 | 26,748,978,092 | IssuesEvent | 2023-01-30 18:01:26 | WordPress/Advanced-administration-handbook | https://api.github.com/repos/WordPress/Advanced-administration-handbook | opened | Update page: Upgrading WordPress | documentation enhancement help wanted | File: [upgrade/upgrading.md](https://github.com/WordPress/Advanced-administration-handbook/blob/main/upgrade/upgrading.md)

This page needs a general review and update.

Needs to have some different parts. One, the simple update via the Admin panel, Two, the manual update via FTP.

Furthermore, probably, check the upgrade via WP Toolkit, or refer to it.

Plus, refer to [Upgrading (very old) WordPress](https://make.wordpress.org/hosting/handbook/upgrading/), maybe [moving all this content from the Hosting Handbook (in Markdown)](https://github.com/WordPress/hosting-handbook/blob/main/upgrading.md).

If you add documentation from another WordPress.org page, indicate it in the Changelog or in the comments of this issue.

### To-Do

- [ ] General review and updating

- [ ] Review all the process, both simple (admin) and complex (FTP / SQL)

- [ ] Upgrading (very old) WordPress | 1.0 | Update page: Upgrading WordPress - File: [upgrade/upgrading.md](https://github.com/WordPress/Advanced-administration-handbook/blob/main/upgrade/upgrading.md)

This page needs a general review and update.

Needs to have some different parts. One, the simple update via the Admin panel, Two, the manual update via FTP.

Furthermore, probably, check the upgrade via WP Toolkit, or refer to it.

Plus, refer to [Upgrading (very old) WordPress](https://make.wordpress.org/hosting/handbook/upgrading/), maybe [moving all this content from the Hosting Handbook (in Markdown)](https://github.com/WordPress/hosting-handbook/blob/main/upgrading.md).

If you add documentation from another WordPress.org page, indicate it in the Changelog or in the comments of this issue.

### To-Do

- [ ] General review and updating

- [ ] Review all the process, both simple (admin) and complex (FTP / SQL)

- [ ] Upgrading (very old) WordPress | non_main | update page upgrading wordpress file this page needs a general review and update needs to have some different parts one the simple update via the admin panel two the manual update via ftp furthermore probably check the upgrade via wp toolkit or refer to it plus refer to maybe if you add documentation from another wordpress org page indicate it in the changelog or in the comments of this issue to do general review and updating review all the process both simple admin and complex ftp sql upgrading very old wordpress | 0 |

2,873 | 10,276,031,332 | IssuesEvent | 2019-08-24 13:45:14 | arcticicestudio/arctic | https://api.github.com/repos/arcticicestudio/arctic | closed | lint-staged | context-workflow scope-dx scope-maintainability scope-quality type-feature | <p align="center"><img src="https://user-images.githubusercontent.com/7836623/63638143-c84d4280-c684-11e9-93cf-98662c6c0168.png" width="25%" /></p>

Integrate [lint-staged][gh-lint-staged] to run linters against staged Git files to prevent to add code that violates any style guide into the code base.

<p align="center"><img src="https://user-images.githubusercontent.com/7836623/63638144-c84d4280-c684-11e9-8ba1-1cec576a8fdb.gif" width="80%" /></p>

### Configuration

The configuration file `lint-staged.config.js` will be placed in the project root and includes the command that should be run for matching file extensions (globs). It will include at least the three following entries with the same order as listed here:

1. `prettier --list-different` - Run Prettier (#32) against `*.{js,json,md,mdx,ts,tsx,yml}` to ensure all files are formatted correctly. The `--list-different` prints the found files that are not conform to the Prettier configuration.

2. `eslint` - Run ESLint (#30) against `*.{js,ts,tsx}` to ensure all TypeScript and JavaScript files are compliant to the style guide after being formatted with Prettier.

3. `remark --no-stdout` - Run remark-lint (#27) against `*.md` to ensure all Markdown files are compliant to the style guide. The `--no-stdout` flag suppresses the output of the parsed file content.

## Tasks

- [x] Install [lint-staged][npm-lint-staged] package.

- [x] Implement `lint-staged.config.js` configuration file.

[gh-lint-staged]: https://github.com/okonet/lint-staged

[npm-lint-staged]: https://www.npmjs.com/package/lint-staged

| True | lint-staged - <p align="center"><img src="https://user-images.githubusercontent.com/7836623/63638143-c84d4280-c684-11e9-93cf-98662c6c0168.png" width="25%" /></p>

Integrate [lint-staged][gh-lint-staged] to run linters against staged Git files to prevent to add code that violates any style guide into the code base.

<p align="center"><img src="https://user-images.githubusercontent.com/7836623/63638144-c84d4280-c684-11e9-8ba1-1cec576a8fdb.gif" width="80%" /></p>

### Configuration

The configuration file `lint-staged.config.js` will be placed in the project root and includes the command that should be run for matching file extensions (globs). It will include at least the three following entries with the same order as listed here:

1. `prettier --list-different` - Run Prettier (#32) against `*.{js,json,md,mdx,ts,tsx,yml}` to ensure all files are formatted correctly. The `--list-different` prints the found files that are not conform to the Prettier configuration.

2. `eslint` - Run ESLint (#30) against `*.{js,ts,tsx}` to ensure all TypeScript and JavaScript files are compliant to the style guide after being formatted with Prettier.

3. `remark --no-stdout` - Run remark-lint (#27) against `*.md` to ensure all Markdown files are compliant to the style guide. The `--no-stdout` flag suppresses the output of the parsed file content.

## Tasks

- [x] Install [lint-staged][npm-lint-staged] package.

- [x] Implement `lint-staged.config.js` configuration file.

[gh-lint-staged]: https://github.com/okonet/lint-staged

[npm-lint-staged]: https://www.npmjs.com/package/lint-staged

| main | lint staged integrate to run linters against staged git files to prevent to add code that violates any style guide into the code base configuration the configuration file lint staged config js will be placed in the project root and includes the command that should be run for matching file extensions globs it will include at least the three following entries with the same order as listed here prettier list different run prettier against js json md mdx ts tsx yml to ensure all files are formatted correctly the list different prints the found files that are not conform to the prettier configuration eslint run eslint against js ts tsx to ensure all typescript and javascript files are compliant to the style guide after being formatted with prettier remark no stdout run remark lint against md to ensure all markdown files are compliant to the style guide the no stdout flag suppresses the output of the parsed file content tasks install package implement lint staged config js configuration file | 1 |

5,079 | 25,979,343,578 | IssuesEvent | 2022-12-19 17:20:46 | aws/serverless-application-model | https://api.github.com/repos/aws/serverless-application-model | closed | Lambda Versioning Issues | area/resource/function type/feature contributors/good-first-issue maintainer/need-response | Hello Team,

This is regarding the issue which I faced during lambda versioning.

We used AutoPublishAlias in SAM template for versioning/aliasing of lambdas which has a restriction that we cannot provide custom description to versions. Intent is to pass commit id as a description as a correlation id between lambda version and commits which generated the versions.

Resource AWS::Lambda::Version is also not proving to be any help, as on the second execution I cloudformation is failing with the message that "Update to resource type AWS::Lambda::Version is not supported.". Going through several blogs I came to know that for any new version to be published we need to define an addition AWS::Lambda::Version resource. I assume we cannot perform update to Lambda Version as it is immutable resource and we have to create new version each time.

AutoPublishAlias to accept a description will help to put a relational mapping between the lambda version and the stack which created the stack. Or if you can map commit id and display it in lambda console as Tags that will also suffice the purpose.

Many Thanks. | True | Lambda Versioning Issues - Hello Team,

This is regarding the issue which I faced during lambda versioning.

We used AutoPublishAlias in SAM template for versioning/aliasing of lambdas which has a restriction that we cannot provide custom description to versions. Intent is to pass commit id as a description as a correlation id between lambda version and commits which generated the versions.

Resource AWS::Lambda::Version is also not proving to be any help, as on the second execution I cloudformation is failing with the message that "Update to resource type AWS::Lambda::Version is not supported.". Going through several blogs I came to know that for any new version to be published we need to define an addition AWS::Lambda::Version resource. I assume we cannot perform update to Lambda Version as it is immutable resource and we have to create new version each time.

AutoPublishAlias to accept a description will help to put a relational mapping between the lambda version and the stack which created the stack. Or if you can map commit id and display it in lambda console as Tags that will also suffice the purpose.

Many Thanks. | main | lambda versioning issues hello team this is regarding the issue which i faced during lambda versioning we used autopublishalias in sam template for versioning aliasing of lambdas which has a restriction that we cannot provide custom description to versions intent is to pass commit id as a description as a correlation id between lambda version and commits which generated the versions resource aws lambda version is also not proving to be any help as on the second execution i cloudformation is failing with the message that update to resource type aws lambda version is not supported going through several blogs i came to know that for any new version to be published we need to define an addition aws lambda version resource i assume we cannot perform update to lambda version as it is immutable resource and we have to create new version each time autopublishalias to accept a description will help to put a relational mapping between the lambda version and the stack which created the stack or if you can map commit id and display it in lambda console as tags that will also suffice the purpose many thanks | 1 |

3,870 | 17,111,618,510 | IssuesEvent | 2021-07-10 12:32:07 | RalfKoban/MiKo-Analyzers | https://api.github.com/repos/RalfKoban/MiKo-Analyzers | closed | return statements should be preceded by a blank line | Area: analyzer Area: maintainability feature | If a return statement is the only statement on a line of code, then it should be preceded by a blank line. This allows to easily spot them. | True | return statements should be preceded by a blank line - If a return statement is the only statement on a line of code, then it should be preceded by a blank line. This allows to easily spot them. | main | return statements should be preceded by a blank line if a return statement is the only statement on a line of code then it should be preceded by a blank line this allows to easily spot them | 1 |

3,142 | 12,056,605,572 | IssuesEvent | 2020-04-15 14:41:28 | arcticicestudio/igloo | https://api.github.com/repos/arcticicestudio/igloo | opened | “taskwarrior“ & “timewarrior“ snowblock decommission | scope-maintainability snowblock-taskwarrior snowblock-timewarrior type-task | Related to #248

---

Both _snowblocks_ for [Taskwarrior][] and [Timewarrior][] are not required anymore since they have been replaced with my own custom 💙 [Go][] application that is currently private/closed source, bur planned to be open sourced later on.

Both tools are great and provide a lot of features, but it's kind of an overload and I missed the possibility to integrate the data and API into my other Go applications as well as web-based projects with a quite more modern _techstack_ (_Protocol Buffers_, _NATS Messaging_, _React_ SPA etc.).

Therefore the _snowblocks_ will be removed while the data is still available through the [_Git_ repository history/logs][git-docs-hist].

[git-docs-hist]: https://git-scm.com/book/en/v2/Git-Basics-Viewing-the-Commit-History

[go]: https://go.dev

[taskwarrior]: https://taskwarrior.org

[timewarrior]: https://timewarrior.net | True | “taskwarrior“ & “timewarrior“ snowblock decommission - Related to #248

---

Both _snowblocks_ for [Taskwarrior][] and [Timewarrior][] are not required anymore since they have been replaced with my own custom 💙 [Go][] application that is currently private/closed source, bur planned to be open sourced later on.

Both tools are great and provide a lot of features, but it's kind of an overload and I missed the possibility to integrate the data and API into my other Go applications as well as web-based projects with a quite more modern _techstack_ (_Protocol Buffers_, _NATS Messaging_, _React_ SPA etc.).

Therefore the _snowblocks_ will be removed while the data is still available through the [_Git_ repository history/logs][git-docs-hist].

[git-docs-hist]: https://git-scm.com/book/en/v2/Git-Basics-Viewing-the-Commit-History

[go]: https://go.dev

[taskwarrior]: https://taskwarrior.org

[timewarrior]: https://timewarrior.net | main | “taskwarrior“ “timewarrior“ snowblock decommission related to both snowblocks for and are not required anymore since they have been replaced with my own custom 💙 application that is currently private closed source bur planned to be open sourced later on both tools are great and provide a lot of features but it s kind of an overload and i missed the possibility to integrate the data and api into my other go applications as well as web based projects with a quite more modern techstack protocol buffers nats messaging react spa etc therefore the snowblocks will be removed while the data is still available through the | 1 |

76,337 | 15,495,927,271 | IssuesEvent | 2021-03-11 01:44:57 | rgordon95/github-search-redux-thunk | https://api.github.com/repos/rgordon95/github-search-redux-thunk | opened | CVE-2020-7608 (Medium) detected in yargs-parser-10.1.0.tgz, yargs-parser-11.1.1.tgz | security vulnerability | ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-10.1.0.tgz</b>, <b>yargs-parser-11.1.1.tgz</b></p></summary>

<p>

<details><summary><b>yargs-parser-10.1.0.tgz</b></p></summary>

<p>the mighty option parser used by yargs</p>

<p>Library home page: <a href="https://registry.npmjs.org/yargs-parser/-/yargs-parser-10.1.0.tgz">https://registry.npmjs.org/yargs-parser/-/yargs-parser-10.1.0.tgz</a></p>

<p>Path to dependency file: /github-search-redux-thunk/package.json</p>

<p>Path to vulnerable library: github-search-redux-thunk/node_modules/webpack-dev-server/node_modules/yargs-parser/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.0.1.tgz (Root Library)

- webpack-dev-server-3.2.1.tgz

- yargs-12.0.2.tgz

- :x: **yargs-parser-10.1.0.tgz** (Vulnerable Library)

</details>