Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

64,650 | 3,213,813,138 | IssuesEvent | 2015-10-06 21:34:22 | lorentey/stagger | https://api.github.com/repos/lorentey/stagger | closed | Integrate stagger into Python's packaging system | auto-migrated Priority-Medium Type-Enhancement | ```

See "Distributing Python Modules":

http://docs.python.org/3.0/distutils/index.html

```

Original issue reported on code.google.com by `Karoly.Lorentey` on 13 Jun 2009 at 5:45 | 1.0 | Integrate stagger into Python's packaging system - ```

See "Distributing Python Modules":

http://docs.python.org/3.0/distutils/index.html

```

Original issue reported on code.google.com by `Karoly.Lorentey` on 13 Jun 2009 at 5:45 | non_main | integrate stagger into python s packaging system see distributing python modules original issue reported on code google com by karoly lorentey on jun at | 0 |

1,988 | 6,694,259,593 | IssuesEvent | 2017-10-10 00:42:11 | duckduckgo/zeroclickinfo-spice | https://api.github.com/repos/duckduckgo/zeroclickinfo-spice | closed | Maps: more specific address search | Maintainer Input Requested | Hey, is it possible to search with more specific search queries, i.e. including an address?That would be much more useful than just a simple city name matching.

IA Page: http://duck.co/ia/view/maps_maps

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @nilnilnil

| True | Maps: more specific address search - Hey, is it possible to search with more specific search queries, i.e. including an address?That would be much more useful than just a simple city name matching.

IA Page: http://duck.co/ia/view/maps_maps

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @nilnilnil

| main | maps more specific address search hey is it possible to search with more specific search queries i e including an address that would be much more useful than just a simple city name matching ia page nilnilnil | 1 |

827,243 | 31,761,502,902 | IssuesEvent | 2023-09-12 05:41:27 | RagnarokResearchLab/RagLite | https://api.github.com/repos/RagnarokResearchLab/RagLite | opened | Add more statistics to the data mining toolkit | Complexity: Low Priority: Optional Status: Accepted Type: Improvement Scope: File Formats | Found this somewhere in my old notes:

>

Number of processed files (duh)

Number of different values that occured for each field (only variables/LUTs, depends on the data)

Min, max, avg of those values (if there are multiple)

Name of the files corresponding to min, max values

List of those values (only useful if it's not too big)

Keys of the entries whose values were always identical

Min, max, avg loading times

Name of the files corresponding to min, max loading time

Probably not too relevant right now, but if I ever feel like looking into Renewal changes again then it might come in handy. | 1.0 | Add more statistics to the data mining toolkit - Found this somewhere in my old notes:

>

Number of processed files (duh)

Number of different values that occured for each field (only variables/LUTs, depends on the data)

Min, max, avg of those values (if there are multiple)

Name of the files corresponding to min, max values

List of those values (only useful if it's not too big)

Keys of the entries whose values were always identical

Min, max, avg loading times

Name of the files corresponding to min, max loading time

Probably not too relevant right now, but if I ever feel like looking into Renewal changes again then it might come in handy. | non_main | add more statistics to the data mining toolkit found this somewhere in my old notes number of processed files duh number of different values that occured for each field only variables luts depends on the data min max avg of those values if there are multiple name of the files corresponding to min max values list of those values only useful if it s not too big keys of the entries whose values were always identical min max avg loading times name of the files corresponding to min max loading time probably not too relevant right now but if i ever feel like looking into renewal changes again then it might come in handy | 0 |

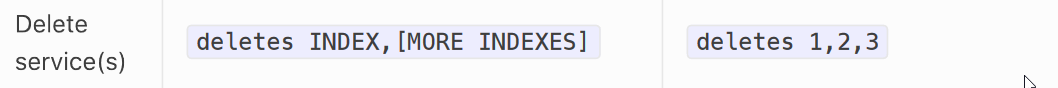

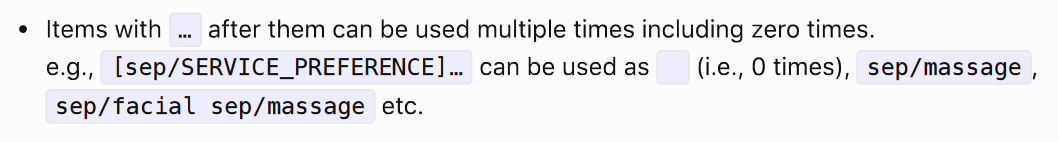

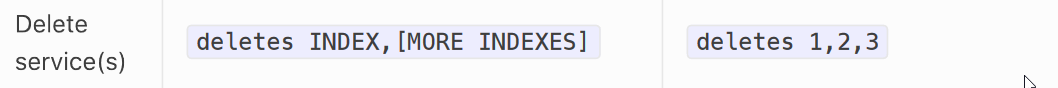

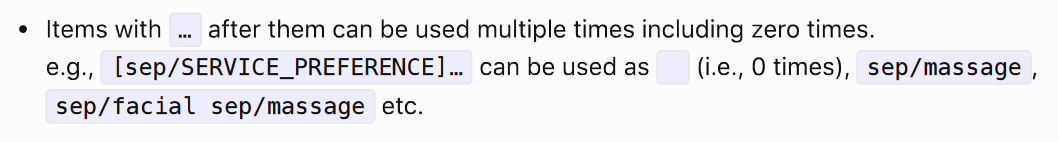

281,530 | 21,315,412,286 | IssuesEvent | 2022-04-16 07:22:03 | jaysmyname/pe | https://api.github.com/repos/jaysmyname/pe | opened | Delete command formats should have ... at the end | type.DocumentationBug severity.VeryLow |

For all the commands regarding delete, there should be a `...` at the end, signifying you can use it more than once, as demonstrated by the example `deletes 1,2,3`

<!--session: 1650087410741-ccd0034b-8cd8-4172-883c-753c24bc19a1-->

<!--Version: Web v3.4.2--> | 1.0 | Delete command formats should have ... at the end -

For all the commands regarding delete, there should be a `...` at the end, signifying you can use it more than once, as demonstrated by the example `deletes 1,2,3`

<!--session: 1650087410741-ccd0034b-8cd8-4172-883c-753c24bc19a1-->

<!--Version: Web v3.4.2--> | non_main | delete command formats should have at the end for all the commands regarding delete there should be a at the end signifying you can use it more than once as demonstrated by the example deletes | 0 |

2,163 | 7,529,464,409 | IssuesEvent | 2018-04-14 05:12:03 | ansible/ansible | https://api.github.com/repos/ansible/ansible | closed | Network Modules Running Slower After Upgrading to Ansible 2.5 | aci affects_2.5 avi bug f5 module needs_maintainer needs_triage networking nxos performance support:community support:core support:network | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

All network modules for all device families. E.g., ios, ios-xr, nxos, eos, etc...

##### ANSIBLE VERSION

```

ansible 2.5.0

configured module search path = [u'/user/.ansible/plugins/modules', u'/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python2.7/site-packages/ansible

executable location = /bin/ansible

python version = 2.7.5 (default, May 3 2017, 07:55:04) [GCC 4.8.5 20150623 (Red Hat 4.8.5-14)]

```

##### CONFIGURATION

```

# Tested without callback and strategy

DEFAULT_CALLBACK_WHITELIST(ansible/ansible.cfg) = ['timer', 'mail', 'skippy', 'profile_tasks']

DEFAULT_STRATEGY(ansible/ansible.cfg) = free

DEFAULT_TIMEOUT(ansible/ansible.cfg) = 20

PERSISTENT_CONNECT_TIMEOUT(ansible/ansible.cfg) = 90

```

##### OS / ENVIRONMENT

RHEL 7.4

3.10.0-693.11.1.el7.x86_64

##### SUMMARY

There's a significant difference in speed/performance after upgrading to 2.5 from 2.4.3. Result are unaffected by scale. I've tested most of our custom roles so far, and I've verified this is consistent in all of our environments. Also, going with/without the free strategy and callbacks we normally run does not affect performance. Processor and memory utilization is far lower, consistent with the increase in run times.

Simply put. all network roles are running significantly slower in 2.5:

##### STEPS TO REPRODUCE

Facts:

```ansible-playbook facts.yml -l cisco-ios[1:500] --forks 500```

Passwords::

```ansible-playbook password.yml -l cisco-ios[1:100] --forks 100 ```

##### EXPECTED RESULTS

**2.4.3**

Facts:

```

network_facts : collect output from ios device ------------------------- 41.10s

network_facts : set config_lines fact ---------------------------------- 33.16s

debug ------------------------------------------------------------------- 5.31s

network_facts : include cisco-ios tasks --------------------------------- 2.66s

network_facts : set config fact ----------------------------------------- 0.46s

network_facts : set version fact ---------------------------------------- 0.41s

network_facts : set model number ---------------------------------------- 0.41s

network_facts : set management interface name fact ---------------------- 0.40s

Playbook run took 0 days, 0 hours, 4 minutes, 24 seconds

```

Passwords:

```

config_localpw : Update line passwords -------------------------------- 111.60s

network_facts : collect output from ios device -------------------------- 6.12s

config_localpw : Update line passwords ---------------------------------- 0.91s

network_facts : include cisco-ios tasks --------------------------------- 0.51s

config_localpw : Update line passwords ---------------------------------- 0.50s

config_localpw : Update terminal server username doorbell --------------- 0.28s

config_localpw : debug -------------------------------------------------- 0.22s

config_localpw : Update terminal server username doorbell --------------- 0.19s

config_localpw : set_fact - Modem slot 3 -------------------------------- 0.16s

config_localpw : Update enable and username config lines ---------------- 0.16s

config_localpw : debug -------------------------------------------------- 0.13s

config_localpw : Identify if it has a modem ----------------------------- 0.13s

config_localpw : Update enable and username config lines ---------------- 0.13s

config_localpw : Update terminal server username doorbell --------------- 0.12s

config_localpw : debug -------------------------------------------------- 0.12s

config_localpw : Update terminal server username doorbell --------------- 0.11s

config_localpw : Update terminal server username doorbell --------------- 0.08s

config_localpw : Update enable and username config lines ---------------- 0.07s

config_localpw : debug -------------------------------------------------- 0.07s

config_localpw : Update line passwords ---------------------------------- 0.07s

Playbook run took 0 days, 0 hours, 3 minutes, 12 seconds

```

##### ACTUAL RESULTS

**2.5**

Facts:

```

network_facts : collect output from ios device ------------------------- 27.77s

network_facts : include cisco-ios tasks --------------------------------- 2.83s

debug ------------------------------------------------------------------- 0.26s

network_facts : set config fact ----------------------------------------- 0.12s

network_facts : set management interface name fact ---------------------- 0.12s

network_facts : set model number ---------------------------------------- 0.06s

network_facts : set version fact ---------------------------------------- 0.04s

network_facts : set config_lines fact ----------------------------------- 0.04s

Playbook run took 0 days, 0 hours, 26 minutes, 33 seconds

```

Passwords:

```

config_localpw : Update line passwords ---------------------------------- 40.38s

config_localpw : Update line passwords ---------------------------------- 26.52s

config_localpw : Update terminal server username doorbell --------------- 22.32s

config_localpw : Update enable and username config lines ---------------- 21.04s

config_localpw : Update terminal server username doorbell --------------- 16.96s

config_localpw : Update line passwords ---------------------------------- 16.87s

config_localpw : Update line passwords ---------------------------------- 16.86s

config_localpw : Update line passwords ---------------------------------- 16.68s

config_localpw : Update line passwords ---------------------------------- 15.75s

config_localpw : Update terminal server username doorbell --------------- 15.39s

config_localpw : Update line passwords ---------------------------------- 15.25s

config_localpw : Update line passwords ---------------------------------- 15.12s

config_localpw : Update line passwords ---------------------------------- 14.62s

config_localpw : Identify if it has a modem ----------------------------- 14.52s

config_localpw : Update terminal server username doorbell --------------- 13.94s

config_localpw : Update line passwords ---------------------------------- 13.78s

config_localpw : Update line passwords ---------------------------------- 13.47s

config_localpw : Identify if and where the modem is --------------------- 13.03s

config_localpw : Update enable and username config lines ---------------- 12.82s

config_localpw : Update line passwords ---------------------------------- 12.07s

Playbook run took 0 days, 0 hours, 17 minutes, 38 seconds

``` | True | Network Modules Running Slower After Upgrading to Ansible 2.5 - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

All network modules for all device families. E.g., ios, ios-xr, nxos, eos, etc...

##### ANSIBLE VERSION

```

ansible 2.5.0

configured module search path = [u'/user/.ansible/plugins/modules', u'/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python2.7/site-packages/ansible

executable location = /bin/ansible

python version = 2.7.5 (default, May 3 2017, 07:55:04) [GCC 4.8.5 20150623 (Red Hat 4.8.5-14)]

```

##### CONFIGURATION

```

# Tested without callback and strategy

DEFAULT_CALLBACK_WHITELIST(ansible/ansible.cfg) = ['timer', 'mail', 'skippy', 'profile_tasks']

DEFAULT_STRATEGY(ansible/ansible.cfg) = free

DEFAULT_TIMEOUT(ansible/ansible.cfg) = 20

PERSISTENT_CONNECT_TIMEOUT(ansible/ansible.cfg) = 90

```

##### OS / ENVIRONMENT

RHEL 7.4

3.10.0-693.11.1.el7.x86_64

##### SUMMARY

There's a significant difference in speed/performance after upgrading to 2.5 from 2.4.3. Result are unaffected by scale. I've tested most of our custom roles so far, and I've verified this is consistent in all of our environments. Also, going with/without the free strategy and callbacks we normally run does not affect performance. Processor and memory utilization is far lower, consistent with the increase in run times.

Simply put. all network roles are running significantly slower in 2.5:

##### STEPS TO REPRODUCE

Facts:

```ansible-playbook facts.yml -l cisco-ios[1:500] --forks 500```

Passwords::

```ansible-playbook password.yml -l cisco-ios[1:100] --forks 100 ```

##### EXPECTED RESULTS

**2.4.3**

Facts:

```

network_facts : collect output from ios device ------------------------- 41.10s

network_facts : set config_lines fact ---------------------------------- 33.16s

debug ------------------------------------------------------------------- 5.31s

network_facts : include cisco-ios tasks --------------------------------- 2.66s

network_facts : set config fact ----------------------------------------- 0.46s

network_facts : set version fact ---------------------------------------- 0.41s

network_facts : set model number ---------------------------------------- 0.41s

network_facts : set management interface name fact ---------------------- 0.40s

Playbook run took 0 days, 0 hours, 4 minutes, 24 seconds

```

Passwords:

```

config_localpw : Update line passwords -------------------------------- 111.60s

network_facts : collect output from ios device -------------------------- 6.12s

config_localpw : Update line passwords ---------------------------------- 0.91s

network_facts : include cisco-ios tasks --------------------------------- 0.51s

config_localpw : Update line passwords ---------------------------------- 0.50s

config_localpw : Update terminal server username doorbell --------------- 0.28s

config_localpw : debug -------------------------------------------------- 0.22s

config_localpw : Update terminal server username doorbell --------------- 0.19s

config_localpw : set_fact - Modem slot 3 -------------------------------- 0.16s

config_localpw : Update enable and username config lines ---------------- 0.16s

config_localpw : debug -------------------------------------------------- 0.13s

config_localpw : Identify if it has a modem ----------------------------- 0.13s

config_localpw : Update enable and username config lines ---------------- 0.13s

config_localpw : Update terminal server username doorbell --------------- 0.12s

config_localpw : debug -------------------------------------------------- 0.12s

config_localpw : Update terminal server username doorbell --------------- 0.11s

config_localpw : Update terminal server username doorbell --------------- 0.08s

config_localpw : Update enable and username config lines ---------------- 0.07s

config_localpw : debug -------------------------------------------------- 0.07s

config_localpw : Update line passwords ---------------------------------- 0.07s

Playbook run took 0 days, 0 hours, 3 minutes, 12 seconds

```

##### ACTUAL RESULTS

**2.5**

Facts:

```

network_facts : collect output from ios device ------------------------- 27.77s

network_facts : include cisco-ios tasks --------------------------------- 2.83s

debug ------------------------------------------------------------------- 0.26s

network_facts : set config fact ----------------------------------------- 0.12s

network_facts : set management interface name fact ---------------------- 0.12s

network_facts : set model number ---------------------------------------- 0.06s

network_facts : set version fact ---------------------------------------- 0.04s

network_facts : set config_lines fact ----------------------------------- 0.04s

Playbook run took 0 days, 0 hours, 26 minutes, 33 seconds

```

Passwords:

```

config_localpw : Update line passwords ---------------------------------- 40.38s

config_localpw : Update line passwords ---------------------------------- 26.52s

config_localpw : Update terminal server username doorbell --------------- 22.32s

config_localpw : Update enable and username config lines ---------------- 21.04s

config_localpw : Update terminal server username doorbell --------------- 16.96s

config_localpw : Update line passwords ---------------------------------- 16.87s

config_localpw : Update line passwords ---------------------------------- 16.86s

config_localpw : Update line passwords ---------------------------------- 16.68s

config_localpw : Update line passwords ---------------------------------- 15.75s

config_localpw : Update terminal server username doorbell --------------- 15.39s

config_localpw : Update line passwords ---------------------------------- 15.25s

config_localpw : Update line passwords ---------------------------------- 15.12s

config_localpw : Update line passwords ---------------------------------- 14.62s

config_localpw : Identify if it has a modem ----------------------------- 14.52s

config_localpw : Update terminal server username doorbell --------------- 13.94s

config_localpw : Update line passwords ---------------------------------- 13.78s

config_localpw : Update line passwords ---------------------------------- 13.47s

config_localpw : Identify if and where the modem is --------------------- 13.03s

config_localpw : Update enable and username config lines ---------------- 12.82s

config_localpw : Update line passwords ---------------------------------- 12.07s

Playbook run took 0 days, 0 hours, 17 minutes, 38 seconds

``` | main | network modules running slower after upgrading to ansible issue type bug report component name all network modules for all device families e g ios ios xr nxos eos etc ansible version ansible configured module search path ansible python module location usr lib site packages ansible executable location bin ansible python version default may configuration tested without callback and strategy default callback whitelist ansible ansible cfg default strategy ansible ansible cfg free default timeout ansible ansible cfg persistent connect timeout ansible ansible cfg os environment rhel summary there s a significant difference in speed performance after upgrading to from result are unaffected by scale i ve tested most of our custom roles so far and i ve verified this is consistent in all of our environments also going with without the free strategy and callbacks we normally run does not affect performance processor and memory utilization is far lower consistent with the increase in run times simply put all network roles are running significantly slower in steps to reproduce facts ansible playbook facts yml l cisco ios forks passwords ansible playbook password yml l cisco ios forks expected results facts network facts collect output from ios device network facts set config lines fact debug network facts include cisco ios tasks network facts set config fact network facts set version fact network facts set model number network facts set management interface name fact playbook run took days hours minutes seconds passwords config localpw update line passwords network facts collect output from ios device config localpw update line passwords network facts include cisco ios tasks config localpw update line passwords config localpw update terminal server username doorbell config localpw debug config localpw update terminal server username doorbell config localpw set fact modem slot config localpw update enable and username config lines config localpw debug config localpw identify if it has a modem config localpw update enable and username config lines config localpw update terminal server username doorbell config localpw debug config localpw update terminal server username doorbell config localpw update terminal server username doorbell config localpw update enable and username config lines config localpw debug config localpw update line passwords playbook run took days hours minutes seconds actual results facts network facts collect output from ios device network facts include cisco ios tasks debug network facts set config fact network facts set management interface name fact network facts set model number network facts set version fact network facts set config lines fact playbook run took days hours minutes seconds passwords config localpw update line passwords config localpw update line passwords config localpw update terminal server username doorbell config localpw update enable and username config lines config localpw update terminal server username doorbell config localpw update line passwords config localpw update line passwords config localpw update line passwords config localpw update line passwords config localpw update terminal server username doorbell config localpw update line passwords config localpw update line passwords config localpw update line passwords config localpw identify if it has a modem config localpw update terminal server username doorbell config localpw update line passwords config localpw update line passwords config localpw identify if and where the modem is config localpw update enable and username config lines config localpw update line passwords playbook run took days hours minutes seconds | 1 |

4,944 | 25,414,742,813 | IssuesEvent | 2022-11-22 22:31:36 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | closed | Creat a GitHub action to add 'needs grooming' label to any ticket added to the repo | Maintain | TASK: action being added to GitHub

The requirement is that every time a ticket gets added to the repo the label 'needs grooming' automatically gets added.

**Context**:

In the 'Backlog' column currently, there's no way of defining if the ticket has or has not been groomed. This would make tracking tickets that need to be groomed easier when looking at ZenHub.

By automatically adding the label to any ticket that has been created we ensure that a manual action is required to mark the ticket as 'groomed' (by removing the label).

The expectation is that a user (product owner, dev, designer, dm) would remove the label if the ticket has been groomed.

| True | Creat a GitHub action to add 'needs grooming' label to any ticket added to the repo - TASK: action being added to GitHub

The requirement is that every time a ticket gets added to the repo the label 'needs grooming' automatically gets added.

**Context**:

In the 'Backlog' column currently, there's no way of defining if the ticket has or has not been groomed. This would make tracking tickets that need to be groomed easier when looking at ZenHub.

By automatically adding the label to any ticket that has been created we ensure that a manual action is required to mark the ticket as 'groomed' (by removing the label).

The expectation is that a user (product owner, dev, designer, dm) would remove the label if the ticket has been groomed.

| main | creat a github action to add needs grooming label to any ticket added to the repo task action being added to github the requirement is that every time a ticket gets added to the repo the label needs grooming automatically gets added context in the backlog column currently there s no way of defining if the ticket has or has not been groomed this would make tracking tickets that need to be groomed easier when looking at zenhub by automatically adding the label to any ticket that has been created we ensure that a manual action is required to mark the ticket as groomed by removing the label the expectation is that a user product owner dev designer dm would remove the label if the ticket has been groomed | 1 |

3,496 | 13,646,946,517 | IssuesEvent | 2020-09-26 00:52:19 | amyjko/faculty | https://api.github.com/repos/amyjko/faculty | closed | Link bio to publication and award counts | maintainability | It's currently static, but can point to publication data. | True | Link bio to publication and award counts - It's currently static, but can point to publication data. | main | link bio to publication and award counts it s currently static but can point to publication data | 1 |

5,291 | 26,736,833,251 | IssuesEvent | 2023-01-30 10:04:48 | bazelbuild/intellij | https://api.github.com/repos/bazelbuild/intellij | closed | Golang source sets (go_source) are not supported: source files are unsynced | type: feature request lang: go topic: sync awaiting-maintainer | Bazel golang rule supports [source sets](https://github.com/bazelbuild/rules_go/blob/master/go/core.rst#go-source) and their further [embedding](https://github.com/bazelbuild/rules_go/blob/master/go/core.rst#embedding) into binaries/tests (https://github.com/bazelbuild/rules_go/blob/master/go/core.rst#embedding).

We are using this extensively and unfortunately, this is not supported by Bazel Intellij plugin: all sources defined via `go_source` & `embed` attr are "unsynced".

## Setup

### .bazelproject

```

directories:

.

derive_targets_from_directories: false

targets:

//myapp:all

additional_languages:

go

```

### Bazel build file

```

go_source(

name = "src",

srcs = glob(

include = ["*.go"],

exclude = ["*_test.go"],

),

deps = [],

)

go_binary(

name = "app",

embed = [":src"],

)

```

## Expected behavior

All source files matched by `go_source#srcs` are synced.

## Actual behavior

`go_binary#embed` is ignored and all sources are unsynced.

## Walkaround

It seems that Bazel plugin doesn't understand `go_source` so all sources must be specified explicitly in `go_binary#srcs`. This syncs up correctly:

### Bazel build file

```

go_binary(

name = "app",

srcs = glob(

include = ["*.go"],

exclude = ["*_test.go"],

),

)

``` | True | Golang source sets (go_source) are not supported: source files are unsynced - Bazel golang rule supports [source sets](https://github.com/bazelbuild/rules_go/blob/master/go/core.rst#go-source) and their further [embedding](https://github.com/bazelbuild/rules_go/blob/master/go/core.rst#embedding) into binaries/tests (https://github.com/bazelbuild/rules_go/blob/master/go/core.rst#embedding).

We are using this extensively and unfortunately, this is not supported by Bazel Intellij plugin: all sources defined via `go_source` & `embed` attr are "unsynced".

## Setup

### .bazelproject

```

directories:

.

derive_targets_from_directories: false

targets:

//myapp:all

additional_languages:

go

```

### Bazel build file

```

go_source(

name = "src",

srcs = glob(

include = ["*.go"],

exclude = ["*_test.go"],

),

deps = [],

)

go_binary(

name = "app",

embed = [":src"],

)

```

## Expected behavior

All source files matched by `go_source#srcs` are synced.

## Actual behavior

`go_binary#embed` is ignored and all sources are unsynced.

## Walkaround

It seems that Bazel plugin doesn't understand `go_source` so all sources must be specified explicitly in `go_binary#srcs`. This syncs up correctly:

### Bazel build file

```

go_binary(

name = "app",

srcs = glob(

include = ["*.go"],

exclude = ["*_test.go"],

),

)

``` | main | golang source sets go source are not supported source files are unsynced bazel golang rule supports and their further into binaries tests we are using this extensively and unfortunately this is not supported by bazel intellij plugin all sources defined via go source embed attr are unsynced setup bazelproject directories derive targets from directories false targets myapp all additional languages go bazel build file go source name src srcs glob include exclude deps go binary name app embed expected behavior all source files matched by go source srcs are synced actual behavior go binary embed is ignored and all sources are unsynced walkaround it seems that bazel plugin doesn t understand go source so all sources must be specified explicitly in go binary srcs this syncs up correctly bazel build file go binary name app srcs glob include exclude | 1 |

2,060 | 6,977,802,027 | IssuesEvent | 2017-12-12 15:40:36 | OpenLightingProject/ola | https://api.github.com/repos/OpenLightingProject/ola | closed | Download share is outdated | Difficulty-Medium Maintainability OpSys-Linux Type-Task | Hey guys,

It seems like the download share is very outdated: http://dl.openlighting.org/?C=M;O=A

Can we get this checked out so that its up to date again?

Thanks | True | Download share is outdated - Hey guys,

It seems like the download share is very outdated: http://dl.openlighting.org/?C=M;O=A

Can we get this checked out so that its up to date again?

Thanks | main | download share is outdated hey guys it seems like the download share is very outdated can we get this checked out so that its up to date again thanks | 1 |

22,882 | 7,241,977,090 | IssuesEvent | 2018-02-14 04:46:15 | caffe2/caffe2 | https://api.github.com/repos/caffe2/caffe2 | closed | undefined reference to cudaStreamCreate | build | Hi, I encounter the following error when the making process has proceeded up to 99 percent:

CMakeFiles/core_overhead_benchmark.dir/core_overhead_benchmark.cc.o: In function `BM_cudaStreamWaitEventThenStreamSynchronize(benchmark::State&)':

core_overhead_benchmark.cc:(.text+0x2e2): undefined reference to `cudaStreamCreate'

Here is the build summary:

-- ******** Summary ********

-- General:

-- Git version : v0.8.1-667-gbd5bb22-dirty

-- System : Linux

-- C++ compiler : /usr/bin/c++

-- C++ compiler version : 5.4.0

-- Protobuf compiler : /usr/bin/protoc

-- CXX flags : -fopenmp -std=c++11 -O2 -fPIC -Wno-narrowing

-- Build type : Release

-- Compile definitions :

--

-- BUILD_BINARY : ON

-- BUILD_PYTHON : ON

-- Python version : 2.7.12

-- Python library : /usr/lib/x86_64-linux-gnu/libpython2.7.so

-- BUILD_SHARED_LIBS : ON

-- BUILD_TEST : ON

-- USE_ATEN : OFF

-- USE_ASAN : OFF

-- USE_CUDA : ON

-- CUDA version : 8.0

-- CuDNN version : 6.0.21

-- USE_EIGEN_FOR_BLAS : 1

-- USE_FFMPEG : OFF

-- USE_GFLAGS : ON

-- USE_GLOG : ON

-- USE_GLOO : OFF

-- USE_LEVELDB : ON

-- LevelDB version : 1.18

-- Snappy version : 1.1.3

-- USE_LITE_PROTO : OFF

-- USE_LMDB : ON

-- LMDB version : 0.9.17

-- USE_METAL : OFF

-- USE_MKL :

-- USE_MOBILE_OPENGL : OFF

-- USE_MPI : ON

-- USE_NCCL : ON

-- USE_NERVANA_GPU : OFF

-- USE_NNPACK : ON

-- USE_OBSERVERS : ON

-- USE_OPENCV : ON

-- OpenCV version : 3.2.0

-- USE_OPENMP : ON

-- USE_REDIS : OFF

-- USE_ROCKSDB : OFF

-- USE_THREADS : ON

-- USE_ZMQ : OFF

I don't think anything is wrong with my cuda installation as I'm using it both in Caffe and other codes and it works without a problem. And also I had another caffe2 that I have git cloned around a month ago, this one also builds and passes the tests without a problem, so the new one that I have git cloned today seems to have an issue! I appreciate any help greatly. | 1.0 | undefined reference to cudaStreamCreate - Hi, I encounter the following error when the making process has proceeded up to 99 percent:

CMakeFiles/core_overhead_benchmark.dir/core_overhead_benchmark.cc.o: In function `BM_cudaStreamWaitEventThenStreamSynchronize(benchmark::State&)':

core_overhead_benchmark.cc:(.text+0x2e2): undefined reference to `cudaStreamCreate'

Here is the build summary:

-- ******** Summary ********

-- General:

-- Git version : v0.8.1-667-gbd5bb22-dirty

-- System : Linux

-- C++ compiler : /usr/bin/c++

-- C++ compiler version : 5.4.0

-- Protobuf compiler : /usr/bin/protoc

-- CXX flags : -fopenmp -std=c++11 -O2 -fPIC -Wno-narrowing

-- Build type : Release

-- Compile definitions :

--

-- BUILD_BINARY : ON

-- BUILD_PYTHON : ON

-- Python version : 2.7.12

-- Python library : /usr/lib/x86_64-linux-gnu/libpython2.7.so

-- BUILD_SHARED_LIBS : ON

-- BUILD_TEST : ON

-- USE_ATEN : OFF

-- USE_ASAN : OFF

-- USE_CUDA : ON

-- CUDA version : 8.0

-- CuDNN version : 6.0.21

-- USE_EIGEN_FOR_BLAS : 1

-- USE_FFMPEG : OFF

-- USE_GFLAGS : ON

-- USE_GLOG : ON

-- USE_GLOO : OFF

-- USE_LEVELDB : ON

-- LevelDB version : 1.18

-- Snappy version : 1.1.3

-- USE_LITE_PROTO : OFF

-- USE_LMDB : ON

-- LMDB version : 0.9.17

-- USE_METAL : OFF

-- USE_MKL :

-- USE_MOBILE_OPENGL : OFF

-- USE_MPI : ON

-- USE_NCCL : ON

-- USE_NERVANA_GPU : OFF

-- USE_NNPACK : ON

-- USE_OBSERVERS : ON

-- USE_OPENCV : ON

-- OpenCV version : 3.2.0

-- USE_OPENMP : ON

-- USE_REDIS : OFF

-- USE_ROCKSDB : OFF

-- USE_THREADS : ON

-- USE_ZMQ : OFF

I don't think anything is wrong with my cuda installation as I'm using it both in Caffe and other codes and it works without a problem. And also I had another caffe2 that I have git cloned around a month ago, this one also builds and passes the tests without a problem, so the new one that I have git cloned today seems to have an issue! I appreciate any help greatly. | non_main | undefined reference to cudastreamcreate hi i encounter the following error when the making process has proceeded up to percent cmakefiles core overhead benchmark dir core overhead benchmark cc o in function bm cudastreamwaiteventthenstreamsynchronize benchmark state core overhead benchmark cc text undefined reference to cudastreamcreate here is the build summary summary general git version dirty system linux c compiler usr bin c c compiler version protobuf compiler usr bin protoc cxx flags fopenmp std c fpic wno narrowing build type release compile definitions build binary on build python on python version python library usr lib linux gnu so build shared libs on build test on use aten off use asan off use cuda on cuda version cudnn version use eigen for blas use ffmpeg off use gflags on use glog on use gloo off use leveldb on leveldb version snappy version use lite proto off use lmdb on lmdb version use metal off use mkl use mobile opengl off use mpi on use nccl on use nervana gpu off use nnpack on use observers on use opencv on opencv version use openmp on use redis off use rocksdb off use threads on use zmq off i don t think anything is wrong with my cuda installation as i m using it both in caffe and other codes and it works without a problem and also i had another that i have git cloned around a month ago this one also builds and passes the tests without a problem so the new one that i have git cloned today seems to have an issue i appreciate any help greatly | 0 |

4,946 | 25,455,551,843 | IssuesEvent | 2022-11-24 13:55:24 | pace/bricks | https://api.github.com/repos/pace/bricks | closed | Upgrade go-pg dependency | T::Maintainance | ### Problem

We are currently using `github.com/go-pg/pg v6.14.5` which might be outdated.

As far as I can tell the only impact this has on us is a performance one. When using the `Exists()` method on a query (e.g. `db.Model(&m).Where(...).Exists()`) go-pg [performs a regular select and checks whether the number of rows returned](https://github.com/go-pg/pg/blob/v6.14.5/orm/query.go#L1054). This is far from efficient.

### Suggested solution

Upgrade to a newer version, like v8.0.4 where [this seems to be fixed](https://github.com/go-pg/pg/blob/v8.0.4/orm/query.go#L1130).

[Changelog of v.8.0.4](https://github.com/go-pg/pg/blob/v8.0.4/CHANGELOG.md). Upgrading needs adjustments in our code:

> DB.OnQueryProcessed is replaced with DB.AddQueryHook

The format of the hook changes also.

If the impact of this upgrade is too huge, we can live with or work around the problem mentioned. But we probably have to upgrade eventually. | True | Upgrade go-pg dependency - ### Problem

We are currently using `github.com/go-pg/pg v6.14.5` which might be outdated.

As far as I can tell the only impact this has on us is a performance one. When using the `Exists()` method on a query (e.g. `db.Model(&m).Where(...).Exists()`) go-pg [performs a regular select and checks whether the number of rows returned](https://github.com/go-pg/pg/blob/v6.14.5/orm/query.go#L1054). This is far from efficient.

### Suggested solution

Upgrade to a newer version, like v8.0.4 where [this seems to be fixed](https://github.com/go-pg/pg/blob/v8.0.4/orm/query.go#L1130).

[Changelog of v.8.0.4](https://github.com/go-pg/pg/blob/v8.0.4/CHANGELOG.md). Upgrading needs adjustments in our code:

> DB.OnQueryProcessed is replaced with DB.AddQueryHook

The format of the hook changes also.

If the impact of this upgrade is too huge, we can live with or work around the problem mentioned. But we probably have to upgrade eventually. | main | upgrade go pg dependency problem we are currently using github com go pg pg which might be outdated as far as i can tell the only impact this has on us is a performance one when using the exists method on a query e g db model m where exists go pg this is far from efficient suggested solution upgrade to a newer version like where upgrading needs adjustments in our code db onqueryprocessed is replaced with db addqueryhook the format of the hook changes also if the impact of this upgrade is too huge we can live with or work around the problem mentioned but we probably have to upgrade eventually | 1 |

2,664 | 9,107,472,600 | IssuesEvent | 2019-02-21 04:35:57 | prkumar/uplink | https://api.github.com/repos/prkumar/uplink | closed | Add a `retry` decorator | Feature Request Needs Maintainer Input | Here's the original use case from @liiight on [Gitter](https://gitter.im/python-uplink/Lobby?utm_source=badge&utm_medium=badge&utm_campaign=pr-badge&utm_content=badge):

> I'm implementing retries in my code, which is done using a package called retry. basically its a super simple decorator, just catch exception, and call the decoratored func until conditions apply, nothing fancy

> when using with uplink, I'm implementing it in the layer above uplink, i.e, the usage of it. i was wondering if it's possible to do it via uplink itself

> i.e, catch an error and retry the called method | True | Add a `retry` decorator - Here's the original use case from @liiight on [Gitter](https://gitter.im/python-uplink/Lobby?utm_source=badge&utm_medium=badge&utm_campaign=pr-badge&utm_content=badge):

> I'm implementing retries in my code, which is done using a package called retry. basically its a super simple decorator, just catch exception, and call the decoratored func until conditions apply, nothing fancy

> when using with uplink, I'm implementing it in the layer above uplink, i.e, the usage of it. i was wondering if it's possible to do it via uplink itself

> i.e, catch an error and retry the called method | main | add a retry decorator here s the original use case from liiight on i m implementing retries in my code which is done using a package called retry basically its a super simple decorator just catch exception and call the decoratored func until conditions apply nothing fancy when using with uplink i m implementing it in the layer above uplink i e the usage of it i was wondering if it s possible to do it via uplink itself i e catch an error and retry the called method | 1 |

2,510 | 8,655,459,903 | IssuesEvent | 2018-11-27 16:00:31 | codestation/qcma | https://api.github.com/repos/codestation/qcma | closed | QCMA disconnects in middle of file transfer | unmaintained | Ended event, code: 0xc105, id: 161

This is the last event response. | True | QCMA disconnects in middle of file transfer - Ended event, code: 0xc105, id: 161

This is the last event response. | main | qcma disconnects in middle of file transfer ended event code id this is the last event response | 1 |

815 | 4,441,581,899 | IssuesEvent | 2016-08-19 09:52:12 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | Unarchive Error, No such file or directory | bug_report waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

unarchive

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0 (devel 3c65c03a67) last updated 2016/08/15 16:01:24 (GMT +1000)

lib/ansible/modules/core: (detached HEAD decb2ec9fa) last updated 2016/08/15 16:01:29 (GMT +1000)

lib/ansible/modules/extras: (detached HEAD 61d5fe148c) last updated 2016/08/15 16:01:29 (GMT +1000)

config file = /home/linus/Documents/ansible-playbooks/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

Ubuntu management node

Centos 7 managed node

##### SUMMARY

<!--- Explain the problem briefly -->

Gives No such file or directory error when added extra_opts: "--strip-components=2"

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

<!--- Paste example playbooks or commands between quotes below -->

```

- name: unpack the artifacts

unarchive:

src: /usr/share/stuff.tar.gz

dest: /usr/share/

extra_opts: "--strip-components=2"

owner: nginx

group: nginx

copy: no

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

Changed

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

The archive file structure is:

dist/production/files

I wan to strip the first two directories

<!--- Paste verbatim command output between quotes below -->

```

fatal: [52.65.150.148]: FAILED! => {"changed": true, "dest": "/usr/share/", "extract_results": {"cmd": "/bin/gtar -C \"/usr/share/\" -xz --strip-components=2 --owner=\"nginx\" --group=\"nginx\" -f \"/usr/share/stuff.tar.gz\"", "err": "", "out": "", "rc": 0}, "failed": true, "gid": 992, "group": "nginx", "handler": "TgzArchive", "mode": "02775", "msg": "Unexpected error when accessing exploded file: [Errno 2] No such file or directory: '/usr/share/stuff/dist/production/'", "owner": "bitbucket", "size": 4096, "src": "/usr/share/stuff.tar.gz", "state": "directory", "uid": 1003}

```

| True | Unarchive Error, No such file or directory - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

unarchive

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0 (devel 3c65c03a67) last updated 2016/08/15 16:01:24 (GMT +1000)

lib/ansible/modules/core: (detached HEAD decb2ec9fa) last updated 2016/08/15 16:01:29 (GMT +1000)

lib/ansible/modules/extras: (detached HEAD 61d5fe148c) last updated 2016/08/15 16:01:29 (GMT +1000)

config file = /home/linus/Documents/ansible-playbooks/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

Ubuntu management node

Centos 7 managed node

##### SUMMARY

<!--- Explain the problem briefly -->

Gives No such file or directory error when added extra_opts: "--strip-components=2"

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

<!--- Paste example playbooks or commands between quotes below -->

```

- name: unpack the artifacts

unarchive:

src: /usr/share/stuff.tar.gz

dest: /usr/share/

extra_opts: "--strip-components=2"

owner: nginx

group: nginx

copy: no

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

Changed

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

The archive file structure is:

dist/production/files

I wan to strip the first two directories

<!--- Paste verbatim command output between quotes below -->

```

fatal: [52.65.150.148]: FAILED! => {"changed": true, "dest": "/usr/share/", "extract_results": {"cmd": "/bin/gtar -C \"/usr/share/\" -xz --strip-components=2 --owner=\"nginx\" --group=\"nginx\" -f \"/usr/share/stuff.tar.gz\"", "err": "", "out": "", "rc": 0}, "failed": true, "gid": 992, "group": "nginx", "handler": "TgzArchive", "mode": "02775", "msg": "Unexpected error when accessing exploded file: [Errno 2] No such file or directory: '/usr/share/stuff/dist/production/'", "owner": "bitbucket", "size": 4096, "src": "/usr/share/stuff.tar.gz", "state": "directory", "uid": 1003}

```

| main | unarchive error no such file or directory issue type bug report component name unarchive ansible version ansible devel last updated gmt lib ansible modules core detached head last updated gmt lib ansible modules extras detached head last updated gmt config file home linus documents ansible playbooks ansible cfg configured module search path default w o overrides configuration mention any settings you have changed added removed in ansible cfg or using the ansible environment variables os environment mention the os you are running ansible from and the os you are managing or say “n a” for anything that is not platform specific ubuntu management node centos managed node summary gives no such file or directory error when added extra opts strip components steps to reproduce for bugs show exactly how to reproduce the problem for new features show how the feature would be used name unpack the artifacts unarchive src usr share stuff tar gz dest usr share extra opts strip components owner nginx group nginx copy no expected results changed actual results the archive file structure is dist production files i wan to strip the first two directories fatal failed changed true dest usr share extract results cmd bin gtar c usr share xz strip components owner nginx group nginx f usr share stuff tar gz err out rc failed true gid group nginx handler tgzarchive mode msg unexpected error when accessing exploded file no such file or directory usr share stuff dist production owner bitbucket size src usr share stuff tar gz state directory uid | 1 |

4,278 | 21,523,726,758 | IssuesEvent | 2022-04-28 16:18:48 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | reopened | SEO | Create a sitemap index file | engineering Maintain | # Description

Create a sitemap index file, which should be findable at https://foundation.mozilla.org/sitemap.xml.

This index would link to the sitemaps for all of the languages that the website supports.

# Acceptance criteria

- [x] /sitemap.xml should be accessible on staging/production/and review apps

- [x] sitemap should include all 10 languages included on foundation site translations

# Dev tasks

- [x] Generate Sitemap using wagtail [sitemap generator](https://docs.wagtail.org/en/stable/reference/contrib/sitemaps.html) | True | SEO | Create a sitemap index file - # Description

Create a sitemap index file, which should be findable at https://foundation.mozilla.org/sitemap.xml.

This index would link to the sitemaps for all of the languages that the website supports.

# Acceptance criteria

- [x] /sitemap.xml should be accessible on staging/production/and review apps

- [x] sitemap should include all 10 languages included on foundation site translations

# Dev tasks

- [x] Generate Sitemap using wagtail [sitemap generator](https://docs.wagtail.org/en/stable/reference/contrib/sitemaps.html) | main | seo create a sitemap index file description create a sitemap index file which should be findable at this index would link to the sitemaps for all of the languages that the website supports acceptance criteria sitemap xml should be accessible on staging production and review apps sitemap should include all languages included on foundation site translations dev tasks generate sitemap using wagtail | 1 |

18,450 | 3,062,239,861 | IssuesEvent | 2015-08-16 11:33:41 | dkpro/dkpro-jwktl | https://api.github.com/repos/dkpro/dkpro-jwktl | closed | Upgrade to Java 8 | defect | Originally reported on Google Code with ID 16

```

This is essentially possible by upgrading to the newest parent POM.

```

Reported by `chmeyer.de` on 2015-04-22 13:07:53

| 1.0 | Upgrade to Java 8 - Originally reported on Google Code with ID 16

```

This is essentially possible by upgrading to the newest parent POM.

```

Reported by `chmeyer.de` on 2015-04-22 13:07:53

| non_main | upgrade to java originally reported on google code with id this is essentially possible by upgrading to the newest parent pom reported by chmeyer de on | 0 |

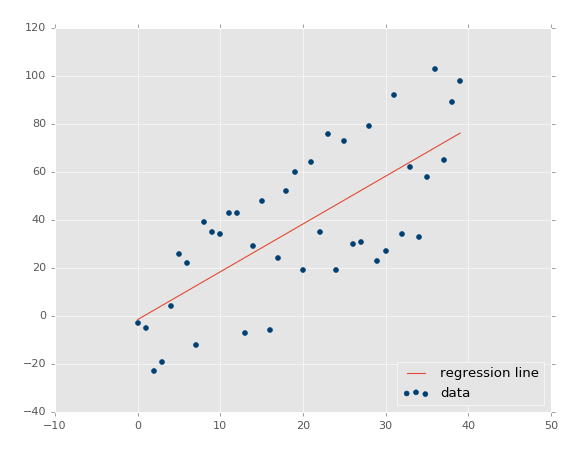

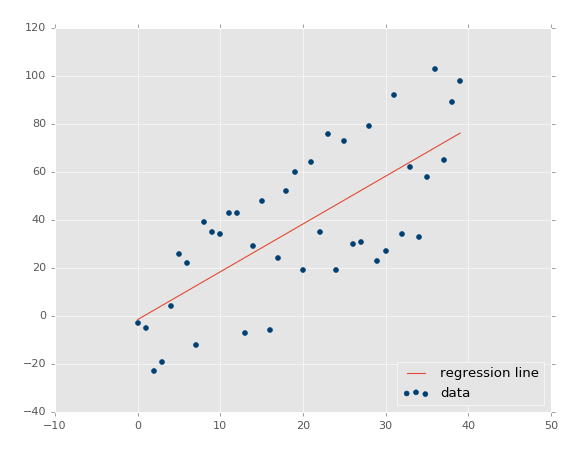

131,762 | 12,489,821,444 | IssuesEvent | 2020-05-31 20:41:41 | edwardtheharris/machines-wat-learn-good | https://api.github.com/repos/edwardtheharris/machines-wat-learn-good | closed | Regression - Intro and Data | documentation | Welcome to the introduction to the regression section of the Machine Learning with Python tutorial series. By this point, you should have Scikit-Learn already installed. If not, get it, along with Pandas and matplotlib!

If you have a pre-compiled scientific distribution of Python like ActivePython from our sponsor, you should already have numpy, scipy, scikit-learn, matplotlib, and pandas installed. If not, do:

```bash

pip install numpy scipy scikit-learn matplotlib pandas

```

Along with those tutorial-wide imports, we're also going to be making use of Quandl here, which you may need to separately install, with:

```bash

pip install quandl

```

I will note again in the first part of the code, but the Quandl module used to be imported with an upper-case Q, but is now imported with a lower-cased q. In the video and sample codes, it is upper-cased.

To begin, what is regression in terms of us using it with machine learning? The goal is to take continuous data, find the equation that best fits the data, and be able forecast out a specific value. With simple linear regression, you are just simply doing this by creating a best-fit line:

From here, we can use the equation of that line to forecast out into the future, where the 'date' is the x-axis, what the price will be.

A popular use with regression is to predict stock prices. This is done because we are considering the fluidity of price over time, and attempting to forecast the next fluid price in the future using a continuous dataset.

Regression is a form of supervised machine learning, which is where the scientist teaches the machine by presenting features and then presenting the correct answer, over and over, to teach the machine. Once the machine is taught, the scientist will usually "test" the machine on some unseen data, where the scientist still knows what the correct answer is, but the machine doesn't. The machine's answers are compared to the known answers, and the machine's accuracy can be measured. If the accuracy is high enough, the scientist may consider actually employing the algorithm in the real world.

Since regression is so popularly used with stock prices, we can start there with an example. To begin, we need data. Sometimes the data is easy to acquire, and sometimes you have to go out and scrape it together, like what we did in an older tutorial series using machine learning with stock fundamentals for investing. In our case, we're able to at least start with simple stock price and volume information from Quandl. To begin, we'll start with data that grabs the stock price for Alphabet (previously Google), with the ticker of GOOGL:

```python

import pandas as pd

import Quandl

df = Quandl.get("WIKI/GOOGL")

print(df.head())

# Note: when filmed, Quandl's module was referenced with an upper-case Q, now it is a lower-case q, so

import quandl

```

At this point, we have:

| Date | Open | High | Low | Close | Volume | Ex-Dividend |

|------------|--------|--------|--------|--------|----------|-------------|

| 2004-08-19 | 100.00 | 104.06 | 95.96 | 100.34 | 44659000 | 0 |

| 2004-08-20 | 101.01 | 109.08 | 100.50 | 108.31 | 22834300 | 0 |

| 2004-08-23 | 110.75 | 113.48 | 109.05 | 109.40 | 18256100 | 0 |

| 2004-08-24 | 111.24 | 111.60 | 103.57 | 104.87 | 15247300 | 0 |

| 2004-08-25 | 104.96 | 108.00 | 103.88 | 106.00 | 9188600 | 0 |

| Date | Split Ratio | Adj. Open | Adj. High | Adj. Low | Adj. Close |

| ---------- | ----------- | --------- | --------- | -------- | ---------- |

| 2004-08-19 | 1 | 50.000 | 52.03 | 47.980 | 50.170 |

| 2004-08-20 | 1 | 50.505 | 54.54 | 50.250 | 54.155 |

| 2004-08-23 | 1 | 55.375 | 56.74 | 54.525 | 54.700 |

| 2004-08-24 | 1 | 55.620 | 55.80 | 51.785 | 52.435 |

| 2004-08-25 | 1 | 52.480 | 54.00 | 51.940 | 53.000 |

| Date | Adj. Volume |

| ---------- | ----------- |

| 2004-08-19 | 44659000 |

| 2004-08-20 | 22834300 |

| 2004-08-23 | 18256100 |

| 2004-08-24 | 15247300 |

| 2004-08-25 | 9188600 |

Awesome, off to a good start, we have the data, but maybe a bit much. To reference the intro, there exists an entire machine learning category that aims to reduce the amount of input that we process. In our case, we have quite a few columns, many are redundant, a couple don't really change. We can most likely agree that having both the regular columns and adjusted columns is redundant. Adjusted columns are the most ideal ones. Regular columns here are prices on the day, but stocks have things called stock splits, where suddenly 1 share becomes something like 2 shares, thus the value of a share is halved, but the value of the company has not halved. Adjusted columns are adjusted for stock splits over time, which makes them more reliable for doing analysis.

Thus, let's go ahead and pair down our original dataframe a bit:

```python

df = df[['Adj. Open', 'Adj. High', 'Adj. Low', 'Adj. Close', 'Adj. Volume']]

```

Now we just have the adjusted columns, and the volume column. A couple major points to make here. Many people talk about or hear about machine learning as if it is some sort of dark art that somehow generates value from nothing. Machine learning can highlight value if it is there, but it has to actually be there. You need meaningful data. So how do you know if you have meaningful data? My best suggestion is to just simply use your brain. Think about it. Are historical prices indicative of future prices? Some people think so, but this has been continually disproven over time. What about historical patterns? This has a bit more merit when taken to the extremes (which machine learning can help with), but is overall fairly weak. What about the relationship between price changes and volume over time, along with historical patterns? Probably a bit better. So, as you can already see, it is not the case that the more data the merrier, but we instead want to use useful data. At the same time, raw data sometimes should be transformed.

Consider daily volatility, such as with the high minus low % change? How about daily percent change? Would you consider data that is simply the Open, High, Low, Close or data that is the Close, Spread/Volatility, %change daily to be better? I would expect the latter to be more ideal. The former is all very similar data points. The latter is created based on identical data from the former, but it brings far more valuable information to the table.

Thus, not all of the data you have is useful, and sometimes you need to do further manipulation on your data to make it even more valuable before feeding it through a machine learning algorithm. Let's go ahead and transform our data next:

```python

df['HL_PCT'] = (df['Adj. High'] - df['Adj. Low']) / df['Adj. Close'] * 100.0

```

I went ahead and recorded the video version of this, not realizing my stake that it was high minus low divided by close. I meant to do High - Low, divided by the low. Feel free to fix that if you like.

This creates a new column that is the % spread based on the closing price, which is our crude measure of volatility. Next, we'll do daily percent change:

```python

df['PCT_change'] = (df['Adj. Close'] - df['Adj. Open']) / df['Adj. Open'] * 100.0

```

Now we will define a new dataframe as:

```python

df = df[['Adj. Close', 'HL_PCT', 'PCT_change', 'Adj. Volume']]

print(df.head())

```

| 1.0 | Regression - Intro and Data - Welcome to the introduction to the regression section of the Machine Learning with Python tutorial series. By this point, you should have Scikit-Learn already installed. If not, get it, along with Pandas and matplotlib!

If you have a pre-compiled scientific distribution of Python like ActivePython from our sponsor, you should already have numpy, scipy, scikit-learn, matplotlib, and pandas installed. If not, do:

```bash

pip install numpy scipy scikit-learn matplotlib pandas

```

Along with those tutorial-wide imports, we're also going to be making use of Quandl here, which you may need to separately install, with:

```bash

pip install quandl

```

I will note again in the first part of the code, but the Quandl module used to be imported with an upper-case Q, but is now imported with a lower-cased q. In the video and sample codes, it is upper-cased.

To begin, what is regression in terms of us using it with machine learning? The goal is to take continuous data, find the equation that best fits the data, and be able forecast out a specific value. With simple linear regression, you are just simply doing this by creating a best-fit line:

From here, we can use the equation of that line to forecast out into the future, where the 'date' is the x-axis, what the price will be.

A popular use with regression is to predict stock prices. This is done because we are considering the fluidity of price over time, and attempting to forecast the next fluid price in the future using a continuous dataset.

Regression is a form of supervised machine learning, which is where the scientist teaches the machine by presenting features and then presenting the correct answer, over and over, to teach the machine. Once the machine is taught, the scientist will usually "test" the machine on some unseen data, where the scientist still knows what the correct answer is, but the machine doesn't. The machine's answers are compared to the known answers, and the machine's accuracy can be measured. If the accuracy is high enough, the scientist may consider actually employing the algorithm in the real world.

Since regression is so popularly used with stock prices, we can start there with an example. To begin, we need data. Sometimes the data is easy to acquire, and sometimes you have to go out and scrape it together, like what we did in an older tutorial series using machine learning with stock fundamentals for investing. In our case, we're able to at least start with simple stock price and volume information from Quandl. To begin, we'll start with data that grabs the stock price for Alphabet (previously Google), with the ticker of GOOGL:

```python

import pandas as pd

import Quandl

df = Quandl.get("WIKI/GOOGL")

print(df.head())

# Note: when filmed, Quandl's module was referenced with an upper-case Q, now it is a lower-case q, so

import quandl

```

At this point, we have:

| Date | Open | High | Low | Close | Volume | Ex-Dividend |

|------------|--------|--------|--------|--------|----------|-------------|

| 2004-08-19 | 100.00 | 104.06 | 95.96 | 100.34 | 44659000 | 0 |

| 2004-08-20 | 101.01 | 109.08 | 100.50 | 108.31 | 22834300 | 0 |

| 2004-08-23 | 110.75 | 113.48 | 109.05 | 109.40 | 18256100 | 0 |

| 2004-08-24 | 111.24 | 111.60 | 103.57 | 104.87 | 15247300 | 0 |

| 2004-08-25 | 104.96 | 108.00 | 103.88 | 106.00 | 9188600 | 0 |

| Date | Split Ratio | Adj. Open | Adj. High | Adj. Low | Adj. Close |

| ---------- | ----------- | --------- | --------- | -------- | ---------- |

| 2004-08-19 | 1 | 50.000 | 52.03 | 47.980 | 50.170 |

| 2004-08-20 | 1 | 50.505 | 54.54 | 50.250 | 54.155 |

| 2004-08-23 | 1 | 55.375 | 56.74 | 54.525 | 54.700 |

| 2004-08-24 | 1 | 55.620 | 55.80 | 51.785 | 52.435 |

| 2004-08-25 | 1 | 52.480 | 54.00 | 51.940 | 53.000 |

| Date | Adj. Volume |

| ---------- | ----------- |

| 2004-08-19 | 44659000 |

| 2004-08-20 | 22834300 |

| 2004-08-23 | 18256100 |

| 2004-08-24 | 15247300 |

| 2004-08-25 | 9188600 |

Awesome, off to a good start, we have the data, but maybe a bit much. To reference the intro, there exists an entire machine learning category that aims to reduce the amount of input that we process. In our case, we have quite a few columns, many are redundant, a couple don't really change. We can most likely agree that having both the regular columns and adjusted columns is redundant. Adjusted columns are the most ideal ones. Regular columns here are prices on the day, but stocks have things called stock splits, where suddenly 1 share becomes something like 2 shares, thus the value of a share is halved, but the value of the company has not halved. Adjusted columns are adjusted for stock splits over time, which makes them more reliable for doing analysis.

Thus, let's go ahead and pair down our original dataframe a bit:

```python

df = df[['Adj. Open', 'Adj. High', 'Adj. Low', 'Adj. Close', 'Adj. Volume']]

```

Now we just have the adjusted columns, and the volume column. A couple major points to make here. Many people talk about or hear about machine learning as if it is some sort of dark art that somehow generates value from nothing. Machine learning can highlight value if it is there, but it has to actually be there. You need meaningful data. So how do you know if you have meaningful data? My best suggestion is to just simply use your brain. Think about it. Are historical prices indicative of future prices? Some people think so, but this has been continually disproven over time. What about historical patterns? This has a bit more merit when taken to the extremes (which machine learning can help with), but is overall fairly weak. What about the relationship between price changes and volume over time, along with historical patterns? Probably a bit better. So, as you can already see, it is not the case that the more data the merrier, but we instead want to use useful data. At the same time, raw data sometimes should be transformed.

Consider daily volatility, such as with the high minus low % change? How about daily percent change? Would you consider data that is simply the Open, High, Low, Close or data that is the Close, Spread/Volatility, %change daily to be better? I would expect the latter to be more ideal. The former is all very similar data points. The latter is created based on identical data from the former, but it brings far more valuable information to the table.

Thus, not all of the data you have is useful, and sometimes you need to do further manipulation on your data to make it even more valuable before feeding it through a machine learning algorithm. Let's go ahead and transform our data next:

```python

df['HL_PCT'] = (df['Adj. High'] - df['Adj. Low']) / df['Adj. Close'] * 100.0

```

I went ahead and recorded the video version of this, not realizing my stake that it was high minus low divided by close. I meant to do High - Low, divided by the low. Feel free to fix that if you like.

This creates a new column that is the % spread based on the closing price, which is our crude measure of volatility. Next, we'll do daily percent change:

```python

df['PCT_change'] = (df['Adj. Close'] - df['Adj. Open']) / df['Adj. Open'] * 100.0

```

Now we will define a new dataframe as:

```python

df = df[['Adj. Close', 'HL_PCT', 'PCT_change', 'Adj. Volume']]

print(df.head())

```

| non_main | regression intro and data welcome to the introduction to the regression section of the machine learning with python tutorial series by this point you should have scikit learn already installed if not get it along with pandas and matplotlib if you have a pre compiled scientific distribution of python like activepython from our sponsor you should already have numpy scipy scikit learn matplotlib and pandas installed if not do bash pip install numpy scipy scikit learn matplotlib pandas along with those tutorial wide imports we re also going to be making use of quandl here which you may need to separately install with bash pip install quandl i will note again in the first part of the code but the quandl module used to be imported with an upper case q but is now imported with a lower cased q in the video and sample codes it is upper cased to begin what is regression in terms of us using it with machine learning the goal is to take continuous data find the equation that best fits the data and be able forecast out a specific value with simple linear regression you are just simply doing this by creating a best fit line from here we can use the equation of that line to forecast out into the future where the date is the x axis what the price will be a popular use with regression is to predict stock prices this is done because we are considering the fluidity of price over time and attempting to forecast the next fluid price in the future using a continuous dataset regression is a form of supervised machine learning which is where the scientist teaches the machine by presenting features and then presenting the correct answer over and over to teach the machine once the machine is taught the scientist will usually test the machine on some unseen data where the scientist still knows what the correct answer is but the machine doesn t the machine s answers are compared to the known answers and the machine s accuracy can be measured if the accuracy is high enough the scientist may consider actually employing the algorithm in the real world since regression is so popularly used with stock prices we can start there with an example to begin we need data sometimes the data is easy to acquire and sometimes you have to go out and scrape it together like what we did in an older tutorial series using machine learning with stock fundamentals for investing in our case we re able to at least start with simple stock price and volume information from quandl to begin we ll start with data that grabs the stock price for alphabet previously google with the ticker of googl python import pandas as pd import quandl df quandl get wiki googl print df head note when filmed quandl s module was referenced with an upper case q now it is a lower case q so import quandl at this point we have date open high low close volume ex dividend date split ratio adj open adj high adj low adj close date adj volume awesome off to a good start we have the data but maybe a bit much to reference the intro there exists an entire machine learning category that aims to reduce the amount of input that we process in our case we have quite a few columns many are redundant a couple don t really change we can most likely agree that having both the regular columns and adjusted columns is redundant adjusted columns are the most ideal ones regular columns here are prices on the day but stocks have things called stock splits where suddenly share becomes something like shares thus the value of a share is halved but the value of the company has not halved adjusted columns are adjusted for stock splits over time which makes them more reliable for doing analysis thus let s go ahead and pair down our original dataframe a bit python df df now we just have the adjusted columns and the volume column a couple major points to make here many people talk about or hear about machine learning as if it is some sort of dark art that somehow generates value from nothing machine learning can highlight value if it is there but it has to actually be there you need meaningful data so how do you know if you have meaningful data my best suggestion is to just simply use your brain think about it are historical prices indicative of future prices some people think so but this has been continually disproven over time what about historical patterns this has a bit more merit when taken to the extremes which machine learning can help with but is overall fairly weak what about the relationship between price changes and volume over time along with historical patterns probably a bit better so as you can already see it is not the case that the more data the merrier but we instead want to use useful data at the same time raw data sometimes should be transformed consider daily volatility such as with the high minus low change how about daily percent change would you consider data that is simply the open high low close or data that is the close spread volatility change daily to be better i would expect the latter to be more ideal the former is all very similar data points the latter is created based on identical data from the former but it brings far more valuable information to the table thus not all of the data you have is useful and sometimes you need to do further manipulation on your data to make it even more valuable before feeding it through a machine learning algorithm let s go ahead and transform our data next python df df df df i went ahead and recorded the video version of this not realizing my stake that it was high minus low divided by close i meant to do high low divided by the low feel free to fix that if you like this creates a new column that is the spread based on the closing price which is our crude measure of volatility next we ll do daily percent change python df df df df now we will define a new dataframe as python df df print df head | 0 |

455,439 | 13,126,831,010 | IssuesEvent | 2020-08-06 09:15:51 | The-Codin-Hole/HotWired-Bot | https://api.github.com/repos/The-Codin-Hole/HotWired-Bot | closed | Cant Launch Bot From start.py, 'module' object is not callable | priority: 1 - high type: bug | [`start.py`](https://github.com/The-Codin-Hole/HotWired-Bot/blob/f2be6e60742bcb387e80fe40131fc8d0a5f8216a/start.py) file doesn't start the bot properly and raises an exception:

```

Traceback (most recent call last):

File "G:\New Downloads\HotWired-Bot\start.py", line 5, in <module>

main()

TypeError: 'module' object is not callable

``` | 1.0 | Cant Launch Bot From start.py, 'module' object is not callable - [`start.py`](https://github.com/The-Codin-Hole/HotWired-Bot/blob/f2be6e60742bcb387e80fe40131fc8d0a5f8216a/start.py) file doesn't start the bot properly and raises an exception:

```

Traceback (most recent call last):

File "G:\New Downloads\HotWired-Bot\start.py", line 5, in <module>

main()

TypeError: 'module' object is not callable

``` | non_main | cant launch bot from start py module object is not callable file doesn t start the bot properly and raises an exception traceback most recent call last file g new downloads hotwired bot start py line in main typeerror module object is not callable | 0 |

3,093 | 11,741,740,334 | IssuesEvent | 2020-03-11 22:32:02 | alacritty/alacritty | https://api.github.com/repos/alacritty/alacritty | closed | Failed to open input method | A - deps B - bug B - crash C - waiting on maintainer DS - X11 S - winit/glutin | Which operating system does the issue occur on? Ubuntu 18.04

If on linux, are you using X11 or Wayland? X11

the error:

```

RUST_BACKTRACE=1 alacritty

thread 'main' panicked at 'Failed to open input method: PotentialInputMethods {

xmodifiers: None,

fallbacks: [

PotentialInputMethod {

name: "@im=local",

successful: Some(

false

)

},

PotentialInputMethod {

name: "@im=",

successful: Some(

false

)

}

],

_xim_servers: Err(

GetPropertyError(

TypeMismatch(

0

)

)

)

}', /home/skariel/.cargo/registry/src/github.com-1ecc6299db9ec823/winit-0.15.1/src/platform/linux/x11/mod.rs:90:17

stack backtrace:

0: <unknown>

1: <unknown>

2: <unknown>

3: <unknown>

4: <unknown>

5: <unknown>

6: <unknown>

7: <unknown>

8: <unknown>

9: __libc_start_main

10: <unknown>

```

| True | Failed to open input method - Which operating system does the issue occur on? Ubuntu 18.04

If on linux, are you using X11 or Wayland? X11

the error:

```

RUST_BACKTRACE=1 alacritty

thread 'main' panicked at 'Failed to open input method: PotentialInputMethods {

xmodifiers: None,

fallbacks: [

PotentialInputMethod {

name: "@im=local",

successful: Some(

false

)

},

PotentialInputMethod {

name: "@im=",

successful: Some(

false

)

}

],

_xim_servers: Err(

GetPropertyError(

TypeMismatch(

0

)

)

)

}', /home/skariel/.cargo/registry/src/github.com-1ecc6299db9ec823/winit-0.15.1/src/platform/linux/x11/mod.rs:90:17

stack backtrace:

0: <unknown>

1: <unknown>

2: <unknown>

3: <unknown>

4: <unknown>

5: <unknown>

6: <unknown>

7: <unknown>

8: <unknown>

9: __libc_start_main

10: <unknown>

```

| main | failed to open input method which operating system does the issue occur on ubuntu if on linux are you using or wayland the error rust backtrace alacritty thread main panicked at failed to open input method potentialinputmethods xmodifiers none fallbacks potentialinputmethod name im local successful some false potentialinputmethod name im successful some false xim servers err getpropertyerror typemismatch home skariel cargo registry src github com winit src platform linux mod rs stack backtrace libc start main | 1 |

822,043 | 30,849,806,311 | IssuesEvent | 2023-08-02 15:55:18 | DDMAL/CantusDB | https://api.github.com/repos/DDMAL/CantusDB | closed | We should remove the extra line in each row of `searchms` results | priority: low cosmetic/accessibility | Poking around OldCantus for CU-related things, I just found a feature I had never seen before but that is not implemented in NewCantus. If this is a known difference, close the issue....

The results on a `searchms` page have a details button that accordions out to include some chant information.