Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

20,236 | 3,800,035,845 | IssuesEvent | 2016-03-23 17:45:36 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | circleci: failed tests (15094): TestCommitWaitState | Robot test-failure | The following test appears to have failed:

[#15094](https://circleci.com/gh/cockroachdb/cockroach/15094):

```

E160323 17:44:51.857301 client/txn.go:358 failure aborting transaction: kv/txn_coord_sender.go:344: writing transaction timed out, was aborted, or ran on multiple coordinators; abort caused by: node unavailable; try another peer

W160323 17:44:51.857409 kv/dist_sender.go:676 failed to invoke AdminSplit [/Table/50/0,/Min): storage/replica_command.go:1664: split at key /Table/50 failed: node unavailable; try another peer

I160323 17:44:51.857452 client/db.go:422 failed batch: storage/replica_command.go:1664: split at key /Table/50 failed: node unavailable; try another peer

E160323 17:44:51.857510 storage/queue.go:444 [split] range=5 [/Table/14-/Max): error: storage/split_queue.go:100: unable to split range=5 [/Table/14-/Max) at key "/Table/50/0": storage/replica_command.go:1664: split at key /Table/50 failed: node unavailable; try another peer

I160323 17:44:52.857210 /go/src/google.golang.org/grpc/clientconn.go:463 grpc: Conn.resetTransport failed to create client transport: connection error: desc = "transport: dial tcp 127.0.0.1:42530: getsockopt: connection refused"; Reconnecting to "127.0.0.1:42530"

panic: test timed out after 1m10s

goroutine 26124 [running]:

panic(0x14a0e00, 0xc821015be0)

/usr/local/go/src/runtime/panic.go:464 +0x3e6

testing.startAlarm.func1()

/usr/local/go/src/testing/testing.go:725 +0x14b

created by time.goFunc

/usr/local/go/src/time/sleep.go:129 +0x3a

goroutine 1 [chan receive]:

testing.RunTests(0x1beda10, 0x2376a40, 0x48, 0x48, 0xf8317da5b7241101)

/usr/local/go/src/testing/testing.go:583 +0x8d2

testing.(*M).Run(0xc820764f08, 0x11)

/usr/local/go/src/testing/testing.go:515 +0x81

github.com/cockroachdb/cockroach/sql_test.TestMain(0xc820764f08)

/go/src/github.com/cockroachdb/cockroach/sql/main_test.go:234 +0x26

main.main()

github.com/cockroachdb/cockroach/sql/_test/_testmain.go:382 +0x114

goroutine 17 [syscall, 1 minutes, locked to thread]:

runtime.goexit()

/usr/local/go/src/runtime/asm_amd64.s:1998 +0x1

goroutine 22 [chan receive]:

github.com/cockroachdb/cockroach/util/log.(*loggingT).flushDaemon(0x264b580)

/go/src/github.com/cockroachdb/cockroach/util/log/clog.go:1003 +0x64

created by github.com/cockroachdb/cockroach/util/log.init.1

/go/src/github.com/cockroachdb/cockroach/util/log/clog.go:604 +0x8a

goroutine 25032 [IO wait]:

net.runtime_pollWait(0x7fb5b3e5d5f8, 0x72, 0xc8201bc800)

/usr/local/go/src/runtime/netpoll.go:160 +0x60

net.(*pollDesc).Wait(0xc820d0b560, 0x72, 0x0, 0x0)

/usr/local/go/src/net/fd_poll_runtime.go:73 +0x3a

net.(*pollDesc).WaitRead(0xc820d0b560, 0x0, 0x0)

/usr/local/go/src/net/fd_poll_runtime.go:78 +0x36

net.(*netFD).Read(0xc820d0b500, 0xc8201bc800, 0x400, 0x400, 0x0, 0x7fb5b3e45028, 0xc82007e0b0)

/usr/local/go/src/net/fd_unix.go:250 +0x23a

net.(*conn).Read(0xc8200262e0, 0xc8201bc800, 0x400, 0x400, 0x0, 0x0, 0x0)

/usr/local/go/src/net/net.go:172 +0xe4

--

google.golang.org/grpc.(*Server).handleRawConn(0xc82116ae00, 0x7fb5b3e5fd98, 0xc82136a580)

/go/src/google.golang.org/grpc/server.go:289 +0x4ee

created by google.golang.org/grpc.(*Server).Serve

/go/src/google.golang.org/grpc/server.go:261 +0x372

goroutine 24985 [semacquire]:

sync.runtime_Semacquire(0xc821546024)

/usr/local/go/src/runtime/sema.go:47 +0x26

sync.(*WaitGroup).Wait(0xc821546018)

/usr/local/go/src/sync/waitgroup.go:127 +0xb4

github.com/cockroachdb/cockroach/util/stop.(*Stopper).Stop(0xc821546000)

/go/src/github.com/cockroachdb/cockroach/util/stop/stopper.go:302 +0x1dd

github.com/cockroachdb/cockroach/server.(*Server).Stop(0xc8206780d0)

/go/src/github.com/cockroachdb/cockroach/server/server.go:359 +0x28

github.com/cockroachdb/cockroach/server.(*TestServer).Stop(0xc82123a030)

/go/src/github.com/cockroachdb/cockroach/server/testserver.go:318 +0x5d

--

testing.tRunner(0xc820090000, 0x23770a0)

/usr/local/go/src/testing/testing.go:473 +0x98

created by testing.RunTests

/usr/local/go/src/testing/testing.go:582 +0x892

goroutine 25065 [sleep]:

time.Sleep(0x6e51796d)

/usr/local/go/src/runtime/time.go:59 +0xf9

google.golang.org/grpc.(*Conn).resetTransport(0xc8209abb20, 0xad5200, 0x0, 0x0)

/go/src/google.golang.org/grpc/clientconn.go:461 +0x79f

google.golang.org/grpc.NewConn.func1(0xc8209abb20)

/go/src/google.golang.org/grpc/clientconn.go:317 +0x2a

created by google.golang.org/grpc.NewConn

/go/src/google.golang.org/grpc/clientconn.go:323 +0x509

goroutine 25066 [select]:

google.golang.org/grpc.(*Conn).Wait(0xc8209abb20, 0x7fb5b3e5f9a0, 0xc8209ce000, 0x0, 0x0, 0x0, 0x0)

/go/src/google.golang.org/grpc/clientconn.go:552 +0x360

google.golang.org/grpc.(*unicastPicker).Pick(0xc8209a4420, 0x7fb5b3e5f9a0, 0xc8209ce000, 0x0, 0x0, 0x0, 0x0)

/go/src/google.golang.org/grpc/picker.go:84 +0x51

google.golang.org/grpc.Invoke(0x7fb5b3e5f9a0, 0xc8209ce000, 0x1968c40, 0x1d, 0x17da680, 0xc820875e40, 0x17cc9e0, 0xc821012640, 0xc820958a00, 0x0, ...)

/go/src/google.golang.org/grpc/call.go:154 +0x6d0

github.com/cockroachdb/cockroach/rpc.(*heartbeatClient).Ping(0xc8200266d8, 0x7fb5b3e5f9a0, 0xc8209ce000, 0xc820875e40, 0x0, 0x0, 0x0, 0x401000000000000, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/rpc/heartbeat.pb.go:115 +0xec

github.com/cockroachdb/cockroach/rpc.(*Context).runHeartbeat(0xc820a3ab40, 0xc820958a00, 0xc821014cd0, 0xf, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/rpc/context.go:187 +0x2b5

--

github.com/cockroachdb/cockroach/util/stop.(*Stopper).RunWorker.func1(0xc821546000, 0xc820b36870)

/go/src/github.com/cockroachdb/cockroach/util/stop/stopper.go:139 +0x52

created by github.com/cockroachdb/cockroach/util/stop.(*Stopper).RunWorker

/go/src/github.com/cockroachdb/cockroach/util/stop/stopper.go:140 +0x62

goroutine 24992 [chan receive]:

github.com/cockroachdb/cockroach/storage/engine.(*RocksDB).Open.func1(0xc82084ea20)

/go/src/github.com/cockroachdb/cockroach/storage/engine/rocksdb.go:135 +0x3a

created by github.com/cockroachdb/cockroach/storage/engine.(*RocksDB).Open

/go/src/github.com/cockroachdb/cockroach/storage/engine/rocksdb.go:136 +0x7c9

goroutine 25002 [chan receive]:

github.com/cockroachdb/cockroach/server.RegisterAdminHandlerFromEndpoint.func1.1(0x7fb5b3e93a80, 0xc8207ba6c0, 0xc8209dc000, 0xc82095bc90, 0xf)

/go/src/github.com/cockroachdb/cockroach/server/admin.pb.gw.go:177 +0x51

created by github.com/cockroachdb/cockroach/server.RegisterAdminHandlerFromEndpoint.func1

/go/src/github.com/cockroachdb/cockroach/server/admin.pb.gw.go:181 +0x205

goroutine 25046 [select]:

google.golang.org/grpc/transport.(*http2Server).controller(0xc8202c61b0)

/go/src/google.golang.org/grpc/transport/http2_server.go:610 +0x5da

created by google.golang.org/grpc/transport.newHTTP2Server

/go/src/google.golang.org/grpc/transport/http2_server.go:134 +0x853

goroutine 25048 [select]:

google.golang.org/grpc/transport.(*http2Client).controller(0xc820b43770)

/go/src/google.golang.org/grpc/transport/http2_client.go:831 +0x5da

created by google.golang.org/grpc/transport.newHTTP2Client

/go/src/google.golang.org/grpc/transport/http2_client.go:194 +0x153b

goroutine 25049 [IO wait]:

net.runtime_pollWait(0x7fb5b3e5d178, 0x72, 0xc8210ef000)

/usr/local/go/src/runtime/netpoll.go:160 +0x60

net.(*pollDesc).Wait(0xc820d0a140, 0x72, 0x0, 0x0)

/usr/local/go/src/net/fd_poll_runtime.go:73 +0x3a

net.(*pollDesc).WaitRead(0xc820d0a140, 0x0, 0x0)

/usr/local/go/src/net/fd_poll_runtime.go:78 +0x36

net.(*netFD).Read(0xc820d0a0e0, 0xc8210ef000, 0x1000, 0x1000, 0x0, 0x7fb5b3e45028, 0xc82007e0b0)

/usr/local/go/src/net/fd_unix.go:250 +0x23a

net.(*conn).Read(0xc820026000, 0xc8210ef000, 0x1000, 0x1000, 0x0, 0x0, 0x0)

/usr/local/go/src/net/net.go:172 +0xe4

--

google.golang.org/grpc/transport.(*http2Client).reader(0xc820b43770)

/go/src/google.golang.org/grpc/transport/http2_client.go:754 +0xef

created by google.golang.org/grpc/transport.newHTTP2Client

/go/src/google.golang.org/grpc/transport/http2_client.go:200 +0x159a

goroutine 25001 [select]:

google.golang.org/grpc.(*Conn).transportMonitor(0xc8212f6380)

/go/src/google.golang.org/grpc/clientconn.go:511 +0x1d3

google.golang.org/grpc.NewConn.func1(0xc8212f6380)

/go/src/google.golang.org/grpc/clientconn.go:322 +0x1b5

created by google.golang.org/grpc.NewConn

/go/src/google.golang.org/grpc/clientconn.go:323 +0x509

FAIL github.com/cockroachdb/cockroach/sql 70.044s

=== RUN TestEval

--- PASS: TestEval (0.01s)

=== RUN TestEvalError

--- PASS: TestEvalError (0.00s)

=== RUN TestEvalComparisonExprCaching

--- PASS: TestEvalComparisonExprCaching (0.00s)

=== RUN TestSimilarEscape

--- PASS: TestSimilarEscape (0.00s)

=== RUN TestQualifiedNameString

--- PASS: TestQualifiedNameString (0.00s)

```

Please assign, take a look and update the issue accordingly. | 1.0 | circleci: failed tests (15094): TestCommitWaitState - The following test appears to have failed:

[#15094](https://circleci.com/gh/cockroachdb/cockroach/15094):

```

E160323 17:44:51.857301 client/txn.go:358 failure aborting transaction: kv/txn_coord_sender.go:344: writing transaction timed out, was aborted, or ran on multiple coordinators; abort caused by: node unavailable; try another peer

W160323 17:44:51.857409 kv/dist_sender.go:676 failed to invoke AdminSplit [/Table/50/0,/Min): storage/replica_command.go:1664: split at key /Table/50 failed: node unavailable; try another peer

I160323 17:44:51.857452 client/db.go:422 failed batch: storage/replica_command.go:1664: split at key /Table/50 failed: node unavailable; try another peer

E160323 17:44:51.857510 storage/queue.go:444 [split] range=5 [/Table/14-/Max): error: storage/split_queue.go:100: unable to split range=5 [/Table/14-/Max) at key "/Table/50/0": storage/replica_command.go:1664: split at key /Table/50 failed: node unavailable; try another peer

I160323 17:44:52.857210 /go/src/google.golang.org/grpc/clientconn.go:463 grpc: Conn.resetTransport failed to create client transport: connection error: desc = "transport: dial tcp 127.0.0.1:42530: getsockopt: connection refused"; Reconnecting to "127.0.0.1:42530"

panic: test timed out after 1m10s

goroutine 26124 [running]:

panic(0x14a0e00, 0xc821015be0)

/usr/local/go/src/runtime/panic.go:464 +0x3e6

testing.startAlarm.func1()

/usr/local/go/src/testing/testing.go:725 +0x14b

created by time.goFunc

/usr/local/go/src/time/sleep.go:129 +0x3a

goroutine 1 [chan receive]:

testing.RunTests(0x1beda10, 0x2376a40, 0x48, 0x48, 0xf8317da5b7241101)

/usr/local/go/src/testing/testing.go:583 +0x8d2

testing.(*M).Run(0xc820764f08, 0x11)

/usr/local/go/src/testing/testing.go:515 +0x81

github.com/cockroachdb/cockroach/sql_test.TestMain(0xc820764f08)

/go/src/github.com/cockroachdb/cockroach/sql/main_test.go:234 +0x26

main.main()

github.com/cockroachdb/cockroach/sql/_test/_testmain.go:382 +0x114

goroutine 17 [syscall, 1 minutes, locked to thread]:

runtime.goexit()

/usr/local/go/src/runtime/asm_amd64.s:1998 +0x1

goroutine 22 [chan receive]:

github.com/cockroachdb/cockroach/util/log.(*loggingT).flushDaemon(0x264b580)

/go/src/github.com/cockroachdb/cockroach/util/log/clog.go:1003 +0x64

created by github.com/cockroachdb/cockroach/util/log.init.1

/go/src/github.com/cockroachdb/cockroach/util/log/clog.go:604 +0x8a

goroutine 25032 [IO wait]:

net.runtime_pollWait(0x7fb5b3e5d5f8, 0x72, 0xc8201bc800)

/usr/local/go/src/runtime/netpoll.go:160 +0x60

net.(*pollDesc).Wait(0xc820d0b560, 0x72, 0x0, 0x0)

/usr/local/go/src/net/fd_poll_runtime.go:73 +0x3a

net.(*pollDesc).WaitRead(0xc820d0b560, 0x0, 0x0)

/usr/local/go/src/net/fd_poll_runtime.go:78 +0x36

net.(*netFD).Read(0xc820d0b500, 0xc8201bc800, 0x400, 0x400, 0x0, 0x7fb5b3e45028, 0xc82007e0b0)

/usr/local/go/src/net/fd_unix.go:250 +0x23a

net.(*conn).Read(0xc8200262e0, 0xc8201bc800, 0x400, 0x400, 0x0, 0x0, 0x0)

/usr/local/go/src/net/net.go:172 +0xe4

--

google.golang.org/grpc.(*Server).handleRawConn(0xc82116ae00, 0x7fb5b3e5fd98, 0xc82136a580)

/go/src/google.golang.org/grpc/server.go:289 +0x4ee

created by google.golang.org/grpc.(*Server).Serve

/go/src/google.golang.org/grpc/server.go:261 +0x372

goroutine 24985 [semacquire]:

sync.runtime_Semacquire(0xc821546024)

/usr/local/go/src/runtime/sema.go:47 +0x26

sync.(*WaitGroup).Wait(0xc821546018)

/usr/local/go/src/sync/waitgroup.go:127 +0xb4

github.com/cockroachdb/cockroach/util/stop.(*Stopper).Stop(0xc821546000)

/go/src/github.com/cockroachdb/cockroach/util/stop/stopper.go:302 +0x1dd

github.com/cockroachdb/cockroach/server.(*Server).Stop(0xc8206780d0)

/go/src/github.com/cockroachdb/cockroach/server/server.go:359 +0x28

github.com/cockroachdb/cockroach/server.(*TestServer).Stop(0xc82123a030)

/go/src/github.com/cockroachdb/cockroach/server/testserver.go:318 +0x5d

--

testing.tRunner(0xc820090000, 0x23770a0)

/usr/local/go/src/testing/testing.go:473 +0x98

created by testing.RunTests

/usr/local/go/src/testing/testing.go:582 +0x892

goroutine 25065 [sleep]:

time.Sleep(0x6e51796d)

/usr/local/go/src/runtime/time.go:59 +0xf9

google.golang.org/grpc.(*Conn).resetTransport(0xc8209abb20, 0xad5200, 0x0, 0x0)

/go/src/google.golang.org/grpc/clientconn.go:461 +0x79f

google.golang.org/grpc.NewConn.func1(0xc8209abb20)

/go/src/google.golang.org/grpc/clientconn.go:317 +0x2a

created by google.golang.org/grpc.NewConn

/go/src/google.golang.org/grpc/clientconn.go:323 +0x509

goroutine 25066 [select]:

google.golang.org/grpc.(*Conn).Wait(0xc8209abb20, 0x7fb5b3e5f9a0, 0xc8209ce000, 0x0, 0x0, 0x0, 0x0)

/go/src/google.golang.org/grpc/clientconn.go:552 +0x360

google.golang.org/grpc.(*unicastPicker).Pick(0xc8209a4420, 0x7fb5b3e5f9a0, 0xc8209ce000, 0x0, 0x0, 0x0, 0x0)

/go/src/google.golang.org/grpc/picker.go:84 +0x51

google.golang.org/grpc.Invoke(0x7fb5b3e5f9a0, 0xc8209ce000, 0x1968c40, 0x1d, 0x17da680, 0xc820875e40, 0x17cc9e0, 0xc821012640, 0xc820958a00, 0x0, ...)

/go/src/google.golang.org/grpc/call.go:154 +0x6d0

github.com/cockroachdb/cockroach/rpc.(*heartbeatClient).Ping(0xc8200266d8, 0x7fb5b3e5f9a0, 0xc8209ce000, 0xc820875e40, 0x0, 0x0, 0x0, 0x401000000000000, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/rpc/heartbeat.pb.go:115 +0xec

github.com/cockroachdb/cockroach/rpc.(*Context).runHeartbeat(0xc820a3ab40, 0xc820958a00, 0xc821014cd0, 0xf, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/rpc/context.go:187 +0x2b5

--

github.com/cockroachdb/cockroach/util/stop.(*Stopper).RunWorker.func1(0xc821546000, 0xc820b36870)

/go/src/github.com/cockroachdb/cockroach/util/stop/stopper.go:139 +0x52

created by github.com/cockroachdb/cockroach/util/stop.(*Stopper).RunWorker

/go/src/github.com/cockroachdb/cockroach/util/stop/stopper.go:140 +0x62

goroutine 24992 [chan receive]:

github.com/cockroachdb/cockroach/storage/engine.(*RocksDB).Open.func1(0xc82084ea20)

/go/src/github.com/cockroachdb/cockroach/storage/engine/rocksdb.go:135 +0x3a

created by github.com/cockroachdb/cockroach/storage/engine.(*RocksDB).Open

/go/src/github.com/cockroachdb/cockroach/storage/engine/rocksdb.go:136 +0x7c9

goroutine 25002 [chan receive]:

github.com/cockroachdb/cockroach/server.RegisterAdminHandlerFromEndpoint.func1.1(0x7fb5b3e93a80, 0xc8207ba6c0, 0xc8209dc000, 0xc82095bc90, 0xf)

/go/src/github.com/cockroachdb/cockroach/server/admin.pb.gw.go:177 +0x51

created by github.com/cockroachdb/cockroach/server.RegisterAdminHandlerFromEndpoint.func1

/go/src/github.com/cockroachdb/cockroach/server/admin.pb.gw.go:181 +0x205

goroutine 25046 [select]:

google.golang.org/grpc/transport.(*http2Server).controller(0xc8202c61b0)

/go/src/google.golang.org/grpc/transport/http2_server.go:610 +0x5da

created by google.golang.org/grpc/transport.newHTTP2Server

/go/src/google.golang.org/grpc/transport/http2_server.go:134 +0x853

goroutine 25048 [select]:

google.golang.org/grpc/transport.(*http2Client).controller(0xc820b43770)

/go/src/google.golang.org/grpc/transport/http2_client.go:831 +0x5da

created by google.golang.org/grpc/transport.newHTTP2Client

/go/src/google.golang.org/grpc/transport/http2_client.go:194 +0x153b

goroutine 25049 [IO wait]:

net.runtime_pollWait(0x7fb5b3e5d178, 0x72, 0xc8210ef000)

/usr/local/go/src/runtime/netpoll.go:160 +0x60

net.(*pollDesc).Wait(0xc820d0a140, 0x72, 0x0, 0x0)

/usr/local/go/src/net/fd_poll_runtime.go:73 +0x3a

net.(*pollDesc).WaitRead(0xc820d0a140, 0x0, 0x0)

/usr/local/go/src/net/fd_poll_runtime.go:78 +0x36

net.(*netFD).Read(0xc820d0a0e0, 0xc8210ef000, 0x1000, 0x1000, 0x0, 0x7fb5b3e45028, 0xc82007e0b0)

/usr/local/go/src/net/fd_unix.go:250 +0x23a

net.(*conn).Read(0xc820026000, 0xc8210ef000, 0x1000, 0x1000, 0x0, 0x0, 0x0)

/usr/local/go/src/net/net.go:172 +0xe4

--

google.golang.org/grpc/transport.(*http2Client).reader(0xc820b43770)

/go/src/google.golang.org/grpc/transport/http2_client.go:754 +0xef

created by google.golang.org/grpc/transport.newHTTP2Client

/go/src/google.golang.org/grpc/transport/http2_client.go:200 +0x159a

goroutine 25001 [select]:

google.golang.org/grpc.(*Conn).transportMonitor(0xc8212f6380)

/go/src/google.golang.org/grpc/clientconn.go:511 +0x1d3

google.golang.org/grpc.NewConn.func1(0xc8212f6380)

/go/src/google.golang.org/grpc/clientconn.go:322 +0x1b5

created by google.golang.org/grpc.NewConn

/go/src/google.golang.org/grpc/clientconn.go:323 +0x509

FAIL github.com/cockroachdb/cockroach/sql 70.044s

=== RUN TestEval

--- PASS: TestEval (0.01s)

=== RUN TestEvalError

--- PASS: TestEvalError (0.00s)

=== RUN TestEvalComparisonExprCaching

--- PASS: TestEvalComparisonExprCaching (0.00s)

=== RUN TestSimilarEscape

--- PASS: TestSimilarEscape (0.00s)

=== RUN TestQualifiedNameString

--- PASS: TestQualifiedNameString (0.00s)

```

Please assign, take a look and update the issue accordingly. | non_main | circleci failed tests testcommitwaitstate the following test appears to have failed client txn go failure aborting transaction kv txn coord sender go writing transaction timed out was aborted or ran on multiple coordinators abort caused by node unavailable try another peer kv dist sender go failed to invoke adminsplit table min storage replica command go split at key table failed node unavailable try another peer client db go failed batch storage replica command go split at key table failed node unavailable try another peer storage queue go range table max error storage split queue go unable to split range table max at key table storage replica command go split at key table failed node unavailable try another peer go src google golang org grpc clientconn go grpc conn resettransport failed to create client transport connection error desc transport dial tcp getsockopt connection refused reconnecting to panic test timed out after goroutine panic usr local go src runtime panic go testing startalarm usr local go src testing testing go created by time gofunc usr local go src time sleep go goroutine testing runtests usr local go src testing testing go testing m run usr local go src testing testing go github com cockroachdb cockroach sql test testmain go src github com cockroachdb cockroach sql main test go main main github com cockroachdb cockroach sql test testmain go goroutine runtime goexit usr local go src runtime asm s goroutine github com cockroachdb cockroach util log loggingt flushdaemon go src github com cockroachdb cockroach util log clog go created by github com cockroachdb cockroach util log init go src github com cockroachdb cockroach util log clog go goroutine net runtime pollwait usr local go src runtime netpoll go net polldesc wait usr local go src net fd poll runtime go net polldesc waitread usr local go src net fd poll runtime go net netfd read usr local go src net fd unix go net conn read usr local go src net net go google golang org grpc server handlerawconn go src google golang org grpc server go created by google golang org grpc server serve go src google golang org grpc server go goroutine sync runtime semacquire usr local go src runtime sema go sync waitgroup wait usr local go src sync waitgroup go github com cockroachdb cockroach util stop stopper stop go src github com cockroachdb cockroach util stop stopper go github com cockroachdb cockroach server server stop go src github com cockroachdb cockroach server server go github com cockroachdb cockroach server testserver stop go src github com cockroachdb cockroach server testserver go testing trunner usr local go src testing testing go created by testing runtests usr local go src testing testing go goroutine time sleep usr local go src runtime time go google golang org grpc conn resettransport go src google golang org grpc clientconn go google golang org grpc newconn go src google golang org grpc clientconn go created by google golang org grpc newconn go src google golang org grpc clientconn go goroutine google golang org grpc conn wait go src google golang org grpc clientconn go google golang org grpc unicastpicker pick go src google golang org grpc picker go google golang org grpc invoke go src google golang org grpc call go github com cockroachdb cockroach rpc heartbeatclient ping go src github com cockroachdb cockroach rpc heartbeat pb go github com cockroachdb cockroach rpc context runheartbeat go src github com cockroachdb cockroach rpc context go github com cockroachdb cockroach util stop stopper runworker go src github com cockroachdb cockroach util stop stopper go created by github com cockroachdb cockroach util stop stopper runworker go src github com cockroachdb cockroach util stop stopper go goroutine github com cockroachdb cockroach storage engine rocksdb open go src github com cockroachdb cockroach storage engine rocksdb go created by github com cockroachdb cockroach storage engine rocksdb open go src github com cockroachdb cockroach storage engine rocksdb go goroutine github com cockroachdb cockroach server registeradminhandlerfromendpoint go src github com cockroachdb cockroach server admin pb gw go created by github com cockroachdb cockroach server registeradminhandlerfromendpoint go src github com cockroachdb cockroach server admin pb gw go goroutine google golang org grpc transport controller go src google golang org grpc transport server go created by google golang org grpc transport go src google golang org grpc transport server go goroutine google golang org grpc transport controller go src google golang org grpc transport client go created by google golang org grpc transport go src google golang org grpc transport client go goroutine net runtime pollwait usr local go src runtime netpoll go net polldesc wait usr local go src net fd poll runtime go net polldesc waitread usr local go src net fd poll runtime go net netfd read usr local go src net fd unix go net conn read usr local go src net net go google golang org grpc transport reader go src google golang org grpc transport client go created by google golang org grpc transport go src google golang org grpc transport client go goroutine google golang org grpc conn transportmonitor go src google golang org grpc clientconn go google golang org grpc newconn go src google golang org grpc clientconn go created by google golang org grpc newconn go src google golang org grpc clientconn go fail github com cockroachdb cockroach sql run testeval pass testeval run testevalerror pass testevalerror run testevalcomparisonexprcaching pass testevalcomparisonexprcaching run testsimilarescape pass testsimilarescape run testqualifiednamestring pass testqualifiednamestring please assign take a look and update the issue accordingly | 0 |

75,468 | 9,256,369,583 | IssuesEvent | 2019-03-16 18:27:47 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Duplicate GlobalKey when using MaterialApp(builder) on Hot Restart | f: material design framework | Suddenly, (only) hot restarting causes this issue.

```dart

runApp(MaterialApp(builder: (_, child) => AnyBuilder(builder: (_) => AnyWidget(child: child)), ...)) // app contains functionality

```

```

Multiple widgets used the same GlobalKey.

The key [GlobalObjectKey<NavigatorState> _WidgetsAppState#bd478] was used by multiple widgets. The

parents of those widgets were:

- AnyBuilder

- AnyWidget

A GlobalKey can only be specified on one widget at a time in the widget tree.

```

In this case I tested `AnyBuilder` with `FutureBuilder` and `ValueListenableBuilder` and `AnyWidget` can even be a `Container`.

This always happens when hot restarting the application and only then. I looked at the Android Studio `Local History` of my project from before this behavior occured and after it occured and the `builder` in the `MaterialApp` has been unchanged. Having said that, there are many other widgets included in the actual code, e.g. `InheritedWidget`'s before `MaterialApp` etc.

It is just really weird that this happens because I am not using any `GlobalKey`'s that could cause this and the exception is just really weird because neither `AnyBuilder` nor `AnyWidget` was ever supplied with any `Key` by me. I tried to change the `builder` in `MaterialApp` in many different ways but this bug (?) remains.

Oh, and since this issue has been around my workflow is `Hot Restart -> Hot Reload` because a hot reload will fix it (otherwise the application is unusable because this `builder` sits at the top of the widget tree).

`Channel master, v1.3.10-pre.27` | 1.0 | Duplicate GlobalKey when using MaterialApp(builder) on Hot Restart - Suddenly, (only) hot restarting causes this issue.

```dart

runApp(MaterialApp(builder: (_, child) => AnyBuilder(builder: (_) => AnyWidget(child: child)), ...)) // app contains functionality

```

```

Multiple widgets used the same GlobalKey.

The key [GlobalObjectKey<NavigatorState> _WidgetsAppState#bd478] was used by multiple widgets. The

parents of those widgets were:

- AnyBuilder

- AnyWidget

A GlobalKey can only be specified on one widget at a time in the widget tree.

```

In this case I tested `AnyBuilder` with `FutureBuilder` and `ValueListenableBuilder` and `AnyWidget` can even be a `Container`.

This always happens when hot restarting the application and only then. I looked at the Android Studio `Local History` of my project from before this behavior occured and after it occured and the `builder` in the `MaterialApp` has been unchanged. Having said that, there are many other widgets included in the actual code, e.g. `InheritedWidget`'s before `MaterialApp` etc.

It is just really weird that this happens because I am not using any `GlobalKey`'s that could cause this and the exception is just really weird because neither `AnyBuilder` nor `AnyWidget` was ever supplied with any `Key` by me. I tried to change the `builder` in `MaterialApp` in many different ways but this bug (?) remains.

Oh, and since this issue has been around my workflow is `Hot Restart -> Hot Reload` because a hot reload will fix it (otherwise the application is unusable because this `builder` sits at the top of the widget tree).

`Channel master, v1.3.10-pre.27` | non_main | duplicate globalkey when using materialapp builder on hot restart suddenly only hot restarting causes this issue dart runapp materialapp builder child anybuilder builder anywidget child child app contains functionality multiple widgets used the same globalkey the key was used by multiple widgets the parents of those widgets were anybuilder anywidget a globalkey can only be specified on one widget at a time in the widget tree in this case i tested anybuilder with futurebuilder and valuelistenablebuilder and anywidget can even be a container this always happens when hot restarting the application and only then i looked at the android studio local history of my project from before this behavior occured and after it occured and the builder in the materialapp has been unchanged having said that there are many other widgets included in the actual code e g inheritedwidget s before materialapp etc it is just really weird that this happens because i am not using any globalkey s that could cause this and the exception is just really weird because neither anybuilder nor anywidget was ever supplied with any key by me i tried to change the builder in materialapp in many different ways but this bug remains oh and since this issue has been around my workflow is hot restart hot reload because a hot reload will fix it otherwise the application is unusable because this builder sits at the top of the widget tree channel master pre | 0 |

5,143 | 26,222,057,709 | IssuesEvent | 2023-01-04 15:33:55 | NIAEFEUP/website-niaefeup-backend | https://api.github.com/repos/NIAEFEUP/website-niaefeup-backend | closed | global: refactor DTO structure | maintainability | Until a while ago, all the DTOs we had were a direct mapping between DTO and model, so we had a `Dto` class with useful methods like `create()` and `update()`. However, we quickly realized we need more than that kind of DTO (see discussion in https://github.com/NIAEFEUP/website-niaefeup-backend/pull/54#discussion_r1038903546). The result was a few DTOs residing alongside the controller classes, which is not ideal.

The discussion above proposes the following solution:

```

dto/

model/

auth/

otherSemanticUseCase/

```

This `dto` package should either reside in the global scope of the application or inside the `controller` package, I'll leave that to discussion. It'd also be nice to consider cases of model DTOs that need more fields than the respective model's fields (e.g. creating a project with a list of account IDs to be used as its team). | True | global: refactor DTO structure - Until a while ago, all the DTOs we had were a direct mapping between DTO and model, so we had a `Dto` class with useful methods like `create()` and `update()`. However, we quickly realized we need more than that kind of DTO (see discussion in https://github.com/NIAEFEUP/website-niaefeup-backend/pull/54#discussion_r1038903546). The result was a few DTOs residing alongside the controller classes, which is not ideal.

The discussion above proposes the following solution:

```

dto/

model/

auth/

otherSemanticUseCase/

```

This `dto` package should either reside in the global scope of the application or inside the `controller` package, I'll leave that to discussion. It'd also be nice to consider cases of model DTOs that need more fields than the respective model's fields (e.g. creating a project with a list of account IDs to be used as its team). | main | global refactor dto structure until a while ago all the dtos we had were a direct mapping between dto and model so we had a dto class with useful methods like create and update however we quickly realized we need more than that kind of dto see discussion in the result was a few dtos residing alongside the controller classes which is not ideal the discussion above proposes the following solution dto model auth othersemanticusecase this dto package should either reside in the global scope of the application or inside the controller package i ll leave that to discussion it d also be nice to consider cases of model dtos that need more fields than the respective model s fields e g creating a project with a list of account ids to be used as its team | 1 |

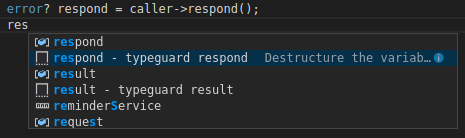

125,515 | 10,345,260,024 | IssuesEvent | 2019-09-04 13:07:23 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Typeguard auto complete not working as expected for union with error type | Area/Tooling BetaTesting Component/LanguageServer Priority/High Type/Bug | **Description:**

The type guard generated has an additional `else` keyword

```ballerina

error? respond = caller->respond();

if (respond is error) {

} else else {

}

```

**Steps to reproduce:**

Click on the type guard option on any variable of union type which includes only two types from which one of them is error type

i.e.: type guard generation on `boolean|int|error x = true;` works but fails for `boolean|error x = true;`

**Affected Versions:**

Ballerina 1.0.0-beta-SNAPSHOT

VSCode Version: 1.36.1 | 1.0 | Typeguard auto complete not working as expected for union with error type - **Description:**

The type guard generated has an additional `else` keyword

```ballerina

error? respond = caller->respond();

if (respond is error) {

} else else {

}

```

**Steps to reproduce:**

Click on the type guard option on any variable of union type which includes only two types from which one of them is error type

i.e.: type guard generation on `boolean|int|error x = true;` works but fails for `boolean|error x = true;`

**Affected Versions:**

Ballerina 1.0.0-beta-SNAPSHOT

VSCode Version: 1.36.1 | non_main | typeguard auto complete not working as expected for union with error type description the type guard generated has an additional else keyword ballerina error respond caller respond if respond is error else else steps to reproduce click on the type guard option on any variable of union type which includes only two types from which one of them is error type i e type guard generation on boolean int error x true works but fails for boolean error x true affected versions ballerina beta snapshot vscode version | 0 |

1,903 | 6,577,556,021 | IssuesEvent | 2017-09-12 01:44:15 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | mount is not idempotent for bind mount (Ubuntu 14.04, ansible 2.0.1.0) | affects_2.0 bug_report waiting_on_maintainer | ##### Issue Type:

- Bug Report

##### Plugin Name:

mount

##### Ansible Version:

```

ansible 2.0.1.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### Ansible Configuration:

no changes from default

##### Environment:

Ubuntu 14.04 control host and target host, but see below for exact steps to reproduce

##### Summary:

Using the mount module to set up and mount a bind mount is not idempotent.

##### Steps To Reproduce:

The following playbook illustrates the problem:

```

---

- hosts: all

tasks:

- file: path=/tmp/1 state=directory

- file: path=/tmp/2 state=directory

- mount: src=/tmp/1 name=/tmp/2 fstype=- opts=bind state=mounted

```

Here's a detailed recipe for reproducing this if you run into trouble.

- Launch an instance in ec2 off the latest ubuntu trusty AMI. A t2.nano is sufficient

- On the AMI:

```

sudo -s

apt-get -y install software-properties-common

apt-add-repository -y ppa:ansible/ansible

apt-get update

apt-get -y install ansible

cd /tmp

cat >a.yml <<EOF

---

- hosts: all

tasks:

- file: path=/tmp/1 state=directory

- file: path=/tmp/2 state=directory

- mount: src=/tmp/1 name=/tmp/2 fstype=- opts=bind state=mounted

EOF

cat >local <<EOF

[local]

127.0.0.1 ansible_connection=local

EOF

ansible-playbook -i local a.yml

ansible-playbook -i local a.yml

```

##### Expected Results:

The second time, nothing should have changed since /tmp/1 is already mounted on /tmp/2

##### Actual Results:

The directory was mounted again. The line:

```

/tmp/1 on /tmp/2 type none (rw,bind)

```

appears twice in the output of `mount`.

| True | mount is not idempotent for bind mount (Ubuntu 14.04, ansible 2.0.1.0) - ##### Issue Type:

- Bug Report

##### Plugin Name:

mount

##### Ansible Version:

```

ansible 2.0.1.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### Ansible Configuration:

no changes from default

##### Environment:

Ubuntu 14.04 control host and target host, but see below for exact steps to reproduce

##### Summary:

Using the mount module to set up and mount a bind mount is not idempotent.

##### Steps To Reproduce:

The following playbook illustrates the problem:

```

---

- hosts: all

tasks:

- file: path=/tmp/1 state=directory

- file: path=/tmp/2 state=directory

- mount: src=/tmp/1 name=/tmp/2 fstype=- opts=bind state=mounted

```

Here's a detailed recipe for reproducing this if you run into trouble.

- Launch an instance in ec2 off the latest ubuntu trusty AMI. A t2.nano is sufficient

- On the AMI:

```

sudo -s

apt-get -y install software-properties-common

apt-add-repository -y ppa:ansible/ansible

apt-get update

apt-get -y install ansible

cd /tmp

cat >a.yml <<EOF

---

- hosts: all

tasks:

- file: path=/tmp/1 state=directory

- file: path=/tmp/2 state=directory

- mount: src=/tmp/1 name=/tmp/2 fstype=- opts=bind state=mounted

EOF

cat >local <<EOF

[local]

127.0.0.1 ansible_connection=local

EOF

ansible-playbook -i local a.yml

ansible-playbook -i local a.yml

```

##### Expected Results:

The second time, nothing should have changed since /tmp/1 is already mounted on /tmp/2

##### Actual Results:

The directory was mounted again. The line:

```

/tmp/1 on /tmp/2 type none (rw,bind)

```

appears twice in the output of `mount`.

| main | mount is not idempotent for bind mount ubuntu ansible issue type bug report plugin name mount ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides ansible configuration no changes from default environment ubuntu control host and target host but see below for exact steps to reproduce summary using the mount module to set up and mount a bind mount is not idempotent steps to reproduce the following playbook illustrates the problem hosts all tasks file path tmp state directory file path tmp state directory mount src tmp name tmp fstype opts bind state mounted here s a detailed recipe for reproducing this if you run into trouble launch an instance in off the latest ubuntu trusty ami a nano is sufficient on the ami sudo s apt get y install software properties common apt add repository y ppa ansible ansible apt get update apt get y install ansible cd tmp cat a yml eof hosts all tasks file path tmp state directory file path tmp state directory mount src tmp name tmp fstype opts bind state mounted eof cat local eof ansible connection local eof ansible playbook i local a yml ansible playbook i local a yml expected results the second time nothing should have changed since tmp is already mounted on tmp actual results the directory was mounted again the line tmp on tmp type none rw bind appears twice in the output of mount | 1 |

5,541 | 27,743,765,161 | IssuesEvent | 2023-03-15 15:46:52 | cosmos/ibc-rs | https://api.github.com/repos/cosmos/ibc-rs | opened | [ICS02] Split `check_misbehaviour_and_update_state` and `check_header_and_update_state` into two sets of validation/execution functions | A: breaking I: logic O: maintainability O: modularity | <!-- < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < ☺

v ✰ Thanks for opening an issue! ✰

v Before smashing the submit button please review the template.

v Please also ensure that this is not a duplicate issue :)

☺ > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > -->

## Problem Statement

Part of #173, in continuation of implementing [IBC-go/ADR006](https://github.com/cosmos/ibc-go/blob/main/docs/architecture/adr-006-02-client-refactor.md) and getting `check_misbehaviour_and_update_state()` and `check_misbehaviour_and_update_state()` compatible with the Validation/Execution design ([ADR005](https://github.com/cosmos/ibc-rs/blob/main/docs/architecture/adr-005-handlers-redesign.md))

| True | [ICS02] Split `check_misbehaviour_and_update_state` and `check_header_and_update_state` into two sets of validation/execution functions - <!-- < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < ☺

v ✰ Thanks for opening an issue! ✰

v Before smashing the submit button please review the template.

v Please also ensure that this is not a duplicate issue :)

☺ > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > -->

## Problem Statement

Part of #173, in continuation of implementing [IBC-go/ADR006](https://github.com/cosmos/ibc-go/blob/main/docs/architecture/adr-006-02-client-refactor.md) and getting `check_misbehaviour_and_update_state()` and `check_misbehaviour_and_update_state()` compatible with the Validation/Execution design ([ADR005](https://github.com/cosmos/ibc-rs/blob/main/docs/architecture/adr-005-handlers-redesign.md))

| main | split check misbehaviour and update state and check header and update state into two sets of validation execution functions ☺ v ✰ thanks for opening an issue ✰ v before smashing the submit button please review the template v please also ensure that this is not a duplicate issue ☺ problem statement part of in continuation of implementing and getting check misbehaviour and update state and check misbehaviour and update state compatible with the validation execution design | 1 |

62,434 | 17,023,922,311 | IssuesEvent | 2021-07-03 04:34:26 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | OSM website Map Key is missing "cliff" | Component: website Priority: minor Resolution: wontfix Type: defect | **[Submitted to the original trac issue database at 10.41pm, Sunday, 21st June 2015]**

The Map Key on www.openstreetmap.org/search is missing the "cliff" line type item. See screenshot for example:

[[Image(http://i.imgur.com/aOUEkQz.png)]]

You can view this map position [https://www.openstreetmap.org/search?query=san%20miguel%20de%20allende#map=16/20.8959/-100.7382 here]. | 1.0 | OSM website Map Key is missing "cliff" - **[Submitted to the original trac issue database at 10.41pm, Sunday, 21st June 2015]**

The Map Key on www.openstreetmap.org/search is missing the "cliff" line type item. See screenshot for example:

[[Image(http://i.imgur.com/aOUEkQz.png)]]

You can view this map position [https://www.openstreetmap.org/search?query=san%20miguel%20de%20allende#map=16/20.8959/-100.7382 here]. | non_main | osm website map key is missing cliff the map key on is missing the cliff line type item see screenshot for example you can view this map position | 0 |

744 | 4,349,990,219 | IssuesEvent | 2016-07-30 23:29:48 | christoff-buerger/racr | https://api.github.com/repos/christoff-buerger/racr | closed | RACR-meta: separation and testing of annotation facilities | enhancement low maintainability user interface | To simplify the core implementation of _RACR_ and, in particular, clearly identify and elaborate the essential facilities required for meta-instantiation and reasoning (cf. issues #62 and #63 ), the existing abstract syntax tree annotation functionalities should become a separate _Scheme_ library, that can be loaded additionally to `(racr-meta core)` if required.

Since unit tests for the existing annotation facilities are missing, they have to be added to ensure correct refactoring and improve code coverage. | True | RACR-meta: separation and testing of annotation facilities - To simplify the core implementation of _RACR_ and, in particular, clearly identify and elaborate the essential facilities required for meta-instantiation and reasoning (cf. issues #62 and #63 ), the existing abstract syntax tree annotation functionalities should become a separate _Scheme_ library, that can be loaded additionally to `(racr-meta core)` if required.

Since unit tests for the existing annotation facilities are missing, they have to be added to ensure correct refactoring and improve code coverage. | main | racr meta separation and testing of annotation facilities to simplify the core implementation of racr and in particular clearly identify and elaborate the essential facilities required for meta instantiation and reasoning cf issues and the existing abstract syntax tree annotation functionalities should become a separate scheme library that can be loaded additionally to racr meta core if required since unit tests for the existing annotation facilities are missing they have to be added to ensure correct refactoring and improve code coverage | 1 |

2,495 | 8,655,457,949 | IssuesEvent | 2018-11-27 16:00:18 | codestation/qcma | https://api.github.com/repos/codestation/qcma | closed | [Issues] Disconnections | unmaintained | I'm using QCMA on Win 8.1 Pro with my Vita running on the latest official firmware

Vita is connecting to PC via WiFi

I'm getting disconnections in the following situation

- Under Content Manager, I open Photos or Videos

- Under Content Manager, I leave the Vita and QCMA to run overnight to back up all my apps (not a full backup, just the Vita games)

any idea what's wrong?

I can also provide a log - just need to know where to get that

| True | [Issues] Disconnections - I'm using QCMA on Win 8.1 Pro with my Vita running on the latest official firmware

Vita is connecting to PC via WiFi

I'm getting disconnections in the following situation

- Under Content Manager, I open Photos or Videos

- Under Content Manager, I leave the Vita and QCMA to run overnight to back up all my apps (not a full backup, just the Vita games)

any idea what's wrong?

I can also provide a log - just need to know where to get that

| main | disconnections i m using qcma on win pro with my vita running on the latest official firmware vita is connecting to pc via wifi i m getting disconnections in the following situation under content manager i open photos or videos under content manager i leave the vita and qcma to run overnight to back up all my apps not a full backup just the vita games any idea what s wrong i can also provide a log just need to know where to get that | 1 |

622,413 | 19,634,682,162 | IssuesEvent | 2022-01-08 03:48:41 | TASVideos/tasvideos | https://api.github.com/repos/TASVideos/tasvideos | closed | Editing the post there's 2 previews | enhancement Priority: Low | One preview is at the top and doesn't change/update, and another one appears at the bottom when you click the Preview button. I like that it appears at the bottom so you keep seeing your actual text above it, but I don't think the static preview at the top is needed. | 1.0 | Editing the post there's 2 previews - One preview is at the top and doesn't change/update, and another one appears at the bottom when you click the Preview button. I like that it appears at the bottom so you keep seeing your actual text above it, but I don't think the static preview at the top is needed. | non_main | editing the post there s previews one preview is at the top and doesn t change update and another one appears at the bottom when you click the preview button i like that it appears at the bottom so you keep seeing your actual text above it but i don t think the static preview at the top is needed | 0 |

3,909 | 17,381,248,951 | IssuesEvent | 2021-07-31 19:07:19 | dotnet/Silk.NET | https://api.github.com/repos/dotnet/Silk.NET | opened | Use ClangSharp's autogenerated bindings tests (i.e. for blittability, size, etc) | area-SilkTouch help wanted tenet-Maintainability | This is something that is left out of the initial pass of the SilkTouch Scraper because I (knowingly and idiotically) did not add support for tests in single-file XML dumps upstream in ClangSharp.

The work is:

- [ ] Investigate adding support upstream in ClangSharp for outputting test code where the XML output mode is used with a single file

- currently, ClangSharp's OutputBuilderFactory is hardcoded to create a CSharpOutputBuilder. options are:

- return a CSharpOutputBuilder that has C# code for stuff like `CreateTests` within the XML, so it outputs something like `<bindings><tests name="DXGI_OUTPUT_DESCTests"><code></code></tests></bindings>`. This would work nicely, but there may not be enough XML markers to allow us to change the name of the class under test, for example,

- add to the XML the test "goals" i.e. `<bindings><test type="DXGI_OUTPUT_DESC" isSize32="32" isSize64="64" /></bindings>`

- [ ] Recognise the new XML elements in the SilkTouch Scraper

- [ ] Add required configuration structures, so that this functionality can be configured for each project

- [ ] Output into the test project (the path of which indicated by the configuration structure) all the test code.

cc @tannergooding | True | Use ClangSharp's autogenerated bindings tests (i.e. for blittability, size, etc) - This is something that is left out of the initial pass of the SilkTouch Scraper because I (knowingly and idiotically) did not add support for tests in single-file XML dumps upstream in ClangSharp.

The work is:

- [ ] Investigate adding support upstream in ClangSharp for outputting test code where the XML output mode is used with a single file

- currently, ClangSharp's OutputBuilderFactory is hardcoded to create a CSharpOutputBuilder. options are:

- return a CSharpOutputBuilder that has C# code for stuff like `CreateTests` within the XML, so it outputs something like `<bindings><tests name="DXGI_OUTPUT_DESCTests"><code></code></tests></bindings>`. This would work nicely, but there may not be enough XML markers to allow us to change the name of the class under test, for example,

- add to the XML the test "goals" i.e. `<bindings><test type="DXGI_OUTPUT_DESC" isSize32="32" isSize64="64" /></bindings>`

- [ ] Recognise the new XML elements in the SilkTouch Scraper

- [ ] Add required configuration structures, so that this functionality can be configured for each project

- [ ] Output into the test project (the path of which indicated by the configuration structure) all the test code.

cc @tannergooding | main | use clangsharp s autogenerated bindings tests i e for blittability size etc this is something that is left out of the initial pass of the silktouch scraper because i knowingly and idiotically did not add support for tests in single file xml dumps upstream in clangsharp the work is investigate adding support upstream in clangsharp for outputting test code where the xml output mode is used with a single file currently clangsharp s outputbuilderfactory is hardcoded to create a csharpoutputbuilder options are return a csharpoutputbuilder that has c code for stuff like createtests within the xml so it outputs something like this would work nicely but there may not be enough xml markers to allow us to change the name of the class under test for example add to the xml the test goals i e recognise the new xml elements in the silktouch scraper add required configuration structures so that this functionality can be configured for each project output into the test project the path of which indicated by the configuration structure all the test code cc tannergooding | 1 |

305,908 | 23,136,785,299 | IssuesEvent | 2022-07-28 14:54:17 | eCapitalAdvisors/Incorta_templates | https://api.github.com/repos/eCapitalAdvisors/Incorta_templates | closed | Design Document | documentation | Identified data sources: https://www.chicagobooth.edu/research/kilts/datasets/dominicks

- [x] Examine Dominick's Dataset

New Direction: I will be using the data in Incorta from the PAC Dev Instance (OnlineStoreData)

- [x] Understanding the schema structure of the dataset

- [x] Identified Analytics: Using R Studio to write sparklyr syntax

- [x] Identified software solution: Incorta | 1.0 | Design Document - Identified data sources: https://www.chicagobooth.edu/research/kilts/datasets/dominicks

- [x] Examine Dominick's Dataset

New Direction: I will be using the data in Incorta from the PAC Dev Instance (OnlineStoreData)

- [x] Understanding the schema structure of the dataset

- [x] Identified Analytics: Using R Studio to write sparklyr syntax

- [x] Identified software solution: Incorta | non_main | design document identified data sources examine dominick s dataset new direction i will be using the data in incorta from the pac dev instance onlinestoredata understanding the schema structure of the dataset identified analytics using r studio to write sparklyr syntax identified software solution incorta | 0 |

2,191 | 7,735,704,514 | IssuesEvent | 2018-05-27 18:02:30 | Chromeroni/Hera-Chatbot | https://api.github.com/repos/Chromeroni/Hera-Chatbot | closed | Store log-files in a cloud | maintainability | Currently, log files are stored localy on the execution environment. This prevents easy access to the log files for all developers.

**Change**

Implement an interface to the google drive API, so that the daily created log files can automatically be stored in the developer cloud.

**Prerequisite**

Issue #16 & #27 | True | Store log-files in a cloud - Currently, log files are stored localy on the execution environment. This prevents easy access to the log files for all developers.

**Change**

Implement an interface to the google drive API, so that the daily created log files can automatically be stored in the developer cloud.

**Prerequisite**

Issue #16 & #27 | main | store log files in a cloud currently log files are stored localy on the execution environment this prevents easy access to the log files for all developers change implement an interface to the google drive api so that the daily created log files can automatically be stored in the developer cloud prerequisite issue | 1 |

1,157 | 5,047,424,283 | IssuesEvent | 2016-12-20 09:23:18 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | docker_container with empty links list always restarts | affects_2.2 bug_report cloud docker waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

docker_container

##### ANSIBLE VERSION

```

ansible 2.2.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

*none*

##### OS / ENVIRONMENT

Ubuntu 16.04

##### SUMMARY

When using `links: []`, the container is always restarted.

##### STEPS TO REPRODUCE

Run this playbook twice:

```

- hosts: localhost

tasks:

- docker_container:

name: test

image: alpine

links: []

command: sleep 10000

```

##### EXPECTED RESULTS

The container is started once. It is not restarted when running the second time.

##### ACTUAL RESULTS

The container is restarted on the second run. When running with `-vvv --diff`:

```

TASK [docker_container] ********************************************************

[...]

changed: [localhost] => {

"ansible_facts": {},

"changed": true,

"diff": {

"differences": [

{

"expected_links": {

"container": null,

"parameter": []

}

}

]

},

"invocation": {

[...]

```

The problem seems to be that the modules compares an empty list with `None`. From `docker.log` (when enabled):

```

check differences expected_links [] vs None

primitive compare: expected_links

[...]

differences

[

{

"expected_links": {

"container": null,

"parameter": []

}

}

]

``` | True | docker_container with empty links list always restarts - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

docker_container

##### ANSIBLE VERSION

```

ansible 2.2.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

*none*

##### OS / ENVIRONMENT

Ubuntu 16.04

##### SUMMARY

When using `links: []`, the container is always restarted.

##### STEPS TO REPRODUCE

Run this playbook twice:

```

- hosts: localhost

tasks:

- docker_container:

name: test

image: alpine

links: []

command: sleep 10000

```

##### EXPECTED RESULTS

The container is started once. It is not restarted when running the second time.

##### ACTUAL RESULTS

The container is restarted on the second run. When running with `-vvv --diff`:

```

TASK [docker_container] ********************************************************

[...]

changed: [localhost] => {

"ansible_facts": {},

"changed": true,

"diff": {

"differences": [

{

"expected_links": {

"container": null,

"parameter": []

}

}

]

},

"invocation": {

[...]

```

The problem seems to be that the modules compares an empty list with `None`. From `docker.log` (when enabled):

```

check differences expected_links [] vs None

primitive compare: expected_links

[...]

differences

[

{

"expected_links": {

"container": null,

"parameter": []

}

}

]

``` | main | docker container with empty links list always restarts issue type bug report component name docker container ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides configuration none os environment ubuntu summary when using links the container is always restarted steps to reproduce run this playbook twice hosts localhost tasks docker container name test image alpine links command sleep expected results the container is started once it is not restarted when running the second time actual results the container is restarted on the second run when running with vvv diff task changed ansible facts changed true diff differences expected links container null parameter invocation the problem seems to be that the modules compares an empty list with none from docker log when enabled check differences expected links vs none primitive compare expected links differences expected links container null parameter | 1 |

5,160 | 26,274,240,629 | IssuesEvent | 2023-01-06 20:11:20 | cosmos/ibc-rs | https://api.github.com/repos/cosmos/ibc-rs | closed | Add `cargo doc` check to CI | O: maintainability I: CI/CD | <!-- < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < ☺

v ✰ Thanks for opening an issue! ✰

v Before smashing the submit button please review the template.

v Please also ensure that this is not a duplicate issue :)

☺ > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > -->

## Acceptance Criteria

- CI checks to ensure that the `cargo doc` command has been successfully executed

____

## For Admin Use

- [ ] Not duplicate issue

- [ ] Appropriate labels applied

- [ ] Appropriate milestone (priority) applied

- [ ] Appropriate contributors tagged

- [ ] Contributor assigned/self-assigned

| True | Add `cargo doc` check to CI - <!-- < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < ☺

v ✰ Thanks for opening an issue! ✰

v Before smashing the submit button please review the template.

v Please also ensure that this is not a duplicate issue :)

☺ > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > -->

## Acceptance Criteria

- CI checks to ensure that the `cargo doc` command has been successfully executed

____

## For Admin Use

- [ ] Not duplicate issue

- [ ] Appropriate labels applied

- [ ] Appropriate milestone (priority) applied

- [ ] Appropriate contributors tagged

- [ ] Contributor assigned/self-assigned

| main | add cargo doc check to ci ☺ v ✰ thanks for opening an issue ✰ v before smashing the submit button please review the template v please also ensure that this is not a duplicate issue ☺ acceptance criteria ci checks to ensure that the cargo doc command has been successfully executed for admin use not duplicate issue appropriate labels applied appropriate milestone priority applied appropriate contributors tagged contributor assigned self assigned | 1 |

5,633 | 28,292,276,901 | IssuesEvent | 2023-04-09 11:09:40 | WiredForWar/machines | https://api.github.com/repos/WiredForWar/machines | opened | Machines do self-destruct right on the start of Battle / Fortress / Siege in multiplayer | Bug Maintainance UI & UX Network | The scenario https://github.com/WiredForWar/machines/blob/dev/data/seige.scn#L27 works only if the start positions are fixed (rather than random).

1. Add `FIXED_POSITIONS` property to the scenarios format

2. Adjust the scenario file to indicate that it requires fixed positions.

3. Disable the positions combobox if only fixed positions are supported by the scn.

4. It seems that there is a scenarios cache file which is not invalidated on a scenario changed. Fix/implement the invalidation. | True | Machines do self-destruct right on the start of Battle / Fortress / Siege in multiplayer - The scenario https://github.com/WiredForWar/machines/blob/dev/data/seige.scn#L27 works only if the start positions are fixed (rather than random).

1. Add `FIXED_POSITIONS` property to the scenarios format

2. Adjust the scenario file to indicate that it requires fixed positions.

3. Disable the positions combobox if only fixed positions are supported by the scn.

4. It seems that there is a scenarios cache file which is not invalidated on a scenario changed. Fix/implement the invalidation. | main | machines do self destruct right on the start of battle fortress siege in multiplayer the scenario works only if the start positions are fixed rather than random add fixed positions property to the scenarios format adjust the scenario file to indicate that it requires fixed positions disable the positions combobox if only fixed positions are supported by the scn it seems that there is a scenarios cache file which is not invalidated on a scenario changed fix implement the invalidation | 1 |

5,396 | 27,115,666,274 | IssuesEvent | 2023-02-15 18:21:50 | VA-Explorer/va_explorer | https://api.github.com/repos/VA-Explorer/va_explorer | closed | Ensure that edited VAs are subjected to re-coding | Type: Maintainance good first issue Language: Python Domain: API/ Databases Status: Inactive | **What is the expected state?**

If I change a VA, the CoD assigned or not assigned to it may also change. Since this is the case, I expect edited VAs to be considered by any code responsible for assigning CoD.

**What is the actual state?**

Edited VAs currently do not appear to be subject to CoD assignment unless they previously did not have a CoD to begin with (either due to errors or having never been considered by the CoD assignment system).

**Relevant context**

`va_explorer/va_data_management/utils/coding.py::run_coding_algorithms()`

| True | Ensure that edited VAs are subjected to re-coding - **What is the expected state?**

If I change a VA, the CoD assigned or not assigned to it may also change. Since this is the case, I expect edited VAs to be considered by any code responsible for assigning CoD.

**What is the actual state?**

Edited VAs currently do not appear to be subject to CoD assignment unless they previously did not have a CoD to begin with (either due to errors or having never been considered by the CoD assignment system).

**Relevant context**

`va_explorer/va_data_management/utils/coding.py::run_coding_algorithms()`

| main | ensure that edited vas are subjected to re coding what is the expected state if i change a va the cod assigned or not assigned to it may also change since this is the case i expect edited vas to be considered by any code responsible for assigning cod what is the actual state edited vas currently do not appear to be subject to cod assignment unless they previously did not have a cod to begin with either due to errors or having never been considered by the cod assignment system relevant context va explorer va data management utils coding py run coding algorithms | 1 |

644 | 4,158,721,236 | IssuesEvent | 2016-06-17 05:00:19 | Microsoft/DirectXTK | https://api.github.com/repos/Microsoft/DirectXTK | opened | Raise minimum supported Feature Level to 9.3 | maintainence | The DirectX Tool Kit has supported all feature levels 9.1 and up, primarily to support Surface RT and Surface RT 2 devices. Since these devices will not be getting upgraded to Windows 10, and given the fact that the vast majority of PCs have 10.0 or better hardware, we will be retiring support for FL 9.1. There are almost no 9.2 devices of relevance today, but Windows phone 8.1 support requires FL 9.3. | True | Raise minimum supported Feature Level to 9.3 - The DirectX Tool Kit has supported all feature levels 9.1 and up, primarily to support Surface RT and Surface RT 2 devices. Since these devices will not be getting upgraded to Windows 10, and given the fact that the vast majority of PCs have 10.0 or better hardware, we will be retiring support for FL 9.1. There are almost no 9.2 devices of relevance today, but Windows phone 8.1 support requires FL 9.3. | main | raise minimum supported feature level to the directx tool kit has supported all feature levels and up primarily to support surface rt and surface rt devices since these devices will not be getting upgraded to windows and given the fact that the vast majority of pcs have or better hardware we will be retiring support for fl there are almost no devices of relevance today but windows phone support requires fl | 1 |

5,327 | 26,903,221,819 | IssuesEvent | 2023-02-06 17:03:11 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | closed | Add logged in user's name in the app header user icon dropdown | work: frontend status: ready restricted: maintainers | <img width="251" alt="Screenshot 2022-12-07 at 3 36 44 AM" src="https://user-images.githubusercontent.com/11032856/206033602-d55d998d-a2b6-4135-94ad-934be54fc588.png">

| True | Add logged in user's name in the app header user icon dropdown - <img width="251" alt="Screenshot 2022-12-07 at 3 36 44 AM" src="https://user-images.githubusercontent.com/11032856/206033602-d55d998d-a2b6-4135-94ad-934be54fc588.png">

| main | add logged in user s name in the app header user icon dropdown img width alt screenshot at am src | 1 |

88,046 | 3,770,904,325 | IssuesEvent | 2016-03-16 15:58:17 | Vannevelj/VSDiagnostics | https://api.github.com/repos/Vannevelj/VSDiagnostics | opened | Implement LINQUsed | priority - medium type - feature | In performance-sensitive code, teams might sometimes decide to not allow any LINQ (known for its many allocations). We can write an analyzer that basically warns anytime LINQ is used.

We'll have to do some thinking about how we address this: do we just check `using` statements? Do we search for exact occurrences after we've done that?

This should be disabled by default (users have to explicitly opt-in since only a very small subset will use this). | 1.0 | Implement LINQUsed - In performance-sensitive code, teams might sometimes decide to not allow any LINQ (known for its many allocations). We can write an analyzer that basically warns anytime LINQ is used.

We'll have to do some thinking about how we address this: do we just check `using` statements? Do we search for exact occurrences after we've done that?

This should be disabled by default (users have to explicitly opt-in since only a very small subset will use this). | non_main | implement linqused in performance sensitive code teams might sometimes decide to not allow any linq known for its many allocations we can write an analyzer that basically warns anytime linq is used we ll have to do some thinking about how we address this do we just check using statements do we search for exact occurrences after we ve done that this should be disabled by default users have to explicitly opt in since only a very small subset will use this | 0 |

4,033 | 18,853,026,066 | IssuesEvent | 2021-11-12 00:09:52 | exercism/python | https://api.github.com/repos/exercism/python | opened | [Templates]: Create New Concept Document Issue Template | maintainer chore 🔧 | We should create an new issue template for [Concept Documents], similar to this issue https://github.com/exercism/python/issues/2456, but with `details` sections that can be expanded/collapsed. | True | [Templates]: Create New Concept Document Issue Template - We should create an new issue template for [Concept Documents], similar to this issue https://github.com/exercism/python/issues/2456, but with `details` sections that can be expanded/collapsed. | main | create new concept document issue template we should create an new issue template for similar to this issue but with details sections that can be expanded collapsed | 1 |

2,879 | 10,319,547,782 | IssuesEvent | 2019-08-30 17:50:12 | backdrop-ops/contrib | https://api.github.com/repos/backdrop-ops/contrib | closed | Port of Facebook OAuth (FBOAuth) | Maintainer application Port in progress | Hi there.

I recently became maintainer of FBOAuth module, thanks to Nate. I want to port it to Backdrop.

| True | Port of Facebook OAuth (FBOAuth) - Hi there.

I recently became maintainer of FBOAuth module, thanks to Nate. I want to port it to Backdrop.

| main | port of facebook oauth fboauth hi there i recently became maintainer of fboauth module thanks to nate i want to port it to backdrop | 1 |

106,480 | 13,303,751,987 | IssuesEvent | 2020-08-25 15:56:05 | LibraryOfCongress/concordia | https://api.github.com/repos/LibraryOfCongress/concordia | closed | Create user flows on reviewing transcriptions for specific personas | design | **User story/persona**

- High touch user, particularly intrigued by reviewing or wanting to participate in a challenge

- A student given an assignment

- Transcribe-a-thon participant

**Is your feature request related to a problem? Please describe.**

At this point, the team has some ideas on how a user comes to BTP and the paths they take. Mapping of potential user's journey can help the product team gain more understanding of users motives and make better design decisions.

**Acceptance Criteria**

- [ ] Create user flows for each persona listed above

| 1.0 | Create user flows on reviewing transcriptions for specific personas - **User story/persona**

- High touch user, particularly intrigued by reviewing or wanting to participate in a challenge

- A student given an assignment

- Transcribe-a-thon participant

**Is your feature request related to a problem? Please describe.**

At this point, the team has some ideas on how a user comes to BTP and the paths they take. Mapping of potential user's journey can help the product team gain more understanding of users motives and make better design decisions.

**Acceptance Criteria**

- [ ] Create user flows for each persona listed above

| non_main | create user flows on reviewing transcriptions for specific personas user story persona high touch user particularly intrigued by reviewing or wanting to participate in a challenge a student given an assignment transcribe a thon participant is your feature request related to a problem please describe at this point the team has some ideas on how a user comes to btp and the paths they take mapping of potential user s journey can help the product team gain more understanding of users motives and make better design decisions acceptance criteria create user flows for each persona listed above | 0 |

701,804 | 24,109,100,070 | IssuesEvent | 2022-09-20 09:51:08 | younginnovations/iatipublisher | https://api.github.com/repos/younginnovations/iatipublisher | closed | Bug : Drop Down Issue | type: bug priority: high Frontend Backend | Context

- Desktop

- Chrome 103.0.5060.114

Preconditions

- stage.iatipublisher.yipl.com.np

- Username: Ram

- password: 12345678

Steps

- Select the drop-down options

Actual Result

- remove the icon is missing from the drop-down options

Expected Result

- remove the icon should be in the drop-down options

https://user-images.githubusercontent.com/78422663/188442470-e3bb2ba7-67a8-4a45-bf68-27d76ebcc1b7.mp4

| 1.0 | Bug : Drop Down Issue - Context

- Desktop

- Chrome 103.0.5060.114

Preconditions

- stage.iatipublisher.yipl.com.np

- Username: Ram

- password: 12345678

Steps

- Select the drop-down options

Actual Result

- remove the icon is missing from the drop-down options

Expected Result

- remove the icon should be in the drop-down options

https://user-images.githubusercontent.com/78422663/188442470-e3bb2ba7-67a8-4a45-bf68-27d76ebcc1b7.mp4

| non_main | bug drop down issue context desktop chrome preconditions stage iatipublisher yipl com np username ram password steps select the drop down options actual result remove the icon is missing from the drop down options expected result remove the icon should be in the drop down options | 0 |

884 | 4,543,548,386 | IssuesEvent | 2016-09-10 06:13:57 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | docker_container module always recreate container if combine hostname and network_mode: host | affects_2.1 bug_report cloud docker waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

docker_container

##### ANSIBLE VERSION

```

ansible 2.1.1.0

```

##### CONFIGURATION

N/A

##### OS / ENVIRONMENT

N/A

##### SUMMARY

docker_container module always recreate container if use network_mode: host and specify hostname. If you remove network_mode or hostname it will work fine.

##### STEPS TO REPRODUCE

```

- name: Simple start container

hosts: localhost

connection: local

gather_facts: no

tasks:

- name: Create simple container with network host

docker_container:

image: alpine

name: test_container

hostname: test_container

network_mode: host

command: sleep 9999

state: started

```

##### EXPECTED RESULTS

When you rerun playbook. The container shouldn't be removed

##### ACTUAL RESULTS

```

[WARNING]: Host file not found: /etc/ansible/hosts

[WARNING]: provided hosts list is empty, only localhost is available

Loaded callback default of type stdout, v2.0

PLAYBOOK: tmp.yml **************************************************************

1 plays in tmp.yml

PLAY [Simple start container] **************************************************

TASK [Create simple container with network host] *******************************

task path: /home/username/ansible/tmp.yml:18

<127.0.0.1> ESTABLISH LOCAL CONNECTION FOR USER: username

<127.0.0.1> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo $HOME/.ansible/tmp/ansible-tmp-1473347657.57-15375112345113 `" && echo ansible-tmp-1473347657.57-15375112345113="` echo $HOME/.ansible/tmp/ansible-tmp-1473347657.57-15375112345113 `" ) && sleep 0'

<127.0.0.1> PUT /tmp/tmpUnye2A TO /home/username/.ansible/tmp/ansible-tmp-1473347657.57-15375112345113/docker_container

<127.0.0.1> EXEC /bin/sh -c 'LANG=en_US.UTF-8 LC_ALL=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8 /home/username/.virtualenvs/ansible/bin/python /home/username/.ansible/tmp/ansible-tmp-1473347657.57-15375112345113/docker_container; rm -rf "/home/username/.ansible/tmp/ansible-tmp-1473347657.57-15375112345113/" > /dev/null 2>&1 && sleep 0'