Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

170,886 | 13,207,653,620 | IssuesEvent | 2020-08-15 00:00:15 | OneCDOnly/sherpa | https://api.github.com/repos/OneCDOnly/sherpa | closed | sherpa should permit a single instance only | enhancement testing ... | Don't want a second instance messing things up. :wink: | 1.0 | sherpa should permit a single instance only - Don't want a second instance messing things up. :wink: | non_main | sherpa should permit a single instance only don t want a second instance messing things up wink | 0 |

721,505 | 24,829,462,205 | IssuesEvent | 2022-10-26 01:07:41 | AY2223S1-CS2113-T17-1/tp | https://api.github.com/repos/AY2223S1-CS2113-T17-1/tp | closed | Change boarding gate number - both flightlist & passenger list | priority.Medium | modify gate_number FLIGHT_NUM PREV_NUM NEW_NUM

PREV_NUM must be a existing number.

NEW_NUM cannot be an existing number.

Due Date: 23rd Oct 2022 (Sunday)

| 1.0 | Change boarding gate number - both flightlist & passenger list - modify gate_number FLIGHT_NUM PREV_NUM NEW_NUM

PREV_NUM must be a existing number.

NEW_NUM cannot be an existing number.

Due Date: 23rd Oct 2022 (Sunday)

| non_main | change boarding gate number both flightlist passenger list modify gate number flight num prev num new num prev num must be a existing number new num cannot be an existing number due date oct sunday | 0 |

1,792 | 6,721,542,962 | IssuesEvent | 2017-10-16 12:10:42 | Chainsawkitten/LargeGameProjectEngine | https://api.github.com/repos/Chainsawkitten/LargeGameProjectEngine | closed | Decide assets for game | Architecture Asset | A document listing all assets to be used in game, highlighting those that are needed to be done by this sprint. | 1.0 | Decide assets for game - A document listing all assets to be used in game, highlighting those that are needed to be done by this sprint. | non_main | decide assets for game a document listing all assets to be used in game highlighting those that are needed to be done by this sprint | 0 |

12,677 | 14,970,622,060 | IssuesEvent | 2021-01-27 19:53:32 | Leaflet/Leaflet | https://api.github.com/repos/Leaflet/Leaflet | closed | marker.bindPopup() - version 1.7.1 only; on Mac Safari only - click event isn't recognized properly | bug compatibility needs investigation | An event added via `marker.bindpopup()` - version 1.7.1 only using a Mac Safari (version 14 and 13) browser only - will **only** be recognized if one makes a _long mouse-click_ of about 1s like a long tap event. If one adds such an event via `marker.on('click', function(e) {this.openPopup();});` it works properly.

| True | marker.bindPopup() - version 1.7.1 only; on Mac Safari only - click event isn't recognized properly - An event added via `marker.bindpopup()` - version 1.7.1 only using a Mac Safari (version 14 and 13) browser only - will **only** be recognized if one makes a _long mouse-click_ of about 1s like a long tap event. If one adds such an event via `marker.on('click', function(e) {this.openPopup();});` it works properly.

| non_main | marker bindpopup version only on mac safari only click event isn t recognized properly an event added via marker bindpopup version only using a mac safari version and browser only will only be recognized if one makes a long mouse click of about like a long tap event if one adds such an event via marker on click function e this openpopup it works properly | 0 |

5,295 | 26,761,214,212 | IssuesEvent | 2023-01-31 07:04:03 | bazelbuild/intellij | https://api.github.com/repos/bazelbuild/intellij | closed | No autocomplete or debugging on targets using custom rules | type: bug product: GoLand topic: debugging awaiting-maintainer | #### Description of the issue. Please be specific.

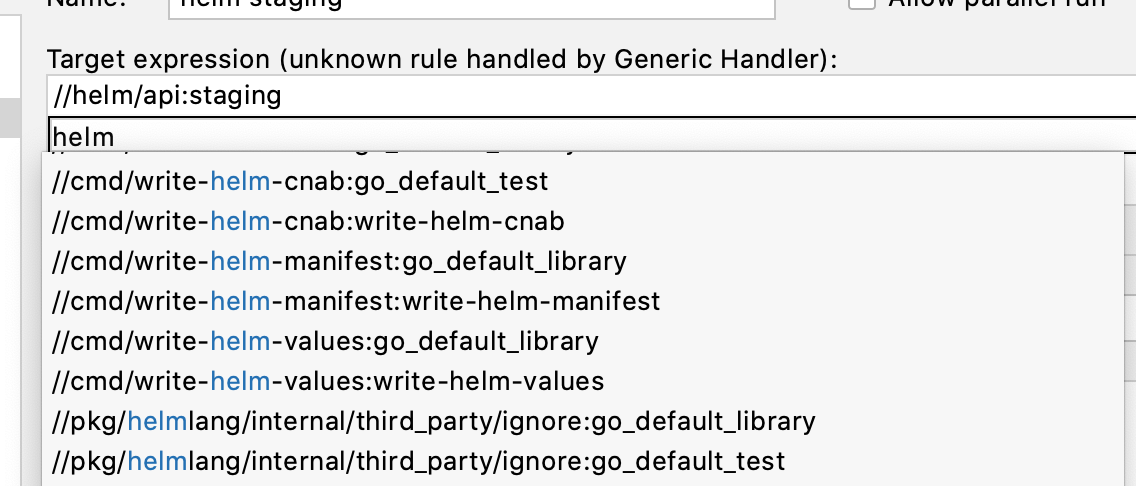

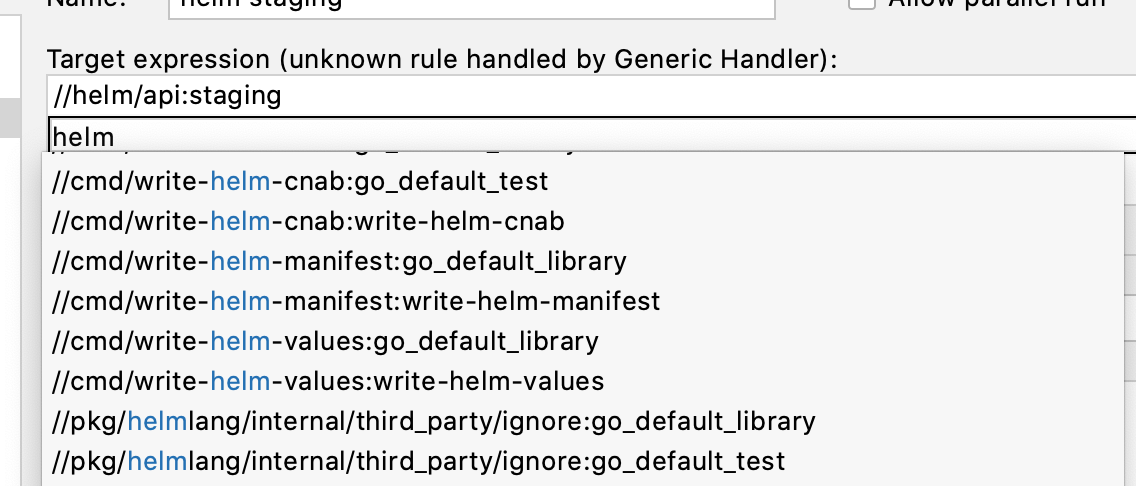

I'm trying to run and debug bazel targets in my workspace which use custom rules also defined in my workspace. I can execute these targets manually from the command line and can hardcode them when setting up a run configuration, but I don't get autocomplete when trying to find the target during configuration editing.

Note in this screenshot how I've hardcoded `//helm/api:staging` and that it doesn't show up in the autocomplete list when adding a second target. I can run this target, but the UI doesn't let me debug it which I guess is part of the same problem.

This target uses a custom rule defined in a `helm.bzl` file located in the same workspace. Looking through the docs, it seems like the bazel plugin [ignores some rules](https://ij.bazel.build/docs/project-views.html#derive_targets_from_directories) which might contribute to this.

#### What's the simplest set of steps to reproduce this issue? Please provide an example project, if possible.

I'm still working on a simple repro and will update this question once I have it figured out.

#### Version information

GoLand: 2020.1.4

Platform: Mac OS X 10.15.5

Bazel plugin: 2020.06.25.0.3

Bazel: 2.2.0

| True | No autocomplete or debugging on targets using custom rules - #### Description of the issue. Please be specific.

I'm trying to run and debug bazel targets in my workspace which use custom rules also defined in my workspace. I can execute these targets manually from the command line and can hardcode them when setting up a run configuration, but I don't get autocomplete when trying to find the target during configuration editing.

Note in this screenshot how I've hardcoded `//helm/api:staging` and that it doesn't show up in the autocomplete list when adding a second target. I can run this target, but the UI doesn't let me debug it which I guess is part of the same problem.

This target uses a custom rule defined in a `helm.bzl` file located in the same workspace. Looking through the docs, it seems like the bazel plugin [ignores some rules](https://ij.bazel.build/docs/project-views.html#derive_targets_from_directories) which might contribute to this.

#### What's the simplest set of steps to reproduce this issue? Please provide an example project, if possible.

I'm still working on a simple repro and will update this question once I have it figured out.

#### Version information

GoLand: 2020.1.4

Platform: Mac OS X 10.15.5

Bazel plugin: 2020.06.25.0.3

Bazel: 2.2.0

| main | no autocomplete or debugging on targets using custom rules description of the issue please be specific i m trying to run and debug bazel targets in my workspace which use custom rules also defined in my workspace i can execute these targets manually from the command line and can hardcode them when setting up a run configuration but i don t get autocomplete when trying to find the target during configuration editing note in this screenshot how i ve hardcoded helm api staging and that it doesn t show up in the autocomplete list when adding a second target i can run this target but the ui doesn t let me debug it which i guess is part of the same problem this target uses a custom rule defined in a helm bzl file located in the same workspace looking through the docs it seems like the bazel plugin which might contribute to this what s the simplest set of steps to reproduce this issue please provide an example project if possible i m still working on a simple repro and will update this question once i have it figured out version information goland platform mac os x bazel plugin bazel | 1 |

738 | 4,347,746,106 | IssuesEvent | 2016-07-29 20:40:49 | coniks-sys/coniks-ref-implementation | https://api.github.com/repos/coniks-sys/coniks-ref-implementation | opened | Restructure code as in coniks-go repo | maintainability | The existing code base should be reorganized into the following packages:

- crypto

- client

- merkletree

- keyserver

- protocol

- utils

These packages should be considered for the future:

- bots for third-party account verification

- storage for persistent storage backend hooks | True | Restructure code as in coniks-go repo - The existing code base should be reorganized into the following packages:

- crypto

- client

- merkletree

- keyserver

- protocol

- utils

These packages should be considered for the future:

- bots for third-party account verification

- storage for persistent storage backend hooks | main | restructure code as in coniks go repo the existing code base should be reorganized into the following packages crypto client merkletree keyserver protocol utils these packages should be considered for the future bots for third party account verification storage for persistent storage backend hooks | 1 |

5,756 | 30,513,559,197 | IssuesEvent | 2023-07-18 23:37:35 | VoronDesign/VoronUsers | https://api.github.com/repos/VoronDesign/VoronUsers | closed | 350mm issue with V2.4 Skirt switch mod by tayto-chip | Action required by maintainers | Sorry @tayto-gp there is no user with the name tayto-chip on discord which the readme says to contact with issues.

The 350mm side a skirt won't work the nub is too long.

| True | 350mm issue with V2.4 Skirt switch mod by tayto-chip - Sorry @tayto-gp there is no user with the name tayto-chip on discord which the readme says to contact with issues.

The 350mm side a skirt won't work the nub is too long.

| main | issue with skirt switch mod by tayto chip sorry tayto gp there is no user with the name tayto chip on discord which the readme says to contact with issues the side a skirt won t work the nub is too long | 1 |

92,669 | 10,760,936,158 | IssuesEvent | 2019-10-31 19:40:12 | Automattic/simplenote-electron | https://api.github.com/repos/Automattic/simplenote-electron | closed | Add Evernote notebook as a tag | documentation | <!--

Thanks for contributing to Simplenote! Pick a clear title ("Note editor: emojis not displaying correctly") and proceed.

Please review the FAQs before submitting an issue: https://github.com/Automattic/simplenote-electron/labels/FAQ

Mac users: Does your Simplenote app have a file size of less than 50 MB? Then you are using simplenote-macos, not simplenote-electron. Please post your issue here: https://github.com/Automattic/simplenote-macos

-->

#### Steps to reproduce

1. Export all notes from Evernote across notebooks via Windows desktop app

2. Import Evernote.enex file into Simplenote

#### What I expected

Notes would be tagged with the Evernote notebook that they were in

#### What happened instead

No notebook tag was applied

Looking in the `.enex` files this might be an issue with the Evernote export that it doesn't include the notebook. If there is nothing that can be done, it might be worth noting in your help that you should pre-tag everything with the notebook name in Evernote before exporting (which is quite easy to do) as it's a big hassle to delete and re-import 1000s of notes in Simplenote

#### Simplenote version

<!--

Here's the version number of our latest release: https://github.com/Automattic/simplenote-electron/releases/latest

-->

v1.3.4

#### OS version

Windows 10

#### Screenshot / Video

<!--

PLEASE NOTE

- These comments won't show up when you submit the issue.

- Everything is optional, but try to add as many details as possible.

- If requesting a new feature, explain why you'd like to see it added.

-->

| 1.0 | Add Evernote notebook as a tag - <!--

Thanks for contributing to Simplenote! Pick a clear title ("Note editor: emojis not displaying correctly") and proceed.

Please review the FAQs before submitting an issue: https://github.com/Automattic/simplenote-electron/labels/FAQ

Mac users: Does your Simplenote app have a file size of less than 50 MB? Then you are using simplenote-macos, not simplenote-electron. Please post your issue here: https://github.com/Automattic/simplenote-macos

-->

#### Steps to reproduce

1. Export all notes from Evernote across notebooks via Windows desktop app

2. Import Evernote.enex file into Simplenote

#### What I expected

Notes would be tagged with the Evernote notebook that they were in

#### What happened instead

No notebook tag was applied

Looking in the `.enex` files this might be an issue with the Evernote export that it doesn't include the notebook. If there is nothing that can be done, it might be worth noting in your help that you should pre-tag everything with the notebook name in Evernote before exporting (which is quite easy to do) as it's a big hassle to delete and re-import 1000s of notes in Simplenote

#### Simplenote version

<!--

Here's the version number of our latest release: https://github.com/Automattic/simplenote-electron/releases/latest

-->

v1.3.4

#### OS version

Windows 10

#### Screenshot / Video

<!--

PLEASE NOTE

- These comments won't show up when you submit the issue.

- Everything is optional, but try to add as many details as possible.

- If requesting a new feature, explain why you'd like to see it added.

-->

| non_main | add evernote notebook as a tag thanks for contributing to simplenote pick a clear title note editor emojis not displaying correctly and proceed please review the faqs before submitting an issue mac users does your simplenote app have a file size of less than mb then you are using simplenote macos not simplenote electron please post your issue here steps to reproduce export all notes from evernote across notebooks via windows desktop app import evernote enex file into simplenote what i expected notes would be tagged with the evernote notebook that they were in what happened instead no notebook tag was applied looking in the enex files this might be an issue with the evernote export that it doesn t include the notebook if there is nothing that can be done it might be worth noting in your help that you should pre tag everything with the notebook name in evernote before exporting which is quite easy to do as it s a big hassle to delete and re import of notes in simplenote simplenote version here s the version number of our latest release os version windows screenshot video please note these comments won t show up when you submit the issue everything is optional but try to add as many details as possible if requesting a new feature explain why you d like to see it added | 0 |

320,952 | 23,832,855,381 | IssuesEvent | 2022-09-06 00:31:54 | farhanfadila1717/slide_countdown | https://api.github.com/repos/farhanfadila1717/slide_countdown | opened | Readme Add documentation `start`, `stop`, `changeDuration`. | documentation | Ovveride StreamDuration example for `start`, `stop`, `changeDuration` countdown. | 1.0 | Readme Add documentation `start`, `stop`, `changeDuration`. - Ovveride StreamDuration example for `start`, `stop`, `changeDuration` countdown. | non_main | readme add documentation start stop changeduration ovveride streamduration example for start stop changeduration countdown | 0 |

3,444 | 13,212,219,958 | IssuesEvent | 2020-08-16 05:29:51 | ansible/ansible | https://api.github.com/repos/ansible/ansible | closed | terraform: Plan file doesn't seen in next steps | affects_2.8 bot_closed cloud collection collection:community.general module needs_collection_redirect needs_maintainer needs_triage support:community | Continue of https://github.com/ansible/ansible/issues/39611

Fix doesn't work (https://github.com/ansible/ansible/commit/9a607283aafce8f1eb424df6b4c567095844bfd7)

At this moment i specify plan file, but next step doesn't see it

##### COMPONENT NAME

terraform

Ansible version:

```

ansible 2.8.3

config file = /etc/ansible/ansible.cfg

configured module search path = [u'/home/aermakov/.ansible/plugins/modules', u'/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python2.7/dist-packages/ansible

executable location = /usr/bin/ansible

python version = 2.7.15+ (default, Nov 27 2018, 23:36:35) [GCC 7.3.0]

```

Can you help to handle it? @mohitkumarsharmaflux7 @ryansb

Code:

```

- name: Check plan and created elements

terraform:

project_path: '{{ playbook_dir }}/roles/terraform/templates/terraform'

plan_file: '{{ playbook_dir }}/roles/terraform/templates/terraform/okd.tfplan'

state: planned

force_init: true

- name: Create Vms and prepare inventoryfile

terraform:

project_path: '{{ playbook_dir }}/roles/terraform/templates/terraform'

state: present

``` | True | terraform: Plan file doesn't seen in next steps - Continue of https://github.com/ansible/ansible/issues/39611

Fix doesn't work (https://github.com/ansible/ansible/commit/9a607283aafce8f1eb424df6b4c567095844bfd7)

At this moment i specify plan file, but next step doesn't see it

##### COMPONENT NAME

terraform

Ansible version:

```

ansible 2.8.3

config file = /etc/ansible/ansible.cfg

configured module search path = [u'/home/aermakov/.ansible/plugins/modules', u'/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python2.7/dist-packages/ansible

executable location = /usr/bin/ansible

python version = 2.7.15+ (default, Nov 27 2018, 23:36:35) [GCC 7.3.0]

```

Can you help to handle it? @mohitkumarsharmaflux7 @ryansb

Code:

```

- name: Check plan and created elements

terraform:

project_path: '{{ playbook_dir }}/roles/terraform/templates/terraform'

plan_file: '{{ playbook_dir }}/roles/terraform/templates/terraform/okd.tfplan'

state: planned

force_init: true

- name: Create Vms and prepare inventoryfile

terraform:

project_path: '{{ playbook_dir }}/roles/terraform/templates/terraform'

state: present

``` | main | terraform plan file doesn t seen in next steps continue of fix doesn t work at this moment i specify plan file but next step doesn t see it component name terraform ansible version ansible config file etc ansible ansible cfg configured module search path ansible python module location usr lib dist packages ansible executable location usr bin ansible python version default nov can you help to handle it ryansb code name check plan and created elements terraform project path playbook dir roles terraform templates terraform plan file playbook dir roles terraform templates terraform okd tfplan state planned force init true name create vms and prepare inventoryfile terraform project path playbook dir roles terraform templates terraform state present | 1 |

198,247 | 22,620,974,484 | IssuesEvent | 2022-06-30 06:16:55 | ioana-nicolae/testing-functionality | https://api.github.com/repos/ioana-nicolae/testing-functionality | opened | CVE-2021-43797 (Medium) detected in netty-codec-http-4.1.48.Final.jar | security vulnerability | ## CVE-2021-43797 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.48.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application framework for

rapid development of maintainable high performance protocol servers and

clients.</p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/io/netty/netty-codec-http/4.1.48.Final/netty-codec-http-4.1.48.Final.jar</p>

<p>

Dependency Hierarchy:

- aws-java-sdk-1.11.856.jar (Root Library)

- aws-java-sdk-kinesisvideo-1.11.856.jar

- :x: **netty-codec-http-4.1.48.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ioana-nicolae/testing-functionality/commit/b9cf710c94adea695ef39d08725a0ef0851297b6">b9cf710c94adea695ef39d08725a0ef0851297b6</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Netty is an asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers & clients. Netty prior to version 4.1.71.Final skips control chars when they are present at the beginning / end of the header name. It should instead fail fast as these are not allowed by the spec and could lead to HTTP request smuggling. Failing to do the validation might cause netty to "sanitize" header names before it forward these to another remote system when used as proxy. This remote system can't see the invalid usage anymore, and therefore does not do the validation itself. Users should upgrade to version 4.1.71.Final.

Mend Note: After conducting further research, Mend has determined that all versions of netty up to version 4.1.71.Final are vulnerable to CVE-2021-43797.

<p>Publish Date: 2021-12-09

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-43797>CVE-2021-43797</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="CVE-2021-43797">CVE-2021-43797</a></p>

<p>Release Date: 2021-12-09</p>

<p>Fix Resolution (io.netty:netty-codec-http): 4.1.71.Final</p>

<p>Direct dependency fix Resolution (com.amazonaws:aws-java-sdk): 1.11.875</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue | True | CVE-2021-43797 (Medium) detected in netty-codec-http-4.1.48.Final.jar - ## CVE-2021-43797 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.48.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application framework for

rapid development of maintainable high performance protocol servers and

clients.</p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/io/netty/netty-codec-http/4.1.48.Final/netty-codec-http-4.1.48.Final.jar</p>

<p>

Dependency Hierarchy:

- aws-java-sdk-1.11.856.jar (Root Library)

- aws-java-sdk-kinesisvideo-1.11.856.jar

- :x: **netty-codec-http-4.1.48.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ioana-nicolae/testing-functionality/commit/b9cf710c94adea695ef39d08725a0ef0851297b6">b9cf710c94adea695ef39d08725a0ef0851297b6</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Netty is an asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers & clients. Netty prior to version 4.1.71.Final skips control chars when they are present at the beginning / end of the header name. It should instead fail fast as these are not allowed by the spec and could lead to HTTP request smuggling. Failing to do the validation might cause netty to "sanitize" header names before it forward these to another remote system when used as proxy. This remote system can't see the invalid usage anymore, and therefore does not do the validation itself. Users should upgrade to version 4.1.71.Final.

Mend Note: After conducting further research, Mend has determined that all versions of netty up to version 4.1.71.Final are vulnerable to CVE-2021-43797.

<p>Publish Date: 2021-12-09

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-43797>CVE-2021-43797</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="CVE-2021-43797">CVE-2021-43797</a></p>

<p>Release Date: 2021-12-09</p>

<p>Fix Resolution (io.netty:netty-codec-http): 4.1.71.Final</p>

<p>Direct dependency fix Resolution (com.amazonaws:aws-java-sdk): 1.11.875</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue | non_main | cve medium detected in netty codec http final jar cve medium severity vulnerability vulnerable library netty codec http final jar netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers and clients library home page a href path to dependency file pom xml path to vulnerable library home wss scanner repository io netty netty codec http final netty codec http final jar dependency hierarchy aws java sdk jar root library aws java sdk kinesisvideo jar x netty codec http final jar vulnerable library found in head commit a href found in base branch main vulnerability details netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers clients netty prior to version final skips control chars when they are present at the beginning end of the header name it should instead fail fast as these are not allowed by the spec and could lead to http request smuggling failing to do the validation might cause netty to sanitize header names before it forward these to another remote system when used as proxy this remote system can t see the invalid usage anymore and therefore does not do the validation itself users should upgrade to version final mend note after conducting further research mend has determined that all versions of netty up to version final are vulnerable to cve publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin cve release date fix resolution io netty netty codec http final direct dependency fix resolution com amazonaws aws java sdk rescue worker helmet automatic remediation is available for this issue | 0 |

19,562 | 14,236,968,118 | IssuesEvent | 2020-11-18 16:37:49 | rokwire/safer-illinois-app | https://api.github.com/repos/rokwire/safer-illinois-app | closed | [USABILITY] - Add an external link icon to the Feedback button. | Type: Usability | **Describe the bug**

Add an external link icon to the Feedback button.

**To Reproduce**

Steps to reproduce the behavior:

1. Download the Safer Illinois app.

2. Complete the initial onboarding process and logged in as a University student.

3. COVID 19 screen is displayed.

4. Tap on the Settings icon.

5. On the Settings screen, tap on Submit Feedback button

**Actual Result**

The submit screen is not part of the Safer app. Adding an external link icon helps the user to understand as the feedback screen is an external screen.

**Expected behavior**

Add an external link icon to the Feedback button. | True | [USABILITY] - Add an external link icon to the Feedback button. - **Describe the bug**

Add an external link icon to the Feedback button.

**To Reproduce**

Steps to reproduce the behavior:

1. Download the Safer Illinois app.

2. Complete the initial onboarding process and logged in as a University student.

3. COVID 19 screen is displayed.

4. Tap on the Settings icon.

5. On the Settings screen, tap on Submit Feedback button

**Actual Result**

The submit screen is not part of the Safer app. Adding an external link icon helps the user to understand as the feedback screen is an external screen.

**Expected behavior**

Add an external link icon to the Feedback button. | non_main | add an external link icon to the feedback button describe the bug add an external link icon to the feedback button to reproduce steps to reproduce the behavior download the safer illinois app complete the initial onboarding process and logged in as a university student covid screen is displayed tap on the settings icon on the settings screen tap on submit feedback button actual result the submit screen is not part of the safer app adding an external link icon helps the user to understand as the feedback screen is an external screen expected behavior add an external link icon to the feedback button | 0 |

185,512 | 15,024,068,474 | IssuesEvent | 2021-02-01 19:06:29 | mermaid-js/mermaid | https://api.github.com/repos/mermaid-js/mermaid | closed | Update NPM readme | Area: Documentation Status: Approved Type: Other | As the Readme got reworked with #1045 it also needs to be updated on [npm](https://www.npmjs.com/package/mermaid). The images are broken currently because they are referenced by a relative path. They might need to be replaced with an absolute url. | 1.0 | Update NPM readme - As the Readme got reworked with #1045 it also needs to be updated on [npm](https://www.npmjs.com/package/mermaid). The images are broken currently because they are referenced by a relative path. They might need to be replaced with an absolute url. | non_main | update npm readme as the readme got reworked with it also needs to be updated on the images are broken currently because they are referenced by a relative path they might need to be replaced with an absolute url | 0 |

156,876 | 24,626,127,855 | IssuesEvent | 2022-10-16 14:45:08 | dotnet/efcore | https://api.github.com/repos/dotnet/efcore | closed | Why does EF Core pluralize table names by default? | closed-by-design customer-reported | As described in this post:

https://entityframeworkcore.com/knowledge-base/37493095/entity-framework-core-rc2-table-name-pluralization

I'm wondering why the EF Core team took the decision to use the name of the DbSet property for the SQL table name by default? This is generally going to result in plural table names, as that is the appropriate name for the DbSet properties. I thought this was considered bad practice, and that SQL table named should be singular - why this default? | 1.0 | Why does EF Core pluralize table names by default? - As described in this post:

https://entityframeworkcore.com/knowledge-base/37493095/entity-framework-core-rc2-table-name-pluralization

I'm wondering why the EF Core team took the decision to use the name of the DbSet property for the SQL table name by default? This is generally going to result in plural table names, as that is the appropriate name for the DbSet properties. I thought this was considered bad practice, and that SQL table named should be singular - why this default? | non_main | why does ef core pluralize table names by default as described in this post i m wondering why the ef core team took the decision to use the name of the dbset property for the sql table name by default this is generally going to result in plural table names as that is the appropriate name for the dbset properties i thought this was considered bad practice and that sql table named should be singular why this default | 0 |

514,899 | 14,946,342,387 | IssuesEvent | 2021-01-26 06:34:38 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | mail.google.com - site is not usable | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:85.0) Gecko/20100101 Firefox/85.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66239 -->

**URL**: https://mail.google.com/mail/u/0/?tab=wm

**Browser / Version**: Firefox 85.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Internet Explorer

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

the page loads, but it appears blank

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2021/1/30a92310-54f8-40c8-af8b-cc5c99c5c5d1.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20210118153634</li><li>channel: release</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2021/1/c68eefd5-7ee3-4a43-8121-4199b0e090d2)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | mail.google.com - site is not usable - <!-- @browser: Firefox 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:85.0) Gecko/20100101 Firefox/85.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66239 -->

**URL**: https://mail.google.com/mail/u/0/?tab=wm

**Browser / Version**: Firefox 85.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Internet Explorer

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

the page loads, but it appears blank

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2021/1/30a92310-54f8-40c8-af8b-cc5c99c5c5d1.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20210118153634</li><li>channel: release</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2021/1/c68eefd5-7ee3-4a43-8121-4199b0e090d2)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_main | mail google com site is not usable url browser version firefox operating system windows tested another browser yes internet explorer problem type site is not usable description page not loading correctly steps to reproduce the page loads but it appears blank view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel release hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 0 |

3,223 | 12,367,096,845 | IssuesEvent | 2020-05-18 11:41:20 | pace/bricks | https://api.github.com/repos/pace/bricks | closed | Add log breadcrumbs to log.handleError() | EST::Hours S::Ready T::Maintainance | Currently, breadcrumbs are only attached when an http request is aborted with an error or panic. Sometimes we want to use handleError to report a message to sentry without aborting the request.

Breadcrumbs should be added there as well. | True | Add log breadcrumbs to log.handleError() - Currently, breadcrumbs are only attached when an http request is aborted with an error or panic. Sometimes we want to use handleError to report a message to sentry without aborting the request.

Breadcrumbs should be added there as well. | main | add log breadcrumbs to log handleerror currently breadcrumbs are only attached when an http request is aborted with an error or panic sometimes we want to use handleerror to report a message to sentry without aborting the request breadcrumbs should be added there as well | 1 |

1,827 | 6,577,345,978 | IssuesEvent | 2017-09-12 00:16:00 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | ec2_asg ignores replace_instances if lc_check is true | affects_2.0 aws bug_report cloud waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

cloud/amazon/ec2_asg

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.0.0.1

config file = <snip>

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

N/A

##### SUMMARY

<!--- Explain the problem briefly -->

Running `ec2_asg` with `replace_instances` set to a single instance and `lc_check` set to `yes` against an ASG with multiple instances causes it to ignore `replace_instances` and replace a random instance in the ASG.

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

- Spin up an ASG with min, max and desired > 1

- Change the launch configuration for the ASG

- Run `ec2_asg`, specifying a single instance for `replace_instances`, and `lc_check` = `yes`

It will choose a random instance from the instances in the ASG which have the old LC.

This seems to stem from [these lines](https://github.com/ansible/ansible-modules-core/blob/7314cc3867eb90bc1c098e29265ae48670ad35b1/cloud/amazon/ec2_asg.py#L628-L633) ignoring the passed-in `initial_instances` and instead producing its own list of instances to be terminated.

<!--- Paste example playbooks or commands between quotes below -->

```

ec2_asg:

lc_check: yes

replace_batch_size: 1

replace_instances: my_instance_id

name: my_asg

min_size: 3

max_size: 3

desired_capacity: 3

launch_config_name: my_lc

region: us-west-2

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

It would spin up a new instance in the ASG, and then terminate the instance I specified above

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

It sometimes terminates the one I specify, other times it terminates a different one.

<!--- Paste verbatim command output between quotes below -->

```

TASK [Cycling | ec2_asg | Cycle instance (only if its launch configuration differs from that of the ASG)] ***

task path: cycle-asg-instance-with-status-check.yml:16

Wednesday 15 June 2016 15:31:18 +0000 (0:00:00.020) 0:04:47.913 ********

ESTABLISH LOCAL CONNECTION FOR USER: admin

127.0.0.1 EXEC ( umask 22 && mkdir -p "$( echo $HOME/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736 )" && echo "$( echo $HOME/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736 )" )

127.0.0.1 PUT /tmp/tmpTZaAaD TO /home/admin/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736/ec2_asg

127.0.0.1 EXEC LANG=en_US.UTF-8 LC_ALL=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8 /usr/bin/python /home/admin/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736/ec2_asg; rm -rf "/home/admin/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736/" > /dev/null 2>&1

changed: [localhost] => {"availability_zones": ["us-west-2c"], "changed": true, "default_cooldown": 300, "desired_capacity": 2, "health_check_period": 300, "health_check_type": "EC2", "healthy_instances": 3, "in_service_instances": 3, "instance_facts": {"i-01fca335e1e29c65c": {"health_status": "Healthy", "launch_config_name": "terraform-5nlhqhrvt5e3taugrqzifth2su", "lifecycle_state": "InService"}, "i-03ea5a0be5b5b92a5"

: {"health_status": "Healthy", "launch_config_name": "<snip>", "lifecycle_state": "InService"}, "i-0ad0d81d719fe7bc1": {"health_status": "Healthy", "launch_config_name": null, "lifecycle_state": "InService"}}, "instances": ["i-01fca335e1e29c65c", "i-03ea5a0be5b5b92a5", "i-0ad0d81d719fe7bc1"], "invocation": {"module_args": {"availability_zones": null, "aws_access_key": null, "aws_secret_key

": null, "default_cooldown": 300, "desired_capacity": 2, "ec2_url": null, "health_check_period": 300, "health_check_type": "EC2", "launch_config_name": "<snip>", "lc_check": true, "load_balancers": null, "max_size": 2, "min_size": 2, "name": "router-jljw-us-west-2c", "profile": null, "region": "us-west-2", "replace_all_instances": false, "replace_batch_size": 1, "replace_instances": ["i-00

65d89d324fe72df"], "security_token": null, "state": "present", "tags": [], "termination_policies": ["Default"], "validate_certs": true, "vpc_zone_identifier": null, "wait_for_instances": true, "wait_timeout": 300}, "module_name": "ec2_asg"}, "launch_config_name": "<snip>", "load_balancers":<snip>, "max_size": 2, "min_size": 2, "name": "my_asg", "pending_instanc

es": 0, "placement_group": null, "tags": {"cleaner-destroy-after": "2016-06-14 16:49:17 +0000"}, "terminating_instances": 0, "termination_policies": ["Default"], "unhealthy_instances": 0, "viable_instances": 3, "vpc_zone_identifier": "subnet-f453acac"}

```

| True | ec2_asg ignores replace_instances if lc_check is true - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

cloud/amazon/ec2_asg

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.0.0.1

config file = <snip>

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

N/A

##### SUMMARY

<!--- Explain the problem briefly -->

Running `ec2_asg` with `replace_instances` set to a single instance and `lc_check` set to `yes` against an ASG with multiple instances causes it to ignore `replace_instances` and replace a random instance in the ASG.

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

- Spin up an ASG with min, max and desired > 1

- Change the launch configuration for the ASG

- Run `ec2_asg`, specifying a single instance for `replace_instances`, and `lc_check` = `yes`

It will choose a random instance from the instances in the ASG which have the old LC.

This seems to stem from [these lines](https://github.com/ansible/ansible-modules-core/blob/7314cc3867eb90bc1c098e29265ae48670ad35b1/cloud/amazon/ec2_asg.py#L628-L633) ignoring the passed-in `initial_instances` and instead producing its own list of instances to be terminated.

<!--- Paste example playbooks or commands between quotes below -->

```

ec2_asg:

lc_check: yes

replace_batch_size: 1

replace_instances: my_instance_id

name: my_asg

min_size: 3

max_size: 3

desired_capacity: 3

launch_config_name: my_lc

region: us-west-2

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

It would spin up a new instance in the ASG, and then terminate the instance I specified above

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

It sometimes terminates the one I specify, other times it terminates a different one.

<!--- Paste verbatim command output between quotes below -->

```

TASK [Cycling | ec2_asg | Cycle instance (only if its launch configuration differs from that of the ASG)] ***

task path: cycle-asg-instance-with-status-check.yml:16

Wednesday 15 June 2016 15:31:18 +0000 (0:00:00.020) 0:04:47.913 ********

ESTABLISH LOCAL CONNECTION FOR USER: admin

127.0.0.1 EXEC ( umask 22 && mkdir -p "$( echo $HOME/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736 )" && echo "$( echo $HOME/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736 )" )

127.0.0.1 PUT /tmp/tmpTZaAaD TO /home/admin/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736/ec2_asg

127.0.0.1 EXEC LANG=en_US.UTF-8 LC_ALL=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8 /usr/bin/python /home/admin/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736/ec2_asg; rm -rf "/home/admin/.ansible/tmp/ansible-tmp-1466004678.61-36579860387736/" > /dev/null 2>&1

changed: [localhost] => {"availability_zones": ["us-west-2c"], "changed": true, "default_cooldown": 300, "desired_capacity": 2, "health_check_period": 300, "health_check_type": "EC2", "healthy_instances": 3, "in_service_instances": 3, "instance_facts": {"i-01fca335e1e29c65c": {"health_status": "Healthy", "launch_config_name": "terraform-5nlhqhrvt5e3taugrqzifth2su", "lifecycle_state": "InService"}, "i-03ea5a0be5b5b92a5"

: {"health_status": "Healthy", "launch_config_name": "<snip>", "lifecycle_state": "InService"}, "i-0ad0d81d719fe7bc1": {"health_status": "Healthy", "launch_config_name": null, "lifecycle_state": "InService"}}, "instances": ["i-01fca335e1e29c65c", "i-03ea5a0be5b5b92a5", "i-0ad0d81d719fe7bc1"], "invocation": {"module_args": {"availability_zones": null, "aws_access_key": null, "aws_secret_key

": null, "default_cooldown": 300, "desired_capacity": 2, "ec2_url": null, "health_check_period": 300, "health_check_type": "EC2", "launch_config_name": "<snip>", "lc_check": true, "load_balancers": null, "max_size": 2, "min_size": 2, "name": "router-jljw-us-west-2c", "profile": null, "region": "us-west-2", "replace_all_instances": false, "replace_batch_size": 1, "replace_instances": ["i-00

65d89d324fe72df"], "security_token": null, "state": "present", "tags": [], "termination_policies": ["Default"], "validate_certs": true, "vpc_zone_identifier": null, "wait_for_instances": true, "wait_timeout": 300}, "module_name": "ec2_asg"}, "launch_config_name": "<snip>", "load_balancers":<snip>, "max_size": 2, "min_size": 2, "name": "my_asg", "pending_instanc

es": 0, "placement_group": null, "tags": {"cleaner-destroy-after": "2016-06-14 16:49:17 +0000"}, "terminating_instances": 0, "termination_policies": ["Default"], "unhealthy_instances": 0, "viable_instances": 3, "vpc_zone_identifier": "subnet-f453acac"}

```

| main | asg ignores replace instances if lc check is true issue type bug report component name cloud amazon asg ansible version ansible config file configured module search path default w o overrides configuration mention any settings you have changed added removed in ansible cfg or using the ansible environment variables os environment mention the os you are running ansible from and the os you are managing or say “n a” for anything that is not platform specific n a summary running asg with replace instances set to a single instance and lc check set to yes against an asg with multiple instances causes it to ignore replace instances and replace a random instance in the asg steps to reproduce for bugs show exactly how to reproduce the problem for new features show how the feature would be used spin up an asg with min max and desired change the launch configuration for the asg run asg specifying a single instance for replace instances and lc check yes it will choose a random instance from the instances in the asg which have the old lc this seems to stem from ignoring the passed in initial instances and instead producing its own list of instances to be terminated asg lc check yes replace batch size replace instances my instance id name my asg min size max size desired capacity launch config name my lc region us west expected results it would spin up a new instance in the asg and then terminate the instance i specified above actual results it sometimes terminates the one i specify other times it terminates a different one task task path cycle asg instance with status check yml wednesday june establish local connection for user admin exec umask mkdir p echo home ansible tmp ansible tmp echo echo home ansible tmp ansible tmp put tmp tmptzaaad to home admin ansible tmp ansible tmp asg exec lang en us utf lc all en us utf lc messages en us utf usr bin python home admin ansible tmp ansible tmp asg rm rf home admin ansible tmp ansible tmp dev null changed availability zones changed true default cooldown desired capacity health check period health check type healthy instances in service instances instance facts i health status healthy launch config name terraform lifecycle state inservice i health status healthy launch config name lifecycle state inservice i health status healthy launch config name null lifecycle state inservice instances invocation module args availability zones null aws access key null aws secret key null default cooldown desired capacity url null health check period health check type launch config name lc check true load balancers null max size min size name router jljw us west profile null region us west replace all instances false replace batch size replace instances i security token null state present tags termination policies validate certs true vpc zone identifier null wait for instances true wait timeout module name asg launch config name load balancers max size min size name my asg pending instanc es placement group null tags cleaner destroy after terminating instances termination policies unhealthy instances viable instances vpc zone identifier subnet | 1 |

1,344 | 5,721,693,133 | IssuesEvent | 2017-04-20 07:28:22 | tomchentw/react-google-maps | https://api.github.com/repos/tomchentw/react-google-maps | closed | HeatmapLayer Broken | CALL_FOR_MAINTAINERS | HeatmapLayer should use `google.maps.visualization.HeatmapLayer` instead of just `google.maps.HeatmapLayer` in order to construct a HeatmapLayer. | True | HeatmapLayer Broken - HeatmapLayer should use `google.maps.visualization.HeatmapLayer` instead of just `google.maps.HeatmapLayer` in order to construct a HeatmapLayer. | main | heatmaplayer broken heatmaplayer should use google maps visualization heatmaplayer instead of just google maps heatmaplayer in order to construct a heatmaplayer | 1 |

6,661 | 2,610,258,666 | IssuesEvent | 2015-02-26 19:22:29 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 深圳激光治疗痘痘好吗 | auto-migrated Priority-Medium Type-Defect | ```

深圳激光治疗痘痘好吗【深圳韩方科颜全国热线400-869-1818,24

小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩��

�秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,�

��方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹

”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内��

�业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上�

��痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:52 | 1.0 | 深圳激光治疗痘痘好吗 - ```

深圳激光治疗痘痘好吗【深圳韩方科颜全国热线400-869-1818,24

小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩��

�秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,�

��方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹

”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内��

�业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上�

��痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:52 | non_main | 深圳激光治疗痘痘好吗 深圳激光治疗痘痘好吗【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩�� �秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,� ��方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹 ”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内�� �业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上� ��痘痘。 original issue reported on code google com by szft com on may at | 0 |

186,449 | 14,394,699,316 | IssuesEvent | 2020-12-03 01:55:08 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | mraksoll4/lnd: nursery_store_test.go; 3 LoC | fresh test tiny |

Found a possible issue in [mraksoll4/lnd](https://www.github.com/mraksoll4/lnd) at [nursery_store_test.go](https://github.com/mraksoll4/lnd/blob/e495a1057c2a4b9e3df37f2bac991cedcd64c89a/nursery_store_test.go#L159-L161)

The below snippet of Go code triggered static analysis which searches for goroutines and/or defer statements

which capture loop variables.

[Click here to see the code in its original context.](https://github.com/mraksoll4/lnd/blob/e495a1057c2a4b9e3df37f2bac991cedcd64c89a/nursery_store_test.go#L159-L161)

<details>

<summary>Click here to show the 3 line(s) of Go which triggered the analyzer.</summary>

```go

for _, htlcOutput := range test.htlcOutputs {

assertCribAtExpiryHeight(t, ns, &htlcOutput)

}

```

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call which takes a reference to htlcOutput at line 160 may start a goroutine

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: e495a1057c2a4b9e3df37f2bac991cedcd64c89a

| 1.0 | mraksoll4/lnd: nursery_store_test.go; 3 LoC -

Found a possible issue in [mraksoll4/lnd](https://www.github.com/mraksoll4/lnd) at [nursery_store_test.go](https://github.com/mraksoll4/lnd/blob/e495a1057c2a4b9e3df37f2bac991cedcd64c89a/nursery_store_test.go#L159-L161)

The below snippet of Go code triggered static analysis which searches for goroutines and/or defer statements

which capture loop variables.

[Click here to see the code in its original context.](https://github.com/mraksoll4/lnd/blob/e495a1057c2a4b9e3df37f2bac991cedcd64c89a/nursery_store_test.go#L159-L161)

<details>

<summary>Click here to show the 3 line(s) of Go which triggered the analyzer.</summary>

```go

for _, htlcOutput := range test.htlcOutputs {

assertCribAtExpiryHeight(t, ns, &htlcOutput)

}

```

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call which takes a reference to htlcOutput at line 160 may start a goroutine

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: e495a1057c2a4b9e3df37f2bac991cedcd64c89a

| non_main | lnd nursery store test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyzer go for htlcoutput range test htlcoutputs assertcribatexpiryheight t ns htlcoutput below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message function call which takes a reference to htlcoutput at line may start a goroutine leave a reaction on this issue to contribute to the project by classifying this instance as a bug mitigated or desirable behavior rocket see the descriptions of the classifications for more information commit id | 0 |

102,694 | 4,158,593,656 | IssuesEvent | 2016-06-17 03:53:59 | BYU-ARCLITE/Ayamel-Examples | https://api.github.com/repos/BYU-ARCLITE/Ayamel-Examples | closed | CaptionAider: Creating a new subtitle track and saving/editing it multiple times creates multiple tracks | Bug CaptionAider Mac PC Priority 1 | When I create captions in CaptionAider, it creates 2 caption tracks in both configuration and under the caption/subtitles menu. The tracks are identical unless you make any edits in CaptionAider. After that, the duplicate remains how it was before you made any edits.

| 1.0 | CaptionAider: Creating a new subtitle track and saving/editing it multiple times creates multiple tracks - When I create captions in CaptionAider, it creates 2 caption tracks in both configuration and under the caption/subtitles menu. The tracks are identical unless you make any edits in CaptionAider. After that, the duplicate remains how it was before you made any edits.

| non_main | captionaider creating a new subtitle track and saving editing it multiple times creates multiple tracks when i create captions in captionaider it creates caption tracks in both configuration and under the caption subtitles menu the tracks are identical unless you make any edits in captionaider after that the duplicate remains how it was before you made any edits | 0 |

349,352 | 10,467,866,136 | IssuesEvent | 2019-09-22 09:19:43 | SkyrimTogether/issues-game | https://api.github.com/repos/SkyrimTogether/issues-game | closed | Bug with getting respawned but immediatly hitted by an enemy and getting to the down state again | comp: client priority: 2 (medium) type: bug | ## Description

My friend had a bug, when he was killed by a Whiterun Guard.

He went into a down state, waiting for a revive.

When he typed /respawn command in console he stud up with a half of hp.

But after getting attacked by a Guard he went into a down state with a 1hp again.

When he tried to type /respawn command again the console was sending a request a revive message or so.

Saying, that he's not dead yet to be respawned again.

## Steps to reproduce

How to reproduce this issue:

1. Start the game.

2. Connect to the server.

3. Got yourself downed by the enemy.

4. Make sure, that the enemy is still hitting your corpse.

5. Type /respawn command in console.

6. Get yourself respawned on the same place when you got downed by the enemy with a half of hp.

7. Enemy is attacking you again.

8. You're going into a down state again with 1hp.

9. Type /respawn command again and see an error message in a console.

Attaching latest dmp files:

https://drive.google.com/file/d/1WuT0timchUL3cW5YWTi8oDBIkHUGsvlC/view?usp=sharing

https://drive.google.com/open?id=1fWFId8otnBmW_xbNzhg7B97qWoWecXHq

https://drive.google.com/open?id=1abWhvPHg8mwtLy5IOqhr_prcKh8t9VQo

## Reproduction rate

Mostly all the times, when you're trying to get respawned, while your corpse is still getting attacked by an enemy.

## Expected result

To be respawned in a Shrine immediately without getting attacked by an enemy.

Or having a temporary invincibility to be able to travel to the Shrine without getting any dmg by an enemy.

## Your environment

* Game edition (choose on which edition do you have problems):

* The Elder Scrolls V: Skyrim Special Edition

* Skyrim Together Mod

## Evidence (optional)

Don't have an evidence for this bug, i'm sorry 👎 | 1.0 | Bug with getting respawned but immediatly hitted by an enemy and getting to the down state again - ## Description

My friend had a bug, when he was killed by a Whiterun Guard.

He went into a down state, waiting for a revive.

When he typed /respawn command in console he stud up with a half of hp.

But after getting attacked by a Guard he went into a down state with a 1hp again.

When he tried to type /respawn command again the console was sending a request a revive message or so.

Saying, that he's not dead yet to be respawned again.

## Steps to reproduce

How to reproduce this issue:

1. Start the game.

2. Connect to the server.

3. Got yourself downed by the enemy.

4. Make sure, that the enemy is still hitting your corpse.

5. Type /respawn command in console.

6. Get yourself respawned on the same place when you got downed by the enemy with a half of hp.

7. Enemy is attacking you again.

8. You're going into a down state again with 1hp.

9. Type /respawn command again and see an error message in a console.

Attaching latest dmp files:

https://drive.google.com/file/d/1WuT0timchUL3cW5YWTi8oDBIkHUGsvlC/view?usp=sharing

https://drive.google.com/open?id=1fWFId8otnBmW_xbNzhg7B97qWoWecXHq

https://drive.google.com/open?id=1abWhvPHg8mwtLy5IOqhr_prcKh8t9VQo

## Reproduction rate

Mostly all the times, when you're trying to get respawned, while your corpse is still getting attacked by an enemy.

## Expected result

To be respawned in a Shrine immediately without getting attacked by an enemy.

Or having a temporary invincibility to be able to travel to the Shrine without getting any dmg by an enemy.

## Your environment

* Game edition (choose on which edition do you have problems):

* The Elder Scrolls V: Skyrim Special Edition

* Skyrim Together Mod

## Evidence (optional)

Don't have an evidence for this bug, i'm sorry 👎 | non_main | bug with getting respawned but immediatly hitted by an enemy and getting to the down state again description my friend had a bug when he was killed by a whiterun guard he went into a down state waiting for a revive when he typed respawn command in console he stud up with a half of hp but after getting attacked by a guard he went into a down state with a again when he tried to type respawn command again the console was sending a request a revive message or so saying that he s not dead yet to be respawned again steps to reproduce how to reproduce this issue start the game connect to the server got yourself downed by the enemy make sure that the enemy is still hitting your corpse type respawn command in console get yourself respawned on the same place when you got downed by the enemy with a half of hp enemy is attacking you again you re going into a down state again with type respawn command again and see an error message in a console attaching latest dmp files reproduction rate mostly all the times when you re trying to get respawned while your corpse is still getting attacked by an enemy expected result to be respawned in a shrine immediately without getting attacked by an enemy or having a temporary invincibility to be able to travel to the shrine without getting any dmg by an enemy your environment game edition choose on which edition do you have problems the elder scrolls v skyrim special edition skyrim together mod evidence optional don t have an evidence for this bug i m sorry 👎 | 0 |

77,316 | 14,784,531,990 | IssuesEvent | 2021-01-12 00:25:34 | streetcomplete/StreetComplete | https://api.github.com/repos/streetcomplete/StreetComplete | opened | Separate view data in quest data classes | code cleanup | ### Task

For the cycleway quest, the cycleway answer options are cleanly seperated into two files: `Cycleway.kt` contains the "pure data" (the enum) and `CyclewayItem` contains the view-related part of a cycleway answer option.

This is better than how it is done for for example the surface quest (and others). There, in `Surface.kt`, the enum is not pure data because is contains reference to view stuff and also Android-specific stuff. So for these quest(s), the data class should be refactored to be like for the cycleway class.

### Example

So it should be....

```kotlin

enum class Surface(val value: String) { // or osmValue?

ASPHALT("asphalt"),

...

}

// in SurfaceItem.kt

fun Surface.asItem(): Item<Surface> = ...

```

### Reason

Apart from it being good style, it is a step towards making #1892 a little less work to do

### Quests where this "old style" is used

- BuildingType

- Surface

### And then

And there is more. Quest types should no longer have a `String` as parameter but an enum.

Compare `AddRecyclingType` (new style) with `AddRailwayCrossingBarrier` (old style). It is okay for the enum to define an OSM value like in the example with surface above. | 1.0 | Separate view data in quest data classes - ### Task

For the cycleway quest, the cycleway answer options are cleanly seperated into two files: `Cycleway.kt` contains the "pure data" (the enum) and `CyclewayItem` contains the view-related part of a cycleway answer option.

This is better than how it is done for for example the surface quest (and others). There, in `Surface.kt`, the enum is not pure data because is contains reference to view stuff and also Android-specific stuff. So for these quest(s), the data class should be refactored to be like for the cycleway class.

### Example

So it should be....

```kotlin

enum class Surface(val value: String) { // or osmValue?

ASPHALT("asphalt"),

...

}

// in SurfaceItem.kt

fun Surface.asItem(): Item<Surface> = ...

```

### Reason

Apart from it being good style, it is a step towards making #1892 a little less work to do

### Quests where this "old style" is used

- BuildingType

- Surface

### And then

And there is more. Quest types should no longer have a `String` as parameter but an enum.

Compare `AddRecyclingType` (new style) with `AddRailwayCrossingBarrier` (old style). It is okay for the enum to define an OSM value like in the example with surface above. | non_main | separate view data in quest data classes task for the cycleway quest the cycleway answer options are cleanly seperated into two files cycleway kt contains the pure data the enum and cyclewayitem contains the view related part of a cycleway answer option this is better than how it is done for for example the surface quest and others there in surface kt the enum is not pure data because is contains reference to view stuff and also android specific stuff so for these quest s the data class should be refactored to be like for the cycleway class example so it should be kotlin enum class surface val value string or osmvalue asphalt asphalt in surfaceitem kt fun surface asitem item reason apart from it being good style it is a step towards making a little less work to do quests where this old style is used buildingtype surface and then and there is more quest types should no longer have a string as parameter but an enum compare addrecyclingtype new style with addrailwaycrossingbarrier old style it is okay for the enum to define an osm value like in the example with surface above | 0 |

4,210 | 6,447,246,796 | IssuesEvent | 2017-08-14 05:55:39 | inveniosoftware/invenio-accounts | https://api.github.com/repos/inveniosoftware/invenio-accounts | closed | ext: make monkey patching optional | Service: INSPIRE Type: RFC | The release of `invenio-accounts==1.0.0b7` led to a failure in our build: https://travis-ci.org/inspirehep/inspire-next/jobs/262243957.

In particular, what seems to be failing is the fact that the user in our acceptance is not logged in, that is [our mechanism](https://github.com/inspirehep/inspire-next/blob/12da525d1b939bcd4994e36cbb52492d3e140029/tests/acceptance/conftest.py#L88-L104) for saving/restoring cookies to bypass manual authentication is no longer working. In fact, I see some commits between `invenio-accounts==1.0.0b6` and `invenio-accounts=1.0.0b7` that seem to relate to this: https://github.com/inveniosoftware/invenio-accounts/commit/c80ee61e686d8929dde6c0d7fad770729b7b12a8, https://github.com/inveniosoftware/invenio-accounts/commit/b611762ed65ce064c7743cf5847f497a32caf2f7, and https://github.com/inveniosoftware/invenio-accounts/commit/0d22f84bef21a0a25581abc1f3ac85c51a7f1055.

Note that [`login_user_via_session`](https://github.com/inveniosoftware/invenio-accounts/blob/4b1e8870ed3b6620adf35e64636a012a6492e093/invenio_accounts/testutils.py#L70-L81) won't work for us here as we have no `app.test_client` object to use, as the `acceptance` test suite is interacting through Selenium with **another** application.

One possible fix is outlined in the title: we make the above monkey patching configurable, and we disable it in the configuration of INSPIRE. Another possibility is that we revert https://github.com/inspirehep/inspire-next/commit/63043361c5508c31d7c26ed728916f678c40589c on our side, but this incurs in a performance penalty in our test suite. There's probably more, but this is all I can think of right now...

CC: @lnielsen | 1.0 | ext: make monkey patching optional - The release of `invenio-accounts==1.0.0b7` led to a failure in our build: https://travis-ci.org/inspirehep/inspire-next/jobs/262243957.

In particular, what seems to be failing is the fact that the user in our acceptance is not logged in, that is [our mechanism](https://github.com/inspirehep/inspire-next/blob/12da525d1b939bcd4994e36cbb52492d3e140029/tests/acceptance/conftest.py#L88-L104) for saving/restoring cookies to bypass manual authentication is no longer working. In fact, I see some commits between `invenio-accounts==1.0.0b6` and `invenio-accounts=1.0.0b7` that seem to relate to this: https://github.com/inveniosoftware/invenio-accounts/commit/c80ee61e686d8929dde6c0d7fad770729b7b12a8, https://github.com/inveniosoftware/invenio-accounts/commit/b611762ed65ce064c7743cf5847f497a32caf2f7, and https://github.com/inveniosoftware/invenio-accounts/commit/0d22f84bef21a0a25581abc1f3ac85c51a7f1055.

Note that [`login_user_via_session`](https://github.com/inveniosoftware/invenio-accounts/blob/4b1e8870ed3b6620adf35e64636a012a6492e093/invenio_accounts/testutils.py#L70-L81) won't work for us here as we have no `app.test_client` object to use, as the `acceptance` test suite is interacting through Selenium with **another** application.

One possible fix is outlined in the title: we make the above monkey patching configurable, and we disable it in the configuration of INSPIRE. Another possibility is that we revert https://github.com/inspirehep/inspire-next/commit/63043361c5508c31d7c26ed728916f678c40589c on our side, but this incurs in a performance penalty in our test suite. There's probably more, but this is all I can think of right now...

CC: @lnielsen | non_main | ext make monkey patching optional the release of invenio accounts led to a failure in our build in particular what seems to be failing is the fact that the user in our acceptance is not logged in that is for saving restoring cookies to bypass manual authentication is no longer working in fact i see some commits between invenio accounts and invenio accounts that seem to relate to this and note that won t work for us here as we have no app test client object to use as the acceptance test suite is interacting through selenium with another application one possible fix is outlined in the title we make the above monkey patching configurable and we disable it in the configuration of inspire another possibility is that we revert on our side but this incurs in a performance penalty in our test suite there s probably more but this is all i can think of right now cc lnielsen | 0 |

1,407 | 6,041,090,568 | IssuesEvent | 2017-06-10 20:38:58 | duckduckgo/zeroclickinfo-goodies | https://api.github.com/repos/duckduckgo/zeroclickinfo-goodies | closed | SVN Cheat Sheet: Add description for `svn log` | Improvement Maintainer Approved Suggestion | Hi @Juholei

nice and helpful Goody! I came along a few times and missed the `svn log` statement to display commit log messages with some helpful arguments (-l, -v).

http://svnbook.red-bean.com/en/1.7/svn.ref.svn.c.log.html

Any thoughts on adding it?

------

IA Page: http://duck.co/ia/view/svn_cheat_sheet

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @Juholei | True | SVN Cheat Sheet: Add description for `svn log` - Hi @Juholei

nice and helpful Goody! I came along a few times and missed the `svn log` statement to display commit log messages with some helpful arguments (-l, -v).

http://svnbook.red-bean.com/en/1.7/svn.ref.svn.c.log.html

Any thoughts on adding it?

------

IA Page: http://duck.co/ia/view/svn_cheat_sheet

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @Juholei | main | svn cheat sheet add description for svn log hi juholei nice and helpful goody i came along a few times and missed the svn log statement to display commit log messages with some helpful arguments l v any thoughts on adding it ia page juholei | 1 |

4,887 | 25,074,975,381 | IssuesEvent | 2022-11-07 14:53:47 | BioArchLinux/Packages | https://api.github.com/repos/BioArchLinux/Packages | closed | [MAINTAIN] packages influenced by openssl | maintain | <!--

Please report the error of one package in one issue! Use multi issues to report multi bugs.

Thanks!

-->

**Packages List**

<details>

- [x] htslib-1.16-2: usr/lib/htslib/plugins/hfile_s3.so (libcrypto.so.1.1

- [x] phylosuite-1.2.2-5: usr/bin/phylosuite/libssl.so.1.1 (libcrypto.so.1.1)

- [x] python2-2.7.18-4: usr/lib/python2.7/lib-dynload/_ssl.so (libssl.so.1.1)

- [x] qt4-4.8.7-40: usr/lib/libQtNetwork.so.4.8.7 (libssl.so.1.1)

- [x] r-hdf5array-1.26.0-1: usr/lib/R/library/HDF5Array/libs/HDF5Array.so (libcrypto.so.1.1)

- [x] r-openssl-2.0.4-1: usr/lib/R/library/openssl/libs/openssl.so (libssl.so.1.1)

- [x] r-rhdf5-2.42.0-1: usr/lib/R/library/rhdf5/libs/rhdf5.so (libcrypto.so.1.1)

- [x] r-rserve-1.8.10-4: usr/lib/R/library/Rserve/libs/Rserve.so (libssl.so.1.1)

- [x] r-s2-1.1.0-2: usr/lib/R/library/s2/libs/s2.so (libcrypto.so.1.1)

- [x] seqlib-1.2.0-3: usr/bin/seqtools (libcrypto.so.1.1)

- [x] shapeit4-4.2.2-3: usr/bin/shapeit4 (libssl.so.1.1)

</details>

**Packages (please complete the following information):**

- Package Name: [e.g. iqtree]

**Description**

Add any other context about the problem here.

| True | [MAINTAIN] packages influenced by openssl - <!--

Please report the error of one package in one issue! Use multi issues to report multi bugs.

Thanks!

-->

**Packages List**

<details>

- [x] htslib-1.16-2: usr/lib/htslib/plugins/hfile_s3.so (libcrypto.so.1.1

- [x] phylosuite-1.2.2-5: usr/bin/phylosuite/libssl.so.1.1 (libcrypto.so.1.1)

- [x] python2-2.7.18-4: usr/lib/python2.7/lib-dynload/_ssl.so (libssl.so.1.1)

- [x] qt4-4.8.7-40: usr/lib/libQtNetwork.so.4.8.7 (libssl.so.1.1)

- [x] r-hdf5array-1.26.0-1: usr/lib/R/library/HDF5Array/libs/HDF5Array.so (libcrypto.so.1.1)

- [x] r-openssl-2.0.4-1: usr/lib/R/library/openssl/libs/openssl.so (libssl.so.1.1)

- [x] r-rhdf5-2.42.0-1: usr/lib/R/library/rhdf5/libs/rhdf5.so (libcrypto.so.1.1)

- [x] r-rserve-1.8.10-4: usr/lib/R/library/Rserve/libs/Rserve.so (libssl.so.1.1)

- [x] r-s2-1.1.0-2: usr/lib/R/library/s2/libs/s2.so (libcrypto.so.1.1)

- [x] seqlib-1.2.0-3: usr/bin/seqtools (libcrypto.so.1.1)

- [x] shapeit4-4.2.2-3: usr/bin/shapeit4 (libssl.so.1.1)

</details>

**Packages (please complete the following information):**

- Package Name: [e.g. iqtree]

**Description**

Add any other context about the problem here.