Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2,508 | 8,655,459,785 | IssuesEvent | 2018-11-27 16:00:30 | codestation/qcma | https://api.github.com/repos/codestation/qcma | closed | QCMA crashes after unplugging vita | unmaintained | Pretty much every time i unplug the vita after transferring something it crashes QCMA | True | QCMA crashes after unplugging vita - Pretty much every time i unplug the vita after transferring something it crashes QCMA | main | qcma crashes after unplugging vita pretty much every time i unplug the vita after transferring something it crashes qcma | 1 |

149,991 | 19,597,828,284 | IssuesEvent | 2022-01-05 20:12:15 | edgexfoundry/go-mod-secrets | https://api.github.com/repos/edgexfoundry/go-mod-secrets | closed | Add "make lint" target and add to "make test" target | security_audit | Should enable golangci-lint with default linters + gosec.

See https://github.com/edgexfoundry/edgex-go/issues/3565 | True | Add "make lint" target and add to "make test" target - Should enable golangci-lint with default linters + gosec.

See https://github.com/edgexfoundry/edgex-go/issues/3565 | non_main | add make lint target and add to make test target should enable golangci lint with default linters gosec see | 0 |

424,631 | 29,146,758,293 | IssuesEvent | 2023-05-18 04:11:25 | nattadasu/ryuuRyuusei | https://api.github.com/repos/nattadasu/ryuuRyuusei | opened | (PY-D0002) Missing class docstring | documentation | ## Description

Class docstring is missing. If you want to ignore this, you can configure this in the `.deepsource.toml` file. Please refer to [docs](https://deepsource.io/docs/analyzer/python/#meta) for available options.

## Occurrences

There are 76 occurrences of this issue in the repository.

See all occurrences on DeepSource → [app.deepsource.com/gh/nattadasu/ryuuRyuusei/issue/PY-D0002/occurrences/](https://app.deepsource.com/gh/nattadasu/ryuuRyuusei/issue/PY-D0002/occurrences/)

| 1.0 | (PY-D0002) Missing class docstring - ## Description

Class docstring is missing. If you want to ignore this, you can configure this in the `.deepsource.toml` file. Please refer to [docs](https://deepsource.io/docs/analyzer/python/#meta) for available options.

## Occurrences

There are 76 occurrences of this issue in the repository.

See all occurrences on DeepSource → [app.deepsource.com/gh/nattadasu/ryuuRyuusei/issue/PY-D0002/occurrences/](https://app.deepsource.com/gh/nattadasu/ryuuRyuusei/issue/PY-D0002/occurrences/)

| non_main | py missing class docstring description class docstring is missing if you want to ignore this you can configure this in the deepsource toml file please refer to for available options occurrences there are occurrences of this issue in the repository see all occurrences on deepsource rarr | 0 |

2,135 | 7,334,336,466 | IssuesEvent | 2018-03-05 22:25:17 | jramell/Choice | https://api.github.com/repos/jramell/Choice | opened | Change SystemManager's Architecture | maintainability | The SystemManager doesn't allow for unconfigured trasition between systems. Should implement a stack of system states, and when a new system is registered, a new entry is added on the stack. When the system unregisters, its state is popped from the stack and applied to the system as a whole. | True | Change SystemManager's Architecture - The SystemManager doesn't allow for unconfigured trasition between systems. Should implement a stack of system states, and when a new system is registered, a new entry is added on the stack. When the system unregisters, its state is popped from the stack and applied to the system as a whole. | main | change systemmanager s architecture the systemmanager doesn t allow for unconfigured trasition between systems should implement a stack of system states and when a new system is registered a new entry is added on the stack when the system unregisters its state is popped from the stack and applied to the system as a whole | 1 |

1,426 | 6,196,339,331 | IssuesEvent | 2017-07-05 14:33:48 | ocaml/opam-repository | https://api.github.com/repos/ocaml/opam-repository | closed | Fix zenon.{0.7.1,0.8.0} | needs maintainer action no maintainer | The packages are misbehaving which leads to obscure errors in [other parts](https://github.com/ocaml/opam-repository/issues/9690#issuecomment-312921611) of the system (which shouldn't but that's another question).

A first fix would be to make them install in their own prefix.

I could not understand what in the world lead to the `zenon` directory [become a symlink](https://github.com/ocaml/opam-repository/issues/9690#issuecomment-312921164).

Unfortunately zenon has no maintainer.

| True | Fix zenon.{0.7.1,0.8.0} - The packages are misbehaving which leads to obscure errors in [other parts](https://github.com/ocaml/opam-repository/issues/9690#issuecomment-312921611) of the system (which shouldn't but that's another question).

A first fix would be to make them install in their own prefix.

I could not understand what in the world lead to the `zenon` directory [become a symlink](https://github.com/ocaml/opam-repository/issues/9690#issuecomment-312921164).

Unfortunately zenon has no maintainer.

| main | fix zenon the packages are misbehaving which leads to obscure errors in of the system which shouldn t but that s another question a first fix would be to make them install in their own prefix i could not understand what in the world lead to the zenon directory unfortunately zenon has no maintainer | 1 |

1,880 | 6,577,510,511 | IssuesEvent | 2017-09-12 01:25:11 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | allow iam group assignment without wiping out all other groups | affects_2.0 aws cloud feature_idea waiting_on_maintainer | ##### Issue Type:

- Feature Idea

##### Component Name:

iam module

##### Ansible Version:

```

2.0.1.0

```

##### Ansible Configuration:

NA

##### Environment:

NA

##### Summary:

It's currently impossible to assign an IAM group to a user without wiping out all other groups. The only way to assign groups is in an iam module task:

```

- iam: iam_type=user name=user_name state=present groups="{{ iam_groups }}"

```

However, this always wipes existing groups, losing data for existing users if you don't already know all of the groups assigned to the user. Other modules with group assignments use another parameter to allow appending to lists (e.g. mysql_user has "append_privs" and ec2_group has "purge_rules")

##### Steps To Reproduce:

```

vars:

groups1:

- groups1_example

groups2:

- groups2_example

tasks:

- iam: iam_type=user name=user_name state=present groups="{{ groups1 }}"

- iam: iam_type=user name=user_name state=present groups="{{ groups2 }}"

```

user will only have groups2_example group assigned.

##### Expected Results:

user would belong to both groups1_example and groups2_example

##### Actual Results:

user will only have groups2_example group assigned.

| True | allow iam group assignment without wiping out all other groups - ##### Issue Type:

- Feature Idea

##### Component Name:

iam module

##### Ansible Version:

```

2.0.1.0

```

##### Ansible Configuration:

NA

##### Environment:

NA

##### Summary:

It's currently impossible to assign an IAM group to a user without wiping out all other groups. The only way to assign groups is in an iam module task:

```

- iam: iam_type=user name=user_name state=present groups="{{ iam_groups }}"

```

However, this always wipes existing groups, losing data for existing users if you don't already know all of the groups assigned to the user. Other modules with group assignments use another parameter to allow appending to lists (e.g. mysql_user has "append_privs" and ec2_group has "purge_rules")

##### Steps To Reproduce:

```

vars:

groups1:

- groups1_example

groups2:

- groups2_example

tasks:

- iam: iam_type=user name=user_name state=present groups="{{ groups1 }}"

- iam: iam_type=user name=user_name state=present groups="{{ groups2 }}"

```

user will only have groups2_example group assigned.

##### Expected Results:

user would belong to both groups1_example and groups2_example

##### Actual Results:

user will only have groups2_example group assigned.

| main | allow iam group assignment without wiping out all other groups issue type feature idea component name iam module ansible version ansible configuration na environment na summary it s currently impossible to assign an iam group to a user without wiping out all other groups the only way to assign groups is in an iam module task iam iam type user name user name state present groups iam groups however this always wipes existing groups losing data for existing users if you don t already know all of the groups assigned to the user other modules with group assignments use another parameter to allow appending to lists e g mysql user has append privs and group has purge rules steps to reproduce vars example example tasks iam iam type user name user name state present groups iam iam type user name user name state present groups user will only have example group assigned expected results user would belong to both example and example actual results user will only have example group assigned | 1 |

66,298 | 7,986,318,334 | IssuesEvent | 2018-07-19 01:23:49 | ProcessMaker/bpm | https://api.github.com/repos/ProcessMaker/bpm | closed | Delete of shapes via crown | P2 designer | When I click on the the trash can icon in the crown I'd like to have a confirmation (javascript confirmation) that gives me options to confirm deletion or cancel. Then have the subsequent object be removed from the canvas. | 1.0 | Delete of shapes via crown - When I click on the the trash can icon in the crown I'd like to have a confirmation (javascript confirmation) that gives me options to confirm deletion or cancel. Then have the subsequent object be removed from the canvas. | non_main | delete of shapes via crown when i click on the the trash can icon in the crown i d like to have a confirmation javascript confirmation that gives me options to confirm deletion or cancel then have the subsequent object be removed from the canvas | 0 |

5,793 | 30,693,785,838 | IssuesEvent | 2023-07-26 16:58:13 | PyCQA/flake8-bugbear | https://api.github.com/repos/PyCQA/flake8-bugbear | closed | Stop using `python setup.py bdist_wheel/sdist` | bug help wanted terrible_maintainer | Lets move to pypa/build in the upload to PyPI action.

```

python setup.py bdist_wheel

/opt/hostedtoolcache/Python/3.11.3/x64/lib/python3.11/site-packages/setuptools/config/pyprojecttoml.py:66: _BetaConfiguration: Support for `[tool.setuptools]` in `pyproject.toml` is still *beta*.

config = read_configuration(filepath, True, ignore_option_errors, dist)

running bdist_wheel

running build

running build_py

creating build

creating build/lib

copying bugbear.py -> build/lib

/opt/hostedtoolcache/Python/3.11.3/x64/lib/python3.11/site-packages/setuptools/_distutils/cmd.py:66: SetuptoolsDeprecationWarning: setup.py install is deprecated.

!!

********************************************************************************

Please avoid running ``setup.py`` directly.

Instead, use pypa/build, pypa/installer, pypa/build or

other standards-based tools.

installing to build/bdist.linux-x86_64/wheel

running install

See https://blog.ganssle.io/articles/[20](https://github.com/PyCQA/flake8-bugbear/actions/runs/5179489298/jobs/9332438714#step:5:21)[21](https://github.com/PyCQA/flake8-bugbear/actions/runs/5179489298/jobs/9332438714#step:5:22)/10/setup-py-deprecated.html for details.

running install_lib

********************************************************************************

!!

``` | True | Stop using `python setup.py bdist_wheel/sdist` - Lets move to pypa/build in the upload to PyPI action.

```

python setup.py bdist_wheel

/opt/hostedtoolcache/Python/3.11.3/x64/lib/python3.11/site-packages/setuptools/config/pyprojecttoml.py:66: _BetaConfiguration: Support for `[tool.setuptools]` in `pyproject.toml` is still *beta*.

config = read_configuration(filepath, True, ignore_option_errors, dist)

running bdist_wheel

running build

running build_py

creating build

creating build/lib

copying bugbear.py -> build/lib

/opt/hostedtoolcache/Python/3.11.3/x64/lib/python3.11/site-packages/setuptools/_distutils/cmd.py:66: SetuptoolsDeprecationWarning: setup.py install is deprecated.

!!

********************************************************************************

Please avoid running ``setup.py`` directly.

Instead, use pypa/build, pypa/installer, pypa/build or

other standards-based tools.

installing to build/bdist.linux-x86_64/wheel

running install

See https://blog.ganssle.io/articles/[20](https://github.com/PyCQA/flake8-bugbear/actions/runs/5179489298/jobs/9332438714#step:5:21)[21](https://github.com/PyCQA/flake8-bugbear/actions/runs/5179489298/jobs/9332438714#step:5:22)/10/setup-py-deprecated.html for details.

running install_lib

********************************************************************************

!!

``` | main | stop using python setup py bdist wheel sdist lets move to pypa build in the upload to pypi action python setup py bdist wheel opt hostedtoolcache python lib site packages setuptools config pyprojecttoml py betaconfiguration support for in pyproject toml is still beta config read configuration filepath true ignore option errors dist running bdist wheel running build running build py creating build creating build lib copying bugbear py build lib opt hostedtoolcache python lib site packages setuptools distutils cmd py setuptoolsdeprecationwarning setup py install is deprecated please avoid running setup py directly instead use pypa build pypa installer pypa build or other standards based tools installing to build bdist linux wheel running install see for details running install lib | 1 |

1,270 | 5,375,435,627 | IssuesEvent | 2017-02-23 04:47:22 | wojno/movie_manager | https://api.github.com/repos/wojno/movie_manager | opened | As an authenticated user, I want to view a movie record in my collection | Maintain Collection | As an `authenticated user`, I want to `view` a movie record in my `collection` so that I can see all `details` about that `movie` | True | As an authenticated user, I want to view a movie record in my collection - As an `authenticated user`, I want to `view` a movie record in my `collection` so that I can see all `details` about that `movie` | main | as an authenticated user i want to view a movie record in my collection as an authenticated user i want to view a movie record in my collection so that i can see all details about that movie | 1 |

29,957 | 8,445,333,514 | IssuesEvent | 2018-10-18 21:09:23 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | KVM/QEMU Network "has no peer" and WinRM/SSH fails due to no network present | bug builder/qemu | I have a Packer KVM build that I have not run for some time locally since it usually runs on the CI server. When I tried to run it locally I initially hit an issue due to the deprecation of `-usbevice tablet` in QEMU. After fixing this I noticed WinRM would never connect and the Windows VM had no network. The output from `PACKER_LOG=1` shows a warning from QEMU: `qemu-system-x86_64: warning: netdev user.0 has no peer` which according to the QEMU docs will result in no functional network.

Packer version: `1.3.1`

Host Platform:

```

Distributor ID: Ubuntu

Description: Ubuntu 18.04.1 LTS

Release: 18.04

Codename: bionic

```

QEMU Version:

```

QEMU emulator version 2.11.1(Debian 1:2.11+dfsg-1ubuntu7.5)

Copyright (c) 2003-2017 Fabrice Bellard and the QEMU Project developers

```

QEMU Args in json:

```json

"qemuargs": [

["-boot", "c"],

["-m", "4096M"],

["-smp", "2"],

["-usb"],

["-device", "usb-tablet"],

["-rtc", "base=localtime"],

]

```

Packer Debug log snippet:

```

==> kvm: Overriding defaults Qemu arguments with QemuArgs...

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Executing /usr/bin/qemu-system-x86_64: []string{"-drive", "format=qcow2,file=./windows-2012r2-db/windows-2012r2-db-standard,if=virtio,cache=writeback,discard=ignore", "-boot", "c", "-m", "4096M", "-rtc", "base=localtime", "-fda", "/tmp/packer682066627", "-name", "windows-2012r2-db-standard", "-machine", "type=pc,accel=kvm", "-netdev", "user,id=user.0,hostfwd=tcp::3882-:5985", "-vnc", "127.0.0.1:11", "-smp", "2", "-usb", "-device", "usb-tablet"}

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Started Qemu. Pid: 24112

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: WARNING: Image format was not specified for '/tmp/packer682066627' and probing guessed raw.

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: Automatically detecting the format is dangerous for raw images, write operations on block 0 will be restricted.

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: Specify the 'raw' format explicitly to remove the restrictions.

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: qemu-system-x86_64: warning: host doesn't support requested feature: CPUID.80000001H:ECX.svm [bit 2]

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: qemu-system-x86_64: warning: host doesn't support requested feature: CPUID.80000001H:ECX.svm [bit 2]

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: qemu-system-x86_64: warning: netdev user.0 has no peer

```

### Solution

Through some trial and error I managed to get a working set of `qemuargs`.

```json

"qemuargs": [

["-boot", "c"],

["-m", "4096M"],

["-smp", "2"],

["-usb"],

["-device", "usb-tablet"],

["-device", "virtio-net,netdev=user.0"],

["-rtc", "base=localtime"],

]

```

I suspect changes in QEMU have changed the required set of command instructions when setting up the network. Note the addition of `["-device", "virtio-net,netdev=user.0"]`. I tried setting `net_device` as per the packer docs but this had no effect, the above configuration was required.

I'm not sure if this constitutes a bug in Packer? Perhaps changes are required to support the new QEMU features/behaviour.

Having to manually add the `-device virtio-net` to every QEMU build does not seem like a valid long term solution. If the `netdev` ID is ever changed this will break again.

| 1.0 | KVM/QEMU Network "has no peer" and WinRM/SSH fails due to no network present - I have a Packer KVM build that I have not run for some time locally since it usually runs on the CI server. When I tried to run it locally I initially hit an issue due to the deprecation of `-usbevice tablet` in QEMU. After fixing this I noticed WinRM would never connect and the Windows VM had no network. The output from `PACKER_LOG=1` shows a warning from QEMU: `qemu-system-x86_64: warning: netdev user.0 has no peer` which according to the QEMU docs will result in no functional network.

Packer version: `1.3.1`

Host Platform:

```

Distributor ID: Ubuntu

Description: Ubuntu 18.04.1 LTS

Release: 18.04

Codename: bionic

```

QEMU Version:

```

QEMU emulator version 2.11.1(Debian 1:2.11+dfsg-1ubuntu7.5)

Copyright (c) 2003-2017 Fabrice Bellard and the QEMU Project developers

```

QEMU Args in json:

```json

"qemuargs": [

["-boot", "c"],

["-m", "4096M"],

["-smp", "2"],

["-usb"],

["-device", "usb-tablet"],

["-rtc", "base=localtime"],

]

```

Packer Debug log snippet:

```

==> kvm: Overriding defaults Qemu arguments with QemuArgs...

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Executing /usr/bin/qemu-system-x86_64: []string{"-drive", "format=qcow2,file=./windows-2012r2-db/windows-2012r2-db-standard,if=virtio,cache=writeback,discard=ignore", "-boot", "c", "-m", "4096M", "-rtc", "base=localtime", "-fda", "/tmp/packer682066627", "-name", "windows-2012r2-db-standard", "-machine", "type=pc,accel=kvm", "-netdev", "user,id=user.0,hostfwd=tcp::3882-:5985", "-vnc", "127.0.0.1:11", "-smp", "2", "-usb", "-device", "usb-tablet"}

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Started Qemu. Pid: 24112

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: WARNING: Image format was not specified for '/tmp/packer682066627' and probing guessed raw.

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: Automatically detecting the format is dangerous for raw images, write operations on block 0 will be restricted.

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: Specify the 'raw' format explicitly to remove the restrictions.

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: qemu-system-x86_64: warning: host doesn't support requested feature: CPUID.80000001H:ECX.svm [bit 2]

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: qemu-system-x86_64: warning: host doesn't support requested feature: CPUID.80000001H:ECX.svm [bit 2]

2018/10/05 12:44:48 packer: 2018/10/05 12:44:48 Qemu stderr: qemu-system-x86_64: warning: netdev user.0 has no peer

```

### Solution

Through some trial and error I managed to get a working set of `qemuargs`.

```json

"qemuargs": [

["-boot", "c"],

["-m", "4096M"],

["-smp", "2"],

["-usb"],

["-device", "usb-tablet"],

["-device", "virtio-net,netdev=user.0"],

["-rtc", "base=localtime"],

]

```

I suspect changes in QEMU have changed the required set of command instructions when setting up the network. Note the addition of `["-device", "virtio-net,netdev=user.0"]`. I tried setting `net_device` as per the packer docs but this had no effect, the above configuration was required.

I'm not sure if this constitutes a bug in Packer? Perhaps changes are required to support the new QEMU features/behaviour.

Having to manually add the `-device virtio-net` to every QEMU build does not seem like a valid long term solution. If the `netdev` ID is ever changed this will break again.

| non_main | kvm qemu network has no peer and winrm ssh fails due to no network present i have a packer kvm build that i have not run for some time locally since it usually runs on the ci server when i tried to run it locally i initially hit an issue due to the deprecation of usbevice tablet in qemu after fixing this i noticed winrm would never connect and the windows vm had no network the output from packer log shows a warning from qemu qemu system warning netdev user has no peer which according to the qemu docs will result in no functional network packer version host platform distributor id ubuntu description ubuntu lts release codename bionic qemu version qemu emulator version debian dfsg copyright c fabrice bellard and the qemu project developers qemu args in json json qemuargs packer debug log snippet kvm overriding defaults qemu arguments with qemuargs packer executing usr bin qemu system string drive format file windows db windows db standard if virtio cache writeback discard ignore boot c m rtc base localtime fda tmp name windows db standard machine type pc accel kvm netdev user id user hostfwd tcp vnc smp usb device usb tablet packer started qemu pid packer qemu stderr warning image format was not specified for tmp and probing guessed raw packer qemu stderr automatically detecting the format is dangerous for raw images write operations on block will be restricted packer qemu stderr specify the raw format explicitly to remove the restrictions packer qemu stderr qemu system warning host doesn t support requested feature cpuid ecx svm packer qemu stderr qemu system warning host doesn t support requested feature cpuid ecx svm packer qemu stderr qemu system warning netdev user has no peer solution through some trial and error i managed to get a working set of qemuargs json qemuargs i suspect changes in qemu have changed the required set of command instructions when setting up the network note the addition of i tried setting net device as per the packer docs but this had no effect the above configuration was required i m not sure if this constitutes a bug in packer perhaps changes are required to support the new qemu features behaviour having to manually add the device virtio net to every qemu build does not seem like a valid long term solution if the netdev id is ever changed this will break again | 0 |

148,282 | 13,232,969,729 | IssuesEvent | 2020-08-18 14:08:37 | ue-sho/camet | https://api.github.com/repos/ue-sho/camet | closed | 機能仕様書の修正 | documentation | # CASL

## コンパイル機能

- [ ] コンパイルエラーの種類

~~オブジェクトファイルに出力する等の記述(出力形式)~~

## リンク機能

- [ ] リンクのやり方など記述

# COMET

~~オブジェファイルの読み込み等を記述~~

- [ ] バスに色を付ける等の記述

| 1.0 | 機能仕様書の修正 - # CASL

## コンパイル機能

- [ ] コンパイルエラーの種類

~~オブジェクトファイルに出力する等の記述(出力形式)~~

## リンク機能

- [ ] リンクのやり方など記述

# COMET

~~オブジェファイルの読み込み等を記述~~

- [ ] バスに色を付ける等の記述

| non_main | 機能仕様書の修正 casl コンパイル機能 コンパイルエラーの種類 オブジェクトファイルに出力する等の記述 出力形式 リンク機能 リンクのやり方など記述 comet オブジェファイルの読み込み等を記述 バスに色を付ける等の記述 | 0 |

306,615 | 9,397,194,514 | IssuesEvent | 2019-04-08 09:10:05 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Spaces appended on the certificate content in IAM management console service providers' edit functionality | Complexity/Low Component/Auth Framework Priority/High Severity/Critical Type/Bug | To recreate the issue

1. Create an application in the store.

2. Log in to IAM management console.

3. Under service provider click the edit button of any service providers

4. You can see extra spaces appended at the end of the certificate content in Application certificate

5. Remove the spaces and update

6. Redo the above flows and you can see the spaces appended again

If the certificate is updated (by removing the spaces) in step 4 successfully the request flow works fine but if you click the edit button again the spaces are appended, which means every time the page loads on the edit function the spaces are appended at the end of the certificate content.

<img width="1674" alt="screen shot 2019-01-29 at 9 28 13 am" src="https://user-images.githubusercontent.com/18033158/51884526-981f1900-23ad-11e9-9d22-5b1b3d1d3bb9.png">

| 1.0 | Spaces appended on the certificate content in IAM management console service providers' edit functionality - To recreate the issue

1. Create an application in the store.

2. Log in to IAM management console.

3. Under service provider click the edit button of any service providers

4. You can see extra spaces appended at the end of the certificate content in Application certificate

5. Remove the spaces and update

6. Redo the above flows and you can see the spaces appended again

If the certificate is updated (by removing the spaces) in step 4 successfully the request flow works fine but if you click the edit button again the spaces are appended, which means every time the page loads on the edit function the spaces are appended at the end of the certificate content.

<img width="1674" alt="screen shot 2019-01-29 at 9 28 13 am" src="https://user-images.githubusercontent.com/18033158/51884526-981f1900-23ad-11e9-9d22-5b1b3d1d3bb9.png">

| non_main | spaces appended on the certificate content in iam management console service providers edit functionality to recreate the issue create an application in the store log in to iam management console under service provider click the edit button of any service providers you can see extra spaces appended at the end of the certificate content in application certificate remove the spaces and update redo the above flows and you can see the spaces appended again if the certificate is updated by removing the spaces in step successfully the request flow works fine but if you click the edit button again the spaces are appended which means every time the page loads on the edit function the spaces are appended at the end of the certificate content img width alt screen shot at am src | 0 |

3,901 | 17,360,245,191 | IssuesEvent | 2021-07-29 19:30:27 | ansible/ansible | https://api.github.com/repos/ansible/ansible | closed | get_url doesn't work when running Ansible against Docker container | affects_2.12 bug cloud deprecated docker module needs_maintainer needs_triage support:community support:core | ### Summary

When Im trying to run playbook with get_url task against Docker container it fails with following error even in `-vvvvvv` verbose mode:

```

fatal: [ubuntu2004]: FAILED! => {

"changed": false,

"module_stderr": "",

"module_stdout": "",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 0

}

```

If I will run my playbook with `ANSIBLE_KEEP_REMOTE_FILES=1` and execute the AnsiballZ_get_url.py script it succeeds.

`$ docker exec -i ubuntu2004 /bin/sh -c "/bin/sh -c '/usr/bin/python3 /root/.ansible/tmp/ansible-tmp-<some_nrs>/AnsiballZ_get_url.py && sleep 0'"`

Also same goes for facts gathering sometimes. It gives me error:

```

fatal: [ubuntu2004]: FAILED! => {

"ansible_facts": {},

"changed": false,

"failed_modules": {

"ansible.legacy.setup": {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3"

},

"failed": true,

"module_stderr": "",

"module_stdout": "",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 0

}

},

"msg": "The following modules failed to execute: ansible.legacy.setup\n"

}

```

But if I execute the command directly, it works:

`$ docker exec -i ubuntu2004 /bin/sh -c "/bin/sh -c '/usr/bin/python3 /root/.ansible/tmp/ansible-tmp-<some_nrs>/AnsiballZ_setup.py && sleep 0'"`

I've tried Ubuntu 20.04 and CentOS 8 images from here: https://github.com/ansible/distro-test-containers

### Issue Type

Bug Report

### Component Name

get_url, docker, ubuntu

### Ansible Version

```console

$ ansible --version

ansible [core 2.11.3]

config file = /etc/ansible/ansible.cfg

configured module search path = ['/home/user1/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /home/user1/.local/lib/python3.6/site-packages/ansible

ansible collection location = /home/user1/.ansible/collections:/usr/share/ansible/collections

executable location = /home/user1/.local/bin/ansible

python version = 3.6.8 (default, Aug 13 2020, 07:46:32) [GCC 4.8.5 20150623 (Red Hat 4.8.5-39)]

jinja version = 3.0.1

libyaml = True

```

### Configuration

```console

$ ansible-config dump --only-changed

(N/A)

```

### OS / Environment

Running Ansible on RHEL 7.9 against Ubuntu 20.04 Docker (version 1.13.1) container.

### Steps to Reproduce

Im just running a role with `get_url` task

### Expected Results

I expected that the file will be downloaded.

### Actual Results

```console

fatal: [ubuntu2004]: FAILED! => {

"changed": false,

"module_stderr": "",

"module_stdout": "",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 0

}

```

### Code of Conduct

- [X] I agree to follow the Ansible Code of Conduct | True | get_url doesn't work when running Ansible against Docker container - ### Summary

When Im trying to run playbook with get_url task against Docker container it fails with following error even in `-vvvvvv` verbose mode:

```

fatal: [ubuntu2004]: FAILED! => {

"changed": false,

"module_stderr": "",

"module_stdout": "",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 0

}

```

If I will run my playbook with `ANSIBLE_KEEP_REMOTE_FILES=1` and execute the AnsiballZ_get_url.py script it succeeds.

`$ docker exec -i ubuntu2004 /bin/sh -c "/bin/sh -c '/usr/bin/python3 /root/.ansible/tmp/ansible-tmp-<some_nrs>/AnsiballZ_get_url.py && sleep 0'"`

Also same goes for facts gathering sometimes. It gives me error:

```

fatal: [ubuntu2004]: FAILED! => {

"ansible_facts": {},

"changed": false,

"failed_modules": {

"ansible.legacy.setup": {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3"

},

"failed": true,

"module_stderr": "",

"module_stdout": "",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 0

}

},

"msg": "The following modules failed to execute: ansible.legacy.setup\n"

}

```

But if I execute the command directly, it works:

`$ docker exec -i ubuntu2004 /bin/sh -c "/bin/sh -c '/usr/bin/python3 /root/.ansible/tmp/ansible-tmp-<some_nrs>/AnsiballZ_setup.py && sleep 0'"`

I've tried Ubuntu 20.04 and CentOS 8 images from here: https://github.com/ansible/distro-test-containers

### Issue Type

Bug Report

### Component Name

get_url, docker, ubuntu

### Ansible Version

```console

$ ansible --version

ansible [core 2.11.3]

config file = /etc/ansible/ansible.cfg

configured module search path = ['/home/user1/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /home/user1/.local/lib/python3.6/site-packages/ansible

ansible collection location = /home/user1/.ansible/collections:/usr/share/ansible/collections

executable location = /home/user1/.local/bin/ansible

python version = 3.6.8 (default, Aug 13 2020, 07:46:32) [GCC 4.8.5 20150623 (Red Hat 4.8.5-39)]

jinja version = 3.0.1

libyaml = True

```

### Configuration

```console

$ ansible-config dump --only-changed

(N/A)

```

### OS / Environment

Running Ansible on RHEL 7.9 against Ubuntu 20.04 Docker (version 1.13.1) container.

### Steps to Reproduce

Im just running a role with `get_url` task

### Expected Results

I expected that the file will be downloaded.

### Actual Results

```console

fatal: [ubuntu2004]: FAILED! => {

"changed": false,

"module_stderr": "",

"module_stdout": "",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 0

}

```

### Code of Conduct

- [X] I agree to follow the Ansible Code of Conduct | main | get url doesn t work when running ansible against docker container summary when im trying to run playbook with get url task against docker container it fails with following error even in vvvvvv verbose mode fatal failed changed false module stderr module stdout msg module failure nsee stdout stderr for the exact error rc if i will run my playbook with ansible keep remote files and execute the ansiballz get url py script it succeeds docker exec i bin sh c bin sh c usr bin root ansible tmp ansible tmp ansiballz get url py sleep also same goes for facts gathering sometimes it gives me error fatal failed ansible facts changed false failed modules ansible legacy setup ansible facts discovered interpreter python usr bin failed true module stderr module stdout msg module failure nsee stdout stderr for the exact error rc msg the following modules failed to execute ansible legacy setup n but if i execute the command directly it works docker exec i bin sh c bin sh c usr bin root ansible tmp ansible tmp ansiballz setup py sleep i ve tried ubuntu and centos images from here issue type bug report component name get url docker ubuntu ansible version console ansible version ansible config file etc ansible ansible cfg configured module search path ansible python module location home local lib site packages ansible ansible collection location home ansible collections usr share ansible collections executable location home local bin ansible python version default aug jinja version libyaml true configuration console ansible config dump only changed n a os environment running ansible on rhel against ubuntu docker version container steps to reproduce im just running a role with get url task expected results i expected that the file will be downloaded actual results console fatal failed changed false module stderr module stdout msg module failure nsee stdout stderr for the exact error rc code of conduct i agree to follow the ansible code of conduct | 1 |

3,083 | 11,708,806,744 | IssuesEvent | 2020-03-08 15:21:59 | OpenRefine/OpenRefine | https://api.github.com/repos/OpenRefine/OpenRefine | closed | Publish dependencies on Maven Central | maintainability maven | Some of our dependencies are not available on Maven Central - they are old unmaintained libraries or our own forks of them. We currently ship their jars in the repository itself, but this is a problem since it requires installing these in the user's own local Maven repository first. This impedes workflows such as importing OpenRefine in Eclipse with m2e.

We should publish all our dependencies under our own `org.openrefine` groupId. This will make #2254 easier. | True | Publish dependencies on Maven Central - Some of our dependencies are not available on Maven Central - they are old unmaintained libraries or our own forks of them. We currently ship their jars in the repository itself, but this is a problem since it requires installing these in the user's own local Maven repository first. This impedes workflows such as importing OpenRefine in Eclipse with m2e.

We should publish all our dependencies under our own `org.openrefine` groupId. This will make #2254 easier. | main | publish dependencies on maven central some of our dependencies are not available on maven central they are old unmaintained libraries or our own forks of them we currently ship their jars in the repository itself but this is a problem since it requires installing these in the user s own local maven repository first this impedes workflows such as importing openrefine in eclipse with we should publish all our dependencies under our own org openrefine groupid this will make easier | 1 |

3,254 | 12,402,316,304 | IssuesEvent | 2020-05-21 11:43:29 | ocaml/opam-repository | https://api.github.com/repos/ocaml/opam-repository | closed | Problem with mlpost 0.8.2 Package | Stale needs maintainer action | There is a problem with the mlpost 0.8.2 package provided by OPAM, which causes errors when trying to install melt.

This has been observed with the 4.04.0 and 4.0.4.2 compilers.

I believe the problem is that the source provided by OPAM is out of date:

http://mlpost.lri.fr/download/mlpost-0.8.2.tar.gz

Pinning OPAM to the following source seems to work fine:

https://github.com/backtracking/mlpost/releases/tag/0.8.2 | True | Problem with mlpost 0.8.2 Package - There is a problem with the mlpost 0.8.2 package provided by OPAM, which causes errors when trying to install melt.

This has been observed with the 4.04.0 and 4.0.4.2 compilers.

I believe the problem is that the source provided by OPAM is out of date:

http://mlpost.lri.fr/download/mlpost-0.8.2.tar.gz

Pinning OPAM to the following source seems to work fine:

https://github.com/backtracking/mlpost/releases/tag/0.8.2 | main | problem with mlpost package there is a problem with the mlpost package provided by opam which causes errors when trying to install melt this has been observed with the and compilers i believe the problem is that the source provided by opam is out of date pinning opam to the following source seems to work fine | 1 |

81,777 | 7,802,950,778 | IssuesEvent | 2018-06-10 18:10:38 | Students-of-the-city-of-Kostroma/Student-timetable | https://api.github.com/repos/Students-of-the-city-of-Kostroma/Student-timetable | closed | Разработать сценарии функционального тестирования для Story 4 | Functional test Script | Разработать сценарии функционального тестирования для Story #4 | 1.0 | Разработать сценарии функционального тестирования для Story 4 - Разработать сценарии функционального тестирования для Story #4 | non_main | разработать сценарии функционального тестирования для story разработать сценарии функционального тестирования для story | 0 |

2,449 | 8,639,868,976 | IssuesEvent | 2018-11-23 22:13:57 | F5OEO/rpitx | https://api.github.com/repos/F5OEO/rpitx | closed | How to tx no modulation signal? | V1 related (not maintained) | Hi. I trying tuning IF in my receiver and need HF generator tuning on 10.7 mhz. Signal must be nomod. How I can use ur program for my goal?

In additional. I found some problem with transmit on rpi 3. After starting a tx, signal have distortions and after few moments signal is disappear. | True | How to tx no modulation signal? - Hi. I trying tuning IF in my receiver and need HF generator tuning on 10.7 mhz. Signal must be nomod. How I can use ur program for my goal?

In additional. I found some problem with transmit on rpi 3. After starting a tx, signal have distortions and after few moments signal is disappear. | main | how to tx no modulation signal hi i trying tuning if in my receiver and need hf generator tuning on mhz signal must be nomod how i can use ur program for my goal in additional i found some problem with transmit on rpi after starting a tx signal have distortions and after few moments signal is disappear | 1 |

190,727 | 15,255,299,888 | IssuesEvent | 2021-02-20 15:33:57 | hengband/hengband | https://api.github.com/repos/hengband/hengband | closed | 自動生成スポイラーを出力するコマンドラインオプション | documentation enhancement | 現在、ゲーム中にデバッグ/詐欺オプションを有効にして、デバッグコマンド`^a`→`"`キーで出力できるスポイラーファイルを、`hengband --output-spoiler` のような感じでコマンドラインオプションを与えることで出力できるようにしたい。

この機能があれば、どこかに最新のスポイラーファイルを自動的にアップロードするなどの機能も実現できそう。

とりあえず指定したファイル名で指定したスポイラーファイルを出力する関数を作って、後はプラットフォーム毎にコマンドラインオプションを解析してそれを呼ぶようにする感じか。

| 1.0 | 自動生成スポイラーを出力するコマンドラインオプション - 現在、ゲーム中にデバッグ/詐欺オプションを有効にして、デバッグコマンド`^a`→`"`キーで出力できるスポイラーファイルを、`hengband --output-spoiler` のような感じでコマンドラインオプションを与えることで出力できるようにしたい。

この機能があれば、どこかに最新のスポイラーファイルを自動的にアップロードするなどの機能も実現できそう。

とりあえず指定したファイル名で指定したスポイラーファイルを出力する関数を作って、後はプラットフォーム毎にコマンドラインオプションを解析してそれを呼ぶようにする感じか。

| non_main | 自動生成スポイラーを出力するコマンドラインオプション 現在、ゲーム中にデバッグ 詐欺オプションを有効にして、デバッグコマンド a → キーで出力できるスポイラーファイルを、 hengband output spoiler のような感じでコマンドラインオプションを与えることで出力できるようにしたい。 この機能があれば、どこかに最新のスポイラーファイルを自動的にアップロードするなどの機能も実現できそう。 とりあえず指定したファイル名で指定したスポイラーファイルを出力する関数を作って、後はプラットフォーム毎にコマンドラインオプションを解析してそれを呼ぶようにする感じか。 | 0 |

41,738 | 10,583,959,405 | IssuesEvent | 2019-10-08 14:37:46 | zfsonlinux/zfs | https://api.github.com/repos/zfsonlinux/zfs | closed | After a week of running array, issuing zpool scrub causes system hang | Type: Defect | <!--

Thank you for reporting an issue.

*IMPORTANT* - Please search our issue tracker *before* making a new issue.

If you cannot find a similar issue, then create a new issue.

https://github.com/zfsonlinux/zfs/issues

*IMPORTANT* - This issue tracker is for *bugs* and *issues* only.

Please search the wiki and the mailing list archives before asking

questions on the mailing list.

https://github.com/zfsonlinux/zfs/wiki/Mailing-Lists

Please fill in as much of the template as possible.

-->

### System information

<!-- add version after "|" character -->

Dell R620 | Sandy Bridge

--- | ---

Distribution Name | Gentoo

Distribution Version | Rolling

Linux Kernel | 4.15.16

Architecture | x86_64

ZFS Version | 0.7.9-r0-gentoo

SPL Version | 0.7.9-r0-gentoo

<!--

Commands to find ZFS/SPL versions:

modinfo zfs | grep -iw version

modinfo spl | grep -iw version

-->

### Describe the problem you're observing

My array, which is made up of 10x5TB disks attached to an LSI 9300 SAS controller running RAIDz2, and is currently about 18.2TB full out of ~36TB of usable storage, is seeing a system hang after zpool scrub runs.

However, I can run zpool scrub on my pool after a fresh reboot, and the scrub runs to completion with no issues (and finds no problems). But if I have the scrub run out of cron once a week, as it has been running for about 2 years now, it will cause the system to become unresponsive. If I run the scrub manually after about a week of running the system, the same behavior occurs.

My system is a Dell R620 running Gentoo. It has 72GB of ECC RAM. I have checked SMART data on all the disks, and run other health checks against the RAM, and nothing has indicated a hardware issue. This started occurring after upgrading ZFS to 0.7.x at some point. I honestly don't know where the cutoff happened, since I wrote off the the strange crashes as anomalies until I noticed the pattern.

### Describe how to reproduce the problem

My system just has to run for about a week doing its normal workloads (a couple of VMs, serving data to my Plex server, etc.), and then kick off a zpool scrub on the pool. This can be done via cron or interactively. Either way, same issue.

### Include any warning/errors/backtraces from the system logs

I'm in the process of getting a serial console hooked up to capture this. Because my cron job is currently scheduled to run at 2am on Sunday's, I forget to get this configured until it's too late. I'm hoping someone has also seen this (Google'ing around did show some similar issues, but nothing specific).

<!--

*IMPORTANT* - Please mark logs and text output from terminal commands

or else Github will not display them correctly.

An example is provided below.

Example:

```

this is an example how log text should be marked (wrap it with ```)

```

-->

| 1.0 | After a week of running array, issuing zpool scrub causes system hang - <!--

Thank you for reporting an issue.

*IMPORTANT* - Please search our issue tracker *before* making a new issue.

If you cannot find a similar issue, then create a new issue.

https://github.com/zfsonlinux/zfs/issues

*IMPORTANT* - This issue tracker is for *bugs* and *issues* only.

Please search the wiki and the mailing list archives before asking

questions on the mailing list.

https://github.com/zfsonlinux/zfs/wiki/Mailing-Lists

Please fill in as much of the template as possible.

-->

### System information

<!-- add version after "|" character -->

Dell R620 | Sandy Bridge

--- | ---

Distribution Name | Gentoo

Distribution Version | Rolling

Linux Kernel | 4.15.16

Architecture | x86_64

ZFS Version | 0.7.9-r0-gentoo

SPL Version | 0.7.9-r0-gentoo

<!--

Commands to find ZFS/SPL versions:

modinfo zfs | grep -iw version

modinfo spl | grep -iw version

-->

### Describe the problem you're observing

My array, which is made up of 10x5TB disks attached to an LSI 9300 SAS controller running RAIDz2, and is currently about 18.2TB full out of ~36TB of usable storage, is seeing a system hang after zpool scrub runs.

However, I can run zpool scrub on my pool after a fresh reboot, and the scrub runs to completion with no issues (and finds no problems). But if I have the scrub run out of cron once a week, as it has been running for about 2 years now, it will cause the system to become unresponsive. If I run the scrub manually after about a week of running the system, the same behavior occurs.

My system is a Dell R620 running Gentoo. It has 72GB of ECC RAM. I have checked SMART data on all the disks, and run other health checks against the RAM, and nothing has indicated a hardware issue. This started occurring after upgrading ZFS to 0.7.x at some point. I honestly don't know where the cutoff happened, since I wrote off the the strange crashes as anomalies until I noticed the pattern.

### Describe how to reproduce the problem

My system just has to run for about a week doing its normal workloads (a couple of VMs, serving data to my Plex server, etc.), and then kick off a zpool scrub on the pool. This can be done via cron or interactively. Either way, same issue.

### Include any warning/errors/backtraces from the system logs

I'm in the process of getting a serial console hooked up to capture this. Because my cron job is currently scheduled to run at 2am on Sunday's, I forget to get this configured until it's too late. I'm hoping someone has also seen this (Google'ing around did show some similar issues, but nothing specific).

<!--

*IMPORTANT* - Please mark logs and text output from terminal commands

or else Github will not display them correctly.

An example is provided below.

Example:

```

this is an example how log text should be marked (wrap it with ```)

```

-->

| non_main | after a week of running array issuing zpool scrub causes system hang thank you for reporting an issue important please search our issue tracker before making a new issue if you cannot find a similar issue then create a new issue important this issue tracker is for bugs and issues only please search the wiki and the mailing list archives before asking questions on the mailing list please fill in as much of the template as possible system information dell sandy bridge distribution name gentoo distribution version rolling linux kernel architecture zfs version gentoo spl version gentoo commands to find zfs spl versions modinfo zfs grep iw version modinfo spl grep iw version describe the problem you re observing my array which is made up of disks attached to an lsi sas controller running and is currently about full out of of usable storage is seeing a system hang after zpool scrub runs however i can run zpool scrub on my pool after a fresh reboot and the scrub runs to completion with no issues and finds no problems but if i have the scrub run out of cron once a week as it has been running for about years now it will cause the system to become unresponsive if i run the scrub manually after about a week of running the system the same behavior occurs my system is a dell running gentoo it has of ecc ram i have checked smart data on all the disks and run other health checks against the ram and nothing has indicated a hardware issue this started occurring after upgrading zfs to x at some point i honestly don t know where the cutoff happened since i wrote off the the strange crashes as anomalies until i noticed the pattern describe how to reproduce the problem my system just has to run for about a week doing its normal workloads a couple of vms serving data to my plex server etc and then kick off a zpool scrub on the pool this can be done via cron or interactively either way same issue include any warning errors backtraces from the system logs i m in the process of getting a serial console hooked up to capture this because my cron job is currently scheduled to run at on sunday s i forget to get this configured until it s too late i m hoping someone has also seen this google ing around did show some similar issues but nothing specific important please mark logs and text output from terminal commands or else github will not display them correctly an example is provided below example this is an example how log text should be marked wrap it with | 0 |

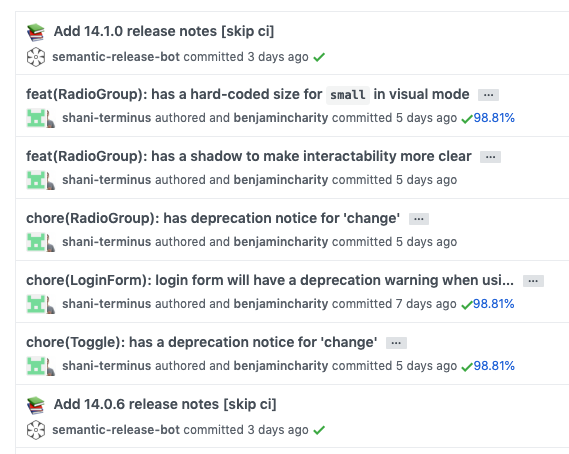

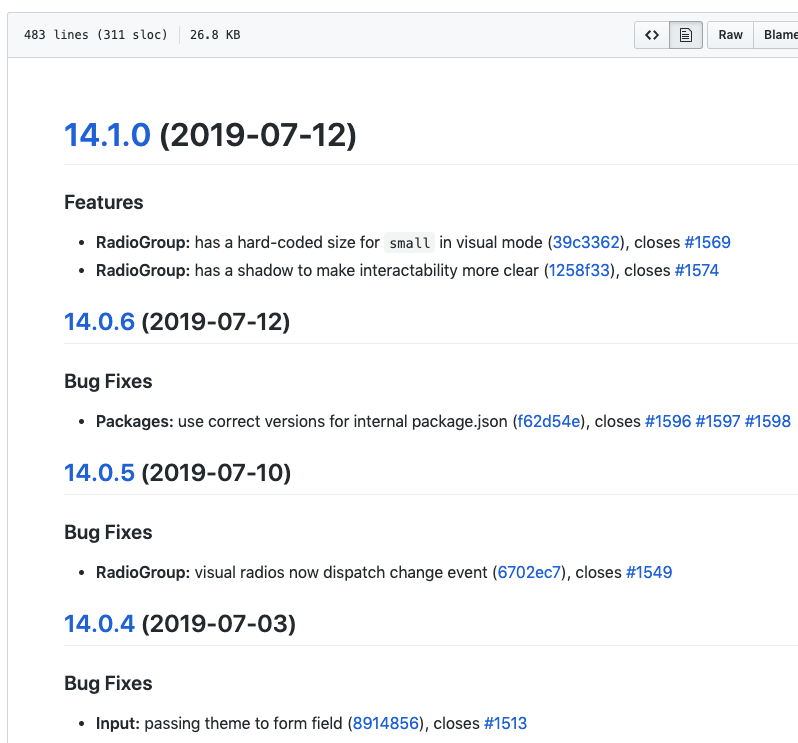

118,803 | 10,011,935,082 | IssuesEvent | 2019-07-15 11:59:37 | GetTerminus/terminus-ui | https://api.github.com/repos/GetTerminus/terminus-ui | opened | Chore commits not tied to a release do not get added to the changelog | Goal: Library Stabilization Needs: exploration Target: latest Type: bug | #### 1. What is the expected behavior?

All commits should be added to the changelog.

#### 2. What is the current behavior?

Chore commits seem to not be added if not commited with code that triggers a release.

#### 3. What are the steps to reproduce?

Providing a reproduction is the *best* way to share your issue.

a) Create a `chore` commit and push it to master

b) Notice no changelog changes are published (which is correct).

c) Create another `chore` commit and then a `fix` commit.

d) Releasing the code, you will see both commits from step 'c' but not the commit from step 'a'.

##### Example:

These commits:

Created this changelog (notice missing commits for the toggle chore and log in chore):

#### 4. Which versions of this library, Angular, TypeScript, & browsers are affected?

- UI Library: latest

| 1.0 | Chore commits not tied to a release do not get added to the changelog - #### 1. What is the expected behavior?

All commits should be added to the changelog.

#### 2. What is the current behavior?

Chore commits seem to not be added if not commited with code that triggers a release.

#### 3. What are the steps to reproduce?

Providing a reproduction is the *best* way to share your issue.

a) Create a `chore` commit and push it to master

b) Notice no changelog changes are published (which is correct).

c) Create another `chore` commit and then a `fix` commit.

d) Releasing the code, you will see both commits from step 'c' but not the commit from step 'a'.

##### Example:

These commits:

Created this changelog (notice missing commits for the toggle chore and log in chore):

#### 4. Which versions of this library, Angular, TypeScript, & browsers are affected?

- UI Library: latest

| non_main | chore commits not tied to a release do not get added to the changelog what is the expected behavior all commits should be added to the changelog what is the current behavior chore commits seem to not be added if not commited with code that triggers a release what are the steps to reproduce providing a reproduction is the best way to share your issue a create a chore commit and push it to master b notice no changelog changes are published which is correct c create another chore commit and then a fix commit d releasing the code you will see both commits from step c but not the commit from step a example these commits created this changelog notice missing commits for the toggle chore and log in chore which versions of this library angular typescript browsers are affected ui library latest | 0 |

177 | 2,772,038,465 | IssuesEvent | 2015-05-02 08:18:57 | spyder-ide/spyder | https://api.github.com/repos/spyder-ide/spyder | opened | Move helper widgets to `helperwidgets.py` | Maintainability Miscelleneous Usability | Refactor the code in charge of the [Check for updates PR](#2321), to move the CheckMessageBox to `helperwidgets.py` file.

Refactor code from the [arraybuilder ](https://bitbucket.org/spyder-ide/spyderlib/pull-request/98/add-array-matrix-helper-in-editor-and) to move the ToolTip to `helperwidgets.py` file. | True | Move helper widgets to `helperwidgets.py` - Refactor the code in charge of the [Check for updates PR](#2321), to move the CheckMessageBox to `helperwidgets.py` file.

Refactor code from the [arraybuilder ](https://bitbucket.org/spyder-ide/spyderlib/pull-request/98/add-array-matrix-helper-in-editor-and) to move the ToolTip to `helperwidgets.py` file. | main | move helper widgets to helperwidgets py refactor the code in charge of the to move the checkmessagebox to helperwidgets py file refactor code from the to move the tooltip to helperwidgets py file | 1 |

305,794 | 26,413,219,814 | IssuesEvent | 2023-01-13 13:59:54 | JQAstudent/TEODOR | https://api.github.com/repos/JQAstudent/TEODOR | opened | teodor.bg - When in Greek a user can enter delivery details in Cyrillic alphabet and a Bulgarian phone number | NEGATIVE TEST CASE | <html>

<body>

<!--StartFragment-->

Bug ID | BR TDR BSKT - 008

-- | --

Name | When in Greek a user can enter delivery details in Cyrillic alphabet and a Bulgarian phone number

Priority |

Severity | 3/4

Description |

Steps to reproduce |

Step 1 | Navigate to https://teodor.bg/

Step 2 | Change the language to Greek

Step 3 | Log in with valid credentials (email: ez_jqa@mail.bg, password: @ideSeg@)

Step 4 | Add any products to the basket

Step 5 | Click on "ΠΡΟΧΩΡΗΣΤΕ ΣΤΗΝ ΑΓΟΡΑ" button

Step 6 | Fill in the required fields in Cyrillic and incomplete Bulgarian phone number (003598)

Step 7 | Click on "ΕΠΟΜΕΝΟ" button

|

Expected result: | The page with the delivery details is loaded in Greek. The required fields are marked and instructions are available for specific fields. There is a verification for the fields with a specific length and format of the number such as phone number. When the fields are filled in in a Cyrillic alphabet, or when shorter phone number is entered or a phone number with different country dialing code, a warning message is displayed and the user is not able to proceed with the order.

|

Actual result: | The page with the delivery details is loaded in Greek. The required fields are marked and instructions are available for a specific fields. There isn't a verification for the fields with a specific length of the number such as phone number and financial identification code. When the fields are filled in in a Cyrillic alphabet, or when shorter number or non-Greek country dialing code is entered not any warning message is displayed and the user is able to proceed with the order with incorrect details entered.

Attachment | https://drive.google.com/file/d/1qKbbob3IZAhvZIMXnkpYJqee3gz_pOkw/view?usp=sharing

Status | Opened

Component | Shopping basket

Version/Build number (Found in) | 2022

Environment | Windows 7, Yandex 22.7.1.806 (64-bit)

Comments |

Date Created | 8/7/2022

Author | Emil Zahariev

Audit Log |

<!--EndFragment-->

</body>

</html>Bug ID BR TDR BSKT - 008

Name When in Greek a user can enter delivery details in Cyrillic alphabet and a Bulgarian phone number

Priority

Severity 3/4

Description

Steps to reproduce

Step 1 Navigate to https://teodor.bg/

Step 2 Change the language to Greek

Step 3 Log in with valid credentials (email: [ez_jqa@mail.bg](mailto:ez_jqa@mail.bg), password: @ideSeg@)

Step 4 Add any products to the basket

Step 5 Click on "ΠΡΟΧΩΡΗΣΤΕ ΣΤΗΝ ΑΓΟΡΑ" button

Step 6 Fill in the required fields in Cyrillic and incomplete Bulgarian phone number (003598)

Step 7 Click on "ΕΠΟΜΕΝΟ" button

Expected result: The page with the delivery details is loaded in Greek. The required fields are marked and instructions are available for specific fields. There is a verification for the fields with a specific length and format of the number such as phone number. When the fields are filled in in a Cyrillic alphabet, or when shorter phone number is entered or a phone number with different country dialing code, a warning message is displayed and the user is not able to proceed with the order.

Actual result: The page with the delivery details is loaded in Greek. The required fields are marked and instructions are available for a specific fields. There isn't a verification for the fields with a specific length of the number such as phone number and financial identification code. When the fields are filled in in a Cyrillic alphabet, or when shorter number or non-Greek country dialing code is entered not any warning message is displayed and the user is able to proceed with the order with incorrect details entered.

Attachment https://drive.google.com/file/d/1qKbbob3IZAhvZIMXnkpYJqee3gz_pOkw/view?usp=sharing

Status Opened

Component Shopping basket

Version/Build number (Found in) 2022

Environment Windows 7, Yandex 22.7.1.806 (64-bit)

Comments

Date Created 8/7/2022

Author --

Audit Log | 1.0 | teodor.bg - When in Greek a user can enter delivery details in Cyrillic alphabet and a Bulgarian phone number - <html>

<body>

<!--StartFragment-->

Bug ID | BR TDR BSKT - 008

-- | --

Name | When in Greek a user can enter delivery details in Cyrillic alphabet and a Bulgarian phone number

Priority |

Severity | 3/4

Description |

Steps to reproduce |

Step 1 | Navigate to https://teodor.bg/

Step 2 | Change the language to Greek

Step 3 | Log in with valid credentials (email: ez_jqa@mail.bg, password: @ideSeg@)

Step 4 | Add any products to the basket

Step 5 | Click on "ΠΡΟΧΩΡΗΣΤΕ ΣΤΗΝ ΑΓΟΡΑ" button

Step 6 | Fill in the required fields in Cyrillic and incomplete Bulgarian phone number (003598)

Step 7 | Click on "ΕΠΟΜΕΝΟ" button

|

Expected result: | The page with the delivery details is loaded in Greek. The required fields are marked and instructions are available for specific fields. There is a verification for the fields with a specific length and format of the number such as phone number. When the fields are filled in in a Cyrillic alphabet, or when shorter phone number is entered or a phone number with different country dialing code, a warning message is displayed and the user is not able to proceed with the order.

|

Actual result: | The page with the delivery details is loaded in Greek. The required fields are marked and instructions are available for a specific fields. There isn't a verification for the fields with a specific length of the number such as phone number and financial identification code. When the fields are filled in in a Cyrillic alphabet, or when shorter number or non-Greek country dialing code is entered not any warning message is displayed and the user is able to proceed with the order with incorrect details entered.

Attachment | https://drive.google.com/file/d/1qKbbob3IZAhvZIMXnkpYJqee3gz_pOkw/view?usp=sharing

Status | Opened

Component | Shopping basket

Version/Build number (Found in) | 2022

Environment | Windows 7, Yandex 22.7.1.806 (64-bit)

Comments |

Date Created | 8/7/2022

Author | Emil Zahariev

Audit Log |

<!--EndFragment-->

</body>

</html>Bug ID BR TDR BSKT - 008

Name When in Greek a user can enter delivery details in Cyrillic alphabet and a Bulgarian phone number

Priority

Severity 3/4

Description

Steps to reproduce

Step 1 Navigate to https://teodor.bg/

Step 2 Change the language to Greek

Step 3 Log in with valid credentials (email: [ez_jqa@mail.bg](mailto:ez_jqa@mail.bg), password: @ideSeg@)

Step 4 Add any products to the basket

Step 5 Click on "ΠΡΟΧΩΡΗΣΤΕ ΣΤΗΝ ΑΓΟΡΑ" button

Step 6 Fill in the required fields in Cyrillic and incomplete Bulgarian phone number (003598)

Step 7 Click on "ΕΠΟΜΕΝΟ" button

Expected result: The page with the delivery details is loaded in Greek. The required fields are marked and instructions are available for specific fields. There is a verification for the fields with a specific length and format of the number such as phone number. When the fields are filled in in a Cyrillic alphabet, or when shorter phone number is entered or a phone number with different country dialing code, a warning message is displayed and the user is not able to proceed with the order.

Actual result: The page with the delivery details is loaded in Greek. The required fields are marked and instructions are available for a specific fields. There isn't a verification for the fields with a specific length of the number such as phone number and financial identification code. When the fields are filled in in a Cyrillic alphabet, or when shorter number or non-Greek country dialing code is entered not any warning message is displayed and the user is able to proceed with the order with incorrect details entered.

Attachment https://drive.google.com/file/d/1qKbbob3IZAhvZIMXnkpYJqee3gz_pOkw/view?usp=sharing

Status Opened

Component Shopping basket

Version/Build number (Found in) 2022

Environment Windows 7, Yandex 22.7.1.806 (64-bit)

Comments

Date Created 8/7/2022

Author --

Audit Log | non_main | teodor bg when in greek a user can enter delivery details in cyrillic alphabet and a bulgarian phone number bug id br tdr bskt name when in greek a user can enter delivery details in cyrillic alphabet and a bulgarian phone number priority severity description steps to reproduce step navigate to step change the language to greek step log in with valid credentials email ez jqa mail bg password ideseg step add any products to the basket step click on προχωρηστε στην αγορα button step fill in the required fields in cyrillic and incomplete bulgarian phone number step click on επομενο button expected result the page with the delivery details is loaded in greek the required fields are marked and instructions are available for specific fields there is a verification for the fields with a specific length and format of the number such as phone number when the fields are filled in in a cyrillic alphabet or when shorter phone number is entered or a phone number with different country dialing code a warning message is displayed and the user is not able to proceed with the order actual result the page with the delivery details is loaded in greek the required fields are marked and instructions are available for a specific fields there isn t a verification for the fields with a specific length of the number such as phone number and financial identification code when the fields are filled in in a cyrillic alphabet or when shorter number or non greek country dialing code is entered not any warning message is displayed and the user is able to proceed with the order with incorrect details entered attachment status opened component shopping basket version build number found in environment windows yandex bit comments date created author emil zahariev audit log bug id br tdr bskt name when in greek a user can enter delivery details in cyrillic alphabet and a bulgarian phone number priority severity description steps to reproduce step navigate to step change the language to greek step log in with valid credentials email mailto ez jqa mail bg password ideseg step add any products to the basket step click on προχωρηστε στην αγορα button step fill in the required fields in cyrillic and incomplete bulgarian phone number step click on επομενο button expected result the page with the delivery details is loaded in greek the required fields are marked and instructions are available for specific fields there is a verification for the fields with a specific length and format of the number such as phone number when the fields are filled in in a cyrillic alphabet or when shorter phone number is entered or a phone number with different country dialing code a warning message is displayed and the user is not able to proceed with the order actual result the page with the delivery details is loaded in greek the required fields are marked and instructions are available for a specific fields there isn t a verification for the fields with a specific length of the number such as phone number and financial identification code when the fields are filled in in a cyrillic alphabet or when shorter number or non greek country dialing code is entered not any warning message is displayed and the user is able to proceed with the order with incorrect details entered attachment status opened component shopping basket version build number found in environment windows yandex bit comments date created author audit log | 0 |

38,596 | 19,405,705,239 | IssuesEvent | 2021-12-19 23:48:27 | ghost-fvtt/fxmaster | https://api.github.com/repos/ghost-fvtt/fxmaster | closed | Investigate performance issues | bug to be confirmed performance | ### Expected Behavior

performance is good and in particular, performance of the core weather effects should be the same as performance of the FXMaster weather effects.

### Current Behavior

Performance suffers massively in large scenes (10890x14000) and it seems to be worse for effects from FXMaster than for core weather effects.

### Steps to Reproduce

1. create a large scene (e.g. 10890x14000)

2. add a FXMaster weather effect to that scene

3. observer bad performance

### Context

Feedback from discord tests of v2.0.0-rc1

### Version

v1.2.1, v2.0.0-rc1

### Foundry VTT Version

V9.235

### Operating System

unknown

### Browser / App

Native Electron App, Chrome, Firefox

### Game System

-

### Relevant Modules

- | True | Investigate performance issues - ### Expected Behavior

performance is good and in particular, performance of the core weather effects should be the same as performance of the FXMaster weather effects.

### Current Behavior

Performance suffers massively in large scenes (10890x14000) and it seems to be worse for effects from FXMaster than for core weather effects.

### Steps to Reproduce

1. create a large scene (e.g. 10890x14000)

2. add a FXMaster weather effect to that scene

3. observer bad performance

### Context

Feedback from discord tests of v2.0.0-rc1

### Version

v1.2.1, v2.0.0-rc1

### Foundry VTT Version

V9.235

### Operating System

unknown

### Browser / App

Native Electron App, Chrome, Firefox

### Game System

-

### Relevant Modules

- | non_main | investigate performance issues expected behavior performance is good and in particular performance of the core weather effects should be the same as performance of the fxmaster weather effects current behavior performance suffers massively in large scenes and it seems to be worse for effects from fxmaster than for core weather effects steps to reproduce create a large scene e g add a fxmaster weather effect to that scene observer bad performance context feedback from discord tests of version foundry vtt version operating system unknown browser app native electron app chrome firefox game system relevant modules | 0 |

1,817 | 6,577,318,442 | IssuesEvent | 2017-09-12 00:04:27 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | Ansible 2.1.x cloud/docker incompatible with docker-py 1.1.0 | affects_2.1 bug_report cloud docker waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

_docker module

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.1.0.0

config file = /users/pikachuexe/projects/spacious/spacious-rails/ansible.cfg

configured module search path = ['./ansible/library']

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

```

[defaults]

roles_path = ./ansible/roles

hostfile = ./ansible/inventories/localhost

filter_plugins = ./ansible/filter_plugins

library = ./ansible/library

error_on_undefined_vars = True

display_skipped_hosts = False

```

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

"N/A"

##### SUMMARY

<!--- Explain the problem briefly -->

Ansible 2.1.x cloud/docker incompatible with docker-py 1.1.0

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

I try to update from Ansible 1.8.x

And worked around some issues with `2.0.x` (at least still works with some issue unfixed)

https://docs.ansible.com/ansible/docker_module.html

Declares it works with `docker-py` >= `0.3.0`

So I run my usual deploy playbook which fails at the task below

<!--- Paste example playbooks or commands between quotes below -->

```

---

- name: pull docker image by creating a tmp container

sudo: true

# This is step that fails

# No label specified

action:

module: docker

image: "{{ docker_image_name }}:{{ docker_image_tag }}"

state: "present"

pull: "missing"

# By using name, the existing container will be stopped automatically

name: "{{ docker_container_prefix }}.tmp.pull"

command: "bash"

detach: yes

username: "{{ docker_api_username | mandatory }}"

password: "{{ docker_api_password | mandatory }}"

email: "{{ docker_api_email | mandatory }}"

register: pull_docker_image_result

until: pull_docker_image_result|success

retries: 5

delay: 3

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

Starts container without issue

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with extra verbosity (-vvvv) -->

Failed due to docker-py version too old (locked at `1.1.0` for ansible `1.8.x`)

And `labels` keyword is passed without checking the version

<!--- Paste verbatim command output between quotes below -->

```

fatal: [app_server_02]: FAILED! => {"changed": false, "failed": true, "invocation": {"module_name": "docker"}, "module_stderr": "OpenSSH_6.2p2, OSSLShim 0.9.8r 8 Dec 2011\ndebug1: Reading configuration data /users/pikachuexe/.ssh/config\r\ndebug1: /users/pikachuexe/.ssh/config line 1: Applying options for *\r\ndebug1: /users/pikachuexe/.ssh/config line 17: Applying options for 119.81.*.*\r\ndebug1: Reading configuration data /etc/ssh_config\r\ndebug1: /etc/ssh_config line 20: Applying options for *\r\ndebug1: /etc/ssh_config line 102: Applying options for *\r\ndebug1: auto-mux: Trying existing master\r\ndebug2: fd 3 setting O_NONBLOCK\r\ndebug2: mux_client_hello_exchange: master version 4\r\ndebug3: mux_client_forwards: request forwardings: 0 local, 0 remote\r\ndebug3: mux_client_request_session: entering\r\ndebug3: mux_client_request_alive: entering\r\ndebug3: mux_client_request_alive: done pid = 87565\r\ndebug3: mux_client_request_session: session request sent\r\ndebug1: mux_client_request_session: master session id: 2\r\ndebug3: mux_client_read_packet: read header failed: Broken pipe\r\ndebug2: Received exit status from master 0\r\nShared connection to 119.81.xxx.xxx closed.\r\n", "module_stdout": "Traceback (most recent call last):\r\n File \"/tmp/ansible_q5yEWt/ansible_module_docker.py\", line 1972, in <module>\r\n main()\r\n File \"/tmp/ansible_q5yEWt/ansible_module_docker.py\", line 1938, in main\r\n present(manager, containers, count, name)\r\n File \"/tmp/ansible_q5yEWt/ansible_module_docker.py\", line 1742, in present\r\n created = manager.create_containers(delta)\r\n File \"/tmp/ansible_q5yEWt/ansible_module_docker.py\", line 1660, in create_containers\r\n containers = do_create(count, params)\r\n File \"/tmp/ansible_q5yEWt/ansible_module_docker.py\", line 1653, in do_create\r\n result = self.client.create_container(**params)\r\nTypeError: create_container() got an unexpected keyword argument 'labels'\r\n", "msg": "MODULE FAILURE", "parsed": false}

```

| True | Ansible 2.1.x cloud/docker incompatible with docker-py 1.1.0 - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

_docker module

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.1.0.0

config file = /users/pikachuexe/projects/spacious/spacious-rails/ansible.cfg

configured module search path = ['./ansible/library']

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

```

[defaults]

roles_path = ./ansible/roles

hostfile = ./ansible/inventories/localhost

filter_plugins = ./ansible/filter_plugins

library = ./ansible/library

error_on_undefined_vars = True

display_skipped_hosts = False

```

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

"N/A"

##### SUMMARY

<!--- Explain the problem briefly -->

Ansible 2.1.x cloud/docker incompatible with docker-py 1.1.0

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

I try to update from Ansible 1.8.x

And worked around some issues with `2.0.x` (at least still works with some issue unfixed)

https://docs.ansible.com/ansible/docker_module.html

Declares it works with `docker-py` >= `0.3.0`

So I run my usual deploy playbook which fails at the task below

<!--- Paste example playbooks or commands between quotes below -->

```

---

- name: pull docker image by creating a tmp container

sudo: true

# This is step that fails

# No label specified

action:

module: docker

image: "{{ docker_image_name }}:{{ docker_image_tag }}"

state: "present"

pull: "missing"

# By using name, the existing container will be stopped automatically

name: "{{ docker_container_prefix }}.tmp.pull"

command: "bash"

detach: yes

username: "{{ docker_api_username | mandatory }}"

password: "{{ docker_api_password | mandatory }}"