Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1,519 | 6,572,207,154 | IssuesEvent | 2017-09-11 00:02:27 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | s3_bucket fails when loading JSON policy from a template | affects_2.1 aws bug_report cloud in progress waiting_on_maintainer | ##### Issue Type:

- Bug Report

##### Plugin Name:

s3_bucket.py

##### Ansible Version:

```

ansible 2.1.0

config file =

configured module search path = Default w/o overrides

```

##### Ansible Configuration:

N/A

##### Environment:

N/A

##### Summary:

Loading an S3 bucket policy from a file results in failure due to various silent conversions performed by the lookup function, ansible core, and the s3_bucket function itself.

##### Steps To Reproduce:

For bugs, please show exactly how to reproduce the problem. For new

features, show how the feature would be used.

Playbook:

```

vars:

domain: "example.com"

envname: "stage"

aws_account_number: "12345678"

region: "us-west-2"

s3_bucket_name: "{{domain | regex_replace('\\.', '-')}}-{{envname}}-{{aws_account_number}}"

elb_principal_mappings:

us-east-1: 127311923021

us-west-2: 797873946194

us-west-1: 027434742980

eu-west-1: 156460612806

eu-central-1: 054676820928

ap-southeast-1: 114774131450

ap-northeast-1: 582318560864

ap-southeast-2: 783225319266

ap-northeast-2: 600734575887

sa-east-1: 507241528517

- name: Create S3 asset bucket

s3_bucket:

name: "{{ s3_bucket_name }}"

region: "{{ region }}"

policy: "{{ lookup('template', './s3_bucket_policy.json.j2', convert_data=False) }}"

register: site_s3_bucket

```

Template (Could be anything but just for completeness):

```

{

"Id": "AllowELBWriteAccess",

"Version": "2012-10-17",

"Statement": [

{

"Sid": "Stmt1454671534294",

"Action": [

"s3:PutObject"

],

"Effect": "Allow",

"Resource": "arn:aws:s3:::{{domain | regex_replace('\.', '-')}}-{{envname}}-{{aws_account_number}}/accesslogs/AWSLogs/{{ aws_account_number }}/*",

"Principal": {

"AWS": [

"{{ elb_principal_mappings[region] }}"

]

}

}

]

}

```

##### Expected Results:

An S3 bucket is created

##### Actual Results:

Failure incorrectly implying that that the JSON is invalid.

```

TASK [Create S3 asset bucket] **************************************************

fatal: [127.0.0.1]: FAILED! => {"changed": false, "failed": true, "msg": "Policies must be valid JSON and the first byte must be '{'"}

```

| True | s3_bucket fails when loading JSON policy from a template - ##### Issue Type:

- Bug Report

##### Plugin Name:

s3_bucket.py

##### Ansible Version:

```

ansible 2.1.0

config file =

configured module search path = Default w/o overrides

```

##### Ansible Configuration:

N/A

##### Environment:

N/A

##### Summary:

Loading an S3 bucket policy from a file results in failure due to various silent conversions performed by the lookup function, ansible core, and the s3_bucket function itself.

##### Steps To Reproduce:

For bugs, please show exactly how to reproduce the problem. For new

features, show how the feature would be used.

Playbook:

```

vars:

domain: "example.com"

envname: "stage"

aws_account_number: "12345678"

region: "us-west-2"

s3_bucket_name: "{{domain | regex_replace('\\.', '-')}}-{{envname}}-{{aws_account_number}}"

elb_principal_mappings:

us-east-1: 127311923021

us-west-2: 797873946194

us-west-1: 027434742980

eu-west-1: 156460612806

eu-central-1: 054676820928

ap-southeast-1: 114774131450

ap-northeast-1: 582318560864

ap-southeast-2: 783225319266

ap-northeast-2: 600734575887

sa-east-1: 507241528517

- name: Create S3 asset bucket

s3_bucket:

name: "{{ s3_bucket_name }}"

region: "{{ region }}"

policy: "{{ lookup('template', './s3_bucket_policy.json.j2', convert_data=False) }}"

register: site_s3_bucket

```

Template (Could be anything but just for completeness):

```

{

"Id": "AllowELBWriteAccess",

"Version": "2012-10-17",

"Statement": [

{

"Sid": "Stmt1454671534294",

"Action": [

"s3:PutObject"

],

"Effect": "Allow",

"Resource": "arn:aws:s3:::{{domain | regex_replace('\.', '-')}}-{{envname}}-{{aws_account_number}}/accesslogs/AWSLogs/{{ aws_account_number }}/*",

"Principal": {

"AWS": [

"{{ elb_principal_mappings[region] }}"

]

}

}

]

}

```

##### Expected Results:

An S3 bucket is created

##### Actual Results:

Failure incorrectly implying that that the JSON is invalid.

```

TASK [Create S3 asset bucket] **************************************************

fatal: [127.0.0.1]: FAILED! => {"changed": false, "failed": true, "msg": "Policies must be valid JSON and the first byte must be '{'"}

```

| main | bucket fails when loading json policy from a template issue type bug report plugin name bucket py ansible version ansible config file configured module search path default w o overrides ansible configuration n a environment n a summary loading an bucket policy from a file results in failure due to various silent conversions performed by the lookup function ansible core and the bucket function itself steps to reproduce for bugs please show exactly how to reproduce the problem for new features show how the feature would be used playbook vars domain example com envname stage aws account number region us west bucket name domain regex replace envname aws account number elb principal mappings us east us west us west eu west eu central ap southeast ap northeast ap southeast ap northeast sa east name create asset bucket bucket name bucket name region region policy lookup template bucket policy json convert data false register site bucket template could be anything but just for completeness id allowelbwriteaccess version statement sid action putobject effect allow resource arn aws domain regex replace envname aws account number accesslogs awslogs aws account number principal aws elb principal mappings expected results an bucket is created actual results failure incorrectly implying that that the json is invalid task fatal failed changed false failed true msg policies must be valid json and the first byte must be | 1 |

262,244 | 19,768,800,754 | IssuesEvent | 2022-01-17 07:42:39 | kubernetes-sigs/descheduler | https://api.github.com/repos/kubernetes-sigs/descheduler | closed | Docs around autohealing are misleading | lifecycle/rotten kind/documentation | The [docs around autohealing](https://github.com/kubernetes-sigs/descheduler/blob/master/docs/user-guide.md#autoheal-node-problems) are a bit misleading in my opinion.

They link off to Node Problem Detector, claiming that `Node Problem Detector can detect specific Node problems and taint any Nodes which have those problems.`. In fact, NPD doesn't do any tainting. It's the `TaintNodeByCondition` feature of the node controller that takes _some_ conditions and turns them in to taints. However this only works for the default node conditions: `PIDPressure`, `MemoryPressure`, `DiskPressure`, `Ready`, and some cloud provider specific conditions.

There is an [open PR](https://github.com/kubernetes/node-problem-detector/pull/565) on NPD that wants to add this tainting behaviour, but the maintainers seem to think it shouldn't be NPD that does the tainting.

The effect is that the autoheal cycle describe doesn't actually work, at least not for custom conditions. It would be wonderful if it did, because it's quite a compelling outcome, and it would be amazing if it were offered exclusively in terms of Kubernetes first party tooling.

At the very least, we should change the wording in the docs to make it clear that NPD doesn't really participate in the autohealing. At most, I'm hoping that by raising this issue, the fact that this cycle doesn't currently work as intended can get a little more visibility. My guess at possible solutions:

* Merge the PR linked above, so that NPD creates taints

* Extend the node controller to add condition based taints for _all_ conditions, including custom ones

* Create a new project to convert conditions in to taints

* Add a new strategy in this repo that allows for descheduling based on Conditions | 1.0 | Docs around autohealing are misleading - The [docs around autohealing](https://github.com/kubernetes-sigs/descheduler/blob/master/docs/user-guide.md#autoheal-node-problems) are a bit misleading in my opinion.

They link off to Node Problem Detector, claiming that `Node Problem Detector can detect specific Node problems and taint any Nodes which have those problems.`. In fact, NPD doesn't do any tainting. It's the `TaintNodeByCondition` feature of the node controller that takes _some_ conditions and turns them in to taints. However this only works for the default node conditions: `PIDPressure`, `MemoryPressure`, `DiskPressure`, `Ready`, and some cloud provider specific conditions.

There is an [open PR](https://github.com/kubernetes/node-problem-detector/pull/565) on NPD that wants to add this tainting behaviour, but the maintainers seem to think it shouldn't be NPD that does the tainting.

The effect is that the autoheal cycle describe doesn't actually work, at least not for custom conditions. It would be wonderful if it did, because it's quite a compelling outcome, and it would be amazing if it were offered exclusively in terms of Kubernetes first party tooling.

At the very least, we should change the wording in the docs to make it clear that NPD doesn't really participate in the autohealing. At most, I'm hoping that by raising this issue, the fact that this cycle doesn't currently work as intended can get a little more visibility. My guess at possible solutions:

* Merge the PR linked above, so that NPD creates taints

* Extend the node controller to add condition based taints for _all_ conditions, including custom ones

* Create a new project to convert conditions in to taints

* Add a new strategy in this repo that allows for descheduling based on Conditions | non_main | docs around autohealing are misleading the are a bit misleading in my opinion they link off to node problem detector claiming that node problem detector can detect specific node problems and taint any nodes which have those problems in fact npd doesn t do any tainting it s the taintnodebycondition feature of the node controller that takes some conditions and turns them in to taints however this only works for the default node conditions pidpressure memorypressure diskpressure ready and some cloud provider specific conditions there is an on npd that wants to add this tainting behaviour but the maintainers seem to think it shouldn t be npd that does the tainting the effect is that the autoheal cycle describe doesn t actually work at least not for custom conditions it would be wonderful if it did because it s quite a compelling outcome and it would be amazing if it were offered exclusively in terms of kubernetes first party tooling at the very least we should change the wording in the docs to make it clear that npd doesn t really participate in the autohealing at most i m hoping that by raising this issue the fact that this cycle doesn t currently work as intended can get a little more visibility my guess at possible solutions merge the pr linked above so that npd creates taints extend the node controller to add condition based taints for all conditions including custom ones create a new project to convert conditions in to taints add a new strategy in this repo that allows for descheduling based on conditions | 0 |

3,662 | 14,942,823,689 | IssuesEvent | 2021-01-25 21:55:46 | flag-camp-2020-t3/Spare4Fun-Server | https://api.github.com/repos/flag-camp-2020-t3/Spare4Fun-Server | opened | Dao Throw Exception & captured by exception handler | backend-v2.0 critical enhancement maintain / refactor | branch: ```refactor-dao-throw-exception-exception-handler``` | True | Dao Throw Exception & captured by exception handler - branch: ```refactor-dao-throw-exception-exception-handler``` | main | dao throw exception captured by exception handler branch refactor dao throw exception exception handler | 1 |

4,390 | 22,497,682,559 | IssuesEvent | 2022-06-23 09:00:59 | zoj613/polyagamma | https://api.github.com/repos/zoj613/polyagamma | closed | MAINT: Get full precision for 32 bit floating point random values. | good first issue maintainance random_polyagamma | A numpy [issue](https://github.com/numpy/numpy/issues/17478) showed that numpy's formula for generating 32bit random uniform values was waiting bits. There is a PR that fixes this which was merge in https://github.com/numpy/numpy/pull/20314.

It states

```

The formula to convert a 32 bit random integer to a random float32,

(next_uint32(bitgen_state) >> 9) * (1.0f / 8388608.0f)

shifts by one bit too many, resulting in uniform float32 samples always

having a 0 in the least significant bit. The formula is corrected to

(next_uint32(bitgen_state) >> 8) * (1.0f / 16777216.0f)

Occurrences of the incorrect formula in numpy/random/tests/test_direct.py

were also corrected.

```

Since the project uses this formula at https://github.com/zoj613/polyagamma/blob/fca4a8bce462066803c888708e2f109d1991c00c/src/pgm_macros.h#L54-L56

it is worth updating to get the full precision of 32bit float because they are used extensively in the accept-rejection steps of the samplers. Im positive this slight adjustment does not affect the results nor the performance but I think it's worth updating the formula. | True | MAINT: Get full precision for 32 bit floating point random values. - A numpy [issue](https://github.com/numpy/numpy/issues/17478) showed that numpy's formula for generating 32bit random uniform values was waiting bits. There is a PR that fixes this which was merge in https://github.com/numpy/numpy/pull/20314.

It states

```

The formula to convert a 32 bit random integer to a random float32,

(next_uint32(bitgen_state) >> 9) * (1.0f / 8388608.0f)

shifts by one bit too many, resulting in uniform float32 samples always

having a 0 in the least significant bit. The formula is corrected to

(next_uint32(bitgen_state) >> 8) * (1.0f / 16777216.0f)

Occurrences of the incorrect formula in numpy/random/tests/test_direct.py

were also corrected.

```

Since the project uses this formula at https://github.com/zoj613/polyagamma/blob/fca4a8bce462066803c888708e2f109d1991c00c/src/pgm_macros.h#L54-L56

it is worth updating to get the full precision of 32bit float because they are used extensively in the accept-rejection steps of the samplers. Im positive this slight adjustment does not affect the results nor the performance but I think it's worth updating the formula. | main | maint get full precision for bit floating point random values a numpy showed that numpy s formula for generating random uniform values was waiting bits there is a pr that fixes this which was merge in it states the formula to convert a bit random integer to a random next bitgen state shifts by one bit too many resulting in uniform samples always having a in the least significant bit the formula is corrected to next bitgen state occurrences of the incorrect formula in numpy random tests test direct py were also corrected since the project uses this formula at it is worth updating to get the full precision of float because they are used extensively in the accept rejection steps of the samplers im positive this slight adjustment does not affect the results nor the performance but i think it s worth updating the formula | 1 |

5,214 | 26,464,344,104 | IssuesEvent | 2023-01-16 21:18:37 | bazelbuild/intellij | https://api.github.com/repos/bazelbuild/intellij | closed | Flag --incompatible_disable_starlark_host_transitions will break Android Studio Plugin in Bazel 7.0 | type: bug product: Android Studio topic: bazel awaiting-maintainer | Incompatible flag `--incompatible_disable_starlark_host_transitions` will be enabled by default in the next major release (Bazel 7.0), thus breaking Android Studio Plugin. Please migrate to fix this and unblock the flip of this flag.

The flag is documented here: [bazelbuild/bazel#17032](https://github.com/bazelbuild/bazel/issues/17032).

Please check the following CI builds for build and test results:

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc33-4a0d-bfba-a9d0f36f4e1c)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc36-44c7-9a05-b49d4a1c32f5)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc2c-44c5-ba2f-d20d0c3515e1)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc3c-4a48-a7bc-5c475690a11b)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc39-4303-8caa-95e9935cab6d)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc3f-4bda-9815-991ef8b9d7ad)

Never heard of incompatible flags before? We have [documentation](https://docs.bazel.build/versions/master/backward-compatibility.html) that explains everything.

If you have any questions, please file an issue in https://github.com/bazelbuild/continuous-integration. | True | Flag --incompatible_disable_starlark_host_transitions will break Android Studio Plugin in Bazel 7.0 - Incompatible flag `--incompatible_disable_starlark_host_transitions` will be enabled by default in the next major release (Bazel 7.0), thus breaking Android Studio Plugin. Please migrate to fix this and unblock the flip of this flag.

The flag is documented here: [bazelbuild/bazel#17032](https://github.com/bazelbuild/bazel/issues/17032).

Please check the following CI builds for build and test results:

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc33-4a0d-bfba-a9d0f36f4e1c)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc36-44c7-9a05-b49d4a1c32f5)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc2c-44c5-ba2f-d20d0c3515e1)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc3c-4a48-a7bc-5c475690a11b)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc39-4303-8caa-95e9935cab6d)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154a-dc3f-4bda-9815-991ef8b9d7ad)

Never heard of incompatible flags before? We have [documentation](https://docs.bazel.build/versions/master/backward-compatibility.html) that explains everything.

If you have any questions, please file an issue in https://github.com/bazelbuild/continuous-integration. | main | flag incompatible disable starlark host transitions will break android studio plugin in bazel incompatible flag incompatible disable starlark host transitions will be enabled by default in the next major release bazel thus breaking android studio plugin please migrate to fix this and unblock the flip of this flag the flag is documented here please check the following ci builds for build and test results never heard of incompatible flags before we have that explains everything if you have any questions please file an issue in | 1 |

507,049 | 14,679,153,479 | IssuesEvent | 2020-12-31 06:04:31 | oppia/oppia-android | https://api.github.com/repos/oppia/oppia-android | closed | Remove constraints from ScrollView in profile_reset_pin_activity | Priority: Nice-to-have Type: Improvement good first issue | Remove constraints from ScrollView in profile_reset_pin_activity both landscape and portrait xml files.

https://github.com/oppia/oppia-android/blob/2511715a4770cda65df593cf6821b17b7f8f3d28/app/src/main/res/layout/profile_reset_pin_activity.xml#L55

| 1.0 | Remove constraints from ScrollView in profile_reset_pin_activity - Remove constraints from ScrollView in profile_reset_pin_activity both landscape and portrait xml files.

https://github.com/oppia/oppia-android/blob/2511715a4770cda65df593cf6821b17b7f8f3d28/app/src/main/res/layout/profile_reset_pin_activity.xml#L55

| non_main | remove constraints from scrollview in profile reset pin activity remove constraints from scrollview in profile reset pin activity both landscape and portrait xml files | 0 |

46,944 | 24,794,622,649 | IssuesEvent | 2022-10-24 16:10:55 | iree-org/iree | https://api.github.com/repos/iree-org/iree | closed | Add `hal_inline` dialect/module for tiny environments. | runtime performance ⚡ | For environments where the execution model is known to be exclusively local and inline (embedded systems) we can have a paired down HAL that pretty much only contains executable support. The idea is to still use HAL executable translation in the compiler but lowering the stream dialect to a new lightweight dialect that pretty much only manages executables and dispatches. Most of the local/ implementation of the executable loader and the loaders themselves have no dependencies on command buffers, allocators, buffers, or devices and can be cleanly pulled into the module without bringing in the bulk of the HAL API.

It's debatable whether the allocator/buffer stuff should be included - that would allow the coming allocator types to be reused but at the cost of additional non-user-controllable overheads. Since the local executables take byte spans all buffers could just be `iree_vm_buffer_t` which is already compiled in and available for use - and since they take a custom `iree_allocator_t` it's still possible for hosting applications to manage memory however they want.

Executables themselves will still be injected on the module when created same as today, allowing for dynamic, static, vmvx, etc executables to be run this way. This allows us to separate the execution model from the deployment model at the cost of a few vtables.

Outline:

* [x] Add `hal_inline` dialect with basic ops:

* [x] `hal_inline.executable.create`

* [x] `hal_inline.executable.dispatch`

* [x] `hal_inline.executable_layout.create`? (still need this to reuse loaders/libraries)

* [x] Add `--execution-mode=` iree-compile flag to switch between `hal-async` and `hal-inline` (or w/e)

* [x] Have a new `iree-hal-inline-transformation-pipeline` that still performs interface materialization and executable translation but otherwise lowers `stream` itself

* [x] Add `iree/modules/hal_inline` runtime module that links directly against the `iree/hal/local/` libraries

* [x] Build a runner tool that uses the inline module (or make iree-run-module/etc always support it with a flag)

(could also call this the `inline` dialect or something - it's still technically a HAL though as the executables being called are abstracted across hardware - can have CPU/FPGA/DSP/etc) | True | Add `hal_inline` dialect/module for tiny environments. - For environments where the execution model is known to be exclusively local and inline (embedded systems) we can have a paired down HAL that pretty much only contains executable support. The idea is to still use HAL executable translation in the compiler but lowering the stream dialect to a new lightweight dialect that pretty much only manages executables and dispatches. Most of the local/ implementation of the executable loader and the loaders themselves have no dependencies on command buffers, allocators, buffers, or devices and can be cleanly pulled into the module without bringing in the bulk of the HAL API.

It's debatable whether the allocator/buffer stuff should be included - that would allow the coming allocator types to be reused but at the cost of additional non-user-controllable overheads. Since the local executables take byte spans all buffers could just be `iree_vm_buffer_t` which is already compiled in and available for use - and since they take a custom `iree_allocator_t` it's still possible for hosting applications to manage memory however they want.

Executables themselves will still be injected on the module when created same as today, allowing for dynamic, static, vmvx, etc executables to be run this way. This allows us to separate the execution model from the deployment model at the cost of a few vtables.

Outline:

* [x] Add `hal_inline` dialect with basic ops:

* [x] `hal_inline.executable.create`

* [x] `hal_inline.executable.dispatch`

* [x] `hal_inline.executable_layout.create`? (still need this to reuse loaders/libraries)

* [x] Add `--execution-mode=` iree-compile flag to switch between `hal-async` and `hal-inline` (or w/e)

* [x] Have a new `iree-hal-inline-transformation-pipeline` that still performs interface materialization and executable translation but otherwise lowers `stream` itself

* [x] Add `iree/modules/hal_inline` runtime module that links directly against the `iree/hal/local/` libraries

* [x] Build a runner tool that uses the inline module (or make iree-run-module/etc always support it with a flag)

(could also call this the `inline` dialect or something - it's still technically a HAL though as the executables being called are abstracted across hardware - can have CPU/FPGA/DSP/etc) | non_main | add hal inline dialect module for tiny environments for environments where the execution model is known to be exclusively local and inline embedded systems we can have a paired down hal that pretty much only contains executable support the idea is to still use hal executable translation in the compiler but lowering the stream dialect to a new lightweight dialect that pretty much only manages executables and dispatches most of the local implementation of the executable loader and the loaders themselves have no dependencies on command buffers allocators buffers or devices and can be cleanly pulled into the module without bringing in the bulk of the hal api it s debatable whether the allocator buffer stuff should be included that would allow the coming allocator types to be reused but at the cost of additional non user controllable overheads since the local executables take byte spans all buffers could just be iree vm buffer t which is already compiled in and available for use and since they take a custom iree allocator t it s still possible for hosting applications to manage memory however they want executables themselves will still be injected on the module when created same as today allowing for dynamic static vmvx etc executables to be run this way this allows us to separate the execution model from the deployment model at the cost of a few vtables outline add hal inline dialect with basic ops hal inline executable create hal inline executable dispatch hal inline executable layout create still need this to reuse loaders libraries add execution mode iree compile flag to switch between hal async and hal inline or w e have a new iree hal inline transformation pipeline that still performs interface materialization and executable translation but otherwise lowers stream itself add iree modules hal inline runtime module that links directly against the iree hal local libraries build a runner tool that uses the inline module or make iree run module etc always support it with a flag could also call this the inline dialect or something it s still technically a hal though as the executables being called are abstracted across hardware can have cpu fpga dsp etc | 0 |

14,714 | 3,419,369,800 | IssuesEvent | 2015-12-08 09:26:03 | centreon/centreon | https://api.github.com/repos/centreon/centreon | closed | Delete a poller doesn't delete associated Centreon Broker configuration | BetaTest Kind/Bug Status/Solved | When I delete a poller the associated Centreon Broker configuration is disabled but not deleted.

to have a clean configuration it would be good to remove the entire configuration of Centreon Broker

Regards, | 1.0 | Delete a poller doesn't delete associated Centreon Broker configuration - When I delete a poller the associated Centreon Broker configuration is disabled but not deleted.

to have a clean configuration it would be good to remove the entire configuration of Centreon Broker

Regards, | non_main | delete a poller doesn t delete associated centreon broker configuration when i delete a poller the associated centreon broker configuration is disabled but not deleted to have a clean configuration it would be good to remove the entire configuration of centreon broker regards | 0 |

239,613 | 7,799,878,792 | IssuesEvent | 2018-06-09 01:34:37 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0005764:

convert $_folder->cache_uidvalidity to integer (in DB) | Felamimail Mantis high priority | **Reported by pschuele on 20 Feb 2012 09:00**

convert $_folder->cache_uidvalidity to integer (in DB)

- as imap_uidvalidity is an integer, too and there are issues regarding comparison between the two when using postgresql

**Additional information:** http://www.tine20.org/forum/viewtopic.php?f=10&t=10508

| 1.0 | 0005764:

convert $_folder->cache_uidvalidity to integer (in DB) - **Reported by pschuele on 20 Feb 2012 09:00**

convert $_folder->cache_uidvalidity to integer (in DB)

- as imap_uidvalidity is an integer, too and there are issues regarding comparison between the two when using postgresql

**Additional information:** http://www.tine20.org/forum/viewtopic.php?f=10&t=10508

| non_main | convert folder cache uidvalidity to integer in db reported by pschuele on feb convert folder gt cache uidvalidity to integer in db as imap uidvalidity is an integer too and there are issues regarding comparison between the two when using postgresql additional information | 0 |

4,752 | 24,509,600,788 | IssuesEvent | 2022-10-10 19:55:50 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | The columns endpoint results in a 500, possibly metadata related | type: bug work: backend status: ready restricted: maintainers | ## Description

Endpoint: `http://localhost:8000/api/db/v0/tables/<table_id>/columns/`

```

Environment:

Request Method: GET

Request URL: http://localhost:8000/api/db/v0/tables/5/columns/?limit=500

Django Version: 3.1.14

Python Version: 3.9.8

Installed Applications:

['django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'rest_framework',

'django_filters',

'django_property_filter',

'mathesar']

Installed Middleware:

['django.middleware.security.SecurityMiddleware',

'django.contrib.sessions.middleware.SessionMiddleware',

'django.middleware.common.CommonMiddleware',

'django.middleware.csrf.CsrfViewMiddleware',

'django.contrib.auth.middleware.AuthenticationMiddleware',

'django.contrib.messages.middleware.MessageMiddleware',

'django.middleware.clickjacking.XFrameOptionsMiddleware']

Traceback (most recent call last):

File "/code/mathesar/models/base.py", line 675, in __getattribute__

return super().__getattribute__(name)

During handling of the above exception ('Column' object has no attribute 'primary_key'), another exception occurred:

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1167, in __getattr__

return self._index[key]

The above exception ('nspname') was the direct cause of the following exception:

File "/code/mathesar/models/base.py", line 675, in __getattribute__

return super().__getattribute__(name)

File "/code/mathesar/models/base.py", line 696, in _sa_column

return self.table.sa_columns[self.name]

File "/code/mathesar/models/base.py", line 362, in sa_columns

return self._enriched_column_sa_table.columns

File "/code/mathesar/models/base.py", line 350, in _enriched_column_sa_table

table=self._sa_table,

File "/code/mathesar/state/cached_property.py", line 62, in __get__

new_value = self.original_get_fn(instance)

File "/code/mathesar/models/base.py", line 331, in _sa_table

sa_table = reflect_table_from_oid(

File "/code/db/tables/operations/select.py", line 23, in reflect_table_from_oid

tables = reflect_tables_from_oids([oid], engine, metadata=metadata, connection_to_use=connection_to_use)

File "/code/db/tables/operations/select.py", line 29, in reflect_tables_from_oids

get_map_of_table_oid_to_schema_name_and_table_name(

File "/code/db/tables/operations/select.py", line 59, in get_map_of_table_oid_to_schema_name_and_table_name

select(pg_namespace.c.nspname, pg_class.c.relname, pg_class.c.oid)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1169, in __getattr__

util.raise_(AttributeError(key), replace_context=err)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

During handling of the above exception (nspname), another exception occurred:

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1167, in __getattr__

return self._index[key]

The above exception ('nspname') was the direct cause of the following exception:

File "/code/mathesar/models/base.py", line 675, in __getattribute__

return super().__getattribute__(name)

File "/code/mathesar/models/base.py", line 696, in _sa_column

return self.table.sa_columns[self.name]

File "/code/mathesar/models/base.py", line 362, in sa_columns

return self._enriched_column_sa_table.columns

File "/code/mathesar/models/base.py", line 350, in _enriched_column_sa_table

table=self._sa_table,

File "/code/mathesar/state/cached_property.py", line 62, in __get__

new_value = self.original_get_fn(instance)

File "/code/mathesar/models/base.py", line 331, in _sa_table

sa_table = reflect_table_from_oid(

File "/code/db/tables/operations/select.py", line 23, in reflect_table_from_oid

tables = reflect_tables_from_oids([oid], engine, metadata=metadata, connection_to_use=connection_to_use)

File "/code/db/tables/operations/select.py", line 29, in reflect_tables_from_oids

get_map_of_table_oid_to_schema_name_and_table_name(

File "/code/db/tables/operations/select.py", line 59, in get_map_of_table_oid_to_schema_name_and_table_name

select(pg_namespace.c.nspname, pg_class.c.relname, pg_class.c.oid)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1169, in __getattr__

util.raise_(AttributeError(key), replace_context=err)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

During handling of the above exception (nspname), another exception occurred:

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/elements.py", line 826, in __getattr__

return getattr(self.comparator, key)

The above exception ('Comparator' object has no attribute '_sa_column') was the direct cause of the following exception:

File "/usr/local/lib/python3.9/site-packages/django/core/handlers/exception.py", line 47, in inner

response = get_response(request)

File "/usr/local/lib/python3.9/site-packages/django/core/handlers/base.py", line 181, in _get_response

response = wrapped_callback(request, *callback_args, **callback_kwargs)

File "/usr/local/lib/python3.9/site-packages/django/views/decorators/csrf.py", line 54, in wrapped_view

return view_func(*args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/viewsets.py", line 125, in view

return self.dispatch(request, *args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 509, in dispatch

response = self.handle_exception(exc)

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 466, in handle_exception

response = exception_handler(exc, context)

File "/code/mathesar/exception_handlers.py", line 55, in mathesar_exception_handler

raise exc

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 506, in dispatch

response = handler(request, *args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/mixins.py", line 38, in list

queryset = self.filter_queryset(self.get_queryset())

File "/code/mathesar/api/db/viewsets/columns.py", line 33, in get_queryset

queryset = Column.objects.filter(table=self.kwargs['table_pk']).order_by('attnum')

File "/usr/local/lib/python3.9/site-packages/django/db/models/manager.py", line 85, in manager_method

return getattr(self.get_queryset(), name)(*args, **kwargs)

File "/code/mathesar/models/base.py", line 70, in get_queryset

make_sure_initial_reflection_happened()

File "/code/mathesar/state/base.py", line 8, in make_sure_initial_reflection_happened

reset_reflection()

File "/code/mathesar/state/base.py", line 27, in reset_reflection

_trigger_django_model_reflection()

File "/code/mathesar/state/base.py", line 31, in _trigger_django_model_reflection

reflect_db_objects(metadata=get_cached_metadata())

File "/code/mathesar/state/django.py", line 44, in reflect_db_objects

reflect_columns_from_tables(tables, metadata=metadata)

File "/code/mathesar/state/django.py", line 123, in reflect_columns_from_tables

models._compute_preview_template(table)

File "/code/mathesar/models/base.py", line 859, in _compute_preview_template

if column.primary_key:

File "/code/mathesar/models/base.py", line 681, in __getattribute__

return getattr(self._sa_column, name)

File "/code/mathesar/models/base.py", line 681, in __getattribute__

return getattr(self._sa_column, name)

File "/code/mathesar/models/base.py", line 681, in __getattribute__

return getattr(self._sa_column, name)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/elements.py", line 828, in __getattr__

util.raise_(

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

Exception Type: AttributeError at /api/db/v0/tables/5/columns/

Exception Value: Neither 'MathesarColumn' object nor 'Comparator' object has an attribute '_sa_column'

```

## Expected behavior

<!-- A clear and concise description of what you expected to happen. -->

## To Reproduce

<!-- How can we recreate this bug? Please try to provide a Minimal, Complete, and Verifiable (http://stackoverflow.com/help/mcve) example if code-related. -->

## Environment

- OS: (_eg._ macOS 10.14.6; Fedora 32)

- Browser: (_eg._ Safari; Firefox)

- Browser Version: (_eg._ 13; 73)

- Other info:

## Additional context

<!-- Add any other context about the problem or screenshots here. -->

| True | The columns endpoint results in a 500, possibly metadata related - ## Description

Endpoint: `http://localhost:8000/api/db/v0/tables/<table_id>/columns/`

```

Environment:

Request Method: GET

Request URL: http://localhost:8000/api/db/v0/tables/5/columns/?limit=500

Django Version: 3.1.14

Python Version: 3.9.8

Installed Applications:

['django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'rest_framework',

'django_filters',

'django_property_filter',

'mathesar']

Installed Middleware:

['django.middleware.security.SecurityMiddleware',

'django.contrib.sessions.middleware.SessionMiddleware',

'django.middleware.common.CommonMiddleware',

'django.middleware.csrf.CsrfViewMiddleware',

'django.contrib.auth.middleware.AuthenticationMiddleware',

'django.contrib.messages.middleware.MessageMiddleware',

'django.middleware.clickjacking.XFrameOptionsMiddleware']

Traceback (most recent call last):

File "/code/mathesar/models/base.py", line 675, in __getattribute__

return super().__getattribute__(name)

During handling of the above exception ('Column' object has no attribute 'primary_key'), another exception occurred:

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1167, in __getattr__

return self._index[key]

The above exception ('nspname') was the direct cause of the following exception:

File "/code/mathesar/models/base.py", line 675, in __getattribute__

return super().__getattribute__(name)

File "/code/mathesar/models/base.py", line 696, in _sa_column

return self.table.sa_columns[self.name]

File "/code/mathesar/models/base.py", line 362, in sa_columns

return self._enriched_column_sa_table.columns

File "/code/mathesar/models/base.py", line 350, in _enriched_column_sa_table

table=self._sa_table,

File "/code/mathesar/state/cached_property.py", line 62, in __get__

new_value = self.original_get_fn(instance)

File "/code/mathesar/models/base.py", line 331, in _sa_table

sa_table = reflect_table_from_oid(

File "/code/db/tables/operations/select.py", line 23, in reflect_table_from_oid

tables = reflect_tables_from_oids([oid], engine, metadata=metadata, connection_to_use=connection_to_use)

File "/code/db/tables/operations/select.py", line 29, in reflect_tables_from_oids

get_map_of_table_oid_to_schema_name_and_table_name(

File "/code/db/tables/operations/select.py", line 59, in get_map_of_table_oid_to_schema_name_and_table_name

select(pg_namespace.c.nspname, pg_class.c.relname, pg_class.c.oid)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1169, in __getattr__

util.raise_(AttributeError(key), replace_context=err)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

During handling of the above exception (nspname), another exception occurred:

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1167, in __getattr__

return self._index[key]

The above exception ('nspname') was the direct cause of the following exception:

File "/code/mathesar/models/base.py", line 675, in __getattribute__

return super().__getattribute__(name)

File "/code/mathesar/models/base.py", line 696, in _sa_column

return self.table.sa_columns[self.name]

File "/code/mathesar/models/base.py", line 362, in sa_columns

return self._enriched_column_sa_table.columns

File "/code/mathesar/models/base.py", line 350, in _enriched_column_sa_table

table=self._sa_table,

File "/code/mathesar/state/cached_property.py", line 62, in __get__

new_value = self.original_get_fn(instance)

File "/code/mathesar/models/base.py", line 331, in _sa_table

sa_table = reflect_table_from_oid(

File "/code/db/tables/operations/select.py", line 23, in reflect_table_from_oid

tables = reflect_tables_from_oids([oid], engine, metadata=metadata, connection_to_use=connection_to_use)

File "/code/db/tables/operations/select.py", line 29, in reflect_tables_from_oids

get_map_of_table_oid_to_schema_name_and_table_name(

File "/code/db/tables/operations/select.py", line 59, in get_map_of_table_oid_to_schema_name_and_table_name

select(pg_namespace.c.nspname, pg_class.c.relname, pg_class.c.oid)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1169, in __getattr__

util.raise_(AttributeError(key), replace_context=err)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

During handling of the above exception (nspname), another exception occurred:

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/elements.py", line 826, in __getattr__

return getattr(self.comparator, key)

The above exception ('Comparator' object has no attribute '_sa_column') was the direct cause of the following exception:

File "/usr/local/lib/python3.9/site-packages/django/core/handlers/exception.py", line 47, in inner

response = get_response(request)

File "/usr/local/lib/python3.9/site-packages/django/core/handlers/base.py", line 181, in _get_response

response = wrapped_callback(request, *callback_args, **callback_kwargs)

File "/usr/local/lib/python3.9/site-packages/django/views/decorators/csrf.py", line 54, in wrapped_view

return view_func(*args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/viewsets.py", line 125, in view

return self.dispatch(request, *args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 509, in dispatch

response = self.handle_exception(exc)

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 466, in handle_exception

response = exception_handler(exc, context)

File "/code/mathesar/exception_handlers.py", line 55, in mathesar_exception_handler

raise exc

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 506, in dispatch

response = handler(request, *args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/mixins.py", line 38, in list

queryset = self.filter_queryset(self.get_queryset())

File "/code/mathesar/api/db/viewsets/columns.py", line 33, in get_queryset

queryset = Column.objects.filter(table=self.kwargs['table_pk']).order_by('attnum')

File "/usr/local/lib/python3.9/site-packages/django/db/models/manager.py", line 85, in manager_method

return getattr(self.get_queryset(), name)(*args, **kwargs)

File "/code/mathesar/models/base.py", line 70, in get_queryset

make_sure_initial_reflection_happened()

File "/code/mathesar/state/base.py", line 8, in make_sure_initial_reflection_happened

reset_reflection()

File "/code/mathesar/state/base.py", line 27, in reset_reflection

_trigger_django_model_reflection()

File "/code/mathesar/state/base.py", line 31, in _trigger_django_model_reflection

reflect_db_objects(metadata=get_cached_metadata())

File "/code/mathesar/state/django.py", line 44, in reflect_db_objects

reflect_columns_from_tables(tables, metadata=metadata)

File "/code/mathesar/state/django.py", line 123, in reflect_columns_from_tables

models._compute_preview_template(table)

File "/code/mathesar/models/base.py", line 859, in _compute_preview_template

if column.primary_key:

File "/code/mathesar/models/base.py", line 681, in __getattribute__

return getattr(self._sa_column, name)

File "/code/mathesar/models/base.py", line 681, in __getattribute__

return getattr(self._sa_column, name)

File "/code/mathesar/models/base.py", line 681, in __getattribute__

return getattr(self._sa_column, name)

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/sql/elements.py", line 828, in __getattr__

util.raise_(

File "/usr/local/lib/python3.9/site-packages/sqlalchemy/util/compat.py", line 207, in raise_

raise exception

Exception Type: AttributeError at /api/db/v0/tables/5/columns/

Exception Value: Neither 'MathesarColumn' object nor 'Comparator' object has an attribute '_sa_column'

```

## Expected behavior

<!-- A clear and concise description of what you expected to happen. -->

## To Reproduce

<!-- How can we recreate this bug? Please try to provide a Minimal, Complete, and Verifiable (http://stackoverflow.com/help/mcve) example if code-related. -->

## Environment

- OS: (_eg._ macOS 10.14.6; Fedora 32)

- Browser: (_eg._ Safari; Firefox)

- Browser Version: (_eg._ 13; 73)

- Other info:

## Additional context

<!-- Add any other context about the problem or screenshots here. -->

| main | the columns endpoint results in a possibly metadata related description endpoint environment request method get request url django version python version installed applications django contrib admin django contrib auth django contrib contenttypes django contrib sessions django contrib messages django contrib staticfiles rest framework django filters django property filter mathesar installed middleware django middleware security securitymiddleware django contrib sessions middleware sessionmiddleware django middleware common commonmiddleware django middleware csrf csrfviewmiddleware django contrib auth middleware authenticationmiddleware django contrib messages middleware messagemiddleware django middleware clickjacking xframeoptionsmiddleware traceback most recent call last file code mathesar models base py line in getattribute return super getattribute name during handling of the above exception column object has no attribute primary key another exception occurred file usr local lib site packages sqlalchemy sql base py line in getattr return self index the above exception nspname was the direct cause of the following exception file code mathesar models base py line in getattribute return super getattribute name file code mathesar models base py line in sa column return self table sa columns file code mathesar models base py line in sa columns return self enriched column sa table columns file code mathesar models base py line in enriched column sa table table self sa table file code mathesar state cached property py line in get new value self original get fn instance file code mathesar models base py line in sa table sa table reflect table from oid file code db tables operations select py line in reflect table from oid tables reflect tables from oids engine metadata metadata connection to use connection to use file code db tables operations select py line in reflect tables from oids get map of table oid to schema name and table name file code db tables operations select py line in get map of table oid to schema name and table name select pg namespace c nspname pg class c relname pg class c oid file usr local lib site packages sqlalchemy sql base py line in getattr util raise attributeerror key replace context err file usr local lib site packages sqlalchemy util compat py line in raise raise exception during handling of the above exception nspname another exception occurred file usr local lib site packages sqlalchemy sql base py line in getattr return self index the above exception nspname was the direct cause of the following exception file code mathesar models base py line in getattribute return super getattribute name file code mathesar models base py line in sa column return self table sa columns file code mathesar models base py line in sa columns return self enriched column sa table columns file code mathesar models base py line in enriched column sa table table self sa table file code mathesar state cached property py line in get new value self original get fn instance file code mathesar models base py line in sa table sa table reflect table from oid file code db tables operations select py line in reflect table from oid tables reflect tables from oids engine metadata metadata connection to use connection to use file code db tables operations select py line in reflect tables from oids get map of table oid to schema name and table name file code db tables operations select py line in get map of table oid to schema name and table name select pg namespace c nspname pg class c relname pg class c oid file usr local lib site packages sqlalchemy sql base py line in getattr util raise attributeerror key replace context err file usr local lib site packages sqlalchemy util compat py line in raise raise exception during handling of the above exception nspname another exception occurred file usr local lib site packages sqlalchemy sql elements py line in getattr return getattr self comparator key the above exception comparator object has no attribute sa column was the direct cause of the following exception file usr local lib site packages django core handlers exception py line in inner response get response request file usr local lib site packages django core handlers base py line in get response response wrapped callback request callback args callback kwargs file usr local lib site packages django views decorators csrf py line in wrapped view return view func args kwargs file usr local lib site packages rest framework viewsets py line in view return self dispatch request args kwargs file usr local lib site packages rest framework views py line in dispatch response self handle exception exc file usr local lib site packages rest framework views py line in handle exception response exception handler exc context file code mathesar exception handlers py line in mathesar exception handler raise exc file usr local lib site packages rest framework views py line in dispatch response handler request args kwargs file usr local lib site packages rest framework mixins py line in list queryset self filter queryset self get queryset file code mathesar api db viewsets columns py line in get queryset queryset column objects filter table self kwargs order by attnum file usr local lib site packages django db models manager py line in manager method return getattr self get queryset name args kwargs file code mathesar models base py line in get queryset make sure initial reflection happened file code mathesar state base py line in make sure initial reflection happened reset reflection file code mathesar state base py line in reset reflection trigger django model reflection file code mathesar state base py line in trigger django model reflection reflect db objects metadata get cached metadata file code mathesar state django py line in reflect db objects reflect columns from tables tables metadata metadata file code mathesar state django py line in reflect columns from tables models compute preview template table file code mathesar models base py line in compute preview template if column primary key file code mathesar models base py line in getattribute return getattr self sa column name file code mathesar models base py line in getattribute return getattr self sa column name file code mathesar models base py line in getattribute return getattr self sa column name file usr local lib site packages sqlalchemy sql elements py line in getattr util raise file usr local lib site packages sqlalchemy util compat py line in raise raise exception exception type attributeerror at api db tables columns exception value neither mathesarcolumn object nor comparator object has an attribute sa column expected behavior to reproduce environment os eg macos fedora browser eg safari firefox browser version eg other info additional context | 1 |

130,908 | 18,213,855,857 | IssuesEvent | 2021-09-30 00:00:49 | ghc-dev/Margaret-Rose | https://api.github.com/repos/ghc-dev/Margaret-Rose | opened | CVE-2021-21295 (Medium) detected in netty-codec-http-4.1.39.Final.jar | security vulnerability | ## CVE-2021-21295 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.39.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application framework for

rapid development of maintainable high performance protocol servers and

clients.</p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: Margaret-Rose/build.gradle</p>

<p>Path to vulnerable library: /caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.39.Final/732d06961162e27fa3ae5989541c4460853745d3/netty-codec-http-4.1.39.Final.jar</p>

<p>

Dependency Hierarchy:

- :x: **netty-codec-http-4.1.39.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ghc-dev/Margaret-Rose/commit/c4e02f67dd4676db950425e7cac2d1a3f3883f24">c4e02f67dd4676db950425e7cac2d1a3f3883f24</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Netty is an open-source, asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers & clients. In Netty (io.netty:netty-codec-http2) before version 4.1.60.Final there is a vulnerability that enables request smuggling. If a Content-Length header is present in the original HTTP/2 request, the field is not validated by `Http2MultiplexHandler` as it is propagated up. This is fine as long as the request is not proxied through as HTTP/1.1. If the request comes in as an HTTP/2 stream, gets converted into the HTTP/1.1 domain objects (`HttpRequest`, `HttpContent`, etc.) via `Http2StreamFrameToHttpObjectCodec `and then sent up to the child channel's pipeline and proxied through a remote peer as HTTP/1.1 this may result in request smuggling. In a proxy case, users may assume the content-length is validated somehow, which is not the case. If the request is forwarded to a backend channel that is a HTTP/1.1 connection, the Content-Length now has meaning and needs to be checked. An attacker can smuggle requests inside the body as it gets downgraded from HTTP/2 to HTTP/1.1. For an example attack refer to the linked GitHub Advisory. Users are only affected if all of this is true: `HTTP2MultiplexCodec` or `Http2FrameCodec` is used, `Http2StreamFrameToHttpObjectCodec` is used to convert to HTTP/1.1 objects, and these HTTP/1.1 objects are forwarded to another remote peer. This has been patched in 4.1.60.Final As a workaround, the user can do the validation by themselves by implementing a custom `ChannelInboundHandler` that is put in the `ChannelPipeline` behind `Http2StreamFrameToHttpObjectCodec`.

<p>Publish Date: 2021-03-09

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21295>CVE-2021-21295</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-wm47-8v5p-wjpj">https://github.com/advisories/GHSA-wm47-8v5p-wjpj</a></p>

<p>Release Date: 2021-03-09</p>

<p>Fix Resolution: io.netty:netty-all:4.1.60;io.netty:netty-codec-http:4.1.60;io.netty:netty-codec-http2:4.1.60</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"io.netty","packageName":"netty-codec-http","packageVersion":"4.1.39.Final","packageFilePaths":["/build.gradle"],"isTransitiveDependency":false,"dependencyTree":"io.netty:netty-codec-http:4.1.39.Final","isMinimumFixVersionAvailable":true,"minimumFixVersion":"io.netty:netty-all:4.1.60;io.netty:netty-codec-http:4.1.60;io.netty:netty-codec-http2:4.1.60"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-21295","vulnerabilityDetails":"Netty is an open-source, asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers \u0026 clients. In Netty (io.netty:netty-codec-http2) before version 4.1.60.Final there is a vulnerability that enables request smuggling. If a Content-Length header is present in the original HTTP/2 request, the field is not validated by `Http2MultiplexHandler` as it is propagated up. This is fine as long as the request is not proxied through as HTTP/1.1. If the request comes in as an HTTP/2 stream, gets converted into the HTTP/1.1 domain objects (`HttpRequest`, `HttpContent`, etc.) via `Http2StreamFrameToHttpObjectCodec `and then sent up to the child channel\u0027s pipeline and proxied through a remote peer as HTTP/1.1 this may result in request smuggling. In a proxy case, users may assume the content-length is validated somehow, which is not the case. If the request is forwarded to a backend channel that is a HTTP/1.1 connection, the Content-Length now has meaning and needs to be checked. An attacker can smuggle requests inside the body as it gets downgraded from HTTP/2 to HTTP/1.1. For an example attack refer to the linked GitHub Advisory. Users are only affected if all of this is true: `HTTP2MultiplexCodec` or `Http2FrameCodec` is used, `Http2StreamFrameToHttpObjectCodec` is used to convert to HTTP/1.1 objects, and these HTTP/1.1 objects are forwarded to another remote peer. This has been patched in 4.1.60.Final As a workaround, the user can do the validation by themselves by implementing a custom `ChannelInboundHandler` that is put in the `ChannelPipeline` behind `Http2StreamFrameToHttpObjectCodec`.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21295","cvss3Severity":"medium","cvss3Score":"5.9","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | True | CVE-2021-21295 (Medium) detected in netty-codec-http-4.1.39.Final.jar - ## CVE-2021-21295 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.39.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application framework for

rapid development of maintainable high performance protocol servers and

clients.</p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: Margaret-Rose/build.gradle</p>

<p>Path to vulnerable library: /caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.39.Final/732d06961162e27fa3ae5989541c4460853745d3/netty-codec-http-4.1.39.Final.jar</p>

<p>

Dependency Hierarchy:

- :x: **netty-codec-http-4.1.39.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ghc-dev/Margaret-Rose/commit/c4e02f67dd4676db950425e7cac2d1a3f3883f24">c4e02f67dd4676db950425e7cac2d1a3f3883f24</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Netty is an open-source, asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers & clients. In Netty (io.netty:netty-codec-http2) before version 4.1.60.Final there is a vulnerability that enables request smuggling. If a Content-Length header is present in the original HTTP/2 request, the field is not validated by `Http2MultiplexHandler` as it is propagated up. This is fine as long as the request is not proxied through as HTTP/1.1. If the request comes in as an HTTP/2 stream, gets converted into the HTTP/1.1 domain objects (`HttpRequest`, `HttpContent`, etc.) via `Http2StreamFrameToHttpObjectCodec `and then sent up to the child channel's pipeline and proxied through a remote peer as HTTP/1.1 this may result in request smuggling. In a proxy case, users may assume the content-length is validated somehow, which is not the case. If the request is forwarded to a backend channel that is a HTTP/1.1 connection, the Content-Length now has meaning and needs to be checked. An attacker can smuggle requests inside the body as it gets downgraded from HTTP/2 to HTTP/1.1. For an example attack refer to the linked GitHub Advisory. Users are only affected if all of this is true: `HTTP2MultiplexCodec` or `Http2FrameCodec` is used, `Http2StreamFrameToHttpObjectCodec` is used to convert to HTTP/1.1 objects, and these HTTP/1.1 objects are forwarded to another remote peer. This has been patched in 4.1.60.Final As a workaround, the user can do the validation by themselves by implementing a custom `ChannelInboundHandler` that is put in the `ChannelPipeline` behind `Http2StreamFrameToHttpObjectCodec`.

<p>Publish Date: 2021-03-09

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21295>CVE-2021-21295</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-wm47-8v5p-wjpj">https://github.com/advisories/GHSA-wm47-8v5p-wjpj</a></p>

<p>Release Date: 2021-03-09</p>

<p>Fix Resolution: io.netty:netty-all:4.1.60;io.netty:netty-codec-http:4.1.60;io.netty:netty-codec-http2:4.1.60</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"io.netty","packageName":"netty-codec-http","packageVersion":"4.1.39.Final","packageFilePaths":["/build.gradle"],"isTransitiveDependency":false,"dependencyTree":"io.netty:netty-codec-http:4.1.39.Final","isMinimumFixVersionAvailable":true,"minimumFixVersion":"io.netty:netty-all:4.1.60;io.netty:netty-codec-http:4.1.60;io.netty:netty-codec-http2:4.1.60"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-21295","vulnerabilityDetails":"Netty is an open-source, asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers \u0026 clients. In Netty (io.netty:netty-codec-http2) before version 4.1.60.Final there is a vulnerability that enables request smuggling. If a Content-Length header is present in the original HTTP/2 request, the field is not validated by `Http2MultiplexHandler` as it is propagated up. This is fine as long as the request is not proxied through as HTTP/1.1. If the request comes in as an HTTP/2 stream, gets converted into the HTTP/1.1 domain objects (`HttpRequest`, `HttpContent`, etc.) via `Http2StreamFrameToHttpObjectCodec `and then sent up to the child channel\u0027s pipeline and proxied through a remote peer as HTTP/1.1 this may result in request smuggling. In a proxy case, users may assume the content-length is validated somehow, which is not the case. If the request is forwarded to a backend channel that is a HTTP/1.1 connection, the Content-Length now has meaning and needs to be checked. An attacker can smuggle requests inside the body as it gets downgraded from HTTP/2 to HTTP/1.1. For an example attack refer to the linked GitHub Advisory. Users are only affected if all of this is true: `HTTP2MultiplexCodec` or `Http2FrameCodec` is used, `Http2StreamFrameToHttpObjectCodec` is used to convert to HTTP/1.1 objects, and these HTTP/1.1 objects are forwarded to another remote peer. This has been patched in 4.1.60.Final As a workaround, the user can do the validation by themselves by implementing a custom `ChannelInboundHandler` that is put in the `ChannelPipeline` behind `Http2StreamFrameToHttpObjectCodec`.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21295","cvss3Severity":"medium","cvss3Score":"5.9","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | non_main | cve medium detected in netty codec http final jar cve medium severity vulnerability vulnerable library netty codec http final jar netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers and clients library home page a href path to dependency file margaret rose build gradle path to vulnerable library caches modules files io netty netty codec http final netty codec http final jar dependency hierarchy x netty codec http final jar vulnerable library found in head commit a href found in base branch master vulnerability details netty is an open source asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers clients in netty io netty netty codec before version final there is a vulnerability that enables request smuggling if a content length header is present in the original http request the field is not validated by as it is propagated up this is fine as long as the request is not proxied through as http if the request comes in as an http stream gets converted into the http domain objects httprequest httpcontent etc via and then sent up to the child channel s pipeline and proxied through a remote peer as http this may result in request smuggling in a proxy case users may assume the content length is validated somehow which is not the case if the request is forwarded to a backend channel that is a http connection the content length now has meaning and needs to be checked an attacker can smuggle requests inside the body as it gets downgraded from http to http for an example attack refer to the linked github advisory users are only affected if all of this is true or is used is used to convert to http objects and these http objects are forwarded to another remote peer this has been patched in final as a workaround the user can do the validation by themselves by implementing a custom channelinboundhandler that is put in the channelpipeline behind publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution io netty netty all io netty netty codec http io netty netty codec isopenpronvulnerability true ispackagebased true isdefaultbranch true packages istransitivedependency false dependencytree io netty netty codec http final isminimumfixversionavailable true minimumfixversion io netty netty all io netty netty codec http io netty netty codec basebranches vulnerabilityidentifier cve vulnerabilitydetails netty is an open source asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers clients in netty io netty netty codec before version final there is a vulnerability that enables request smuggling if a content length header is present in the original http request the field is not validated by as it is propagated up this is fine as long as the request is not proxied through as http if the request comes in as an http stream gets converted into the http domain objects httprequest httpcontent etc via and then sent up to the child channel pipeline and proxied through a remote peer as http this may result in request smuggling in a proxy case users may assume the content length is validated somehow which is not the case if the request is forwarded to a backend channel that is a http connection the content length now has meaning and needs to be checked an attacker can smuggle requests inside the body as it gets downgraded from http to http for an example attack refer to the linked github advisory users are only affected if all of this is true or is used is used to convert to http objects and these http objects are forwarded to another remote peer this has been patched in final as a workaround the user can do the validation by themselves by implementing a custom channelinboundhandler that is put in the channelpipeline behind vulnerabilityurl | 0 |

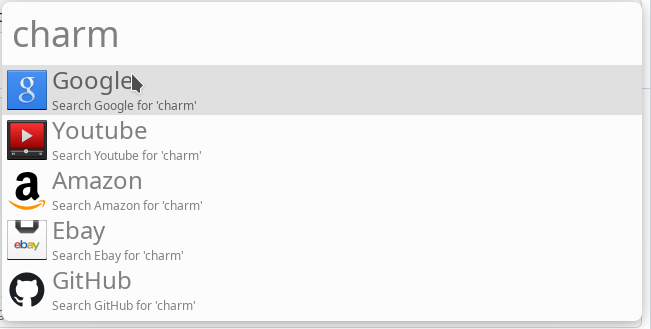

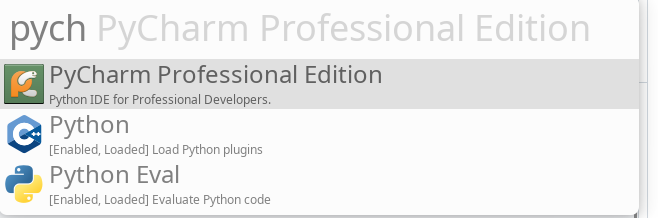

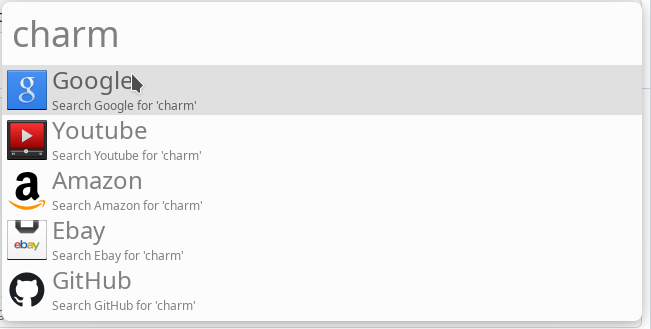

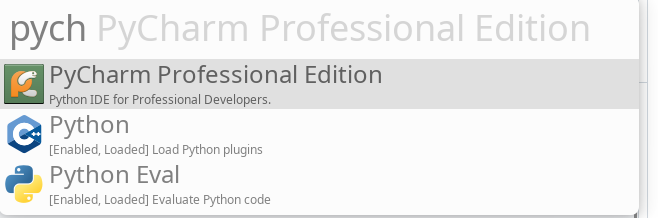

5,252 | 26,581,338,965 | IssuesEvent | 2023-01-22 13:49:14 | albertlauncher/plugins | https://api.github.com/repos/albertlauncher/plugins | closed | Applications plugins cannot find pycharm | Maintainer wanted | If I would like to try to find `pycharm`, it doesn't show anything

desktop file looks like

```

[Desktop Entry]

Type=Application

Name=PyCharm Professional Edition

Icon=pycharm

Comment=Python IDE for Professional Developers.

Exec=pycharm %f

Terminal=false

Categories=Development;IDE;Python;

StartupNotify=true

StartupWMClass=jetbrains-pycharm

```

`Charm` (case sensitive) search doesn't work either. However it is possible to find by typing `pycharm` instead (or even `professional`). Fuzzy search option has no effect

| True | Applications plugins cannot find pycharm - If I would like to try to find `pycharm`, it doesn't show anything

desktop file looks like

```

[Desktop Entry]

Type=Application

Name=PyCharm Professional Edition

Icon=pycharm

Comment=Python IDE for Professional Developers.

Exec=pycharm %f

Terminal=false

Categories=Development;IDE;Python;

StartupNotify=true

StartupWMClass=jetbrains-pycharm

```

`Charm` (case sensitive) search doesn't work either. However it is possible to find by typing `pycharm` instead (or even `professional`). Fuzzy search option has no effect

| main | applications plugins cannot find pycharm if i would like to try to find pycharm it doesn t show anything desktop file looks like type application name pycharm professional edition icon pycharm comment python ide for professional developers exec pycharm f terminal false categories development ide python startupnotify true startupwmclass jetbrains pycharm charm case sensitive search doesn t work either however it is possible to find by typing pycharm instead or even professional fuzzy search option has no effect | 1 |

3,756 | 15,788,683,637 | IssuesEvent | 2021-04-01 21:12:24 | carbon-design-system/carbon | https://api.github.com/repos/carbon-design-system/carbon | closed | Add a new property to component Dropdown to allow specifying the min-width of the` .bx--list-box__menu` class | status: needs triage 🕵️♀️ status: waiting for maintainer response 💬 type: enhancement 💡 |

### Summary

Add a new property to component Dropdown to allow specifying the min-width of the` .bx--list-box__menu` class

inside Dropdown so we can see full text (and not truncated text) of all values in the options list.

Clarify if you are asking for design, development, or both design and

development.

### Justification

Without this property, consumer of Dropdown has to use a specific className for their Dropdown and add a css to specify the min-width of the class ` .bx--list-box__menu` in the scope of the Dropdown class , that means having a specific css per Dropdown.

### Desired UX and success metrics

<!--alex disable failure-->

Describe the full user experience for this feature. Also define the metrics by

which we can measure success/failure for the user.

<!--alex enable failure-->

### "Must have" functionality

Highlight any "must have" needs and functionality for the request.

This should not be a full list of functionality; the Carbon team will work with

you to define functionality based on the desired UX.

### Specific timeline issues / requests

It's a UX requirement for Cognos Analytics release 11.2.1 (scheduled probably for early next year)

<!--alex disable period-->

Do you want this work within a specific time period? Is it related to an

upcoming release?

<!--alex enable period-->

_NB: The Carbon team will try to work with your timeline, but it's not

guaranteed. The earlier you make a request in advance of a desired delivery

date, the better!_

### Available extra resources

What resources do you have to assist this effort?

_Carbon is a collaborative system. We encourage teams to build components and

submit them for integration as either add-ons or core components._

| True | Add a new property to component Dropdown to allow specifying the min-width of the` .bx--list-box__menu` class -

### Summary

Add a new property to component Dropdown to allow specifying the min-width of the` .bx--list-box__menu` class