Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1,003 | 4,771,184,968 | IssuesEvent | 2016-10-26 17:15:39 | spyder-ide/spyder | https://api.github.com/repos/spyder-ide/spyder | reopened | Add ciocheck to test mode and update Pull Request guidelines | OS-All Type-Maintainability | https://github.com/ContinuumIO/ciocheck

Package available at: https://anaconda.org/continuumcrew/ciocheck

`conda install ciocheck -c conda-forge -c continuumcrew`

Moving to pypi and conda-forge soon. | True | Add ciocheck to test mode and update Pull Request guidelines - https://github.com/ContinuumIO/ciocheck

Package available at: https://anaconda.org/continuumcrew/ciocheck

`conda install ciocheck -c conda-forge -c continuumcrew`

Moving to pypi and conda-forge soon. | main | add ciocheck to test mode and update pull request guidelines package available at conda install ciocheck c conda forge c continuumcrew moving to pypi and conda forge soon | 1 |

1,560 | 6,572,254,684 | IssuesEvent | 2017-09-11 00:39:54 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | please make lineinfile support edit in-place | affects_2.3 feature_idea waiting_on_maintainer | ##### ISSUE TYPE

Feature Idea

##### COMPONENT NAME

lineinfile module

##### ANSIBLE VERSION

N/A

##### SUMMARY

Docker makes /etc/resolv.conf a mount point, so that we can't do "mv some_tmp /etc/resolv.conf", "lineinfile" fails to update /etc/resolv.conf.

Currently I use module "shell" to bypass this issue, but this is long and ugly, and I don't like Ansible always reports something changed, please consider adding an "in-place" option to "lineinfile", thanks!

| True | please make lineinfile support edit in-place - ##### ISSUE TYPE

Feature Idea

##### COMPONENT NAME

lineinfile module

##### ANSIBLE VERSION

N/A

##### SUMMARY

Docker makes /etc/resolv.conf a mount point, so that we can't do "mv some_tmp /etc/resolv.conf", "lineinfile" fails to update /etc/resolv.conf.

Currently I use module "shell" to bypass this issue, but this is long and ugly, and I don't like Ansible always reports something changed, please consider adding an "in-place" option to "lineinfile", thanks!

| main | please make lineinfile support edit in place issue type feature idea component name lineinfile module ansible version n a summary docker makes etc resolv conf a mount point so that we can t do mv some tmp etc resolv conf lineinfile fails to update etc resolv conf currently i use module shell to bypass this issue but this is long and ugly and i don t like ansible always reports something changed please consider adding an in place option to lineinfile thanks | 1 |

92,270 | 10,739,745,478 | IssuesEvent | 2019-10-29 16:53:50 | nus-cs2103-AY1920S1/alpha-dev-response | https://api.github.com/repos/nus-cs2103-AY1920S1/alpha-dev-response | opened | Wakanda Forever | severity.Medium team.3 tutorial.CS2103T-F14 type.DocumentationBug | # Wakanda Falls

### Thanos and Minions Strike

Thanos and his troops have conquered Wakanda. The avengers are in a state of despair and have their backs against the wall. Can they stage a monumental comeback, or will Thanos accomplish his goal and retire in his garden home?

<hr><sub>[original: nus-cs2103-AY1920S1/alpha-interim#1314]<br/>

</sub> | 1.0 | Wakanda Forever - # Wakanda Falls

### Thanos and Minions Strike

Thanos and his troops have conquered Wakanda. The avengers are in a state of despair and have their backs against the wall. Can they stage a monumental comeback, or will Thanos accomplish his goal and retire in his garden home?

<hr><sub>[original: nus-cs2103-AY1920S1/alpha-interim#1314]<br/>

</sub> | non_main | wakanda forever wakanda falls thanos and minions strike thanos and his troops have conquered wakanda the avengers are in a state of despair and have their backs against the wall can they stage a monumental comeback or will thanos accomplish his goal and retire in his garden home | 0 |

289,813 | 21,793,203,028 | IssuesEvent | 2022-05-15 08:16:12 | scikit-rf/scikit-rf | https://api.github.com/repos/scikit-rf/scikit-rf | closed | Erroneous Demo in "Measuring a Mutiport Device with a 2-Port Network Analyzer" | Bug Documentation Calibration | In the tutorial *Measuring a Mutiport Device with a 2-Port Network Analyzer*, the section *[Test For Accuracy](https://scikit-rf.readthedocs.io/en/latest/examples/metrology/Measuring%20a%20Mutiport%20Device%20with%20a%202-Port%20Network%20Analyzer.html#Test-For-Accuracy)* appears erroneous, it has probably been broken by a regression.

Two years ago, this section reads:

> Making sure our composite network is the same as our DUT

>

> [10]: composite == dut

> [10]: True

>

> Nice!. How close ?

>

> [11]: sum((composite - dut).s_mag)

> [11]: 9.917536367984054e-13

But it now reads:

> Making sure our composite network is the same as our DUT

>

> [10]: composite == dut

> [10]: False

>

> Nice!. How close ?

>

> [11]: sum((composite - dut).s_mag)

> [11]: 880.7934663060666 | 1.0 | Erroneous Demo in "Measuring a Mutiport Device with a 2-Port Network Analyzer" - In the tutorial *Measuring a Mutiport Device with a 2-Port Network Analyzer*, the section *[Test For Accuracy](https://scikit-rf.readthedocs.io/en/latest/examples/metrology/Measuring%20a%20Mutiport%20Device%20with%20a%202-Port%20Network%20Analyzer.html#Test-For-Accuracy)* appears erroneous, it has probably been broken by a regression.

Two years ago, this section reads:

> Making sure our composite network is the same as our DUT

>

> [10]: composite == dut

> [10]: True

>

> Nice!. How close ?

>

> [11]: sum((composite - dut).s_mag)

> [11]: 9.917536367984054e-13

But it now reads:

> Making sure our composite network is the same as our DUT

>

> [10]: composite == dut

> [10]: False

>

> Nice!. How close ?

>

> [11]: sum((composite - dut).s_mag)

> [11]: 880.7934663060666 | non_main | erroneous demo in measuring a mutiport device with a port network analyzer in the tutorial measuring a mutiport device with a port network analyzer the section appears erroneous it has probably been broken by a regression two years ago this section reads making sure our composite network is the same as our dut composite dut true nice how close sum composite dut s mag but it now reads making sure our composite network is the same as our dut composite dut false nice how close sum composite dut s mag | 0 |

352,361 | 10,540,897,890 | IssuesEvent | 2019-10-02 09:28:42 | UniversityOfHelsinkiCS/fuksilaiterekisteri | https://api.github.com/repos/UniversityOfHelsinkiCS/fuksilaiterekisteri | closed | season over -update | enhancement high priority management | - [x] stop new regs

- [x] update ineligibility-page

- [x] send email lists of ready and wants to Pekka

| 1.0 | season over -update - - [x] stop new regs

- [x] update ineligibility-page

- [x] send email lists of ready and wants to Pekka

| non_main | season over update stop new regs update ineligibility page send email lists of ready and wants to pekka | 0 |

422 | 3,508,966,034 | IssuesEvent | 2016-01-08 20:20:39 | antigenomics/vdjdb-db | https://api.github.com/repos/antigenomics/vdjdb-db | closed | DB maintainance | maintainance | * Consistency checks via Travis

* Add CDR3 fixing functional from vdjdb

* Merge chunks into final table (say, with pandas) | True | DB maintainance - * Consistency checks via Travis

* Add CDR3 fixing functional from vdjdb

* Merge chunks into final table (say, with pandas) | main | db maintainance consistency checks via travis add fixing functional from vdjdb merge chunks into final table say with pandas | 1 |

514 | 3,875,242,333 | IssuesEvent | 2016-04-11 23:53:19 | spyder-ide/spyder | https://api.github.com/repos/spyder-ide/spyder | closed | Migrate to qtpy: Remove internal Qt shim used by Spyder. | Enhancement Maintainability | Remove internal Qt shim used by Spyder and replace by qtpy package. | True | Migrate to qtpy: Remove internal Qt shim used by Spyder. - Remove internal Qt shim used by Spyder and replace by qtpy package. | main | migrate to qtpy remove internal qt shim used by spyder remove internal qt shim used by spyder and replace by qtpy package | 1 |

569,795 | 17,016,187,099 | IssuesEvent | 2021-07-02 12:24:00 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | opened | Add new tunnel values to roads preset | Component: potlatch2 Priority: minor Type: enhancement | **[Submitted to the original trac issue database at 11.56am, Sunday, 2nd December 2012]**

The predefined road presets should be updated, so that the new tunnel values can be selected. See approved proposal: https://wiki.openstreetmap.org/wiki/Proposed_features/building_passage | 1.0 | Add new tunnel values to roads preset - **[Submitted to the original trac issue database at 11.56am, Sunday, 2nd December 2012]**

The predefined road presets should be updated, so that the new tunnel values can be selected. See approved proposal: https://wiki.openstreetmap.org/wiki/Proposed_features/building_passage | non_main | add new tunnel values to roads preset the predefined road presets should be updated so that the new tunnel values can be selected see approved proposal | 0 |

80,204 | 23,139,881,519 | IssuesEvent | 2022-07-28 17:23:43 | foundry-rs/foundry | https://api.github.com/repos/foundry-rs/foundry | closed | Forge caching issues | T-bug C-forge T-meta Cmd-forge-build | ### Component

Forge

### Have you ensured that all of these are up to date?

- [X] Foundry

- [X] Foundryup

### What version of Foundry are you on?

forge 0.2.0 (05e72b6 2022-05-19T00:03:30.647862736Z)

### What command(s) is the bug in?

forge build

### Operating System

Linux

### Describe the bug

This is a consolidation of bugs that are suspected to be cache related:

- https://github.com/foundry-rs/foundry/issues/1629

- https://github.com/foundry-rs/foundry/issues/1344

## Issue 1

- [ ] Check if solved

**Bug**: Sometimes `forge test` does not run any test files.

**Workaround**: You can run `forge clean` before `forge test`

## Issue 2

- [ ] Check if solved

**Bug**: Sometimes a file is changed and that edit is not picked up by `forge test`, which will then not recompile that contract. There is no reliable way to reproduce this currently. | 1.0 | Forge caching issues - ### Component

Forge

### Have you ensured that all of these are up to date?

- [X] Foundry

- [X] Foundryup

### What version of Foundry are you on?

forge 0.2.0 (05e72b6 2022-05-19T00:03:30.647862736Z)

### What command(s) is the bug in?

forge build

### Operating System

Linux

### Describe the bug

This is a consolidation of bugs that are suspected to be cache related:

- https://github.com/foundry-rs/foundry/issues/1629

- https://github.com/foundry-rs/foundry/issues/1344

## Issue 1

- [ ] Check if solved

**Bug**: Sometimes `forge test` does not run any test files.

**Workaround**: You can run `forge clean` before `forge test`

## Issue 2

- [ ] Check if solved

**Bug**: Sometimes a file is changed and that edit is not picked up by `forge test`, which will then not recompile that contract. There is no reliable way to reproduce this currently. | non_main | forge caching issues component forge have you ensured that all of these are up to date foundry foundryup what version of foundry are you on forge what command s is the bug in forge build operating system linux describe the bug this is a consolidation of bugs that are suspected to be cache related issue check if solved bug sometimes forge test does not run any test files workaround you can run forge clean before forge test issue check if solved bug sometimes a file is changed and that edit is not picked up by forge test which will then not recompile that contract there is no reliable way to reproduce this currently | 0 |

3,995 | 18,516,865,223 | IssuesEvent | 2021-10-20 11:06:57 | plaguesec/plaguesec-os | https://api.github.com/repos/plaguesec/plaguesec-os | closed | calendar versioning approach | maintainers only p3 todo tweak | Great guide for versioning a penetration testing operating system.

We use for this project is [Calendar Versioning](https://calver.org/).

This is our format (which is the same as Ubuntu but we have our own version).

``YY.0M.MICRO-MODFIER.Patch`` or ``21.10.1-alpha.1``

Cheers! 🚀🎉 | True | calendar versioning approach - Great guide for versioning a penetration testing operating system.

We use for this project is [Calendar Versioning](https://calver.org/).

This is our format (which is the same as Ubuntu but we have our own version).

``YY.0M.MICRO-MODFIER.Patch`` or ``21.10.1-alpha.1``

Cheers! 🚀🎉 | main | calendar versioning approach great guide for versioning a penetration testing operating system we use for this project is this is our format which is the same as ubuntu but we have our own version yy micro modfier patch or alpha cheers 🚀🎉 | 1 |

65,996 | 3,249,426,301 | IssuesEvent | 2015-10-18 05:22:58 | littlefoot32/gitiles | https://api.github.com/repos/littlefoot32/gitiles | closed | API for +log views | auto-migrated Priority-Medium Type-Enhancement | ```

-Support JSON/TEXT format for +log

-Support additional arguments similar to git log, e.g. filtering by author or

date.

```

Original issue reported on code.google.com by `dborowitz@google.com` on 11 Apr 2013 at 7:29 | 1.0 | API for +log views - ```

-Support JSON/TEXT format for +log

-Support additional arguments similar to git log, e.g. filtering by author or

date.

```

Original issue reported on code.google.com by `dborowitz@google.com` on 11 Apr 2013 at 7:29 | non_main | api for log views support json text format for log support additional arguments similar to git log e g filtering by author or date original issue reported on code google com by dborowitz google com on apr at | 0 |

3,306 | 12,806,593,279 | IssuesEvent | 2020-07-03 09:44:35 | geolexica/geolexica-server | https://api.github.com/repos/geolexica/geolexica-server | closed | Disable Liquid processing for some machine-readable pages | maintainability | Jekyll 4 allows to disable liquid processing via front matter variable: https://github.com/jekyll/jekyll/pull/6824. We should use it in favour of `{% raw %}` wherever it's appropriate, e.g. in `Jekyll::Geolexica::ConceptPage` which is meant to produce some non-Liquid content. | True | Disable Liquid processing for some machine-readable pages - Jekyll 4 allows to disable liquid processing via front matter variable: https://github.com/jekyll/jekyll/pull/6824. We should use it in favour of `{% raw %}` wherever it's appropriate, e.g. in `Jekyll::Geolexica::ConceptPage` which is meant to produce some non-Liquid content. | main | disable liquid processing for some machine readable pages jekyll allows to disable liquid processing via front matter variable we should use it in favour of raw wherever it s appropriate e g in jekyll geolexica conceptpage which is meant to produce some non liquid content | 1 |

1,697 | 6,574,278,501 | IssuesEvent | 2017-09-11 12:16:18 | ansible/ansible | https://api.github.com/repos/ansible/ansible | closed | docker_service got an unexpected keyword argument | affects_2.3 bug_report cloud docker module needs_maintainer support:core | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

* ansible

* ansible-playbook

* docker_service

* ansible-container

##### ANSIBLE VERSION

```

ansible 2.3.1.0

config file =

configured module search path = Default w/o overrides

python version = 2.7.12 (default, Sep 1 2016, 22:14:00) [GCC 4.8.3 20140911 (Red Hat 4.8.3-9)]

```

##### CONFIGURATION

```

```

##### OS / ENVIRONMENT

```

AWS Linux 64 bit

Python 2.7.12

pip 9.0.1 from /usr/local/lib/python2.7/site-packages (python 2.7)

ansible==2.3.1.0

-e git+https://github.com/ansible/ansible-container@0d54b0efba8a6bc205c0619226397201381e8e20#egg=ansible_container

aws-cfn-bootstrap==1.4

awscli==1.11.83

Babel==0.9.4

backports.ssl-match-hostname==3.5.0.1

boto==2.42.0

botocore==1.5.46

cached-property==1.3.0

certifi==2017.4.17

chardet==3.0.4

cloud-init==0.7.6

colorama==0.3.9

configobj==4.7.2

dictdiffer==0.6.1

docker==2.0.1

docker-compose==1.14.0

docker-pycreds==0.2.1

dockerpty==0.4.1

docopt==0.6.2

docutils==0.11

ecdsa==0.11

enum34==1.1.6

functools32==3.2.3.post2

futures==3.0.3

httplib2==0.10.3

idna==2.5

iniparse==0.3.1

ipaddress==1.0.18

Jinja2==2.9.6

jmespath==0.9.2

jsonpatch==1.2

jsonpointer==1.0

jsonschema==2.6.0

kitchen==1.1.1

kubernetes==1.0.2

lockfile==0.8

MarkupSafe==1.0

oauth2client==4.1.2

openshift==0.0.1

paramiko==1.15.1

PIL==1.1.6

ply==3.4

pyasn1==0.1.7

pyasn1-modules==0.0.9

pycrypto==2.6.1

pycurl==7.19.0

pygpgme==0.3

pyliblzma==0.5.3

pystache==0.5.3

python-daemon==1.5.2

python-dateutil==2.1

python-string-utils==0.6.0

pyxattr==0.5.0

PyYAML==3.12

requests==2.11.1

rsa==3.4.1

ruamel.ordereddict==0.4.9

ruamel.yaml==0.15.18

simplejson==3.6.5

six==1.10.0

structlog==17.2.0

texttable==0.8.8

urlgrabber==3.10

urllib3==1.21.1

virtualenv==12.0.7

websocket-client==0.44.0

yum-metadata-parser==1.1.4

```

##### SUMMARY

Playbook, generated by ansible-container, fails to run.

There were already some basic error in the generated playbook which i fixed.

The images now download but then there is this error message

```

TASK [docker_service] **********************************************************************************************

fatal: [localhost]: FAILED! => {"changed": false, "failed": true, "module_stderr": "", "module_stdout": "", "msg": "Error starting project __init__() got an unexpected keyword argument 'cpu_count'"}

to retry, use: --limit @/home/ec2-user/orson/orson.retry

```

##### STEPS TO REPRODUCE

Run this command on the playbook. You can either put other generic images in place of mine or you can contact me and I will give you temporary access to our private registry.

```

ansible-playbook --step -t start orson.yml

```

<!--- Paste example playbooks or commands between quotes below -->

```yaml

- gather_facts: false

tasks:

- docker_login: username=mchassy password=XXXXXXX email=mchassy@orsontestdata.com

tags:

- start

- docker_service:

definition:

services: &id001

tomee:

image: orsontestdata/orson-tomee:1.2.0-ansible

working_dir: /opt

entrypoint:

- /entrypoint.sh

user: orson

command:

- /usr/bin/dumb-init

- /opt/orson/tomee/bin/catalina.sh

- run

ports:

- 8080:8080

mongo:

image: orsontestdata/orson-mongo:1.2.0-ansible

working_dir: /opt

entrypoint:

- /entrypoint.sh

user: orson

volumes:

- mongo_data:/opt/orson/mongo/data

command:

- /usr/bin/dumb-init

- /opt/orson/mongo/bin/mongod --config /opt/orson/mongo/conf/mongod.conf

expose:

- 27017

version: '2'

volumes:

mongo_data: &id002 {}

state: present

project_name: orson

tags:

- start

```

##### EXPECTED RESULTS

images pulled and services started

##### ACTUAL RESULTS

images are pulled but services don't start

```

fatal: [localhost]: FAILED! => {"changed": false, "failed": true, "module_stderr": "", "module_stdout": "", "msg": "Error starting project __init__() got an unexpected keyword argument 'cpu_count'"}

to retry, use: --limit @/home/ec2-user/orson/orson.retry

```

| True | docker_service got an unexpected keyword argument - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

* ansible

* ansible-playbook

* docker_service

* ansible-container

##### ANSIBLE VERSION

```

ansible 2.3.1.0

config file =

configured module search path = Default w/o overrides

python version = 2.7.12 (default, Sep 1 2016, 22:14:00) [GCC 4.8.3 20140911 (Red Hat 4.8.3-9)]

```

##### CONFIGURATION

```

```

##### OS / ENVIRONMENT

```

AWS Linux 64 bit

Python 2.7.12

pip 9.0.1 from /usr/local/lib/python2.7/site-packages (python 2.7)

ansible==2.3.1.0

-e git+https://github.com/ansible/ansible-container@0d54b0efba8a6bc205c0619226397201381e8e20#egg=ansible_container

aws-cfn-bootstrap==1.4

awscli==1.11.83

Babel==0.9.4

backports.ssl-match-hostname==3.5.0.1

boto==2.42.0

botocore==1.5.46

cached-property==1.3.0

certifi==2017.4.17

chardet==3.0.4

cloud-init==0.7.6

colorama==0.3.9

configobj==4.7.2

dictdiffer==0.6.1

docker==2.0.1

docker-compose==1.14.0

docker-pycreds==0.2.1

dockerpty==0.4.1

docopt==0.6.2

docutils==0.11

ecdsa==0.11

enum34==1.1.6

functools32==3.2.3.post2

futures==3.0.3

httplib2==0.10.3

idna==2.5

iniparse==0.3.1

ipaddress==1.0.18

Jinja2==2.9.6

jmespath==0.9.2

jsonpatch==1.2

jsonpointer==1.0

jsonschema==2.6.0

kitchen==1.1.1

kubernetes==1.0.2

lockfile==0.8

MarkupSafe==1.0

oauth2client==4.1.2

openshift==0.0.1

paramiko==1.15.1

PIL==1.1.6

ply==3.4

pyasn1==0.1.7

pyasn1-modules==0.0.9

pycrypto==2.6.1

pycurl==7.19.0

pygpgme==0.3

pyliblzma==0.5.3

pystache==0.5.3

python-daemon==1.5.2

python-dateutil==2.1

python-string-utils==0.6.0

pyxattr==0.5.0

PyYAML==3.12

requests==2.11.1

rsa==3.4.1

ruamel.ordereddict==0.4.9

ruamel.yaml==0.15.18

simplejson==3.6.5

six==1.10.0

structlog==17.2.0

texttable==0.8.8

urlgrabber==3.10

urllib3==1.21.1

virtualenv==12.0.7

websocket-client==0.44.0

yum-metadata-parser==1.1.4

```

##### SUMMARY

Playbook, generated by ansible-container, fails to run.

There were already some basic error in the generated playbook which i fixed.

The images now download but then there is this error message

```

TASK [docker_service] **********************************************************************************************

fatal: [localhost]: FAILED! => {"changed": false, "failed": true, "module_stderr": "", "module_stdout": "", "msg": "Error starting project __init__() got an unexpected keyword argument 'cpu_count'"}

to retry, use: --limit @/home/ec2-user/orson/orson.retry

```

##### STEPS TO REPRODUCE

Run this command on the playbook. You can either put other generic images in place of mine or you can contact me and I will give you temporary access to our private registry.

```

ansible-playbook --step -t start orson.yml

```

<!--- Paste example playbooks or commands between quotes below -->

```yaml

- gather_facts: false

tasks:

- docker_login: username=mchassy password=XXXXXXX email=mchassy@orsontestdata.com

tags:

- start

- docker_service:

definition:

services: &id001

tomee:

image: orsontestdata/orson-tomee:1.2.0-ansible

working_dir: /opt

entrypoint:

- /entrypoint.sh

user: orson

command:

- /usr/bin/dumb-init

- /opt/orson/tomee/bin/catalina.sh

- run

ports:

- 8080:8080

mongo:

image: orsontestdata/orson-mongo:1.2.0-ansible

working_dir: /opt

entrypoint:

- /entrypoint.sh

user: orson

volumes:

- mongo_data:/opt/orson/mongo/data

command:

- /usr/bin/dumb-init

- /opt/orson/mongo/bin/mongod --config /opt/orson/mongo/conf/mongod.conf

expose:

- 27017

version: '2'

volumes:

mongo_data: &id002 {}

state: present

project_name: orson

tags:

- start

```

##### EXPECTED RESULTS

images pulled and services started

##### ACTUAL RESULTS

images are pulled but services don't start

```

fatal: [localhost]: FAILED! => {"changed": false, "failed": true, "module_stderr": "", "module_stdout": "", "msg": "Error starting project __init__() got an unexpected keyword argument 'cpu_count'"}

to retry, use: --limit @/home/ec2-user/orson/orson.retry

```

| main | docker service got an unexpected keyword argument issue type bug report component name ansible ansible playbook docker service ansible container ansible version ansible config file configured module search path default w o overrides python version default sep configuration os environment aws linux bit python pip from usr local lib site packages python ansible e git aws cfn bootstrap awscli babel backports ssl match hostname boto botocore cached property certifi chardet cloud init colorama configobj dictdiffer docker docker compose docker pycreds dockerpty docopt docutils ecdsa futures idna iniparse ipaddress jmespath jsonpatch jsonpointer jsonschema kitchen kubernetes lockfile markupsafe openshift paramiko pil ply modules pycrypto pycurl pygpgme pyliblzma pystache python daemon python dateutil python string utils pyxattr pyyaml requests rsa ruamel ordereddict ruamel yaml simplejson six structlog texttable urlgrabber virtualenv websocket client yum metadata parser summary playbook generated by ansible container fails to run there were already some basic error in the generated playbook which i fixed the images now download but then there is this error message task fatal failed changed false failed true module stderr module stdout msg error starting project init got an unexpected keyword argument cpu count to retry use limit home user orson orson retry steps to reproduce run this command on the playbook you can either put other generic images in place of mine or you can contact me and i will give you temporary access to our private registry ansible playbook step t start orson yml yaml gather facts false tasks docker login username mchassy password xxxxxxx email mchassy orsontestdata com tags start docker service definition services tomee image orsontestdata orson tomee ansible working dir opt entrypoint entrypoint sh user orson command usr bin dumb init opt orson tomee bin catalina sh run ports mongo image orsontestdata orson mongo ansible working dir opt entrypoint entrypoint sh user orson volumes mongo data opt orson mongo data command usr bin dumb init opt orson mongo bin mongod config opt orson mongo conf mongod conf expose version volumes mongo data state present project name orson tags start expected results images pulled and services started actual results images are pulled but services don t start fatal failed changed false failed true module stderr module stdout msg error starting project init got an unexpected keyword argument cpu count to retry use limit home user orson orson retry | 1 |

113,409 | 24,413,794,953 | IssuesEvent | 2022-10-05 14:24:12 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | Parameterised queries only work with `SELECT` queries | unfinished code | **Describe the unexpected behaviour**

When trying to use parameterised for queries other than `SELECT`s I get database exceptions for unexpected strings. Apologies if this is intended behaviour, I tried searching the issues but couldn't find anything open or closed.

**How to reproduce**

* ClickHouse 22.8.5.29

* Both HTTP and native interfaces

* Queries to run that lead to unexpected result:

```

POST http://localhost:8123/?param_uname=test¶m_password=qwerty

CREATE USER {uname:Identifier} IDENITIFIED WITH plaintext_password BY {password:String}

```

**Expected behavior**

I would expect the substitutions to work in the same way `Identifier` or other data type placeholders do for `SELECT` queries.

**Error message and/or stacktrace**

Example:

```

Code: 62. DB::Exception: Syntax error: failed at position X ('{'): {uname:Identifier}. Expected one of: IF NOT EXISTS, OR REPLACE, UserNamesWithHost, identifier, string literal. (SYNTAX_ERROR)

Code: 62. DB::Exception: Syntax error: failed at position X ('{'): {password:String}. Expected one of: string literal, end of query. (SYNTAX_ERROR)

```

**Additional context**

I also found the same behaviour when creating roles and row policies, I haven't tried other statements yet, I just know it works fine for `SELECT`s.

| 1.0 | Parameterised queries only work with `SELECT` queries - **Describe the unexpected behaviour**

When trying to use parameterised for queries other than `SELECT`s I get database exceptions for unexpected strings. Apologies if this is intended behaviour, I tried searching the issues but couldn't find anything open or closed.

**How to reproduce**

* ClickHouse 22.8.5.29

* Both HTTP and native interfaces

* Queries to run that lead to unexpected result:

```

POST http://localhost:8123/?param_uname=test¶m_password=qwerty

CREATE USER {uname:Identifier} IDENITIFIED WITH plaintext_password BY {password:String}

```

**Expected behavior**

I would expect the substitutions to work in the same way `Identifier` or other data type placeholders do for `SELECT` queries.

**Error message and/or stacktrace**

Example:

```

Code: 62. DB::Exception: Syntax error: failed at position X ('{'): {uname:Identifier}. Expected one of: IF NOT EXISTS, OR REPLACE, UserNamesWithHost, identifier, string literal. (SYNTAX_ERROR)

Code: 62. DB::Exception: Syntax error: failed at position X ('{'): {password:String}. Expected one of: string literal, end of query. (SYNTAX_ERROR)

```

**Additional context**

I also found the same behaviour when creating roles and row policies, I haven't tried other statements yet, I just know it works fine for `SELECT`s.

| non_main | parameterised queries only work with select queries describe the unexpected behaviour when trying to use parameterised for queries other than select s i get database exceptions for unexpected strings apologies if this is intended behaviour i tried searching the issues but couldn t find anything open or closed how to reproduce clickhouse both http and native interfaces queries to run that lead to unexpected result post create user uname identifier idenitified with plaintext password by password string expected behavior i would expect the substitutions to work in the same way identifier or other data type placeholders do for select queries error message and or stacktrace example code db exception syntax error failed at position x uname identifier expected one of if not exists or replace usernameswithhost identifier string literal syntax error code db exception syntax error failed at position x password string expected one of string literal end of query syntax error additional context i also found the same behaviour when creating roles and row policies i haven t tried other statements yet i just know it works fine for select s | 0 |

1,411 | 2,753,689,192 | IssuesEvent | 2015-04-25 00:08:11 | deis/deis | https://api.github.com/repos/deis/deis | closed | Buildpack exception not canceling build | builder | I have an exception occurring when pushing an app using heroku-buildpack-ruby. The buildpack process throws and error, and yet the build goes through and deploys the app.

This is in a newly provisioned 1.4.0 cluster. | 1.0 | Buildpack exception not canceling build - I have an exception occurring when pushing an app using heroku-buildpack-ruby. The buildpack process throws and error, and yet the build goes through and deploys the app.

This is in a newly provisioned 1.4.0 cluster. | non_main | buildpack exception not canceling build i have an exception occurring when pushing an app using heroku buildpack ruby the buildpack process throws and error and yet the build goes through and deploys the app this is in a newly provisioned cluster | 0 |

11,181 | 7,460,420,362 | IssuesEvent | 2018-03-30 19:36:40 | smith-chem-wisc/MetaMorpheus | https://api.github.com/repos/smith-chem-wisc/MetaMorpheus | closed | Search stalled in new MM version and lengthy searches | Performance | Hi,

I've downloaded the new version and ran a search. search stalled in "picking MS2 spectra". when I shut the run down, I got "index out of range error". the search was done on a single file running a search and G-PTM-D pipeline.

the second question is of slow searches. before downloading the new version i've put a quant set to search running an initial search with wide ppm values and small DB, calibration and then full search and G-PTM-D.

calibration took forever - 3 files took more than 8 hours. the software is installed on a 26 core VM on a Windows Enterprise 2008 R2 version. could that be influencing the search speed?

Thanks!

David. | True | Search stalled in new MM version and lengthy searches - Hi,

I've downloaded the new version and ran a search. search stalled in "picking MS2 spectra". when I shut the run down, I got "index out of range error". the search was done on a single file running a search and G-PTM-D pipeline.

the second question is of slow searches. before downloading the new version i've put a quant set to search running an initial search with wide ppm values and small DB, calibration and then full search and G-PTM-D.

calibration took forever - 3 files took more than 8 hours. the software is installed on a 26 core VM on a Windows Enterprise 2008 R2 version. could that be influencing the search speed?

Thanks!

David. | non_main | search stalled in new mm version and lengthy searches hi i ve downloaded the new version and ran a search search stalled in picking spectra when i shut the run down i got index out of range error the search was done on a single file running a search and g ptm d pipeline the second question is of slow searches before downloading the new version i ve put a quant set to search running an initial search with wide ppm values and small db calibration and then full search and g ptm d calibration took forever files took more than hours the software is installed on a core vm on a windows enterprise version could that be influencing the search speed thanks david | 0 |

154,372 | 13,546,865,761 | IssuesEvent | 2020-09-17 02:30:04 | openmobilityfoundation/mobility-data-specification | https://api.github.com/repos/openmobilityfoundation/mobility-data-specification | closed | Create translation tables/example scripts for reconciled state machine | Agency Provider State Machine documentation | After #506 is merged, we'll have a reconciled state machine between Agency and Provider. A follow-up task is to create additional documentation/guidance around how to translate event data from pre-1.0.0 into the newly reconciled 1.0.0 set of states.

The guidance itself should live in the [governance repo](https://github.com/openmobilityfoundation/governance) and we should link to it from the Vehicle State docs that #506 will introduce. | 1.0 | Create translation tables/example scripts for reconciled state machine - After #506 is merged, we'll have a reconciled state machine between Agency and Provider. A follow-up task is to create additional documentation/guidance around how to translate event data from pre-1.0.0 into the newly reconciled 1.0.0 set of states.

The guidance itself should live in the [governance repo](https://github.com/openmobilityfoundation/governance) and we should link to it from the Vehicle State docs that #506 will introduce. | non_main | create translation tables example scripts for reconciled state machine after is merged we ll have a reconciled state machine between agency and provider a follow up task is to create additional documentation guidance around how to translate event data from pre into the newly reconciled set of states the guidance itself should live in the and we should link to it from the vehicle state docs that will introduce | 0 |

575,876 | 17,064,639,238 | IssuesEvent | 2021-07-07 05:10:21 | nerdguyahmad/randomstuff.py | https://api.github.com/repos/nerdguyahmad/randomstuff.py | opened | Plans not working with Joke and images endpoint | Priority: MEDIUM Type: Bug | Plans seem to not work and return HTTPError for Bad request when using on jokes and images endpoint. | 1.0 | Plans not working with Joke and images endpoint - Plans seem to not work and return HTTPError for Bad request when using on jokes and images endpoint. | non_main | plans not working with joke and images endpoint plans seem to not work and return httperror for bad request when using on jokes and images endpoint | 0 |

31,422 | 14,961,388,018 | IssuesEvent | 2021-01-27 07:40:31 | FenPhoenix/AngelLoader | https://api.github.com/repos/FenPhoenix/AngelLoader | opened | Improve 7-Zip scan performance to the extent possible | performance | More and more people are releasing FMs in .7z format, so even though they will never have acceptable performance IMO, I should at least try to minimize the performance hit to the extent I can.

Things we can do:

- **Smarter .7z extract in the scanner:** Currently, the scanner simply extracts the whole .7z archive to a temp folder and thereafter treats it like an extracted FM. This works, but is *extremely* slow. We unfortunately can't just extract only the files we need, because we won't know exactly what files we'll need until we read other files (missflag.str etc.). But what we can do is just extract every file we *may possibly* need, which will at least be some amount less than the total file count.

- **Caching of readmes during scans:** Even though readme caching is reasonably performant in most cases, we can make it instantaneous by telling the scanner to simply copy the readmes from the temp extract folder into the cache folder as soon as it has them. That way only one extract is need. See T2X.7z, where it takes a million years to scan and then immediately afterward takes another million years to cache the readme. | True | Improve 7-Zip scan performance to the extent possible - More and more people are releasing FMs in .7z format, so even though they will never have acceptable performance IMO, I should at least try to minimize the performance hit to the extent I can.

Things we can do:

- **Smarter .7z extract in the scanner:** Currently, the scanner simply extracts the whole .7z archive to a temp folder and thereafter treats it like an extracted FM. This works, but is *extremely* slow. We unfortunately can't just extract only the files we need, because we won't know exactly what files we'll need until we read other files (missflag.str etc.). But what we can do is just extract every file we *may possibly* need, which will at least be some amount less than the total file count.

- **Caching of readmes during scans:** Even though readme caching is reasonably performant in most cases, we can make it instantaneous by telling the scanner to simply copy the readmes from the temp extract folder into the cache folder as soon as it has them. That way only one extract is need. See T2X.7z, where it takes a million years to scan and then immediately afterward takes another million years to cache the readme. | non_main | improve zip scan performance to the extent possible more and more people are releasing fms in format so even though they will never have acceptable performance imo i should at least try to minimize the performance hit to the extent i can things we can do smarter extract in the scanner currently the scanner simply extracts the whole archive to a temp folder and thereafter treats it like an extracted fm this works but is extremely slow we unfortunately can t just extract only the files we need because we won t know exactly what files we ll need until we read other files missflag str etc but what we can do is just extract every file we may possibly need which will at least be some amount less than the total file count caching of readmes during scans even though readme caching is reasonably performant in most cases we can make it instantaneous by telling the scanner to simply copy the readmes from the temp extract folder into the cache folder as soon as it has them that way only one extract is need see where it takes a million years to scan and then immediately afterward takes another million years to cache the readme | 0 |

368,836 | 10,885,206,986 | IssuesEvent | 2019-11-18 09:56:31 | kubeflow/pipelines | https://api.github.com/repos/kubeflow/pipelines | closed | System performance degrades with large numbers of runs | area/backend area/pipelines kind/bug priority/p0 | **What happened:**

On systems with lots of runs, listing experiments becomes unbearably slow.

**What did you expect to happen:**

The performance remains constant

**What steps did you take:**

I enabled slow query logging and identified the culprit query. Change #1836 attempted to address the issue but it doesn't.

1) the query that's generated by gorm is inherently inefficient. No index can make that query faster as it's currently written. In particular, the left join that does a select * from resource_references will need to materialize a temp table. That temp table will have no index for the next part of the join

Here's an explain statement from our cluster that contains ~70k resource_references:

```

mysql> explain extended SELECT subq.*, CONCAT("[",GROUP_CONCAT(r.Payload SEPARATOR ","),"]") AS refs FROM (SELECT rd.*, CONCAT("[",GROUP_CONCAT(m.Payload SEPARATOR ","),"]") AS metrics FROM (SELECT UUID, DisplayName, Name, StorageState, Namespace, Description, CreatedAtInSec, ScheduledAtInSec, FinishedAtInSec, Conditions, PipelineId, PipelineSpecManifest, WorkflowSpecManifest, Parameters, pipelineRuntimeManifest, WorkflowRuntimeManifest FROM run_details) AS rd LEFT JOIN run_metrics AS m ON rd.UUID=m.RunUUID GROUP BY rd.UUID) AS subq LEFT JOIN (select * from resource_references where ResourceType='Run') AS r ON subq.UUID=r.ResourceUUID WHERE UUID = '5e9c12b0-d27d-11e9-86a0-42010a8000e5' GROUP BY subq.UUID LIMIT 1;

+----+-------------+---------------------+------+---------------+-------------+---------+-------+-------+----------+----------------------------------------------------+

| id | select_type | table | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+---------------------+------+---------------+-------------+---------+-------+-------+----------+----------------------------------------------------+

| 1 | PRIMARY | <derived2> | ref | <auto_key0> | <auto_key0> | 257 | const | 10 | 100.00 | Using index condition |

| 1 | PRIMARY | <derived4> | ref | <auto_key0> | <auto_key0> | 257 | const | 10 | 100.00 | Using where |

| 4 | DERIVED | resource_references | ALL | NULL | NULL | NULL | NULL | 63229 | 100.00 | Using where |

| 2 | DERIVED | <derived3> | ALL | NULL | NULL | NULL | NULL | 19546 | 100.00 | Using temporary; Using filesort |

| 2 | DERIVED | m | ALL | PRIMARY | NULL | NULL | NULL | 1 | 100.00 | Using where; Using join buffer (Block Nested Loop) |

| 3 | DERIVED | run_details | ALL | NULL | NULL | NULL | NULL | 19546 | 100.00 | NULL |

+----+-------------+---------------------+------+---------------+-------------+---------+-------+-------+----------+----------------------------------------------------+

6 rows in set, 1 warning (6.90 sec)

```

**Anything else you would like to add:**

I'm not familiar enough with gorm to know how to force it to generate a more efficient query.

| 1.0 | System performance degrades with large numbers of runs - **What happened:**

On systems with lots of runs, listing experiments becomes unbearably slow.

**What did you expect to happen:**

The performance remains constant

**What steps did you take:**

I enabled slow query logging and identified the culprit query. Change #1836 attempted to address the issue but it doesn't.

1) the query that's generated by gorm is inherently inefficient. No index can make that query faster as it's currently written. In particular, the left join that does a select * from resource_references will need to materialize a temp table. That temp table will have no index for the next part of the join

Here's an explain statement from our cluster that contains ~70k resource_references:

```

mysql> explain extended SELECT subq.*, CONCAT("[",GROUP_CONCAT(r.Payload SEPARATOR ","),"]") AS refs FROM (SELECT rd.*, CONCAT("[",GROUP_CONCAT(m.Payload SEPARATOR ","),"]") AS metrics FROM (SELECT UUID, DisplayName, Name, StorageState, Namespace, Description, CreatedAtInSec, ScheduledAtInSec, FinishedAtInSec, Conditions, PipelineId, PipelineSpecManifest, WorkflowSpecManifest, Parameters, pipelineRuntimeManifest, WorkflowRuntimeManifest FROM run_details) AS rd LEFT JOIN run_metrics AS m ON rd.UUID=m.RunUUID GROUP BY rd.UUID) AS subq LEFT JOIN (select * from resource_references where ResourceType='Run') AS r ON subq.UUID=r.ResourceUUID WHERE UUID = '5e9c12b0-d27d-11e9-86a0-42010a8000e5' GROUP BY subq.UUID LIMIT 1;

+----+-------------+---------------------+------+---------------+-------------+---------+-------+-------+----------+----------------------------------------------------+

| id | select_type | table | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+---------------------+------+---------------+-------------+---------+-------+-------+----------+----------------------------------------------------+

| 1 | PRIMARY | <derived2> | ref | <auto_key0> | <auto_key0> | 257 | const | 10 | 100.00 | Using index condition |

| 1 | PRIMARY | <derived4> | ref | <auto_key0> | <auto_key0> | 257 | const | 10 | 100.00 | Using where |

| 4 | DERIVED | resource_references | ALL | NULL | NULL | NULL | NULL | 63229 | 100.00 | Using where |

| 2 | DERIVED | <derived3> | ALL | NULL | NULL | NULL | NULL | 19546 | 100.00 | Using temporary; Using filesort |

| 2 | DERIVED | m | ALL | PRIMARY | NULL | NULL | NULL | 1 | 100.00 | Using where; Using join buffer (Block Nested Loop) |

| 3 | DERIVED | run_details | ALL | NULL | NULL | NULL | NULL | 19546 | 100.00 | NULL |

+----+-------------+---------------------+------+---------------+-------------+---------+-------+-------+----------+----------------------------------------------------+

6 rows in set, 1 warning (6.90 sec)

```

**Anything else you would like to add:**

I'm not familiar enough with gorm to know how to force it to generate a more efficient query.

| non_main | system performance degrades with large numbers of runs what happened on systems with lots of runs listing experiments becomes unbearably slow what did you expect to happen the performance remains constant what steps did you take i enabled slow query logging and identified the culprit query change attempted to address the issue but it doesn t the query that s generated by gorm is inherently inefficient no index can make that query faster as it s currently written in particular the left join that does a select from resource references will need to materialize a temp table that temp table will have no index for the next part of the join here s an explain statement from our cluster that contains resource references mysql explain extended select subq concat as refs from select rd concat as metrics from select uuid displayname name storagestate namespace description createdatinsec scheduledatinsec finishedatinsec conditions pipelineid pipelinespecmanifest workflowspecmanifest parameters pipelineruntimemanifest workflowruntimemanifest from run details as rd left join run metrics as m on rd uuid m runuuid group by rd uuid as subq left join select from resource references where resourcetype run as r on subq uuid r resourceuuid where uuid group by subq uuid limit id select type table type possible keys key key len ref rows filtered extra primary ref const using index condition primary ref const using where derived resource references all null null null null using where derived all null null null null using temporary using filesort derived m all primary null null null using where using join buffer block nested loop derived run details all null null null null null rows in set warning sec anything else you would like to add i m not familiar enough with gorm to know how to force it to generate a more efficient query | 0 |

5,399 | 27,115,670,019 | IssuesEvent | 2023-02-15 18:22:00 | VA-Explorer/va_explorer | https://api.github.com/repos/VA-Explorer/va_explorer | closed | Make granularity work for more regions | Type: Maintainance Status: Inactive | **What is the expected state?**

The expected state would be to have the ability to filter down to additional granularity beyond province and district and for the code to be more generalized.

**What is the actual state?**

As it stands, things are hard-coded to "province" and "district" only.

**Relevant context**

- **```va_explorer/va_analytics/dash_apps/va_dashboard.py```**

See references to hard-coded values/comparisons of ```granularity```

| True | Make granularity work for more regions - **What is the expected state?**

The expected state would be to have the ability to filter down to additional granularity beyond province and district and for the code to be more generalized.

**What is the actual state?**

As it stands, things are hard-coded to "province" and "district" only.

**Relevant context**

- **```va_explorer/va_analytics/dash_apps/va_dashboard.py```**

See references to hard-coded values/comparisons of ```granularity```

| main | make granularity work for more regions what is the expected state the expected state would be to have the ability to filter down to additional granularity beyond province and district and for the code to be more generalized what is the actual state as it stands things are hard coded to province and district only relevant context va explorer va analytics dash apps va dashboard py see references to hard coded values comparisons of granularity | 1 |

24,401 | 12,290,680,504 | IssuesEvent | 2020-05-10 05:39:25 | conda-forge/status | https://api.github.com/repos/conda-forge/status | closed | Travis-CI ppc64le builds sometimes fail with no space left on device | degraded performance | One node on Travis-CI workers is the cause of this and restarting the build usually work.

Travis-CI has been informed. | True | Travis-CI ppc64le builds sometimes fail with no space left on device - One node on Travis-CI workers is the cause of this and restarting the build usually work.

Travis-CI has been informed. | non_main | travis ci builds sometimes fail with no space left on device one node on travis ci workers is the cause of this and restarting the build usually work travis ci has been informed | 0 |

1,109 | 2,532,226,039 | IssuesEvent | 2015-01-23 14:43:46 | AAndharia/ZIMS-School-Mgmt | https://api.github.com/repos/AAndharia/ZIMS-School-Mgmt | closed | Student Fee Receipt > Receipt number issue | bug Tested & Verified | http://screencast.com/t/MdoRXhGB

1. There is already one receipt number "201501/00003" in database ... but in new receipt it is showing same receipt number...

2. I am not sure how you are assigning receipt number... but if there is 0001 receipt number then next should be 0002... it directly came 0003... Please check the logic | 1.0 | Student Fee Receipt > Receipt number issue - http://screencast.com/t/MdoRXhGB

1. There is already one receipt number "201501/00003" in database ... but in new receipt it is showing same receipt number...

2. I am not sure how you are assigning receipt number... but if there is 0001 receipt number then next should be 0002... it directly came 0003... Please check the logic | non_main | student fee receipt receipt number issue there is already one receipt number in database but in new receipt it is showing same receipt number i am not sure how you are assigning receipt number but if there is receipt number then next should be it directly came please check the logic | 0 |

5,209 | 26,464,332,116 | IssuesEvent | 2023-01-16 21:17:43 | bazelbuild/intellij | https://api.github.com/repos/bazelbuild/intellij | closed | Flag --incompatible_disable_starlark_host_transitions will break IntelliJ UE Plugin Google in Bazel 7.0 | type: bug product: IntelliJ topic: bazel awaiting-maintainer | Incompatible flag `--incompatible_disable_starlark_host_transitions` will be enabled by default in the next major release (Bazel 7.0), thus breaking IntelliJ UE Plugin Google. Please migrate to fix this and unblock the flip of this flag.

The flag is documented here: [bazelbuild/bazel#17032](https://github.com/bazelbuild/bazel/issues/17032).

Please check the following CI builds for build and test results:

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f8a-4362-8723-a4a3623eea43)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f86-4245-92df-9232ddf91098)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f95-4b12-a5b0-5b835e6d7624)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f91-4ff0-9e9e-12f7b44155d6)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f98-44a8-9f91-f363f6c96d5e)

Never heard of incompatible flags before? We have [documentation](https://docs.bazel.build/versions/master/backward-compatibility.html) that explains everything.

If you have any questions, please file an issue in https://github.com/bazelbuild/continuous-integration. | True | Flag --incompatible_disable_starlark_host_transitions will break IntelliJ UE Plugin Google in Bazel 7.0 - Incompatible flag `--incompatible_disable_starlark_host_transitions` will be enabled by default in the next major release (Bazel 7.0), thus breaking IntelliJ UE Plugin Google. Please migrate to fix this and unblock the flip of this flag.

The flag is documented here: [bazelbuild/bazel#17032](https://github.com/bazelbuild/bazel/issues/17032).

Please check the following CI builds for build and test results:

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f8a-4362-8723-a4a3623eea43)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f86-4245-92df-9232ddf91098)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f95-4b12-a5b0-5b835e6d7624)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f91-4ff0-9e9e-12f7b44155d6)

- [Ubuntu 18.04 OpenJDK 11](https://buildkite.com/bazel/bazelisk-plus-incompatible-flags/builds/1365#0185154b-1f98-44a8-9f91-f363f6c96d5e)

Never heard of incompatible flags before? We have [documentation](https://docs.bazel.build/versions/master/backward-compatibility.html) that explains everything.

If you have any questions, please file an issue in https://github.com/bazelbuild/continuous-integration. | main | flag incompatible disable starlark host transitions will break intellij ue plugin google in bazel incompatible flag incompatible disable starlark host transitions will be enabled by default in the next major release bazel thus breaking intellij ue plugin google please migrate to fix this and unblock the flip of this flag the flag is documented here please check the following ci builds for build and test results never heard of incompatible flags before we have that explains everything if you have any questions please file an issue in | 1 |

715,028 | 24,584,256,159 | IssuesEvent | 2022-10-13 18:13:01 | ScicraftLearn/MineLabs | https://api.github.com/repos/ScicraftLearn/MineLabs | closed | Portal block does not work when first creating a world | bug low priority Subatomic dimension outdated | When creating a new world, the first time a portal block is used it does not work. When reloading the world this issue is fixed | 1.0 | Portal block does not work when first creating a world - When creating a new world, the first time a portal block is used it does not work. When reloading the world this issue is fixed | non_main | portal block does not work when first creating a world when creating a new world the first time a portal block is used it does not work when reloading the world this issue is fixed | 0 |

1,284 | 5,429,658,091 | IssuesEvent | 2017-03-03 19:02:12 | duckduckgo/zeroclickinfo-goodies | https://api.github.com/repos/duckduckgo/zeroclickinfo-goodies | closed | Ruby on Rails 4 Cheat Sheet: | Maintainer Input Requested Suggestion | Maybe we should update this to Rails 5 now? Alternatively we can change the trigger to `Rails 4 cheatsheet` instead of `Rails cheatsheet`

---

IA Page: http://duck.co/ia/view/rails_cheat_sheet

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @Sayanc93

| True | Ruby on Rails 4 Cheat Sheet: - Maybe we should update this to Rails 5 now? Alternatively we can change the trigger to `Rails 4 cheatsheet` instead of `Rails cheatsheet`

---

IA Page: http://duck.co/ia/view/rails_cheat_sheet

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @Sayanc93

| main | ruby on rails cheat sheet maybe we should update this to rails now alternatively we can change the trigger to rails cheatsheet instead of rails cheatsheet ia page | 1 |

109,204 | 23,738,546,208 | IssuesEvent | 2022-08-31 10:15:50 | Onelinerhub/onelinerhub | https://api.github.com/repos/Onelinerhub/onelinerhub | opened | Short solution needed: "How to overlay (merge) two images" (php-gd) | help wanted good first issue code php-gd | Please help us write most modern and shortest code solution for this issue:

**How to overlay (merge) two images** (technology: [php-gd](https://onelinerhub.com/php-gd))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create [pull request](https://github.com/Onelinerhub/onelinerhub/blob/main/how-to-contribute.md) with a new code file inside [inbox folder](https://github.com/Onelinerhub/onelinerhub/tree/main/inbox).

2. Don't forget to [use comments](https://github.com/Onelinerhub/onelinerhub/blob/main/how-to-contribute.md#code-file-md-format) explain solution.

3. Link to this issue in comments of pull request. | 1.0 | Short solution needed: "How to overlay (merge) two images" (php-gd) - Please help us write most modern and shortest code solution for this issue:

**How to overlay (merge) two images** (technology: [php-gd](https://onelinerhub.com/php-gd))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create [pull request](https://github.com/Onelinerhub/onelinerhub/blob/main/how-to-contribute.md) with a new code file inside [inbox folder](https://github.com/Onelinerhub/onelinerhub/tree/main/inbox).

2. Don't forget to [use comments](https://github.com/Onelinerhub/onelinerhub/blob/main/how-to-contribute.md#code-file-md-format) explain solution.

3. Link to this issue in comments of pull request. | non_main | short solution needed how to overlay merge two images php gd please help us write most modern and shortest code solution for this issue how to overlay merge two images technology fast way just write the code solution in the comments prefered way create with a new code file inside don t forget to explain solution link to this issue in comments of pull request | 0 |

62,493 | 8,615,548,080 | IssuesEvent | 2018-11-19 20:54:33 | Azure/azure-iot-sdk-csharp | https://api.github.com/repos/Azure/azure-iot-sdk-csharp | closed | Message.DeliveryCount property description in reference documentation seems incorrect | area-documentation bug | Hi there! I'm the docs author at the [Azure .NET SDK docs site](https://docs.microsoft.com/dotnet/azure), and a customer submitted the following issue with documentation generated from the `///` comments in your API. Please be sure to @ them in your response. Thanks!

@ali-nazem commented on [Thu Jun 07 2018](https://github.com/Azure/azure-docs-sdk-dotnet/issues/668)

"Time when the message was received by the server" seems to be an irrelevant description for a property called "DeliveryCount".

It looks like this description was copy pasted from the description of "EnqueuedTimeUtc" property, hence still unclear what DeliveryCount property provides.

Best regards,

Ali

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: b9e589d5-b37d-c203-794e-edb04529240a

* Version Independent ID: a9d44046-5cbb-5ace-835d-26c797dd9709

* Content: [Message.DeliveryCount Property (Microsoft.Azure.Devices.Client)](https://docs.microsoft.com/en-us/dotnet/api/microsoft.azure.devices.client.message.deliverycount?view=azure-dotnet#Microsoft_Azure_Devices_Client_Message_DeliveryCount)

* Content Source: [xml/Microsoft.Azure.Devices.Client/Message.xml](https://github.com/Azure/azure-docs-sdk-dotnet/blob/master/xml/Microsoft.Azure.Devices.Client/Message.xml)

* Service: **iot-hub**

* GitHub Login: @erickson-doug

* Microsoft Alias: **douge**

| 1.0 | Message.DeliveryCount property description in reference documentation seems incorrect - Hi there! I'm the docs author at the [Azure .NET SDK docs site](https://docs.microsoft.com/dotnet/azure), and a customer submitted the following issue with documentation generated from the `///` comments in your API. Please be sure to @ them in your response. Thanks!

@ali-nazem commented on [Thu Jun 07 2018](https://github.com/Azure/azure-docs-sdk-dotnet/issues/668)

"Time when the message was received by the server" seems to be an irrelevant description for a property called "DeliveryCount".

It looks like this description was copy pasted from the description of "EnqueuedTimeUtc" property, hence still unclear what DeliveryCount property provides.

Best regards,

Ali

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: b9e589d5-b37d-c203-794e-edb04529240a

* Version Independent ID: a9d44046-5cbb-5ace-835d-26c797dd9709

* Content: [Message.DeliveryCount Property (Microsoft.Azure.Devices.Client)](https://docs.microsoft.com/en-us/dotnet/api/microsoft.azure.devices.client.message.deliverycount?view=azure-dotnet#Microsoft_Azure_Devices_Client_Message_DeliveryCount)

* Content Source: [xml/Microsoft.Azure.Devices.Client/Message.xml](https://github.com/Azure/azure-docs-sdk-dotnet/blob/master/xml/Microsoft.Azure.Devices.Client/Message.xml)

* Service: **iot-hub**

* GitHub Login: @erickson-doug

* Microsoft Alias: **douge**

| non_main | message deliverycount property description in reference documentation seems incorrect hi there i m the docs author at the and a customer submitted the following issue with documentation generated from the comments in your api please be sure to them in your response thanks ali nazem commented on time when the message was received by the server seems to be an irrelevant description for a property called deliverycount it looks like this description was copy pasted from the description of enqueuedtimeutc property hence still unclear what deliverycount property provides best regards ali document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service iot hub github login erickson doug microsoft alias douge | 0 |

3,465 | 13,282,885,567 | IssuesEvent | 2020-08-24 01:12:41 | amyjko/faculty | https://api.github.com/repos/amyjko/faculty | closed | Separate data from presentation | maintainability | It'd be much more convenient to edit data files without having to rebuild the js. Find a clean way to load them separately from source. | True | Separate data from presentation - It'd be much more convenient to edit data files without having to rebuild the js. Find a clean way to load them separately from source. | main | separate data from presentation it d be much more convenient to edit data files without having to rebuild the js find a clean way to load them separately from source | 1 |

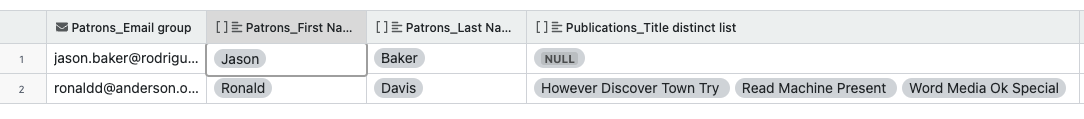

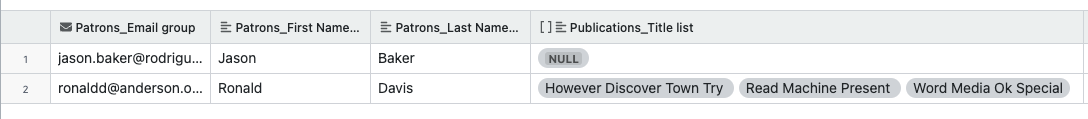

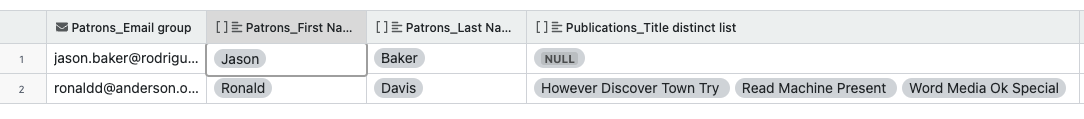

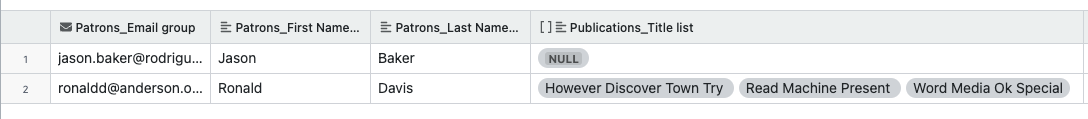

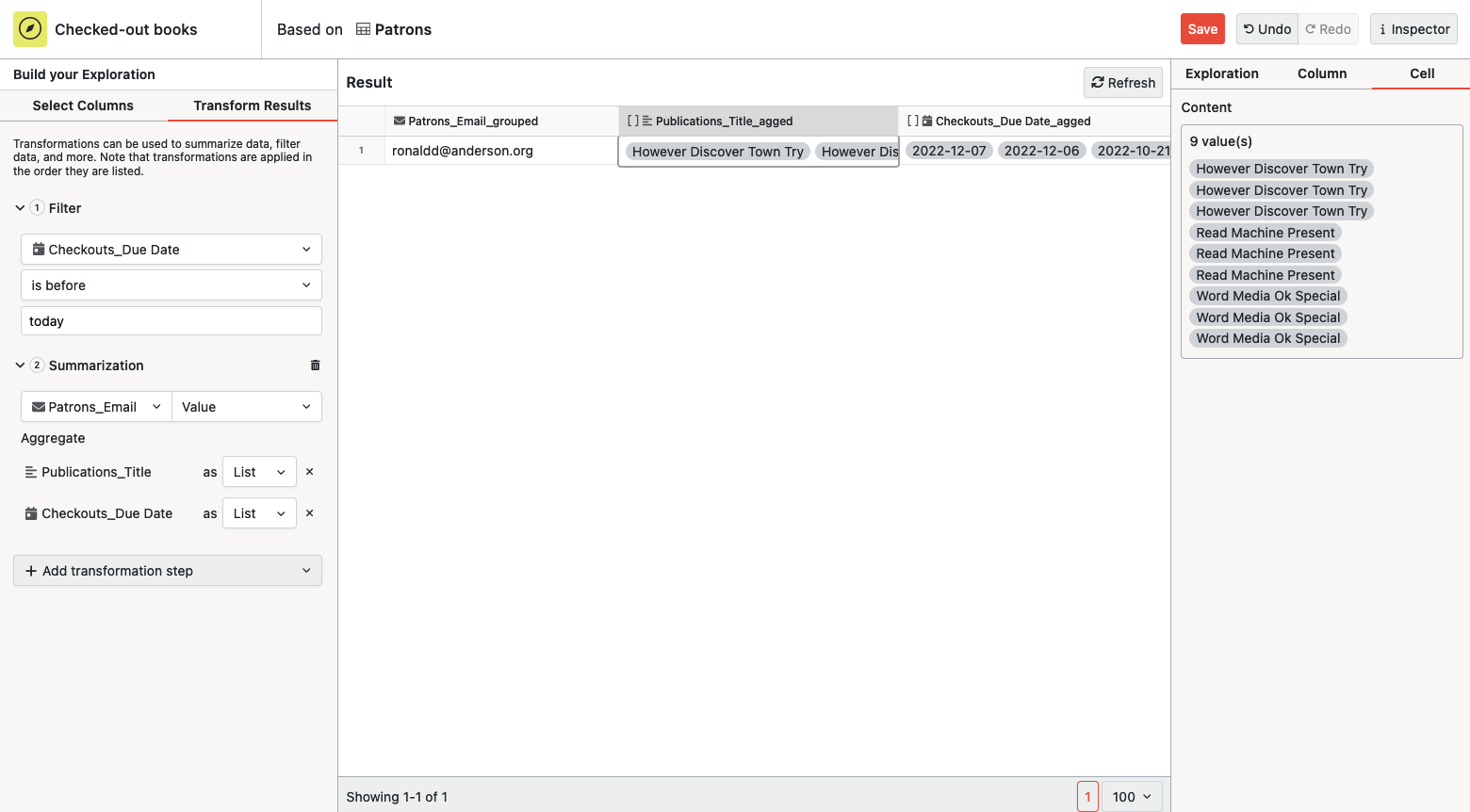

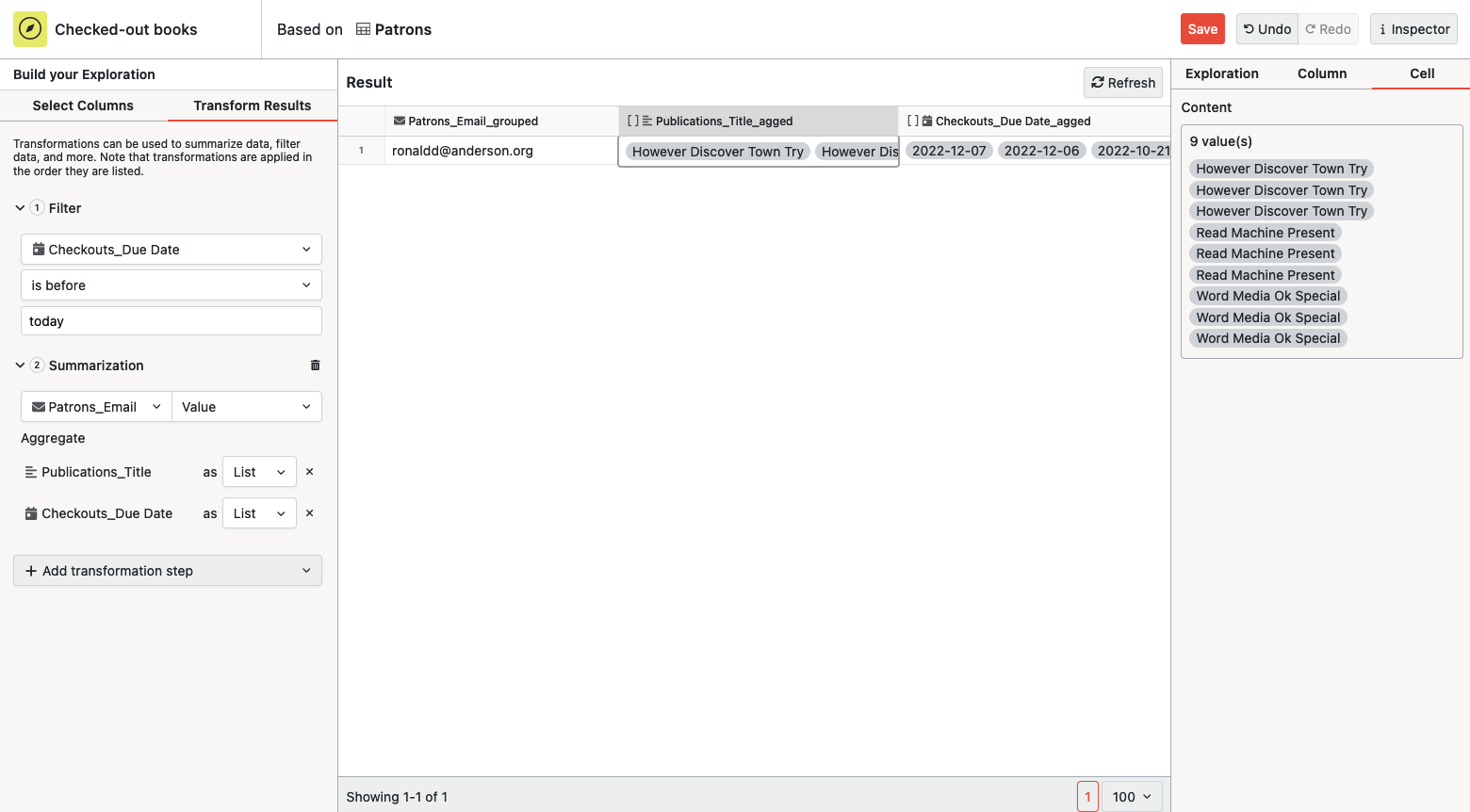

5,115 | 26,045,632,933 | IssuesEvent | 2022-12-22 14:10:45 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | closed | Summarization suggestion aggregates columns instead of grouping, when the base column is a unique key column | type: bug work: backend status: ready restricted: maintainers | ## Description

* Select a table with a unique key column as the base table in Data Explorer Eg., Patrons

* Add the unique key column, along with a few other columns. Eg., Email, first name, last name

* Summarize by the unique key column. Expect the other columns to be grouped, instead notice that they are aggregated as a list.

Related comment/issue: https://github.com/centerofci/mathesar/issues/2145#issuecomment-1362358413

> _Summarize based on patron email (there should be LESS than 180 rows at this point, and only "Book Title" should show up as a List)_

> For this point, we'd expect patron first name and last name to be part of the grouped columns, instead they are getting aggregated as list. Summarization suggestion aggregates them instead of grouping.

> Currently, the list has a single value but it's still an array column so it appears inside a pill, which looks like this:

> What we'd expect is this:

| True | Summarization suggestion aggregates columns instead of grouping, when the base column is a unique key column - ## Description

* Select a table with a unique key column as the base table in Data Explorer Eg., Patrons

* Add the unique key column, along with a few other columns. Eg., Email, first name, last name

* Summarize by the unique key column. Expect the other columns to be grouped, instead notice that they are aggregated as a list.

Related comment/issue: https://github.com/centerofci/mathesar/issues/2145#issuecomment-1362358413

> _Summarize based on patron email (there should be LESS than 180 rows at this point, and only "Book Title" should show up as a List)_

> For this point, we'd expect patron first name and last name to be part of the grouped columns, instead they are getting aggregated as list. Summarization suggestion aggregates them instead of grouping.

> Currently, the list has a single value but it's still an array column so it appears inside a pill, which looks like this:

> What we'd expect is this:

| main | summarization suggestion aggregates columns instead of grouping when the base column is a unique key column description select a table with a unique key column as the base table in data explorer eg patrons add the unique key column along with a few other columns eg email first name last name summarize by the unique key column expect the other columns to be grouped instead notice that they are aggregated as a list related comment issue summarize based on patron email there should be less than rows at this point and only book title should show up as a list for this point we d expect patron first name and last name to be part of the grouped columns instead they are getting aggregated as list summarization suggestion aggregates them instead of grouping currently the list has a single value but it s still an array column so it appears inside a pill which looks like this what we d expect is this | 1 |

3,566 | 14,272,421,936 | IssuesEvent | 2020-11-21 16:57:32 | MarcusWolschon/osmeditor4android | https://api.github.com/repos/MarcusWolschon/osmeditor4android | opened | Re-factor string literals in de.blau.android.osm.* | Maintainability Task | THere are quite a few duplicate string literals in the OsmXml and OsmParser that should be turned in to constants. | True | Re-factor string literals in de.blau.android.osm.* - THere are quite a few duplicate string literals in the OsmXml and OsmParser that should be turned in to constants. | main | re factor string literals in de blau android osm there are quite a few duplicate string literals in the osmxml and osmparser that should be turned in to constants | 1 |

193,338 | 22,216,135,113 | IssuesEvent | 2022-06-08 01:59:34 | AlexRogalskiy/github-action-open-jscharts | https://api.github.com/repos/AlexRogalskiy/github-action-open-jscharts | closed | CVE-2021-44907 (High) detected in qs-6.5.2.tgz - autoclosed | security vulnerability | ## CVE-2021-44907 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>qs-6.5.2.tgz</b></p></summary>

<p>A querystring parser that supports nesting and arrays, with a depth limit</p>

<p>Library home page: <a href="https://registry.npmjs.org/qs/-/qs-6.5.2.tgz">https://registry.npmjs.org/qs/-/qs-6.5.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/npm/node_modules/qs/package.json,/node_modules/request/node_modules/qs/package.json</p>

<p>

Dependency Hierarchy:

- jest-27.0.0-next.2.tgz (Root Library)

- jest-cli-27.0.0-next.2.tgz

- jest-config-27.0.0-next.2.tgz

- jest-environment-jsdom-27.0.0-next.1.tgz

- jsdom-16.4.0.tgz

- request-2.88.2.tgz

- :x: **qs-6.5.2.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/github-action-open-jscharts/commit/bd85a148d84f20fa7abd580c8fce60cb02f3ba92">bd85a148d84f20fa7abd580c8fce60cb02f3ba92</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A Denial of Service vulnerability exists in qs up to 6.8.0 due to insufficient sanitization of property in the gs.parse function. The merge() function allows the assignment of properties on an array in the query. For any property being assigned, a value in the array is converted to an object containing these properties. Essentially, this means that the property whose expected type is Array always has to be checked with Array.isArray() by the user. This may not be obvious to the user and can cause unexpected behavior.

<p>Publish Date: 2022-03-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-44907>CVE-2021-44907</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-44907">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-44907</a></p>

<p>Release Date: 2022-03-17</p>

<p>Fix Resolution: qs - 6.8.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-44907 (High) detected in qs-6.5.2.tgz - autoclosed - ## CVE-2021-44907 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>qs-6.5.2.tgz</b></p></summary>

<p>A querystring parser that supports nesting and arrays, with a depth limit</p>

<p>Library home page: <a href="https://registry.npmjs.org/qs/-/qs-6.5.2.tgz">https://registry.npmjs.org/qs/-/qs-6.5.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/npm/node_modules/qs/package.json,/node_modules/request/node_modules/qs/package.json</p>

<p>

Dependency Hierarchy:

- jest-27.0.0-next.2.tgz (Root Library)

- jest-cli-27.0.0-next.2.tgz

- jest-config-27.0.0-next.2.tgz

- jest-environment-jsdom-27.0.0-next.1.tgz

- jsdom-16.4.0.tgz

- request-2.88.2.tgz

- :x: **qs-6.5.2.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/github-action-open-jscharts/commit/bd85a148d84f20fa7abd580c8fce60cb02f3ba92">bd85a148d84f20fa7abd580c8fce60cb02f3ba92</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A Denial of Service vulnerability exists in qs up to 6.8.0 due to insufficient sanitization of property in the gs.parse function. The merge() function allows the assignment of properties on an array in the query. For any property being assigned, a value in the array is converted to an object containing these properties. Essentially, this means that the property whose expected type is Array always has to be checked with Array.isArray() by the user. This may not be obvious to the user and can cause unexpected behavior.

<p>Publish Date: 2022-03-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-44907>CVE-2021-44907</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-44907">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-44907</a></p>

<p>Release Date: 2022-03-17</p>

<p>Fix Resolution: qs - 6.8.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve high detected in qs tgz autoclosed cve high severity vulnerability vulnerable library qs tgz a querystring parser that supports nesting and arrays with a depth limit library home page a href path to dependency file package json path to vulnerable library node modules npm node modules qs package json node modules request node modules qs package json dependency hierarchy jest next tgz root library jest cli next tgz jest config next tgz jest environment jsdom next tgz jsdom tgz request tgz x qs tgz vulnerable library found in head commit a href vulnerability details a denial of service vulnerability exists in qs up to due to insufficient sanitization of property in the gs parse function the merge function allows the assignment of properties on an array in the query for any property being assigned a value in the array is converted to an object containing these properties essentially this means that the property whose expected type is array always has to be checked with array isarray by the user this may not be obvious to the user and can cause unexpected behavior publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution qs step up your open source security game with whitesource | 0 |

3,194 | 12,227,801,423 | IssuesEvent | 2020-05-03 16:43:23 | gfleetwood/asteres | https://api.github.com/repos/gfleetwood/asteres | opened | ericmjl/data-testing-tutorial (36561859) | Jupyter Notebook data science maintain | https://github.com/ericmjl/data-testing-tutorial

A short tutorial for data scientists on how to write tests for code + data. | True | ericmjl/data-testing-tutorial (36561859) - https://github.com/ericmjl/data-testing-tutorial