Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7

values | text_combine stringlengths 96 254k | label stringclasses 2

values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

139,704 | 12,878,229,310 | IssuesEvent | 2020-07-11 15:28:26 | UC-Davis-molecular-computing/scadnano-python-package | https://api.github.com/repos/UC-Davis-molecular-computing/scadnano-python-package | opened | add CONTRIBUTING document | documentation high priority | This repo should have a CONTRIBUTING.md document, similar to that of the [web interface](https://github.com/UC-Davis-molecular-computing/scadnano/blob/master/CONTRIBUTING.md), explaining how to contribute.

It should follow the same basic model, although it does not require the full explanation of the React/Redux arc... | 1.0 | add CONTRIBUTING document - This repo should have a CONTRIBUTING.md document, similar to that of the [web interface](https://github.com/UC-Davis-molecular-computing/scadnano/blob/master/CONTRIBUTING.md), explaining how to contribute.

It should follow the same basic model, although it does not require the full explan... | non_main | add contributing document this repo should have a contributing md document similar to that of the explaining how to contribute it should follow the same basic model although it does not require the full explanation of the react redux architecture since the python package architecture is much simpler | 0 |

16,836 | 9,536,669,789 | IssuesEvent | 2019-04-30 10:20:25 | Garados007/Werwolf | https://api.github.com/repos/Garados007/Werwolf | closed | Optimiere Abrufe bei Spielrundenwechsel | difficult performance | Bei einem Wechsel der aktuellen Spielrunde werden ein Großteil der Daten (z.B. Chateinträge) verworfen und müssen neu abgerufen werden. Ein Teil davon ändert sich aber nicht in der nächsten Runde und soll nur ausgeblendet werden oder ungültige registrierte periodische Abrfragen existieren. Diese Abfragen lassen sich op... | True | Optimiere Abrufe bei Spielrundenwechsel - Bei einem Wechsel der aktuellen Spielrunde werden ein Großteil der Daten (z.B. Chateinträge) verworfen und müssen neu abgerufen werden. Ein Teil davon ändert sich aber nicht in der nächsten Runde und soll nur ausgeblendet werden oder ungültige registrierte periodische Abrfragen... | non_main | optimiere abrufe bei spielrundenwechsel bei einem wechsel der aktuellen spielrunde werden ein großteil der daten z b chateinträge verworfen und müssen neu abgerufen werden ein teil davon ändert sich aber nicht in der nächsten runde und soll nur ausgeblendet werden oder ungültige registrierte periodische abrfragen... | 0 |

5,212 | 26,464,339,927 | IssuesEvent | 2023-01-16 21:18:18 | bazelbuild/intellij | https://api.github.com/repos/bazelbuild/intellij | closed | Flag --incompatible_disable_starlark_host_transitions will break IntelliJ Plugin Aspect Google in Bazel 7.0 | type: bug product: IntelliJ topic: bazel awaiting-maintainer | Incompatible flag `--incompatible_disable_starlark_host_transitions` will be enabled by default in the next major release (Bazel 7.0), thus breaking IntelliJ Plugin Aspect Google. Please migrate to fix this and unblock the flip of this flag.

The flag is documented here: [bazelbuild/bazel#17032](https://github.com/ba... | True | Flag --incompatible_disable_starlark_host_transitions will break IntelliJ Plugin Aspect Google in Bazel 7.0 - Incompatible flag `--incompatible_disable_starlark_host_transitions` will be enabled by default in the next major release (Bazel 7.0), thus breaking IntelliJ Plugin Aspect Google. Please migrate to fix this and... | main | flag incompatible disable starlark host transitions will break intellij plugin aspect google in bazel incompatible flag incompatible disable starlark host transitions will be enabled by default in the next major release bazel thus breaking intellij plugin aspect google please migrate to fix this and... | 1 |

2,868 | 10,275,584,891 | IssuesEvent | 2019-08-24 09:14:44 | arcticicestudio/arctic | https://api.github.com/repos/arcticicestudio/arctic | opened | ESLint | context-workflow scope-dx scope-maintainability scope-quality scope-stability type-feature | <p align="center"><img src="https://user-images.githubusercontent.com/7836623/63634799-a8555900-c65b-11e9-8988-dd0bc5d9c03b.png" /></p>

Integrate [ESLint][], the _pluggable_ and de-facto standard linting utility for JavaScript.

### Configuration Preset

The configuration presets that will be used are [@arcticic... | True | ESLint - <p align="center"><img src="https://user-images.githubusercontent.com/7836623/63634799-a8555900-c65b-11e9-8988-dd0bc5d9c03b.png" /></p>

Integrate [ESLint][], the _pluggable_ and de-facto standard linting utility for JavaScript.

### Configuration Preset

The configuration presets that will be used are [... | main | eslint integrate the pluggable and de facto standard linting utility for javascript configuration preset the configuration presets that will be used are that implements the it comes with the following peer dependencies it it built on top of that includes various rules of ... | 1 |

2,140 | 7,360,244,817 | IssuesEvent | 2018-03-10 16:37:21 | MDAnalysis/mdanalysis | https://api.github.com/repos/MDAnalysis/mdanalysis | closed | MAINT: asv custom builds | maintainability performance | It seems `asv` now has [basic support for custom builds](https://github.com/airspeed-velocity/asv/pull/611) where `setup.py`is not in the project root. We should likely investigate if this will suffice for our purposes so that we no longer have to maintain a custom branch.

If it suffices for our purposes we should a... | True | MAINT: asv custom builds - It seems `asv` now has [basic support for custom builds](https://github.com/airspeed-velocity/asv/pull/611) where `setup.py`is not in the project root. We should likely investigate if this will suffice for our purposes so that we no longer have to maintain a custom branch.

If it suffices f... | main | maint asv custom builds it seems asv now has where setup py is not in the project root we should likely investigate if this will suffice for our purposes so that we no longer have to maintain a custom branch if it suffices for our purposes we should almost certainly abandon maintaining our own asv bran... | 1 |

27,054 | 12,509,811,485 | IssuesEvent | 2020-06-02 17:33:50 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | Meet with RPP Folks to Discuss Online Payments | Product: Residential Parking Permit Digitization Service: Apps Service: Product Type: Meeting Workgroup: PE | Scheduled for 6/2/2020 at 11 am

### Objective

Try to talk about RPP Online payments so that everyone is on the same page

### Participants

- Jacob, Jason, Joseph, John, Stephanie & Diana

### Agenda

- Debrief on status of Online Payments with comptroller office

- Configuration of Online Payments

- Whether this will b... | 2.0 | Meet with RPP Folks to Discuss Online Payments - Scheduled for 6/2/2020 at 11 am

### Objective

Try to talk about RPP Online payments so that everyone is on the same page

### Participants

- Jacob, Jason, Joseph, John, Stephanie & Diana

### Agenda

- Debrief on status of Online Payments with comptroller office

- Conf... | non_main | meet with rpp folks to discuss online payments scheduled for at am objective try to talk about rpp online payments so that everyone is on the same page participants jacob jason joseph john stephanie diana agenda debrief on status of online payments with comptroller office configur... | 0 |

3,123 | 11,959,670,993 | IssuesEvent | 2020-04-04 23:00:27 | microsoft/DirectXMesh | https://api.github.com/repos/microsoft/DirectXMesh | opened | Remove use of DWORD in public interface | maintainence | I changed most of the functions to take C++ Standard Types, but never fixed up ``DWORD`` out of concern for changing link signatures.

I should really change it to something standard. | True | Remove use of DWORD in public interface - I changed most of the functions to take C++ Standard Types, but never fixed up ``DWORD`` out of concern for changing link signatures.

I should really change it to something standard. | main | remove use of dword in public interface i changed most of the functions to take c standard types but never fixed up dword out of concern for changing link signatures i should really change it to something standard | 1 |

2,230 | 7,869,447,714 | IssuesEvent | 2018-06-24 14:07:04 | arcticicestudio/nord-hyper | https://api.github.com/repos/arcticicestudio/nord-hyper | opened | Add Prettier | context-workflow scope-maintainability type-improvement | <p align="center"><img src="https://prettier.io/icon.png" width="128" height="128" /></p>

[Prettier][] should be used in development with the _Prettier → ESLint_ formatting flow.

[prettier]: https://prettier.io | True | Add Prettier - <p align="center"><img src="https://prettier.io/icon.png" width="128" height="128" /></p>

[Prettier][] should be used in development with the _Prettier → ESLint_ formatting flow.

[prettier]: https://prettier.io | main | add prettier should be used in development with the prettier → eslint formatting flow | 1 |

80,554 | 15,586,295,162 | IssuesEvent | 2021-03-18 01:37:00 | attesch/myretail | https://api.github.com/repos/attesch/myretail | opened | CVE-2020-10673 (High) detected in jackson-databind-2.9.4.jar | security vulnerability | ## CVE-2020-10673 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-10673 (High) detected in jackson-databind-2.9.4.jar - ## CVE-2020-10673 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.4.jar</b></p></summary>

<p>General... | non_main | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file myretail build gradle path t... | 0 |

7,674 | 9,924,791,215 | IssuesEvent | 2019-07-01 10:33:09 | sisbell/tor-android-service | https://api.github.com/repos/sisbell/tor-android-service | closed | Settings Changes | Orbot Compatibility | Changes to

- Socks Ports, DNS, IPv6Traffic, HTTP_PROXY

- Auto resolving of more ports

https://github.com/guardianproject/orbot/commit/56917567cd21a734a35f3bee0e56ba23793b6887 | True | Settings Changes - Changes to

- Socks Ports, DNS, IPv6Traffic, HTTP_PROXY

- Auto resolving of more ports

https://github.com/guardianproject/orbot/commit/56917567cd21a734a35f3bee0e56ba23793b6887 | non_main | settings changes changes to socks ports dns http proxy auto resolving of more ports | 0 |

12,227 | 3,059,488,500 | IssuesEvent | 2015-08-14 15:14:57 | mysociety/fixmystreet-international | https://api.github.com/repos/mysociety/fixmystreet-international | closed | [Design] Define logo size we require for app and MakeMyIsland site | design | - [ ] Check if the logo will be updated

- [ ] Get requirements from designers | 1.0 | [Design] Define logo size we require for app and MakeMyIsland site - - [ ] Check if the logo will be updated

- [ ] Get requirements from designers | non_main | define logo size we require for app and makemyisland site check if the logo will be updated get requirements from designers | 0 |

5,404 | 27,115,681,186 | IssuesEvent | 2023-02-15 18:22:31 | VA-Explorer/va_explorer | https://api.github.com/repos/VA-Explorer/va_explorer | closed | Calculate and highlight outlier data within VA trends | Type: Maintainance good first issue Domain: Frontend Status: Inactive | **What is the expected state?**

As a data analyst I expect to be able to tell at a glance if my trends data contains outliers so I can quickly identify them or further investigate them. I would like the trends tab chart to somehow highlight data that qualifies as an outlier.

**What is the actual state?**

The trend... | True | Calculate and highlight outlier data within VA trends - **What is the expected state?**

As a data analyst I expect to be able to tell at a glance if my trends data contains outliers so I can quickly identify them or further investigate them. I would like the trends tab chart to somehow highlight data that qualifies as... | main | calculate and highlight outlier data within va trends what is the expected state as a data analyst i expect to be able to tell at a glance if my trends data contains outliers so i can quickly identify them or further investigate them i would like the trends tab chart to somehow highlight data that qualifies as... | 1 |

2,759 | 2,642,559,175 | IssuesEvent | 2015-03-12 01:11:05 | Annexa/Moki-Ecommerce | https://api.github.com/repos/Annexa/Moki-Ecommerce | opened | Check all taxes are calculated correctly based on international location | Page design | International distros: 0% tax | 1.0 | Check all taxes are calculated correctly based on international location - International distros: 0% tax | non_main | check all taxes are calculated correctly based on international location international distros tax | 0 |

5,819 | 30,792,568,420 | IssuesEvent | 2023-07-31 17:16:33 | jupyter-naas/awesome-notebooks | https://api.github.com/repos/jupyter-naas/awesome-notebooks | closed | JSON - Handle Nested Data | templates maintainer | This notebook will show how to handle nested data in JSON format using Python library. It is usefull for organizations to quickly parse and extract data from complex JSON files.

| True | JSON - Handle Nested Data - This notebook will show how to handle nested data in JSON format using Python library. It is usefull for organizations to quickly parse and extract data from complex JSON files.

| main | json handle nested data this notebook will show how to handle nested data in json format using python library it is usefull for organizations to quickly parse and extract data from complex json files | 1 |

25,035 | 4,183,770,098 | IssuesEvent | 2016-06-23 02:20:24 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | DboSource: Logging of query time and numRows in tests | datasource Defect | This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 2.7.11

* Platform and Target: Apache, MySQL, PHPUnit tests

### What you did

Running PHPUnit tests and debugging performance problems with MySQL/creating fixtures.

### Expected Behavior

When setting... | 1.0 | DboSource: Logging of query time and numRows in tests - This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 2.7.11

* Platform and Target: Apache, MySQL, PHPUnit tests

### What you did

Running PHPUnit tests and debugging performance problems with MySQL/c... | non_main | dbosource logging of query time and numrows in tests this is a multiple allowed bug enhancement feature discussion rfc cakephp version platform and target apache mysql phpunit tests what you did running phpunit tests and debugging performance problems with mysql creating... | 0 |

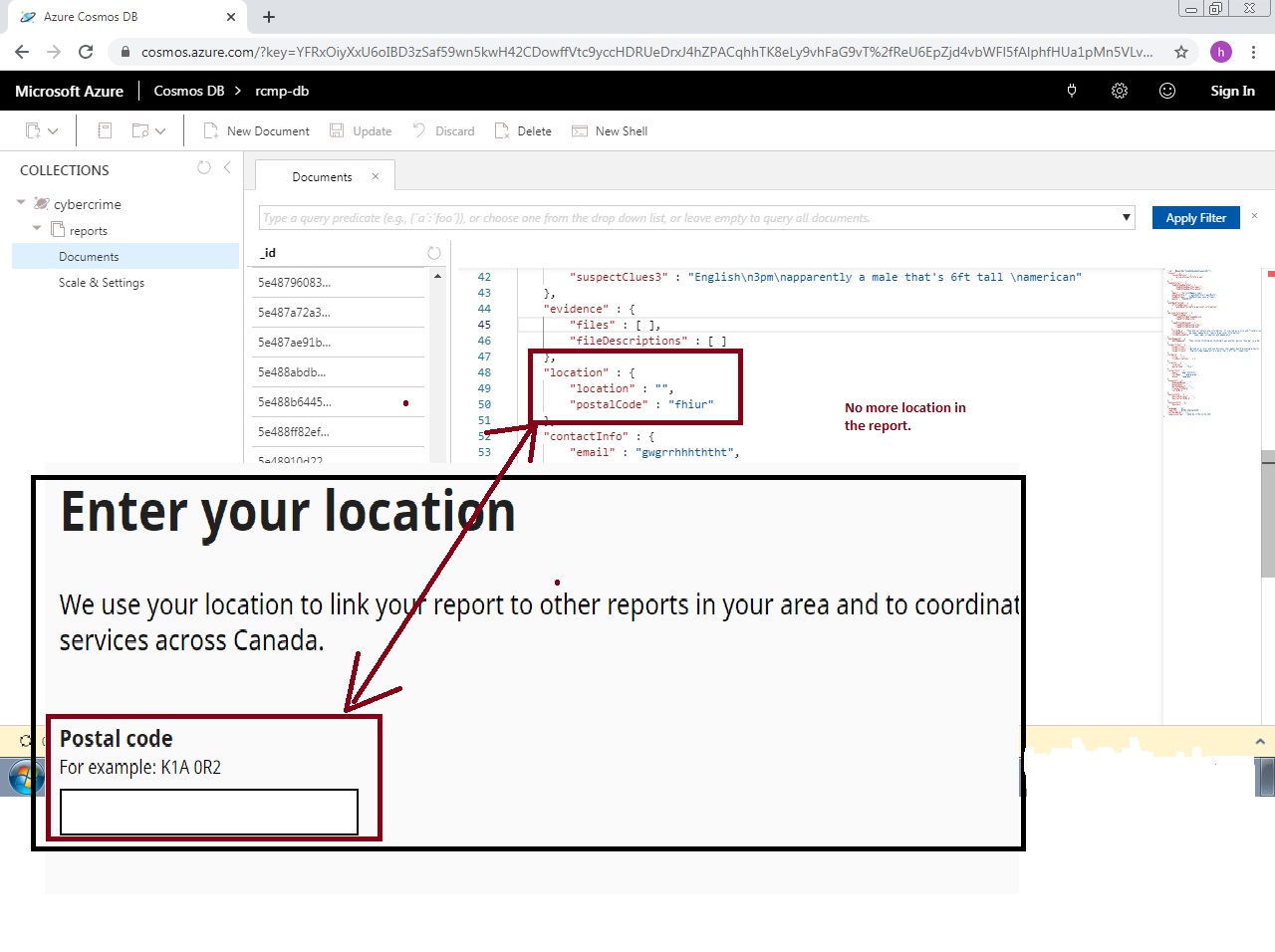

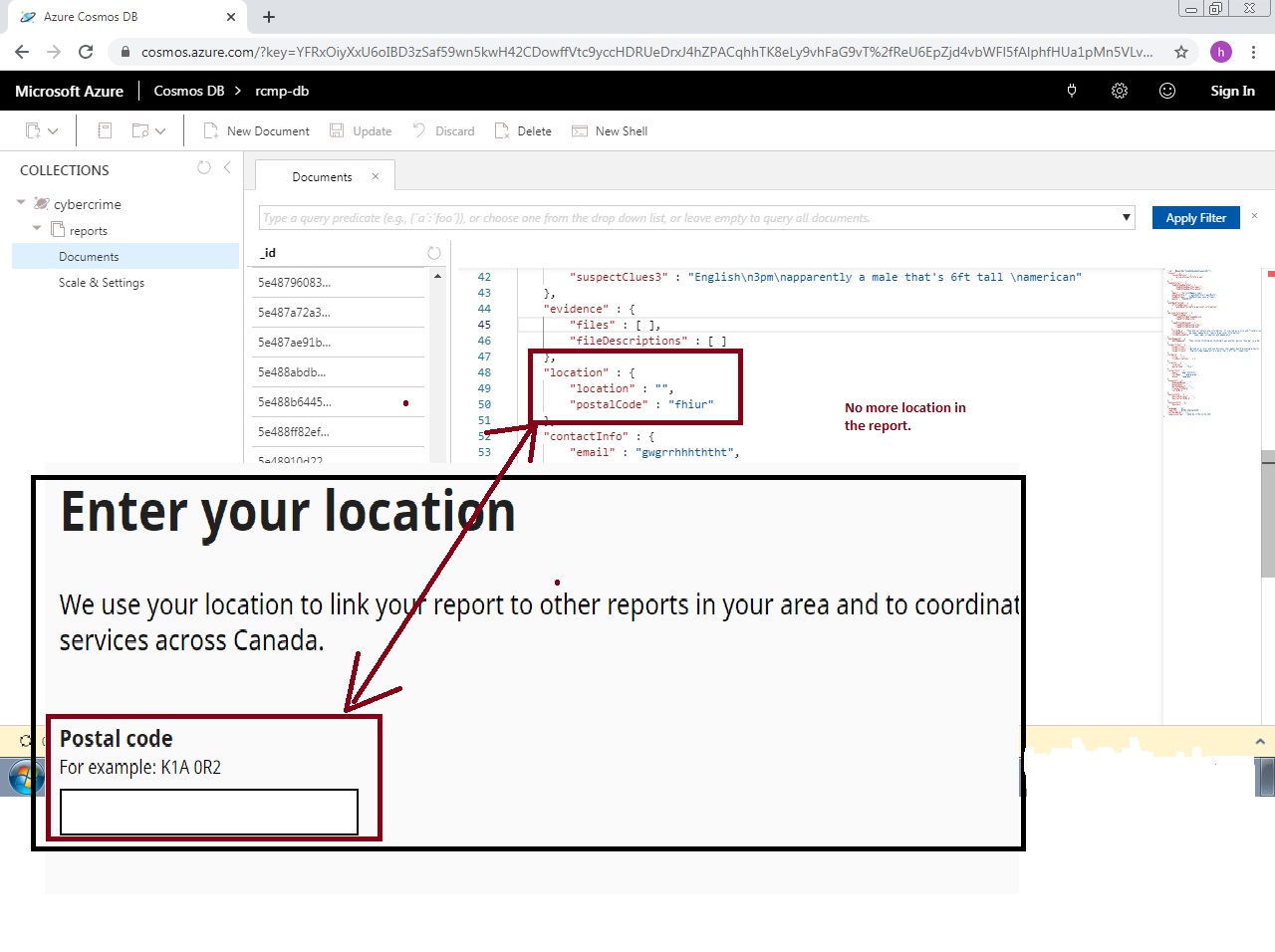

392,899 | 11,597,409,779 | IssuesEvent | 2020-02-24 20:49:03 | cds-snc/report-a-cybercrime | https://api.github.com/repos/cds-snc/report-a-cybercrime | closed | Should remove "Location" field in the database | bug medium priority | ## Summary

With a currently version, the location is removed from "Enter your location" page. Should the location be removed as well in the database?

## Steps to reproduce

> How exactly can the ... | 1.0 | Should remove "Location" field in the database - ## Summary

With a currently version, the location is removed from "Enter your location" page. Should the location be removed as well in the database?

... | non_main | should remove location field in the database summary with a currently version the location is removed from enter your location page should the location be removed as well in the database steps to reproduce how exactly can the bug be reproduced be very specific unresolved questi... | 0 |

3,590 | 14,480,928,846 | IssuesEvent | 2020-12-10 11:53:14 | utm-cssc/website | https://api.github.com/repos/utm-cssc/website | opened | 🥅 Initiative: Winter 2021 Course | Domain: User Experience Role: Maintainer Role: Product Owner | ### Motivation 🏁

<!--

A clear and concise motivation for this initiative? How will this help execute the vision of the org?

-->

The purpose of this issue is to define the goals for the Winter 2021 iteration of the CSSC Website development course.

### Initiative Overview 👁️🗨️

<!--

A clear and con... | True | 🥅 Initiative: Winter 2021 Course - ### Motivation 🏁

<!--

A clear and concise motivation for this initiative? How will this help execute the vision of the org?

-->

The purpose of this issue is to define the goals for the Winter 2021 iteration of the CSSC Website development course.

### Initiative Overvie... | main | 🥅 initiative winter course motivation 🏁 a clear and concise motivation for this initiative how will this help execute the vision of the org the purpose of this issue is to define the goals for the winter iteration of the cssc website development course initiative overview 👁️... | 1 |

62,309 | 17,023,894,364 | IssuesEvent | 2021-07-03 04:25:02 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Andrew S. Edwards Memorial Triangle | Component: admin Priority: minor Resolution: invalid Type: defect | **[Submitted to the original trac issue database at 12.10am, Saturday, 11th January 2014]**

The Andrew S. Edwards Memorial Triangle, which is at the triangle of D Street, Louisiana Avenue, and N Capitol Street in Washington DC is in Waze maps and Google maps, but not on openstreetmap. | 1.0 | Andrew S. Edwards Memorial Triangle - **[Submitted to the original trac issue database at 12.10am, Saturday, 11th January 2014]**

The Andrew S. Edwards Memorial Triangle, which is at the triangle of D Street, Louisiana Avenue, and N Capitol Street in Washington DC is in Waze maps and Google maps, but not on openstreet... | non_main | andrew s edwards memorial triangle the andrew s edwards memorial triangle which is at the triangle of d street louisiana avenue and n capitol street in washington dc is in waze maps and google maps but not on openstreetmap | 0 |

8,653 | 6,608,849,381 | IssuesEvent | 2017-09-19 12:40:18 | Openki/Openki | https://api.github.com/repos/Openki/Openki | opened | Load testing | Big Expertise needed Performance Tech | Some Entry-points:

- https://www.mongodb.com/presentations/mongodb-europe-2016-debugging-mongodb-performance

- https://github.com/rueckstiess/mtools/wiki/mplotqueries

- Record DPP Calls --> https://chrome.google.com/webstore/detail/meteor-devtools/ippapidnnboiophakmmhkdlchoccbgje?hl=en

- Reproduce Calls --> https... | True | Load testing - Some Entry-points:

- https://www.mongodb.com/presentations/mongodb-europe-2016-debugging-mongodb-performance

- https://github.com/rueckstiess/mtools/wiki/mplotqueries

- Record DPP Calls --> https://chrome.google.com/webstore/detail/meteor-devtools/ippapidnnboiophakmmhkdlchoccbgje?hl=en

- Reproduce ... | non_main | load testing some entry points record dpp calls reproduce calls | 0 |

803 | 4,423,286,535 | IssuesEvent | 2016-08-16 07:58:20 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | ansible 2.1.0, s3 module bug | aws bug_report cloud waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

s3 module

##### ANSIBLE VERSION

```

ansible 2.1.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

Only default settings

##### OS / ENVIRONMENT

I think N/A. But we use Ubu... | True | ansible 2.1.0, s3 module bug - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

s3 module

##### ANSIBLE VERSION

```

ansible 2.1.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

Only default settings

##### OS / ENVIRONME... | main | ansible module bug issue type bug report component name module ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides configuration only default settings os environment... | 1 |

27,741 | 8,031,831,597 | IssuesEvent | 2018-07-28 07:20:51 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | On Windows at least building with a -j > 1 scons parameter fails with a file not found even though the file exists | bug confirmed platform:windows topic:buildsystem | **Operating system or device - Godot version:**

Windows 10 Pro 64-bit, Godot source 64-bit build (tested with master branch)

Visual Studio 2015 Community, Windows 10 SDK Kit 10586.

Python 2.7.11, Scons 2.5.0, and Pywin32 220 all these tools are 32-bit.

**Issue description** (what happened, and what was expected):

... | 1.0 | On Windows at least building with a -j > 1 scons parameter fails with a file not found even though the file exists - **Operating system or device - Godot version:**

Windows 10 Pro 64-bit, Godot source 64-bit build (tested with master branch)

Visual Studio 2015 Community, Windows 10 SDK Kit 10586.

Python 2.7.11, Scon... | non_main | on windows at least building with a j scons parameter fails with a file not found even though the file exists operating system or device godot version windows pro bit godot source bit build tested with master branch visual studio community windows sdk kit python scons and... | 0 |

46,449 | 11,842,775,455 | IssuesEvent | 2020-03-24 00:06:37 | AObuchow/lsp4xml-extensions-maven | https://api.github.com/repos/AObuchow/lsp4xml-extensions-maven | opened | Build is timing out on CI | build testing | Recently the CI builds have been going on for the total allocated time on GitHub actions (6 hours).

Odds are, some test is missing a timeout duration and going on until the entire build times out.

I will have to ensure all tests have a timeout duration. | 1.0 | Build is timing out on CI - Recently the CI builds have been going on for the total allocated time on GitHub actions (6 hours).

Odds are, some test is missing a timeout duration and going on until the entire build times out.

I will have to ensure all tests have a timeout duration. | non_main | build is timing out on ci recently the ci builds have been going on for the total allocated time on github actions hours odds are some test is missing a timeout duration and going on until the entire build times out i will have to ensure all tests have a timeout duration | 0 |

77,248 | 7,569,604,560 | IssuesEvent | 2018-04-23 05:39:36 | apache/incubator-superset | https://api.github.com/repos/apache/incubator-superset | closed | [js-testing] write more tests for javascripts/explore/reducers/exploreReducer.js | js-testing | current coverage: 34%. bring to 70%. | 1.0 | [js-testing] write more tests for javascripts/explore/reducers/exploreReducer.js - current coverage: 34%. bring to 70%. | non_main | write more tests for javascripts explore reducers explorereducer js current coverage bring to | 0 |

2,051 | 6,952,510,854 | IssuesEvent | 2017-12-06 17:41:37 | OpenRefine/OpenRefine | https://api.github.com/repos/OpenRefine/OpenRefine | closed | Weblate unable to push new translations | maintainability | Now that the master branch is protected, Weblate fails to push new translations. One possible solution would be to change the weblate user to administrator, but that's not really ideal… Any better ideas?

Also, it's currently failing to merge translation files. I will look into solving that. | True | Weblate unable to push new translations - Now that the master branch is protected, Weblate fails to push new translations. One possible solution would be to change the weblate user to administrator, but that's not really ideal… Any better ideas?

Also, it's currently failing to merge translation files. I will look in... | main | weblate unable to push new translations now that the master branch is protected weblate fails to push new translations one possible solution would be to change the weblate user to administrator but that s not really ideal… any better ideas also it s currently failing to merge translation files i will look in... | 1 |

1,464 | 6,363,118,697 | IssuesEvent | 2017-07-31 16:18:54 | duckduckgo/zeroclickinfo-goodies | https://api.github.com/repos/duckduckgo/zeroclickinfo-goodies | closed | Conversions: Trigger mph to kmh conversion | Category: Highest Impact Tasks Maintainer Active Topic: Conversions | These don't trigger unless you change it to "kmph":

**55 mph in kmh**

[https://duckduckgo.com/?q=55%20mph%20in%20kmh&kp=1&kad=wt_WT&kl=wt-wt](https://duckduckgo.com/?q=55%20mph%20in%20kmh&kp=1&kad=wt_WT&kl=wt-wt)

**661 mph to km/h**

[https://duckduckgo.com/?q=661%20mph%20to%20km%2Fh&kp=1&kad=wt_WT&kl=wt-wt](htt... | True | Conversions: Trigger mph to kmh conversion - These don't trigger unless you change it to "kmph":

**55 mph in kmh**

[https://duckduckgo.com/?q=55%20mph%20in%20kmh&kp=1&kad=wt_WT&kl=wt-wt](https://duckduckgo.com/?q=55%20mph%20in%20kmh&kp=1&kad=wt_WT&kl=wt-wt)

**661 mph to km/h**

[https://duckduckgo.com/?q=661%20m... | main | conversions trigger mph to kmh conversion these don t trigger unless you change it to kmph mph in kmh mph to km h ia page mintsoft | 1 |

4,225 | 20,909,147,562 | IssuesEvent | 2022-03-24 07:27:12 | pypiserver/pypiserver | https://api.github.com/repos/pypiserver/pypiserver | opened | Rename `master` branch to `main` | type.Maintainance | To be consistent with the guidelines.

The proposal is to follow the suggestions here https://github.com/github/renaming.

Personally, I believe this would be a good step, other ideas are welcome.

Planning to do this _after_ the next release is published (#419) ✌️

| True | Rename `master` branch to `main` - To be consistent with the guidelines.

The proposal is to follow the suggestions here https://github.com/github/renaming.

Personally, I believe this would be a good step, other ideas are welcome.

Planning to do this _after_ the next release is published (#419) ✌️

| main | rename master branch to main to be consistent with the guidelines the proposal is to follow the suggestions here personally i believe this would be a good step other ideas are welcome planning to do this after the next release is published ✌️ | 1 |

94,360 | 19,534,025,517 | IssuesEvent | 2021-12-31 00:18:02 | GTNewHorizons/GT-New-Horizons-Modpack | https://api.github.com/repos/GTNewHorizons/GT-New-Horizons-Modpack | closed | Witchery++ baba yaga spawn rate | Status: CodeComplete Status: Need to be Tested | #### Which modpack version are you using?

2.0.4.6

#

#### What do you suggest instead/what changes do you propose?

Change the spawn rate of the bags yaga using the crystal ball. The times I tried, I must have put 8-9 hours into the dumb fortunes and got the spawn once. It's just dumb, it's not interesting to do the ... | 1.0 | Witchery++ baba yaga spawn rate - #### Which modpack version are you using?

2.0.4.6

#

#### What do you suggest instead/what changes do you propose?

Change the spawn rate of the bags yaga using the crystal ball. The times I tried, I must have put 8-9 hours into the dumb fortunes and got the spawn once. It's just du... | non_main | witchery baba yaga spawn rate which modpack version are you using what do you suggest instead what changes do you propose change the spawn rate of the bags yaga using the crystal ball the times i tried i must have put hours into the dumb fortunes and got the spawn once it s just du... | 0 |

4,356 | 22,035,596,864 | IssuesEvent | 2022-05-28 14:23:28 | BioArchLinux/Packages | https://api.github.com/repos/BioArchLinux/Packages | closed | [MAINTAIN] sukana | maintain | **Please check all your packages requirement**

saddly, there is one package **r-metabma** when compile it, it always broke the server, please check a normal 16G RAM can compile it or not. I have rm it to the prepare

**Log of the bug**

<details>

```

-` root@bioarchlinux

... | True | [MAINTAIN] sukana - **Please check all your packages requirement**

saddly, there is one package **r-metabma** when compile it, it always broke the server, please check a normal 16G RAM can compile it or not. I have rm it to the prepare

**Log of the bug**

<details>

```

-` ... | main | sukana please check all your packages requirement saddly there is one package r metabma when compile it it always broke the server please check a normal ram can compile it or not i have rm it to the prepare log of the bug root bioarchlinux ... | 1 |

65,249 | 19,297,087,777 | IssuesEvent | 2021-12-12 19:15:26 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | closed | Installation order issue | Type: Documentation Type: Defect | I broke three fresh Fedora 34/35 installations after having installed ZFS as mentioned in your documentation here:

https://openzfs.github.io/openzfs-docs/Getting%20Started/Fedora/index.html

The command...

```

dnf install -y kernel-devel zfs

```

...causes to the following error:

```

Loading new zfs-2.1.1 DKM... | 1.0 | Installation order issue - I broke three fresh Fedora 34/35 installations after having installed ZFS as mentioned in your documentation here:

https://openzfs.github.io/openzfs-docs/Getting%20Started/Fedora/index.html

The command...

```

dnf install -y kernel-devel zfs

```

...causes to the following error:

```... | non_main | installation order issue i broke three fresh fedora installations after having installed zfs as mentioned in your documentation here the command dnf install y kernel devel zfs causes to the following error loading new zfs dkms files building for module... | 0 |

28,308 | 6,978,852,497 | IssuesEvent | 2017-12-12 19:03:45 | flutter/flutter | https://api.github.com/repos/flutter/flutter | opened | Firebase codelab uses Android Studio as example, but doesn't show IntelliJ version. | dev: docs - codelab | For installing Google Repository, on [this page](https://codelabs.developers.google.com/codelabs/flutter-firebase/index.html#4), the codelab says to use "Android Studio > Tools > Android > SDK Manager > SDK Tools", but there is a similar method on IntelliJ that it doesn't show (or have screen shots for) which starts wi... | 1.0 | Firebase codelab uses Android Studio as example, but doesn't show IntelliJ version. - For installing Google Repository, on [this page](https://codelabs.developers.google.com/codelabs/flutter-firebase/index.html#4), the codelab says to use "Android Studio > Tools > Android > SDK Manager > SDK Tools", but there is a simi... | non_main | firebase codelab uses android studio as example but doesn t show intellij version for installing google repository on the codelab says to use android studio tools android sdk manager sdk tools but there is a similar method on intellij that it doesn t show or have screen shots for which starts with... | 0 |

557 | 4,006,257,118 | IssuesEvent | 2016-05-12 14:25:19 | ESAPI/esapi-java-legacy | https://api.github.com/repos/ESAPI/esapi-java-legacy | opened | Create File Validator that checks Magic Bytes as Opposed to Extensions | Component-Validator enhancement Maintainability Validation | This Might make more sense as its own little mini-module, but we can discuss it here. Apache [Tika](https://tika.apache.org/) is a library that can be used to parse and inspect file headers to determine actual file types to mitigate the issue of say, renaming `netcat.exe` to `imsafe.docx` for the purposes of getting a... | True | Create File Validator that checks Magic Bytes as Opposed to Extensions - This Might make more sense as its own little mini-module, but we can discuss it here. Apache [Tika](https://tika.apache.org/) is a library that can be used to parse and inspect file headers to determine actual file types to mitigate the issue of ... | main | create file validator that checks magic bytes as opposed to extensions this might make more sense as its own little mini module but we can discuss it here apache is a library that can be used to parse and inspect file headers to determine actual file types to mitigate the issue of say renaming netcat exe to... | 1 |

5,322 | 26,882,654,281 | IssuesEvent | 2023-02-05 20:29:37 | IzK-ArcOS/ArcOS-Environment | https://api.github.com/repos/IzK-ArcOS/ArcOS-Environment | closed | Refactoring ArcOS & implementing build systems | enhancement maintainability | As the codebase grows, it might be time to rethink the project structure, code and build systems.

Right now, ArcOS doesn't use build systems aside from `electron-packager`, has no project-wide linter, is written in plain JavaScript and CSS without preprocessors, does not follow directory structure conventions and th... | True | Refactoring ArcOS & implementing build systems - As the codebase grows, it might be time to rethink the project structure, code and build systems.

Right now, ArcOS doesn't use build systems aside from `electron-packager`, has no project-wide linter, is written in plain JavaScript and CSS without preprocessors, does ... | main | refactoring arcos implementing build systems as the codebase grows it might be time to rethink the project structure code and build systems right now arcos doesn t use build systems aside from electron packager has no project wide linter is written in plain javascript and css without preprocessors does ... | 1 |

2,706 | 9,531,849,402 | IssuesEvent | 2019-04-29 17:01:47 | codestation/qcma | https://api.github.com/repos/codestation/qcma | closed | Request - Release with latest changes | unmaintained | No changes have been applied past months. Is there some possibility to make a release with all changes including removal of deprecated ffmpeg flags?

Thanks. | True | Request - Release with latest changes - No changes have been applied past months. Is there some possibility to make a release with all changes including removal of deprecated ffmpeg flags?

Thanks. | main | request release with latest changes no changes have been applied past months is there some possibility to make a release with all changes including removal of deprecated ffmpeg flags thanks | 1 |

321,491 | 9,799,309,435 | IssuesEvent | 2019-06-11 14:10:42 | python/mypy | https://api.github.com/repos/python/mypy | closed | None is allowed in generic instantiation regardless of bound | bug new-semantic-analyzer priority-1-normal topic-strict-optional topic-type-variables | This is a little (inconsequential) oddity I've noticed. It seems like `None` bypasses any generic bounds even in strict mode.

* Are you reporting a bug, or opening a feature request?

Bug.

* Please insert below the code you are checking with mypy.

```python

from typing import TypeVar, Generic

T = TypeVar... | 1.0 | None is allowed in generic instantiation regardless of bound - This is a little (inconsequential) oddity I've noticed. It seems like `None` bypasses any generic bounds even in strict mode.

* Are you reporting a bug, or opening a feature request?

Bug.

* Please insert below the code you are checking with mypy.

... | non_main | none is allowed in generic instantiation regardless of bound this is a little inconsequential oddity i ve noticed it seems like none bypasses any generic bounds even in strict mode are you reporting a bug or opening a feature request bug please insert below the code you are checking with mypy ... | 0 |

176,014 | 28,013,578,577 | IssuesEvent | 2023-03-27 20:34:15 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Button splash radius does not update when pressed | framework f: material design has reproducible steps found in release: 3.7 found in release: 3.8 | ## Steps to Reproduce

1. Update buttons border radius when pressed.

**Expected results:**

Buttons splash border radius updates when pressed.

**Actual results:**

Buttons splash border radius... | 1.0 | Button splash radius does not update when pressed - ## Steps to Reproduce

1. Update buttons border radius when pressed.

**Expected results:**

Buttons splash border radius updates when pressed.

... | non_main | button splash radius does not update when pressed steps to reproduce update buttons border radius when pressed expected results buttons splash border radius updates when pressed actual results buttons splash border radius does not update when pressed code sam... | 0 |

1,590 | 6,572,372,993 | IssuesEvent | 2017-09-11 01:48:35 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | ecs_service - Application Load Balancer register | affects_2.1 aws cloud feature_idea waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Feature Idea

##### COMPONENT NAME

ecs_service

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.1.1.0

co... | True | ecs_service - Application Load Balancer register - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Feature Idea

##### COMPONENT NAME

ecs_service

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version”... | main | ecs service application load balancer register issue type feature idea component name ecs service ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides os environment distributor id ubuntu desc... | 1 |

240,660 | 20,067,723,023 | IssuesEvent | 2022-02-04 00:06:39 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [test-failed]: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/monitoring/beats/cluster·js - Monitoring app beats cluster "before all" hook for "shows beats panel with data" | failed-test test-cloud | **Version: 8.1.0**

**Class: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/monitoring/beats/cluster·js**

**Stack Trace:**

```

Error: retry.try timeout: Error: expected testSubject(superDatePickerQuickMenu) to exist

at TestSubjects.existOrFail (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-te... | 2.0 | [test-failed]: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/monitoring/beats/cluster·js - Monitoring app beats cluster "before all" hook for "shows beats panel with data" - **Version: 8.1.0**

**Class: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/monitoring/beats/cluster·js**

**Stack ... | non_main | chrome x pack ui functional x pack test functional apps monitoring beats cluster·js monitoring app beats cluster before all hook for shows beats panel with data version class chrome x pack ui functional x pack test functional apps monitoring beats cluster·js stack trace error r... | 0 |

1,365 | 5,889,631,907 | IssuesEvent | 2017-05-17 13:21:32 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | maven_artifact does not use classifier when constructing destination name of artifact | affects_2.2 bug_report waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

maven_artifact

##### ANSIBLE VERSION

```

$ ansible --version

ansible 2.2.0 (devel 1fc44e4103) last updated 2016/05/02 13:44:52 (GMT +1050)

lib/ansible/modules/core: (detached HEAD b6ad3b6773) last updated 2016/05/02 13:45:21 (GMT +1050)

lib/ansible/modules/extras... | True | maven_artifact does not use classifier when constructing destination name of artifact - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

maven_artifact

##### ANSIBLE VERSION

```

$ ansible --version

ansible 2.2.0 (devel 1fc44e4103) last updated 2016/05/02 13:44:52 (GMT +1050)

lib/ansible/modules/core: (detached HE... | main | maven artifact does not use classifier when constructing destination name of artifact issue type bug report component name maven artifact ansible version ansible version ansible devel last updated gmt lib ansible modules core detached head last updated ... | 1 |

72,152 | 31,167,983,340 | IssuesEvent | 2023-08-16 21:27:30 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | opened | Shrink disk on 6 Silver app nodes | *team/ DXC* *team/ ops and shared services* | **Describe the issue**

In an effort to save money, test out using 500G disks on 6 VM workers instead of 800G.

**What is the Value/Impact?**

Reduced costs

**What is the plan? How will this get completed?**

Create CHG and send notifications

Work with VMware team to rebuild the nodes with smaller disks

**Identify any d... | 1.0 | Shrink disk on 6 Silver app nodes - **Describe the issue**

In an effort to save money, test out using 500G disks on 6 VM workers instead of 800G.

**What is the Value/Impact?**

Reduced costs

**What is the plan? How will this get completed?**

Create CHG and send notifications

Work with VMware team to rebuild the nodes ... | non_main | shrink disk on silver app nodes describe the issue in an effort to save money test out using disks on vm workers instead of what is the value impact reduced costs what is the plan how will this get completed create chg and send notifications work with vmware team to rebuild the nodes with s... | 0 |

3,232 | 12,368,706,361 | IssuesEvent | 2020-05-18 14:13:29 | Kashdeya/Tiny-Progressions | https://api.github.com/repos/Kashdeya/Tiny-Progressions | closed | [Suggestion] Toggle Birthday Pickaxe Cake | Version not Maintainted | I think the Birthday Pickaxe is a great tool with an adjusted recipe. I can already do that with craft-tweaker, but what I can't change is whether or not the cake is placed and takes a massive hit of durability from it. I get that this pickaxe came from a joke, but it would be great for that to be toggleable. | True | [Suggestion] Toggle Birthday Pickaxe Cake - I think the Birthday Pickaxe is a great tool with an adjusted recipe. I can already do that with craft-tweaker, but what I can't change is whether or not the cake is placed and takes a massive hit of durability from it. I get that this pickaxe came from a joke, but it would b... | main | toggle birthday pickaxe cake i think the birthday pickaxe is a great tool with an adjusted recipe i can already do that with craft tweaker but what i can t change is whether or not the cake is placed and takes a massive hit of durability from it i get that this pickaxe came from a joke but it would be great for... | 1 |

22,714 | 32,038,498,240 | IssuesEvent | 2023-09-22 17:14:44 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | 120850Release X.Y.Z - $MONTH $YEAR | P1 type: process release team-OSS | # Status of Bazel X.Y.Z

- Expected first release candidate date: [date]

- Expected release date: [date]

- [List of release blockers](link-to-milestone)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to the mil... | 1.0 | 120850Release X.Y.Z - $MONTH $YEAR - # Status of Bazel X.Y.Z

- Expected first release candidate date: [date]

- Expected release date: [date]

- [List of release blockers](link-to-milestone)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager... | non_main | x y z month year status of bazel x y z expected first release candidate date expected release date link to milestone to report a release blocking bug please add a comment with the text bazel io flag to the issue a release manager will triage it and add it to the milestone ... | 0 |

4,954 | 25,455,594,266 | IssuesEvent | 2022-11-24 13:57:15 | pace/bricks | https://api.github.com/repos/pace/bricks | closed | service generation: extend Makefile | T::Maintainance | When generating a new service, also generate an extended Makefile. Something like this:

```Makefile

.PHONY: all lint test integration

GOPATH?=~/go

all: bin/foobarctl bin/foobard

bin/foobarctl: cmd/foobarctl

go build -mod=vendor -o $@ ./$<

bin/foobard: cmd/foobard

go build -mod=vendor -o $@ ./$<

lin... | True | service generation: extend Makefile - When generating a new service, also generate an extended Makefile. Something like this:

```Makefile

.PHONY: all lint test integration

GOPATH?=~/go

all: bin/foobarctl bin/foobard

bin/foobarctl: cmd/foobarctl

go build -mod=vendor -o $@ ./$<

bin/foobard: cmd/foobard

... | main | service generation extend makefile when generating a new service also generate an extended makefile something like this makefile phony all lint test integration gopath go all bin foobarctl bin foobard bin foobarctl cmd foobarctl go build mod vendor o bin foobard cmd foobard ... | 1 |

90,314 | 18,107,037,987 | IssuesEvent | 2021-09-22 20:21:34 | StanfordBioinformatics/pulsar_lims | https://api.github.com/repos/StanfordBioinformatics/pulsar_lims | closed | ENCODE data submission: ENCSR079SHZ Western, marker missing | Encode IP submission | https://www.encodeproject.org/experiments/ENCSR079SHZ/

Hi Tao and Cory, can you check this experiment. I believe the biosample characterization is actually okay but it needs labels on the marker.

I don’t think it’s in Pulsar either. Thanks, Annika | 1.0 | ENCODE data submission: ENCSR079SHZ Western, marker missing - https://www.encodeproject.org/experiments/ENCSR079SHZ/

Hi Tao and Cory, can you check this experiment. I believe the biosample characterization is actually okay but it needs labels on the marker.

I don’t think it’s in Pulsar either. Thanks, Annika | non_main | encode data submission western marker missing hi tao and cory can you check this experiment i believe the biosample characterization is actually okay but it needs labels on the marker i don’t think it’s in pulsar either thanks annika | 0 |

4,013 | 18,736,464,756 | IssuesEvent | 2021-11-04 08:20:25 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Process custom global transform macros | type/feature maintainer/need-response | <!-- Make sure we don't have an existing Issue that reports the bug you are seeing (both open and closed). -->

### Describe your idea/feature/enhancement

As far as I can tell, `sam local start-api` ignores the `Transforms` section of the template. This is problematic for anybody trying to do template transforms bef... | True | Process custom global transform macros - <!-- Make sure we don't have an existing Issue that reports the bug you are seeing (both open and closed). -->

### Describe your idea/feature/enhancement

As far as I can tell, `sam local start-api` ignores the `Transforms` section of the template. This is problematic for any... | main | process custom global transform macros describe your idea feature enhancement as far as i can tell sam local start api ignores the transforms section of the template this is problematic for anybody trying to do template transforms before the serverless transform because sam wont understand custom reso... | 1 |

240,625 | 20,051,989,962 | IssuesEvent | 2022-02-03 07:54:34 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/transform/creation_runtime_mappings·ts - transform creation with runtime mappings batch transform with unique rt_airline_lower and sort by time and runtime mappings navigates through the wizard and sets all needed fields | failed-test | A test failed on a tracked branch

```

Error: Transform id input text should be 'fq_2_1643874372355' (got '')

at Assertion.assert (/opt/local-ssd/buildkite/builds/kb-n2-4-b847c70509228dfd/elastic/kibana-hourly/kibana/node_modules/@kbn/expect/expect.js:100:11)

at Assertion.eql (/opt/local-ssd/buildkite/builds/kb... | 1.0 | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/transform/creation_runtime_mappings·ts - transform creation with runtime mappings batch transform with unique rt_airline_lower and sort by time and runtime mappings navigates through the wizard and sets all needed fields - A test failed on a tr... | non_main | failing test chrome x pack ui functional tests x pack test functional apps transform creation runtime mappings·ts transform creation with runtime mappings batch transform with unique rt airline lower and sort by time and runtime mappings navigates through the wizard and sets all needed fields a test failed on a tr... | 0 |

679 | 4,226,587,416 | IssuesEvent | 2016-07-02 15:21:23 | duckduckgo/zeroclickinfo-spice | https://api.github.com/repos/duckduckgo/zeroclickinfo-spice | closed | Amazon: Add shipping information | Maintainer Input Requested |

The amazon product price shown does not include shipping. Add a separate shipping field?

ie.

https://www.amazon.com/dp/0545302021

9.49 + 5.49 shipping

------

IA Page: http://duck.co/ia/view/products

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @bsstoner | True | Amazon: Add shipping information -

The amazon product price shown does not include shipping. Add a separate shipping field?

ie.

https://www.amazon.com/dp/0545302021

9.49 + 5.49 shipping

------

IA Page: http://duck.co/ia/view/products

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html):... | main | amazon add shipping information the amazon product price shown does not include shipping add a separate shipping field ie shipping ia page bsstoner | 1 |

33,480 | 7,720,467,561 | IssuesEvent | 2018-05-23 23:20:15 | wallabyjs/public | https://api.github.com/repos/wallabyjs/public | closed | VSCode - Show code coverage at the bottom right corner | UX VS Code feature request | Hi!

One feature that would be really nice to have until the webapp support multiple projects is to have code coverage displayed in the bottom right corner of the VS Code extension.

**Proposition:**

Show code coverage at the bottom right by default

Add a setting that can disable code coverage.

If multi-root wor... | 1.0 | VSCode - Show code coverage at the bottom right corner - Hi!

One feature that would be really nice to have until the webapp support multiple projects is to have code coverage displayed in the bottom right corner of the VS Code extension.

**Proposition:**

Show code coverage at the bottom right by default

Add a s... | non_main | vscode show code coverage at the bottom right corner hi one feature that would be really nice to have until the webapp support multiple projects is to have code coverage displayed in the bottom right corner of the vs code extension proposition show code coverage at the bottom right by default add a s... | 0 |

1,686 | 6,574,166,156 | IssuesEvent | 2017-09-11 11:47:18 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | ec2_elb_lb misconfigures minimum size, desired size, and maximum size | affects_2.2 aws bug_report cloud waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

ec2_elb_lb

##### ANSIBLE VERSION

```

ansible 2.2.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGUR... | True | ec2_elb_lb misconfigures minimum size, desired size, and maximum size - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

ec2_elb_lb

##### ANSIBLE VERSION

```

ansible 2.2.0.0

config file = /etc/ansible/ansible.cfg

conf... | main | elb lb misconfigures minimum size desired size and maximum size issue type bug report component name elb lb ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides configuration defa... | 1 |

3,680 | 15,037,148,754 | IssuesEvent | 2021-02-02 16:01:34 | IITIDIDX597/sp_2021_team1 | https://api.github.com/repos/IITIDIDX597/sp_2021_team1 | opened | Most frequently annotated content (administrator side) | Epic: 4 Personal control of information Epic: 5 Maintaining the system Story Week 3 | **Project Goal:** S Lab is a tailored integrative learning and collaboration platform for clinicians that combines the latest research and tacit knowledge gained from experience in a practical way, while at the same time foster deeper learning experiences in order to deliver better AbilityLab Patient care.

**Hill Stat... | True | Most frequently annotated content (administrator side) - **Project Goal:** S Lab is a tailored integrative learning and collaboration platform for clinicians that combines the latest research and tacit knowledge gained from experience in a practical way, while at the same time foster deeper learning experiences in orde... | main | most frequently annotated content administrator side project goal s lab is a tailored integrative learning and collaboration platform for clinicians that combines the latest research and tacit knowledge gained from experience in a practical way while at the same time foster deeper learning experiences in orde... | 1 |

4,255 | 21,096,763,045 | IssuesEvent | 2022-04-04 11:03:51 | Lissy93/dashy | https://api.github.com/repos/Lissy93/dashy | closed | [FEATURE_REQUEST] Email notification when a service is down | 🦄 Feature Request 🛑 No Response 👤 Awaiting Maintainer Response | ### Is your feature request related to a problem? If so, please describe.

Hi,

First thank you for this wonderfull piece of software, it is very handy and easy to use.

Regarding the http status check, it would be great if you could implement an email notification when a service is down.

I do not want to depl... | True | [FEATURE_REQUEST] Email notification when a service is down - ### Is your feature request related to a problem? If so, please describe.

Hi,

First thank you for this wonderfull piece of software, it is very handy and easy to use.

Regarding the http status check, it would be great if you could implement an email... | main | email notification when a service is down is your feature request related to a problem if so please describe hi first thank you for this wonderfull piece of software it is very handy and easy to use regarding the http status check it would be great if you could implement an email notification wh... | 1 |

3,014 | 11,140,135,438 | IssuesEvent | 2019-12-21 11:50:33 | ansible/ansible | https://api.github.com/repos/ansible/ansible | closed | fix terraform module support for no workspace | affects_2.9 bug cloud has_pr module needs_maintainer needs_triage python3 support:community | ##### SUMMARY

Some terraform backends don't support workspaces. The module should not fail when using them.

The module fails with messages like:

- `Failed to list Terraform workspaces:\r\nworkspaces not supported\n`

- `Failed to list Terraform workspaces:\r\nnamed states not supported\n`

Related to:

- https:/... | True | fix terraform module support for no workspace - ##### SUMMARY

Some terraform backends don't support workspaces. The module should not fail when using them.

The module fails with messages like:

- `Failed to list Terraform workspaces:\r\nworkspaces not supported\n`

- `Failed to list Terraform workspaces:\r\nnamed s... | main | fix terraform module support for no workspace summary some terraform backends don t support workspaces the module should not fail when using them the module fails with messages like failed to list terraform workspaces r nworkspaces not supported n failed to list terraform workspaces r nnamed s... | 1 |

25,644 | 12,266,741,674 | IssuesEvent | 2020-05-07 09:28:09 | PrestaShop/PrestaShop | https://api.github.com/repos/PrestaShop/PrestaShop | closed | Accented & special characters misinterpreted in customer service messages | 1.7.6.4 1.7.6.5 BO Customer service NMI | #### Describe the bug

Accented & special characters misinterpreted in customer service messages (SAV in french).

#### Expected behavior

A well interpretation of accented & special characters as customer wrote it in original message.

#### Steps to Reproduce

Steps to reproduce the behavior:

1. In FO, wr... | 1.0 | Accented & special characters misinterpreted in customer service messages - #### Describe the bug

Accented & special characters misinterpreted in customer service messages (SAV in french).

#### Expected behavior

A well interpretation of accented & special characters as customer wrote it in original message.

... | non_main | accented special characters misinterpreted in customer service messages describe the bug accented special characters misinterpreted in customer service messages sav in french expected behavior a well interpretation of accented special characters as customer wrote it in original message ... | 0 |

3,273 | 12,501,076,921 | IssuesEvent | 2020-06-02 00:08:45 | unoplatform/uno | https://api.github.com/repos/unoplatform/uno | closed | Building Uno.UI.sln from the command line does not download %TEMP%\mono-wasm-e894d683f9f | area/vswin kind/bug kind/contributor-experience kind/maintainer-experience | ## Current behavior

```

empty %TEMP%\*.*

git clone master

cd src

msbuild /m /t:restore Uno.UI.sln

msbuild /m Uno.UI.sln

```

- Compilation fails with `%TEMP%\mono-wasm-e894d683f9f` does not exist.

- Opening Visual Studio and doing right click -> build SamplesApp.Wasm will create `%TEMP%\mono-wasm-e894d683f9f`... | True | Building Uno.UI.sln from the command line does not download %TEMP%\mono-wasm-e894d683f9f - ## Current behavior

```

empty %TEMP%\*.*

git clone master

cd src

msbuild /m /t:restore Uno.UI.sln

msbuild /m Uno.UI.sln

```

- Compilation fails with `%TEMP%\mono-wasm-e894d683f9f` does not exist.

- Opening Visual Studi... | main | building uno ui sln from the command line does not download temp mono wasm current behavior empty temp git clone master cd src msbuild m t restore uno ui sln msbuild m uno ui sln compilation fails with temp mono wasm does not exist opening visual studio and doing right cl... | 1 |

3,630 | 14,679,371,940 | IssuesEvent | 2020-12-31 06:49:45 | backdrop-ops/contrib | https://api.github.com/repos/backdrop-ops/contrib | closed | Maintainer change request | Maintainer change request | Thank you for adopting an abandoned Backdrop project.

Please note the procedure to add new maintainers:

1. If you haven't already, please join the Backdrop Contrib group by submitting an application.~

2. File an issue in the current project's issue queue requesting to help maintain the project.

3. If written p... | True | Maintainer change request - Thank you for adopting an abandoned Backdrop project.

Please note the procedure to add new maintainers:

1. If you haven't already, please join the Backdrop Contrib group by submitting an application.~

2. File an issue in the current project's issue queue requesting to help maintain t... | main | maintainer change request thank you for adopting an abandoned backdrop project please note the procedure to add new maintainers if you haven t already please join the backdrop contrib group by submitting an application file an issue in the current project s issue queue requesting to help maintain t... | 1 |

1,780 | 6,575,830,198 | IssuesEvent | 2017-09-11 17:29:34 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | hg: updating to empty changeset (a.k.a. revision -1 or null) doesn't work | affects_2.1 bug_report waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

hg

##### ANSIBLE VERSION

```

ansible 2.1.1.0

config file = /home/argh/.ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

##### OS / ENVIRONMENT

Running from Debian GNU/Linux testing, managing Ubuntu 14.04.5 LTS.

##### SUMM... | True | hg: updating to empty changeset (a.k.a. revision -1 or null) doesn't work - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

hg

##### ANSIBLE VERSION

```

ansible 2.1.1.0

config file = /home/argh/.ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

##### OS / ENVIRONMENT

Ru... | main | hg updating to empty changeset a k a revision or null doesn t work issue type bug report component name hg ansible version ansible config file home argh ansible cfg configured module search path default w o overrides configuration os environment ru... | 1 |

3,709 | 15,188,224,725 | IssuesEvent | 2021-02-15 14:50:15 | carbon-design-system/carbon | https://api.github.com/repos/carbon-design-system/carbon | closed | Tag component with css variables | status: needs triage 🕵️♀️ status: waiting for maintainer response 💬 type: enhancement 💡 | Hi!

As I see, this example not working: https://www.carbondesignsystem.com/components/tag/code

If I change the theme, the colors don't react, because the css custom variable is undefined and always the fallback is active.

We are facing the same problem, because if we add this to our root scss, it's don't contains ... | True | Tag component with css variables - Hi!

As I see, this example not working: https://www.carbondesignsystem.com/components/tag/code

If I change the theme, the colors don't react, because the css custom variable is undefined and always the fallback is active.

We are facing the same problem, because if we add this to ... | main | tag component with css variables hi as i see this example not working if i change the theme the colors don t react because the css custom variable is undefined and always the fallback is active we are facing the same problem because if we add this to our root scss it s don t contains the vars for tag ... | 1 |

4,455 | 23,184,394,518 | IssuesEvent | 2022-08-01 07:02:35 | beefproject/beef | https://api.github.com/repos/beefproject/beef | closed | Webcam HTML5 TypeError | Module Maintainability Medium | #### Environment

What version/revision of BeEF are you using? 0.4.7.0-alpha

On what version of Ruby? ruby 2.3.0p0

On what browser? Chrome Version 71.0.3578.98 (Official Build) (64-bit)

On what operating system? ubuntu 16.04 LTS

#### Configuration

Are you using a non-default configuration? yes (to h... | True | Webcam HTML5 TypeError - #### Environment

What version/revision of BeEF are you using? 0.4.7.0-alpha

On what version of Ruby? ruby 2.3.0p0

On what browser? Chrome Version 71.0.3578.98 (Official Build) (64-bit)

On what operating system? ubuntu 16.04 LTS

#### Configuration

Are you using a non-default... | main | webcam typeerror environment what version revision of beef are you using alpha on what version of ruby ruby on what browser chrome version official build bit on what operating system ubuntu lts configuration are you using a non default configuration... | 1 |

5,445 | 27,263,875,306 | IssuesEvent | 2023-02-22 16:36:37 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | Refactor Icon component to decouple "notification dot" functionality | type: enhancement work: frontend status: draft restricted: maintainers | In #2514 we modified the `Icon` component to support a red notification dot. We'd like to move that functionality out of `Icon` and into some other mechanism so that `Icon` is kept simple. We need to give some thought to the best way to design the code for the notification dot. Should it be a Svelte action so that we c... | True | Refactor Icon component to decouple "notification dot" functionality - In #2514 we modified the `Icon` component to support a red notification dot. We'd like to move that functionality out of `Icon` and into some other mechanism so that `Icon` is kept simple. We need to give some thought to the best way to design the c... | main | refactor icon component to decouple notification dot functionality in we modified the icon component to support a red notification dot we d like to move that functionality out of icon and into some other mechanism so that icon is kept simple we need to give some thought to the best way to design the code... | 1 |

840 | 4,480,013,828 | IssuesEvent | 2016-08-28 00:31:08 | coniks-sys/coniks-java | https://api.github.com/repos/coniks-sys/coniks-java | closed | Use single logger in server and client | maintainability util | Change how logging works:

- Have a single logger class

- Use the following logging convention: "[class] error/exception message" | True | Use single logger in server and client - Change how logging works:

- Have a single logger class

- Use the following logging convention: "[class] error/exception message" | main | use single logger in server and client change how logging works have a single logger class use the following logging convention error exception message | 1 |

50,861 | 7,641,457,893 | IssuesEvent | 2018-05-08 05:05:43 | ConsenSys/mythril | https://api.github.com/repos/ConsenSys/mythril | closed | Installation instructions problems | need documentation | Hi,

I have two issues with the installation instructions available at the README:

1. It's not mentioned there, but in macos you need to install `leveldb` before installing `mythril` from `pip`. `brew install leveldb` worked for me. If you don't install it first, `plyvel`'s compilation will fail.

2. It's not un... | 1.0 | Installation instructions problems - Hi,

I have two issues with the installation instructions available at the README:

1. It's not mentioned there, but in macos you need to install `leveldb` before installing `mythril` from `pip`. `brew install leveldb` worked for me. If you don't install it first, `plyvel`'s com... | non_main | installation instructions problems hi i have two issues with the installation instructions available at the readme it s not mentioned there but in macos you need to install leveldb before installing mythril from pip brew install leveldb worked for me if you don t install it first plyvel s com... | 0 |

3,912 | 17,469,305,010 | IssuesEvent | 2021-08-06 22:42:08 | SNDST00M/material-dynmap | https://api.github.com/repos/SNDST00M/material-dynmap | opened | Cross-browser testing for endpoints | context-workflow scope-maintainability type-improvement status-evaluation | ## 🏗 Feature Request

#### Is your feature request related to a problem?

Currently the CI workflow does not test whether UI elements are injected into the DOM after running the script.

#### Describe the solution you'd like

By adopting cross-browser testing in the stack, we can check that elements load as expec... | True | Cross-browser testing for endpoints - ## 🏗 Feature Request

#### Is your feature request related to a problem?

Currently the CI workflow does not test whether UI elements are injected into the DOM after running the script.

#### Describe the solution you'd like

By adopting cross-browser testing in the stack, we... | main | cross browser testing for endpoints 🏗 feature request is your feature request related to a problem currently the ci workflow does not test whether ui elements are injected into the dom after running the script describe the solution you d like by adopting cross browser testing in the stack we... | 1 |

14 | 2,515,188,394 | IssuesEvent | 2015-01-15 16:58:43 | simplesamlphp/simplesamlphp | https://api.github.com/repos/simplesamlphp/simplesamlphp | opened | Extract the consentAdmin or consentSimpleAdmin out of the repository | enhancement low maintainability | Pick one of them, the other should get its own repository and allow installation through composer. | True | Extract the consentAdmin or consentSimpleAdmin out of the repository - Pick one of them, the other should get its own repository and allow installation through composer. | main | extract the consentadmin or consentsimpleadmin out of the repository pick one of them the other should get its own repository and allow installation through composer | 1 |

306 | 3,078,425,253 | IssuesEvent | 2015-08-21 10:06:31 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | r500. Нелогичное поведение автовыбора настроек соединения. | bug imported Maintainability Priority-Medium | _From [bobrikov](https://code.google.com/u/bobrikov/) on May 06, 2011 12:43:04_

У меня стоит роутер с фключенным UPnP , в настройках соединения ставлю Фаервлл с UPnP. Соединяюсь - он пишеь в логе, что не удалось пробросить и сам включает активный режим. (может роутер глюкнул, не знаю, в стронге было всё ок)

Зачем м... | True | r500. Нелогичное поведение автовыбора настроек соединения. - _From [bobrikov](https://code.google.com/u/bobrikov/) on May 06, 2011 12:43:04_

У меня стоит роутер с фключенным UPnP , в настройках соединения ставлю Фаервлл с UPnP. Соединяюсь - он пишеь в логе, что не удалось пробросить и сам включает активный режим. (мож... | main | нелогичное поведение автовыбора настроек соединения from on may у меня стоит роутер с фключенным upnp в настройках соединения ставлю фаервлл с upnp соединяюсь он пишеь в логе что не удалось пробросить и сам включает активный режим может роутер глюкнул не знаю в стронге было всё ок ... | 1 |

143,301 | 21,995,887,603 | IssuesEvent | 2022-05-26 06:16:02 | stores-cedcommerce/Anthony-Store-Design | https://api.github.com/repos/stores-cedcommerce/Anthony-Store-Design | opened | The spacing from the left and right side is not equal. | Header section Mobile Design / UI / UX | **Actual result:**

The spacing from the left and right side is not equal.

**Expected result:**

The spacing can be equal from the left and right side. | 1.0 | The spacing from the left and right side is not equal. - **Actual result:**

The spacing from the left and right side is not equal.

**Expected result:**

The spacing can be equal from the left and right ... | non_main | the spacing from the left and right side is not equal actual result the spacing from the left and right side is not equal expected result the spacing can be equal from the left and right side | 0 |

35,899 | 2,793,819,833 | IssuesEvent | 2015-05-11 13:37:16 | elecoest/allevents-3-2 | https://api.github.com/repos/elecoest/allevents-3-2 | closed | Frontend - affichage événements passés ? | auto-migrated Priority-Medium Type-Enhancement | ```

Est-il possible de faire afficher en FrontEnd à la fois les événements à

venir et passés, comme AllEvent pour Joomla 1.x?

```

Original issue reported on code.google.com by `antoine....@gmail.com` on 10 Mar 2015 at 8:32 | 1.0 | Frontend - affichage événements passés ? - ```

Est-il possible de faire afficher en FrontEnd à la fois les événements à

venir et passés, comme AllEvent pour Joomla 1.x?

```

Original issue reported on code.google.com by `antoine....@gmail.com` on 10 Mar 2015 at 8:32 | non_main | frontend affichage événements passés est il possible de faire afficher en frontend à la fois les événements à venir et passés comme allevent pour joomla x original issue reported on code google com by antoine gmail com on mar at | 0 |

188,646 | 14,449,161,933 | IssuesEvent | 2020-12-08 07:37:42 | facebook/react-native | https://api.github.com/repos/facebook/react-native | reopened | App sometimes reloads the js bundle when switching from background to foreground. | Needs: Attention Needs: Repro Needs: Verify on Latest Version | ## Description

App sometimes reloads the js bundle when switching from background to foreground.

## React Native version:

0.61

## Steps To Reproduce

Provide a detailed list of steps that reproduce the issue.

Create a new react-native application. Install react-navigation. Create a stack navigator. Open app,... | 1.0 | App sometimes reloads the js bundle when switching from background to foreground. - ## Description

App sometimes reloads the js bundle when switching from background to foreground.

## React Native version:

0.61

## Steps To Reproduce

Provide a detailed list of steps that reproduce the issue.

Create a new rea... | non_main | app sometimes reloads the js bundle when switching from background to foreground description app sometimes reloads the js bundle when switching from background to foreground react native version steps to reproduce provide a detailed list of steps that reproduce the issue create a new reac... | 0 |

331,499 | 28,965,085,742 | IssuesEvent | 2023-05-10 07:18:37 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix backend_handler.test_set_backend | Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4712126583/jobs/8356932101" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4712126583/jobs/8356932101" rel="noopener ... | 1.0 | Fix backend_handler.test_set_backend - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4712126583/jobs/8356932101" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/471... | non_main | fix backend handler test set backend tensorflow img src torch img src numpy img src jax img src | 0 |

4,375 | 22,274,625,853 | IssuesEvent | 2022-06-10 15:23:46 | carbon-design-system/carbon | https://api.github.com/repos/carbon-design-system/carbon | closed | [Bug]: ParserError in index.scss | type: bug 🐛 status: needs triage 🕵️♀️ status: waiting for maintainer response 💬 | ### Package

@carbon/react

### Browser

Chrome

### Package version

1.3.0

### React version

16.10

### Description

Building a using a scss including @use 'carbon/react' project fails

# yarn build

Creating an optimized production build...

Browserslist: caniuse-lite is outdated. Please run:

npx browserslist@... | True | [Bug]: ParserError in index.scss - ### Package

@carbon/react

### Browser

Chrome

### Package version

1.3.0

### React version

16.10

### Description

Building a using a scss including @use 'carbon/react' project fails

# yarn build

Creating an optimized production build...

Browserslist: caniuse-lite is out... | main | parsererror in index scss package carbon react browser chrome package version react version description building a using a scss including use carbon react project fails yarn build creating an optimized production build browserslist caniuse lite is outdated ... | 1 |

369,517 | 10,914,202,241 | IssuesEvent | 2019-11-21 08:40:24 | devinit/D-Portal | https://api.github.com/repos/devinit/D-Portal | closed | Filter by humanitarian flag ? | feature priority | I can see on an activity page whether the ``humanitaria`` flag is declared :

eg: http://d-portal.org/q.html?aid=GB-CHC-1065972-DF168

At the d-portal search , is it possible to have a filter in o... | 1.0 | Filter by humanitarian flag ? - I can see on an activity page whether the ``humanitaria`` flag is declared :

eg: http://d-portal.org/q.html?aid=GB-CHC-1065972-DF168

At the d-portal search , is i... | non_main | filter by humanitarian flag i can see on an activity page whether the humanitaria flag is declared eg at the d portal search is it possible to have a filter in order to only see search humanitarian activities those with humanitarian or true at the iati activity element ... | 0 |

2,116 | 7,198,243,774 | IssuesEvent | 2018-02-05 12:04:31 | Chromeroni/Hera-Chatbot | https://api.github.com/repos/Chromeroni/Hera-Chatbot | opened | Implement a logging framework | Maintainability to be reviewed | Implement a logging framework, such as log4J.

Create daily logs, which will be stored on the bots current execution environment, | True | Implement a logging framework - Implement a logging framework, such as log4J.

Create daily logs, which will be stored on the bots current execution environment, | main | implement a logging framework implement a logging framework such as create daily logs which will be stored on the bots current execution environment | 1 |