Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7

values | text_combine stringlengths 96 254k | label stringclasses 2

values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2,160 | 7,519,425,501 | IssuesEvent | 2018-04-12 11:34:33 | RalfKoban/MiKo-Analyzers | https://api.github.com/repos/RalfKoban/MiKo-Analyzers | closed | Interface methods should not return IList, IDictionary, ICollection | Area: analyzer Area: maintainability feature in progress | Interface methods or public class methods or properties should not get or return

- IList

- IDictionary

- ICollection

- ...

Instead they should get/return the read-only/immutable pendants.

Normally, you do not want to add or remove items or clear the list. So the interface should not allow to do so | True | Interface methods should not return IList, IDictionary, ICollection - Interface methods or public class methods or properties should not get or return

- IList

- IDictionary

- ICollection

- ...

Instead they should get/return the read-only/immutable pendants.

Normally, you do not want to add or remove items or clear th... | main | interface methods should not return ilist idictionary icollection interface methods or public class methods or properties should not get or return ilist idictionary icollection instead they should get return the read only immutable pendants normally you do not want to add or remove items or clear th... | 1 |

4,743 | 24,480,401,021 | IssuesEvent | 2022-10-08 18:59:24 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | Date input should close date picker when losing focus via Tab or Shift+Tab | type: bug work: frontend status: ready restricted: maintainers | ## Steps to reproduce

1. Navigate to the Record Page for one Checkouts record.

1. Click on the "Checkout Time" input field to focus it.

1. Observe the date picker to appear (good).

1. Press Tab to focus on the Due Date input.

1. Expect the Checkout Time date picker to close.

1. Instead, observe the Checkout Time date ... | True | Date input should close date picker when losing focus via Tab or Shift+Tab - ## Steps to reproduce

1. Navigate to the Record Page for one Checkouts record.

1. Click on the "Checkout Time" input field to focus it.

1. Observe the date picker to appear (good).

1. Press Tab to focus on the Due Date input.

1. Expect the Ch... | main | date input should close date picker when losing focus via tab or shift tab steps to reproduce navigate to the record page for one checkouts record click on the checkout time input field to focus it observe the date picker to appear good press tab to focus on the due date input expect the ch... | 1 |

21,127 | 6,980,965,289 | IssuesEvent | 2017-12-13 05:14:09 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | Breaking change in 1.1.3 | bug builder/amazon docs | [This commit](https://github.com/hashicorp/packer/commit/a90c45d9bb3f2abd56ea77c8a456df19baaa60a7#diff-76f53be4e00c8508514464a9b9235c4e) which made it into the `1.1.3` release introduces a dependency in AWS for the `ec2:DescribeInstanceStatus` permission on the role that is building AMI's.

This broke our pipelines w... | 1.0 | Breaking change in 1.1.3 - [This commit](https://github.com/hashicorp/packer/commit/a90c45d9bb3f2abd56ea77c8a456df19baaa60a7#diff-76f53be4e00c8508514464a9b9235c4e) which made it into the `1.1.3` release introduces a dependency in AWS for the `ec2:DescribeInstanceStatus` permission on the role that is building AMI's.

... | non_main | breaking change in which made it into the release introduces a dependency in aws for the describeinstancestatus permission on the role that is building ami s this broke our pipelines which were previously working on we used the docker light image which wasn t pinned so it autom... | 0 |

5,839 | 31,023,140,908 | IssuesEvent | 2023-08-10 07:17:03 | jupyter-naas/awesome-notebooks | https://api.github.com/repos/jupyter-naas/awesome-notebooks | opened | Xero - Get Bank Transactions | templates maintainer | This notebook retrieves one or many bank transactions from Xero. It is usefull for organizations to keep track of their financial data.

| True | Xero - Get Bank Transactions - This notebook retrieves one or many bank transactions from Xero. It is usefull for organizations to keep track of their financial data.

| main | xero get bank transactions this notebook retrieves one or many bank transactions from xero it is usefull for organizations to keep track of their financial data | 1 |

3,406 | 13,181,837,636 | IssuesEvent | 2020-08-12 14:54:03 | duo-labs/cloudmapper | https://api.github.com/repos/duo-labs/cloudmapper | closed | Add command to show what resources are supported | map unmaintained_functionality | There isn't an easy way for people to know what resources CloudMapper supports for the different commands. Let's start by showing what `prepare` supports. | True | Add command to show what resources are supported - There isn't an easy way for people to know what resources CloudMapper supports for the different commands. Let's start by showing what `prepare` supports. | main | add command to show what resources are supported there isn t an easy way for people to know what resources cloudmapper supports for the different commands let s start by showing what prepare supports | 1 |

3,994 | 18,509,642,327 | IssuesEvent | 2021-10-20 00:05:24 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Cannot build from source under Ubuntu 20.04 | platform/linux area/installation maintainer/need-response | <!-- Make sure we don't have an existing Issue that reports the bug you are seeing (both open and closed).

If you do find an existing Issue, re-open or add a comment to that Issue instead of creating a new one. -->

### Description:

<!-- Briefly describe the bug you are facing.-->

I'm following instructions in `.... | True | Cannot build from source under Ubuntu 20.04 - <!-- Make sure we don't have an existing Issue that reports the bug you are seeing (both open and closed).

If you do find an existing Issue, re-open or add a comment to that Issue instead of creating a new one. -->

### Description:

<!-- Briefly describe the bug you ar... | main | cannot build from source under ubuntu make sure we don t have an existing issue that reports the bug you are seeing both open and closed if you do find an existing issue re open or add a comment to that issue instead of creating a new one description i m following instructions in in... | 1 |

416,728 | 28,097,429,553 | IssuesEvent | 2023-03-30 16:47:00 | WorldEnterpriseGroup/silkcorp | https://api.github.com/repos/WorldEnterpriseGroup/silkcorp | closed | Change the images of team member and content mentioned with images | documentation help wanted content writing copywriting | Website Link: https://silkcorp.org/

**Please Follow these Instructions:**

- Change all 4 images of team members

- Make an image having a message from Team member

- Change the image beside the message.

Find the attached image for reference.

Other names:

emma

Hostname: amanda.int.prhv.afunix.org

Uuid: c6654b8f-3ca8-424f-8d0f-7c0b7d8108cc

State: Peer in... | True | gluster_volume module should parse "other names" of 'gluster peer status' command - Hi.

I have the following `gluster peer status` output:

```

Number of Peers: 2

Hostname: emma.int.prhv.afunix.org

Uuid: c536dce8-dda8-4a66-a840-2eea709572da

State: Peer in Cluster (Connected)

Other names:

emma

Hostname: am... | main | gluster volume module should parse other names of gluster peer status command hi i have the following gluster peer status output number of peers hostname emma int prhv afunix org uuid state peer in cluster connected other names emma hostname amanda int prhv afunix org u... | 1 |

18,992 | 10,312,364,811 | IssuesEvent | 2019-08-29 19:40:50 | jamijam/WebGoat-Legacy | https://api.github.com/repos/jamijam/WebGoat-Legacy | opened | CVE-2017-7525 (High) detected in jackson-databind-2.0.4.jar | security vulnerability | ## CVE-2017-7525 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming... | True | CVE-2017-7525 (High) detected in jackson-databind-2.0.4.jar - ## CVE-2017-7525 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>General d... | non_main | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api path to dependency file webgoat legacy pom xml path to vulnerable library reposi... | 0 |

645 | 4,158,831,034 | IssuesEvent | 2016-06-17 05:55:36 | StefMa/TimeTracking | https://api.github.com/repos/StefMa/TimeTracking | opened | Automatic release/publish to testes and Google Play | MAINTAINING | Currently we have to build the APK and release it by our self.

We have to create a script which shares the APK to testers (with the dev flavor) and to the play store with the prod flavor. | True | Automatic release/publish to testes and Google Play - Currently we have to build the APK and release it by our self.

We have to create a script which shares the APK to testers (with the dev flavor) and to the play store with the prod flavor. | main | automatic release publish to testes and google play currently we have to build the apk and release it by our self we have to create a script which shares the apk to testers with the dev flavor and to the play store with the prod flavor | 1 |

3,106 | 11,868,468,938 | IssuesEvent | 2020-03-26 09:15:01 | chocolatey-community/chocolatey-package-requests | https://api.github.com/repos/chocolatey-community/chocolatey-package-requests | closed | RFM - freac | Status: Available For Maintainer(s) | ## Current Maintainer

- [x] I am the maintainer of the package and wish to pass it to someone else;

## I DON'T Want To Become The Maintainer

- [x] I have followed the Package Triage Process and I do NOT want to become maintainer of the package;

- [x] There is no existing open maintainer request for this packa... | True | RFM - freac - ## Current Maintainer

- [x] I am the maintainer of the package and wish to pass it to someone else;

## I DON'T Want To Become The Maintainer

- [x] I have followed the Package Triage Process and I do NOT want to become maintainer of the package;

- [x] There is no existing open maintainer request ... | main | rfm freac current maintainer i am the maintainer of the package and wish to pass it to someone else i don t want to become the maintainer i have followed the package triage process and i do not want to become maintainer of the package there is no existing open maintainer request for th... | 1 |

25,273 | 4,151,867,250 | IssuesEvent | 2016-06-15 22:04:21 | Wirlie/AllBanks-2 | https://api.github.com/repos/Wirlie/AllBanks-2 | reopened | Add a command to restart a plot. Like /plot clear | AllBanksLand bug enhancement goal high priority testing required work in progress | This command can help to restart an abandoned plot (also if you want to start from 0).

- [x] Add command: /plot clear

- [x] Add a confirmation before restarting a plot. | 1.0 | Add a command to restart a plot. Like /plot clear - This command can help to restart an abandoned plot (also if you want to start from 0).

- [x] Add command: /plot clear

- [x] Add a confirmation before restarting a plot. | non_main | add a command to restart a plot like plot clear this command can help to restart an abandoned plot also if you want to start from add command plot clear add a confirmation before restarting a plot | 0 |

166,316 | 14,047,311,892 | IssuesEvent | 2020-11-02 06:53:46 | JuanOliveros/git_web_practice | https://api.github.com/repos/JuanOliveros/git_web_practice | opened | Un commit que no sigue la convención de código o FIX a realizar | documentation | La convención de código a seguir:

- Para los arreglos: `<Identificador de la corrección>: <Comentario>`

- Para los arreglos con conflictos: `<Identificador de la corrección>: <Comentario por defecto del merge>`

Igualmente, solo hay 3 fixes a realizar. Al realizar uno y completarlo se creará un issue con las instruccio... | 1.0 | Un commit que no sigue la convención de código o FIX a realizar - La convención de código a seguir:

- Para los arreglos: `<Identificador de la corrección>: <Comentario>`

- Para los arreglos con conflictos: `<Identificador de la corrección>: <Comentario por defecto del merge>`

Igualmente, solo hay 3 fixes a realizar. A... | non_main | un commit que no sigue la convención de código o fix a realizar la convención de código a seguir para los arreglos para los arreglos con conflictos igualmente solo hay fixes a realizar al realizar uno y completarlo se creará un issue con las instrucciones a realizar para el siguiente para ... | 0 |

1,836 | 6,577,368,557 | IssuesEvent | 2017-09-12 00:25:27 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | ec2_vol delete volume by name | affects_2.1 aws cloud feature_idea waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

cloud/amazon/ec2_vol

##### ANSIBLE VERSION

```

ansible 2.1.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

... | True | ec2_vol delete volume by name - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

cloud/amazon/ec2_vol

##### ANSIBLE VERSION

```

ansible 2.1.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o ov... | main | vol delete volume by name issue type feature idea component name cloud amazon vol ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides configuration nothing extra os environment n a su... | 1 |

212,344 | 7,236,081,569 | IssuesEvent | 2018-02-13 04:43:31 | GeoTIFF/geoblaze | https://api.github.com/repos/GeoTIFF/geoblaze | opened | [USER REQUEST] Describe 3rd Param for Histogram | top-priority | Currently, documentation doesn't describe that third options parameter for histogram. A user spent a long time and had to go through our source code to figure out what params to pass in with options. We need to update documentation. | 1.0 | [USER REQUEST] Describe 3rd Param for Histogram - Currently, documentation doesn't describe that third options parameter for histogram. A user spent a long time and had to go through our source code to figure out what params to pass in with options. We need to update documentation. | non_main | describe param for histogram currently documentation doesn t describe that third options parameter for histogram a user spent a long time and had to go through our source code to figure out what params to pass in with options we need to update documentation | 0 |

2,431 | 8,621,114,567 | IssuesEvent | 2018-11-20 16:33:45 | simplesamlphp/simplesamlphp | https://api.github.com/repos/simplesamlphp/simplesamlphp | closed | Double concatenation in translation string | maintainability | `lib/SimpleSAML/Error/Error.php` has the only double concatenation:

```

$moduleCode = explode(':', $this->errorCode, 2);

if (count($moduleCode) === 2) {

$this->module = $moduleCode[0];

... | True | Double concatenation in translation string - `lib/SimpleSAML/Error/Error.php` has the only double concatenation:

```

$moduleCode = explode(':', $this->errorCode, 2);

if (count($moduleCode) === 2) {

$this->module = $moduleCode[... | main | double concatenation in translation string lib simplesaml error error php has the only double concatenation modulecode explode this errorcode if count modulecode this module modulecode ... | 1 |

2,845 | 3,211,738,577 | IssuesEvent | 2015-10-06 12:33:38 | coreos/rkt | https://api.github.com/repos/coreos/rkt | closed | Rkt should support setting supplemental groups on containers | area/usability kind/enhancement | Currently it is possible to set the UID/GID on a container process, but not possible to control the supplemental groups. It would be very convenient to have supplemental group control to enable sharing volumes across containers running as different UID/GIDs. | True | Rkt should support setting supplemental groups on containers - Currently it is possible to set the UID/GID on a container process, but not possible to control the supplemental groups. It would be very convenient to have supplemental group control to enable sharing volumes across containers running as different UID/GID... | non_main | rkt should support setting supplemental groups on containers currently it is possible to set the uid gid on a container process but not possible to control the supplemental groups it would be very convenient to have supplemental group control to enable sharing volumes across containers running as different uid gid... | 0 |

137,210 | 5,299,907,306 | IssuesEvent | 2017-02-10 02:03:38 | copperhead/bugtracker | https://api.github.com/repos/copperhead/bugtracker | closed | IDS | enhancement priority-low | It would be cool to have optional built-in IDS support. It's not possible to do this well without it being built into the OS due to lack of privileges, especially as the app sandbox is hardened. It's an area where CopperheadOS could provide a real edge. Android has SafetyNet, but that's meant to protect the ecosystem a... | 1.0 | IDS - It would be cool to have optional built-in IDS support. It's not possible to do this well without it being built into the OS due to lack of privileges, especially as the app sandbox is hardened. It's an area where CopperheadOS could provide a real edge. Android has SafetyNet, but that's meant to protect the ecosy... | non_main | ids it would be cool to have optional built in ids support it s not possible to do this well without it being built into the os due to lack of privileges especially as the app sandbox is hardened it s an area where copperheados could provide a real edge android has safetynet but that s meant to protect the ecosy... | 0 |

821,973 | 30,845,819,215 | IssuesEvent | 2023-08-02 13:40:16 | plan-be/iode | https://api.github.com/repos/plan-be/iode | closed | PYTHON: Change compile options according to the compiler and buid config in cythonize_iode.py | enhancement priority: high python difficulty: low | Currently, the compile options are fixed for the MSVC compiler and the Debug build config:

```

extra_compile_args=["-Zi", "/Od", "/DVC", "/DSCRPROTO", "/DREALD"],

``` | 1.0 | PYTHON: Change compile options according to the compiler and buid config in cythonize_iode.py - Currently, the compile options are fixed for the MSVC compiler and the Debug build config:

```

extra_compile_args=["-Zi", "/Od", "/DVC", "/DSCRPROTO", "/DREALD"],

``` | non_main | python change compile options according to the compiler and buid config in cythonize iode py currently the compile options are fixed for the msvc compiler and the debug build config extra compile args | 0 |

110,807 | 9,477,936,354 | IssuesEvent | 2019-04-19 20:32:26 | cerner/terra-core | https://api.github.com/repos/cerner/terra-core | closed | Improve icon visual regression test coverage | Orion Reviewed icon intermediate issue testing | # Feature Request

## Description

Currently, our icon visual regression coverage is very minimal. We should expand it to better capture the full icon set. We've had a couple bugs slip through related to how the SVGs have been formatted that we can catch if we set up visual regression tests for the entire icon set. | 1.0 | Improve icon visual regression test coverage - # Feature Request

## Description

Currently, our icon visual regression coverage is very minimal. We should expand it to better capture the full icon set. We've had a couple bugs slip through related to how the SVGs have been formatted that we can catch if we set up vis... | non_main | improve icon visual regression test coverage feature request description currently our icon visual regression coverage is very minimal we should expand it to better capture the full icon set we ve had a couple bugs slip through related to how the svgs have been formatted that we can catch if we set up vis... | 0 |

990 | 4,756,646,363 | IssuesEvent | 2016-10-24 14:33:53 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | [Regression] asa_command: silently allows invalid command | affects_2.2 bug_report networking waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

asa_command

##### ANSIBLE VERSION

```

ansible 2.2.0 (devel eb33ed4219) last updated 2016/09/27 09:18:44 (GMT +100)

lib/ansible/modules/core: (devel c03697c81e) last updated 2016/09/27 09:18:49 (GMT +100)

lib/ansible/modules/extras: (devel 119bc466be) l... | True | [Regression] asa_command: silently allows invalid command - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

asa_command

##### ANSIBLE VERSION

```

ansible 2.2.0 (devel eb33ed4219) last updated 2016/09/27 09:18:44 (GMT +100)

lib/ansible/modules/core: (devel c03697c81e) last updated 2016/09/27 09:18:49 (... | main | asa command silently allows invalid command issue type bug report component name asa command ansible version ansible devel last updated gmt lib ansible modules core devel last updated gmt lib ansible modules extras devel ... | 1 |

4,834 | 24,912,106,884 | IssuesEvent | 2022-10-30 00:51:37 | chocolatey-community/chocolatey-package-requests | https://api.github.com/repos/chocolatey-community/chocolatey-package-requests | closed | RFM - ds4windows | Status: Available For Maintainer(s) | ## Current Maintainer

<!-- If you are not confirmed as a known maintainer, you may be asked to take additional steps to confirm your user account -->

- [x] I am the maintainer of the package and wish to pass it to someone else;

## Checklist

- [x] Issue title starts with 'RFM - '

## Existing Package Detai... | True | RFM - ds4windows - ## Current Maintainer

<!-- If you are not confirmed as a known maintainer, you may be asked to take additional steps to confirm your user account -->

- [x] I am the maintainer of the package and wish to pass it to someone else;

## Checklist

- [x] Issue title starts with 'RFM - '

## Exi... | main | rfm current maintainer i am the maintainer of the package and wish to pass it to someone else checklist issue title starts with rfm existing package details package url package source url | 1 |

3,122 | 11,956,415,536 | IssuesEvent | 2020-04-04 10:18:11 | custom-cards/flex-table-card | https://api.github.com/repos/custom-cards/flex-table-card | closed | here somehow avoid that a 'null' is string-converted | maintaining todo :spiral_notepad: | https://github.com/custom-cards/flex-table-card/blob/3ec416da586266272e06f958622158e3b5ddf9b6/flex-table-card.js#L164-L168

---

###### This issue was generated by [todo](https://todo.jasonet.co) based on a `todo` comment in 3ec416da586266272e06f958622158e3b5ddf9b6. It's been assigned to @daringer because they com... | True | here somehow avoid that a 'null' is string-converted - https://github.com/custom-cards/flex-table-card/blob/3ec416da586266272e06f958622158e3b5ddf9b6/flex-table-card.js#L164-L168

---

###### This issue was generated by [todo](https://todo.jasonet.co) based on a `todo` comment in 3ec416da586266272e06f958622158e3b5ddf9b6... | main | here somehow avoid that a null is string converted this issue was generated by based on a todo comment in it s been assigned to daringer because they committed the code | 1 |

244,631 | 18,764,165,284 | IssuesEvent | 2021-11-05 20:34:00 | satijalab/seurat | https://api.github.com/repos/satijalab/seurat | closed | Dockerfile | documentation | <!-- A clear description of what content at https://satijalab.org/seurat or in the Seurat function man pages is an issue. -->

Good morning, i was trying to reach the dockerfile, in order to build the docker with seurat, but the only thing i can find is https://hub.docker.com/r/satijalab/seurat the link to pull the do... | 1.0 | Dockerfile - <!-- A clear description of what content at https://satijalab.org/seurat or in the Seurat function man pages is an issue. -->

Good morning, i was trying to reach the dockerfile, in order to build the docker with seurat, but the only thing i can find is https://hub.docker.com/r/satijalab/seurat the link t... | non_main | dockerfile good morning i was trying to reach the dockerfile in order to build the docker with seurat but the only thing i can find is the link to pull the docker can you please provide the dockerfile thank you best luca | 0 |

165,517 | 26,183,988,518 | IssuesEvent | 2023-01-02 20:09:28 | flutter/website | https://api.github.com/repos/flutter/website | opened | Migrate to Bootstrap 5 | infrastructure design p3-low blocked e2-days e3-weeks | ### Describe the problem

Bootstrap 5 is the current release Bootstrap, replacing Bootstrap 4. We use it heavily across the site and we want to make sure we stay up to date. This will also allow us to eventually drop Jquery since Bootstrap 5 no longer uses it. Beyond that, this will also make a dark mode slightly easie... | 1.0 | Migrate to Bootstrap 5 - ### Describe the problem

Bootstrap 5 is the current release Bootstrap, replacing Bootstrap 4. We use it heavily across the site and we want to make sure we stay up to date. This will also allow us to eventually drop Jquery since Bootstrap 5 no longer uses it. Beyond that, this will also make a... | non_main | migrate to bootstrap describe the problem bootstrap is the current release bootstrap replacing bootstrap we use it heavily across the site and we want to make sure we stay up to date this will also allow us to eventually drop jquery since bootstrap no longer uses it beyond that this will also make a... | 0 |

151,701 | 13,429,844,132 | IssuesEvent | 2020-09-07 03:03:41 | rorepoid/twgroup | https://api.github.com/repos/rorepoid/twgroup | closed | Desafío 1 | documentation | Al momento de iniciar un nuevo proyecto en Laravel debemos realizar una serie de pasos para configurar el proyecto dependiendo de sus requerimientos. Imagina que necesitamos una plataforma sobre Laravel que utilizará un motor de base de datos MySQL/MariaDB, un servidor de correos SMTP y un servidor Redis.

¿Cuáles so... | 1.0 | Desafío 1 - Al momento de iniciar un nuevo proyecto en Laravel debemos realizar una serie de pasos para configurar el proyecto dependiendo de sus requerimientos. Imagina que necesitamos una plataforma sobre Laravel que utilizará un motor de base de datos MySQL/MariaDB, un servidor de correos SMTP y un servidor Redis.

... | non_main | desafío al momento de iniciar un nuevo proyecto en laravel debemos realizar una serie de pasos para configurar el proyecto dependiendo de sus requerimientos imagina que necesitamos una plataforma sobre laravel que utilizará un motor de base de datos mysql mariadb un servidor de correos smtp y un servidor redis ... | 0 |

1,482 | 6,416,004,678 | IssuesEvent | 2017-08-08 14:00:09 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | vca_vapp missing network_ip option | affects_2.3 cloud feature_idea vmware waiting_on_maintainer | Unable to set IP address when creating vCloud instances due to missing 'network_ip' option.

See:

https://github.com/vmware/vca-codesamples/blob/master/ansibleworkshopVMworld2015/lessons/lesson2/library/vca_vapp.py#L100

[module: cloud/vmware/vca_vapp.py]

| True | vca_vapp missing network_ip option - Unable to set IP address when creating vCloud instances due to missing 'network_ip' option.

See:

https://github.com/vmware/vca-codesamples/blob/master/ansibleworkshopVMworld2015/lessons/lesson2/library/vca_vapp.py#L100

[module: cloud/vmware/vca_vapp.py]

| main | vca vapp missing network ip option unable to set ip address when creating vcloud instances due to missing network ip option see | 1 |

2,504 | 8,655,459,620 | IssuesEvent | 2018-11-27 16:00:29 | codestation/qcma | https://api.github.com/repos/codestation/qcma | closed | Music damaged file | unmaintained | Hi, I recently Updated from 3.52 to the last firmware (3.61). But when I try to send music from the PC to PSVita, the music app of the psvita says me is a damaged file. I tried with converting the music (mp3 to mp3) with ffmpeg, vlc but nothing.

I use the qcma client of Linux installed from aur.

With the official cma I... | True | Music damaged file - Hi, I recently Updated from 3.52 to the last firmware (3.61). But when I try to send music from the PC to PSVita, the music app of the psvita says me is a damaged file. I tried with converting the music (mp3 to mp3) with ffmpeg, vlc but nothing.

I use the qcma client of Linux installed from aur.

Wi... | main | music damaged file hi i recently updated from to the last firmware but when i try to send music from the pc to psvita the music app of the psvita says me is a damaged file i tried with converting the music to with ffmpeg vlc but nothing i use the qcma client of linux installed from aur with the... | 1 |

313,875 | 26,959,577,223 | IssuesEvent | 2023-02-08 17:09:11 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: restore/tpce/8TB/aws/nodes=10/cpus=8 failed | C-test-failure O-robot O-roachtest branch-master release-blocker T-disaster-recovery | roachtest.restore/tpce/8TB/aws/nodes=10/cpus=8 [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyAwsBazel/8311515?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyAwsBazel/8311515?buildTab=artifacts#/res... | 2.0 | roachtest: restore/tpce/8TB/aws/nodes=10/cpus=8 failed - roachtest.restore/tpce/8TB/aws/nodes=10/cpus=8 [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyAwsBazel/8311515?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies... | non_main | roachtest restore tpce aws nodes cpus failed roachtest restore tpce aws nodes cpus with on master test artifacts and logs in artifacts restore tpce aws nodes cpus run monitor go wait monitor failure monitor command failure unexpected node event dead exit status ... | 0 |

1,545 | 6,572,237,119 | IssuesEvent | 2017-09-11 00:26:30 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | New ecs_service_facts module has a return behavior that is inconsistent when compared to existing *_facts modules. | affects_2.1 aws bug_report cloud feature_idea waiting_on_maintainer | ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

`/ansible/ansible-modules-extras/cloud/amazon/ecs_service_facts.py`

##### ANSIBLE VERSION

```

2.1.0

```

##### SUMMARY

New ecs_service_facts module has a return behavior that is inconsistent when compared to existing *_facts modules.

The new `ecs_service_facts` mo... | True | New ecs_service_facts module has a return behavior that is inconsistent when compared to existing *_facts modules. - ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

`/ansible/ansible-modules-extras/cloud/amazon/ecs_service_facts.py`

##### ANSIBLE VERSION

```

2.1.0

```

##### SUMMARY

New ecs_service_facts module ... | main | new ecs service facts module has a return behavior that is inconsistent when compared to existing facts modules issue type feature idea component name ansible ansible modules extras cloud amazon ecs service facts py ansible version summary new ecs service facts module ... | 1 |

152,890 | 13,487,072,920 | IssuesEvent | 2020-09-11 10:23:12 | cksystemsgroup/monster | https://api.github.com/repos/cksystemsgroup/monster | closed | Write down a Concept | documentation | Create a "big-picture" description, how the symbolic execution engine should work. This should ideally be written in markdown an should be convertable to browsable HTML.

We want to write that in the simplest way. We should describe the engine based on a running example of C* code. This piece of code should have at m... | 1.0 | Write down a Concept - Create a "big-picture" description, how the symbolic execution engine should work. This should ideally be written in markdown an should be convertable to browsable HTML.

We want to write that in the simplest way. We should describe the engine based on a running example of C* code. This piece o... | non_main | write down a concept create a big picture description how the symbolic execution engine should work this should ideally be written in markdown an should be convertable to browsable html we want to write that in the simplest way we should describe the engine based on a running example of c code this piece o... | 0 |

3,627 | 14,672,547,338 | IssuesEvent | 2020-12-30 10:53:23 | Homebrew/homebrew-core | https://api.github.com/repos/Homebrew/homebrew-core | opened | luajit probably needs to be deprecated | help wanted maintainer feedback | - The latest release (stable OR beta) is from 2017

- It's heavily patched

- Every new macOS version requires an additional patch

- Upstream's recommendation is to “build from git HEAD”, and they won't apparently ship new releases: https://github.com/LuaJIT/LuaJIT/issues/648#issuecomment-752404043

The reason I'm n... | True | luajit probably needs to be deprecated - - The latest release (stable OR beta) is from 2017

- It's heavily patched

- Every new macOS version requires an additional patch

- Upstream's recommendation is to “build from git HEAD”, and they won't apparently ship new releases: https://github.com/LuaJIT/LuaJIT/issues/648#i... | main | luajit probably needs to be deprecated the latest release stable or beta is from it s heavily patched every new macos version requires an additional patch upstream s recommendation is to “build from git head” and they won t apparently ship new releases the reason i m not doing a pull request dir... | 1 |

83,641 | 3,638,064,985 | IssuesEvent | 2016-02-12 14:07:21 | molgenis/molgenis | https://api.github.com/repos/molgenis/molgenis | closed | Charts won't plot TypeTest ID column versus TypeTest ID column | bug molgenis-dataexplorer priority-later | ## Reproduce

Select Dataexplorer, Select TypeTest, Select charts

Create scatter plot for ID versus ID column.

## Expected

I can see the chart

## Actual

I get a somewhat obscure error:

```

18:32:36.221 [ajp-bio-8009-exec-161] ERROR org.molgenis.charts.ChartController - null

org.elasticsearch.action.search... | 1.0 | Charts won't plot TypeTest ID column versus TypeTest ID column - ## Reproduce

Select Dataexplorer, Select TypeTest, Select charts

Create scatter plot for ID versus ID column.

## Expected

I can see the chart

## Actual

I get a somewhat obscure error:

```

18:32:36.221 [ajp-bio-8009-exec-161] ERROR org.molgen... | non_main | charts won t plot typetest id column versus typetest id column reproduce select dataexplorer select typetest select charts create scatter plot for id versus id column expected i can see the chart actual i get a somewhat obscure error error org molgenis charts chartcontroller ... | 0 |

1,150 | 5,008,198,798 | IssuesEvent | 2016-12-12 18:50:41 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | iam_policy not using role structure for policy_document path | affects_2.0 aws bug_report cloud waiting_on_maintainer | ##### Issue Type:

- Bug Report

##### Component Name:

iam_policy module

##### Ansible Version:

ansible 2.0.1.0

##### Ansible Configuration:

no changes to ansible.cfg

##### Environment:

control server Redhat 6.7

target server Redhat 6.7

##### Summary:

iam_policy policy_document parameter does not use role file struc... | True | iam_policy not using role structure for policy_document path - ##### Issue Type:

- Bug Report

##### Component Name:

iam_policy module

##### Ansible Version:

ansible 2.0.1.0

##### Ansible Configuration:

no changes to ansible.cfg

##### Environment:

control server Redhat 6.7

target server Redhat 6.7

##### Summary:

ia... | main | iam policy not using role structure for policy document path issue type bug report component name iam policy module ansible version ansible ansible configuration no changes to ansible cfg environment control server redhat target server redhat summary ia... | 1 |

4,167 | 19,982,012,114 | IssuesEvent | 2022-01-30 02:58:53 | thumbor/thumbor-bootcamp | https://api.github.com/repos/thumbor/thumbor-bootcamp | opened | [Bootcamp Task] Remove thumbor upload into its own project | task L3 python maintainability | ## Areas of Expertise

thumbor, open-source, testing, python, maintainability

Creating your own open source project will teach a lot of the concepts required for participating in another's open source project. Great experience!

## Summary

Create a new project `thumbor-upload` in thumbor's org and move the up... | True | [Bootcamp Task] Remove thumbor upload into its own project - ## Areas of Expertise

thumbor, open-source, testing, python, maintainability

Creating your own open source project will teach a lot of the concepts required for participating in another's open source project. Great experience!

## Summary

Create a ... | main | remove thumbor upload into its own project areas of expertise thumbor open source testing python maintainability creating your own open source project will teach a lot of the concepts required for participating in another s open source project great experience summary create a new project t... | 1 |

3,903 | 17,376,851,919 | IssuesEvent | 2021-07-30 23:28:21 | chorman0773/Clever-ISA | https://api.github.com/repos/chorman0773/Clever-ISA | closed | Long Immediate Operand references "Operand Control Structure" but the term is not defined anywhere | I-unclear S-blocked-on-maintainer X-main | Long Immediate Operand references "Operand Control Structure" but the term is not defined anywhere | True | Long Immediate Operand references "Operand Control Structure" but the term is not defined anywhere - Long Immediate Operand references "Operand Control Structure" but the term is not defined anywhere | main | long immediate operand references operand control structure but the term is not defined anywhere long immediate operand references operand control structure but the term is not defined anywhere | 1 |

227,547 | 18,068,431,798 | IssuesEvent | 2021-09-20 22:09:09 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: decommission/mixed-versions failed [should stop after beta1] | C-test-failure O-robot O-roachtest branch-master GA-blocker | roachtest.decommission/mixed-versions [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3462983&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3462983&tab=artifacts#/decommission/mixed-versions) on master @ [78c6771c7e9f7ba6431f44b067f27e0857341374](https://github.com/... | 2.0 | roachtest: decommission/mixed-versions failed [should stop after beta1] - roachtest.decommission/mixed-versions [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3462983&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3462983&tab=artifacts#/decommission/mixed-versions) ... | non_main | roachtest decommission mixed versions failed roachtest decommission mixed versions with on master the test failed on branch master cloud gce test timed out see artifacts for details reproduce see cc cockroachdb kv triage | 0 |

548,064 | 16,056,442,761 | IssuesEvent | 2021-04-23 06:10:49 | ucfopen/UDOIT | https://api.github.com/repos/ucfopen/UDOIT | closed | imgAltIsDifferent triggered even if aria-label used | enhancement low priority | The imgAltIsDifferent rule is triggered even if `aria-label` is used to provide alternative text for an `<img>` tag that has an `alt` attribute set to the file name.

| 1.0 | imgAltIsDifferent triggered even if aria-label used - The imgAltIsDifferent rule is triggered even if `aria-label` is used to provide alternative text for an `<img>` tag that has an `alt` attribute set to the file name.

| I've experienced a strange shifting of 2.8 MHz upwards from the carrier. Randomly the nominal 14MHz transmission is just shifted up to 16.8 MHz... then back.

Using latest Raspbian Jessie (as of today 22/04/2016), all the update, upgrade, disti upgrade done before. Cloned and installed riptx just right now from github... | True | At RPi 3 rpitx randomly shifting frequency during TX - I've experienced a strange shifting of 2.8 MHz upwards from the carrier. Randomly the nominal 14MHz transmission is just shifted up to 16.8 MHz... then back.

Using latest Raspbian Jessie (as of today 22/04/2016), all the update, upgrade, disti upgrade done before... | main | at rpi rpitx randomly shifting frequency during tx i ve experienced a strange shifting of mhz upwards from the carrier randomly the nominal transmission is just shifted up to mhz then back using latest raspbian jessie as of today all the update upgrade disti upgrade done before cloned a... | 1 |

707,082 | 24,294,236,927 | IssuesEvent | 2022-09-29 08:47:57 | FinalProject-AIPARK/JenaPark-BE | https://api.github.com/repos/FinalProject-AIPARK/JenaPark-BE | closed | 회원 정보 받는 api | Priority: Medium Status: Done | ## 설명

로그인 성공 후 받는 토큰을 통해서 회원정보를 불러오느 api

## 할 일

- [x] Controller 수정

- [x] Service 수정

- [x] ResponseDto 생성

## 기타

참조 및 링크를 첨부하시오.

| 1.0 | 회원 정보 받는 api - ## 설명

로그인 성공 후 받는 토큰을 통해서 회원정보를 불러오느 api

## 할 일

- [x] Controller 수정

- [x] Service 수정

- [x] ResponseDto 생성

## 기타

참조 및 링크를 첨부하시오.

| non_main | 회원 정보 받는 api 설명 로그인 성공 후 받는 토큰을 통해서 회원정보를 불러오느 api 할 일 controller 수정 service 수정 responsedto 생성 기타 참조 및 링크를 첨부하시오 | 0 |

369,639 | 10,915,735,893 | IssuesEvent | 2019-11-21 11:49:43 | incognitochain/incognito-wallet | https://api.github.com/repos/incognitochain/incognito-wallet | closed | Node go from online to offline | Priority: Critical Type: Bug | Node from online to offline although it's online before and staked successfully | 1.0 | Node go from online to offline - Node from online to offline although it's online before and staked successfully | non_main | node go from online to offline node from online to offline although it s online before and staked successfully | 0 |

3,058 | 11,454,973,684 | IssuesEvent | 2020-02-06 18:08:23 | 18F/cg-product | https://api.github.com/repos/18F/cg-product | closed | As a federalist operator, I want to be able to migrate S3 origins in Cloud Front while the cdn broker is unavailable. | contractor-3-maintainability | The Federalist team has a backlog of sites awaiting S3 origin migration.

## Acceptance Criteria

* [ ] GIVEN an existing federalist site \

AND the need to update the origin of the site \

WHEN the federalist team invokes a migration via `cf task` \

THEN the origin is updated \

AND certification regeneration is ... | True | As a federalist operator, I want to be able to migrate S3 origins in Cloud Front while the cdn broker is unavailable. - The Federalist team has a backlog of sites awaiting S3 origin migration.

## Acceptance Criteria

* [ ] GIVEN an existing federalist site \

AND the need to update the origin of the site \

WHEN the... | main | as a federalist operator i want to be able to migrate origins in cloud front while the cdn broker is unavailable the federalist team has a backlog of sites awaiting origin migration acceptance criteria given an existing federalist site and the need to update the origin of the site when the fed... | 1 |

90,898 | 15,856,317,119 | IssuesEvent | 2021-04-08 02:03:22 | rvvergara/react-native-learning-starter | https://api.github.com/repos/rvvergara/react-native-learning-starter | opened | CVE-2021-23337 (High) detected in lodash-4.17.14.tgz, lodash-4.17.15.tgz | security vulnerability | ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.14.tgz</b>, <b>lodash-4.17.15.tgz</b></p></summary>

<p>

<details><summary><b>lodash-4.17.14.tgz</b></p><... | True | CVE-2021-23337 (High) detected in lodash-4.17.14.tgz, lodash-4.17.15.tgz - ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.14.tgz</b>, <b>lodash-4.17.15.... | non_main | cve high detected in lodash tgz lodash tgz cve high severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz lodash modular utilities library home page a href path to dependency file react native learning starter packa... | 0 |

185,362 | 21,788,706,747 | IssuesEvent | 2022-05-14 15:02:05 | GNS3/gns3-web-ui | https://api.github.com/repos/GNS3/gns3-web-ui | closed | CVE-2022-24773 (Medium) detected in node-forge-0.10.0.tgz | security vulnerability | ## CVE-2022-24773 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.10.0.tgz</b></p></summary>

<p>JavaScript implementations of network transports, cryptography, ciphers, ... | True | CVE-2022-24773 (Medium) detected in node-forge-0.10.0.tgz - ## CVE-2022-24773 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.10.0.tgz</b></p></summary>

<p>JavaScript im... | non_main | cve medium detected in node forge tgz cve medium severity vulnerability vulnerable library node forge tgz javascript implementations of network transports cryptography ciphers pki message digests and various utilities library home page a href path to dependency fil... | 0 |

170,620 | 14,265,909,897 | IssuesEvent | 2020-11-20 17:51:00 | postmanlabs/postman-app-support | https://api.github.com/repos/postmanlabs/postman-app-support | closed | Reorder Collection Versions | feature product/documentation | While publishing my collection with multiple versions, right now I'm not able to reorder it. Is there any way to reorder my versions at the time of publishing my collection? | 1.0 | Reorder Collection Versions - While publishing my collection with multiple versions, right now I'm not able to reorder it. Is there any way to reorder my versions at the time of publishing my collection? | non_main | reorder collection versions while publishing my collection with multiple versions right now i m not able to reorder it is there any way to reorder my versions at the time of publishing my collection | 0 |

3,795 | 16,218,791,910 | IssuesEvent | 2021-05-06 01:13:19 | truecharts/apps | https://api.github.com/repos/truecharts/apps | closed | Add Podgrab | New App Request No-Maintainer | An extremely useful application for podcasts.

> Podgrab is a is a self-hosted podcast manager which automatically downloads latest podcast episodes.

Application Repo: https://github.com/akhilrex/podgrab

Thank you! | True | Add Podgrab - An extremely useful application for podcasts.

> Podgrab is a is a self-hosted podcast manager which automatically downloads latest podcast episodes.

Application Repo: https://github.com/akhilrex/podgrab

Thank you! | main | add podgrab an extremely useful application for podcasts podgrab is a is a self hosted podcast manager which automatically downloads latest podcast episodes application repo thank you | 1 |

568,329 | 16,964,908,257 | IssuesEvent | 2021-06-29 09:41:42 | status-im/StatusQ | https://api.github.com/repos/status-im/StatusQ | opened | Implement `StatusWindowsToolBar` component | priority 3: important type: feature | A component to render Microsoft Windows specific window toolbars.

Here's what they look like as per current design:

<img width="683" alt="Screenshot 2021-06-29 at 11 41 06" src="https://user-images.githubusercontent.com/445106/123775738-f201d100-d8ce-11eb-8529-0e71a953f652.png">

Figma: https://www.figma.com/fi... | 1.0 | Implement `StatusWindowsToolBar` component - A component to render Microsoft Windows specific window toolbars.

Here's what they look like as per current design:

<img width="683" alt="Screenshot 2021-06-29 at 11 41 06" src="https://user-images.githubusercontent.com/445106/123775738-f201d100-d8ce-11eb-8529-0e71a953f6... | non_main | implement statuswindowstoolbar component a component to render microsoft windows specific window toolbars here s what they look like as per current design img width alt screenshot at src figma | 0 |

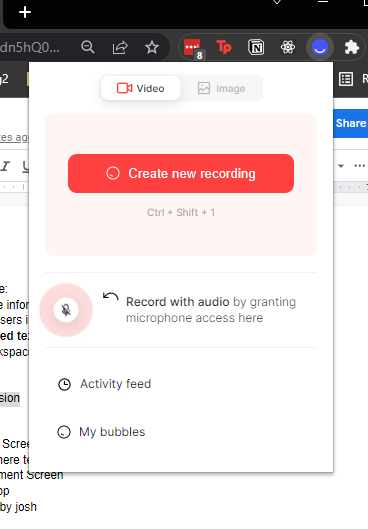

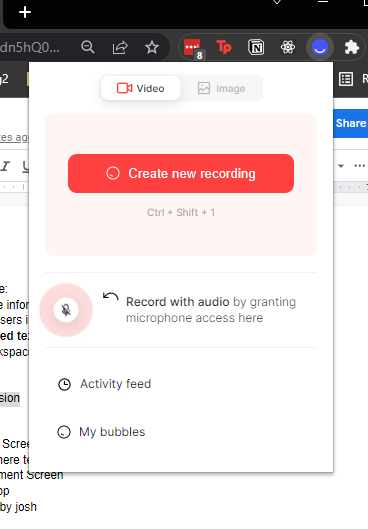

635,210 | 20,381,981,307 | IssuesEvent | 2022-02-21 23:32:50 | NerdyNomads/Text-Savvy | https://api.github.com/repos/NerdyNomads/Text-Savvy | opened | Create mock up of extension | high priority front-end | Create a UI mock up of the chrome extension dropdown panel with Figma.

Here is an example of UI design from an extension (Bubbles).

| 1.0 | Create mock up of extension - Create a UI mock up of the chrome extension dropdown panel with Figma.

Here is an example of UI design from an extension (Bubbles).

| non_main | create mock up of extension create a ui mock up of the chrome extension dropdown panel with figma here is an example of ui design from an extension bubbles | 0 |

5,539 | 27,735,433,247 | IssuesEvent | 2023-03-15 10:53:30 | precice/precice | https://api.github.com/repos/precice/precice | closed | Nightly build of dockerimage precice/precice:develop | maintainability compatibility | **Please describe the problem you are trying to solve.**

The python bindings use the docker image `precice/precice` provided via (https://github.com/precice/precice/blob/v2.3.0/.github/workflows/release-docker.yml) in their CI pipeline to create and push a docker image with the python bindings `precice/python-bindin... | True | Nightly build of dockerimage precice/precice:develop - **Please describe the problem you are trying to solve.**

The python bindings use the docker image `precice/precice` provided via (https://github.com/precice/precice/blob/v2.3.0/.github/workflows/release-docker.yml) in their CI pipeline to create and push a docke... | main | nightly build of dockerimage precice precice develop please describe the problem you are trying to solve the python bindings use the docker image precice precice provided via in their ci pipeline to create and push a docker image with the python bindings precice python bindings currently precice... | 1 |

757,551 | 26,517,531,186 | IssuesEvent | 2023-01-18 22:12:31 | SlimeVR/SlimeVR-Tracker-ESP | https://api.github.com/repos/SlimeVR/SlimeVR-Tracker-ESP | closed | [Magneto] Handle infinite samples with constant memory usage | Type: Feature Request Priority: Low Status: Unlabeled | the first thing magneto does with the input sample is first expand each sample into a 10 item row/column, treat the input as a 10xN matrix, and multiply it by it's own transpose, resulting in a 10x10 matrix.

expanding out the math, it turns out that taking each sample as a 10x1 matrix, multiplying by its own transpo... | 1.0 | [Magneto] Handle infinite samples with constant memory usage - the first thing magneto does with the input sample is first expand each sample into a 10 item row/column, treat the input as a 10xN matrix, and multiply it by it's own transpose, resulting in a 10x10 matrix.

expanding out the math, it turns out that taki... | non_main | handle infinite samples with constant memory usage the first thing magneto does with the input sample is first expand each sample into a item row column treat the input as a matrix and multiply it by it s own transpose resulting in a matrix expanding out the math it turns out that taking each sample a... | 0 |

4,892 | 25,124,310,645 | IssuesEvent | 2022-11-09 10:31:21 | goharbor/community | https://api.github.com/repos/goharbor/community | closed | Pierre PÉRONNET changed employment | area/maintainer-nomination | Hi @holyhope, I see you have left OVHcloud are you still planing to be part of the maintainers team in the new company?

Thanks! | True | Pierre PÉRONNET changed employment - Hi @holyhope, I see you have left OVHcloud are you still planing to be part of the maintainers team in the new company?

Thanks! | main | pierre péronnet changed employment hi holyhope i see you have left ovhcloud are you still planing to be part of the maintainers team in the new company thanks | 1 |

19,032 | 6,664,492,524 | IssuesEvent | 2017-10-02 20:21:14 | dart-lang/build | https://api.github.com/repos/dart-lang/build | closed | Checking for existing outputs fails if an intermediate output is deleted | package:build_runner | Situation:

- There is a source file `source.dart` and two phases of builders `.dart` -> `.phase1` and `.phase1` -> `.phase2`

- The `.dart_tool` directory does not exist so there is no serialized asset graph

- `source.phase1` does *not* exist on disk, `source.phase2` *does* exist on disk.

Checking for existing out... | 1.0 | Checking for existing outputs fails if an intermediate output is deleted - Situation:

- There is a source file `source.dart` and two phases of builders `.dart` -> `.phase1` and `.phase1` -> `.phase2`

- The `.dart_tool` directory does not exist so there is no serialized asset graph

- `source.phase1` does *not* exist ... | non_main | checking for existing outputs fails if an intermediate output is deleted situation there is a source file source dart and two phases of builders dart and the dart tool directory does not exist so there is no serialized asset graph source does not exist on disk source ... | 0 |

4,082 | 19,285,632,005 | IssuesEvent | 2021-12-11 00:12:18 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Debug dotnetcore 2.1 in Visual Studio 2017/2019 | area/ide type/feature area/debugging stage/pm-review maintainer/need-response | ### Describe your idea/feature/enhancement

We're currently migrating from a monolith ASP.NET Core app to a serverless architecture and SAM proved to be a valuable asset. However, the team is much more comfortable with VS (currently 2017 and we're moving to 2019) and not that much with VSCode.

Now that SAM support... | True | Debug dotnetcore 2.1 in Visual Studio 2017/2019 - ### Describe your idea/feature/enhancement

We're currently migrating from a monolith ASP.NET Core app to a serverless architecture and SAM proved to be a valuable asset. However, the team is much more comfortable with VS (currently 2017 and we're moving to 2019) and ... | main | debug dotnetcore in visual studio describe your idea feature enhancement we re currently migrating from a monolith asp net core app to a serverless architecture and sam proved to be a valuable asset however the team is much more comfortable with vs currently and we re moving to and not that muc... | 1 |

516,145 | 14,975,963,202 | IssuesEvent | 2021-01-28 07:12:55 | threefoldtech/home | https://api.github.com/repos/threefoldtech/home | opened | Deployed blog appears in the deployed solutions page but not in the deployed blogs overview. | priority_major type_bug | In VDC: jetserthing (Gold, testnet) a deployed blog using the example blog source from the manual results in successful deployment. But the deployed solution pages (generic and specific) display different results.

a deployed blog using the example blog source from the manual results in successful deployment. But the deployed solution pages (generic and specific) display different results.

![image... | non_main | deployed blog appears in the deployed solutions page but not in the deployed blogs overview in vdc jetserthing gold testnet a deployed blog using the example blog source from the manual results in successful deployment but the deployed solution pages generic and specific display different results ... | 0 |

83,102 | 16,091,350,336 | IssuesEvent | 2021-04-26 17:05:31 | microsoft/vscode-jupyter | https://api.github.com/repos/microsoft/vscode-jupyter | closed | No scrollbar generated for large outputs | bug upstream-vscode vscode-notebook | ## Environment data

- VS Code version: 1.56.0-insider

- Jupyter Extension version (available under the Extensions sidebar):

- Python Extension version (available under the Extensions sidebar): v2021.6.780948196

- OS (Windows | Mac | Linux distro) and version: Ubuntu 20.04.2 LTS

- Python and/or Anacond... | 2.0 | No scrollbar generated for large outputs - ## Environment data

- VS Code version: 1.56.0-insider

- Jupyter Extension version (available under the Extensions sidebar):

- Python Extension version (available under the Extensions sidebar): v2021.6.780948196

- OS (Windows | Mac | Linux distro) and version: Ub... | non_main | no scrollbar generated for large outputs environment data vs code version insider jupyter extension version available under the extensions sidebar python extension version available under the extensions sidebar os windows mac linux distro and version ubuntu lt... | 0 |

49,968 | 12,439,282,568 | IssuesEvent | 2020-05-26 09:50:33 | docascod/DocsAsCode | https://api.github.com/repos/docascod/DocsAsCode | opened | default theme slides : add default images | enhancement fct_build | Add default (blank) images into slides default theme :

* title-page-background

* title-page-logo

* header-logo

* footer-logo

| 1.0 | default theme slides : add default images - Add default (blank) images into slides default theme :

* title-page-background

* title-page-logo

* header-logo

* footer-logo

| non_main | default theme slides add default images add default blank images into slides default theme title page background title page logo header logo footer logo | 0 |

2,663 | 9,105,550,221 | IssuesEvent | 2019-02-20 21:06:35 | lrozenblyum/chess | https://api.github.com/repos/lrozenblyum/chess | closed | IDE-specific derived resources | devenv maintainability | IDEA: Let's check whether we need the *.iml in version control.

When we change pom.xml, it's getting updated.

Find best practices.

https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

- [x] IDEA: *.iml

- [x] IDEA: general storage

- [x] Eclipse: .settings

- [x] Eclipse; other

Caused by #245 | True | IDE-specific derived resources - IDEA: Let's check whether we need the *.iml in version control.

When we change pom.xml, it's getting updated.

Find best practices.

https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

- [x] IDEA: *.iml

- [x] IDEA: general storage

- [x] Eclipse: .settings

- [x] Ecl... | main | ide specific derived resources idea let s check whether we need the iml in version control when we change pom xml it s getting updated find best practices idea iml idea general storage eclipse settings eclipse other caused by | 1 |

14,018 | 24,208,421,355 | IssuesEvent | 2022-09-25 15:06:36 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | opened | Error configuring GitLab CI_REGISTRY setting using registryAliases | type:bug status:requirements priority-5-triage | ### How are you running Renovate?

Self-hosted

### If you're self-hosting Renovate, tell us what version of Renovate you run.

whitesource/renovate-on-prem v2.5.1 (renovate 32.185.3)

### If you're self-hosting Renovate, select which platform you are using.

GitLab self-hosted

### If you're self-hosting Renovate, tel... | 1.0 | Error configuring GitLab CI_REGISTRY setting using registryAliases - ### How are you running Renovate?

Self-hosted

### If you're self-hosting Renovate, tell us what version of Renovate you run.

whitesource/renovate-on-prem v2.5.1 (renovate 32.185.3)

### If you're self-hosting Renovate, select which platform you are... | non_main | error configuring gitlab ci registry setting using registryaliases how are you running renovate self hosted if you re self hosting renovate tell us what version of renovate you run whitesource renovate on prem renovate if you re self hosting renovate select which platform you are usi... | 0 |

169,630 | 26,834,761,518 | IssuesEvent | 2023-02-02 18:34:52 | runtimeverification/haskell-backend | https://api.github.com/repos/runtimeverification/haskell-backend | closed | Validate predicate simplification rules | design cleanup | The backend should validate that predicate simplification rules (`\ceil(_) => ...`) have a predicate on the right-hand side. The related internal errors during execution may be removed. | 1.0 | Validate predicate simplification rules - The backend should validate that predicate simplification rules (`\ceil(_) => ...`) have a predicate on the right-hand side. The related internal errors during execution may be removed. | non_main | validate predicate simplification rules the backend should validate that predicate simplification rules ceil have a predicate on the right hand side the related internal errors during execution may be removed | 0 |

4,999 | 25,722,559,400 | IssuesEvent | 2022-12-07 14:38:44 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | Data Explorer styling/frontend granular meta issue | work: frontend status: ready restricted: maintainers type: meta | This meta issue tracks pending items in Data Explorer prioritized by demo video readiness

1. **The following are essential for the demo video, since they will be visible during the demo**

- [ ] Show cell content on the Cell selection tab

- [ ] Automatically select inspector tabs based on column selection/c... | True | Data Explorer styling/frontend granular meta issue - This meta issue tracks pending items in Data Explorer prioritized by demo video readiness

1. **The following are essential for the demo video, since they will be visible during the demo**

- [ ] Show cell content on the Cell selection tab

- [ ] Automatica... | main | data explorer styling frontend granular meta issue this meta issue tracks pending items in data explorer prioritized by demo video readiness the following are essential for the demo video since they will be visible during the demo show cell content on the cell selection tab automatically ... | 1 |

170,371 | 14,257,572,580 | IssuesEvent | 2020-11-20 04:01:51 | microsoft/STL | https://api.github.com/repos/microsoft/STL | closed | README.md: The Block Diagram doesn't mention ConcRT | documentation resolved | https://github.com/microsoft/STL#block-diagram mentions VCStartup, VCRuntime, and the Universal CRT, but not ConcRT. As ConcRT's relationship with the STL is unusually circular, this should probably be mentioned. | 1.0 | README.md: The Block Diagram doesn't mention ConcRT - https://github.com/microsoft/STL#block-diagram mentions VCStartup, VCRuntime, and the Universal CRT, but not ConcRT. As ConcRT's relationship with the STL is unusually circular, this should probably be mentioned. | non_main | readme md the block diagram doesn t mention concrt mentions vcstartup vcruntime and the universal crt but not concrt as concrt s relationship with the stl is unusually circular this should probably be mentioned | 0 |

232,615 | 17,788,777,328 | IssuesEvent | 2021-08-31 14:04:36 | calliope-project/euro-calliope | https://api.github.com/repos/calliope-project/euro-calliope | closed | Explain background and purpose | documentation | Expand Sphinx documentation to describe the background and purpose of the repository

* Can build on Tim + Bryn's code sprint presentations | 1.0 | Explain background and purpose - Expand Sphinx documentation to describe the background and purpose of the repository

* Can build on Tim + Bryn's code sprint presentations | non_main | explain background and purpose expand sphinx documentation to describe the background and purpose of the repository can build on tim bryn s code sprint presentations | 0 |

27,254 | 2,691,404,673 | IssuesEvent | 2015-03-31 21:21:51 | NuGet/NuGetGallery | https://api.github.com/repos/NuGet/NuGetGallery | closed | Statistics navigation/drilldown suggestion | Priority - 2 | I found the navigation/drilldown for the existing statistics pages didn't work the way I expected (after coming back to the packages with fresh expectations after a while).

**Problem:**

You cannot drill down into the by-version statistics for a package from the package's overall statistics.

**Repro:**

1. Navi... | 1.0 | Statistics navigation/drilldown suggestion - I found the navigation/drilldown for the existing statistics pages didn't work the way I expected (after coming back to the packages with fresh expectations after a while).

**Problem:**

You cannot drill down into the by-version statistics for a package from the package's... | non_main | statistics navigation drilldown suggestion i found the navigation drilldown for the existing statistics pages didn t work the way i expected after coming back to the packages with fresh expectations after a while problem you cannot drill down into the by version statistics for a package from the package s... | 0 |

865 | 4,534,587,162 | IssuesEvent | 2016-09-08 15:00:05 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | apache2_module fails for php7.0 on Ubuntu Xenial | bug_report waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

apache2_module

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0 (devel 982db58aff) last updated 2016/09/08 11:50:49 (GMT +100)

lib/ansible/modules/core: (detached HEAD db38f0c876) last ... | True | apache2_module fails for php7.0 on Ubuntu Xenial - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

apache2_module

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0 (devel 982db58aff) last updated 2016/09/08 11:50:49 (GMT +100)

lib/ans... | main | module fails for on ubuntu xenial issue type bug report component name module ansible version ansible devel last updated gmt lib ansible modules core detached head last updated gmt lib ansible modules extras detache... | 1 |

170,689 | 20,883,857,046 | IssuesEvent | 2022-03-23 01:20:49 | turkdevops/sanity-nuxt-events | https://api.github.com/repos/turkdevops/sanity-nuxt-events | opened | CVE-2021-44906 (Medium) detected in minimist-0.0.8.tgz, minimist-1.2.0.tgz | security vulnerability | ## CVE-2021-44906 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-1.2.0.tgz</b></p></summary>

<p>

<details><summary><b>minimist-0.0.8.tgz</b></p... | True | CVE-2021-44906 (Medium) detected in minimist-0.0.8.tgz, minimist-1.2.0.tgz - ## CVE-2021-44906 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-1.... | non_main | cve medium detected in minimist tgz minimist tgz cve medium severity vulnerability vulnerable libraries minimist tgz minimist tgz minimist tgz parse argument options library home page a href path to dependency file web package json path... | 0 |

2,933 | 3,255,175,199 | IssuesEvent | 2015-10-20 06:59:37 | piwik/piwik | https://api.github.com/repos/piwik/piwik | opened | Remove word "website" from website selector | c: Usability | As mentioned here https://github.com/piwik/piwik/issues/8712 I do now think as well that we should remove the word "Website" from the sites selector eg as suggested here: https://github.com/piwik/piwik/issues/8712#issuecomment-148390762

All other selectors use a proper icon instead but the selector doesn't yet.

... | True | Remove word "website" from website selector - As mentioned here https://github.com/piwik/piwik/issues/8712 I do now think as well that we should remove the word "Website" from the sites selector eg as suggested here: https://github.com/piwik/piwik/issues/8712#issuecomment-148390762

All other selectors use a proper i... | non_main | remove word website from website selector as mentioned here i do now think as well that we should remove the word website from the sites selector eg as suggested here all other selectors use a proper icon instead but the selector doesn t yet instead of the word website i d rather see an ic... | 0 |

1,501 | 2,514,821,676 | IssuesEvent | 2015-01-15 14:43:53 | eclipsesource/tabris-js | https://api.github.com/repos/eclipsesource/tabris-js | closed | Support search actions | feature priority: high | Support actions that integrate a search field with proposals in the navigation bar when executed. | 1.0 | Support search actions - Support actions that integrate a search field with proposals in the navigation bar when executed. | non_main | support search actions support actions that integrate a search field with proposals in the navigation bar when executed | 0 |

836 | 4,473,748,310 | IssuesEvent | 2016-08-26 06:17:59 | Particular/NServiceBus.SqlServer | https://api.github.com/repos/Particular/NServiceBus.SqlServer | closed | Setup mulit-catalog deployment with Linked Servers with Sql Server | Tag: Maintainer Prio Type: Spike | ## Goal

Validate if that is a valid option to provide multi instance deployments without need for sharing connection strings at the endpoint configuration level

## Results

### Proof-of-concept

The proof of concept consisted of a simple application that was using direct ADO.NET to send messages on my local mac... | True | Setup mulit-catalog deployment with Linked Servers with Sql Server - ## Goal

Validate if that is a valid option to provide multi instance deployments without need for sharing connection strings at the endpoint configuration level

## Results

### Proof-of-concept

The proof of concept consisted of a simple appli... | main | setup mulit catalog deployment with linked servers with sql server goal validate if that is a valid option to provide multi instance deployments without need for sharing connection strings at the endpoint configuration level results proof of concept the proof of concept consisted of a simple appli... | 1 |

262,138 | 27,857,406,372 | IssuesEvent | 2023-03-21 00:56:08 | aws/eks-distro-build-tooling | https://api.github.com/repos/aws/eks-distro-build-tooling | closed | Vulnerability in golang.org/x/text/language - CVE-2022-32149 | security golang | From [Golang Security Announcement](https://groups.google.com/g/golang-announce/c/-hjNw559_tE/m/KlGTfid5CAAJ):

Version v0.3.8 of [golang.org/x/text](http://golang.org/x/text) fixes a vulnerability in the [golang.org/x/text/language](http://golang.org/x/text/language) package which could cause a denial of service.

... | True | Vulnerability in golang.org/x/text/language - CVE-2022-32149 - From [Golang Security Announcement](https://groups.google.com/g/golang-announce/c/-hjNw559_tE/m/KlGTfid5CAAJ):

Version v0.3.8 of [golang.org/x/text](http://golang.org/x/text) fixes a vulnerability in the [golang.org/x/text/language](http://golang.org/x/t... | non_main | vulnerability in golang org x text language cve from version of fixes a vulnerability in the package which could cause a denial of service an attacker can craft an accept language header which parseacceptlanguage will take significant time to parse this issue was discovered by oss f... | 0 |

51,283 | 13,635,089,318 | IssuesEvent | 2020-09-25 01:51:38 | nasifimtiazohi/openmrs-module-event-2.7.0 | https://api.github.com/repos/nasifimtiazohi/openmrs-module-event-2.7.0 | opened | CVE-2015-6524 (Medium) detected in activemq-core-5.4.3.jar | security vulnerability | ## CVE-2015-6524 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>activemq-core-5.4.3.jar</b></p></summary>

<p>The ActiveMQ Message Broker and Client implementations</p>

<p>Path to de... | True | CVE-2015-6524 (Medium) detected in activemq-core-5.4.3.jar - ## CVE-2015-6524 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>activemq-core-5.4.3.jar</b></p></summary>

<p>The ActiveM... | non_main | cve medium detected in activemq core jar cve medium severity vulnerability vulnerable library activemq core jar the activemq message broker and client implementations path to dependency file openmrs module event omod pom xml path to vulnerable library home wss scann... | 0 |

226,083 | 17,947,865,460 | IssuesEvent | 2021-09-12 06:18:10 | MetagaussInc/Blazeforms-Revamped-Frontend | https://api.github.com/repos/MetagaussInc/Blazeforms-Revamped-Frontend | closed | When control is resized to the biggest size then Make Bigger or Make smaller not appearing faded out.[Form-build] | bug high Ready For Retest | 1. When control is resized to the biggest possible size either via contextual menu or via left side panel options, the contextual option for "Make Bigger" not appearing to fade out.

2. When control is resized to the smallest possible size either via contextual menu or via left side panel options, the contextual option... | 1.0 | When control is resized to the biggest size then Make Bigger or Make smaller not appearing faded out.[Form-build] - 1. When control is resized to the biggest possible size either via contextual menu or via left side panel options, the contextual option for "Make Bigger" not appearing to fade out.

2. When control is re... | non_main | when control is resized to the biggest size then make bigger or make smaller not appearing faded out when control is resized to the biggest possible size either via contextual menu or via left side panel options the contextual option for make bigger not appearing to fade out when control is resized to th... | 0 |

18,055 | 3,664,439,003 | IssuesEvent | 2016-02-19 11:41:52 | handsontable/handsontable | https://api.github.com/repos/handsontable/handsontable | closed | Getting 2 rows of data when pasting to one row in Chrome. | Answered Merged (ready for release) Tested | Hi There

There's an issue in Handsontable when it's used inside an Angular2 application.

When you copy a row and paste it into a different row on Chrome, 2 rows are inserted instead of one.

It only happens when Handsontable is running inside Angula... | 1.0 | Getting 2 rows of data when pasting to one row in Chrome. - Hi There

There's an issue in Handsontable when it's used inside an Angular2 application.

When you copy a row and paste it into a different row on Chrome, 2 rows are inserted instead of one.

... | non_main | getting rows of data when pasting to one row in chrome hi there there s an issue in handsontable when it s used inside an application when you copy a row and paste it into a different row on chrome rows are inserted instead of one it only happens when handsontable is running inside i think... | 0 |

4,027 | 18,797,941,499 | IssuesEvent | 2021-11-09 01:43:35 | tgstation/tgstation | https://api.github.com/repos/tgstation/tgstation | closed | Admin logs on a ckey read from said mob's specific status, rather than all logs. | Maintainability/Hinders improvements Bug Administration Cleanup Flagged | This means that when looking at a ghost's logs through the player panel, it only shows ghost death logs, if you examine bodies it shows body combat logs, etc.

| True | Admin logs on a ckey read from said mob's specific status, rather than all logs. - This means that when looking at a ghost's logs through the player panel, it only shows ghost death logs, if you examine bodies it shows body combat logs, etc.

| main | admin logs on a ckey read from said mob s specific status rather than all logs this means that when looking at a ghost s logs through the player panel it only shows ghost death logs if you examine bodies it shows body combat logs etc | 1 |

692 | 4,238,514,479 | IssuesEvent | 2016-07-06 04:18:04 | duckduckgo/zeroclickinfo-spice | https://api.github.com/repos/duckduckgo/zeroclickinfo-spice | closed | Goodreads: IA not showing up | Maintainer Input Requested | When I performed a search the instant answer did not show up. I was using Microsoft Edge with DDG Beta. URL - https://beta.duckduckgo.com/?q=books+by+melanie+watt.

------