Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

137,629 | 18,755,114,447 | IssuesEvent | 2021-11-05 09:44:20 | Dima2022/node-jose | https://api.github.com/repos/Dima2022/node-jose | opened | CVE-2021-33623 (High) detected in trim-newlines-1.0.0.tgz | security vulnerability | ## CVE-2021-33623 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>trim-newlines-1.0.0.tgz</b></p></summary>

<p>Trim newlines from the start and/or end of a string</p>

<p>Library home page: <a href="https://registry.npmjs.org/trim-newlines/-/trim-newlines-1.0.0.tgz">https://registry.npmjs.org/trim-newlines/-/trim-newlines-1.0.0.tgz</a></p>

<p>Path to dependency file: node-jose/package.json</p>

<p>Path to vulnerable library: node-jose/node_modules/trim-newlines/package.json</p>

<p>

Dependency Hierarchy:

- karma-coverage-1.1.2.tgz (Root Library)

- dateformat-1.0.12.tgz

- meow-3.7.0.tgz

- :x: **trim-newlines-1.0.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/Dima2022/node-jose/commit/402fef2668e2cefe13cada8c1eb68c22d93a6837">402fef2668e2cefe13cada8c1eb68c22d93a6837</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The trim-newlines package before 3.0.1 and 4.x before 4.0.1 for Node.js has an issue related to regular expression denial-of-service (ReDoS) for the .end() method.

<p>Publish Date: 2021-05-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33623>CVE-2021-33623</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33623">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33623</a></p>

<p>Release Date: 2021-05-28</p>

<p>Fix Resolution: trim-newlines - 3.0.1, 4.0.1</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"trim-newlines","packageVersion":"1.0.0","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"karma-coverage:1.1.2;dateformat:1.0.12;meow:3.7.0;trim-newlines:1.0.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"trim-newlines - 3.0.1, 4.0.1"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-33623","vulnerabilityDetails":"The trim-newlines package before 3.0.1 and 4.x before 4.0.1 for Node.js has an issue related to regular expression denial-of-service (ReDoS) for the .end() method.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33623","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | True | CVE-2021-33623 (High) detected in trim-newlines-1.0.0.tgz - ## CVE-2021-33623 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>trim-newlines-1.0.0.tgz</b></p></summary>

<p>Trim newlines from the start and/or end of a string</p>

<p>Library home page: <a href="https://registry.npmjs.org/trim-newlines/-/trim-newlines-1.0.0.tgz">https://registry.npmjs.org/trim-newlines/-/trim-newlines-1.0.0.tgz</a></p>

<p>Path to dependency file: node-jose/package.json</p>

<p>Path to vulnerable library: node-jose/node_modules/trim-newlines/package.json</p>

<p>

Dependency Hierarchy:

- karma-coverage-1.1.2.tgz (Root Library)

- dateformat-1.0.12.tgz

- meow-3.7.0.tgz

- :x: **trim-newlines-1.0.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/Dima2022/node-jose/commit/402fef2668e2cefe13cada8c1eb68c22d93a6837">402fef2668e2cefe13cada8c1eb68c22d93a6837</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The trim-newlines package before 3.0.1 and 4.x before 4.0.1 for Node.js has an issue related to regular expression denial-of-service (ReDoS) for the .end() method.

<p>Publish Date: 2021-05-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33623>CVE-2021-33623</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33623">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33623</a></p>

<p>Release Date: 2021-05-28</p>

<p>Fix Resolution: trim-newlines - 3.0.1, 4.0.1</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"trim-newlines","packageVersion":"1.0.0","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"karma-coverage:1.1.2;dateformat:1.0.12;meow:3.7.0;trim-newlines:1.0.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"trim-newlines - 3.0.1, 4.0.1"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-33623","vulnerabilityDetails":"The trim-newlines package before 3.0.1 and 4.x before 4.0.1 for Node.js has an issue related to regular expression denial-of-service (ReDoS) for the .end() method.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33623","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | non_main | cve high detected in trim newlines tgz cve high severity vulnerability vulnerable library trim newlines tgz trim newlines from the start and or end of a string library home page a href path to dependency file node jose package json path to vulnerable library node jose node modules trim newlines package json dependency hierarchy karma coverage tgz root library dateformat tgz meow tgz x trim newlines tgz vulnerable library found in head commit a href found in base branch master vulnerability details the trim newlines package before and x before for node js has an issue related to regular expression denial of service redos for the end method publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution trim newlines isopenpronvulnerability true ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree karma coverage dateformat meow trim newlines isminimumfixversionavailable true minimumfixversion trim newlines basebranches vulnerabilityidentifier cve vulnerabilitydetails the trim newlines package before and x before for node js has an issue related to regular expression denial of service redos for the end method vulnerabilityurl | 0 |

4,539 | 23,621,312,120 | IssuesEvent | 2022-08-24 20:52:10 | aws/aws-sam-build-images | https://api.github.com/repos/aws/aws-sam-build-images | closed | What happened with aws-sam-cli-build-image-nodejs16.x | maintainer/need-response stage/needs-triage type/bug | ### Description:

Some month ago we was deploying our function using nodejs16 image version. Now it's not available anymore. The repository still contains the Dockerfile for building this image, but it looks like it's excluded to be pushed. | True | What happened with aws-sam-cli-build-image-nodejs16.x - ### Description:

Some month ago we was deploying our function using nodejs16 image version. Now it's not available anymore. The repository still contains the Dockerfile for building this image, but it looks like it's excluded to be pushed. | main | what happened with aws sam cli build image x description some month ago we was deploying our function using image version now it s not available anymore the repository still contains the dockerfile for building this image but it looks like it s excluded to be pushed | 1 |

78,787 | 9,795,148,242 | IssuesEvent | 2019-06-11 02:20:56 | flutter/flutter | https://api.github.com/repos/flutter/flutter | reopened | Let scrollbars avoid obstructing slivers and media query paddings | f: cupertino f: material design f: scrolling framework severe: new feature | Consider a CustomScrollView with a SliverAppBar and a SliverList and the user wants a scrollbar showing the position inside the SliverList's contents only.

Let the SliverAppBar report SliverGeometry describing its obstructing and max obstructing extends. Let that get aggregated by the scrollable and bubbled up with a scroll notification so the scrollbar can start below the obstructing area. | 1.0 | Let scrollbars avoid obstructing slivers and media query paddings - Consider a CustomScrollView with a SliverAppBar and a SliverList and the user wants a scrollbar showing the position inside the SliverList's contents only.

Let the SliverAppBar report SliverGeometry describing its obstructing and max obstructing extends. Let that get aggregated by the scrollable and bubbled up with a scroll notification so the scrollbar can start below the obstructing area. | non_main | let scrollbars avoid obstructing slivers and media query paddings consider a customscrollview with a sliverappbar and a sliverlist and the user wants a scrollbar showing the position inside the sliverlist s contents only let the sliverappbar report slivergeometry describing its obstructing and max obstructing extends let that get aggregated by the scrollable and bubbled up with a scroll notification so the scrollbar can start below the obstructing area | 0 |

158,609 | 24,864,761,647 | IssuesEvent | 2022-10-27 11:01:52 | WordPress/wporg-showcase-2022 | https://api.github.com/repos/WordPress/wporg-showcase-2022 | reopened | Add wporg submenu | Template: Single Template: Front Page Template: Archive Template: Submit Template: Submit Assets Need Design | We need all pages to display this menu:

<img width="498" alt="Screen Shot 2022-10-12 at 1 46 39 PM" src="https://user-images.githubusercontent.com/1657336/195252365-bd4bdcee-42d4-4ba5-a16e-533077dd7d13.png">

**Menu Items**

- Submit a site

| 1.0 | Add wporg submenu - We need all pages to display this menu:

<img width="498" alt="Screen Shot 2022-10-12 at 1 46 39 PM" src="https://user-images.githubusercontent.com/1657336/195252365-bd4bdcee-42d4-4ba5-a16e-533077dd7d13.png">

**Menu Items**

- Submit a site

| non_main | add wporg submenu we need all pages to display this menu img width alt screen shot at pm src menu items submit a site | 0 |

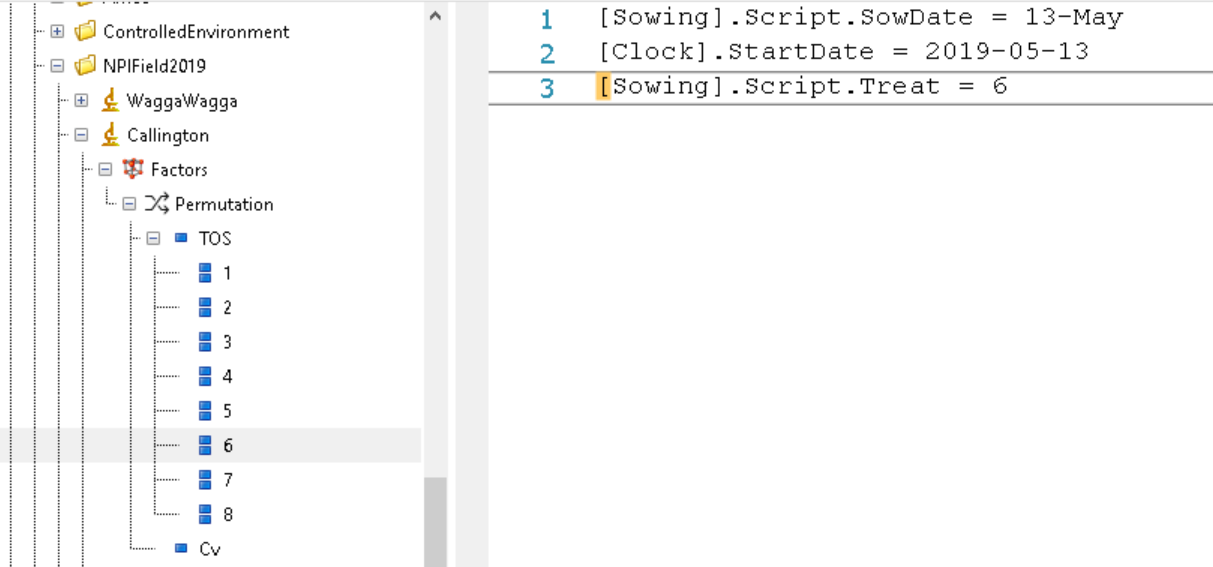

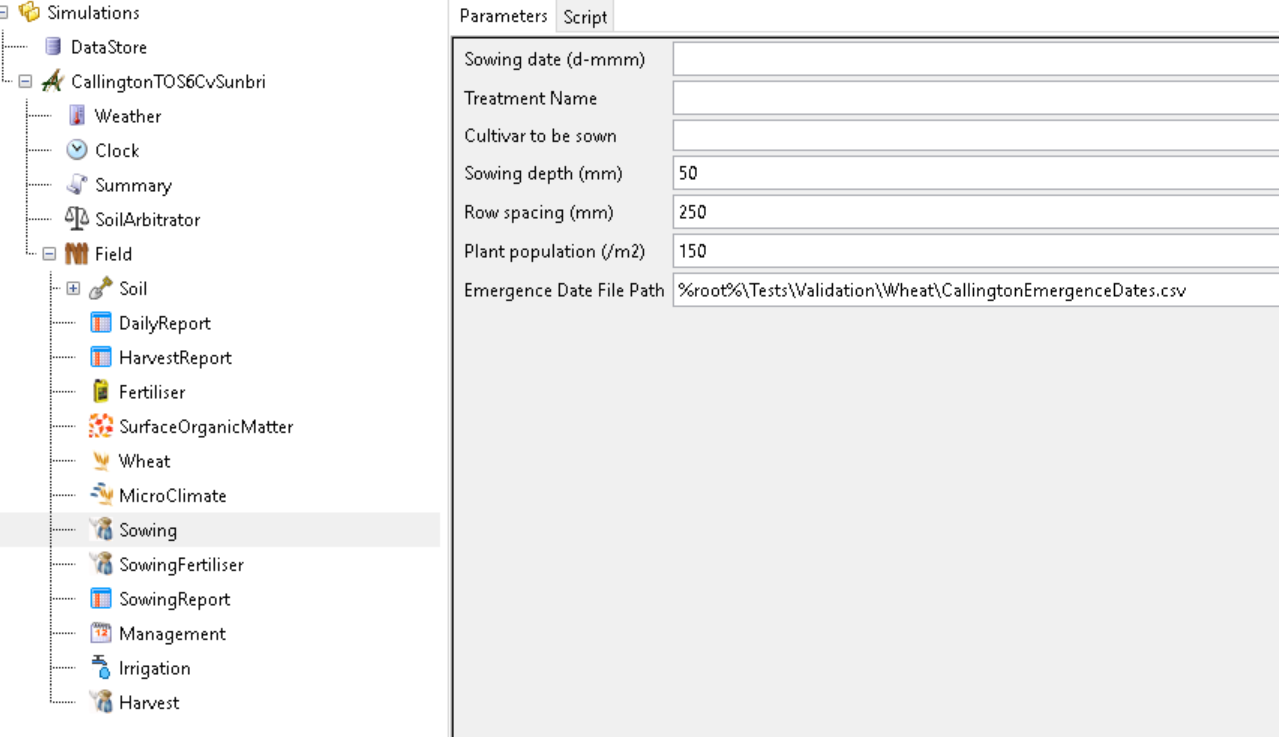

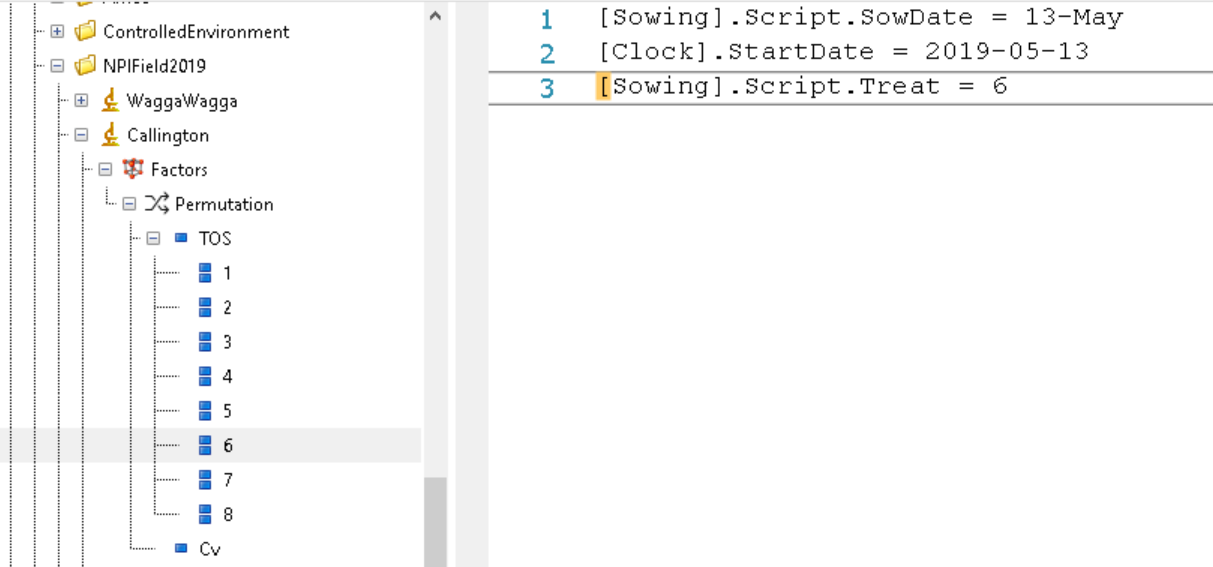

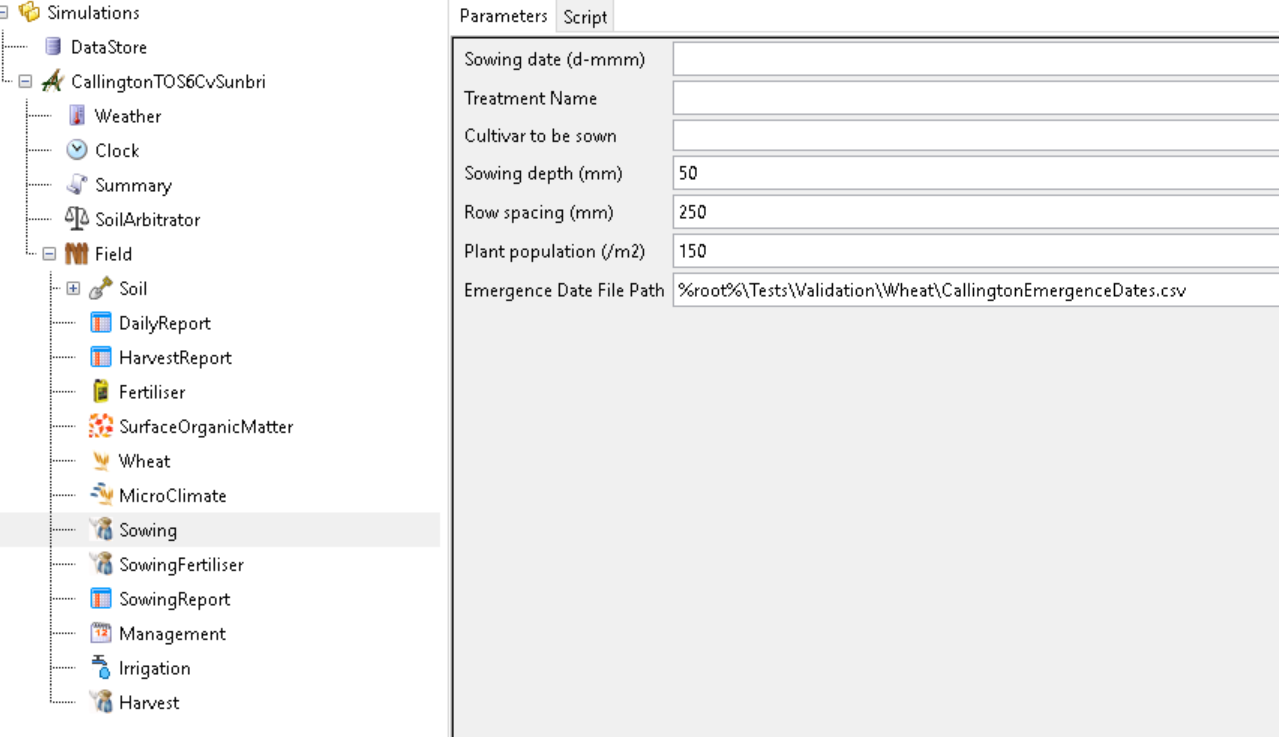

23,626 | 16,475,511,497 | IssuesEvent | 2021-05-24 04:42:19 | APSIMInitiative/ApsimX | https://api.github.com/repos/APSIMInitiative/ApsimX | closed | Error in generate apsimx file | bug interface/infrastructure | I use the master branch of ApsimX repository.

Steps to reproduce this error.

* Open the wheat.apsimx under Tests\Validation\Wheat

* Right click the Experiment `Callington` under folder `NPIField2019` to generate *.apsimx

* Open the generated file `CallingtonTOS6CvSunbri.apsimx` and run simulations

Then I get an error about `GetData` which is related with some information is not stored in the generated apsimx.

In the wheat.apsimx file, `TOS 6` specified the `[Sowing].Script.SowDate` and `[Sowing].Script.Treat`

However, these two attributes are not exported into generated apsimx file.

| 1.0 | Error in generate apsimx file - I use the master branch of ApsimX repository.

Steps to reproduce this error.

* Open the wheat.apsimx under Tests\Validation\Wheat

* Right click the Experiment `Callington` under folder `NPIField2019` to generate *.apsimx

* Open the generated file `CallingtonTOS6CvSunbri.apsimx` and run simulations

Then I get an error about `GetData` which is related with some information is not stored in the generated apsimx.

In the wheat.apsimx file, `TOS 6` specified the `[Sowing].Script.SowDate` and `[Sowing].Script.Treat`

However, these two attributes are not exported into generated apsimx file.

| non_main | error in generate apsimx file i use the master branch of apsimx repository steps to reproduce this error open the wheat apsimx under tests validation wheat right click the experiment callington under folder to generate apsimx open the generated file apsimx and run simulations then i get an error about getdata which is related with some information is not stored in the generated apsimx in the wheat apsimx file tos specified the script sowdate and script treat however these two attributes are not exported into generated apsimx file | 0 |

2,845 | 10,219,571,266 | IssuesEvent | 2019-08-15 18:56:07 | arcticicestudio/styleguide-javascript | https://api.github.com/repos/arcticicestudio/styleguide-javascript | closed | Git ignore and attribute pattern | context-workflow scope-maintainability scope-quality type-task | <p align="center"><img src="https://upload.wikimedia.org/wikipedia/commons/e/e0/Git-logo.svg" width="20%" /></p>

> Epic: #8

Update the [`.gitattributes`][a] and [`.gitignore`][i] configuration files to use the latest pattern.

[a]: https://git-scm.com/docs/gitattributes

[i]: https://git-scm.com/docs/gitignore | True | Git ignore and attribute pattern - <p align="center"><img src="https://upload.wikimedia.org/wikipedia/commons/e/e0/Git-logo.svg" width="20%" /></p>

> Epic: #8

Update the [`.gitattributes`][a] and [`.gitignore`][i] configuration files to use the latest pattern.

[a]: https://git-scm.com/docs/gitattributes

[i]: https://git-scm.com/docs/gitignore | main | git ignore and attribute pattern epic update the and configuration files to use the latest pattern | 1 |

2,698 | 9,436,330,164 | IssuesEvent | 2019-04-13 05:28:06 | invertase/react-native-firebase | https://api.github.com/repos/invertase/react-native-firebase | closed | [Proposal][WIP] Storage improvements | Hacktoberfest await-maintainer-feedback docs help-wanted ios js storage 🐞 bug 👁investigate 👉 await-user-feedback 🤖 android | Hi all 👋

`storage()` has fallen slightly behind recently and we plan on improving the module for a 5.x.x release which includes improving it to ensure that it provides the all the functionality in an easy to use and reliable manner with better documentation and bug fixes.

This issue is a placeholder that will be updated as we have more details on what will be supported and how this will be structured in the API.

Secondly, it's also here to show that we're aware of the issues that have already been raised and will be addressing them as part of this proposal. Any historic or new issues will be closed and redirected here to track all the issues that need addressing in one place.

New features to add:

- [ ] Multi-bucket support

- RNFB internals re-written to support this JS side in https://github.com/invertase/react-native-firebase/commit/7632da1809554cc5314361ec36c5ca88db6a8fa6 - just needs storage work done now.

- [ ] `StorageTask`; and support for resumable uploads/downloads

- [ ] `cancel()`

- [ ] `resume()`

- [ ] `pause()`

---

Loving `react-native-firebase` and the support we provide? Please consider supporting us with any of the below:

- 👉 Back financially via [Open Collective](https://opencollective.com/react-native-firebase/donate)

- 👉 Follow [`React Native Firebase`](https://twitter.com/rnfirebase) and [`Invertase`](https://twitter.com/invertaseio) on Twitter

- 👉 Star this repo on GitHub ⭐️ | True | [Proposal][WIP] Storage improvements - Hi all 👋

`storage()` has fallen slightly behind recently and we plan on improving the module for a 5.x.x release which includes improving it to ensure that it provides the all the functionality in an easy to use and reliable manner with better documentation and bug fixes.

This issue is a placeholder that will be updated as we have more details on what will be supported and how this will be structured in the API.

Secondly, it's also here to show that we're aware of the issues that have already been raised and will be addressing them as part of this proposal. Any historic or new issues will be closed and redirected here to track all the issues that need addressing in one place.

New features to add:

- [ ] Multi-bucket support

- RNFB internals re-written to support this JS side in https://github.com/invertase/react-native-firebase/commit/7632da1809554cc5314361ec36c5ca88db6a8fa6 - just needs storage work done now.

- [ ] `StorageTask`; and support for resumable uploads/downloads

- [ ] `cancel()`

- [ ] `resume()`

- [ ] `pause()`

---

Loving `react-native-firebase` and the support we provide? Please consider supporting us with any of the below:

- 👉 Back financially via [Open Collective](https://opencollective.com/react-native-firebase/donate)

- 👉 Follow [`React Native Firebase`](https://twitter.com/rnfirebase) and [`Invertase`](https://twitter.com/invertaseio) on Twitter

- 👉 Star this repo on GitHub ⭐️ | main | storage improvements hi all 👋 storage has fallen slightly behind recently and we plan on improving the module for a x x release which includes improving it to ensure that it provides the all the functionality in an easy to use and reliable manner with better documentation and bug fixes this issue is a placeholder that will be updated as we have more details on what will be supported and how this will be structured in the api secondly it s also here to show that we re aware of the issues that have already been raised and will be addressing them as part of this proposal any historic or new issues will be closed and redirected here to track all the issues that need addressing in one place new features to add multi bucket support rnfb internals re written to support this js side in just needs storage work done now storagetask and support for resumable uploads downloads cancel resume pause loving react native firebase and the support we provide please consider supporting us with any of the below 👉 back financially via 👉 follow and on twitter 👉 star this repo on github ⭐️ | 1 |

40,430 | 20,832,471,994 | IssuesEvent | 2022-03-19 17:19:18 | artichoke/artichoke | https://api.github.com/repos/artichoke/artichoke | closed | Add a `EncodedString::utf8` constructor | E-easy A-ruby-core A-performance C-quality | As a nit, I'd rather add a `EncodedString::utf8` constructor, since we pay to branch on the encoding in `EncodedString::new` when we don't need to.

_Originally posted by @lopopolo in https://github.com/artichoke/artichoke/pull/1678#r820325590_ | True | Add a `EncodedString::utf8` constructor - As a nit, I'd rather add a `EncodedString::utf8` constructor, since we pay to branch on the encoding in `EncodedString::new` when we don't need to.

_Originally posted by @lopopolo in https://github.com/artichoke/artichoke/pull/1678#r820325590_ | non_main | add a encodedstring constructor as a nit i d rather add a encodedstring constructor since we pay to branch on the encoding in encodedstring new when we don t need to originally posted by lopopolo in | 0 |

147,327 | 23,200,068,854 | IssuesEvent | 2022-08-01 20:28:02 | dart-lang/dartdoc | https://api.github.com/repos/dart-lang/dartdoc | closed | Allow fold out sections for more detailed/advanced docs | enhancement P3 web-design | From an [old dartdoc discussion](https://docs.google.com/document/d/1tndhthlM9jFls1kA5YvPj-LMiaNhp1uSAnRStpxB370/edit?ts=5a134f5e#heading=h.4tkns0pv6mna):

---snip---

We should also use sections inside member comments. I'm proposing that dartdoc recognizes Advanced sections and folds them by default. That is, a markdown section Advanced in a normal dartdoc comment is not visible by default, but can only be looked at by clicking on a '+' button.

```

/// Converts a double to its decimal string-representation.

///

/// # Advanced

/// The conversion algorithm must be accurate and follow the internal identity

/// requirement as specified in [Steele](http:// …).

///

/// If the output number requires more than 5 digits, it is expressed as an

/// exponential number.

/// ....String toString() { … }

```

---snip---

| 1.0 | Allow fold out sections for more detailed/advanced docs - From an [old dartdoc discussion](https://docs.google.com/document/d/1tndhthlM9jFls1kA5YvPj-LMiaNhp1uSAnRStpxB370/edit?ts=5a134f5e#heading=h.4tkns0pv6mna):

---snip---

We should also use sections inside member comments. I'm proposing that dartdoc recognizes Advanced sections and folds them by default. That is, a markdown section Advanced in a normal dartdoc comment is not visible by default, but can only be looked at by clicking on a '+' button.

```

/// Converts a double to its decimal string-representation.

///

/// # Advanced

/// The conversion algorithm must be accurate and follow the internal identity

/// requirement as specified in [Steele](http:// …).

///

/// If the output number requires more than 5 digits, it is expressed as an

/// exponential number.

/// ....String toString() { … }

```

---snip---

| non_main | allow fold out sections for more detailed advanced docs from an snip we should also use sections inside member comments i m proposing that dartdoc recognizes advanced sections and folds them by default that is a markdown section advanced in a normal dartdoc comment is not visible by default but can only be looked at by clicking on a button converts a double to its decimal string representation advanced the conversion algorithm must be accurate and follow the internal identity requirement as specified in http … if the output number requires more than digits it is expressed as an exponential number string tostring … snip | 0 |

323,229 | 23,939,243,803 | IssuesEvent | 2022-09-11 17:17:49 | supabase/supabase | https://api.github.com/repos/supabase/supabase | opened | resetPasswordForEmail() Example does not work. | documentation | # Improve documentation

## Link

[Docs Link](https://supabase.com/docs/reference/javascript/next/auth-resetpasswordforemail)

## Describe the problem

The code example in the docs has`options` as argument passed down to the function which can't work as `options: { redirectTo: "" }` is no valid object without curly brackets. Like so `{ options: { redirectTo: "" }}`. After looking deeper at this issue i found out to skip the option key completely. So the docs should be improved like written below under **Describe the improvement**.

```js

const { error, data } = await supabase.auth.resetPasswordForEmail(email, options: {

redirectTo: 'https://example.com/update-password',

})

```

## Describe the improvement

Remove the `options:` key. from args. To prevent users from debugging and looking for the actual object to pass.

```js

const { error, data } = await supabase.auth.resetPasswordForEmail(email, {

redirectTo: 'https://example.com/update-password',

})

``` | 1.0 | resetPasswordForEmail() Example does not work. - # Improve documentation

## Link

[Docs Link](https://supabase.com/docs/reference/javascript/next/auth-resetpasswordforemail)

## Describe the problem

The code example in the docs has`options` as argument passed down to the function which can't work as `options: { redirectTo: "" }` is no valid object without curly brackets. Like so `{ options: { redirectTo: "" }}`. After looking deeper at this issue i found out to skip the option key completely. So the docs should be improved like written below under **Describe the improvement**.

```js

const { error, data } = await supabase.auth.resetPasswordForEmail(email, options: {

redirectTo: 'https://example.com/update-password',

})

```

## Describe the improvement

Remove the `options:` key. from args. To prevent users from debugging and looking for the actual object to pass.

```js

const { error, data } = await supabase.auth.resetPasswordForEmail(email, {

redirectTo: 'https://example.com/update-password',

})

``` | non_main | resetpasswordforemail example does not work improve documentation link describe the problem the code example in the docs has options as argument passed down to the function which can t work as options redirectto is no valid object without curly brackets like so options redirectto after looking deeper at this issue i found out to skip the option key completely so the docs should be improved like written below under describe the improvement js const error data await supabase auth resetpasswordforemail email options redirectto describe the improvement remove the options key from args to prevent users from debugging and looking for the actual object to pass js const error data await supabase auth resetpasswordforemail email redirectto | 0 |

933 | 4,644,111,853 | IssuesEvent | 2016-09-30 15:25:17 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | Will ec2_asg support "default_cooldown" and "termination_policies" options? | affects_2.0 aws cloud feature_idea waiting_on_maintainer | When trying to use both "termination_policies" and "default_cooldown" it fails as there is no support for them even though they are listed in the ASG_ATTRIBUTES string and also supported by boto downstream. Will these get added to the module? | True | Will ec2_asg support "default_cooldown" and "termination_policies" options? - When trying to use both "termination_policies" and "default_cooldown" it fails as there is no support for them even though they are listed in the ASG_ATTRIBUTES string and also supported by boto downstream. Will these get added to the module? | main | will asg support default cooldown and termination policies options when trying to use both termination policies and default cooldown it fails as there is no support for them even though they are listed in the asg attributes string and also supported by boto downstream will these get added to the module | 1 |

1,916 | 6,577,706,408 | IssuesEvent | 2017-09-12 02:45:01 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | cloud/docker: recreates stopped named containers with state=started | affects_2.0 bug_report cloud docker waiting_on_maintainer | ##### Issue Type:

Bug Report

##### Plugin Name:

docker

##### Ansible Version:

```

ansible 2.0.0.2

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### Ansible Configuration:

N/A

##### Environment:

N/A

##### Summary:

Docker module recreates stopped named container with state=started

##### Steps To Reproduce:

Task description

```

- name: Start container

docker:

image: debian

name: lab

pull: missing

detach: yes

net: bridge

tty: yes

command: sleep infinity

state: started

```

Output from ansible-paybook -vv

```

TASK [start-container : Start container] ***************************************

changed: [localhost] => {"ansible_facts": {"docker_containers": [{"AppArmorProfile": "", "Args": ["infinity"], "Config": {"AttachStderr": false, "AttachStdin": false, "AttachStdout": false, "Cmd": ["sleep", "infinity"], "Domainname": "", "Entrypoint": null, "Env": ["PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin"], "Hostname": "75bb43da9afb", "Image": "android", "Labels": {}, "OnBuild": null, "OpenStdin": false, "StdinOnce": false, "Tty": true, "User": "", "Volumes": null, "WorkingDir": ""}, "Created": "2016-02-21T15:57:18.464570878Z", "Driver": "btrfs", "ExecIDs": null, "GraphDriver": {"Data": null, "Name": "btrfs"}, "HostConfig": {"Binds": null, "BlkioDeviceReadBps": null, "BlkioDeviceReadIOps": null, "BlkioDeviceWriteBps": null, "BlkioDeviceWriteIOps": null, "BlkioWeight": 0, "BlkioWeightDevice": null, "CapAdd": null, "CapDrop": null, "CgroupParent": "", "ConsoleSize": [0, 0], "ContainerIDFile": "", "CpuPeriod": 0, "CpuQuota": 0, "CpuShares": 0, "CpusetCpus": "", "CpusetMems": "", "Devices": null, "Dns": null, "DnsOptions": null, "DnsSearch": null, "ExtraHosts": null, "GroupAdd": null, "IpcMode": "", "Isolation": "", "KernelMemory": 0, "Links": null, "LogConfig": {"Config": {}, "Type": "journald"}, "Memory": 0, "MemoryReservation": 0, "MemorySwap": 0, "MemorySwappiness": -1, "NetworkMode": "bridge", "OomKillDisable": false, "OomScoreAdj": 0, "PidMode": "", "PidsLimit": 0, "PortBindings": null, "Privileged": false, "PublishAllPorts": false, "ReadonlyRootfs": false, "RestartPolicy": {"MaximumRetryCount": 0, "Name": ""}, "SecurityOpt": null, "ShmSize": 67108864, "UTSMode": "", "Ulimits": null, "VolumeDriver": "", "VolumesFrom": null}, "HostnamePath": "/var/lib/docker/containers/75bb43da9afb967ee60c095f3dd5f03183f80b3973ccb75d62d08678f0de6436/hostname", "HostsPath": "/var/lib/docker/containers/75bb43da9afb967ee60c095f3dd5f03183f80b3973ccb75d62d08678f0de6436/hosts", "Id": "75bb43da9afb967ee60c095f3dd5f03183f80b3973ccb75d62d08678f0de6436", "Image": "sha256:484a6a69ac0c4f06b7bff344a36745414fe57024c07ab3c90d8146b835256008", "LogPath": "", "MountLabel": "", "Mounts": [], "Name": "/lab", "NetworkSettings": {"Bridge": "", "EndpointID": "a929802ab259e1992472d2ac0383f82ca82ca19f901f565a6dcc1ccd3072f4ce", "Gateway": "172.17.0.1", "GlobalIPv6Address": "", "GlobalIPv6PrefixLen": 0, "HairpinMode": false, "IPAddress": "172.17.0.2", "IPPrefixLen": 16, "IPv6Gateway": "", "LinkLocalIPv6Address": "", "LinkLocalIPv6PrefixLen": 0, "MacAddress": "02:42:ac:11:00:02", "Networks": {"bridge": {"Aliases": null, "EndpointID": "a929802ab259e1992472d2ac0383f82ca82ca19f901f565a6dcc1ccd3072f4ce", "Gateway": "172.17.0.1", "GlobalIPv6Address": "", "GlobalIPv6PrefixLen": 0, "IPAMConfig": null, "IPAddress": "172.17.0.2", "IPPrefixLen": 16, "IPv6Gateway": "", "Links": null, "MacAddress": "02:42:ac:11:00:02", "NetworkID": "6a6c469a29d9f7d80a25d6df2e158d1f4c4ead81a463fe4ac13b20a60280ce6d"}}, "Ports": {}, "SandboxID": "6ccf812bac48ad68b87808dcc05dad84cf41054a21d03f48e2eb1dcba45414a7", "SandboxKey": "/var/run/docker/netns/6ccf812bac48", "SecondaryIPAddresses": null, "SecondaryIPv6Addresses": null}, "Path": "sleep", "ProcessLabel": "", "ResolvConfPath": "/var/lib/docker/containers/75bb43da9afb967ee60c095f3dd5f03183f80b3973ccb75d62d08678f0de6436/resolv.conf", "RestartCount": 0, "State": {"Dead": false, "Error": "", "ExitCode": 0, "FinishedAt": "0001-01-01T00:00:00Z", "OOMKilled": false, "Paused": false, "Pid": 16620, "Restarting": false, "Running": true, "StartedAt": "2016-02-21T15:57:18.649681499Z", "Status": "running"}}]}, "changed": true, "msg": "removed 1 container, started 1 container, created 1 container.", "reload_reasons": null, "summary": {"created": 1, "killed": 0, "pulled": 0, "removed": 1, "restarted": 0, "started": 1, "stopped": 0}}

```

##### Expected Results:

Named container restarts with last saved state

##### Actual Results:

Named container was recreated from image

Looks like the problem caused by this code:

https://github.com/ansible/ansible-modules-core/blob/devel/cloud/docker/docker.py#L1690

| True | cloud/docker: recreates stopped named containers with state=started - ##### Issue Type:

Bug Report

##### Plugin Name:

docker

##### Ansible Version:

```

ansible 2.0.0.2

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### Ansible Configuration:

N/A

##### Environment:

N/A

##### Summary:

Docker module recreates stopped named container with state=started

##### Steps To Reproduce:

Task description

```

- name: Start container

docker:

image: debian

name: lab

pull: missing

detach: yes

net: bridge

tty: yes

command: sleep infinity

state: started

```

Output from ansible-paybook -vv

```

TASK [start-container : Start container] ***************************************

changed: [localhost] => {"ansible_facts": {"docker_containers": [{"AppArmorProfile": "", "Args": ["infinity"], "Config": {"AttachStderr": false, "AttachStdin": false, "AttachStdout": false, "Cmd": ["sleep", "infinity"], "Domainname": "", "Entrypoint": null, "Env": ["PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin"], "Hostname": "75bb43da9afb", "Image": "android", "Labels": {}, "OnBuild": null, "OpenStdin": false, "StdinOnce": false, "Tty": true, "User": "", "Volumes": null, "WorkingDir": ""}, "Created": "2016-02-21T15:57:18.464570878Z", "Driver": "btrfs", "ExecIDs": null, "GraphDriver": {"Data": null, "Name": "btrfs"}, "HostConfig": {"Binds": null, "BlkioDeviceReadBps": null, "BlkioDeviceReadIOps": null, "BlkioDeviceWriteBps": null, "BlkioDeviceWriteIOps": null, "BlkioWeight": 0, "BlkioWeightDevice": null, "CapAdd": null, "CapDrop": null, "CgroupParent": "", "ConsoleSize": [0, 0], "ContainerIDFile": "", "CpuPeriod": 0, "CpuQuota": 0, "CpuShares": 0, "CpusetCpus": "", "CpusetMems": "", "Devices": null, "Dns": null, "DnsOptions": null, "DnsSearch": null, "ExtraHosts": null, "GroupAdd": null, "IpcMode": "", "Isolation": "", "KernelMemory": 0, "Links": null, "LogConfig": {"Config": {}, "Type": "journald"}, "Memory": 0, "MemoryReservation": 0, "MemorySwap": 0, "MemorySwappiness": -1, "NetworkMode": "bridge", "OomKillDisable": false, "OomScoreAdj": 0, "PidMode": "", "PidsLimit": 0, "PortBindings": null, "Privileged": false, "PublishAllPorts": false, "ReadonlyRootfs": false, "RestartPolicy": {"MaximumRetryCount": 0, "Name": ""}, "SecurityOpt": null, "ShmSize": 67108864, "UTSMode": "", "Ulimits": null, "VolumeDriver": "", "VolumesFrom": null}, "HostnamePath": "/var/lib/docker/containers/75bb43da9afb967ee60c095f3dd5f03183f80b3973ccb75d62d08678f0de6436/hostname", "HostsPath": "/var/lib/docker/containers/75bb43da9afb967ee60c095f3dd5f03183f80b3973ccb75d62d08678f0de6436/hosts", "Id": "75bb43da9afb967ee60c095f3dd5f03183f80b3973ccb75d62d08678f0de6436", "Image": "sha256:484a6a69ac0c4f06b7bff344a36745414fe57024c07ab3c90d8146b835256008", "LogPath": "", "MountLabel": "", "Mounts": [], "Name": "/lab", "NetworkSettings": {"Bridge": "", "EndpointID": "a929802ab259e1992472d2ac0383f82ca82ca19f901f565a6dcc1ccd3072f4ce", "Gateway": "172.17.0.1", "GlobalIPv6Address": "", "GlobalIPv6PrefixLen": 0, "HairpinMode": false, "IPAddress": "172.17.0.2", "IPPrefixLen": 16, "IPv6Gateway": "", "LinkLocalIPv6Address": "", "LinkLocalIPv6PrefixLen": 0, "MacAddress": "02:42:ac:11:00:02", "Networks": {"bridge": {"Aliases": null, "EndpointID": "a929802ab259e1992472d2ac0383f82ca82ca19f901f565a6dcc1ccd3072f4ce", "Gateway": "172.17.0.1", "GlobalIPv6Address": "", "GlobalIPv6PrefixLen": 0, "IPAMConfig": null, "IPAddress": "172.17.0.2", "IPPrefixLen": 16, "IPv6Gateway": "", "Links": null, "MacAddress": "02:42:ac:11:00:02", "NetworkID": "6a6c469a29d9f7d80a25d6df2e158d1f4c4ead81a463fe4ac13b20a60280ce6d"}}, "Ports": {}, "SandboxID": "6ccf812bac48ad68b87808dcc05dad84cf41054a21d03f48e2eb1dcba45414a7", "SandboxKey": "/var/run/docker/netns/6ccf812bac48", "SecondaryIPAddresses": null, "SecondaryIPv6Addresses": null}, "Path": "sleep", "ProcessLabel": "", "ResolvConfPath": "/var/lib/docker/containers/75bb43da9afb967ee60c095f3dd5f03183f80b3973ccb75d62d08678f0de6436/resolv.conf", "RestartCount": 0, "State": {"Dead": false, "Error": "", "ExitCode": 0, "FinishedAt": "0001-01-01T00:00:00Z", "OOMKilled": false, "Paused": false, "Pid": 16620, "Restarting": false, "Running": true, "StartedAt": "2016-02-21T15:57:18.649681499Z", "Status": "running"}}]}, "changed": true, "msg": "removed 1 container, started 1 container, created 1 container.", "reload_reasons": null, "summary": {"created": 1, "killed": 0, "pulled": 0, "removed": 1, "restarted": 0, "started": 1, "stopped": 0}}

```

##### Expected Results:

Named container restarts with last saved state

##### Actual Results:

Named container was recreated from image

Looks like the problem caused by this code:

https://github.com/ansible/ansible-modules-core/blob/devel/cloud/docker/docker.py#L1690

| main | cloud docker recreates stopped named containers with state started issue type bug report plugin name docker ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides ansible configuration n a environment n a summary docker module recreates stopped named container with state started steps to reproduce task description name start container docker image debian name lab pull missing detach yes net bridge tty yes command sleep infinity state started output from ansible paybook vv task changed ansible facts docker containers config attachstderr false attachstdin false attachstdout false cmd domainname entrypoint null env hostname image android labels onbuild null openstdin false stdinonce false tty true user volumes null workingdir created driver btrfs execids null graphdriver data null name btrfs hostconfig binds null blkiodevicereadbps null blkiodevicereadiops null blkiodevicewritebps null blkiodevicewriteiops null blkioweight blkioweightdevice null capadd null capdrop null cgroupparent consolesize containeridfile cpuperiod cpuquota cpushares cpusetcpus cpusetmems devices null dns null dnsoptions null dnssearch null extrahosts null groupadd null ipcmode isolation kernelmemory links null logconfig config type journald memory memoryreservation memoryswap memoryswappiness networkmode bridge oomkilldisable false oomscoreadj pidmode pidslimit portbindings null privileged false publishallports false readonlyrootfs false restartpolicy maximumretrycount name securityopt null shmsize utsmode ulimits null volumedriver volumesfrom null hostnamepath var lib docker containers hostname hostspath var lib docker containers hosts id image logpath mountlabel mounts name lab networksettings bridge endpointid gateway hairpinmode false ipaddress ipprefixlen macaddress ac networks bridge aliases null endpointid gateway ipamconfig null ipaddress ipprefixlen links null macaddress ac networkid ports sandboxid sandboxkey var run docker netns secondaryipaddresses null null path sleep processlabel resolvconfpath var lib docker containers resolv conf restartcount state dead false error exitcode finishedat oomkilled false paused false pid restarting false running true startedat status running changed true msg removed container started container created container reload reasons null summary created killed pulled removed restarted started stopped expected results named container restarts with last saved state actual results named container was recreated from image looks like the problem caused by this code | 1 |

30,882 | 25,141,834,881 | IssuesEvent | 2022-11-10 00:03:11 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | System.IO.FileNotFoundException thrown when using System.Text.Json in mixed .Net version solution. Assembly versions do not match. | question area-Infrastructure-libraries no-recent-activity needs-author-action | ### Description

We have a fairly large code base which consist of different .Net version projects. Mostly .Net6.0 and .NetStandard2.0 but a few .Net Framework 4.7.2 projects as well. We are migrating all of these to .Net6.0 but it will still take a lot of time before everything is done.

The issue we are facing is with System.Text.Json. We have installed the 6.0.5 nuget package to the projects that require it but since couple of them are .Net Framework projects, it seems that they get a different version of the System.Text.Json assembly. For other project types the assembly version is 6.0.0.0 but for the legacy framework it is 6.0.0.5. This causes runtime error: "System.IO.FileNotFoundException: Could not load file or assembly 'System.Text.Json, Version=6.0.0.5, Culture=neutral, PublicKeyToken=cc7b13ffcd2ddd51'. The system cannot find the file specified." when the framework project is referenced by a .Net6.0 project (yes, I know it's not ideal but at the time being a necessary evil).

### Reproduction Steps

Create a .Net6.0 and a .Net4.7.2 projects. Add a System.Text.Json reference to the 4.7.2 project and reference that project from the .Net6.0 project. Run the .Net6.0 and it'll throw an System.IO.FileNotFoundException

Here is a sample solution for this:

[JsonReferenceIssue.zip](https://github.com/dotnet/runtime/files/9111329/JsonReferenceIssue.zip)

### Expected behavior

I would expect the NuGet for System.Text.Json 6.0.5 to have the same assembly version for different .Net versions.

### Actual behavior

Runtime error: "System.IO.FileNotFoundException: Could not load file or assembly 'System.Text.Json, Version=6.0.0.5, Culture=neutral, PublicKeyToken=cc7b13ffcd2ddd51'. The system cannot find the file specified."

### Regression?

_No response_

### Known Workarounds

If I downgrade the projects to use the NuGet 6.0.0 this works as expected.

In the sample solution I can also workaround the issue by adding the following snippet to the Program.cs but I don't feel comfortable of trying to do this in our production code.

```

AppDomain.CurrentDomain.AssemblyResolve += CurrentDomain_AssemblyResolve;

static System.Reflection.Assembly? CurrentDomain_AssemblyResolve(object? sender, ResolveEventArgs args)

{

if (args.Name == "System.Text.Json, Version=6.0.0.5, Culture=neutral, PublicKeyToken=cc7b13ffcd2ddd51")

{

return typeof(System.Text.Json.JsonSerializer).Assembly;

}

return null;

}

```

### Configuration

Partial output of `dotnet --info`:

```

.NET SDK (reflecting any global.json):

Version: 6.0.301

Commit: 43f9b18481

Runtime Environment:

OS Name: Windows

OS Version: 10.0.19044

OS Platform: Windows

RID: win10-x64

Base Path: C:\Program Files\dotnet\sdk\6.0.301\

Host (useful for support):

Version: 6.0.6

Commit: 7cca709db2

```

### Other information

I'm not sure if the different System.Text.Json assembly version numbers between the .Net Framework and .Net Core versions is deliberate. If yes, I would like to know how to circumvent this issue. | 1.0 | System.IO.FileNotFoundException thrown when using System.Text.Json in mixed .Net version solution. Assembly versions do not match. - ### Description

We have a fairly large code base which consist of different .Net version projects. Mostly .Net6.0 and .NetStandard2.0 but a few .Net Framework 4.7.2 projects as well. We are migrating all of these to .Net6.0 but it will still take a lot of time before everything is done.

The issue we are facing is with System.Text.Json. We have installed the 6.0.5 nuget package to the projects that require it but since couple of them are .Net Framework projects, it seems that they get a different version of the System.Text.Json assembly. For other project types the assembly version is 6.0.0.0 but for the legacy framework it is 6.0.0.5. This causes runtime error: "System.IO.FileNotFoundException: Could not load file or assembly 'System.Text.Json, Version=6.0.0.5, Culture=neutral, PublicKeyToken=cc7b13ffcd2ddd51'. The system cannot find the file specified." when the framework project is referenced by a .Net6.0 project (yes, I know it's not ideal but at the time being a necessary evil).

### Reproduction Steps

Create a .Net6.0 and a .Net4.7.2 projects. Add a System.Text.Json reference to the 4.7.2 project and reference that project from the .Net6.0 project. Run the .Net6.0 and it'll throw an System.IO.FileNotFoundException

Here is a sample solution for this:

[JsonReferenceIssue.zip](https://github.com/dotnet/runtime/files/9111329/JsonReferenceIssue.zip)

### Expected behavior

I would expect the NuGet for System.Text.Json 6.0.5 to have the same assembly version for different .Net versions.

### Actual behavior

Runtime error: "System.IO.FileNotFoundException: Could not load file or assembly 'System.Text.Json, Version=6.0.0.5, Culture=neutral, PublicKeyToken=cc7b13ffcd2ddd51'. The system cannot find the file specified."

### Regression?

_No response_

### Known Workarounds

If I downgrade the projects to use the NuGet 6.0.0 this works as expected.

In the sample solution I can also workaround the issue by adding the following snippet to the Program.cs but I don't feel comfortable of trying to do this in our production code.

```

AppDomain.CurrentDomain.AssemblyResolve += CurrentDomain_AssemblyResolve;

static System.Reflection.Assembly? CurrentDomain_AssemblyResolve(object? sender, ResolveEventArgs args)

{

if (args.Name == "System.Text.Json, Version=6.0.0.5, Culture=neutral, PublicKeyToken=cc7b13ffcd2ddd51")

{

return typeof(System.Text.Json.JsonSerializer).Assembly;

}

return null;

}

```

### Configuration

Partial output of `dotnet --info`:

```

.NET SDK (reflecting any global.json):

Version: 6.0.301

Commit: 43f9b18481

Runtime Environment:

OS Name: Windows

OS Version: 10.0.19044

OS Platform: Windows

RID: win10-x64

Base Path: C:\Program Files\dotnet\sdk\6.0.301\

Host (useful for support):

Version: 6.0.6

Commit: 7cca709db2

```

### Other information

I'm not sure if the different System.Text.Json assembly version numbers between the .Net Framework and .Net Core versions is deliberate. If yes, I would like to know how to circumvent this issue. | non_main | system io filenotfoundexception thrown when using system text json in mixed net version solution assembly versions do not match description we have a fairly large code base which consist of different net version projects mostly and but a few net framework projects as well we are migrating all of these to but it will still take a lot of time before everything is done the issue we are facing is with system text json we have installed the nuget package to the projects that require it but since couple of them are net framework projects it seems that they get a different version of the system text json assembly for other project types the assembly version is but for the legacy framework it is this causes runtime error system io filenotfoundexception could not load file or assembly system text json version culture neutral publickeytoken the system cannot find the file specified when the framework project is referenced by a project yes i know it s not ideal but at the time being a necessary evil reproduction steps create a and a projects add a system text json reference to the project and reference that project from the project run the and it ll throw an system io filenotfoundexception here is a sample solution for this expected behavior i would expect the nuget for system text json to have the same assembly version for different net versions actual behavior runtime error system io filenotfoundexception could not load file or assembly system text json version culture neutral publickeytoken the system cannot find the file specified regression no response known workarounds if i downgrade the projects to use the nuget this works as expected in the sample solution i can also workaround the issue by adding the following snippet to the program cs but i don t feel comfortable of trying to do this in our production code appdomain currentdomain assemblyresolve currentdomain assemblyresolve static system reflection assembly currentdomain assemblyresolve object sender resolveeventargs args if args name system text json version culture neutral publickeytoken return typeof system text json jsonserializer assembly return null configuration partial output of dotnet info net sdk reflecting any global json version commit runtime environment os name windows os version os platform windows rid base path c program files dotnet sdk host useful for support version commit other information i m not sure if the different system text json assembly version numbers between the net framework and net core versions is deliberate if yes i would like to know how to circumvent this issue | 0 |

3,528 | 13,884,113,119 | IssuesEvent | 2020-10-18 14:52:43 | grey-software/org | https://api.github.com/repos/grey-software/org | opened | 🥅 Initiative: Grey Software Report Card | Domain: User Experience Role: Maintainer Role: Product Owner | ### Motivation 🏁

Students need to show employers real-world software experience in order to stand

out.

A report card that outlines a student's open-source experience at Grey Software

can allow them to stand apart from the competition.

### Initiative Overview 👁️🗨️

<!--

A clear and concise description of what the initiative is.

-->

Every contributor to Grey Software will be able to generate an authentic report

card by entering their username into grey.software/report-card

Contributors will be able to share a PDF of this report on websites like

LinkedIn.

A link-able version of their report card will also be available at

grey.software/report-card/GITHUB_USERNAME if they choose to.

**Implementation Details 🛠️**

<!--- Please share a plan to help realize this initiative -->

We'll add a page to our website under /report-card that will have an input box

for Github usernames.

Once users enter their usernames and click "Generate", our backend will scrape

Github and query its API to generate a PDF of their report card.

If they choose to (via a checkbox), they can host their report card publicly on

our website.

Additional Notes:

- Students will have to authenticate with Guthub in order to generate a verified

report card for their username

- A user's insights for a repo will have to be scraped

### Impact 💥

Students struggling to demonstrate real-world software experience will now be

able to show their open-source work in a professional manner.

### Describe alternatives you've considered 🔍

Students could link their contributor cards from the Github insights page for

every repo they have contributed to.

### Additional details ℹ️

N/A

| True | 🥅 Initiative: Grey Software Report Card - ### Motivation 🏁

Students need to show employers real-world software experience in order to stand

out.

A report card that outlines a student's open-source experience at Grey Software

can allow them to stand apart from the competition.

### Initiative Overview 👁️🗨️

<!--

A clear and concise description of what the initiative is.

-->

Every contributor to Grey Software will be able to generate an authentic report

card by entering their username into grey.software/report-card

Contributors will be able to share a PDF of this report on websites like

LinkedIn.

A link-able version of their report card will also be available at

grey.software/report-card/GITHUB_USERNAME if they choose to.

**Implementation Details 🛠️**

<!--- Please share a plan to help realize this initiative -->

We'll add a page to our website under /report-card that will have an input box

for Github usernames.

Once users enter their usernames and click "Generate", our backend will scrape

Github and query its API to generate a PDF of their report card.

If they choose to (via a checkbox), they can host their report card publicly on

our website.

Additional Notes:

- Students will have to authenticate with Guthub in order to generate a verified

report card for their username

- A user's insights for a repo will have to be scraped

### Impact 💥

Students struggling to demonstrate real-world software experience will now be

able to show their open-source work in a professional manner.

### Describe alternatives you've considered 🔍

Students could link their contributor cards from the Github insights page for

every repo they have contributed to.

### Additional details ℹ️

N/A

| main | 🥅 initiative grey software report card motivation 🏁 students need to show employers real world software experience in order to stand out a report card that outlines a student s open source experience at grey software can allow them to stand apart from the competition initiative overview 👁️🗨️ a clear and concise description of what the initiative is every contributor to grey software will be able to generate an authentic report card by entering their username into grey software report card contributors will be able to share a pdf of this report on websites like linkedin a link able version of their report card will also be available at grey software report card github username if they choose to implementation details 🛠️ we ll add a page to our website under report card that will have an input box for github usernames once users enter their usernames and click generate our backend will scrape github and query its api to generate a pdf of their report card if they choose to via a checkbox they can host their report card publicly on our website additional notes students will have to authenticate with guthub in order to generate a verified report card for their username a user s insights for a repo will have to be scraped impact 💥 students struggling to demonstrate real world software experience will now be able to show their open source work in a professional manner describe alternatives you ve considered 🔍 students could link their contributor cards from the github insights page for every repo they have contributed to additional details ℹ️ n a | 1 |

2,527 | 8,655,460,713 | IssuesEvent | 2018-11-27 16:00:36 | codestation/qcma | https://api.github.com/repos/codestation/qcma | closed | init.d script for init.d-based systems | unmaintained | Hello there!

It's kinda very long ago that I have actually made an init.d script, especially having forgotten the LSB tags that go at the top... But as I run my machine as a headless home-server, I would like to have QCMA start up, when it starts. It's an old Mac Mini running Ubuntu 18.04 now, so it would easily take scripts by the `init.d` format. Currently, I start `qcma_cli` via `screen` and just detach from the screen. This might be a viable method to really use for "daemonizing" it in a quick way.

Also, I could not seem to really find any CLI switches for configuring the search folders for media... Any plans on tidying up the CLI a little?

Kind regards,

Ingwie | True | init.d script for init.d-based systems - Hello there!

It's kinda very long ago that I have actually made an init.d script, especially having forgotten the LSB tags that go at the top... But as I run my machine as a headless home-server, I would like to have QCMA start up, when it starts. It's an old Mac Mini running Ubuntu 18.04 now, so it would easily take scripts by the `init.d` format. Currently, I start `qcma_cli` via `screen` and just detach from the screen. This might be a viable method to really use for "daemonizing" it in a quick way.

Also, I could not seem to really find any CLI switches for configuring the search folders for media... Any plans on tidying up the CLI a little?

Kind regards,

Ingwie | main | init d script for init d based systems hello there it s kinda very long ago that i have actually made an init d script especially having forgotten the lsb tags that go at the top but as i run my machine as a headless home server i would like to have qcma start up when it starts it s an old mac mini running ubuntu now so it would easily take scripts by the init d format currently i start qcma cli via screen and just detach from the screen this might be a viable method to really use for daemonizing it in a quick way also i could not seem to really find any cli switches for configuring the search folders for media any plans on tidying up the cli a little kind regards ingwie | 1 |

28,211 | 13,595,518,809 | IssuesEvent | 2020-09-22 03:20:50 | layer5io/meshery | https://api.github.com/repos/layer5io/meshery | closed | Complete nighthawk integration with meshery modules | area/performance kind/enhancement | **Current Behavior**

<!-- A brief description of what the problem is. (e.g. I need to be able to...) -->

Nighthawk Server Interface has already been added but UI, Mesheryctl, SMPConfig & Other configurations needs to be exposed.

**Desired Behavior**

<!-- A brief description of the enhancement. -->

Complete Nighthawk API Integration with all the modules of Meshery

--- | True | Complete nighthawk integration with meshery modules - **Current Behavior**

<!-- A brief description of what the problem is. (e.g. I need to be able to...) -->

Nighthawk Server Interface has already been added but UI, Mesheryctl, SMPConfig & Other configurations needs to be exposed.

**Desired Behavior**

<!-- A brief description of the enhancement. -->

Complete Nighthawk API Integration with all the modules of Meshery

--- | non_main | complete nighthawk integration with meshery modules current behavior nighthawk server interface has already been added but ui mesheryctl smpconfig other configurations needs to be exposed desired behavior complete nighthawk api integration with all the modules of meshery | 0 |

3,720 | 15,382,341,792 | IssuesEvent | 2021-03-03 00:26:19 | Homebrew/homebrew-cask | https://api.github.com/repos/Homebrew/homebrew-cask | closed | No mountable filesystems when my cask extracts an app from a .dmg.zip file | awaiting maintainer feedback stale | Hi all,

I have a problem with writing a cask for an application often used by my colleagues.

The upstream app is supplied in a zipped DMG file (suffixed by .dmg.zip).

I noticed several available casks that work simply and perfectly from such a .dmg.zip file (e.g. [creepy.rb](https://github.com/Homebrew/homebrew-cask/blob/master/Casks/creepy.rb))

I wrote something similar:

```

cask "macghostview" do

version "6.1"

sha256 "b20270fffea09bb4ca6c19fa41a18e8ef0071402a4b8ba17927f3da3c6f848d3"

url "https://www.math.tamu.edu/~tkiffe/tex/programs/MacGhostView#{version.no_dots}.dmg.zip"

appcast "https://www.math.tamu.edu/~tkiffe/macghostview.html"

name "MacGhostView"

desc "Application for previewing Postscript and encapsulated Postscript files"

homepage "https://www.math.tamu.edu/~tkiffe/macghostview.html"

app "MacGhostView.app"

end

```

Surprinsingly, after downloading from the URL, `brew install --cask` fails when mounting the downloaded result,

```

Error: Failure while executing; `hdiutil attach -plist -nobrowse -readonly -mountrandom /var/folders/9p/ztbq9wsn6195z4c5d25_7b340000gq/T/d20201220-71972-19mlccu /var/folders/9p/ztbq9wsn6195z4c5d25_7b340000gq/T/d20201220-71972-19mlccu/MacGhostView61.cdr` exited with 1. Here's the output:

hdiutil: attach failed - no mountable filesystems

```

Don't know if the `MacGhostView61.cdr` in the failure output above (and not .dmg as it is inside the zip) can be a clue to explain the failure? Nevertheless:

- downloading and mounting a .dmg.zip file works in other cask scripts (mentioned above)

- rewriting my cask to download the dmg file (manually unzipped with macOS Finder integrated function (Archive Utility), and uploaded to my own server) mounts perfectly.

I tried to play a little with `container type:`and `container nested:` (mentioned [here](https://github.com/Homebrew/homebrew-cask/blob/master/doc/cask_language_reference/all_stanzas.md)) in my cask, but to no avail.

Have you any idea for the mount failure when directly using the .dmp.zip file?

Would there be limitations in zip-format support in cask subroutines different from the Finder function?

Any help welcome, best regards.

| True | No mountable filesystems when my cask extracts an app from a .dmg.zip file - Hi all,

I have a problem with writing a cask for an application often used by my colleagues.

The upstream app is supplied in a zipped DMG file (suffixed by .dmg.zip).

I noticed several available casks that work simply and perfectly from such a .dmg.zip file (e.g. [creepy.rb](https://github.com/Homebrew/homebrew-cask/blob/master/Casks/creepy.rb))

I wrote something similar:

```

cask "macghostview" do

version "6.1"

sha256 "b20270fffea09bb4ca6c19fa41a18e8ef0071402a4b8ba17927f3da3c6f848d3"

url "https://www.math.tamu.edu/~tkiffe/tex/programs/MacGhostView#{version.no_dots}.dmg.zip"

appcast "https://www.math.tamu.edu/~tkiffe/macghostview.html"

name "MacGhostView"

desc "Application for previewing Postscript and encapsulated Postscript files"

homepage "https://www.math.tamu.edu/~tkiffe/macghostview.html"

app "MacGhostView.app"

end

```

Surprinsingly, after downloading from the URL, `brew install --cask` fails when mounting the downloaded result,

```

Error: Failure while executing; `hdiutil attach -plist -nobrowse -readonly -mountrandom /var/folders/9p/ztbq9wsn6195z4c5d25_7b340000gq/T/d20201220-71972-19mlccu /var/folders/9p/ztbq9wsn6195z4c5d25_7b340000gq/T/d20201220-71972-19mlccu/MacGhostView61.cdr` exited with 1. Here's the output:

hdiutil: attach failed - no mountable filesystems

```

Don't know if the `MacGhostView61.cdr` in the failure output above (and not .dmg as it is inside the zip) can be a clue to explain the failure? Nevertheless:

- downloading and mounting a .dmg.zip file works in other cask scripts (mentioned above)

- rewriting my cask to download the dmg file (manually unzipped with macOS Finder integrated function (Archive Utility), and uploaded to my own server) mounts perfectly.

I tried to play a little with `container type:`and `container nested:` (mentioned [here](https://github.com/Homebrew/homebrew-cask/blob/master/doc/cask_language_reference/all_stanzas.md)) in my cask, but to no avail.

Have you any idea for the mount failure when directly using the .dmp.zip file?

Would there be limitations in zip-format support in cask subroutines different from the Finder function?

Any help welcome, best regards.

| main | no mountable filesystems when my cask extracts an app from a dmg zip file hi all i have a problem with writing a cask for an application often used by my colleagues the upstream app is supplied in a zipped dmg file suffixed by dmg zip i noticed several available casks that work simply and perfectly from such a dmg zip file e g i wrote something similar cask macghostview do version url appcast name macghostview desc application for previewing postscript and encapsulated postscript files homepage app macghostview app end surprinsingly after downloading from the url brew install cask fails when mounting the downloaded result error failure while executing hdiutil attach plist nobrowse readonly mountrandom var folders t var folders t cdr exited with here s the output hdiutil attach failed no mountable filesystems don t know if the cdr in the failure output above and not dmg as it is inside the zip can be a clue to explain the failure nevertheless downloading and mounting a dmg zip file works in other cask scripts mentioned above rewriting my cask to download the dmg file manually unzipped with macos finder integrated function archive utility and uploaded to my own server mounts perfectly i tried to play a little with container type and container nested mentioned in my cask but to no avail have you any idea for the mount failure when directly using the dmp zip file would there be limitations in zip format support in cask subroutines different from the finder function any help welcome best regards | 1 |

2,512 | 8,655,459,981 | IssuesEvent | 2018-11-27 16:00:32 | codestation/qcma | https://api.github.com/repos/codestation/qcma | closed | PSTV Doesn't Detect QCMA | unmaintained | Hello, I am running QCMA on Debian Stretch ARM via Crouton on a Chromebook. I have installed VitaMTP with QCMA. Everything appears to be running, I have the icon on my panel, but I can't get my Vita to see my device. I have turned them both off and on, made sure to start QCMA before my PSTV, and even tried reinstalling. It doesn't seem to work.

Naturally, I assumed it was something funky with my computer, as I have an odd setup, but the verbose (http://pastebin.com/SgJYKwgD) seems rather normal. Any assistance is greatly appreciated. | True | PSTV Doesn't Detect QCMA - Hello, I am running QCMA on Debian Stretch ARM via Crouton on a Chromebook. I have installed VitaMTP with QCMA. Everything appears to be running, I have the icon on my panel, but I can't get my Vita to see my device. I have turned them both off and on, made sure to start QCMA before my PSTV, and even tried reinstalling. It doesn't seem to work.

Naturally, I assumed it was something funky with my computer, as I have an odd setup, but the verbose (http://pastebin.com/SgJYKwgD) seems rather normal. Any assistance is greatly appreciated. | main | pstv doesn t detect qcma hello i am running qcma on debian stretch arm via crouton on a chromebook i have installed vitamtp with qcma everything appears to be running i have the icon on my panel but i can t get my vita to see my device i have turned them both off and on made sure to start qcma before my pstv and even tried reinstalling it doesn t seem to work naturally i assumed it was something funky with my computer as i have an odd setup but the verbose seems rather normal any assistance is greatly appreciated | 1 |

41,624 | 5,345,827,309 | IssuesEvent | 2017-02-17 17:59:10 | 18F/calc | https://api.github.com/repos/18F/calc | closed | Use bugs for status indicators | in progress priority: low skill: design | For the user's own price lists, bugs like the WDS uses for their "alpha" and "beta" component indicators might be really helpful: https://standards.usa.gov/labels/

| 1.0 | Use bugs for status indicators - For the user's own price lists, bugs like the WDS uses for their "alpha" and "beta" component indicators might be really helpful: https://standards.usa.gov/labels/

| non_main | use bugs for status indicators for the user s own price lists bugs like the wds uses for their alpha and beta component indicators might be really helpful | 0 |

270,455 | 20,601,260,510 | IssuesEvent | 2022-03-06 09:44:13 | Kira272921/solidity-quickstart | https://api.github.com/repos/Kira272921/solidity-quickstart | closed | 📝docs: improve the `README.md` | documentation | Improve the [`README.md`](https://github.com/Kira272921/solidity-quickstart/blob/main/README.md) file by creating a table of contents for all the markdown guide files and the example solidity files present in the [`contract`](https://github.com/Kira272921/solidity-quickstart/tree/main/contracts) folder. | 1.0 | 📝docs: improve the `README.md` - Improve the [`README.md`](https://github.com/Kira272921/solidity-quickstart/blob/main/README.md) file by creating a table of contents for all the markdown guide files and the example solidity files present in the [`contract`](https://github.com/Kira272921/solidity-quickstart/tree/main/contracts) folder. | non_main | 📝docs improve the readme md improve the file by creating a table of contents for all the markdown guide files and the example solidity files present in the folder | 0 |

58,401 | 24,439,278,147 | IssuesEvent | 2022-10-06 13:37:17 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | .NET not supported by OpenAI? | cognitive-services/svc triaged assigned-to-author product-question Pri1 | I expected to see at least C# since GitHub CoPilot does support it.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 25c15e01-3578-c4ae-afbc-32c7172173d0

* Version Independent ID: 0d20fad8-0e13-2d85-64e5-31861fabdcdd

* Content: [Azure OpenAI Engines - Azure OpenAI](https://docs.microsoft.com/en-us/azure/cognitive-services/openai/concepts/engines)

* Content Source: [articles/cognitive-services/openai/concepts/engines.md](https://github.com/MicrosoftDocs/azure-docs/blob/main/articles/cognitive-services/openai/concepts/engines.md)

* Service: **cognitive-services**

* GitHub Login: @mrbullwinkle

* Microsoft Alias: **mbullwin** | 1.0 | .NET not supported by OpenAI? - I expected to see at least C# since GitHub CoPilot does support it.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 25c15e01-3578-c4ae-afbc-32c7172173d0

* Version Independent ID: 0d20fad8-0e13-2d85-64e5-31861fabdcdd

* Content: [Azure OpenAI Engines - Azure OpenAI](https://docs.microsoft.com/en-us/azure/cognitive-services/openai/concepts/engines)

* Content Source: [articles/cognitive-services/openai/concepts/engines.md](https://github.com/MicrosoftDocs/azure-docs/blob/main/articles/cognitive-services/openai/concepts/engines.md)

* Service: **cognitive-services**

* GitHub Login: @mrbullwinkle

* Microsoft Alias: **mbullwin** | non_main | net not supported by openai i expected to see at least c since github copilot does support it document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id afbc version independent id content content source service cognitive services github login mrbullwinkle microsoft alias mbullwin | 0 |

2,847 | 10,219,571,377 | IssuesEvent | 2019-08-15 18:56:08 | arcticicestudio/styleguide-javascript | https://api.github.com/repos/arcticicestudio/styleguide-javascript | closed | Prettier | context-workflow scope-dx scope-maintainability scope-quality type-feature | <p align="center"><img src="https://user-images.githubusercontent.com/7836623/48644231-4556d780-e9e2-11e8-862e-e8ce630fd0ba.png" width="30%" /></p>

> Epic: #8

Integrate [Prettier][], the opinionated code formatter with support for many languages and integrations with most editors. It ensures that all outputted code conforms to a consistent style.

### Configuration

This is one of the main features of Prettier: It already provides the best and recommended style configurations of-out-the-box™.

The only option we will change is the [print width][prettier-docs-pwidth]. It is set to 80 by default which not up-to-date for modern screens (might only be relevant when working in terminals only like e.g. with Vim). It'll be changed to 120 used by all of Arctic Ice Studio's style guides.

The `prettier.config.js` configuration file will be placed in the project root as well as the `.prettierignore` file to also define ignore pattern.

### NPM script/task

To allow to format all sources a `format:pretty` npm script/task will be added to be included in the main `format` script flow.

### False-Positives

To ensure incorrect examples of the style guide won't be fixed by Prettier, the affected lines must be excluded from Prettier by [adding the `<!-- prettier-ignore -->` handle for HTML][p-d-ign].

Note that this might trigger `remark-lint` when added right above a code block (`no-missing-blank-lines`). This can also be fixed by [adding the `<!--lint disable no-missing-blank-lines-->` handle][rml-ign] as well.

## Tasks

- [x] Install [prettier][npm-prettier] packages.

- [x] Implement `prettier.config.js` configuration file.

- [x] Implement `.prettierignore` ignore pattern file.

- [x] Implement NPM `format:pretty` script/task.

- [x] Format current code base for the first time and fix possible style guide violations using the configured linters of the project.

- [x] Ensure compatibility with Prettier (#11) by adding required _ignore_/_disable_ handles for Prettier and `remark-lint`.

[npm-prettier]: https://www.npmjs.com/package/prettier

[prettier-docs-pwidth]: https://prettier.io/docs/en/options.html#print-width

[prettier]: https://prettier.io

[p-d-ign]: https://prettier.io/docs/en/ignore.html#html

[rml-ign]: https://github.com/remarkjs/remark-lint#configuring-remark-lint | True | Prettier - <p align="center"><img src="https://user-images.githubusercontent.com/7836623/48644231-4556d780-e9e2-11e8-862e-e8ce630fd0ba.png" width="30%" /></p>

> Epic: #8

Integrate [Prettier][], the opinionated code formatter with support for many languages and integrations with most editors. It ensures that all outputted code conforms to a consistent style.

### Configuration

This is one of the main features of Prettier: It already provides the best and recommended style configurations of-out-the-box™.

The only option we will change is the [print width][prettier-docs-pwidth]. It is set to 80 by default which not up-to-date for modern screens (might only be relevant when working in terminals only like e.g. with Vim). It'll be changed to 120 used by all of Arctic Ice Studio's style guides.

The `prettier.config.js` configuration file will be placed in the project root as well as the `.prettierignore` file to also define ignore pattern.

### NPM script/task

To allow to format all sources a `format:pretty` npm script/task will be added to be included in the main `format` script flow.

### False-Positives

To ensure incorrect examples of the style guide won't be fixed by Prettier, the affected lines must be excluded from Prettier by [adding the `<!-- prettier-ignore -->` handle for HTML][p-d-ign].

Note that this might trigger `remark-lint` when added right above a code block (`no-missing-blank-lines`). This can also be fixed by [adding the `<!--lint disable no-missing-blank-lines-->` handle][rml-ign] as well.

## Tasks

- [x] Install [prettier][npm-prettier] packages.

- [x] Implement `prettier.config.js` configuration file.

- [x] Implement `.prettierignore` ignore pattern file.

- [x] Implement NPM `format:pretty` script/task.

- [x] Format current code base for the first time and fix possible style guide violations using the configured linters of the project.

- [x] Ensure compatibility with Prettier (#11) by adding required _ignore_/_disable_ handles for Prettier and `remark-lint`.

[npm-prettier]: https://www.npmjs.com/package/prettier

[prettier-docs-pwidth]: https://prettier.io/docs/en/options.html#print-width

[prettier]: https://prettier.io

[p-d-ign]: https://prettier.io/docs/en/ignore.html#html