Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

52,484 | 6,258,606,211 | IssuesEvent | 2017-07-14 15:58:24 | Microsoft/vscode | https://api.github.com/repos/Microsoft/vscode | opened | Test: Multi Root Workspaces | testplan-item | Test for: Multi Root Workspaces

Complexity: 5

- [ ] Windows

- [ ] Linux

- [ ] macOS

In this milestone we rewrote how multi root workspaces surface in VS Code. The previous solution with having a `workspace` setting in user settings is obsolete (there is no migration). See https://github.com/Microsoft/vscode/issues/396#issuecomment-315079618 for more details on our approach.

`Basics`

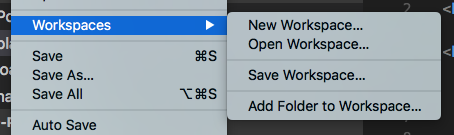

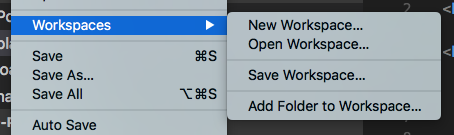

Most workspace related operations center around a new submenu under the file menu:

Try to work with multi root workspaces and play around with the available actions. Transition between empty workspaces, single folder workspaces and multi-root workspaces. Some things to keep an eye on:

* explorer and search operations work as before in any of the contexts

* you can save "Untitled Workspace" to some location on disk and open them from there

* you can switch workspaces via `File > Open Recent` as well as the recently opened picker (F1 `>open recent`)

* you can add and remove root folders from a multi-root workspace

* you see that you are inside a workspace by a new status bar color as well as the workspace name showing up in the explorer section for folders

* workspaces that are opened will restore in the same way as folder do (you can set `window.restoreWindows`: all to restore multiple windows)

* debugging (node.js and extension host debugging) work as before

`Data`

Once you are in a workspace context, we use the workspaces identifier to associate:

* UI state (e.g. the files you have opened as tabs)

* hot-exit state (e.g. dirty files you left dirty when quitting)

* extension storage (a location on disk where extensions can store data via the [`ExtensionContext.storagePath`](https://github.com/Microsoft/vscode/blob/master/src/vs/vscode.d.ts#L3513) API)

Verify:

* UI state you have inside a workspace is restored next time you open it

* dirty files are restored when you quit and reopen the workspace

* extensions have a stable `ExtensionContext.storagePath` location per workspace

`Settings`

Once you are in a workspace context, workspace settings are no longer stored within the `.vscode` folder, but within the workspace file. Verify that you can still define workspace settings when you are in a workspace context and that settings apply as usual. Also verify that folder settings (the ones we do support, e.g. editor settings) still apply per resource you open of that folder.

| 1.0 | Test: Multi Root Workspaces - Test for: Multi Root Workspaces

Complexity: 5

- [ ] Windows

- [ ] Linux

- [ ] macOS

In this milestone we rewrote how multi root workspaces surface in VS Code. The previous solution with having a `workspace` setting in user settings is obsolete (there is no migration). See https://github.com/Microsoft/vscode/issues/396#issuecomment-315079618 for more details on our approach.

`Basics`

Most workspace related operations center around a new submenu under the file menu:

Try to work with multi root workspaces and play around with the available actions. Transition between empty workspaces, single folder workspaces and multi-root workspaces. Some things to keep an eye on:

* explorer and search operations work as before in any of the contexts

* you can save "Untitled Workspace" to some location on disk and open them from there

* you can switch workspaces via `File > Open Recent` as well as the recently opened picker (F1 `>open recent`)

* you can add and remove root folders from a multi-root workspace

* you see that you are inside a workspace by a new status bar color as well as the workspace name showing up in the explorer section for folders

* workspaces that are opened will restore in the same way as folder do (you can set `window.restoreWindows`: all to restore multiple windows)

* debugging (node.js and extension host debugging) work as before

`Data`

Once you are in a workspace context, we use the workspaces identifier to associate:

* UI state (e.g. the files you have opened as tabs)

* hot-exit state (e.g. dirty files you left dirty when quitting)

* extension storage (a location on disk where extensions can store data via the [`ExtensionContext.storagePath`](https://github.com/Microsoft/vscode/blob/master/src/vs/vscode.d.ts#L3513) API)

Verify:

* UI state you have inside a workspace is restored next time you open it

* dirty files are restored when you quit and reopen the workspace

* extensions have a stable `ExtensionContext.storagePath` location per workspace

`Settings`

Once you are in a workspace context, workspace settings are no longer stored within the `.vscode` folder, but within the workspace file. Verify that you can still define workspace settings when you are in a workspace context and that settings apply as usual. Also verify that folder settings (the ones we do support, e.g. editor settings) still apply per resource you open of that folder.

| non_main | test multi root workspaces test for multi root workspaces complexity windows linux macos in this milestone we rewrote how multi root workspaces surface in vs code the previous solution with having a workspace setting in user settings is obsolete there is no migration see for more details on our approach basics most workspace related operations center around a new submenu under the file menu try to work with multi root workspaces and play around with the available actions transition between empty workspaces single folder workspaces and multi root workspaces some things to keep an eye on explorer and search operations work as before in any of the contexts you can save untitled workspace to some location on disk and open them from there you can switch workspaces via file open recent as well as the recently opened picker open recent you can add and remove root folders from a multi root workspace you see that you are inside a workspace by a new status bar color as well as the workspace name showing up in the explorer section for folders workspaces that are opened will restore in the same way as folder do you can set window restorewindows all to restore multiple windows debugging node js and extension host debugging work as before data once you are in a workspace context we use the workspaces identifier to associate ui state e g the files you have opened as tabs hot exit state e g dirty files you left dirty when quitting extension storage a location on disk where extensions can store data via the api verify ui state you have inside a workspace is restored next time you open it dirty files are restored when you quit and reopen the workspace extensions have a stable extensioncontext storagepath location per workspace settings once you are in a workspace context workspace settings are no longer stored within the vscode folder but within the workspace file verify that you can still define workspace settings when you are in a workspace context and that settings apply as usual also verify that folder settings the ones we do support e g editor settings still apply per resource you open of that folder | 0 |

23,598 | 4,958,082,952 | IssuesEvent | 2016-12-02 08:22:59 | Freeyourgadget/Gadgetbridge | https://api.github.com/repos/Freeyourgadget/Gadgetbridge | closed | "Acquire location" doesn't work | documentation not a bug | I'm on a Samsung Galaxy Note N7000 running CM 11-20160815-nightly (CM11 was never fully released for this phone) - Android 4.4.4. GPS works fine in other apps (e.g. maps.me, osmtracker etc.) But in the pebble settings section of GB, pressing the "acquire location" button doesn't seem to do anything - lat and lon stayed at 0 and 0, and I don't see the GPS icon showing up in the notification area, like it does when any other app accesses the GPS.

I worked around it by entering my lat/lon manually. It didn't seem to affect sunrise/sunset times right away (not sure about that though) so I rebooted. Then it affected tomorrow's sunrise/sunset times on the timeline, but not today's sunset time. | 1.0 | "Acquire location" doesn't work - I'm on a Samsung Galaxy Note N7000 running CM 11-20160815-nightly (CM11 was never fully released for this phone) - Android 4.4.4. GPS works fine in other apps (e.g. maps.me, osmtracker etc.) But in the pebble settings section of GB, pressing the "acquire location" button doesn't seem to do anything - lat and lon stayed at 0 and 0, and I don't see the GPS icon showing up in the notification area, like it does when any other app accesses the GPS.

I worked around it by entering my lat/lon manually. It didn't seem to affect sunrise/sunset times right away (not sure about that though) so I rebooted. Then it affected tomorrow's sunrise/sunset times on the timeline, but not today's sunset time. | non_main | acquire location doesn t work i m on a samsung galaxy note running cm nightly was never fully released for this phone android gps works fine in other apps e g maps me osmtracker etc but in the pebble settings section of gb pressing the acquire location button doesn t seem to do anything lat and lon stayed at and and i don t see the gps icon showing up in the notification area like it does when any other app accesses the gps i worked around it by entering my lat lon manually it didn t seem to affect sunrise sunset times right away not sure about that though so i rebooted then it affected tomorrow s sunrise sunset times on the timeline but not today s sunset time | 0 |

548 | 3,984,358,139 | IssuesEvent | 2016-05-07 04:22:30 | duckduckgo/zeroclickinfo-goodies | https://api.github.com/repos/duckduckgo/zeroclickinfo-goodies | opened | Conversions: No teaspoon/tablespoon support | Maintainer Input Requested | Queries like `teaspoons in a tablespoon` or `6 tsp in tbsp` return no Instant Answer.

I'm not sure where in `Conversions.pm` the possible units of measurement are defined, except for temperatures in `sub convert_temperatures`. Would be glad to help implement this if someone could help me follow the breadcrumbs.

------

IA Page: http://duck.co/ia/view/conversions

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @mintsoft | True | Conversions: No teaspoon/tablespoon support - Queries like `teaspoons in a tablespoon` or `6 tsp in tbsp` return no Instant Answer.

I'm not sure where in `Conversions.pm` the possible units of measurement are defined, except for temperatures in `sub convert_temperatures`. Would be glad to help implement this if someone could help me follow the breadcrumbs.

------

IA Page: http://duck.co/ia/view/conversions

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @mintsoft | main | conversions no teaspoon tablespoon support queries like teaspoons in a tablespoon or tsp in tbsp return no instant answer i m not sure where in conversions pm the possible units of measurement are defined except for temperatures in sub convert temperatures would be glad to help implement this if someone could help me follow the breadcrumbs ia page mintsoft | 1 |

1,473 | 6,396,817,982 | IssuesEvent | 2017-08-04 16:24:44 | duckduckgo/zeroclickinfo-goodies | https://api.github.com/repos/duckduckgo/zeroclickinfo-goodies | closed | Tips: Ensure a currency is always specified | Low-Hanging Fruit Maintainer Input Requested Triggering | Currently the Tips IA can handle queries such as `25% of 500`, which it shouldn't.

We should make sure that a currency is always present so we are only doing tip calculations, not arbitrary percentages. If the word 'tip' is present we can probably assume this is what they want.

---

IA Page: http://duck.co/ia/view/tips

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @mattlehning

| True | Tips: Ensure a currency is always specified - Currently the Tips IA can handle queries such as `25% of 500`, which it shouldn't.

We should make sure that a currency is always present so we are only doing tip calculations, not arbitrary percentages. If the word 'tip' is present we can probably assume this is what they want.

---

IA Page: http://duck.co/ia/view/tips

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @mattlehning

| main | tips ensure a currency is always specified currently the tips ia can handle queries such as of which it shouldn t we should make sure that a currency is always present so we are only doing tip calculations not arbitrary percentages if the word tip is present we can probably assume this is what they want ia page mattlehning | 1 |

2,582 | 8,774,513,708 | IssuesEvent | 2018-12-18 20:02:49 | arcticicestudio/nord-docs | https://api.github.com/repos/arcticicestudio/nord-docs | closed | Google Analytics | context-workflow scope-maintainability scope-quality scope-stability type-feature | <p align="center"><img src="https://user-images.githubusercontent.com/7836623/50167256-14bbd380-02e9-11e9-8aca-a31baf745cd8.png" width="20%"/></p>

> Associated epic: #86

This issue documents the implementation of [Google Analytics][ga-mark] like documented in the [“Analytics & Statistics” design concept][gh-86].

<p align="center"><img src="https://user-images.githubusercontent.com/7836623/50167593-c824c800-02e9-11e9-9b70-84b6fc40c05f.png " width="20%"/></p>

The main tool to collect and analyze data will be [Google Analytics][ga-mark]. It is a stable and proven service with a lot of useful configurable features and a reliable persistence.

_Nord Docs_ will use the latest and recommended [gtag.js][gdev-ga-gtag] library that optionally allows, next to Google Analytics itself, the integration of almost all Google Marketing services like e.g. [Google Tag Manager][gdev-tm].

The library will be integrated through [gatsby-plugin-google-gtag][gh-gb-p-ga-tag].

## Tasks

- [x] Install required packages:

- [gatsby-plugin-google-gtag][npm-gp-gtag]

- [x] Implement required internal constants.

- [x] Implement the plugin configuration.

[g-sup-anonip]: https://support.google.com/analytics/answer/2763052

[gh-gb-p-ga-tag]: https://github.com/gatsbyjs/gatsby/tree/master/packages/gatsby-plugin-google-gtag

[gh-86]: https://github.com/arcticicestudio/nord-docs/issues/86

[ga-mark]: https://marketingplatform.google.com/about/analytics

[gdev-ga-gtag]: https://developers.google.com/analytics/devguides/collection/gtagjs

[gdev-tm]: https://developers.google.com/tag-manager

[wiki-a]: https://en.wikipedia.org/wiki/Analytics

[wiki-s]: https://en.wikipedia.org/wiki/Statistics

[wiki-dnt]: https://en.wikipedia.org/wiki/Do_Not_Track

[npm-gp-gtag]: https://www.npmjs.com/package/gatsby-plugin-google-gtag

| True | Google Analytics - <p align="center"><img src="https://user-images.githubusercontent.com/7836623/50167256-14bbd380-02e9-11e9-8aca-a31baf745cd8.png" width="20%"/></p>

> Associated epic: #86

This issue documents the implementation of [Google Analytics][ga-mark] like documented in the [“Analytics & Statistics” design concept][gh-86].

<p align="center"><img src="https://user-images.githubusercontent.com/7836623/50167593-c824c800-02e9-11e9-9b70-84b6fc40c05f.png " width="20%"/></p>

The main tool to collect and analyze data will be [Google Analytics][ga-mark]. It is a stable and proven service with a lot of useful configurable features and a reliable persistence.

_Nord Docs_ will use the latest and recommended [gtag.js][gdev-ga-gtag] library that optionally allows, next to Google Analytics itself, the integration of almost all Google Marketing services like e.g. [Google Tag Manager][gdev-tm].

The library will be integrated through [gatsby-plugin-google-gtag][gh-gb-p-ga-tag].

## Tasks

- [x] Install required packages:

- [gatsby-plugin-google-gtag][npm-gp-gtag]

- [x] Implement required internal constants.

- [x] Implement the plugin configuration.

[g-sup-anonip]: https://support.google.com/analytics/answer/2763052

[gh-gb-p-ga-tag]: https://github.com/gatsbyjs/gatsby/tree/master/packages/gatsby-plugin-google-gtag

[gh-86]: https://github.com/arcticicestudio/nord-docs/issues/86

[ga-mark]: https://marketingplatform.google.com/about/analytics

[gdev-ga-gtag]: https://developers.google.com/analytics/devguides/collection/gtagjs

[gdev-tm]: https://developers.google.com/tag-manager

[wiki-a]: https://en.wikipedia.org/wiki/Analytics

[wiki-s]: https://en.wikipedia.org/wiki/Statistics

[wiki-dnt]: https://en.wikipedia.org/wiki/Do_Not_Track

[npm-gp-gtag]: https://www.npmjs.com/package/gatsby-plugin-google-gtag

| main | google analytics associated epic this issue documents the implementation of like documented in the the main tool to collect and analyze data will be it is a stable and proven service with a lot of useful configurable features and a reliable persistence nord docs will use the latest and recommended library that optionally allows next to google analytics itself the integration of almost all google marketing services like e g the library will be integrated through tasks install required packages implement required internal constants implement the plugin configuration | 1 |

169,726 | 20,841,888,408 | IssuesEvent | 2022-03-21 01:46:24 | ekediala/inventory | https://api.github.com/repos/ekediala/inventory | opened | CVE-2022-24772 (High) detected in node-forge-0.8.2.tgz | security vulnerability | ## CVE-2022-24772 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.8.2.tgz</b></p></summary>

<p>JavaScript implementations of network transports, cryptography, ciphers, PKI, message digests, and various utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-forge/-/node-forge-0.8.2.tgz">https://registry.npmjs.org/node-forge/-/node-forge-0.8.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/node-forge/package.json</p>

<p>

Dependency Hierarchy:

- laravel-mix-4.1.4.tgz (Root Library)

- webpack-dev-server-3.8.1.tgz

- selfsigned-1.10.6.tgz

- :x: **node-forge-0.8.2.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Forge (also called `node-forge`) is a native implementation of Transport Layer Security in JavaScript. Prior to version 1.3.0, RSA PKCS#1 v1.5 signature verification code does not check for tailing garbage bytes after decoding a `DigestInfo` ASN.1 structure. This can allow padding bytes to be removed and garbage data added to forge a signature when a low public exponent is being used. The issue has been addressed in `node-forge` version 1.3.0. There are currently no known workarounds.

<p>Publish Date: 2022-03-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-24772>CVE-2022-24772</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-24772">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-24772</a></p>

<p>Release Date: 2022-03-18</p>

<p>Fix Resolution: node-forge - 1.3.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-24772 (High) detected in node-forge-0.8.2.tgz - ## CVE-2022-24772 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.8.2.tgz</b></p></summary>

<p>JavaScript implementations of network transports, cryptography, ciphers, PKI, message digests, and various utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-forge/-/node-forge-0.8.2.tgz">https://registry.npmjs.org/node-forge/-/node-forge-0.8.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/node-forge/package.json</p>

<p>

Dependency Hierarchy:

- laravel-mix-4.1.4.tgz (Root Library)

- webpack-dev-server-3.8.1.tgz

- selfsigned-1.10.6.tgz

- :x: **node-forge-0.8.2.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Forge (also called `node-forge`) is a native implementation of Transport Layer Security in JavaScript. Prior to version 1.3.0, RSA PKCS#1 v1.5 signature verification code does not check for tailing garbage bytes after decoding a `DigestInfo` ASN.1 structure. This can allow padding bytes to be removed and garbage data added to forge a signature when a low public exponent is being used. The issue has been addressed in `node-forge` version 1.3.0. There are currently no known workarounds.

<p>Publish Date: 2022-03-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-24772>CVE-2022-24772</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-24772">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-24772</a></p>

<p>Release Date: 2022-03-18</p>

<p>Fix Resolution: node-forge - 1.3.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve high detected in node forge tgz cve high severity vulnerability vulnerable library node forge tgz javascript implementations of network transports cryptography ciphers pki message digests and various utilities library home page a href path to dependency file package json path to vulnerable library node modules node forge package json dependency hierarchy laravel mix tgz root library webpack dev server tgz selfsigned tgz x node forge tgz vulnerable library vulnerability details forge also called node forge is a native implementation of transport layer security in javascript prior to version rsa pkcs signature verification code does not check for tailing garbage bytes after decoding a digestinfo asn structure this can allow padding bytes to be removed and garbage data added to forge a signature when a low public exponent is being used the issue has been addressed in node forge version there are currently no known workarounds publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution node forge step up your open source security game with whitesource | 0 |

101,867 | 31,717,746,432 | IssuesEvent | 2023-09-10 03:31:33 | TABConf/2023.tabconf.com | https://api.github.com/repos/TABConf/2023.tabconf.com | closed | TABconf hackathon by emeralize x PlebLab | Builder Days Project Accepted SM |

`author: ThrillerX` `author: Santos Hernandez`

# Description

### What is this project about? Please give us as many details as possible.

The purpose of the Hackathon is to promote innovation in the fields of Bitcoin, Lightning Network, Nostr, and AI. Our goal is to facilitate an environment that encourages collaboration, education, and the creation of new and exciting projects. We aim to grow the Lightning Network and Nostr communities by providing a platform for developers to showcase their skills and share their work. Above all, we want to have fun and celebrate bleeding-edge technology!

### Is it a FOSS or Open Source project?

Open Source, we want to work with the community on this to get their feedback and incorporate it.

### What would an attendee learn from visiting this projects table at Builder Days?

How to build Lightning Apps and then building a brand new project!

### Is there anything people should read up on before finding the table at Builder Day?

Yes, we can hook up folks with lots of course content to prepare them from nocode to writing a LN script to a full blown Lightning or Nostr App. It all just depends on how much time you want to put into it and what you want to do as well as how much experience you have.

### Relevant Links

Courses: https://emeralize.app/marketplace/

Docs: https://docs.zebedee.io

Book: https://book.pleblab.com

# Project Details

### Who will run the table? Are those people current contributors or maintainers who can answer questions and get people onboarded?

- Car Gonzalez (https://twitter.com/ThrillerX_)

- Santos Hernandez (https://twitter.com/5antoshernandez)

# Prize Pool

10K in total prizes. TBD on specifics.

# Strategy

- Focused categories for the participants. This will ensure that there is a fluid theme throughout the hackathon.

- Bitcoin

- Lightning Network

- AI (ChatGPT)

- Nostr

- Educational resources to prepare and market in advance to adequately prepare folks.

- Buy-in: 25,000 sats.

- Only new projects being worked on, the true essence and purpose of a hackathon.

# Agenda / Schedule

1. Start at the beginning of the conference - Friday

2. Pitch ideas

3. Team selection

4. Execution

5. Pitches

6. Judging

7. Announcements of the top 3 winners on mainstage.

# Value Proposition

- Promotion of the teams entering

- Promotion of the winners

- Interviews

- Trophy

- Nostr badge

- Podcasts

- Articles

- Promotion of projects

# What else you'll win

- Trophy

- Nostr badge issuance

## People

- Supertestnet

- Austin

- Car

- Santos

- Jure Grahek

## Company Sponsors

We're currently looking for sponsorship

## Space

- Full room

- Mainstage for announcement of winners

# Questions

- 25,000 sats buy-in — Would you be interested in this? The total could be included as a bonus earnings. It puts some skin in the game.

So what does everyone think? We'd love to make this happen! Let us know below!

| 1.0 | TABconf hackathon by emeralize x PlebLab -

`author: ThrillerX` `author: Santos Hernandez`

# Description

### What is this project about? Please give us as many details as possible.

The purpose of the Hackathon is to promote innovation in the fields of Bitcoin, Lightning Network, Nostr, and AI. Our goal is to facilitate an environment that encourages collaboration, education, and the creation of new and exciting projects. We aim to grow the Lightning Network and Nostr communities by providing a platform for developers to showcase their skills and share their work. Above all, we want to have fun and celebrate bleeding-edge technology!

### Is it a FOSS or Open Source project?

Open Source, we want to work with the community on this to get their feedback and incorporate it.

### What would an attendee learn from visiting this projects table at Builder Days?

How to build Lightning Apps and then building a brand new project!

### Is there anything people should read up on before finding the table at Builder Day?

Yes, we can hook up folks with lots of course content to prepare them from nocode to writing a LN script to a full blown Lightning or Nostr App. It all just depends on how much time you want to put into it and what you want to do as well as how much experience you have.

### Relevant Links

Courses: https://emeralize.app/marketplace/

Docs: https://docs.zebedee.io

Book: https://book.pleblab.com

# Project Details

### Who will run the table? Are those people current contributors or maintainers who can answer questions and get people onboarded?

- Car Gonzalez (https://twitter.com/ThrillerX_)

- Santos Hernandez (https://twitter.com/5antoshernandez)

# Prize Pool

10K in total prizes. TBD on specifics.

# Strategy

- Focused categories for the participants. This will ensure that there is a fluid theme throughout the hackathon.

- Bitcoin

- Lightning Network

- AI (ChatGPT)

- Nostr

- Educational resources to prepare and market in advance to adequately prepare folks.

- Buy-in: 25,000 sats.

- Only new projects being worked on, the true essence and purpose of a hackathon.

# Agenda / Schedule

1. Start at the beginning of the conference - Friday

2. Pitch ideas

3. Team selection

4. Execution

5. Pitches

6. Judging

7. Announcements of the top 3 winners on mainstage.

# Value Proposition

- Promotion of the teams entering

- Promotion of the winners

- Interviews

- Trophy

- Nostr badge

- Podcasts

- Articles

- Promotion of projects

# What else you'll win

- Trophy

- Nostr badge issuance

## People

- Supertestnet

- Austin

- Car

- Santos

- Jure Grahek

## Company Sponsors

We're currently looking for sponsorship

## Space

- Full room

- Mainstage for announcement of winners

# Questions

- 25,000 sats buy-in — Would you be interested in this? The total could be included as a bonus earnings. It puts some skin in the game.

So what does everyone think? We'd love to make this happen! Let us know below!

| non_main | tabconf hackathon by emeralize x pleblab author thrillerx author santos hernandez description what is this project about please give us as many details as possible the purpose of the hackathon is to promote innovation in the fields of bitcoin lightning network nostr and ai our goal is to facilitate an environment that encourages collaboration education and the creation of new and exciting projects we aim to grow the lightning network and nostr communities by providing a platform for developers to showcase their skills and share their work above all we want to have fun and celebrate bleeding edge technology is it a foss or open source project open source we want to work with the community on this to get their feedback and incorporate it what would an attendee learn from visiting this projects table at builder days how to build lightning apps and then building a brand new project is there anything people should read up on before finding the table at builder day yes we can hook up folks with lots of course content to prepare them from nocode to writing a ln script to a full blown lightning or nostr app it all just depends on how much time you want to put into it and what you want to do as well as how much experience you have relevant links courses docs book project details who will run the table are those people current contributors or maintainers who can answer questions and get people onboarded car gonzalez santos hernandez prize pool in total prizes tbd on specifics strategy focused categories for the participants this will ensure that there is a fluid theme throughout the hackathon bitcoin lightning network ai chatgpt nostr educational resources to prepare and market in advance to adequately prepare folks buy in sats only new projects being worked on the true essence and purpose of a hackathon agenda schedule start at the beginning of the conference friday pitch ideas team selection execution pitches judging announcements of the top winners on mainstage value proposition promotion of the teams entering promotion of the winners interviews trophy nostr badge podcasts articles promotion of projects what else you ll win trophy nostr badge issuance people supertestnet austin car santos jure grahek company sponsors we re currently looking for sponsorship space full room mainstage for announcement of winners questions sats buy in — would you be interested in this the total could be included as a bonus earnings it puts some skin in the game so what does everyone think we d love to make this happen let us know below | 0 |

1,871 | 6,577,493,724 | IssuesEvent | 2017-09-12 01:18:10 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | ec2_group check mode is inaccurate | affects_2.0 aws bug_report cloud feature_idea waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

ec2_group

##### ANSIBLE VERSION

```

ansible 2.0.1.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

N/A

##### SUMMARY

When running ansible with `--check`, all security groups are listed as having changes.

##### STEPS TO REPRODUCE

You should be able to reproduce this with [the example task](https://docs.ansible.com/ansible/ec2_group_module.html#examples), or anything simpler.

##### EXPECTED RESULTS

I expected the comparison to be made between the local declarations and the currently existing definitions in AWS, and only those that would normally be changed would show changes. Additionally, it'd be really nice if `--diff` produced any sort of output indicating what the diff between them is.

##### ACTUAL RESULTS

Ansible reports changes to every security group, with no additional information. Reading through [the module](https://github.com/ansible/ansible-modules-core/blob/devel/cloud/amazon/ec2_group.py), there are a bunch of places where `check_mode` just causes a conditional to be skipped, and so probably some of that logic needs to be moved out of the conditional.

| True | ec2_group check mode is inaccurate - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

ec2_group

##### ANSIBLE VERSION

```

ansible 2.0.1.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

N/A

##### SUMMARY

When running ansible with `--check`, all security groups are listed as having changes.

##### STEPS TO REPRODUCE

You should be able to reproduce this with [the example task](https://docs.ansible.com/ansible/ec2_group_module.html#examples), or anything simpler.

##### EXPECTED RESULTS

I expected the comparison to be made between the local declarations and the currently existing definitions in AWS, and only those that would normally be changed would show changes. Additionally, it'd be really nice if `--diff` produced any sort of output indicating what the diff between them is.

##### ACTUAL RESULTS

Ansible reports changes to every security group, with no additional information. Reading through [the module](https://github.com/ansible/ansible-modules-core/blob/devel/cloud/amazon/ec2_group.py), there are a bunch of places where `check_mode` just causes a conditional to be skipped, and so probably some of that logic needs to be moved out of the conditional.

| main | group check mode is inaccurate issue type feature idea component name group ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides configuration mention any settings you have changed added removed in ansible cfg or using the ansible environment variables os environment n a summary when running ansible with check all security groups are listed as having changes steps to reproduce you should be able to reproduce this with or anything simpler expected results i expected the comparison to be made between the local declarations and the currently existing definitions in aws and only those that would normally be changed would show changes additionally it d be really nice if diff produced any sort of output indicating what the diff between them is actual results ansible reports changes to every security group with no additional information reading through there are a bunch of places where check mode just causes a conditional to be skipped and so probably some of that logic needs to be moved out of the conditional | 1 |

2,966 | 10,651,165,243 | IssuesEvent | 2019-10-17 09:47:55 | valbergconsulting/bitcore-abc | https://api.github.com/repos/valbergconsulting/bitcore-abc | opened | Remove all use of CAmount | maintainance | Upstream refactored `CAmount` into a class (`Amount`). Replacing all use of CAmount with Amount would save time and reduce risk of bugs when merging upstream changes. | True | Remove all use of CAmount - Upstream refactored `CAmount` into a class (`Amount`). Replacing all use of CAmount with Amount would save time and reduce risk of bugs when merging upstream changes. | main | remove all use of camount upstream refactored camount into a class amount replacing all use of camount with amount would save time and reduce risk of bugs when merging upstream changes | 1 |

1,099 | 4,970,653,581 | IssuesEvent | 2016-12-05 16:33:55 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | ec2_elb_facts should support check mode | affects_2.2 aws bug_report cloud waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

ec2_elb_facts

##### ANSIBLE VERSION

```

ansible 2.2.0.0

config file =

configured module search path = Default w/o overrides

```

##### CONFIGURATION

*N/A*

##### OS / ENVIRONMENT

*N/A*

##### SUMMARY

Since the `ec2_elb_facts` is strictly a read-only operation, it should support running with `--check`

##### STEPS TO REPRODUCE

```sh

ansible-playbook \

-i hosts \

-l my-elb-host \

ec2_elb_facts_check.yml \

-vv \

--check

```

```yaml

- hosts: all

connection: local

gather_facts: no

tasks:

- name: Collect ELB facts

ec2_elb_facts:

names: "my-elb"

region: "us-east-1"

register: elbfacts

tags: always

```

##### EXPECTED RESULTS

It would be expected that `ec2_elb_facts` would still fetch the instance information. This being omitted, prevents the ability to enumerate ELB instance hosts, dynamically add them to the inventory, and then conduct `--check` mode against what would *actually* be getting done.

##### ACTUAL RESULTS

```

TASK [Collect ELB facts] ***********************************************

task path: /Projects/ec2_elb_facts_check.yml:6

skipping: [my-elb-host] => {

"changed": false,

"skipped": true

}

MSG:

remote module (ec2_elb_facts) does not support check mode

``` | True | ec2_elb_facts should support check mode - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

ec2_elb_facts

##### ANSIBLE VERSION

```

ansible 2.2.0.0

config file =

configured module search path = Default w/o overrides

```

##### CONFIGURATION

*N/A*

##### OS / ENVIRONMENT

*N/A*

##### SUMMARY

Since the `ec2_elb_facts` is strictly a read-only operation, it should support running with `--check`

##### STEPS TO REPRODUCE

```sh

ansible-playbook \

-i hosts \

-l my-elb-host \

ec2_elb_facts_check.yml \

-vv \

--check

```

```yaml

- hosts: all

connection: local

gather_facts: no

tasks:

- name: Collect ELB facts

ec2_elb_facts:

names: "my-elb"

region: "us-east-1"

register: elbfacts

tags: always

```

##### EXPECTED RESULTS

It would be expected that `ec2_elb_facts` would still fetch the instance information. This being omitted, prevents the ability to enumerate ELB instance hosts, dynamically add them to the inventory, and then conduct `--check` mode against what would *actually* be getting done.

##### ACTUAL RESULTS

```

TASK [Collect ELB facts] ***********************************************

task path: /Projects/ec2_elb_facts_check.yml:6

skipping: [my-elb-host] => {

"changed": false,

"skipped": true

}

MSG:

remote module (ec2_elb_facts) does not support check mode

``` | main | elb facts should support check mode issue type bug report component name elb facts ansible version ansible config file configured module search path default w o overrides configuration n a os environment n a summary since the elb facts is strictly a read only operation it should support running with check steps to reproduce sh ansible playbook i hosts l my elb host elb facts check yml vv check yaml hosts all connection local gather facts no tasks name collect elb facts elb facts names my elb region us east register elbfacts tags always expected results it would be expected that elb facts would still fetch the instance information this being omitted prevents the ability to enumerate elb instance hosts dynamically add them to the inventory and then conduct check mode against what would actually be getting done actual results task task path projects elb facts check yml skipping changed false skipped true msg remote module elb facts does not support check mode | 1 |

1,591 | 6,572,373,105 | IssuesEvent | 2017-09-11 01:48:39 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | bigip_selfip fails when using traffic_group parameter | affects_2.2 bug_report networking waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

F5 bigip (bigip_selfip)

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### F5 BIGIP LTM VERSION

```

Sys::Version

Main Package

Product BIG-IP

Version 12.1.1

Build 0.0.184

Edition Final

Date Thu Aug 11 17:09:01 PDT 2016

```

##### PYTHON VERSION

```

Python 2.7.12

```

##### CONFIGURATION

```

retry_files_enabled = False

host_key_checking=False

```

##### OS / ENVIRONMENT

Alpine 3.4 (Docker Container)

##### SUMMARY

<!--- Explain the problem briefly -->

If the `traffic_group` parameter in the `bigip_selfip` module is set to a valid traffic group that exists on the remote device such as `traffic-group-local-only` or `traffic-group-1`, the `bigip_selfip` module will always return the error: `The specified traffic group was not found`.

##### STEPS TO REPRODUCE

Create a task that uses the `bigip_selfip` module and specify a valid `traffic_group` parameter.

_NOTE: The default traffic group `traffic-group-local-only` will always exist, and the traffic group `traffic-group-1` will be automatically created during the process of configuring device HA through the HA Wizard._

```

- name: Assign Floating IP to external

bigip_selfip:

address: "1.1.1.1"

name: "external_floating"

netmask: "255.255.255.0"

password: "{{ bigip_password }}"

server: "{{ inventory_hostname }}"

traffic_group: "traffic-group-1"

user: "{{ bigip_username }}"

validate_certs: "{{ validate_certs }}"

vlan: "external"

```

##### EXPECTED RESULTS

It should set the self ip's traffic group to the valid traffic group specified.

##### ACTUAL RESULTS

The Ansible task fails with the following error: `The specified traffic group was not found`.

```

TASK [Assign Floating IP to internal] ******************************************

task path: /site/site.yaml:96

Using module file /site/library/bigip_selfip.py

<lbl11.example.com> ESTABLISH LOCAL CONNECTION FOR USER: root

<lbl11.example.com> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo $HOME/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314 `" && echo ansible-tmp-1474482164.33-122340317119314="` echo $HOME/.ansibl

e/tmp/ansible-tmp-1474482164.33-122340317119314 `" ) && sleep 0'

<lbl11.example.com> PUT /tmp/tmpo34T9a TO /root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/bigip_selfip.py

<lbl11.example.com> EXEC /bin/sh -c 'chmod u+x /root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/ /root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/bigip_selfip.py && sleep 0'

<lbl11.example.com> EXEC /bin/sh -c '/usr/bin/python /root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/bigip_selfip.py; rm -rf "/root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/" >

/dev/null 2>&1 && sleep 0'

fatal: [lbl11.example.com]: FAILED! => {

"changed": false,

"failed": true,

"invocation": {

"module_args": {

"address": "10.11.50.9",

"allow_service": null,

"name": "internal_floating",

"netmask": "255.255.254.0",

"partition": "Common",

"password": "VALUE_SPECIFIED_IN_NO_LOG_PARAMETER",

"server": "lbl11.example.com",

"server_port": 443,

"state": "present",

"traffic_group": "traffic-group-1",

"user": "VALUE_SPECIFIED_IN_NO_LOG_PARAMETER",

"validate_certs": false,

"vlan": "internal"

},

"module_name": "bigip_selfip"

},

"msg": "The specified traffic group was not found"

}

to retry, use: --limit @/site/site.retry

```

| True | bigip_selfip fails when using traffic_group parameter - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

F5 bigip (bigip_selfip)

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### F5 BIGIP LTM VERSION

```

Sys::Version

Main Package

Product BIG-IP

Version 12.1.1

Build 0.0.184

Edition Final

Date Thu Aug 11 17:09:01 PDT 2016

```

##### PYTHON VERSION

```

Python 2.7.12

```

##### CONFIGURATION

```

retry_files_enabled = False

host_key_checking=False

```

##### OS / ENVIRONMENT

Alpine 3.4 (Docker Container)

##### SUMMARY

<!--- Explain the problem briefly -->

If the `traffic_group` parameter in the `bigip_selfip` module is set to a valid traffic group that exists on the remote device such as `traffic-group-local-only` or `traffic-group-1`, the `bigip_selfip` module will always return the error: `The specified traffic group was not found`.

##### STEPS TO REPRODUCE

Create a task that uses the `bigip_selfip` module and specify a valid `traffic_group` parameter.

_NOTE: The default traffic group `traffic-group-local-only` will always exist, and the traffic group `traffic-group-1` will be automatically created during the process of configuring device HA through the HA Wizard._

```

- name: Assign Floating IP to external

bigip_selfip:

address: "1.1.1.1"

name: "external_floating"

netmask: "255.255.255.0"

password: "{{ bigip_password }}"

server: "{{ inventory_hostname }}"

traffic_group: "traffic-group-1"

user: "{{ bigip_username }}"

validate_certs: "{{ validate_certs }}"

vlan: "external"

```

##### EXPECTED RESULTS

It should set the self ip's traffic group to the valid traffic group specified.

##### ACTUAL RESULTS

The Ansible task fails with the following error: `The specified traffic group was not found`.

```

TASK [Assign Floating IP to internal] ******************************************

task path: /site/site.yaml:96

Using module file /site/library/bigip_selfip.py

<lbl11.example.com> ESTABLISH LOCAL CONNECTION FOR USER: root

<lbl11.example.com> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo $HOME/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314 `" && echo ansible-tmp-1474482164.33-122340317119314="` echo $HOME/.ansibl

e/tmp/ansible-tmp-1474482164.33-122340317119314 `" ) && sleep 0'

<lbl11.example.com> PUT /tmp/tmpo34T9a TO /root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/bigip_selfip.py

<lbl11.example.com> EXEC /bin/sh -c 'chmod u+x /root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/ /root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/bigip_selfip.py && sleep 0'

<lbl11.example.com> EXEC /bin/sh -c '/usr/bin/python /root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/bigip_selfip.py; rm -rf "/root/.ansible/tmp/ansible-tmp-1474482164.33-122340317119314/" >

/dev/null 2>&1 && sleep 0'

fatal: [lbl11.example.com]: FAILED! => {

"changed": false,

"failed": true,

"invocation": {

"module_args": {

"address": "10.11.50.9",

"allow_service": null,

"name": "internal_floating",

"netmask": "255.255.254.0",

"partition": "Common",

"password": "VALUE_SPECIFIED_IN_NO_LOG_PARAMETER",

"server": "lbl11.example.com",

"server_port": 443,

"state": "present",

"traffic_group": "traffic-group-1",

"user": "VALUE_SPECIFIED_IN_NO_LOG_PARAMETER",

"validate_certs": false,

"vlan": "internal"

},

"module_name": "bigip_selfip"

},

"msg": "The specified traffic group was not found"

}

to retry, use: --limit @/site/site.retry

```

| main | bigip selfip fails when using traffic group parameter issue type bug report component name bigip bigip selfip ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides bigip ltm version sys version main package product big ip version build edition final date thu aug pdt python version python configuration retry files enabled false host key checking false os environment alpine docker container summary if the traffic group parameter in the bigip selfip module is set to a valid traffic group that exists on the remote device such as traffic group local only or traffic group the bigip selfip module will always return the error the specified traffic group was not found steps to reproduce create a task that uses the bigip selfip module and specify a valid traffic group parameter note the default traffic group traffic group local only will always exist and the traffic group traffic group will be automatically created during the process of configuring device ha through the ha wizard name assign floating ip to external bigip selfip address name external floating netmask password bigip password server inventory hostname traffic group traffic group user bigip username validate certs validate certs vlan external expected results it should set the self ip s traffic group to the valid traffic group specified actual results the ansible task fails with the following error the specified traffic group was not found task task path site site yaml using module file site library bigip selfip py establish local connection for user root exec bin sh c umask mkdir p echo home ansible tmp ansible tmp echo ansible tmp echo home ansibl e tmp ansible tmp sleep put tmp to root ansible tmp ansible tmp bigip selfip py exec bin sh c chmod u x root ansible tmp ansible tmp root ansible tmp ansible tmp bigip selfip py sleep exec bin sh c usr bin python root ansible tmp ansible tmp bigip selfip py rm rf root ansible tmp ansible tmp dev null sleep fatal failed changed false failed true invocation module args address allow service null name internal floating netmask partition common password value specified in no log parameter server example com server port state present traffic group traffic group user value specified in no log parameter validate certs false vlan internal module name bigip selfip msg the specified traffic group was not found to retry use limit site site retry | 1 |

5,229 | 26,517,024,708 | IssuesEvent | 2023-01-18 21:45:40 | aws/aws-lambda-builders | https://api.github.com/repos/aws/aws-lambda-builders | closed | Bug: recursively copies artifact dir when it's in source dir | type/feature maintainer/need-followup | Recursively copies artifact dir when it's in source dir until it errors out:

JSON-RPC input:

```json

{

...

"source_dir": "path/to/source",

"artifacts_dir": "path/to/source/.build/artifact",

...

}

```

Then running `lambda-builders <JSON INPUT>`

Results in

```bash

PythonPipBuilder:CopySource - [Errno 63] File name too long: '/path/to/source/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/python_dateutil-2.8.2.dist-info/top_level.txt'

```

Setting `artifact_dir` outside the `source_dir` solves this issue.

----

**Is this a bug, or is this expected? Perhaps we should add `ignore: {}` interface? I can contribute a PR for this if you guys can point me in the right direction.**

----

#### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Mac

2. If using SAM CLI, `sam --version`:

3. AWS region: us-east-1 (shouldn't matter)

`Add --debug flag to any SAM CLI commands you are running`

| True | Bug: recursively copies artifact dir when it's in source dir - Recursively copies artifact dir when it's in source dir until it errors out:

JSON-RPC input:

```json

{

...

"source_dir": "path/to/source",

"artifacts_dir": "path/to/source/.build/artifact",

...

}

```

Then running `lambda-builders <JSON INPUT>`

Results in

```bash

PythonPipBuilder:CopySource - [Errno 63] File name too long: '/path/to/source/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/.build/artifact/python_dateutil-2.8.2.dist-info/top_level.txt'

```

Setting `artifact_dir` outside the `source_dir` solves this issue.

----

**Is this a bug, or is this expected? Perhaps we should add `ignore: {}` interface? I can contribute a PR for this if you guys can point me in the right direction.**

----

#### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Mac

2. If using SAM CLI, `sam --version`:

3. AWS region: us-east-1 (shouldn't matter)

`Add --debug flag to any SAM CLI commands you are running`

| main | bug recursively copies artifact dir when it s in source dir recursively copies artifact dir when it s in source dir until it errors out json rpc input json source dir path to source artifacts dir path to source build artifact then running lambda builders results in bash pythonpipbuilder copysource file name too long path to source build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact build artifact python dateutil dist info top level txt setting artifact dir outside the source dir solves this issue is this a bug or is this expected perhaps we should add ignore interface i can contribute a pr for this if you guys can point me in the right direction additional environment details ex windows mac amazon linux etc os mac if using sam cli sam version aws region us east shouldn t matter add debug flag to any sam cli commands you are running | 1 |

119,987 | 4,778,758,126 | IssuesEvent | 2016-10-27 20:18:57 | easydigitaldownloads/easy-digital-downloads | https://api.github.com/repos/easydigitaldownloads/easy-digital-downloads | closed | problems with multiple instances of the cart widget | Bug Frontend Priority: Medium | So it appears that if more than one cart widget is on a page, the second (and beyond) widget in the HTML flow will not display or behave properly. Here's what I see so far:

* The second widget [specifically] does not display a title: http://glui.me/?i=ypc8f0vqffffcgv/2015-07-09_at_12.56_PM.png/

* Once you add an item to the cart and the widget updates (NO page refresh), the cart items are missing from the second widget and beyond: http://glui.me/?i=ga7qhlotrlt6zo9/2015-07-09_at_12.58_PM.png/

* Refresh the page and the cart items show up (still not second widget title): http://glui.me/?i=ztc92e627eq3pwo/2015-07-09_at_12.59_PM.png/ | 1.0 | problems with multiple instances of the cart widget - So it appears that if more than one cart widget is on a page, the second (and beyond) widget in the HTML flow will not display or behave properly. Here's what I see so far:

* The second widget [specifically] does not display a title: http://glui.me/?i=ypc8f0vqffffcgv/2015-07-09_at_12.56_PM.png/

* Once you add an item to the cart and the widget updates (NO page refresh), the cart items are missing from the second widget and beyond: http://glui.me/?i=ga7qhlotrlt6zo9/2015-07-09_at_12.58_PM.png/

* Refresh the page and the cart items show up (still not second widget title): http://glui.me/?i=ztc92e627eq3pwo/2015-07-09_at_12.59_PM.png/ | non_main | problems with multiple instances of the cart widget so it appears that if more than one cart widget is on a page the second and beyond widget in the html flow will not display or behave properly here s what i see so far the second widget does not display a title once you add an item to the cart and the widget updates no page refresh the cart items are missing from the second widget and beyond refresh the page and the cart items show up still not second widget title | 0 |

99,060 | 30,268,069,549 | IssuesEvent | 2023-07-07 13:23:20 | cms-sw/cmssw | https://api.github.com/repos/cms-sw/cmssw | closed | Build CMSSW_13_0_10 | release-notes-requested release-announced release-build-request slc7_amd64_gcc11-finished el8_amd64_gcc11-finished el8_aarch64_gcc11-finished el8_ppc64le_gcc11-finished el9_amd64_gcc11-finished | To start the MC production campaign for Run3 2023

The build will go in parallel with the IB tests in CMSSW_13_0_X_2023-07-05-1100, to speed up the procedure: the release will get uploaded only if those tests show no issues. | 1.0 | Build CMSSW_13_0_10 - To start the MC production campaign for Run3 2023

The build will go in parallel with the IB tests in CMSSW_13_0_X_2023-07-05-1100, to speed up the procedure: the release will get uploaded only if those tests show no issues. | non_main | build cmssw to start the mc production campaign for the build will go in parallel with the ib tests in cmssw x to speed up the procedure the release will get uploaded only if those tests show no issues | 0 |

2,368 | 8,470,346,938 | IssuesEvent | 2018-10-24 03:45:47 | AllAlgorithms/cpp | https://api.github.com/repos/AllAlgorithms/cpp | opened | Looking for a new C++ maintainer. | Hacktoberfest help wanted looking for maintainers 🙈 | Since we are a small team reviewing pull requests, we are looking a C++ maintainer to resolve the Outstanding pull requests:

Requirements:

- At least 6 month on Github (Experience reviewig code, etc..)

- Previus C++ Knowladge.

- Decided to review and work on issues at least every 3 days.

- Open source enthusiast

- Must start this project :)

How to apply? Please Join our [Gitter Chat](https://gitter.im/allalgorithms/cpp), and let me know in private or here! | True | Looking for a new C++ maintainer. - Since we are a small team reviewing pull requests, we are looking a C++ maintainer to resolve the Outstanding pull requests:

Requirements:

- At least 6 month on Github (Experience reviewig code, etc..)

- Previus C++ Knowladge.

- Decided to review and work on issues at least every 3 days.

- Open source enthusiast

- Must start this project :)

How to apply? Please Join our [Gitter Chat](https://gitter.im/allalgorithms/cpp), and let me know in private or here! | main | looking for a new c maintainer since we are a small team reviewing pull requests we are looking a c maintainer to resolve the outstanding pull requests requirements at least month on github experience reviewig code etc previus c knowladge decided to review and work on issues at least every days open source enthusiast must start this project how to apply please join our and let me know in private or here | 1 |

98,112 | 11,045,380,040 | IssuesEvent | 2019-12-09 15:01:08 | 18F/dtmo-ei | https://api.github.com/repos/18F/dtmo-ei | closed | DTMO – Mid-Point Check-In | Epic documentation | As ```a representative of DTMO``` I want to know ```what the 18F has been and is up to``` in order to ```assess if they are providing value to our organization and fulfilling the scope.``` | 1.0 | DTMO – Mid-Point Check-In - As ```a representative of DTMO``` I want to know ```what the 18F has been and is up to``` in order to ```assess if they are providing value to our organization and fulfilling the scope.``` | non_main | dtmo – mid point check in as a representative of dtmo i want to know what the has been and is up to in order to assess if they are providing value to our organization and fulfilling the scope | 0 |

3,559 | 14,237,307,961 | IssuesEvent | 2020-11-18 17:03:14 | backdrop-ops/contrib | https://api.github.com/repos/backdrop-ops/contrib | closed | Permissions change request: Search API | Maintainer change request | Can someone with appropriate permissions adjust the Github settings for `search_api`? @earlyburg is listed as a maintainer at this point but does not have write access. See:

https://github.com/backdrop-contrib/search_api/pull/11#issuecomment-727858612

Also, less urgently, he is not a maintainer on Entity Plus but indicates he does have write access there. | True | Permissions change request: Search API - Can someone with appropriate permissions adjust the Github settings for `search_api`? @earlyburg is listed as a maintainer at this point but does not have write access. See:

https://github.com/backdrop-contrib/search_api/pull/11#issuecomment-727858612

Also, less urgently, he is not a maintainer on Entity Plus but indicates he does have write access there. | main | permissions change request search api can someone with appropriate permissions adjust the github settings for search api earlyburg is listed as a maintainer at this point but does not have write access see also less urgently he is not a maintainer on entity plus but indicates he does have write access there | 1 |

4,729 | 24,411,872,431 | IssuesEvent | 2022-10-05 13:03:11 | coq/platform | https://api.github.com/repos/coq/platform | closed | Add MathComp Word to the Coq Platform | kind: package inclusion approval: has maintainer agreement | [MathComp Word](https://github.com/jasmin-lang/coqword) is a Coq library on machine words based on Mathematical Components. It is a core dependency of [SSProve](https://github.com/SSProve/ssprove) (a [candidate](https://github.com/coq/platform/issues/177) to join the Platform) and other projects such as the [Jasmin compiler](https://github.com/jasmin-lang/jasmin). Machine words are a frequently formalized concept useful in many verification projects.

To allow SSProve and other projects to more easily use MathComp Word, and based on [this discussion on Zulip](https://coq.zulipchat.com/#narrow/stream/237977-Coq-users/topic/Word.20libraries.20and.20duplication/near/289583415), I propose that MathComp Word is added to the Coq Platform.

The primary maintainers of MathComp Word are @vbgl and @strub. In accordance with the [Platform package inclusion process](https://github.com/coq/platform/blob/main/charter.md#package-inclusion-process), we would like for them to comment here that they agree on including the library. In practice, this means committing to making a Git tag for every major Coq release in the GitHub repository (this tag is then ideally packaged in Coq opam repository).

cc: @spitters @gares (can this package use the `coq-math-comp-` prefix?) | True | Add MathComp Word to the Coq Platform - [MathComp Word](https://github.com/jasmin-lang/coqword) is a Coq library on machine words based on Mathematical Components. It is a core dependency of [SSProve](https://github.com/SSProve/ssprove) (a [candidate](https://github.com/coq/platform/issues/177) to join the Platform) and other projects such as the [Jasmin compiler](https://github.com/jasmin-lang/jasmin). Machine words are a frequently formalized concept useful in many verification projects.

To allow SSProve and other projects to more easily use MathComp Word, and based on [this discussion on Zulip](https://coq.zulipchat.com/#narrow/stream/237977-Coq-users/topic/Word.20libraries.20and.20duplication/near/289583415), I propose that MathComp Word is added to the Coq Platform.

The primary maintainers of MathComp Word are @vbgl and @strub. In accordance with the [Platform package inclusion process](https://github.com/coq/platform/blob/main/charter.md#package-inclusion-process), we would like for them to comment here that they agree on including the library. In practice, this means committing to making a Git tag for every major Coq release in the GitHub repository (this tag is then ideally packaged in Coq opam repository).

cc: @spitters @gares (can this package use the `coq-math-comp-` prefix?) | main | add mathcomp word to the coq platform is a coq library on machine words based on mathematical components it is a core dependency of a to join the platform and other projects such as the machine words are a frequently formalized concept useful in many verification projects to allow ssprove and other projects to more easily use mathcomp word and based on i propose that mathcomp word is added to the coq platform the primary maintainers of mathcomp word are vbgl and strub in accordance with the we would like for them to comment here that they agree on including the library in practice this means committing to making a git tag for every major coq release in the github repository this tag is then ideally packaged in coq opam repository cc spitters gares can this package use the coq math comp prefix | 1 |

20,229 | 3,317,720,833 | IssuesEvent | 2015-11-06 23:16:42 | spockframework/spock | https://api.github.com/repos/spockframework/spock | closed | Cannot resolve symbol from where: section after instanceof | Module-Core not a bug Status-New Type-Defect | Originally reported on Google Code with ID 343

```

I want to do something like this:

@Unroll

def " should contain #clazz.getSimpleName() bean"() {

given:

builder.build()

when:

def component = builder.getContainerComponent(clazz)

then:

component instanceof clazz

where:

clazz << [Javers, EntityManager, TypeMapper, DiffFactory]

}

but in line 10 i have compilation problem. The IntelliJ tell's me that cannot resolve

symbol clazz, but when i use Assertj and do something like this (in line 10):

assertThat(component) isInstanceOf(clazz)

it works ;)

I try in Intellij 12 and 13.

What version of Spock and Groovy are you using?

0.7-groovy-2.0

```

Reported by `pawel.szymczyk90` on 2014-01-31 18:50:06

| 1.0 | Cannot resolve symbol from where: section after instanceof - Originally reported on Google Code with ID 343

```

I want to do something like this:

@Unroll

def " should contain #clazz.getSimpleName() bean"() {

given:

builder.build()

when:

def component = builder.getContainerComponent(clazz)

then:

component instanceof clazz

where:

clazz << [Javers, EntityManager, TypeMapper, DiffFactory]

}

but in line 10 i have compilation problem. The IntelliJ tell's me that cannot resolve

symbol clazz, but when i use Assertj and do something like this (in line 10):

assertThat(component) isInstanceOf(clazz)

it works ;)

I try in Intellij 12 and 13.

What version of Spock and Groovy are you using?

0.7-groovy-2.0

```

Reported by `pawel.szymczyk90` on 2014-01-31 18:50:06

| non_main | cannot resolve symbol from where section after instanceof originally reported on google code with id i want to do something like this unroll def should contain clazz getsimplename bean given builder build when def component builder getcontainercomponent clazz then component instanceof clazz where clazz but in line i have compilation problem the intellij tell s me that cannot resolve symbol clazz but when i use assertj and do something like this in line assertthat component isinstanceof clazz it works i try in intellij and what version of spock and groovy are you using groovy reported by pawel on | 0 |

1,499 | 6,488,377,257 | IssuesEvent | 2017-08-20 16:29:32 | ocaml/opam-repository | https://api.github.com/repos/ocaml/opam-repository | closed | ocamlfind fails to compile with jocaml switch | bug needs admin action needs maintainer action | When installing oasis with the jocaml switch, I get the following error message:

~~~~

[pkl@phi ocamlec]$ opam switch 4.01.0+jocaml

[pkl@phi ocamlec]$ opam install oasis

The following actions will be performed:

∗ install ocamlfind 1.7.1 [required by oasis]

∗ install ocamlmod 0.0.8 [required by oasis]

∗ install ocamlify 0.0.1 [required by oasis]

∗ install oasis 0.4.8

===== ∗ 4 =====

Do you want to continue ? [Y/n] y

=-=- Gathering sources =-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

[oasis] Archive in cache

[ocamlfind] Archive in cache

[ocamlify] Archive in cache

[ocamlmod] Archive in cache

=-=- Processing actions -=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

[ERROR] The compilation of ocamlfind failed at "make install".

Processing 1/4: [ocamlfind: make uninstall]

#=== ERROR while installing ocamlfind.1.7.1 ===================================#

# opam-version 1.2.2

# os linux

# command make install

# path /home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1

# compiler 4.01.0+jocaml

# exit-code 2

# env-file /home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/ocamlfind-13726-a00279.env

# stdout-file /home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/ocamlfind-13726-a00279.out

# stderr-file /home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/ocamlfind-13726-a00279.err

### stdout ###

# [...]

# make[1]: Leaving directory '/home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1'

# for p in findlib; do ( cd src/$p; make install ); done

# make[1]: Entering directory '/home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/src/findlib'

# ocamldep *.ml *.mli >depend

# mkdir -p "/home/pkl/.opam/4.01.0+jocaml/lib/findlib"

# mkdir -p "/home/pkl/.opam/4.01.0+jocaml/bin"

# test 1 -eq 0 || cp topfind "/usr/lib/ocaml"

# Makefile:122: recipe for target 'install' failed

# make[1]: Leaving directory '/home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/src/findlib'

# Makefile:20: recipe for target 'install' failed

### stderr ###

# cp: cannot create regular file '/usr/lib/ocaml/topfind': Permission denied

# make[1]: *** [install] Error 1

# make: *** [install] Error 2

=-=- Error report -=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

The following actions were aborted

∗ install oasis 0.4.8

∗ install ocamlify 0.0.1

∗ install ocamlmod 0.0.8

The following actions failed

∗ install ocamlfind 1.7.1

No changes have been performed

~~~~ | True | ocamlfind fails to compile with jocaml switch - When installing oasis with the jocaml switch, I get the following error message:

~~~~

[pkl@phi ocamlec]$ opam switch 4.01.0+jocaml

[pkl@phi ocamlec]$ opam install oasis

The following actions will be performed:

∗ install ocamlfind 1.7.1 [required by oasis]

∗ install ocamlmod 0.0.8 [required by oasis]

∗ install ocamlify 0.0.1 [required by oasis]

∗ install oasis 0.4.8

===== ∗ 4 =====

Do you want to continue ? [Y/n] y

=-=- Gathering sources =-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

[oasis] Archive in cache

[ocamlfind] Archive in cache

[ocamlify] Archive in cache

[ocamlmod] Archive in cache

=-=- Processing actions -=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=-=

[ERROR] The compilation of ocamlfind failed at "make install".

Processing 1/4: [ocamlfind: make uninstall]

#=== ERROR while installing ocamlfind.1.7.1 ===================================#

# opam-version 1.2.2

# os linux

# command make install

# path /home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1

# compiler 4.01.0+jocaml

# exit-code 2

# env-file /home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/ocamlfind-13726-a00279.env

# stdout-file /home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/ocamlfind-13726-a00279.out

# stderr-file /home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/ocamlfind-13726-a00279.err

### stdout ###

# [...]

# make[1]: Leaving directory '/home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1'

# for p in findlib; do ( cd src/$p; make install ); done

# make[1]: Entering directory '/home/pkl/.opam/4.01.0+jocaml/build/ocamlfind.1.7.1/src/findlib'

# ocamldep *.ml *.mli >depend

# mkdir -p "/home/pkl/.opam/4.01.0+jocaml/lib/findlib"

# mkdir -p "/home/pkl/.opam/4.01.0+jocaml/bin"