Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

3,092 | 11,741,740,317 | IssuesEvent | 2020-03-11 22:32:02 | alacritty/alacritty | https://api.github.com/repos/alacritty/alacritty | closed | Mouse cursor rendered in low-res on a HiDPI Wayland output | A - deps B - bug C - waiting on maintainer DS - Wayland H - linux S - winit/glutin | > Which operating system does the issue occur on?

Linux

> If on linux, are you using X11 or Wayland?

Wayland, Sway git master roughly equivalent to 1.1rc2.

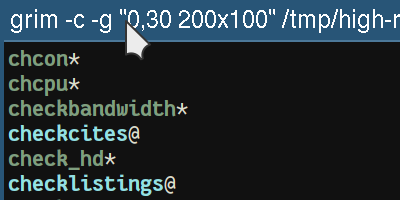

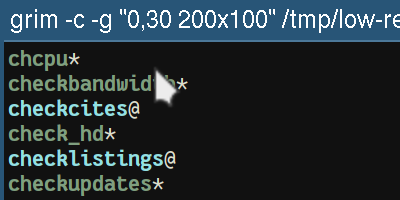

On a HiDPI output, with scaling set to 2, the mouse cursor set by Alacritty is a low-res one. I.e. it is probably rendered to a surface that has **not** had its buffer scale set. This means the compositor (Sway in this case) will scale it.

See the difference below. When the cursor is hovering over the title bar, it is rendered by Sway itself, in high-res. When it's hovering over the window content, it is rendered in low-res.

I realize it's likely this isn't a bug in Alacritty per sé, but in one of its (wayland) dependencies. | True | Mouse cursor rendered in low-res on a HiDPI Wayland output - > Which operating system does the issue occur on?

Linux

> If on linux, are you using X11 or Wayland?

Wayland, Sway git master roughly equivalent to 1.1rc2.

On a HiDPI output, with scaling set to 2, the mouse cursor set by Alacritty is a low-res one. I.e. it is probably rendered to a surface that has **not** had its buffer scale set. This means the compositor (Sway in this case) will scale it.

See the difference below. When the cursor is hovering over the title bar, it is rendered by Sway itself, in high-res. When it's hovering over the window content, it is rendered in low-res.

I realize it's likely this isn't a bug in Alacritty per sé, but in one of its (wayland) dependencies. | main | mouse cursor rendered in low res on a hidpi wayland output which operating system does the issue occur on linux if on linux are you using or wayland wayland sway git master roughly equivalent to on a hidpi output with scaling set to the mouse cursor set by alacritty is a low res one i e it is probably rendered to a surface that has not had its buffer scale set this means the compositor sway in this case will scale it see the difference below when the cursor is hovering over the title bar it is rendered by sway itself in high res when it s hovering over the window content it is rendered in low res i realize it s likely this isn t a bug in alacritty per sé but in one of its wayland dependencies | 1 |

5,488 | 27,401,720,167 | IssuesEvent | 2023-03-01 01:31:51 | aws/serverless-application-model | https://api.github.com/repos/aws/serverless-application-model | closed | Failed to publish to SAR with Error: ResultPath being null | type/bug area/step-function area/sar maintainer/need-followup | **Description:** If you deploy a `AWS::Serverless::StateMachine` with the AWS SAM CLI it works great, but you cannot publish this app/stack to SAR.

**Steps to reproduce the issue:**

1. Define a simple app with a `AWS::Serverless::StateMachine` in it

2. Run `sam package` and `sam publish`

**Observed result:**

App publication fails with this error:

> Error: SAM template is invalid. It cannot be deployed using AWS CloudFormation due to the following validation error: /Resources/powerTuningStateMachine/Type/Definition/States/Cleaner/ResultPath 'null' values are not allowed in templates

**Expected result:**

I'd expect to app to be published successfully, or at least I'd expect SAM CLI to provide a meaningful error.

| True | Failed to publish to SAR with Error: ResultPath being null - **Description:** If you deploy a `AWS::Serverless::StateMachine` with the AWS SAM CLI it works great, but you cannot publish this app/stack to SAR.

**Steps to reproduce the issue:**

1. Define a simple app with a `AWS::Serverless::StateMachine` in it

2. Run `sam package` and `sam publish`

**Observed result:**

App publication fails with this error:

> Error: SAM template is invalid. It cannot be deployed using AWS CloudFormation due to the following validation error: /Resources/powerTuningStateMachine/Type/Definition/States/Cleaner/ResultPath 'null' values are not allowed in templates

**Expected result:**

I'd expect to app to be published successfully, or at least I'd expect SAM CLI to provide a meaningful error.

| main | failed to publish to sar with error resultpath being null description if you deploy a aws serverless statemachine with the aws sam cli it works great but you cannot publish this app stack to sar steps to reproduce the issue define a simple app with a aws serverless statemachine in it run sam package and sam publish observed result app publication fails with this error error sam template is invalid it cannot be deployed using aws cloudformation due to the following validation error resources powertuningstatemachine type definition states cleaner resultpath null values are not allowed in templates expected result i d expect to app to be published successfully or at least i d expect sam cli to provide a meaningful error | 1 |

5,232 | 26,534,839,415 | IssuesEvent | 2023-01-19 14:58:55 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | closed | Upgrade `wagtail-inventory` to 1.6 | engineering maintain | ## Description

To unblock the upgrade of Wagtail to version 3.0 we need to upgrade `wagtail-inventory` to version 1.6.

See also: https://github.com/cfpb/wagtail-inventory/releases/tag/1.6

## Acceptance criteria

- [x] `wagtail-inventory` is upgraded to version 1.6 | True | Upgrade `wagtail-inventory` to 1.6 - ## Description

To unblock the upgrade of Wagtail to version 3.0 we need to upgrade `wagtail-inventory` to version 1.6.

See also: https://github.com/cfpb/wagtail-inventory/releases/tag/1.6

## Acceptance criteria

- [x] `wagtail-inventory` is upgraded to version 1.6 | main | upgrade wagtail inventory to description to unblock the upgrade of wagtail to version we need to upgrade wagtail inventory to version see also acceptance criteria wagtail inventory is upgraded to version | 1 |

78,413 | 22,264,383,484 | IssuesEvent | 2022-06-10 05:45:17 | foundry-rs/foundry | https://api.github.com/repos/foundry-rs/foundry | closed | Feature: SMTChecker support (a.k.a support all possible outputs) | T-feature C-forge P-normal Cmd-forge-build | ### Component

Forge

### Describe the feature you would like

As of 0.8.4, solidity is depreciating the experimental pragma for SMT Checker as it runs on all files if enabled

```solidity

pragma experimental SMTChecker;

```

The new way to configure this is through the JSON config file

```json

"settings.modelChecker.targets": ["underflow", "overflow"]

```

You have to define which _engine_ it should run as well, [see https://docs.soliditylang.org/en/v0.8.11/smtchecker.html#smtchecker-engines](https://docs.soliditylang.org/en/v0.8.11/smtchecker.html#smtchecker-engines)

```json

"settings.modelChecker.solvers": ["smtlib2","z3"]

```

```

settings.modelChecker.targets=<targets>

```

And what targets to check

```

: --model-checker-targets assert,overflow

```

These options are not configurable currently for forge

Additional benefits would be when using the yul optimizer you would have access to the `ReasoningBasedSimplifier`

### Additional context

Additionally custom natspec parsing would help in tests

```js

/// @custom:smtchecker

```

maybe can be expanded to slither other tools, etc | 1.0 | Feature: SMTChecker support (a.k.a support all possible outputs) - ### Component

Forge

### Describe the feature you would like

As of 0.8.4, solidity is depreciating the experimental pragma for SMT Checker as it runs on all files if enabled

```solidity

pragma experimental SMTChecker;

```

The new way to configure this is through the JSON config file

```json

"settings.modelChecker.targets": ["underflow", "overflow"]

```

You have to define which _engine_ it should run as well, [see https://docs.soliditylang.org/en/v0.8.11/smtchecker.html#smtchecker-engines](https://docs.soliditylang.org/en/v0.8.11/smtchecker.html#smtchecker-engines)

```json

"settings.modelChecker.solvers": ["smtlib2","z3"]

```

```

settings.modelChecker.targets=<targets>

```

And what targets to check

```

: --model-checker-targets assert,overflow

```

These options are not configurable currently for forge

Additional benefits would be when using the yul optimizer you would have access to the `ReasoningBasedSimplifier`

### Additional context

Additionally custom natspec parsing would help in tests

```js

/// @custom:smtchecker

```

maybe can be expanded to slither other tools, etc | non_main | feature smtchecker support a k a support all possible outputs component forge describe the feature you would like as of solidity is depreciating the experimental pragma for smt checker as it runs on all files if enabled solidity pragma experimental smtchecker the new way to configure this is through the json config file json settings modelchecker targets you have to define which engine it should run as well json settings modelchecker solvers settings modelchecker targets and what targets to check model checker targets assert overflow these options are not configurable currently for forge additional benefits would be when using the yul optimizer you would have access to the reasoningbasedsimplifier additional context additionally custom natspec parsing would help in tests js custom smtchecker maybe can be expanded to slither other tools etc | 0 |

290,497 | 21,882,612,196 | IssuesEvent | 2022-05-19 15:30:43 | KristyNerhaugen/password-generator | https://api.github.com/repos/KristyNerhaugen/password-generator | closed | Character Types | documentation | WHEN prompted for character types to include in the password

THEN I choose lowercase, uppercase, numeric, and/or special characters | 1.0 | Character Types - WHEN prompted for character types to include in the password

THEN I choose lowercase, uppercase, numeric, and/or special characters | non_main | character types when prompted for character types to include in the password then i choose lowercase uppercase numeric and or special characters | 0 |

4,909 | 25,249,758,264 | IssuesEvent | 2022-11-15 13:53:17 | precice/precice | https://api.github.com/repos/precice/precice | closed | Implement constants of actions as different data type (e.g. enum class instead of std::string) | enhancement maintainability | I propose the change of the API for clearer and cleaner to move from `std::string` as identifier for actions to `enum class` or something similar in [`src/cplscheme/Constants.cpp`](https://github.com/precice/precice/blob/bc27c26d195185e61663f580d469399c6587010f/src/cplscheme/Constants.cpp).

Major improvements:

- It limits the number of available actions in a "natural" way. An `enum class` can only take certain, pre-defined values while the `std::string` could take arbitrary values of arbitrary length.

- It separates description of what it is (an action) and what it does (writes initial data, e.g.) in a clean way. The `enum class` is called `Actions` and its members/values describe the actual action performed/checked for.

- Minimizes risk of forgetting to account for an action. One can use the `switch` statement and the compiler has the chance to warn you about handing other actions. When using the definition of `Actions` mentioned below the following code would produce a compiler warning:

```c++

bool isActionRequired( const constants::Actions action) const {

switch (action) {

case Actions::writeInitialData: { //do something }

//Oh no! We forgot to handle other actions.

}

}

```

Minor improvements:

- Comparison should be cheaper than for strings (probably not a performance concern at the moment)

- For me personally it feels more correct in C++ to use `enum class`.

Drawback:

It will break compatibility with the API and thus is probably something for preCICE 3.0. However, one could introduce it in a future release to enable both, the `std::string`-based and the `enum class`-based version and phase out the old approach slowly.

The solution I would propose (I am playing with it myself at the moment) would look like that:

```c++

enum class Actions {

writeInitialData,

writeIterationCheckpoint,

readIterationCheckpoint

};

``` | True | Implement constants of actions as different data type (e.g. enum class instead of std::string) - I propose the change of the API for clearer and cleaner to move from `std::string` as identifier for actions to `enum class` or something similar in [`src/cplscheme/Constants.cpp`](https://github.com/precice/precice/blob/bc27c26d195185e61663f580d469399c6587010f/src/cplscheme/Constants.cpp).

Major improvements:

- It limits the number of available actions in a "natural" way. An `enum class` can only take certain, pre-defined values while the `std::string` could take arbitrary values of arbitrary length.

- It separates description of what it is (an action) and what it does (writes initial data, e.g.) in a clean way. The `enum class` is called `Actions` and its members/values describe the actual action performed/checked for.

- Minimizes risk of forgetting to account for an action. One can use the `switch` statement and the compiler has the chance to warn you about handing other actions. When using the definition of `Actions` mentioned below the following code would produce a compiler warning:

```c++

bool isActionRequired( const constants::Actions action) const {

switch (action) {

case Actions::writeInitialData: { //do something }

//Oh no! We forgot to handle other actions.

}

}

```

Minor improvements:

- Comparison should be cheaper than for strings (probably not a performance concern at the moment)

- For me personally it feels more correct in C++ to use `enum class`.

Drawback:

It will break compatibility with the API and thus is probably something for preCICE 3.0. However, one could introduce it in a future release to enable both, the `std::string`-based and the `enum class`-based version and phase out the old approach slowly.

The solution I would propose (I am playing with it myself at the moment) would look like that:

```c++

enum class Actions {

writeInitialData,

writeIterationCheckpoint,

readIterationCheckpoint

};

``` | main | implement constants of actions as different data type e g enum class instead of std string i propose the change of the api for clearer and cleaner to move from std string as identifier for actions to enum class or something similar in major improvements it limits the number of available actions in a natural way an enum class can only take certain pre defined values while the std string could take arbitrary values of arbitrary length it separates description of what it is an action and what it does writes initial data e g in a clean way the enum class is called actions and its members values describe the actual action performed checked for minimizes risk of forgetting to account for an action one can use the switch statement and the compiler has the chance to warn you about handing other actions when using the definition of actions mentioned below the following code would produce a compiler warning c bool isactionrequired const constants actions action const switch action case actions writeinitialdata do something oh no we forgot to handle other actions minor improvements comparison should be cheaper than for strings probably not a performance concern at the moment for me personally it feels more correct in c to use enum class drawback it will break compatibility with the api and thus is probably something for precice however one could introduce it in a future release to enable both the std string based and the enum class based version and phase out the old approach slowly the solution i would propose i am playing with it myself at the moment would look like that c enum class actions writeinitialdata writeiterationcheckpoint readiterationcheckpoint | 1 |

5,829 | 21,333,609,143 | IssuesEvent | 2022-04-18 11:51:45 | red-hat-storage/ocs-ci | https://api.github.com/repos/red-hat-storage/ocs-ci | opened | Wait for project selection is namespace drop-down on OCP console | bug ui_automation | Issue seen with Run `1649886578`

Also take screenshots on important pages. | 1.0 | Wait for project selection is namespace drop-down on OCP console - Issue seen with Run `1649886578`

Also take screenshots on important pages. | non_main | wait for project selection is namespace drop down on ocp console issue seen with run also take screenshots on important pages | 0 |

798 | 4,415,180,075 | IssuesEvent | 2016-08-13 22:26:43 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | Optional Parameter called Source for win_feature | feature_idea waiting_on_maintainer windows | Issue Type:

Feature Idea

Component Name:

win_feature

Ansible Version: 1.9.2

Ansible Configuration:

Stock install with Extra's modules.

Environment:

Windows 2012 R2

Summary:

When trying to add a new feature like DotNet 3.5 core (feature is called 'NET-Framework-Core') to Win 2012 R2, it fails because 'NET-Framework-Core' did not exist on the box natively or was removed by a Windows Security Update. For it to install successfully, you need to be able to pass an argument called "source" to win_feature ie: D:\sources\sxs or \\IP\Share\sources\sxs so that it can pass it onto the cmdlet install-windowsfeature.

Steps To Reproduce:

On a Windows 2012 R2 Machine without DotNet 3.5 source, run the following:

ansible -m win_feature -a "name=NET-Framework-Core" windowsvms

Expected Results:

TASK: [Install DotNet Framework 3.5 Feature] **********************************

ok: [site-06]

ok: [site-05]

changed: [site-07]

Actual Results:

TASK: [Install DotNet Framework 3.5 Feature] **********************************

ok: [site-06]

ok: [site-05]

failed: [site-07] => {"changed": false, "exitcode": "Failed", "failed": true, "feature_result": [], "restart_needed": false, "success": false}

msg: Failed to add feature

If I get time tonight I will fix win_feature.ps1 and post the changes. | True | Optional Parameter called Source for win_feature - Issue Type:

Feature Idea

Component Name:

win_feature

Ansible Version: 1.9.2

Ansible Configuration:

Stock install with Extra's modules.

Environment:

Windows 2012 R2

Summary:

When trying to add a new feature like DotNet 3.5 core (feature is called 'NET-Framework-Core') to Win 2012 R2, it fails because 'NET-Framework-Core' did not exist on the box natively or was removed by a Windows Security Update. For it to install successfully, you need to be able to pass an argument called "source" to win_feature ie: D:\sources\sxs or \\IP\Share\sources\sxs so that it can pass it onto the cmdlet install-windowsfeature.

Steps To Reproduce:

On a Windows 2012 R2 Machine without DotNet 3.5 source, run the following:

ansible -m win_feature -a "name=NET-Framework-Core" windowsvms

Expected Results:

TASK: [Install DotNet Framework 3.5 Feature] **********************************

ok: [site-06]

ok: [site-05]

changed: [site-07]

Actual Results:

TASK: [Install DotNet Framework 3.5 Feature] **********************************

ok: [site-06]

ok: [site-05]

failed: [site-07] => {"changed": false, "exitcode": "Failed", "failed": true, "feature_result": [], "restart_needed": false, "success": false}

msg: Failed to add feature

If I get time tonight I will fix win_feature.ps1 and post the changes. | main | optional parameter called source for win feature issue type feature idea component name win feature ansible version ansible configuration stock install with extra s modules environment windows summary when trying to add a new feature like dotnet core feature is called net framework core to win it fails because net framework core did not exist on the box natively or was removed by a windows security update for it to install successfully you need to be able to pass an argument called source to win feature ie d sources sxs or ip share sources sxs so that it can pass it onto the cmdlet install windowsfeature steps to reproduce on a windows machine without dotnet source run the following ansible m win feature a name net framework core windowsvms expected results task ok ok changed actual results task ok ok failed changed false exitcode failed failed true feature result restart needed false success false msg failed to add feature if i get time tonight i will fix win feature and post the changes | 1 |

760 | 4,357,366,964 | IssuesEvent | 2016-08-02 01:25:35 | duckduckgo/zeroclickinfo-goodies | https://api.github.com/repos/duckduckgo/zeroclickinfo-goodies | closed | Dice: "Roll a dice" search doesn't trigger Instant Answer | Improvement Maintainer Approved Needs a Developer PR Received | Even though 'Dice' is the plural of 'Die' and "roll 'a' dice" is not entirely valid, 'roll a dice' should return the result of "roll a die".

------

IA Page: http://duck.co/ia/view/dice

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @loganom | True | Dice: "Roll a dice" search doesn't trigger Instant Answer - Even though 'Dice' is the plural of 'Die' and "roll 'a' dice" is not entirely valid, 'roll a dice' should return the result of "roll a die".

------

IA Page: http://duck.co/ia/view/dice

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @loganom | main | dice roll a dice search doesn t trigger instant answer even though dice is the plural of die and roll a dice is not entirely valid roll a dice should return the result of roll a die ia page loganom | 1 |

9,355 | 11,403,458,997 | IssuesEvent | 2020-01-31 07:16:16 | jrabbit/pyborg-1up | https://api.github.com/repos/jrabbit/pyborg-1up | closed | pip installs a newer aiohttp | Compatibility Packaging | ref #106

on stock pip on `python:3.8@2d2ae8451803` on docker

`ERROR: discord-py 1.3.0 has requirement aiohttp<3.7.0,>=3.6.0, but you'll have aiohttp 4.0.0a1 which is incompatible.` | True | pip installs a newer aiohttp - ref #106

on stock pip on `python:3.8@2d2ae8451803` on docker

`ERROR: discord-py 1.3.0 has requirement aiohttp<3.7.0,>=3.6.0, but you'll have aiohttp 4.0.0a1 which is incompatible.` | non_main | pip installs a newer aiohttp ref on stock pip on python on docker error discord py has requirement aiohttp but you ll have aiohttp which is incompatible | 0 |

22 | 2,523,647,398 | IssuesEvent | 2015-01-20 12:25:11 | simplesamlphp/simplesamlphp | https://api.github.com/repos/simplesamlphp/simplesamlphp | closed | Cleanup the SimpleSAML_Session class | enhancement maintainability started | The following must be done:

* Remove the default `NULL` value for the `$authority` parameter in the `getAuthState()` method. :+1:

* Remove the `getAttribute()` method. :+1:

* Remove the `setAttribute()` method. :+1:

* Remove the `setAttributes()` method. :+1:

* Remove the `getInstance()` method. :+1:

* Refactor. Should be renamed to `getCurrentSession()`. :+1: (see comment below)

* The error handling code should disappear.

* `session.disable_fallback` defaults to TRUE and goes away (exception is always thrown)

* Some functionality to avoid recursive loops, maybe solved in `Logger::getTracktId()`.

* Cleanup session initialization. Two flows:

* load by ID

* load by `$_REQUEST`

* Remove the `getAuthority()` method. :+1:

* Remove the `getAuthnRequest()` method. :+1:

* Remove the `setAuthnRequest()` method. :+1:

* Remove the `getIdP()` method. :+1:

* Remove the `setIdP()` method. :+1:

* Remove the `getSessionIndex()` method. :+1:

* Remove the `setSessionIndex()` method. :+1:

* Remove the `getNameId()` method. :+1:

* Remove the `setNameId()` method. :+1:

* Remove the `setSessionDuration()` method. :+1:

* Remove the `remainingTime()` method. :+1:

* Remove the `isAuthenticated()` method. :+1:

* Remove the `getAuthInstant()` method. :+1:

* Remove the `getAttributes()` method. :+1:

* Remove the `getSize()` method. :+1:

* Remove the `get_sp_list()` method. :+1:

* Remove the `expireDataLogout()` method. :+1:

* Remove the `getLogoutState()` and `setLogoutState()` methods. If there's callers, change the call with a direct access to `$state['LogoutState']`. :+1:

* Remove the `$authority` property. :+1:

* Remove the `DATA_TIMEOUT_LOGOUT` constant. Check dependencies in: :+1:

* `lib/SimpleSAML/Auth/Source.php` and :+1:

* `lib/SimpleSAML/IdP.php` :+1:

* Modify the `registerLogoutHandler()` method to add the `$authority` as a parameter. This is a previous step to wrapping this functionality into `SimpleSAML_Auth_Simple`. :+1: | True | Cleanup the SimpleSAML_Session class - The following must be done:

* Remove the default `NULL` value for the `$authority` parameter in the `getAuthState()` method. :+1:

* Remove the `getAttribute()` method. :+1:

* Remove the `setAttribute()` method. :+1:

* Remove the `setAttributes()` method. :+1:

* Remove the `getInstance()` method. :+1:

* Refactor. Should be renamed to `getCurrentSession()`. :+1: (see comment below)

* The error handling code should disappear.

* `session.disable_fallback` defaults to TRUE and goes away (exception is always thrown)

* Some functionality to avoid recursive loops, maybe solved in `Logger::getTracktId()`.

* Cleanup session initialization. Two flows:

* load by ID

* load by `$_REQUEST`

* Remove the `getAuthority()` method. :+1:

* Remove the `getAuthnRequest()` method. :+1:

* Remove the `setAuthnRequest()` method. :+1:

* Remove the `getIdP()` method. :+1:

* Remove the `setIdP()` method. :+1:

* Remove the `getSessionIndex()` method. :+1:

* Remove the `setSessionIndex()` method. :+1:

* Remove the `getNameId()` method. :+1:

* Remove the `setNameId()` method. :+1:

* Remove the `setSessionDuration()` method. :+1:

* Remove the `remainingTime()` method. :+1:

* Remove the `isAuthenticated()` method. :+1:

* Remove the `getAuthInstant()` method. :+1:

* Remove the `getAttributes()` method. :+1:

* Remove the `getSize()` method. :+1:

* Remove the `get_sp_list()` method. :+1:

* Remove the `expireDataLogout()` method. :+1:

* Remove the `getLogoutState()` and `setLogoutState()` methods. If there's callers, change the call with a direct access to `$state['LogoutState']`. :+1:

* Remove the `$authority` property. :+1:

* Remove the `DATA_TIMEOUT_LOGOUT` constant. Check dependencies in: :+1:

* `lib/SimpleSAML/Auth/Source.php` and :+1:

* `lib/SimpleSAML/IdP.php` :+1:

* Modify the `registerLogoutHandler()` method to add the `$authority` as a parameter. This is a previous step to wrapping this functionality into `SimpleSAML_Auth_Simple`. :+1: | main | cleanup the simplesaml session class the following must be done remove the default null value for the authority parameter in the getauthstate method remove the getattribute method remove the setattribute method remove the setattributes method remove the getinstance method refactor should be renamed to getcurrentsession see comment below the error handling code should disappear session disable fallback defaults to true and goes away exception is always thrown some functionality to avoid recursive loops maybe solved in logger gettracktid cleanup session initialization two flows load by id load by request remove the getauthority method remove the getauthnrequest method remove the setauthnrequest method remove the getidp method remove the setidp method remove the getsessionindex method remove the setsessionindex method remove the getnameid method remove the setnameid method remove the setsessionduration method remove the remainingtime method remove the isauthenticated method remove the getauthinstant method remove the getattributes method remove the getsize method remove the get sp list method remove the expiredatalogout method remove the getlogoutstate and setlogoutstate methods if there s callers change the call with a direct access to state remove the authority property remove the data timeout logout constant check dependencies in lib simplesaml auth source php and lib simplesaml idp php modify the registerlogouthandler method to add the authority as a parameter this is a previous step to wrapping this functionality into simplesaml auth simple | 1 |

5,627 | 28,151,993,885 | IssuesEvent | 2023-04-03 02:41:20 | medic/cht-roadmap | https://api.github.com/repos/medic/cht-roadmap | closed | Server monitoring | strat: Large CHT systems maintainable by admins | Make it easy for self and Medic hosted deployments to monitor resources and statuses of CHT instances. The monitoring falls into three main categories:

1. Generic stats from container like CPU usage, memory usage, disk space, network transfers, etc. This should be available through third party tools.

2. CouchDB stats like fragmentation, requests, response error rates, doc conflicts, etc. Look for third party tools for this, eg: https://github.com/gesellix/couchdb-prometheus-exporter

3. CHT specific stats like messaging queues, sentinel backlog, feedback docs, etc. This can be sourced from [this api](https://docs.communityhealthtoolkit.org/apps/reference/api/#get-apiv2monitoring).

Regardless of how this is implemented they should be all shown in one dashboard for ease of use. | True | Server monitoring - Make it easy for self and Medic hosted deployments to monitor resources and statuses of CHT instances. The monitoring falls into three main categories:

1. Generic stats from container like CPU usage, memory usage, disk space, network transfers, etc. This should be available through third party tools.

2. CouchDB stats like fragmentation, requests, response error rates, doc conflicts, etc. Look for third party tools for this, eg: https://github.com/gesellix/couchdb-prometheus-exporter

3. CHT specific stats like messaging queues, sentinel backlog, feedback docs, etc. This can be sourced from [this api](https://docs.communityhealthtoolkit.org/apps/reference/api/#get-apiv2monitoring).

Regardless of how this is implemented they should be all shown in one dashboard for ease of use. | main | server monitoring make it easy for self and medic hosted deployments to monitor resources and statuses of cht instances the monitoring falls into three main categories generic stats from container like cpu usage memory usage disk space network transfers etc this should be available through third party tools couchdb stats like fragmentation requests response error rates doc conflicts etc look for third party tools for this eg cht specific stats like messaging queues sentinel backlog feedback docs etc this can be sourced from regardless of how this is implemented they should be all shown in one dashboard for ease of use | 1 |

3,619 | 14,630,516,979 | IssuesEvent | 2020-12-23 17:52:38 | umn-asr/courses | https://api.github.com/repos/umn-asr/courses | opened | Update README with development section | courses maintainability | Add a development section with these subsections:

- [ ] setup

- [ ] testing

- [ ] deployment | True | Update README with development section - Add a development section with these subsections:

- [ ] setup

- [ ] testing

- [ ] deployment | main | update readme with development section add a development section with these subsections setup testing deployment | 1 |

3,318 | 12,876,787,322 | IssuesEvent | 2020-07-11 07:09:25 | geolexica/geolexica-server | https://api.github.com/repos/geolexica/geolexica-server | opened | Term languages are hardcoded in search | maintainability | https://github.com/geolexica/geolexica-server/blob/189aabee07651fcc8d453131ec209dd5498b4054/assets/js/concept-search-worker.js#L5-L18

Note that many of these codes are actually incorrect, and that many are missing. It probably explains #105. Anyway, they should be taken from site configuration. | True | Term languages are hardcoded in search - https://github.com/geolexica/geolexica-server/blob/189aabee07651fcc8d453131ec209dd5498b4054/assets/js/concept-search-worker.js#L5-L18

Note that many of these codes are actually incorrect, and that many are missing. It probably explains #105. Anyway, they should be taken from site configuration. | main | term languages are hardcoded in search note that many of these codes are actually incorrect and that many are missing it probably explains anyway they should be taken from site configuration | 1 |

84,766 | 10,417,820,304 | IssuesEvent | 2019-09-15 01:58:47 | golang/go | https://api.github.com/repos/golang/go | closed | net/http: Content-Length is not set in outgoing request when using ioutil.NopCloser | Documentation | <!-- Please answer these questions before submitting your issue. Thanks! -->

### What version of Go are you using (`go version`)?

<pre>

$ go version

go version go1.13 darwin/amd64

</pre>

### Does this issue reproduce with the latest release?

Yes

### What operating system and processor architecture are you using (`go env`)?

<details><summary><code>go env</code> Output</summary><br><pre>

$ go env

GO111MODULE=""

GOARCH="amd64"

GOBIN=""

GOCACHE="/Users/1041775/Library/Caches/go-build"

GOENV="/Users/1041775/Library/Application Support/go/env"

GOEXE=""

GOFLAGS=""

GOHOSTARCH="amd64"

GOHOSTOS="darwin"

GONOPROXY=""

GONOSUMDB=""

GOOS="darwin"

GOPATH="/Users/1041775/go"

GOPRIVATE=""

GOPROXY="https://proxy.golang.org,direct"

GOROOT="/usr/local/Cellar/go/1.13/libexec"

GOSUMDB="sum.golang.org"

GOTMPDIR=""

GOTOOLDIR="/usr/local/Cellar/go/1.13/libexec/pkg/tool/darwin_amd64"

GCCGO="gccgo"

AR="ar"

CC="clang"

CXX="clang++"

CGO_ENABLED="1"

GOMOD="/Users/1041775/projects/js-scripts/go.mod"

CGO_CFLAGS="-g -O2"

CGO_CPPFLAGS=""

CGO_CXXFLAGS="-g -O2"

CGO_FFLAGS="-g -O2"

CGO_LDFLAGS="-g -O2"

PKG_CONFIG="pkg-config"

GOGCCFLAGS="-fPIC -m64 -pthread -fno-caret-diagnostics -Qunused-arguments -fmessage-length=0 -fdebug-prefix-map=/var/folders/d6/809nhvwd23nd_wryrw60j6hdms016p/T/go-build703352534=/tmp/go-build -gno-record-gcc-switches -fno-common"

</pre></details>

### What did you do?

<!--

If possible, provide a recipe for reproducing the error.

A complete runnable program is good.

A link on play.golang.org is best.

-->

Start a httpbin server locally.

<pre>

docker run -p 80:80 kennethreitz/httpbin

</pre>

Run the following program

```go

package main

import (

"bytes"

"io/ioutil"

"log"

"net/http"

)

func main() {

reqBody := ioutil.NopCloser(bytes.NewBufferString(`{}`))

req, err := http.NewRequest("POST", "http://localhost:80/post", reqBody)

if err != nil {

log.Fatalf("Cannot create request: %v", err)

}

res, err := http.DefaultClient.Do(req)

if err != nil {

log.Fatalf("Cannot do: %v", err)

}

defer res.Body.Close()

resBody, err := ioutil.ReadAll(res.Body)

if err != nil {

log.Fatalf("Cannot read body: %v", err)

}

log.Printf("Response Body: %s", resBody)

}

```

### What did you expect to see?

Content-Length header is set when it is received by the server.

### What did you see instead?

Content-Length header is missing when it is received by the server.

<pre>

2019/09/14 12:55:00 Response Body: {

"args": {},

"data": "{}",

"files": {},

"form": {},

"headers": {

"Accept-Encoding": "gzip",

"Host": "localhost:80",

"Transfer-Encoding": "chunked",

"User-Agent": "Go-http-client/1.1"

},

"json": {},

"origin": "172.17.0.1",

"url": "http://localhost:80/post"

}

</pre>

Versus what I would receive if I use <pre>reqBody := bytes.NewBufferString(`{}`)</pre>.

<pre>

2019/09/14 12:55:22 Response Body: {

"args": {},

"data": "{}",

"files": {},

"form": {},

"headers": {

"Accept-Encoding": "gzip",

"Content-Length": "2",

"Host": "localhost:80",

"User-Agent": "Go-http-client/1.1"

},

"json": {},

"origin": "172.17.0.1",

"url": "http://localhost:80/post"

}

</pre>

| 1.0 | net/http: Content-Length is not set in outgoing request when using ioutil.NopCloser - <!-- Please answer these questions before submitting your issue. Thanks! -->

### What version of Go are you using (`go version`)?

<pre>

$ go version

go version go1.13 darwin/amd64

</pre>

### Does this issue reproduce with the latest release?

Yes

### What operating system and processor architecture are you using (`go env`)?

<details><summary><code>go env</code> Output</summary><br><pre>

$ go env

GO111MODULE=""

GOARCH="amd64"

GOBIN=""

GOCACHE="/Users/1041775/Library/Caches/go-build"

GOENV="/Users/1041775/Library/Application Support/go/env"

GOEXE=""

GOFLAGS=""

GOHOSTARCH="amd64"

GOHOSTOS="darwin"

GONOPROXY=""

GONOSUMDB=""

GOOS="darwin"

GOPATH="/Users/1041775/go"

GOPRIVATE=""

GOPROXY="https://proxy.golang.org,direct"

GOROOT="/usr/local/Cellar/go/1.13/libexec"

GOSUMDB="sum.golang.org"

GOTMPDIR=""

GOTOOLDIR="/usr/local/Cellar/go/1.13/libexec/pkg/tool/darwin_amd64"

GCCGO="gccgo"

AR="ar"

CC="clang"

CXX="clang++"

CGO_ENABLED="1"

GOMOD="/Users/1041775/projects/js-scripts/go.mod"

CGO_CFLAGS="-g -O2"

CGO_CPPFLAGS=""

CGO_CXXFLAGS="-g -O2"

CGO_FFLAGS="-g -O2"

CGO_LDFLAGS="-g -O2"

PKG_CONFIG="pkg-config"

GOGCCFLAGS="-fPIC -m64 -pthread -fno-caret-diagnostics -Qunused-arguments -fmessage-length=0 -fdebug-prefix-map=/var/folders/d6/809nhvwd23nd_wryrw60j6hdms016p/T/go-build703352534=/tmp/go-build -gno-record-gcc-switches -fno-common"

</pre></details>

### What did you do?

<!--

If possible, provide a recipe for reproducing the error.

A complete runnable program is good.

A link on play.golang.org is best.

-->

Start a httpbin server locally.

<pre>

docker run -p 80:80 kennethreitz/httpbin

</pre>

Run the following program

```go

package main

import (

"bytes"

"io/ioutil"

"log"

"net/http"

)

func main() {

reqBody := ioutil.NopCloser(bytes.NewBufferString(`{}`))

req, err := http.NewRequest("POST", "http://localhost:80/post", reqBody)

if err != nil {

log.Fatalf("Cannot create request: %v", err)

}

res, err := http.DefaultClient.Do(req)

if err != nil {

log.Fatalf("Cannot do: %v", err)

}

defer res.Body.Close()

resBody, err := ioutil.ReadAll(res.Body)

if err != nil {

log.Fatalf("Cannot read body: %v", err)

}

log.Printf("Response Body: %s", resBody)

}

```

### What did you expect to see?

Content-Length header is set when it is received by the server.

### What did you see instead?

Content-Length header is missing when it is received by the server.

<pre>

2019/09/14 12:55:00 Response Body: {

"args": {},

"data": "{}",

"files": {},

"form": {},

"headers": {

"Accept-Encoding": "gzip",

"Host": "localhost:80",

"Transfer-Encoding": "chunked",

"User-Agent": "Go-http-client/1.1"

},

"json": {},

"origin": "172.17.0.1",

"url": "http://localhost:80/post"

}

</pre>

Versus what I would receive if I use <pre>reqBody := bytes.NewBufferString(`{}`)</pre>.

<pre>

2019/09/14 12:55:22 Response Body: {

"args": {},

"data": "{}",

"files": {},

"form": {},

"headers": {

"Accept-Encoding": "gzip",

"Content-Length": "2",

"Host": "localhost:80",

"User-Agent": "Go-http-client/1.1"

},

"json": {},

"origin": "172.17.0.1",

"url": "http://localhost:80/post"

}

</pre>

| non_main | net http content length is not set in outgoing request when using ioutil nopcloser what version of go are you using go version go version go version darwin does this issue reproduce with the latest release yes what operating system and processor architecture are you using go env go env output go env goarch gobin gocache users library caches go build goenv users library application support go env goexe goflags gohostarch gohostos darwin gonoproxy gonosumdb goos darwin gopath users go goprivate goproxy goroot usr local cellar go libexec gosumdb sum golang org gotmpdir gotooldir usr local cellar go libexec pkg tool darwin gccgo gccgo ar ar cc clang cxx clang cgo enabled gomod users projects js scripts go mod cgo cflags g cgo cppflags cgo cxxflags g cgo fflags g cgo ldflags g pkg config pkg config gogccflags fpic pthread fno caret diagnostics qunused arguments fmessage length fdebug prefix map var folders t go tmp go build gno record gcc switches fno common what did you do if possible provide a recipe for reproducing the error a complete runnable program is good a link on play golang org is best start a httpbin server locally docker run p kennethreitz httpbin run the following program go package main import bytes io ioutil log net http func main reqbody ioutil nopcloser bytes newbufferstring req err http newrequest post reqbody if err nil log fatalf cannot create request v err res err http defaultclient do req if err nil log fatalf cannot do v err defer res body close resbody err ioutil readall res body if err nil log fatalf cannot read body v err log printf response body s resbody what did you expect to see content length header is set when it is received by the server what did you see instead content length header is missing when it is received by the server response body args data files form headers accept encoding gzip host localhost transfer encoding chunked user agent go http client json origin url versus what i would receive if i use reqbody bytes newbufferstring response body args data files form headers accept encoding gzip content length host localhost user agent go http client json origin url | 0 |

4,735 | 24,447,046,596 | IssuesEvent | 2022-10-06 18:55:40 | backdrop-ops/contrib | https://api.github.com/repos/backdrop-ops/contrib | closed | Contrib Group Application: markabur (port of emptyparagraphkiller) | Maintainer application Port complete | Hello and welcome to the contrib application process! We're happy to have you :)

**Please indicate how you intend to help the Backdrop community by joining this group**

Option 1: I would like to contribute a project

## Based on your selection above, please provide the following information:

**(option 1) The name of your module, theme, or layout**

Empty paragraph killer

## (option 1) Please note these 3 requirements for new contrib projects:

- [x] Include a README.md file containing license and maintainer information.

You can use this example: https://raw.githubusercontent.com/backdrop-ops/contrib/master/examples/README.md

- [x] Include a LICENSE.txt file.

You can use this example: https://raw.githubusercontent.com/backdrop-ops/contrib/master/examples/LICENSE.txt.

- [x] If porting a Drupal 7 project, Maintain the Git history from Drupal.

**(option 1 -- optional) Post a link here to an issue in the drupal.org queue notifying the Drupal 7 maintainers that you are working on a Backdrop port of their project**

https://www.drupal.org/project/emptyparagraphkiller/issues/3312732

**Post a link to your new Backdrop project under your own GitHub account (option 1)**

https://github.com/markabur/emptyparagraphkiller

**If you have chosen option 2 or 1 above, do you agree to the [Backdrop Contributed Project Agreement](https://github.com/backdrop-ops/contrib#backdrop-contributed-project-agreement)**

YES

<!-- (option 1) Once we have a chance to review your project, we will check for the 3 requirements at the top of this issue. If those requirements are met, you will be invited to the @backdrop-contrib group. At that point you will be able to transfer the project. -->

<!-- (option 1) Please note that we may also include additional feedback in the code review, but anything else is only intended to be helpful, and is NOT a requirement for joining the contrib group. -->

| True | Contrib Group Application: markabur (port of emptyparagraphkiller) - Hello and welcome to the contrib application process! We're happy to have you :)

**Please indicate how you intend to help the Backdrop community by joining this group**

Option 1: I would like to contribute a project

## Based on your selection above, please provide the following information:

**(option 1) The name of your module, theme, or layout**

Empty paragraph killer

## (option 1) Please note these 3 requirements for new contrib projects:

- [x] Include a README.md file containing license and maintainer information.

You can use this example: https://raw.githubusercontent.com/backdrop-ops/contrib/master/examples/README.md

- [x] Include a LICENSE.txt file.

You can use this example: https://raw.githubusercontent.com/backdrop-ops/contrib/master/examples/LICENSE.txt.

- [x] If porting a Drupal 7 project, Maintain the Git history from Drupal.

**(option 1 -- optional) Post a link here to an issue in the drupal.org queue notifying the Drupal 7 maintainers that you are working on a Backdrop port of their project**

https://www.drupal.org/project/emptyparagraphkiller/issues/3312732

**Post a link to your new Backdrop project under your own GitHub account (option 1)**

https://github.com/markabur/emptyparagraphkiller

**If you have chosen option 2 or 1 above, do you agree to the [Backdrop Contributed Project Agreement](https://github.com/backdrop-ops/contrib#backdrop-contributed-project-agreement)**

YES

<!-- (option 1) Once we have a chance to review your project, we will check for the 3 requirements at the top of this issue. If those requirements are met, you will be invited to the @backdrop-contrib group. At that point you will be able to transfer the project. -->

<!-- (option 1) Please note that we may also include additional feedback in the code review, but anything else is only intended to be helpful, and is NOT a requirement for joining the contrib group. -->

| main | contrib group application markabur port of emptyparagraphkiller hello and welcome to the contrib application process we re happy to have you please indicate how you intend to help the backdrop community by joining this group option i would like to contribute a project based on your selection above please provide the following information option the name of your module theme or layout empty paragraph killer option please note these requirements for new contrib projects include a readme md file containing license and maintainer information you can use this example include a license txt file you can use this example if porting a drupal project maintain the git history from drupal option optional post a link here to an issue in the drupal org queue notifying the drupal maintainers that you are working on a backdrop port of their project post a link to your new backdrop project under your own github account option if you have chosen option or above do you agree to the yes | 1 |

55,851 | 23,617,448,567 | IssuesEvent | 2022-08-24 17:08:20 | operate-first/apps | https://api.github.com/repos/operate-first/apps | closed | Fybrik CRDs preventing Smaug Cluster-Resources app from syncing successfully | kind/bug area/service/argocd | ArgoCD App: https://argocd.operate-first.cloud/applications/cluster-resources-smaug?resource=sync%3AOutOfSync&operation=true

Example sync error for resources:

CustomResourceDefinition.apiextensions.k8s.io "plotters.app.fybrik.io" is invalid: status.storedVersions[0]: Invalid value: "v1alpha1": must appear in spec.versions

It is complaining about: https://github.com/operate-first/apps/blob/master/cluster-scope/base/apiextensions.k8s.io/customresourcedefinitions/plotters.app.fybrik.io/customresourcedefinition.yaml#L499

The PR in question: https://github.com/operate-first/apps/pull/2220

The kubeval test seemed to indicate passing before I merged it. But seemed to leave another comment after merge indicating it failed, logs are expired so I'm not sure if it as complaining about this particular issue.

| 1.0 | Fybrik CRDs preventing Smaug Cluster-Resources app from syncing successfully - ArgoCD App: https://argocd.operate-first.cloud/applications/cluster-resources-smaug?resource=sync%3AOutOfSync&operation=true

Example sync error for resources:

CustomResourceDefinition.apiextensions.k8s.io "plotters.app.fybrik.io" is invalid: status.storedVersions[0]: Invalid value: "v1alpha1": must appear in spec.versions

It is complaining about: https://github.com/operate-first/apps/blob/master/cluster-scope/base/apiextensions.k8s.io/customresourcedefinitions/plotters.app.fybrik.io/customresourcedefinition.yaml#L499

The PR in question: https://github.com/operate-first/apps/pull/2220

The kubeval test seemed to indicate passing before I merged it. But seemed to leave another comment after merge indicating it failed, logs are expired so I'm not sure if it as complaining about this particular issue.

| non_main | fybrik crds preventing smaug cluster resources app from syncing successfully argocd app example sync error for resources customresourcedefinition apiextensions io plotters app fybrik io is invalid status storedversions invalid value must appear in spec versions it is complaining about the pr in question the kubeval test seemed to indicate passing before i merged it but seemed to leave another comment after merge indicating it failed logs are expired so i m not sure if it as complaining about this particular issue | 0 |

1,926 | 6,598,715,917 | IssuesEvent | 2017-09-16 09:45:15 | caskroom/homebrew-cask | https://api.github.com/repos/caskroom/homebrew-cask | closed | Feature request: `audit --download` should check if app name is correct | awaiting maintainer feedback | ### Description of feature/enhancement

`brew cask audit --download cask` should check if a dmg contains an app like described in the Cask.

### Justification

We are already downloading the dmg to check the checksum, it should be easy to mount the dmg (the source code is there) and check if the app exists.

### Example use case

For example, in this MR the Name of the app changed:

https://github.com/caskroom/homebrew-cask/pull/37346/files

Travis would have shown a green build, if I hadn't change the app name. A "careless" merge would have broken the Cask for everyone, as the app could not be copied during install.

- - -

I would implement it myself, but I have a real hard time to get started with ruby and I think it is a small feature for the hbc developers. Thank you, as always for your hard work.

| True | Feature request: `audit --download` should check if app name is correct - ### Description of feature/enhancement

`brew cask audit --download cask` should check if a dmg contains an app like described in the Cask.

### Justification

We are already downloading the dmg to check the checksum, it should be easy to mount the dmg (the source code is there) and check if the app exists.

### Example use case

For example, in this MR the Name of the app changed:

https://github.com/caskroom/homebrew-cask/pull/37346/files

Travis would have shown a green build, if I hadn't change the app name. A "careless" merge would have broken the Cask for everyone, as the app could not be copied during install.

- - -

I would implement it myself, but I have a real hard time to get started with ruby and I think it is a small feature for the hbc developers. Thank you, as always for your hard work.

| main | feature request audit download should check if app name is correct description of feature enhancement brew cask audit download cask should check if a dmg contains an app like described in the cask justification we are already downloading the dmg to check the checksum it should be easy to mount the dmg the source code is there and check if the app exists example use case for example in this mr the name of the app changed travis would have shown a green build if i hadn t change the app name a careless merge would have broken the cask for everyone as the app could not be copied during install i would implement it myself but i have a real hard time to get started with ruby and i think it is a small feature for the hbc developers thank you as always for your hard work | 1 |

139,560 | 20,910,711,264 | IssuesEvent | 2022-03-24 09:03:33 | ASE-Projekte-WS-2021/ase-ws-21-zusammenleben | https://api.github.com/repos/ASE-Projekte-WS-2021/ase-ws-21-zusammenleben | opened | Design Prototype | important Design | Prototype of finished UI Screens (Color Theme, Fonts, Icon Style, Button Style etc.) | 1.0 | Design Prototype - Prototype of finished UI Screens (Color Theme, Fonts, Icon Style, Button Style etc.) | non_main | design prototype prototype of finished ui screens color theme fonts icon style button style etc | 0 |

50,594 | 6,403,861,355 | IssuesEvent | 2017-08-06 22:34:11 | a8cteam51/strikestart | https://api.github.com/repos/a8cteam51/strikestart | opened | On larger screens, consider moving navigation to the left-hand side | design enhancement low-priority | Might be a more effective use of space.... | 1.0 | On larger screens, consider moving navigation to the left-hand side - Might be a more effective use of space.... | non_main | on larger screens consider moving navigation to the left hand side might be a more effective use of space | 0 |

142,302 | 21,713,322,806 | IssuesEvent | 2022-05-10 15:32:30 | gordon-cs/gordon-360-ui | https://api.github.com/repos/gordon-cs/gordon-360-ui | opened | Use Tabbed UI on Involvements page | Enhancement Visual Design | Currently, the Involvements page has three cards, stacked vertically, with different views of involvements data:

1. Requests (both sent and received)

2. My Involvements

3. All Involvements

A user is generally only interested in one of these cards, and they're never interested in more than one at a time. For that reason, I think a tabbed UI would be a cleaner, more user-friendly interface for this page. | 1.0 | Use Tabbed UI on Involvements page - Currently, the Involvements page has three cards, stacked vertically, with different views of involvements data:

1. Requests (both sent and received)

2. My Involvements

3. All Involvements

A user is generally only interested in one of these cards, and they're never interested in more than one at a time. For that reason, I think a tabbed UI would be a cleaner, more user-friendly interface for this page. | non_main | use tabbed ui on involvements page currently the involvements page has three cards stacked vertically with different views of involvements data requests both sent and received my involvements all involvements a user is generally only interested in one of these cards and they re never interested in more than one at a time for that reason i think a tabbed ui would be a cleaner more user friendly interface for this page | 0 |

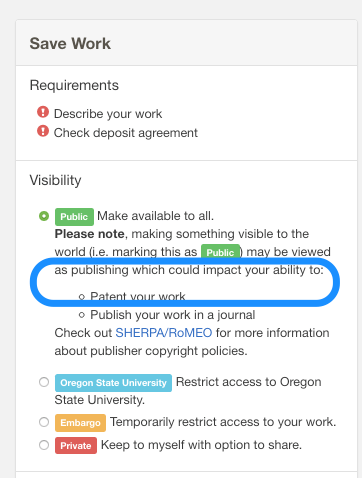

347,993 | 10,437,195,970 | IssuesEvent | 2019-09-17 21:23:20 | osulp/Scholars-Archive | https://api.github.com/repos/osulp/Scholars-Archive | closed | Remove extra space in Visibility box | Priority: Low User Interface | ### Descriptive summary

There's an extra space before the bulleted terms begin.

### Expected behavior

Space should not be there.

| 1.0 | Remove extra space in Visibility box - ### Descriptive summary

There's an extra space before the bulleted terms begin.

### Expected behavior

Space should not be there.

| non_main | remove extra space in visibility box descriptive summary there s an extra space before the bulleted terms begin expected behavior space should not be there | 0 |

5,152 | 26,252,517,782 | IssuesEvent | 2023-01-05 20:43:04 | chocolatey-community/chocolatey-package-requests | https://api.github.com/repos/chocolatey-community/chocolatey-package-requests | closed | RFM - plexmediaserver | Status: Available For Maintainer(s) | ## Current Maintainer

- [x] I am the maintainer of the package and wish to pass it to someone else;

## Checklist

- [x] Issue title starts with 'RFM - '

## Existing Package Details

Package URL: https://community.chocolatey.org/packages/plexmediaserver

Package source URL: https://github.com/mikecole/chocolatey-packages/tree/master/automatic/plexmediaserver

This is a working package with a functional AU script. I simply don't have the capacity to keep it updated as it is a popular package. Currently, there are a handful of requests to add the 64-bit version and I have not been able to field these requests. I will help as much as I can to transfer ownership. | True | RFM - plexmediaserver - ## Current Maintainer

- [x] I am the maintainer of the package and wish to pass it to someone else;

## Checklist

- [x] Issue title starts with 'RFM - '

## Existing Package Details

Package URL: https://community.chocolatey.org/packages/plexmediaserver

Package source URL: https://github.com/mikecole/chocolatey-packages/tree/master/automatic/plexmediaserver

This is a working package with a functional AU script. I simply don't have the capacity to keep it updated as it is a popular package. Currently, there are a handful of requests to add the 64-bit version and I have not been able to field these requests. I will help as much as I can to transfer ownership. | main | rfm plexmediaserver current maintainer i am the maintainer of the package and wish to pass it to someone else checklist issue title starts with rfm existing package details package url package source url this is a working package with a functional au script i simply don t have the capacity to keep it updated as it is a popular package currently there are a handful of requests to add the bit version and i have not been able to field these requests i will help as much as i can to transfer ownership | 1 |

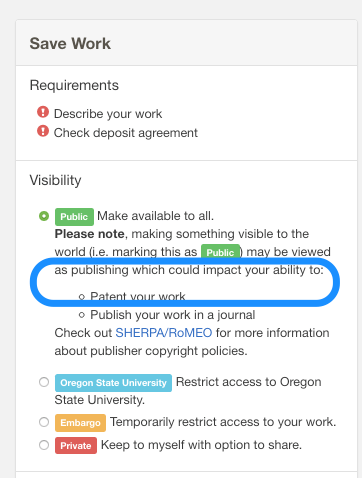

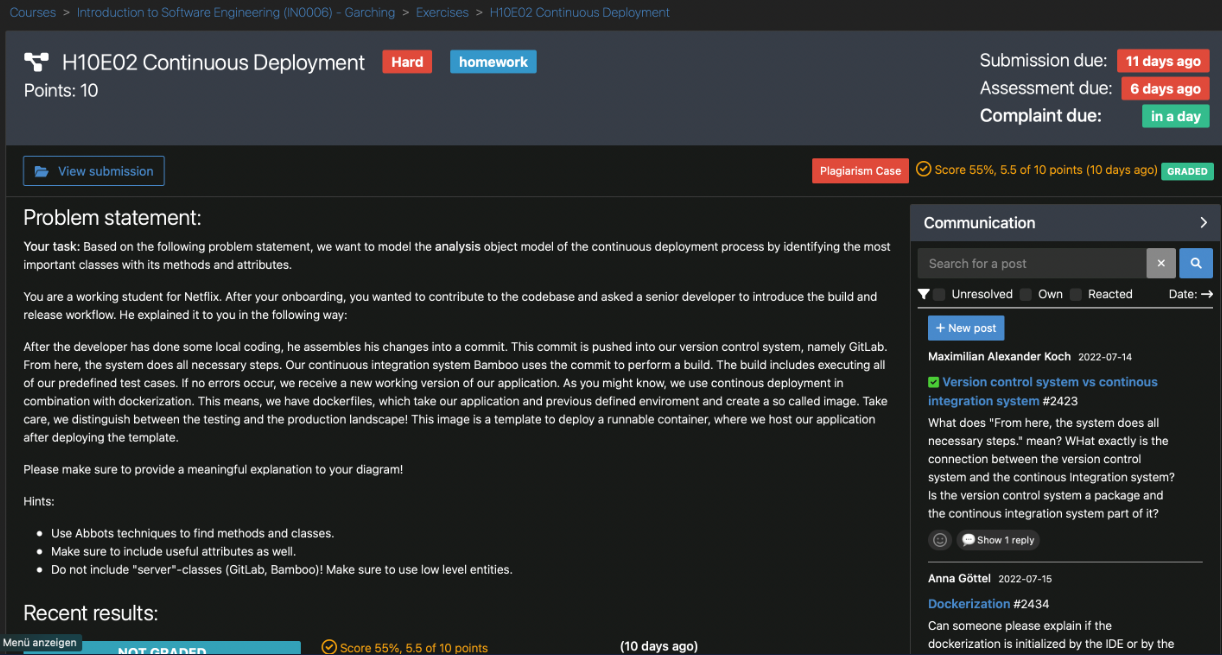

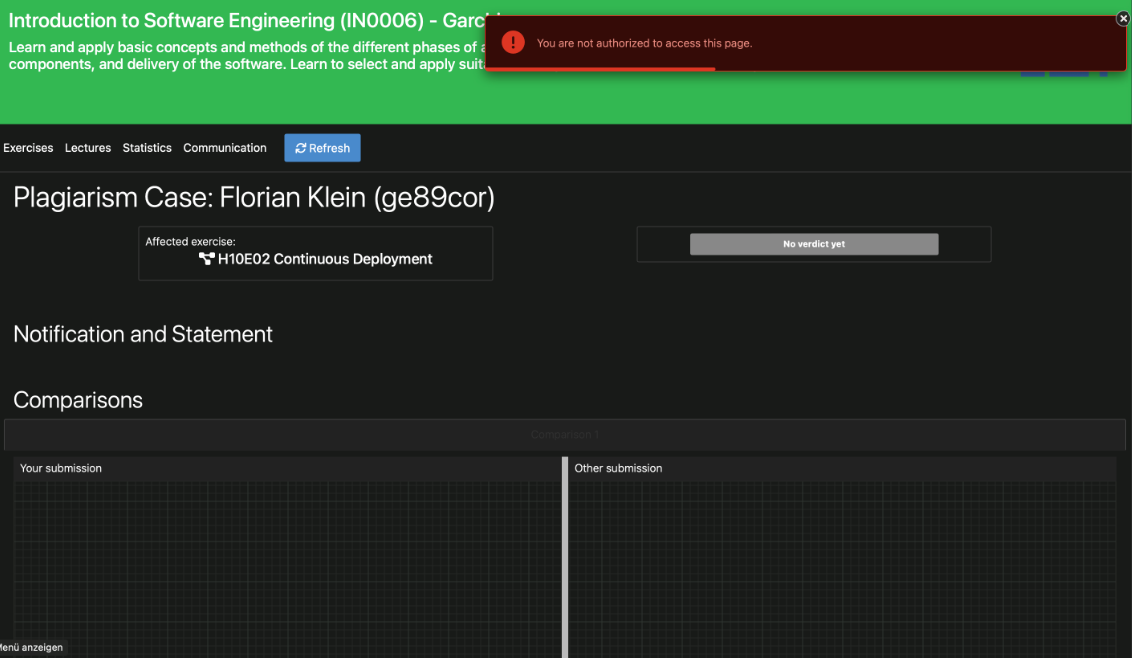

677,897 | 23,179,410,626 | IssuesEvent | 2022-07-31 22:28:37 | ls1intum/Artemis | https://api.github.com/repos/ls1intum/Artemis | closed | Students can see plagiarism accusations before they are notified | bug plagiarism detection priority:high | ### Describe the bug

In the EiSt course we noticed, that some students could see a plagiarism accusation despite the fact that they hadn't been notified. The students can see the plagiarism case button but when they want to access the page they get the message that they aren't authorised to access it (see screenshots). The students shouldn't see the button at all because they haven't been notified.

### To Reproduce

1. Create an exercise

2. Submit at least 2 similar solutions

3. Run the plagiarism check for this exercise

4. Flag the matches as plagiarism

5. Log into a student account and go to the exercise

6. Click on the button and access the page

### Expected behavior

Normaly the student shouldn't get notified before an instructor notifies him over the plagiarism cases interface. The plagiarism button and the page shouldn't be visible to the student until then.

### Screenshots

### What browsers are you seeing the problem on?

Chrome

### Additional context

_No response_

### Relevant log output

_No response_ | 1.0 | Students can see plagiarism accusations before they are notified - ### Describe the bug

In the EiSt course we noticed, that some students could see a plagiarism accusation despite the fact that they hadn't been notified. The students can see the plagiarism case button but when they want to access the page they get the message that they aren't authorised to access it (see screenshots). The students shouldn't see the button at all because they haven't been notified.

### To Reproduce

1. Create an exercise

2. Submit at least 2 similar solutions

3. Run the plagiarism check for this exercise

4. Flag the matches as plagiarism

5. Log into a student account and go to the exercise

6. Click on the button and access the page

### Expected behavior

Normaly the student shouldn't get notified before an instructor notifies him over the plagiarism cases interface. The plagiarism button and the page shouldn't be visible to the student until then.

### Screenshots

### What browsers are you seeing the problem on?

Chrome

### Additional context

_No response_

### Relevant log output

_No response_ | non_main | students can see plagiarism accusations before they are notified describe the bug in the eist course we noticed that some students could see a plagiarism accusation despite the fact that they hadn t been notified the students can see the plagiarism case button but when they want to access the page they get the message that they aren t authorised to access it see screenshots the students shouldn t see the button at all because they haven t been notified to reproduce create an exercise submit at least similar solutions run the plagiarism check for this exercise flag the matches as plagiarism log into a student account and go to the exercise click on the button and access the page expected behavior normaly the student shouldn t get notified before an instructor notifies him over the plagiarism cases interface the plagiarism button and the page shouldn t be visible to the student until then screenshots what browsers are you seeing the problem on chrome additional context no response relevant log output no response | 0 |

449,152 | 31,830,460,943 | IssuesEvent | 2023-09-14 10:14:07 | ueberdosis/tiptap | https://api.github.com/repos/ueberdosis/tiptap | opened | [Documentation]: | Type: Documentation Category: Open Source | ### What’s the URL to the page you’re sending feedback for?

https://tiptap.dev/api/utilities/suggestion

### What part of the documentation needs improvement?

https://tiptap.dev/api/utilities/suggestion

### What is helpful about that part?

It is helpful to know that we can allow spaces without closing the mentioning functionality

### What is hard to understand, missing or misleading?

It is missing to explain that it also allows you to add a second "@" char in the string without closing the mention. Without it, it was not possible to search by email, as typing "@sara@" would close mentions on that last char input.

### Anything to add? (optional)

I dont know if that was intentional or unintentional, but it was an issue that I encountered with it so really glad that it can get fixed that way! | 1.0 | [Documentation]: - ### What’s the URL to the page you’re sending feedback for?

https://tiptap.dev/api/utilities/suggestion

### What part of the documentation needs improvement?

https://tiptap.dev/api/utilities/suggestion

### What is helpful about that part?

It is helpful to know that we can allow spaces without closing the mentioning functionality

### What is hard to understand, missing or misleading?

It is missing to explain that it also allows you to add a second "@" char in the string without closing the mention. Without it, it was not possible to search by email, as typing "@sara@" would close mentions on that last char input.

### Anything to add? (optional)

I dont know if that was intentional or unintentional, but it was an issue that I encountered with it so really glad that it can get fixed that way! | non_main | what’s the url to the page you’re sending feedback for what part of the documentation needs improvement what is helpful about that part it is helpful to know that we can allow spaces without closing the mentioning functionality what is hard to understand missing or misleading it is missing to explain that it also allows you to add a second char in the string without closing the mention without it it was not possible to search by email as typing sara would close mentions on that last char input anything to add optional i dont know if that was intentional or unintentional but it was an issue that i encountered with it so really glad that it can get fixed that way | 0 |

102,938 | 12,832,496,038 | IssuesEvent | 2020-07-07 07:46:29 | Blazored/Modal | https://api.github.com/repos/Blazored/Modal | closed | Support Bootstrap/custom modal markup | Feature Request Needs: Design | Modify the BlazoredModalInstance.razor component to support bootstrap, and other templates, when emitting HTML markup.

I suggest creating a Modal.Framework enumeration that includes:

Blazored,

Bootstrap,

Custom,

etc.

When adding the BlazoredModal component to the MainLayout, the user can supply this value as an attribute (i.e. `<BlazoredModal Framework="Bootstrap">` ) to configure all modals to render with their specified framework; Framework.Blazored could continue as the default preventing any breaking changes to existing implementations.

When the Modal.Framework has been specified, the BlazorModalInstance.razor component would emit bootstrap modal HTML elements with the appropriate structure, layout and css classes, instead of the default markup as currently implemented.

Also, a method can be provided to supply custom Markup for the modal, allowing for specialized layout and HTML for more advanced layouts.

Alternatively, fork the branch and create bootstrap compatible markup and styles.

| 1.0 | Support Bootstrap/custom modal markup - Modify the BlazoredModalInstance.razor component to support bootstrap, and other templates, when emitting HTML markup.

I suggest creating a Modal.Framework enumeration that includes:

Blazored,

Bootstrap,

Custom,

etc.

When adding the BlazoredModal component to the MainLayout, the user can supply this value as an attribute (i.e. `<BlazoredModal Framework="Bootstrap">` ) to configure all modals to render with their specified framework; Framework.Blazored could continue as the default preventing any breaking changes to existing implementations.

When the Modal.Framework has been specified, the BlazorModalInstance.razor component would emit bootstrap modal HTML elements with the appropriate structure, layout and css classes, instead of the default markup as currently implemented.

Also, a method can be provided to supply custom Markup for the modal, allowing for specialized layout and HTML for more advanced layouts.

Alternatively, fork the branch and create bootstrap compatible markup and styles.

| non_main | support bootstrap custom modal markup modify the blazoredmodalinstance razor component to support bootstrap and other templates when emitting html markup i suggest creating a modal framework enumeration that includes blazored bootstrap custom etc when adding the blazoredmodal component to the mainlayout the user can supply this value as an attribute i e to configure all modals to render with their specified framework framework blazored could continue as the default preventing any breaking changes to existing implementations when the modal framework has been specified the blazormodalinstance razor component would emit bootstrap modal html elements with the appropriate structure layout and css classes instead of the default markup as currently implemented also a method can be provided to supply custom markup for the modal allowing for specialized layout and html for more advanced layouts alternatively fork the branch and create bootstrap compatible markup and styles | 0 |

809,912 | 30,217,358,672 | IssuesEvent | 2023-07-05 16:31:14 | bloom-works/handbook | https://api.github.com/repos/bloom-works/handbook | opened | Add content: Information for contractors | priority-2 content-new | ## Where to find the content

https://docs.google.com/document/d/1IWmW3pYjCnryU8rEQeenjtBX7VtvQeL-4AfVJHcyPXc/edit#heading=h.6iujc7r4hlx

This issue includes subsections, but might consider breaking each subsection into its own issue:

- Onboarding

- Paperwork

- How to log time

- Travel on behalf of Bloom

- Offboarding

## Where the content will go

Top level, after 'Working partners and coalitions'

## Person responsible for this content

@dottiebobottie (confirm)

## Approver

_who will approve it or already did // we'll also track this via this github workflow_

| 1.0 | Add content: Information for contractors - ## Where to find the content

https://docs.google.com/document/d/1IWmW3pYjCnryU8rEQeenjtBX7VtvQeL-4AfVJHcyPXc/edit#heading=h.6iujc7r4hlx

This issue includes subsections, but might consider breaking each subsection into its own issue:

- Onboarding

- Paperwork

- How to log time

- Travel on behalf of Bloom

- Offboarding

## Where the content will go

Top level, after 'Working partners and coalitions'

## Person responsible for this content

@dottiebobottie (confirm)

## Approver

_who will approve it or already did // we'll also track this via this github workflow_

| non_main | add content information for contractors where to find the content this issue includes subsections but might consider breaking each subsection into its own issue onboarding paperwork how to log time travel on behalf of bloom offboarding where the content will go top level after working partners and coalitions person responsible for this content dottiebobottie confirm approver who will approve it or already did we ll also track this via this github workflow | 0 |

120,896 | 15,819,573,363 | IssuesEvent | 2021-04-05 17:41:53 | fluxcd/flux | https://api.github.com/repos/fluxcd/flux | closed | Deal with multiple tags referring to the same image layer, and moving tags | blocked-design question vague | Many library images have tags that track e.g., major versions. That means any given image pushed will have two or three tags that refer to it; e.g., `{alpine:2, alpine:2.3, alpine:2.3.4}`.

We do in general know that these are the same, since the manifests refer to the image layer hash.

By the same token, we could know when e.g., a `":latest"` image is different, since we can check the image layer hash from Kubernetes (or given in the manifest), against the one we see in the image registry.

| 1.0 | Deal with multiple tags referring to the same image layer, and moving tags - Many library images have tags that track e.g., major versions. That means any given image pushed will have two or three tags that refer to it; e.g., `{alpine:2, alpine:2.3, alpine:2.3.4}`.

We do in general know that these are the same, since the manifests refer to the image layer hash.

By the same token, we could know when e.g., a `":latest"` image is different, since we can check the image layer hash from Kubernetes (or given in the manifest), against the one we see in the image registry.

| non_main | deal with multiple tags referring to the same image layer and moving tags many library images have tags that track e g major versions that means any given image pushed will have two or three tags that refer to it e g alpine alpine alpine we do in general know that these are the same since the manifests refer to the image layer hash by the same token we could know when e g a latest image is different since we can check the image layer hash from kubernetes or given in the manifest against the one we see in the image registry | 0 |

330,826 | 28,487,240,929 | IssuesEvent | 2023-04-18 08:43:06 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/lens/group3/rollup·ts - lens app - group 3 lens rollup tests should allow seamless transition to and from table view | Team:Visualizations failed-test Feature:Lens | A test failed on a tracked branch

```

Error: timed out waiting for assertExpectedText -- last error: TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="metric_label"])

Wait timed out after 10052ms

at /var/lib/buildkite-agent/builds/kb-n2-4-spot-cd690b02a97aedf7/elastic/kibana-on-merge/kibana/node_modules/selenium-webdriver/lib/webdriver.js:929:17

at runMicrotasks (<anonymous>)

at processTicksAndRejections (node:internal/process/task_queues:96:5)

at onFailure (retry_for_truthy.ts:39:13)

at retryForSuccess (retry_for_success.ts:59:13)

at retryForTruthy (retry_for_truthy.ts:27:3)

at RetryService.waitForWithTimeout (retry.ts:45:5)

at Object.assertExpectedText (lens_page.ts:85:7)

at Object.assertLegacyMetric (lens_page.ts:1181:7)

at Context.<anonymous> (rollup.ts:76:7)

at Object.apply (wrap_function.js:73:16)

```

First failure: [CI Build - main](https://buildkite.com/elastic/kibana-on-merge/builds/28977#01878f5c-4722-4f05-8d9c-d144e7962604)

<!-- kibanaCiData = {"failed-test":{"test.class":"Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/lens/group3/rollup·ts","test.name":"lens app - group 3 lens rollup tests should allow seamless transition to and from table view","test.failCount":1}} --> | 1.0 | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/lens/group3/rollup·ts - lens app - group 3 lens rollup tests should allow seamless transition to and from table view - A test failed on a tracked branch

```

Error: timed out waiting for assertExpectedText -- last error: TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="metric_label"])

Wait timed out after 10052ms

at /var/lib/buildkite-agent/builds/kb-n2-4-spot-cd690b02a97aedf7/elastic/kibana-on-merge/kibana/node_modules/selenium-webdriver/lib/webdriver.js:929:17

at runMicrotasks (<anonymous>)

at processTicksAndRejections (node:internal/process/task_queues:96:5)

at onFailure (retry_for_truthy.ts:39:13)

at retryForSuccess (retry_for_success.ts:59:13)

at retryForTruthy (retry_for_truthy.ts:27:3)

at RetryService.waitForWithTimeout (retry.ts:45:5)

at Object.assertExpectedText (lens_page.ts:85:7)

at Object.assertLegacyMetric (lens_page.ts:1181:7)

at Context.<anonymous> (rollup.ts:76:7)

at Object.apply (wrap_function.js:73:16)

```

First failure: [CI Build - main](https://buildkite.com/elastic/kibana-on-merge/builds/28977#01878f5c-4722-4f05-8d9c-d144e7962604)

<!-- kibanaCiData = {"failed-test":{"test.class":"Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/lens/group3/rollup·ts","test.name":"lens app - group 3 lens rollup tests should allow seamless transition to and from table view","test.failCount":1}} --> | non_main | failing test chrome x pack ui functional tests x pack test functional apps lens rollup·ts lens app group lens rollup tests should allow seamless transition to and from table view a test failed on a tracked branch error timed out waiting for assertexpectedtext last error timeouterror waiting for element to be located by css selector wait timed out after at var lib buildkite agent builds kb spot elastic kibana on merge kibana node modules selenium webdriver lib webdriver js at runmicrotasks at processticksandrejections node internal process task queues at onfailure retry for truthy ts at retryforsuccess retry for success ts at retryfortruthy retry for truthy ts at retryservice waitforwithtimeout retry ts at object assertexpectedtext lens page ts at object assertlegacymetric lens page ts at context rollup ts at object apply wrap function js first failure | 0 |

742,526 | 25,860,036,099 | IssuesEvent | 2022-12-13 16:18:24 | oceanprotocol/df-py | https://api.github.com/repos/oceanprotocol/df-py | closed | Create route/feed for allocations in purgatory. | Priority: Mid | ### Problem:

Right now, there is no way to view the items in purgatory that the user has allocated to.

This keeps the user from being able to adjust or reset their allocation.

### Candidate Solutions

Cand A

- Show items from purgatory where the user has allocated to, and force them to reallocate

- Users should then be able to reallocate away from these assets, and call "Update Allocations" once

Cand B

- Do not show items from purgatory, but create a "Reset Allocations" button that enables the user to reset their allocations

- This requires 2 txs to reset. 1 for the reset. 2 for the allocation.

### DoD:

- [ ] BE is able to get required purgatory + allocation data in order to show to user, and have them reallocate | 1.0 | Create route/feed for allocations in purgatory. - ### Problem:

Right now, there is no way to view the items in purgatory that the user has allocated to.

This keeps the user from being able to adjust or reset their allocation.

### Candidate Solutions

Cand A

- Show items from purgatory where the user has allocated to, and force them to reallocate

- Users should then be able to reallocate away from these assets, and call "Update Allocations" once

Cand B