Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

461,166 | 13,224,536,776 | IssuesEvent | 2020-08-17 19:20:16 | grey-software/Twitter-Focus | https://api.github.com/repos/grey-software/Twitter-Focus | opened | Inprove the README | Domain: Dev Experience Priority: Medium Type: Maintenance | Consult https://github.com/grey-software/LinkedInFocus

- [ ] Add webstore link

- [ ] Add local dev instructions

- [ ] Add screenshots or gif

- [ ] Add logo | 1.0 | Inprove the README - Consult https://github.com/grey-software/LinkedInFocus

- [ ] Add webstore link

- [ ] Add local dev instructions

- [ ] Add screenshots or gif

- [ ] Add logo | priority | inprove the readme consult add webstore link add local dev instructions add screenshots or gif add logo | 1 |

146,794 | 5,627,950,302 | IssuesEvent | 2017-04-05 03:58:50 | dnGrep/dnGrep | https://api.github.com/repos/dnGrep/dnGrep | closed | no supports for gb2312/utf8 encoding? | bug imported Priority-Medium | _From [mfm...@sina.com](https://code.google.com/u/107484051280825188802/) on February 25, 2013 23:15:51_

it turns out to be no supports for greping gb2312/utf8 encoded text.

1. dngrep did find the occurs, but linenos and positions of the result are very wrong

2. chinese characters displayed in result pane are unreadable, while they are good in preview pane.

3. converting gb2312/utf8 encoded text file to unicode encoding will solve this problem, but it is a tough work and not feasible

3. env: dngrep version 2.7.1, win7 x64(simplified chinese)

_Original issue: http://code.google.com/p/dngrep/issues/detail?id=177_

| 1.0 | no supports for gb2312/utf8 encoding? - _From [mfm...@sina.com](https://code.google.com/u/107484051280825188802/) on February 25, 2013 23:15:51_

it turns out to be no supports for greping gb2312/utf8 encoded text.

1. dngrep did find the occurs, but linenos and positions of the result are very wrong

2. chinese characters displayed in result pane are unreadable, while they are good in preview pane.

3. converting gb2312/utf8 encoded text file to unicode encoding will solve this problem, but it is a tough work and not feasible

3. env: dngrep version 2.7.1, win7 x64(simplified chinese)

_Original issue: http://code.google.com/p/dngrep/issues/detail?id=177_

| priority | no supports for encoding from on february it turns out to be no supports for greping encoded text dngrep did find the occurs but linenos and positions of the result are very wrong chinese characters displayed in result pane are unreadable while they are good in preview pane converting encoded text file to unicode encoding will solve this problem but it is a tough work and not feasible env dngrep version simplified chinese original issue | 1 |

405,428 | 11,873,120,850 | IssuesEvent | 2020-03-26 16:49:01 | netdata/netdata | https://api.github.com/repos/netdata/netdata | closed | During the agent installation, if the ACLK fails to be built, show an error message to the user | ACLK internal priority/high priority/medium | #### Summary

Ensure that the requirement in product#282 are met.

- [x] If ACLK error reporting not set yet by #8051 define it with this issue

- [x] If ACLK build fails make it prominent to the user so he knows about it. It should not be just small line easily overlooked in log. @amoss will speak to @jacekkolasa about this separately.

- [x] Netdata should log on startup it is build without ACLK

- [x] Report failure to Cloud to same endpoint #8051

- [x] Respect DO_NOT_TRACK environment variable

(Some of this will be covered by PR 8025 but there are fresh requests at the bottom of the discussion).

| 2.0 | During the agent installation, if the ACLK fails to be built, show an error message to the user - #### Summary

Ensure that the requirement in product#282 are met.

- [x] If ACLK error reporting not set yet by #8051 define it with this issue

- [x] If ACLK build fails make it prominent to the user so he knows about it. It should not be just small line easily overlooked in log. @amoss will speak to @jacekkolasa about this separately.

- [x] Netdata should log on startup it is build without ACLK

- [x] Report failure to Cloud to same endpoint #8051

- [x] Respect DO_NOT_TRACK environment variable

(Some of this will be covered by PR 8025 but there are fresh requests at the bottom of the discussion).

| priority | during the agent installation if the aclk fails to be built show an error message to the user summary ensure that the requirement in product are met if aclk error reporting not set yet by define it with this issue if aclk build fails make it prominent to the user so he knows about it it should not be just small line easily overlooked in log amoss will speak to jacekkolasa about this separately netdata should log on startup it is build without aclk report failure to cloud to same endpoint respect do not track environment variable some of this will be covered by pr but there are fresh requests at the bottom of the discussion | 1 |

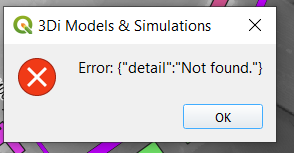

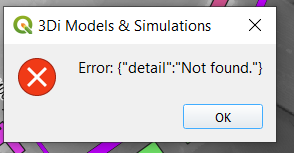

632,748 | 20,205,862,237 | IssuesEvent | 2022-02-11 20:16:28 | nens/threedi-api-qgis-client | https://api.github.com/repos/nens/threedi-api-qgis-client | closed | Download results if user has access to simulation results but not to schematisation | ⏰ Priority: 3. Medium | This currently results in the following error:

Desired behaviour: if schematisation is not found, let user choose where to store the results (Save as dialog) | 1.0 | Download results if user has access to simulation results but not to schematisation - This currently results in the following error:

Desired behaviour: if schematisation is not found, let user choose where to store the results (Save as dialog) | priority | download results if user has access to simulation results but not to schematisation this currently results in the following error desired behaviour if schematisation is not found let user choose where to store the results save as dialog | 1 |

69,655 | 3,309,741,825 | IssuesEvent | 2015-11-05 03:18:40 | cs2103aug2015-t14-2j/main | https://api.github.com/repos/cs2103aug2015-t14-2j/main | closed | As a user i want to have a GUI | priority.medium | so that I can visualize the different tasks on my screen and better conceptualize my schedule | 1.0 | As a user i want to have a GUI - so that I can visualize the different tasks on my screen and better conceptualize my schedule | priority | as a user i want to have a gui so that i can visualize the different tasks on my screen and better conceptualize my schedule | 1 |

479,370 | 13,795,364,105 | IssuesEvent | 2020-10-09 17:53:18 | medic/cht-core | https://api.github.com/repos/medic/cht-core | closed | Update `admin` to be a standalone app | Priority: 2 - Medium Type: Technical issue | **Describe the issue**

Currently, the admin app requires a significantly large number of webapp files (services, filters, directives, redux actions/reducers/selectors).

This becomes a problem with the migration to Angular 10, where the files imported from webapp will, most likely, be unusable by admin.

**Describe the improvement you'd like**

"Duplicate" all files that admin app requires from the webapp folder, preserving git line history as much as possible. (this includes their tests)

**Describe alternatives you've considered**

We could postpone this migration under the assumption that we may be able to use post-angular-10-migration webapp files as we do now, but it's a risk.

| 1.0 | Update `admin` to be a standalone app - **Describe the issue**

Currently, the admin app requires a significantly large number of webapp files (services, filters, directives, redux actions/reducers/selectors).

This becomes a problem with the migration to Angular 10, where the files imported from webapp will, most likely, be unusable by admin.

**Describe the improvement you'd like**

"Duplicate" all files that admin app requires from the webapp folder, preserving git line history as much as possible. (this includes their tests)

**Describe alternatives you've considered**

We could postpone this migration under the assumption that we may be able to use post-angular-10-migration webapp files as we do now, but it's a risk.

| priority | update admin to be a standalone app describe the issue currently the admin app requires a significantly large number of webapp files services filters directives redux actions reducers selectors this becomes a problem with the migration to angular where the files imported from webapp will most likely be unusable by admin describe the improvement you d like duplicate all files that admin app requires from the webapp folder preserving git line history as much as possible this includes their tests describe alternatives you ve considered we could postpone this migration under the assumption that we may be able to use post angular migration webapp files as we do now but it s a risk | 1 |

148,749 | 5,695,880,666 | IssuesEvent | 2017-04-16 04:23:56 | tootsuite/mastodon | https://api.github.com/repos/tootsuite/mastodon | closed | Videos in unsupported codecs cause UI problems | bug priority - medium ui | Viewing a toot containing a video in a format your browser doesn't support causes usability issues with the UI:

* there is a blank space where the thumbnail would be (see the first toot in the following screenshot)

* clicking on the space covers the screen in a lightbox, but it's empty other than a close button

These screenshots were taken of a toot containing a WebM video, on iOS, which doesn't support WebM video encoding in the browser (due to lack of hardware acceleration of that format).

I understand that it's not feasible to convert every video uploaded to Mastodon to compatible formats for every device, but I'm wondering if it's possible to detect the unsupported format and either hide the thumbnail preview and/or display a message or icon indicating the format isn't supported (in any case, the link should still be clickable to download the file because another app might support it even if the browser doesn't).

* * * *

- [X] I searched or browsed the repo’s other issues to ensure this is not a duplicate.

| 1.0 | Videos in unsupported codecs cause UI problems - Viewing a toot containing a video in a format your browser doesn't support causes usability issues with the UI:

* there is a blank space where the thumbnail would be (see the first toot in the following screenshot)

* clicking on the space covers the screen in a lightbox, but it's empty other than a close button

These screenshots were taken of a toot containing a WebM video, on iOS, which doesn't support WebM video encoding in the browser (due to lack of hardware acceleration of that format).

I understand that it's not feasible to convert every video uploaded to Mastodon to compatible formats for every device, but I'm wondering if it's possible to detect the unsupported format and either hide the thumbnail preview and/or display a message or icon indicating the format isn't supported (in any case, the link should still be clickable to download the file because another app might support it even if the browser doesn't).

* * * *

- [X] I searched or browsed the repo’s other issues to ensure this is not a duplicate.

| priority | videos in unsupported codecs cause ui problems viewing a toot containing a video in a format your browser doesn t support causes usability issues with the ui there is a blank space where the thumbnail would be see the first toot in the following screenshot clicking on the space covers the screen in a lightbox but it s empty other than a close button these screenshots were taken of a toot containing a webm video on ios which doesn t support webm video encoding in the browser due to lack of hardware acceleration of that format i understand that it s not feasible to convert every video uploaded to mastodon to compatible formats for every device but i m wondering if it s possible to detect the unsupported format and either hide the thumbnail preview and or display a message or icon indicating the format isn t supported in any case the link should still be clickable to download the file because another app might support it even if the browser doesn t i searched or browsed the repo’s other issues to ensure this is not a duplicate | 1 |

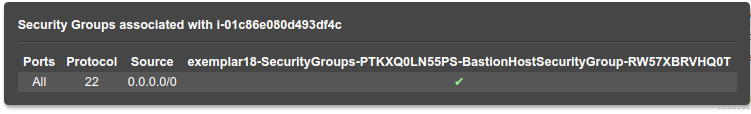

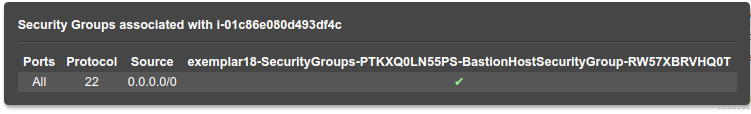

232,593 | 7,667,799,349 | IssuesEvent | 2018-05-14 00:47:04 | hackoregon/civic-devops | https://api.github.com/repos/hackoregon/civic-devops | closed | Cannot `ssh` into Bastion Host in newly created stack | Priority: medium bug | This issue was previously mentioned at issue #87

@MikeTheCanuck suspects that the security group assigned to the Bastion Host only allows certain IP addresses incoming. The below screenshot showed that this host allows `tcp/22` from anywhere but both Mike and me got `Connect time out`

| 1.0 | Cannot `ssh` into Bastion Host in newly created stack - This issue was previously mentioned at issue #87

@MikeTheCanuck suspects that the security group assigned to the Bastion Host only allows certain IP addresses incoming. The below screenshot showed that this host allows `tcp/22` from anywhere but both Mike and me got `Connect time out`

| priority | cannot ssh into bastion host in newly created stack this issue was previously mentioned at issue mikethecanuck suspects that the security group assigned to the bastion host only allows certain ip addresses incoming the below screenshot showed that this host allows tcp from anywhere but both mike and me got connect time out | 1 |

413,916 | 12,093,352,708 | IssuesEvent | 2020-04-19 19:17:09 | samiha-rahman/soen390 | https://api.github.com/repos/samiha-rahman/soen390 | closed | US-64: Select classroom as start or end destination | epic 4 priority: medium user story | As a user, I would like to be able to select a classroom as a start or end destination.

This story is created from a requirement extracted from #31 to reduce the scope of that story.

**Associated epic:** #20

**Acceptance criteria:**

Step # | Execution Procedure or Input | Expected Results/Outputs | Passed/Failed

--- | --- | --- | ---

1 | Select a room | Should provide option to select designated room as starting point or end destination | | 1.0 | US-64: Select classroom as start or end destination - As a user, I would like to be able to select a classroom as a start or end destination.

This story is created from a requirement extracted from #31 to reduce the scope of that story.

**Associated epic:** #20

**Acceptance criteria:**

Step # | Execution Procedure or Input | Expected Results/Outputs | Passed/Failed

--- | --- | --- | ---

1 | Select a room | Should provide option to select designated room as starting point or end destination | | priority | us select classroom as start or end destination as a user i would like to be able to select a classroom as a start or end destination this story is created from a requirement extracted from to reduce the scope of that story associated epic acceptance criteria step execution procedure or input expected results outputs passed failed select a room should provide option to select designated room as starting point or end destination | 1 |

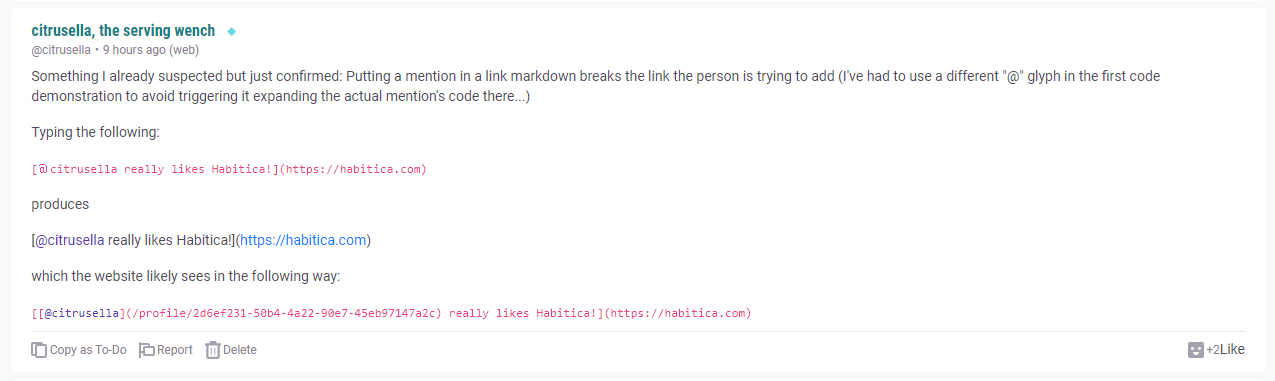

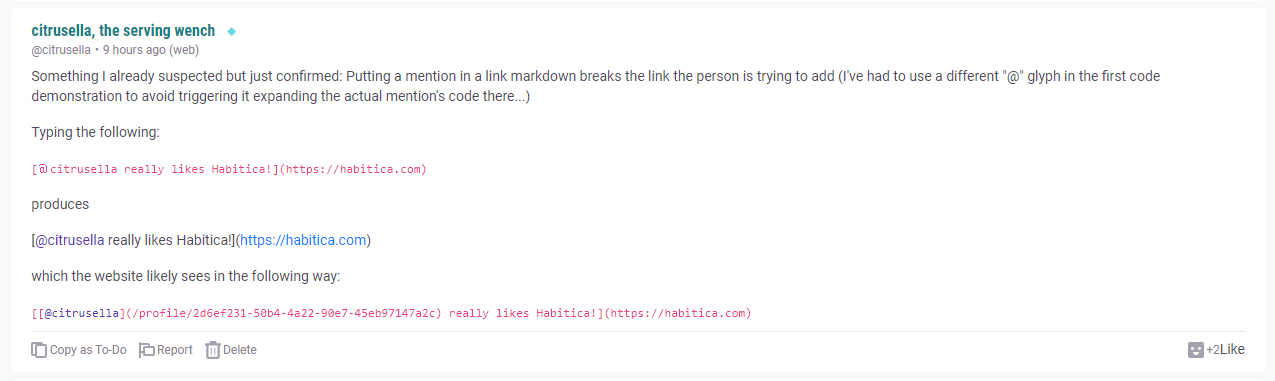

422,945 | 12,288,633,361 | IssuesEvent | 2020-05-09 17:37:10 | HabitRPG/habitica | https://api.github.com/repos/HabitRPG/habitica | closed | Username formatting breaks markdown for links in posts | help wanted priority: medium section: Guilds section: Tavern Chat |

> "Something I already suspected but just confirmed: Putting a mention in a link markdown breaks the link the person is trying to add (I've had to use a different "@" glyph in the first code demonstration to avoid triggering it expanding the actual mention's code there...)

>

> Typing the following:

>

> [@citrusella really likes Habitica!](https://habitica.com)

>

> produces

>

> [@citrusella really likes Habitica!](https://habitica.com)

>

> which the website likely sees in the following way:

>

> [[@citrusella](/profile/2d6ef231-50b4-4a22-90e7-45eb97147a2c) really likes Habitica!](https://habitica.com)"

(Image included since obviously one can't demonstrate in the same way on github! In other words, including @username in markdown links breaks it because the @mention formatting takes precedence. | 1.0 | Username formatting breaks markdown for links in posts -

> "Something I already suspected but just confirmed: Putting a mention in a link markdown breaks the link the person is trying to add (I've had to use a different "@" glyph in the first code demonstration to avoid triggering it expanding the actual mention's code there...)

>

> Typing the following:

>

> [@citrusella really likes Habitica!](https://habitica.com)

>

> produces

>

> [@citrusella really likes Habitica!](https://habitica.com)

>

> which the website likely sees in the following way:

>

> [[@citrusella](/profile/2d6ef231-50b4-4a22-90e7-45eb97147a2c) really likes Habitica!](https://habitica.com)"

(Image included since obviously one can't demonstrate in the same way on github! In other words, including @username in markdown links breaks it because the @mention formatting takes precedence. | priority | username formatting breaks markdown for links in posts something i already suspected but just confirmed putting a mention in a link markdown breaks the link the person is trying to add i ve had to use a different glyph in the first code demonstration to avoid triggering it expanding the actual mention s code there typing the following produces which the website likely sees in the following way profile really likes habitica image included since obviously one can t demonstrate in the same way on github in other words including username in markdown links breaks it because the mention formatting takes precedence | 1 |

776,099 | 27,246,883,097 | IssuesEvent | 2023-02-22 03:16:28 | ansible-collections/azure | https://api.github.com/repos/ansible-collections/azure | closed | azure_rm_virtualmachine fails in AzureChinaCloud | has_pr medium_priority | ##### SUMMARY

something called by azure_rm_virtualmachine does not obey AZURE_CLOUD_ENVIRONMENT and attempts to connect to AzureCloud when operating on AzureChinaCloud hosts

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

azure_rm_virtualmachine

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes -->

```paste below

ansible-playbook [core 2.12.5.post0]

config file = /ansible/ansible.cfg

configured module search path = ['/home/runner/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/local/lib/python3.8/site-packages/ansible

ansible collection location = /home/runner/.ansible/collections:/ansible/collections

executable location = /usr/local/bin/ansible-playbook

python version = 3.8.13 (default, Jun 24 2022, 15:27:57) [GCC 8.5.0 20210514 (Red Hat 8.5.0-13)]

jinja version = 3.1.2

libyaml = True

```

##### COLLECTION VERSION

<!--- Paste verbatim output from "ansible-galaxy collection list <namespace>.<collection>" between the quotes

for example: ansible-galaxy collection list community.general

-->

```paste below

- name: azure.azcollection

version: '==1.14.0'

```

##### CONFIGURATION

<!--- Paste verbatim output from "ansible-config dump --only-changed" between quotes -->

```paste below

ANY_ERRORS_FATAL(/ansible/ansible.cfg) = False

COLLECTIONS_PATHS(/ansible/ansible.cfg) = ['/home/runner/.ansible/collections', '/ansible/collections']

DEFAULT_FORKS(/ansible/ansible.cfg) = 50

DEFAULT_GATHER_SUBSET(/ansible/ansible.cfg) = ['all']

DEFAULT_GATHER_TIMEOUT(/ansible/ansible.cfg) = 20

DEFAULT_HASH_BEHAVIOUR(/ansible/ansible.cfg) = merge

DEFAULT_TIMEOUT(/ansible/ansible.cfg) = 20

DEFAULT_TRANSPORT(/ansible/ansible.cfg) = smart

HOST_KEY_CHECKING(/ansible/ansible.cfg) = False

INVENTORY_ENABLED(/ansible/ansible.cfg) = ['vue.azure.azure_rm', 'script', 'yaml', 'ini']

PERSISTENT_COMMAND_TIMEOUT(/ansible/ansible.cfg) = 20

```

##### OS / ENVIRONMENT

running on a recent awx-ee image that has had additional packages/modules installed

##### STEPS TO REPRODUCE

example one: setting vm tags

```yaml

- name: Set patch_version tags

azure.azcollection.azure_rm_virtualmachine:

resource_group: "{{ resource_group }}"

name: "{{ name }}"

tags:

patch_version: "{{ ansible_date_time.iso8601 }}"

zones: "{{ availability_zone }}"

delegate_to: localhost

when:

- "'patch_version' in tags"

- tags['patch_version'] == 'none'

```

example two: rebooting a vm via azure_rm_virtualmachine:

```

- name: Tell Azure to reboot this VM (so UAC setting change takes effect)

azure.azcollection.azure_rm_virtualmachine:

resource_group: "{{ resource_group }}"

name: "{{ name }}"

restarted: yes

zones: "{{ availability_zone }}"

delegate_to: localhost

connection: local

```

##### EXPECTED RESULTS

VM tags set, or VM rebooted, no fatal errors

##### ACTUAL RESULTS

```paste below

TASK [windows : Set patch_version tags] ***************************************************************************************************************

task path: /ansible/roles/windows/tasks/patching_apply.yml:32

<localhost> ESTABLISH LOCAL CONNECTION FOR USER: root

<localhost> EXEC /bin/sh -c 'echo ~root && sleep 0'

<localhost> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo /root/.ansible/tmp `"&& mkdir "` echo /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344 `" && echo ansible-tmp-1671211081.1372511-135-112305740467344="` echo /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344 `" ) && sleep 0'

Using module file /home/runner/.ansible/collections/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py

<localhost> PUT /home/runner/.ansible/tmp/ansible-local-64whuibyec/tmpa3z1tq85 TO /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py

<localhost> EXEC /bin/sh -c 'chmod u+x /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/ /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py && sleep 0'

<localhost> EXEC /bin/sh -c '/usr/bin/python3 /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py && sleep 0'

<localhost> EXEC /bin/sh -c 'rm -f -r /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/ > /dev/null 2>&1 && sleep 0'

fatal: [************-vm0 -> localhost]: FAILED! => {

"changed": false,

"module_stderr": "ClientSecretCredential.get_token failed: Authentication failed: AADSTS500011: The resource principal named https://management.azure.com was not found in the tenant named PVUECN. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You might have sent your authentication request to the wrong tenant.\r\nTrace ID: ****\r\nCorrelation ID: ****\r\nTimestamp: 2022-12-16 17:18:11Z\nTraceback (most recent call last):\n File \"/root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py\", line 107, in <module>\n _ansiballz_main()\n File \"/root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py\", line 99, in _ansiballz_main\n invoke_module(zipped_mod, temp_path, ANSIBALLZ_PARAMS)\n File \"/root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py\", line 47, in invoke_module\n runpy.run_module(mod_name='ansible_collections.azure.azcollection.plugins.modules.azure_rm_virtualmachine', init_globals=dict(_module_fqn='ansible_collections.azure.azcollection.plugins.modules.azure_rm_virtualmachine', _modlib_path=modlib_path),\n File \"/usr/lib64/python3.8/runpy.py\", line 207, in run_module\n return _run_module_code(code, init_globals, run_name, mod_spec)\n File \"/usr/lib64/python3.8/runpy.py\", line 97, in _run_module_code\n _run_code(code, mod_globals, init_globals,\n File \"/usr/lib64/python3.8/runpy.py\", line 87, in _run_code\n exec(code, run_globals)\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 2344, in <module>\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 2340, in main\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 963, in __init__\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/module_utils/azure_rm_common.py\", line 469, in __init__\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 1114, in exec_module\n File \"/usr/local/lib/python3.8/site-packages/azure/core/tracing/decorator.py\", line 78, in wrapper_use_tracer\n return func(*args, **kwargs)\n File \"/usr/local/lib/python3.8/site-packages/azure/mgmt/compute/v2021_04_01/operations/_virtual_machines_operations.py\", line 1502, in get\n pipeline_response = self._client._pipeline.run(request, stream=False, **kwargs)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 211, in run\n return first_node.send(pipeline_request) # type: ignore\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send\n response = self.next.send(request)\n [Previous line repeated 2 more times]\n File \"/usr/local/lib/python3.8/site-packages/azure/mgmt/core/policies/_base.py\", line 47, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_redirect.py\", line 158, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_retry.py\", line 446, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_authentication.py\", line 116, in send\n self.on_request(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_authentication.py\", line 93, in on_request\n self._token = self._credential.get_token(*self._scopes)\n File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/get_token_mixin.py\", line 76, in get_token\n token = self._request_token(*scopes, **kwargs)\n File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/decorators.py\", line 56, in wrapper\n return fn(*args, **kwargs)\n File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/client_credential_base.py\", line 40, in _request_token\n raise ClientAuthenticationError(message=message)\nazure.core.exceptions.ClientAuthenticationError: Authentication failed: AADSTS500011: The resource principal named https://management.azure.com was not found in the tenant named PVUECN. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You might have sent your authentication request to the wrong tenant.\r\nTrace ID: ****\r\nCorrelation ID: *****\r\nTimestamp: 2022-12-16 17:18:11Z\n",

"module_stdout": "",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 1

}

```

decoded:

```

ClientSecretCredential.get_token failed: Authentication failed: AADSTS500011: The resource principal named https://management.azure.com was not found in the tenant named PVUECN. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You might have sent your authentication request to the wrong tenant.

Trace ID: ****

Correlation ID: ****

Timestamp: 2022-12-16 16:08:27Z

Traceback (most recent call last):

File \"/root/.ansible/tmp/ansible-tmp-1671206898.300529-135-138589534907732/AnsiballZ_azure_rm_virtualmachine.py\", line 107, in <module>

_ansiballz_main()

File \"/root/.ansible/tmp/ansible-tmp-1671206898.300529-135-138589534907732/AnsiballZ_azure_rm_virtualmachine.py\", line 99, in _ansiballz_main

invoke_module(zipped_mod, temp_path, ANSIBALLZ_PARAMS)

File \"/root/.ansible/tmp/ansible-tmp-1671206898.300529-135-138589534907732/AnsiballZ_azure_rm_virtualmachine.py\", line 47, in invoke_module

runpy.run_module(mod_name='ansible_collections.azure.azcollection.plugins.modules.azure_rm_virtualmachine', init_globals=dict(_module_fqn='ansible_collections.azure.azcollection.plugins.modules.azure_rm_virtualmachine', _modlib_path=modlib_path),

File \"/usr/lib64/python3.8/runpy.py\", line 207, in run_module

return _run_module_code(code, init_globals, run_name, mod_spec)

File \"/usr/lib64/python3.8/runpy.py\", line 97, in _run_module_code

_run_code(code, mod_globals, init_globals,

File \"/usr/lib64/python3.8/runpy.py\", line 87, in _run_code

exec(code, run_globals)

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 2344, in <module>

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 2340, in main

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 963, in __init__

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/module_utils/azure_rm_common.py\", line 469, in __init__

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 1114, in exec_module

File \"/usr/local/lib/python3.8/site-packages/azure/core/tracing/decorator.py\", line 78, in wrapper_use_tracer

return func(*args, **kwargs)

File \"/usr/local/lib/python3.8/site-packages/azure/mgmt/compute/v2021_04_01/operations/_virtual_machines_operations.py\", line 1502, in get

pipeline_response = self._client._pipeline.run(request, stream=False, **kwargs)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 211, in run

return first_node.send(pipeline_request) # type: ignore

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send

response = self.next.send(request)

[Previous line repeated 2 more times]

File \"/usr/local/lib/python3.8/site-packages/azure/mgmt/core/policies/_base.py\", line 47, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_redirect.py\", line 158, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_retry.py\", line 446, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_authentication.py\", line 116, in send

self.on_request(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_authentication.py\", line 93, in on_request

self._token = self._credential.get_token(*self._scopes)

File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/get_token_mixin.py\", line 76, in get_token

token = self._request_token(*scopes, **kwargs)

File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/decorators.py\", line 56, in wrapper

return fn(*args, **kwargs)

File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/client_credential_base.py\", line 40, in _request_token

raise ClientAuthenticationError(message=message)

azure.core.exceptions.ClientAuthenticationError: Authentication failed: AADSTS500011: The resource principal named https://management.azure.com was not found in the tenant named PVUECN. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You might have sent your authentication request to the wrong tenant.

```

| 1.0 | azure_rm_virtualmachine fails in AzureChinaCloud - ##### SUMMARY

something called by azure_rm_virtualmachine does not obey AZURE_CLOUD_ENVIRONMENT and attempts to connect to AzureCloud when operating on AzureChinaCloud hosts

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

azure_rm_virtualmachine

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes -->

```paste below

ansible-playbook [core 2.12.5.post0]

config file = /ansible/ansible.cfg

configured module search path = ['/home/runner/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/local/lib/python3.8/site-packages/ansible

ansible collection location = /home/runner/.ansible/collections:/ansible/collections

executable location = /usr/local/bin/ansible-playbook

python version = 3.8.13 (default, Jun 24 2022, 15:27:57) [GCC 8.5.0 20210514 (Red Hat 8.5.0-13)]

jinja version = 3.1.2

libyaml = True

```

##### COLLECTION VERSION

<!--- Paste verbatim output from "ansible-galaxy collection list <namespace>.<collection>" between the quotes

for example: ansible-galaxy collection list community.general

-->

```paste below

- name: azure.azcollection

version: '==1.14.0'

```

##### CONFIGURATION

<!--- Paste verbatim output from "ansible-config dump --only-changed" between quotes -->

```paste below

ANY_ERRORS_FATAL(/ansible/ansible.cfg) = False

COLLECTIONS_PATHS(/ansible/ansible.cfg) = ['/home/runner/.ansible/collections', '/ansible/collections']

DEFAULT_FORKS(/ansible/ansible.cfg) = 50

DEFAULT_GATHER_SUBSET(/ansible/ansible.cfg) = ['all']

DEFAULT_GATHER_TIMEOUT(/ansible/ansible.cfg) = 20

DEFAULT_HASH_BEHAVIOUR(/ansible/ansible.cfg) = merge

DEFAULT_TIMEOUT(/ansible/ansible.cfg) = 20

DEFAULT_TRANSPORT(/ansible/ansible.cfg) = smart

HOST_KEY_CHECKING(/ansible/ansible.cfg) = False

INVENTORY_ENABLED(/ansible/ansible.cfg) = ['vue.azure.azure_rm', 'script', 'yaml', 'ini']

PERSISTENT_COMMAND_TIMEOUT(/ansible/ansible.cfg) = 20

```

##### OS / ENVIRONMENT

running on a recent awx-ee image that has had additional packages/modules installed

##### STEPS TO REPRODUCE

example one: setting vm tags

```yaml

- name: Set patch_version tags

azure.azcollection.azure_rm_virtualmachine:

resource_group: "{{ resource_group }}"

name: "{{ name }}"

tags:

patch_version: "{{ ansible_date_time.iso8601 }}"

zones: "{{ availability_zone }}"

delegate_to: localhost

when:

- "'patch_version' in tags"

- tags['patch_version'] == 'none'

```

example two: rebooting a vm via azure_rm_virtualmachine:

```

- name: Tell Azure to reboot this VM (so UAC setting change takes effect)

azure.azcollection.azure_rm_virtualmachine:

resource_group: "{{ resource_group }}"

name: "{{ name }}"

restarted: yes

zones: "{{ availability_zone }}"

delegate_to: localhost

connection: local

```

##### EXPECTED RESULTS

VM tags set, or VM rebooted, no fatal errors

##### ACTUAL RESULTS

```paste below

TASK [windows : Set patch_version tags] ***************************************************************************************************************

task path: /ansible/roles/windows/tasks/patching_apply.yml:32

<localhost> ESTABLISH LOCAL CONNECTION FOR USER: root

<localhost> EXEC /bin/sh -c 'echo ~root && sleep 0'

<localhost> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo /root/.ansible/tmp `"&& mkdir "` echo /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344 `" && echo ansible-tmp-1671211081.1372511-135-112305740467344="` echo /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344 `" ) && sleep 0'

Using module file /home/runner/.ansible/collections/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py

<localhost> PUT /home/runner/.ansible/tmp/ansible-local-64whuibyec/tmpa3z1tq85 TO /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py

<localhost> EXEC /bin/sh -c 'chmod u+x /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/ /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py && sleep 0'

<localhost> EXEC /bin/sh -c '/usr/bin/python3 /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py && sleep 0'

<localhost> EXEC /bin/sh -c 'rm -f -r /root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/ > /dev/null 2>&1 && sleep 0'

fatal: [************-vm0 -> localhost]: FAILED! => {

"changed": false,

"module_stderr": "ClientSecretCredential.get_token failed: Authentication failed: AADSTS500011: The resource principal named https://management.azure.com was not found in the tenant named PVUECN. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You might have sent your authentication request to the wrong tenant.\r\nTrace ID: ****\r\nCorrelation ID: ****\r\nTimestamp: 2022-12-16 17:18:11Z\nTraceback (most recent call last):\n File \"/root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py\", line 107, in <module>\n _ansiballz_main()\n File \"/root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py\", line 99, in _ansiballz_main\n invoke_module(zipped_mod, temp_path, ANSIBALLZ_PARAMS)\n File \"/root/.ansible/tmp/ansible-tmp-1671211081.1372511-135-112305740467344/AnsiballZ_azure_rm_virtualmachine.py\", line 47, in invoke_module\n runpy.run_module(mod_name='ansible_collections.azure.azcollection.plugins.modules.azure_rm_virtualmachine', init_globals=dict(_module_fqn='ansible_collections.azure.azcollection.plugins.modules.azure_rm_virtualmachine', _modlib_path=modlib_path),\n File \"/usr/lib64/python3.8/runpy.py\", line 207, in run_module\n return _run_module_code(code, init_globals, run_name, mod_spec)\n File \"/usr/lib64/python3.8/runpy.py\", line 97, in _run_module_code\n _run_code(code, mod_globals, init_globals,\n File \"/usr/lib64/python3.8/runpy.py\", line 87, in _run_code\n exec(code, run_globals)\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 2344, in <module>\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 2340, in main\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 963, in __init__\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/module_utils/azure_rm_common.py\", line 469, in __init__\n File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_4tp4ffhc/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 1114, in exec_module\n File \"/usr/local/lib/python3.8/site-packages/azure/core/tracing/decorator.py\", line 78, in wrapper_use_tracer\n return func(*args, **kwargs)\n File \"/usr/local/lib/python3.8/site-packages/azure/mgmt/compute/v2021_04_01/operations/_virtual_machines_operations.py\", line 1502, in get\n pipeline_response = self._client._pipeline.run(request, stream=False, **kwargs)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 211, in run\n return first_node.send(pipeline_request) # type: ignore\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send\n response = self.next.send(request)\n [Previous line repeated 2 more times]\n File \"/usr/local/lib/python3.8/site-packages/azure/mgmt/core/policies/_base.py\", line 47, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_redirect.py\", line 158, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_retry.py\", line 446, in send\n response = self.next.send(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_authentication.py\", line 116, in send\n self.on_request(request)\n File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_authentication.py\", line 93, in on_request\n self._token = self._credential.get_token(*self._scopes)\n File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/get_token_mixin.py\", line 76, in get_token\n token = self._request_token(*scopes, **kwargs)\n File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/decorators.py\", line 56, in wrapper\n return fn(*args, **kwargs)\n File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/client_credential_base.py\", line 40, in _request_token\n raise ClientAuthenticationError(message=message)\nazure.core.exceptions.ClientAuthenticationError: Authentication failed: AADSTS500011: The resource principal named https://management.azure.com was not found in the tenant named PVUECN. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You might have sent your authentication request to the wrong tenant.\r\nTrace ID: ****\r\nCorrelation ID: *****\r\nTimestamp: 2022-12-16 17:18:11Z\n",

"module_stdout": "",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 1

}

```

decoded:

```

ClientSecretCredential.get_token failed: Authentication failed: AADSTS500011: The resource principal named https://management.azure.com was not found in the tenant named PVUECN. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You might have sent your authentication request to the wrong tenant.

Trace ID: ****

Correlation ID: ****

Timestamp: 2022-12-16 16:08:27Z

Traceback (most recent call last):

File \"/root/.ansible/tmp/ansible-tmp-1671206898.300529-135-138589534907732/AnsiballZ_azure_rm_virtualmachine.py\", line 107, in <module>

_ansiballz_main()

File \"/root/.ansible/tmp/ansible-tmp-1671206898.300529-135-138589534907732/AnsiballZ_azure_rm_virtualmachine.py\", line 99, in _ansiballz_main

invoke_module(zipped_mod, temp_path, ANSIBALLZ_PARAMS)

File \"/root/.ansible/tmp/ansible-tmp-1671206898.300529-135-138589534907732/AnsiballZ_azure_rm_virtualmachine.py\", line 47, in invoke_module

runpy.run_module(mod_name='ansible_collections.azure.azcollection.plugins.modules.azure_rm_virtualmachine', init_globals=dict(_module_fqn='ansible_collections.azure.azcollection.plugins.modules.azure_rm_virtualmachine', _modlib_path=modlib_path),

File \"/usr/lib64/python3.8/runpy.py\", line 207, in run_module

return _run_module_code(code, init_globals, run_name, mod_spec)

File \"/usr/lib64/python3.8/runpy.py\", line 97, in _run_module_code

_run_code(code, mod_globals, init_globals,

File \"/usr/lib64/python3.8/runpy.py\", line 87, in _run_code

exec(code, run_globals)

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 2344, in <module>

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 2340, in main

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 963, in __init__

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/module_utils/azure_rm_common.py\", line 469, in __init__

File \"/tmp/ansible_azure.azcollection.azure_rm_virtualmachine_payload_jbwif2z3/ansible_azure.azcollection.azure_rm_virtualmachine_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_virtualmachine.py\", line 1114, in exec_module

File \"/usr/local/lib/python3.8/site-packages/azure/core/tracing/decorator.py\", line 78, in wrapper_use_tracer

return func(*args, **kwargs)

File \"/usr/local/lib/python3.8/site-packages/azure/mgmt/compute/v2021_04_01/operations/_virtual_machines_operations.py\", line 1502, in get

pipeline_response = self._client._pipeline.run(request, stream=False, **kwargs)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 211, in run

return first_node.send(pipeline_request) # type: ignore

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/_base.py\", line 71, in send

response = self.next.send(request)

[Previous line repeated 2 more times]

File \"/usr/local/lib/python3.8/site-packages/azure/mgmt/core/policies/_base.py\", line 47, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_redirect.py\", line 158, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_retry.py\", line 446, in send

response = self.next.send(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_authentication.py\", line 116, in send

self.on_request(request)

File \"/usr/local/lib/python3.8/site-packages/azure/core/pipeline/policies/_authentication.py\", line 93, in on_request

self._token = self._credential.get_token(*self._scopes)

File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/get_token_mixin.py\", line 76, in get_token

token = self._request_token(*scopes, **kwargs)

File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/decorators.py\", line 56, in wrapper

return fn(*args, **kwargs)

File \"/usr/local/lib/python3.8/site-packages/azure/identity/_internal/client_credential_base.py\", line 40, in _request_token

raise ClientAuthenticationError(message=message)

azure.core.exceptions.ClientAuthenticationError: Authentication failed: AADSTS500011: The resource principal named https://management.azure.com was not found in the tenant named PVUECN. This can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant. You might have sent your authentication request to the wrong tenant.

```

| priority | azure rm virtualmachine fails in azurechinacloud summary something called by azure rm virtualmachine does not obey azure cloud environment and attempts to connect to azurecloud when operating on azurechinacloud hosts issue type bug report component name azure rm virtualmachine ansible version paste below ansible playbook config file ansible ansible cfg configured module search path ansible python module location usr local lib site packages ansible ansible collection location home runner ansible collections ansible collections executable location usr local bin ansible playbook python version default jun jinja version libyaml true collection version between the quotes for example ansible galaxy collection list community general paste below name azure azcollection version configuration paste below any errors fatal ansible ansible cfg false collections paths ansible ansible cfg default forks ansible ansible cfg default gather subset ansible ansible cfg default gather timeout ansible ansible cfg default hash behaviour ansible ansible cfg merge default timeout ansible ansible cfg default transport ansible ansible cfg smart host key checking ansible ansible cfg false inventory enabled ansible ansible cfg persistent command timeout ansible ansible cfg os environment running on a recent awx ee image that has had additional packages modules installed steps to reproduce example one setting vm tags yaml name set patch version tags azure azcollection azure rm virtualmachine resource group resource group name name tags patch version ansible date time zones availability zone delegate to localhost when patch version in tags tags none example two rebooting a vm via azure rm virtualmachine name tell azure to reboot this vm so uac setting change takes effect azure azcollection azure rm virtualmachine resource group resource group name name restarted yes zones availability zone delegate to localhost connection local expected results vm tags set or vm rebooted no fatal errors actual results paste below task task path ansible roles windows tasks patching apply yml establish local connection for user root exec bin sh c echo root sleep exec bin sh c umask mkdir p echo root ansible tmp mkdir echo root ansible tmp ansible tmp echo ansible tmp echo root ansible tmp ansible tmp sleep using module file home runner ansible collections ansible collections azure azcollection plugins modules azure rm virtualmachine py put home runner ansible tmp ansible local to root ansible tmp ansible tmp ansiballz azure rm virtualmachine py exec bin sh c chmod u x root ansible tmp ansible tmp root ansible tmp ansible tmp ansiballz azure rm virtualmachine py sleep exec bin sh c usr bin root ansible tmp ansible tmp ansiballz azure rm virtualmachine py sleep exec bin sh c rm f r root ansible tmp ansible tmp dev null sleep fatal failed changed false module stderr clientsecretcredential get token failed authentication failed the resource principal named was not found in the tenant named pvuecn this can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant you might have sent your authentication request to the wrong tenant r ntrace id r ncorrelation id r ntimestamp ntraceback most recent call last n file root ansible tmp ansible tmp ansiballz azure rm virtualmachine py line in n ansiballz main n file root ansible tmp ansible tmp ansiballz azure rm virtualmachine py line in ansiballz main n invoke module zipped mod temp path ansiballz params n file root ansible tmp ansible tmp ansiballz azure rm virtualmachine py line in invoke module n runpy run module mod name ansible collections azure azcollection plugins modules azure rm virtualmachine init globals dict module fqn ansible collections azure azcollection plugins modules azure rm virtualmachine modlib path modlib path n file usr runpy py line in run module n return run module code code init globals run name mod spec n file usr runpy py line in run module code n run code code mod globals init globals n file usr runpy py line in run code n exec code run globals n file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins modules azure rm virtualmachine py line in n file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins modules azure rm virtualmachine py line in main n file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins modules azure rm virtualmachine py line in init n file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins module utils azure rm common py line in init n file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins modules azure rm virtualmachine py line in exec module n file usr local lib site packages azure core tracing decorator py line in wrapper use tracer n return func args kwargs n file usr local lib site packages azure mgmt compute operations virtual machines operations py line in get n pipeline response self client pipeline run request stream false kwargs n file usr local lib site packages azure core pipeline base py line in run n return first node send pipeline request type ignore n file usr local lib site packages azure core pipeline base py line in send n response self next send request n file usr local lib site packages azure core pipeline base py line in send n response self next send request n file usr local lib site packages azure core pipeline base py line in send n response self next send request n n file usr local lib site packages azure mgmt core policies base py line in send n response self next send request n file usr local lib site packages azure core pipeline policies redirect py line in send n response self next send request n file usr local lib site packages azure core pipeline policies retry py line in send n response self next send request n file usr local lib site packages azure core pipeline policies authentication py line in send n self on request request n file usr local lib site packages azure core pipeline policies authentication py line in on request n self token self credential get token self scopes n file usr local lib site packages azure identity internal get token mixin py line in get token n token self request token scopes kwargs n file usr local lib site packages azure identity internal decorators py line in wrapper n return fn args kwargs n file usr local lib site packages azure identity internal client credential base py line in request token n raise clientauthenticationerror message message nazure core exceptions clientauthenticationerror authentication failed the resource principal named was not found in the tenant named pvuecn this can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant you might have sent your authentication request to the wrong tenant r ntrace id r ncorrelation id r ntimestamp n module stdout msg module failure nsee stdout stderr for the exact error rc decoded clientsecretcredential get token failed authentication failed the resource principal named was not found in the tenant named pvuecn this can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant you might have sent your authentication request to the wrong tenant trace id correlation id timestamp traceback most recent call last file root ansible tmp ansible tmp ansiballz azure rm virtualmachine py line in ansiballz main file root ansible tmp ansible tmp ansiballz azure rm virtualmachine py line in ansiballz main invoke module zipped mod temp path ansiballz params file root ansible tmp ansible tmp ansiballz azure rm virtualmachine py line in invoke module runpy run module mod name ansible collections azure azcollection plugins modules azure rm virtualmachine init globals dict module fqn ansible collections azure azcollection plugins modules azure rm virtualmachine modlib path modlib path file usr runpy py line in run module return run module code code init globals run name mod spec file usr runpy py line in run module code run code code mod globals init globals file usr runpy py line in run code exec code run globals file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins modules azure rm virtualmachine py line in file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins modules azure rm virtualmachine py line in main file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins modules azure rm virtualmachine py line in init file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins module utils azure rm common py line in init file tmp ansible azure azcollection azure rm virtualmachine payload ansible azure azcollection azure rm virtualmachine payload zip ansible collections azure azcollection plugins modules azure rm virtualmachine py line in exec module file usr local lib site packages azure core tracing decorator py line in wrapper use tracer return func args kwargs file usr local lib site packages azure mgmt compute operations virtual machines operations py line in get pipeline response self client pipeline run request stream false kwargs file usr local lib site packages azure core pipeline base py line in run return first node send pipeline request type ignore file usr local lib site packages azure core pipeline base py line in send response self next send request file usr local lib site packages azure core pipeline base py line in send response self next send request file usr local lib site packages azure core pipeline base py line in send response self next send request file usr local lib site packages azure mgmt core policies base py line in send response self next send request file usr local lib site packages azure core pipeline policies redirect py line in send response self next send request file usr local lib site packages azure core pipeline policies retry py line in send response self next send request file usr local lib site packages azure core pipeline policies authentication py line in send self on request request file usr local lib site packages azure core pipeline policies authentication py line in on request self token self credential get token self scopes file usr local lib site packages azure identity internal get token mixin py line in get token token self request token scopes kwargs file usr local lib site packages azure identity internal decorators py line in wrapper return fn args kwargs file usr local lib site packages azure identity internal client credential base py line in request token raise clientauthenticationerror message message azure core exceptions clientauthenticationerror authentication failed the resource principal named was not found in the tenant named pvuecn this can happen if the application has not been installed by the administrator of the tenant or consented to by any user in the tenant you might have sent your authentication request to the wrong tenant | 1 |

812,561 | 30,342,069,154 | IssuesEvent | 2023-07-11 13:20:27 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID: 318645] Out-of-bounds access in subsys/bluetooth/controller/ll_sw/ull_adv_aux.c | bug priority: medium area: Bluetooth Coverity area: Bluetooth Controller |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/7b2034aaecc4cb2a261973b10b2fa608b29d398c/subsys/bluetooth/controller/ll_sw/ull_adv_aux.c#L842

Category: Memory - corruptions

Function: `ll_adv_aux_sr_data_set`

Component: Bluetooth

CID: [318645](https://scan9.scan.coverity.com/reports.htm#v29726/p12996/mergedDefectId=318645)

Details:

https://github.com/zephyrproject-rtos/zephyr/blob/7b2034aaecc4cb2a261973b10b2fa608b29d398c/subsys/bluetooth/controller/ll_sw/ull_adv_aux.c

```

828

829 if (op == BT_HCI_LE_EXT_ADV_OP_INTERM_FRAG ||

830 op == BT_HCI_LE_EXT_ADV_OP_LAST_FRAG) {

831 /* Append fragment to existing data */

832 hdr_add_fields |= ULL_ADV_PDU_HDR_FIELD_ADVA |

833 ULL_ADV_PDU_HDR_FIELD_AD_DATA_APPEND;

>>> CID 318645: (OVERRUN)

>>> Overrunning array "hdr_data" of 16 bytes by passing it to a function which accesses it at byte offset 20.

834 err = ull_adv_aux_pdu_set_clear(adv, sr_pdu_prev, sr_pdu,

835 hdr_add_fields,

836 0,

837 hdr_data);

838 } else {

839 /* Add AD Data and remove any prior presence of Aux Ptr */

806 */

807 *val_ptr++ = len;

808 (void)memcpy(val_ptr, &data, sizeof(data));

809 }

810

811 /* Trigger DID update */

>>> CID 318645: (OVERRUN)

>>> Overrunning array "hdr_data" of 16 bytes by passing it to a function which accesses it at byte offset 20.

812 err = ull_adv_aux_hdr_set_clear(adv, hdr_add_fields, 0U,

813 hdr_data, &pri_idx, &sec_idx);

814 if (err) {

815 return err;

816 }

817

836 0,

837 hdr_data);

838 } else {

839 /* Add AD Data and remove any prior presence of Aux Ptr */

840 hdr_add_fields |= ULL_ADV_PDU_HDR_FIELD_ADVA |

841 ULL_ADV_PDU_HDR_FIELD_AD_DATA;

>>> CID 318645: (OVERRUN)

>>> Overrunning array "hdr_data" of 16 bytes by passing it to a function which accesses it at byte offset 20.

842 err = ull_adv_aux_pdu_set_clear(adv, sr_pdu_prev, sr_pdu,

843 hdr_add_fields,

844 ULL_ADV_PDU_HDR_FIELD_AUX_PTR,

845 hdr_data);

846 }

847 #if defined(CONFIG_BT_CTLR_ADV_AUX_PDU_LINK)

```

For more information about the violation, check the [Coverity Reference](https://scan9.scan.coverity.com/doc/en/cov_checker_ref.html#static_checker_OVERRUN). ([CWE-119](http://cwe.mitre.org/data/definitions/119.html))

Please fix or provide comments in coverity using the link:

https://scan9.scan.coverity.com/reports.htm#v29271/p12996

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| 1.0 | [Coverity CID: 318645] Out-of-bounds access in subsys/bluetooth/controller/ll_sw/ull_adv_aux.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/7b2034aaecc4cb2a261973b10b2fa608b29d398c/subsys/bluetooth/controller/ll_sw/ull_adv_aux.c#L842

Category: Memory - corruptions

Function: `ll_adv_aux_sr_data_set`

Component: Bluetooth

CID: [318645](https://scan9.scan.coverity.com/reports.htm#v29726/p12996/mergedDefectId=318645)

Details:

https://github.com/zephyrproject-rtos/zephyr/blob/7b2034aaecc4cb2a261973b10b2fa608b29d398c/subsys/bluetooth/controller/ll_sw/ull_adv_aux.c

```

828

829 if (op == BT_HCI_LE_EXT_ADV_OP_INTERM_FRAG ||

830 op == BT_HCI_LE_EXT_ADV_OP_LAST_FRAG) {

831 /* Append fragment to existing data */

832 hdr_add_fields |= ULL_ADV_PDU_HDR_FIELD_ADVA |

833 ULL_ADV_PDU_HDR_FIELD_AD_DATA_APPEND;

>>> CID 318645: (OVERRUN)

>>> Overrunning array "hdr_data" of 16 bytes by passing it to a function which accesses it at byte offset 20.

834 err = ull_adv_aux_pdu_set_clear(adv, sr_pdu_prev, sr_pdu,

835 hdr_add_fields,

836 0,

837 hdr_data);

838 } else {

839 /* Add AD Data and remove any prior presence of Aux Ptr */

806 */

807 *val_ptr++ = len;

808 (void)memcpy(val_ptr, &data, sizeof(data));

809 }

810

811 /* Trigger DID update */

>>> CID 318645: (OVERRUN)

>>> Overrunning array "hdr_data" of 16 bytes by passing it to a function which accesses it at byte offset 20.

812 err = ull_adv_aux_hdr_set_clear(adv, hdr_add_fields, 0U,

813 hdr_data, &pri_idx, &sec_idx);

814 if (err) {

815 return err;

816 }

817

836 0,

837 hdr_data);

838 } else {

839 /* Add AD Data and remove any prior presence of Aux Ptr */

840 hdr_add_fields |= ULL_ADV_PDU_HDR_FIELD_ADVA |

841 ULL_ADV_PDU_HDR_FIELD_AD_DATA;

>>> CID 318645: (OVERRUN)

>>> Overrunning array "hdr_data" of 16 bytes by passing it to a function which accesses it at byte offset 20.

842 err = ull_adv_aux_pdu_set_clear(adv, sr_pdu_prev, sr_pdu,

843 hdr_add_fields,

844 ULL_ADV_PDU_HDR_FIELD_AUX_PTR,

845 hdr_data);

846 }

847 #if defined(CONFIG_BT_CTLR_ADV_AUX_PDU_LINK)

```

For more information about the violation, check the [Coverity Reference](https://scan9.scan.coverity.com/doc/en/cov_checker_ref.html#static_checker_OVERRUN). ([CWE-119](http://cwe.mitre.org/data/definitions/119.html))

Please fix or provide comments in coverity using the link:

https://scan9.scan.coverity.com/reports.htm#v29271/p12996

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| priority | out of bounds access in subsys bluetooth controller ll sw ull adv aux c static code scan issues found in file category memory corruptions function ll adv aux sr data set component bluetooth cid details if op bt hci le ext adv op interm frag op bt hci le ext adv op last frag append fragment to existing data hdr add fields ull adv pdu hdr field adva ull adv pdu hdr field ad data append cid overrun overrunning array hdr data of bytes by passing it to a function which accesses it at byte offset err ull adv aux pdu set clear adv sr pdu prev sr pdu hdr add fields hdr data else add ad data and remove any prior presence of aux ptr val ptr len void memcpy val ptr data sizeof data trigger did update cid overrun overrunning array hdr data of bytes by passing it to a function which accesses it at byte offset err ull adv aux hdr set clear adv hdr add fields hdr data pri idx sec idx if err return err hdr data else add ad data and remove any prior presence of aux ptr hdr add fields ull adv pdu hdr field adva ull adv pdu hdr field ad data cid overrun overrunning array hdr data of bytes by passing it to a function which accesses it at byte offset err ull adv aux pdu set clear adv sr pdu prev sr pdu hdr add fields ull adv pdu hdr field aux ptr hdr data if defined config bt ctlr adv aux pdu link for more information about the violation check the please fix or provide comments in coverity using the link note this issue was created automatically priority was set based on classification of the file affected and the impact field in coverity assignees were set using the codeowners file | 1 |

85,953 | 3,700,889,459 | IssuesEvent | 2016-02-29 10:38:45 | OCHA-DAP/hdx-ckan | https://api.github.com/repos/OCHA-DAP/hdx-ckan | closed | New contribute flow: Horizontal scrollbar showing | bug New Contribute Flow Priority-Medium | I tested in Linux - Firefox and Chrome in FullHD, and in Windows in Firefox:

Notice the scrollbar at the bottom | 1.0 | New contribute flow: Horizontal scrollbar showing - I tested in Linux - Firefox and Chrome in FullHD, and in Windows in Firefox:

Notice the scrollbar at the bottom | priority | new contribute flow horizontal scrollbar showing i tested in linux firefox and chrome in fullhd and in windows in firefox notice the scrollbar at the bottom | 1 |

424,564 | 12,313,098,666 | IssuesEvent | 2020-05-12 14:50:56 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | closed | Come up with a way to handle reviews for the feature replacement workflow | Category: Core Priority: Medium Status: New/Undefined Type: Feature | Two types of reviews could be useful during feature replacement. The first would be the regular conflate review and another could be flagging reviews for linear features snapped post conflation (not yet added; could group reviews by connected ways to reduce the number of them). Since our output is a changeset and the command works outside of the UI review workflow, there is currently no way to handle reviews and they are dropped completely. | 1.0 | Come up with a way to handle reviews for the feature replacement workflow - Two types of reviews could be useful during feature replacement. The first would be the regular conflate review and another could be flagging reviews for linear features snapped post conflation (not yet added; could group reviews by connected ways to reduce the number of them). Since our output is a changeset and the command works outside of the UI review workflow, there is currently no way to handle reviews and they are dropped completely. | priority | come up with a way to handle reviews for the feature replacement workflow two types of reviews could be useful during feature replacement the first would be the regular conflate review and another could be flagging reviews for linear features snapped post conflation not yet added could group reviews by connected ways to reduce the number of them since our output is a changeset and the command works outside of the ui review workflow there is currently no way to handle reviews and they are dropped completely | 1 |

679,463 | 23,233,245,396 | IssuesEvent | 2022-08-03 09:28:25 | owncloud/web | https://api.github.com/repos/owncloud/web | closed | Selected item glues on bottom & scrolls for ⬆️ key up | Type:Bug Priority:p3-medium GA-Blocker | ### Steps to reproduce

1. Login to https://ocis.ocis-web.latest.owncloud.works/

2. upload ~100 files into a single folder

3. select one of the 100 files

4. press key down ⬇️ until scolling starts

5. press key ⬆️ until scrolling starts

6. Selected item glues on bottom and scrolls on every keystroke

https://user-images.githubusercontent.com/26610733/180446452-a27ec8b3-ac8d-45aa-9635-4e21cd9132b4.mp4

### Expected behaviour

selection should "walk" to the top without scrolling, then start scrolling

https://user-images.githubusercontent.com/26610733/180445840-e7deb84f-da62-41c6-b625-156e48980383.mp4

### Actual behaviour

Selected item glues on bottom and scrolls on every keystroke

| 1.0 | Selected item glues on bottom & scrolls for ⬆️ key up - ### Steps to reproduce

1. Login to https://ocis.ocis-web.latest.owncloud.works/

2. upload ~100 files into a single folder

3. select one of the 100 files

4. press key down ⬇️ until scolling starts

5. press key ⬆️ until scrolling starts

6. Selected item glues on bottom and scrolls on every keystroke

https://user-images.githubusercontent.com/26610733/180446452-a27ec8b3-ac8d-45aa-9635-4e21cd9132b4.mp4

### Expected behaviour

selection should "walk" to the top without scrolling, then start scrolling

https://user-images.githubusercontent.com/26610733/180445840-e7deb84f-da62-41c6-b625-156e48980383.mp4

### Actual behaviour

Selected item glues on bottom and scrolls on every keystroke

| priority | selected item glues on bottom scrolls for ⬆️ key up steps to reproduce login to upload files into a single folder select one of the files press key down ⬇️ until scolling starts press key ⬆️ until scrolling starts selected item glues on bottom and scrolls on every keystroke expected behaviour selection should walk to the top without scrolling then start scrolling actual behaviour selected item glues on bottom and scrolls on every keystroke | 1 |

205,052 | 7,093,594,910 | IssuesEvent | 2018-01-12 21:12:42 | certificate-helper/TLS-Inspector | https://api.github.com/repos/certificate-helper/TLS-Inspector | closed | Limit the number of redirects TLS Inspector will follow | CertificateKit bug easy medium priority merged | **Affected Version:**

Since 1.6.0

**Is this a Test Flight version or the App Store version?**

App Store

**Device and iOS Version:**

All

**What steps will reproduce the problem?**

1. Navigate to a web page that triggers a redirect loop

**What is the expected output?**

A specific warning about a redirect loop

**What do you see instead?**

Long loading then eventually timeout

**Please provide any additional information below.**

| 1.0 | Limit the number of redirects TLS Inspector will follow - **Affected Version:**

Since 1.6.0

**Is this a Test Flight version or the App Store version?**

App Store

**Device and iOS Version:**

All

**What steps will reproduce the problem?**

1. Navigate to a web page that triggers a redirect loop

**What is the expected output?**

A specific warning about a redirect loop

**What do you see instead?**

Long loading then eventually timeout

**Please provide any additional information below.**

| priority | limit the number of redirects tls inspector will follow affected version since is this a test flight version or the app store version app store device and ios version all what steps will reproduce the problem navigate to a web page that triggers a redirect loop what is the expected output a specific warning about a redirect loop what do you see instead long loading then eventually timeout please provide any additional information below | 1 |

134,710 | 5,232,910,807 | IssuesEvent | 2017-01-30 11:03:23 | openworm/behavioral_syntax | https://api.github.com/repos/openworm/behavioral_syntax | closed | consider alternatives to compression(MDL) | medium priority | Following Andre's presentation at the OpenWorm journal club today it might be a good idea to look into using:

1) Hidden Markov Models

2) Statistical Analysis: use p-values to see whether there's any information gain from using n grams vs (n-1) grams

3) Use Hierarchical Markov Models

| 1.0 | consider alternatives to compression(MDL) - Following Andre's presentation at the OpenWorm journal club today it might be a good idea to look into using:

1) Hidden Markov Models

2) Statistical Analysis: use p-values to see whether there's any information gain from using n grams vs (n-1) grams

3) Use Hierarchical Markov Models

| priority | consider alternatives to compression mdl following andre s presentation at the openworm journal club today it might be a good idea to look into using hidden markov models statistical analysis use p values to see whether there s any information gain from using n grams vs n grams use hierarchical markov models | 1 |

623,214 | 19,663,333,600 | IssuesEvent | 2022-01-10 19:25:46 | ScottUK/ladojrp-issues | https://api.github.com/repos/ScottUK/ladojrp-issues | closed | PA System For Police | Class: enhancement Priority: medium Scope: scripts | **Describe the feature you'd like implemented**

There should be a PA system for police to use in their vehicles so if they need to yell for a vehicle to pull over or pull closer to the side of the road they can do so by using that implemented PA system.

| 1.0 | PA System For Police - **Describe the feature you'd like implemented**

There should be a PA system for police to use in their vehicles so if they need to yell for a vehicle to pull over or pull closer to the side of the road they can do so by using that implemented PA system.