Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

479,441 | 13,796,699,965 | IssuesEvent | 2020-10-09 20:19:32 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | Delay JS - IE11: Object doesn't support property or method 'forEach' | effort: [XS] module: file optimization priority: medium type: bug | **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version ✅

- Used the search feature to ensure that the bug hasn’t been reported before ✅

**Describe the bug**

The ""rocket-delay-js-js-after" script we use contains a `forEach` method:

https://github.com/wp-media/wp-rocket/blob/7e8d54aaf5ad8ddffda404d7011931f1a43f3a53/assets/js/lazyload-scripts.js#L59

That's not supported on IE11 when using ES6, and results in the following error:

```JavaScript

Object doesn't support property or method 'forEach'

```

which breaks the feature.

I'm quoting @engahmeds3ed who was kind enough to clarify the "why" this doesn't work in IE11:

> forEach() is actually working on IE11, just be careful on how you call it.

> querySelectorAll() is a method which return a NodeList. And on Internet Explorer, foreach() only works on Array objects. (It works with NodeList with ES6, not supported by IE11).

**To Reproduce**

Steps to reproduce the behavior:

1. Enable **Delay JavaScript execution**.

2. Visit a site using IE11 on your PC or **browserstack.com** .

3. Open the JavaScript console.

4. See error.

**Expected behavior**

The feature should work on IE.

**Screenshots**

**Additional context**

**Related ticket:** https://secure.helpscout.net/conversation/1287835559/196361?folderId=2135277

**Potential solution:** https://rimdev.io/foreach-for-ie-11/

**IE11 worldwide market share:** [2.1% the previous year]( https://gs.statcounter.com/browser-version-market-share/desktop/worldwide/#monthly-201908-202008)

**Backlog Grooming (for WP Media dev team use only)**

- [x] Reproduce the problem

- [x] Identify the root cause

- [x] Scope a solution

- [x] Estimate the effort

| 1.0 | Delay JS - IE11: Object doesn't support property or method 'forEach' - **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version ✅

- Used the search feature to ensure that the bug hasn’t been reported before ✅

**Describe the bug**

The ""rocket-delay-js-js-after" script we use contains a `forEach` method:

https://github.com/wp-media/wp-rocket/blob/7e8d54aaf5ad8ddffda404d7011931f1a43f3a53/assets/js/lazyload-scripts.js#L59

That's not supported on IE11 when using ES6, and results in the following error:

```JavaScript

Object doesn't support property or method 'forEach'

```

which breaks the feature.

I'm quoting @engahmeds3ed who was kind enough to clarify the "why" this doesn't work in IE11:

> forEach() is actually working on IE11, just be careful on how you call it.

> querySelectorAll() is a method which return a NodeList. And on Internet Explorer, foreach() only works on Array objects. (It works with NodeList with ES6, not supported by IE11).

**To Reproduce**

Steps to reproduce the behavior:

1. Enable **Delay JavaScript execution**.

2. Visit a site using IE11 on your PC or **browserstack.com** .

3. Open the JavaScript console.

4. See error.

**Expected behavior**

The feature should work on IE.

**Screenshots**

**Additional context**

**Related ticket:** https://secure.helpscout.net/conversation/1287835559/196361?folderId=2135277

**Potential solution:** https://rimdev.io/foreach-for-ie-11/

**IE11 worldwide market share:** [2.1% the previous year]( https://gs.statcounter.com/browser-version-market-share/desktop/worldwide/#monthly-201908-202008)

**Backlog Grooming (for WP Media dev team use only)**

- [x] Reproduce the problem

- [x] Identify the root cause

- [x] Scope a solution

- [x] Estimate the effort

| priority | delay js object doesn t support property or method foreach before submitting an issue please check that you’ve completed the following steps made sure you’re on the latest version ✅ used the search feature to ensure that the bug hasn’t been reported before ✅ describe the bug the rocket delay js js after script we use contains a foreach method that s not supported on when using and results in the following error javascript object doesn t support property or method foreach which breaks the feature i m quoting who was kind enough to clarify the why this doesn t work in foreach is actually working on just be careful on how you call it queryselectorall is a method which return a nodelist and on internet explorer foreach only works on array objects it works with nodelist with not supported by to reproduce steps to reproduce the behavior enable delay javascript execution visit a site using on your pc or browserstack com open the javascript console see error expected behavior the feature should work on ie screenshots additional context related ticket potential solution worldwide market share backlog grooming for wp media dev team use only reproduce the problem identify the root cause scope a solution estimate the effort | 1 |

246,416 | 7,895,200,517 | IssuesEvent | 2018-06-29 01:43:39 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Rebuild fastbit and h5part for the 2.13.0 thirdparty_shared libraries at LLNL. | Expected Use: 3 - Occasional Feature Impact: 3 - Medium OS: All Priority: Normal Support Group: Any version: 2.12.3 | Allen updated bv_fastbit and bv_h5part on the trunk, so we should rebuild those libraries for our 2.13.0 thirdparty_shared libraries.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. The following information

could not be accurately captured in the new ticket:

Original author: Eric Brugger

Original creation: 01/24/2017 04:38 pm

Original update: 03/01/2018 01:11 pm

Ticket number: 2743 | 1.0 | Rebuild fastbit and h5part for the 2.13.0 thirdparty_shared libraries at LLNL. - Allen updated bv_fastbit and bv_h5part on the trunk, so we should rebuild those libraries for our 2.13.0 thirdparty_shared libraries.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. The following information

could not be accurately captured in the new ticket:

Original author: Eric Brugger

Original creation: 01/24/2017 04:38 pm

Original update: 03/01/2018 01:11 pm

Ticket number: 2743 | priority | rebuild fastbit and for the thirdparty shared libraries at llnl allen updated bv fastbit and bv on the trunk so we should rebuild those libraries for our thirdparty shared libraries redmine migration this ticket was migrated from redmine the following information could not be accurately captured in the new ticket original author eric brugger original creation pm original update pm ticket number | 1 |

523,988 | 15,193,567,389 | IssuesEvent | 2021-02-16 01:04:28 | code4lib/2021.code4lib.org | https://api.github.com/repos/code4lib/2021.code4lib.org | closed | Update Conference Schedule | Priority: Medium Status: In Progress | Kathy provided an extract from Whova with finalized schedule. I'll update the information on the website over the next few days. | 1.0 | Update Conference Schedule - Kathy provided an extract from Whova with finalized schedule. I'll update the information on the website over the next few days. | priority | update conference schedule kathy provided an extract from whova with finalized schedule i ll update the information on the website over the next few days | 1 |

451,696 | 13,040,314,999 | IssuesEvent | 2020-07-28 18:17:21 | dnnsoftware/Dnn.Platform | https://api.github.com/repos/dnnsoftware/Dnn.Platform | closed | Site Import/Export fails due to pre-filled Server name on task | Area: AE > PersonaBar Ext > SiteImportExport.Web Effort: Medium Priority: Medium Status: Ready for Development Type: Bug | <!--

Please read contribution guideline first: https://github.com/dnnsoftware/Dnn.Platform/blob/development/CONTRIBUTING.md

Any potential security issues should be sent to security@dnnsoftware.com, rather than posted on GitHub

-->

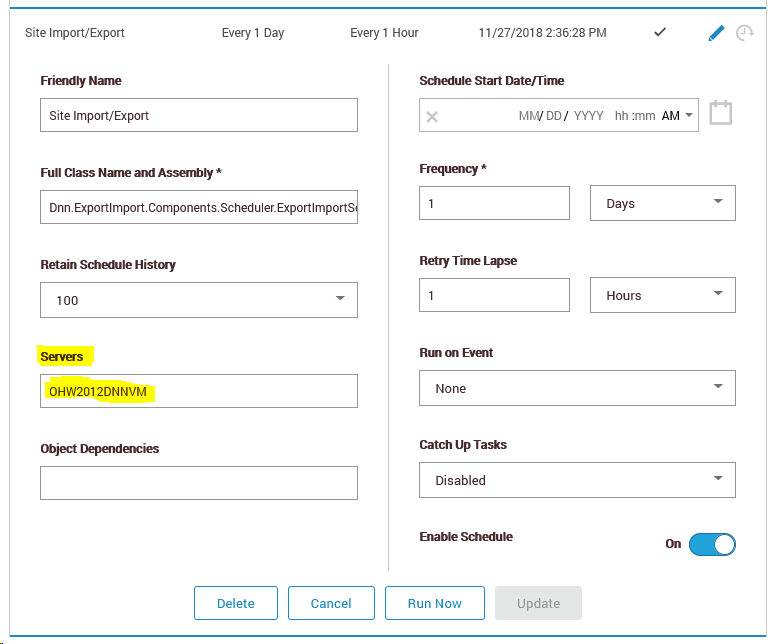

## Description of bug

On a newly installed site, the Servers box on the Site Import/Export scheduled task is pre-filled with the name of the server DNN was installed on. However, on an Azure App Service the server name frequently changes (e.g. whenever you restart the app service) which causes the scheduled task to fail.

Therefore we should consider leaving the Servers box blank (meaning run this task on the current server) on a fresh installation.

## Steps to reproduce

List the steps to reproduce the behavior:

1. Install DNN 9.2.2 on an Azure App Service

2. Restart the App Service, so the server name changes

3. Kick off a Site Export from PersonaBar > Settings > Site Import/Export

4. Note how the status moves to In Progress, but never completes

5. Visit PersonaBar > Settings > Scheduler

6. Edit the Site Import/Export scheduled task, removing anything from the Servers box.

7. Re-run the task

8. Re-visit PersonaBar > Settings > Site Import/Export and note the task will now run to completion

## Current result

Explain what the current result is.

## Expected result

Provide a clear and concise description of what you expected to happen.

## Screenshots

## Affected version

<!-- Check all that apply and add more if necessary -->

* [x] 9.2.2

(I haven't tested older versions)

## Affected browser

n/a

| 1.0 | Site Import/Export fails due to pre-filled Server name on task - <!--

Please read contribution guideline first: https://github.com/dnnsoftware/Dnn.Platform/blob/development/CONTRIBUTING.md

Any potential security issues should be sent to security@dnnsoftware.com, rather than posted on GitHub

-->

## Description of bug

On a newly installed site, the Servers box on the Site Import/Export scheduled task is pre-filled with the name of the server DNN was installed on. However, on an Azure App Service the server name frequently changes (e.g. whenever you restart the app service) which causes the scheduled task to fail.

Therefore we should consider leaving the Servers box blank (meaning run this task on the current server) on a fresh installation.

## Steps to reproduce

List the steps to reproduce the behavior:

1. Install DNN 9.2.2 on an Azure App Service

2. Restart the App Service, so the server name changes

3. Kick off a Site Export from PersonaBar > Settings > Site Import/Export

4. Note how the status moves to In Progress, but never completes

5. Visit PersonaBar > Settings > Scheduler

6. Edit the Site Import/Export scheduled task, removing anything from the Servers box.

7. Re-run the task

8. Re-visit PersonaBar > Settings > Site Import/Export and note the task will now run to completion

## Current result

Explain what the current result is.

## Expected result

Provide a clear and concise description of what you expected to happen.

## Screenshots

## Affected version

<!-- Check all that apply and add more if necessary -->

* [x] 9.2.2

(I haven't tested older versions)

## Affected browser

n/a

| priority | site import export fails due to pre filled server name on task please read contribution guideline first any potential security issues should be sent to security dnnsoftware com rather than posted on github description of bug on a newly installed site the servers box on the site import export scheduled task is pre filled with the name of the server dnn was installed on however on an azure app service the server name frequently changes e g whenever you restart the app service which causes the scheduled task to fail therefore we should consider leaving the servers box blank meaning run this task on the current server on a fresh installation steps to reproduce list the steps to reproduce the behavior install dnn on an azure app service restart the app service so the server name changes kick off a site export from personabar settings site import export note how the status moves to in progress but never completes visit personabar settings scheduler edit the site import export scheduled task removing anything from the servers box re run the task re visit personabar settings site import export and note the task will now run to completion current result explain what the current result is expected result provide a clear and concise description of what you expected to happen screenshots affected version i haven t tested older versions affected browser n a | 1 |

502,453 | 14,546,497,472 | IssuesEvent | 2020-12-15 21:20:00 | rubyforgood/casa | https://api.github.com/repos/rubyforgood/casa | closed | remove Case Contacts view from Supervisor and Admin dashboards | :clipboard: Supervisor :crown: Admin Priority: Medium | **What type of user is this for? volunteer/supervisor/admin/all OR All CASA Admin**

admins and supervisors

**Description**

The Case Contacts view can be removed for both admins and supervisors. They can already see this data by clicking on a `casa_case`, or by generating a report.

**Screenshots of current behavior, if any**

This is what the Case Contacts view looks like, except it goes on forever because there are many case contacts associated with each case. It is no longer needed.

<img width="1324" alt="Screen Shot 2020-10-24 at 3 23 33 PM" src="https://user-images.githubusercontent.com/62810851/97094794-050c2500-160d-11eb-8386-af6615fc7d3a.png">

| 1.0 | remove Case Contacts view from Supervisor and Admin dashboards - **What type of user is this for? volunteer/supervisor/admin/all OR All CASA Admin**

admins and supervisors

**Description**

The Case Contacts view can be removed for both admins and supervisors. They can already see this data by clicking on a `casa_case`, or by generating a report.

**Screenshots of current behavior, if any**

This is what the Case Contacts view looks like, except it goes on forever because there are many case contacts associated with each case. It is no longer needed.

<img width="1324" alt="Screen Shot 2020-10-24 at 3 23 33 PM" src="https://user-images.githubusercontent.com/62810851/97094794-050c2500-160d-11eb-8386-af6615fc7d3a.png">

| priority | remove case contacts view from supervisor and admin dashboards what type of user is this for volunteer supervisor admin all or all casa admin admins and supervisors description the case contacts view can be removed for both admins and supervisors they can already see this data by clicking on a casa case or by generating a report screenshots of current behavior if any this is what the case contacts view looks like except it goes on forever because there are many case contacts associated with each case it is no longer needed img width alt screen shot at pm src | 1 |

83,841 | 3,643,817,670 | IssuesEvent | 2016-02-15 05:41:01 | vega/vega-lite | https://api.github.com/repos/vega/vega-lite | opened | Do not output stroke property e.g., strokeWidth when there is no stroke fill. | bug Priority/3-Medium | (I guess the same has to be true for fill.) | 1.0 | Do not output stroke property e.g., strokeWidth when there is no stroke fill. - (I guess the same has to be true for fill.) | priority | do not output stroke property e g strokewidth when there is no stroke fill i guess the same has to be true for fill | 1 |

229,490 | 7,575,123,816 | IssuesEvent | 2018-04-23 23:54:25 | adrn/gala | https://api.github.com/repos/adrn/gala | closed | Add "fast" option to pericenter/apocenter and support multiple orbits | bug enhancement priority:medium | Right now, `.pericenter()` and `.apocenter()` are slow because they do interpolation to figure out a precise value. There should be a `fast=True` option that skips the interpolation.

We also need to support these methods for multiple orbits in the same object. | 1.0 | Add "fast" option to pericenter/apocenter and support multiple orbits - Right now, `.pericenter()` and `.apocenter()` are slow because they do interpolation to figure out a precise value. There should be a `fast=True` option that skips the interpolation.

We also need to support these methods for multiple orbits in the same object. | priority | add fast option to pericenter apocenter and support multiple orbits right now pericenter and apocenter are slow because they do interpolation to figure out a precise value there should be a fast true option that skips the interpolation we also need to support these methods for multiple orbits in the same object | 1 |

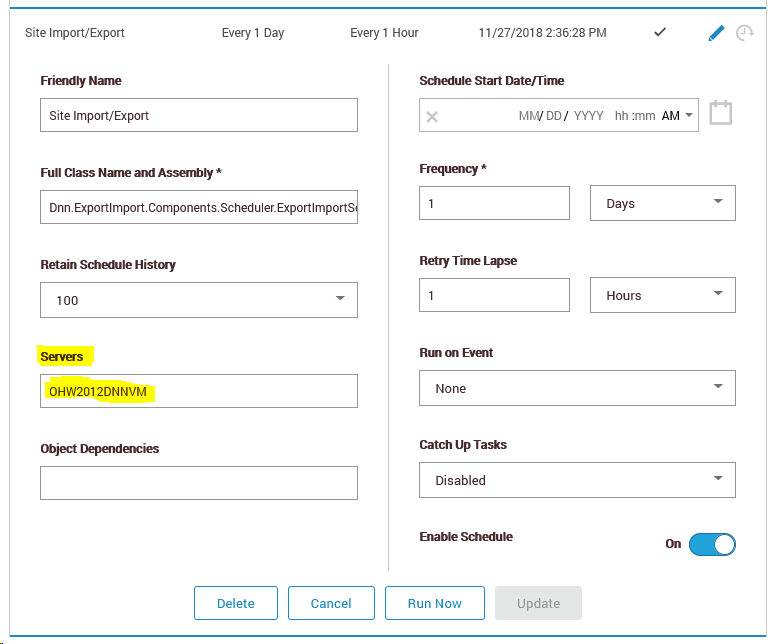

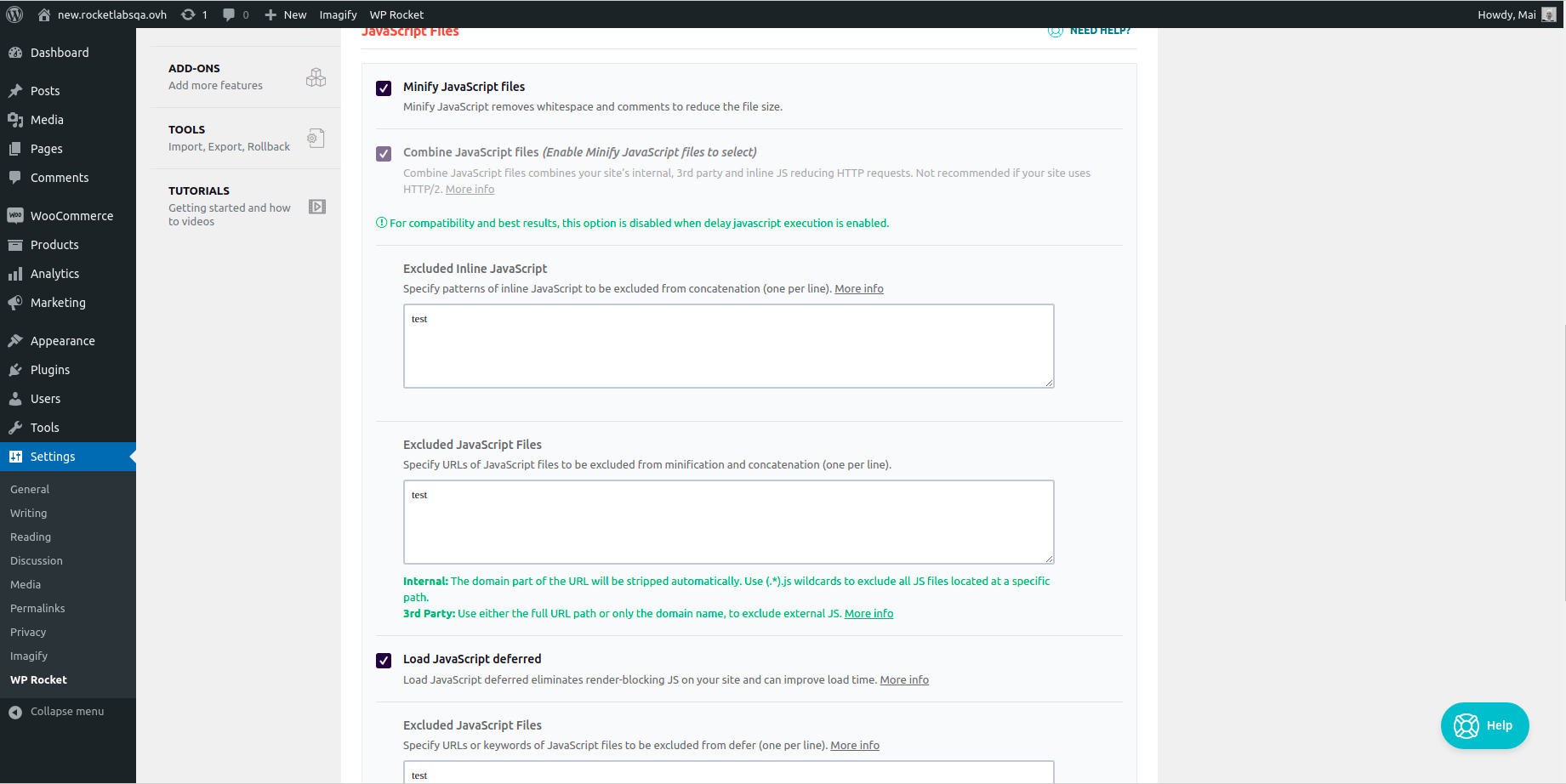

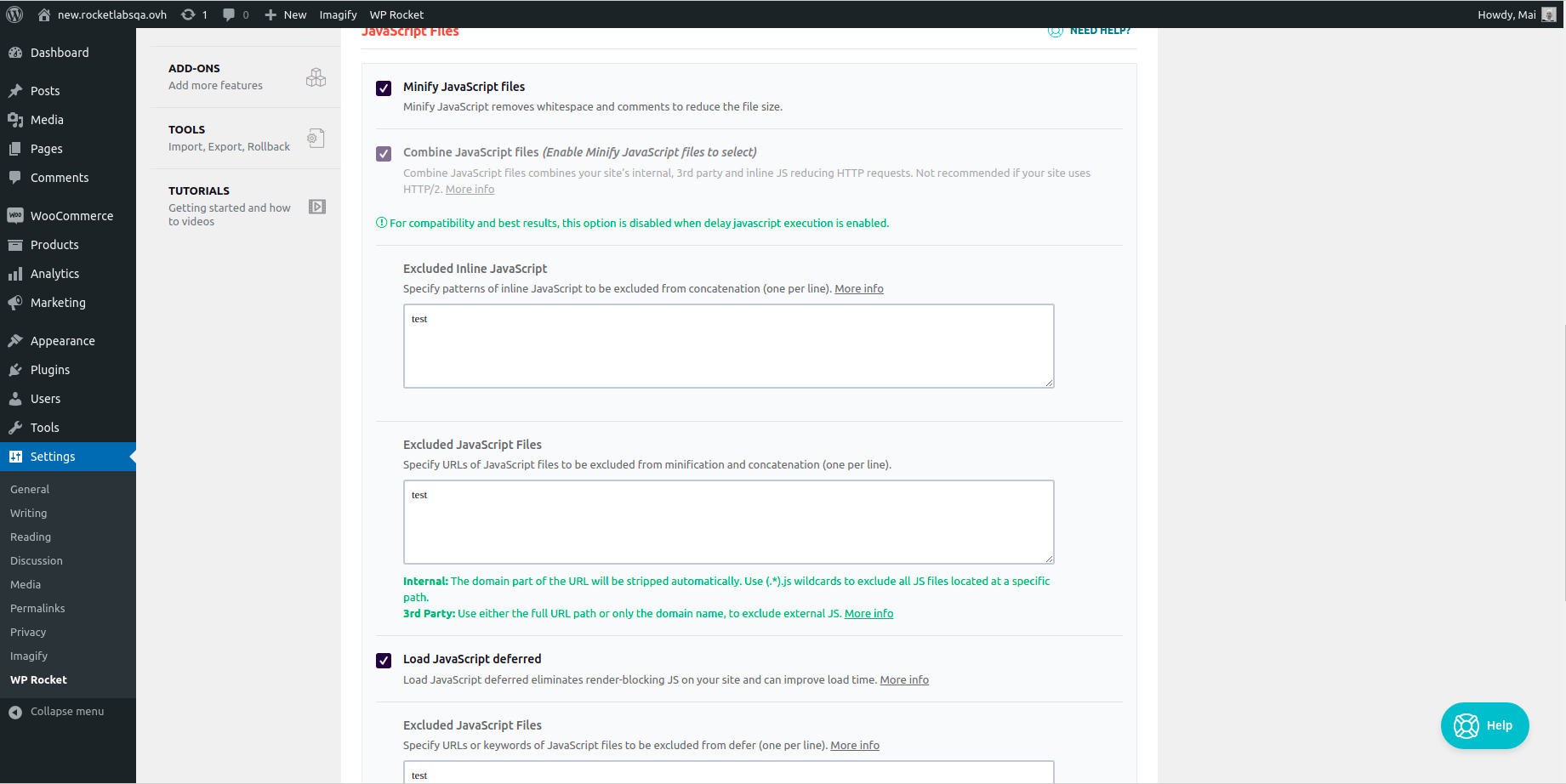

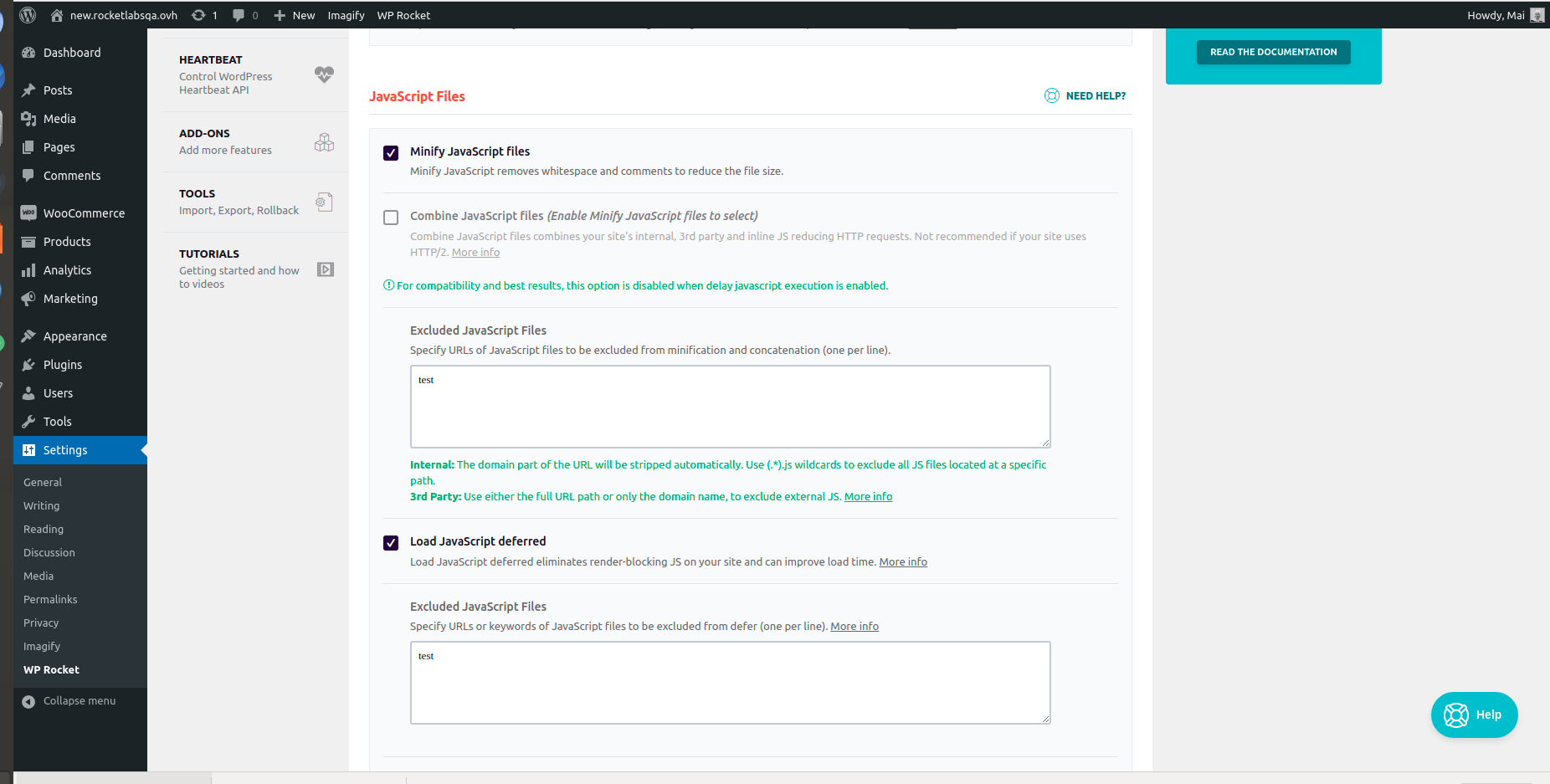

547,798 | 16,047,641,826 | IssuesEvent | 2021-04-22 15:16:47 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | opened | UI of combine js needs to match design when import settings having delay js and combine js on | module: combine JS module: tools priority: medium severity: minor type: bug | **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version => Y

- Used the search feature to ensure that the bug hasn’t been reported before => Y

**Describe the bug**

UI of combine js needs to match design when import settings having delay js and combine js on

**To Reproduce**

Precondition:

- exported settings exist from version < 3.9 with delay js and combine js on

- WPR 3.9 installed and activated

Steps to reproduce the behavior:

1. Go to the tools tab and import settings

2. open file optimization tab

3. check the UI for combine js

**Expected behavior**

Combine js is dimmed and unchecked

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Additional context**

Expected is

**Backlog Grooming (for WP Media dev team use only)**

- [ ] Reproduce the problem

- [ ] Identify the root cause

- [ ] Scope a solution

- [ ] Estimate the effort

| 1.0 | UI of combine js needs to match design when import settings having delay js and combine js on - **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version => Y

- Used the search feature to ensure that the bug hasn’t been reported before => Y

**Describe the bug**

UI of combine js needs to match design when import settings having delay js and combine js on

**To Reproduce**

Precondition:

- exported settings exist from version < 3.9 with delay js and combine js on

- WPR 3.9 installed and activated

Steps to reproduce the behavior:

1. Go to the tools tab and import settings

2. open file optimization tab

3. check the UI for combine js

**Expected behavior**

Combine js is dimmed and unchecked

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Additional context**

Expected is

**Backlog Grooming (for WP Media dev team use only)**

- [ ] Reproduce the problem

- [ ] Identify the root cause

- [ ] Scope a solution

- [ ] Estimate the effort

| priority | ui of combine js needs to match design when import settings having delay js and combine js on before submitting an issue please check that you’ve completed the following steps made sure you’re on the latest version y used the search feature to ensure that the bug hasn’t been reported before y describe the bug ui of combine js needs to match design when import settings having delay js and combine js on to reproduce precondition exported settings exist from version with delay js and combine js on wpr installed and activated steps to reproduce the behavior go to the tools tab and import settings open file optimization tab check the ui for combine js expected behavior combine js is dimmed and unchecked screenshots if applicable add screenshots to help explain your problem additional context expected is backlog grooming for wp media dev team use only reproduce the problem identify the root cause scope a solution estimate the effort | 1 |

77,019 | 3,506,248,209 | IssuesEvent | 2016-01-08 04:59:07 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | Player limit (BB #79) | migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 20.03.2010 16:19:10 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** resolved

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/79

<hr>

You're not allowed to set negative values for GMs.

Like, .server plimit -2 would make only Game Masters able to access the server but it simply ignores that and lets everyone still in. | 1.0 | Player limit (BB #79) - This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 20.03.2010 16:19:10 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** resolved

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/79

<hr>

You're not allowed to set negative values for GMs.

Like, .server plimit -2 would make only Game Masters able to access the server but it simply ignores that and lets everyone still in. | priority | player limit bb this issue was migrated from bitbucket original reporter original date gmt original priority major original type bug original state resolved direct link you re not allowed to set negative values for gms like server plimit would make only game masters able to access the server but it simply ignores that and lets everyone still in | 1 |

744,299 | 25,937,559,190 | IssuesEvent | 2022-12-16 15:24:31 | trimble-oss/website-modus.trimble.com | https://api.github.com/repos/trimble-oss/website-modus.trimble.com | closed | Submission guidelines - move them to GitHub | 2 priority:medium | RE: https://modus.trimble.com/community/submission-guidelines/

We could move the form and instructions to GitHub as a template. | 1.0 | Submission guidelines - move them to GitHub - RE: https://modus.trimble.com/community/submission-guidelines/

We could move the form and instructions to GitHub as a template. | priority | submission guidelines move them to github re we could move the form and instructions to github as a template | 1 |

698,884 | 23,995,724,738 | IssuesEvent | 2022-09-14 07:22:50 | redhat-developer/odo | https://api.github.com/repos/redhat-developer/odo | closed | add `app.openshift.io/runtime` label to resources created by odo | priority/Medium kind/user-story | /kind user-story

## User Story

As an odo and ODC user, I want to see language/framework icons in ODC topology view So that I can quickly recognize what is running and so that I have a consistent view with components created by ODC.

<img width="573" alt="Screenshot 2022-08-22 at 17 12 39" src="https://user-images.githubusercontent.com/57206/185956224-eeba324e-6057-422a-9675-9c1de05738ef.png">

The left is the component with the correct labels deployed with ODC, the right is nodejs component deployed using `odo dev`

## Acceptance Criteria

- [ ] `odo dev` should add `app.openshift.io/runtime` label to all resources created with value of `metadata.language` field in devfile.yaml

- [ ] `odo deploy` should add `app.openshift.io/runtime` label to all resources created with value of `metadata.language` field in devfile.yaml

/kind user-story

/priority medium

| 1.0 | add `app.openshift.io/runtime` label to resources created by odo - /kind user-story

## User Story

As an odo and ODC user, I want to see language/framework icons in ODC topology view So that I can quickly recognize what is running and so that I have a consistent view with components created by ODC.

<img width="573" alt="Screenshot 2022-08-22 at 17 12 39" src="https://user-images.githubusercontent.com/57206/185956224-eeba324e-6057-422a-9675-9c1de05738ef.png">

The left is the component with the correct labels deployed with ODC, the right is nodejs component deployed using `odo dev`

## Acceptance Criteria

- [ ] `odo dev` should add `app.openshift.io/runtime` label to all resources created with value of `metadata.language` field in devfile.yaml

- [ ] `odo deploy` should add `app.openshift.io/runtime` label to all resources created with value of `metadata.language` field in devfile.yaml

/kind user-story

/priority medium

| priority | add app openshift io runtime label to resources created by odo kind user story user story as an odo and odc user i want to see language framework icons in odc topology view so that i can quickly recognize what is running and so that i have a consistent view with components created by odc img width alt screenshot at src the left is the component with the correct labels deployed with odc the right is nodejs component deployed using odo dev acceptance criteria odo dev should add app openshift io runtime label to all resources created with value of metadata language field in devfile yaml odo deploy should add app openshift io runtime label to all resources created with value of metadata language field in devfile yaml kind user story priority medium | 1 |

264,250 | 8,306,910,939 | IssuesEvent | 2018-09-23 00:53:23 | pennmush/pennmush | https://api.github.com/repos/pennmush/pennmush | closed | File descriptor leak with curl | Component-HTTP bug priority medium | Doing a `@shutdown/reboot` when there are outstanding `@http` requests causes the sockets being used for those to be forgotten but still left open. This will eventually take up all available descriptors.

Fix (When I have a few minutes to write it) will probably involve setting the CLOEXEC flag on those descriptors. | 1.0 | File descriptor leak with curl - Doing a `@shutdown/reboot` when there are outstanding `@http` requests causes the sockets being used for those to be forgotten but still left open. This will eventually take up all available descriptors.

Fix (When I have a few minutes to write it) will probably involve setting the CLOEXEC flag on those descriptors. | priority | file descriptor leak with curl doing a shutdown reboot when there are outstanding http requests causes the sockets being used for those to be forgotten but still left open this will eventually take up all available descriptors fix when i have a few minutes to write it will probably involve setting the cloexec flag on those descriptors | 1 |

204,365 | 7,087,353,331 | IssuesEvent | 2018-01-11 17:31:05 | salesagility/SuiteCRM | https://api.github.com/repos/salesagility/SuiteCRM | closed | Apply Status to Case Updates | Fix Proposed Medium Priority Resolved: Next Release bug | <!--- Provide a general summary of the issue in the **Title** above -->

<!--- Before you open an issue, please check if a similar issue already exists or has been closed before. --->

#### Issue

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug -->

At AOP and Case flow.

In the area "Admin>AOP Setting > Case Status Changes" not running.

#### Expected Behavior

<!--- Tell us what should happen -->

When a new case update exists, the system must apply the state changes as established

#### Actual Behavior

<!--- Tell us what happens instead -->

Not change

#### Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the bug -->

Ubicación: .../modules/AOP_Case_Updates/CaseUpdateHook.php

Clase, funcion: updateCaseStatus (line 299)

```

/* PPW - CODe - Modifiación CORE */

// if (!empty($case->id)) {

if (empty($case->id)) {

```

NOTE: (Deleted "!")

#### Steps to Reproduce

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug include code to reproduce, if relevant -->

1. Go to Admin > Email Settings and configure smtp mail

2. Go to Admin > Inbound Email and configure inbound mail

3. Go to Admin > AOP Setting > Enable AOP and configure "Case Status Changes"

4. Go to Cases > Creare a update case

5. Go to email final client and repply mail

6. Go to the case and verify that the status has not been changed

#### Context

<!--- How has this bug affected you? What were you trying to accomplish? -->

<!--- If you feel this should be a low/medium/high priority then please state so -->

Customer Support. High priority

#### Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* SuiteCRM Version used: 7.8.2

* Browser name and version (e.g. Chrome Version 51.0.2704.63 (64-bit)): All

* Environment name and version (e.g. MySQL, PHP 7): Mysql/MariaDb, PHP7

* Operating System and version (e.g Ubuntu 16.04): Ubuntu 16.04

| 1.0 | Apply Status to Case Updates - <!--- Provide a general summary of the issue in the **Title** above -->

<!--- Before you open an issue, please check if a similar issue already exists or has been closed before. --->

#### Issue

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug -->

At AOP and Case flow.

In the area "Admin>AOP Setting > Case Status Changes" not running.

#### Expected Behavior

<!--- Tell us what should happen -->

When a new case update exists, the system must apply the state changes as established

#### Actual Behavior

<!--- Tell us what happens instead -->

Not change

#### Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the bug -->

Ubicación: .../modules/AOP_Case_Updates/CaseUpdateHook.php

Clase, funcion: updateCaseStatus (line 299)

```

/* PPW - CODe - Modifiación CORE */

// if (!empty($case->id)) {

if (empty($case->id)) {

```

NOTE: (Deleted "!")

#### Steps to Reproduce

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug include code to reproduce, if relevant -->

1. Go to Admin > Email Settings and configure smtp mail

2. Go to Admin > Inbound Email and configure inbound mail

3. Go to Admin > AOP Setting > Enable AOP and configure "Case Status Changes"

4. Go to Cases > Creare a update case

5. Go to email final client and repply mail

6. Go to the case and verify that the status has not been changed

#### Context

<!--- How has this bug affected you? What were you trying to accomplish? -->

<!--- If you feel this should be a low/medium/high priority then please state so -->

Customer Support. High priority

#### Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* SuiteCRM Version used: 7.8.2

* Browser name and version (e.g. Chrome Version 51.0.2704.63 (64-bit)): All

* Environment name and version (e.g. MySQL, PHP 7): Mysql/MariaDb, PHP7

* Operating System and version (e.g Ubuntu 16.04): Ubuntu 16.04

| priority | apply status to case updates issue at aop and case flow in the area admin aop setting case status changes not running expected behavior when a new case update exists the system must apply the state changes as established actual behavior not change possible fix ubicación modules aop case updates caseupdatehook php clase funcion updatecasestatus line ppw code modifiación core if empty case id if empty case id note deleted steps to reproduce go to admin email settings and configure smtp mail go to admin inbound email and configure inbound mail go to admin aop setting enable aop and configure case status changes go to cases creare a update case go to email final client and repply mail go to the case and verify that the status has not been changed context customer support high priority your environment suitecrm version used browser name and version e g chrome version bit all environment name and version e g mysql php mysql mariadb operating system and version e g ubuntu ubuntu | 1 |

331,181 | 10,061,395,662 | IssuesEvent | 2019-07-22 21:13:59 | svof/svof | https://api.github.com/repos/svof/svof | closed | Monk Transmute | bug confirmed futher analysis needed in-client medium priority | Forwarded by Andraste. Monk transmute apparently is having some issues so will need to have a look at it. | 1.0 | Monk Transmute - Forwarded by Andraste. Monk transmute apparently is having some issues so will need to have a look at it. | priority | monk transmute forwarded by andraste monk transmute apparently is having some issues so will need to have a look at it | 1 |

298,339 | 9,199,015,802 | IssuesEvent | 2019-03-07 14:04:06 | cms-gem-daq-project/gem-plotting-tools | https://api.github.com/repos/cms-gem-daq-project/gem-plotting-tools | closed | Bug Report: anaDACScans.py generates KeyError if OH0 not in chamber_config | Priority: Medium Status: Help Wanted Type: Bug | <!--- Provide a general summary of the issue in the Title above -->

## Brief summary of issue

<!--- Provide a description of the issue, including any other issues or pull requests it references -->

`anaDACScans.py` throws a `KeyError` if `0` (OH0) is not in the list of keys for `chamber_config`.

### Types of issue

<!--- Propsed labels (see CONTRIBUTING.md) to help maintainers label your issue: -->

- [X] Bug report (report an issue with the code)

- [ ] Feature request (request for change which adds functionality)

## Expected Behavior

<!--- If you're describing a bug, tell us what should happen -->

<!--- If you're suggesting a change/improvement, tell us how it should work -->

This should not throw.

I can imagine two cases:

1. data is taken and OH0 actually existed in the data but for some reason `chamber_config` was not updated correctly,

2. OH0 is assigned as a default for the case of missing links and exists as a dummy.

In case 1 we might want to still analyze this data and place it in some "uncategorised" location. In case 2 perhaps the GEM Tree format should be updated to not set this default link...

## Current Behavior

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

These lines will generate a `KeyError` if `0` is not in `chamber_config` dictionary of `chamberInfo.py`:

https://github.com/cms-gem-daq-project/gem-plotting-tools/blob/390a76897576eb0eba97eb8286c644b418891025/anaDACScan.py#L106-L112

## Possible Solution (for bugs)

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

<!--- or ideas how to implement the addition or change -->

Set the chamber name as follows:

```python

if oh in chamber_config.keys():

cName = chamber_config[oh]

else

cName = "unknown"

```

Then `cName` is passed to `runCommand` instead of `chamber_config[oh]`. Not sure if this is the best solution though.

## Context (for feature requests)

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

Prevents data analysis of DAC scans.

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Version used: 59ce1e4cf86c0c9a949969f2df22958a0b8ed43f

* Shell used: `zsh`

<!--- Template thanks to https://www.talater.com/open-source-templates/#/page/98 -->

| 1.0 | Bug Report: anaDACScans.py generates KeyError if OH0 not in chamber_config - <!--- Provide a general summary of the issue in the Title above -->

## Brief summary of issue

<!--- Provide a description of the issue, including any other issues or pull requests it references -->

`anaDACScans.py` throws a `KeyError` if `0` (OH0) is not in the list of keys for `chamber_config`.

### Types of issue

<!--- Propsed labels (see CONTRIBUTING.md) to help maintainers label your issue: -->

- [X] Bug report (report an issue with the code)

- [ ] Feature request (request for change which adds functionality)

## Expected Behavior

<!--- If you're describing a bug, tell us what should happen -->

<!--- If you're suggesting a change/improvement, tell us how it should work -->

This should not throw.

I can imagine two cases:

1. data is taken and OH0 actually existed in the data but for some reason `chamber_config` was not updated correctly,

2. OH0 is assigned as a default for the case of missing links and exists as a dummy.

In case 1 we might want to still analyze this data and place it in some "uncategorised" location. In case 2 perhaps the GEM Tree format should be updated to not set this default link...

## Current Behavior

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

These lines will generate a `KeyError` if `0` is not in `chamber_config` dictionary of `chamberInfo.py`:

https://github.com/cms-gem-daq-project/gem-plotting-tools/blob/390a76897576eb0eba97eb8286c644b418891025/anaDACScan.py#L106-L112

## Possible Solution (for bugs)

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

<!--- or ideas how to implement the addition or change -->

Set the chamber name as follows:

```python

if oh in chamber_config.keys():

cName = chamber_config[oh]

else

cName = "unknown"

```

Then `cName` is passed to `runCommand` instead of `chamber_config[oh]`. Not sure if this is the best solution though.

## Context (for feature requests)

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

Prevents data analysis of DAC scans.

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Version used: 59ce1e4cf86c0c9a949969f2df22958a0b8ed43f

* Shell used: `zsh`

<!--- Template thanks to https://www.talater.com/open-source-templates/#/page/98 -->

| priority | bug report anadacscans py generates keyerror if not in chamber config brief summary of issue anadacscans py throws a keyerror if is not in the list of keys for chamber config types of issue bug report report an issue with the code feature request request for change which adds functionality expected behavior this should not throw i can imagine two cases data is taken and actually existed in the data but for some reason chamber config was not updated correctly is assigned as a default for the case of missing links and exists as a dummy in case we might want to still analyze this data and place it in some uncategorised location in case perhaps the gem tree format should be updated to not set this default link current behavior these lines will generate a keyerror if is not in chamber config dictionary of chamberinfo py possible solution for bugs set the chamber name as follows python if oh in chamber config keys cname chamber config else cname unknown then cname is passed to runcommand instead of chamber config not sure if this is the best solution though context for feature requests prevents data analysis of dac scans your environment version used shell used zsh | 1 |

696,087 | 23,883,832,122 | IssuesEvent | 2022-09-08 05:33:10 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | opened | Need an ingame way to list values for debugging | Priority: 3-Not Required Issue: Feature Request Difficulty: 2-Medium | Even if the UI is just generic and it requires custom code to fill in the data. Extremely useful for balancing being able to see everything at once.

E.g.

List all melee weapons with their cooldowns, range, arcs, etc. | 1.0 | Need an ingame way to list values for debugging - Even if the UI is just generic and it requires custom code to fill in the data. Extremely useful for balancing being able to see everything at once.

E.g.

List all melee weapons with their cooldowns, range, arcs, etc. | priority | need an ingame way to list values for debugging even if the ui is just generic and it requires custom code to fill in the data extremely useful for balancing being able to see everything at once e g list all melee weapons with their cooldowns range arcs etc | 1 |

16,861 | 2,615,125,371 | IssuesEvent | 2015-03-01 05:53:28 | chrsmith/google-api-java-client | https://api.github.com/repos/chrsmith/google-api-java-client | opened | A very small code required to obtain accessToken from Authorization Token | auto-migrated Priority-Medium Type-Sample | ```

Which Google API and version (e.g. Google Calendar Data API version 2)?

Google Analytics Reporting for Android

What format (e.g. JSON, Atom)?

JSON , Java

What Authentation (e.g. OAuth, OAuth 2, ClientLogin)?

OAuth 2

Java environment (e.g. Java 6, Android 2.3, App Engine)?

Java 6, Android 2.3+

External references, such as API reference guide?

Please provide any additional information below.

The main problem I am facing is I have successfully obtained the Authorization

Token using Account manager for Analytics .But There is no single code of

getting of AccessTOken from the authorization token . Please Help !

```

Original issue reported on code.google.com by `abdulreh...@gmail.com` on 27 Mar 2012 at 7:23 | 1.0 | A very small code required to obtain accessToken from Authorization Token - ```

Which Google API and version (e.g. Google Calendar Data API version 2)?

Google Analytics Reporting for Android

What format (e.g. JSON, Atom)?

JSON , Java

What Authentation (e.g. OAuth, OAuth 2, ClientLogin)?

OAuth 2

Java environment (e.g. Java 6, Android 2.3, App Engine)?

Java 6, Android 2.3+

External references, such as API reference guide?

Please provide any additional information below.

The main problem I am facing is I have successfully obtained the Authorization

Token using Account manager for Analytics .But There is no single code of

getting of AccessTOken from the authorization token . Please Help !

```

Original issue reported on code.google.com by `abdulreh...@gmail.com` on 27 Mar 2012 at 7:23 | priority | a very small code required to obtain accesstoken from authorization token which google api and version e g google calendar data api version google analytics reporting for android what format e g json atom json java what authentation e g oauth oauth clientlogin oauth java environment e g java android app engine java android external references such as api reference guide please provide any additional information below the main problem i am facing is i have successfully obtained the authorization token using account manager for analytics but there is no single code of getting of accesstoken from the authorization token please help original issue reported on code google com by abdulreh gmail com on mar at | 1 |

520,740 | 15,091,994,840 | IssuesEvent | 2021-02-06 17:45:36 | KoderKow/twitchr | https://api.github.com/repos/KoderKow/twitchr | closed | Get Clips | Difficulty: [2] Intermediate Effort: [2] Medium Priority: [1] Low Type: ★ Enhancement | Gets clip information by clip ID (one or more), broadcaster ID (one only), or game ID (one only).

Note: The clips service returns a maximum of 1000 clips.

The response has a JSON payload with a data field containing an array of clip information elements and a pagination field containing information required to query for more streams.

https://dev.twitch.tv/docs/api/reference#get-clips | 1.0 | Get Clips - Gets clip information by clip ID (one or more), broadcaster ID (one only), or game ID (one only).

Note: The clips service returns a maximum of 1000 clips.

The response has a JSON payload with a data field containing an array of clip information elements and a pagination field containing information required to query for more streams.

https://dev.twitch.tv/docs/api/reference#get-clips | priority | get clips gets clip information by clip id one or more broadcaster id one only or game id one only note the clips service returns a maximum of clips the response has a json payload with a data field containing an array of clip information elements and a pagination field containing information required to query for more streams | 1 |

26,002 | 2,684,094,621 | IssuesEvent | 2015-03-28 17:05:02 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | Некорректная интерпретация кодовой страницы EchoX | 1 star bug imported invalid Priority-Medium | _From [gigaplas...@gmail.com](https://code.google.com/u/106336574353395140522/) on June 03, 2012 00:23:30_

Windows 7 SP1 x86 ConEmu 120417 x86

Far 2.0.1777 x86

При использовании ConEmu некорректно отображаются кириллические символы, выводимые утилитой EchoX в кодовой странице 1251 (предварительно выполнена команда "chcp 1251"), при этом в кодовой странице 866 (команда chcp не выполняется) кириллица отображается корректно. "Чистый" Far отображает всё корректно.

Поведение замечено на указанной версии ConEmu , пробовал делать откаты вплоть до 110308a - поведение совпадает для всех промежуточных версий, включая 110308a. Пакетный файл, иллюстрирующий поведение, прилагается.

EchoX является частью пакета Shell Scripting Toolkit http://www.westmesatech.com/sst.html используется последняя версия пакета (sst27).

Опция "Inject ConEmuHk " включена. (Суб)плагины Background, Lines, Thumbs удалены (не используются - хотя, думаю, это неважно).

**Attachment:** [test-cp.7z](http://code.google.com/p/conemu-maximus5/issues/detail?id=565)

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=565_ | 1.0 | Некорректная интерпретация кодовой страницы EchoX - _From [gigaplas...@gmail.com](https://code.google.com/u/106336574353395140522/) on June 03, 2012 00:23:30_

Windows 7 SP1 x86 ConEmu 120417 x86

Far 2.0.1777 x86

При использовании ConEmu некорректно отображаются кириллические символы, выводимые утилитой EchoX в кодовой странице 1251 (предварительно выполнена команда "chcp 1251"), при этом в кодовой странице 866 (команда chcp не выполняется) кириллица отображается корректно. "Чистый" Far отображает всё корректно.

Поведение замечено на указанной версии ConEmu , пробовал делать откаты вплоть до 110308a - поведение совпадает для всех промежуточных версий, включая 110308a. Пакетный файл, иллюстрирующий поведение, прилагается.

EchoX является частью пакета Shell Scripting Toolkit http://www.westmesatech.com/sst.html используется последняя версия пакета (sst27).

Опция "Inject ConEmuHk " включена. (Суб)плагины Background, Lines, Thumbs удалены (не используются - хотя, думаю, это неважно).

**Attachment:** [test-cp.7z](http://code.google.com/p/conemu-maximus5/issues/detail?id=565)

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=565_ | priority | некорректная интерпретация кодовой страницы echox from on june windows conemu far при использовании conemu некорректно отображаются кириллические символы выводимые утилитой echox в кодовой странице предварительно выполнена команда chcp при этом в кодовой странице команда chcp не выполняется кириллица отображается корректно чистый far отображает всё корректно поведение замечено на указанной версии conemu пробовал делать откаты вплоть до поведение совпадает для всех промежуточных версий включая пакетный файл иллюстрирующий поведение прилагается echox является частью пакета shell scripting toolkit используется последняя версия пакета опция inject conemuhk включена суб плагины background lines thumbs удалены не используются хотя думаю это неважно attachment original issue | 1 |

376,088 | 11,138,283,122 | IssuesEvent | 2019-12-20 21:52:33 | Apexal/late | https://api.github.com/repos/Apexal/late | closed | Block Revamping | Area: Back End Area: Front End Difficulty: Hard Priority: Medium | Blocks should be schedulable for assessments, courses, todos, and general events.

I must revamp how Vuex tracks work blocks on the frontend and how the blocks are handled on the backend. This will start with Tobias and issue #532 and then #544 | 1.0 | Block Revamping - Blocks should be schedulable for assessments, courses, todos, and general events.

I must revamp how Vuex tracks work blocks on the frontend and how the blocks are handled on the backend. This will start with Tobias and issue #532 and then #544 | priority | block revamping blocks should be schedulable for assessments courses todos and general events i must revamp how vuex tracks work blocks on the frontend and how the blocks are handled on the backend this will start with tobias and issue and then | 1 |

809,549 | 30,197,395,009 | IssuesEvent | 2023-07-04 23:53:06 | CodeSystem2022/Team-Fortran-2023 | https://api.github.com/repos/CodeSystem2022/Team-Fortran-2023 | closed | Clase 2 Bloques y mucho más (Java) | Medium priority codigo points:1 | - [x] 1 Argumentos variables

- [x] 2 Manejo de Enumeraciones (enum)

- [x] 3 Pruebas de enum, con la creación de enum Continentes

- [x] 4 Manejo de bloques de código | 1.0 | Clase 2 Bloques y mucho más (Java) - - [x] 1 Argumentos variables

- [x] 2 Manejo de Enumeraciones (enum)

- [x] 3 Pruebas de enum, con la creación de enum Continentes

- [x] 4 Manejo de bloques de código | priority | clase bloques y mucho más java argumentos variables manejo de enumeraciones enum pruebas de enum con la creación de enum continentes manejo de bloques de código | 1 |

811,366 | 30,285,283,397 | IssuesEvent | 2023-07-08 15:44:50 | MarcusZagorski/Coursework-Planner | https://api.github.com/repos/MarcusZagorski/Coursework-Planner | opened | [PD] Organise a study session about Time Management tools | 🏕 Priority Mandatory 🐂 Size Medium 📅 Week 4 🎯 Topic Communication 🎯 Topic Time Management 🎯 Topic Teamwork 📅 Fundamentals | From Course-Fundamentals created by [SallyMcGrath](https://github.com/SallyMcGrath): CodeYourFuture/Course-Fundamentals#12

### Coursework content

Organise a study session with the pair you were assigned to during class - check out the Google Sheet.

Think about how you manage your time and which tools you use (add some examples and suggestions from our side). If you still need to start using them, research some and bring them to this meeting.

### Estimated time in hours

2

### What is the purpose of this assignment?

- [ ] Understand how you and your pair organise your time

- [ ] Identify at least 2 time management tools each

- [ ] With your pair, write a short paragraph about your findings

- [ ] Share your findings in the "Time Management Tools" thread on your cohort Slack Channel. _Search for it on the channel. If the thread is not yet available, you can create it_

- [ ] Read your peers text and react to it the the appropriate emoji

### How to submit

Add the link to your post on Slack on this coursework

Add a screenshot of your post on this coursework | 1.0 | [PD] Organise a study session about Time Management tools - From Course-Fundamentals created by [SallyMcGrath](https://github.com/SallyMcGrath): CodeYourFuture/Course-Fundamentals#12

### Coursework content

Organise a study session with the pair you were assigned to during class - check out the Google Sheet.

Think about how you manage your time and which tools you use (add some examples and suggestions from our side). If you still need to start using them, research some and bring them to this meeting.

### Estimated time in hours

2

### What is the purpose of this assignment?

- [ ] Understand how you and your pair organise your time

- [ ] Identify at least 2 time management tools each

- [ ] With your pair, write a short paragraph about your findings

- [ ] Share your findings in the "Time Management Tools" thread on your cohort Slack Channel. _Search for it on the channel. If the thread is not yet available, you can create it_

- [ ] Read your peers text and react to it the the appropriate emoji

### How to submit

Add the link to your post on Slack on this coursework

Add a screenshot of your post on this coursework | priority | organise a study session about time management tools from course fundamentals created by codeyourfuture course fundamentals coursework content organise a study session with the pair you were assigned to during class check out the google sheet think about how you manage your time and which tools you use add some examples and suggestions from our side if you still need to start using them research some and bring them to this meeting estimated time in hours what is the purpose of this assignment understand how you and your pair organise your time identify at least time management tools each with your pair write a short paragraph about your findings share your findings in the time management tools thread on your cohort slack channel search for it on the channel if the thread is not yet available you can create it read your peers text and react to it the the appropriate emoji how to submit add the link to your post on slack on this coursework add a screenshot of your post on this coursework | 1 |

509,406 | 14,730,059,465 | IssuesEvent | 2021-01-06 12:34:43 | OpenMined/openmined | https://api.github.com/repos/OpenMined/openmined | closed | Pressing "enter" on Sign In will trigger the Github Login | Priority: 3 - Medium :unamused: Severity: 3 - Medium :unamused: Status: Available :wave: Type: Bug :bug: | When we hit the "enter" or "return" key on the sign in form, it triggers the Github login instead of the "Sign In" button. This is a bit confusing, and ironically, does the exact opposite for the sign up form. So the sign up form works as intended, but sign up doesn't... despite being basically identical code. | 1.0 | Pressing "enter" on Sign In will trigger the Github Login - When we hit the "enter" or "return" key on the sign in form, it triggers the Github login instead of the "Sign In" button. This is a bit confusing, and ironically, does the exact opposite for the sign up form. So the sign up form works as intended, but sign up doesn't... despite being basically identical code. | priority | pressing enter on sign in will trigger the github login when we hit the enter or return key on the sign in form it triggers the github login instead of the sign in button this is a bit confusing and ironically does the exact opposite for the sign up form so the sign up form works as intended but sign up doesn t despite being basically identical code | 1 |

271,520 | 8,484,767,948 | IssuesEvent | 2018-10-26 04:34:49 | minio/minio | https://api.github.com/repos/minio/minio | closed | Node port not opened when minio is installed using helm | priority: medium triage | <!--- Provide a general summary of the issue in the Title above -->

I installed minio using helm chart like below .

helm install --set accessKey=myaccesskey,secretKey=mysecretkey stable/minio

It was successfull.

[root@kube-master-0-prodes-1539172764 madhan]# kubectl get pods

NAME READY STATUS RESTARTS AGE

good-deer-minio-795c9b457d-sbnjg 1/1 Running 0 25s

[root@kube-master-0-prodes-1539172764 madhan]# kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

good-deer-minio ClusterIP 10.233.26.112 <none> 9000/TCP 9m

The pod deployed successfully.But, I am unable to acccess it using node port .Since the node port was not opened.

Port forwarding also not working ..

[root@kube-master-0-prodes-1539172764 madhan]# kubectl port-forward good-deer-minio-795c9b457d-sbnjg 9000:31001

Forwarding from 127.0.0.1:9000 -> 31001

it got struck like the above and i am unable to forward the port.

| 1.0 | Node port not opened when minio is installed using helm - <!--- Provide a general summary of the issue in the Title above -->

I installed minio using helm chart like below .

helm install --set accessKey=myaccesskey,secretKey=mysecretkey stable/minio

It was successfull.

[root@kube-master-0-prodes-1539172764 madhan]# kubectl get pods

NAME READY STATUS RESTARTS AGE

good-deer-minio-795c9b457d-sbnjg 1/1 Running 0 25s

[root@kube-master-0-prodes-1539172764 madhan]# kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

good-deer-minio ClusterIP 10.233.26.112 <none> 9000/TCP 9m

The pod deployed successfully.But, I am unable to acccess it using node port .Since the node port was not opened.

Port forwarding also not working ..

[root@kube-master-0-prodes-1539172764 madhan]# kubectl port-forward good-deer-minio-795c9b457d-sbnjg 9000:31001

Forwarding from 127.0.0.1:9000 -> 31001

it got struck like the above and i am unable to forward the port.

| priority | node port not opened when minio is installed using helm i installed minio using helm chart like below helm install set accesskey myaccesskey secretkey mysecretkey stable minio it was successfull kubectl get pods name ready status restarts age good deer minio sbnjg running kubectl get services name type cluster ip external ip port s age good deer minio clusterip tcp the pod deployed successfully but i am unable to acccess it using node port since the node port was not opened port forwarding also not working kubectl port forward good deer minio sbnjg forwarding from it got struck like the above and i am unable to forward the port | 1 |

92,643 | 3,872,899,338 | IssuesEvent | 2016-04-11 15:15:46 | jcgregorio/httplib2 | https://api.github.com/repos/jcgregorio/httplib2 | closed | Multiple heads in in source repo make merging a pain | bug imported Priority-Medium | _From [kkvilek...@gmail.com](https://code.google.com/u/110456896135066953261/) on September 29, 2011 13:23:01_

What steps will reproduce the problem? 1. hg clone https://code.google.com/p/httplib2/ 2. cd httplib2

3. hg heads What is the expected output? What do you see instead? hg heads

*** failed to import extension hgext.qct: No module named qct

changeset: 198:6525cadfde53

tag: tip

user: Joe Gregorio <jcgregorio@google.com>

date: Thu Jun 23 15:41:24 2011 -0400

summary: Change out Go Daddy root ca for their ca bundle. Also add checks for version number matching when doing releases.

changeset: 116:ecfe07128337

branch: ivo

user: Ivo Timmermans <zxnrbl@gmail.com>

date: Fri Jul 17 10:54:15 2009 +0200

summary: Add untested GSSAPI authentication handlers for Kerberos.

changeset: 10:530da6dab120

branch: antill-bug

parent: 8:52484af43ff4

user: jcgregorio

date: Tue Feb 14 04:06:41 2006 +0000

summary: Added support for Python 2.3 What version of the product are you using? On what operating system? mercurial 0.9.1 Please provide any additional information below.

_Original issue: http://code.google.com/p/httplib2/issues/detail?id=181_ | 1.0 | Multiple heads in in source repo make merging a pain - _From [kkvilek...@gmail.com](https://code.google.com/u/110456896135066953261/) on September 29, 2011 13:23:01_

What steps will reproduce the problem? 1. hg clone https://code.google.com/p/httplib2/ 2. cd httplib2

3. hg heads What is the expected output? What do you see instead? hg heads

*** failed to import extension hgext.qct: No module named qct

changeset: 198:6525cadfde53

tag: tip

user: Joe Gregorio <jcgregorio@google.com>

date: Thu Jun 23 15:41:24 2011 -0400

summary: Change out Go Daddy root ca for their ca bundle. Also add checks for version number matching when doing releases.

changeset: 116:ecfe07128337

branch: ivo

user: Ivo Timmermans <zxnrbl@gmail.com>

date: Fri Jul 17 10:54:15 2009 +0200

summary: Add untested GSSAPI authentication handlers for Kerberos.

changeset: 10:530da6dab120

branch: antill-bug

parent: 8:52484af43ff4

user: jcgregorio

date: Tue Feb 14 04:06:41 2006 +0000

summary: Added support for Python 2.3 What version of the product are you using? On what operating system? mercurial 0.9.1 Please provide any additional information below.

_Original issue: http://code.google.com/p/httplib2/issues/detail?id=181_ | priority | multiple heads in in source repo make merging a pain from on september what steps will reproduce the problem hg clone cd hg heads what is the expected output what do you see instead hg heads failed to import extension hgext qct no module named qct changeset tag tip user joe gregorio date thu jun summary change out go daddy root ca for their ca bundle also add checks for version number matching when doing releases changeset branch ivo user ivo timmermans date fri jul summary add untested gssapi authentication handlers for kerberos changeset branch antill bug parent user jcgregorio date tue feb summary added support for python what version of the product are you using on what operating system mercurial please provide any additional information below original issue | 1 |

61,175 | 3,141,500,853 | IssuesEvent | 2015-09-12 17:07:36 | neuropoly/spinalcordtoolbox | https://api.github.com/repos/neuropoly/spinalcordtoolbox | closed | sct_resample output has black lines on first slice (z=0) | bug priority: medium sct_resample | original image voxel dimension: 0.46875x0.46875x15mm

wanted voxel dimension: 0.5x0.5x15mm

path to data:

``/Volumes/folder_shared/greymattersegmentation/DATA_AMU15/all_data_3d_resampling_old_bad_res/G1_2/3d_data``

command:

``sct_resample -i G1_2_im.nii.gz -f 0.9375x0.9375x1 -o G1_2_im_test_resample.nii.gz ``

original image:

Result image:

(c3d -resample-mm gives result worst)

| 1.0 | sct_resample output has black lines on first slice (z=0) - original image voxel dimension: 0.46875x0.46875x15mm

wanted voxel dimension: 0.5x0.5x15mm

path to data:

``/Volumes/folder_shared/greymattersegmentation/DATA_AMU15/all_data_3d_resampling_old_bad_res/G1_2/3d_data``

command:

``sct_resample -i G1_2_im.nii.gz -f 0.9375x0.9375x1 -o G1_2_im_test_resample.nii.gz ``

original image:

Result image:

(c3d -resample-mm gives result worst)

| priority | sct resample output has black lines on first slice z original image voxel dimension wanted voxel dimension path to data volumes folder shared greymattersegmentation data all data resampling old bad res data command sct resample i im nii gz f o im test resample nii gz original image result image resample mm gives result worst | 1 |

677,920 | 23,179,780,645 | IssuesEvent | 2022-07-31 23:41:24 | City-Bureau/city-scrapers-atl | https://api.github.com/repos/City-Bureau/city-scrapers-atl | opened | New Scraper: DeKalb County Board of Ethics | priority-medium | Create a new scraper for DeKalb County Board of Ethics

Website: https://www.dekalbcountyga.gov/meeting-calendar

Jurisdiction: DeKalb County

Classification:

The DeKalb County Board of Ethics serves to interpret the Code of Ethics adopted by the county, to apply sanctions to those in violation of the Code, and to issue advisory opinions defining appropriate behaviors according to community standards as reflected in that Code. When complaints are registered against commissioners or other county employees or appointees over whom the Board has jurisdiction, the Board addresses the matter. If appropriate, a hearing will be scheduled and held to obtain evidence on the issue. Should the party accused be deemed to have violated the Code of Ethics, the Board will recommend appropriate penalties or sanctions. (https://dekalbcountyga.granicus.com/boards/w/968f9572ef2211df/boards/7137)

| 1.0 | New Scraper: DeKalb County Board of Ethics - Create a new scraper for DeKalb County Board of Ethics

Website: https://www.dekalbcountyga.gov/meeting-calendar

Jurisdiction: DeKalb County

Classification:

The DeKalb County Board of Ethics serves to interpret the Code of Ethics adopted by the county, to apply sanctions to those in violation of the Code, and to issue advisory opinions defining appropriate behaviors according to community standards as reflected in that Code. When complaints are registered against commissioners or other county employees or appointees over whom the Board has jurisdiction, the Board addresses the matter. If appropriate, a hearing will be scheduled and held to obtain evidence on the issue. Should the party accused be deemed to have violated the Code of Ethics, the Board will recommend appropriate penalties or sanctions. (https://dekalbcountyga.granicus.com/boards/w/968f9572ef2211df/boards/7137)

| priority | new scraper dekalb county board of ethics create a new scraper for dekalb county board of ethics website jurisdiction dekalb county classification the dekalb county board of ethics serves to interpret the code of ethics adopted by the county to apply sanctions to those in violation of the code and to issue advisory opinions defining appropriate behaviors according to community standards as reflected in that code when complaints are registered against commissioners or other county employees or appointees over whom the board has jurisdiction the board addresses the matter if appropriate a hearing will be scheduled and held to obtain evidence on the issue should the party accused be deemed to have violated the code of ethics the board will recommend appropriate penalties or sanctions | 1 |

416,528 | 12,147,961,215 | IssuesEvent | 2020-04-24 13:51:54 | pa11y/pa11y-reporter-cli | https://api.github.com/repos/pa11y/pa11y-reporter-cli | closed | Replace chalk with a leaner dependency | priority: medium status: good starter issue type: enhancement | [`pa11y-reporter-cli` has a significant install size (114kB)](https://packagephobia.now.sh/result?p=pa11y-reporter-cli), mostly caused by its single dependency [`chalk`, which has an install size of 105kB](https://packagephobia.now.sh/result?p=chalk).

There are some similar packages which seem to have zero dependencies and an install size of ~10kB:

* https://packagephobia.now.sh/result?p=kleur

* https://packagephobia.now.sh/result?p=colorette

We should consider replacing this library wherever is used in pa11y. | 1.0 | Replace chalk with a leaner dependency - [`pa11y-reporter-cli` has a significant install size (114kB)](https://packagephobia.now.sh/result?p=pa11y-reporter-cli), mostly caused by its single dependency [`chalk`, which has an install size of 105kB](https://packagephobia.now.sh/result?p=chalk).

There are some similar packages which seem to have zero dependencies and an install size of ~10kB:

* https://packagephobia.now.sh/result?p=kleur

* https://packagephobia.now.sh/result?p=colorette

We should consider replacing this library wherever is used in pa11y. | priority | replace chalk with a leaner dependency mostly caused by its single dependency there are some similar packages which seem to have zero dependencies and an install size of we should consider replacing this library wherever is used in | 1 |

241,616 | 7,818,137,611 | IssuesEvent | 2018-06-13 11:17:47 | Kris-LIBIS/PdfTool | https://api.github.com/repos/Kris-LIBIS/PdfTool | closed | selectie van pagina's uit pdf's | feature priority 2: medium | dit is issue 3 van de Lias_ingester:

Er moet een selectie (random) gemaakt kunnen worden van de VIEW pdf om te ingesten als VIEW_MAIN. Voorzie een aantal configuratiemogelijkheden (limitative lijst van pagina's die opgenomen moeten worden, procentueel aantal pagina's (vb 10%), (on)even pagina's); Om random en procentuele selecties te maken van een pdf

| 1.0 | selectie van pagina's uit pdf's - dit is issue 3 van de Lias_ingester:

Er moet een selectie (random) gemaakt kunnen worden van de VIEW pdf om te ingesten als VIEW_MAIN. Voorzie een aantal configuratiemogelijkheden (limitative lijst van pagina's die opgenomen moeten worden, procentueel aantal pagina's (vb 10%), (on)even pagina's); Om random en procentuele selecties te maken van een pdf

| priority | selectie van pagina s uit pdf s dit is issue van de lias ingester er moet een selectie random gemaakt kunnen worden van de view pdf om te ingesten als view main voorzie een aantal configuratiemogelijkheden limitative lijst van pagina s die opgenomen moeten worden procentueel aantal pagina s vb on even pagina s om random en procentuele selecties te maken van een pdf | 1 |

410,459 | 11,991,874,991 | IssuesEvent | 2020-04-08 09:08:12 | AY1920S2-CS2103T-W16-4/main | https://api.github.com/repos/AY1920S2-CS2103T-W16-4/main | closed | As a NUS student, I would like to set my events/todos into different priority levels. | priority.Medium type.Story | so that I can look for the important ones more clearly.

| 1.0 | As a NUS student, I would like to set my events/todos into different priority levels. - so that I can look for the important ones more clearly.

| priority | as a nus student i would like to set my events todos into different priority levels so that i can look for the important ones more clearly | 1 |

813,344 | 30,454,529,800 | IssuesEvent | 2023-07-16 18:13:35 | codidact/qpixel | https://api.github.com/repos/codidact/qpixel | closed | Can't revert to a blank profile | area: ruby meta: good first issue meta: help wanted type: bug priority: medium complexity: easy | https://meta.codidact.com/posts/287128

https://meta.codidact.com/posts/287130

The "about" section of a user profile starts out blank. If you've edited it and then later want to revert to the blank state, you can't -- the "save" button is disabled. You should be able to remove content that you added in the first place (without resorting to HTML trickery).

I *suspect* that the "about" block is a post and we have a minimum length for posts. Can we create an exception for this specific post type and allow a zero-length body?

| 1.0 | Can't revert to a blank profile - https://meta.codidact.com/posts/287128

https://meta.codidact.com/posts/287130

The "about" section of a user profile starts out blank. If you've edited it and then later want to revert to the blank state, you can't -- the "save" button is disabled. You should be able to remove content that you added in the first place (without resorting to HTML trickery).

I *suspect* that the "about" block is a post and we have a minimum length for posts. Can we create an exception for this specific post type and allow a zero-length body?

| priority | can t revert to a blank profile the about section of a user profile starts out blank if you ve edited it and then later want to revert to the blank state you can t the save button is disabled you should be able to remove content that you added in the first place without resorting to html trickery i suspect that the about block is a post and we have a minimum length for posts can we create an exception for this specific post type and allow a zero length body | 1 |

745,621 | 25,992,228,271 | IssuesEvent | 2022-12-20 08:39:51 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | NPCs need collision avoidance again | Priority: 2-Before Release Issue: Feature Request Difficulty: 2-Medium | I have a branch using RVO2-CS that seems to work pretty decently but static body avoidance needs porting.

We don't actually 'need' it but it's the easiest way to avoid stacking. | 1.0 | NPCs need collision avoidance again - I have a branch using RVO2-CS that seems to work pretty decently but static body avoidance needs porting.

We don't actually 'need' it but it's the easiest way to avoid stacking. | priority | npcs need collision avoidance again i have a branch using cs that seems to work pretty decently but static body avoidance needs porting we don t actually need it but it s the easiest way to avoid stacking | 1 |

637,346 | 20,625,891,333 | IssuesEvent | 2022-03-07 22:29:42 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | closed | Home Wiki Page - Personal Wiki Linking | enhancement priority-medium waiting-for-others | Add the personal wiki page links of the team members to the [home wiki page](https://github.com/bounswe/bounswe2022group2/wiki).

* This issue has to wait for the completion of the other people's personal wiki pages to be closed.

* It depends on issue #5. | 1.0 | Home Wiki Page - Personal Wiki Linking - Add the personal wiki page links of the team members to the [home wiki page](https://github.com/bounswe/bounswe2022group2/wiki).

* This issue has to wait for the completion of the other people's personal wiki pages to be closed.

* It depends on issue #5. | priority | home wiki page personal wiki linking add the personal wiki page links of the team members to the this issue has to wait for the completion of the other people s personal wiki pages to be closed it depends on issue | 1 |

118,207 | 4,733,071,276 | IssuesEvent | 2016-10-19 09:56:16 | Nexteria/Nextis | https://api.github.com/repos/Nexteria/Nextis | closed | Ziskat default database dump pre lokálny server | Medium priority | Pointa je, aby si každý mohol v ľubovolnom momente vytvoriť usera s admin pravami s prednastavenym menom a heslom na testovacie ucely na lokalnom deployi | 1.0 | Ziskat default database dump pre lokálny server - Pointa je, aby si každý mohol v ľubovolnom momente vytvoriť usera s admin pravami s prednastavenym menom a heslom na testovacie ucely na lokalnom deployi | priority | ziskat default database dump pre lokálny server pointa je aby si každý mohol v ľubovolnom momente vytvoriť usera s admin pravami s prednastavenym menom a heslom na testovacie ucely na lokalnom deployi | 1 |

140,883 | 5,425,624,161 | IssuesEvent | 2017-03-03 07:05:07 | NostraliaWoW/mangoszero | https://api.github.com/repos/NostraliaWoW/mangoszero | opened | Honor Kill | Priority - Medium System | If I recall correctly, in vanilla, a character level 51+ will grant an honor kill for a level 60. Just testing some stuff today, noticed that 2 kills on a level 53 rewarded no honor kill (both of which he dealt damage) and 1 kill on a level 60 rewarded no honor kill. Out of 6 kills today I have been awarded only 3, not sure why? I understand the honor is calculated later in the day, but 3 of the kills (and all kills should) showed up instantly, and there is no evidence of the other 3. Would appreciate a look into this matter as currently it is making it hard to grind honor points.

Paprika says,

I can confirm this, as I was the level 53 getting killed. 3 times, not 2 =(

| 1.0 | Honor Kill - If I recall correctly, in vanilla, a character level 51+ will grant an honor kill for a level 60. Just testing some stuff today, noticed that 2 kills on a level 53 rewarded no honor kill (both of which he dealt damage) and 1 kill on a level 60 rewarded no honor kill. Out of 6 kills today I have been awarded only 3, not sure why? I understand the honor is calculated later in the day, but 3 of the kills (and all kills should) showed up instantly, and there is no evidence of the other 3. Would appreciate a look into this matter as currently it is making it hard to grind honor points.

Paprika says,

I can confirm this, as I was the level 53 getting killed. 3 times, not 2 =(

| priority | honor kill if i recall correctly in vanilla a character level will grant an honor kill for a level just testing some stuff today noticed that kills on a level rewarded no honor kill both of which he dealt damage and kill on a level rewarded no honor kill out of kills today i have been awarded only not sure why i understand the honor is calculated later in the day but of the kills and all kills should showed up instantly and there is no evidence of the other would appreciate a look into this matter as currently it is making it hard to grind honor points paprika says i can confirm this as i was the level getting killed times not | 1 |

670,430 | 22,689,719,240 | IssuesEvent | 2022-07-04 18:14:02 | MrAnyx/Notice | https://api.github.com/repos/MrAnyx/Notice | opened | Update the component scss file import location | Priority: Medium Type: Feature For: Website | ## Suggested solution

Currently, the scss files are imported directly in the js global file. We need to import it in each component.ts file

## Template

## Linked issue

## Todo

- [ ] Update scss file import location for lit components

- [ ] Update typescript files

- [ ] Update `encore_entry_link_tags` function in each twig files | 1.0 | Update the component scss file import location - ## Suggested solution

Currently, the scss files are imported directly in the js global file. We need to import it in each component.ts file

## Template

## Linked issue

## Todo

- [ ] Update scss file import location for lit components

- [ ] Update typescript files

- [ ] Update `encore_entry_link_tags` function in each twig files | priority | update the component scss file import location suggested solution currently the scss files are imported directly in the js global file we need to import it in each component ts file template linked issue todo update scss file import location for lit components update typescript files update encore entry link tags function in each twig files | 1 |