Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

281,988 | 8,701,595,208 | IssuesEvent | 2018-12-05 12:02:53 | antonwilc0x/NewSO | https://api.github.com/repos/antonwilc0x/NewSO | closed | Basic Server Database | complexity: medium hiatus priority: high server | In order to add or extend certain features, a database needs to be established. Entity Framework, Microsoft's cross-platform ORM framework, ~~LiteDB, a noSQL database,~~ will serve as the foundation. For better flexibility, each database will be separate and operate independently of each other. In theory, this _should_ cut down on the number of writes.

## Databases

- [ ] Users (Username, ID, Password Hash, Assigned Avatar IDs)

- [ ] Avatars (Avatar Name, ID, Assigned User ID, City, Money)

- [ ] Cities (Lot, Lot location, Assigned Avatar ID, Category)

The city database is not included in this since it's the most complicated part. | 1.0 | Basic Server Database - In order to add or extend certain features, a database needs to be established. Entity Framework, Microsoft's cross-platform ORM framework, ~~LiteDB, a noSQL database,~~ will serve as the foundation. For better flexibility, each database will be separate and operate independently of each other. In theory, this _should_ cut down on the number of writes.

## Databases

- [ ] Users (Username, ID, Password Hash, Assigned Avatar IDs)

- [ ] Avatars (Avatar Name, ID, Assigned User ID, City, Money)

- [ ] Cities (Lot, Lot location, Assigned Avatar ID, Category)

The city database is not included in this since it's the most complicated part. | priority | basic server database in order to add or extend certain features a database needs to be established entity framework microsoft s cross platform orm framework litedb a nosql database will serve as the foundation for better flexibility each database will be separate and operate independently of each other in theory this should cut down on the number of writes databases users username id password hash assigned avatar ids avatars avatar name id assigned user id city money cities lot lot location assigned avatar id category the city database is not included in this since it s the most complicated part | 1 |

425,456 | 12,340,661,537 | IssuesEvent | 2020-05-14 20:21:13 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | Missing forward_key_balanced configuration in radius config | Priority: Medium Type: Bug | **Describe the bug**

In Configuration -> System configuration -> RADIUS it miss the forward_key_balanced configuration parameter.

| 1.0 | Missing forward_key_balanced configuration in radius config - **Describe the bug**

In Configuration -> System configuration -> RADIUS it miss the forward_key_balanced configuration parameter.

| priority | missing forward key balanced configuration in radius config describe the bug in configuration system configuration radius it miss the forward key balanced configuration parameter | 1 |

311,575 | 9,535,613,212 | IssuesEvent | 2019-04-30 07:27:50 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | Don't set EventsPerJob bigger than RequestNumEvents | Enhancement Medium Priority ReqMgr2 | Of course, also considering the FilterEfficiency and the usual multiple of EventsPerLumi.

This JIRA ticket reports an workflow that goes to failed:

https://its.cern.ch/jira/browse/CMSCOMPPR-5394

and the reason is that the TimePerEvent is so so small that the job splitting gets automatically set to 42 million events per job (420k lumis per job), while it's requesting only 100k events.

| 1.0 | Don't set EventsPerJob bigger than RequestNumEvents - Of course, also considering the FilterEfficiency and the usual multiple of EventsPerLumi.

This JIRA ticket reports an workflow that goes to failed:

https://its.cern.ch/jira/browse/CMSCOMPPR-5394

and the reason is that the TimePerEvent is so so small that the job splitting gets automatically set to 42 million events per job (420k lumis per job), while it's requesting only 100k events.

| priority | don t set eventsperjob bigger than requestnumevents of course also considering the filterefficiency and the usual multiple of eventsperlumi this jira ticket reports an workflow that goes to failed and the reason is that the timeperevent is so so small that the job splitting gets automatically set to million events per job lumis per job while it s requesting only events | 1 |

782,727 | 27,504,944,200 | IssuesEvent | 2023-03-06 02:07:33 | AY2223S2-CS2103T-W13-3/tp | https://api.github.com/repos/AY2223S2-CS2103T-W13-3/tp | closed | As a fickle user, I want to edit a task | type.Story priority.Medium | So that I can correct mistakes without deleting a task. | 1.0 | As a fickle user, I want to edit a task - So that I can correct mistakes without deleting a task. | priority | as a fickle user i want to edit a task so that i can correct mistakes without deleting a task | 1 |

260,355 | 8,209,099,834 | IssuesEvent | 2018-09-04 06:10:27 | edenlabllc/ehealth.api | https://api.github.com/repos/edenlabllc/ehealth.api | closed | Division search (website), PROD, #J247 | kind/support priority/medium | Доброго дня, зареєстровано три відділення ("legal_entity_id": "18791c31-3281-4e5a-9ea5-2eef9a6fe18e"):

1) "id": "b4985f5a-a2e4-4a52-b65f-59c8a7a90549","Амбулаторія загальної практики сімейної медицини № 1"

2) "id": "80904c4a-80e9-4680-9101-3c8d87bad1ba", "Амбулаторія загальної практики сімейної медицини № 2"

3) "id": "ac50fb91-36dc-449c-9205-61365e725dc5", "Амбулаторія загальної практики сімейної медицини № 3"

по запиту через API вони є, поточний статус "ACTIVE" і вказані координати, проте при пошуку на сайті https://portal.ehealth.gov.ua/divisions.html#/ їх не видно, як через ЄДРПОУ закладу так і по ід.

такий запит нічого не повертає хоча division guid зареєстрований

https://portal.ehealth.gov.ua/divisions.html#/b4985f5a-a2e4-4a52-b65f-59c8a7a90549

Скриншот здесь | 1.0 | Division search (website), PROD, #J247 - Доброго дня, зареєстровано три відділення ("legal_entity_id": "18791c31-3281-4e5a-9ea5-2eef9a6fe18e"):

1) "id": "b4985f5a-a2e4-4a52-b65f-59c8a7a90549","Амбулаторія загальної практики сімейної медицини № 1"

2) "id": "80904c4a-80e9-4680-9101-3c8d87bad1ba", "Амбулаторія загальної практики сімейної медицини № 2"

3) "id": "ac50fb91-36dc-449c-9205-61365e725dc5", "Амбулаторія загальної практики сімейної медицини № 3"

по запиту через API вони є, поточний статус "ACTIVE" і вказані координати, проте при пошуку на сайті https://portal.ehealth.gov.ua/divisions.html#/ їх не видно, як через ЄДРПОУ закладу так і по ід.

такий запит нічого не повертає хоча division guid зареєстрований

https://portal.ehealth.gov.ua/divisions.html#/b4985f5a-a2e4-4a52-b65f-59c8a7a90549

Скриншот здесь | priority | division search website prod доброго дня зареєстровано три відділення legal entity id id амбулаторія загальної практики сімейної медицини № id амбулаторія загальної практики сімейної медицини № id амбулаторія загальної практики сімейної медицини № по запиту через api вони є поточний статус active і вказані координати проте при пошуку на сайті їх не видно як через єдрпоу закладу так і по ід такий запит нічого не повертає хоча division guid зареєстрований скриншот здесь | 1 |

490,517 | 14,135,343,527 | IssuesEvent | 2020-11-10 01:28:07 | visit-dav/visit | https://api.github.com/repos/visit-dav/visit | opened | Add arbitrary simple shapes to plots | enhancement impact medium likelihood medium priority | Michael Hohensee would like the ability to add arbitrary simple shapes to the plots. For example, add a sphere or a cylinder.

### Describe alternatives you've considered.

Considered the sphere/line/box tool, but those are more for analytics. Also considered annotations, but that's not gonna work.

### Additional context

I don't know if Michael wants this feature just for visualization or if he wants the arbitrary shapes to be treated as additional data.

| 1.0 | Add arbitrary simple shapes to plots - Michael Hohensee would like the ability to add arbitrary simple shapes to the plots. For example, add a sphere or a cylinder.

### Describe alternatives you've considered.

Considered the sphere/line/box tool, but those are more for analytics. Also considered annotations, but that's not gonna work.

### Additional context

I don't know if Michael wants this feature just for visualization or if he wants the arbitrary shapes to be treated as additional data.

| priority | add arbitrary simple shapes to plots michael hohensee would like the ability to add arbitrary simple shapes to the plots for example add a sphere or a cylinder describe alternatives you ve considered considered the sphere line box tool but those are more for analytics also considered annotations but that s not gonna work additional context i don t know if michael wants this feature just for visualization or if he wants the arbitrary shapes to be treated as additional data | 1 |

637,186 | 20,622,963,517 | IssuesEvent | 2022-03-07 19:18:58 | supercrafter333/theSpawn | https://api.github.com/repos/supercrafter333/theSpawn | closed | [BUG:] TPA's cannot be awnsered | bug TO-DO priority: medium Status: Confirmed | # Bug: TPA's cannot be awnsered

### Informations

theSpawn Version: 1.6.1

Server-OS: Linux Ubuntu 20.04

PHP Version: 8.0.16

PocketMine-MP Version: 4.2.3+dev

### Error

None

### readjustment instructions

1. send a tpa to another player

2. the player should try to awnser the tpa

3. RESULT -> "You don't have any pending tpa" | 1.0 | [BUG:] TPA's cannot be awnsered - # Bug: TPA's cannot be awnsered

### Informations

theSpawn Version: 1.6.1

Server-OS: Linux Ubuntu 20.04

PHP Version: 8.0.16

PocketMine-MP Version: 4.2.3+dev

### Error

None

### readjustment instructions

1. send a tpa to another player

2. the player should try to awnser the tpa

3. RESULT -> "You don't have any pending tpa" | priority | tpa s cannot be awnsered bug tpa s cannot be awnsered informations thespawn version server os linux ubuntu php version pocketmine mp version dev error none readjustment instructions send a tpa to another player the player should try to awnser the tpa result you don t have any pending tpa | 1 |

624,120 | 19,687,212,903 | IssuesEvent | 2022-01-12 00:07:58 | PlaceOS/staff-api | https://api.github.com/repos/PlaceOS/staff-api | opened | add support for configurable booking limits on resources | type: enhancement product: placeos priority: medium focus: backend | The bookings controller provides an API for booking items (desks, car parking spaces etc)

We want to be able to put a limit on how many concurrent bookings a single user is allowed to have.

There is already code to check if there is a clash (two different people trying to book the same resource)

```crystal

# check there isn't a clashing booking

clashing_bookings = check_clashing(existing_booking)

render :conflict, json: clashing_bookings.first if clashing_bookings.size > 0

```

So we need a new bit of code at the same point that checks if the current user will go over any limits

* there might not be a limit

* if there is a limit then check the current request doesn't breach any limits

* when updating a booking the booking being updated can be ignored in the limit check (can't clash with itself)

* users can book on behalf of other users, the check needs to be against the user who is being allocated the resource

To implement this I was thinking that we

* modify the [tenant model](https://github.com/PlaceOS/staff-api/blob/master/src/models/tenant.cr#L49)

* add a [JSON column](https://github.com/PlaceOS/staff-api/blob/master/src/models/booking.cr#L53) called something like `booking_limits`

* the value of which will be `"desk" => 2` i.e. a user can book at most two desk resources at the same time

This way different domains can have different limits | 1.0 | add support for configurable booking limits on resources - The bookings controller provides an API for booking items (desks, car parking spaces etc)

We want to be able to put a limit on how many concurrent bookings a single user is allowed to have.

There is already code to check if there is a clash (two different people trying to book the same resource)

```crystal

# check there isn't a clashing booking

clashing_bookings = check_clashing(existing_booking)

render :conflict, json: clashing_bookings.first if clashing_bookings.size > 0

```

So we need a new bit of code at the same point that checks if the current user will go over any limits

* there might not be a limit

* if there is a limit then check the current request doesn't breach any limits

* when updating a booking the booking being updated can be ignored in the limit check (can't clash with itself)

* users can book on behalf of other users, the check needs to be against the user who is being allocated the resource

To implement this I was thinking that we

* modify the [tenant model](https://github.com/PlaceOS/staff-api/blob/master/src/models/tenant.cr#L49)

* add a [JSON column](https://github.com/PlaceOS/staff-api/blob/master/src/models/booking.cr#L53) called something like `booking_limits`

* the value of which will be `"desk" => 2` i.e. a user can book at most two desk resources at the same time

This way different domains can have different limits | priority | add support for configurable booking limits on resources the bookings controller provides an api for booking items desks car parking spaces etc we want to be able to put a limit on how many concurrent bookings a single user is allowed to have there is already code to check if there is a clash two different people trying to book the same resource crystal check there isn t a clashing booking clashing bookings check clashing existing booking render conflict json clashing bookings first if clashing bookings size so we need a new bit of code at the same point that checks if the current user will go over any limits there might not be a limit if there is a limit then check the current request doesn t breach any limits when updating a booking the booking being updated can be ignored in the limit check can t clash with itself users can book on behalf of other users the check needs to be against the user who is being allocated the resource to implement this i was thinking that we modify the add a called something like booking limits the value of which will be desk i e a user can book at most two desk resources at the same time this way different domains can have different limits | 1 |

632,336 | 20,192,452,930 | IssuesEvent | 2022-02-11 07:21:17 | asyml/forte | https://api.github.com/repos/asyml/forte | closed | Handle unknown attributes and unknown types | priority: medium e/5 | This issue is blocked by https://github.com/asyml/forte/issues/405.

**Is your feature request related to a problem? Please describe.**

Forte ontology defines the data types that can be used in the system, sometimes the ontology evolves by adding new classes or new attributes. Some existing pipelines will break (during deserialization) since they encountered unknown types and attributes.

Example:

The old ontology may define an `EntityMention`, with attribute `ner_type`. At some point we updated the definition, adding a new attribute, say `score` to `EntityMention`, now the `EntityMention` class changes from

```

class EntityMention:

ner_type = ""

```

to

```

class EntityMention:

ner_type = ""

score = 0

```

Loading data with the new types or attributes will break the deserialization.

**Describe the solution you'd like**

Forte can be robust to these changes when a boolean flag is set to `on`, in such cases, it should ignore unknown entry types.

On the other direction, when we load old data to a new pipeline, we can assign default `None` values to unknown entry attributes.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| 1.0 | Handle unknown attributes and unknown types - This issue is blocked by https://github.com/asyml/forte/issues/405.

**Is your feature request related to a problem? Please describe.**

Forte ontology defines the data types that can be used in the system, sometimes the ontology evolves by adding new classes or new attributes. Some existing pipelines will break (during deserialization) since they encountered unknown types and attributes.

Example:

The old ontology may define an `EntityMention`, with attribute `ner_type`. At some point we updated the definition, adding a new attribute, say `score` to `EntityMention`, now the `EntityMention` class changes from

```

class EntityMention:

ner_type = ""

```

to

```

class EntityMention:

ner_type = ""

score = 0

```

Loading data with the new types or attributes will break the deserialization.

**Describe the solution you'd like**

Forte can be robust to these changes when a boolean flag is set to `on`, in such cases, it should ignore unknown entry types.

On the other direction, when we load old data to a new pipeline, we can assign default `None` values to unknown entry attributes.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| priority | handle unknown attributes and unknown types this issue is blocked by is your feature request related to a problem please describe forte ontology defines the data types that can be used in the system sometimes the ontology evolves by adding new classes or new attributes some existing pipelines will break during deserialization since they encountered unknown types and attributes example the old ontology may define an entitymention with attribute ner type at some point we updated the definition adding a new attribute say score to entitymention now the entitymention class changes from class entitymention ner type to class entitymention ner type score loading data with the new types or attributes will break the deserialization describe the solution you d like forte can be robust to these changes when a boolean flag is set to on in such cases it should ignore unknown entry types on the other direction when we load old data to a new pipeline we can assign default none values to unknown entry attributes describe alternatives you ve considered a clear and concise description of any alternative solutions or features you ve considered additional context add any other context or screenshots about the feature request here | 1 |

54,493 | 3,068,413,051 | IssuesEvent | 2015-08-18 15:32:15 | TroyManary/EasyPlow | https://api.github.com/repos/TroyManary/EasyPlow | reopened | Smart Phone Home Page | Function: General Priority: Medium State: In Progress Type: Bug | As with all browsers not every browser including smart phone- the look and feel is not at all common. With the Smart Phone we even see a new bar below the header indicating "Snow Storm" a clock and share icon....

| 1.0 | Smart Phone Home Page - As with all browsers not every browser including smart phone- the look and feel is not at all common. With the Smart Phone we even see a new bar below the header indicating "Snow Storm" a clock and share icon....

| priority | smart phone home page as with all browsers not every browser including smart phone the look and feel is not at all common with the smart phone we even see a new bar below the header indicating snow storm a clock and share icon | 1 |

126,729 | 5,003,040,353 | IssuesEvent | 2016-12-11 18:18:33 | dteviot/WebToEpub | https://api.github.com/repos/dteviot/WebToEpub | closed | Parser issue: Skythewood | bug medium priority | I noticed that the skythewood.blogspot.it parser doesn't always grab the higher resolution image.

For example: "Altina the Sword Princess" volume 10.

There is a b/w picture (0002_p017.jpg) which is 412kB if you click on the pic when reading (1st chapter); the addon grabs just the 81kB preview you see on the page.

It happens every now and the on all of the Altina; it does not happen every time, since the addon usually grabs the correct picture.

I don't know if it happens with other LN on the site.

Can you look into it? Thanks. | 1.0 | Parser issue: Skythewood - I noticed that the skythewood.blogspot.it parser doesn't always grab the higher resolution image.

For example: "Altina the Sword Princess" volume 10.

There is a b/w picture (0002_p017.jpg) which is 412kB if you click on the pic when reading (1st chapter); the addon grabs just the 81kB preview you see on the page.

It happens every now and the on all of the Altina; it does not happen every time, since the addon usually grabs the correct picture.

I don't know if it happens with other LN on the site.

Can you look into it? Thanks. | priority | parser issue skythewood i noticed that the skythewood blogspot it parser doesn t always grab the higher resolution image for example altina the sword princess volume there is a b w picture jpg which is if you click on the pic when reading chapter the addon grabs just the preview you see on the page it happens every now and the on all of the altina it does not happen every time since the addon usually grabs the correct picture i don t know if it happens with other ln on the site can you look into it thanks | 1 |

755,944 | 26,448,651,893 | IssuesEvent | 2023-01-16 09:31:53 | conan-io/conan | https://api.github.com/repos/conan-io/conan | closed | Warnings and other checks with symlinks | type: feature stage: in-progress priority: medium complex: low | - Compressing `tgz` files should warn if the file is not contained in the package.

- Compressing `tgz` files should convert a symlink to relative (as the file copier does) when a symlink is abs but contained in the package.

- Decompressing should warn when discarding a file: #4966

- File copier should warn/info for each thing ignored or discarded or converted.

See this branch containing some tests and incomplete/preliminary implementation: https://github.com/lasote/conan/pull/new/feature/warnings_and_tests | 1.0 | Warnings and other checks with symlinks - - Compressing `tgz` files should warn if the file is not contained in the package.

- Compressing `tgz` files should convert a symlink to relative (as the file copier does) when a symlink is abs but contained in the package.

- Decompressing should warn when discarding a file: #4966

- File copier should warn/info for each thing ignored or discarded or converted.

See this branch containing some tests and incomplete/preliminary implementation: https://github.com/lasote/conan/pull/new/feature/warnings_and_tests | priority | warnings and other checks with symlinks compressing tgz files should warn if the file is not contained in the package compressing tgz files should convert a symlink to relative as the file copier does when a symlink is abs but contained in the package decompressing should warn when discarding a file file copier should warn info for each thing ignored or discarded or converted see this branch containing some tests and incomplete preliminary implementation | 1 |

594,438 | 18,045,628,760 | IssuesEvent | 2021-09-18 21:08:20 | MudBlazor/MudBlazor | https://api.github.com/repos/MudBlazor/MudBlazor | closed | MudSelect dropdown should highlight selected item | enhancement Priority: Medium | ### Feature request type

Enhance component

### Component name

MudSelect

### Is your feature request related to a problem?

When an item has been selected and I open the select dropdown list, I can't see which item I selected from the list.

### Describe the solution you'd like

The selected item should be highlighted in the list similar to how it is highlighted in the Autocomplete component.

### Have you seen this feature anywhere else?

This is a commonly used pattern that can be seen in many apps:

Google fonts:

<img width="227" alt="Screen Shot 2021-09-17 at 10 35 21 AM" src="https://user-images.githubusercontent.com/17790790/133694379-4bcb6423-dc3d-45ac-b180-3ec2c1beca8a.png">

Google flights:

<img width="230" alt="Screen Shot 2021-09-17 at 10 35 44 AM" src="https://user-images.githubusercontent.com/17790790/133694383-df04250f-f105-4a65-8e09-df6da9055eaa.png">

### Describe alternatives you've considered

_No response_

### Pull Request

- [ ] I would like to do a Pull Request

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct | 1.0 | MudSelect dropdown should highlight selected item - ### Feature request type

Enhance component

### Component name

MudSelect

### Is your feature request related to a problem?

When an item has been selected and I open the select dropdown list, I can't see which item I selected from the list.

### Describe the solution you'd like

The selected item should be highlighted in the list similar to how it is highlighted in the Autocomplete component.

### Have you seen this feature anywhere else?

This is a commonly used pattern that can be seen in many apps:

Google fonts:

<img width="227" alt="Screen Shot 2021-09-17 at 10 35 21 AM" src="https://user-images.githubusercontent.com/17790790/133694379-4bcb6423-dc3d-45ac-b180-3ec2c1beca8a.png">

Google flights:

<img width="230" alt="Screen Shot 2021-09-17 at 10 35 44 AM" src="https://user-images.githubusercontent.com/17790790/133694383-df04250f-f105-4a65-8e09-df6da9055eaa.png">

### Describe alternatives you've considered

_No response_

### Pull Request

- [ ] I would like to do a Pull Request

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct | priority | mudselect dropdown should highlight selected item feature request type enhance component component name mudselect is your feature request related to a problem when an item has been selected and i open the select dropdown list i can t see which item i selected from the list describe the solution you d like the selected item should be highlighted in the list similar to how it is highlighted in the autocomplete component have you seen this feature anywhere else this is a commonly used pattern that can be seen in many apps google fonts img width alt screen shot at am src google flights img width alt screen shot at am src describe alternatives you ve considered no response pull request i would like to do a pull request code of conduct i agree to follow this project s code of conduct | 1 |

716,268 | 24,626,734,044 | IssuesEvent | 2022-10-16 16:10:30 | AY2223S1-CS2103T-W08-3/tp | https://api.github.com/repos/AY2223S1-CS2103T-W08-3/tp | closed | As a lazy user, I want to be able to open the github profile page of addresses in my address book with a command | priority.Medium type.Story | so that I can view my friends/Teaching Assistants/Professors github projects easily. | 1.0 | As a lazy user, I want to be able to open the github profile page of addresses in my address book with a command - so that I can view my friends/Teaching Assistants/Professors github projects easily. | priority | as a lazy user i want to be able to open the github profile page of addresses in my address book with a command so that i can view my friends teaching assistants professors github projects easily | 1 |

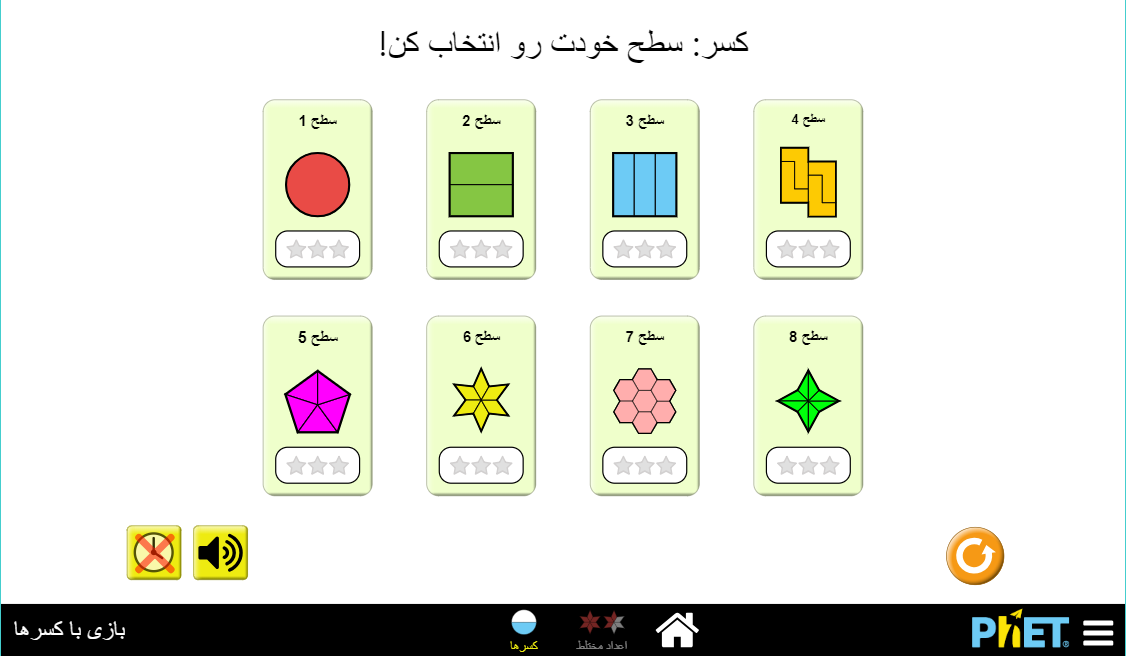

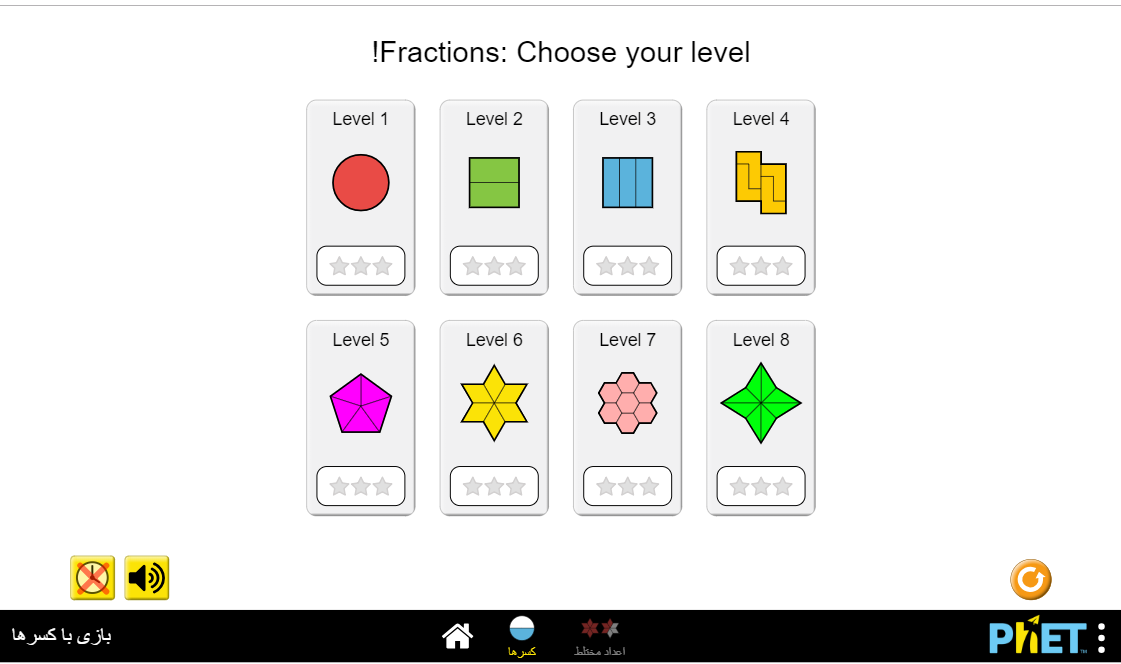

312,792 | 9,553,115,029 | IssuesEvent | 2019-05-02 18:24:16 | phetsims/fraction-matcher | https://api.github.com/repos/phetsims/fraction-matcher | closed | Some previously translated strings are no longer translated | priority:3-medium status:blocks-sim-publication status:ready-for-review | The level selection screen for the published Farsi (Persian) version of Fraction Matcher looks like this:

On the current master version, using locale=fa, it looks like this:

The reason that English words are now appearing is that a number of strings were moved from the fraction-matcher repo to fractions-common during the recent work on the fractions suite, and the translated strings weren't moved over. This should probably be fixed, otherwise the next time Fraction Master is published off of master, it may cause existing translations to fall back to English in several places as seen above.

I'm guessing it would be a couple hours of work max to either propagate the strings manually or write a script to do it. Either @jonathanolson or I could do it, or perhaps someone who we want to get a better understanding of how the translation utility works. Assigning to @ariel-phet for prioritization and assignment. | 1.0 | Some previously translated strings are no longer translated - The level selection screen for the published Farsi (Persian) version of Fraction Matcher looks like this:

On the current master version, using locale=fa, it looks like this:

The reason that English words are now appearing is that a number of strings were moved from the fraction-matcher repo to fractions-common during the recent work on the fractions suite, and the translated strings weren't moved over. This should probably be fixed, otherwise the next time Fraction Master is published off of master, it may cause existing translations to fall back to English in several places as seen above.

I'm guessing it would be a couple hours of work max to either propagate the strings manually or write a script to do it. Either @jonathanolson or I could do it, or perhaps someone who we want to get a better understanding of how the translation utility works. Assigning to @ariel-phet for prioritization and assignment. | priority | some previously translated strings are no longer translated the level selection screen for the published farsi persian version of fraction matcher looks like this on the current master version using locale fa it looks like this the reason that english words are now appearing is that a number of strings were moved from the fraction matcher repo to fractions common during the recent work on the fractions suite and the translated strings weren t moved over this should probably be fixed otherwise the next time fraction master is published off of master it may cause existing translations to fall back to english in several places as seen above i m guessing it would be a couple hours of work max to either propagate the strings manually or write a script to do it either jonathanolson or i could do it or perhaps someone who we want to get a better understanding of how the translation utility works assigning to ariel phet for prioritization and assignment | 1 |

401,550 | 11,795,107,200 | IssuesEvent | 2020-03-18 08:16:18 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | The user with deleted account appears as 'Anonymous' in forum discussion. | bug priority: medium | **Describe the bug**

if a user deleted his own account, His data is not deleted from forum and displayed as 'anonymous' user.

https://prnt.sc/rere3y

**To Reproduce**

Steps to reproduce the behavior:

1.Log in as a test user.

2. Post in discussion.

3. Go to Account > Delete Account

4. Mark check ' I understand the consequence' and click on 'Delete Account'.

5. Log in again as another user. Go the same discussion.

6. The user appears as 'Anonymous'.

**Expected behavior**

The content should be deleted.

**Screenshots**

https://prnt.sc/rere3y

**Support ticket links**

https://buddyboss.zendesk.com/agent/tickets/63594

| 1.0 | The user with deleted account appears as 'Anonymous' in forum discussion. - **Describe the bug**

if a user deleted his own account, His data is not deleted from forum and displayed as 'anonymous' user.

https://prnt.sc/rere3y

**To Reproduce**

Steps to reproduce the behavior:

1.Log in as a test user.

2. Post in discussion.

3. Go to Account > Delete Account

4. Mark check ' I understand the consequence' and click on 'Delete Account'.

5. Log in again as another user. Go the same discussion.

6. The user appears as 'Anonymous'.

**Expected behavior**

The content should be deleted.

**Screenshots**

https://prnt.sc/rere3y

**Support ticket links**

https://buddyboss.zendesk.com/agent/tickets/63594

| priority | the user with deleted account appears as anonymous in forum discussion describe the bug if a user deleted his own account his data is not deleted from forum and displayed as anonymous user to reproduce steps to reproduce the behavior log in as a test user post in discussion go to account delete account mark check i understand the consequence and click on delete account log in again as another user go the same discussion the user appears as anonymous expected behavior the content should be deleted screenshots support ticket links | 1 |

683,978 | 23,401,577,879 | IssuesEvent | 2022-08-12 08:32:13 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | sample.drivers.flash.shell: Failed on atmel targets | bug priority: medium platform: Microchip SAM | **Describe the bug**

Seen here: https://github.com/zephyrproject-rtos/zephyr/runs/7769981753?check_suite_focus=true

`samples/drivers/flash_shell/sample.drivers.flash.shell ` is failed on following targets:

- sam4s_xplained

- sam4l_ek

- sam4e_xpro

- arduino_due

**Log Error**

```

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_get_page':

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:88:18: error: 'IFLASH_PAGE_SIZE' undeclared (first use in this function); did you mean 'IFLASH0_PAGE_SIZE'?

88 | return offset / IFLASH_PAGE_SIZE;

| ^~~~~~~~~~~~~~~~

| IFLASH0_PAGE_SIZE

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:88:18: note: each undeclared identifier is reported only once for each function it appears in

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_wait_ready':

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:108:13: error: 'EEFC_FSR_FLERR' undeclared (first use in this function); did you mean 'EEFC_FSR_FRDY'?

108 | if (fsr & EEFC_FSR_FLERR) {

| ^~~~~~~~~~~~~~

| EEFC_FSR_FRDY

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_write':

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:204:27: error: 'IFLASH_PAGE_SIZE' undeclared (first use in this function); did you mean 'IFLASH0_PAGE_SIZE'?

204 | eop_len = -(offset | ~(IFLASH_PAGE_SIZE - 1));

| ^~~~~~~~~~~~~~~~

| IFLASH0_PAGE_SIZE

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_erase_block':

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:255:4: error: 'EEFC_FCR_FCMD_EPA' undeclared (first use in this function); did you mean 'EEFC_FCR_FCMD_EA'?

255 | EEFC_FCR_FCMD_EPA;

| ^~~~~~~~~~~~~~~~~

| EEFC_FCR_FCMD_EA

In file included from /local/mcu/zephyrproject/zephyr/include/zephyr/device.h:29,

from /local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:13:

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_erase':

/local/mcu/zephyrproject/zephyr/twister-out/arduino_due/samples/drivers/flash_shell/sample.drivers.flash.shell/zephyr/include/generated/devicetree_unfixed.h:2883:34: error: 'DT_N_S_soc_S_flash_controller_400e0a00_S_flash_80000_P_erase_block_size' undeclared (first use in this function); did you mean 'DT_N_S_soc_S_flash_controller_400e0a00_S_flash_80000_P_write_block_size'?

2883 | #define DT_N_INST_0_soc_nv_flash DT_N_S_soc_S_flash_controller_400e0a00_S_flash_80000

| ^~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

```

**To Reproduce**

`twister -b -s samples/drivers/flash_shell/sample.drivers.flash.shell -p sam4s_xplained`

**Impact**

Blocking CI (https://github.com/zephyrproject-rtos/zephyr/pull/45221)

**Environment (please complete the following information):**

zephyr-v3.1.0-3289-gc93361a5bf

| 1.0 | sample.drivers.flash.shell: Failed on atmel targets - **Describe the bug**

Seen here: https://github.com/zephyrproject-rtos/zephyr/runs/7769981753?check_suite_focus=true

`samples/drivers/flash_shell/sample.drivers.flash.shell ` is failed on following targets:

- sam4s_xplained

- sam4l_ek

- sam4e_xpro

- arduino_due

**Log Error**

```

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_get_page':

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:88:18: error: 'IFLASH_PAGE_SIZE' undeclared (first use in this function); did you mean 'IFLASH0_PAGE_SIZE'?

88 | return offset / IFLASH_PAGE_SIZE;

| ^~~~~~~~~~~~~~~~

| IFLASH0_PAGE_SIZE

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:88:18: note: each undeclared identifier is reported only once for each function it appears in

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_wait_ready':

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:108:13: error: 'EEFC_FSR_FLERR' undeclared (first use in this function); did you mean 'EEFC_FSR_FRDY'?

108 | if (fsr & EEFC_FSR_FLERR) {

| ^~~~~~~~~~~~~~

| EEFC_FSR_FRDY

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_write':

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:204:27: error: 'IFLASH_PAGE_SIZE' undeclared (first use in this function); did you mean 'IFLASH0_PAGE_SIZE'?

204 | eop_len = -(offset | ~(IFLASH_PAGE_SIZE - 1));

| ^~~~~~~~~~~~~~~~

| IFLASH0_PAGE_SIZE

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_erase_block':

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:255:4: error: 'EEFC_FCR_FCMD_EPA' undeclared (first use in this function); did you mean 'EEFC_FCR_FCMD_EA'?

255 | EEFC_FCR_FCMD_EPA;

| ^~~~~~~~~~~~~~~~~

| EEFC_FCR_FCMD_EA

In file included from /local/mcu/zephyrproject/zephyr/include/zephyr/device.h:29,

from /local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c:13:

/local/mcu/zephyrproject/zephyr/drivers/flash/flash_sam.c: In function 'flash_sam_erase':

/local/mcu/zephyrproject/zephyr/twister-out/arduino_due/samples/drivers/flash_shell/sample.drivers.flash.shell/zephyr/include/generated/devicetree_unfixed.h:2883:34: error: 'DT_N_S_soc_S_flash_controller_400e0a00_S_flash_80000_P_erase_block_size' undeclared (first use in this function); did you mean 'DT_N_S_soc_S_flash_controller_400e0a00_S_flash_80000_P_write_block_size'?

2883 | #define DT_N_INST_0_soc_nv_flash DT_N_S_soc_S_flash_controller_400e0a00_S_flash_80000

| ^~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

```

**To Reproduce**

`twister -b -s samples/drivers/flash_shell/sample.drivers.flash.shell -p sam4s_xplained`

**Impact**

Blocking CI (https://github.com/zephyrproject-rtos/zephyr/pull/45221)

**Environment (please complete the following information):**

zephyr-v3.1.0-3289-gc93361a5bf

| priority | sample drivers flash shell failed on atmel targets describe the bug seen here samples drivers flash shell sample drivers flash shell is failed on following targets xplained ek xpro arduino due log error local mcu zephyrproject zephyr drivers flash flash sam c in function flash sam get page local mcu zephyrproject zephyr drivers flash flash sam c error iflash page size undeclared first use in this function did you mean page size return offset iflash page size page size local mcu zephyrproject zephyr drivers flash flash sam c note each undeclared identifier is reported only once for each function it appears in local mcu zephyrproject zephyr drivers flash flash sam c in function flash sam wait ready local mcu zephyrproject zephyr drivers flash flash sam c error eefc fsr flerr undeclared first use in this function did you mean eefc fsr frdy if fsr eefc fsr flerr eefc fsr frdy local mcu zephyrproject zephyr drivers flash flash sam c in function flash sam write local mcu zephyrproject zephyr drivers flash flash sam c error iflash page size undeclared first use in this function did you mean page size eop len offset iflash page size page size local mcu zephyrproject zephyr drivers flash flash sam c in function flash sam erase block local mcu zephyrproject zephyr drivers flash flash sam c error eefc fcr fcmd epa undeclared first use in this function did you mean eefc fcr fcmd ea eefc fcr fcmd epa eefc fcr fcmd ea in file included from local mcu zephyrproject zephyr include zephyr device h from local mcu zephyrproject zephyr drivers flash flash sam c local mcu zephyrproject zephyr drivers flash flash sam c in function flash sam erase local mcu zephyrproject zephyr twister out arduino due samples drivers flash shell sample drivers flash shell zephyr include generated devicetree unfixed h error dt n s soc s flash controller s flash p erase block size undeclared first use in this function did you mean dt n s soc s flash controller s flash p write block size define dt n inst soc nv flash dt n s soc s flash controller s flash to reproduce twister b s samples drivers flash shell sample drivers flash shell p xplained impact blocking ci environment please complete the following information zephyr | 1 |

205,200 | 7,094,693,339 | IssuesEvent | 2018-01-13 07:06:12 | facelessuser/backrefs | https://api.github.com/repos/facelessuser/backrefs | closed | Ignore comments | Bug Priority - Medium Severity - Major | I never use them, but this is kind of a big oversight. `(?#comment)` should not have content processed. | 1.0 | Ignore comments - I never use them, but this is kind of a big oversight. `(?#comment)` should not have content processed. | priority | ignore comments i never use them but this is kind of a big oversight comment should not have content processed | 1 |

43,851 | 2,893,439,709 | IssuesEvent | 2015-06-15 17:59:51 | SteamDatabase/steamSummerMinigame | https://api.github.com/repos/SteamDatabase/steamSummerMinigame | closed | Autobuy Cheapest Upgrade | 2 - Medium Priority | If Clicking messes up the autoplayer and the goal is efficiency, then we need some way of the game simply buying the cheapest available upgrade to continue effective progression without crippling the script itself. | 1.0 | Autobuy Cheapest Upgrade - If Clicking messes up the autoplayer and the goal is efficiency, then we need some way of the game simply buying the cheapest available upgrade to continue effective progression without crippling the script itself. | priority | autobuy cheapest upgrade if clicking messes up the autoplayer and the goal is efficiency then we need some way of the game simply buying the cheapest available upgrade to continue effective progression without crippling the script itself | 1 |

548,759 | 16,075,210,722 | IssuesEvent | 2021-04-25 08:04:34 | dodona-edu/dodona | https://api.github.com/repos/dodona-edu/dodona | opened | DNS migration | medium priority | This issue tracks the todo's of the DNS migration

- [ ] register dodona.be

- [ ] point name servers to cloudflare

- [ ] set A and CNAME records (https://github.com/dodona-edu/dodona-ansible/wiki/DNS-records)

- [ ] add domains to ansible (let's encrypt) https://github.com/dodona-edu/dodona-ansible/pull/117

- [ ] rewrite dodona.be and www.dodona.be to dodona.ugent.be on apache

- [ ] rewrite naos.dodona.be to naos.ugent.be on apache

- [ ] rewrite mestra.dodona.be to mestra.ugent.be on apache

- [ ] add sandbox.dodona.be, naos-sandbox.dodona.be and mestra-sandbox.dodona.be to the CSP headers

- [ ] serve the exercises from the new sandbox domains | 1.0 | DNS migration - This issue tracks the todo's of the DNS migration

- [ ] register dodona.be

- [ ] point name servers to cloudflare

- [ ] set A and CNAME records (https://github.com/dodona-edu/dodona-ansible/wiki/DNS-records)

- [ ] add domains to ansible (let's encrypt) https://github.com/dodona-edu/dodona-ansible/pull/117

- [ ] rewrite dodona.be and www.dodona.be to dodona.ugent.be on apache

- [ ] rewrite naos.dodona.be to naos.ugent.be on apache

- [ ] rewrite mestra.dodona.be to mestra.ugent.be on apache

- [ ] add sandbox.dodona.be, naos-sandbox.dodona.be and mestra-sandbox.dodona.be to the CSP headers

- [ ] serve the exercises from the new sandbox domains | priority | dns migration this issue tracks the todo s of the dns migration register dodona be point name servers to cloudflare set a and cname records add domains to ansible let s encrypt rewrite dodona be and to dodona ugent be on apache rewrite naos dodona be to naos ugent be on apache rewrite mestra dodona be to mestra ugent be on apache add sandbox dodona be naos sandbox dodona be and mestra sandbox dodona be to the csp headers serve the exercises from the new sandbox domains | 1 |

317,932 | 9,671,280,169 | IssuesEvent | 2019-05-21 22:15:16 | GingerWalnut/SQBeyondPublic | https://api.github.com/repos/GingerWalnut/SQBeyondPublic | closed | SQ Tech Machines not giving blocks back if block is moving | medium priority | So, If a piston is pushing a block when the SQ Tech Machine tries to break it, then it does not give the block back. This is fairly easy to see by comparing the two example machines in the Screenshot breaking blocks. The one on the right pushes a block in front to the breaker each cycle, making sure that there is never a moving block in front of the breaker. This one returns all the blocks placed. The one on the left just pushes them in as fast as possible, which means that sometimes the block in front of the breaker is moving when it activates. This one looses blocks as it goes.

I think a fix might be making the breaker unable to break block 42 (or whatever the moving block thing has turned into after 1.13).

| 1.0 | SQ Tech Machines not giving blocks back if block is moving - So, If a piston is pushing a block when the SQ Tech Machine tries to break it, then it does not give the block back. This is fairly easy to see by comparing the two example machines in the Screenshot breaking blocks. The one on the right pushes a block in front to the breaker each cycle, making sure that there is never a moving block in front of the breaker. This one returns all the blocks placed. The one on the left just pushes them in as fast as possible, which means that sometimes the block in front of the breaker is moving when it activates. This one looses blocks as it goes.

I think a fix might be making the breaker unable to break block 42 (or whatever the moving block thing has turned into after 1.13).

| priority | sq tech machines not giving blocks back if block is moving so if a piston is pushing a block when the sq tech machine tries to break it then it does not give the block back this is fairly easy to see by comparing the two example machines in the screenshot breaking blocks the one on the right pushes a block in front to the breaker each cycle making sure that there is never a moving block in front of the breaker this one returns all the blocks placed the one on the left just pushes them in as fast as possible which means that sometimes the block in front of the breaker is moving when it activates this one looses blocks as it goes i think a fix might be making the breaker unable to break block or whatever the moving block thing has turned into after | 1 |

287,173 | 8,805,284,325 | IssuesEvent | 2018-12-26 18:39:26 | GoldenSoftwareLtd/gedemin | https://api.github.com/repos/GoldenSoftwareLtd/gedemin | closed | В группу ассортимента добавить поле Лимит. | Meat Priority-Medium Type-Enhancement | Originally reported on Google Code with ID 2189

```

Если добавляем сырьё как заменитель в рецепт, и если это сырьё уже входит в рецепт то

дать возможность добавить. Если добавляем не как заменитель, а как позицию рецепта,

то показать сообщение что сырьё уже присутствует.

```

Reported by `stasgm` on 2010-10-20 13:41:14

| 1.0 | В группу ассортимента добавить поле Лимит. - Originally reported on Google Code with ID 2189

```

Если добавляем сырьё как заменитель в рецепт, и если это сырьё уже входит в рецепт то

дать возможность добавить. Если добавляем не как заменитель, а как позицию рецепта,

то показать сообщение что сырьё уже присутствует.

```

Reported by `stasgm` on 2010-10-20 13:41:14

| priority | в группу ассортимента добавить поле лимит originally reported on google code with id если добавляем сырьё как заменитель в рецепт и если это сырьё уже входит в рецепт то дать возможность добавить если добавляем не как заменитель а как позицию рецепта то показать сообщение что сырьё уже присутствует reported by stasgm on | 1 |

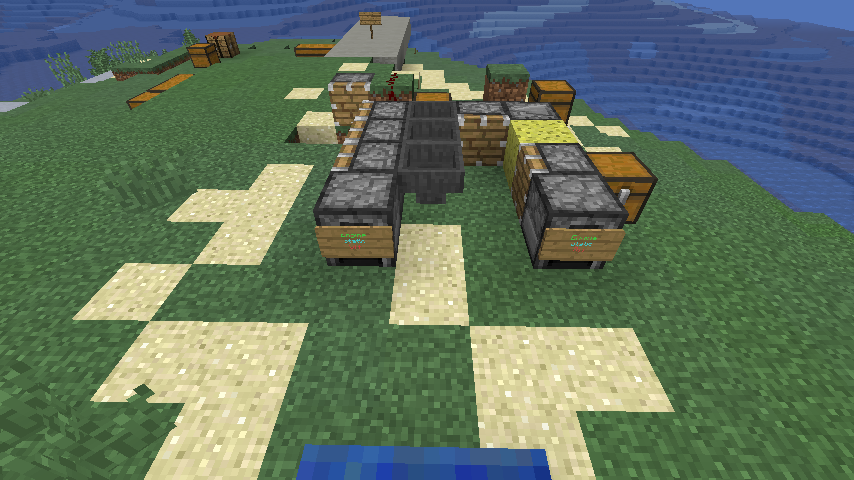

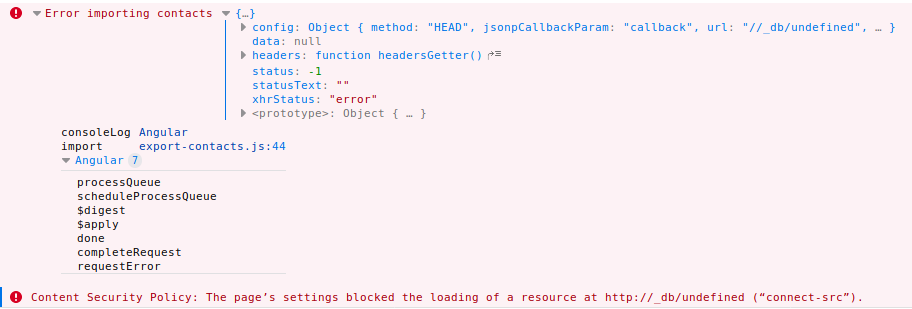

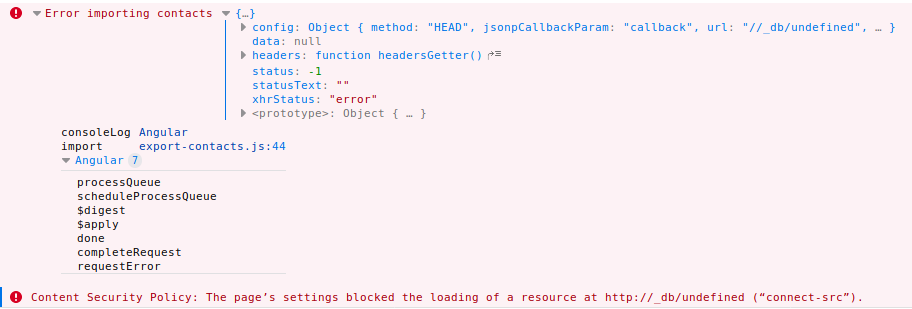

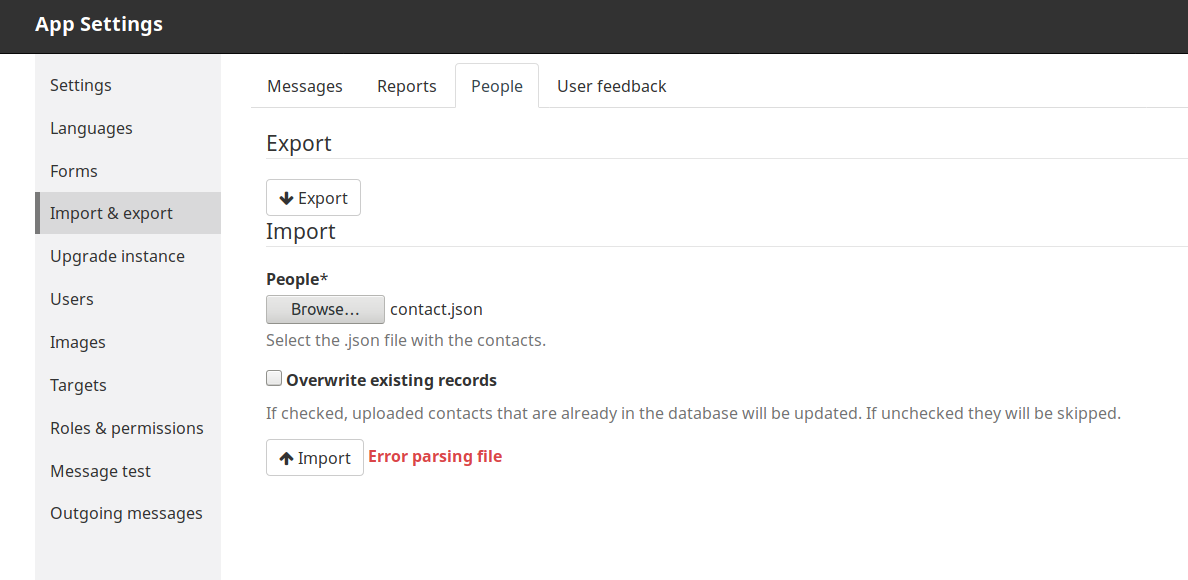

317,332 | 9,663,456,805 | IssuesEvent | 2019-05-21 00:43:47 | medic/medic | https://api.github.com/repos/medic/medic | closed | Import people blocked by CSP | Configuration Priority: 2 - Medium Type: Bug | **Describe the bug**

Uploading people using the admin app isn't working.

**To Reproduce**

1. Create a JSON file with some people data in it. A minimal example:

`[{"_id": "a", "name": "something"}]`

1. Go to Admin app > Import & Export > People

2. Click on the Browse button in the Import section

3. Select the file you created in 1.

4. Click Submit

5. An error is shown in the UI. There's an error in the log. The person is not imported.

**Expected behavior**

No errors are shown and a contact is imported.

**Logs**

```

Content Security Policy: The page’s settings blocked the loading of a resource at http://_db/undefined (“connect-src”).

```

**Screenshots**

**Environment**

- Instance: localhost

- Browser: Firefox

- Client platform: Ubuntu

- App: admin

- Version: 3.5.0 (master)

**Additional context**

1. Before starting on this bug consider if we can drop this feature altogether. medic-conf provides similar functionality.

2. If it is worth fixing, consider refactoring it to use the existing APIs for importing people and places.

3. Also consider using bulk APIs instead of one call per contact.

| 1.0 | Import people blocked by CSP - **Describe the bug**

Uploading people using the admin app isn't working.

**To Reproduce**

1. Create a JSON file with some people data in it. A minimal example:

`[{"_id": "a", "name": "something"}]`

1. Go to Admin app > Import & Export > People

2. Click on the Browse button in the Import section

3. Select the file you created in 1.

4. Click Submit

5. An error is shown in the UI. There's an error in the log. The person is not imported.

**Expected behavior**

No errors are shown and a contact is imported.

**Logs**

```

Content Security Policy: The page’s settings blocked the loading of a resource at http://_db/undefined (“connect-src”).

```

**Screenshots**

**Environment**

- Instance: localhost

- Browser: Firefox

- Client platform: Ubuntu

- App: admin

- Version: 3.5.0 (master)

**Additional context**

1. Before starting on this bug consider if we can drop this feature altogether. medic-conf provides similar functionality.

2. If it is worth fixing, consider refactoring it to use the existing APIs for importing people and places.

3. Also consider using bulk APIs instead of one call per contact.

| priority | import people blocked by csp describe the bug uploading people using the admin app isn t working to reproduce create a json file with some people data in it a minimal example go to admin app import export people click on the browse button in the import section select the file you created in click submit an error is shown in the ui there s an error in the log the person is not imported expected behavior no errors are shown and a contact is imported logs content security policy the page’s settings blocked the loading of a resource at “connect src” screenshots environment instance localhost browser firefox client platform ubuntu app admin version master additional context before starting on this bug consider if we can drop this feature altogether medic conf provides similar functionality if it is worth fixing consider refactoring it to use the existing apis for importing people and places also consider using bulk apis instead of one call per contact | 1 |

155,565 | 5,957,006,590 | IssuesEvent | 2017-05-28 22:04:45 | bitfighter/bitfighter | https://api.github.com/repos/bitfighter/bitfighter | closed | Windows upgrader doesn't always close bitfighter | 019c 020 bug imported Priority-Medium | _From [watusim...@bitfighter.org](https://code.google.com/u/105427273526970468779/) on December 01, 2013 04:20:37_

On some Windows machines (but not all), the upgrader does not close the running Bitfighter session. This will cause the upgrade to fail if the user does not close the window themselves.

At a minimum, we should add a message to the upgrade window telling people they need to make sure the window is closed. Also, we should figure out why this happens.

I have one machine where I can reliably reproduce.

_Original issue: http://code.google.com/p/bitfighter/issues/detail?id=322_

| 1.0 | Windows upgrader doesn't always close bitfighter - _From [watusim...@bitfighter.org](https://code.google.com/u/105427273526970468779/) on December 01, 2013 04:20:37_

On some Windows machines (but not all), the upgrader does not close the running Bitfighter session. This will cause the upgrade to fail if the user does not close the window themselves.

At a minimum, we should add a message to the upgrade window telling people they need to make sure the window is closed. Also, we should figure out why this happens.

I have one machine where I can reliably reproduce.

_Original issue: http://code.google.com/p/bitfighter/issues/detail?id=322_

| priority | windows upgrader doesn t always close bitfighter from on december on some windows machines but not all the upgrader does not close the running bitfighter session this will cause the upgrade to fail if the user does not close the window themselves at a minimum we should add a message to the upgrade window telling people they need to make sure the window is closed also we should figure out why this happens i have one machine where i can reliably reproduce original issue | 1 |

490,682 | 14,138,607,515 | IssuesEvent | 2020-11-10 08:41:12 | vmware/singleton | https://api.github.com/repos/vmware/singleton | closed | [ENHANCEMENT] Optimize VIPService by removing unused code. Automate initialization of VIPService in VIPCfg. | area/java-client kind/enhancement priority/medium | **Is your feature request related to a problem? Please describe.**

VIPService has redundant/unused properties such as productID and version properties.

Singleton pattern is not needed for VIPService because VIPService is a member of VIPCfg.

Initialization of VIPService is unnecessarily a separate API call after VIPCfg.initialize.

**Describe the solution you'd like**

Optimize VIPService by removing unused code such as productId and version properties.

No need to have a singleton instance of VIPService because VIPService is a member of VIPCfg.

Automate instantiation of VIPService in VIPCfg. Do not allow instantiation of VIPCfg with null vipServer or null/faultyHttpRequester | 1.0 | [ENHANCEMENT] Optimize VIPService by removing unused code. Automate initialization of VIPService in VIPCfg. - **Is your feature request related to a problem? Please describe.**

VIPService has redundant/unused properties such as productID and version properties.

Singleton pattern is not needed for VIPService because VIPService is a member of VIPCfg.

Initialization of VIPService is unnecessarily a separate API call after VIPCfg.initialize.

**Describe the solution you'd like**

Optimize VIPService by removing unused code such as productId and version properties.

No need to have a singleton instance of VIPService because VIPService is a member of VIPCfg.

Automate instantiation of VIPService in VIPCfg. Do not allow instantiation of VIPCfg with null vipServer or null/faultyHttpRequester | priority | optimize vipservice by removing unused code automate initialization of vipservice in vipcfg is your feature request related to a problem please describe vipservice has redundant unused properties such as productid and version properties singleton pattern is not needed for vipservice because vipservice is a member of vipcfg initialization of vipservice is unnecessarily a separate api call after vipcfg initialize describe the solution you d like optimize vipservice by removing unused code such as productid and version properties no need to have a singleton instance of vipservice because vipservice is a member of vipcfg automate instantiation of vipservice in vipcfg do not allow instantiation of vipcfg with null vipserver or null faultyhttprequester | 1 |

268,225 | 8,405,040,351 | IssuesEvent | 2018-10-11 14:21:07 | geosolutions-it/smb-app | https://api.github.com/repos/geosolutions-it/smb-app | closed | Landing View | Priority: Medium review | Create Landing view to show call to actions buttons to relevant functionalities (tracking, lost/found notifications, etc.) with short description.

This will substituto the current "Tracks registration" view as the landing view.

In the future it could also host news and messages | 1.0 | Landing View - Create Landing view to show call to actions buttons to relevant functionalities (tracking, lost/found notifications, etc.) with short description.

This will substituto the current "Tracks registration" view as the landing view.

In the future it could also host news and messages | priority | landing view create landing view to show call to actions buttons to relevant functionalities tracking lost found notifications etc with short description this will substituto the current tracks registration view as the landing view in the future it could also host news and messages | 1 |

22,141 | 2,645,690,087 | IssuesEvent | 2015-03-13 01:08:34 | prikhi/evoluspencil | https://api.github.com/repos/prikhi/evoluspencil | closed | Allow reordering of page tabs | 1 star bug imported Priority-Medium | _From [brownsp...@gmail.com](https://code.google.com/u/100468905672215389538/) on August 22, 2008 06:38:15_

What steps will reproduce the problem? 1. Create a new document, one page available.

2. Add a new page.

3. Add another new page, but you want the tab to appear next to the first one. What is the expected output? What do you see instead? I would like to have some sort of function to facilitate reordering of the

page tabs, for organization. Drag-and-drop just like Firefox does would be

nice. What version of the product are you using? On what operating system? 1.0 standalone on Windows XP. Please provide any additional information below.

_Original issue: http://code.google.com/p/evoluspencil/issues/detail?id=34_ | 1.0 | Allow reordering of page tabs - _From [brownsp...@gmail.com](https://code.google.com/u/100468905672215389538/) on August 22, 2008 06:38:15_

What steps will reproduce the problem? 1. Create a new document, one page available.

2. Add a new page.

3. Add another new page, but you want the tab to appear next to the first one. What is the expected output? What do you see instead? I would like to have some sort of function to facilitate reordering of the

page tabs, for organization. Drag-and-drop just like Firefox does would be

nice. What version of the product are you using? On what operating system? 1.0 standalone on Windows XP. Please provide any additional information below.

_Original issue: http://code.google.com/p/evoluspencil/issues/detail?id=34_ | priority | allow reordering of page tabs from on august what steps will reproduce the problem create a new document one page available add a new page add another new page but you want the tab to appear next to the first one what is the expected output what do you see instead i would like to have some sort of function to facilitate reordering of the page tabs for organization drag and drop just like firefox does would be nice what version of the product are you using on what operating system standalone on windows xp please provide any additional information below original issue | 1 |

345,432 | 10,367,956,770 | IssuesEvent | 2019-09-07 13:05:56 | eternialz/moeverdose | https://api.github.com/repos/eternialz/moeverdose | closed | Main menu isn't working correctly in Edge <= 18 | Bug Priority:Medium Theme:Frontend | The main-menu isn't displaying at all using Microsoft Edge | 1.0 | Main menu isn't working correctly in Edge <= 18 - The main-menu isn't displaying at all using Microsoft Edge | priority | main menu isn t working correctly in edge the main menu isn t displaying at all using microsoft edge | 1 |

115,808 | 4,682,506,958 | IssuesEvent | 2016-10-09 09:27:29 | CS2103AUG2016-F10-C2/main | https://api.github.com/repos/CS2103AUG2016-F10-C2/main | closed | Logic parser: Implement a better parser algo | priority.medium status.ongoing type.enchancement type.epic | Currently, if the user messes up the order of an valid command (such as putting the tags before the description of an add command), the parser will deem it as an invalid command when in essence it is a totally valid command with all the required information to add a new task.

Thus this overhaul plans to introduce more flexibility in the parser class in order to make commands less rigid and supports the easy addition of new arguments to new and existing commands.

### Examples

---

**Example 1:** Valid command

```java

ArgumentsParser parser = new ArgumentsParser() ;

parser.addNoFlagsArgs(CommandArgs.NAME).addOptionalArg(CommandArgs.DESC).addOptionalArg(CommandArgs.TAGS) ;

parser.parse ("add hello d/hi e/tag1 e/tag2") ;

```

> parser.getArgValue (CommandArgs.NAME) = "hello" <br>

> parser.getArgValue (CommandArgs.DESC) = "hi" <br>

> parser.getArgValue (CommandArgs.TAGS) = {tag1, tag2} <br>

**Example 2:** Required Arguments not present

```java

ArgumentsParser parser = new ArgumentsParser() ;

parser.addNoFlagsArgs(CommandArgs.NAME).addOptionalArg(CommandArgs.DESC).addOptionalArg(CommandArgs.TAGS) ;

parser.parse ("add") ;

```

> IllegalValueException : Invalid command format | 1.0 | Logic parser: Implement a better parser algo - Currently, if the user messes up the order of an valid command (such as putting the tags before the description of an add command), the parser will deem it as an invalid command when in essence it is a totally valid command with all the required information to add a new task.

Thus this overhaul plans to introduce more flexibility in the parser class in order to make commands less rigid and supports the easy addition of new arguments to new and existing commands.

### Examples

---

**Example 1:** Valid command

```java

ArgumentsParser parser = new ArgumentsParser() ;

parser.addNoFlagsArgs(CommandArgs.NAME).addOptionalArg(CommandArgs.DESC).addOptionalArg(CommandArgs.TAGS) ;

parser.parse ("add hello d/hi e/tag1 e/tag2") ;

```

> parser.getArgValue (CommandArgs.NAME) = "hello" <br>

> parser.getArgValue (CommandArgs.DESC) = "hi" <br>

> parser.getArgValue (CommandArgs.TAGS) = {tag1, tag2} <br>

**Example 2:** Required Arguments not present

```java

ArgumentsParser parser = new ArgumentsParser() ;

parser.addNoFlagsArgs(CommandArgs.NAME).addOptionalArg(CommandArgs.DESC).addOptionalArg(CommandArgs.TAGS) ;

parser.parse ("add") ;

```

> IllegalValueException : Invalid command format | priority | logic parser implement a better parser algo currently if the user messes up the order of an valid command such as putting the tags before the description of an add command the parser will deem it as an invalid command when in essence it is a totally valid command with all the required information to add a new task thus this overhaul plans to introduce more flexibility in the parser class in order to make commands less rigid and supports the easy addition of new arguments to new and existing commands examples example valid command java argumentsparser parser new argumentsparser parser addnoflagsargs commandargs name addoptionalarg commandargs desc addoptionalarg commandargs tags parser parse add hello d hi e e parser getargvalue commandargs name hello parser getargvalue commandargs desc hi parser getargvalue commandargs tags example required arguments not present java argumentsparser parser new argumentsparser parser addnoflagsargs commandargs name addoptionalarg commandargs desc addoptionalarg commandargs tags parser parse add illegalvalueexception invalid command format | 1 |

768,770 | 26,979,695,394 | IssuesEvent | 2023-02-09 12:12:27 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Bluetooth: Host: Periodic scanner does not differentiate between partial and incomplete data | bug priority: medium area: Bluetooth area: Bluetooth Host | **Describe the bug**

When the periodic scanner is reconstructing the periodic advertising data to report to the application, it only checks if the data_status is marked as complete or not, it does not differentiate between incomplete and partial data, see [here](https://github.com/zephyrproject-rtos/zephyr/blob/main/subsys/bluetooth/host/scan.c#L766-L835). In the case where the controller does not receive the data complete packet and the data_status is marked as "incomplete, no more data to come", the data of the next incoming periodic advertising event is appended to the truncated data resulting in incorrect data being passed to the application.

**To Reproduce**

This is quite a difficult bug to reproduce as it relies on a packet being dropped. The test environment where this was discovered had a very high RSSI just above the threshold of the radios sensitivity which increased the likely of the packet not being received.

Steps to reproduce the behavior:

1. Establish a synchronization between a periodic advertiser and scanner. The periodic advertiser should be advertising known data that is long enough to be split across multiple packets.

2. Repeatedly check the data received by the scanner and compare it with the expected advertised data.

3. When one of the packets to construct the data is not received the data should differ between the received data and the expected data

**Expected behavior**

The incomplete data should not be passed up to the application and should be dropped.

**Impact**

The application does not receive the correct data from the host.

**Logs and console output**

Expected data:

```

\xfa\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,-./0123456789:;<=>?@ABCDEFGHIJKLMNOPQRSTUVWXYZ[\\]^_`abcdefghijklmnopqrstuvwxyz{\|}~\x7f\x80\x81\x82\x83\x84\x85\x86\x87\x88\x89\x8a\x8b\x8c\x8d\x8e\x8f\x90\x91\x92\x93\x94\x95\x96\x97\x98\x99\x9a\x9b\x9c\x9d\x9e\x9f\xa0\xa1\xa2\xa3\xa4\xa5\xa6\xa7\xa8\xa9\xaa\xab\xac\xad\xae\xaf\xb0\xb1\xb2\xb3\xb4\xb5\xb6\xb7\xb8\xb9\xba\xbb\xbc\xbd\xbe\xbf\xc0\xc1\xc2\xc3\xc4\xc5\xc6\xc7\xc8\xc9\xca\xcb\xcc\xcd\xce\xcf\xd0\xd1\xd2\xd3\xd4\xd5\xd6\xd7\xd8\xd9\xda\xdb\xdc\xdd\xde\xdf\xe0\xe1\xe2\xe3\xe4\xe5\xe6\xe7\xe8\xe9\xea\xeb\xec\xed\xee\xef\xf0\xf1\xf2\xf3\xf4\xf5\xf60\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,\x03'

```

Received data:

```

\xfa\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,-./0123456789:;<=>?@ABCDEFGHIJKLMNOPQRSTUVWXYZ[\\]^_`abcdefghijklmnopqrstuvwxyz{\|}~\x7f\x80\x81\x82\x83\x84\x85\x86\x87\x88\x89\x8a\x8b\x8c\x8d\x8e\x8f\x90\x91\x92\x93\x94\x95\x96\x97\x98\x99\x9a\x9b\x9c\x9d\x9e\x9f\xa0\xa1\xa2\xa3\xa4\xa5\xa6\xa7\xa8\xa9\xaa\xab\xac\xad\xae\xaf\xb0\xb1\xb2\xb3\xb4\xb5\xb6\xb7\xb8\xb9\xba\xbb\xbc\xbd\xbe\xbf\xc0\xc1\xc2\xc3\xc4\xc5\xc6\xc7\xc8\xc9\xca\xcb\xcc\xcd\xce\xcf\xd0\xd1\xd2\xd3\xd4\xd5\xd6\xd7\xd8\xd9\xda\xdb\xdc\xdd\xde\xdf\xe0\xe1\xe2\xe3\xe4\xe5\xe6\xe7\xe8\xe9\xea\xeb\xec\xed\xee\xef\xf0\xf1\xf2\xf3\xf4\xf5\xfa\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,-./0123456789:;<=>?@ABCDEFGHIJKLMNOPQRSTUVWXYZ[\\]^_`abcdefghijklmnopqrstuvwxyz{\|}~\x7f\x80\x81\x82\x83\x84\x85\x86\x87\x88\x89\x8a\x8b\x8c\x8d\x8e\x8f\x90\x91\x92\x93\x94\x95\x96\x97\x98\x99\x9a\x9b\x9c\x9d\x9e\x9f\xa0\xa1\xa2\xa3\xa4\xa5\xa6\xa7\xa8\xa9\xaa\xab\xac\xad\xae\xaf\xb0\xb1\xb2\xb3\xb4\xb5\xb6\xb7\xb8\xb9\xba\xbb\xbc\xbd\xbe\xbf\xc0\xc1\xc2\xc3\xc4\xc5\xc6\xc7\xc8\xc9\xca\xcb\xcc\xcd\xce\xcf\xd0\xd1\xd2\xd3\xd4\xd5\xd6\xd7\xd8\xd9\xda\xdb\xdc\xdd\xde\xdf\xe0\xe1\xe2\xe3\xe4\xe5\xe6\xe7\xe8\xe9\xea\xeb\xec\xed\xee\xef\xf0\xf1\xf2\xf3\xf4\xf5\xf60\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,\x03'

```

In the following logs, the data total data length of the advertised data was 300bytes, which was split into 3 packets: 247, 3, 50. After logging the data length of the incoming periodic advertisement packets before they're reconstructed, it can be seen in the following logs that right before the test failed, a packet of data length 50 (the final data in the sequence) was dropped. This resulted in the data being 250 bytes longer than expected as it appended 247, 3, 247, 3 and 50 bytes to the data buffer for the advertisement report. (I've indented the events of interest)

```

\*\*\* Booting Zephyr OS build v3.2.99-ncs1-1495-gaf6add53aa1f \*\*\*

[00:00:01.836,822] <inf> bt_hci_core: hci_vs_init: HW Platform: Nordic Semiconductor (0x0002)

[00:00:01.836,883] <inf> bt_hci_core: hci_vs_init: HW Variant: nRF53x (0x0003)

[00:00:01.836,914] <inf> bt_hci_core: hci_vs_init: Firmware: Standard Bluetooth controller (0x00) Version 184.62253 Build 1392531289

[00:00:01.839,080] <inf> bt_hci_core: bt_dev_show_info: Identity: D6:30:BF:22:A4:15 (random)

[00:00:01.839,111] <inf> bt_hci_core: bt_dev_show_info: HCI: version 5.3 (0x0c) revision 0x221c, manufacturer 0x0059

[00:00:01.839,141] <inf> bt_hci_core: bt_dev_show_info: LMP: version 5.3 (0x0c) subver 0x221c

[00:00:07.969,604] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 247

[00:00:07.969,726] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 3

[00:00:07.969,848] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 50

[00:00:08.276,550] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 247

[00:00:08.276,672] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 3

[00:00:08.276,794] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 50

[00:00:08.351,409] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 247

[00:00:08.351,501] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 3

[00:00:08.351,654] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 50

[00:00:09.415,588] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 247

[00:00:09.415,740] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 3

[00:00:09.415,863] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 50

[00:00:09.718,414] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 247

[00:00:09.718,536] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 3

[00:00:09.793,792] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 247

[00:00:09.793,914] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 3

[00:00:09.794,036] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 50

[00:00:09.868,743] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 247

[00:00:09.868,865] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 3

[00:00:09.868,988] <err> bt_scan: bt_hci_le_per_adv_report: evt len: 50

```

On further investigation I found in repeated failures the data_status on the packet before the dropped packet had data status set to 2: data incomplete no more to come as expected, it just isn't handled by the host.

**Environment:**

- OS: Linux (Ubuntu 20.04)

- Target: nrf5340dk

- Toolchain: NCS

- SHA: [af6add53](https://github.com/zephyrproject-rtos/zephyr/tree/af6add53aa1f357e5216a6207af0572b53830783)

| 1.0 | Bluetooth: Host: Periodic scanner does not differentiate between partial and incomplete data - **Describe the bug**

When the periodic scanner is reconstructing the periodic advertising data to report to the application, it only checks if the data_status is marked as complete or not, it does not differentiate between incomplete and partial data, see [here](https://github.com/zephyrproject-rtos/zephyr/blob/main/subsys/bluetooth/host/scan.c#L766-L835). In the case where the controller does not receive the data complete packet and the data_status is marked as "incomplete, no more data to come", the data of the next incoming periodic advertising event is appended to the truncated data resulting in incorrect data being passed to the application.

**To Reproduce**

This is quite a difficult bug to reproduce as it relies on a packet being dropped. The test environment where this was discovered had a very high RSSI just above the threshold of the radios sensitivity which increased the likely of the packet not being received.

Steps to reproduce the behavior:

1. Establish a synchronization between a periodic advertiser and scanner. The periodic advertiser should be advertising known data that is long enough to be split across multiple packets.

2. Repeatedly check the data received by the scanner and compare it with the expected advertised data.

3. When one of the packets to construct the data is not received the data should differ between the received data and the expected data

**Expected behavior**

The incomplete data should not be passed up to the application and should be dropped.

**Impact**

The application does not receive the correct data from the host.

**Logs and console output**

Expected data:

```

\xfa\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,-./0123456789:;<=>?@ABCDEFGHIJKLMNOPQRSTUVWXYZ[\\]^_`abcdefghijklmnopqrstuvwxyz{\|}~\x7f\x80\x81\x82\x83\x84\x85\x86\x87\x88\x89\x8a\x8b\x8c\x8d\x8e\x8f\x90\x91\x92\x93\x94\x95\x96\x97\x98\x99\x9a\x9b\x9c\x9d\x9e\x9f\xa0\xa1\xa2\xa3\xa4\xa5\xa6\xa7\xa8\xa9\xaa\xab\xac\xad\xae\xaf\xb0\xb1\xb2\xb3\xb4\xb5\xb6\xb7\xb8\xb9\xba\xbb\xbc\xbd\xbe\xbf\xc0\xc1\xc2\xc3\xc4\xc5\xc6\xc7\xc8\xc9\xca\xcb\xcc\xcd\xce\xcf\xd0\xd1\xd2\xd3\xd4\xd5\xd6\xd7\xd8\xd9\xda\xdb\xdc\xdd\xde\xdf\xe0\xe1\xe2\xe3\xe4\xe5\xe6\xe7\xe8\xe9\xea\xeb\xec\xed\xee\xef\xf0\xf1\xf2\xf3\xf4\xf5\xf60\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,\x03'

```

Received data:

```

\xfa\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,-./0123456789:;<=>?@ABCDEFGHIJKLMNOPQRSTUVWXYZ[\\]^_`abcdefghijklmnopqrstuvwxyz{\|}~\x7f\x80\x81\x82\x83\x84\x85\x86\x87\x88\x89\x8a\x8b\x8c\x8d\x8e\x8f\x90\x91\x92\x93\x94\x95\x96\x97\x98\x99\x9a\x9b\x9c\x9d\x9e\x9f\xa0\xa1\xa2\xa3\xa4\xa5\xa6\xa7\xa8\xa9\xaa\xab\xac\xad\xae\xaf\xb0\xb1\xb2\xb3\xb4\xb5\xb6\xb7\xb8\xb9\xba\xbb\xbc\xbd\xbe\xbf\xc0\xc1\xc2\xc3\xc4\xc5\xc6\xc7\xc8\xc9\xca\xcb\xcc\xcd\xce\xcf\xd0\xd1\xd2\xd3\xd4\xd5\xd6\xd7\xd8\xd9\xda\xdb\xdc\xdd\xde\xdf\xe0\xe1\xe2\xe3\xe4\xe5\xe6\xe7\xe8\xe9\xea\xeb\xec\xed\xee\xef\xf0\xf1\xf2\xf3\xf4\xf5\xfa\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,-./0123456789:;<=>?@ABCDEFGHIJKLMNOPQRSTUVWXYZ[\\]^_`abcdefghijklmnopqrstuvwxyz{\|}~\x7f\x80\x81\x82\x83\x84\x85\x86\x87\x88\x89\x8a\x8b\x8c\x8d\x8e\x8f\x90\x91\x92\x93\x94\x95\x96\x97\x98\x99\x9a\x9b\x9c\x9d\x9e\x9f\xa0\xa1\xa2\xa3\xa4\xa5\xa6\xa7\xa8\xa9\xaa\xab\xac\xad\xae\xaf\xb0\xb1\xb2\xb3\xb4\xb5\xb6\xb7\xb8\xb9\xba\xbb\xbc\xbd\xbe\xbf\xc0\xc1\xc2\xc3\xc4\xc5\xc6\xc7\xc8\xc9\xca\xcb\xcc\xcd\xce\xcf\xd0\xd1\xd2\xd3\xd4\xd5\xd6\xd7\xd8\xd9\xda\xdb\xdc\xdd\xde\xdf\xe0\xe1\xe2\xe3\xe4\xe5\xe6\xe7\xe8\xe9\xea\xeb\xec\xed\xee\xef\xf0\xf1\xf2\xf3\xf4\xf5\xf60\xffY\x00\x00\x01\x02\x03\x04\x05\x06\x07\x08\t\n\x0b\x0c\r\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a\x1b\x1c\x1d\x1e\x1f !"#$%&\'()*+,\x03'

```

In the following logs, the data total data length of the advertised data was 300bytes, which was split into 3 packets: 247, 3, 50. After logging the data length of the incoming periodic advertisement packets before they're reconstructed, it can be seen in the following logs that right before the test failed, a packet of data length 50 (the final data in the sequence) was dropped. This resulted in the data being 250 bytes longer than expected as it appended 247, 3, 247, 3 and 50 bytes to the data buffer for the advertisement report. (I've indented the events of interest)

```

\*\*\* Booting Zephyr OS build v3.2.99-ncs1-1495-gaf6add53aa1f \*\*\*