Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

28,246 | 2,700,668,000 | IssuesEvent | 2015-04-04 12:34:05 | cs2103jan2015-f13-1j/main | https://api.github.com/repos/cs2103jan2015-f13-1j/main | opened | Implement simple functionality for right sidebar | priority.medium type.enhancement | Depending on toggle status of right sidebar, display floating tasks, deadline tasks and/or timed tasks (using search functionality for the time being).

Depending on the status of the right radio button, display tasks due today, tomorrow, this week or all time (floating tasks are always displayed) | 1.0 | Implement simple functionality for right sidebar - Depending on toggle status of right sidebar, display floating tasks, deadline tasks and/or timed tasks (using search functionality for the time being).

Depending on the status of the right radio button, display tasks due today, tomorrow, this week or all time (floating tasks are always displayed) | priority | implement simple functionality for right sidebar depending on toggle status of right sidebar display floating tasks deadline tasks and or timed tasks using search functionality for the time being depending on the status of the right radio button display tasks due today tomorrow this week or all time floating tasks are always displayed | 1 |

464,908 | 13,348,000,794 | IssuesEvent | 2020-08-29 16:20:37 | debops/debops | https://api.github.com/repos/debops/debops | closed | [debops.users] My user isn't added to the admins group by ansible when running a customized playbook | meta priority: medium | Preface: I'm new to Ansible/debops, and I'm doing a bit of a loopy setup, so my setup is confusing. Any help you can offer is much appreciated!

I am setting up a custom ansible playbook that I can use for my personal server infrastructure. I am re-using most of debops as the basis for my playbooks to try to leverage the existing conventions defined to avoid re-inventing the wheel.

That said, I'm not using debops as designed and I'm also using ansible-pull, which is changing things up a bit for me.

I have a mostly working playbook ready to serve as my basic setup that I can then build applications on top of. However, I have one major issue:

When I run my playbook, the user account it creates ("devin") is not being added to the "admins" group. If I run `adduser devin admins` after running ansible-pull, everything works fine. But I can't figure out how to make it work.

I don't observe any difference when running on a fresh host vs running the playbook again on the same host - should I expect a difference?

Any advice on a) what might be going wrong or b) best strategies for debugging? As I said, I'm new to ansible so any tips would help. I got as far as editing /root/.ansible/collections/ansible_collections/debops/debops/roles/users/tasks/main.yml to see if I could figure it out but didn't make any progress after 30 minutes of trying things blindly.

Code I'm using https://github.com/devvmh/ansible-playbook-core/tree/devel

Specifically, this code is not functioning as expected:

```

- role: debops.debops.system_groups

- role: debops.debops.users

vars:

- users__accounts:

- name: 'devin'

- groups: ['admins']

```

Output I get:

```

TASK [debops.debops.users : Manage additional UNIX groups for UNIX accounts] *********************************************************

skipping: [localhost] => (item={u'state': u'present', u'gecos': u'', u'name': u'devin', u'groups': u''})

```

If I remove the when block from this step in tasks/main.yml, I get this output, but it still doesn't work:

```

TASK [debops.debops.users : Manage additional UNIX groups for UNIX accounts] *********************************************************

ok: [localhost] => (item={u'state': u'present', u'gecos': u'', u'name': u'devin', u'groups': u''})

```

Thanks for any help or tips you can provide! | 1.0 | [debops.users] My user isn't added to the admins group by ansible when running a customized playbook - Preface: I'm new to Ansible/debops, and I'm doing a bit of a loopy setup, so my setup is confusing. Any help you can offer is much appreciated!

I am setting up a custom ansible playbook that I can use for my personal server infrastructure. I am re-using most of debops as the basis for my playbooks to try to leverage the existing conventions defined to avoid re-inventing the wheel.

That said, I'm not using debops as designed and I'm also using ansible-pull, which is changing things up a bit for me.

I have a mostly working playbook ready to serve as my basic setup that I can then build applications on top of. However, I have one major issue:

When I run my playbook, the user account it creates ("devin") is not being added to the "admins" group. If I run `adduser devin admins` after running ansible-pull, everything works fine. But I can't figure out how to make it work.

I don't observe any difference when running on a fresh host vs running the playbook again on the same host - should I expect a difference?

Any advice on a) what might be going wrong or b) best strategies for debugging? As I said, I'm new to ansible so any tips would help. I got as far as editing /root/.ansible/collections/ansible_collections/debops/debops/roles/users/tasks/main.yml to see if I could figure it out but didn't make any progress after 30 minutes of trying things blindly.

Code I'm using https://github.com/devvmh/ansible-playbook-core/tree/devel

Specifically, this code is not functioning as expected:

```

- role: debops.debops.system_groups

- role: debops.debops.users

vars:

- users__accounts:

- name: 'devin'

- groups: ['admins']

```

Output I get:

```

TASK [debops.debops.users : Manage additional UNIX groups for UNIX accounts] *********************************************************

skipping: [localhost] => (item={u'state': u'present', u'gecos': u'', u'name': u'devin', u'groups': u''})

```

If I remove the when block from this step in tasks/main.yml, I get this output, but it still doesn't work:

```

TASK [debops.debops.users : Manage additional UNIX groups for UNIX accounts] *********************************************************

ok: [localhost] => (item={u'state': u'present', u'gecos': u'', u'name': u'devin', u'groups': u''})

```

Thanks for any help or tips you can provide! | priority | my user isn t added to the admins group by ansible when running a customized playbook preface i m new to ansible debops and i m doing a bit of a loopy setup so my setup is confusing any help you can offer is much appreciated i am setting up a custom ansible playbook that i can use for my personal server infrastructure i am re using most of debops as the basis for my playbooks to try to leverage the existing conventions defined to avoid re inventing the wheel that said i m not using debops as designed and i m also using ansible pull which is changing things up a bit for me i have a mostly working playbook ready to serve as my basic setup that i can then build applications on top of however i have one major issue when i run my playbook the user account it creates devin is not being added to the admins group if i run adduser devin admins after running ansible pull everything works fine but i can t figure out how to make it work i don t observe any difference when running on a fresh host vs running the playbook again on the same host should i expect a difference any advice on a what might be going wrong or b best strategies for debugging as i said i m new to ansible so any tips would help i got as far as editing root ansible collections ansible collections debops debops roles users tasks main yml to see if i could figure it out but didn t make any progress after minutes of trying things blindly code i m using specifically this code is not functioning as expected role debops debops system groups role debops debops users vars users accounts name devin groups output i get task skipping item u state u present u gecos u u name u devin u groups u if i remove the when block from this step in tasks main yml i get this output but it still doesn t work task ok item u state u present u gecos u u name u devin u groups u thanks for any help or tips you can provide | 1 |

3,806 | 2,540,574,898 | IssuesEvent | 2015-01-27 22:43:55 | SiCKRAGETV/sickrage-issues | https://api.github.com/repos/SiCKRAGETV/sickrage-issues | closed | POSTPROCESSER stops running | 1: Bug / issue 2: Medium Priority 3: Unconfirmed branch: master | Branch: Master

Commit Hash: (401cb666016e45b42fe675bafdf7908ad6c2b9bb)

OS: Win 7 pro 64bit

Python Version: 2.7.3 (default, Apr 10 2012, 23:24:47) [MSC v.1500 64 bit (AMD64)

I'm having a problem with automatic post processing. When I first start sickrage the post processing will work fine, but at some point it stops. Usually a day or so after starting sickrage. During the time when this is happening there are no "POSTPROCESSER" entries in the log. If I restart sickrage, post processing will resume and the backlog of completed downloads will process. Generally it will also process new downloads for about a day, then it stops again.

I'm not sure when this started but everything was running fine for months before I had a problem. I have auto updates turned on and the problem may have coincided with an update.

I have the post processing set to run every 10 minutes. Below is a log for an hour period with no POSTPROCESSER entries. I'm not sure if it will be of any help but it at least proves that post processing isn't running.

http://pastebin.com/eHHN2RbV | 1.0 | POSTPROCESSER stops running - Branch: Master

Commit Hash: (401cb666016e45b42fe675bafdf7908ad6c2b9bb)

OS: Win 7 pro 64bit

Python Version: 2.7.3 (default, Apr 10 2012, 23:24:47) [MSC v.1500 64 bit (AMD64)

I'm having a problem with automatic post processing. When I first start sickrage the post processing will work fine, but at some point it stops. Usually a day or so after starting sickrage. During the time when this is happening there are no "POSTPROCESSER" entries in the log. If I restart sickrage, post processing will resume and the backlog of completed downloads will process. Generally it will also process new downloads for about a day, then it stops again.

I'm not sure when this started but everything was running fine for months before I had a problem. I have auto updates turned on and the problem may have coincided with an update.

I have the post processing set to run every 10 minutes. Below is a log for an hour period with no POSTPROCESSER entries. I'm not sure if it will be of any help but it at least proves that post processing isn't running.

http://pastebin.com/eHHN2RbV | priority | postprocesser stops running branch master commit hash os win pro python version default apr msc v bit i m having a problem with automatic post processing when i first start sickrage the post processing will work fine but at some point it stops usually a day or so after starting sickrage during the time when this is happening there are no postprocesser entries in the log if i restart sickrage post processing will resume and the backlog of completed downloads will process generally it will also process new downloads for about a day then it stops again i m not sure when this started but everything was running fine for months before i had a problem i have auto updates turned on and the problem may have coincided with an update i have the post processing set to run every minutes below is a log for an hour period with no postprocesser entries i m not sure if it will be of any help but it at least proves that post processing isn t running | 1 |

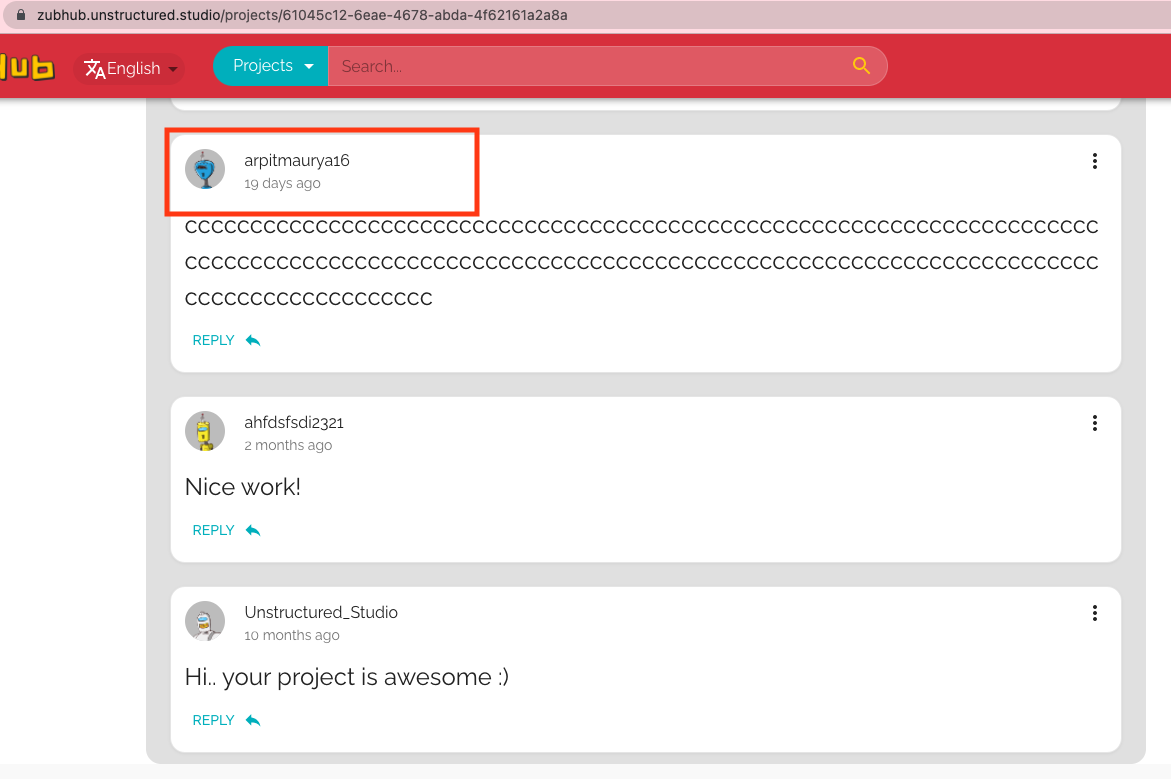

788,431 | 27,752,853,566 | IssuesEvent | 2023-03-15 22:27:05 | unstructuredstudio/zubhub | https://api.github.com/repos/unstructuredstudio/zubhub | closed | Clicking on a username in the comment box takes you to the current user's profile page | bug good first issue medium priority | **Describe the bug**

If you click on a username in the comment box, it will take you to the current user's profile page. Ideally, it should take you to the user's profile whose avatar or username is clicked.

**To Reproduce**

Steps to reproduce the behavior:

1. Visit the following project: https://zubhub.unstructured.studio/projects/61045c12-6eae-4678-abda-4f62161a2a8a

2. Scroll to the comments section

3. Click on the username or avatar in a comment box

4. Notice that you are redirected to your own profile page

**Expected behavior**

Ideally, you were redirected to the clicked user's profile page.

| 1.0 | Clicking on a username in the comment box takes you to the current user's profile page - **Describe the bug**

If you click on a username in the comment box, it will take you to the current user's profile page. Ideally, it should take you to the user's profile whose avatar or username is clicked.

**To Reproduce**

Steps to reproduce the behavior:

1. Visit the following project: https://zubhub.unstructured.studio/projects/61045c12-6eae-4678-abda-4f62161a2a8a

2. Scroll to the comments section

3. Click on the username or avatar in a comment box

4. Notice that you are redirected to your own profile page

**Expected behavior**

Ideally, you were redirected to the clicked user's profile page.

| priority | clicking on a username in the comment box takes you to the current user s profile page describe the bug if you click on a username in the comment box it will take you to the current user s profile page ideally it should take you to the user s profile whose avatar or username is clicked to reproduce steps to reproduce the behavior visit the following project scroll to the comments section click on the username or avatar in a comment box notice that you are redirected to your own profile page expected behavior ideally you were redirected to the clicked user s profile page | 1 |

173,566 | 6,527,887,711 | IssuesEvent | 2017-08-30 03:57:53 | orange-alliance/the-orange-alliance | https://api.github.com/repos/orange-alliance/the-orange-alliance | closed | Add year to Season on Team Page | bug Medium Priority | To have constancy the page should show season as XXXX/YYYY (Example: 2016/2017) | 1.0 | Add year to Season on Team Page - To have constancy the page should show season as XXXX/YYYY (Example: 2016/2017) | priority | add year to season on team page to have constancy the page should show season as xxxx yyyy example | 1 |

118,427 | 4,744,923,981 | IssuesEvent | 2016-10-21 04:05:27 | CovertJaguar/Railcraft | https://api.github.com/repos/CovertJaguar/Railcraft | closed | Redstone Condition Incorrect on RF Loader | bug priority-medium | Description:

The "complete" redstone condition for the RF loader should be "when cart is full", but instead it's "Process until cart is empty".

Tested With:

RailCraft: 1.10.2-10.0.0-beta-3

Forge: 1.10.2-12.18.2.2099 | 1.0 | Redstone Condition Incorrect on RF Loader - Description:

The "complete" redstone condition for the RF loader should be "when cart is full", but instead it's "Process until cart is empty".

Tested With:

RailCraft: 1.10.2-10.0.0-beta-3

Forge: 1.10.2-12.18.2.2099 | priority | redstone condition incorrect on rf loader description the complete redstone condition for the rf loader should be when cart is full but instead it s process until cart is empty tested with railcraft beta forge | 1 |

395,966 | 11,699,295,092 | IssuesEvent | 2020-03-06 15:23:41 | luna/enso | https://api.github.com/repos/luna/enso | closed | Desugar Operators to Functions | Category: Compiler Category: Core Change: Breaking Difficulty: Core Contributor Priority: Medium Type: Enhancement | ### Summary

Operators in Enso are just syntactic sugar for functions, but analysis passes don't need to know about that.

### Value

Analysis passes don't want to have to deal with syntax sugar, and at this stage in the compiler implementation this is the only sugar we support.

### Specification

- [x] Implement a pass that transforms all uses of operators into standard prefix function applications.

- [x] The output of this pass is not analysis, but just a graph without operator sugar in it.

- [x] Implement operators as standard builting functions in the interpreter to support this.

- [ ] Implement an extensible optimisation pass that treats known, fully-saturated functions with known arity specially such that they can be compiled to specific nodes. This should contain the node constructor function in the metadata from the pass, and map from arity to function name to the necessary data.

### Acceptance Criteria & Test Cases

- Operators can be successfully desugared to functions. | 1.0 | Desugar Operators to Functions - ### Summary

Operators in Enso are just syntactic sugar for functions, but analysis passes don't need to know about that.

### Value

Analysis passes don't want to have to deal with syntax sugar, and at this stage in the compiler implementation this is the only sugar we support.

### Specification

- [x] Implement a pass that transforms all uses of operators into standard prefix function applications.

- [x] The output of this pass is not analysis, but just a graph without operator sugar in it.

- [x] Implement operators as standard builting functions in the interpreter to support this.

- [ ] Implement an extensible optimisation pass that treats known, fully-saturated functions with known arity specially such that they can be compiled to specific nodes. This should contain the node constructor function in the metadata from the pass, and map from arity to function name to the necessary data.

### Acceptance Criteria & Test Cases

- Operators can be successfully desugared to functions. | priority | desugar operators to functions summary operators in enso are just syntactic sugar for functions but analysis passes don t need to know about that value analysis passes don t want to have to deal with syntax sugar and at this stage in the compiler implementation this is the only sugar we support specification implement a pass that transforms all uses of operators into standard prefix function applications the output of this pass is not analysis but just a graph without operator sugar in it implement operators as standard builting functions in the interpreter to support this implement an extensible optimisation pass that treats known fully saturated functions with known arity specially such that they can be compiled to specific nodes this should contain the node constructor function in the metadata from the pass and map from arity to function name to the necessary data acceptance criteria test cases operators can be successfully desugared to functions | 1 |

642,790 | 20,913,520,985 | IssuesEvent | 2022-03-24 11:23:52 | netdata/netdata-cloud | https://api.github.com/repos/netdata/netdata-cloud | closed | [BUG] chart families not showing properly in overview screen | bug priority/medium visualizations-team | <!---

If you are a member of the Netdata organization, add the label 'internal submit'.

-->

**Describe the bug**

I have a room with one node. This node about 20 anomalies collector jobs running on it and so has lots of anomalies contexts in the menu on the right.

When i am in the node itself i see correct menus:

But for some reason when i go to the overview for the room i lose those menu's and it has for some reason sort of flattened out all the "System Overview" sections into their own menus for some reason.

**To Reproduce**

Create a agent with a lot of non standard collectors and/or multiple jobs per collector. Chanage the priority of those jobs to be 80-90 and so appear at top of menu list.

Create a space and room with just that node. Look at the differences between the overview and the node view of the dashboard itself.

**Expected behavior**

I could see all the menu sections in the overview screen just like i do on the node screen.

**Screenshots**

As above

**Additional context**

I'm happy to invite anyone to my room if easier to debug that way as might be a tricky one to recreate.

| 1.0 | [BUG] chart families not showing properly in overview screen - <!---

If you are a member of the Netdata organization, add the label 'internal submit'.

-->

**Describe the bug**

I have a room with one node. This node about 20 anomalies collector jobs running on it and so has lots of anomalies contexts in the menu on the right.

When i am in the node itself i see correct menus:

But for some reason when i go to the overview for the room i lose those menu's and it has for some reason sort of flattened out all the "System Overview" sections into their own menus for some reason.

**To Reproduce**

Create a agent with a lot of non standard collectors and/or multiple jobs per collector. Chanage the priority of those jobs to be 80-90 and so appear at top of menu list.

Create a space and room with just that node. Look at the differences between the overview and the node view of the dashboard itself.

**Expected behavior**

I could see all the menu sections in the overview screen just like i do on the node screen.

**Screenshots**

As above

**Additional context**

I'm happy to invite anyone to my room if easier to debug that way as might be a tricky one to recreate.

| priority | chart families not showing properly in overview screen if you are a member of the netdata organization add the label internal submit describe the bug i have a room with one node this node about anomalies collector jobs running on it and so has lots of anomalies contexts in the menu on the right when i am in the node itself i see correct menus but for some reason when i go to the overview for the room i lose those menu s and it has for some reason sort of flattened out all the system overview sections into their own menus for some reason to reproduce create a agent with a lot of non standard collectors and or multiple jobs per collector chanage the priority of those jobs to be and so appear at top of menu list create a space and room with just that node look at the differences between the overview and the node view of the dashboard itself expected behavior i could see all the menu sections in the overview screen just like i do on the node screen screenshots as above additional context i m happy to invite anyone to my room if easier to debug that way as might be a tricky one to recreate | 1 |

705,287 | 24,229,601,869 | IssuesEvent | 2022-09-26 17:02:39 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] Import schema qualification fix | kind/bug area/ysql priority/medium 2.12 Backport Required 2.14 Backport Required | Jira Link: [DB-3617](https://yugabyte.atlassian.net/browse/DB-3617)

### Description

Import schema qualification fix | 1.0 | [YSQL] Import schema qualification fix - Jira Link: [DB-3617](https://yugabyte.atlassian.net/browse/DB-3617)

### Description

Import schema qualification fix | priority | import schema qualification fix jira link description import schema qualification fix | 1 |

431,302 | 12,476,988,360 | IssuesEvent | 2020-05-29 14:22:24 | ansible/ansible-lint | https://api.github.com/repos/ansible/ansible-lint | closed | auto-detect modules with collection layouts | help wanted needs_implementation priority/medium status/new type/enhancement type/proposal | Ansible-lint ability to just work without any extra tuning can be improved by making it automatically define `ANSIBLE_LIBRARY=plugins/modules` when the variable is not already defined.

This would follow the [official collection repository layout](https://docs.ansible.com/ansible/latest/dev_guide/developing_collections.html) and make ansible-lint more likely work without extra configuration.

This feature should be enabled only with auto-detection mode because it needs to know what is the repository root location. That is because the tool could be called from any subdirectory and we do expect to give the same kind of results.

When implement this feature should allow people to remove extra code added to files like `tox.ini` or `.pre-commit-config.yaml` that define ANSIBLE_LIBRARY in order to be able perform the linting. | 1.0 | auto-detect modules with collection layouts - Ansible-lint ability to just work without any extra tuning can be improved by making it automatically define `ANSIBLE_LIBRARY=plugins/modules` when the variable is not already defined.

This would follow the [official collection repository layout](https://docs.ansible.com/ansible/latest/dev_guide/developing_collections.html) and make ansible-lint more likely work without extra configuration.

This feature should be enabled only with auto-detection mode because it needs to know what is the repository root location. That is because the tool could be called from any subdirectory and we do expect to give the same kind of results.

When implement this feature should allow people to remove extra code added to files like `tox.ini` or `.pre-commit-config.yaml` that define ANSIBLE_LIBRARY in order to be able perform the linting. | priority | auto detect modules with collection layouts ansible lint ability to just work without any extra tuning can be improved by making it automatically define ansible library plugins modules when the variable is not already defined this would follow the and make ansible lint more likely work without extra configuration this feature should be enabled only with auto detection mode because it needs to know what is the repository root location that is because the tool could be called from any subdirectory and we do expect to give the same kind of results when implement this feature should allow people to remove extra code added to files like tox ini or pre commit config yaml that define ansible library in order to be able perform the linting | 1 |

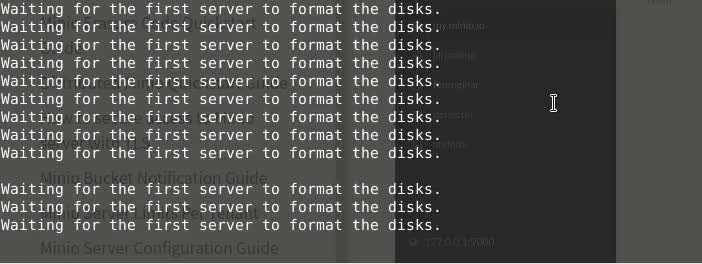

262,874 | 8,272,568,746 | IssuesEvent | 2018-09-16 21:35:13 | minio/minio | https://api.github.com/repos/minio/minio | closed | [Distributed] Disks are never formatted if instances mixed with binary and docker hosts | priority: medium won't fix | I have set up 4 minio servers in 4 different physical locations. 3 Are running the native linux binary and one is running in a docker container.

**All instances are using this exact version:**

- Version: 2018-08-21T00:37:20Z

- Release-Tag: RELEASE.2018-08-21T00-37-20Z

- Commit-ID: 2d84b02bc429ad059a060001bccd712491e6e6a3

I am able to ping and curl all instances from all locations and even from inside the container. The answer from CURL is the expected XML with the message "XMinioServerNotInitialized" from all 4 nodes

I have all 4 sites open via my browser so I know they have the same auth info and that they are running correctly.

But it seems they never format the disks.

Here is a video of the servers in action

[Video of all servers waiting for format ](https://pictshare.net/raw/m4szy91lqp.mp4)

However if I replace the docker container with a binary as well, everything works without a problem.

## Expected Behavior

Format should happen and servers should be initialized

## Current Behavior

forever hanging in "Waiting for the first server to format the disks."

## Possible Solution

Replace docker container with linux binary, then it works.

Also it might be noted in the docs that mixing docker and binary will cause problems even at the same version

## Steps to Reproduce (for bugs)

1. Start 3 machines (different networks, exposed port 9000) with `minio server http://server1:9000/data http://server2:9000/data http://server3:9000/data http://server4:9000/data`

2. Start one docker container `docker run -it --rm -e MINIO_ACCESS_KEY=mykey -e MINIO_SECRET_KEY=mysecret -p 9000:9000 -v /mnt:/data minio/minio server http://server1:9000/data http://server2:9000/data http://server3:9000/data http://server4:9000/data`

3. Watch them never sync

## Context

I tried to set up a distributed system on 4 physical locations with portforwarding and domains enabled. Didn't work unless I let them all run form the linux binary instead of docker

## Your Environment

* Version used (`minio version`): 2018-08-21T00:37:20Z

* Environment name and version: Debian 8 and 9 and one digitalocean docker instance

* Server type and version: mixed native hardare, vhosts and cloud instances

| 1.0 | [Distributed] Disks are never formatted if instances mixed with binary and docker hosts - I have set up 4 minio servers in 4 different physical locations. 3 Are running the native linux binary and one is running in a docker container.

**All instances are using this exact version:**

- Version: 2018-08-21T00:37:20Z

- Release-Tag: RELEASE.2018-08-21T00-37-20Z

- Commit-ID: 2d84b02bc429ad059a060001bccd712491e6e6a3

I am able to ping and curl all instances from all locations and even from inside the container. The answer from CURL is the expected XML with the message "XMinioServerNotInitialized" from all 4 nodes

I have all 4 sites open via my browser so I know they have the same auth info and that they are running correctly.

But it seems they never format the disks.

Here is a video of the servers in action

[Video of all servers waiting for format ](https://pictshare.net/raw/m4szy91lqp.mp4)

However if I replace the docker container with a binary as well, everything works without a problem.

## Expected Behavior

Format should happen and servers should be initialized

## Current Behavior

forever hanging in "Waiting for the first server to format the disks."

## Possible Solution

Replace docker container with linux binary, then it works.

Also it might be noted in the docs that mixing docker and binary will cause problems even at the same version

## Steps to Reproduce (for bugs)

1. Start 3 machines (different networks, exposed port 9000) with `minio server http://server1:9000/data http://server2:9000/data http://server3:9000/data http://server4:9000/data`

2. Start one docker container `docker run -it --rm -e MINIO_ACCESS_KEY=mykey -e MINIO_SECRET_KEY=mysecret -p 9000:9000 -v /mnt:/data minio/minio server http://server1:9000/data http://server2:9000/data http://server3:9000/data http://server4:9000/data`

3. Watch them never sync

## Context

I tried to set up a distributed system on 4 physical locations with portforwarding and domains enabled. Didn't work unless I let them all run form the linux binary instead of docker

## Your Environment

* Version used (`minio version`): 2018-08-21T00:37:20Z

* Environment name and version: Debian 8 and 9 and one digitalocean docker instance

* Server type and version: mixed native hardare, vhosts and cloud instances

| priority | disks are never formatted if instances mixed with binary and docker hosts i have set up minio servers in different physical locations are running the native linux binary and one is running in a docker container all instances are using this exact version version release tag release commit id i am able to ping and curl all instances from all locations and even from inside the container the answer from curl is the expected xml with the message xminioservernotinitialized from all nodes i have all sites open via my browser so i know they have the same auth info and that they are running correctly but it seems they never format the disks here is a video of the servers in action however if i replace the docker container with a binary as well everything works without a problem expected behavior format should happen and servers should be initialized current behavior forever hanging in waiting for the first server to format the disks possible solution replace docker container with linux binary then it works also it might be noted in the docs that mixing docker and binary will cause problems even at the same version steps to reproduce for bugs start machines different networks exposed port with minio server start one docker container docker run it rm e minio access key mykey e minio secret key mysecret p v mnt data minio minio server watch them never sync context i tried to set up a distributed system on physical locations with portforwarding and domains enabled didn t work unless i let them all run form the linux binary instead of docker your environment version used minio version environment name and version debian and and one digitalocean docker instance server type and version mixed native hardare vhosts and cloud instances | 1 |

272,860 | 8,518,511,161 | IssuesEvent | 2018-11-01 11:53:37 | GoldenSoftwareLtd/gedemin | https://api.github.com/repos/GoldenSoftwareLtd/gedemin | closed | Закрытие счёта с 0 суммой | Priority-Medium Type-Enhancement check | Originally reported on Google Code with ID 3219

```

Чисто теоретически можно сделать заказ с 0 суммой. К примеру, скидка 100% или ранее

принятый аванс покрыл всю сумму заказа.

Нужно предусмотреть закрытие такого заказ.

1. Печатать предчек.

2. Дать возможность вызвать окно оплаты

3. В окне оплаты печатается счёт но по фискальному регистратору если сумма 0 то не

печатать чек (хотя если аванс был принят то как бы надо закрыть такой заказ)

```

Reported by `stasgm` on 2013-08-22 14:57:12

| 1.0 | Закрытие счёта с 0 суммой - Originally reported on Google Code with ID 3219

```

Чисто теоретически можно сделать заказ с 0 суммой. К примеру, скидка 100% или ранее

принятый аванс покрыл всю сумму заказа.

Нужно предусмотреть закрытие такого заказ.

1. Печатать предчек.

2. Дать возможность вызвать окно оплаты

3. В окне оплаты печатается счёт но по фискальному регистратору если сумма 0 то не

печатать чек (хотя если аванс был принят то как бы надо закрыть такой заказ)

```

Reported by `stasgm` on 2013-08-22 14:57:12

| priority | закрытие счёта с суммой originally reported on google code with id чисто теоретически можно сделать заказ с суммой к примеру скидка или ранее принятый аванс покрыл всю сумму заказа нужно предусмотреть закрытие такого заказ печатать предчек дать возможность вызвать окно оплаты в окне оплаты печатается счёт но по фискальному регистратору если сумма то не печатать чек хотя если аванс был принят то как бы надо закрыть такой заказ reported by stasgm on | 1 |

445,654 | 12,834,617,005 | IssuesEvent | 2020-07-07 11:23:48 | NgyAnthony/APOS | https://api.github.com/repos/NgyAnthony/APOS | opened | Can't have an empty textbox for barcode | bug medium priority | Trying to make the textbox empty won't work because it tries to convert an empty string into a long value which obviously doesn't work. | 1.0 | Can't have an empty textbox for barcode - Trying to make the textbox empty won't work because it tries to convert an empty string into a long value which obviously doesn't work. | priority | can t have an empty textbox for barcode trying to make the textbox empty won t work because it tries to convert an empty string into a long value which obviously doesn t work | 1 |

793,393 | 27,994,302,698 | IssuesEvent | 2023-03-27 07:20:11 | AY2223S2-CS2113-T13-3/tp | https://api.github.com/repos/AY2223S2-CS2113-T13-3/tp | closed | Commands interface | Medium priority | An interface to allow the Parser class to call commands directly. Currently, the stopgap measure is to pass in the whole object into the parser class when instantiated so that the commands can be accessed. | 1.0 | Commands interface - An interface to allow the Parser class to call commands directly. Currently, the stopgap measure is to pass in the whole object into the parser class when instantiated so that the commands can be accessed. | priority | commands interface an interface to allow the parser class to call commands directly currently the stopgap measure is to pass in the whole object into the parser class when instantiated so that the commands can be accessed | 1 |

119,382 | 4,769,225,353 | IssuesEvent | 2016-10-26 11:50:55 | Cadasta/cadasta-platform | https://api.github.com/repos/Cadasta/cadasta-platform | closed | Uploading a XLSForm with empty labels throws IntegrityError | bug medium priority | ### Steps to reproduce the error

- Create a new project

- In the wizard, upload an XLSForm with empty labels

- Finish the remaining steps in the wizards and save the project.

### Actual behavior

Saving the project throws an IntegrityError. The field `label_xlat` in `QuestionOption` models can be an empty `dict` but not `None`. [This line](https://github.com/Cadasta/cadasta-platform/blob/master/cadasta/questionnaires/managers.py#L64) reads empty values to `None`, which leads to the exception. The line should read `label_xlat=o.get('label_xlat', o.get('label', {}))` instead.

### Expected behavior

The form should be processed without throwing an exception.

| 1.0 | Uploading a XLSForm with empty labels throws IntegrityError - ### Steps to reproduce the error

- Create a new project

- In the wizard, upload an XLSForm with empty labels

- Finish the remaining steps in the wizards and save the project.

### Actual behavior

Saving the project throws an IntegrityError. The field `label_xlat` in `QuestionOption` models can be an empty `dict` but not `None`. [This line](https://github.com/Cadasta/cadasta-platform/blob/master/cadasta/questionnaires/managers.py#L64) reads empty values to `None`, which leads to the exception. The line should read `label_xlat=o.get('label_xlat', o.get('label', {}))` instead.

### Expected behavior

The form should be processed without throwing an exception.

| priority | uploading a xlsform with empty labels throws integrityerror steps to reproduce the error create a new project in the wizard upload an xlsform with empty labels finish the remaining steps in the wizards and save the project actual behavior saving the project throws an integrityerror the field label xlat in questionoption models can be an empty dict but not none reads empty values to none which leads to the exception the line should read label xlat o get label xlat o get label instead expected behavior the form should be processed without throwing an exception | 1 |

279,102 | 8,657,530,072 | IssuesEvent | 2018-11-27 21:36:00 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Setting x._backward_hooks to None doesn't error, doesn't clear hooks | bootcamp medium priority | ## 🐛 Bug

Steps to reproduce the behavior:

```

import torch

y = torch.ones(5, 5, requires_grad=True)

counter = [0]

def bw_hook(grad):

counter[0] += 1

z = y + y

test = z.register_hook(bw_hook)

print(z._backward_hooks)

z._backward_hooks = None

print(z._backward_hooks)

z.backward(torch.ones(5, 5), retain_graph=True)

print(counter)

```

this prints:

```

$ python hooky.py

OrderedDict([(0, <function bw_hook at 0x7f2c9ab69e18>)])

None

[1]

```

## Expected behavior

I expect to either get an error, saying you can't set `_backward_hooks` to None, or for hooks to be cleared if I do this. | 1.0 | Setting x._backward_hooks to None doesn't error, doesn't clear hooks - ## 🐛 Bug

Steps to reproduce the behavior:

```

import torch

y = torch.ones(5, 5, requires_grad=True)

counter = [0]

def bw_hook(grad):

counter[0] += 1

z = y + y

test = z.register_hook(bw_hook)

print(z._backward_hooks)

z._backward_hooks = None

print(z._backward_hooks)

z.backward(torch.ones(5, 5), retain_graph=True)

print(counter)

```

this prints:

```

$ python hooky.py

OrderedDict([(0, <function bw_hook at 0x7f2c9ab69e18>)])

None

[1]

```

## Expected behavior

I expect to either get an error, saying you can't set `_backward_hooks` to None, or for hooks to be cleared if I do this. | priority | setting x backward hooks to none doesn t error doesn t clear hooks 🐛 bug steps to reproduce the behavior import torch y torch ones requires grad true counter def bw hook grad counter z y y test z register hook bw hook print z backward hooks z backward hooks none print z backward hooks z backward torch ones retain graph true print counter this prints python hooky py ordereddict none expected behavior i expect to either get an error saying you can t set backward hooks to none or for hooks to be cleared if i do this | 1 |

48,832 | 3,000,286,755 | IssuesEvent | 2015-07-24 00:06:59 | opendatakit/opendatakit | https://api.github.com/repos/opendatakit/opendatakit | closed | cannot use livereload feature of grunt | Priority-Medium Survey Type-Other | Originally reported on Google Code with ID 1058

```

Please add a comment to this issue if you encounter this problem:

Running:

grunt

Causes the webpage to never display (it is always spinning trying to load the page

and never times out).

Running:

grunt --verbose connect:livereload:keepalive

Displays it.

This starts grunt, but disables the file-change detection mechanisms that automatically

reload an HTML page when it or any javascript file it uses has been modified.

```

Reported by `mitchellsundt` on 2014-09-05 16:15:33

| 1.0 | cannot use livereload feature of grunt - Originally reported on Google Code with ID 1058

```

Please add a comment to this issue if you encounter this problem:

Running:

grunt

Causes the webpage to never display (it is always spinning trying to load the page

and never times out).

Running:

grunt --verbose connect:livereload:keepalive

Displays it.

This starts grunt, but disables the file-change detection mechanisms that automatically

reload an HTML page when it or any javascript file it uses has been modified.

```

Reported by `mitchellsundt` on 2014-09-05 16:15:33

| priority | cannot use livereload feature of grunt originally reported on google code with id please add a comment to this issue if you encounter this problem running grunt causes the webpage to never display it is always spinning trying to load the page and never times out running grunt verbose connect livereload keepalive displays it this starts grunt but disables the file change detection mechanisms that automatically reload an html page when it or any javascript file it uses has been modified reported by mitchellsundt on | 1 |

458,921 | 13,184,163,965 | IssuesEvent | 2020-08-12 18:52:43 | LBL-EESA/TECA | https://api.github.com/repos/LBL-EESA/TECA | opened | use pre-built docker image for travis-ci | 2_medium_priority | **Is your feature request related to a problem? Please describe.**

Travis-CI runs are slow, each run wastes time by building and installing all of the dependencies from scratch every time.

**Describe the solution you'd like**

We will publish docker images containing all the dependencies on docker hub and fetch them from the tests.

https://docs.travis-ci.com/user/docker/#using-a-docker-image-from-a-repository-in-a-build

**Describe alternatives you've considered**

**Additional context**

More of an issue now that we are running each docker flavor multiple times

| 1.0 | use pre-built docker image for travis-ci - **Is your feature request related to a problem? Please describe.**

Travis-CI runs are slow, each run wastes time by building and installing all of the dependencies from scratch every time.

**Describe the solution you'd like**

We will publish docker images containing all the dependencies on docker hub and fetch them from the tests.

https://docs.travis-ci.com/user/docker/#using-a-docker-image-from-a-repository-in-a-build

**Describe alternatives you've considered**

**Additional context**

More of an issue now that we are running each docker flavor multiple times

| priority | use pre built docker image for travis ci is your feature request related to a problem please describe travis ci runs are slow each run wastes time by building and installing all of the dependencies from scratch every time describe the solution you d like we will publish docker images containing all the dependencies on docker hub and fetch them from the tests describe alternatives you ve considered additional context more of an issue now that we are running each docker flavor multiple times | 1 |

31,995 | 2,742,582,080 | IssuesEvent | 2015-04-21 17:09:06 | boxkite/ckanext-donneesqctheme | https://api.github.com/repos/boxkite/ckanext-donneesqctheme | closed | Users to be member of all groups by default | Medium Priority | I don't know if this is new with 2.3, but it seems that now a user has to be member of a group to put datasts in that group.

In Données Québec, all users should be able to link datasets with all groups. Would it be possible to have the users linked with all the groups when created? | 1.0 | Users to be member of all groups by default - I don't know if this is new with 2.3, but it seems that now a user has to be member of a group to put datasts in that group.

In Données Québec, all users should be able to link datasets with all groups. Would it be possible to have the users linked with all the groups when created? | priority | users to be member of all groups by default i don t know if this is new with but it seems that now a user has to be member of a group to put datasts in that group in données québec all users should be able to link datasets with all groups would it be possible to have the users linked with all the groups when created | 1 |

84,985 | 3,683,134,972 | IssuesEvent | 2016-02-24 12:50:49 | PlanHubMe/PlanHub | https://api.github.com/repos/PlanHubMe/PlanHub | closed | A way of overriding a user's planner. | enhancement medium priority | Here is how it can go:

1. Go to admin panel

2. Click user data override

3. Give unique link, (expires in 3 hrs) for user to sign in.

4. User signs in, goes to page verifying giving temporary (24 hr) account access to administrator who is helping you.

5. Administrator has various options, but **cannot edit events directly**.

6. Administrator selects "Finished" button.

7. Session is closed, and cannot be reopened without going back to step 1

Admin options:

* Erase all data

* Change user's name

* Export data (JSON)

* Sends email to user with data

* Import data (JSON)

* Sends email to user with unique line (expires in 3hrs) for user to paste data into.

This is a lot of programming work, but it can be useful. | 1.0 | A way of overriding a user's planner. - Here is how it can go:

1. Go to admin panel

2. Click user data override

3. Give unique link, (expires in 3 hrs) for user to sign in.

4. User signs in, goes to page verifying giving temporary (24 hr) account access to administrator who is helping you.

5. Administrator has various options, but **cannot edit events directly**.

6. Administrator selects "Finished" button.

7. Session is closed, and cannot be reopened without going back to step 1

Admin options:

* Erase all data

* Change user's name

* Export data (JSON)

* Sends email to user with data

* Import data (JSON)

* Sends email to user with unique line (expires in 3hrs) for user to paste data into.

This is a lot of programming work, but it can be useful. | priority | a way of overriding a user s planner here is how it can go go to admin panel click user data override give unique link expires in hrs for user to sign in user signs in goes to page verifying giving temporary hr account access to administrator who is helping you administrator has various options but cannot edit events directly administrator selects finished button session is closed and cannot be reopened without going back to step admin options erase all data change user s name export data json sends email to user with data import data json sends email to user with unique line expires in for user to paste data into this is a lot of programming work but it can be useful | 1 |

398,296 | 11,739,455,330 | IssuesEvent | 2020-03-11 17:44:33 | thaliawww/ThaliApp | https://api.github.com/repos/thaliawww/ThaliApp | closed | User cannot deregister after deregistration deadline | bug priority: medium | In GitLab by @pingiun on Nov 11, 2019, 13:18

### One-sentence description

User cannot deregister after deregistration deadline

### Current behaviour / Reproducing the bug

<!-- Please write what is happening and how we could reproduce it, if relevant -->

1. Register for an event

2. Wait for deregistration deadline

3. Try to deregister, knowing that you will be fined

4. There is no deregistration button

### Expected behaviour

Just like on the website, you should be able to deregister and get a fine.

<!-- Please write how what happened did not meet your expectations --> | 1.0 | User cannot deregister after deregistration deadline - In GitLab by @pingiun on Nov 11, 2019, 13:18

### One-sentence description

User cannot deregister after deregistration deadline

### Current behaviour / Reproducing the bug

<!-- Please write what is happening and how we could reproduce it, if relevant -->

1. Register for an event

2. Wait for deregistration deadline

3. Try to deregister, knowing that you will be fined

4. There is no deregistration button

### Expected behaviour

Just like on the website, you should be able to deregister and get a fine.

<!-- Please write how what happened did not meet your expectations --> | priority | user cannot deregister after deregistration deadline in gitlab by pingiun on nov one sentence description user cannot deregister after deregistration deadline current behaviour reproducing the bug register for an event wait for deregistration deadline try to deregister knowing that you will be fined there is no deregistration button expected behaviour just like on the website you should be able to deregister and get a fine | 1 |

1,930 | 2,521,824,496 | IssuesEvent | 2015-01-19 17:09:40 | oculusinfo/aperture-tiles | https://api.github.com/repos/oculusinfo/aperture-tiles | closed | Map and Layer files expect the pyramid with different labels | bug client invalid P2 - Medium Priority refactor | The map configuration expects its pyramid configuration to be labelled "PyramidConfig.

The layer configuration expects its pyramid configuration to be labelled "pyramid".

The labels should be the same in both cases.

We should go through other similar cases and make sure we're consistent across the board. | 1.0 | Map and Layer files expect the pyramid with different labels - The map configuration expects its pyramid configuration to be labelled "PyramidConfig.

The layer configuration expects its pyramid configuration to be labelled "pyramid".

The labels should be the same in both cases.

We should go through other similar cases and make sure we're consistent across the board. | priority | map and layer files expect the pyramid with different labels the map configuration expects its pyramid configuration to be labelled pyramidconfig the layer configuration expects its pyramid configuration to be labelled pyramid the labels should be the same in both cases we should go through other similar cases and make sure we re consistent across the board | 1 |

358,904 | 10,651,690,966 | IssuesEvent | 2019-10-17 10:57:09 | dotkom/onlineweb4 | https://api.github.com/repos/dotkom/onlineweb4 | closed | Receipts are sent multiple times for individual transactions | Easy Package: Payment Priority: Medium Status: Available Type: Bug | **Describe the bug**

Receipts are mailed multiple times, even though only one payment occurs. The receipts have differing IDs.

**To Reproduce**

- Purchase something in the webshop, and observe that two receipt mails are received.

- Add money to your wallet for the same behavior.

Probably also occurs when paying for events.

**Additional context**

My guess, I haven't checked that this is precisely the case: Receipts are sent when PaymentRelation objects are saved. Following the recent changes to payments using Stripe, payments get saved multiple times. Perhaps getting asked for 3D-Secure authentication results in a third mail? | 1.0 | Receipts are sent multiple times for individual transactions - **Describe the bug**

Receipts are mailed multiple times, even though only one payment occurs. The receipts have differing IDs.

**To Reproduce**

- Purchase something in the webshop, and observe that two receipt mails are received.

- Add money to your wallet for the same behavior.

Probably also occurs when paying for events.

**Additional context**

My guess, I haven't checked that this is precisely the case: Receipts are sent when PaymentRelation objects are saved. Following the recent changes to payments using Stripe, payments get saved multiple times. Perhaps getting asked for 3D-Secure authentication results in a third mail? | priority | receipts are sent multiple times for individual transactions describe the bug receipts are mailed multiple times even though only one payment occurs the receipts have differing ids to reproduce purchase something in the webshop and observe that two receipt mails are received add money to your wallet for the same behavior probably also occurs when paying for events additional context my guess i haven t checked that this is precisely the case receipts are sent when paymentrelation objects are saved following the recent changes to payments using stripe payments get saved multiple times perhaps getting asked for secure authentication results in a third mail | 1 |

244,952 | 7,880,709,382 | IssuesEvent | 2018-06-26 16:42:14 | aowen87/FOO | https://api.github.com/repos/aowen87/FOO | closed | add support for external ghosts to SAMRAI plugin | Expected Use: 3 - Occasional Impact: 3 - Medium OS: All Priority: Normal Support Group: Any Target Version: 2.13.0 feature version: 2.12.3 | cyrus has an example dataset from noah elliot | 1.0 | add support for external ghosts to SAMRAI plugin - cyrus has an example dataset from noah elliot | priority | add support for external ghosts to samrai plugin cyrus has an example dataset from noah elliot | 1 |

608,011 | 18,795,933,322 | IssuesEvent | 2021-11-08 22:19:45 | mosaicml/yahp | https://api.github.com/repos/mosaicml/yahp | opened | Expose dot notation helpers conversion between dot notation/nested syntax | enhancement Medium Priority | It would be extremely useful to be able to convert between dot notation and nested syntax in order to support new features like parameter sweeping and other configuration tools. | 1.0 | Expose dot notation helpers conversion between dot notation/nested syntax - It would be extremely useful to be able to convert between dot notation and nested syntax in order to support new features like parameter sweeping and other configuration tools. | priority | expose dot notation helpers conversion between dot notation nested syntax it would be extremely useful to be able to convert between dot notation and nested syntax in order to support new features like parameter sweeping and other configuration tools | 1 |

462,627 | 13,250,445,670 | IssuesEvent | 2020-08-19 23:03:51 | onicagroup/runway | https://api.github.com/repos/onicagroup/runway | closed | [TODO] investigate implementing a code formatter | maintenance priority:medium | # Viable Options

- [autopep](https://github.com/hhatto/autopep8)

- [black](https://github.com/psf/black)

- [yapf](https://github.com/google/yapf)

# Considerations

- Should we implement one?

- Which one should we implement?

- How will it impact the code base?

- How will it impact the developers/maintainers? | 1.0 | [TODO] investigate implementing a code formatter - # Viable Options

- [autopep](https://github.com/hhatto/autopep8)

- [black](https://github.com/psf/black)

- [yapf](https://github.com/google/yapf)

# Considerations

- Should we implement one?

- Which one should we implement?

- How will it impact the code base?

- How will it impact the developers/maintainers? | priority | investigate implementing a code formatter viable options considerations should we implement one which one should we implement how will it impact the code base how will it impact the developers maintainers | 1 |

582,414 | 17,360,987,911 | IssuesEvent | 2021-07-29 20:35:55 | kleros/court | https://api.github.com/repos/kleros/court | closed | Email Notifications | Priority: Medium Status: Available Type: Enhancement :sparkles: | We should implement the new "Uber style" email notification that was designed by Plinio. | 1.0 | Email Notifications - We should implement the new "Uber style" email notification that was designed by Plinio. | priority | email notifications we should implement the new uber style email notification that was designed by plinio | 1 |

170,803 | 6,471,810,643 | IssuesEvent | 2017-08-17 12:37:27 | semperfiwebdesign/semperpluginstheme | https://api.github.com/repos/semperfiwebdesign/semperpluginstheme | opened | Reorganize documentation | Priority | Medium | https://semperplugins.com/documentation/

Not sure if this is a theme or plugin issue, but the documentation can be difficult to look at.

For example:

1) If there there are more items for one category than another, both are pushed down (check out the space under file editor module)

2) Maybe there should be a way to easily distinguish guides from settings.

3) I wonder if API should be on it's own page. | 1.0 | Reorganize documentation - https://semperplugins.com/documentation/

Not sure if this is a theme or plugin issue, but the documentation can be difficult to look at.

For example:

1) If there there are more items for one category than another, both are pushed down (check out the space under file editor module)

2) Maybe there should be a way to easily distinguish guides from settings.

3) I wonder if API should be on it's own page. | priority | reorganize documentation not sure if this is a theme or plugin issue but the documentation can be difficult to look at for example if there there are more items for one category than another both are pushed down check out the space under file editor module maybe there should be a way to easily distinguish guides from settings i wonder if api should be on it s own page | 1 |

344,103 | 10,340,005,168 | IssuesEvent | 2019-09-03 20:46:00 | acl-services/paprika | https://api.github.com/repos/acl-services/paprika | closed | Make Paprika storybook available publicly | Medium Priority → Task WIP | **Estimation: ~1 day**

Making paprika storybook public:

- [ ] domain for this?

- [ ] coordinate Jedi team to help with this

- [ ] script the release process for doing this. | 1.0 | Make Paprika storybook available publicly - **Estimation: ~1 day**

Making paprika storybook public:

- [ ] domain for this?

- [ ] coordinate Jedi team to help with this

- [ ] script the release process for doing this. | priority | make paprika storybook available publicly estimation day making paprika storybook public domain for this coordinate jedi team to help with this script the release process for doing this | 1 |

655,417 | 21,689,745,309 | IssuesEvent | 2022-05-09 14:25:02 | zeyneplervesarp/swe574-javagang | https://api.github.com/repos/zeyneplervesarp/swe574-javagang | closed | Change minutes to hours for time credits | enhancement backend high priority difficulty-medium | The balance of the users have been implemented with minutes, they should be hours.

Refactor the domain, dto, service classes and the faker objects in load database class. | 1.0 | Change minutes to hours for time credits - The balance of the users have been implemented with minutes, they should be hours.

Refactor the domain, dto, service classes and the faker objects in load database class. | priority | change minutes to hours for time credits the balance of the users have been implemented with minutes they should be hours refactor the domain dto service classes and the faker objects in load database class | 1 |

370,154 | 10,926,022,054 | IssuesEvent | 2019-11-22 13:53:16 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Inaccessible titles after deleting other titles | Medium Priority QA Staging | Version: 0.8.1.4 beta

In the Registrar GUI, deleting a title higher up in the list of titles causes all titles below the deleted title to disappear from the GUI and become inaccessible. These titles still exist as titles in /titlelist, law propositions, etc but do not appear in the registrar. | 1.0 | Inaccessible titles after deleting other titles - Version: 0.8.1.4 beta

In the Registrar GUI, deleting a title higher up in the list of titles causes all titles below the deleted title to disappear from the GUI and become inaccessible. These titles still exist as titles in /titlelist, law propositions, etc but do not appear in the registrar. | priority | inaccessible titles after deleting other titles version beta in the registrar gui deleting a title higher up in the list of titles causes all titles below the deleted title to disappear from the gui and become inaccessible these titles still exist as titles in titlelist law propositions etc but do not appear in the registrar | 1 |

215,486 | 7,294,496,033 | IssuesEvent | 2018-02-26 00:11:39 | minio/minio | https://api.github.com/repos/minio/minio | closed | Unable to heal objects | priority: medium | Unable to heal objects in distributed minio. even if the cluster have always ben running except for the upgrade i did before the heal command to latest version.

I am not able to send the sosreport since its time consuming to clear sensitive data. But I am willing to send you reports of specific parts if you require it. Just let me know what you need.

## Expected Behavior

Running `mc admin heal` should heal objects.

## Current Behavior

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

## Possible Solution

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

<!--- or ideas how to implement the addition or change -->

## Steps to Reproduce (for bugs)

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug. Include code to reproduce, if relevant -->

1.

2.

3.

4.

## Context

ls -l for the different minio instances

minio01:

```

drwxr-xr-x 2 minio minio 35 24 apr 09.07 3546c1e2-b191-4b61-bdde-2411225c0625.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 83b17557-edea-4662-9d79-f54faeb4259c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 b85be709-aa98-4e73-9b19-4a04b4861a1c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 c8885a40-d5df-4f90-9872-0af7c46026c0.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.58 d8ab0052-3057-4baa-aa9a-e082271a2cc8.jpg

```

minio02:

```

drwxr-xr-x 2 minio minio 35 24 apr 13.26 00ad64d8-b767-4f6e-b7f6-5282d0e9f058.png

drwxr-xr-x 2 minio minio 35 24 apr 13.28 05dce802-9eee-446b-8c1a-39fe80bf2e92.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 2086f4a1-e06e-40c0-9d95-9046cb04a80e.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 2c88345a-1218-49ea-8be9-92e572fab683.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 3234d90e-be31-44f8-9ba1-cb3a3a97d51e.zip

drwxr-xr-x 2 minio minio 35 24 apr 09.07 3546c1e2-b191-4b61-bdde-2411225c0625.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.28 361594f7-d42d-4e6e-92a8-957799697c36.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.27 41ef03e5-e7f1-48fd-aa2f-a86f232fc9ca.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 455a68bf-10b1-4092-b3f6-170fdac06d18.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 48f2b7c6-3325-41ad-959b-1f45881bbd8e.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 550e4bb7-b0f0-4aac-862b-679db72058ca.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 5587c75b-b0a6-4479-b810-a705c1015c45.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 58a3afd6-8f6c-4460-837b-0b182b6e6435.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 5de5ca3e-443f-4305-8010-95a1cf6994ee.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 7586fba1-142d-4833-b3b6-f8a30f94c23b.jpg

drwxr-xr-x 2 minio minio 35 22 sep 07.25 83b17557-edea-4662-9d79-f54faeb4259c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 881df61c-933b-42ca-9a7a-6701665b735a.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 88d450ef-50e7-4d58-bbbc-978631ac08dc.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.27 925a3bdc-5c93-4e27-955a-038a22f57185.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 9b2c5946-ccfb-4763-af36-1ccf2683baff.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 a05d07d6-3e35-4771-90f0-84801e4a9654.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 a905a220-07c1-4979-9452-1462c590b96a.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 b6cc1d50-d215-477c-b7b6-0a63c3358fd1.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 b85be709-aa98-4e73-9b19-4a04b4861a1c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 c241949c-8b76-448e-826d-75453d62e6fe.pdf

drwxr-xr-x 2 minio minio 35 22 sep 07.25 c8885a40-d5df-4f90-9872-0af7c46026c0.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.58 d8ab0052-3057-4baa-aa9a-e082271a2cc8.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 dde3c849-7891-48a5-8c60-e547c48b8201.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 e1ea8118-f8ee-495f-8d8a-435139907993.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 e2c8ded6-524e-485f-997c-33d19e1d762b.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 e452af3e-9a91-4fce-b028-fba91144a521.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 f619c4d9-b185-4e79-a88d-f2957aec8a30.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 ff3921d4-6531-4dd6-ac0e-fd00296ca690.pdf

```

minio03:

```

drwxr-xr-x 2 minio minio 35 24 apr 09.07 3546c1e2-b191-4b61-bdde-2411225c0625.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 83b17557-edea-4662-9d79-f54faeb4259c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 b85be709-aa98-4e73-9b19-4a04b4861a1c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 c8885a40-d5df-4f90-9872-0af7c46026c0.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.58 d8ab0052-3057-4baa-aa9a-e082271a2cc8.jpg

```

minio04:

```

drwxr-xr-x 2 minio minio 35 24 apr 09.07 3546c1e2-b191-4b61-bdde-2411225c0625.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 83b17557-edea-4662-9d79-f54faeb4259c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 b85be709-aa98-4e73-9b19-4a04b4861a1c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 c8885a40-d5df-4f90-9872-0af7c46026c0.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.58 d8ab0052-3057-4baa-aa9a-e082271a2cc8.jpg

```

## Your Environment

* minio disk/node count: 4

* Version used (`minio version`): 2017-08-05T00:00:53Z

* Environment name and version (e.g. nginx 1.9.1): HAproxy 1.5

* Server type and version: VMware VSphere 6.5

* Operating System and version (`uname -a`): CentOS 7, Linux 3.10.0-514.10.2.el7.x86_64

* Storage: SSD SAN connected with fibrechannel

* Link to your project:

| 1.0 | Unable to heal objects - Unable to heal objects in distributed minio. even if the cluster have always ben running except for the upgrade i did before the heal command to latest version.

I am not able to send the sosreport since its time consuming to clear sensitive data. But I am willing to send you reports of specific parts if you require it. Just let me know what you need.

## Expected Behavior

Running `mc admin heal` should heal objects.

## Current Behavior

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

## Possible Solution

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

<!--- or ideas how to implement the addition or change -->

## Steps to Reproduce (for bugs)

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug. Include code to reproduce, if relevant -->

1.

2.

3.

4.

## Context

ls -l for the different minio instances

minio01:

```

drwxr-xr-x 2 minio minio 35 24 apr 09.07 3546c1e2-b191-4b61-bdde-2411225c0625.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 83b17557-edea-4662-9d79-f54faeb4259c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 b85be709-aa98-4e73-9b19-4a04b4861a1c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 c8885a40-d5df-4f90-9872-0af7c46026c0.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.58 d8ab0052-3057-4baa-aa9a-e082271a2cc8.jpg

```

minio02:

```

drwxr-xr-x 2 minio minio 35 24 apr 13.26 00ad64d8-b767-4f6e-b7f6-5282d0e9f058.png

drwxr-xr-x 2 minio minio 35 24 apr 13.28 05dce802-9eee-446b-8c1a-39fe80bf2e92.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 2086f4a1-e06e-40c0-9d95-9046cb04a80e.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 2c88345a-1218-49ea-8be9-92e572fab683.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 3234d90e-be31-44f8-9ba1-cb3a3a97d51e.zip

drwxr-xr-x 2 minio minio 35 24 apr 09.07 3546c1e2-b191-4b61-bdde-2411225c0625.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.28 361594f7-d42d-4e6e-92a8-957799697c36.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.27 41ef03e5-e7f1-48fd-aa2f-a86f232fc9ca.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 455a68bf-10b1-4092-b3f6-170fdac06d18.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 48f2b7c6-3325-41ad-959b-1f45881bbd8e.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 550e4bb7-b0f0-4aac-862b-679db72058ca.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 5587c75b-b0a6-4479-b810-a705c1015c45.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 58a3afd6-8f6c-4460-837b-0b182b6e6435.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 5de5ca3e-443f-4305-8010-95a1cf6994ee.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 7586fba1-142d-4833-b3b6-f8a30f94c23b.jpg

drwxr-xr-x 2 minio minio 35 22 sep 07.25 83b17557-edea-4662-9d79-f54faeb4259c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 881df61c-933b-42ca-9a7a-6701665b735a.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 88d450ef-50e7-4d58-bbbc-978631ac08dc.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.27 925a3bdc-5c93-4e27-955a-038a22f57185.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 9b2c5946-ccfb-4763-af36-1ccf2683baff.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 a05d07d6-3e35-4771-90f0-84801e4a9654.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 a905a220-07c1-4979-9452-1462c590b96a.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 b6cc1d50-d215-477c-b7b6-0a63c3358fd1.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 b85be709-aa98-4e73-9b19-4a04b4861a1c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 c241949c-8b76-448e-826d-75453d62e6fe.pdf

drwxr-xr-x 2 minio minio 35 22 sep 07.25 c8885a40-d5df-4f90-9872-0af7c46026c0.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.58 d8ab0052-3057-4baa-aa9a-e082271a2cc8.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 dde3c849-7891-48a5-8c60-e547c48b8201.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 e1ea8118-f8ee-495f-8d8a-435139907993.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 e2c8ded6-524e-485f-997c-33d19e1d762b.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 e452af3e-9a91-4fce-b028-fba91144a521.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.28 f619c4d9-b185-4e79-a88d-f2957aec8a30.jpg

drwxr-xr-x 2 minio minio 35 24 apr 13.26 ff3921d4-6531-4dd6-ac0e-fd00296ca690.pdf

```

minio03:

```

drwxr-xr-x 2 minio minio 35 24 apr 09.07 3546c1e2-b191-4b61-bdde-2411225c0625.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 83b17557-edea-4662-9d79-f54faeb4259c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 b85be709-aa98-4e73-9b19-4a04b4861a1c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 c8885a40-d5df-4f90-9872-0af7c46026c0.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.58 d8ab0052-3057-4baa-aa9a-e082271a2cc8.jpg

```

minio04:

```

drwxr-xr-x 2 minio minio 35 24 apr 09.07 3546c1e2-b191-4b61-bdde-2411225c0625.pdf

drwxr-xr-x 2 minio minio 35 24 apr 13.26 83b17557-edea-4662-9d79-f54faeb4259c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 b85be709-aa98-4e73-9b19-4a04b4861a1c.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.57 c8885a40-d5df-4f90-9872-0af7c46026c0.jpg

drwxr-xr-x 2 minio minio 35 24 apr 12.58 d8ab0052-3057-4baa-aa9a-e082271a2cc8.jpg

```

## Your Environment

* minio disk/node count: 4

* Version used (`minio version`): 2017-08-05T00:00:53Z

* Environment name and version (e.g. nginx 1.9.1): HAproxy 1.5

* Server type and version: VMware VSphere 6.5

* Operating System and version (`uname -a`): CentOS 7, Linux 3.10.0-514.10.2.el7.x86_64

* Storage: SSD SAN connected with fibrechannel

* Link to your project:

| priority | unable to heal objects unable to heal objects in distributed minio even if the cluster have always ben running except for the upgrade i did before the heal command to latest version i am not able to send the sosreport since its time consuming to clear sensitive data but i am willing to send you reports of specific parts if you require it just let me know what you need expected behavior running mc admin heal should heal objects current behavior possible solution steps to reproduce for bugs context ls l for the different minio instances drwxr xr x minio minio apr bdde pdf drwxr xr x minio minio apr edea jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr png drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr pdf drwxr xr x minio minio apr zip drwxr xr x minio minio apr bdde pdf drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr pdf drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio sep edea jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr bbbc pdf drwxr xr x minio minio apr jpg drwxr xr x minio minio apr ccfb jpg drwxr xr x minio minio apr pdf drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr pdf drwxr xr x minio minio sep jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr pdf drwxr xr x minio minio apr bdde pdf drwxr xr x minio minio apr edea jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr bdde pdf drwxr xr x minio minio apr edea jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg drwxr xr x minio minio apr jpg your environment minio disk node count version used minio version environment name and version e g nginx haproxy server type and version vmware vsphere operating system and version uname a centos linux storage ssd san connected with fibrechannel link to your project | 1 |

144,732 | 5,544,867,667 | IssuesEvent | 2017-03-22 20:12:31 | astropy/astropy | https://api.github.com/repos/astropy/astropy | closed | Make a pretty printer for OrderedDict | Effort-medium Feature Request Package-novice Priority-Low | `OrderedDict` is used in many places within astropy, but printing an OrderedDict object gives an ugly and difficult-to-read output. `pprint` is no better. Some quick googling led to some stackoverflow answers but nothing really helpful.

Having a readable representation of these objects would be useful. One idea is to customize `pprint.pformat` to work with OrderedDict, then use that as `__repr__` or `__str__` in astropy the OrderDict class.

If someone is bored maybe this would be a good little project...

| 1.0 | Make a pretty printer for OrderedDict - `OrderedDict` is used in many places within astropy, but printing an OrderedDict object gives an ugly and difficult-to-read output. `pprint` is no better. Some quick googling led to some stackoverflow answers but nothing really helpful.

Having a readable representation of these objects would be useful. One idea is to customize `pprint.pformat` to work with OrderedDict, then use that as `__repr__` or `__str__` in astropy the OrderDict class.

If someone is bored maybe this would be a good little project...

| priority | make a pretty printer for ordereddict ordereddict is used in many places within astropy but printing an ordereddict object gives an ugly and difficult to read output pprint is no better some quick googling led to some stackoverflow answers but nothing really helpful having a readable representation of these objects would be useful one idea is to customize pprint pformat to work with ordereddict then use that as repr or str in astropy the orderdict class if someone is bored maybe this would be a good little project | 1 |

757,923 | 26,535,828,457 | IssuesEvent | 2023-01-19 15:35:00 | notofonts/latin-greek-cyrillic | https://api.github.com/repos/notofonts/latin-greek-cyrillic | closed | Some fonts might need update for USE and default mark zeroing behavior change in HB | in-evaluation Android Priority-Medium | In HarfBuzz 1.2.0 I change Universal Shaping Engine mark zeroing behavior to match (undocumented) Microsoft behavior: