Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

416,314 | 12,142,536,077 | IssuesEvent | 2020-04-24 01:59:41 | confidantstation/Confidant-Station | https://api.github.com/repos/confidantstation/Confidant-Station | closed | File transfer optimization 2 Distributed file storage and transfer between nodes | Priority: Medium Status: Accepted Status: In Progress Status: Pending enhancement | Is your feature request related to a problem? Please describe.

The current cross-node tox scheme file transfer performance is too poor, the file storage is single point storage, unreliable

Describe the solution you'd like

Refer to the distributed file storage scheme to organize a supplementary scheme suitable for the storage and transmission of our chain file data

Describe alternatives you've considered

A clear and concise description of any alternative solutions or features you've considered.

Additional context

Add any other context or screenshots about the feature request here. | 1.0 | File transfer optimization 2 Distributed file storage and transfer between nodes - Is your feature request related to a problem? Please describe.

The current cross-node tox scheme file transfer performance is too poor, the file storage is single point storage, unreliable

Describe the solution you'd like

Refer to the distributed file storage scheme to organize a supplementary scheme suitable for the storage and transmission of our chain file data

Describe alternatives you've considered

A clear and concise description of any alternative solutions or features you've considered.

Additional context

Add any other context or screenshots about the feature request here. | priority | file transfer optimization distributed file storage and transfer between nodes is your feature request related to a problem please describe the current cross node tox scheme file transfer performance is too poor the file storage is single point storage unreliable describe the solution you d like refer to the distributed file storage scheme to organize a supplementary scheme suitable for the storage and transmission of our chain file data describe alternatives you ve considered a clear and concise description of any alternative solutions or features you ve considered additional context add any other context or screenshots about the feature request here | 1 |

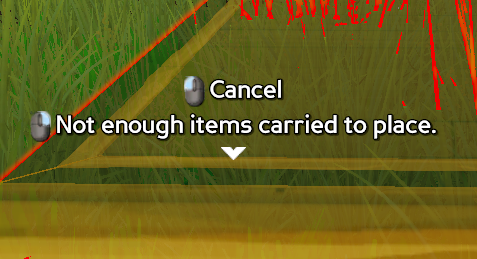

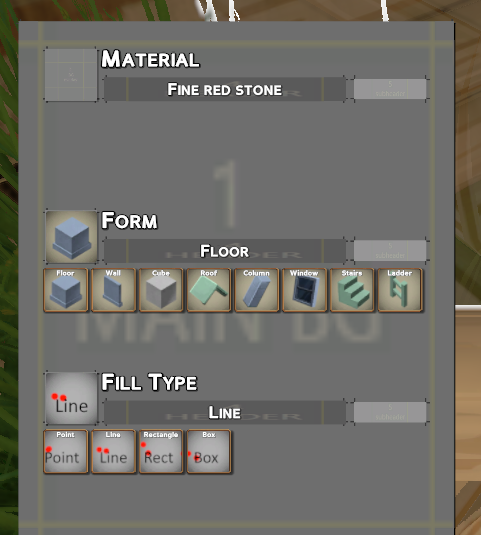

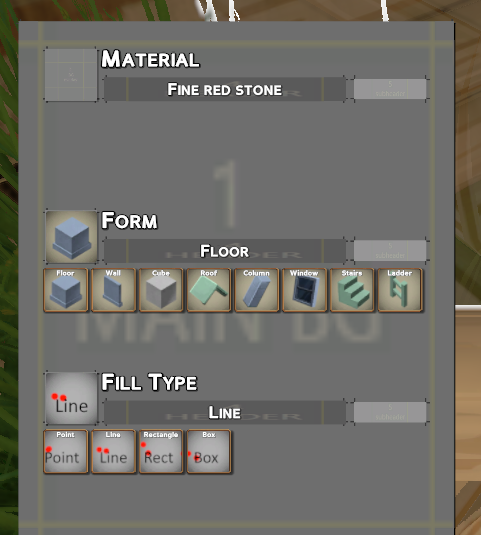

392,367 | 11,590,556,070 | IssuesEvent | 2020-02-24 07:08:11 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Hammer Feedback | Priority: Medium Status: Fixed | - [x] Wasnt able to drop this Copper Ore block while holding hammer, probably because it has no forms

- [x] Holding shift displayed the hammer UI off screen. Need to fix anchors for various resolutions, and clamp the ui

- [ ] Selection has a number of problems still. It needs to work off a projection, so it can place second selection even in midair. IE, once you start drawing a line it should always display that line no matter where you're looking.

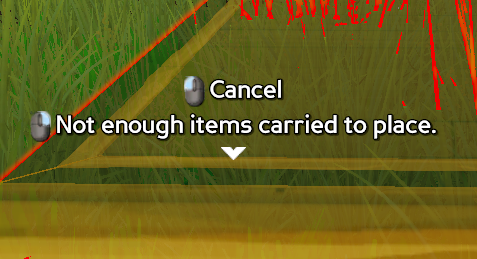

- [x] Popup text should say how many you need. 'Not enough materials (5 needed)'

- [x] After placing a block, there's a delay before you see it. Notice in the gif how it blinks away for a second. Prediction should be handling this.

- [x] Text for placing first point in a shape should be more descriptive. Ie: 'Place first floor corner' or 'Place line starting position'

- [x] 'Material' section needs to be hidden of setup properly

- [x] Text should still have controls for rotation, Q/R

- [x] Clicking these brought up the hammer selection dialog, which is good, but they also stayed highlighted

Perhaps shift should be on the 'point' and 'ladder' icons instead.

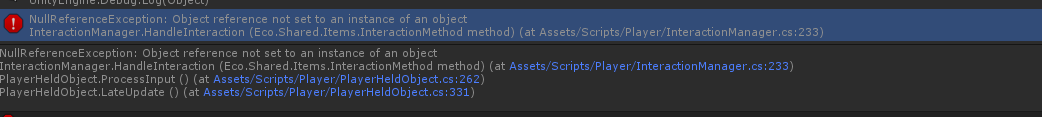

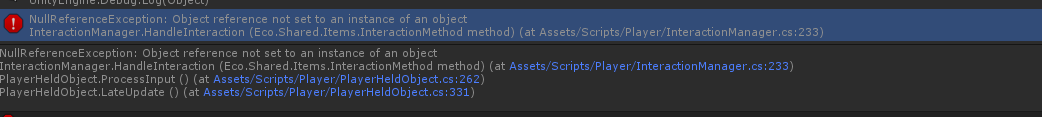

- [x] Got this client exception at one point not sure when:

| 1.0 | Hammer Feedback - - [x] Wasnt able to drop this Copper Ore block while holding hammer, probably because it has no forms

- [x] Holding shift displayed the hammer UI off screen. Need to fix anchors for various resolutions, and clamp the ui

- [ ] Selection has a number of problems still. It needs to work off a projection, so it can place second selection even in midair. IE, once you start drawing a line it should always display that line no matter where you're looking.

- [x] Popup text should say how many you need. 'Not enough materials (5 needed)'

- [x] After placing a block, there's a delay before you see it. Notice in the gif how it blinks away for a second. Prediction should be handling this.

- [x] Text for placing first point in a shape should be more descriptive. Ie: 'Place first floor corner' or 'Place line starting position'

- [x] 'Material' section needs to be hidden of setup properly

- [x] Text should still have controls for rotation, Q/R

- [x] Clicking these brought up the hammer selection dialog, which is good, but they also stayed highlighted

Perhaps shift should be on the 'point' and 'ladder' icons instead.

- [x] Got this client exception at one point not sure when:

| priority | hammer feedback wasnt able to drop this copper ore block while holding hammer probably because it has no forms holding shift displayed the hammer ui off screen need to fix anchors for various resolutions and clamp the ui selection has a number of problems still it needs to work off a projection so it can place second selection even in midair ie once you start drawing a line it should always display that line no matter where you re looking popup text should say how many you need not enough materials needed after placing a block there s a delay before you see it notice in the gif how it blinks away for a second prediction should be handling this text for placing first point in a shape should be more descriptive ie place first floor corner or place line starting position material section needs to be hidden of setup properly text should still have controls for rotation q r clicking these brought up the hammer selection dialog which is good but they also stayed highlighted perhaps shift should be on the point and ladder icons instead got this client exception at one point not sure when | 1 |

614,486 | 19,184,130,548 | IssuesEvent | 2021-12-04 22:56:26 | MarketSquare/robotframework-browser | https://api.github.com/repos/MarketSquare/robotframework-browser | closed | Document that Get Text keyword also work with <input> and <textarea> elements. | bug priority: medium | Currently `Get Text` documentation mentions that it works with elements containing text, but actually it also works with input and textarea elements. With input and textarea elements keywords returns the value property. We should document this also in the keyword. | 1.0 | Document that Get Text keyword also work with <input> and <textarea> elements. - Currently `Get Text` documentation mentions that it works with elements containing text, but actually it also works with input and textarea elements. With input and textarea elements keywords returns the value property. We should document this also in the keyword. | priority | document that get text keyword also work with and elements currently get text documentation mentions that it works with elements containing text but actually it also works with input and textarea elements with input and textarea elements keywords returns the value property we should document this also in the keyword | 1 |

757,712 | 26,525,958,468 | IssuesEvent | 2023-01-19 08:45:13 | mi6/ic-design-system | https://api.github.com/repos/mi6/ic-design-system | opened | Anchor links on high level tabs within components not working | type: bug 🐛 priority: medium | ## Summary of the bug

You are unable to use a copied link from an anchor nav within the 'Code' or 'Accessibility' tabs to then take you back to that place. It loads the 'Guidance' tab.

## 🪜 How to reproduce

Tell us the steps to reproduce the problem:

1. Go to page: Footer component, 'Accessibility' tab.

2. Go to the heading 'For Assistive Technology'

3. Use the anchor to copy a link to that section (https://design.sis.gov.uk/components/footer#for-assistive-technology)

4. Paste it back into browser, it loads the 'Guidance' tab.

## 🧐 Expected behaviour

The link should take you to https://design.sis.gov.uk/components/footer#for-assistive-technology

| 1.0 | Anchor links on high level tabs within components not working - ## Summary of the bug

You are unable to use a copied link from an anchor nav within the 'Code' or 'Accessibility' tabs to then take you back to that place. It loads the 'Guidance' tab.

## 🪜 How to reproduce

Tell us the steps to reproduce the problem:

1. Go to page: Footer component, 'Accessibility' tab.

2. Go to the heading 'For Assistive Technology'

3. Use the anchor to copy a link to that section (https://design.sis.gov.uk/components/footer#for-assistive-technology)

4. Paste it back into browser, it loads the 'Guidance' tab.

## 🧐 Expected behaviour

The link should take you to https://design.sis.gov.uk/components/footer#for-assistive-technology

| priority | anchor links on high level tabs within components not working summary of the bug you are unable to use a copied link from an anchor nav within the code or accessibility tabs to then take you back to that place it loads the guidance tab 🪜 how to reproduce tell us the steps to reproduce the problem go to page footer component accessibility tab go to the heading for assistive technology use the anchor to copy a link to that section paste it back into browser it loads the guidance tab 🧐 expected behaviour the link should take you to | 1 |

789,077 | 27,777,672,912 | IssuesEvent | 2023-03-16 18:24:48 | impactMarket/app | https://api.github.com/repos/impactMarket/app | closed | [community search] searching when not on the first page, breaks the search | priority-2: medium type: bug | "you need to be in page 1. For example, if you search for communities in Venezuela and you go to page 3. Then you decide to clear search and search for Indonesia. But you were not on page 3 (when searching for Venezuela) so it won´t show you the results for Indonesia. You need to go back to Venezuela, puts it on page 1, and then it will show the Indonesia communities" - Catarina | 1.0 | [community search] searching when not on the first page, breaks the search - "you need to be in page 1. For example, if you search for communities in Venezuela and you go to page 3. Then you decide to clear search and search for Indonesia. But you were not on page 3 (when searching for Venezuela) so it won´t show you the results for Indonesia. You need to go back to Venezuela, puts it on page 1, and then it will show the Indonesia communities" - Catarina | priority | searching when not on the first page breaks the search you need to be in page for example if you search for communities in venezuela and you go to page then you decide to clear search and search for indonesia but you were not on page when searching for venezuela so it won´t show you the results for indonesia you need to go back to venezuela puts it on page and then it will show the indonesia communities catarina | 1 |

457,160 | 13,152,658,146 | IssuesEvent | 2020-08-09 23:36:39 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | Make items in long-press menu of DeckPicker more intuitively accessible | Accepted Enhancement Priority-Medium Stale | Originally reported on Google Code with ID 977

```

A lot of users can not find the "delete deck" and "rename deck" actions, because they

don't have the idea to long-press in the deck picker (and I agree it is not very intuitive).

How about putting these actions in the "More" menu of the study options too?

```

Reported by `nicolas.raoul` on 2012-01-30 08:59:49

| 1.0 | Make items in long-press menu of DeckPicker more intuitively accessible - Originally reported on Google Code with ID 977

```

A lot of users can not find the "delete deck" and "rename deck" actions, because they

don't have the idea to long-press in the deck picker (and I agree it is not very intuitive).

How about putting these actions in the "More" menu of the study options too?

```

Reported by `nicolas.raoul` on 2012-01-30 08:59:49

| priority | make items in long press menu of deckpicker more intuitively accessible originally reported on google code with id a lot of users can not find the delete deck and rename deck actions because they don t have the idea to long press in the deck picker and i agree it is not very intuitive how about putting these actions in the more menu of the study options too reported by nicolas raoul on | 1 |

66,573 | 3,255,912,568 | IssuesEvent | 2015-10-20 11:10:29 | awesome-raccoons/gqt | https://api.github.com/repos/awesome-raccoons/gqt | opened | Keyboard shortcuts require shift key to be pressed | bug medium priority | Keyboard shortcuts were added in #46, but they require the shift key to be pressed, e.g. Ctrl+Shift+N instead of Ctrl-N. | 1.0 | Keyboard shortcuts require shift key to be pressed - Keyboard shortcuts were added in #46, but they require the shift key to be pressed, e.g. Ctrl+Shift+N instead of Ctrl-N. | priority | keyboard shortcuts require shift key to be pressed keyboard shortcuts were added in but they require the shift key to be pressed e g ctrl shift n instead of ctrl n | 1 |

443,025 | 12,758,167,397 | IssuesEvent | 2020-06-29 01:10:57 | minio/minio | https://api.github.com/repos/minio/minio | closed | minio crash when accessed from All-in-One WP Migration tool | community priority: medium | ## Expected Behavior

I am trying to use the All-in-One WP Migration tool S3 client plugin to back up wordpress web sites to minio.

## Current Behavior

When I attempt to backup to minio, the plugin reports curl error 52 "nothing" returned, and the minio server logs:

```

http: panic serving 192.168.1.1:60476: runtime error: invalid memory address or nil pointer dereference","source":["github.com/minio/minio@/cmd/server-main.go:453:cmd.serverMain.func2()

goroutine 262 [running]:","source":["github.com/minio/minio@/cmd/server-main.go:453:cmd.serverMain.func2()

"net/http.(*conn).serve.func1(0xc000936000)","source":["github.com/minio/minio@/cmd/server-main.go:453:cmd.serverMain.func2()

...

etc.

```

Taking a packet trace shows that plugin issues three requests in quick succession, which are as follows. Only the third gets a response.

```

HEAD / HTTP/1.1

Host: s3.elided.uk

Accept: */*

User-Agent: All-in-One WP Migration

x-amz-date: 20200623T152202Z

x-amz-content-sha256: ...elided...

Authorization: AWS4-HMAC-SHA256 Credential=unbind-trigger-bail/20200623/oracle/s3/aws4_request,SignedHeaders=host;user-agent;x-amz-content-sha256;x-amz-date,Signature=...elided...

```

```

PUT / HTTP/1.1

Host: s3.elided.uk

Accept: */*

User-Agent: All-in-One WP Migration

Content-Type: application/xml

x-amz-date: 20200623T152202Z

x-amz-content-sha256: ...elided...

Authorization: AWS4-HMAC-SHA256 Credential=unbind-trigger-bail/20200623/oracle/s3/aws4_request,SignedHeaders=content-type;host;user-agent;x-amz-content-sha256;x-amz-date,Signature=...elided...

Content-Length: 102

<CreateBucketConfiguration><LocationConstraint>oracle</LocationConstraint></CreateBucketConfiguration>

```

```

GET / HTTP/1.1

Host: s3.elided.uk

Accept: */*

User-Agent: All-in-One WP Migration

x-amz-date: 20200623T152202Z

x-amz-content-sha256: ...elided...

Authorization: AWS4-HMAC-SHA256 Credential=unbind-trigger-bail/20200623/oracle/s3/aws4_request,SignedHeaders=host;user-agent;x-amz-content-sha256;x-amz-date,Signature=...elided...

HTTP/1.1 200 OK

Accept-Ranges: bytes

Content-Length: 461

Content-Security-Policy: block-all-mixed-content

Content-Type: application/xml

Server: MinIO/RELEASE.2020-06-22T03-12-50Z

Vary: Origin

X-Amz-Bucket-Region: oracle

X-Amz-Request-Id: 161B35815F87D1A1

X-Xss-Protection: 1; mode=block

Date: Tue, 23 Jun 2020 15:22:02 GMT

<?xml version="1.0" encoding="UTF-8"?>

<ListAllMyBucketsResult xmlns="http://s3.amazonaws.com/doc/2006-03-01/"><Owner><ID>0...elided...</ID><DisplayName></DisplayName></Owner><Buckets><Bucket><Name>leek12-com</Name><CreationDate>2020-06-23T14:19:31.497Z</CreationDate></Bucket><Bucket><Name>migrate</Name><CreationDate>2020-06-23T13:39:06.594Z</CreationDate></Bucket></Buckets></ListAllMyBucketsResult>

```

## Steps to Reproduce (for bugs)

I can reproduce using the mentioned plugin, clicking on update in the control panel causes the error. I have not yet been able to replicate using something like `curl` unfortunately.

## Your Environment

* Version used: RELEASE.2020-06-22T03-12-50Z

* Environment name and version: standalone

* Server type and version: Oracle Enterprise Linux (Oracle cloud server)

* Operating System and version: Linux ns2 4.14.35-1902.301.1.el7uek.x86_64 #2 SMP Tue Mar 31 16:50:32 PDT 2020 x86_64 x86_64 x86_64 GNU/Linux

| 1.0 | minio crash when accessed from All-in-One WP Migration tool - ## Expected Behavior

I am trying to use the All-in-One WP Migration tool S3 client plugin to back up wordpress web sites to minio.

## Current Behavior

When I attempt to backup to minio, the plugin reports curl error 52 "nothing" returned, and the minio server logs:

```

http: panic serving 192.168.1.1:60476: runtime error: invalid memory address or nil pointer dereference","source":["github.com/minio/minio@/cmd/server-main.go:453:cmd.serverMain.func2()

goroutine 262 [running]:","source":["github.com/minio/minio@/cmd/server-main.go:453:cmd.serverMain.func2()

"net/http.(*conn).serve.func1(0xc000936000)","source":["github.com/minio/minio@/cmd/server-main.go:453:cmd.serverMain.func2()

...

etc.

```

Taking a packet trace shows that plugin issues three requests in quick succession, which are as follows. Only the third gets a response.

```

HEAD / HTTP/1.1

Host: s3.elided.uk

Accept: */*

User-Agent: All-in-One WP Migration

x-amz-date: 20200623T152202Z

x-amz-content-sha256: ...elided...

Authorization: AWS4-HMAC-SHA256 Credential=unbind-trigger-bail/20200623/oracle/s3/aws4_request,SignedHeaders=host;user-agent;x-amz-content-sha256;x-amz-date,Signature=...elided...

```

```

PUT / HTTP/1.1

Host: s3.elided.uk

Accept: */*

User-Agent: All-in-One WP Migration

Content-Type: application/xml

x-amz-date: 20200623T152202Z

x-amz-content-sha256: ...elided...

Authorization: AWS4-HMAC-SHA256 Credential=unbind-trigger-bail/20200623/oracle/s3/aws4_request,SignedHeaders=content-type;host;user-agent;x-amz-content-sha256;x-amz-date,Signature=...elided...

Content-Length: 102

<CreateBucketConfiguration><LocationConstraint>oracle</LocationConstraint></CreateBucketConfiguration>

```

```

GET / HTTP/1.1

Host: s3.elided.uk

Accept: */*

User-Agent: All-in-One WP Migration

x-amz-date: 20200623T152202Z

x-amz-content-sha256: ...elided...

Authorization: AWS4-HMAC-SHA256 Credential=unbind-trigger-bail/20200623/oracle/s3/aws4_request,SignedHeaders=host;user-agent;x-amz-content-sha256;x-amz-date,Signature=...elided...

HTTP/1.1 200 OK

Accept-Ranges: bytes

Content-Length: 461

Content-Security-Policy: block-all-mixed-content

Content-Type: application/xml

Server: MinIO/RELEASE.2020-06-22T03-12-50Z

Vary: Origin

X-Amz-Bucket-Region: oracle

X-Amz-Request-Id: 161B35815F87D1A1

X-Xss-Protection: 1; mode=block

Date: Tue, 23 Jun 2020 15:22:02 GMT

<?xml version="1.0" encoding="UTF-8"?>

<ListAllMyBucketsResult xmlns="http://s3.amazonaws.com/doc/2006-03-01/"><Owner><ID>0...elided...</ID><DisplayName></DisplayName></Owner><Buckets><Bucket><Name>leek12-com</Name><CreationDate>2020-06-23T14:19:31.497Z</CreationDate></Bucket><Bucket><Name>migrate</Name><CreationDate>2020-06-23T13:39:06.594Z</CreationDate></Bucket></Buckets></ListAllMyBucketsResult>

```

## Steps to Reproduce (for bugs)

I can reproduce using the mentioned plugin, clicking on update in the control panel causes the error. I have not yet been able to replicate using something like `curl` unfortunately.

## Your Environment

* Version used: RELEASE.2020-06-22T03-12-50Z

* Environment name and version: standalone

* Server type and version: Oracle Enterprise Linux (Oracle cloud server)

* Operating System and version: Linux ns2 4.14.35-1902.301.1.el7uek.x86_64 #2 SMP Tue Mar 31 16:50:32 PDT 2020 x86_64 x86_64 x86_64 GNU/Linux

| priority | minio crash when accessed from all in one wp migration tool expected behavior i am trying to use the all in one wp migration tool client plugin to back up wordpress web sites to minio current behavior when i attempt to backup to minio the plugin reports curl error nothing returned and the minio server logs http panic serving runtime error invalid memory address or nil pointer dereference source github com minio minio cmd server main go cmd servermain goroutine source github com minio minio cmd server main go cmd servermain net http conn serve source github com minio minio cmd server main go cmd servermain etc taking a packet trace shows that plugin issues three requests in quick succession which are as follows only the third gets a response head http host elided uk accept user agent all in one wp migration x amz date x amz content elided authorization hmac credential unbind trigger bail oracle request signedheaders host user agent x amz content x amz date signature elided put http host elided uk accept user agent all in one wp migration content type application xml x amz date x amz content elided authorization hmac credential unbind trigger bail oracle request signedheaders content type host user agent x amz content x amz date signature elided content length oracle get http host elided uk accept user agent all in one wp migration x amz date x amz content elided authorization hmac credential unbind trigger bail oracle request signedheaders host user agent x amz content x amz date signature elided http ok accept ranges bytes content length content security policy block all mixed content content type application xml server minio release vary origin x amz bucket region oracle x amz request id x xss protection mode block date tue jun gmt listallmybucketsresult xmlns steps to reproduce for bugs i can reproduce using the mentioned plugin clicking on update in the control panel causes the error i have not yet been able to replicate using something like curl unfortunately your environment version used release environment name and version standalone server type and version oracle enterprise linux oracle cloud server operating system and version linux smp tue mar pdt gnu linux | 1 |

386,154 | 11,432,807,212 | IssuesEvent | 2020-02-04 14:41:39 | ooni/backend | https://api.github.com/repos/ooni/backend | closed | Introduce measurement count tables | enhancement ooni/pipeline priority/medium | Analysis scripts and the private API often use count() on measurements.

Investigate introducing tables that contains msm counts or extend the ones named ooexpl* to improve speed. | 1.0 | Introduce measurement count tables - Analysis scripts and the private API often use count() on measurements.

Investigate introducing tables that contains msm counts or extend the ones named ooexpl* to improve speed. | priority | introduce measurement count tables analysis scripts and the private api often use count on measurements investigate introducing tables that contains msm counts or extend the ones named ooexpl to improve speed | 1 |

166,438 | 6,304,691,065 | IssuesEvent | 2017-07-21 16:29:45 | vmware/vic | https://api.github.com/repos/vmware/vic | opened | Incorrect IP address reported by ovftool | kind/bug priority/medium product/ova | Potentially related to:

https://github.com/vmware/vic/issues/4995

Powering on VM: VIC-mike

Task Completed

Received IP address: 172.17.0.1

Completed successfully

172.17.0.1 is definitely not the right address... | 1.0 | Incorrect IP address reported by ovftool - Potentially related to:

https://github.com/vmware/vic/issues/4995

Powering on VM: VIC-mike

Task Completed

Received IP address: 172.17.0.1

Completed successfully

172.17.0.1 is definitely not the right address... | priority | incorrect ip address reported by ovftool potentially related to powering on vm vic mike task completed received ip address completed successfully is definitely not the right address | 1 |

760,403 | 26,638,431,785 | IssuesEvent | 2023-01-25 00:49:23 | gabrielagqueiroz/portifolio | https://api.github.com/repos/gabrielagqueiroz/portifolio | closed | Adicionar meus dados de contato | Priority: Medium Weight:3 Type: Feature | ## Informações

- [ ] Linkedin

- [ ] Email

- [ ] Telefone (Whatsapp/Telegram)

- [ ] Github | 1.0 | Adicionar meus dados de contato - ## Informações

- [ ] Linkedin

- [ ] Email

- [ ] Telefone (Whatsapp/Telegram)

- [ ] Github | priority | adicionar meus dados de contato informações linkedin email telefone whatsapp telegram github | 1 |

248,653 | 7,934,720,918 | IssuesEvent | 2018-07-08 22:38:35 | commercialhaskell/hindent | https://api.github.com/repos/commercialhaskell/hindent | closed | Misaligned comment at top of do-block | component: hindent priority: medium type: bug | ```

long_function x = do

-- bla

let y = z

return z

```

becomes

```

long_function x

-- bla

= do

let y = z

return z

```

Version `hindent 5.2.1`, built from current master.

---

**Update** at v5.2.2, c2ac3e3ce57c834525dc8ec5ca87d5e8d728b69b:

The output is now

```

long_function x

-- bla

= do

let y = z

return z

``` | 1.0 | Misaligned comment at top of do-block - ```

long_function x = do

-- bla

let y = z

return z

```

becomes

```

long_function x

-- bla

= do

let y = z

return z

```

Version `hindent 5.2.1`, built from current master.

---

**Update** at v5.2.2, c2ac3e3ce57c834525dc8ec5ca87d5e8d728b69b:

The output is now

```

long_function x

-- bla

= do

let y = z

return z

``` | priority | misaligned comment at top of do block long function x do bla let y z return z becomes long function x bla do let y z return z version hindent built from current master update at the output is now long function x bla do let y z return z | 1 |

426,037 | 12,366,254,406 | IssuesEvent | 2020-05-18 10:07:58 | sunpy/sunpy | https://api.github.com/repos/sunpy/sunpy | closed | map.draw_rectangle API is inconsistent with submap | Effort Medium Feature Request Hacktoberfest Package Novice Priority High map | submap uses top right and bottom left and draw rectangle uses bottom right width and height. This is stupid.

This is especially stupid as it prevents you from plotting a rectangle in the coordinates of one image over another. | 1.0 | map.draw_rectangle API is inconsistent with submap - submap uses top right and bottom left and draw rectangle uses bottom right width and height. This is stupid.

This is especially stupid as it prevents you from plotting a rectangle in the coordinates of one image over another. | priority | map draw rectangle api is inconsistent with submap submap uses top right and bottom left and draw rectangle uses bottom right width and height this is stupid this is especially stupid as it prevents you from plotting a rectangle in the coordinates of one image over another | 1 |

593,920 | 18,020,204,865 | IssuesEvent | 2021-09-16 18:23:29 | hashicorp/flight | https://api.github.com/repos/hashicorp/flight | closed | Set the value of the data-test-icon attribute to the name of the icon | priority: medium 1.0 | Small thing, but `<svg data-test-icon={{@name}} ...>` might be useful for testing? | 1.0 | Set the value of the data-test-icon attribute to the name of the icon - Small thing, but `<svg data-test-icon={{@name}} ...>` might be useful for testing? | priority | set the value of the data test icon attribute to the name of the icon small thing but might be useful for testing | 1 |

33,237 | 2,763,187,724 | IssuesEvent | 2015-04-29 07:20:34 | less/less.js | https://api.github.com/repos/less/less.js | closed | Latest less.js (2.1.1) behaves async in IE | Browser Bug Medium Priority | Latest less.js (2.1.1) behaves async in IE (ver 11) even when async:false is set explicitly.

The file processed is complex less with multiple @import commands that take about 1 second to load and process on dev machine, during which time IE shows style-free html. Firefox and Chrome behave correctly. Had to revert to 1.7.5 which behaves correctly.

Perhaps related to the recent promises changes? Perhaps setTimeout(function(){}, 0); allows IE to proceed in parallel and partially render the page? | 1.0 | Latest less.js (2.1.1) behaves async in IE - Latest less.js (2.1.1) behaves async in IE (ver 11) even when async:false is set explicitly.

The file processed is complex less with multiple @import commands that take about 1 second to load and process on dev machine, during which time IE shows style-free html. Firefox and Chrome behave correctly. Had to revert to 1.7.5 which behaves correctly.

Perhaps related to the recent promises changes? Perhaps setTimeout(function(){}, 0); allows IE to proceed in parallel and partially render the page? | priority | latest less js behaves async in ie latest less js behaves async in ie ver even when async false is set explicitly the file processed is complex less with multiple import commands that take about second to load and process on dev machine during which time ie shows style free html firefox and chrome behave correctly had to revert to which behaves correctly perhaps related to the recent promises changes perhaps settimeout function allows ie to proceed in parallel and partially render the page | 1 |

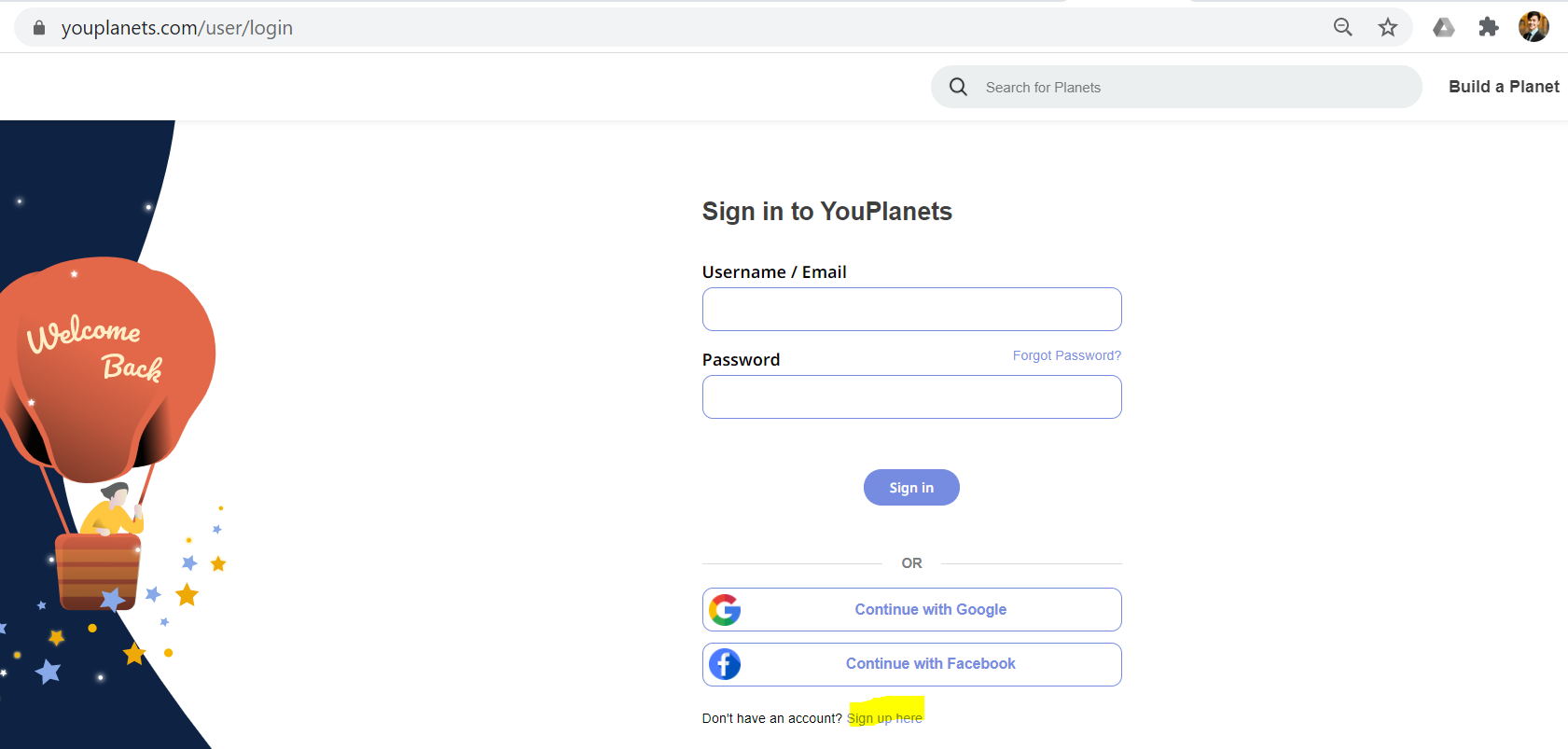

514,861 | 14,945,727,029 | IssuesEvent | 2021-01-26 04:56:28 | Plaxy-Technologies-Inc/YouPlanets-Bug-Report | https://api.github.com/repos/Plaxy-Technologies-Inc/YouPlanets-Bug-Report | closed | SIGN UP button too small - not visible on HomePage | Priority: Medium | First, it's not available on the homepage. So you already have to find it, which hinders new user enrollment.

Then, once you click on "Sign In", the sign up button is all the way down below, in small font. I missed it a couple times.

Sign-up option should be front and center, and very easy to see, because that's how we obtain new clients and new users.

| 1.0 | SIGN UP button too small - not visible on HomePage - First, it's not available on the homepage. So you already have to find it, which hinders new user enrollment.

Then, once you click on "Sign In", the sign up button is all the way down below, in small font. I missed it a couple times.

Sign-up option should be front and center, and very easy to see, because that's how we obtain new clients and new users.

| priority | sign up button too small not visible on homepage first it s not available on the homepage so you already have to find it which hinders new user enrollment then once you click on sign in the sign up button is all the way down below in small font i missed it a couple times sign up option should be front and center and very easy to see because that s how we obtain new clients and new users | 1 |

38,217 | 2,842,252,199 | IssuesEvent | 2015-05-28 08:11:15 | soi-toolkit/soi-toolkit-mule | https://api.github.com/repos/soi-toolkit/soi-toolkit-mule | closed | Introduce Catch/Rollback exception strategies for improved logging and fault handling | AffectsVersion-v0.6.0 BackwardCompatibility-MinorChange Component-tools-templates Milestone-Release0.7.0 Priority-Medium Type-Review | Original [issue 359](https://code.google.com/p/soi-toolkit/issues/detail?id=359) created by soi-toolkit on 2013-11-09T09:17:16.000Z:

The current ServiceExceptionStrategy-implementation (generated at the bottom of flows):

<custom-exception-strategy class="org.soitoolkit.commons.mule.error.ServiceExceptionStrategy"/>

lacks features:

1. Control over retry-handling: for which kind of exceptions should processing be retried/aborted?

Note: retry-handling currently falls back on individual transports, typically using JMS-inbound with retry-parameters.

2. Access to MuleMessage when exceptions occur: needed for logging error-context like message-headers, specifically correlationId for a flow.

Note: in current ServiceExceptionStrategy the MuleMessage is not available in all cases, like when a TransformerException occurs. | 1.0 | Introduce Catch/Rollback exception strategies for improved logging and fault handling - Original [issue 359](https://code.google.com/p/soi-toolkit/issues/detail?id=359) created by soi-toolkit on 2013-11-09T09:17:16.000Z:

The current ServiceExceptionStrategy-implementation (generated at the bottom of flows):

<custom-exception-strategy class="org.soitoolkit.commons.mule.error.ServiceExceptionStrategy"/>

lacks features:

1. Control over retry-handling: for which kind of exceptions should processing be retried/aborted?

Note: retry-handling currently falls back on individual transports, typically using JMS-inbound with retry-parameters.

2. Access to MuleMessage when exceptions occur: needed for logging error-context like message-headers, specifically correlationId for a flow.

Note: in current ServiceExceptionStrategy the MuleMessage is not available in all cases, like when a TransformerException occurs. | priority | introduce catch rollback exception strategies for improved logging and fault handling original created by soi toolkit on the current serviceexceptionstrategy implementation generated at the bottom of flows lt custom exception strategy class quot org soitoolkit commons mule error serviceexceptionstrategy quot gt lacks features control over retry handling for which kind of exceptions should processing be retried aborted note retry handling currently falls back on individual transports typically using jms inbound with retry parameters access to mulemessage when exceptions occur needed for logging error context like message headers specifically correlationid for a flow note in current serviceexceptionstrategy the mulemessage is not available in all cases like when a transformerexception occurs | 1 |

556,546 | 16,485,586,780 | IssuesEvent | 2021-05-24 17:27:07 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [0.9.3.4 beta release-226]Pollution spread only likes vertical | Category: Gameplay Priority: Medium Regression Type: Bug | Pollution expand seems to only really spread N/S

Brick line is pollution from 1 stockpile after 24 hours.

Reinforced concrete line is pollution from 4 stockpiles after another 24 hours. No change in East/West direction

| 1.0 | [0.9.3.4 beta release-226]Pollution spread only likes vertical - Pollution expand seems to only really spread N/S

Brick line is pollution from 1 stockpile after 24 hours.

Reinforced concrete line is pollution from 4 stockpiles after another 24 hours. No change in East/West direction

| priority | pollution spread only likes vertical pollution expand seems to only really spread n s brick line is pollution from stockpile after hours reinforced concrete line is pollution from stockpiles after another hours no change in east west direction | 1 |

84,126 | 3,654,276,145 | IssuesEvent | 2016-02-17 11:44:38 | brunoais/javadude | https://api.github.com/repos/brunoais/javadude | closed | Annotations - add binding code (for bean-bean binding, swing, swt, etc) | auto-migrated Priority-Medium Project-Annotations Type-Enhancement | ```

add binding code (for bean-bean binding, swing, swt, etc)

```

Original issue reported on code.google.com by `scott%ja...@gtempaccount.com` on 24 Dec 2008 at 10:12 | 1.0 | Annotations - add binding code (for bean-bean binding, swing, swt, etc) - ```

add binding code (for bean-bean binding, swing, swt, etc)

```

Original issue reported on code.google.com by `scott%ja...@gtempaccount.com` on 24 Dec 2008 at 10:12 | priority | annotations add binding code for bean bean binding swing swt etc add binding code for bean bean binding swing swt etc original issue reported on code google com by scott ja gtempaccount com on dec at | 1 |

585,826 | 17,535,692,291 | IssuesEvent | 2021-08-12 06:04:07 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | web admin: SNMP version is prefixed with a useless "v" | Type: Bug Priority: Medium | **Describe the bug**

When you specify a SNMP version for a switch using GUI, you have to choose between 3 values in a dropdown:

- v1

- v2c

- v3

but API call sent doesn't contain the **v** letter and value saved in `switches.conf` too.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a new switch with SNMP Version equals to `v2c`

2. Check value sent by API call: `SNMPVersion: 2c`

3. Check value saved in `switches.conf`: `SNMPVersion=2c`

**Expected behavior**

We should remove `v` letter in dropdown list of GUI.

**Additional context**

Issue seems on API side:

```json

# pfperl-api get -M OPTIONS /api/v1/config/switches | jq .meta.SNMPVersion

{

"allow_custom": false,

"allowed": [

{

"text": "",

"value": ""

},

{

"text": "v1",

"value": "1"

},

{

"text": "v2c",

"value": "2c"

},

{

"text": "v3",

"value": "3"

}

],

"default": null,

"placeholder": "1",

"required": false,

"type": "string"

}

```

| 1.0 | web admin: SNMP version is prefixed with a useless "v" - **Describe the bug**

When you specify a SNMP version for a switch using GUI, you have to choose between 3 values in a dropdown:

- v1

- v2c

- v3

but API call sent doesn't contain the **v** letter and value saved in `switches.conf` too.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a new switch with SNMP Version equals to `v2c`

2. Check value sent by API call: `SNMPVersion: 2c`

3. Check value saved in `switches.conf`: `SNMPVersion=2c`

**Expected behavior**

We should remove `v` letter in dropdown list of GUI.

**Additional context**

Issue seems on API side:

```json

# pfperl-api get -M OPTIONS /api/v1/config/switches | jq .meta.SNMPVersion

{

"allow_custom": false,

"allowed": [

{

"text": "",

"value": ""

},

{

"text": "v1",

"value": "1"

},

{

"text": "v2c",

"value": "2c"

},

{

"text": "v3",

"value": "3"

}

],

"default": null,

"placeholder": "1",

"required": false,

"type": "string"

}

```

| priority | web admin snmp version is prefixed with a useless v describe the bug when you specify a snmp version for a switch using gui you have to choose between values in a dropdown but api call sent doesn t contain the v letter and value saved in switches conf too to reproduce steps to reproduce the behavior create a new switch with snmp version equals to check value sent by api call snmpversion check value saved in switches conf snmpversion expected behavior we should remove v letter in dropdown list of gui additional context issue seems on api side json pfperl api get m options api config switches jq meta snmpversion allow custom false allowed text value text value text value text value default null placeholder required false type string | 1 |

251,701 | 8,025,931,700 | IssuesEvent | 2018-07-27 00:40:35 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Campfire Speed | Medium Priority | talked to Pam on discord about this a little.

Here is a GIF on Charred Tomato

[](https://gyazo.com/d2d07c978be78877770001a47e871927)

The TimeMult is set to 1.0 and everything else is running fine in seconds but anything in CampFire was going pretty fast, half speed basically. | 1.0 | Campfire Speed - talked to Pam on discord about this a little.

Here is a GIF on Charred Tomato

[](https://gyazo.com/d2d07c978be78877770001a47e871927)

The TimeMult is set to 1.0 and everything else is running fine in seconds but anything in CampFire was going pretty fast, half speed basically. | priority | campfire speed talked to pam on discord about this a little here is a gif on charred tomato the timemult is set to and everything else is running fine in seconds but anything in campfire was going pretty fast half speed basically | 1 |

81,253 | 3,588,165,252 | IssuesEvent | 2016-01-30 20:48:27 | marvinlabs/customer-area | https://api.github.com/repos/marvinlabs/customer-area | closed | Duplicate Notifications | enhancement Premium add-ons Priority - medium | 1) If you are the author of a conversation, and a member of the project to which the conversation is owned by, you will get two emails.

Adding $recipient_ids = array_unique($recipient_ids); to line 456 of notifications-addon.class.php resolves this

2) Functions "on_private_post_published" and "on_conversation_started" need a catch to stop an email being sent to author, similar to "on_new_reply_to_conversation" below;

if ( $user_id==$reply_author_id ) continue;

3) New conversation reply fires "on_private_post_published" and "on_new_reply_to_conversation" functions. Without the catch in point 2), the sender will get two emails as well.

| 1.0 | Duplicate Notifications - 1) If you are the author of a conversation, and a member of the project to which the conversation is owned by, you will get two emails.

Adding $recipient_ids = array_unique($recipient_ids); to line 456 of notifications-addon.class.php resolves this

2) Functions "on_private_post_published" and "on_conversation_started" need a catch to stop an email being sent to author, similar to "on_new_reply_to_conversation" below;

if ( $user_id==$reply_author_id ) continue;

3) New conversation reply fires "on_private_post_published" and "on_new_reply_to_conversation" functions. Without the catch in point 2), the sender will get two emails as well.

| priority | duplicate notifications if you are the author of a conversation and a member of the project to which the conversation is owned by you will get two emails adding recipient ids array unique recipient ids to line of notifications addon class php resolves this functions on private post published and on conversation started need a catch to stop an email being sent to author similar to on new reply to conversation below if user id reply author id continue new conversation reply fires on private post published and on new reply to conversation functions without the catch in point the sender will get two emails as well | 1 |

635,178 | 20,381,168,615 | IssuesEvent | 2022-02-21 22:04:31 | GDSCUTM-CommunityProjects/UTimeManager | https://api.github.com/repos/GDSCUTM-CommunityProjects/UTimeManager | closed | Login & Register (Backend) | Backend: Enhancement Priority: Medium | As a user, I want to log in so that I can access all my tasks for the week

Sub Tasks

- [x] Upon registration, data should be saved on the database (email, password, etc.)

- [ ] Upon registration, user information should be sanitized

Acceptance Criteria

- [x] Upon a successful login a token should be returned back to the user (including correct status codes, payload returns, etc.)

- [x] Upon a failed login the correct status code along with any payloads should be returned

Optional (Only done with the frontend)

- [ ] Emailing a user their token to activate their account | 1.0 | Login & Register (Backend) - As a user, I want to log in so that I can access all my tasks for the week

Sub Tasks

- [x] Upon registration, data should be saved on the database (email, password, etc.)

- [ ] Upon registration, user information should be sanitized

Acceptance Criteria

- [x] Upon a successful login a token should be returned back to the user (including correct status codes, payload returns, etc.)

- [x] Upon a failed login the correct status code along with any payloads should be returned

Optional (Only done with the frontend)

- [ ] Emailing a user their token to activate their account | priority | login register backend as a user i want to log in so that i can access all my tasks for the week sub tasks upon registration data should be saved on the database email password etc upon registration user information should be sanitized acceptance criteria upon a successful login a token should be returned back to the user including correct status codes payload returns etc upon a failed login the correct status code along with any payloads should be returned optional only done with the frontend emailing a user their token to activate their account | 1 |

146,649 | 5,625,618,242 | IssuesEvent | 2017-04-04 19:50:32 | phetsims/unit-rates | https://api.github.com/repos/phetsims/unit-rates | opened | Create sim primer | priority:3-medium | Tracking sim primer creation in this issue so that #1 can be closed.

Target Deadlines

- [ ] Script drafted by 4/14/17

- [ ] Script reviewed by 4/28/17

- [ ] First recording by 5/12/17

- [ ] Primer live by 6/1/17 | 1.0 | Create sim primer - Tracking sim primer creation in this issue so that #1 can be closed.

Target Deadlines

- [ ] Script drafted by 4/14/17

- [ ] Script reviewed by 4/28/17

- [ ] First recording by 5/12/17

- [ ] Primer live by 6/1/17 | priority | create sim primer tracking sim primer creation in this issue so that can be closed target deadlines script drafted by script reviewed by first recording by primer live by | 1 |

5,380 | 2,575,046,691 | IssuesEvent | 2015-02-11 20:33:24 | javalite/activejdbc | https://api.github.com/repos/javalite/activejdbc | closed | Add Expectation.shouldContain(String) | enhancement imported Priority-Medium | _Original author: ipolevoy@gmail.com (July 28, 2011 23:25:21)_

so as not to write things like

a(myString.contains("hello")).shouldBeTrue();

better syntax:

a(myString).shouldContain("hello");

_Original issue: http://code.google.com/p/activejdbc/issues/detail?id=100_ | 1.0 | Add Expectation.shouldContain(String) - _Original author: ipolevoy@gmail.com (July 28, 2011 23:25:21)_

so as not to write things like

a(myString.contains("hello")).shouldBeTrue();

better syntax:

a(myString).shouldContain("hello");

_Original issue: http://code.google.com/p/activejdbc/issues/detail?id=100_ | priority | add expectation shouldcontain string original author ipolevoy gmail com july so as not to write things like a mystring contains quot hello quot shouldbetrue better syntax a mystring shouldcontain quot hello quot original issue | 1 |

591,650 | 17,857,594,420 | IssuesEvent | 2021-09-05 10:41:09 | GIST-Petition-Site-Project/GIST-petition-web | https://api.github.com/repos/GIST-Petition-Site-Project/GIST-petition-web | opened | 페이지 이동 시 스크롤 조정 | Type: Feature/UI Type: Feature/Function Status: To Do Priority: Medium | ## Feature description

<li> 페이지를 이동할 때, 스크롤은 그대로여서 페이지를 이동할 때마다 스크롤을 맨 위로 조정함.

<li> 첫 페이지에서 메인 헤더의 g-talk g-talk 로고를 클릭하면 reload하게 만듦

<li> media query 적용한 메인 메뉴 심볼?에 마우스 커서를 올려도 반응이 없어서 cursor: pointer 적용함.

### Use cases

## Benefits

For whom and why.

## Requirements

## Links / references

| 1.0 | 페이지 이동 시 스크롤 조정 - ## Feature description

<li> 페이지를 이동할 때, 스크롤은 그대로여서 페이지를 이동할 때마다 스크롤을 맨 위로 조정함.

<li> 첫 페이지에서 메인 헤더의 g-talk g-talk 로고를 클릭하면 reload하게 만듦

<li> media query 적용한 메인 메뉴 심볼?에 마우스 커서를 올려도 반응이 없어서 cursor: pointer 적용함.

### Use cases

## Benefits

For whom and why.

## Requirements

## Links / references

| priority | 페이지 이동 시 스크롤 조정 feature description 페이지를 이동할 때 스크롤은 그대로여서 페이지를 이동할 때마다 스크롤을 맨 위로 조정함 첫 페이지에서 메인 헤더의 g talk g talk 로고를 클릭하면 reload하게 만듦 media query 적용한 메인 메뉴 심볼 에 마우스 커서를 올려도 반응이 없어서 cursor pointer 적용함 use cases benefits for whom and why requirements links references | 1 |

594,022 | 18,022,109,541 | IssuesEvent | 2021-09-16 20:54:18 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | [Windows] Right click in task bar is gone | bug windows general priority 2: medium | 1. Install windows build and run it

2. Go to task bar -> show hidden icons

3. Right click the Status icon

**Actual result:** context menu is gone

**Expected result:** right click on Status icon opens context menu with applicable actions (right click -> quit app for example)

<img width="1552" alt="Screenshot 2021-09-10 at 10 51 05" src="https://user-images.githubusercontent.com/82375995/132820722-2bd30d9d-000a-4436-9c37-42ea0bc75964.png">

https://user-images.githubusercontent.com/82375995/132820690-1acdd498-64e3-41f0-ae65-c5d9ede4591a.mov

| 1.0 | [Windows] Right click in task bar is gone - 1. Install windows build and run it

2. Go to task bar -> show hidden icons

3. Right click the Status icon

**Actual result:** context menu is gone

**Expected result:** right click on Status icon opens context menu with applicable actions (right click -> quit app for example)

<img width="1552" alt="Screenshot 2021-09-10 at 10 51 05" src="https://user-images.githubusercontent.com/82375995/132820722-2bd30d9d-000a-4436-9c37-42ea0bc75964.png">

https://user-images.githubusercontent.com/82375995/132820690-1acdd498-64e3-41f0-ae65-c5d9ede4591a.mov

| priority | right click in task bar is gone install windows build and run it go to task bar show hidden icons right click the status icon actual result context menu is gone expected result right click on status icon opens context menu with applicable actions right click quit app for example img width alt screenshot at src | 1 |

309,535 | 9,476,618,977 | IssuesEvent | 2019-04-19 15:39:53 | CosminNechifor/IKHNAIE | https://api.github.com/repos/CosminNechifor/IKHNAIE | closed | Manager should be the only contract that can modify the state of other contracts. | Medium Priority | ## Manager contract logic and implementation

The ``Manager`` contract should be the only contract that can modify the state of the deployed ``Component``s and ``Registry``.

## Needs to be taken care of:

- When a **ChildComponent** is removed from a **ParentComponent** → manager should do the following changes:

- ~~The **ParentComponent** should change it's state into ``Broken``.~~ **REMOVED BECAUSE OF COUNTER EXAMPLE:** If we take the windows out of a car, it would not be considered broken but it should show that it's not as in the **original state**.

- **ChildComponent** should have the ``address(0)`` as parent.

- **ChildComponent** is flaged as broken:

- ~~**ParentComponent** should become ``Broken`` as well till we replace the missing component. (The propagation will be done till we reach the top level ``Component``)~~ Not sure if the propagation should go to the top. The Root component could still work normally. Example: if you take the radio out of the car, that doesn't make the car broken. Instead the logic should change a little bit.

- When a **ParentComponent** is flaged as broken only the parent component should become broken.

| 1.0 | Manager should be the only contract that can modify the state of other contracts. - ## Manager contract logic and implementation

The ``Manager`` contract should be the only contract that can modify the state of the deployed ``Component``s and ``Registry``.

## Needs to be taken care of:

- When a **ChildComponent** is removed from a **ParentComponent** → manager should do the following changes:

- ~~The **ParentComponent** should change it's state into ``Broken``.~~ **REMOVED BECAUSE OF COUNTER EXAMPLE:** If we take the windows out of a car, it would not be considered broken but it should show that it's not as in the **original state**.

- **ChildComponent** should have the ``address(0)`` as parent.

- **ChildComponent** is flaged as broken:

- ~~**ParentComponent** should become ``Broken`` as well till we replace the missing component. (The propagation will be done till we reach the top level ``Component``)~~ Not sure if the propagation should go to the top. The Root component could still work normally. Example: if you take the radio out of the car, that doesn't make the car broken. Instead the logic should change a little bit.

- When a **ParentComponent** is flaged as broken only the parent component should become broken.

| priority | manager should be the only contract that can modify the state of other contracts manager contract logic and implementation the manager contract should be the only contract that can modify the state of the deployed component s and registry needs to be taken care of when a childcomponent is removed from a parentcomponent rarr manager should do the following changes the parentcomponent should change it s state into broken removed because of counter example if we take the windows out of a car it would not be considered broken but it should show that it s not as in the original state childcomponent should have the address as parent childcomponent is flaged as broken parentcomponent should become broken as well till we replace the missing component the propagation will be done till we reach the top level component not sure if the propagation should go to the top the root component could still work normally example if you take the radio out of the car that doesn t make the car broken instead the logic should change a little bit when a parentcomponent is flaged as broken only the parent component should become broken | 1 |

416,019 | 12,138,458,525 | IssuesEvent | 2020-04-23 17:16:30 | AbsaOSS/enceladus | https://api.github.com/repos/AbsaOSS/enceladus | closed | Make sure 'Source' and 'Raw' checkpoints are present when Standardization starts | Standardization feature priority: medium | ## Background

We need to start more strict validations of incoming _INFO files.

## Feature

Make sure 'Source' and 'Raw' checkpoints are present when Standardization starts.

## Additional context

It might require changes to Atum to allow clients access to checkpoints (instance of `ControlMeasure`)

This is related to #1186 | 1.0 | Make sure 'Source' and 'Raw' checkpoints are present when Standardization starts - ## Background

We need to start more strict validations of incoming _INFO files.

## Feature

Make sure 'Source' and 'Raw' checkpoints are present when Standardization starts.

## Additional context

It might require changes to Atum to allow clients access to checkpoints (instance of `ControlMeasure`)

This is related to #1186 | priority | make sure source and raw checkpoints are present when standardization starts background we need to start more strict validations of incoming info files feature make sure source and raw checkpoints are present when standardization starts additional context it might require changes to atum to allow clients access to checkpoints instance of controlmeasure this is related to | 1 |

743,058 | 25,885,538,588 | IssuesEvent | 2022-12-14 14:19:10 | ncssar/radiolog | https://api.github.com/repos/ncssar/radiolog | closed | pywintypes error 31 (ShellExecute error) when printing | bug Priority:Medium | From the transcript:

```

183754:PRINT radio log

183754:teamFilterList=['']

183754:generating radio log pdf: C:\Users\SAR 425\Documents\RadioLog Backups\Testing_2022_12_11_183332\Testing_2022_12_11_183332_OP1.pdf

183754:length:8

183754:valid logo file C:\Users\SAR 425\RadioLog\.config\radiolog_logo.jpg

183754:Page number:1

183754:Height:43.199999999999996

183754:Pagesize:(792.0, 612.0)

183755:done drawing printLogHeaderFooter canvas

183755:end of printLogHeaderFooter

Uncaught exception

Traceback (most recent call last):

File "radiolog.py", line 5084, in accept

File "radiolog.py", line 2611, in printLog

pywintypes.error: (31, 'ShellExecute', 'A device attached to the system is not functioning.')

183819:PRINT radio log

183819:teamFilterList=['']

183819:generating radio log pdf: C:\Users\SAR 425\Documents\RadioLog Backups\Testing_2022_12_11_183332\Testing_2022_12_11_183332_OP1.pdf

183819:length:8

183819:valid logo file C:\Users\SAR 425\RadioLog\.config\radiolog_logo.jpg

183819:Page number:1

183819:Height:43.199999999999996

183819:Pagesize:(792.0, 612.0)

183819:done drawing printLogHeaderFooter canvas

183819:end of printLogHeaderFooter

Uncaught exception

Traceback (most recent call last):

File "radiolog.py", line 5084, in accept

File "radiolog.py", line 2611, in printLog

pywintypes.error: (31, 'ShellExecute', 'A device attached to the system is not functioning.')

183822:PRINT team radio logs

183822:teamFilterList=['TeamAlpha', 'TeamBravo']

183822:generating radio log pdf: C:\Users\SAR 425\Documents\RadioLog Backups\Testing_2022_12_11_183332\Testing_2022_12_11_183332_teams_OP1.pdf

183822:length:6

183822:length:3

183822:valid logo file C:\Users\SAR 425\RadioLog\.config\radiolog_logo.jpg

183822:Page number:1

183822:Height:43.199999999999996

183822:Pagesize:(792.0, 612.0)

183822:done drawing printLogHeaderFooter canvas

183822:end of printLogHeaderFooter

Uncaught exception

Traceback (most recent call last):

File "radiolog.py", line 5087, in accept

File "radiolog.py", line 2619, in printTeamLogs

File "radiolog.py", line 2611, in printLog

pywintypes.error: (31, 'ShellExecute', 'A device attached to the system is not functioning.')

183824:PRINT clue log

183824:appending: ['', 'Radio Log Begins: Sun Dec 11, 2022', '', '1833', '', '', '', '', '']

183824:Nothing to print for specified operational period 1

```

This was reported by @RadiosPRN.

A quick google of that error shows that a few folks determined that the problem was that no pdf reader application was installed, and/or it was not set as the default application for opening pdf files: https://stackoverflow.com/questions/36022695

Sure enough, if I uninstall Acrobat Reader, then printing from radiolog shows this:

```

175633:PRINT radio log

175633:teamFilterList=['']

175633:generating radio log pdf: C:\Users\caver\RadioLog\New_Incident_2022_12_11_161621\New_Incident_2022_12_11_161621_OP1.pdf

175633:length:8

175633:valid logo file C:\Users\caver\RadioLog\.config\radiolog_logo.jpg

175633:Page number:1

175633:Height:43.199999999999996

175633:Pagesize:(792.0, 612.0)

175633:done drawing printLogHeaderFooter canvas

175633:end of printLogHeaderFooter

Traceback (most recent call last):

File "C:\Users\caver\Documents\GitHub\radiolog\radiolog.py", line 5086, in accept

self.parent.printLog(opPeriod)

File "C:\Users\caver\Documents\GitHub\radiolog\radiolog.py", line 2613, in printLog

win32api.ShellExecute(0,"print",pdfName,'/d:"%s"' % win32print.GetDefaultPrinter(),".",0)

pywintypes.error: (31, 'ShellExecute', 'A device attached to the system is not functioning.')

```

The error syntax is a bit different - not sure why - but the underlying error 31 seems to be the same.

This does match the behavior of what @RadiosPRN reported - you can only save one pdf file at a time, because the print failure for any given doc causes the subsequent pdf saves to be skipped.

So this issue could have two fixes:

1) find a way to print without needing a pdf reader to be installed (and set as the default application for pdfs)

2) if a print fails, continue with the save of the other requested pdfs | 1.0 | pywintypes error 31 (ShellExecute error) when printing - From the transcript:

```

183754:PRINT radio log

183754:teamFilterList=['']

183754:generating radio log pdf: C:\Users\SAR 425\Documents\RadioLog Backups\Testing_2022_12_11_183332\Testing_2022_12_11_183332_OP1.pdf

183754:length:8

183754:valid logo file C:\Users\SAR 425\RadioLog\.config\radiolog_logo.jpg

183754:Page number:1

183754:Height:43.199999999999996

183754:Pagesize:(792.0, 612.0)

183755:done drawing printLogHeaderFooter canvas

183755:end of printLogHeaderFooter

Uncaught exception

Traceback (most recent call last):

File "radiolog.py", line 5084, in accept

File "radiolog.py", line 2611, in printLog

pywintypes.error: (31, 'ShellExecute', 'A device attached to the system is not functioning.')

183819:PRINT radio log

183819:teamFilterList=['']

183819:generating radio log pdf: C:\Users\SAR 425\Documents\RadioLog Backups\Testing_2022_12_11_183332\Testing_2022_12_11_183332_OP1.pdf

183819:length:8

183819:valid logo file C:\Users\SAR 425\RadioLog\.config\radiolog_logo.jpg

183819:Page number:1

183819:Height:43.199999999999996

183819:Pagesize:(792.0, 612.0)

183819:done drawing printLogHeaderFooter canvas

183819:end of printLogHeaderFooter

Uncaught exception

Traceback (most recent call last):

File "radiolog.py", line 5084, in accept

File "radiolog.py", line 2611, in printLog

pywintypes.error: (31, 'ShellExecute', 'A device attached to the system is not functioning.')

183822:PRINT team radio logs

183822:teamFilterList=['TeamAlpha', 'TeamBravo']

183822:generating radio log pdf: C:\Users\SAR 425\Documents\RadioLog Backups\Testing_2022_12_11_183332\Testing_2022_12_11_183332_teams_OP1.pdf

183822:length:6

183822:length:3

183822:valid logo file C:\Users\SAR 425\RadioLog\.config\radiolog_logo.jpg

183822:Page number:1

183822:Height:43.199999999999996

183822:Pagesize:(792.0, 612.0)

183822:done drawing printLogHeaderFooter canvas

183822:end of printLogHeaderFooter

Uncaught exception

Traceback (most recent call last):

File "radiolog.py", line 5087, in accept

File "radiolog.py", line 2619, in printTeamLogs

File "radiolog.py", line 2611, in printLog

pywintypes.error: (31, 'ShellExecute', 'A device attached to the system is not functioning.')

183824:PRINT clue log

183824:appending: ['', 'Radio Log Begins: Sun Dec 11, 2022', '', '1833', '', '', '', '', '']

183824:Nothing to print for specified operational period 1

```

This was reported by @RadiosPRN.

A quick google of that error shows that a few folks determined that the problem was that no pdf reader application was installed, and/or it was not set as the default application for opening pdf files: https://stackoverflow.com/questions/36022695

Sure enough, if I uninstall Acrobat Reader, then printing from radiolog shows this:

```

175633:PRINT radio log

175633:teamFilterList=['']

175633:generating radio log pdf: C:\Users\caver\RadioLog\New_Incident_2022_12_11_161621\New_Incident_2022_12_11_161621_OP1.pdf

175633:length:8

175633:valid logo file C:\Users\caver\RadioLog\.config\radiolog_logo.jpg

175633:Page number:1

175633:Height:43.199999999999996

175633:Pagesize:(792.0, 612.0)

175633:done drawing printLogHeaderFooter canvas

175633:end of printLogHeaderFooter

Traceback (most recent call last):

File "C:\Users\caver\Documents\GitHub\radiolog\radiolog.py", line 5086, in accept

self.parent.printLog(opPeriod)

File "C:\Users\caver\Documents\GitHub\radiolog\radiolog.py", line 2613, in printLog

win32api.ShellExecute(0,"print",pdfName,'/d:"%s"' % win32print.GetDefaultPrinter(),".",0)

pywintypes.error: (31, 'ShellExecute', 'A device attached to the system is not functioning.')

```

The error syntax is a bit different - not sure why - but the underlying error 31 seems to be the same.

This does match the behavior of what @RadiosPRN reported - you can only save one pdf file at a time, because the print failure for any given doc causes the subsequent pdf saves to be skipped.

So this issue could have two fixes:

1) find a way to print without needing a pdf reader to be installed (and set as the default application for pdfs)

2) if a print fails, continue with the save of the other requested pdfs | priority | pywintypes error shellexecute error when printing from the transcript print radio log teamfilterlist generating radio log pdf c users sar documents radiolog backups testing testing pdf length valid logo file c users sar radiolog config radiolog logo jpg page number height pagesize done drawing printlogheaderfooter canvas end of printlogheaderfooter uncaught exception traceback most recent call last file radiolog py line in accept file radiolog py line in printlog pywintypes error shellexecute a device attached to the system is not functioning print radio log teamfilterlist generating radio log pdf c users sar documents radiolog backups testing testing pdf length valid logo file c users sar radiolog config radiolog logo jpg page number height pagesize done drawing printlogheaderfooter canvas end of printlogheaderfooter uncaught exception traceback most recent call last file radiolog py line in accept file radiolog py line in printlog pywintypes error shellexecute a device attached to the system is not functioning print team radio logs teamfilterlist generating radio log pdf c users sar documents radiolog backups testing testing teams pdf length length valid logo file c users sar radiolog config radiolog logo jpg page number height pagesize done drawing printlogheaderfooter canvas end of printlogheaderfooter uncaught exception traceback most recent call last file radiolog py line in accept file radiolog py line in printteamlogs file radiolog py line in printlog pywintypes error shellexecute a device attached to the system is not functioning print clue log appending nothing to print for specified operational period this was reported by radiosprn a quick google of that error shows that a few folks determined that the problem was that no pdf reader application was installed and or it was not set as the default application for opening pdf files sure enough if i uninstall acrobat reader then printing from radiolog shows this print radio log teamfilterlist generating radio log pdf c users caver radiolog new incident new incident pdf length valid logo file c users caver radiolog config radiolog logo jpg page number height pagesize done drawing printlogheaderfooter canvas end of printlogheaderfooter traceback most recent call last file c users caver documents github radiolog radiolog py line in accept self parent printlog opperiod file c users caver documents github radiolog radiolog py line in printlog shellexecute print pdfname d s getdefaultprinter pywintypes error shellexecute a device attached to the system is not functioning the error syntax is a bit different not sure why but the underlying error seems to be the same this does match the behavior of what radiosprn reported you can only save one pdf file at a time because the print failure for any given doc causes the subsequent pdf saves to be skipped so this issue could have two fixes find a way to print without needing a pdf reader to be installed and set as the default application for pdfs if a print fails continue with the save of the other requested pdfs | 1 |

252,975 | 8,049,608,058 | IssuesEvent | 2018-08-01 10:39:32 | IBM/watson-assistant-workbench | https://api.github.com/repos/IBM/watson-assistant-workbench | opened | Use python library for parsing TOML config files | Priority: medium discussion | We use TOML config format (https://github.com/toml-lang/toml).

Think about using toml python library for parsing (https://pypi.python.org/pypi/toml). | 1.0 | Use python library for parsing TOML config files - We use TOML config format (https://github.com/toml-lang/toml).

Think about using toml python library for parsing (https://pypi.python.org/pypi/toml). | priority | use python library for parsing toml config files we use toml config format think about using toml python library for parsing | 1 |

52,295 | 3,022,484,097 | IssuesEvent | 2015-07-31 20:38:27 | information-artifact-ontology/IAO | https://api.github.com/repos/information-artifact-ontology/IAO | opened | Bibliographic metadata in IAO | imported Priority-Medium | _From [z_califo...@shiftingbalance.org](https://code.google.com/u/113769195097935438546/) on February 15, 2010 15:27:35_

I need to organize a collection of bibliographic references and I would like to consider whether IAO

ought to be expanded to allow the possibility of using it for that purpose. By "bibliographic," I mean

any kind of human expression that can be cataloged, not just books.

To attempt to invent a new standard for bibliographic metadata is not a good idea, and moreover it

would be a waste of time, since many such standards are in use today. The strategy should be to

represent these metadata standards in IAO. I see three central issues.

(1) Provide a mechanism in IAO which allows users to apply any metadata standard they want. I have a

few ideas about how to work this out which I can share with people in an IAO call.

(2) Realism about ontology. It's not clear whether the commonly used metadata standards describe

works in a way which reflects their most salient aspect from an ontological point of view. I think there

is going to have to be some compromise here, but it won't be too dear for realists.

(3) Making this work by surveying the available digital representations of the various metadata

schemes and modeling them in OWL, so that someone could enter records by hand, in Protege; and

creating parsers for importing records into an ontology en masse from the various catalogs and

indexes.

_Original issue: http://code.google.com/p/information-artifact-ontology/issues/detail?id=77_ | 1.0 | Bibliographic metadata in IAO - _From [z_califo...@shiftingbalance.org](https://code.google.com/u/113769195097935438546/) on February 15, 2010 15:27:35_

I need to organize a collection of bibliographic references and I would like to consider whether IAO

ought to be expanded to allow the possibility of using it for that purpose. By "bibliographic," I mean

any kind of human expression that can be cataloged, not just books.

To attempt to invent a new standard for bibliographic metadata is not a good idea, and moreover it

would be a waste of time, since many such standards are in use today. The strategy should be to

represent these metadata standards in IAO. I see three central issues.

(1) Provide a mechanism in IAO which allows users to apply any metadata standard they want. I have a

few ideas about how to work this out which I can share with people in an IAO call.

(2) Realism about ontology. It's not clear whether the commonly used metadata standards describe

works in a way which reflects their most salient aspect from an ontological point of view. I think there

is going to have to be some compromise here, but it won't be too dear for realists.

(3) Making this work by surveying the available digital representations of the various metadata

schemes and modeling them in OWL, so that someone could enter records by hand, in Protege; and

creating parsers for importing records into an ontology en masse from the various catalogs and

indexes.

_Original issue: http://code.google.com/p/information-artifact-ontology/issues/detail?id=77_ | priority | bibliographic metadata in iao from on february i need to organize a collection of bibliographic references and i would like to consider whether iao ought to be expanded to allow the possibility of using it for that purpose by bibliographic i mean any kind of human expression that can be cataloged not just books to attempt to invent a new standard for bibliographic metadata is not a good idea and moreover it would be a waste of time since many such standards are in use today the strategy should be to represent these metadata standards in iao i see three central issues provide a mechanism in iao which allows users to apply any metadata standard they want i have a few ideas about how to work this out which i can share with people in an iao call realism about ontology it s not clear whether the commonly used metadata standards describe works in a way which reflects their most salient aspect from an ontological point of view i think there is going to have to be some compromise here but it won t be too dear for realists making this work by surveying the available digital representations of the various metadata schemes and modeling them in owl so that someone could enter records by hand in protege and creating parsers for importing records into an ontology en masse from the various catalogs and indexes original issue | 1 |

477,564 | 13,764,587,094 | IssuesEvent | 2020-10-07 12:17:48 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | Group Admin setting - can't remove the group parent | bug priority: medium | **Describe the bug**

If you have assigned the Group Parent and then if you have to remove the group parent from the backend then you can't remove the group parent it's not removing the group parent.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

**Expected behavior**

A clear and concise description of what you expected to happen.

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Support ticket links**

If applicable, add HelpScout link or ticket number where the issue was originally reported.

| 1.0 | Group Admin setting - can't remove the group parent - **Describe the bug**

If you have assigned the Group Parent and then if you have to remove the group parent from the backend then you can't remove the group parent it's not removing the group parent.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

**Expected behavior**

A clear and concise description of what you expected to happen.

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Support ticket links**

If applicable, add HelpScout link or ticket number where the issue was originally reported.

| priority | group admin setting can t remove the group parent describe the bug if you have assigned the group parent and then if you have to remove the group parent from the backend then you can t remove the group parent it s not removing the group parent to reproduce steps to reproduce the behavior go to click on scroll down to see error expected behavior a clear and concise description of what you expected to happen screenshots if applicable add screenshots to help explain your problem support ticket links if applicable add helpscout link or ticket number where the issue was originally reported | 1 |

675,232 | 23,085,442,649 | IssuesEvent | 2022-07-26 10:57:57 | COS301-SE-2022/Office-Booker | https://api.github.com/repos/COS301-SE-2022/Office-Booker | closed | Add email invite system | Priority: Medium | One a guest has been added to the guestlist, the system should automatically send them an email informing them. | 1.0 | Add email invite system - One a guest has been added to the guestlist, the system should automatically send them an email informing them. | priority | add email invite system one a guest has been added to the guestlist the system should automatically send them an email informing them | 1 |

600,501 | 18,298,544,681 | IssuesEvent | 2021-10-05 23:16:32 | cagov/design-system | https://api.github.com/repos/cagov/design-system | closed | Content principle writing: Make your tone conversationa, empathetic, and official | Medium Priority - Must | Write full documentation for the content principle _Make your tone conversationa, empathetic, and official_.

Work will take place in this [Google Doc](https://docs.google.com/document/d/1XflZYzozsFHuyyDX5G0HbygexBwQsxyLiK4wPkH9NLQ/edit?usp=sharing). | 1.0 | Content principle writing: Make your tone conversationa, empathetic, and official - Write full documentation for the content principle _Make your tone conversationa, empathetic, and official_.

Work will take place in this [Google Doc](https://docs.google.com/document/d/1XflZYzozsFHuyyDX5G0HbygexBwQsxyLiK4wPkH9NLQ/edit?usp=sharing). | priority | content principle writing make your tone conversationa empathetic and official write full documentation for the content principle make your tone conversationa empathetic and official work will take place in this | 1 |

587,514 | 17,618,077,513 | IssuesEvent | 2021-08-18 12:20:10 | knative/docs | https://api.github.com/repos/knative/docs | closed | Add documentation for BYO certificate for custom domains | triage/needs-eng-input priority/medium kind/serving | **Describe the change you'd like to see**

- https://github.com/knative/serving/issues/10530 starts BYO certs but no documentation is available yet.

- We need a new doc page or add the procedure in https://knative.dev/docs/developer/serving/services/custom-domains/ | 1.0 | Add documentation for BYO certificate for custom domains - **Describe the change you'd like to see**

- https://github.com/knative/serving/issues/10530 starts BYO certs but no documentation is available yet.