Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

617,764 | 19,404,167,081 | IssuesEvent | 2021-12-19 18:06:24 | codidact/qpixel | https://api.github.com/repos/codidact/qpixel | closed | Preview renders unsupported HTML tags, but final post (correctly) doesn't | area: html/css/js type: bug priority: medium complexity: unassessed | https://meta.codidact.com/posts/284503

https://meta.codidact.com/posts/284505

When composing a post, an unsupported HTML tag (`div` and `kbd` in these reports) was accepted and rendered. However, after submission the post doesn't render it, which is correct because we don't support the tag. This is confusing to users who haven't memorized (or don't look up) which HTML tags we do/don't support.

I know there's at least one other issue about differences between preview and final rendering, though I couldn't find it. We use different libraries in the two cases so differences aren't surprising. If we can't use the same library (or logic) to render the Markdown in both cases, is there anything we can do to provide some feedback when editing? Can we "lint" the post body and indicate if we found something? Maybe, as with missing alt text, that could be something we do when the user clicks the "post" button, so it doesn't have to be a performance drain.

| 1.0 | Preview renders unsupported HTML tags, but final post (correctly) doesn't - https://meta.codidact.com/posts/284503

https://meta.codidact.com/posts/284505

When composing a post, an unsupported HTML tag (`div` and `kbd` in these reports) was accepted and rendered. However, after submission the post doesn't render it, which is correct because we don't support the tag. This is confusing to users who haven't memorized (or don't look up) which HTML tags we do/don't support.

I know there's at least one other issue about differences between preview and final rendering, though I couldn't find it. We use different libraries in the two cases so differences aren't surprising. If we can't use the same library (or logic) to render the Markdown in both cases, is there anything we can do to provide some feedback when editing? Can we "lint" the post body and indicate if we found something? Maybe, as with missing alt text, that could be something we do when the user clicks the "post" button, so it doesn't have to be a performance drain.

| priority | preview renders unsupported html tags but final post correctly doesn t when composing a post an unsupported html tag div and kbd in these reports was accepted and rendered however after submission the post doesn t render it which is correct because we don t support the tag this is confusing to users who haven t memorized or don t look up which html tags we do don t support i know there s at least one other issue about differences between preview and final rendering though i couldn t find it we use different libraries in the two cases so differences aren t surprising if we can t use the same library or logic to render the markdown in both cases is there anything we can do to provide some feedback when editing can we lint the post body and indicate if we found something maybe as with missing alt text that could be something we do when the user clicks the post button so it doesn t have to be a performance drain | 1 |

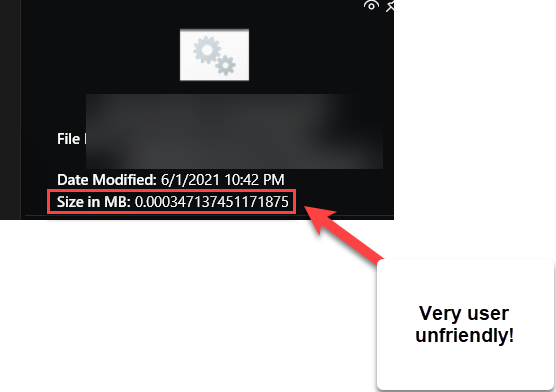

567,309 | 16,854,480,669 | IssuesEvent | 2021-06-21 03:26:56 | adirh3/Fluent-Search | https://api.github.com/repos/adirh3/Fluent-Search | closed | Change size in MB to look cleaner | Medium Priority UI/UX bug | This is no-brainer and standard interface

kilobyte, megabyte, gigabyte, terabyte instead of "Size of MB 0.03891485883838". This looks very hard to read for all users, novice and expert alike.

| 1.0 | Change size in MB to look cleaner - This is no-brainer and standard interface

kilobyte, megabyte, gigabyte, terabyte instead of "Size of MB 0.03891485883838". This looks very hard to read for all users, novice and expert alike.

| priority | change size in mb to look cleaner this is no brainer and standard interface kilobyte megabyte gigabyte terabyte instead of size of mb this looks very hard to read for all users novice and expert alike | 1 |

627,412 | 19,904,337,359 | IssuesEvent | 2022-01-25 11:10:40 | docker-mailserver/docker-mailserver | https://api.github.com/repos/docker-mailserver/docker-mailserver | opened | Can't create email accounts while enabling LDAP | kind/bug meta/needs triage priority/medium | ### Miscellaneous first checks

- [X] I checked that all ports are open and not blocked by my ISP / hosting provider.

- [X] I know that SSL errors are likely the result of a wrong setup on the user side and not caused by DMS itself. I'm confident my setup is correct.

### Affected Component(s)

Mail creation, deletion and listing

### What happened and when does this occur?

```Markdown

While trying to create the email account using the setup.sh; I'm encountering the error below.

Waiting for dovecot to create /var/mail/deol.com/deoltito...

Waiting for dovecot to create /var/mail/deol.com/deoltito...

Waiting for dovecot to create /var/mail/deol.com/deoltito...

Waiting for dovecot to create /var/mail/deol.com/deoltito...

This message goes on until I stops it. However the email account won't be created.

When I try to list the available email accounts, this particular error is seen under it.

==========

# ./setup.sh email list

Fatal: Unknown command 'quota', but plugin quota exists. Try to set mail_plugins=quota

/usr/local/bin/listmailuser: line 15: 1024 * : syntax error: operand expected (error token is "* ")

/usr/local/bin/listmailuser: line 15: 1024 * : syntax error: operand expected (error token is "* ")

* new@deol.com ( / ) [%]

==========

```

### What did you expect to happen?

```Markdown

I believe it has something to with the LDAP integration. When I disable LDAP in the compose file, the email accounts can be created, listed and deleted without any issues.

```

### How do we replicate the issue?

```Markdown

1. Try to create a compose file with LDAP enabled and integrated in it

2. Try creating an email account after that

3. Try to list or delete the email accounts too

...

```

### DMS version

v10.4.0

### What operating system is DMS running on?

Linux

### What instruction set architecture is DMS running on?

x86_64 / AMD64

### What container orchestration tool are you using?

Docker Compose

### docker-compose.yml

```yaml

version: '3.8'

services:

mailserver:

image: docker.io/mailserver/docker-mailserver:latest

container_name: mailserver

hostname: mail

domainname: deol.com

ports:

- "25:25"

- "143:143"

- "587:587"

- "993:993"

volumes:

- ./docker-data/dms/mail-data/:/var/mail/

- ./docker-data/dms/mail-state/:/var/mail-state/

- ./docker-data/dms/mail-logs/:/var/log/mail/

- ./docker-data/dms/config/:/tmp/docker-mailserver/

- /etc/localtime:/etc/localtime:ro

environment:

- ENABLE_SPAMASSASSIN=1

- SPAMASSASSIN_SPAM_TO_INBOX=1

- ENABLE_CLAMAV=1

- ENABLE_FAIL2BAN=1

- ENABLE_POSTGREY=1

- ENABLE_SASLAUTHD=1

- ONE_DIR=1

- DMS_DEBUG=1

- ENABLE_LDAP=1

- LDAP_SERVER_HOST=LDAPNEW # your ldap container/IP/ServerName

- LDAP_SEARCH_BASE=ou=people,dc=ds,dc=domain,dc=com

- LDAP_BIND_DN=cn=admin,dc=ds,dc=domain,dc=com

- LDAP_BIND_PW=

- ENABLE_SASLAUTHD=1

- SASLAUTHD_MECHANISMS=ldap

- SASLAUTHD_LDAP_SERVER=LDAPNEW

- SASLAUTHD_LDAP_BIND_DN=cn=admin,dc=ds,dc=domain,dc=com

- SASLAUTHD_LDAP_PASSWORD=

- SASLAUTHD_LDAP_SEARCH_BASE=ou=people,dc=ds,dc=domain,dc=com

- SASLAUTHD_LDAP_FILTER=(&(objectClass=PostfixBookMailAccount)(uniqueIdentifier=%U))

- POSTMASTER_ADDRESS=postmaster@deol.com

- POSTFIX_MESSAGE_SIZE_LIMIT=100000000

cap_add:

- NET_ADMIN

- SYS_PTRACE

```

### Relevant log output

_No response_

### Other relevant information

_No response_

### What level of experience do you have with Docker and mail servers?

- [ ] I am inexperienced with docker

- [ ] I am inexperienced with mail servers

- [ ] I am uncomfortable with the CLI

### Code of conduct

- [X] I have read this project's [Code of Conduct](https://github.com/docker-mailserver/docker-mailserver/blob/master/CODE_OF_CONDUCT.md) and I agree

- [X] I have read the [README](https://github.com/docker-mailserver/docker-mailserver/blob/master/README.md) and the [documentation](https://docker-mailserver.github.io/docker-mailserver/edge/) and I searched the [issue tracker](https://github.com/docker-mailserver/docker-mailserver/issues?q=is%3Aissue) but could not find a solution

### Improvements to this form?

_No response_ | 1.0 | Can't create email accounts while enabling LDAP - ### Miscellaneous first checks

- [X] I checked that all ports are open and not blocked by my ISP / hosting provider.

- [X] I know that SSL errors are likely the result of a wrong setup on the user side and not caused by DMS itself. I'm confident my setup is correct.

### Affected Component(s)

Mail creation, deletion and listing

### What happened and when does this occur?

```Markdown

While trying to create the email account using the setup.sh; I'm encountering the error below.

Waiting for dovecot to create /var/mail/deol.com/deoltito...

Waiting for dovecot to create /var/mail/deol.com/deoltito...

Waiting for dovecot to create /var/mail/deol.com/deoltito...

Waiting for dovecot to create /var/mail/deol.com/deoltito...

This message goes on until I stops it. However the email account won't be created.

When I try to list the available email accounts, this particular error is seen under it.

==========

# ./setup.sh email list

Fatal: Unknown command 'quota', but plugin quota exists. Try to set mail_plugins=quota

/usr/local/bin/listmailuser: line 15: 1024 * : syntax error: operand expected (error token is "* ")

/usr/local/bin/listmailuser: line 15: 1024 * : syntax error: operand expected (error token is "* ")

* new@deol.com ( / ) [%]

==========

```

### What did you expect to happen?

```Markdown

I believe it has something to with the LDAP integration. When I disable LDAP in the compose file, the email accounts can be created, listed and deleted without any issues.

```

### How do we replicate the issue?

```Markdown

1. Try to create a compose file with LDAP enabled and integrated in it

2. Try creating an email account after that

3. Try to list or delete the email accounts too

...

```

### DMS version

v10.4.0

### What operating system is DMS running on?

Linux

### What instruction set architecture is DMS running on?

x86_64 / AMD64

### What container orchestration tool are you using?

Docker Compose

### docker-compose.yml

```yaml

version: '3.8'

services:

mailserver:

image: docker.io/mailserver/docker-mailserver:latest

container_name: mailserver

hostname: mail

domainname: deol.com

ports:

- "25:25"

- "143:143"

- "587:587"

- "993:993"

volumes:

- ./docker-data/dms/mail-data/:/var/mail/

- ./docker-data/dms/mail-state/:/var/mail-state/

- ./docker-data/dms/mail-logs/:/var/log/mail/

- ./docker-data/dms/config/:/tmp/docker-mailserver/

- /etc/localtime:/etc/localtime:ro

environment:

- ENABLE_SPAMASSASSIN=1

- SPAMASSASSIN_SPAM_TO_INBOX=1

- ENABLE_CLAMAV=1

- ENABLE_FAIL2BAN=1

- ENABLE_POSTGREY=1

- ENABLE_SASLAUTHD=1

- ONE_DIR=1

- DMS_DEBUG=1

- ENABLE_LDAP=1

- LDAP_SERVER_HOST=LDAPNEW # your ldap container/IP/ServerName

- LDAP_SEARCH_BASE=ou=people,dc=ds,dc=domain,dc=com

- LDAP_BIND_DN=cn=admin,dc=ds,dc=domain,dc=com

- LDAP_BIND_PW=

- ENABLE_SASLAUTHD=1

- SASLAUTHD_MECHANISMS=ldap

- SASLAUTHD_LDAP_SERVER=LDAPNEW

- SASLAUTHD_LDAP_BIND_DN=cn=admin,dc=ds,dc=domain,dc=com

- SASLAUTHD_LDAP_PASSWORD=

- SASLAUTHD_LDAP_SEARCH_BASE=ou=people,dc=ds,dc=domain,dc=com

- SASLAUTHD_LDAP_FILTER=(&(objectClass=PostfixBookMailAccount)(uniqueIdentifier=%U))

- POSTMASTER_ADDRESS=postmaster@deol.com

- POSTFIX_MESSAGE_SIZE_LIMIT=100000000

cap_add:

- NET_ADMIN

- SYS_PTRACE

```

### Relevant log output

_No response_

### Other relevant information

_No response_

### What level of experience do you have with Docker and mail servers?

- [ ] I am inexperienced with docker

- [ ] I am inexperienced with mail servers

- [ ] I am uncomfortable with the CLI

### Code of conduct

- [X] I have read this project's [Code of Conduct](https://github.com/docker-mailserver/docker-mailserver/blob/master/CODE_OF_CONDUCT.md) and I agree

- [X] I have read the [README](https://github.com/docker-mailserver/docker-mailserver/blob/master/README.md) and the [documentation](https://docker-mailserver.github.io/docker-mailserver/edge/) and I searched the [issue tracker](https://github.com/docker-mailserver/docker-mailserver/issues?q=is%3Aissue) but could not find a solution

### Improvements to this form?

_No response_ | priority | can t create email accounts while enabling ldap miscellaneous first checks i checked that all ports are open and not blocked by my isp hosting provider i know that ssl errors are likely the result of a wrong setup on the user side and not caused by dms itself i m confident my setup is correct affected component s mail creation deletion and listing what happened and when does this occur markdown while trying to create the email account using the setup sh i m encountering the error below waiting for dovecot to create var mail deol com deoltito waiting for dovecot to create var mail deol com deoltito waiting for dovecot to create var mail deol com deoltito waiting for dovecot to create var mail deol com deoltito this message goes on until i stops it however the email account won t be created when i try to list the available email accounts this particular error is seen under it setup sh email list fatal unknown command quota but plugin quota exists try to set mail plugins quota usr local bin listmailuser line syntax error operand expected error token is usr local bin listmailuser line syntax error operand expected error token is new deol com what did you expect to happen markdown i believe it has something to with the ldap integration when i disable ldap in the compose file the email accounts can be created listed and deleted without any issues how do we replicate the issue markdown try to create a compose file with ldap enabled and integrated in it try creating an email account after that try to list or delete the email accounts too dms version what operating system is dms running on linux what instruction set architecture is dms running on what container orchestration tool are you using docker compose docker compose yml yaml version services mailserver image docker io mailserver docker mailserver latest container name mailserver hostname mail domainname deol com ports volumes docker data dms mail data var mail docker data dms mail state var mail state docker data dms mail logs var log mail docker data dms config tmp docker mailserver etc localtime etc localtime ro environment enable spamassassin spamassassin spam to inbox enable clamav enable enable postgrey enable saslauthd one dir dms debug enable ldap ldap server host ldapnew your ldap container ip servername ldap search base ou people dc ds dc domain dc com ldap bind dn cn admin dc ds dc domain dc com ldap bind pw enable saslauthd saslauthd mechanisms ldap saslauthd ldap server ldapnew saslauthd ldap bind dn cn admin dc ds dc domain dc com saslauthd ldap password saslauthd ldap search base ou people dc ds dc domain dc com saslauthd ldap filter objectclass postfixbookmailaccount uniqueidentifier u postmaster address postmaster deol com postfix message size limit cap add net admin sys ptrace relevant log output no response other relevant information no response what level of experience do you have with docker and mail servers i am inexperienced with docker i am inexperienced with mail servers i am uncomfortable with the cli code of conduct i have read this project s and i agree i have read the and the and i searched the but could not find a solution improvements to this form no response | 1 |

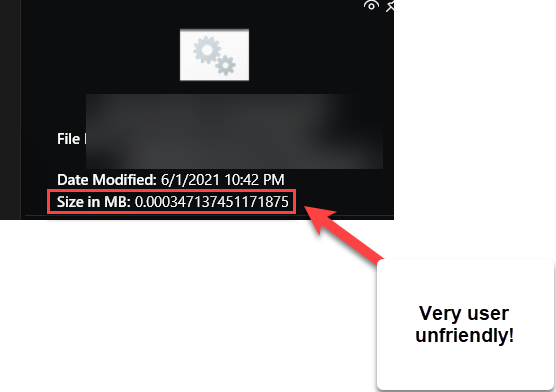

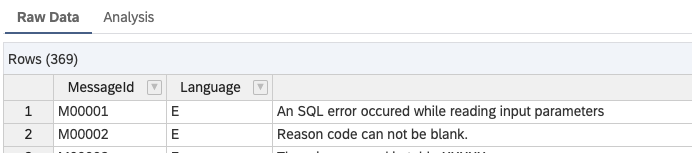

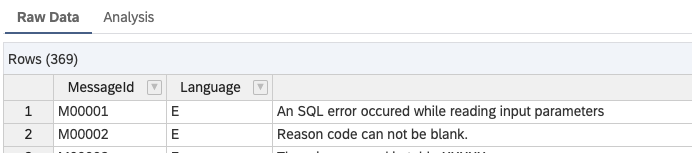

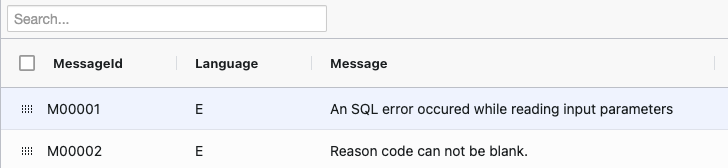

666,300 | 22,349,529,677 | IssuesEvent | 2022-06-15 10:45:34 | SAP/xsk | https://api.github.com/repos/SAP/xsk | closed | [IDE] CSV Editor - Total Records Count | enhancement priority-low effort-medium usability tooling shadow | It would be great if the CSV editor is displaying the total records count somewhere:

**Sample rows count:**

**CSV Editor:**

| 1.0 | [IDE] CSV Editor - Total Records Count - It would be great if the CSV editor is displaying the total records count somewhere:

**Sample rows count:**

**CSV Editor:**

| priority | csv editor total records count it would be great if the csv editor is displaying the total records count somewhere sample rows count csv editor | 1 |

92,675 | 3,872,900,201 | IssuesEvent | 2016-04-11 15:15:54 | jcgregorio/httplib2 | https://api.github.com/repos/jcgregorio/httplib2 | closed | bdist_rpm fails | bug imported Priority-Medium | _From [brian.la...@gmail.com](https://code.google.com/u/108532649133345963591/) on December 29, 2009 16:24:09_

What steps will reproduce the problem? 1. python setup.py bdist_rpm What is the expected output? What do you see instead? EXPECTED::

+ python setup.py build

running build

running build_py

creating build

creating build/lib

creating build/lib/httplib2

copying httplib2/__init__.py -> build/lib/httplib2

copying httplib2/iri2uri.py -> build/lib/httplib2

+ exit 0

ACTUAL RESULT::

+ python setup.py build

running build

running build_py

error: package directory 'python2/httplib2' does not exist

error: Bad exit status from /var/tmp/rpm-tmp.50855 (%build) What version of the product are you using? On what operating system? Latest check-in on any RPM based distro (Fedora/RHEL/Centos etc.) Please provide any additional information below. I believe that the RPM isn't being generated correctly with the new python2

and python3 subdirectories. I'm not a huge user of python distutils...but

I'll try to look and see if I can fix.

_Original issue: http://code.google.com/p/httplib2/issues/detail?id=85_ | 1.0 | bdist_rpm fails - _From [brian.la...@gmail.com](https://code.google.com/u/108532649133345963591/) on December 29, 2009 16:24:09_

What steps will reproduce the problem? 1. python setup.py bdist_rpm What is the expected output? What do you see instead? EXPECTED::

+ python setup.py build

running build

running build_py

creating build

creating build/lib

creating build/lib/httplib2

copying httplib2/__init__.py -> build/lib/httplib2

copying httplib2/iri2uri.py -> build/lib/httplib2

+ exit 0

ACTUAL RESULT::

+ python setup.py build

running build

running build_py

error: package directory 'python2/httplib2' does not exist

error: Bad exit status from /var/tmp/rpm-tmp.50855 (%build) What version of the product are you using? On what operating system? Latest check-in on any RPM based distro (Fedora/RHEL/Centos etc.) Please provide any additional information below. I believe that the RPM isn't being generated correctly with the new python2

and python3 subdirectories. I'm not a huge user of python distutils...but

I'll try to look and see if I can fix.

_Original issue: http://code.google.com/p/httplib2/issues/detail?id=85_ | priority | bdist rpm fails from on december what steps will reproduce the problem python setup py bdist rpm what is the expected output what do you see instead expected python setup py build running build running build py creating build creating build lib creating build lib copying init py build lib copying py build lib exit actual result python setup py build running build running build py error package directory does not exist error bad exit status from var tmp rpm tmp build what version of the product are you using on what operating system latest check in on any rpm based distro fedora rhel centos etc please provide any additional information below i believe that the rpm isn t being generated correctly with the new and subdirectories i m not a huge user of python distutils but i ll try to look and see if i can fix original issue | 1 |

171,596 | 6,491,685,465 | IssuesEvent | 2017-08-21 10:41:07 | softdevteam/krun | https://api.github.com/repos/softdevteam/krun | opened | Move masking of the core cycle counter outside the time section. | enhancement medium priority (a clear improvement but not a blocker for publication) | This loop:

https://github.com/softdevteam/krun/blob/9c38f01a7e6292669d7c9f83780d44e2abae8279/libkrun/libkruntime.c#L401

Can be moved into the getter functions, thus moving the masking operations out of the timed section. | 1.0 | Move masking of the core cycle counter outside the time section. - This loop:

https://github.com/softdevteam/krun/blob/9c38f01a7e6292669d7c9f83780d44e2abae8279/libkrun/libkruntime.c#L401

Can be moved into the getter functions, thus moving the masking operations out of the timed section. | priority | move masking of the core cycle counter outside the time section this loop can be moved into the getter functions thus moving the masking operations out of the timed section | 1 |

11,563 | 2,610,142,072 | IssuesEvent | 2015-02-26 18:44:46 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | seed line should NOT be visible right away | auto-migrated Priority-Medium Type-Enhancement | ```

What steps will reproduce the problem?

1. latest revision offers a neat functionality, the possibility of editing the

seed line

2. this functionality is useful for testing and has been requested by users

3. however despite being in the game configuration page, it should not be

visible right away for not always the user needs to change that line and has

the high likelihood of scaring the user with too much technicality

What is the expected output? What do you see instead?

in my view there should be a nice button that when pushed either shows a popup

or simply makes visible that line

```

-----

Original issue reported on code.google.com by `vittorio...@gmail.com` on 20 Dec 2010 at 12:02

* Blocking: #115 | 1.0 | seed line should NOT be visible right away - ```

What steps will reproduce the problem?

1. latest revision offers a neat functionality, the possibility of editing the

seed line

2. this functionality is useful for testing and has been requested by users

3. however despite being in the game configuration page, it should not be

visible right away for not always the user needs to change that line and has

the high likelihood of scaring the user with too much technicality

What is the expected output? What do you see instead?

in my view there should be a nice button that when pushed either shows a popup

or simply makes visible that line

```

-----

Original issue reported on code.google.com by `vittorio...@gmail.com` on 20 Dec 2010 at 12:02

* Blocking: #115 | priority | seed line should not be visible right away what steps will reproduce the problem latest revision offers a neat functionality the possibility of editing the seed line this functionality is useful for testing and has been requested by users however despite being in the game configuration page it should not be visible right away for not always the user needs to change that line and has the high likelihood of scaring the user with too much technicality what is the expected output what do you see instead in my view there should be a nice button that when pushed either shows a popup or simply makes visible that line original issue reported on code google com by vittorio gmail com on dec at blocking | 1 |

70,631 | 3,332,949,470 | IssuesEvent | 2015-11-11 22:28:04 | angular/material | https://api.github.com/repos/angular/material | closed | menuContents has zero items | priority: medium | In menuDirective.js, the link function call mdMenuCtrl.init.

if the md-menu-item is hard coded, like in the case of demoBasicUsage, then in menuController.js this will return items : 'menuContainer[0].querySelectorAll('md-menu-item')'.

if the md-menu-item is not hard coded, like in the case of demoMenuPositionModes and demoMenuWidth, then in menuController.js this will return zero items: 'menuContainer[0].querySelectorAll('md-menu-item')'.

```javascript

function link(scope, element, attrs, ctrls) {

var mdMenuCtrl = ctrls[0];

var isInMenuBar = ctrls[1] != undefined;

// Move everything into a md-menu-container and pass it to the controller

var menuContainer = angular.element(

'<div class="md-open-menu-container md-whiteframe-z2"></div>'

);

var menuContents = element.children()[1];

menuContainer.append(menuContents);

if (isInMenuBar) {

element.append(menuContainer);

menuContainer[0].style.display = 'none';

}

mdMenuCtrl.init(menuContainer, { isInMenuBar: isInMenuBar });

scope.$on('$destroy', function() {

mdMenuCtrl

.destroy()

.finally(function(){

menuContainer.remove();

});

});

}

``` | 1.0 | menuContents has zero items - In menuDirective.js, the link function call mdMenuCtrl.init.

if the md-menu-item is hard coded, like in the case of demoBasicUsage, then in menuController.js this will return items : 'menuContainer[0].querySelectorAll('md-menu-item')'.

if the md-menu-item is not hard coded, like in the case of demoMenuPositionModes and demoMenuWidth, then in menuController.js this will return zero items: 'menuContainer[0].querySelectorAll('md-menu-item')'.

```javascript

function link(scope, element, attrs, ctrls) {

var mdMenuCtrl = ctrls[0];

var isInMenuBar = ctrls[1] != undefined;

// Move everything into a md-menu-container and pass it to the controller

var menuContainer = angular.element(

'<div class="md-open-menu-container md-whiteframe-z2"></div>'

);

var menuContents = element.children()[1];

menuContainer.append(menuContents);

if (isInMenuBar) {

element.append(menuContainer);

menuContainer[0].style.display = 'none';

}

mdMenuCtrl.init(menuContainer, { isInMenuBar: isInMenuBar });

scope.$on('$destroy', function() {

mdMenuCtrl

.destroy()

.finally(function(){

menuContainer.remove();

});

});

}

``` | priority | menucontents has zero items in menudirective js the link function call mdmenuctrl init if the md menu item is hard coded like in the case of demobasicusage then in menucontroller js this will return items menucontainer queryselectorall md menu item if the md menu item is not hard coded like in the case of demomenupositionmodes and demomenuwidth then in menucontroller js this will return zero items menucontainer queryselectorall md menu item javascript function link scope element attrs ctrls var mdmenuctrl ctrls var isinmenubar ctrls undefined move everything into a md menu container and pass it to the controller var menucontainer angular element var menucontents element children menucontainer append menucontents if isinmenubar element append menucontainer menucontainer style display none mdmenuctrl init menucontainer isinmenubar isinmenubar scope on destroy function mdmenuctrl destroy finally function menucontainer remove | 1 |

462,654 | 13,250,971,352 | IssuesEvent | 2020-08-20 00:41:55 | TB-Modeling/modeltb.org | https://api.github.com/repos/TB-Modeling/modeltb.org | opened | Professional downloadable briefs | Priority 2/3: Medium new feature new idea | ## Description

We can host professional-looking briefs on TB and other topics. | 1.0 | Professional downloadable briefs - ## Description

We can host professional-looking briefs on TB and other topics. | priority | professional downloadable briefs description we can host professional looking briefs on tb and other topics | 1 |

273,401 | 8,530,257,133 | IssuesEvent | 2018-11-03 20:34:54 | minio/minio | https://api.github.com/repos/minio/minio | closed | Crashing with Out of Memory Errors (refs 6164) | community priority: medium | I hate to be the bearer of bad news, @harshavardhana , but the fix has not been working for me. I've upgraded to all the most recent Minio.exe builds since the last post in this issue, but with no success. I've delayed responding here in case the fix was in a build later than I thought, and because I found an issue in my environment and wondered if it was related. I've determined it is not but will outline what I've found in the past month.

I noticed as time went on that Minio started reporting read errors on my V: drive, but not any of the others. Separately, I discovered while trying to copy a set of large files from my V: drive (completely unrelated to Minio) that there was at least one corrupted file in the mix. This led me to believe my V drive was experiencing data corruption, and I wondered if it was the culprit of problems all along. I replaced both my V and X drives. Because they're identical and were purchased at the same time, I replaced both just in case the X drive was soon to follow. Two brand-new 6 TB drives are in their place.

This allowed me a chance to test Minio's healing capabilities, which so far are working OK. However, I've not been able to fully test it (I've only healed about half my data set so far) because Minio continues to crash with out of memory errors after 6-7 hours of run time. I brainstormed that if this out of memory error cannot be fixed I could heal in two stages to work-around the bug. First the V and X drives, which should heal their data in this "new" format. Then delete the Minio data on W and Y and heal back to those drives. Thus getting all my data into the new format in a very round-about (and dangerous) fashion.

But like I said, because Minio continues to crash with out of memory exceptions during the heal, I've yet to accomplish this in full. My previous screenshot shows my Minio data at 294 GB, and currently I've only healed about 154 GB. The healed amount tends to change unpredictably. Sometimes it will run for hours and appear to make 0 progress (the V and X data directories are not larger), and other times they will grow by several GB. It's almost as if the process is starting over every single time and it only makes progress if it happens to run longer than last time. I'm not sure, though.

Further, even without the heal running, the W and Y drives continue to be hammered while Minio consumes more and more memory for several hours before crashing. This is puzzling to me, because with the number of times I've run Minio it has to have a total run time of at least 50 hours, maybe much more. Assuming a read/write speed of just 10 MB a second, which is very slow for a SATA drive, even platter-based, it would have been able to read/write 1.8 TB of data from each drive. Even if speed were half that at 5 MB/s, that's still 900 GB. Half that, 2.5 MB/s would be 450 GB in that time period. Given my total data set is ~300 GB, I am extremely puzzled as to why it's not done yet unless it is restarting progress every single time. Which isn't logical given that no software would try to complete 300 GB worth of work before writing out progress. That wouldn't make sense.

Can you shed any light into what's going on here? My last resort is to simply delete everything and restart with no data. That's not the end of the world because my most important backup copy is off-site. Having stuff locally is for a more convenient full-copy.

I've attached CPU and MEM logs that I gathered yesterday, letting it run for a few hours and _not_ healing, just doing it's behind-the-scenes work. Again, it slammed usage of my W and Y drives, as well as the CPU. I stopped it before a crash to get the log output. The CPU log is shorter than the mem one due to my schedule, but it still has a couple hours.

Thank you.

[mem_20180918.txt](https://github.com/minio/minio/files/2397728/mem_20180918.txt)

[cpu_20180918.txt](https://github.com/minio/minio/files/2397729/cpu_20180918.txt)

_Originally posted by @tylerforsythe in https://github.com/minio/minio/issue_comments#issuecomment-422851849_ | 1.0 | Crashing with Out of Memory Errors (refs 6164) - I hate to be the bearer of bad news, @harshavardhana , but the fix has not been working for me. I've upgraded to all the most recent Minio.exe builds since the last post in this issue, but with no success. I've delayed responding here in case the fix was in a build later than I thought, and because I found an issue in my environment and wondered if it was related. I've determined it is not but will outline what I've found in the past month.

I noticed as time went on that Minio started reporting read errors on my V: drive, but not any of the others. Separately, I discovered while trying to copy a set of large files from my V: drive (completely unrelated to Minio) that there was at least one corrupted file in the mix. This led me to believe my V drive was experiencing data corruption, and I wondered if it was the culprit of problems all along. I replaced both my V and X drives. Because they're identical and were purchased at the same time, I replaced both just in case the X drive was soon to follow. Two brand-new 6 TB drives are in their place.

This allowed me a chance to test Minio's healing capabilities, which so far are working OK. However, I've not been able to fully test it (I've only healed about half my data set so far) because Minio continues to crash with out of memory errors after 6-7 hours of run time. I brainstormed that if this out of memory error cannot be fixed I could heal in two stages to work-around the bug. First the V and X drives, which should heal their data in this "new" format. Then delete the Minio data on W and Y and heal back to those drives. Thus getting all my data into the new format in a very round-about (and dangerous) fashion.

But like I said, because Minio continues to crash with out of memory exceptions during the heal, I've yet to accomplish this in full. My previous screenshot shows my Minio data at 294 GB, and currently I've only healed about 154 GB. The healed amount tends to change unpredictably. Sometimes it will run for hours and appear to make 0 progress (the V and X data directories are not larger), and other times they will grow by several GB. It's almost as if the process is starting over every single time and it only makes progress if it happens to run longer than last time. I'm not sure, though.

Further, even without the heal running, the W and Y drives continue to be hammered while Minio consumes more and more memory for several hours before crashing. This is puzzling to me, because with the number of times I've run Minio it has to have a total run time of at least 50 hours, maybe much more. Assuming a read/write speed of just 10 MB a second, which is very slow for a SATA drive, even platter-based, it would have been able to read/write 1.8 TB of data from each drive. Even if speed were half that at 5 MB/s, that's still 900 GB. Half that, 2.5 MB/s would be 450 GB in that time period. Given my total data set is ~300 GB, I am extremely puzzled as to why it's not done yet unless it is restarting progress every single time. Which isn't logical given that no software would try to complete 300 GB worth of work before writing out progress. That wouldn't make sense.

Can you shed any light into what's going on here? My last resort is to simply delete everything and restart with no data. That's not the end of the world because my most important backup copy is off-site. Having stuff locally is for a more convenient full-copy.

I've attached CPU and MEM logs that I gathered yesterday, letting it run for a few hours and _not_ healing, just doing it's behind-the-scenes work. Again, it slammed usage of my W and Y drives, as well as the CPU. I stopped it before a crash to get the log output. The CPU log is shorter than the mem one due to my schedule, but it still has a couple hours.

Thank you.

[mem_20180918.txt](https://github.com/minio/minio/files/2397728/mem_20180918.txt)

[cpu_20180918.txt](https://github.com/minio/minio/files/2397729/cpu_20180918.txt)

_Originally posted by @tylerforsythe in https://github.com/minio/minio/issue_comments#issuecomment-422851849_ | priority | crashing with out of memory errors refs i hate to be the bearer of bad news harshavardhana but the fix has not been working for me i ve upgraded to all the most recent minio exe builds since the last post in this issue but with no success i ve delayed responding here in case the fix was in a build later than i thought and because i found an issue in my environment and wondered if it was related i ve determined it is not but will outline what i ve found in the past month i noticed as time went on that minio started reporting read errors on my v drive but not any of the others separately i discovered while trying to copy a set of large files from my v drive completely unrelated to minio that there was at least one corrupted file in the mix this led me to believe my v drive was experiencing data corruption and i wondered if it was the culprit of problems all along i replaced both my v and x drives because they re identical and were purchased at the same time i replaced both just in case the x drive was soon to follow two brand new tb drives are in their place this allowed me a chance to test minio s healing capabilities which so far are working ok however i ve not been able to fully test it i ve only healed about half my data set so far because minio continues to crash with out of memory errors after hours of run time i brainstormed that if this out of memory error cannot be fixed i could heal in two stages to work around the bug first the v and x drives which should heal their data in this new format then delete the minio data on w and y and heal back to those drives thus getting all my data into the new format in a very round about and dangerous fashion but like i said because minio continues to crash with out of memory exceptions during the heal i ve yet to accomplish this in full my previous screenshot shows my minio data at gb and currently i ve only healed about gb the healed amount tends to change unpredictably sometimes it will run for hours and appear to make progress the v and x data directories are not larger and other times they will grow by several gb it s almost as if the process is starting over every single time and it only makes progress if it happens to run longer than last time i m not sure though further even without the heal running the w and y drives continue to be hammered while minio consumes more and more memory for several hours before crashing this is puzzling to me because with the number of times i ve run minio it has to have a total run time of at least hours maybe much more assuming a read write speed of just mb a second which is very slow for a sata drive even platter based it would have been able to read write tb of data from each drive even if speed were half that at mb s that s still gb half that mb s would be gb in that time period given my total data set is gb i am extremely puzzled as to why it s not done yet unless it is restarting progress every single time which isn t logical given that no software would try to complete gb worth of work before writing out progress that wouldn t make sense can you shed any light into what s going on here my last resort is to simply delete everything and restart with no data that s not the end of the world because my most important backup copy is off site having stuff locally is for a more convenient full copy i ve attached cpu and mem logs that i gathered yesterday letting it run for a few hours and not healing just doing it s behind the scenes work again it slammed usage of my w and y drives as well as the cpu i stopped it before a crash to get the log output the cpu log is shorter than the mem one due to my schedule but it still has a couple hours thank you originally posted by tylerforsythe in | 1 |

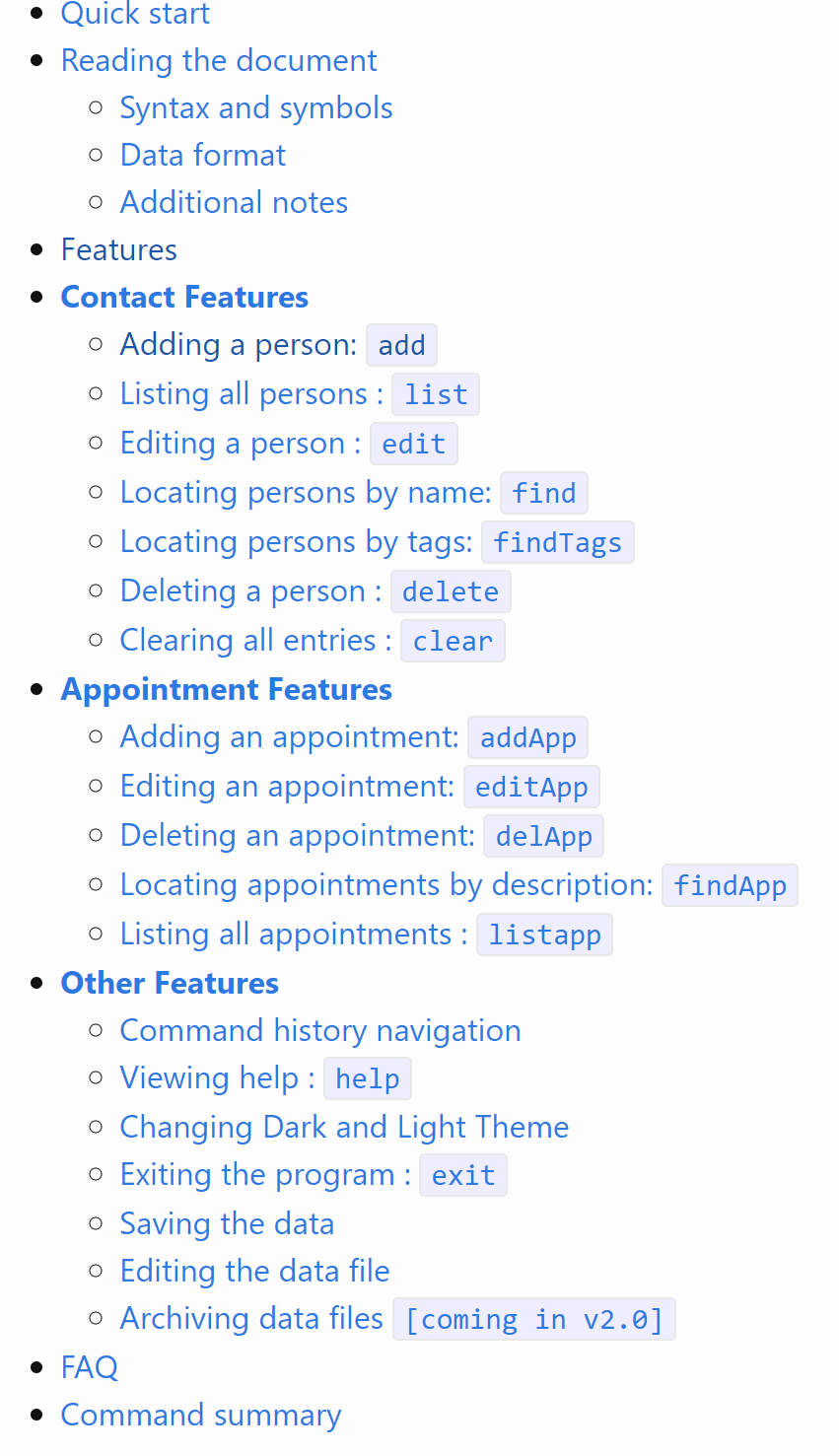

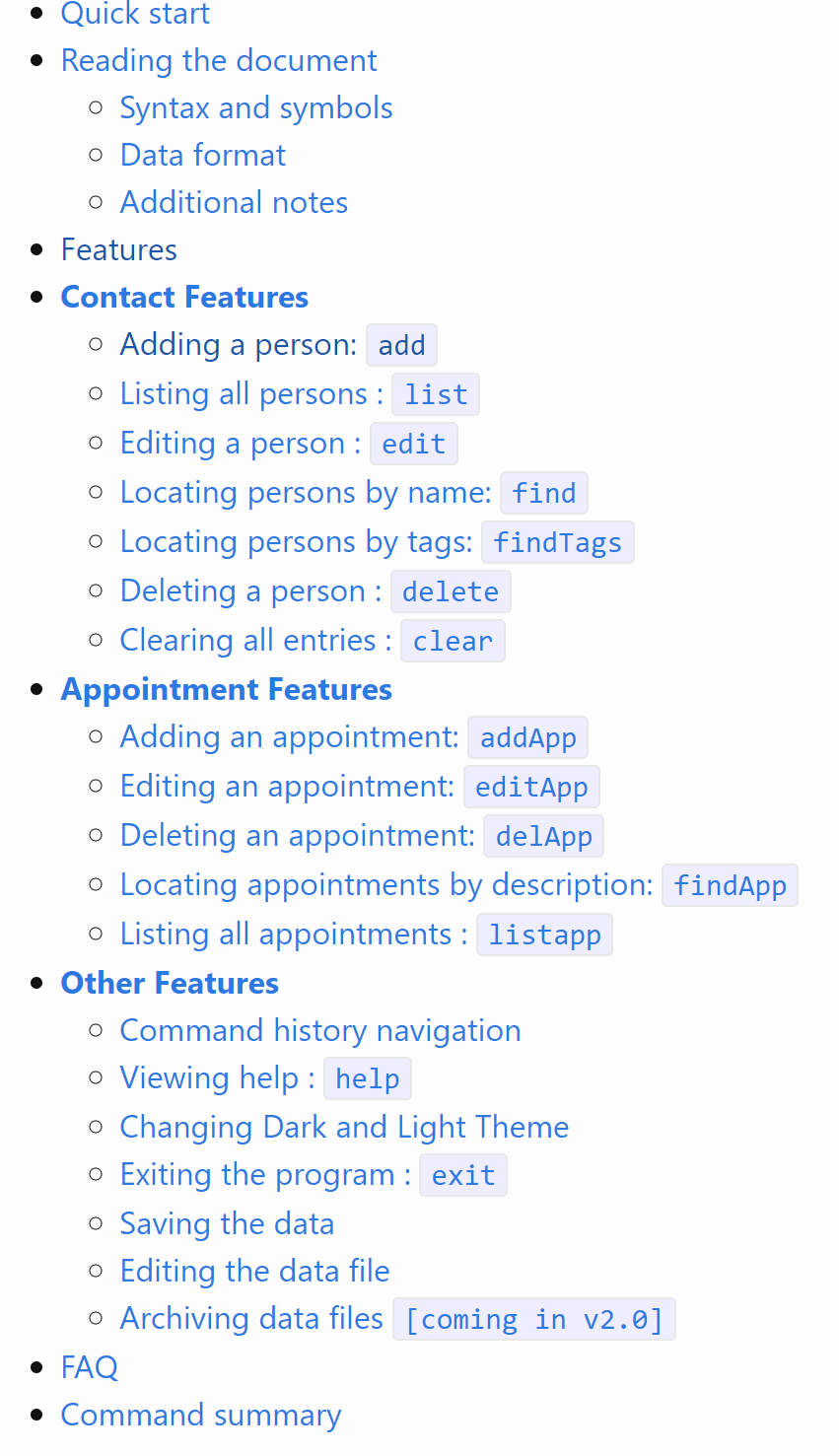

604,574 | 18,714,856,193 | IssuesEvent | 2021-11-03 02:12:14 | AY2122S1-CS2103T-T12-3/tp | https://api.github.com/repos/AY2122S1-CS2103T-T12-3/tp | closed | [PE-D] UG TOC | priority.Medium |

I think for your sectioning of TOC,

The `feature` should be an overall header for all the subsections.

Something like below

- Features

- Contact Features

- Appointment Features

- Other Features

<!--session: 1635494624855-6c4169c8-8f98-434c-bb03-2bfcaad4f18c-->

<!--Version: Web v3.4.1-->

-------------

Labels: `severity.Low` `type.DocumentationBug`

original: Timothyoung97/ped#2 | 1.0 | [PE-D] UG TOC -

I think for your sectioning of TOC,

The `feature` should be an overall header for all the subsections.

Something like below

- Features

- Contact Features

- Appointment Features

- Other Features

<!--session: 1635494624855-6c4169c8-8f98-434c-bb03-2bfcaad4f18c-->

<!--Version: Web v3.4.1-->

-------------

Labels: `severity.Low` `type.DocumentationBug`

original: Timothyoung97/ped#2 | priority | ug toc i think for your sectioning of toc the feature should be an overall header for all the subsections something like below features contact features appointment features other features labels severity low type documentationbug original ped | 1 |

727,944 | 25,060,654,219 | IssuesEvent | 2022-11-07 00:59:44 | AY2223S1-CS2103T-W08-2/tp | https://api.github.com/repos/AY2223S1-CS2103T-W08-2/tp | closed | [PE-D][Tester B] find command duplicates person | priority.High priority.Medium | Using the default data provided, running 'find Irfan` displays Irfan's details correclty.

But then running `find Irfan Ibrahim` causes does nothing.

Then run `find Irfan`, Irfan is duplicated in the list.

To recover, the app must be reopened.

There might be a deeper issue here where clients are randomly getting duplicated in the model.

<!--session: 1666944033620-ae8585f7-7738-461c-9ea8-0b2de73b6950--><!--Version: Web v3.4.4-->

-------------

Labels: `severity.Medium` `type.FunctionalityBug`

original: Thing1Thing2/ped#10 | 2.0 | [PE-D][Tester B] find command duplicates person - Using the default data provided, running 'find Irfan` displays Irfan's details correclty.

But then running `find Irfan Ibrahim` causes does nothing.

Then run `find Irfan`, Irfan is duplicated in the list.

To recover, the app must be reopened.

There might be a deeper issue here where clients are randomly getting duplicated in the model.

<!--session: 1666944033620-ae8585f7-7738-461c-9ea8-0b2de73b6950--><!--Version: Web v3.4.4-->

-------------

Labels: `severity.Medium` `type.FunctionalityBug`

original: Thing1Thing2/ped#10 | priority | find command duplicates person using the default data provided running find irfan displays irfan s details correclty but then running find irfan ibrahim causes does nothing then run find irfan irfan is duplicated in the list to recover the app must be reopened there might be a deeper issue here where clients are randomly getting duplicated in the model labels severity medium type functionalitybug original ped | 1 |

738,045 | 25,542,863,056 | IssuesEvent | 2022-11-29 16:28:50 | envoyproxy/gateway | https://api.github.com/repos/envoyproxy/gateway | closed | Wildcard in HttpRoute hostnames generates exact match | bug area/translator priority/medium | *Description*:

When `listeners` spec in the Gateway does not specify hosts and HttpRoute spec specifies _wildcard_ host envoy-gateway generates Exact match for the `:authority` that results in matching failures and hence 404.

E.g. for HttpRoute host name `*.example.com` it generates

```

HeaderMatches:

- Exact: '*.example.com'

Name: :authority

Prefix: null

SafeRegex: null

```

The problem seems to be in [this code](https://github.com/envoyproxy/gateway/blob/cede77ebbecf25fbe8994cfd7643e8295bf75712/internal/gatewayapi/translator.go#L1166) that compares host to `*` while it probably needs to consider `*.example.com` cases

**Expected:**

Regex match is generated instead of Exact match and request passes the filter

*Repro steps*:

Apply this Gateway manifest

```

apiVersion: gateway.networking.k8s.io/v1beta1

kind: Gateway

metadata:

name: eg

spec:

gatewayClassName: eg

listeners:

- name: http

protocol: HTTP

port: 80

```

Apply this HttpRoute manifest:

```

apiVersion: gateway.networking.k8s.io/v1beta1

kind: HTTPRoute

metadata:

name: backend

spec:

parentRefs:

- name: eg

hostnames:

- "*.example.com"

rules:

- backendRefs:

- name: paveliak-playground-1

port: 8080

matches:

- path:

type: PathPrefix

value: /

```

*Environment*:

- Minikube 1.27.1

- K8s 1.25.2

- envoy-gateway (tried both `v0.2.0` and `main`)

*Logs*:

```

HTTP:

- Address: 0.0.0.0

Hostnames:

- '*'

Name: default-eg-http

Port: 10080

Routes:

- AddRequestHeaders: null

BackendWeights:

Invalid: 0

Valid: 0

Destinations:

- Host: 10.100.121.40

Port: 8080

Weight: 1

DirectResponse: null

HeaderMatches:

- Exact: '*.example.com'

Name: :authority

Prefix: null

SafeRegex: null

Name: default-backend-rule-0-match-0-*.example.com

PathMatch:

Exact: null

Name: ""

Prefix: /

SafeRegex: null

QueryParamMatches: null

Redirect: null

RemoveRequestHeaders: null

TLS: null

TCP: null

{"runner": "gateway-api", "output": "xds-ir"}

```

As a result this works `curl http://10.110.191.160 -H "Host: *.example.com"`

But this request returns 404 `curl http://10.110.191.160 -H "Host: www.example.com"`

| 1.0 | Wildcard in HttpRoute hostnames generates exact match - *Description*:

When `listeners` spec in the Gateway does not specify hosts and HttpRoute spec specifies _wildcard_ host envoy-gateway generates Exact match for the `:authority` that results in matching failures and hence 404.

E.g. for HttpRoute host name `*.example.com` it generates

```

HeaderMatches:

- Exact: '*.example.com'

Name: :authority

Prefix: null

SafeRegex: null

```

The problem seems to be in [this code](https://github.com/envoyproxy/gateway/blob/cede77ebbecf25fbe8994cfd7643e8295bf75712/internal/gatewayapi/translator.go#L1166) that compares host to `*` while it probably needs to consider `*.example.com` cases

**Expected:**

Regex match is generated instead of Exact match and request passes the filter

*Repro steps*:

Apply this Gateway manifest

```

apiVersion: gateway.networking.k8s.io/v1beta1

kind: Gateway

metadata:

name: eg

spec:

gatewayClassName: eg

listeners:

- name: http

protocol: HTTP

port: 80

```

Apply this HttpRoute manifest:

```

apiVersion: gateway.networking.k8s.io/v1beta1

kind: HTTPRoute

metadata:

name: backend

spec:

parentRefs:

- name: eg

hostnames:

- "*.example.com"

rules:

- backendRefs:

- name: paveliak-playground-1

port: 8080

matches:

- path:

type: PathPrefix

value: /

```

*Environment*:

- Minikube 1.27.1

- K8s 1.25.2

- envoy-gateway (tried both `v0.2.0` and `main`)

*Logs*:

```

HTTP:

- Address: 0.0.0.0

Hostnames:

- '*'

Name: default-eg-http

Port: 10080

Routes:

- AddRequestHeaders: null

BackendWeights:

Invalid: 0

Valid: 0

Destinations:

- Host: 10.100.121.40

Port: 8080

Weight: 1

DirectResponse: null

HeaderMatches:

- Exact: '*.example.com'

Name: :authority

Prefix: null

SafeRegex: null

Name: default-backend-rule-0-match-0-*.example.com

PathMatch:

Exact: null

Name: ""

Prefix: /

SafeRegex: null

QueryParamMatches: null

Redirect: null

RemoveRequestHeaders: null

TLS: null

TCP: null

{"runner": "gateway-api", "output": "xds-ir"}

```

As a result this works `curl http://10.110.191.160 -H "Host: *.example.com"`

But this request returns 404 `curl http://10.110.191.160 -H "Host: www.example.com"`

| priority | wildcard in httproute hostnames generates exact match description when listeners spec in the gateway does not specify hosts and httproute spec specifies wildcard host envoy gateway generates exact match for the authority that results in matching failures and hence e g for httproute host name example com it generates headermatches exact example com name authority prefix null saferegex null the problem seems to be in that compares host to while it probably needs to consider example com cases expected regex match is generated instead of exact match and request passes the filter repro steps apply this gateway manifest apiversion gateway networking io kind gateway metadata name eg spec gatewayclassname eg listeners name http protocol http port apply this httproute manifest apiversion gateway networking io kind httproute metadata name backend spec parentrefs name eg hostnames example com rules backendrefs name paveliak playground port matches path type pathprefix value environment minikube envoy gateway tried both and main logs http address hostnames name default eg http port routes addrequestheaders null backendweights invalid valid destinations host port weight directresponse null headermatches exact example com name authority prefix null saferegex null name default backend rule match example com pathmatch exact null name prefix saferegex null queryparammatches null redirect null removerequestheaders null tls null tcp null runner gateway api output xds ir as a result this works curl h host example com but this request returns curl h host | 1 |

302,963 | 9,300,893,328 | IssuesEvent | 2019-03-23 17:22:54 | HabitRPG/habitica | https://api.github.com/repos/HabitRPG/habitica | closed | Username searches in the Hall of Heroes should be case-insensitive | good first issue priority: medium section: Achievements/Popups/Notifications section: other status: issue: in progress | As described in issue https://github.com/HabitRPG/habitica/issues/10972 and its pull request https://github.com/HabitRPG/habitica/pull/10980 , the Hall of Heroes allows moderators to search for users by the Username. Currently that search is case-sensitive. It should be case-insensitive. I.e., searching for "examplename" would let you find a user called "ExampleName".

Any contributor who wants to work on this should read the top post at https://github.com/HabitRPG/habitica/issues/10972 to learn about how the moderator search feature works and how you can access it on your local install. https://github.com/HabitRPG/habitica/pull/10980/files will show you how the current case-sensitive search is implemented.

_(NB We're not considering this to be a bug in https://github.com/HabitRPG/habitica/pull/10980. We neglected to say that the search should be case-insensitive and so that PR did implement the search feature as intended at the time!)_ | 1.0 | Username searches in the Hall of Heroes should be case-insensitive - As described in issue https://github.com/HabitRPG/habitica/issues/10972 and its pull request https://github.com/HabitRPG/habitica/pull/10980 , the Hall of Heroes allows moderators to search for users by the Username. Currently that search is case-sensitive. It should be case-insensitive. I.e., searching for "examplename" would let you find a user called "ExampleName".

Any contributor who wants to work on this should read the top post at https://github.com/HabitRPG/habitica/issues/10972 to learn about how the moderator search feature works and how you can access it on your local install. https://github.com/HabitRPG/habitica/pull/10980/files will show you how the current case-sensitive search is implemented.

_(NB We're not considering this to be a bug in https://github.com/HabitRPG/habitica/pull/10980. We neglected to say that the search should be case-insensitive and so that PR did implement the search feature as intended at the time!)_ | priority | username searches in the hall of heroes should be case insensitive as described in issue and its pull request the hall of heroes allows moderators to search for users by the username currently that search is case sensitive it should be case insensitive i e searching for examplename would let you find a user called examplename any contributor who wants to work on this should read the top post at to learn about how the moderator search feature works and how you can access it on your local install will show you how the current case sensitive search is implemented nb we re not considering this to be a bug in we neglected to say that the search should be case insensitive and so that pr did implement the search feature as intended at the time | 1 |

596,841 | 18,145,008,004 | IssuesEvent | 2021-09-25 09:06:36 | google/mozc | https://api.github.com/repos/google/mozc | closed | Missing "こと***" entries | Priority-Medium auto-migrated OpSys-All Type-Conversion | ```

I've got them automatically and fixed them manually.

Maybe there are some mistakes and you need to calculate the scores.

Check "tmp.koto" please.

e.g.

======================================================

すごいこと 2665 2235 5782 凄いこと

そういうこと 3006 2235 4853 そういうこと

======================================================

```

Original issue reported on code.google.com by `heathros...@gmail.com` on 9 Nov 2010 at 7:26

Attachments:

- [tmp.koto](https://storage.googleapis.com/google-code-attachments/mozc/issue-67/comment-0/tmp.koto)

| 1.0 | Missing "こと***" entries - ```

I've got them automatically and fixed them manually.

Maybe there are some mistakes and you need to calculate the scores.

Check "tmp.koto" please.

e.g.

======================================================

すごいこと 2665 2235 5782 凄いこと

そういうこと 3006 2235 4853 そういうこと

======================================================

```

Original issue reported on code.google.com by `heathros...@gmail.com` on 9 Nov 2010 at 7:26

Attachments:

- [tmp.koto](https://storage.googleapis.com/google-code-attachments/mozc/issue-67/comment-0/tmp.koto)

| priority | missing こと entries i ve got them automatically and fixed them manually maybe there are some mistakes and you need to calculate the scores check tmp koto please e g すごいこと 凄いこと そういうこと そういうこと original issue reported on code google com by heathros gmail com on nov at attachments | 1 |

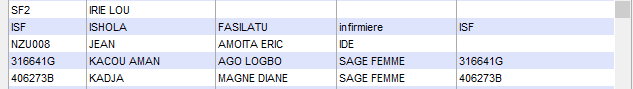

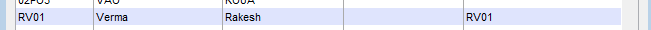

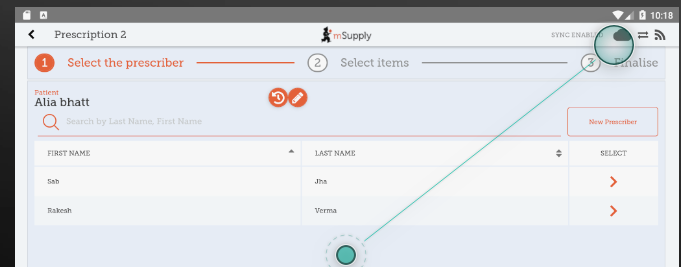

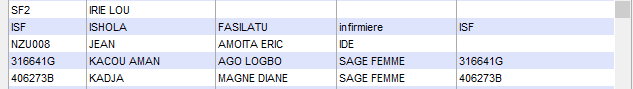

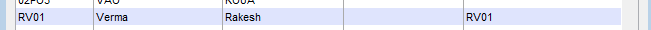

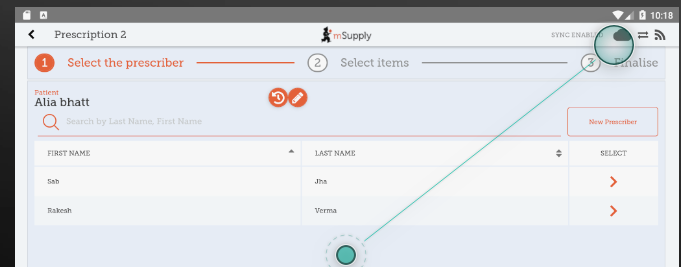

442,168 | 12,740,990,356 | IssuesEvent | 2020-06-26 04:36:10 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | opened | Merged Prescriber from Desktop store doesn't sync to Mobile Store | 5.0.4 Docs: not needed Effort: medium Priority: immediate | ## Describe the bug

Merged Prescriber from Desktop store doesn't sync to Mobile Store

### To reproduce

Steps to reproduce the behavior:

1. In mobile store, create atleast two prescriber .

2. Create Prescription from one of the created Prescriber and sync

3. Merge these prescriber in Desktop Store such that Keep Prescriber with no Prescription and Merge Prescriber with Prescription.

4. Check Mobile Store

5. See Error. Prescribers is not sync

### Expected behaviour

Sync should work as expected and Merged prescriber should be shown in Mobile as well

### Proposed Solution

Leave if you don't know how to fix/implement. Edit this issue description and explain here if you know the best path of implementing the fix within the codebase.

### Version and device info

- App version: v5.0.4

- Tablet model: API 21

- Desktop version: v412RC04

### Additional context

Add any other context about the problem here.

| 1.0 | Merged Prescriber from Desktop store doesn't sync to Mobile Store - ## Describe the bug

Merged Prescriber from Desktop store doesn't sync to Mobile Store

### To reproduce

Steps to reproduce the behavior:

1. In mobile store, create atleast two prescriber .

2. Create Prescription from one of the created Prescriber and sync

3. Merge these prescriber in Desktop Store such that Keep Prescriber with no Prescription and Merge Prescriber with Prescription.

4. Check Mobile Store

5. See Error. Prescribers is not sync

### Expected behaviour

Sync should work as expected and Merged prescriber should be shown in Mobile as well

### Proposed Solution

Leave if you don't know how to fix/implement. Edit this issue description and explain here if you know the best path of implementing the fix within the codebase.

### Version and device info

- App version: v5.0.4

- Tablet model: API 21

- Desktop version: v412RC04

### Additional context

Add any other context about the problem here.

| priority | merged prescriber from desktop store doesn t sync to mobile store describe the bug merged prescriber from desktop store doesn t sync to mobile store to reproduce steps to reproduce the behavior in mobile store create atleast two prescriber create prescription from one of the created prescriber and sync merge these prescriber in desktop store such that keep prescriber with no prescription and merge prescriber with prescription check mobile store see error prescribers is not sync expected behaviour sync should work as expected and merged prescriber should be shown in mobile as well proposed solution leave if you don t know how to fix implement edit this issue description and explain here if you know the best path of implementing the fix within the codebase version and device info app version tablet model api desktop version additional context add any other context about the problem here | 1 |

118,917 | 4,757,600,475 | IssuesEvent | 2016-10-24 17:02:50 | geosolutions-it/geotools | https://api.github.com/repos/geosolutions-it/geotools | opened | Communitty interaction | C009-2016-MONGODB Priority: Medium Task | Since we are preserving the current MongoDB behavior and only users that want to use complex feature will need to do something, we only need to convince the community about how we intend to support the complex features. Another point that needs to be discussed is the dependency on app-schema (if we don't manage to avoid it). | 1.0 | Communitty interaction - Since we are preserving the current MongoDB behavior and only users that want to use complex feature will need to do something, we only need to convince the community about how we intend to support the complex features. Another point that needs to be discussed is the dependency on app-schema (if we don't manage to avoid it). | priority | communitty interaction since we are preserving the current mongodb behavior and only users that want to use complex feature will need to do something we only need to convince the community about how we intend to support the complex features another point that needs to be discussed is the dependency on app schema if we don t manage to avoid it | 1 |

22,105 | 2,645,590,069 | IssuesEvent | 2015-03-13 00:06:08 | pedromorgan/flightgear-issues-test | https://api.github.com/repos/pedromorgan/flightgear-issues-test | closed | MPmap is out off sync | apt.dat bug imported mpmap pigeon Priority-Medium | _From [pedromor...@gmail.com](https://code.google.com/u/112081649209717344658/) on February 03, 2010 07:45:16_

What steps will reproduce the problem? * the nav.dat is updated in cvs, but changes are not reflected on

mmapservers

* mpmap needs to be in sync with cvs

_Original issue: http://code.google.com/p/flightgear-bugs/issues/detail?id=28_ | 1.0 | MPmap is out off sync - _From [pedromor...@gmail.com](https://code.google.com/u/112081649209717344658/) on February 03, 2010 07:45:16_

What steps will reproduce the problem? * the nav.dat is updated in cvs, but changes are not reflected on

mmapservers

* mpmap needs to be in sync with cvs

_Original issue: http://code.google.com/p/flightgear-bugs/issues/detail?id=28_ | priority | mpmap is out off sync from on february what steps will reproduce the problem the nav dat is updated in cvs but changes are not reflected on mmapservers mpmap needs to be in sync with cvs original issue | 1 |

271,612 | 8,485,749,511 | IssuesEvent | 2018-10-26 08:48:57 | cms-gem-daq-project/cmsgemos | https://api.github.com/repos/cms-gem-daq-project/cmsgemos | closed | Exception in amc_info_uhal.py if -r option used at cmd line | Priority: Medium Status: Help Wanted Type: Bug | Based on feedback from DAQ expert tried to issue a reset command to the CTP7 with command line option -r.

Generated the exception shown: [amc_info_resetExcept.txt](https://github.com/cms-gem-daq-project/cmsgemos/files/1089047/amc_info_resetExcept.txt)

| 1.0 | Exception in amc_info_uhal.py if -r option used at cmd line - Based on feedback from DAQ expert tried to issue a reset command to the CTP7 with command line option -r.

Generated the exception shown: [amc_info_resetExcept.txt](https://github.com/cms-gem-daq-project/cmsgemos/files/1089047/amc_info_resetExcept.txt)

| priority | exception in amc info uhal py if r option used at cmd line based on feedback from daq expert tried to issue a reset command to the with command line option r generated the exception shown | 1 |

465,173 | 13,358,091,790 | IssuesEvent | 2020-08-31 11:02:33 | ooni/probe | https://api.github.com/repos/ooni/probe | closed | Make the OONI Probe desktop app rely entirely on the golang engine | enhancement epic funder/otf20 ooni/probe-desktop priority/medium | We are in the process of consolidating all the code related to running network experiments inside of a new golang based engine called probe-engine, which will replace measurement-kit.

As part of this activity, we will make the OONI Probe desktop (Windows and macOS) app rely entirely on the golang engine.

We are not aiming to port the OONI Probe desktop apps to a new codebase. We are also not aiming to port the OONI CLI to a new codebase. The work we are proposing is rather to reduce the amount of C++ dependencies used by the OONI Probe CLI and the desktop apps. Below we explain in detail which components we are planning to modify, why we are doing that, and why we have chosen to start integrating these changes in the desktop apps, rather than in the mobile apps.

The current OONI Probe desktop apps for Windows and macOS are Electron-based apps implemented at github.com/ooni/probe-desktop. These apps use the CLI interface written in golang to run OONI experiments and perform other functions.

The codebase of the OONI Probe CLI interface is available at github.com/ooni/probe-cli. More in detail, we have specified how the Desktop app will exec out the CLI to perform specific tasks and we have also specified the data format emitted by the CLI when running tests.

The CLI, in turn, is based on a lower-level “engine” library written in golang and available at github.com/ooni/probe-engine. This library aims to include a unified desktop and mobile implementation of all experiments. However, inside this library, we are still linking to our old C++ engine, Measurement Kit, to implement most OONI network experiments. (Measurement Kit is composed of several GitHub repositories available at github.com/measurement-kit.)

The work described in this activity is about gradually phasing out Measurement Kit, so that in the end all experiments are written in Go rather than in C++. This will mostly happen inside the probe-engine and will mostly concern changes in the implementation of tests (i.e. rewriting from C++ to Go). We believe that the API exposed by probe-engine will not change, except for minor changes in the options supported by the new experiments (for example, we may drop an option that does not make sense in the Go context or we may add an option that did not make sense for a C++ implementation).

This should explain why we believe that writing more OONI experiments in Go as part of probe-engine does not overlap with our previous work on creating the OONI Probe desktop apps (supported by our last OTF contract). In our view, this proposed work is simply the natural continuation of what we have been working on over the past year.

The advantages of rewriting in Go are the following:

* It will reduce the code size, because we can drop the C dependencies (whereas we cannot drop the dependency from Go since we want to ship a Psiphon experiment).

* It allows us to write experiments that can easily and safely be interrupted, because Go natively support this functionality, which is instead rather tricky to get right in C++.

* It allows us to easily wrap standard library functionality, hence allowing for easier low level measurements.

* It allows us to quickly cross compile for the operating systems we care about (Windows, macOS, Linux, Android, iOS); full builds will complete in minutes rather than hours, thus enabling faster development cycles.

* It will make OONI Probe safer, because Go is memory safe and has built-in support for concurrency.

Regarding the choice of focusing on the OONI Probe desktop app first (and on the mobile apps next) the reason is simple. Since probe-engine is already being used by the OONI Probe desktop apps via probe-cli, we have estimated that the effort (and cost) for doing this for the desktop apps is less than doing it on mobile, where we haven’t shipped golang code in production yet. In fact, we can update the desktop apps by recompiling probe-cli to use a new version of probe-engine that reimplements all tests in Go, with none or minimal API changes.

| 1.0 | Make the OONI Probe desktop app rely entirely on the golang engine - We are in the process of consolidating all the code related to running network experiments inside of a new golang based engine called probe-engine, which will replace measurement-kit.

As part of this activity, we will make the OONI Probe desktop (Windows and macOS) app rely entirely on the golang engine.

We are not aiming to port the OONI Probe desktop apps to a new codebase. We are also not aiming to port the OONI CLI to a new codebase. The work we are proposing is rather to reduce the amount of C++ dependencies used by the OONI Probe CLI and the desktop apps. Below we explain in detail which components we are planning to modify, why we are doing that, and why we have chosen to start integrating these changes in the desktop apps, rather than in the mobile apps.

The current OONI Probe desktop apps for Windows and macOS are Electron-based apps implemented at github.com/ooni/probe-desktop. These apps use the CLI interface written in golang to run OONI experiments and perform other functions.

The codebase of the OONI Probe CLI interface is available at github.com/ooni/probe-cli. More in detail, we have specified how the Desktop app will exec out the CLI to perform specific tasks and we have also specified the data format emitted by the CLI when running tests.

The CLI, in turn, is based on a lower-level “engine” library written in golang and available at github.com/ooni/probe-engine. This library aims to include a unified desktop and mobile implementation of all experiments. However, inside this library, we are still linking to our old C++ engine, Measurement Kit, to implement most OONI network experiments. (Measurement Kit is composed of several GitHub repositories available at github.com/measurement-kit.)

The work described in this activity is about gradually phasing out Measurement Kit, so that in the end all experiments are written in Go rather than in C++. This will mostly happen inside the probe-engine and will mostly concern changes in the implementation of tests (i.e. rewriting from C++ to Go). We believe that the API exposed by probe-engine will not change, except for minor changes in the options supported by the new experiments (for example, we may drop an option that does not make sense in the Go context or we may add an option that did not make sense for a C++ implementation).

This should explain why we believe that writing more OONI experiments in Go as part of probe-engine does not overlap with our previous work on creating the OONI Probe desktop apps (supported by our last OTF contract). In our view, this proposed work is simply the natural continuation of what we have been working on over the past year.

The advantages of rewriting in Go are the following:

* It will reduce the code size, because we can drop the C dependencies (whereas we cannot drop the dependency from Go since we want to ship a Psiphon experiment).

* It allows us to write experiments that can easily and safely be interrupted, because Go natively support this functionality, which is instead rather tricky to get right in C++.

* It allows us to easily wrap standard library functionality, hence allowing for easier low level measurements.

* It allows us to quickly cross compile for the operating systems we care about (Windows, macOS, Linux, Android, iOS); full builds will complete in minutes rather than hours, thus enabling faster development cycles.

* It will make OONI Probe safer, because Go is memory safe and has built-in support for concurrency.

Regarding the choice of focusing on the OONI Probe desktop app first (and on the mobile apps next) the reason is simple. Since probe-engine is already being used by the OONI Probe desktop apps via probe-cli, we have estimated that the effort (and cost) for doing this for the desktop apps is less than doing it on mobile, where we haven’t shipped golang code in production yet. In fact, we can update the desktop apps by recompiling probe-cli to use a new version of probe-engine that reimplements all tests in Go, with none or minimal API changes.

| priority | make the ooni probe desktop app rely entirely on the golang engine we are in the process of consolidating all the code related to running network experiments inside of a new golang based engine called probe engine which will replace measurement kit as part of this activity we will make the ooni probe desktop windows and macos app rely entirely on the golang engine we are not aiming to port the ooni probe desktop apps to a new codebase we are also not aiming to port the ooni cli to a new codebase the work we are proposing is rather to reduce the amount of c dependencies used by the ooni probe cli and the desktop apps below we explain in detail which components we are planning to modify why we are doing that and why we have chosen to start integrating these changes in the desktop apps rather than in the mobile apps the current ooni probe desktop apps for windows and macos are electron based apps implemented at github com ooni probe desktop these apps use the cli interface written in golang to run ooni experiments and perform other functions the codebase of the ooni probe cli interface is available at github com ooni probe cli more in detail we have specified how the desktop app will exec out the cli to perform specific tasks and we have also specified the data format emitted by the cli when running tests the cli in turn is based on a lower level “engine” library written in golang and available at github com ooni probe engine this library aims to include a unified desktop and mobile implementation of all experiments however inside this library we are still linking to our old c engine measurement kit to implement most ooni network experiments measurement kit is composed of several github repositories available at github com measurement kit the work described in this activity is about gradually phasing out measurement kit so that in the end all experiments are written in go rather than in c this will mostly happen inside the probe engine and will mostly concern changes in the implementation of tests i e rewriting from c to go we believe that the api exposed by probe engine will not change except for minor changes in the options supported by the new experiments for example we may drop an option that does not make sense in the go context or we may add an option that did not make sense for a c implementation this should explain why we believe that writing more ooni experiments in go as part of probe engine does not overlap with our previous work on creating the ooni probe desktop apps supported by our last otf contract in our view this proposed work is simply the natural continuation of what we have been working on over the past year the advantages of rewriting in go are the following it will reduce the code size because we can drop the c dependencies whereas we cannot drop the dependency from go since we want to ship a psiphon experiment it allows us to write experiments that can easily and safely be interrupted because go natively support this functionality which is instead rather tricky to get right in c it allows us to easily wrap standard library functionality hence allowing for easier low level measurements it allows us to quickly cross compile for the operating systems we care about windows macos linux android ios full builds will complete in minutes rather than hours thus enabling faster development cycles it will make ooni probe safer because go is memory safe and has built in support for concurrency regarding the choice of focusing on the ooni probe desktop app first and on the mobile apps next the reason is simple since probe engine is already being used by the ooni probe desktop apps via probe cli we have estimated that the effort and cost for doing this for the desktop apps is less than doing it on mobile where we haven’t shipped golang code in production yet in fact we can update the desktop apps by recompiling probe cli to use a new version of probe engine that reimplements all tests in go with none or minimal api changes | 1 |

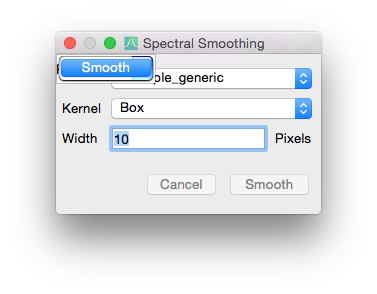

272,107 | 8,499,185,828 | IssuesEvent | 2018-10-29 16:32:48 | spacetelescope/specviz | https://api.github.com/repos/spacetelescope/specviz | closed | Smoothing fails when trying it a second time | bug gui medium-priority | Smoothing a spectrum the first time, works fine. But when I try to smooth it a second time (click again on the spectrum on the left and the hit the smoothing button) the dialog window looks like this and it won't let me click anything on it.

That happens even if I delete the first smoothing spectrum. | 1.0 | Smoothing fails when trying it a second time - Smoothing a spectrum the first time, works fine. But when I try to smooth it a second time (click again on the spectrum on the left and the hit the smoothing button) the dialog window looks like this and it won't let me click anything on it.

That happens even if I delete the first smoothing spectrum. | priority | smoothing fails when trying it a second time smoothing a spectrum the first time works fine but when i try to smooth it a second time click again on the spectrum on the left and the hit the smoothing button the dialog window looks like this and it won t let me click anything on it that happens even if i delete the first smoothing spectrum | 1 |

357,821 | 10,618,352,164 | IssuesEvent | 2019-10-13 03:38:10 | carbon-design-system/ibm-dotcom-library | https://api.github.com/repos/carbon-design-system/ibm-dotcom-library | opened | Masthead | Search autosuggest not working in Firefox | bug dev dotcom migrate priority: medium to be triaged | _Jeff-Chew created the following on Aug 16:_

### Detailed description

@Kenny-Lam noticed this while testing the masthead locally. Autosuggest currently isn't working in Firefox.

### Steps to reproduce the issue

1. Go to https://ibmdotcom-react.netlify.com in Firefox

2. Activate search and type in a query (minimum 3 characters)

3. Search results not appearing

### Additional information

- Currently the preview isn't there as it's not merged to the main test environment. Will update the ticket once we can reproduce there.

_Original issue: https://github.ibm.com/webstandards/digital-design/issues/1473_ | 1.0 | Masthead | Search autosuggest not working in Firefox - _Jeff-Chew created the following on Aug 16:_

### Detailed description

@Kenny-Lam noticed this while testing the masthead locally. Autosuggest currently isn't working in Firefox.

### Steps to reproduce the issue

1. Go to https://ibmdotcom-react.netlify.com in Firefox

2. Activate search and type in a query (minimum 3 characters)

3. Search results not appearing

### Additional information

- Currently the preview isn't there as it's not merged to the main test environment. Will update the ticket once we can reproduce there.

_Original issue: https://github.ibm.com/webstandards/digital-design/issues/1473_ | priority | masthead search autosuggest not working in firefox jeff chew created the following on aug detailed description kenny lam noticed this while testing the masthead locally autosuggest currently isn t working in firefox steps to reproduce the issue go to in firefox activate search and type in a query minimum characters search results not appearing additional information currently the preview isn t there as it s not merged to the main test environment will update the ticket once we can reproduce there original issue | 1 |